Reiner Hartenstein Reconfigurable Computing and the von Neumann

Reiner Hartenstein Reconfigurable Computing and the von Neumann Syndrome

Questions ? TU Kaiserslautern • familiar with FPGAs ? Programming easy? • Who is familiar with systolic arrays ? • Duality: data streams vs. instruction streams ? • Programming a multicore microprocessor: will it be easy ? © 2007, reiner@hartenstein. de 2 http: //hartenstein. de

TU Kaiserslautern pervas © 2007, reiner@hartenstein. de 3 http: //hartenstein. de

Outline TU Kaiserslautern • • • The Pervasiveness of FPGAs The Reconfigurable Computing Paradox The Gordon Moore gap The von Neumann syndrome We need a dual paradigm approach Conclusions © 2007, reiner@hartenstein. de 4 http: //hartenstein. de

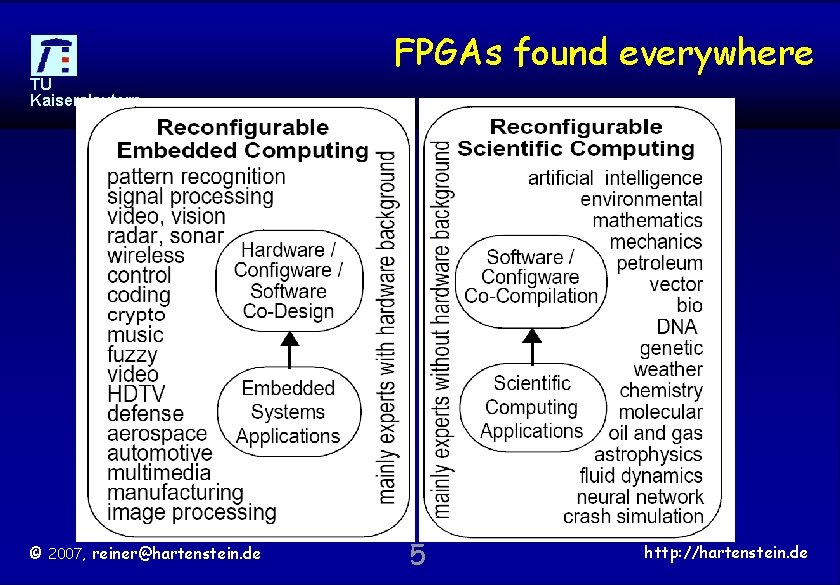

FPGAs found everywhere TU Kaiserslautern © 2007, reiner@hartenstein. de 5 http: //hartenstein. de

Pervasiveness of RC TU Kaiserslautern http: //hartenstein. de/pervasiveness. html http: //www. fpl. uni-kl. de/ RCeducation 08/pervasiveness. html © 2007, reiner@hartenstein. de 6 http: //hartenstein. de

RCeducation 2008 TU Kaiserslautern The 3 rd International Workshop on Reconfigurable Computing Education April 10, 2008, Montpellier, France http: //www. fpl. uni-kl. de/RCeducation 08/ © 2007, reiner@hartenstein. de 7 http: //hartenstein. de

Outline TU Kaiserslautern • • • The Pervasiveness of FPGAs The Reconfigurable Computing Paradox The Gordon Moore gap The von Neumann syndrome We need a dual paradigm approach Conclusions the hardware / software chasm the configware / software cha the instruction stream tunnel the overhead-prone paradigm © 2007, reiner@hartenstein. de 8 http: //hartenstein. de

Outline TU Kaiserslautern • • • The Pervasiveness of FPGAs The Reconfigurable Computing Paradox The Gordon Moore gap The von Neumann syndrome We need a dual paradigm approach Conclusions © 2007, reiner@hartenstein. de instruction-stream vs. data stream bridging the chasm: an old hat stubborn curriculum task forces 9 http: //hartenstein. de

Outline TU Kaiserslautern • • • The Pervasiveness of FPGAs The Reconfigurable Computing Paradox The Gordon Moore gap The von Neumann syndrome We need a dual paradigm approach Conclusions © 2007, reiner@hartenstein. de 10 http: //hartenstein. de

Outline TU Kaiserslautern paradox © 2007, reiner@hartenstein. de 11 http: //hartenstein. de

RC education TU Kaiserslautern http: //www. fpl. uni-kl. de/RCeducation/ http: //www. fpl. uni-kl. de/ RCeducation 08/pervasiveness. html © 2007, reiner@hartenstein. de 12 http: //hartenstein. de

Outline TU Kaiserslautern • • • The Pervasiveness of FPGAs The Reconfigurable Computing Paradox The Gordon Moore gap The von Neumann syndrome We need a dual paradigm approach Conclusions © 2007, reiner@hartenstein. de platform FPGAs, coarse-grained arrays saving energy 13 http: //hartenstein. de

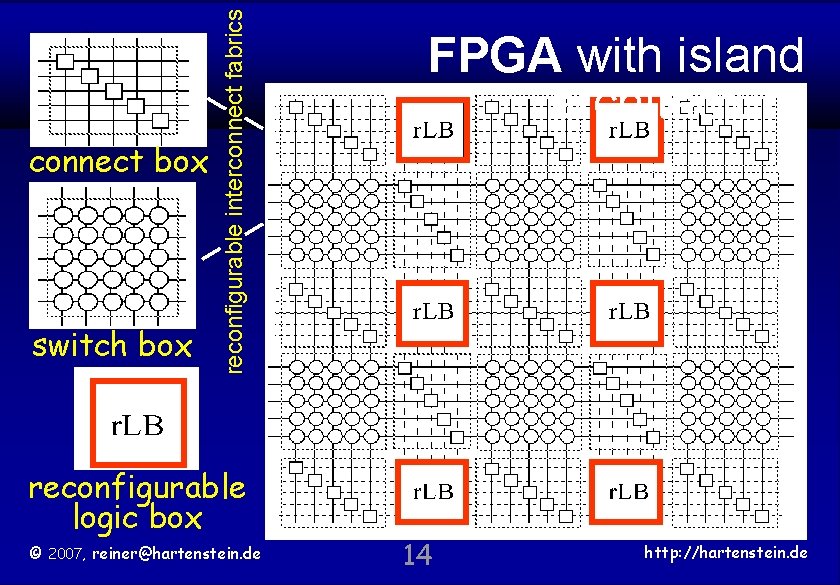

connect box switch box reconfigurable interconnect fabrics TU Kaiserslautern reconfigurable logic box © 2007, reiner@hartenstein. de FPGA with island architecture FPGA architecture 14 http: //hartenstein. de

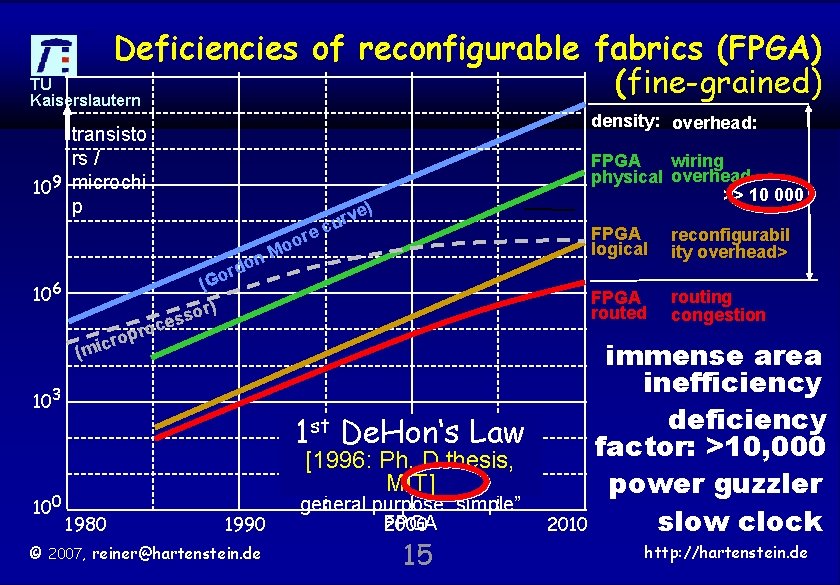

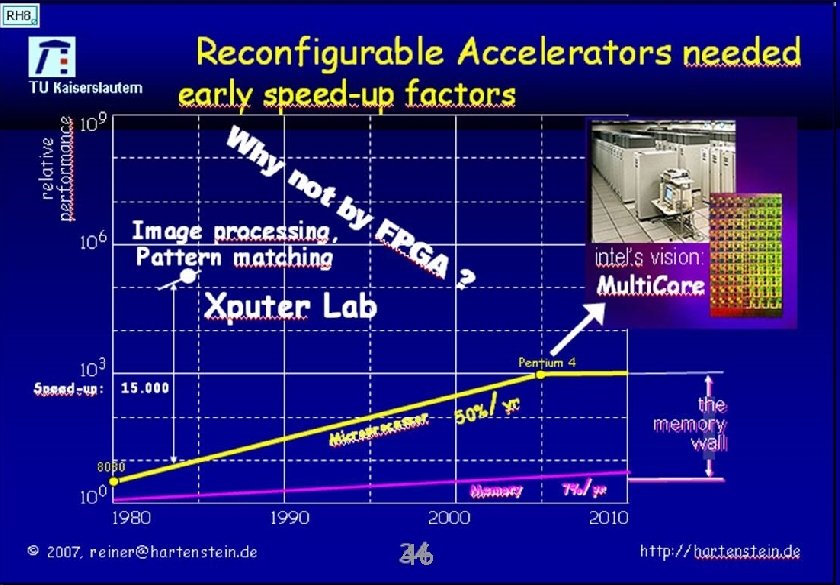

Deficiencies of reconfigurable fabrics (FPGA) TU (fine-grained) Kaiserslautern density: overhead: transisto rs / 109 microchi p ore o n. M rdo o (G 106 r (mic oc opr ) sor es 103 100 1980 1990 © 2007, reiner@hartenstein. de ve r u c ) FPGA wiring physical overhead >> 10 000 FPGA logical reconfigurabil ity overhead> FPGA routed routing congestion immense area inefficiency deficiency 1 st De. Hon‘s Law factor: >10, 000 [1996: Ph. D thesis, MIT] power guzzler general purpose “simple” FPGA slow clock 2000 2010 http: //hartenstein. de 15

![Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern 106 speedup Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern 106 speedup](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-16.jpg)

Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern 106 speedup factor Image processing, Pattern matching, Multimedia DSP and wireless real-time face detection 6000 Reed-Solomon Decoding MAC 103 video-rate stereo vision pattern recognition 730 1000 900 400 SPIHT wavelet-based image compression 457 288 100 FFT 52 BLAST protein identification X 2/yr 100 20 1980 © 2007, reiner@hartenstein. de 1990 17 crypto 1000 Viterbi Decoding Smith-Waterman pattern matching molecular dynamics simulation oil and gas Bioinformatics Astrophysics GRAPE 2000 16 40 88 2400 2010 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 17 http: //hartenstein. de

![Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-18.jpg)

Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor 106 103 100 The RC paradox Image processing, Pattern matching, Multimedia A S I P DSP and wireless real-time face detection 6000 Reed-Solomon Decoding MAC deficiency factor: >10, 000 speed-up factor: 6, 000 video-rate stereo vision pattern recognition 730 1000 900 400 SPIHT wavelet-based image compression 457 total discrepancy: © 2007, reiner@hartenstein. de >60, 000 1980 288 100 FFT 52 BLAST protein identification X 2/yr 20 1990 17 crypto 3000 1000 Viterbi Decoding Smith-Waterman pattern matching molecular dynamics simulation oil and gas Bioinformatics Astrophysics GRAPE 2000 18 40 88 2400 2010 http: //hartenstein. de

![Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-19.jpg)

Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor 106 103 100 The RC paradox Image processing, Pattern matching, Multimedia DSP and wireless real-time face detection 6000 Reed-Solomon Decoding MAC deficiency factor: >10, 000 speed-up factor: 6, 000 video-rate stereo vision pattern recognition 730 1000 900 400 SPIHT wavelet-based image compression 457 total discrepancy: © 2007, reiner@hartenstein. de >60, 000 1980 288 100 FFT 52 BLAST protein identification X 2/yr 20 1990 17 crypto 3000 1000 Viterbi Decoding Smith-Waterman pattern matching molecular dynamics simulation oil and gas Bioinformatics Astrophysics GRAPE 2000 19 40 88 2400 2010 http: //hartenstein. de

![Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-20.jpg)

Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern speedup factor 106 103 100 The RC paradox Image processing, Pattern matching, Multimedia A S I P DSP and wireless real-time face detection 6000 Reed-Solomon Decoding MAC deficiency factor: >10, 000 speed-up factor: 6, 000 video-rate stereo vision pattern recognition 730 1000 900 400 SPIHT wavelet-based image compression 457 total discrepancy: © 2007, reiner@hartenstein. de >60, 000 1980 288 100 FFT 52 BLAST protein identification X 2/yr 20 1990 17 crypto 1000 Viterbi Decoding Smith-Waterman pattern matching molecular dynamics simulation oil and gas Bioinformatics Astrophysics GRAPE 2000 20 40 88 2400 2010 http: //hartenstein. de

![Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern These examples Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern These examples](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-21.jpg)

Software-to-Configware (FPGA) Migration: some published speed-up factors [2003 – 2005] TU Kaiserslautern These examples worked fine with on-chip memory There are other algorithms more difficult to accelerate … … where d-daching might be useful (ASM) © 2007, reiner@hartenstein. de 21 http: //hartenstein. de

Outline TU Kaiserslautern platform. FPGA © 2007, reiner@hartenstein. de 22 http: //hartenstein. de

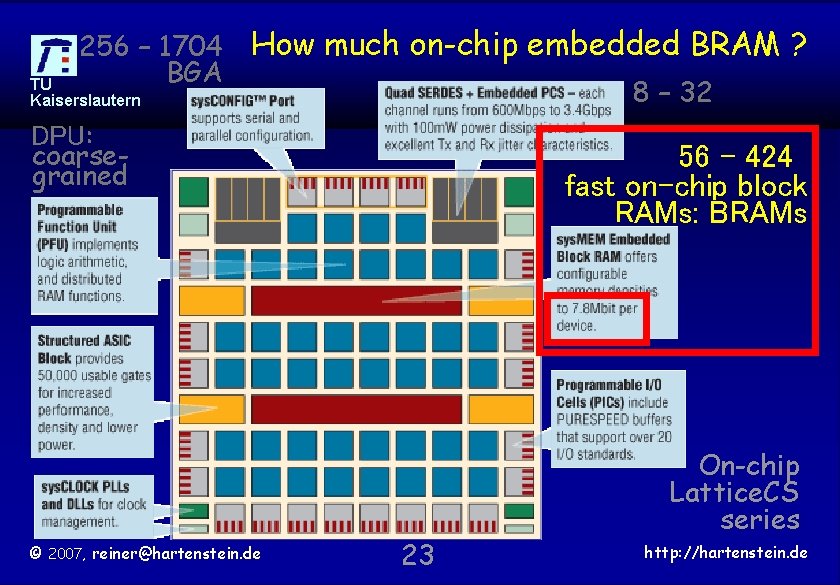

256 – 1704 BGA How much on-chip embedded BRAM ? 8 – 32 TU Kaiserslautern DPU: coarsegrained © 2007, reiner@hartenstein. de 56 – 424 fast on-chip block RAMs: BRAMs 23 On-chip Lattice. CS series http: //hartenstein. de

TU Kaiserslautern coarse © 2007, reiner@hartenstein. de 24 http: //hartenstein. de

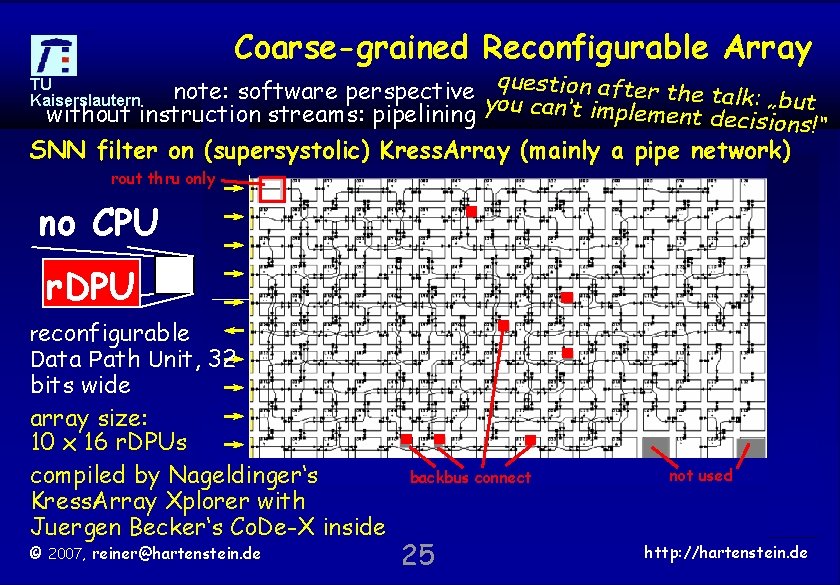

Coarse-grained Reconfigurable Array note: software perspective y question after the talk: „but without instruction streams: pipelining ou can‘t implement decisions!“ TU Kaiserslautern SNN filter on (supersystolic) Kress. Array (mainly a pipe network) rout thru only no CPU r. DPU reconfigurable Data Path Unit, 32 bits wide array size: 10 x 16 r. DPUs compiled by Nageldinger‘s Kress. Array Xplorer with Juergen Becker‘s Co. De-X inside © 2007, reiner@hartenstein. de backbus connect 25 not used http: //hartenstein. de

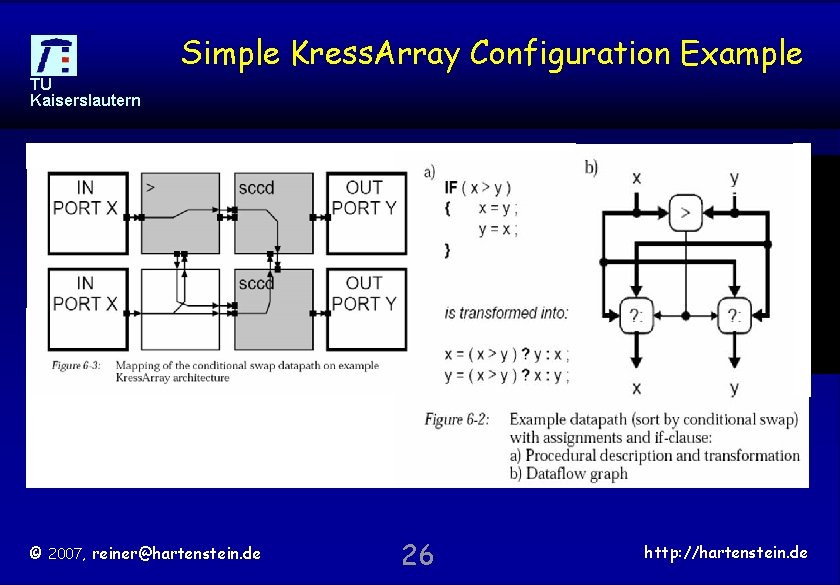

Simple Kress. Array Configuration Example TU Kaiserslautern © 2007, reiner@hartenstein. de 26 http: //hartenstein. de

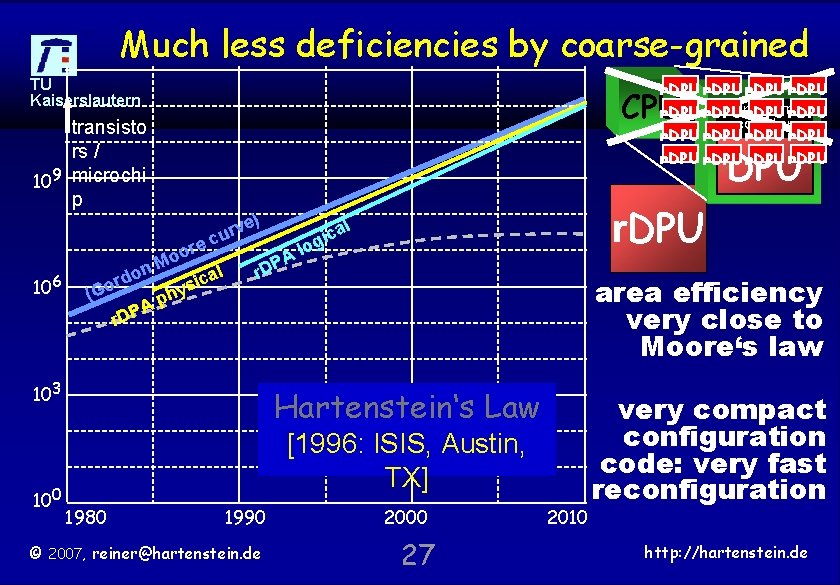

Much less deficiencies by coarse-grained TU Kaiserslautern r. DPU program r. DPU counter r. DPU r. DPU CPU transisto rs / 109 microchi p DPU ve r u c 106 ore o M n o al d c r i (Go hys p A r. DP ) PA lo r. D 103 100 r. DPU l a gic area efficiency very close to Moore‘s law Hartenstein‘s Law [1996: ISIS, Austin, TX] 1980 1990 © 2007, reiner@hartenstein. de 2000 27 2010 very compact configuration code: very fast reconfiguration http: //hartenstein. de

TU Kaiserslautern energy © 2007, reiner@hartenstein. de 28 http: //hartenstein. de

![Software-to-Configware (FPGA) Migration: Oil and gas [2005] TU Kaiserslautern speedup factor 106 side effect: Software-to-Configware (FPGA) Migration: Oil and gas [2005] TU Kaiserslautern speedup factor 106 side effect:](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-29.jpg)

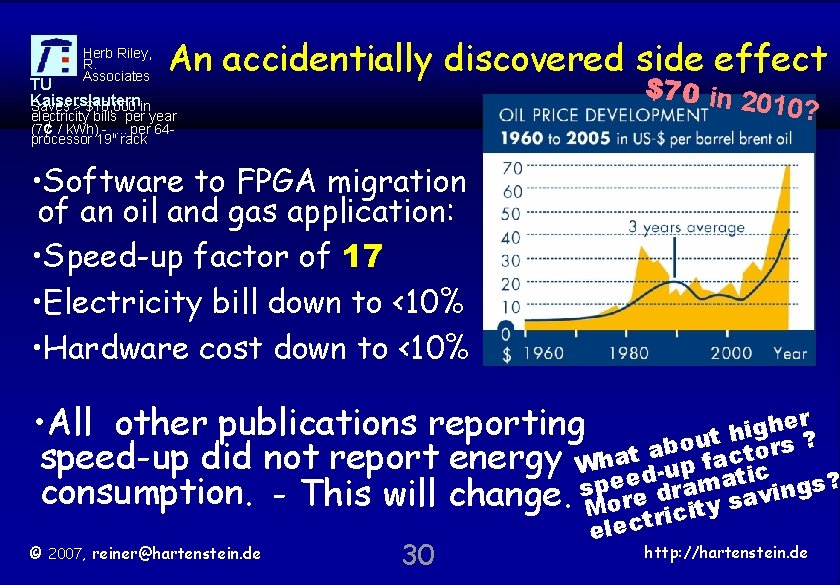

Software-to-Configware (FPGA) Migration: Oil and gas [2005] TU Kaiserslautern speedup factor 106 side effect: slashing the electricity bill by more than an order of magnitude 103 17 oil and gas X 2/yr 100 1980 © 2007, reiner@hartenstein. de 1990 2000 29 2010 http: //hartenstein. de

Herb Riley, R. Associates TU Kaiserslautern Saves > $10, 000 in An accidentially discovered side effect $70 in 2 010? electricity bills per year (7¢ / k. Wh) -. . per 64 processor 19" rack • Software to FPGA migration of an oil and gas application: • Speed-up factor of 17 • Electricity bill down to <10% • Hardware cost down to <10% r e h • All other publications reporting g i t h rs ? u o b speed-up did not report energy Whatda-up facttioc ee drama p s consumption. - This will change. More ity savings? ic r © 2007, reiner@hartenstein. de 30 elect http: //hartenstein. de

![TU Kaiserslautern What’s Really Going On With Oil Prices? [Business. Week, January 29, 2007] TU Kaiserslautern What’s Really Going On With Oil Prices? [Business. Week, January 29, 2007]](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-31.jpg)

TU Kaiserslautern What’s Really Going On With Oil Prices? [Business. Week, January 29, 2007] $52 Price of delivery in February 2007 [New York Mercantile Exchange: Jan. 17] $200 Minimum oil price in 2010, in a bet by investment banker Matthew Simmons © 2007, reiner@hartenstein. de 31 http: //hartenstein. de

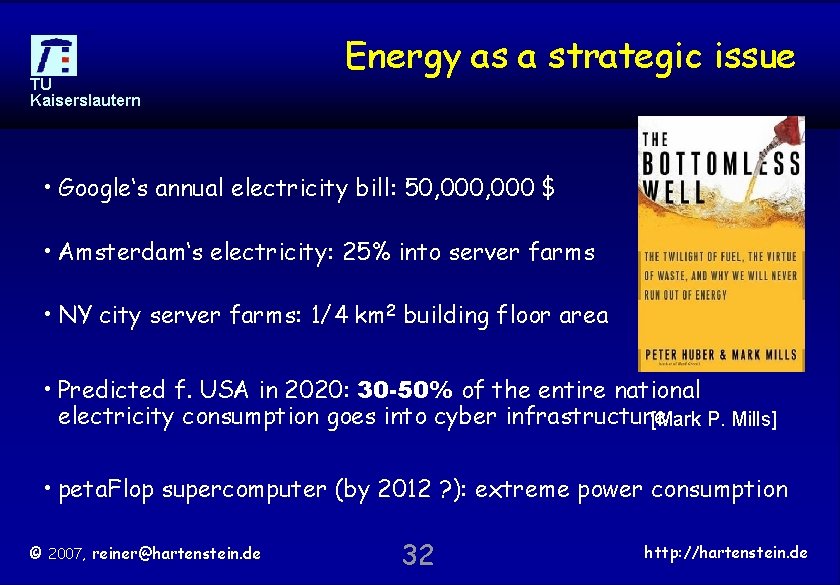

TU Kaiserslautern Energy as a strategic issue • Google‘s annual electricity bill: 50, 000 $ • Amsterdam‘s electricity: 25% into server farms • NY city server farms: 1/4 km 2 building floor area • Predicted f. USA in 2020: 30 -50% of the entire national electricity consumption goes into cyber infrastructure [Mark P. Mills] • peta. Flop supercomputer (by 2012 ? ): extreme power consumption © 2007, reiner@hartenstein. de 32 http: //hartenstein. de

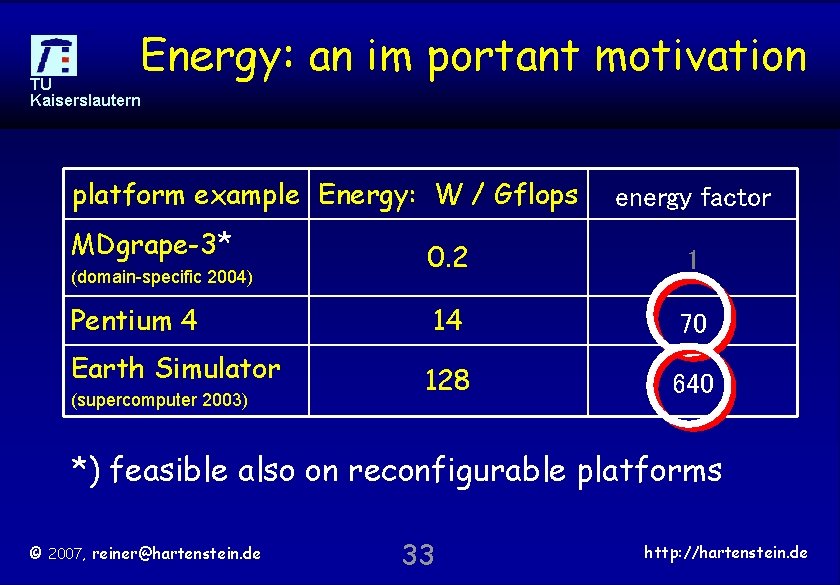

Energy: an im portant motivation TU Kaiserslautern platform example Energy: W / Gflops MDgrape-3* (domain-specific 2004) Pentium 4 Earth Simulator (supercomputer 2003) energy factor 0. 2 1 14 70 128 640 *) feasible also on reconfigurable platforms © 2007, reiner@hartenstein. de 33 http: //hartenstein. de

TU Kaiserslautern Moore gap © 2007, reiner@hartenstein. de 34 http: //hartenstein. de

Outline TU Kaiserslautern • • • The Pervasiveness of FPGAs The Reconfigurable Computing Paradox The Gordon Moore gap The von Neumann syndrome We need a dual paradigm approach Conclusions & the multicore crisis © 2007, reiner@hartenstein. de 35 http: //hartenstein. de

What is the reason of the paradox ? TU Kaiserslautern The Gordon Moore curve does not indicate performance Moore’s law not applicable to all aspects of VLSI The peak clock frequency does not indicate performance the law of Gates © 2007, reiner@hartenstein. de 36 http: //hartenstein. de

![Rapid Decline of Computational Density 175 DEC a lp [BWRC, UC Berkeley, 2004] ha Rapid Decline of Computational Density 175 DEC a lp [BWRC, UC Berkeley, 2004] ha](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-37.jpg)

Rapid Decline of Computational Density 175 DEC a lp [BWRC, UC Berkeley, 2004] ha 150 125 U SPECfp 2000/MHz/Billion Transistors 200 primary design goal: avoiding a paradigm shift dramatic demo of the von Neumann Syndrome CP alpha: down by 100 in 6 yrs IBM: down by 20 in 6 yrs TU Kaiserslautern memory wall, caches, . . . 100 75 50 IB M 25 SUN 0 1990 © 2007, reiner@hartenstein. de HP 1995 stolen from Bob Colwell 2000 37 2005 http: //hartenstein. de

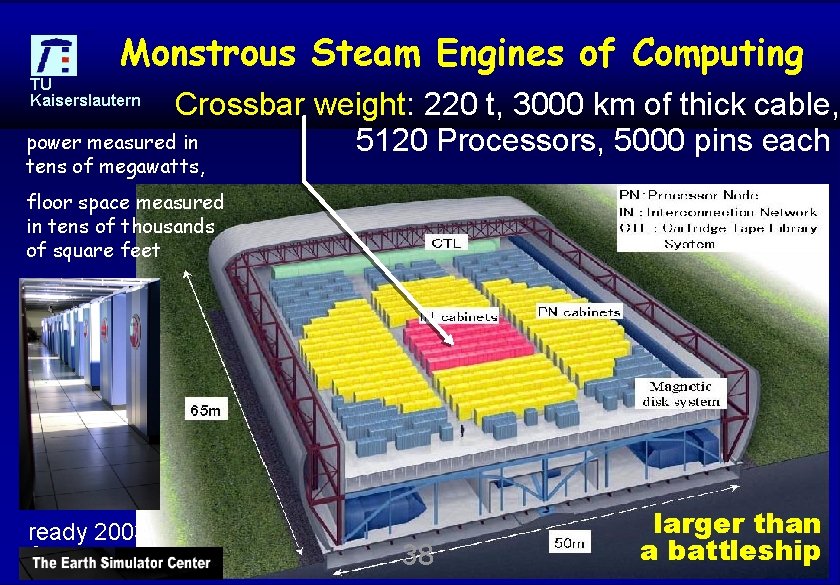

Monstrous Steam Engines of Computing TU Kaiserslautern Crossbar weight: 220 t, 3000 km of thick cable, power measured in 5120 Processors, 5000 pins each tens of megawatts, floor space measured in tens of thousands of square feet ready 2003 © 2007, reiner@hartenstein. de 38 larger than ahttp: //hartenstein. de battleship

![Dead Supercomputer Society TU Kaiserslautern Research 1985 – 1995 [Gordon Bell, keynote ISCA 2000] Dead Supercomputer Society TU Kaiserslautern Research 1985 – 1995 [Gordon Bell, keynote ISCA 2000]](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-39.jpg)

Dead Supercomputer Society TU Kaiserslautern Research 1985 – 1995 [Gordon Bell, keynote ISCA 2000] • DAPP • ACRI • Denelcor • Alliant • Elexsi • American • ETA Systems Superco • Evans and Sutherland mputer • Computer • Ametek • Floating Point Systems • Applied • Galaxy YH-1 Dynamics • Goodyear Aerospace MPP • Astronautics • Gould NPL • BBN • Guiltech • CDC • ICL • Convex • Intel Scientific Computers • Cray Computer • International Parallel • Cray Research. Machines • Culler-Harris • Kendall Square Research • Culler Scientific • Key Computer • Cydrome • Dana/Ardent/ Laboratories ©Stellar/Stardent 2007, reiner@hartenstein. de 39 • Mas. Par • Meiko • Multiflow • Myrias • Numerix • Prisma • Tera • Thinking Machines • Saxpy • Scientific Computer • Systems (SCS) • Soviet Supercomputers • Supertek • Supercomputer Systems • Suprenum • Vitesse Electronics http: //hartenstein. de

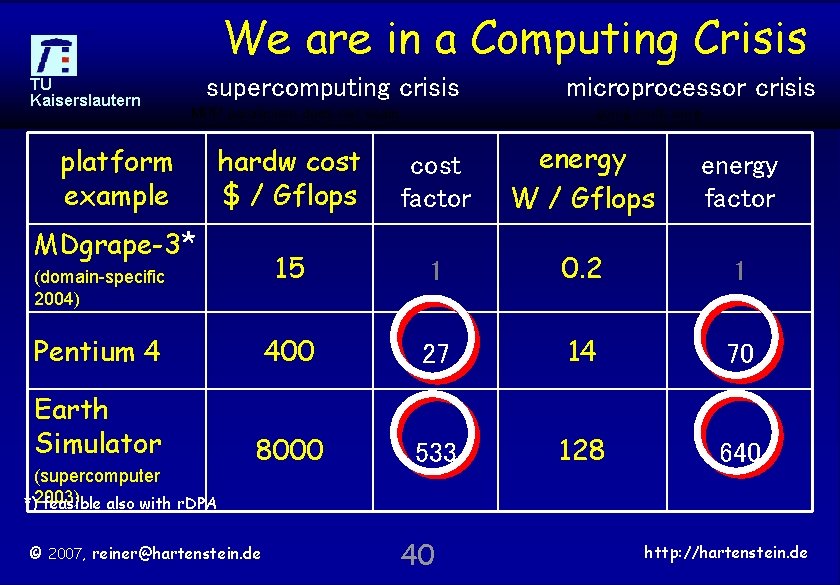

We are in a Computing Crisis TU Kaiserslautern supercomputing crisis MPP parallelism does not scale microprocessor crisis going multi core hardw cost $ / Gflops cost factor energy W / Gflops energy factor 15 1 0. 2 1 Pentium 4 400 27 14 70 Earth Simulator 8000 533 128 640 platform example MDgrape-3* (domain-specific 2004) (supercomputer *)2003) feasible also with r. DPA © 2007, reiner@hartenstein. de 40 http: //hartenstein. de

TU Kaiserslautern Syndrome © 2007, reiner@hartenstein. de 41 http: //hartenstein. de

The von Neumann Paradigm Trap TU Kaiserslautern CS education got stuck in this paradigm trap which stems from technology of the 1940 s CS education’s right eye is blind, and its left eye suffers from tunnel view We need a dual paradigm approach • [Burks, Goldstein, von Neumann; 1946] • RAM (memory cells have adresses …. ) • Program counter (auto-increment, jump, goto, branch) • Datapath Unit with ALU etc. , • I/O unit, …. © 2007, reiner@hartenstein. de 42 http: //hartenstein. de

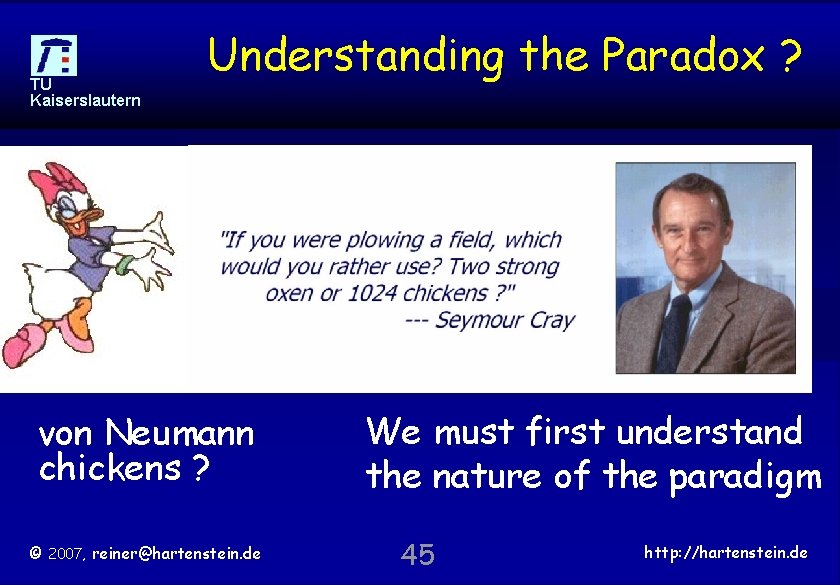

What is the reason of the paradox ? TU Kaiserslautern the von Neumann Syndrome Result from decades of tunnel view in CS R&D and education basic mind set completely wrong “CPU: most flexible platform” ? >1000 CPUs running in parallel: the most inflexible platform The Law of More: drastically declining programmer productivity However, FPGA & r. DPA are very flexible © 2007, reiner@hartenstein. de 43 http: //hartenstein. de

TU Kaiserslautern multicore © 2007, reiner@hartenstein. de 44 http: //hartenstein. de

TU Kaiserslautern Understanding the Paradox ? Executive Summary doesn‘t help von Neumann chickens ? © 2007, reiner@hartenstein. de We must first understand the nature of the paradigm 45 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 46 http: //hartenstein. de

TU Kaiserslautern models © 2007, reiner@hartenstein. de 47 http: //hartenstein. de

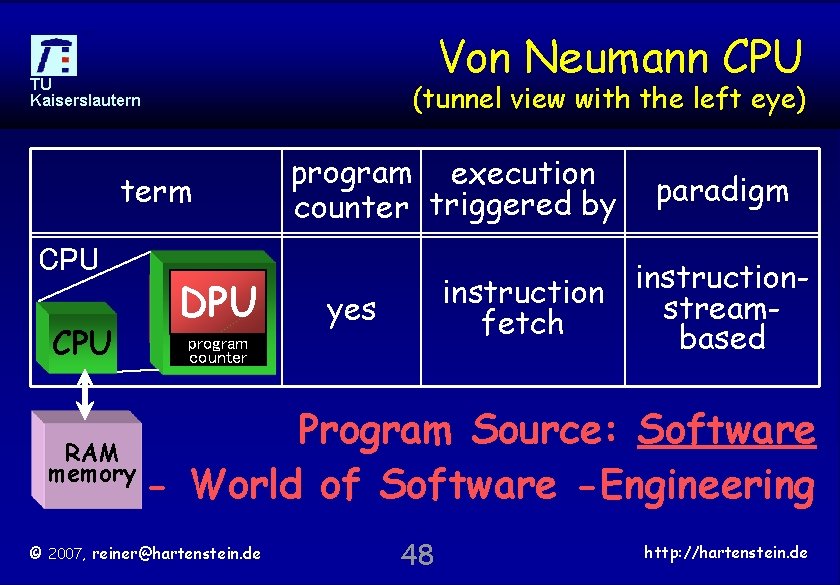

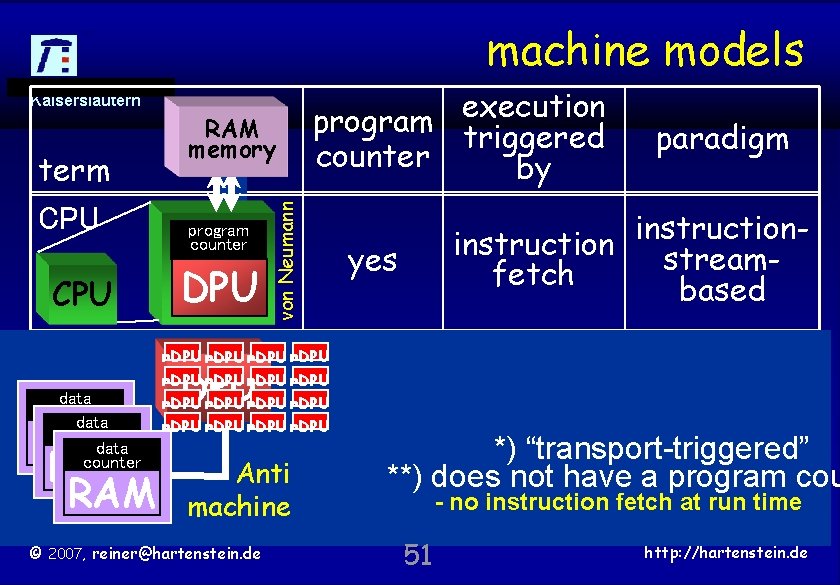

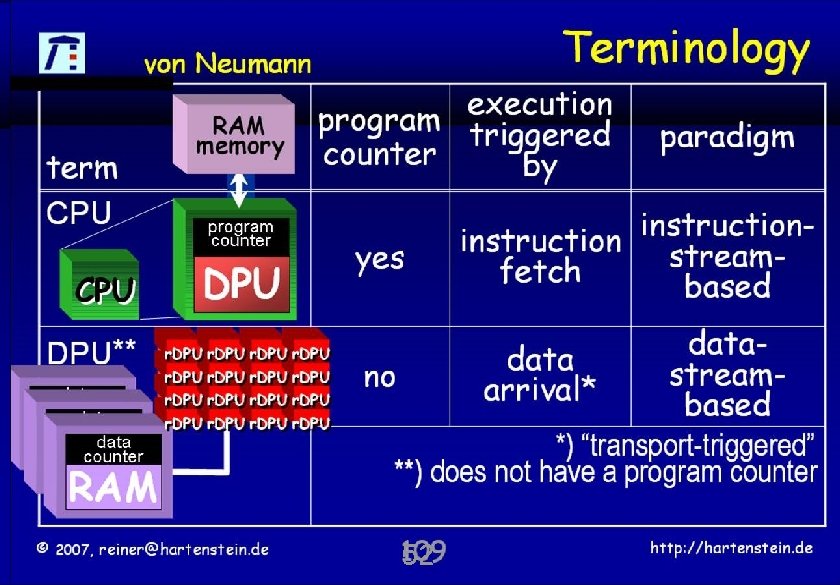

Von Neumann CPU TU Kaiserslautern (tunnel view with the left eye) term CPU DPU program execution counter triggered by paradigm instructionstreamfetch based yes counter Program Source: Software RAM memory - World of Software -Engineering © 2007, reiner@hartenstein. de 48 http: //hartenstein. de

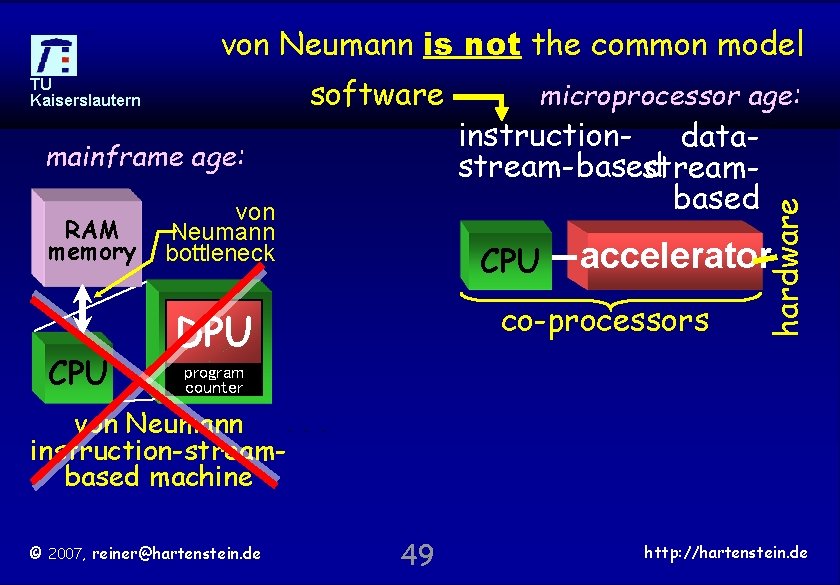

von Neumann is not the common model mainframe age: RAM memory CPU von Neumann bottleneck microprocessor age: instruction- datastream-based streambased CPU accelerator co-processors DPU hardware software TU Kaiserslautern program counter von Neumann instruction-streambased machine © 2007, reiner@hartenstein. de 49 http: //hartenstein. de

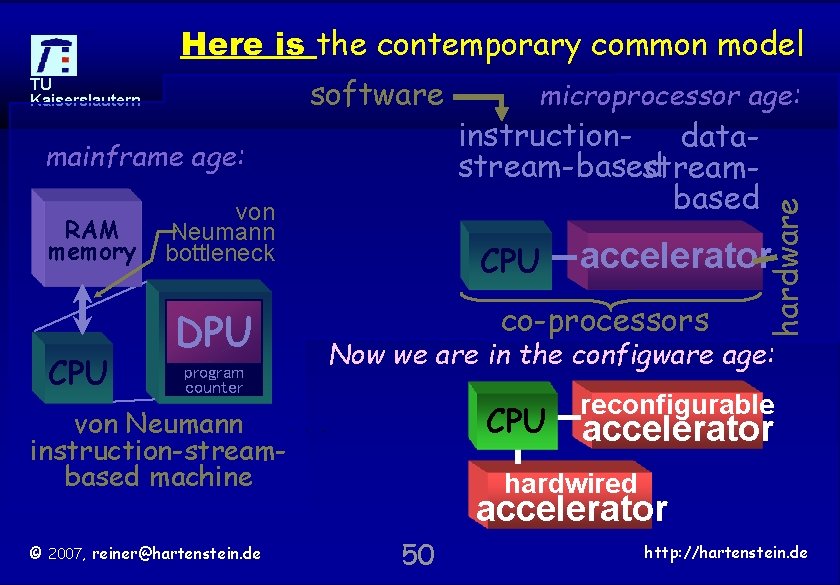

Here is the contemporary common model mainframe age: RAM memory CPU von Neumann bottleneck DPU program counter instruction- datastream-based streambased CPU accelerator co-processors Now we are in the configware age: CPU von Neumann instruction-streambased machine © 2007, reiner@hartenstein. de microprocessor age: hardware software TU Kaiserslautern reconfigurable accelerator hardwired accelerator 50 http: //hartenstein. de

machine models TU Kaiserslautern CPU DPU** data counter RAM RAM program counter DPU von Neumann term RAM memory program execution triggered counter by r. DPU r. DPU DPU Anti machine © 2007, reiner@hartenstein. de paradigm instruction streamfetch based yes data streamno arrival* based *) “transport-triggered” **) does not have a program cou - no instruction fetch at run time 51 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 52 http: //hartenstein. de

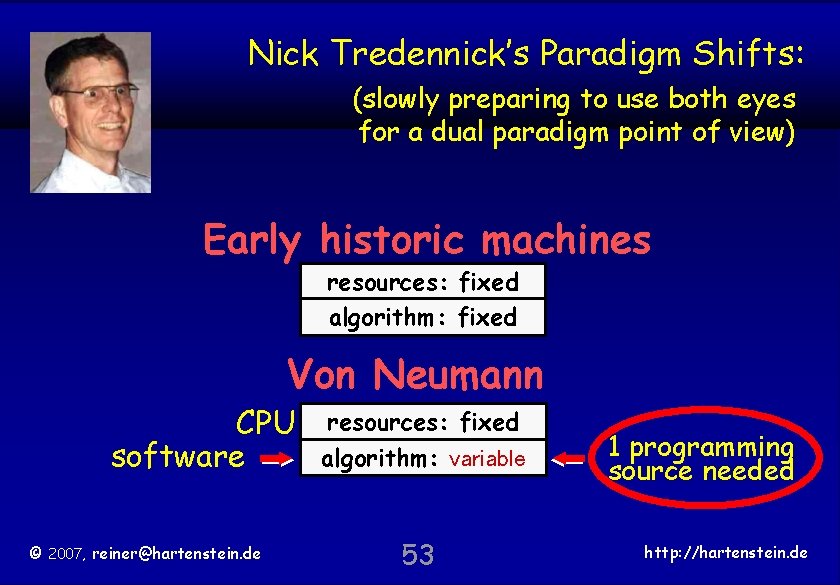

Nick Tredennick’s Paradigm Shifts: TU Kaiserslautern (slowly preparing to use both eyes for a dual paradigm point of view) Early historic machines resources: fixed algorithm: fixed Von Neumann CPU software © 2007, reiner@hartenstein. de resources: fixed algorithm: variable 53 1 programming source needed http: //hartenstein. de

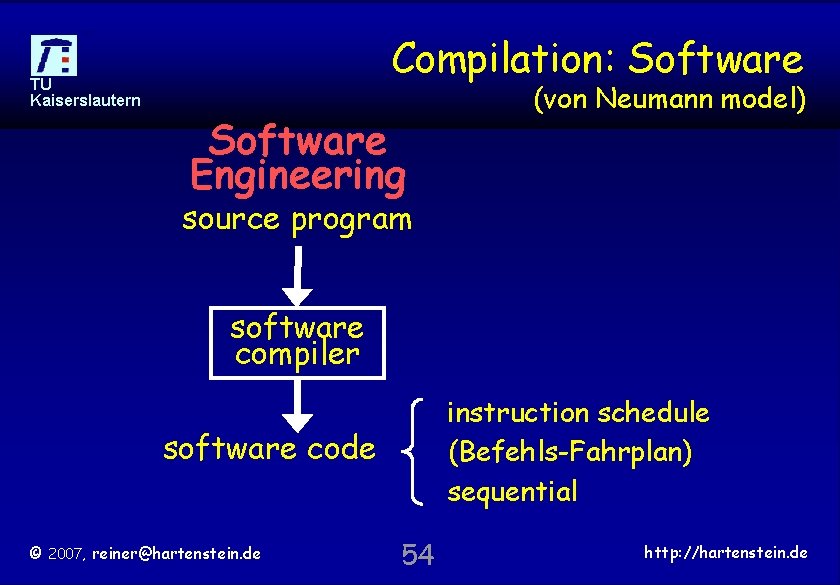

Compilation: Software TU Kaiserslautern Software Engineering (von Neumann model) source program software compiler instruction schedule (Befehls-Fahrplan) sequential software code © 2007, reiner@hartenstein. de 54 http: //hartenstein. de

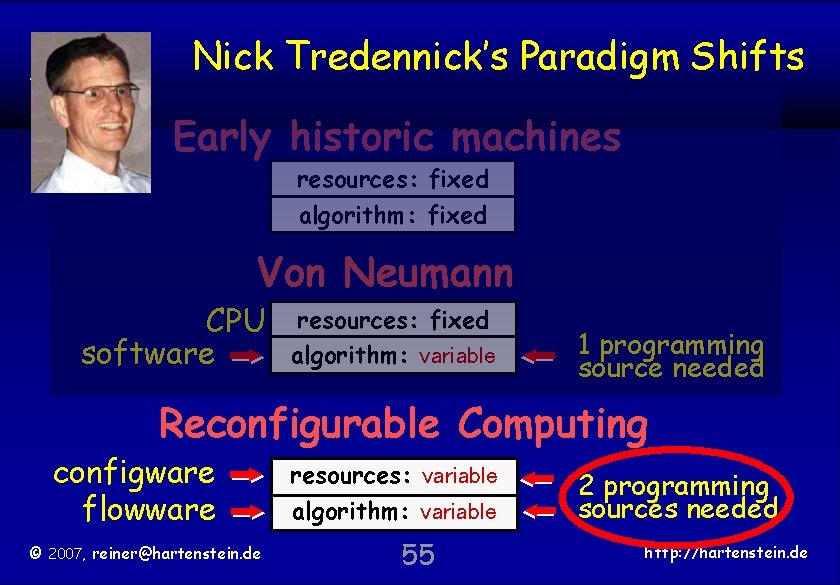

TU Kaiserslautern Nick Tredennick’s Paradigm Shifts Early historic machines resources: fixed algorithm: fixed Von Neumann CPU software resources: fixed algorithm: variable 1 programming source needed Reconfigurable Computing configware flowware © 2007, reiner@hartenstein. de resources: variable algorithm: variable 55 2 programming sources needed http: //hartenstein. de

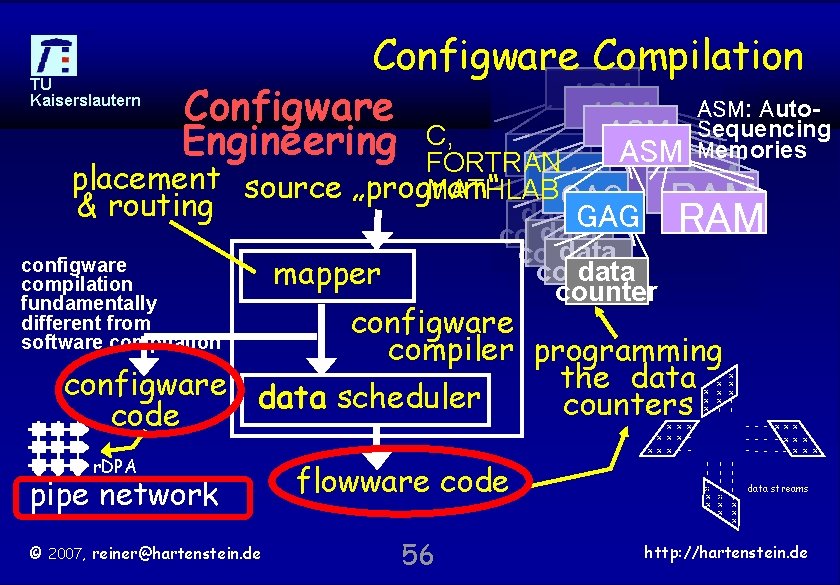

TU Kaiserslautern Configware Compilation ASM Auto. Configware ASM: Sequencing ASM C, Engineering FORTRAN GAG ASM Memories placement & routing RAM GAG RAM source „program“ MATHLAB GAG RAM data counter configware compilation fundamentally different from software compilation data counter mapper configware compiler programming the data configware data scheduler counters code x x x x | x | | © 2007, reiner@hartenstein. de 56 | | | | | x x x x | pipe network flowware code - - - x xx - - xx x - - - x x x data streams | r. DPA x x x x x - - http: //hartenstein. de

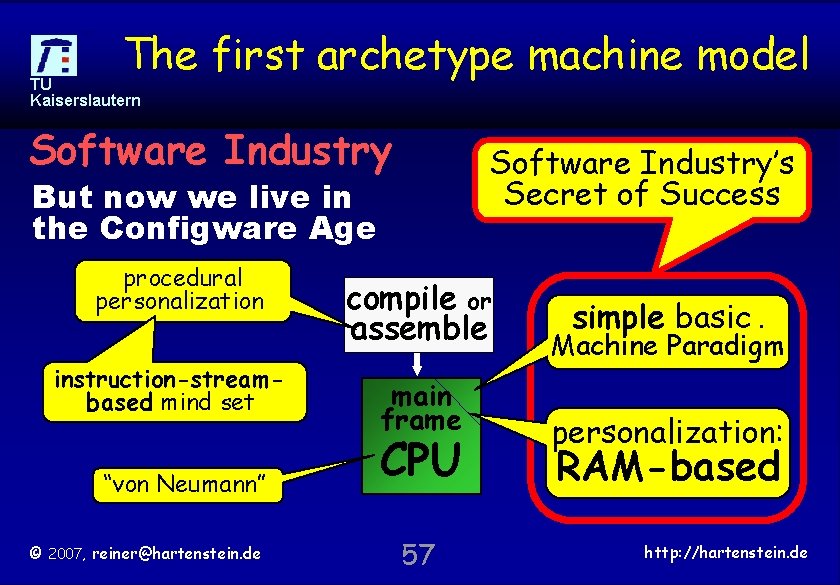

The first archetype machine model TU Kaiserslautern Software Industry’s Secret of Success But now we live in the Configware Age procedural personalization instruction-streambased mind set “von Neumann” © 2007, reiner@hartenstein. de compile or assemble main frame CPU 57 simple basic. Machine Paradigm personalization: RAM-based http: //hartenstein. de

TU Kaiserslautern systolic © 2007, reiner@hartenstein. de 58 http: //hartenstein. de

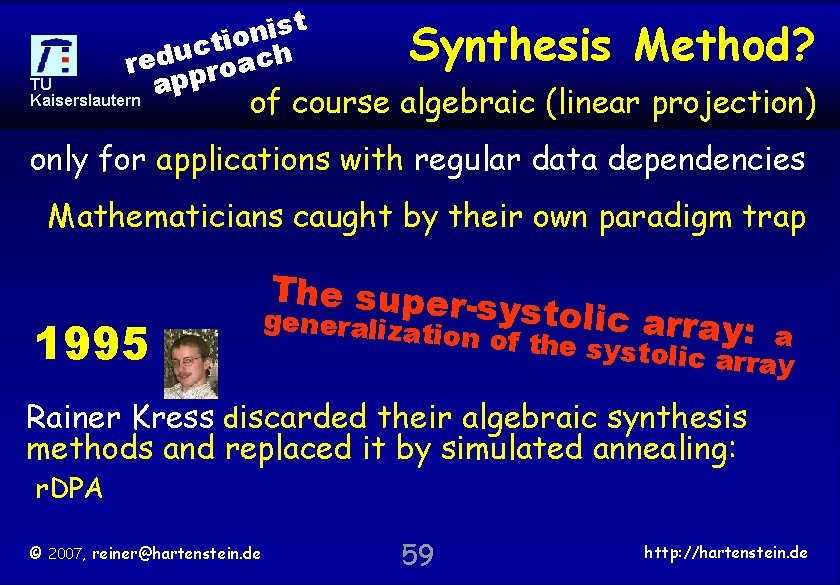

t s i n o i t c u red proach TU p a Kaiserslautern Synthesis Method? of course algebraic (linear projection) only for applications with regular data dependencies Mathematicians caught by their own paradigm trap 1995 The supersystolic arr generalizat ay: ion of the s ystolic arraa y Rainer Kress discarded their algebraic synthesis methods and replaced it by simulated annealing: r. DPA © 2007, reiner@hartenstein. de 59 http: //hartenstein. de

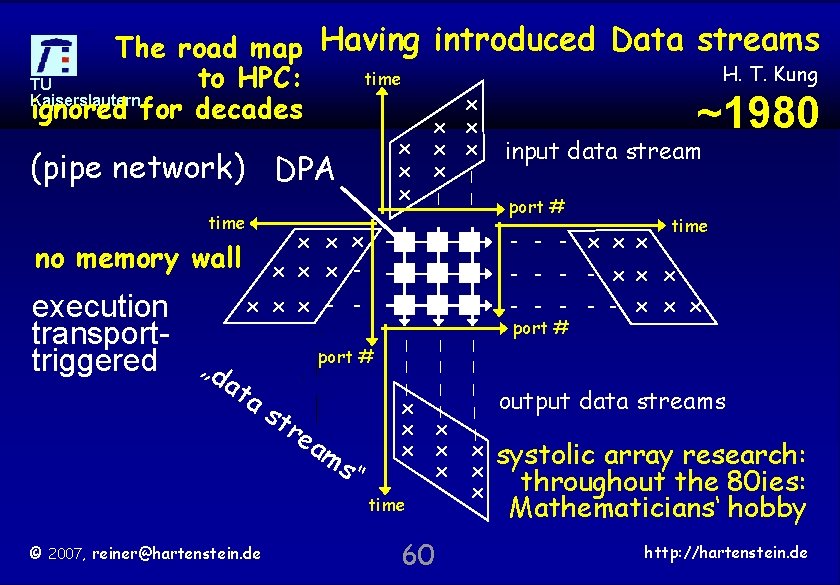

The road map Having introduced Data streams H. T. Kung time to HPC: TU Kaiserslautern x ignored for decades ~1980 x x x (pipe network) DPA time x x | | - - - x x x | | | x x x time © 2007, reiner@hartenstein. de 60 x x x | s“ time - - x x x port # tr ea m port # - - - x x x - - ta s input data stream | x x x - no memory wall execution transporttriggered „d a x x x | x x x port # output data streams systolic array research: throughout the 80 ies: Mathematicians‘ hobby http: //hartenstein. de

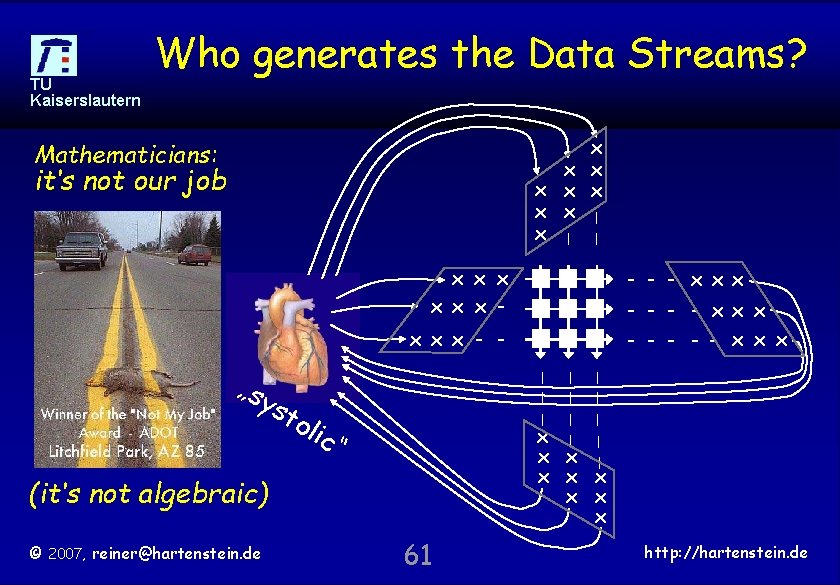

TU Kaiserslautern Who generates the Data Streams? x x x x | x | | Mathematicians: it‘s not our job x x x - - x xx - - xx x - - - x x x - - st o lic | | | | | x x x x “ | „sy | (it‘s not algebraic) © 2007, reiner@hartenstein. de 61 http: //hartenstein. de

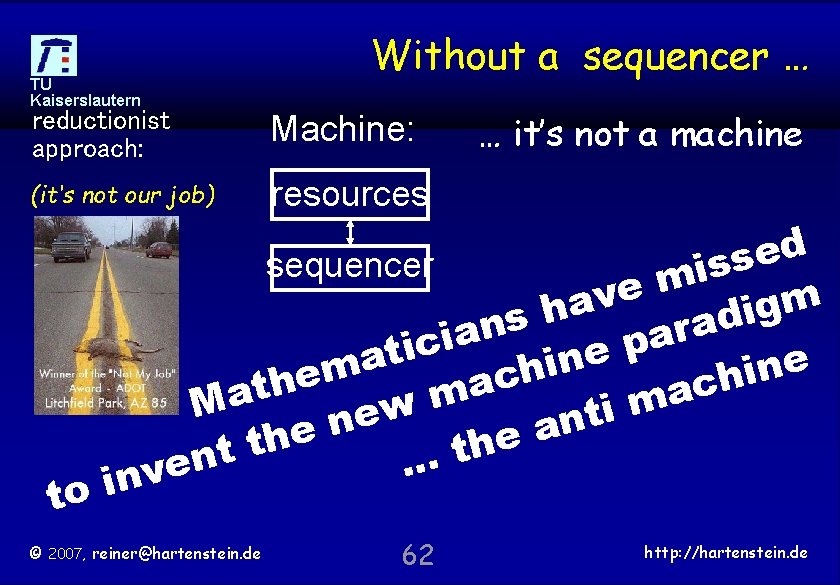

TU Kaiserslautern Without a sequencer … reductionist approach: Machine: (it‘s not our job) resources to … it’s not a machine d e s sequencer s i m e v m a g h i d s a n r a a i p c i t e a n e i n m h i e c h h a c t a m m Ma w i t e n n a e e h t t. n. . e inv © 2007, reiner@hartenstein. de 62 http: //hartenstein. de

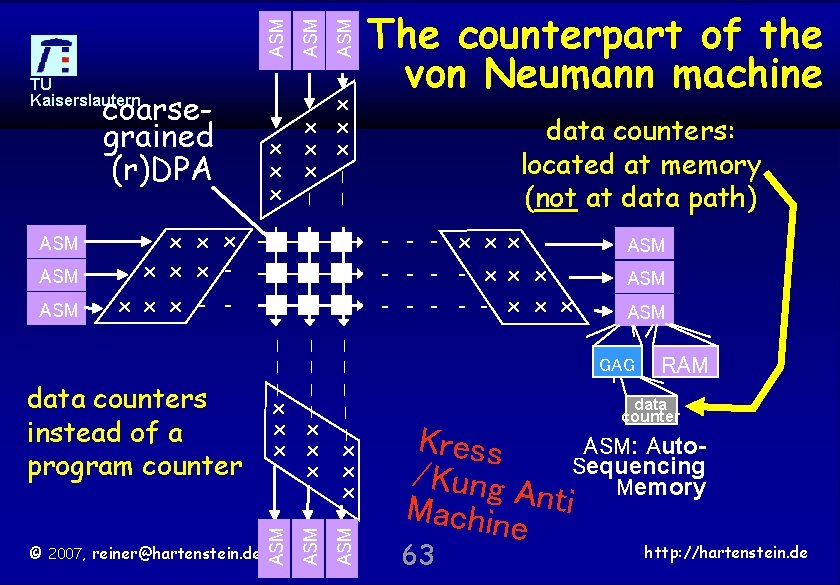

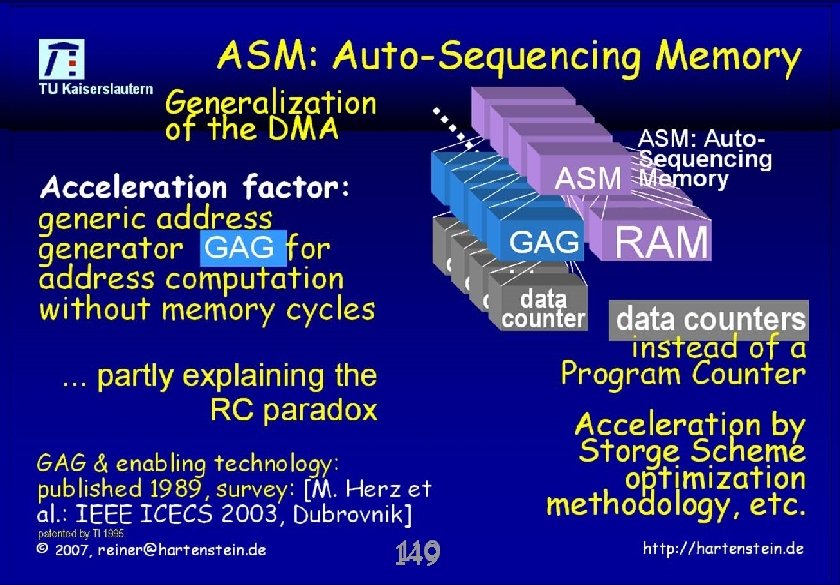

© 2007, reiner@hartenstein. de | | | x x x | x x x ASM data counters instead of a program counter data counters: located at memory (not at data path) | | ASM x x x - - ASM | | ASM x x x - ASM x x x x x | coarsegrained (r)DPA ASM ASM TU Kaiserslautern The counterpart of the von Neumann machine - - - x x x ASM GAG RAM data counter Kress ASM: Auto/Kung A Sequencing Memory n Machin ti e 63 http: //hartenstein. de

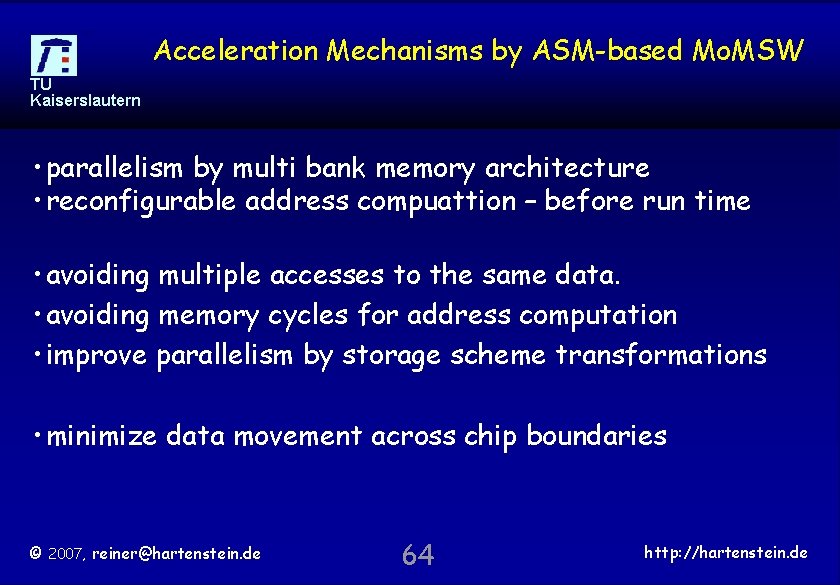

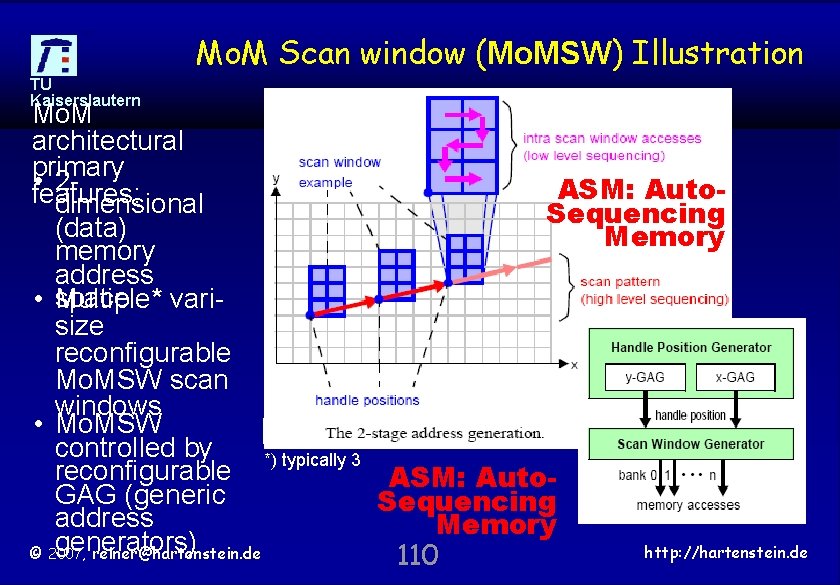

Acceleration Mechanisms by ASM-based Mo. MSW TU Kaiserslautern • parallelism by multi bank memory architecture • reconfigurable address compuattion – before run time • avoiding multiple accesses to the same data. • avoiding memory cycles for address computation • improve parallelism by storage scheme transformations • minimize data movement across chip boundaries © 2007, reiner@hartenstein. de 64 http: //hartenstein. de

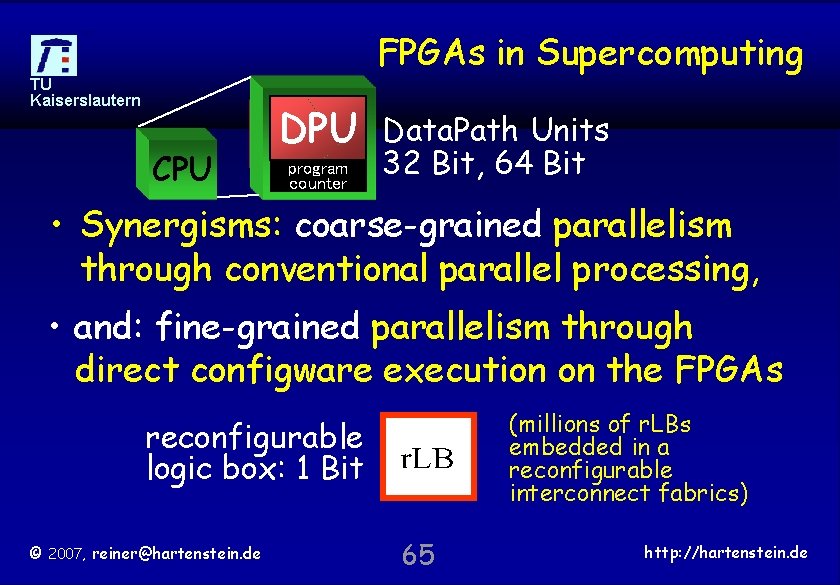

FPGAs in Supercomputing TU Kaiserslautern CPU DPU program counter Data. Path Units 32 Bit, 64 Bit • Synergisms: coarse-grained parallelism through conventional parallel processing, • and: fine-grained parallelism through direct configware execution on the FPGAs (millions of r. LBs embedded in a reconfigurable interconnect fabrics) reconfigurable logic box: 1 Bit © 2007, reiner@hartenstein. de 65 http: //hartenstein. de

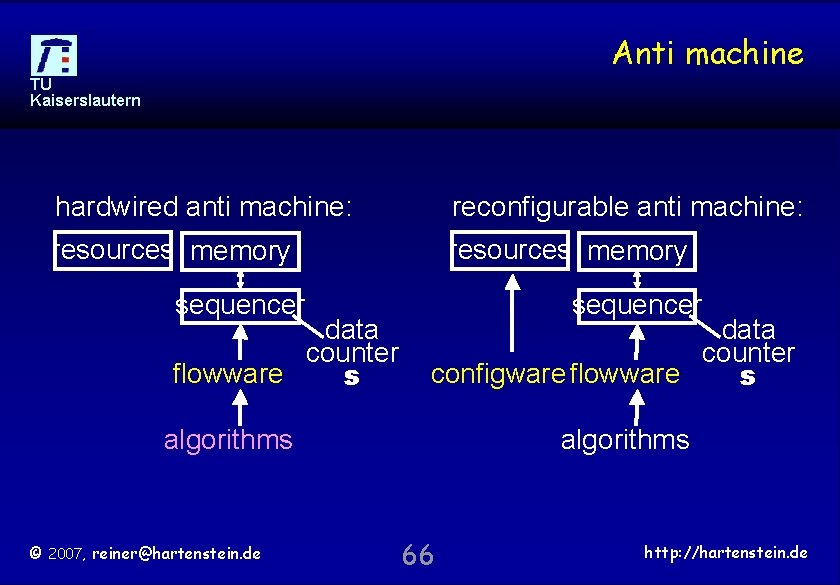

Anti machine TU Kaiserslautern hardwired anti machine: reconfigurable anti machine: resources memory sequencer data counter flowware s sequencer data counter configware flowware s algorithms © 2007, reiner@hartenstein. de algorithms 66 http: //hartenstein. de

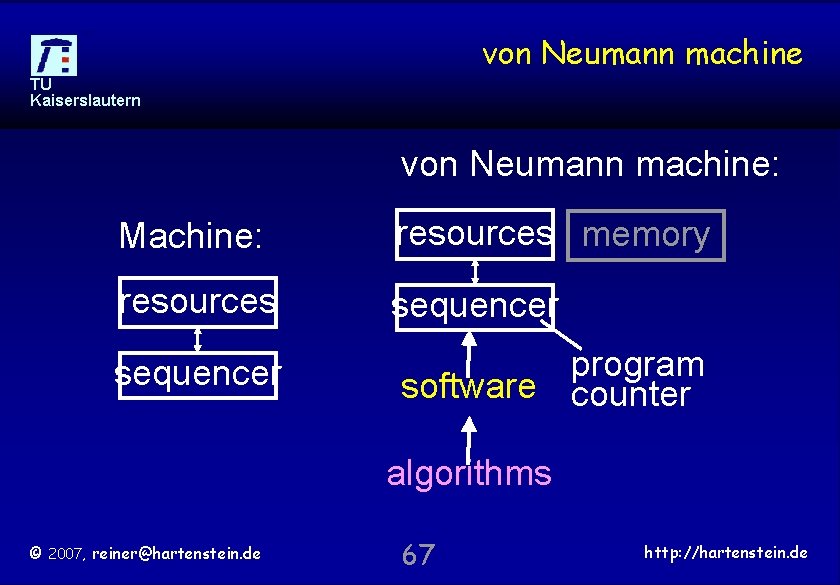

von Neumann machine TU Kaiserslautern von Neumann machine: Machine: resources memory resources sequencer program software counter algorithms © 2007, reiner@hartenstein. de 67 http: //hartenstein. de

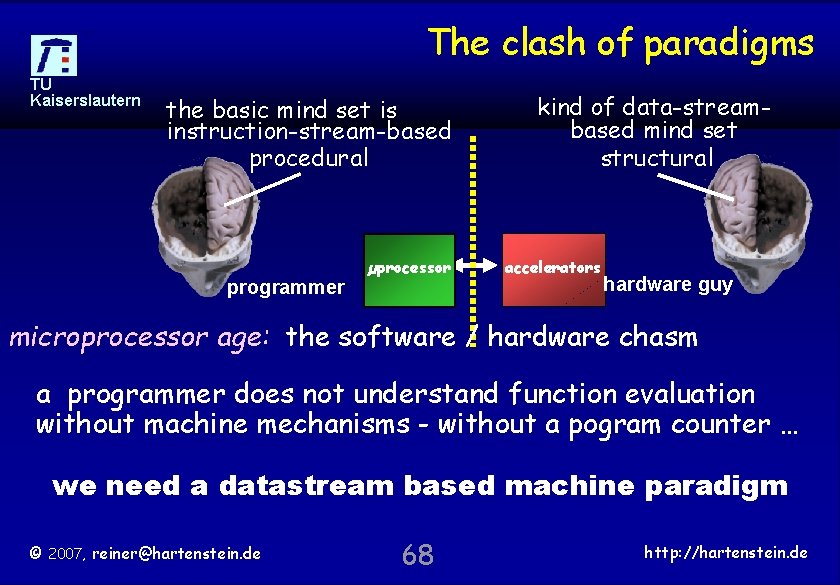

The clash of paradigms TU Kaiserslautern the basic mind set is instruction-stream-based procedural programmer µprocessor kind of data-streambased mind set structural accelerators hardware guy microprocessor age: the software / hardware chasm a programmer does not understand function evaluation without machine mechanisms - without a pogram counter … we need a datastream based machine paradigm © 2007, reiner@hartenstein. de 68 http: //hartenstein. de

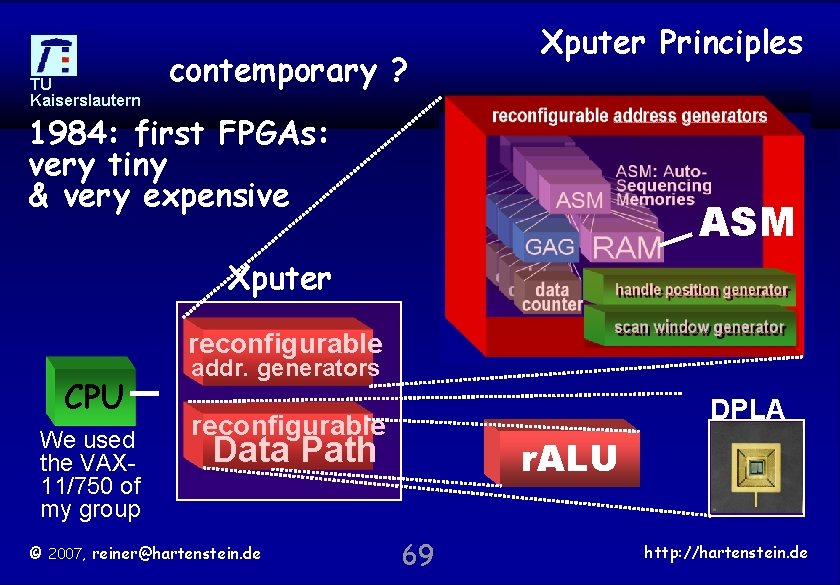

TU Kaiserslautern contemporary ? Xputer Principles 1984: first FPGAs: very tiny & very expensive ASM Xputer reconfigurable CPU We used the VAX 11/750 of my group addr. generators DPLA reconfigurable Data Path © 2007, reiner@hartenstein. de r. ALU 69 http: //hartenstein. de

TU Kaiserslautern super © 2007, reiner@hartenstein. de 70 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 71 http: //hartenstein. de

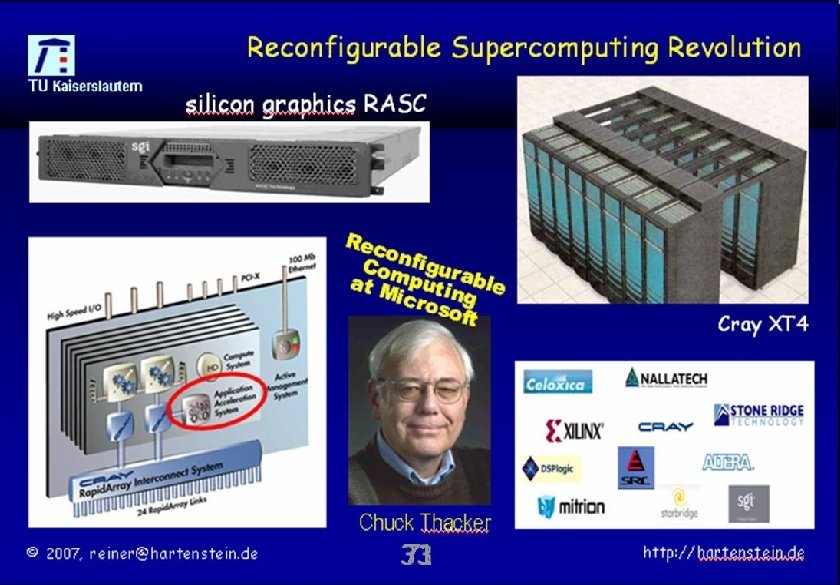

Outline TU Kaiserslautern • • The von Neumann Paradigm Accelerators and FPGAs The Reconfigurable Computing Paradox The new Paradigm Coarse-grained Bridging the Paradigm Chasm Conclusions © 2007, reiner@hartenstein. de 72 http: //hartenstein. de

TU Kaiserslautern dynamic © 2007, reiner@hartenstein. de 73 http: //hartenstein. de

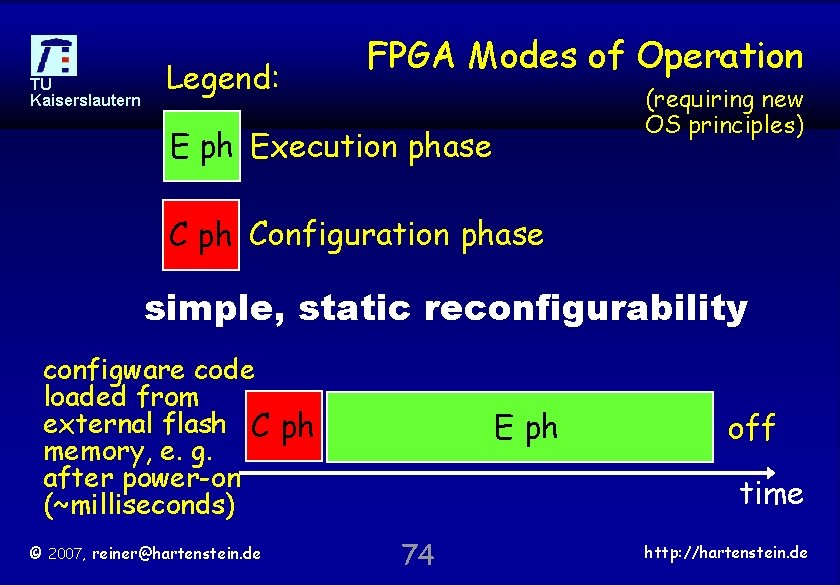

TU Kaiserslautern Legend: FPGA Modes of Operation (requiring new OS principles) E ph Execution phase C ph Configuration phase simple, static reconfigurability configware code loaded from external flash C ph memory, e. g. after power-on (~milliseconds) © 2007, reiner@hartenstein. de E ph off time 74 http: //hartenstein. de

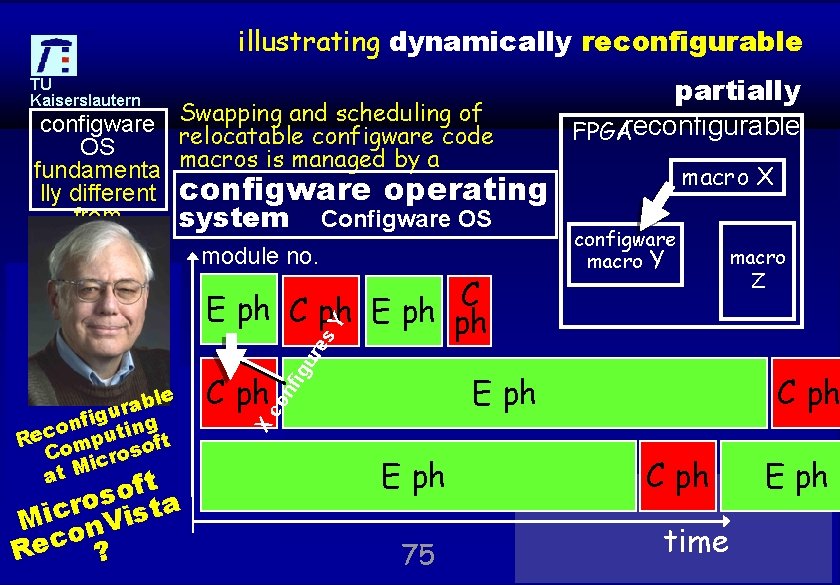

illustrating dynamically reconfigurable configware OS fundamenta lly different from establish software OS ed R&D area macro X configware operating system Configware OS module no. C E ph C ph E ph ph configware macro Y macro Z module z f o nf C ph X fig ing n o Rec omput oft C ros c i at M t E ph co module C ph le b. Y a r u ig ur es module X Swapping and scheduling of relocatable configware code macros is managed by a partially FPGAreconfigurable Y TU Kaiserslautern s ta o r Micon. Vis c reiner@hartenstein. de R©e 2007, ? E ph 75 C ph time E ph http: //hartenstein. de

Gliederung TU Kaiserslautern • • The von Neumann Paradigm Accelerators and FPGAs The Reconfigurable Computing Paradox The new Paradigm Coarse-grained Bridging the Paradigm Chasm Conclusions © 2007, reiner@hartenstein. de 76 http: //hartenstein. de

TU Kaiserslautern Reconfigurable HPC • This area is almost 10 years old © 2007, reiner@hartenstein. de 77 http: //hartenstein. de

TU Kaiserslautern Reconfigurable HPC • This area is almost 10 years old © 2007, reiner@hartenstein. de 78 http: //hartenstein. de

TU Kaiserslautern Have to re-think basic assumptions Instead of physical limits, fundamental misconceptions of algorithmic complexity theory limit the progress and will necessitate new breakthroughs. Not processing is costly, but moving data and messages We’ve to re-think basic assumptions behind computing © 2007, reiner@hartenstein. de 79 http: //hartenstein. de

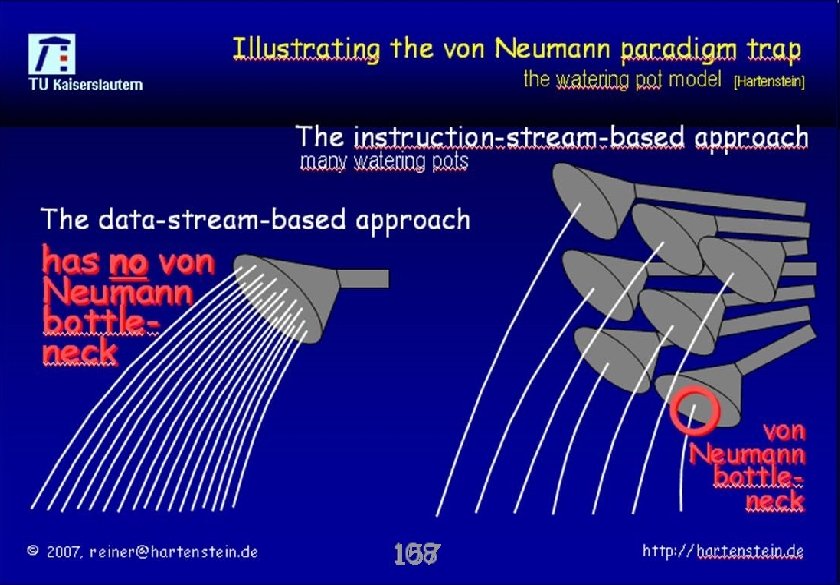

![Illustrating the von Neumann paradigm trap the watering pot model TU Kaiserslautern [Hartenstein] The Illustrating the von Neumann paradigm trap the watering pot model TU Kaiserslautern [Hartenstein] The](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-80.jpg)

Illustrating the von Neumann paradigm trap the watering pot model TU Kaiserslautern [Hartenstein] The instruction-stream-based approach many watering pots The data-stream-based approach has no von Neumann bottleneck © 2007, reiner@hartenstein. de 80 http: //hartenstein. de

TU Kaiserslautern Have to re-think basic assumptions Instead of physical limits, fundamental misconceptions of algorithmic complexity theory limit the progress and will necessitate new breakthroughs. Not processing is costly, but moving data and messages We’ve to re-think basic assumptions behind computing © 2007, reiner@hartenstein. de 81 http: //hartenstein. de

Outline TU Kaiserslautern • • The (non-v-N) anti-machine (Xputer) Speed-up by address generators Data-procedural Programming Language Generalization of the Systolic Array Partitioning Compilation Techniques Design Space Exploration Bridging the Paradigm Chasm © 2007, reiner@hartenstein. de 82 http: //hartenstein. de

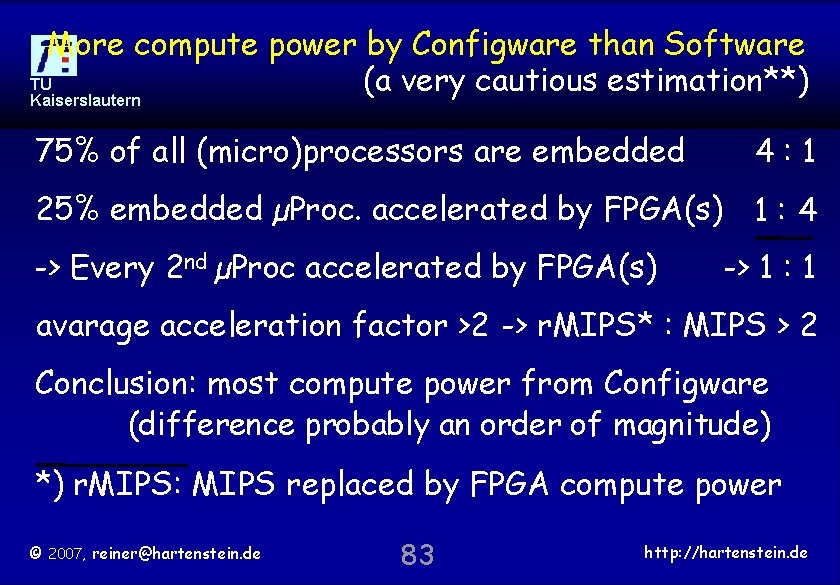

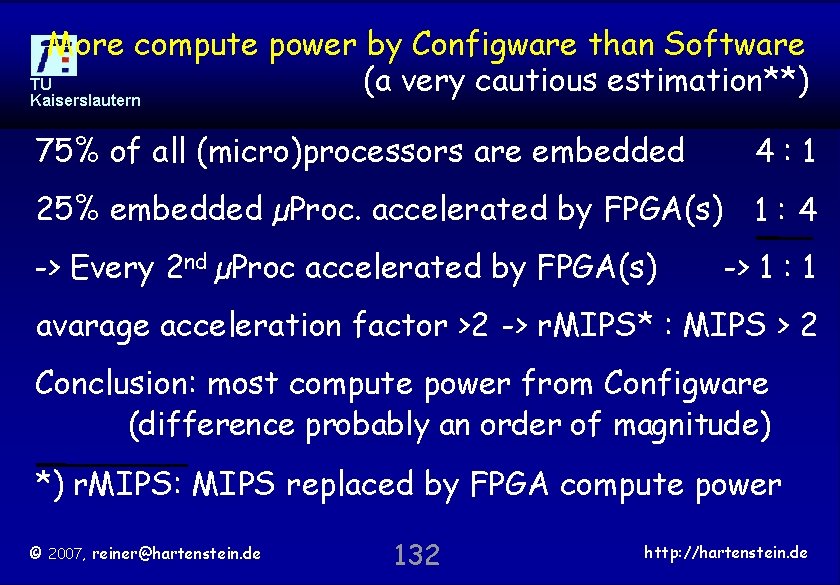

More compute power by Configware than Software TU (a very cautious estimation**) Kaiserslautern 75% of all (micro)processors are embedded 4: 1 25% embedded µProc. accelerated by FPGA(s) 1 : 4 -> Every 2 nd µProc accelerated by FPGA(s) -> 1 : 1 avarage acceleration factor >2 -> r. MIPS* : MIPS > 2 Conclusion: most compute power from Configware (difference probably an order of magnitude) *) r. MIPS: MIPS replaced by FPGA compute power © 2007, reiner@hartenstein. de 83 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de Xputer Lab (around 1990) 84 http: //hartenstein. de

TU Kaiserslautern anti © 2007, reiner@hartenstein. de 85 http: //hartenstein. de

![Programming Language Paradigms Principle TU Kaiserslautern s of Mo PL [1994 ] ve ry Programming Language Paradigms Principle TU Kaiserslautern s of Mo PL [1994 ] ve ry](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-86.jpg)

Programming Language Paradigms Principle TU Kaiserslautern s of Mo PL [1994 ] ve ry to eas lea y rn mu lti GA ple Gs © 2007, reiner@hartenstein. de 86 http: //hartenstein. de

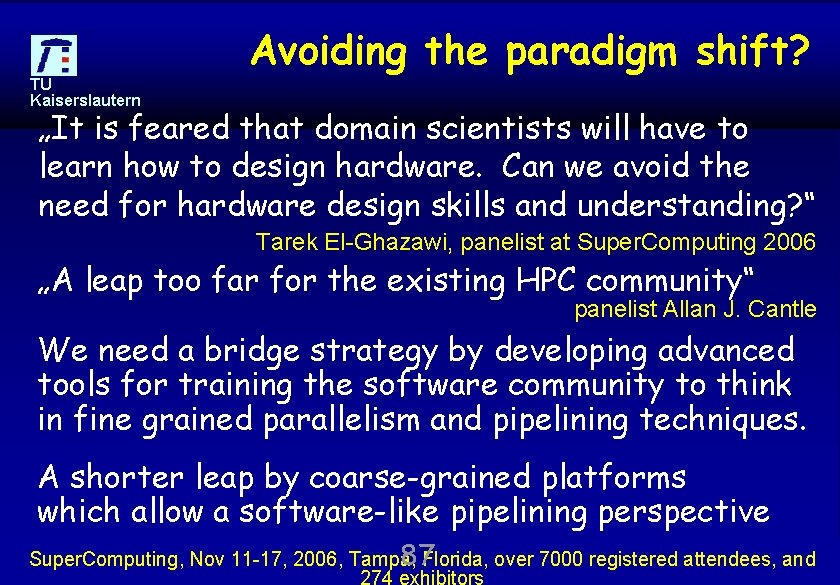

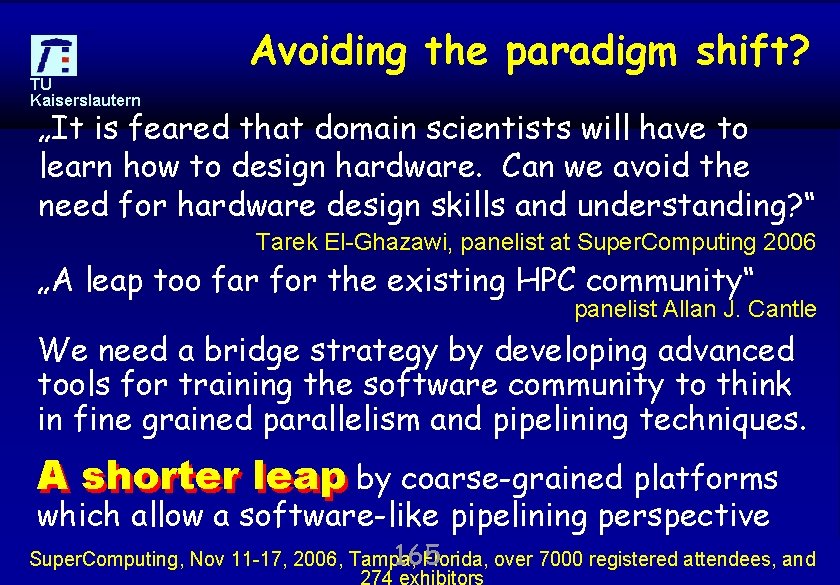

TU Kaiserslautern Avoiding the paradigm shift? „It is feared that domain scientists will have to learn how to design hardware. Can we avoid the need for hardware design skills and understanding? “ Tarek El-Ghazawi, panelist at Super. Computing 2006 „A leap too far for the existing HPC community“ panelist Allan J. Cantle We need a bridge strategy by developing advanced tools for training the software community to think in fine grained parallelism and pipelining techniques. A shorter leap by coarse-grained platforms which allow a software-like pipelining perspective © 2007, reiner@hartenstein. de Super. Computing, Nov 11 -17, http: //hartenstein. de 87 Florida, over 7000 registered 2006, Tampa, attendees, and 274 exhibitors

Outline TU Kaiserslautern • • The von Neumann Paradigm Accelerators and FPGAs The Reconfigurable Computing Paradox The new Paradigm Coarse-grained Bridging the Paradigm Chasm Conclusions © 2007, reiner@hartenstein. de 88 http: //hartenstein. de

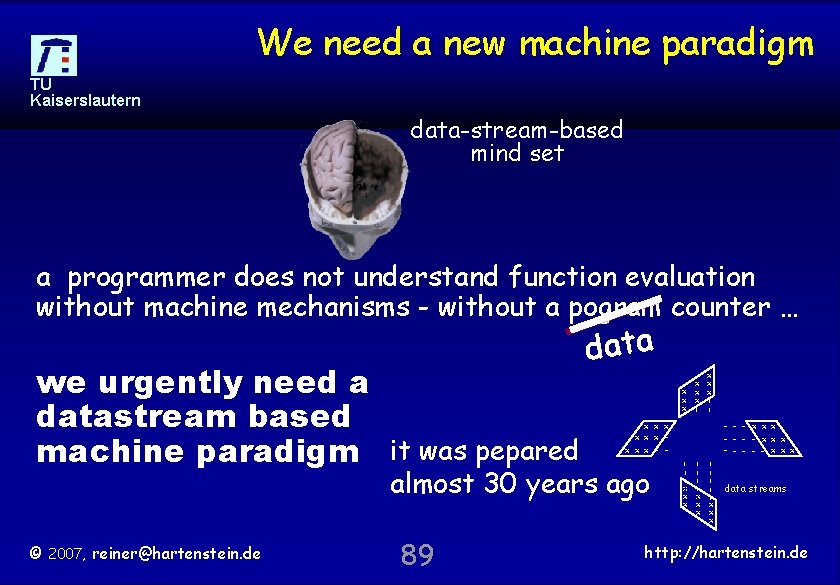

We need a new machine paradigm TU Kaiserslautern data-stream-based mind set a programmer does not understand function evaluation without machine mechanisms - without a pogram counter … x x x x x - - 89 - - - x xx - - xx x - - - x x x | | | | | x x x x | almost 30 years ago © 2007, reiner@hartenstein. de x x x x | x | | data streams | we urgently need a datastream based machine paradigm it was pepared data http: //hartenstein. de

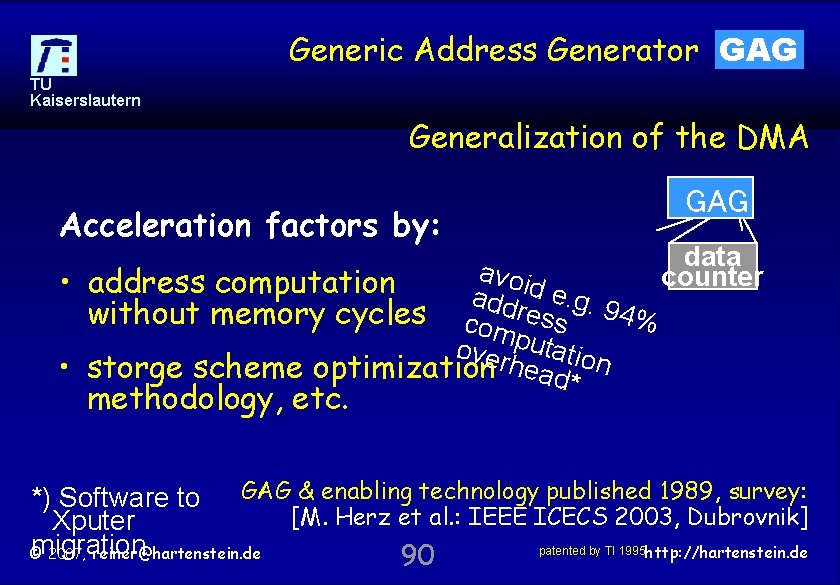

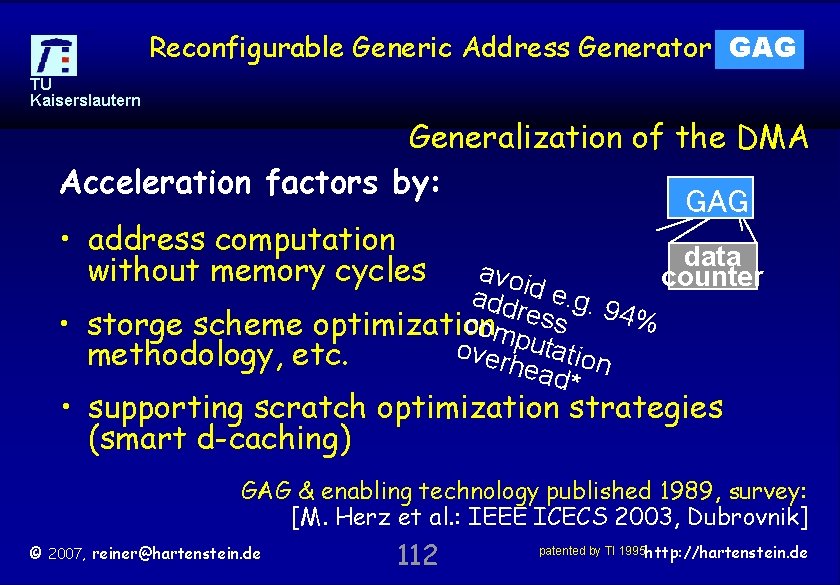

Generic Address Generator GAG TU Kaiserslautern Generalization of the DMA Acceleration factors by: GAG data a void counter • address computation addr e. g. 9 4% without memory cycles com ess over putatio • storge scheme optimization head* n methodology, etc. *) Software to GAG & enabling technology published 1989, survey: [M. Herz et al. : IEEE ICECS 2003, Dubrovnik] Xputer migration patented by TI 1995 http: //hartenstein. de © 2007, reiner@hartenstein. de 90

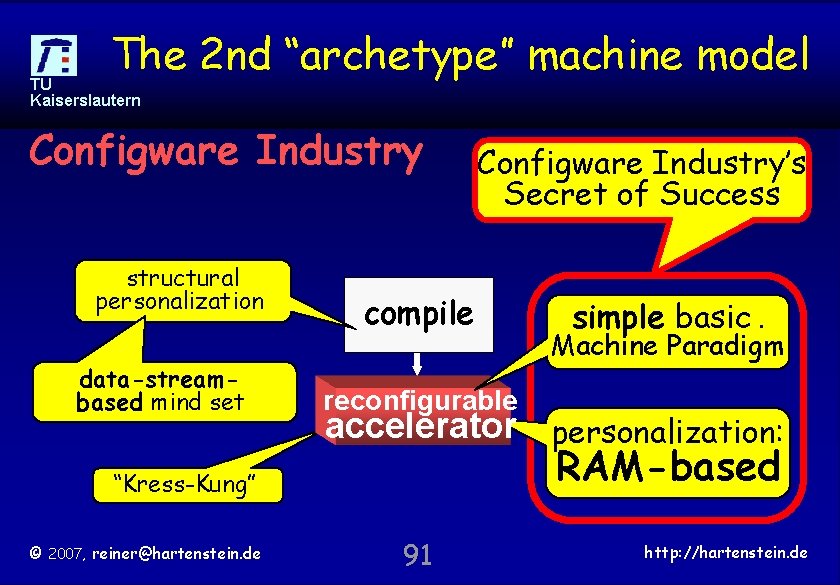

The 2 nd “archetype” machine model TU Kaiserslautern Configware Industry structural personalization data-streambased mind set compile simple basic. Machine Paradigm reconfigurable accelerator personalization: RAM-based “Kress-Kung” © 2007, reiner@hartenstein. de Configware Industry’s Secret of Success 91 http: //hartenstein. de

Outline TU Kaiserslautern • • The von Neumann Paradigm Accelerators and FPGAs The Reconfigurable Computing Paradox The new Paradigm Coarse-grained Bridging the Paradigm Chasm Conclusions © 2007, reiner@hartenstein. de 92 http: //hartenstein. de

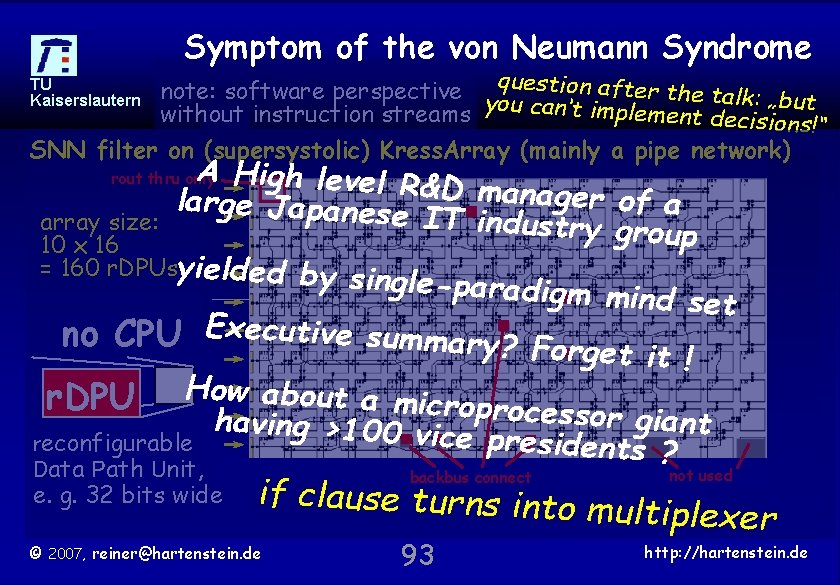

Symptom of the von Neumann Syndrome TU Kaiserslautern note: software perspective y question after the talk: „but without instruction streams ou can‘t implement decisions!“ SNN filter on (supersystolic) Kress. Array (mainly a pipe network) A High level R& large Japanese D manager of a IT industry gro array size: up 10 x 16 = 160 r. DPUsyielded by single-paradigm mind set no CPU Executive summary? Forge t it ! r. DPU How about a microprocesso r giant having >100 vic e presidents ? reconfigurable Data Path Unit, rout thru only e. g. 32 bits wide not used if clause turns into m ultiplexer © 2007, reiner@hartenstein. de backbus connect 93 http: //hartenstein. de

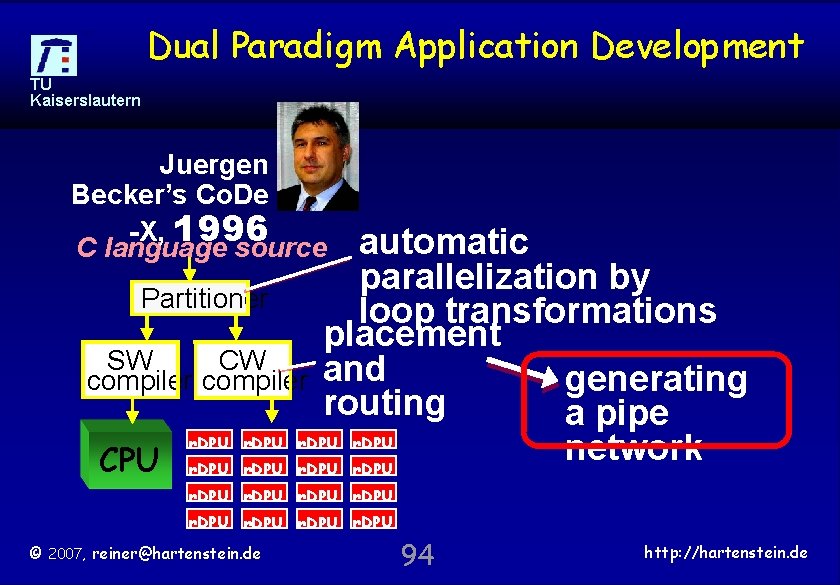

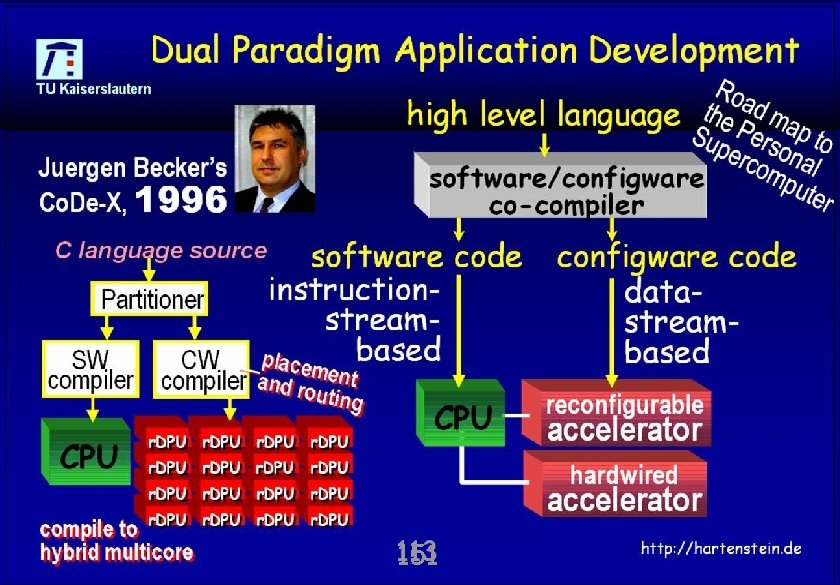

Dual Paradigm Application Development TU Kaiserslautern Juergen Becker’s Co. De -X, 1996 C language source automatic parallelization by Partitioner loop transformations placement SW CW compiler and generating routing a pipe r. DPU network CPU r. DPU r. DPU © 2007, reiner@hartenstein. de 94 http: //hartenstein. de

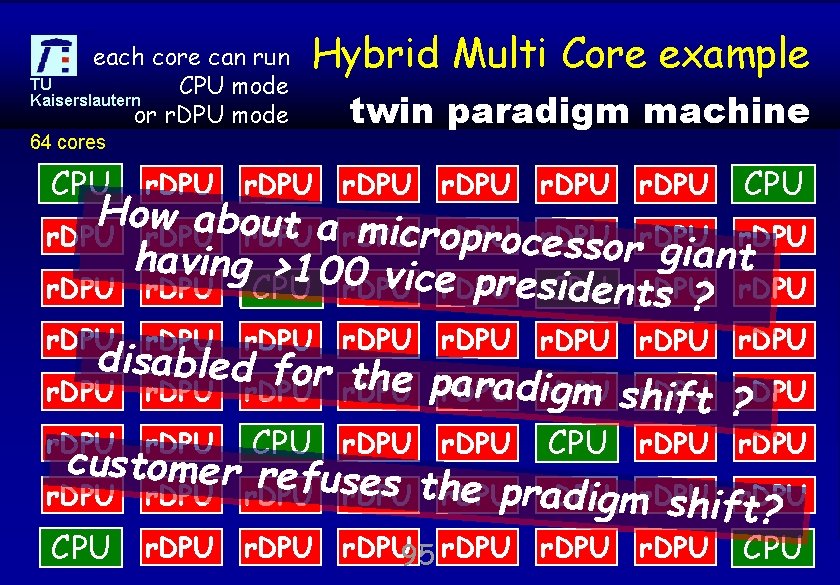

each core can run TU CPU mode Kaiserslautern or r. DPU mode Hybrid Multi Core example 64 cores twin paradigm machine CPU r. DPU r. DPU CPU r. DPU 95 r. DPU http: //hartenstein. de CPU How about a m icror. DPU r. DPU procr. DPU e s s o r giant having >100 vic presr. DPU identr. DPU CPU r. DPU er. DPU CPU s ? r. DPU disabled for th e pr. DPU aradir. DPU gm sh ift ? r. DPU CPU r. DPU customer refus es thr. DPU e pra r. DPU digmr. DPU shifr. DPU t? © 2007, reiner@hartenstein. de

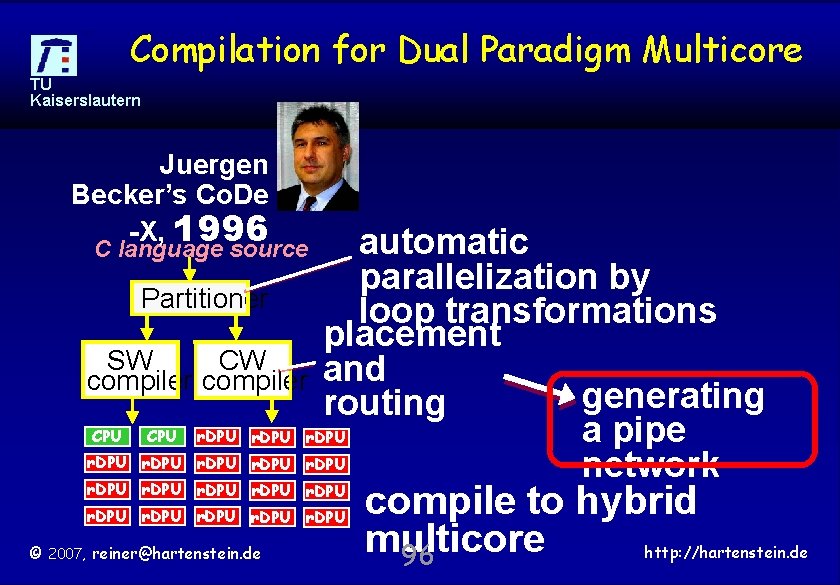

Compilation for Dual Paradigm Multicore TU Kaiserslautern Juergen Becker’s Co. De -X, 1996 automatic parallelization by Partitioner loop transformations placement SW CW compiler and generating routing CPU r. DPU a pipe r. DPU network C language source r. DPU r. DPU © 2007, reiner@hartenstein. de compile to hybrid multicore 96 http: //hartenstein. de

Outline TU Kaiserslautern • • The von Neumann Paradigm Accelerators and FPGAs The Reconfigurable Computing Paradox The new Paradigm Coarse-grained Bridging the Paradigm Chasm Conclusions © 2007, reiner@hartenstein. de 97 http: //hartenstein. de

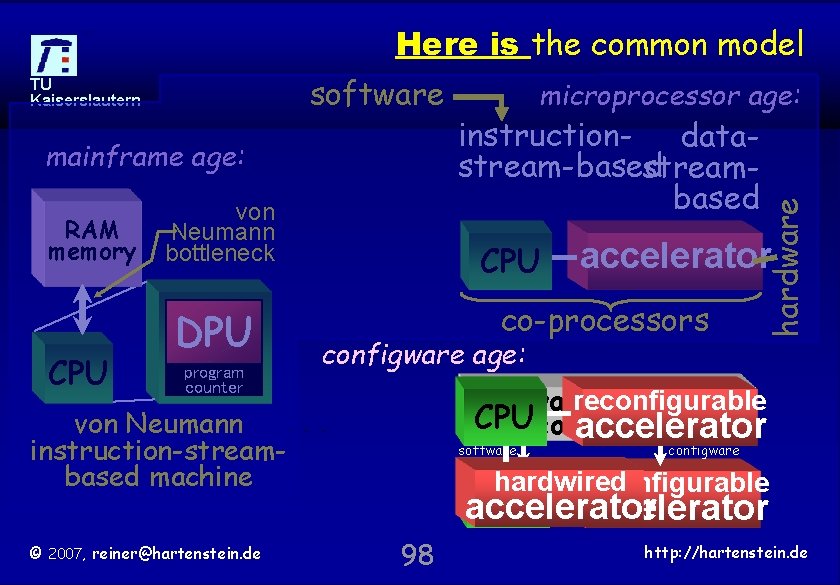

Here is the common model mainframe age: RAM memory CPU von Neumann bottleneck DPU program counter instruction- datastream-based streambased CPU accelerator co-processors configware age: reconfigurable software/configware CPUco-compiler accelerator von Neumann instruction-streambased machine © 2007, reiner@hartenstein. de microprocessor age: hardware software TU Kaiserslautern software configware hardwired reconfigurable CPU accelerator 98 http: //hartenstein. de

Outline TU Kaiserslautern • • The von Neumann Paradigm Accelerators and FPGAs The Reconfigurable Computing Paradox The new Paradigm Coarse-grained Bridging the Paradigm Chasm Conclusions © 2007, reiner@hartenstein. de 99 http: //hartenstein. de

TU Kaiserslautern Multi Core: Just more CPUs ? Complexity and clock frequency of singlecore microprocessors come to an end Multi-core microprocessor chips emerging: soon 32 cores on an AMD chip, and 80 on an intel Without a paradigm shift just more CPUs on chip lead to the dead roads known from supercomputing Multi-threading is not the silver bullet We’ve to re-think basic assumptions behind computing © 2007, reiner@hartenstein. de 100 http: //hartenstein. de

TU Kaiserslautern Solution not expected from CS officers Progress of the joint task force on CS curriculum recommendations is extremely disillusioning it‘s more like a lobby: „my area is the most important“ We need mutual efforts, like EE/CS cooperation known from the Mead & Conway revolution The personal supercomputer: a far-ranging massive push of innovation in all areas of science and economy: by Reconfigurable Computing For RC other motivations are similarly high-grade: growing cost and looming shortage of energy. © 2007, reiner@hartenstein. de 101 http: //hartenstein. de

Computing Sciences are in a severe Crisis TU Kaiserslautern We urgently need to shape the Reconfigurable Computing Revolution for enabling to go toward incredibly promising new horizons of affordable highest performance computing This cannot be achieved with the classical software-based mind set We need a new dual paradigm approach Supercomputing titans may be your enemies Watch out not to get screwed ! © 2007, reiner@hartenstein. de 102 http: //hartenstein. de

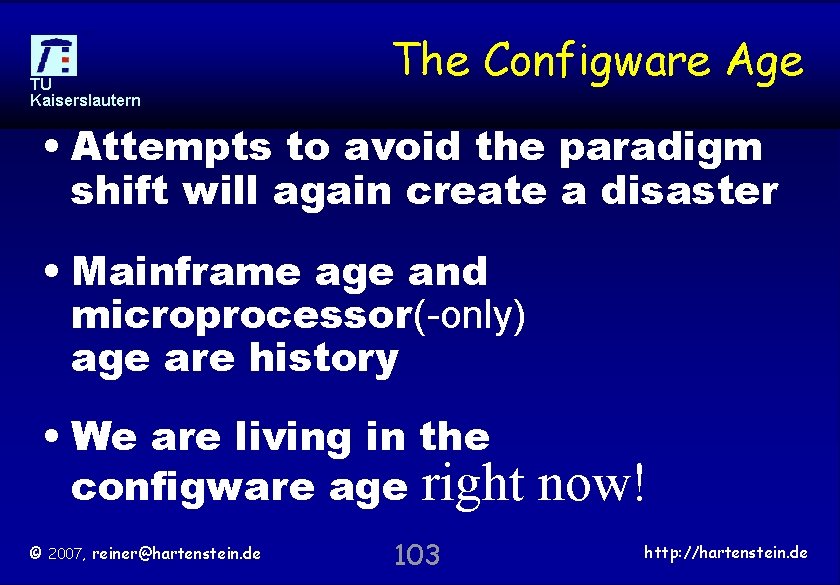

TU Kaiserslautern The Configware Age • Attempts to avoid the paradigm shift will again create a disaster • Mainframe age and microprocessor(-only) age are history • We are living in the configware age right © 2007, reiner@hartenstein. de 103 now! http: //hartenstein. de

TU Kaiserslautern thank you for your patience © 2007, reiner@hartenstein. de 104 http: //hartenstein. de

TU Kaiserslautern overhead © 2007, reiner@hartenstein. de 105 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 106 http: //hartenstein. de

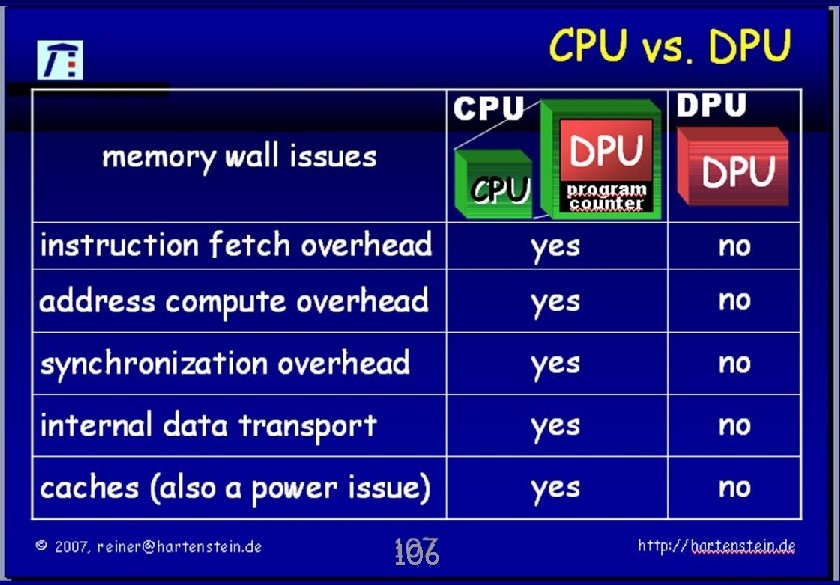

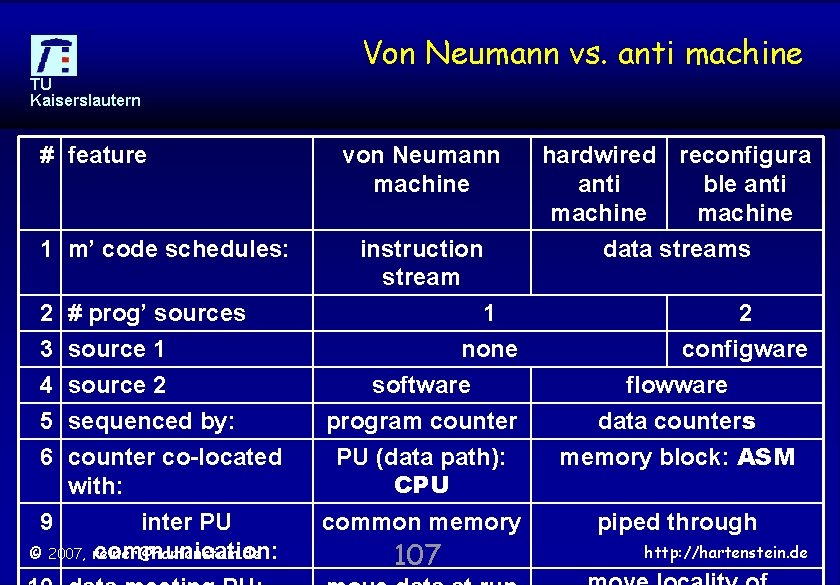

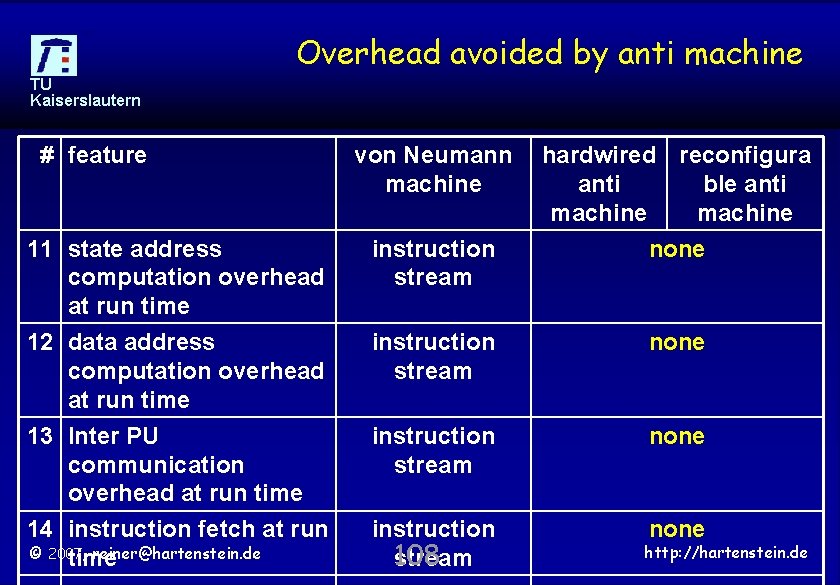

Von Neumann vs. anti machine TU Kaiserslautern # feature 1 m’ code schedules: 2 3 4 5 6 # prog’ sources source 1 source 2 sequenced by: counter co-located with: 9 inter PU © 2007, reiner@hartenstein. de communication: von Neumann machine instruction stream hardwired reconfigura anti ble anti machine data streams 1 none software program counter PU (data path): CPU 2 configware flowware data counters memory block: ASM common memory piped through 107 http: //hartenstein. de

Overhead avoided by anti machine TU Kaiserslautern # feature von Neumann machine hardwired reconfigura anti ble anti machine none 11 state address computation overhead at run time 12 data address computation overhead at run time instruction stream none 13 Inter PU communication overhead at run time 14 instruction fetch at run © 2007, reiner@hartenstein. de time instruction stream none instruction 108 stream none http: //hartenstein. de

TU Kaiserslautern GAG © 2007, reiner@hartenstein. de 109 http: //hartenstein. de

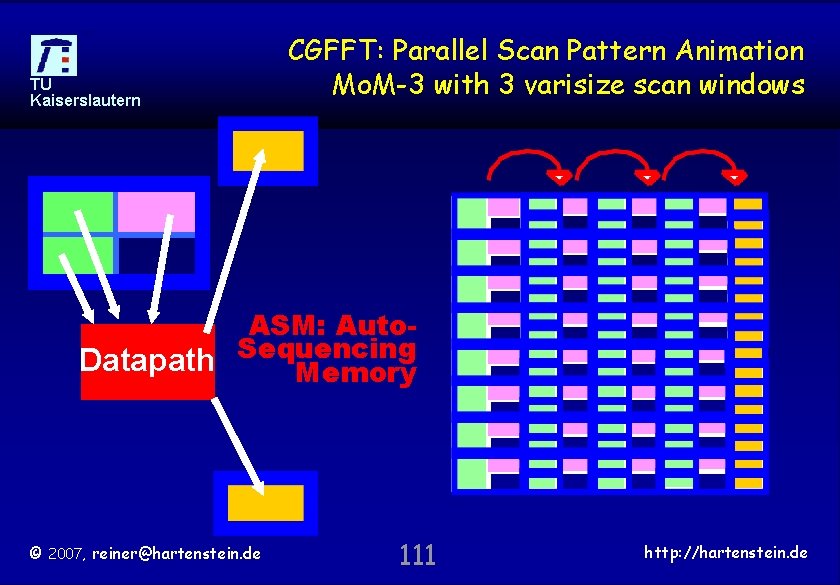

Mo. M Scan window (Mo. MSW) Illustration TU Kaiserslautern Mo. M architectural primary • 2 ASM: Autofeatures: dimensional Sequencing (data) Memory memory address • space Multiple* varisize reconfigurable Mo. MSW scan windows • Mo. MSW controlled by *) typically 3 reconfigurable ASM: Auto. GAG (generic Sequencing address Memory generators) http: //hartenstein. de © 2007, reiner@hartenstein. de 110

TU Kaiserslautern CGFFT: Parallel Scan Pattern Animation Mo. M-3 with 3 varisize scan windows ASM: Auto. Datapath Sequencing Memory © 2007, reiner@hartenstein. de 111 http: //hartenstein. de

Reconfigurable Generic Address Generator GAG TU Kaiserslautern Generalization of the DMA Acceleration factors by: GAG • address computation data a without memory cycles void counter addr e. g. 9 4% com ess • storge scheme optimization puta o verh tion methodology, etc. ead* • supporting scratch optimization strategies (smart d-caching) GAG & enabling technology published 1989, survey: [M. Herz et al. : IEEE ICECS 2003, Dubrovnik] © 2007, reiner@hartenstein. de 112 patented by TI 1995 http: //hartenstein. de

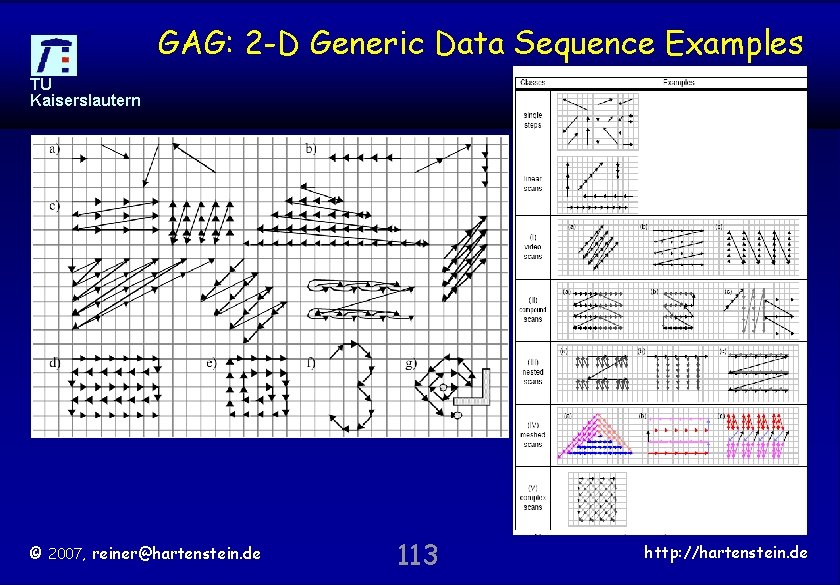

GAG: 2 -D Generic Data Sequence Examples TU Kaiserslautern © 2007, reiner@hartenstein. de 113 http: //hartenstein. de

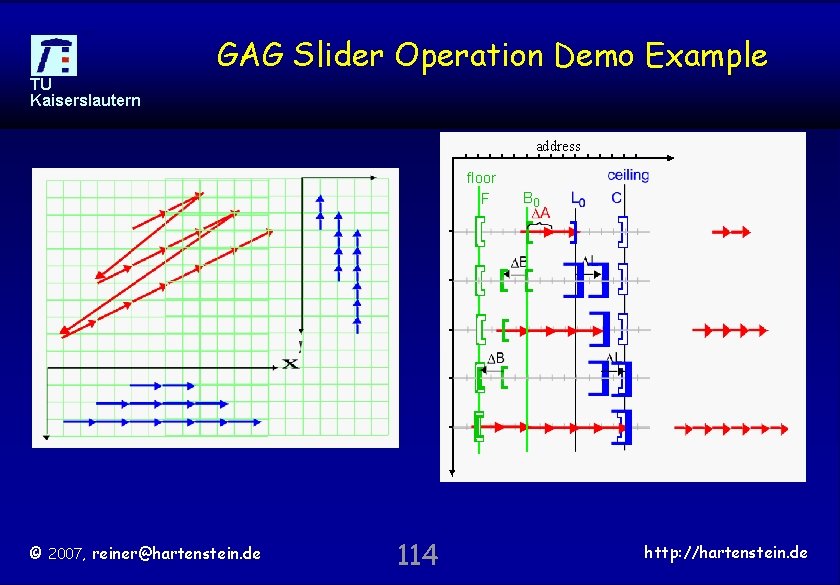

GAG Slider Operation Demo Example TU Kaiserslautern address floor F © 2007, reiner@hartenstein. de 114 B 0 http: //hartenstein. de

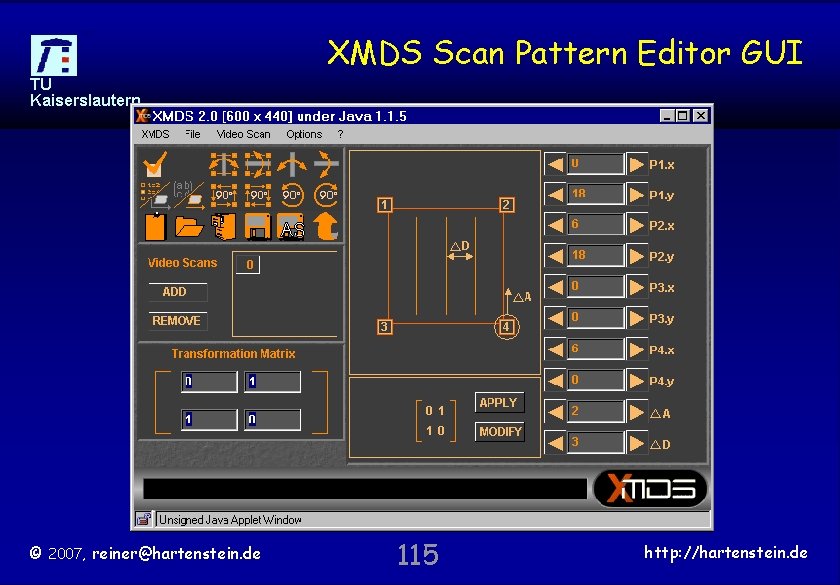

XMDS Scan Pattern Editor GUI TU Kaiserslautern © 2007, reiner@hartenstein. de 115 http: //hartenstein. de

![JPEG zigzag scan pattern *> Declarations goto Pix. Map[1, 1] East. Scan is TU JPEG zigzag scan pattern *> Declarations goto Pix. Map[1, 1] East. Scan is TU](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-116.jpg)

JPEG zigzag scan pattern *> Declarations goto Pix. Map[1, 1] East. Scan is TU step by [1, 0] Kaiserslautern Half. Zig. Zag; South. West. Scan uturn (Half. Zig. Zag) 2 South. Scan is step by [0, 1] end. South. Scan; 3 North. East. Scan is loop 8 times until [*, 1] step by [1, -1] endloop end North. East. Scan; 1 South. West. Scan is loop 8 times until [1, *] step by [-1, 1] endloop end South. West. Scan; Half. Zig. Zag is East. Scan loop 3 times South. West. Scan South. Scan North. East. Scan endloop end Half. Zig. Zag; © 2007, reiner@hartenstein. de x y data. Half. Zig. Zag counter data counter 116 Half. Zig. Zag 4 end East. Scan; http: //hartenstein. de

Significance of Mo. MSW Reconfigurable Scan Windows TU Kaiserslautern • Mo. MSW Scan windows have the potential to drastically reduce traffic to/from slow off-chip memory. • No instruction streams needed to implement scratch pad optimization strategies using fast on-chip memory • Mo. MSW Scan windows may contribute to speed-up by a factor of 10 and sometimes even much more • Mo. MSW Scan windows are the deterministic alternative („d -caching“) to (indeterministic and speculative) classical cache usage: performance can be well predicted • For data-stream-based computing scan windows are highly effective, whereas classical caches are entirely useless © 2007, reiner@hartenstein. de 117 http: //hartenstein. de

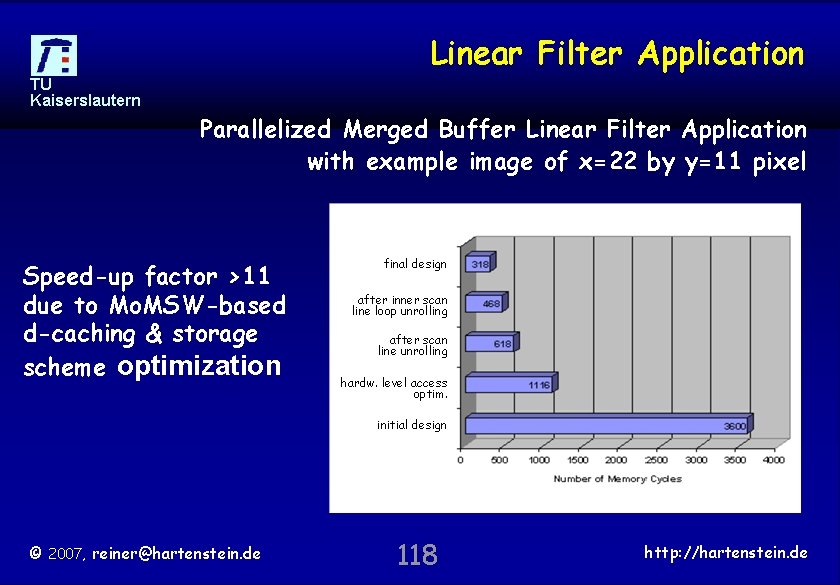

Linear Filter Application TU Kaiserslautern Parallelized Merged Buffer Linear Filter Application with example image of x=22 by y=11 pixel Speed-up factor >11 due to Mo. MSW-based d-caching & storage scheme optimization final design after inner scan line loop unrolling after scan line unrolling hardw. level access optim. initial design © 2007, reiner@hartenstein. de 118 http: //hartenstein. de

TU Kaiserslautern PISA-Mo. M © 2007, reiner@hartenstein. de 119 http: //hartenstein. de

![reconfigurable accelerator Processing 4 -by-4 Reference Patterns PISA DRC accelerator [ICCAD 1984] TU Kaiserslautern reconfigurable accelerator Processing 4 -by-4 Reference Patterns PISA DRC accelerator [ICCAD 1984] TU Kaiserslautern](http://slidetodoc.com/presentation_image_h/e6b00b7c8fd38b5f8259ee7a0b93f83a/image-120.jpg)

reconfigurable accelerator Processing 4 -by-4 Reference Patterns PISA DRC accelerator [ICCAD 1984] TU Kaiserslautern DPLA: fabricated by the. Mead-&-Conway n. MOS Design Rules: E. I. S. Multi University 256 4 -by-4 reference patterns Project: Mead-&-Conway CMOS Design Rules: >800 4 -by-4 reference patterns v. N Software: some reference patterns can be skipped, depending on earlier patterns Mo. M: all reference patterns PISA: a matched in a single clock forerunner cycle of the Mo. M Reference patterns automatically generated from 1984: 1 DPLA replaces 256 Design Rules FPGAs © 2007, reiner@hartenstein. de 120 http: //hartenstein. de

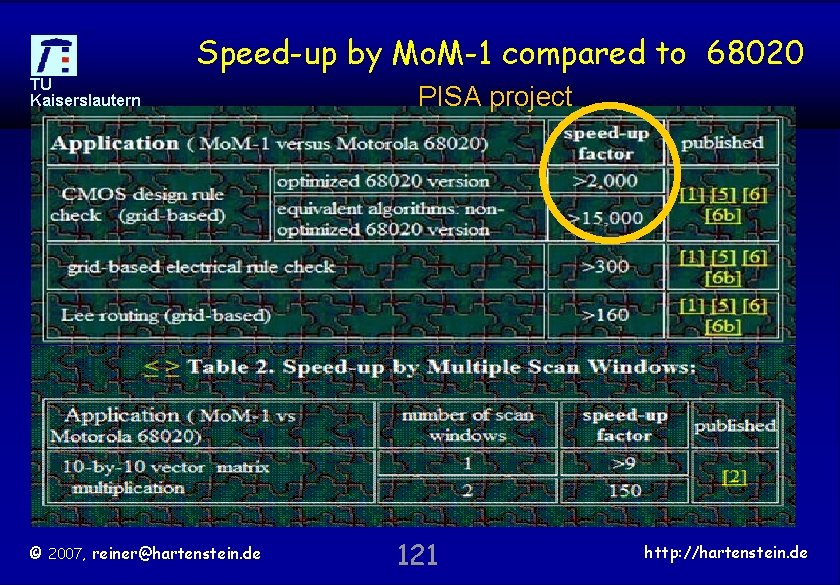

Speed-up by Mo. M-1 compared to 68020 TU Kaiserslautern © 2007, reiner@hartenstein. de PISA project 121 http: //hartenstein. de

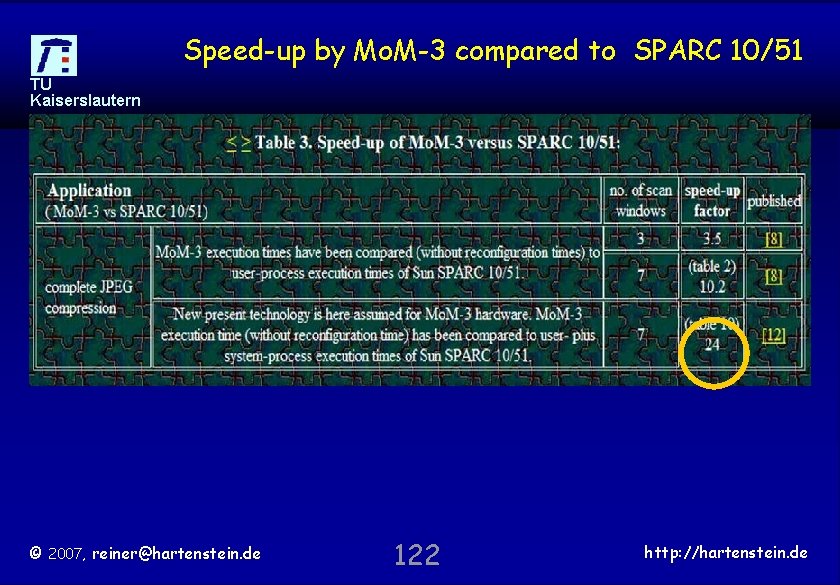

Speed-up by Mo. M-3 compared to SPARC 10/51 TU Kaiserslautern © 2007, reiner@hartenstein. de 122 http: //hartenstein. de

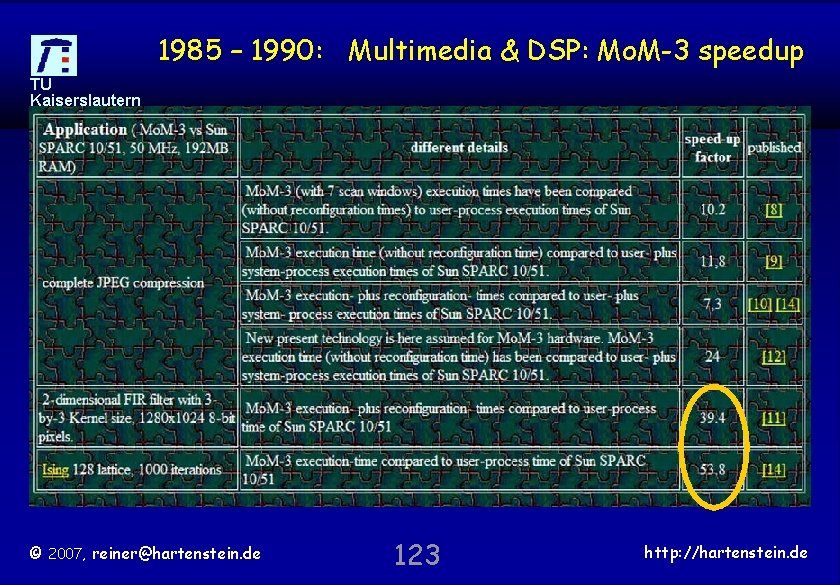

1985 – 1990: Multimedia & DSP: Mo. M-3 speedup TU Kaiserslautern © 2007, reiner@hartenstein. de 123 http: //hartenstein. de

Outline TU Kaiserslautern • • The (non-v-N) anti-machine (Xputer) Speed-up by address generators Data-procedural Programming Language Generalization of the Systolic Array Partitioning Compilation Techniques Design Space Exploration Bridging the Paradigm Chasm © 2007, reiner@hartenstein. de 124 http: //hartenstein. de

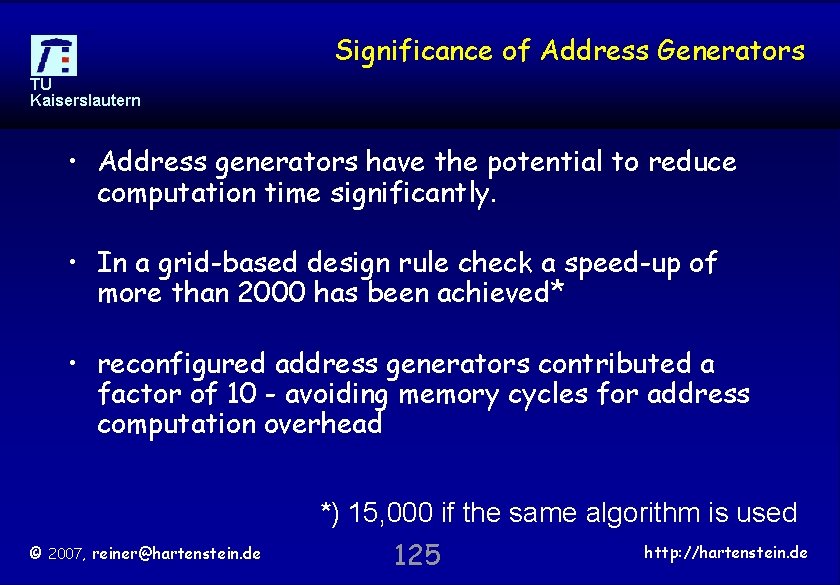

Significance of Address Generators TU Kaiserslautern • Address generators have the potential to reduce computation time significantly. • In a grid-based design rule check a speed-up of more than 2000 has been achieved* • reconfigured address generators contributed a factor of 10 - avoiding memory cycles for address computation overhead *) 15, 000 if the same algorithm is used © 2007, reiner@hartenstein. de 125 http: //hartenstein. de

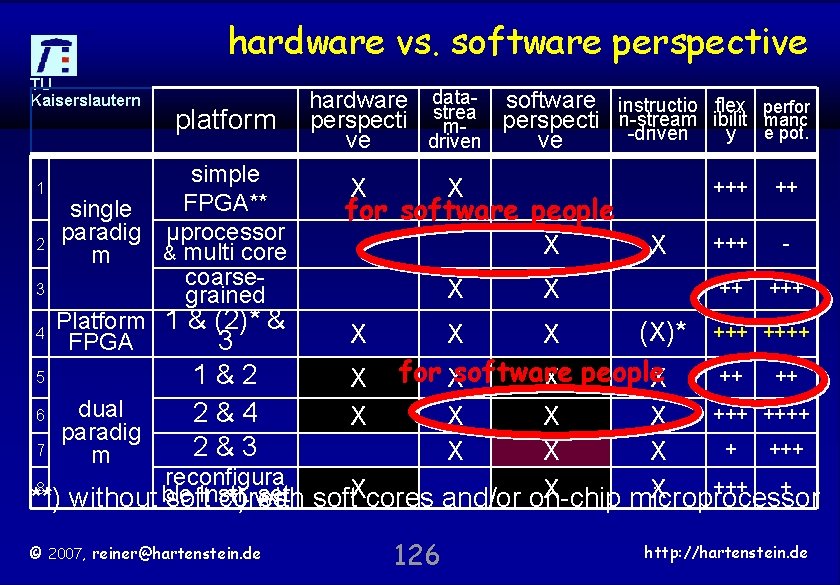

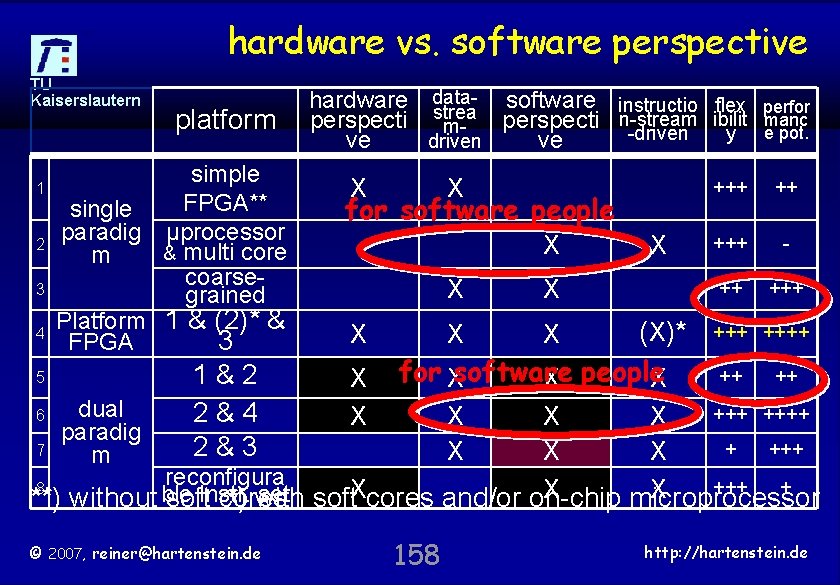

hardware vs. software perspective TU Kaiserslautern 1 2 3 platform simple FPGA** single paradig µprocessor & multi core m coarsegrained Platform 1 & (2)* & FPGA 3 hardware datasoftware instructio flex strea perspecti n-stream ibilit m-driven y driven ve ve X X for software people X X perfor manc e pot. +++ ++ +++ - ++ +++ (X)* ++++ X X 5 ++ ++ 1&2 X for Xsoftware X people X dual 6 ++++ 2&4 X X paradig 7 + +++ 2&3 X X X m reconfigura 8 +++ + X X set soft. Xcores and/or on-chip **) without ble softinstr. cores *) with microprocessor 4 © 2007, reiner@hartenstein. de X 126 http: //hartenstein. de

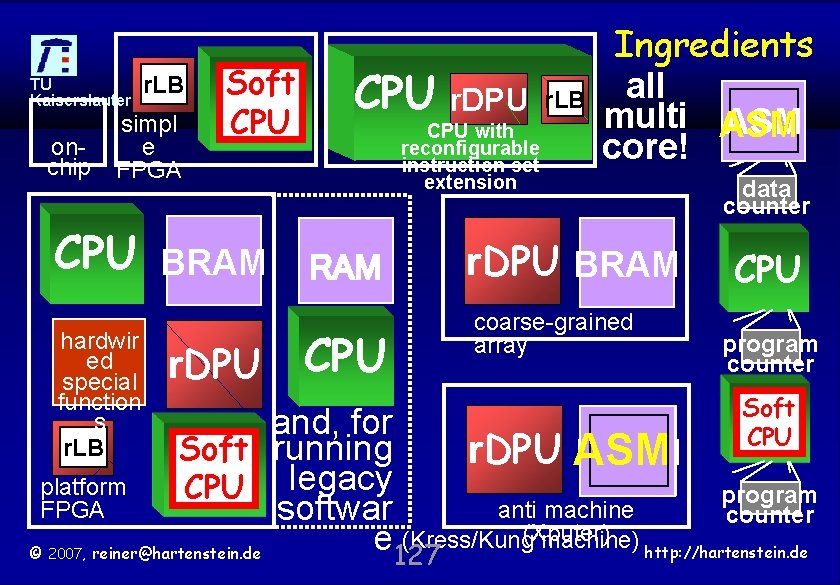

TU r. LB Kaiserslautern onchip simpl e FPGA Soft CPU r. DPU CPU with reconfigurable instruction set extension CPU BRAM hardwir ed special function s r. LB r. DPU CPU r. LB Ingredients all multi ASM core! r. DPU BRAM coarse-grained array data counter CPU program counter Soft CPU and, for Soft running r. DPU ASM BRAM platform CPU legacy program FPGA anti machine softwar counter (Xputer) (Kress/Kung machine) http: //hartenstein. de e © reiner@hartenstein. de 127 2007,

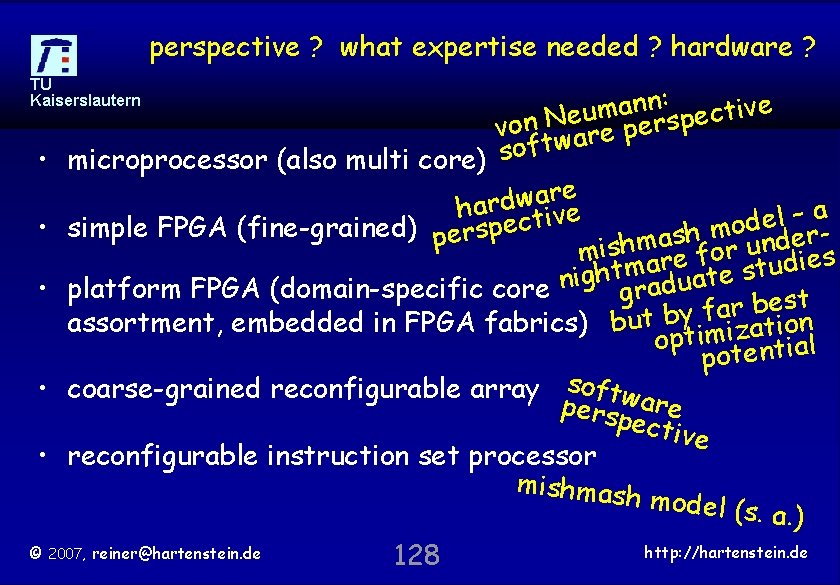

perspective ? what expertise needed ? hardware ? TU Kaiserslautern : n n e a v i m t u c e e von Nware persp t f o s • microprocessor (also multi core) e r a w d har ctive a – l e d e o • simple FPGA (fine-grained) persp m h s r a e d m n h u mis are for s e i d u m t t s h e g i t n a u d • platform FPGA (domain-specific core gra st e b r a f y assortment, embedded in FPGA fabrics) but b imization opt potential • coarse-grained reconfigurable array softwa persp re ectiv e • reconfigurable instruction set processor mishmash model (s. a. ) http: //hartenstein. de © 2007, reiner@hartenstein. de 128

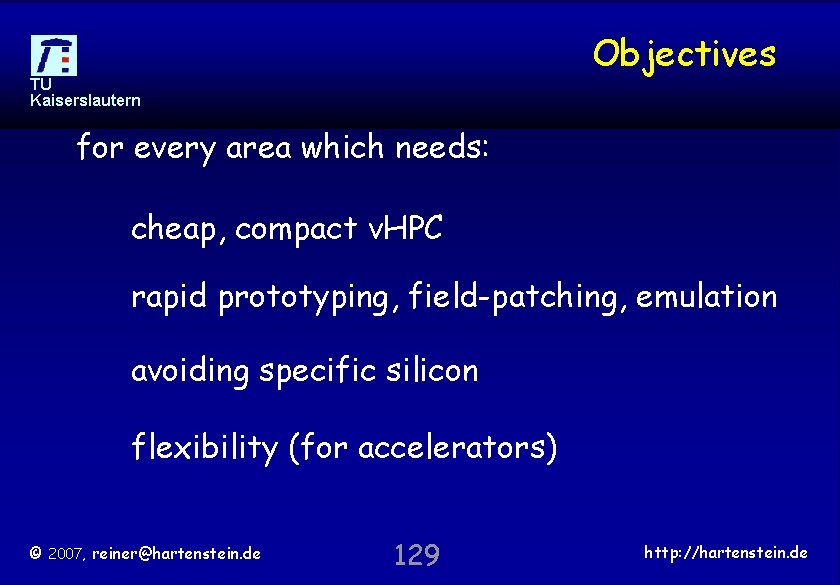

Objectives TU Kaiserslautern for every area which needs: cheap, compact v. HPC rapid prototyping, field-patching, emulation avoiding specific silicon flexibility (for accelerators) © 2007, reiner@hartenstein. de 129 http: //hartenstein. de

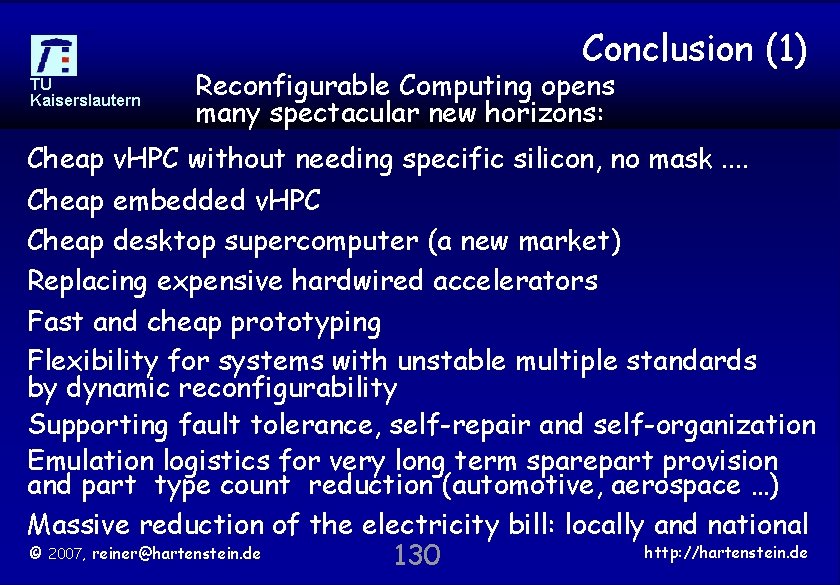

Conclusion (1) TU Kaiserslautern Reconfigurable Computing opens many spectacular new horizons: Cheap v. HPC without needing specific silicon, no mask. . Cheap embedded v. HPC Cheap desktop supercomputer (a new market) Replacing expensive hardwired accelerators Fast and cheap prototyping Flexibility for systems with unstable multiple standards by dynamic reconfigurability Supporting fault tolerance, self-repair and self-organization Emulation logistics for very long term sparepart provision and part type count reduction (automotive, aerospace …) Massive reduction of the electricity bill: locally and national http: //hartenstein. de © 2007, reiner@hartenstein. de 130

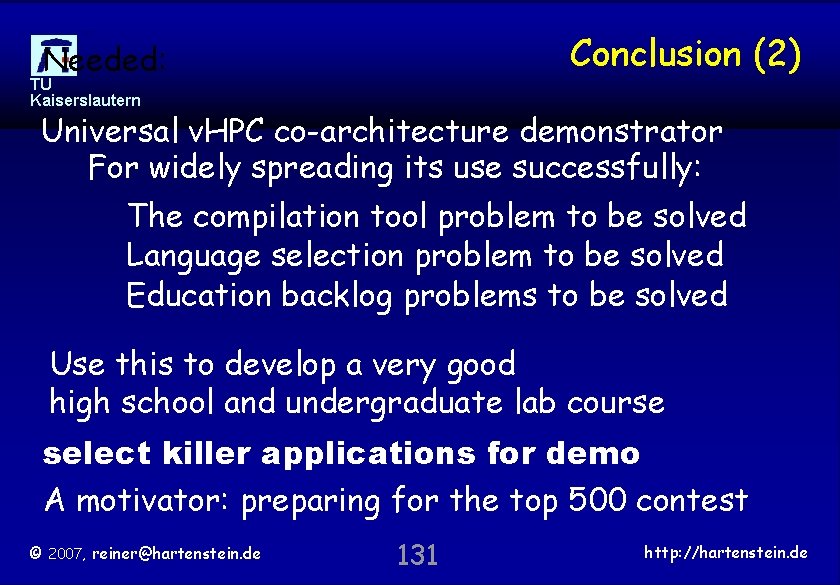

Conclusion (2) Needed: TU Kaiserslautern Universal v. HPC co-architecture demonstrator For widely spreading its use successfully: The compilation tool problem to be solved Language selection problem to be solved Education backlog problems to be solved Use this to develop a very good high school and undergraduate lab course select killer applications for demo A motivator: preparing for the top 500 contest © 2007, reiner@hartenstein. de 131 http: //hartenstein. de

More compute power by Configware than Software TU (a very cautious estimation**) Kaiserslautern 75% of all (micro)processors are embedded 4: 1 25% embedded µProc. accelerated by FPGA(s) 1 : 4 -> Every 2 nd µProc accelerated by FPGA(s) -> 1 : 1 avarage acceleration factor >2 -> r. MIPS* : MIPS > 2 Conclusion: most compute power from Configware (difference probably an order of magnitude) *) r. MIPS: MIPS replaced by FPGA compute power © 2007, reiner@hartenstein. de 132 http: //hartenstein. de

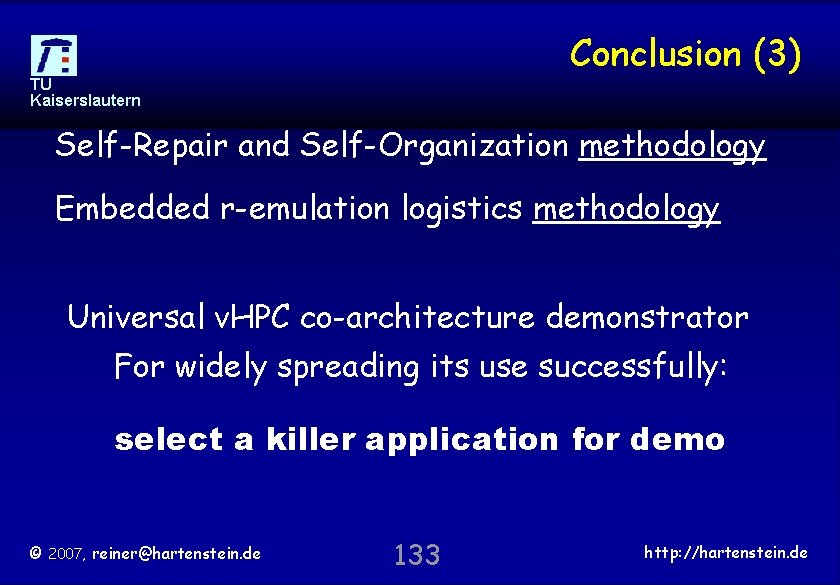

Conclusion (3) TU Kaiserslautern Self-Repair and Self-Organization methodology Embedded r-emulation logistics methodology Universal v. HPC co-architecture demonstrator For widely spreading its use successfully: select a killer application for demo © 2007, reiner@hartenstein. de 133 http: //hartenstein. de

some Goals TU Kaiserslautern Universal HPC co-architecture for: embedded v. HPC (nomadic, automotive, . . . ) desktop v. HPC (scientific computing. . . ) Application co-development environment for Hardware non-experts, . . Acceptability by software-type users, . . . Meet product lifetime >> embedded syst. life: FPGA emulation logistics from development downto maintenance and repair stations examples: automotive, aerospace, industrial, . . © 2007, reiner@hartenstein. de 134 http: //hartenstein. de

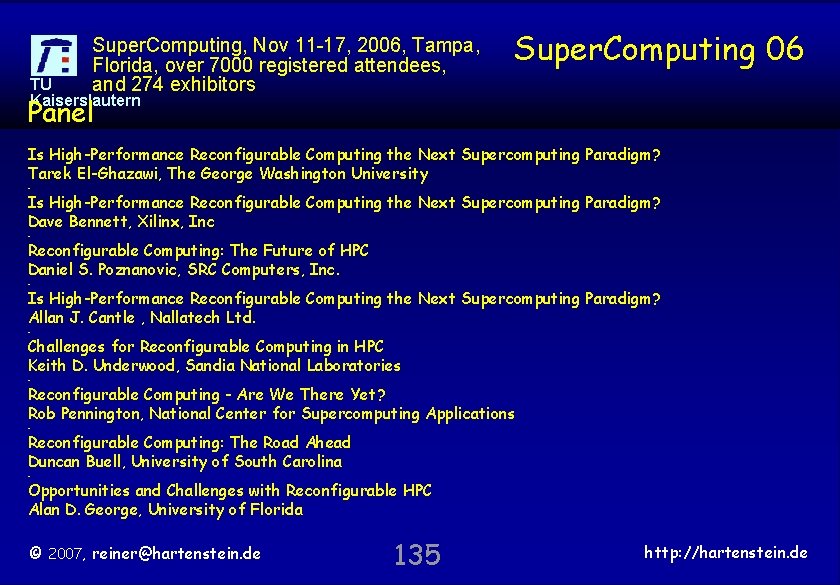

Super. Computing, Nov 11 -17, 2006, Tampa, Florida, over 7000 registered attendees, and 274 exhibitors Super. Computing 06 TU Kaiserslautern Panel Is High-Performance Reconfigurable Computing the Next Supercomputing Paradigm? Tarek El-Ghazawi, The George Washington University - Is High-Performance Reconfigurable Computing the Next Supercomputing Paradigm? Dave Bennett, Xilinx, Inc - Reconfigurable Computing: The Future of HPC Daniel S. Poznanovic, SRC Computers, Inc. - Is High-Performance Reconfigurable Computing the Next Supercomputing Paradigm? Allan J. Cantle , Nallatech Ltd. - Challenges for Reconfigurable Computing in HPC Keith D. Underwood, Sandia National Laboratories - Reconfigurable Computing - Are We There Yet? Rob Pennington, National Center for Supercomputing Applications - Reconfigurable Computing: The Road Ahead Duncan Buell, University of South Carolina - Opportunities and Challenges with Reconfigurable HPC Alan D. George, University of Florida © 2007, reiner@hartenstein. de 135 http: //hartenstein. de

Outline TU Kaiserslautern • • The (non-v-N) anti-machine (Xputer) Speed-up by address generators Data-procedural Programming Language Generalization of the Systolic Array Partitioning Compilation Techniques Design Space Exploration Bridging the Paradigm Chasm © 2007, reiner@hartenstein. de 136 http: //hartenstein. de

Outline TU Kaiserslautern • • The (non-v-N) anti-machine (Xputer) Speed-up by address generators Data-procedural Programming Language Generalization of the Systolic Array Partitioning Compilation Techniques Design Space Exploration Bridging the Paradigm Chasm © 2007, reiner@hartenstein. de 137 http: //hartenstein. de

Acceleration Mechanisms by ASM-based Mo. MSW TU Kaiserslautern • parallelism by multi bank memory architecture • reconfigurable address compuattion – before run time • avoiding multiple accesses to the same data. • avoiding memory cycles for address computation • improve parallelism by storage scheme transformations • minimize data movement across chip boundaries © 2007, reiner@hartenstein. de 138 http: //hartenstein. de

Outline TU Kaiserslautern • • The (non-v-N) anti-machine (Xputer) Speed-up by address generators Data-procedural Programming Language Generalization of the Systolic Array Partitioning Compilation Techniques Design Space Exploration Bridging the Paradigm Chasm © 2007, reiner@hartenstein. de 139 http: //hartenstein. de

Outline TU Kaiserslautern • • The (non-v-N) anti-machine (Xputer) Speed-up by address generators Data-procedural Programming Language Generalization of the Systolic Array Partitioning Compilation Techniques Design Space Exploration Bridging the Paradigm Chasm © 2007, reiner@hartenstein. de 140 http: //hartenstein. de

Outline TU Kaiserslautern • • The (non-v-N) anti-machine (Xputer) Speed-up by address generators Data-procedural Programming Language Generalization of the Systolic Array Partitioning Compilation Techniques Design Space Exploration Bridging the Paradigm Chasm © 2007, reiner@hartenstein. de 141 http: //hartenstein. de

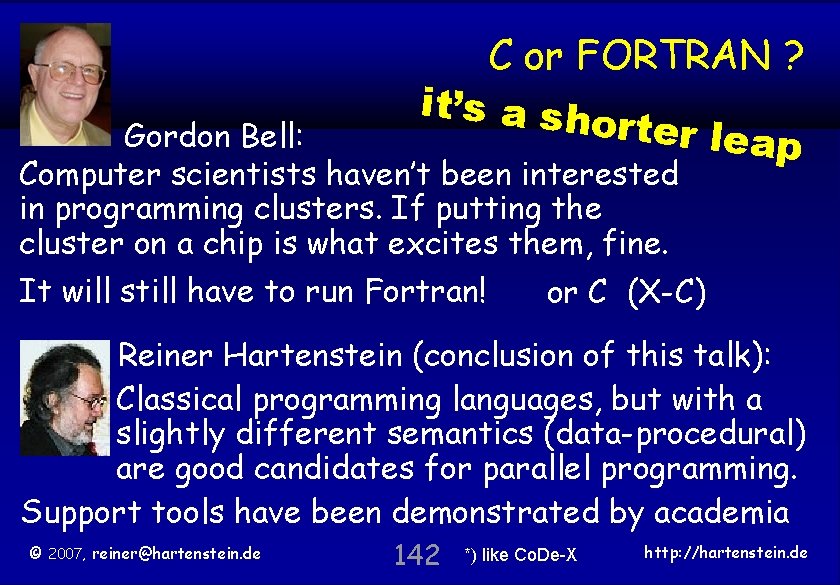

TU Kaiserslautern C or FORTRAN ? it’s a sh orter lea p Gordon Bell: Computer scientists haven’t been interested in programming clusters. If putting the cluster on a chip is what excites them, fine. It will still have to run Fortran! or C (X-C) Reiner Hartenstein (conclusion of this talk): Classical programming languages, but with a slightly different semantics (data-procedural) are good candidates for parallel programming. Support tools have been demonstrated by academia © 2007, reiner@hartenstein. de 142 *) like Co. De-X http: //hartenstein. de

Newton’s 1 st Law TU Kaiserslautern Newton’s 1 st Law à la Gordon Bell: Scientists do not change their direction ## ### ## a ##’ ### © 2007, reiner@hartenstein. de 143 *) like Co. De-X http: //hartenstein. de

TU Kaiserslautern Edu defic © 2007, reiner@hartenstein. de 144 http: //hartenstein. de

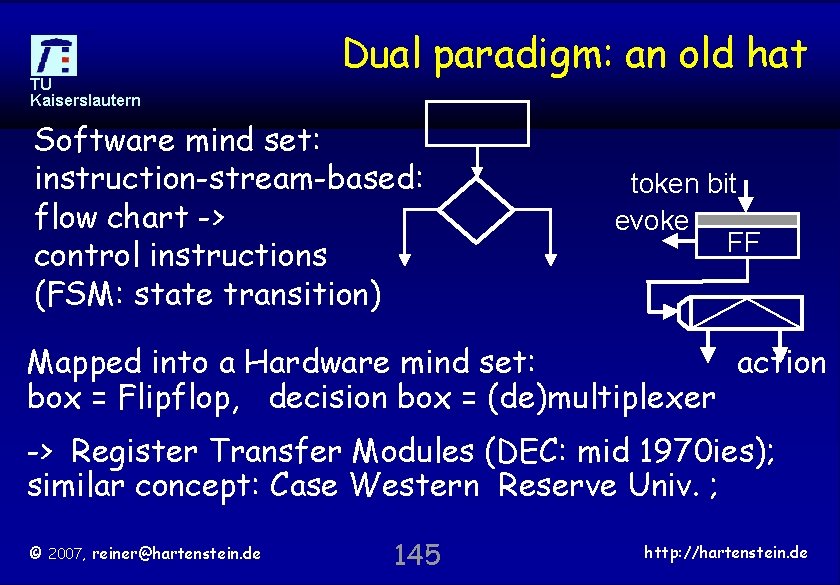

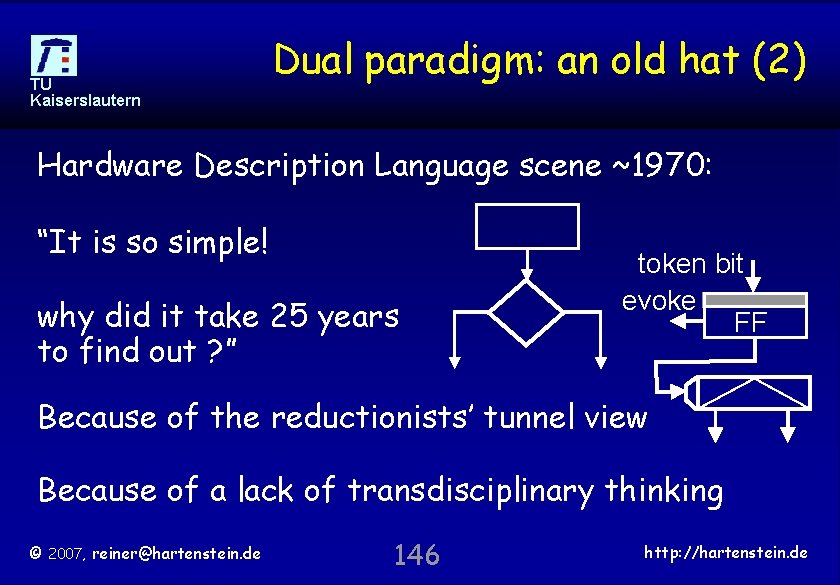

TU Kaiserslautern Dual paradigm: an old hat Software mind set: instruction-stream-based: flow chart -> control instructions (FSM: state transition) token bit evoke FF Mapped into a Hardware mind set: action box = Flipflop, decision box = (de)multiplexer -> Register Transfer Modules (DEC: mid 1970 ies); similar concept: Case Western Reserve Univ. ; © 2007, reiner@hartenstein. de 145 http: //hartenstein. de

TU Kaiserslautern Dual paradigm: an old hat (2) Hardware Description Language scene ~1970: “It is so simple! why did it take 25 years to find out ? ” token bit evoke FF Because of the reductionists’ tunnel view Because of a lack of transdisciplinary thinking © 2007, reiner@hartenstein. de 146 http: //hartenstein. de

TU Kaiserslautern Dual paradigm: an old hat (3) Hardware Description Languages; “procedure call” or function call Module-name (parameters); Software: time domain Hardware description: space domain © 2007, reiner@hartenstein. de 147 http: //hartenstein. de

TU Kaiserslautern ASM © 2007, reiner@hartenstein. de 148 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 149 http: //hartenstein. de

TU Kaiserslautern Co-comp © 2007, reiner@hartenstein. de 150 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 151 http: //hartenstein. de

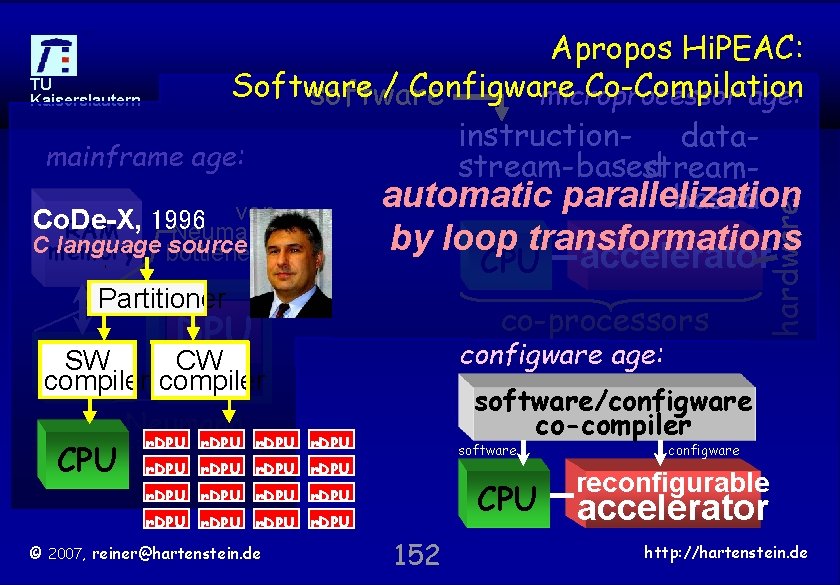

Co. De-X, RAM 1996 Neumann by loop transformations accelerator Cmemory language bottleneck source CPU Partitioner co-processors DPU configware age: SW CW program CPU compiler counter von Neumann r. DPU instruction-stream. CPU r. DPU based r. DPU machine software/configware co-compiler r. DPU software r. DPU CPU r. DPU r. DPU © 2007, reiner@hartenstein. de hardware Apropos Hi. PEAC: TU Software / Configware Co-Compilation microprocessor age: software Kaiserslautern instruction- datamainframe age: stream-based streamautomatic parallelization based von 152 configware reconfigurable accelerator http: //hartenstein. de

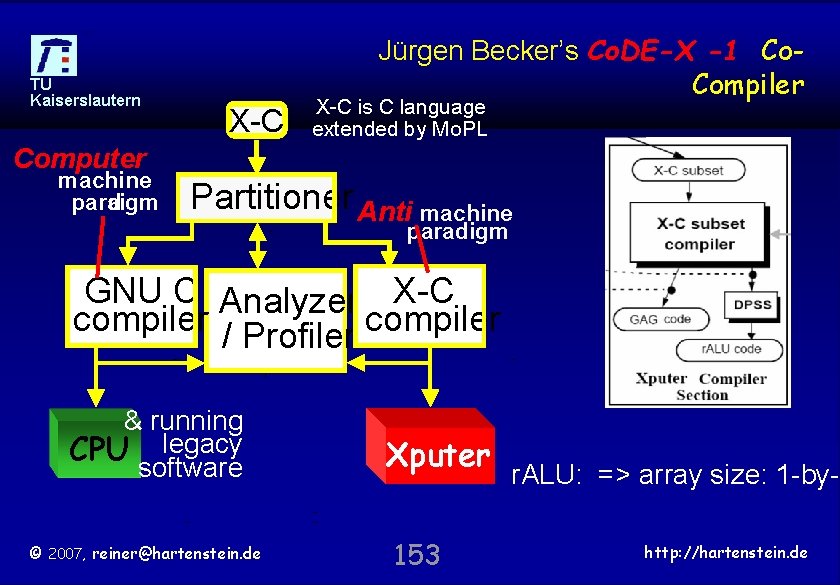

TU Kaiserslautern Jürgen Becker’s Co. DE-X -1 Co. Compiler X-C is C language extended by Mo. PL Computer machine para digm Partitioner Anti machine paradigm GNU C Analyzer X-C compiler / Profiler compiler & running CPU legacy software © 2007, reiner@hartenstein. de Xputer 153 r. ALU: => array size: 1 -by-1 http: //hartenstein. de

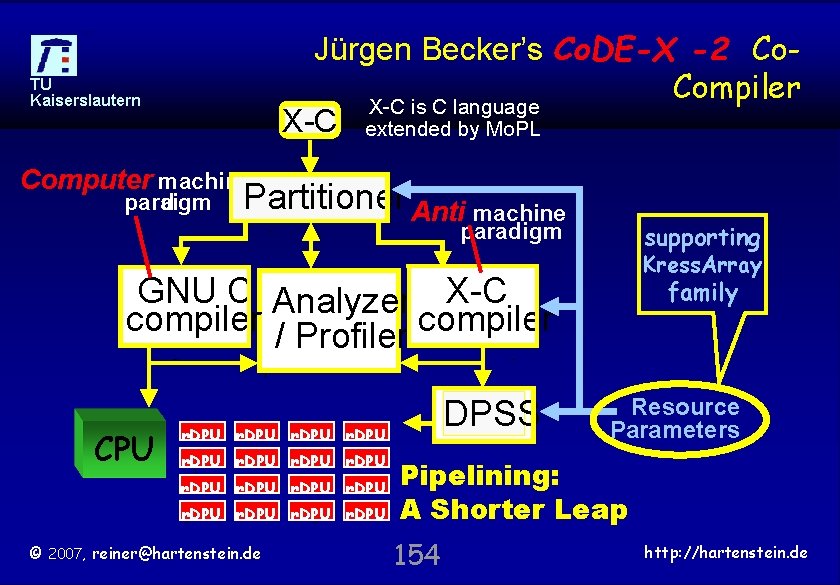

Jürgen Becker’s Co. DE-X -2 Co. Compiler X-C is C language X-C extended by Mo. PL TU Kaiserslautern Computer machine para digm Partitioner Anti machine paradigm supporting Kress. Array GNU C Analyzer X-C compiler / Profiler compiler CPU DPSS r. DPU r. DPU © 2007, reiner@hartenstein. de family Resource Parameters Pipelining: A Shorter Leap 154 http: //hartenstein. de

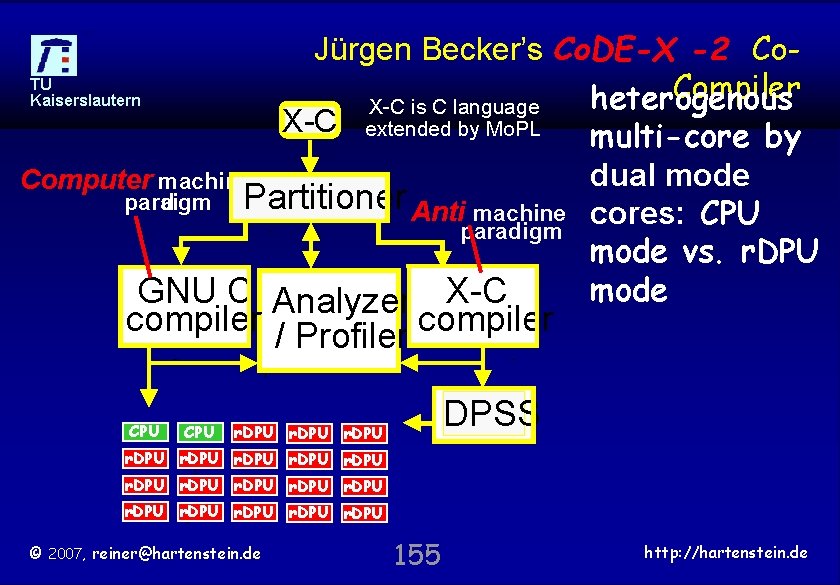

Jürgen Becker’s Co. DE-X -2 Co. Compiler heterogenous X-C is C language X-C extended by Mo. PL multi-core by TU Kaiserslautern Computer machine para digm Partitioner Anti machine paradigm GNU C Analyzer X-C compiler / Profiler compiler CPU dual mode cores: CPU mode vs. r. DPU mode DPSS r. DPU r. DPU © 2007, reiner@hartenstein. de 155 http: //hartenstein. de

TU Kaiserslautern Why better © 2007, reiner@hartenstein. de 156 http: //hartenstein. de

TU Kaiserslautern © 2007, reiner@hartenstein. de 157 http: //hartenstein. de

hardware vs. software perspective TU Kaiserslautern 1 2 3 platform simple FPGA** single paradig µprocessor & multi core m coarsegrained Platform 1 & (2)* & FPGA 3 hardware datasoftware instructio flex strea perspecti n-stream ibilit m-driven y driven ve ve X X for software people X X perfor manc e pot. +++ ++ +++ - ++ +++ (X)* ++++ X X 5 ++ ++ 1&2 X for Xsoftware X people X dual 6 ++++ 2&4 X X paradig 7 + +++ 2&3 X X X m reconfigura 8 +++ + X X set soft. Xcores and/or on-chip **) without ble softinstr. cores *) with microprocessor 4 © 2007, reiner@hartenstein. de X 158 http: //hartenstein. de

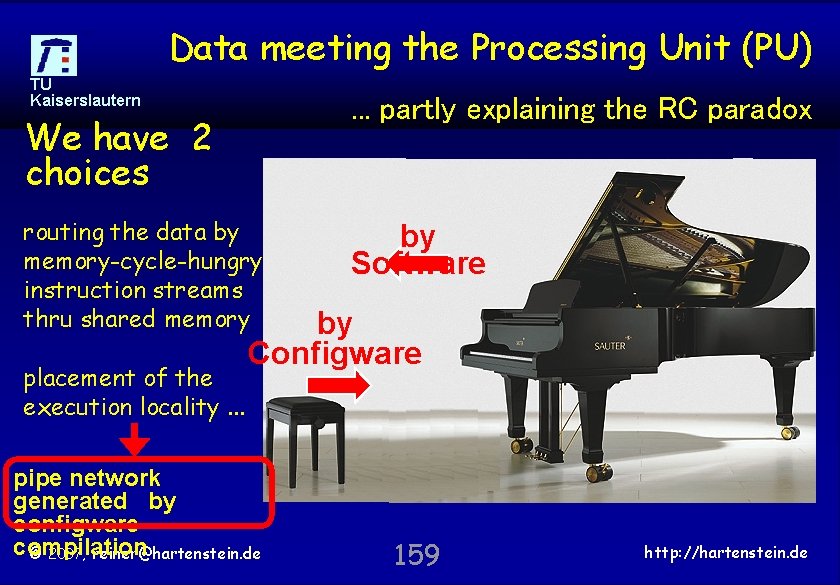

Data meeting the Processing Unit (PU) TU Kaiserslautern . . . partly explaining the RC paradox We have 2 choices routing the data by memory-cycle-hungry instruction streams thru shared memory placement of the execution locality. . . by Software by Configware pipe network generated by configware compilation © 2007, reiner@hartenstein. de 159 http: //hartenstein. de

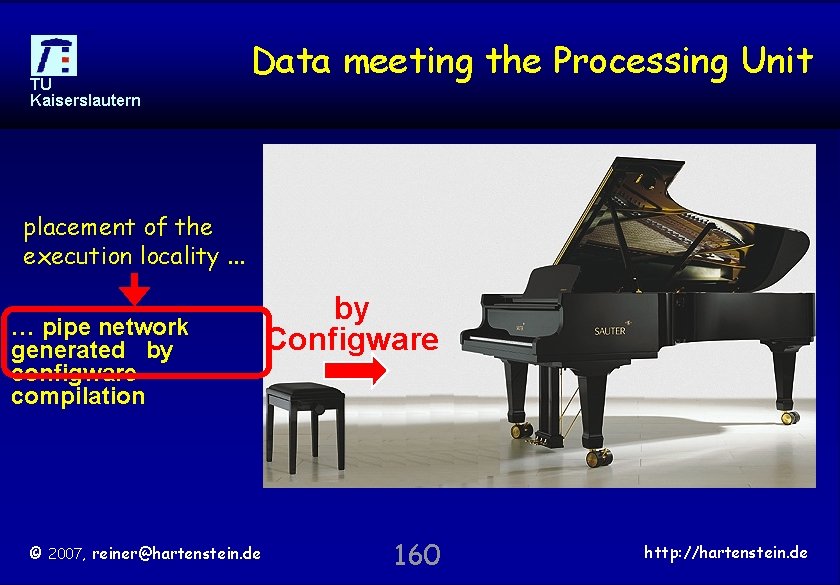

TU Kaiserslautern Data meeting the Processing Unit placement of the execution locality. . . … pipe network generated by configware compilation © 2007, reiner@hartenstein. de by Configware 160 http: //hartenstein. de

TU Kaiserslautern conclus © 2007, reiner@hartenstein. de 161 http: //hartenstein. de

TU Kaiserslautern thank you for your patience © 2007, reiner@hartenstein. de 162 http: //hartenstein. de

TU Kaiserslautern END © 2007, reiner@hartenstein. de 163 http: //hartenstein. de

TU Kaiserslautern END © 2007, reiner@hartenstein. de 164 http: //hartenstein. de

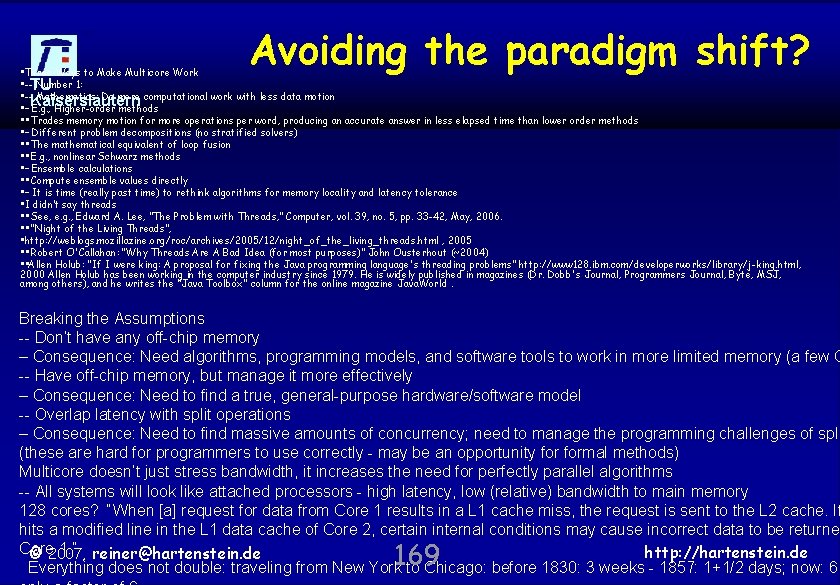

TU Kaiserslautern Avoiding the paradigm shift? „It is feared that domain scientists will have to learn how to design hardware. Can we avoid the need for hardware design skills and understanding? “ Tarek El-Ghazawi, panelist at Super. Computing 2006 „A leap too far for the existing HPC community“ panelist Allan J. Cantle We need a bridge strategy by developing advanced tools for training the software community to think in fine grained parallelism and pipelining techniques. A shorter leap by coarse-grained platforms which allow a software-like pipelining perspective © 2007, reiner@hartenstein. de Super. Computing, Nov 11 -17, http: //hartenstein. de 165 2006, Tampa, Florida, over 7000 registered attendees, and 274 exhibitors

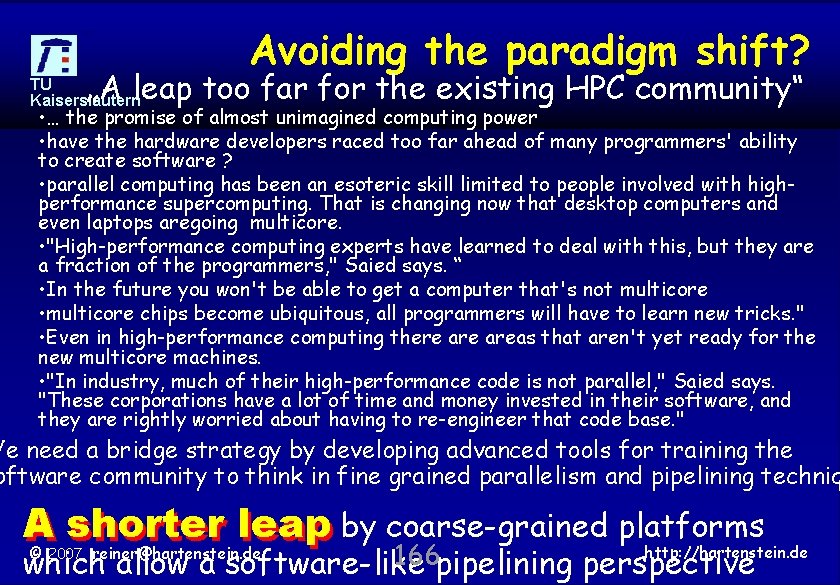

Avoiding the paradigm shift? „A leap too far for the existing HPC community“ TU Kaiserslautern • … the promise of almost unimagined computing power • have the hardware developers raced too far ahead of many programmers' ability to create software ? • parallel computing has been an esoteric skill limited to people involved with highperformance supercomputing. That is changing now that desktop computers and even laptops aregoing multicore. • "High-performance computing experts have learned to deal with this, but they are a fraction of the programmers, " Saied says. “ • In the future you won't be able to get a computer that's not multicore • multicore chips become ubiquitous, all programmers will have to learn new tricks. " • Even in high-performance computing there areas that aren't yet ready for the new multicore machines. • "In industry, much of their high-performance code is not parallel, " Saied says. "These corporations have a lot of time and money invested in their software, and they are rightly worried about having to re-engineer that code base. " We need a bridge strategy by developing advanced tools for training the oftware community to think in fine grained parallelism and pipelining techniq A shorter leap by coarse-grained platforms http: //hartenstein. de 166 which allow a software-like pipelining perspective © 2007, reiner@hartenstein. de

Avoiding the paradigm shift? • "Moore's Gap. " TU Kaiserslautern • Steve Kirsch, an engineering fellow for Raytheon Systems Co. , says that multicore computing presents both the dream of infinite computing power and the nightmare of programming. • "The real lesson here is that the hardware and software industries have to pay attention to each other, " Kirsch says. "Their futures are tied together in a way that they haven't been in recent memory, and that will change the way both businesses will operate. " ebruary, Intel released research details about a chip with 80 cores, a ngernail sized chip that has the same processing power that in 1996 equired a supercomputer with a 2, 000 -square-footprint and using , 000 times electrical power. on previously written software that ha problem forthe those who depend been steadily improving and evolving over decades. "Our legac software is a real concern to u parallel programming for multicore computers may require ne computer language http: //hartenstein. de © 2007, reiner@hartenstein. de "Today 167 we program in sequential language

Avoiding the paradigm shift? • TU ""Our programming languages researchers are exploring Kaiserslautern new programming paradigms and models, " Hambrusch says. "Our course on multicore architectures is also preparing students for future software development positions. Purdue is clearly playing a defining role in this critical technology. " n five or six years, laptop computers will have the same capabilities, nd face the same obstacles, as today's supercomputers, " Saied says This challenge will face people who program for desktop computers, eople who think they have nothing to do with supercomputers and arallel processing will find out that they need these skills, too. " Remote Direct Memory Access (RDMA) is a technology that allow computers in a network to exchange data in main memory withou involving the processor, cache, or operating system of either com Like locally-based Direct Memory Access (DMA), RDMA improves ©throughput 2007, reiner@hartenstein. de and performance 168 because it frees up http: //hartenstein. de resources. RDM

Avoiding the paradigm shift? • Three Ways to Make Multicore Work • --TU Number 1: • -- Mathematics: Do more computational work with less data motion Kaiserslautern • – E. g. , Higher-order methods • • Trades memory motion for more operations per word, producing an accurate answer in less elapsed time than lower order methods • – Different problem decompositions (no stratified solvers) • • The mathematical equivalent of loop fusion • • E. g. , nonlinear Schwarz methods • – Ensemble calculations • • Compute ensemble values directly • – It is time (really past time) to rethink algorithms for memory locality and latency tolerance • I didn’t say threads • • See, e. g. , Edward A. Lee, "The Problem with Threads, " Computer, vol. 39, no. 5, pp. 33 -42, May, 2006. • • “Night of the Living Threads”, • http: //weblogs. mozillazine. org/roc/archives/2005/12/night_of_the_living_threads. html , 2005 • • Robert O'Callahan: “Why Threads Are A Bad Idea (for most purposes)” John Ousterhout (~2004) • • Allen Holub: “If I were king: A proposal for fixing the Java programming language's threading problems” http: //www 128. ibm. com/developerworks/library/j-king. html, 2000 Allen Holub has been working in the computer industry since 1979. He is widely published in magazines (Dr. Dobb's Journal, Programmers Journal, Byte, MSJ, among others), and he writes the "Java Toolbox" column for the online magazine Java. World. Breaking the Assumptions -- Don’t have any off-chip memory – Consequence: Need algorithms, programming models, and software tools to work in more limited memory (a few G -- Have off-chip memory, but manage it more effectively – Consequence: Need to find a true, general-purpose hardware/software model -- Overlap latency with split operations – Consequence: Need to find massive amounts of concurrency; need to manage the programming challenges of spli (these are hard for programmers to use correctly - may be an opportunity formal methods) Multicore doesn’t just stress bandwidth, it increases the need for perfectly parallel algorithms -- All systems will look like attached processors - high latency, low (relative) bandwidth to main memory 128 cores? “When [a] request for data from Core 1 results in a L 1 cache miss, the request is sent to the L 2 cache. If hits a modified line in the L 1 data cache of Core 2, certain internal conditions may cause incorrect data to be returned Core 1. ” reiner@hartenstein. de http: //hartenstein. de © 2007, Everything does not double: traveling from New York to Chicago: before 1830: 3 weeks - 1857: 1+1/2 days; now: 6 169

in Memoriam … TU Kaiserslautern in Memoriam Richard Newton in Memoriam Stamatis Vassiliadis 1951 - 2007 © 2007, reiner@hartenstein. de 170 http: //hartenstein. de

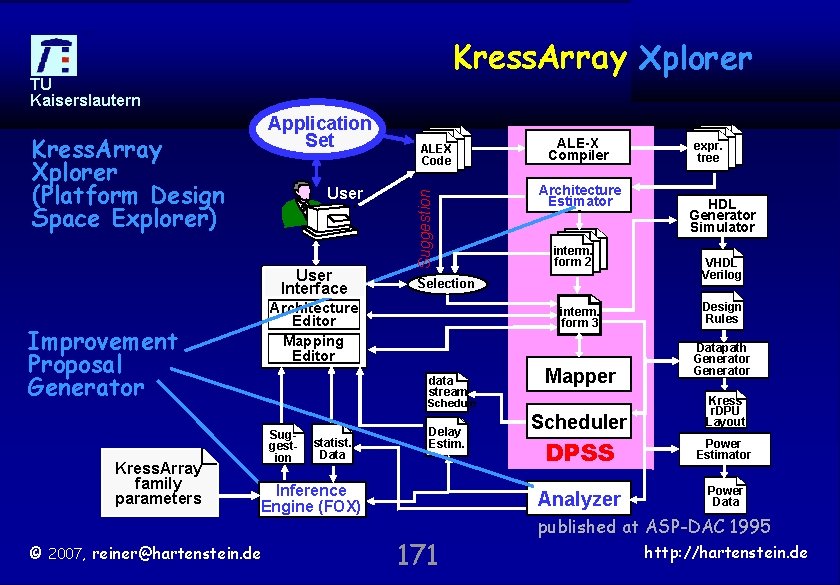

Kress. Array DPSS Xplorer TU Kaiserslautern Kress. Array Xplorer (Platform Design Space Explorer) User Interface ALE-X Compiler Architecture Estimator interm. form 2 Selection Architecture Editor Mapping Editor Improvement Proposal Generator Kress. Array family parameters ALEX Code Suggestion Application Set interm. form 3 data stream Mapper Schedule Suggestion statist. Data Delay Estim. Inference Engine (FOX) © 2007, reiner@hartenstein. de 171 Scheduler expr. tree HDL Generator Simulator VHDL Verilog Design Rules Datapath Generator Kress r. DPU Layout DPSS Power Estimator Analyzer Power Data published at ASP-DAC 1995 http: //hartenstein. de

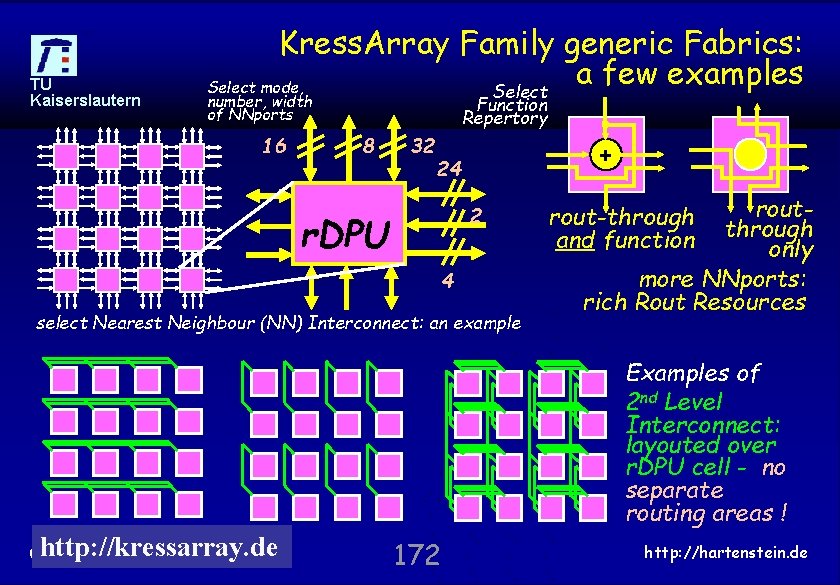

TU Kaiserslautern Kress. Array Family generic Fabrics: a few examples Select mode, Select number, width of NNports 16 Function Repertory 8 32 + 24 2 r. DPU 4 select Nearest Neighbour (NN) Interconnect: an example routthrough only more NNports: rich Rout Resources rout-through and function Examples of 2 nd Level Interconnect: layouted over r. DPU cell - no separate routing areas ! http: //kressarray. de © 2007, reiner@hartenstein. de 172 http: //hartenstein. de

- Slides: 172