CS 730 Text Mining for Social Media Collaboratively

CS 730: Text Mining for Social Media & Collaboratively Generated Content Lecture 3: Parsing and Chunking

“Big picture” of the course ü Language models (word, n-gram, …) ü Classification and sequence models üWSD, Part-of-speech tagging Ø Syntactic parsing and tagging • Next week: semantics • Next->Next week: Info Extraction + Text Mining Intro – Fall break • Part II: Social media (research papers start) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 2

Today’s Lecture Plan • Phrase Chunking • Syntactic Parsing • Machine Learning-based Syntactic Parse 9/20/2010 CS 730: Text Mining for Social Media, F 2010 3

Phrase Chunking 9/20/2010 CS 730: Text Mining for Social Media, F 2010 4

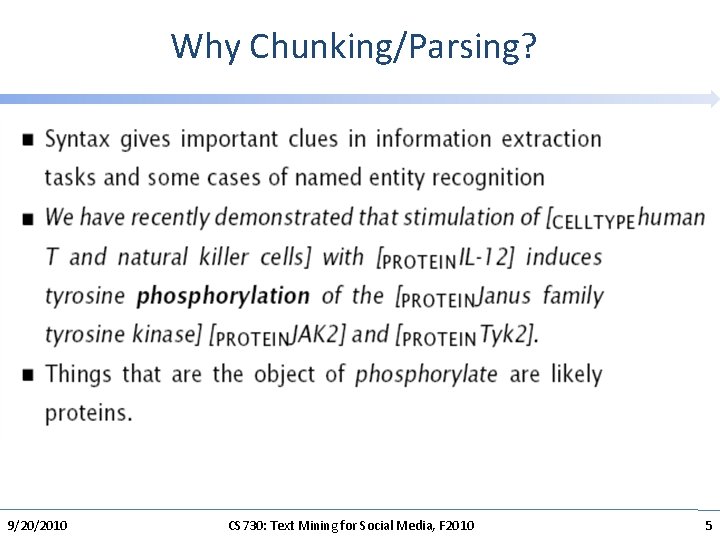

Why Chunking/Parsing? 9/20/2010 CS 730: Text Mining for Social Media, F 2010 5

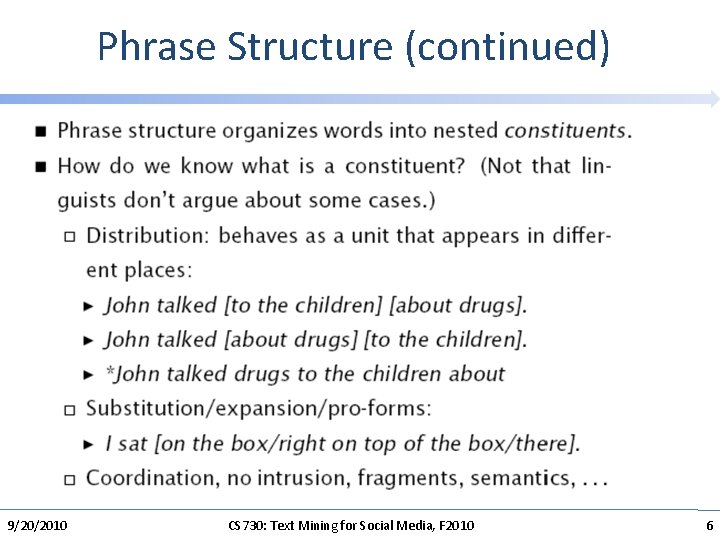

Phrase Structure (continued) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 6

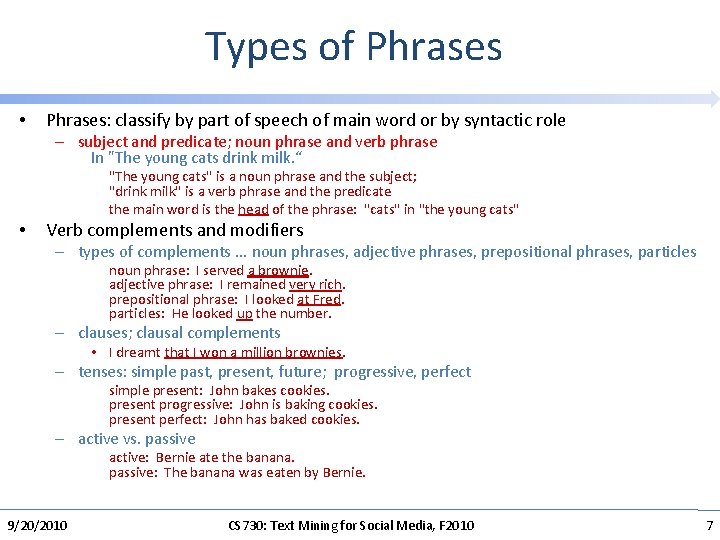

Types of Phrases • Phrases: classify by part of speech of main word or by syntactic role – subject and predicate; noun phrase and verb phrase In "The young cats drink milk. “ "The young cats" is a noun phrase and the subject; "drink milk" is a verb phrase and the predicate the main word is the head of the phrase: "cats" in "the young cats" • Verb complements and modifiers – types of complements. . . noun phrases, adjective phrases, prepositional phrases, particles noun phrase: I served a brownie. adjective phrase: I remained very rich. prepositional phrase: I looked at Fred. particles: He looked up the number. – clauses; clausal complements • I dreamt that I won a million brownies. – tenses: simple past, present, future; progressive, perfect simple present: John bakes cookies. present progressive: John is baking cookies. present perfect: John has baked cookies. – active vs. passive active: Bernie ate the banana. passive: The banana was eaten by Bernie. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 7

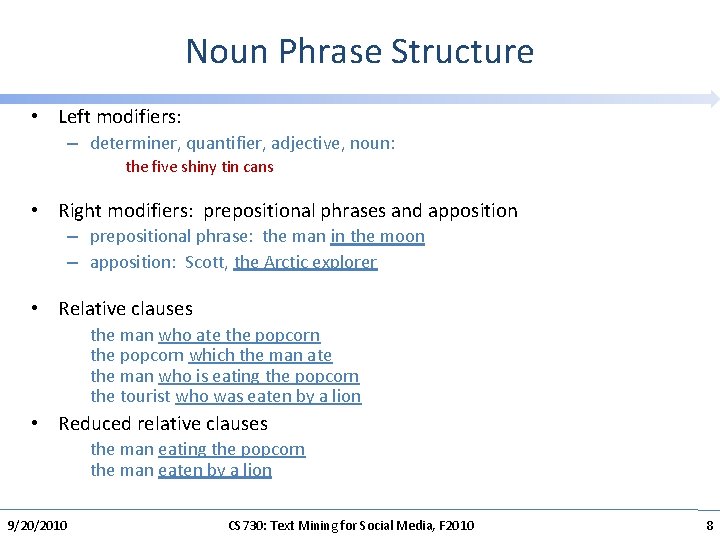

Noun Phrase Structure • Left modifiers: – determiner, quantifier, adjective, noun: the five shiny tin cans • Right modifiers: prepositional phrases and apposition – prepositional phrase: the man in the moon – apposition: Scott, the Arctic explorer • Relative clauses the man who ate the popcorn which the man ate the man who is eating the popcorn the tourist who was eaten by a lion • Reduced relative clauses the man eating the popcorn the man eaten by a lion 9/20/2010 CS 730: Text Mining for Social Media, F 2010 8

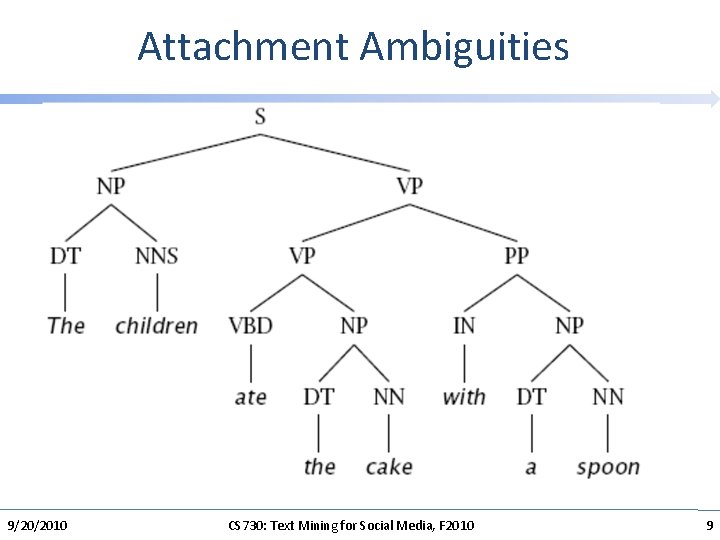

Attachment Ambiguities 9/20/2010 CS 730: Text Mining for Social Media, F 2010 9

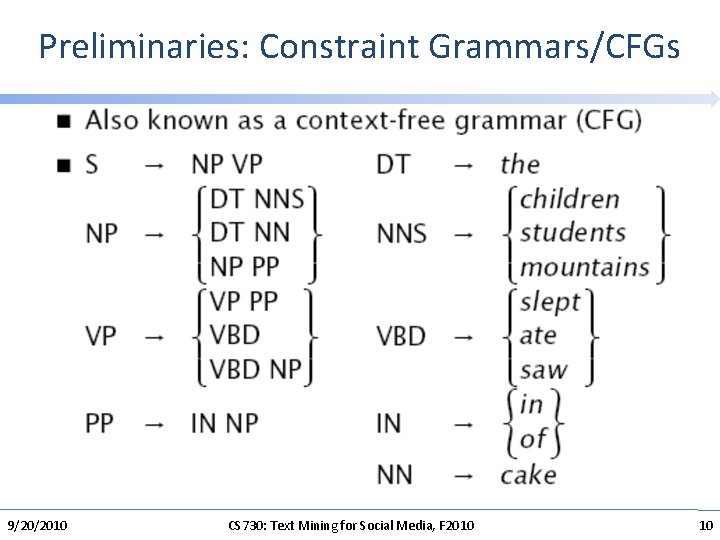

Preliminaries: Constraint Grammars/CFGs 9/20/2010 CS 730: Text Mining for Social Media, F 2010 10

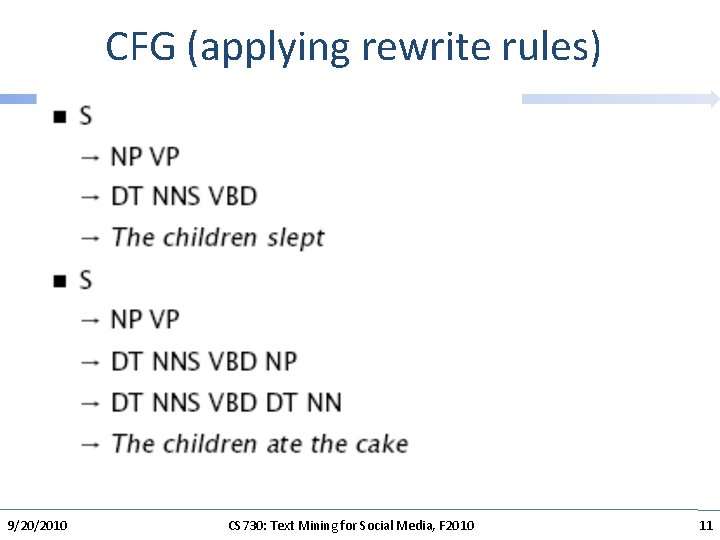

CFG (applying rewrite rules) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 11

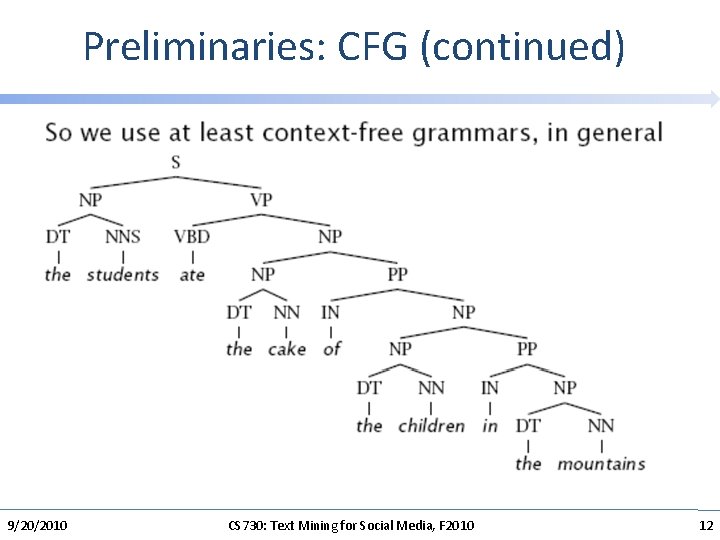

Preliminaries: CFG (continued) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 12

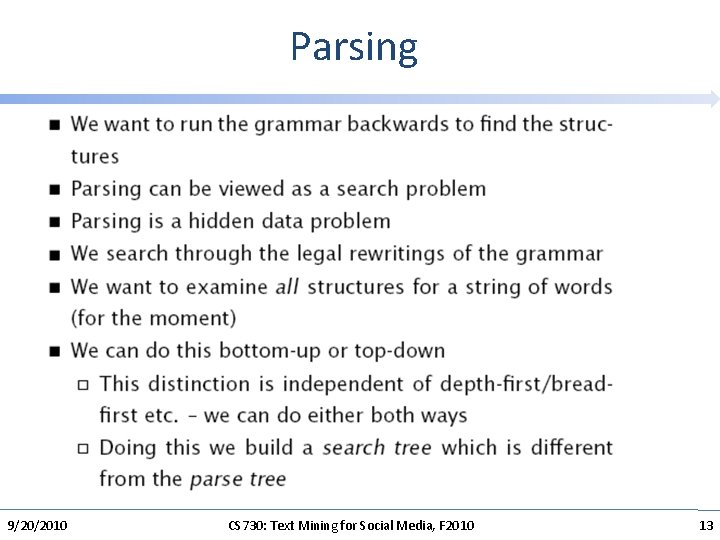

Parsing 9/20/2010 CS 730: Text Mining for Social Media, F 2010 13

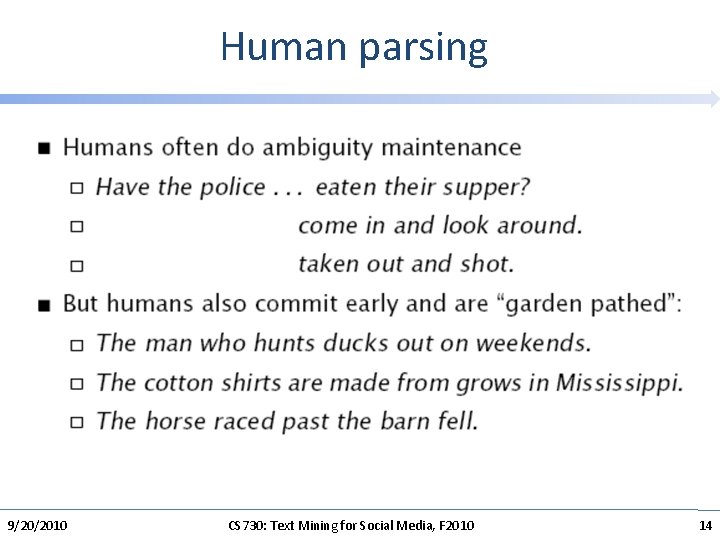

Human parsing 9/20/2010 CS 730: Text Mining for Social Media, F 2010 14

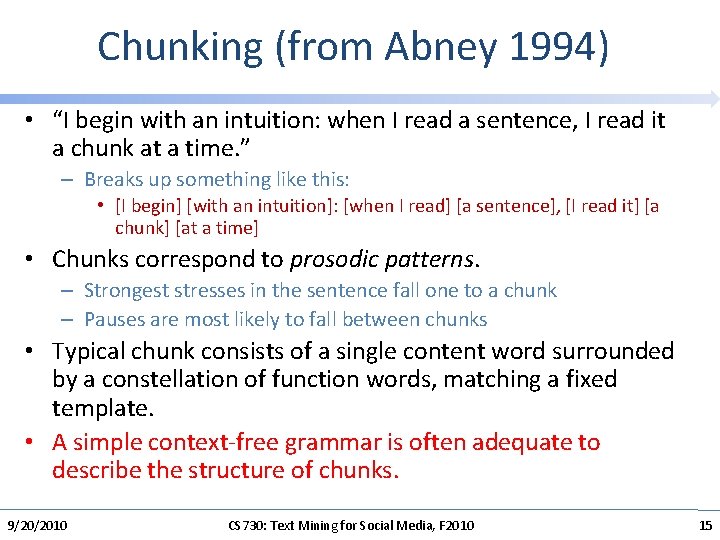

Chunking (from Abney 1994) • “I begin with an intuition: when I read a sentence, I read it a chunk at a time. ” – Breaks up something like this: • [I begin] [with an intuition]: [when I read] [a sentence], [I read it] [a chunk] [at a time] • Chunks correspond to prosodic patterns. – Strongest stresses in the sentence fall one to a chunk – Pauses are most likely to fall between chunks • Typical chunk consists of a single content word surrounded by a constellation of function words, matching a fixed template. • A simple context-free grammar is often adequate to describe the structure of chunks. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 15

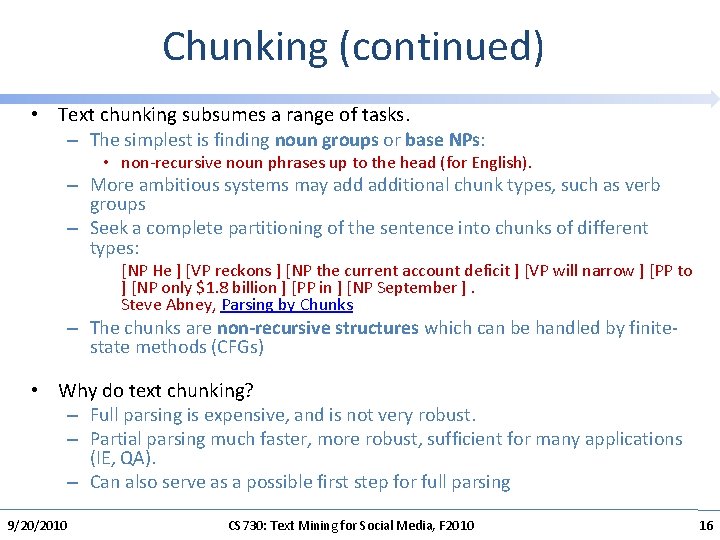

Chunking (continued) • Text chunking subsumes a range of tasks. – The simplest is finding noun groups or base NPs: • non-recursive noun phrases up to the head (for English). – More ambitious systems may additional chunk types, such as verb groups – Seek a complete partitioning of the sentence into chunks of different types: [NP He ] [VP reckons ] [NP the current account deficit ] [VP will narrow ] [PP to ] [NP only $1. 8 billion ] [PP in ] [NP September ]. Steve Abney, Parsing by Chunks – The chunks are non-recursive structures which can be handled by finitestate methods (CFGs) • Why do text chunking? – Full parsing is expensive, and is not very robust. – Partial parsing much faster, more robust, sufficient for many applications (IE, QA). – Can also serve as a possible first step for full parsing 9/20/2010 CS 730: Text Mining for Social Media, F 2010 16

Chunking: Rule-based • Quite high performance on NP chunking can be obtained with a small number of regular expressions • With a larger rule set, using Constraint Grammar rules, Voutilainen reports recall of 98%+ with precison of 95 -98% for noun chunks. – Atro Voutilainen, NPtool, a Detector of English Noun Phrases, WVLC 93. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 17

Why chunking can be difficult? • • Two major sources of error (and these are also error sources for simple finite-state patterns for base. NP): participles and conjunction. Whether a particple is part of a noun phrase will depend on the particular choice of words He enjoys writing letters. He sells writing paper. – and sometimes is genuinely ambiguous. . . He enjoys baking potatoes. He has broken bottles in the basement. • The rules for conjoined NPs are complicated by the bracketing rules of the Penn Tree Bank. – Conjoined prenominal nouns are generally treated as part of a single base. NP: "brick and mortar university" (with "brick and mortar" modifying "university"). – Conjoined heads with shared modifiers are also to be treated as a single base. NP: "ripe apples and bananas"; • If the modifier is not shared, there are two base. NPs: "ripe apples and cinnamon". • Modifier sharing, however, is hard for people to judge and is not consistently annotated 9/20/2010 CS 730: Text Mining for Social Media, F 2010 18

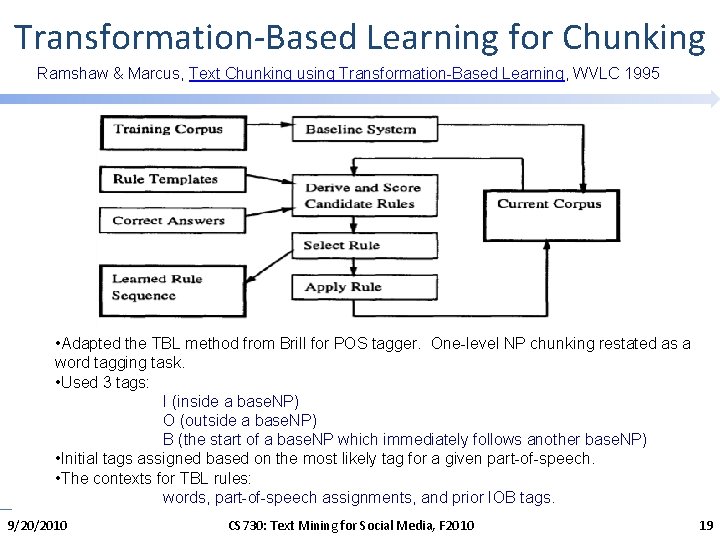

Transformation-Based Learning for Chunking Ramshaw & Marcus, Text Chunking using Transformation-Based Learning, WVLC 1995 • Adapted the TBL method from Brill for POS tagger. One-level NP chunking restated as a word tagging task. • Used 3 tags: I (inside a base. NP) O (outside a base. NP) B (the start of a base. NP which immediately follows another base. NP) • Initial tags assigned based on the most likely tag for a given part-of-speech. • The contexts for TBL rules: words, part-of-speech assignments, and prior IOB tags. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 19

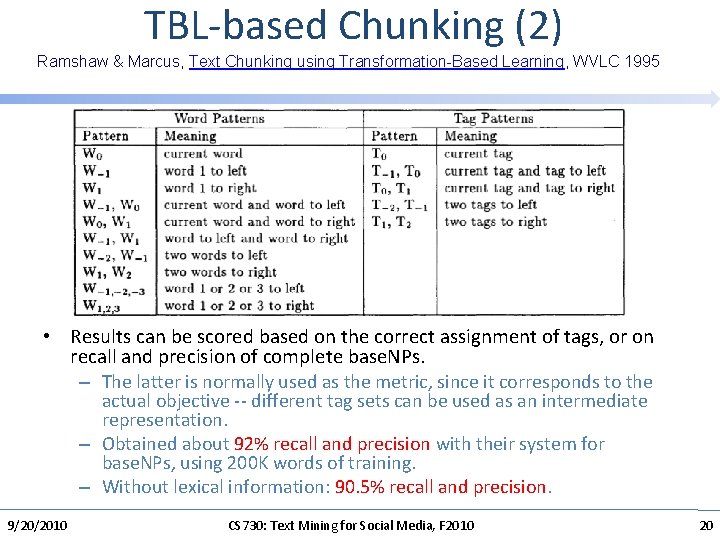

TBL-based Chunking (2) Ramshaw & Marcus, Text Chunking using Transformation-Based Learning, WVLC 1995 • Results can be scored based on the correct assignment of tags, or on recall and precision of complete base. NPs. – The latter is normally used as the metric, since it corresponds to the actual objective -- different tag sets can be used as an intermediate representation. – Obtained about 92% recall and precision with their system for base. NPs, using 200 K words of training. – Without lexical information: 90. 5% recall and precision. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 20

Chunking: Classification-based • Classification task: – NP or not NP? • Using classifiers for Chunking – The best performance on the base NP and chunking tasks was obtained using a Support Vector Machine method. – They obtained an accuracy of 94. 22% with the small data set of Ramshaw and Marcus, and 95. 77% by training on almost the entire Penn Treebank. Taku Kudo; Yuji Matsumoto. Chunking with Support Vector Machines Proc. NAACL 01. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 21

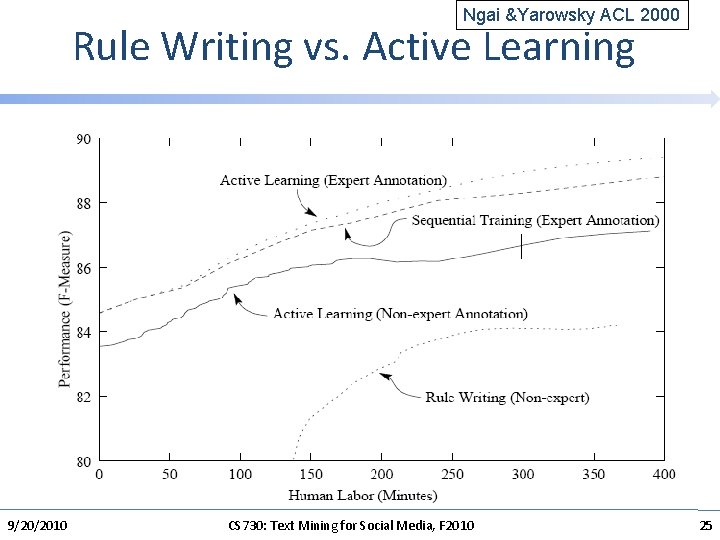

Hand-tuning vs. Machine Learning • Base. NP chunking is a task for which people (with some linguistics training) can write quite good rules quickly. • This raises the practical question of whether we should be using machine learning at all. – If there is already a large relevant resource, it makes sense to learn from it. – However, if we have to develop a chunker for a new language, is it cheaper to annotate some data or to write the rules directly? • Ngai and Yarowsky addressed this question. – They also considered selecting the data to be annotated. – Traditional training is based on sequential text annotation. . . we just annotate a series of documents in sequence. – Can we do better? • Ngai, G. and D. Yarowsky, Rule Writing or Annotation: Cost-efficient Resource Usage for Base Noun Phrase Chunking. ACL 2000 9/20/2010 CS 730: Text Mining for Social Media, F 2010 22

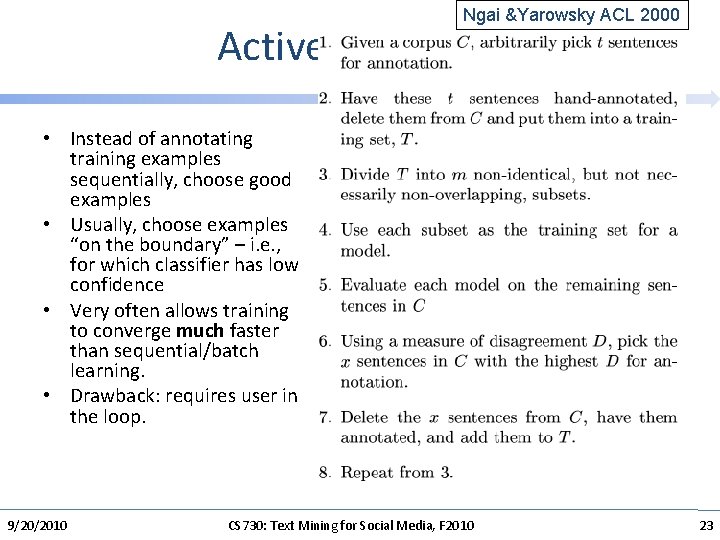

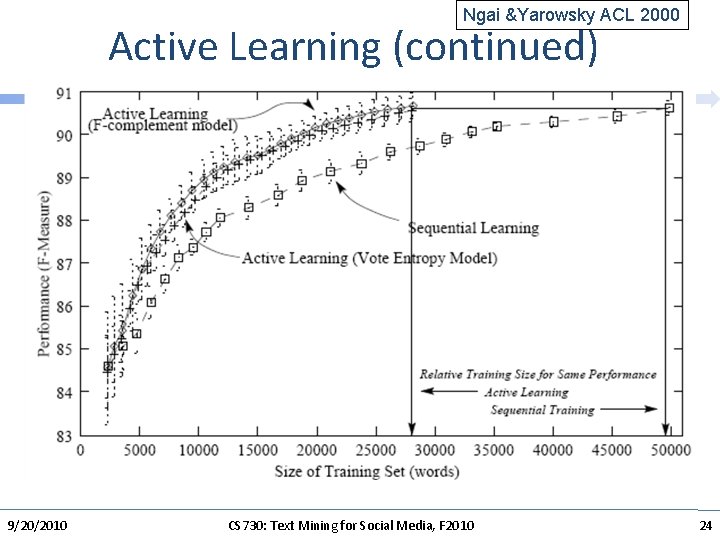

Ngai &Yarowsky ACL 2000 Active Learning • Instead of annotating training examples sequentially, choose good examples • Usually, choose examples “on the boundary” – i. e. , for which classifier has low confidence • Very often allows training to converge much faster than sequential/batch learning. • Drawback: requires user in the loop. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 23

Ngai &Yarowsky ACL 2000 Active Learning (continued) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 24

Ngai &Yarowsky ACL 2000 Rule Writing vs. Active Learning 9/20/2010 CS 730: Text Mining for Social Media, F 2010 25

Ngai &Yarowsky ACL 2000 Rule Writing vs. Annotation Learning • Annotation: – – Can continue infinitely Can combine efforts of multiple annotators More consistent results Accuracy can be improved by better learning algs. • Rule writing – – Must keep in mind rule interactions Difficult to combine rules from different experts Requires more skills Accuracy limited by set of rules (will not improve ever) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 26

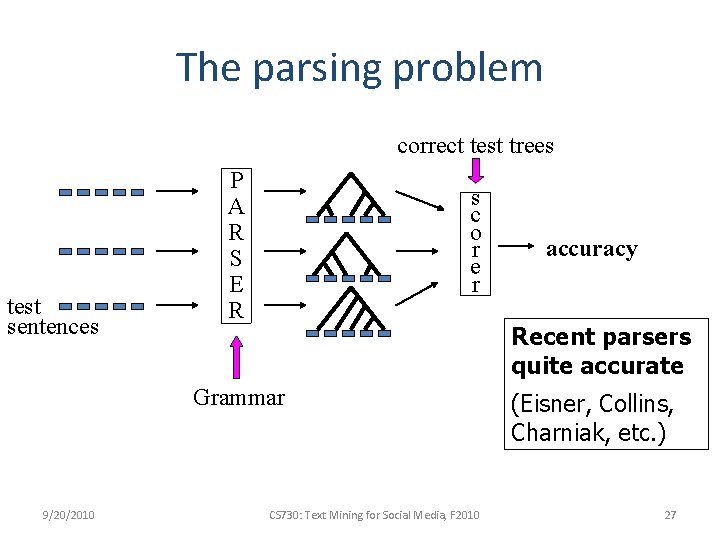

The parsing problem correct test trees test sentences P A R S E R s c o r e r Recent parsers quite accurate Grammar 9/20/2010 accuracy CS 730: Text Mining for Social Media, F 2010 (Eisner, Collins, Charniak, etc. ) 27

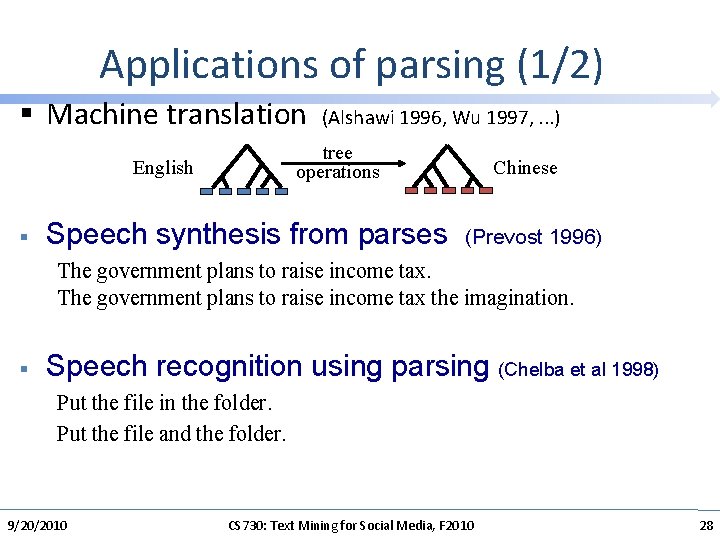

Applications of parsing (1/2) § Machine translation (Alshawi 1996, Wu 1997, . . . ) tree operations English § Chinese Speech synthesis from parses (Prevost 1996) The government plans to raise income tax the imagination. § Speech recognition using parsing (Chelba et al 1998) Put the file in the folder. Put the file and the folder. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 28

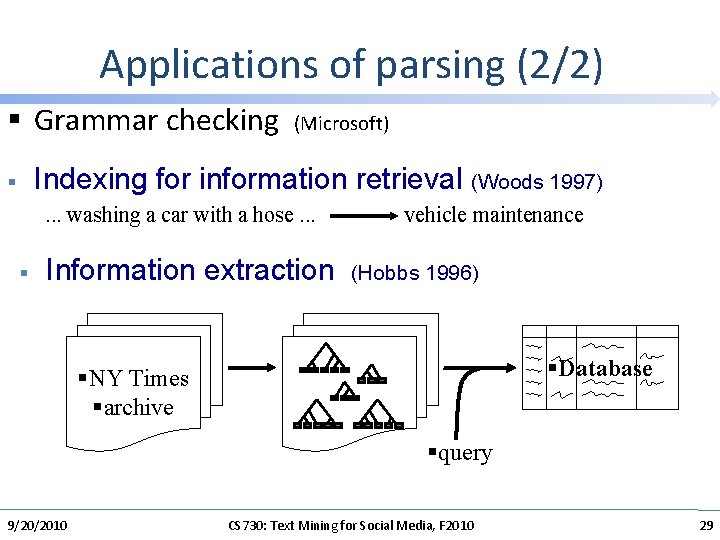

Applications of parsing (2/2) § Grammar checking (Microsoft) Indexing for information retrieval (Woods 1997) § . . . washing a car with a hose. . . § vehicle maintenance Information extraction (Hobbs 1996) §Database §NY Times §archive §query 9/20/2010 CS 730: Text Mining for Social Media, F 2010 29

Parsing for the Turing Test § Most linguistic properties are defined over trees. § One needs to parse to see subtle distinctions. E. g. : Sara dislikes criticism of her. (her Sara) Sara dislikes criticism of her by anyone. (her Sara) Sara dislikes anyone’s criticism of her. (her = Sara or her Sara) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 30

What makes a good grammar • Conjunctions must match – I ate a hamburger and on the stove. – I ate a cold hot dog and well burned. – I ate the hot dog slowly and a hamburger 9/20/2010 CS 730: Text Mining for Social Media, F 2010 31

Vanilla CFG not sufficient for NL • Number agreement – a men • DET selection – a apple • Tense, mood, etc. agreement • For now, let’s what it would take to parse English with vanilla CFG 9/20/2010 CS 730: Text Mining for Social Media, F 2010 32

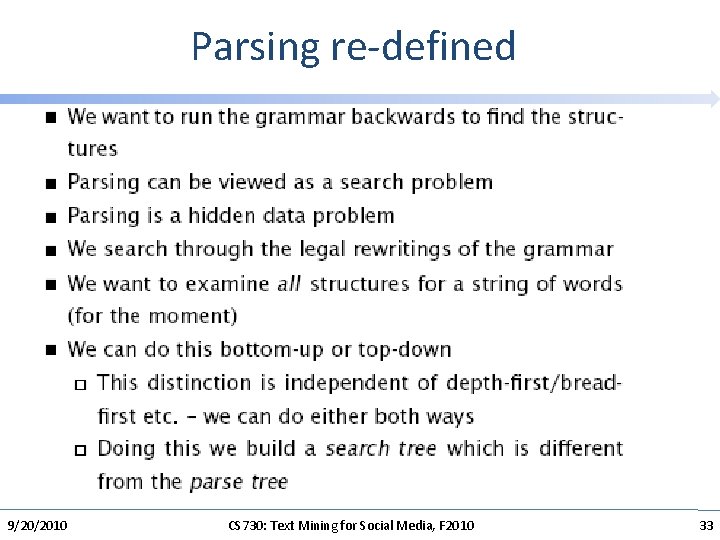

Parsing re-defined 9/20/2010 CS 730: Text Mining for Social Media, F 2010 33

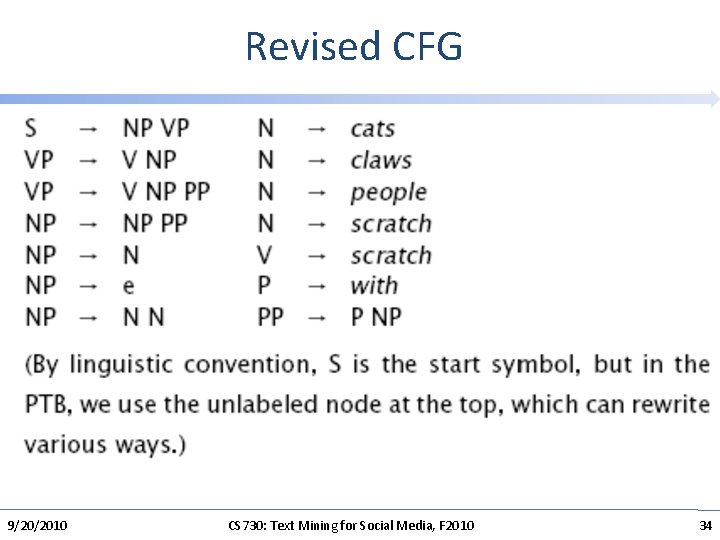

Revised CFG 9/20/2010 CS 730: Text Mining for Social Media, F 2010 34

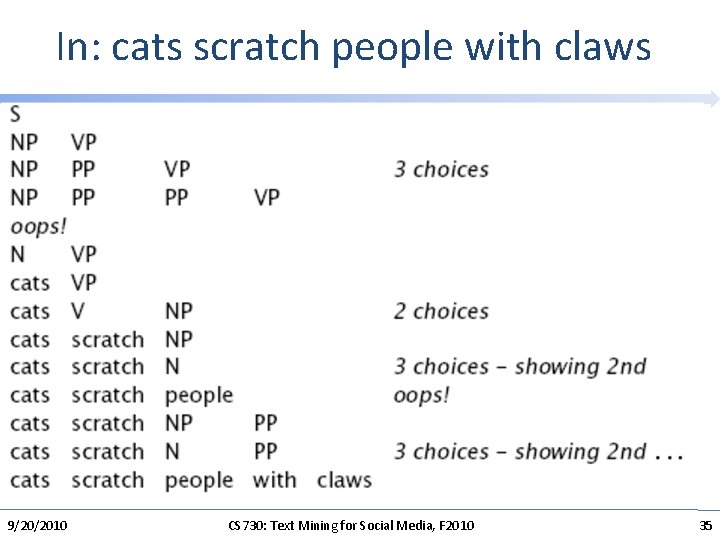

In: cats scratch people with claws 9/20/2010 CS 730: Text Mining for Social Media, F 2010 35

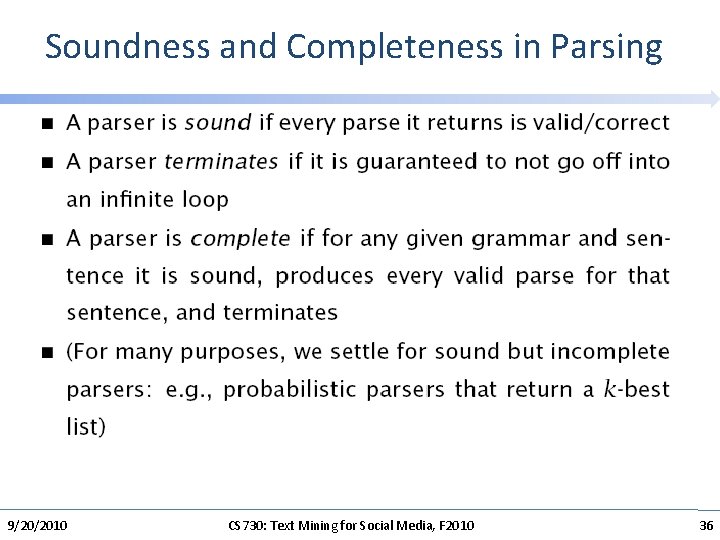

Soundness and Completeness in Parsing 9/20/2010 CS 730: Text Mining for Social Media, F 2010 36

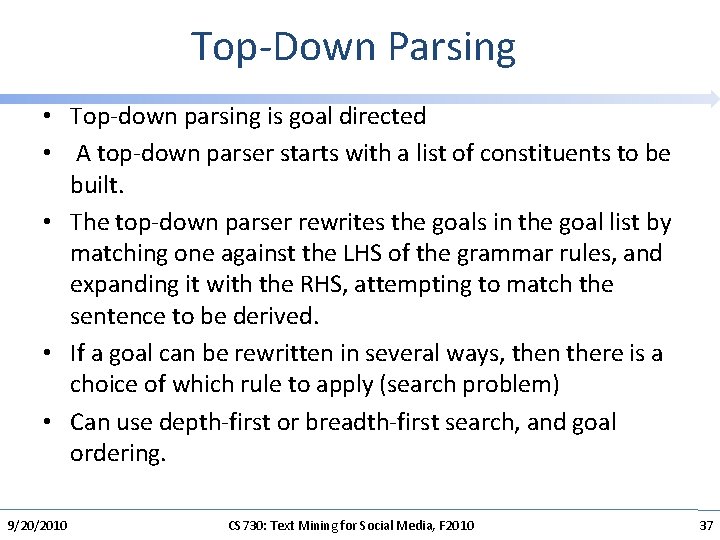

Top-Down Parsing • Top-down parsing is goal directed • A top-down parser starts with a list of constituents to be built. • The top-down parser rewrites the goals in the goal list by matching one against the LHS of the grammar rules, and expanding it with the RHS, attempting to match the sentence to be derived. • If a goal can be rewritten in several ways, then there is a choice of which rule to apply (search problem) • Can use depth-first or breadth-first search, and goal ordering. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 37

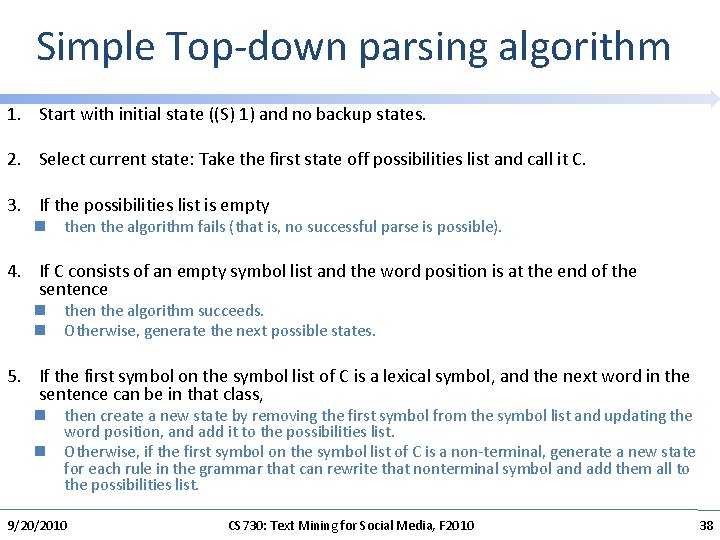

Simple Top-down parsing algorithm 1. Start with initial state ((S) 1) and no backup states. 2. Select current state: Take the first state off possibilities list and call it C. 3. If the possibilities list is empty n the algorithm fails (that is, no successful parse is possible). 4. If C consists of an empty symbol list and the word position is at the end of the sentence n the algorithm succeeds. n Otherwise, generate the next possible states. 5. If the first symbol on the symbol list of C is a lexical symbol, and the next word in the sentence can be in that class, n then create a new state by removing the first symbol from the symbol list and updating the word position, and add it to the possibilities list. n Otherwise, if the first symbol on the symbol list of C is a non-terminal, generate a new state for each rule in the grammar that can rewrite that nonterminal symbol and add them all to the possibilities list. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 38

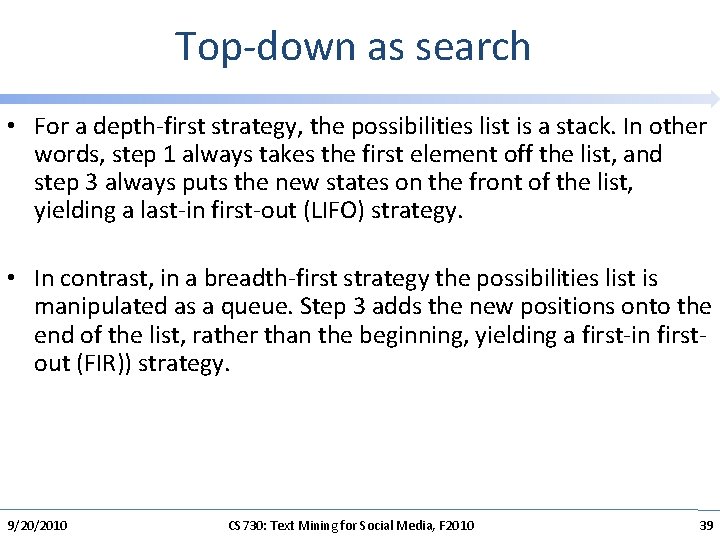

Top-down as search • For a depth-first strategy, the possibilities list is a stack. In other words, step 1 always takes the first element off the list, and step 3 always puts the new states on the front of the list, yielding a last-in first-out (LIFO) strategy. • In contrast, in a breadth-first strategy the possibilities list is manipulated as a queue. Step 3 adds the new positions onto the end of the list, rather than the beginning, yielding a first-in firstout (FIR)) strategy. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 39

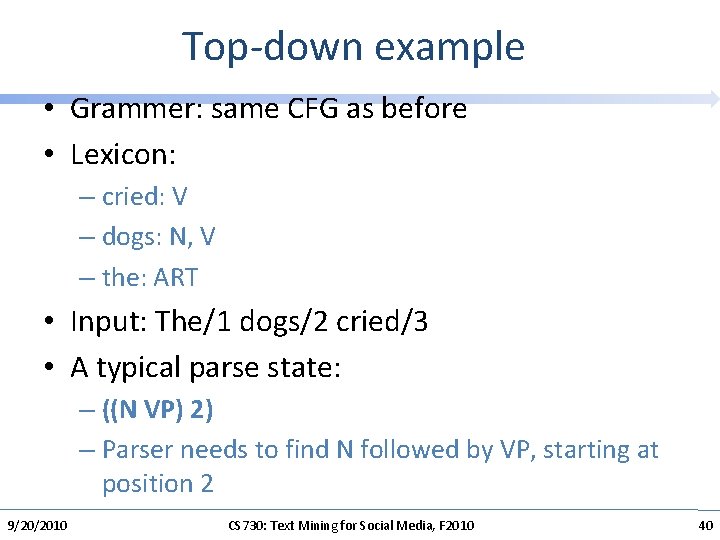

Top-down example • Grammer: same CFG as before • Lexicon: – cried: V – dogs: N, V – the: ART • Input: The/1 dogs/2 cried/3 • A typical parse state: – ((N VP) 2) – Parser needs to find N followed by VP, starting at position 2 9/20/2010 CS 730: Text Mining for Social Media, F 2010 40

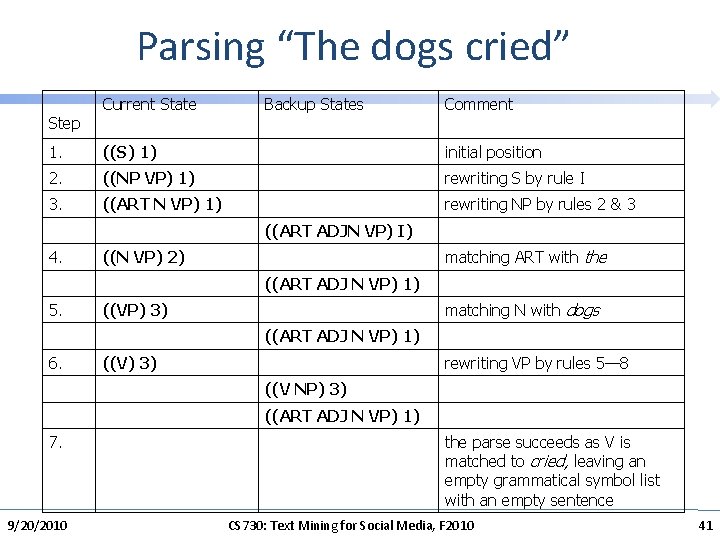

Parsing “The dogs cried” Current State Backup States Comment 1. ((S) 1) initial position 2. ((NP VP) 1) rewriting S by rule I 3. ((ART N VP) 1) rewriting NP by rules 2 & 3 ((ART ADJN VP) I) 4. ((N VP) 2) matching ART with the ((ART ADJ N VP) 1) 5. ((VP) 3) matching N with dogs ((ART ADJ N VP) 1) 6. ((V) 3) rewriting VP by rules 5— 8 ((V NP) 3) ((ART ADJ N VP) 1) 7. the parse succeeds as V is matched to cried, leaving an empty grammatical symbol list with an empty sentence Step 9/20/2010 CS 730: Text Mining for Social Media, F 2010 41

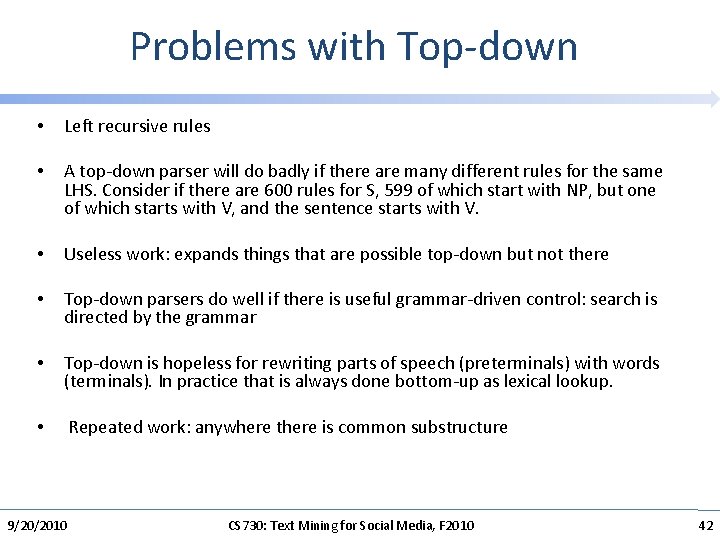

Problems with Top-down • Left recursive rules • A top-down parser will do badly if there are many different rules for the same LHS. Consider if there are 600 rules for S, 599 of which start with NP, but one of which starts with V, and the sentence starts with V. • Useless work: expands things that are possible top-down but not there • Top-down parsers do well if there is useful grammar-driven control: search is directed by the grammar • Top-down is hopeless for rewriting parts of speech (preterminals) with words (terminals). In practice that is always done bottom-up as lexical lookup. • Repeated work: anywhere there is common substructure 9/20/2010 CS 730: Text Mining for Social Media, F 2010 42

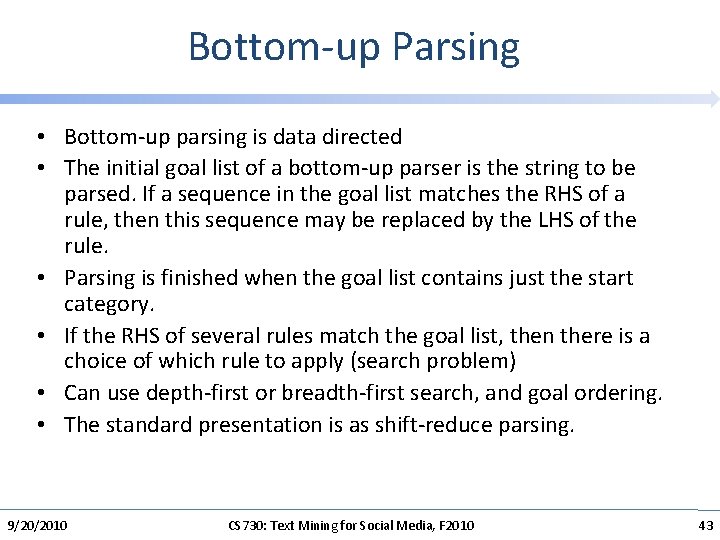

Bottom-up Parsing • Bottom-up parsing is data directed • The initial goal list of a bottom-up parser is the string to be parsed. If a sequence in the goal list matches the RHS of a rule, then this sequence may be replaced by the LHS of the rule. • Parsing is finished when the goal list contains just the start category. • If the RHS of several rules match the goal list, then there is a choice of which rule to apply (search problem) • Can use depth-first or breadth-first search, and goal ordering. • The standard presentation is as shift-reduce parsing. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 43

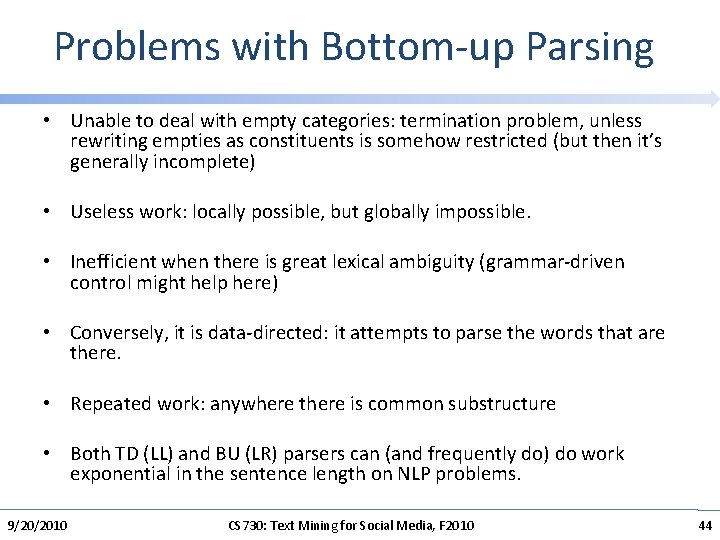

Problems with Bottom-up Parsing • Unable to deal with empty categories: termination problem, unless rewriting empties as constituents is somehow restricted (but then it’s generally incomplete) • Useless work: locally possible, but globally impossible. • Inefficient when there is great lexical ambiguity (grammar-driven control might help here) • Conversely, it is data-directed: it attempts to parse the words that are there. • Repeated work: anywhere there is common substructure • Both TD (LL) and BU (LR) parsers can (and frequently do) do work exponential in the sentence length on NLP problems. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 44

Dynamic Programming for Parsing • Systematically fill in tables of solutions to subproblems. • Store subtrees for each of the various constituents in the input as they are discovered • Cocke-Kasami-Younger (CKY) algorithm, Early’s algorithm, and chart parsing. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 45

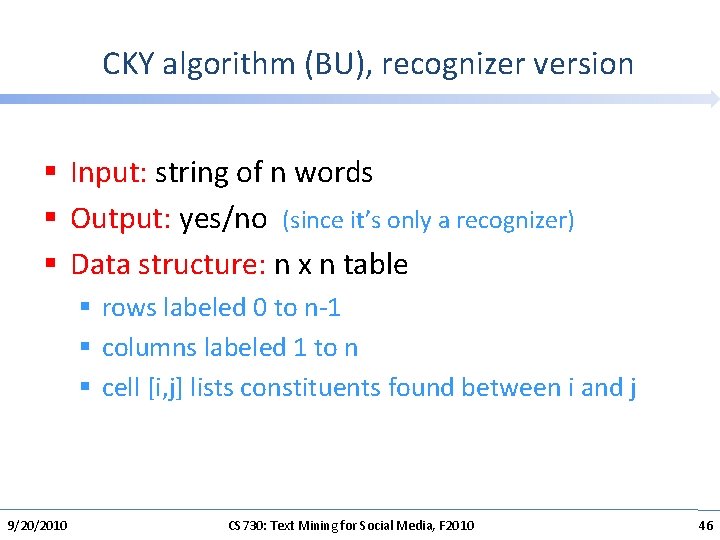

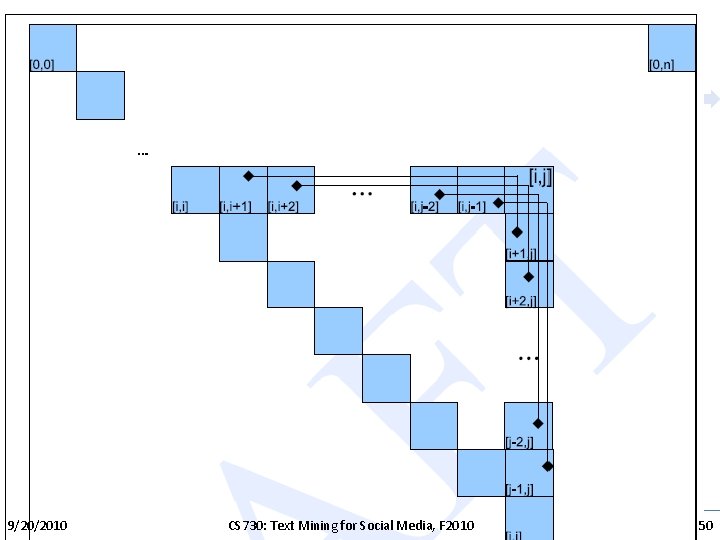

CKY algorithm (BU), recognizer version § Input: string of n words § Output: yes/no (since it’s only a recognizer) § Data structure: n x n table § rows labeled 0 to n-1 § columns labeled 1 to n § cell [i, j] lists constituents found between i and j 9/20/2010 CS 730: Text Mining for Social Media, F 2010 46

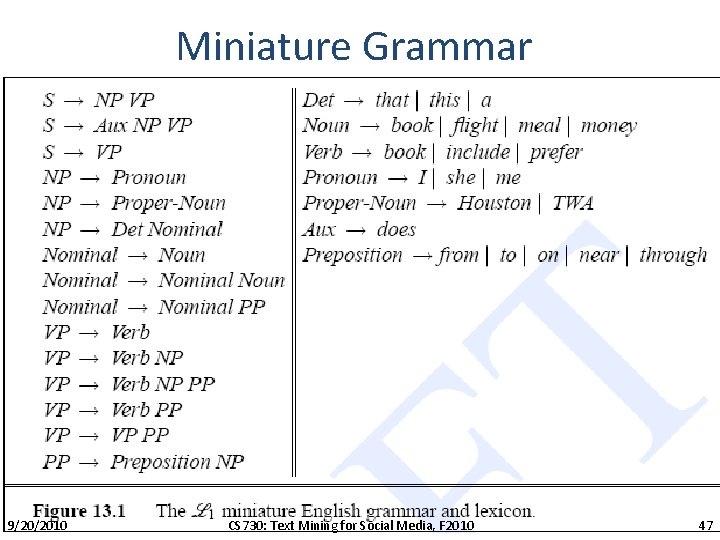

Miniature Grammar 9/20/2010 CS 730: Text Mining for Social Media, F 2010 47

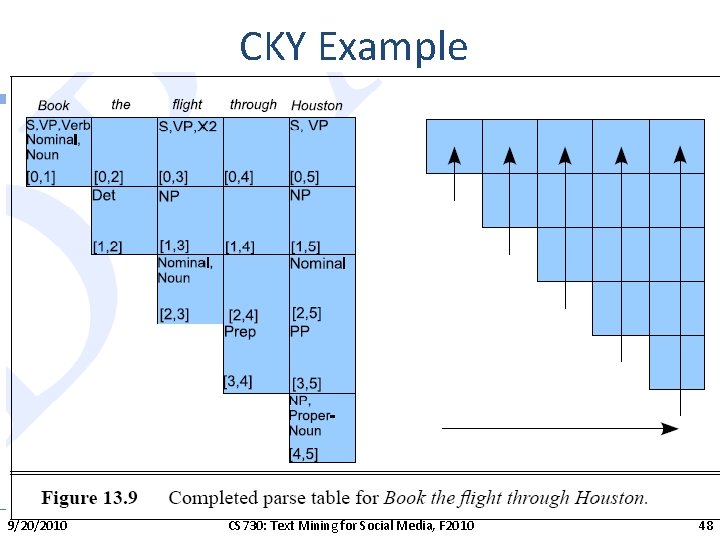

CKY Example 9/20/2010 CS 730: Text Mining for Social Media, F 2010 48

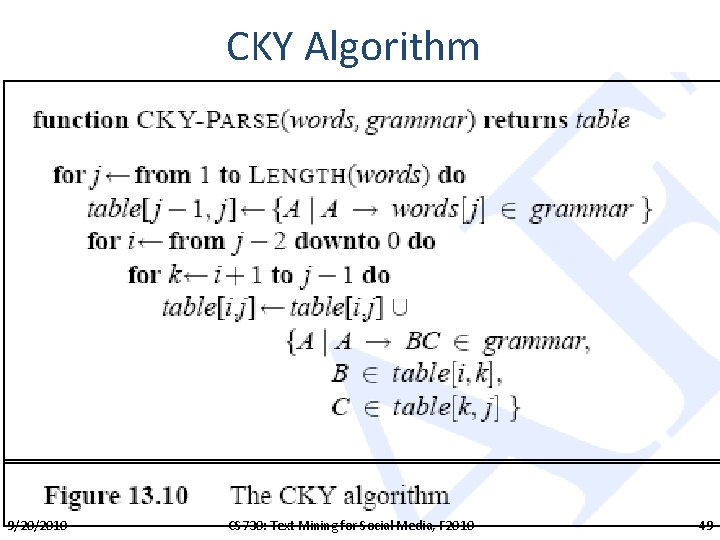

CKY Algorithm 9/20/2010 CS 730: Text Mining for Social Media, F 2010 49

9/20/2010 CS 730: Text Mining for Social Media, F 2010 50

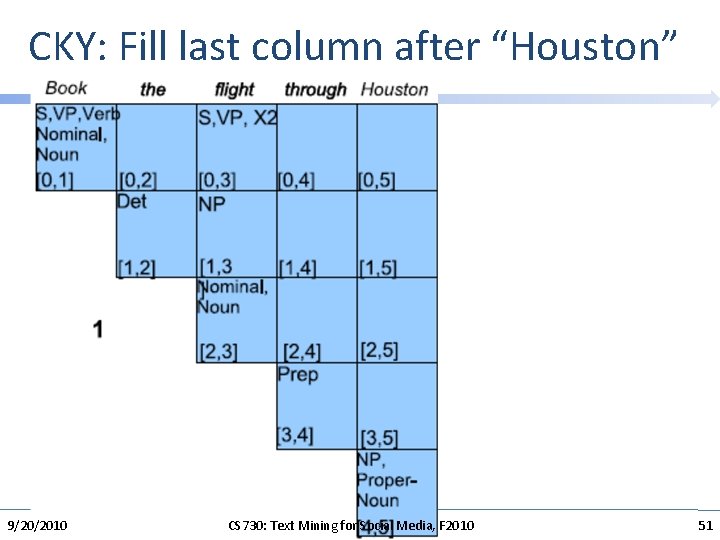

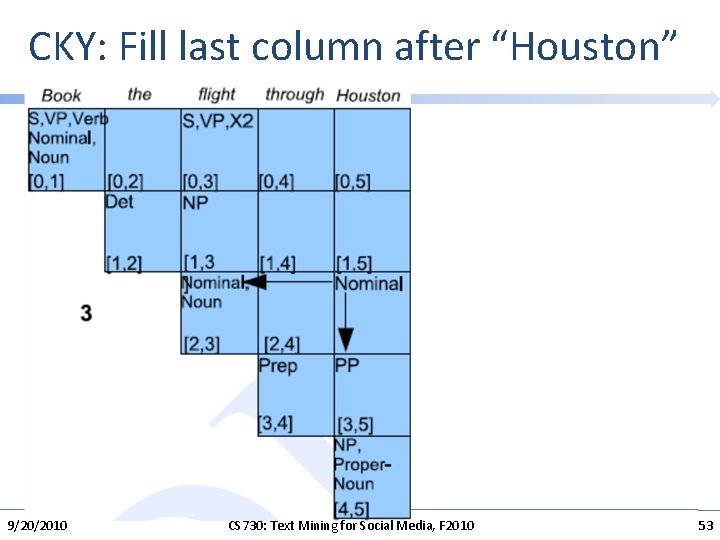

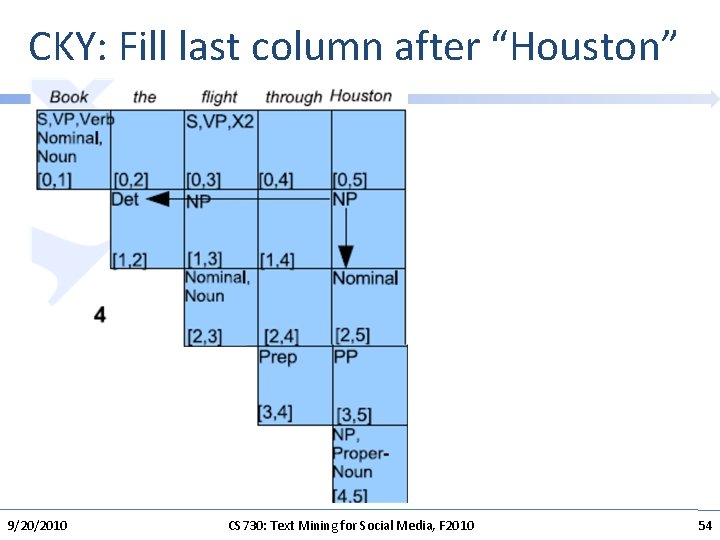

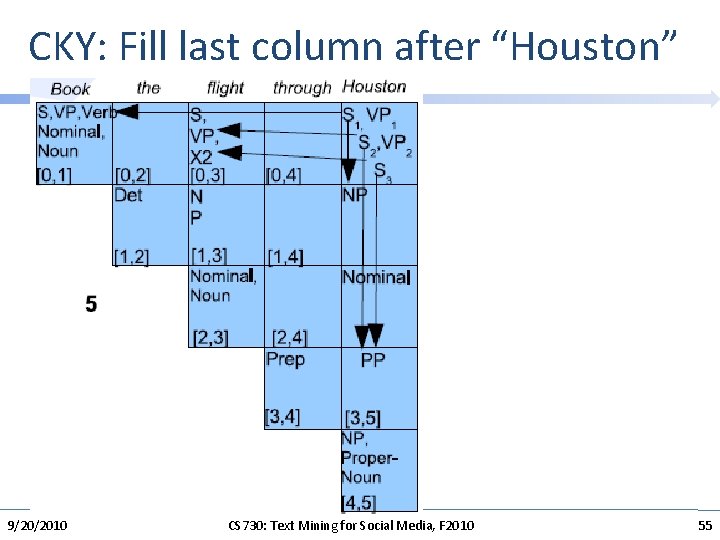

CKY: Fill last column after “Houston” 9/20/2010 CS 730: Text Mining for Social Media, F 2010 51

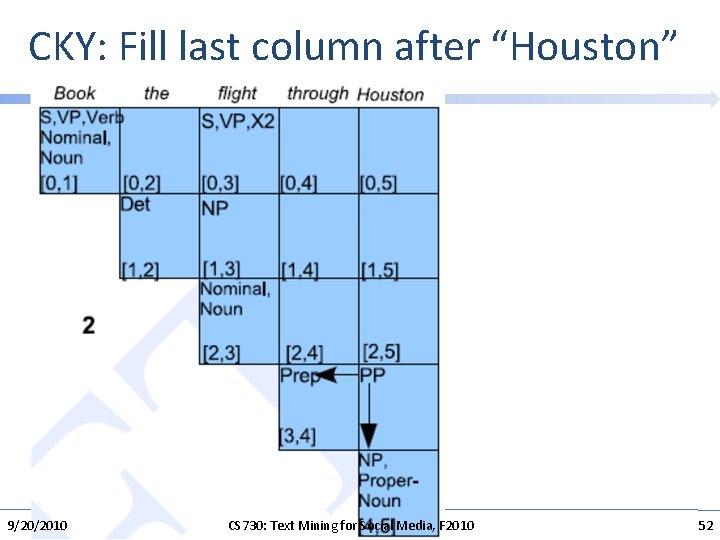

CKY: Fill last column after “Houston” 9/20/2010 CS 730: Text Mining for Social Media, F 2010 52

CKY: Fill last column after “Houston” 9/20/2010 CS 730: Text Mining for Social Media, F 2010 53

CKY: Fill last column after “Houston” 9/20/2010 CS 730: Text Mining for Social Media, F 2010 54

CKY: Fill last column after “Houston” 9/20/2010 CS 730: Text Mining for Social Media, F 2010 55

CKY Algorithm: Additional Information • More formal algorithm analysis/description: http: //www. cs. uiuc. edu/class/sp 09/cs 373/lectures/lect_15. pdf • Online demo: http: //homepages. uni-tuebingen. de/student/martin. lazarov/demos/cky. html 9/20/2010 CS 730: Text Mining for Social Media, F 2010 56

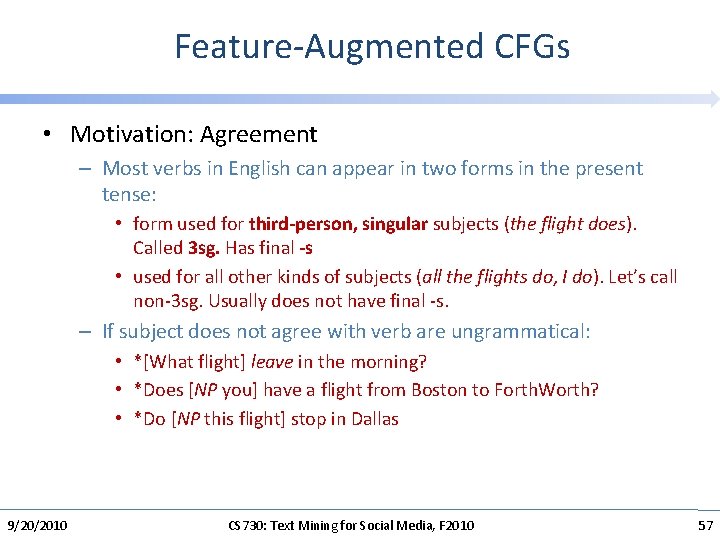

Feature-Augmented CFGs • Motivation: Agreement – Most verbs in English can appear in two forms in the present tense: • form used for third-person, singular subjects (the flight does). Called 3 sg. Has final -s • used for all other kinds of subjects (all the flights do, I do). Let’s call non-3 sg. Usually does not have final -s. – If subject does not agree with verb are ungrammatical: • *[What flight] leave in the morning? • *Does [NP you] have a flight from Boston to Forth. Worth? • *Do [NP this flight] stop in Dallas 9/20/2010 CS 730: Text Mining for Social Media, F 2010 57

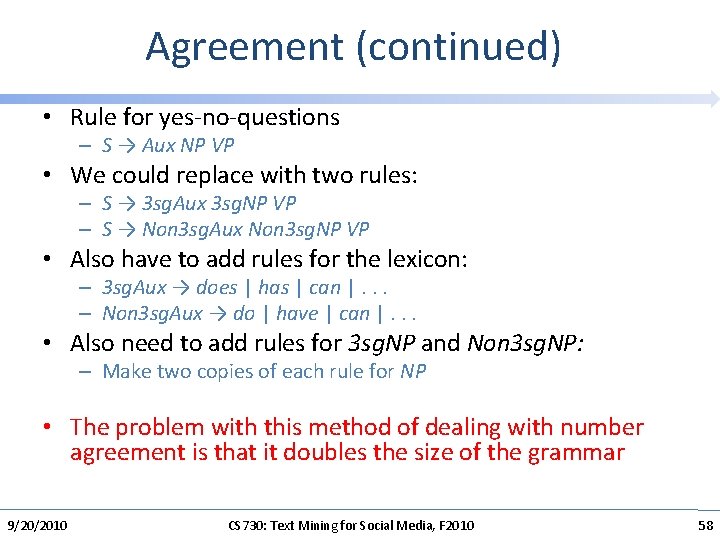

Agreement (continued) • Rule for yes-no-questions – S → Aux NP VP • We could replace with two rules: – S → 3 sg. Aux 3 sg. NP VP – S → Non 3 sg. Aux Non 3 sg. NP VP • Also have to add rules for the lexicon: – 3 sg. Aux → does | has | can |. . . – Non 3 sg. Aux → do | have | can |. . . • Also need to add rules for 3 sg. NP and Non 3 sg. NP: – Make two copies of each rule for NP • The problem with this method of dealing with number agreement is that it doubles the size of the grammar 9/20/2010 CS 730: Text Mining for Social Media, F 2010 58

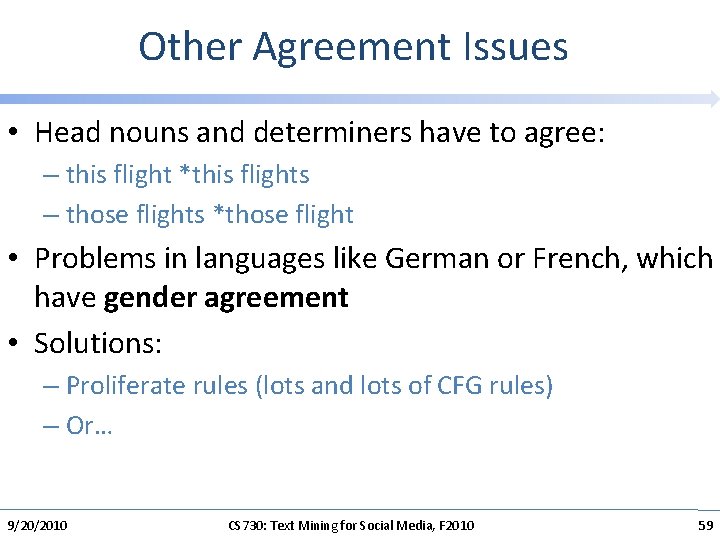

Other Agreement Issues • Head nouns and determiners have to agree: – this flight *this flights – those flights *those flight • Problems in languages like German or French, which have gender agreement • Solutions: – Proliferate rules (lots and lots of CFG rules) – Or… 9/20/2010 CS 730: Text Mining for Social Media, F 2010 59

![Feature-Augmented CFGs • Number agreement features: [NUMBER SG] • Adding an additional feature-value pair Feature-Augmented CFGs • Number agreement features: [NUMBER SG] • Adding an additional feature-value pair](http://slidetodoc.com/presentation_image_h/28196531e2efc176ef1aca4a8884d79a/image-60.jpg)

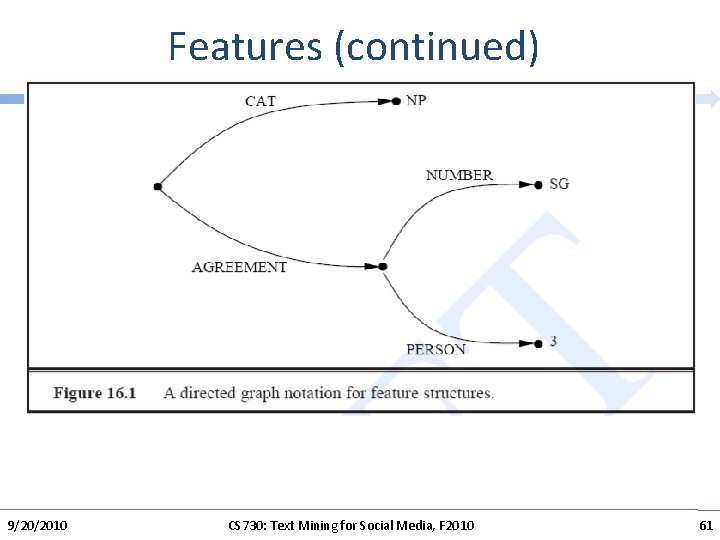

Feature-Augmented CFGs • Number agreement features: [NUMBER SG] • Adding an additional feature-value pair to capture person [ NUMBER SG PERSON 3 # ] • Encode grammatical category of the constituent [ CAT NP NUMBER SG PERSON 3 ] • Represent 3 sg. NP category of noun phrases. • Corresponding plural version of this structure: [ CAT NP NUMBER PL PERSON 3 ] 9/20/2010 CS 730: Text Mining for Social Media, F 2010 60

Features (continued) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 61

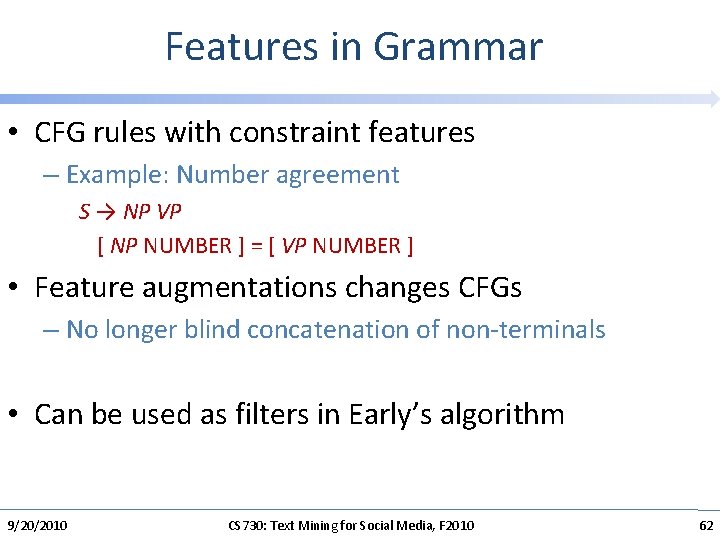

Features in Grammar • CFG rules with constraint features – Example: Number agreement S → NP VP [ NP NUMBER ] = [ VP NUMBER ] • Feature augmentations changes CFGs – No longer blind concatenation of non-terminals • Can be used as filters in Early’s algorithm 9/20/2010 CS 730: Text Mining for Social Media, F 2010 62

Feature Augmentation Key Ideas • The elements of context-free grammar rules have feature-based constraints associated with them. Shift from atomic grammatical categories to more complex categories with properties. • The constraints associated with individual rules can refer to, and manipulate, the feature structures associated with the parts of the rule to which they are attached. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 63

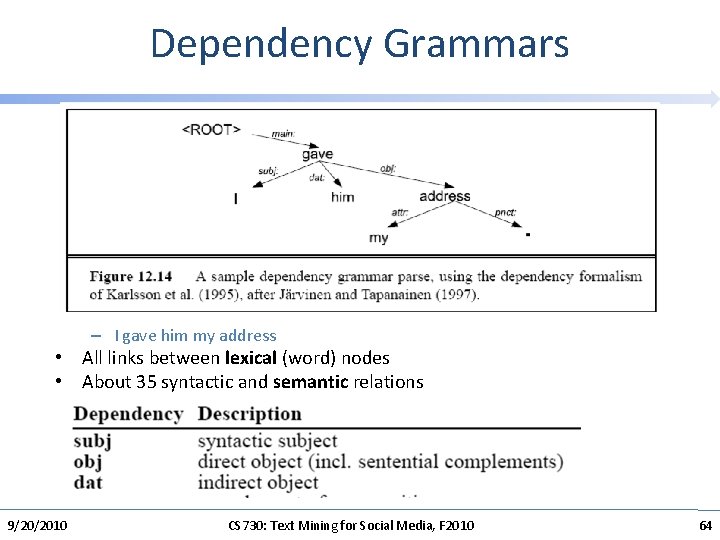

Dependency Grammars – I gave him my address • All links between lexical (word) nodes • About 35 syntactic and semantic relations 9/20/2010 CS 730: Text Mining for Social Media, F 2010 64

Dependency Grammars (continued) • Advantages: – Free word order • Implementations: – Link parser (Sleator). Freely available 9/20/2010 CS 730: Text Mining for Social Media, F 2010 65

LEARNING TO PARSE 9/20/2010 CS 730: Text Mining for Social Media, F 2010 66

Parsing in the early 1990 s • The parsers produced detailed, linguistically rich representations • Parsers had uneven and usually rather poor coverage – E. g. , 30% of sentences received no analysis • Even quite simple sentences had many possible analyses – Parsers either had no method to choose between them or a very ad hoc treatment of parse preferences • Parsers could not be learned from data • Parser performance usually wasn’t or couldn’t be assessed quantitatively and the performance of different parsers was often incommensurable 9/20/2010 CS 730: Text Mining for Social Media, F 2010 67

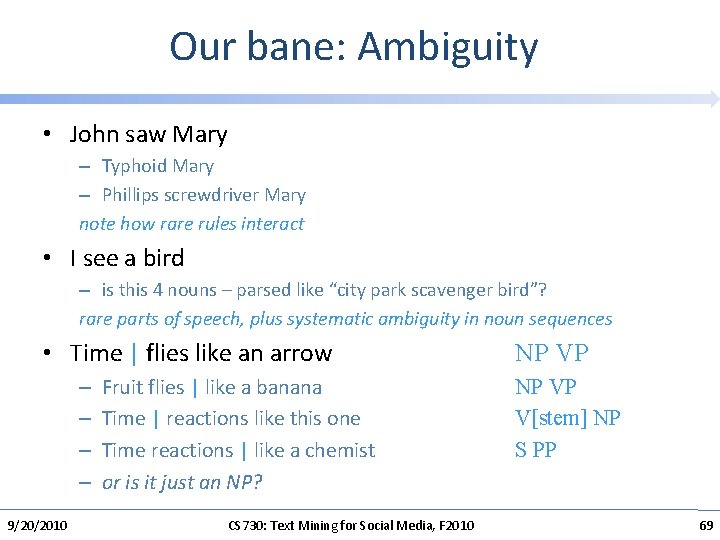

Ambiguity • John saw Mary – Typhoid Mary – Phillips screwdriver Mary note how rare rules interact • I see a bird The official seat, center of authority, jurisdiction, or office of a bishop – is this 4 nouns – parsed like “city park scavenger bird”? rare parts of speech, plus systematic ambiguity in noun sequences • Time flies like an arrow – – 9/20/2010 Fruit flies like a banana Time reactions like this one Time reactions like a chemist or is it just an NP? CS 730: Text Mining for Social Media, F 2010 68

Our bane: Ambiguity • John saw Mary – Typhoid Mary – Phillips screwdriver Mary note how rare rules interact • I see a bird – is this 4 nouns – parsed like “city park scavenger bird”? rare parts of speech, plus systematic ambiguity in noun sequences • Time | flies like an arrow – – 9/20/2010 Fruit flies | like a banana Time | reactions like this one Time reactions | like a chemist or is it just an NP? CS 730: Text Mining for Social Media, F 2010 NP VP V[stem] NP S PP 69

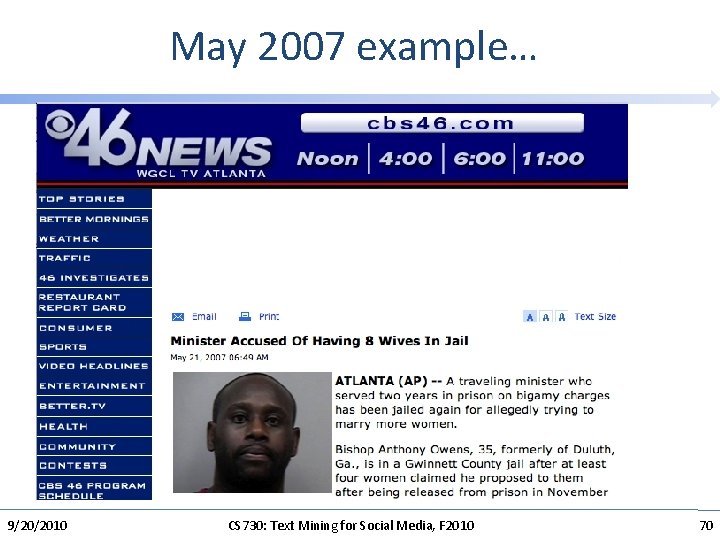

May 2007 example… 9/20/2010 CS 730: Text Mining for Social Media, F 2010 70

How to solve this combinatorial explosion of ambiguity? 1. First try parsing without any weird rules, throwing them in only if needed. 2. Better: every rule has a weight. A tree’s weight is total weight of all its rules. Pick the overall lightest parse of sentence. 3. Can we pick the weights automatically Yes: Statistical Parsing 9/20/2010 CS 730: Text Mining for Social Media, F 2010 71

Statistical parsing • Over the last 12 years statistical parsing has succeeded wonderfully! • NLP researchers have produced a range of (often free, open source) statistical parsers, which can parse any sentence and often get most of it correct • These parsers are now a commodity component • The parsers are still improving. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 72

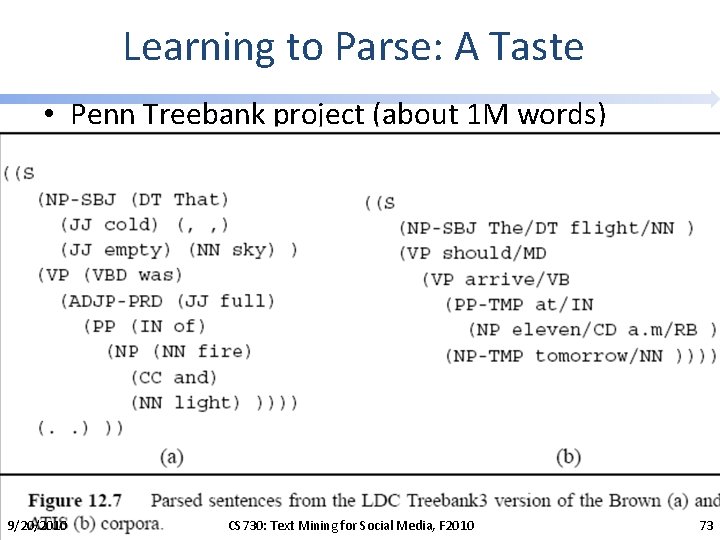

Learning to Parse: A Taste • Penn Treebank project (about 1 M words) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 73

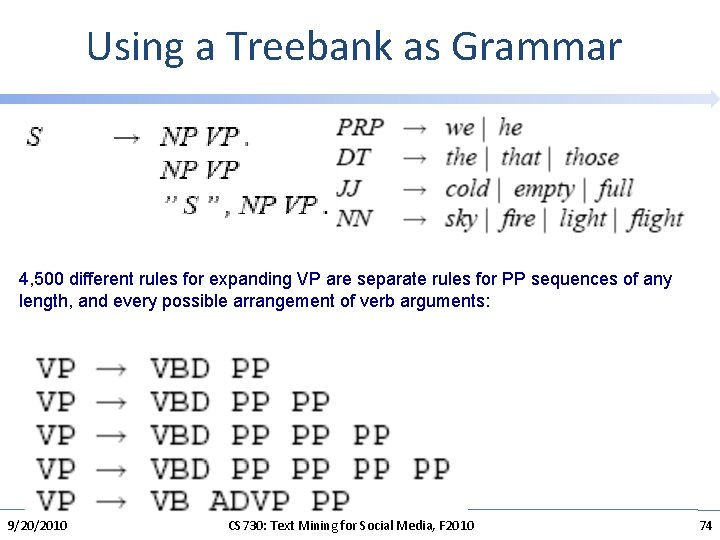

Using a Treebank as Grammar 4, 500 different rules for expanding VP are separate rules for PP sequences of any length, and every possible arrangement of verb arguments: 9/20/2010 CS 730: Text Mining for Social Media, F 2010 74

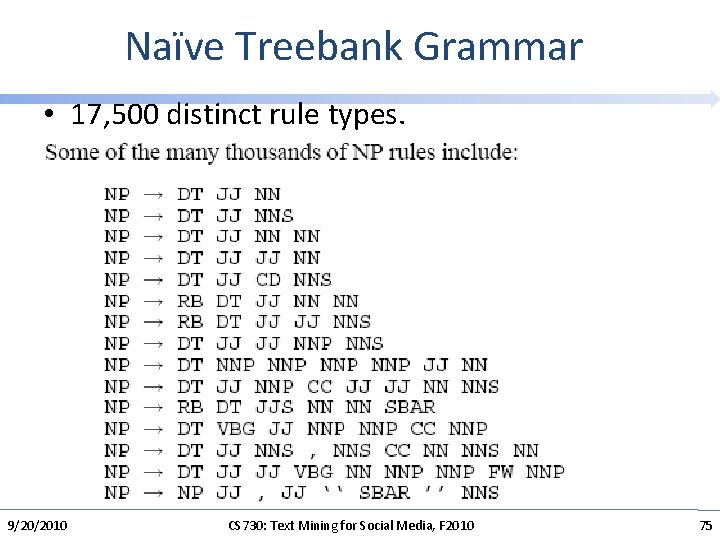

Naïve Treebank Grammar • 17, 500 distinct rule types. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 75

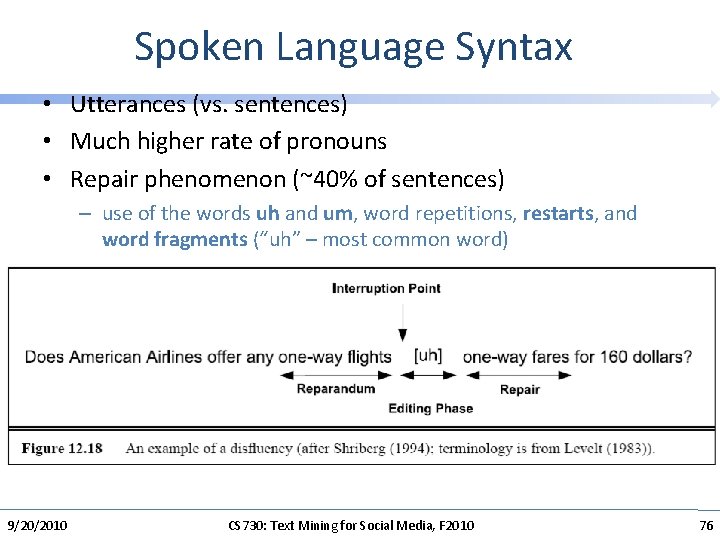

Spoken Language Syntax • Utterances (vs. sentences) • Much higher rate of pronouns • Repair phenomenon (~40% of sentences) – use of the words uh and um, word repetitions, restarts, and word fragments (“uh” – most common word) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 76

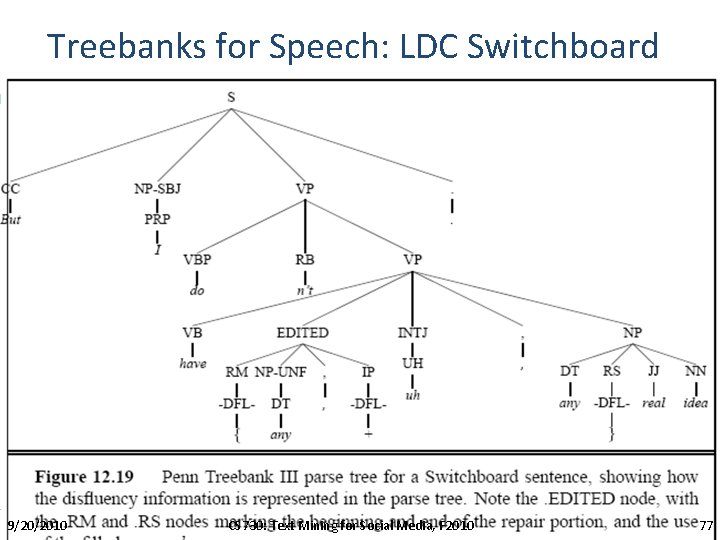

Treebanks for Speech: LDC Switchboard 9/20/2010 CS 730: Text Mining for Social Media, F 2010 77

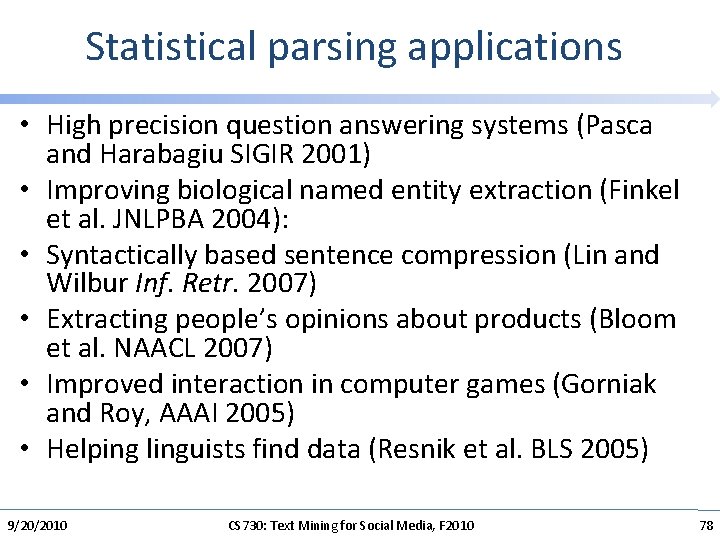

Statistical parsing applications • High precision question answering systems (Pasca and Harabagiu SIGIR 2001) • Improving biological named entity extraction (Finkel et al. JNLPBA 2004): • Syntactically based sentence compression (Lin and Wilbur Inf. Retr. 2007) • Extracting people’s opinions about products (Bloom et al. NAACL 2007) • Improved interaction in computer games (Gorniak and Roy, AAAI 2005) • Helping linguists find data (Resnik et al. BLS 2005) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 78

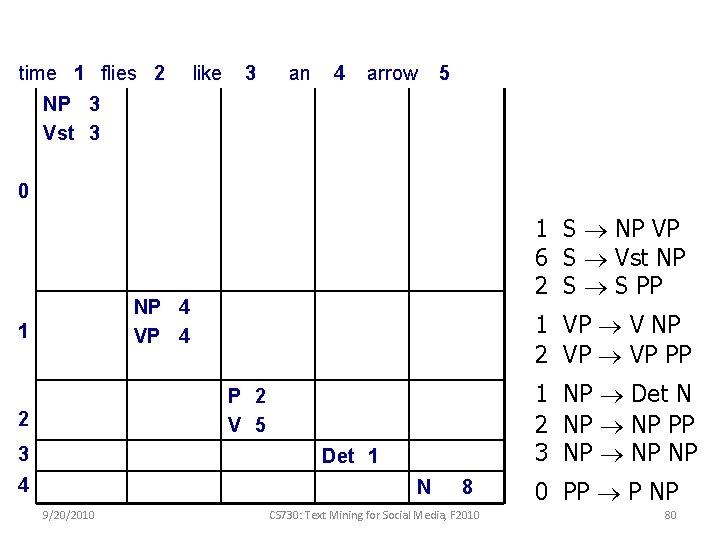

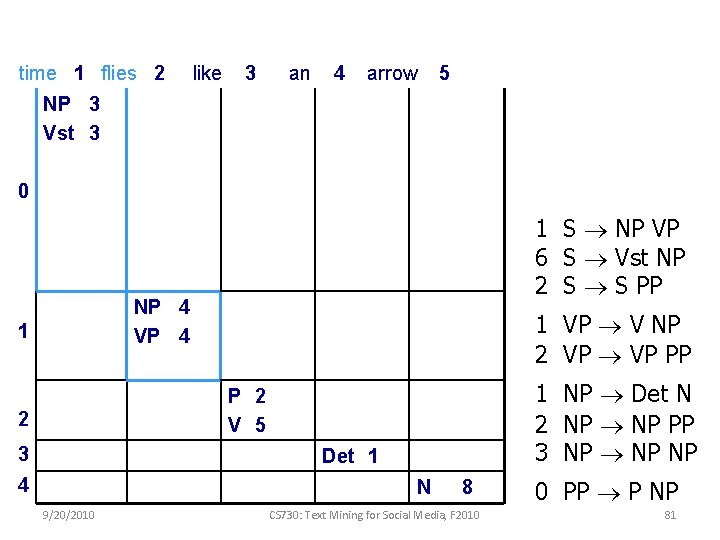

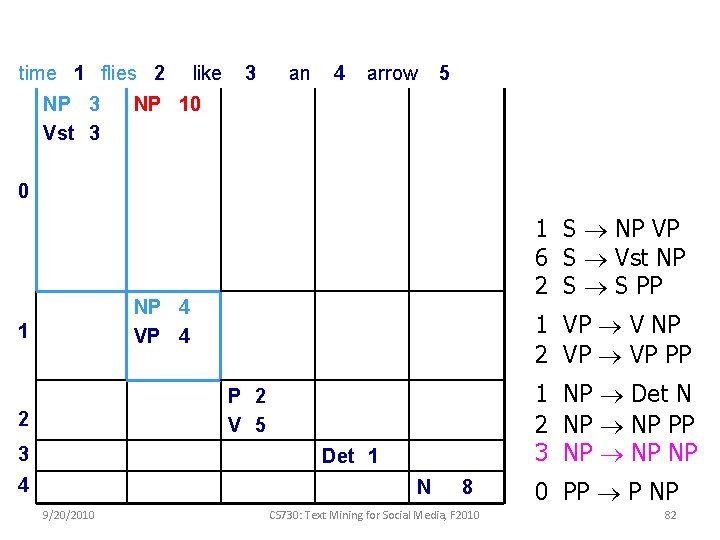

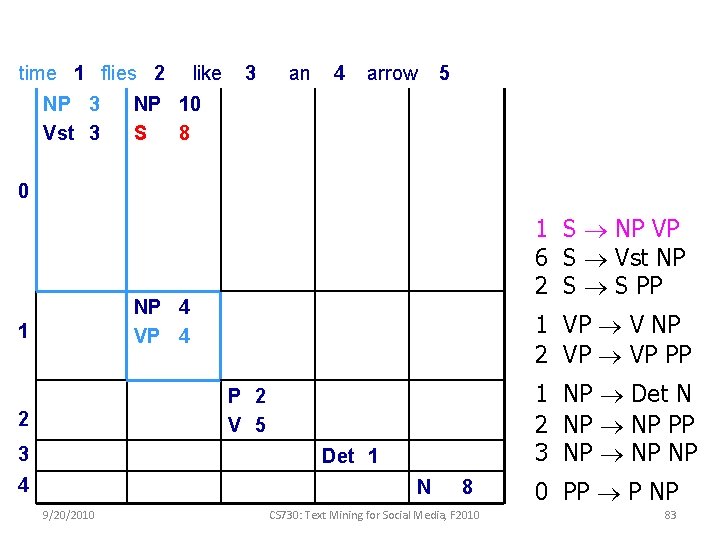

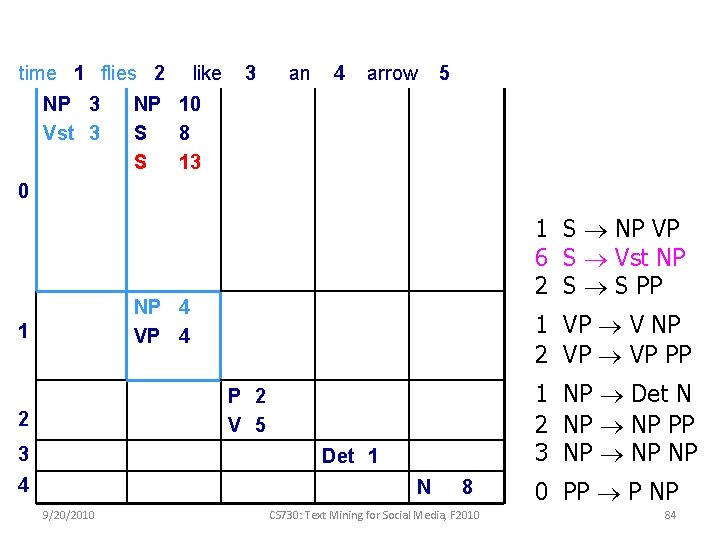

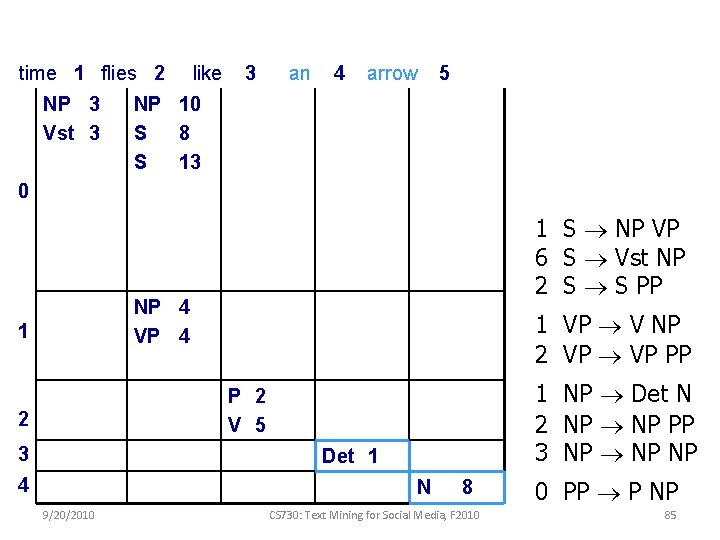

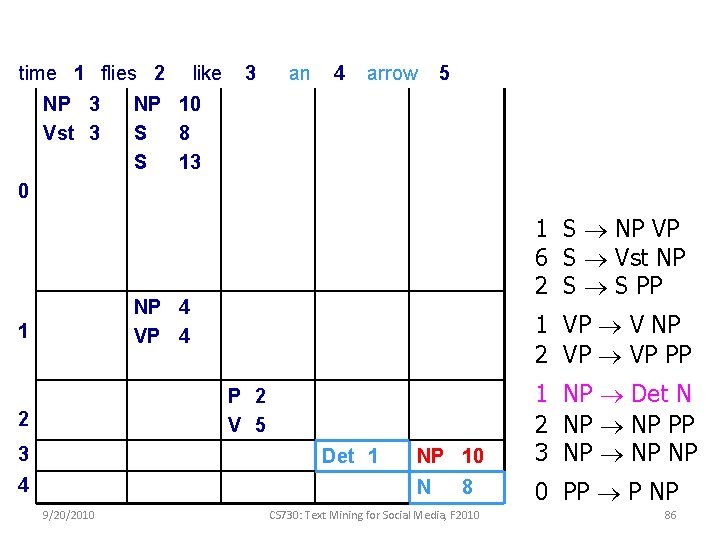

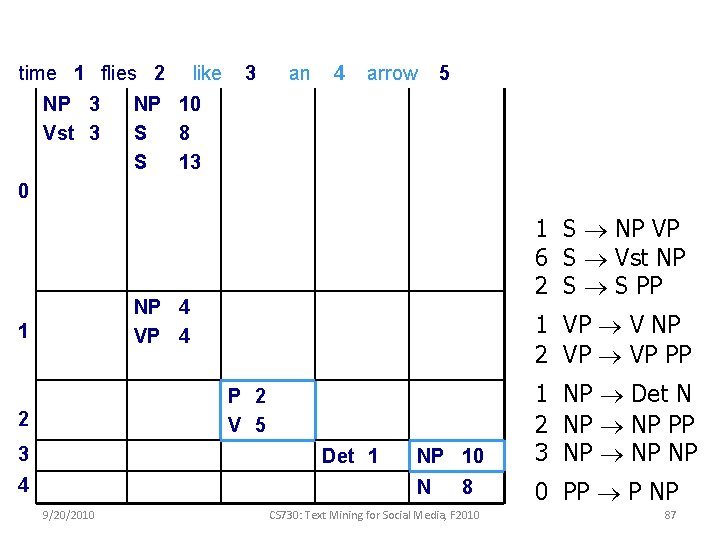

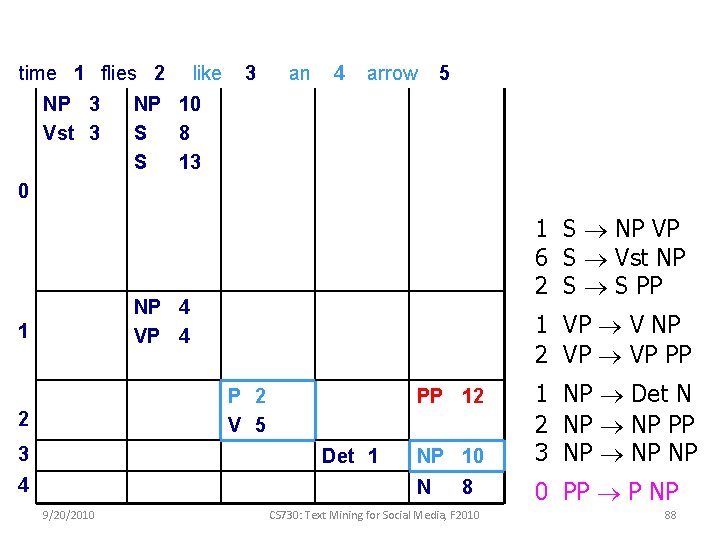

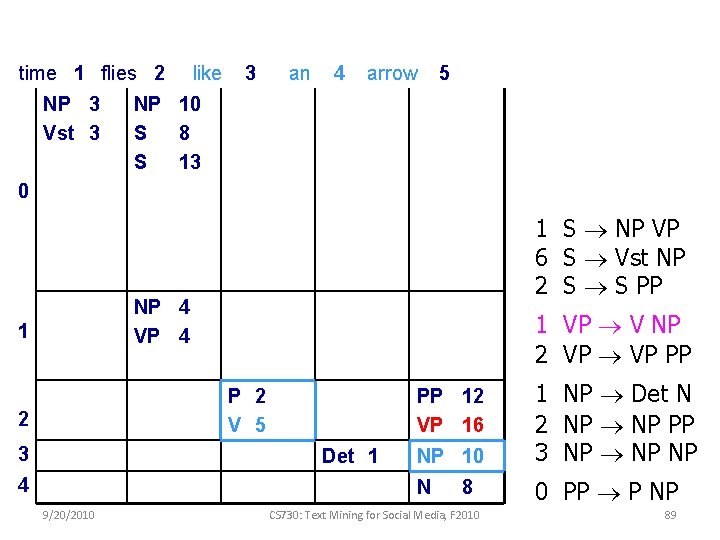

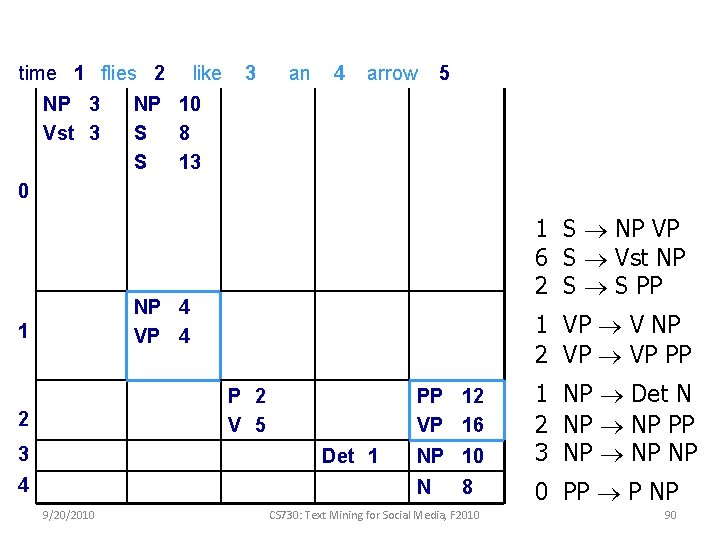

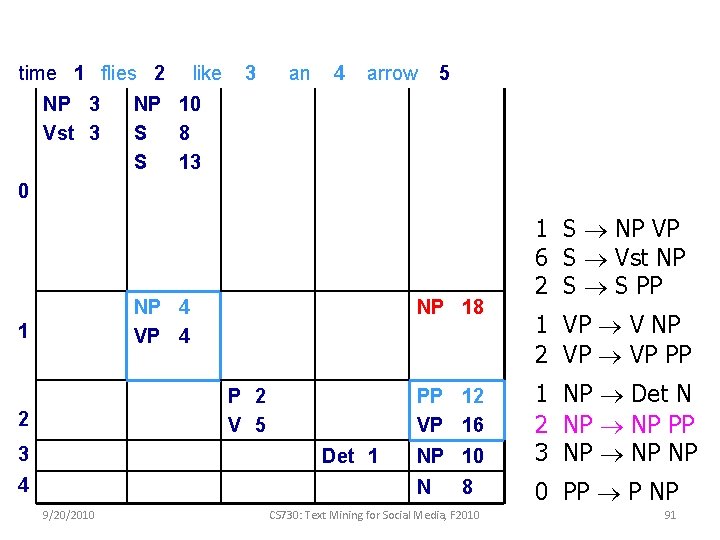

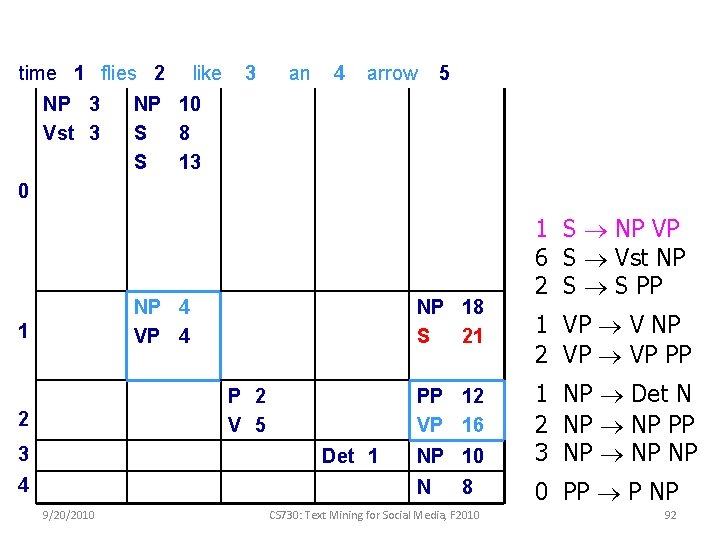

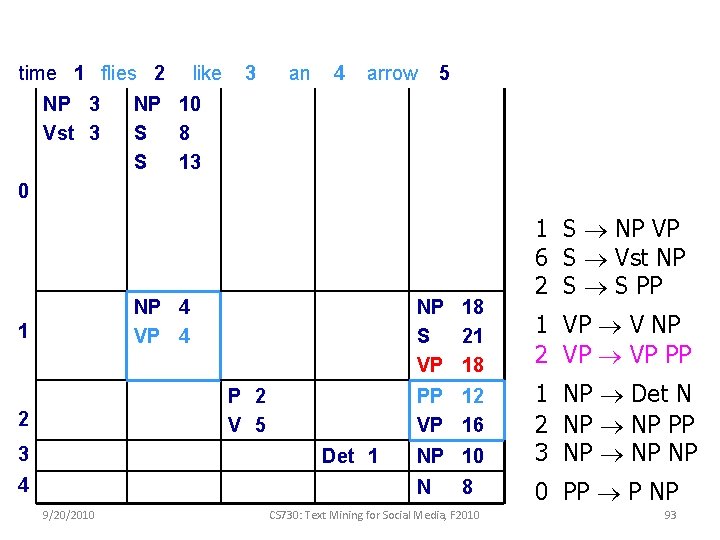

Probabilistic CKY 9/20/2010 CS 730: Text Mining for Social Media, F 2010 79

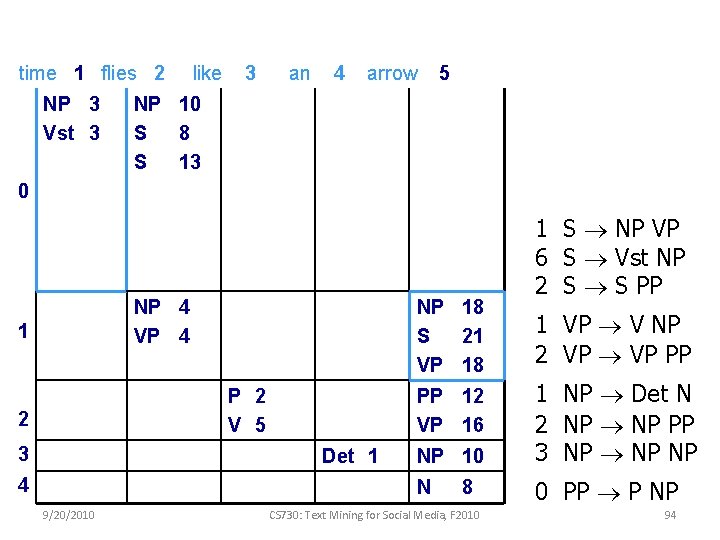

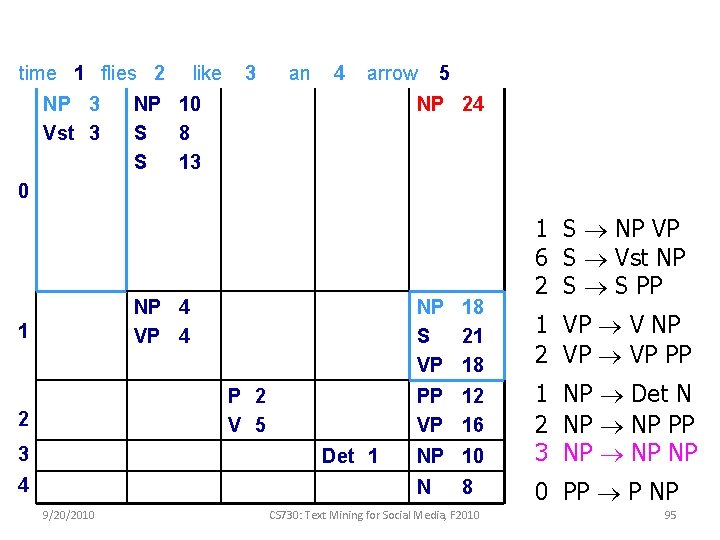

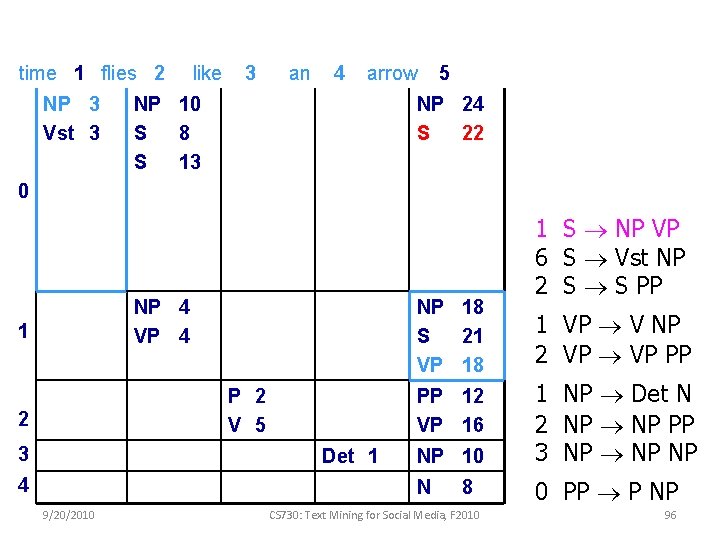

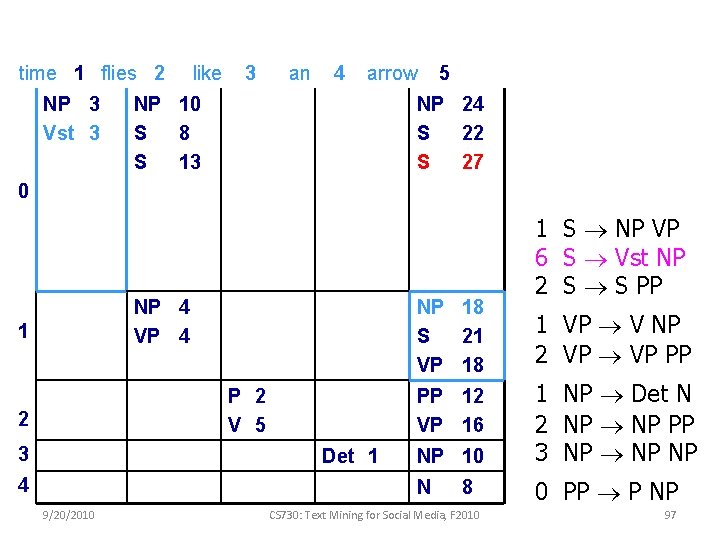

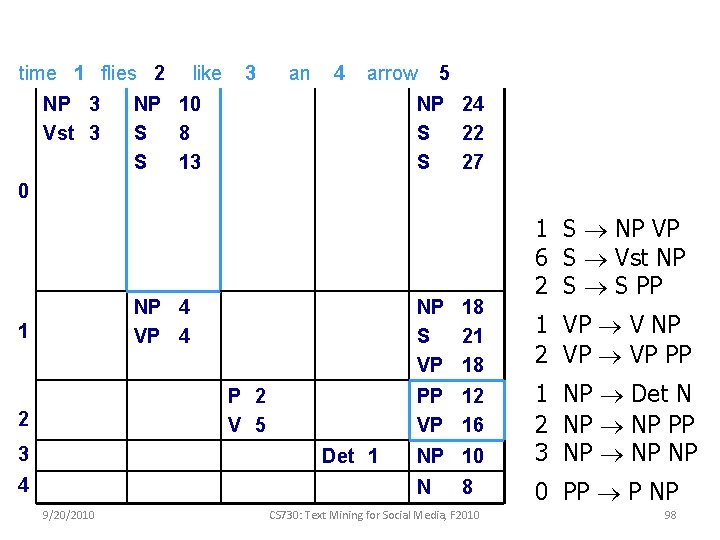

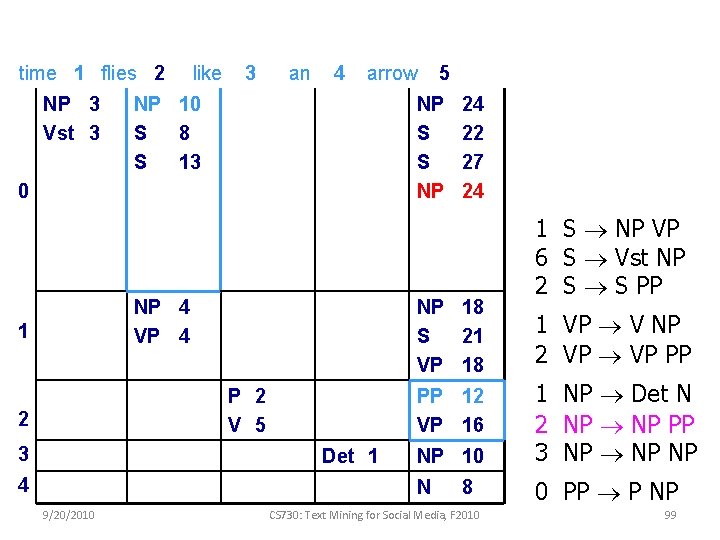

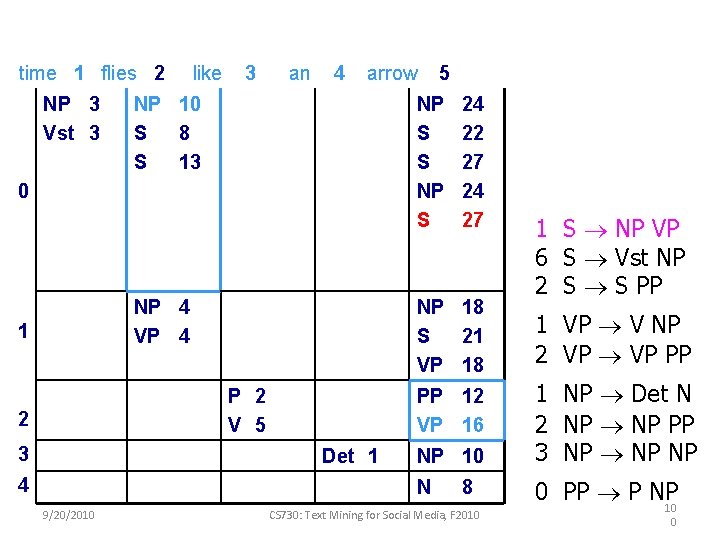

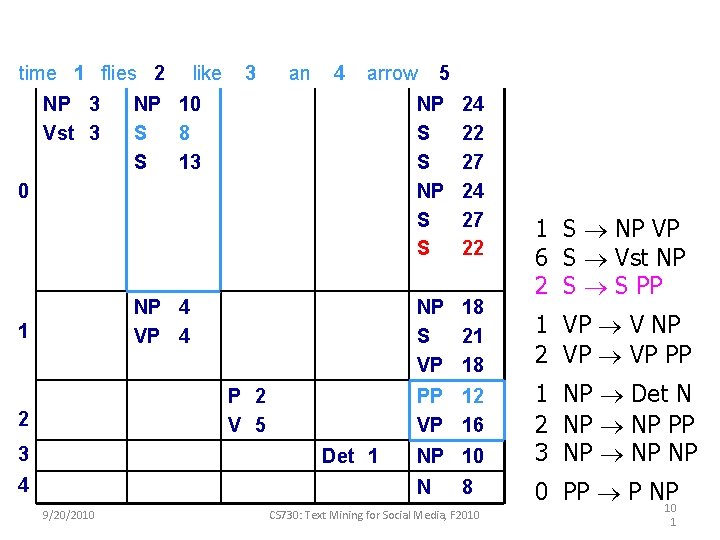

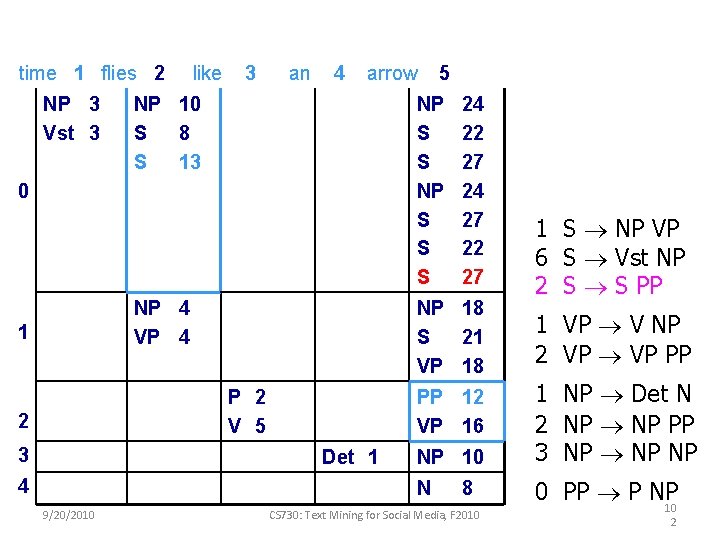

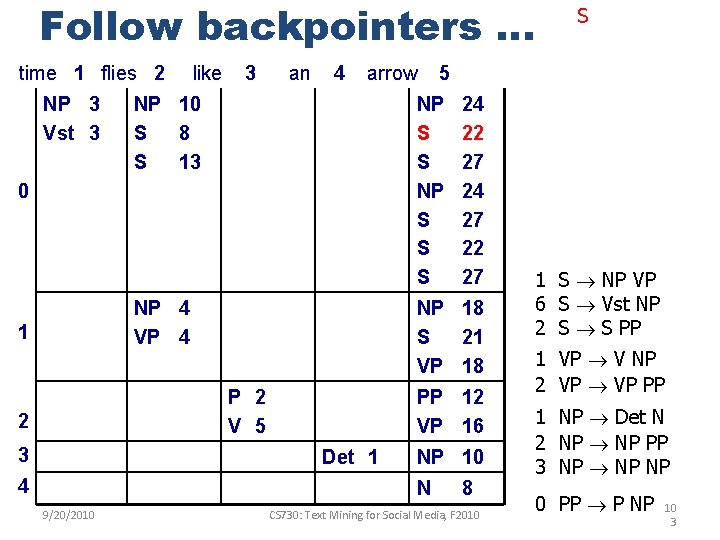

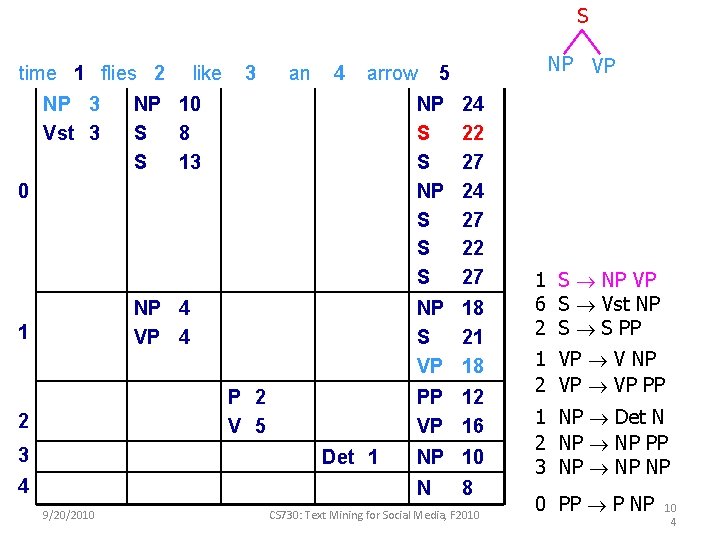

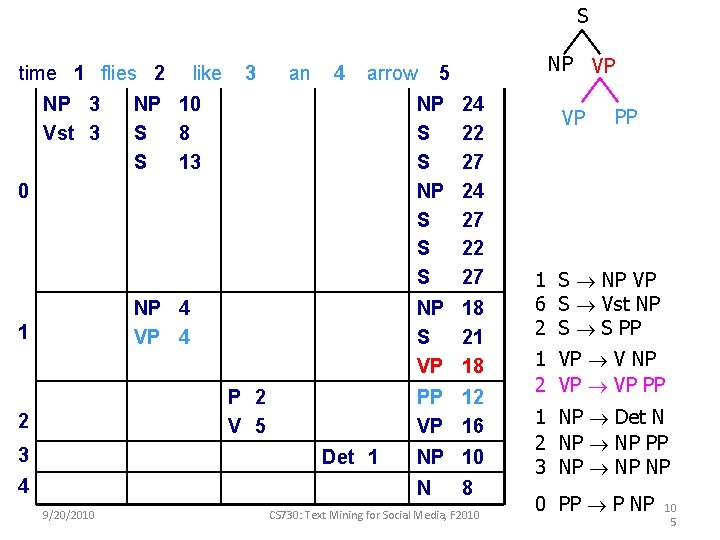

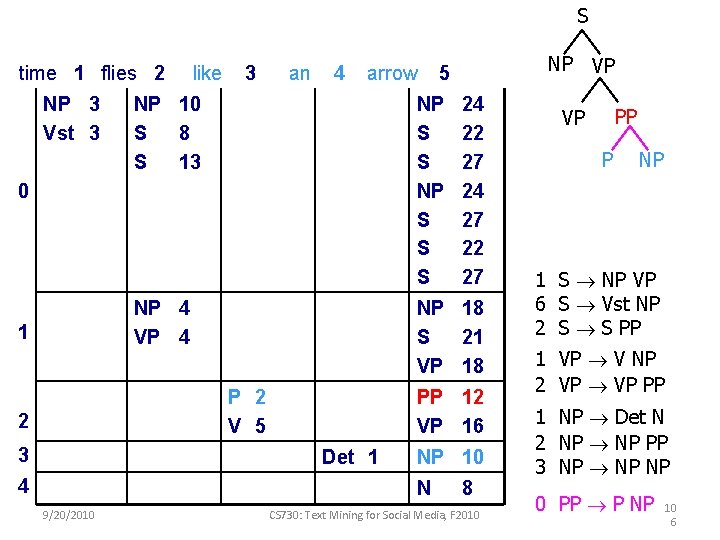

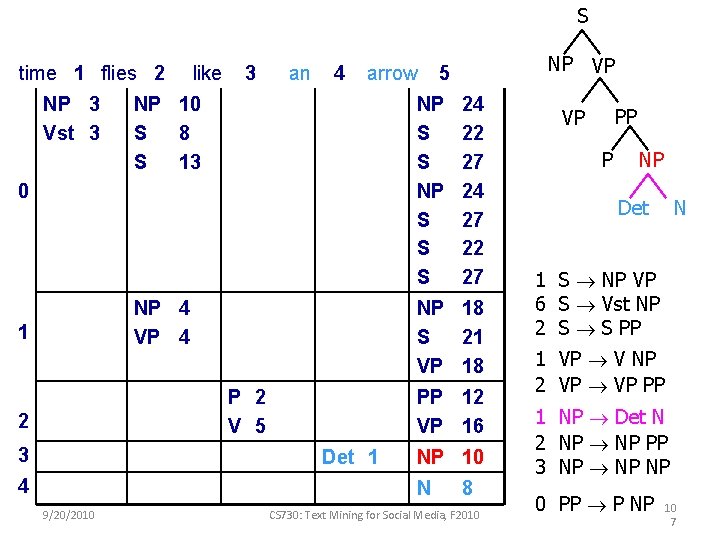

time 1 flies 2 like 3 an 4 arrow 5 NP 3 Vst 3 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP P 2 V 5 2 3 Det 1 4 N 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 0 PP P NP 80

time 1 flies 2 like 3 an 4 arrow 5 NP 3 Vst 3 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP P 2 V 5 2 3 Det 1 4 N 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 0 PP P NP 81

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP P 2 V 5 2 3 Det 1 4 N 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 0 PP P NP 82

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP P 2 V 5 2 3 Det 1 4 N 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 0 PP P NP 83

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP P 2 V 5 2 3 Det 1 4 N 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 0 PP P NP 84

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP P 2 V 5 2 3 Det 1 4 N 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 0 PP P NP 85

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP P 2 V 5 2 3 Det 1 4 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 86

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP P 2 V 5 2 3 Det 1 4 9/20/2010 8 CS 730: Text Mining for Social Media, F 2010 87

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP P 2 V 5 2 3 Det 1 4 9/20/2010 NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 8 CS 730: Text Mining for Social Media, F 2010 88

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP P 2 V 5 2 3 Det 1 4 9/20/2010 NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 8 CS 730: Text Mining for Social Media, F 2010 89

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 1 S NP VP 6 S Vst NP 2 S S PP NP 4 VP 4 1 1 VP V NP 2 VP PP P 2 V 5 2 3 Det 1 4 9/20/2010 NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 8 CS 730: Text Mining for Social Media, F 2010 90

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 NP 4 VP 4 1 NP 18 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 91

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 NP 4 VP 4 1 NP 18 S 21 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 92

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 NP 4 VP 4 1 NP 18 S 21 VP 18 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 93

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow 5 NP 10 S 8 S 13 0 NP 4 VP 4 1 NP 18 S 21 VP 18 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 94

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 5 NP 24 0 NP 4 VP 4 1 NP 18 S 21 VP 18 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 95

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 5 NP 24 S 22 0 NP 4 VP 4 1 NP 18 S 21 VP 18 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 96

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 5 NP 24 S 22 S 27 0 NP 4 VP 4 1 NP 18 S 21 VP 18 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 97

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 5 NP 24 S 22 S 27 0 NP 4 VP 4 1 NP 18 S 21 VP 18 P 2 V 5 2 3 4 9/20/2010 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 1 S NP VP 6 S Vst NP 2 S S PP 8 CS 730: Text Mining for Social Media, F 2010 98

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S NP 0 NP 4 VP 4 1 3 9/20/2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 4 24 22 27 24 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 99

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S NP S 0 NP 4 VP 4 1 3 9/20/2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 4 24 22 27 24 27 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 10 0

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S 0 NP 4 VP 4 1 3 9/20/2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 4 24 22 27 24 27 22 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 10 1

time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 9/20/2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP NP 10 1 NP Det N 2 NP PP 3 NP NP N 0 PP P NP PP 12 VP 16 Det 1 4 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 10 2

Follow backpointers … time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 5 NP S S S 0 S 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 10 3

S time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 NP VP 5 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 10 4

S time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 NP VP 5 8 CS 730: Text Mining for Social Media, F 2010 VP PP 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 10 5

S time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 NP VP 5 8 CS 730: Text Mining for Social Media, F 2010 PP VP P NP 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 10 6

S time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 NP VP 5 8 CS 730: Text Mining for Social Media, F 2010 PP VP P NP Det N 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 10 7

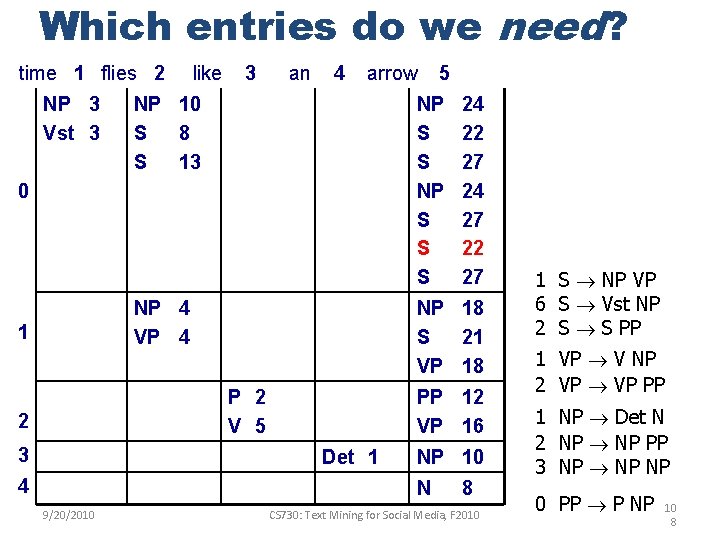

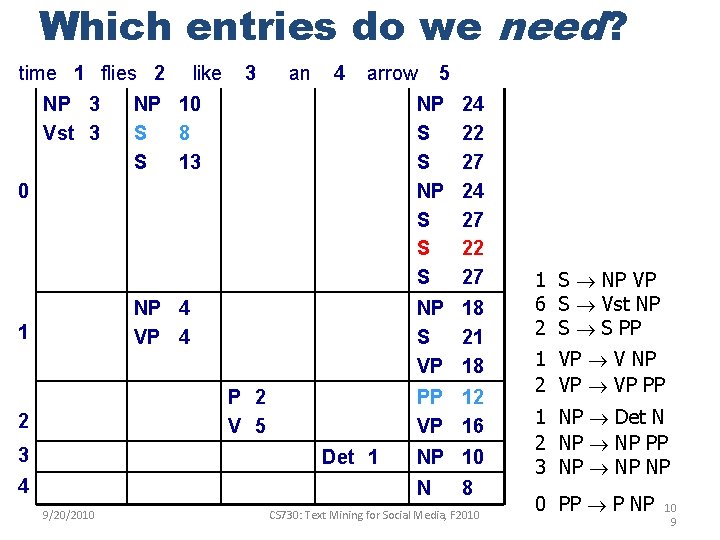

Which entries do we need? time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 10 8

Which entries do we need? time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 10 9

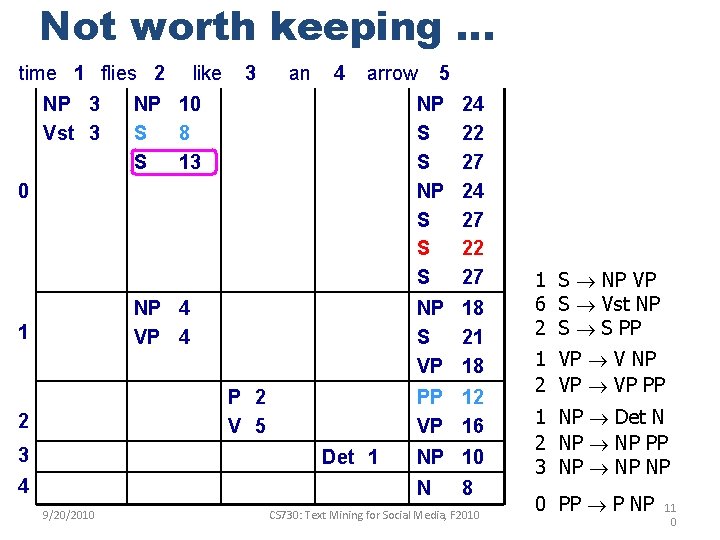

Not worth keeping … time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 11 0

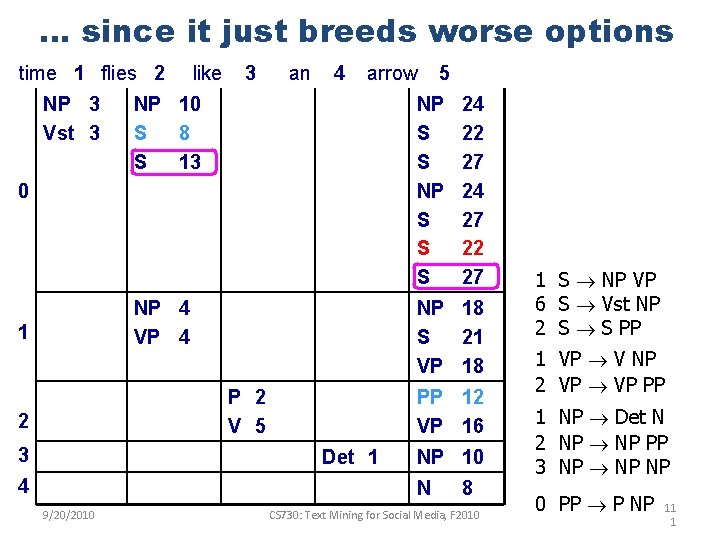

… since it just breeds worse options time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 NP S S S 0 NP 4 VP 4 1 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 24 22 27 24 27 22 27 NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 11 1

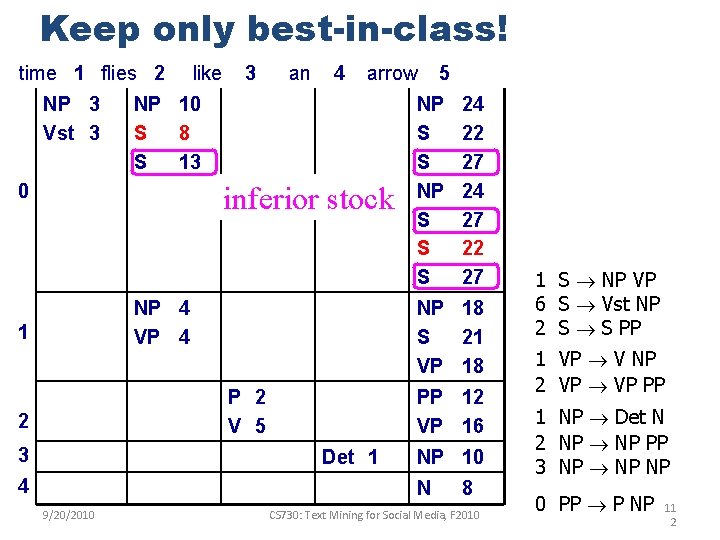

Keep only best-in-class! time 1 flies 2 NP 3 Vst 3 like 3 an 4 arrow NP 10 S 8 S 13 0 inferior stock NP 4 VP 4 1 3 NP 10 N 9/20/2010 24 22 27 24 27 22 27 PP 12 VP 16 Det 1 4 NP S S S NP 18 S 21 VP 18 P 2 V 5 2 5 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 11 2

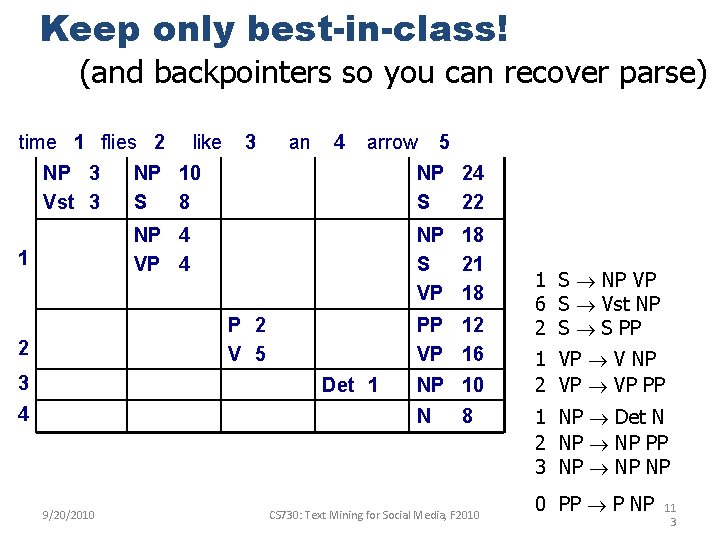

Keep only best-in-class! (and backpointers so you can recover parse) time 1 flies 2 NP 3 Vst 3 1 like 3 an 4 arrow NP 10 S 8 NP 24 S 22 NP 4 VP 4 NP 18 S 21 VP 18 P 2 V 5 2 3 PP 12 VP 16 Det 1 4 NP 10 N 9/20/2010 5 8 CS 730: Text Mining for Social Media, F 2010 1 S NP VP 6 S Vst NP 2 S S PP 1 VP V NP 2 VP PP 1 NP Det N 2 NP PP 3 NP NP 0 PP P NP 11 3

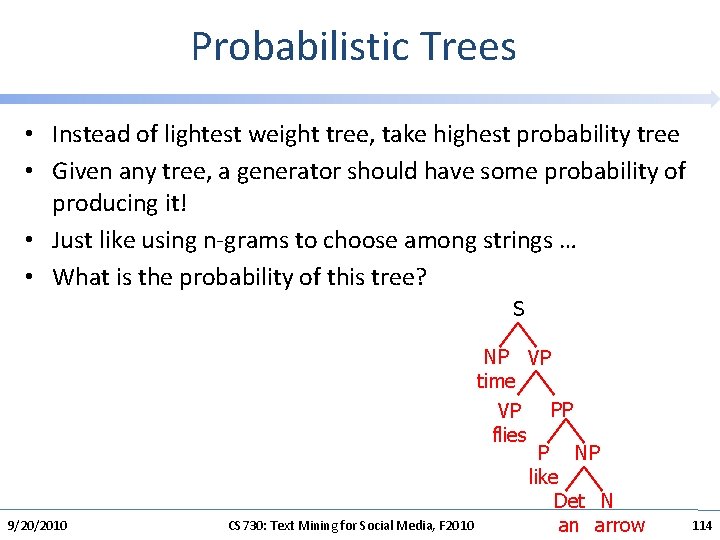

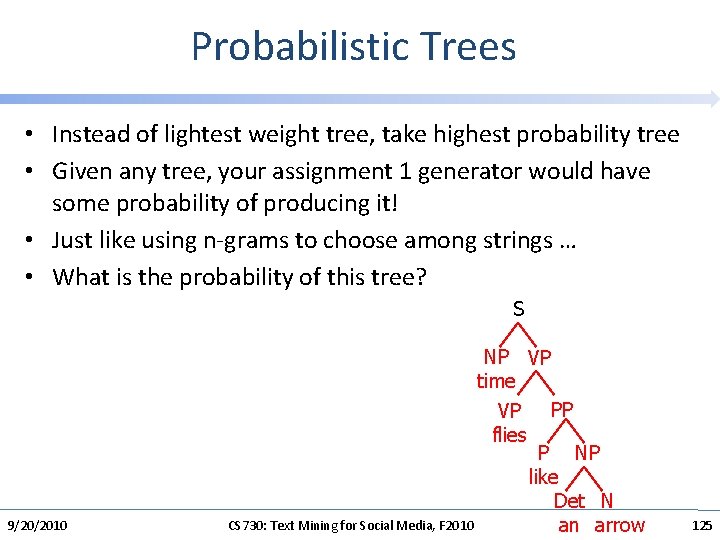

Probabilistic Trees • Instead of lightest weight tree, take highest probability tree • Given any tree, a generator should have some probability of producing it! • Just like using n-grams to choose among strings … • What is the probability of this tree? S 9/20/2010 NP VP time PP VP flies P NP like Det N CS 730: Text Mining for Social Media, F 2010 an arrow 114

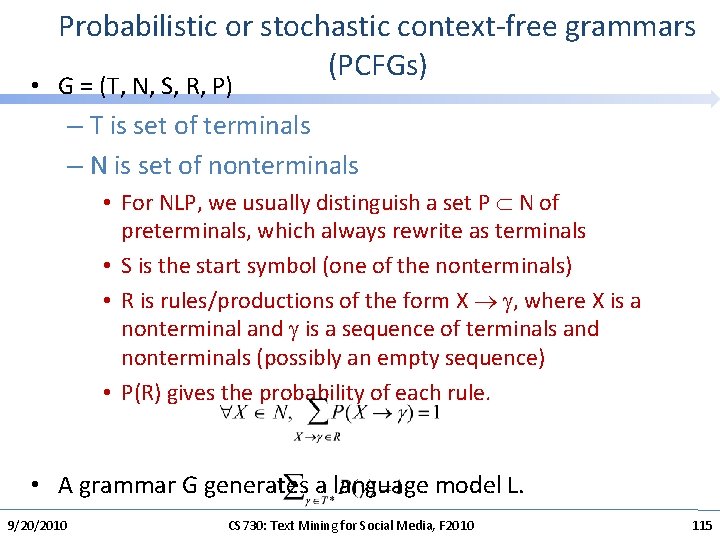

Probabilistic or stochastic context-free grammars (PCFGs) • G = (T, N, S, R, P) – T is set of terminals – N is set of nonterminals • For NLP, we usually distinguish a set P N of preterminals, which always rewrite as terminals • S is the start symbol (one of the nonterminals) • R is rules/productions of the form X , where X is a nonterminal and is a sequence of terminals and nonterminals (possibly an empty sequence) • P(R) gives the probability of each rule. • A grammar G generates a language model L. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 115

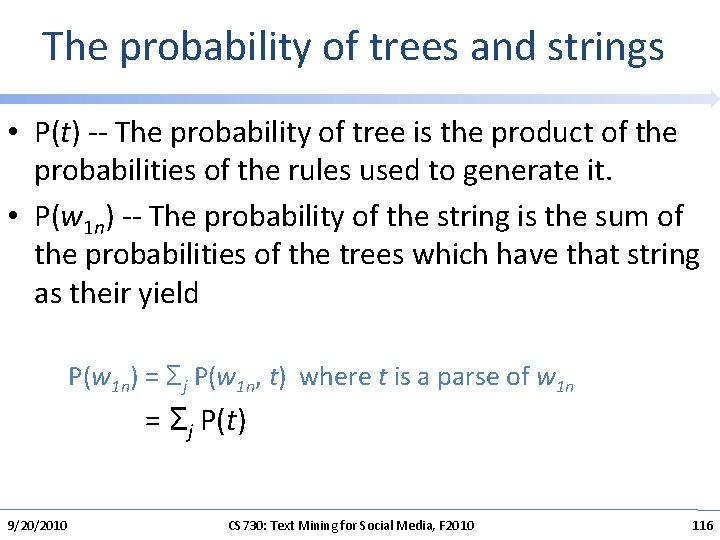

The probability of trees and strings • P(t) -- The probability of tree is the product of the probabilities of the rules used to generate it. • P(w 1 n) -- The probability of the string is the sum of the probabilities of the trees which have that string as their yield P(w 1 n) = Σj P(w 1 n, t) where t is a parse of w 1 n = Σj P(t) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 116

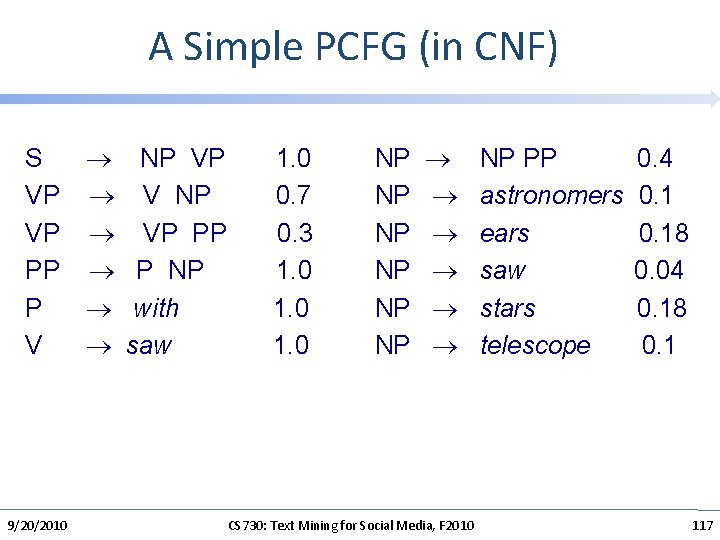

A Simple PCFG (in CNF) S NP VP 1. 0 VP V NP 0. 7 VP PP 0. 3 PP P NP 1. 0 P with 1. 0 V saw 1. 0 9/20/2010 NP PP NP astronomers NP ears NP saw NP stars NP telescope CS 730: Text Mining for Social Media, F 2010 0. 4 0. 18 0. 04 0. 18 0. 1 117

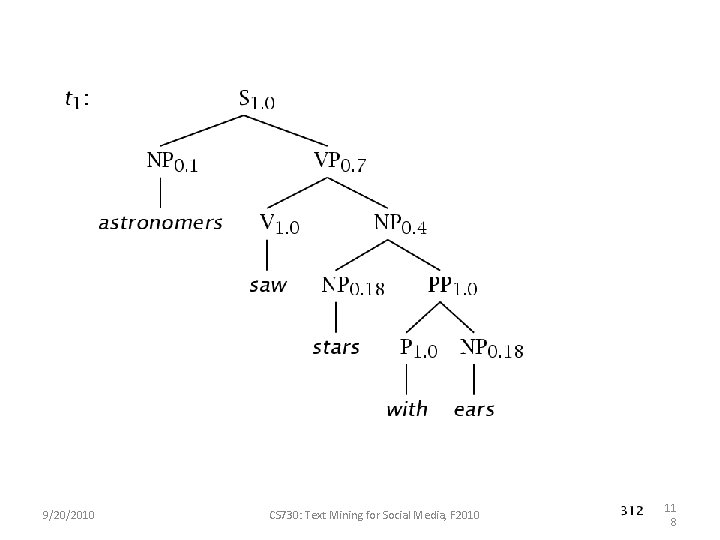

9/20/2010 CS 730: Text Mining for Social Media, F 2010 11 8

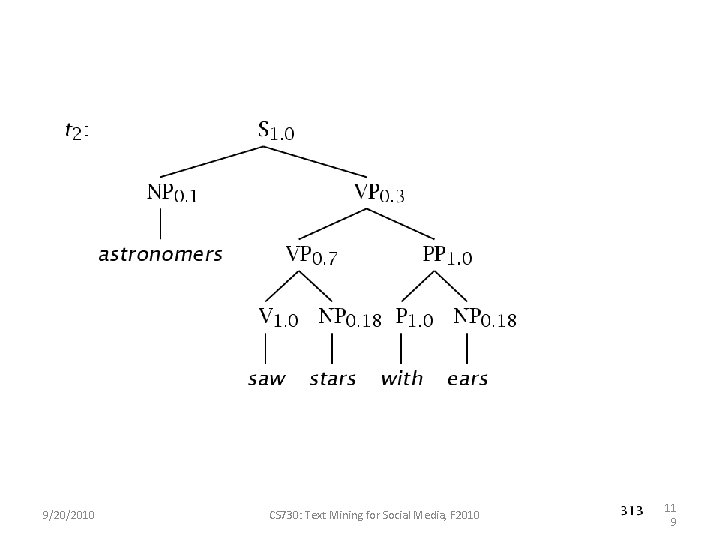

9/20/2010 CS 730: Text Mining for Social Media, F 2010 11 9

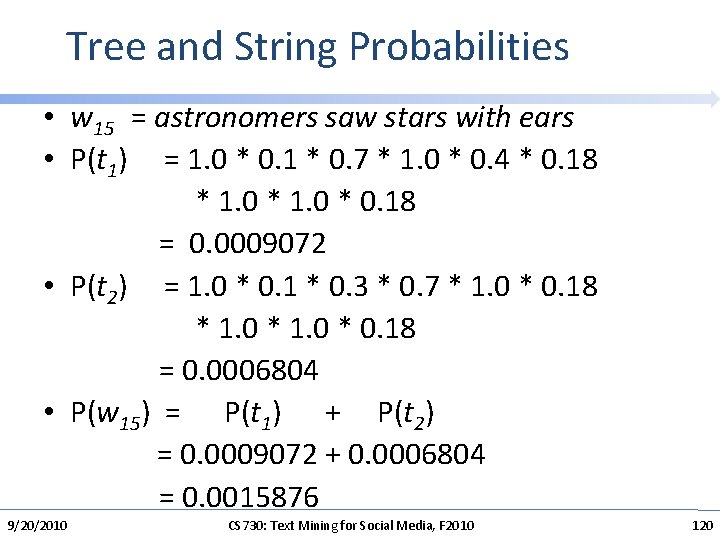

Tree and String Probabilities • w 15 = astronomers saw stars with ears • P(t 1) = 1. 0 * 0. 1 * 0. 7 * 1. 0 * 0. 4 * 0. 18 * 1. 0 * 0. 18 = 0. 0009072 • P(t 2) = 1. 0 * 0. 1 * 0. 3 * 0. 7 * 1. 0 * 0. 18 = 0. 0006804 • P(w 15) = P(t 1) + P(t 2) = 0. 0009072 + 0. 0006804 = 0. 0015876 9/20/2010 CS 730: Text Mining for Social Media, F 2010 120

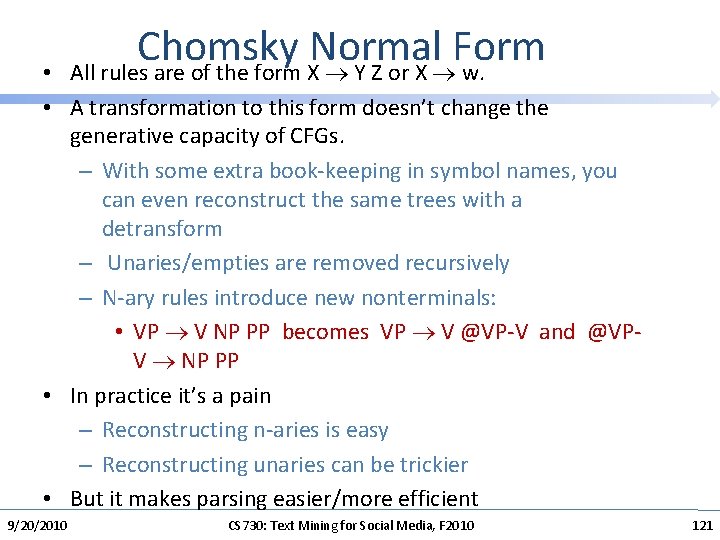

Chomsky Normal Form All rules are of the form X Y Z or X w. • • A transformation to this form doesn’t change the generative capacity of CFGs. – With some extra book-keeping in symbol names, you can even reconstruct the same trees with a detransform – Unaries/empties are removed recursively – N-ary rules introduce new nonterminals: • VP V NP PP becomes VP V @VP-V and @VPV NP PP • In practice it’s a pain – Reconstructing n-aries is easy – Reconstructing unaries can be trickier • But it makes parsing easier/more efficient 9/20/2010 CS 730: Text Mining for Social Media, F 2010 121

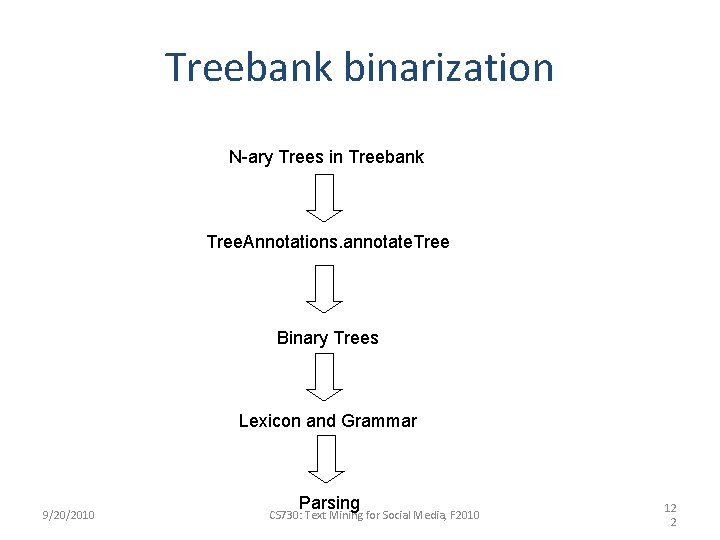

Treebank binarization N-ary Trees in Treebank Tree. Annotations. annotate. Tree Binary Trees Lexicon and Grammar 9/20/2010 Parsing CS 730: Text Mining for Social Media, F 2010 12 2

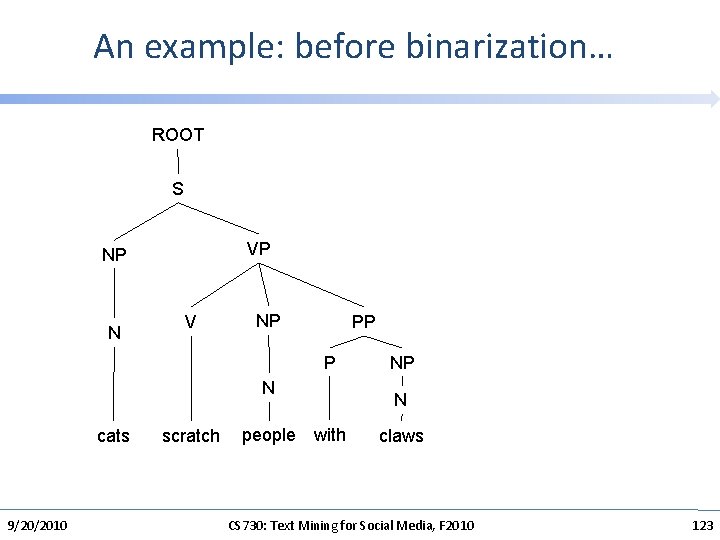

An example: before binarization… ROOT S VP NP N V NP PP P N cats 9/20/2010 scratch people with NP N claws CS 730: Text Mining for Social Media, F 2010 123

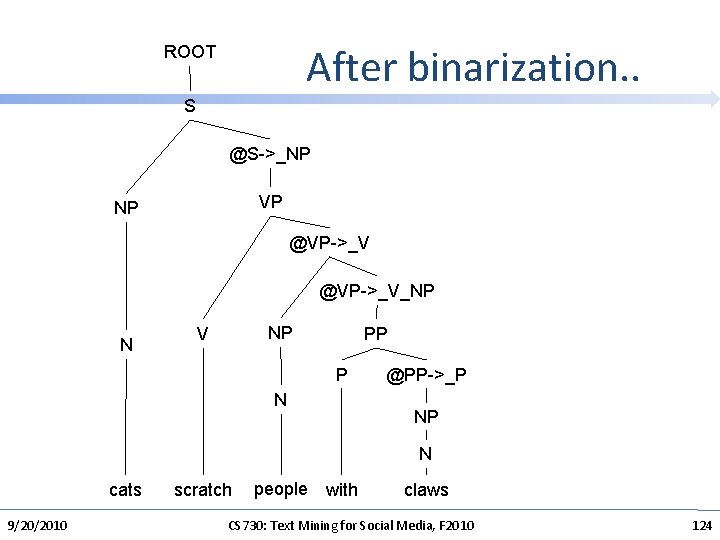

After binarization. . ROOT S @S->_NP VP NP @VP->_V_NP N NP V PP P N @PP->_P NP N cats 9/20/2010 scratch people with claws CS 730: Text Mining for Social Media, F 2010 124

Probabilistic Trees • Instead of lightest weight tree, take highest probability tree • Given any tree, your assignment 1 generator would have some probability of producing it! • Just like using n-grams to choose among strings … • What is the probability of this tree? S 9/20/2010 NP VP time PP VP flies P NP like Det N CS 730: Text Mining for Social Media, F 2010 an arrow 125

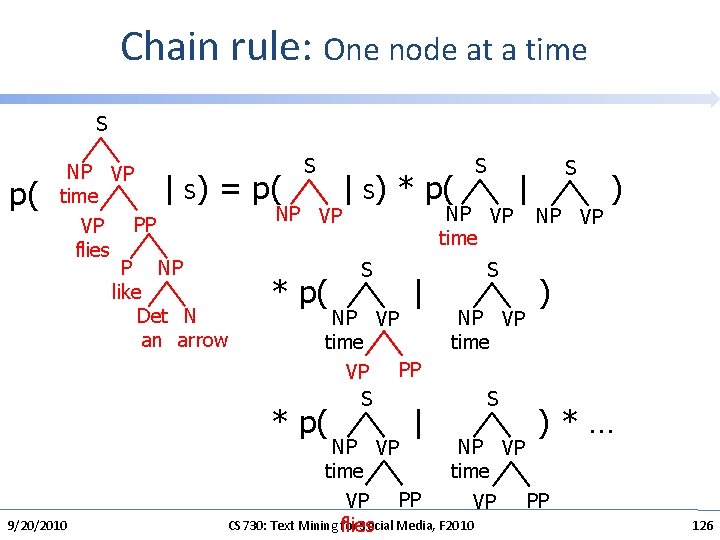

Chain rule: One node at a time S p( NP VP S time PP VP flies P NP like Det N an arrow | ) = p( S | S) * p( NP VP * p( 9/20/2010 NP VP time S | NP VP time PP VP S * p( S | | S NP VP time S S NP VP ) ) ) * … NP VP time PP VP CS 730: Text Mining for Social Media, F 2010 flies 126

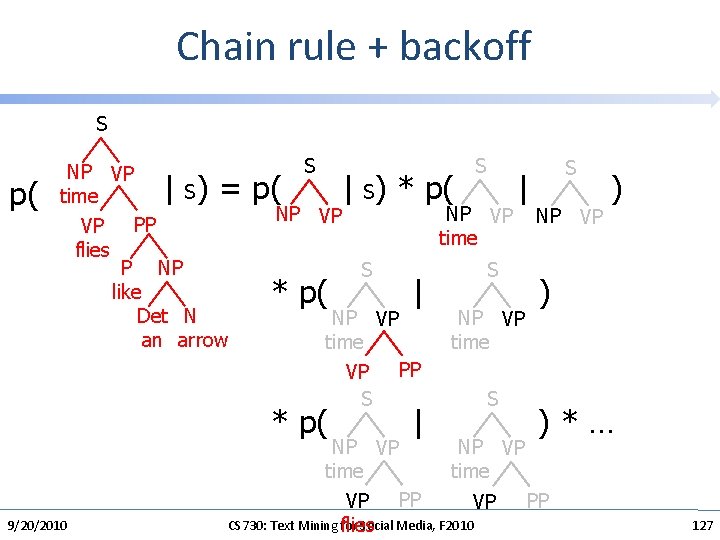

Chain rule + backoff S p( NP VP S time PP VP flies P NP like Det N an arrow | ) = p( S | S) * p( NP VP * p( 9/20/2010 NP VP time S | NP VP time PP VP S * p( S | | S NP VP time S S NP VP ) ) ) * … NP VP time PP VP CS 730: Text Mining for Social Media, F 2010 flies 127

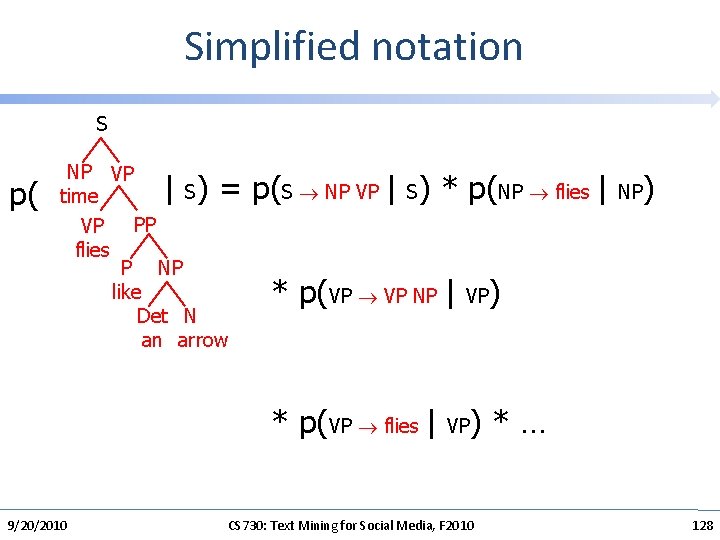

Simplified notation S p( NP VP S time PP VP flies P NP like Det N an arrow | ) = p(S NP VP | S) * p(NP flies | NP) * p(VP VP NP | VP) * p(VP flies | VP) * … 9/20/2010 CS 730: Text Mining for Social Media, F 2010 128

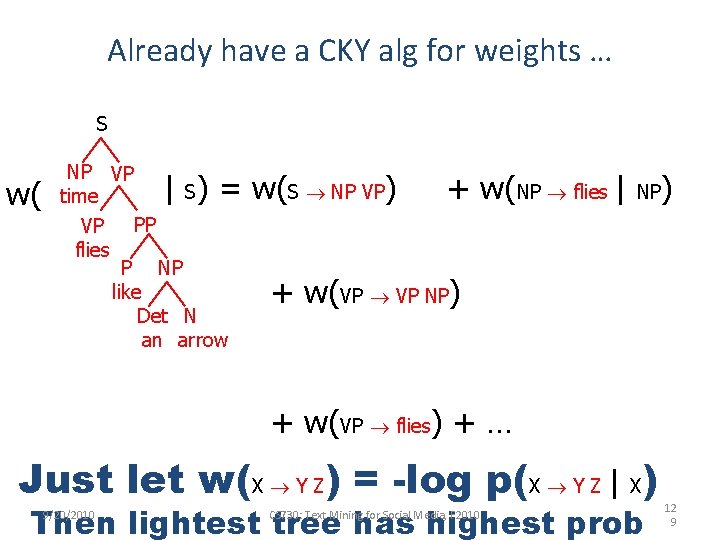

Already have a CKY alg for weights … S w( NP VP S time PP VP flies P NP like Det N an arrow | ) = w(S NP VP) + w(NP flies | NP) + w(VP VP NP) + w(VP flies) + … Just let w(X Y Z) = -log p(X Y Z | X) Then lightest tree has highest prob 9/20/2010 CS 730: Text Mining for Social Media, F 2010 12 9

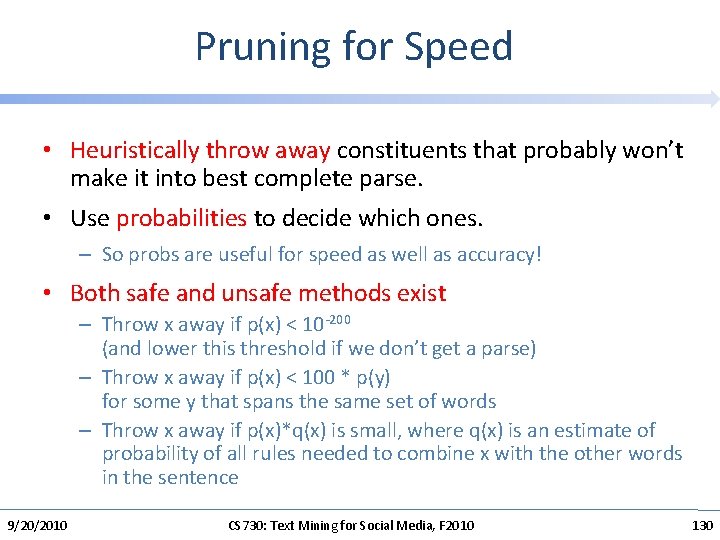

Pruning for Speed • Heuristically throw away constituents that probably won’t make it into best complete parse. • Use probabilities to decide which ones. – So probs are useful for speed as well as accuracy! • Both safe and unsafe methods exist – Throw x away if p(x) < 10 -200 (and lower this threshold if we don’t get a parse) – Throw x away if p(x) < 100 * p(y) for some y that spans the same set of words – Throw x away if p(x)*q(x) is small, where q(x) is an estimate of probability of all rules needed to combine x with the other words in the sentence 9/20/2010 CS 730: Text Mining for Social Media, F 2010 130

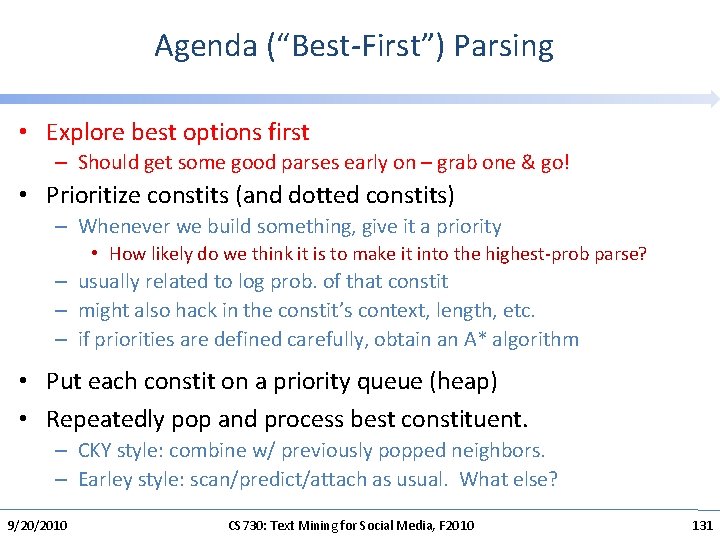

Agenda (“Best-First”) Parsing • Explore best options first – Should get some good parses early on – grab one & go! • Prioritize constits (and dotted constits) – Whenever we build something, give it a priority • How likely do we think it is to make it into the highest-prob parse? – usually related to log prob. of that constit – might also hack in the constit’s context, length, etc. – if priorities are defined carefully, obtain an A* algorithm • Put each constit on a priority queue (heap) • Repeatedly pop and process best constituent. – CKY style: combine w/ previously popped neighbors. – Earley style: scan/predict/attach as usual. What else? 9/20/2010 CS 730: Text Mining for Social Media, F 2010 131

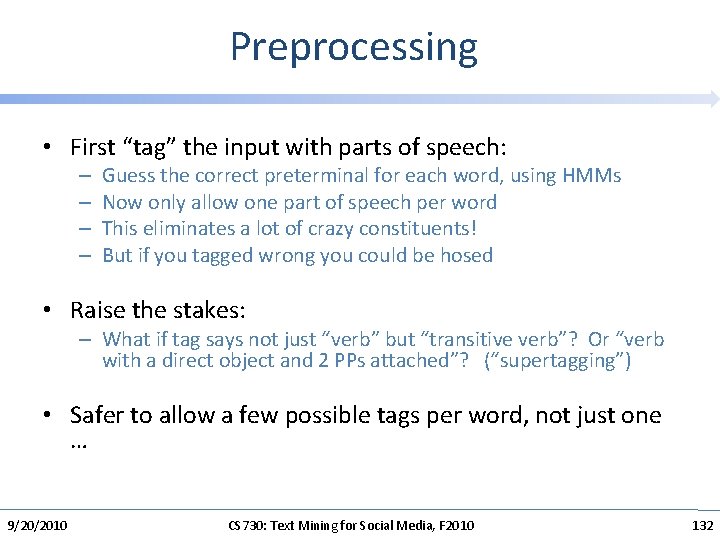

Preprocessing • First “tag” the input with parts of speech: – – Guess the correct preterminal for each word, using HMMs Now only allow one part of speech per word This eliminates a lot of crazy constituents! But if you tagged wrong you could be hosed • Raise the stakes: – What if tag says not just “verb” but “transitive verb”? Or “verb with a direct object and 2 PPs attached”? (“supertagging”) • Safer to allow a few possible tags per word, not just one … 9/20/2010 CS 730: Text Mining for Social Media, F 2010 132

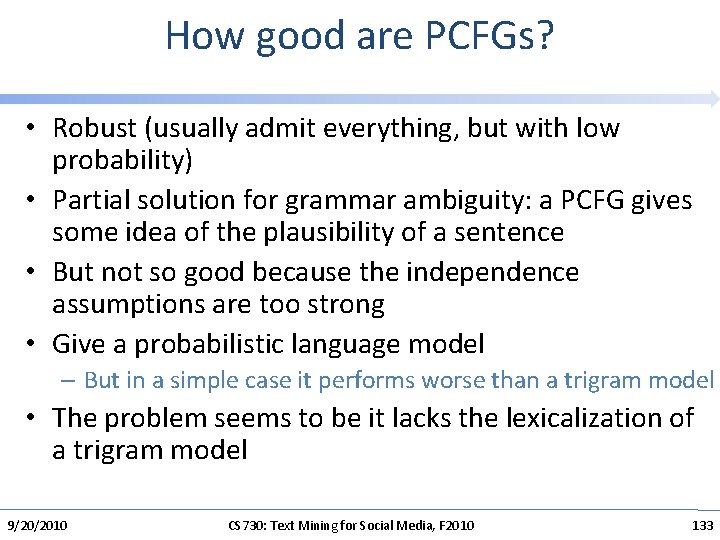

How good are PCFGs? • Robust (usually admit everything, but with low probability) • Partial solution for grammar ambiguity: a PCFG gives some idea of the plausibility of a sentence • But not so good because the independence assumptions are too strong • Give a probabilistic language model – But in a simple case it performs worse than a trigram model • The problem seems to be it lacks the lexicalization of a trigram model 9/20/2010 CS 730: Text Mining for Social Media, F 2010 133

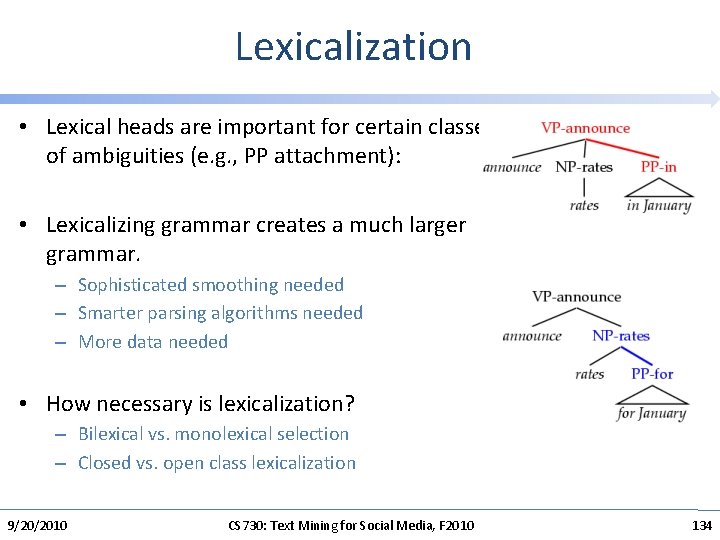

Lexicalization • Lexical heads are important for certain classes of ambiguities (e. g. , PP attachment): • Lexicalizing grammar creates a much larger grammar. – Sophisticated smoothing needed – Smarter parsing algorithms needed – More data needed • How necessary is lexicalization? – Bilexical vs. monolexical selection – Closed vs. open class lexicalization 9/20/2010 CS 730: Text Mining for Social Media, F 2010 134

Lexicalized Parsing • peel the apple on the towel – ambiguous • put the apple on the towel – on attached to put (is the other reading even possible? ) • put the apple on the towel in the box • VP[head=put] V[head=put] NP PP[head=on] • study the apple on the towel – study dislikes on (how can the PCFG express this? ) • VP[head=study] PP[head=on] • study it on the towel – it dislikes on even more – PP can’t attach to pronoun 9/20/2010 CS 730: Text Mining for Social Media, F 2010 135

Lexicalized Parsing • the plan that Natasha would swallow – ambiguous between content of plan and relative clause • the plan that Natasha would snooze – snooze dislikes a direct object (plan) • the plan that Natasha would make – make likes a direct object (plan) • the pill that Natasha would swallow – pill can’t express a content-clause the way plan does – pill is a probable direct object for swallow • How to express these distinctions in a CFG or PCFG? 9/20/2010 CS 730: Text Mining for Social Media, F 2010 136

Putting words into PCFGs • A PCFG uses the actual words only to determine the probability of parts-of-speech (the preterminals) • In many cases we need to know about words to choose a parse • The head word of a phrase gives a good representation of the phrase’s structure and meaning – Attachment ambiguities The astronomer saw the moon with the telescope – Coordination: the dogs in the house and the cats – Subcategorization frames: put versus like 9/20/2010 CS 730: Text Mining for Social Media, F 2010 137

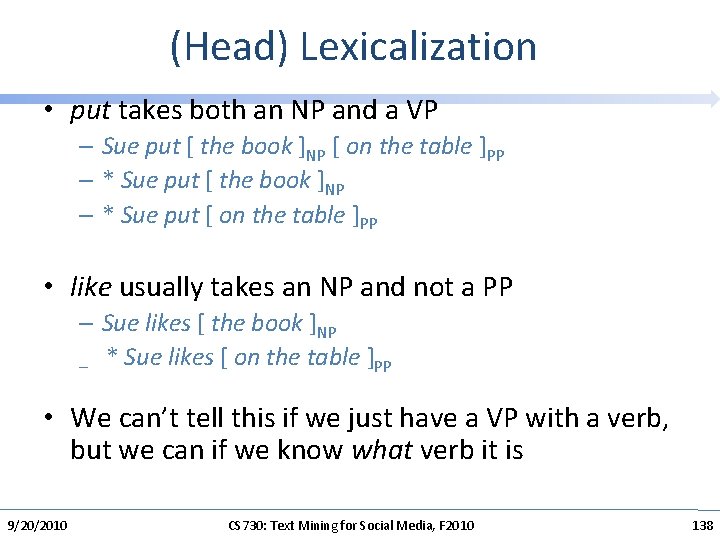

(Head) Lexicalization • put takes both an NP and a VP – Sue put [ the book ]NP [ on the table ]PP – * Sue put [ the book ]NP – * Sue put [ on the table ]PP • like usually takes an NP and not a PP – Sue likes [ the book ]NP – * Sue likes [ on the table ]PP • We can’t tell this if we just have a VP with a verb, but we can if we know what verb it is 9/20/2010 CS 730: Text Mining for Social Media, F 2010 138

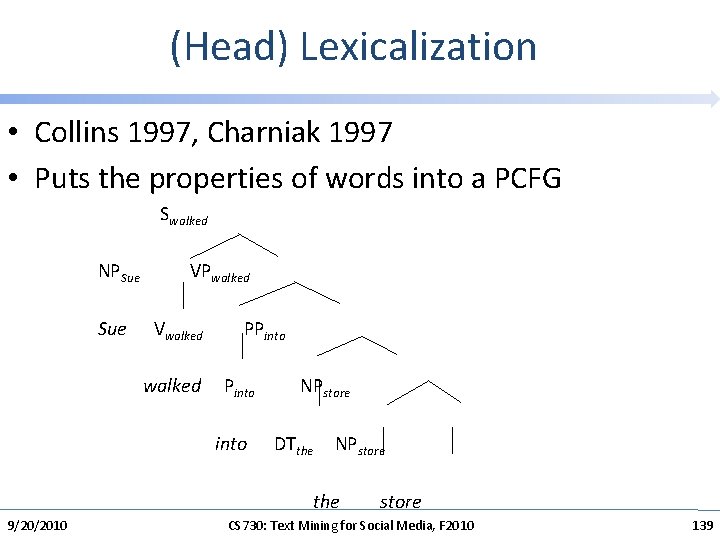

(Head) Lexicalization • Collins 1997, Charniak 1997 • Puts the properties of words into a PCFG Swalked NPSue VPwalked Sue Vwalked PPinto walked Pinto NPstore into DTthe NPstore the store 9/20/2010 CS 730: Text Mining for Social Media, F 2010 139

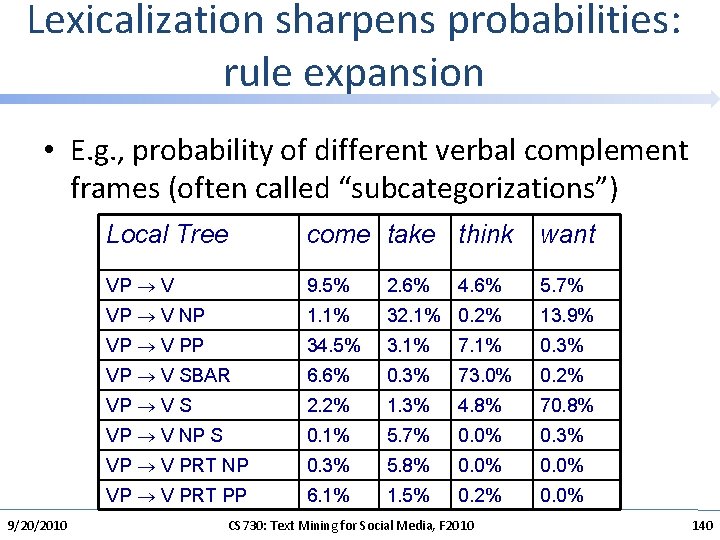

Lexicalization sharpens probabilities: rule expansion • E. g. , probability of different verbal complement frames (often called “subcategorizations”) 9/20/2010 Local Tree come take think want VP V 9. 5% 2. 6% 5. 7% VP V NP 1. 1% 32. 1% 0. 2% 13. 9% VP V PP 34. 5% 3. 1% 7. 1% 0. 3% VP V SBAR 6. 6% 0. 3% 73. 0% 0. 2% VP V S 2. 2% 1. 3% 4. 8% 70. 8% VP V NP S 0. 1% 5. 7% 0. 0% 0. 3% VP V PRT NP 0. 3% 5. 8% 0. 0% VP V PRT PP 6. 1% 1. 5% 0. 2% 0. 0% 4. 6% CS 730: Text Mining for Social Media, F 2010 140

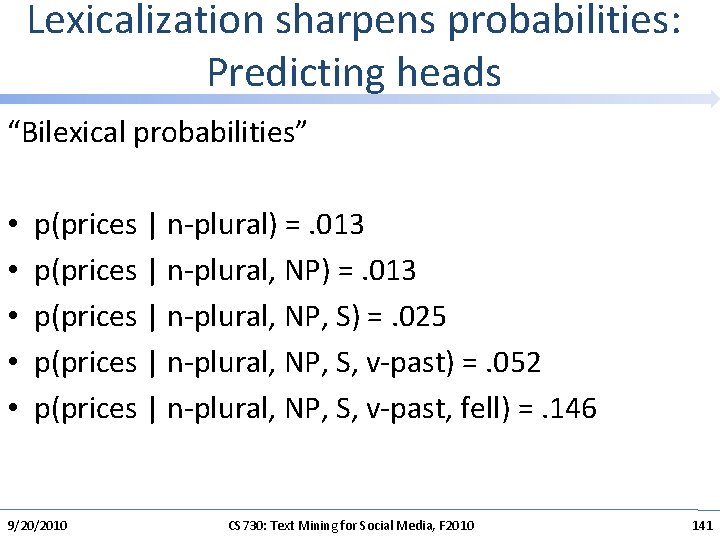

Lexicalization sharpens probabilities: Predicting heads “Bilexical probabilities” • • • p(prices | n-plural) =. 013 p(prices | n-plural, NP, S) =. 025 p(prices | n-plural, NP, S, v-past) =. 052 p(prices | n-plural, NP, S, v-past, fell) =. 146 9/20/2010 CS 730: Text Mining for Social Media, F 2010 141

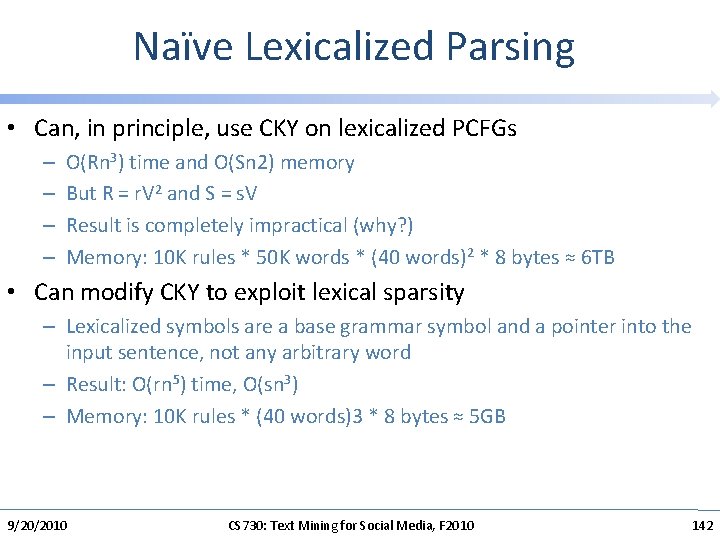

Naïve Lexicalized Parsing • Can, in principle, use CKY on lexicalized PCFGs – – O(Rn 3) time and O(Sn 2) memory But R = r. V 2 and S = s. V Result is completely impractical (why? ) Memory: 10 K rules * 50 K words * (40 words)2 * 8 bytes ≈ 6 TB • Can modify CKY to exploit lexical sparsity – Lexicalized symbols are a base grammar symbol and a pointer into the input sentence, not any arbitrary word – Result: O(rn 5) time, O(sn 3) – Memory: 10 K rules * (40 words)3 * 8 bytes ≈ 5 GB 9/20/2010 CS 730: Text Mining for Social Media, F 2010 142

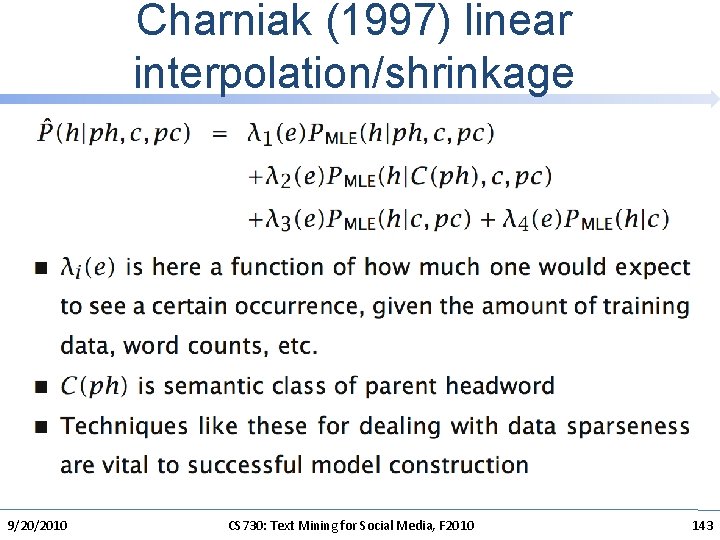

Charniak (1997) linear interpolation/shrinkage 9/20/2010 CS 730: Text Mining for Social Media, F 2010 143

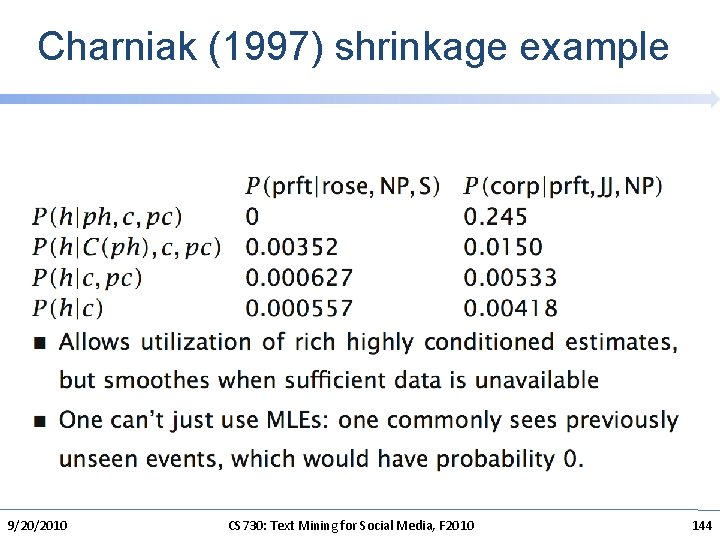

Charniak (1997) shrinkage example 9/20/2010 CS 730: Text Mining for Social Media, F 2010 144

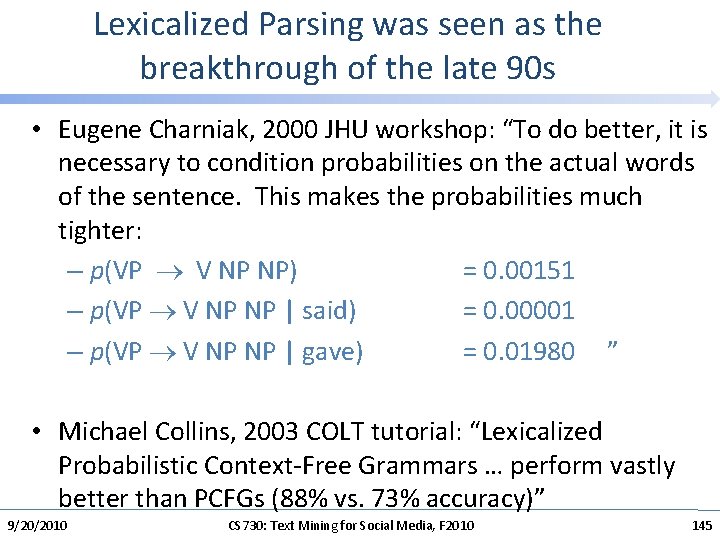

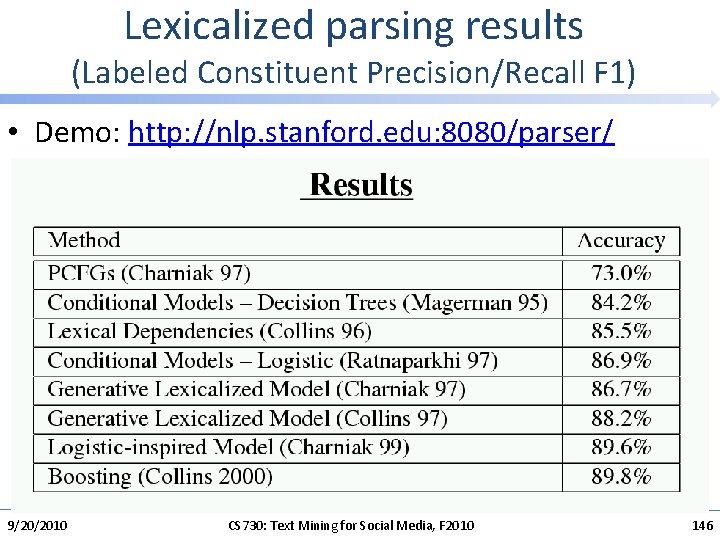

Lexicalized Parsing was seen as the breakthrough of the late 90 s • Eugene Charniak, 2000 JHU workshop: “To do better, it is necessary to condition probabilities on the actual words of the sentence. This makes the probabilities much tighter: – p(VP V NP NP) = 0. 00151 – p(VP V NP NP | said) = 0. 00001 – p(VP V NP NP | gave) = 0. 01980 ” • Michael Collins, 2003 COLT tutorial: “Lexicalized Probabilistic Context-Free Grammars … perform vastly better than PCFGs (88% vs. 73% accuracy)” 9/20/2010 CS 730: Text Mining for Social Media, F 2010 145

Lexicalized parsing results (Labeled Constituent Precision/Recall F 1) • Demo: http: //nlp. stanford. edu: 8080/parser/ 9/20/2010 CS 730: Text Mining for Social Media, F 2010 146

Sparseness & the Penn Treebank • The Penn Treebank – 1 million words of parsed English WSJ – has been a key resource (because of the widespread reliance on supervised learning) • But 1 million words is like nothing: – 965, 000 constituents, but only 66 WHADJP, of which only 6 aren’t how much or how many, but there is an infinite space of these • How clever/original/incompetent (at risk assessment and evaluation) … • Most of the probabilities that you would like to compute, you can’t compute 9/20/2010 CS 730: Text Mining for Social Media, F 2010 147

Sparseness & the Penn Treebank (2) • Many parse preferences depend on bilexical statistics: likelihoods of relationships between pairs of words (compound nouns, PP attachments, …) • Extremely sparse, even on topics central to the WSJ: – stocks plummeted 2 occurrences – stocks stabilized 1 occurrence – stocks skyrocketed 0 occurrences – #stocks discussed 0 occurrences • So far there has been very modest success in augmenting the Penn Treebank with extra unannotated materials or using semantic classes – once there is more than a little annotated training data. – Cf. Charniak 1997, Charniak 2000; but see Mc. Closky et al. 2006 9/20/2010 CS 730: Text Mining for Social Media, F 2010 148

Motivating discriminative parsing • In discriminative models, it is easy to incorporate different kinds of features – Often just about anything that seems linguistically interesting • In generative models, it’s often difficult, and the model suffers because of false independence assumptions • This ability to add informative features is the real power of discriminative models for NLP. 9/20/2010 CS 730: Text Mining for Social Media, F 2010 149

Discriminative Parsers • Discriminative Dependency Parsing – Not as computationally hard (tiny grammar constant) – Explored considerably recently. E. g. Mc. Donald et al. 2005 • Make parser action decisions discriminatively – E. g. with a shift-reduce parser • Dynamic program Phrase Structure Parsing – Resource intensive! Most work on sentences of length <=15 – The need to be able to dynamic program limits the feature types you can use • Post-Processing: Parse reranking – Just work with output of k-best generative parser 1. Distribution-free methods 2. Probabilistic model methods 9/20/2010 CS 730: Text Mining for Social Media, F 2010 150

Charniak and Johnson (ACL 2005): Coarse-to-fine n-best parsing and Max. Ent discriminative reranking • Builds a maxent discriminative reranker over parses produced by (a slightly bugfixed and improved version of) Charniak (2000). • Gets 50 best parses from Charniak (2000) parser – Doing this exploits the “coarse-to-fine” idea to heuristically find good candidates • Maxent model for reranking uses heads, etc. as generative model, but also nice linguistic features: – Conjunct parallelism – Right branching preference – Heaviness (length) of constituents factored in • Gets 91% LP/LR F 1 (on all sentences! – up to 80 wd) 9/20/2010 CS 730: Text Mining for Social Media, F 2010 151

Readings for Next Week • FSNLP Chapters 11 and 12 9/20/2010 CS 730: Text Mining for Social Media, F 2010 152

- Slides: 152