Text Mining and Sentiment Analysis in R Brant

Text Mining and Sentiment Analysis in R Brant Deppa Professor of Statistics & Data Science Winona State University bdeppa@winona. edu

OUTLINE • Overview of Unsupervised Learning (DSCI 415) • Text Mining • Setting up a Twitter API • Summarizing Tweet Traits • Cleaning Tweets/Text • Storing Text Data • Association Rules • Clustering Tweets • Sentiment Analysis • Discussion

UNSUPERVISED LEARNING Supervised Learning (DSCI 425) - Study of learning methods that seek to find a function of inputs/variables that predict respective targets/responses. If the targets are expressed as some classes, it is called classification problem. Alternatively, if the target space is continuous, it is called regression problem. Unsupervised Learning (DSCI 415) – Study of learning methods where we are working only with a set of inputs/variables in order to find the structure, relationships, or differences between them.

UNSUPERVISED LEARNING METHODS • Graphical exploration • Clustering • Dimension reduction methods • Latent variable models • Signal separation • Association Rules/Market Basket Analysis • Recommender Systems • Text Mining • Sentiment Analysis

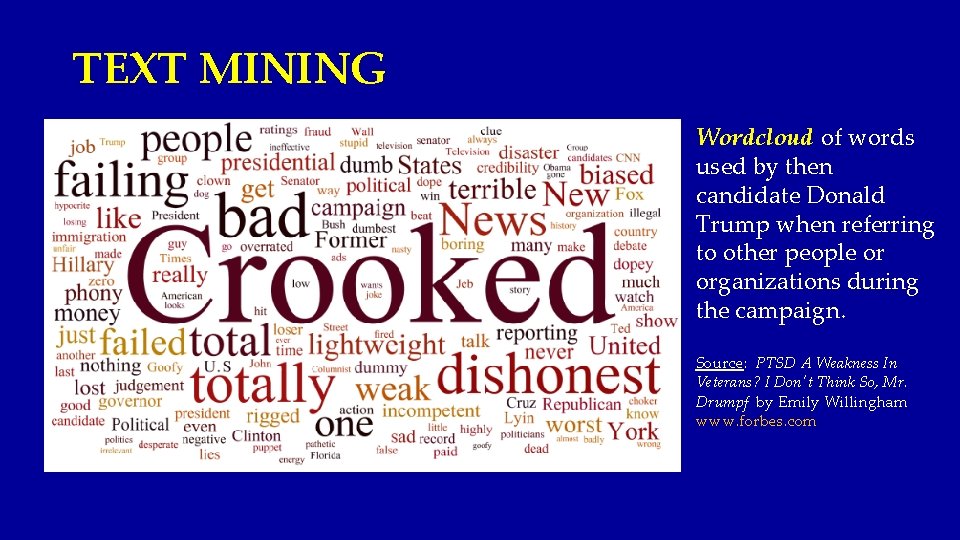

TEXT MINING Wordcloud of words used by then candidate Donald Trump when referring to other people or organizations during the campaign. Source: PTSD A Weakness In Veterans? I Don't Think So, Mr. Drumpf by Emily Willingham www. forbes. com

TEXT MINING & SENTIMENT ANALYSIS • Obtaining Text Data from Twitter ® Tweets • Summarizing Tweet Content • Cleaning the Text Content of Tweets • Storing Text Data (Data Structures for Text Data) • Summarizing Text Content – word counts, wordclouds • Association Rules & Clustering • Basics of Sentiment Analysis • Sentiment Analysis Examples • Challenges of Sentiment Analysis

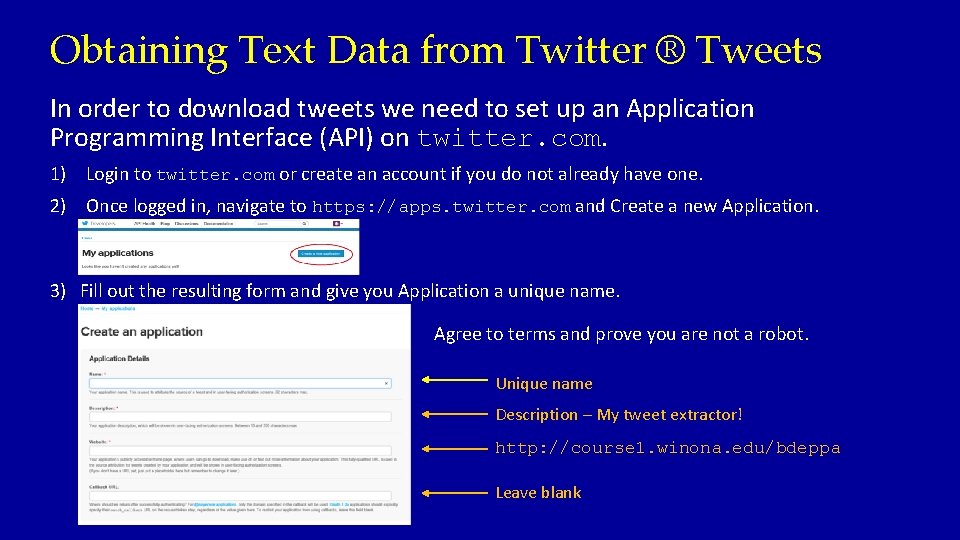

Obtaining Text Data from Twitter ® Tweets In order to download tweets we need to set up an Application Programming Interface (API) on twitter. com. 1) Login to twitter. com or create an account if you do not already have one. 2) Once logged in, navigate to https: //apps. twitter. com and Create a new Application. 3) Fill out the resulting form and give you Application a unique name. Agree to terms and prove you are not a robot. Unique name Description – My tweet extractor! http: //course 1. winona. edu/bdeppa Leave blank

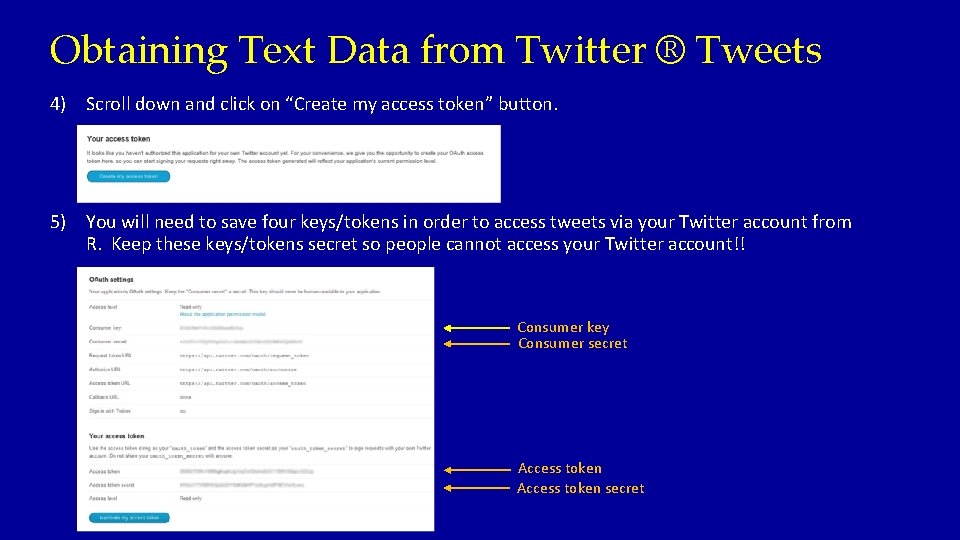

Obtaining Text Data from Twitter ® Tweets 4) Scroll down and click on “Create my access token” button. 5) You will need to save four keys/tokens in order to access tweets via your Twitter account from R. Keep these keys/tokens secret so people cannot access your Twitter account!! Consumer key Consumer secret Access token secret

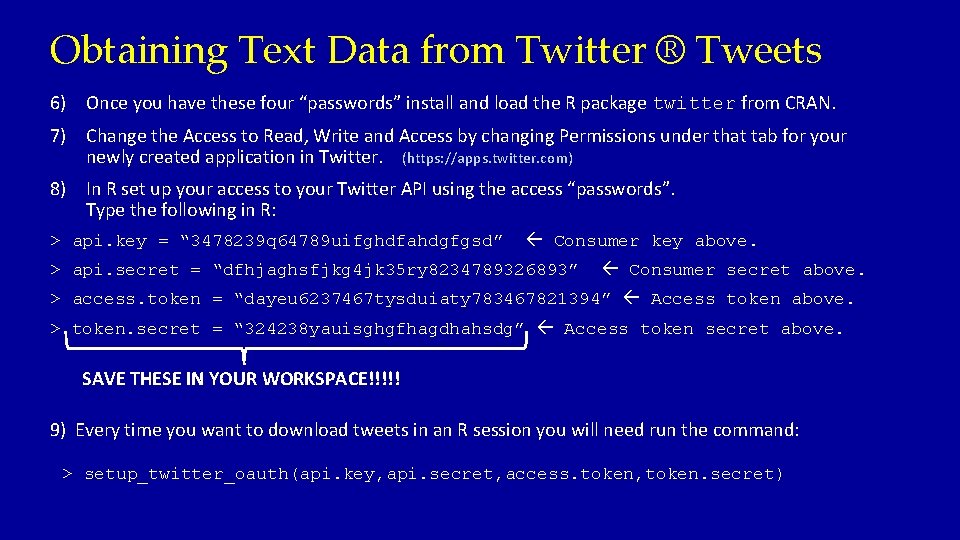

Obtaining Text Data from Twitter ® Tweets 6) Once you have these four “passwords” install and load the R package twitter from CRAN. 7) Change the Access to Read, Write and Access by changing Permissions under that tab for your newly created application in Twitter. (https: //apps. twitter. com) 8) In R set up your access to your Twitter API using the access “passwords”. Type the following in R: > api. key = “ 3478239 q 64789 uifghdfahdgfgsd” Consumer key above. > api. secret = “dfhjaghsfjkg 4 jk 35 ry 8234789326893” Consumer secret above. > access. token = “dayeu 6237467 tysduiaty 783467821394” Access token above. > token. secret = “ 324238 yauisghgfhagdhahsdg” Access token secret above. SAVE THESE IN YOUR WORKSPACE!!!!! 9) Every time you want to download tweets in an R session you will need run the command: > setup_twitter_oauth(api. key, api. secret, access. token, token. secret)

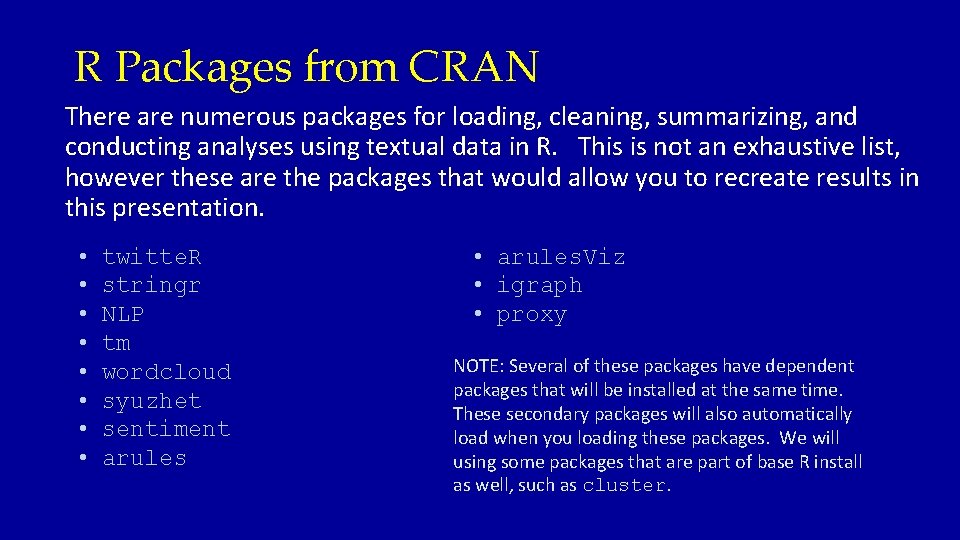

R Packages from CRAN There are numerous packages for loading, cleaning, summarizing, and conducting analyses using textual data in R. This is not an exhaustive list, however these are the packages that would allow you to recreate results in this presentation. • • twitte. R stringr NLP tm wordcloud syuzhet sentiment arules • arules. Viz • igraph • proxy NOTE: Several of these packages have dependent packages that will be installed at the same time. These secondary packages will also automatically load when you loading these packages. We will using some packages that are part of base R install as well, such as cluster.

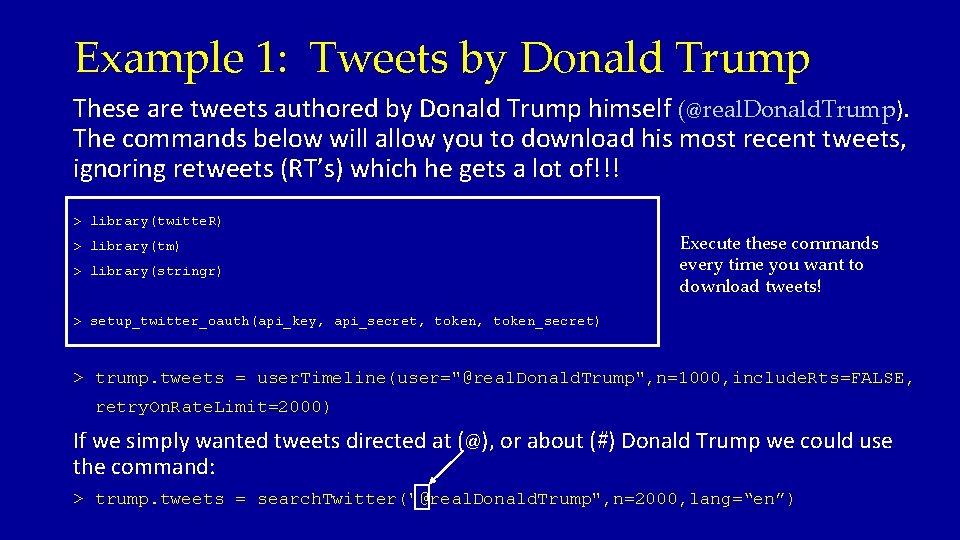

Example 1: Tweets by Donald Trump These are tweets authored by Donald Trump himself (@real. Donald. Trump). The commands below will allow you to download his most recent tweets, ignoring retweets (RT’s) which he gets a lot of!!! > library(twitte. R) > library(tm) > library(stringr) Execute these commands every time you want to download tweets! > setup_twitter_oauth(api_key, api_secret, token_secret) > trump. tweets = user. Timeline(user="@real. Donald. Trump", n=1000, include. Rts=FALSE, retry. On. Rate. Limit=2000) If we simply wanted tweets directed at (@), or about (#) Donald Trump we could use the command: > trump. tweets = search. Twitter("@real. Donald. Trump", n=2000, lang=“en”)

![Example 1: Tweets by Donald Trump > trump. tweets[1: 4] [[1]] [1] "real. Donald. Example 1: Tweets by Donald Trump > trump. tweets[1: 4] [[1]] [1] "real. Donald.](http://slidetodoc.com/presentation_image_h2/495c014b3da87c4a5d465cfe8aa739f4/image-12.jpg)

Example 1: Tweets by Donald Trump > trump. tweets[1: 4] [[1]] [1] "real. Donald. Trump: Thank you Ohio! Together, we made history – and now, the real work begins. America will start winning again!… https: //t. co/s. VNSNJE 7 Uf" [[2]] [1] "real. Donald. Trump: Heading to U. S. Bank Arena in Cincinnati, Ohio for a 7 pm rally. n. Join me! Tickets: https: //t. co/Hi. Wq. Zv. Hv 6 M" [[3]] [1] "real. Donald. Trump: Getting ready to leave for the Great State of Indiana and meet the hard working and wonderful people of Carrier A. C. " [[4]] [1] "real. Donald. Trump: My thoughts and prayers are with those affected by the tragic storms and tornadoes in the Southeastern United States. Stay safe!"

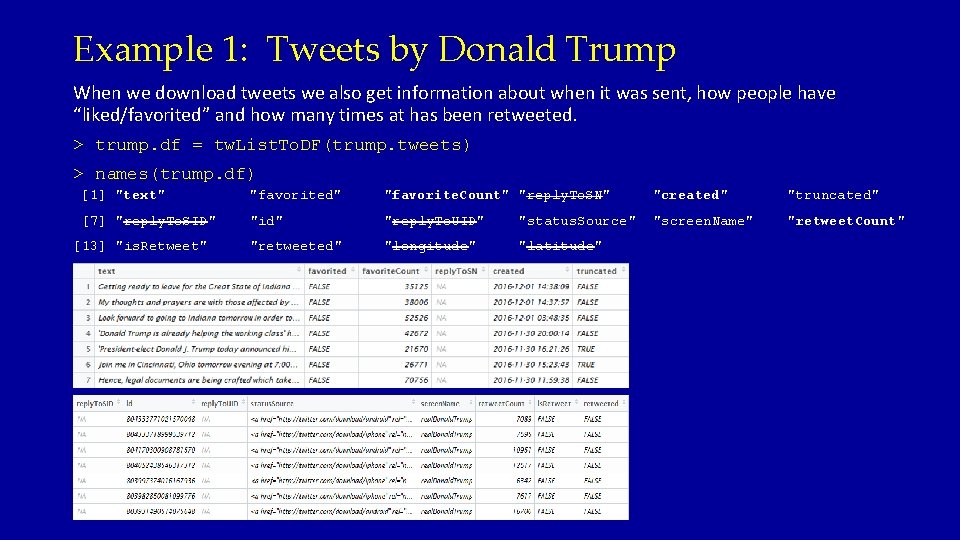

Example 1: Tweets by Donald Trump When we download tweets we also get information about when it was sent, how people have “liked/favorited” and how many times at has been retweeted. > trump. df = tw. List. To. DF(trump. tweets) > names(trump. df) [1] "text" "favorited" "favorite. Count" "reply. To. SN" "created" "truncated" [7] "reply. To. SID" "id" "reply. To. UID" "status. Source" "screen. Name" "retweet. Count" "retweeted" "longitude" "latitude" [13] "is. Retweet" > View(trump. df)

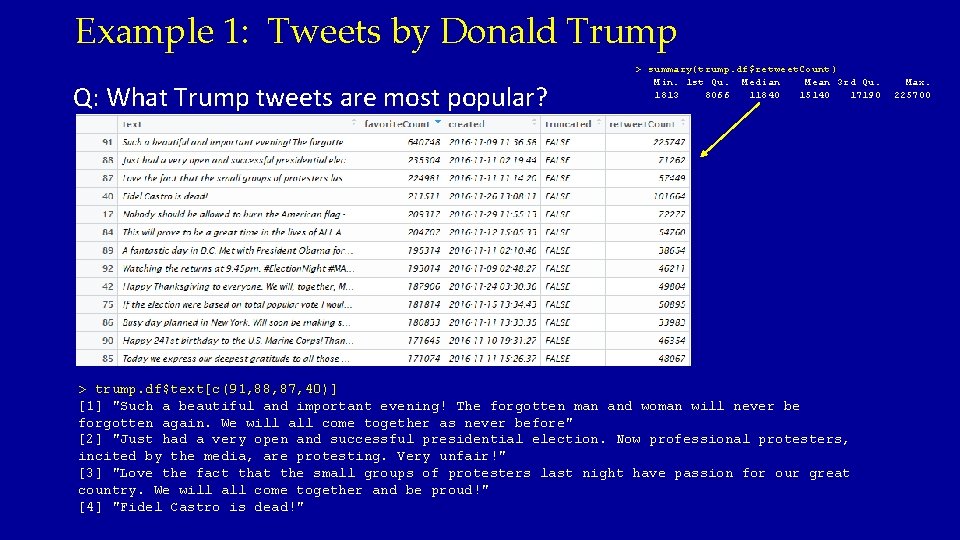

Example 1: Tweets by Donald Trump Q: What Trump tweets are most popular? > summary(trump. df$retweet. Count) Min. 1 st Qu. Median Mean 3 rd Qu. 1813 8066 11840 15140 17190 > trump. df$text[c(91, 88, 87, 40)] [1] "Such a beautiful and important evening! The forgotten man and woman will never be forgotten again. We will all come together as never before" [2] "Just had a very open and successful presidential election. Now professional protesters, incited by the media, are protesting. Very unfair!" [3] "Love the fact that the small groups of protesters last night have passion for our great country. We will all come together and be proud!" [4] "Fidel Castro is dead!" Max. 225700

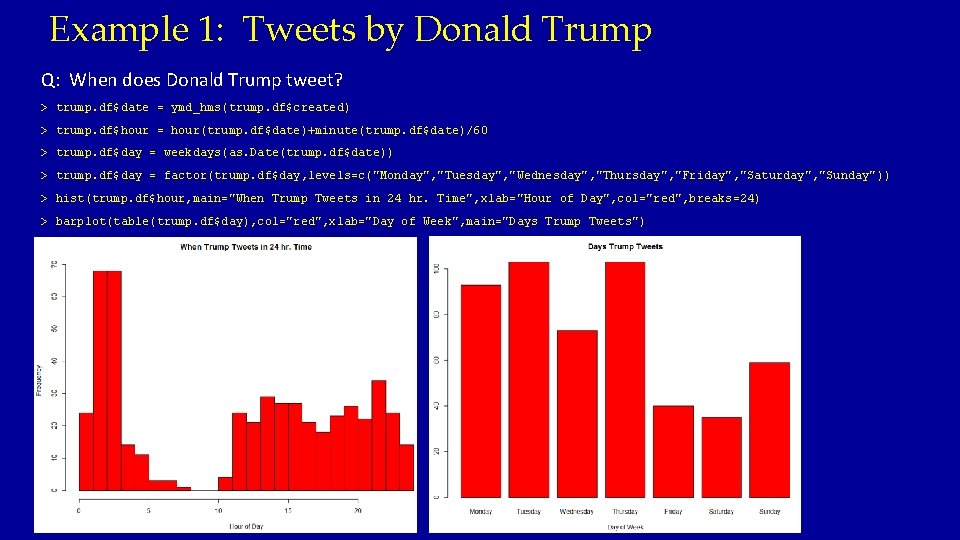

Example 1: Tweets by Donald Trump Q: When does Donald Trump tweet? > trump. df$date = ymd_hms(trump. df$created) > trump. df$hour = hour(trump. df$date)+minute(trump. df$date)/60 > trump. df$day = weekdays(as. Date(trump. df$date)) > trump. df$day = factor(trump. df$day, levels=c("Monday", "Tuesday", "Wednesday", "Thursday", "Friday", "Saturday", "Sunday")) > hist(trump. df$hour, main="When Trump Tweets in 24 hr. Time", xlab="Hour of Day", col="red", breaks=24) > barplot(table(trump. df$day), col="red", xlab="Day of Week", main="Days Trump Tweets")

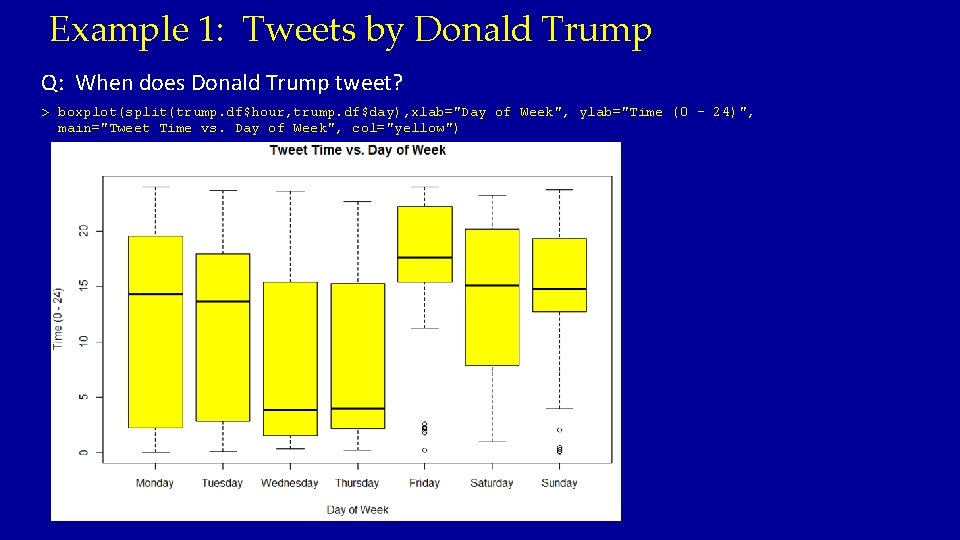

Example 1: Tweets by Donald Trump Q: When does Donald Trump tweet? > boxplot(split(trump. df$hour, trump. df$day), xlab="Day of Week", ylab="Time (0 - 24)", main="Tweet Time vs. Day of Week", col="yellow")

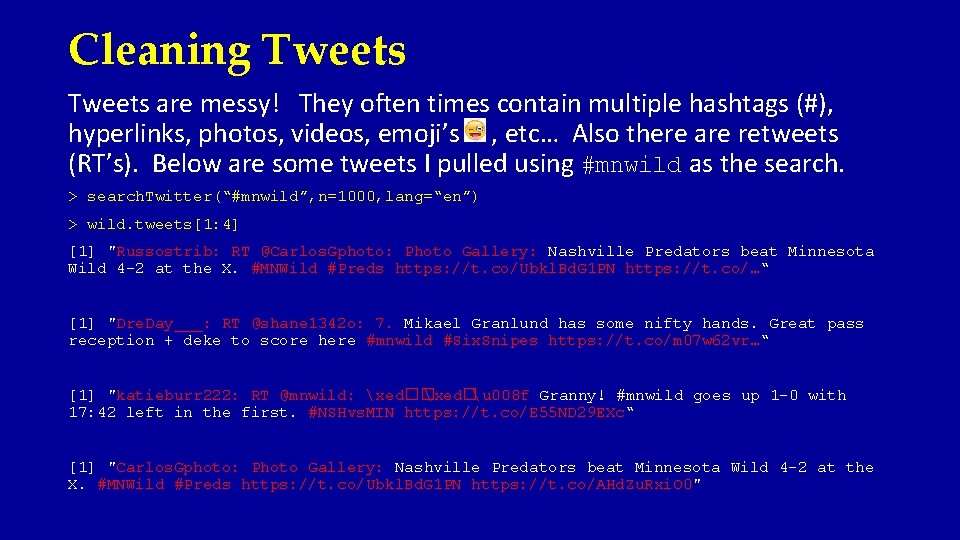

Cleaning Tweets are messy! They often times contain multiple hashtags (#), hyperlinks, photos, videos, emoji’s , etc… Also there are retweets (RT’s). Below are some tweets I pulled using #mnwild as the search. > search. Twitter(“#mnwild”, n=1000, lang=“en”) > wild. tweets[1: 4] [1] "Russostrib: RT @Carlos. Gphoto: Photo Gallery: Nashville Predators beat Minnesota Wild 4 -2 at the X. #MNWild #Preds https: //t. co/Ubkl. Bd. G 1 PN https: //t. co/…“ [1] "Dre. Day___: RT @shane 1342 o: 7. Mikael Granlund has some nifty hands. Great pass reception + deke to score here #mnwild #Six. Snipes https: //t. co/m 07 w 62 vr…“ [1] "katieburr 222: RT @mnwild: xed�� xed�u 008 f Granny! #mnwild goes up 1 -0 with 17: 42 left in the first. #NSHvs. MIN https: //t. co/E 55 ND 29 EXc“ [1] "Carlos. Gphoto: Photo Gallery: Nashville Predators beat Minnesota Wild 4 -2 at the X. #MNWild #Preds https: //t. co/Ubkl. Bd. G 1 PN https: //t. co/AHd. Zu. Rxi. O 0"

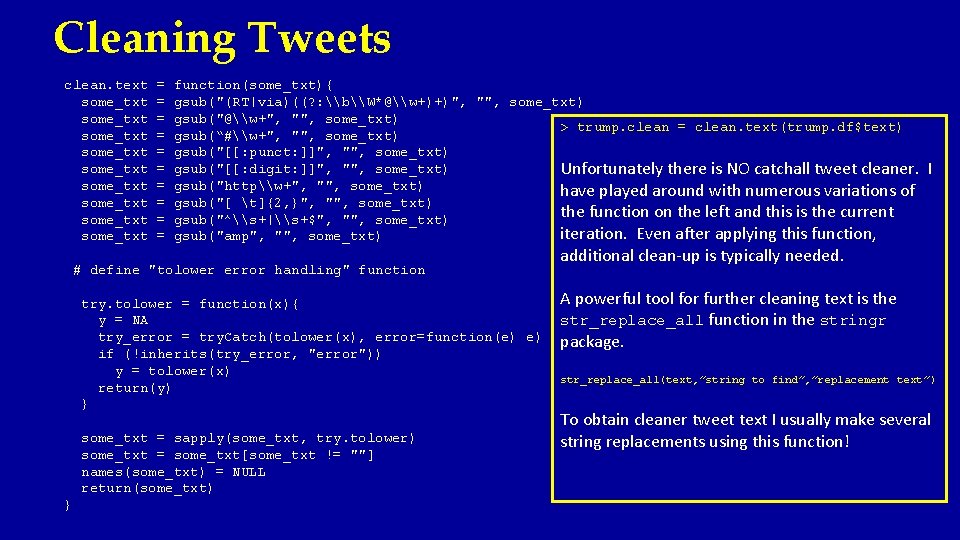

Cleaning Tweets clean. text some_txt some_txt some_txt = = = = = function(some_txt){ gsub("(RT|via)((? : \b\W*@\w+)+)", "", some_txt) gsub("@\w+", "", some_txt) > trump. clean = clean. text(trump. df$text) gsub(“#\w+", "", some_txt) gsub("[[: punct: ]]", "", some_txt) gsub("[[: digit: ]]", "", some_txt) Unfortunately there is NO catchall tweet cleaner. gsub("http\w+", "", some_txt) have played around with numerous variations of gsub("[ t]{2, }", "", some_txt) the function on the left and this is the current gsub("^\s+|\s+$", "", some_txt) iteration. Even after applying this function, gsub("amp", "", some_txt) # define "tolower error handling" function try. tolower = function(x){ y = NA try_error = try. Catch(tolower(x), error=function(e) e) if (!inherits(try_error, "error")) y = tolower(x) return(y) } some_txt = sapply(some_txt, try. tolower) some_txt = some_txt[some_txt != ""] names(some_txt) = NULL return(some_txt) } I additional clean-up is typically needed. A powerful tool for further cleaning text is the str_replace_all function in the stringr package. str_replace_all(text, ”string to find”, ”replacement text”) To obtain cleaner tweet text I usually make several string replacements using this function!

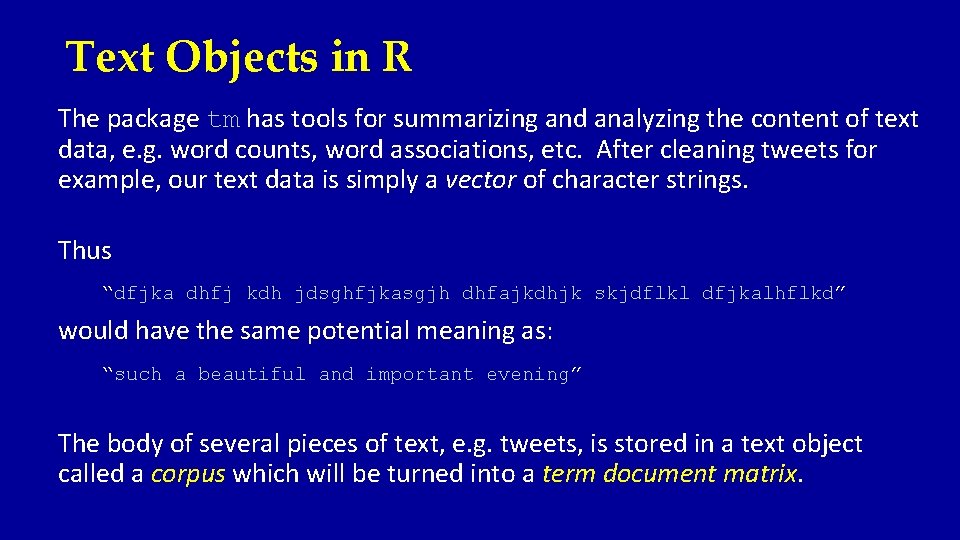

Text Objects in R The package tm has tools for summarizing and analyzing the content of text data, e. g. word counts, word associations, etc. After cleaning tweets for example, our text data is simply a vector of character strings. Thus “dfjka dhfj kdh jdsghfjkasgjh dhfajkdhjk skjdflkl dfjkalhflkd” would have the same potential meaning as: “such a beautiful and important evening” The body of several pieces of text, e. g. tweets, is stored in a text object called a corpus which will be turned into a term document matrix.

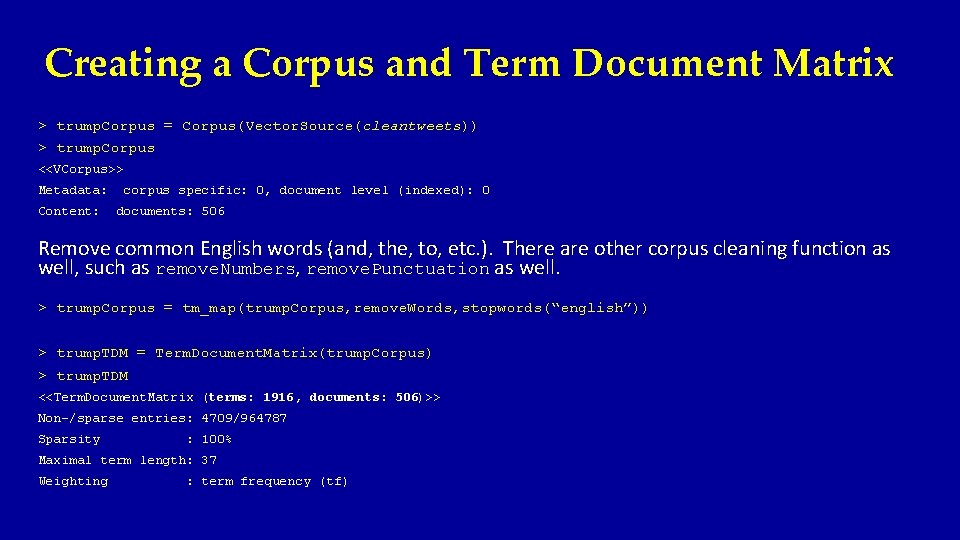

Creating a Corpus and Term Document Matrix > trump. Corpus = Corpus(Vector. Source(cleantweets)) > trump. Corpus <<VCorpus>> Metadata: Content: corpus specific: 0, document level (indexed): 0 documents: 506 Remove common English words (and, the, to, etc. ). There are other corpus cleaning function as well, such as remove. Numbers, remove. Punctuation as well. > trump. Corpus = tm_map(trump. Corpus, remove. Words, stopwords(“english”)) > trump. TDM = Term. Document. Matrix(trump. Corpus) > trump. TDM <<Term. Document. Matrix (terms: 1916, documents: 506)>> Non-/sparse entries: 4709/964787 Sparsity : 100% Maximal term length: 37 Weighting : term frequency (tf)

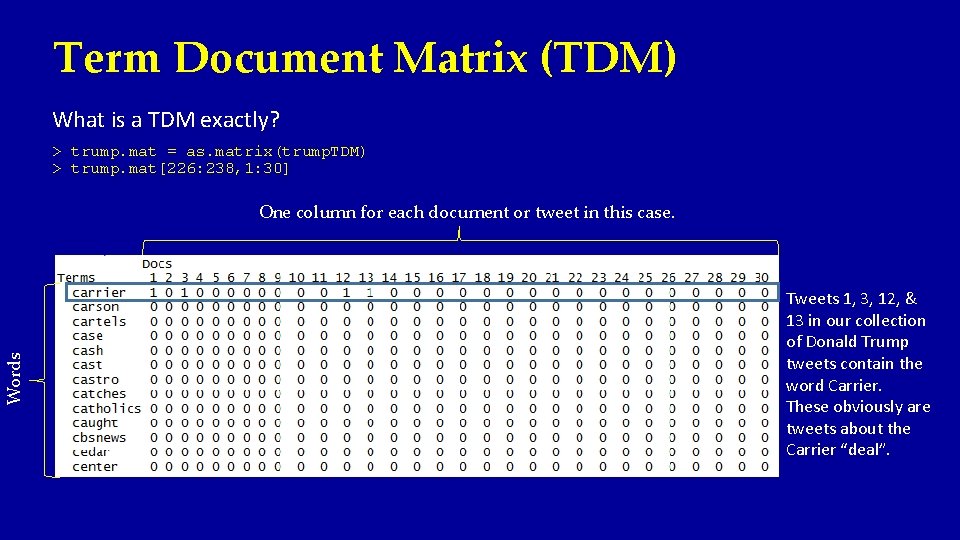

Term Document Matrix (TDM) What is a TDM exactly? > trump. mat = as. matrix(trump. TDM) > trump. mat[226: 238, 1: 30] Words One column for each document or tweet in this case. Tweets 1, 3, 12, & 13 in our collection of Donald Trump tweets contain the word Carrier. These obviously are tweets about the Carrier “deal”.

![Word Frequencies > word. freq = sort(row. Sums(trump. mat), decreasing=T) > barplot(word. freq[1: 20], Word Frequencies > word. freq = sort(row. Sums(trump. mat), decreasing=T) > barplot(word. freq[1: 20],](http://slidetodoc.com/presentation_image_h2/495c014b3da87c4a5d465cfe8aa739f4/image-22.jpg)

Word Frequencies > word. freq = sort(row. Sums(trump. mat), decreasing=T) > barplot(word. freq[1: 20], xlab="Frequent Trump Tweet Words", cex. names=. 7)

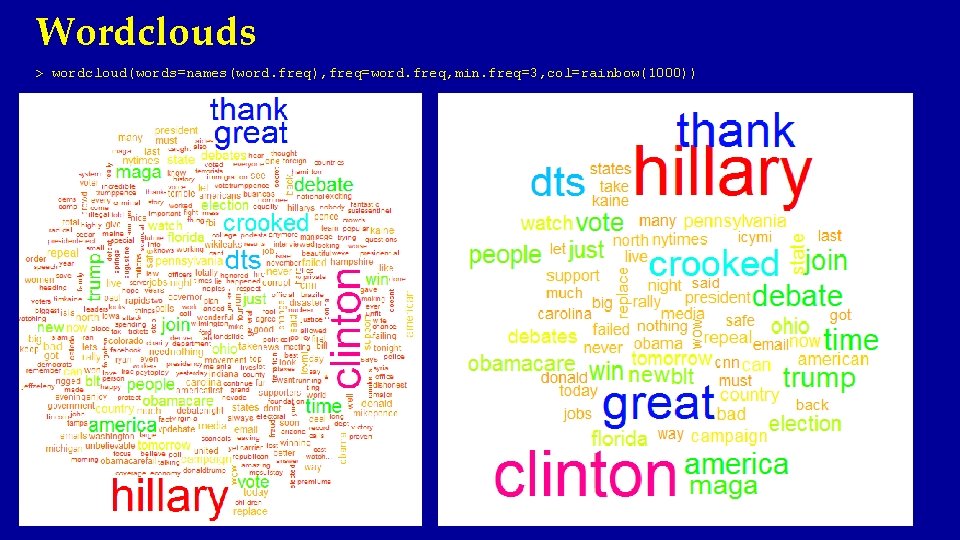

Wordclouds > wordcloud(words=names(word. freq), freq=word. freq, min. freq=3, col=rainbow(1000))

![Wordclouds > wordcloud(words=names(word. freq[-c(1: 4)]), freq=word. freq[-c(1: 4)], min. freq=3, col=rainbow(1000)) After removing Hillary, Wordclouds > wordcloud(words=names(word. freq[-c(1: 4)]), freq=word. freq[-c(1: 4)], min. freq=3, col=rainbow(1000)) After removing Hillary,](http://slidetodoc.com/presentation_image_h2/495c014b3da87c4a5d465cfe8aa739f4/image-24.jpg)

Wordclouds > wordcloud(words=names(word. freq[-c(1: 4)]), freq=word. freq[-c(1: 4)], min. freq=3, col=rainbow(1000)) After removing Hillary, Clinton, thank, and great which were the four most frequent words in the text of Trump’s tweets.

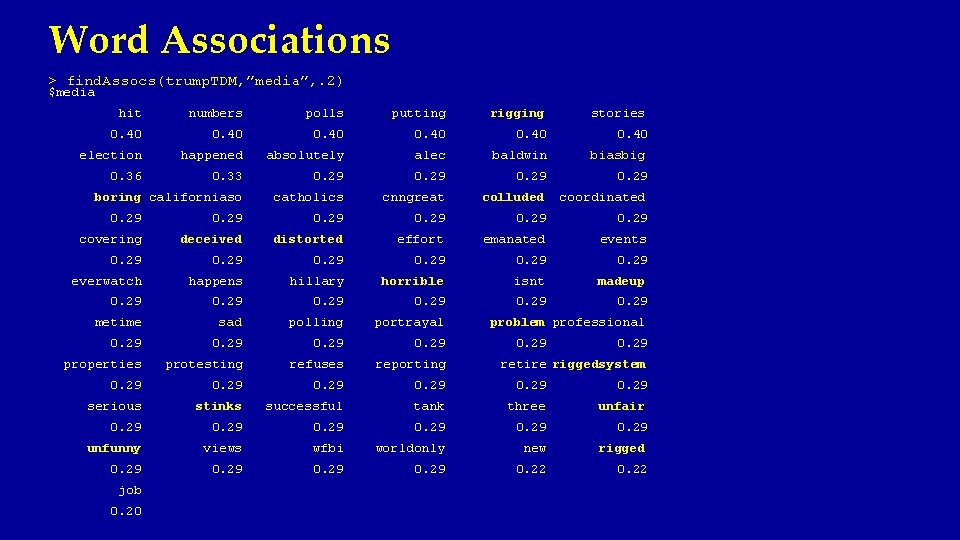

Word Associations > find. Assocs(trump. TDM, ”media”, . 2) $media hit numbers polls putting rigging stories 0. 40 election happened absolutely alec baldwin biasbig 0. 36 0. 33 0. 29 boring californiaso catholics cnngreat colluded coordinated 0. 29 covering deceived distorted effort emanated events 0. 29 everwatch happens hillary horrible isnt madeup 0. 29 metime sad polling portrayal 0. 29 properties protesting refuses reporting 0. 29 serious stinks successful tank three unfair 0. 29 unfunny views wfbi worldonly new rigged 0. 29 0. 22 job 0. 20 problem professional 0. 29 retire riggedsystem

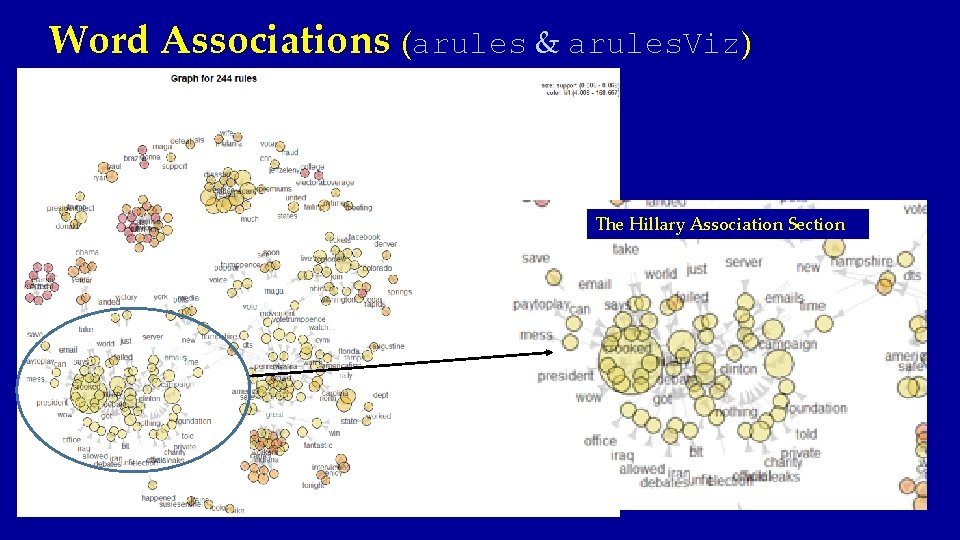

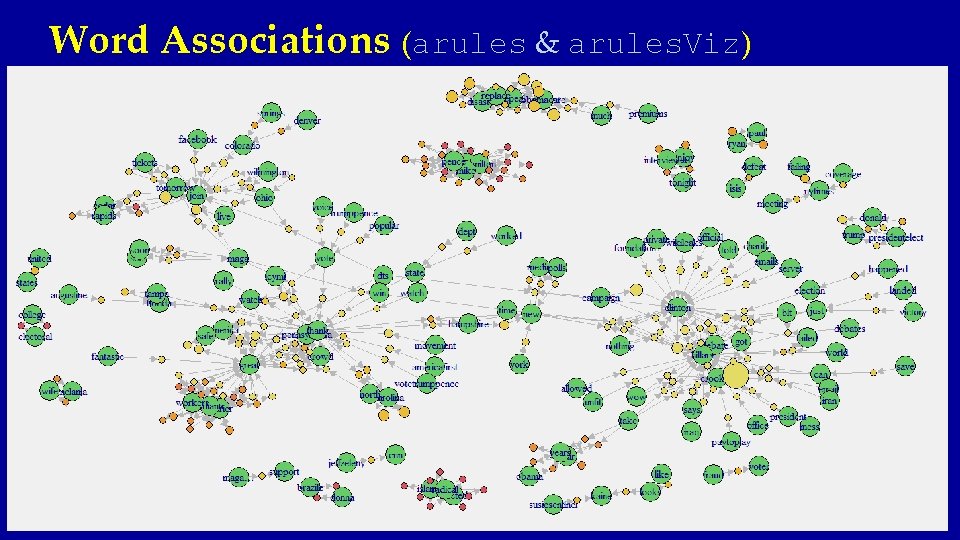

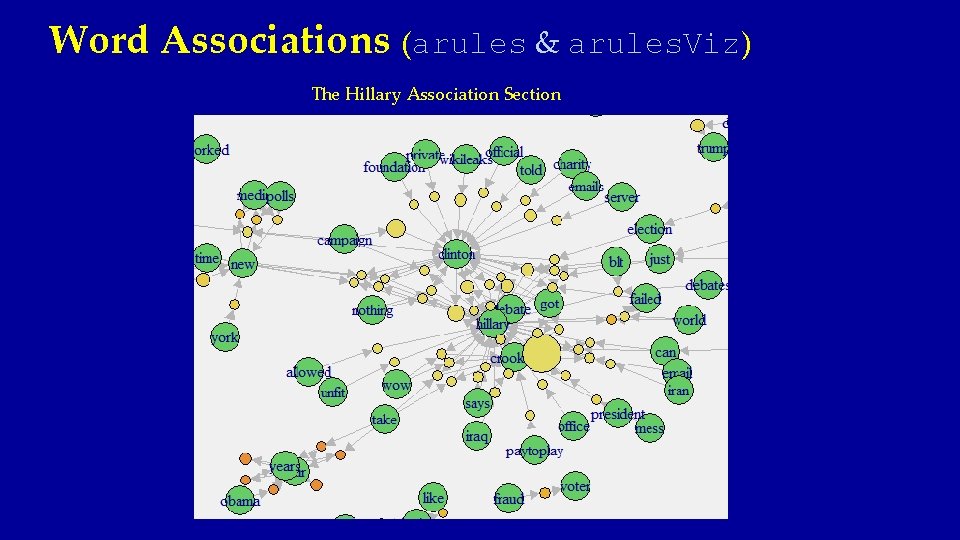

Word Associations (arules & arules. Viz) The Hillary Association Section

Word Associations (arules & arules. Viz)

Word Associations (arules & arules. Viz) The Hillary Association Section

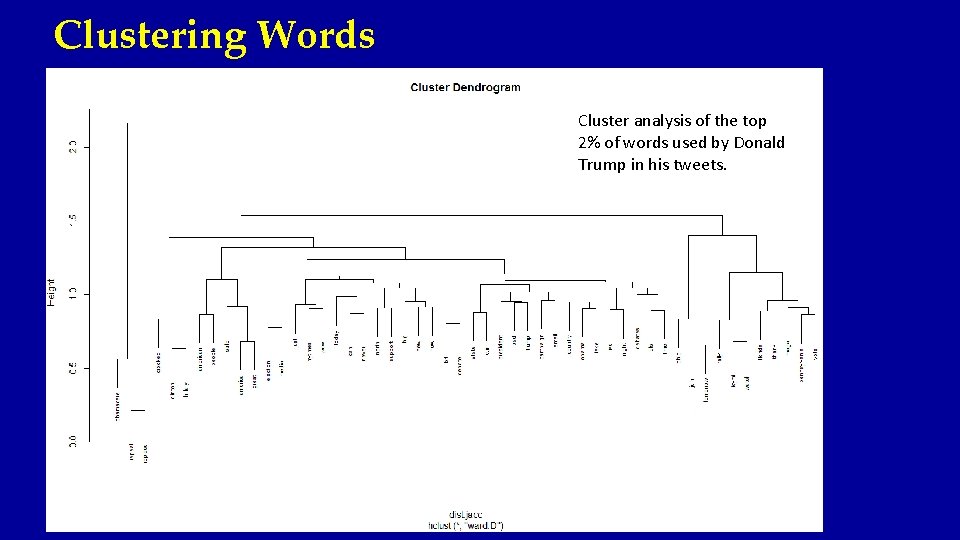

Clustering Words Cluster analysis of the top 2% of words used by Donald Trump in his tweets.

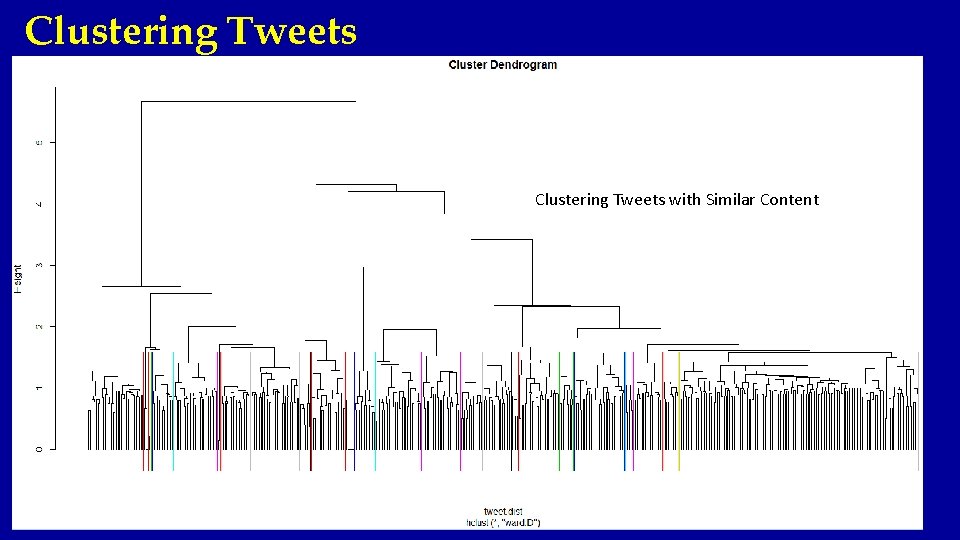

Clustering Tweets with Similar Content

![Clustering Tweets > trump. clean 6[tweet. grps==17] # Repeal and replace Obamacare (AHA) [1] Clustering Tweets > trump. clean 6[tweet. grps==17] # Repeal and replace Obamacare (AHA) [1]](http://slidetodoc.com/presentation_image_h2/495c014b3da87c4a5d465cfe8aa739f4/image-31.jpg)

Clustering Tweets > trump. clean 6[tweet. grps==17] # Repeal and replace Obamacare (AHA) [1] "obamacare is a total disaster hillary clinton wants to save it by making it even more expensive doesnt work i repeal replace" [2] "i am to repeal replace obamacare we have much less expensive much better healthcare with hillary costs triple" [3] "trump promises special session to repeal obamacare" [4] "people in arizona just got a taste of obamacare with a increase in premiums repeal replace" [5] "obamacare is a disaster we must repeal replace tired of the lies and want to dts out vote…" [6] "repeal and replace obamacare" [7] "repeal and replace Obamacare in three words" [8] "obamacare is a disaster as ive been saying from the beginning time to repeal replace obamacarefail" [9] "obamacare is a disaster time to repeal replace obamacarefail" [10] "obamacare premiums are about to skyrocket again crooked hillary only it worse we repeal replace" [11] "we have to repeal replace obamacare look at what is doing to people dts" [12] "i sign the first bill to repeal obamacare give americans many choices and much lower rates" [13] "we must repeal obamacare and replace it with a much more competitive comprehensive affordable system debate maga" > trump. clean 6[tweet. grps==16] [1] [2] [3] [4] [5] [6] [7] "join "join me me # “Come see me” Tweets live in wilmington ohio" today in wilmington ohio at pm tomorrow tampa florida at am" in wilmington ohio tomorrow at pm it is time to dts tickets" live in toledo ohio time to dts maga" live in springfield ohio" in ohio tomorrow springfield pm toledo pm geneva pm…" in delaware ohio tomorrow at pm dts tickets"

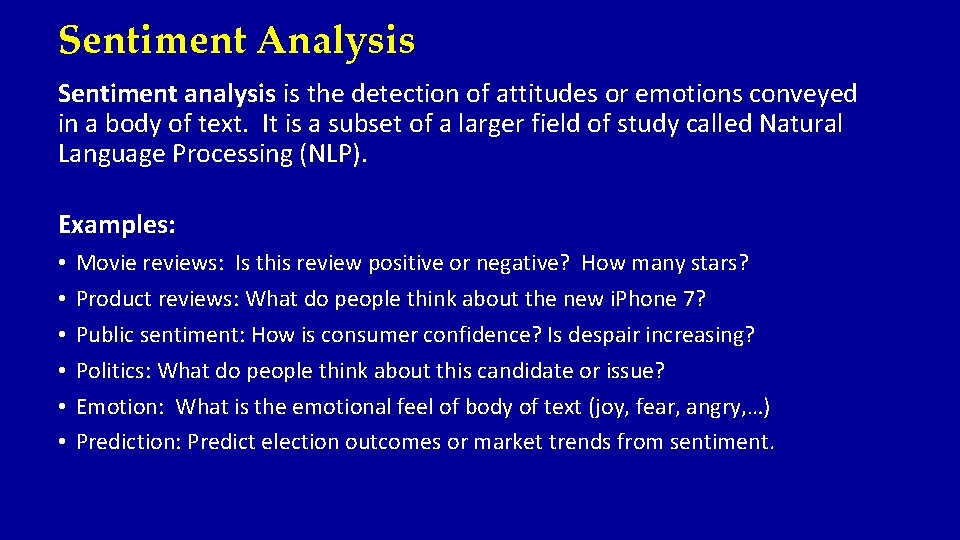

Sentiment Analysis Sentiment analysis is the detection of attitudes or emotions conveyed in a body of text. It is a subset of a larger field of study called Natural Language Processing (NLP). Examples: • • • Movie reviews: Is this review positive or negative? How many stars? Product reviews: What do people think about the new i. Phone 7? Public sentiment: How is consumer confidence? Is despair increasing? Politics: What do people think about this candidate or issue? Emotion: What is the emotional feel of body of text (joy, fear, angry, …) Prediction: Predict election outcomes or market trends from sentiment.

Sentiment Analysis Basic Idea: Search through the words in the body of text and find POSITIVE (+1) and NEGATIVE (-1) words. Many/most of the words in the text will not receive a score or be scored as zero. The sentiment score could simply be the sum of the scored words. Some words probably are more positive/negative than others. We may want to assign words a score ranging from -5 to 5. This assignment would be quite subjective, but clearly WORST is probably more negative than MEDIOCRE. Similarly BEST is probably more positive than GOOD. Using this type of scheme the sentiment score would be the weighted sum of the scored words in the body of the text.

Sentiment Analysis There are several word lists that been developed by NLP and computational linguistic researchers that are publically available. • Minqing Hu & Bing Lui (2005) – published a list of positive and negative words. (https: //www. cs. uic. edu/~liub/FBS/sentiment-analysis. html#lexicon) • Finn Arup Nielsen (2015) – published a list of words scored from -5 to 5 targets towards sentiment analysis on short text as one finds in social media, such as Twitter. (https: //finnaarupnielsen. wordpress. com/2011/03/16/afinn-a-new-word-list-for-sentiment-analysis/) • Saif Mohammad (2013) – published a list of words with their associations to the 8 basic emotions (anger, fear, anticipation, trust, surprise, sadness, joy, and disgust). He has also published a positive-negative word lists. (http: //saifmohammad. com/Web. Pages/meetsaif. htm) There are several others…

![Sentiment Analysis Examples of Positive Words (Hu & Lui) > pos. words[sample(1: 2006, 25)] Sentiment Analysis Examples of Positive Words (Hu & Lui) > pos. words[sample(1: 2006, 25)]](http://slidetodoc.com/presentation_image_h2/495c014b3da87c4a5d465cfe8aa739f4/image-35.jpg)

Sentiment Analysis Examples of Positive Words (Hu & Lui) > pos. words[sample(1: 2006, 25)] [1] "outstandingly" "calm" "significant" "gained" "stunned" [6] "comely" "satisfies" "togetherness" "righteously" "spontaneous" [11] "good" "spellbind" "beautifully" "trumpet" "affirmative" [16] "thankful" "famed" "resounding" "delightfulness" "accessible" [21] "leading" "excellence" "confidence" "eagerly" "wholeheartedly“ Examples of Negative Words (Hu & Lui) > neg. words[sample(1: 4783, 25)] [1] "seriousness" "disputable" "occludes" "exaggerate" [5] "hating" "doldrums" "cramped" "intimidation" [9] "failures" "hell-bent" "spitefully" "preoccupy" [13] "calumny" "malevolently" "sloooow" "stab" [17] "wrestle" "limp" "humming" "ridiculous" [21] "mysteriously" "quarrellous" "insubstantially" "oversimplified" [25] "chatter"

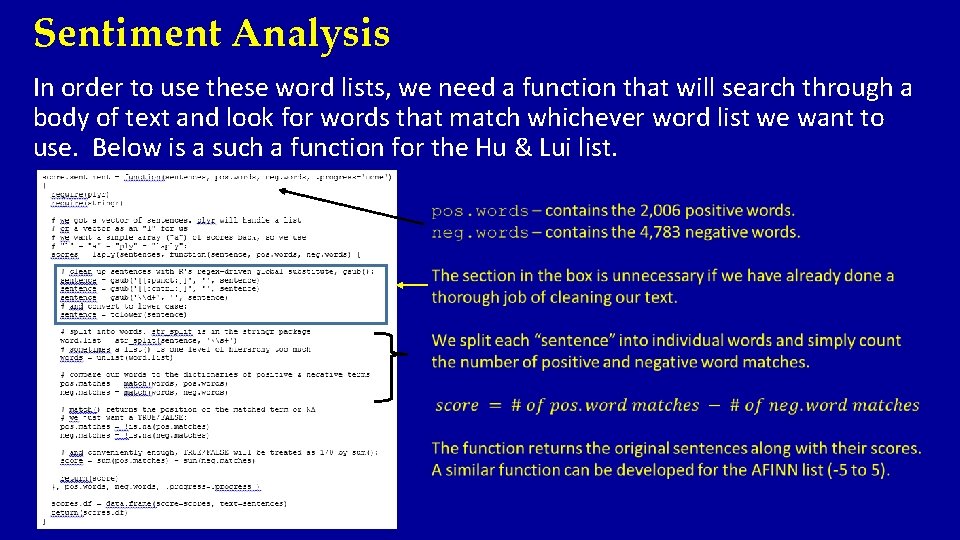

Sentiment Analysis In order to use these word lists, we need a function that will search through a body of text and look for words that match whichever word list we want to use. Below is a such a function for the Hu & Lui list.

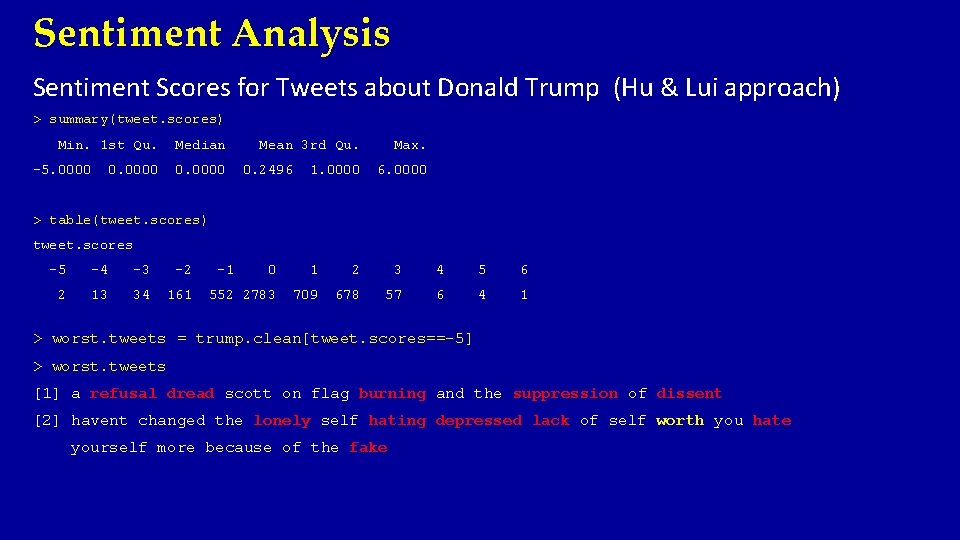

Sentiment Analysis Sentiment Scores for Tweets about Donald Trump (Hu & Lui approach) > summary(tweet. scores) Min. 1 st Qu. -5. 0000 Median 0. 0000 Mean 3 rd Qu. 0. 2496 1. 0000 Max. 6. 0000 > table(tweet. scores) tweet. scores -5 -4 -3 -2 2 13 34 161 -1 0 1 2 3 4 5 6 552 2783 709 678 57 6 4 1 > worst. tweets = trump. clean[tweet. scores==-5] > worst. tweets [1] a refusal dread scott on flag burning and the suppression of dissent [2] havent changed the lonely self hating depressed lack of self worth you hate yourself more because of the fake

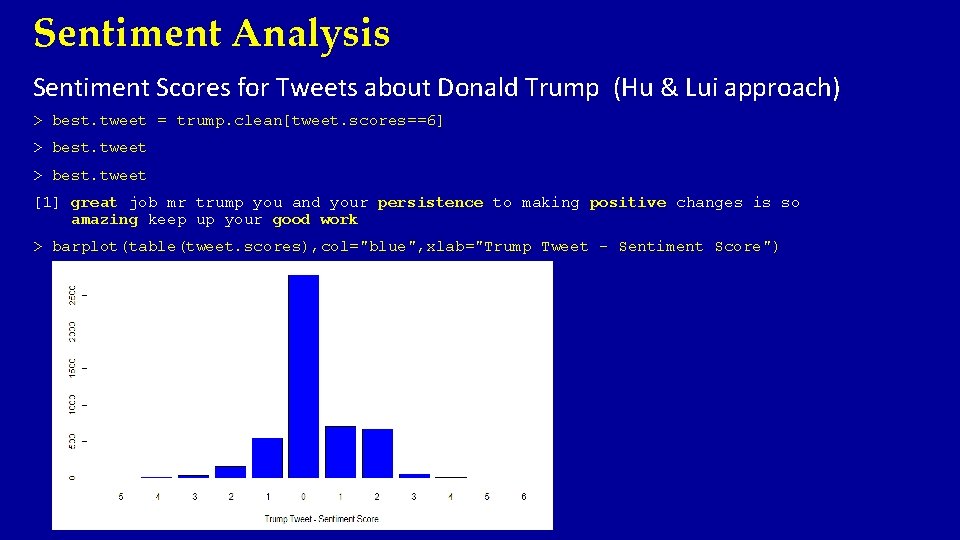

Sentiment Analysis Sentiment Scores for Tweets about Donald Trump (Hu & Lui approach) > best. tweet = trump. clean[tweet. scores==6] > best. tweet [1] great job mr trump you and your persistence to making positive changes is so amazing keep up your good work > barplot(table(tweet. scores), col="blue", xlab="Trump Tweet - Sentiment Score")

Sentiment Analysis Sentiment Scores for Tweets about Donald Trump (Hu & Lui approach) Computing polarity (positive, negative, or neutral) of the Trump tweets. > table(tweet. scores>0) FALSE TRUE 3545 1455 > table(tweet. scores<0) FALSE TRUE 4238 762 > table(tweet. scores==0) FALSE TRUE 2217 2783 Positive Tweets (29. 1%) Negative Tweets (15. 2%) Neutral Tweets (55. 7%)

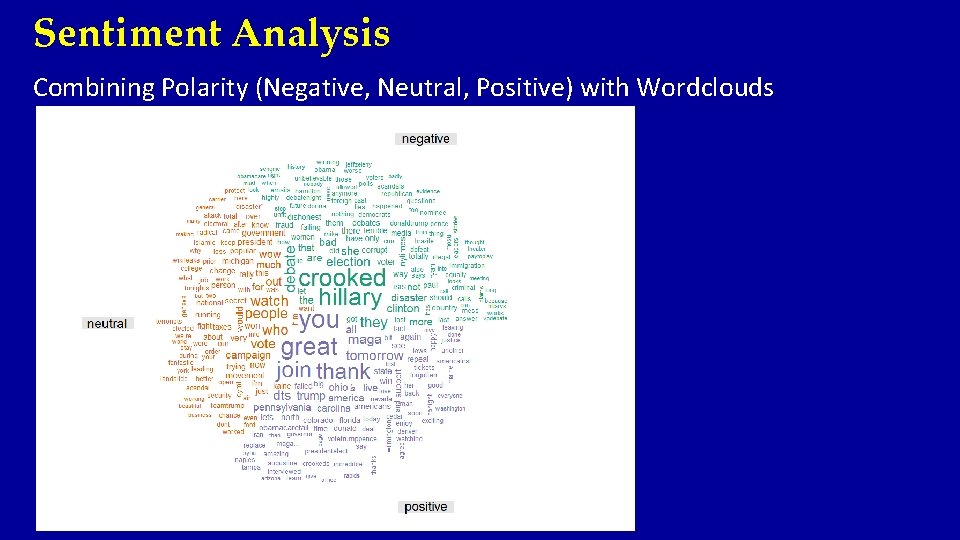

Sentiment Analysis Combining Polarity (Negative, Neutral, Positive) with Wordclouds

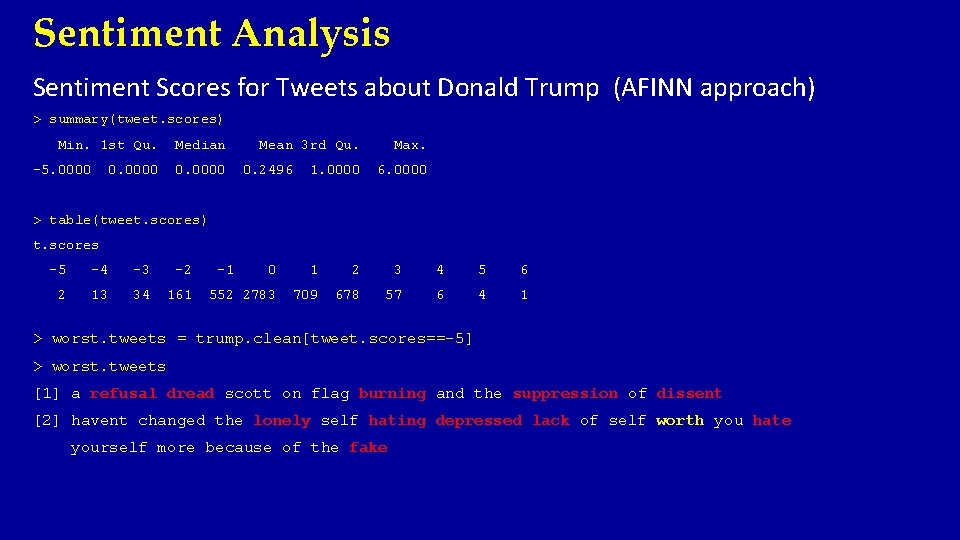

Sentiment Analysis Sentiment Scores for Tweets about Donald Trump (AFINN approach) > summary(tweet. scores) Min. 1 st Qu. -5. 0000 Median 0. 0000 Mean 3 rd Qu. 0. 2496 1. 0000 Max. 6. 0000 > table(tweet. scores) t. scores -5 -4 -3 -2 2 13 34 161 -1 0 1 2 3 4 5 6 552 2783 709 678 57 6 4 1 > worst. tweets = trump. clean[tweet. scores==-5] > worst. tweets [1] a refusal dread scott on flag burning and the suppression of dissent [2] havent changed the lonely self hating depressed lack of self worth you hate yourself more because of the fake

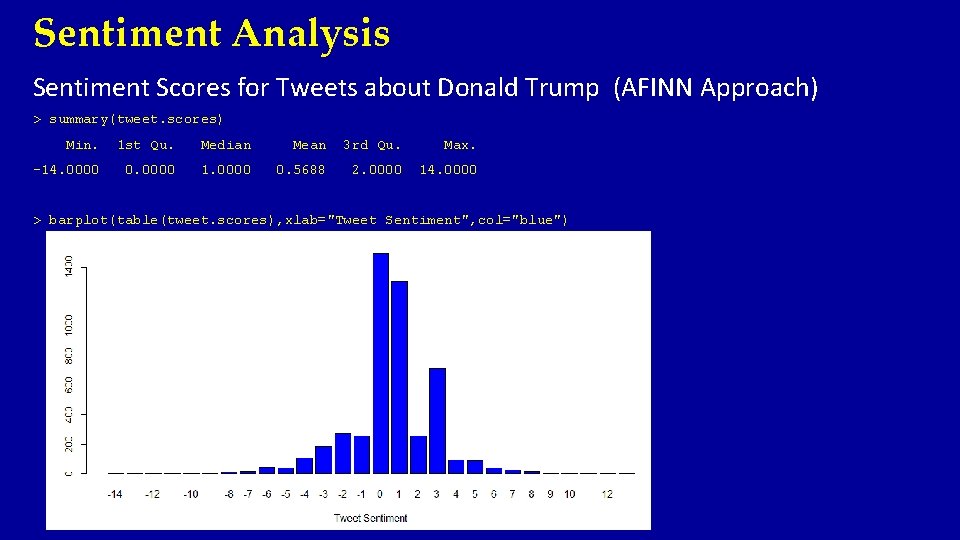

Sentiment Analysis Sentiment Scores for Tweets about Donald Trump (AFINN Approach) > summary(tweet. scores) Min. 1 st Qu. Median Mean 3 rd Qu. Max. -14. 0000 0. 0000 1. 0000 0. 5688 2. 0000 14. 0000 > barplot(table(tweet. scores), xlab="Tweet Sentiment", col="blue")

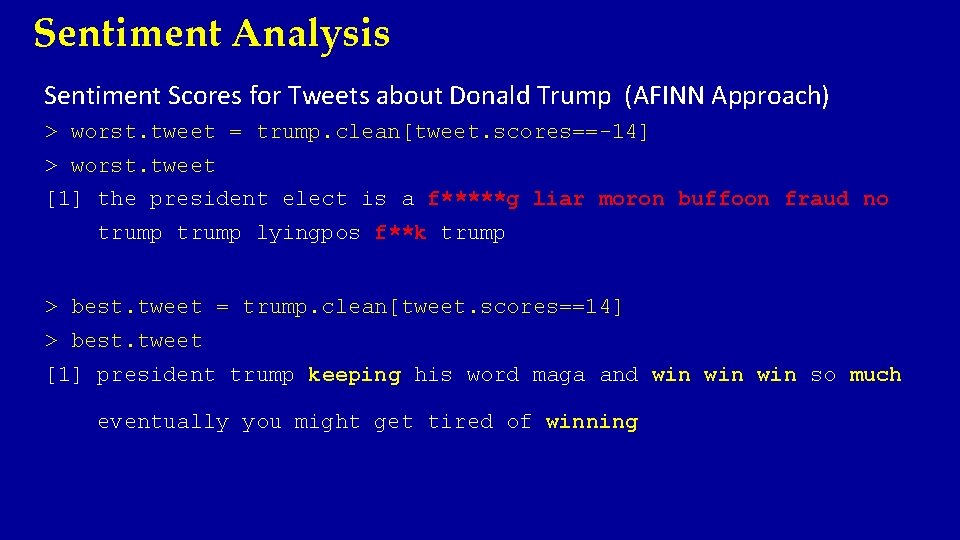

Sentiment Analysis Sentiment Scores for Tweets about Donald Trump (AFINN Approach) > worst. tweet = trump. clean[tweet. scores==-14] > worst. tweet [1] the president elect is a f*****g liar moron buffoon fraud no trump lyingpos f**k trump > best. tweet = trump. clean[tweet. scores==14] > best. tweet [1] president trump keeping his word maga and win win so much eventually you might get tired of winning

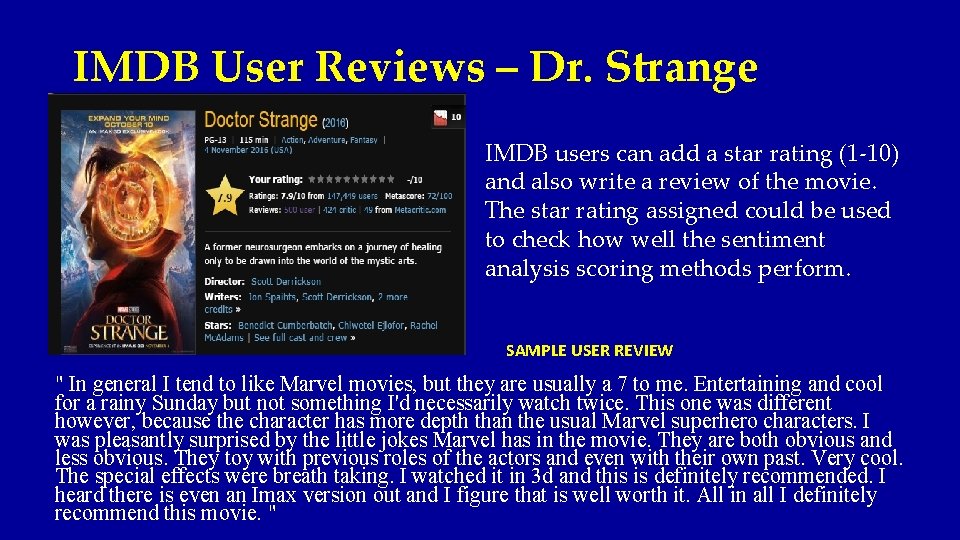

IMDB User Reviews – Dr. Strange IMDB users can add a star rating (1 -10) and also write a review of the movie. The star rating assigned could be used to check how well the sentiment analysis scoring methods perform. SAMPLE USER REVIEW " In general I tend to like Marvel movies, but they are usually a 7 to me. Entertaining and cool for a rainy Sunday but not something I'd necessarily watch twice. This one was different however, because the character has more depth than the usual Marvel superhero characters. I was pleasantly surprised by the little jokes Marvel has in the movie. They are both obvious and less obvious. They toy with previous roles of the actors and even with their own past. Very cool. The special effects were breath taking. I watched it in 3 d and this is definitely recommended. I heard there is even an Imax version out and I figure that is well worth it. All in all I definitely recommend this movie. "

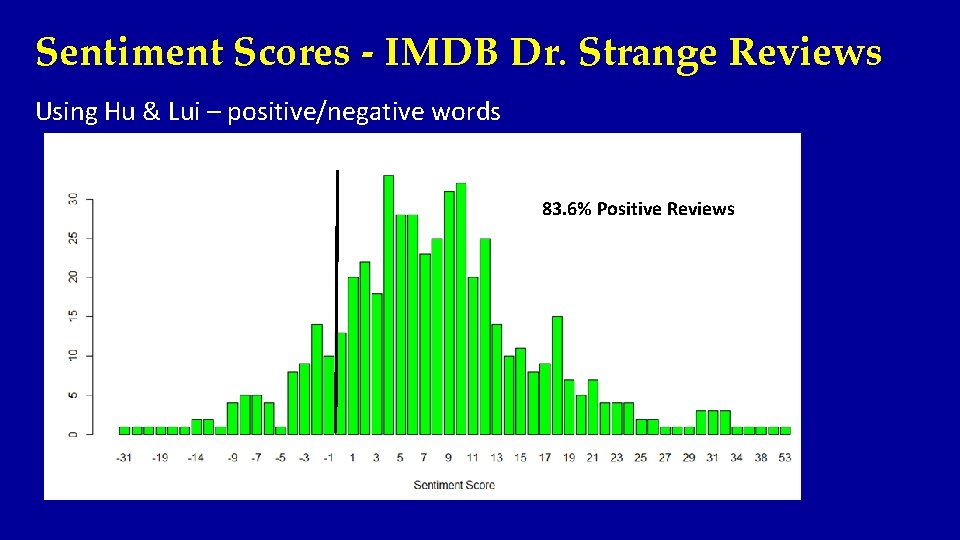

Sentiment Scores - IMDB Dr. Strange Reviews Using Hu & Lui – positive/negative words 83. 6% Positive Reviews

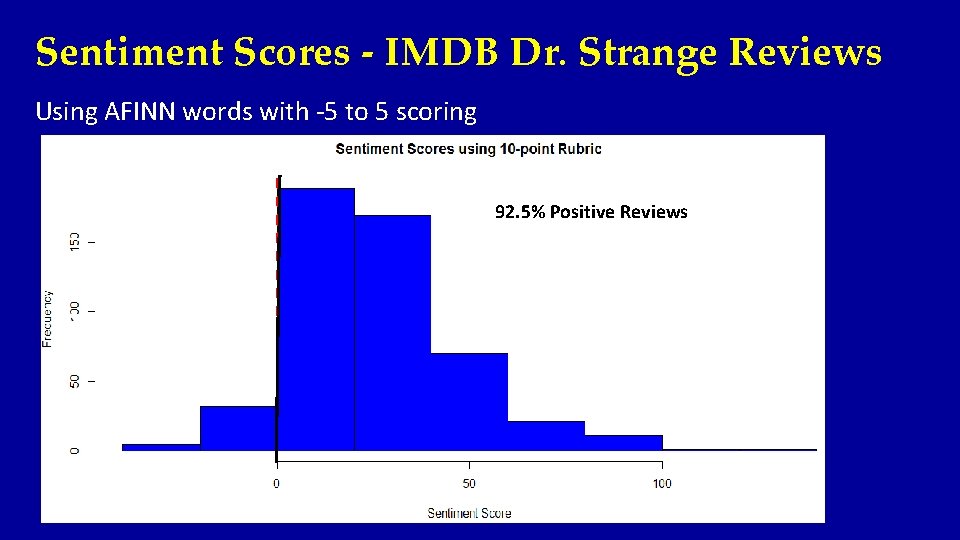

Sentiment Scores - IMDB Dr. Strange Reviews Using AFINN words with -5 to 5 scoring 92. 5% Positive Reviews

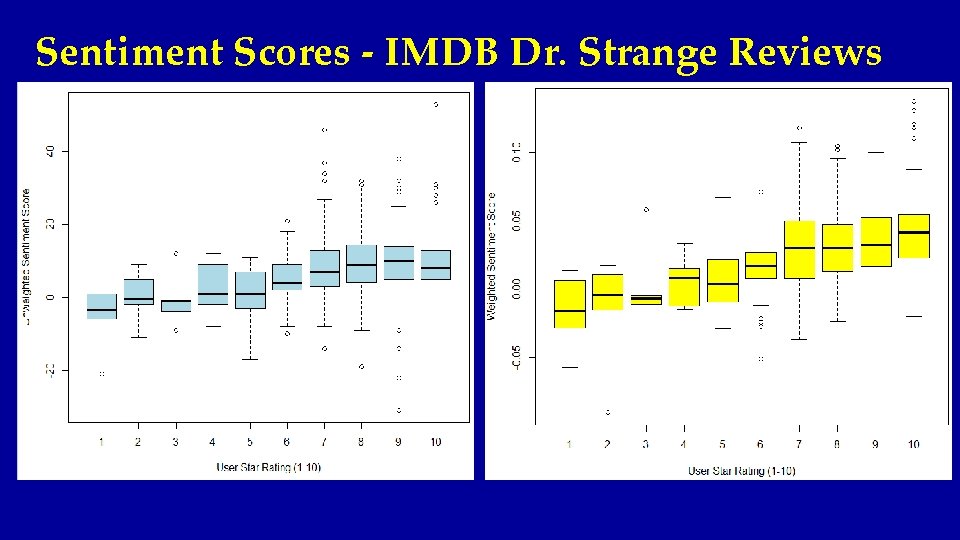

Sentiment Scores - IMDB Dr. Strange Reviews

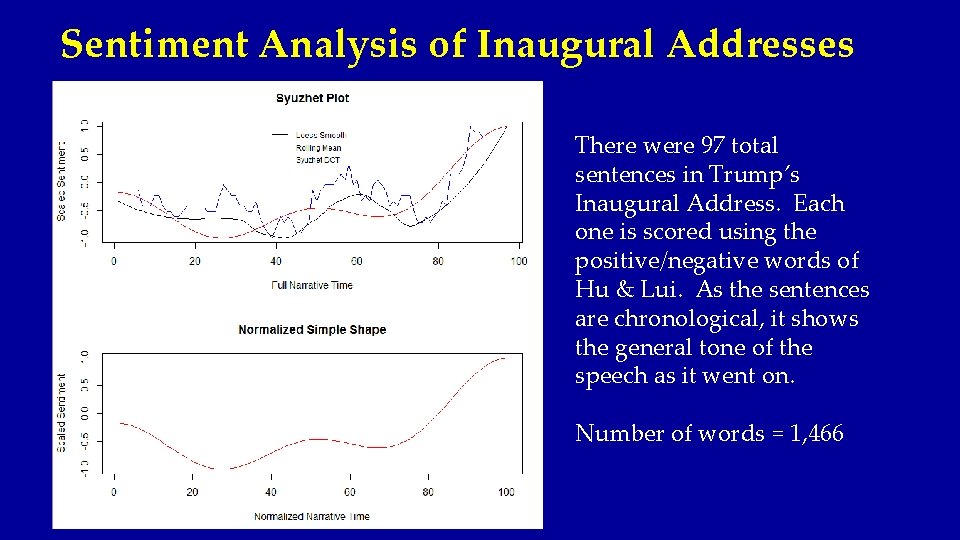

Sentiment Analysis of Inaugural Addresses There were 97 total sentences in Trump’s Inaugural Address. Each one is scored using the positive/negative words of Hu & Lui. As the sentences are chronological, it shows the general tone of the speech as it went on. Number of words = 1, 466

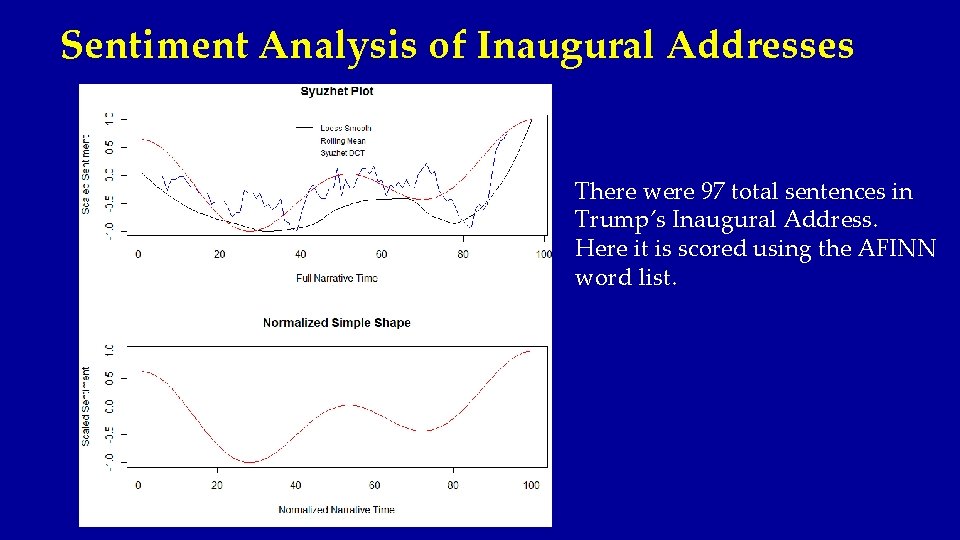

Sentiment Analysis of Inaugural Addresses There were 97 total sentences in Trump’s Inaugural Address. Here it is scored using the AFINN word list.

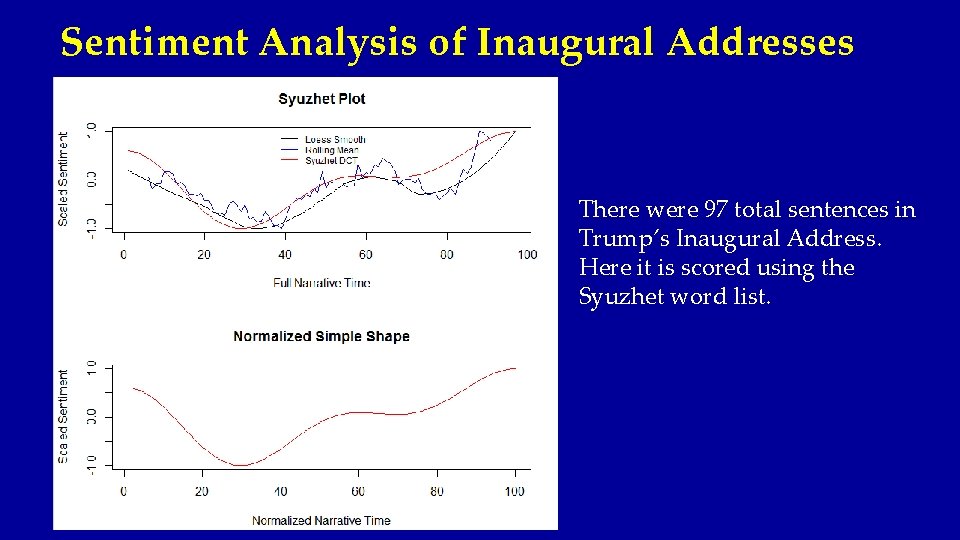

Sentiment Analysis of Inaugural Addresses There were 97 total sentences in Trump’s Inaugural Address. Here it is scored using the Syuzhet word list.

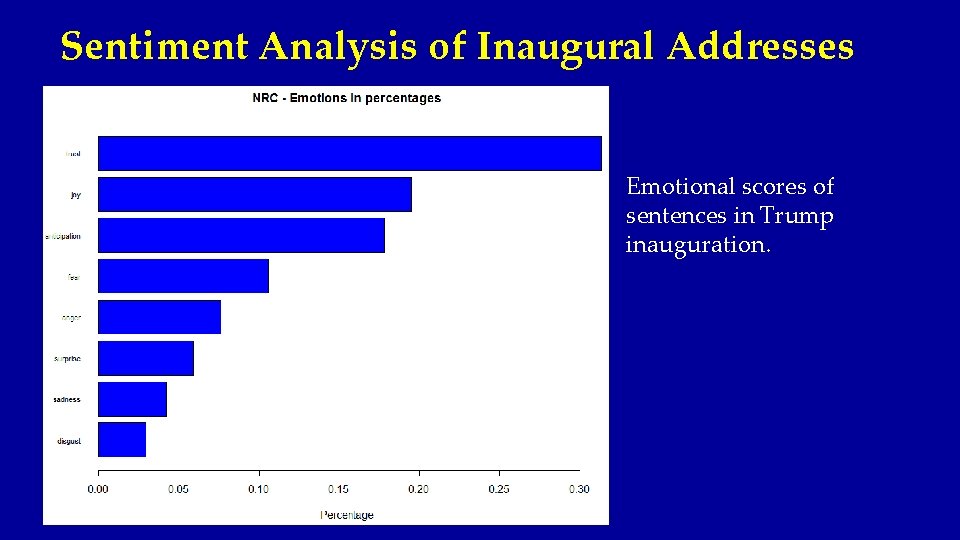

Sentiment Analysis of Inaugural Addresses Emotional scores of sentences in Trump inauguration.

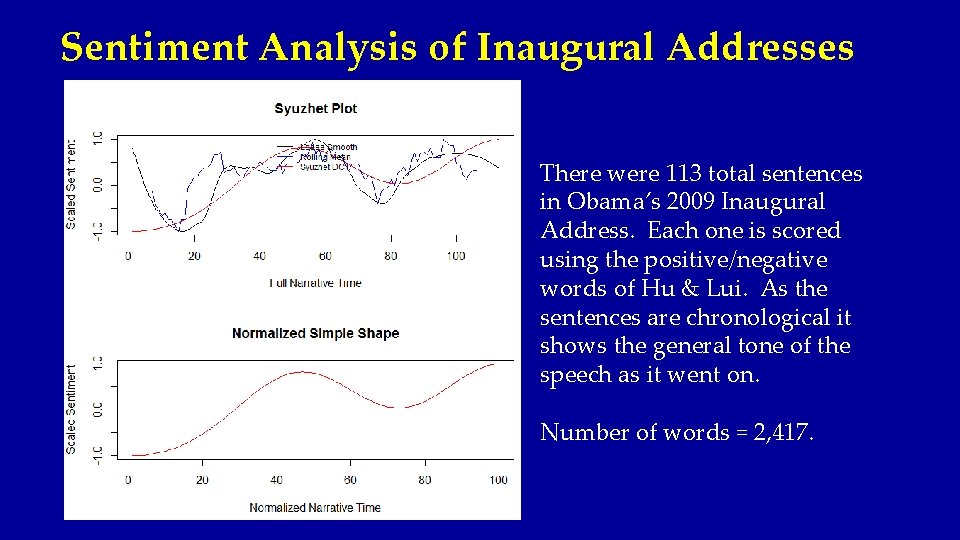

Sentiment Analysis of Inaugural Addresses There were 113 total sentences in Obama’s 2009 Inaugural Address. Each one is scored using the positive/negative words of Hu & Lui. As the sentences are chronological it shows the general tone of the speech as it went on. Number of words = 2, 417.

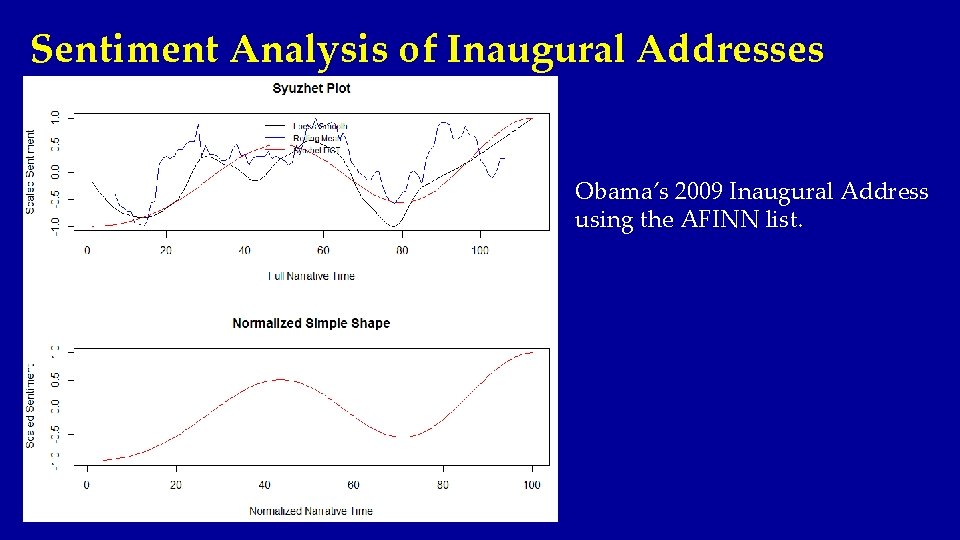

Sentiment Analysis of Inaugural Addresses Obama’s 2009 Inaugural Address using the AFINN list.

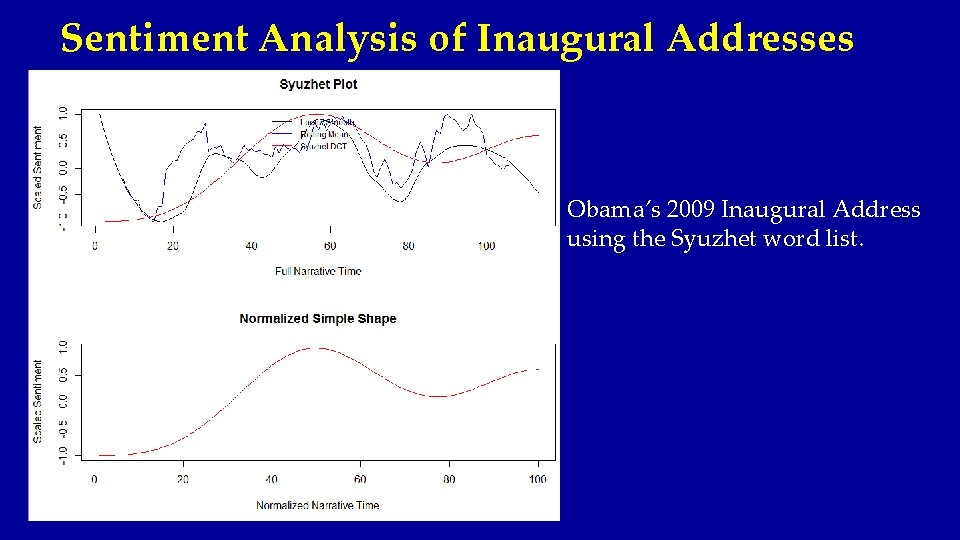

Sentiment Analysis of Inaugural Addresses Obama’s 2009 Inaugural Address using the Syuzhet word list.

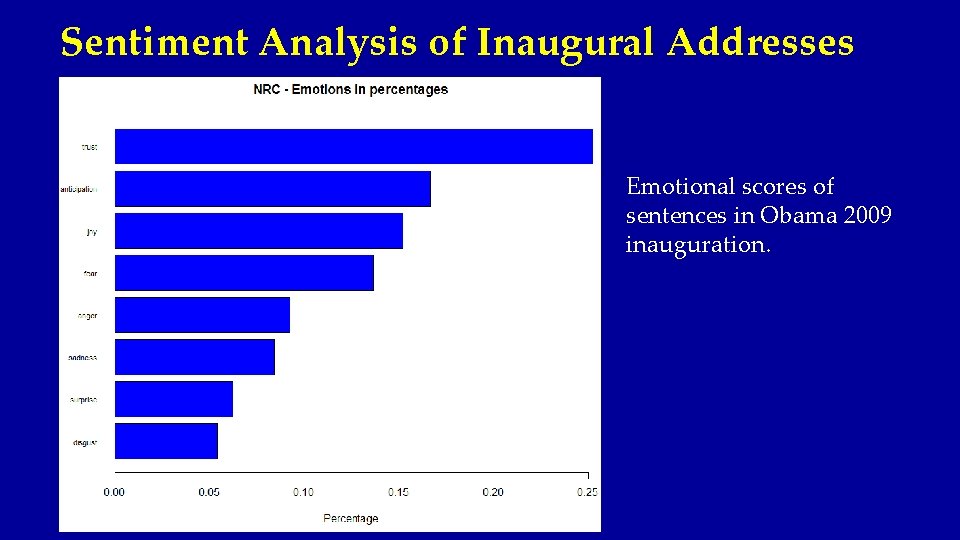

Sentiment Analysis of Inaugural Addresses Emotional scores of sentences in Obama 2009 inauguration.

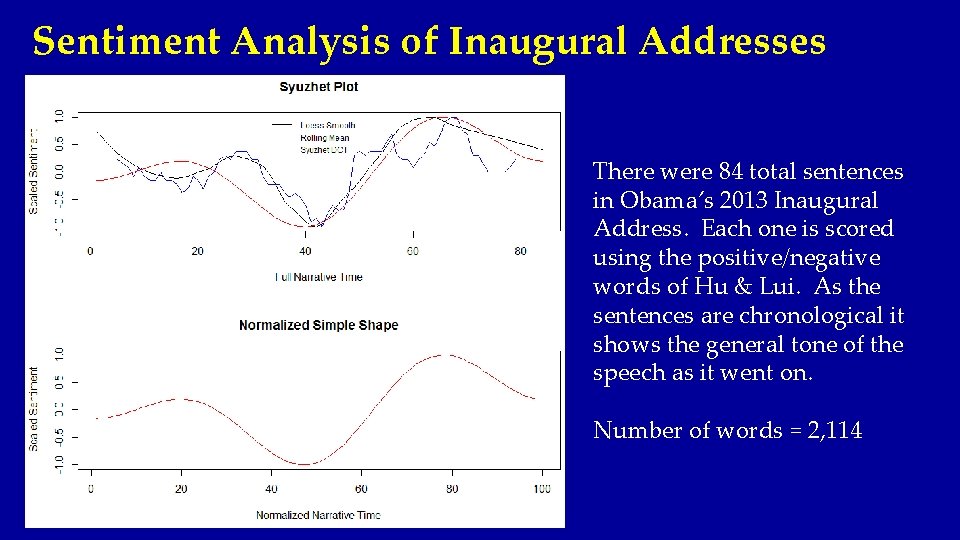

Sentiment Analysis of Inaugural Addresses There were 84 total sentences in Obama’s 2013 Inaugural Address. Each one is scored using the positive/negative words of Hu & Lui. As the sentences are chronological it shows the general tone of the speech as it went on. Number of words = 2, 114

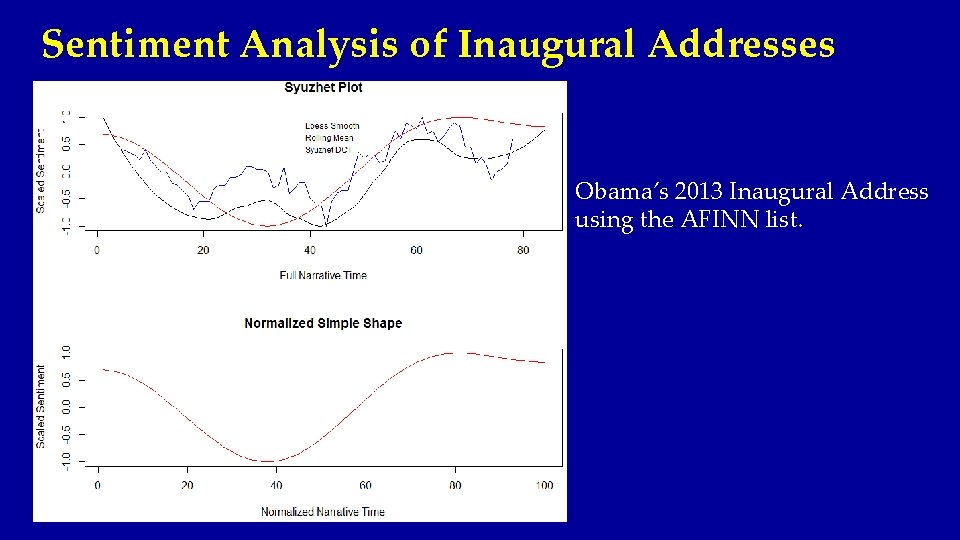

Sentiment Analysis of Inaugural Addresses Obama’s 2013 Inaugural Address using the AFINN list.

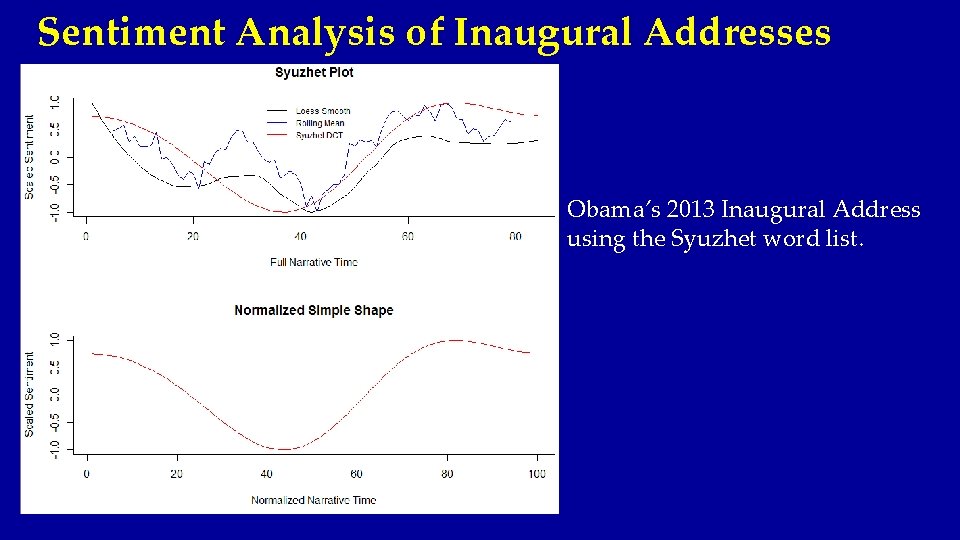

Sentiment Analysis of Inaugural Addresses Obama’s 2013 Inaugural Address using the Syuzhet word list.

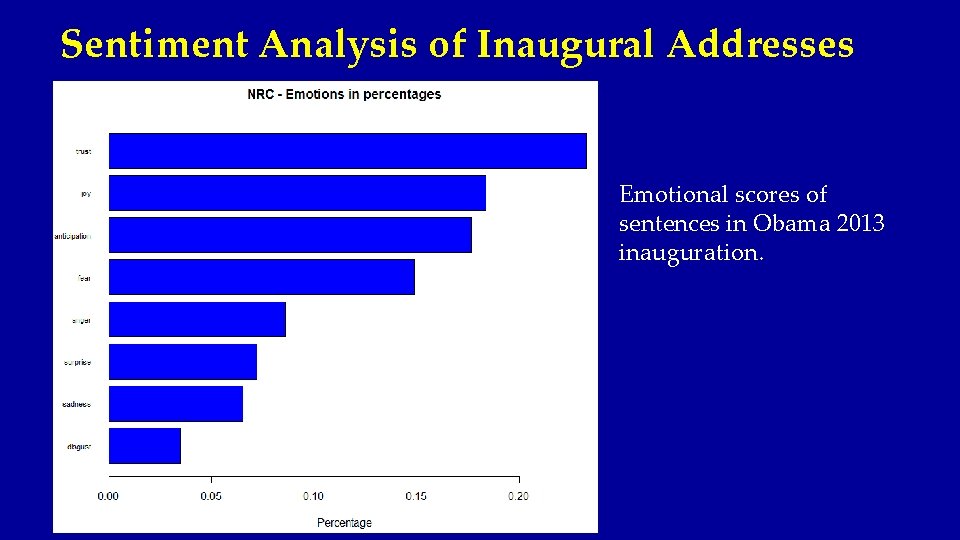

Sentiment Analysis of Inaugural Addresses Emotional scores of sentences in Obama 2013 inauguration.

Conclusion • Textual data is everywhere and can be easily obtained! • Cleaning textual data (particularly Tweets or other scraped sources) can be time consuming and challenging. • Assigning sentiment/opinion/emotion to a body of text is an area of active research (NLP). e. g. unpredictable plot (movie good) vs. unpredictable steering (car bad) flakey crust (pie good) vs. flakey politician (bad)

References • • • Sentiment Analysis by Bing Lui (2015) – Cambridge University Press Bing Lui’s website - https: //www. cs. uic. edu/~liub/FBS/sentiment-analysis. html Basic Text Mining in R - https: //rstudio-pubs-static. s 3. amazonaws. com/31867_8236987 cf 0 a 8444 e 962 ccd 2 aec 46 d 9 c 3. html Intro to Text Analysis in R - https: //www. r-bloggers. com/intro-to-text-analysis-with-r/ RDM – R Data Mining http: //www. rdatamining. com/examples/text-mining First shot: Sentiment Analysis in R - http: //andybromberg. com/sentiment-analysis/ Twitter Sentiment Analysis with R - http: //analyzecore. com/2014/04/28/twitter-sentiment-analysis/ NLP Group at Stanford University – http: //nlp. stanford. edu/ Text Mining with R (A Tidy Approach) by Julia Silge and David Robinson (2016) – tidytextmining. com “Why Text Mining May Be The Next Big Thing” by Gary Belskey (2012) – Time Magazine • BONUS REFERENCE: Caroline (Carr) Carrico’s paper “Twitter analysis of the orthodontic patient experience with braces vs. Invisalign” - http: //www. angle. org/doi/pdf/10. 2319/062816 -508. 1? code=angf-site (WSU Math/Stat Alum – Asst. Prof. VCU School of Dentistry)

- Slides: 62