Machine Learning for Word Sense Disambiguation and CoReference

Machine Learning for Word Sense Disambiguation and (Co)Reference Resolution Razvan C. Bunescu Electrical Engineering and Computer Science Ohio University Athens, OH bunescu@ohio. edu Joint work with Hui Shen (OU) and Rada Mihalcea (UNT) Memphis, February 5, 2014

Question Answering As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. Q: What is the composition of Earth’s atmosphere? 2

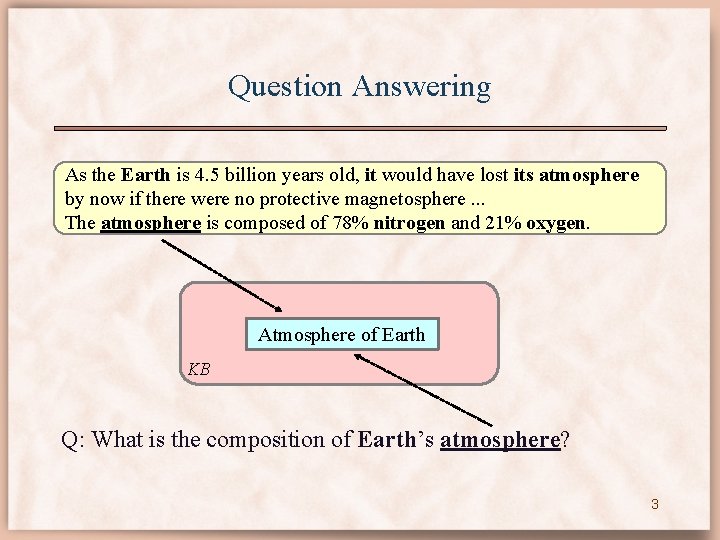

Question Answering As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. Atmosphere of Earth KB Q: What is the composition of Earth’s atmosphere? 3

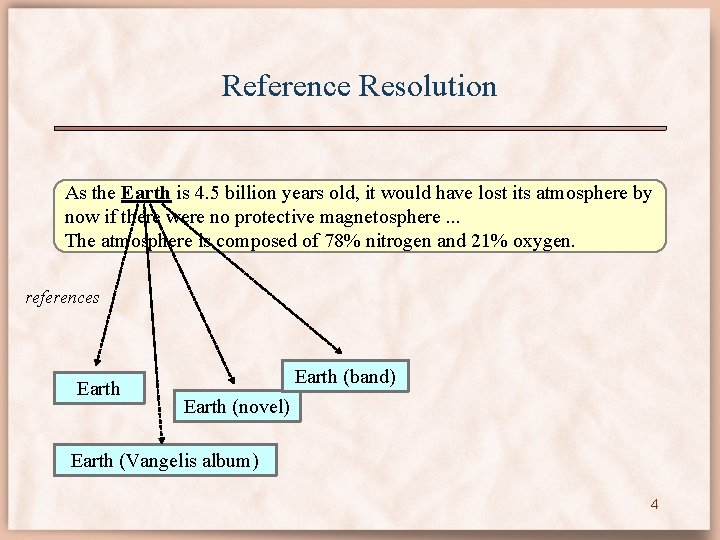

Reference Resolution As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. references Earth (band) Earth (novel) Earth (Vangelis album) 4

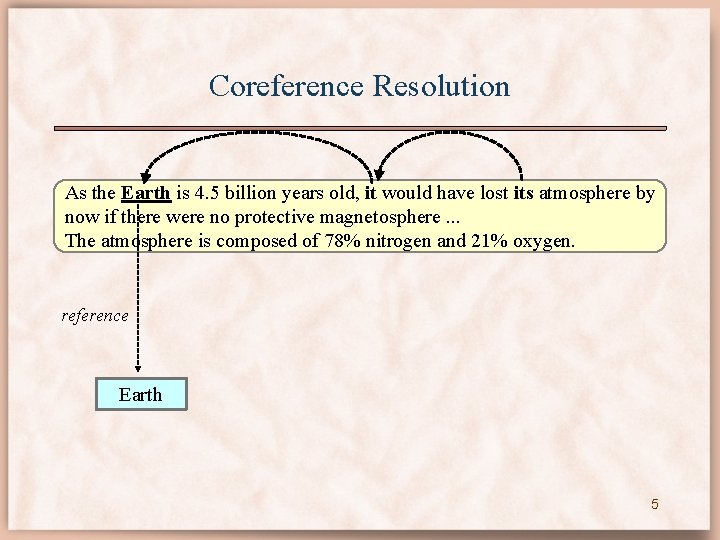

Coreference Resolution As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. reference Earth 5

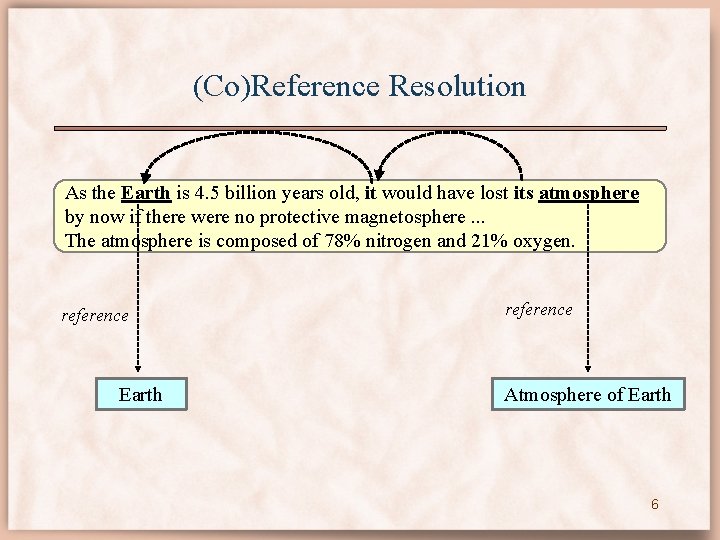

(Co)Reference Resolution As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. reference Earth reference Atmosphere of Earth 6

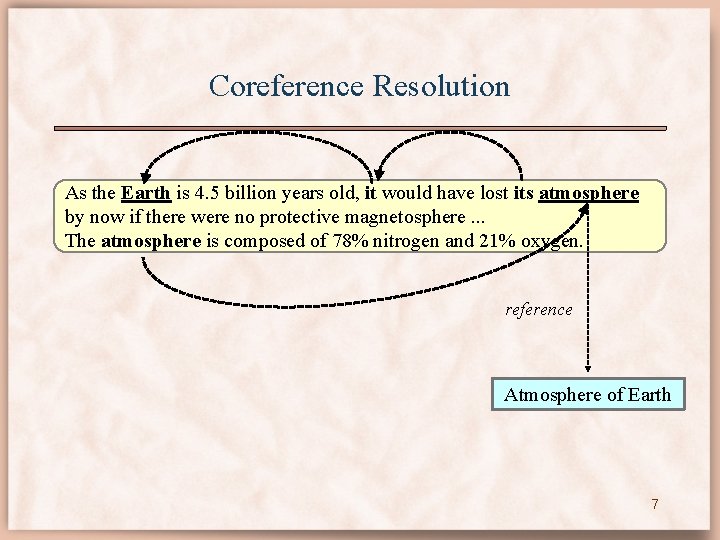

Coreference Resolution As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. reference Atmosphere of Earth 7

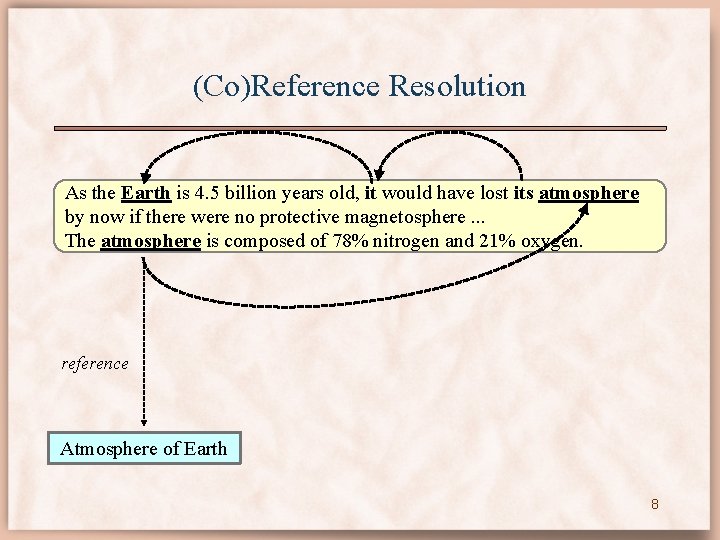

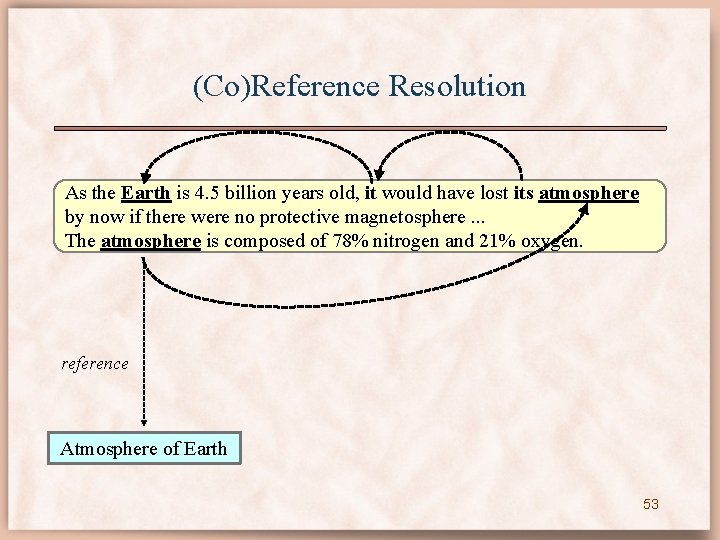

(Co)Reference Resolution As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. reference Atmosphere of Earth 8

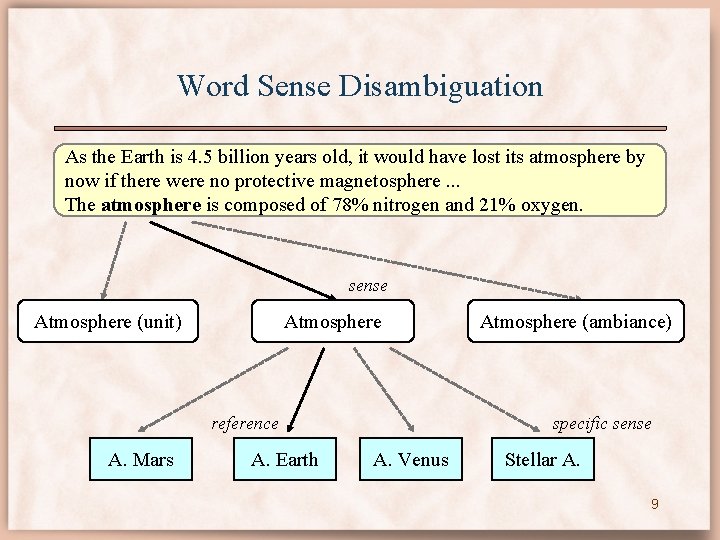

Word Sense Disambiguation As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. sense Atmosphere (unit) Atmosphere reference A. Mars A. Earth Atmosphere (ambiance) specific sense A. Venus Stellar A. 9

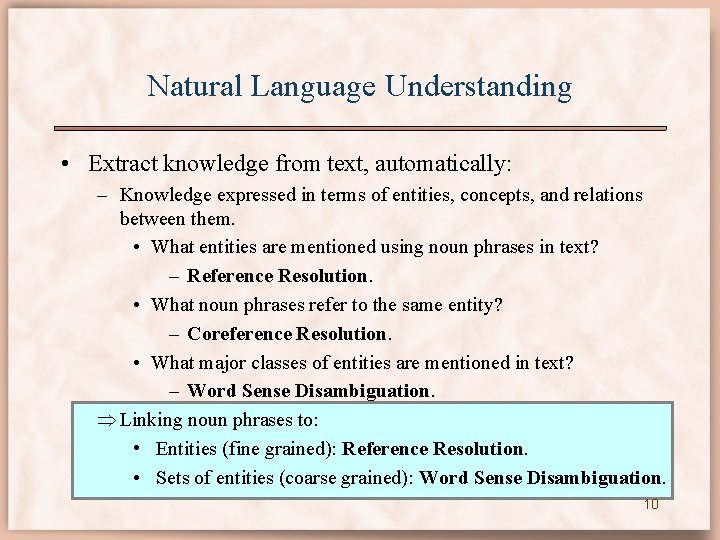

Natural Language Understanding • Extract knowledge from text, automatically: – Knowledge expressed in terms of entities, concepts, and relations between them. • What entities are mentioned using noun phrases in text? – Reference Resolution. • What noun phrases refer to the same entity? – Coreference Resolution. • What major classes of entities are mentioned in text? – Word Sense Disambiguation. Linking noun phrases to: • Entities (fine grained): Reference Resolution. • Sets of entities (coarse grained): Word Sense Disambiguation. 10

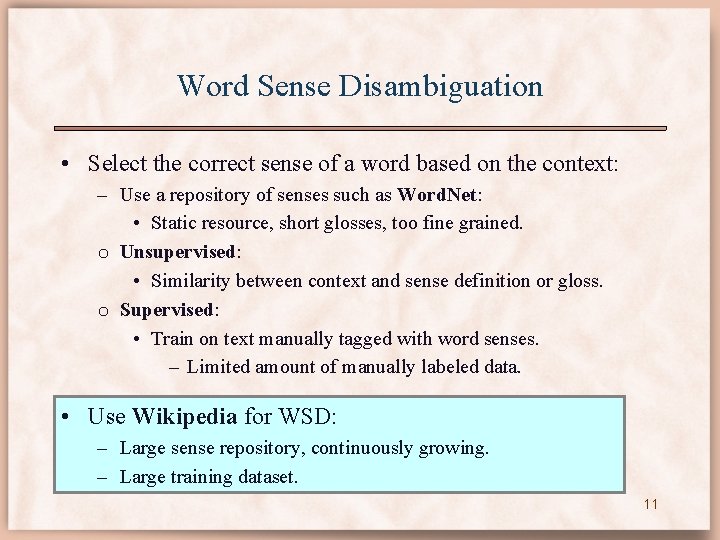

Word Sense Disambiguation • Select the correct sense of a word based on the context: – Use a repository of senses such as Word. Net: • Static resource, short glosses, too fine grained. o Unsupervised: • Similarity between context and sense definition or gloss. o Supervised: • Train on text manually tagged with word senses. – Limited amount of manually labeled data. • Use Wikipedia for WSD: – Large sense repository, continuously growing. – Large training dataset. 11

![Wikipedia for WSD [COLING’ 12, *SEM’ 13] ’’’Palermo’’’ is a city in [[Southern Italy]], Wikipedia for WSD [COLING’ 12, *SEM’ 13] ’’’Palermo’’’ is a city in [[Southern Italy]],](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-12.jpg)

Wikipedia for WSD [COLING’ 12, *SEM’ 13] ’’’Palermo’’’ is a city in [[Southern Italy]], the [[capital city | capital]] of the [[autonomous area | autonomous region]] of [[Sicily]]. wiki Palermo is a city in Southern Italy, the capital of the autonomous region of Sicily. html capital (economics) capital (architecture) capital city human capital financial capital 12

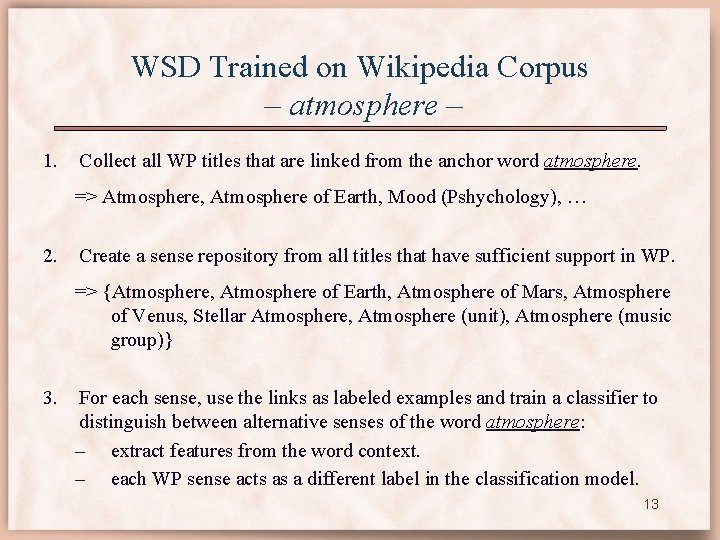

WSD Trained on Wikipedia Corpus – atmosphere – 1. Collect all WP titles that are linked from the anchor word atmosphere. => Atmosphere, Atmosphere of Earth, Mood (Pshychology), … 2. Create a sense repository from all titles that have sufficient support in WP. => {Atmosphere, Atmosphere of Earth, Atmosphere of Mars, Atmosphere of Venus, Stellar Atmosphere, Atmosphere (unit), Atmosphere (music group)} 3. For each sense, use the links as labeled examples and train a classifier to distinguish between alternative senses of the word atmosphere: – extract features from the word context. – each WP sense acts as a different label in the classification model. 13

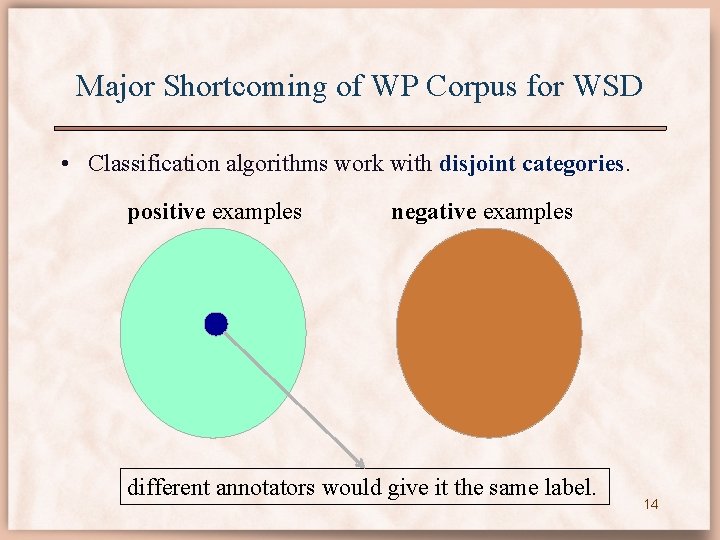

Major Shortcoming of WP Corpus for WSD • Classification algorithms work with disjoint categories. positive examples negative examples different annotators would give it the same label. 14

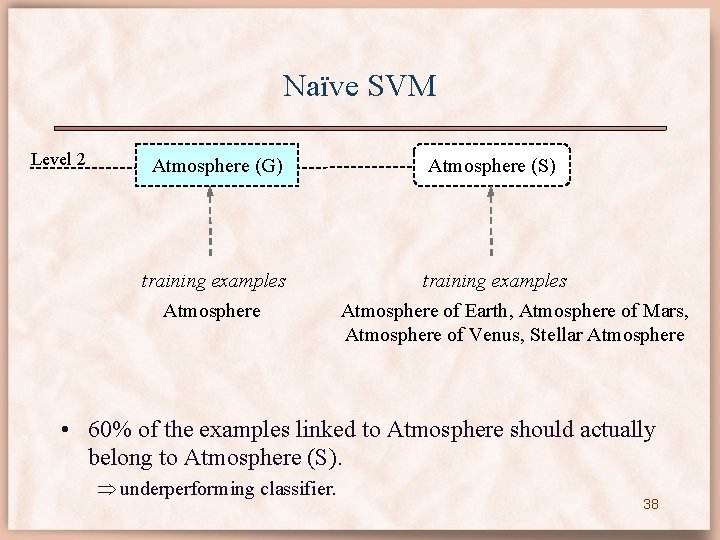

Major Shortcoming of WP Corpus for WSD • Classification algorithms work with disjoint categories. • Sense labels collected from WP are not disjoint! – Many instances that are linked to Atmosphere could have been linked to more specific titles: Atmosphere of Earth or Atmosphere of Mars. • Atmosphere category is ill defined. the learning algorithm will underperform, since it tries to separate Atmosphere examples from Atmosphere of Earth examples. 15

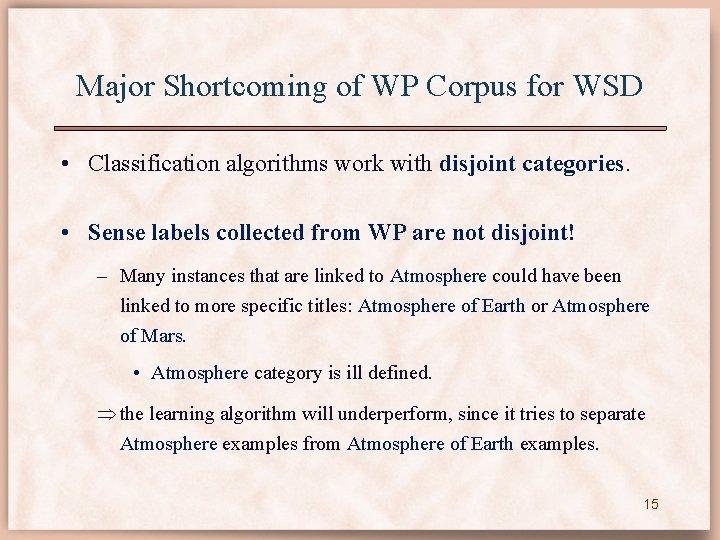

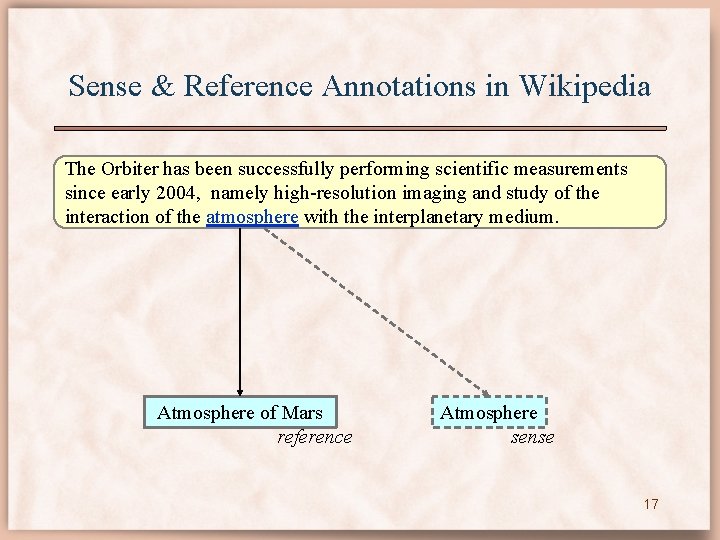

Sense & Reference Annotations in Wikipedia The Beagle 2 lander objectives were to characterize the physical properties of the atmosphere and surface layers. Atmosphere sense Atmosphere of Mars reference 16

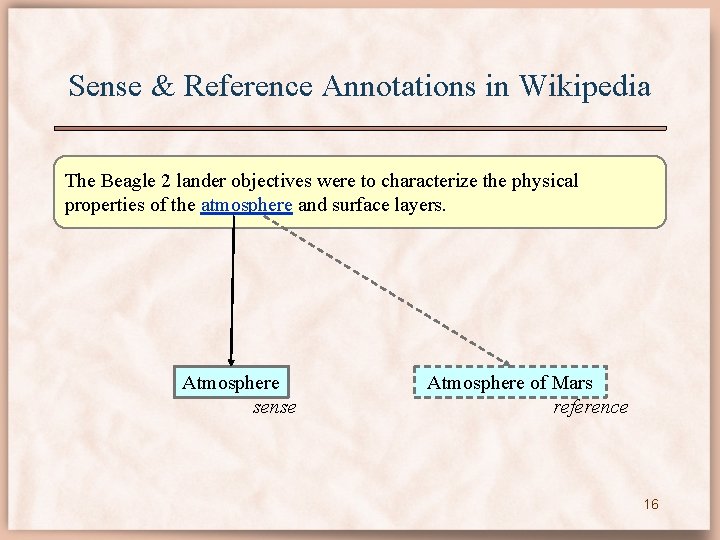

Sense & Reference Annotations in Wikipedia The Orbiter has been successfully performing scientific measurements since early 2004, namely high-resolution imaging and study of the interaction of the atmosphere with the interplanetary medium. Atmosphere of Mars reference Atmosphere sense 17

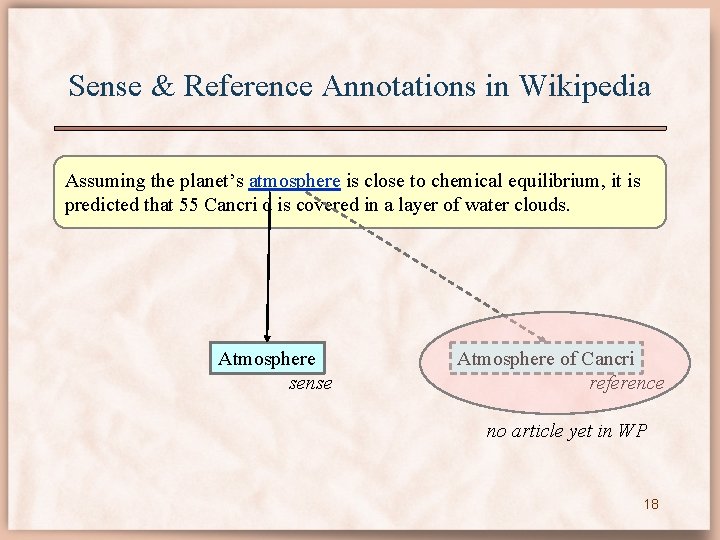

Sense & Reference Annotations in Wikipedia Assuming the planet’s atmosphere is close to chemical equilibrium, it is predicted that 55 Cancri d is covered in a layer of water clouds. Atmosphere sense Atmosphere of Cancri reference no article yet in WP 18

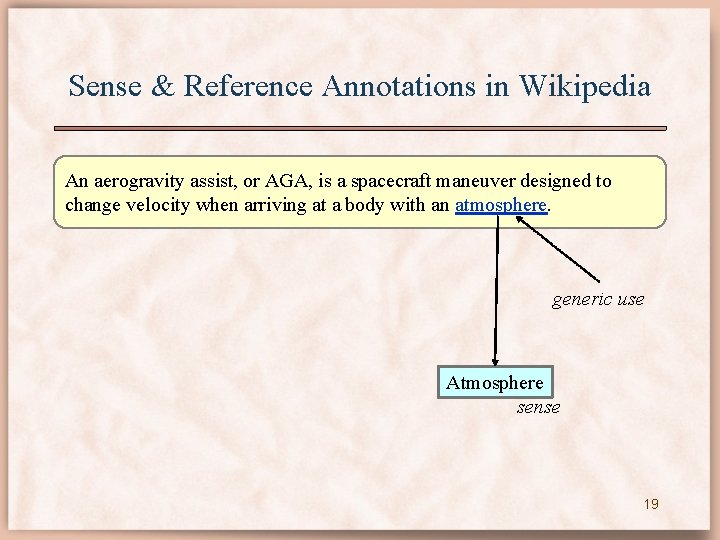

Sense & Reference Annotations in Wikipedia An aerogravity assist, or AGA, is a spacecraft maneuver designed to change velocity when arriving at a body with an atmosphere. generic use Atmosphere sense 19

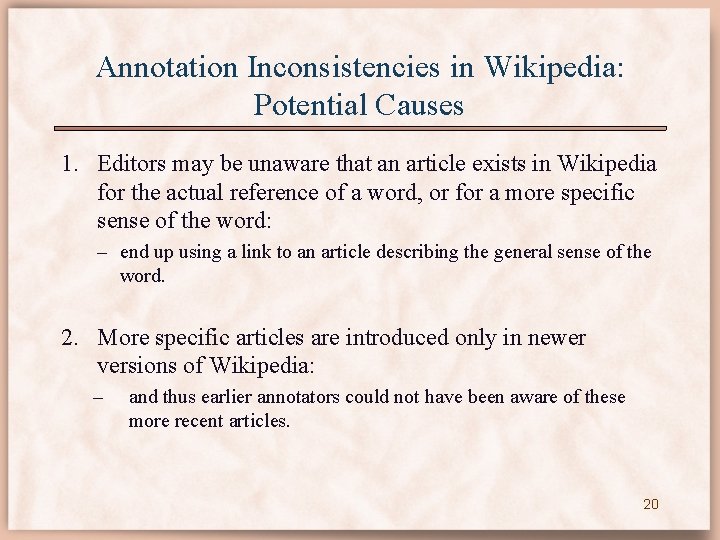

Annotation Inconsistencies in Wikipedia: Potential Causes 1. Editors may be unaware that an article exists in Wikipedia for the actual reference of a word, or for a more specific sense of the word: – end up using a link to an article describing the general sense of the word. 2. More specific articles are introduced only in newer versions of Wikipedia: – and thus earlier annotators could not have been aware of these more recent articles. 20

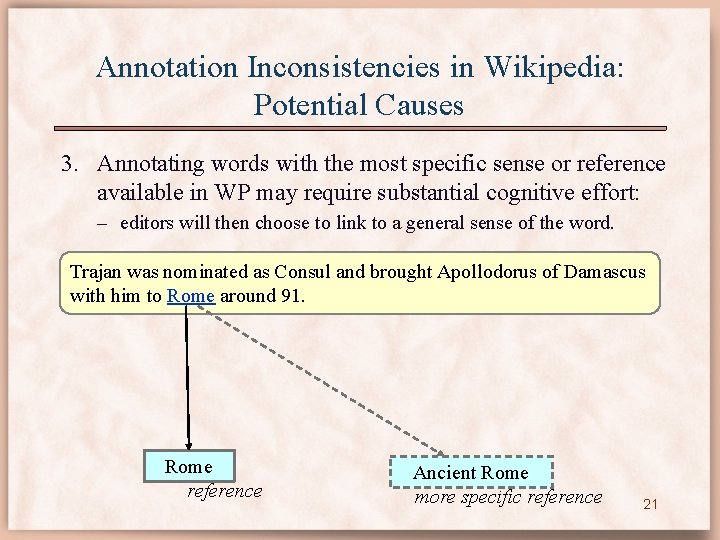

Annotation Inconsistencies in Wikipedia: Potential Causes 3. Annotating words with the most specific sense or reference available in WP may require substantial cognitive effort: – editors will then choose to link to a general sense of the word. Trajan was nominated as Consul and brought Apollodorus of Damascus with him to Rome around 91. Rome reference Ancient Rome more specific reference 21

An animal sleeps on the couch. A cat sleeps on the couch. A white siameze cat sleeps on the couch. 22

An cat sleeps on the piece of furniture. A cat sleeps on the couch. A cat sleeps on the white leather couch. 23

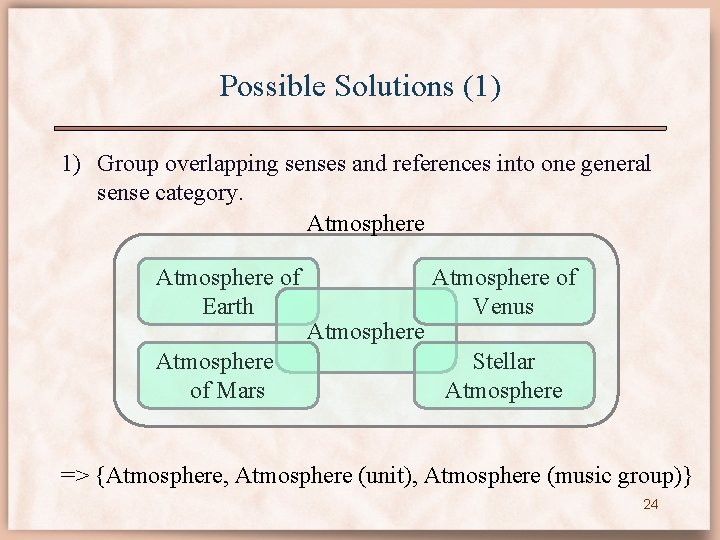

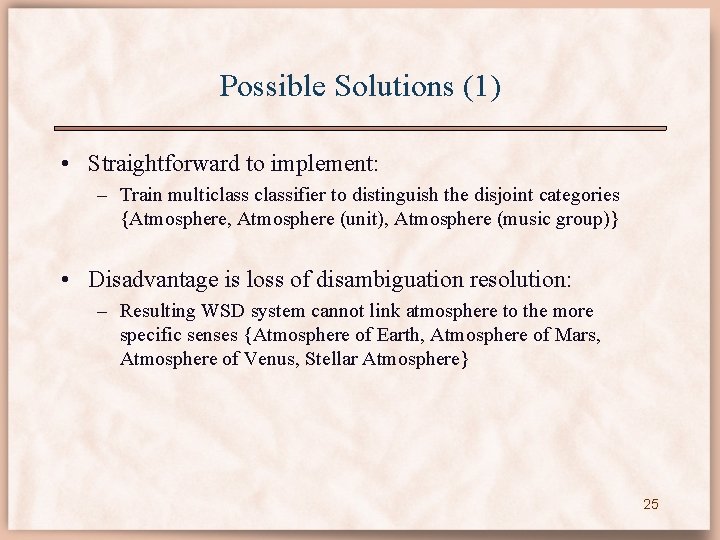

Possible Solutions (1) 1) Group overlapping senses and references into one general sense category. Atmosphere of Earth Atmosphere of Mars Atmosphere of Venus Stellar Atmosphere => {Atmosphere, Atmosphere (unit), Atmosphere (music group)} 24

Possible Solutions (1) • Straightforward to implement: – Train multiclassifier to distinguish the disjoint categories {Atmosphere, Atmosphere (unit), Atmosphere (music group)} • Disadvantage is loss of disambiguation resolution: – Resulting WSD system cannot link atmosphere to the more specific senses {Atmosphere of Earth, Atmosphere of Mars, Atmosphere of Venus, Stellar Atmosphere} 25

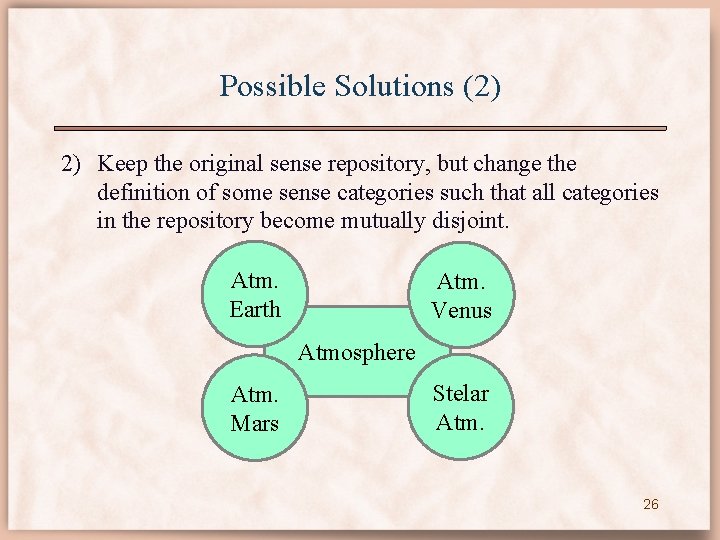

Possible Solutions (2) 2) Keep the original sense repository, but change the definition of some sense categories such that all categories in the repository become mutually disjoint. Atm. Earth Atm. Venus Atmosphere Atm. Mars Stelar Atm. 26

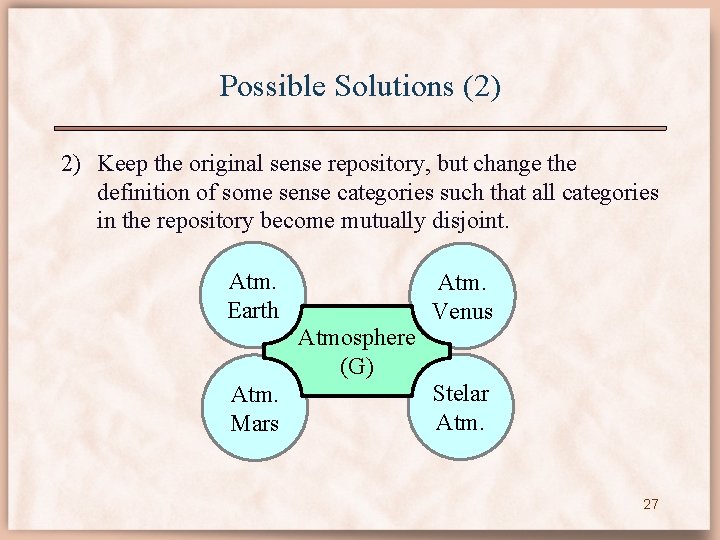

Possible Solutions (2) 2) Keep the original sense repository, but change the definition of some sense categories such that all categories in the repository become mutually disjoint. Atm. Earth Atmosphere (G) Atm. Mars Atm. Venus Stelar Atm. 27

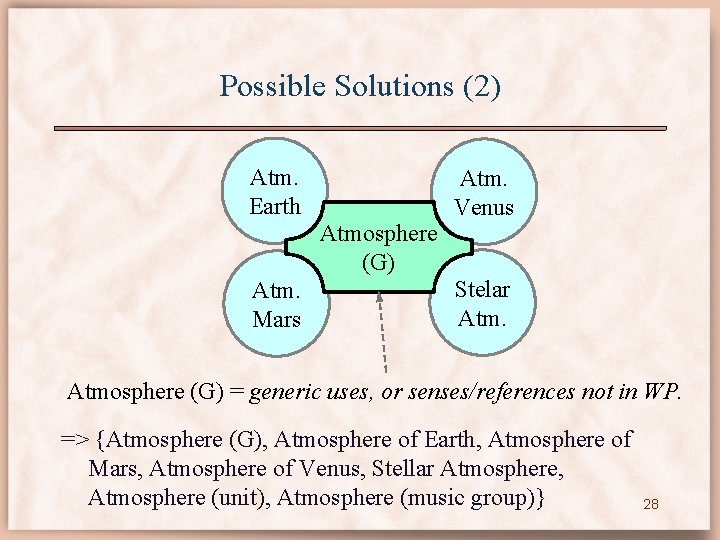

Possible Solutions (2) Atm. Earth Atmosphere (G) Atm. Mars Atm. Venus Stelar Atmosphere (G) = generic uses, or senses/references not in WP. => {Atmosphere (G), Atmosphere of Earth, Atmosphere of Mars, Atmosphere of Venus, Stellar Atmosphere, Atmosphere (unit), Atmosphere (music group)} 28

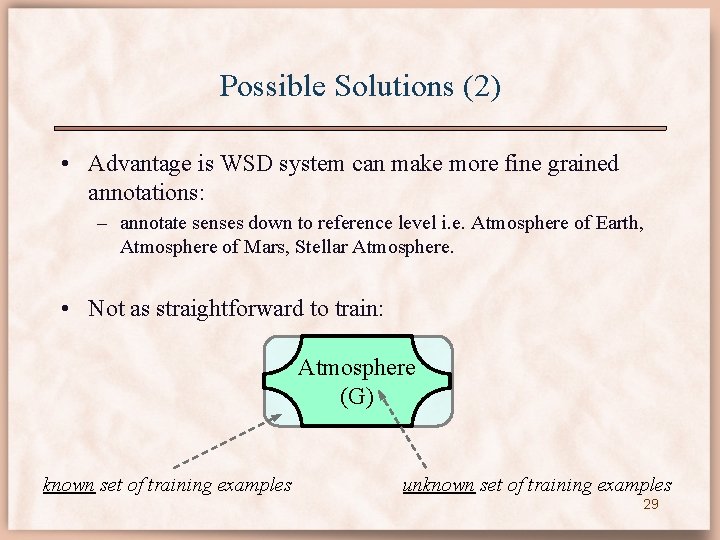

Possible Solutions (2) • Advantage is WSD system can make more fine grained annotations: – annotate senses down to reference level i. e. Atmosphere of Earth, Atmosphere of Mars, Stellar Atmosphere. • Not as straightforward to train: Atmosphere (G) known set of training examples unknown set of training examples 29

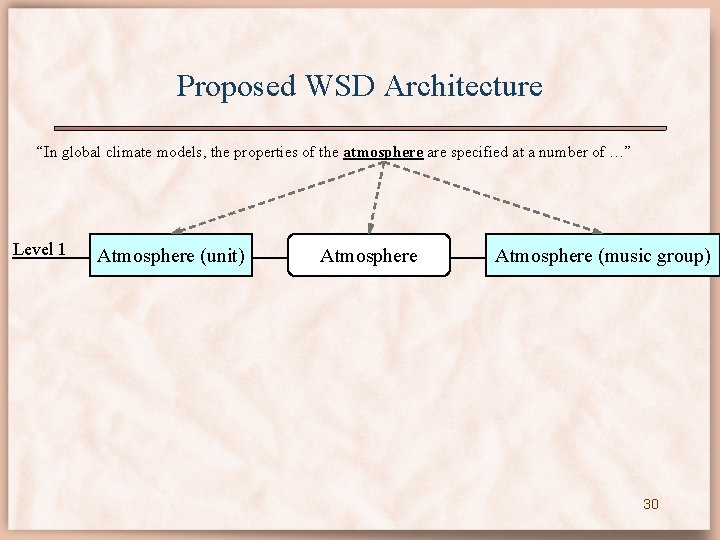

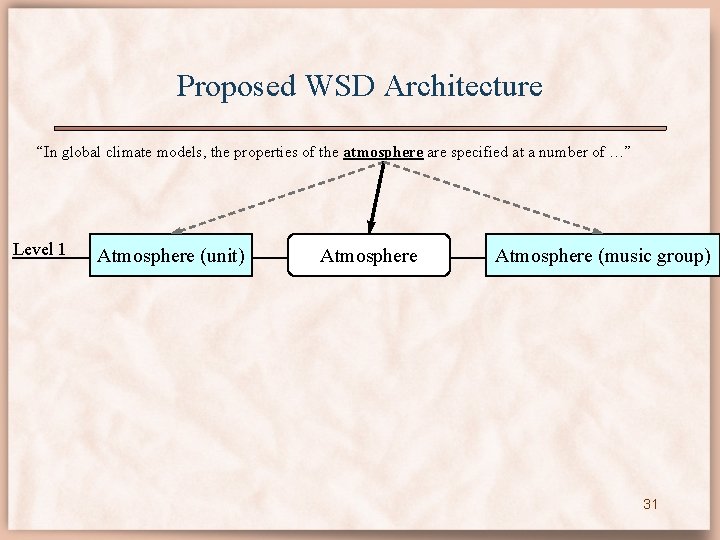

Proposed WSD Architecture “In global climate models, the properties of the atmosphere are specified at a number of …” Level 1 Atmosphere (unit) Atmosphere (music group) 30

Proposed WSD Architecture “In global climate models, the properties of the atmosphere are specified at a number of …” Level 1 Atmosphere (unit) Atmosphere (music group) 31

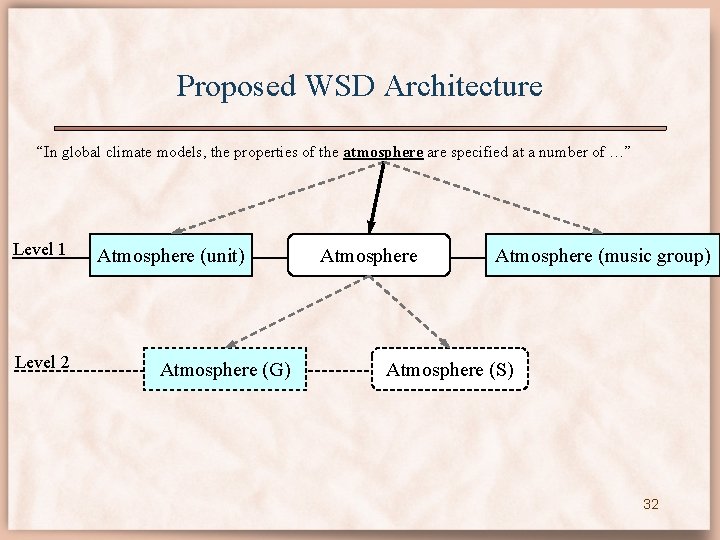

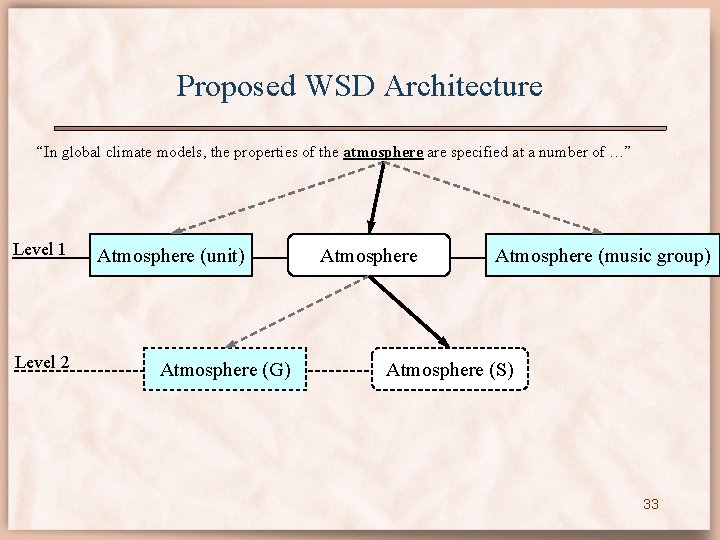

Proposed WSD Architecture “In global climate models, the properties of the atmosphere are specified at a number of …” Level 1 Level 2 Atmosphere (unit) Atmosphere (G) Atmosphere (music group) Atmosphere (S) 32

Proposed WSD Architecture “In global climate models, the properties of the atmosphere are specified at a number of …” Level 1 Level 2 Atmosphere (unit) Atmosphere (G) Atmosphere (music group) Atmosphere (S) 33

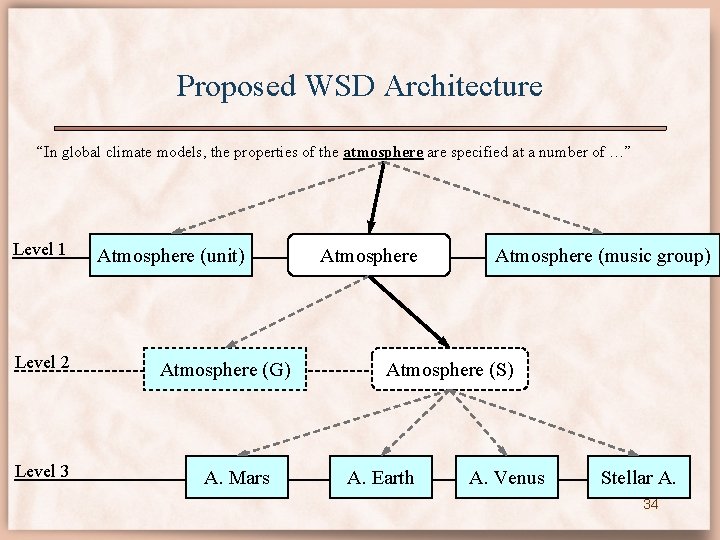

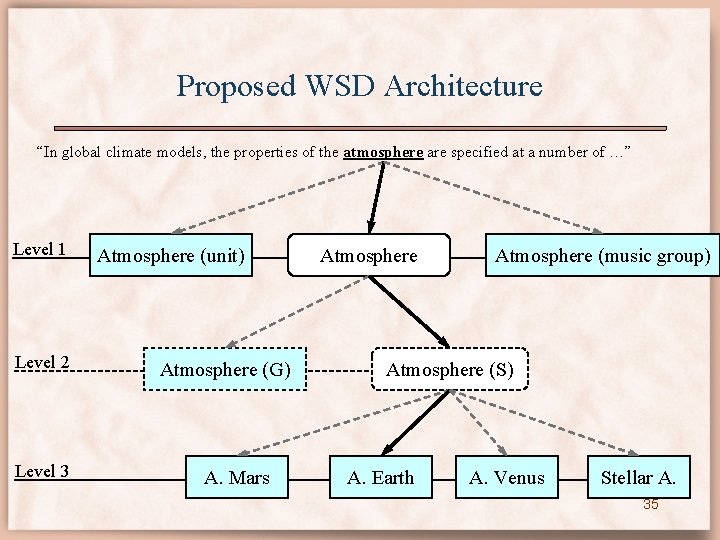

Proposed WSD Architecture “In global climate models, the properties of the atmosphere are specified at a number of …” Level 1 Level 2 Level 3 Atmosphere (unit) Atmosphere (G) A. Mars Atmosphere (music group) Atmosphere (S) A. Earth A. Venus Stellar A. 34

Proposed WSD Architecture “In global climate models, the properties of the atmosphere are specified at a number of …” Level 1 Level 2 Level 3 Atmosphere (unit) Atmosphere (G) A. Mars Atmosphere (music group) Atmosphere (S) A. Earth A. Venus Stellar A. 35

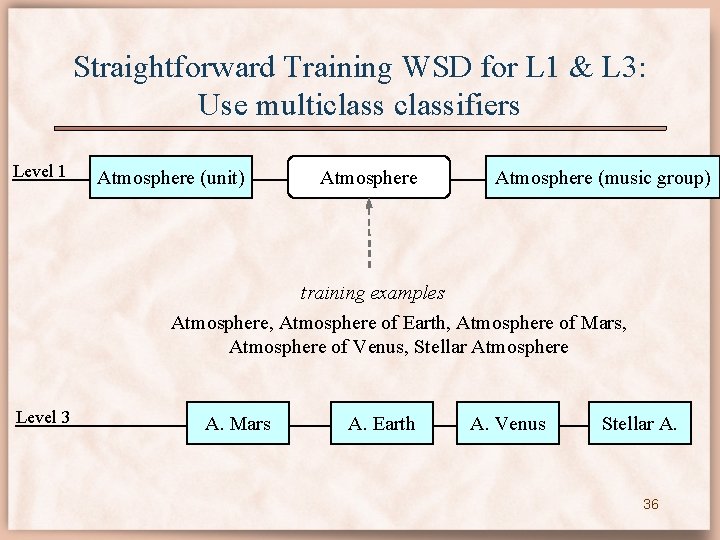

Straightforward Training WSD for L 1 & L 3: Use multiclassifiers Level 1 Atmosphere (unit) Atmosphere (music group) training examples Atmosphere, Atmosphere of Earth, Atmosphere of Mars, Atmosphere of Venus, Stellar Atmosphere Level 3 A. Mars A. Earth A. Venus Stellar A. 36

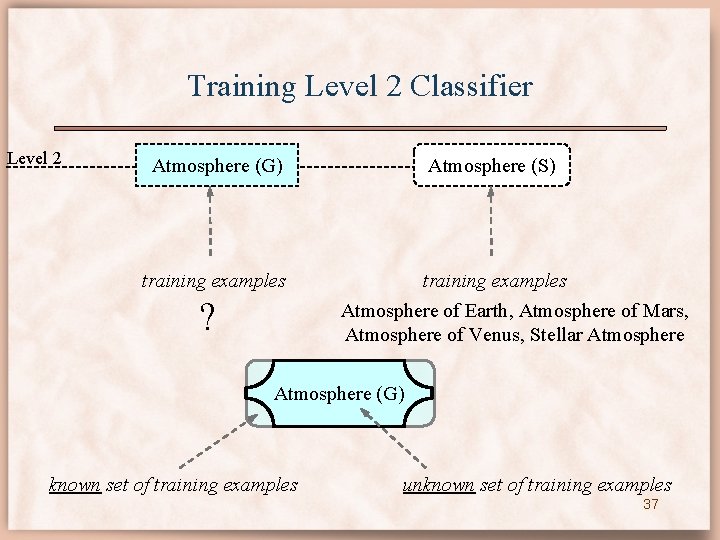

Training Level 2 Classifier Level 2 Atmosphere (G) training examples ? Atmosphere (S) training examples Atmosphere of Earth, Atmosphere of Mars, Atmosphere of Venus, Stellar Atmosphere (G) known set of training examples unknown set of training examples 37

Naïve SVM Level 2 Atmosphere (G) training examples Atmosphere (S) training examples Atmosphere of Earth, Atmosphere of Mars, Atmosphere of Venus, Stellar Atmosphere • 60% of the examples linked to Atmosphere should actually belong to Atmosphere (S). underperforming classifier. 38

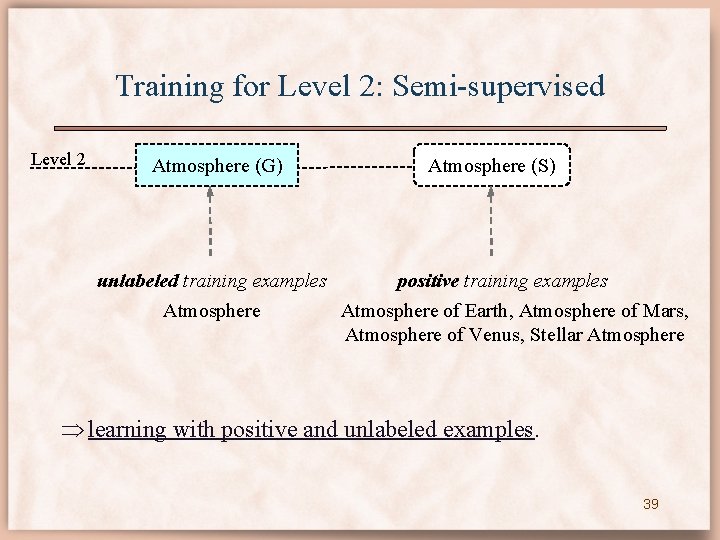

Training for Level 2: Semi-supervised Level 2 Atmosphere (G) Atmosphere (S) unlabeled training examples positive training examples Atmosphere of Earth, Atmosphere of Mars, Atmosphere of Venus, Stellar Atmosphere learning with positive and unlabeled examples. 39

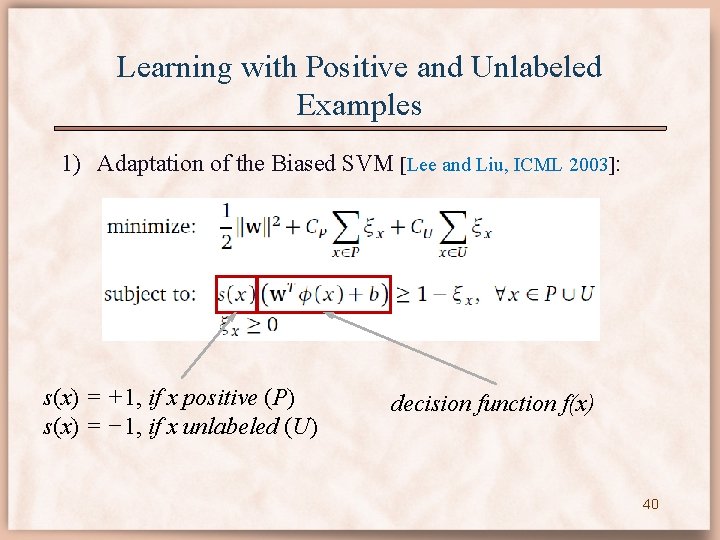

Learning with Positive and Unlabeled Examples 1) Adaptation of the Biased SVM [Lee and Liu, ICML 2003]: s(x) = +1, if x positive (P) s(x) = − 1, if x unlabeled (U) decision function f(x) 40

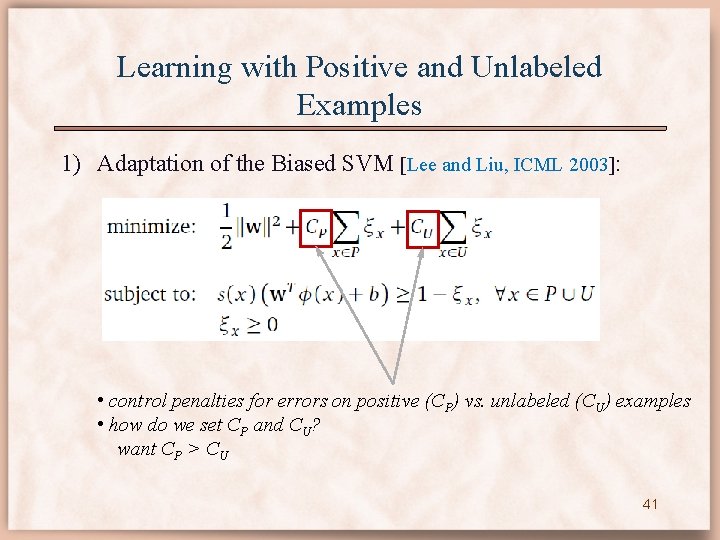

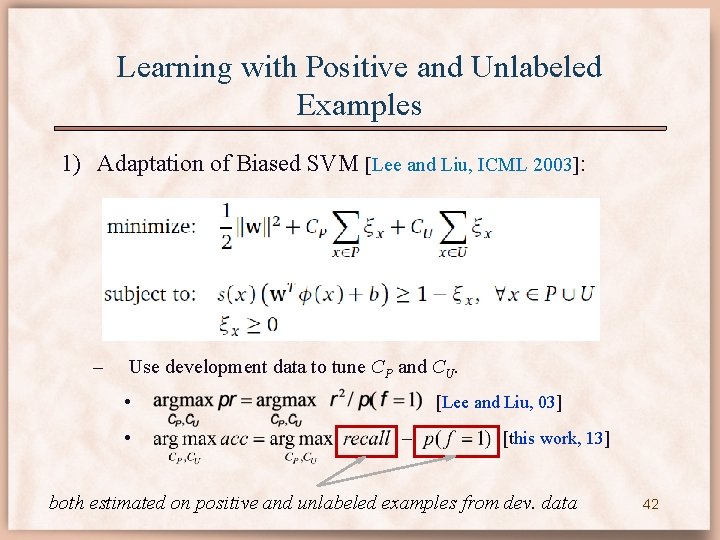

Learning with Positive and Unlabeled Examples 1) Adaptation of the Biased SVM [Lee and Liu, ICML 2003]: • control penalties for errors on positive (CP) vs. unlabeled (CU) examples • how do we set CP and CU? want CP > CU 41

Learning with Positive and Unlabeled Examples 1) Adaptation of Biased SVM [Lee and Liu, ICML 2003]: – Use development data to tune CP and CU. • • [Lee and Liu, 03] [this work, 13] both estimated on positive and unlabeled examples from dev. data 42

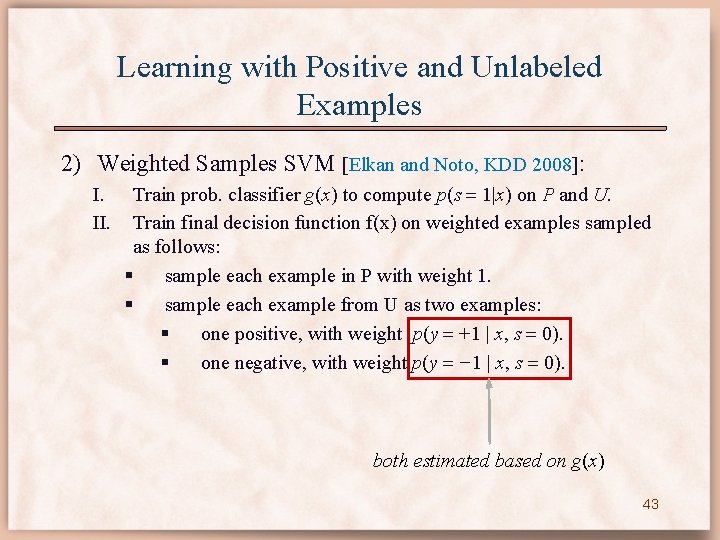

Learning with Positive and Unlabeled Examples 2) Weighted Samples SVM [Elkan and Noto, KDD 2008]: I. II. Train prob. classifier g(x) to compute p(s 1|x) on P and U. Train final decision function f(x) on weighted examples sampled as follows: § sample each example in P with weight 1. § sample each example from U as two examples: § one positive, with weight p(y +1 | x, s 0). § one negative, with weight p(y − 1 | x, s 0). both estimated based on g(x) 43

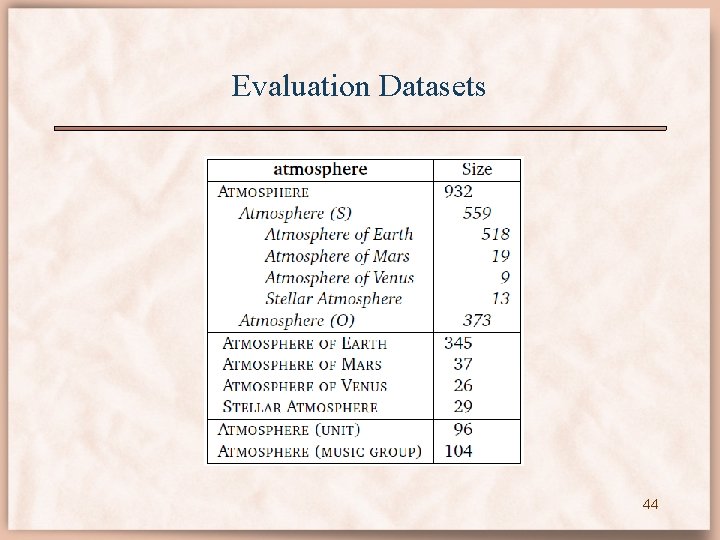

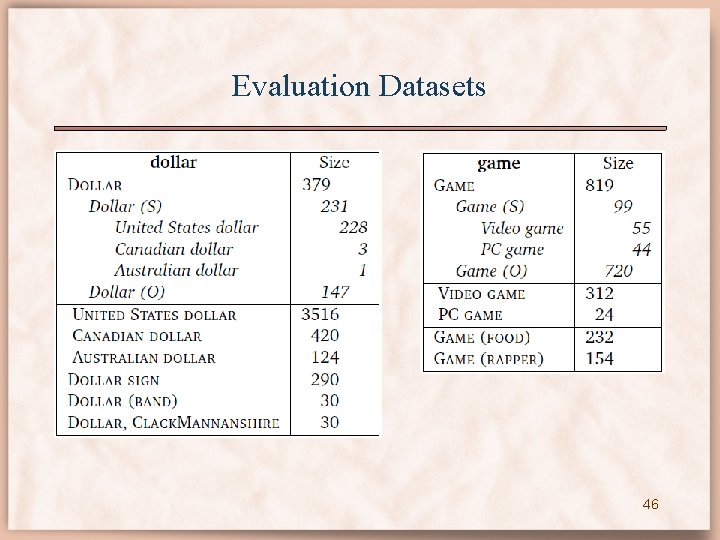

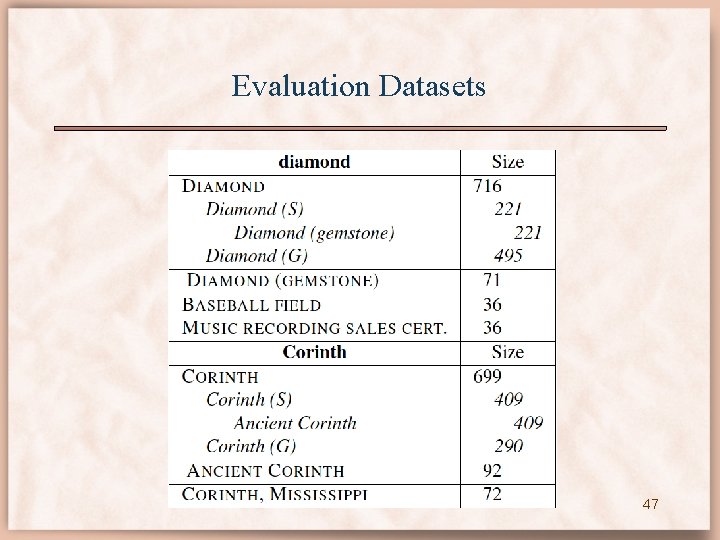

Evaluation Datasets 44

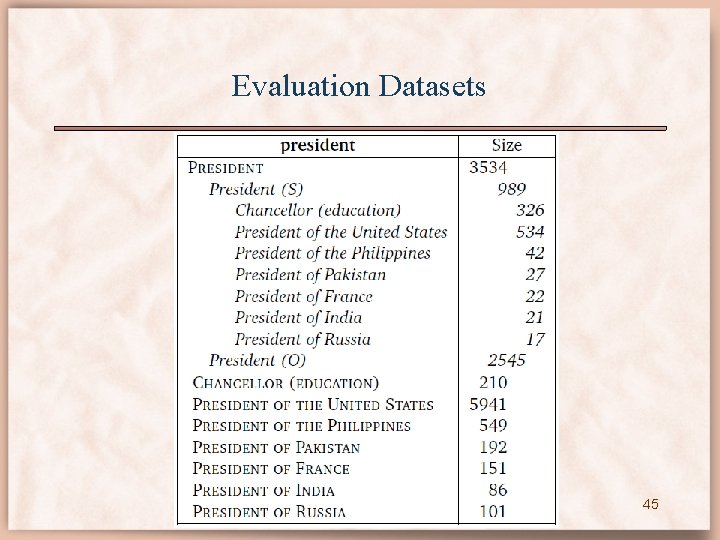

Evaluation Datasets 45

Evaluation Datasets 46

Evaluation Datasets 47

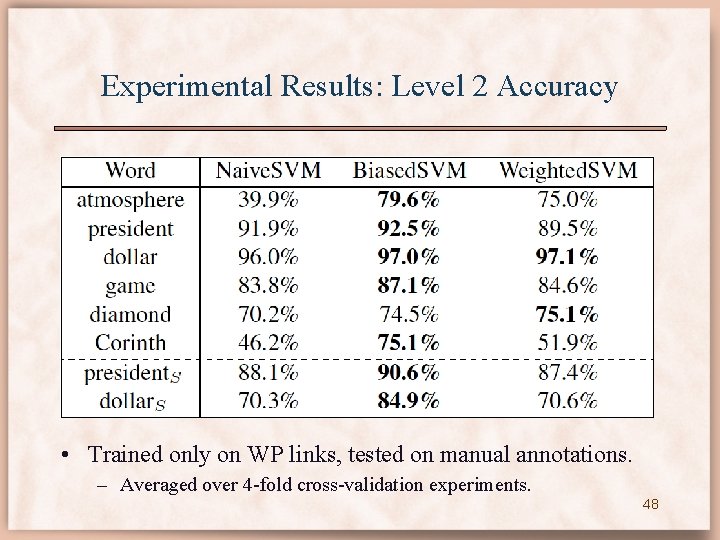

Experimental Results: Level 2 Accuracy • Trained only on WP links, tested on manual annotations. – Averaged over 4 -fold cross-validation experiments. 48

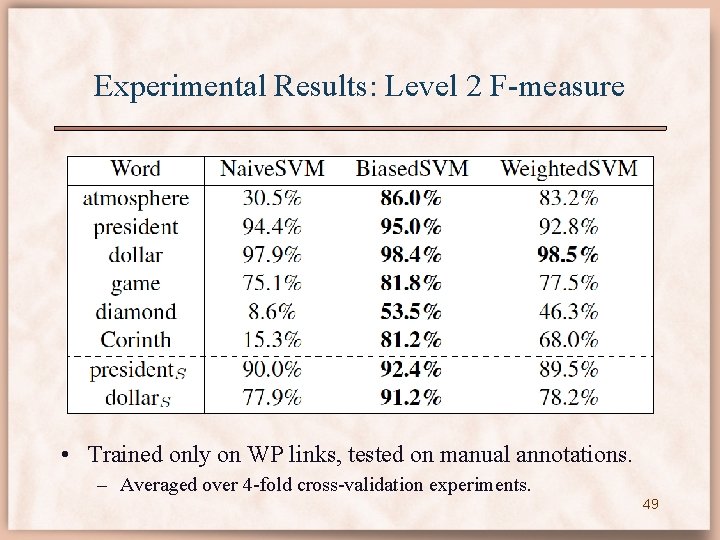

Experimental Results: Level 2 F-measure • Trained only on WP links, tested on manual annotations. – Averaged over 4 -fold cross-validation experiments. 49

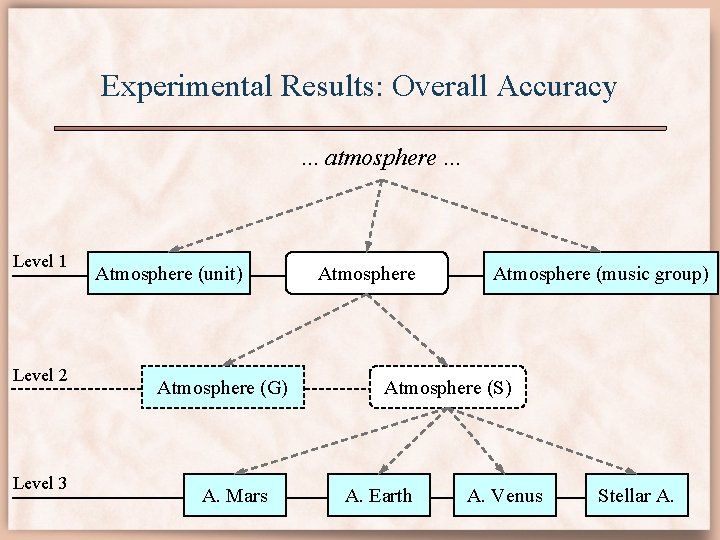

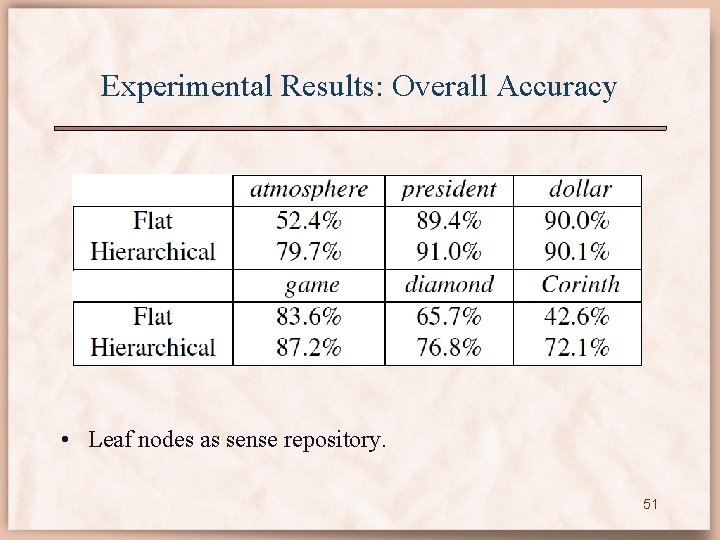

Experimental Results: Overall Accuracy. . . atmosphere. . . Level 1 Level 2 Level 3 Atmosphere (unit) Atmosphere (G) A. Mars Atmosphere (music group) Atmosphere (S) A. Earth A. Venus Stellar 50 A.

Experimental Results: Overall Accuracy • Leaf nodes as sense repository. 51

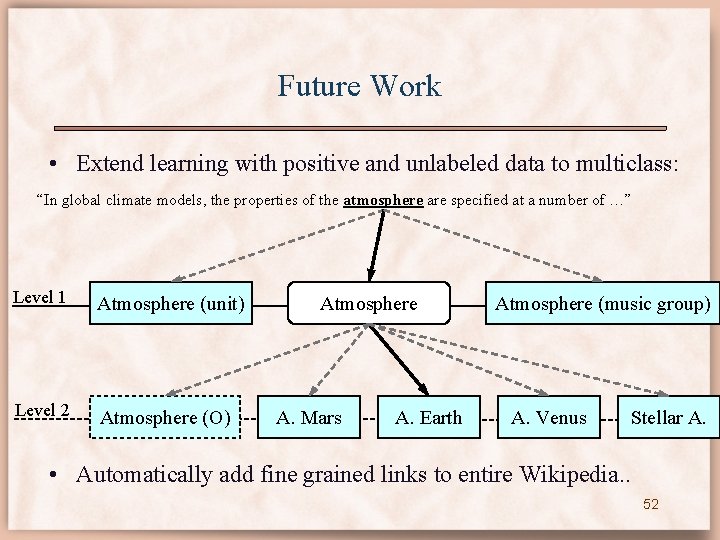

Future Work • Extend learning with positive and unlabeled data to multiclass: “In global climate models, the properties of the atmosphere are specified at a number of …” Level 1 Atmosphere (unit) Level 2 Atmosphere (O) Atmosphere A. Mars A. Earth Atmosphere (music group) A. Venus Stellar A. • Automatically add fine grained links to entire Wikipedia. . 52

(Co)Reference Resolution As the Earth is 4. 5 billion years old, it would have lost its atmosphere by now if there were no protective magnetosphere. . . The atmosphere is composed of 78% nitrogen and 21% oxygen. reference Atmosphere of Earth 53

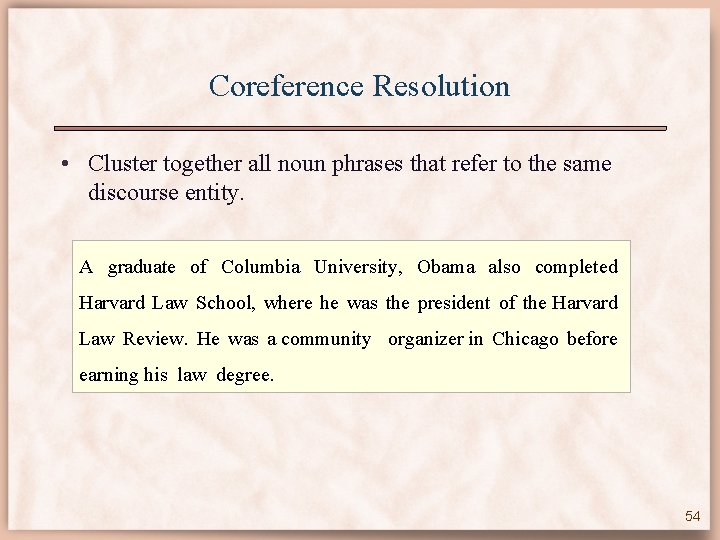

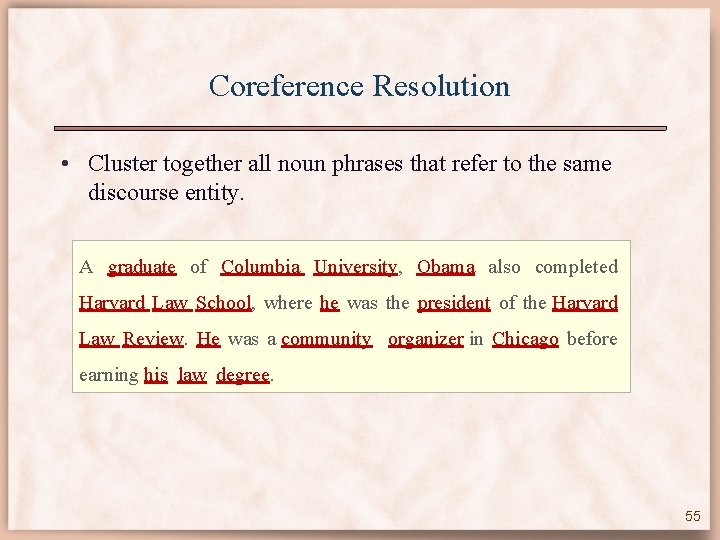

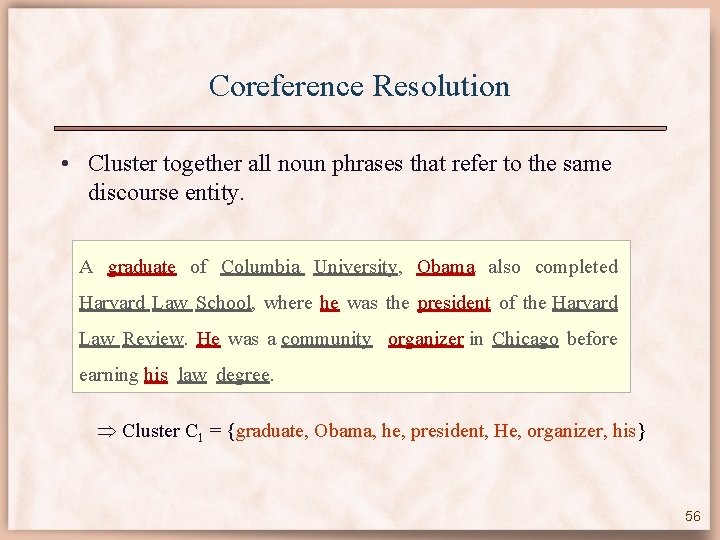

Coreference Resolution • Cluster together all noun phrases that refer to the same discourse entity. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree. 54

Coreference Resolution • Cluster together all noun phrases that refer to the same discourse entity. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree. 55

Coreference Resolution • Cluster together all noun phrases that refer to the same discourse entity. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree. Cluster C 1 = {graduate, Obama, he, president, He, organizer, his} 56

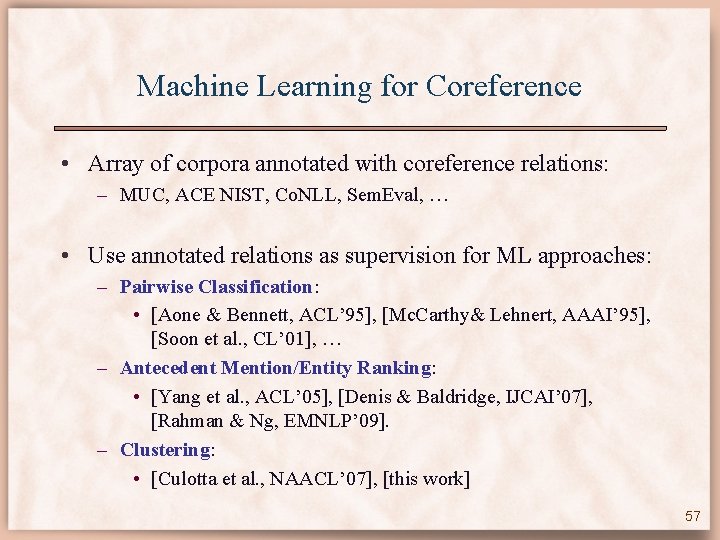

Machine Learning for Coreference • Array of corpora annotated with coreference relations: – MUC, ACE NIST, Co. NLL, Sem. Eval, … • Use annotated relations as supervision for ML approaches: – Pairwise Classification: • [Aone & Bennett, ACL’ 95], [Mc. Carthy& Lehnert, AAAI’ 95], [Soon et al. , CL’ 01], … – Antecedent Mention/Entity Ranking: • [Yang et al. , ACL’ 05], [Denis & Baldridge, IJCAI’ 07], [Rahman & Ng, EMNLP’ 09]. – Clustering: • [Culotta et al. , NAACL’ 07], [this work] 57

Rule-Based Coreference Resolution • Expert rules are competitive with supervised machine learning approaches: – [Haghighi & Klein, EMNLP’ 09] – “Simple coreference resolution with rich syntactic and semantic features”. – [Raghunathan et al. , EMNLP’ 10] – “A multi-pass sieve for coreference resolution”: • [Lee et al. , Co. NLL’ 11] – “Stanford’s multi-pass sieve coreference resolution system at the Co. NLL-2011 shared task”. – best performing system. Is there any utility in using ML for coreference resolution? 58

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-59.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 59

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-60.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 2. Exact String Match 60

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-61.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 4. Precise Constructs 61

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-62.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 4. Precise Constructs 62

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-63.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 4. Precise Constructs 63

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-64.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 13. Pronoun Match 64

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-65.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 13. Pronoun Match 65

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-66.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 13. Pronoun Match 66

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-67.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 13. Pronoun Match 67

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-68.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 13. Pronoun Match 68

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-69.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision. A graduate of Columbia University, Obama also completed Harvard Law School, where he was the president of the Harvard Law Review. He was a community organizer in Chicago before earning his law degree … Obama served three terms in the Illinois Senate. 13. Pronoun Match 69

![Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-70.jpg)

Stanford’s Deterministic Sieves [Lee et al. , Co. NLL’ 11] • Apply tiers of deterministic rules, from highest to lowest precision: 1. 2. 3. 4. 5. 6. 7. 8. Discourse Processing Exact String Match Relaxed Exact String Match Precise Constructs Strict Head Match, 1 Strict Head Match, 2 Strict Head Match, 3 Strict Head Match, 4 9. 10. 11. 12. 13. Proper Head Word Match Alias Relaxed Head Match Lexical Chain Pronouns Ordering determined on separate development data. 70

![Adaptive Clustering for Coreference [ECAI’ 2012] • An Adaptive Clustering approach to coreference: – Adaptive Clustering for Coreference [ECAI’ 2012] • An Adaptive Clustering approach to coreference: –](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-71.jpg)

Adaptive Clustering for Coreference [ECAI’ 2012] • An Adaptive Clustering approach to coreference: – • accomodates features defined on pairs of clusters. Use the expert rules as features in the AC approach: 1. Automatically learn the relative importance of the original sieves as features: no need for development data to determine ordering 2. Easy to integrate additional, overlapping features: • Semantic compatibility features for neutral pronouns. – Web N-Gram statistics useful for computing semantic compatibility. 71

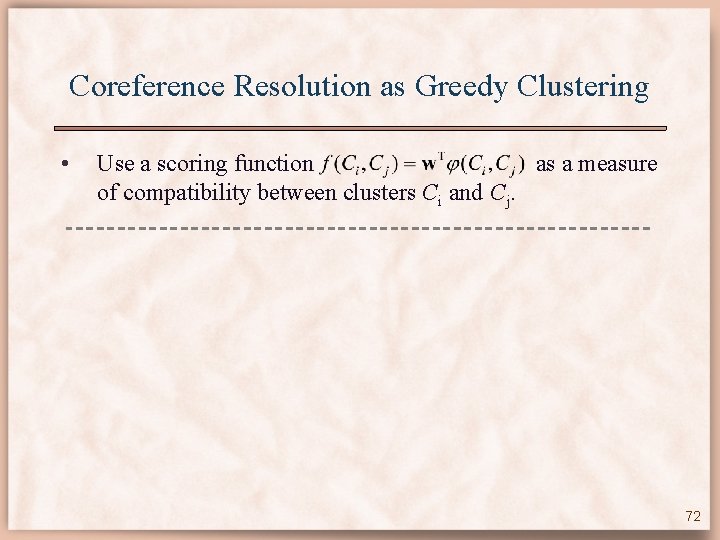

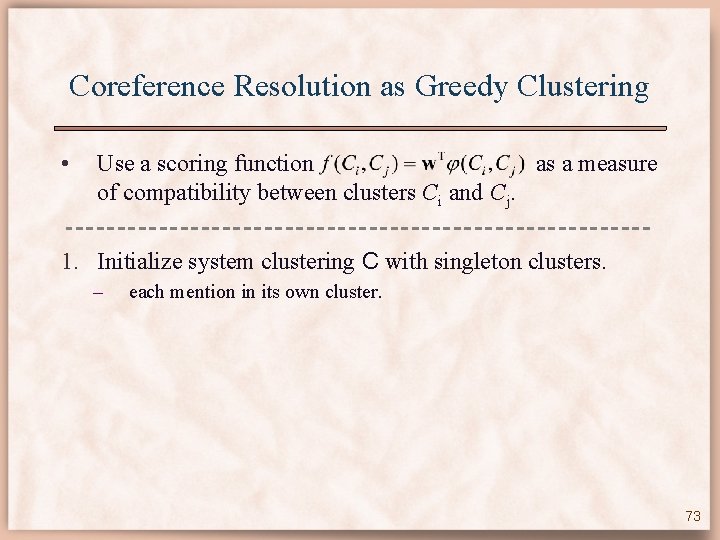

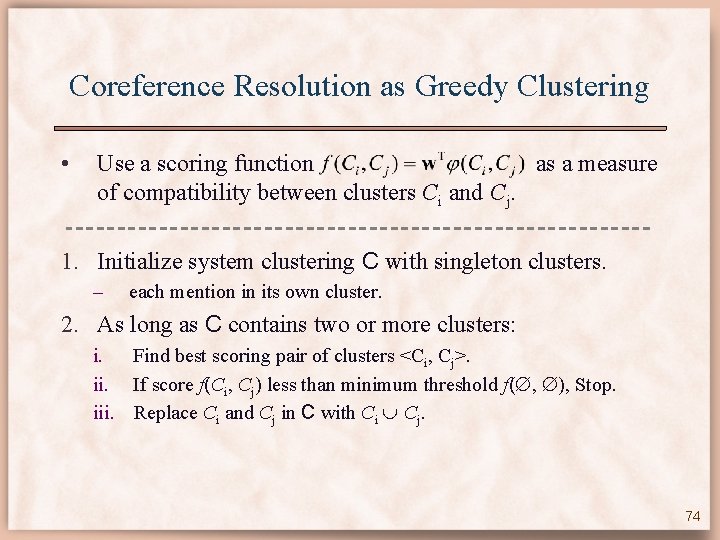

Coreference Resolution as Greedy Clustering • Use a scoring function as a measure of compatibility between clusters Ci and Cj. 72

Coreference Resolution as Greedy Clustering • Use a scoring function as a measure of compatibility between clusters Ci and Cj. 1. Initialize system clustering C with singleton clusters. – each mention in its own cluster. 73

Coreference Resolution as Greedy Clustering • Use a scoring function as a measure of compatibility between clusters Ci and Cj. 1. Initialize system clustering C with singleton clusters. – each mention in its own cluster. 2. As long as C contains two or more clusters: i. Find best scoring pair of clusters <Ci, Cj>. ii. If score f(Ci, Cj) less than minimum threshold f( , ), Stop. iii. Replace Ci and Cj in C with Ci Cj. 74

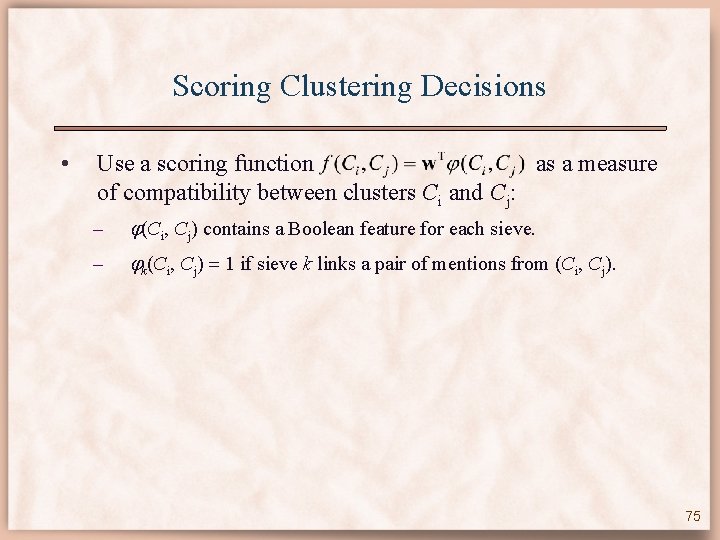

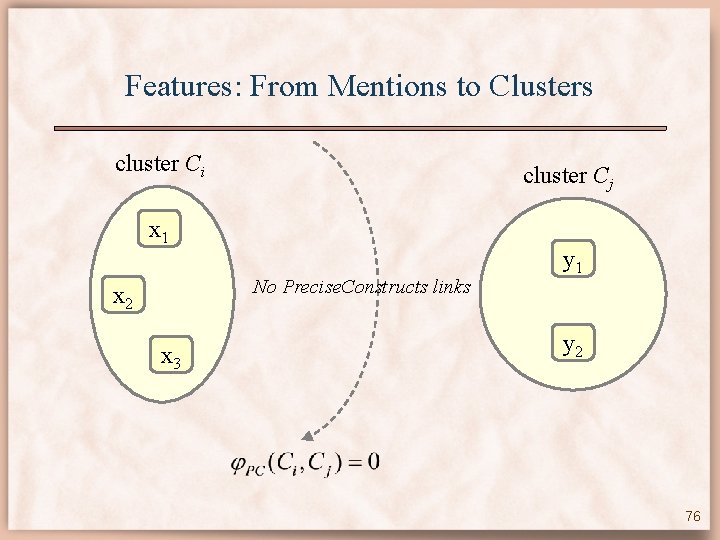

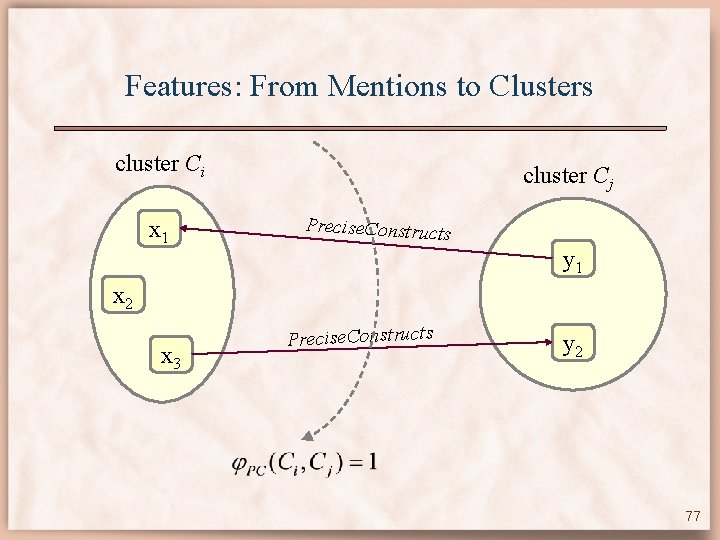

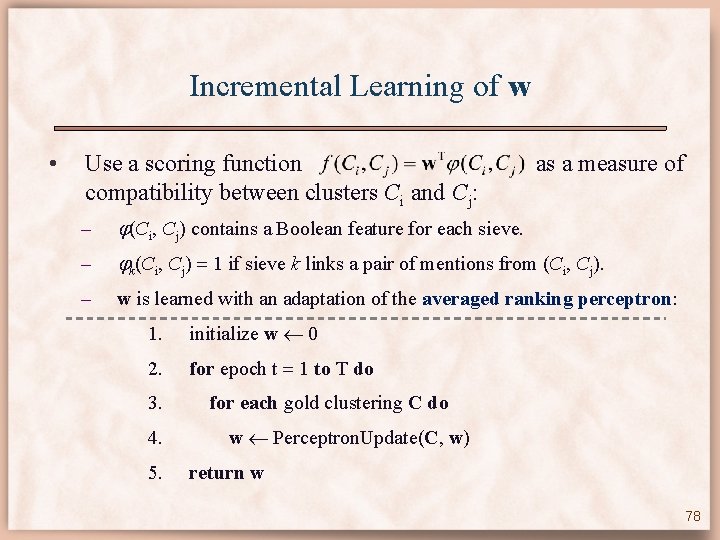

Scoring Clustering Decisions • Use a scoring function as a measure of compatibility between clusters Ci and Cj: – (Ci, Cj) contains a Boolean feature for each sieve. – k(Ci, Cj) 1 if sieve k links a pair of mentions from (Ci, Cj). 75

Features: From Mentions to Clusters cluster Ci cluster Cj x 1 No Precise. Constructs links x 2 x 3 y 1 y 2 76

Features: From Mentions to Clusters cluster Ci x 1 cluster Cj Precise. Constructs y 1 x 2 x 3 Precise. Constructs y 2 77

Incremental Learning of w • Use a scoring function compatibility between clusters Ci and Cj: as a measure of – (Ci, Cj) contains a Boolean feature for each sieve. – k(Ci, Cj) 1 if sieve k links a pair of mentions from (Ci, Cj). – w is learned with an adaptation of the averaged ranking perceptron: 1. initialize w 0 2. for epoch t 1 to T do 3. 4. 5. for each gold clustering C do w Perceptron. Update(C, w) return w 78

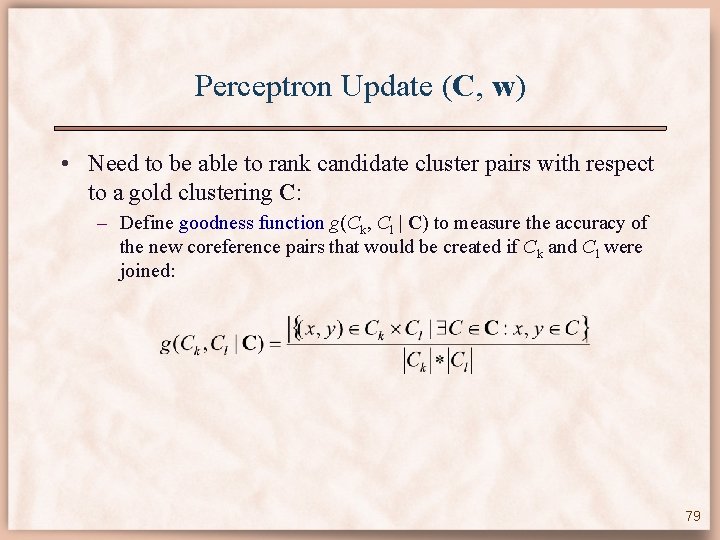

Perceptron Update (C, w) • Need to be able to rank candidate cluster pairs with respect to a gold clustering C: – Define goodness function g(Ck, Cl | C) to measure the accuracy of the new coreference pairs that would be created if Ck and Cl were joined: 79

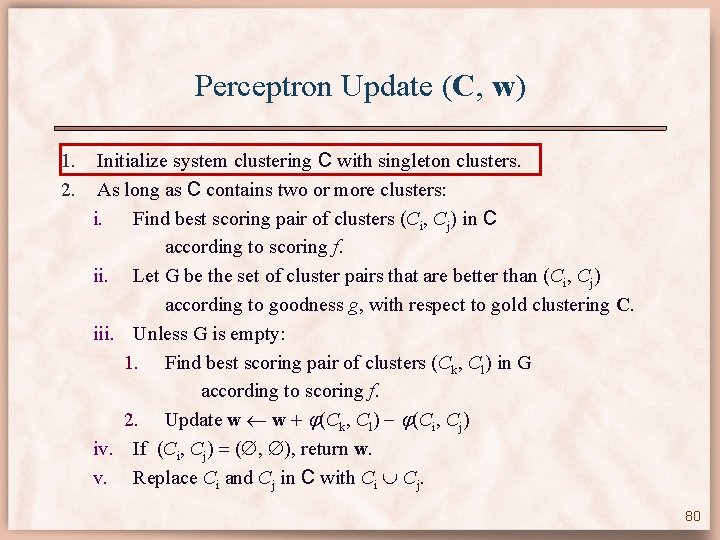

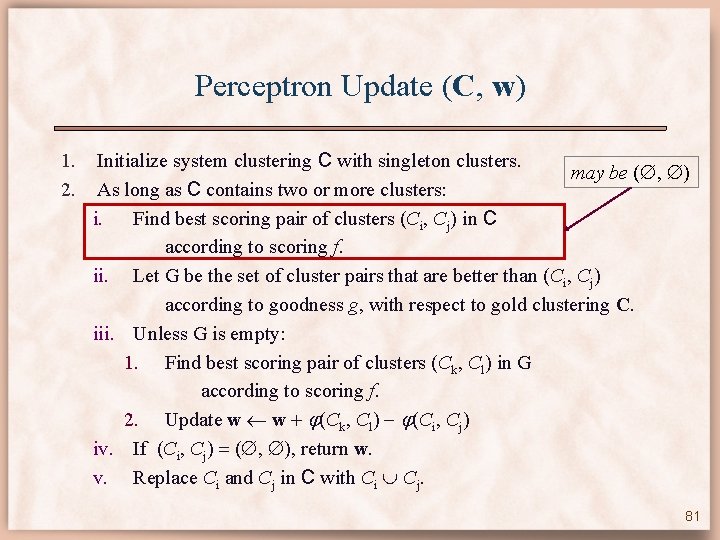

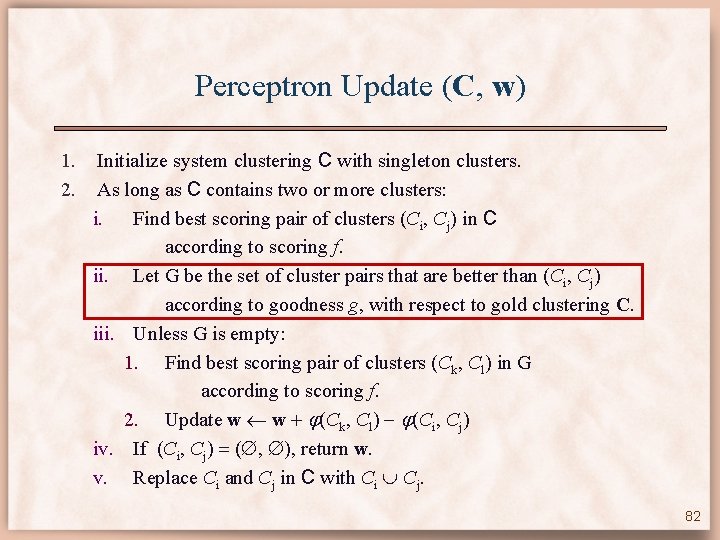

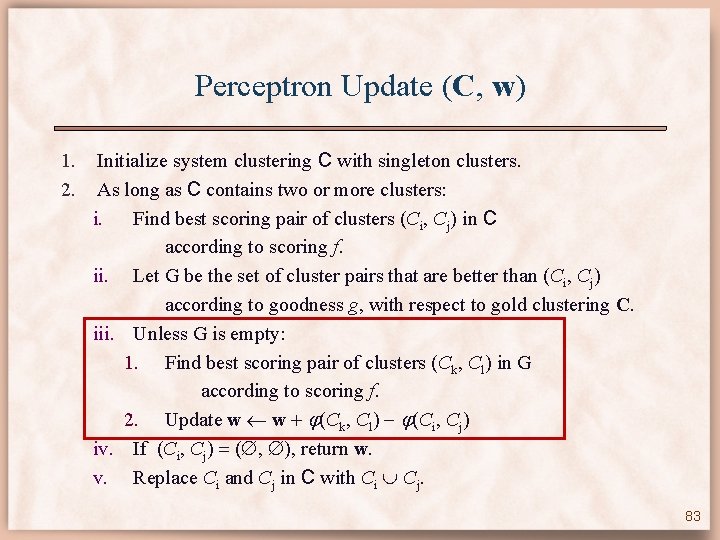

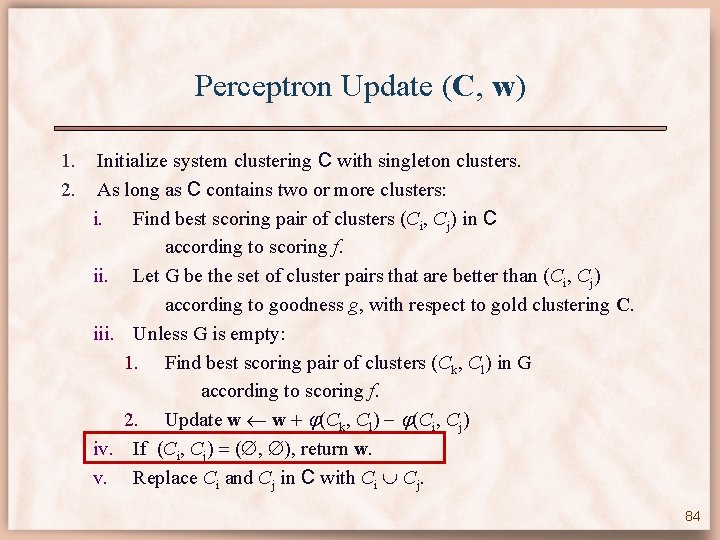

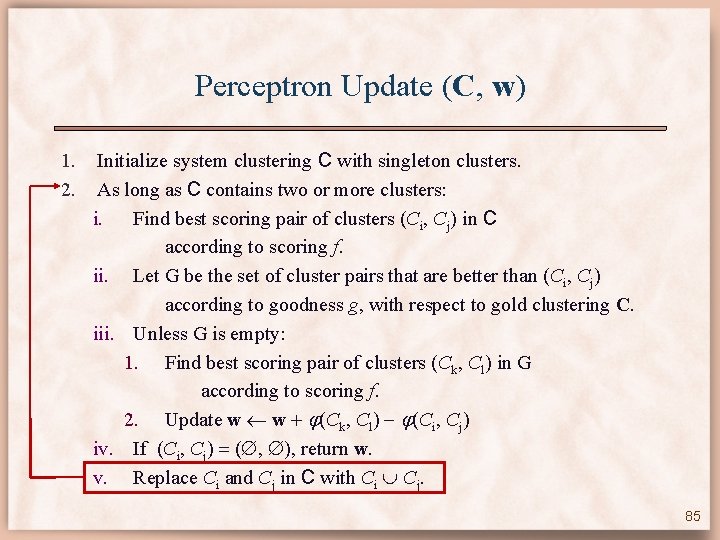

Perceptron Update (C, w) 1. 2. Initialize system clustering C with singleton clusters. As long as C contains two or more clusters: i. Find best scoring pair of clusters (Ci, Cj) in C according to scoring f. ii. Let G be the set of cluster pairs that are better than (Ci, Cj) according to goodness g, with respect to gold clustering C. iii. Unless G is empty: 1. Find best scoring pair of clusters (Ck, Cl) in G according to scoring f. 2. Update w w (Ck, Cl) (Ci, Cj) iv. If (Ci, Cj) ( , ), return w. v. Replace Ci and Cj in C with Ci Cj. 80

Perceptron Update (C, w) 1. 2. Initialize system clustering C with singleton clusters. may be ( , ) As long as C contains two or more clusters: i. Find best scoring pair of clusters (Ci, Cj) in C according to scoring f. ii. Let G be the set of cluster pairs that are better than (Ci, Cj) according to goodness g, with respect to gold clustering C. iii. Unless G is empty: 1. Find best scoring pair of clusters (Ck, Cl) in G according to scoring f. 2. Update w w (Ck, Cl) (Ci, Cj) iv. If (Ci, Cj) ( , ), return w. v. Replace Ci and Cj in C with Ci Cj. 81

Perceptron Update (C, w) 1. 2. Initialize system clustering C with singleton clusters. As long as C contains two or more clusters: i. Find best scoring pair of clusters (Ci, Cj) in C according to scoring f. ii. Let G be the set of cluster pairs that are better than (Ci, Cj) according to goodness g, with respect to gold clustering C. iii. Unless G is empty: 1. Find best scoring pair of clusters (Ck, Cl) in G according to scoring f. 2. Update w w (Ck, Cl) (Ci, Cj) iv. If (Ci, Cj) ( , ), return w. v. Replace Ci and Cj in C with Ci Cj. 82

Perceptron Update (C, w) 1. 2. Initialize system clustering C with singleton clusters. As long as C contains two or more clusters: i. Find best scoring pair of clusters (Ci, Cj) in C according to scoring f. ii. Let G be the set of cluster pairs that are better than (Ci, Cj) according to goodness g, with respect to gold clustering C. iii. Unless G is empty: 1. Find best scoring pair of clusters (Ck, Cl) in G according to scoring f. 2. Update w w (Ck, Cl) (Ci, Cj) iv. If (Ci, Cj) ( , ), return w. v. Replace Ci and Cj in C with Ci Cj. 83

Perceptron Update (C, w) 1. 2. Initialize system clustering C with singleton clusters. As long as C contains two or more clusters: i. Find best scoring pair of clusters (Ci, Cj) in C according to scoring f. ii. Let G be the set of cluster pairs that are better than (Ci, Cj) according to goodness g, with respect to gold clustering C. iii. Unless G is empty: 1. Find best scoring pair of clusters (Ck, Cl) in G according to scoring f. 2. Update w w (Ck, Cl) (Ci, Cj) iv. If (Ci, Cj) ( , ), return w. v. Replace Ci and Cj in C with Ci Cj. 84

Perceptron Update (C, w) 1. 2. Initialize system clustering C with singleton clusters. As long as C contains two or more clusters: i. Find best scoring pair of clusters (Ci, Cj) in C according to scoring f. ii. Let G be the set of cluster pairs that are better than (Ci, Cj) according to goodness g, with respect to gold clustering C. iii. Unless G is empty: 1. Find best scoring pair of clusters (Ck, Cl) in G according to scoring f. 2. Update w w (Ck, Cl) (Ci, Cj) iv. If (Ci, Cj) ( , ), return w. v. Replace Ci and Cj in C with Ci Cj. 85

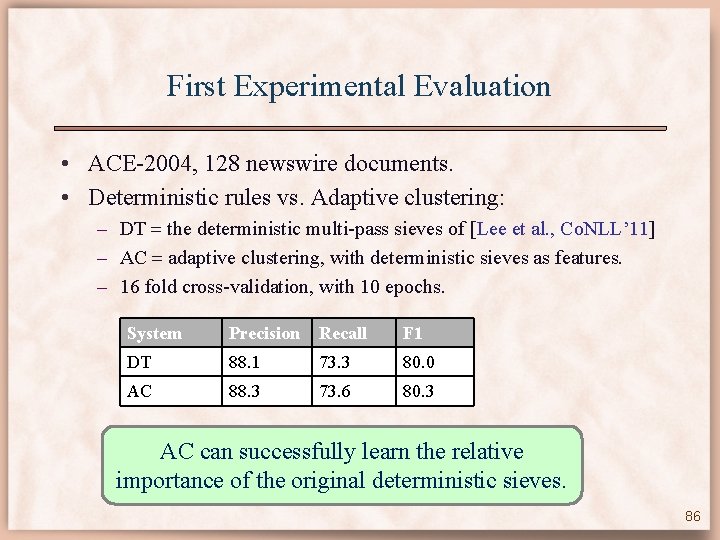

First Experimental Evaluation • ACE-2004, 128 newswire documents. • Deterministic rules vs. Adaptive clustering: – DT the deterministic multi-pass sieves of [Lee et al. , Co. NLL’ 11] – AC adaptive clustering, with deterministic sieves as features. – 16 fold cross-validation, with 10 epochs. System Precision Recall F 1 DT 88. 1 73. 3 80. 0 AC 88. 3 73. 6 80. 3 AC can successfully learn the relative importance of the original deterministic sieves. 86

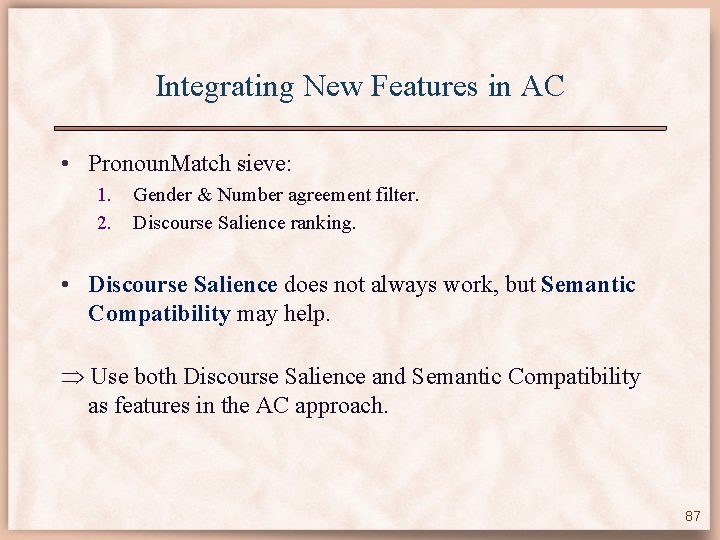

Integrating New Features in AC • Pronoun. Match sieve: 1. 2. Gender & Number agreement filter. Discourse Salience ranking. • Discourse Salience does not always work, but Semantic Compatibility may help. Use both Discourse Salience and Semantic Compatibility as features in the AC approach. 87

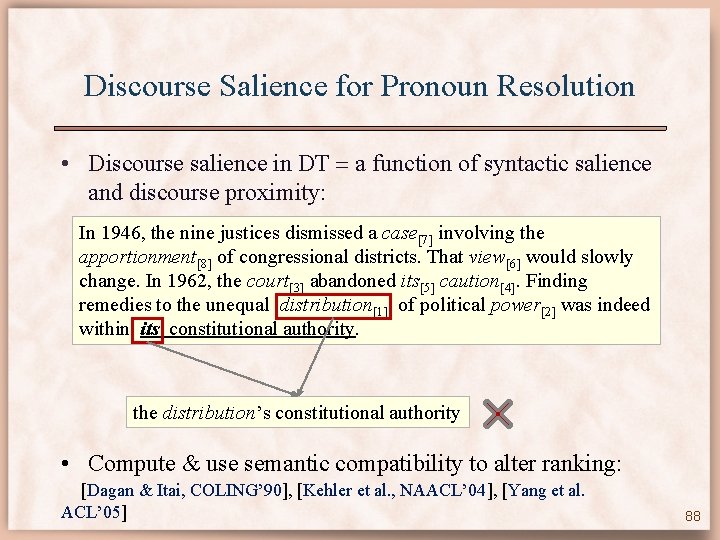

Discourse Salience for Pronoun Resolution • Discourse salience in DT a function of syntactic salience and discourse proximity: In 1946, the nine justices dismissed a case[7] involving the apportionment[8] of congressional districts. That view[6] would slowly change. In 1962, the court[3] abandoned its[5] caution[4]. Finding remedies to the unequal distribution[1] of political power[2] was indeed within its constitutional authority. the distribution’s constitutional authority • Compute & use semantic compatibility to alter ranking: [Dagan & Itai, COLING’ 90], [Kehler et al. , NAACL’ 04], [Yang et al. ACL’ 05] 88

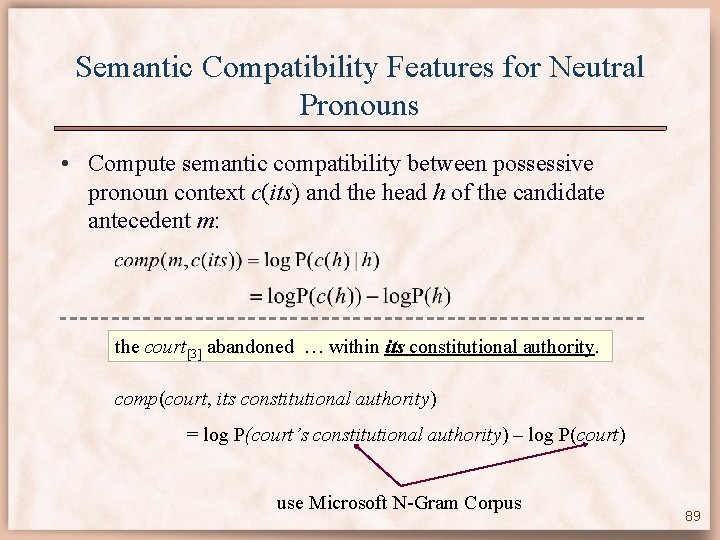

Semantic Compatibility Features for Neutral Pronouns • Compute semantic compatibility between possessive pronoun context c(its) and the head h of the candidate antecedent m: the court[3] abandoned … within its constitutional authority. comp(court, its constitutional authority) = log P(court’s constitutional authority) – log P(court) use Microsoft N-Gram Corpus 89

![Semantic Compatibility Features for Neutral Pronouns In 1946, the nine justices dismissed a case[7] Semantic Compatibility Features for Neutral Pronouns In 1946, the nine justices dismissed a case[7]](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-90.jpg)

Semantic Compatibility Features for Neutral Pronouns In 1946, the nine justices dismissed a case[7] involving the apportionment[8] of congressional districts. That view[6] would slowly change. In 1962, the court[3] abandoned its[5] caution[4]. Finding remedies to the unequal distribution[1] of political power[2] was indeed within its constitutional authority. [3] log P(court’s constitutional authority | court) = 5. 91 [5] log P(court’s constitutional authority | court) = 5. 91 [7] log P(cases’s constitutional authority | cases) = 8. 32 [2] log P(power’s constitutional authority | court) = 9. 30 [8] log P(app-nt’s constitutional authority | app-nt) = 9. 32 [4] log P(caution’s constitutional authority | caution) = 9. 39 [1] log P(dist-ion’s constitutional authority | dist-ion) = 9. 40 [6] log P(view’s constitutional authority | view) = 9. 69 90

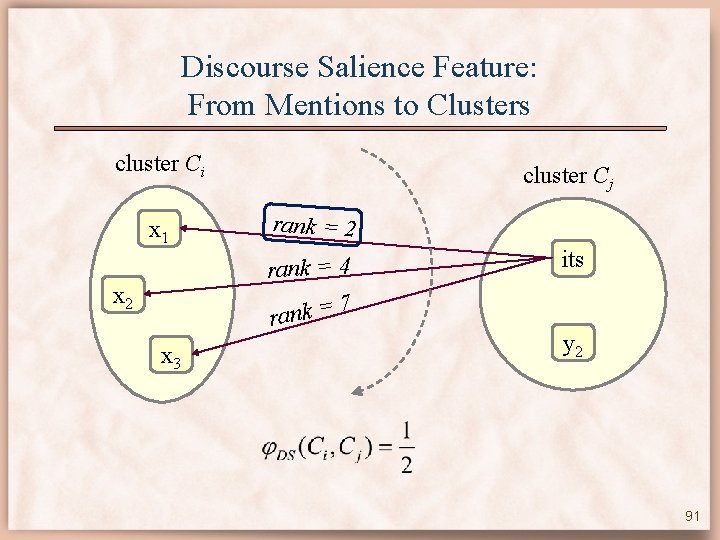

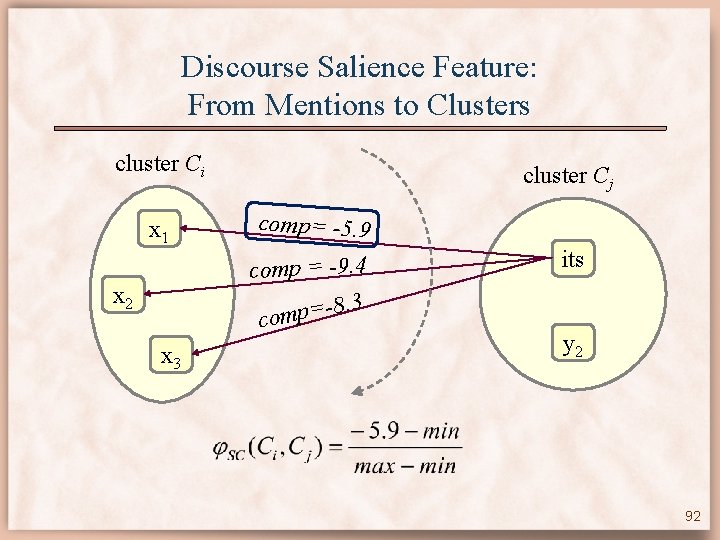

Discourse Salience Feature: From Mentions to Clusters cluster Ci x 1 cluster Cj rank = 2 rank = 4 7 rank = x 2 x 3 its y 2 91

Discourse Salience Feature: From Mentions to Clusters cluster Ci x 1 cluster Cj comp= -5. 9 comp = -9. 4 8. 3 = p m co x 2 x 3 its y 2 92

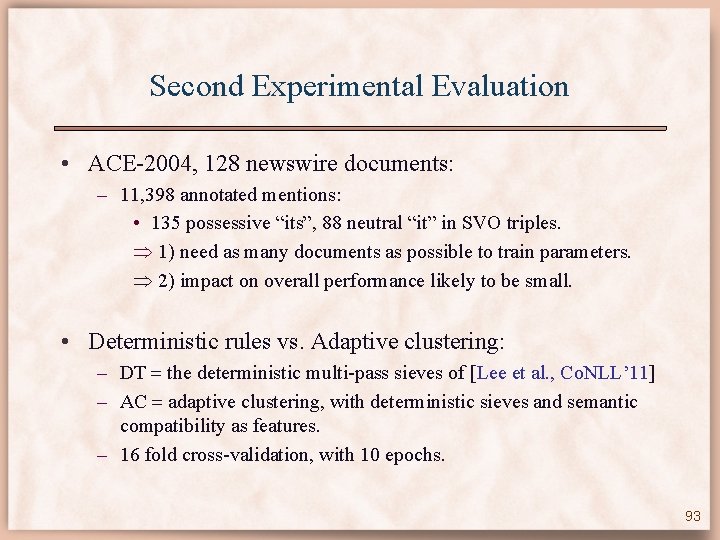

Second Experimental Evaluation • ACE-2004, 128 newswire documents: – 11, 398 annotated mentions: • 135 possessive “its”, 88 neutral “it” in SVO triples. 1) need as many documents as possible to train parameters. 2) impact on overall performance likely to be small. • Deterministic rules vs. Adaptive clustering: – DT the deterministic multi-pass sieves of [Lee et al. , Co. NLL’ 11] – AC adaptive clustering, with deterministic sieves and semantic compatibility as features. – 16 fold cross-validation, with 10 epochs. 93

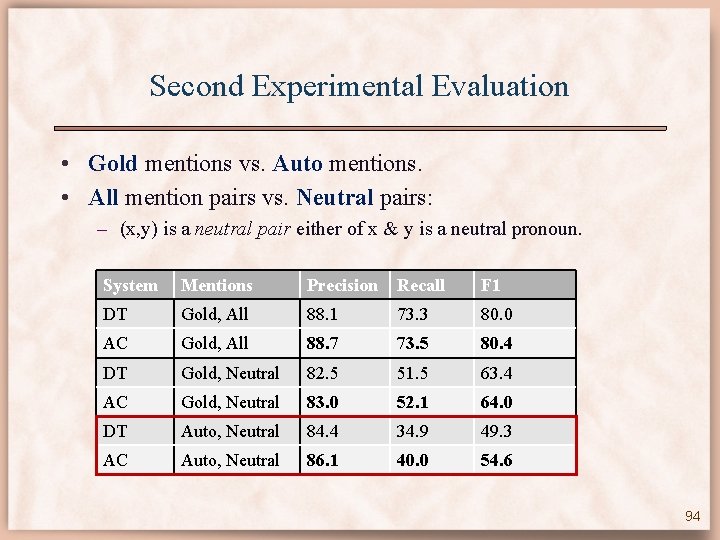

Second Experimental Evaluation • Gold mentions vs. Auto mentions. • All mention pairs vs. Neutral pairs: – (x, y) is a neutral pair either of x & y is a neutral pronoun. System Mentions Precision Recall F 1 DT Gold, All 88. 1 73. 3 80. 0 AC Gold, All 88. 7 73. 5 80. 4 DT Gold, Neutral 82. 5 51. 5 63. 4 AC Gold, Neutral 83. 0 52. 1 64. 0 DT Auto, Neutral 84. 4 34. 9 49. 3 AC Auto, Neutral 86. 1 40. 0 54. 6 94

Conclusions & More Work • Machine learning approaches to: – Word Sense Disambiguation. – Coreference Resolution. Aim: Link noun phrases to corresponding referent entities. • Exploited large scale text repositories: – Wikipedia (hyperlinks). – Web (N-gram satistics). • Automatically extract from WP a semantic network of knowledge about the world. 95

Questions ? 96

![Wikipedia and NLP • Named Entity Disambiguation – [Bunescu and Pasca, EACL 2006] – Wikipedia and NLP • Named Entity Disambiguation – [Bunescu and Pasca, EACL 2006] –](http://slidetodoc.com/presentation_image_h2/7491b74d6174e6f48517c5d9f1101bb4/image-97.jpg)

Wikipedia and NLP • Named Entity Disambiguation – [Bunescu and Pasca, EACL 2006] – [Cucerzan, EMNLP 2007] • Word Sense Disambiguation – [Mihalcea, NAACL 2007], [Mihalcea si Csomai, CIKM 2007] • Information Retrieval – [Milne, 2007], [Li et al. , 2007], – [Potthast et al. , 2008], [Cimiano et al. , 2009] • Question Answering – [Ahn et al. , 2004], [Kaisser, 2008], [Ferrucci et al. , 2010] 97

- Slides: 97