Combining Inductive and Analytical Learning Why combine inductive

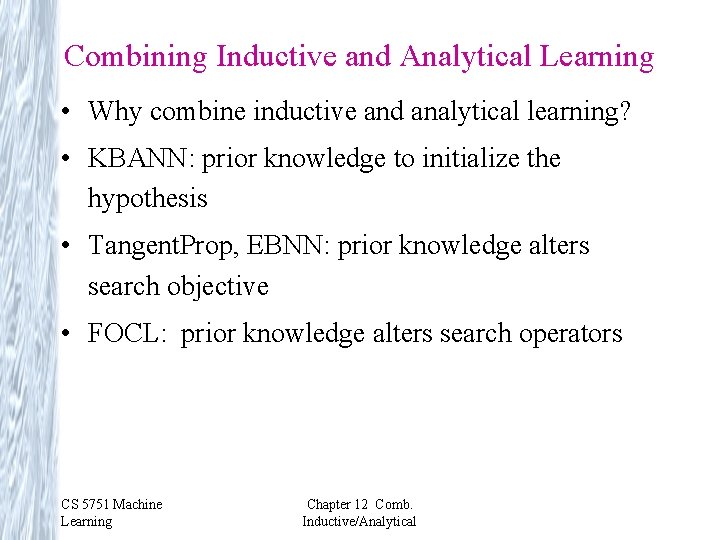

Combining Inductive and Analytical Learning • Why combine inductive and analytical learning? • KBANN: prior knowledge to initialize the hypothesis • Tangent. Prop, EBNN: prior knowledge alters search objective • FOCL: prior knowledge alters search operators CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

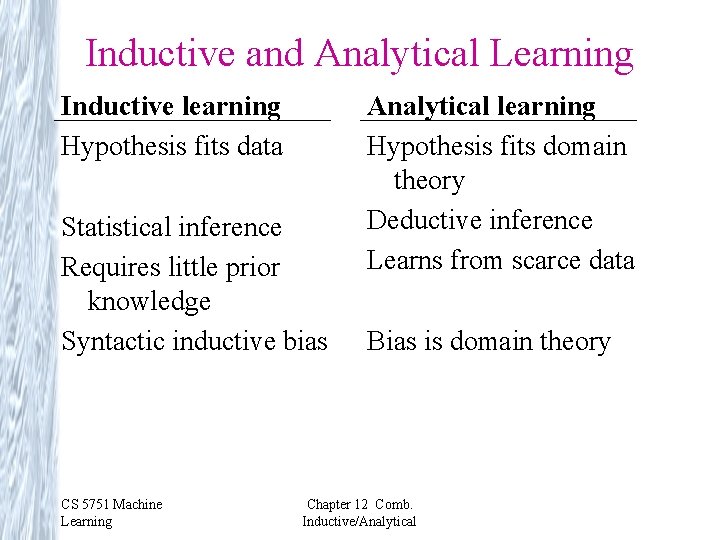

Inductive and Analytical Learning Inductive learning Hypothesis fits data Statistical inference Requires little prior knowledge Syntactic inductive bias CS 5751 Machine Learning Analytical learning Hypothesis fits domain theory Deductive inference Learns from scarce data Bias is domain theory Chapter 12 Comb. Inductive/Analytical

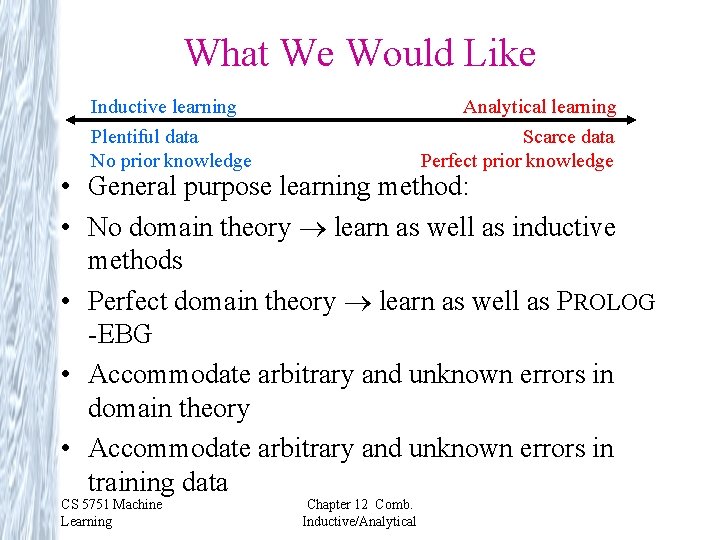

What We Would Like Inductive learning Plentiful data No prior knowledge Analytical learning Scarce data Perfect prior knowledge • General purpose learning method: • No domain theory learn as well as inductive methods • Perfect domain theory learn as well as PROLOG -EBG • Accommodate arbitrary and unknown errors in domain theory • Accommodate arbitrary and unknown errors in training data CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

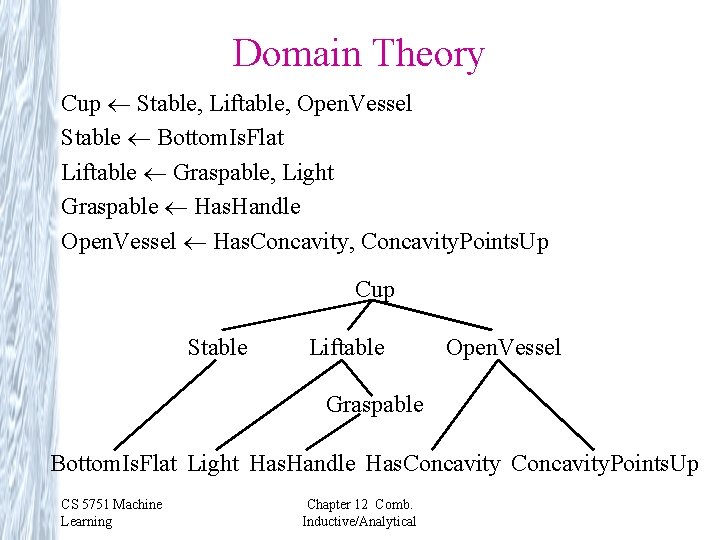

Domain Theory Cup Stable, Liftable, Open. Vessel Stable Bottom. Is. Flat Liftable Graspable, Light Graspable Has. Handle Open. Vessel Has. Concavity, Concavity. Points. Up Cup Stable Liftable Open. Vessel Graspable Bottom. Is. Flat Light Has. Handle Has. Concavity. Points. Up CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

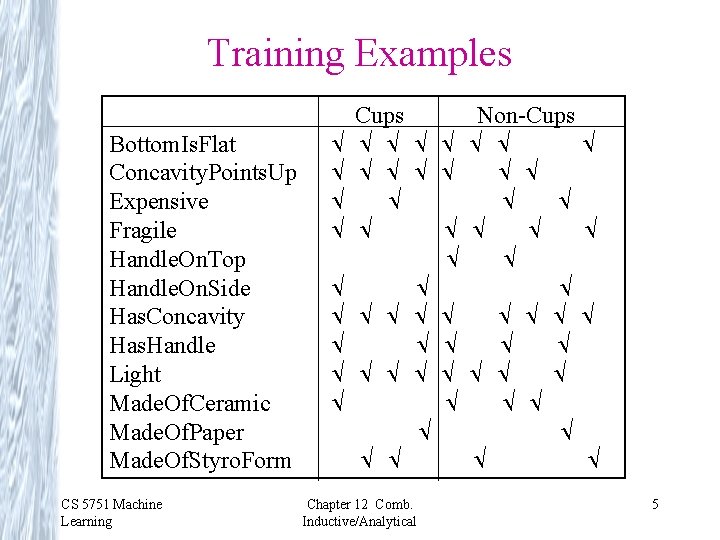

Training Examples Bottom. Is. Flat Concavity. Points. Up Expensive Fragile Handle. On. Top Handle. On. Side Has. Concavity Has. Handle Light Made. Of. Ceramic Made. Of. Paper Made. Of. Styro. Form CS 5751 Machine Learning Cups Non-Cups Chapter 12 Comb. Inductive/Analytical 5

KBANN Knowledge Based Artificial Neural Networks KBANN (data D, domain theory B) 1. Create a feedforward network h equivalent to B 2. Use BACKPROP to tune h to fit D CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

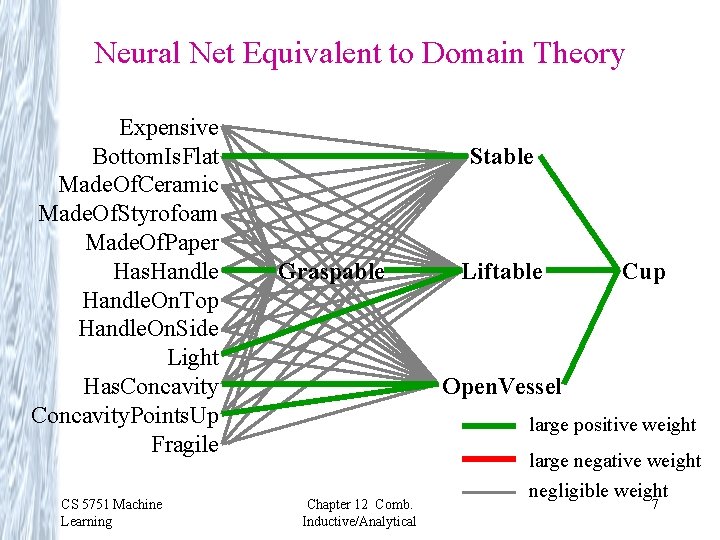

Neural Net Equivalent to Domain Theory Expensive Bottom. Is. Flat Made. Of. Ceramic Made. Of. Styrofoam Made. Of. Paper Has. Handle. On. Top Handle. On. Side Light Has. Concavity. Points. Up Fragile CS 5751 Machine Learning Stable Graspable Liftable Cup Open. Vessel large positive weight Chapter 12 Comb. Inductive/Analytical large negative weight negligible weight 7

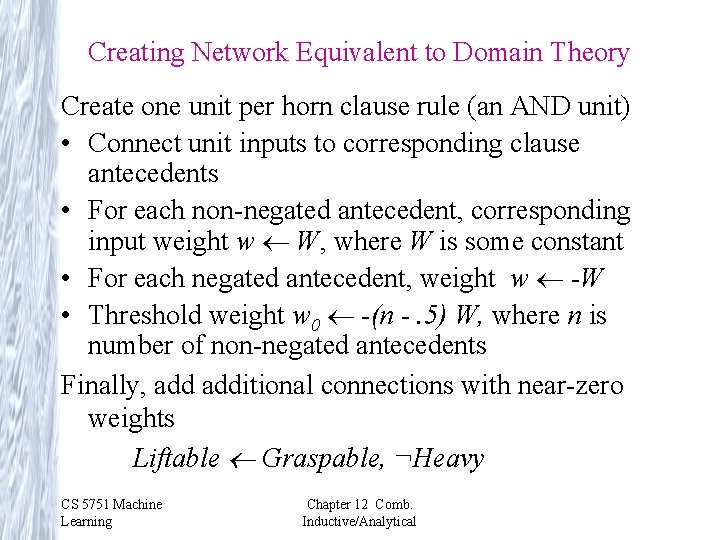

Creating Network Equivalent to Domain Theory Create one unit per horn clause rule (an AND unit) • Connect unit inputs to corresponding clause antecedents • For each non-negated antecedent, corresponding input weight w W, where W is some constant • For each negated antecedent, weight w -W • Threshold weight w 0 -(n -. 5) W, where n is number of non-negated antecedents Finally, additional connections with near-zero weights Liftable Graspable, ¬Heavy CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

Result of Refining the Network Expensive Bottom. Is. Flat Made. Of. Ceramic Made. Of. Styrofoam Made. Of. Paper Has. Handle. On. Top Handle. On. Side Light Has. Concavity. Points. Up Fragile CS 5751 Machine Learning Stable Graspable Liftable Cup Open. Vessel large positive weight Chapter 12 Comb. Inductive/Analytical large negative weight negligible weight 9

KBANN Results Classifying promoter regions in DNA (leave one out testing): • Backpropagation: error rate 8/106 • KBANN: 4/106 Similar improvements on other classification, control tasks. CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

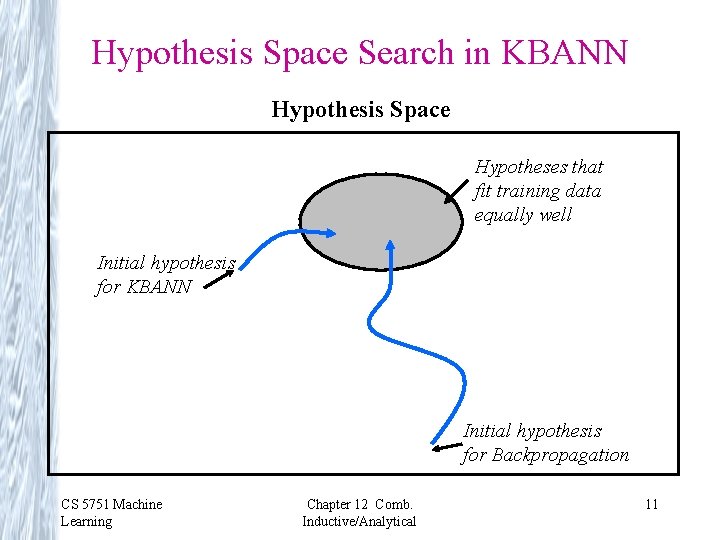

Hypothesis Space Search in KBANN Hypothesis Space Hypotheses that fit training data equally well Initial hypothesis for KBANN Initial hypothesis for Backpropagation CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical 11

EBNN Explanation Based Neural Network Key idea: • Previously learned approximate domain theory • Domain theory represented by collection of neural networks • Learn target function as another neural network CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

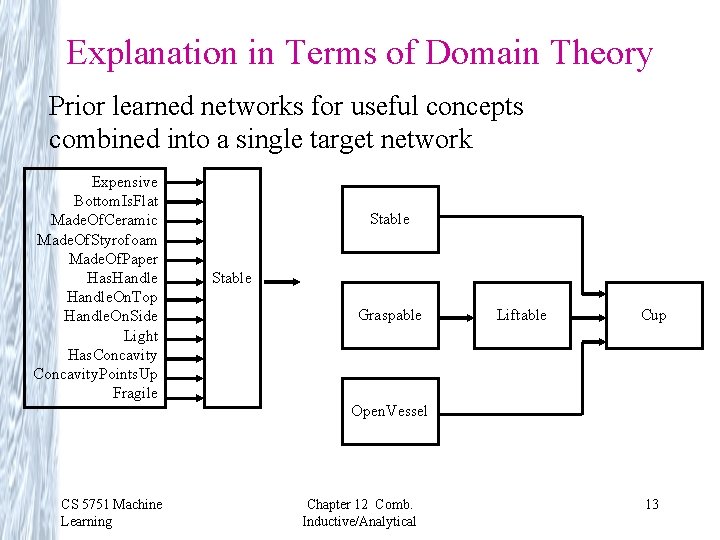

Explanation in Terms of Domain Theory Prior learned networks for useful concepts combined into a single target network Expensive Bottom. Is. Flat Made. Of. Ceramic Made. Of. Styrofoam Made. Of. Paper Has. Handle. On. Top Handle. On. Side Light Has. Concavity. Points. Up Fragile Stable Graspable Liftable Cup Open. Vessel CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical 13

Tanget. Prop CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

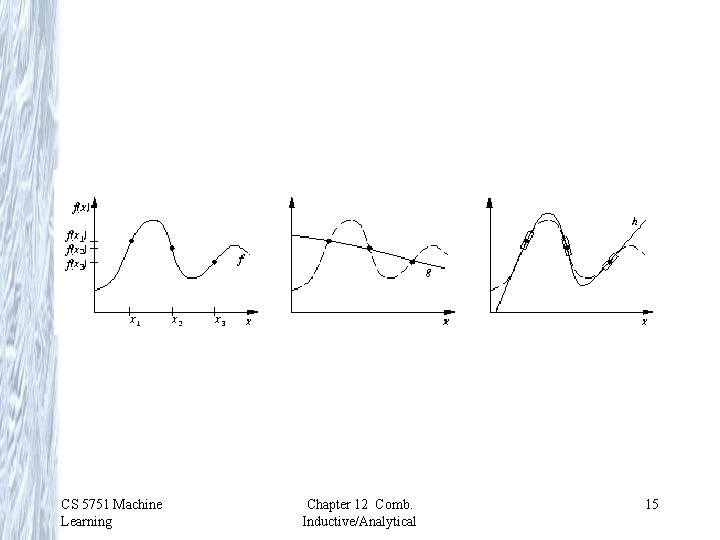

CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical 15

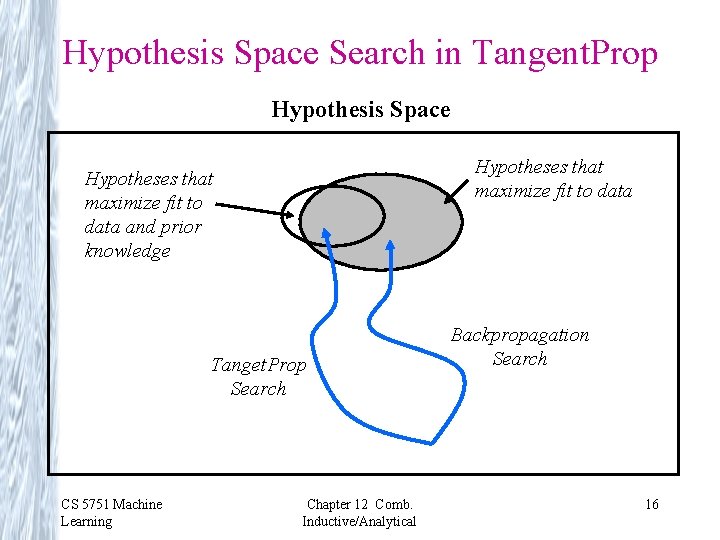

Hypothesis Space Search in Tangent. Prop Hypothesis Space Hypotheses that maximize fit to data and prior knowledge Tanget. Prop Search CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical Backpropagation Search 16

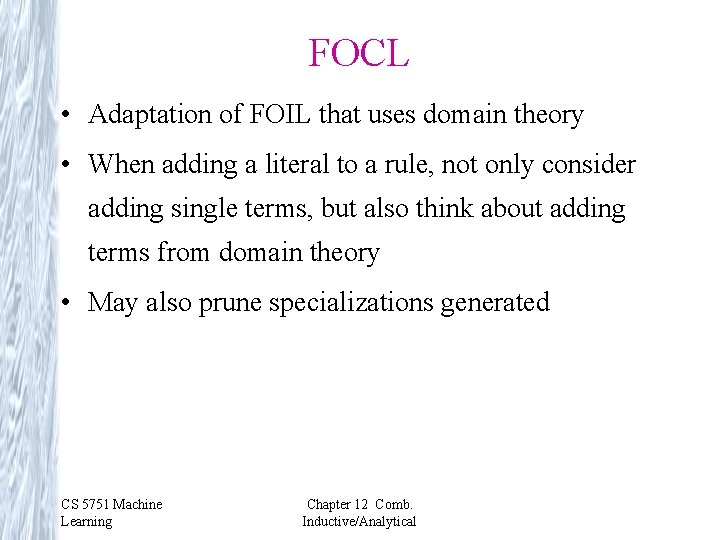

FOCL • Adaptation of FOIL that uses domain theory • When adding a literal to a rule, not only consider adding single terms, but also think about adding terms from domain theory • May also prune specializations generated CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

![Search in FOCL Cup Has. Handle [2+, 3 -] Cup Bottom. Is. Flat, Cup Search in FOCL Cup Has. Handle [2+, 3 -] Cup Bottom. Is. Flat, Cup](http://slidetodoc.com/presentation_image_h/e76a81399593be22a482b53e4c5406c6/image-18.jpg)

Search in FOCL Cup Has. Handle [2+, 3 -] Cup Bottom. Is. Flat, Cup ¬Has. Handle Light, [2+, 3 -] Has. Concavity, Cup Fragile Concavity. Points. Up [2+, 4 -] [4+, 2 -] . . . Cup Bottom. Is. Flat, Light, Has. Concavity, Concavity. Points. Up, Handle. On. Top Concavity. Points. Up, Handle. On. Side [0+, 2 -] ¬ Handle. On. Top [2+, 0 -] [4+, 0 -] CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical 18

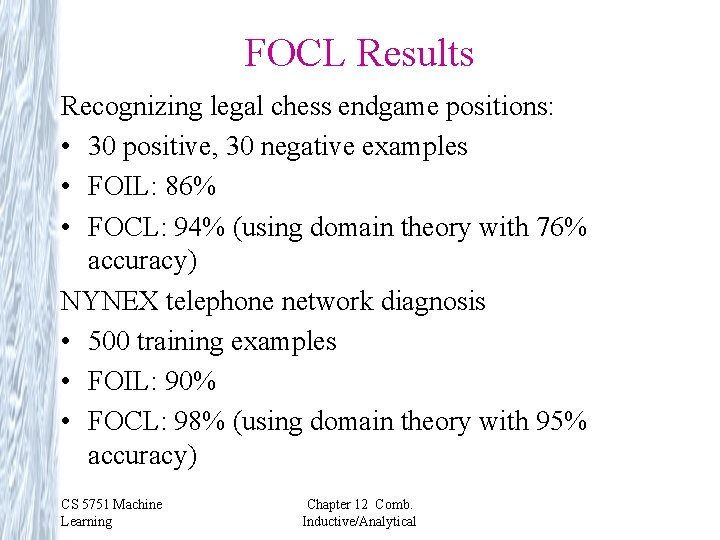

FOCL Results Recognizing legal chess endgame positions: • 30 positive, 30 negative examples • FOIL: 86% • FOCL: 94% (using domain theory with 76% accuracy) NYNEX telephone network diagnosis • 500 training examples • FOIL: 90% • FOCL: 98% (using domain theory with 95% accuracy) CS 5751 Machine Learning Chapter 12 Comb. Inductive/Analytical

- Slides: 19