Advances in Word Sense Disambiguation Tutorial at AAAI2005

Advances in Word Sense Disambiguation Tutorial at AAAI-2005 July 9, 2005 Rada Mihalcea University of North Texas http: //www. cs. unt. edu/~rada Ted Pedersen University of Minnesota, Duluth http: //www. d. umn. edu/~tpederse [Note: slides have been modified/deleted/added] [For those interested in lexical semantics, I suggest getting the entire tutorial]

Definitions • Word sense disambiguation is the problem of selecting a sense for a word from a set of predefined possibilities. – Sense Inventory usually comes from a dictionary or thesaurus. – Knowledge intensive methods, supervised learning, and (sometimes) bootstrapping approaches • Word sense discrimination is the problem of dividing the usages of a word into different meanings, without regard to any particular existing sense inventory. – Unsupervised techniques 2

Word Senses • The meaning of a word in a given context • Word sense representations – With respect to a dictionary chair = a seat for one person, with a support for the back; "he put his coat over the back of the chair and sat down" chair = the position of professor; "he was awarded an endowed chair in economics" – With respect to the translation in a second language chair = chaise chair = directeur – With respect to the context where it occurs (discrimination) “Sit on a chair” “Take a seat on this chair” “The chair of the Math Department” “The chair of the meeting” 3

Approaches to Word Sense Disambiguation • Knowledge-Based Disambiguation – use of external lexical resources such as dictionaries and thesauri – discourse properties • Supervised Disambiguation – based on a labeled training set – the learning system has: • a training set of feature-encoded inputs AND • their appropriate sense label (category) • Unsupervised Disambiguation – based on unlabeled corpora – The learning system has: • a training set of feature-encoded inputs BUT • NOT their appropriate sense label (category) 4

All Words Word Sense Disambiguation • Attempt to disambiguate all open-class words in a text “He put his suit over the back of the chair” • Use information from dictionaries – Definitions / Examples for each meaning • Find similarity between definitions and current context • Position in a semantic network • Find that “table” is closer to “chair/furniture” than to “chair/person” • Use discourse properties • A word exhibits the same sense in a discourse / in a collocation 5

All Words Word Sense Disambiguation • Minimally supervised approaches – Learn to disambiguate words using small annotated corpora – E. g. Sem. Cor – corpus where all open class words are disambiguated • 200, 000 running words • Most frequent sense 6

Targeted Word Sense Disambiguation (we saw this in the previous lecture notes) • Disambiguate one target word “Take a seat on this chair” “The chair of the Math Department” • WSD is viewed as a typical classification problem – use machine learning techniques to train a system • Training: – Corpus of occurrences of the target word, each occurrence annotated with appropriate sense – Build feature vectors: • a vector of relevant linguistic features that represents the context (ex: a window of words around the target word) • Disambiguation: – Disambiguate the target word in new unseen text 7

Unsupervised Disambiguation • Disambiguate word senses: – without supporting tools such as dictionaries and thesauri – without a labeled training text • Without such resources, word senses are not labeled – We cannot say “chair/furniture” or “chair/person” • We can: – Cluster/group the contexts of an ambiguous word into a number of groups – Discriminate between these groups without actually labeling them 8

Unsupervised Disambiguation • Hypothesis: same senses of words will have similar neighboring words • Disambiguation algorithm – Identify context vectors corresponding to all occurrences of a particular word – Partition them into regions of high density – Assign a sense to each such region “Sit on a chair” “Take a seat on this chair” “The chair of the Math Department” “The chair of the meeting” 9

Bounds on Performance • Upper and Lower Bounds on Performance: – Measure of how well an algorithm performs relative to the difficulty of the task. • Upper Bound: – Human performance – Around 97%-99% with few and clearly distinct senses – Inter-judge agreement: • With words with clear & distinct senses – 95% and up • With polysemous words with related senses – 65% – 70% • Lower Bound (or baseline): – The assignment of a random sense / the most frequent sense • 90% is excellent for a word with 2 equiprobable senses • 90% is trivial for a word with 2 senses with probability ratios of 9 to 1 10

References • • 11 (Gale, Church and Yarowsky 1992) Gale, W. , Church, K. , and Yarowsky, D. Estimating upper and lower bounds on the performance of word-sense disambiguation programs ACL 1992. (Miller et. al. , 1994) Miller, G. , Chodorow, M. , Landes, S. , Leacock, C. , and Thomas, R. Using a semantic concordance for sense identification. ARPA Workshop 1994. (Miller, 1995) Miller, G. Wordnet: A lexical database. ACM, 38(11) 1995. (Senseval) Sensevaluation exercises http: //www. senseval. org

Part 3: Knowledge-based Methods for Word Sense Disambiguation

Outline • Task definition – Machine Readable Dictionaries • • 13 Algorithms based on Machine Readable Dictionaries Selectional Restrictions Measures of Semantic Similarity Heuristic-based Methods

Task Definition • Knowledge-based WSD = class of WSD methods relying (mainly) on knowledge drawn from dictionaries and/or raw text • Resources – Yes • Machine Readable Dictionaries • Raw corpora – No • Manually annotated corpora • Scope – All open-class words 14

Machine Readable Dictionaries • In recent years, most dictionaries made available in Machine Readable format (MRD) – Oxford English Dictionary – Collins – Longman Dictionary of Ordinary Contemporary English (LDOCE) • Thesauruses – add synonymy information – Roget Thesaurus • Semantic networks – add more semantic relations – Word. Net – Euro. Word. Net 15

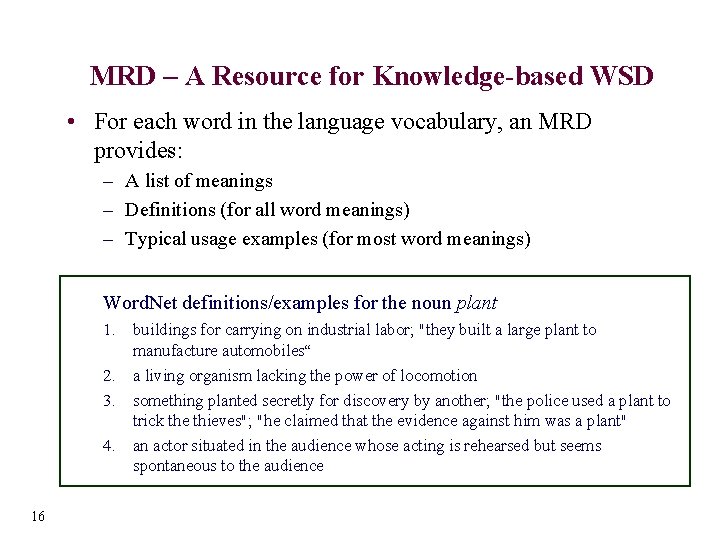

MRD – A Resource for Knowledge-based WSD • For each word in the language vocabulary, an MRD provides: – A list of meanings – Definitions (for all word meanings) – Typical usage examples (for most word meanings) Word. Net definitions/examples for the noun plant 1. buildings for carrying on industrial labor; "they built a large plant to manufacture automobiles“ 2. a living organism lacking the power of locomotion 3. something planted secretly for discovery by another; "the police used a plant to trick the thieves"; "he claimed that the evidence against him was a plant" 4. an actor situated in the audience whose acting is rehearsed but seems spontaneous to the audience 16

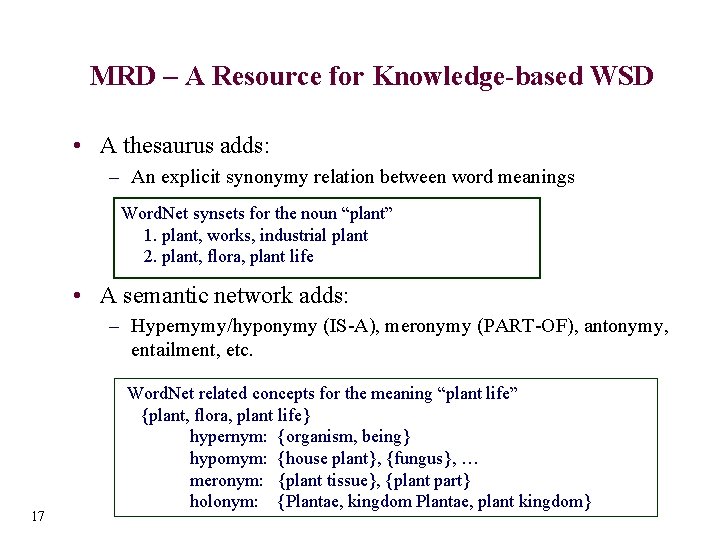

MRD – A Resource for Knowledge-based WSD • A thesaurus adds: – An explicit synonymy relation between word meanings Word. Net synsets for the noun “plant” 1. plant, works, industrial plant 2. plant, flora, plant life • A semantic network adds: – Hypernymy/hyponymy (IS-A), meronymy (PART-OF), antonymy, entailment, etc. 17 Word. Net related concepts for the meaning “plant life” {plant, flora, plant life} hypernym: {organism, being} hypomym: {house plant}, {fungus}, … meronym: {plant tissue}, {plant part} holonym: {Plantae, kingdom Plantae, plant kingdom}

Outline • Task definition – Machine Readable Dictionaries • • 18 Algorithms based on Machine Readable Dictionaries Selectional Restrictions Measures of Semantic Similarity Heuristic-based Methods

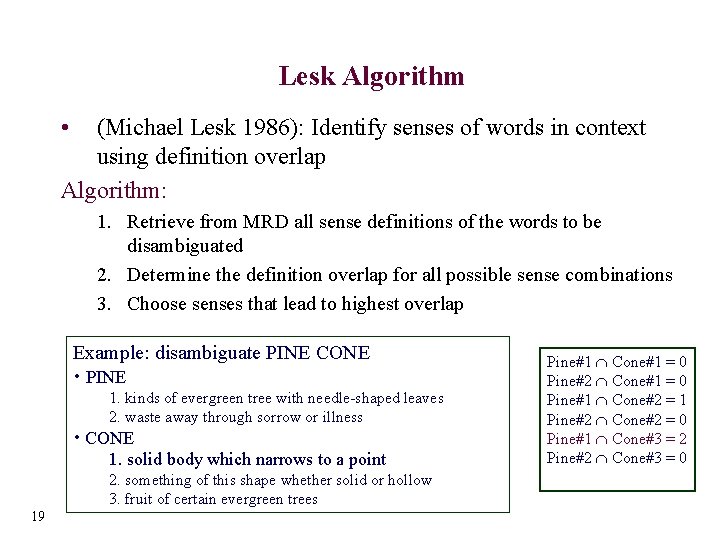

Lesk Algorithm • (Michael Lesk 1986): Identify senses of words in context using definition overlap Algorithm: 1. Retrieve from MRD all sense definitions of the words to be disambiguated 2. Determine the definition overlap for all possible sense combinations 3. Choose senses that lead to highest overlap Example: disambiguate PINE CONE • PINE 1. kinds of evergreen tree with needle-shaped leaves 2. waste away through sorrow or illness • CONE 1. solid body which narrows to a point 2. something of this shape whether solid or hollow 3. fruit of certain evergreen trees 19 Pine#1 Cone#1 = 0 Pine#2 Cone#1 = 0 Pine#1 Cone#2 = 1 Pine#2 Cone#2 = 0 Pine#1 Cone#3 = 2 Pine#2 Cone#3 = 0

Lesk Algorithm: A Simplified Version • Original Lesk definition: measure overlap between sense definitions for all words in context – • Simplified Lesk (Kilgarriff & Rosensweig 2000): measure overlap between sense definitions of a word and current context – • 20 Identify simultaneously the correct senses for all words in context Identify the correct sense for one word at a time Search space significantly reduced

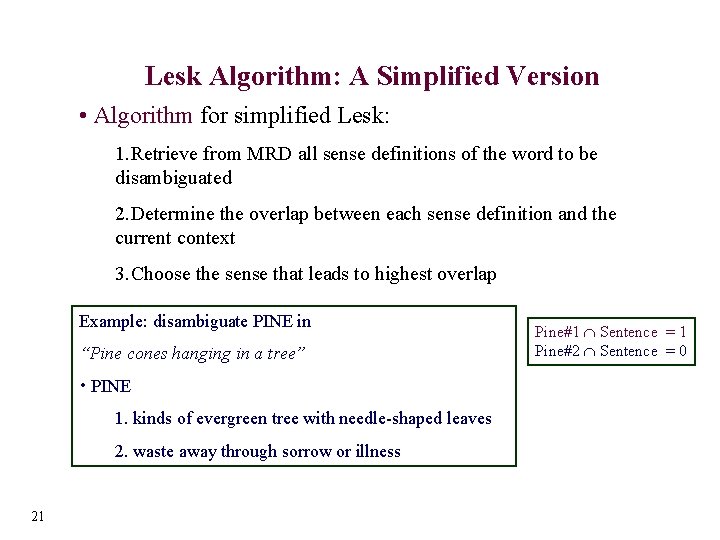

Lesk Algorithm: A Simplified Version • Algorithm for simplified Lesk: 1. Retrieve from MRD all sense definitions of the word to be disambiguated 2. Determine the overlap between each sense definition and the current context 3. Choose the sense that leads to highest overlap Example: disambiguate PINE in “Pine cones hanging in a tree” • PINE 1. kinds of evergreen tree with needle-shaped leaves 2. waste away through sorrow or illness 21 Pine#1 Sentence = 1 Pine#2 Sentence = 0

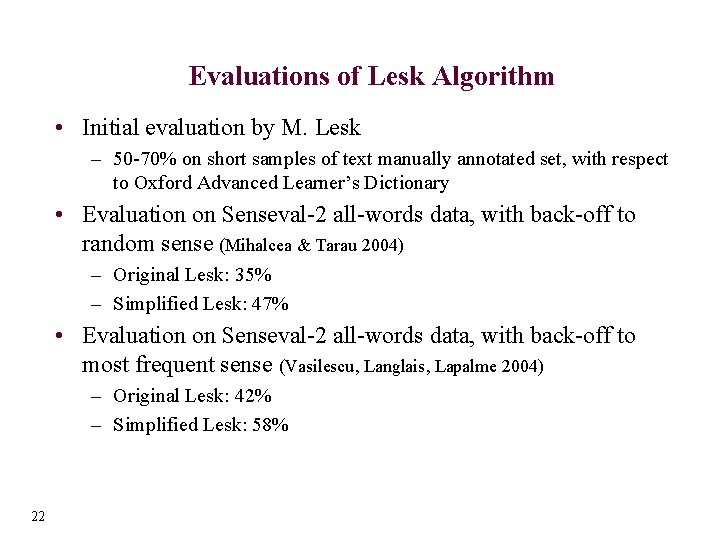

Evaluations of Lesk Algorithm • Initial evaluation by M. Lesk – 50 -70% on short samples of text manually annotated set, with respect to Oxford Advanced Learner’s Dictionary • Evaluation on Senseval-2 all-words data, with back-off to random sense (Mihalcea & Tarau 2004) – Original Lesk: 35% – Simplified Lesk: 47% • Evaluation on Senseval-2 all-words data, with back-off to most frequent sense (Vasilescu, Langlais, Lapalme 2004) – Original Lesk: 42% – Simplified Lesk: 58% 22

Outline • Task definition – Machine Readable Dictionaries • • 23 Algorithms based on Machine Readable Dictionaries Selectional Preferences Measures of Semantic Similarity Heuristic-based Methods

Selectional Preferences • A way to constrain the possible meanings of words in a given context • E. g. “Wash a dish” vs. “Cook a dish” – WASH-OBJECT vs. COOK-FOOD • Capture information about possible relations between semantic classes – Common sense knowledge • Alternative terminology – Selectional Restrictions – Selectional Preferences – Selectional Constraints 24

Acquiring Selectional Preferences • From annotated corpora – We saw this in the previous lecture notes • From raw corpora – Frequency counts – Information theory measures – Class-to-class relations 25

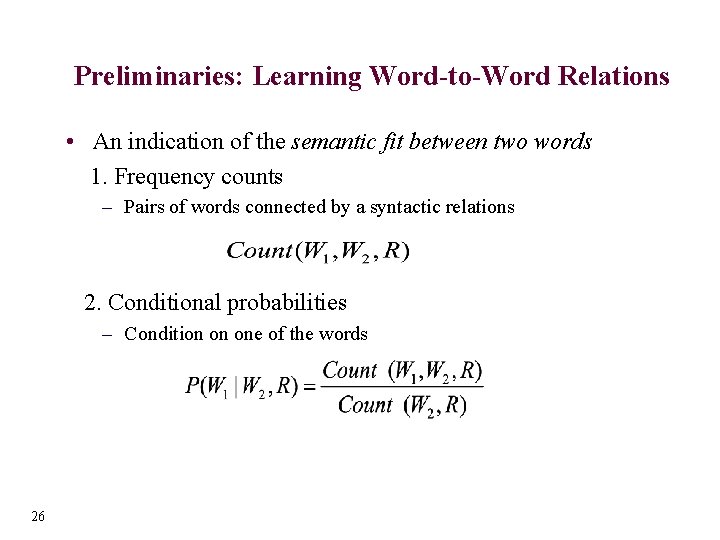

Preliminaries: Learning Word-to-Word Relations • An indication of the semantic fit between two words 1. Frequency counts – Pairs of words connected by a syntactic relations 2. Conditional probabilities – Condition on one of the words 26

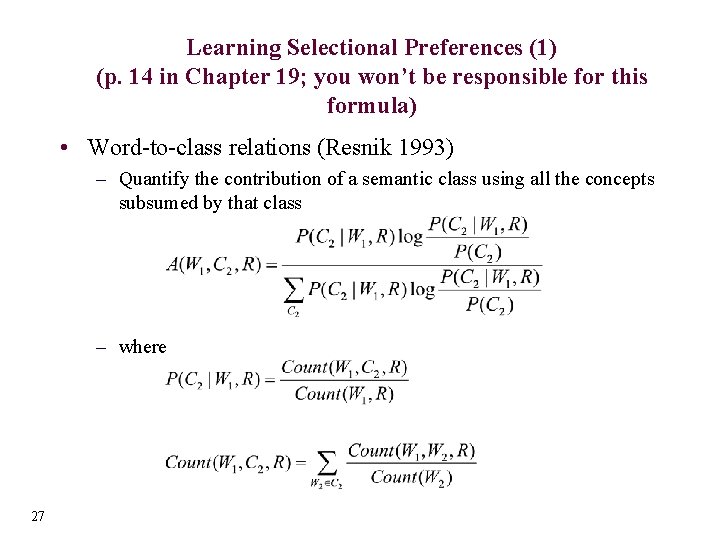

Learning Selectional Preferences (1) (p. 14 in Chapter 19; you won’t be responsible for this formula) • Word-to-class relations (Resnik 1993) – Quantify the contribution of a semantic class using all the concepts subsumed by that class – where 27

Outline • Task definition – Machine Readable Dictionaries • • 28 Algorithms based on Machine Readable Dictionaries Selectional Restrictions Measures of Semantic Similarity Heuristic-based Methods

Semantic Similarity • Words in a discourse must be related in meaning, for the discourse to be coherent (Haliday and Hassan, 1976) • Use this property for WSD – Identify related meanings for words that share a common context 29

See Figure 19. 6 in the chapter • Basic idea: the shorter the path between two senses in a semantic network, the more similar they are. • So, you can see that nickel, dime are closer to budget than they are to Richter scale (in Figure 19. 6) 30

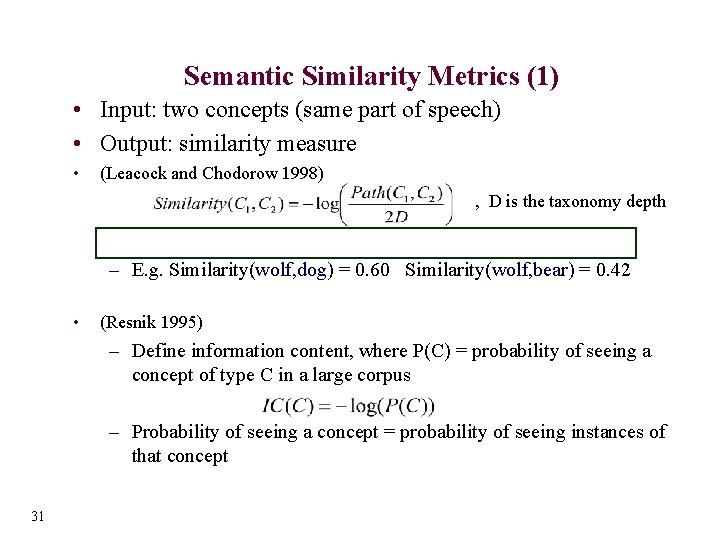

Semantic Similarity Metrics (1) • Input: two concepts (same part of speech) • Output: similarity measure • (Leacock and Chodorow 1998) , D is the taxonomy depth – E. g. Similarity(wolf, dog) = 0. 60 Similarity(wolf, bear) = 0. 42 • (Resnik 1995) – Define information content, where P(C) = probability of seeing a concept of type C in a large corpus – Probability of seeing a concept = probability of seeing instances of that concept 31

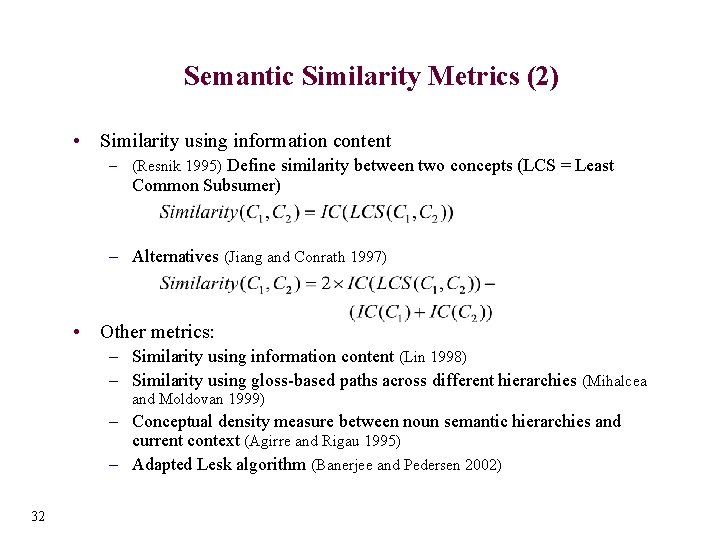

Semantic Similarity Metrics (2) • Similarity using information content – (Resnik 1995) Define similarity between two concepts (LCS = Least Common Subsumer) – Alternatives (Jiang and Conrath 1997) • Other metrics: – Similarity using information content (Lin 1998) – Similarity using gloss-based paths across different hierarchies (Mihalcea and Moldovan 1999) – Conceptual density measure between noun semantic hierarchies and current context (Agirre and Rigau 1995) – Adapted Lesk algorithm (Banerjee and Pedersen 2002) 32

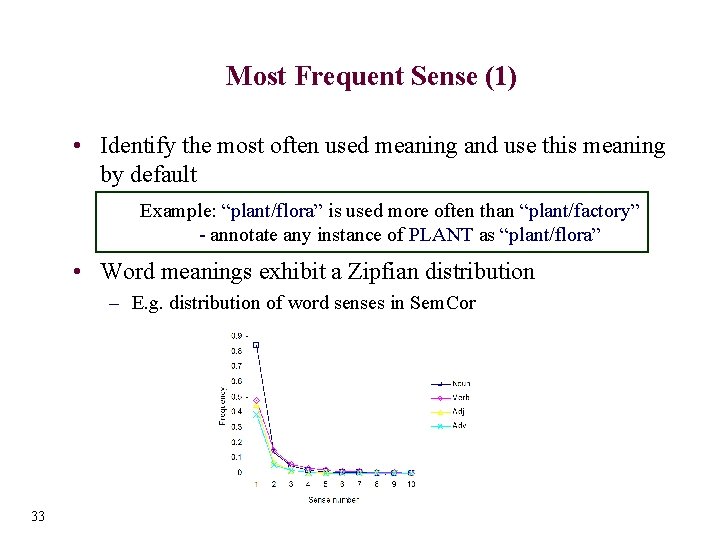

Most Frequent Sense (1) • Identify the most often used meaning and use this meaning by default Example: “plant/flora” is used more often than “plant/factory” - annotate any instance of PLANT as “plant/flora” • Word meanings exhibit a Zipfian distribution – E. g. distribution of word senses in Sem. Cor 33

• • Most Frequent Sense (2) (you aren’t responsible for this) Method 1: Find the most frequent sense in an annotated corpus Method 2: Find the most frequent sense using a method based on distributional similarity (Mc. Carthy et al. 2004) 1. Given a word w, find the top k distributionally similar words Nw = {n 1, n 2, …, nk}, with associated similarity scores {dss(w, n 1), dss(w, n 2), … dss(w, nk)} 2. For each sense wsi of w, identify the similarity with the words nj, using the sense of nj that maximizes this score 3. Rank senses wsi of w based on the total similarity score 34

Most Frequent Sense(3) • Word senses – pipe #1 = tobacco pipe – pipe #2 = tube of metal or plastic • Distributional similar words – N = {tube, cable, wire, tank, hole, cylinder, fitting, tap, …} • For each word in N, find similarity with pipe#i (using the sense that maximizes the similarity) – pipe#1 – tube (#3) = 0. 3 – pipe#2 – tube (#1) = 0. 6 • Compute score for each sense pipe#i – score (pipe#1) = 0. 25 – score (pipe#2) = 0. 73 Note: results depend on the corpus used to find distributionally similar words => can find domain specific predominant senses 35

One Sense Per Discourse • A word tends to preserve its meaning across all its occurrences in a given discourse (Gale, Church, Yarowksy 1992) • What does this mean? E. g. The ambiguous word PLANT occurs 10 times in a discourse all instances of “plant” carry the same meaning • Evaluation: – 8 words with two-way ambiguity, e. g. plant, crane, etc. – 98% of the two-word occurrences in the same discourse carry the same meaning • The grain of salt: Performance depends on granularity – (Krovetz 1998) experiments with words with more than two senses – Performance of “one sense per discourse” measured on Sem. Cor is approx. 70% 36

One Sense per Collocation • A word tends to preserve its meaning when used in the same collocation (Yarowsky 1993) – Strong for adjacent collocations – Weaker as the distance between words increases • An example The ambiguous word PLANT preserves its meaning in all its occurrences within the collocation “industrial plant”, regardless of the context where this collocation occurs • Evaluation: – 97% precision on words with two-way ambiguity • Finer granularity: – (Martinez and Agirre 2000) tested the “one sense per collocation” hypothesis on text annotated with Word. Net senses – 70% precision on Sem. Cor words 37

References • • • 38 (Agirre and Rigau, 1995) Agirre, E. and Rigau, G. A proposal for word sense disambiguation using conceptual distance. RANLP 1995. (Agirre and Martinez 2001) Agirre, E. and Martinez, D. Learning class-to-class selectional preferences. CONLL 2001. (Banerjee and Pedersen 2002) Banerjee, S. and Pedersen, T. An adapted Lesk algorithm for word sense disambiguation using Word. Net. CICLING 2002. (Cowie, Guthrie and Guthrie 1992), Cowie, L. and Guthrie, J. A. and Guthrie, L. : Lexical disambiguation using simulated annealing. COLING 2002. (Gale, Church and Yarowsky 1992) Gale, W. , Church, K. , and Yarowsky, D. One sense per discourse. DARPA workshop 1992. (Halliday and Hasan 1976) Halliday, M. and Hasan, R. , (1976). Cohesion in English. Longman. (Galley and Mc. Keown 2003) Galley, M. and Mc. Keown, K. (2003) Improving word sense disambiguation in lexical chaining. IJCAI 2003 (Hirst and St-Onge 1998) Hirst, G. and St-Onge, D. Lexical chains as representations of context in the detection and correction of malaproprisms. Word. Net: An electronic lexical database, MIT Press. (Jiang and Conrath 1997) Jiang, J. and Conrath, D. Semantic similarity based on corpus statistics and lexical taxonomy. COLING 1997. (Krovetz, 1998) Krovetz, R. More than one sense per discourse. ACL-SIGLEX 1998. (Lesk, 1986) Lesk, M. Automatic sense disambiguation using machine readable dictionaries: How to tell a pine cone from an ice cream cone. SIGDOC 1986. (Lin 1998) Lin, D An information theoretic definition of similarity. ICML 1998.

References • • • 39 • (Martinez and Agirre 2000) Martinez, D. and Agirre, E. One sense per collocation and genre/topic variations. EMNLP 2000. (Miller et. al. , 1994) Miller, G. , Chodorow, M. , Landes, S. , Leacock, C. , and Thomas, R. Using a semantic concordance for sense identification. ARPA Workshop 1994. (Miller, 1995) Miller, G. Wordnet: A lexical database. ACM, 38(11) 1995. (Mihalcea and Moldovan, 1999) Mihalcea, R. and Moldovan, D. A method for word sense disambiguation of unrestricted text. ACL 1999. (Mihalcea and Moldovan 2000) Mihalcea, R. and Moldovan, D. An iterative approach to word sense disambiguation. FLAIRS 2000. (Mihalcea, Tarau, Figa 2004) R. Mihalcea, P. Tarau, E. Figa Page. Rank on Semantic Networks with Application to Word Sense Disambiguation, COLING 2004. (Patwardhan, Banerjee, and Pedersen 2003) Patwardhan, S. and Banerjee, S. and Pedersen, T. Using Measures of Semantic Relatedeness for Word Sense Disambiguation. CICLING 2003. (Rada et al 1989) Rada, R. and Mili, H. and Bicknell, E. and Blettner, M. Development and application of a metric on semantic nets. IEEE Transactions on Systems, Man, and Cybernetics, 19(1) 1989. (Resnik 1993) Resnik, P. Selection and Information: A Class-Based Approach to Lexical Relationships. University of Pennsylvania 1993. (Resnik 1995) Resnik, P. Using information content to evaluate semantic similarity. IJCAI 1995. (Vasilescu, Langlais, Lapalme 2004) F. Vasilescu, P. Langlais, G. Lapalme "Evaluating variants of the Lesk approach for disambiguating words”, LREC 2004. (Yarowsky, 1993) Yarowsky, D. One sense per collocation. ARPA Workshop 1993.

![Part 4: Supervised Methods of Word Sense Disambiguation [This section has been deleted] Part 4: Supervised Methods of Word Sense Disambiguation [This section has been deleted]](http://slidetodoc.com/presentation_image_h/7391725bdeb480ef093577a7e16fa354/image-40.jpg)

Part 4: Supervised Methods of Word Sense Disambiguation [This section has been deleted]

Part 5: Minimally Supervised Methods for Word Sense Disambiguation

Outline • Task definition – What does “minimally” supervised mean? • Bootstrapping algorithms – Co-training – Self-training – Yarowsky algorithm • Using the Web for Word Sense Disambiguation – Web as a corpus – Web as collective mind 42

Task Definition • Supervised WSD = learning sense classifiers starting with annotated data • Minimally supervised WSD = learning sense classifiers from annotated data, with minimal human supervision • Examples – Automatically bootstrap a corpus starting with a few human annotated examples – Use monosemous relatives / dictionary definitions to automatically construct sense tagged data 43

Outline • Task definition – What does “minimally” supervised mean? • Bootstrapping algorithms – Co-training – Self-training – Yarowsky algorithm • Using the Web for Word Sense Disambiguation – Web as a corpus – Web as collective mind 44

Bootstrapping Recipe • Ingredients – (Some) labeled data – (Large amounts of) unlabeled data – (One or more) basic classifiers • Output – Classifier that improves over the basic classifiers 45

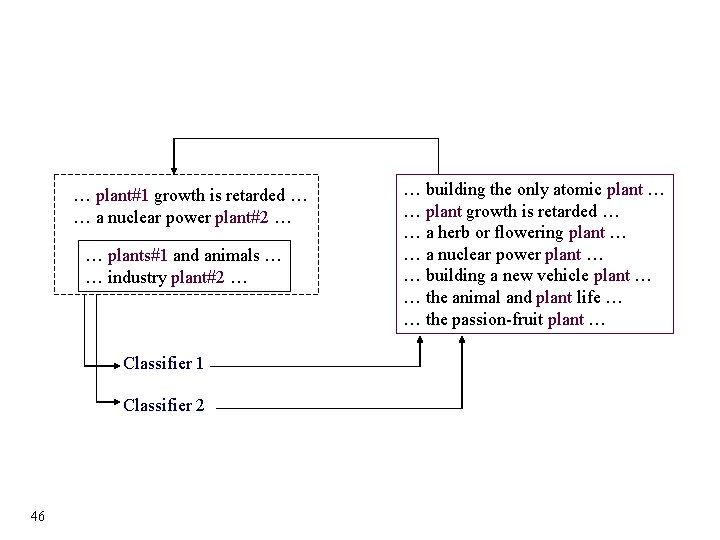

… plant#1 growth is retarded … … a nuclear power plant#2 … … plants#1 and animals … … industry plant#2 … Classifier 1 Classifier 2 46 … building the only atomic plant … … plant growth is retarded … … a herb or flowering plant … … a nuclear power plant … … building a new vehicle plant … … the animal and plant life … … the passion-fruit plant …

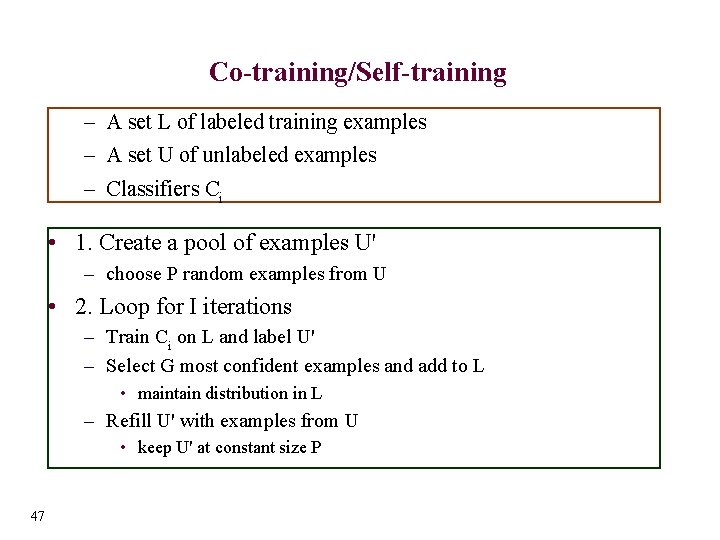

Co-training/Self-training – A set L of labeled training examples – A set U of unlabeled examples – Classifiers Ci • 1. Create a pool of examples U' – choose P random examples from U • 2. Loop for I iterations – Train Ci on L and label U' – Select G most confident examples and add to L • maintain distribution in L – Refill U' with examples from U • keep U' at constant size P 47

Co-training • (Blum and Mitchell 1998) • Two classifiers – independent views – [independence condition can be relaxed] • Co-training in Natural Language Learning – – 48 Statistical parsing (Sarkar 2001) Co-reference resolution (Ng and Cardie 2003) Part of speech tagging (Clark, Curran and Osborne 2003). . .

Self-training • (Nigam and Ghani 2000) • One single classifier • Retrain on its own output • Self-training for Natural Language Learning – Part of speech tagging (Clark, Curran and Osborne 2003) – Co-reference resolution (Ng and Cardie 2003) • several classifiers through bagging 49

Yarowsky Algorithm • (Yarowsky 1995) • Similar to co-training • Relies on two heuristics and a decision list – One sense per collocation : • Nearby words provide strong and consistent clues as to the sense of a target word – One sense per discourse : • The sense of a target word is highly consistent within a single document 50

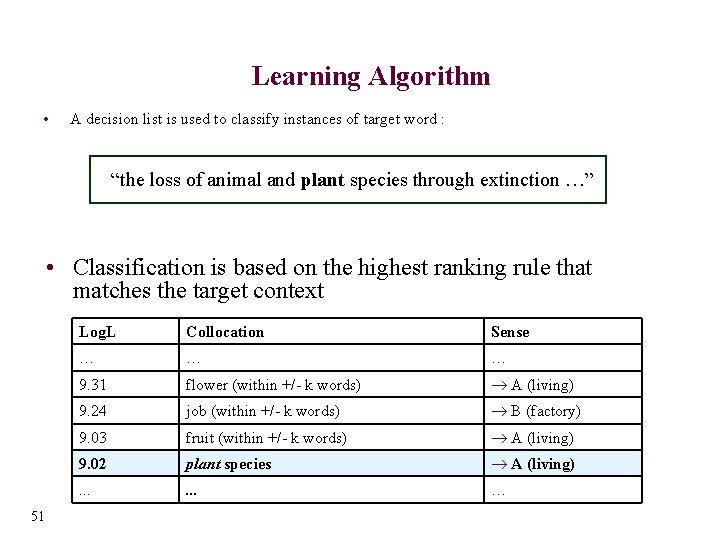

Learning Algorithm • A decision list is used to classify instances of target word : “the loss of animal and plant species through extinction …” • Classification is based on the highest ranking rule that matches the target context 51 Log. L Collocation Sense … … … 9. 31 flower (within +/- k words) ® A (living) 9. 24 job (within +/- k words) ® B (factory) 9. 03 fruit (within +/- k words) ® A (living) 9. 02 plant species ® A (living) . . . …

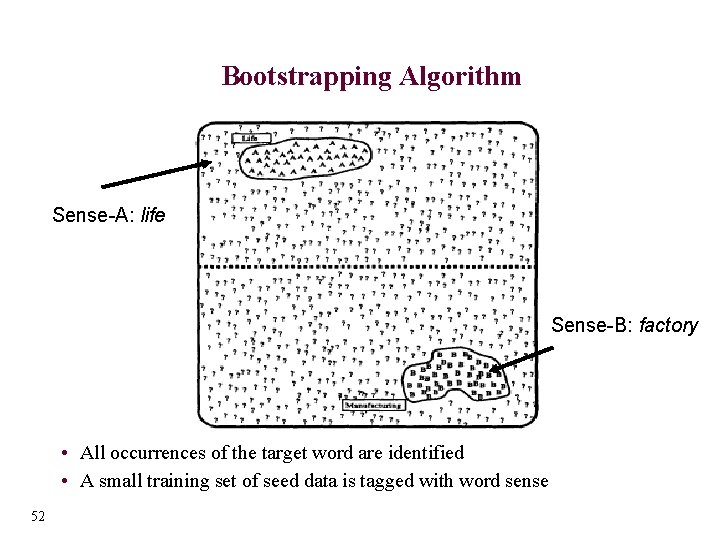

Bootstrapping Algorithm Sense-A: life Sense-B: factory • All occurrences of the target word are identified • A small training set of seed data is tagged with word sense 52

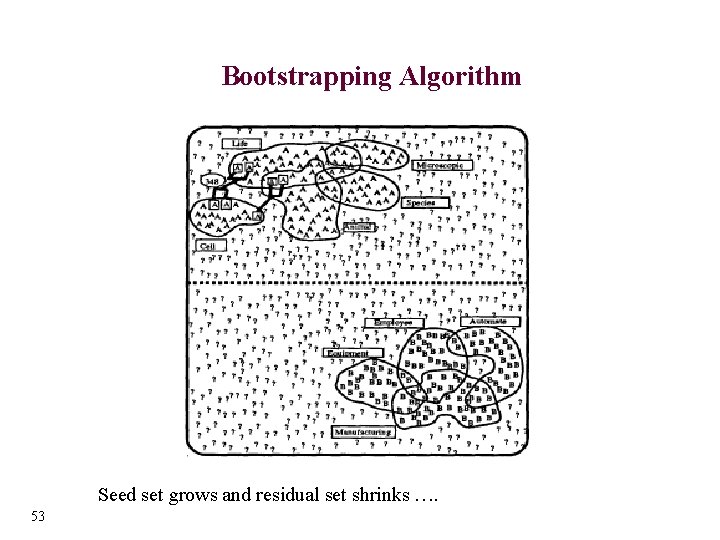

Bootstrapping Algorithm Seed set grows and residual set shrinks …. 53

Bootstrapping Algorithm Convergence: Stop when residual set stabilizes 54

Bootstrapping Algorithm • Iterative procedure: – Train decision list algorithm on seed set – Classify residual data with decision list – Create new seed set by identifying samples that are tagged with a probability above a certain threshold – Retrain classifier on new seed set • Selecting training seeds – Initial training set should accurately distinguish among possible senses – Strategies: 55 • Select a single, defining seed collocation for each possible sense. Ex: “life” and “manufacturing” for target plant • Use words from dictionary definitions • Hand-label most frequent collocates

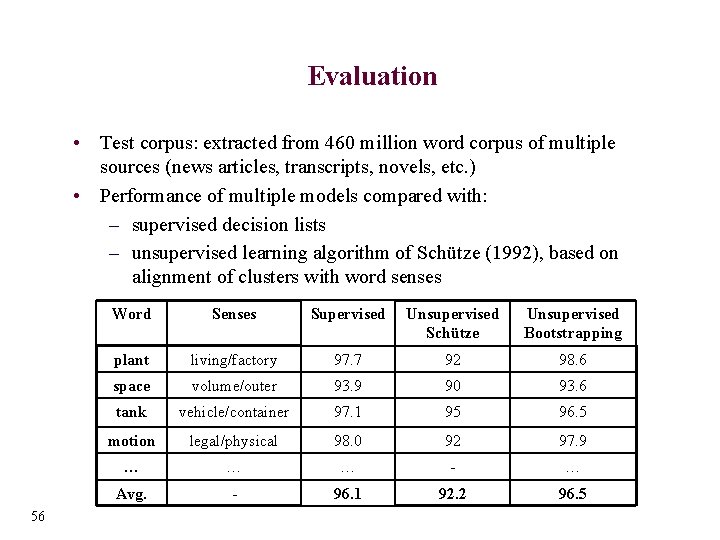

Evaluation • Test corpus: extracted from 460 million word corpus of multiple sources (news articles, transcripts, novels, etc. ) • Performance of multiple models compared with: – supervised decision lists – unsupervised learning algorithm of Schütze (1992), based on alignment of clusters with word senses 56 Word Senses Supervised Unsupervised Schütze Unsupervised Bootstrapping plant living/factory 97. 7 92 98. 6 space volume/outer 93. 9 90 93. 6 tank vehicle/container 97. 1 95 96. 5 motion legal/physical 98. 0 92 97. 9 … … … - … Avg. - 96. 1 92. 2 96. 5

![The Web as a Corpus [This topic has been deleted] • Use the Web The Web as a Corpus [This topic has been deleted] • Use the Web](http://slidetodoc.com/presentation_image_h/7391725bdeb480ef093577a7e16fa354/image-57.jpg)

The Web as a Corpus [This topic has been deleted] • Use the Web as a large textual corpus – Build annotated corpora using monosemous relatives – Bootstrap annotated corpora starting with few seeds • Similar to (Yarowsky 1995) • Use the (semi)automatically tagged data to train WSD classifiers 57

References • • • 58 (Abney 2002) Abney, S. Bootstrapping. Proceedings of ACL 2002. (Blum and Mitchell 1998) Blum, A. and Mitchell, T. Combining labeled and unlabeled data with co-training. Proceedings of COLT 1998. (Chklovski and Mihalcea 2002) Chklovski, T. and Mihalcea, R. Building a sense tagged corpus with Open Mind Word Expert. Proceedings of ACL 2002 workshop on WSD. (Clark, Curran and Osborne 2003) Clark, S. and Curran, J. R. and Osborne, M. Bootstrapping POS taggers using unlabelled data. Proceedings of Co. NLL 2003. (Mihalcea 1999) Mihalcea, R. An automatic method for generating sense tagged corpora. Proceedings of AAAI 1999. (Mihalcea 2002) Mihalcea, R. Bootstrapping large sense tagged corpora. Proceedings of LREC 2002. (Mihalcea 2004) Mihalcea, R. Co-training and Self-training for Word Sense Disambiguation. Proceedings of Co. NLL 2004. (Ng and Cardie 2003) Ng, V. and Cardie, C. Weakly supervised natural language learning without redundant views. Proceedings of HLT-NAACL 2003. (Nigam and Ghani 2000) Nigam, K. and Ghani, R. Analyzing the effectiveness and applicability of co-training. Proceedings of CIKM 2000. (Sarkar 2001) Sarkar, A. Applying cotraining methods to statistical parsing. Proceedings of NAACL 2001. (Yarowsky 1995) Yarowsky, D. Unsupervised word sense disambiguation rivaling supervised methods. Proceedings of ACL 1995.

Part 6: Unsupervised Methods of Word Sense Discrimination

Outline • • • 60 What is Unsupervised Learning? Task Definition Agglomerative Clustering LSI/LSA Sense Discrimination Using Parallel Texts

What is Unsupervised Learning? • Unsupervised learning identifies patterns in a large sample of data, without the benefit of any manually labeled examples or external knowledge sources • These patterns are used to divide the data into clusters, where each member of a cluster has more in common with the other members of its own cluster than any other • Note! If you remove manual labels from supervised data and cluster, you may not discover the same classes as in supervised learning – Supervised Classification identifies features that trigger a sense tag – Unsupervised Clustering finds similarity between contexts 61

Task Definition • Word Sense Discrimination reduces to the problem of finding the targeted words that occur in the most similar contexts and placing them in a cluster 62

Agglomerative Clustering • Create a similarity matrix of instances to be discriminated – Results in a symmetric “instance by instance” matrix, where each cell contains the similarity score between a pair of instances – Typically a first order representation, where similarity is based on the features observed in the pair of instances • Apply Agglomerative Clustering algorithm to matrix – To start, each instance is its own cluster – Form a cluster from the most similar pair of instances – Repeat until the desired number of clusters is obtained • Advantages : high quality clustering • Disadvantages – computationally expensive, must carry out exhaustive pair wise comparisons 63

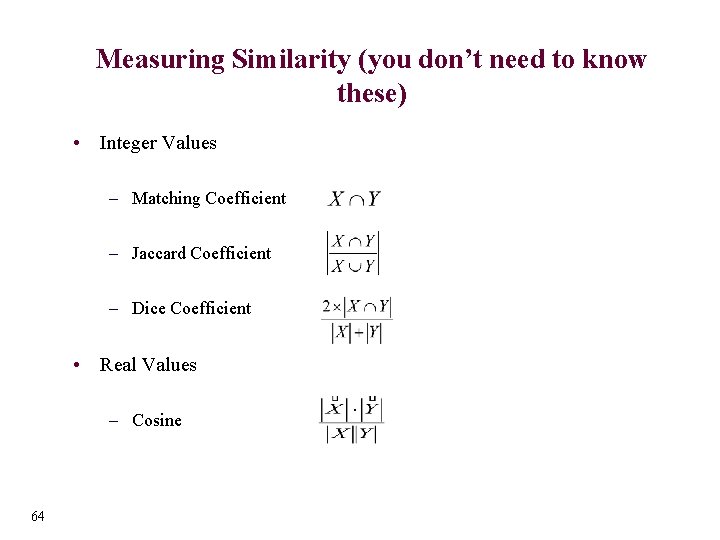

Measuring Similarity (you don’t need to know these) • Integer Values – Matching Coefficient – Jaccard Coefficient – Dice Coefficient • Real Values – Cosine 64

Evaluation of Unsupervised Methods • If Sense tagged text is available, can be used for evaluation • Assume that sense tags represent “true” clusters, and compare these to discovered clusters – Find mapping of clusters to senses that attains maximum accuracy • Pseudo words are especially useful, since it is hard to find data that is discriminated – Pick two words or names from a corpus, and conflate them into one name. Then see how well you can discriminate. – http: //www. d. umn. edu/~kulka 020/kanagha. Name. html • Baseline Algorithm– group all instances into one cluster, this will reach “accuracy” equal to majority classifier 65

Sense Discrimination Using Parallel Texts • There is controversy as to what exactly is a “word sense” (e. g. , Kilgarriff, 1997) • It is sometimes unclear how fine grained sense distinctions need to be useful in practice. • Parallel text may present a solution to both problems! – Text in one language and its translation into another • Resnik and Yarowsky (1997) suggest that word sense disambiguation concern itself with sense distinctions that manifest themselves across languages. – A “bill” in English may be a “pico” (bird jaw) in or a “cuenta” (invoice) in Spanish. 66

Parallel Text • Parallel Text can be found on the Web and there are several large corpora available (e. g. , UN Parallel Text, Canadian Hansards) • Manual annotation of sense tags is not required! However, text must be word aligned (translations identified between the two languages). – http: //www. cs. unt. edu/~rada/wpt/ Workshop on Parallel Text, NAACL 2003 • Given word aligned parallel text, sense distinctions can be discovered. (e. g. , Li and Li, 2002, Diab, 2002) 67

References • • • 68 (Diab, 2002) Diab, Mona and Philip Resnik, An Unsupervised Method for Word Sense Tagging using Parallel Corpora, Proceedings of ACL, 2002. (Firth, 1957) A Synopsis of Linguistic Theory 1930 -1955. In Studies in Linguistic Analysis, Oxford University Press, Oxford. (Kilgarriff, 1997) “I don’t believe in word senses”, Computers and the Humanities (31) pp. 91 -113. (Li and Li, 2002) Word Translation Disambiguation Using Bilingual Bootstrapping. Proceedings of ACL. Pp. 343 -351. (Mc. Quitty, 1966) Similarity Analysis by Reciprocal Pairs for Discrete and Continuous Data. Educational and Psychological Measurement (26) pp. 825 -831. (Miller and Charles, 1991) Contextual correlates of semantic similarity. Language and Cognitive Processes, 6 (1) pp. 1 - 28. (Pedersen and Bruce, 1997) Distinguishing Word Sense in Untagged Text. In Proceedings of EMNLP 2. pp 197 -207. (Purandare and Pedersen, 2004) Word Sense Discrimination by Clustering Contexts in Vector and Similarity Spaces. Proceedings of the Conference on Natural Language and Learning. pp. 41 -48. (Resnik and Yarowsky, 1997) A Perspective on Word Sense Disambiguation Methods and their Evaluation. The ACL-SIGLEX Workshop Tagging Text with Lexical Semantics. pp. 79 -86. (Schutze, 1998) Automatic Word Sense Discrimination. Computational Linguistics, 24 (1) pp. 97 -123.

![Outline [most of this section deleted] • Where to get the required ingredients? – Outline [most of this section deleted] • Where to get the required ingredients? –](http://slidetodoc.com/presentation_image_h/7391725bdeb480ef093577a7e16fa354/image-69.jpg)

Outline [most of this section deleted] • Where to get the required ingredients? – – Machine Readable Dictionaries Machine Learning Algorithms Sense Annotated Data Raw Data • Where to get WSD software? • How to get your algorithms tested? – Senseval 69

Senseval • • • Evaluation of WSD systems http: //www. senseval. org Senseval 1: 1999 – about 10 teams Senseval 2: 2001 – about 30 teams Senseval 3: 2004 – about 55 teams Senseval 4: 2007(? ) • Provides sense annotated data for many languages, for several tasks – Languages: English, Romanian, Chinese, Basque, Spanish, etc. – Tasks: Lexical Sample, All words, etc. 70 • Provides evaluation software • Provides results of other participating systems

Thank You! • Rada Mihalcea (rada@cs. unt. edu) – http: //www. cs. unt. edu/~rada • Ted Pedersen (tpederse@d. umn. edu) – http: //www. d. umn. edu/~tpederse 71

- Slides: 71