Word Sense Disambiguation CS 4705 Overview Selectional restriction

Word Sense Disambiguation CS 4705

Overview • Selectional restriction based approaches • Robust techniques – Machine Learning • Supervised • Unsupervised – Dictionary-based techniques

Disambiguation via Selectional Restrictions • A step toward semantic parsing – Different verbs select for different thematic roles wash the dishes (takes washable-thing as patient) serve delicious dishes (takes food-type as patient) • Method: rule-to-rule syntactico-semantic analysis – Semantic attachment rules are applied as sentences are syntactically parsed VP --> V NP V serve <theme> {theme: food-type} – Selectional restriction violation: no parse

• Requires: – Write selectional restrictions for each sense of each predicate – or use Frame. Net • Serve alone has 15 verb senses – Hierarchical type information about each argument (a la Word. Net) • How many hypernyms does dish have? • How many lexemes are hyponyms of dish? • But also: – Sometimes selectional restrictions don’t restrict enough (Which dishes do you like? ) – Sometimes they restrict too much (Eat dirt, worm! I’ll eat my hat!)

Can we take a more statistical approach? How likely is dish/crockery to be the object of serve? dish/food? • A simple approach (baseline): predict the most likely sense – Why might this work? – When will it fail? • A better approach: learn from a tagged corpus – What needs to be tagged? • An even better approach: Resnik’s selectional association (1997, 1998) – Estimate conditional probabilities of word senses from a corpus tagged only with verbs and their arguments (e. g. ragout is an object of served -- Jane served/V ragout/Obj

• How do we get the word sense probabilities? – For each verb object (e. g. ragout) • Look up hypernym classes in Word. Net • Distribute “credit” for this object sense occurring with this verb among all the classes to which the object belongs Brian served/V the dish/Obj Jane served/V food/Obj • If ragout has N hypernym classes in Word. Net, add 1/N to each class count (including food) as object of serve • If tureen has M hypernym classes in Word. Net, add 1/M to each class count (including dish) as object of serve – Pr(Class|v) is the count(c, v)/count(v) – How can this work? • Ambiguous words have many superordinate classes John served food/the dish/tuna/curry • There is a common sense among these which gets “credit” in each instance, eventually dominating the likelihood score

• To determine most likely sense of ‘bass’ in Bill served bass – Having previously assigned ‘credit’ for the occurrence of all hypernyms of things like fish and things like musical instruments to all their hypernym classes (e. g. ‘fish’ and ‘musical instruments’) – Find the hypernym classes of bass (including fish and musical instruments) – Choose the class C with the highest probability, given that the verb is serve • Results: – Baselines: • random choice of word sense is 26. 8% • choose most frequent sense (NB: requires sense-labeled training corpus) is 58. 2% – Resnik’s: 44% correct with only pred/arg relations labeled

Machine Learning Approaches • Learn a classifier to assign one of possible word senses for each word – Acquire knowledge from labeled or unlabeled corpus – Human intervention only in labeling corpus and selecting set of features to use in training • Input: feature vectors – Target (dependent variable) – Context (set of independent variables) • Output: classification rules for unseen text

Supervised Learning • Training and test sets with words labeled as to correct sense (It was the biggest [fish: bass] I’ve seen. ) – Obtain values of independent variables automatically (POS, co-occurrence information, …) – Run classifier on training data – Test on test data – Result: Classifier for use on unlabeled data

Input Features for WSD • • POS tags of target and neighbors Surrounding context words (stemmed or not) Punctuation, capitalization and formatting Partial parsing to identify thematic/grammatical roles and relations • Collocational information: – How likely are target and left/right neighbor to co-occur • Co-occurrence of neighboring words – Intuition: How often does sea or words with bass

– How do we proceed? • Look at a window around the word to be disambiguated, in training data • Which features accurately predict the correct tag? • Can you think of other features might be useful in general for WSD? • Input to learner, e. g. Is the bass fresh today? [w-2, w-2/pos, w-1, w-/pos, w+1/pos, w+2/pos… [is, V, the, DET, fresh, RB, today, N. . .

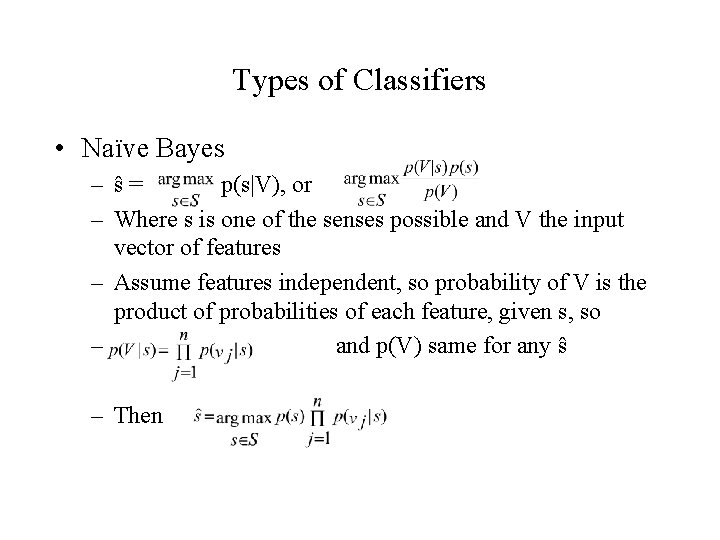

Types of Classifiers • Naïve Bayes – ŝ= p(s|V), or – Where s is one of the senses possible and V the input vector of features – Assume features independent, so probability of V is the product of probabilities of each feature, given s, so – and p(V) same for any ŝ – Then

Rule Induction Learners (e. g. Ripper) • Given a feature vector of values for independent variables associated with observations of values for the training set (e. g. [fishing, NP, 3, …] + bass 2) • Produce a set of rules that perform best on the training data, e. g. – bass 2 if w-1==‘fishing’ & pos==NP – …

Decision Lists – like case statements applying tests to input in turn fish within window --> bass 1 striped bass --> bass 1 guitar within window --> bass 2 bass player --> bass 1 … – Yarowsky ‘ 96’s approach orders tests by individual accuracy on entire training set based on log-likelihood ratio

• Bootstrapping I – Start with a few labeled instances of target item as seeds to train initial classifier, C – Use high confidence classifications of C on unlabeled data as training data – Iterate • Bootstrapping II – Start with sentences containing words strongly associated with each sense (e. g. sea and music for bass), either intuitively or from corpus or from dictionary entries – One Sense per Discourse hypothesis

Unsupervised Learning • Cluster feature vectors to ‘discover’ word senses using some similarity metric (e. g. cosine distance) – Represent each cluster as average of feature vectors it contains – Label clusters by hand with known senses – Classify unseen instances by proximity to these known and labeled clusters • Evaluation problem – What are the ‘right’ senses?

– Cluster impurity – How do you know how many clusters to create? – Some clusters may not map to ‘known’ senses

Dictionary Approaches • Problem of scale for all ML approaches – Build a classifier for each sense ambiguity • Machine readable dictionaries (Lesk ‘ 86) – Retrieve all definitions of content words occurring in context of target (e. g. the happy seafarer ate the bass) – Compare for overlap with sense definitions of target entry (bass 2: a type of fish that lives in the sea) – Choose sense with most overlap • Limits: Entries are short --> expand entries to ‘related’ words

Summary • Many useful approaches developed to do WSD – Supervised and unsupervised ML techniques – Novel uses of existing resources (WN, dictionaries) • Future – More tagged training corpora becoming available – New learning techniques being tested, e. g. co-training • Next class: – Ch 17: 3 -5

- Slides: 19