Web Crawler and Database DR MIN GKA I

Web Crawler and Database DR. MIN G-KA I JIAU ( 焦名楷) RESEA RCHE R ASSISTANT P ROF ESS OR NATIONAL TA IPEI UNIV ERSIT Y OF TEC HNOLOGY 1

Data-driven Business Big data leads to a massive data-driven business Building a data-driven business of this scale requires lots of time, effort and money due to data source Significant amount of data that you have to collect before you can launch your product/project ◦ ◦ Direct User Feedback (questionnaire, website form input, etc. ) Server Logs (logs from web servers, mail servers, proxy servers, etc. ) Third Party APIs (Twitter, Facebook, Google, Instagram, etc. ) Be active to collect data from web 2

What you should begin with ? IF YOU ARE STARTING AT COLLECTING DATA FROM WEB 3

That's to learn Web Crawler Web crawler/spider/robot/scutter is an Internet bot which systematically browses the World Wide Web (Web Pages) while collecting the web content Search Engine ◦ Typically for the purpose of Web indexing, such Googlebot, Bingbot, and etc. ◦ Crawling (website, meta, sitemap) + Data Indexing + Result Search ◦ <meta name="keywords" content="空腹熊貓是最方便的美食外送網✔我們提供便當, 日式料理, 義大利麵, 披薩, 等各式美食✔線 上或者手機APP訂餐!方便快速✔"> Web crawler in the age of Big Data ◦ This is what tool we embrace to collect the data (even big data) ◦ Crawling + Scraping + Data Storing 4

The origin of web crawling is URL: UNIFORM RESOURCE LOCATOR 5

For example http: //www. ntut. edu. tw/ 6

Enter 7

8

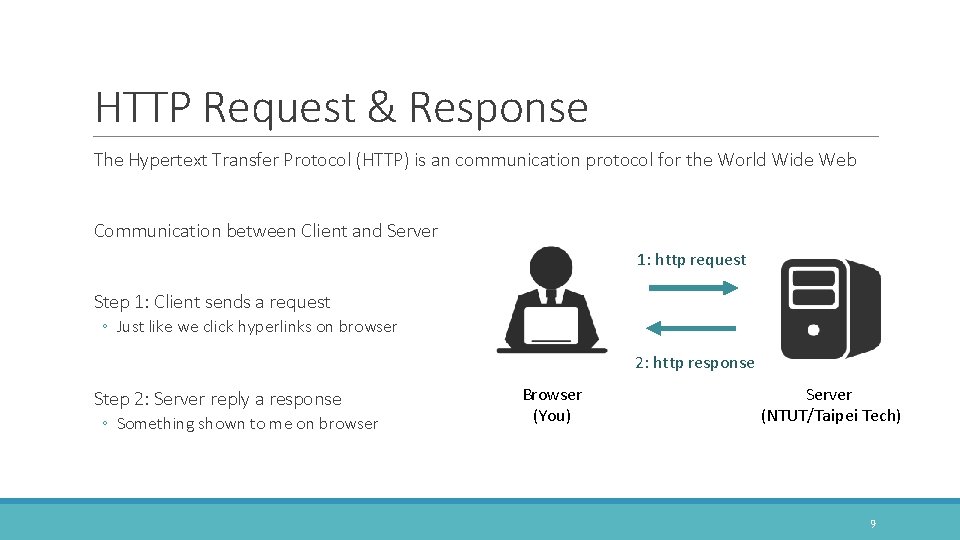

HTTP Request & Response The Hypertext Transfer Protocol (HTTP) is an communication protocol for the World Wide Web Communication between Client and Server 1: http request Step 1: Client sends a request ◦ Just like we click hyperlinks on browser 2: http response Step 2: Server reply a response ◦ Something shown to me on browser Browser (You) Server (NTUT/Taipei Tech) 9

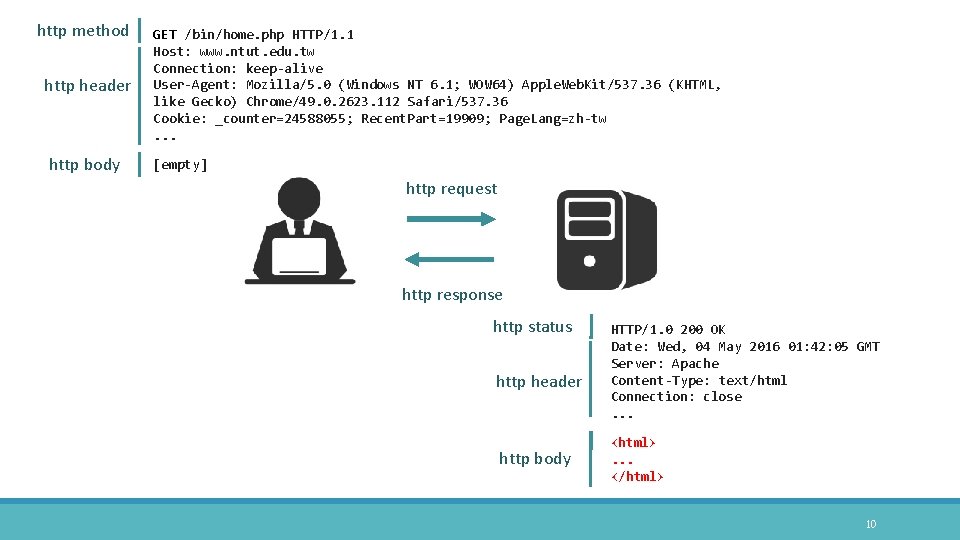

http method http header http body GET /bin/home. php HTTP/1. 1 Host: www. ntut. edu. tw Connection: keep-alive User-Agent: Mozilla/5. 0 (Windows NT 6. 1; WOW 64) Apple. Web. Kit/537. 36 (KHTML, like Gecko) Chrome/49. 0. 2623. 112 Safari/537. 36 Cookie: _counter=24588055; Recent. Part=19909; Page. Lang=zh-tw. . . [empty] http request http response http status http header http body HTTP/1. 0 200 OK Date: Wed, 04 May 2016 01: 42: 05 GMT Server: Apache Content-Type: text/html Connection: close. . . <html>. . . </html> 10

http request 11

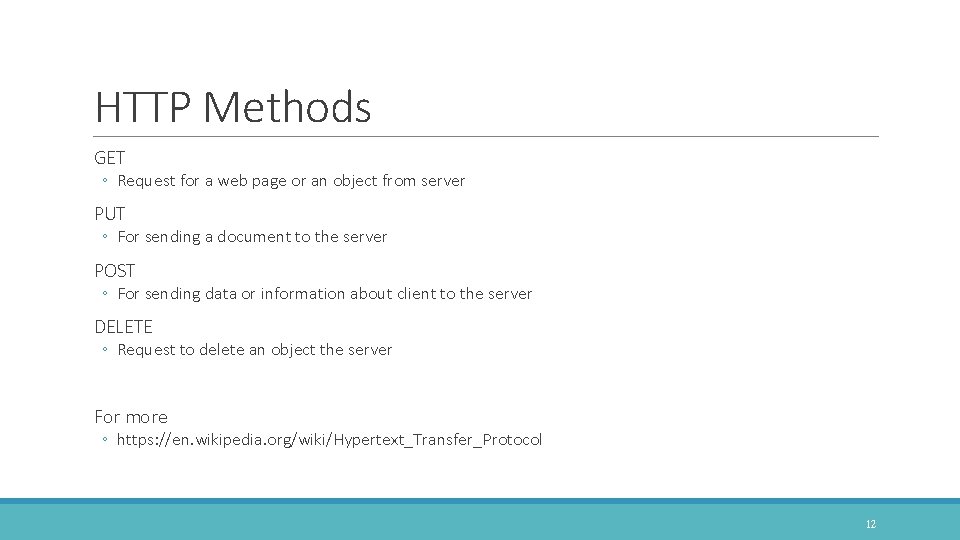

HTTP Methods GET ◦ Request for a web page or an object from server PUT ◦ For sending a document to the server POST ◦ For sending data or information about client to the server DELETE ◦ Request to delete an object the server For more ◦ https: //en. wikipedia. org/wiki/Hypertext_Transfer_Protocol 12

http response 13

14

HTTP status codes 15

List of HTTP Status Codes 2 xx Success: ◦ 200 OK, Standard response for successful HTTP requests. 3 xx Redirection: ◦ 302 Found, An HTTP response with this status code will additionally provide a URL in the location header field. 4 xx Client Error: ◦ 404 Not Found, 400 Bad Request, The server cannot or will not process the request due to an apparent client error 5 xx Server Error: ◦ 503 Service Unavailable, 502 Bad Gateway, The server was acting as a gateway or proxy and received an invalid response from the upstream server 16

HTTP Request & Response Contain two parts in request and response ◦ http header ◦ http body http header http body 17

HTTP Header Request and Response have the different fields ◦ https: //en. wikipedia. org/wiki/List_of_HTTP_header_fields Request fields http header ◦ Accept, Accept-Encoding, Accept-Language, Connection, Cookie, Host, Referer, User-Agent, and etc. Response fields http body ◦ Connection, Content-Type, Date, Server, and etc. 18

Cookie: Keeping State Cookies were designed to be a mechanism for websites to remember stateful information Such as ◦ Items added in the shopping cart in an online store ◦ Record the user's browsing activity ◦ Clicking particular buttons ◦ Logging in ◦ Recording which pages were visited in the past ◦ Cookies can also store passwords and form content a user has previously entered ◦ ◦ Username E-mail Address Credit card number 19

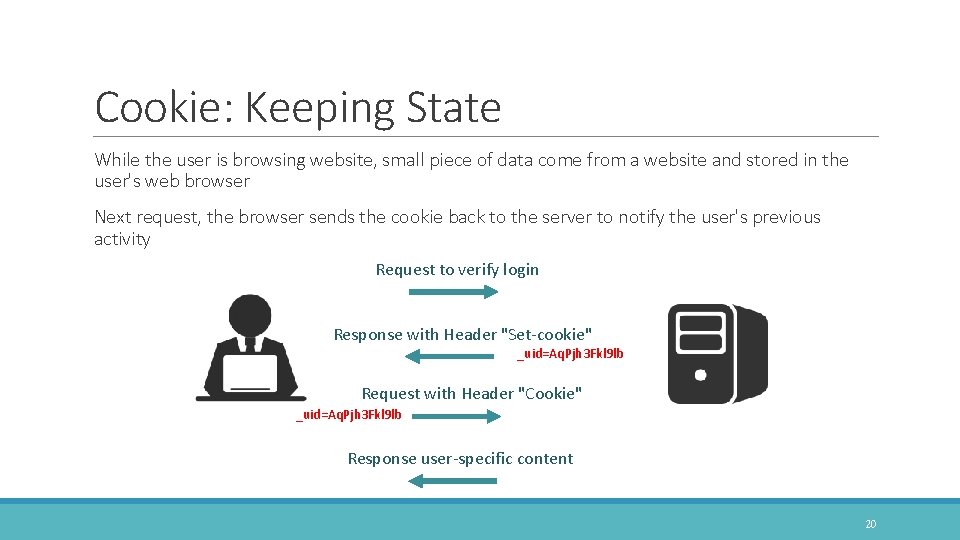

Cookie: Keeping State While the user is browsing website, small piece of data come from a website and stored in the user's web browser Next request, the browser sends the cookie back to the server to notify the user's previous activity Request to verify login Response with Header "Set-cookie" _uid=Aq. Pjh 3 Fkl 9 lb Request with Header "Cookie" _uid=Aq. Pjh 3 Fkl 9 lb Response user-specific content 20

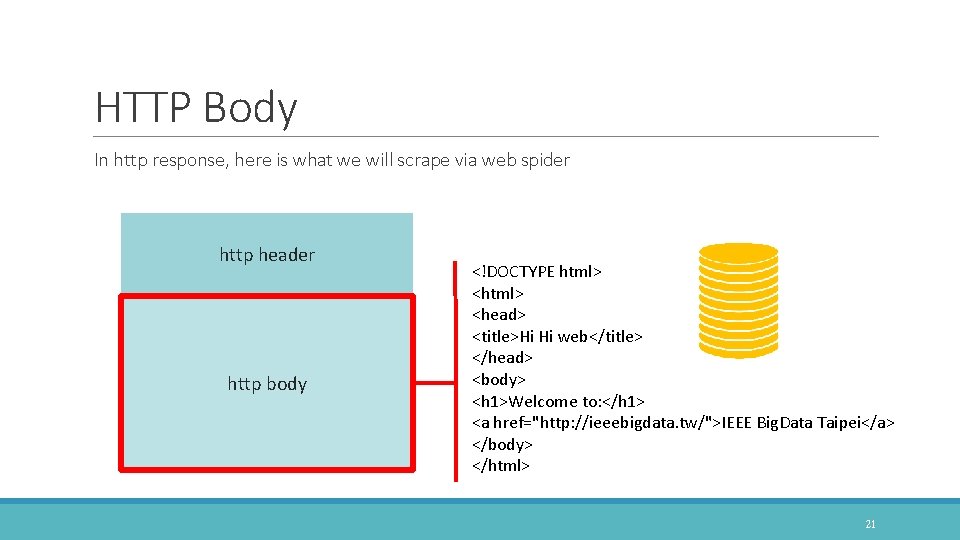

HTTP Body In http response, here is what we will scrape via web spider http header http body <!DOCTYPE html> <head> <title>Hi Hi web</title> </head> <body> <h 1>Welcome to: </h 1> <a href="http: //ieeebigdata. tw/">IEEE Big. Data Taipei</a> </body> </html> 21

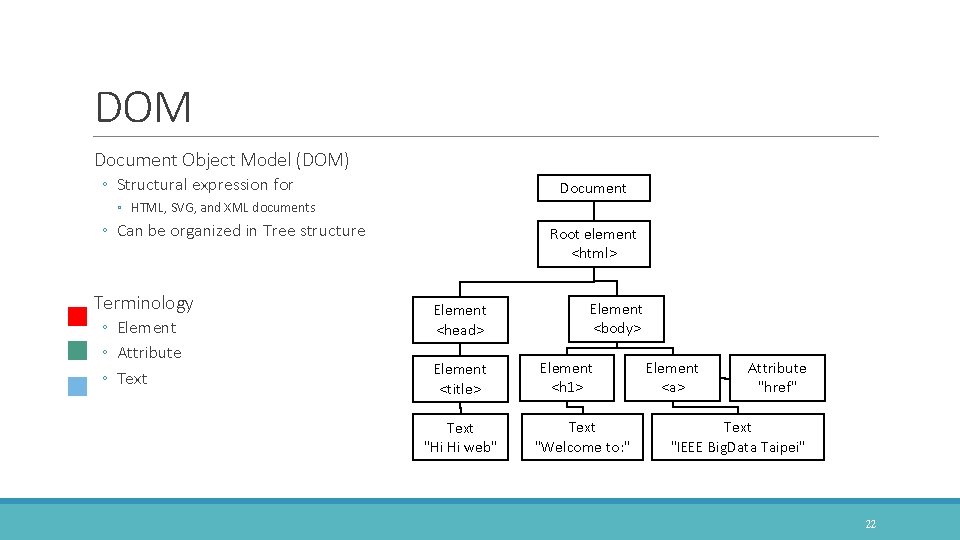

DOM Document Object Model (DOM) ◦ Structural expression for Document ◦ HTML, SVG, and XML documents ◦ Can be organized in Tree structure Terminology ◦ Element ◦ Attribute ◦ Text Root element <html> Element <head> Element <title> Text "Hi Hi web" Element <body> Element <h 1> Text "Welcome to: " Element <a> Attribute "href" Text "IEEE Big. Data Taipei" 22

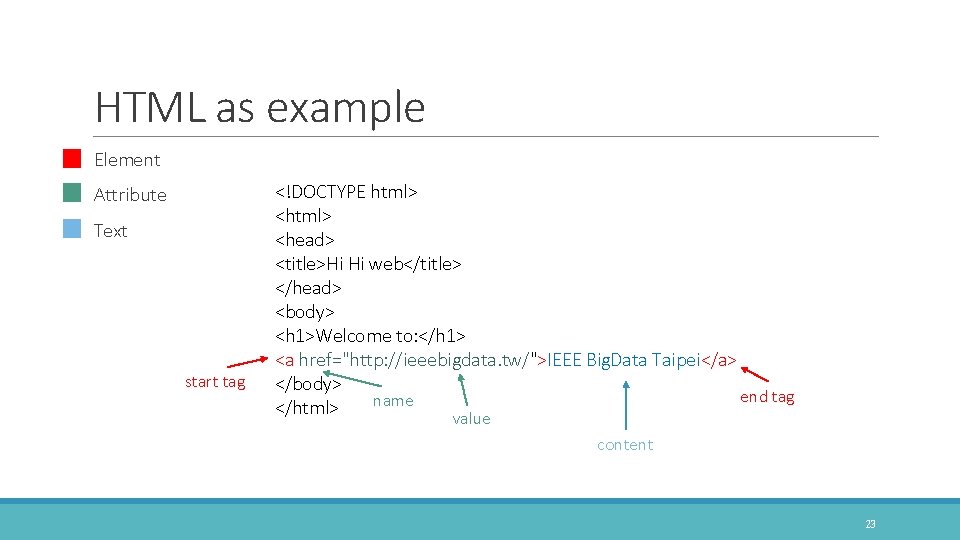

HTML as example Element Attribute Text start tag <!DOCTYPE html> <head> <title>Hi Hi web</title> </head> <body> <h 1>Welcome to: </h 1> <a href="http: //ieeebigdata. tw/">IEEE Big. Data Taipei</a> </body> end tag name </html> value content 23

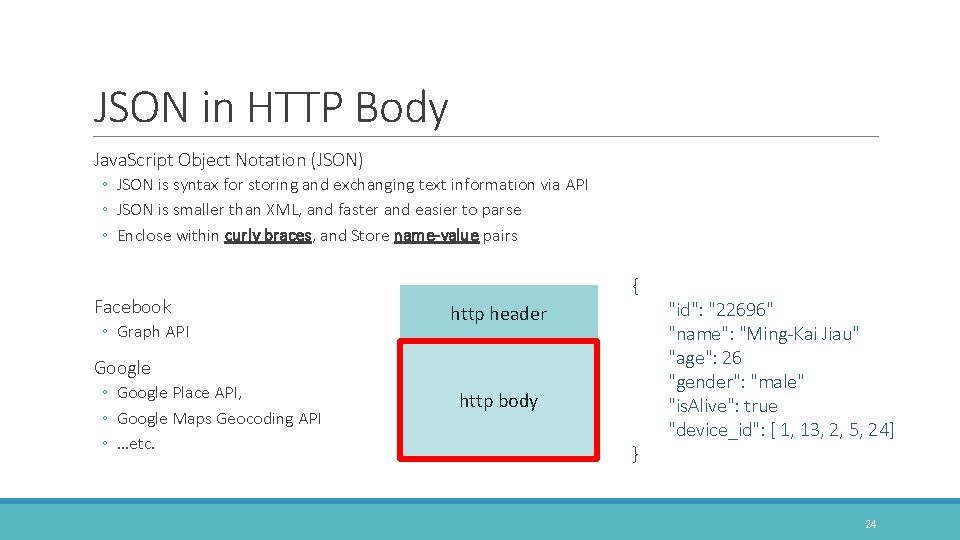

JSON in HTTP Body Java. Script Object Notation (JSON) ◦ JSON is syntax for storing and exchanging text information via API ◦ JSON is smaller than XML, and faster and easier to parse ◦ Enclose within curly braces, and Store name-value pairs Facebook ◦ Graph API http header Google ◦ Google Place API, ◦ Google Maps Geocoding API ◦ …etc. http body { "id": "22696" "name": "Ming-Kai Jiau" "age": 26 "gender": "male" "is. Alive": true "device_id": [ 1, 13, 2, 5, 24] } 24

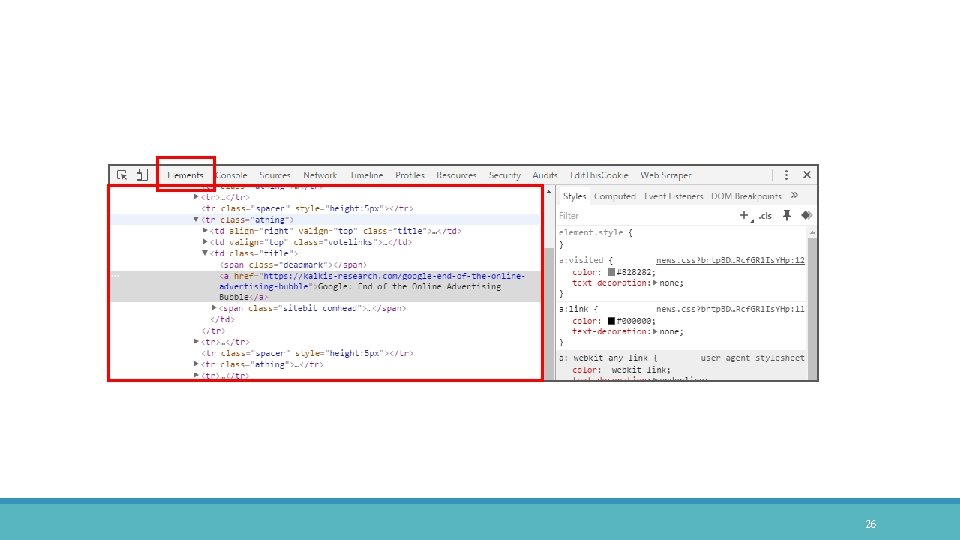

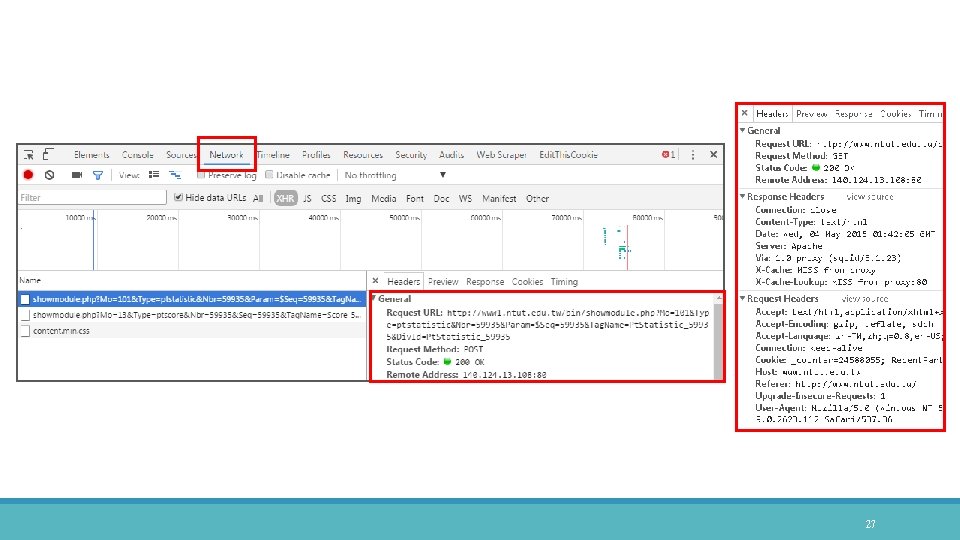

Developer Tools in Chrome Step 1: Open your browser - Chrome Step 2: Hotkey to Developer Tools ◦ Ctrl + Shift + I ◦ F 12 Developer Tools ◦ Elements: observe html content ◦ Network: observe http requests and traffic 25

26

27

Google Extension: Postman Create HTTP requests quickly ◦ Based on what request you want Request Capture ◦ Captured requests will show up inside Postman's history Install Postman ◦ https: //chrome. google. com/webstore/detail/postman/fhbjgbiflinjbdggehcddcbncdddomop? hl=en 28

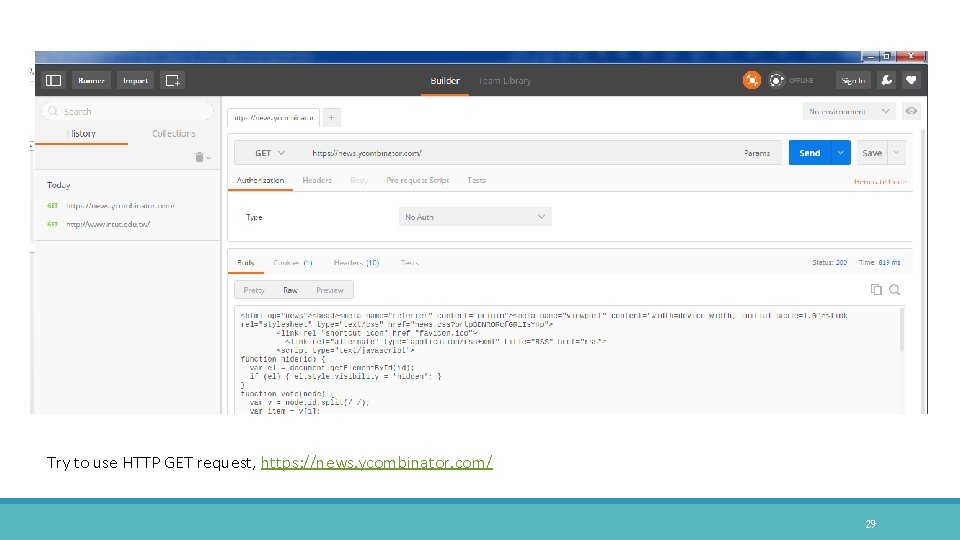

Try to use HTTP GET request, https: //news. ycombinator. com/ 29

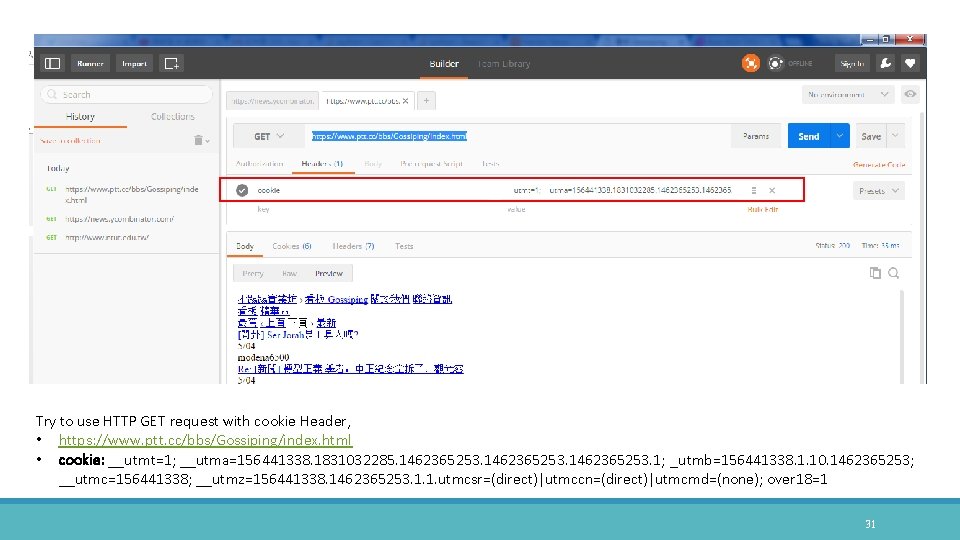

Try to use HTTP GET request, https: //www. ptt. cc/bbs/Gossiping/index. html 30

Try to use HTTP GET request with cookie Header, • https: //www. ptt. cc/bbs/Gossiping/index. html • cookie: __utmt=1; __utma=156441338. 1831032285. 1462365253. 1; _utmb=156441338. 1. 10. 1462365253; __utmc=156441338; __utmz=156441338. 1462365253. 1. 1. utmcsr=(direct)|utmccn=(direct)|utmcmd=(none); over 18=1 31

Other URLs Redirection Case: 露天拍賣 ◦ http: //class. ruten. com. tw/category/main? 00110002 Echo everything that you request carries about ◦ http: //httpbin. org/get? key 1=owo&key 2=-w- 32

Google Extension: Edit. This. Cookie Install Chrome Extension ◦ http: //www. editthiscookie. com/ 33

Python Requests GOOD TUTORIAL HTTP: //DOCS. PYTHON-REQUESTS. ORG/EN/MASTER/ 34

Install Python Resynchronize your package index files from source and make sure it is up-to-date ◦ $ sudo apt-get upgrade Use apt to install Python 2. 7 ◦ $ sudo apt-get install python-pip ◦ http: //python-packaging-user-guide. readthedocs. org/en/latest/install_requirements_linux/ 35

Install Requests is actively developed on Git. Hub ◦ https: //github. com/kennethreitz/requests To install Requests, simply run this simple command in your terminal of choice ◦ $ pip install requests 36

Quickstart to do GET request Enter to Python ◦ $ python Begin by importing the Requests module ◦ >>> import requests Now, let's try to get a webpage ◦ >>> url = 'https: //www. ptt. cc/bbs/Gossiping/index. html' ◦ >>> res = requests. get(url) ◦ Now, we have a Response object called res. Display the content of response ◦ >>> print res. text 37

GET request with cookie Set cookie in header ◦ >>> headers = { 'cookie': 'over 18=1' } Redo GET request and with headers (i. e. , cookie) ◦ >>> res = requests. get(url, headers=headers) Display the content of response that passes the agreement page ◦ >>> print res. text 39

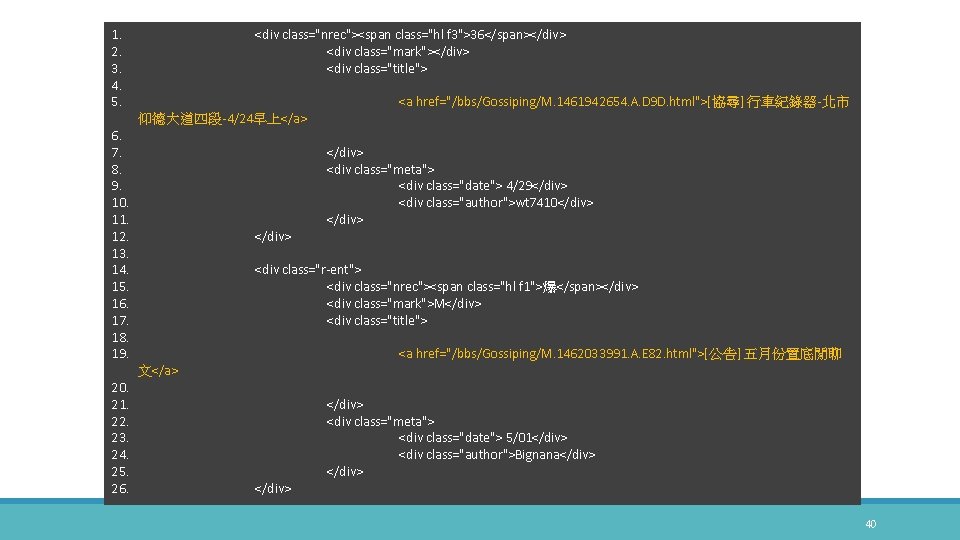

1. 2. 3. 4. 5. <div class="nrec"><span class="hl f 3">36</span></div> <div class="mark"></div> <div class="title"> <a href="/bbs/Gossiping/M. 1461942654. A. D 9 D. html">[協尋] 行車紀錄器-北市 仰德大道四段-4/24早上</a> 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. 17. 18. 19. 文</a> 20. 21. 22. 23. 24. 25. 26. </div> <div class="meta"> <div class="date"> 4/29</div> <div class="author">wt 7410</div> <div class="r-ent"> <div class="nrec"><span class="hl f 1">爆</span></div> <div class="mark">M</div> <div class="title"> <a href="/bbs/Gossiping/M. 1462033991. A. E 82. html">[公告] 五月份置底閒聊 </div> <div class="meta"> <div class="date"> 5/01</div> <div class="author">Bignana</div> 40

Web Crawler Solutions FRAMEWORK AND SYSTEM 41

Web Crawler Solutions in Python Scrapy ◦ http: //scrapy. org/ Pyspider ◦ https: //github. com/binux/pyspider Comparison of Scrapy and Pyspider ◦ http: //stackoverflow. com/questions/27243246/can-scrapy-be-replaced-by-pyspider ◦ https: //www. quora. com/How-does-pyspider-compare-to-scrapy 42

Building Block of Web Crawler Can set a list of starting URLs (initial web pages) Request these URLs to begin fetching over HTTP protocal Parse their HTTP responses (HTML, XML, JSON, and etc. ) Extract URLs and/or valuable data during parsing process Place the extracted URLs on the queue Until all the URLs are fetched 43

Scrapy WEB CRAWLER FRAMEWORK 44

Scrapy is a mature framework (latest is v 1. 0. 6, 2016 -05 -04) ◦ Github: 13, 814 stars, 3, 874 fork (2016 -05 -03 updated) ◦ unicode, redirection handling, download delay, gzipped responses, concurrent requests, http cache, etc. Scrapy crawling is fast ◦ it uses asynchronous operations (a. k. a. non-blocking) on top of Twisted (event-driven networking) Scrapy has good and fast support for parsing html ◦ it is on top of lxml (XML/HTML parsing library) 45

Scrapy Official ◦ http: //scrapy. org/ Documentation ◦ http: //doc. scrapy. org/en/latest/news. html Git. Hub Repository ◦ https: //github. com/scrapy/ Scrapy 1. 0 (Today) ◦ Python 2 support ◦ NOTE: Scrapy 1. 1 has beta Python 3 support (requires Twisted >= 15. 5). See Beta Python 3 Support for more details and some limitations. 46

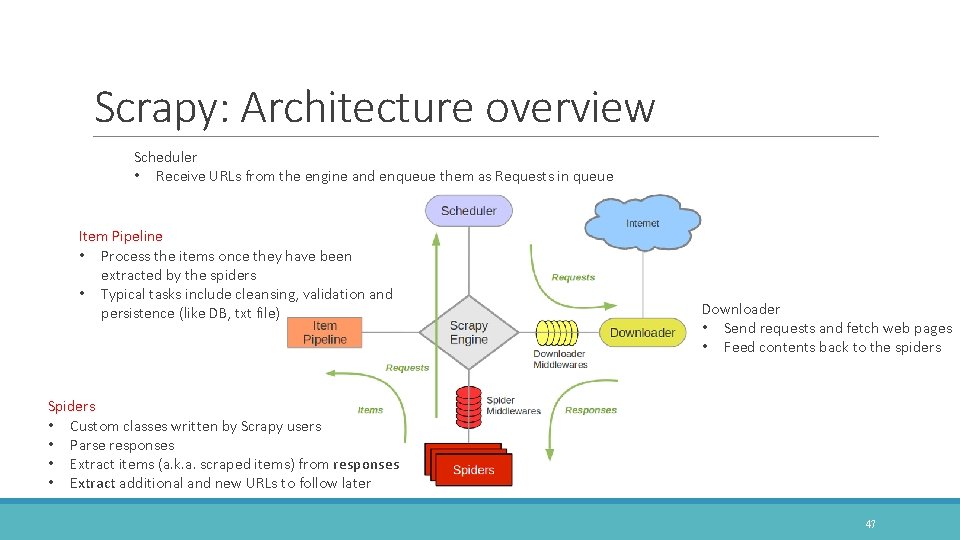

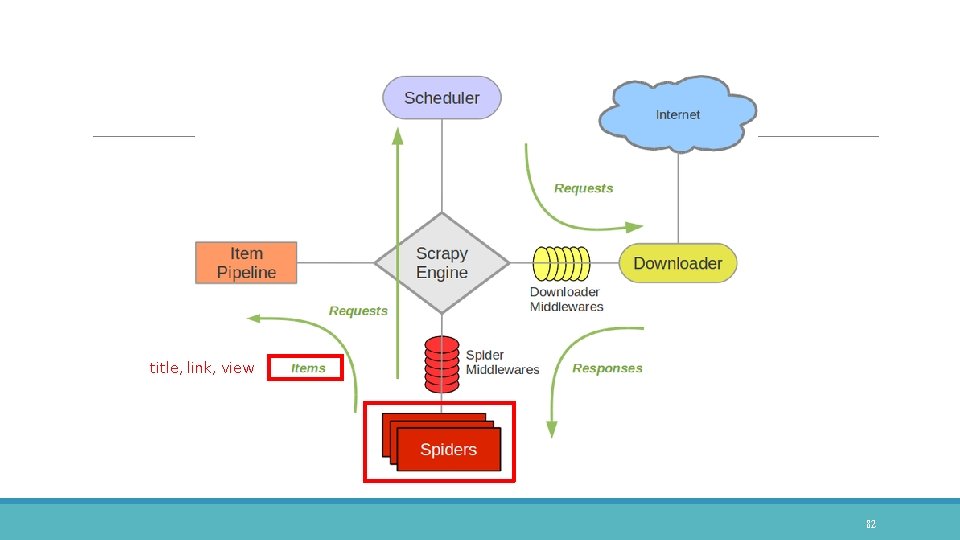

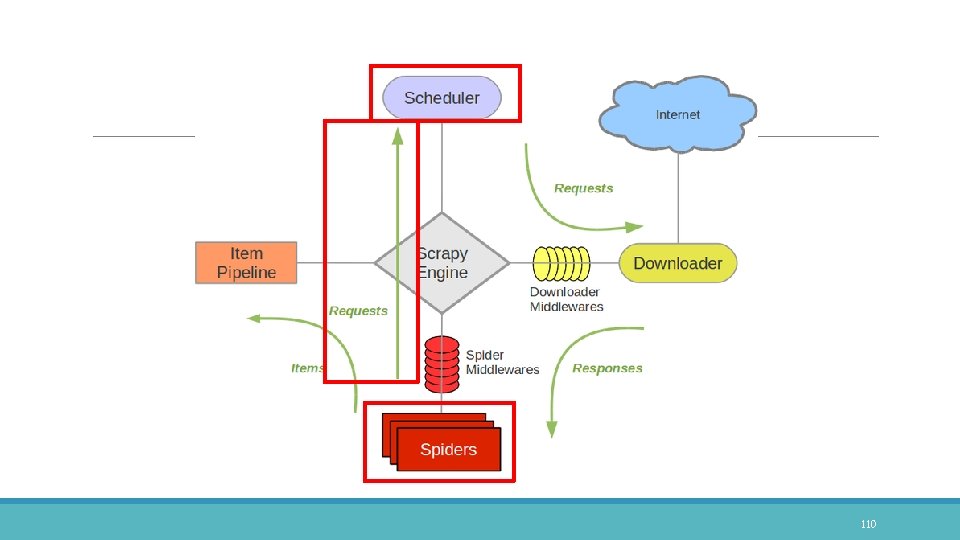

Scrapy: Architecture overview Scheduler • Receive URLs from the engine and enqueue them as Requests in queue Item Pipeline • Process the items once they have been extracted by the spiders • Typical tasks include cleansing, validation and persistence (like DB, txt file) Downloader • Send requests and fetch web pages • Feed contents back to the spiders Spiders • Custom classes written by Scrapy users • Parse responses • Extract items (a. k. a. scraped items) from responses • Extract additional and new URLs to follow later 47

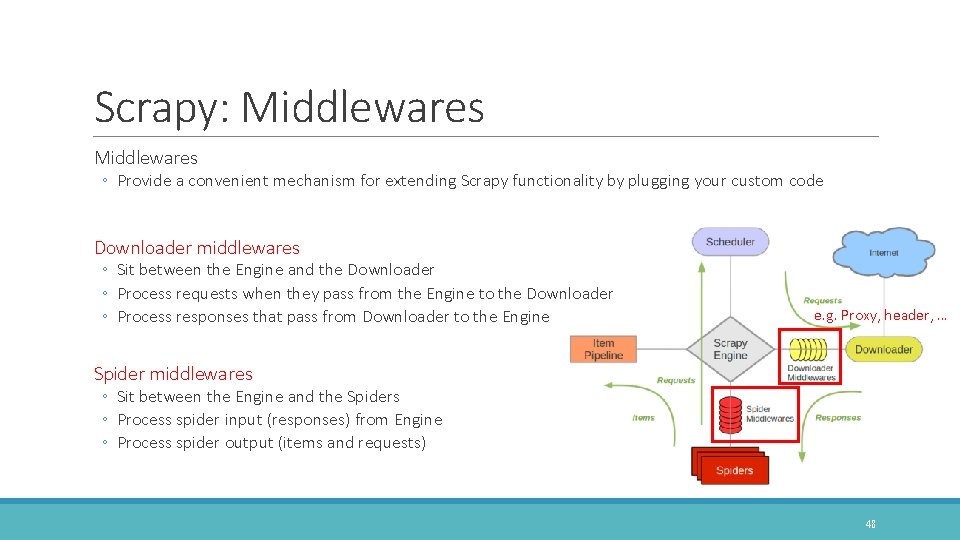

Scrapy: Middlewares ◦ Provide a convenient mechanism for extending Scrapy functionality by plugging your custom code Downloader middlewares ◦ Sit between the Engine and the Downloader ◦ Process requests when they pass from the Engine to the Downloader ◦ Process responses that pass from Downloader to the Engine e. g. Proxy, header, … Spider middlewares ◦ Sit between the Engine and the Spiders ◦ Process spider input (responses) from Engine ◦ Process spider output (items and requests) 48

Scrapy: Installation FOLLOW ME IF POSSIBLE 49

Preliminary Environment Requirements ◦ Python 2. 7 ◦ Virtualenv My OS ◦ Ubuntu 15. 04 -desktop-amd 64 50

Preliminary: Install Virtualenv virtualenv is a tool to create isolated Python environments. ◦ https: //virtualenv. pypa. io/en/latest/ Install virtualenv package via pip tool (package management system for Python) ◦ $ sudo pip install virtualenv Make and change to your working directory ◦ $ mkdir ~/myworking ◦ $ cd ~/myworking Create and launch virtual environment for your python work ◦ $ virtualenv SCRAPY_ENV ◦ $ source SCRAPY_ENV/bin/activate 51

Scrapy: Installation Install dependent packages in Ubuntu ◦ sudo apt-get install build-essential libssl-dev libffi-dev python-dev libxml 2 -dev libxslt 1 -dev Download requirements. txt for installation of scrapy and dependent python packages ◦ ◦ ◦ (SCRAPY_ENV) $ curl -o requirements. txt https: //raw. githubusercontent. com/kaikyle 7997/scrapy_tutorial/master/requirements. txt lxml == 3. 6. 0 twisted == 16. 0. 0 cryptography == 1. 3. 1 scrapy == 1. 0. 5 Start installing Scrapy and dependent python packages ◦ (SCRAPY_ENV) $ pip install -r requirements. txt 52

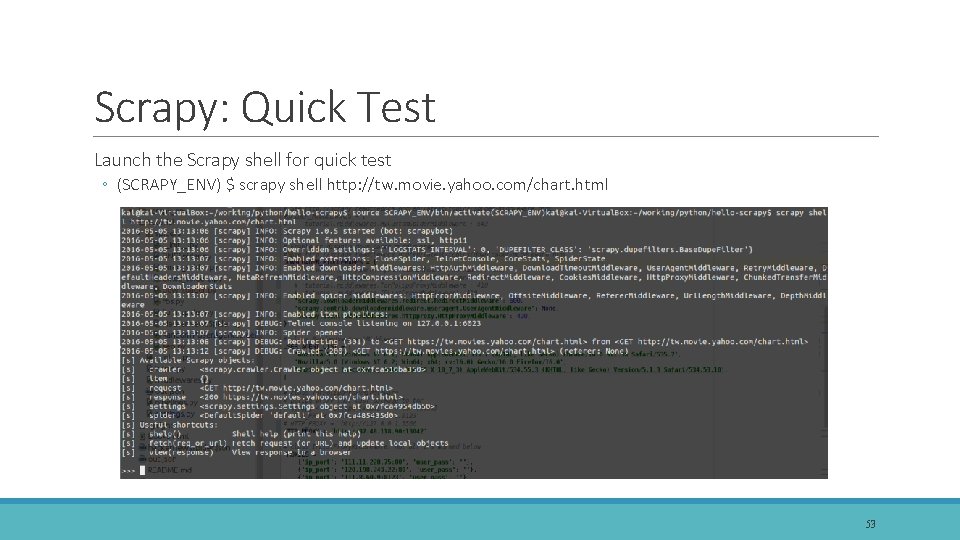

Scrapy: Quick Test Launch the Scrapy shell for quick test ◦ (SCRAPY_ENV) $ scrapy shell http: //tw. movie. yahoo. com/chart. html 53

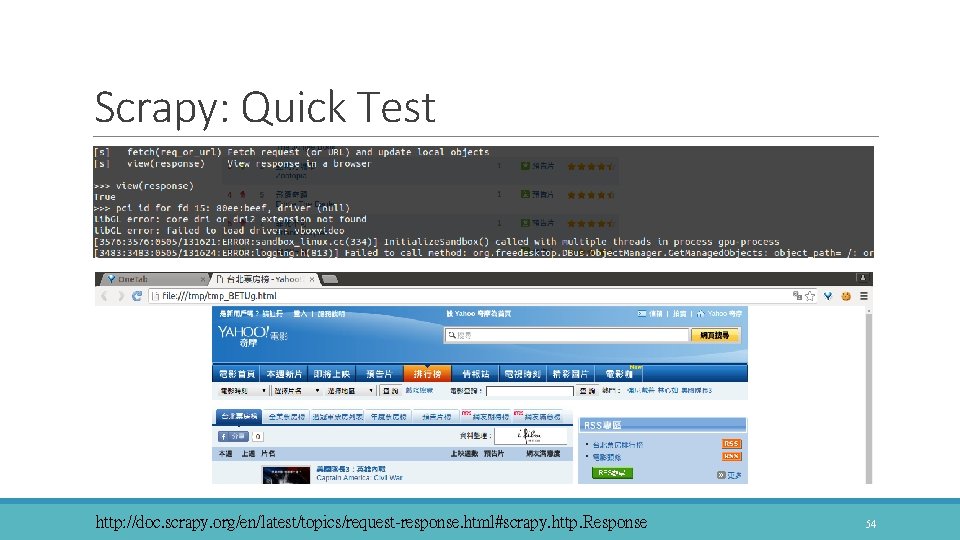

Scrapy: Quick Test >>>>view(response) http: //doc. scrapy. org/en/latest/topics/request-response. html#scrapy. http. Response 54

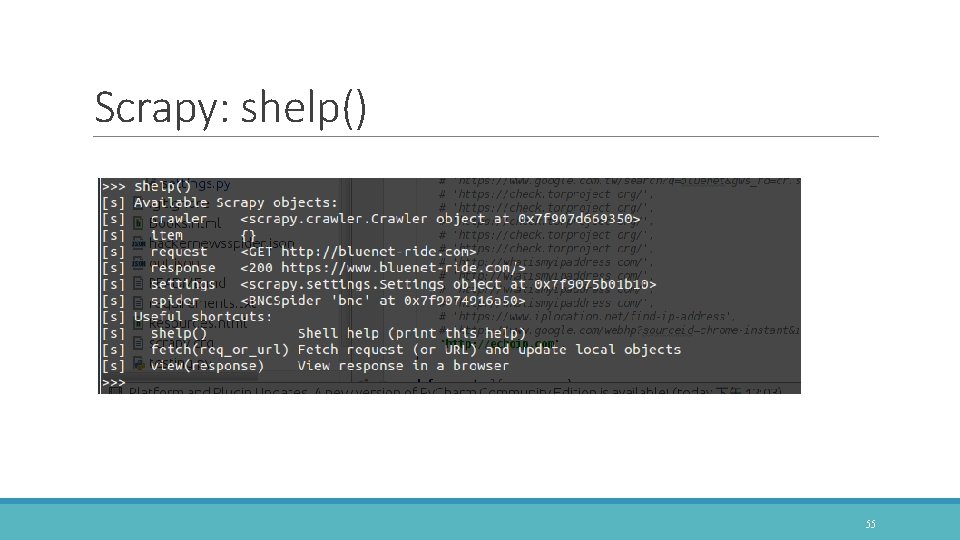

Scrapy: shelp() 55

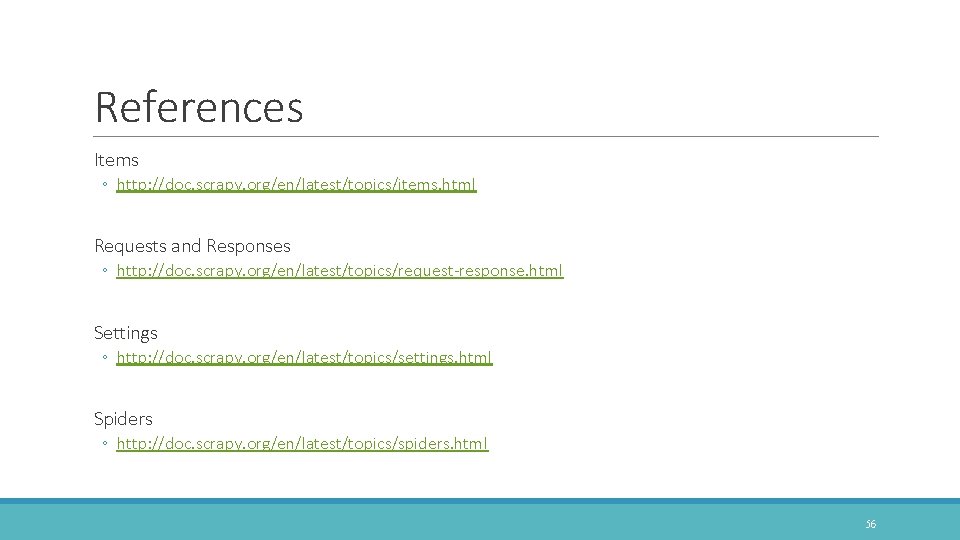

References Items ◦ http: //doc. scrapy. org/en/latest/topics/items. html Requests and Responses ◦ http: //doc. scrapy. org/en/latest/topics/request-response. html Settings ◦ http: //doc. scrapy. org/en/latest/topics/settings. html Spiders ◦ http: //doc. scrapy. org/en/latest/topics/spiders. html 56

Selectors PARSING HTML HTTP: //DOC. SCRAPY. ORG/EN/LATEST/TOPICS/SELECTORS. HTML 57

Parsing Libraries When you’re scraping web pages, the most common task you need to perform is to extract data from the HTML source. Beautiful. Soup ◦ It is a very popular web scraping library among Python programmers ◦ But it is slow to extract lxml ◦ It is an XML parsing library which also parses HTML ◦ Scrapy uses it as selector 58

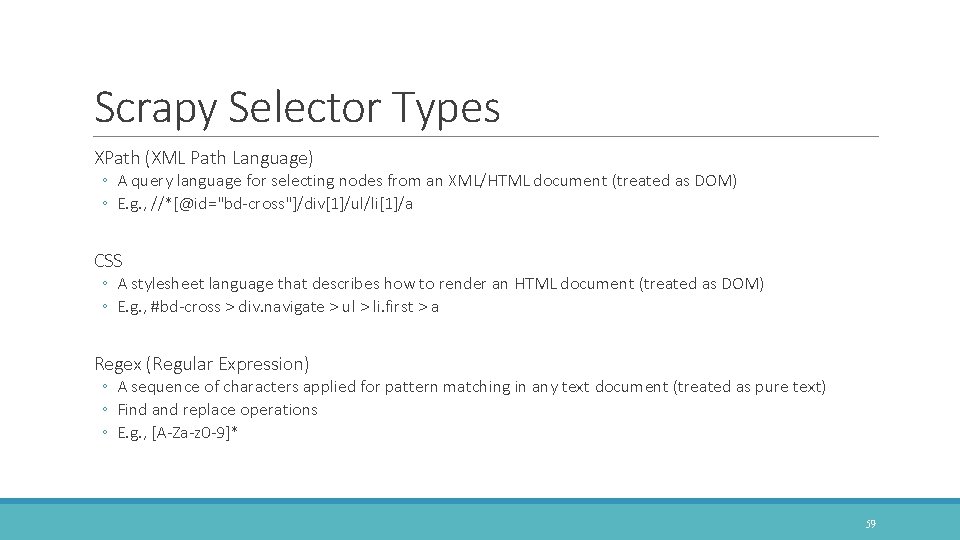

Scrapy Selector Types XPath (XML Path Language) ◦ A query language for selecting nodes from an XML/HTML document (treated as DOM) ◦ E. g. , //*[@id="bd-cross"]/div[1]/ul/li[1]/a CSS ◦ A stylesheet language that describes how to render an HTML document (treated as DOM) ◦ E. g. , #bd-cross > div. navigate > ul > li. first > a Regex (Regular Expression) ◦ A sequence of characters applied for pattern matching in any text document (treated as pure text) ◦ Find and replace operations ◦ E. g. , [A-Za-z 0 -9]* 59

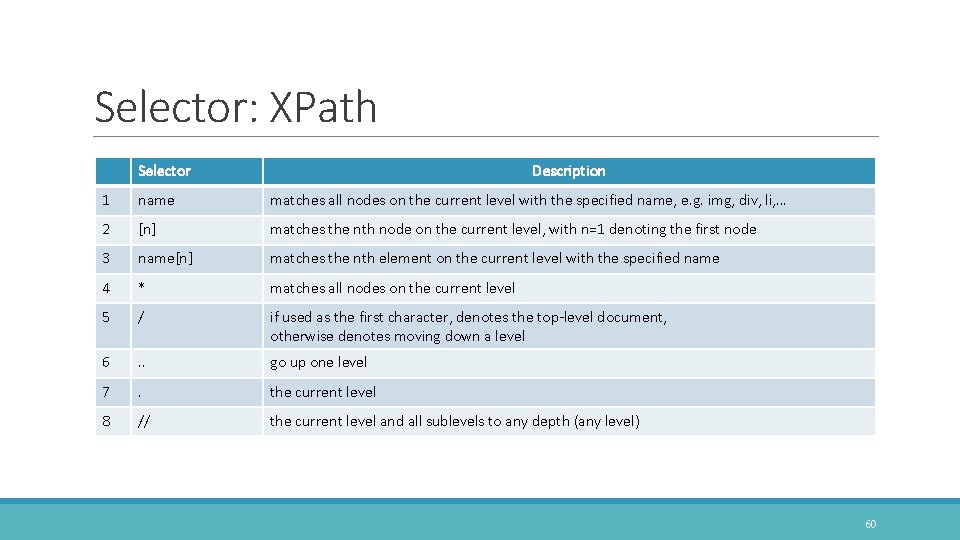

Selector: XPath Selector Description 1 name matches all nodes on the current level with the specified name, e. g. img, div, li, … 2 [n] matches the nth node on the current level, with n=1 denoting the first node 3 name[n] matches the nth element on the current level with the specified name 4 * matches all nodes on the current level 5 / if used as the first character, denotes the top-level document, otherwise denotes moving down a level 6 . . go up one level 7 . the current level 8 // the current level and all sublevels to any depth (any level) 60

![Selector: XPath Selector Description 9 [@key='value'] all elements with an attribute that matches the Selector: XPath Selector Description 9 [@key='value'] all elements with an attribute that matches the](http://slidetodoc.com/presentation_image_h/10ac18755d309066b5039f4060c70efe/image-61.jpg)

Selector: XPath Selector Description 9 [@key='value'] all elements with an attribute that matches the specified key/value pair 10 name[@key='value'] all elements with the specified name and an attribute that matches the specified key/value pair 11 [text()='value'] all elements with the specified text 12 name[text()='value'] all elements with the specified name and text 13 @name the attribute with the specified name, e. g. all URLs are returned by //@href 14 @* all attributes 15 text() retrieve the text closured by element, e. g. , <span>I'm text!</span> Try on "http: //doc. scrapy. org/en/latest/_static/selectors-sample 1. html" 61

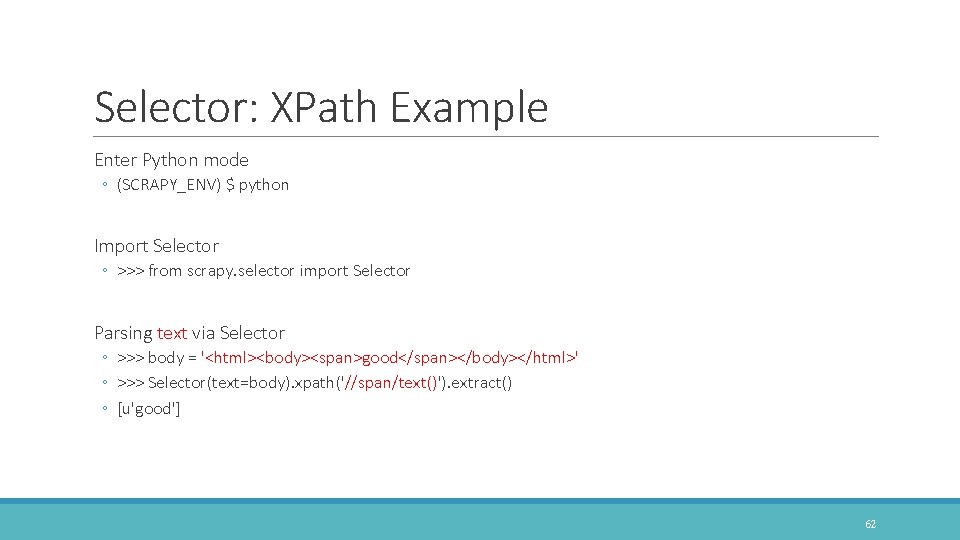

Selector: XPath Example Enter Python mode ◦ (SCRAPY_ENV) $ python Import Selector ◦ >>> from scrapy. selector import Selector Parsing text via Selector ◦ >>> body = '<html><body><span>good</span></body></html>' ◦ >>> Selector(text=body). xpath('//span/text()'). extract() ◦ [u'good'] 62

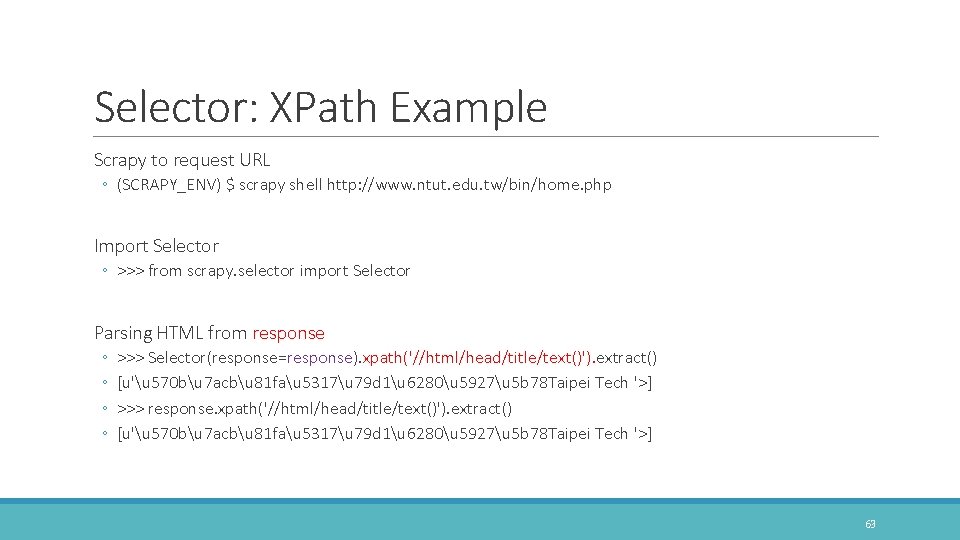

Selector: XPath Example Scrapy to request URL ◦ (SCRAPY_ENV) $ scrapy shell http: //www. ntut. edu. tw/bin/home. php Import Selector ◦ >>> from scrapy. selector import Selector Parsing HTML from response ◦ ◦ >>> Selector(response=response). xpath('//html/head/title/text()'). extract() [u'u 570 bu 7 acbu 81 fau 5317u 79 d 1u 6280u 5927u 5 b 78 Taipei Tech '>] >>> response. xpath('//html/head/title/text()'). extract() [u'u 570 bu 7 acbu 81 fau 5317u 79 d 1u 6280u 5927u 5 b 78 Taipei Tech '>] 63

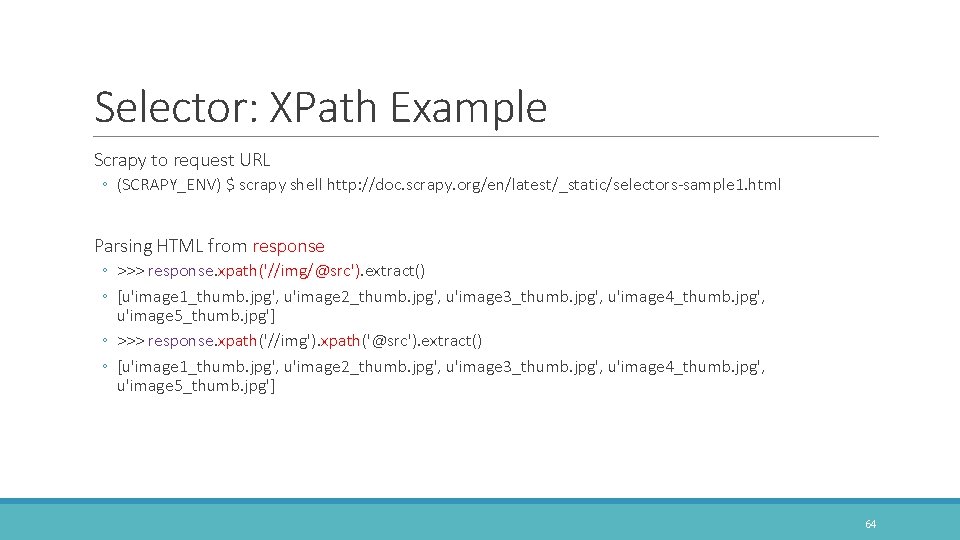

Selector: XPath Example Scrapy to request URL ◦ (SCRAPY_ENV) $ scrapy shell http: //doc. scrapy. org/en/latest/_static/selectors-sample 1. html Parsing HTML from response ◦ >>> response. xpath('//img/@src'). extract() ◦ [u'image 1_thumb. jpg', u'image 2_thumb. jpg', u'image 3_thumb. jpg', u'image 4_thumb. jpg', u'image 5_thumb. jpg'] ◦ >>> response. xpath('//img'). xpath('@src'). extract() ◦ [u'image 1_thumb. jpg', u'image 2_thumb. jpg', u'image 3_thumb. jpg', u'image 4_thumb. jpg', u'image 5_thumb. jpg'] 64

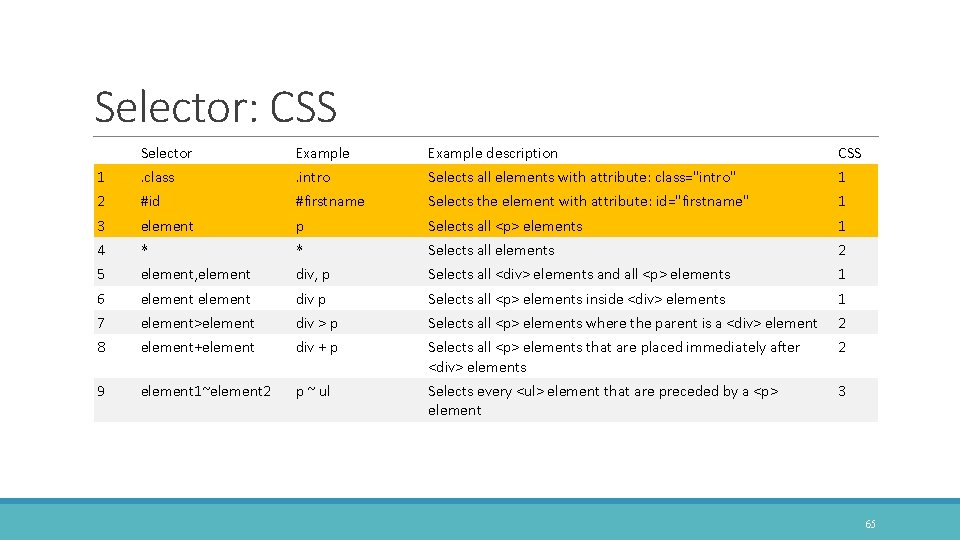

Selector: CSS Selector Example description CSS 1 . class . intro Selects all elements with attribute: class="intro" 1 2 #id #firstname Selects the element with attribute: id="firstname" 1 3 element p Selects all <p> elements 1 4 * div, p Selects all elements 2 5 * element, element Selects all <div> elements and all <p> elements 1 6 element div p Selects all <p> elements inside <div> elements 1 7 element>element div > p Selects all <p> elements where the parent is a <div> element 2 8 element+element div + p Selects all <p> elements that are placed immediately after <div> elements 2 9 element 1~element 2 p ~ ul Selects every <ul> element that are preceded by a <p> element 3 65

![Selector: CSS Selector Example description CSS 10 [attribute] [target] Selects all elements with a Selector: CSS Selector Example description CSS 10 [attribute] [target] Selects all elements with a](http://slidetodoc.com/presentation_image_h/10ac18755d309066b5039f4060c70efe/image-66.jpg)

Selector: CSS Selector Example description CSS 10 [attribute] [target] Selects all elements with a target attribute 2 11 [attribute=value] [target=_blank] Selects all elements with target="_blank" 2 12 [attribute~=value] [title~=flower] Selects all elements with a title attribute containing the word "flower" 2 13 [attribute|=value] [lang|=en] Selects all elements with a lang attribute value starting with "en" 2 14 [attribute^=value] a[href^="https"] Selects every <a> element whose href attribute value begins with "https" 3 15 [attribute$=value] a[href$=". pdf"] Selects every <a> element whose href attribute value ends with ". pdf" 16 [attribute*=value] a[href*="w 3 schools"] Selects every <a> element whose href attribute value contains the substring "w 3 schools" 3 3 more. . . W 3 C, w 3 schools Try on "http: //doc. scrapy. org/en/latest/_static/selectors-sample 1. html" 66

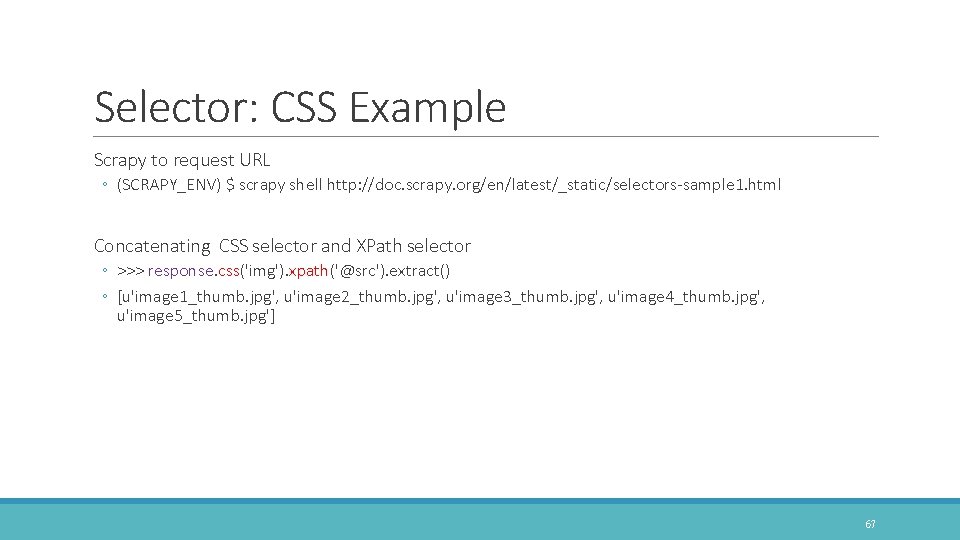

Selector: CSS Example Scrapy to request URL ◦ (SCRAPY_ENV) $ scrapy shell http: //doc. scrapy. org/en/latest/_static/selectors-sample 1. html Concatenating CSS selector and XPath selector ◦ >>> response. css('img'). xpath('@src'). extract() ◦ [u'image 1_thumb. jpg', u'image 2_thumb. jpg', u'image 3_thumb. jpg', u'image 4_thumb. jpg', u'image 5_thumb. jpg'] 67

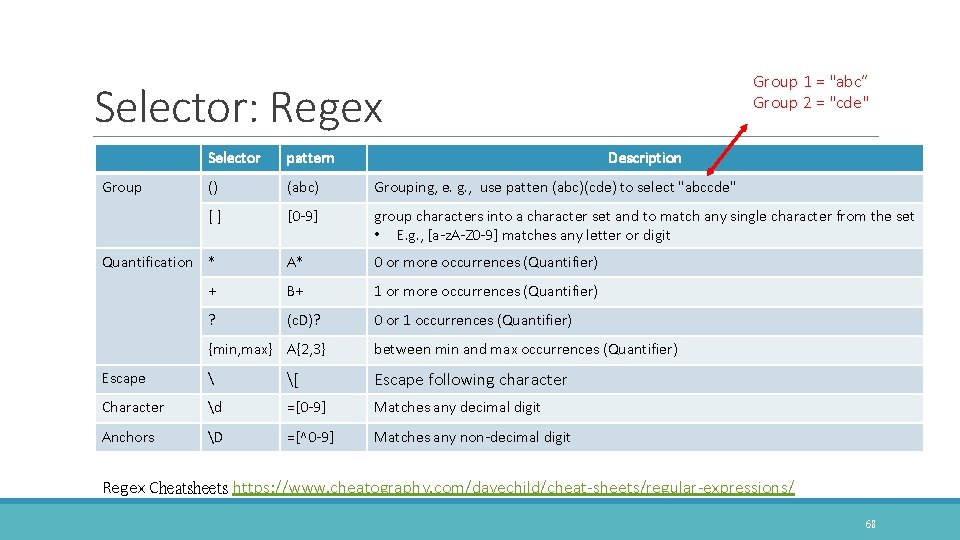

Group 1 = "abc” Group 2 = "cde" Selector: Regex Selector pattern () (abc) Grouping, e. g. , use patten (abc)(cde) to select "abccde" [] [0 -9] group characters into a character set and to match any single character from the set • E. g. , [a-z. A-Z 0 -9] matches any letter or digit Quantification * A* 0 or more occurrences (Quantifier) + B+ 1 or more occurrences (Quantifier) ? (c. D)? 0 or 1 occurrences (Quantifier) Group {min, max} A{2, 3} Description between min and max occurrences (Quantifier) Escape [ Escape following character Character d =[0 -9] Matches any decimal digit Anchors D =[^0 -9] Matches any non-decimal digit Regex Cheatsheets https: //www. cheatography. com/davechild/cheat-sheets/regular-expressions/ 68

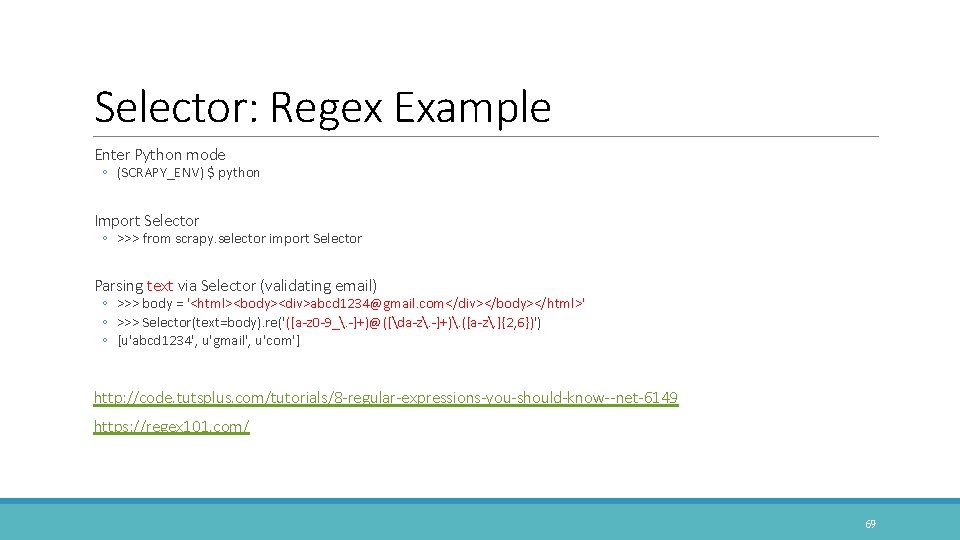

Selector: Regex Example Enter Python mode ◦ (SCRAPY_ENV) $ python Import Selector ◦ >>> from scrapy. selector import Selector Parsing text via Selector (validating email) ◦ >>> body = '<html><body><div>abcd 1234@gmail. com</div></body></html>' ◦ >>> Selector(text=body). re('([a-z 0 -9_. -]+)@([da-z. -]+). ([a-z. ]{2, 6})') ◦ [u'abcd 1234', u'gmail', u'com'] http: //code. tutsplus. com/tutorials/8 -regular-expressions-you-should-know--net-6149 https: //regex 101. com/ 69

Scrapy: First Project CREATE YOUR FIRST PROJECT TO CRAWL STACKOVERFLOW 70

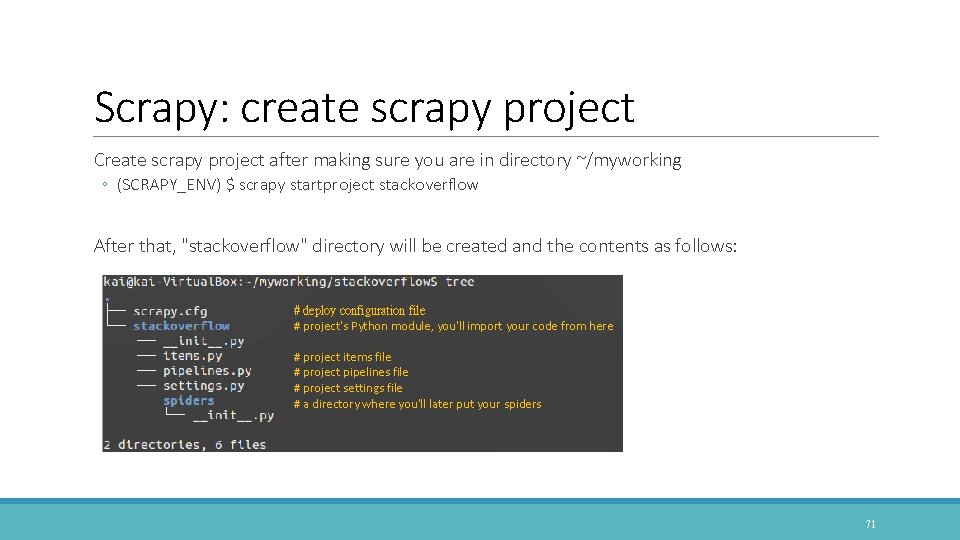

Scrapy: create scrapy project Create scrapy project after making sure you are in directory ~/myworking ◦ (SCRAPY_ENV) $ scrapy startproject stackoverflow After that, "stackoverflow" directory will be created and the contents as follows: # deploy configuration file # project's Python module, you'll import your code from here # project items file # project pipelines file # project settings file # a directory where you'll later put your spiders 71

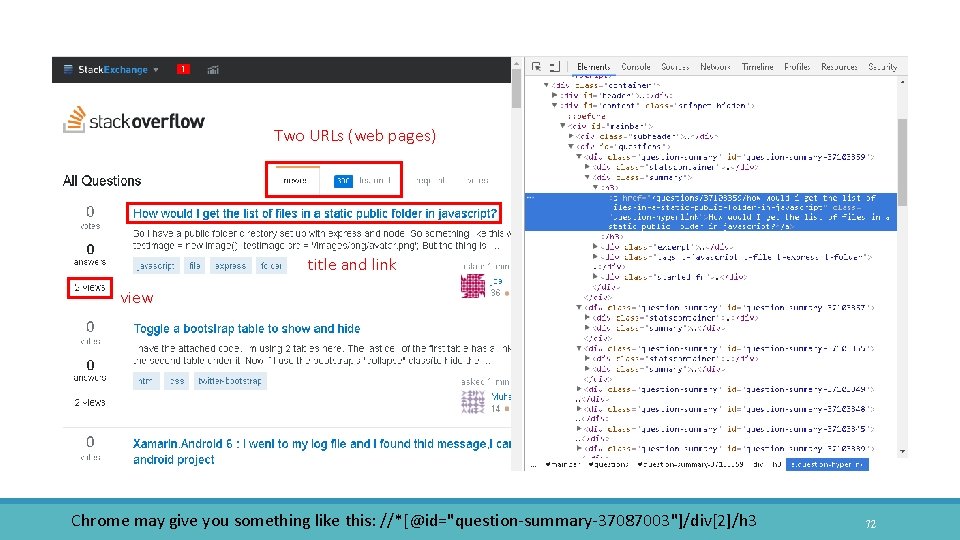

Two URLs (web pages) title and link view Chrome may give you something like this: //*[@id="question-summary-37087003"]/div[2]/h 3 72

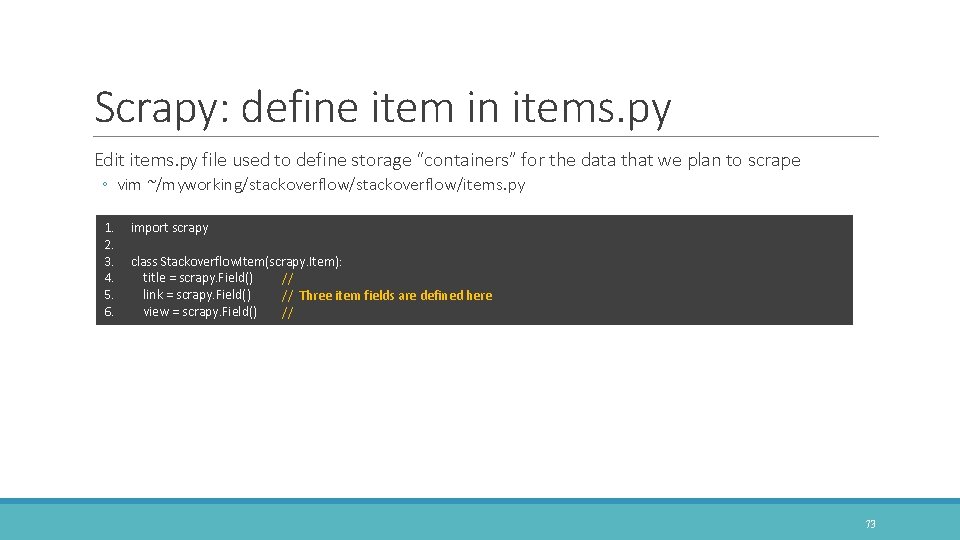

Scrapy: define item in items. py Edit items. py file used to define storage “containers” for the data that we plan to scrape ◦ vim ~/myworking/stackoverflow/items. py 1. 2. 3. 4. 5. 6. import scrapy class Stackoverflow. Item(scrapy. Item): title = scrapy. Field() // link = scrapy. Field() // Three item fields are defined here view = scrapy. Field() // 73

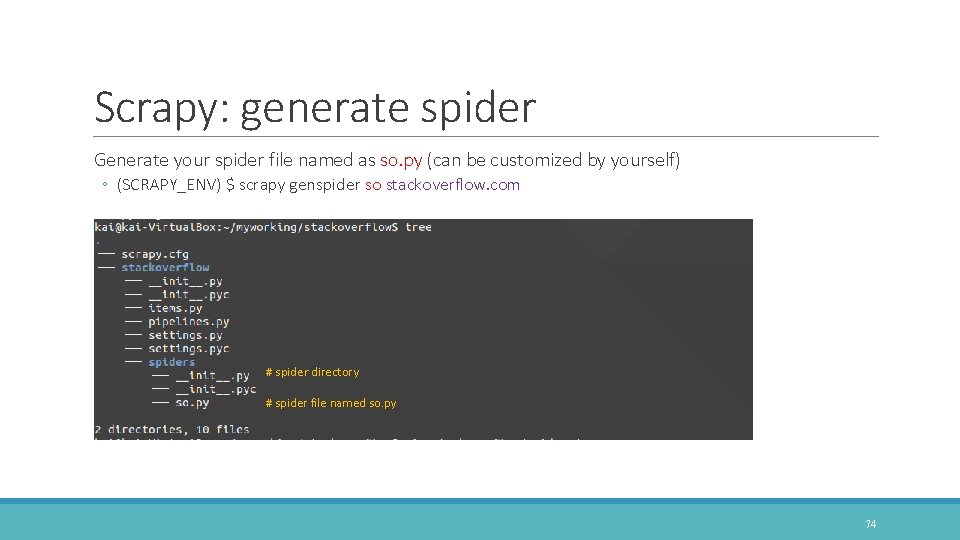

Scrapy: generate spider Generate your spider file named as so. py (can be customized by yourself) ◦ (SCRAPY_ENV) $ scrapy genspider so stackoverflow. com # spider directory # spider file named so. py 74

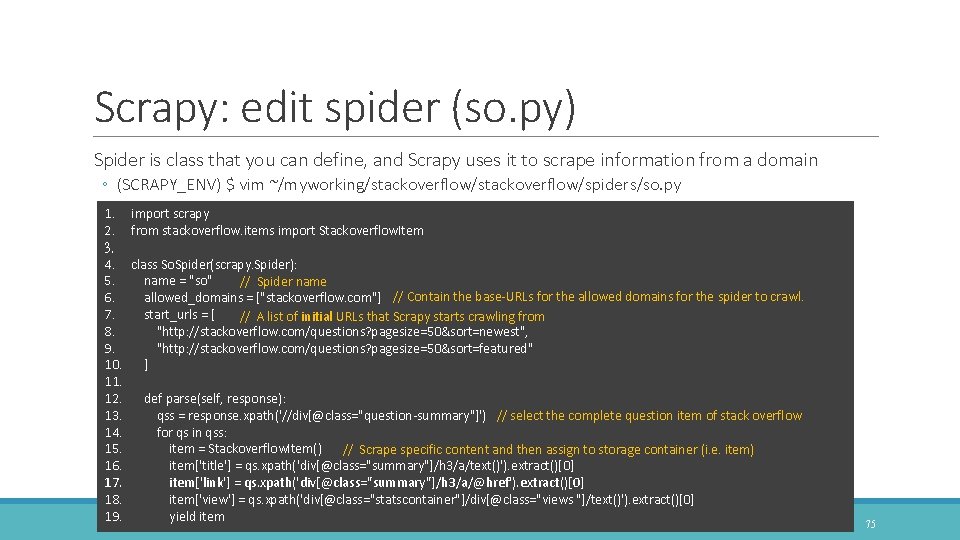

Scrapy: edit spider (so. py) Spider is class that you can define, and Scrapy uses it to scrape information from a domain ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/spiders/so. py 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. 17. 18. 19. import scrapy from stackoverflow. items import Stackoverflow. Item class So. Spider(scrapy. Spider): name = "so" // Spider name allowed_domains = ["stackoverflow. com"] // Contain the base-URLs for the allowed domains for the spider to crawl. start_urls = [ // A list of initial URLs that Scrapy starts crawling from "http: //stackoverflow. com/questions? pagesize=50&sort=newest", "http: //stackoverflow. com/questions? pagesize=50&sort=featured" ] def parse(self, response): qss = response. xpath('//div[@class="question-summary"]') // select the complete question item of stack overflow for qs in qss: item = Stackoverflow. Item() // Scrape specific content and then assign to storage container (i. e. item) item['title'] = qs. xpath('div[@class="summary"]/h 3/a/text()'). extract()[0] item['link'] = qs. xpath('div[@class="summary"]/h 3/a/@href'). extract()[0] item['view'] = qs. xpath('div[@class="statscontainer"]/div[@class="views "]/text()'). extract()[0] yield item 75

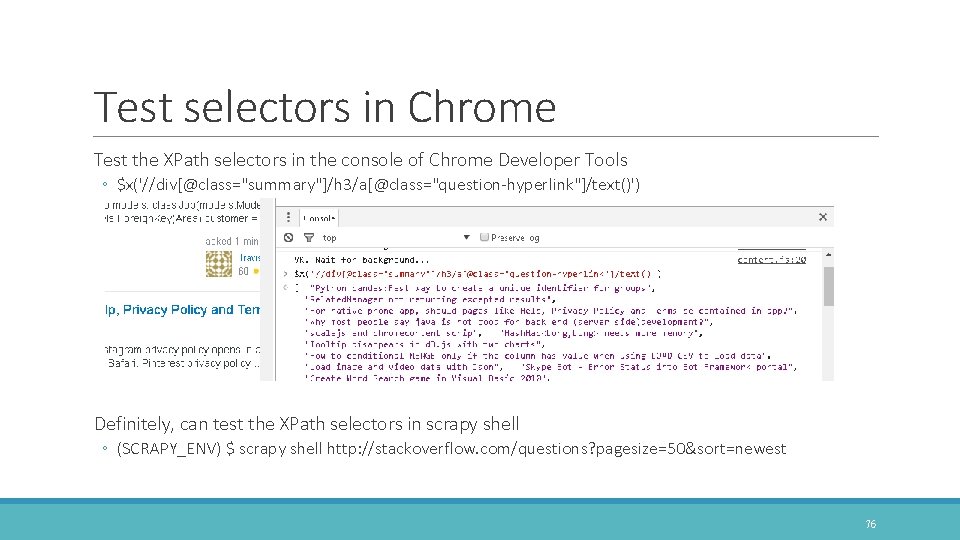

Test selectors in Chrome Test the XPath selectors in the console of Chrome Developer Tools ◦ $x('//div[@class="summary"]/h 3/a[@class="question-hyperlink"]/text()') Definitely, can test the XPath selectors in scrapy shell ◦ (SCRAPY_ENV) $ scrapy shell http: //stackoverflow. com/questions? pagesize=50&sort=newest 76

Scrapy: Start crawling Run the command below in the path "~/myworking/stackoverflow" ◦ (SCRAPY_ENV) $ scrapy crawl so ◦ Some log and the data scraped will also be shown to you on screen 77

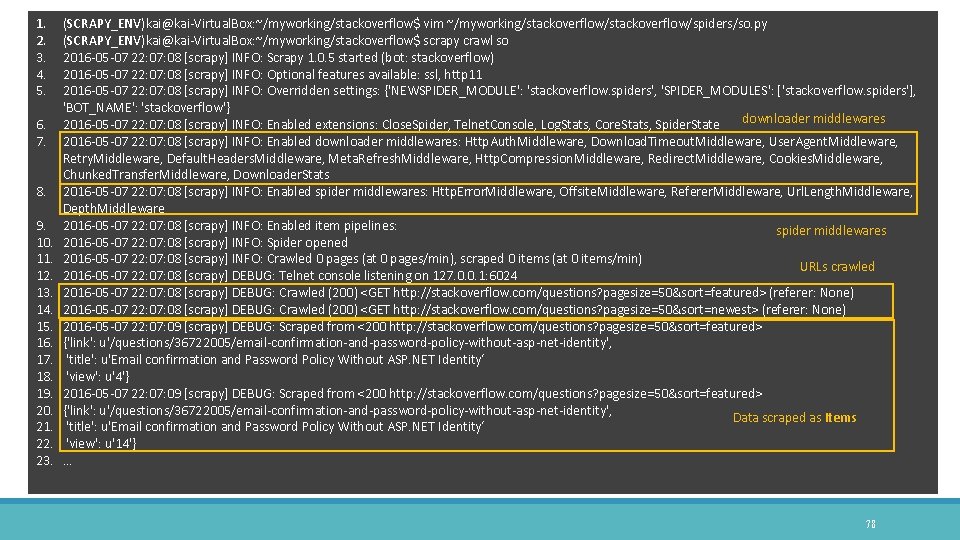

1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. 17. 18. 19. 20. 21. 22. 23. (SCRAPY_ENV)kai@kai-Virtual. Box: ~/myworking/stackoverflow$ vim ~/myworking/stackoverflow/spiders/so. py (SCRAPY_ENV)kai@kai-Virtual. Box: ~/myworking/stackoverflow$ scrapy crawl so 2016 -05 -07 22: 07: 08 [scrapy] INFO: Scrapy 1. 0. 5 started (bot: stackoverflow) 2016 -05 -07 22: 07: 08 [scrapy] INFO: Optional features available: ssl, http 11 2016 -05 -07 22: 07: 08 [scrapy] INFO: Overridden settings: {'NEWSPIDER_MODULE': 'stackoverflow. spiders', 'SPIDER_MODULES': ['stackoverflow. spiders'], 'BOT_NAME': 'stackoverflow'} downloader middlewares 2016 -05 -07 22: 07: 08 [scrapy] INFO: Enabled extensions: Close. Spider, Telnet. Console, Log. Stats, Core. Stats, Spider. State 2016 -05 -07 22: 07: 08 [scrapy] INFO: Enabled downloader middlewares: Http. Auth. Middleware, Download. Timeout. Middleware, User. Agent. Middleware, Retry. Middleware, Default. Headers. Middleware, Meta. Refresh. Middleware, Http. Compression. Middleware, Redirect. Middleware, Cookies. Middleware, Chunked. Transfer. Middleware, Downloader. Stats 2016 -05 -07 22: 07: 08 [scrapy] INFO: Enabled spider middlewares: Http. Error. Middleware, Offsite. Middleware, Referer. Middleware, Url. Length. Middleware, Depth. Middleware 2016 -05 -07 22: 07: 08 [scrapy] INFO: Enabled item pipelines: spider middlewares 2016 -05 -07 22: 07: 08 [scrapy] INFO: Spider opened 2016 -05 -07 22: 07: 08 [scrapy] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min) URLs crawled 2016 -05 -07 22: 07: 08 [scrapy] DEBUG: Telnet console listening on 127. 0. 0. 1: 6024 2016 -05 -07 22: 07: 08 [scrapy] DEBUG: Crawled (200) <GET http: //stackoverflow. com/questions? pagesize=50&sort=featured> (referer: None) 2016 -05 -07 22: 07: 08 [scrapy] DEBUG: Crawled (200) <GET http: //stackoverflow. com/questions? pagesize=50&sort=newest> (referer: None) 2016 -05 -07 22: 07: 09 [scrapy] DEBUG: Scraped from <200 http: //stackoverflow. com/questions? pagesize=50&sort=featured> {'link': u'/questions/36722005/email-confirmation-and-password-policy-without-asp-net-identity', 'title': u'Email confirmation and Password Policy Without ASP. NET Identity‘ 'view': u'4'} 2016 -05 -07 22: 07: 09 [scrapy] DEBUG: Scraped from <200 http: //stackoverflow. com/questions? pagesize=50&sort=featured> {'link': u'/questions/36722005/email-confirmation-and-password-policy-without-asp-net-identity', Data scraped as Items 'title': u'Email confirmation and Password Policy Without ASP. NET Identity‘ 'view': u'14'} … 78

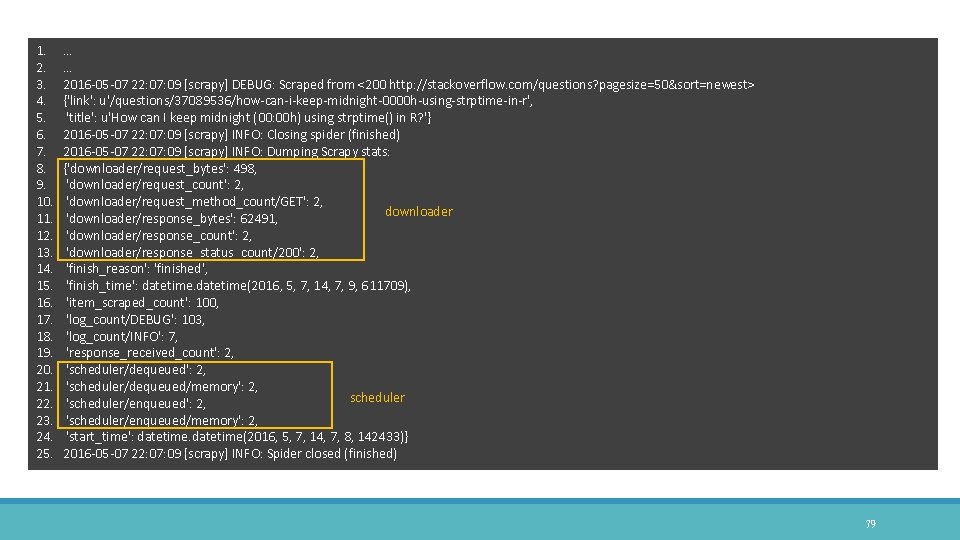

1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. 17. 18. 19. 20. 21. 22. 23. 24. 25. … … 2016 -05 -07 22: 07: 09 [scrapy] DEBUG: Scraped from <200 http: //stackoverflow. com/questions? pagesize=50&sort=newest> {'link': u'/questions/37089536/how-can-i-keep-midnight-0000 h-using-strptime-in-r', 'title': u'How can I keep midnight (00: 00 h) using strptime() in R? '} 2016 -05 -07 22: 07: 09 [scrapy] INFO: Closing spider (finished) 2016 -05 -07 22: 07: 09 [scrapy] INFO: Dumping Scrapy stats: {'downloader/request_bytes': 498, 'downloader/request_count': 2, 'downloader/request_method_count/GET': 2, downloader 'downloader/response_bytes': 62491, 'downloader/response_count': 2, 'downloader/response_status_count/200': 2, 'finish_reason': 'finished', 'finish_time': datetime(2016, 5, 7, 14, 7, 9, 611709), 'item_scraped_count': 100, 'log_count/DEBUG': 103, 'log_count/INFO': 7, 'response_received_count': 2, 'scheduler/dequeued/memory': 2, scheduler 'scheduler/enqueued': 2, 'scheduler/enqueued/memory': 2, 'start_time': datetime(2016, 5, 7, 14, 7, 8, 142433)} 2016 -05 -07 22: 07: 09 [scrapy] INFO: Spider closed (finished) 79

Scrapy: Store in File Crawl and dump items to a JSON file ◦ (SCRAPY_ENV) $ scrapy crawl so -o items. json ◦ Items. json will be generated in current directory Take a look at JSON file ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/items. json 80

items. json 1. 2. [{"title": "stm 32 f 429 USB CDC VCP detection", "link": "/questions/37099580/stm 32 f 429 -usb-cdc-vcp-detection", "view": "rn 2 viewsrn"}, {"title": "Maximum edge-weighted subtree T' constrained by number of max k edges, starting with the same root as T", "link": "/questions/37099578/maximum-edge-weighted-subtree-t-constrained-by-number-of-max-k-edges-starting", "view": "rn 1 viewrn"}, 3. {"title": "How does Typescript load typings? (and what each TS-related file's purpose is)", "link": "/questions/37099577/how-does-typescript-load-typingsand-what-each-ts-related-files-purpose-is", "view": "rn 2 viewsrn"}, 4. {"title": "Windows Phone 8. 1 app shows exception u 201 c. Value does not fall within the expected range. u 201 d", "link": "/questions/37099571/windowsphone-8 -1 -app-shows-exception-value-does-not-fall-within-the-expected-r", "view": "rn 2 viewsrn"}, 5. {"title": "Execv. . what is (char *)0 in c? ", "link": "/questions/37099570/execv-what-is-char-0 -in-c", "view": "rn 6 viewsrn"}, 6. {"title": "I try use my local js file to get remote ip data, but it not works", "link": "/questions/37099567/i-try-use-my-local-js-file-to-get-remote-ip-data-butit-not-works", "view": "rn 2 viewsrn"}, 7. {"title": "Get youtube video title in php very slow", "link": "/questions/37099566/get-youtube-video-title-in-php-very-slow", "view": "rn 3 viewsrn"}, 8. {"title": "Adding a dial on an Alert Dialog", "link": "/questions/37099565/adding-a-dial-on-an-alert-dialog", "view": "rn 3 viewsrn"}, 9. {"title": "Docker - How can run the psql command in the postgres container? ", "link": "/questions/37099564/docker-how-can-run-the-psql-command-inthe-postgres-container", "view": "rn 3 viewsrn"}, 10. {"title": "Colud Foundary getting ERROR: Unknown Cloud. Foundry. Exception: 400 Bad Request ERROR: Cloud Foundry error code: -1", "link": "/questions/37099561/colud-foundary-getting-error-unknown-cloudfoundryexception-400 -bad-request-err", "view": "rn 3 viewsrn"}, 11. {"title": "Filebeat Issue u 201 c Error Initialising publisher: No outputs are defined. u 201 d when trying to forward logs to Logstash", "link": "/questions/37099560/filebeat-issue-error-initialising-publisher-no-outputs-are-defined-when-tr", "view": "rn 3 viewsrn"}, 12. … 81

title, link, view 82

Item Pipeline CLEANSING, VALIDATION AND PERSISTENCE (LIKE DB, TXT FILE ) 83

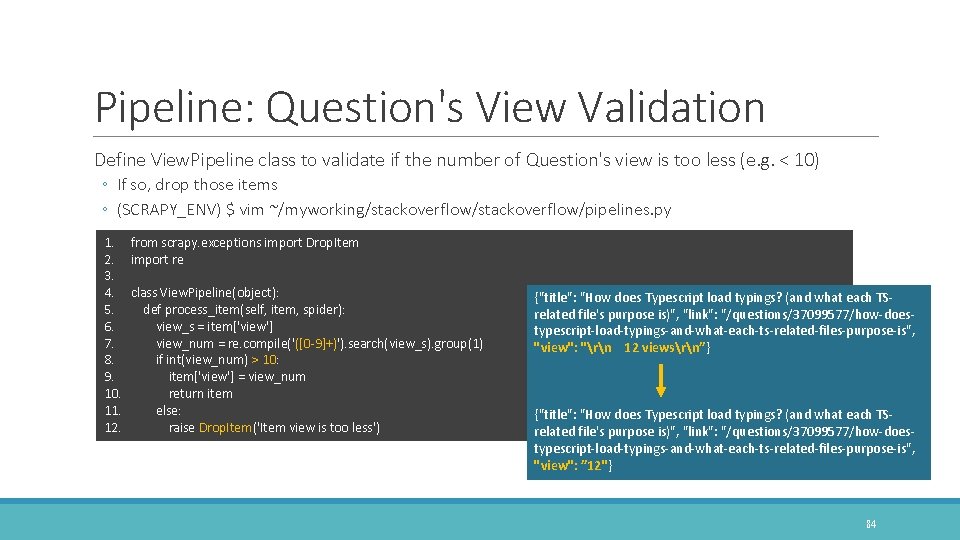

Pipeline: Question's View Validation Define View. Pipeline class to validate if the number of Question's view is too less (e. g. < 10) ◦ If so, drop those items ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/pipelines. py 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. from scrapy. exceptions import Drop. Item import re class View. Pipeline(object): def process_item(self, item, spider): view_s = item['view'] view_num = re. compile('([0 -9]+)'). search(view_s). group(1) if int(view_num) > 10: item['view'] = view_num return item else: raise Drop. Item('Item view is too less') {"title": "How does Typescript load typings? (and what each TSrelated file's purpose is)", "link": "/questions/37099577/how-doestypescript-load-typings-and-what-each-ts-related-files-purpose-is", "view": "rn 12 viewsrn”} {"title": "How does Typescript load typings? (and what each TSrelated file's purpose is)", "link": "/questions/37099577/how-doestypescript-load-typings-and-what-each-ts-related-files-purpose-is", "view": ” 12"} 84

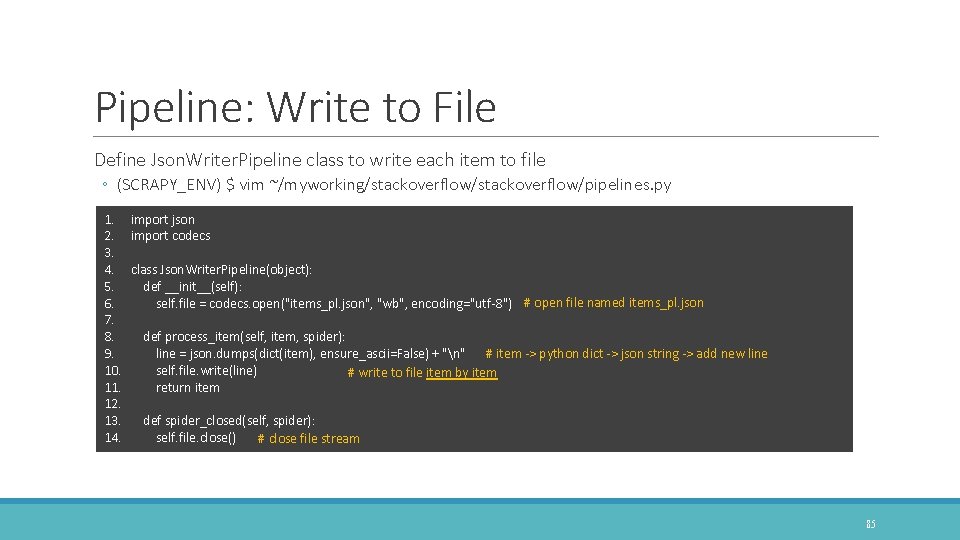

Pipeline: Write to File Define Json. Writer. Pipeline class to write each item to file ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/pipelines. py 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. import json import codecs class Json. Writer. Pipeline(object): def __init__(self): self. file = codecs. open("items_pl. json", "wb", encoding="utf-8") # open file named items_pl. json def process_item(self, item, spider): line = json. dumps(dict(item), ensure_ascii=False) + "n" # item -> python dict -> json string -> add new line self. file. write(line) # write to file item by item return item def spider_closed(self, spider): self. file. close() # close file stream 85

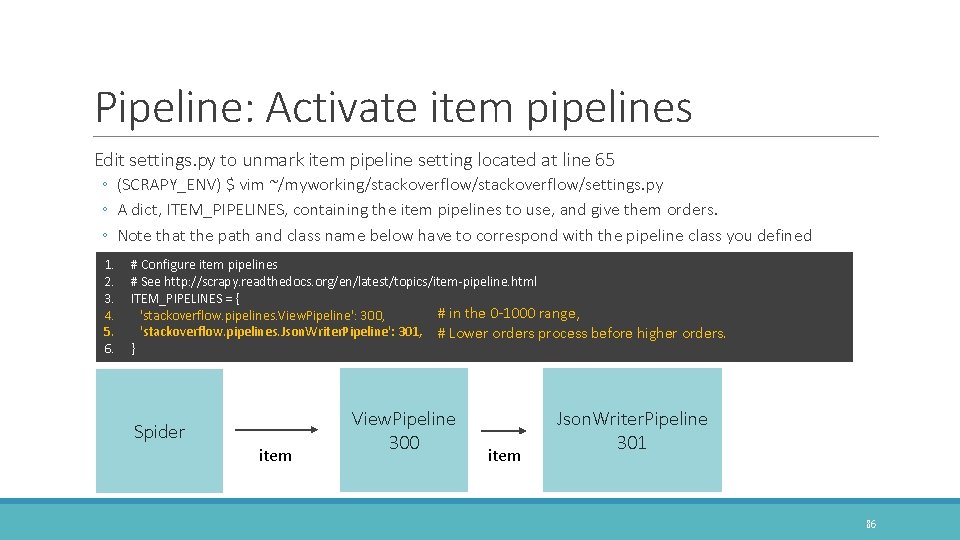

Pipeline: Activate item pipelines Edit settings. py to unmark item pipeline setting located at line 65 ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/settings. py ◦ A dict, ITEM_PIPELINES, containing the item pipelines to use, and give them orders. ◦ Note that the path and class name below have to correspond with the pipeline class you defined 1. 2. 3. 4. 5. 6. # Configure item pipelines # See http: //scrapy. readthedocs. org/en/latest/topics/item-pipeline. html ITEM_PIPELINES = { # in the 0 -1000 range, 'stackoverflow. pipelines. View. Pipeline': 300, 'stackoverflow. pipelines. Json. Writer. Pipeline': 301, # Lower orders process before higher orders. } Spider item View. Pipeline 300 item Json. Writer. Pipeline 301 86

Pipeline: Go to crawl Crawl and dump items to a JSON file in a customizing pipeline way ◦ (SCRAPY_ENV) $ scrapy crawl so ◦ items_pl. json will be generated 87

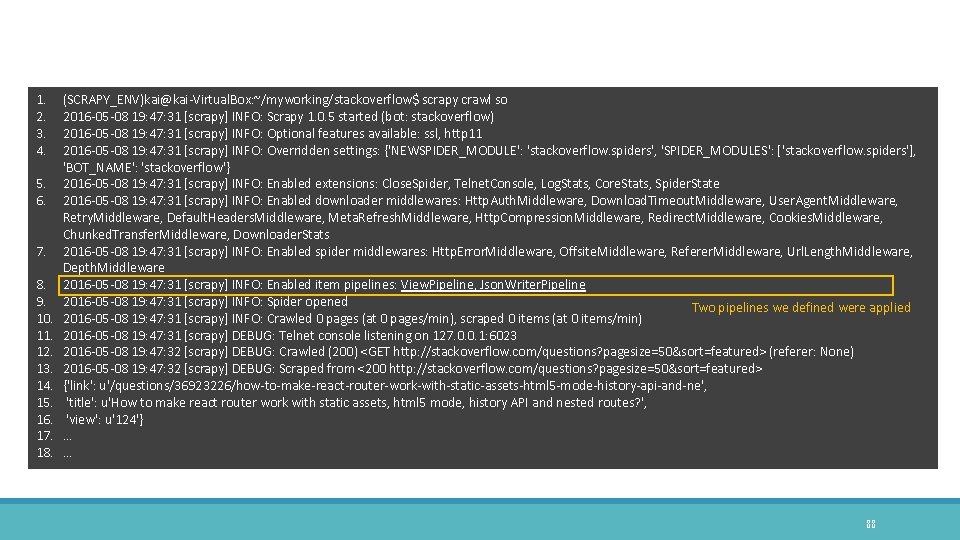

1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. 17. 18. (SCRAPY_ENV)kai@kai-Virtual. Box: ~/myworking/stackoverflow$ scrapy crawl so 2016 -05 -08 19: 47: 31 [scrapy] INFO: Scrapy 1. 0. 5 started (bot: stackoverflow) 2016 -05 -08 19: 47: 31 [scrapy] INFO: Optional features available: ssl, http 11 2016 -05 -08 19: 47: 31 [scrapy] INFO: Overridden settings: {'NEWSPIDER_MODULE': 'stackoverflow. spiders', 'SPIDER_MODULES': ['stackoverflow. spiders'], 'BOT_NAME': 'stackoverflow'} 2016 -05 -08 19: 47: 31 [scrapy] INFO: Enabled extensions: Close. Spider, Telnet. Console, Log. Stats, Core. Stats, Spider. State 2016 -05 -08 19: 47: 31 [scrapy] INFO: Enabled downloader middlewares: Http. Auth. Middleware, Download. Timeout. Middleware, User. Agent. Middleware, Retry. Middleware, Default. Headers. Middleware, Meta. Refresh. Middleware, Http. Compression. Middleware, Redirect. Middleware, Cookies. Middleware, Chunked. Transfer. Middleware, Downloader. Stats 2016 -05 -08 19: 47: 31 [scrapy] INFO: Enabled spider middlewares: Http. Error. Middleware, Offsite. Middleware, Referer. Middleware, Url. Length. Middleware, Depth. Middleware 2016 -05 -08 19: 47: 31 [scrapy] INFO: Enabled item pipelines: View. Pipeline, Json. Writer. Pipeline 2016 -05 -08 19: 47: 31 [scrapy] INFO: Spider opened Two pipelines we defined were applied 2016 -05 -08 19: 47: 31 [scrapy] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min) 2016 -05 -08 19: 47: 31 [scrapy] DEBUG: Telnet console listening on 127. 0. 0. 1: 6023 2016 -05 -08 19: 47: 32 [scrapy] DEBUG: Crawled (200) <GET http: //stackoverflow. com/questions? pagesize=50&sort=featured> (referer: None) 2016 -05 -08 19: 47: 32 [scrapy] DEBUG: Scraped from <200 http: //stackoverflow. com/questions? pagesize=50&sort=featured> {'link': u'/questions/36923226/how-to-make-react-router-work-with-static-assets-html 5 -mode-history-api-and-ne', 'title': u'How to make react router work with static assets, html 5 mode, history API and nested routes? ', 'view': u'124'} … … 88

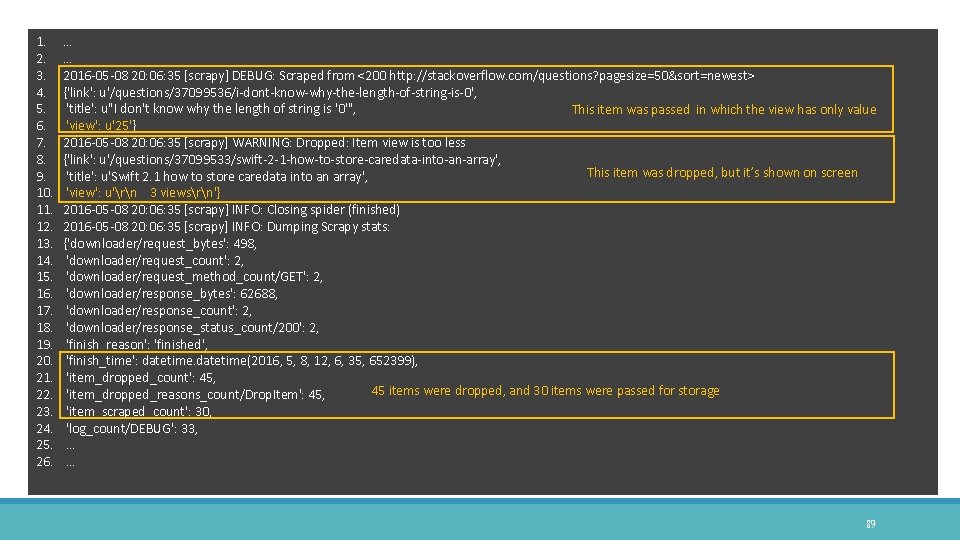

1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. 17. 18. 19. 20. 21. 22. 23. 24. 25. 26. … … 2016 -05 -08 20: 06: 35 [scrapy] DEBUG: Scraped from <200 http: //stackoverflow. com/questions? pagesize=50&sort=newest> {'link': u'/questions/37099536/i-dont-know-why-the-length-of-string-is-0', 'title': u"I don't know why the length of string is '0'", This item was passed in which the view has only value 'view': u'25'} 2016 -05 -08 20: 06: 35 [scrapy] WARNING: Dropped: Item view is too less {'link': u'/questions/37099533/swift-2 -1 -how-to-store-caredata-into-an-array', This item was dropped, but it’s shown on screen 'title': u'Swift 2. 1 how to store caredata into an array', 'view': u'rn 3 viewsrn'} 2016 -05 -08 20: 06: 35 [scrapy] INFO: Closing spider (finished) 2016 -05 -08 20: 06: 35 [scrapy] INFO: Dumping Scrapy stats: {'downloader/request_bytes': 498, 'downloader/request_count': 2, 'downloader/request_method_count/GET': 2, 'downloader/response_bytes': 62688, 'downloader/response_count': 2, 'downloader/response_status_count/200': 2, 'finish_reason': 'finished', 'finish_time': datetime(2016, 5, 8, 12, 6, 35, 652399), 'item_dropped_count': 45, 45 items were dropped, and 30 items were passed for storage 'item_dropped_reasons_count/Drop. Item': 45, 'item_scraped_count': 30, 'log_count/DEBUG': 33, … … 89

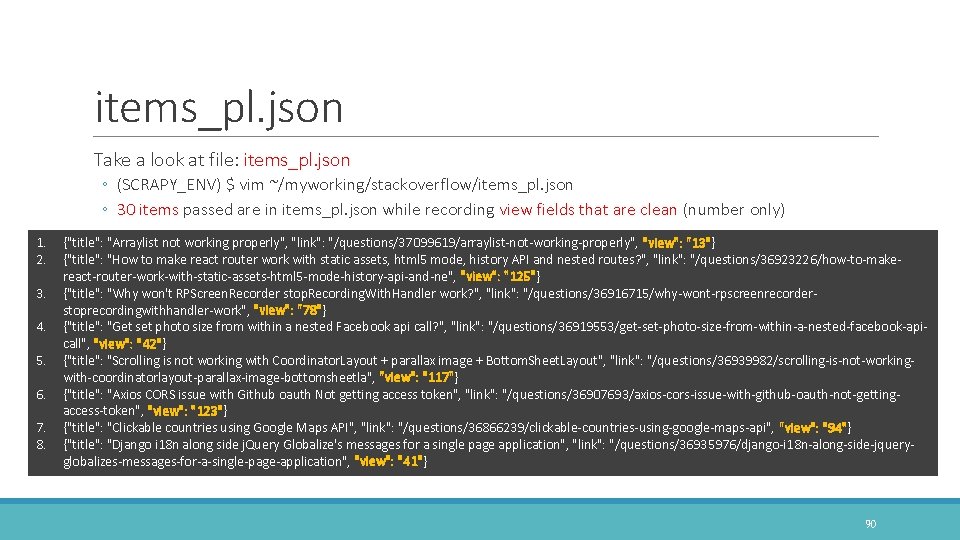

items_pl. json Take a look at file: items_pl. json ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/items_pl. json ◦ 30 items passed are in items_pl. json while recording view fields that are clean (number only) 1. 2. 3. 4. 5. 6. 7. 8. {"title": "Arraylist not working properly", "link": "/questions/37099619/arraylist-not-working-properly", "view": "13"} {"title": "How to make react router work with static assets, html 5 mode, history API and nested routes? ", "link": "/questions/36923226/how-to-makereact-router-work-with-static-assets-html 5 -mode-history-api-and-ne", "view": "125"} {"title": "Why won't RPScreen. Recorder stop. Recording. With. Handler work? ", "link": "/questions/36916715/why-wont-rpscreenrecorderstoprecordingwithhandler-work", "view": "78"} {"title": "Get set photo size from within a nested Facebook api call? ", "link": "/questions/36919553/get-set-photo-size-from-within-a-nested-facebook-apicall", "view": "42"} {"title": "Scrolling is not working with Coordinator. Layout + parallax image + Bottom. Sheet. Layout", "link": "/questions/36939982/scrolling-is-not-workingwith-coordinatorlayout-parallax-image-bottomsheetla", "view": "117"} {"title": "Axios CORS issue with Github oauth Not getting access token", "link": "/questions/36907693/axios-cors-issue-with-github-oauth-not-gettingaccess-token", "view": "123"} {"title": "Clickable countries using Google Maps API", "link": "/questions/36866239/clickable-countries-using-google-maps-api", "view": "94"} {"title": "Django i 18 n along side j. Query Globalize's messages for a single page application", "link": "/questions/36935976/django-i 18 n-along-side-jqueryglobalizes-messages-for-a-single-page-application", "view": "41"} 90

Persistence for Database CRAWL STACKOVERFLOW AND SAVE TO MONGODB 91

Mongo. DB is an open-source document database that provides high performance, high availability, and automatic scaling. ◦ https: //www. mongodb. com/ Document Database ◦ A record in Mongo. DB is a document, which is a data structure composed of field and value pairs. ◦ Mongo. DB documents are similar to JSON objects. ◦ The values of fields may include other documents, arrays, and arrays of documents. 92

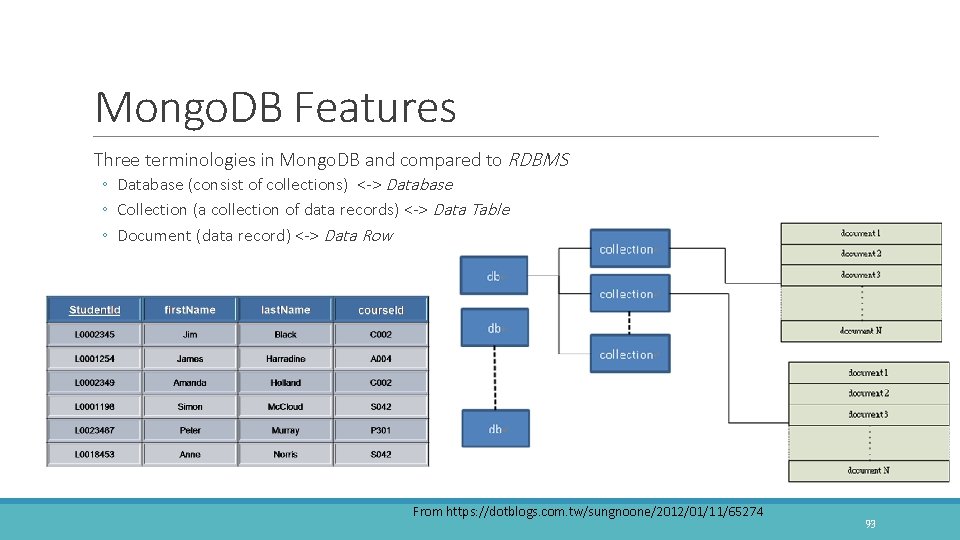

Mongo. DB Features Three terminologies in Mongo. DB and compared to RDBMS ◦ Database (consist of collections) <-> Database ◦ Collection (a collection of data records) <-> Data Table ◦ Document (data record) <-> Data Row From https: //dotblogs. com. tw/sungnoone/2012/01/11/65274 93

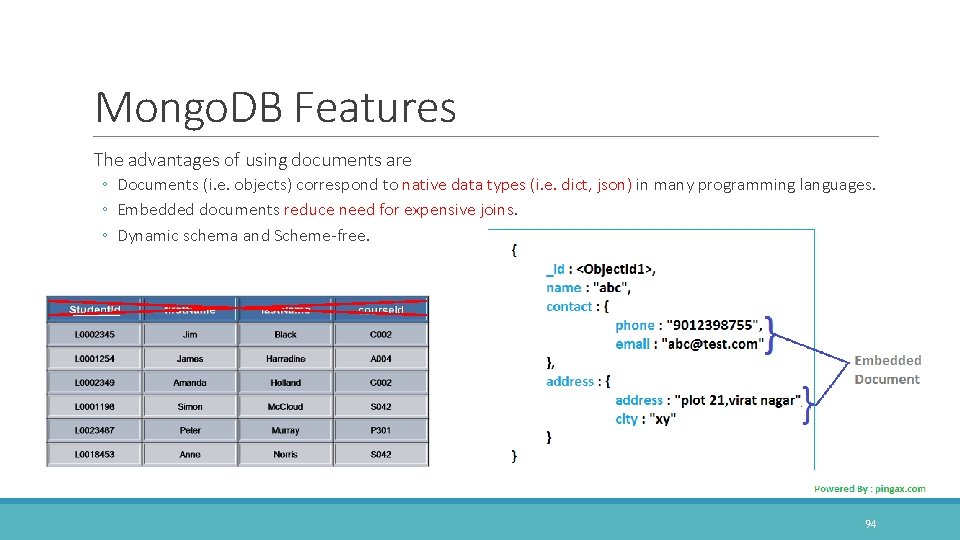

Mongo. DB Features The advantages of using documents are ◦ Documents (i. e. objects) correspond to native data types (i. e. dict, json) in many programming languages. ◦ Embedded documents reduce need for expensive joins. ◦ Dynamic schema and Scheme-free. 94

Install Mongo. DB Import the public key used by the package management system ◦ sudo apt-key adv --keyserver hkp: //keyserver. ubuntu. com: 80 --recv EA 312927 Create a list file for Mongo. DB (Ubuntu 14. 04) ◦ echo "deb http: //repo. mongodb. org/apt/ubuntu trusty/mongodb-org/3. 2 multiverse" | sudo tee /etc/apt/sources. list. d/mongodb-org-3. 2. list Reload local package database ◦ sudo apt-get update Install the latest stable version of Mongo. DB. ◦ sudo apt-get install -y mongodb-org Follow command to start mongod ◦ sudo service mongod start https: //docs. mongodb. com/manual/installation/ 95

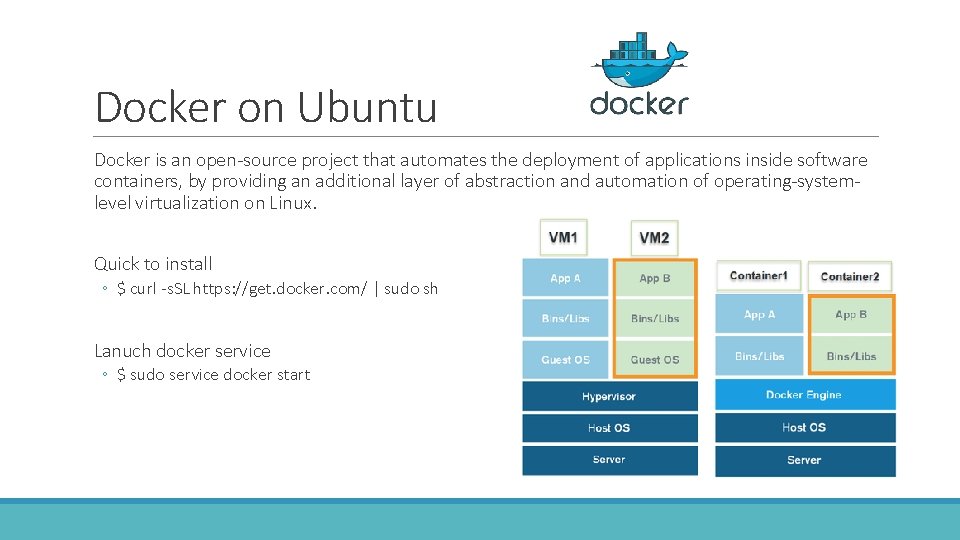

Docker on Ubuntu Docker is an open-source project that automates the deployment of applications inside software containers, by providing an additional layer of abstraction and automation of operating-systemlevel virtualization on Linux. Quick to install ◦ $ curl -s. SL https: //get. docker. com/ | sudo sh Lanuch docker service ◦ $ sudo service docker start

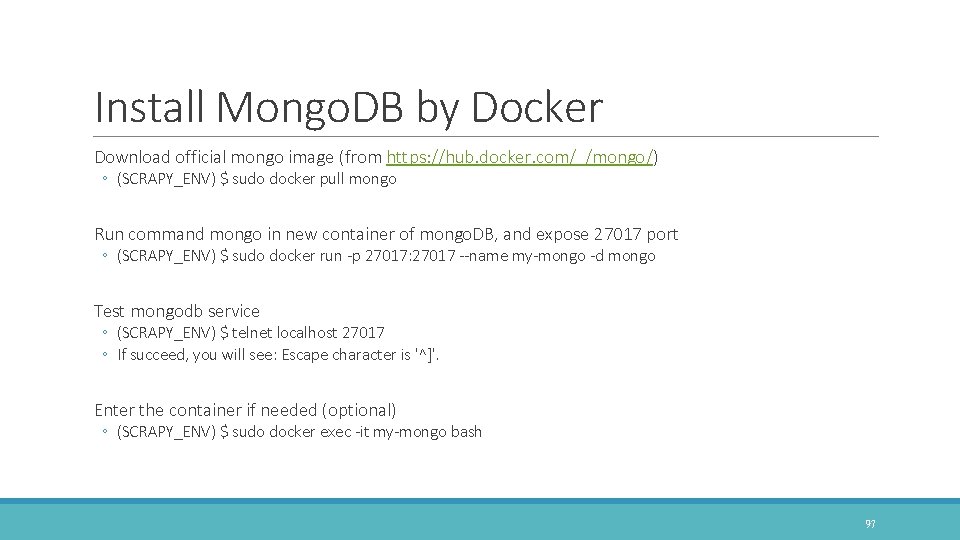

Install Mongo. DB by Docker Download official mongo image (from https: //hub. docker. com/_/mongo/) ◦ (SCRAPY_ENV) $ sudo docker pull mongo Run command mongo in new container of mongo. DB, and expose 27017 port ◦ (SCRAPY_ENV) $ sudo docker run -p 27017: 27017 --name my-mongo -d mongo Test mongodb service ◦ (SCRAPY_ENV) $ telnet localhost 27017 ◦ If succeed, you will see: Escape character is '^]'. Enter the container if needed (optional) ◦ (SCRAPY_ENV) $ sudo docker exec -it my-mongo bash 97

Py. Mongo is a Python driver for Mongo. DB ◦ https: //api. mongodb. com/python/current/ ◦ pip install pymongo==3. 1. 1 # included in previous requirements. txt Py. Mongo connection, get, insert, update, and delete data This tutorial is intended as an introduction to working with Mongo. DB and Py. Mongo. ◦ http: //api. mongodb. com/python/current/tutorial. html 98

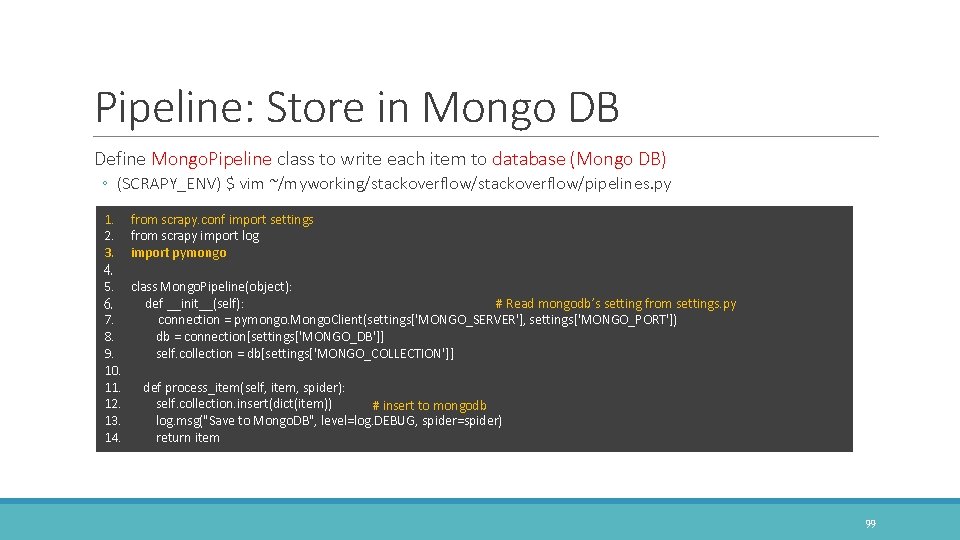

Pipeline: Store in Mongo DB Define Mongo. Pipeline class to write each item to database (Mongo DB) ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/pipelines. py 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. from scrapy. conf import settings from scrapy import log import pymongo class Mongo. Pipeline(object): def __init__(self): # Read mongodb’s setting from settings. py connection = pymongo. Mongo. Client(settings['MONGO_SERVER'], settings['MONGO_PORT']) db = connection[settings['MONGO_DB']] self. collection = db[settings['MONGO_COLLECTION']] def process_item(self, item, spider): self. collection. insert(dict(item)) # insert to mongodb log. msg("Save to Mongo. DB", level=log. DEBUG, spider=spider) return item 99

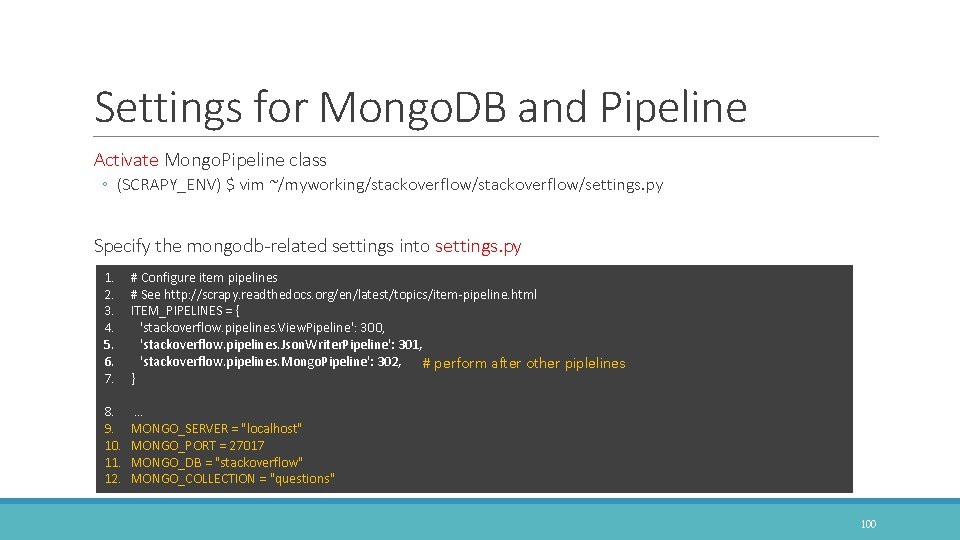

Settings for Mongo. DB and Pipeline Activate Mongo. Pipeline class ◦ (SCRAPY_ENV) $ vim ~/myworking/stackoverflow/settings. py Specify the mongodb-related settings into settings. py 1. 2. 3. 4. 5. 6. 7. # Configure item pipelines # See http: //scrapy. readthedocs. org/en/latest/topics/item-pipeline. html ITEM_PIPELINES = { 'stackoverflow. pipelines. View. Pipeline': 300, 'stackoverflow. pipelines. Json. Writer. Pipeline': 301, 'stackoverflow. pipelines. Mongo. Pipeline': 302, # perform after other piplelines } 8. 9. 10. 11. 12. … MONGO_SERVER = "localhost" MONGO_PORT = 27017 MONGO_DB = "stackoverflow" MONGO_COLLECTION = "questions" 100

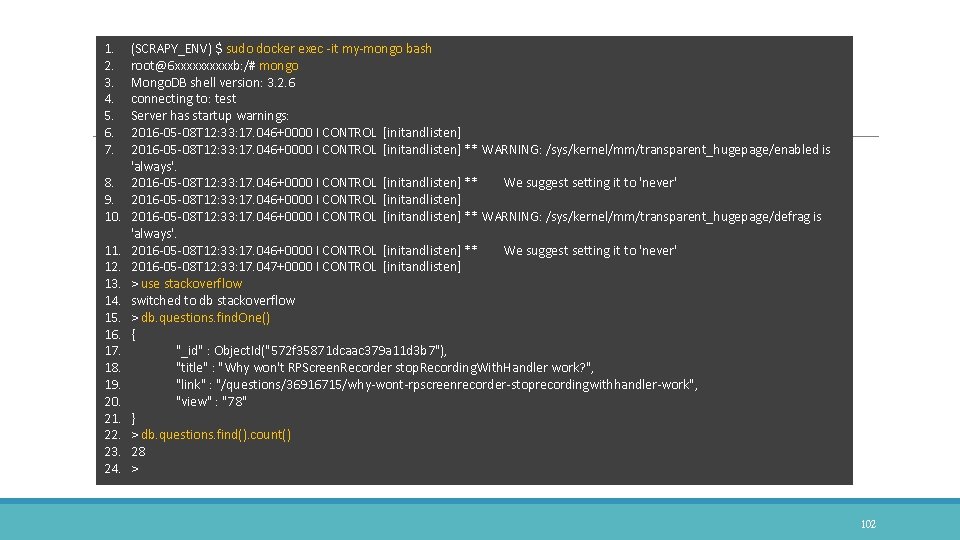

Pipeline: Go to crawl Crawl and store items to a database in a customizing pipeline way ◦ (SCRAPY_ENV) $ scrapy crawl so ◦ Items will be stored into Mongo. DB one by one Find the scraped items in Mongo. DB ◦ ◦ ◦ (SCRAPY_ENV) $ sudo docker exec -it my-mongo bash root@6 xxxxb: /# mongo > use stackoverflow > db. questions. find. One() > db. questions. find(). count() 101

1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. 17. 18. 19. 20. 21. 22. 23. 24. (SCRAPY_ENV) $ sudo docker exec -it my-mongo bash root@6 xxxxxb: /# mongo Mongo. DB shell version: 3. 2. 6 connecting to: test Server has startup warnings: 2016 -05 -08 T 12: 33: 17. 046+0000 I CONTROL [initandlisten] ** WARNING: /sys/kernel/mm/transparent_hugepage/enabled is 'always'. 2016 -05 -08 T 12: 33: 17. 046+0000 I CONTROL [initandlisten] ** We suggest setting it to 'never' 2016 -05 -08 T 12: 33: 17. 046+0000 I CONTROL [initandlisten] ** WARNING: /sys/kernel/mm/transparent_hugepage/defrag is 'always'. 2016 -05 -08 T 12: 33: 17. 046+0000 I CONTROL [initandlisten] ** We suggest setting it to 'never' 2016 -05 -08 T 12: 33: 17. 047+0000 I CONTROL [initandlisten] > use stackoverflow switched to db stackoverflow > db. questions. find. One() { "_id" : Object. Id("572 f 35871 dcaac 379 a 11 d 3 b 7"), "title" : "Why won't RPScreen. Recorder stop. Recording. With. Handler work? ", "link" : "/questions/36916715/why-wont-rpscreenrecorder-stoprecordingwithhandler-work", "view" : "78" } > db. questions. find(). count() 28 > 102

Clone demo codes from Git. Hub You can obtain and run this demo codes in a elegant and easy way ◦ git clone https: //github. com/kaikyle 7997/scrapy_mongodb_demo. git Git. Hub Repos: scrapy_mongodb_demo ◦ https: //github. com/kaikyle 7997/scrapy_mongodb_demo 103

From Scraping Spider to Crawl. Spider FOLLOWING LINKS IN DEPTH 104

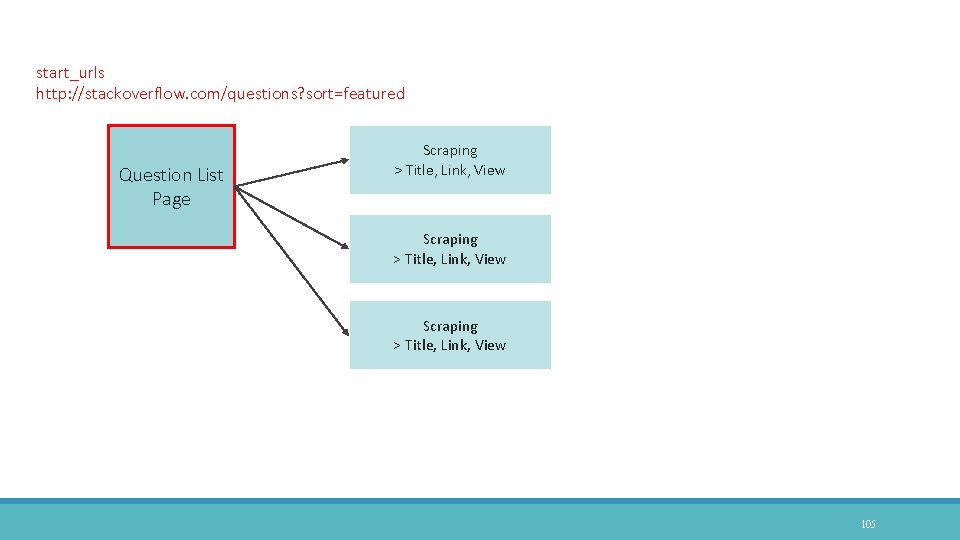

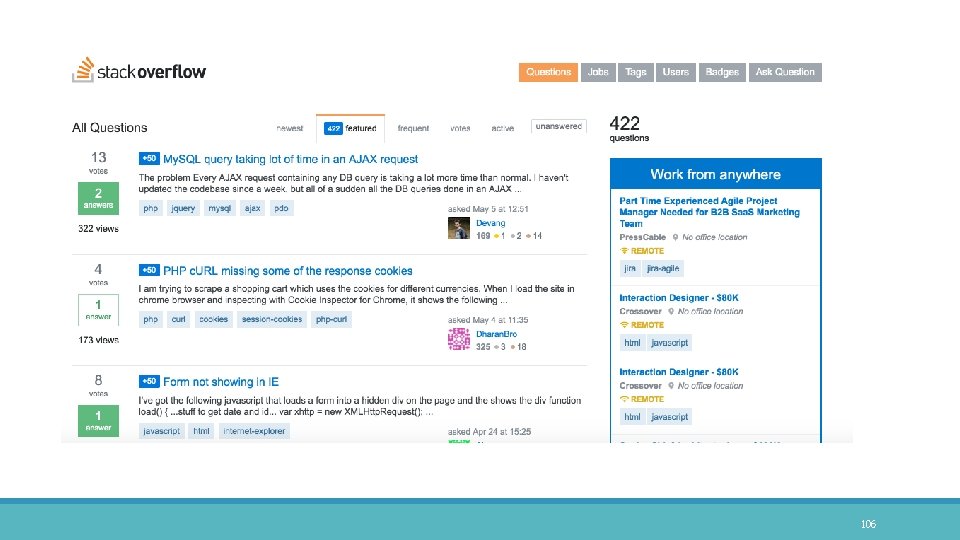

start_urls http: //stackoverflow. com/questions? sort=featured Question List Page Scraping > Title, Link, View 105

106

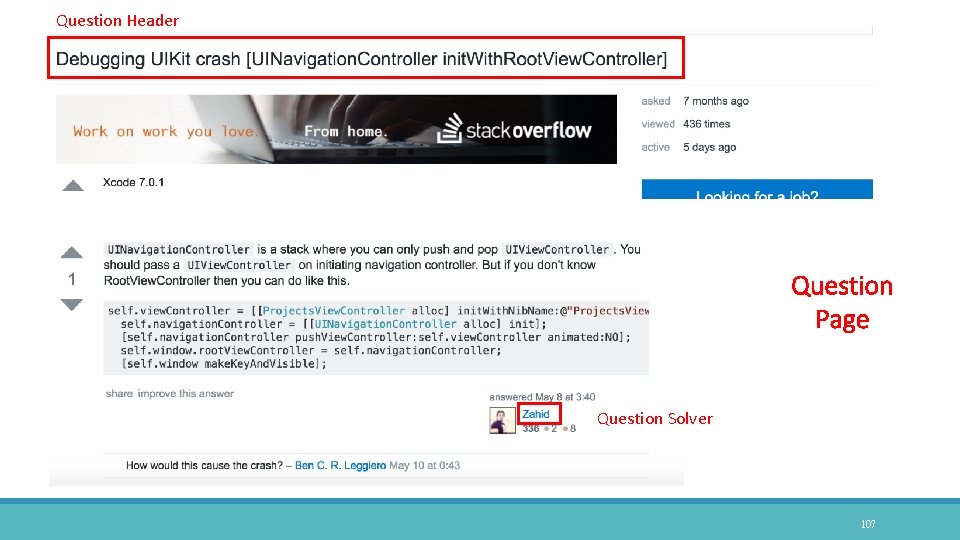

Question Header Question Page Question Solver 107

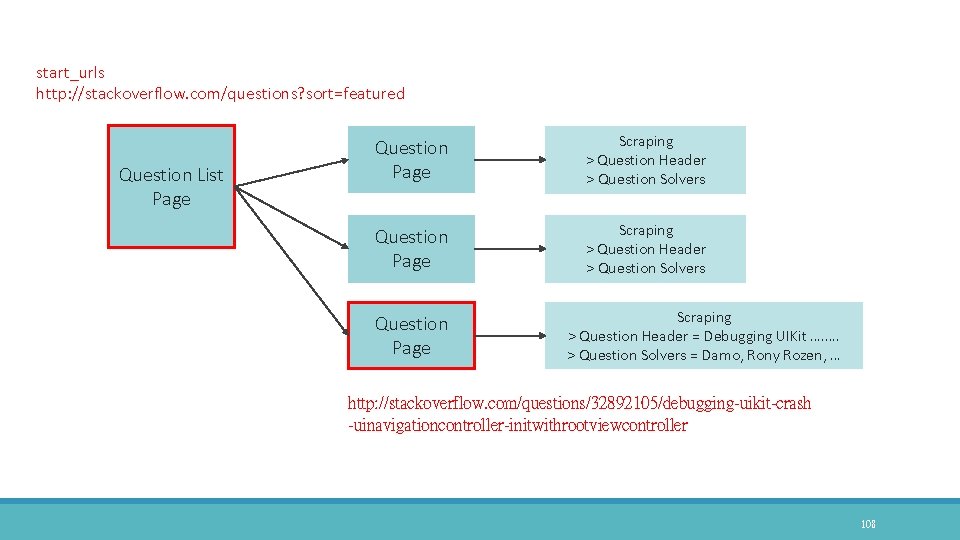

start_urls http: //stackoverflow. com/questions? sort=featured Question List Page Question Page Scraping > Question Header > Question Solvers Question Page Scraping > Question Header = Debugging UIKit …. …. > Question Solvers = Damo, Rony Rozen, … http: //stackoverflow. com/questions/32892105/debugging-uikit-crash -uinavigationcontroller-initwithrootviewcontroller 108

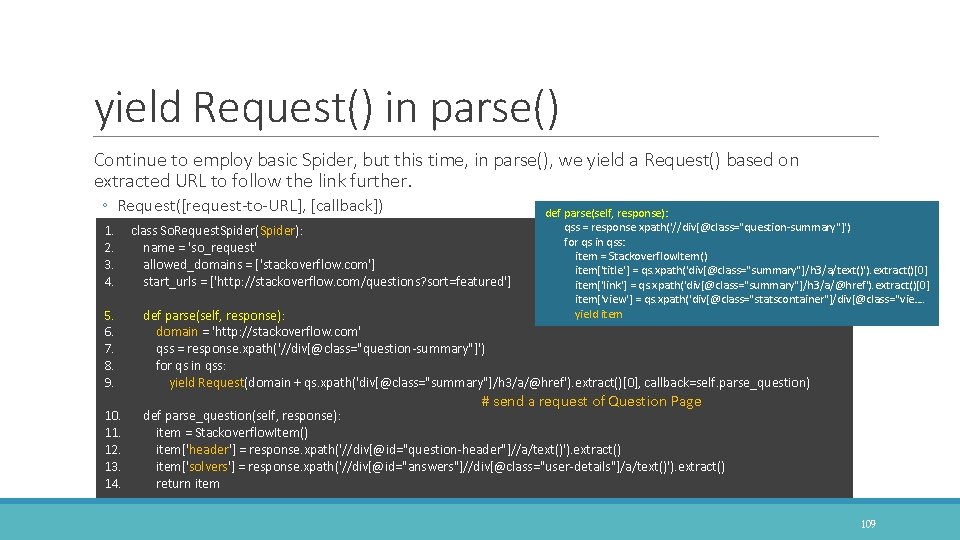

yield Request() in parse() Continue to employ basic Spider, but this time, in parse(), we yield a Request() based on extracted URL to follow the link further. ◦ Request([request-to-URL], [callback]) def parse(self, response): qss = response. xpath('//div[@class="question-summary"]') for qs in qss: item = Stackoverflow. Item() item['title'] = qs. xpath('div[@class="summary"]/h 3/a/text()'). extract()[0] item['link'] = qs. xpath('div[@class="summary"]/h 3/a/@href'). extract()[0] item['view'] = qs. xpath('div[@class="statscontainer"]/div[@class="vie…. yield item 1. 2. 3. 4. class So. Request. Spider(Spider): name = 'so_request' allowed_domains = ['stackoverflow. com'] start_urls = ['http: //stackoverflow. com/questions? sort=featured'] 5. 6. 7. 8. 9. def parse(self, response): domain = 'http: //stackoverflow. com' qss = response. xpath('//div[@class="question-summary"]') for qs in qss: yield Request(domain + qs. xpath('div[@class="summary"]/h 3/a/@href'). extract()[0], callback=self. parse_question) 10. 11. 12. 13. 14. # send a request of Question Page def parse_question(self, response): item = Stackoverflow. Item() item['header'] = response. xpath('//div[@id="question-header"]//a/text()'). extract() item['solvers'] = response. xpath('//div[@id="answers"]//div[@class="user-details"]/a/text()'). extract() return item 109

110

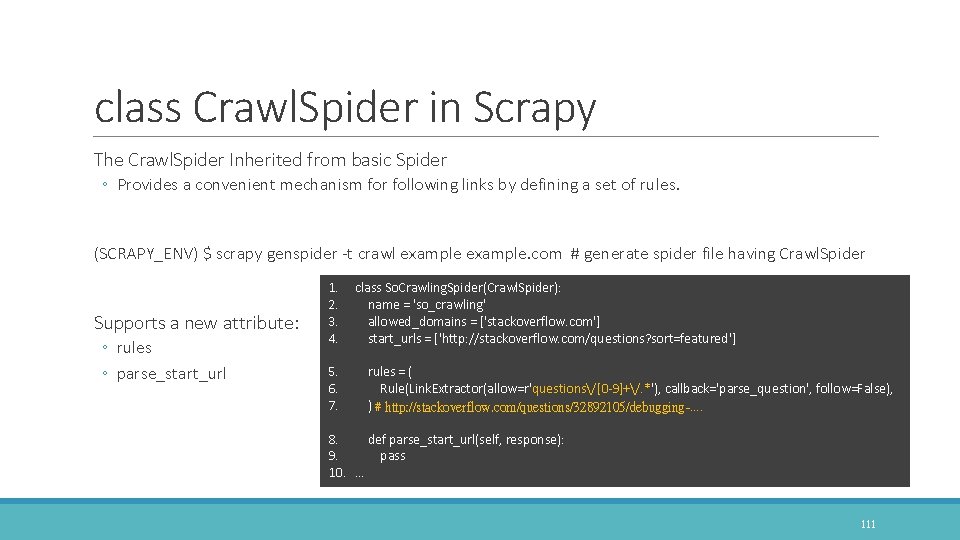

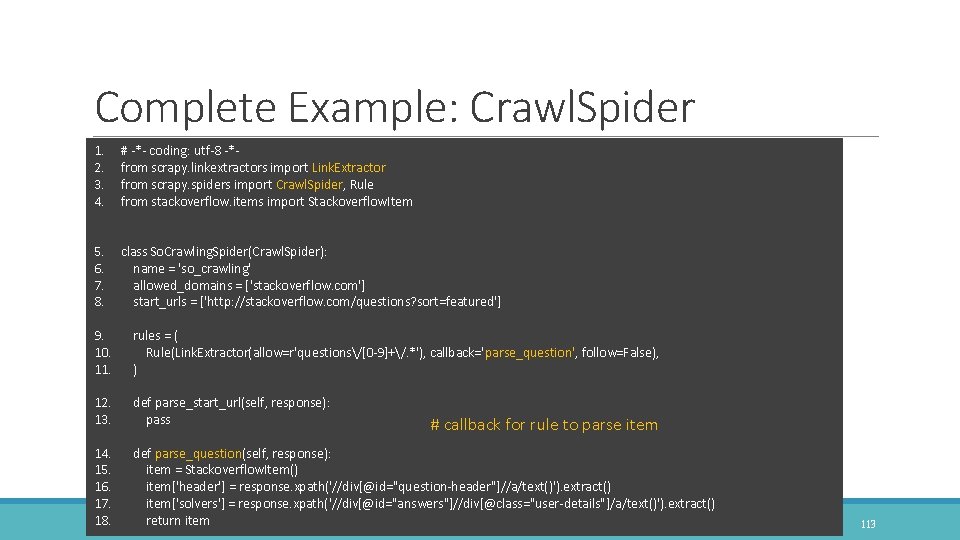

class Crawl. Spider in Scrapy The Crawl. Spider Inherited from basic Spider ◦ Provides a convenient mechanism for following links by defining a set of rules. (SCRAPY_ENV) $ scrapy genspider -t crawl example. com # generate spider file having Crawl. Spider Supports a new attribute: ◦ rules ◦ parse_start_url 1. 2. 3. 4. class So. Crawling. Spider(Crawl. Spider): name = 'so_crawling' allowed_domains = ['stackoverflow. com'] start_urls = ['http: //stackoverflow. com/questions? sort=featured'] 5. 6. 7. rules = ( Rule(Link. Extractor(allow=r'questions/[0 -9]+/. *'), callback='parse_question', follow=False), ) # http: //stackoverflow. com/questions/32892105/debugging-…. 8. def parse_start_url(self, response): 9. pass 10. … 111

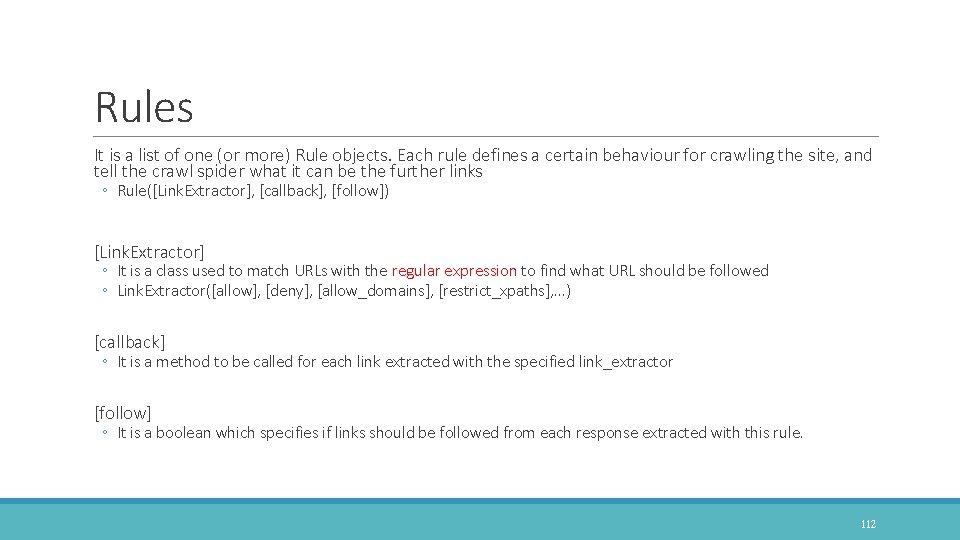

Rules It is a list of one (or more) Rule objects. Each rule defines a certain behaviour for crawling the site, and tell the crawl spider what it can be the further links ◦ Rule([Link. Extractor], [callback], [follow]) [Link. Extractor] ◦ It is a class used to match URLs with the regular expression to find what URL should be followed ◦ Link. Extractor([allow], [deny], [allow_domains], [restrict_xpaths], …) [callback] ◦ It is a method to be called for each link extracted with the specified link_extractor [follow] ◦ It is a boolean which specifies if links should be followed from each response extracted with this rule. 112

Complete Example: Crawl. Spider 1. 2. 3. 4. # -*- coding: utf-8 -*from scrapy. linkextractors import Link. Extractor from scrapy. spiders import Crawl. Spider, Rule from stackoverflow. items import Stackoverflow. Item 5. 6. 7. 8. class So. Crawling. Spider(Crawl. Spider): name = 'so_crawling' allowed_domains = ['stackoverflow. com'] start_urls = ['http: //stackoverflow. com/questions? sort=featured'] 9. rules = ( 10. Rule(Link. Extractor(allow=r'questions/[0 -9]+/. *'), callback='parse_question', follow=False), 11. ) 12. def parse_start_url(self, response): 13. pass 14. 15. 16. 17. 18. # callback for rule to parse item def parse_question(self, response): item = Stackoverflow. Item() item['header'] = response. xpath('//div[@id="question-header"]//a/text()'). extract() item['solvers'] = response. xpath('//div[@id="answers"]//div[@class="user-details"]/a/text()'). extract() return item 113

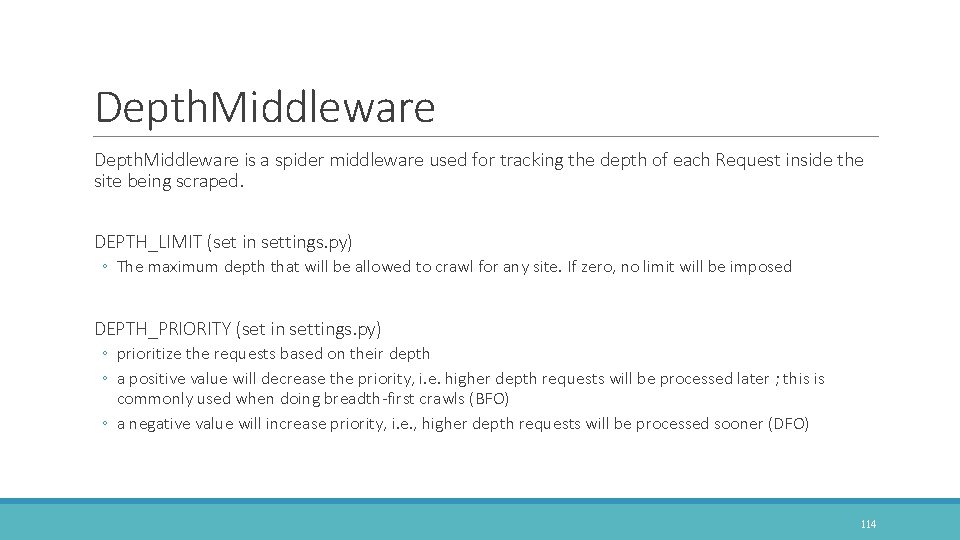

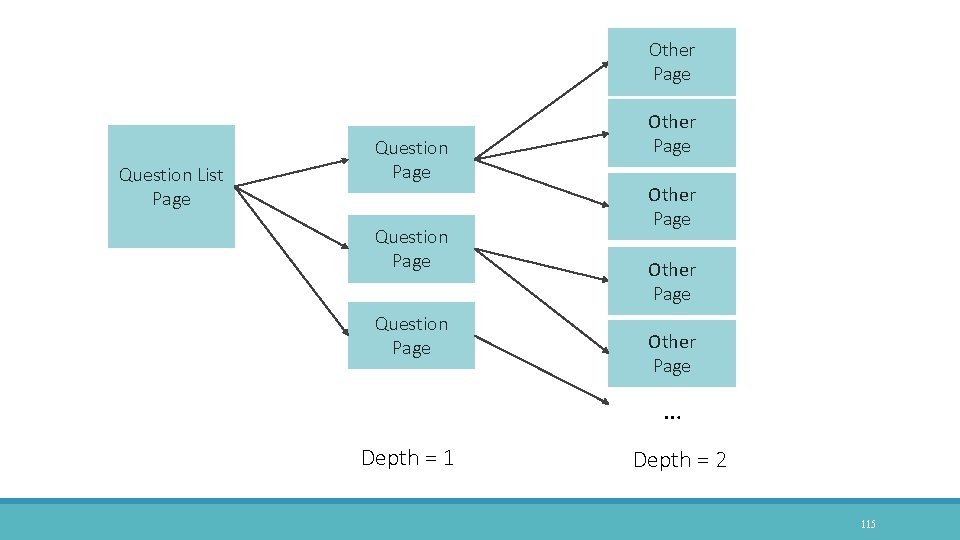

Depth. Middleware is a spider middleware used for tracking the depth of each Request inside the site being scraped. DEPTH_LIMIT (set in settings. py) ◦ The maximum depth that will be allowed to crawl for any site. If zero, no limit will be imposed DEPTH_PRIORITY (set in settings. py) ◦ prioritize the requests based on their depth ◦ a positive value will decrease the priority, i. e. higher depth requests will be processed later ; this is commonly used when doing breadth-first crawls (BFO) ◦ a negative value will increase priority, i. e. , higher depth requests will be processed sooner (DFO) 114

Other Page Question List Page Question Page Other Page … Depth = 1 Depth = 2 115

Clone demo codes from Git. Hub You can obtain and run this demo codes in a elegant and easy way ◦ git clone https: //github. com/kaikyle 7997/scrapy_crawl_indepth. git Git. Hub Repos: scrapy_crawl_indepth ◦ https: //github. com/kaikyle 7997/scrapy_crawl_indepth ◦ Note that settings. py has two lines: DOWNLOAD_DELAY=3 and DEPTH_LIMIT = 1 116

Scrapy on IDE EXPEDITE DEVELOPMENT 117

Help us to debug scrapy As you know, we just run scrapy under terminal that it is not comfortable to clarify why the error happens In IDE, like Py. Charm, to set a breakpoint in scrapy project to debug your codes ◦ Enable to observe the list of Selector result ◦ Enable to observe the content of item ◦ Response URL, Status, Body, …, so on. 118

Py. Charm is an Integrated Development Environment (IDE) used for programming in Python. Download Py. Charm ◦ https: //www. jetbrains. com/pycharm/download/ 119

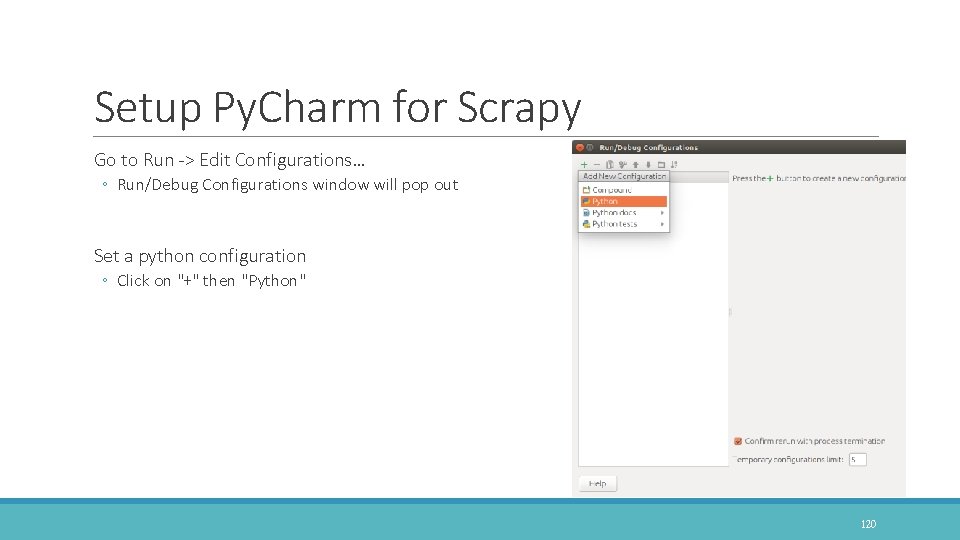

Setup Py. Charm for Scrapy Go to Run -> Edit Configurations… ◦ Run/Debug Configurations window will pop out Set a python configuration ◦ Click on "+" then "Python" 120

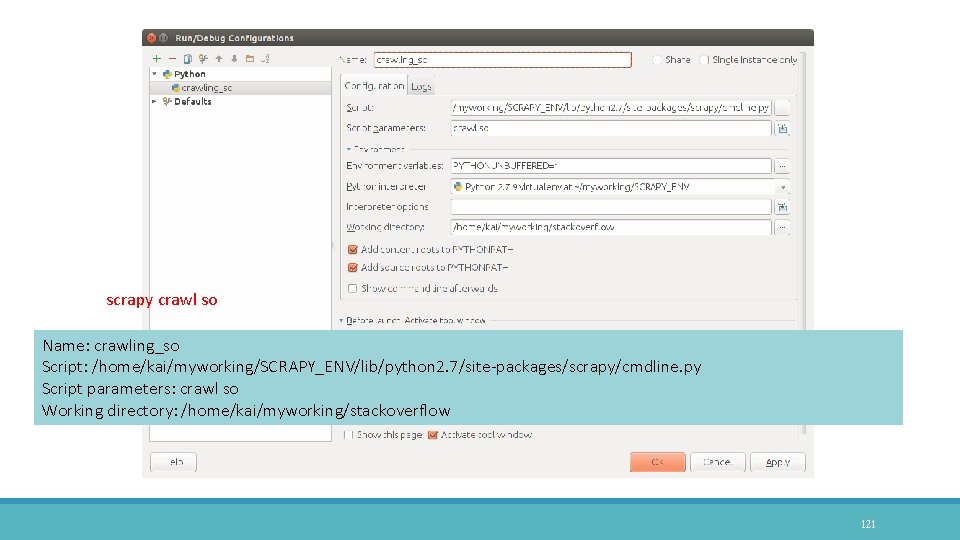

scrapy crawl so Name: crawling_so Script: /home/kai/myworking/SCRAPY_ENV/lib/python 2. 7/site-packages/scrapy/cmdline. py Script parameters: crawl so Working directory: /home/kai/myworking/stackoverflow 121

Prevent Getting Blocked/Banned WHILE SCRAPING WEBSITES 122

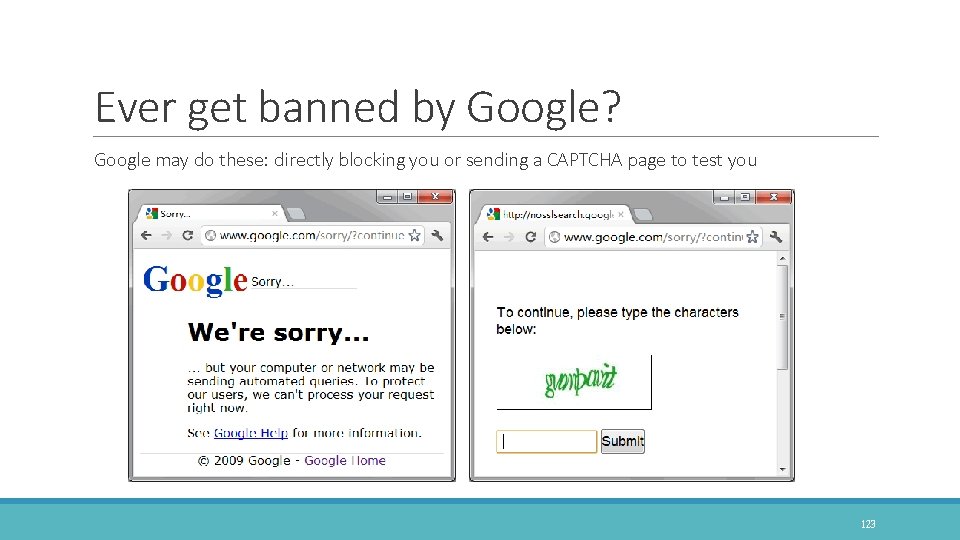

Ever get banned by Google? Google may do these: directly blocking you or sending a CAPTCHA page to test you 123

Best Practices to Web Crawling Make crawling slower, do not DDOS the server, treat them nicely ★ ★ ★ ◦ In Scrapy, Auto. Throttle extension is for automatically throttling crawling speed based on load of both the Scrapy server and the website you are crawling. ◦ In settings. py, CONCURRENT_REQUESTS_PER_IP, DOWNLOAD_DELAY Always respect the robots. txt ★ ★ ★ ◦ Inform the web robot about which areas of the website should not be processed or scanned ◦ https: //en. wikipedia. org/robots. txt ◦ If Robots. Txt. Middleware is enabled, Scrapy will respect robots. txt policies Be aware of honeypots ★ ★ Do not follow the same crawling pattern ★ ◦ Generally, it‘s because of cookies, ◦ Incorporate some random clicks on the page, mouse movements and random actions that will make a spider looks like a human. 124

Best Practices to Web Crawling User-Agent spoofing ★ ★ ◦ It is a HTTP header to identify client to the server with application name, version and OS ◦ Mozilla/5. 0 (Windows NT 6. 1; WOW 64) Apple. Web. Kit/537. 36 (KHTML, like Gecko) Chrome/49. 0. 2623. 112 Safari/537. 36 ◦ In Scrapy, User. Agent. Middleware allows spiders to override the default user agent and its user_agent attribute must be set. Disguise your requests by rotating IPs ★ ★ ◦ In addition to IPs pool, another solutions are VPN, Tor Network, and Proxy Services HOW TO PREVENT GETTING BLACKLISTED WHILE SCRAPING ◦ https: //learn. scrapehero. com/how-to-prevent-getting-blacklisted-while-scraping/ 125

User-Agent Spoofing in Scrapy SETTINGS. PY OR MIDDLEWARE 126

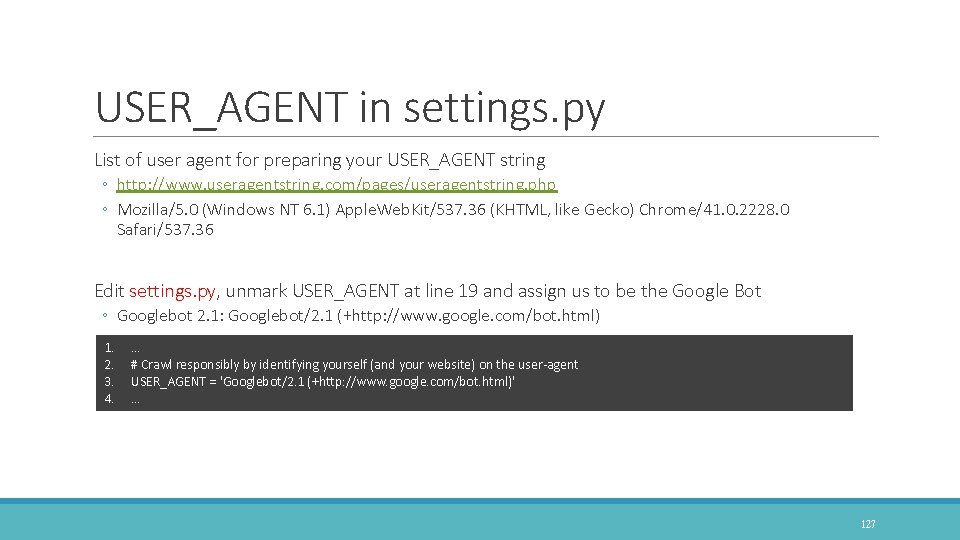

USER_AGENT in settings. py List of user agent for preparing your USER_AGENT string ◦ http: //www. useragentstring. com/pages/useragentstring. php ◦ Mozilla/5. 0 (Windows NT 6. 1) Apple. Web. Kit/537. 36 (KHTML, like Gecko) Chrome/41. 0. 2228. 0 Safari/537. 36 Edit settings. py, unmark USER_AGENT at line 19 and assign us to be the Google Bot ◦ Googlebot 2. 1: Googlebot/2. 1 (+http: //www. google. com/bot. html) 1. 2. 3. 4. … # Crawl responsibly by identifying yourself (and your website) on the user-agent USER_AGENT = 'Googlebot/2. 1 (+http: //www. google. com/bot. html)' … 127

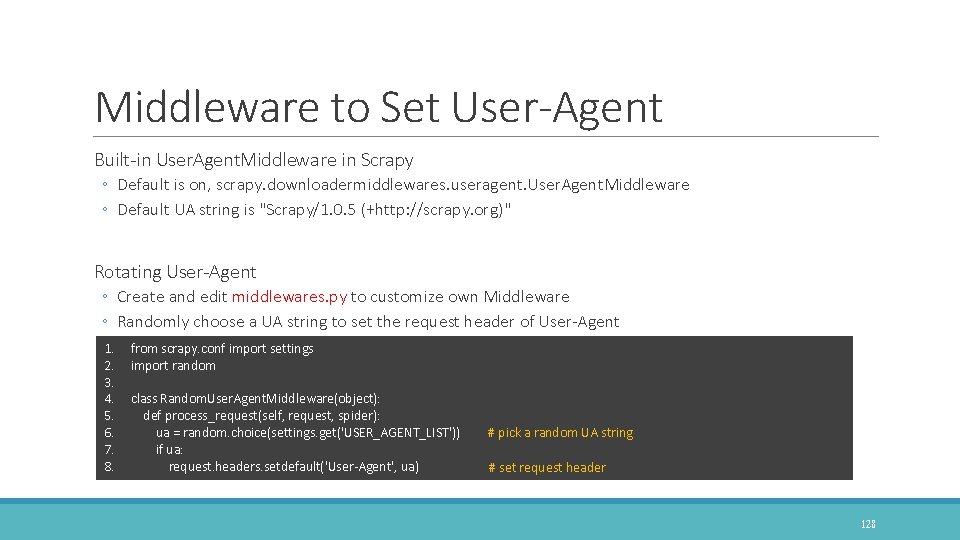

Middleware to Set User-Agent Built-in User. Agent. Middleware in Scrapy ◦ Default is on, scrapy. downloadermiddlewares. useragent. User. Agent. Middleware ◦ Default UA string is "Scrapy/1. 0. 5 (+http: //scrapy. org)" Rotating User-Agent ◦ Create and edit middlewares. py to customize own Middleware ◦ Randomly choose a UA string to set the request header of User-Agent 1. 2. 3. 4. 5. 6. 7. 8. from scrapy. conf import settings import random class Random. User. Agent. Middleware(object): def process_request(self, request, spider): ua = random. choice(settings. get('USER_AGENT_LIST')) if ua: request. headers. setdefault('User-Agent', ua) # pick a random UA string # set request header 128

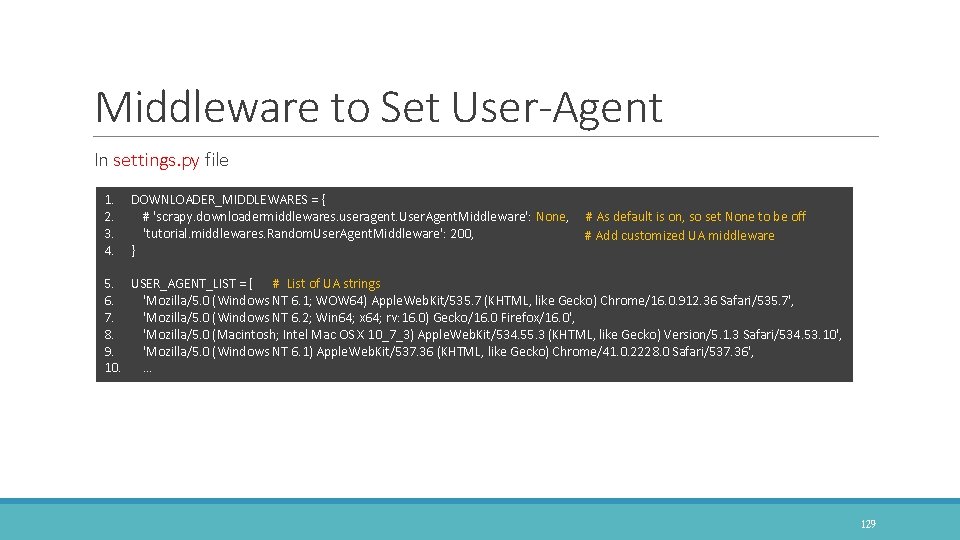

Middleware to Set User-Agent In settings. py file 1. 2. 3. 4. DOWNLOADER_MIDDLEWARES = { # 'scrapy. downloadermiddlewares. useragent. User. Agent. Middleware': None, 'tutorial. middlewares. Random. User. Agent. Middleware': 200, } 5. 6. 7. 8. 9. 10. USER_AGENT_LIST = [ # List of UA strings 'Mozilla/5. 0 (Windows NT 6. 1; WOW 64) Apple. Web. Kit/535. 7 (KHTML, like Gecko) Chrome/16. 0. 912. 36 Safari/535. 7', 'Mozilla/5. 0 (Windows NT 6. 2; Win 64; x 64; rv: 16. 0) Gecko/16. 0 Firefox/16. 0', 'Mozilla/5. 0 (Macintosh; Intel Mac OS X 10_7_3) Apple. Web. Kit/534. 55. 3 (KHTML, like Gecko) Version/5. 1. 3 Safari/534. 53. 10', 'Mozilla/5. 0 (Windows NT 6. 1) Apple. Web. Kit/537. 36 (KHTML, like Gecko) Chrome/41. 0. 2228. 0 Safari/537. 36', … # As default is on, so set None to be off # Add customized UA middleware 129

Clone demo codes from Git. Hub You can obtain and run this demo codes in a elegant and easy way ◦ git clone https: //github. com/kaikyle 7997/scrapy_user_agent. git Git. Hub Repos: scrapy_user_agent ◦ https: //github. com/kaikyle 7997/scrapy_user_agent 130

Rotating your IPs PROXY, TOR, POLIPO, PROXY SERVICE 131

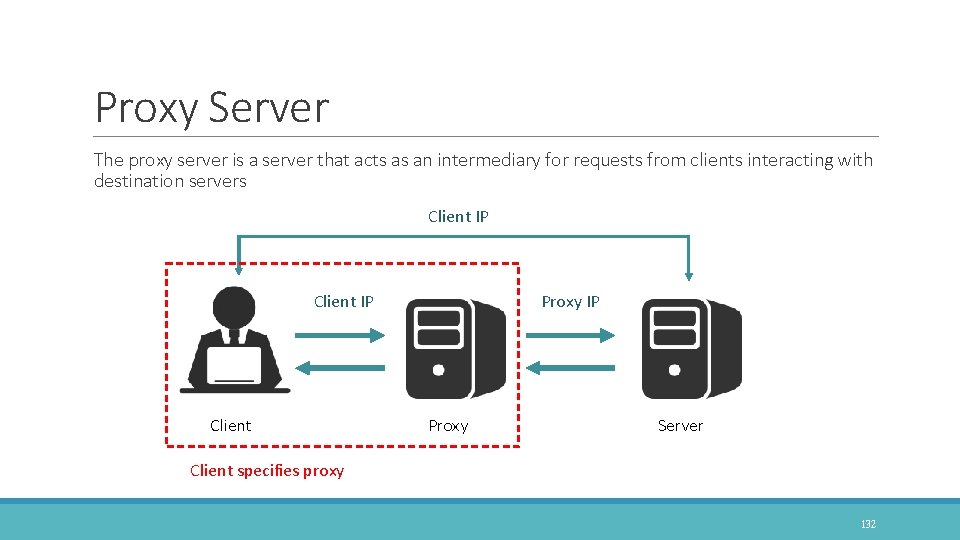

Proxy Server The proxy server is a server that acts as an intermediary for requests from clients interacting with destination servers Client IP Client Proxy IP Proxy Server Client specifies proxy 132

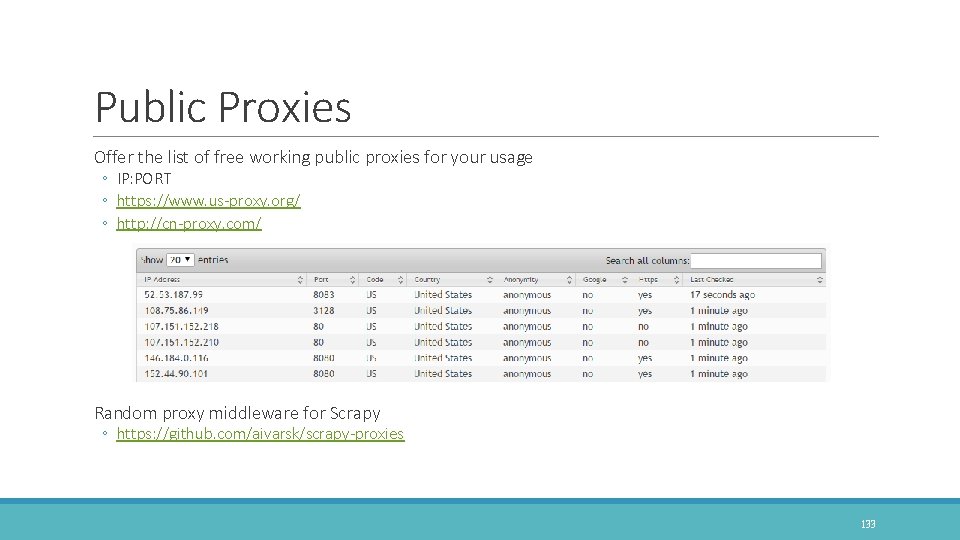

Public Proxies Offer the list of free working public proxies for your usage ◦ IP: PORT ◦ https: //www. us-proxy. org/ ◦ http: //cn-proxy. com/ Random proxy middleware for Scrapy ◦ https: //github. com/aivarsk/scrapy-proxies 133

Polipo ◦ https: //www. irif. univ-paris-diderot. fr/~jch/software/polipo/ ◦ Polipo is a small and fast caching web proxy (a web cache, an HTTP proxy, a proxy server). ◦ HTTP Traffic Install Polipo on Ubuntu ◦ sudo apt-get install polipo 134

TOR Network TOR (The Onion Router) ◦ https: //www. torproject. org/ ◦ Tor is free software for enabling anonymous communication TOR Browser 135

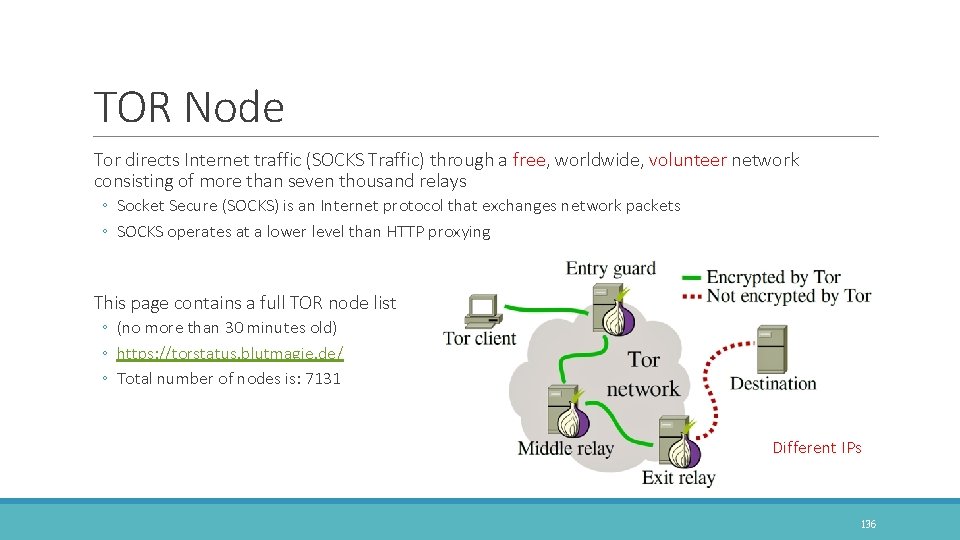

TOR Node Tor directs Internet traffic (SOCKS Traffic) through a free, worldwide, volunteer network consisting of more than seven thousand relays ◦ Socket Secure (SOCKS) is an Internet protocol that exchanges network packets ◦ SOCKS operates at a lower level than HTTP proxying This page contains a full TOR node list ◦ (no more than 30 minutes old) ◦ https: //torstatus. blutmagie. de/ ◦ Total number of nodes is: 7131 Different IPs 136

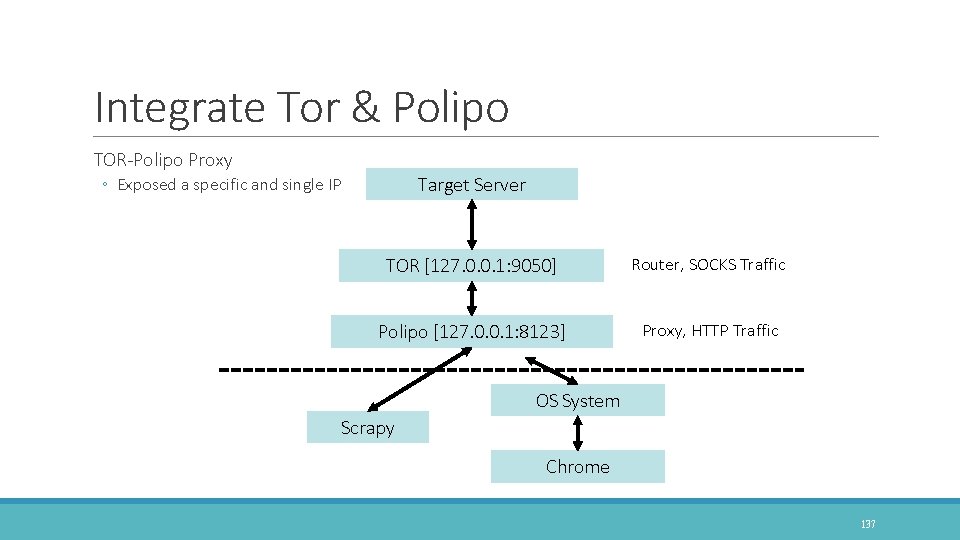

Integrate Tor & Polipo TOR-Polipo Proxy Target Server ◦ Exposed a specific and single IP TOR [127. 0. 0. 1: 9050] Router, SOCKS Traffic Polipo [127. 0. 0. 1: 8123] Proxy, HTTP Traffic OS System Scrapy Chrome 137

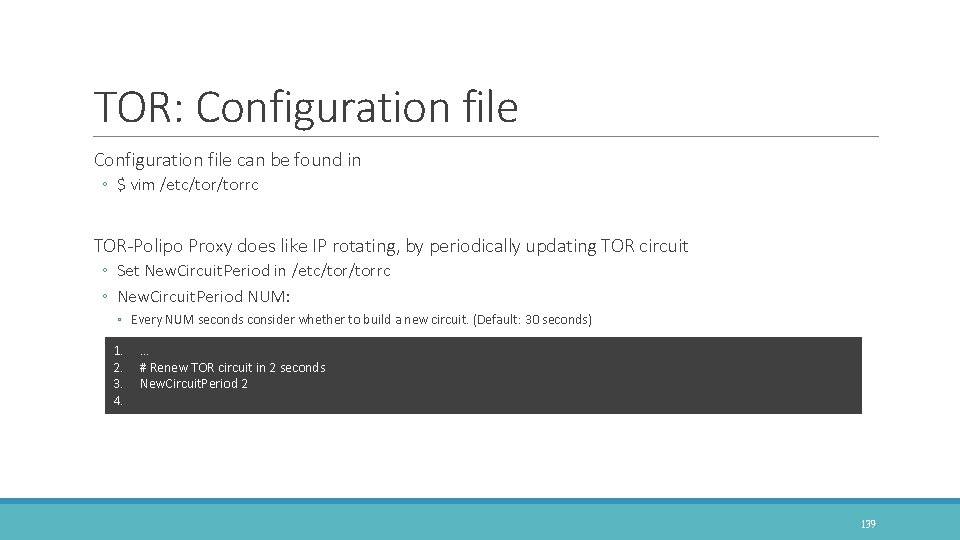

TOR: Installation Tor on Debian stable, Debian sid, or Debian ◦ https: //www. torproject. org/docs/debian. html. en # add this two line in package list file : /etc/apt/sources. list ◦ $ deb http: //deb. torproject. org/torproject. org trusty main ◦ $ deb-src http: //deb. torproject. org/torproject. org trusty main Set GPG key ◦ $ gpg --keyserver keys. gnupg. net --recv 886 DDD 89 ◦ $ gpg --export A 3 C 4 F 0 F 979 CAA 22 CDBA 8 F 512 EE 8 CBC 9 E 886 DDD 89 | sudo apt-key add - # post that, update your system and install tor using ◦ $ apt-get update ◦ $ apt-get install tor deb. torproject. org-keyring 138

TOR: Configuration file can be found in ◦ $ vim /etc/torrc TOR-Polipo Proxy does like IP rotating, by periodically updating TOR circuit ◦ Set New. Circuit. Period in /etc/torrc ◦ New. Circuit. Period NUM: ◦ Every NUM seconds consider whether to build a new circuit. (Default: 30 seconds) 1. 2. 3. 4. … # Renew TOR circuit in 2 seconds New. Circuit. Period 2 139

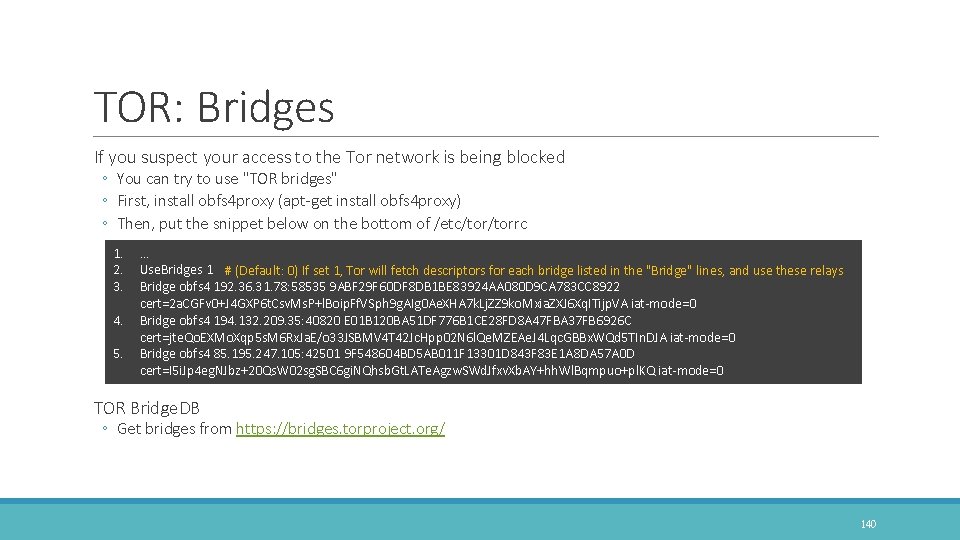

TOR: Bridges If you suspect your access to the Tor network is being blocked ◦ You can try to use "TOR bridges" ◦ First, install obfs 4 proxy (apt-get install obfs 4 proxy) ◦ Then, put the snippet below on the bottom of /etc/torrc 1. 2. 3. 4. 5. … Use. Bridges 1 # (Default: 0) If set 1, Tor will fetch descriptors for each bridge listed in the "Bridge" lines, and use these relays Bridge obfs 4 192. 36. 31. 78: 58535 9 ABF 29 F 60 DF 8 DB 1 BE 83924 AA 080 D 9 CA 783 CC 8922 cert=2 a. CGFv 0+J 4 GXP 6 t. Csv. Ms. P+l. Boip. Ff. VSph 9 g. AIg 0 Ae. XHA 7 k. Lj. ZZ 9 ko. Mxia. ZXJ 6 Xq. ITijp. VA iat-mode=0 Bridge obfs 4 194. 132. 209. 35: 40820 E 01 B 120 BA 51 DF 776 B 1 CE 28 FD 8 A 47 FBA 37 FB 6926 C cert=jte. Qo. EXMo. Xqp 5 s. M 6 Rx. Ja. E/o 33 JSBMV 4 T 42 Jc. Hpp 02 N 6 l. Qe. MZEAe. J 4 Lqc. GBBx. WQd 5 TIn. DJA iat-mode=0 Bridge obfs 4 85. 195. 247. 105: 42501 9 F 548604 BD 5 AB 011 F 13301 D 843 F 83 E 1 A 8 DA 57 A 0 D cert=I 5 i. Jp 4 eg. NJbz+20 Qs. W 02 sg. SBC 6 gi. NQhsb. Gt. LATe. Agzw. SWd. Jfxv. Xb. AY+hh. Wl. Bqmpuo+pl. KQ iat-mode=0 TOR Bridge. DB ◦ Get bridges from https: //bridges. torproject. org/ 140

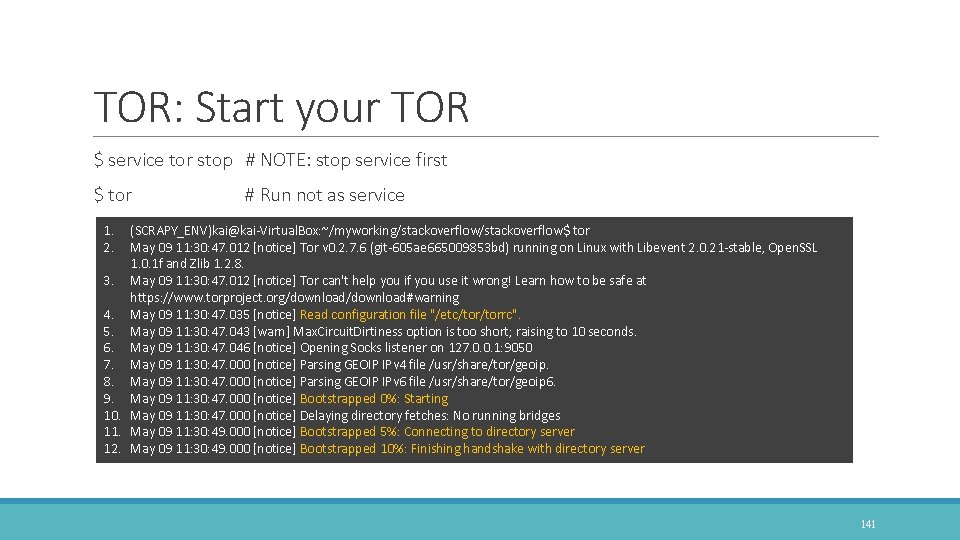

TOR: Start your TOR $ service tor stop # NOTE: stop service first $ tor # Run not as service 1. 2. (SCRAPY_ENV)kai@kai-Virtual. Box: ~/myworking/stackoverflow$ tor May 09 11: 30: 47. 012 [notice] Tor v 0. 2. 7. 6 (git-605 ae 665009853 bd) running on Linux with Libevent 2. 0. 21 -stable, Open. SSL 1. 0. 1 f and Zlib 1. 2. 8. 3. May 09 11: 30: 47. 012 [notice] Tor can't help you if you use it wrong! Learn how to be safe at https: //www. torproject. org/download#warning 4. May 09 11: 30: 47. 035 [notice] Read configuration file "/etc/torrc". 5. May 09 11: 30: 47. 043 [warn] Max. Circuit. Dirtiness option is too short; raising to 10 seconds. 6. May 09 11: 30: 47. 046 [notice] Opening Socks listener on 127. 0. 0. 1: 9050 7. May 09 11: 30: 47. 000 [notice] Parsing GEOIP IPv 4 file /usr/share/tor/geoip. 8. May 09 11: 30: 47. 000 [notice] Parsing GEOIP IPv 6 file /usr/share/tor/geoip 6. 9. May 09 11: 30: 47. 000 [notice] Bootstrapped 0%: Starting 10. May 09 11: 30: 47. 000 [notice] Delaying directory fetches: No running bridges 11. May 09 11: 30: 49. 000 [notice] Bootstrapped 5%: Connecting to directory server 12. May 09 11: 30: 49. 000 [notice] Bootstrapped 10%: Finishing handshake with directory server 141

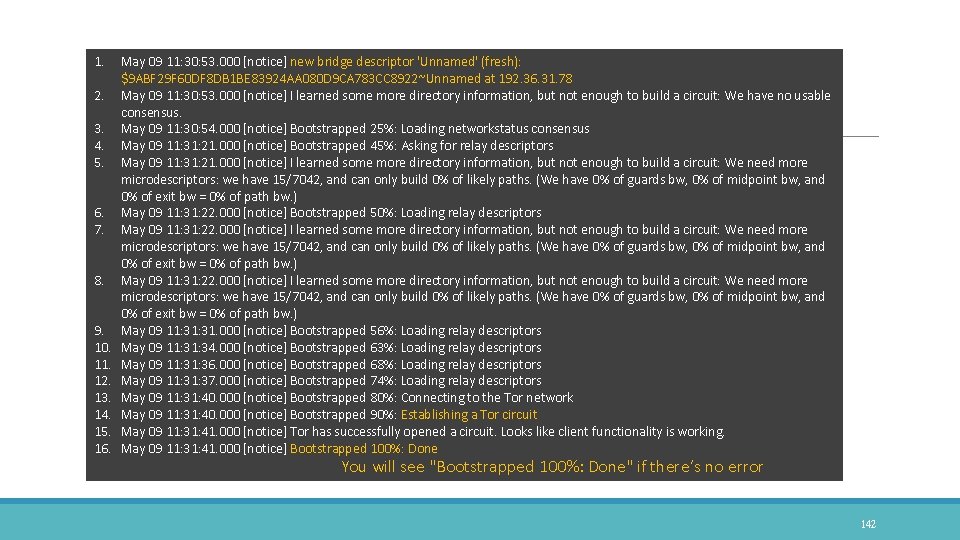

1. 2. 3. 4. 5. 6. 7. 8. 9. 10. 11. 12. 13. 14. 15. 16. May 09 11: 30: 53. 000 [notice] new bridge descriptor 'Unnamed' (fresh): $9 ABF 29 F 60 DF 8 DB 1 BE 83924 AA 080 D 9 CA 783 CC 8922~Unnamed at 192. 36. 31. 78 May 09 11: 30: 53. 000 [notice] I learned some more directory information, but not enough to build a circuit: We have no usable consensus. May 09 11: 30: 54. 000 [notice] Bootstrapped 25%: Loading networkstatus consensus May 09 11: 31: 21. 000 [notice] Bootstrapped 45%: Asking for relay descriptors May 09 11: 31: 21. 000 [notice] I learned some more directory information, but not enough to build a circuit: We need more microdescriptors: we have 15/7042, and can only build 0% of likely paths. (We have 0% of guards bw, 0% of midpoint bw, and 0% of exit bw = 0% of path bw. ) May 09 11: 31: 22. 000 [notice] Bootstrapped 50%: Loading relay descriptors May 09 11: 31: 22. 000 [notice] I learned some more directory information, but not enough to build a circuit: We need more microdescriptors: we have 15/7042, and can only build 0% of likely paths. (We have 0% of guards bw, 0% of midpoint bw, and 0% of exit bw = 0% of path bw. ) May 09 11: 31. 000 [notice] Bootstrapped 56%: Loading relay descriptors May 09 11: 34. 000 [notice] Bootstrapped 63%: Loading relay descriptors May 09 11: 36. 000 [notice] Bootstrapped 68%: Loading relay descriptors May 09 11: 37. 000 [notice] Bootstrapped 74%: Loading relay descriptors May 09 11: 31: 40. 000 [notice] Bootstrapped 80%: Connecting to the Tor network May 09 11: 31: 40. 000 [notice] Bootstrapped 90%: Establishing a Tor circuit May 09 11: 31: 41. 000 [notice] Tor has successfully opened a circuit. Looks like client functionality is working. May 09 11: 31: 41. 000 [notice] Bootstrapped 100%: Done You will see "Bootstrapped 100%: Done" if there’s no error 142

Test TOR-Polipo Proxy $ curl --proxy http: //localhost: 8123 https: //check. torproject. org/ | grep Congratulations $ torify curl https: //check. torproject. org/ | grep IP 143

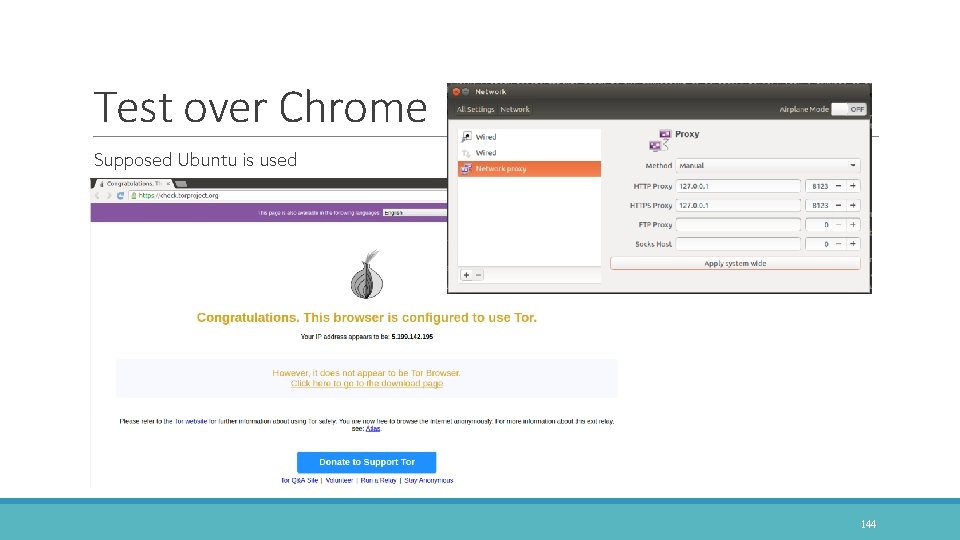

Test over Chrome Supposed Ubuntu is used 144

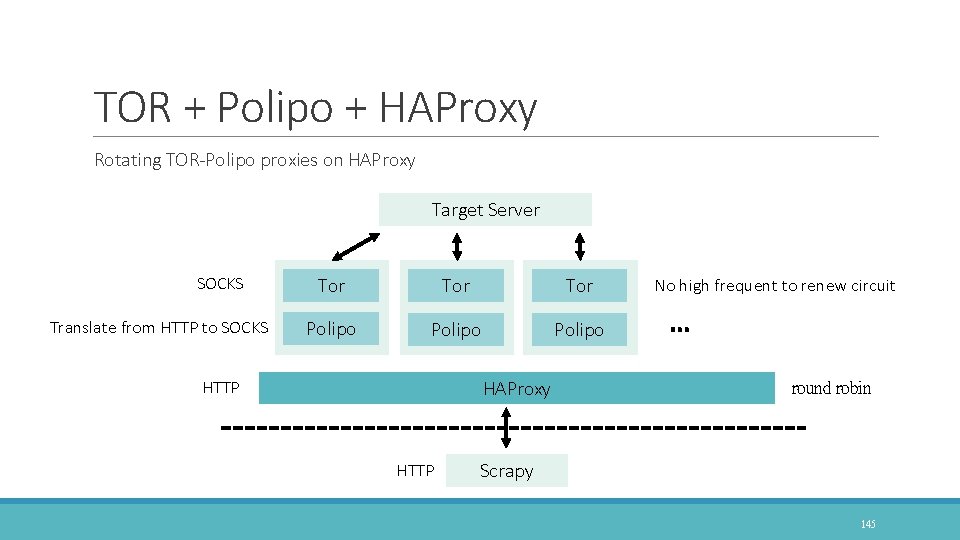

TOR + Polipo + HAProxy Rotating TOR-Polipo proxies on HAProxy Target Server SOCKS Translate from HTTP to SOCKS Tor Tor Polipo HAProxy HTTP No high frequent to renew circuit … round robin Scrapy 145

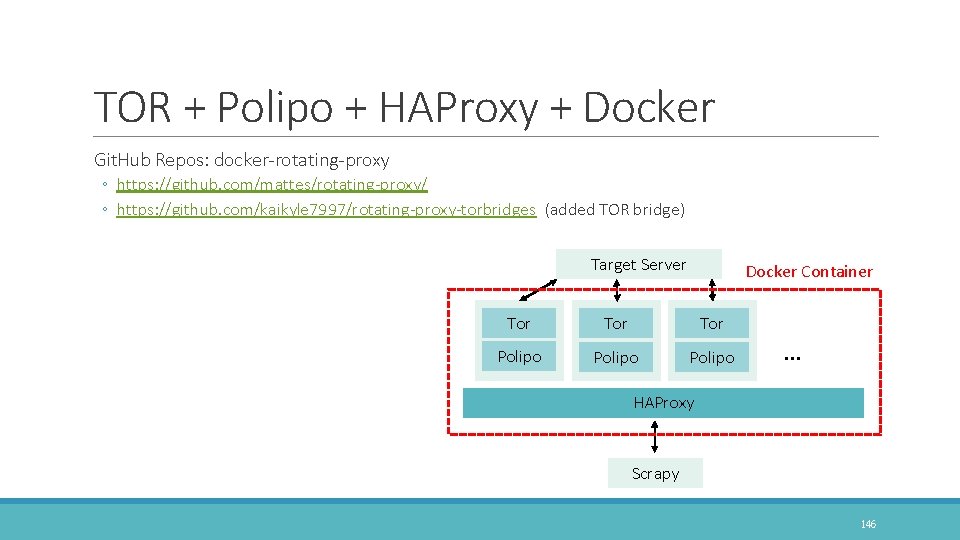

TOR + Polipo + HAProxy + Docker Git. Hub Repos: docker-rotating-proxy ◦ https: //github. com/mattes/rotating-proxy/ ◦ https: //github. com/kaikyle 7997/rotating-proxy-torbridges (added TOR bridge) Target Server Docker Container Tor Tor Polipo … HAProxy Scrapy 146

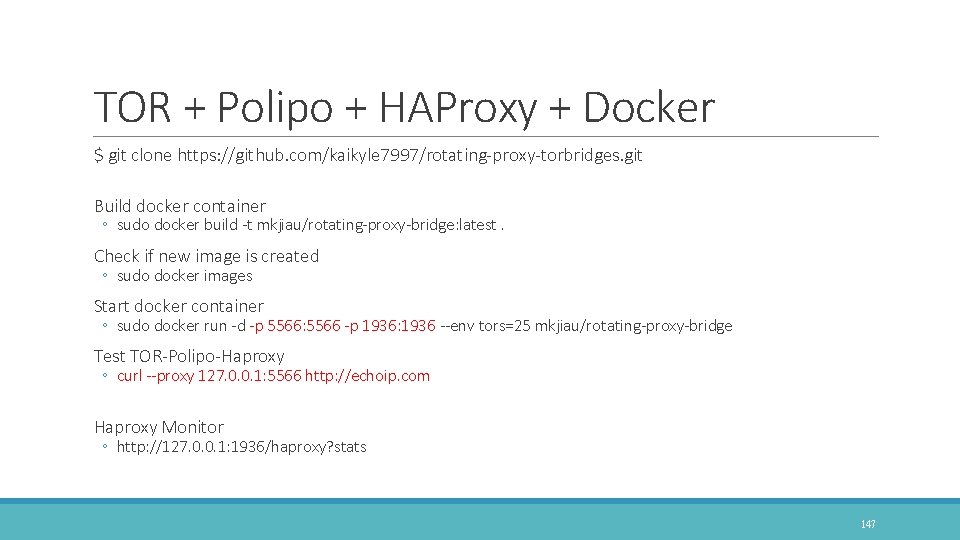

TOR + Polipo + HAProxy + Docker $ git clone https: //github. com/kaikyle 7997/rotating-proxy-torbridges. git Build docker container ◦ sudo docker build -t mkjiau/rotating-proxy-bridge: latest. Check if new image is created ◦ sudo docker images Start docker container ◦ sudo docker run -d -p 5566: 5566 -p 1936: 1936 --env tors=25 mkjiau/rotating-proxy-bridge Test TOR-Polipo-Haproxy ◦ curl --proxy 127. 0. 0. 1: 5566 http: //echoip. com Haproxy Monitor ◦ http: //127. 0. 0. 1: 1936/haproxy? stats 147

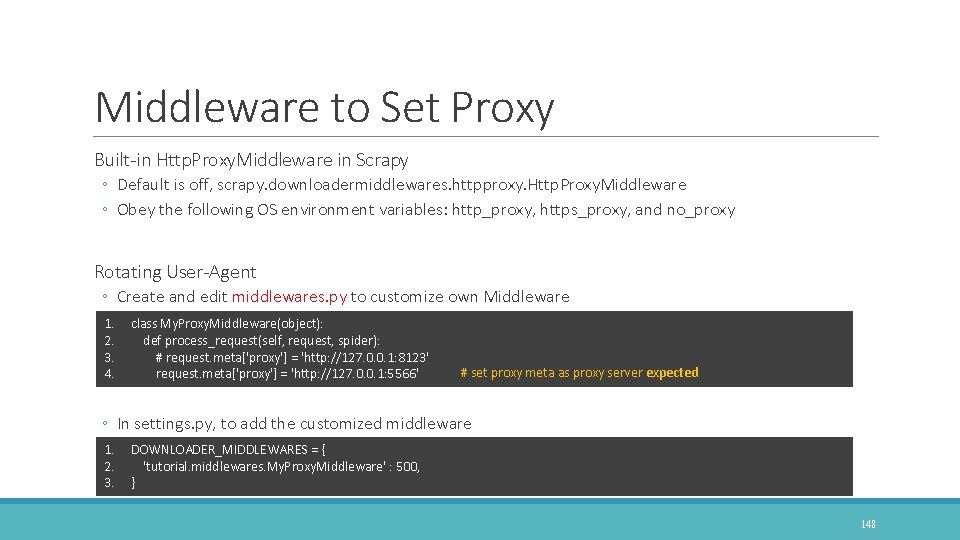

Middleware to Set Proxy Built-in Http. Proxy. Middleware in Scrapy ◦ Default is off, scrapy. downloadermiddlewares. httpproxy. Http. Proxy. Middleware ◦ Obey the following OS environment variables: http_proxy, https_proxy, and no_proxy Rotating User-Agent ◦ Create and edit middlewares. py to customize own Middleware 1. 2. 3. 4. class My. Proxy. Middleware(object): def process_request(self, request, spider): # request. meta['proxy'] = 'http: //127. 0. 0. 1: 8123' request. meta['proxy'] = 'http: //127. 0. 0. 1: 5566' # set proxy meta as proxy server expected ◦ In settings. py, to add the customized middleware 1. 2. 3. DOWNLOADER_MIDDLEWARES = { 'tutorial. middlewares. My. Proxy. Middleware' : 500, } 148

Clone demo codes from Git. Hub You can obtain and run this demo codes in a elegant and easy way ◦ git clone https: //github. com/kaikyle 7997/scrapy_rotating_ips. git Git. Hub Repos: scrapy_rotating_ips ◦ https: //github. com/kaikyle 7997/scrapy_rotating_ips 149

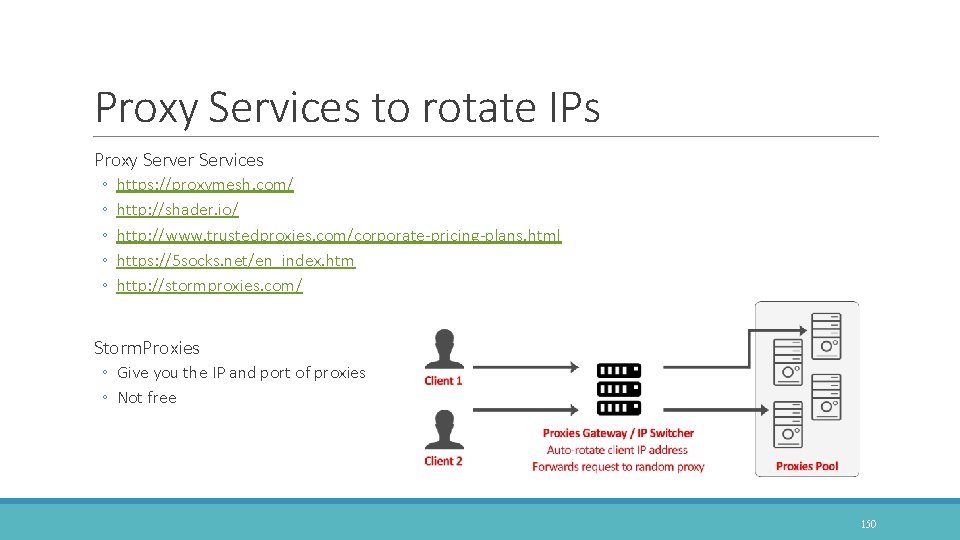

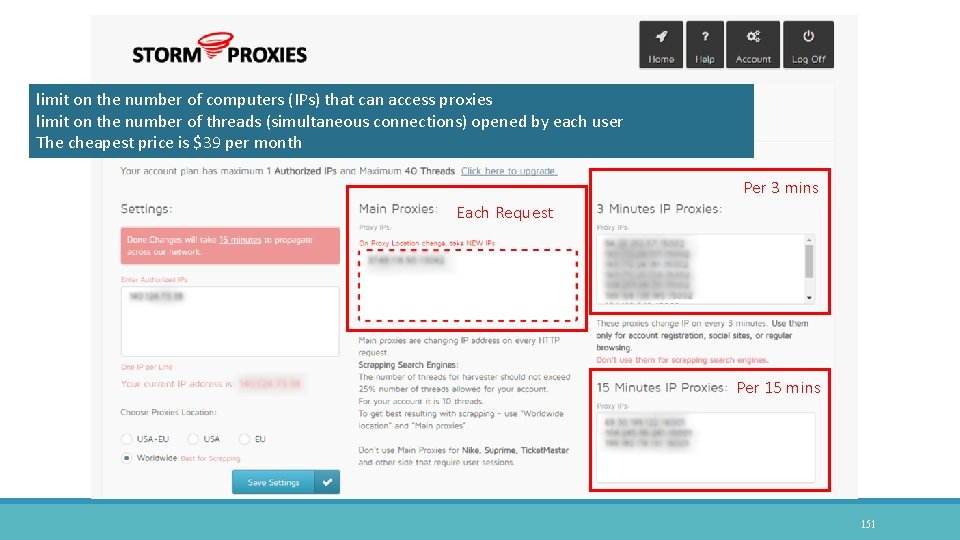

Proxy Services to rotate IPs Proxy Server Services ◦ ◦ ◦ https: //proxymesh. com/ http: //shader. io/ http: //www. trustedproxies. com/corporate-pricing-plans. html https: //5 socks. net/en_index. htm http: //stormproxies. com/ Storm. Proxies ◦ Give you the IP and port of proxies ◦ Not free 150

limit on the number of computers (IPs) that can access proxies limit on the number of threads (simultaneous connections) opened by each user The cheapest price is $39 per month Per 3 mins Each Request Per 15 mins 151

More Scrapy Demo Cases A scrapy project can crawl search result of Google/Bing/Baidu ◦ https: //github. com/rabxwf/se. Crawler ◦ https: //github. com/kaikyle 7997/se. Crawler (added www. googel. com. tw) Multifarious scrapy examples ◦ Doubanbook, doubanmovie, googlescholar, hrtencent, qqnews, sinanews, zhihu, linkedin ◦ https: //github. com/geekan/scrapy-examples ◦ https: //github. com/kaikyle 7997/scrapy-examples (backup) 152

THE END Thank you for being here and your listening Ming-Kai Jiau (焦名楷) Reach me: kasimjm 7997@gmail. com / mkjiau@ntut. edu. tw Download available at http: //www. cc. ntut. edu. tw/~mkjiau/webcrawler/ 153

- Slides: 153