Mercator A scalable extensible Web crawler Allan Heydon

Mercator: A scalable, extensible Web crawler Allan Heydon and Marc Najork, World Wide Web, 1999 2006. 5. 23 Young Geun Han

Contents l l l l Introduction Related Work Architecture of a scalable Web crawler Extensibility Crawler traps and other hazards Results of an extended crawl Conclusions 2

1. Introduction l The motivations of this work l l l Due to the competitive nature of the search engine business, Web crawler design is not well-documented in the literature To collect statistics about the Web Mercator, a scalable, extensible Web crawler l By scalable l l Mercator is designed to scale up to the entire Web They archive scalability by implementing their data structures so that use a bounded amount of memory, regardless of the size of the crawl The vast majority of their data structures are stored on disk, and small parts of them are stored in memory for efficiency By extensible l Mercator is designed in a modular way, with the expectation that new functionality will be added by third parties 3

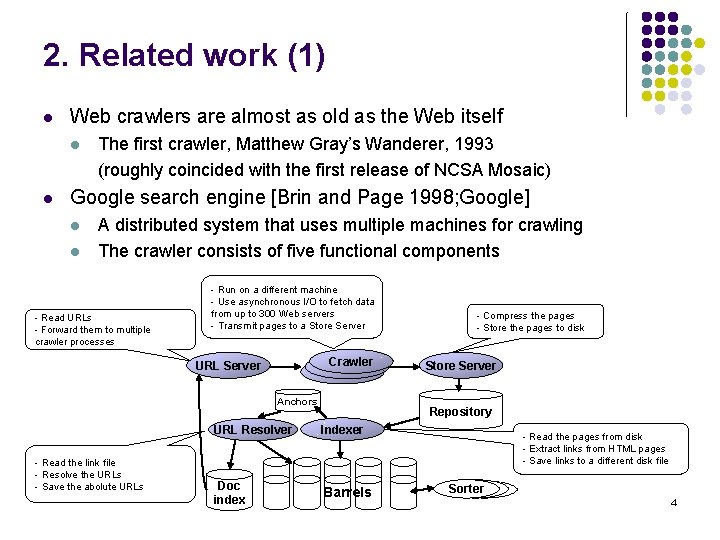

2. Related work (1) l Web crawlers are almost as old as the Web itself l l The first crawler, Matthew Gray’s Wanderer, 1993 (roughly coincided with the first release of NCSA Mosaic) Google search engine [Brin and Page 1998; Google] l l A distributed system that uses multiple machines for crawling The crawler consists of five functional components - Read URLs - Forward them to multiple crawler processes - Run on a different machine - Use asynchronous I/O to fetch data from up to 300 Web servers - Transmit pages to a Store Server Crawler URL Server Anchors - Read the link file - Resolve the URLs - Save the abolute URLs - Compress the pages - Store the pages to disk Store Server Repository URL Resolver Indexer Doc index Barrels - Read the pages from disk - Extract links from HTML pages - Save links to a different disk file Sorter 4

![2. Related work (2) l Internet Archive [Burner 1997; Internet. Archive] l l l 2. Related work (2) l Internet Archive [Burner 1997; Internet. Archive] l l l](http://slidetodoc.com/presentation_image/9130aa64231ea03196fe4dc7335e4093/image-5.jpg)

2. Related work (2) l Internet Archive [Burner 1997; Internet. Archive] l l l The internet Archive also uses multiple machines to crawl the Web Each crawler process is assigned up to 64 sites to crawl Each crawler reads a list of seed URLs and uses asynchronous I/O to fetch pages from per-site queues in parallel When a page is downloaded, the crawler extracts the links and adds to the appropriate site queue Using a batch process, it merges “cross-site” URLs into the site-specific seed sets, filtering out duplicates in the process SPHINK [Miller and Bharat 1998] l SPHINK system provides some of the customizability features (a mechanism for limiting which pages are crawled, document processing code) l SPHINK is targeted towards site-specific crawling, and therefore is not designed to be scalable 5

3. Architecture of a scalable Web crawler l The basic algorithm of any Web crawler takes a list of seed URLs l l l l Remove a URL from the URL list Determine the IP address of its host name Download the corresponding document Extract any links contained in document For each of the extracted links, ensure that it is an absolute URL Add a URL to the list of URLs to download, provided it has not been encountered before Functional components l l l a component (URL frontier) for storing the list of URLs to download a component for resolving host names into IP addresses a component for downloading documents using the HTTP protocol a component for extracting links from HTML documents a component for determining whether a URL has been encountered before 6

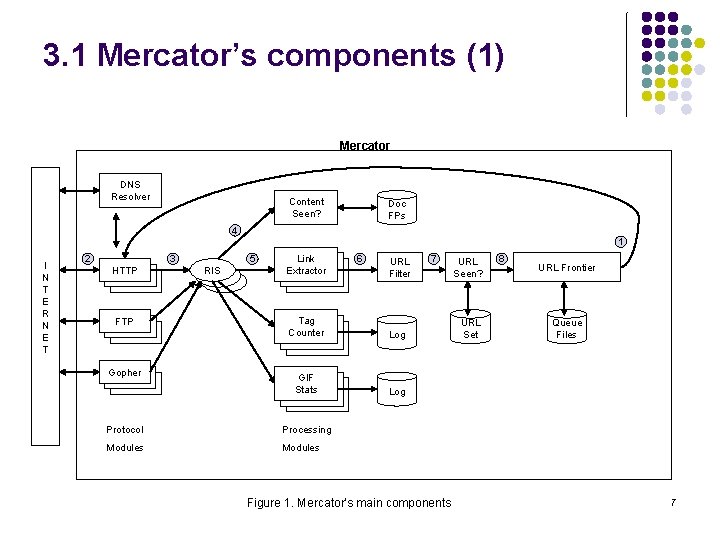

3. 1 Mercator’s components (1) Mercator DNS Resolver Content Seen? Doc FPs 4 1 I N T E R N E T 2 3 HTTP FTP Gopher 5 RIS Link Extractor 6 URL Filter Tag Counter Log GIF Stats Log Protocol Processing Modules 7 Figure 1. Mercator’s main components URL Seen? URL Set 8 URL Frontier Queue Files 7

3. 1 Mercator’s components (2) l l l l The first step of this loop is to remove an absolute URL from the shared 1 URL frontier for downloading The protocol module's fetch method downloads the document from internet into a per-thread Rewind. Input. Stream 3 The worker thread invokes the content-seen test to determine whether this document has been seen before Based on the downloaded document's MIME type, the worker invokes the process method of each processing module associated with that MIME 5 type Each extracted link is converted into an absolute URL, and tested against a user-supplied URL filter to determine if it should be download 6 The worker performs the URL-seen test, which checks if the URL has been seen before If the URL is new, it is added to the frontier 8 2 4 7 8

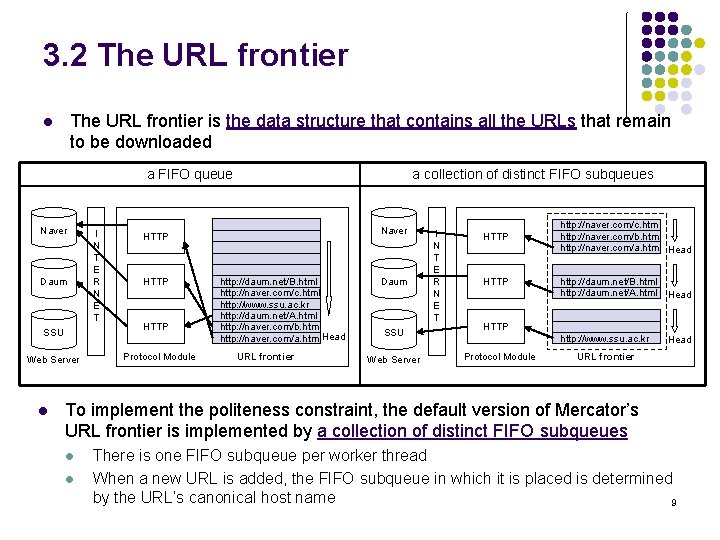

3. 2 The URL frontier is the data structure that contains all the URLs that remain to be downloaded l a FIFO queue Naver Daum SSU Web Server l I N T E R N E T a collection of distinct FIFO subqueues Naver HTTP Protocol Module http: //daum. net/B. html http: //naver. com/c. html http: //www. ssu. ac. kr http: //daum. net/A. html http: //naver. com/b. html http: //naver. com/a. html Head URL frontier Daum SSU Web Server I N T E R N E T HTTP http: //naver. com/c. html http: //naver. com/b. html http: //naver. com/a. html Head http: //daum. net/B. html http: //daum. net/A. html Head http: //www. ssu. ac. kr Head HTTP Protocol Module URL frontier To implement the politeness constraint, the default version of Mercator’s URL frontier is implemented by a collection of distinct FIFO subqueues l l There is one FIFO subqueue per worker thread When a new URL is added, the FIFO subqueue in which it is placed is determined by the URL’s canonical host name 9

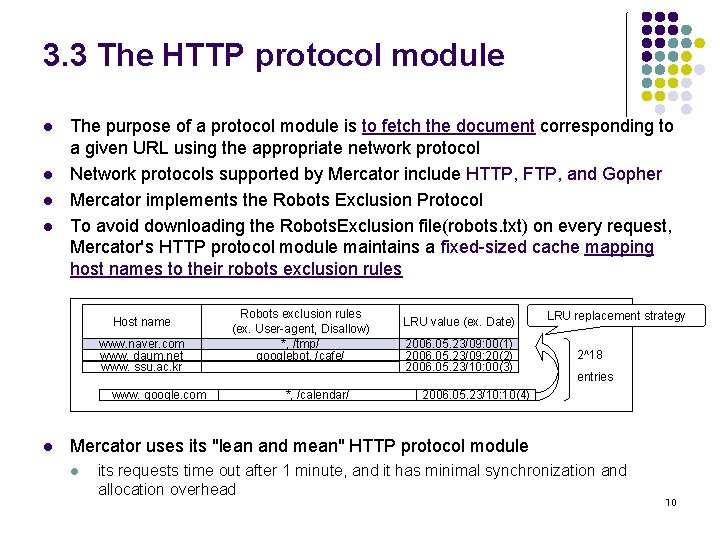

3. 3 The HTTP protocol module l l The purpose of a protocol module is to fetch the document corresponding to a given URL using the appropriate network protocol Network protocols supported by Mercator include HTTP, FTP, and Gopher Mercator implements the Robots Exclusion Protocol To avoid downloading the Robots. Exclusion file(robots. txt) on every request, Mercator's HTTP protocol module maintains a fixed-sized cache mapping host names to their robots exclusion rules Host name www. naver. com www. daum. net www. ssu. ac. kr www. google. com l Robots exclusion rules (ex. User-agent, Disallow) *, /tmp/ googlebot, /cafe/ *, /calendar/ LRU value (ex. Date) 2006. 05. 23/09: 00(1) 2006. 05. 23/09: 20(2) 2006. 05. 23/10: 00(3) LRU replacement strategy 2^18 entries 2006. 05. 23/10: 10(4) Mercator uses its "lean and mean" HTTP protocol module l its requests time out after 1 minute, and it has minimal synchronization and allocation overhead 10

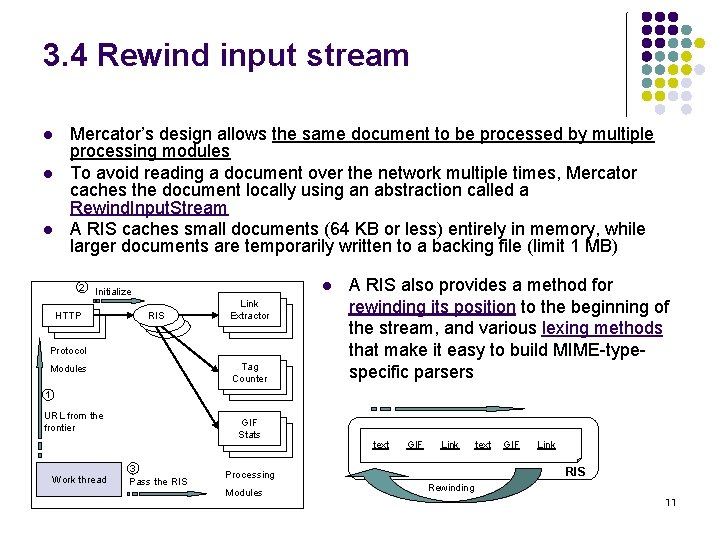

3. 4 Rewind input stream l l l Mercator’s design allows the same document to be processed by multiple processing modules To avoid reading a document over the network multiple times, Mercator caches the document locally using an abstraction called a Rewind. Input. Stream A RIS caches small documents (64 KB or less) entirely in memory, while larger documents are temporarily written to a backing file (limit 1 MB) 2 l Initialize HTTP RIS Link Extractor Protocol Tag Counter Modules A RIS also provides a method for rewinding its position to the beginning of the stream, and various lexing methods that make it easy to build MIME-typespecific parsers 1 URL from the frontier Work thread GIF Stats 3 Pass the RIS text GIF Link RIS Processing Modules text Rewinding 11

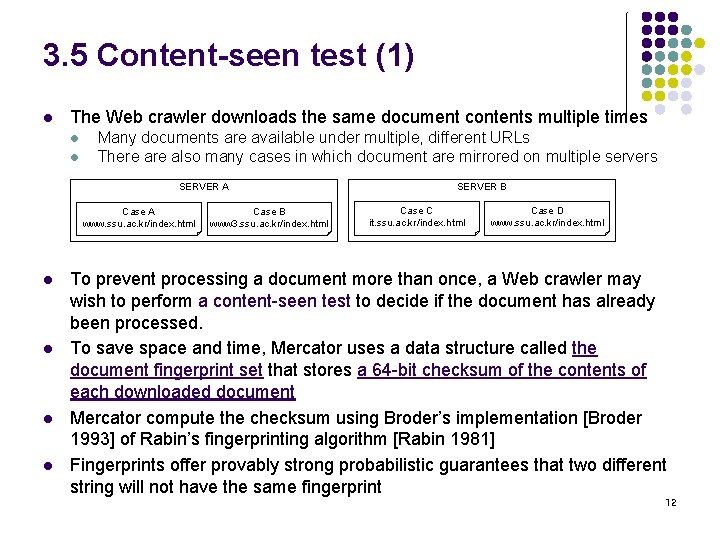

3. 5 Content-seen test (1) l The Web crawler downloads the same document contents multiple times l l Many documents are available under multiple, different URLs There also many cases in which document are mirrored on multiple servers SERVER A Case A www. ssu. ac. kr/index. html l l Case B www 3. ssu. ac. kr/index. html SERVER B Case C it. ssu. ac. kr/index. html Case D www. ssu. ac. kr/index. html To prevent processing a document more than once, a Web crawler may wish to perform a content-seen test to decide if the document has already been processed. To save space and time, Mercator uses a data structure called the document fingerprint set that stores a 64 -bit checksum of the contents of each downloaded document Mercator compute the checksum using Broder’s implementation [Broder 1993] of Rabin’s fingerprinting algorithm [Rabin 1981] Fingerprints offer provably strong probabilistic guarantees that two different string will not have the same fingerprint 12

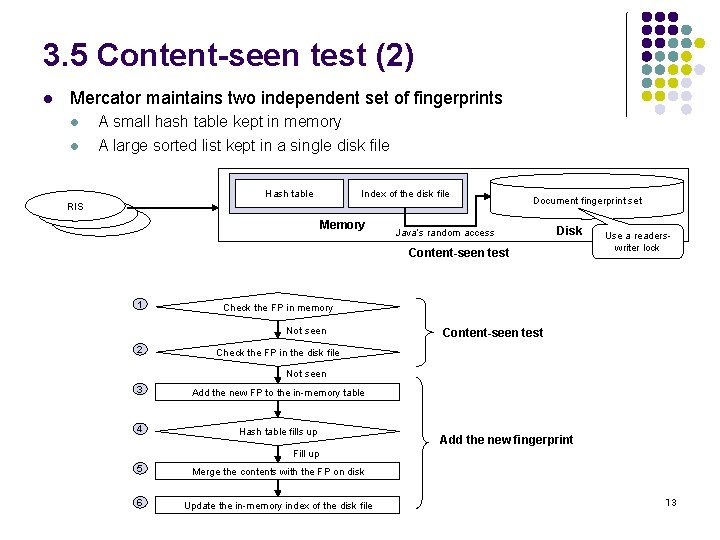

3. 5 Content-seen test (2) l Mercator maintains two independent set of fingerprints l l A small hash table kept in memory A large sorted list kept in a single disk file Hash table Index of the disk file RIS Memory Document fingerprint set Java’s random access Disk Content-seen test 1 Check the FP in memory Not seen 2 Use a readerswriter lock Content-seen test Check the FP in the disk file Not seen 3 Add the new FP to the in-memory table 4 Hash table fills up Add the new fingerprint Fill up 5 Merge the contents with the FP on disk 6 Update the in-memory index of the disk file 13

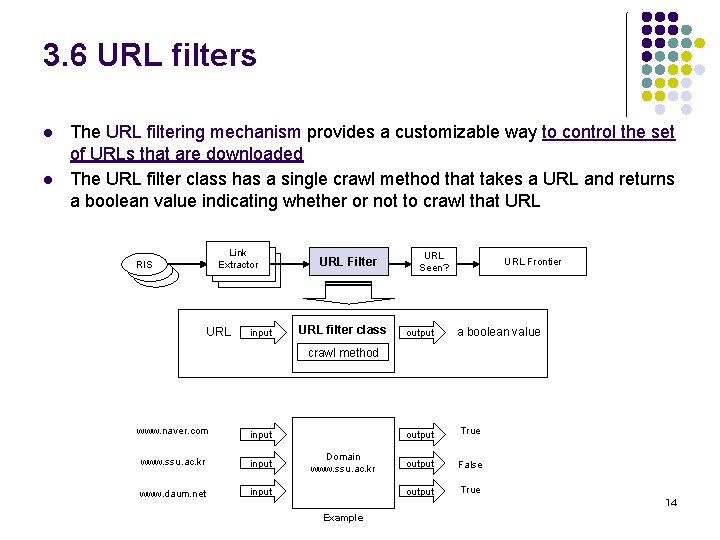

3. 6 URL filters l l The URL filtering mechanism provides a customizable way to control the set of URLs that are downloaded The URL filter class has a single crawl method that takes a URL and returns a boolean value indicating whether or not to crawl that URL Link Extractor RIS URL input URL Filter URL filter class URL Seen? output URL Frontier a boolean value crawl method www. naver. com input www. ssu. ac. kr input www. daum. net input Domain www. ssu. ac. kr output True output False output True 14 Example

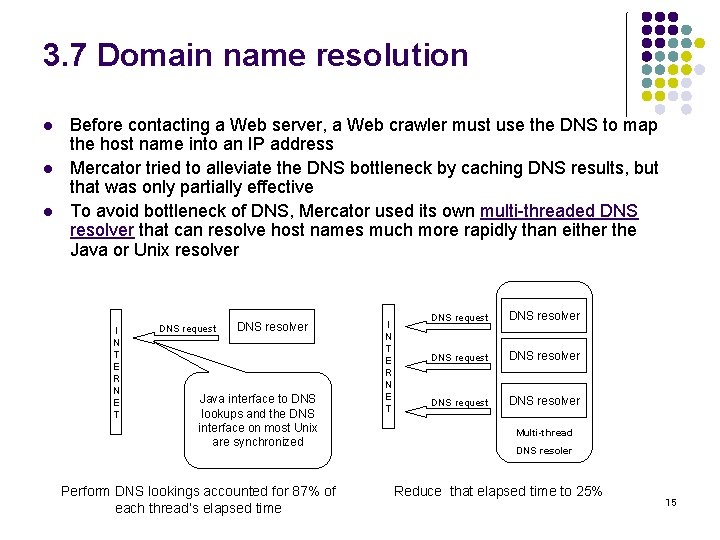

3. 7 Domain name resolution l l l Before contacting a Web server, a Web crawler must use the DNS to map the host name into an IP address Mercator tried to alleviate the DNS bottleneck by caching DNS results, but that was only partially effective To avoid bottleneck of DNS, Mercator used its own multi-threaded DNS resolver that can resolve host names much more rapidly than either the Java or Unix resolver I N T E R N E T DNS request DNS resolver Java interface to DNS lookups and the DNS interface on most Unix are synchronized Perform DNS lookings accounted for 87% of each thread’s elapsed time I N T E R N E T DNS request DNS resolver Multi-thread DNS resoler Reduce that elapsed time to 25% 15

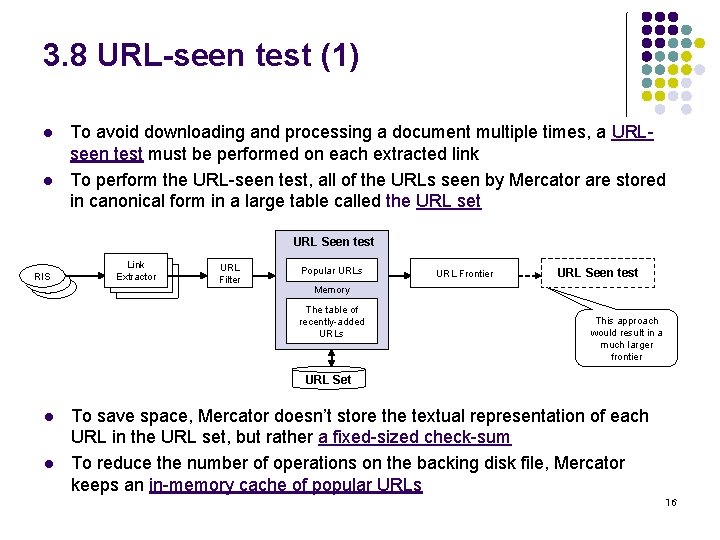

3. 8 URL-seen test (1) l l To avoid downloading and processing a document multiple times, a URLseen test must be performed on each extracted link To perform the URL-seen test, all of the URLs seen by Mercator are stored in canonical form in a large table called the URL set URL Seen test RIS Link Extractor URL Filter Popular URLs URL Frontier URL Seen test Memory The table of recently-added URLs This approach would result in a much larger frontier URL Set l l To save space, Mercator doesn’t store the textual representation of each URL in the URL set, but rather a fixed-sized check-sum To reduce the number of operations on the backing disk file, Mercator keeps an in-memory cache of popular URLs 16

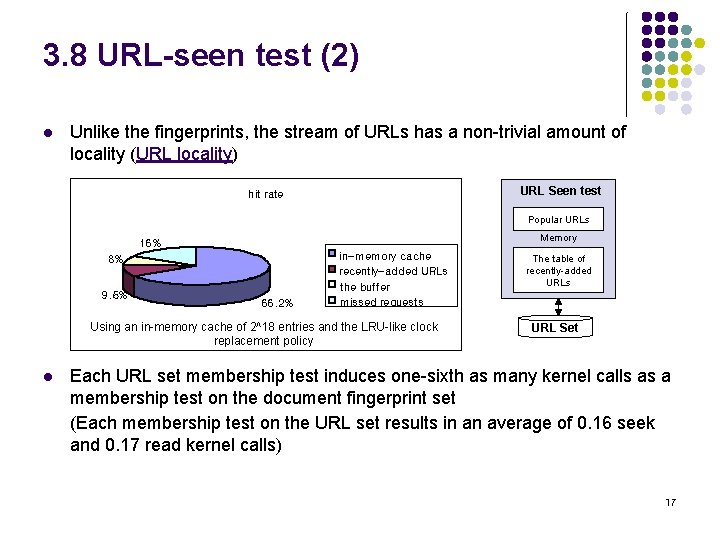

3. 8 URL-seen test (2) l Unlike the fingerprints, the stream of URLs has a non-trivial amount of locality (URL locality) URL Seen test hit rate Popular URLs Memory 16% 8% 9. 5% 66. 2% in-memory cache recently-added URLs the buffer missed requests Using an in-memory cache of 2^18 entries and the LRU-like clock replacement policy l The table of recently-added URLs URL Set Each URL set membership test induces one-sixth as many kernel calls as a membership test on the document fingerprint set (Each membership test on the URL set results in an average of 0. 16 seek and 0. 17 read kernel calls) 17

3. 8 URL-seen test (3) l Host name locality l l Host name locality arises because many links found in Web pages are to different documents on the same server To preserve the locality, they compute the checksum of a URL by merging two independent fingerprints l l l The fingerprint of the URL’s host name The fingerprint of the complete URL These two fingerprints are merged so that the high-order bits of the checksum derive from the host name fingerprint As a result, checksums for URLs with the same host component are numerically close together The host name locality in the stream of URLs translates into access locality on the URL set’s backing disk file, allowing the kernel’s file system buffers to service read requests from memory more often On extended crawls, this technique results in a significant reduction in disk load in a significant performance improvement 18

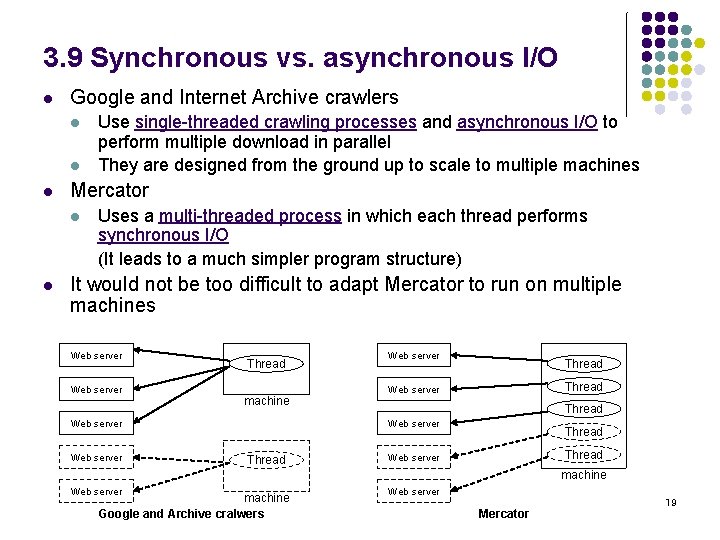

3. 9 Synchronous vs. asynchronous I/O l Google and Internet Archive crawlers l l l Mercator l l Use single-threaded crawling processes and asynchronous I/O to perform multiple download in parallel They are designed from the ground up to scale to multiple machines Uses a multi-threaded process in which each thread performs synchronous I/O (It leads to a much simpler program structure) It would not be too difficult to adapt Mercator to run on multiple machines Web server Thread machine Web server Thread Web server Thread Web server machine Google and Archive cralwers Web server Mercator 19

3. 10 Checkpointing l l l To complete a crawl of the entire Web, Mercator writes regular snapshots of its state to disk An interrupted or aborted crawl can easily be restarted from the lastest checkpoint Mercator’s core classes and all user-supplied modules are required to implement the checkpointing interface Checkpointing are coordinated using a global readers-writer lock Each worker thread acquires a read share of the lock while processing a downloaded document Once a day, Mercator’s main thread has acquired the lock, it arranges for the checkpoint methods 20

- Slides: 20