Web Crawler Distributed IR Rong Jin A Basic

Web Crawler & Distributed IR Rong Jin

A Basic Crawler o o Initialize queue with URLs of known seed pages Repeat n n o Take URL from queue Fetch and parse page Extract URLs from page Add URLs to queue Fundamental assumption n The web is well linked.

Challenges o Fetch 1, 000, 000 pages in one month n o o almost 400 pages per second! Latency/bandwidth Politeness – don’t hit a server too often Duplicates Spider traps n n Malicious server that generates an infinite sequence of linked pages Sophisticated spider traps generate pages that are not easily identified as dynamic.

What A Crawler Should Do? o Be polite n n o Don’t hit a each site too often Only crawl pages you are allowed to crawl: robots. txt Be robust n Be immune to spider traps, duplicates, very large pages, very large websites, dynamic pages etc

Robot. txt o o Protocol for giving crawlers (“robots”) limited access to a website, originally from 1994 Examples: User-agent: * Disallow: /private/ Allow: /other/public/ User-agent: favoredcrawler Disallow: o Important: cache the robots. txt file of each site we are crawling

What A Crawler Should Do? o o Be capable of distributed operation Be scalable: need to be able to increase crawl rate by adding more machines Fetch pages of higher quality or dynamic pages first Continuous operation: get fresh version of already crawled pages

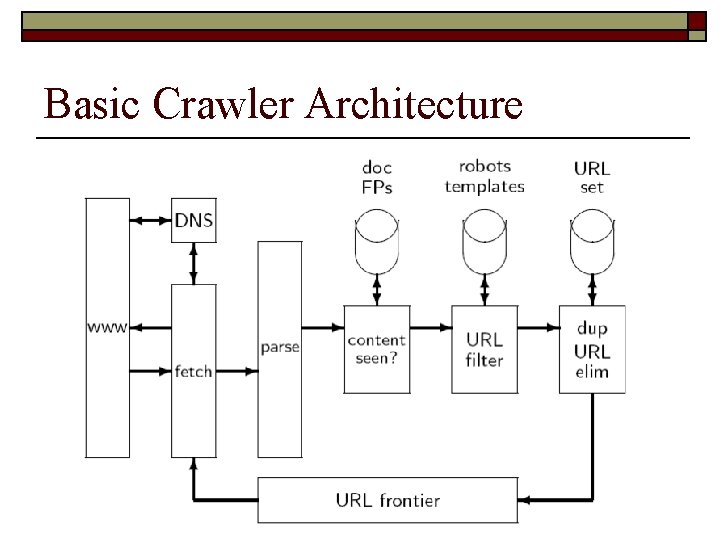

Basic Crawler Architecture

Basic Processing Steps o o o Pick a URL from the frontier Fetch the document at the URL Check if the document has content already seen (if yes: skip following steps) Index document Parse the document and extract URLs to other docs For each extracted URL: n n Does it fail certain tests (e. g. , spam)? Yes: skip Already in the frontier? Yes: skip

URL Normalization o o Some URLs extracted from a document are relative URLs. E. g. , at http: //mit. edu, we may have /aboutsite. html This is the same as: http: //mit. edu/aboutsite. html During parsing, we must normalize (expand) all relative URLs.

Content Seen o o o For each page fetched: check if the content is already in the index Check this using document fingerprints or shingles Skip documents whose content has already been indexed

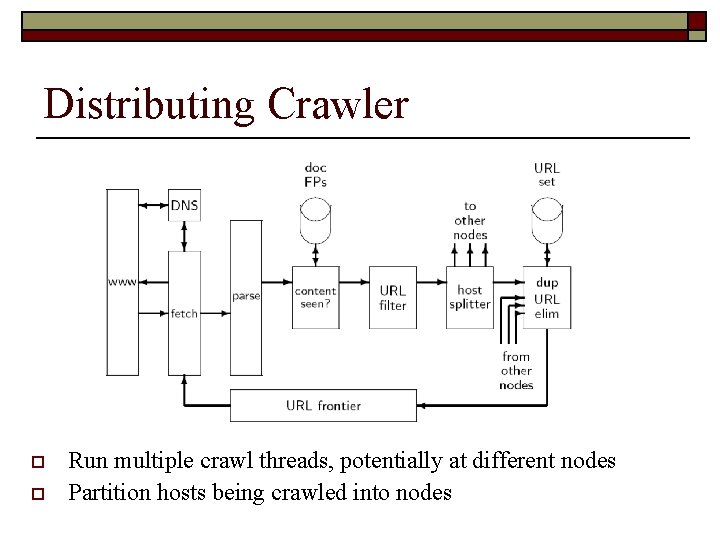

Distributing Crawler o o Run multiple crawl threads, potentially at different nodes Partition hosts being crawled into nodes

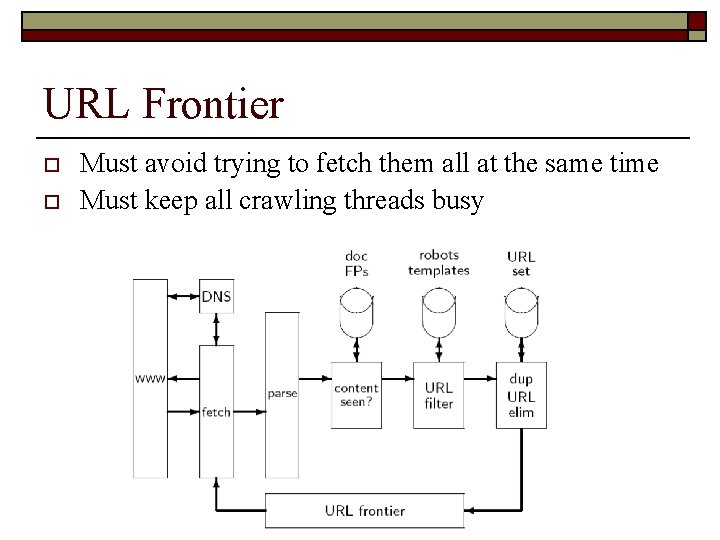

URL Frontier o o Must avoid trying to fetch them all at the same time Must keep all crawling threads busy

URL Frontier o Politeness: Don’t hit a web server too frequently n o o E. g. , insert a time gap between successive requests to the same server Freshness: Crawl some pages (e. g. , news sites) more often than others Not an easy problem

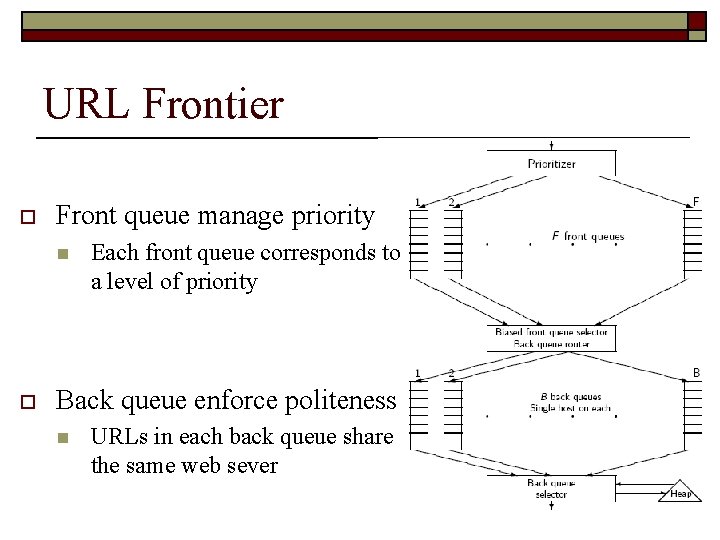

URL Frontier o Front queue manage priority n o Each front queue corresponds to a level of priority Back queue enforce politeness n URLs in each back queue share the same web sever

Multi-Thread Crawlers o o Extract the root of the back queue heap Fetch URL at head of corresponding back queue q Check if q is now empty If yes, n n pulling URLs from the front queues, and adding them to their corresponding back queues until the URL’s host does not have a back queue – then put the URL in q and create heap entry for it.

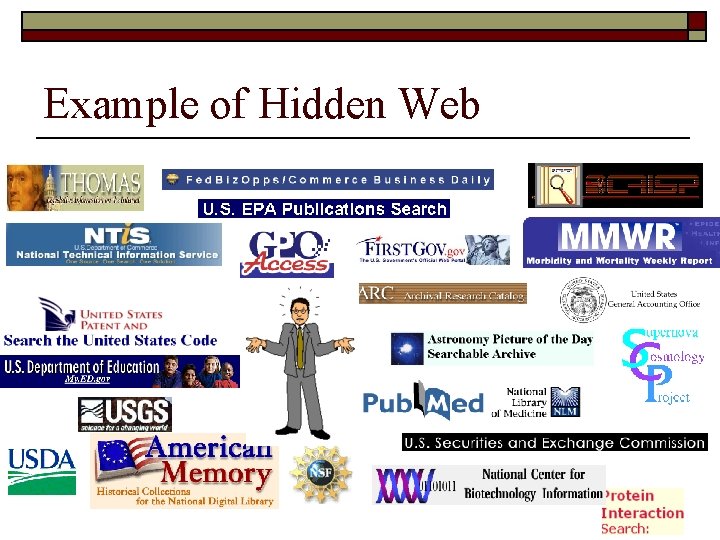

Federated Search o Visible Web: n o Accessible (crawled by) conventional search engines like Google or Yahoo! Hidden Web: n n Hidden from conventional engines (can not be crawled). Accessible via source-specific search engine Larger than Visible Web (2 -50 times, Sherman 2001) Created by professionals

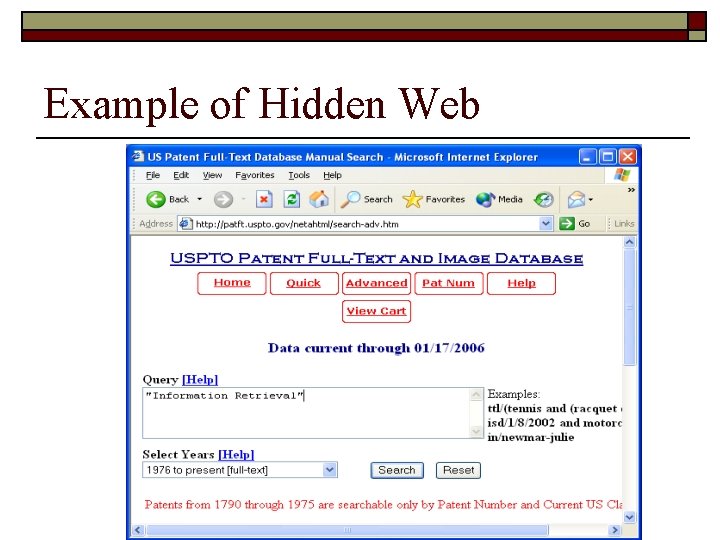

Example of Hidden Web

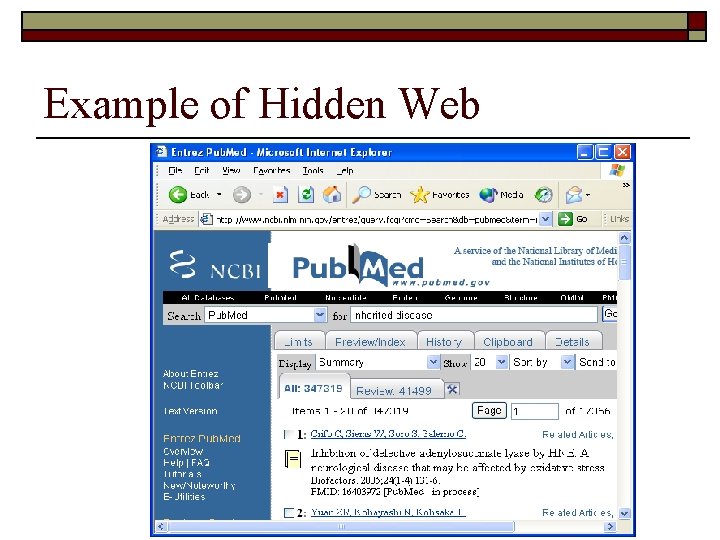

Example of Hidden Web

Example of Hidden Web

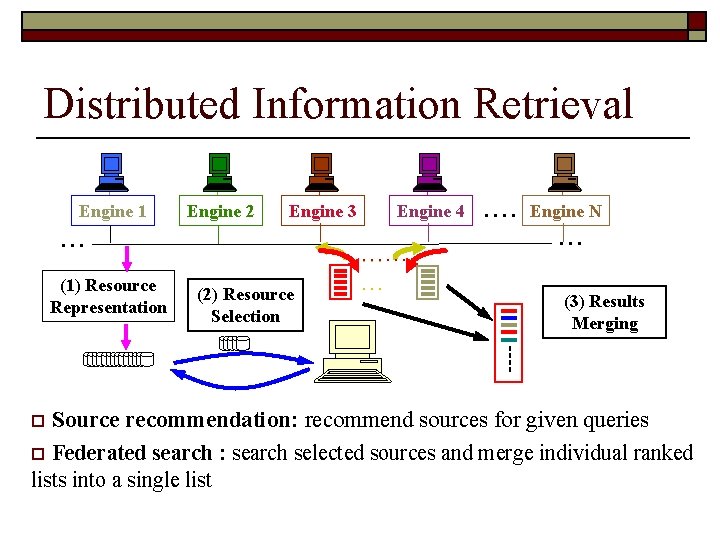

Distributed Information Retrieval Engine 1 Engine 2 Engine 3 . . . (1) Resource Representation (2) Resource Selection Engine 4 …… … … . . Engine N. . . (3) Results Merging Source recommendation: recommend sources for given queries o Federated search : search selected sources and merge individual ranked lists into a single list o

Resource Description o o Description by word occurrences Cooperative protocols n o Eg. STARTS protocol (Gravano et al. , 1997) Query sampling based approaches n n n Collect word occurrences by sending random queries and analyzing the returned docs Build centralized database by the returned docs of random queries Good for uncooperative environment

Resource Selection o o Select information sources that are most likely to provide relevant docs for given queries Basic steps n n Build a word histogram profile for each database Treat each database as a “big” doc, and select the database with the highest relevance for given queries

Result Merging o o Merge the returned docs from different databases into a single list Challenges: n n o Each database only returns a list, no scores Each database may use different retrieval algorithms, making their scores incomparable Solution n n Round and robin CORI merging algorithm (Callan, 1997) o Calibrate the scores from different database

- Slides: 23