NetworkAware Distributed Algorithms for Wireless Networks Nitin Vaidya

Network-Aware Distributed Algorithms for Wireless Networks Nitin Vaidya Electrical and Computer Engineering University of Illinois at Urbana-Champaign 1

2

Multi-Channel Wireless Networks: Theory to Practice Nitin Vaidya Electrical and Computer Engineering University of Illinois at Urbana-Champaign 3

Wireless Networks g Infrastructure-Based Networks g Infrastructure-Less (and Hybrid) Networks: – Mesh networks, ad hoc networks, sensor networks 4

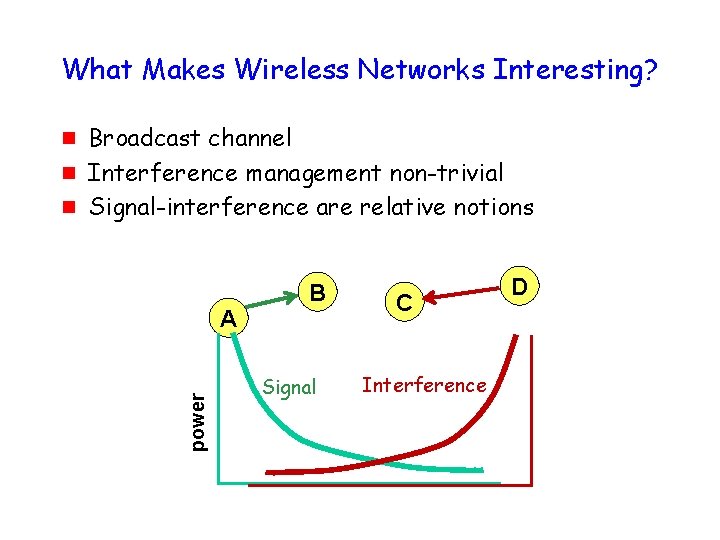

What Makes Wireless Networks Interesting? g g Broadcast channel Interference management non-trivial Signal-interference are relative notions A power g B Signal C Interference D

What Makes Wireless Networks Interesting? Many forms of diversity • Time • Route • Antenna • Path • Channel 6

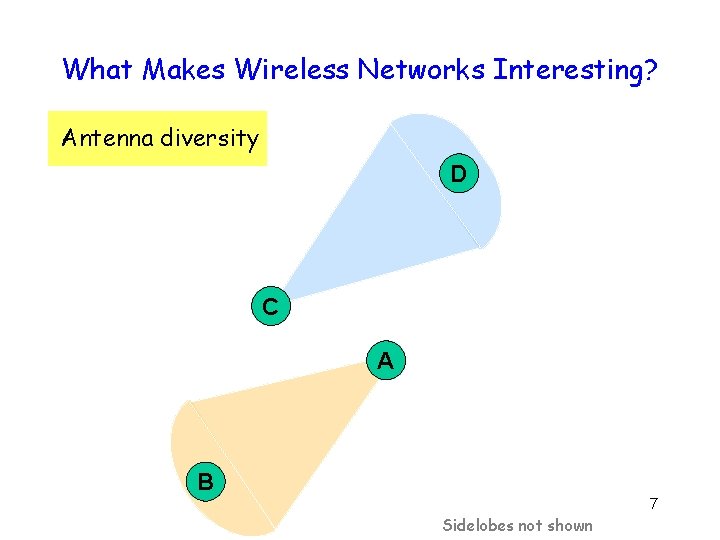

What Makes Wireless Networks Interesting? Antenna diversity D C A B 7 Sidelobes not shown

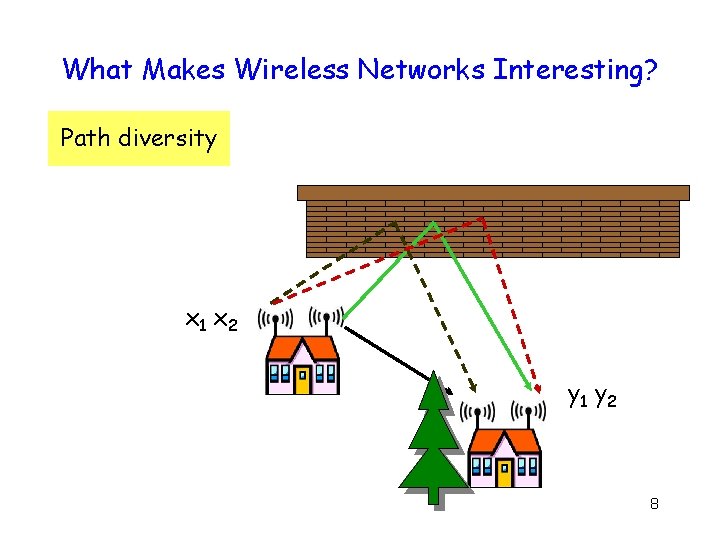

What Makes Wireless Networks Interesting? Path diversity x 1 x 2 y 1 y 2 8

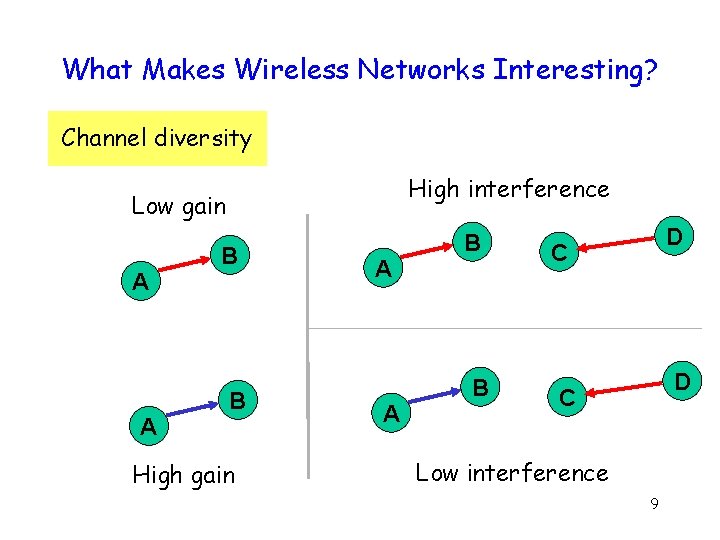

What Makes Wireless Networks Interesting? Channel diversity High interference Low gain A A B B High gain A A B B D C Low interference 9

Research Challenge Dynamic adaptation to exploit available diversity 10

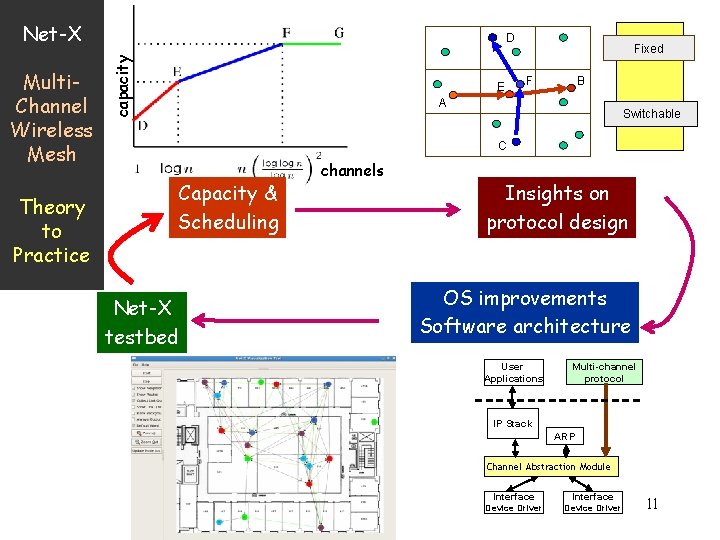

Net-X Theory to Practice capacity Multi. Channel Wireless Mesh D E Fixed F B A Switchable C Capacity & Scheduling Net-X testbed channels Insights on protocol design OS improvements Software architecture User Applications Multi-channel protocol IP Stack ARP Channel Abstraction Module Interface Device Driver 11

12

Secret to happiness is to lower your expectations to the point where they're already met with apologies to Bill Watterson (Calvin & Hobbes) 13

Network-Aware Distributed Algorithms for Wireless Networks Nitin Vaidya Electrical and Computer Engineering University of Illinois at Urbana-Champaign 14

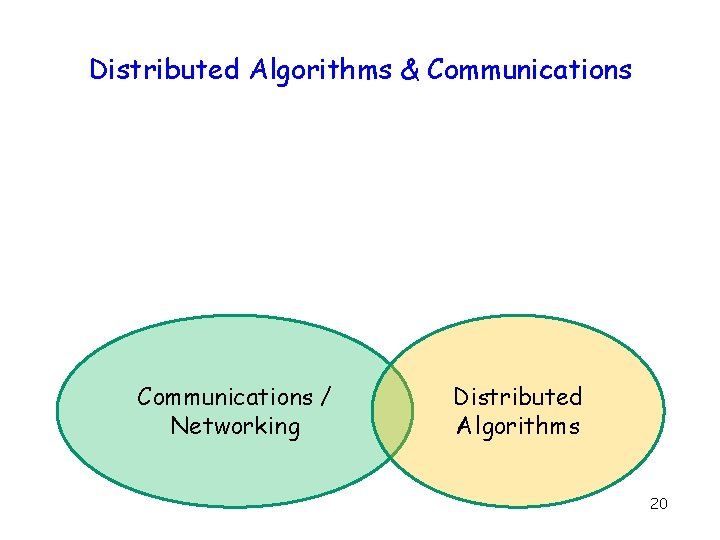

Distributed Algorithms & Communications / Networking Distributed Algorithms 15

Distributed Algorithms & Communications g Problems with overlapping scope g But cultures differ Communications / Networking Distributed Algorithms 16

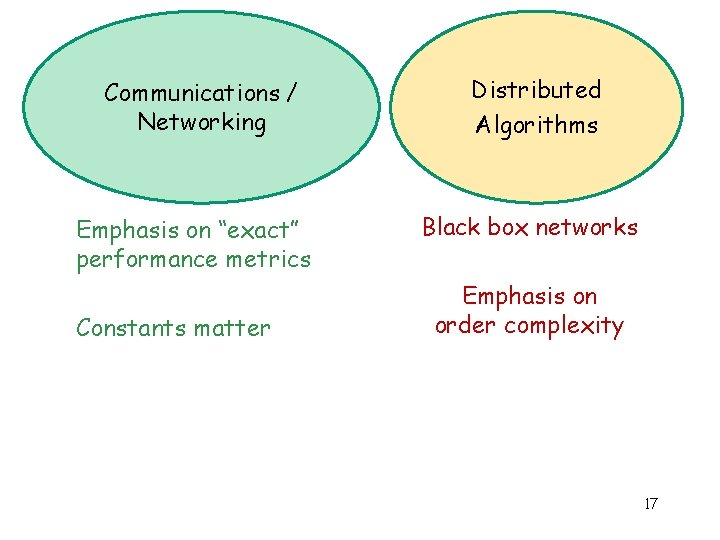

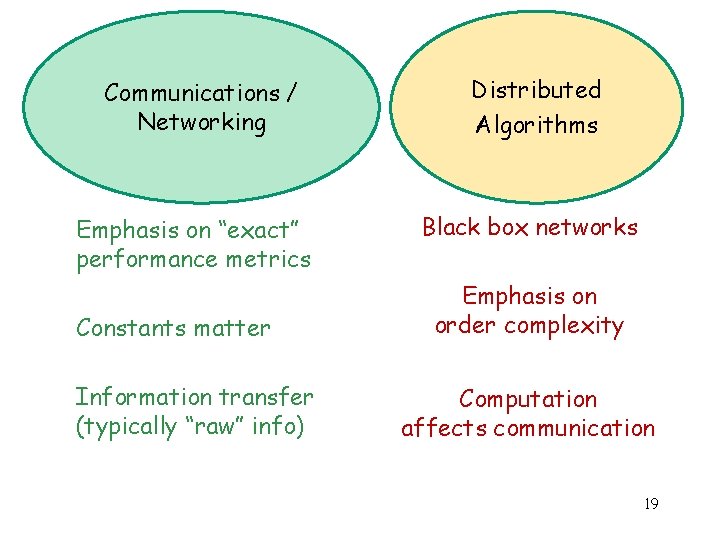

Communications / Networking Emphasis on “exact” performance metrics Constants matter Distributed Algorithms Black box networks Emphasis on order complexity 17

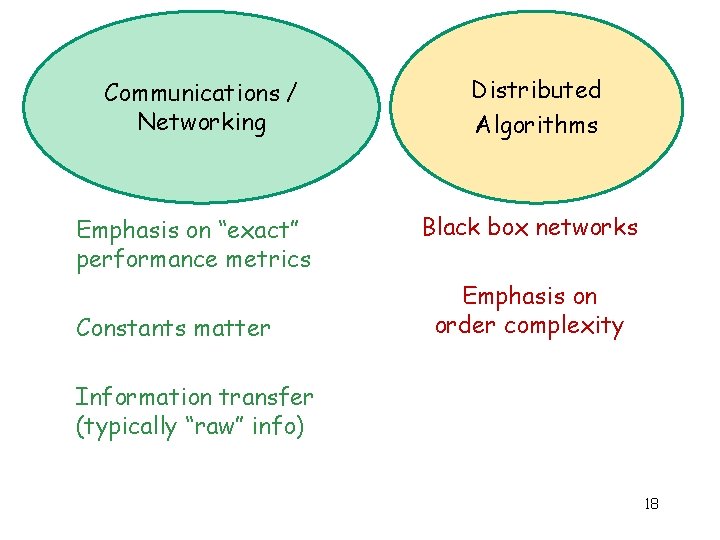

Communications / Networking Emphasis on “exact” performance metrics Constants matter Distributed Algorithms Black box networks Emphasis on order complexity Information transfer (typically “raw” info) 18

Communications / Networking Emphasis on “exact” performance metrics Constants matter Information transfer (typically “raw” info) Distributed Algorithms Black box networks Emphasis on order complexity Computation affects communication 19

Distributed Algorithms & Communications / Networking Distributed Algorithms 20

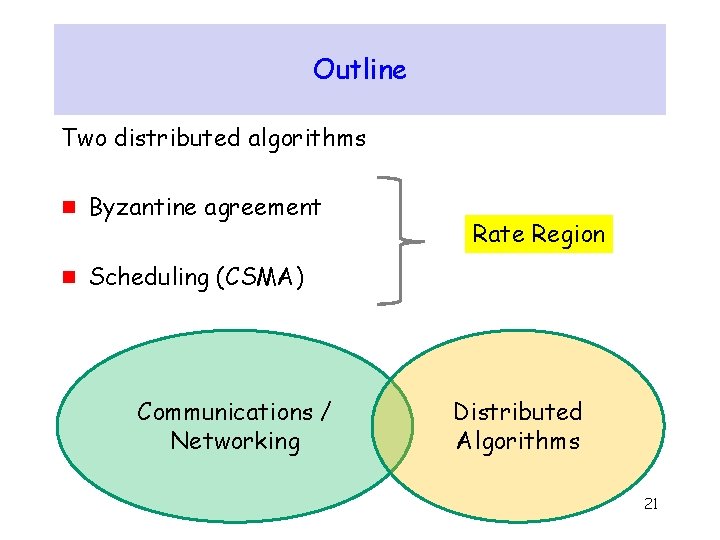

Outline Two distributed algorithms g Byzantine agreement g Scheduling (CSMA) Communications / Networking Rate Region Distributed Algorithms 21

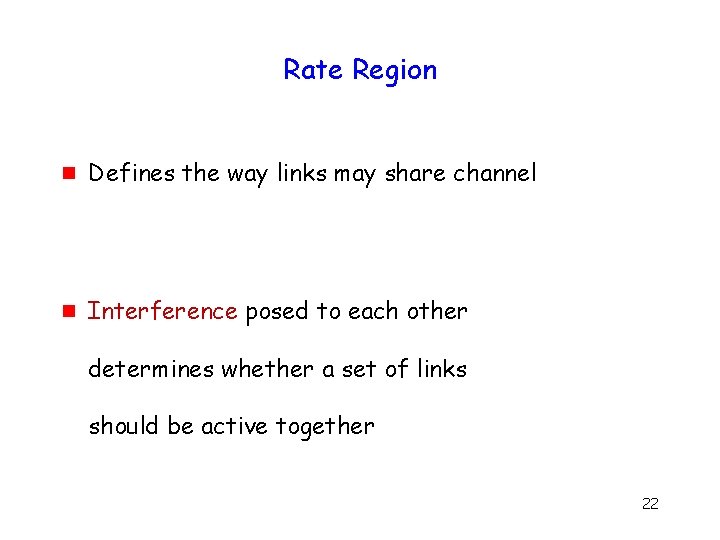

Rate Region g Defines the way links may share channel g Interference posed to each other determines whether a set of links should be active together 22

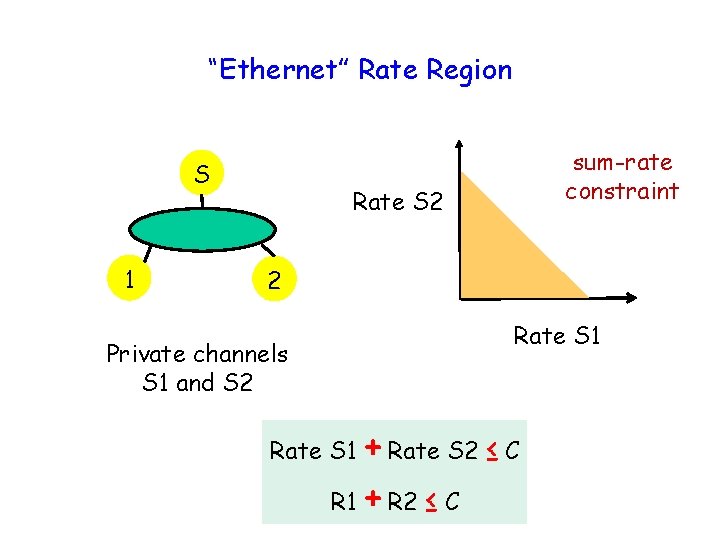

“Ethernet” Rate Region S 1 sum-rate constraint Rate S 2 2 Rate S 1 Private channels S 1 and S 2 + Rate S 2 ≤ C R 1 + R 2 ≤ C Rate S 1 23

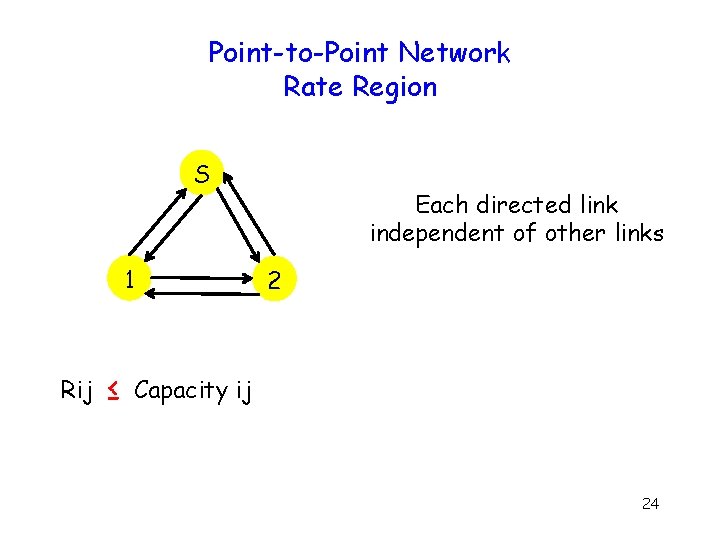

Point-to-Point Network Rate Region S 1 Rij ≤ Each directed link independent of other links 2 Capacity ij 24

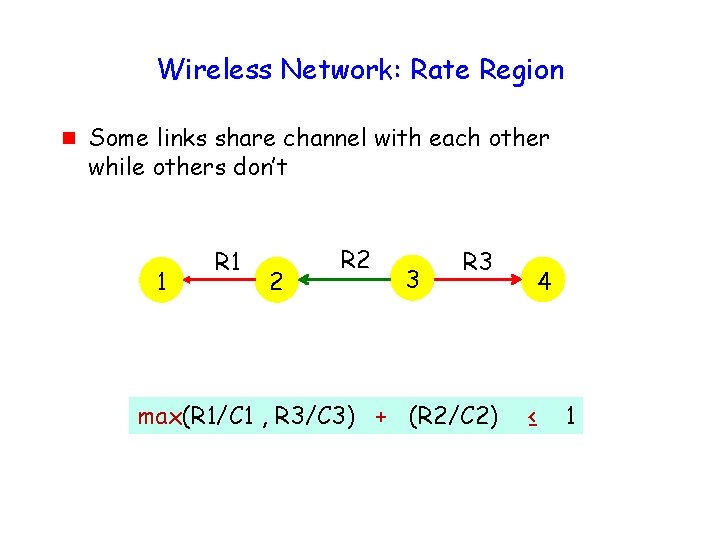

Wireless Network: Rate Region g Some links share channel with each other while others don’t 1 R 1 2 R 2 3 R 3 max(R 1/C 1 , R 3/C 3) + (R 2/C 2) 4 ≤ 1

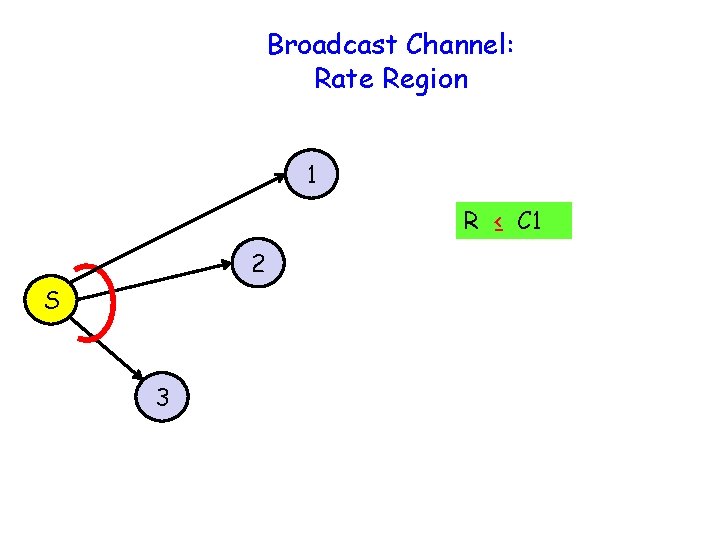

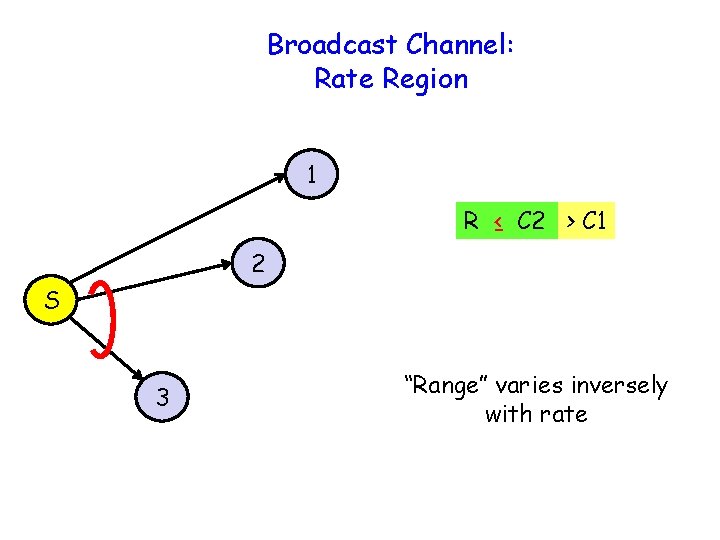

Broadcast Channel: Rate Region 1 R ≤ C 1 2 S 3

Broadcast Channel: Rate Region 1 R ≤ C 2 > C 1 2 S 3 “Range” varies inversely with rate

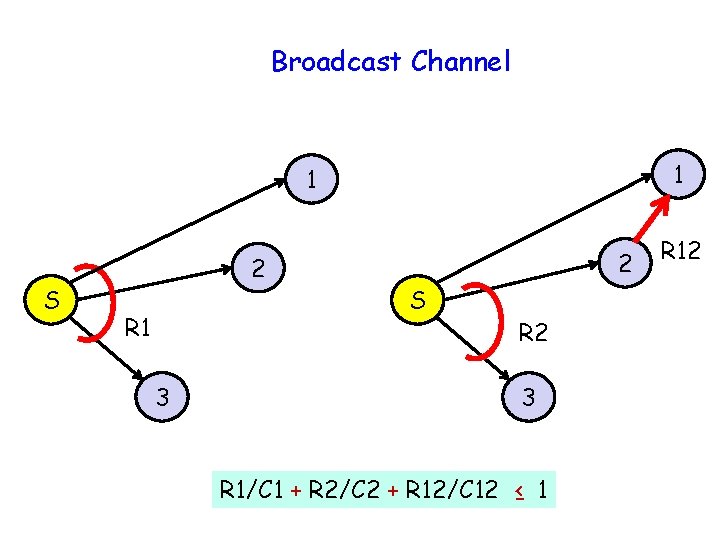

Broadcast Channel 1 1 S 2 R 1 3 2 S R 2 3 R 1/C 1 + R 2/C 2 + R 12/C 12 ≤ 1 R 12

Outline Two distributed algorithms g Byzantine agreement g Scheduling (CSMA) 29

Impact of Rate Region g Network rate region affects ability to perform multi-party computation g Example: Byzantine agreement (broadcast) 30

Byzantine Agreement: Broadcast Source S wants to send message to n-1 receivers g Fault-free receivers agree g S fault-free agree on its message g Up to f failures

Impact of Rate Region g How does rate region affect broadcast performance ? g How to quantify the impact ? 32

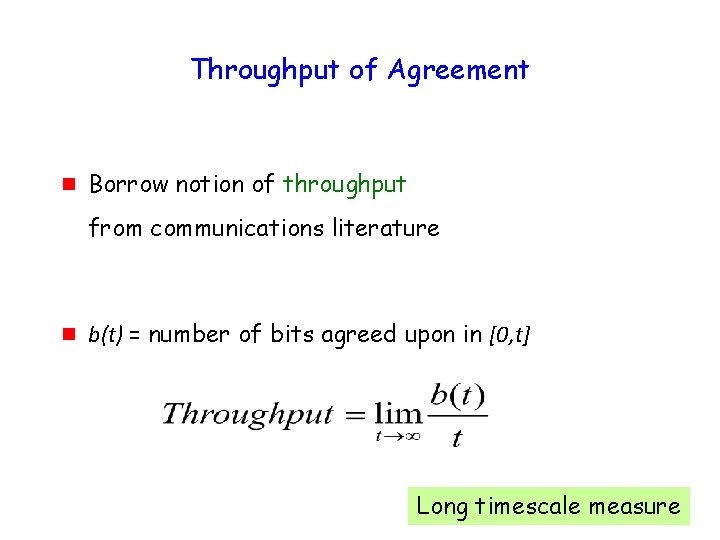

Throughput of Agreement g Borrow notion of throughput from communications literature g b(t) = number of bits agreed upon in [0, t] 33 Long timescale measure

Capacity of Agreement g Supremum of achievable throughputs for a given rate region

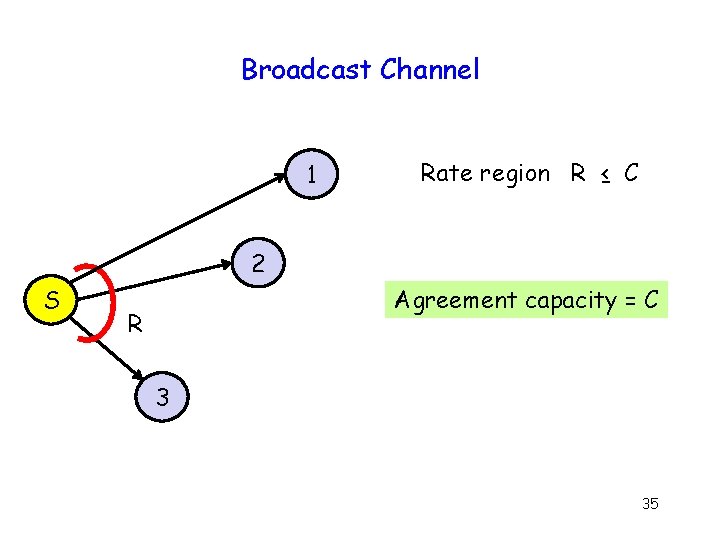

Broadcast Channel 1 Rate region R ≤ C 2 S Agreement capacity = C R 3 35

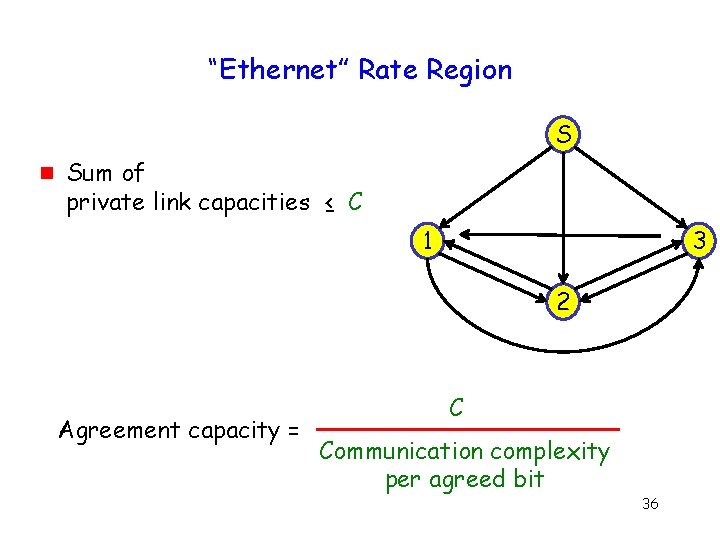

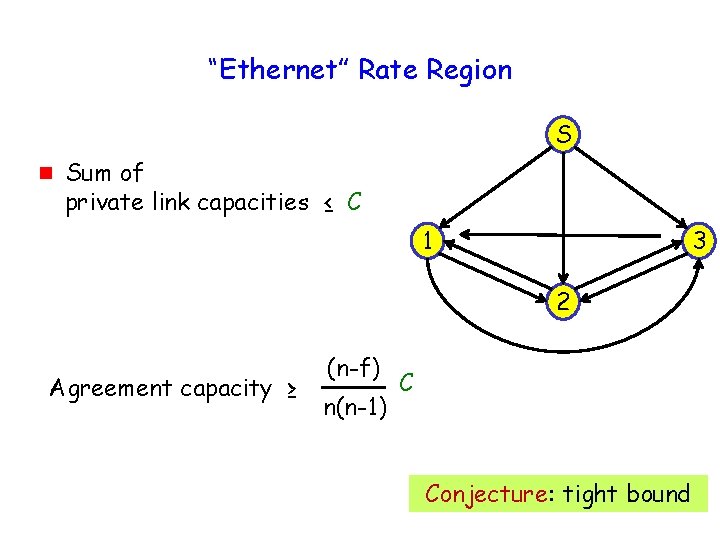

“Ethernet” Rate Region S g Sum of private link capacities ≤ C 1 3 2 Agreement capacity = C Communication complexity per agreed bit 36

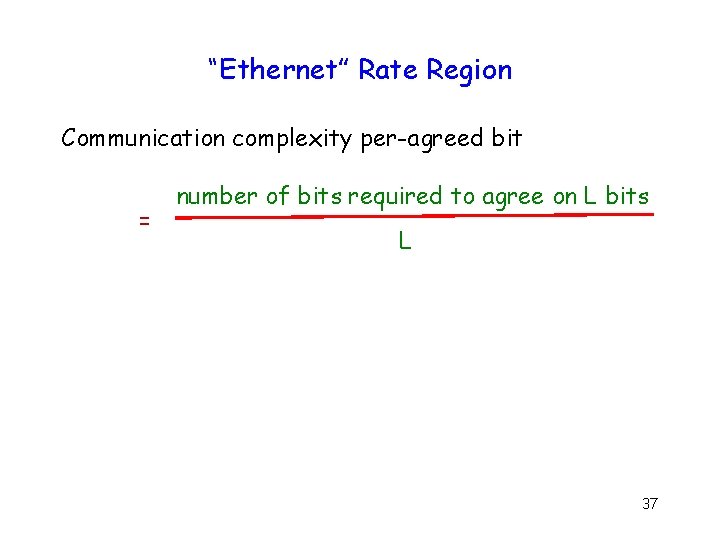

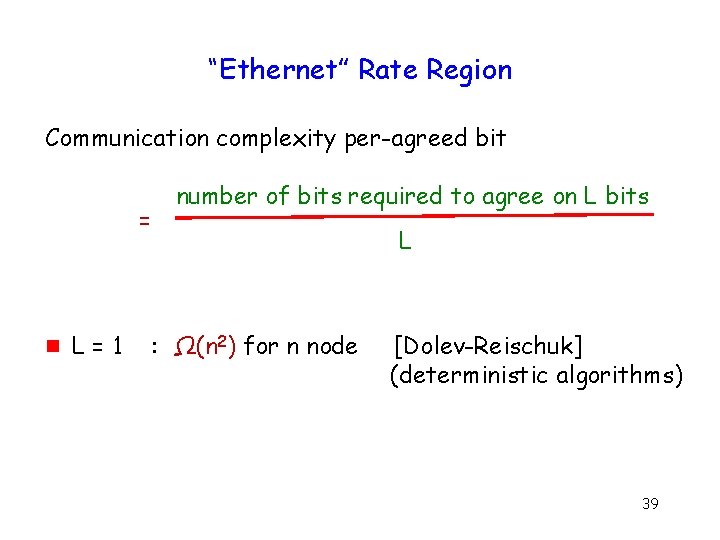

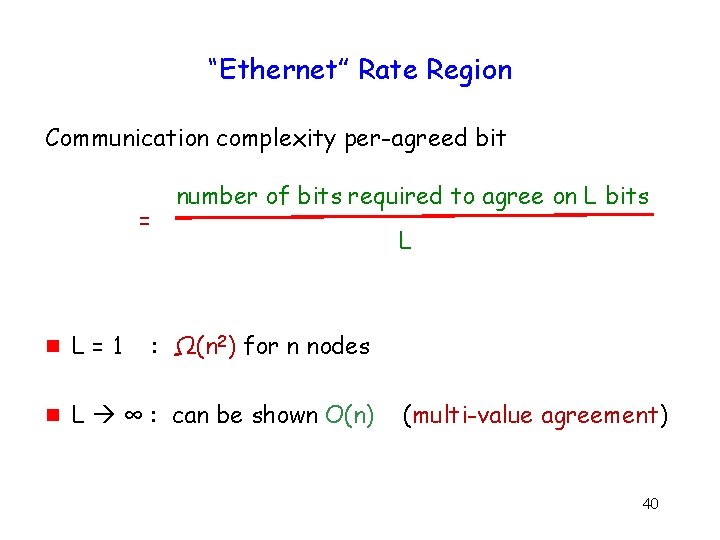

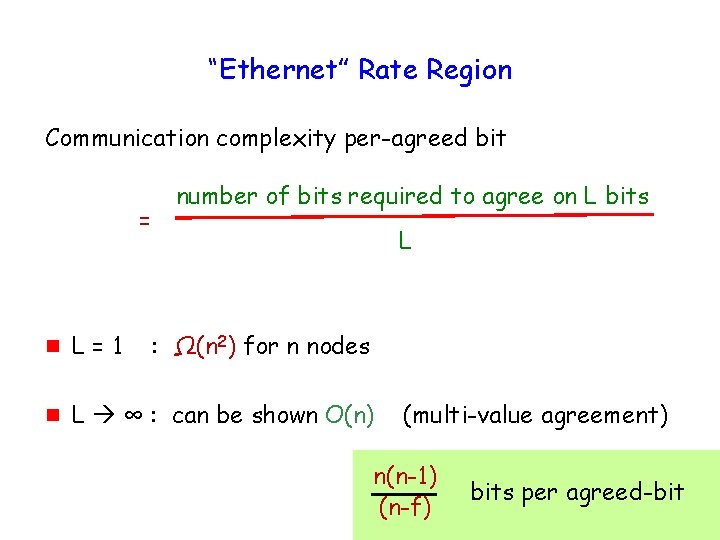

“Ethernet” Rate Region Communication complexity per-agreed bit = number of bits required to agree on L bits L 37

“Ethernet” Rate Region Communication complexity per-agreed bit = number of bits required to agree on L bits L 38

“Ethernet” Rate Region Communication complexity per-agreed bit = g L=1 number of bits required to agree on L bits L : Ω(n 2) for n node [Dolev-Reischuk] (deterministic algorithms) 39

“Ethernet” Rate Region Communication complexity per-agreed bit = number of bits required to agree on L bits L g L=1 : Ω(n 2) for n nodes g L ∞ : can be shown O(n) (multi-value agreement) 40

“Ethernet” Rate Region Communication complexity per-agreed bit = number of bits required to agree on L bits L g L=1 : Ω(n 2) for n nodes g L ∞ : can be shown O(n) (multi-value agreement) n(n-1) (n-f) bits per agreed-bit 41

“Ethernet” Rate Region S g Sum of private link capacities ≤ C 1 3 2 Agreement capacity ≥ (n-f) n(n-1) C Conjecture: tight bound 42

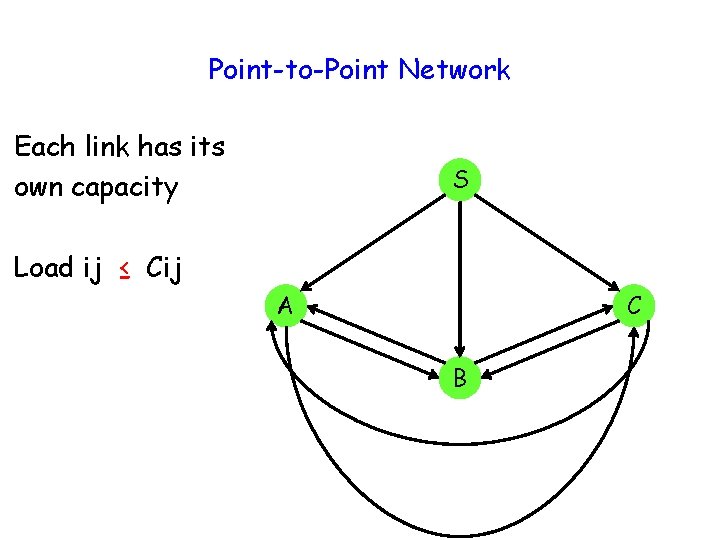

Point-to-Point Network Each link has its own capacity S Load ij ≤ Cij A C B

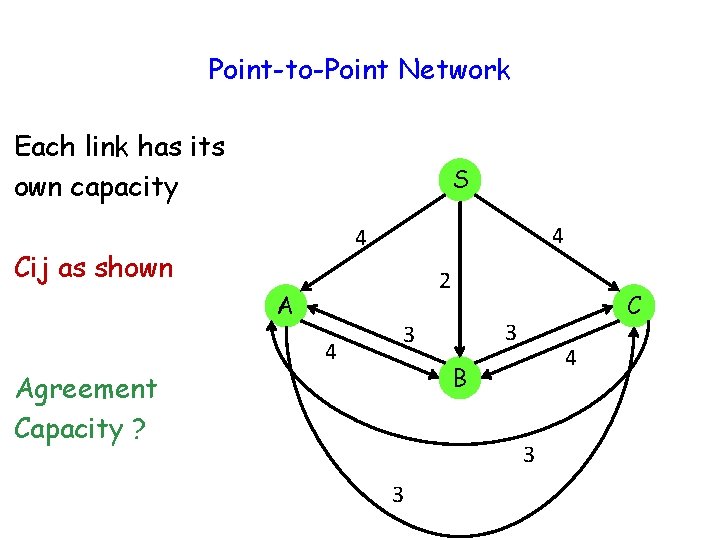

Point-to-Point Network Each link has its own capacity S 4 4 Cij as shown 2 A 4 C 3 3 4 B Agreement Capacity ? 3 3

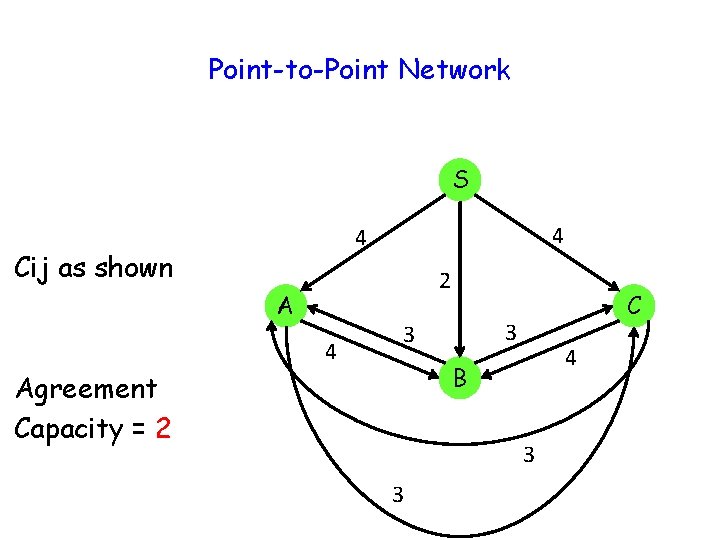

Point-to-Point Network S 4 4 Cij as shown 2 A 4 C 3 3 4 B Agreement Capacity = 2 3 3

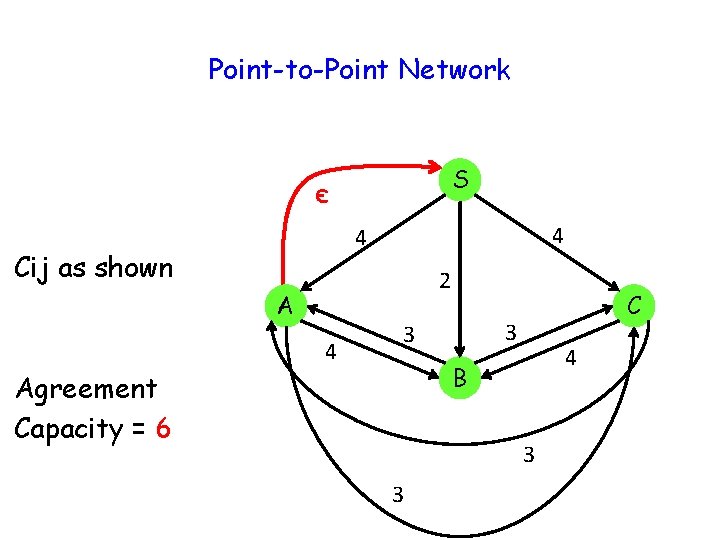

Point-to-Point Network S є 4 4 Cij as shown 2 A 4 C 3 3 4 B Agreement Capacity = 6 3 3

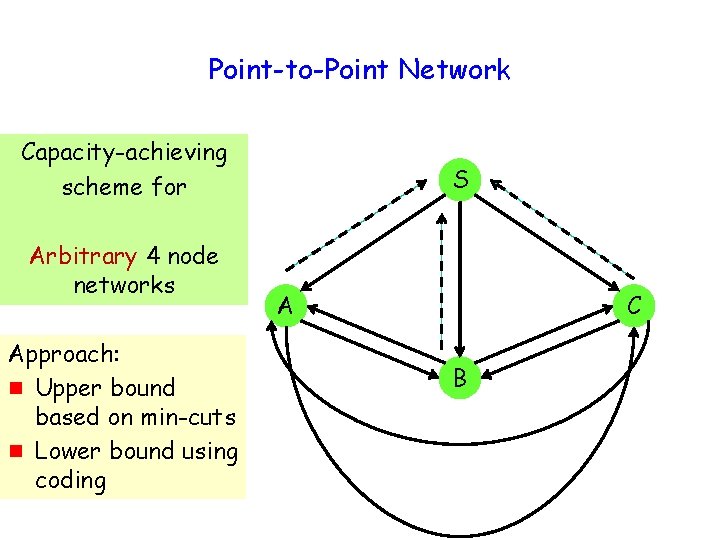

Point-to-Point Network Capacity-achieving scheme for Arbitrary 4 node networks Approach: g Upper bound based on min-cuts g Lower bound using coding S A C B

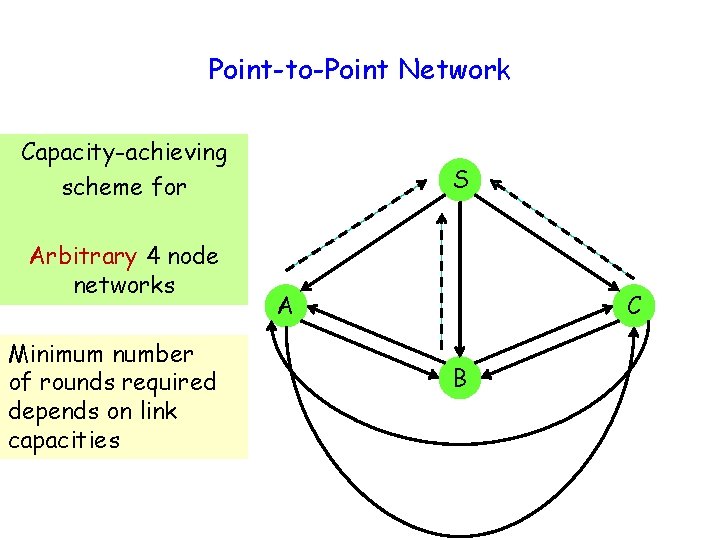

Point-to-Point Network Capacity-achieving scheme for Arbitrary 4 node networks Minimum number of rounds required depends on link capacities S A C B

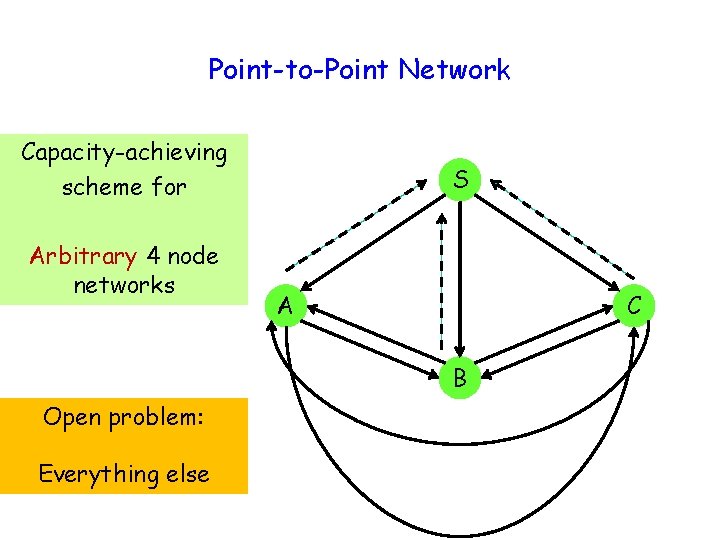

Point-to-Point Network Capacity-achieving scheme for Arbitrary 4 node networks S A C B Open problem: Everything else

Open Problems g g Capacity-achieving agreement with general rate regions Subset of nodes as “receivers” 50

Open Problems g g g Capacity-achieving agreement with general rate regions Subset of nodes as “receivers” Even the multicast problem with Byzantine nodes is unsolved - For multicast, source S fault-free 51

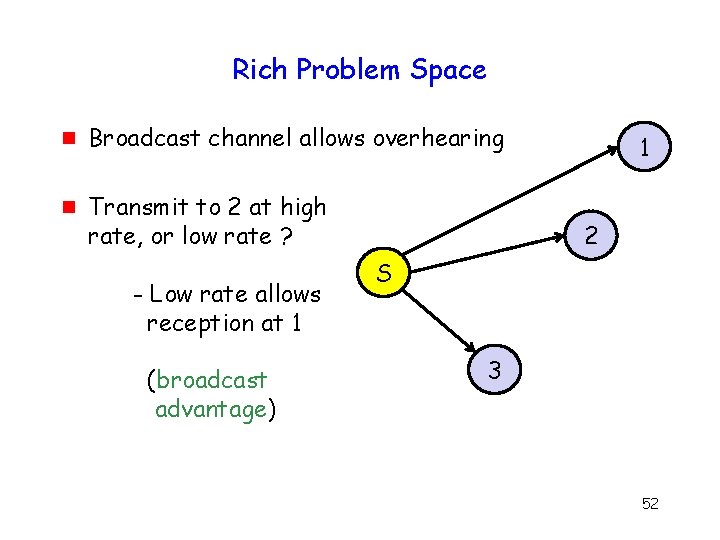

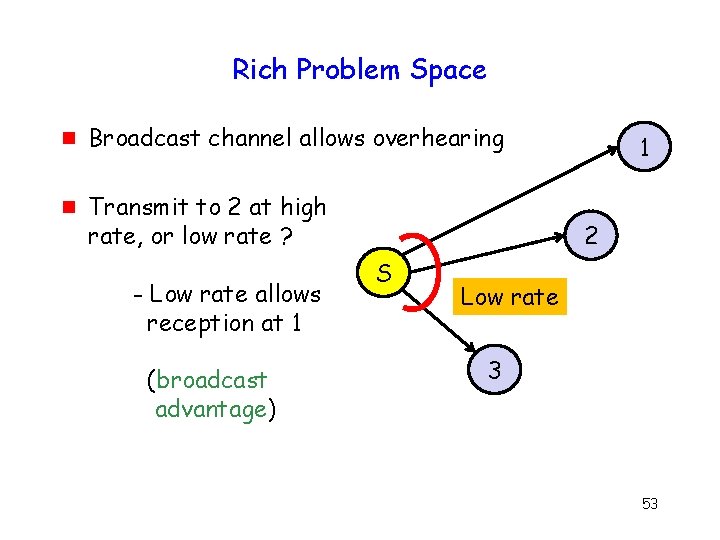

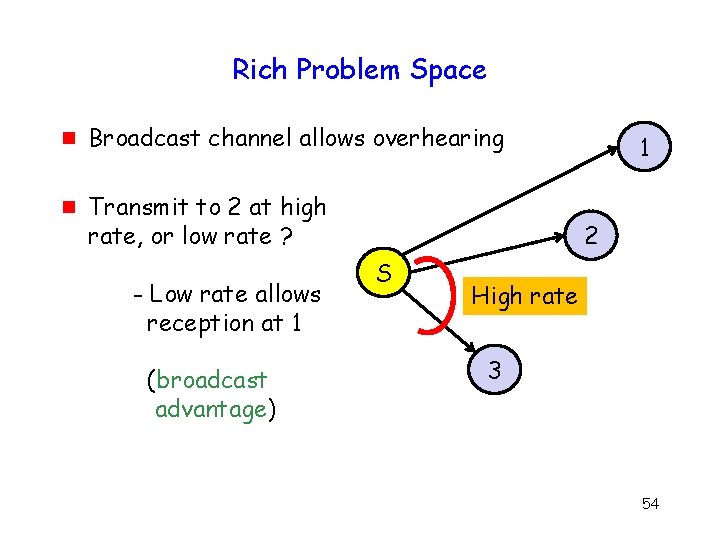

Rich Problem Space g g Broadcast channel allows overhearing Transmit to 2 at high rate, or low rate ? - Low rate allows reception at 1 (broadcast advantage) 1 2 S 3 52

Rich Problem Space g g Broadcast channel allows overhearing Transmit to 2 at high rate, or low rate ? - Low rate allows reception at 1 (broadcast advantage) 1 2 S Low rate 3 53

Rich Problem Space g g Broadcast channel allows overhearing Transmit to 2 at high rate, or low rate ? - Low rate allows reception at 1 (broadcast advantage) 1 2 S High rate 3 54

Rich Problem Space g How to model & exploit reception with probability < 1 ? – Need opportunistic algorithms g Use of available diversity affects rate region – How to dynamically adapt to channel variations ? 55

Rich Problem Space g Similar questions relevant for any multi-party computation Communications / Networking Distributed Algorithms 56

And Now for Something Completely Different * * Monty Python 57

Outline Two distributed algorithms g Byzantine agreement g Scheduling (CSMA) 58

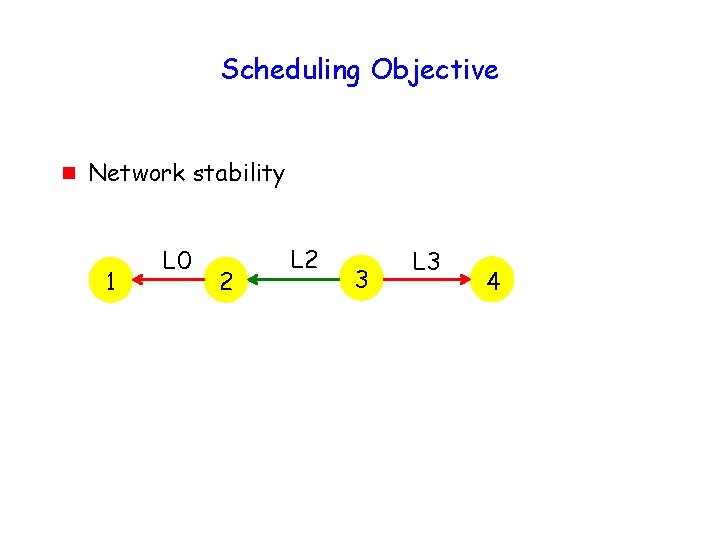

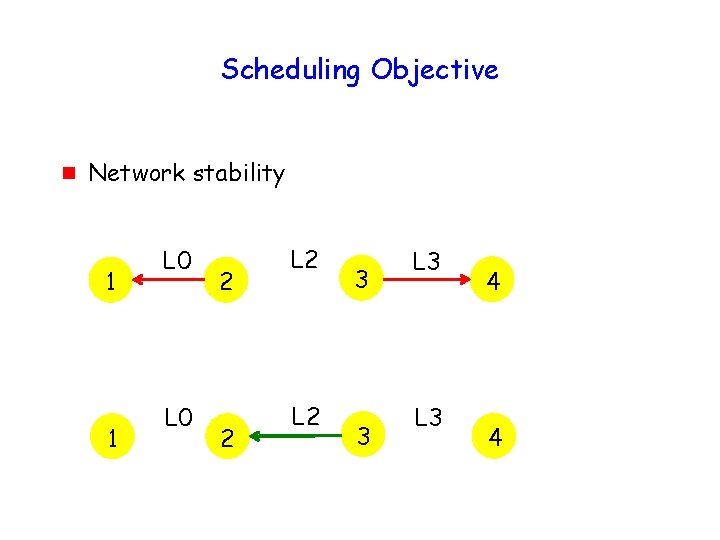

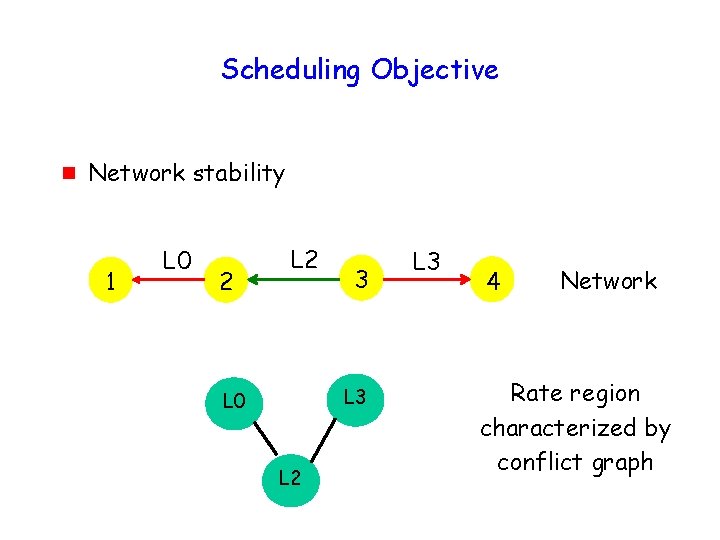

Scheduling Objective g Network stability 1 L 0 2 L 2 3 L 3 4

Scheduling Objective g Network stability 1 1 L 0 2 2 L 2 3 3 L 3 4 4

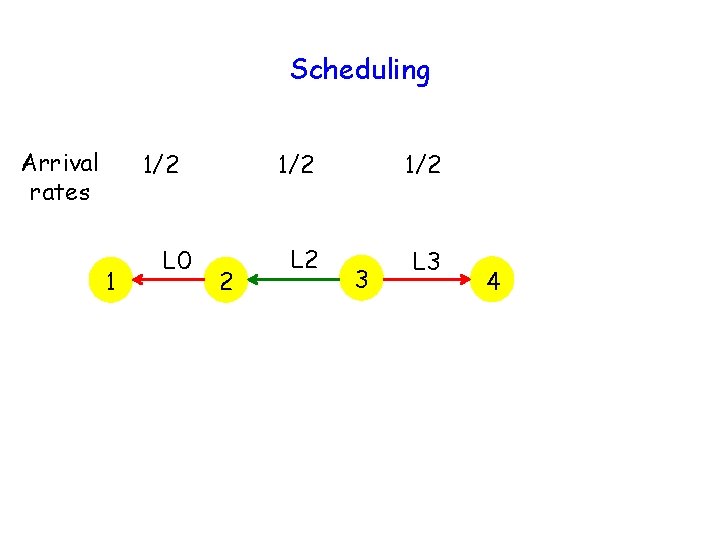

Scheduling Arrival rates 1/2 1 L 0 2 1/2 L 3 3 4

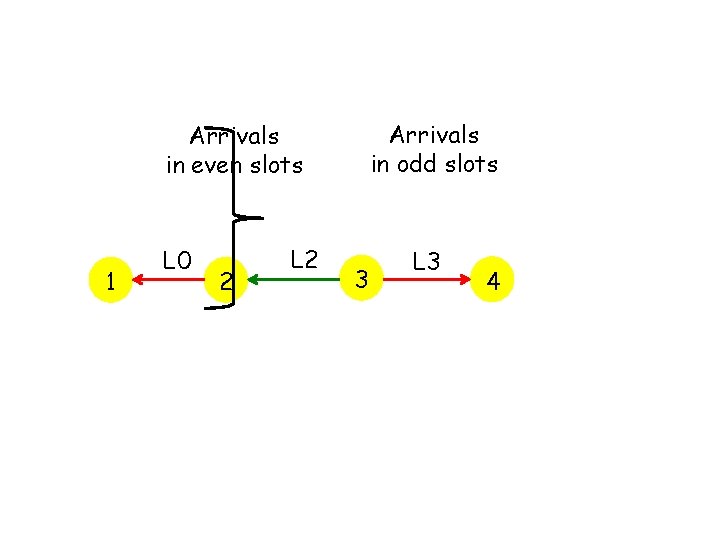

Arrivals in odd slots Arrivals in even slots 1 L 0 2 L 2 3 L 3 4

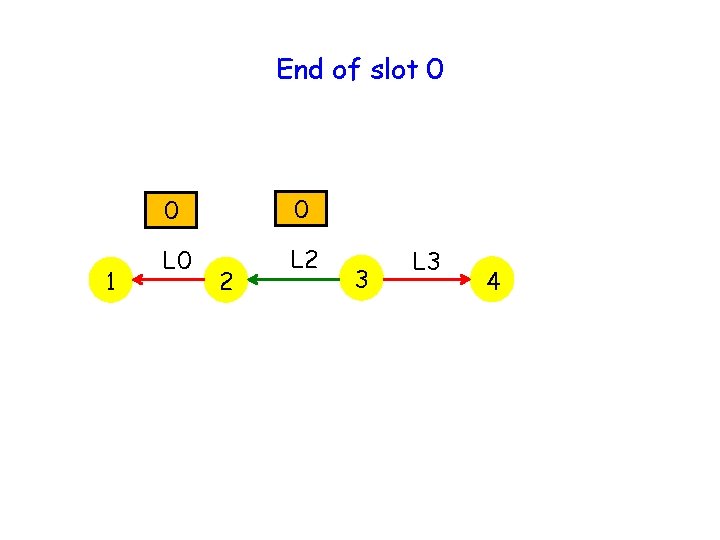

End of slot 0 1 0 0 L 2 2 3 L 3 4

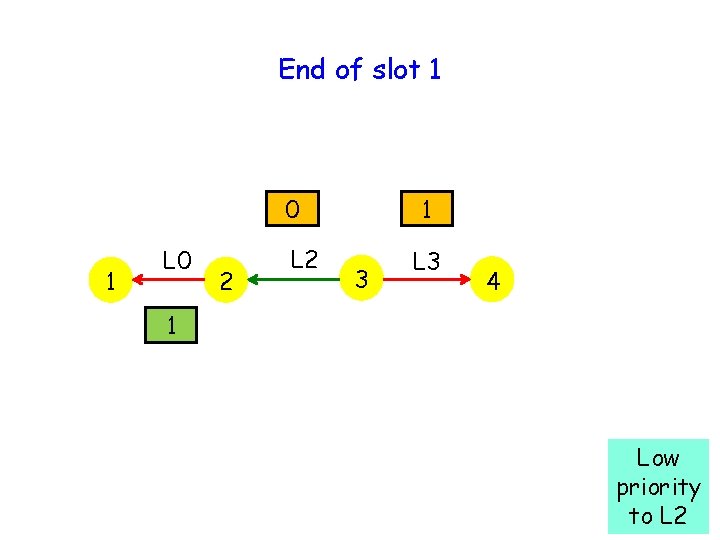

End of slot 1 0 1 L 0 2 L 2 1 3 L 3 4 1 Low priority to L 2

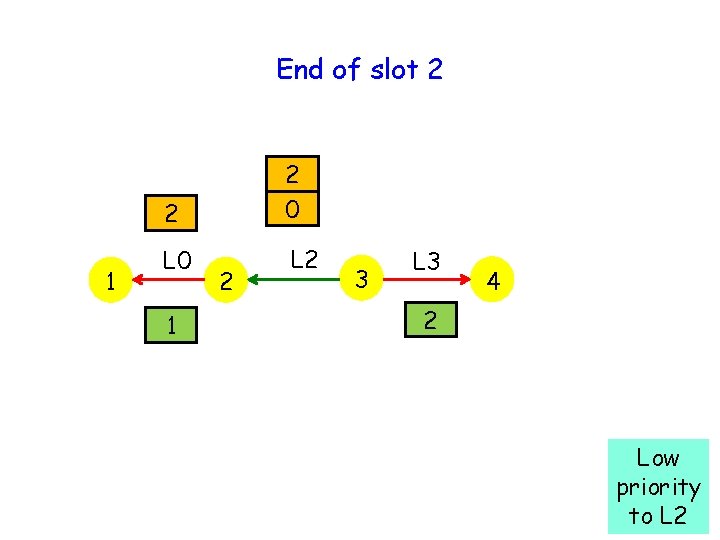

End of slot 2 1 2 2 0 L 2 1 2 3 L 3 4 2 Low priority to L 2

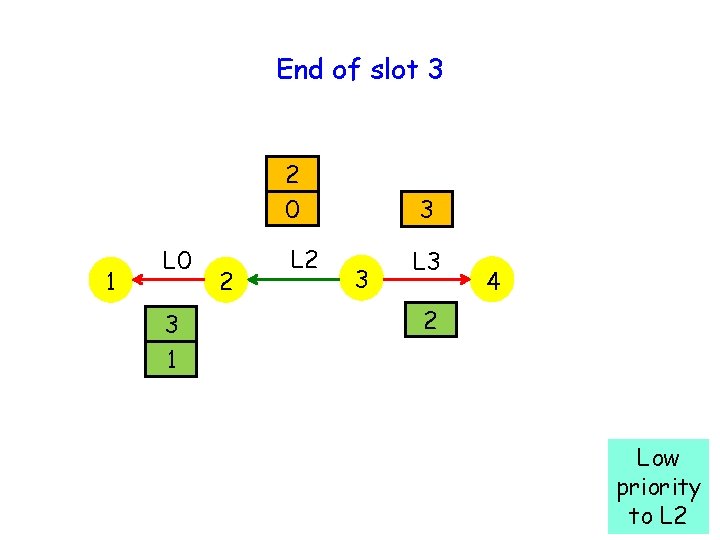

End of slot 3 1 L 0 3 1 2 2 0 3 L 2 L 3 3 4 2 Low priority to L 2

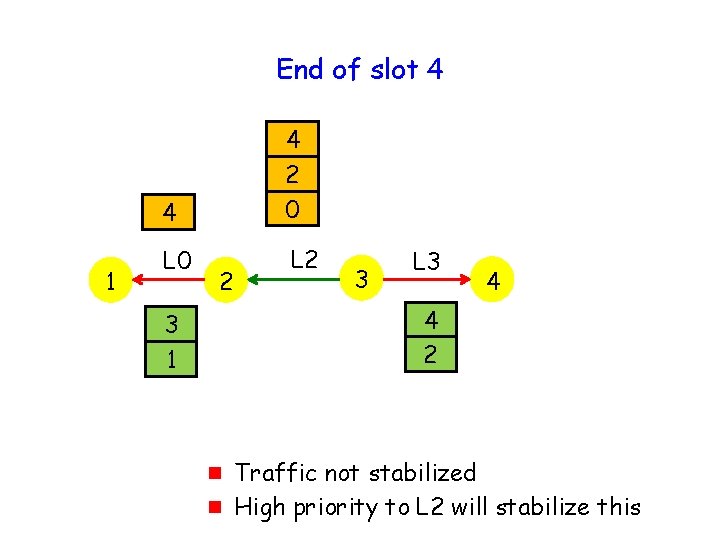

End of slot 4 1 4 4 2 0 L 2 2 3 L 3 4 4 2 3 1 g g Traffic not stabilized High priority to L 2 will stabilize this

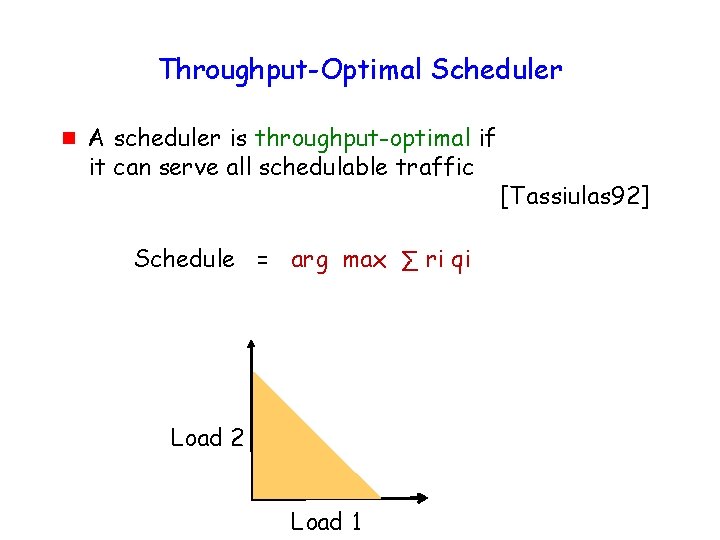

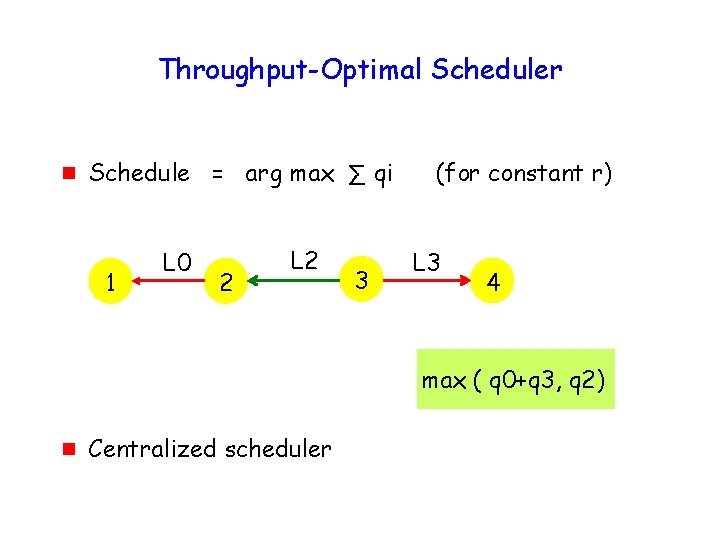

Throughput-Optimal Scheduler g A scheduler is throughput-optimal if it can serve all schedulable traffic Schedule = arg max ∑ ri qi Load 2 Load 1 [Tassiulas 92]

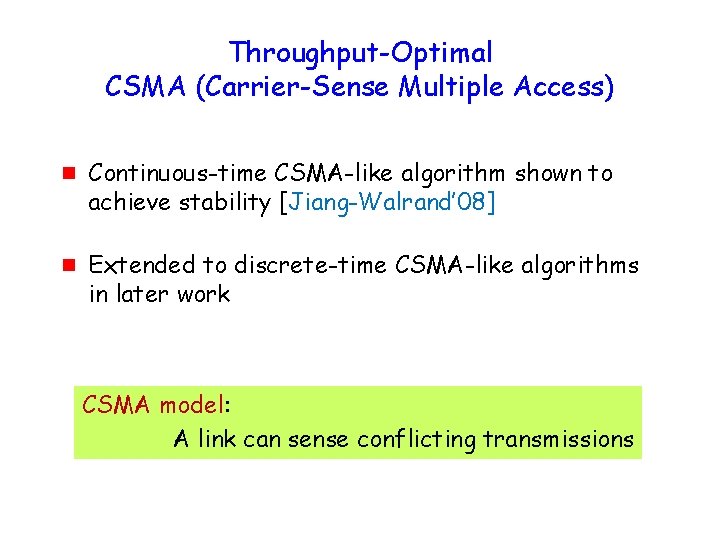

Throughput-Optimal CSMA (Carrier-Sense Multiple Access) g g Continuous-time CSMA-like algorithm shown to achieve stability [Jiang-Walrand’ 08] Extended to discrete-time CSMA-like algorithms in later work CSMA model: A link can sense conflicting transmissions

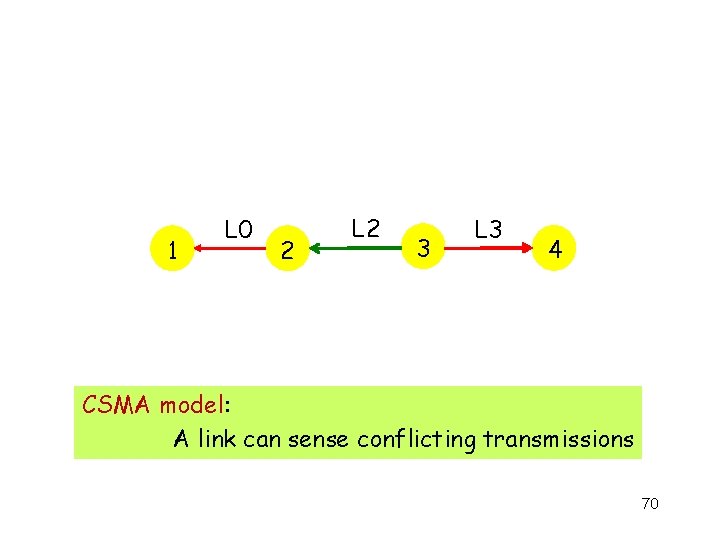

1 L 0 2 L 2 3 L 3 4 CSMA model: A link can sense conflicting transmissions 70

71

Imperfect Carrier Sensing g Conflicting transmissions may not always be sensed, potentially leading to collisions 72

Imperfect Carrier Sensing g Stability with imperfect carrier sensing ? g Yes, almost 73

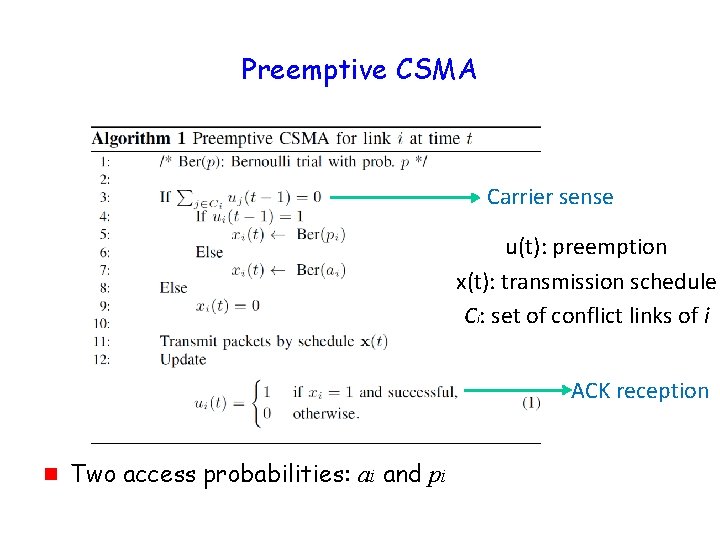

Proposed CSMA Algorithm Two access probability: g g a : probability with which a node attempts to transmit first packet in a “train” p : probability with which a “train” is extended 74

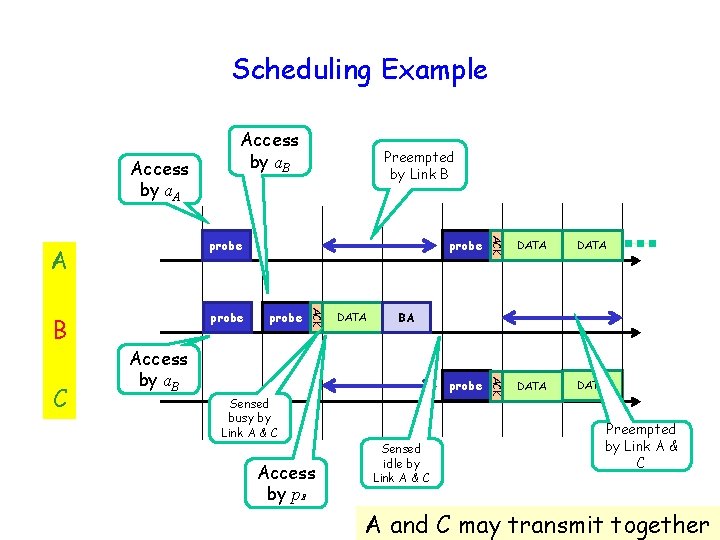

Scheduling Example Access by a. A Access by a. B DATA Access by p. B DATA BA Access by a. B Sensed busy by Link A & C probe DATA ACK C probe ACK B probe ACK A probe Preempted by Link B Sensed idle by Link A & C Preempted by Link A & C A and C may transmit together

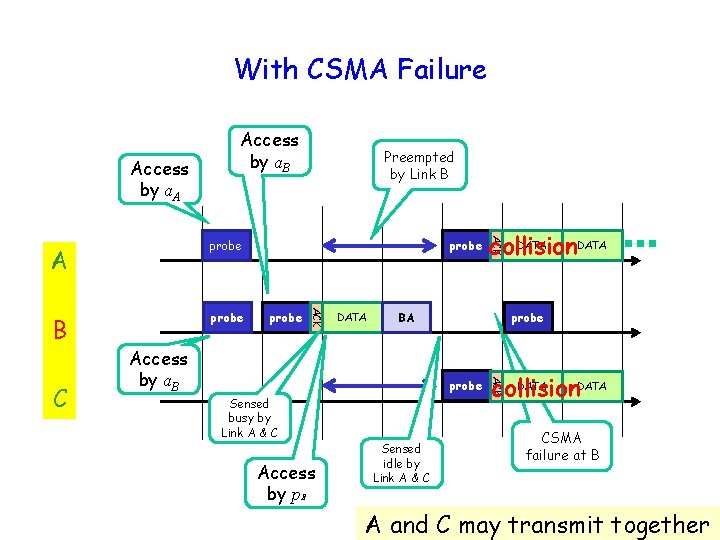

With CSMA Failure Access by a. A Access by a. B DATA probe BA Access by a. B probe Sensed busy by Link A & C Access by p. B Sensed idle by Link A & C DATA collision ACK C probe DATA collision ACK B probe ACK A probe Preempted by Link B CSMA failure at B A and C may transmit together

Stability with Sensing Failure g Small enough access probability (a) suffices to stabilize arbitrarily large fraction of rate region g Continuation probability (p) being function of queue size 77

Open Problems g Carrier sensing failures … correlation over time and space g Asymmetric collisions g Dynamic adaptation to time-varying channel 78

What does this have to do with distributed algorithms ? 79

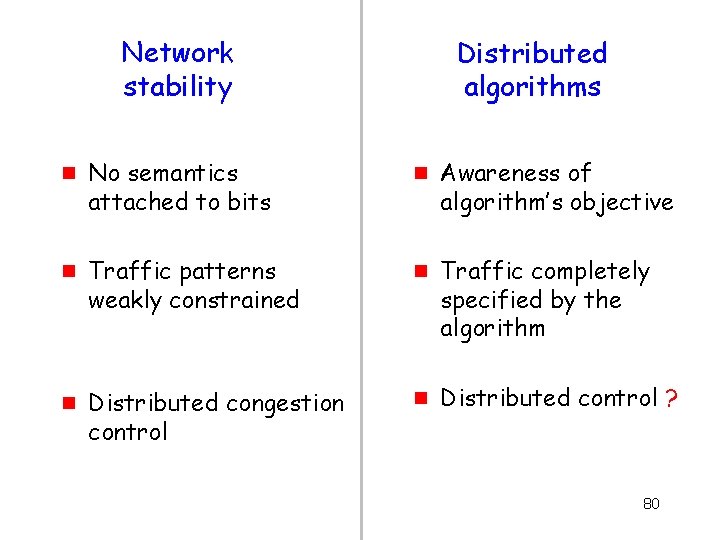

Network stability g g g No semantics attached to bits Traffic patterns weakly constrained Distributed congestion control Distributed algorithms g g g Awareness of algorithm’s objective Traffic completely specified by the algorithm Distributed control ? 80

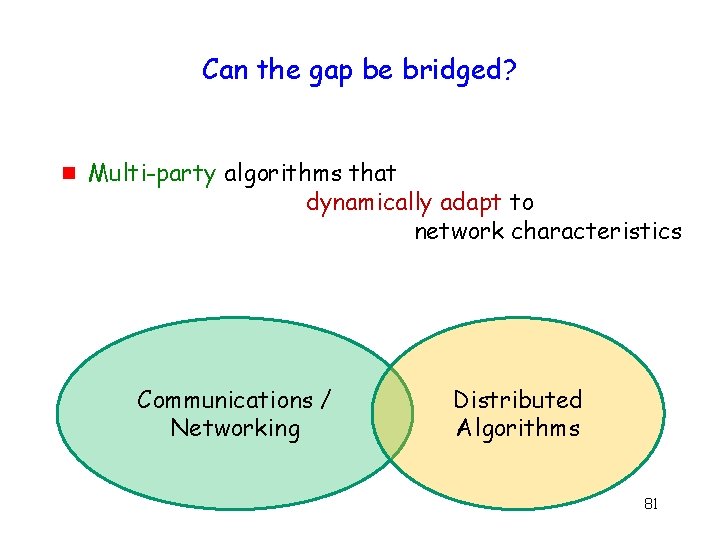

Can the gap be bridged? g Multi-party algorithms that dynamically adapt to network characteristics Communications / Networking Distributed Algorithms 81

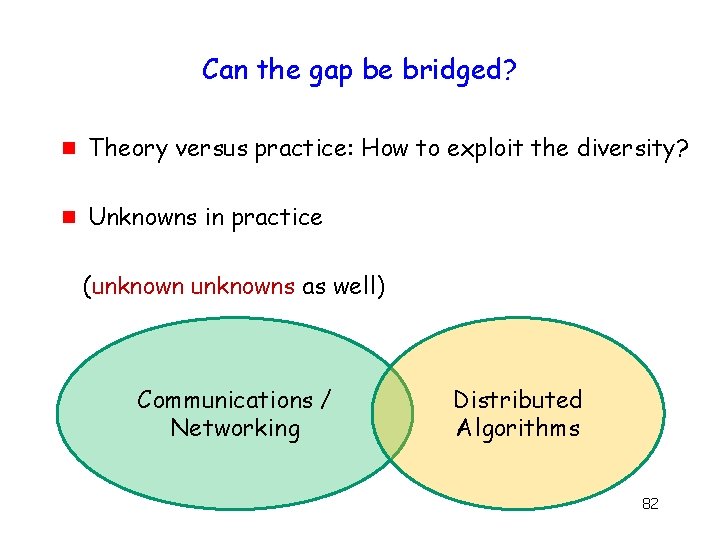

Can the gap be bridged? g Theory versus practice: How to exploit the diversity? g Unknowns in practice (unknowns as well) Communications / Networking Distributed Algorithms 82

Thanks! www. crhc. illinois. edu / wireless

Thanks! www. crhc. illinois. edu / wireless

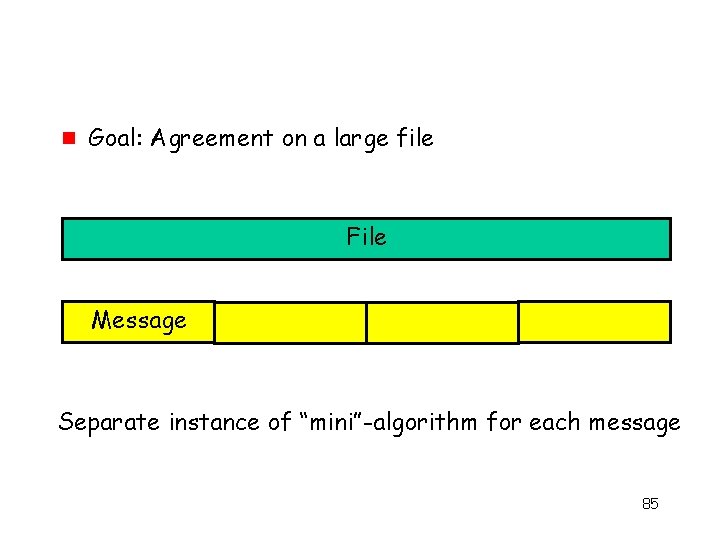

g Goal: Agreement on a large file File Message Separate instance of “mini”-algorithm for each message 85

Back-up slides 86

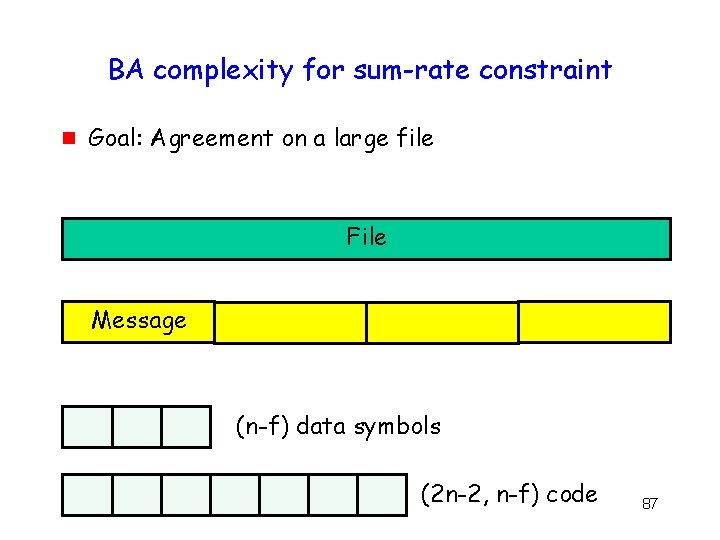

BA complexity for sum-rate constraint g Goal: Agreement on a large file File Message (n-f) data symbols (2 n-2, n-f) code 87

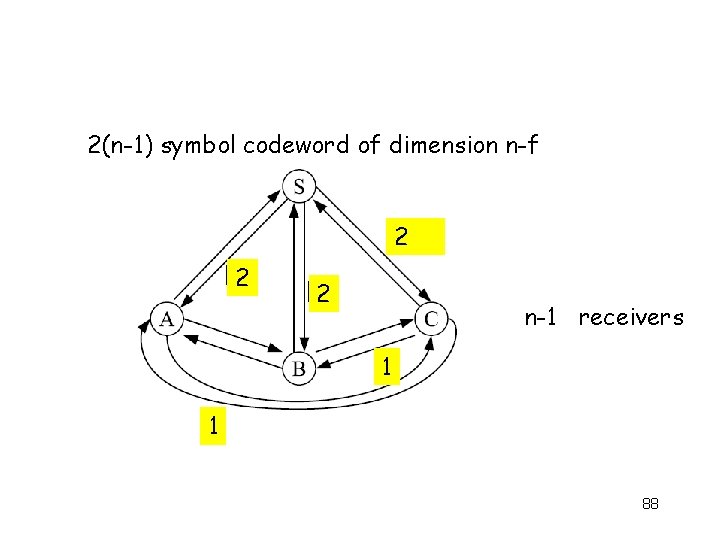

2(n-1) symbol codeword of dimension n-f 2 2 2 n-1 receivers 1 1 88

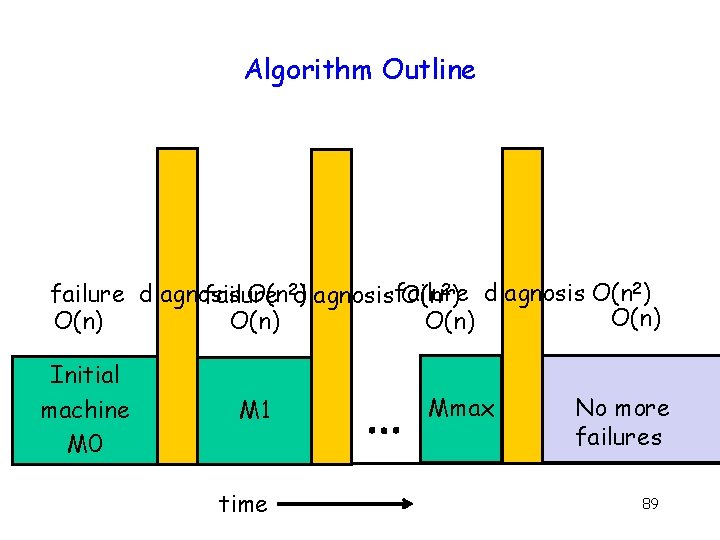

Algorithm Outline failure diagnosis O(n 2 diagnosis ) failure O(n 2) diagnosis O(n 2) O(n) Initial machine M 0 M 1 time Mmax No more failures 89

CSMA 90

Scheduling Objective g Network stability 1 L 0 2 L 2 3 L 0 L 2 L 3 4 Network Rate region characterized by conflict graph

Throughput-Optimal Scheduler g Schedule = arg max ∑ qi 1 L 0 2 L 2 3 (for constant r) L 3 4 max ( q 0+q 3, q 2) g Centralized scheduler

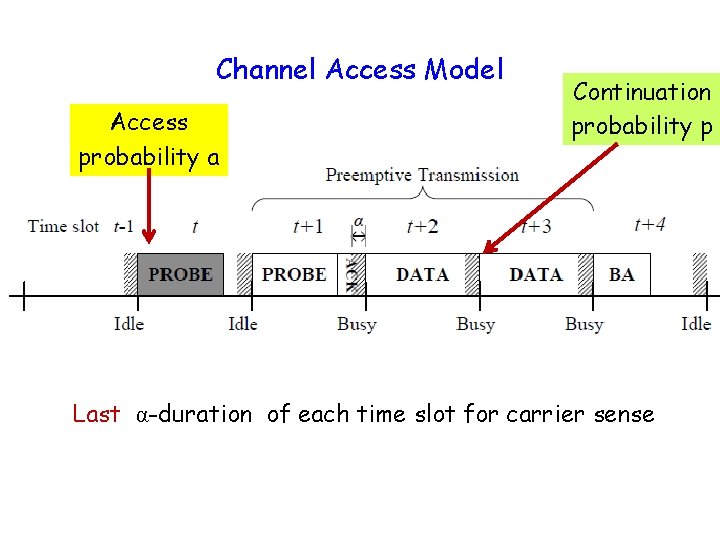

Channel Access Model Access probability a Continuation probability p Last α-duration of each time slot for carrier sense

Preemptive CSMA Carrier sense u(t): preemption x(t): transmission schedule Ci: set of conflict links of i ACK reception g Two access probabilities: ai and pi

Carrier Sense Failure: Main Result g By choosing small enough access probability, possible to stabilize arbitrarily large fraction of capacity region Proof complexity: Markov chain is no longer reversible Use perturbation theory for Markov chains

- Slides: 95