The Elements of Statistical Learning Thomas Lengauer Christian

- Slides: 63

The Elements of Statistical Learning Thomas Lengauer, Christian Merkwirth using the book by Hastie, Tibshirani, Friedman 1

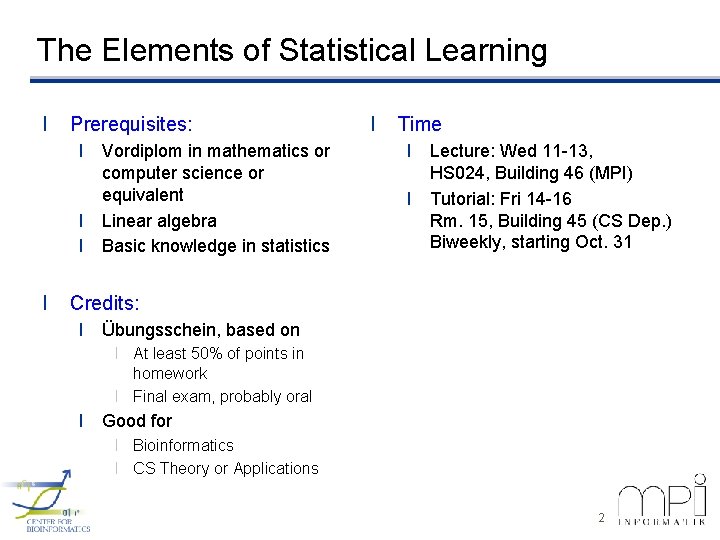

The Elements of Statistical Learning l Prerequisites: l Vordiplom in mathematics or computer science or equivalent l Linear algebra l Basic knowledge in statistics l l Time l Lecture: Wed 11 -13, HS 024, Building 46 (MPI) l Tutorial: Fri 14 -16 Rm. 15, Building 45 (CS Dep. ) Biweekly, starting Oct. 31 Credits: l Übungsschein, based on l At least 50% of points in homework l Final exam, probably oral l Good for l Bioinformatics l CS Theory or Applications 2

1. Introduction 3

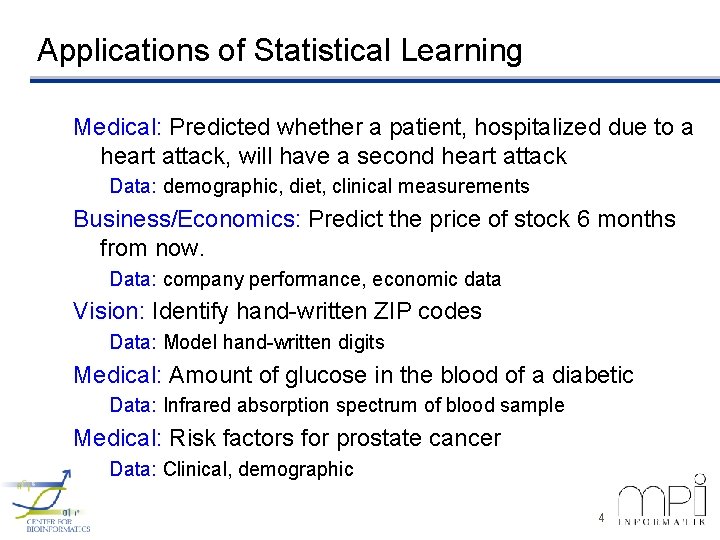

Applications of Statistical Learning Medical: Predicted whether a patient, hospitalized due to a heart attack, will have a second heart attack Data: demographic, diet, clinical measurements Business/Economics: Predict the price of stock 6 months from now. Data: company performance, economic data Vision: Identify hand-written ZIP codes Data: Model hand-written digits Medical: Amount of glucose in the blood of a diabetic Data: Infrared absorption spectrum of blood sample Medical: Risk factors for prostate cancer Data: Clinical, demographic 4

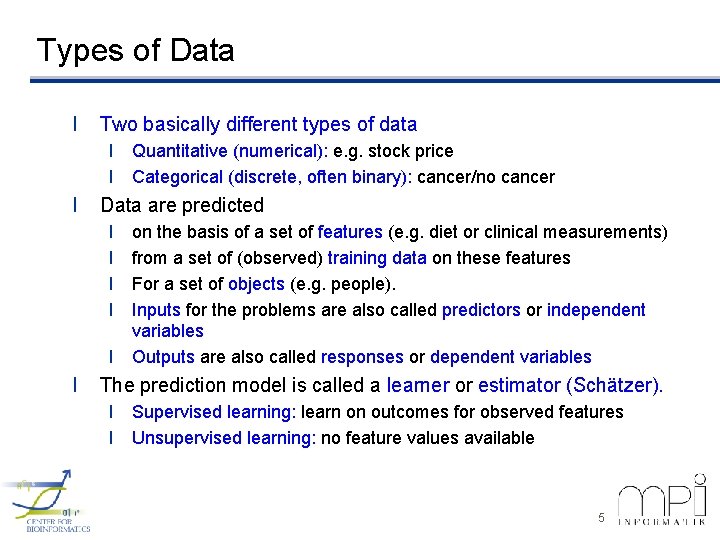

Types of Data l Two basically different types of data l Quantitative (numerical): e. g. stock price l Categorical (discrete, often binary): cancer/no cancer l Data are predicted l l l on the basis of a set of features (e. g. diet or clinical measurements) from a set of (observed) training data on these features For a set of objects (e. g. people). Inputs for the problems are also called predictors or independent variables Outputs are also called responses or dependent variables The prediction model is called a learner or estimator (Schätzer). l Supervised learning: learn on outcomes for observed features l Unsupervised learning: no feature values available 5

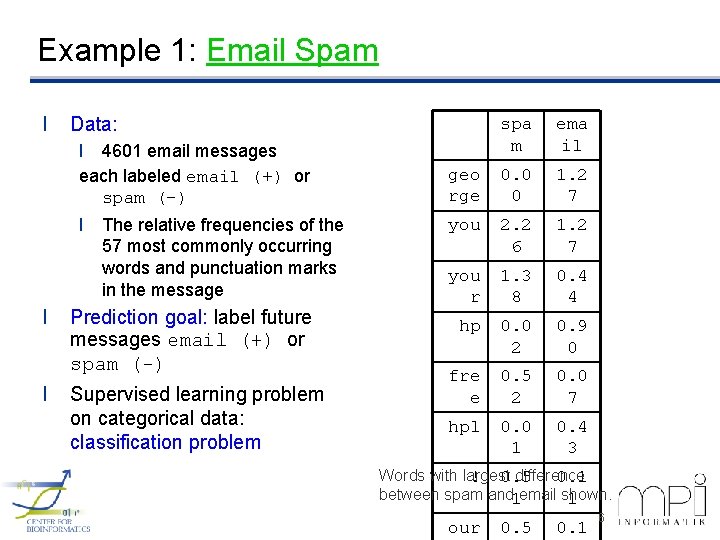

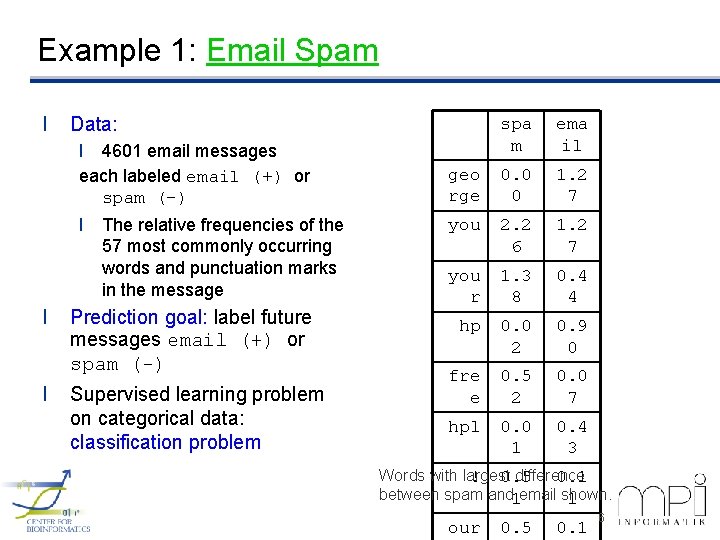

Example 1: Email Spam l Data: l 4601 email messages each labeled email (+) or spam (-) l The relative frequencies of the 57 most commonly occurring words and punctuation marks in the message l l Prediction goal: label future messages email (+) or spam (-) Supervised learning problem on categorical data: classification problem spa m ema il geo rge 0. 0 0 1. 2 7 you 2. 2 6 1. 2 7 you r 1. 3 8 0. 4 4 hp 0. 0 2 0. 9 0 fre e 0. 5 2 0. 0 7 hpl 0. 0 1 0. 4 3 Words with largest difference ! 0. 5 0. 1 between spam and 1 email shown. 1 our 0. 5 0. 1 6

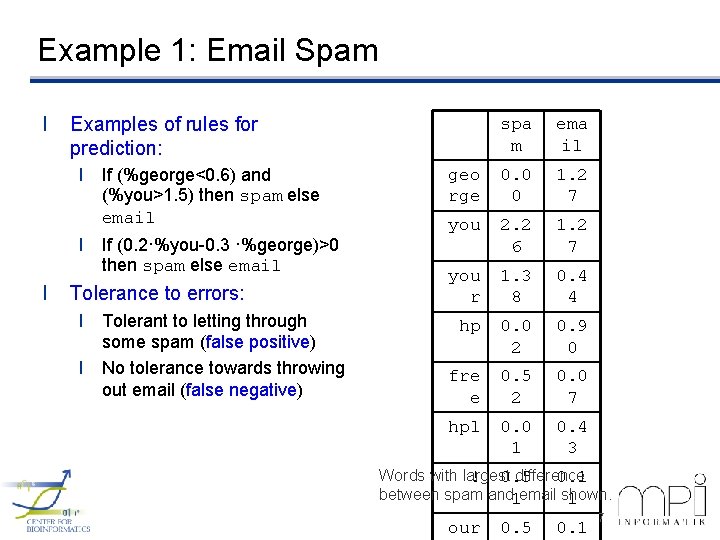

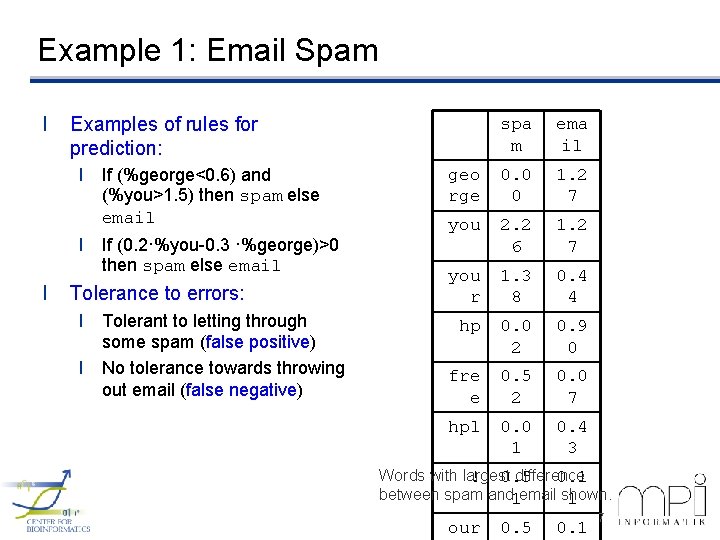

Example 1: Email Spam l Examples of rules for prediction: l If (%george<0. 6) and (%you>1. 5) then spam else email l If (0. 2·%you-0. 3 ·%george)>0 then spam else email l Tolerance to errors: l Tolerant to letting through some spam (false positive) l No tolerance towards throwing out email (false negative) spa m ema il geo rge 0. 0 0 1. 2 7 you 2. 2 6 1. 2 7 you r 1. 3 8 0. 4 4 hp 0. 0 2 0. 9 0 fre e 0. 5 2 0. 0 7 hpl 0. 0 1 0. 4 3 Words with largest difference ! 0. 5 0. 1 between spam and 1 email shown. 1 our 0. 5 0. 1 7

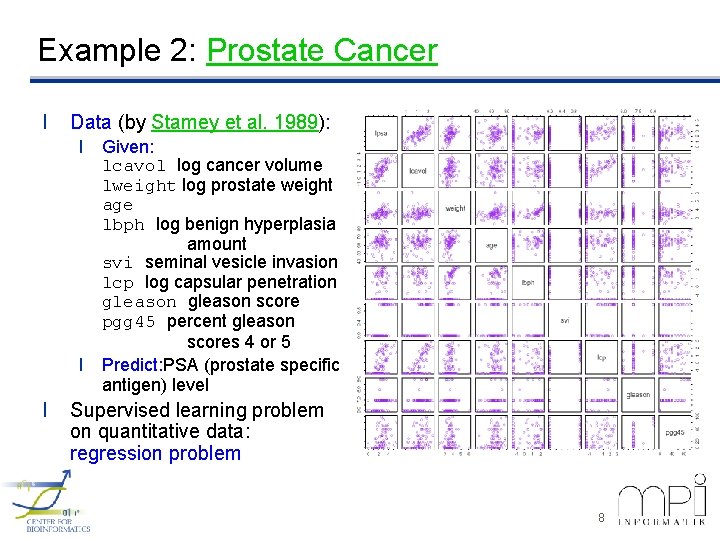

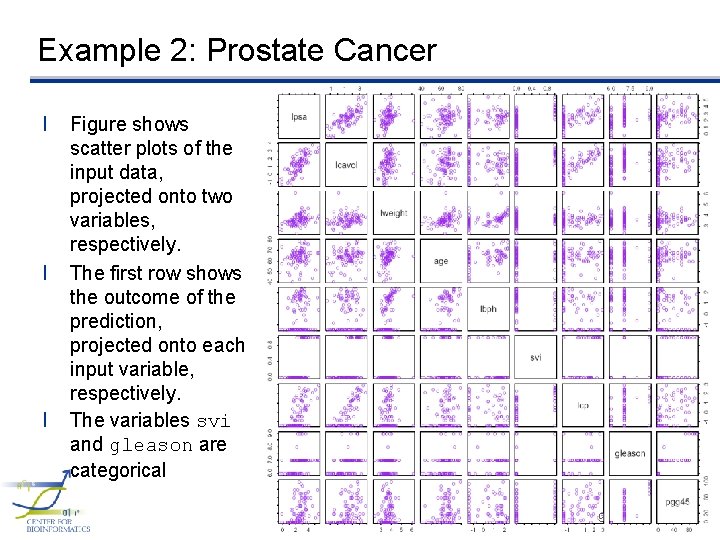

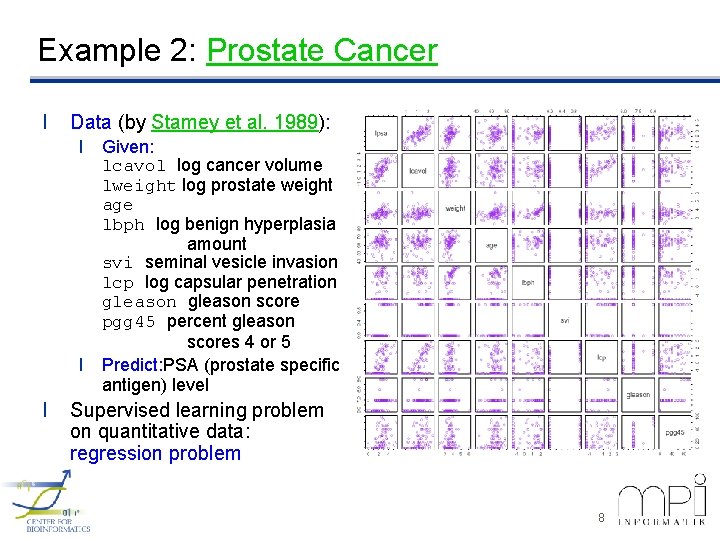

Example 2: Prostate Cancer l Data (by Stamey et al. 1989): l Given: lcavol log cancer volume lweight log prostate weight age lbph log benign hyperplasia amount svi seminal vesicle invasion lcp log capsular penetration gleason score pgg 45 percent gleason scores 4 or 5 l Predict: PSA (prostate specific antigen) level l Supervised learning problem on quantitative data: regression problem 8

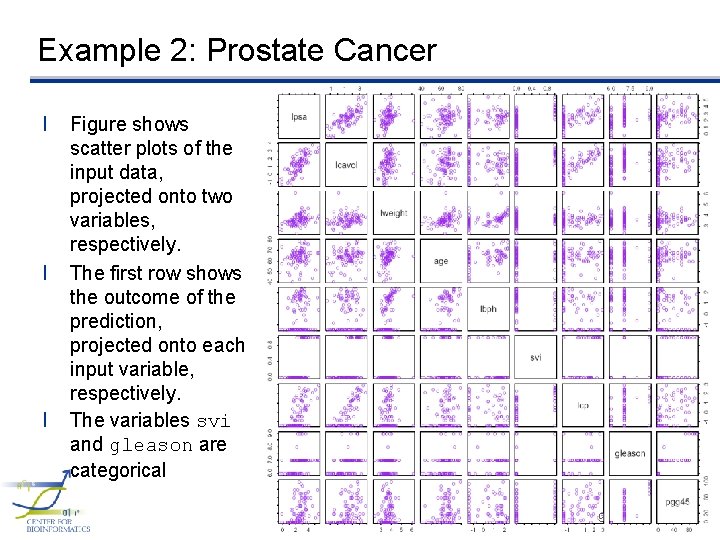

Example 2: Prostate Cancer l l l Figure shows scatter plots of the input data, projected onto two variables, respectively. The first row shows the outcome of the prediction, projected onto each input variable, respectively. The variables svi and gleason are categorical 9

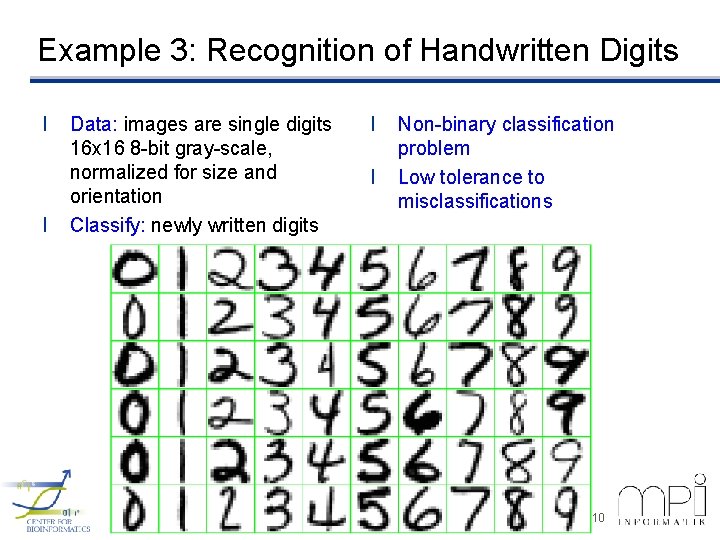

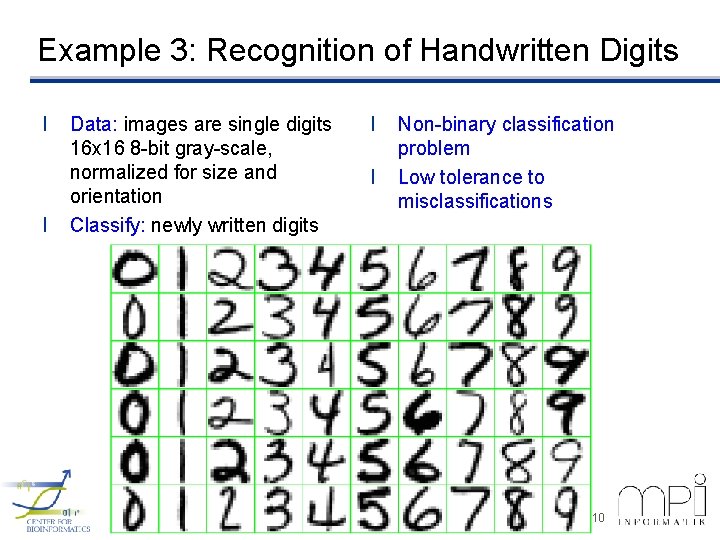

Example 3: Recognition of Handwritten Digits l l Data: images are single digits 16 x 16 8 -bit gray-scale, normalized for size and orientation Classify: newly written digits l l Non-binary classification problem Low tolerance to misclassifications 10

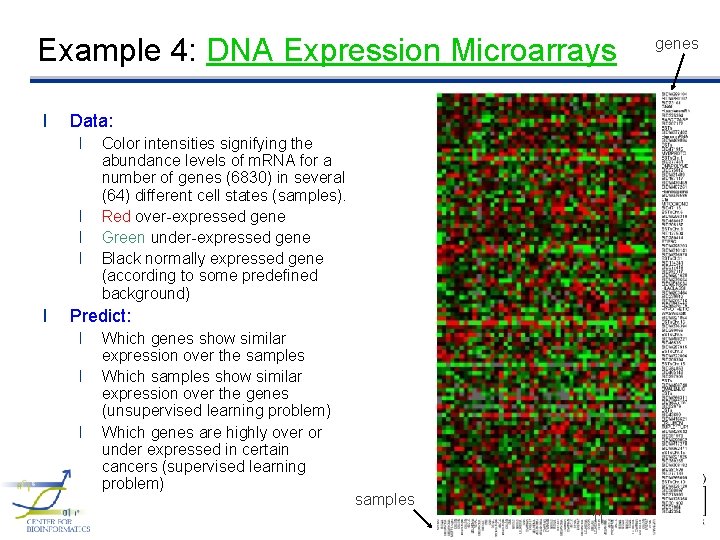

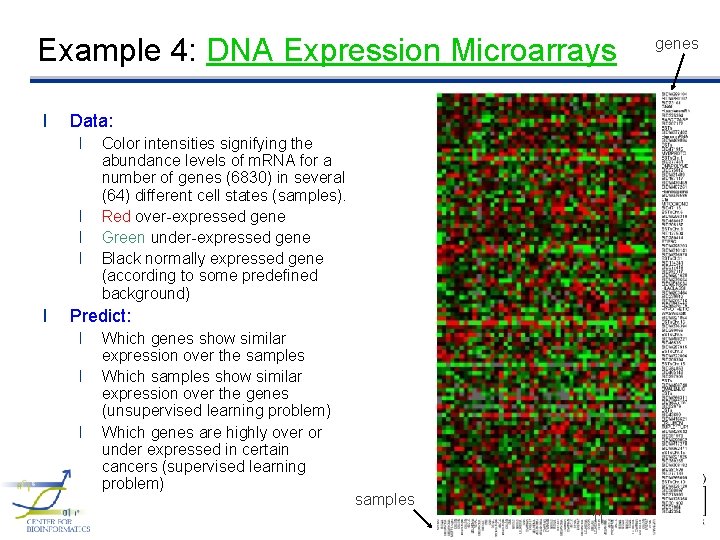

Example 4: DNA Expression Microarrays l Data: l l l Color intensities signifying the abundance levels of m. RNA for a number of genes (6830) in several (64) different cell states (samples). Red over-expressed gene Green under-expressed gene Black normally expressed gene (according to some predefined background) Predict: l l l Which genes show similar expression over the samples Which samples show similar expression over the genes (unsupervised learning problem) Which genes are highly over or under expressed in certain cancers (supervised learning problem) samples 11 genes

2. Overview of Supervised Learning 12

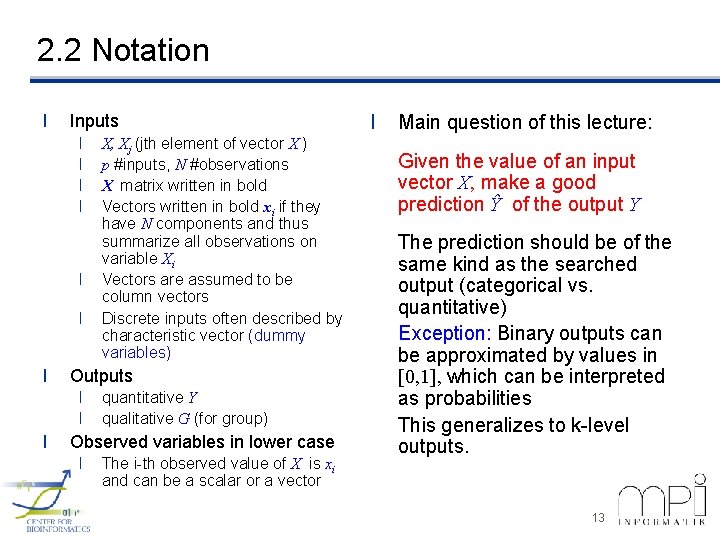

2. 2 Notation l Inputs l l l l Outputs l l l X, Xj (jth element of vector X ) p #inputs, N #observations X matrix written in bold Vectors written in bold xi if they have N components and thus summarize all observations on variable Xi Vectors are assumed to be column vectors Discrete inputs often described by characteristic vector (dummy variables) quantitative Y qualitative G (for group) Observed variables in lower case l The i-th observed value of X is xi and can be a scalar or a vector l Main question of this lecture: Given the value of an input vector X, make a good prediction Ŷ of the output Y The prediction should be of the same kind as the searched output (categorical vs. quantitative) Exception: Binary outputs can be approximated by values in [0, 1], which can be interpreted as probabilities This generalizes to k-level outputs. 13

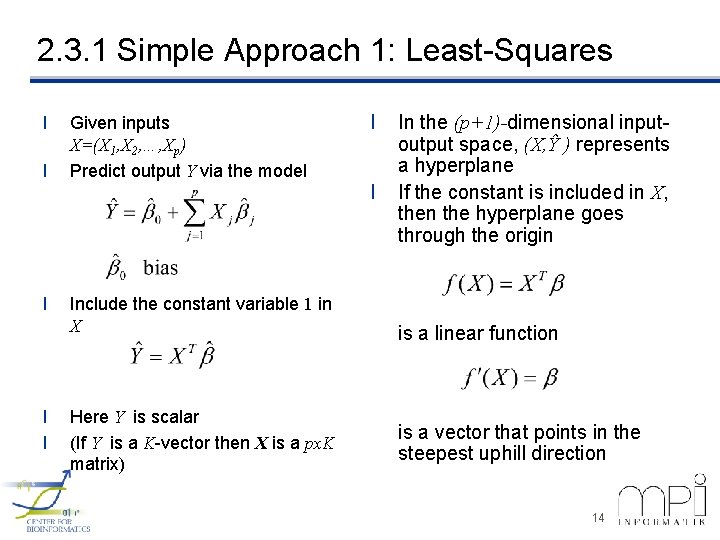

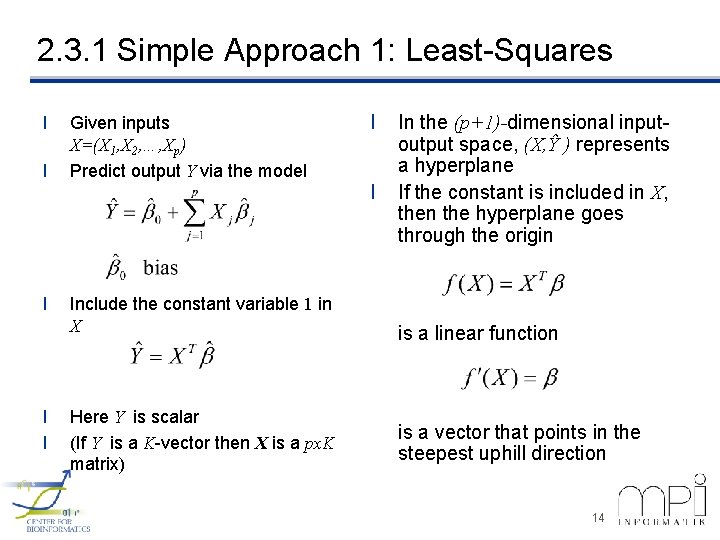

2. 3. 1 Simple Approach 1: Least-Squares l l l Given inputs X=(X 1, X 2, …, Xp) Predict output Y via the model Include the constant variable 1 in X Here Y is scalar (If Y is a K-vector then X is a px. K matrix) l l In the (p+1)-dimensional inputoutput space, (X, Ŷ ) represents a hyperplane If the constant is included in X, then the hyperplane goes through the origin is a linear function is a vector that points in the steepest uphill direction 14

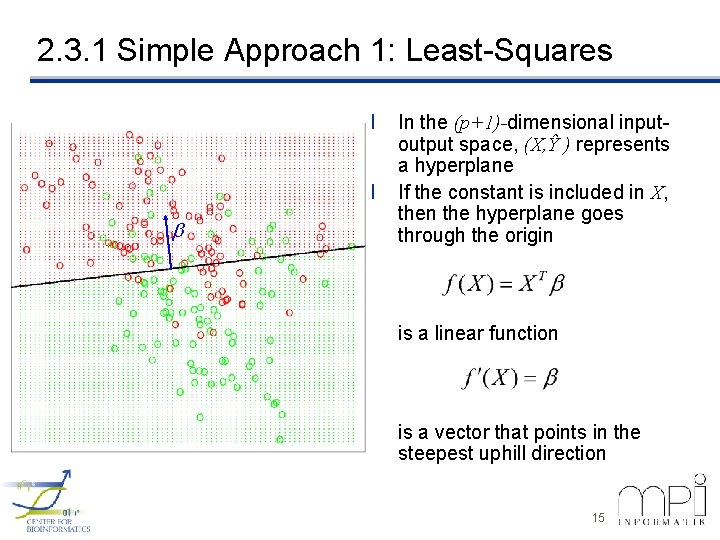

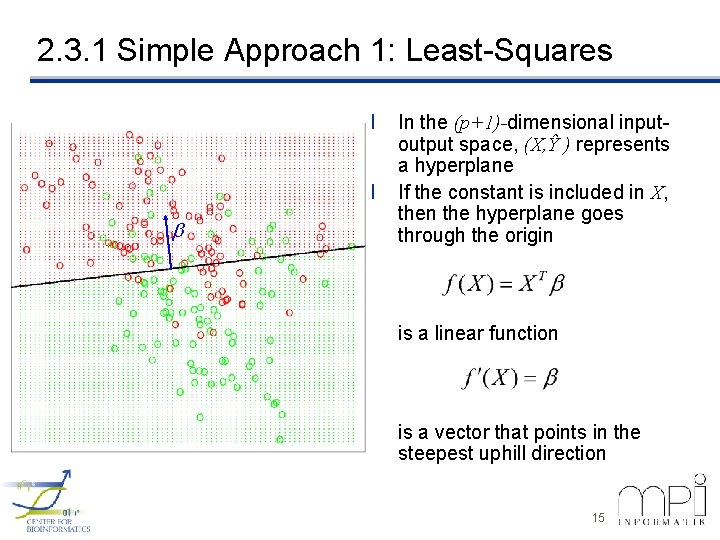

2. 3. 1 Simple Approach 1: Least-Squares l l b In the (p+1)-dimensional inputoutput space, (X, Ŷ ) represents a hyperplane If the constant is included in X, then the hyperplane goes through the origin is a linear function is a vector that points in the steepest uphill direction 15

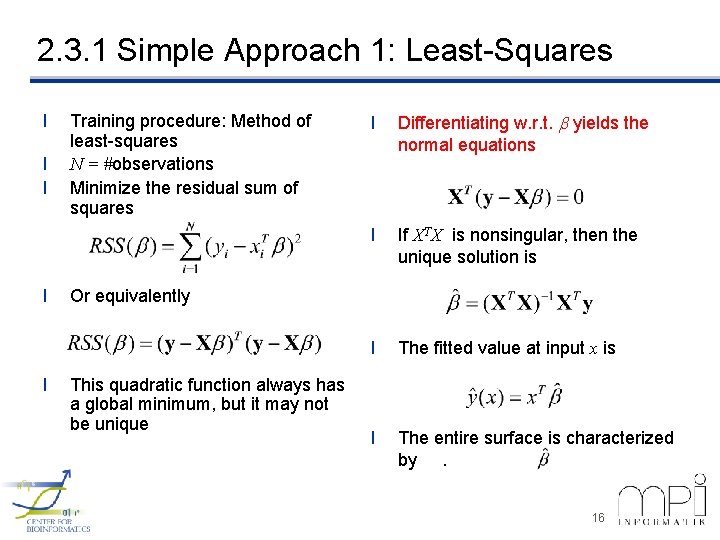

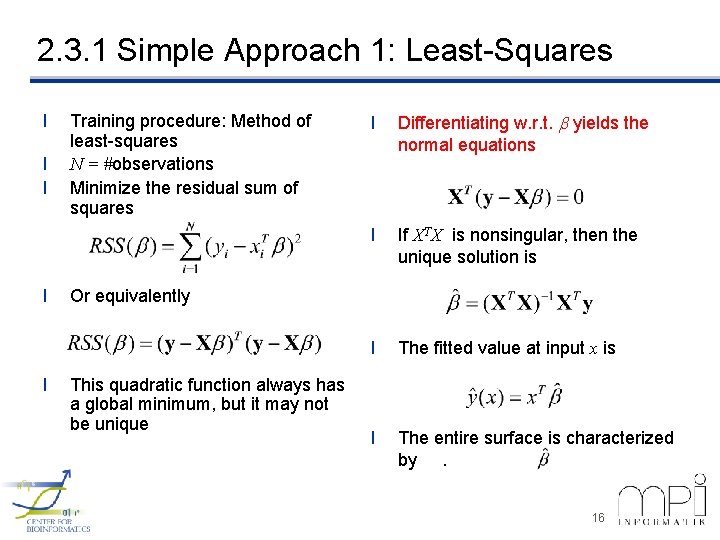

2. 3. 1 Simple Approach 1: Least-Squares l l l Training procedure: Method of least-squares N = #observations Minimize the residual sum of squares l Differentiating w. r. t. b yields the normal equations l If XTX is nonsingular, then the unique solution is l The fitted value at input x is l The entire surface is characterized by. Or equivalently This quadratic function always has a global minimum, but it may not be unique 16

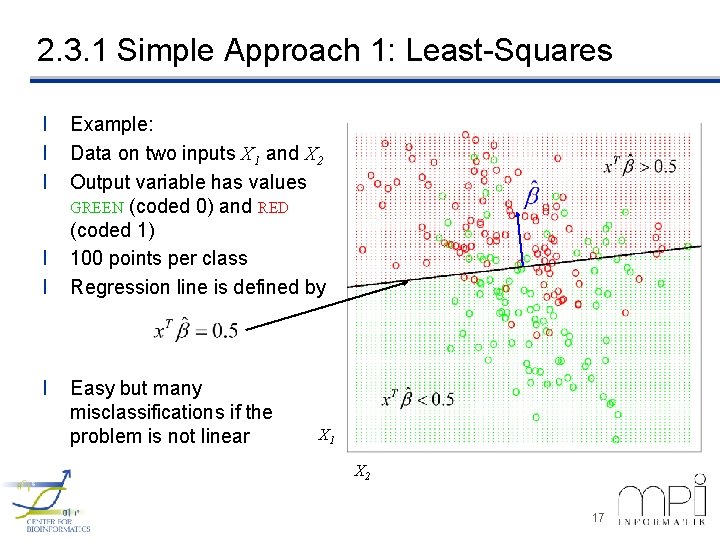

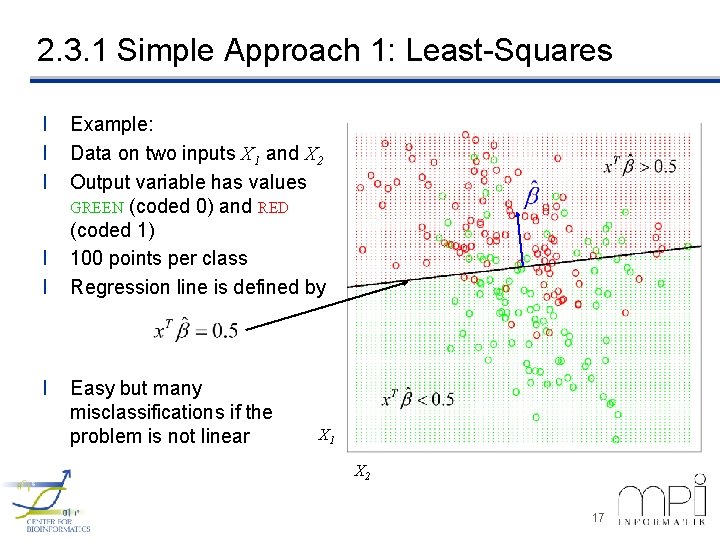

2. 3. 1 Simple Approach 1: Least-Squares l l l Example: Data on two inputs X 1 and X 2 Output variable has values GREEN (coded 0) and RED (coded 1) 100 points per class Regression line is defined by Easy but many misclassifications if the problem is not linear X 1 X 2 17

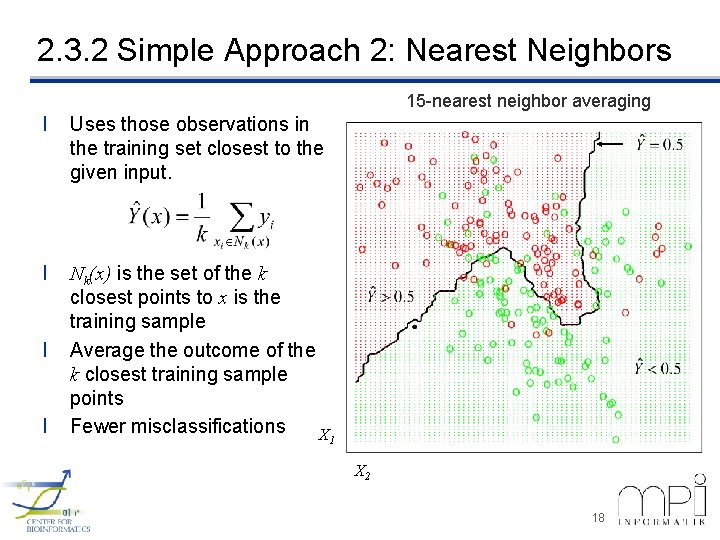

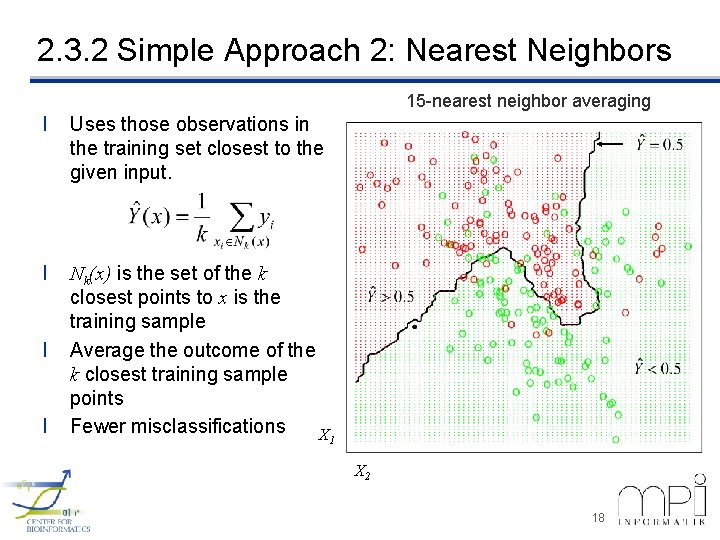

2. 3. 2 Simple Approach 2: Nearest Neighbors 15 -nearest neighbor averaging l Uses those observations in the training set closest to the given input. l Nk(x) is the set of the k closest points to x is the training sample Average the outcome of the k closest training sample points Fewer misclassifications X l l 1 X 2 18

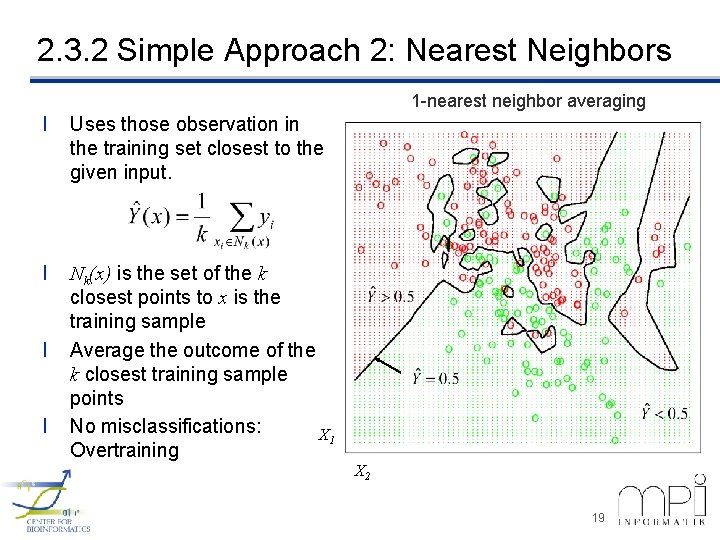

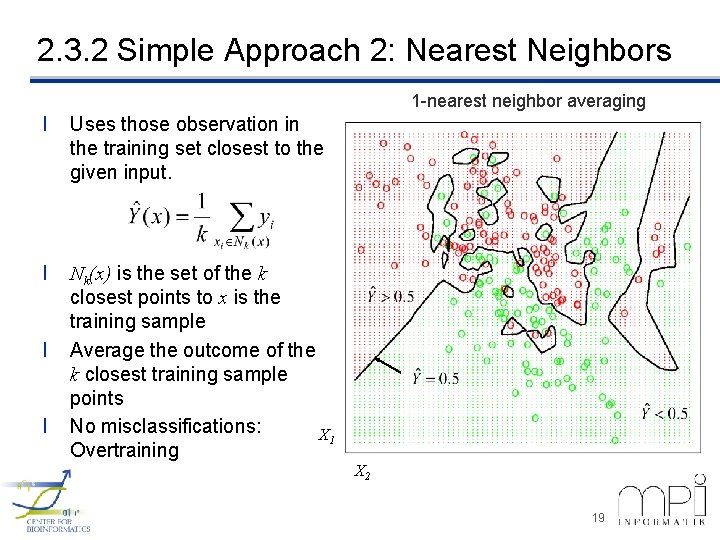

2. 3. 2 Simple Approach 2: Nearest Neighbors 1 -nearest neighbor averaging l Uses those observation in the training set closest to the given input. l Nk(x) is the set of the k closest points to x is the training sample Average the outcome of the k closest training sample points No misclassifications: X 1 Overtraining l l X 2 19

2. 3. 3 Comparison of the Two Approaches l Least squares l K-nearest neighbors 20

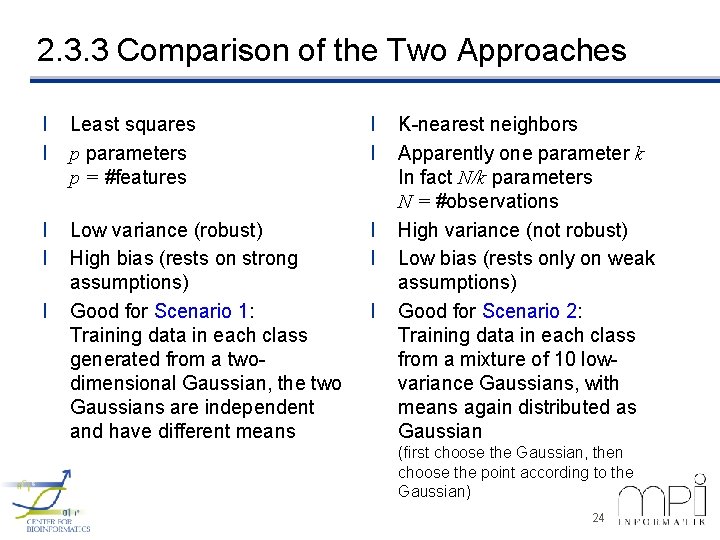

2. 3. 3 Comparison of the Two Approaches l l Least squares p parameters p = #features l l K-nearest neighbors Apparently one parameter k In fact N/k parameters N = #observations 21

2. 3. 3 Comparison of the Two Approaches l l Least squares p parameters p = #features l l l Low variance (robust) l K-nearest neighbors Apparently one parameter k In fact N/k parameters N = #observations High variance (not robust) 22

2. 3. 3 Comparison of the Two Approaches l l Least squares p parameters p = #features l l Low variance (robust) High bias (rests on strong assumptions) l l K-nearest neighbors Apparently one parameter k In fact N/k parameters N = #observations High variance (not robust) Low bias (rests only on weak assumptions) 23

2. 3. 3 Comparison of the Two Approaches l l Least squares p parameters p = #features l l Low variance (robust) High bias (rests on strong assumptions) Good for Scenario 1: Training data in each class generated from a twodimensional Gaussian, the two Gaussians are independent and have different means l l K-nearest neighbors Apparently one parameter k In fact N/k parameters N = #observations High variance (not robust) Low bias (rests only on weak assumptions) Good for Scenario 2: Training data in each class from a mixture of 10 lowvariance Gaussians, with means again distributed as Gaussian (first choose the Gaussian, then choose the point according to the Gaussian) 24

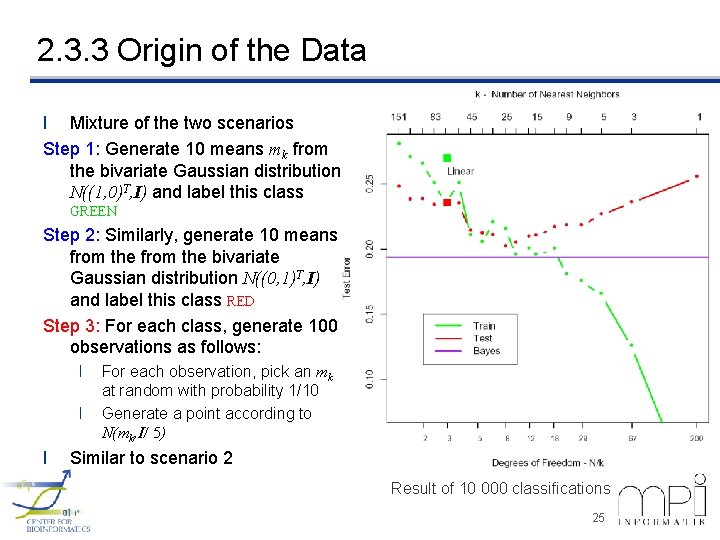

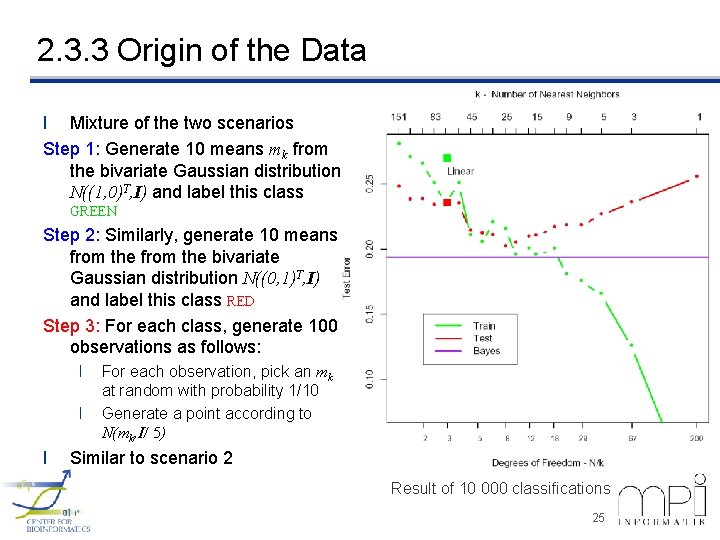

2. 3. 3 Origin of the Data l Mixture of the two scenarios Step 1: Generate 10 means mk from the bivariate Gaussian distribution N((1, 0)T, I) and label this class GREEN Step 2: Similarly, generate 10 means from the bivariate Gaussian distribution N((0, 1)T, I) and label this class RED Step 3: For each class, generate 100 observations as follows: l l l For each observation, pick an mk at random with probability 1/10 Generate a point according to N(mk, I/ 5) Similar to scenario 2 Result of 10 000 classifications 25

2. 3. 3 Variants of These Simple Methods l Kernel methods: use weights that decrease smoothly to zero with distance from the target point, rather than the 0/1 cutoff used in nearest-neighbor methods l In high-dimensional spaces, some variables are emphasized more than others l Local regression fits linear models (by least squares) locally rather than fitting constants locally l Linear models fit to a basis expansion of the original inputs allow arbitrarily complex models l Projection pursuit and neural network models are sums of nonlinearly transformed linear models 26

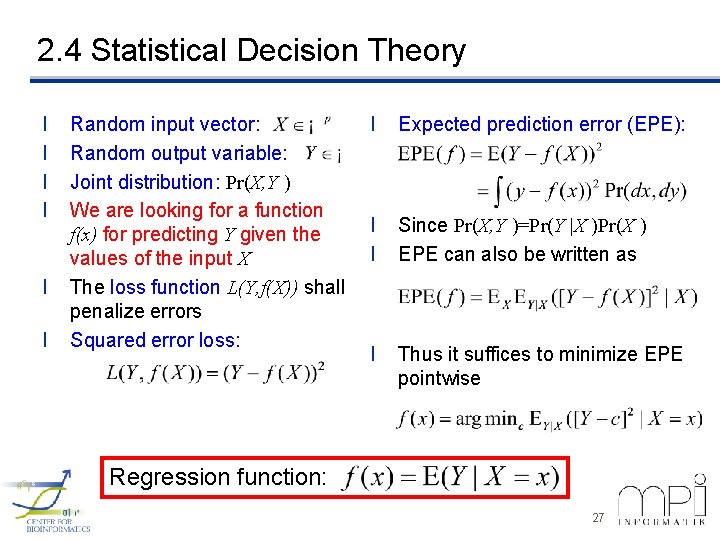

2. 4 Statistical Decision Theory l l l Random input vector: Random output variable: Joint distribution: Pr(X, Y ) We are looking for a function f(x) for predicting Y given the values of the input X The loss function L(Y, f(X)) shall penalize errors Squared error loss: l Expected prediction error (EPE): l l Since Pr(X, Y )=Pr(Y |X )Pr(X ) EPE can also be written as l Thus it suffices to minimize EPE pointwise Regression function: 27

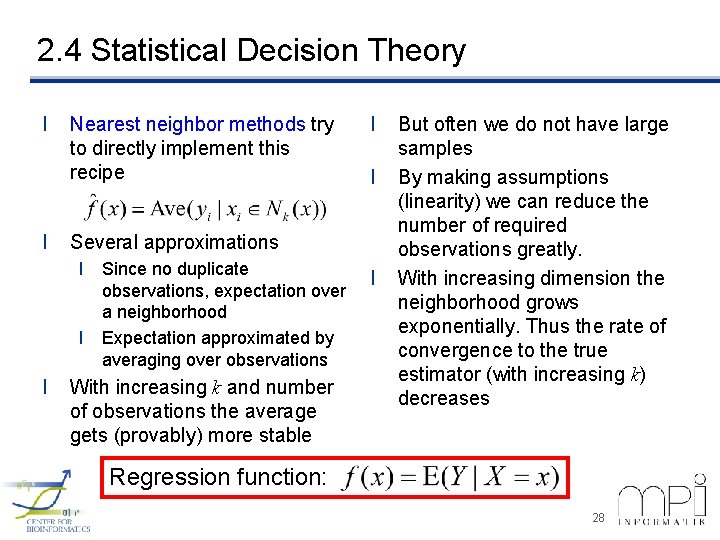

2. 4 Statistical Decision Theory l l Nearest neighbor methods try to directly implement this recipe l Several approximations l Since no duplicate observations, expectation over a neighborhood l Expectation approximated by averaging over observations l l With increasing k and number of observations the average gets (provably) more stable l But often we do not have large samples By making assumptions (linearity) we can reduce the number of required observations greatly. With increasing dimension the neighborhood grows exponentially. Thus the rate of convergence to the true estimator (with increasing k) decreases Regression function: 28

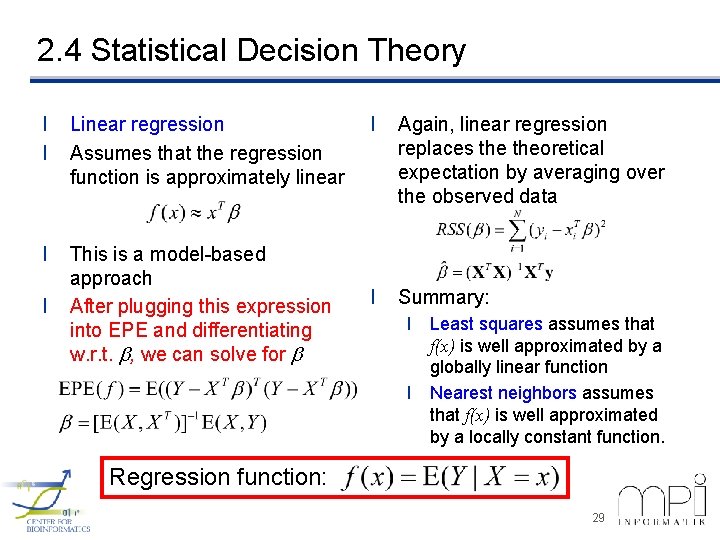

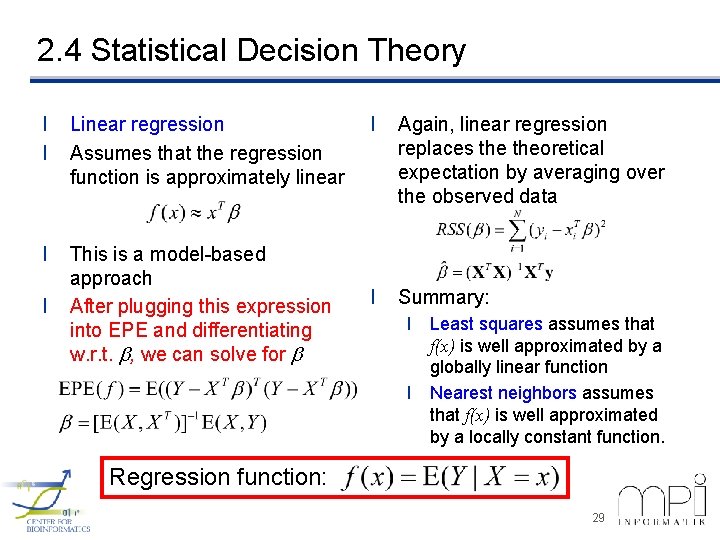

2. 4 Statistical Decision Theory l l Linear regression Assumes that the regression function is approximately linear l This is a model-based approach After plugging this expression into EPE and differentiating w. r. t. b, we can solve for b l l Again, linear regression replaces theoretical expectation by averaging over the observed data l Summary: l Least squares assumes that f(x) is well approximated by a globally linear function l Nearest neighbors assumes that f(x) is well approximated by a locally constant function. Regression function: 29

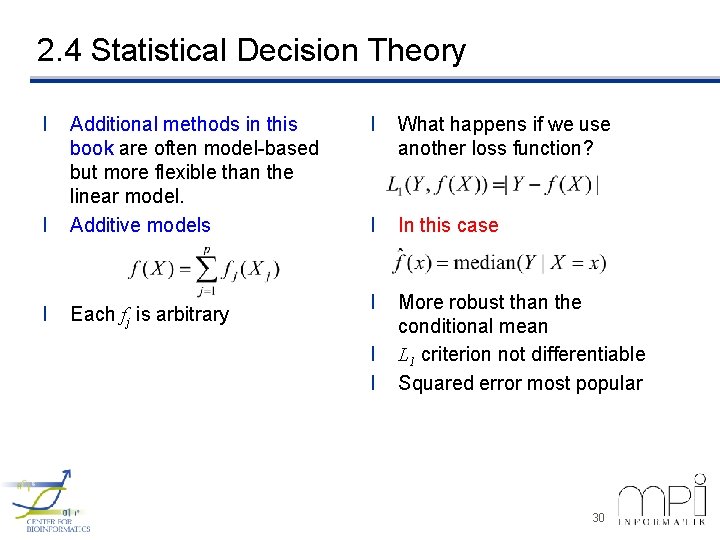

2. 4 Statistical Decision Theory l l Additional methods in this book are often model-based but more flexible than the linear model. Additive models l Each fj is arbitrary l What happens if we use another loss function? l In this case l More robust than the conditional mean L 1 criterion not differentiable Squared error most popular l l 30

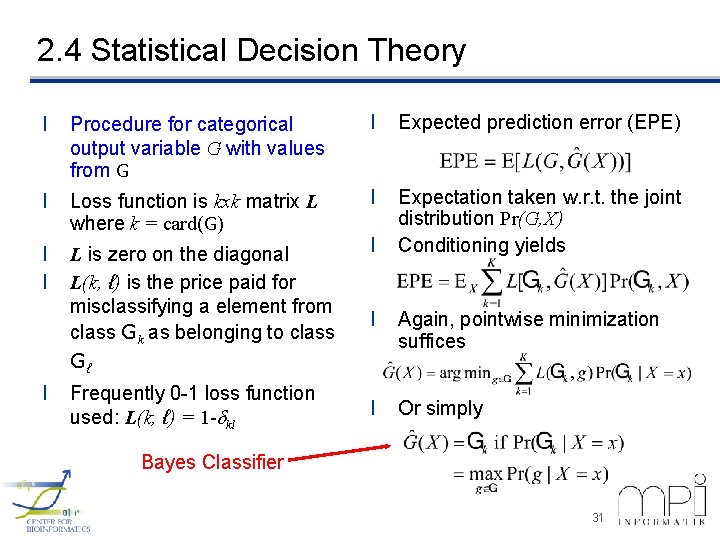

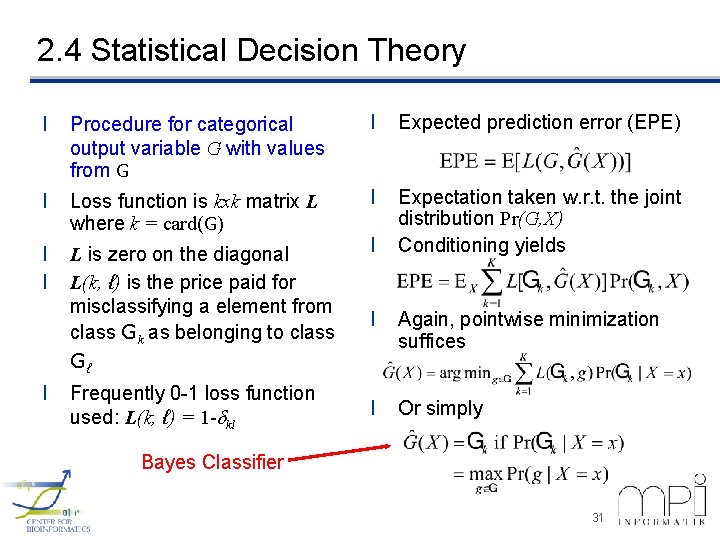

2. 4 Statistical Decision Theory l Procedure for categorical output variable G with values from G l Expected prediction error (EPE) l Loss function is kxk matrix L where k = card(G) l l l L is zero on the diagonal L(k, ℓ) is the price paid for misclassifying a element from class Gk as belonging to class Expectation taken w. r. t. the joint distribution Pr(G, X) Conditioning yields l l Again, pointwise minimization suffices l Or simply Gℓ l Frequently 0 -1 loss function used: L(k, ℓ) = 1 -dkl Bayes Classifier 31

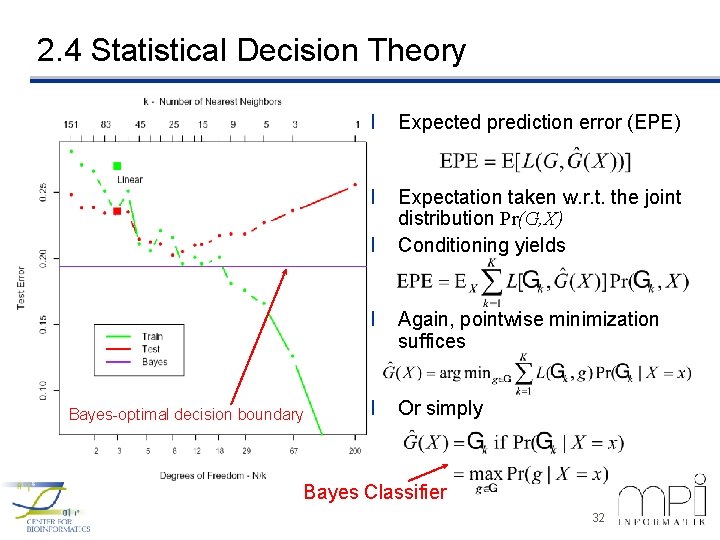

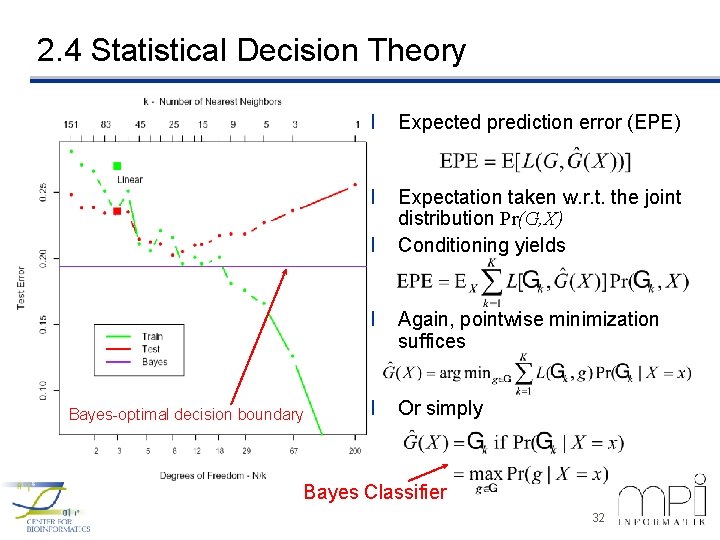

2. 4 Statistical Decision Theory l Expected prediction error (EPE) l Expectation taken w. r. t. the joint distribution Pr(G, X) Conditioning yields l Bayes-optimal decision boundary l Again, pointwise minimization suffices l Or simply Bayes Classifier 32

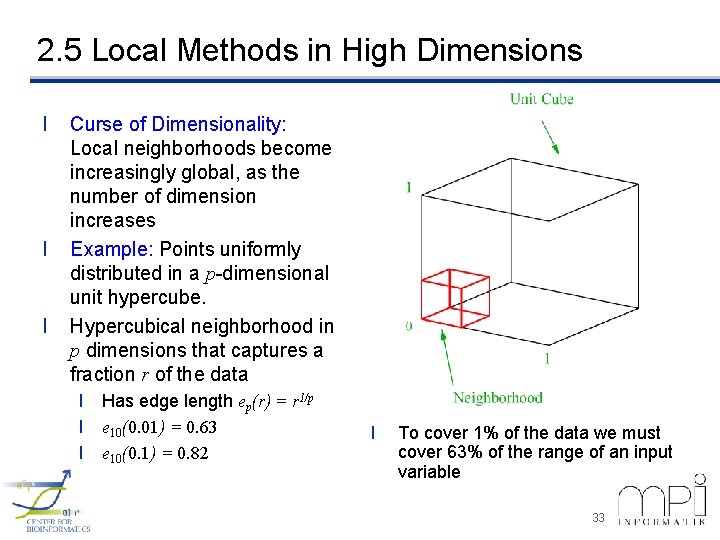

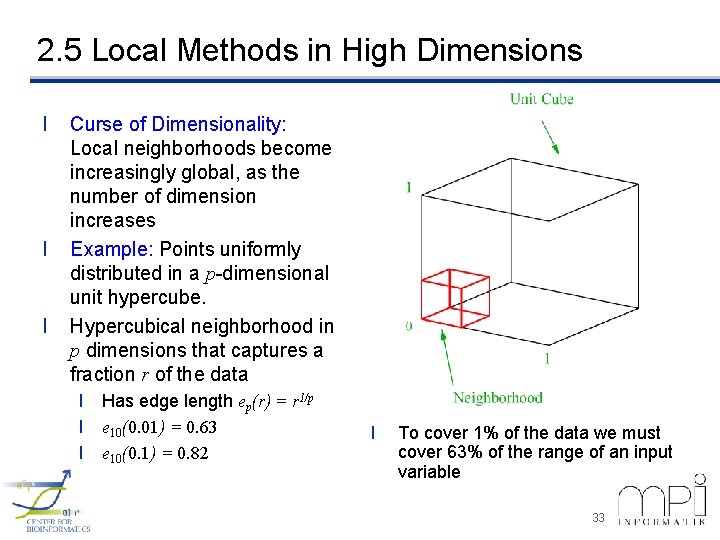

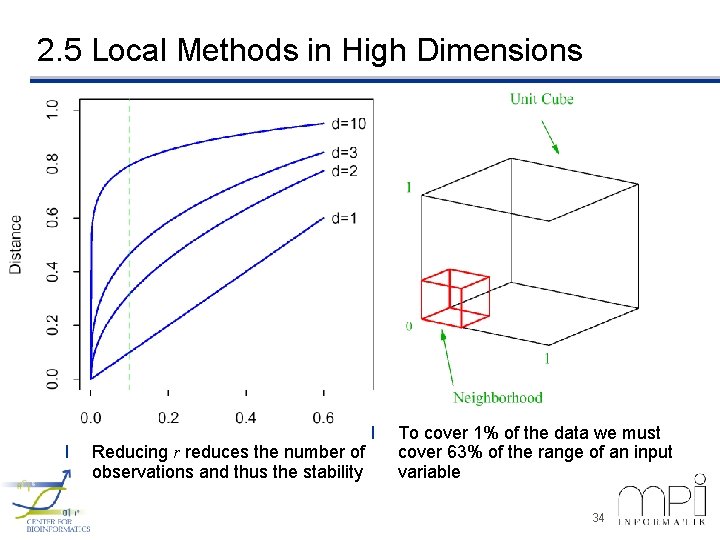

2. 5 Local Methods in High Dimensions l l l Curse of Dimensionality: Local neighborhoods become increasingly global, as the number of dimension increases Example: Points uniformly distributed in a p-dimensional unit hypercube. Hypercubical neighborhood in p dimensions that captures a fraction r of the data l Has edge length ep(r) = r 1/p l e 10(0. 01) = 0. 63 l e 10(0. 1) = 0. 82 l To cover 1% of the data we must cover 63% of the range of an input variable 33

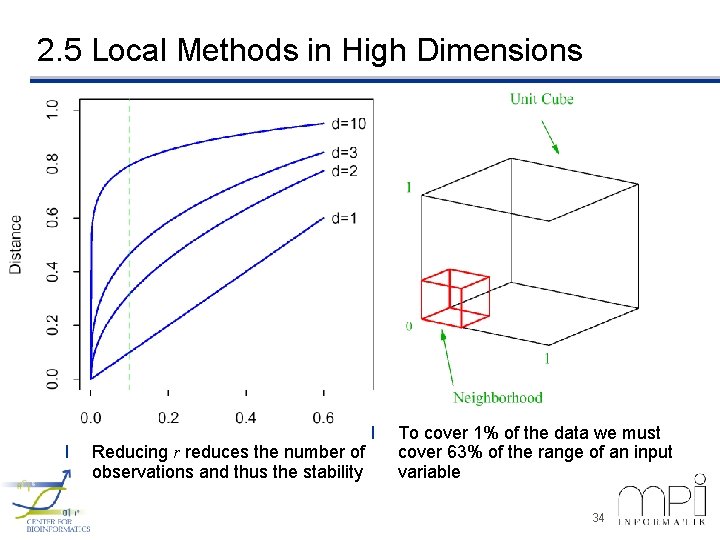

2. 5 Local Methods in High Dimensions l Reducing r reduces the number of observations and thus the stability l To cover 1% of the data we must cover 63% of the range of an input variable 34

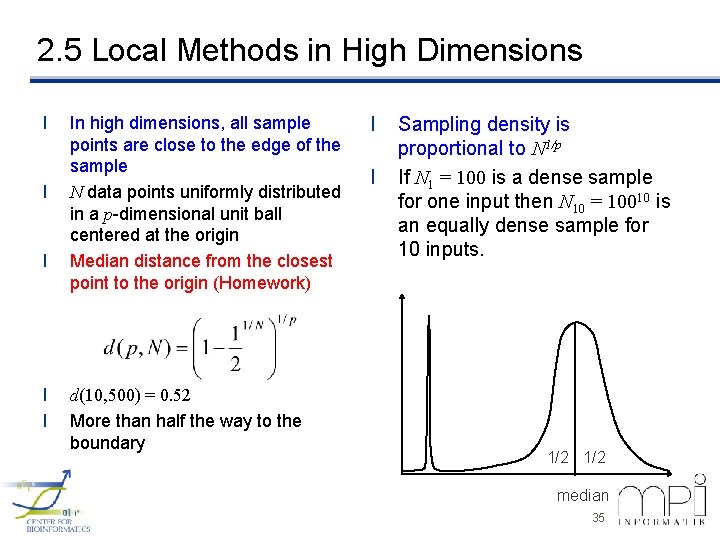

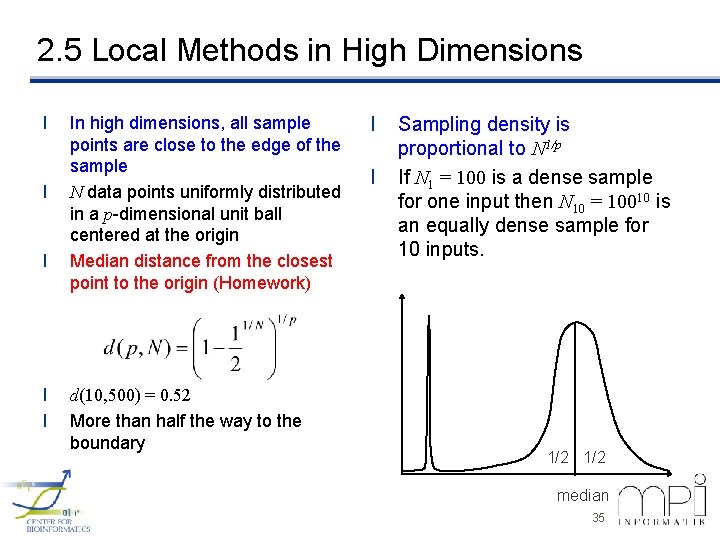

2. 5 Local Methods in High Dimensions l l l In high dimensions, all sample points are close to the edge of the sample N data points uniformly distributed in a p-dimensional unit ball centered at the origin Median distance from the closest point to the origin (Homework) d(10, 500) = 0. 52 More than half the way to the boundary l l Sampling density is proportional to N 1/p If N 1 = 100 is a dense sample for one input then N 10 = 10010 is an equally dense sample for 10 inputs. 1/2 median 35

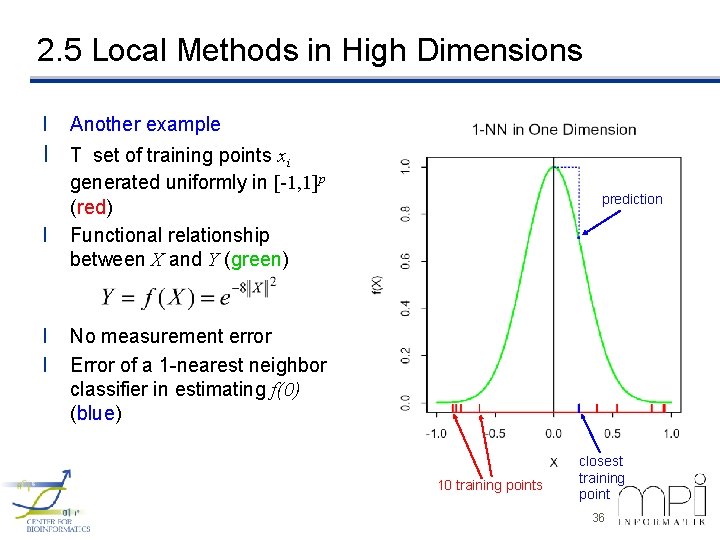

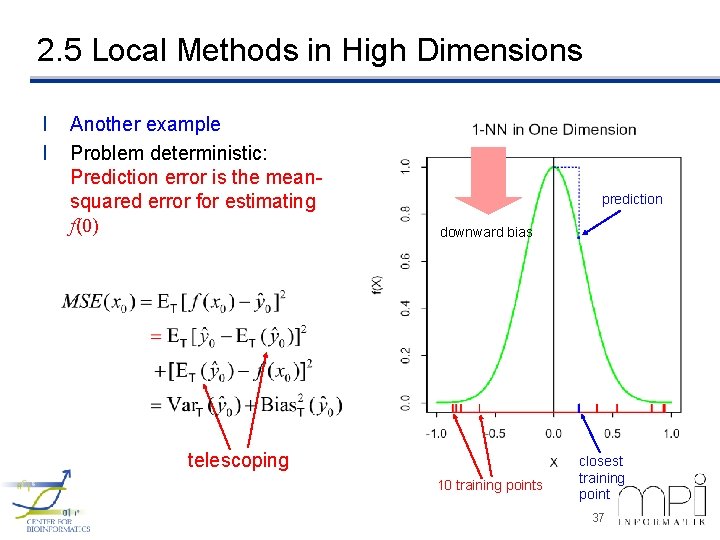

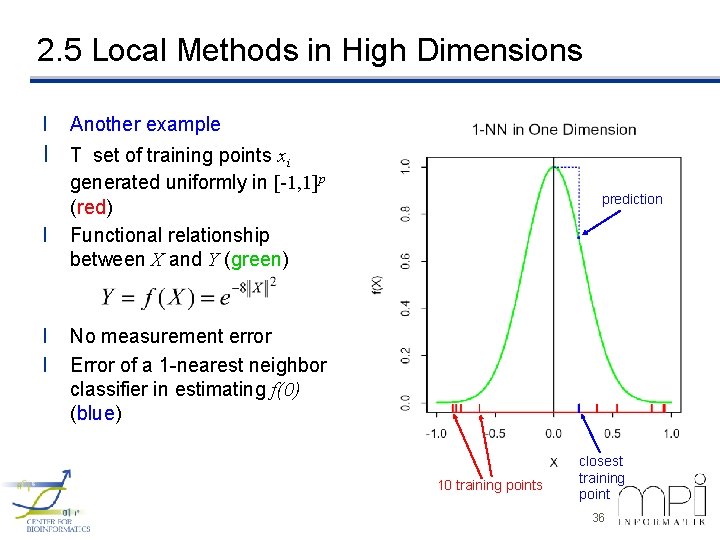

2. 5 Local Methods in High Dimensions l Another example l T set of training points xi l l l generated uniformly in [-1, 1]p (red) Functional relationship between X and Y (green) prediction No measurement error Error of a 1 -nearest neighbor classifier in estimating f(0) (blue) 10 training points closest training point 36

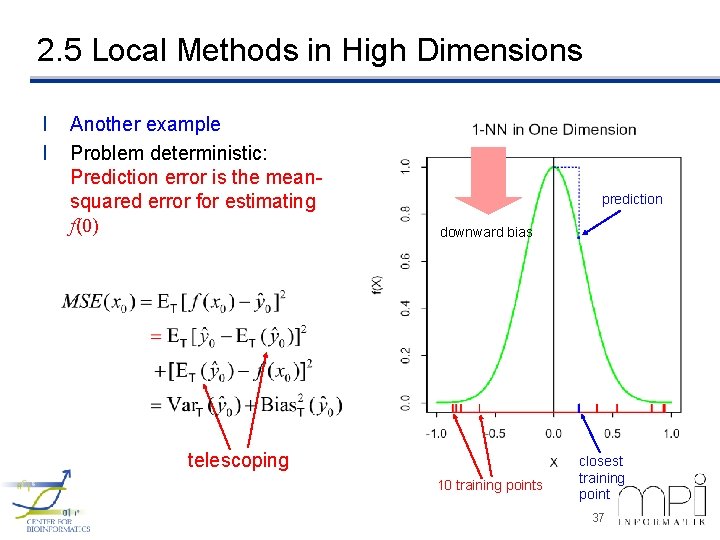

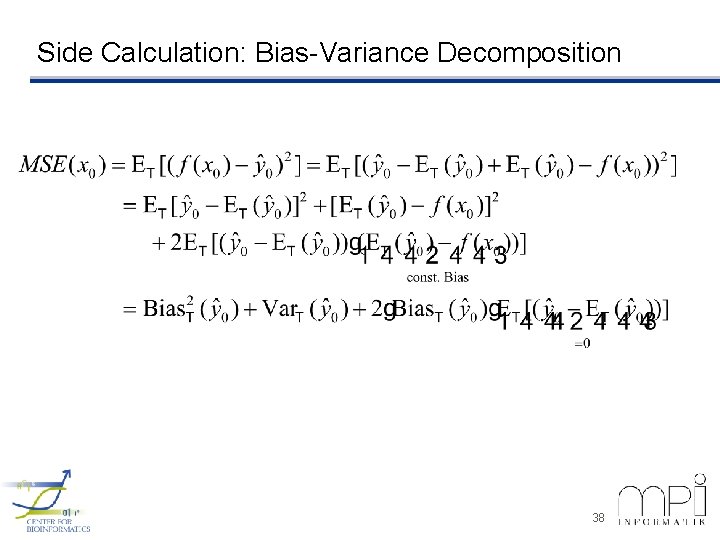

2. 5 Local Methods in High Dimensions l l Another example Problem deterministic: Prediction error is the meansquared error for estimating f(0) prediction downward bias telescoping 10 training points closest training point 37

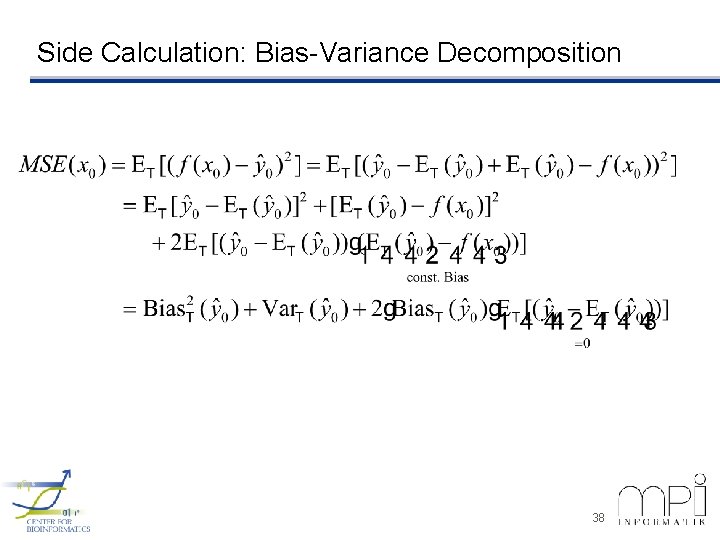

Side Calculation: Bias-Variance Decomposition 38

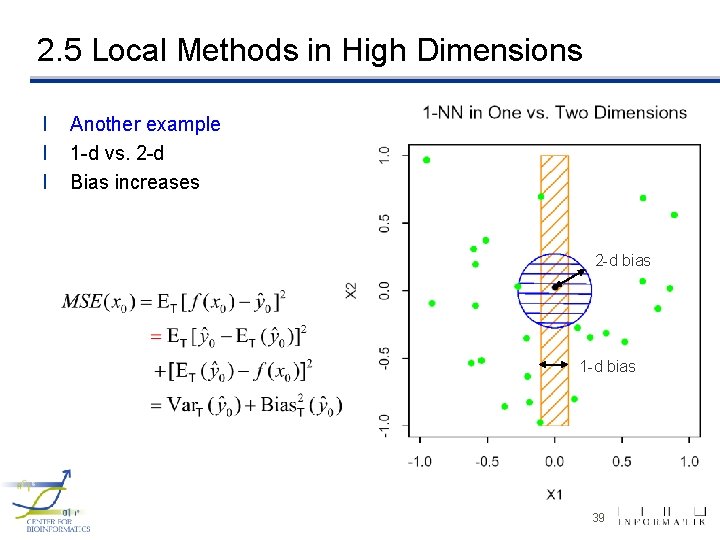

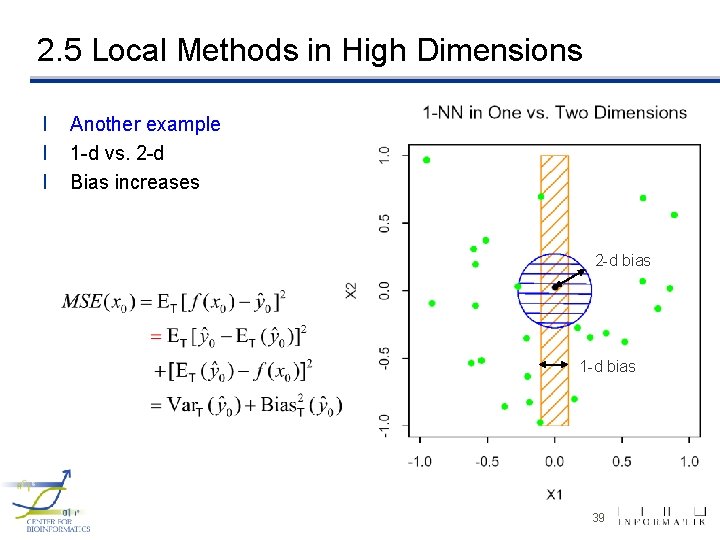

2. 5 Local Methods in High Dimensions l l l Another example 1 -d vs. 2 -d Bias increases 2 -d bias 1 -d bias 39

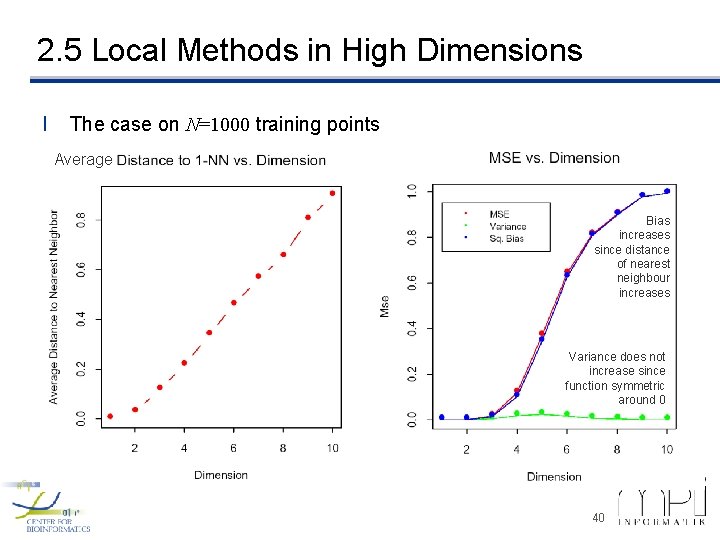

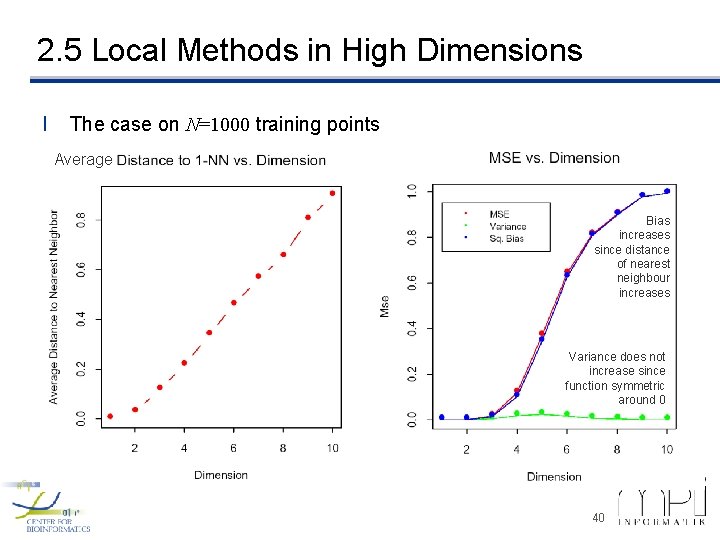

2. 5 Local Methods in High Dimensions l The case on N=1000 training points Average Bias increases since distance of nearest neighbour increases Variance does not increase since function symmetric around 0 40

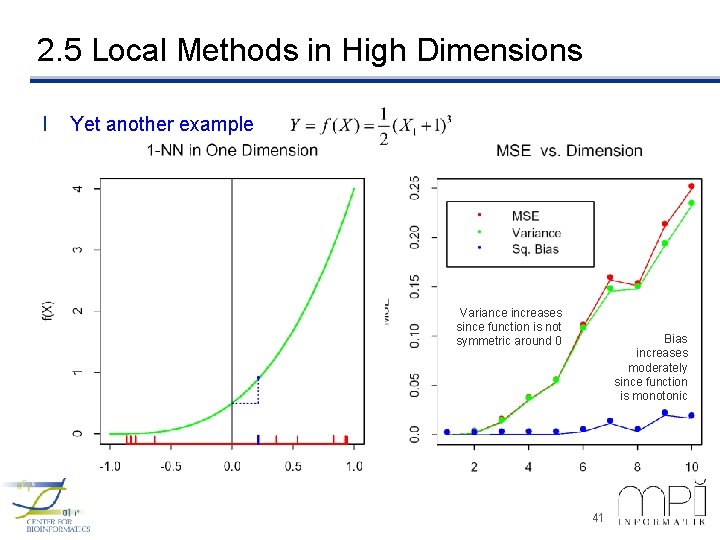

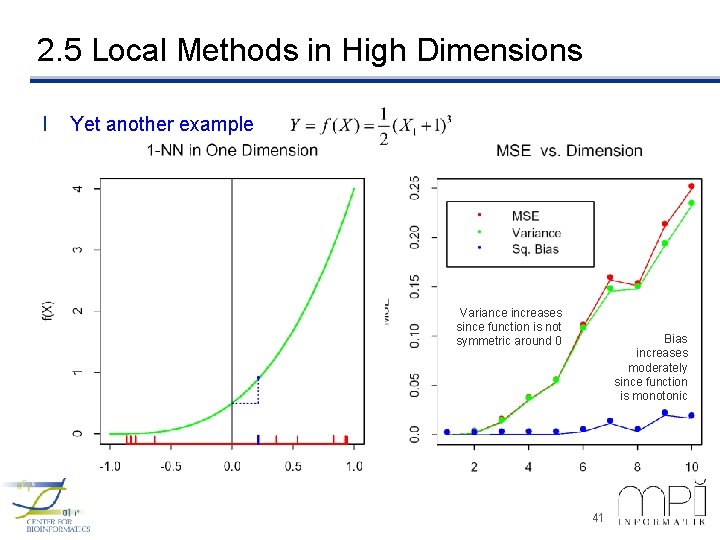

2. 5 Local Methods in High Dimensions l Yet another example Variance increases since function is not symmetric around 0 Bias increases moderately since function is monotonic 41

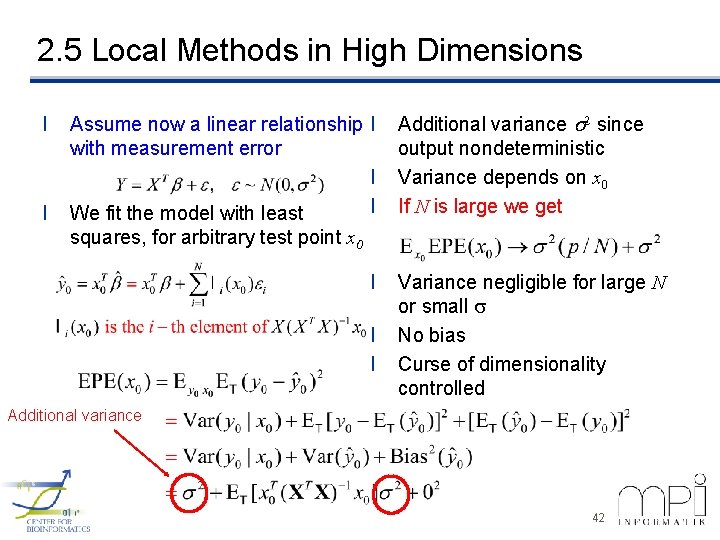

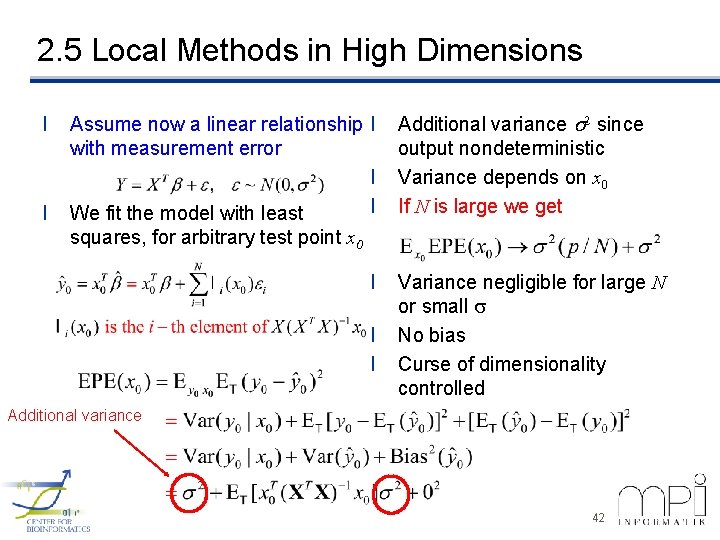

2. 5 Local Methods in High Dimensions l l Assume now a linear relationship l with measurement error l l We fit the model with least Additional variance s 2 since output nondeterministic Variance depends on x 0 If N is large we get squares, for arbitrary test point x 0 l l l Variance negligible for large N or small s No bias Curse of dimensionality controlled Additional variance 42

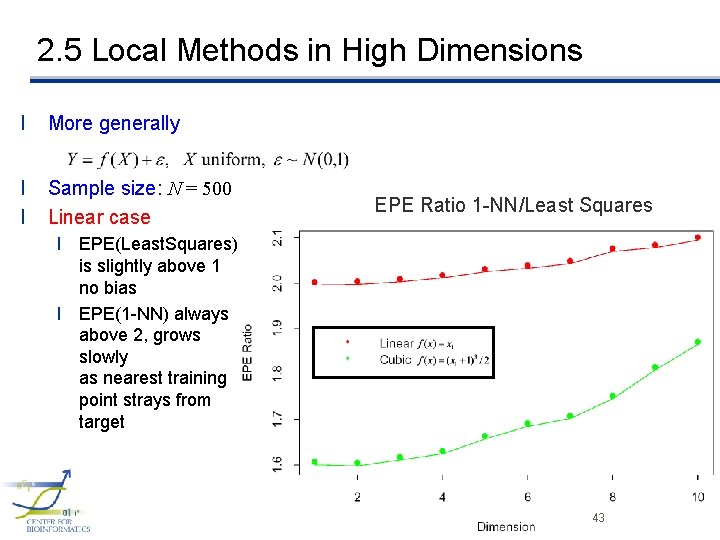

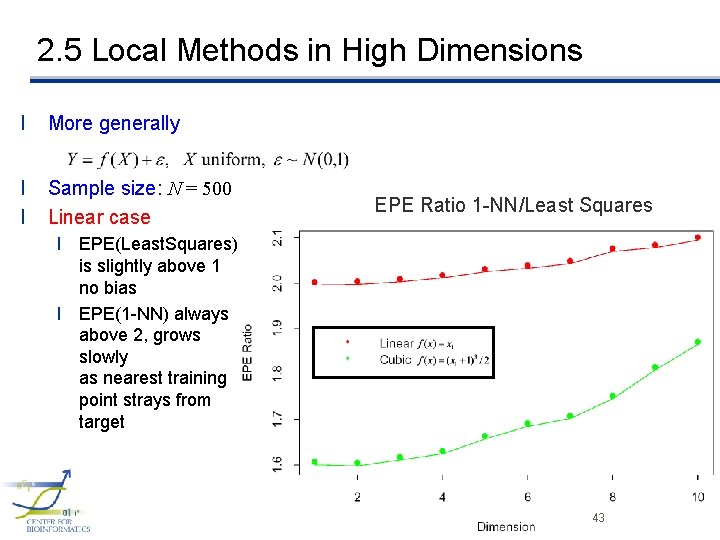

2. 5 Local Methods in High Dimensions l More generally l l Sample size: N = 500 Linear case EPE Ratio 1 -NN/Least Squares l EPE(Least. Squares) is slightly above 1 no bias l EPE(1 -NN) always above 2, grows slowly as nearest training point strays from target 43

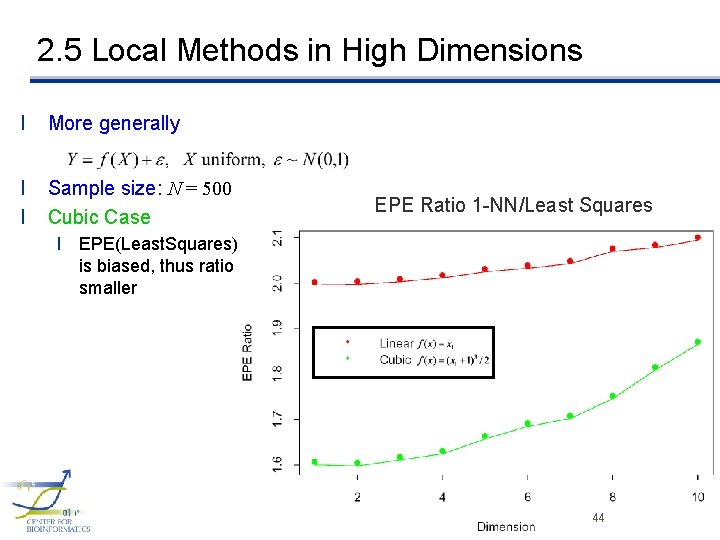

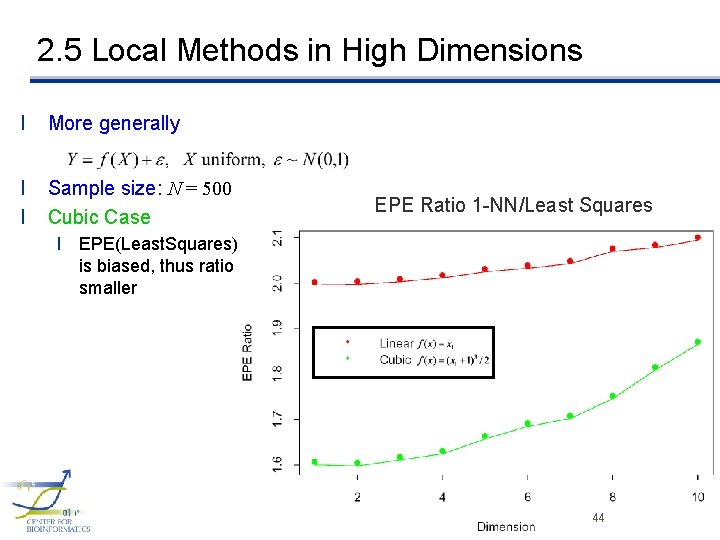

2. 5 Local Methods in High Dimensions l More generally l l Sample size: N = 500 Cubic Case EPE Ratio 1 -NN/Least Squares l EPE(Least. Squares) is biased, thus ratio smaller 44

2. 6 Statistical Models l NN methods are the direct implementation of l But can fail in two ways l With high dimensions NN need not be close to the target point l If special structure exists in the problem, this can be used to reduce variance and bias 45

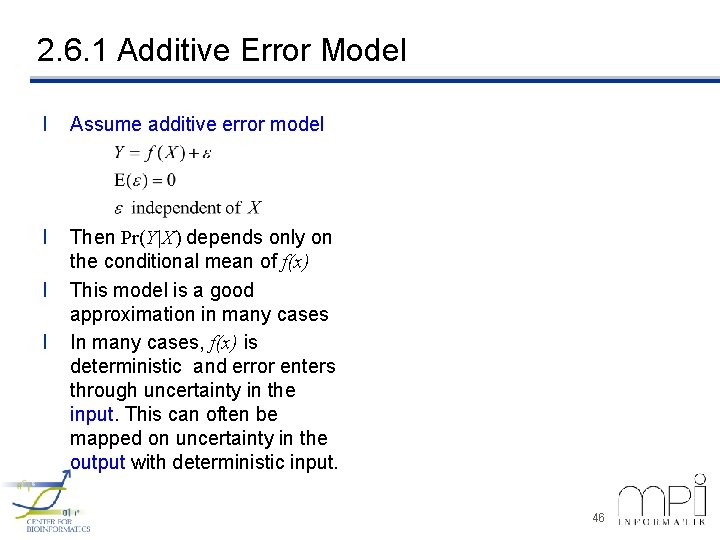

2. 6. 1 Additive Error Model l Assume additive error model l Then Pr(Y|X) depends only on the conditional mean of f(x) This model is a good approximation in many cases In many cases, f(x) is deterministic and error enters through uncertainty in the input. This can often be mapped on uncertainty in the output with deterministic input. l l 46

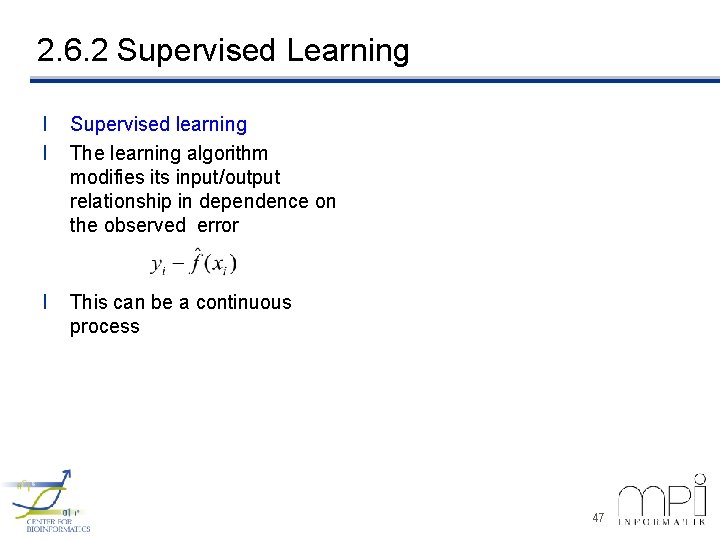

2. 6. 2 Supervised Learning l l Supervised learning The learning algorithm modifies its input/output relationship in dependence on the observed error l This can be a continuous process 47

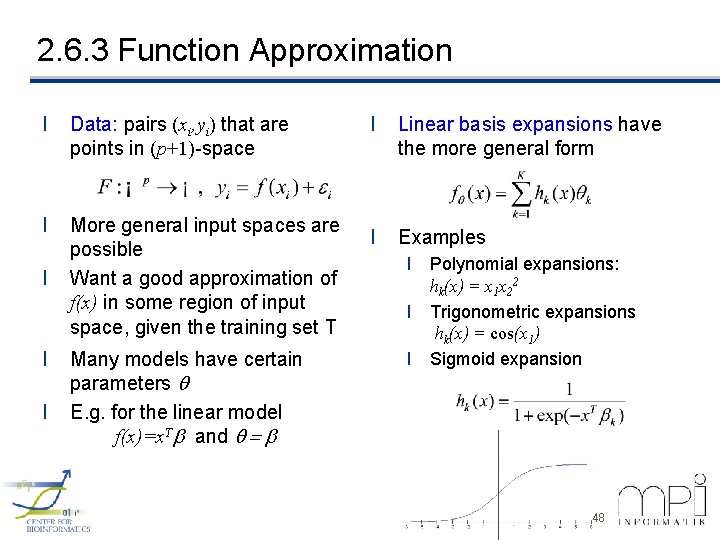

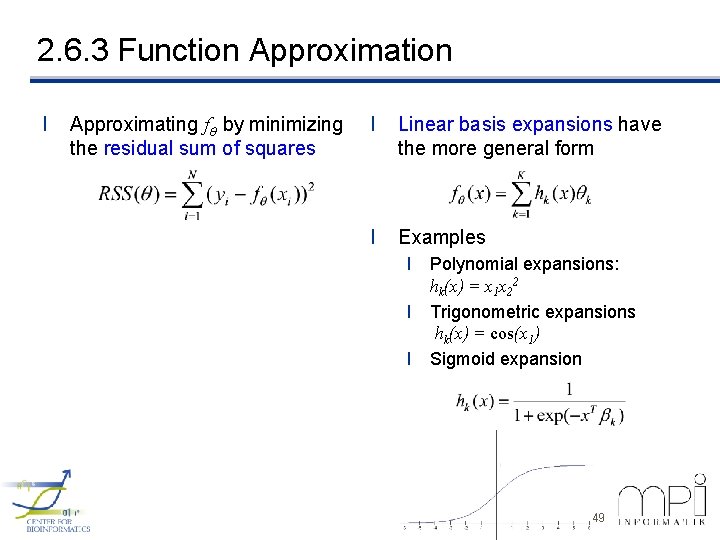

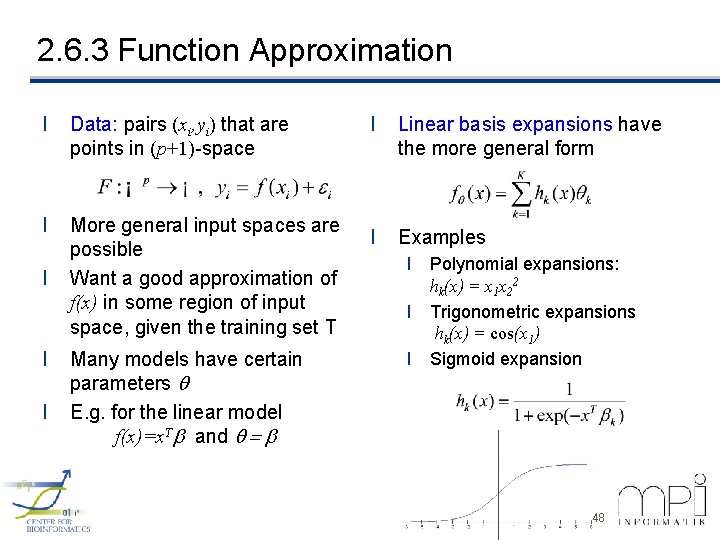

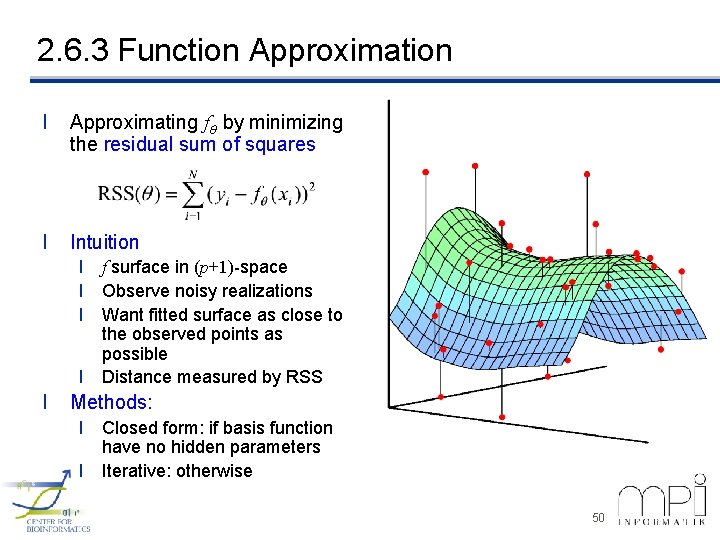

2. 6. 3 Function Approximation l Data: pairs (xi, yi) that are points in (p+1)-space l More general input spaces are possible Want a good approximation of f(x) in some region of input space, given the training set T l l l Many models have certain parameters E. g. for the linear model f(x)=x. Tb and = b l Linear basis expansions have the more general form l Examples l Polynomial expansions: hk(x) = x 1 x 22 l Trigonometric expansions hk(x) = cos(x 1) l Sigmoid expansion 48

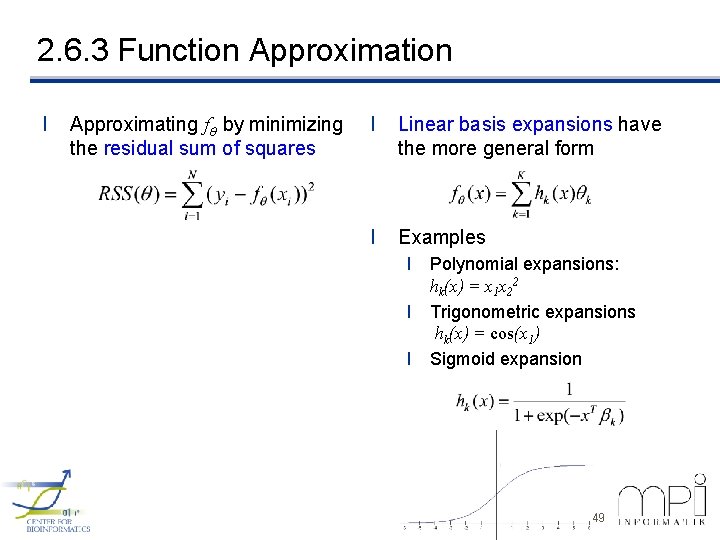

2. 6. 3 Function Approximation l Approximating f by minimizing the residual sum of squares l Linear basis expansions have the more general form l Examples l Polynomial expansions: hk(x) = x 1 x 22 l Trigonometric expansions hk(x) = cos(x 1) l Sigmoid expansion 49

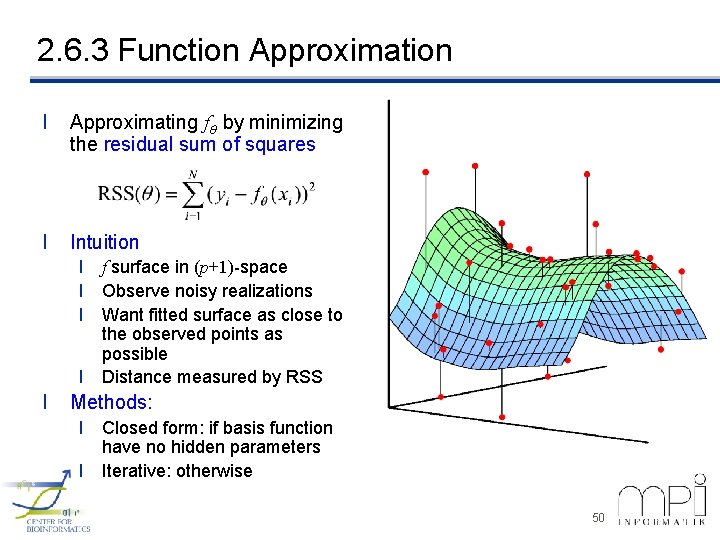

2. 6. 3 Function Approximation l Approximating f by minimizing the residual sum of squares l Intuition l f surface in (p+1)-space l Observe noisy realizations l Want fitted surface as close to the observed points as possible l Distance measured by RSS l Methods: l Closed form: if basis function have no hidden parameters l Iterative: otherwise 50

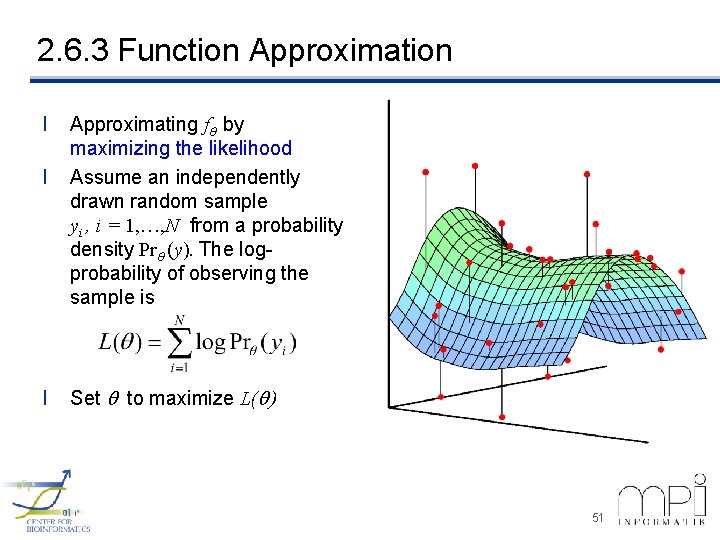

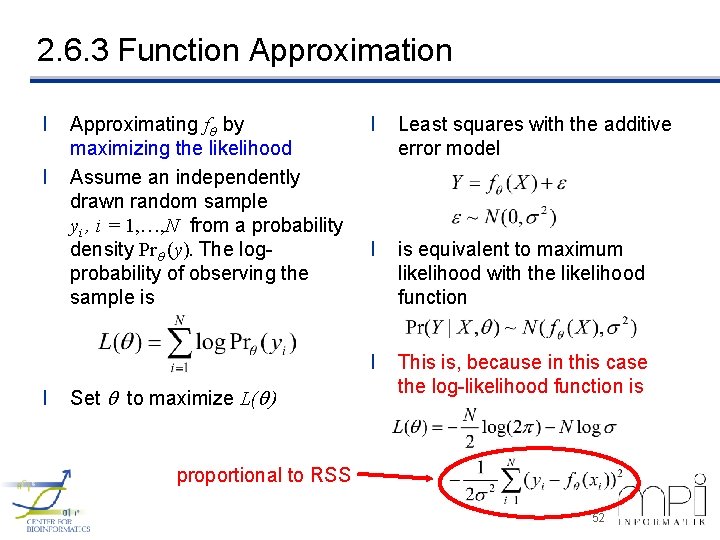

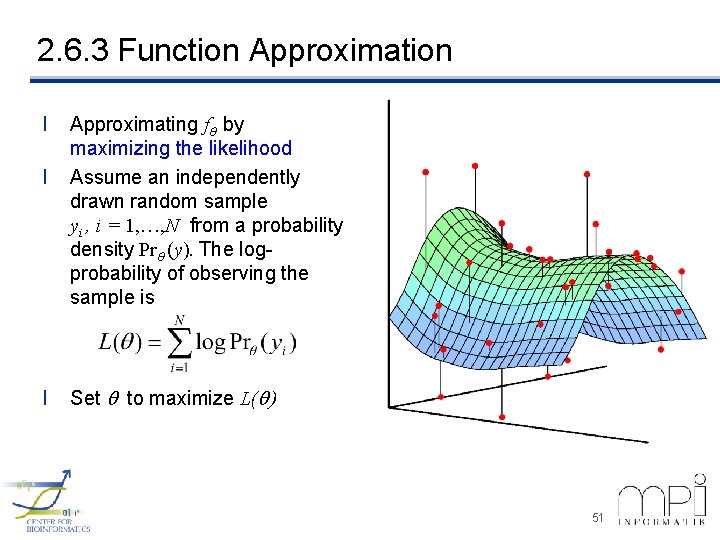

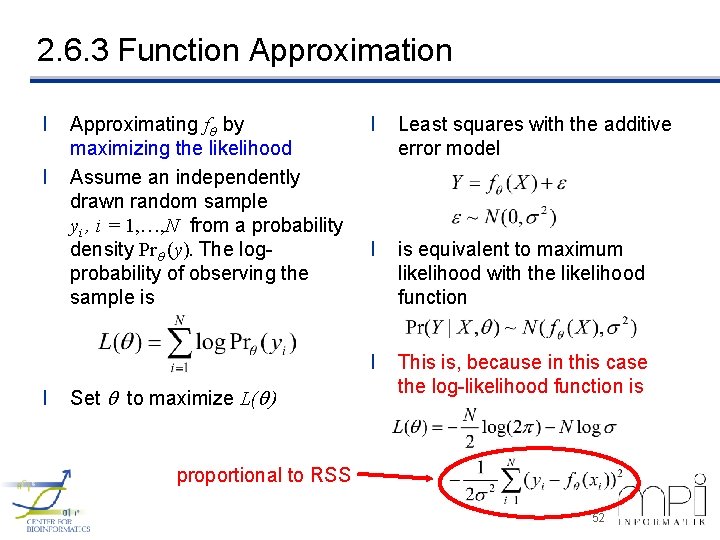

2. 6. 3 Function Approximation l l l Approximating f by maximizing the likelihood Assume an independently drawn random sample yi , i = 1, …, N from a probability density Pr (y). The logprobability of observing the sample is Set to maximize L( ) 51

2. 6. 3 Function Approximation l l l Approximating f by maximizing the likelihood Assume an independently drawn random sample yi , i = 1, …, N from a probability density Pr (y). The logprobability of observing the sample is Set to maximize L( ) l Least squares with the additive error model l is equivalent to maximum likelihood with the likelihood function l This is, because in this case the log-likelihood function is proportional to RSS 52

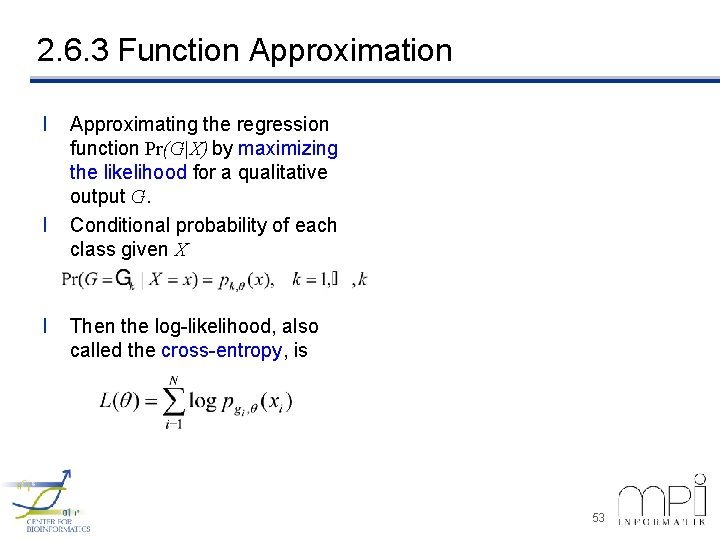

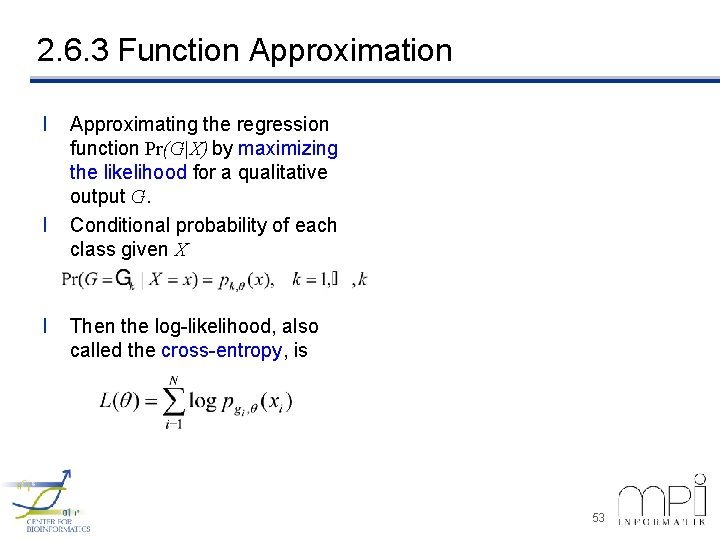

2. 6. 3 Function Approximation l l l Approximating the regression function Pr(G|X) by maximizing the likelihood for a qualitative output G. Conditional probability of each class given X Then the log-likelihood, also called the cross-entropy, is 53

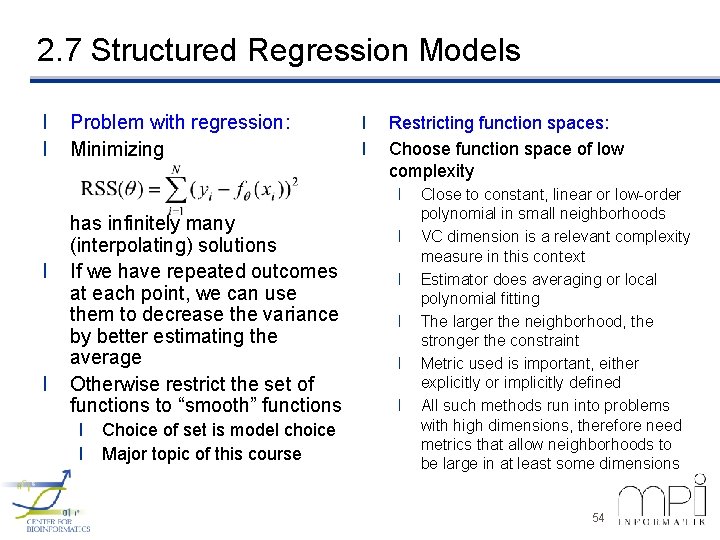

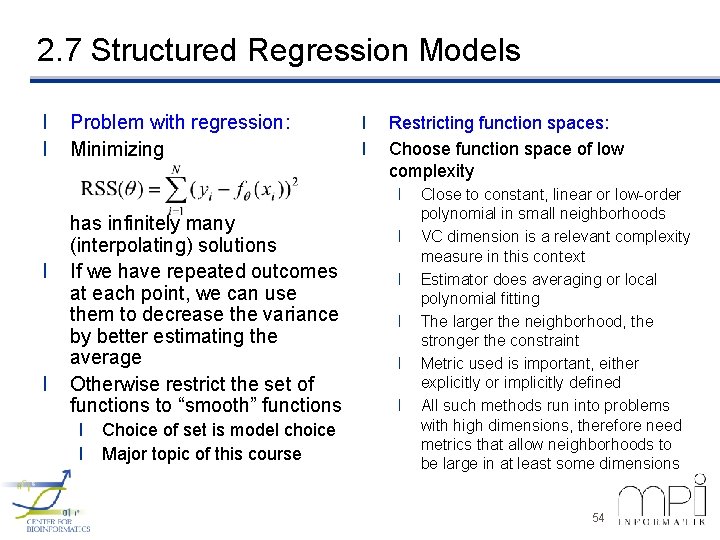

2. 7 Structured Regression Models l l Problem with regression: Minimizing l l Restricting function spaces: Choose function space of low complexity l l l has infinitely many (interpolating) solutions If we have repeated outcomes at each point, we can use them to decrease the variance by better estimating the average Otherwise restrict the set of functions to “smooth” functions l Choice of set is model choice l Major topic of this course l l l Close to constant, linear or low-order polynomial in small neighborhoods VC dimension is a relevant complexity measure in this context Estimator does averaging or local polynomial fitting The larger the neighborhood, the stronger the constraint Metric used is important, either explicitly or implicitly defined All such methods run into problems with high dimensions, therefore need metrics that allow neighborhoods to be large in at least some dimensions 54

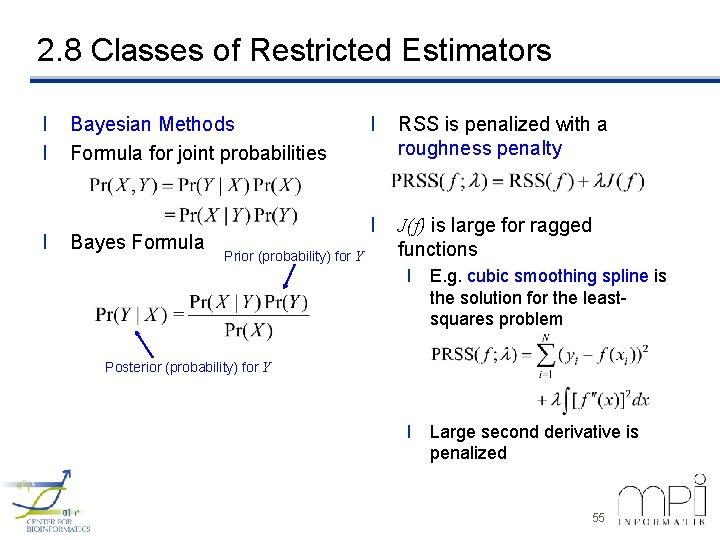

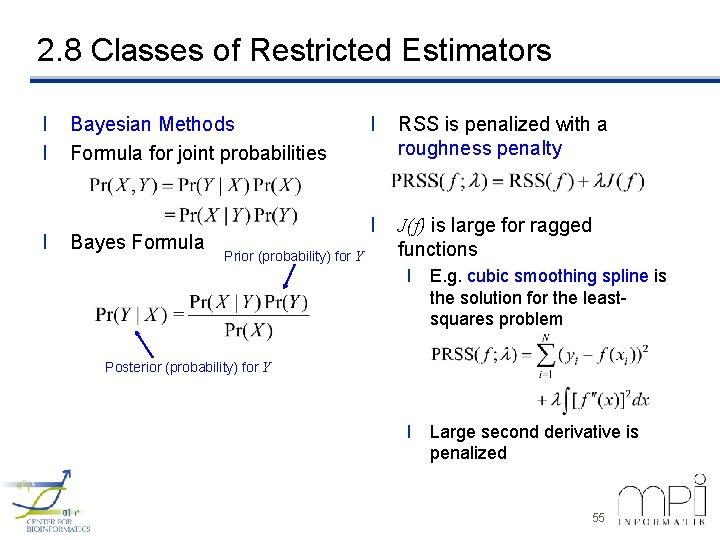

2. 8 Classes of Restricted Estimators l l l Bayesian Methods Formula for joint probabilities Bayes Formula Prior (probability) for Y l RSS is penalized with a roughness penalty l J(f) is large for ragged functions l E. g. cubic smoothing spline is the solution for the leastsquares problem Posterior (probability) for Y l Large second derivative is penalized 55

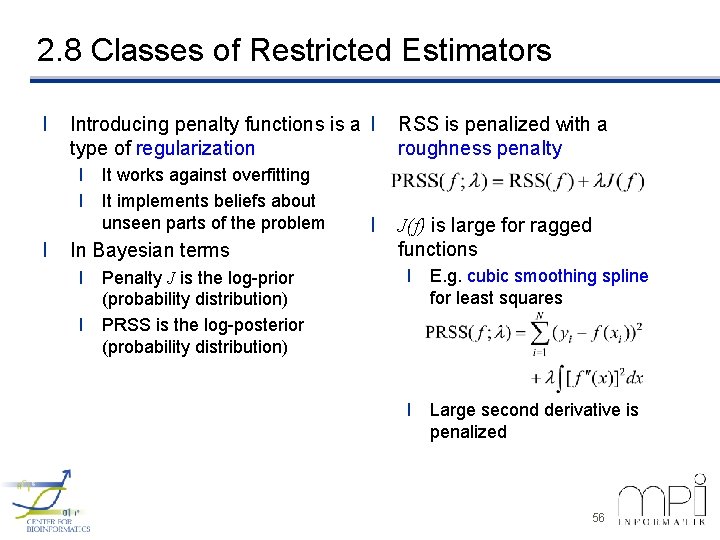

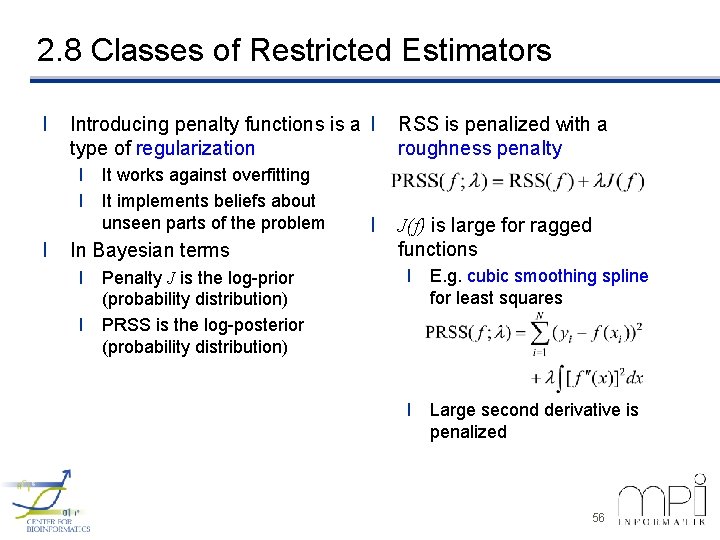

2. 8 Classes of Restricted Estimators l Introducing penalty functions is a l type of regularization l It works against overfitting l It implements beliefs about unseen parts of the problem l In Bayesian terms l Penalty J is the log-prior (probability distribution) l PRSS is the log-posterior (probability distribution) l RSS is penalized with a roughness penalty J(f) is large for ragged functions l E. g. cubic smoothing spline for least squares l Large second derivative is penalized 56

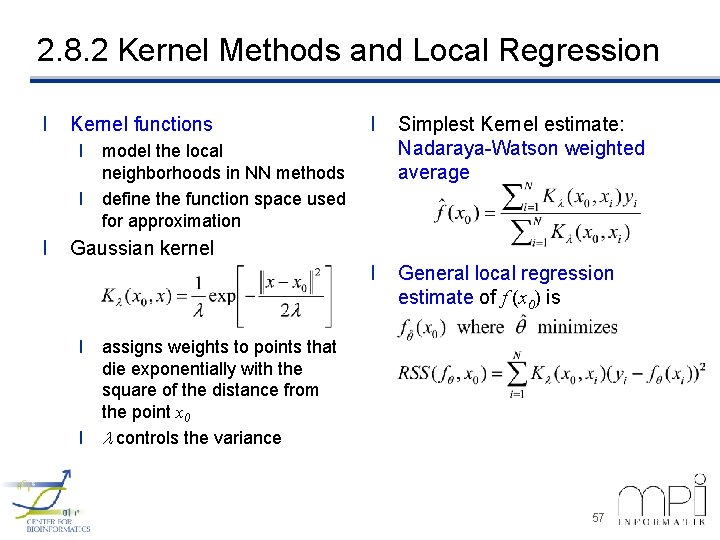

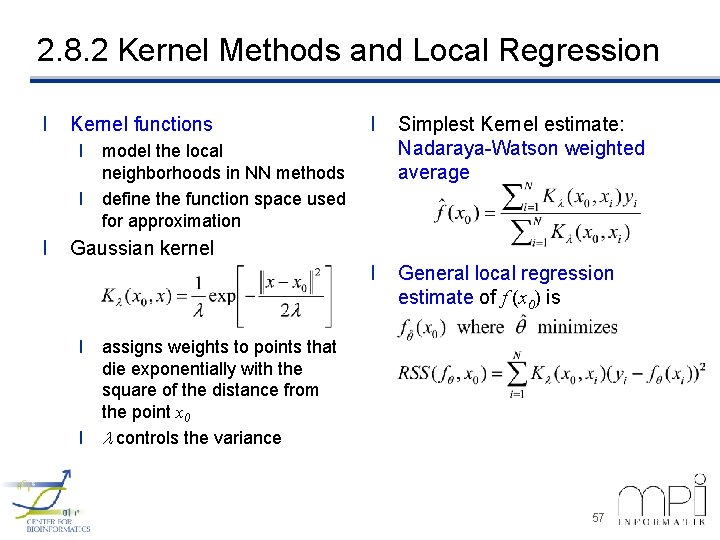

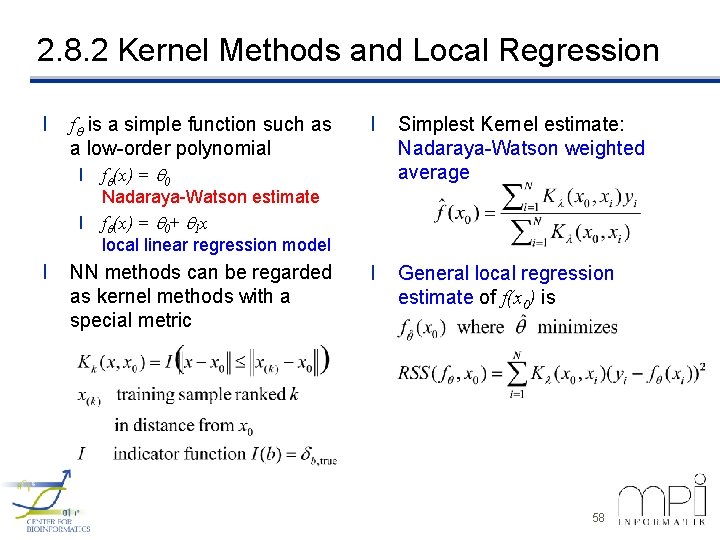

2. 8. 2 Kernel Methods and Local Regression l Kernel functions l Simplest Kernel estimate: Nadaraya-Watson weighted average l General local regression estimate of f (x 0) is l model the local neighborhoods in NN methods l define the function space used for approximation l Gaussian kernel l assigns weights to points that die exponentially with the square of the distance from the point x 0 l l controls the variance 57

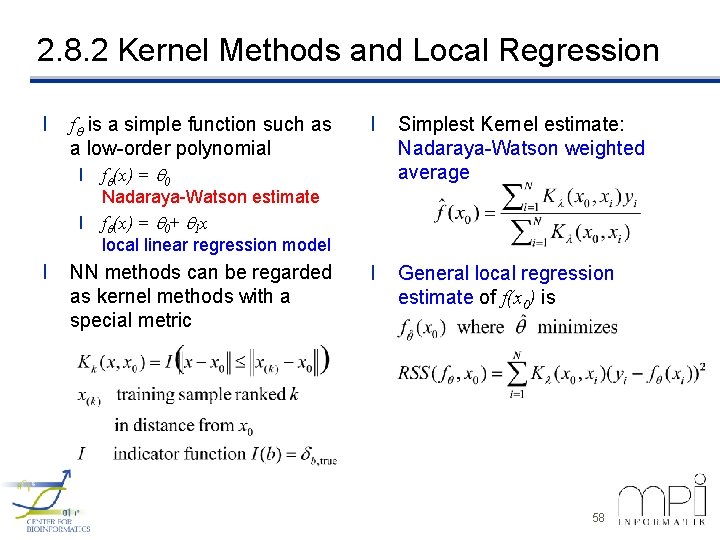

2. 8. 2 Kernel Methods and Local Regression l f is a simple function such as a low-order polynomial l f (x) = 0 l l l Simplest Kernel estimate: Nadaraya-Watson weighted average l General local regression estimate of f(x 0) is Nadaraya-Watson estimate f (x) = 0+ 1 x local linear regression model NN methods can be regarded as kernel methods with a special metric 58

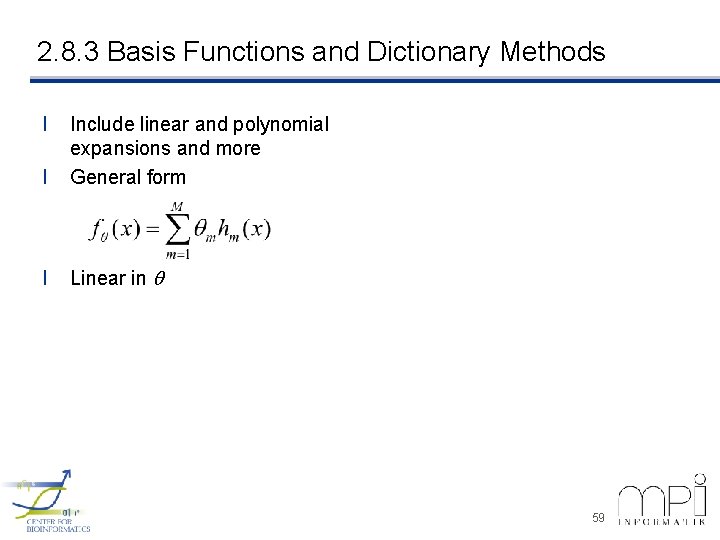

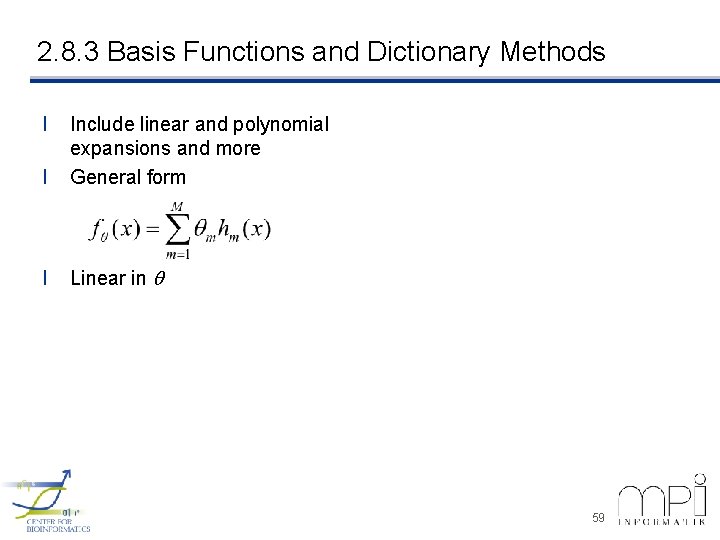

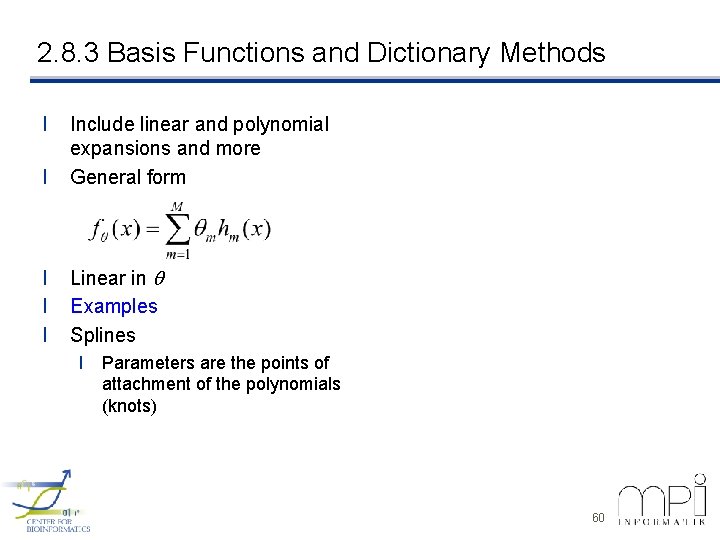

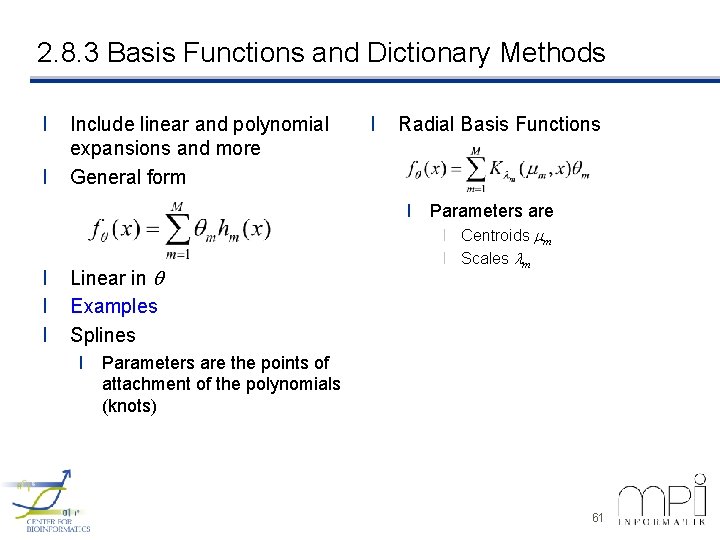

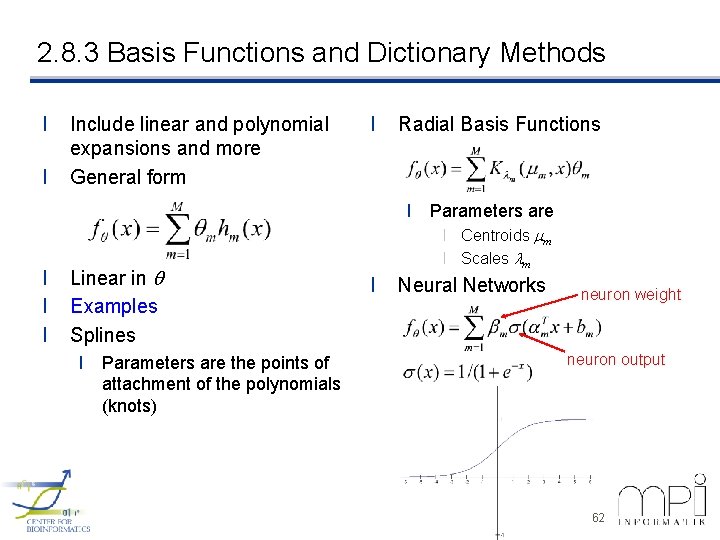

2. 8. 3 Basis Functions and Dictionary Methods l l Include linear and polynomial expansions and more General form l Linear in 59

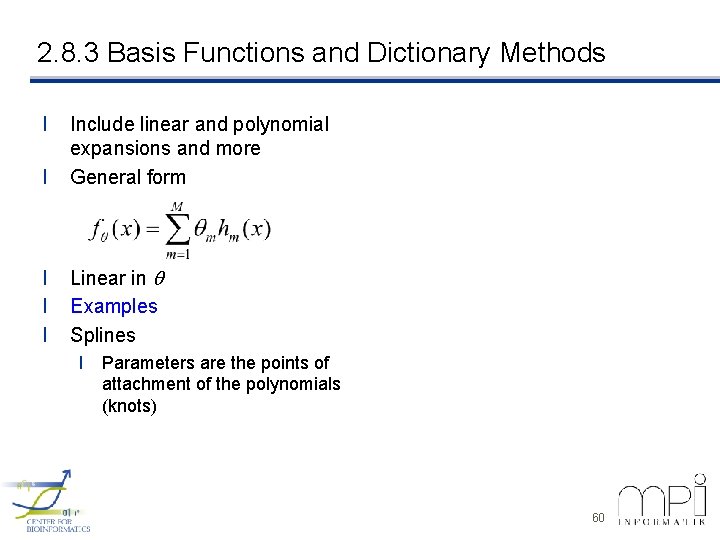

2. 8. 3 Basis Functions and Dictionary Methods l l Include linear and polynomial expansions and more General form l l l Linear in Examples Splines l Parameters are the points of attachment of the polynomials (knots) 60

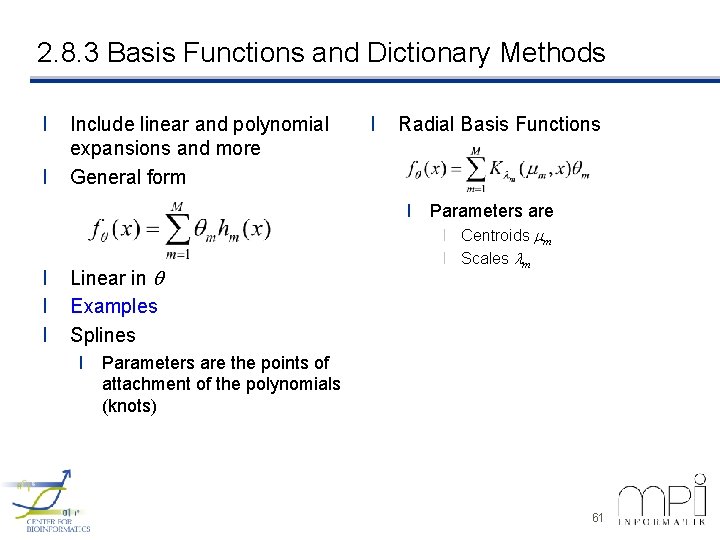

2. 8. 3 Basis Functions and Dictionary Methods l l Include linear and polynomial expansions and more General form l Radial Basis Functions l Parameters are l l l Linear in Examples Splines l Centroids mm l Scales lm l Parameters are the points of attachment of the polynomials (knots) 61

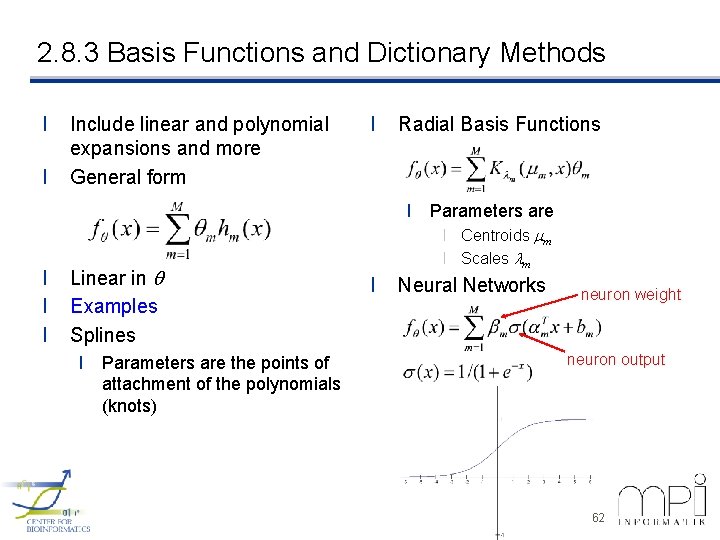

2. 8. 3 Basis Functions and Dictionary Methods l l Include linear and polynomial expansions and more General form l Radial Basis Functions l Parameters are l l l Linear in Examples Splines l Parameters are the points of attachment of the polynomials (knots) l Centroids mm l Scales lm l Neural Networks neuron weight neuron output 62

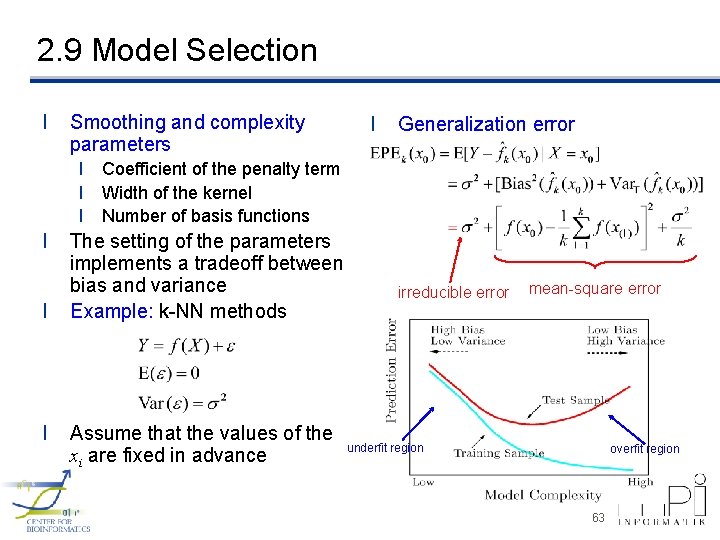

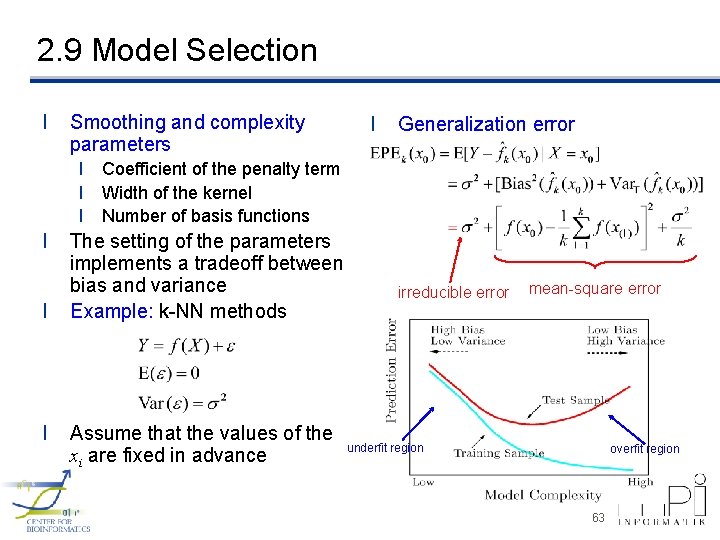

2. 9 Model Selection l Smoothing and complexity parameters l Generalization error l Coefficient of the penalty term l Width of the kernel l Number of basis functions l l l The setting of the parameters implements a tradeoff between bias and variance Example: k-NN methods Assume that the values of the xi are fixed in advance irreducible error mean-square error underfit region overfit region 63