SGD ON HADOOP FOR BIG DATA HUGE MODELS

SGD ON HADOOP FOR BIG DATA & HUGE MODELS Alex Beutel Based on work done with Abhimanu Kumar, Vagelis Papalexakis, Partha Talukdar, Qirong Ho, Christos Faloutsos, and Eric Xing

Outline 1. When to use SGD for distributed learning 2. Optimization • Review of DSGD • SGD for Tensors • SGD for ML models – topic modeling, dictionary learning, MMSB 3. Hadoop 1. General algorithm 2. Setting up the Map. Reduce body 3. Reducer communication 4. Distributed normalization 5. “Always-On SGD” – How to deal with the straggler problem 4. Experiments 5. Looking Forward – Graphical Models and the Cloud

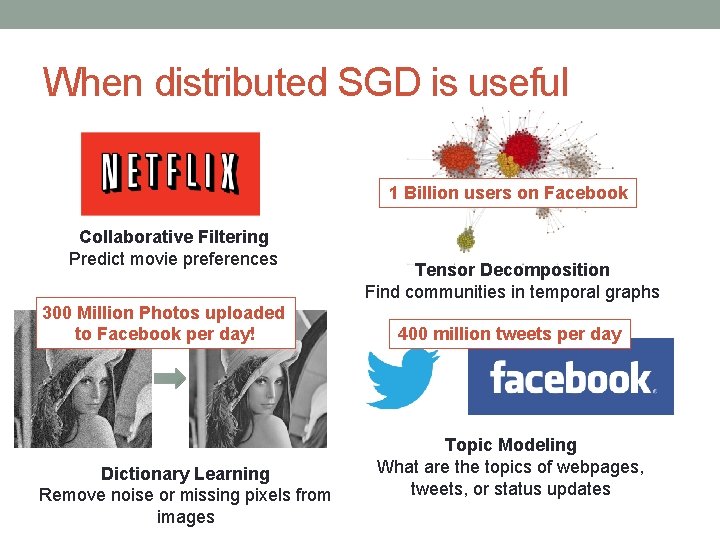

When distributed SGD is useful 1 Billion users on Facebook Collaborative Filtering Predict movie preferences 300 Million Photos uploaded to Facebook per day! Dictionary Learning Remove noise or missing pixels from images Tensor Decomposition Find communities in temporal graphs 400 million tweets per day Topic Modeling What are the topics of webpages, tweets, or status updates

Gradient Descent

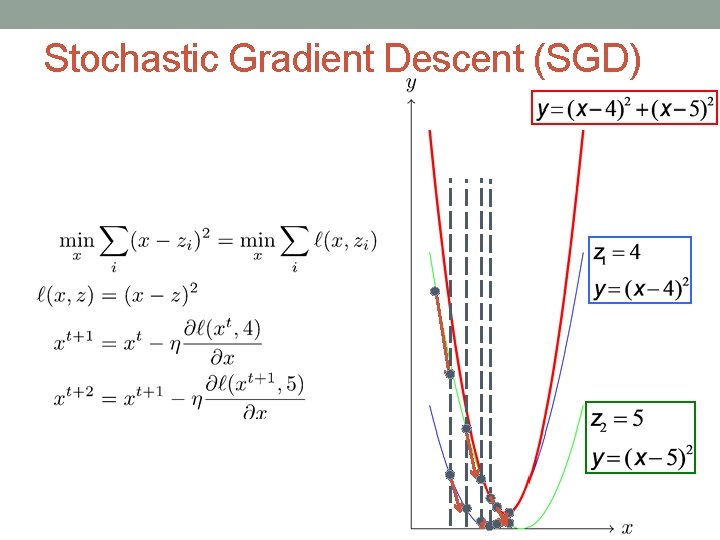

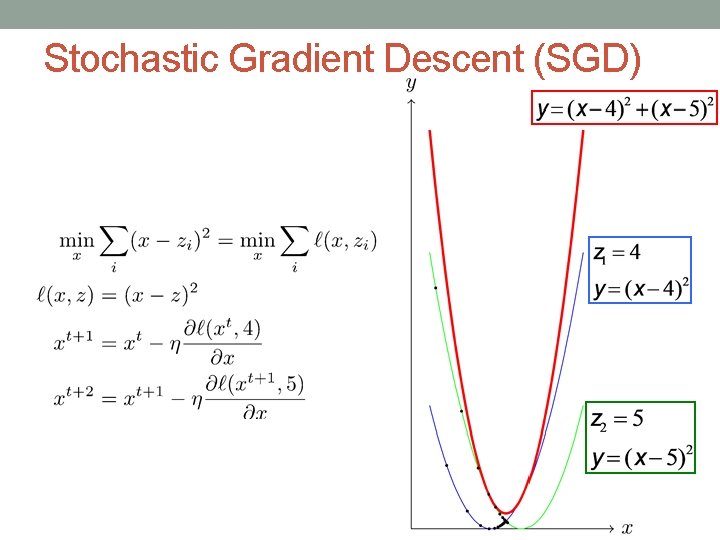

Stochastic Gradient Descent (SGD)

Stochastic Gradient Descent (SGD)

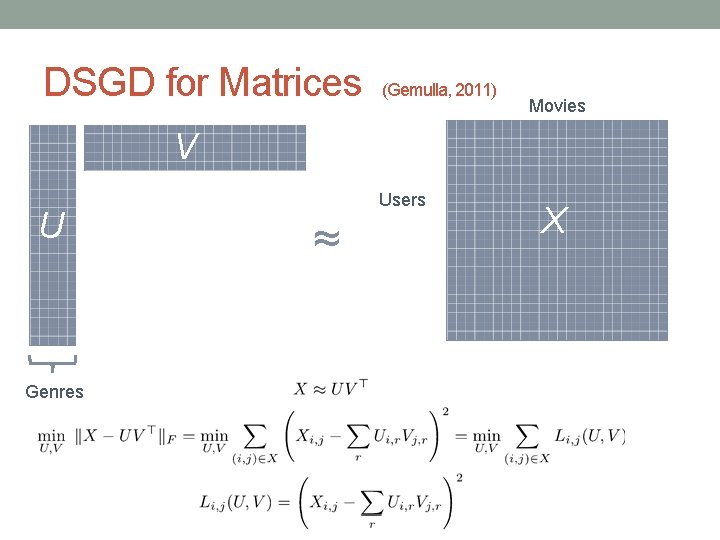

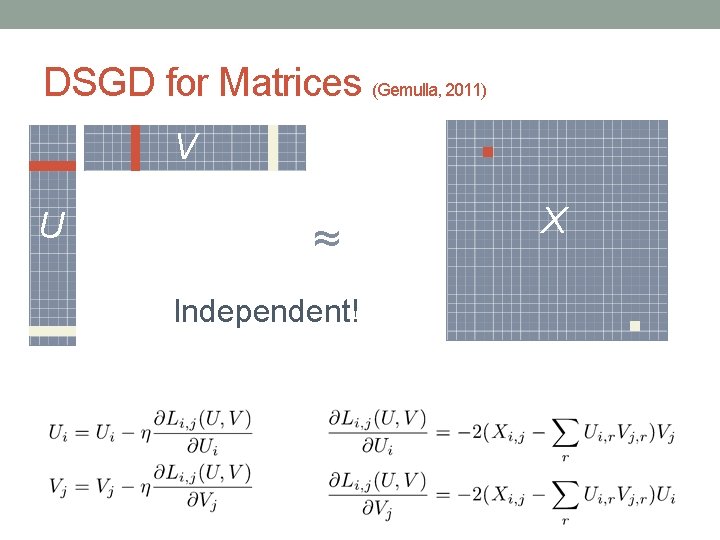

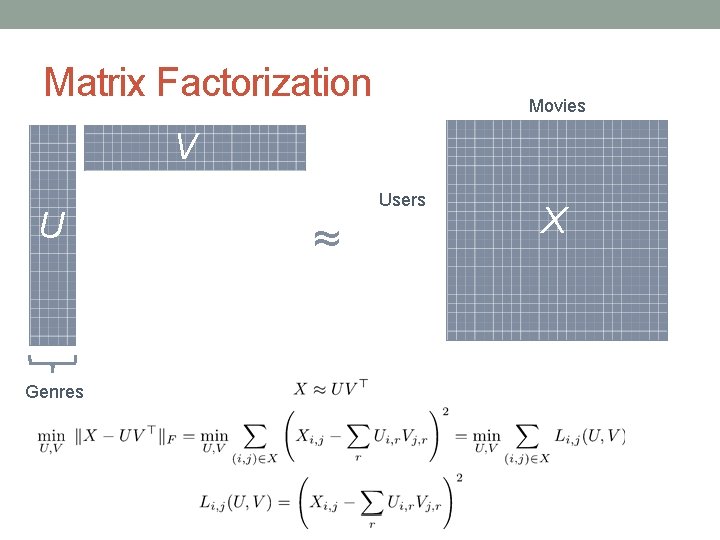

DSGD for Matrices (Gemulla, 2011) Movies V U Genres Users ≈ X

DSGD for Matrices (Gemulla, 2011) V U ≈ Independent! X

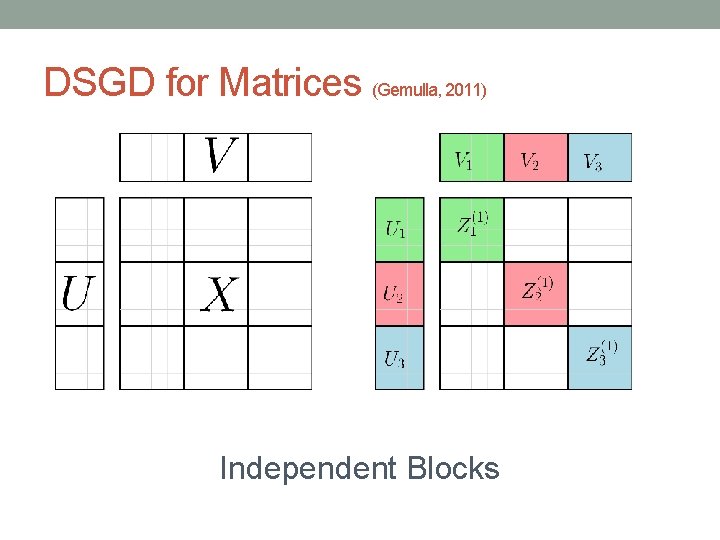

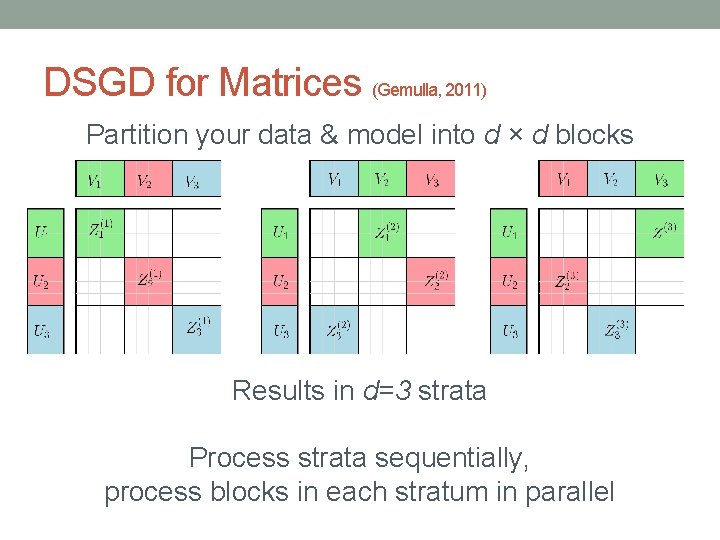

DSGD for Matrices (Gemulla, 2011) Independent Blocks

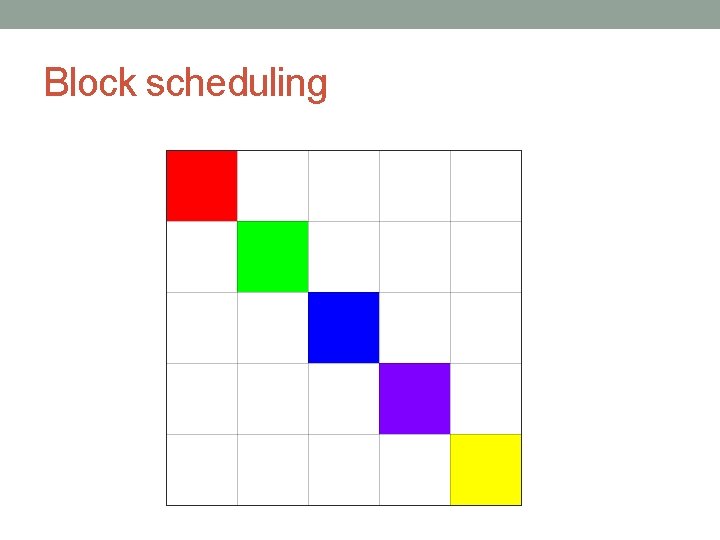

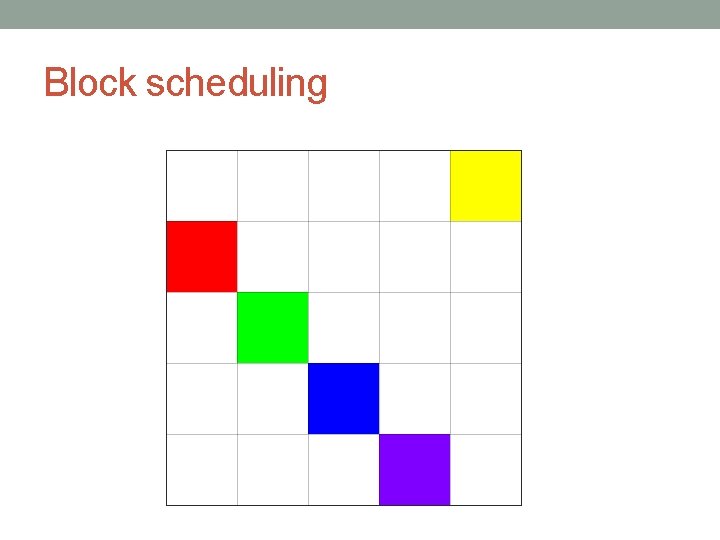

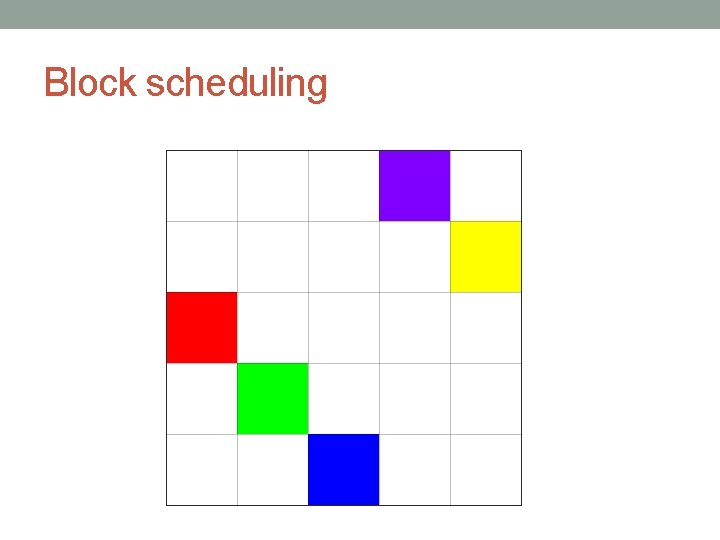

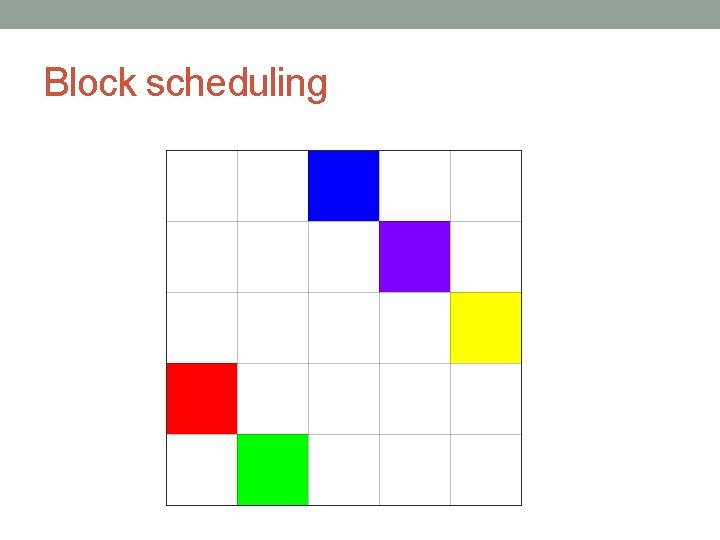

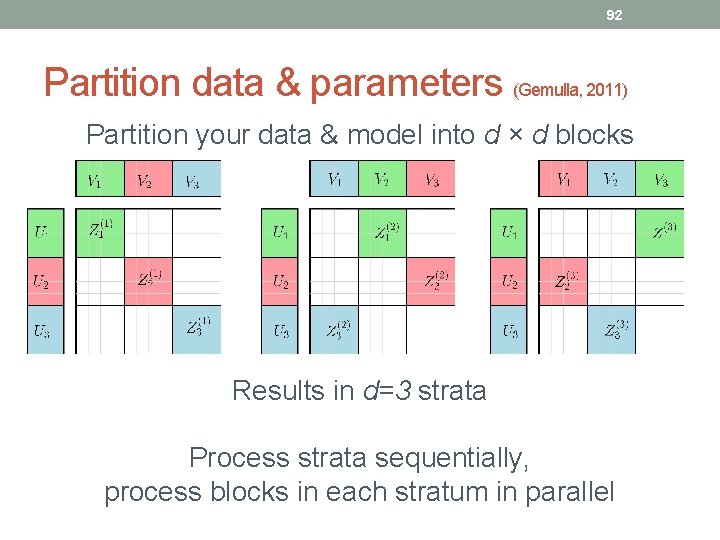

DSGD for Matrices (Gemulla, 2011) Partition your data & model into d × d blocks Results in d=3 strata Process strata sequentially, process blocks in each stratum in parallel

TENSORS

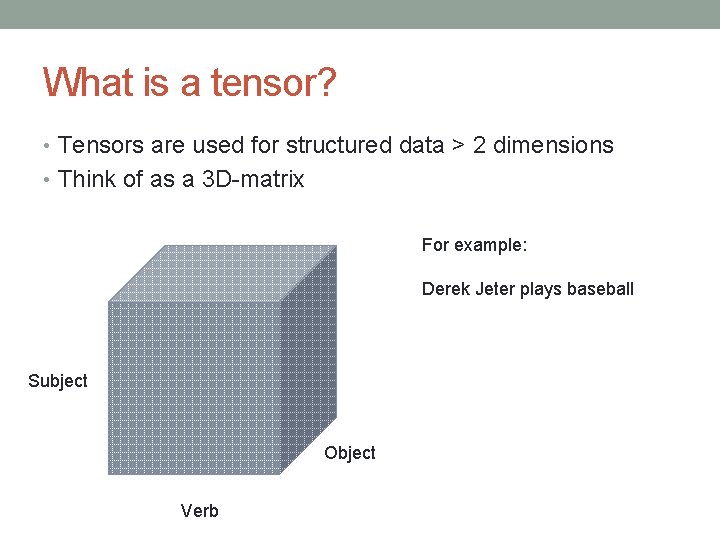

What is a tensor? • Tensors are used for structured data > 2 dimensions • Think of as a 3 D-matrix For example: Derek Jeter plays baseball Subject Object Verb

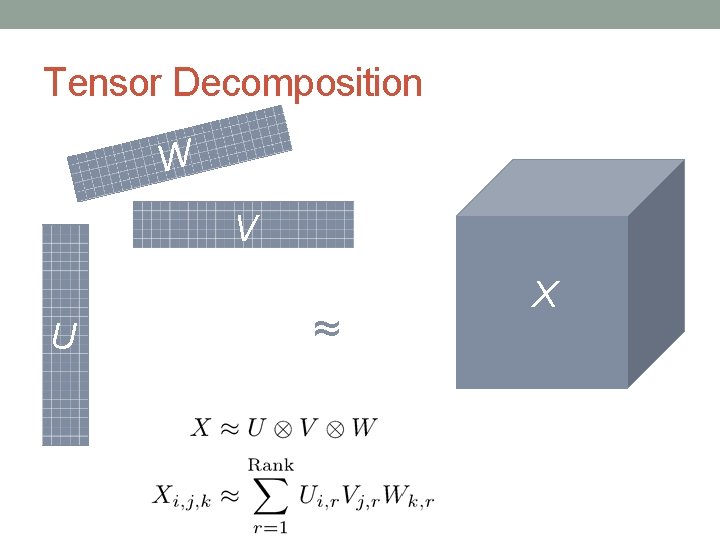

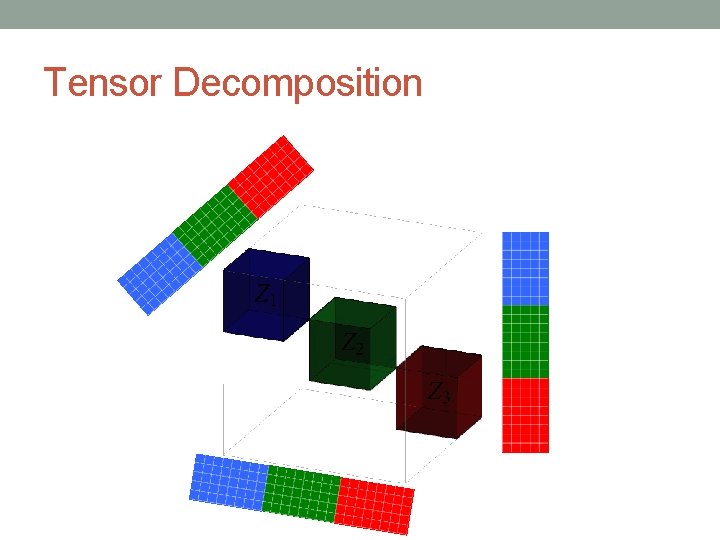

Tensor Decomposition W V U ≈ X

Tensor Decomposition W V U ≈ X

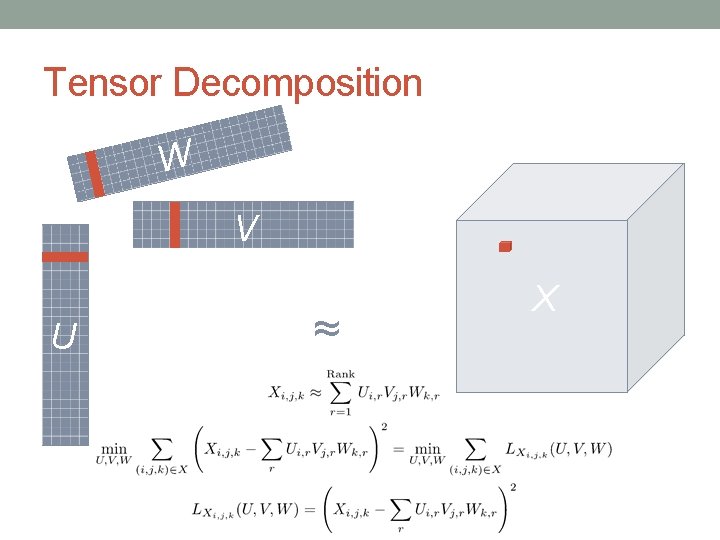

Tensor Decomposition Independent W V U ≈ Not Independent X

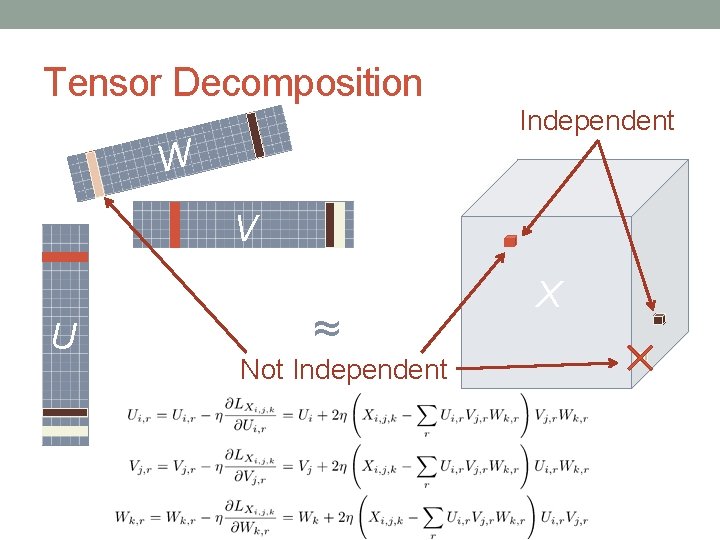

Tensor Decomposition

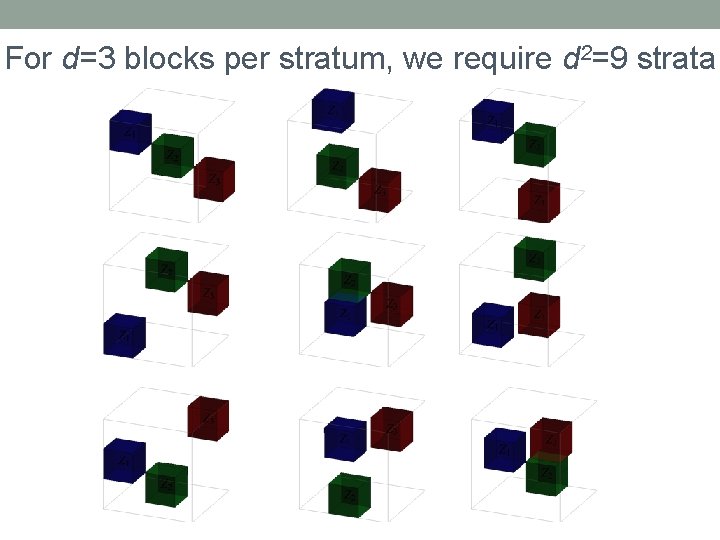

For d=3 blocks per stratum, we require d 2=9 strata

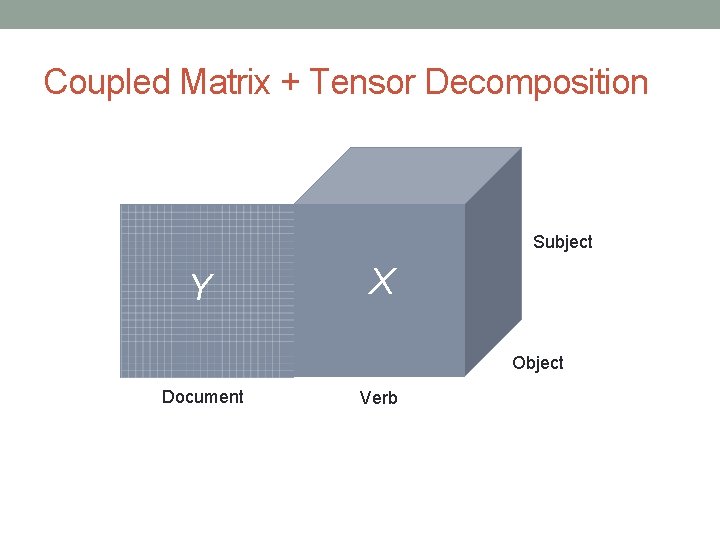

Coupled Matrix + Tensor Decomposition Subject Y X Object Document Verb

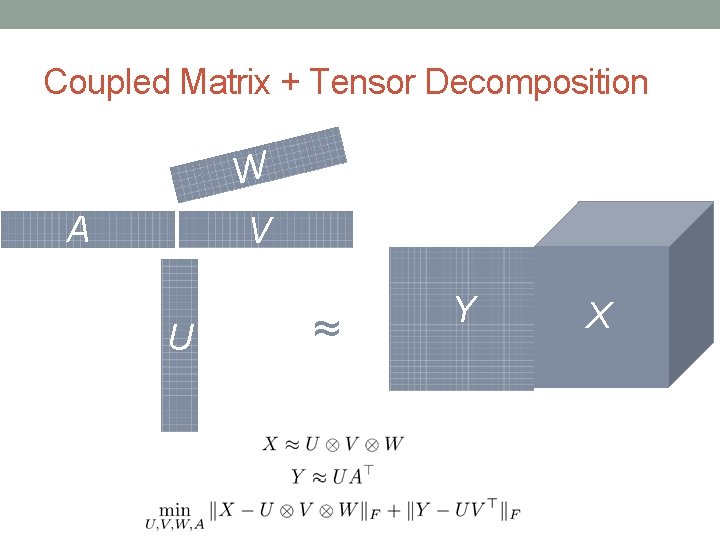

Coupled Matrix + Tensor Decomposition W A V U ≈ Y X

Coupled Matrix + Tensor Decomposition

CONSTRAINTS & PROJECTIONS

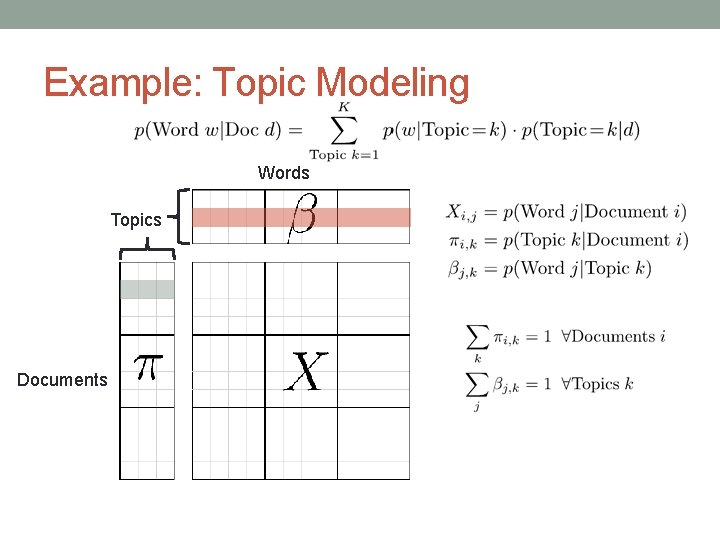

Example: Topic Modeling Words Topics Documents

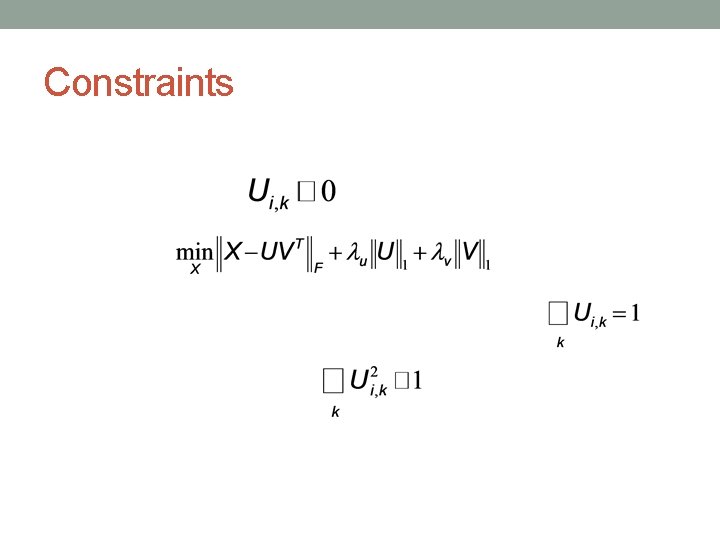

Constraints

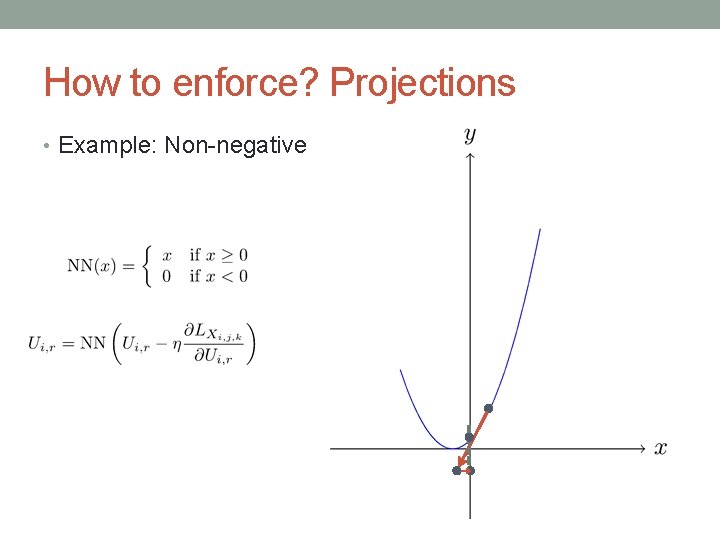

How to enforce? Projections • Example: Non-negative

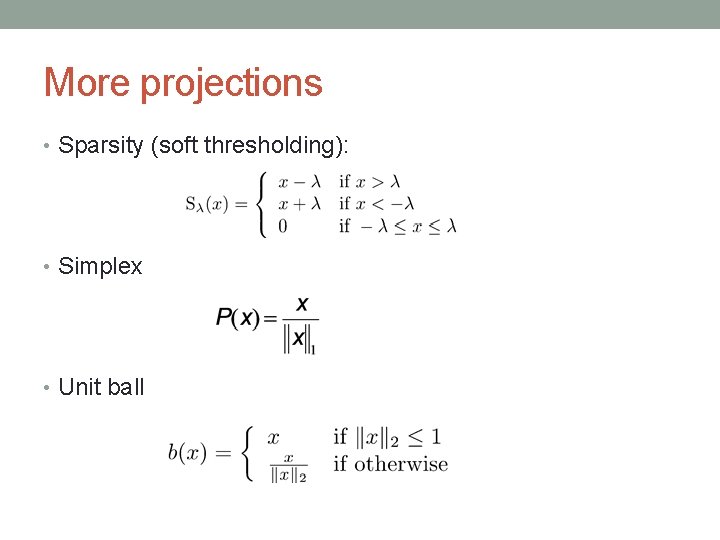

More projections • Sparsity (soft thresholding): • Simplex • Unit ball

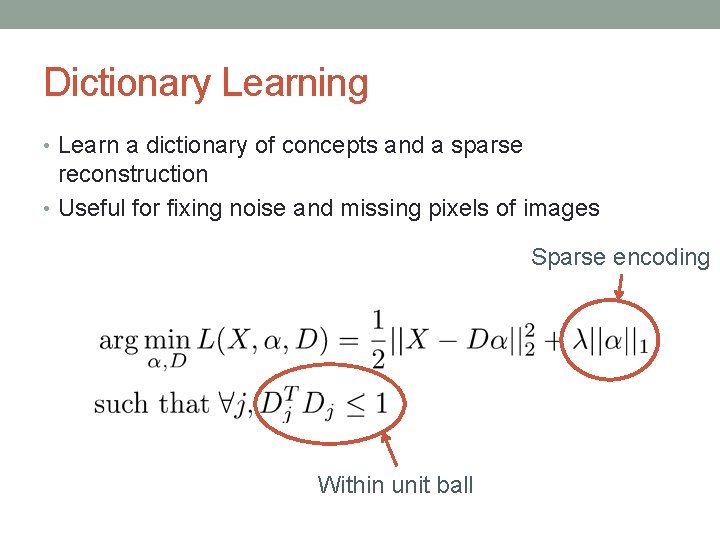

Dictionary Learning • Learn a dictionary of concepts and a sparse reconstruction • Useful for fixing noise and missing pixels of images Sparse encoding Within unit ball

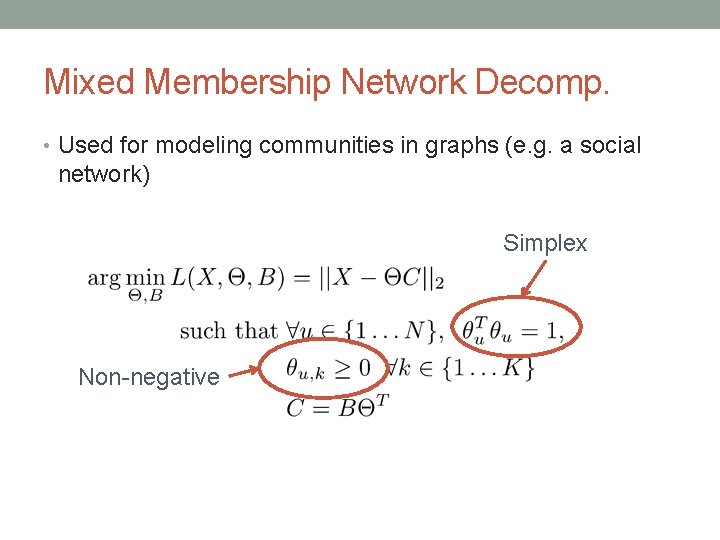

Mixed Membership Network Decomp. • Used for modeling communities in graphs (e. g. a social network) Simplex Non-negative

IMPLEMENTING ON HADOOP

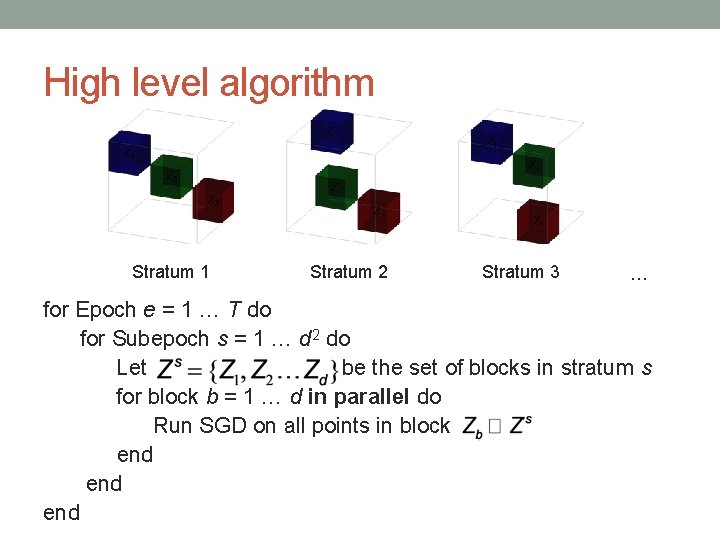

High level algorithm Stratum 1 Stratum 2 Stratum 3 … for Epoch e = 1 … T do for Subepoch s = 1 … d 2 do Let be the set of blocks in stratum s for block b = 1 … d in parallel do Run SGD on all points in block end end

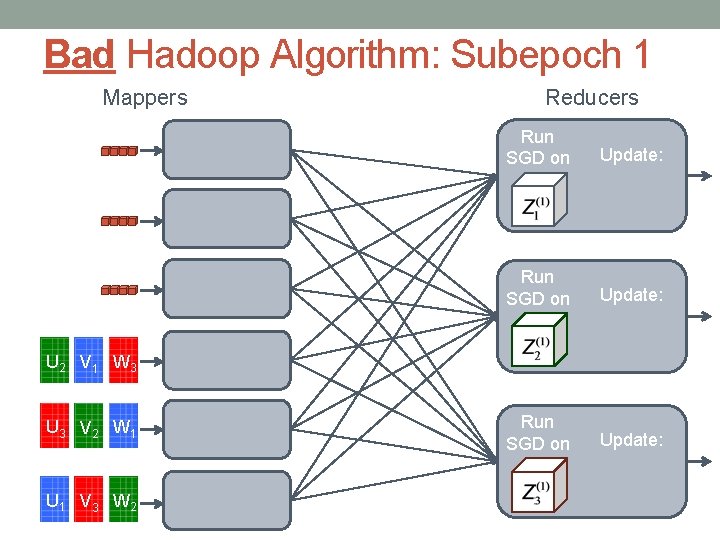

Bad Hadoop Algorithm: Subepoch 1 Mappers Reducers Run SGD on Update: U 2 V 1 W 3 U 3 V 2 W 1 U 1 V 3 W 2

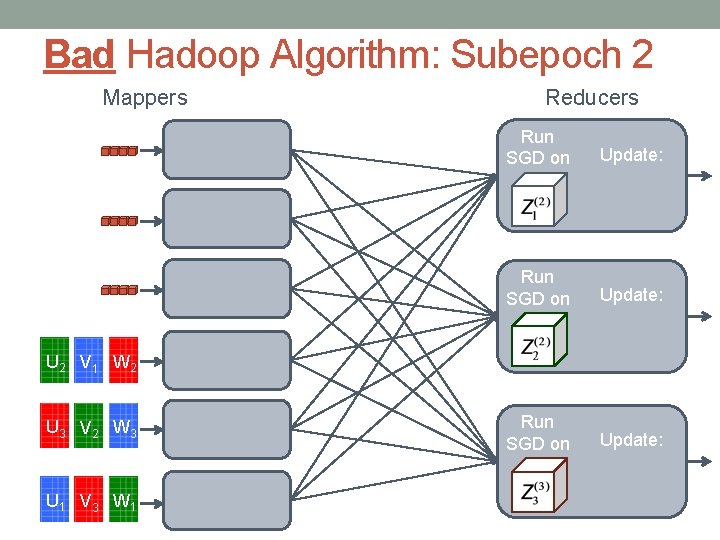

Bad Hadoop Algorithm: Subepoch 2 Mappers Reducers Run SGD on Update: U 2 V 1 W 2 U 3 V 2 W 3 U 1 V 3 W 1

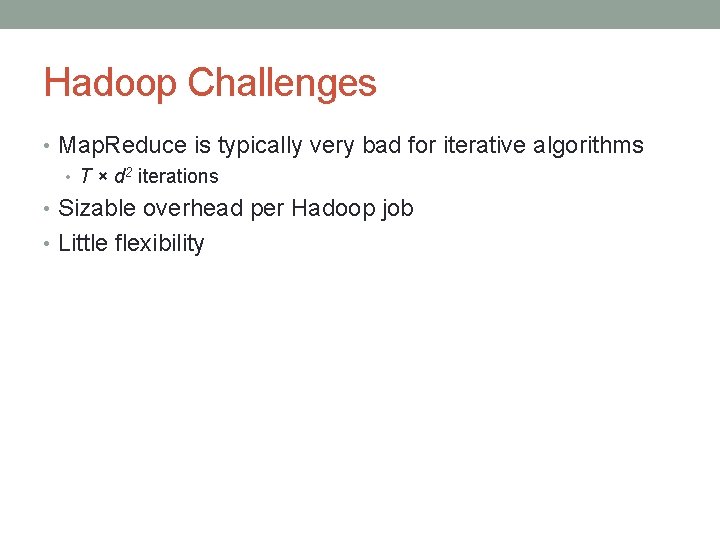

Hadoop Challenges • Map. Reduce is typically very bad for iterative algorithms • T × d 2 iterations • Sizable overhead per Hadoop job • Little flexibility

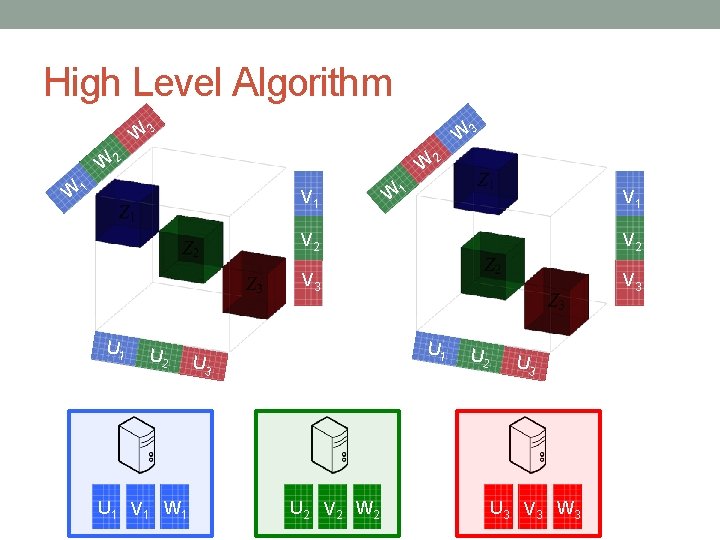

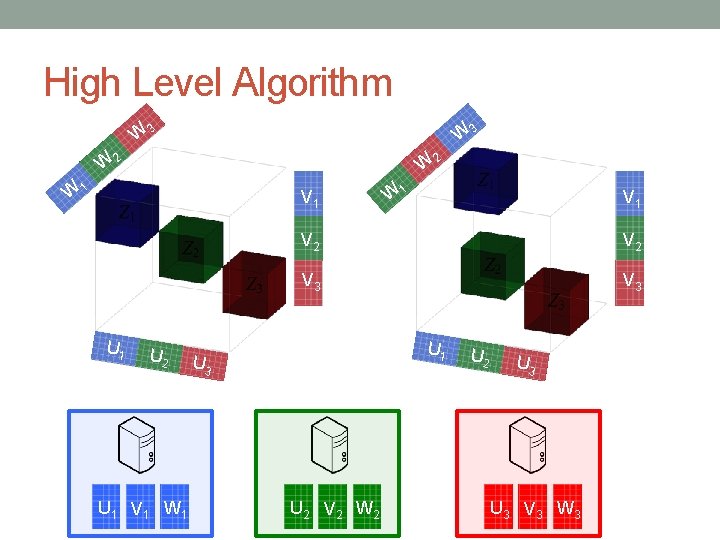

High Level Algorithm W 3 W 2 W 1 V 1 U 2 U 1 V 1 W 1 V 2 V 3 V 3 U 1 U 3 U 2 V 2 W 2 U 3 U 3 V 3 W 3

High Level Algorithm W 3 W 2 W 1 V 1 U 2 U 1 V 1 W 1 V 2 V 3 V 3 U 1 U 3 U 2 V 2 W 2 U 3 U 3 V 3 W 3

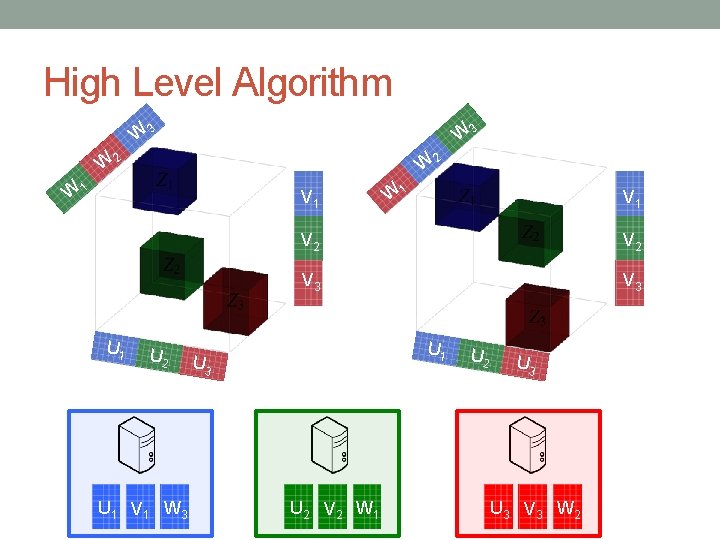

High Level Algorithm W 3 W 2 W 1 V 1 U 2 U 1 V 1 W 3 W 1 V 2 V 3 V 3 U 1 U 3 U 2 V 2 W 1 U 2 U 3 V 3 W 2

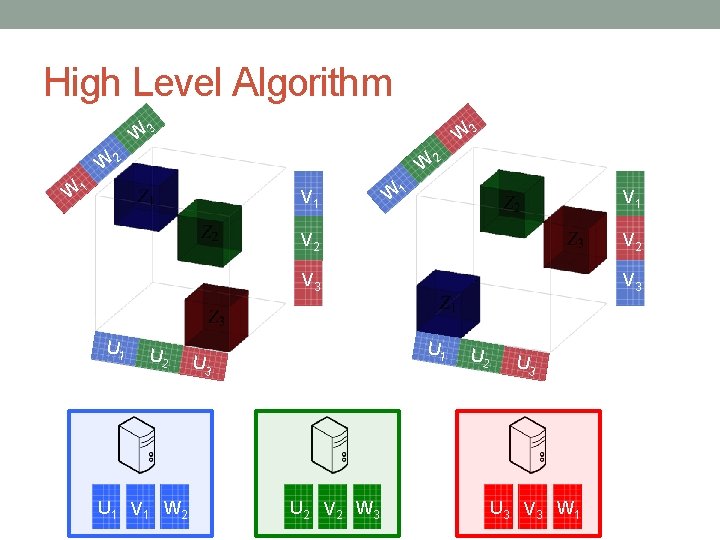

High Level Algorithm W 3 W 2 W 1 V 1 U 2 U 1 V 1 W 2 W 1 V 2 V 3 V 3 U 1 U 3 U 2 V 2 W 3 U 2 U 3 V 3 W 1

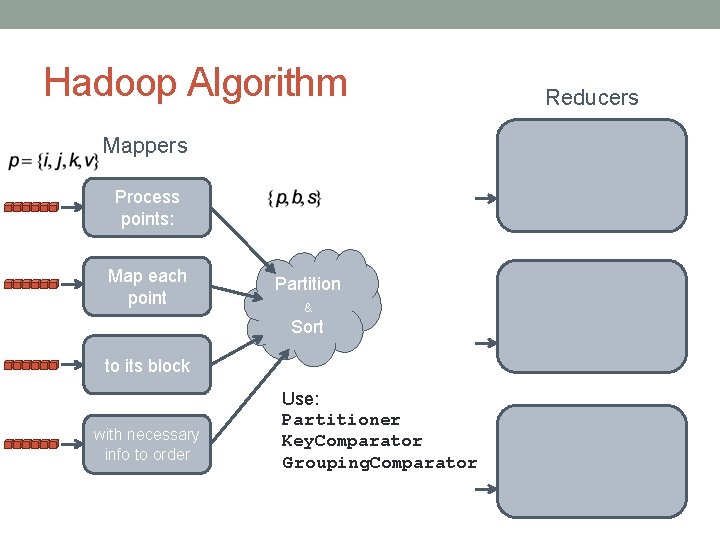

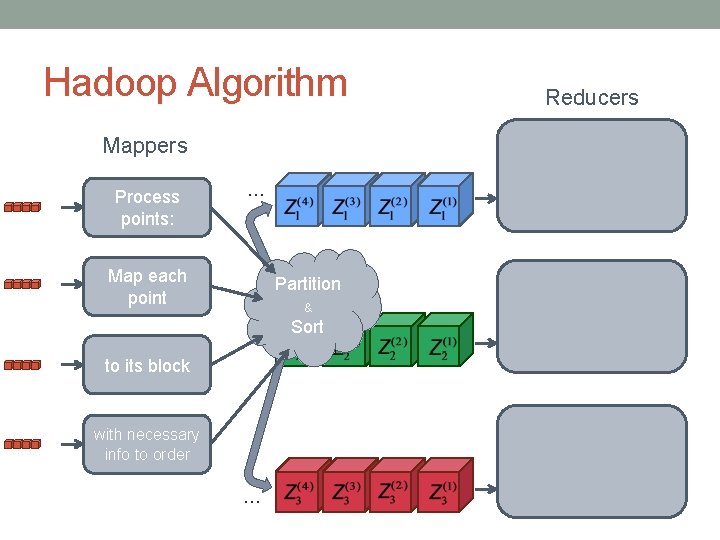

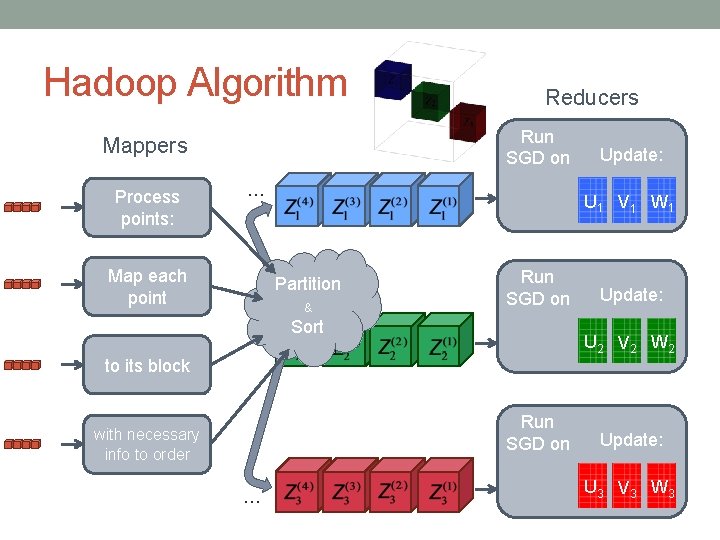

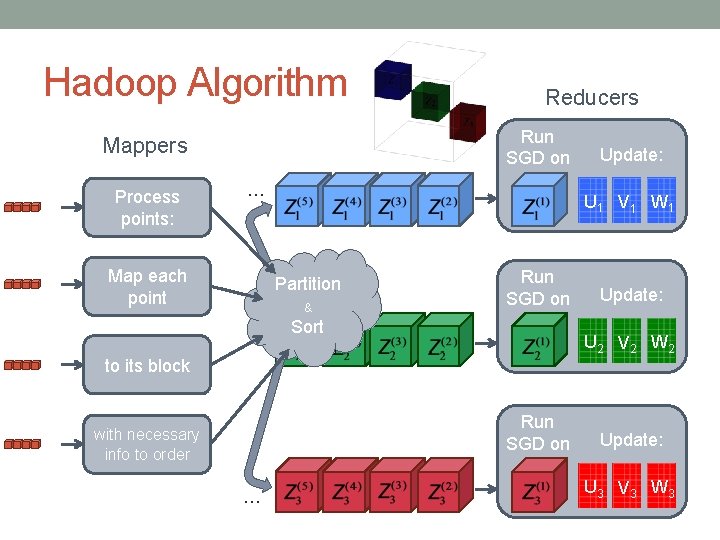

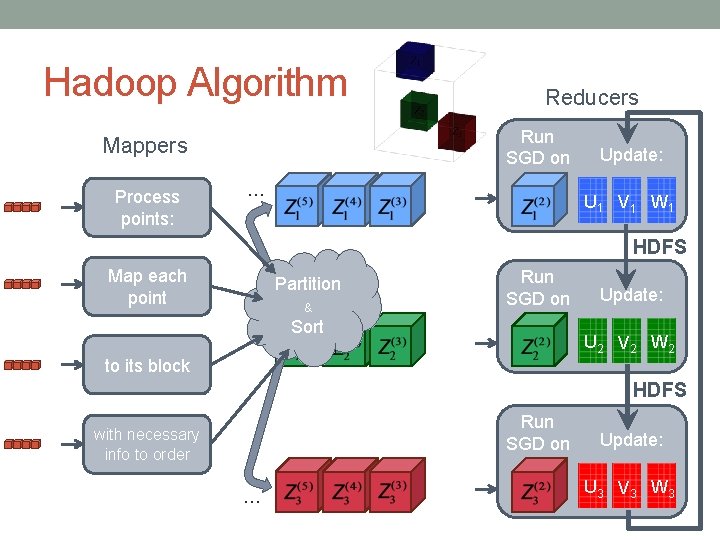

Hadoop Algorithm Mappers Process points: Map each point Partition & Sort to its block with necessary info to order Use: Partitioner Key. Comparator Grouping. Comparator Reducers

Hadoop Algorithm Mappers Process points: … Map each point Partition & Sort to its block with necessary info to order … Reducers

Hadoop Algorithm Run SGD on Mappers Process points: Reducers … Map each point U 1 V 1 W 1 Partition & Run SGD on Sort Run SGD on … Update: U 2 V 2 W 2 to its block with necessary info to order Update: U 3 V 3 W 3

Hadoop Algorithm Run SGD on Mappers Process points: Reducers … Map each point U 1 V 1 W 1 Partition & Run SGD on Sort Run SGD on … Update: U 2 V 2 W 2 to its block with necessary info to order Update: U 3 V 3 W 3

Hadoop Algorithm Run SGD on Mappers Process points: Reducers … Update: U 1 V 1 W 1 HDFS Map each point Partition & Run SGD on Sort Update: U 2 V 2 W 2 to its block HDFS Run SGD on with necessary info to order … Update: U 3 V 3 W 3

Hadoop Summary 1. Use mappers to send data points to the correct reducers in order 2. Use reducers as machines in a normal cluster 3. Use HDFS as the communication channel between reducers

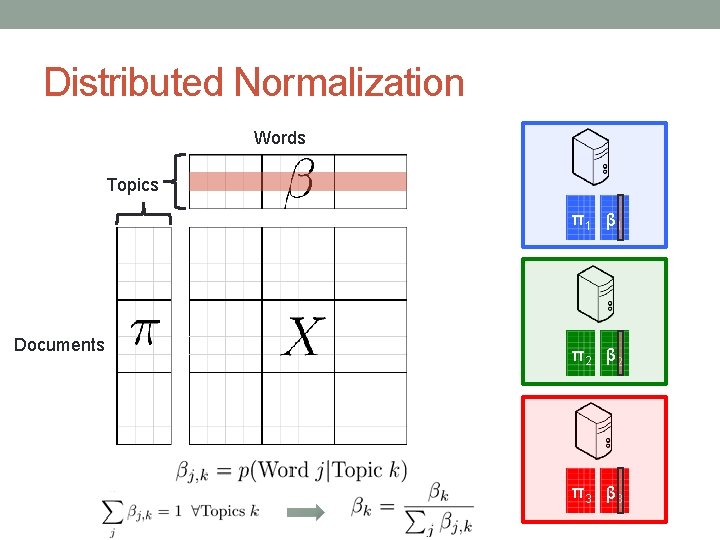

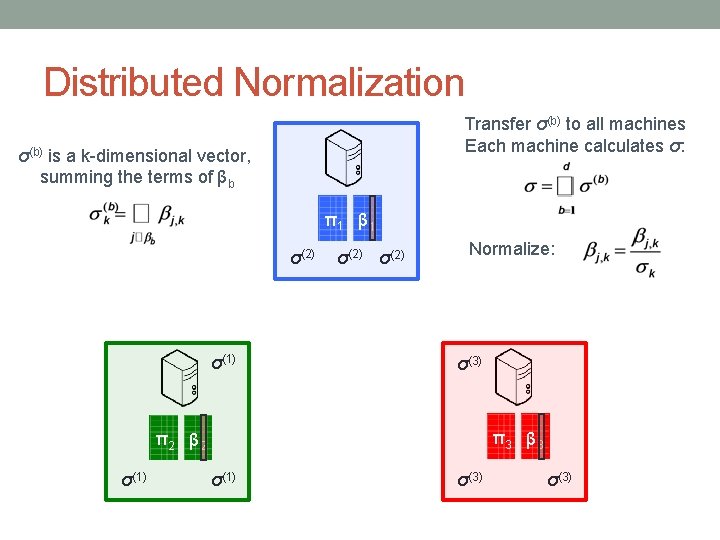

Distributed Normalization Words Topics π 1 β 1 Documents π 2 β 2 π 3 β 3

Distributed Normalization Transfer σ(b) to all machines Each machine calculates σ: σ(b) is a k-dimensional vector, summing the terms of βb π 1 β 1 σ(2) σ(1) σ(2) Normalize: σ(3) π 3 β 3 π 2 β 2 σ(1) σ(3)

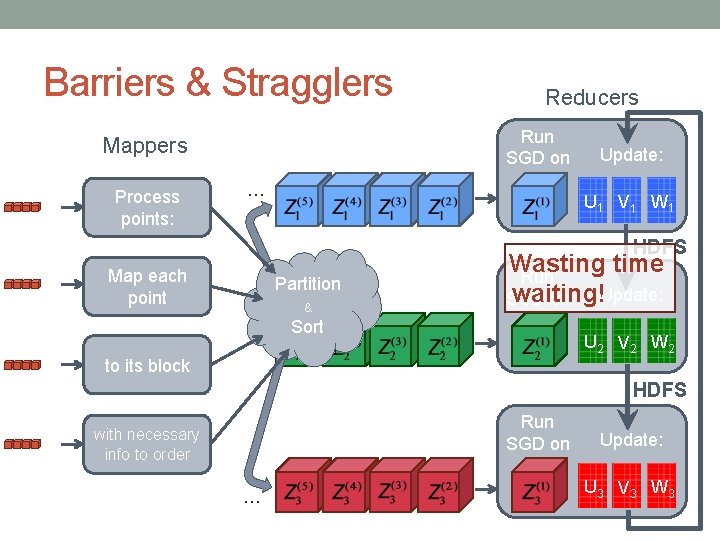

Barriers & Stragglers Run SGD on Mappers Process points: Reducers … Update: U 1 V 1 W 1 HDFS Map each point Partition & Wasting time Run Update: waiting! SGD on Sort U 2 V 2 W 2 to its block HDFS Run SGD on with necessary info to order … Update: U 3 V 3 W 3

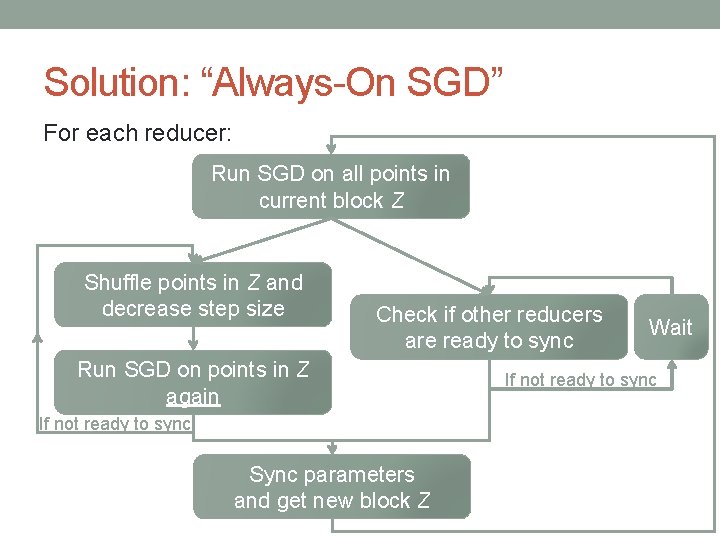

Solution: “Always-On SGD” For each reducer: Run SGD on all points in current block Z Shuffle points in Z and decrease step size Check if other reducers are ready to sync Run SGD on points in Z again If not ready to sync Sync parameters and get new block Z Wait If not ready to sync

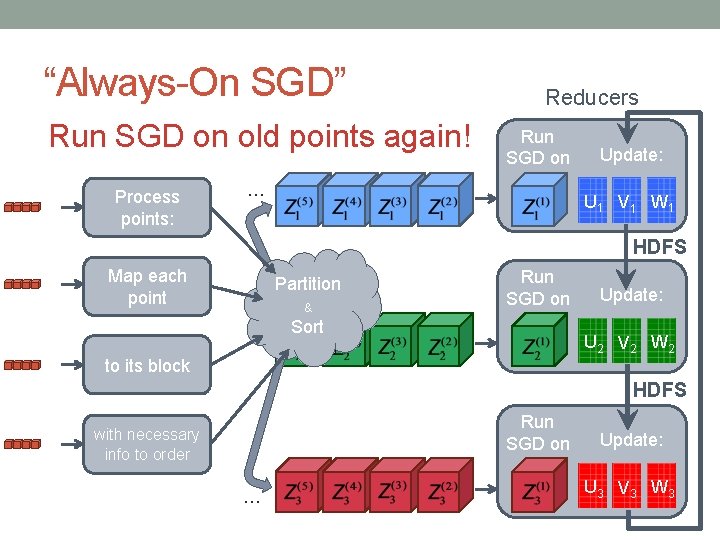

“Always-On SGD” Run SGD on old points again! Process points: Reducers Run SGD on … Update: U 1 V 1 W 1 HDFS Map each point Partition & Run SGD on Sort Update: U 2 V 2 W 2 to its block HDFS Run SGD on with necessary info to order … Update: U 3 V 3 W 3

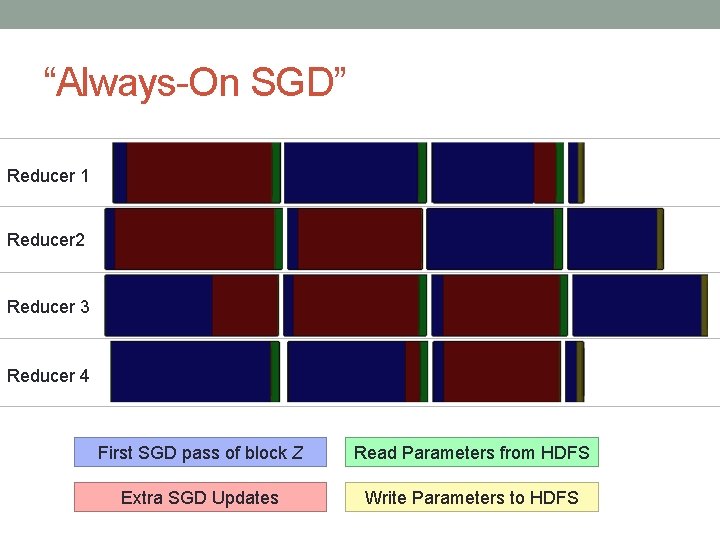

“Always-On SGD” Reducer 1 Reducer 2 Reducer 3 Reducer 4 First SGD pass of block Z Read Parameters from HDFS Extra SGD Updates Write Parameters to HDFS

EXPERIMENTS

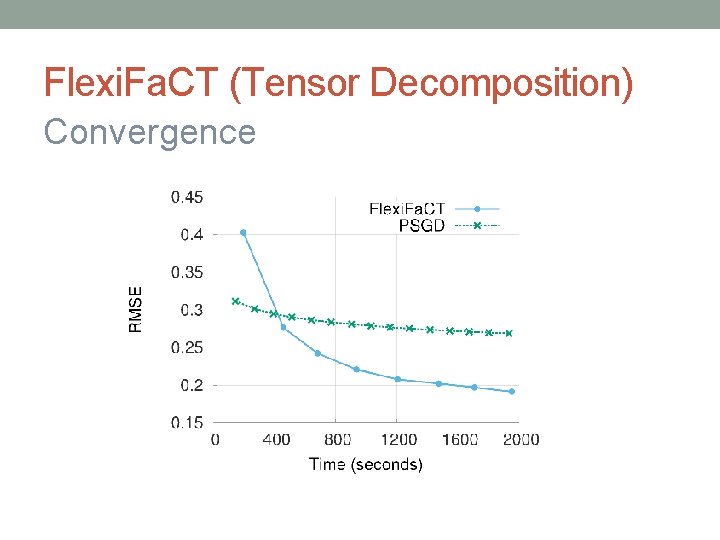

Flexi. Fa. CT (Tensor Decomposition) Convergence

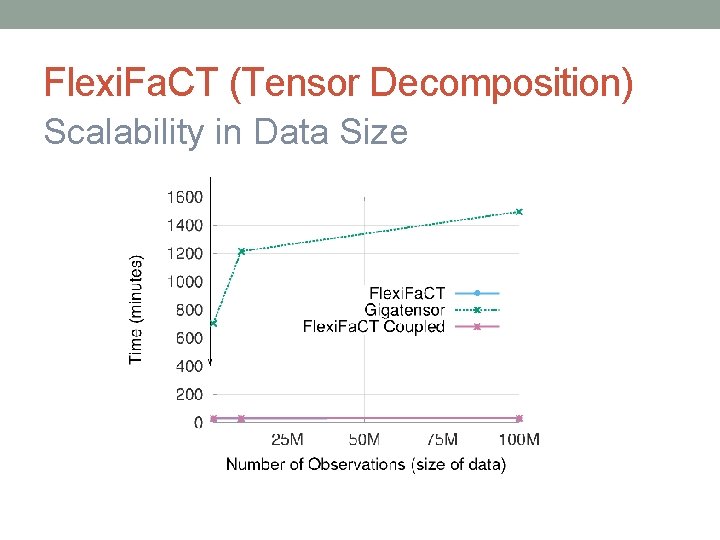

Flexi. Fa. CT (Tensor Decomposition) Scalability in Data Size

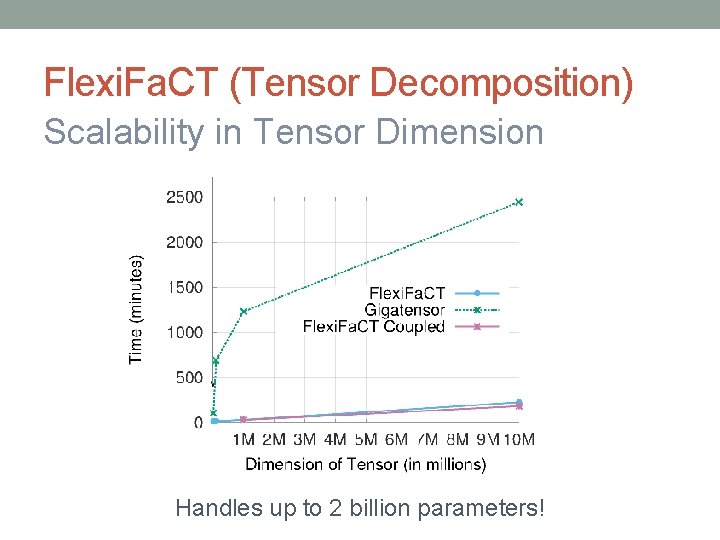

Flexi. Fa. CT (Tensor Decomposition) Scalability in Tensor Dimension Handles up to 2 billion parameters!

Flexi. Fa. CT (Tensor Decomposition) Scalability in Rank of Decomposition Handles up to 4 billion parameters!

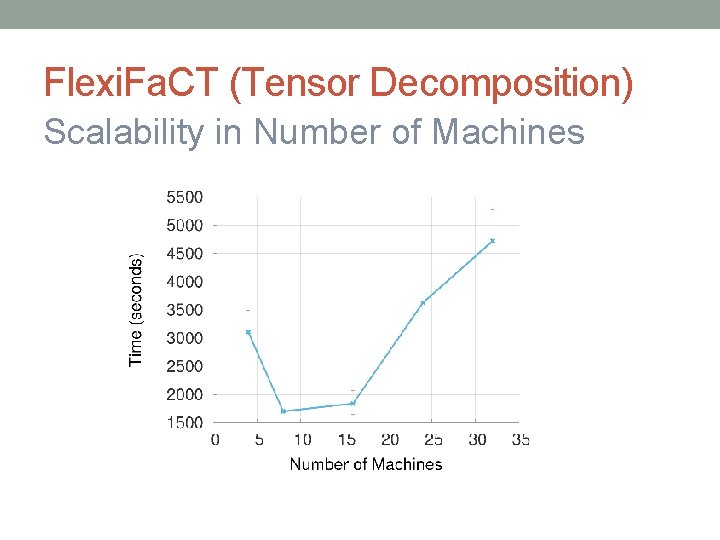

Flexi. Fa. CT (Tensor Decomposition) Scalability in Number of Machines

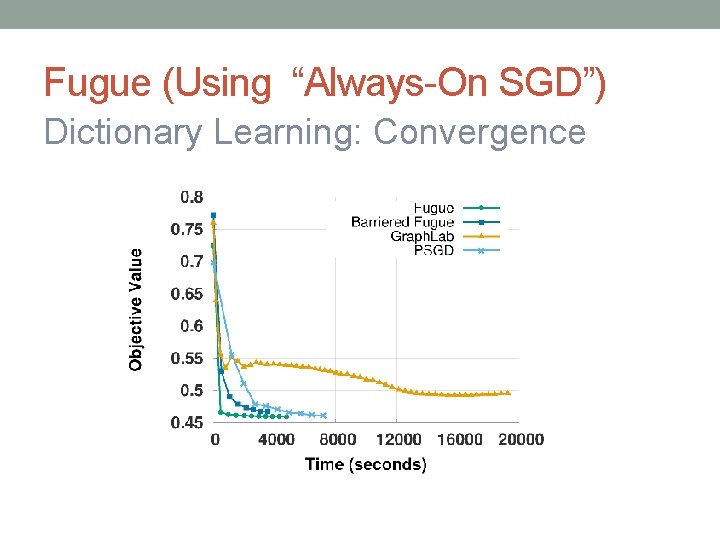

Fugue (Using “Always-On SGD”) Dictionary Learning: Convergence

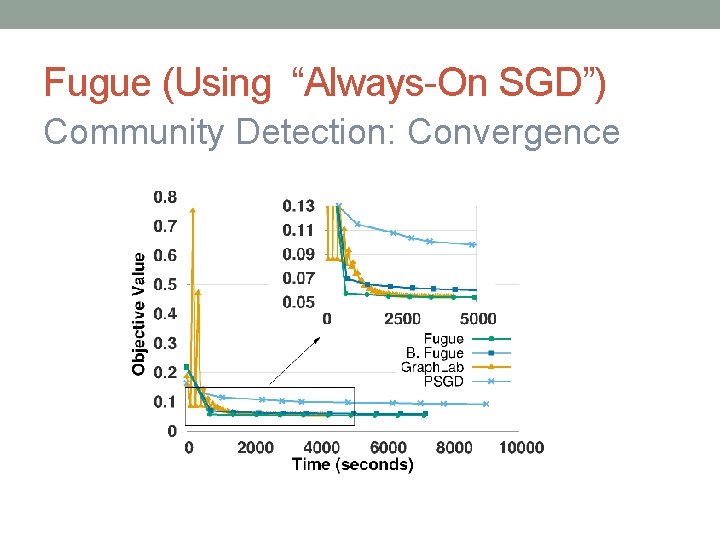

Fugue (Using “Always-On SGD”) Community Detection: Convergence

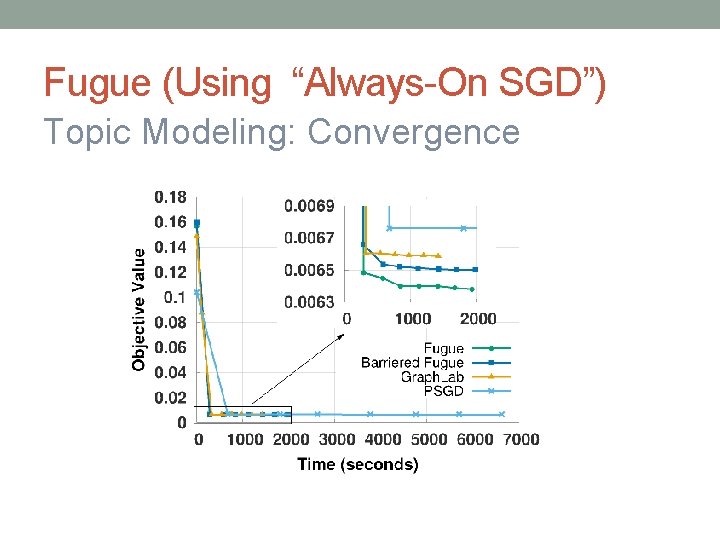

Fugue (Using “Always-On SGD”) Topic Modeling: Convergence

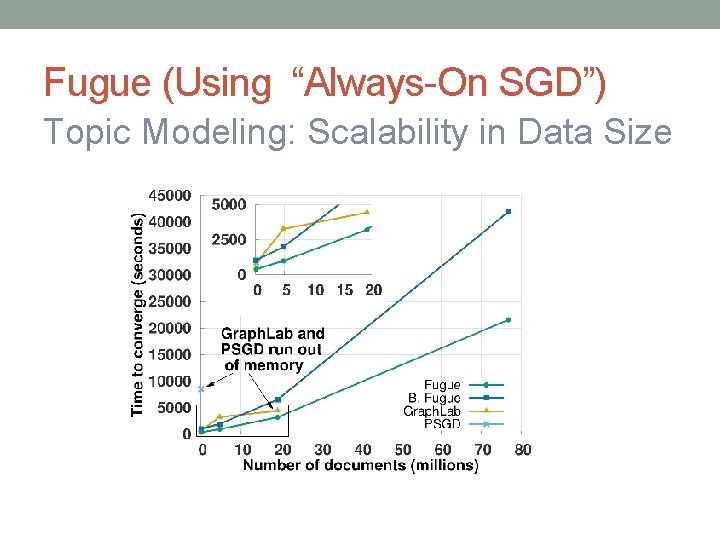

Fugue (Using “Always-On SGD”) Topic Modeling: Scalability in Data Size

Fugue (Using “Always-On SGD”) Topic Modeling: Scalability in Rank

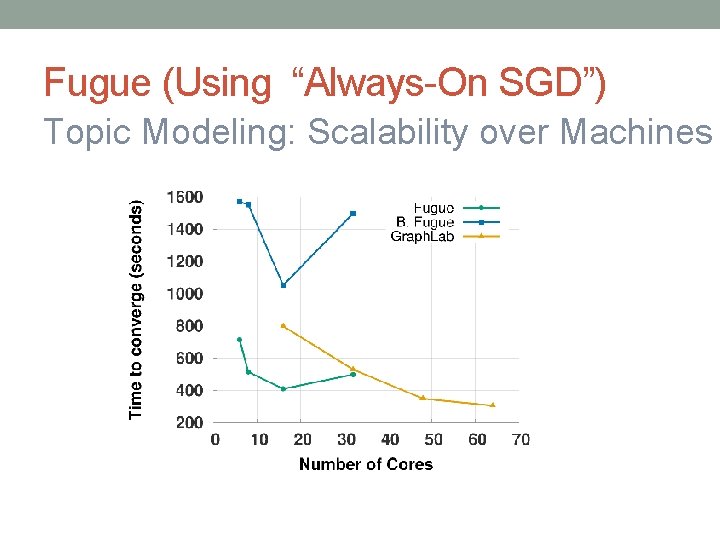

Fugue (Using “Always-On SGD”) Topic Modeling: Scalability over Machines

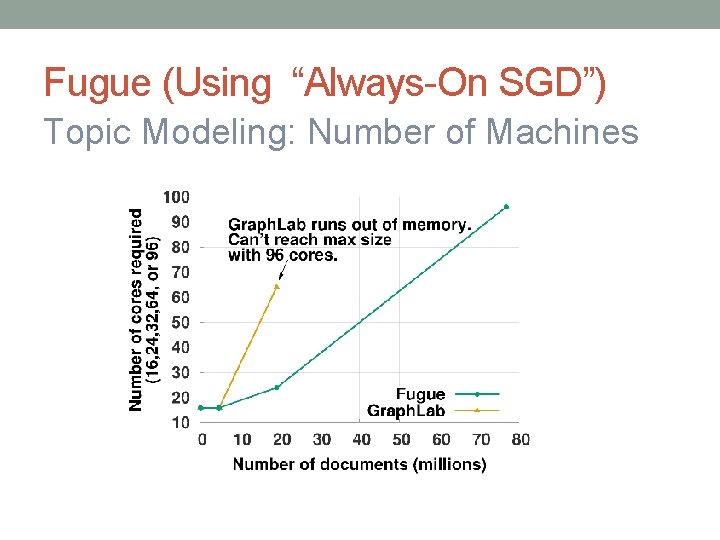

Fugue (Using “Always-On SGD”) Topic Modeling: Number of Machines

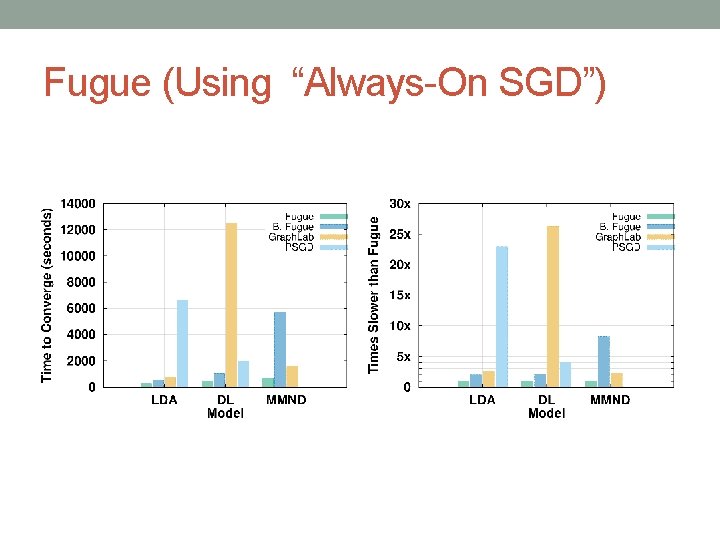

Fugue (Using “Always-On SGD”)

Key Points • Flexible method for tensors & ML models • Can use stock Hadoop through using HDFS for communication • When waiting for slower machines, run updates on old data again

FURTHER SYSTEM IMPROVEMENTS Joint work with Markus Weimer, Tom Minka, Yordan Zaykov and Vijay Narayanan at Microsoft

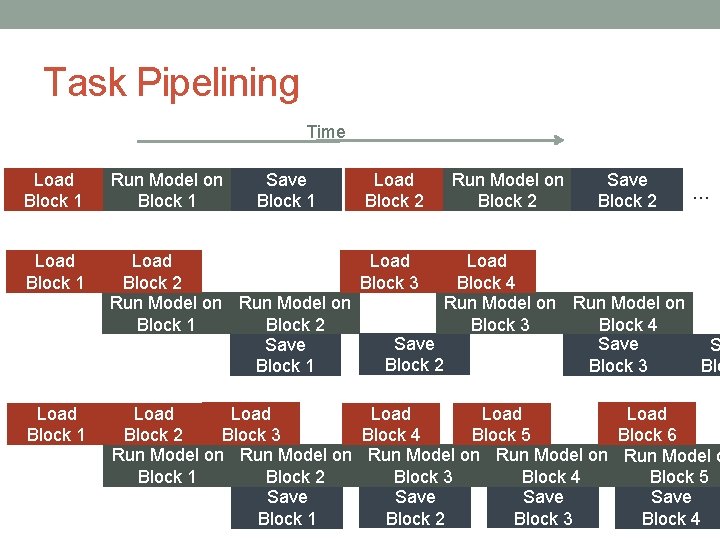

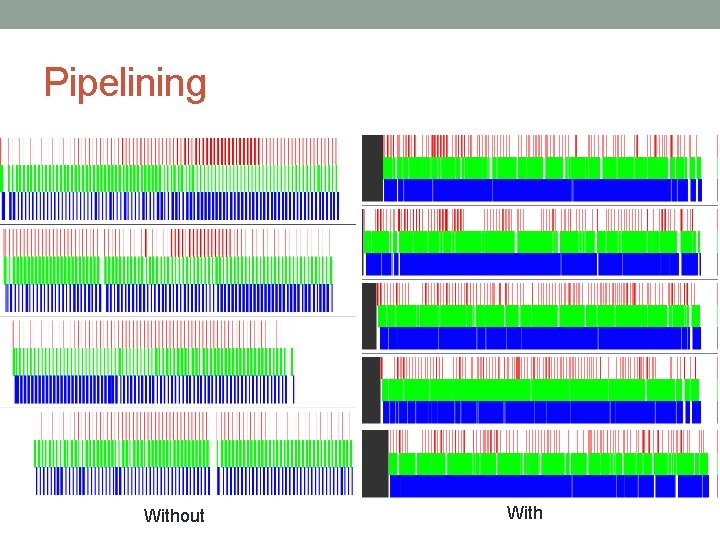

Task Pipelining Time Load Block 1 Run Model on Block 1 Save Block 1 Load Block 2 Run Model on Block 2 Save Block 2 Load Block 1 Load Block 4 Block 2 Block 3 Run Model on Block 3 Block 4 Block 1 Block 2 Save S Block 2 Block 3 Block 1 Blo Load Block 1 Load Load Block 3 Block 6 Block 2 Block 4 Block 5 Run Model on Run Model o Block 4 Block 3 Block 1 Block 2 Block 5 Save Block 2 Block 3 Block 1 Block 4 …

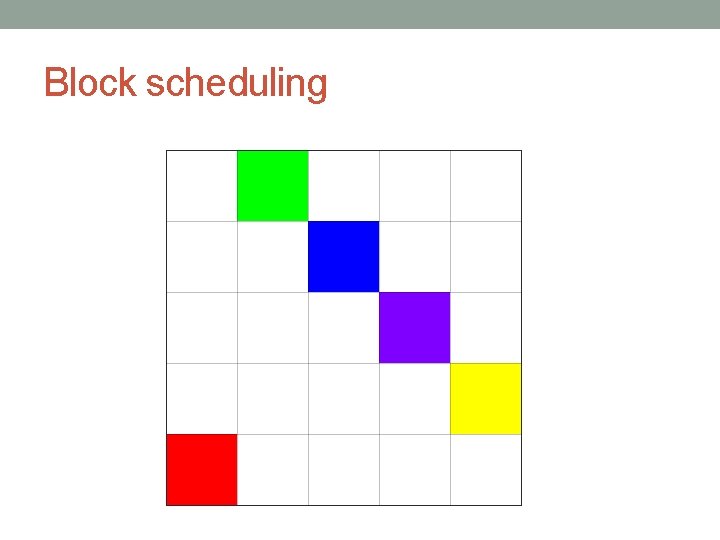

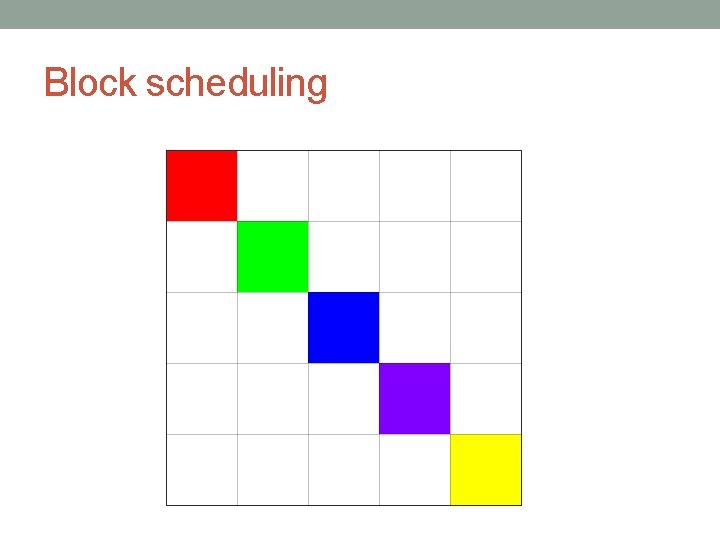

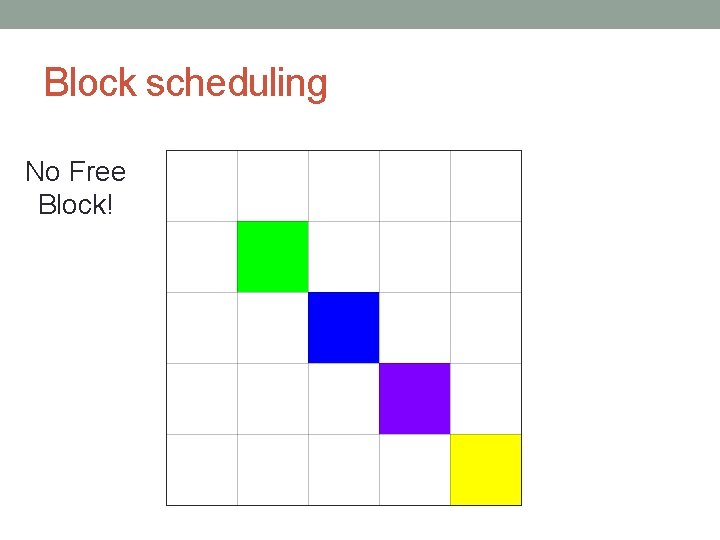

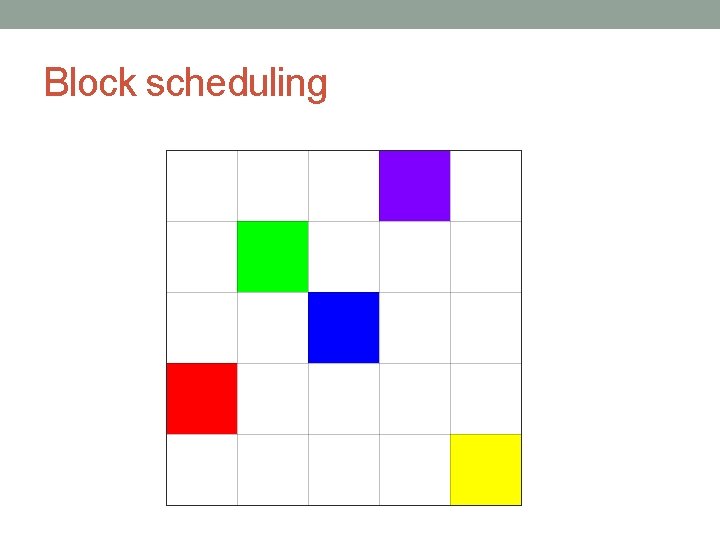

Block scheduling

Block scheduling

Block scheduling

Block scheduling

Block scheduling

Block scheduling

Block scheduling No Free Block!

Block scheduling

![Asynchronous scheduling 1 column bigger 1 row bigger [Zhuang, 2013] Asynchronous scheduling 1 column bigger 1 row bigger [Zhuang, 2013]](http://slidetodoc.com/presentation_image_h2/8711302988149d00d3d8f6e266267a4b/image-74.jpg)

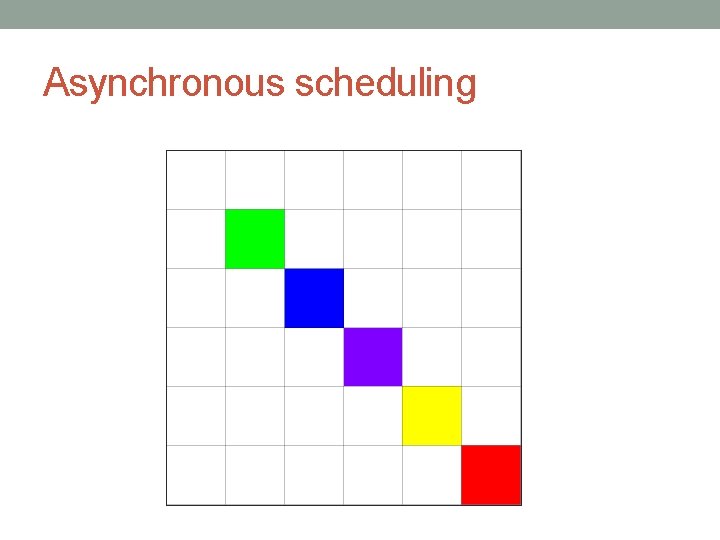

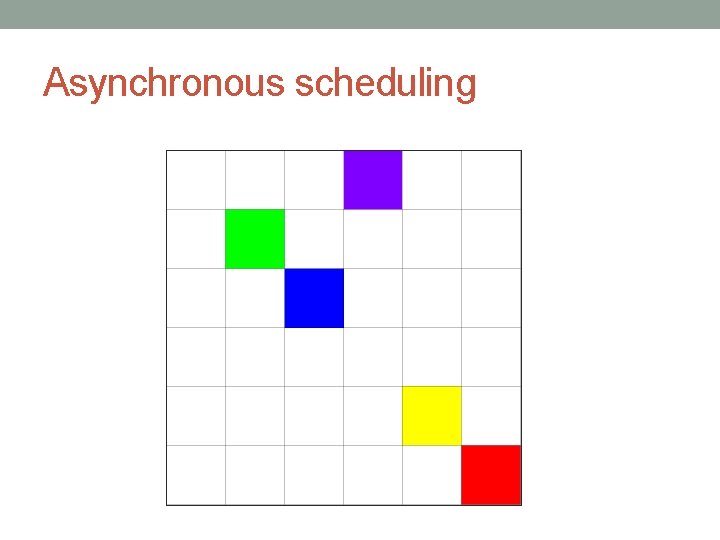

Asynchronous scheduling 1 column bigger 1 row bigger [Zhuang, 2013]

Asynchronous scheduling

Asynchronous scheduling

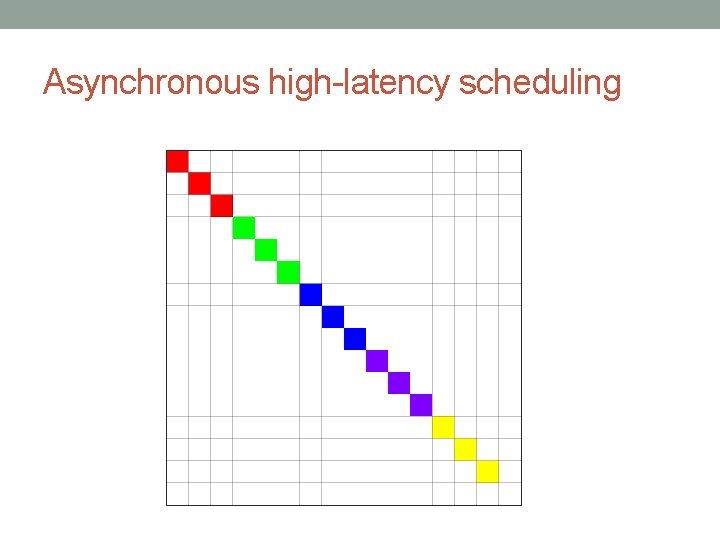

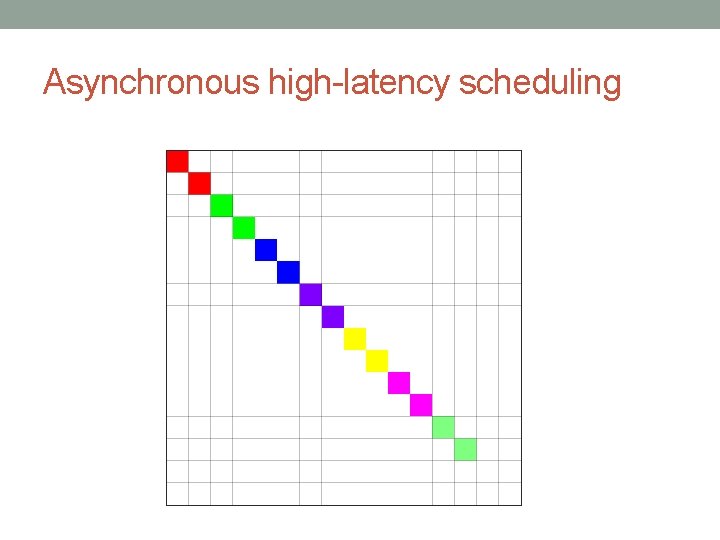

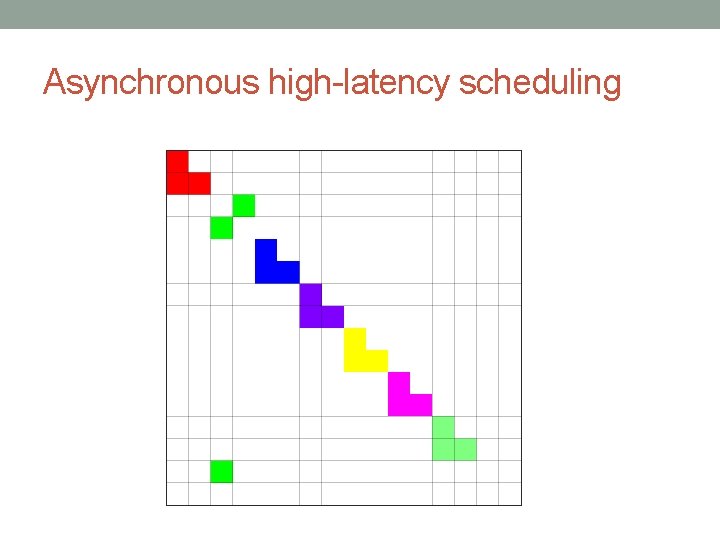

Asynchronous high-latency scheduling

Asynchronous high-latency scheduling

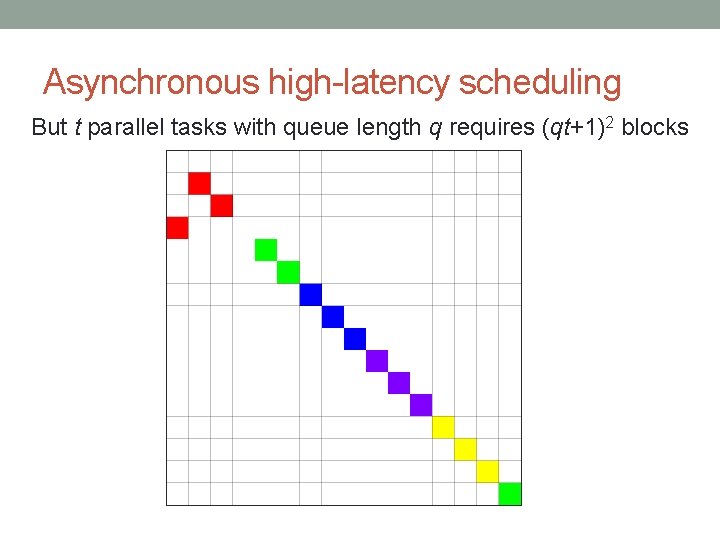

Asynchronous high-latency scheduling But t parallel tasks with queue length q requires (qt+1)2 blocks

Asynchronous high-latency scheduling

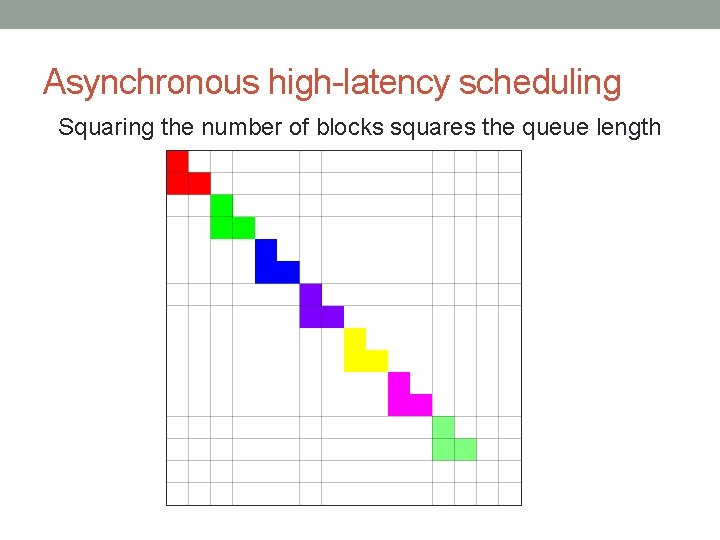

Asynchronous high-latency scheduling Squaring the number of blocks squares the queue length

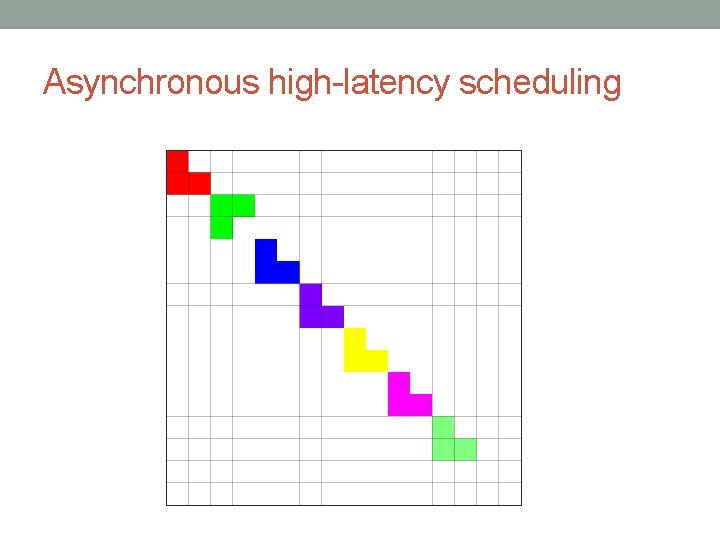

Asynchronous high-latency scheduling

Asynchronous high-latency scheduling

Pipelining Without With

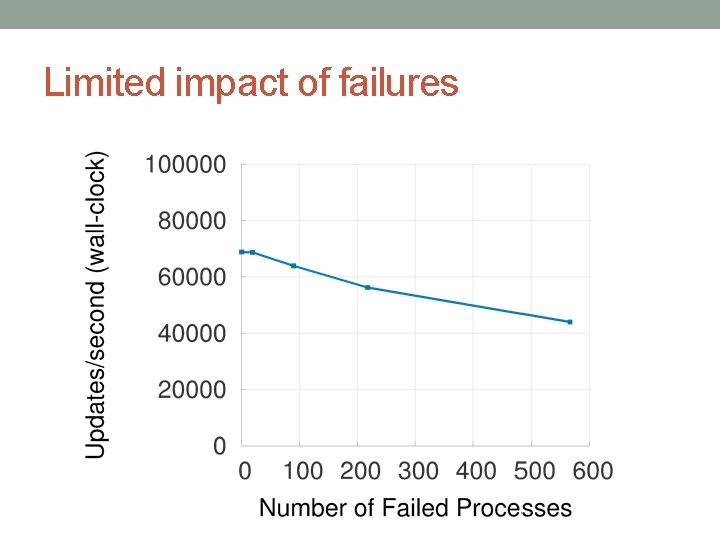

Limited impact of failures

BAYESIAN GRAPHICAL MODELS Joint work with Markus Weimer, Tom Minka, Yordan Zaykov and Vijay Narayanan

Matrix Factorization Movies V U Genres Users ≈ X

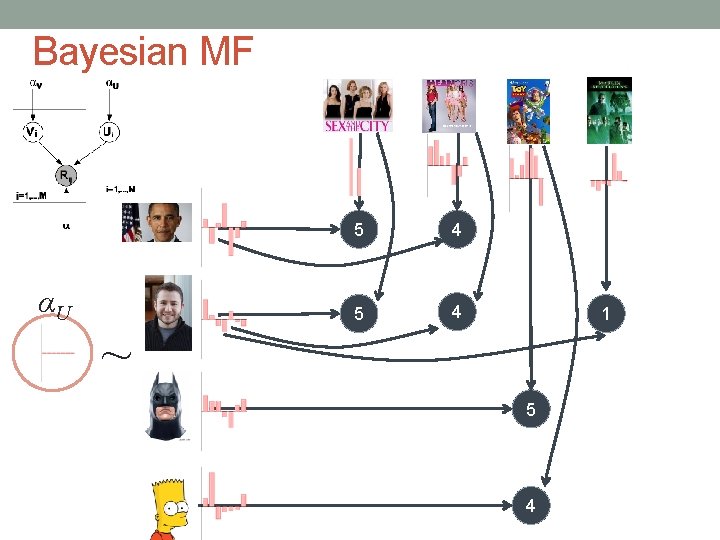

Bayesian MF αU ~ 5 4 1 5 4

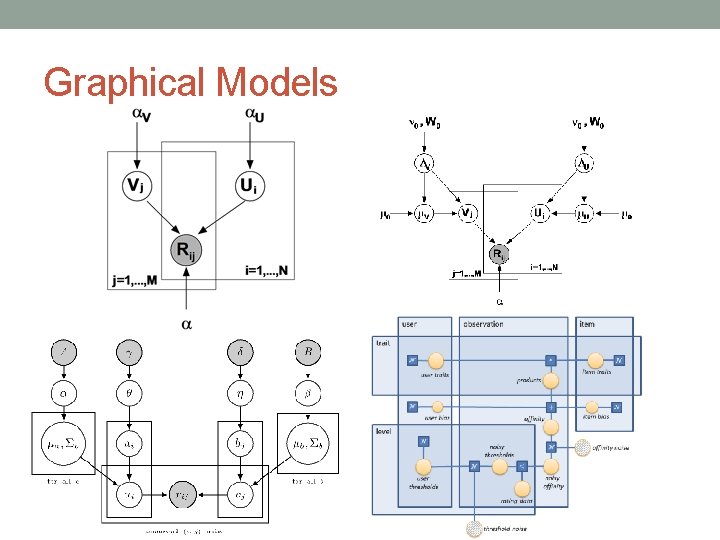

Graphical Models

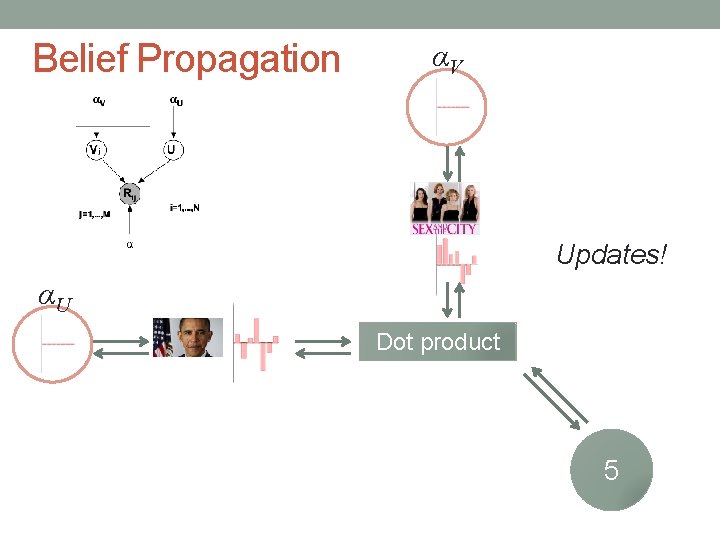

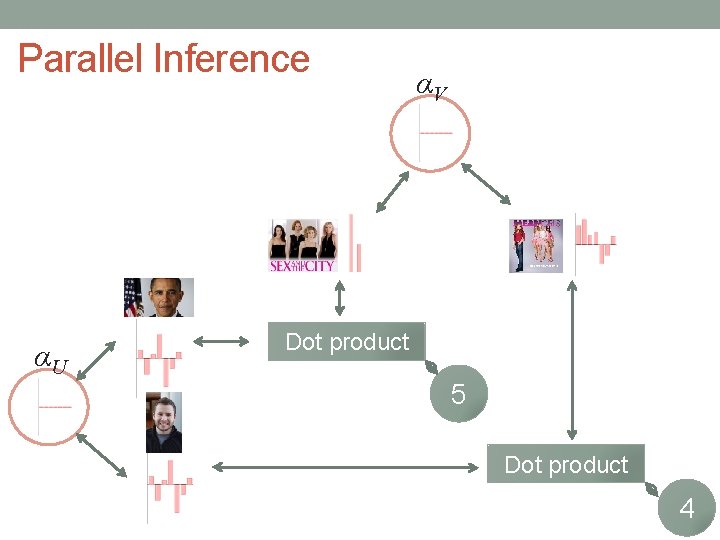

Belief Propagation αV Updates! αU Dot product 5

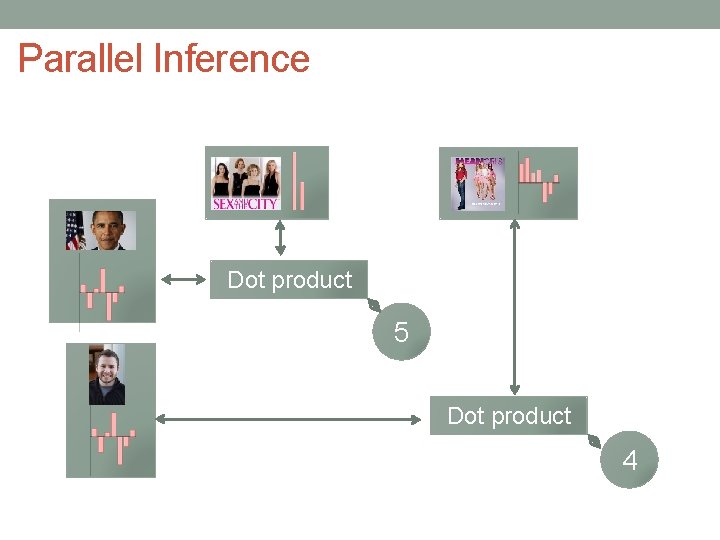

Parallel Inference Dot product 5 Dot product 4

92 Partition data & parameters (Gemulla, 2011) Partition your data & model into d × d blocks Results in d=3 strata Process strata sequentially, process blocks in each stratum in parallel

Parallel Inference αU αV Dot product 5 Dot product 4

Questions? Alex Beutel abeutel@cs. cmu. edu http: //alexbeutel. com

- Slides: 94