Recommender Systems Latent Factor Models Bell Kor Recommender

Recommender Systems: Latent Factor Models

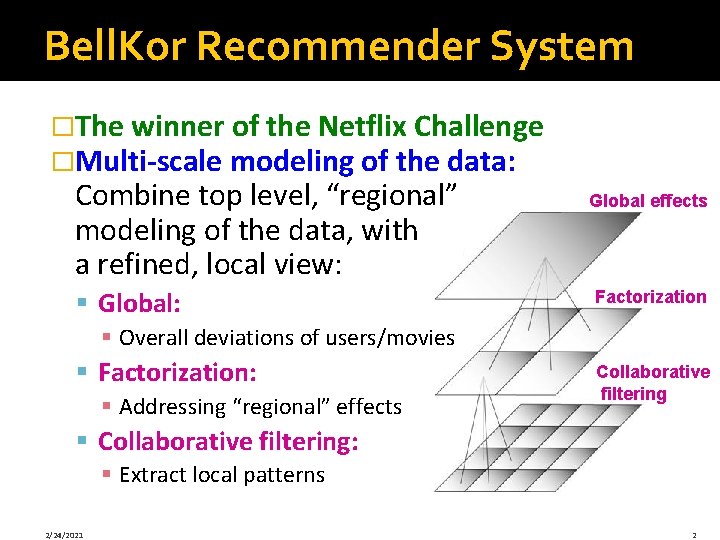

Bell. Kor Recommender System �The winner of the Netflix Challenge �Multi-scale modeling of the data: Combine top level, “regional” modeling of the data, with a refined, local view: § Global: Global effects Factorization § Overall deviations of users/movies § Factorization: § Addressing “regional” effects Collaborative filtering § Collaborative filtering: § Extract local patterns 2/24/2021 2

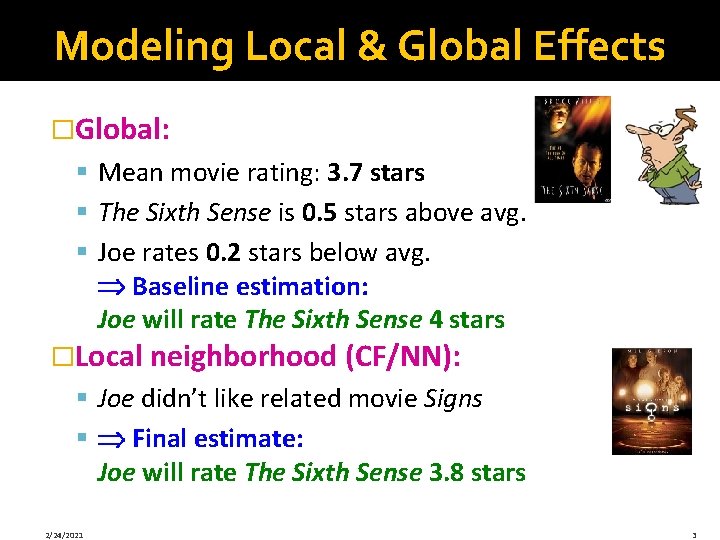

Modeling Local & Global Effects �Global: § Mean movie rating: 3. 7 stars § The Sixth Sense is 0. 5 stars above avg. § Joe rates 0. 2 stars below avg. Baseline estimation: Joe will rate The Sixth Sense 4 stars �Local neighborhood (CF/NN): § Joe didn’t like related movie Signs § Final estimate: Joe will rate The Sixth Sense 3. 8 stars 2/24/2021 3

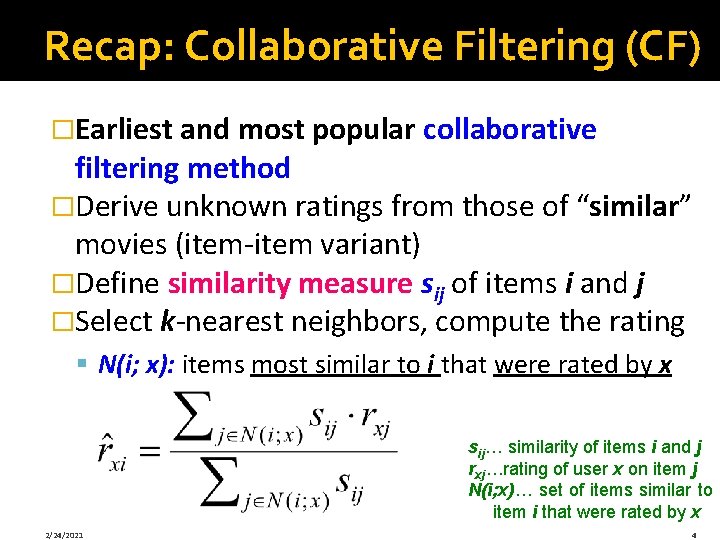

Recap: Collaborative Filtering (CF) �Earliest and most popular collaborative filtering method �Derive unknown ratings from those of “similar” movies (item-item variant) �Define similarity measure sij of items i and j �Select k-nearest neighbors, compute the rating § N(i; x): items most similar to i that were rated by x sij… similarity of items i and j rxj…rating of user x on item j N(i; x)… set of items similar to item i that were rated by x 2/24/2021 4

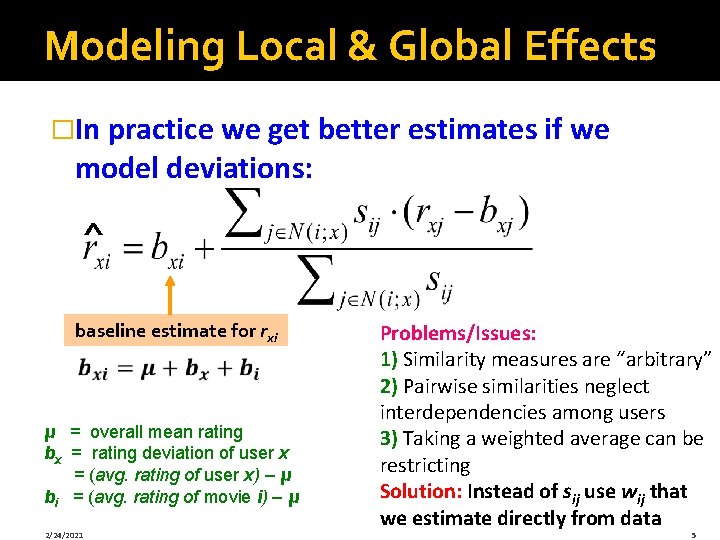

Modeling Local & Global Effects �In practice we get better estimates if we model deviations: ^ baseline estimate for rxi μ = overall mean rating bx = rating deviation of user x = (avg. rating of user x) – μ bi = (avg. rating of movie i) – μ 2/24/2021 Problems/Issues: 1) Similarity measures are “arbitrary” 2) Pairwise similarities neglect interdependencies among users 3) Taking a weighted average can be restricting Solution: Instead of sij use wij that we estimate directly from data 5

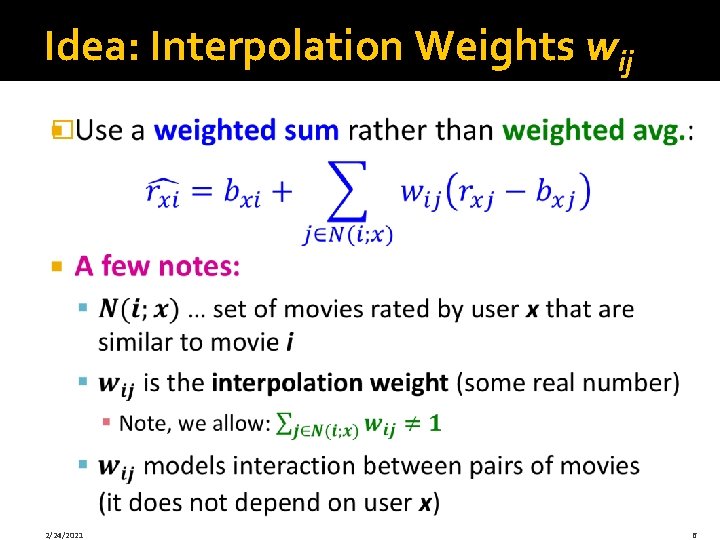

Idea: Interpolation Weights wij � 2/24/2021 6

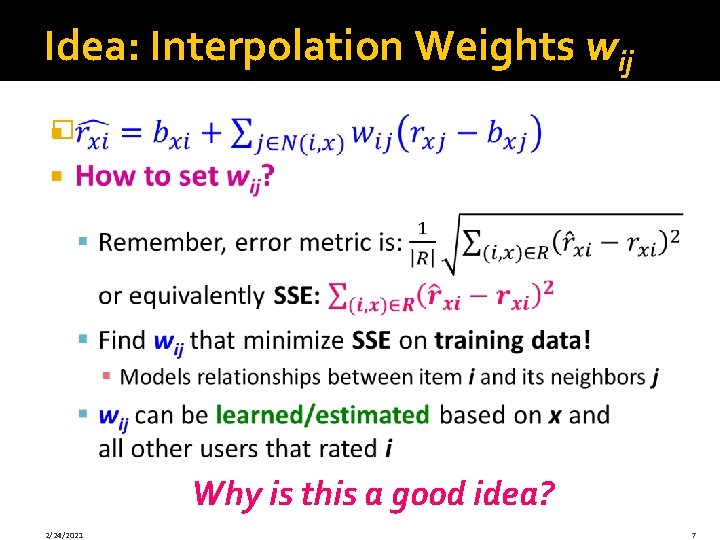

Idea: Interpolation Weights wij � Why is this a good idea? 2/24/2021 7

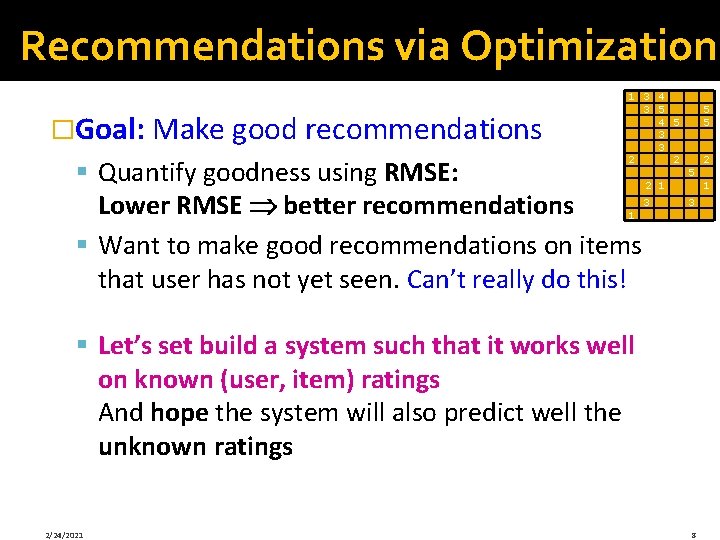

Recommendations via Optimization �Goal: Make good recommendations 1 3 4 3 5 4 5 3 3 2 2 § Quantify goodness using RMSE: Lower RMSE better recommendations § Want to make good recommendations on items that user has not yet seen. Can’t really do this! 2 1 1 3 5 5 5 3 § Let’s set build a system such that it works well on known (user, item) ratings And hope the system will also predict well the unknown ratings 2/24/2021 8 2 1

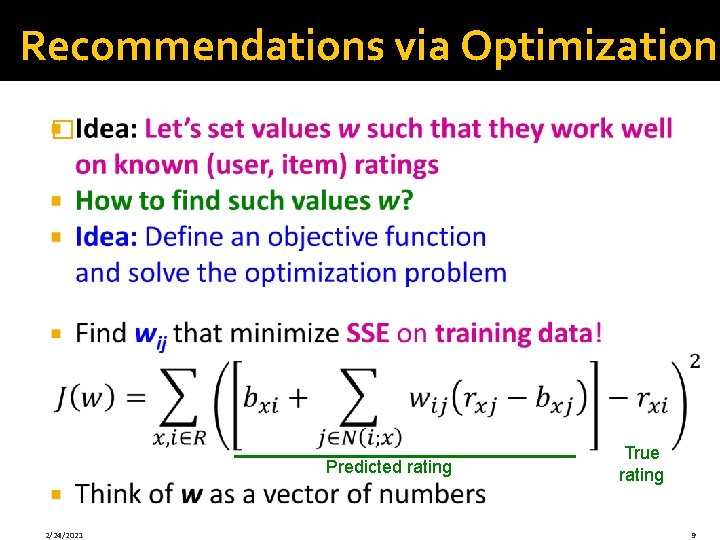

Recommendations via Optimization � Predicted rating 2/24/2021 True rating 9

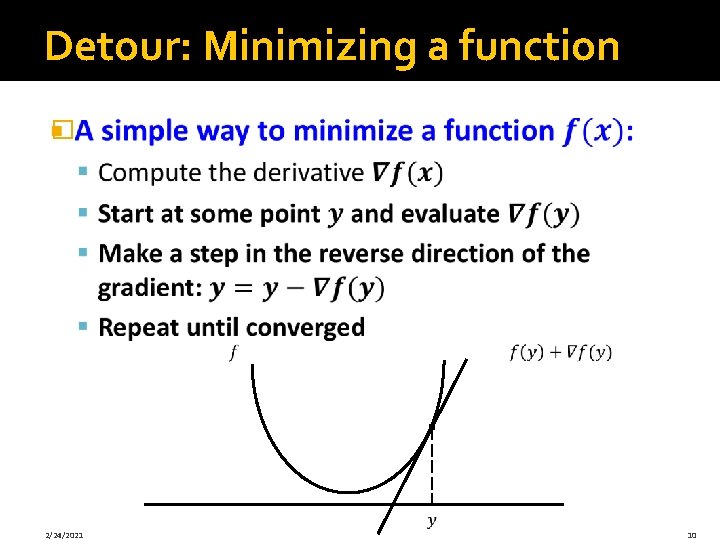

Detour: Minimizing a function � 2/24/2021 10

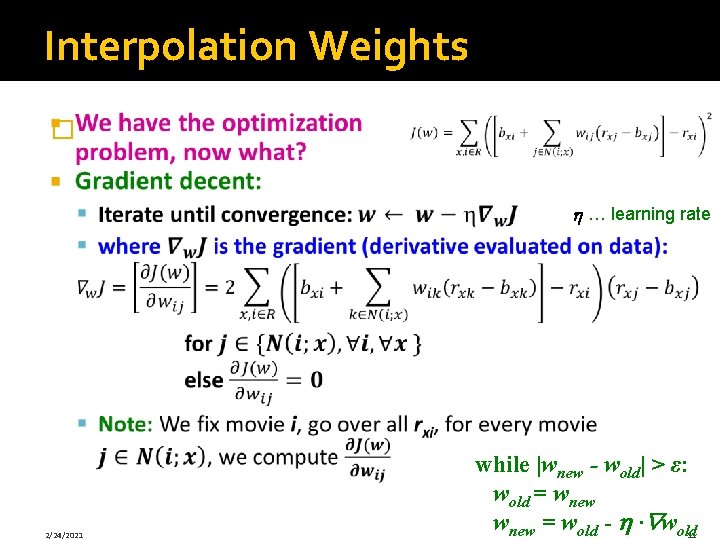

Interpolation Weights � … learning rate 2/24/2021 while |wnew - wold| > ε: wold = wnew = wold - · wold 11

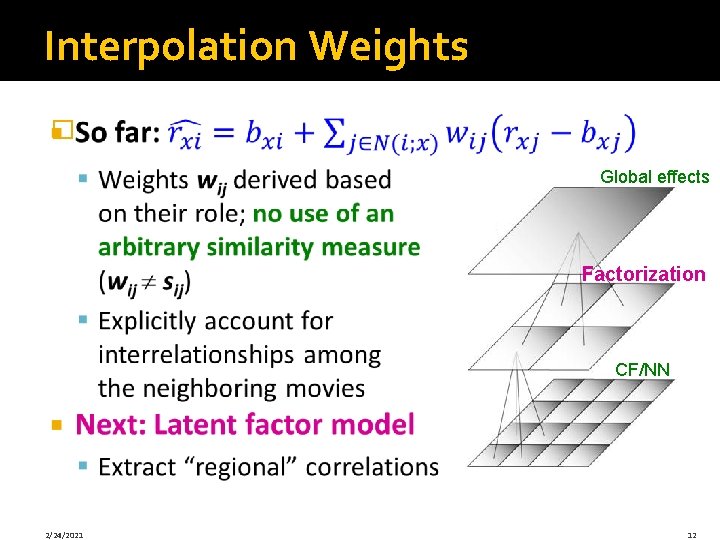

Interpolation Weights � Global effects Factorization CF/NN 2/24/2021 12

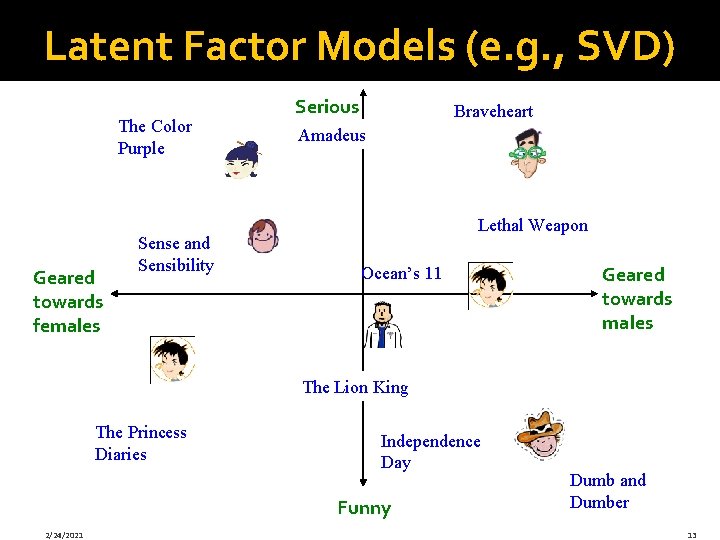

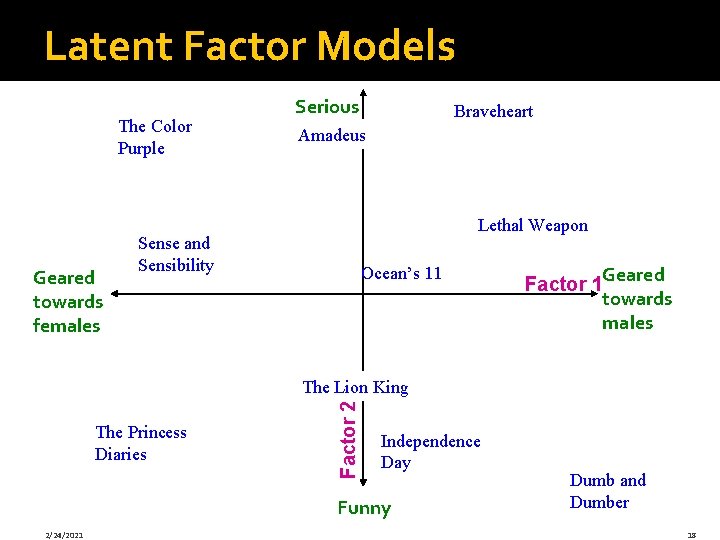

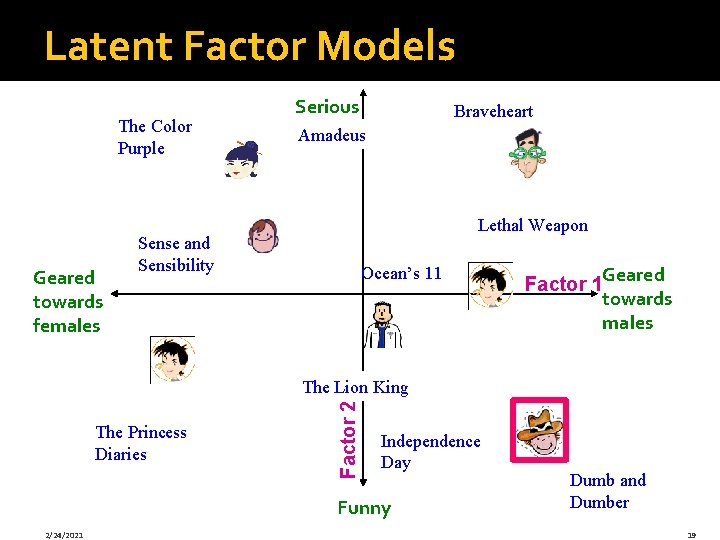

Latent Factor Models (e. g. , SVD) The Color Purple Geared towards females Sense and Sensibility Serious Braveheart Amadeus Lethal Weapon Ocean’s 11 Geared towards males The Lion King The Princess Diaries Independence Day Funny 2/24/2021 Dumb and Dumber 13

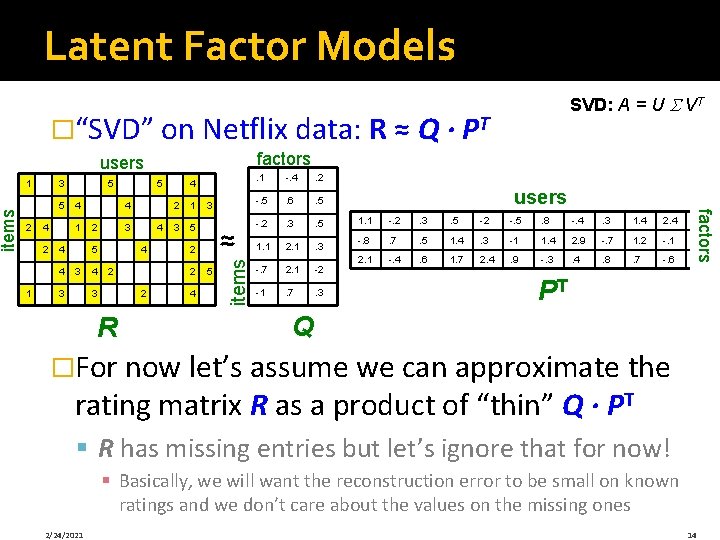

SVD: A = U VT �“SVD” on Netflix data: R ≈ Q · PT factors users 3 5 2 4 1 4 4 1 5 4 2 3 5 3 3 5 4 4 4 2 3 2 1 3 5 3 ≈ 2 2 2 R 4 4 5 items 1 . 1 -. 4 . 2 -. 5 . 6 . 5 -. 2 . 3 . 5 1. 1 2. 1 . 3 -. 7 2. 1 -2 -1 . 7 . 3 users factors items Latent Factor Models 1. 1 -. 2 . 3 . 5 -2 -. 5 . 8 -. 4 . 3 1. 4 2. 4 -. 9 -. 8 . 7 . 5 1. 4 . 3 -1 1. 4 2. 9 -. 7 1. 2 -. 1 1. 3 2. 1 -. 4 . 6 1. 7 2. 4 . 9 -. 3 . 4 . 8 . 7 -. 6 . 1 PT Q �For now let’s assume we can approximate the rating matrix R as a product of “thin” Q · PT § R has missing entries but let’s ignore that for now! § Basically, we will want the reconstruction error to be small on known ratings and we don’t care about the values on the missing ones 2/24/2021 14

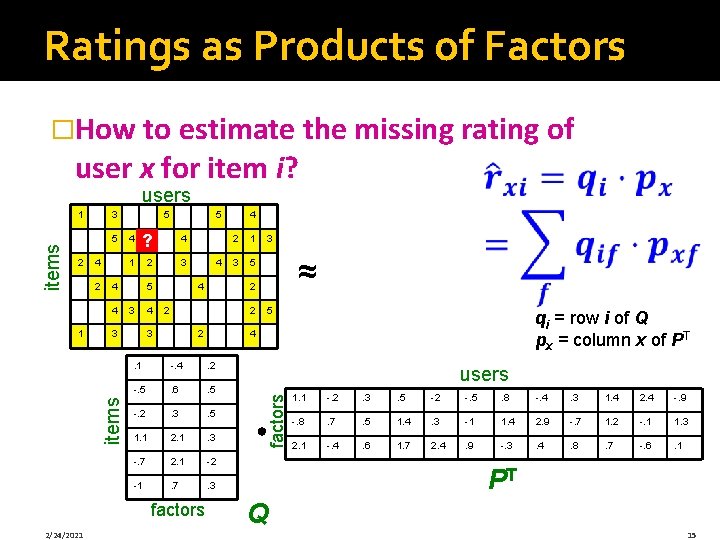

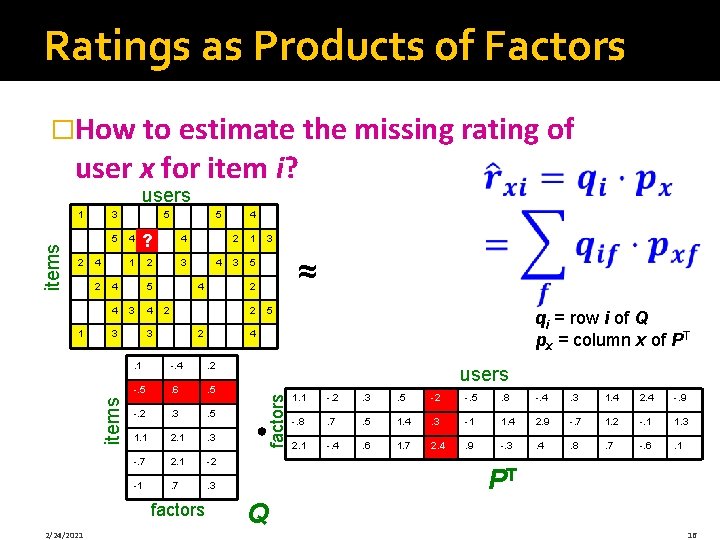

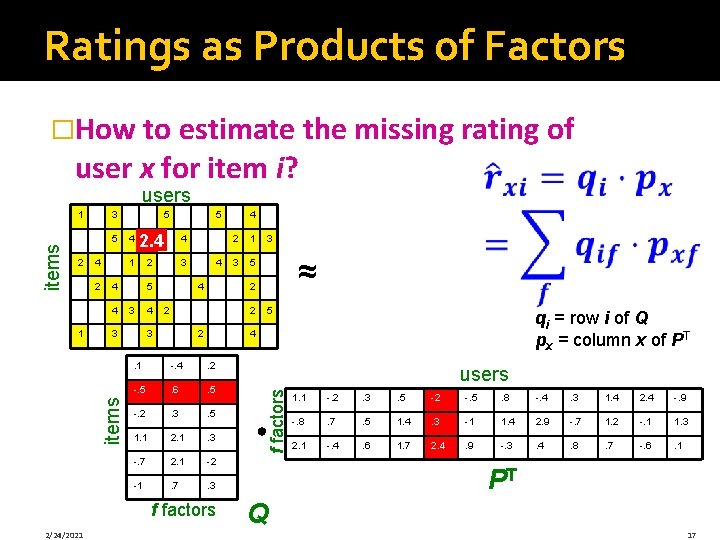

Ratings as Products of Factors �How to estimate the missing rating of user x for item i? users 3 5 2 4 2 ? 4 1 2 3 5 3 3 items 5 4 4 4 1 5 4 4 4 2 1 3 5 2 3 3 ≈ 2 2 2 5 qi = row i of Q px = column x of PT 4 . 1 -. 4 . 2 -. 5 . 6 . 5 -. 2 . 3 . 5 1. 1 2. 1 . 3 -. 7 2. 1 -2 -1 . 7 . 3 factors 2/24/2021 4 users factors items 1 1. 1 -. 2 . 3 . 5 -2 -. 5 . 8 -. 4 . 3 1. 4 2. 4 -. 9 -. 8 . 7 . 5 1. 4 . 3 -1 1. 4 2. 9 -. 7 1. 2 -. 1 1. 3 2. 1 -. 4 . 6 1. 7 2. 4 . 9 -. 3 . 4 . 8 . 7 -. 6 . 1 PT Q 15

Ratings as Products of Factors �How to estimate the missing rating of user x for item i? users 3 5 2 4 2 ? 4 1 2 3 5 3 3 items 5 4 4 4 1 5 4 4 4 2 1 3 5 2 3 3 ≈ 2 2 2 5 qi = row i of Q px = column x of PT 4 . 1 -. 4 . 2 -. 5 . 6 . 5 -. 2 . 3 . 5 1. 1 2. 1 . 3 -. 7 2. 1 -2 -1 . 7 . 3 factors 2/24/2021 4 users factors items 1 1. 1 -. 2 . 3 . 5 -2 -. 5 . 8 -. 4 . 3 1. 4 2. 4 -. 9 -. 8 . 7 . 5 1. 4 . 3 -1 1. 4 2. 9 -. 7 1. 2 -. 1 1. 3 2. 1 -. 4 . 6 1. 7 2. 4 . 9 -. 3 . 4 . 8 . 7 -. 6 . 1 PT Q 16

Ratings as Products of Factors �How to estimate the missing rating of user x for item i? users 3 5 2 4 2 2. 4 ? 4 1 2 3 5 3 3 items 5 4 4 4 1 5 4 4 4 2 1 3 5 2 2 3 3 ≈ 2 2 5 qi = row i of Q px = column x of PT 4 . 1 -. 4 . 2 -. 5 . 6 . 5 -. 2 . 3 . 5 1. 1 2. 1 . 3 -. 7 2. 1 -2 -1 . 7 . 3 f factors 2/24/2021 4 users f factors items 1 1. 1 -. 2 . 3 . 5 -2 -. 5 . 8 -. 4 . 3 1. 4 2. 4 -. 9 -. 8 . 7 . 5 1. 4 . 3 -1 1. 4 2. 9 -. 7 1. 2 -. 1 1. 3 2. 1 -. 4 . 6 1. 7 2. 4 . 9 -. 3 . 4 . 8 . 7 -. 6 . 1 PT Q 17

Latent Factor Models The Color Purple Geared towards females Serious Braveheart Amadeus Lethal Weapon Sense and Sensibility Ocean’s 11 Geared Factor 1 towards males The Princess Diaries Factor 2 The Lion King Independence Day Funny 2/24/2021 Dumb and Dumber 18

Latent Factor Models The Color Purple Geared towards females Serious Braveheart Amadeus Lethal Weapon Sense and Sensibility Ocean’s 11 Geared Factor 1 towards males The Princess Diaries Factor 2 The Lion King Independence Day Funny 2/24/2021 Dumb and Dumber 19

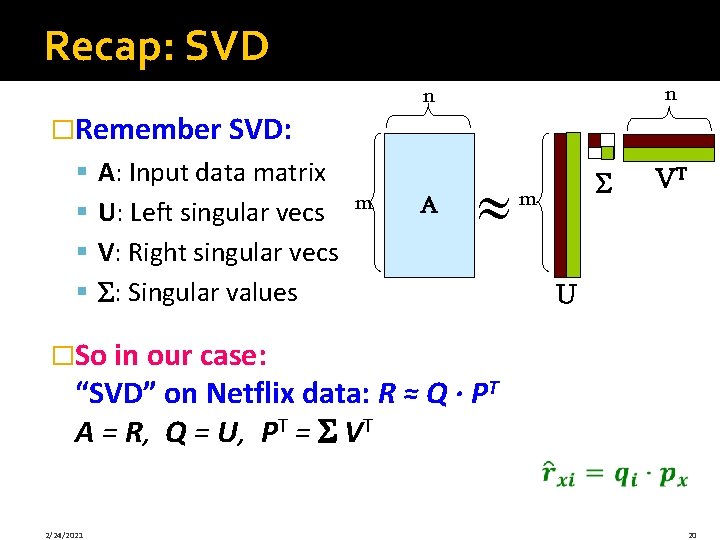

Recap: SVD n n �Remember SVD: § § A: Input data matrix U: Left singular vecs V: Right singular vecs : Singular values m A m VT U �So in our case: “SVD” on Netflix data: R ≈ Q · PT A = R, Q = U, PT = VT 2/24/2021 20

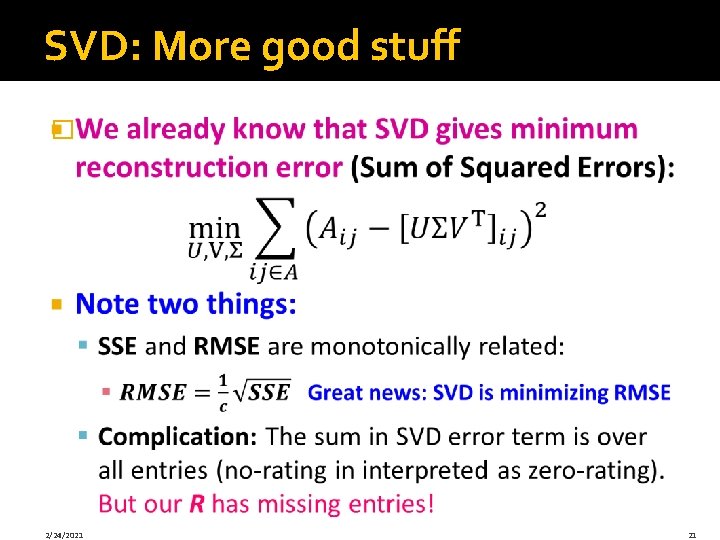

SVD: More good stuff � 2/24/2021 21

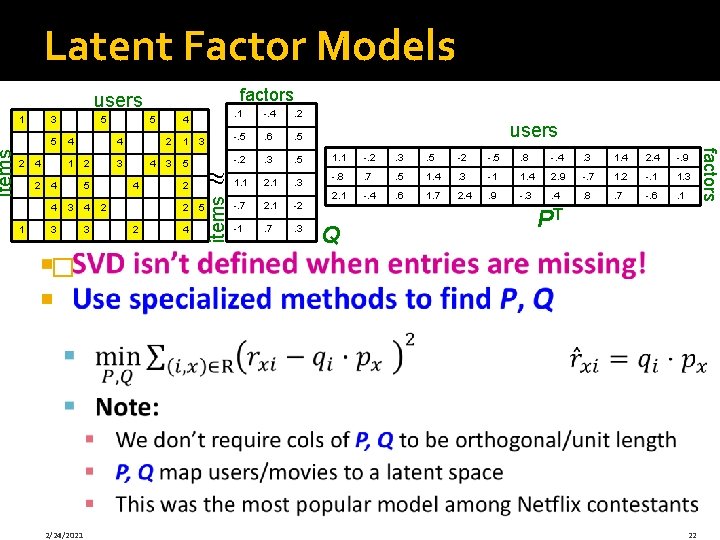

factors 1 3 5 2 4 1 4 4 1 5 4 2 3 5 3 3 � 5 4 3 4 4 2 -. 4 . 2 -. 5 . 6 . 5 -. 2 . 3 . 5 1. 1 2. 1 . 3 -. 7 2. 1 -2 -1 . 7 . 3 4 2 1 3 5 3 2 2 2 . 1 items users 4 5 users 1. 1 -. 2 . 3 . 5 -2 -. 5 . 8 -. 4 . 3 1. 4 2. 4 -. 9 -. 8 . 7 . 5 1. 4 . 3 -1 1. 4 2. 9 -. 7 1. 2 -. 1 1. 3 2. 1 -. 4 . 6 1. 7 2. 4 . 9 -. 3 . 4 . 8 . 7 -. 6 . 1 Q PT 2/24/2021 22 factors items Latent Factor Models

Finding the Latent Factors

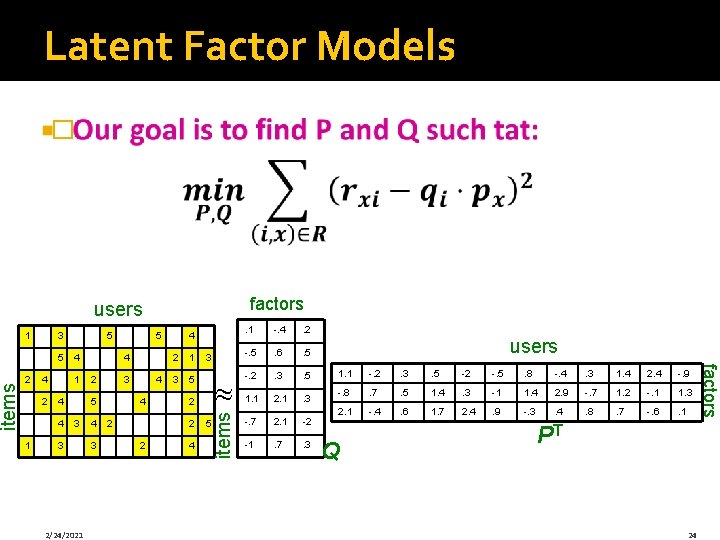

� factors 1 3 5 2 4 1 4 4 1 5 4 2 3 5 3 3 2/24/2021 5 4 3 4 4 2 -. 4 . 2 -. 5 . 6 . 5 -. 2 . 3 . 5 1. 1 2. 1 . 3 -. 7 2. 1 -2 -1 . 7 . 3 4 2 1 3 5 3 2 2 2 . 1 items users 4 5 users 1. 1 -. 2 . 3 . 5 -2 -. 5 . 8 -. 4 . 3 1. 4 2. 4 -. 9 -. 8 . 7 . 5 1. 4 . 3 -1 1. 4 2. 9 -. 7 1. 2 -. 1 1. 3 2. 1 -. 4 . 6 1. 7 2. 4 . 9 -. 3 . 4 . 8 . 7 -. 6 . 1 Q PT 24 factors items Latent Factor Models

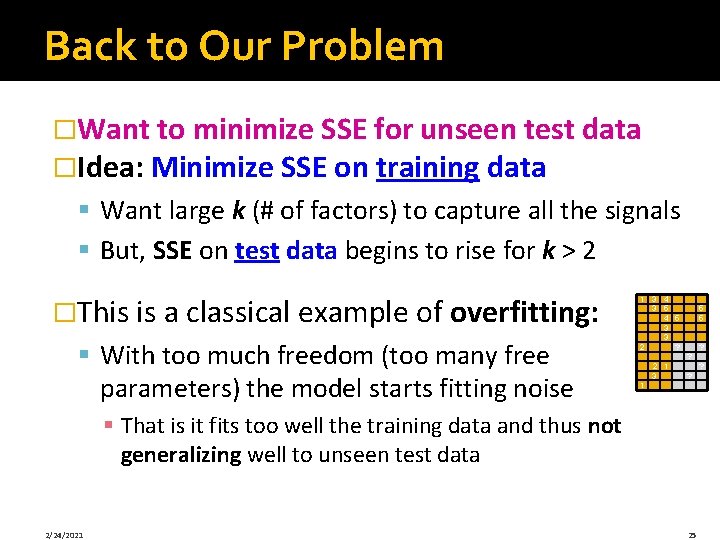

Back to Our Problem �Want to minimize SSE for unseen test data �Idea: Minimize SSE on training data § Want large k (# of factors) to capture all the signals § But, SSE on test data begins to rise for k > 2 �This is a classical example of overfitting: § With too much freedom (too many free parameters) the model starts fitting noise 1 3 4 3 5 4 5 3 3 2 ? 5 5 ? ? 2 1 3 ? ? 1 § That is it fits too well the training data and thus not generalizing well to unseen test data 2/24/2021 25

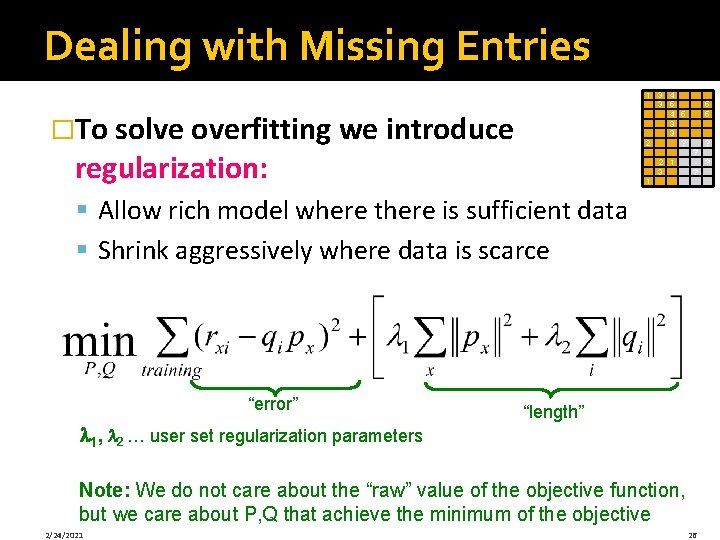

Dealing with Missing Entries 1 3 4 3 5 4 5 3 3 2 ? �To solve overfitting we introduce regularization: 5 5 ? ? 2 1 3 ? ? 1 § Allow rich model where there is sufficient data § Shrink aggressively where data is scarce “error” 1, 2 … user set regularization parameters “length” Note: We do not care about the “raw” value of the objective function, but we care about P, Q that achieve the minimum of the objective 2/24/2021 26

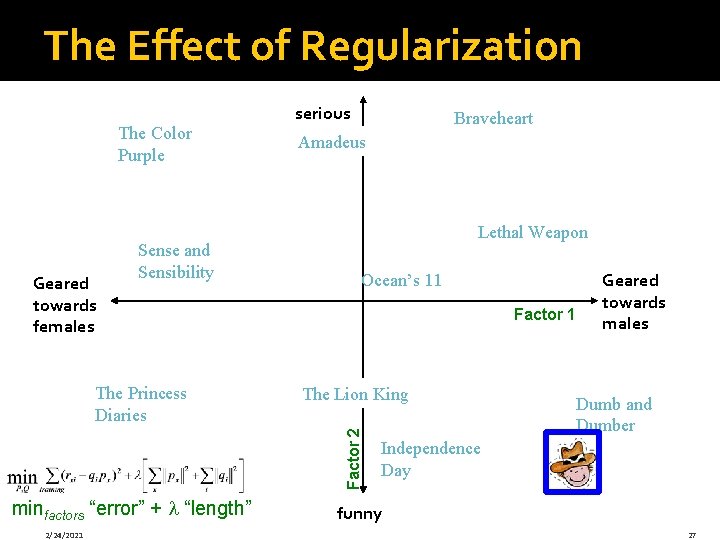

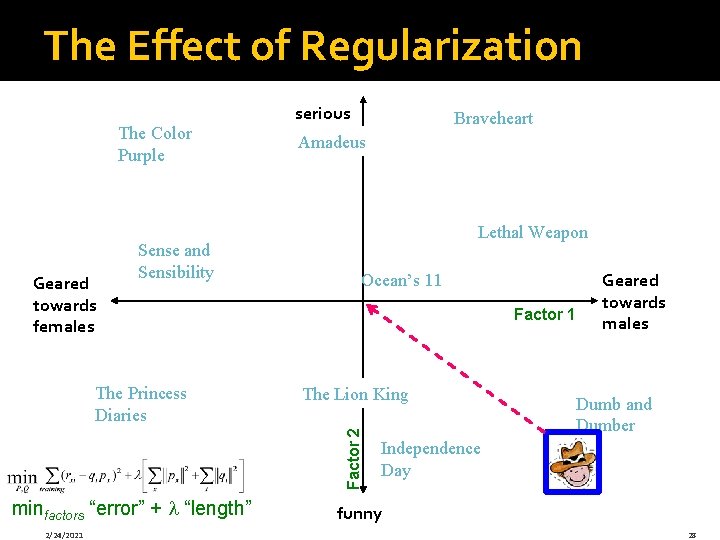

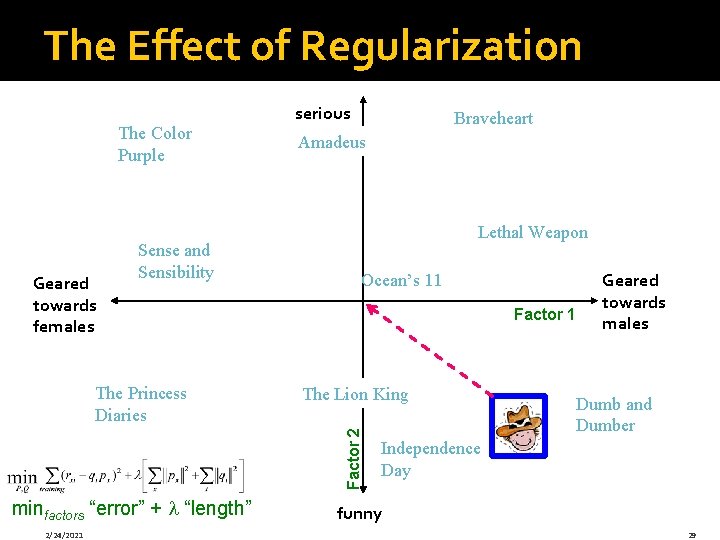

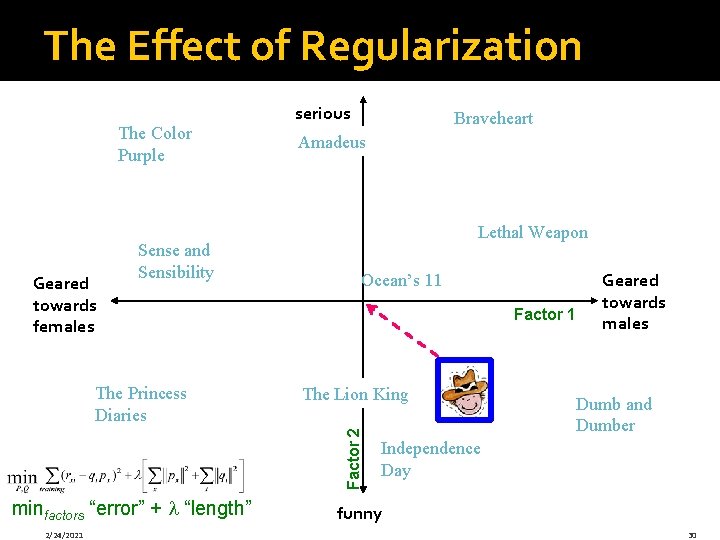

The Effect of Regularization The Color Purple Geared towards females Sense and Sensibility Braveheart Amadeus Lethal Weapon Ocean’s 11 Factor 1 The Lion King Factor 2 The Princess Diaries serious minfactors “error” + “length” 2/24/2021 Geared towards males Dumb and Dumber Independence Day funny 27

The Effect of Regularization The Color Purple Geared towards females Sense and Sensibility Braveheart Amadeus Lethal Weapon Ocean’s 11 Factor 1 The Lion King Factor 2 The Princess Diaries serious minfactors “error” + “length” 2/24/2021 Geared towards males Dumb and Dumber Independence Day funny 28

The Effect of Regularization The Color Purple Geared towards females Sense and Sensibility Braveheart Amadeus Lethal Weapon Ocean’s 11 Factor 1 The Lion King Factor 2 The Princess Diaries serious minfactors “error” + “length” 2/24/2021 Geared towards males Dumb and Dumber Independence Day funny 29

The Effect of Regularization The Color Purple Geared towards females Sense and Sensibility Braveheart Amadeus Lethal Weapon Ocean’s 11 Factor 1 The Lion King Factor 2 The Princess Diaries serious minfactors “error” + “length” 2/24/2021 Geared towards males Dumb and Dumber Independence Day funny 30

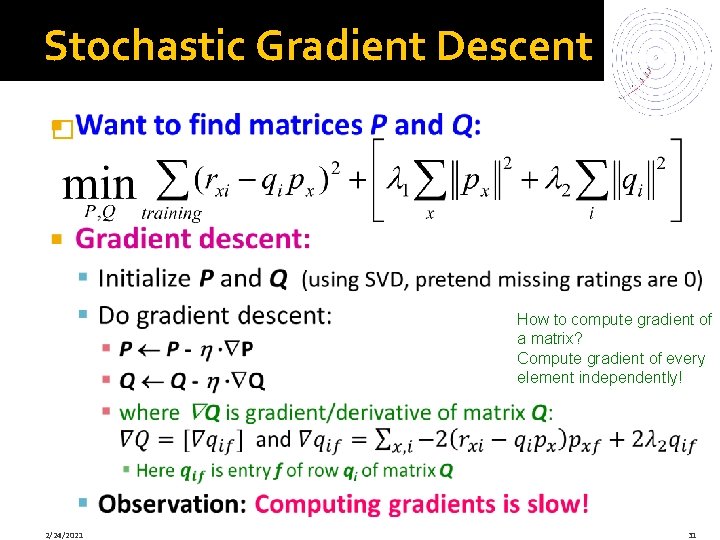

Stochastic Gradient Descent � How to compute gradient of a matrix? Compute gradient of every element independently! 2/24/2021 31

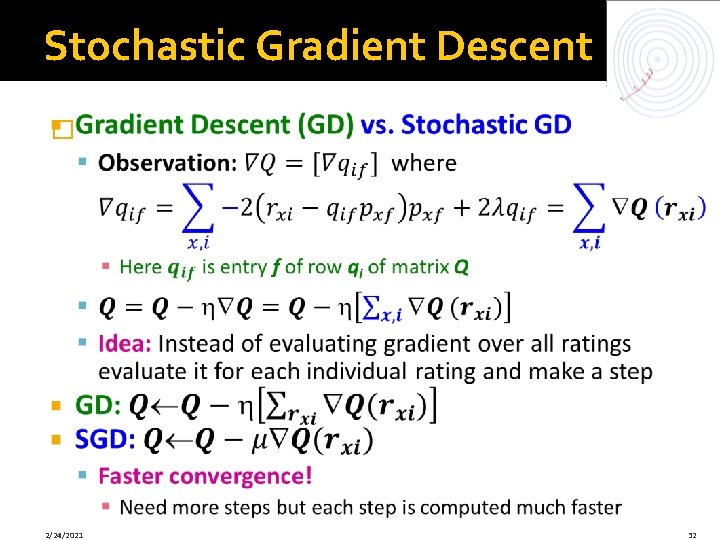

Stochastic Gradient Descent � 2/24/2021 32

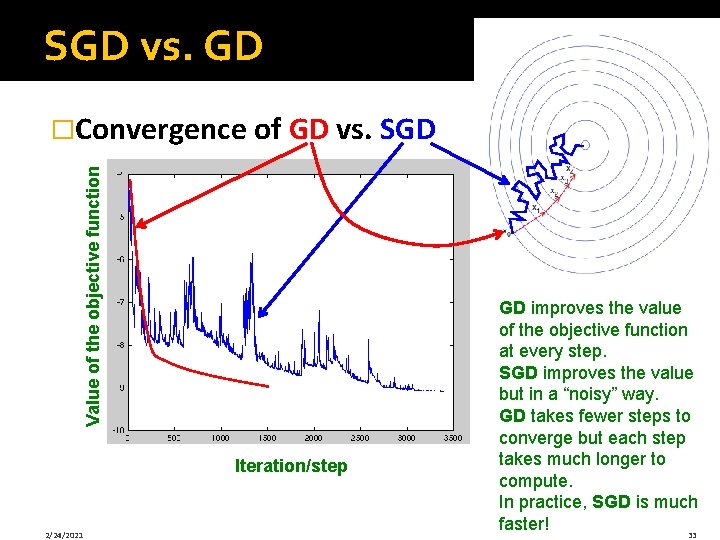

SGD vs. GD Value of the objective function �Convergence of GD vs. SGD Iteration/step 2/24/2021 GD improves the value of the objective function at every step. SGD improves the value but in a “noisy” way. GD takes fewer steps to converge but each step takes much longer to compute. In practice, SGD is much faster! 33

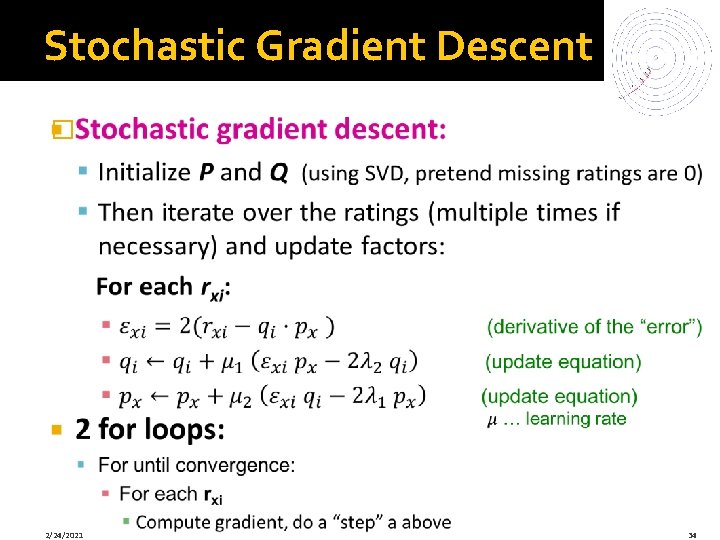

Stochastic Gradient Descent � 2/24/2021 34

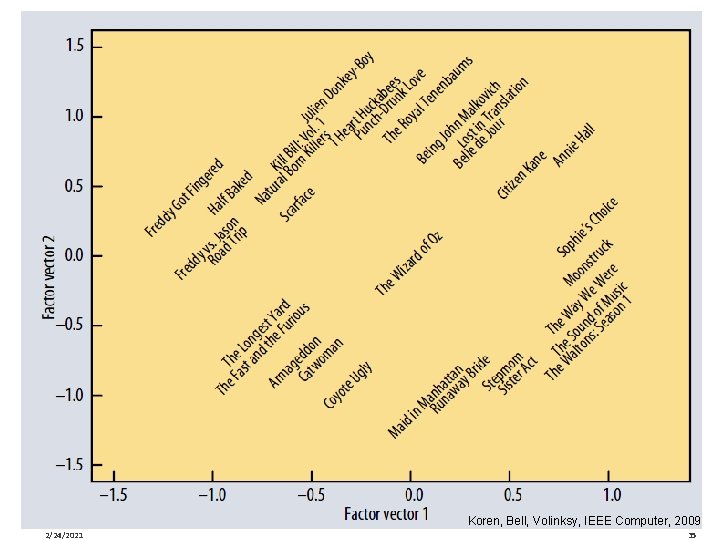

Koren, Bell, Volinksy, IEEE Computer, 2009 2/24/2021 35

Extending Latent Factor Model to Include Biases

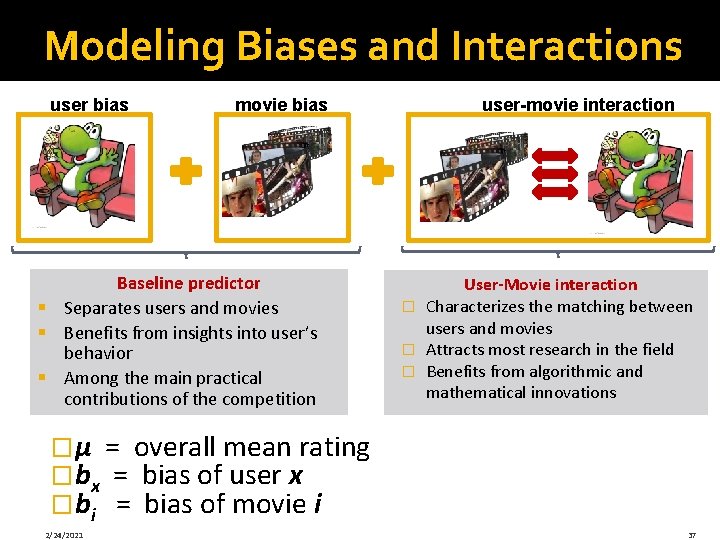

Modeling Biases and Interactions user bias movie bias Baseline predictor § Separates users and movies § Benefits from insights into user’s behavior § Among the main practical contributions of the competition user-movie interaction User-Movie interaction � Characterizes the matching between users and movies � Attracts most research in the field � Benefits from algorithmic and mathematical innovations �μ = overall mean rating �bx = bias of user x �bi = bias of movie i 2/24/2021 37

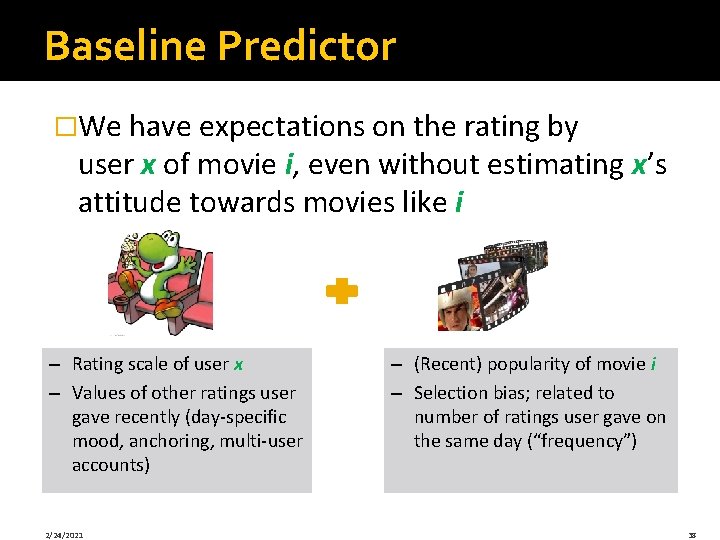

Baseline Predictor �We have expectations on the rating by user x of movie i, even without estimating x’s attitude towards movies like i – Rating scale of user x – Values of other ratings user gave recently (day-specific mood, anchoring, multi-user accounts) 2/24/2021 – (Recent) popularity of movie i – Selection bias; related to number of ratings user gave on the same day (“frequency”) 38

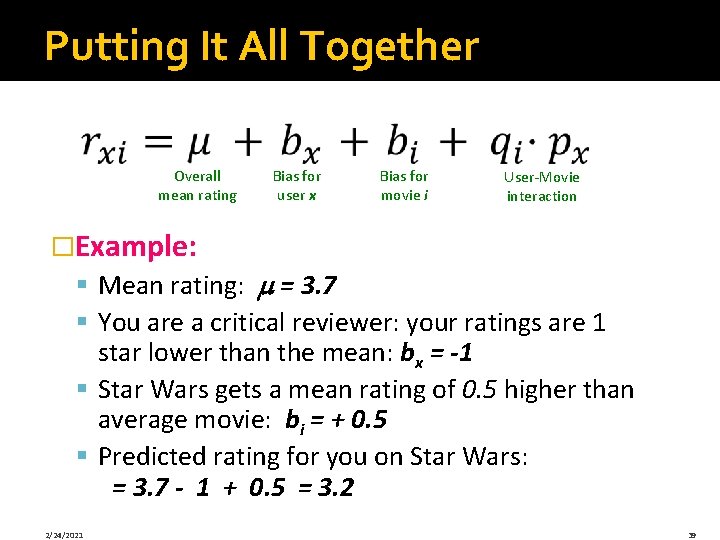

Putting It All Together Overall mean rating Bias for user x Bias for movie i User-Movie interaction �Example: § Mean rating: = 3. 7 § You are a critical reviewer: your ratings are 1 star lower than the mean: bx = -1 § Star Wars gets a mean rating of 0. 5 higher than average movie: bi = + 0. 5 § Predicted rating for you on Star Wars: = 3. 7 - 1 + 0. 5 = 3. 2 2/24/2021 39

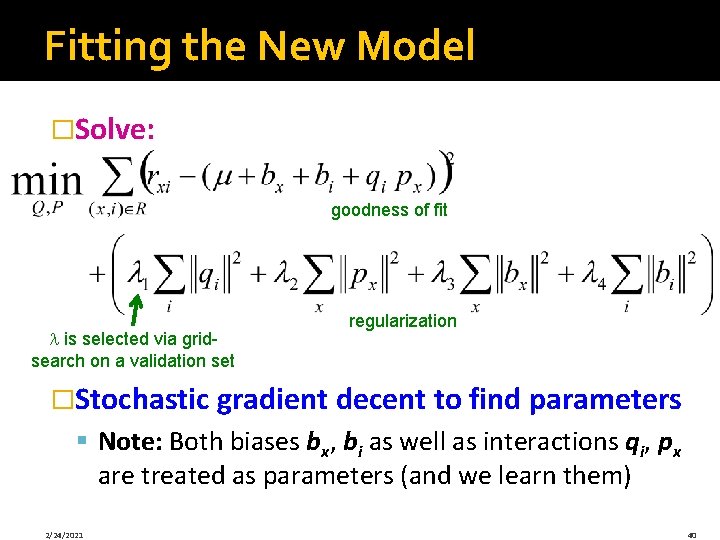

Fitting the New Model �Solve: goodness of fit is selected via gridsearch on a validation set regularization �Stochastic gradient decent to find parameters § Note: Both biases bx, bi as well as interactions qi, px are treated as parameters (and we learn them) 2/24/2021 40

- Slides: 40