MaxMargin Latent Variable Models M Pawan Kumar MaxMargin

Max-Margin Latent Variable Models M. Pawan Kumar

Max-Margin Latent Variable Models M. Pawan Kumar Kevin Miller, Rafi Witten, Tim Tang, Danny Goodman, Haithem Turki, Dan Preston, Ben Packer Daphne Koller Dan Selsam, Andrej Karpathy

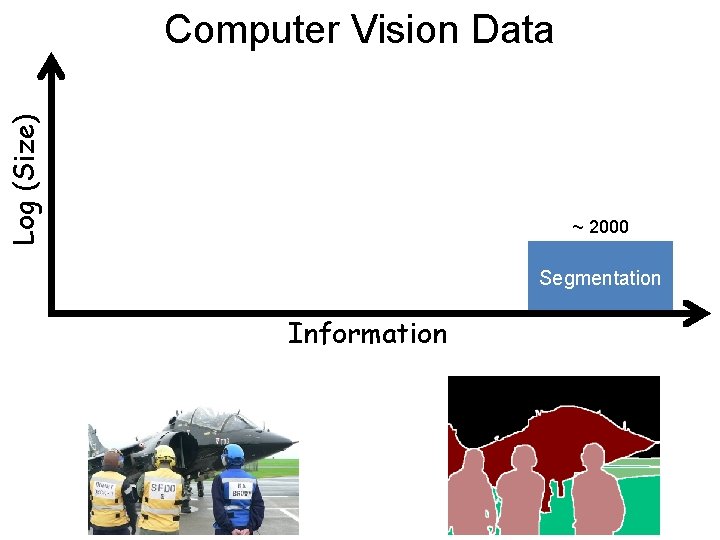

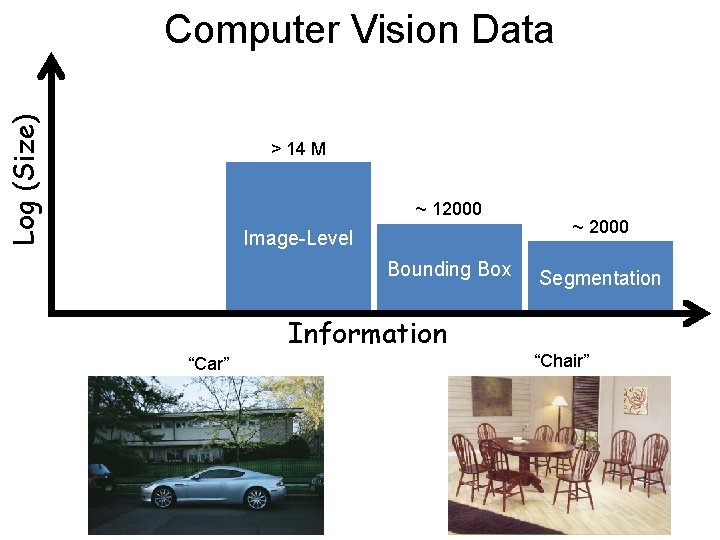

Log (Size) Computer Vision Data ~ 2000 Segmentation Information

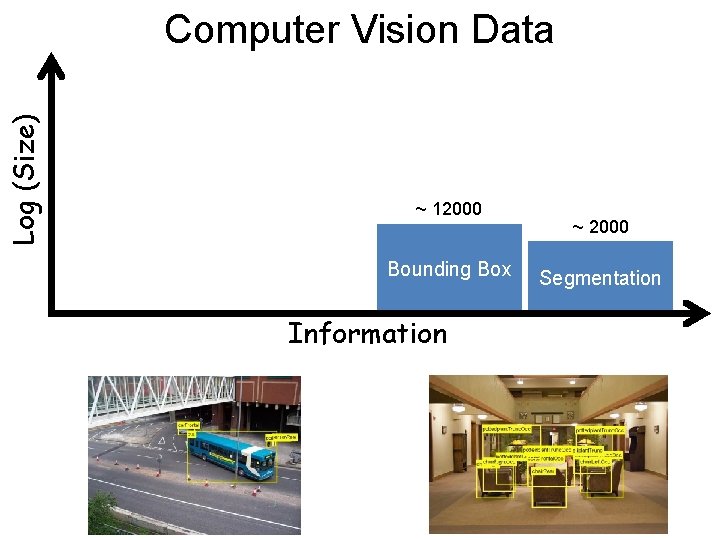

Log (Size) Computer Vision Data ~ 12000 Bounding Box Information ~ 2000 Segmentation

Log (Size) Computer Vision Data > 14 M ~ 12000 Image-Level Bounding Box ~ 2000 Segmentation Information “Car” “Chair”

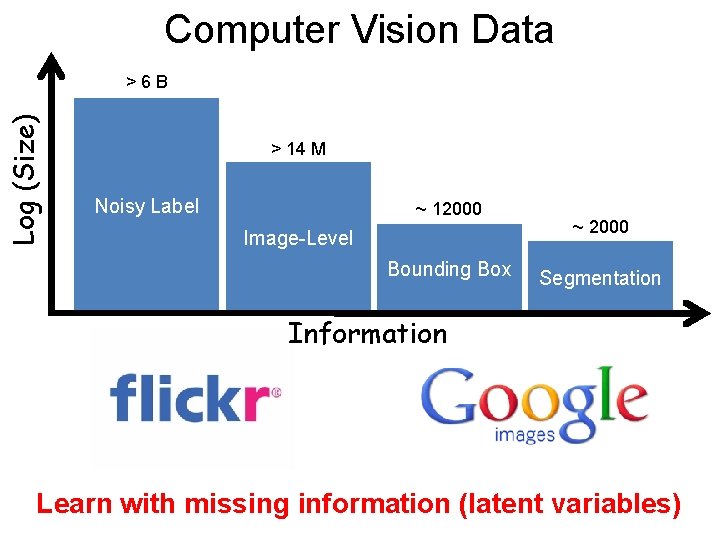

Computer Vision Data Log (Size) >6 B > 14 M Noisy Label ~ 12000 Image-Level Bounding Box ~ 2000 Segmentation Information Learn with missing information (latent variables)

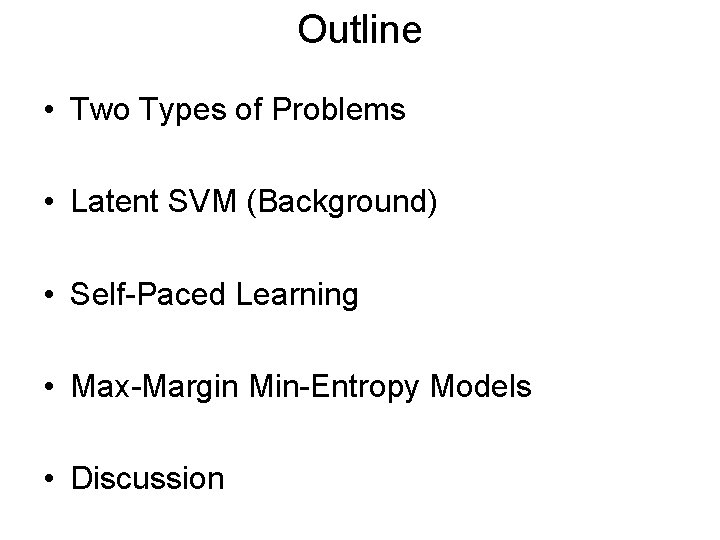

Outline • Two Types of Problems • Latent SVM (Background) • Self-Paced Learning • Max-Margin Min-Entropy Models • Discussion

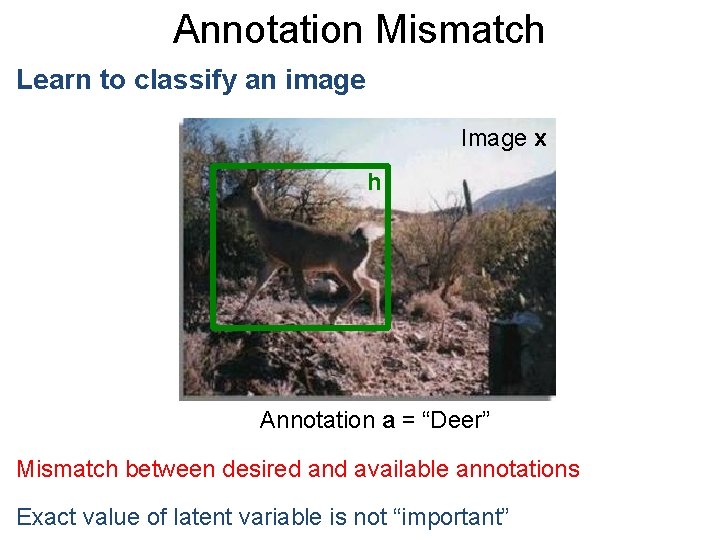

Annotation Mismatch Learn to classify an image Image x h Annotation a = “Deer” Mismatch between desired and available annotations Exact value of latent variable is not “important”

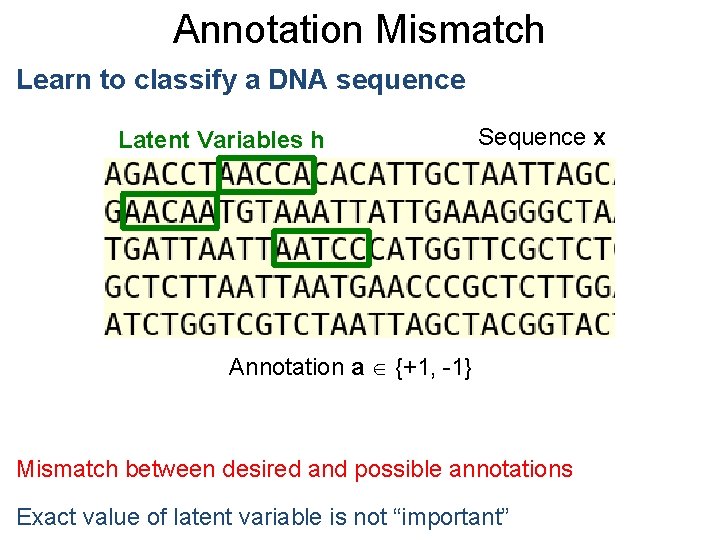

Annotation Mismatch Learn to classify a DNA sequence Latent Variables h Sequence x Annotation a {+1, -1} Mismatch between desired and possible annotations Exact value of latent variable is not “important”

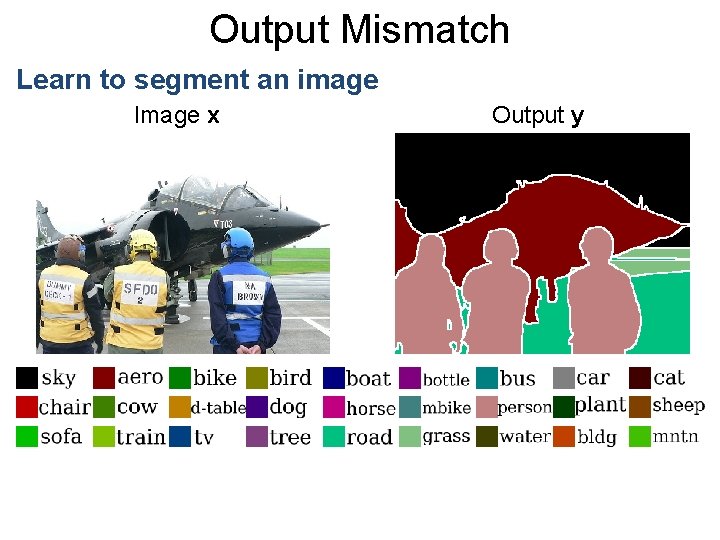

Output Mismatch Learn to segment an image Image x Output y

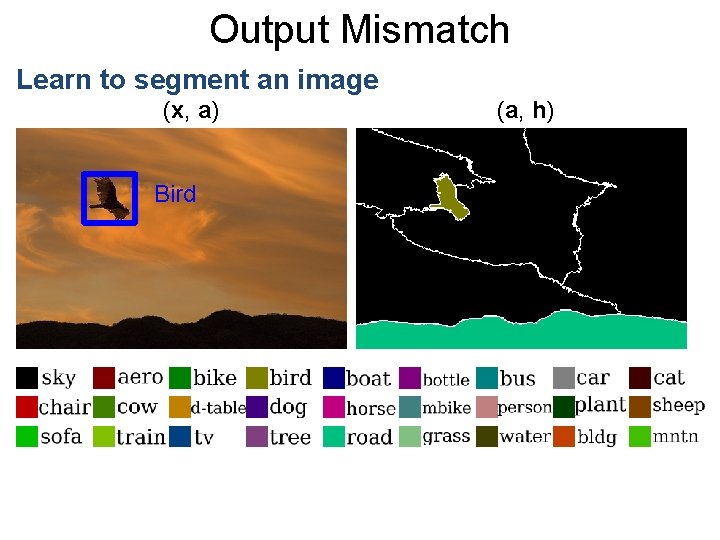

Output Mismatch Learn to segment an image (x, a) Bird (a, h)

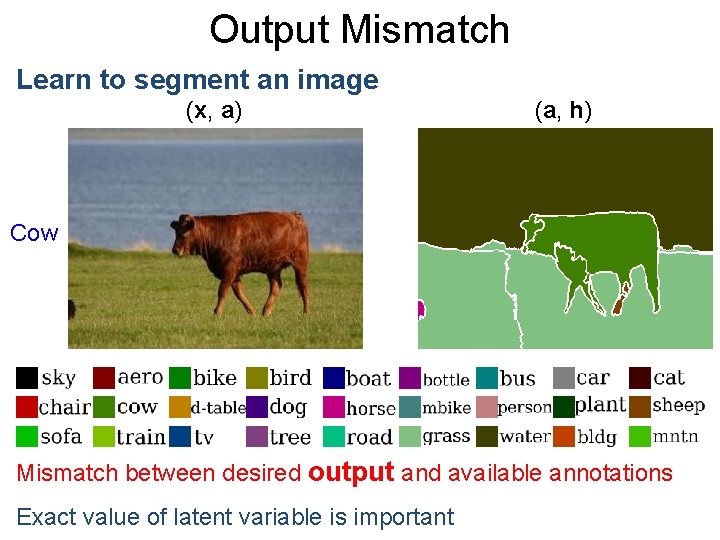

Output Mismatch Learn to segment an image (x, a) (a, h) Cow Mismatch between desired output and available annotations Exact value of latent variable is important

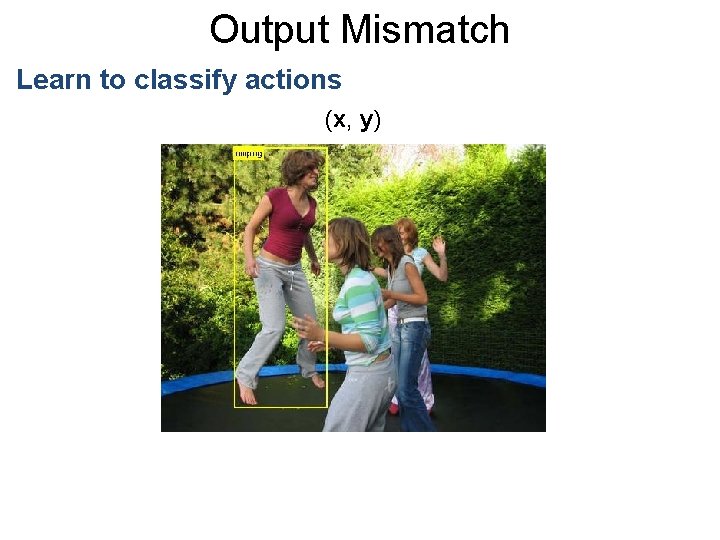

Output Mismatch Learn to classify actions (x, y)

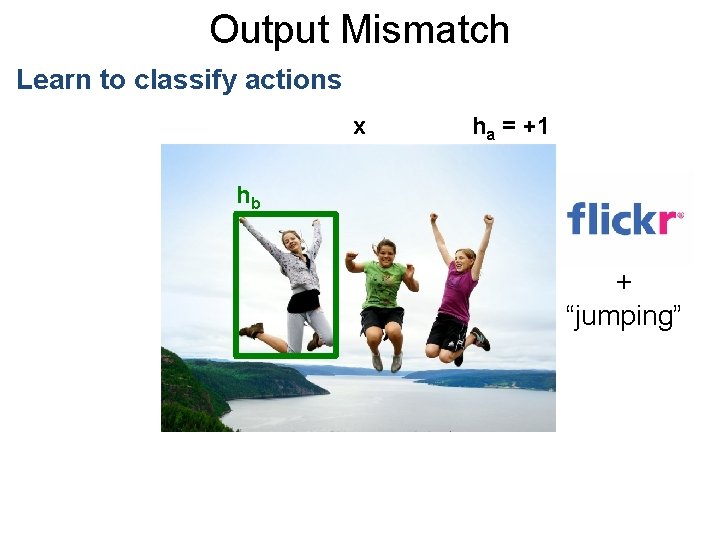

Output Mismatch Learn to classify actions x ha = +1 hb + “jumping”

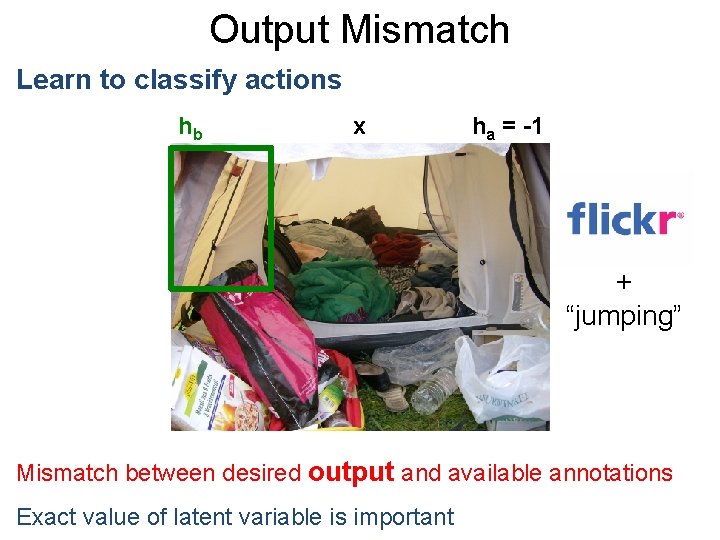

Output Mismatch Learn to classify actions hb x ha = -1 + “jumping” Mismatch between desired output and available annotations Exact value of latent variable is important

Outline • Two Types of Problems • Latent SVM (Background) • Self-Paced Learning • Max-Margin Min-Entropy Models • Discussion

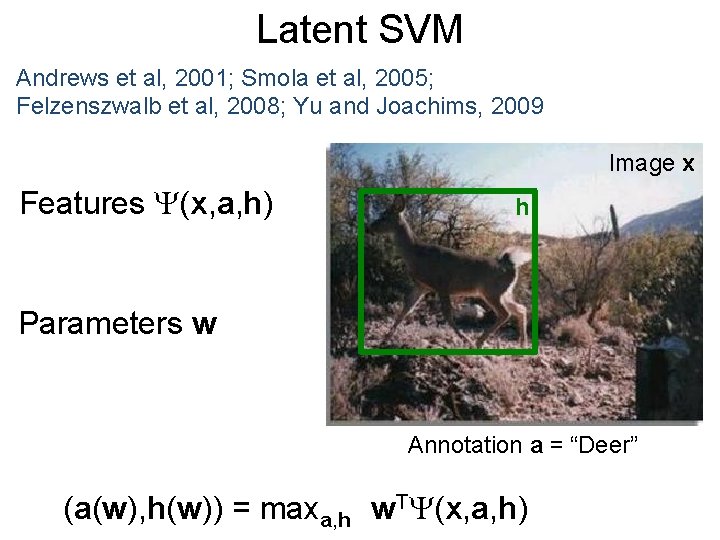

Latent SVM Andrews et al, 2001; Smola et al, 2005; Felzenszwalb et al, 2008; Yu and Joachims, 2009 Image x Features (x, a, h) h Parameters w Annotation a = “Deer” (a(w), h(w)) = maxa, h w. T (x, a, h)

Parameter Learning Score of Best Completion of Ground-Truth > Score of All Other Outputs

Parameter Learning maxh w. T (xi, ai, h) > w. T (x, a, h)

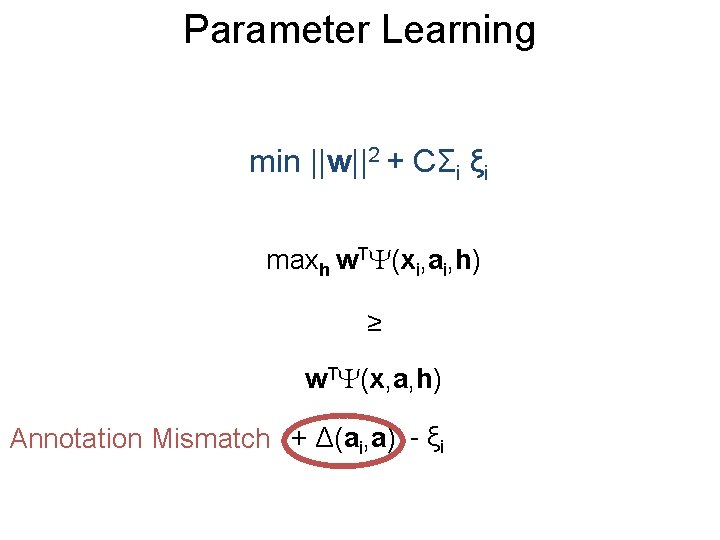

Parameter Learning min ||w||2 + CΣi ξi maxh w. T (xi, ai, h) ≥ w. T (x, a, h) Annotation Mismatch + Δ(ai, a) - ξi

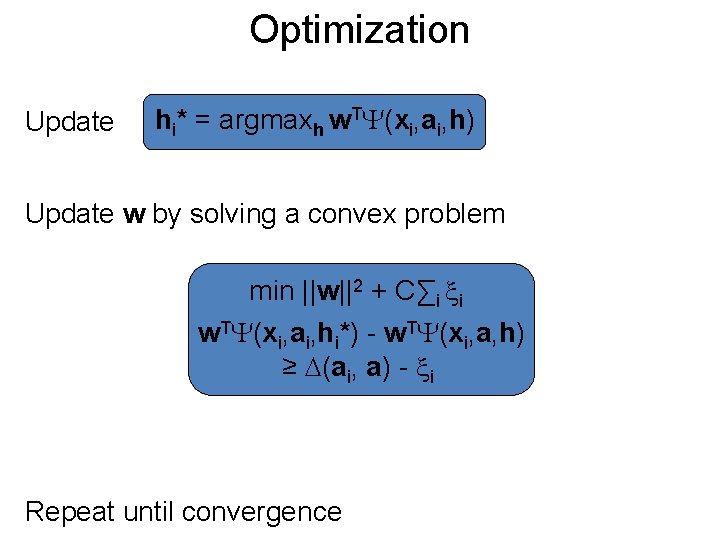

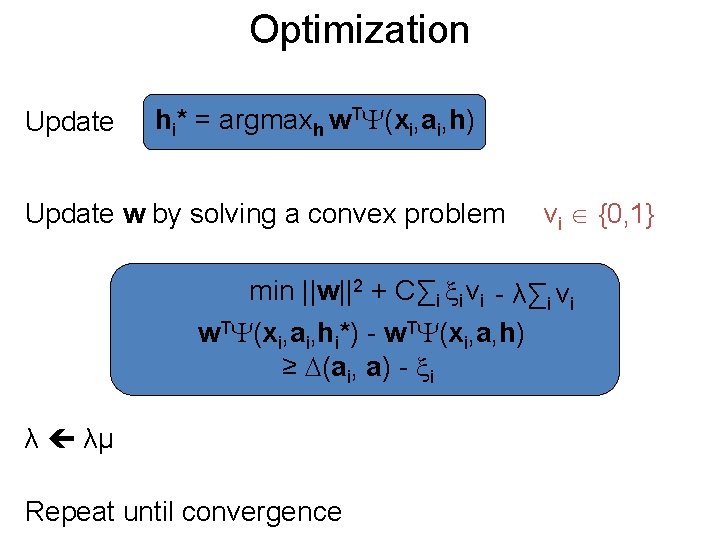

Optimization Update hi* = argmaxh w. T (xi, ai, h) Update w by solving a convex problem min ||w||2 + C∑i i w. T (xi, ai, hi*) - w. T (xi, a, h) ≥ (ai, a) - i Repeat until convergence

Outline • Two Types of Problems • Latent SVM (Background) • Self-Paced Learning • Max-Margin Min-Entropy Models • Discussion

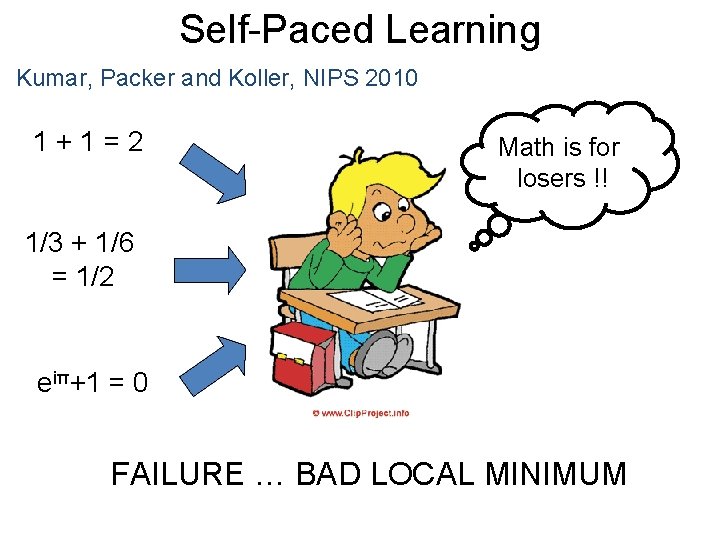

Self-Paced Learning Kumar, Packer and Koller, NIPS 2010 1+1=2 Math is for losers !! 1/3 + 1/6 = 1/2 eiπ+1 = 0 FAILURE … BAD LOCAL MINIMUM

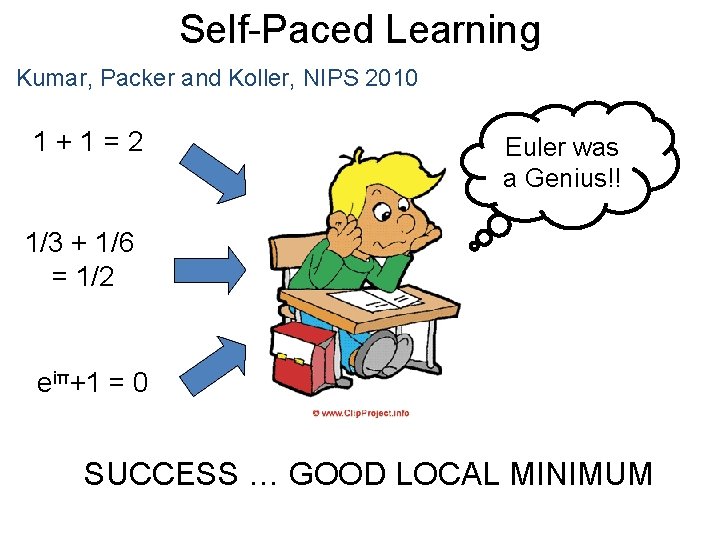

Self-Paced Learning Kumar, Packer and Koller, NIPS 2010 1+1=2 Euler was a Genius!! 1/3 + 1/6 = 1/2 eiπ+1 = 0 SUCCESS … GOOD LOCAL MINIMUM

Optimization Update hi* = argmaxh w. T (xi, ai, h) Update w by solving a convex problem vi {0, 1} min ||w||2 + C∑i i vi - λ∑i vi w. T (xi, ai, hi*) - w. T (xi, a, h) ≥ (ai, a) - i λ λμ Repeat until convergence

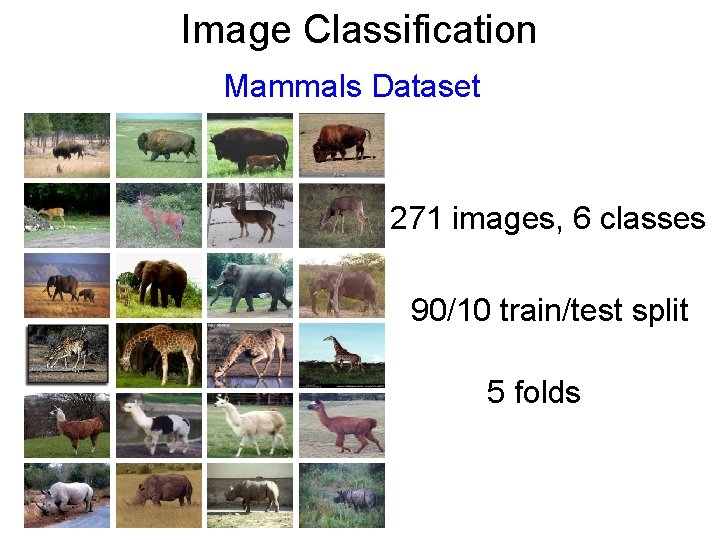

Image Classification Mammals Dataset 271 images, 6 classes 90/10 train/test split 5 folds

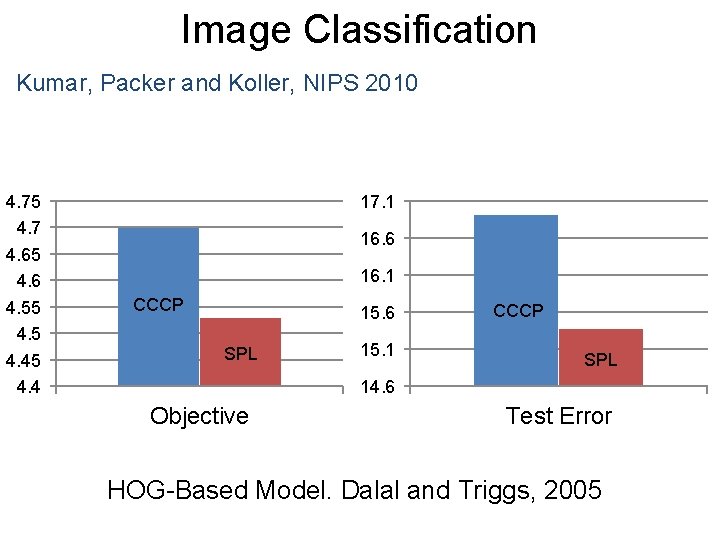

Image Classification Kumar, Packer and Koller, NIPS 2010 4. 75 4. 7 4. 65 4. 6 4. 55 4. 45 4. 4 17. 1 16. 6 16. 1 CCCP 15. 6 SPL 15. 1 CCCP SPL 14. 6 Objective Test Error HOG-Based Model. Dalal and Triggs, 2005

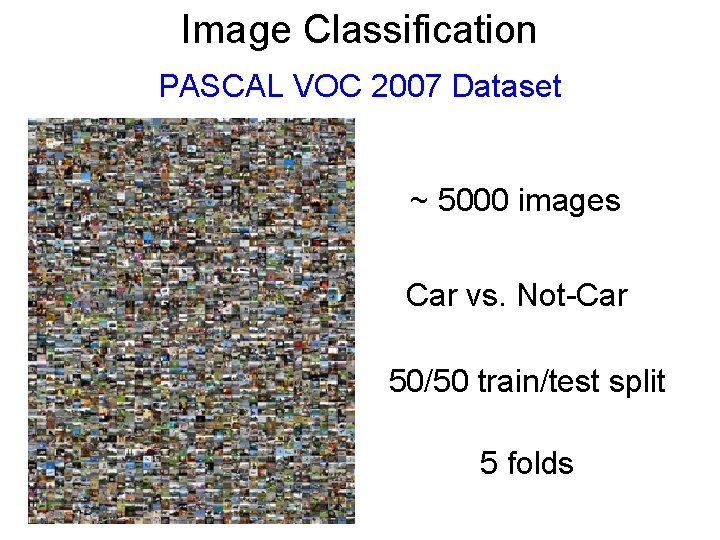

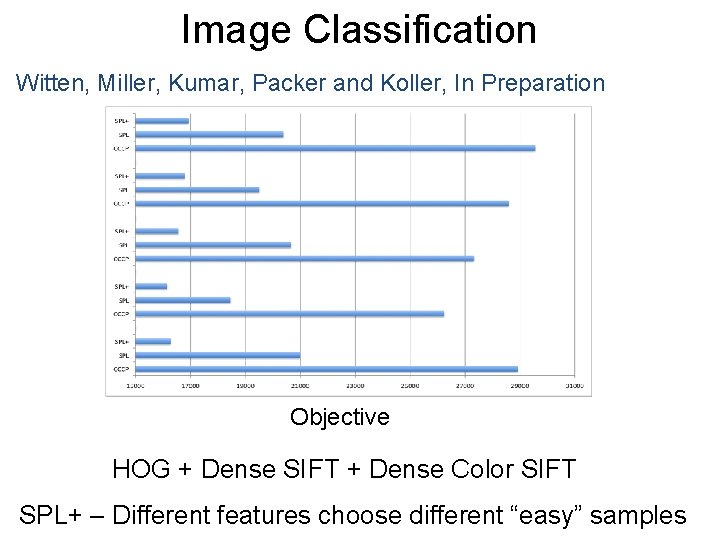

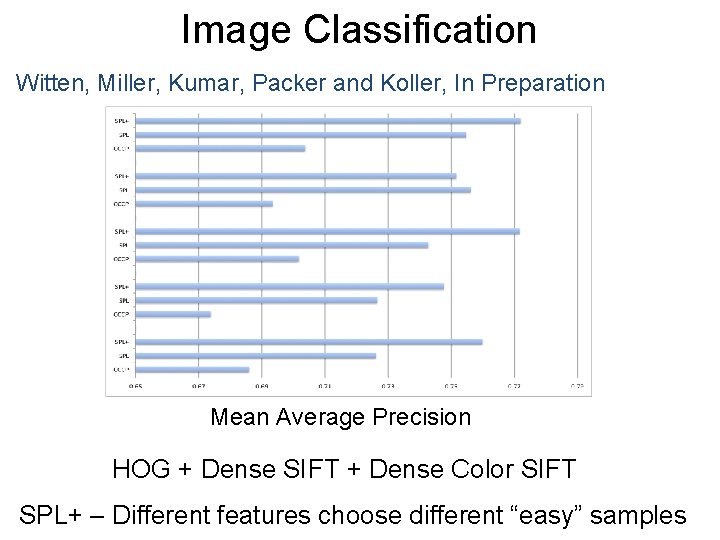

Image Classification PASCAL VOC 2007 Dataset ~ 5000 images Car vs. Not-Car 50/50 train/test split 5 folds

Image Classification Witten, Miller, Kumar, Packer and Koller, In Preparation Objective HOG + Dense SIFT + Dense Color SIFT SPL+ – Different features choose different “easy” samples

Image Classification Witten, Miller, Kumar, Packer and Koller, In Preparation Mean Average Precision HOG + Dense SIFT + Dense Color SIFT SPL+ – Different features choose different “easy” samples

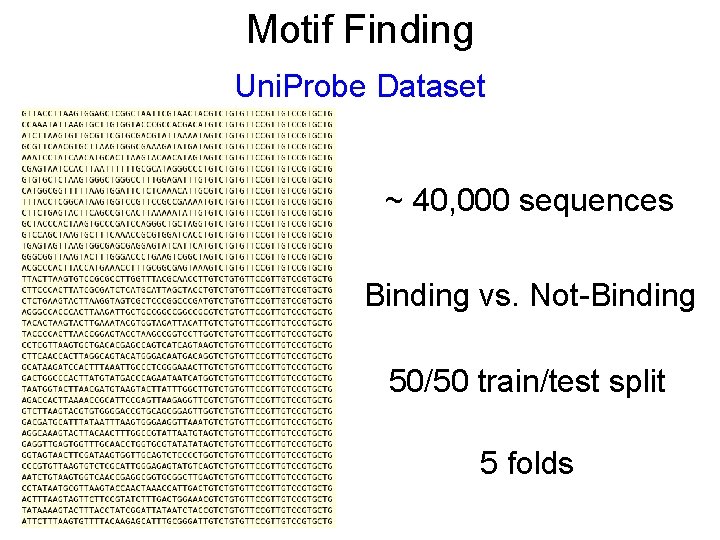

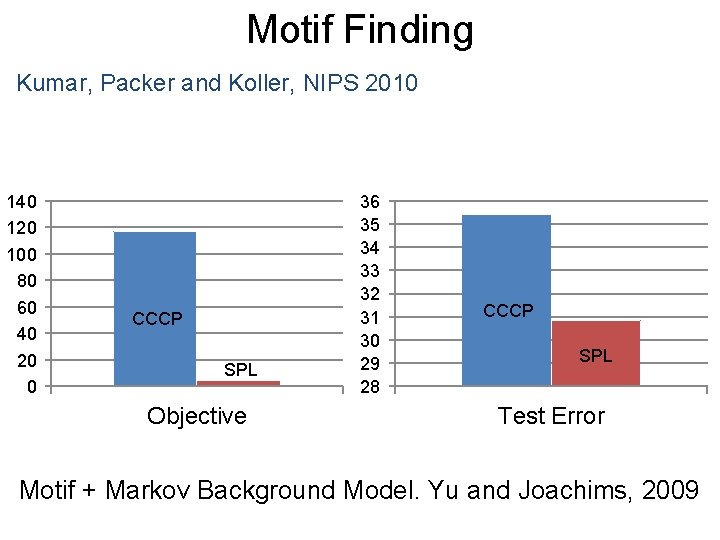

Motif Finding Uni. Probe Dataset ~ 40, 000 sequences Binding vs. Not-Binding 50/50 train/test split 5 folds

Motif Finding Kumar, Packer and Koller, NIPS 2010 140 120 100 80 60 40 20 0 CCCP SPL Objective 36 35 34 33 32 31 30 29 28 CCCP SPL Test Error Motif + Markov Background Model. Yu and Joachims, 2009

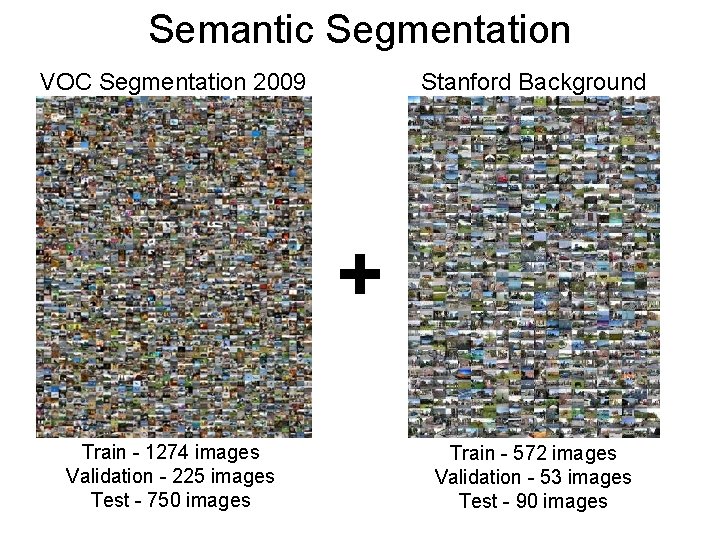

Semantic Segmentation VOC Segmentation 2009 Stanford Background + Train - 1274 images Validation - 225 images Test - 750 images Train - 572 images Validation - 53 images Test - 90 images

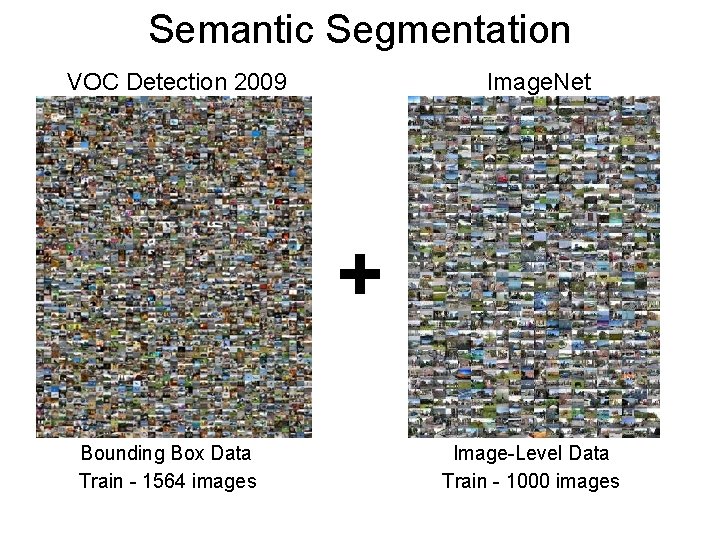

Semantic Segmentation VOC Detection 2009 Image. Net + Bounding Box Data Train - 1564 images Image-Level Data Train - 1000 images

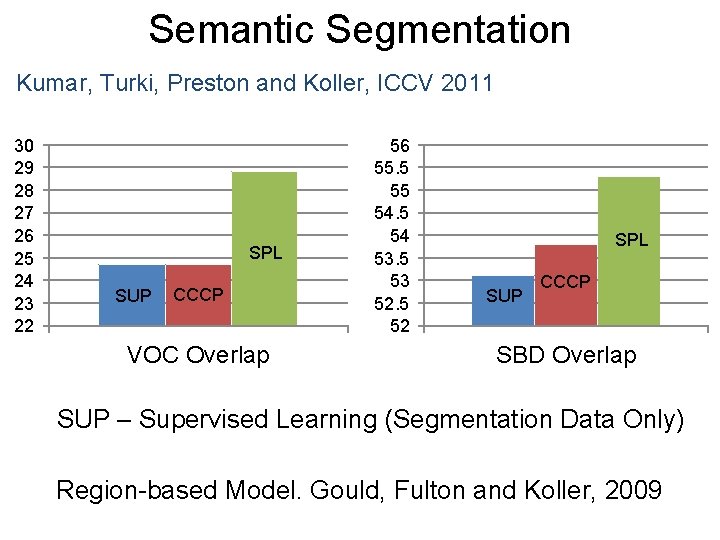

Semantic Segmentation Kumar, Turki, Preston and Koller, ICCV 2011 30 29 28 27 26 25 24 23 22 SPL SUP CCCP VOC Overlap 56 55. 5 55 54 53. 5 53 52. 5 52 SPL SUP CCCP SBD Overlap SUP – Supervised Learning (Segmentation Data Only) Region-based Model. Gould, Fulton and Koller, 2009

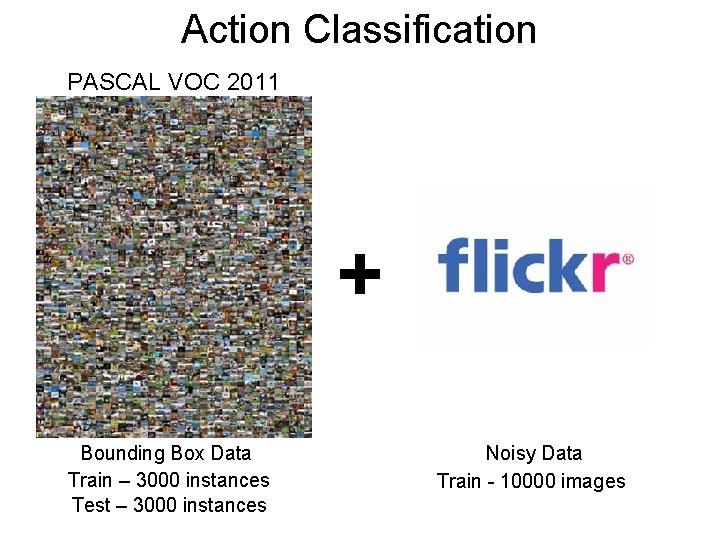

Action Classification PASCAL VOC 2011 + Bounding Box Data Train – 3000 instances Test – 3000 instances Noisy Data Train - 10000 images

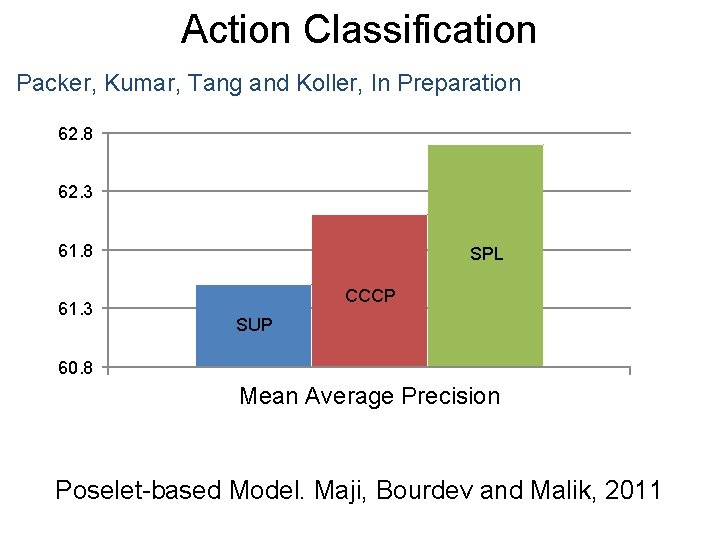

Action Classification Packer, Kumar, Tang and Koller, In Preparation 62. 8 62. 3 61. 8 61. 3 SPL CCCP SUP 60. 8 Mean Average Precision Poselet-based Model. Maji, Bourdev and Malik, 2011

Self-Paced Multiple Kernel Learning Kumar, Packer and Koller, In Preparation 1+1=2 Integers Rational Numbers 1/3 + 1/6 = 1/2 Imaginary Numbers eiπ+1 = 0 USE A FIXED MODEL

Self-Paced Multiple Kernel Learning Kumar, Packer and Koller, In Preparation 1+1=2 1/3 + 1/6 = 1/2 eiπ+1 = 0 Integers Rational Numbers Imaginary Numbers ADAPT THE MODEL COMPLEXITY

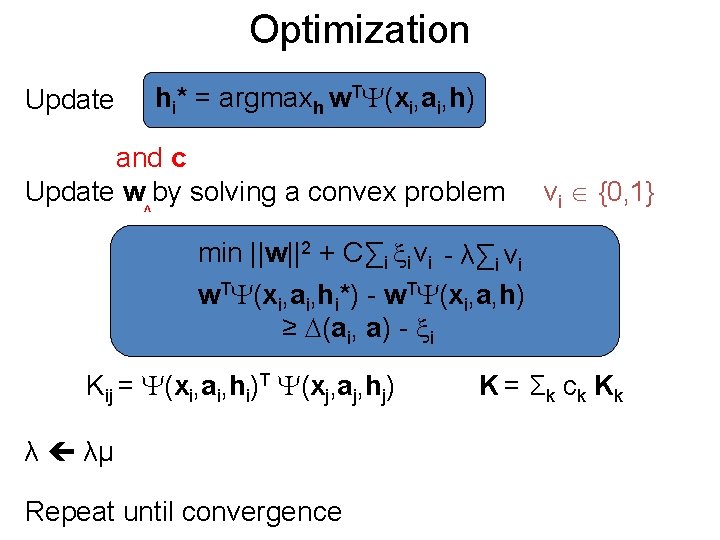

Optimization hi* = argmaxh w. T (xi, ai, h) Update and c Update w by solving a convex problem ^ vi {0, 1} min ||w||2 + C∑i i vi - λ∑i vi w. T (xi, ai, hi*) - w. T (xi, a, h) ≥ (ai, a) - i Kij = (xi, ai, hi)T (xj, aj, hj) λ λμ Repeat until convergence K = Σ k c k Kk

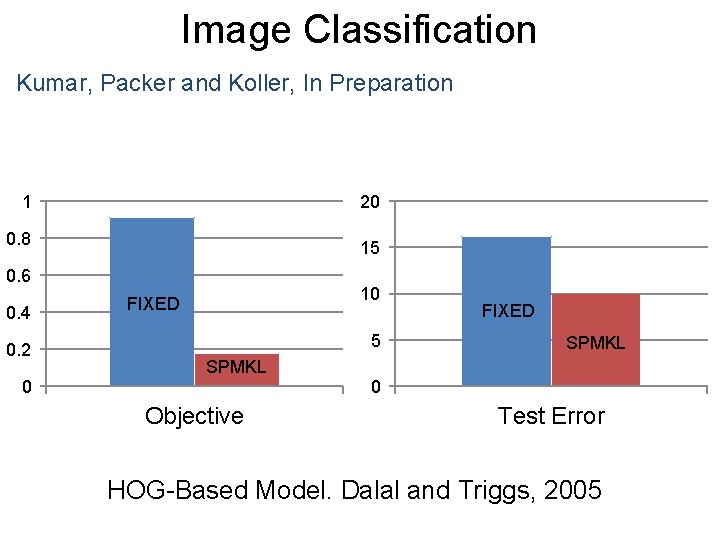

Image Classification Mammals Dataset 271 images, 6 classes 90/10 train/test split 5 folds

Image Classification Kumar, Packer and Koller, In Preparation 1 20 0. 8 15 0. 6 0. 4 0. 2 0 10 FIXED 5 SPMKL Objective FIXED SPMKL 0 Test Error HOG-Based Model. Dalal and Triggs, 2005

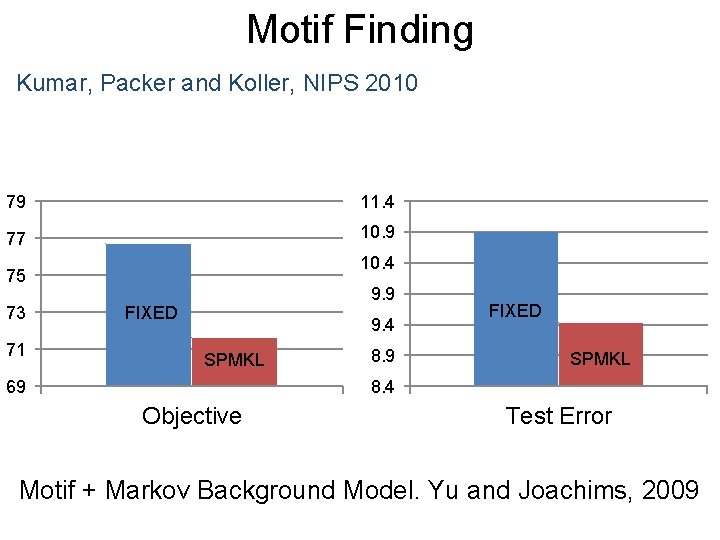

Motif Finding Uni. Probe Dataset ~ 40, 000 sequences Binding vs. Not-Binding 50/50 train/test split 5 folds

Motif Finding Kumar, Packer and Koller, NIPS 2010 79 11. 4 77 10. 9 10. 4 75 73 71 9. 9 FIXED 9. 4 SPMKL 69 8. 9 FIXED SPMKL 8. 4 Objective Test Error Motif + Markov Background Model. Yu and Joachims, 2009

Outline • Two Types of Problems • Latent SVM (Background) • Self-Paced Learning • Max-Margin Min-Entropy Models • Discussion

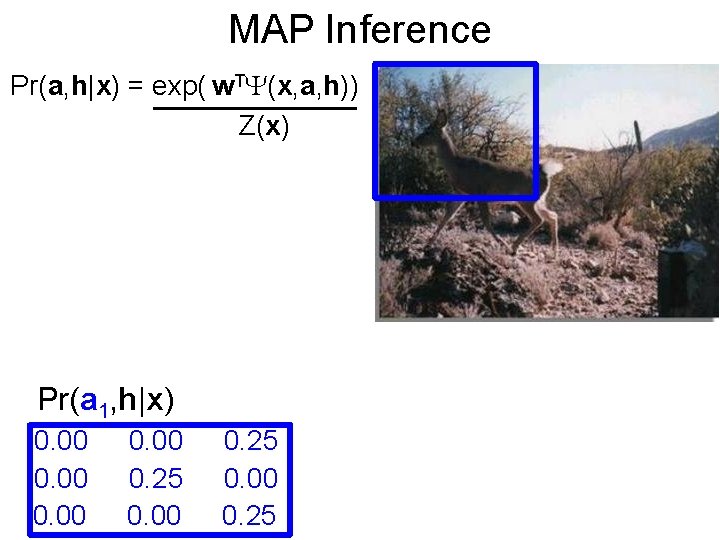

MAP Inference Pr(a, h|x) = exp( w. T (x, a, h)) Z(x) Pr(a 1, h|x) 0. 00 0. 25

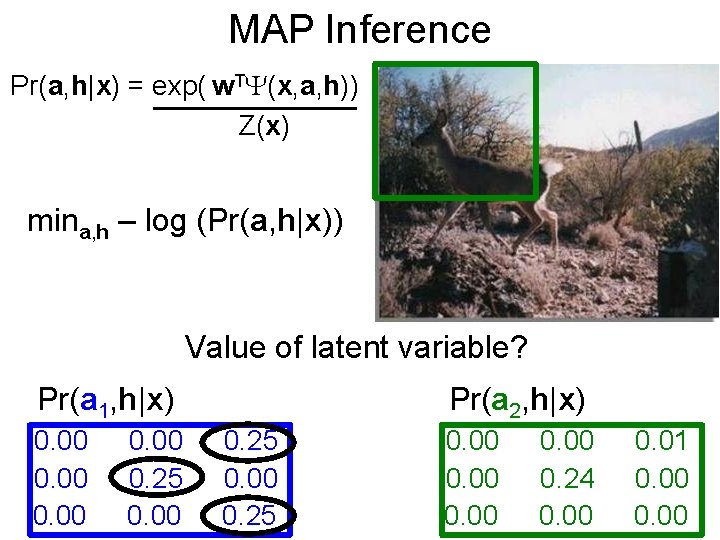

MAP Inference Pr(a, h|x) = exp( w. T (x, a, h)) Z(x) mina, h – log (Pr(a, h|x)) Value of latent variable? Pr(a 1, h|x) 0. 00 0. 25 0. 00 Pr(a 2, h|x) 0. 25 0. 00 0. 24 0. 00 0. 01 0. 00

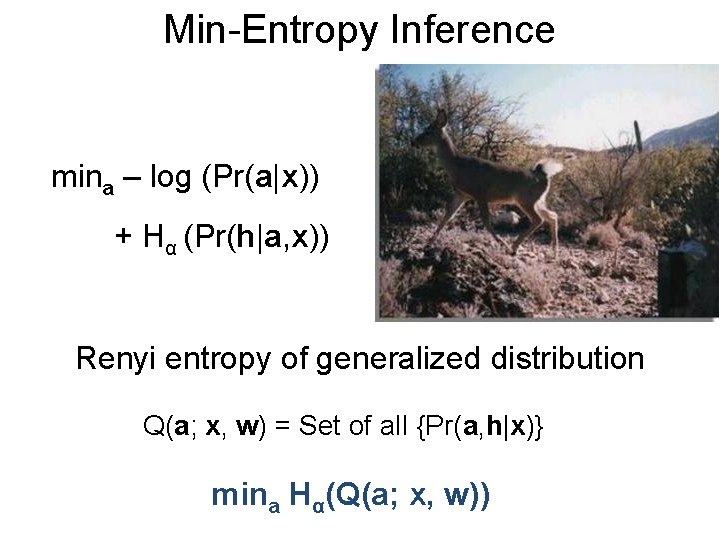

Min-Entropy Inference mina – log (Pr(a|x)) + Hα (Pr(h|a, x)) Renyi entropy of generalized distribution Q(a; x, w) = Set of all {Pr(a, h|x)} mina Hα(Q(a; x, w))

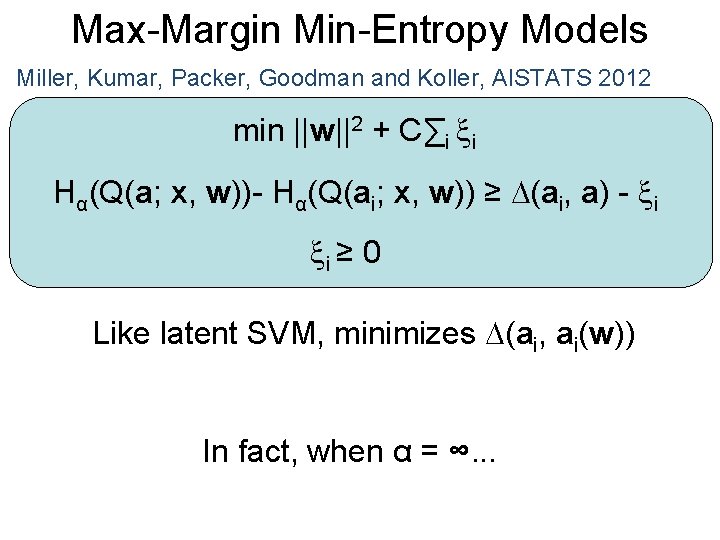

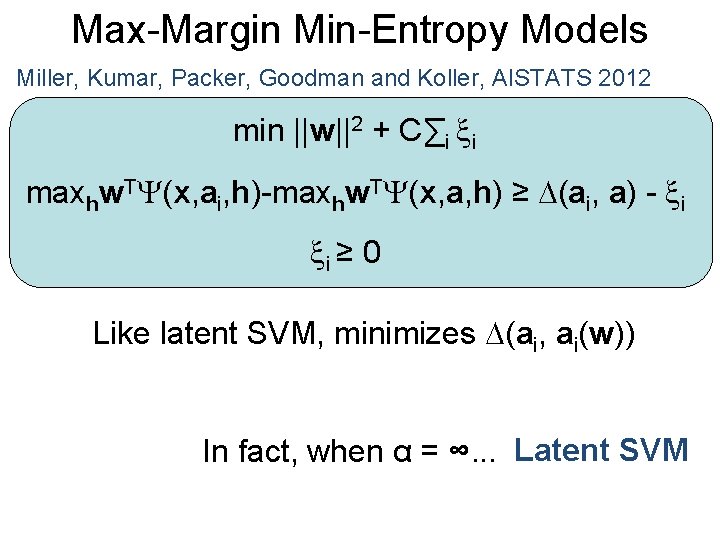

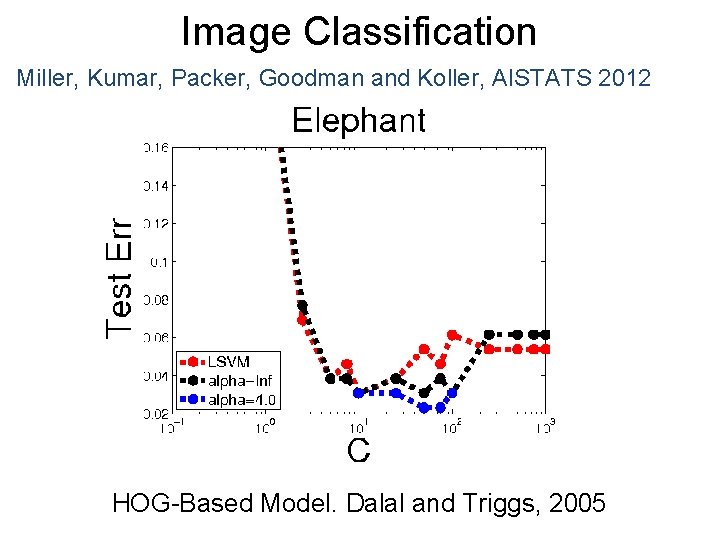

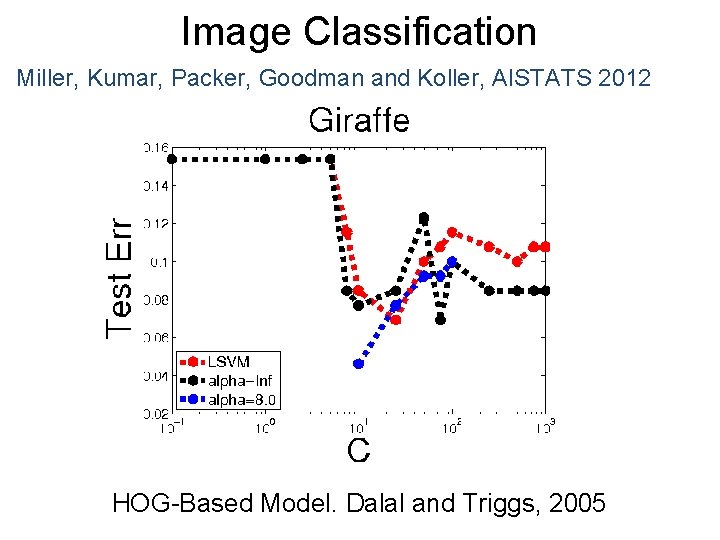

Max-Margin Min-Entropy Models Miller, Kumar, Packer, Goodman and Koller, AISTATS 2012 min ||w||2 + C∑i i Hα(Q(a; x, w))- Hα(Q(ai; x, w)) ≥ (ai, a) - i i ≥ 0 Like latent SVM, minimizes (ai, ai(w)) In fact, when α = ∞. . .

Max-Margin Min-Entropy Models Miller, Kumar, Packer, Goodman and Koller, AISTATS 2012 min ||w||2 + C∑i i maxhw. T (x, ai, h)-maxhw. T (x, a, h) ≥ (ai, a) - i i ≥ 0 Like latent SVM, minimizes (ai, ai(w)) In fact, when α = ∞. . . Latent SVM

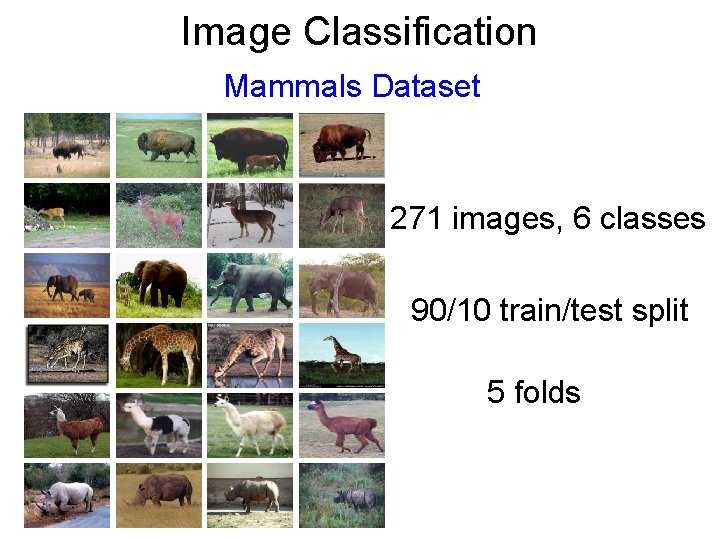

Image Classification Mammals Dataset 271 images, 6 classes 90/10 train/test split 5 folds

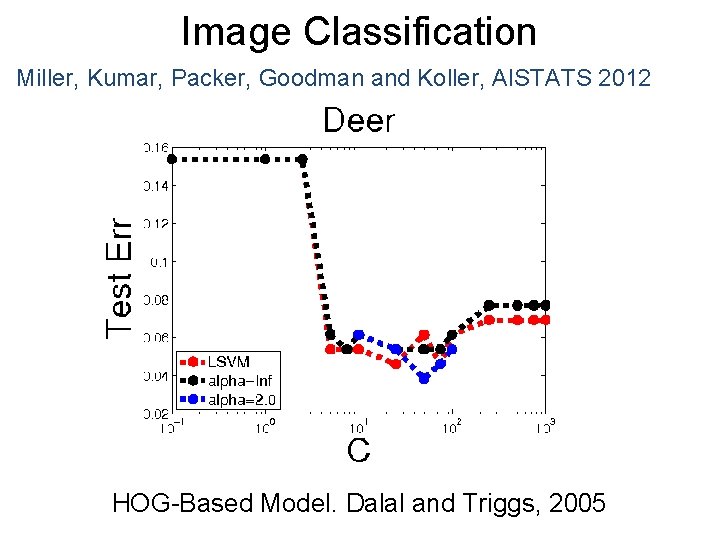

Image Classification Miller, Kumar, Packer, Goodman and Koller, AISTATS 2012 HOG-Based Model. Dalal and Triggs, 2005

Image Classification Miller, Kumar, Packer, Goodman and Koller, AISTATS 2012 HOG-Based Model. Dalal and Triggs, 2005

Image Classification Miller, Kumar, Packer, Goodman and Koller, AISTATS 2012 HOG-Based Model. Dalal and Triggs, 2005

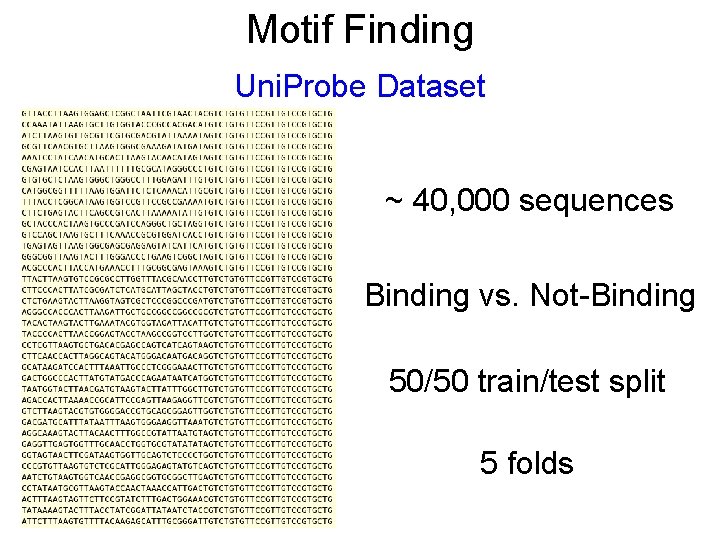

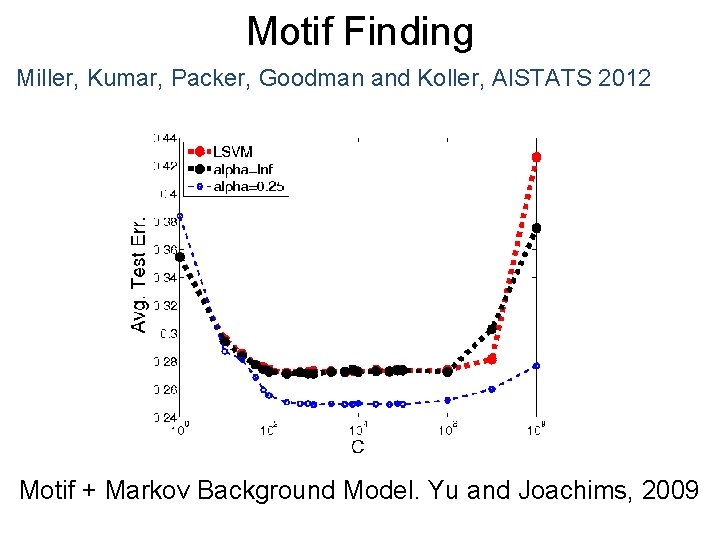

Motif Finding Uni. Probe Dataset ~ 40, 000 sequences Binding vs. Not-Binding 50/50 train/test split 5 folds

Motif Finding Miller, Kumar, Packer, Goodman and Koller, AISTATS 2012 Motif + Markov Background Model. Yu and Joachims, 2009

Outline • Two Types of Problems • Latent SVM (Background) • Self-Paced Learning • Max-Margin Min-Entropy Models • Discussion

Very Large Datasets • Initialize parameters using supervised data • Impute latent variables (inference) • Select easy samples (very efficient) • Update parameters using incremental SVM • Refine efficiently with proximal regularization

Output Mismatch Σh Prθ(h|a, x) Δ(a, h, a(w), h(w)) + A(θ) C. R. Rao’s Relative Quadratic Entropy Minimize over w and θ

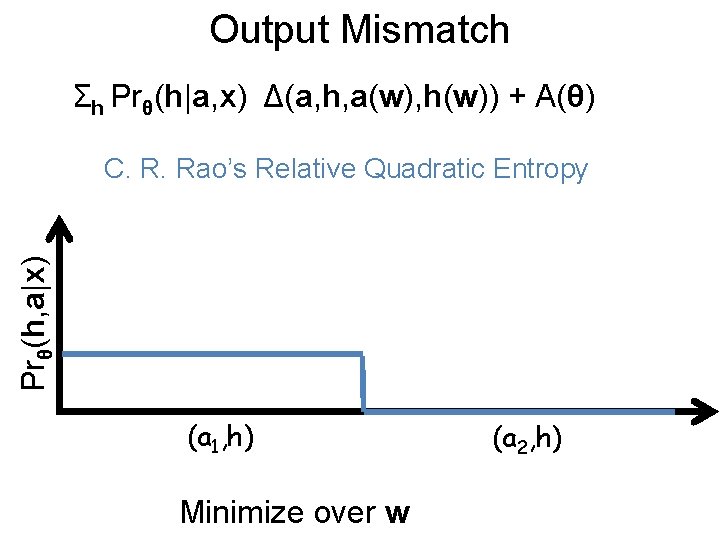

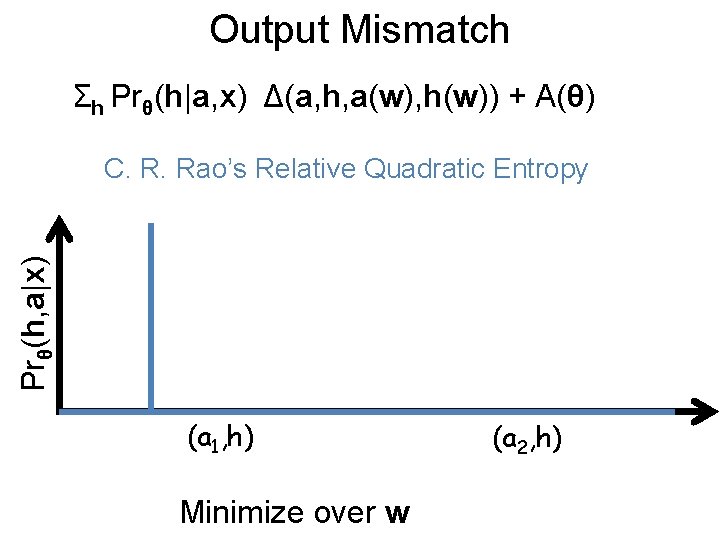

Output Mismatch Σh Prθ(h|a, x) Δ(a, h, a(w), h(w)) + A(θ) Prθ(h, a|x) C. R. Rao’s Relative Quadratic Entropy (a 1, h) Minimize over w (a 2, h)

Output Mismatch Σh Prθ(h|a, x) Δ(a, h, a(w), h(w)) + A(θ) Prθ(h, a|x) C. R. Rao’s Relative Quadratic Entropy (a 1, h) Minimize over w (a 2, h)

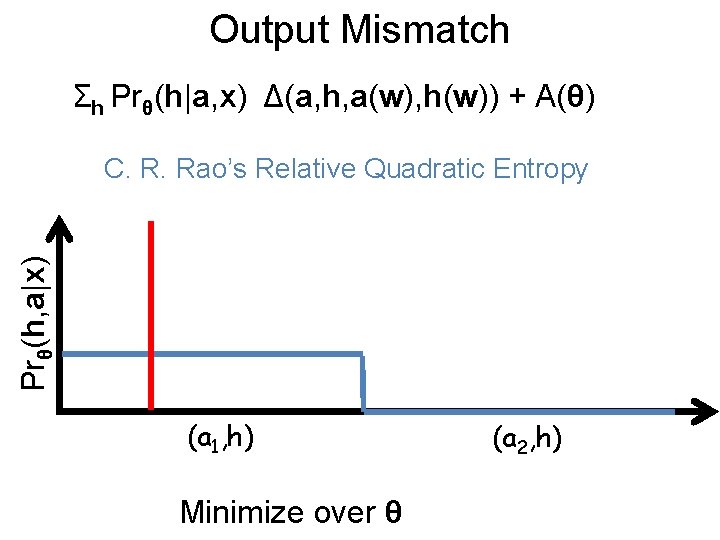

Output Mismatch Σh Prθ(h|a, x) Δ(a, h, a(w), h(w)) + A(θ) Prθ(h, a|x) C. R. Rao’s Relative Quadratic Entropy (a 1, h) Minimize over θ (a 2, h)

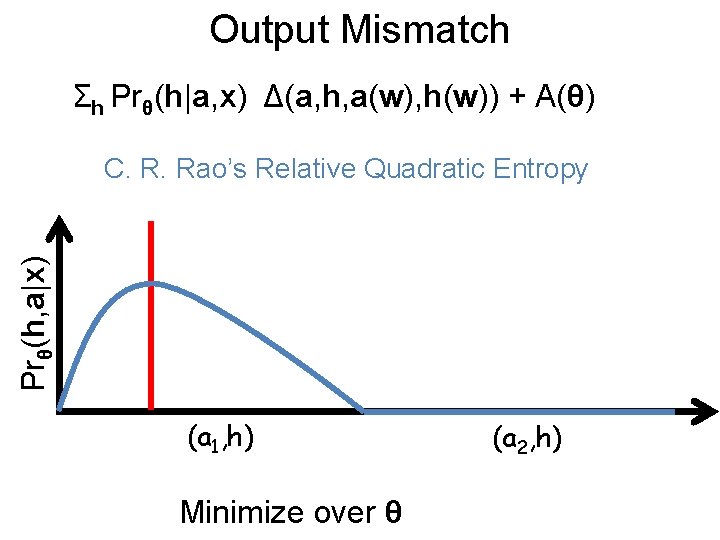

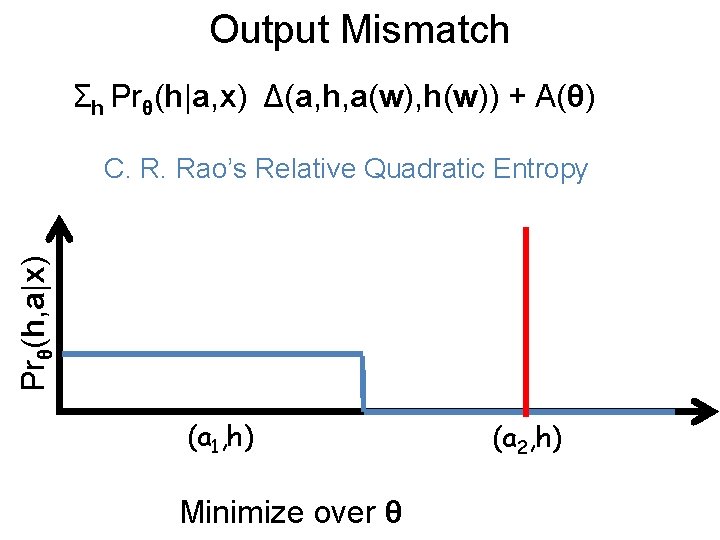

Output Mismatch Σh Prθ(h|a, x) Δ(a, h, a(w), h(w)) + A(θ) Prθ(h, a|x) C. R. Rao’s Relative Quadratic Entropy (a 1, h) Minimize over θ (a 2, h)

Output Mismatch Σh Prθ(h|a, x) Δ(a, h, a(w), h(w)) + A(θ) Prθ(h, a|x) C. R. Rao’s Relative Quadratic Entropy (a 1, h) Minimize over θ (a 2, h)

Questions?

- Slides: 65