Performance Diagnosis of CloudBased Mobile Applications Yu Kang

Performance Diagnosis of Cloud-Based Mobile Applications Yu Kang Supervised by Prof. Michael Lyu 05/07/2016

Outline • Introduction • Client Side Performance Diagnosis • Cloud Side Performance Diagnosis • Conclusion Performance Diagnosis of Cloud-Based Mobile Applications 2

Complaints on Performance • User complaints on the performance of mobile apps Performance Diagnosis of Cloud-Based Mobile Applications 3

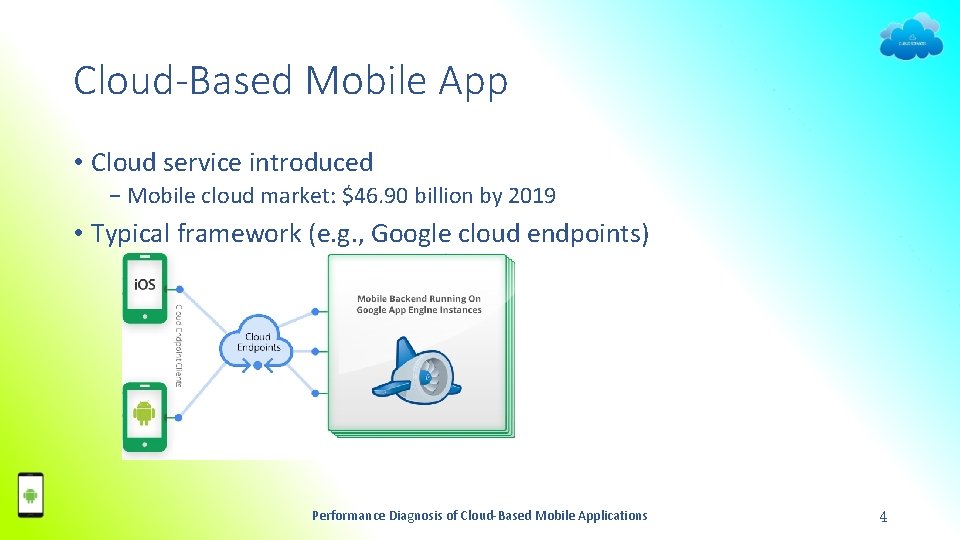

Cloud-Based Mobile App • Cloud service introduced − Mobile cloud market: $46. 90 billion by 2019 • Typical framework (e. g. , Google cloud endpoints) Performance Diagnosis of Cloud-Based Mobile Applications 4

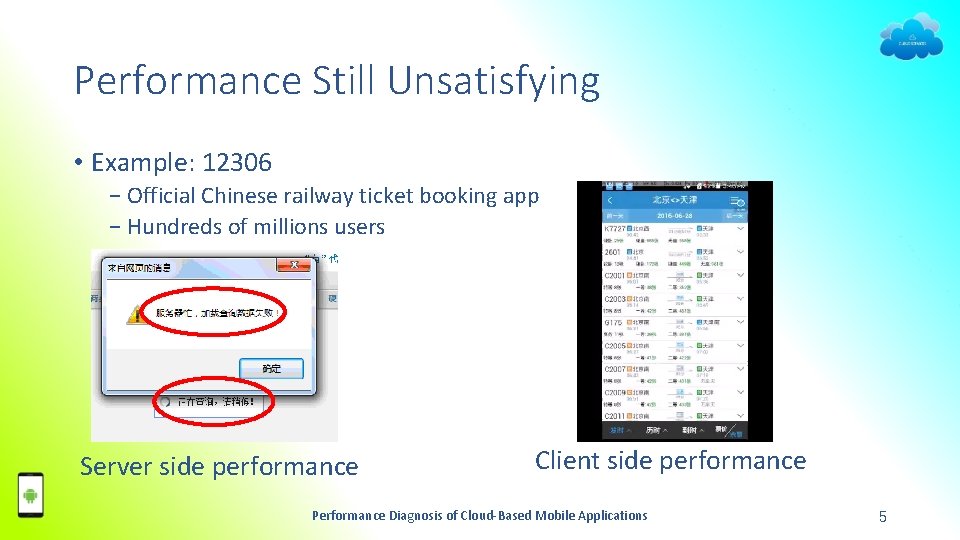

Performance Still Unsatisfying • Example: 12306 − Official Chinese railway ticket booking app − Hundreds of millions users Server side performance Client side performance Performance Diagnosis of Cloud-Based Mobile Applications 5

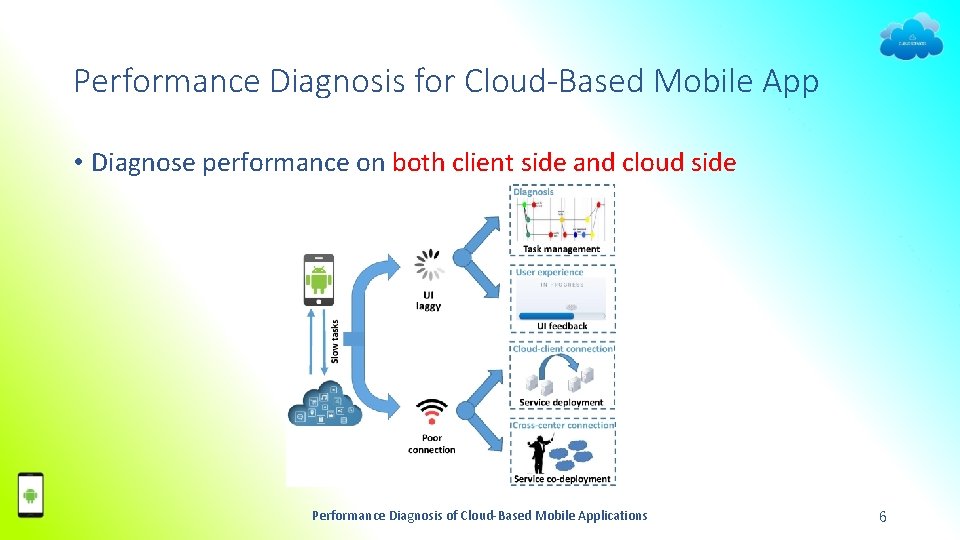

Performance Diagnosis for Cloud-Based Mobile App • Diagnose performance on both client side and cloud side Performance Diagnosis of Cloud-Based Mobile Applications 6

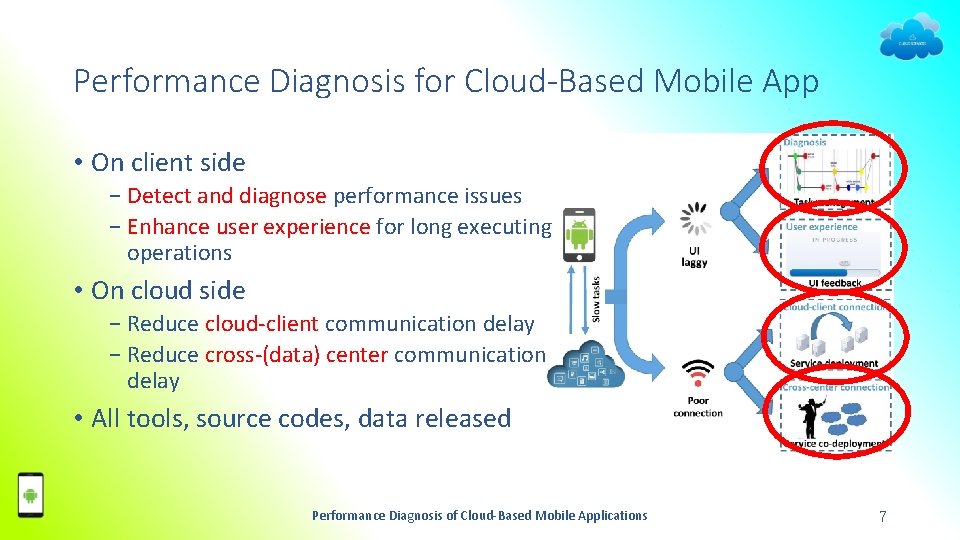

Performance Diagnosis for Cloud-Based Mobile App • On client side − Detect and diagnose performance issues − Enhance user experience for long executing operations • On cloud side − Reduce cloud-client communication delay − Reduce cross-(data) center communication delay • All tools, source codes, data released Performance Diagnosis of Cloud-Based Mobile Applications 7

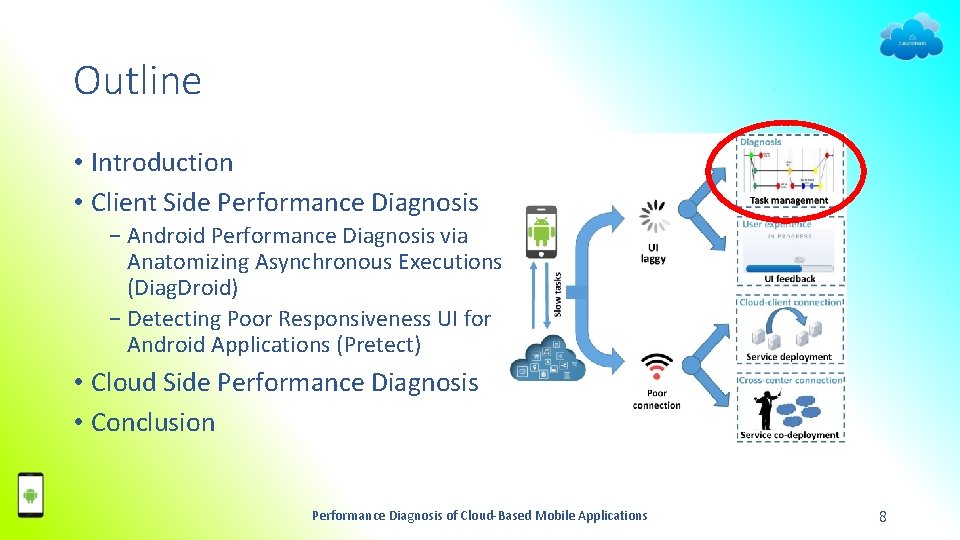

Outline • Introduction • Client Side Performance Diagnosis − Android Performance Diagnosis via Anatomizing Asynchronous Executions (Diag. Droid) − Detecting Poor Responsiveness UI for Android Applications (Pretect) • Cloud Side Performance Diagnosis • Conclusion Performance Diagnosis of Cloud-Based Mobile Applications 8

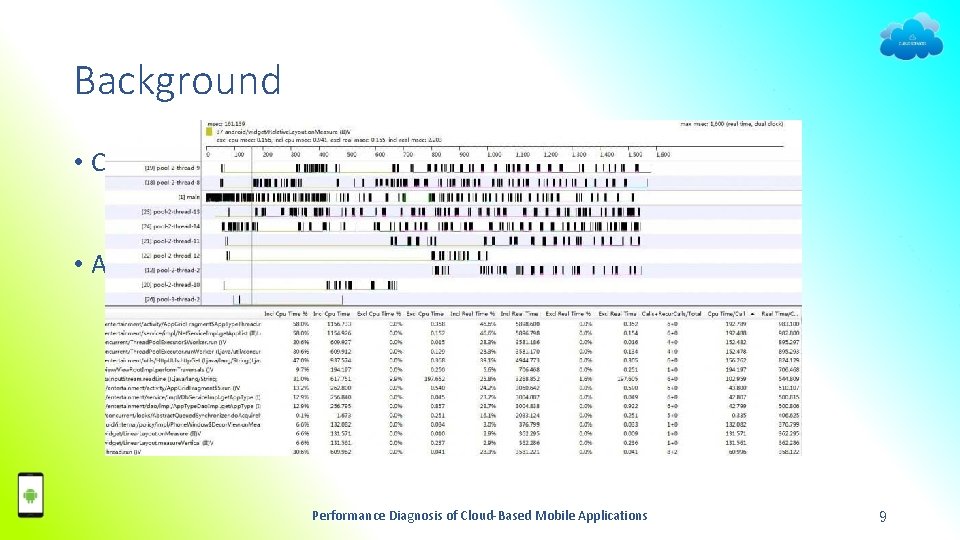

Background • Currently no suitable tools − Daunting human efforts (e. g. , Traceview and dmtracedump) − Limited scenario (e. g. , Strict. Mode, Asynchronizer) • A new handy tool − User-friendly (i. e. , locate performance issues automatically) − Covering more scenarios (e. g. , asynchronous executions) Performance Diagnosis of Cloud-Based Mobile Applications 9

Android Application Specifics • User-interface (UI) oriented − UI thread = main thread − UI thread kept responsive (non-blocking) • Asynchronous executions − Time-consuming tasks − Worker threads − Update UI afterwards ∝ User perceived latency Performance Diagnosis of Cloud-Based Mobile Applications 10

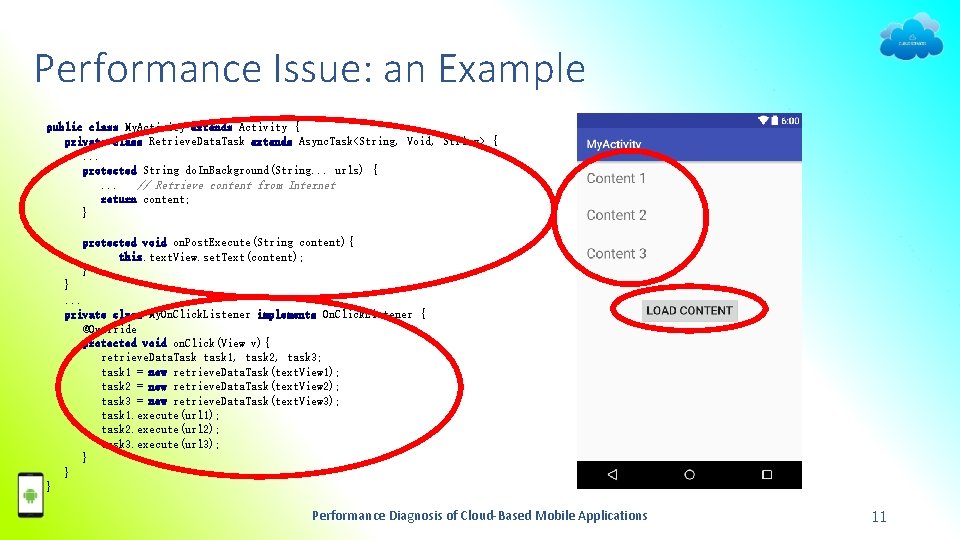

Performance Issue: an Example public class My. Activity extends Activity { private class Retrieve. Data. Task extends Async. Task<String, Void, String> {. . . protected String do. In. Background(String. . . urls) {. . . // Retrieve content from Internet return content; } protected void on. Post. Execute(String content){ this. text. View. set. Text(content); } }. . . private class My. On. Click. Listener implements On. Click. Listener { @Override protected void on. Click(View v){ retrieve. Data. Task task 1, task 2, task 3; task 1 = new retrieve. Data. Task(text. View 1); task 2 = new retrieve. Data. Task(text. View 2); task 3 = new retrieve. Data. Task(text. View 3); task 1. execute(url 1); task 2. execute(url 2); task 3. execute(url 3); } } } Performance Diagnosis of Cloud-Based Mobile Applications 11

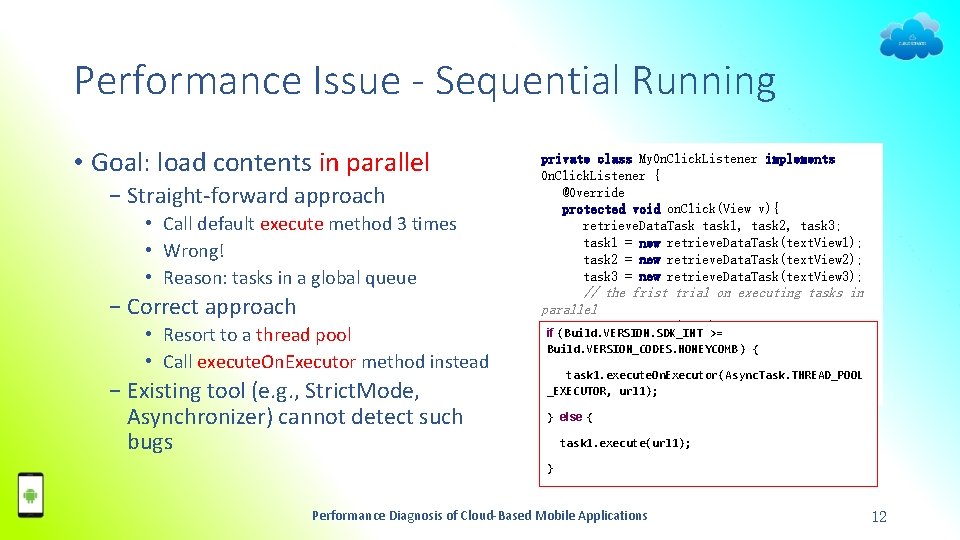

Performance Issue - Sequential Running • Goal: load contents in parallel − Straight-forward approach • Call default execute method 3 times • Wrong! • Reason: tasks in a global queue − Correct approach • Resort to a thread pool • Call execute. On. Executor method instead − Existing tool (e. g. , Strict. Mode, Asynchronizer) cannot detect such bugs private class My. On. Click. Listener implements On. Click. Listener { @Override protected void on. Click(View v){ retrieve. Data. Task task 1, task 2, task 3; task 1 = new retrieve. Data. Task(text. View 1); task 2 = new retrieve. Data. Task(text. View 2); task 3 = new retrieve. Data. Task(text. View 3); // the frist trial on executing tasks in parallel task 1. execute(url 1); if (Build. VERSION. SDK_INT >= task 2. execute(url 2); Build. VERSION_CODES. HONEYCOMB) { task 3. execute(url 3); }task 1. execute. On. Executor(Async. Task. THREAD_POOL }_EXECUTOR, url 1); } else { task 1. execute(url 1); } Performance Diagnosis of Cloud-Based Mobile Applications 12

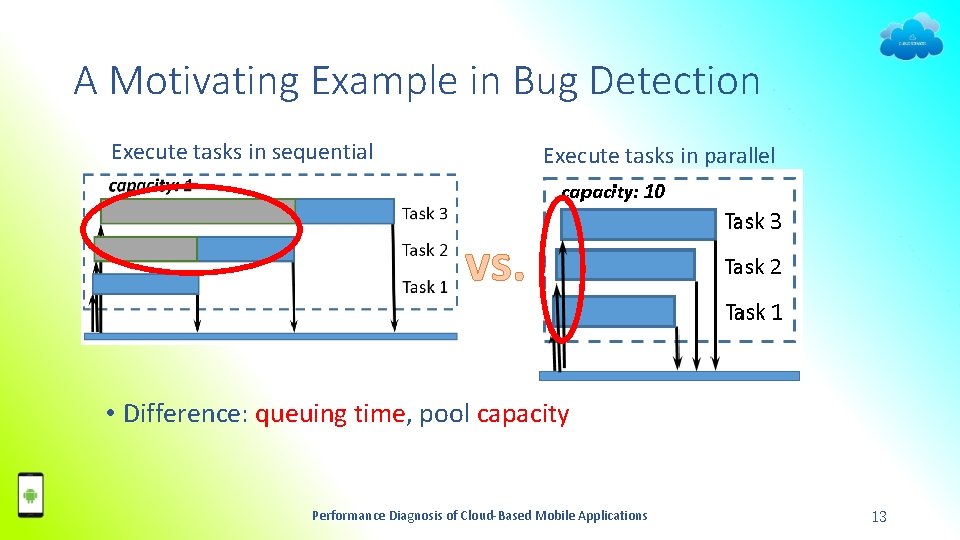

A Motivating Example in Bug Detection Execute tasks in sequential Execute tasks in parallel vs. • Difference: queuing time, pool capacity Performance Diagnosis of Cloud-Based Mobile Applications 13

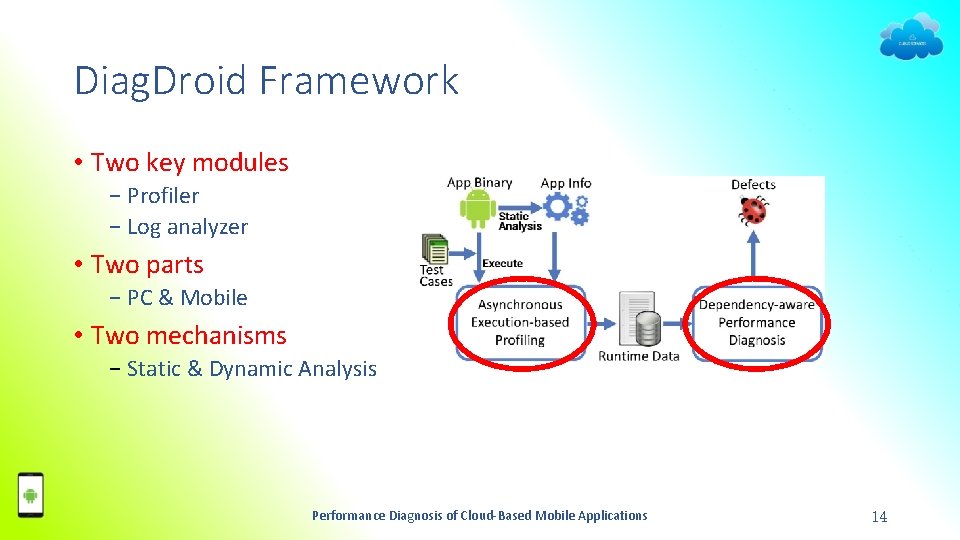

Diag. Droid Framework • Two key modules − Profiler − Log analyzer • Two parts − PC & Mobile • Two mechanisms − Static & Dynamic Analysis Performance Diagnosis of Cloud-Based Mobile Applications 14

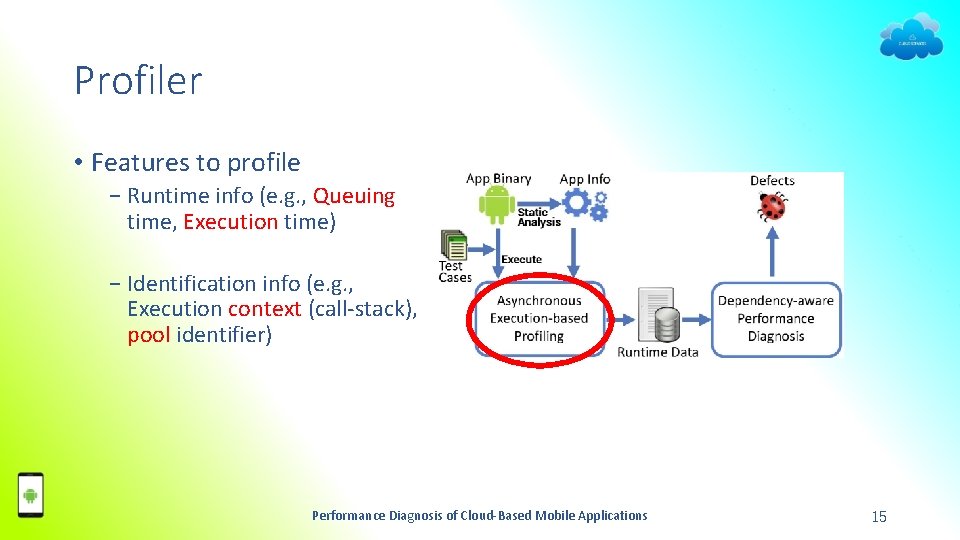

Profiler • Features to profile − Runtime info (e. g. , Queuing time, Execution time) − Identification info (e. g. , Execution context (call-stack), pool identifier) Performance Diagnosis of Cloud-Based Mobile Applications 15

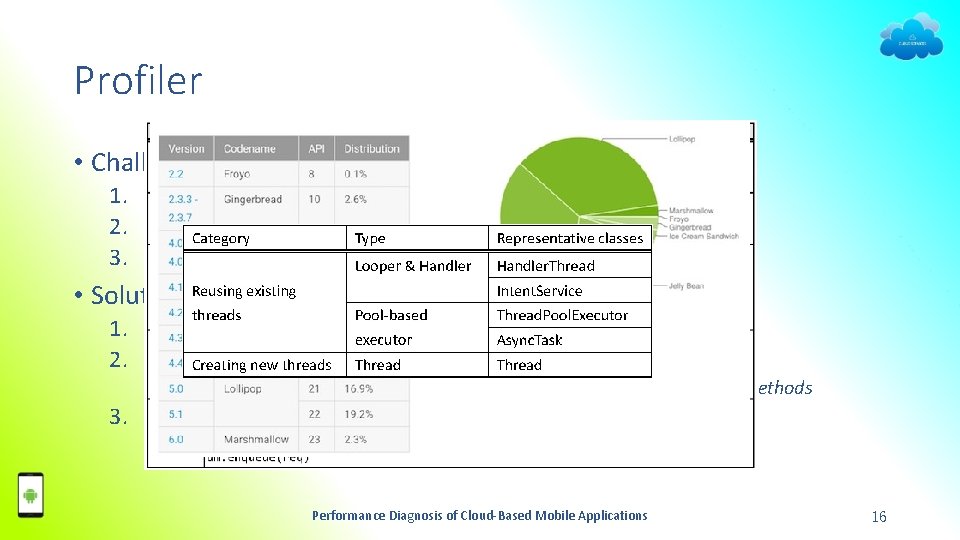

Profiler • Challenge 1. Tremendous ways to run asynchronous executions 2. Android Fragmentation 3. Low overhead • Solution 1. Android asynchronous execution taxonomy 2. Hook only general framework methods • E. g. , For Thread. Pool. Executor execute, before. Execute, and after. Execute methods 3. Granularity • Task level vs. method/line level Performance Diagnosis of Cloud-Based Mobile Applications 16

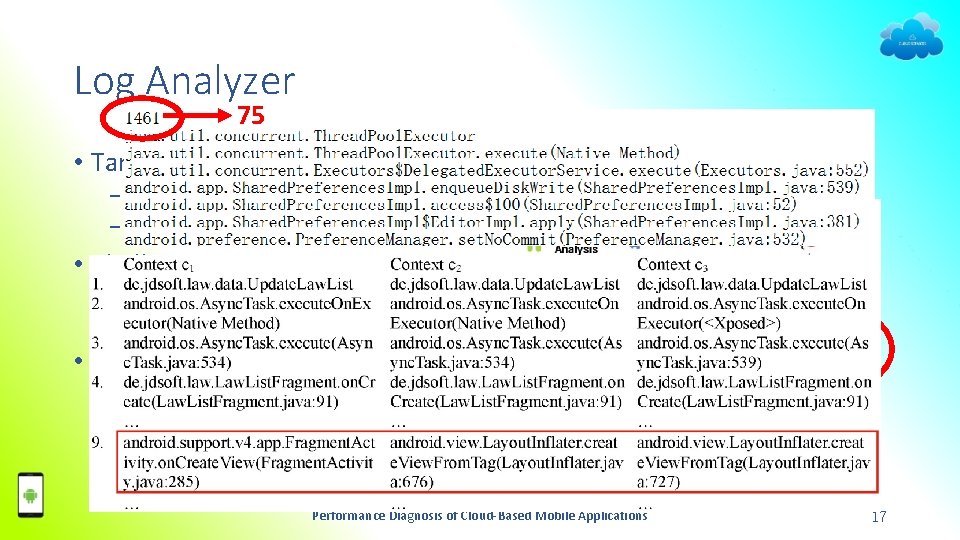

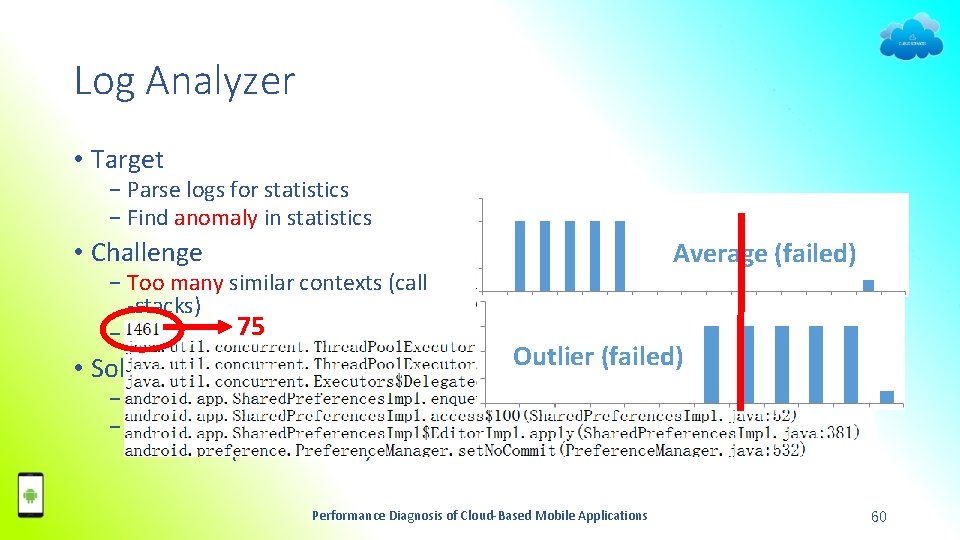

Log Analyzer 75 • Target − Parse logs for statistics − Find anomaly in statistics • Challenge − Too many similar contexts (call -stacks) • Solution − Cluster similar contexts Performance Diagnosis of Cloud-Based Mobile Applications 17

Experimental Study • Test configuration − 4 devices − 4 types of pressures + 1 without pressure • 30 minutes test per configuration • Totally 19, 800 minutes test for 33 apps Performance Diagnosis of Cloud-Based Mobile Applications 18

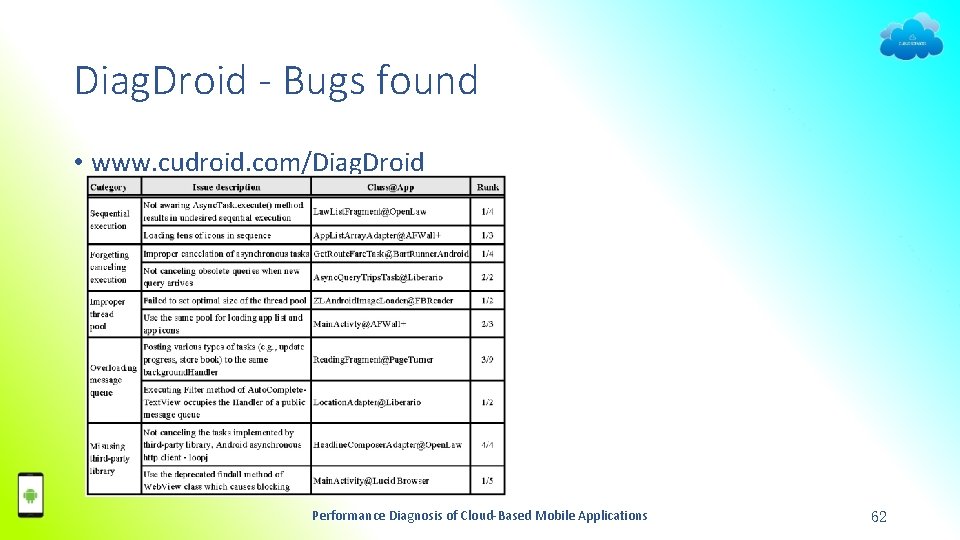

Bugs Found • Found: 27 new bugs of 5 types in 33 open source apps 1. 2. 3. 4. 5. Sequential execution Forgetting cancelling execution Improper execution pool Message queue overloading Misusing third-party library • Note: − No a priori knowledge on the app Performance Diagnosis of Cloud-Based Mobile Applications 19

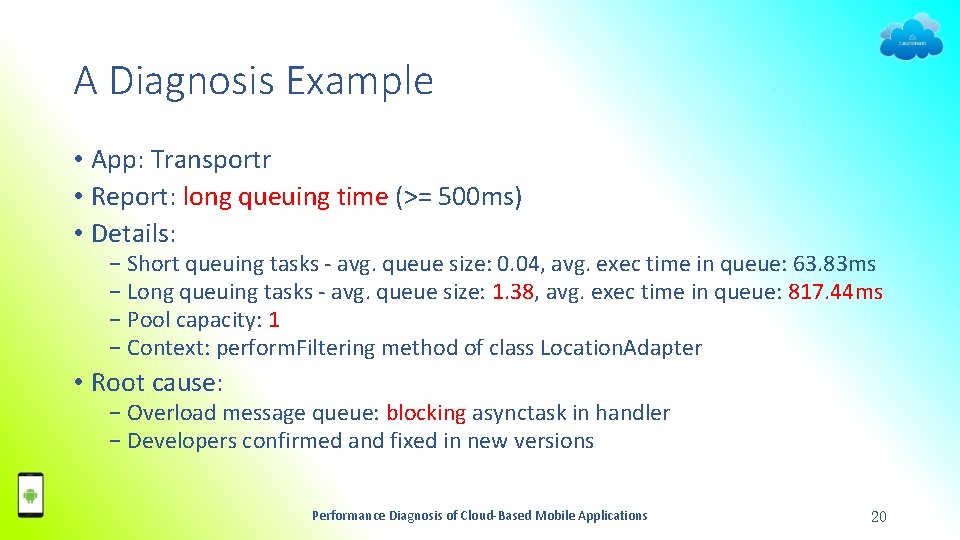

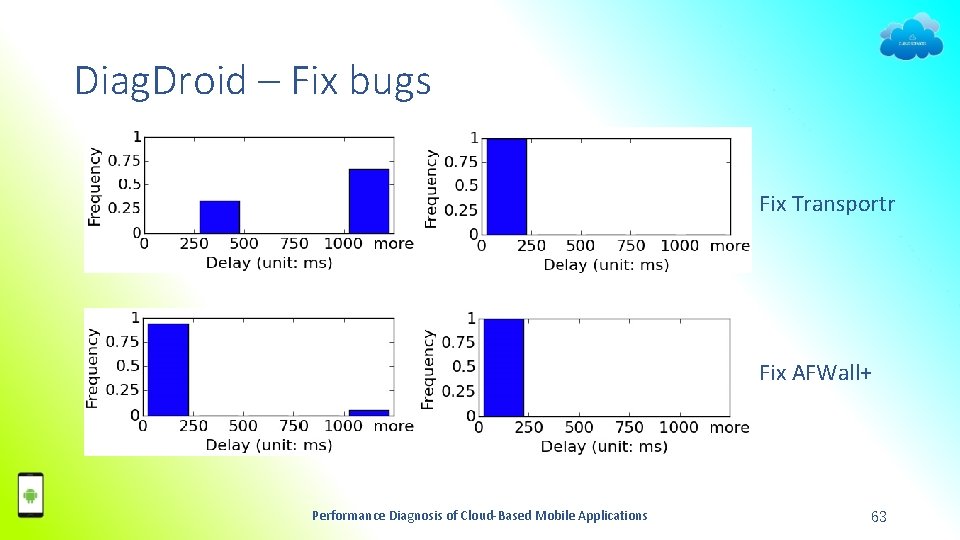

A Diagnosis Example • App: Transportr • Report: long queuing time (>= 500 ms) • Details: − Short queuing tasks - avg. queue size: 0. 04, avg. exec time in queue: 63. 83 ms − Long queuing tasks - avg. queue size: 1. 38, avg. exec time in queue: 817. 44 ms − Pool capacity: 1 − Context: perform. Filtering method of class Location. Adapter • Root cause: − Overload message queue: blocking asynctask in handler − Developers confirmed and fixed in new versions Performance Diagnosis of Cloud-Based Mobile Applications 20

Summary of Diag. Droid • New type of performance issues • Novel diagnosing framework: Diag. Droid • Categorize asynchronous executions • New bugs found • http: //www. cudroid. com/Diag. Droid Performance Diagnosis of Cloud-Based Mobile Applications 21

Outline • Introduction • Client Side Performance Diagnosis − Android Performance Diagnosis via Anatomizing Asynchronous Executions (Diag. Droid) − Detecting Poor Responsiveness UI for Android Applications (Pretect) • Cloud Side Performance Diagnosis • Conclusion Performance Diagnosis of Cloud-Based Mobile Applications 22

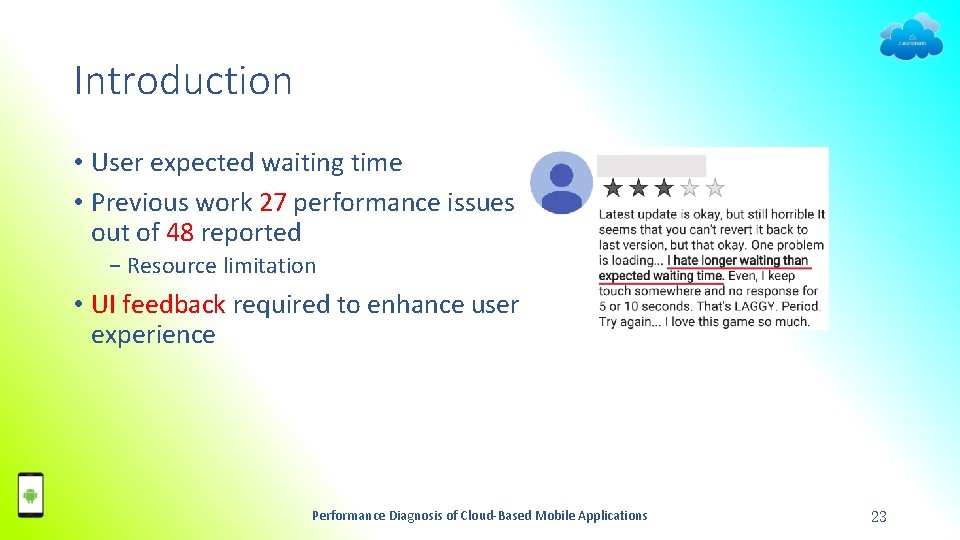

Introduction • User expected waiting time • Previous work 27 performance issues out of 48 reported − Resource limitation • UI feedback required to enhance user experience Performance Diagnosis of Cloud-Based Mobile Applications 23

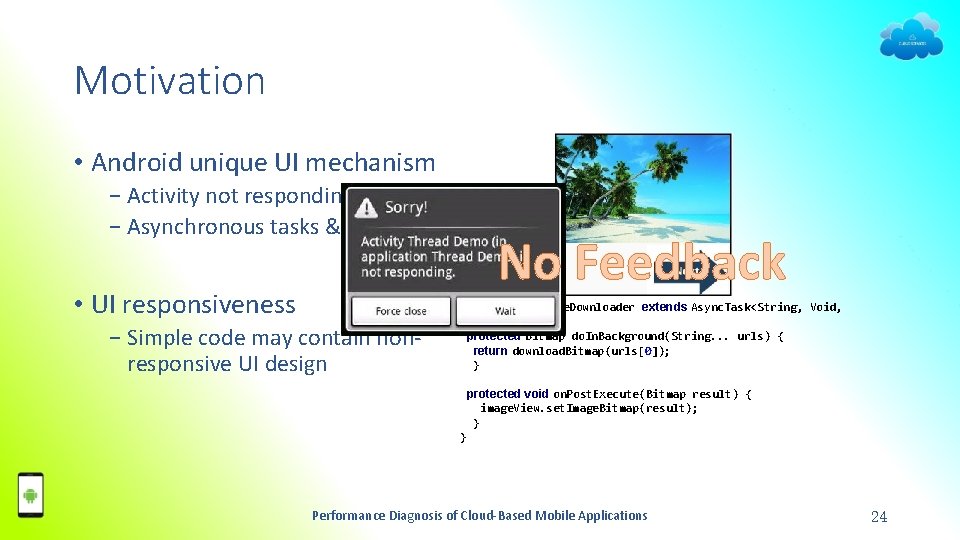

Motivation • Android unique UI mechanism − Activity not responding (ANR) − Asynchronous tasks & UI update • UI responsiveness − Simple code may contain nonresponsive UI design No Feedback private class Image. Downloader extends Async. Task<String, Void, Bitmap> { protected Bitmap do. In. Background(String. . . urls) { return download. Bitmap(urls[0]); } protected void on. Post. Execute(Bitmap result) { image. View. set. Image. Bitmap(result); } } Performance Diagnosis of Cloud-Based Mobile Applications 24

Introduction • Poor-responsive UI − long executing without feedback • Challenge 1. Hard to obtain feedback delay 2. Threshold not clear 3. Impossible to design feedback for all operations • Our contribution − Real world user study (For Challenge 2) − A tool (Pretect) that assists delay tolerant UI design (For Challenge 1 & 3) Performance Diagnosis of Cloud-Based Mobile Applications 25

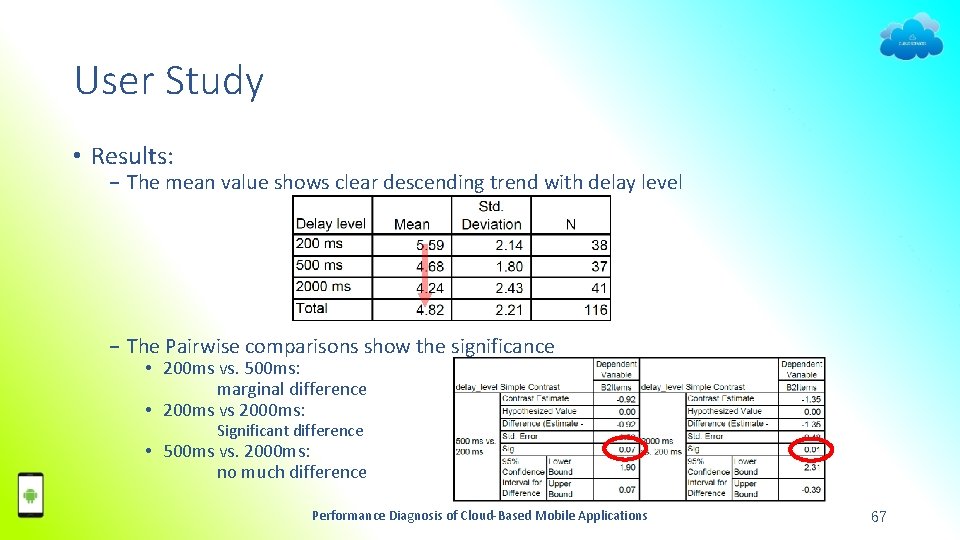

User Study • Impatient mobile users • Study relationship between users experience & operation delay • Test settings − Three delay levels (200 ms, 500 ms, 2000 ms) − Between-subject test (i. e. , fixed delay level per user) − Compare overall performance rating • Results − (Relationship) User experience & UI responsiveness − (Threshold) 500 ms no response is lag enough Performance Diagnosis of Cloud-Based Mobile Applications 26

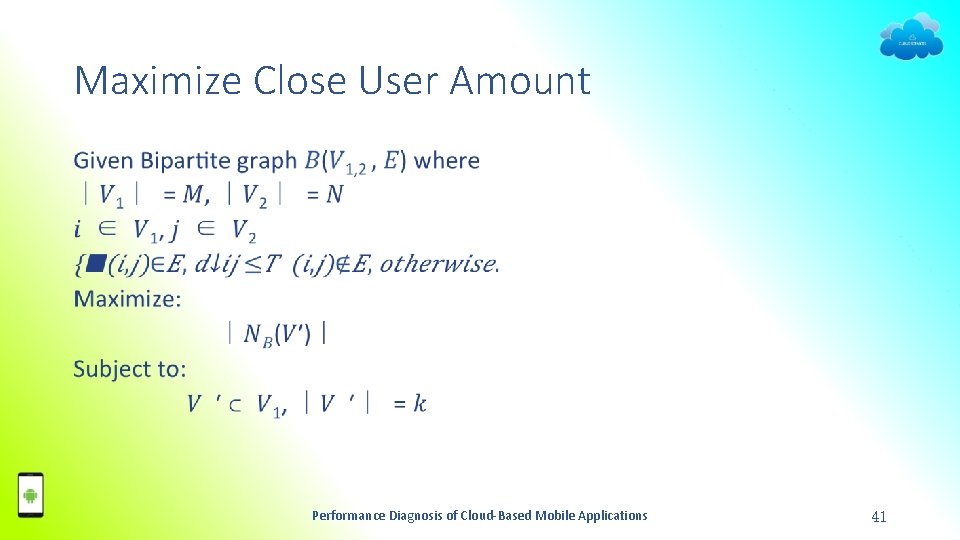

Problem Specification • Operation feedback − First screen update after a user operation • Feedback delay − Latency between the input event and the feedback • Poor-responsive operations − Operations with feedback delay ≥ T (T=500 ms) Performance Diagnosis of Cloud-Based Mobile Applications 27

Challenging Task • Task: detecting poor-responsive operations • Current tool Failed − Detecting abnormal tasks despite feedback delay (e. g. , Diag. Droid) • First Trial Failed − Monitoring all display updates • Solution − Monitor UI update procedure − Separate system display update (e. g. , notification bar) with app UI updates Performance Diagnosis of Cloud-Based Mobile Applications 28

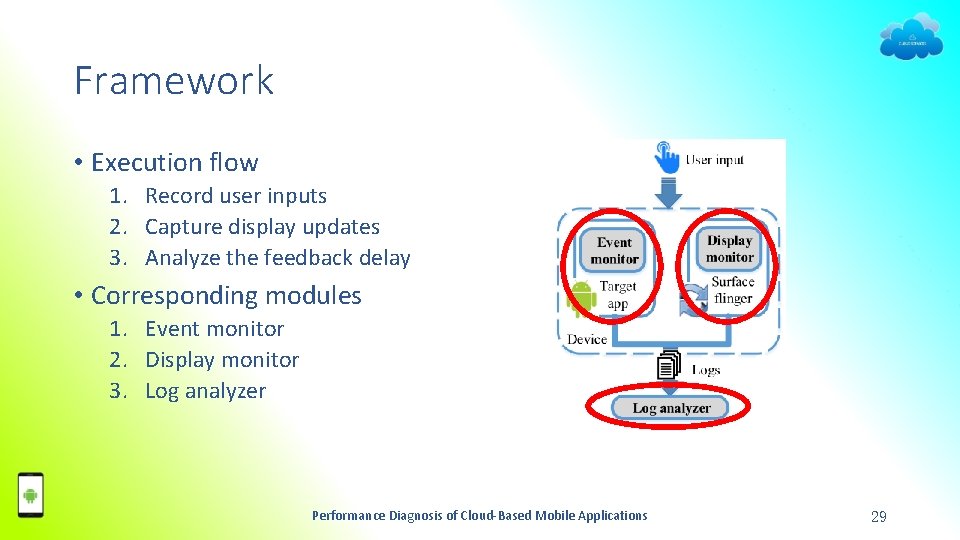

Framework • Execution flow 1. Record user inputs 2. Capture display updates 3. Analyze the feedback delay • Corresponding modules 1. Event monitor 2. Display monitor 3. Log analyzer Performance Diagnosis of Cloud-Based Mobile Applications 29

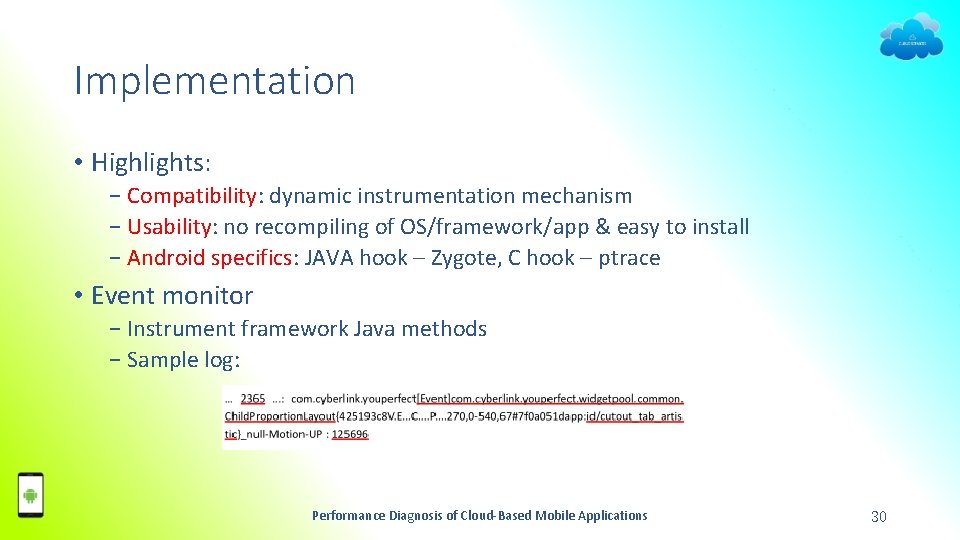

Implementation • Highlights: − Compatibility: dynamic instrumentation mechanism − Usability: no recompiling of OS/framework/app & easy to install − Android specifics: JAVA hook – Zygote, C hook – ptrace • Event monitor − Instrument framework Java methods − Sample log: Performance Diagnosis of Cloud-Based Mobile Applications 30

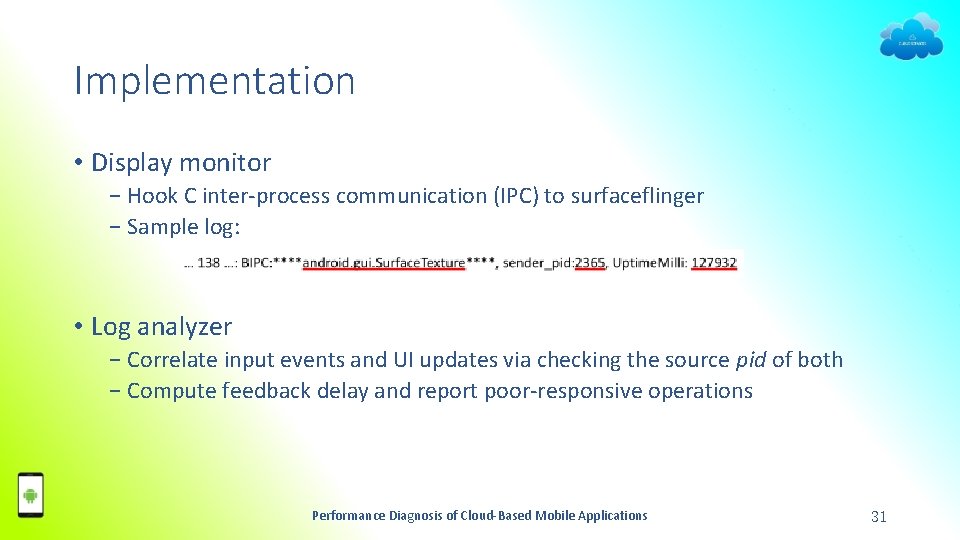

Implementation • Display monitor − Hook C inter-process communication (IPC) to surfaceflinger − Sample log: • Log analyzer − Correlate input events and UI updates via checking the source pid of both − Compute feedback delay and report poor-responsive operations Performance Diagnosis of Cloud-Based Mobile Applications 31

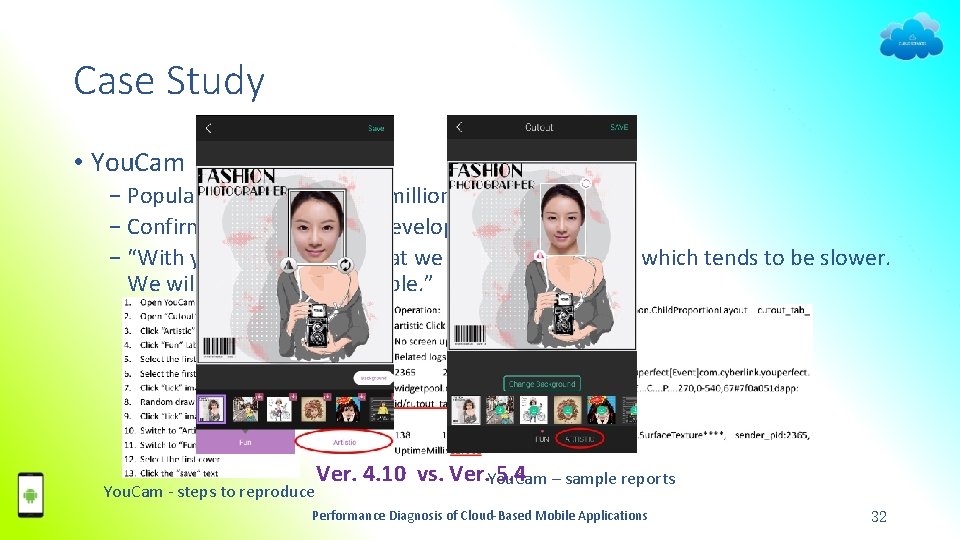

Case Study • You. Cam − Popular selfie app over 60 million downloads − Confirmed & fixed by the developer − “With your hint, we find that we have used a widget which tends to be slower. We will fix as soon as possible. ” You. Cam - steps to reproduce Ver. 4. 10 vs. Ver. You. Cam – sample reports 5. 4 Performance Diagnosis of Cloud-Based Mobile Applications 32

Summary of Pretect • Real world user study • A tool (Pretect) • Cases detected • http: //www. cudroid. com/pretect Performance Diagnosis of Cloud-Based Mobile Applications 33

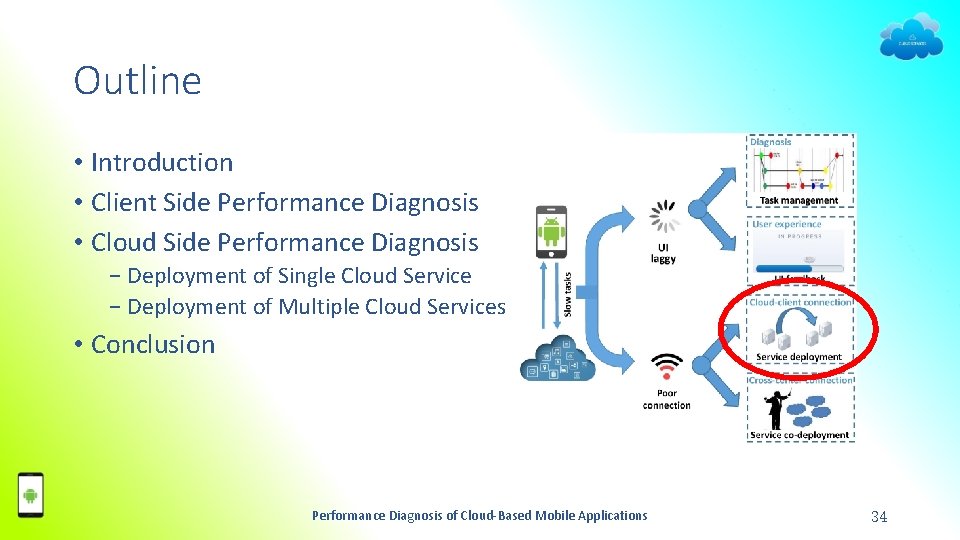

Outline • Introduction • Client Side Performance Diagnosis • Cloud Side Performance Diagnosis − Deployment of Single Cloud Service − Deployment of Multiple Cloud Services • Conclusion Performance Diagnosis of Cloud-Based Mobile Applications 34

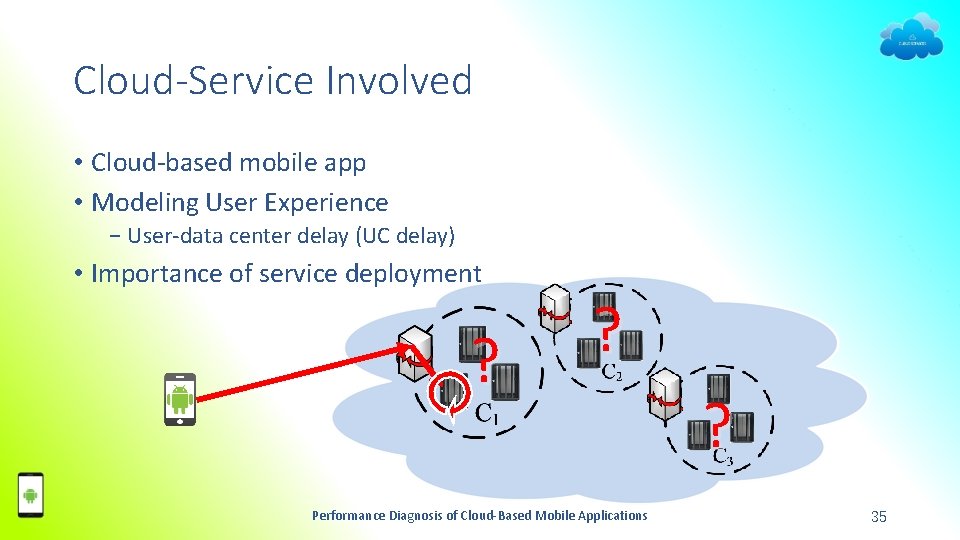

Cloud-Service Involved • Cloud-based mobile app • Modeling User Experience − User-data center delay (UC delay) • Importance of service deployment ? ? Performance Diagnosis of Cloud-Based Mobile Applications ? 35

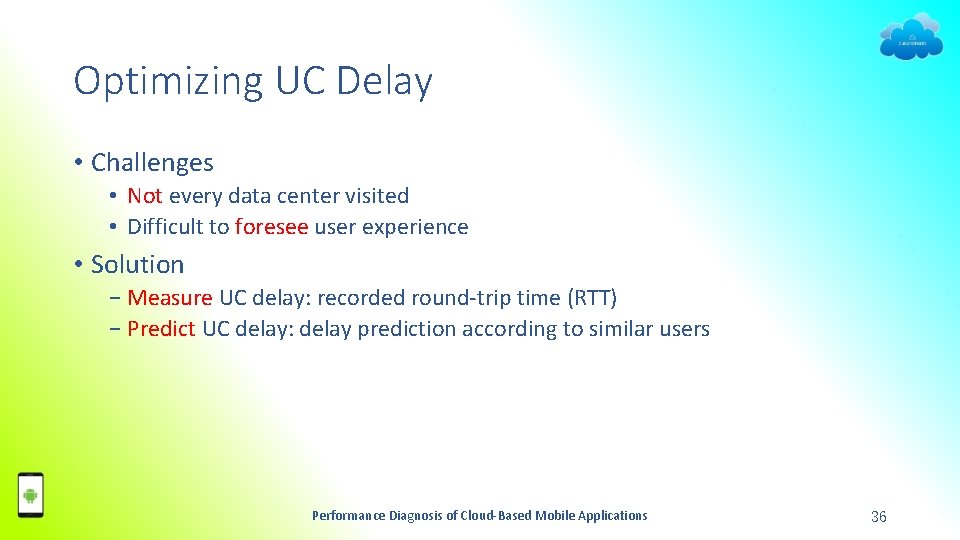

Optimizing UC Delay • Challenges • Not every data center visited • Difficult to foresee user experience • Solution − Measure UC delay: recorded round-trip time (RTT) − Predict UC delay: delay prediction according to similar users Performance Diagnosis of Cloud-Based Mobile Applications 36

Framework of Cloud-Based Services • Measure UC delay • Predict UC delay Performance Diagnosis of Cloud-Based Mobile Applications 37

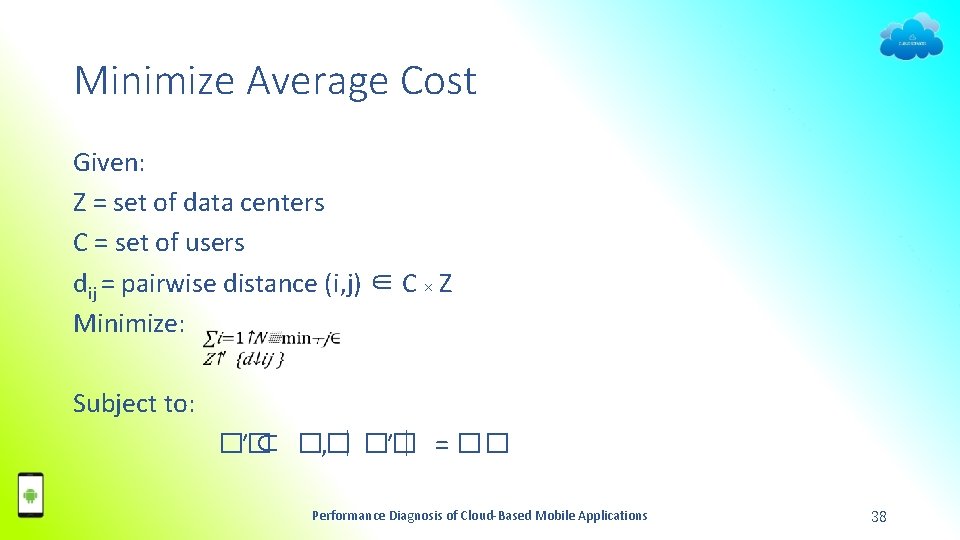

Minimize Average Cost Given: Z = set of data centers C = set of users dij = pairwise distance (i, j) ∈ C × Z Minimize: Subject to: �� ′ ⊂ �� , ∣�� ′∣ = �� Performance Diagnosis of Cloud-Based Mobile Applications 38

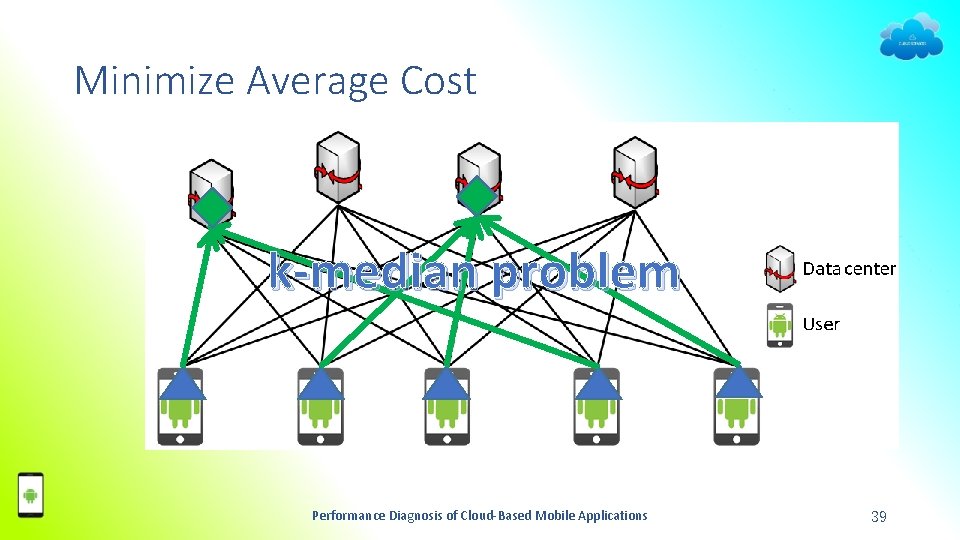

Minimize Average Cost k-median problem Performance Diagnosis of Cloud-Based Mobile Applications 39

Problems of the Model • Unnecessary minimum • Outlier users • Tradeoff − (Response time) ≤ threshold T − (User number) 99% good, 1% poor Performance Diagnosis of Cloud-Based Mobile Applications 40

Maximize Close User Amount • Performance Diagnosis of Cloud-Based Mobile Applications 41

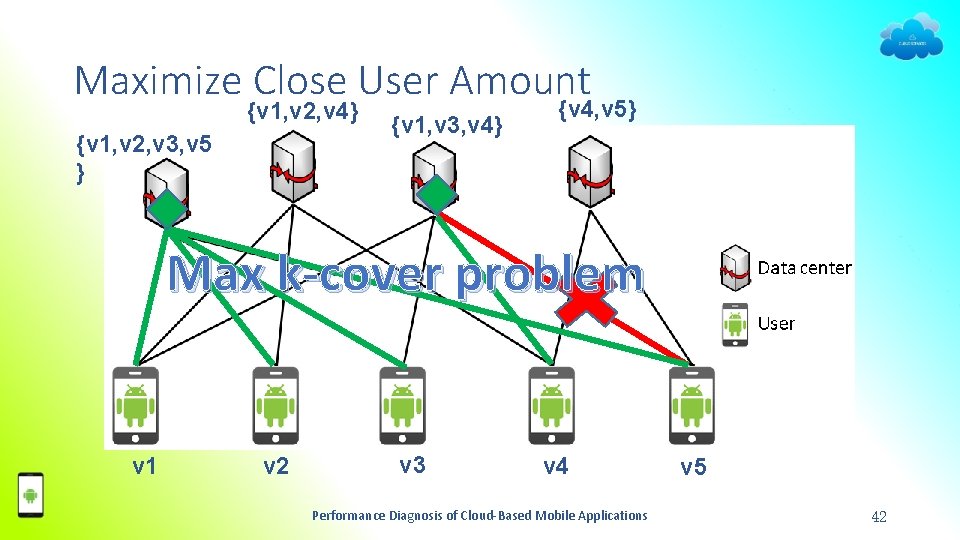

Maximize Close User Amount {v 1, v 2, v 4} {v 1, v 2, v 3, v 5 } {v 1, v 3, v 4} {v 4, v 5} Max k-cover problem v 1 v 2 v 3 v 4 Performance Diagnosis of Cloud-Based Mobile Applications v 5 42

Real-World Dataset • 303 Planet. Lab computers • 4, 302 the Internet services • ≈130, 000 response-time values matrix Performance Diagnosis of Cloud-Based Mobile Applications 43

Outline • Introduction • Client Side Performance Diagnosis • Cloud Side Performance Diagnosis − Deployment of Single Cloud Service − Deployment of Multiple Cloud Services • Conclusion Performance Diagnosis of Cloud-Based Mobile Applications 44

Background • Extending previous model • Different cloud services may cooperate − You. Tube & Facebook − Google Doc & Gmail − Taobao & Alipay • Necessary to deploy together − Independent deployment not enough − Global decision required Performance Diagnosis of Cloud-Based Mobile Applications 45

Motivation Example Performance Diagnosis of Cloud-Based Mobile Applications 46

Multi-Service Co-deployment Problem • Same company to host • Multiple services for different users (may overlap) • Interaction between services Performance Diagnosis of Cloud-Based Mobile Applications 47

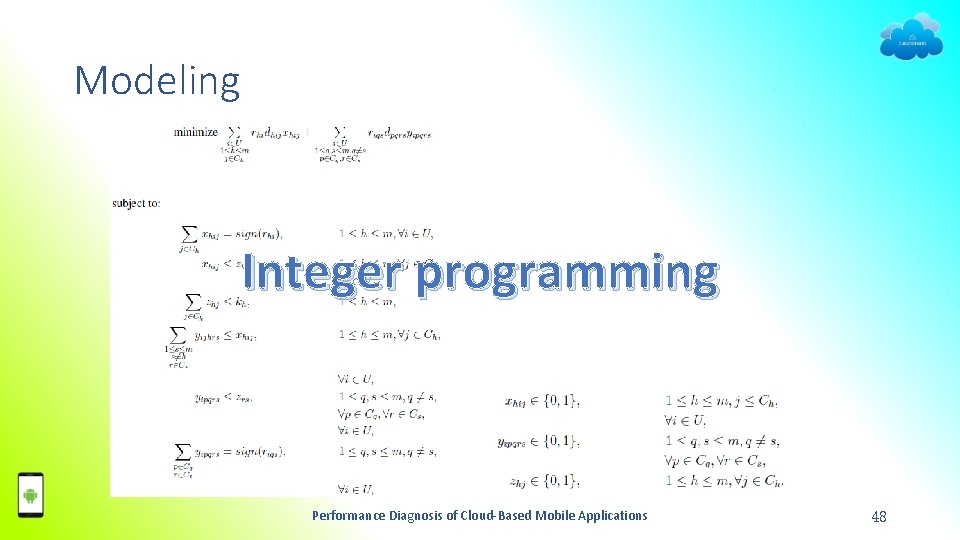

Modeling Integer programming Performance Diagnosis of Cloud-Based Mobile Applications 48

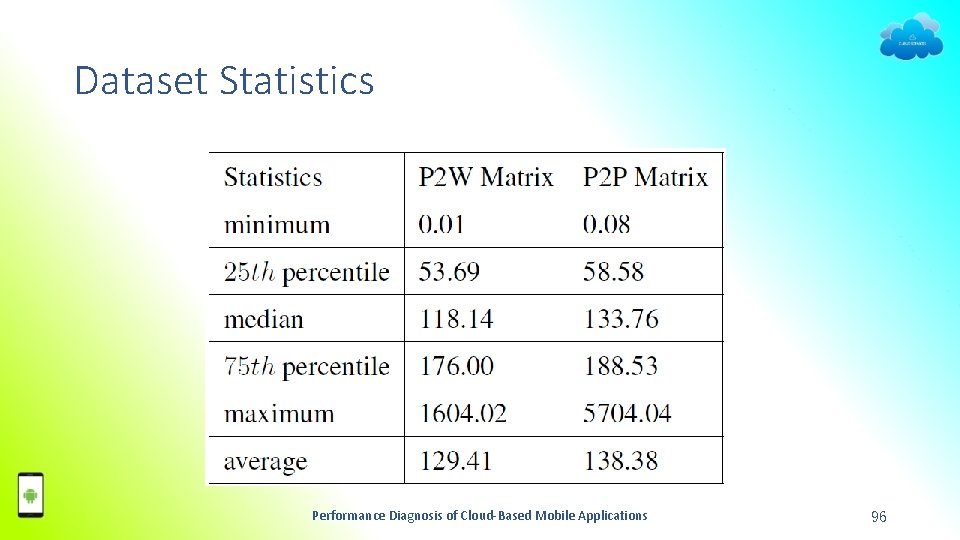

Real-World Dataset 1. 2. 3. 4. 597 Planetlab instances Ping 2, 213 web services Ping all other Planetlab peers (random order) Obtain ≈577, 000 Internet-service access values matrix & ≈94, 000 peer-wise communication delay values matrix Performance Diagnosis of Cloud-Based Mobile Applications 49

Summary of Cloud Service Deployment • Model user experience • Formulate deployment problems • Real-world dataset • http: //appsrv. cse. cuhk. edu. hk/˜ykang/cloud Performance Diagnosis of Cloud-Based Mobile Applications 50

Outline • Introduction • Client Side Performance Diagnosis • Cloud Side Performance Diagnosis • Conclusion Performance Diagnosis of Cloud-Based Mobile Applications 51

Conclusion • Cloud-based mobile app performance enhancing • On client side − Detect and diagnose performance issues − Enhancing user experience for long executing operations • On cloud side − Reduce cloud-client communication delay − Reduce cross-(data) center communication delay • All tools, source codes, data released Performance Diagnosis of Cloud-Based Mobile Applications 52

Thank you! Q & A Performance Diagnosis of Cloud-Based Mobile Applications 53

Backup Slides Performance Diagnosis of Cloud-Based Mobile Applications 54

Diag. Droid Performance Diagnosis of Cloud-Based Mobile Applications 55

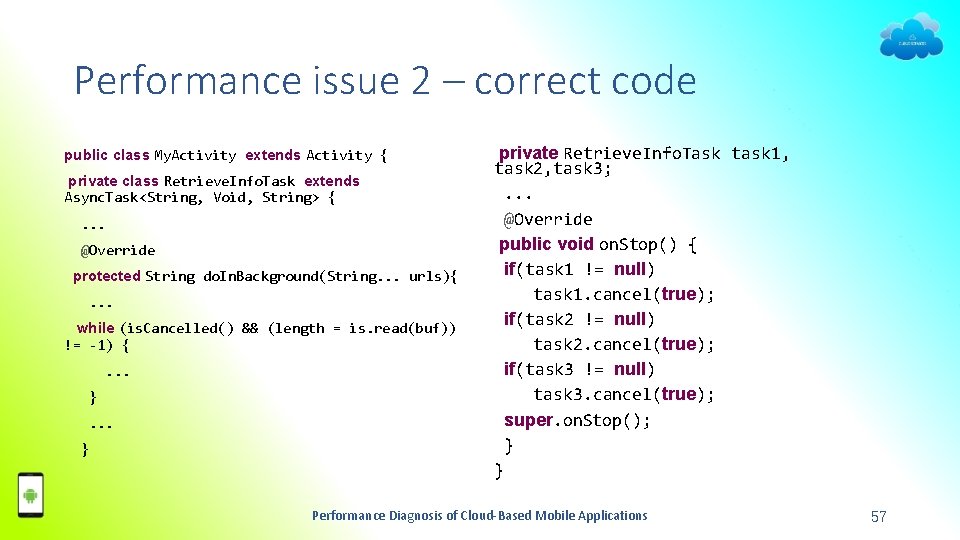

Performance issue 2 – forget cancelling • Goal: deal with dead tasks − Straight-forward approach • Don’t do anything • Wrong! • Reason: tasks do not cancel automatically − Alternative approach • Cancel the task (e. g. , downloading) via overriding on. Cancel method • Wrong! • Reason: on. Cancel is called after do. In. Background, cannot cancel tasks − Correct approach • Check is. Cancelled() periodically • Cancel whenever the function returns true − Existing tool (e. g. , Strict. Mode, Asynchronizer) cannot detect such bugs Performance Diagnosis of Cloud-Based Mobile Applications 56

Performance issue 2 – correct code public class My. Activity extends Activity { private class Retrieve. Info. Task extends Async. Task<String, Void, String> {. . . @Override protected String do. In. Background(String. . . urls){. . . while (is. Cancelled() && (length = is. read(buf)) != ‐ 1) {. . . } private Retrieve. Info. Task task 1, task 2, task 3; . . . @Override public void on. Stop() { if(task 1 != null) task 1. cancel(true); if(task 2 != null) task 2. cancel(true); if(task 3 != null) task 3. cancel(true); super. on. Stop(); } } Performance Diagnosis of Cloud-Based Mobile Applications 57

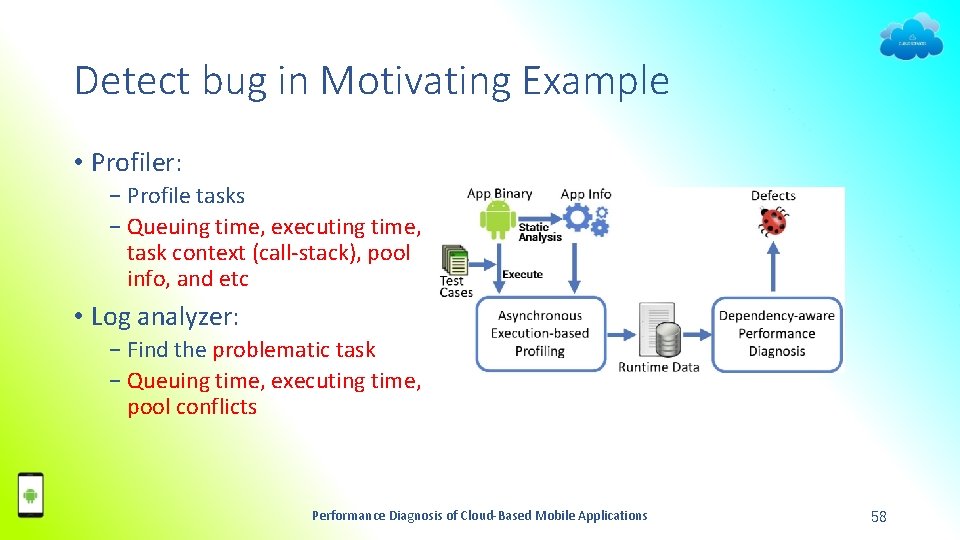

Detect bug in Motivating Example • Profiler: − Profile tasks − Queuing time, executing time, task context (call-stack), pool info, and etc • Log analyzer: − Find the problematic task − Queuing time, executing time, pool conflicts Performance Diagnosis of Cloud-Based Mobile Applications 58

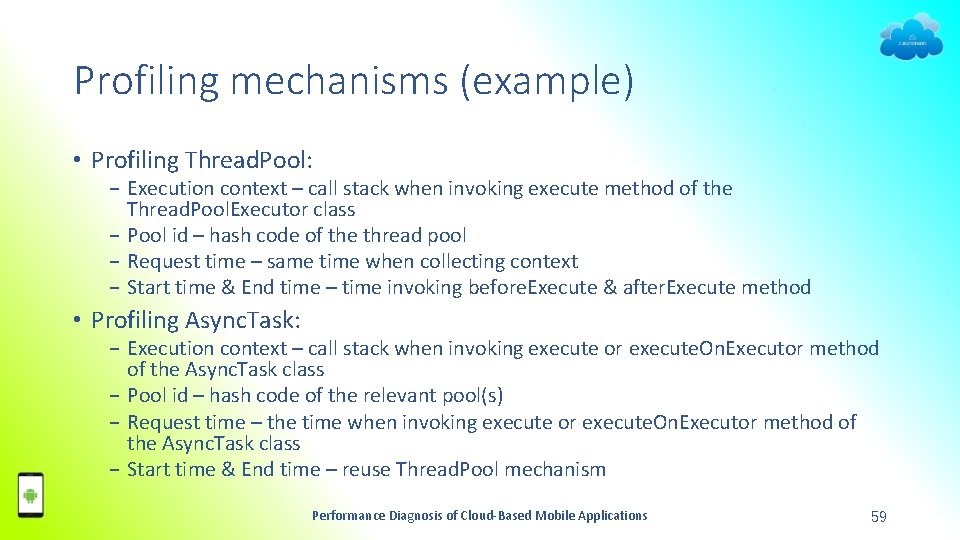

Profiling mechanisms (example) • Profiling Thread. Pool: − Execution context – call stack when invoking execute method of the Thread. Pool. Executor class − Pool id – hash code of the thread pool − Request time – same time when collecting context − Start time & End time – time invoking before. Execute & after. Execute method • Profiling Async. Task: − Execution context – call stack when invoking execute or execute. On. Executor method of the Async. Task class − Pool id – hash code of the relevant pool(s) − Request time – the time when invoking execute or execute. On. Executor method of the Async. Task class − Start time & End time – reuse Thread. Pool mechanism Performance Diagnosis of Cloud-Based Mobile Applications 59

Log Analyzer • Target − Parse logs for statistics − Find anomaly in statistics • Challenge − Too many similar contexts (call -stacks) 75 − Missing extreme cases • Solution Average (failed) Outlier (failed) − Cluster similar contexts − Detect anomalous by maximum (> threshold T) Performance Diagnosis of Cloud-Based Mobile Applications 60

Other modules • Static analysis − Decompile the app and gather information from bytecode − Do not modify the original app • Test executor − A guard of Monkey Exerciser (a random testing tool) − Support plugin of any kind of test scripts Performance Diagnosis of Cloud-Based Mobile Applications 61

Diag. Droid - Bugs found • www. cudroid. com/Diag. Droid Performance Diagnosis of Cloud-Based Mobile Applications 62

Diag. Droid – Fix bugs Fix Transportr Fix AFWall+ Performance Diagnosis of Cloud-Based Mobile Applications 63

Diag. Droid – Low Overhead • 10, 000 Monkey operations with Diag. Droid on and off • 200 ms interval between two operations • Time command for CPU time • 0. 8% overhead Performance Diagnosis of Cloud-Based Mobile Applications 64

Diag. Droid - Development Tips 1. 2. 3. 4. 5. 6. Use private pool instead of public one when necessary. Set reasonable pool size. Use third-party library carefully. Keep effective response. Cancel when no longer needed. Use proper type of asynchronous execution. Performance Diagnosis of Cloud-Based Mobile Applications 65

Pretect Performance Diagnosis of Cloud-Based Mobile Applications 66

User Study • Results: − The mean value shows clear descending trend with delay level − The Pairwise comparisons show the significance • 200 ms vs. 500 ms: marginal difference • 200 ms vs 2000 ms: Significant difference • 500 ms vs. 2000 ms: no much difference Performance Diagnosis of Cloud-Based Mobile Applications 67

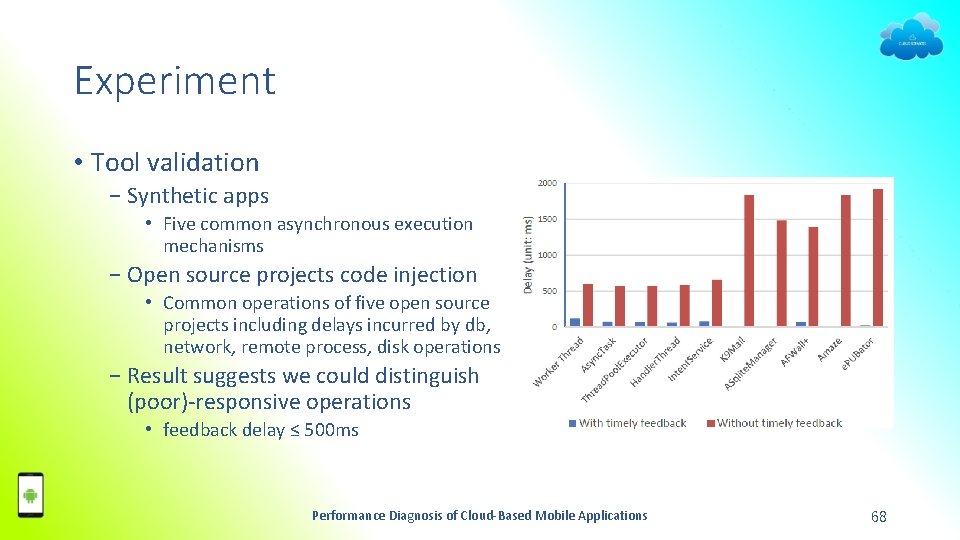

Experiment • Tool validation − Synthetic apps • Five common asynchronous execution mechanisms − Open source projects code injection • Common operations of five open source projects including delays incurred by db, network, remote process, disk operations − Result suggests we could distinguish (poor)-responsive operations • feedback delay ≤ 500 ms Performance Diagnosis of Cloud-Based Mobile Applications 68

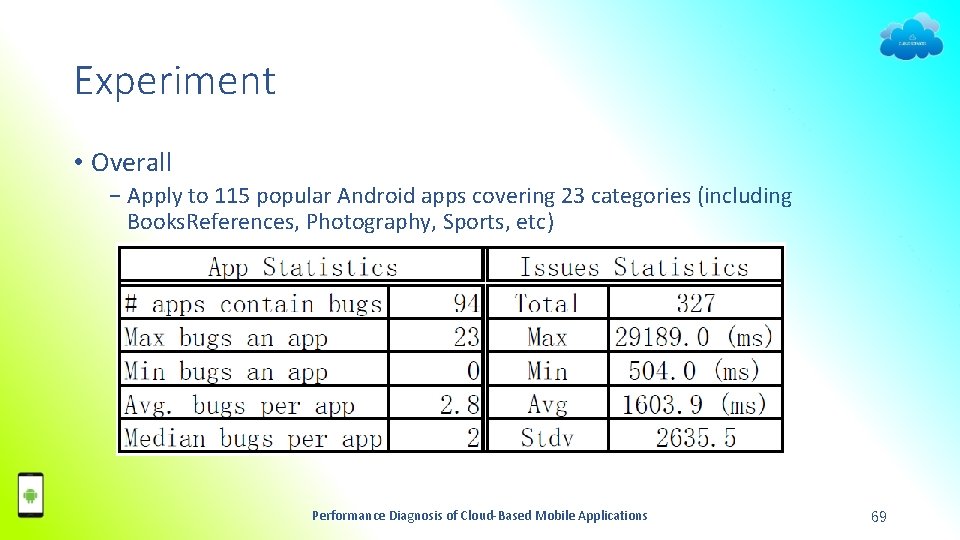

Experiment • Overall − Apply to 115 popular Android apps covering 23 categories (including Books. References, Photography, Sports, etc) Performance Diagnosis of Cloud-Based Mobile Applications 69

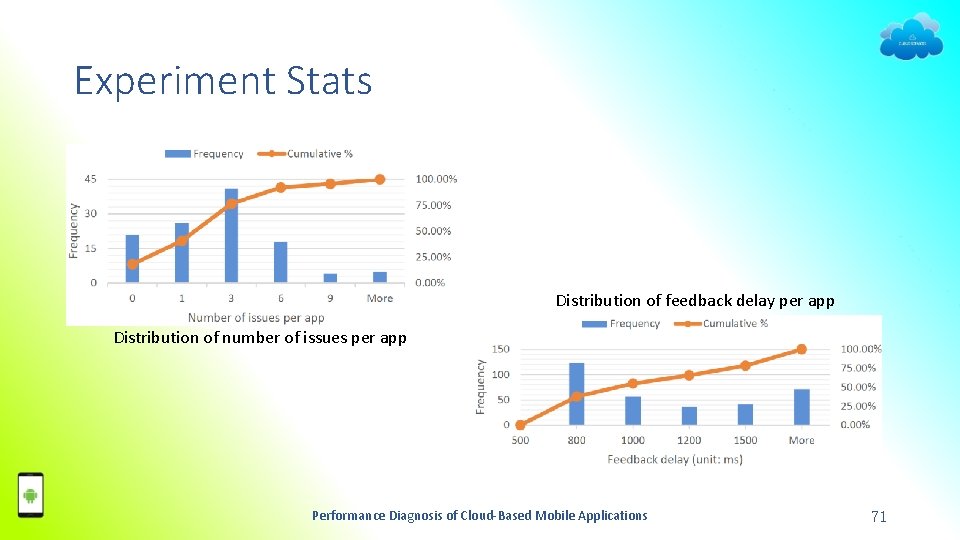

Experiment Stats • 94/115 apps contain potential UI design defects • 327 independent components with feedback delays ≥ 500 ms • Maximum delay ≥ 29 s Performance Diagnosis of Cloud-Based Mobile Applications 70

Experiment Stats Distribution of feedback delay per app Distribution of number of issues per app Performance Diagnosis of Cloud-Based Mobile Applications 71

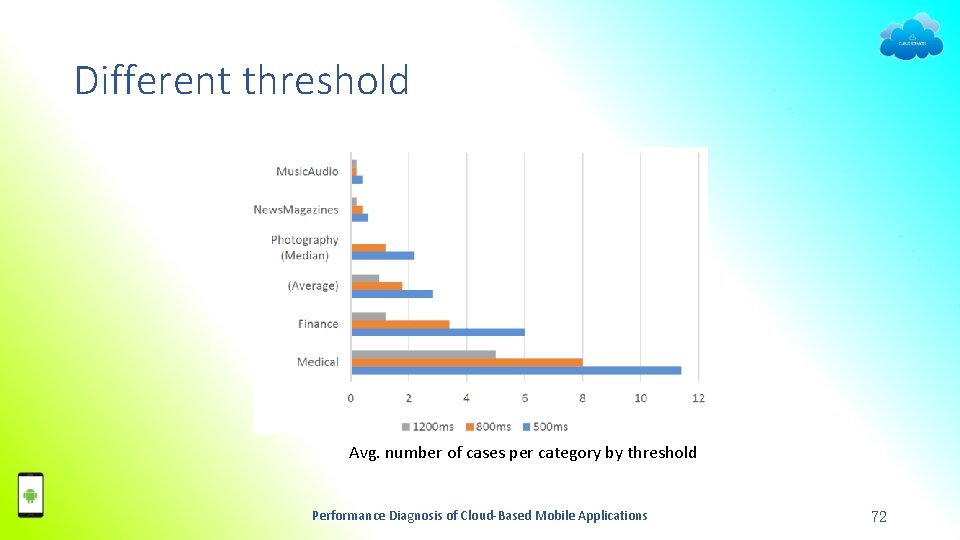

Different threshold Avg. number of cases per category by threshold Performance Diagnosis of Cloud-Based Mobile Applications 72

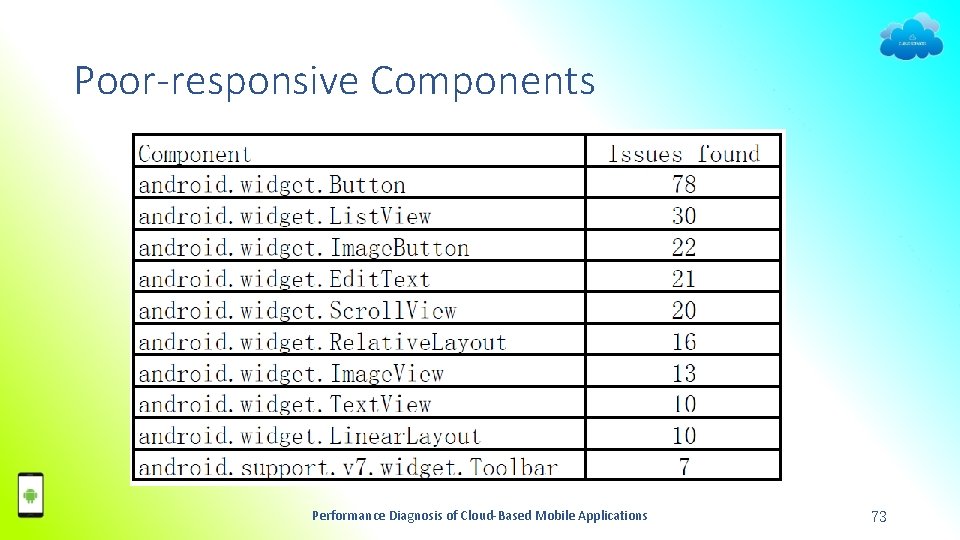

Poor-responsive Components Performance Diagnosis of Cloud-Based Mobile Applications 73

Single Service Deployment Performance Diagnosis of Cloud-Based Mobile Applications 74

Introduction Cloud Computing Systems −Auto scaling Dynamic allocation of computing resources −Elastic load balance Distributes and balances the incoming traffic Performance Diagnosis of Cloud-Based Mobile Applications 75

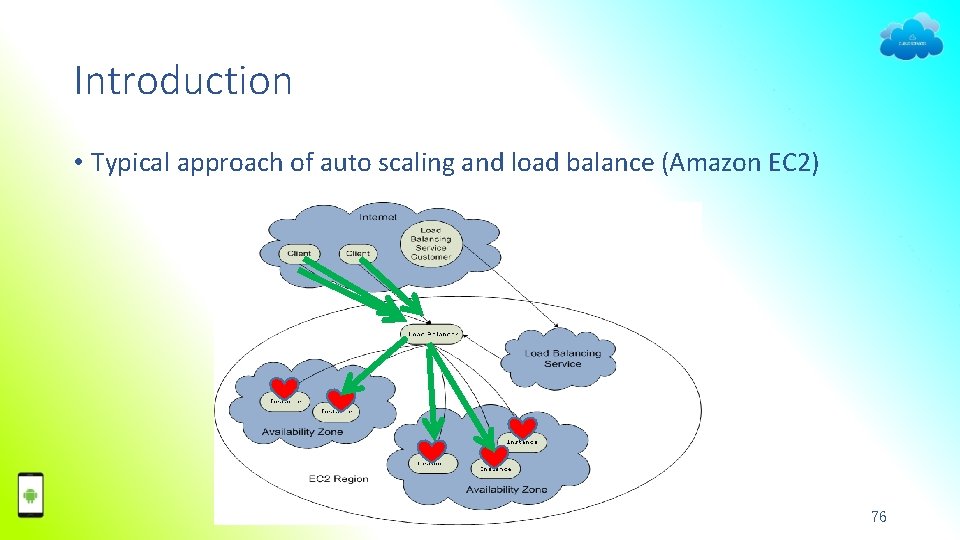

Introduction • Typical approach of auto scaling and load balance (Amazon EC 2) Performance Diagnosis of Cloud-Based Mobile Applications 76

Introduction Current approaches are not optimized for users − Auto scaling Do not consider distributions of the end users − Elastic load balance Do not take the user specifics (e. g. , user location) into considerations Performance Diagnosis of Cloud-Based Mobile Applications 77

Introduction • Our contribution: – User experience model in cloud – A new service redeployment method • Two advantages: 1) Improve auto scaling techniques Launch best set of service instances 2) Extend elastic load balance Directs user request to a nearby one. Performance Diagnosis of Cloud-Based Mobile Applications 78

![Minimize Average Cost • k-median problem • NP-hard • W[2]-hard with k as parameter Minimize Average Cost • k-median problem • NP-hard • W[2]-hard with k as parameter](http://slidetodoc.com/presentation_image/964bdf71456c665f7bdb7dbcf0e4a4b7/image-79.jpg)

Minimize Average Cost • k-median problem • NP-hard • W[2]-hard with k as parameter • W[1]-hard with capacity l as parameter • In FPT with both as parameter algorithm: O(f(k, l)no(1)) time Performance Diagnosis of Cloud-Based Mobile Applications 79

Minimize Average Cost • Approximate Algorithms: 1. 2. 3. 4. Exhaustive Search Greedy Algorithm Local Search Algorithm (3 + ε approximation) Random Algorithm Performance Diagnosis of Cloud-Based Mobile Applications 80

![Maximize Close User Amount • Max k-cover problem • NP-hard • W[2]-hard with k Maximize Close User Amount • Max k-cover problem • NP-hard • W[2]-hard with k](http://slidetodoc.com/presentation_image/964bdf71456c665f7bdb7dbcf0e4a4b7/image-81.jpg)

Maximize Close User Amount • Max k-cover problem • NP-hard • W[2]-hard with k as parameter • W[2]-hard (general) and FPT (tree-like) with maximum subset size as parameter • FPT if both maximum subset size and capacity as parameter Performance Diagnosis of Cloud-Based Mobile Applications 81

Maximize Close User Amount • Approximate Algorithms: 1. Greedy Algorithm (1 -1/e approximation) 2. Local Search Algorithm Performance Diagnosis of Cloud-Based Mobile Applications 82

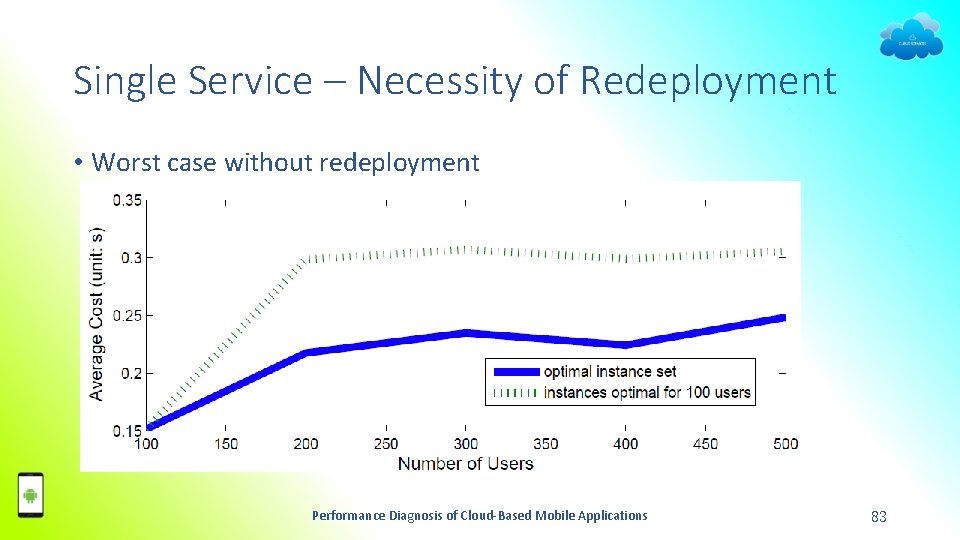

Single Service – Necessity of Redeployment • Worst case without redeployment Performance Diagnosis of Cloud-Based Mobile Applications 83

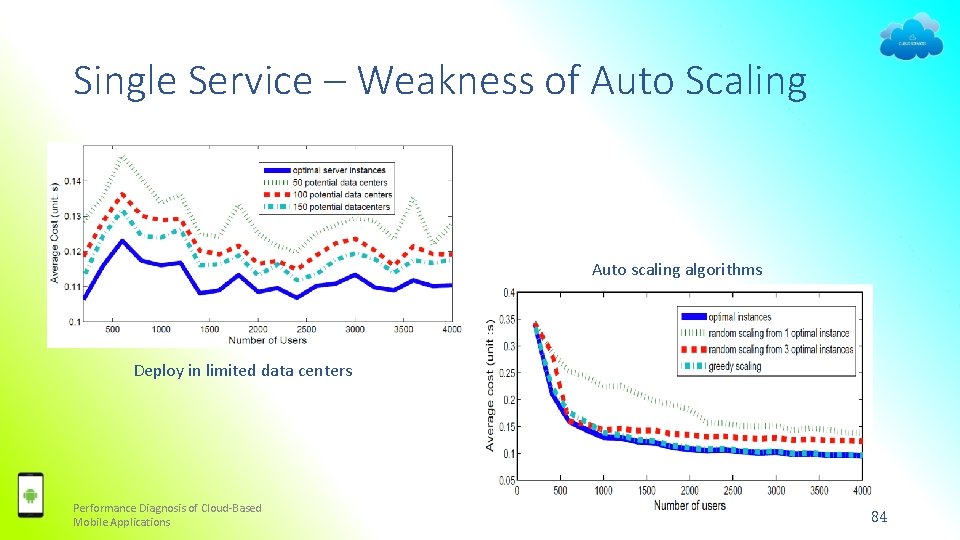

Single Service – Weakness of Auto Scaling Auto scaling algorithms Deploy in limited data centers Performance Diagnosis of Cloud-Based Mobile Applications 84

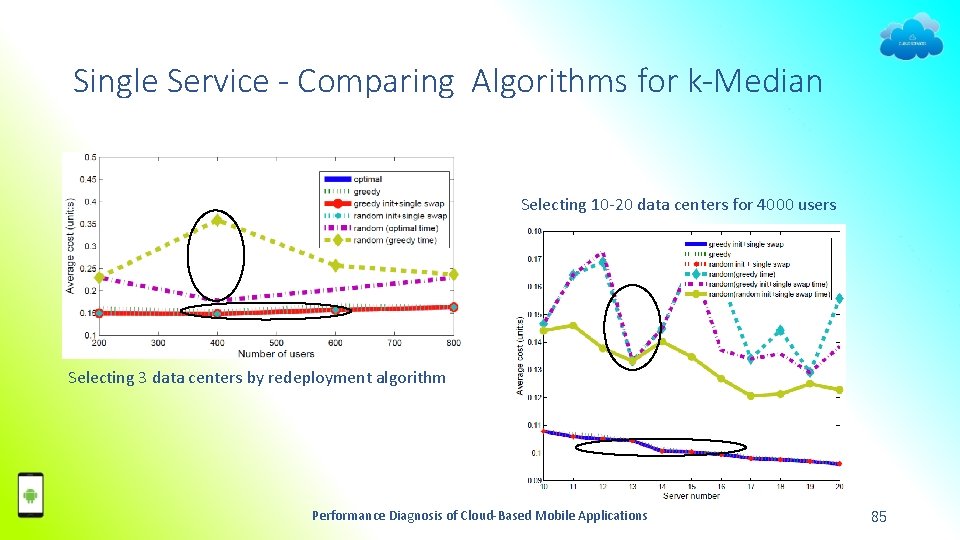

Single Service - Comparing Algorithms for k-Median Selecting 10 -20 data centers for 4000 users Selecting 3 data centers by redeployment algorithm Performance Diagnosis of Cloud-Based Mobile Applications 85

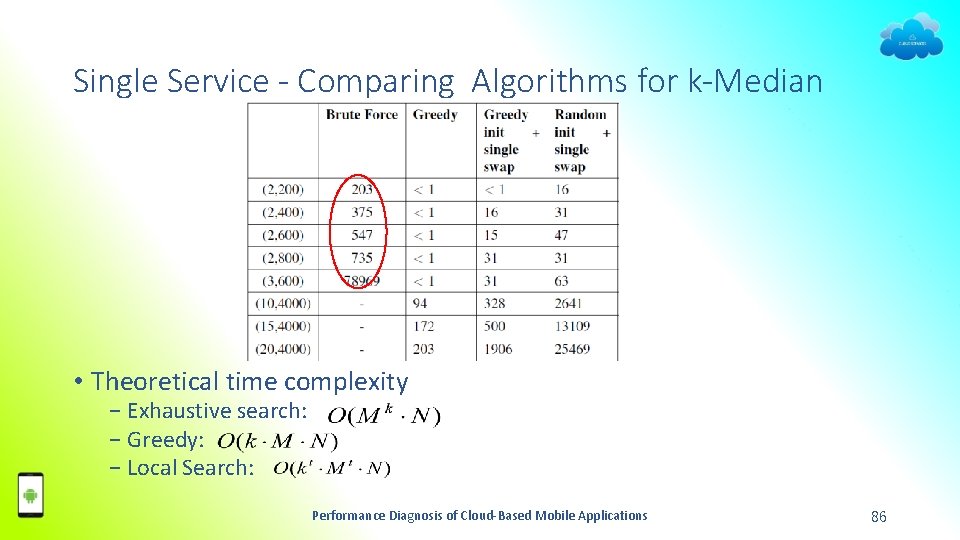

Single Service - Comparing Algorithms for k-Median • Theoretical time complexity − Exhaustive search: − Greedy: − Local Search: Performance Diagnosis of Cloud-Based Mobile Applications 86

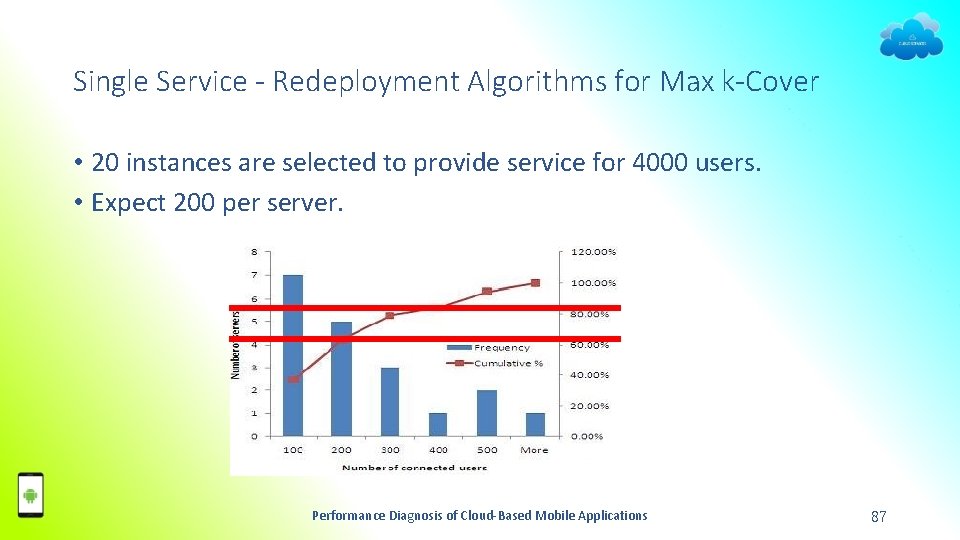

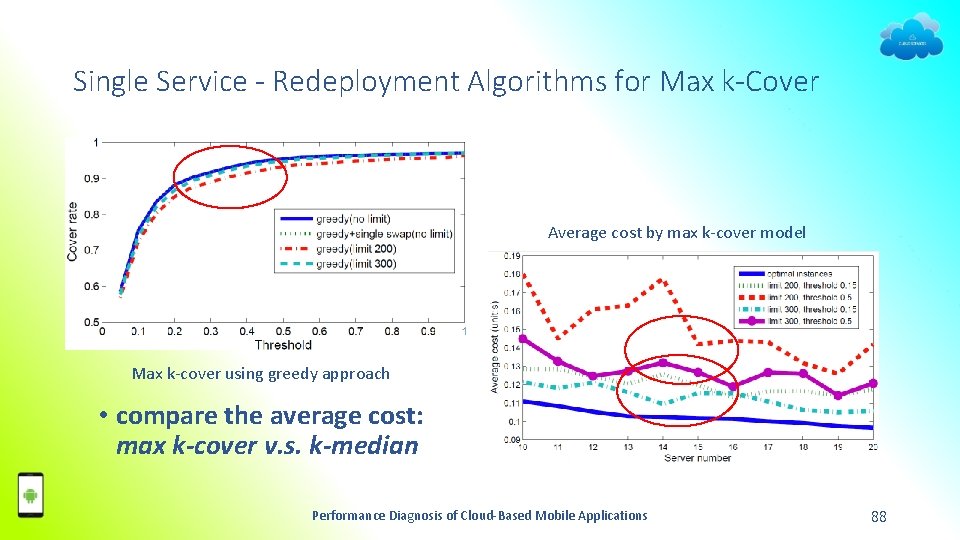

Single Service - Redeployment Algorithms for Max k-Cover • 20 instances are selected to provide service for 4000 users. • Expect 200 per server. Performance Diagnosis of Cloud-Based Mobile Applications 87

Single Service - Redeployment Algorithms for Max k-Cover Average cost by max k-cover model Max k-cover using greedy approach • compare the average cost: max k-cover v. s. k-median Performance Diagnosis of Cloud-Based Mobile Applications 88

Multi-Service Deployment Performance Diagnosis of Cloud-Based Mobile Applications 89

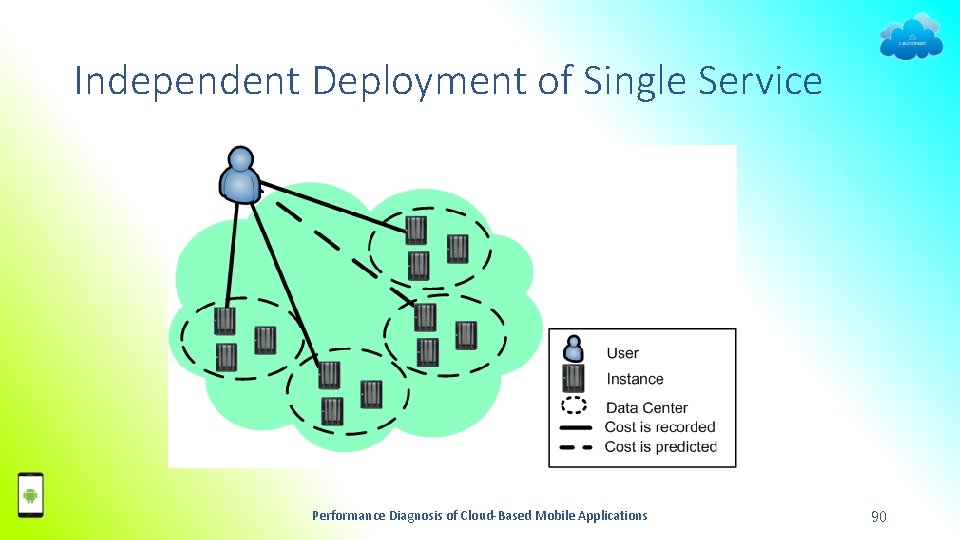

Independent Deployment of Single Service Performance Diagnosis of Cloud-Based Mobile Applications 90

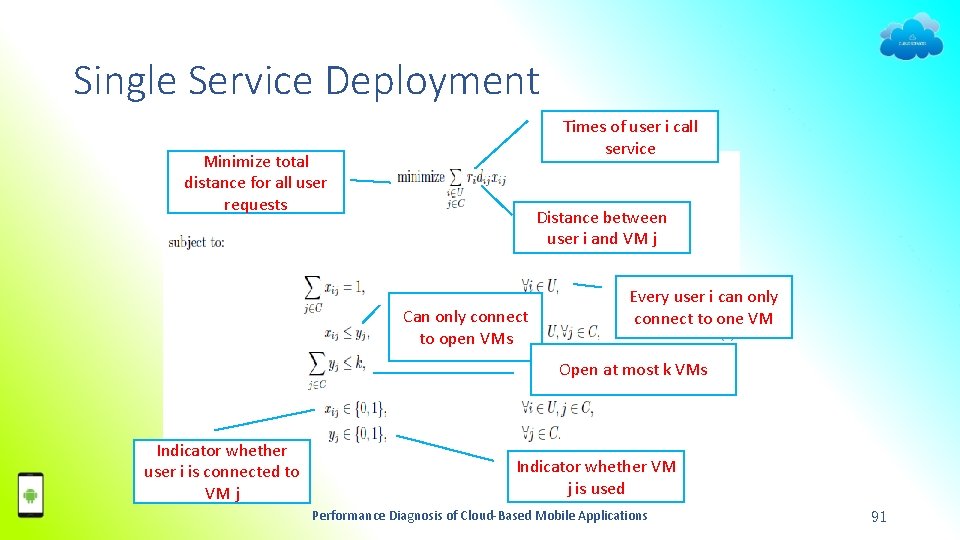

Single Service Deployment Times of user i call service Minimize total distance for all user requests Distance between user i and VM j Can only connect to open VMs Every user i can only connect to one VM Open at most k VMs Indicator whether user i is connected to VM j Indicator whether VM j is used Performance Diagnosis of Cloud-Based Mobile Applications 91

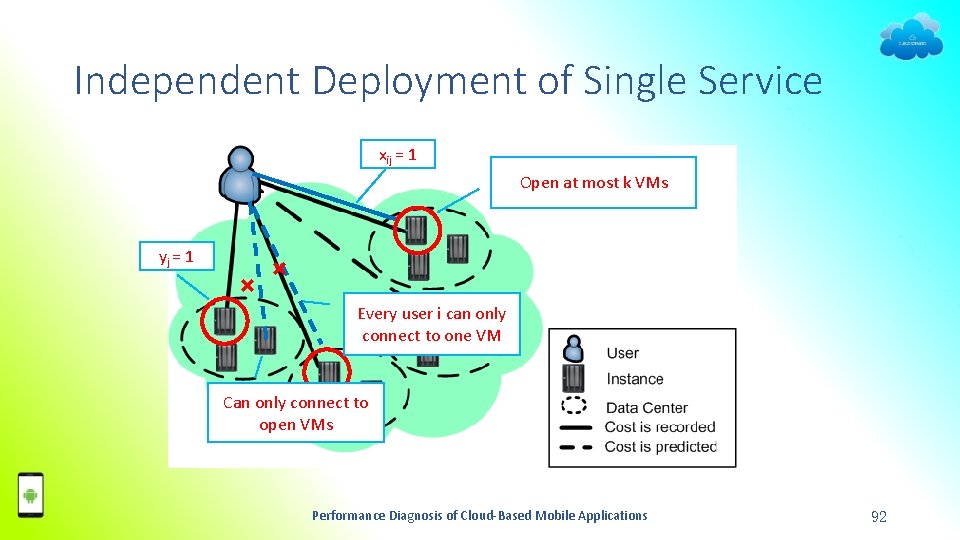

Independent Deployment of Single Service xij = 1 Open at most k VMs yj = 1 × × Every user i can only connect to one VM Can only connect to open VMs Performance Diagnosis of Cloud-Based Mobile Applications 92

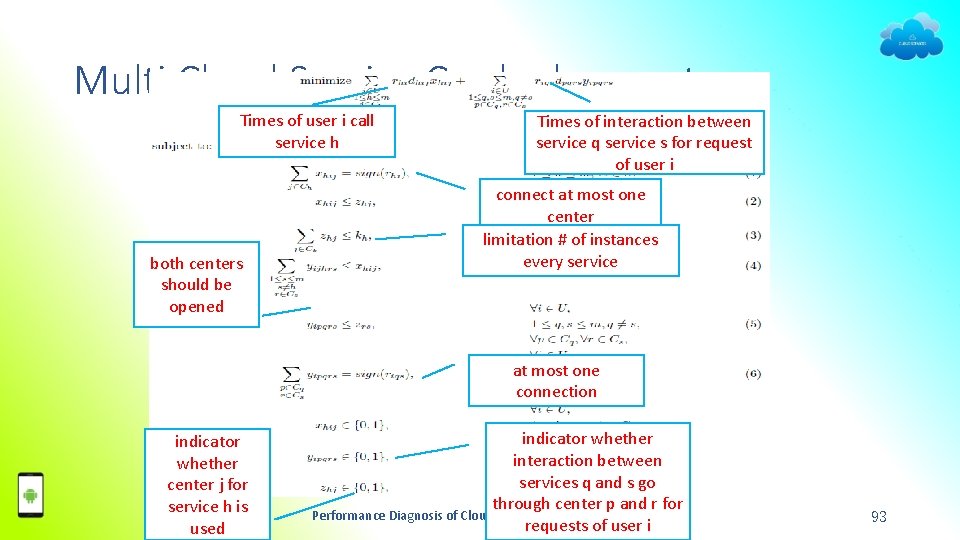

Multi Cloud Service Co-deployment Times of user i call service h both centers should be opened Times of interaction between service q service s for request of user i connect at most one center limitation # of instances every service at most one connection indicator whether center j for service h is used indicator whether interaction between services q and s go through center p and r for Performance Diagnosis of Cloud-Based Mobile Applications requests of user i 93

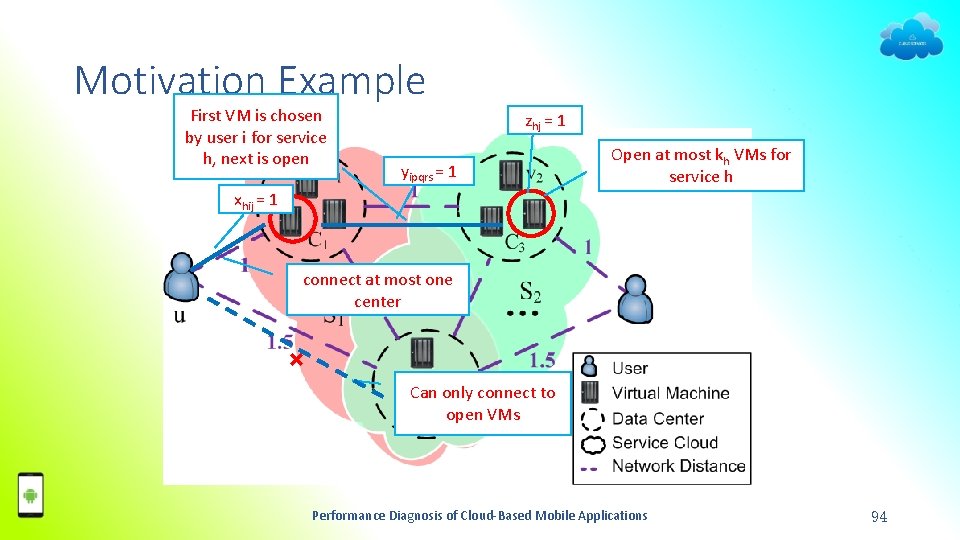

Motivation Example First VM is chosen by user i for service h, next is open zhj = 1 yipqrs = 1 Open at most kh VMs for service h xhij = 1 connect at most one center × Can only connect to open VMs Performance Diagnosis of Cloud-Based Mobile Applications 94

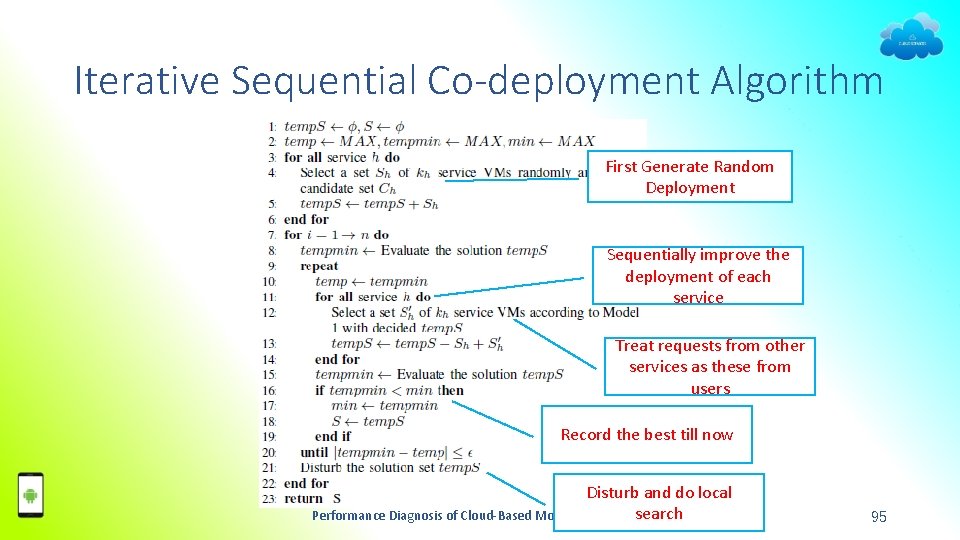

Iterative Sequential Co-deployment Algorithm First Generate Random Deployment Sequentially improve the deployment of each service Treat requests from other services as these from users Record the best till now Disturb and do local search Performance Diagnosis of Cloud-Based Mobile Applications 95

Dataset Statistics Performance Diagnosis of Cloud-Based Mobile Applications 96

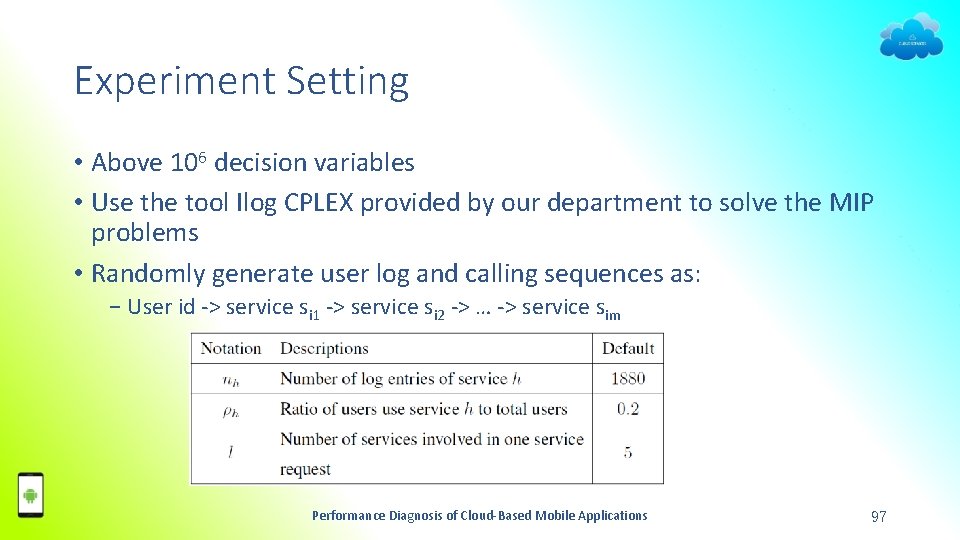

Experiment Setting • Above 106 decision variables • Use the tool Ilog CPLEX provided by our department to solve the MIP problems • Randomly generate user log and calling sequences as: − User id -> service si 1 -> service si 2 -> … -> service sim Performance Diagnosis of Cloud-Based Mobile Applications 97

Default Experiment Setting • 1881 users • 10 services • Deploy 10 service VMs among a candidate set in 100 data centers • A user of service �� would have 5 request logs • One request of a service would involve on average 5 requests of other services Performance Diagnosis of Cloud-Based Mobile Applications 98

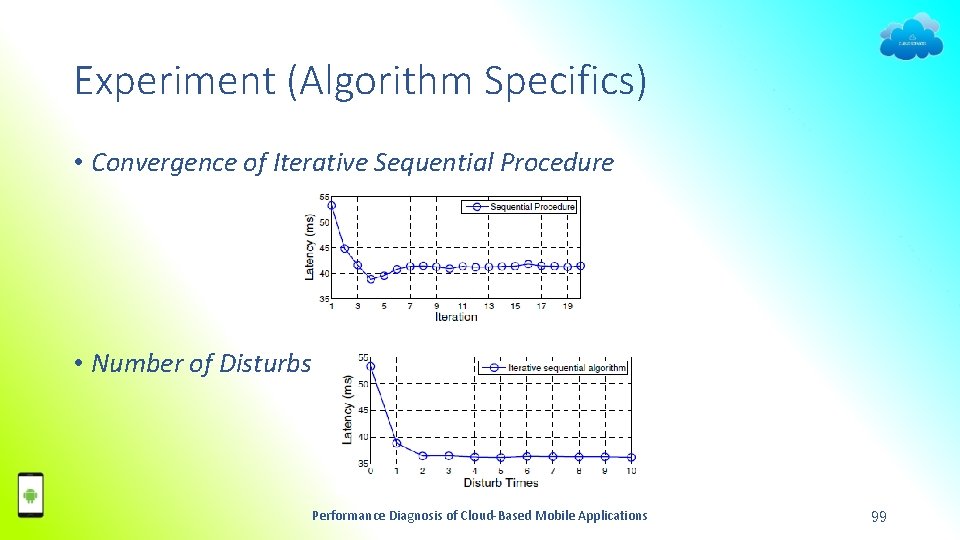

Experiment (Algorithm Specifics) • Convergence of Iterative Sequential Procedure • Number of Disturbs Performance Diagnosis of Cloud-Based Mobile Applications 99

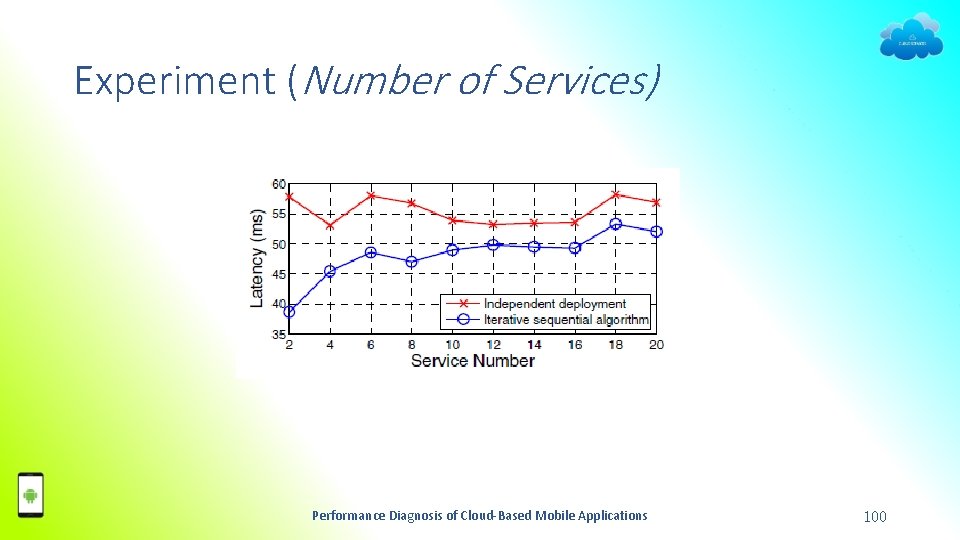

Experiment (Number of Services) Performance Diagnosis of Cloud-Based Mobile Applications 100

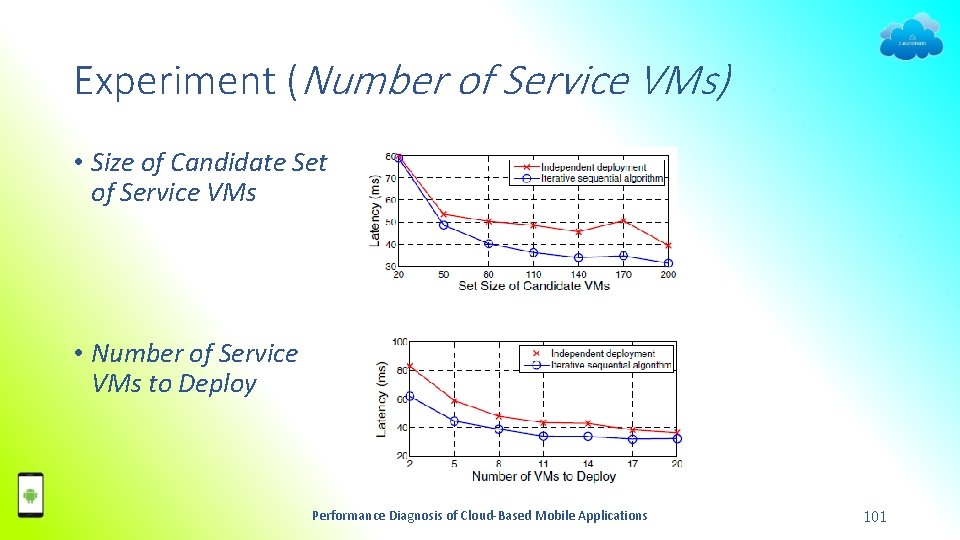

Experiment (Number of Service VMs) • Size of Candidate Set of Service VMs • Number of Service VMs to Deploy Performance Diagnosis of Cloud-Based Mobile Applications 101

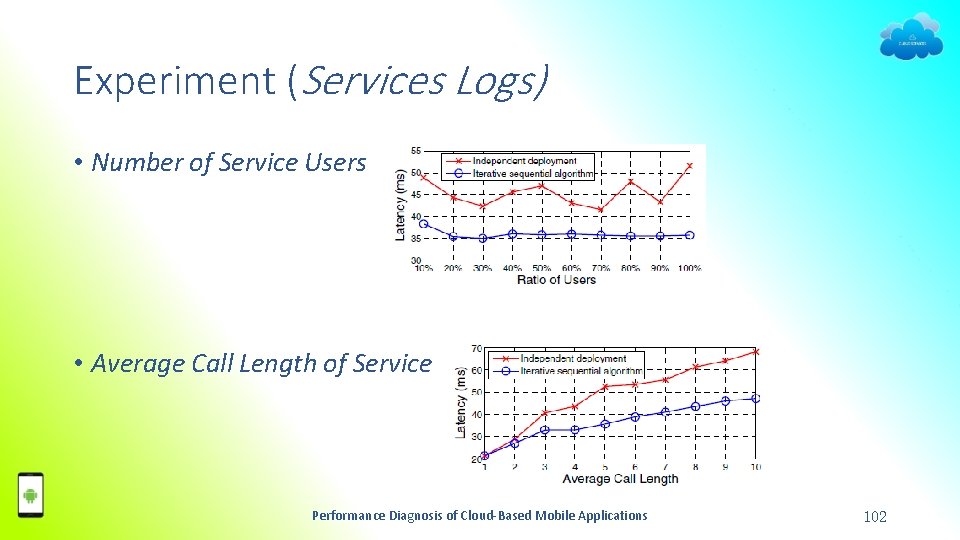

Experiment (Services Logs) • Number of Service Users • Average Call Length of Service Performance Diagnosis of Cloud-Based Mobile Applications 102

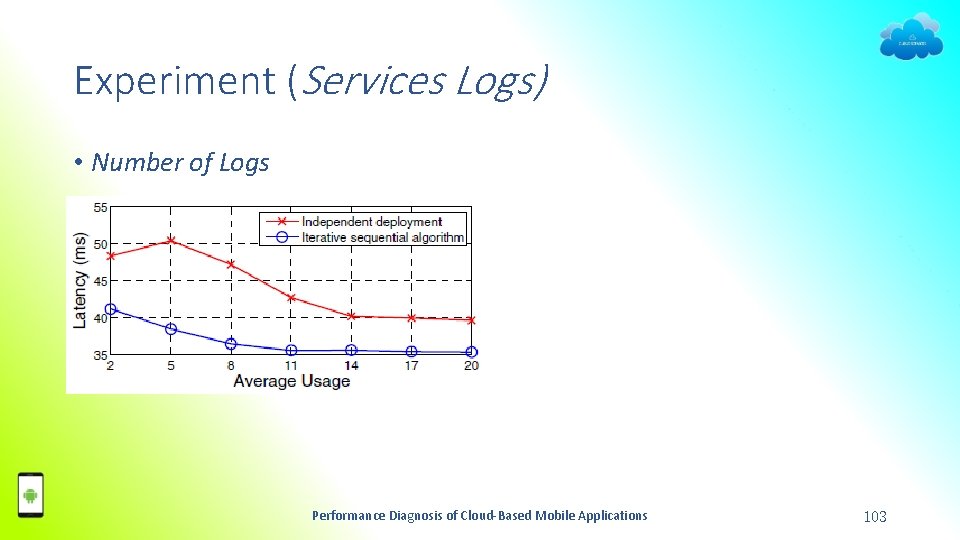

Experiment (Services Logs) • Number of Logs Performance Diagnosis of Cloud-Based Mobile Applications 103

Future work • Mobile Side User Experience Enhancement − Selective Loading, Computing in Advance − Delay Tolerant UI Design • Cloud Side Processing Time Optimization − Moving computation to data • Client-Cloud Communication Cost Reduction − Reduce # communications Performance Diagnosis of Cloud-Based Mobile Applications 104

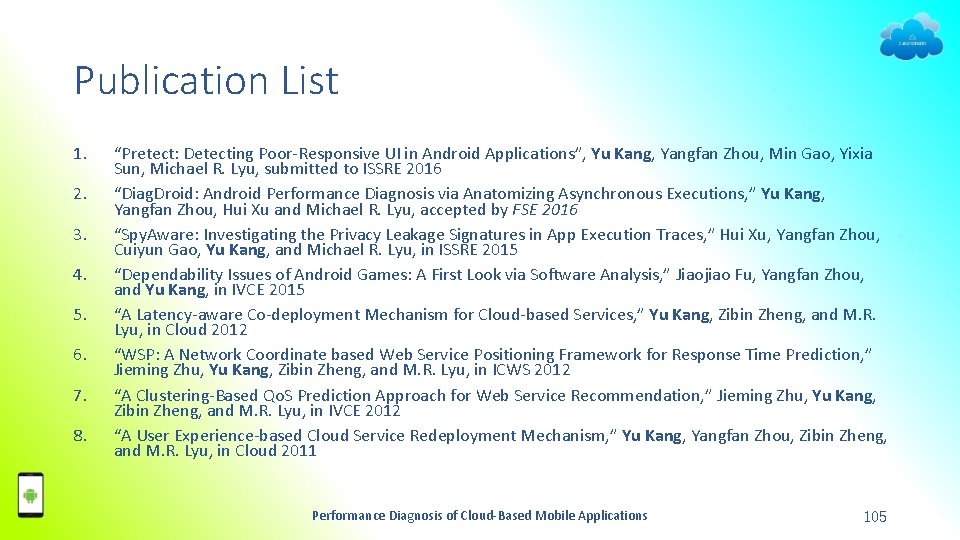

Publication List 1. 2. 3. 4. 5. 6. 7. 8. “Pretect: Detecting Poor-Responsive UI in Android Applications”, Yu Kang, Yangfan Zhou, Min Gao, Yixia Sun, Michael R. Lyu, submitted to ISSRE 2016 “Diag. Droid: Android Performance Diagnosis via Anatomizing Asynchronous Executions, ” Yu Kang, Yangfan Zhou, Hui Xu and Michael R. Lyu, accepted by FSE 2016 “Spy. Aware: Investigating the Privacy Leakage Signatures in App Execution Traces, ” Hui Xu, Yangfan Zhou, Cuiyun Gao, Yu Kang, and Michael R. Lyu, in ISSRE 2015 “Dependability Issues of Android Games: A First Look via Software Analysis, ” Jiaojiao Fu, Yangfan Zhou, and Yu Kang, in IVCE 2015 “A Latency-aware Co-deployment Mechanism for Cloud-based Services, ” Yu Kang, Zibin Zheng, and M. R. Lyu, in Cloud 2012 “WSP: A Network Coordinate based Web Service Positioning Framework for Response Time Prediction, ” Jieming Zhu, Yu Kang, Zibin Zheng, and M. R. Lyu, in ICWS 2012 “A Clustering-Based Qo. S Prediction Approach for Web Service Recommendation, ” Jieming Zhu, Yu Kang, Zibin Zheng, and M. R. Lyu, in IVCE 2012 “A User Experience-based Cloud Service Redeployment Mechanism, ” Yu Kang, Yangfan Zhou, Zibin Zheng, and M. R. Lyu, in Cloud 2011 Performance Diagnosis of Cloud-Based Mobile Applications 105

- Slides: 105