Chapter 6 Floyds Algorithm The AllPairs ShortestPath Problem

Chapter 6 Floyd’s Algorithm

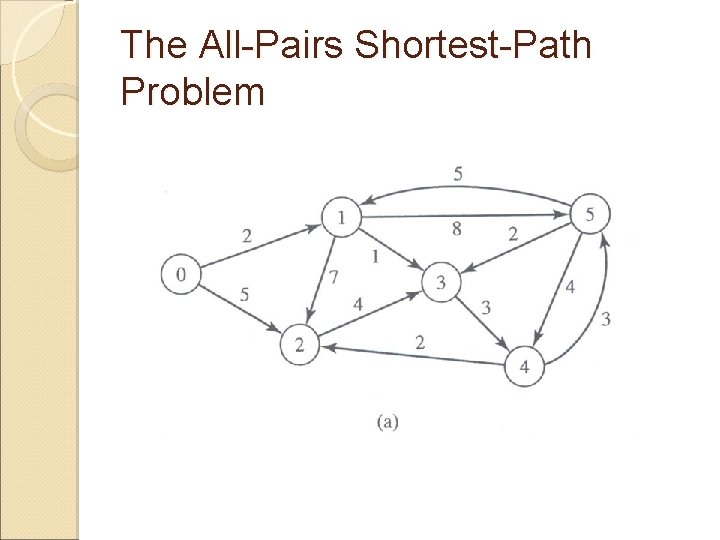

The All-Pairs Shortest-Path Problem

![Floyd’s Algorithm Input n – number of vertices a[0. . n-1, 0. . n-1] Floyd’s Algorithm Input n – number of vertices a[0. . n-1, 0. . n-1]](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-4.jpg)

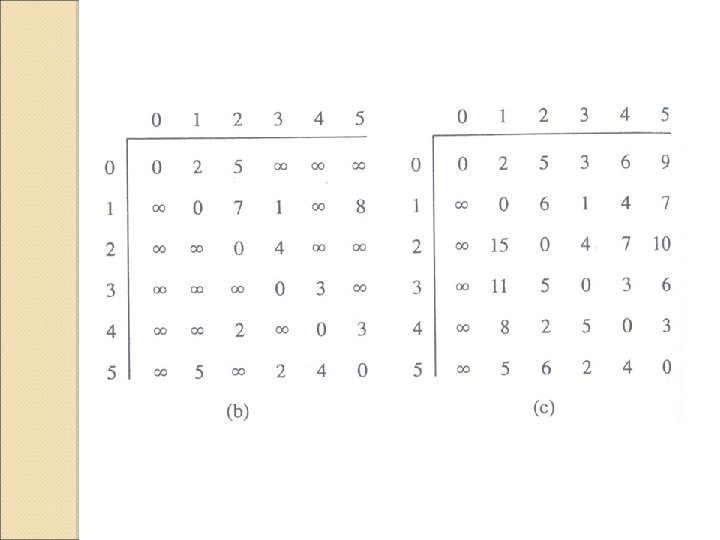

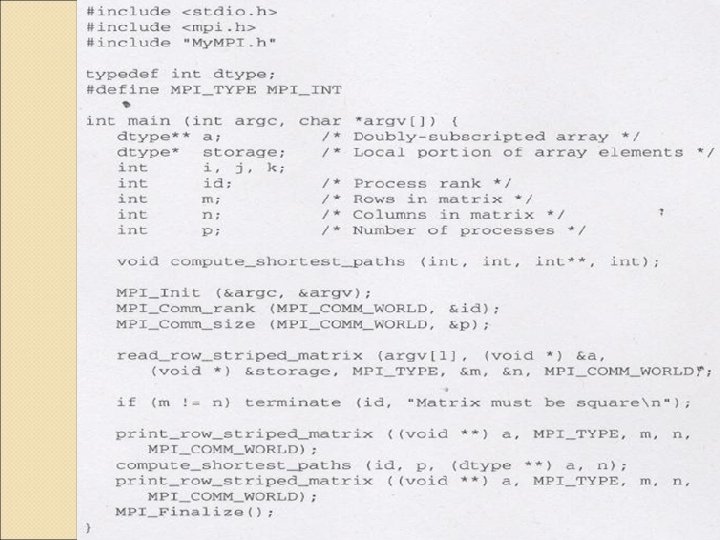

Floyd’s Algorithm Input n – number of vertices a[0. . n-1, 0. . n-1] – adjacency matrix Output Transformed a that contains the shortest path lengths for k ← 0 to n-1 for i ← 0 to n-1 for j ← 0 to n-1 a[i, j] ← min(a[i, j], a[i, k] + a[k, j]) endfor

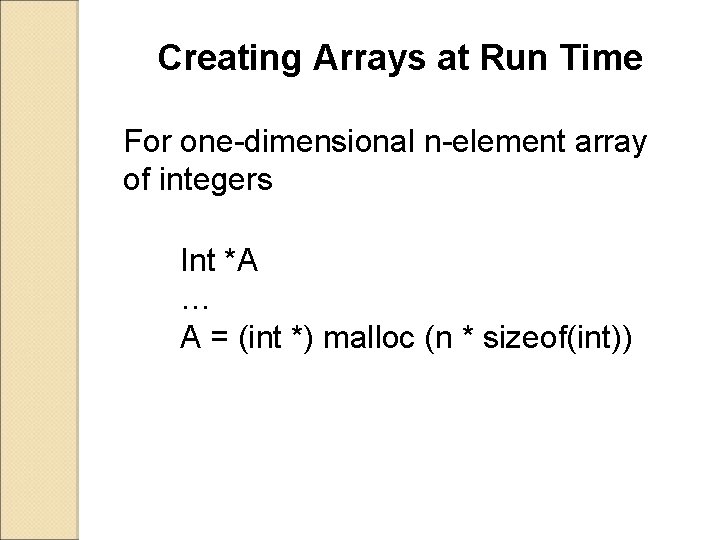

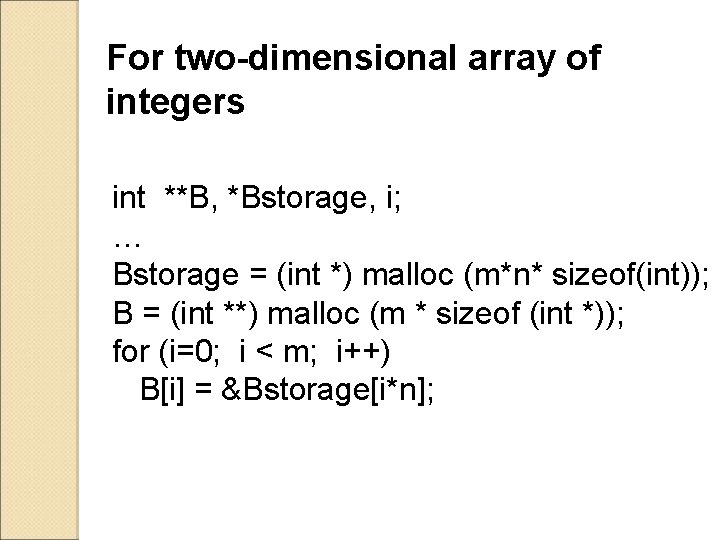

Creating Arrays at Run Time For one-dimensional n-element array of integers Int *A … A = (int *) malloc (n * sizeof(int))

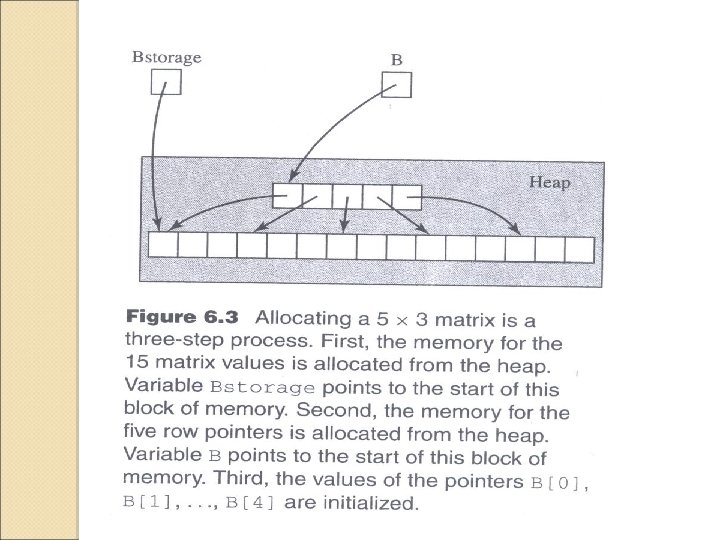

For two-dimensional array of integers int **B, *Bstorage, i; … Bstorage = (int *) malloc (m*n* sizeof(int)); B = (int **) malloc (m * sizeof (int *)); for (i=0; i < m; i++) B[i] = &Bstorage[i*n];

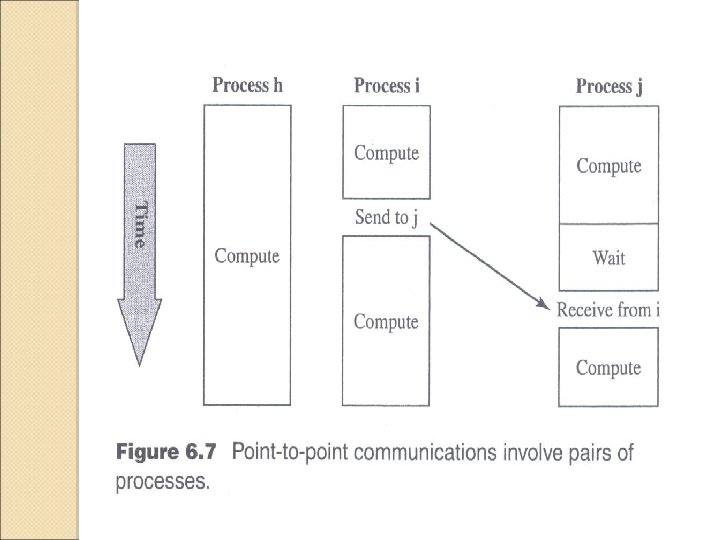

Point to Point Communication

Point to Point Communication Instruction ◦ MPI_Send ◦ MPI_Recv MPI Datatype MPI Tags Deadlock Non-blocking Communication ◦ MPI_Isend ◦ MPI_Irecv ◦ MPI_Wait ◦ MPI_Sendrecv

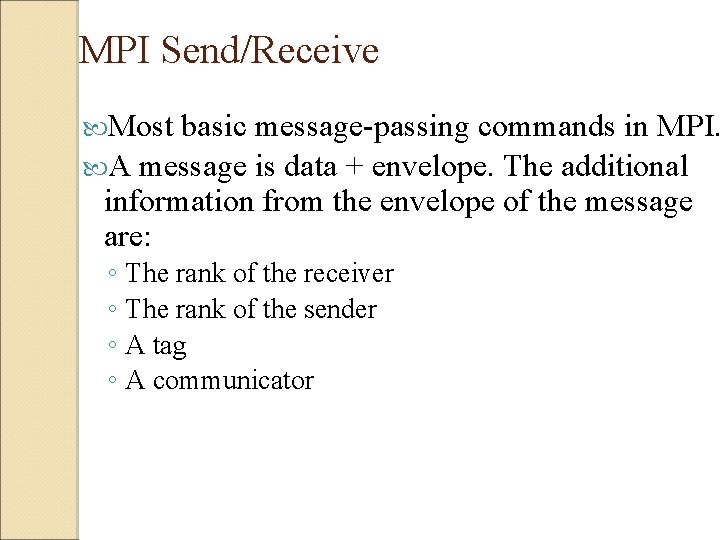

MPI Send/Receive Most basic message-passing commands in MPI. A message is data + envelope. The additional information from the envelope of the message are: ◦ The rank of the receiver ◦ The rank of the sender ◦ A tag ◦ A communicator

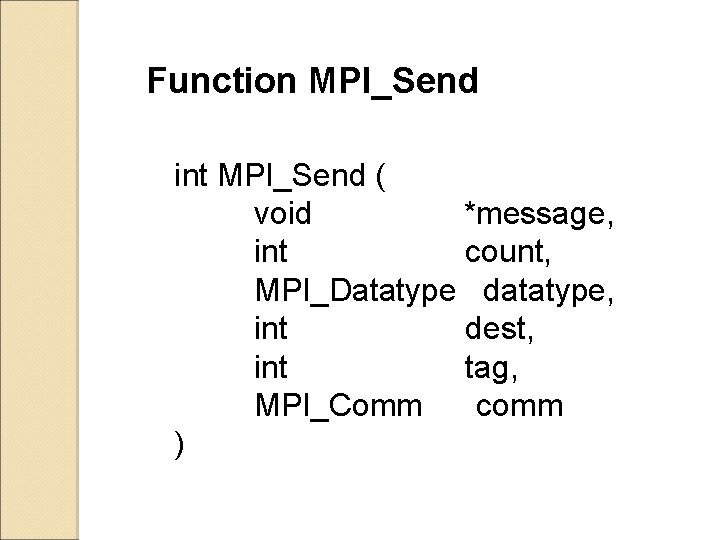

Function MPI_Send int MPI_Send ( void *message, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm )

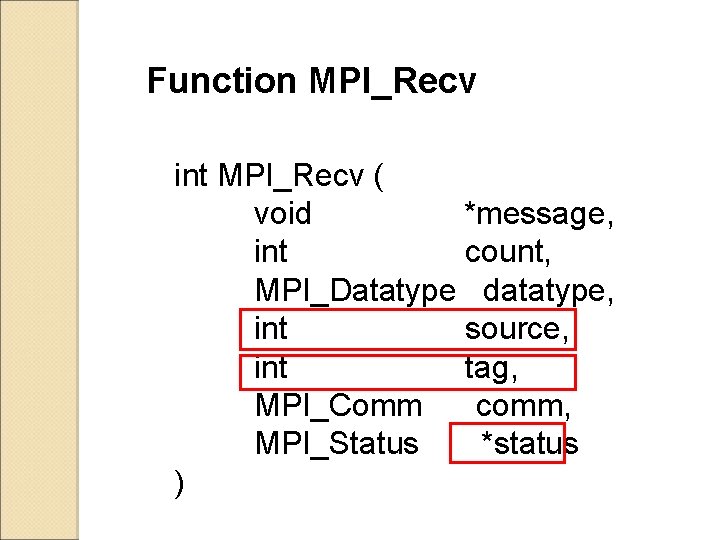

Function MPI_Recv int MPI_Recv ( void *message, int count, MPI_Datatype datatype, int source, int tag, MPI_Comm comm, MPI_Status *status )

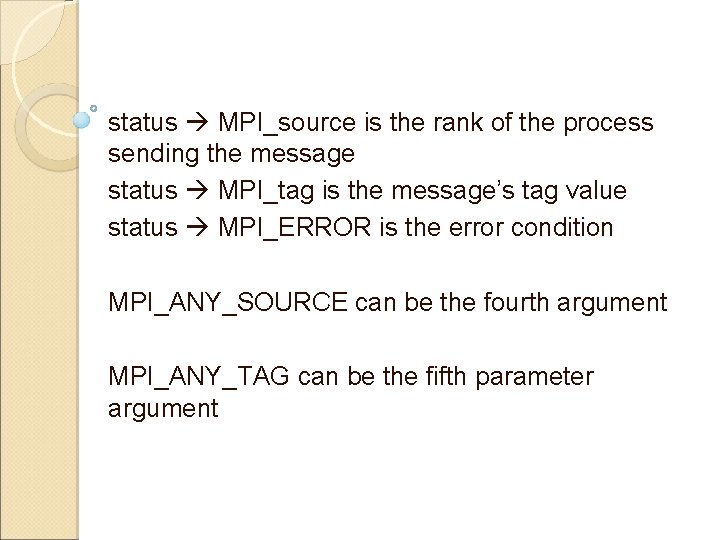

status MPI_source is the rank of the process sending the message status MPI_tag is the message’s tag value status MPI_ERROR is the error condition MPI_ANY_SOURCE can be the fourth argument MPI_ANY_TAG can be the fifth parameter argument

![MPI Send/Receive int Sdata[2] = {1, 2}, Rdata[2], count = 2, src = 0, MPI Send/Receive int Sdata[2] = {1, 2}, Rdata[2], count = 2, src = 0,](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-14.jpg)

MPI Send/Receive int Sdata[2] = {1, 2}, Rdata[2], count = 2, src = 0, dest = 3; if(CPU_id == src) MPI_Send(&Sdata, 2, MPI_INTEGER, dest, 1, MPI_COMM_WORLD); if(CPU_id == dest){ MPI_Status status; MPI_Recv(&Rdata, 2, MPI_INTEGER, src, 1, MPI_COMM_WORLD, &status); } CPU 0 [1, 2] Sdata CPU 1 MPI_Send CPU 2 CPU 3 Rdata [1, 2] MPI_Recv

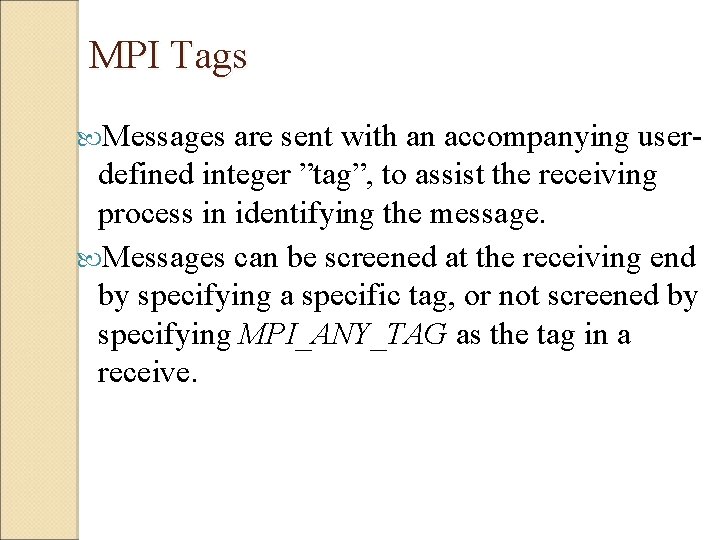

MPI Tags Messages are sent with an accompanying user- defined integer ”tag”, to assist the receiving process in identifying the message. Messages can be screened at the receiving end by specifying a specific tag, or not screened by specifying MPI_ANY_TAG as the tag in a receive.

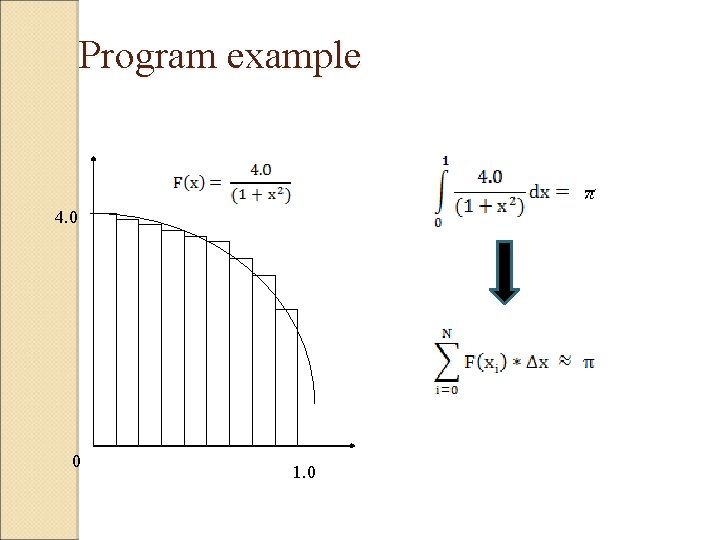

Program example 4. 0 0 1. 0

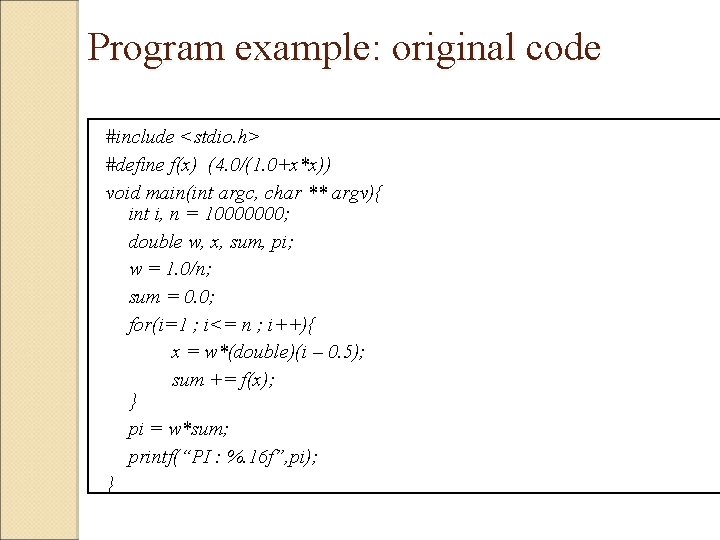

Program example: original code #include <stdio. h> #define f(x) (4. 0/(1. 0+x*x)) void main(int argc, char ** argv){ int i, n = 10000000; double w, x, sum, pi; w = 1. 0/n; sum = 0. 0; for(i=1 ; i<= n ; i++){ x = w*(double)(i – 0. 5); sum += f(x); } pi = w*sum; printf(“PI : %. 16 f”, pi); }

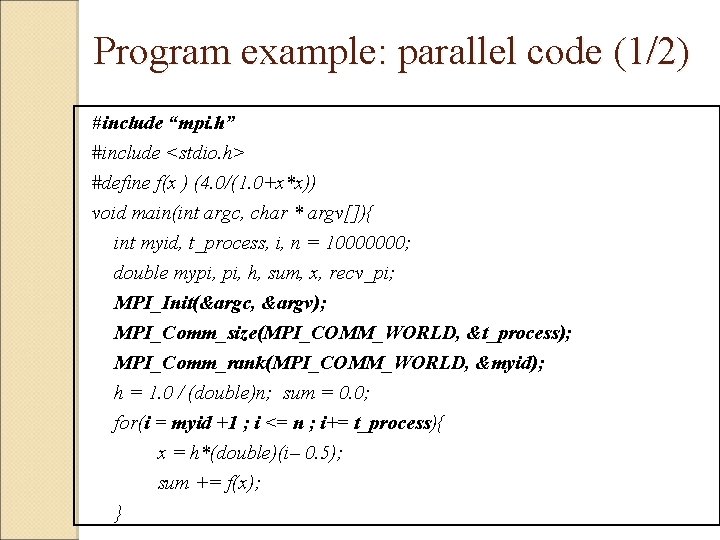

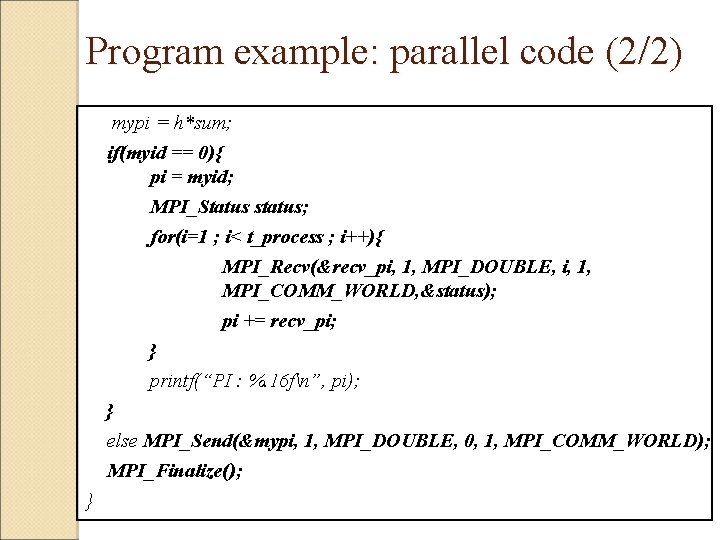

Program example: parallel code (1/2) #include “mpi. h” #include <stdio. h> #define f(x ) (4. 0/(1. 0+x*x)) void main(int argc, char * argv[]){ int myid, t_process, i, n = 10000000; double mypi, h, sum, x, recv_pi; MPI_Init(&argc, &argv); MPI_Comm_size(MPI_COMM_WORLD, &t_process); MPI_Comm_rank(MPI_COMM_WORLD, &myid); h = 1. 0 / (double)n; sum = 0. 0; for(i = myid +1 ; i <= n ; i+= t_process){ x = h*(double)(i– 0. 5); sum += f(x); }

Program example: parallel code (2/2) mypi = h*sum; if(myid == 0){ pi = myid; MPI_Status status; for(i=1 ; i< t_process ; i++){ MPI_Recv(&recv_pi, 1, MPI_DOUBLE, i, 1, MPI_COMM_WORLD, &status); pi += recv_pi; } printf(“PI : %. 16 fn”, pi); } else MPI_Send(&mypi, 1, MPI_DOUBLE, 0, 1, MPI_COMM_WORLD); MPI_Finalize(); }

Communication Definitions “Completion” of the communication means that memory locations used in the message transfer can be safely accessed ◦ Send: variable sent can be reused after completion. ◦ Receive: variable received can now be used. MPI communication modes differ in what conditions are needed for completion. Communication modes can be blocking or non -blocking.

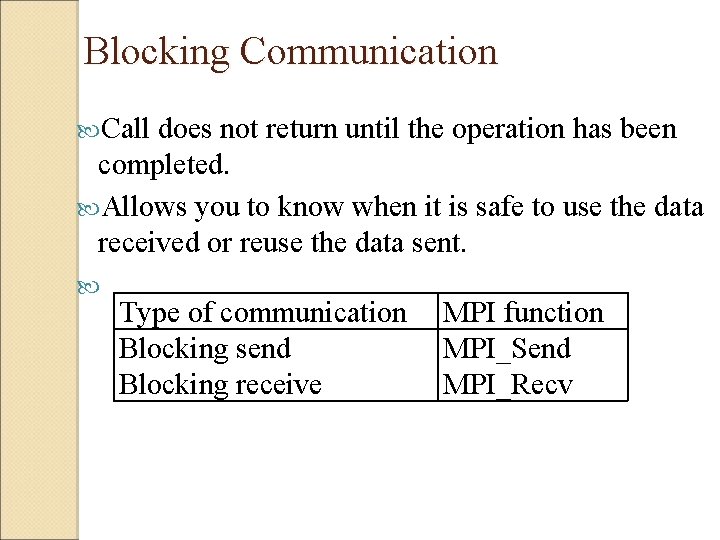

Blocking Communication Call does not return until the operation has been completed. Allows you to know when it is safe to use the data received or reuse the data sent. Type of communication MPI function Blocking send MPI_Send Blocking receive MPI_Recv

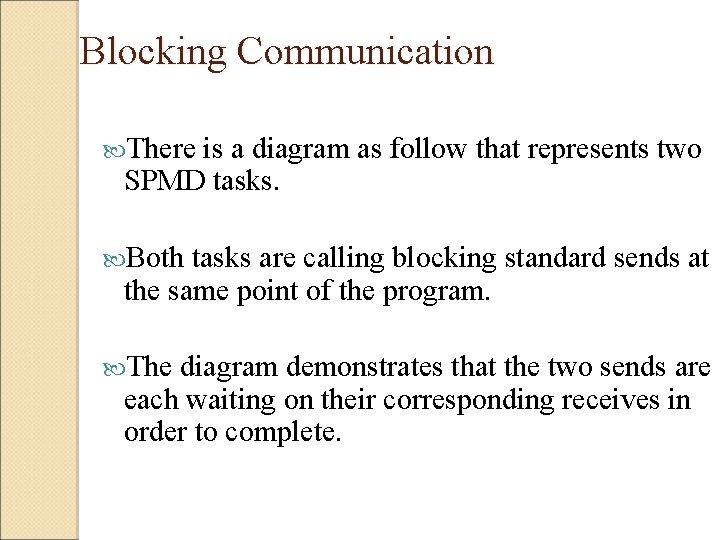

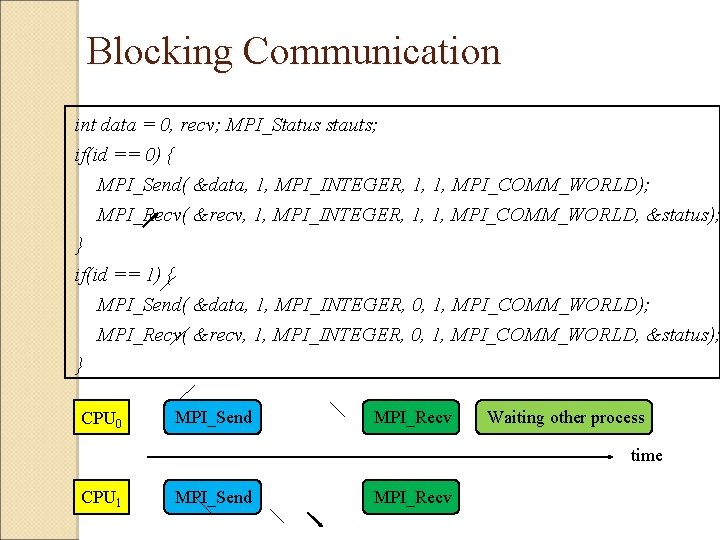

Blocking Communication There is a diagram as follow that represents two SPMD tasks. Both tasks are calling blocking standard sends at the same point of the program. The diagram demonstrates that the two sends are each waiting on their corresponding receives in order to complete.

Blocking Communication int data = 0, recv; MPI_Status stauts; if(id == 0) { MPI_Send( &data, 1, MPI_INTEGER, 1, 1, MPI_COMM_WORLD); MPI_Recv( &recv, 1, MPI_INTEGER, 1, 1, MPI_COMM_WORLD, &status); } if(id == 1) { MPI_Send( &data, 1, MPI_INTEGER, 0, 1, MPI_COMM_WORLD); MPI_Recv( &recv, 1, MPI_INTEGER, 0, 1, MPI_COMM_WORLD, &status); } CPU 0 MPI_Send MPI_Recv Waiting other process time CPU 1 MPI_Send MPI_Recv

Deadlock is a phenomenon most common with blocking communication. The waiting processes will never again change state, because the resources they have requested are held by other waiting processes.

Deadlock Condition Mutual Exclusion ◦ A resource that cannot be used by more than one process at a time. Hold and Wait ◦ Processes already holding resources may request new resources held by other processes. Non-Preemption ◦ No resource can be forcibly removed from a process holding it, resources can be released only by the explicit action of the process. Circular Wait ◦ Two or more processes form a circular chain where each process waits for a resource that the next process in the chain holds.

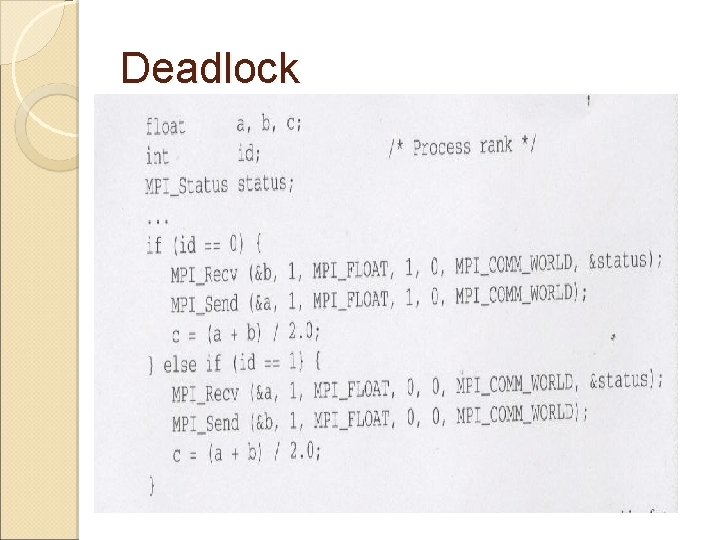

Deadlock

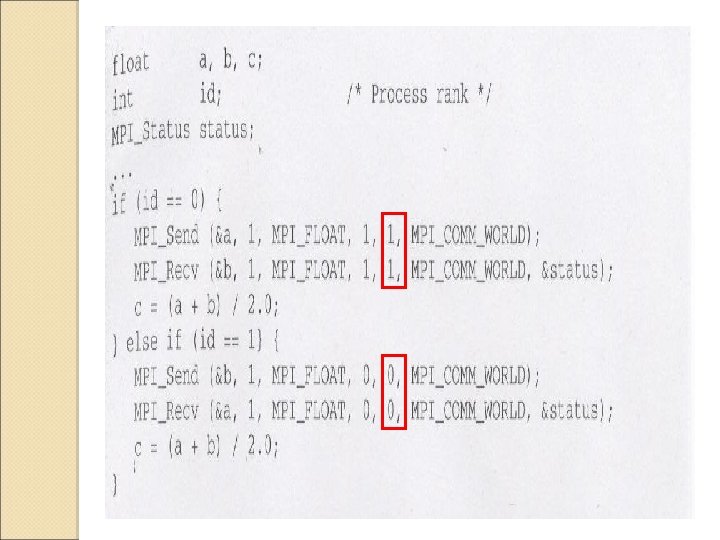

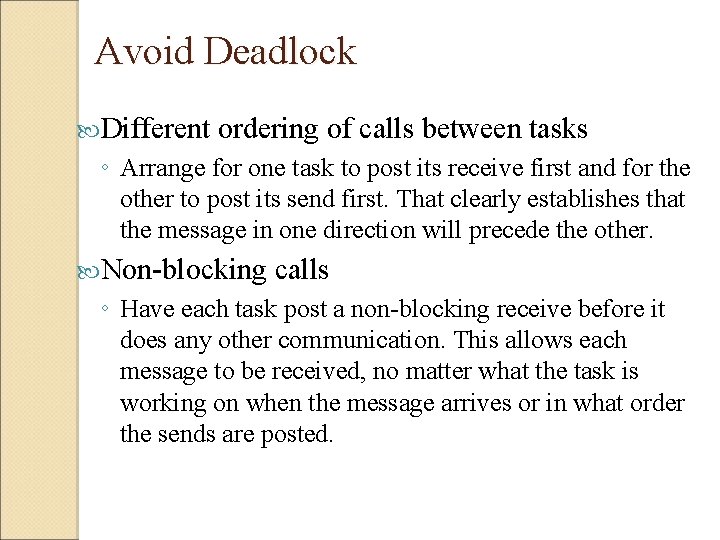

Avoid Deadlock Different ordering of calls between tasks ◦ Arrange for one task to post its receive first and for the other to post its send first. That clearly establishes that the message in one direction will precede the other. Non-blocking calls ◦ Have each task post a non-blocking receive before it does any other communication. This allows each message to be received, no matter what the task is working on when the message arrives or in what order the sends are posted.

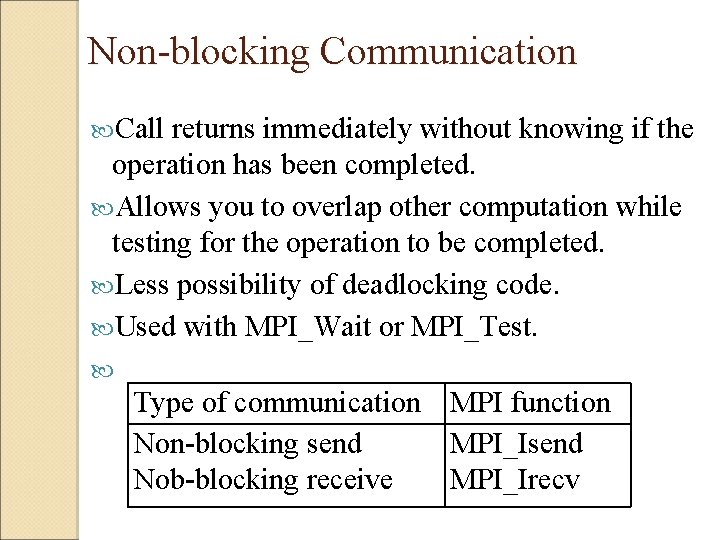

Non-blocking Communication Call returns immediately without knowing if the operation has been completed. Allows you to overlap other computation while testing for the operation to be completed. Less possibility of deadlocking code. Used with MPI_Wait or MPI_Test. Type of communication MPI function Non-blocking send MPI_Isend Nob-blocking receive MPI_Irecv

![MPI_Isend int data[], count, dest, tag, err; MPI_Datatype, type; MPI_Comm comm; MPI_Request request; err MPI_Isend int data[], count, dest, tag, err; MPI_Datatype, type; MPI_Comm comm; MPI_Request request; err](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-30.jpg)

MPI_Isend int data[], count, dest, tag, err; MPI_Datatype, type; MPI_Comm comm; MPI_Request request; err = MPI_Isend( &data, count, type, dest, tag, comm, &request); Data count type dest tag comm request Data which can be a scalar variable or an array. An amount of data, if count >1, then Data must be an array. Data type. CPU id which receive data. Message identifier. Communicator The serial number of this transmission.

![MPI_Irecv int data[], count, src, tag, err; MPI_Datatype; MPI_Comm comm; MPI_Request request; err MPI_Irecv int data[], count, src, tag, err; MPI_Datatype; MPI_Comm comm; MPI_Request request; err](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-31.jpg)

MPI_Irecv int data[], count, src, tag, err; MPI_Datatype; MPI_Comm comm; MPI_Request request; err = MPI_Irecv( &data, count, type, src, tag, comm, &request); Data which can be a scalar variable or an array. count An amount of data, if count >1, then Data must be an array. type Data type. src CPU id which send data. tag Message identifier. comm Communicator request The serial number of this transmission.

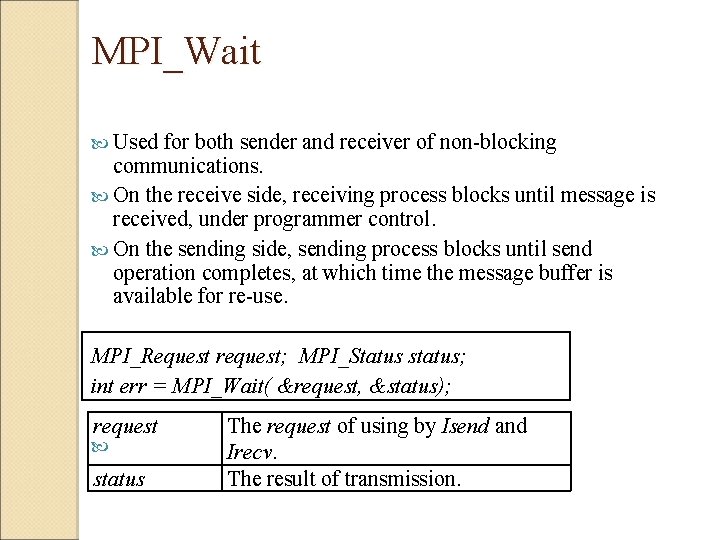

MPI_Wait Used for both sender and receiver of non-blocking communications. On the receive side, receiving process blocks until message is received, under programmer control. On the sending side, sending process blocks until send operation completes, at which time the message buffer is available for re-use. MPI_Request request; MPI_Status status; int err = MPI_Wait( &request, &status); request status The request of using by Isend and Irecv. The result of transmission.

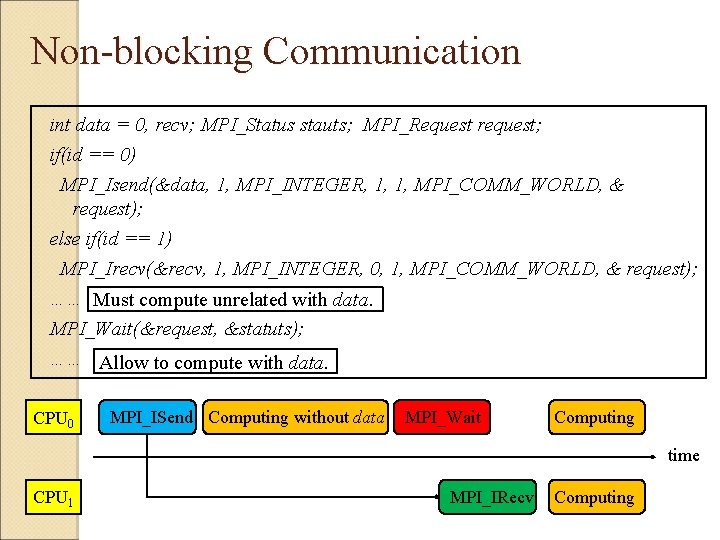

Non-blocking Communication int data = 0, recv; MPI_Status stauts; MPI_Request request; if(id == 0) MPI_Isend(&data, 1, MPI_INTEGER, 1, 1, MPI_COMM_WORLD, & request); else if(id == 1) MPI_Irecv(&recv, 1, MPI_INTEGER, 0, 1, MPI_COMM_WORLD, & request); …… Must compute unrelated with data. MPI_Wait(&request, &statuts); …… Allow to compute with data. CPU 0 MPI_ISend Computing without data MPI_Wait Computing time CPU 1 MPI_IRecv Computing

Avoid Deadlock MPI_Sendrecv, MPI_Sendrecv_replace ◦ Use MPI_Sendrecv. This is an elegant solution. In the _replace version, the system allocates some buffer space (not subject to the threshold limit) to deal with the exchange of messages. Buffered Mode ◦ Use buffered sends so that computation can proceed after copying the message to the user-supplied buffer. This will allow the receives to be executed.

![MPI_Sendrecv int Sdata[], Rdata[], Scount, Rcount, dest, src, Stag, Rtag, err; MPI_Comm comm; MPI_Datatype MPI_Sendrecv int Sdata[], Rdata[], Scount, Rcount, dest, src, Stag, Rtag, err; MPI_Comm comm; MPI_Datatype](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-35.jpg)

MPI_Sendrecv int Sdata[], Rdata[], Scount, Rcount, dest, src, Stag, Rtag, err; MPI_Comm comm; MPI_Datatype Stype, Rtype; MPI_Status status; err = MPI_Sendrecv( &Sdata, Scount, Stype, dest, Stag, &Rdata, Rcount, Rtype, src, Rtag, comm, &status); Sdata Rdata Scount, Rcount dest src Stag, Rtag comm status Data for sending. Data for receiving. The amount of data. CPU id which is received data. CPU id which is sent data. Data tag. Communicator. The result of transmission.

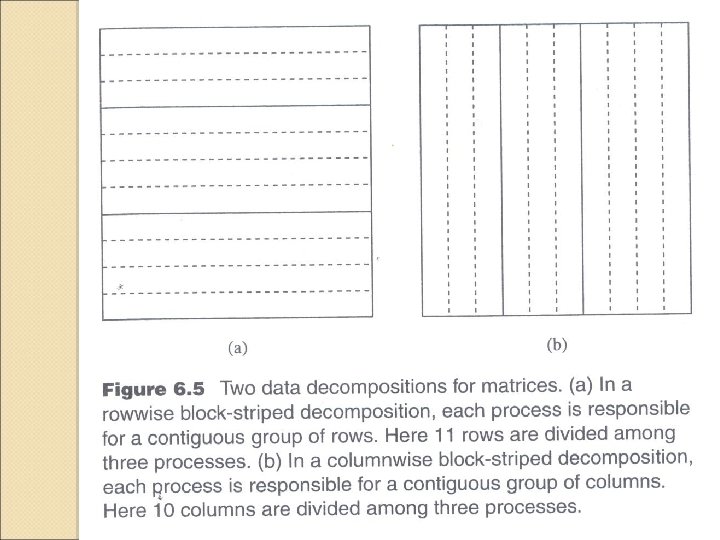

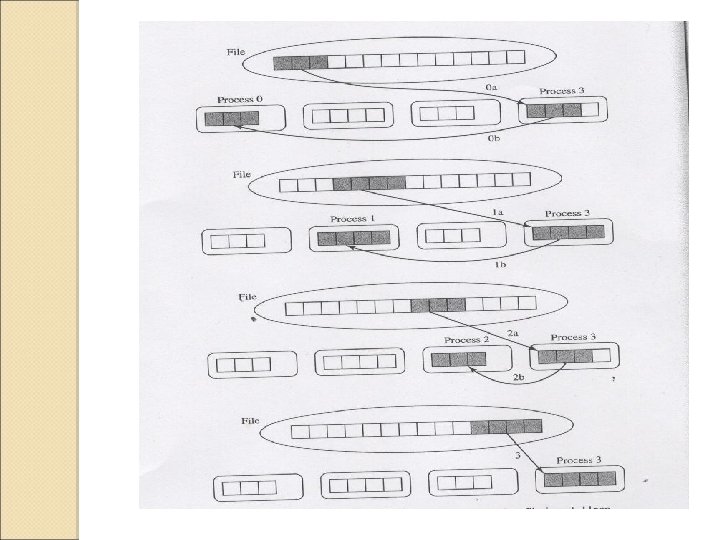

Designing the parallel algorithm Partitioning Communication Agglomeration Matrix input/output

Documenting the Parallel Program

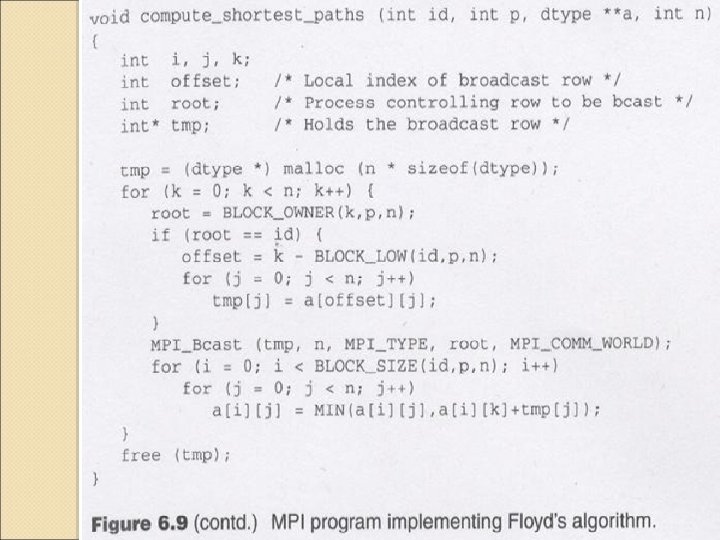

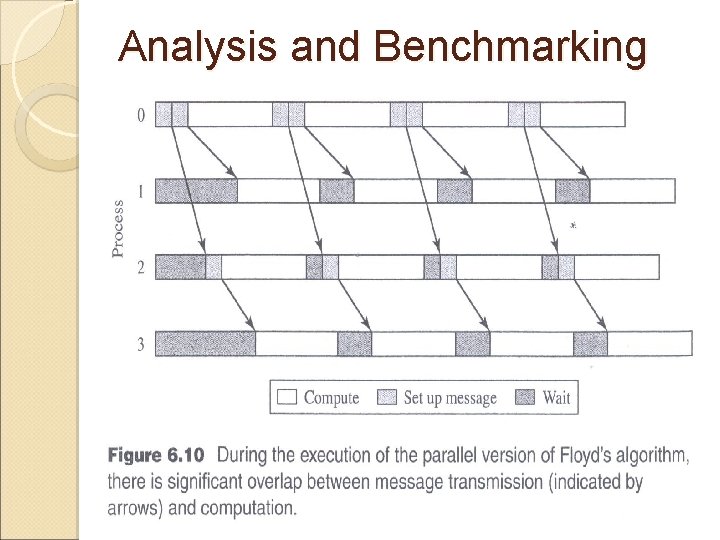

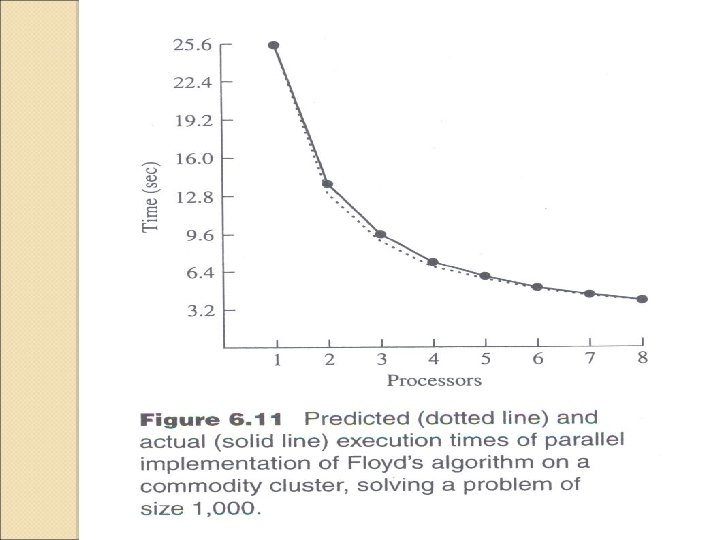

Analysis and Benchmarking

Collective Communication

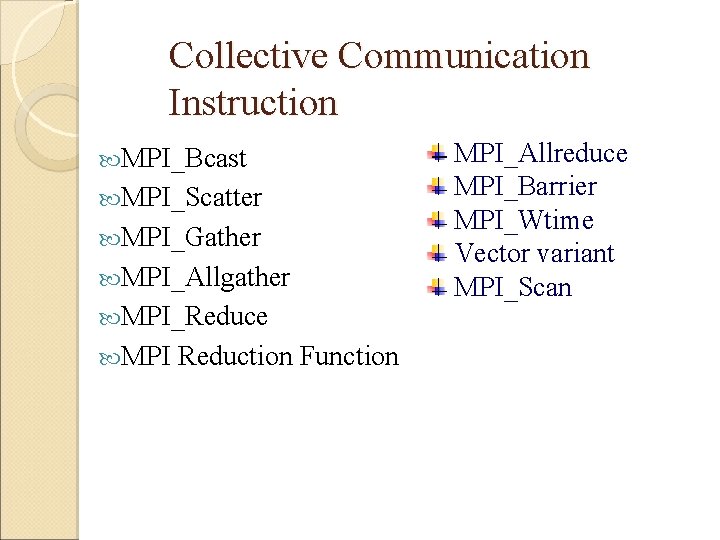

Collective Communication Instruction MPI_Bcast MPI_Scatter MPI_Gather MPI_Allgather MPI_Reduce MPI Reduction Function MPI_Allreduce MPI_Barrier MPI_Wtime Vector variant MPI_Scan

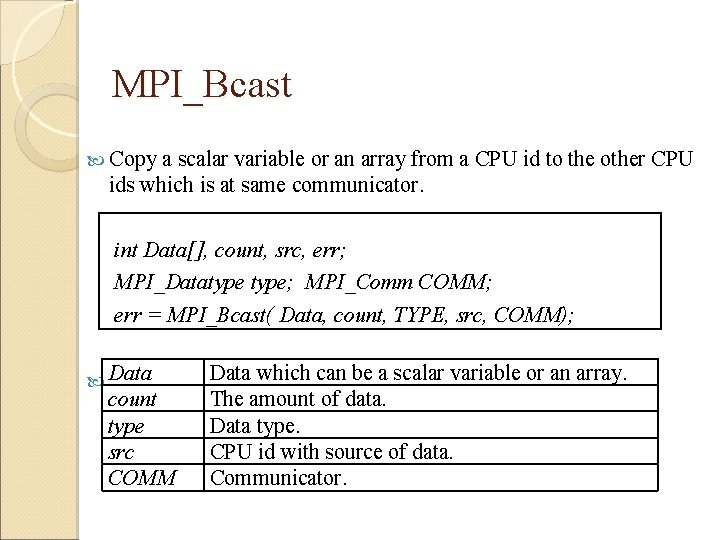

MPI_Bcast Copy a scalar variable or an array from a CPU id to the other CPU ids which is at same communicator. int Data[], count, src, err; MPI_Datatype; MPI_Comm COMM; err = MPI_Bcast( Data, count, TYPE, src, COMM); Data count type src COMM Data which can be a scalar variable or an array. The amount of data. Data type. CPU id with source of data. Communicator.

![MPI_Bcast int data[4] = {1, 2, 3, 4}; int count= 4, src='data:image/svg+xml,%3Csvg%20xmlns=%22http://www.w3.org/2000/svg%22%20viewBox=%220%200%20760%20570%22%3E%3C/svg%3E' data-src= 0; MPI_Bcast(data, MPI_Bcast int data[4] = {1, 2, 3, 4}; int count= 4, src='data:image/svg+xml,%3Csvg%20xmlns=%22http://www.w3.org/2000/svg%22%20viewBox=%220%200%20760%20570%22%3E%3C/svg%3E' data-src= 0; MPI_Bcast(data,](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-50.jpg)

MPI_Bcast int data[4] = {1, 2, 3, 4}; int count= 4, src= 0; MPI_Bcast(data, count, MPI_INTEGER, src, MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 data MPI_Bcast

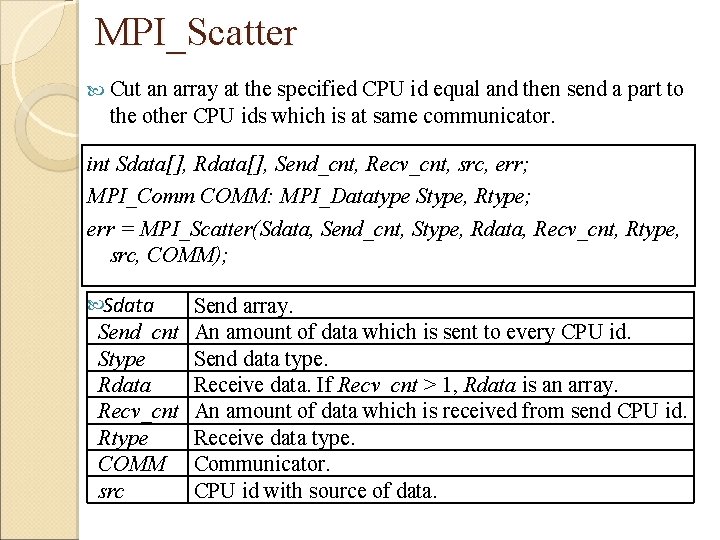

MPI_Scatter Cut an array at the specified CPU id equal and then send a part to the other CPU ids which is at same communicator. int Sdata[], Rdata[], Send_cnt, Recv_cnt, src, err; MPI_Comm COMM: MPI_Datatype Stype, Rtype; err = MPI_Scatter(Sdata, Send_cnt, Stype, Rdata, Recv_cnt, Rtype, src, COMM); Sdata Send array. Send_cnt An amount of data which is sent to every CPU id. Stype Send data type. Rdata Receive data. If Recv_cnt > 1, Rdata is an array. Recv_cnt An amount of data which is received from send CPU id. Rtype Receive data type. COMM Communicator. src CPU id with source of data.

![MPI_Scatter int Sdata[8] = {1, 2, 3, 4, 5, 6, 7, 8}, Rdata[2]; int MPI_Scatter int Sdata[8] = {1, 2, 3, 4, 5, 6, 7, 8}, Rdata[2]; int](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-52.jpg)

MPI_Scatter int Sdata[8] = {1, 2, 3, 4, 5, 6, 7, 8}, Rdata[2]; int Send_cnt = 2, Recv_cnt = 2, src = 0; MPI_Scatter( Sdata, Send_cnt, MPI_INTEGER, Rdata, Recv_cnt, MPI_INTEGER , src, MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 Rdata [1, 2] Rdata [3, 4] Rdata [5, 6] Rdata [7, 8] Sdata [1, 2, 3, 4, 5, 6, 7, 8] [1, 2] [3, 4] [5, 6] [7, 8] MPI_Scatter

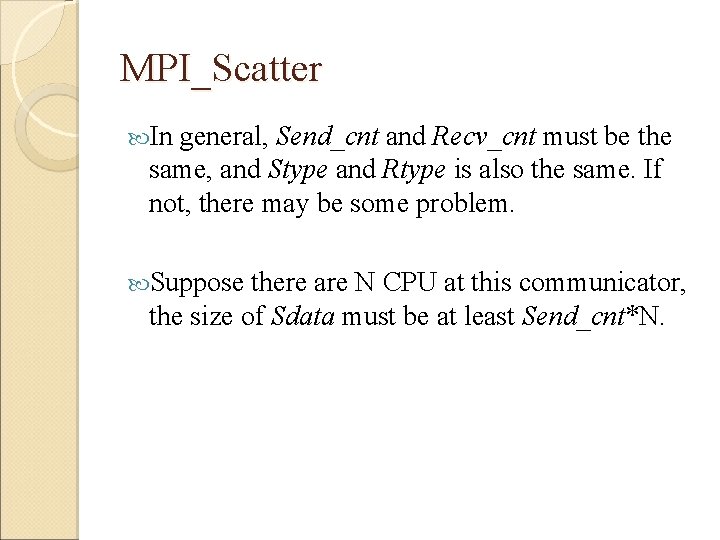

MPI_Scatter In general, Send_cnt and Recv_cnt must be the same, and Stype and Rtype is also the same. If not, there may be some problem. Suppose there are N CPU at this communicator, the size of Sdata must be at least Send_cnt*N.

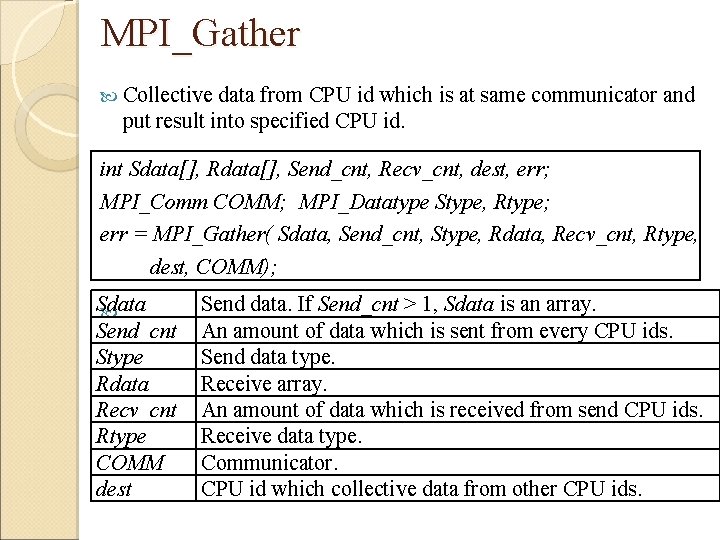

MPI_Gather Collective data from CPU id which is at same communicator and put result into specified CPU id. int Sdata[], Rdata[], Send_cnt, Recv_cnt, dest, err; MPI_Comm COMM; MPI_Datatype Stype, Rtype; err = MPI_Gather( Sdata, Send_cnt, Stype, Rdata, Recv_cnt, Rtype, dest, COMM); Sdata Send_cnt Stype Rdata Recv_cnt Rtype COMM dest Send data. If Send_cnt > 1, Sdata is an array. An amount of data which is sent from every CPU ids. Send data type. Receive array. An amount of data which is received from send CPU ids. Receive data type. Communicator. CPU id which collective data from other CPU ids.

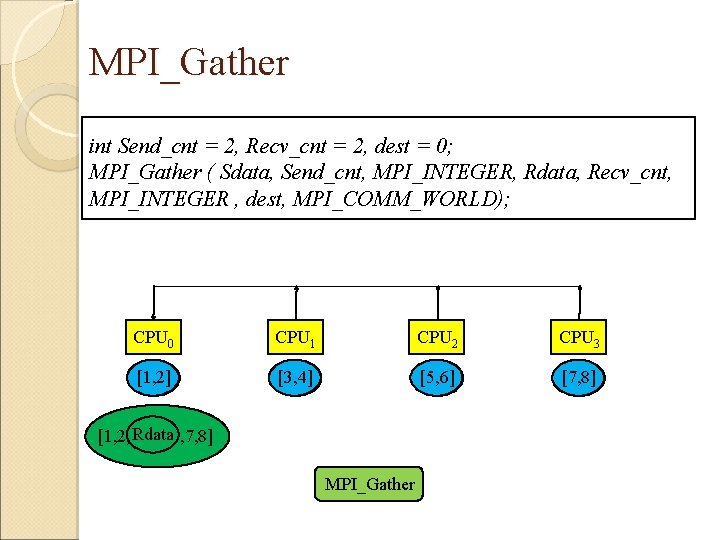

MPI_Gather int Send_cnt = 2, Recv_cnt = 2, dest = 0; MPI_Gather ( Sdata, Send_cnt, MPI_INTEGER, Rdata, Recv_cnt, MPI_INTEGER , dest, MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 [1, 2] Sdata [3, 4] Sdata [5, 6] Sdata [7, 8] Sdata Rdata [1, 2, 3, 4, 5, 6, 7, 8] MPI_Gather

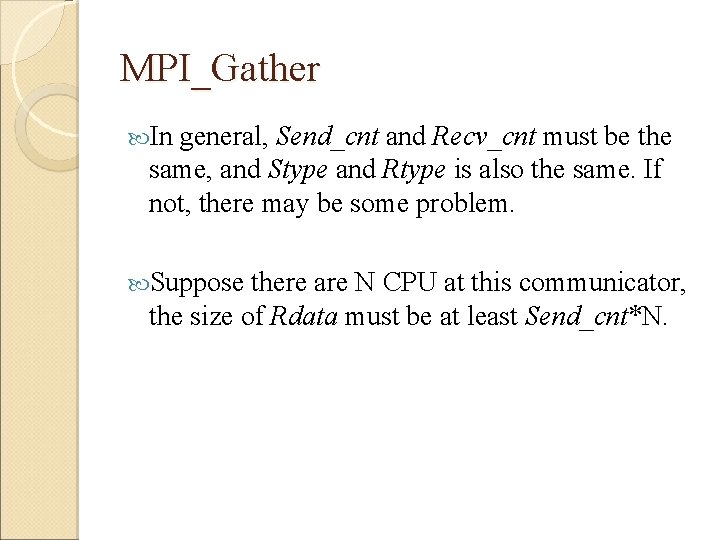

MPI_Gather In general, Send_cnt and Recv_cnt must be the same, and Stype and Rtype is also the same. If not, there may be some problem. Suppose there are N CPU at this communicator, the size of Rdata must be at least Send_cnt*N.

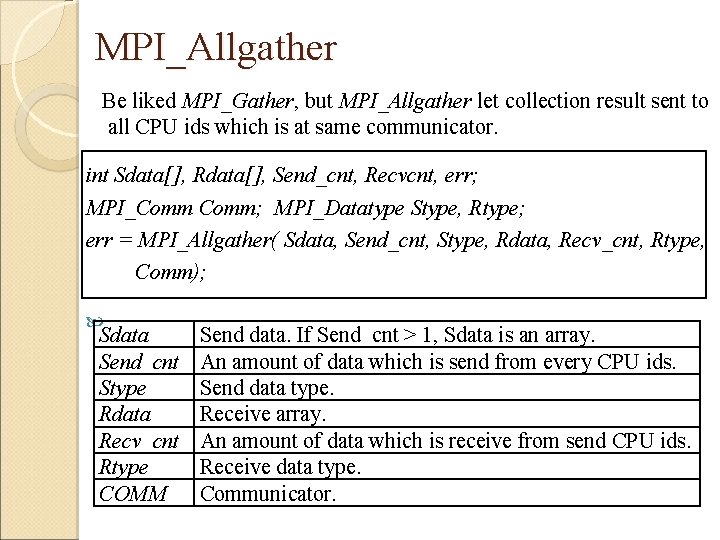

MPI_Allgather Be liked MPI_Gather, but MPI_Allgather let collection result sent to all CPU ids which is at same communicator. int Sdata[], Rdata[], Send_cnt, Recvcnt, err; MPI_Comm; MPI_Datatype Stype, Rtype; err = MPI_Allgather( Sdata, Send_cnt, Stype, Rdata, Recv_cnt, Rtype, Comm); Sdata Send_cnt Stype Rdata Recv_cnt Rtype COMM Send data. If Send_cnt > 1, Sdata is an array. An amount of data which is send from every CPU ids. Send data type. Receive array. An amount of data which is receive from send CPU ids. Receive data type. Communicator.

MPI_Allgather int Send_cnt = 2, Recv_cnt = 8; MPI_Allgather ( Sdata, Send_cnt, MPI_INTEGER, Rdata, Recv_cnt, MPI_INTEGER , MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 Sdata [1, 2] Sdata [3, 4] Sdata [5, 6] Sdata [7, 8] Rdata [1, 2, 3, 4, 5, 6, 7, 8] MPI_Allgather Rdata [1, 2, 3, 4, 5, 6, 7, 8]

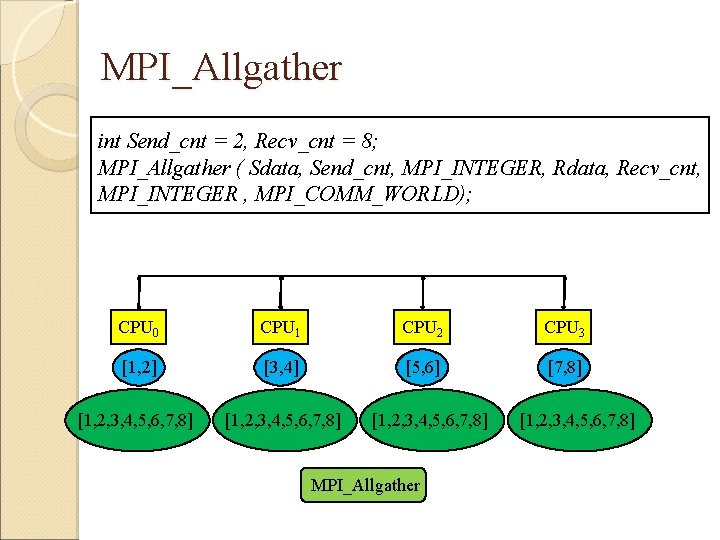

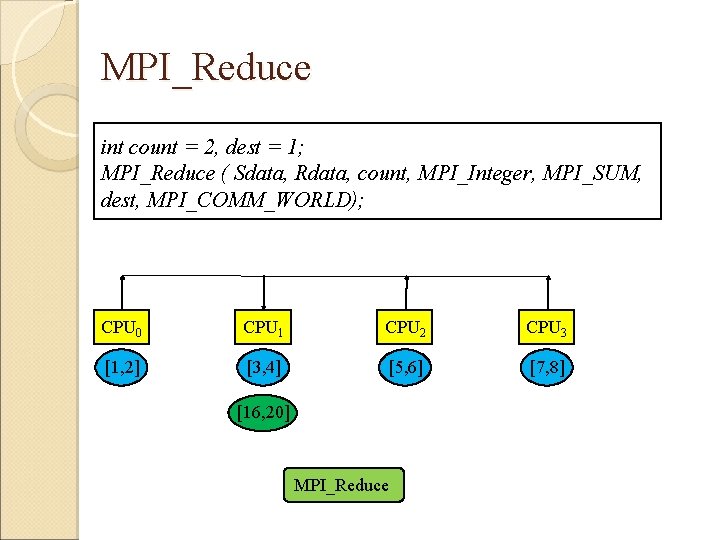

MPI_Reduce Calculate a data array from every CPU ids which is at same communicator and then put result to a specified CPU id. int Sdata[], Rdata[], count, dest, err; MPI_Comm COMM; MPI_Datatype; MPI_Op op; err = MPI_Reduce(Sdata, Rdata, count, type, op, dest, COMM); Sdata Rdata count type op COMM dest Send array. Receive array. The size of array. Data type. Integrated operations approach. Communicator. CPU id which receives array after computing.

MPI_Reduce int count = 2, dest = 1; MPI_Reduce ( Sdata, Rdata, count, MPI_Integer, MPI_SUM, dest, MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 Sdata [1, 2] Sdata [3, 4] Sdata [5, 6] Sdata [7, 8] Rdata [16, 20] MPI_Reduce

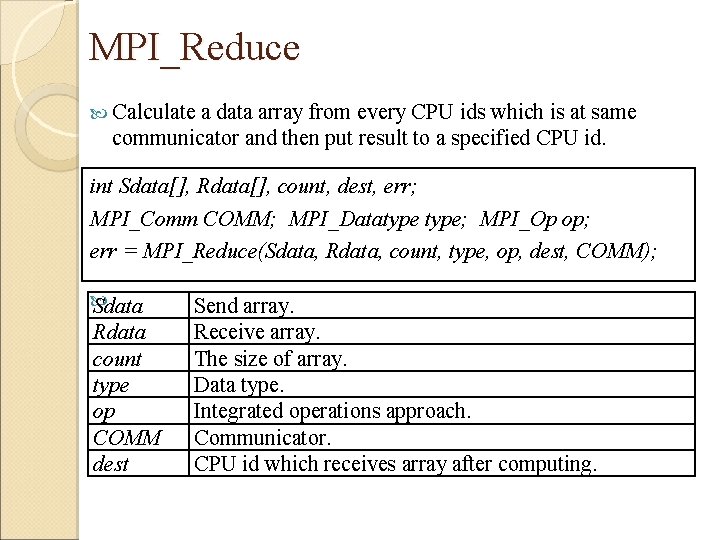

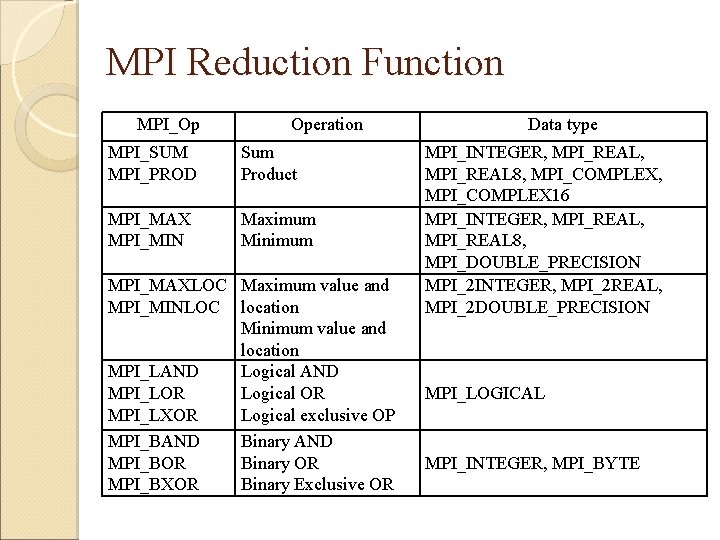

MPI Reduction Function MPI_Op Operation MPI_SUM MPI_PROD Sum Product MPI_MAX MPI_MIN Maximum Minimum MPI_MAXLOC Maximum value and MPI_MINLOC location Minimum value and location MPI_LAND Logical AND MPI_LOR Logical OR MPI_LXOR Logical exclusive OP MPI_BAND Binary AND MPI_BOR Binary OR MPI_BXOR Binary Exclusive OR Data type MPI_INTEGER, MPI_REAL, MPI_REAL 8, MPI_COMPLEX, MPI_COMPLEX 16 MPI_INTEGER, MPI_REAL, MPI_REAL 8, MPI_DOUBLE_PRECISION MPI_2 INTEGER, MPI_2 REAL, MPI_2 DOUBLE_PRECISION MPI_LOGICAL MPI_INTEGER, MPI_BYTE

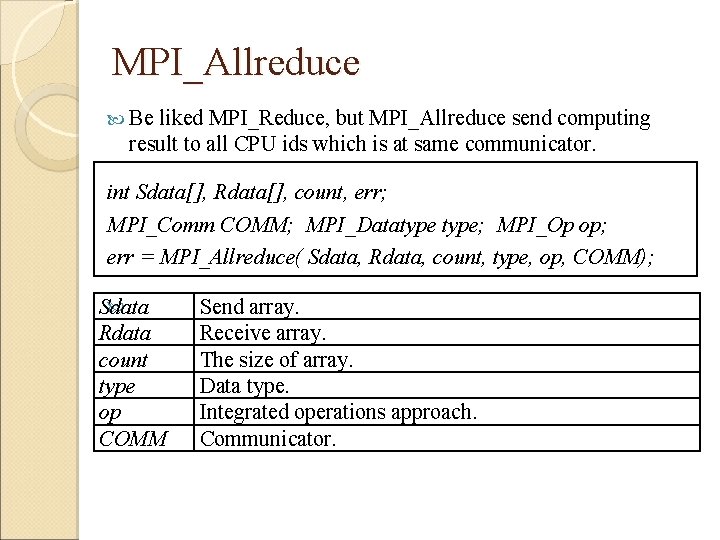

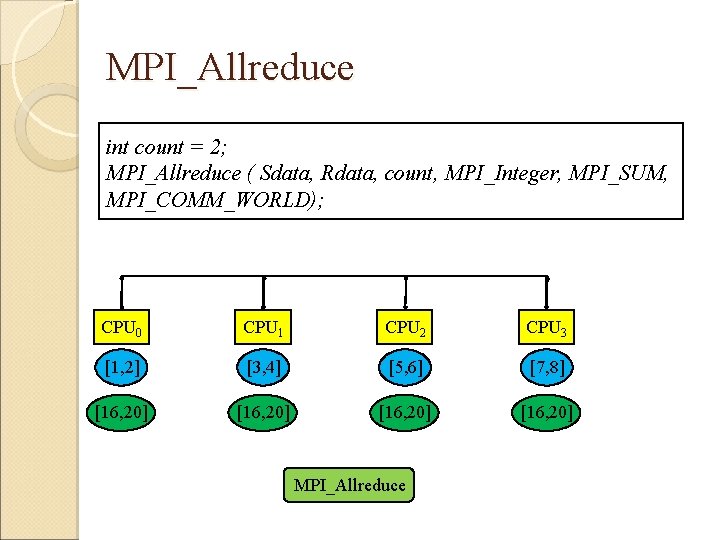

MPI_Allreduce Be liked MPI_Reduce, but MPI_Allreduce send computing result to all CPU ids which is at same communicator. int Sdata[], Rdata[], count, err; MPI_Comm COMM; MPI_Datatype; MPI_Op op; err = MPI_Allreduce( Sdata, Rdata, count, type, op, COMM); Sdata Rdata count type op COMM Send array. Receive array. The size of array. Data type. Integrated operations approach. Communicator.

MPI_Allreduce int count = 2; MPI_Allreduce ( Sdata, Rdata, count, MPI_Integer, MPI_SUM, MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 Sdata [1, 2] Sdata [3, 4] Sdata [5, 6] Sdata [7, 8] [16, 20] Rdata MPI_Allreduce

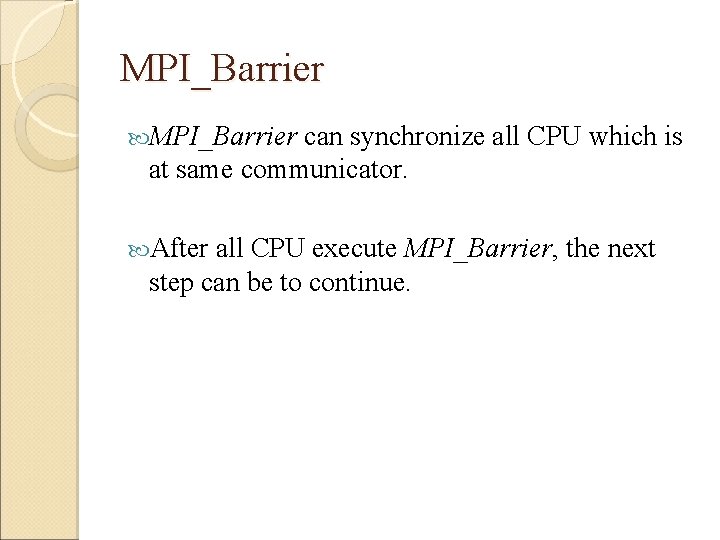

MPI_Barrier can synchronize all CPU which is at same communicator. After all CPU execute MPI_Barrier, the next step can be to continue.

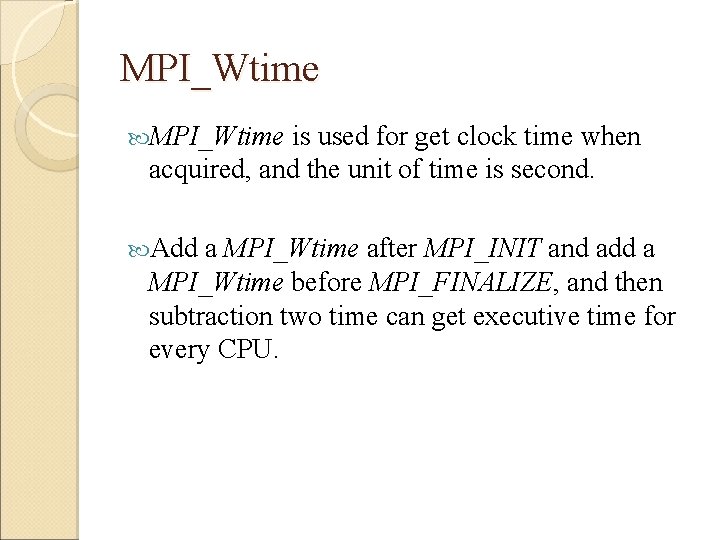

MPI_Wtime is used for get clock time when acquired, and the unit of time is second. Add a MPI_Wtime after MPI_INIT and add a MPI_Wtime before MPI_FINALIZE, and then subtraction two time can get executive time for every CPU.

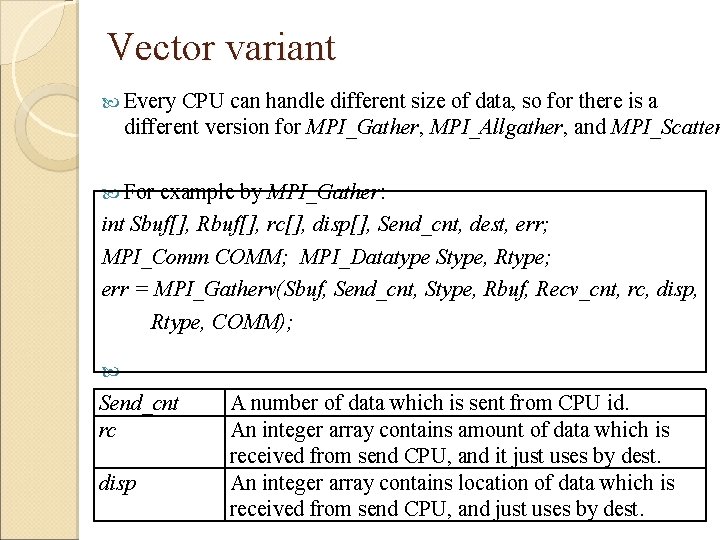

Vector variant Every CPU can handle different size of data, so for there is a different version for MPI_Gather, MPI_Allgather, and MPI_Scatter For example by MPI_Gather: int Sbuf[], Rbuf[], rc[], disp[], Send_cnt, dest, err; MPI_Comm COMM; MPI_Datatype Stype, Rtype; err = MPI_Gatherv(Sbuf, Send_cnt, Stype, Rbuf, Recv_cnt, rc, disp, Rtype, COMM); Send_cnt rc disp A number of data which is sent from CPU id. An integer array contains amount of data which is received from send CPU, and it just uses by dest. An integer array contains location of data which is received from send CPU, and just uses by dest.

![MPI_Gatherv int dest = 0, Send_cnt = sizeof(Sbuf); int rc[4] = {3, 1, 2, MPI_Gatherv int dest = 0, Send_cnt = sizeof(Sbuf); int rc[4] = {3, 1, 2,](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-67.jpg)

MPI_Gatherv int dest = 0, Send_cnt = sizeof(Sbuf); int rc[4] = {3, 1, 2, 2}, disp[4] = {0, 3, 4, 6}; MPI_Gatherv ( Sbuf, Send_cnt, MPI_INTEGER, Rbuf, rc, disp, MPI_INTEGER , dest, MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 [1, 2, 3 Sdata ] [4] Sdata [5, 6] Sdata [7, 8] Sdata Rdata [1, 2, 3, 4, 5, 6, 7, 8] MPI_Gatherv

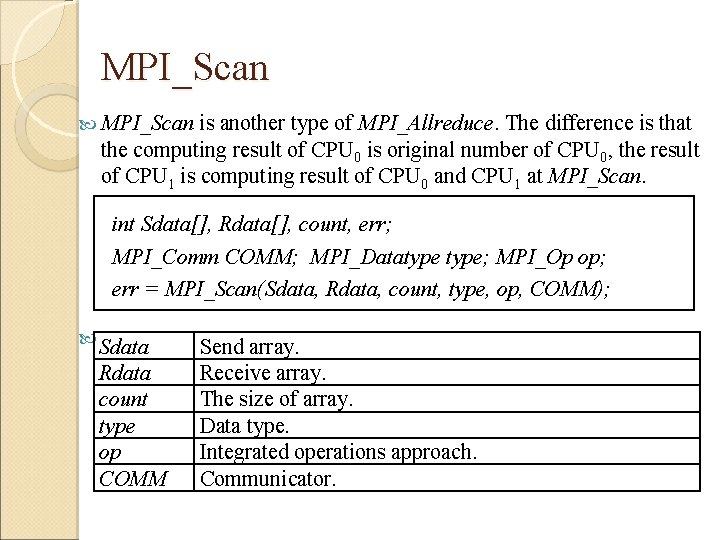

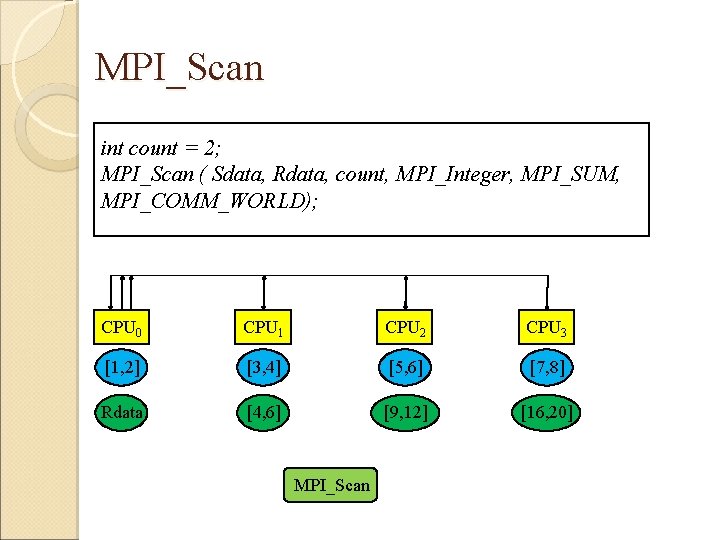

MPI_Scan is another type of MPI_Allreduce. The difference is that the computing result of CPU 0 is original number of CPU 0, the result of CPU 1 is computing result of CPU 0 and CPU 1 at MPI_Scan. int Sdata[], Rdata[], count, err; MPI_Comm COMM; MPI_Datatype; MPI_Op op; err = MPI_Scan(Sdata, Rdata, count, type, op, COMM); Sdata Rdata count type op COMM Send array. Receive array. The size of array. Data type. Integrated operations approach. Communicator.

MPI_Scan int count = 2; MPI_Scan ( Sdata, Rdata, count, MPI_Integer, MPI_SUM, MPI_COMM_WORLD); CPU 0 CPU 1 CPU 2 CPU 3 Sdata [1, 2] Sdata [3, 4] Sdata [5, 6] Sdata [7, 8] [1, 2] Rdata [4, 6] [9, 12] Rdata [16, 20] Rdata MPI_Scan

Related Functions

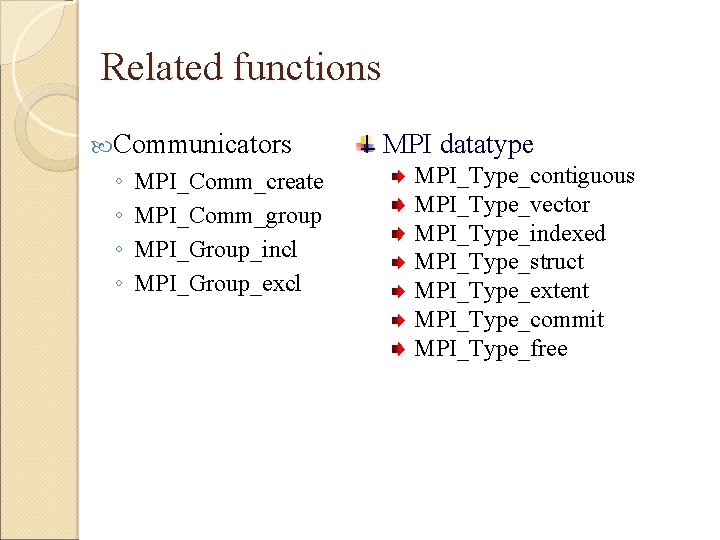

Related functions Communicators ◦ ◦ MPI_Comm_create MPI_Comm_group MPI_Group_incl MPI_Group_excl MPI datatype MPI_Type_contiguous MPI_Type_vector MPI_Type_indexed MPI_Type_struct MPI_Type_extent MPI_Type_commit MPI_Type_free

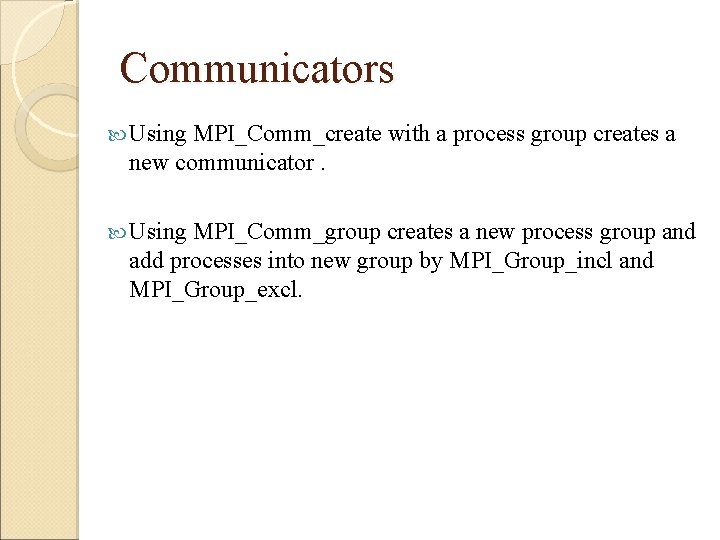

Communicators Using MPI_Comm_create with a process group creates a new communicator. Using MPI_Comm_group creates a new process group and add processes into new group by MPI_Group_incl and MPI_Group_excl.

MPI_Comm_create / MPI_Comm_group MPI_Comm, New_comm; MPI_Group group; int err = MPI_Comm_create( Comm, group, &New_comm); Comm group New_comm Existing communicator now. A process group which includes at new communicator. New communicator. MPI_Comm; MPI_Group group; int err = MPI_Comm_group( Comm, group); Comm group Communicator. A process group which includes all process at Comm.

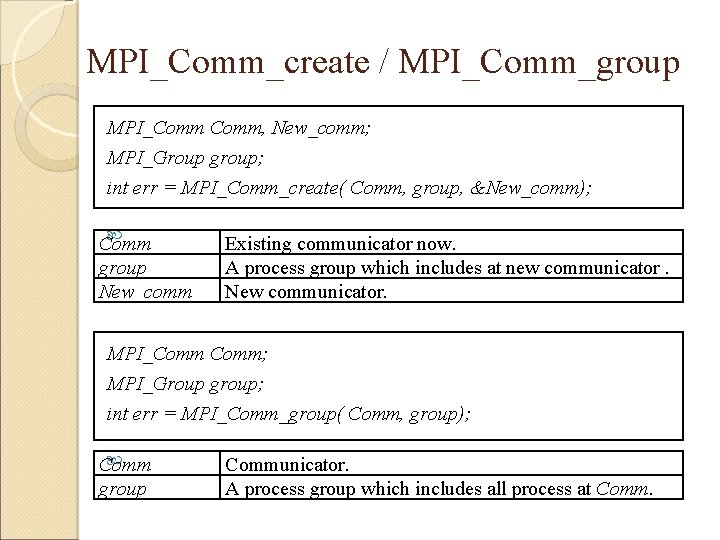

![MPI_Group_incl / MPI_Group_excl int num, ranks[], err; MPI_Group group, new_group; err = MPI_Group_incl( group, MPI_Group_incl / MPI_Group_excl int num, ranks[], err; MPI_Group group, new_group; err = MPI_Group_incl( group,](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-74.jpg)

MPI_Group_incl / MPI_Group_excl int num, ranks[], err; MPI_Group group, new_group; err = MPI_Group_incl( group, num, ranks, &new_group); group num ranks New_group A process group. An amount of processes which add to new group. CPU id which add to new group. New process group. int num, ranks[], err; MPI_Group group, new_group; err = MPI_Group_excl( group, num, ranks, &new_group); num ranks An amount of processes which don’t add to new group. CPU id which don’t add to new group.

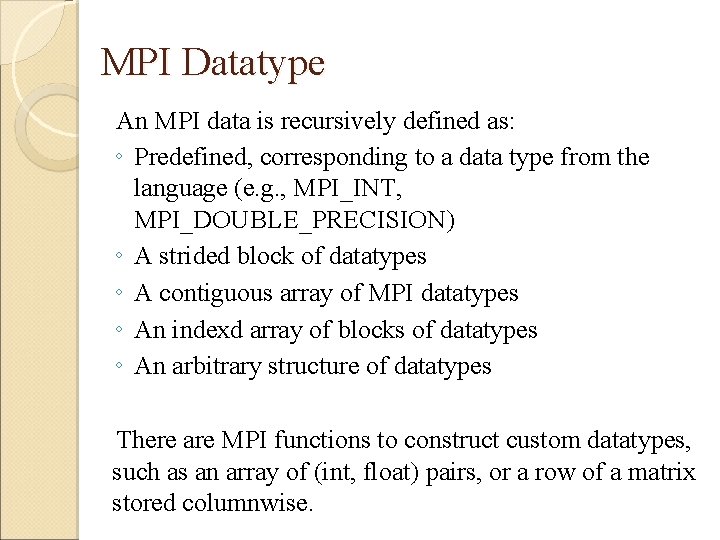

MPI Datatype An MPI data is recursively defined as: ◦ Predefined, corresponding to a data type from the language (e. g. , MPI_INT, MPI_DOUBLE_PRECISION) ◦ A strided block of datatypes ◦ A contiguous array of MPI datatypes ◦ An indexd array of blocks of datatypes ◦ An arbitrary structure of datatypes There are MPI functions to construct custom datatypes, such as an array of (int, float) pairs, or a row of a matrix stored columnwise.

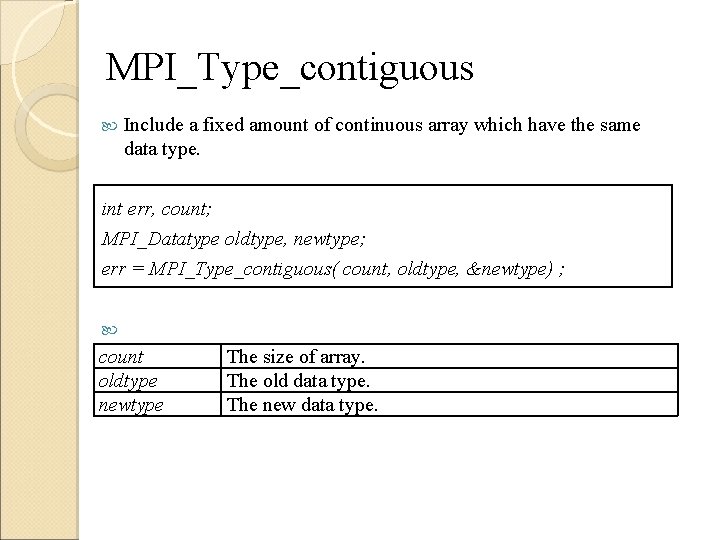

MPI_Type_contiguous Include a fixed amount of continuous array which have the same data type. int err, count; MPI_Datatype oldtype, newtype; err = MPI_Type_contiguous( count, oldtype, &newtype) ; count oldtype newtype The size of array. The old data type. The new data type.

MPI_Type_contiguous int count = 3; MPI_Datatype newtype; err = MPI_Type_contiguous( count, MPI_INTEGER, &newtype); Old: INTEGER 0 1 2 New: INTEGER element 0 element 1 element 2

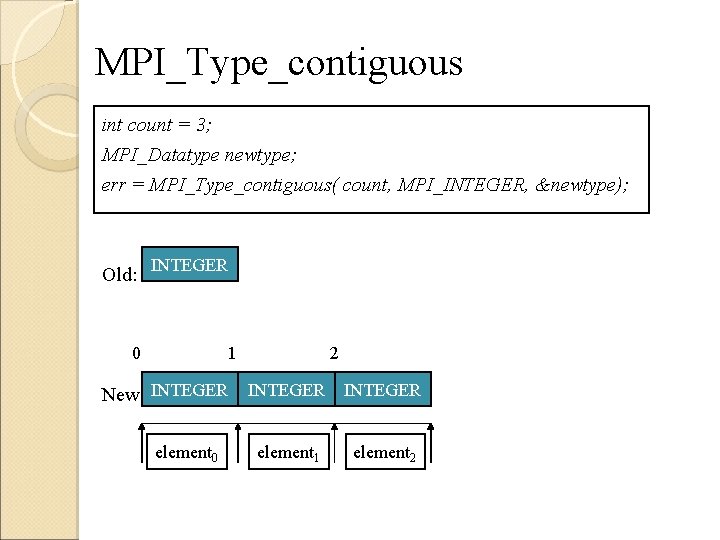

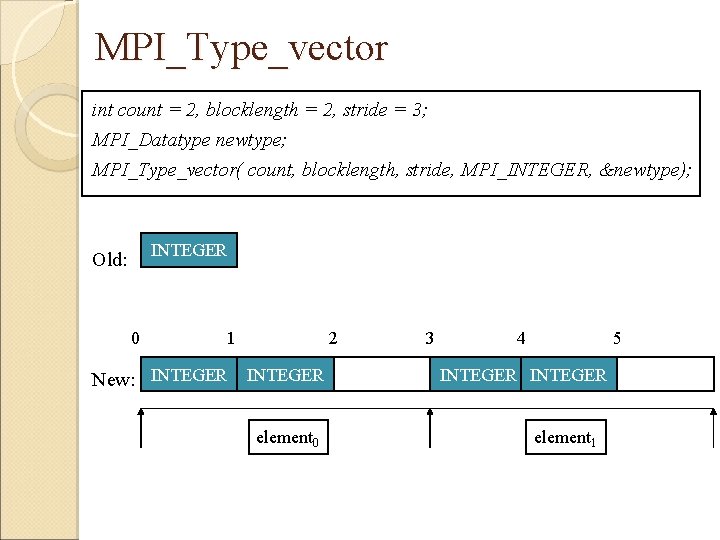

MPI_Type_vector Include a fixed size of interval of discontinuous array which have the same data type. int err, count, blocklength, stride; MPI_Datatype oldtype, newtype; err = MPI_Type_vector( count, blocklength, stride, oldtype, &newtype); count blocklength stride oldtype newtype The amount of block. The amount of data with old data type at a block. The distance of block, and using old data type as unit. The old data type. The new data type.

MPI_Type_vector int count = 2, blocklength = 2, stride = 3; MPI_Datatype newtype; MPI_Type_vector( count, blocklength, stride, MPI_INTEGER, &newtype); INTEGER Old: 0 1 2 3 4 5 New: INTEGER INTEGER element 0 element 1

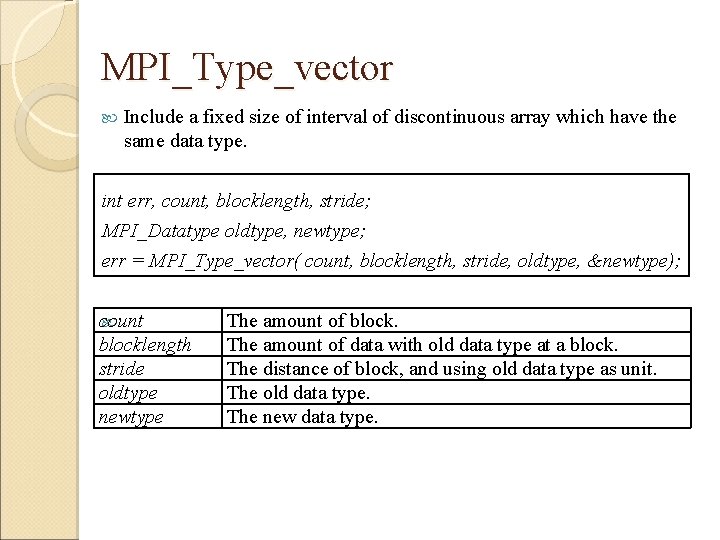

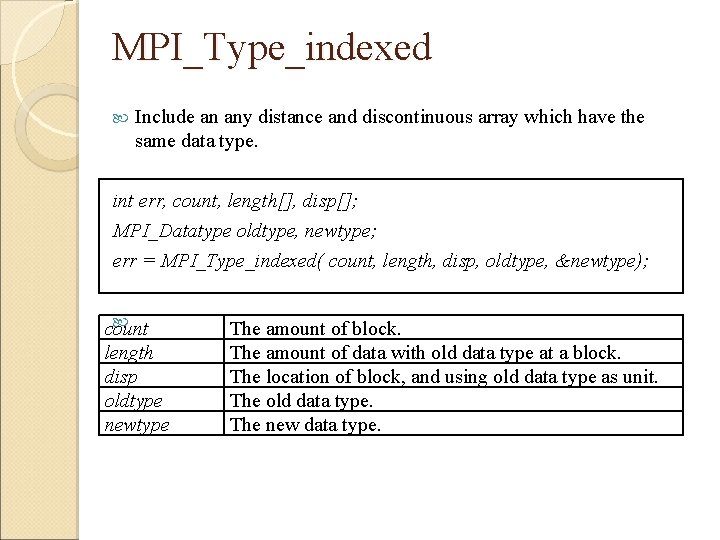

MPI_Type_indexed Include an any distance and discontinuous array which have the same data type. int err, count, length[], disp[]; MPI_Datatype oldtype, newtype; err = MPI_Type_indexed( count, length, disp, oldtype, &newtype); count length disp oldtype newtype The amount of block. The amount of data with old data type at a block. The location of block, and using old data type as unit. The old data type. The new data type.

![MPI_Type_indexed int count = 3, length[3] = {1, 3, 2}, disp[3] = {0, 3, MPI_Type_indexed int count = 3, length[3] = {1, 3, 2}, disp[3] = {0, 3,](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-81.jpg)

MPI_Type_indexed int count = 3, length[3] = {1, 3, 2}, disp[3] = {0, 3, 7}; MPI_Datatype newtype; MPI_Type_indexed( count, length, disp, MPI_INTEGER, &newtype); INT Old: 0 New: INT 1 2 INT element 0 3 INT 4 INT 5 INT 6 INT element 1 7 INT 8 INT element 2

![MPI_Datatype_struct Any combination of data types. int err, count, length[]; MPI_Aint disp[]; MPI_Datatype oldtype[], MPI_Datatype_struct Any combination of data types. int err, count, length[]; MPI_Aint disp[]; MPI_Datatype oldtype[],](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-82.jpg)

MPI_Datatype_struct Any combination of data types. int err, count, length[]; MPI_Aint disp[]; MPI_Datatype oldtype[], newtype; err = MPI_Type_struct( count, length, disp, oldtype, &newtype); count length disp oldtype newtype The amount of block. The amount of data with old data type at a block. The location of block, and using type as unit. The old data types. The new data type.

![MPI_Datatype_struct int count = 2, length[2] = {2, 4}, disp[2] = {0, extent(MPI_INTEGER)*2}; MPI_Datatype MPI_Datatype_struct int count = 2, length[2] = {2, 4}, disp[2] = {0, extent(MPI_INTEGER)*2}; MPI_Datatype](http://slidetodoc.com/presentation_image/317b7e79e2547b00783f84e3a8ff80e8/image-83.jpg)

MPI_Datatype_struct int count = 2, length[2] = {2, 4}, disp[2] = {0, extent(MPI_INTEGER)*2}; MPI_Datatype oldtype[2] = {MPI_INTEGER, MPI_DOUBLE}, newtype; MPI_Type_struct( count, length, disp, oldtype, &newtype); Old: New: INT Double INT element 0 Double element 1 Double

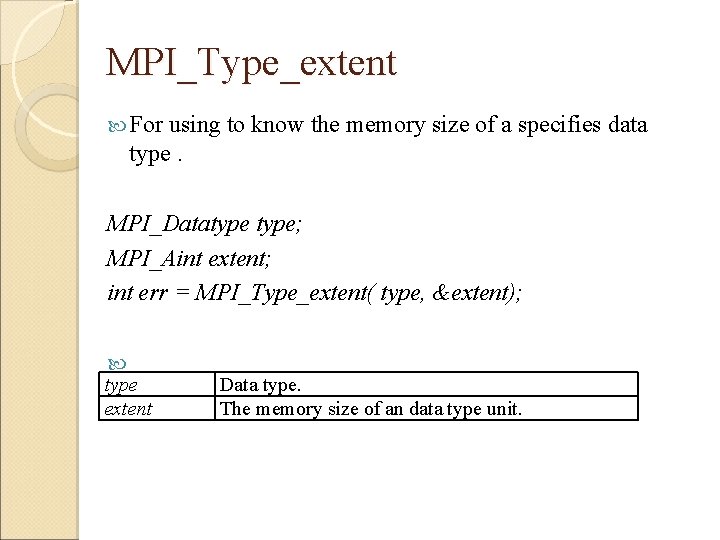

MPI_Type_extent For using to know the memory size of a specifies data type. MPI_Datatype; MPI_Aint extent; int err = MPI_Type_extent( type, &extent); type extent Data type. The memory size of an data type unit.

MPI_Type_commit / MPI_Type_free After constructing a data type, we must commit this data type, and then allow using this type. MPI_Datatype; int err = MPI_Type_commit( &type); If a type which is declared by user will never be used, can use MPI_Type_free to release memory space. MPI_Datatype; int err = MPI_Type_free( &type);

- Slides: 85