Timing Isolation for Systems Rodolfo Pellizzoni Memory Publications

Timing Isolation for Systems Rodolfo Pellizzoni Memory

Publications • • • Single Core Equivalent Virtual Machines for Hard Real-Time Computing on Multicore Processors, IEEE Computer 2016 Memory Bandwidth Management for Efficient Performance Isolation in Multi-core Platforms, IEEE Transactions on Computers 2016 Schedulability Analysis for Memory Bandwidth Regulated Multicore Real-Time Systems, IEEE Transactions on Computers 2016 A Real-Time Scratchpad-centric OS for Multi-core Embedded Systems, RTAS 2016 WCET(m) Estimation in Multi-Core Systems using Single Core Equivalence, ECRTS 2015 Parallelism-Aware Memory Interference Delay Analysis for COTS Multicore Systems, ECRTS 2015 PALLOC: DRAM Bank-Aware Memory Allocator for Performance Isolation on Multicore Platforms, RTAS 2014 Worst Case Analysis of DRAM Latency in Multi-Requestor Systems, RTSS 2013 Real-Time Cache Management Framework for Multi-core Architectures, RTAS 2013 1 / 61

Thanks – Ahmed Alhammad, Saud Wasly, Zheng Pei Wu – Heechul Yun Gang Yao, Renato Mancuso, Marco Caccamo, Lui Sha 2 / 61

Multicore Systems Server Desktop Mobile RT/Embedded 3 / 61

Exploiting Multicores • Idea 1: combine multiple software partitions / applications – Industrial standards (ARINC 673, AUTOSAR) define systems in terms of partitions / software components – We can put more partitions on the same system – Problem: independent development/certification – This is focus of this talk • Idea 2: parallel applications – Computationally-intensive applications – Problem: task model and optimization 4 / 61

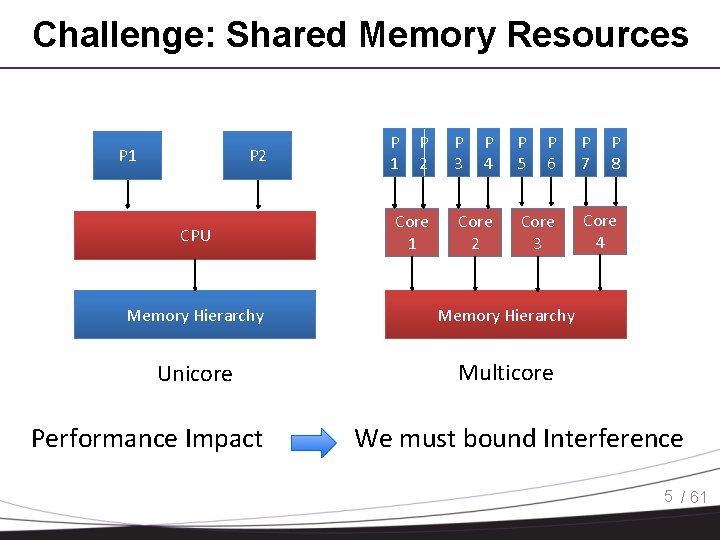

Challenge: Shared Memory Resources P 1 P 2 CPU P 1 P 2 Core 1 P 3 P 4 Core 2 P 5 P 6 Core 3 Memory Hierarchy Unicore Multicore Performance Impact P 7 P 8 Core 4 We must bound Interference 5 / 61

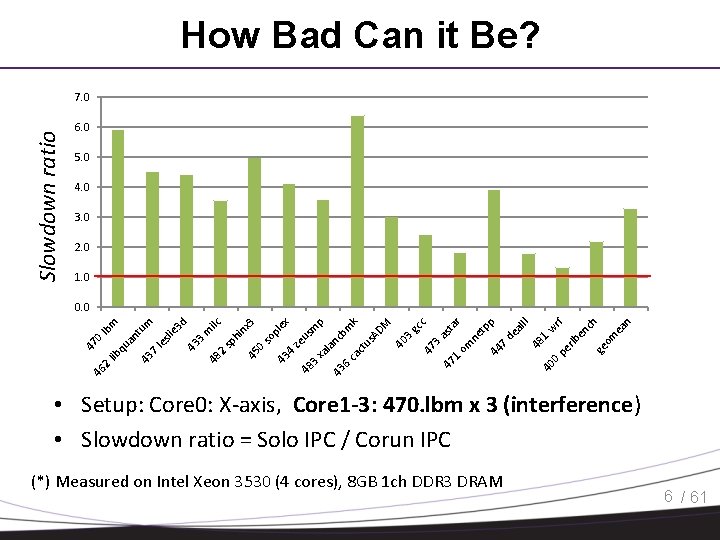

How Bad Can it Be? Slowdown ratio 7. 0 6. 0 5. 0 4. 0 3. 0 2. 0 1. 0 3. as ta 1. om r ne tp p 44 7. de al II 48 1. 40 w 0. rf pe rlb en ch ge om ea n c M gc 3. 40 47 47 43 6. ca ct us nc la AD bm k p m 3. 43 xa ze us pl so 0. 45 sp h in ex x 3 ilc 2. 48 43 3. m d e 3 le sli 43 7. an qu 4. 48 46 2. lib 47 0. lb tu m m 0. 0 • Setup: Core 0: X-axis, Core 1 -3: 470. lbm x 3 (interference) • Slowdown ratio = Solo IPC / Corun IPC (*) Measured on Intel Xeon 3530 (4 cores), 8 GB 1 ch DDR 3 DRAM 6 / 61

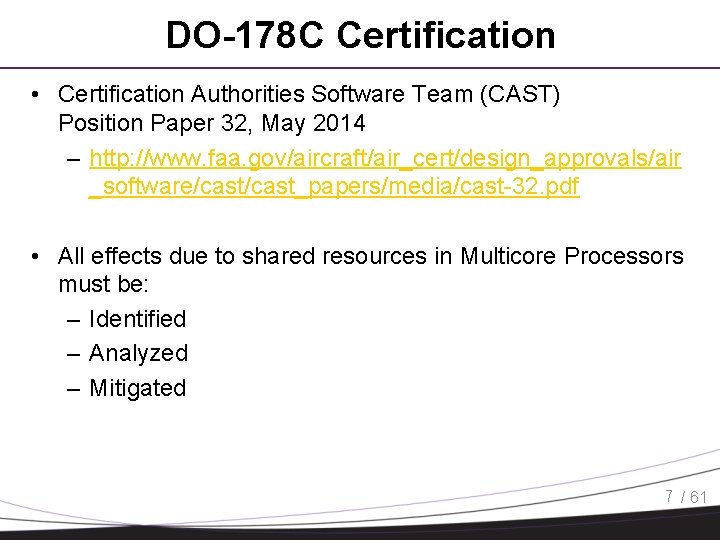

DO-178 C Certification • Certification Authorities Software Team (CAST) Position Paper 32, May 2014 – http: //www. faa. gov/aircraft/air_cert/design_approvals/air _software/cast_papers/media/cast-32. pdf • All effects due to shared resources in Multicore Processors must be: – Identified – Analyzed – Mitigated 7 / 61

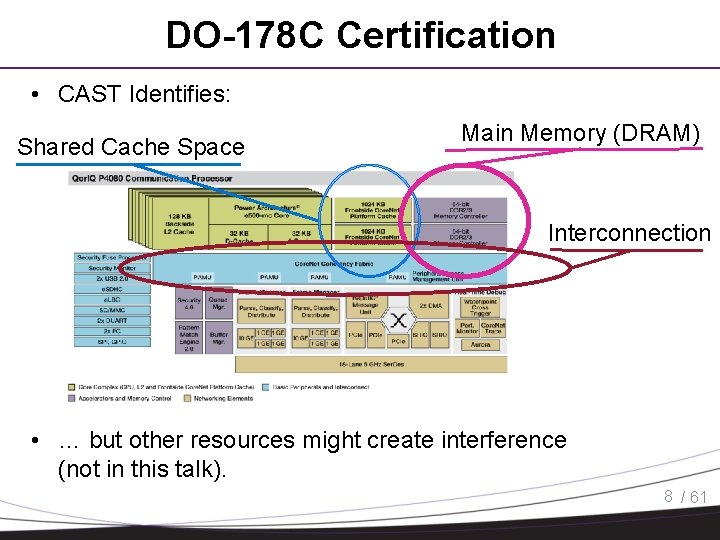

DO-178 C Certification • CAST Identifies: Shared Cache Space Main Memory (DRAM) Interconnection • … but other resources might create interference (not in this talk). 8 / 61

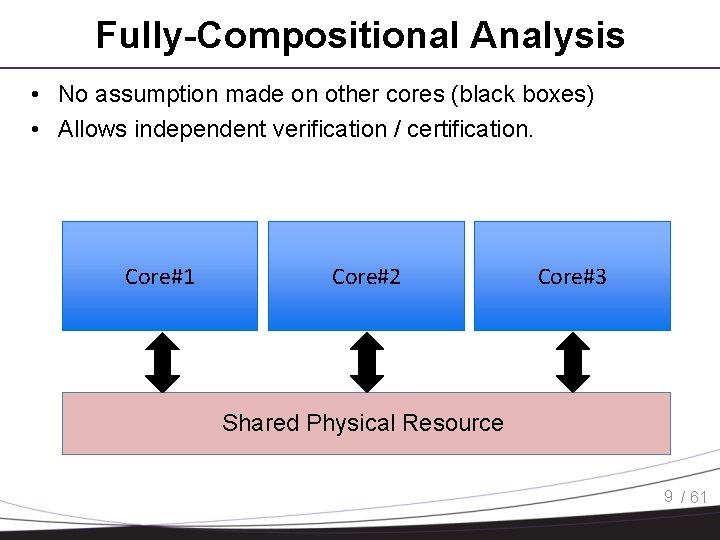

Fully-Compositional Analysis • No assumption made on other cores (black boxes) • Allows independent verification / certification. Core#1 Core#2 Core#3 Shared Physical Resource 9 / 61

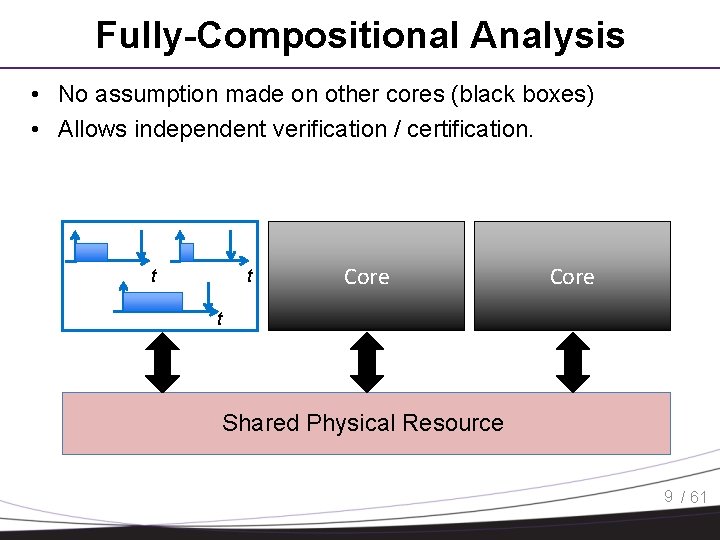

Fully-Compositional Analysis • No assumption made on other cores (black boxes) • Allows independent verification / certification. t t Core t Shared Physical Resource 9 / 61

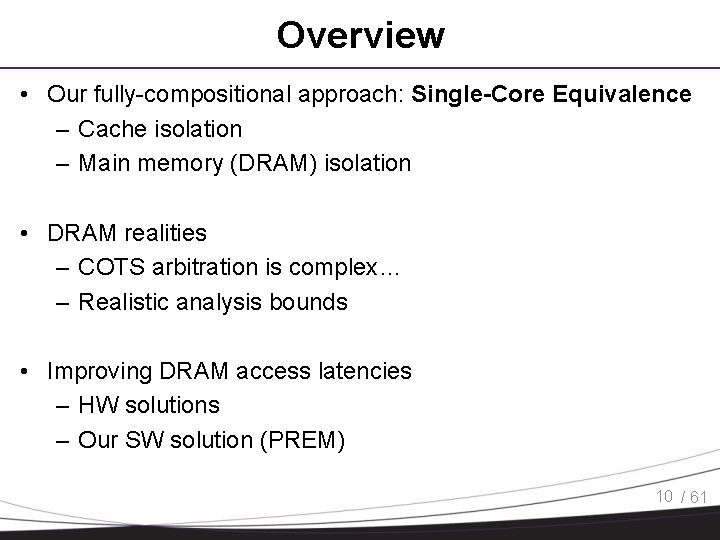

Overview • Our fully-compositional approach: Single-Core Equivalence – Cache isolation – Main memory (DRAM) isolation • DRAM realities – COTS arbitration is complex… – Realistic analysis bounds • Improving DRAM access latencies – HW solutions – Our SW solution (PREM) 10 / 61

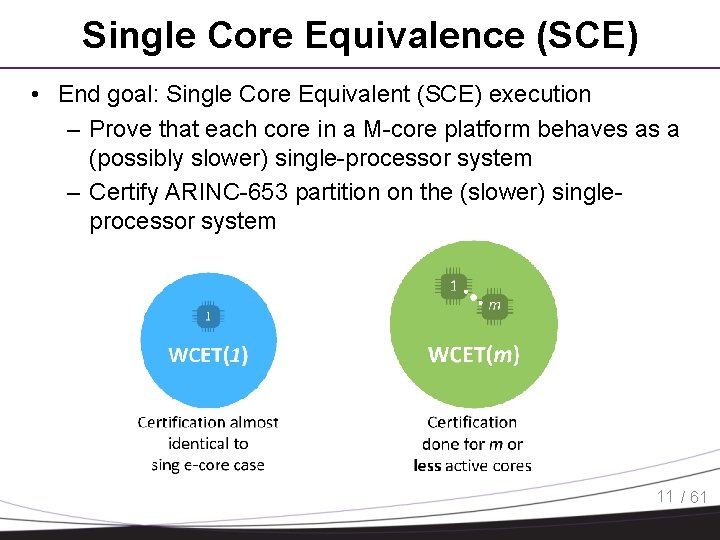

Single Core Equivalence (SCE) • End goal: Single Core Equivalent (SCE) execution – Prove that each core in a M-core platform behaves as a (possibly slower) single-processor system – Certify ARINC-653 partition on the (slower) singleprocessor system 11 / 61

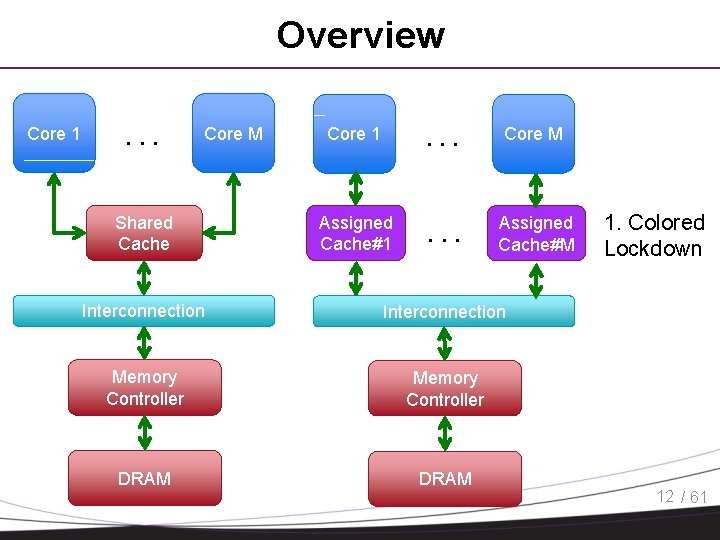

Overview Core 1 . . . Core M Shared Cache Core 1 . . . Core M Assigned Cache#1 . . . Assigned Cache#M Interconnection Memory Controller DRAM 1. Colored Lockdown 12 / 61

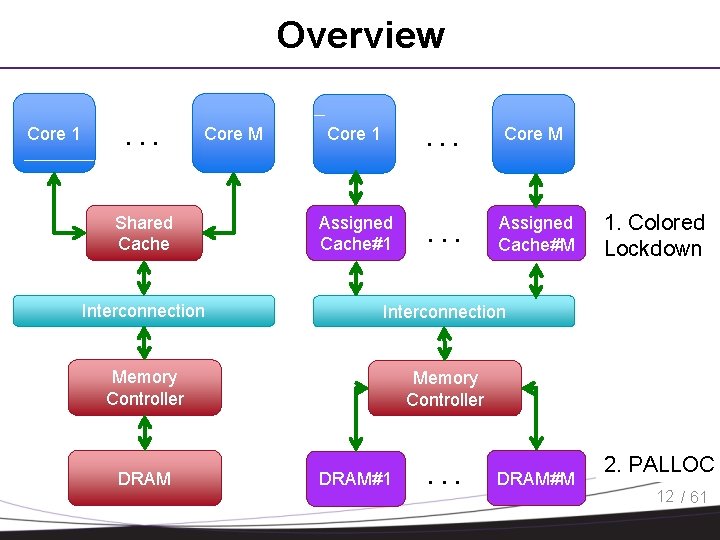

Overview Core 1 . . . Core M Shared Cache Core 1 . . . Core M Assigned Cache#1 . . . Assigned Cache#M Interconnection Memory Controller DRAM#1 . . . DRAM#M 1. Colored Lockdown 2. PALLOC 12 / 61

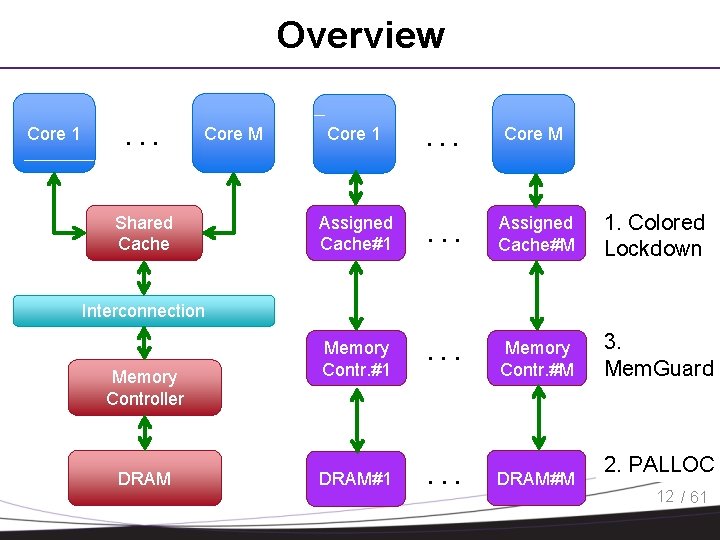

Overview Core 1 . . . Core M Shared Cache Core 1 . . . Core M Assigned Cache#1 . . . Assigned Cache#M 1. Colored Lockdown Memory Contr. #1 . . . Memory Contr. #M 3. Mem. Guard DRAM#1 . . . DRAM#M Interconnection Memory Controller DRAM 2. PALLOC 12 / 61

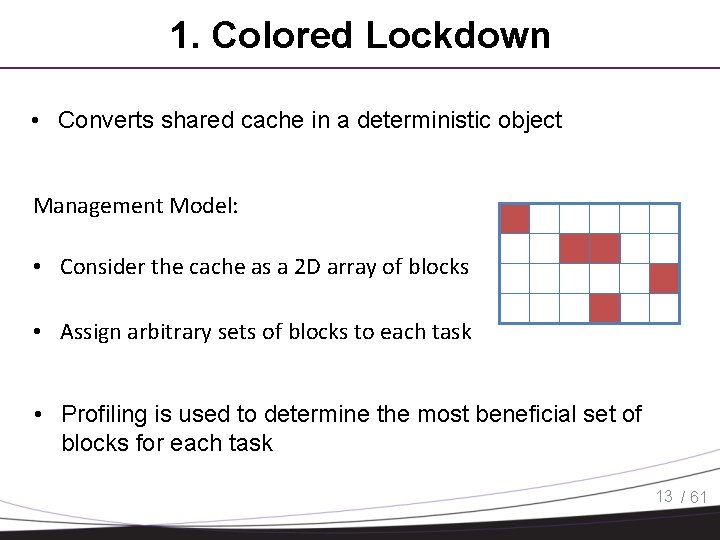

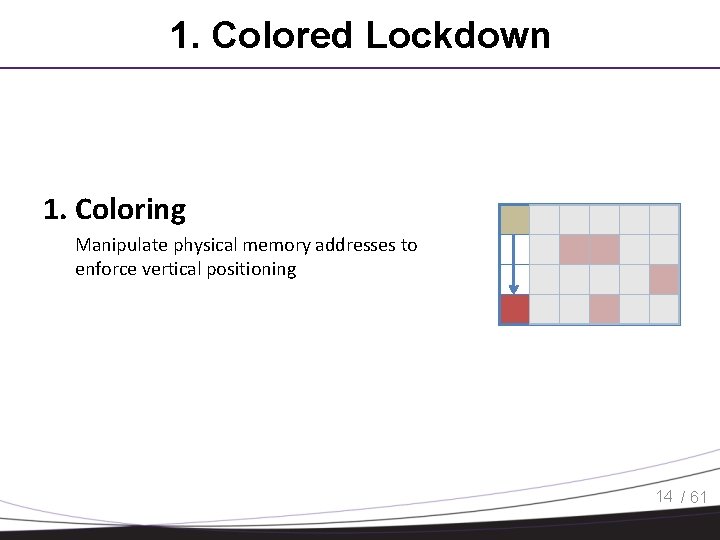

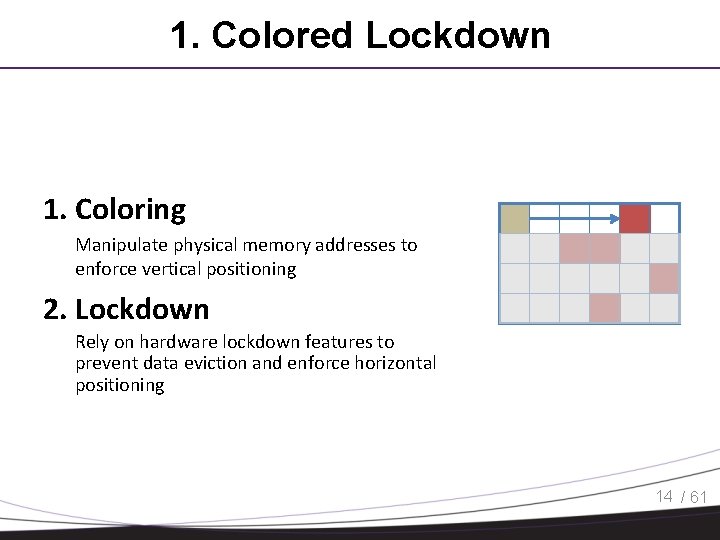

1. Colored Lockdown • Converts shared cache in a deterministic object Management Model: • Consider the cache as a 2 D array of blocks • Assign arbitrary sets of blocks to each task • Profiling is used to determine the most beneficial set of blocks for each task 13 / 61

1. Colored Lockdown 1. Coloring Manipulate physical memory addresses to enforce vertical positioning 14 / 61

1. Colored Lockdown 1. Coloring Manipulate physical memory addresses to enforce vertical positioning 2. Lockdown Rely on hardware lockdown features to prevent data eviction and enforce horizontal positioning 14 / 61

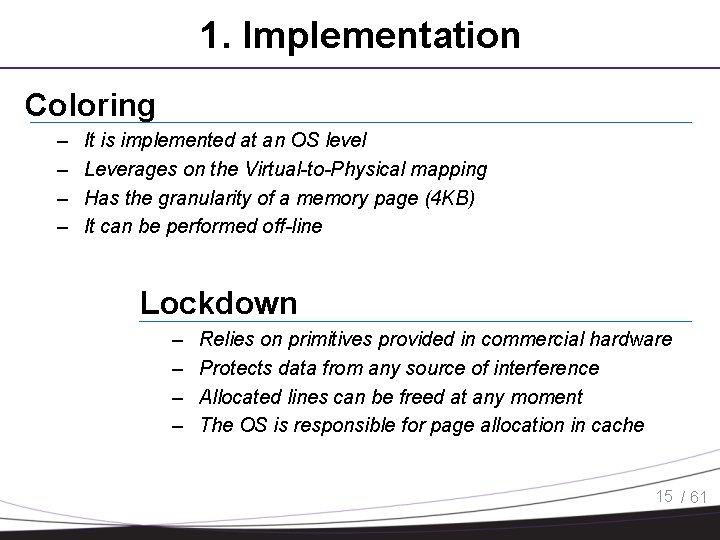

1. Implementation Coloring – – It is implemented at an OS level Leverages on the Virtual-to-Physical mapping Has the granularity of a memory page (4 KB) It can be performed off-line Lockdown – – Relies on primitives provided in commercial hardware Protects data from any source of interference Allocated lines can be freed at any moment The OS is responsible for page allocation in cache 15 / 61

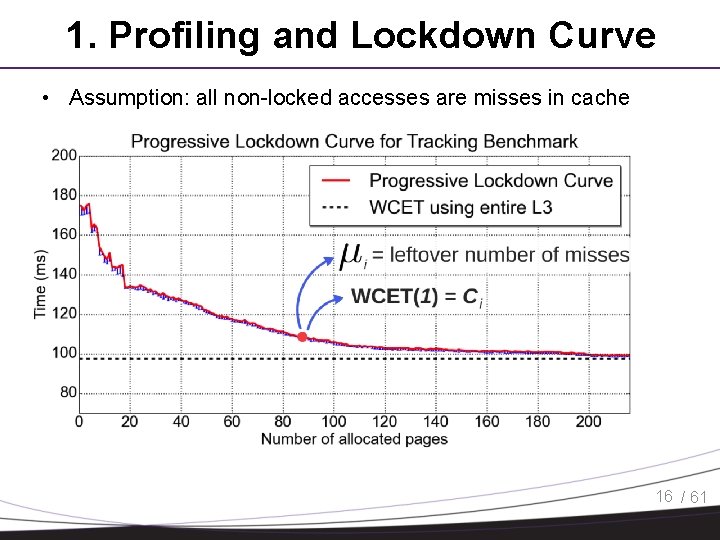

1. Profiling and Lockdown Curve • Assumption: all non-locked accesses are misses in cache 16 / 61

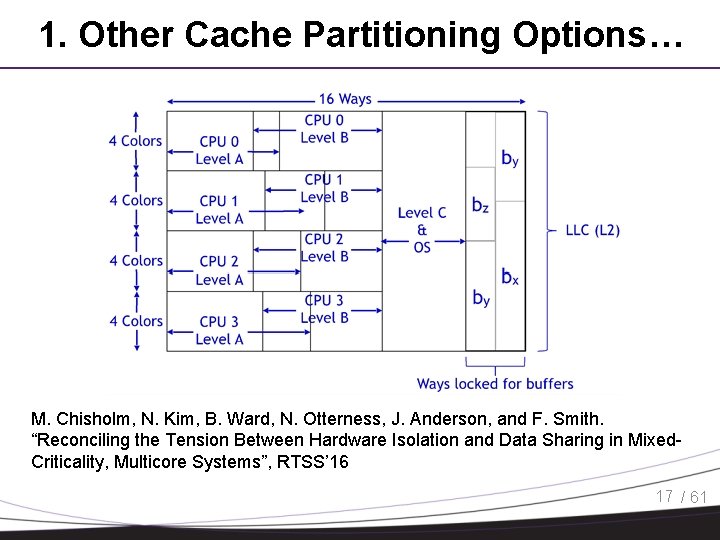

1. Other Cache Partitioning Options… M. Chisholm, N. Kim, B. Ward, N. Otterness, J. Anderson, and F. Smith. “Reconciling the Tension Between Hardware Isolation and Data Sharing in Mixed. Criticality, Multicore Systems”, RTSS’ 16 17 / 61

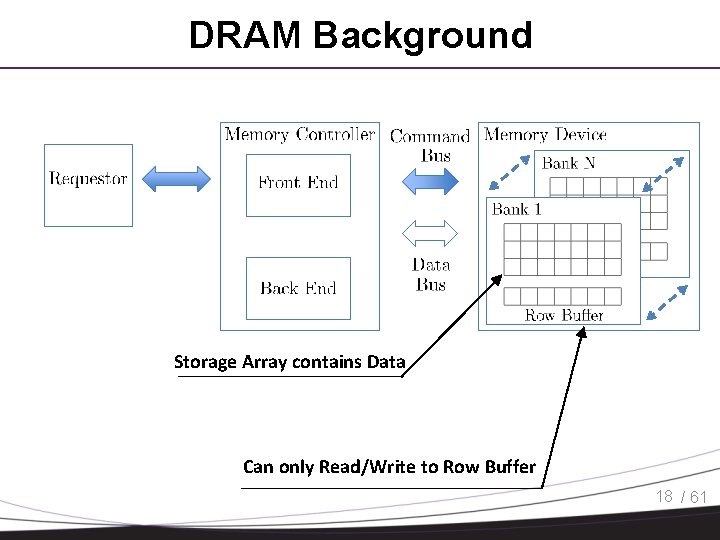

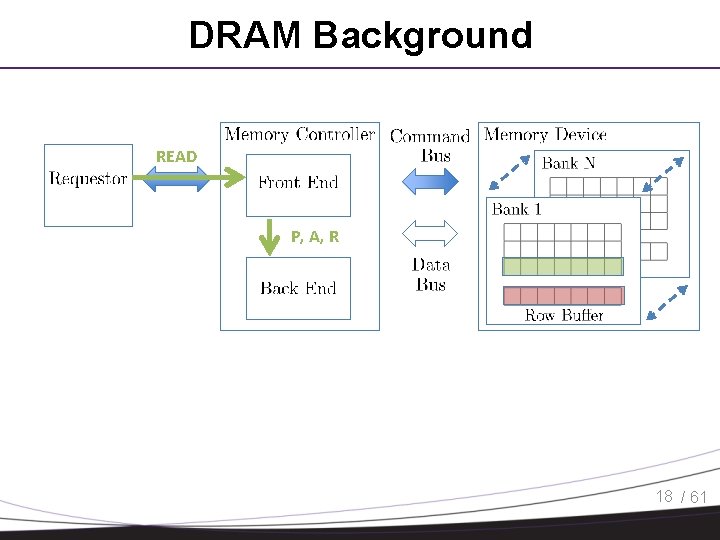

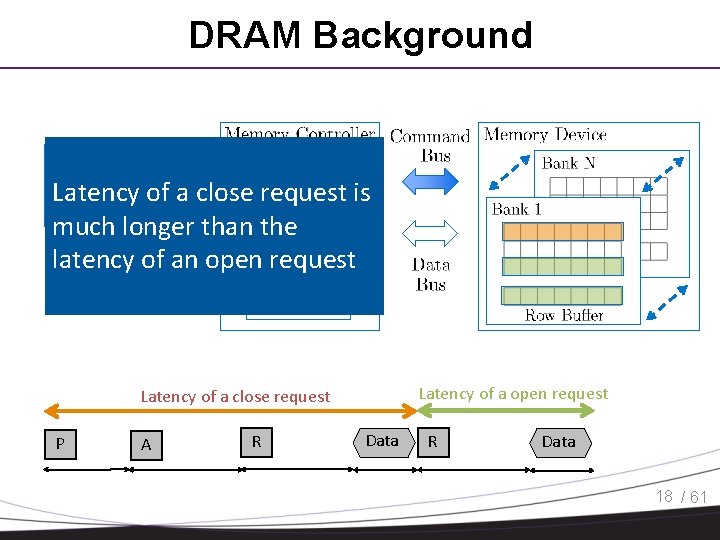

DRAM Background Storage Array contains Data Can only Read/Write to Row Buffer 18 / 61

DRAM Background Front End generates the needed commands, Back End issues commands on command bus 18 / 61

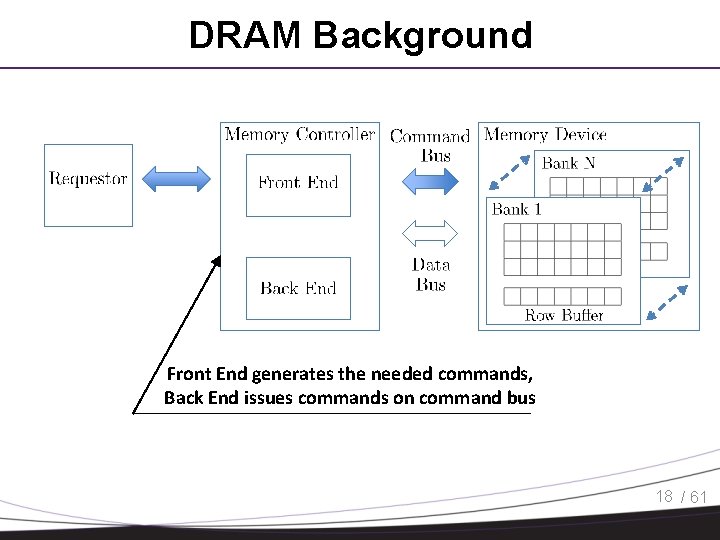

DRAM Background READ Targeting Data in this Row Buffer contain data from a different row 18 / 61

DRAM Background READ P, A, R 18 / 61

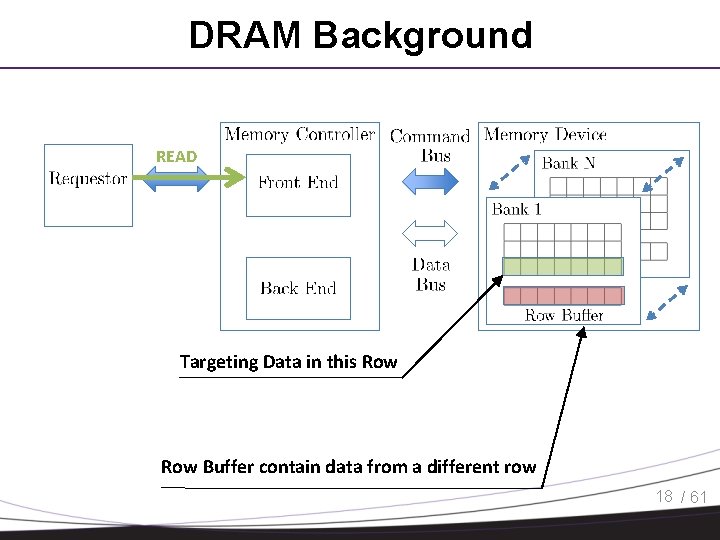

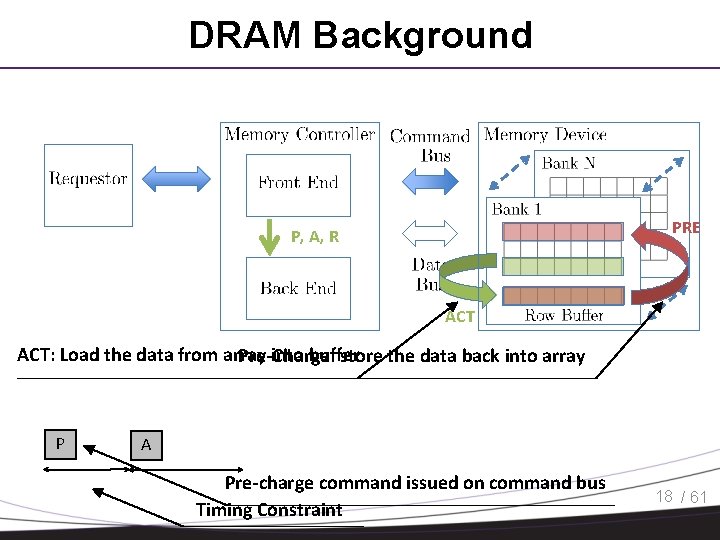

DRAM Background PRE P, A, R ACT: Load the data from array into buffer Pre-Charge: store the data back into array P A Pre-charge command issued on command bus Timing Constraint 18 / 61

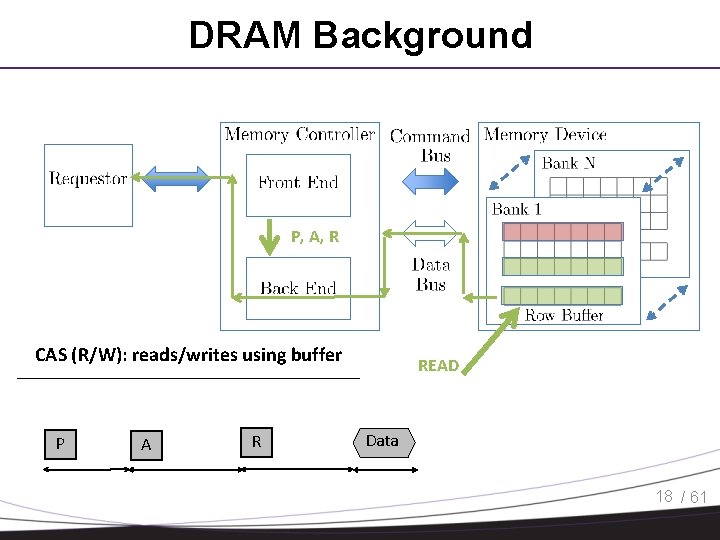

DRAM Background P, A, R CAS (R/W): reads/writes using buffer P A R READ Data 18 / 61

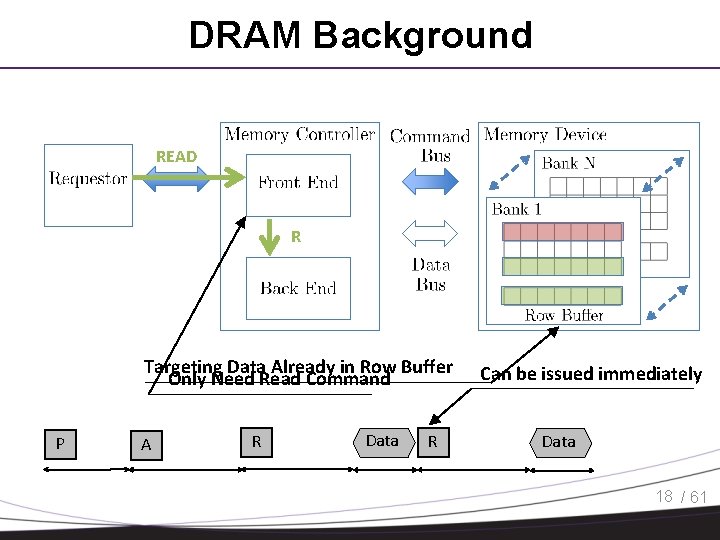

DRAM Background READ R Targeting Data Already in Row Buffer Only Need Read Command P A R Data R Can be issued immediately Data 18 / 61

DRAM Background READ Latency of a close request is much longer than the. R latency of an open request Latency of a close request P A R Data 18 / 61

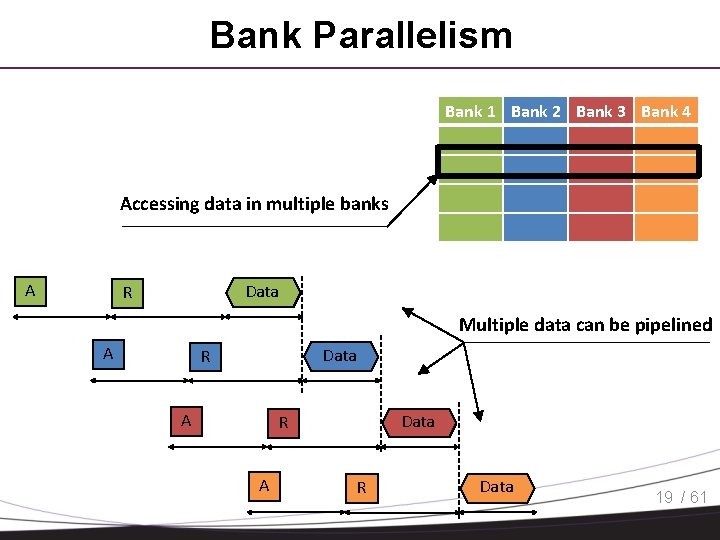

Bank Parallelism Bank 1 Bank 2 Bank 3 Bank 4 Accessing data in multiple banks A Data R Multiple data can be pipelined A Data R A R Data 19 / 61

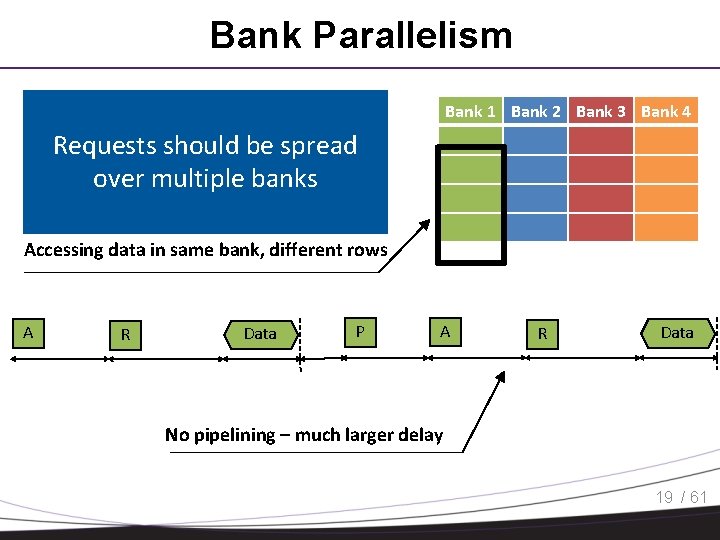

Bank Parallelism Bank 1 Bank 2 Bank 3 Bank 4 Requests should be spread over multiple banks Accessing data in same bank, different rows A R Data P A R Data No pipelining – much larger delay 19 / 61

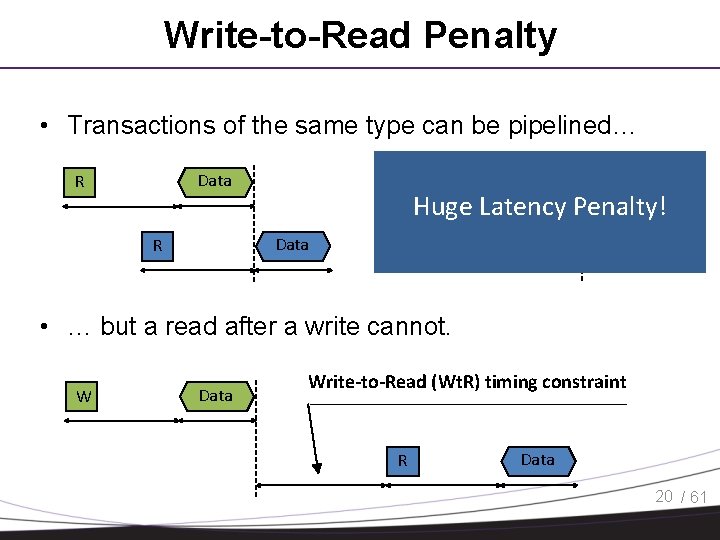

Write-to-Read Penalty • Transactions of the same type can be pipelined… Data R Huge Latency Penalty! Data R Data W • … but a read after a write cannot. W Data Write-to-Read (Wt. R) timing constraint R Data 20 / 61

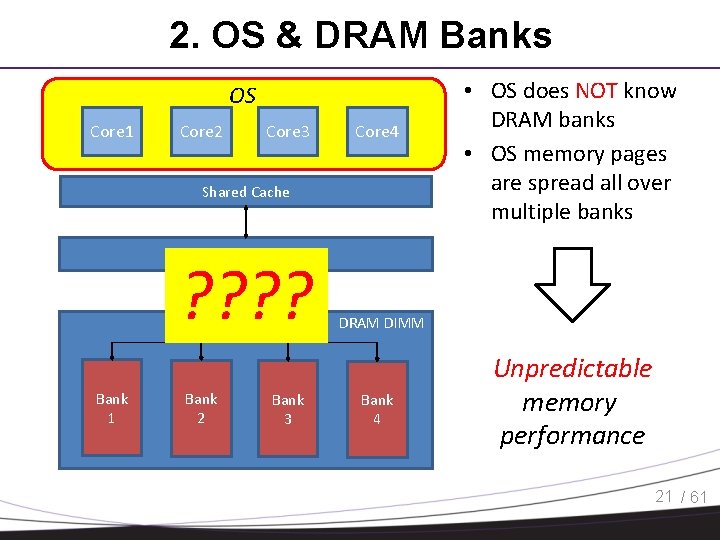

2. OS & DRAM Banks OS Core 1 Core 2 Core 3 Core 4 Shared Cache • OS does NOT know DRAM banks • OS memory pages are spread all over multiple banks Memory Controller (MC) ? ? Bank 1 Bank 2 Bank 3 DRAM DIMM Bank 4 Unpredictable memory performance 21 / 61

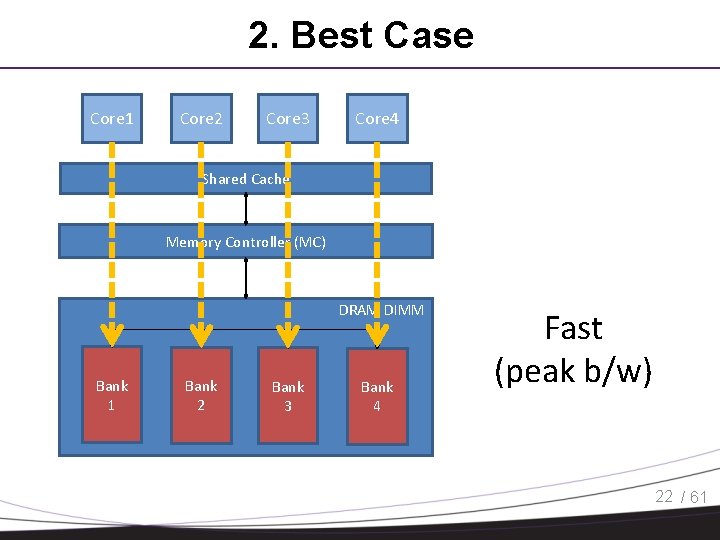

2. Best Case Core 1 Core 2 Core 3 Core 4 Shared Cache Memory Controller (MC) DRAM DIMM Bank 1 Bank 2 Bank 3 Bank 4 Fast (peak b/w) 22 / 61

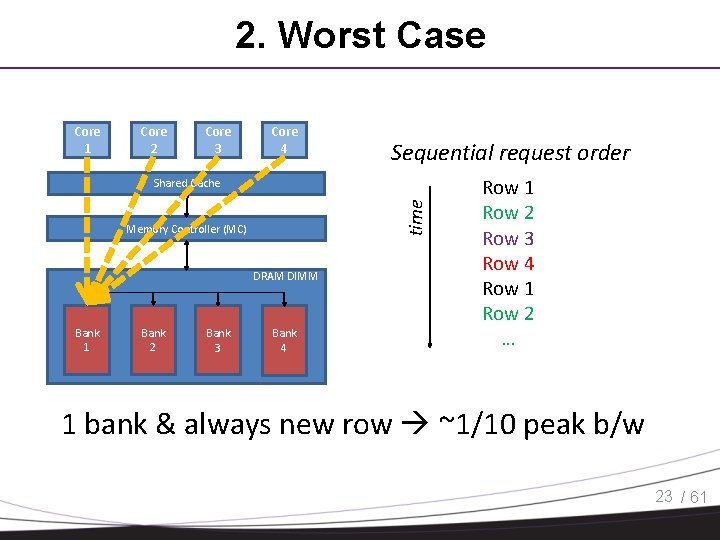

2. Worst Case Core 1 Core 2 Core 3 Core 4 Sequential request order time Shared Cache Memory Controller (MC) DRAM DIMM Bank 1 Bank 2 Bank 3 Bank 4 Row 1 Row 2 Row 3 Row 4 Row 1 Row 2 … 1 bank & always new row ~1/10 peak b/w 23 / 61

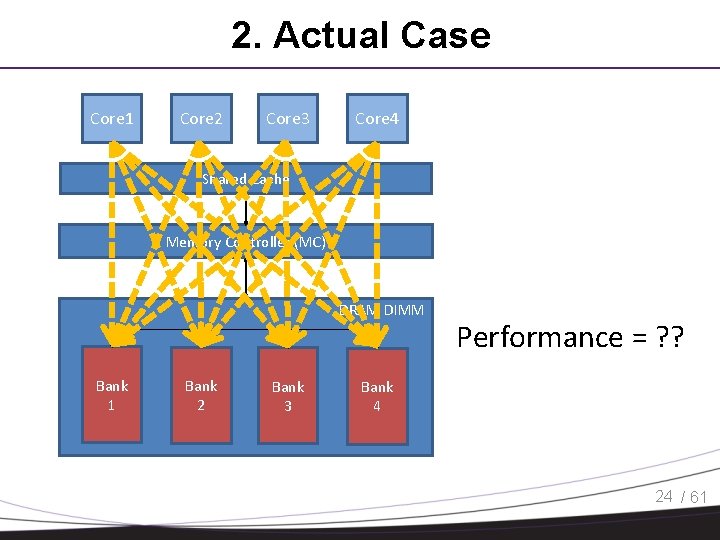

2. Actual Case Core 1 Core 2 Core 3 Core 4 Shared Cache Memory Controller (MC) DRAM DIMM Bank 1 Bank 2 Bank 3 Performance = ? ? Bank 4 24 / 61

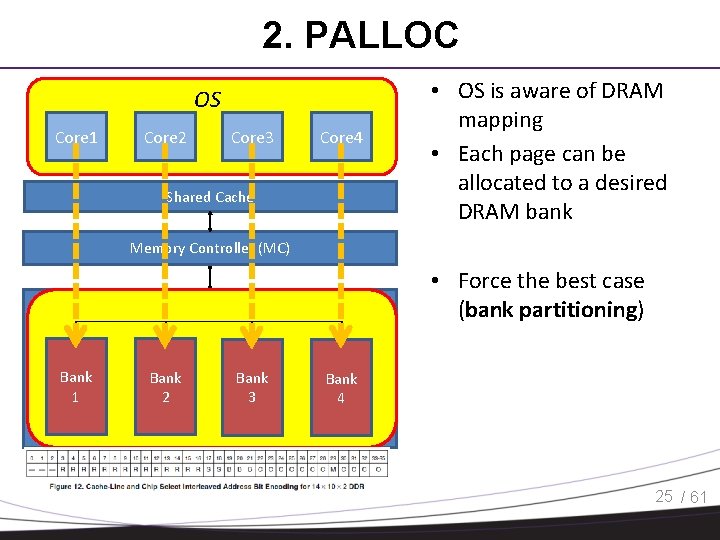

2. PALLOC OS Core 1 Core 2 Core 3 Core 4 Shared Cache • OS is aware of DRAM mapping • Each page can be allocated to a desired DRAM bank Memory Controller (MC) DRAM DIMM Bank 1 Bank 2 Bank 3 • Force the best case (bank partitioning) Bank 4 25 / 61

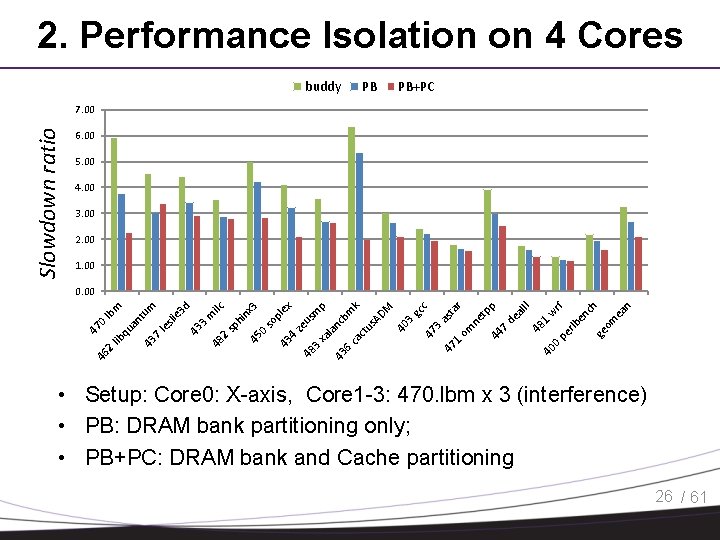

2. Performance Isolation on 4 Cores buddy PB PB+PC Slowdown ratio 7. 00 6. 00 5. 00 4. 00 3. 00 2. 00 1. 00 as ta om r ne tp p 44 7. de al II 48 1. 40 w 0. rf pe rlb en ch ge om ea n c 1. M gc 47 3. 40 3. 47 43 6. ca ct us nc la AD bm k p m 3. xa ze us 43 45 0. so pl in ex x 3 ilc sp h 2. 48 43 3. m d e 3 le sli 43 7. an qu 4. 48 46 2. lib 47 0. lb tu m m 0. 00 • Setup: Core 0: X-axis, Core 1 -3: 470. lbm x 3 (interference) • PB: DRAM bank partitioning only; • PB+PC: DRAM bank and Cache partitioning 26 / 61

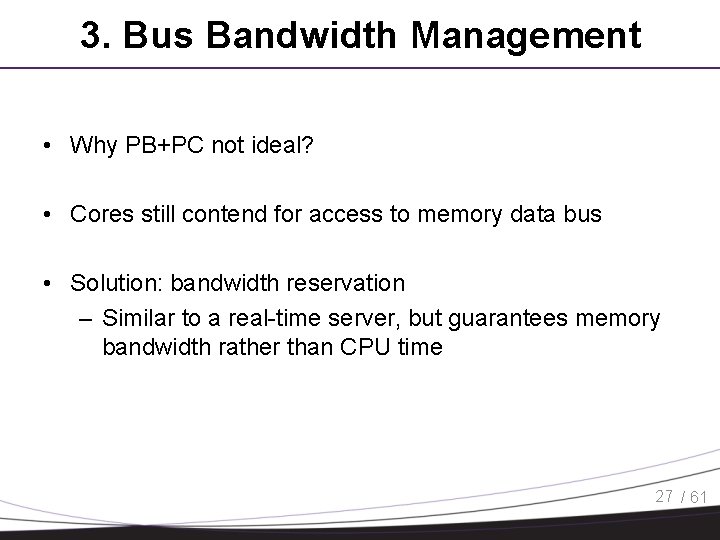

3. Bus Bandwidth Management • Why PB+PC not ideal? • Cores still contend for access to memory data bus • Solution: bandwidth reservation – Similar to a real-time server, but guarantees memory bandwidth rather than CPU time 27 / 61

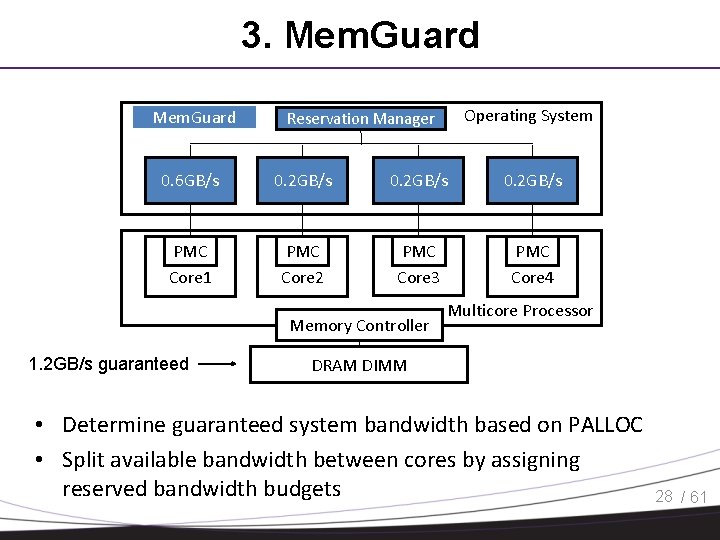

3. Mem. Guard Operating System Reservation Manager BW 0. 6 GB/s Regulator BW 0. 2 GB/s Regulator PMC Core 1 PMC Core 2 PMC Core 3 PMC Core 4 Memory Controller 1. 2 GB/s guaranteed Multicore Processor DRAM DIMM • Determine guaranteed system bandwidth based on PALLOC • Split available bandwidth between cores by assigning reserved bandwidth budgets 28 / 61

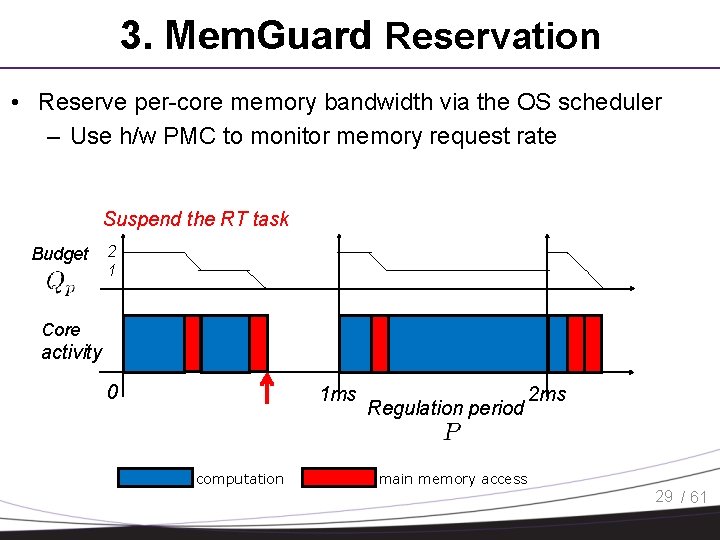

3. Mem. Guard Reservation • Reserve per-core memory bandwidth via the OS scheduler – Use h/w PMC to monitor memory request rate Suspend the RT task Budget 2 1 Core activity 0 1 ms computation Regulation period 2 ms main memory access 29 / 61

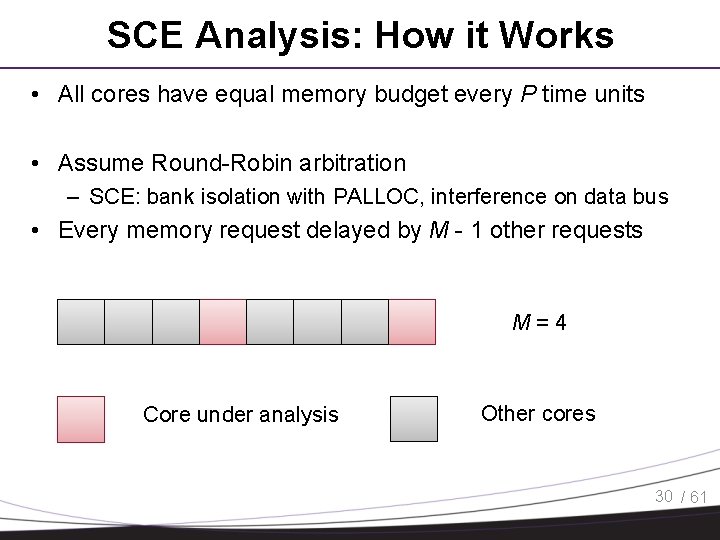

SCE Analysis: How it Works • All cores have equal memory budget every P time units • Assume Round-Robin arbitration – SCE: bank isolation with PALLOC, interference on data bus • Every memory request delayed by M - 1 other requests M=4 Core under analysis Other cores 30 / 61

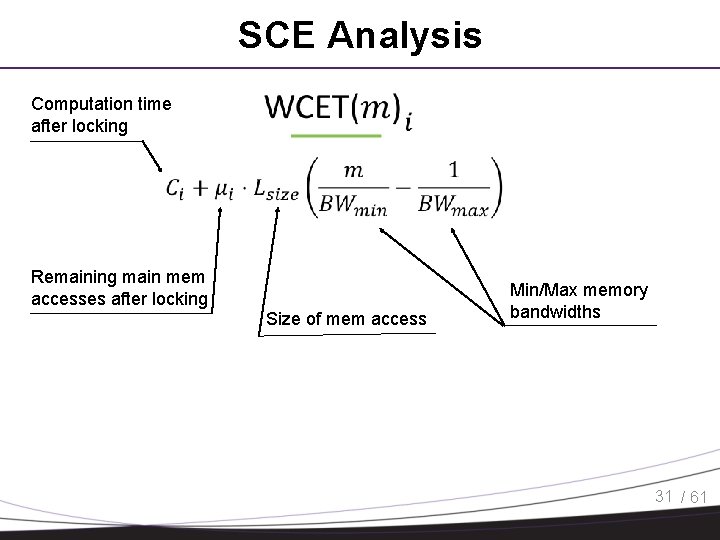

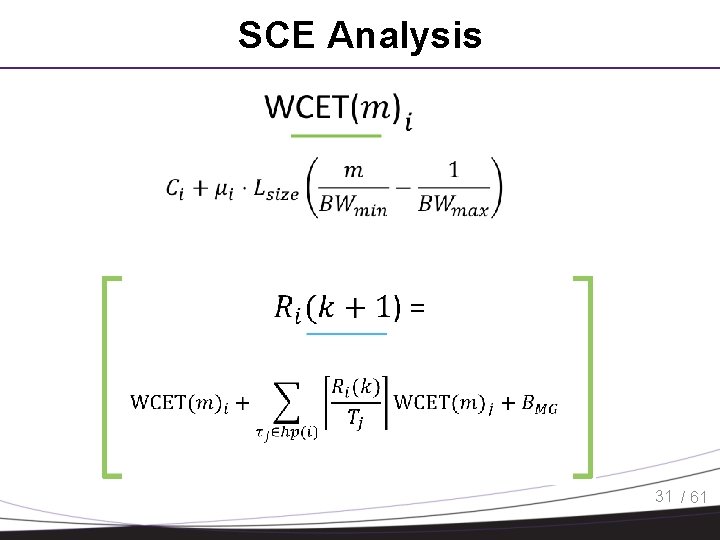

SCE Analysis Computation time after locking Remaining main mem accesses after locking Size of mem access Min/Max memory bandwidths 31 / 61

SCE Analysis 31 / 61

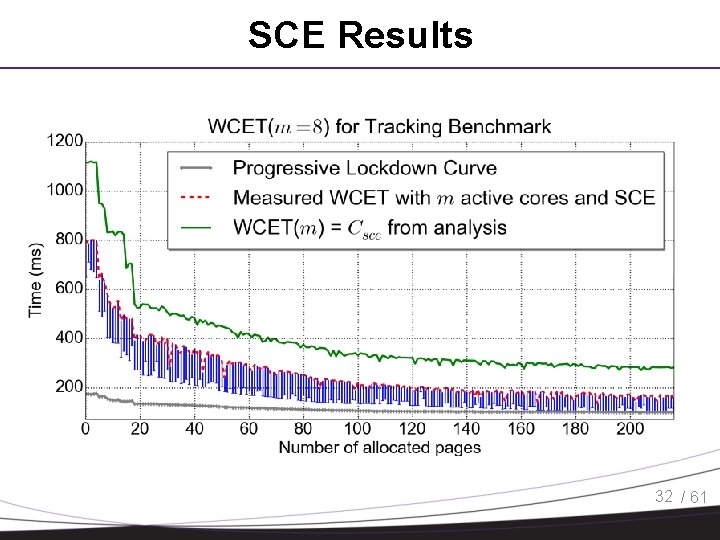

SCE Results 32 / 61

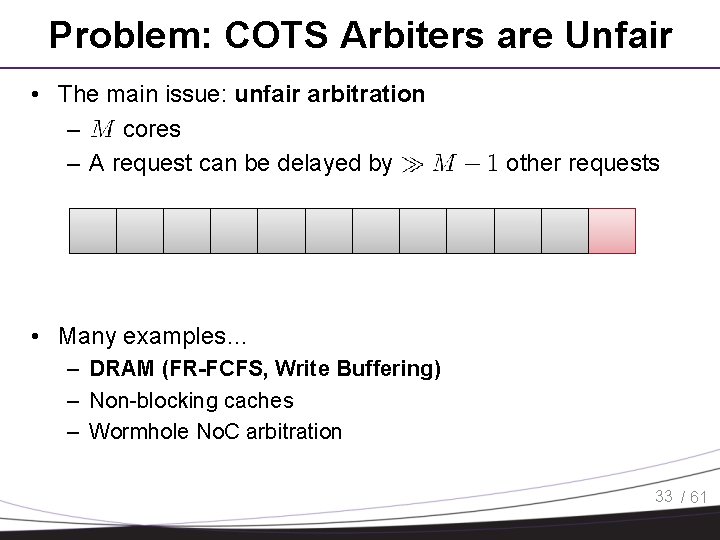

Problem: COTS Arbiters are Unfair • The main issue: unfair arbitration – cores – A request can be delayed by other requests • Many examples… – DRAM (FR-FCFS, Write Buffering) – Non-blocking caches – Wormhole No. C arbitration 33 / 61

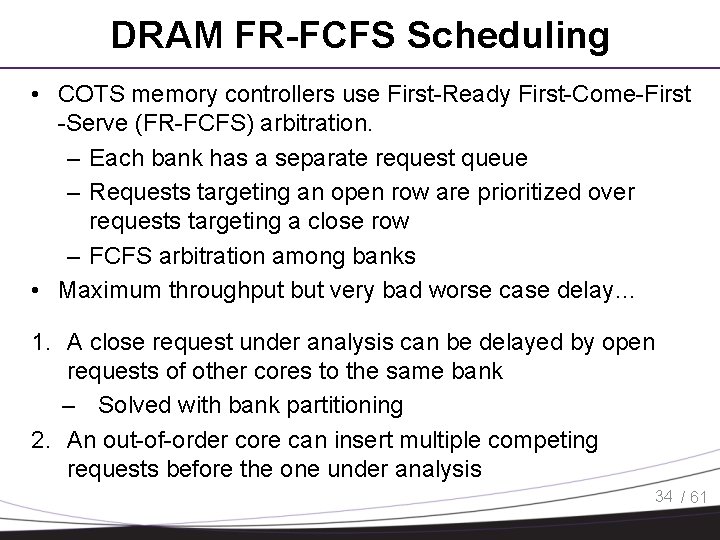

DRAM FR-FCFS Scheduling • COTS memory controllers use First-Ready First-Come-First -Serve (FR-FCFS) arbitration. – Each bank has a separate request queue – Requests targeting an open row are prioritized over requests targeting a close row – FCFS arbitration among banks • Maximum throughput but very bad worse case delay… 1. A close request under analysis can be delayed by open requests of other cores to the same bank – Solved with bank partitioning 2. An out-of-order core can insert multiple competing requests before the one under analysis 34 / 61

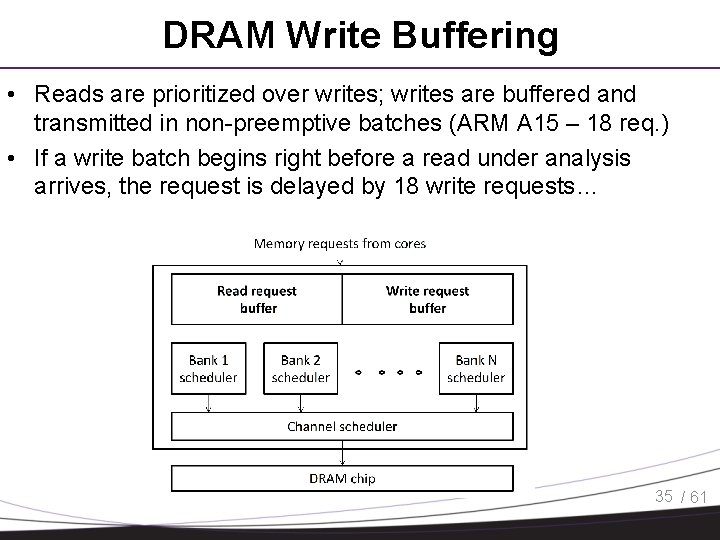

DRAM Write Buffering • Reads are prioritized over writes; writes are buffered and transmitted in non-preemptive batches (ARM A 15 – 18 req. ) • If a write batch begins right before a read under analysis arrives, the request is delayed by 18 write requests… 35 / 61

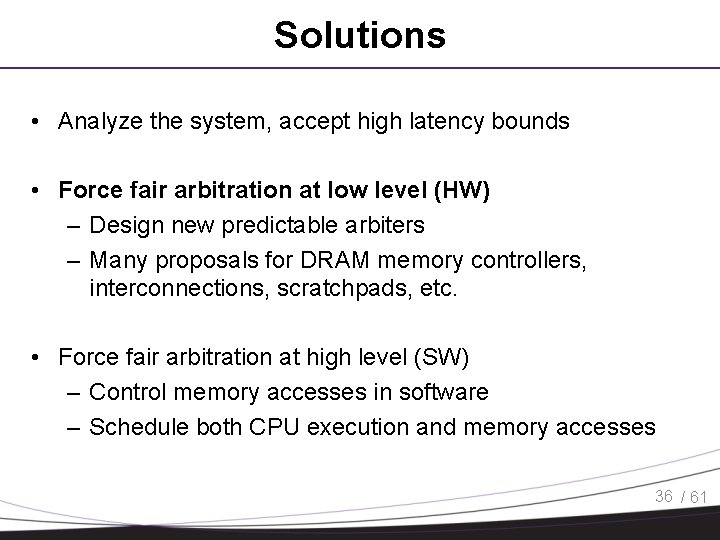

Solutions • Analyze the system, accept high latency bounds • Force fair arbitration at low level (HW) – Design new predictable arbiters – Many proposals for DRAM memory controllers, interconnections, scratchpads, etc. • Force fair arbitration at high level (SW) – Control memory accesses in software – Schedule both CPU execution and memory accesses 36 / 61

Solutions • Analyze the system, accept high latency bounds • Force fair arbitration at low level (HW) – Design new predictable arbiters – Many proposals for DRAM memory controllers, interconnections, scratchpads, etc. • Force fair arbitration at high level (SW) – Control memory accesses in software – Schedule both CPU execution and memory accesses 36 / 61

Delay Analysis • Goal – Compute the worst-case memory interference delay of a task under analysis • Request driven analysis – Based on the task’s own memory demand: H – Compute worst-case per request delay: RD – Memory interference delay = RD x H • FR-FCFS only: [Kim’ 14] H. Kim, D. de Niz, B. Andersson, M. Klein, O. Mutlu, and R. R. Rajkumar. “Bounding Memory Interference Delay in COTS-based Multi-Core Systems”, RTAS’ 14 • FR-FCFS + write bundling: [Ours] H. Yun, R. Pellizzoni and P. K. Valsan. “Parallelism-Aware Memory Interference Delay Analysis for COTS Multicore Systems”, ECRTS’ 15 37 / 61

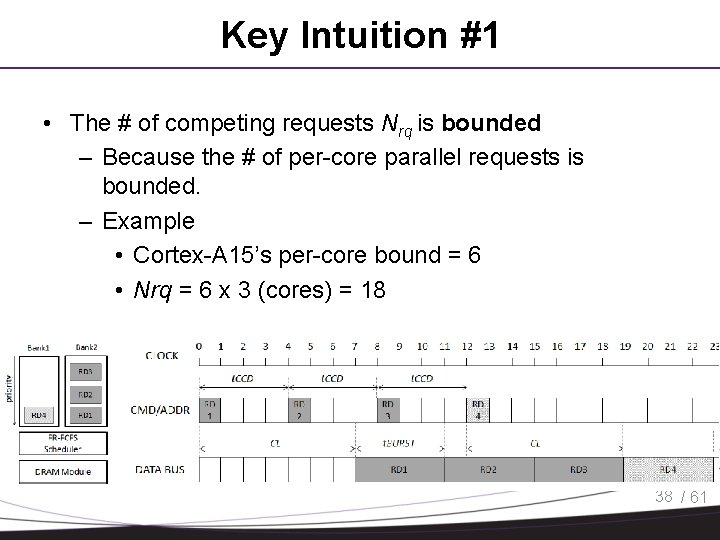

Key Intuition #1 • The # of competing requests Nrq is bounded – Because the # of per-core parallel requests is bounded. – Example • Cortex-A 15’s per-core bound = 6 • Nrq = 6 x 3 (cores) = 18 38 / 61

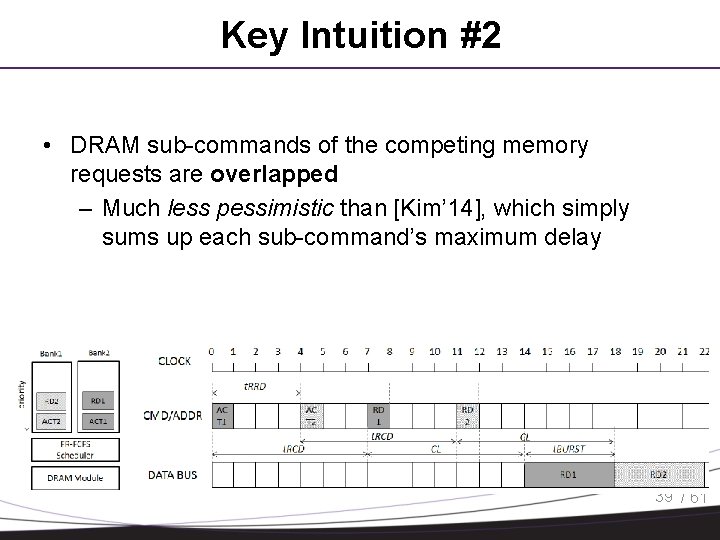

Key Intuition #2 • DRAM sub-commands of the competing memory requests are overlapped – Much less pessimistic than [Kim’ 14], which simply sums up each sub-command’s maximum delay 39 / 61

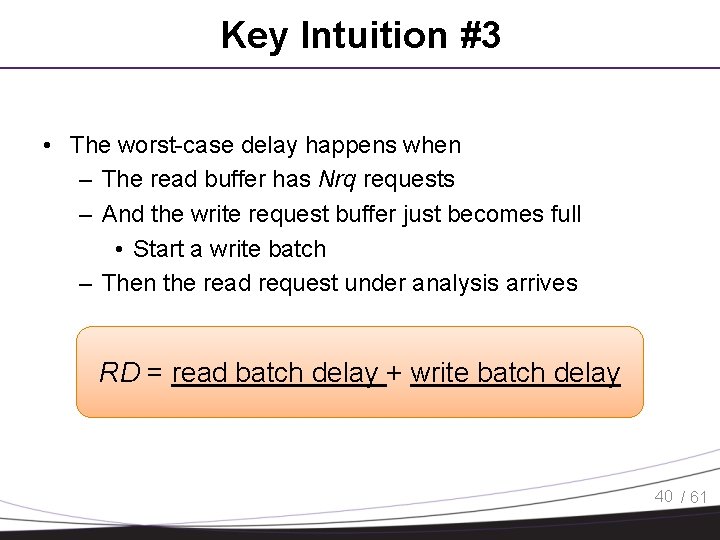

Key Intuition #3 • The worst-case delay happens when – The read buffer has Nrq requests – And the write request buffer just becomes full • Start a write batch – Then the read request under analysis arrives RD = read batch delay + write batch delay 40 / 61

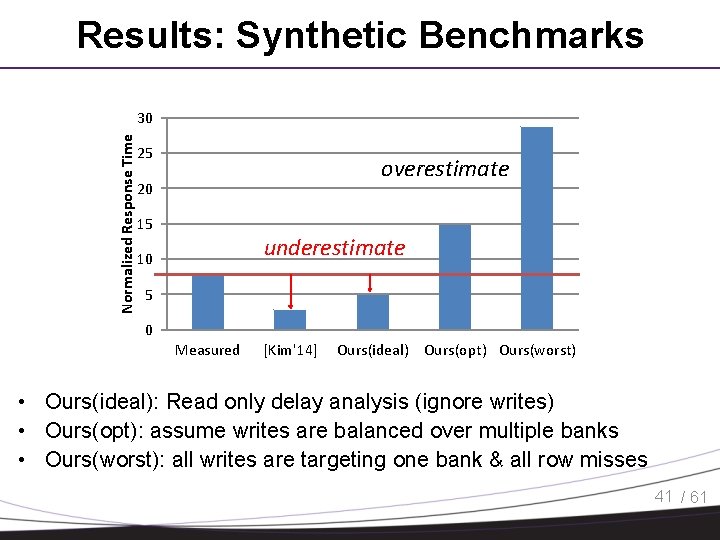

Results: Synthetic Benchmarks Normalized Response Time 30 25 overestimate 20 15 underestimate 10 5 0 Measured [Kim'14] Ours(ideal) Ours(opt) Ours(worst) • Ours(ideal): Read only delay analysis (ignore writes) • Ours(opt): assume writes are balanced over multiple banks • Ours(worst): all writes are targeting one bank & all row misses 41 / 61

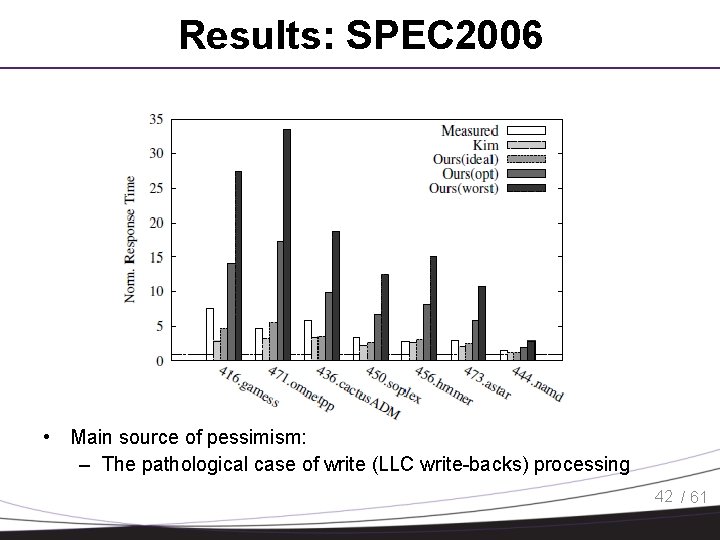

Results: SPEC 2006 • Main source of pessimism: – The pathological case of write (LLC write-backs) processing 42 / 61

Solutions • Analyze the system, accept high latency bounds • Force fair arbitration at low level (HW) – Design new predictable arbiters – Many proposals for DRAM memory controllers, interconnections, scratchpads, etc. • Force fair arbitration at high level (SW) – Control memory accesses in software – Schedule both CPU execution and memory accesses 36 / 61

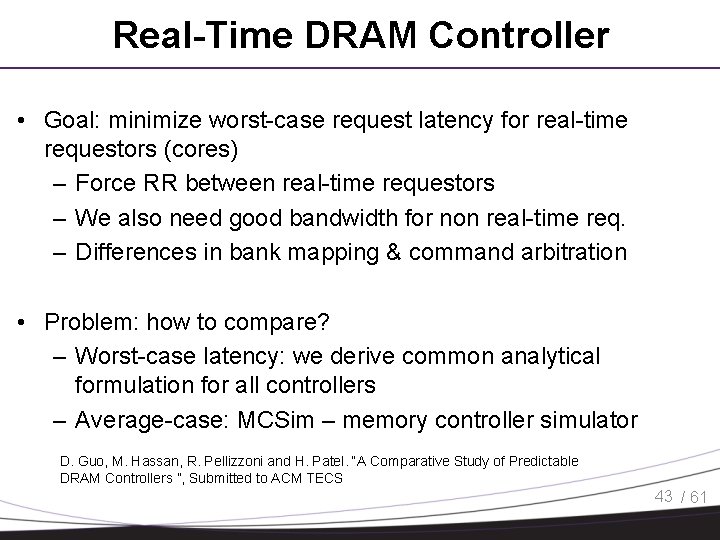

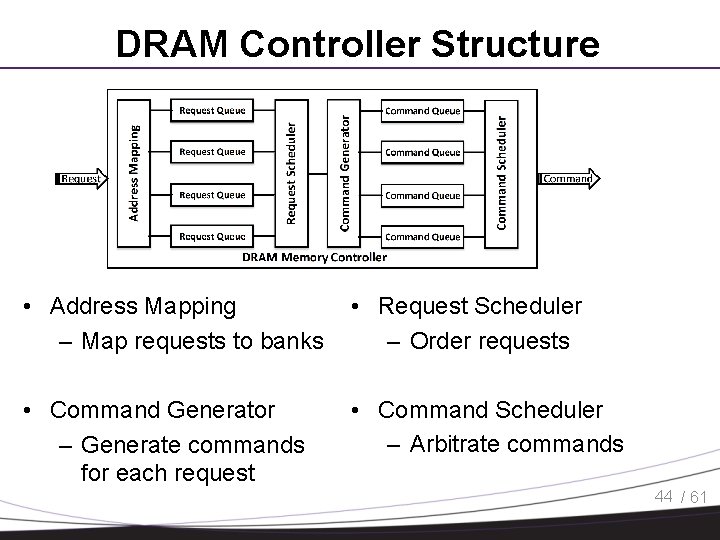

Real-Time DRAM Controller • Goal: minimize worst-case request latency for real-time requestors (cores) – Force RR between real-time requestors – We also need good bandwidth for non real-time req. – Differences in bank mapping & command arbitration • Problem: how to compare? – Worst-case latency: we derive common analytical formulation for all controllers – Average-case: MCSim – memory controller simulator D. Guo, M. Hassan, R. Pellizzoni and H. Patel. “A Comparative Study of Predictable DRAM Controllers ”, Submitted to ACM TECS 43 / 61

DRAM Controller Structure • Address Mapping – Map requests to banks • Request Scheduler – Order requests • Command Generator – Generate commands for each request • Command Scheduler – Arbitrate commands 44 / 61

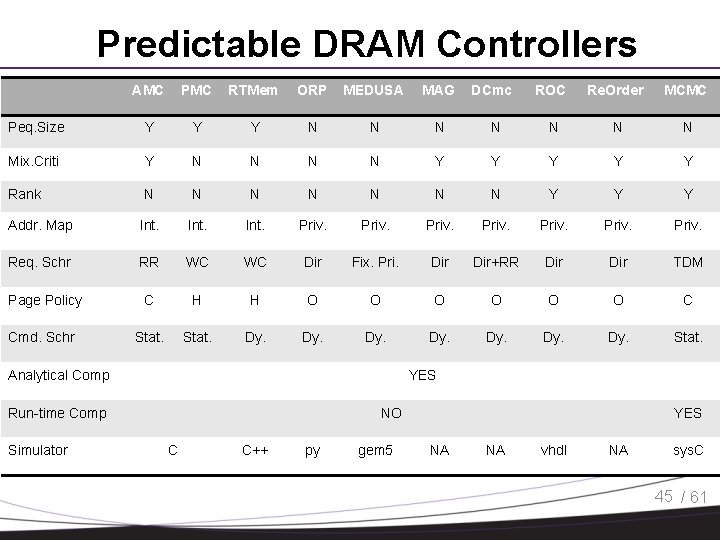

Predictable DRAM Controllers AMC PMC RTMem ORP MEDUSA MAG Peq. Size Y Y Y N N N Mix. Criti Y N N Rank N N Addr. Map Int. Req. Schr RR WC Page Policy C Cmd. Schr Stat. ROC Re. Order MCMC N N Y Y Y N N N Y Y Y Priv. WC Dir Fix. Pri. Dir+RR Dir TDM H H O O O C Stat. Dy. Stat. Analytical Comp YES Run-time Comp Simulator DCmc NO C C++ py gem 5 YES NA NA vhdl NA sys. C 45 / 61

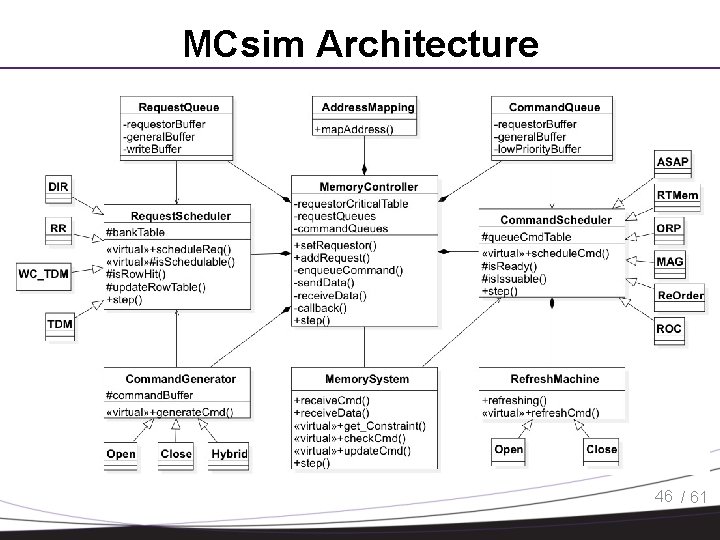

MCsim Architecture 46 / 61

MCSim • Cycle accurate simulator • Implemented 10 state-of-the-art predictable MCs • Each controller requires at most 200 lines of codes • Device simulation based on RAMulator Yoongu Kim, Weikun Yang, and Onur Mutlu. “Ramulator: A Fast and Extensible DRAM Simulator”, CAL 2015. • Use either memory traces or full-system simulator (Gem 5) 47 / 61

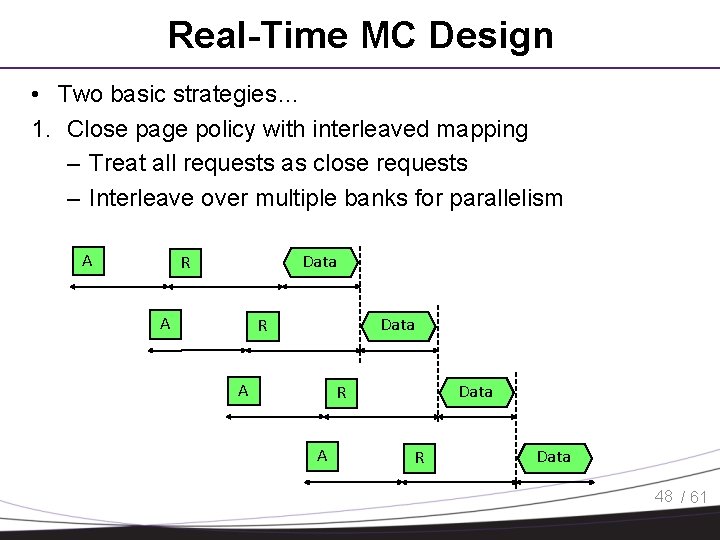

Real-Time MC Design • Two basic strategies… 1. Close page policy with interleaved mapping – Treat all requests as close requests – Interleave over multiple banks for parallelism A Data R A R Data 48 / 61

Real-Time MC Design • Two basic strategies… 1. Close page policy with interleaved mapping – Treat all requests as close requests – Interleave over multiple banks for parallelism 2. Open page policy with private banks – Requests can either be open or close – Force bank partitioning to avoid row interference – Problem: must deal with write-read switching 48 / 61

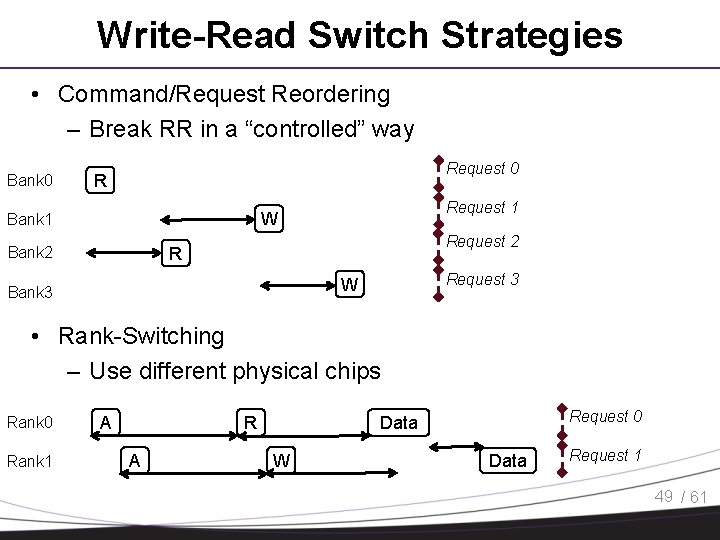

Write-Read Switch Strategies • Command/Request Reordering – Break RR in a “controlled” way Bank 0 Request 0 R Request 1 W Bank 1 Request 2 R Bank 2 Request 3 W Bank 3 • Rank-Switching – Use different physical chips Rank 0 Rank 1 A Request 0 Data W Data Request 1 49 / 61

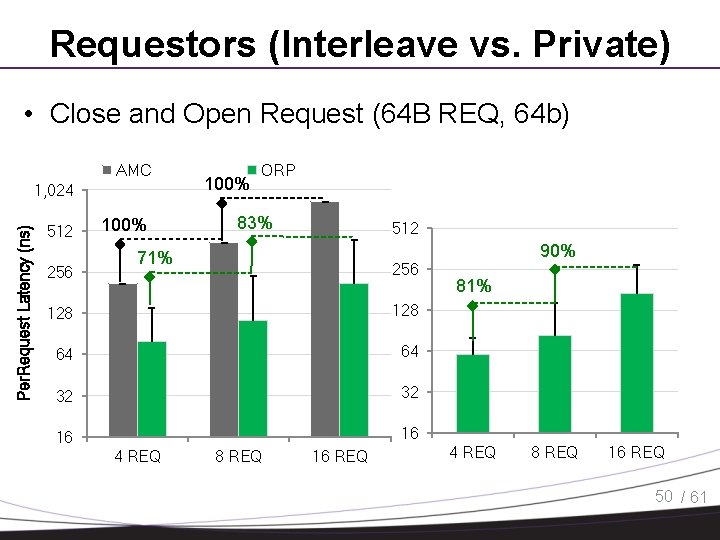

Requestors (Interleave vs. Private) • Close and Open Request (64 B REQ, 64 b) AMC Per. Request Latency (ns) 1, 024 512 256 100% ORP 83% 512 71% 256 128 64 64 32 32 16 16 4 REQ 8 REQ 16 REQ 90% 81% 4 REQ 8 REQ 16 REQ 50 / 61

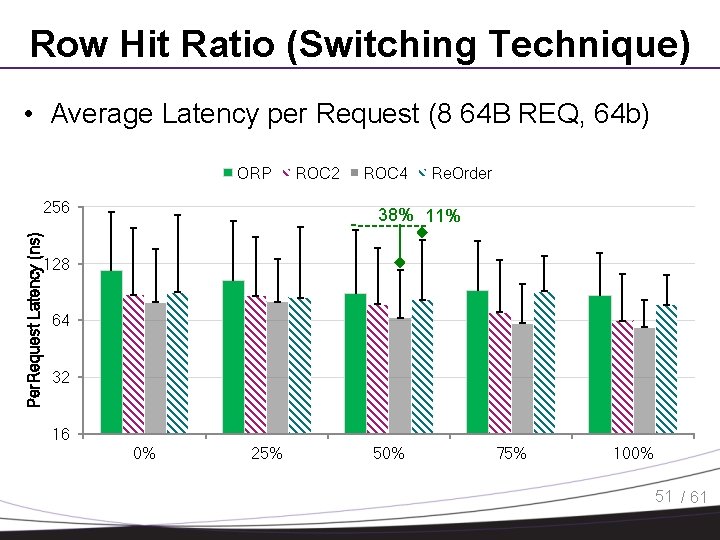

Row Hit Ratio (Switching Technique) • Average Latency per Request (8 64 B REQ, 64 b) ORP Per. Request Latency (ns) 256 ROC 2 ROC 4 Re. Order 38% 11% 128 64 32 16 0% 25% 50% 75% 100% 51 / 61

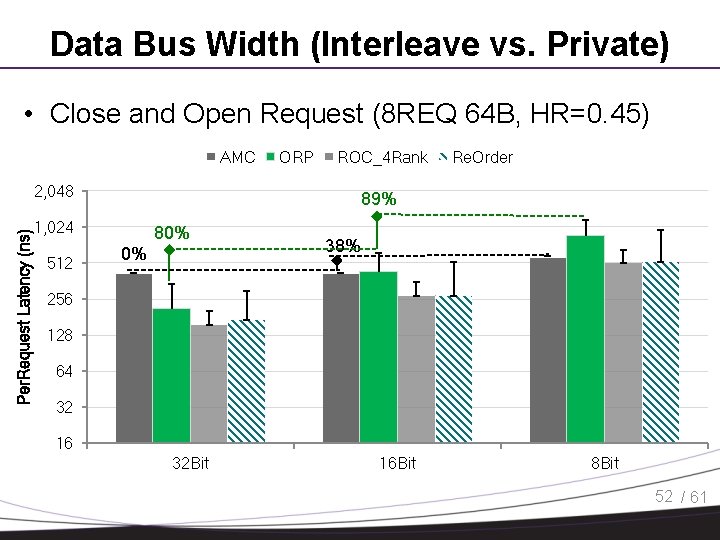

Data Bus Width (Interleave vs. Private) • Close and Open Request (8 REQ 64 B, HR=0. 45) AMC Per. Request Latency (ns) 2, 048 ROC_4 Rank Re. Order 89% 1, 024 512 ORP 80% 0% 38% 256 128 64 32 16 32 Bit 16 Bit 8 Bit 52 / 61

Solutions • Analyze the system, accept high latency bounds • Force fair arbitration at low level (HW) – Design new predictable arbiters – Many proposals for DRAM memory controllers, interconnections, scratchpads, etc. • Force fair arbitration at high level (SW) – Control memory accesses in software – Schedule both CPU execution and memory accesses 36 / 61

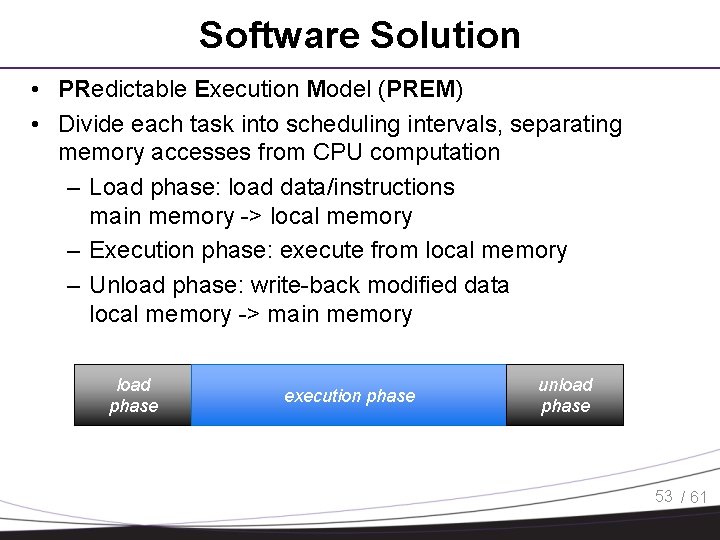

Software Solution • PRedictable Execution Model (PREM) • Divide each task into scheduling intervals, separating memory accesses from CPU computation – Load phase: load data/instructions main memory -> local memory – Execution phase: execute from local memory – Unload phase: write-back modified data local memory -> main memory load phase execution phase unload phase 53 / 61

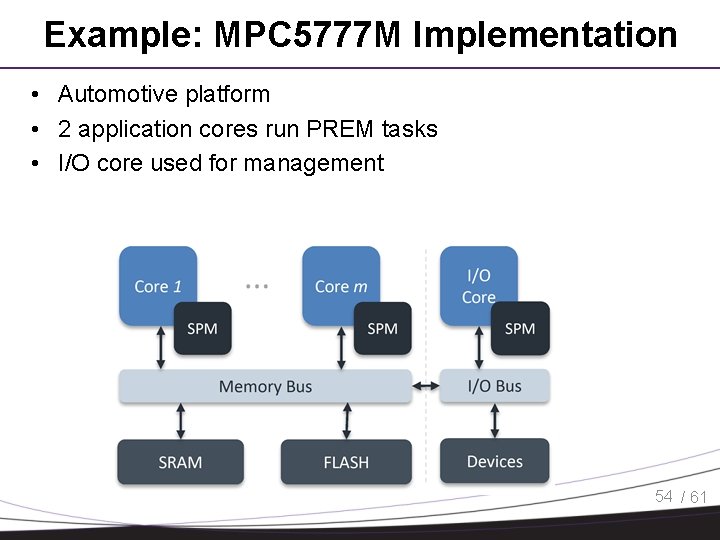

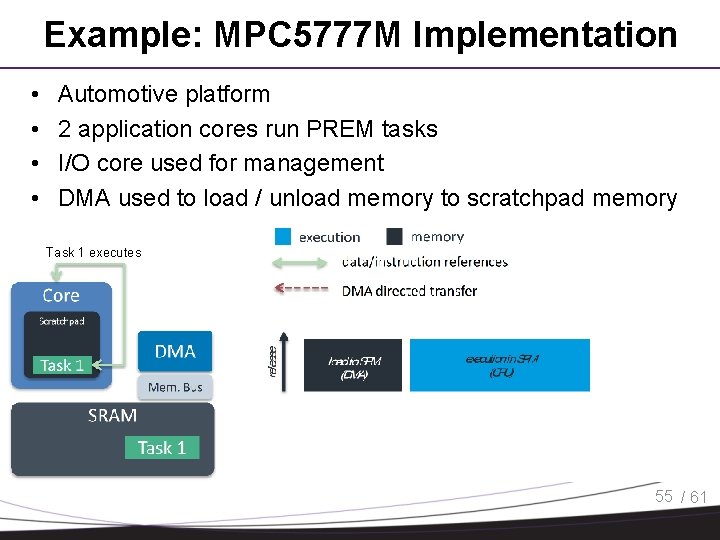

Example: MPC 5777 M Implementation • Automotive platform • 2 application cores run PREM tasks • I/O core used for management 54 / 61

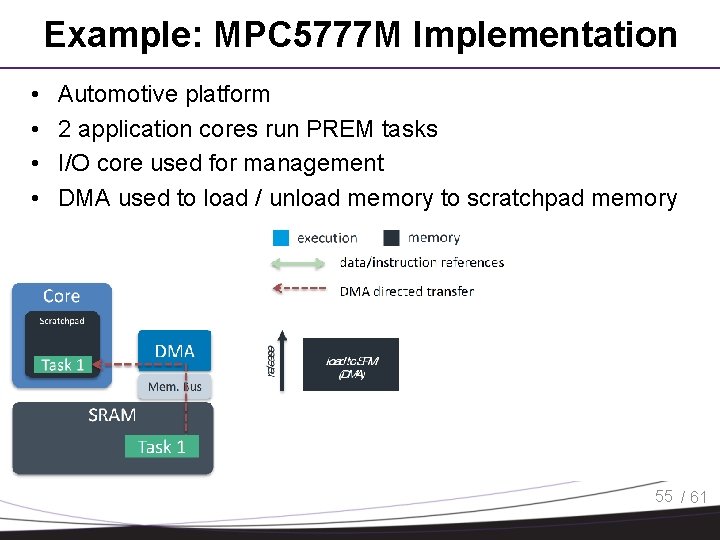

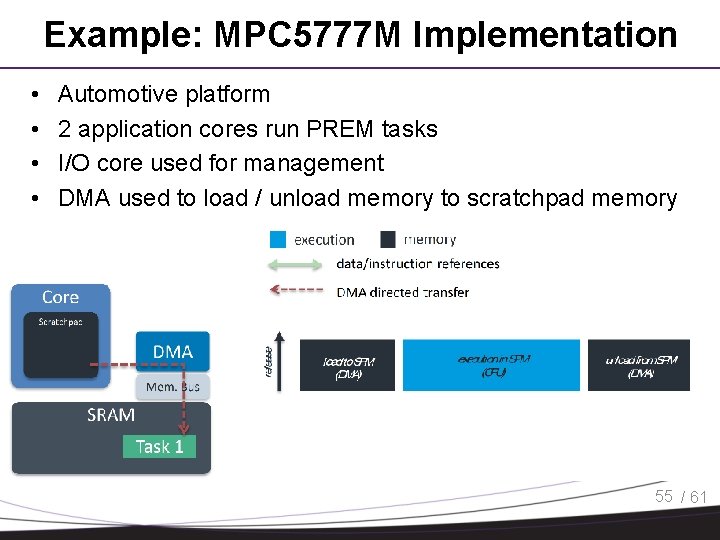

Example: MPC 5777 M Implementation • • Automotive platform 2 application cores run PREM tasks I/O core used for management DMA used to load / unload memory to scratchpad memory 55 / 61

Example: MPC 5777 M Implementation • • Automotive platform 2 application cores run PREM tasks I/O core used for management DMA used to load / unload memory to scratchpad memory Task 1 executes 55 / 61

Example: MPC 5777 M Implementation • • Automotive platform 2 application cores run PREM tasks I/O core used for management DMA used to load / unload memory to scratchpad memory 55 / 61

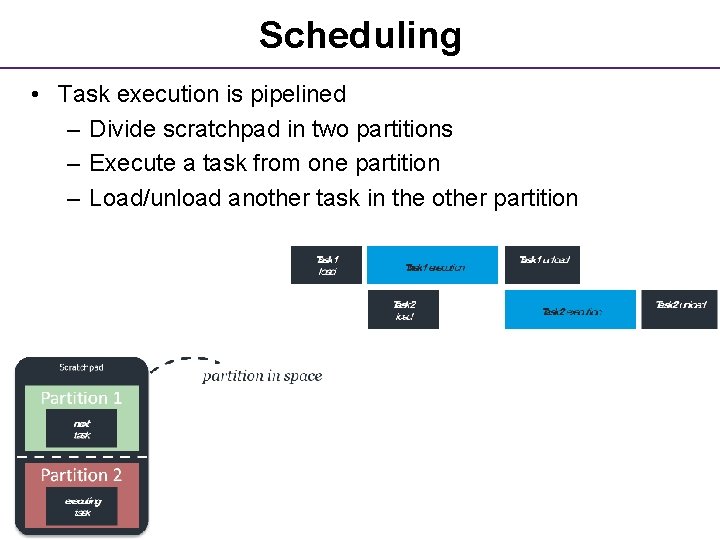

Scheduling • Task execution is pipelined – Divide scratchpad in two partitions – Execute a task from one partition – Load/unload another task in the other partition 76 / 61

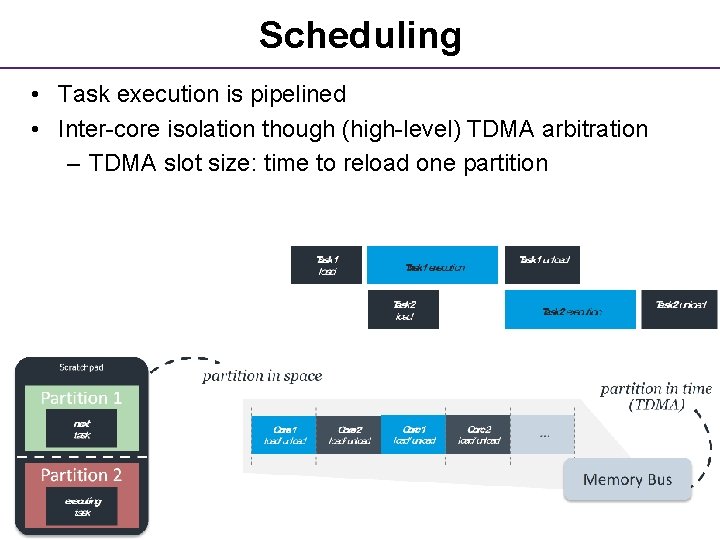

Scheduling • Task execution is pipelined • Inter-core isolation though (high-level) TDMA arbitration – TDMA slot size: time to reload one partition 77 / 61

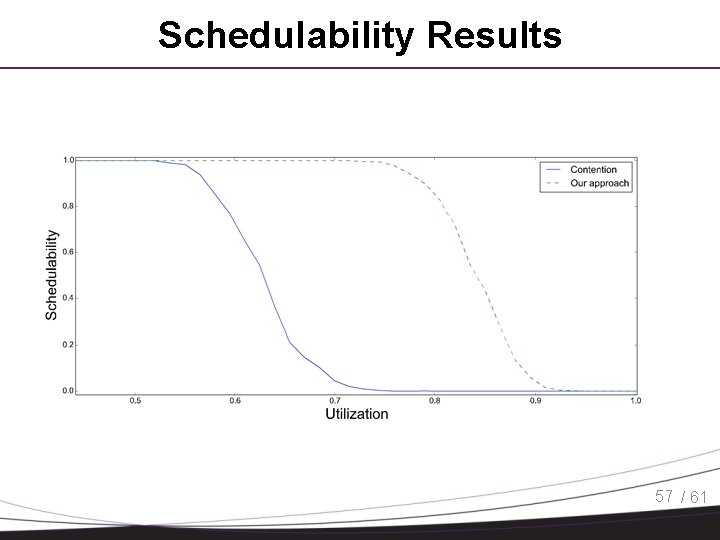

Schedulability Results 57 / 61

PREM Compilation • Three main problems. 1. Task memory footprint too large to fit in SPM/cache – Break task in multiple chunks 2. Data usage depends on inputs – Decide what to load based on control flow at run-time 3. Number of cores > number of tasks/threads – Pipeline memory phases with execution of same task • Solution: compiler-driven predictable data prefetching 58 / 61

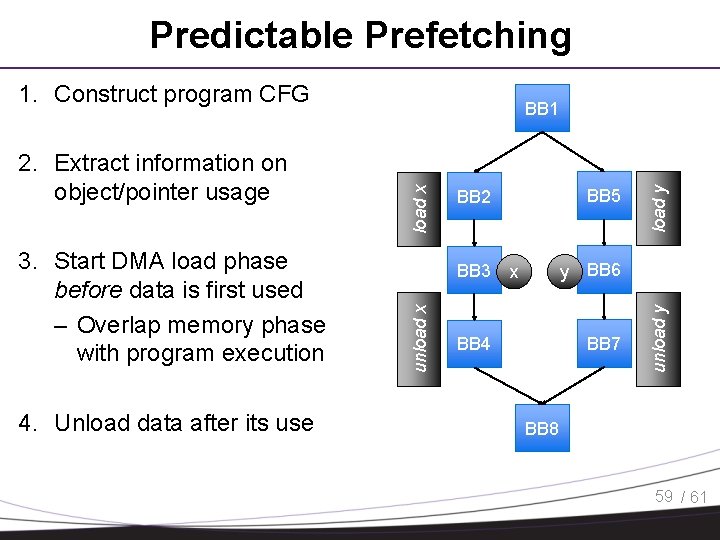

Predictable Prefetching 1. Construct program CFG 4. Unload data after its use BB 3 load y load x BB 5 BB 2 y BB 6 x BB 7 BB 4 unload y 3. Start DMA load phase before data is first used – Overlap memory phase with program execution unload x 2. Extract information on object/pointer usage BB 1 BB 8 59 / 61

Predictable Prefetching • Preliminary implementation based on LLVM • FIFO queue allows issuing multiple DMA operations • Need either special hardware or manager core for DMA management / pointer resolution • Allocation algorithm optimized for worst-case execution time • TDMA arbitration between core for DMA operations 60 / 61

Conclusions • Resource contention is a major problem for the deployment of real-time multicore systems • Timing isolation is important for independent system verification / certification • COTS arbiters are not designed for worst case latency bounds… • . . . but we can use a mix of OS / Compiler / HW solutions 61 / 61

Questions?

84

85

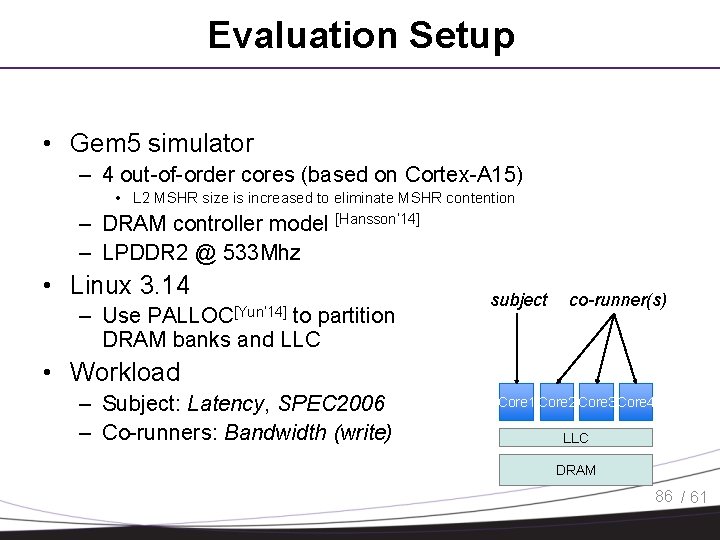

Evaluation Setup • Gem 5 simulator – 4 out-of-order cores (based on Cortex-A 15) • L 2 MSHR size is increased to eliminate MSHR contention – DRAM controller model [Hansson’ 14] – LPDDR 2 @ 533 Mhz • Linux 3. 14 – Use to partition DRAM banks and LLC PALLOC[Yun’ 14] subject co-runner(s) • Workload – Subject: Latency, SPEC 2006 – Co-runners: Bandwidth (write) Core 1 Core 2 Core 3 Core 4 LLC DRAM 86 / 61

![DRAM FR-FCFS Scheduling [Rixner’ 00] • Maximize memory throughput • ? ? ? Bank DRAM FR-FCFS Scheduling [Rixner’ 00] • Maximize memory throughput • ? ? ? Bank](http://slidetodoc.com/presentation_image/5e571cf7cf260039bf72c2bdd1242d67/image-87.jpg)

DRAM FR-FCFS Scheduling [Rixner’ 00] • Maximize memory throughput • ? ? ? Bank 1 Bank 2 – Bank scheduler gives scheduler – Open first Channel scheduler – Ready requests between banks are – FCFS • Unfairness: – Open vs close (prevented with palloc) – FCFS instead of RR (not preventable; out-of-order [Rixner’ 00] Rixner, W. bandwidth) J. Dally, U. J. Kapasi, P. Mattson, and J. cores S. get more Owens. Memory access scheduling. ACM SIGARCH Computer Architecture News. 2000 Bank N scheduler 87 / 61

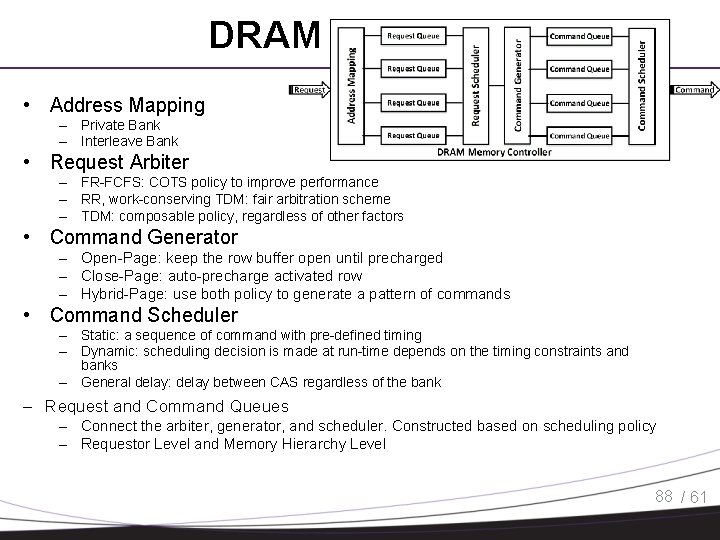

DRAM Controller • Address Mapping – Private Bank – Interleave Bank • Request Arbiter – FR-FCFS: COTS policy to improve performance – RR, work-conserving TDM: fair arbitration scheme – TDM: composable policy, regardless of other factors • Command Generator – Open-Page: keep the row buffer open until precharged – Close-Page: auto-precharge activated row – Hybrid-Page: use both policy to generate a pattern of commands • Command Scheduler – Static: a sequence of command with pre-defined timing – Dynamic: scheduling decision is made at run-time depends on the timing constraints and banks – General delay: delay between CAS regardless of the bank – Request and Command Queues – Connect the arbiter, generator, and scheduler. Constructed based on scheduling policy – Requestor Level and Memory Hierarchy Level 88 / 61

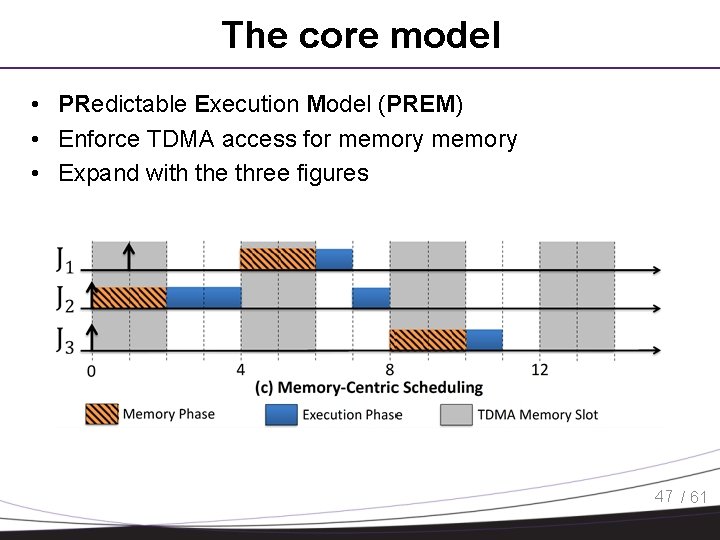

The core model • PRedictable Execution Model (PREM) • Enforce TDMA access for memory • Expand with the three figures 47 / 61

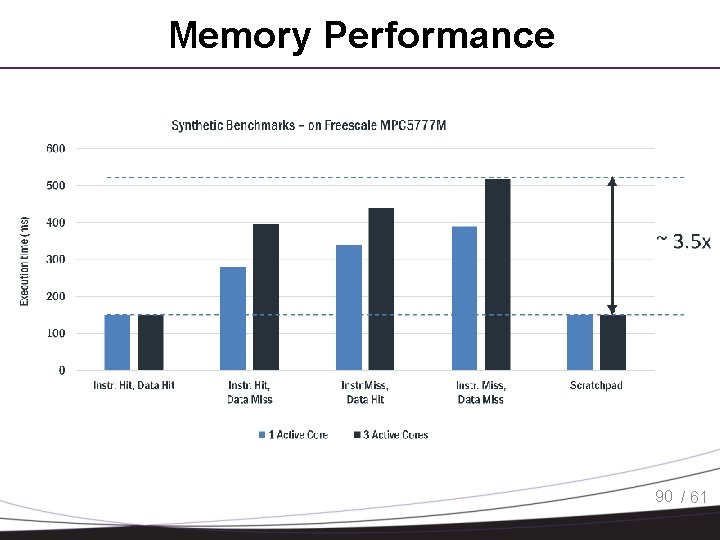

Memory Performance 90 / 61

- Slides: 90