Pattern Matching Algorithms An Overview Shoshana Neuburger The

Pattern Matching Algorithms: An Overview Shoshana Neuburger The Graduate Center, CUNY 9/15/2009

Overview Pattern Matching in 1 D Dictionary Matching Pattern Matching in 2 D Indexing – Suffix Tree – Suffix Array • Research Directions • • 2 of 59

What is Pattern Matching? Given a pattern and text, find the pattern in the text. 3 of 59

What is Pattern Matching? • Σ is an alphabet. • Input: Text T = t 1 t 2 … tn Pattern P = p 1 p 2 … pm • Output: All i such that 4 of 59

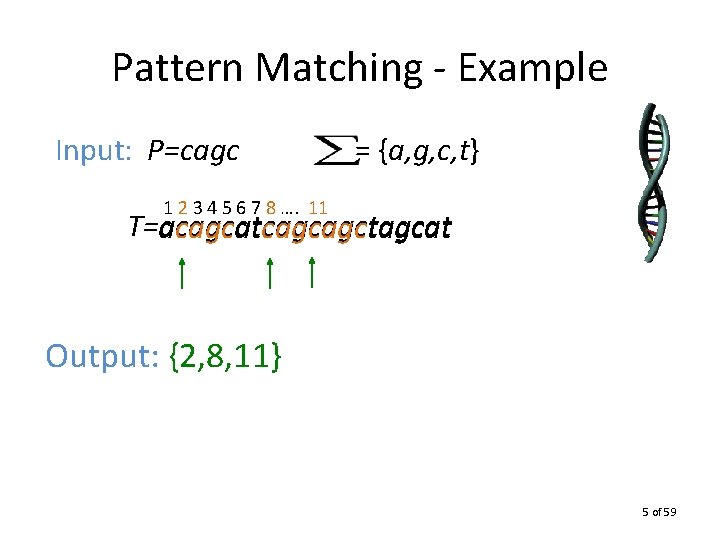

Pattern Matching - Example Input: P=cagc = {a, g, c, t} 1 2 3 4 5 6 7 8 …. 11 T=acagcatcagcagctagcat Output: {2, 8, 11} 5 of 59

Pattern Matching Algorithms • Naïve Approach – Compare pattern to text at each location. – O(mn) time. • More efficient algorithms utilize information from previous comparisons. 6 of 59

Pattern Matching Algorithms • Linear time methods have two stages 1. preprocess pattern in O(m) time and space. 2. scan text in O(n) time and space. • Knuth, Morris, Pratt (1977): automata method • Boyer, Moore (1977): can be sublinear 7 of 59

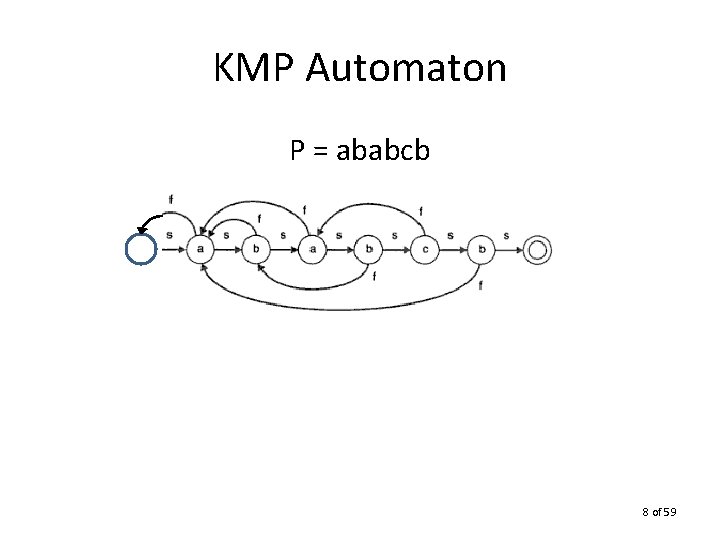

KMP Automaton P = ababcb 8 of 59

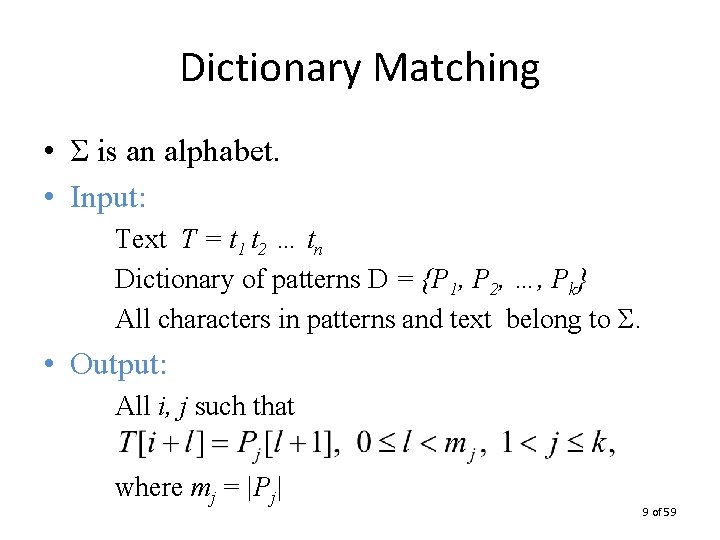

Dictionary Matching • Σ is an alphabet. • Input: Text T = t 1 t 2 … tn Dictionary of patterns D = {P 1, P 2, …, Pk} All characters in patterns and text belong to Σ. • Output: All i, j such that where mj = |Pj| 9 of 59

Dictionary Matching Algorithms • Naïve Approach: – Use an efficient pattern matching algorithm for each pattern in the dictionary. – O(kn) time. More efficient algorithms process text once. 10 of 59

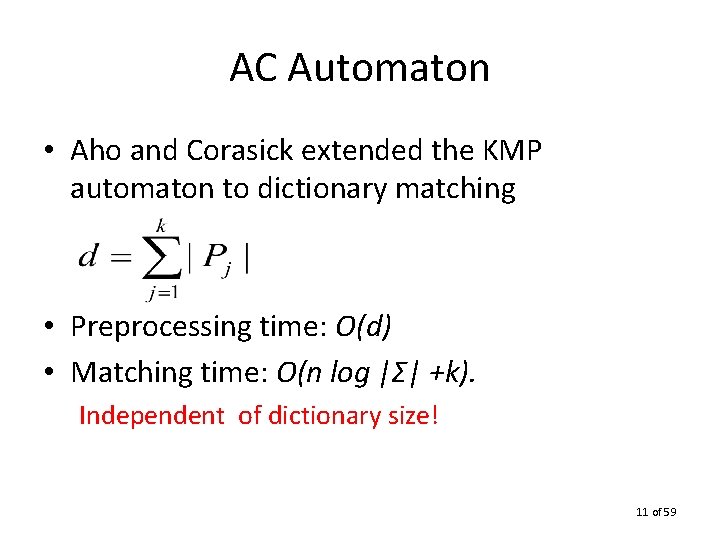

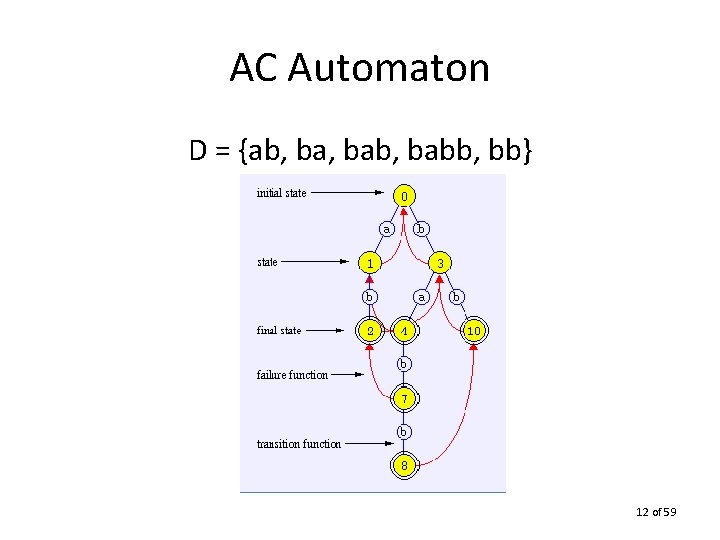

AC Automaton • Aho and Corasick extended the KMP automaton to dictionary matching • Preprocessing time: O(d) • Matching time: O(n log |Σ| +k). Independent of dictionary size! 11 of 59

AC Automaton D = {ab, bab, babb, bb} 12 of 59

Dictionary Matching • KMP automaton does not depend on alphabet size while AC automaton does – branching. • Dori, Landau (2006): AC automaton is built in linear time for integer alphabets. • Breslauer (1995) eliminates log factor in text scanning stage. 13 of 59

Periodicity A crucial task in preprocessing stage of most pattern matching algorithms: computing periodicity. Many forms – failure table – witnesses 14 of 59

Periodicity • A periodic pattern can be superimposed on itself without mismatch before its midpoint. • Why is periodicity useful? Can quickly eliminate many candidates for pattern occurrence. 15 of 59

Periodicity Definition: • S is periodic if S = and is a proper suffix of. • S is periodic if its longest prefix that is also a suffix is at least half |S|. • The shortest period corresponds to the longest border. 16 of 59

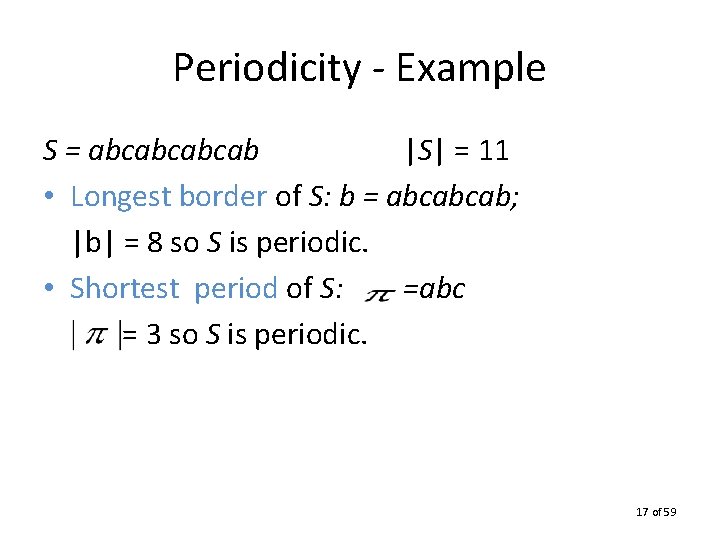

Periodicity - Example S = abcabcabcab |S| = 11 • Longest border of S: b = abcabcab; |b| = 8 so S is periodic. • Shortest period of S: =abc = 3 so S is periodic. 17 of 59

Witnesses Popular paradigm in pattern matching: 1. find consistent candidates 2. verify candidates consistent candidates → verification is linear 18 of 59

Witnesses • Vishkin introduced the duel to choose between two candidates by checking the value of a witness. • Alphabet-independent method. 19 of 59

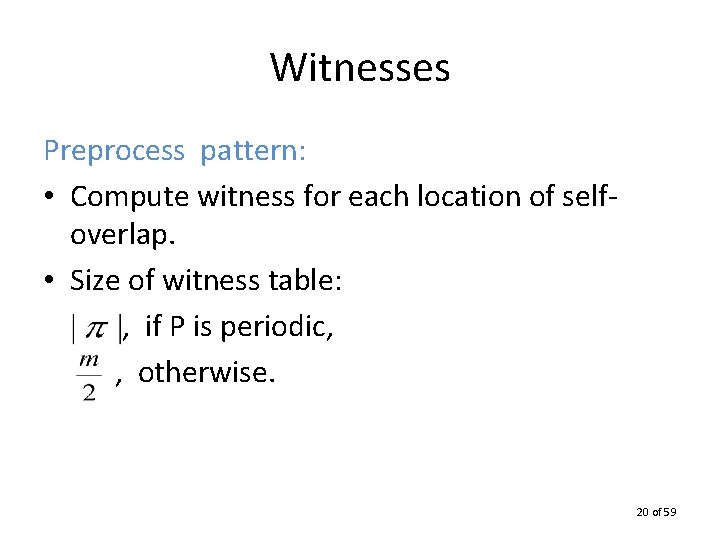

Witnesses Preprocess pattern: • Compute witness for each location of selfoverlap. • Size of witness table: , if P is periodic, , otherwise. 20 of 59

![Witnesses • WIT[i] = any k such that P[k] ≠ P[k-i+1]. • WIT[i] = Witnesses • WIT[i] = any k such that P[k] ≠ P[k-i+1]. • WIT[i] =](http://slidetodoc.com/presentation_image_h2/a9abdb2f964aef99a0f0ce4e7fec9fd0/image-21.jpg)

Witnesses • WIT[i] = any k such that P[k] ≠ P[k-i+1]. • WIT[i] = 0, if there is no such k. k is a witness against i being a period of P. Example: Pattern Witness Table 21 of 59

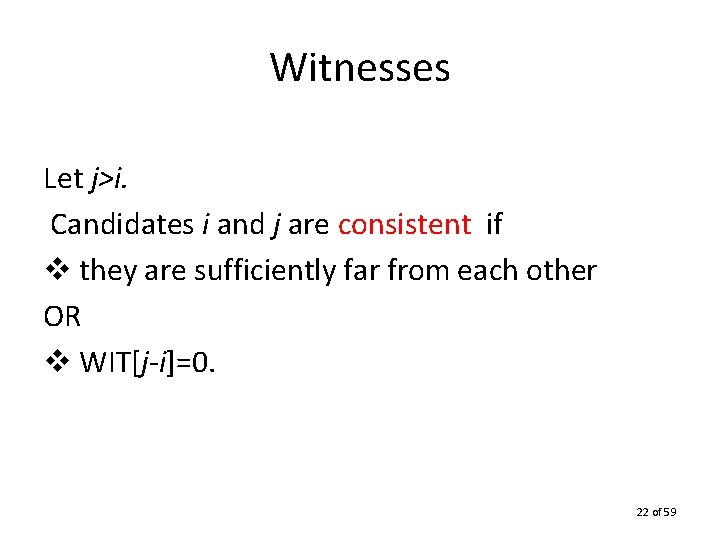

Witnesses Let j>i. Candidates i and j are consistent if v they are sufficiently far from each other OR v WIT[j-i]=0. 22 of 59

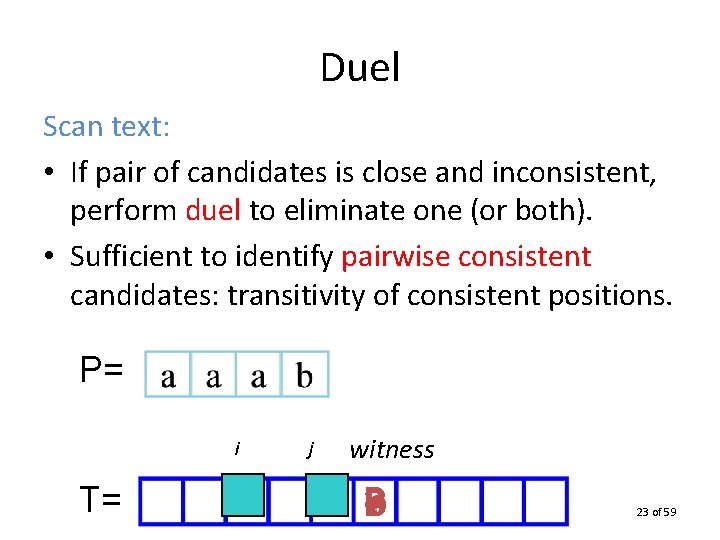

Duel Scan text: • If pair of candidates is close and inconsistent, perform duel to eliminate one (or both). • Sufficient to identify pairwise consistent candidates: transitivity of consistent positions. P= i T= j witness a b ? 23 of 59

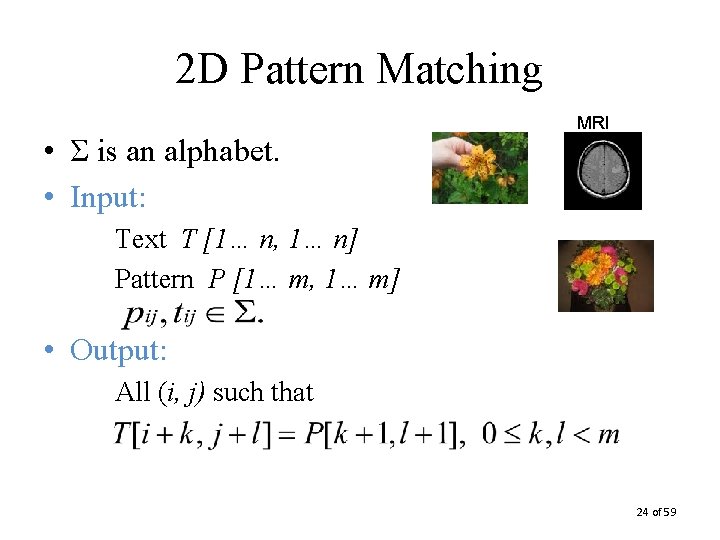

2 D Pattern Matching • Σ is an alphabet. • Input: MRI Text T [1… n, 1… n] Pattern P [1… m, 1… m] • Output: All (i, j) such that 24 of 59

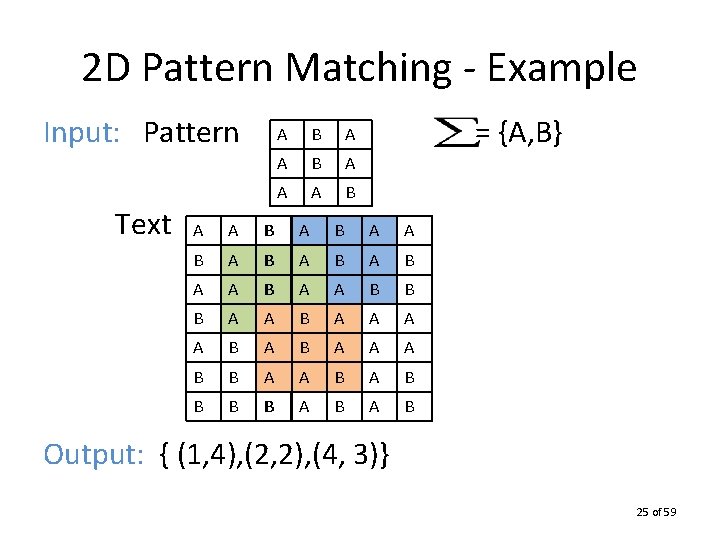

2 D Pattern Matching - Example Input: Pattern Text A B A A A B = {A, B} A A B A B A B A A B B B A A A A B B A A B B B B A B Output: { (1, 4), (2, 2), (4, 3)} 25 of 59

Bird / Baker • First linear-time 2 D pattern matching algorithm. • View each pattern row as a metacharacter to linearize problem. • Convert 2 D pattern matching to 1 D. 26 of 59

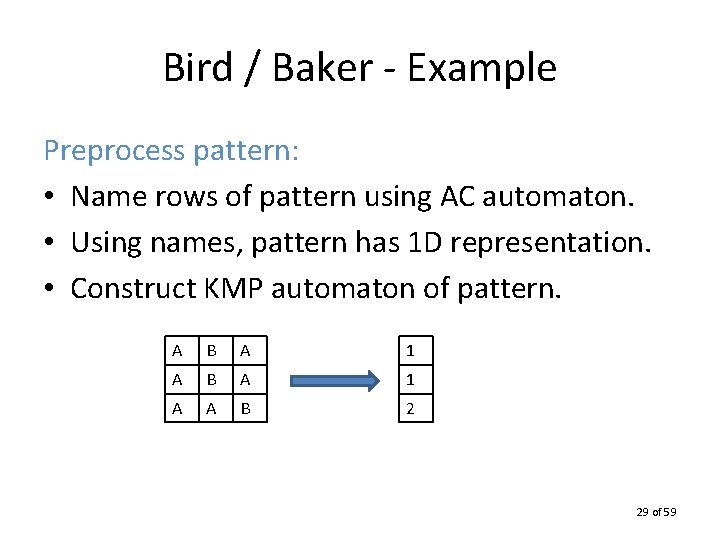

Bird / Baker Preprocess pattern: • Name rows of pattern using AC automaton. • Using names, pattern has 1 D representation. • Construct KMP automaton of pattern. Identical rows receive identical names. 27 of 59

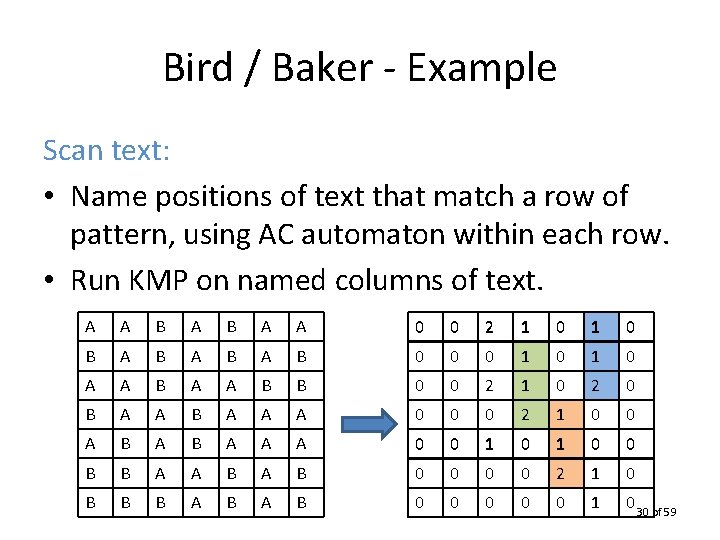

Bird / Baker Scan text: • Name positions of text that match a row of pattern, using AC automaton within each row. • Run KMP on named columns of text. Since the 1 D names are unique, only one name can be given to a text location. 28 of 59

Bird / Baker - Example Preprocess pattern: • Name rows of pattern using AC automaton. • Using names, pattern has 1 D representation. • Construct KMP automaton of pattern. A B A 1 A A B 2 29 of 59

Bird / Baker - Example Scan text: • Name positions of text that match a row of pattern, using AC automaton within each row. • Run KMP on named columns of text. A A B A A 0 0 2 1 0 B A B A B 0 0 0 1 0 A A B B 0 0 2 1 0 2 0 B A A A 0 0 0 2 1 0 0 A B A A A 0 0 1 0 0 B B A A B 0 0 2 1 0 B B B A B 0 0 0 1 0 30 of 59

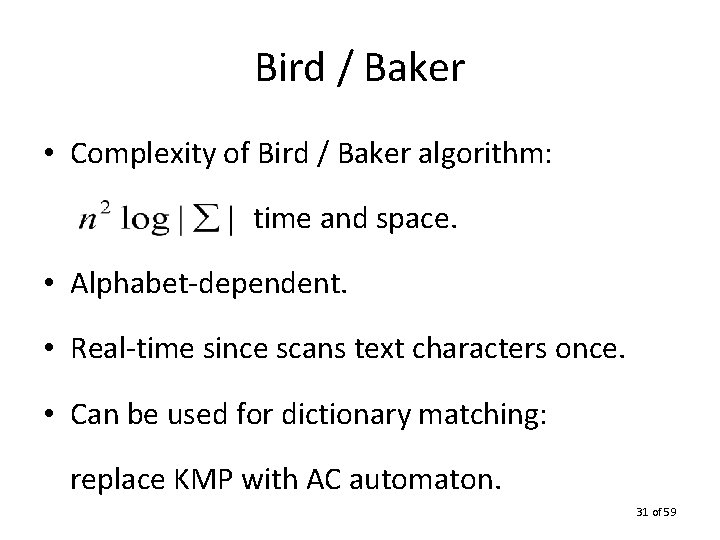

Bird / Baker • Complexity of Bird / Baker algorithm: time and space. • Alphabet-dependent. • Real-time since scans text characters once. • Can be used for dictionary matching: replace KMP with AC automaton. 31 of 59

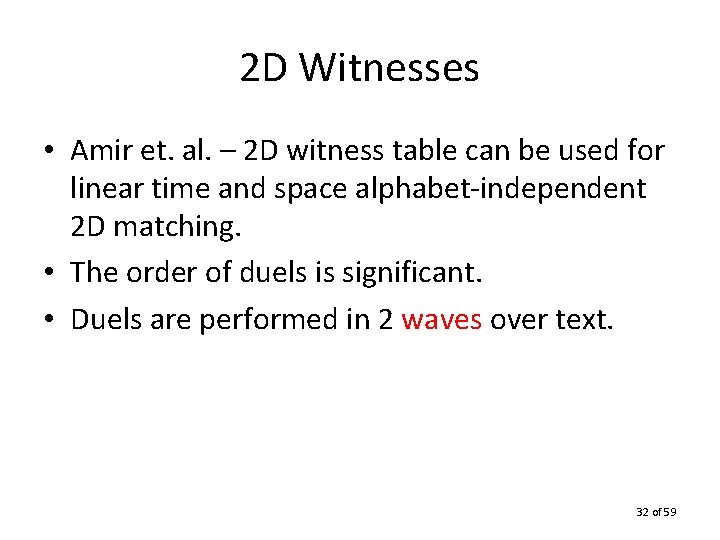

2 D Witnesses • Amir et. al. – 2 D witness table can be used for linear time and space alphabet-independent 2 D matching. • The order of duels is significant. • Duels are performed in 2 waves over text. 32 of 59

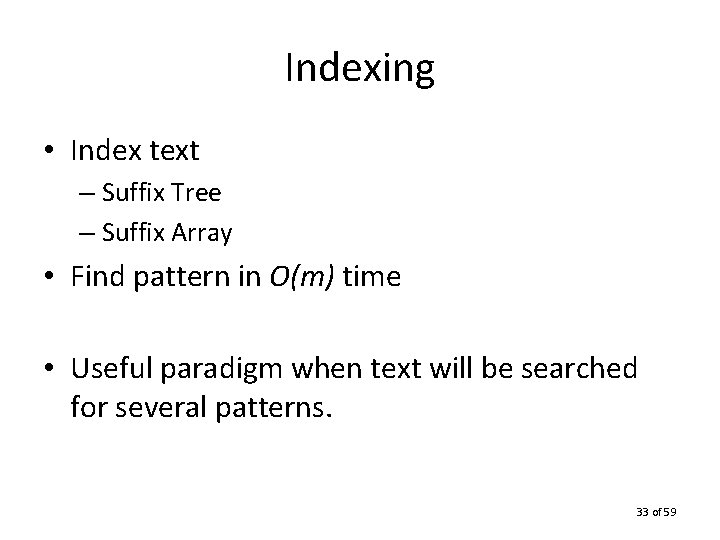

Indexing • Index text – Suffix Tree – Suffix Array • Find pattern in O(m) time • Useful paradigm when text will be searched for several patterns. 33 of 59

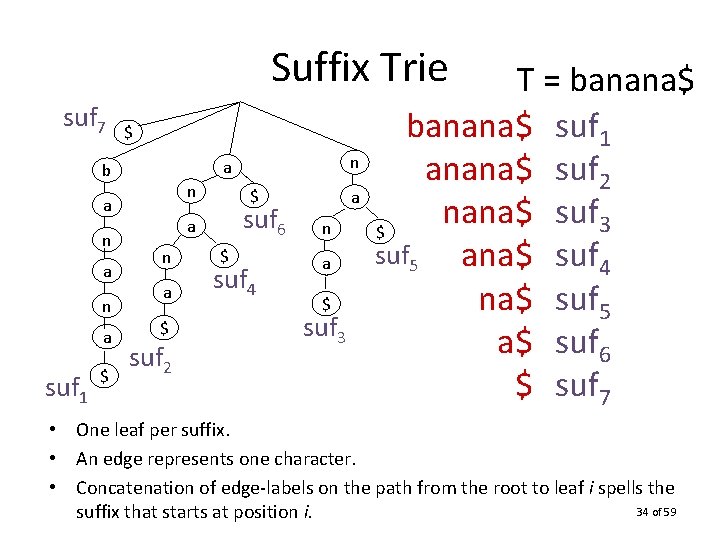

Suffix Trie suf 7 $ n a n a suf 1 $ n a b $ suf 6 a n a $ suf 2 $ suf 4 a n a $ suf 3 T = banana$ suf 1 anana$ suf 2 suf nana$ 3 $ suf 5 ana$ suf 4 na$ suf 5 a$ suf 6 $ suf 7 • One leaf per suffix. • An edge represents one character. • Concatenation of edge-labels on the path from the root to leaf i spells the 34 of 59 suffix that starts at position i.

![Suffix Tree [7, 7] $ [1, 7] [2, 2] a [3, 4] banana$ suf Suffix Tree [7, 7] $ [1, 7] [2, 2] a [3, 4] banana$ suf](http://slidetodoc.com/presentation_image_h2/a9abdb2f964aef99a0f0ce4e7fec9fd0/image-35.jpg)

Suffix Tree [7, 7] $ [1, 7] [2, 2] a [3, 4] banana$ suf 1 [7, 7] $ na [5, 7] [7, 7] na$ $ suf 2 • • [3, 4] suf 6 suf 4 na [5, 7] na$ suf 3 T = banana$ suf 1 anana$ suf 2 nana$ suf 3 [7, 7] ana$ suf 4 $ suf 5 na$ suf 5 a$ suf 6 $ suf 7 Compact representation of trie. A node with one child is merged with its parent. Up to n internal nodes. O(n) space by using indices to label edges 35 of 59

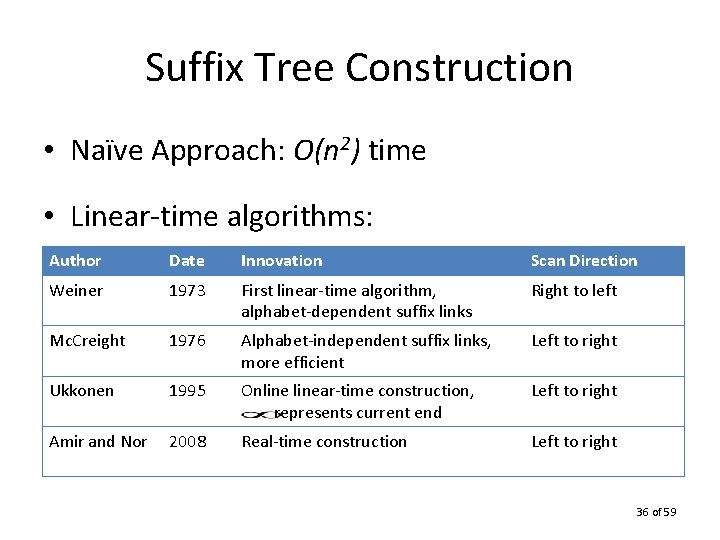

Suffix Tree Construction • Naïve Approach: O(n 2) time • Linear-time algorithms: Author Date Innovation Scan Direction Weiner 1973 First linear-time algorithm, alphabet-dependent suffix links Right to left Mc. Creight 1976 Alphabet-independent suffix links, more efficient Left to right Ukkonen 1995 Onlinear-time construction, represents current end Left to right Amir and Nor 2008 Real-time construction Left to right 36 of 59

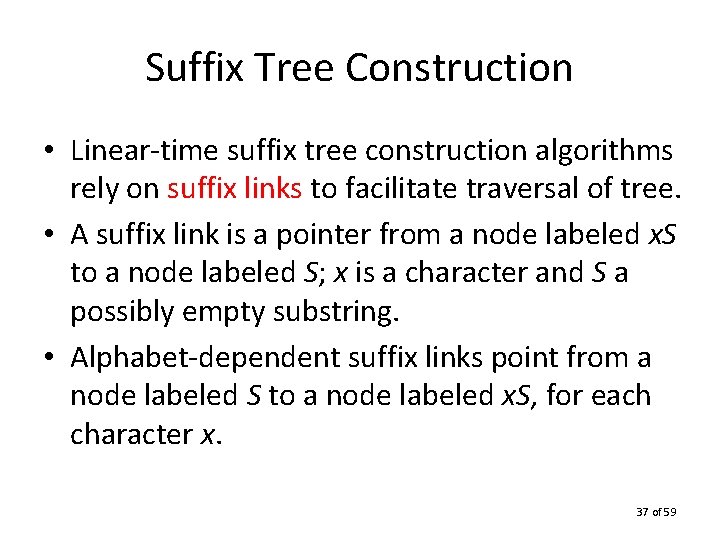

Suffix Tree Construction • Linear-time suffix tree construction algorithms rely on suffix links to facilitate traversal of tree. • A suffix link is a pointer from a node labeled x. S to a node labeled S; x is a character and S a possibly empty substring. • Alphabet-dependent suffix links point from a node labeled S to a node labeled x. S, for each character x. 37 of 59

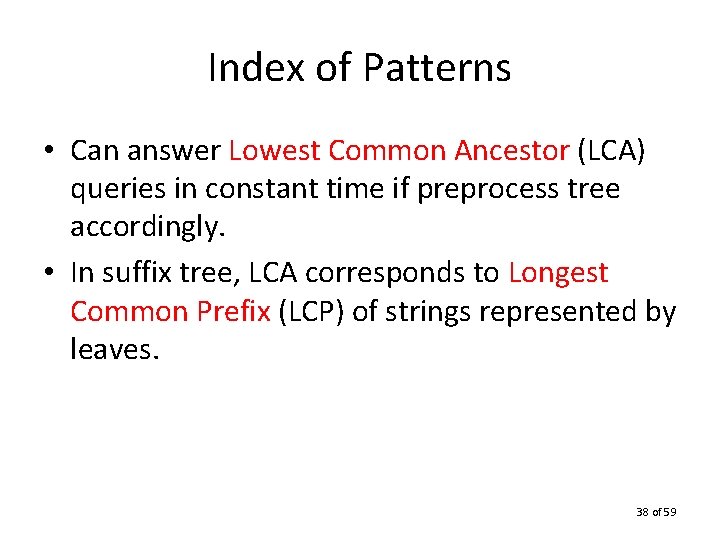

Index of Patterns • Can answer Lowest Common Ancestor (LCA) queries in constant time if preprocess tree accordingly. • In suffix tree, LCA corresponds to Longest Common Prefix (LCP) of strings represented by leaves. 38 of 59

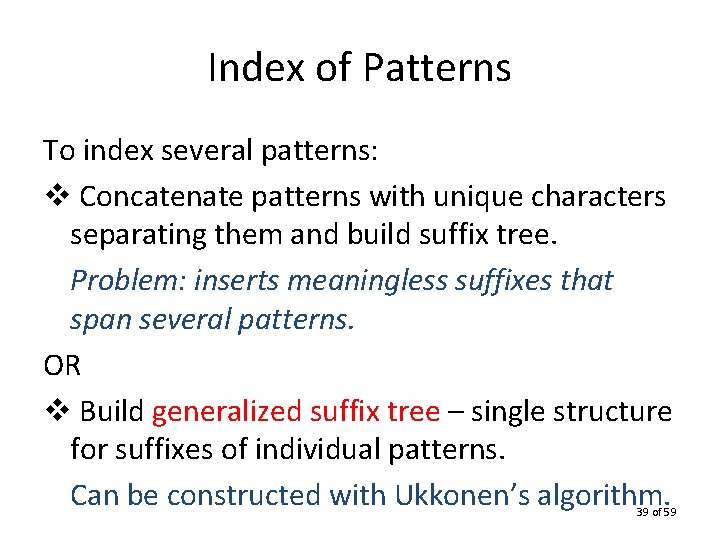

Index of Patterns To index several patterns: v Concatenate patterns with unique characters separating them and build suffix tree. Problem: inserts meaningless suffixes that span several patterns. OR v Build generalized suffix tree – single structure for suffixes of individual patterns. Can be constructed with Ukkonen’s algorithm. 39 of 59

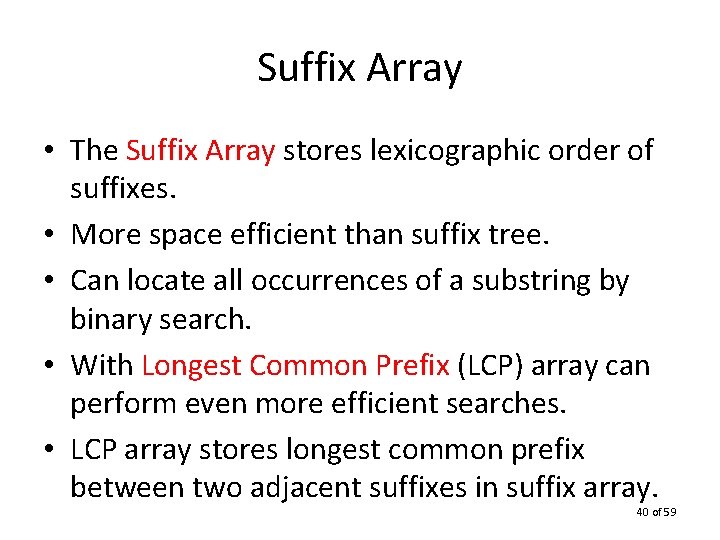

Suffix Array • The Suffix Array stores lexicographic order of suffixes. • More space efficient than suffix tree. • Can locate all occurrences of a substring by binary search. • With Longest Common Prefix (LCP) array can perform even more efficient searches. • LCP array stores longest common prefix between two adjacent suffixes in suffix array. 40 of 59

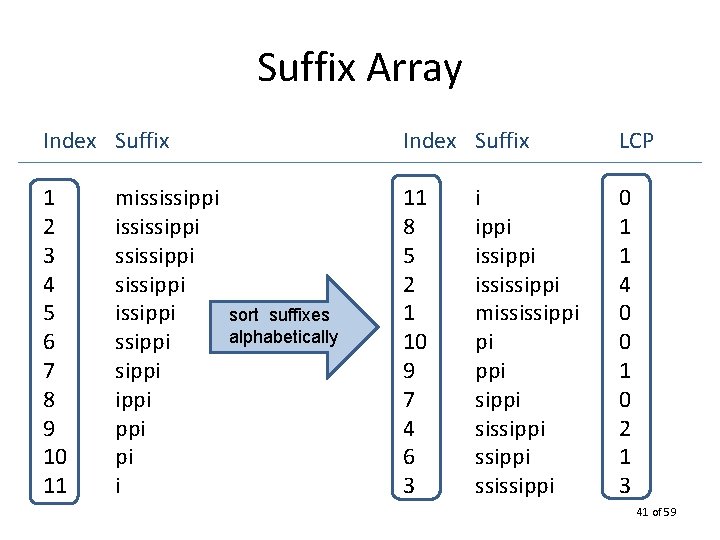

Suffix Array Index Suffix LCP 1 2 3 4 5 6 7 8 9 10 11 11 8 5 2 1 10 9 7 4 6 3 0 1 1 4 0 0 1 0 2 1 3 mississippi issippi ppi pi i sort suffixes alphabetically i ippi ississippi mississippi pi ppi sissippi ssissippi 41 of 59

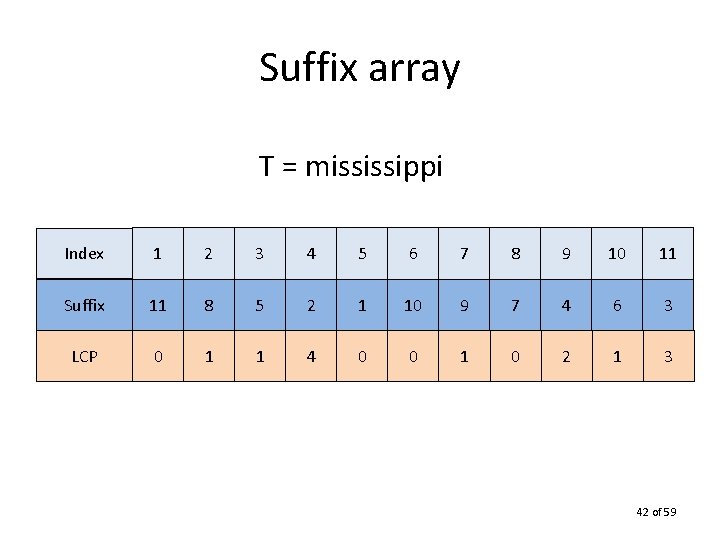

Suffix array T = mississippi Index 1 2 3 4 5 6 7 8 9 10 11 Suffix 11 8 5 2 1 10 9 7 4 6 3 LCP 0 1 1 4 0 0 1 0 2 1 3 42 of 59

Search in Suffix Array O(m log n): Idea: two binary searches - search for leftmost position of X - search for rightmost position of X In between are all suffixes that begin with X With LCP array: O(m + log n) search. 43 of 59

Suffix Array Construction • Naïve Approach: O(n 2) time • Indirect Construction: – preorder traversal of suffix tree – LCA queries for LCP. Problem: does not achieve better space efficiency. 44 of 59

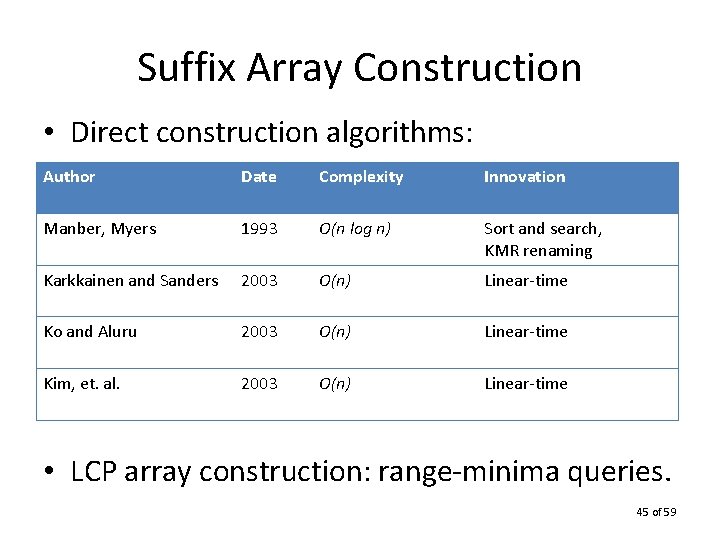

Suffix Array Construction • Direct construction algorithms: Author Date Complexity Innovation Manber, Myers 1993 O(n log n) Sort and search, KMR renaming Karkkainen and Sanders 2003 O(n) Linear-time Ko and Aluru 2003 O(n) Linear-time Kim, et. al. 2003 O(n) Linear-time • LCP array construction: range-minima queries. 45 of 59

Compressed Indices Suffix Tree: O(n) words = O(n log n) bits Compressed suffix tree • Grossi and Vitter (2000) – O(n) space. • Sadakane (2007) – O(n log |Σ|) space. – Supports all suffix tree operations efficiently. – Slowdown of only polylog(n). 46 of 59

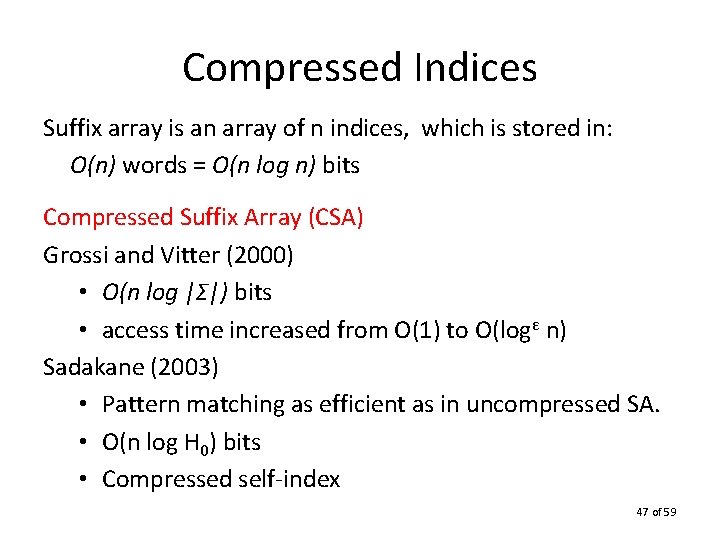

Compressed Indices Suffix array is an array of n indices, which is stored in: O(n) words = O(n log n) bits Compressed Suffix Array (CSA) Grossi and Vitter (2000) • O(n log |Σ|) bits • access time increased from O(1) to O(logε n) Sadakane (2003) • Pattern matching as efficient as in uncompressed SA. • O(n log H 0) bits • Compressed self-index 47 of 59

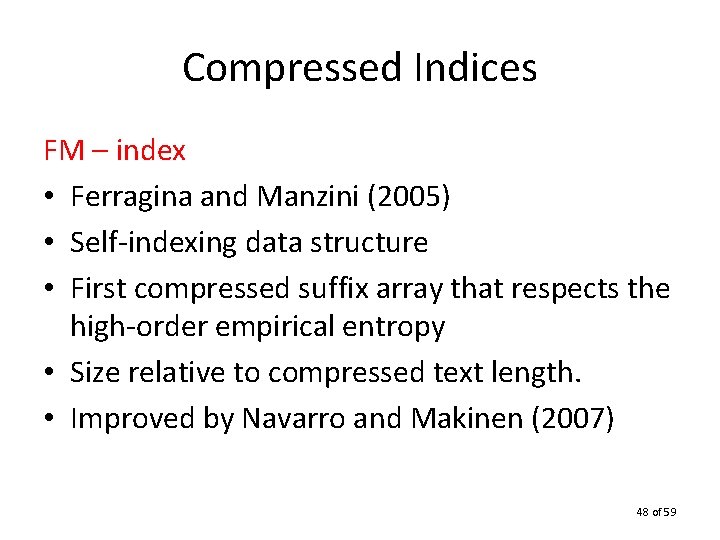

Compressed Indices FM – index • Ferragina and Manzini (2005) • Self-indexing data structure • First compressed suffix array that respects the high-order empirical entropy • Size relative to compressed text length. • Improved by Navarro and Makinen (2007) 48 of 59

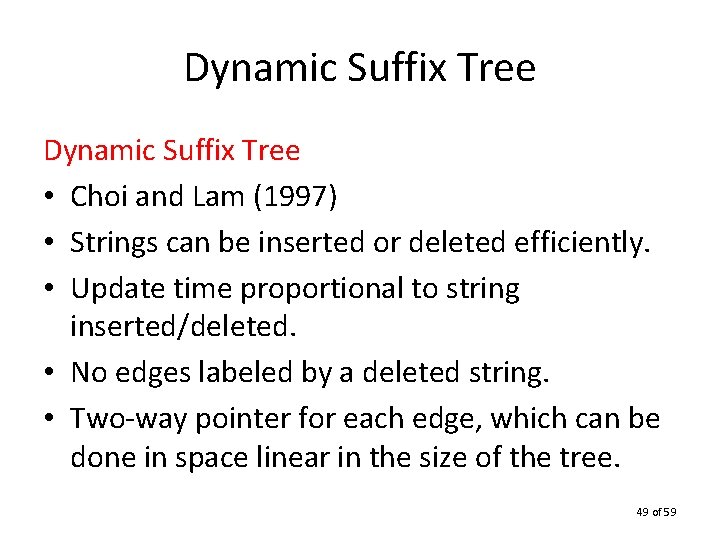

Dynamic Suffix Tree • Choi and Lam (1997) • Strings can be inserted or deleted efficiently. • Update time proportional to string inserted/deleted. • No edges labeled by a deleted string. • Two-way pointer for each edge, which can be done in space linear in the size of the tree. 49 of 59

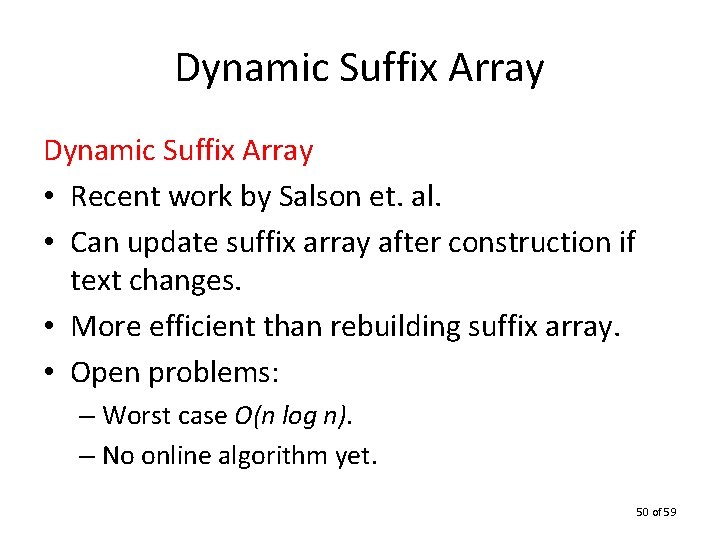

Dynamic Suffix Array • Recent work by Salson et. al. • Can update suffix array after construction if text changes. • More efficient than rebuilding suffix array. • Open problems: – Worst case O(n log n). – No online algorithm yet. 50 of 59

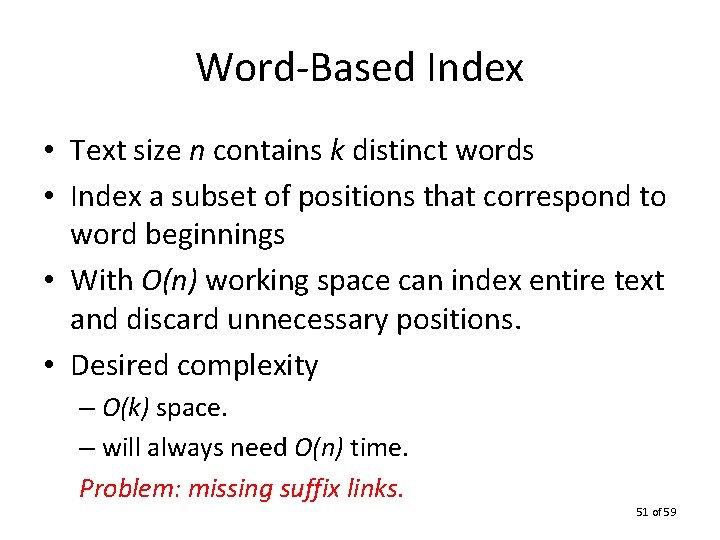

Word-Based Index • Text size n contains k distinct words • Index a subset of positions that correspond to word beginnings • With O(n) working space can index entire text and discard unnecessary positions. • Desired complexity – O(k) space. – will always need O(n) time. Problem: missing suffix links. 51 of 59

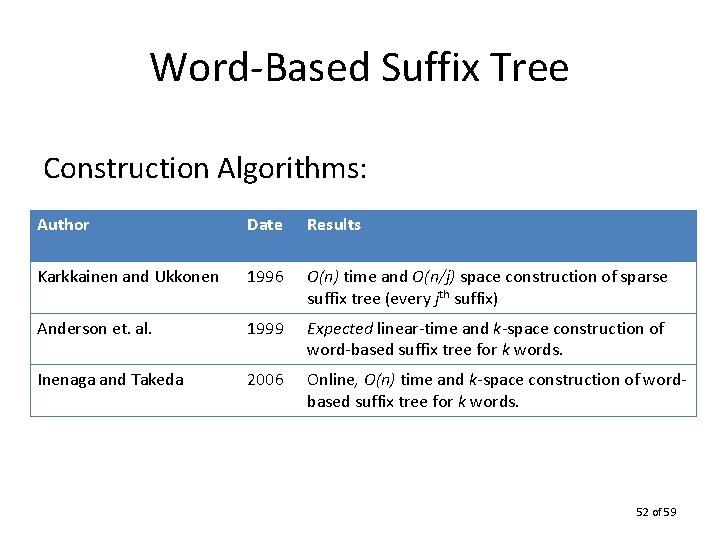

Word-Based Suffix Tree Construction Algorithms: Author Date Results Karkkainen and Ukkonen 1996 O(n) time and O(n/j) space construction of sparse suffix tree (every jth suffix) Anderson et. al. 1999 Expected linear-time and k-space construction of word-based suffix tree for k words. Inenaga and Takeda 2006 Online, O(n) time and k-space construction of wordbased suffix tree for k words. 52 of 59

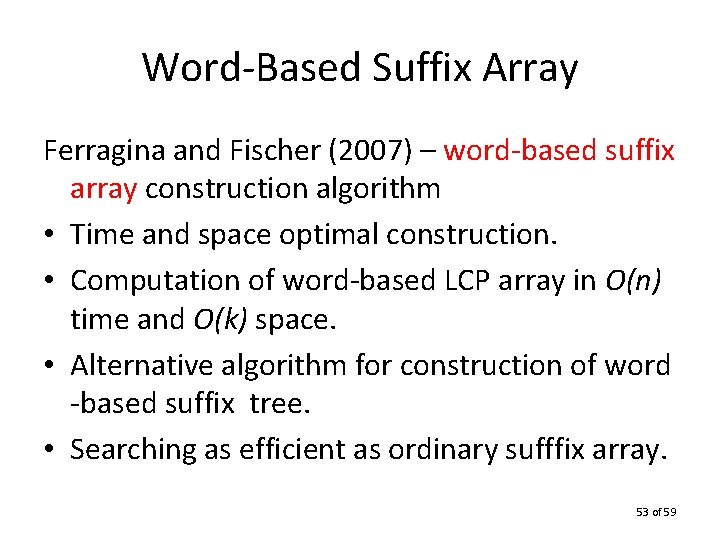

Word-Based Suffix Array Ferragina and Fischer (2007) – word-based suffix array construction algorithm • Time and space optimal construction. • Computation of word-based LCP array in O(n) time and O(k) space. • Alternative algorithm for construction of word -based suffix tree. • Searching as efficient as ordinary sufffix array. 53 of 59

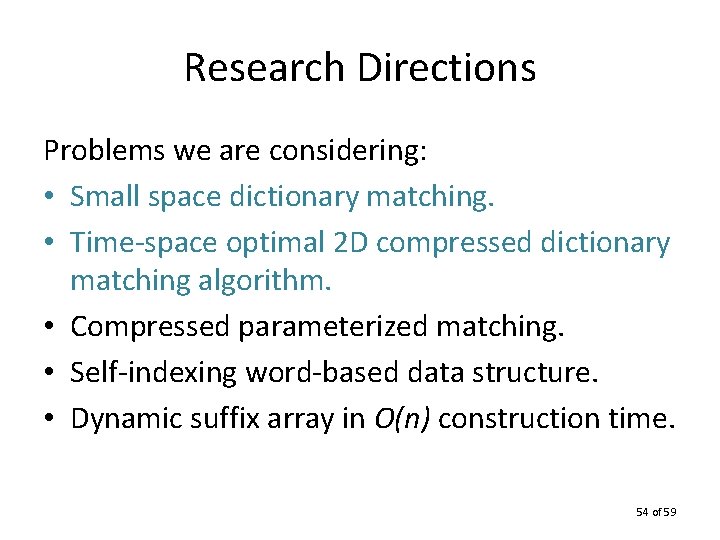

Research Directions Problems we are considering: • Small space dictionary matching. • Time-space optimal 2 D compressed dictionary matching algorithm. • Compressed parameterized matching. • Self-indexing word-based data structure. • Dynamic suffix array in O(n) construction time. 54 of 59

Small-Space • Applications arise in which storage space is limited. • Many innovative algorithms exist for single pattern matching using small additional space: – Galil and Seiferas (1981) developed first timespace optimal algorithm for pattern matching. – Rytter (2003) adapted the KMP algorithm to work in O(1) additional space, O(n) time. 55 of 59

Research Directions • Fast dictionary matching algorithms exist for 1 D and 2 D. Achieve expected sublinear time. • No deterministic dictionary matching method that works in linear time and small space. • We believe that recent results in compressed self-indexing will facilitate the development of a solution to the small space dictionary matching problem. 56 of 59

Compressed Matching • Data is compressed to save space. • Lossless compression schemes can be reversed without loss of data. • Pattern matching cannot be done in compressed text – pattern can span a compressed character. • LZ 78: data can be uncompressed in time and space proportional to the uncompressed data. 57 of 59

Research Directions • Amir et. al. (2003) devised an algorithm for 2 D LZ 78 compressed matching. • They define strongly inplace as a criteria for the algorithm: that the extra space is proportional to the optimal compression of all strings of the given length. • We are seeking a time-space optimal solution to 2 D compressed dictionary matching. 58 of 59

Thank you! 59 of 59

- Slides: 59