UNSCENTED KALMAN FILTER FOR ROBOT MAPPING Kalman Filterbased

UNSCENTED KALMAN FILTER FOR ROBOT MAPPING

![Kalman Filter-based System • [Arras et al. 98]: • Laser range-finder and vision • Kalman Filter-based System • [Arras et al. 98]: • Laser range-finder and vision •](http://slidetodoc.com/presentation_image/1041390bbab08e8302a3fb72ae1caedb/image-2.jpg)

Kalman Filter-based System • [Arras et al. 98]: • Laser range-finder and vision • High precision (<1 cm accuracy) [Courtesy of Kai Arras] 2

KF, EKF and UKF

Taylor Approximation (EKF)

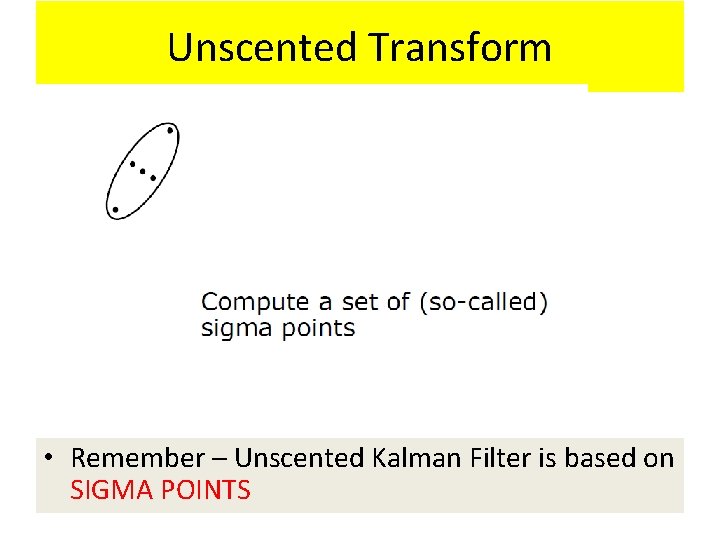

Unscented Transform

Unscented Transform • Remember – Unscented Kalman Filter is based on SIGMA POINTS

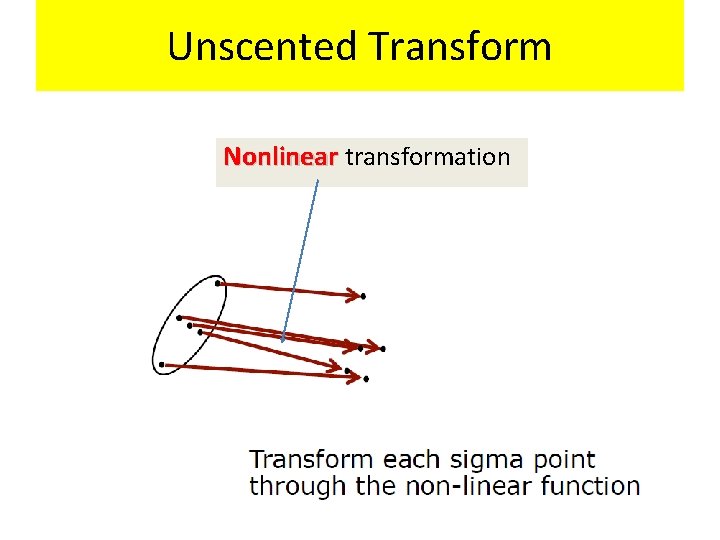

Unscented Transform Nonlinear transformation

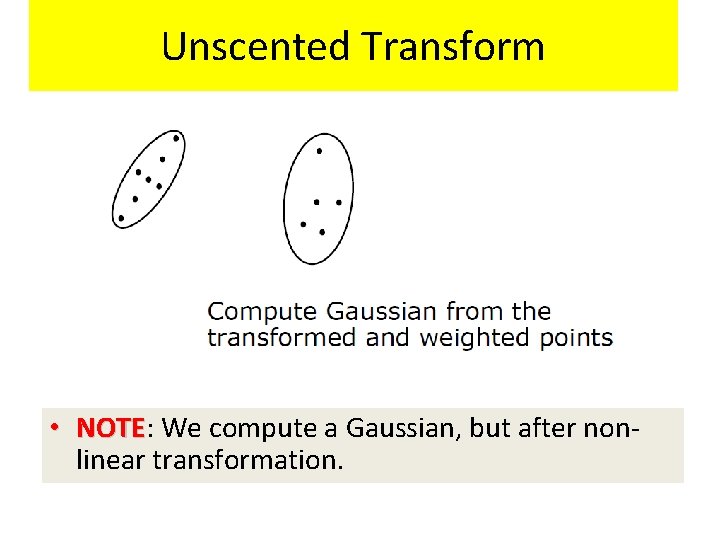

Unscented Transform • NOTE: NOTE We compute a Gaussian, but after nonlinear transformation.

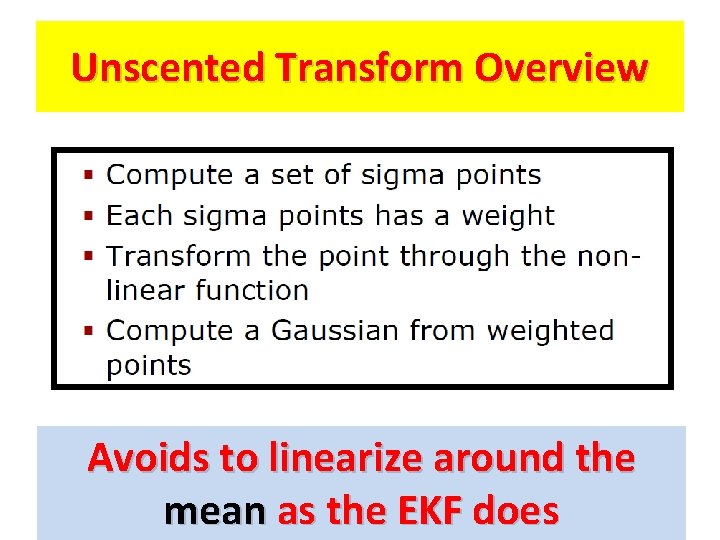

Unscented Transform Overview Avoids to linearize around the mean as the EKF does

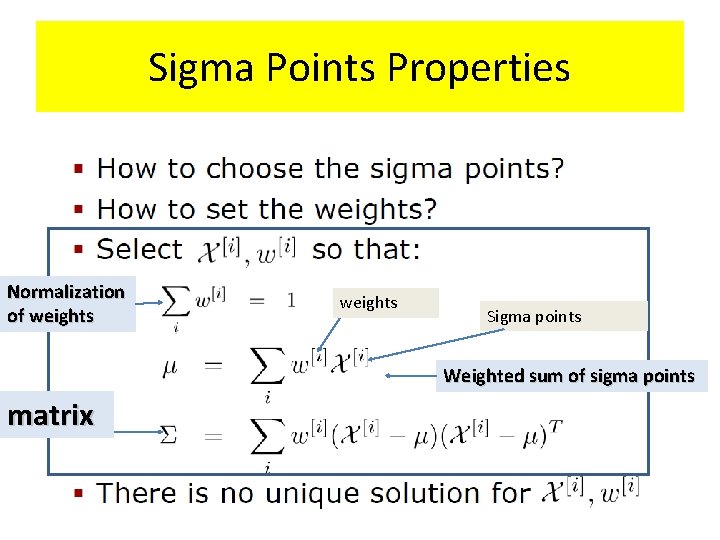

Sigma Points

Sigma Points

Sigma Points Properties Normalization of weights Sigma points Weighted sum of sigma points matrix

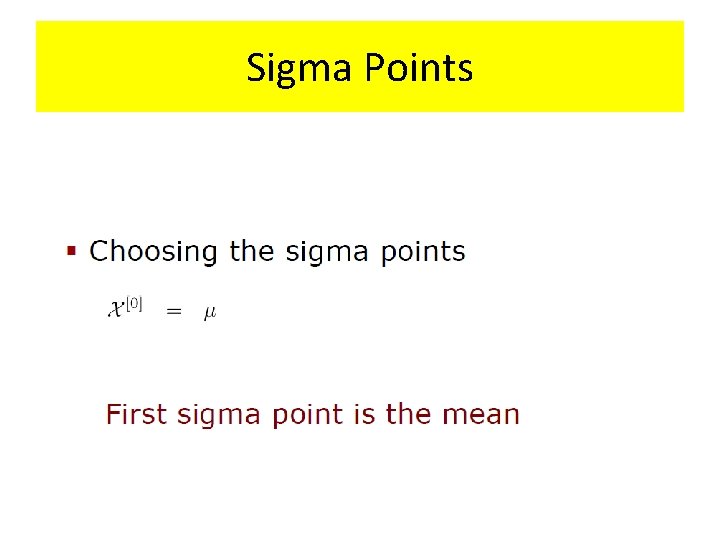

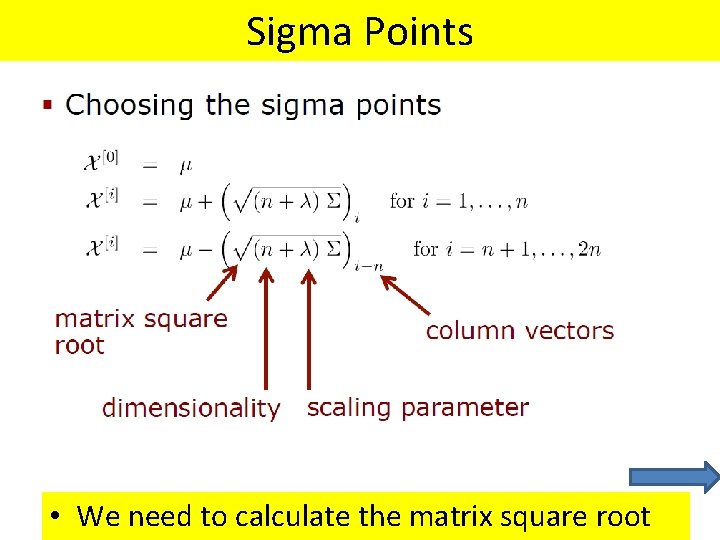

Sigma Points

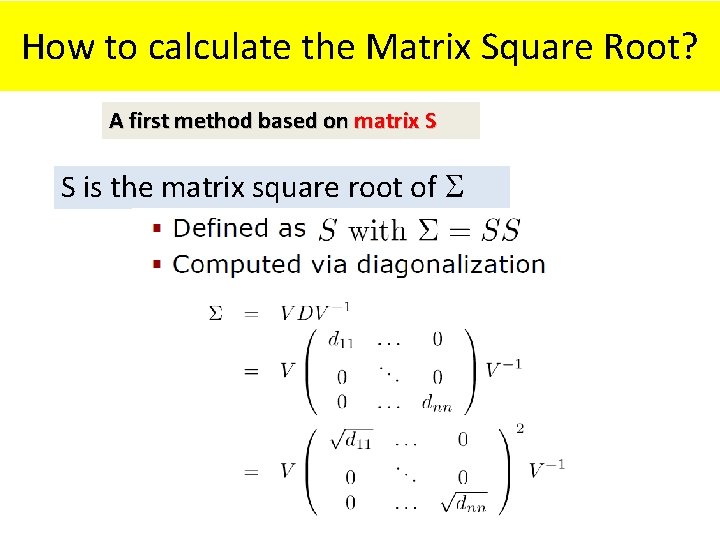

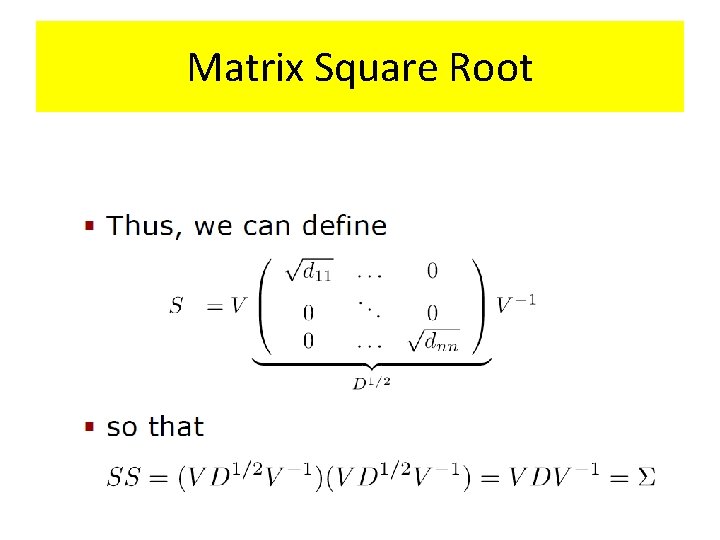

Sigma Points • We need to calculate the matrix square root

Matrix Square Root

How to calculate the Matrix Square Root? A first method based on matrix S S is the matrix square root of

Matrix Square Root

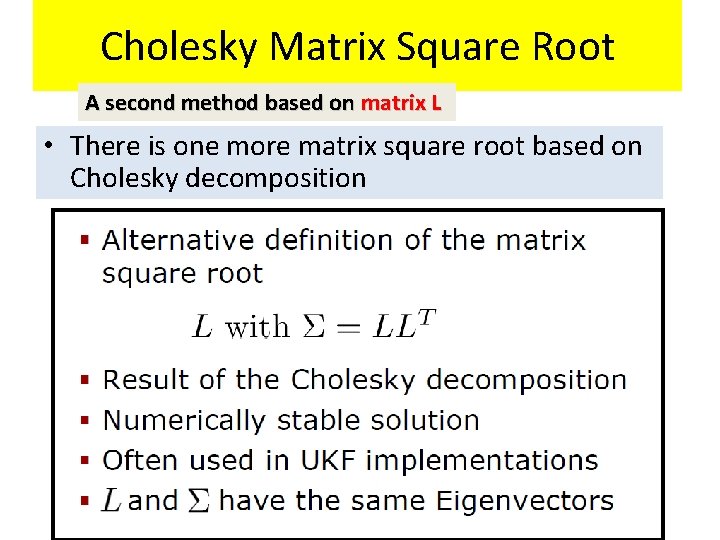

Cholesky Matrix Square Root A second method based on matrix L • There is one more matrix square root based on Cholesky decomposition

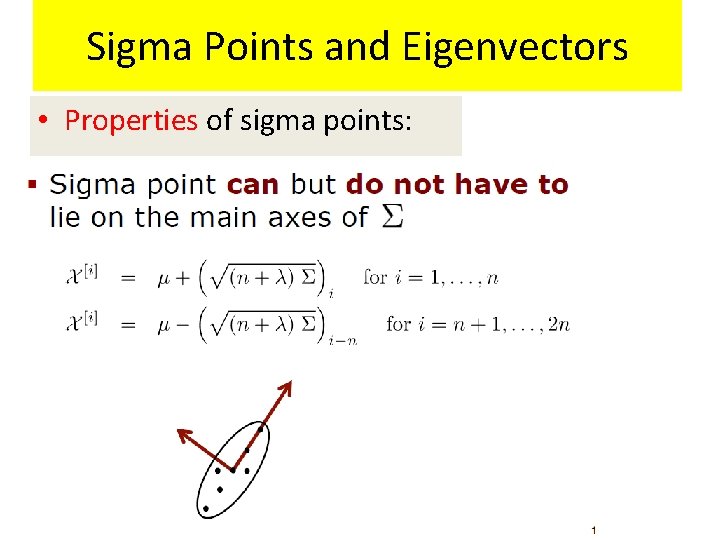

Sigma Points and Eigenvectors • Properties of sigma points:

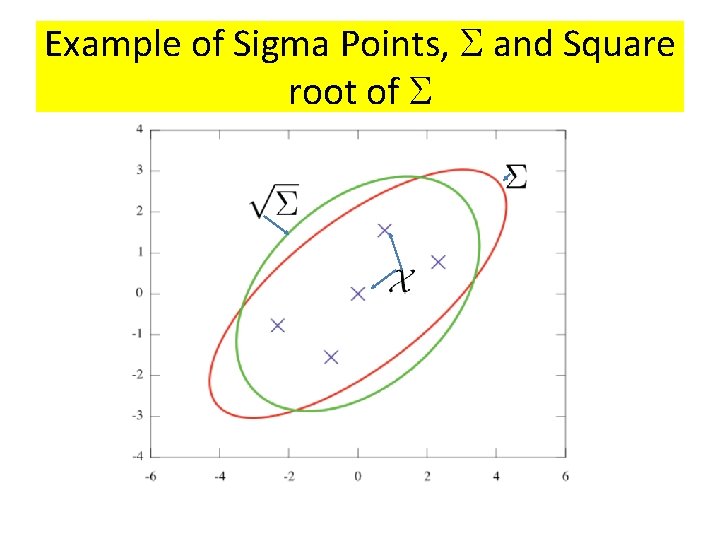

Example of Sigma Points, and Square root of

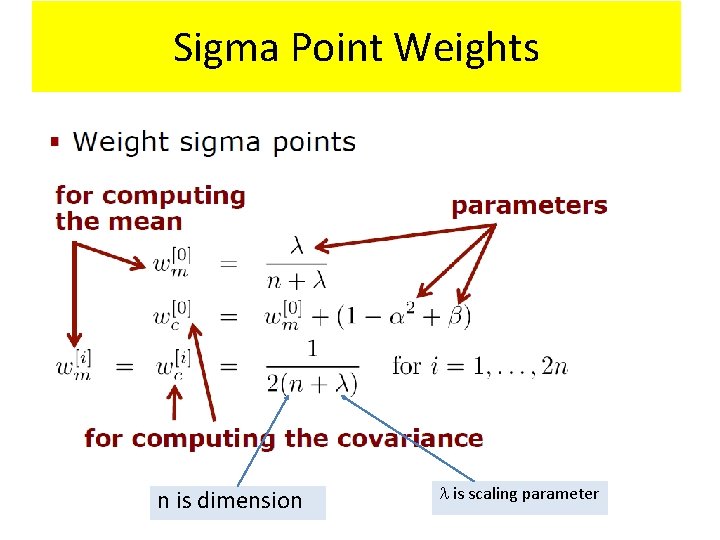

Sigma Point Weights n is dimension is scaling parameter

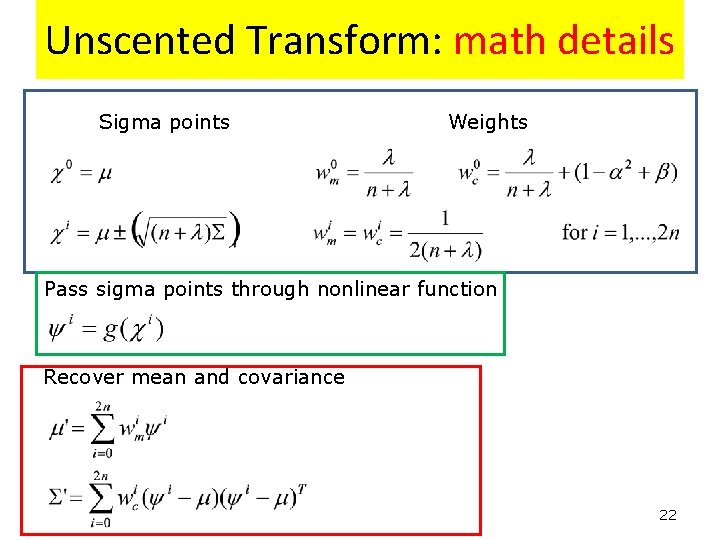

Unscented Transform: math details Sigma points Weights Pass sigma points through nonlinear function Recover mean and covariance 22

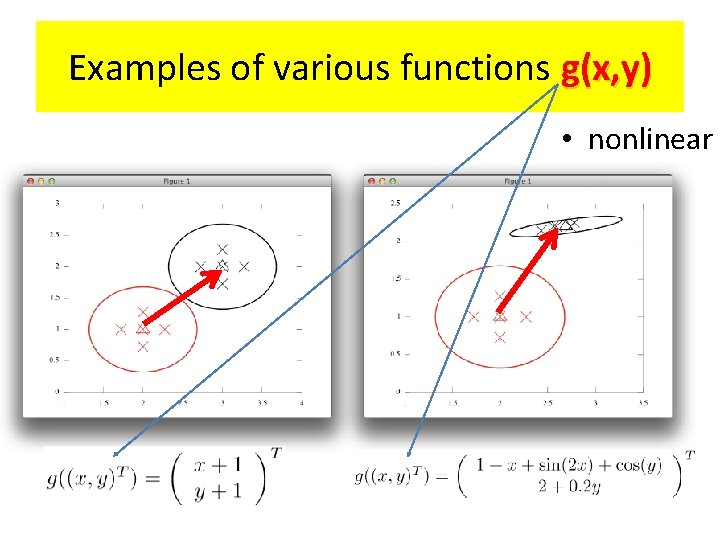

Examples of various functions g(x, y) • nonlinear

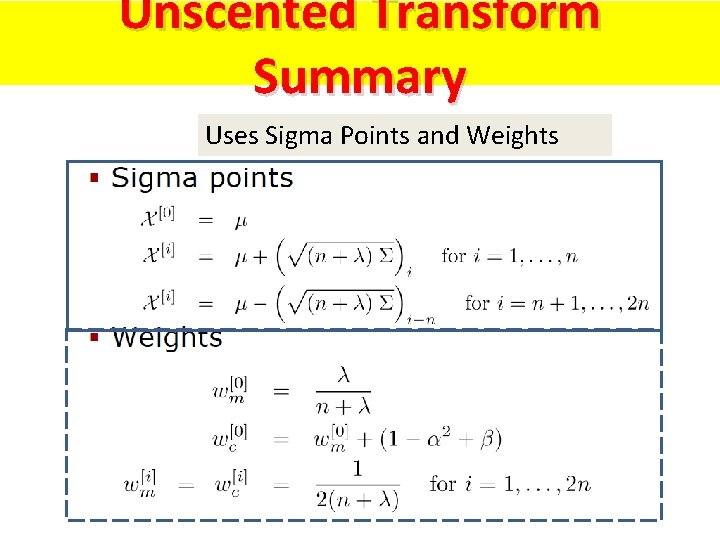

Unscented Transform Summary Uses Sigma Points and Weights

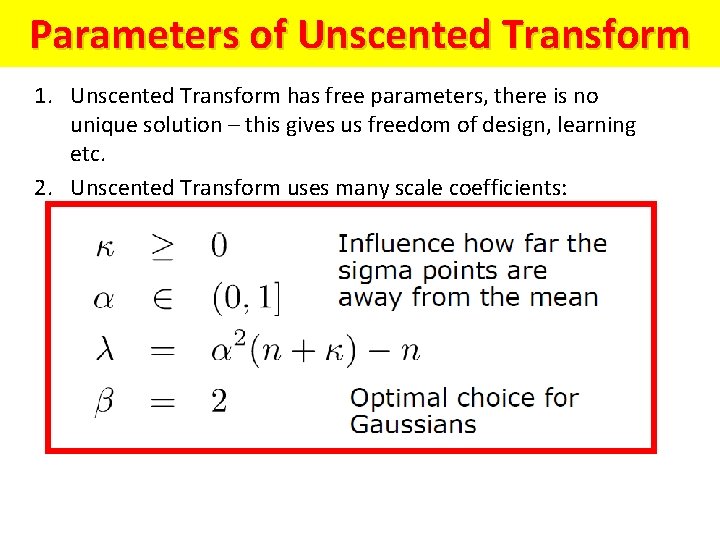

Parameters of Unscented Transform 1. Unscented Transform has free parameters, there is no unique solution – this gives us freedom of design, learning etc. 2. Unscented Transform uses many scale coefficients:

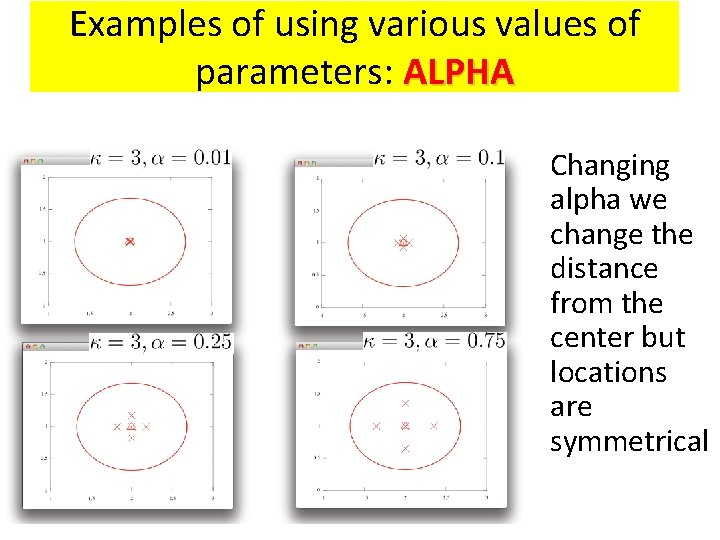

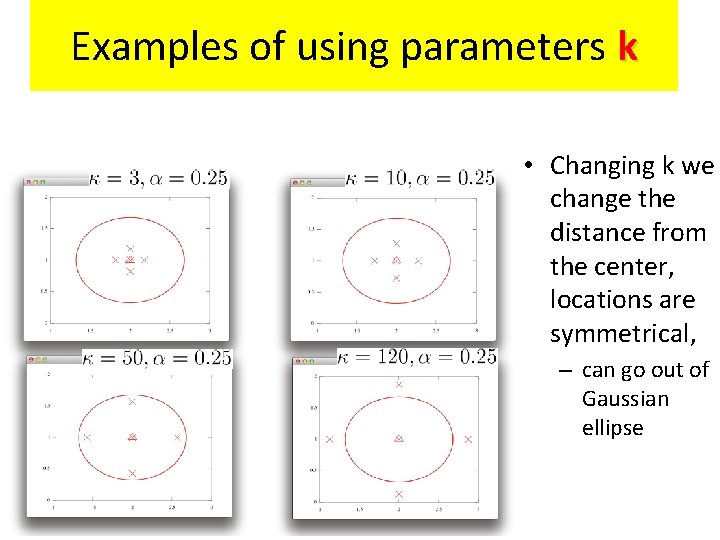

Examples of using various values of parameters: ALPHA • Changing alpha we change the distance from the center but locations are symmetrical

Examples of using parameters k • Changing k we change the distance from the center, locations are symmetrical, – can go out of Gaussian ellipse

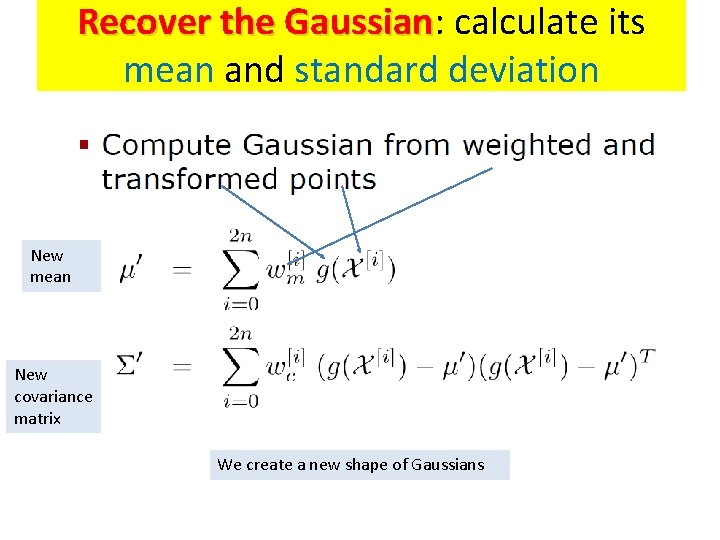

Recover the Gaussian

Recover the Gaussian: Gaussian calculate its mean and standard deviation New mean New covariance matrix We create a new shape of Gaussians

Examples of recovering gaussians

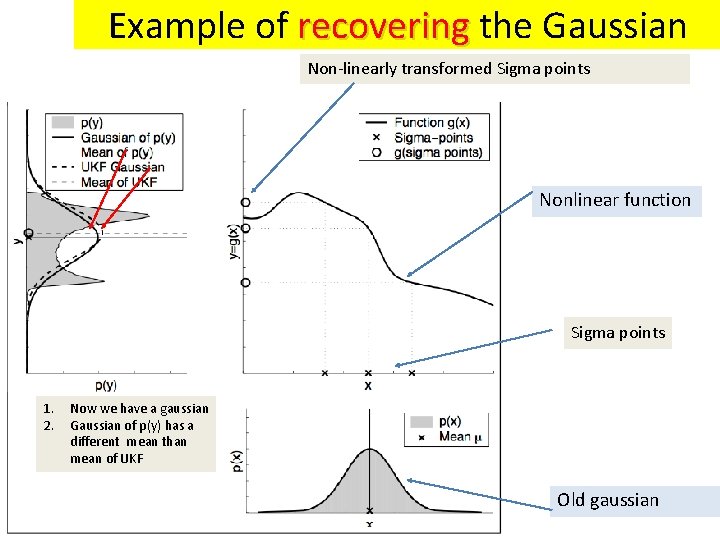

Example of recovering the Gaussian Non-linearly transformed Sigma points Nonlinear function Sigma points 1. 2. Now we have a gaussian Gaussian of p(y) has a different mean than mean of UKF Old gaussian

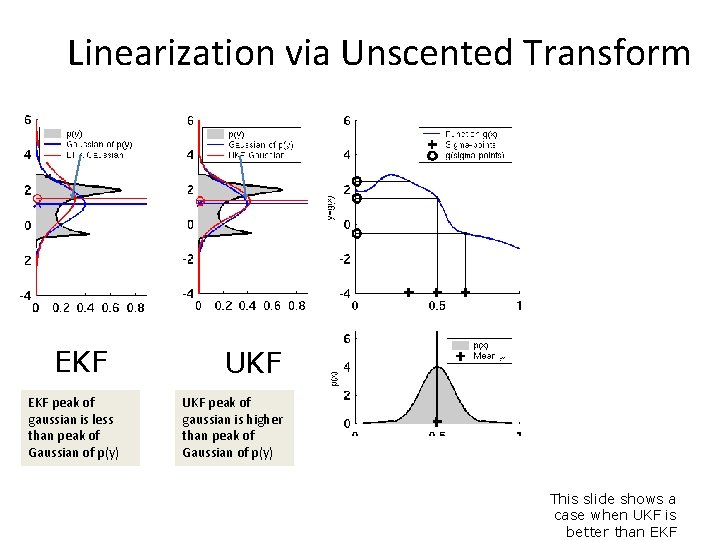

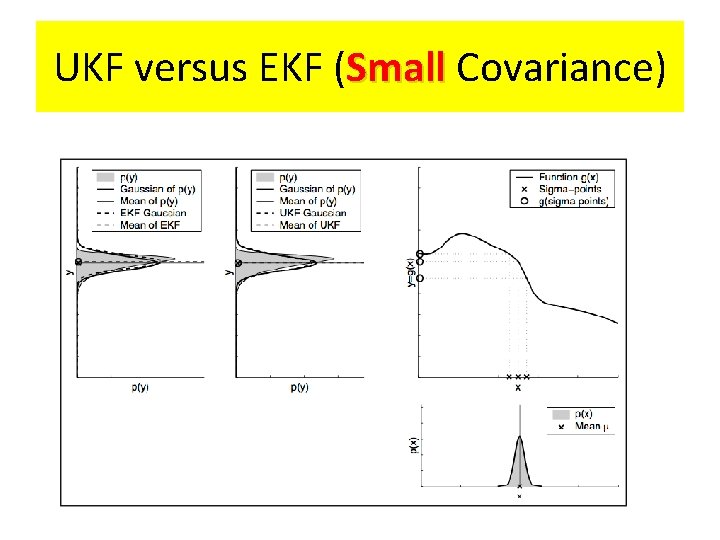

Linearization via Unscented Transform EKF peak of gaussian is less than peak of Gaussian of p(y) UKF peak of gaussian is higher than peak of Gaussian of p(y) This slide shows a case when UKF is better than EKF

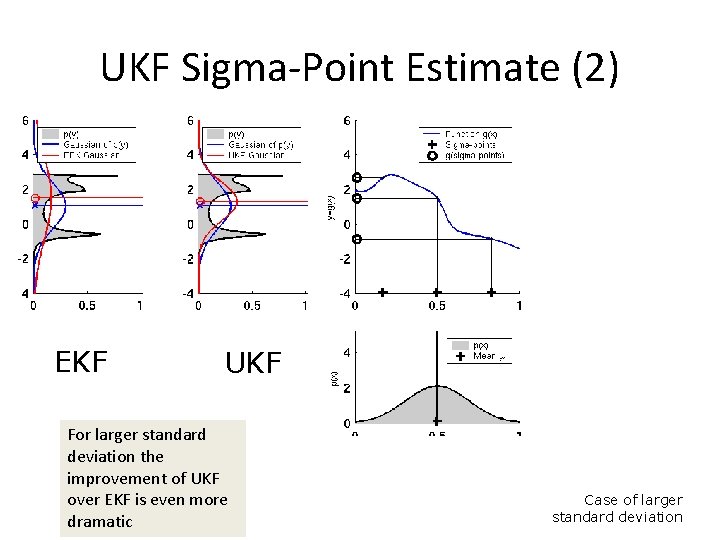

UKF Sigma-Point Estimate (2) EKF UKF For larger standard deviation the improvement of UKF over EKF is even more dramatic Case of larger standard deviation

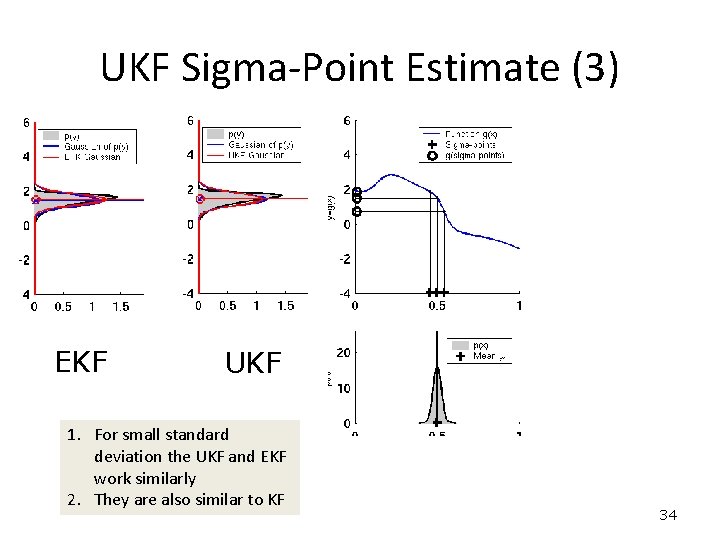

UKF Sigma-Point Estimate (3) EKF UKF 1. For small standard deviation the UKF and EKF work similarly 2. They are also similar to KF 34

UKF versus EKF

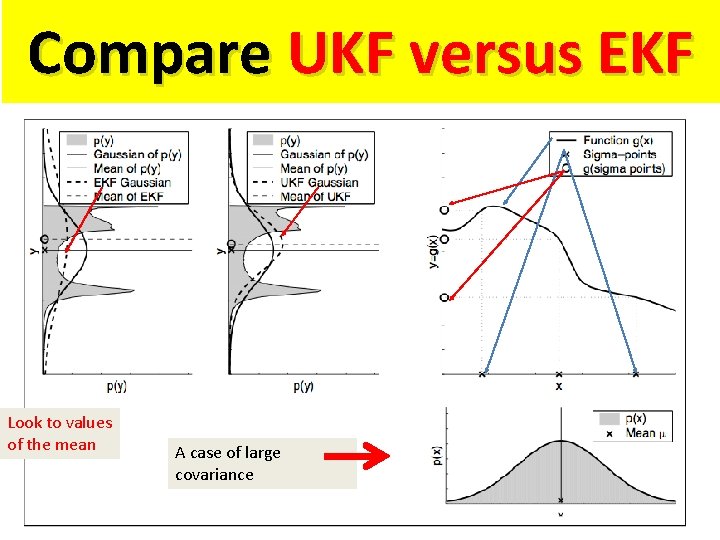

Compare UKF versus EKF Look to values of the mean A case of large covariance

UKF versus EKF (Small Covariance)

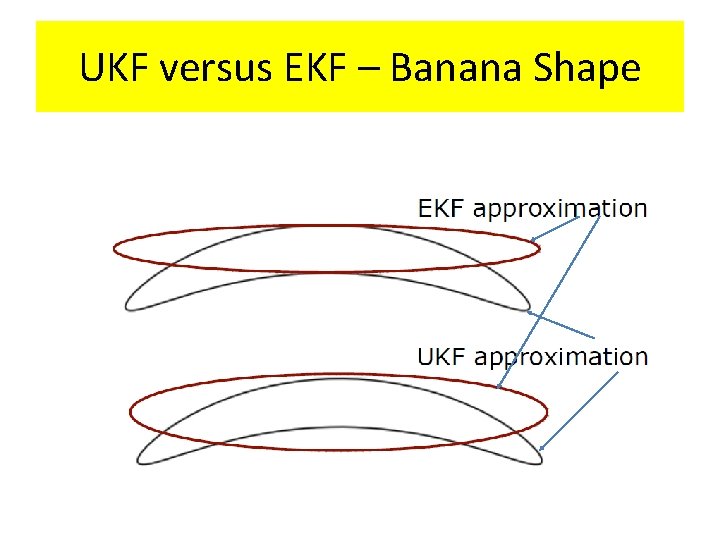

UKF versus EKF – Banana Shape

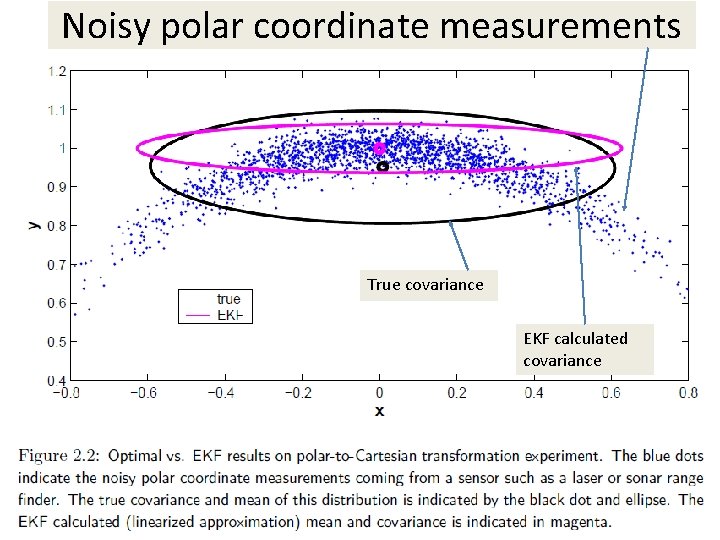

Noisy polar coordinate measurements True covariance EKF calculated covariance

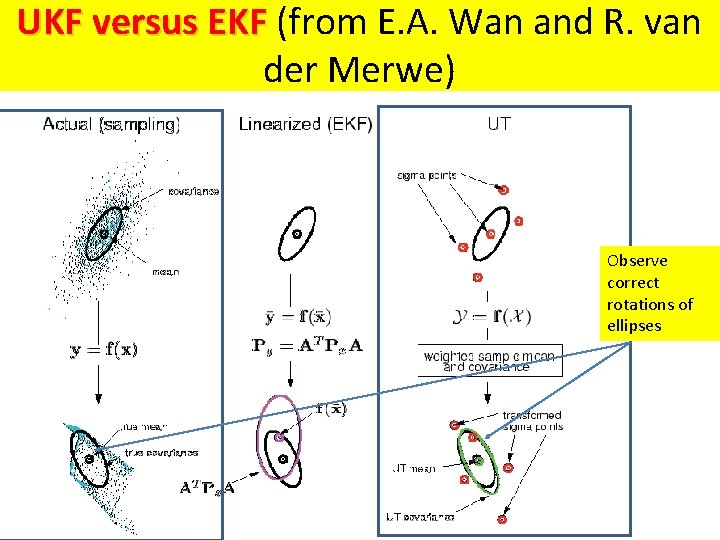

UKF versus EKF (from E. A. Wan and R. van der Merwe) Observe correct rotations of ellipses

UKF Algorithm

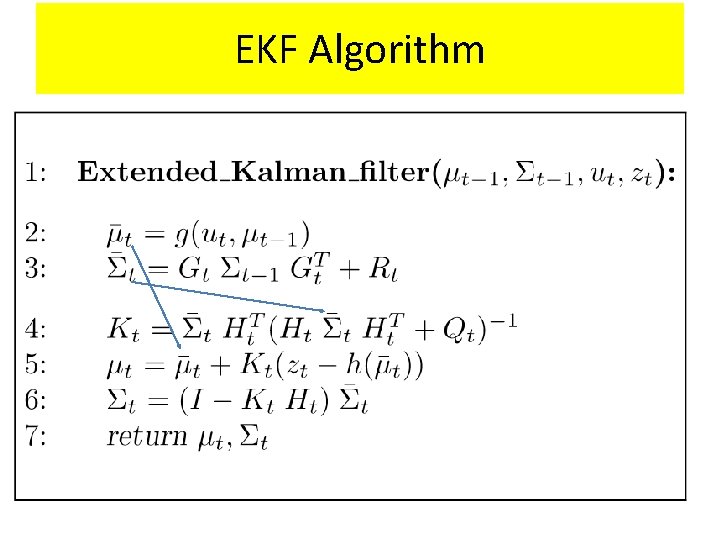

EKF Algorithm

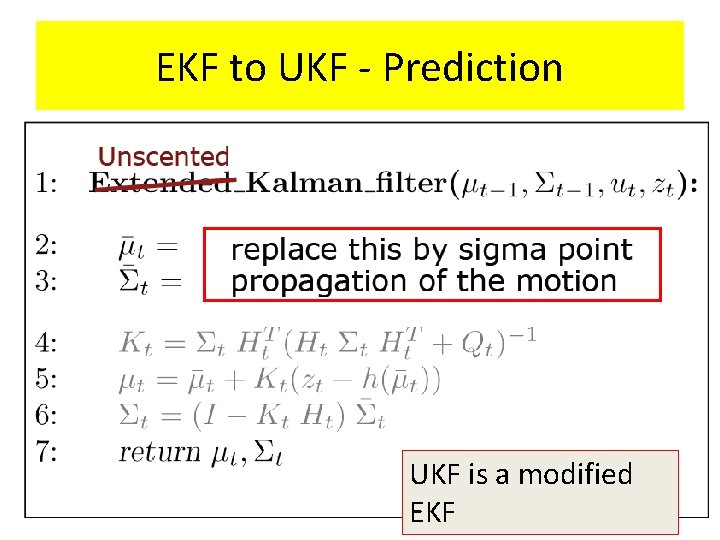

EKF to UKF - Prediction UKF is a modified EKF

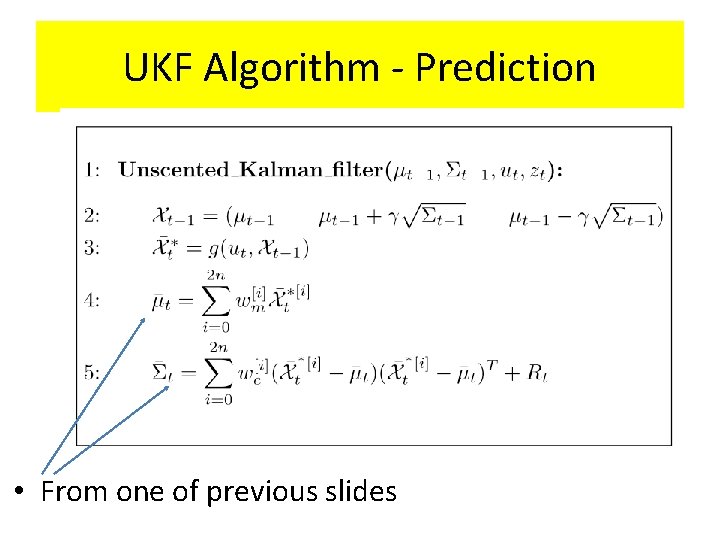

UKF Algorithm - Prediction • From one of previous slides

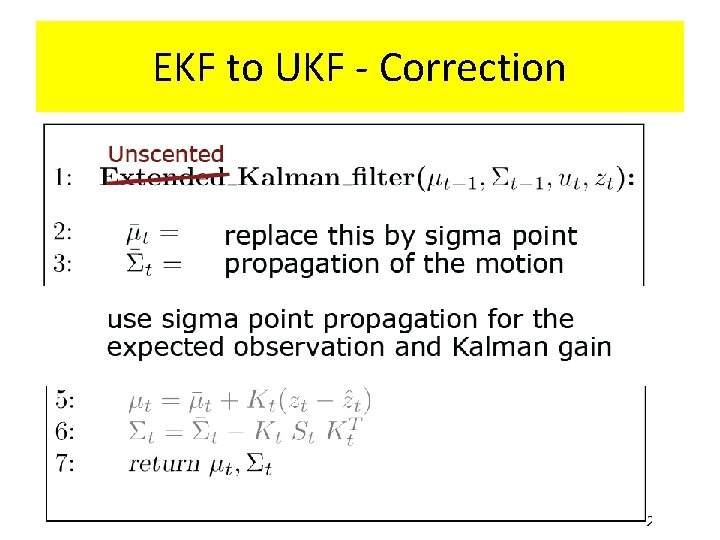

EKF to UKF - Correction

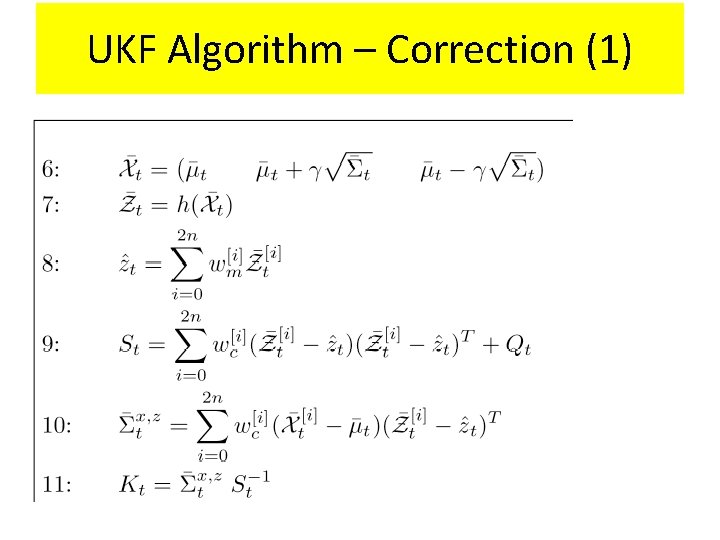

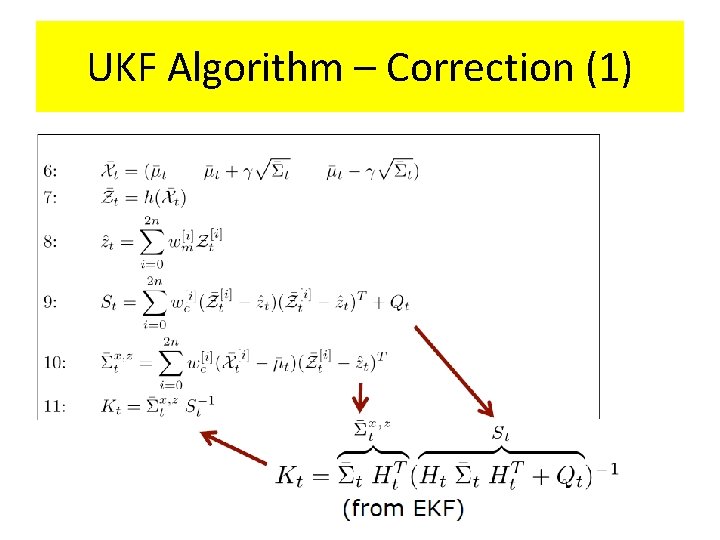

UKF Algorithm – Correction (1)

UKF Algorithm – Correction (1)

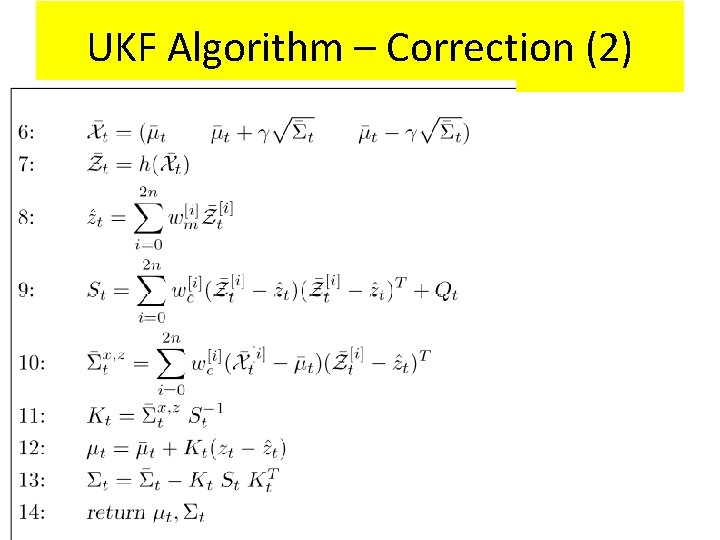

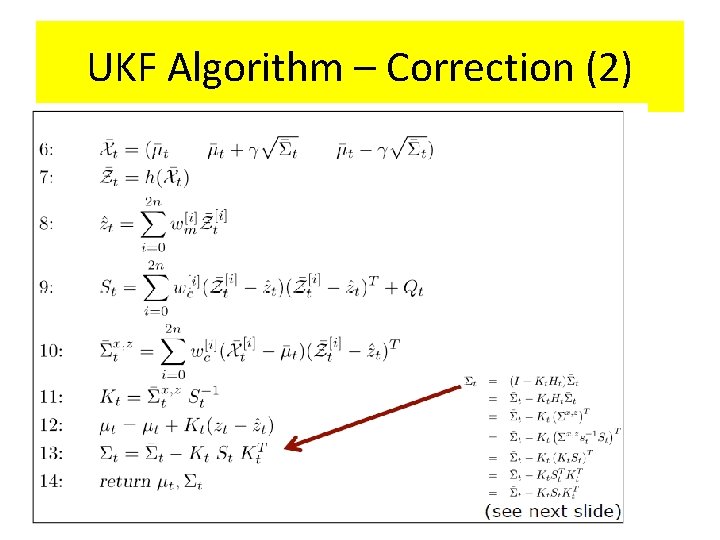

UKF Algorithm – Correction (2)

UKF Algorithm – Correction (2)

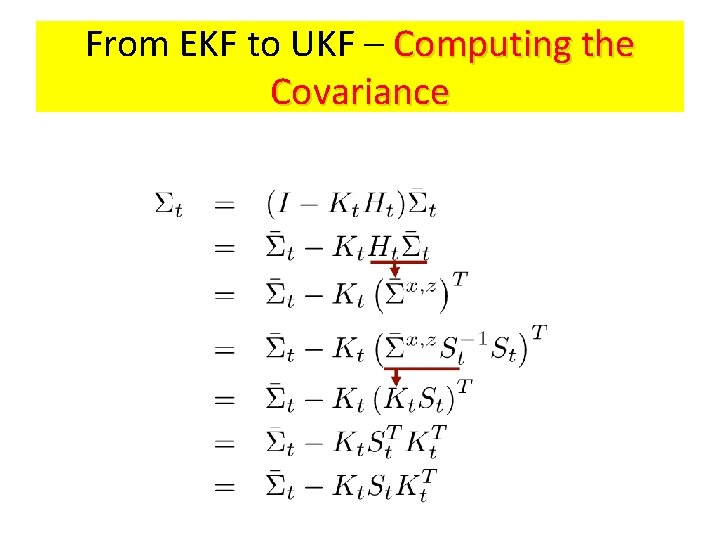

From EKF to UKF – Computing the Covariance

Example of UKF for localization

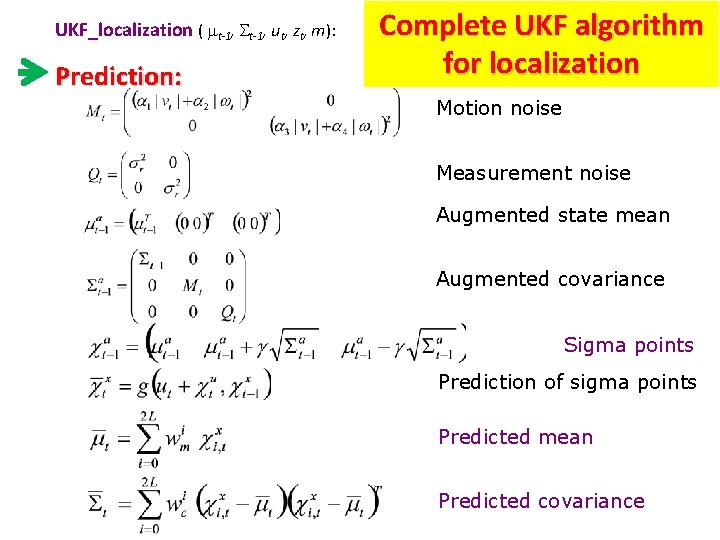

UKF_localization ( mt-1, ut, zt, m): Prediction: Complete UKF algorithm for localization Motion noise Measurement noise Augmented state mean Augmented covariance Sigma points Prediction of sigma points Predicted mean Predicted covariance

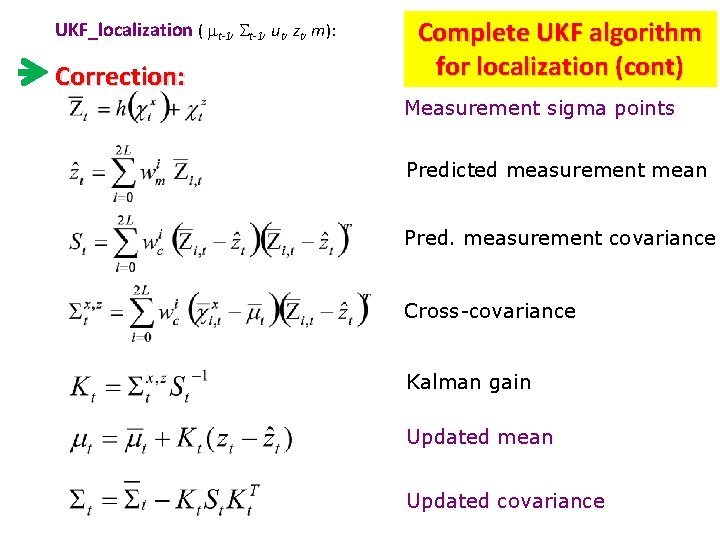

UKF_localization ( mt-1, ut, zt, m): Correction: Complete UKF algorithm for localization (cont) Measurement sigma points Predicted measurement mean Pred. measurement covariance Cross-covariance Kalman gain Updated mean Updated covariance

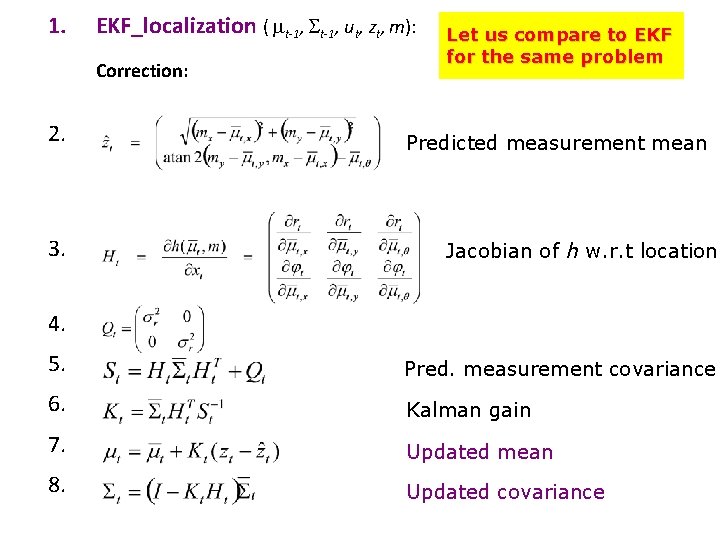

1. EKF_localization ( mt-1, ut, zt, m): Correction: 2. 3. Let us compare to EKF for the same problem Predicted measurement mean Jacobian of h w. r. t location 4. 5. Pred. measurement covariance 6. Kalman gain 7. Updated mean 8. Updated covariance

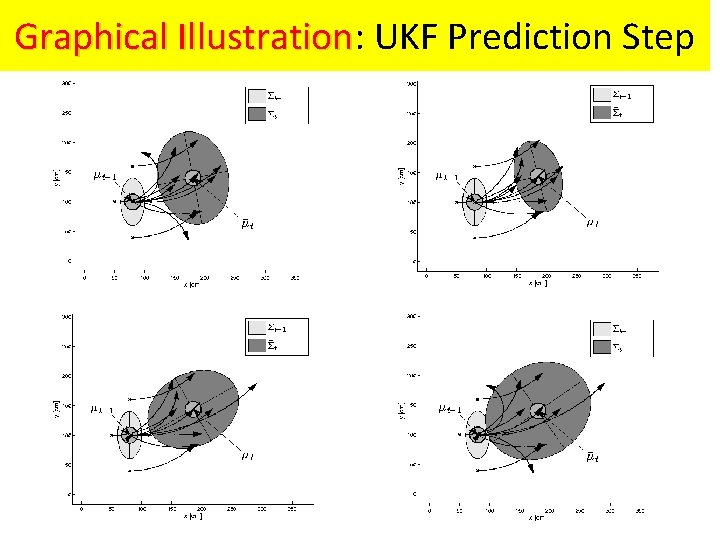

Graphical Illustration: Illustration UKF Prediction Step

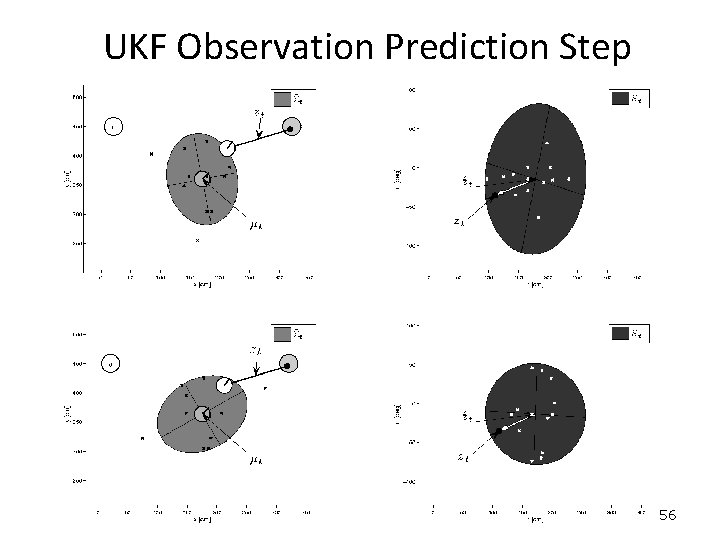

UKF Observation Prediction Step 56

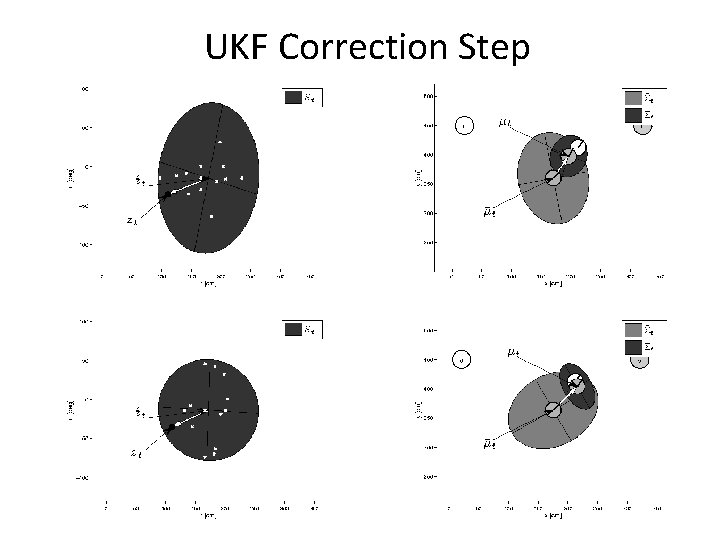

UKF Correction Step 57

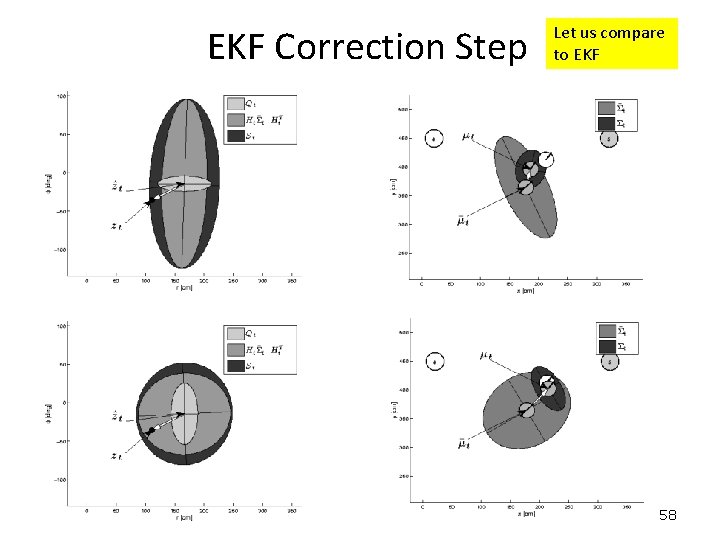

EKF Correction Step Let us compare to EKF 58

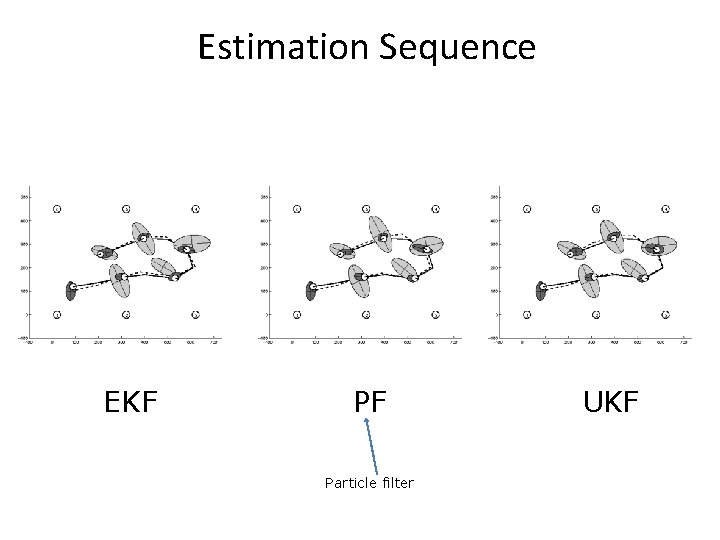

Estimation Sequence EKF PF Particle filter UKF

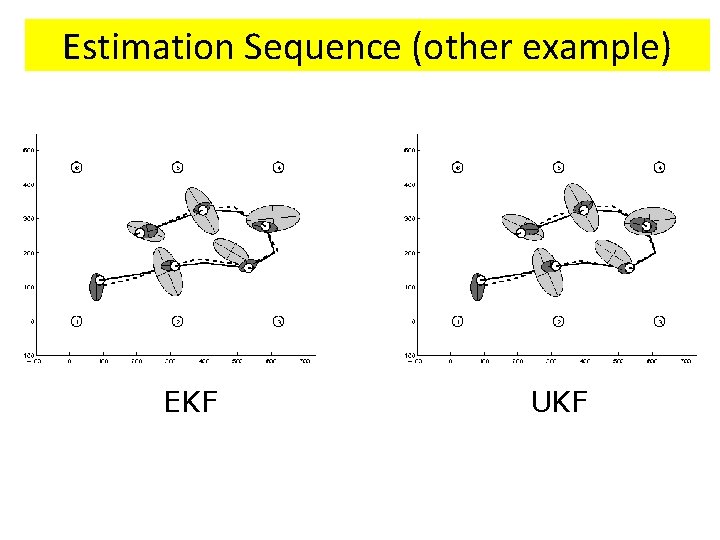

Estimation Sequence (other example) EKF UKF

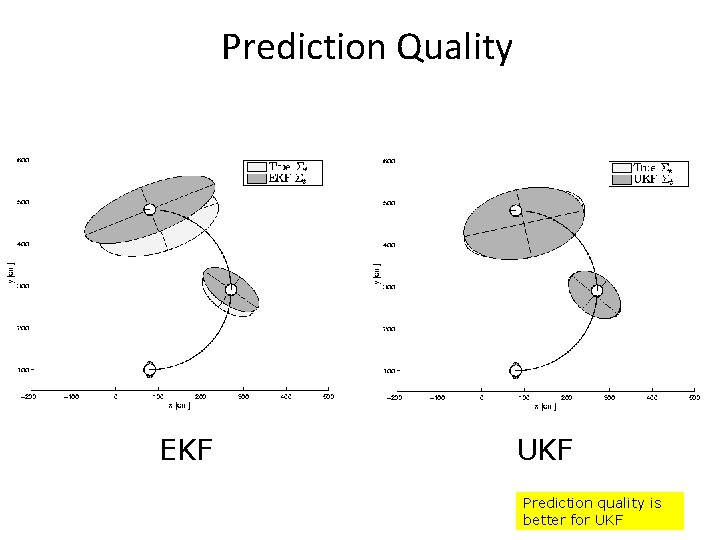

Prediction Quality EKF UKF Prediction quality is better for UKF

UT/UKF Summary

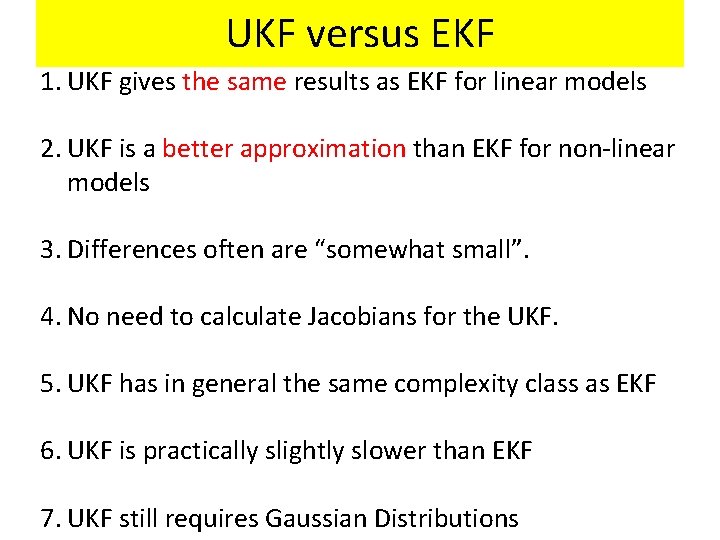

UKF versus EKF 1. UKF gives the same results as EKF for linear models 2. UKF is a better approximation than EKF for non-linear models 3. Differences often are “somewhat small”. 4. No need to calculate Jacobians for the UKF. 5. UKF has in general the same complexity class as EKF 6. UKF is practically slightly slower than EKF 7. UKF still requires Gaussian Distributions

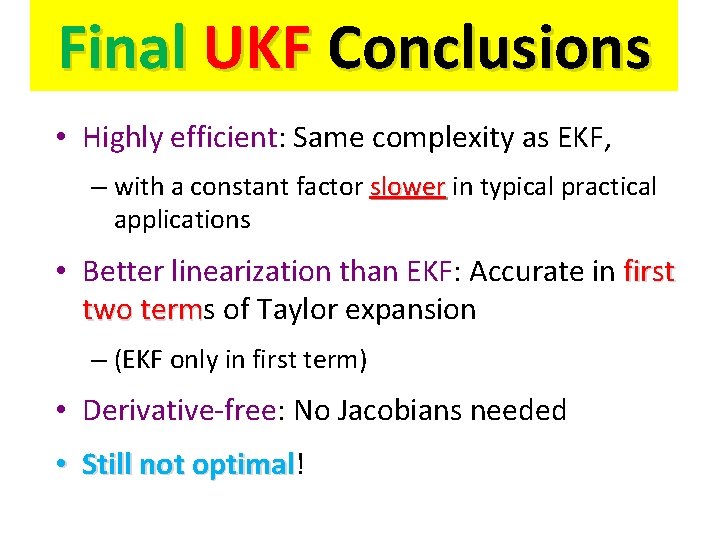

Final UKF Conclusions • Highly efficient: Same complexity as EKF, – with a constant factor slower in typical practical applications • Better linearization than EKF: Accurate in first two terms term of Taylor expansion – (EKF only in first term) • Derivative-free: No Jacobians needed • Still not optimal! optimal

Literature

Localization With Multi-Hypothesis Tracking

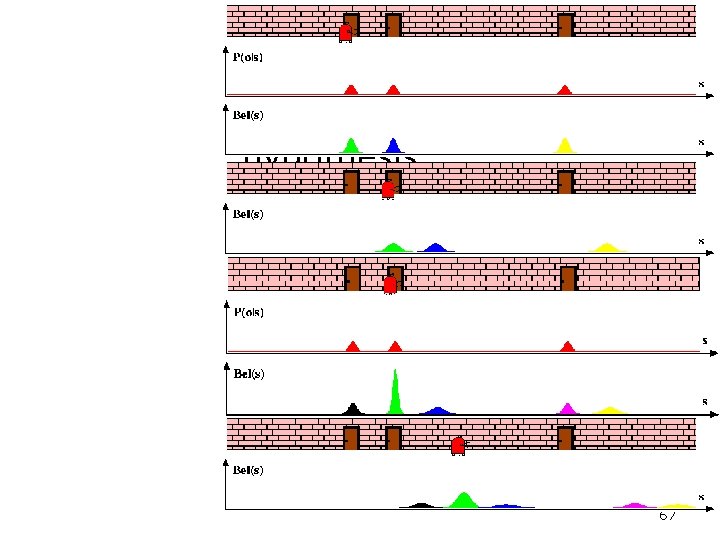

Multihypothesis Tracking 67

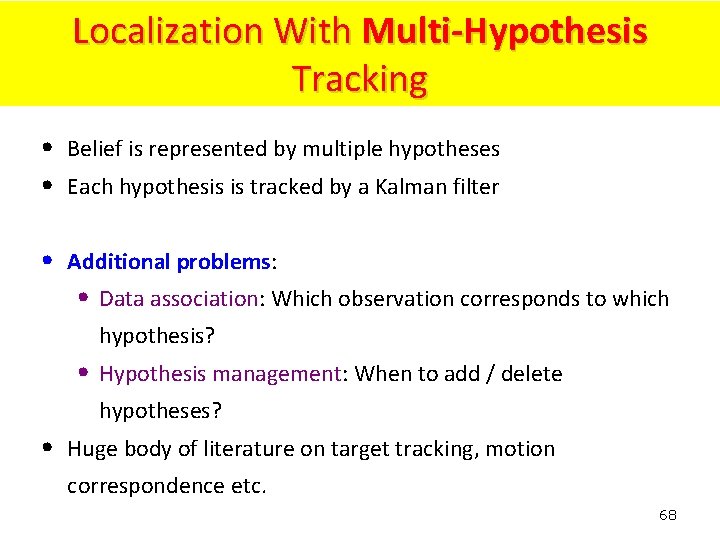

Localization With Multi-Hypothesis Tracking • Belief is represented by multiple hypotheses • Each hypothesis is tracked by a Kalman filter • Additional problems: • Data association: Which observation corresponds to which hypothesis? • Hypothesis management: When to add / delete hypotheses? • Huge body of literature on target tracking, motion correspondence etc. 68

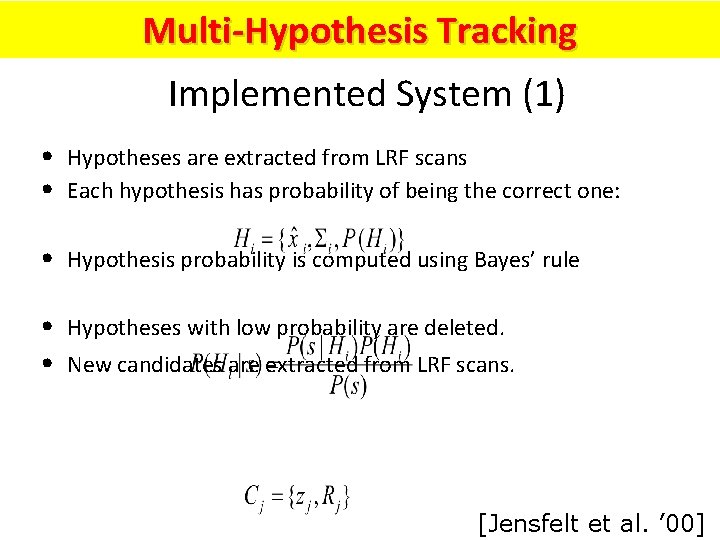

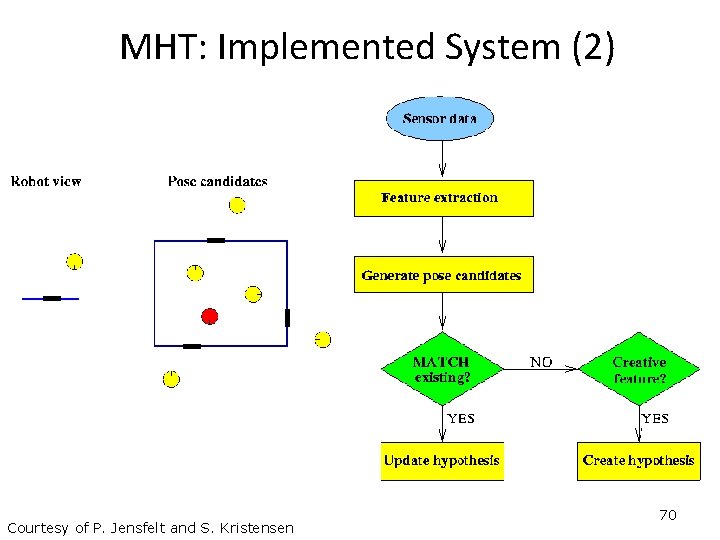

Multi-Hypothesis Tracking Implemented System (1) • Hypotheses are extracted from LRF scans • Each hypothesis has probability of being the correct one: • Hypothesis probability is computed using Bayes’ rule • Hypotheses with low probability are deleted. • New candidates are extracted from LRF scans. [Jensfelt et al. ’ 00]

MHT: Implemented System (2) Courtesy of P. Jensfelt and S. Kristensen 70

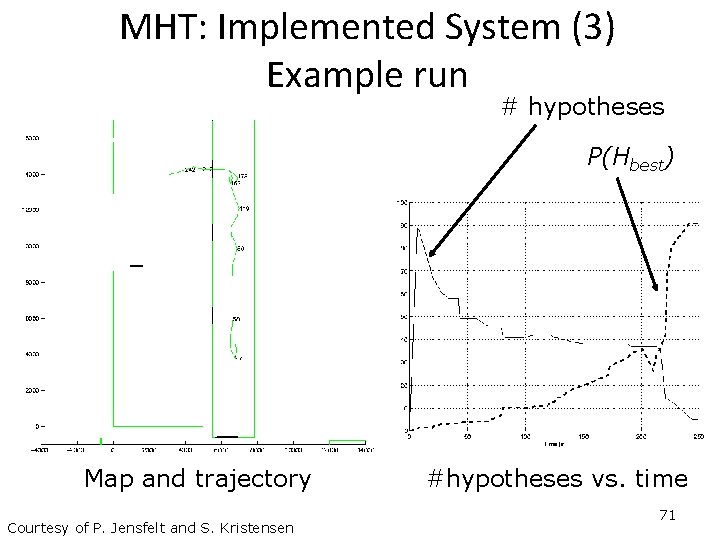

MHT: Implemented System (3) Example run # hypotheses P(Hbest) Map and trajectory Courtesy of P. Jensfelt and S. Kristensen #hypotheses vs. time 71

- Slides: 73