Inspect ISP and FIB reductionbased verification and analysis

Inspect, ISP, and FIB: reduction-based verification and analysis tools for concurrent programs Talk at MSR India, Bangalore Research Labs, June 6, 2008 Research Group: Yu Yang, Xiaofang Chen, Sarvani Vakkalanka, Subodh Sharma, Anh Vo, Michael De. Lisi, Geof Sawaya Faculty: Ganesh Gopalakrishnan (speaker), and Robert M. Kirby School of Computing, University of Utah, Salt Lake City, UT ganesh@cs. utah. edu http: //www. cs. utah. edu/formal_verification Supported by Microsoft HPC Center Grant, NSF CNS-0509379, SRC TJ 1318

Multicores are the future! Need to employ / teach concurrent programming at an unprecedented scale! Some of today’s proposals: (photo courtesy of Intel Corporation. ) o Threads (various) o Message Passing (various) o Transactional Memory (various) o Open. MP o MPI o Intel’s Ct o Microsoft’s Parallel Fx o Cilk Arts’s Cilk o Intel’s TBB o Nvidia’s Cuda o … 2

Q: What tool feature is desired for any concurrency approach ? o Threads o Message Passing o Transactional Memory o Open. MP o MPI o Ct o Parallel Fx o Cilk o TBB o Cuda o … 3

Q: What tool feature is desired A: The ability to verify over all RELEVANT for any concurrency approach ? interleavings ! o Threads o Message Passing o Transactional Memory o Open. MP o MPI o Ct -- deadlocks o Parallel Fx -- data races o Cilk -- communication races o TBB -- memory leaks o Cuda o … o Will have different “grain” sizes (notions of atomicity) o Different types of interactions between “threads / processes” o o Different kinds of bugs Yet, the basics of verification remains achieving the effect of having examined all possible interleavings by only exploring representative interleavings 4

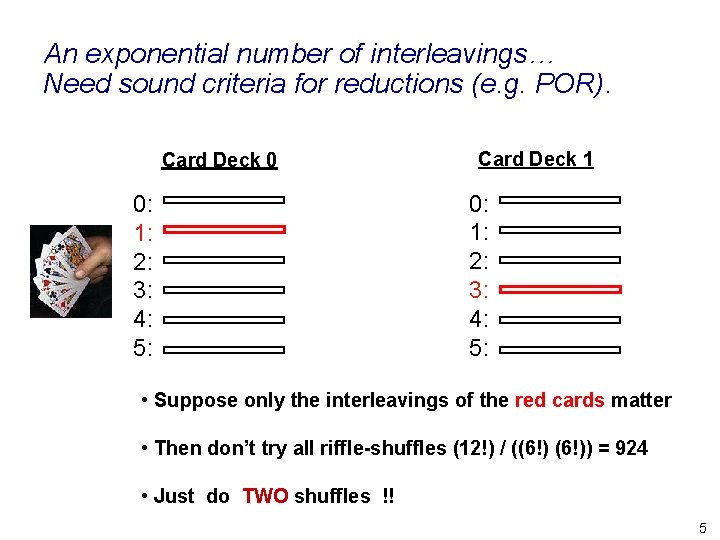

An exponential number of interleavings… Need sound criteria for reductions (e. g. POR). Card Deck 0 0: 1: 2: 3: 4: 5: Card Deck 1 0: 1: 2: 3: 4: 5: • Suppose only the interleavings of the red cards matter • Then don’t try all riffle-shuffles (12!) / ((6!)) = 924 • Just do TWO shuffles !! 5

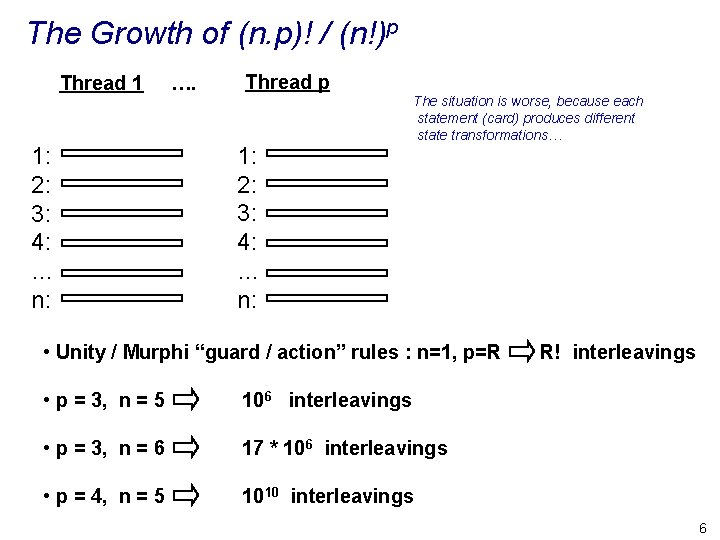

The Growth of (n. p)! / (n!)p Thread 1 1: 2: 3: 4: … n: …. Thread p 1: 2: 3: 4: … n: The situation is worse, because each statement (card) produces different state transformations… • Unity / Murphi “guard / action” rules : n=1, p=R • p = 3, n = 5 106 interleavings • p = 3, n = 6 17 * 106 interleavings • p = 4, n = 5 1010 interleavings R! interleavings 6

Ad-hoc Testing is INEFFECTIVE for thread verification ! Thread 1 1: 2: 3: 4: … n: …. Thread p 1: 2: 3: 4: … n: 7

Ad-hoc Testing is INEFFECTIVE for thread verification ! Thread 1 1: 2: 3: 4: … n: …. Thread p 1: 2: 3: 4: … n: Need Sound and Practically Justifiable Reduction Techniques !! 8

Growing Need to Verify Real-world Concurrent Programs l One often discovers what one is doing through programming! –Need a safety net ! l ‘Correct by construction’ methods work only around stable ideas – Multicore programming not there yet l Tools needed for TODAY’s problems – Inspect is one such tool 9

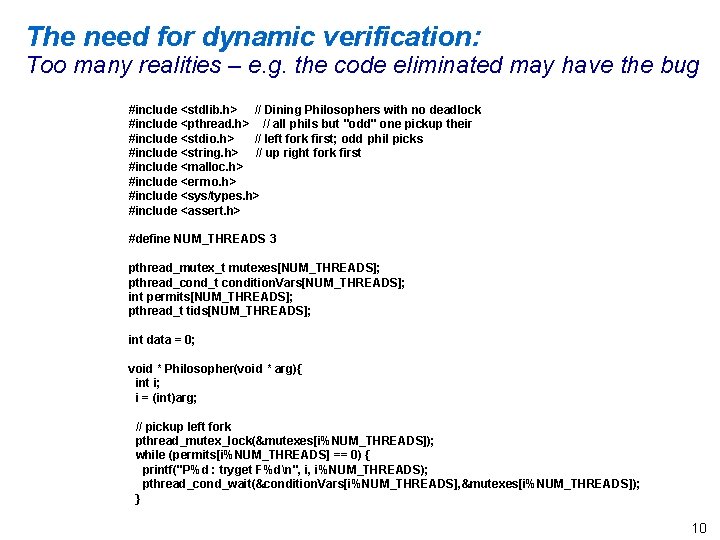

The need for dynamic verification: Too many realities – e. g. the code eliminated may have the bug #include <stdlib. h> // Dining Philosophers with no deadlock #include <pthread. h> // all phils but "odd" one pickup their #include <stdio. h> // left fork first; odd phil picks #include <string. h> // up right fork first #include <malloc. h> #include <errno. h> #include <sys/types. h> #include <assert. h> #define NUM_THREADS 3 pthread_mutex_t mutexes[NUM_THREADS]; pthread_cond_t condition. Vars[NUM_THREADS]; int permits[NUM_THREADS]; pthread_t tids[NUM_THREADS]; int data = 0; void * Philosopher(void * arg){ int i; i = (int)arg; // pickup left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); while (permits[i%NUM_THREADS] == 0) { printf("P%d : tryget F%dn", i, i%NUM_THREADS); pthread_cond_wait(&condition. Vars[i%NUM_THREADS], &mutexes[i%NUM_THREADS]); } 10

Need to support dynamic verification of thread programs l Threads are too low level – Ed Lee – “The Problem with Threads” » Complex global effects » Semantics are not compositional » Non-robustness against failure l Yet, alternative proposals are in a state of flux – Open. MP – Transactional Memory – … l Will need threads to IMPLEMENT alternate proposals! 11

Speaking in general l Each parallel programming API class / approach seems to warrant its own dynamic verification approach l May be possible to employ common instrumentation / replay mechanisms in the long run l As of now, one has to build customized implementations – We have implementations for Threads (Inspect) and MPI (ISP) – We are building an implementation for Open. MP (based on a backtrackable version of OMPi from Greece) 12

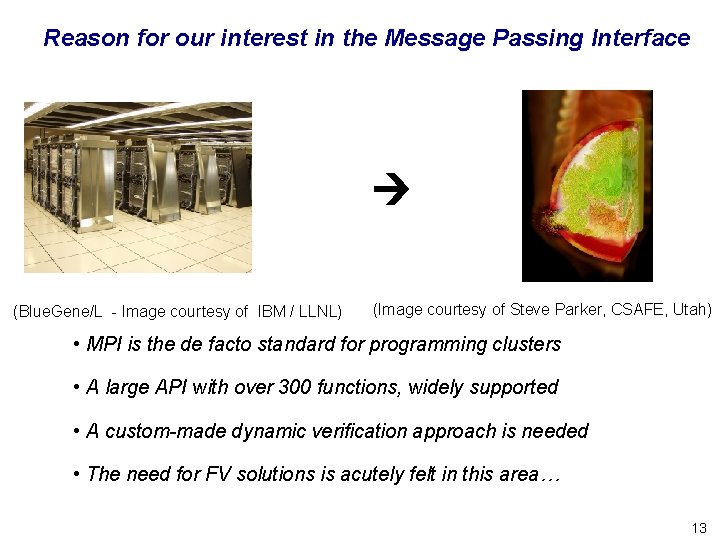

Reason for our interest in the Message Passing Interface (Blue. Gene/L - Image courtesy of IBM / LLNL) (Image courtesy of Steve Parker, CSAFE, Utah) • MPI is the de facto standard for programming clusters • A large API with over 300 functions, widely supported • A custom-made dynamic verification approach is needed • The need for FV solutions is acutely felt in this area… 13

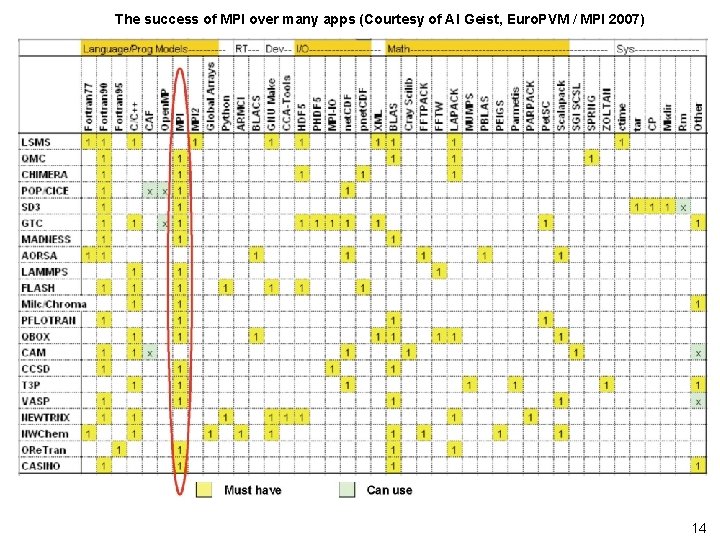

The success of MPI over many apps (Courtesy of Al Geist, Euro. PVM / MPI 2007) 14

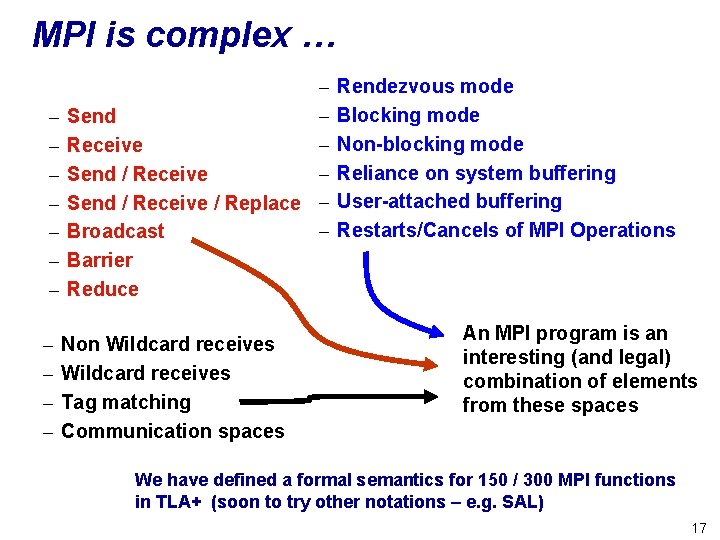

MPI is complex … – – – – – Send – Receive – Send / Receive / Replace – – Broadcast Barrier Reduce – – Non Wildcard receives Tag matching Communication spaces Rendezvous mode Blocking mode Non-blocking mode Reliance on system buffering User-attached buffering Restarts/Cancels of MPI Operations An MPI program is an interesting (and legal) combination of elements from these spaces 15

MPI is complex … – – – – – Send – Receive – Send / Receive / Replace – – Broadcast Barrier Reduce – – Non Wildcard receives Tag matching Communication spaces Rendezvous mode Blocking mode Non-blocking mode Reliance on system buffering User-attached buffering Restarts/Cancels of MPI Operations An MPI program is an interesting (and legal) combination of elements from these spaces Yet, the complexity seems unavoidable to succeed at the scale of MPI’s deployment… 16

MPI is complex … – – – – – Send – Receive – Send / Receive / Replace – – Broadcast Barrier Reduce – – Non Wildcard receives Tag matching Communication spaces Rendezvous mode Blocking mode Non-blocking mode Reliance on system buffering User-attached buffering Restarts/Cancels of MPI Operations An MPI program is an interesting (and legal) combination of elements from these spaces We have defined a formal semantics for 150 / 300 MPI functions in TLA+ (soon to try other notations – e. g. SAL) 17

Our approach with MPI: go after “low hanging bugs” l Automated verification of common mistakes – Deadlocks – Communication Races – Resource Leaks 18

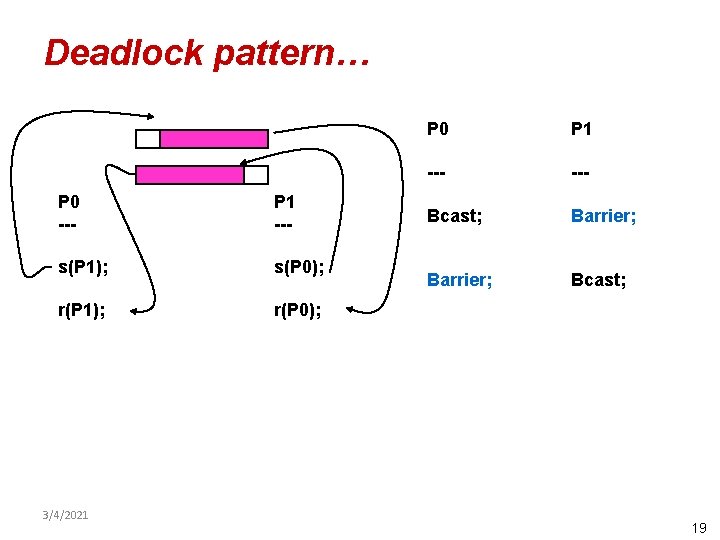

Deadlock pattern… P 0 --- P 1 --- s(P 1); s(P 0); r(P 1); r(P 0); 3/4/2021 P 0 P 1 --- Bcast; Barrier; Bcast; 19

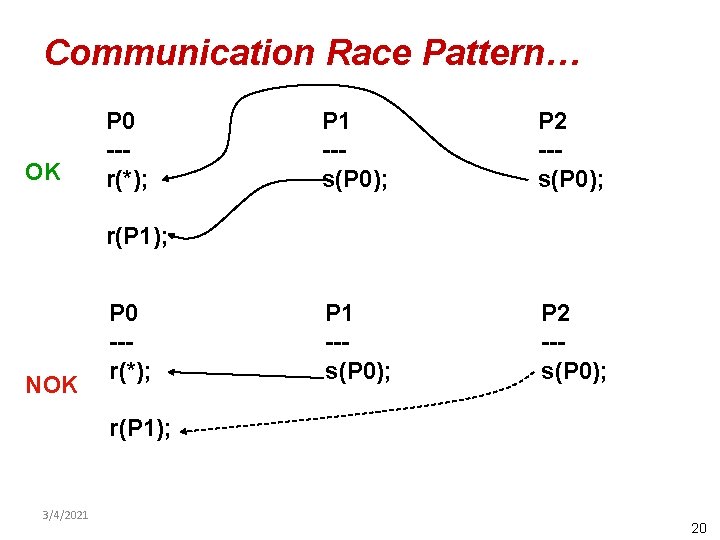

Communication Race Pattern… OK P 0 --r(*); P 1 --s(P 0); P 2 --s(P 0); r(P 1); NOK P 0 --r(*); r(P 1); 3/4/2021 20

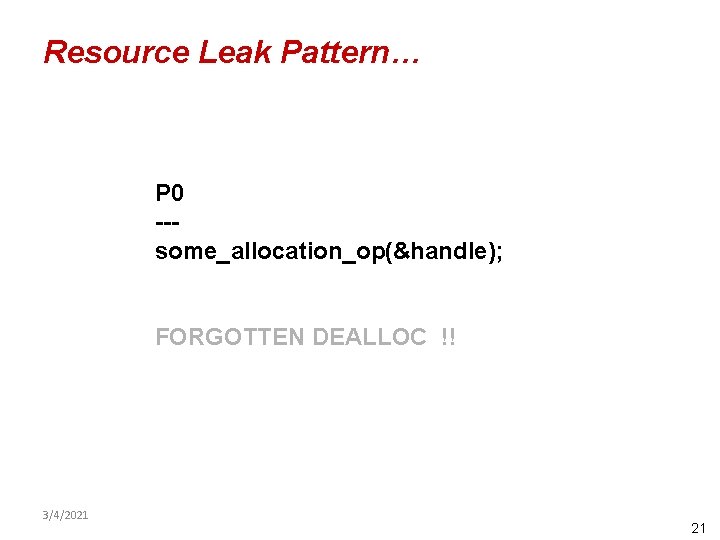

Resource Leak Pattern… P 0 --some_allocation_op(&handle); FORGOTTEN DEALLOC !! 3/4/2021 21

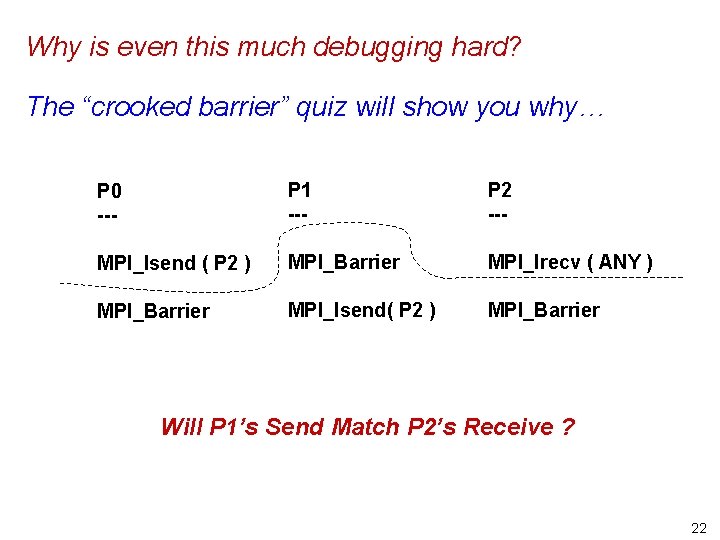

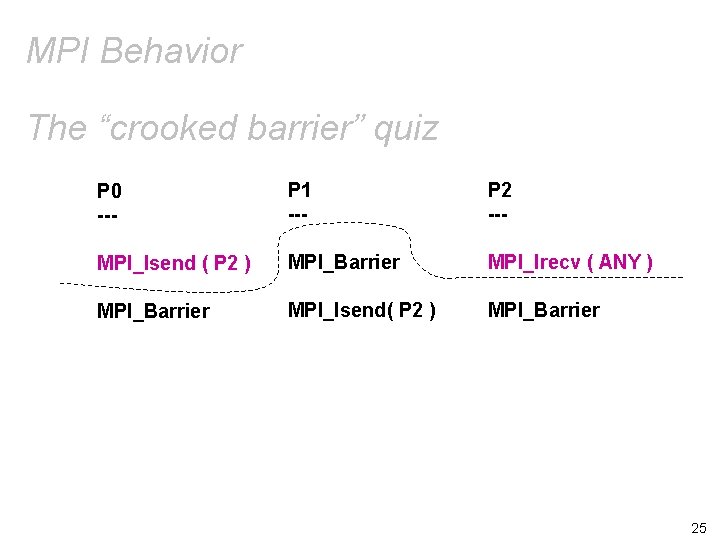

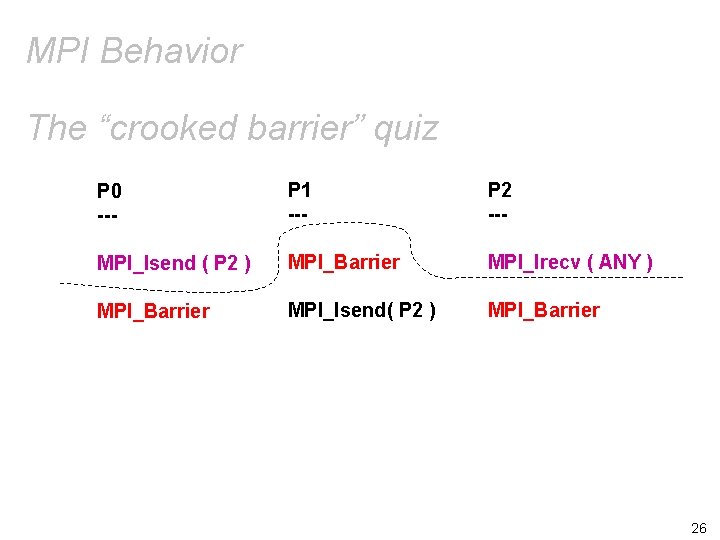

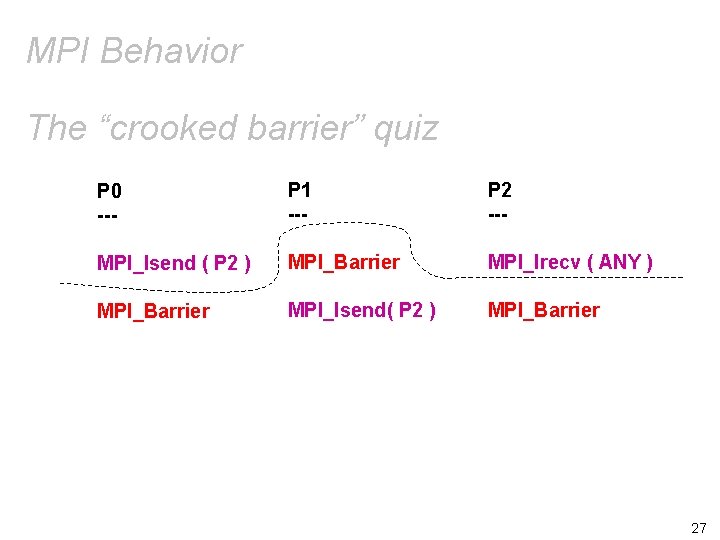

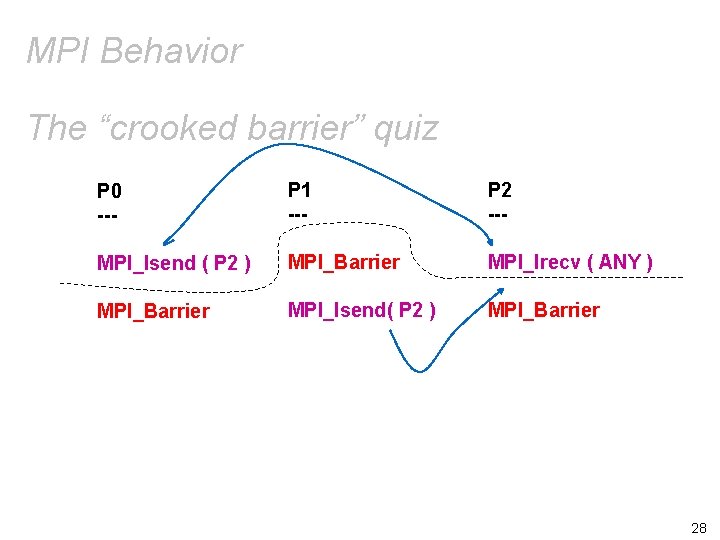

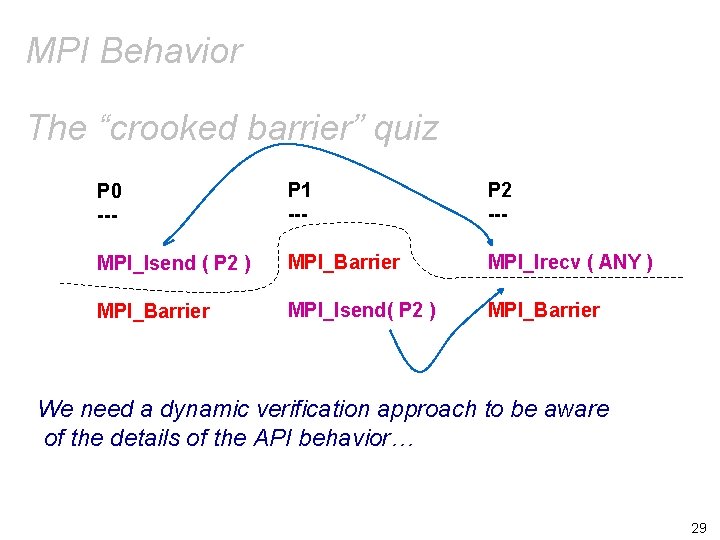

Why is even this much debugging hard? The “crooked barrier” quiz will show you why… P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier Will P 1’s Send Match P 2’s Receive ? 22

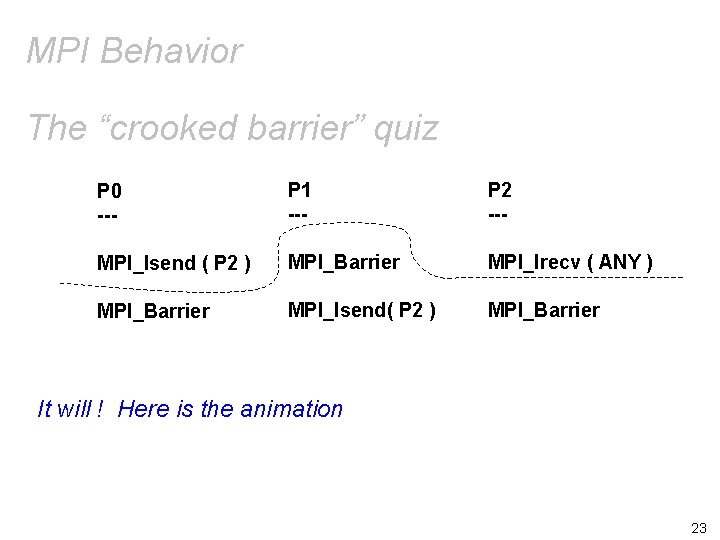

MPI Behavior The “crooked barrier” quiz P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier It will ! Here is the animation 23

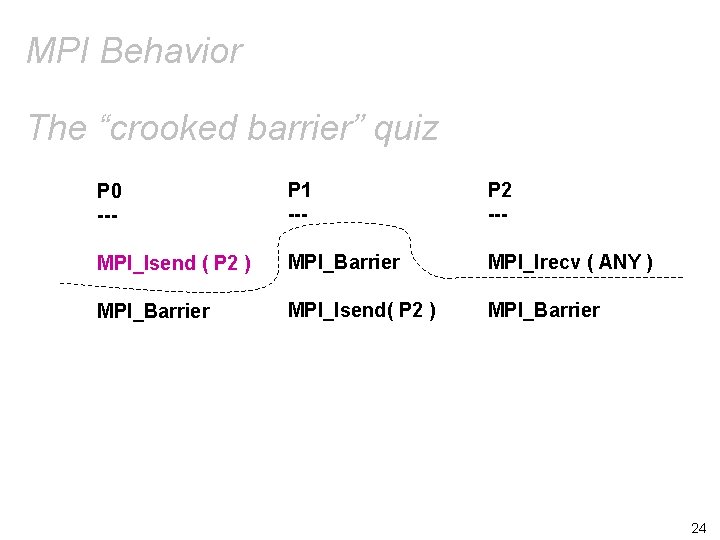

MPI Behavior The “crooked barrier” quiz P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier 24

MPI Behavior The “crooked barrier” quiz P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier 25

MPI Behavior The “crooked barrier” quiz P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier 26

MPI Behavior The “crooked barrier” quiz P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier 27

MPI Behavior The “crooked barrier” quiz P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier 28

MPI Behavior The “crooked barrier” quiz P 0 --- P 1 --- P 2 --- MPI_Isend ( P 2 ) MPI_Barrier MPI_Irecv ( ANY ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier We need a dynamic verification approach to be aware of the details of the API behavior… 29

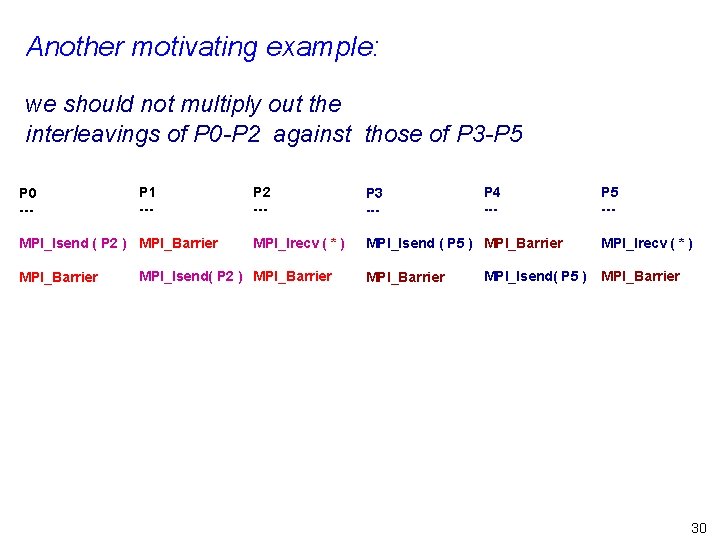

Another motivating example: we should not multiply out the interleavings of P 0 -P 2 against those of P 3 -P 5 P 0 --- P 1 --- MPI_Isend ( P 2 ) MPI_Barrier P 2 --- P 3 --- MPI_Irecv ( * ) MPI_Isend ( P 5 ) MPI_Barrier MPI_Isend( P 2 ) MPI_Barrier P 4 --- MPI_Isend( P 5 ) P 5 --MPI_Irecv ( * ) MPI_Barrier 30

Results pertaining to Inspect (to be presented at SPIN 2008) 31

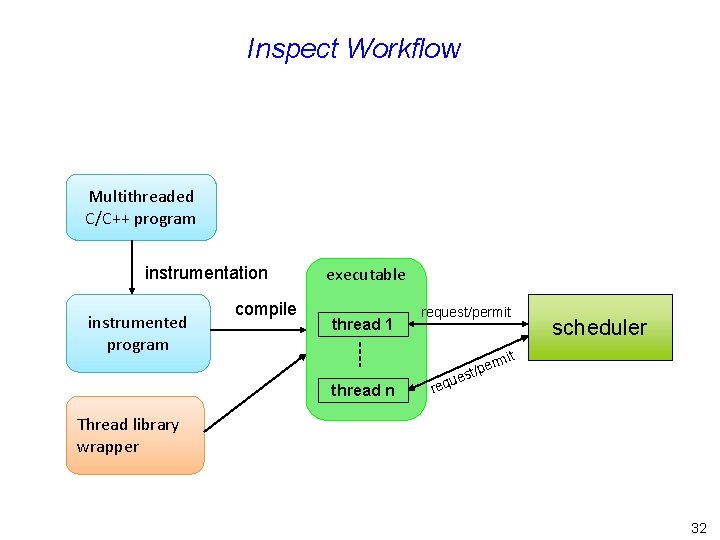

Inspect Workflow Multithreaded C/C++ program instrumentation instrumented program compile executable thread 1 thread n request/permit t rmi e st/p ue req scheduler Thread library wrapper 32

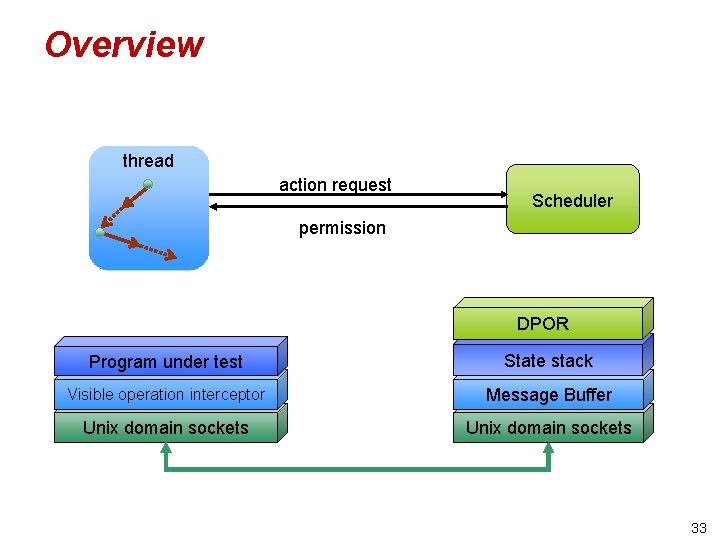

Overview thread action request Scheduler permission DPOR Program under test State stack Visible operation interceptor Message Buffer Unix domain sockets 33

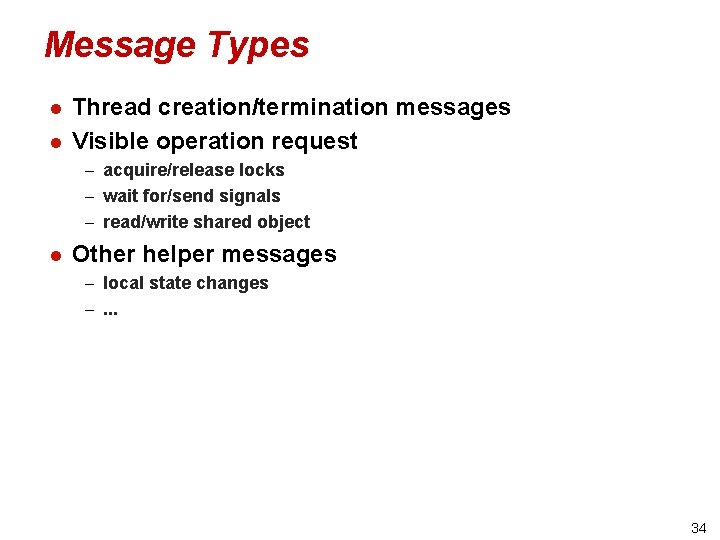

Message Types l l Thread creation/termination messages Visible operation request – acquire/release locks – wait for/send signals – read/write shared object l Other helper messages – local state changes –. . . 34

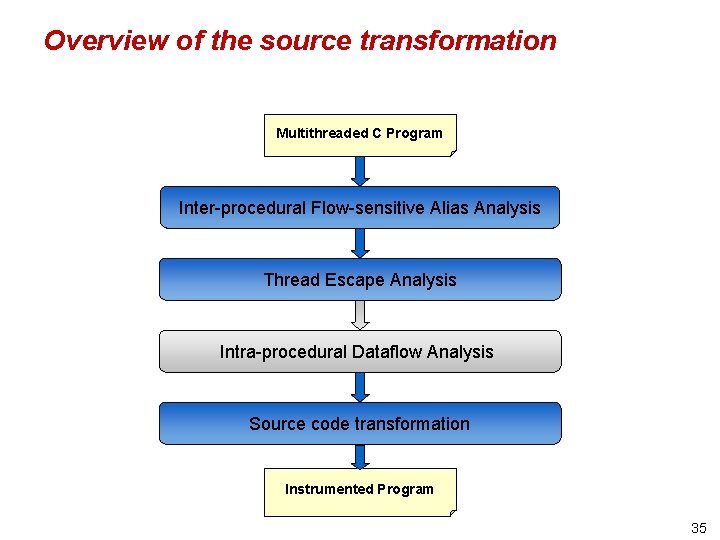

Overview of the source transformation Multithreaded C Program Inter-procedural Flow-sensitive Alias Analysis Thread Escape Analysis Intra-procedural Dataflow Analysis Source code transformation Instrumented Program 35

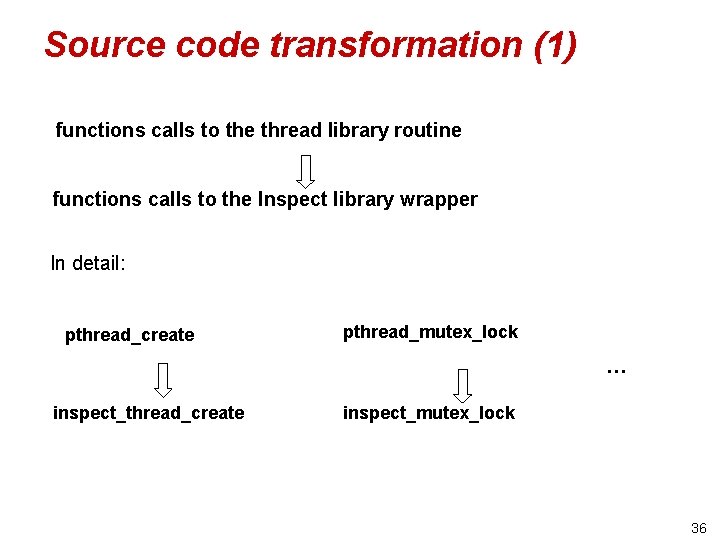

Source code transformation (1) functions calls to the thread library routine functions calls to the Inspect library wrapper In detail: pthread_create pthread_mutex_lock … inspect_thread_create inspect_mutex_lock 36

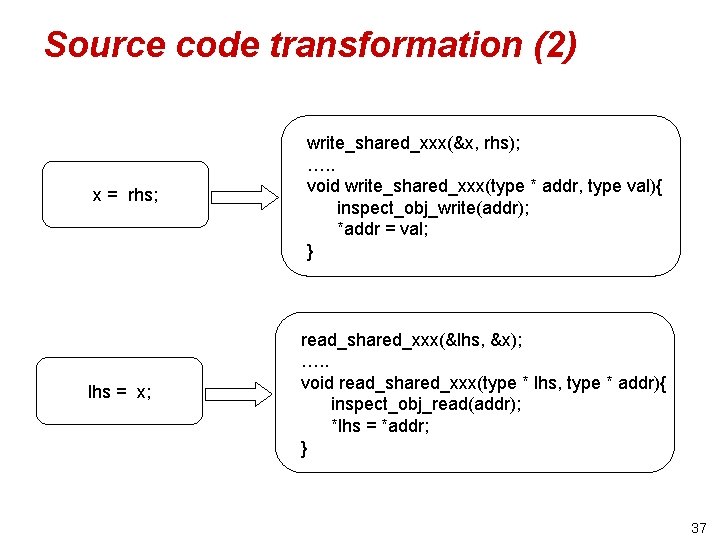

Source code transformation (2) x = rhs; lhs = x; write_shared_xxx(&x, rhs); …. . void write_shared_xxx(type * addr, type val){ inspect_obj_write(addr); *addr = val; } read_shared_xxx(&lhs, &x); …. . void read_shared_xxx(type * lhs, type * addr){ inspect_obj_read(addr); *lhs = *addr; } 37

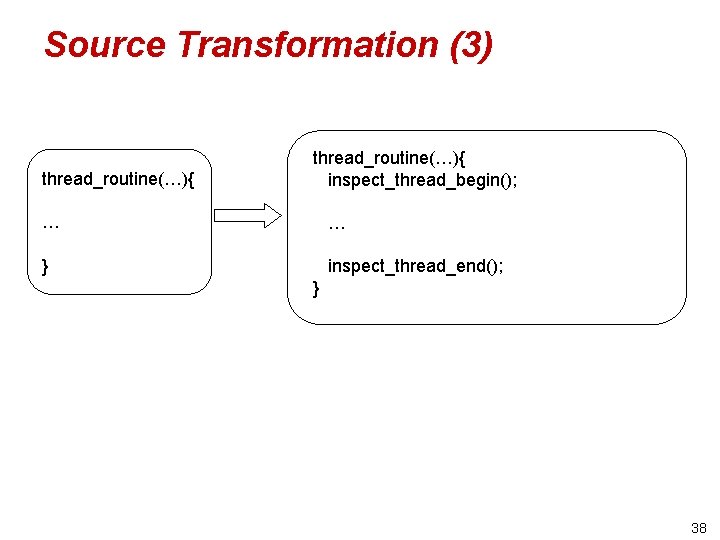

Source Transformation (3) thread_routine(…){ inspect_thread_begin(); … … } inspect_thread_end(); } 38

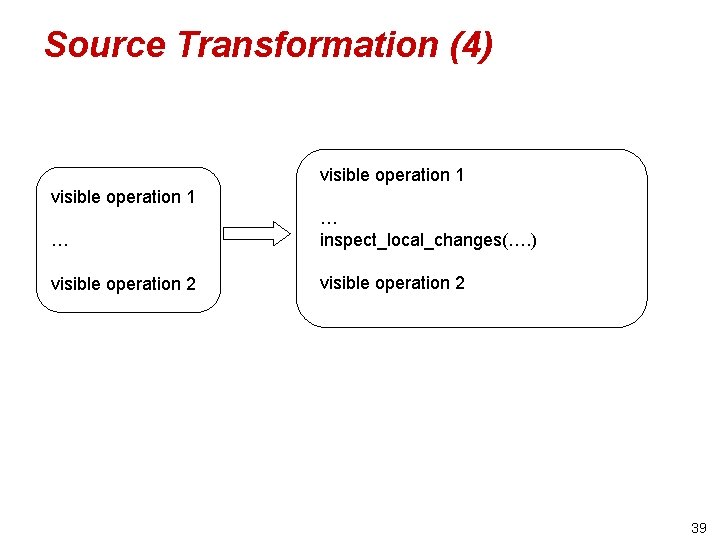

Source Transformation (4) visible operation 1 … … inspect_local_changes(…. ) visible operation 2 39

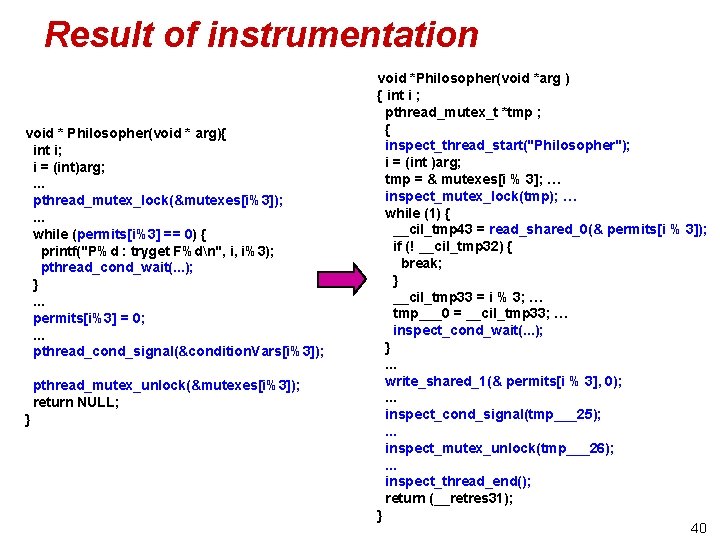

Result of instrumentation void * Philosopher(void * arg){ int i; i = (int)arg; . . . pthread_mutex_lock(&mutexes[i%3]); . . . while (permits[i%3] == 0) { printf("P%d : tryget F%dn", i, i%3); pthread_cond_wait(. . . ); }. . . permits[i%3] = 0; . . . pthread_cond_signal(&condition. Vars[i%3]); pthread_mutex_unlock(&mutexes[i%3]); return NULL; } void *Philosopher(void *arg ) { int i ; pthread_mutex_t *tmp ; { inspect_thread_start("Philosopher"); i = (int )arg; tmp = & mutexes[i % 3]; … inspect_mutex_lock(tmp); … while (1) { __cil_tmp 43 = read_shared_0(& permits[i % 3]); if (! __cil_tmp 32) { break; } __cil_tmp 33 = i % 3; … tmp___0 = __cil_tmp 33; … inspect_cond_wait(. . . ); }. . . write_shared_1(& permits[i % 3], 0); . . . inspect_cond_signal(tmp___25); . . . inspect_mutex_unlock(tmp___26); . . . inspect_thread_end(); return (__retres 31); } 40

![Philosophers in PThreads… permits[i%NUM_THREADS] = 0; printf("P%d : get F%dn", i, i%NUM_THREADS); pthread_mutex_unlock(&mutexes[i%NUM_THREADS]); #include Philosophers in PThreads… permits[i%NUM_THREADS] = 0; printf("P%d : get F%dn", i, i%NUM_THREADS); pthread_mutex_unlock(&mutexes[i%NUM_THREADS]); #include](http://slidetodoc.com/presentation_image_h/232cf04e444311dd84738d70217ba00e/image-41.jpg)

Philosophers in PThreads… permits[i%NUM_THREADS] = 0; printf("P%d : get F%dn", i, i%NUM_THREADS); pthread_mutex_unlock(&mutexes[i%NUM_THREADS]); #include <stdlib. h> // Dining Philosophers with no deadlock #include <pthread. h> // all phils but "odd" one pickup their // pickup right fork #include <stdio. h> // left fork first; odd phil picks pthread_mutex_lock(&mutexes[(i+1)%NUM_THREADS]); #include <string. h> // up right fork first while (permits[(i+1)%NUM_THREADS] == 0) { #include <malloc. h> printf("P%d : tryget F%dn", i, (i+1)%NUM_THREADS); #include <errno. h> pthread_cond_wait(&condition. Vars[(i+1)%NUM_THREAD #include <sys/types. h> S], &mutexes[(i+1)%NUM_THREADS]); #include <assert. h> } permits[(i+1)%NUM_THREADS] = 0; #define NUM_THREADS 3 printf("P%d : get F%dn", i, (i+1)%NUM_THREADS); pthread_mutex_unlock(&mutexes[(i+1)%NUM_THREADS]); pthread_mutex_t mutexes[NUM_THREADS]; pthread_cond_t condition. Vars[NUM_THREADS]; //printf("philosopher %d thinks n", i); int permits[NUM_THREADS]; printf("%dn", i); pthread_t tids[NUM_THREADS]; int data = 0; void * Philosopher(void * arg){ int i; i = (int)arg; // pickup left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); while (permits[i%NUM_THREADS] == 0) { printf("P%d : tryget F%dn", i, i%NUM_THREADS); pthread_cond_wait(&condition. Vars[i%NUM_THREADS], & mutexes[i%NUM_THREADS]); } // data = 10 * data + i; fflush(stdout); // putdown right fork pthread_mutex_lock(&mutexes[(i+1)%NUM_THREADS]); permits[(i+1)%NUM_THREADS] = 1; printf("P%d : put F%dn", i, (i+1)%NUM_THREADS); pthread_cond_signal(&condition. Vars[(i+1)%NUM_THREAD S]); pthread_mutex_unlock(&mutexes[(i+1)%NUM_THREADS]); 41

![…Philosophers in PThreads // putdown left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); permits[i%NUM_THREADS] = 1; printf("P%d : put …Philosophers in PThreads // putdown left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); permits[i%NUM_THREADS] = 1; printf("P%d : put](http://slidetodoc.com/presentation_image_h/232cf04e444311dd84738d70217ba00e/image-42.jpg)

…Philosophers in PThreads // putdown left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); permits[i%NUM_THREADS] = 1; printf("P%d : put F%d n", i, i%NUM_THREADS); pthread_cond_signal(&condition. Vars[i%NUM_THREADS]); pthread_mutex_unlock(&mutexes[i%NUM_THREADS]); pthread_create(&tids[NUM_THREADS-1], NULL, Odd. Philosopher, (void*)(NUM_THREADS-1) ); for (i = 0; i < NUM_THREADS; i++){ pthread_join(tids[i], NULL); } // putdown right fork pthread_mutex_lock(&mutexes[(i+1)%NUM_THREADS]); permits[(i+1)%NUM_THREADS] = 1; printf("P%d : put F%d n", i, (i+1)%NUM_THREADS); pthread_cond_signal(&condition. Vars[(i+1)%NUM_THREADS]); pthread_mutex_unlock(&mutexes[(i+1)%NUM_THREADS]); for (i = 0; i < NUM_THREADS; i++){ pthread_mutex_destroy(&mutexes[i]); } for (i = 0; i < NUM_THREADS; i++){ pthread_cond_destroy(&condition. Vars[i]); } //printf(" data = %d n", data); return NULL; //assert( data != 201); return 0; } } int main(){ int i; for (i = 0; i < NUM_THREADS; i++) pthread_mutex_init(&mutexes[i], NULL); for (i = 0; i < NUM_THREADS; i++) pthread_cond_init(&condition. Vars[i], NULL); for (i = 0; i < NUM_THREADS; i++) permits[i] = 1; for (i = 0; i < NUM_THREADS-1; i++){ pthread_create(&tids[i], NULL, Philosopher, (void*)(i) ); } 42

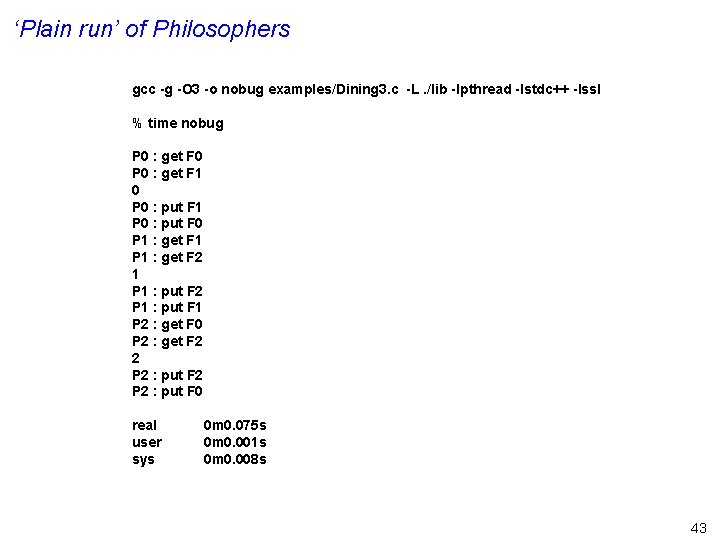

‘Plain run’ of Philosophers gcc -g -O 3 -o nobug examples/Dining 3. c -L. /lib -lpthread -lstdc++ -lssl % time nobug P 0 : get F 0 P 0 : get F 1 0 P 0 : put F 1 P 0 : put F 0 P 1 : get F 1 P 1 : get F 2 1 P 1 : put F 2 P 1 : put F 1 P 2 : get F 0 P 2 : get F 2 2 P 2 : put F 0 real user sys 0 m 0. 075 s 0 m 0. 001 s 0 m 0. 008 s 43

![…Buggy Philosophers in PThreads // putdown left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); permits[i%NUM_THREADS] = 1; printf("P%d : …Buggy Philosophers in PThreads // putdown left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); permits[i%NUM_THREADS] = 1; printf("P%d :](http://slidetodoc.com/presentation_image_h/232cf04e444311dd84738d70217ba00e/image-44.jpg)

…Buggy Philosophers in PThreads // putdown left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); permits[i%NUM_THREADS] = 1; printf("P%d : put F%d n", i, i%NUM_THREADS); pthread_cond_signal(&condition. Vars[i%NUM_THREADS]); pthread_mutex_unlock(&mutexes[i%NUM_THREADS]); pthread_create(&tids[NUM_THREADS-1], NULL, Philosopher, (void*)(NUM_THREADS-1) ); for (i = 0; i < NUM_THREADS; i++){ pthread_join(tids[i], NULL); } // putdown right fork pthread_mutex_lock(&mutexes[(i+1)%NUM_THREADS]); permits[(i+1)%NUM_THREADS] = 1; printf("P%d : put F%d n", i, (i+1)%NUM_THREADS); pthread_cond_signal(&condition. Vars[(i+1)%NUM_THREADS]); pthread_mutex_unlock(&mutexes[(i+1)%NUM_THREADS]); for (i = 0; i < NUM_THREADS; i++){ pthread_mutex_destroy(&mutexes[i]); } for (i = 0; i < NUM_THREADS; i++){ pthread_cond_destroy(&condition. Vars[i]); } //printf(" data = %d n", data); return NULL; //assert( data != 201); return 0; } } int main(){ int i; for (i = 0; i < NUM_THREADS; i++) pthread_mutex_init(&mutexes[i], NULL); for (i = 0; i < NUM_THREADS; i++) pthread_cond_init(&condition. Vars[i], NULL); for (i = 0; i < NUM_THREADS; i++) permits[i] = 1; for (i = 0; i < NUM_THREADS-1; i++){ pthread_create(&tids[i], NULL, Philosopher, (void*)(i) ); } 44

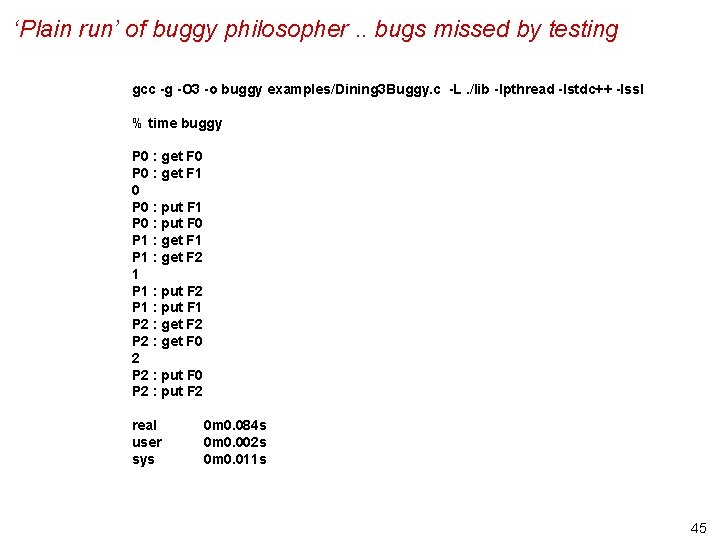

‘Plain run’ of buggy philosopher. . bugs missed by testing gcc -g -O 3 -o buggy examples/Dining 3 Buggy. c -L. /lib -lpthread -lstdc++ -lssl % time buggy P 0 : get F 0 P 0 : get F 1 0 P 0 : put F 1 P 0 : put F 0 P 1 : get F 1 P 1 : get F 2 1 P 1 : put F 2 P 1 : put F 1 P 2 : get F 2 P 2 : get F 0 2 P 2 : put F 0 P 2 : put F 2 real user sys 0 m 0. 084 s 0 m 0. 002 s 0 m 0. 011 s 45

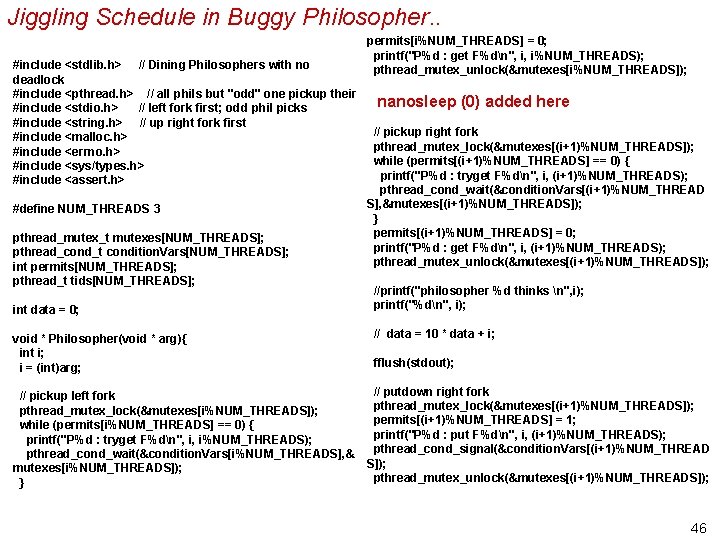

Jiggling Schedule in Buggy Philosopher. . #include <stdlib. h> // Dining Philosophers with no deadlock #include <pthread. h> // all phils but "odd" one pickup their #include <stdio. h> // left fork first; odd phil picks #include <string. h> // up right fork first #include <malloc. h> #include <errno. h> #include <sys/types. h> #include <assert. h> #define NUM_THREADS 3 pthread_mutex_t mutexes[NUM_THREADS]; pthread_cond_t condition. Vars[NUM_THREADS]; int permits[NUM_THREADS]; pthread_t tids[NUM_THREADS]; int data = 0; void * Philosopher(void * arg){ int i; i = (int)arg; // pickup left fork pthread_mutex_lock(&mutexes[i%NUM_THREADS]); while (permits[i%NUM_THREADS] == 0) { printf("P%d : tryget F%dn", i, i%NUM_THREADS); pthread_cond_wait(&condition. Vars[i%NUM_THREADS], & mutexes[i%NUM_THREADS]); } permits[i%NUM_THREADS] = 0; printf("P%d : get F%dn", i, i%NUM_THREADS); pthread_mutex_unlock(&mutexes[i%NUM_THREADS]); nanosleep (0) added here // pickup right fork pthread_mutex_lock(&mutexes[(i+1)%NUM_THREADS]); while (permits[(i+1)%NUM_THREADS] == 0) { printf("P%d : tryget F%dn", i, (i+1)%NUM_THREADS); pthread_cond_wait(&condition. Vars[(i+1)%NUM_THREAD S], &mutexes[(i+1)%NUM_THREADS]); } permits[(i+1)%NUM_THREADS] = 0; printf("P%d : get F%dn", i, (i+1)%NUM_THREADS); pthread_mutex_unlock(&mutexes[(i+1)%NUM_THREADS]); //printf("philosopher %d thinks n", i); printf("%dn", i); // data = 10 * data + i; fflush(stdout); // putdown right fork pthread_mutex_lock(&mutexes[(i+1)%NUM_THREADS]); permits[(i+1)%NUM_THREADS] = 1; printf("P%d : put F%dn", i, (i+1)%NUM_THREADS); pthread_cond_signal(&condition. Vars[(i+1)%NUM_THREAD S]); pthread_mutex_unlock(&mutexes[(i+1)%NUM_THREADS]); 46

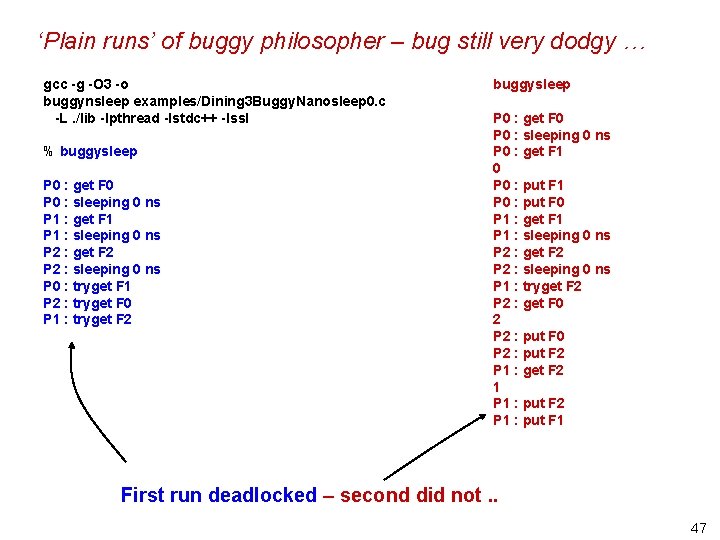

‘Plain runs’ of buggy philosopher – bug still very dodgy … gcc -g -O 3 -o buggynsleep examples/Dining 3 Buggy. Nanosleep 0. c -L. /lib -lpthread -lstdc++ -lssl % buggysleep P 0 : get F 0 P 0 : sleeping 0 ns P 1 : get F 1 P 1 : sleeping 0 ns P 2 : get F 2 P 2 : sleeping 0 ns P 0 : tryget F 1 P 2 : tryget F 0 P 1 : tryget F 2 buggysleep P 0 : get F 0 P 0 : sleeping 0 ns P 0 : get F 1 0 P 0 : put F 1 P 0 : put F 0 P 1 : get F 1 P 1 : sleeping 0 ns P 2 : get F 2 P 2 : sleeping 0 ns P 1 : tryget F 2 P 2 : get F 0 2 P 2 : put F 0 P 2 : put F 2 P 1 : get F 2 1 P 1 : put F 2 P 1 : put F 1 First run deadlocked – second did not. . 47

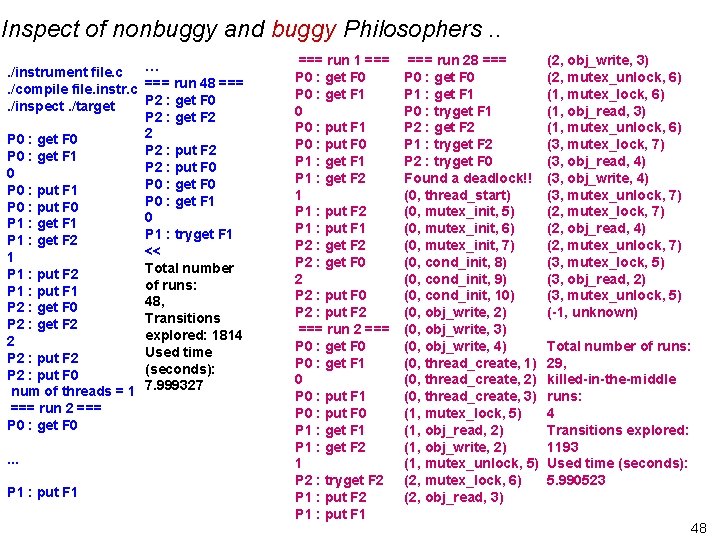

Inspect of nonbuggy and buggy Philosophers. . …. /instrument file. c. /compile file. instr. c === run 48 === P 2 : get F 0. /inspect. /target P 2 : get F 2 2 P 0 : get F 0 P 2 : put F 2 P 0 : get F 1 P 2 : put F 0 0 P 0 : get F 0 P 0 : put F 1 P 0 : get F 1 P 0 : put F 0 0 P 1 : get F 1 P 1 : tryget F 1 P 1 : get F 2 << 1 Total number P 1 : put F 2 of runs: P 1 : put F 1 48, P 2 : get F 0 Transitions P 2 : get F 2 explored: 1814 2 Used time P 2 : put F 2 (seconds): P 2 : put F 0 num of threads = 1 7. 999327 === run 2 === P 0 : get F 0. . . P 1 : put F 1 === run 1 === P 0 : get F 0 P 0 : get F 1 0 P 0 : put F 1 P 0 : put F 0 P 1 : get F 1 P 1 : get F 2 1 P 1 : put F 2 P 1 : put F 1 P 2 : get F 2 P 2 : get F 0 2 P 2 : put F 0 P 2 : put F 2 === run 2 === P 0 : get F 0 P 0 : get F 1 0 P 0 : put F 1 P 0 : put F 0 P 1 : get F 1 P 1 : get F 2 1 P 2 : tryget F 2 P 1 : put F 1 === run 28 === P 0 : get F 0 P 1 : get F 1 P 0 : tryget F 1 P 2 : get F 2 P 1 : tryget F 2 P 2 : tryget F 0 Found a deadlock!! (0, thread_start) (0, mutex_init, 5) (0, mutex_init, 6) (0, mutex_init, 7) (0, cond_init, 8) (0, cond_init, 9) (0, cond_init, 10) (0, obj_write, 2) (0, obj_write, 3) (0, obj_write, 4) (0, thread_create, 1) (0, thread_create, 2) (0, thread_create, 3) (1, mutex_lock, 5) (1, obj_read, 2) (1, obj_write, 2) (1, mutex_unlock, 5) (2, mutex_lock, 6) (2, obj_read, 3) (2, obj_write, 3) (2, mutex_unlock, 6) (1, mutex_lock, 6) (1, obj_read, 3) (1, mutex_unlock, 6) (3, mutex_lock, 7) (3, obj_read, 4) (3, obj_write, 4) (3, mutex_unlock, 7) (2, mutex_lock, 7) (2, obj_read, 4) (2, mutex_unlock, 7) (3, mutex_lock, 5) (3, obj_read, 2) (3, mutex_unlock, 5) (-1, unknown) Total number of runs: 29, killed-in-the-middle runs: 4 Transitions explored: 1193 Used time (seconds): 5. 990523 48

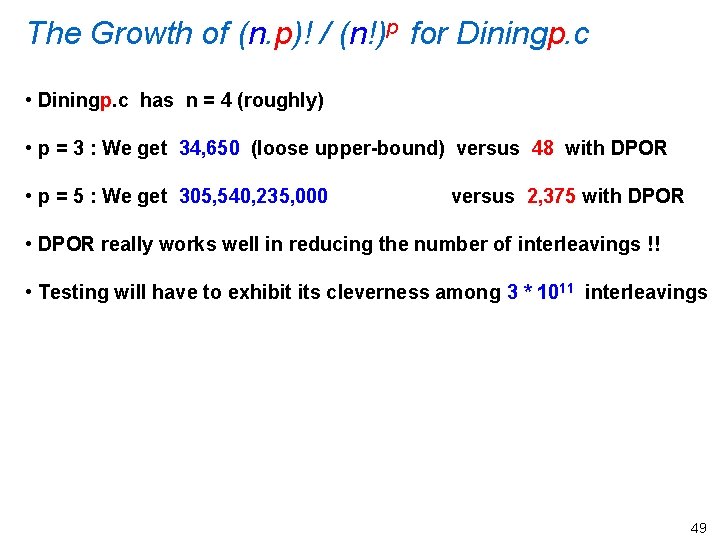

The Growth of (n. p)! / (n!)p for Diningp. c • Diningp. c has n = 4 (roughly) • p = 3 : We get 34, 650 (loose upper-bound) versus 48 with DPOR • p = 5 : We get 305, 540, 235, 000 versus 2, 375 with DPOR • DPOR really works well in reducing the number of interleavings !! • Testing will have to exhibit its cleverness among 3 * 1011 interleavings 49

![[ NEW SLIDE ] On the HUGE importance of DPOR BEFORE INSTRUMENTATION void * [ NEW SLIDE ] On the HUGE importance of DPOR BEFORE INSTRUMENTATION void *](http://slidetodoc.com/presentation_image_h/232cf04e444311dd84738d70217ba00e/image-50.jpg)

[ NEW SLIDE ] On the HUGE importance of DPOR BEFORE INSTRUMENTATION void * thread_A(void* arg) { pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); } AFTER INSTRUMENTATION (transitions are shown as bands) void *thread_A(void *arg ) // thread_B is similar { void *__retres 2 ; int __cil_tmp 3 ; int __cil_tmp 4 ; { inspect_thread_start("thread_A"); inspect_mutex_lock(& mutex); __cil_tmp 4 = read_shared_0(& A_count); __cil_tmp 3 = __cil_tmp 4 + 1; write_shared_1(& A_count, __cil_tmp 3); inspect_mutex_unlock(& mutex); __retres 2 = (void *)0; inspect_thread_end(); return (__retres 2); void * thread_B(void * arg) { pthread_mutex_lock(&lock); B_count++; pthread_mutex_unlock(&lock); } } } 50

![[ NEW SLIDE ] On the HUGE importance of DPOR BEFORE INSTRUMENTATION void * [ NEW SLIDE ] On the HUGE importance of DPOR BEFORE INSTRUMENTATION void *](http://slidetodoc.com/presentation_image_h/232cf04e444311dd84738d70217ba00e/image-51.jpg)

[ NEW SLIDE ] On the HUGE importance of DPOR BEFORE INSTRUMENTATION void * thread_A(void* arg) { pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); } AFTER INSTRUMENTATION (transitions are shown as bands) void *thread_A(void *arg ) // thread_B is similar { void *__retres 2 ; int __cil_tmp 3 ; int __cil_tmp 4 ; { inspect_thread_start("thread_A"); inspect_mutex_lock(& mutex); __cil_tmp 4 = read_shared_0(& A_count); __cil_tmp 3 = __cil_tmp 4 + 1; write_shared_1(& A_count, __cil_tmp 3); inspect_mutex_unlock(& mutex); __retres 2 = (void *)0; inspect_thread_end(); return (__retres 2); void * thread_B(void * arg) { pthread_mutex_lock(&lock); B_count++; pthread_mutex_unlock(&lock); } } } • ONE interleaving with DPOR • 252 = (10!) / (5!)2 without DPOR 51

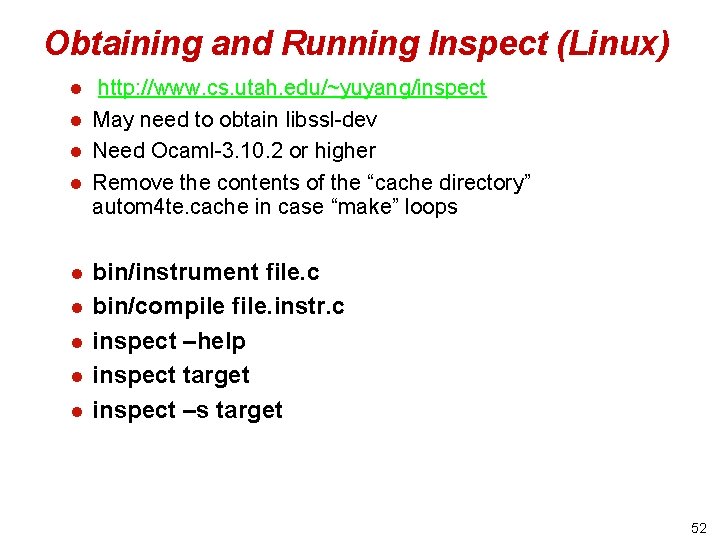

Obtaining and Running Inspect (Linux) l l l l l http: //www. cs. utah. edu/~yuyang/inspect May need to obtain libssl-dev Need Ocaml-3. 10. 2 or higher Remove the contents of the “cache directory” autom 4 te. cache in case “make” loops bin/instrument file. c bin/compile file. instr. c inspect –help inspect target inspect –s target 52

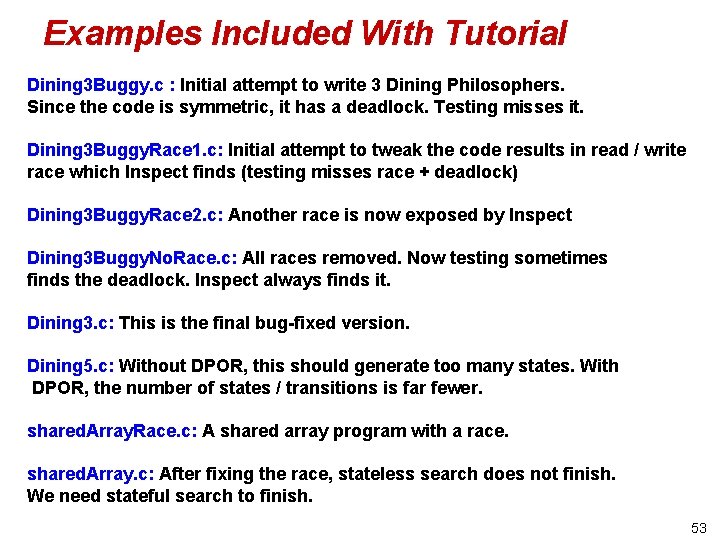

Examples Included With Tutorial Dining 3 Buggy. c : Initial attempt to write 3 Dining Philosophers. Since the code is symmetric, it has a deadlock. Testing misses it. Dining 3 Buggy. Race 1. c: Initial attempt to tweak the code results in read / write race which Inspect finds (testing misses race + deadlock) Dining 3 Buggy. Race 2. c: Another race is now exposed by Inspect Dining 3 Buggy. No. Race. c: All races removed. Now testing sometimes finds the deadlock. Inspect always finds it. Dining 3. c: This is the final bug-fixed version. Dining 5. c: Without DPOR, this should generate too many states. With DPOR, the number of states / transitions is far fewer. shared. Array. Race. c: A shared array program with a race. shared. Array. c: After fixing the race, stateless search does not finish. We need stateful search to finish. 53

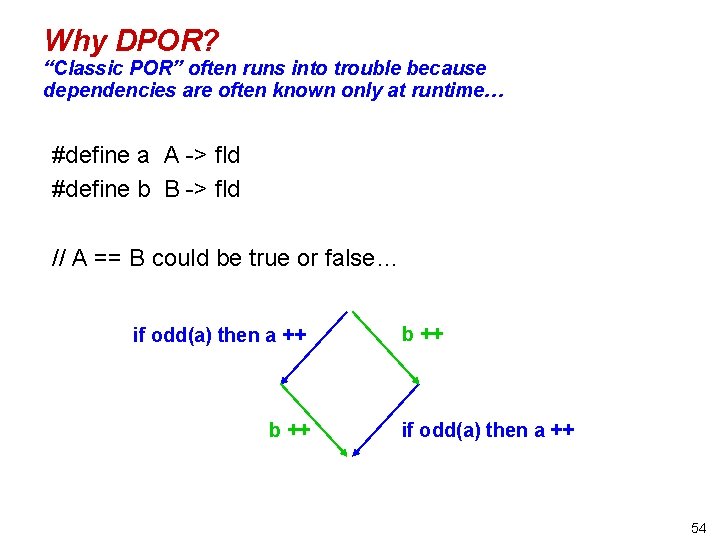

Why DPOR? “Classic POR” often runs into trouble because dependencies are often known only at runtime… #define a A -> fld #define b B -> fld // A == B could be true or false… if odd(a) then a ++ b ++ if odd(a) then a ++ 54

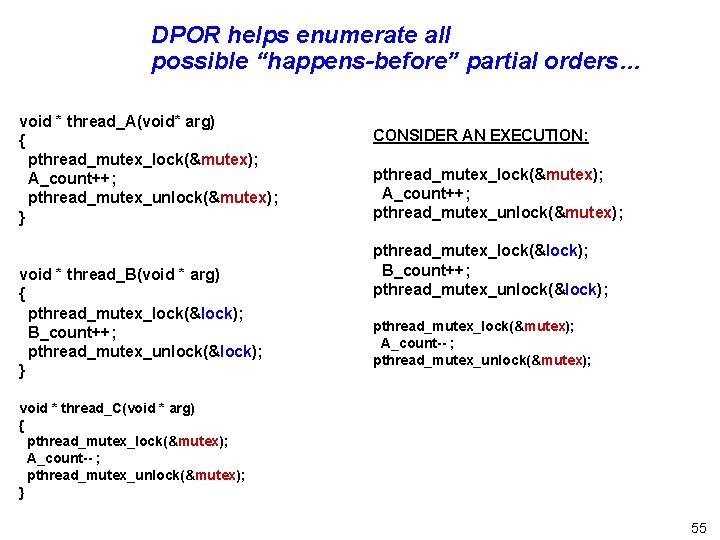

DPOR helps enumerate all possible “happens-before” partial orders… void * thread_A(void* arg) { pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); } void * thread_B(void * arg) { pthread_mutex_lock(&lock); B_count++; pthread_mutex_unlock(&lock); } CONSIDER AN EXECUTION: pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); pthread_mutex_lock(&lock); B_count++; pthread_mutex_unlock(&lock); pthread_mutex_lock(&mutex); A_count-- ; pthread_mutex_unlock(&mutex); void * thread_C(void * arg) { pthread_mutex_lock(&mutex); A_count-- ; pthread_mutex_unlock(&mutex); } 55

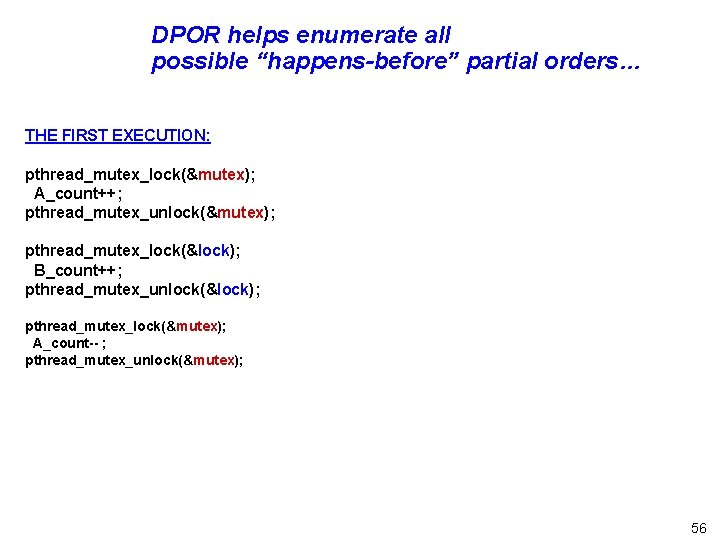

DPOR helps enumerate all possible “happens-before” partial orders… THE FIRST EXECUTION: pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); pthread_mutex_lock(&lock); B_count++; pthread_mutex_unlock(&lock); pthread_mutex_lock(&mutex); A_count-- ; pthread_mutex_unlock(&mutex); 56

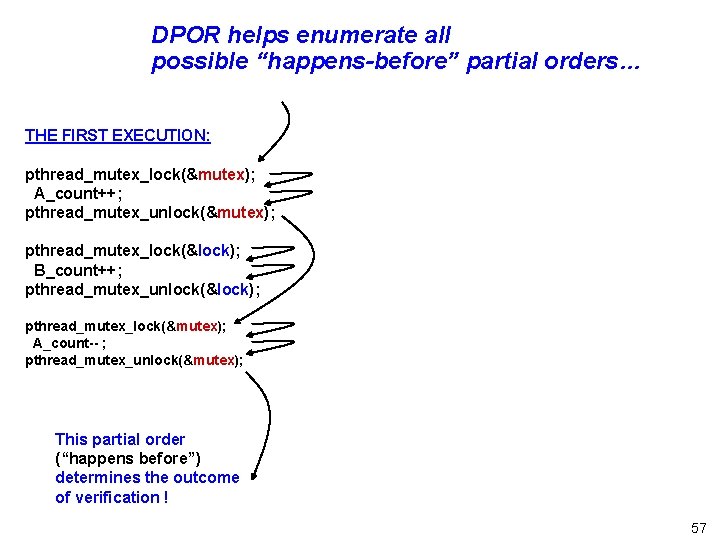

DPOR helps enumerate all possible “happens-before” partial orders… THE FIRST EXECUTION: pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); pthread_mutex_lock(&lock); B_count++; pthread_mutex_unlock(&lock); pthread_mutex_lock(&mutex); A_count-- ; pthread_mutex_unlock(&mutex); This partial order (“happens before”) determines the outcome of verification ! 57

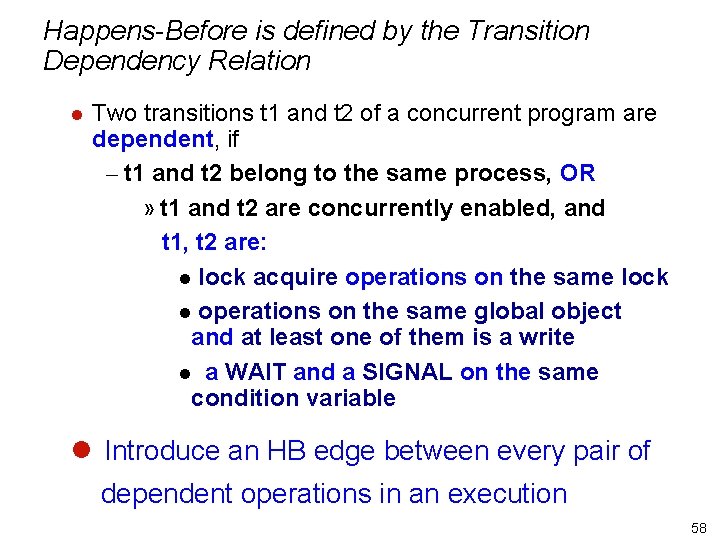

Happens-Before is defined by the Transition Dependency Relation l Two transitions t 1 and t 2 of a concurrent program are dependent, if – t 1 and t 2 belong to the same process, OR » t 1 and t 2 are concurrently enabled, and t 1, t 2 are: l lock acquire operations on the same lock l operations on the same global object and at least one of them is a write l a WAIT and a SIGNAL on the same condition variable l Introduce an HB edge between every pair of dependent operations in an execution 58

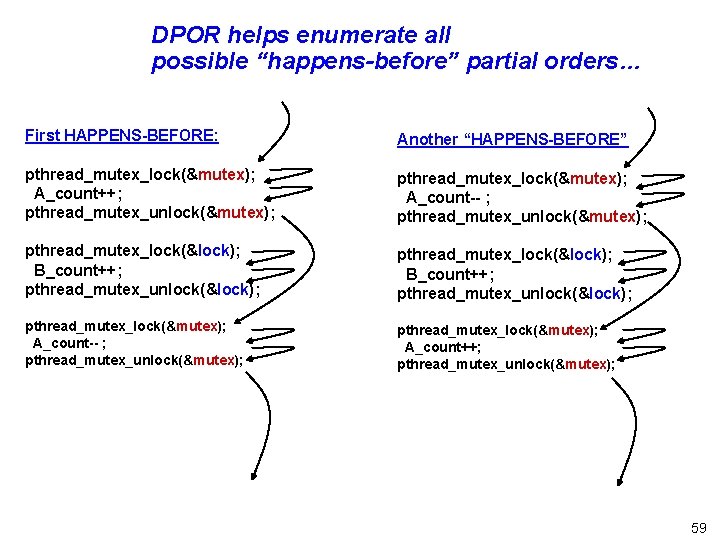

DPOR helps enumerate all possible “happens-before” partial orders… First HAPPENS-BEFORE: Another “HAPPENS-BEFORE” pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); pthread_mutex_lock(&mutex); A_count-- ; pthread_mutex_unlock(&mutex); pthread_mutex_lock(&lock); B_count++; pthread_mutex_unlock(&lock); pthread_mutex_lock(&mutex); A_count-- ; pthread_mutex_unlock(&mutex); pthread_mutex_lock(&mutex); A_count++; pthread_mutex_unlock(&mutex); 59

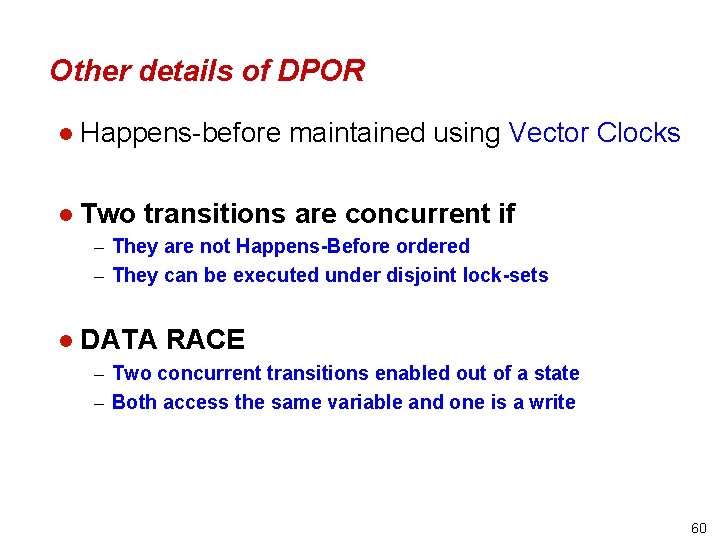

Other details of DPOR l Happens-before maintained using Vector Clocks l Two transitions are concurrent if – They are not Happens-Before ordered – They can be executed under disjoint lock-sets l DATA RACE – Two concurrent transitions enabled out of a state – Both access the same variable and one is a write 60

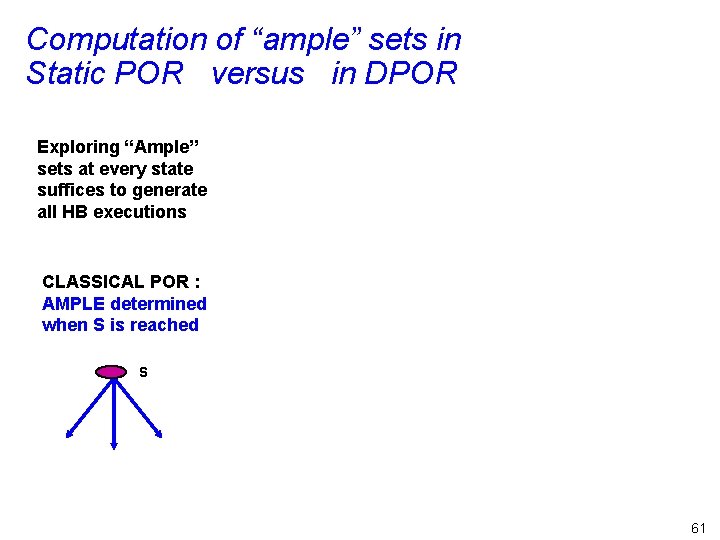

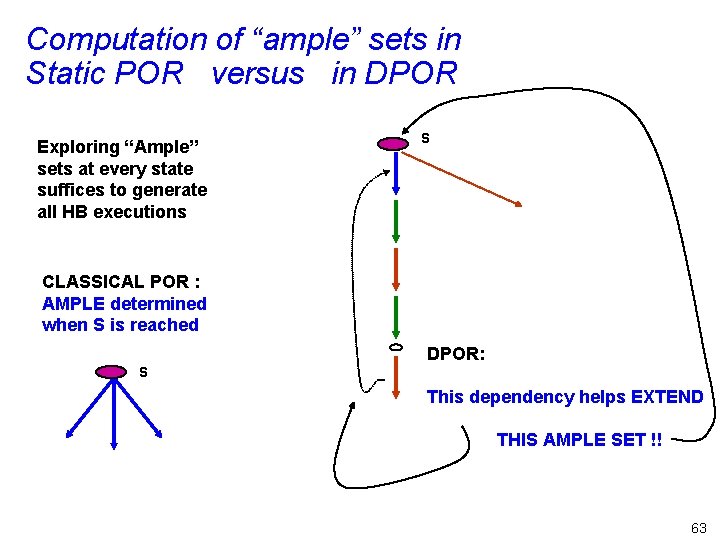

Computation of “ample” sets in Static POR versus in DPOR Exploring “Ample” sets at every state suffices to generate all HB executions CLASSICAL POR : AMPLE determined when S is reached S 61

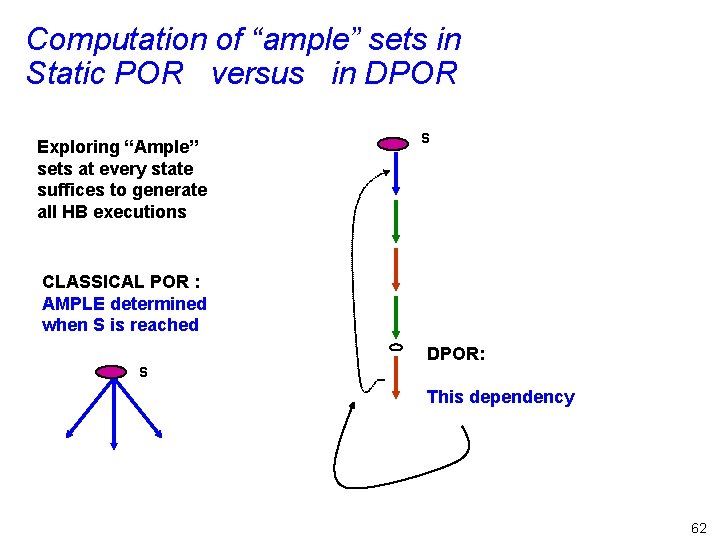

Computation of “ample” sets in Static POR versus in DPOR Exploring “Ample” sets at every state suffices to generate all HB executions S CLASSICAL POR : AMPLE determined when S is reached DPOR: S This dependency 62

Computation of “ample” sets in Static POR versus in DPOR Exploring “Ample” sets at every state suffices to generate all HB executions S CLASSICAL POR : AMPLE determined when S is reached DPOR: S This dependency helps EXTEND THIS AMPLE SET !! 63

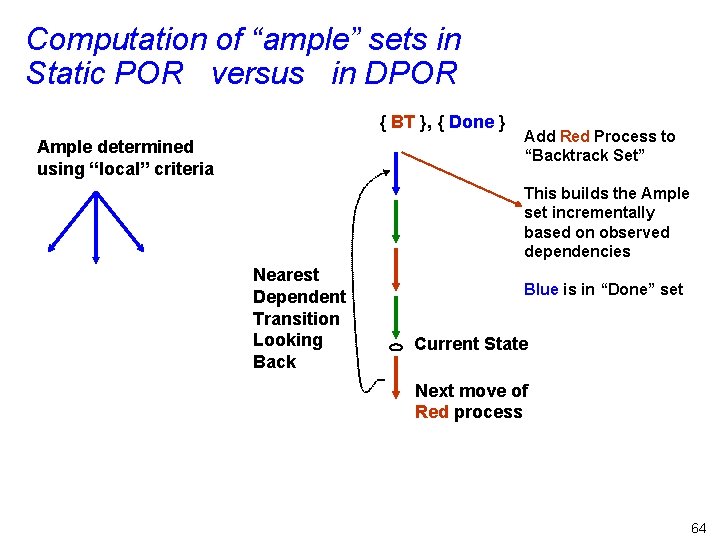

Computation of “ample” sets in Static POR versus in DPOR { BT }, { Done } Ample determined using “local” criteria Add Red Process to “Backtrack Set” This builds the Ample set incrementally based on observed dependencies Nearest Dependent Transition Looking Back Blue is in “Done” set Current State Next move of Red process 64

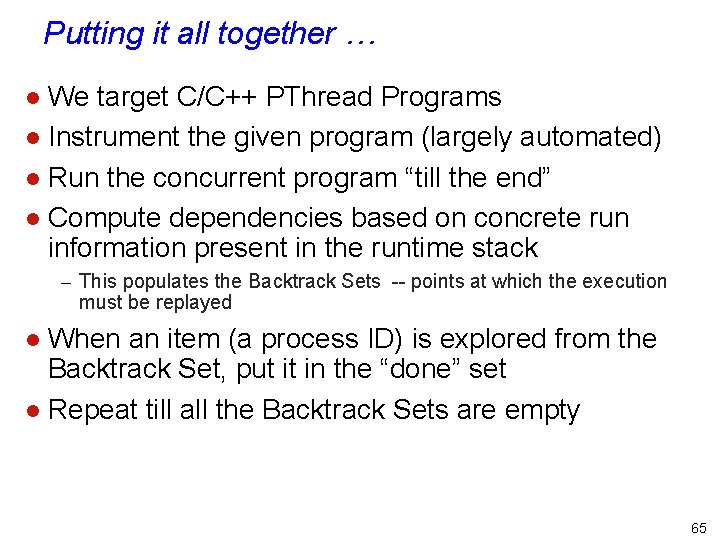

Putting it all together … We target C/C++ PThread Programs l Instrument the given program (largely automated) l Run the concurrent program “till the end” l Compute dependencies based on concrete run information present in the runtime stack l – This populates the Backtrack Sets -- points at which the execution must be replayed When an item (a process ID) is explored from the Backtrack Set, put it in the “done” set l Repeat till all the Backtrack Sets are empty l 65

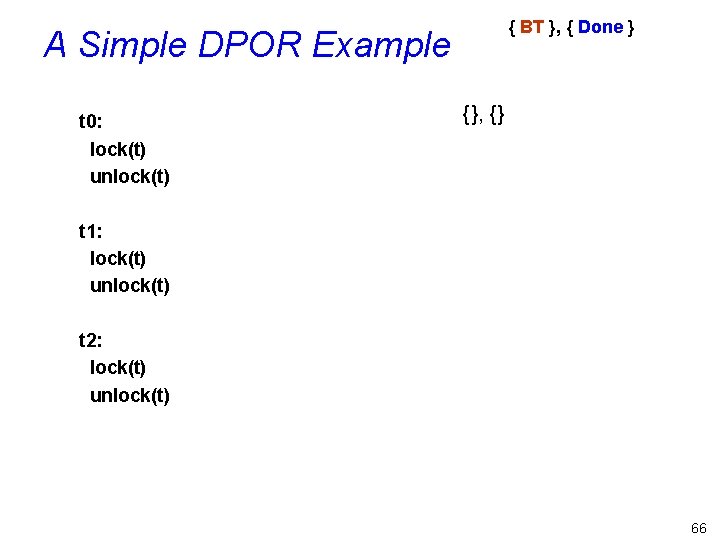

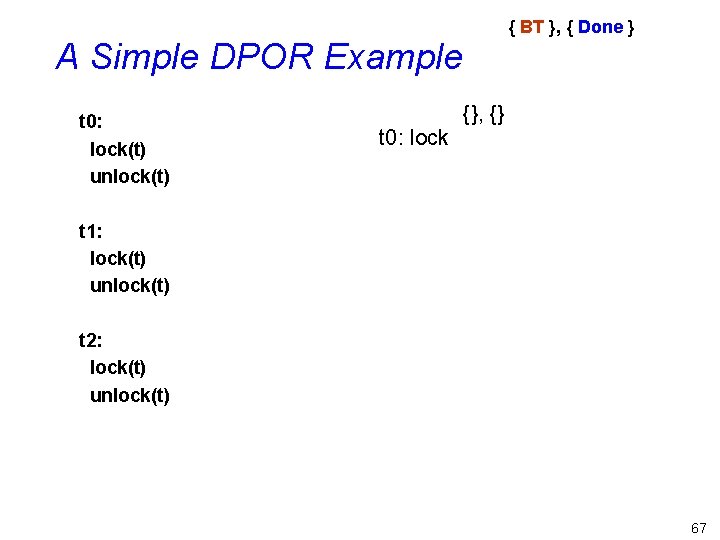

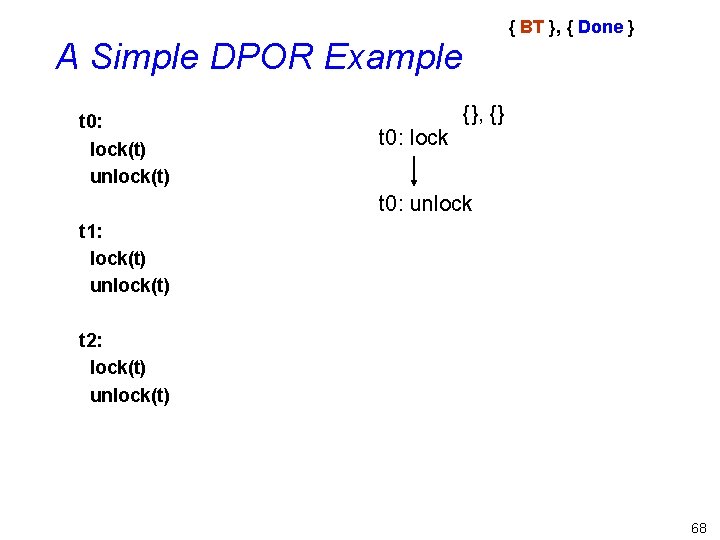

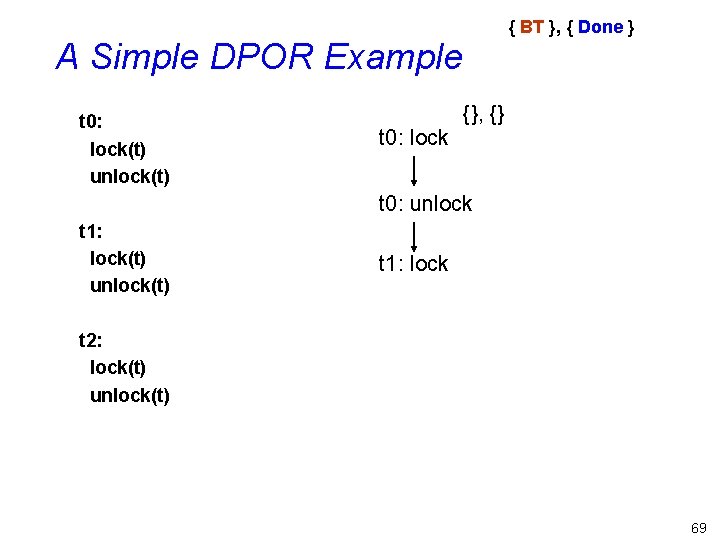

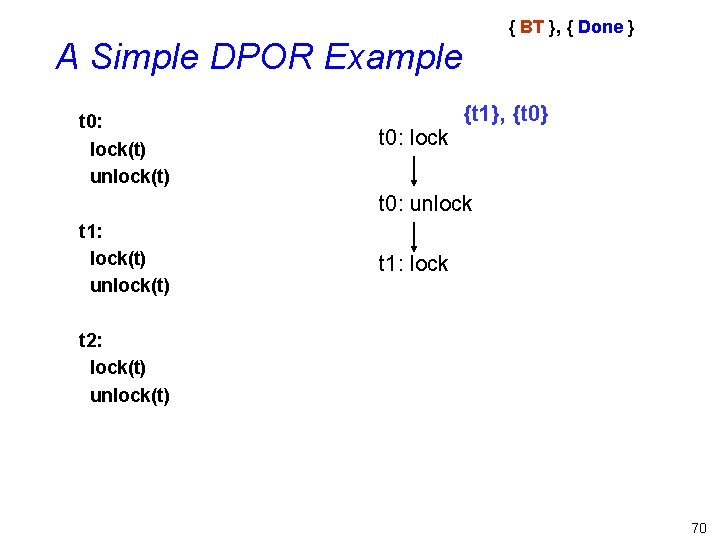

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) {}, {} t 1: lock(t) unlock(t) t 2: lock(t) unlock(t) 66

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {}, {} t 1: lock(t) unlock(t) t 2: lock(t) unlock(t) 67

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {}, {} t 0: unlock t 1: lock(t) unlock(t) t 2: lock(t) unlock(t) 68

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {}, {} t 0: unlock t 1: lock(t) unlock(t) t 1: lock t 2: lock(t) unlock(t) 69

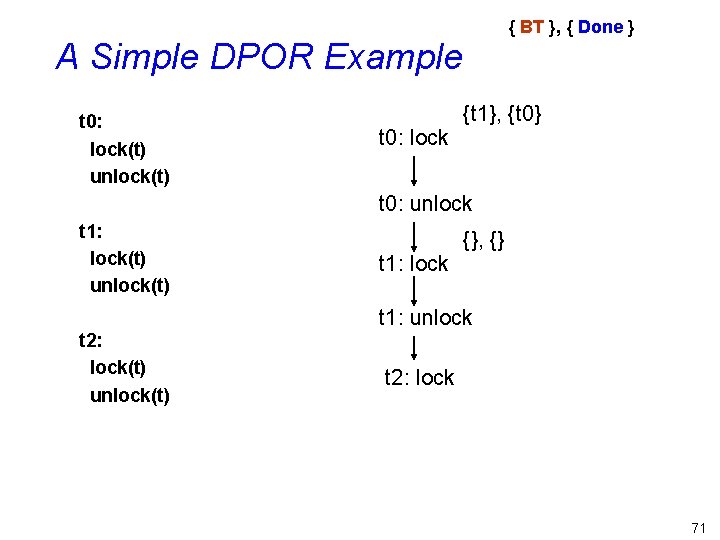

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1}, {t 0} t 0: unlock t 1: lock(t) unlock(t) t 1: lock t 2: lock(t) unlock(t) 70

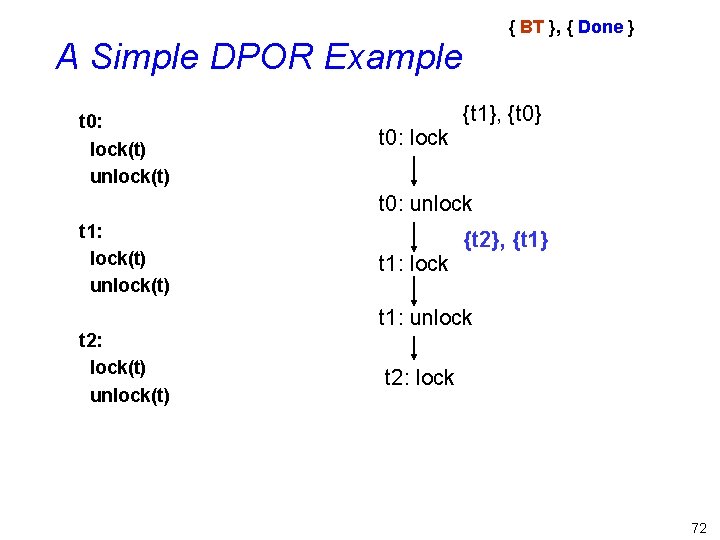

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1}, {t 0} t 0: unlock t 1: lock(t) unlock(t) t 1: lock {}, {} t 1: unlock t 2: lock(t) unlock(t) t 2: lock 71

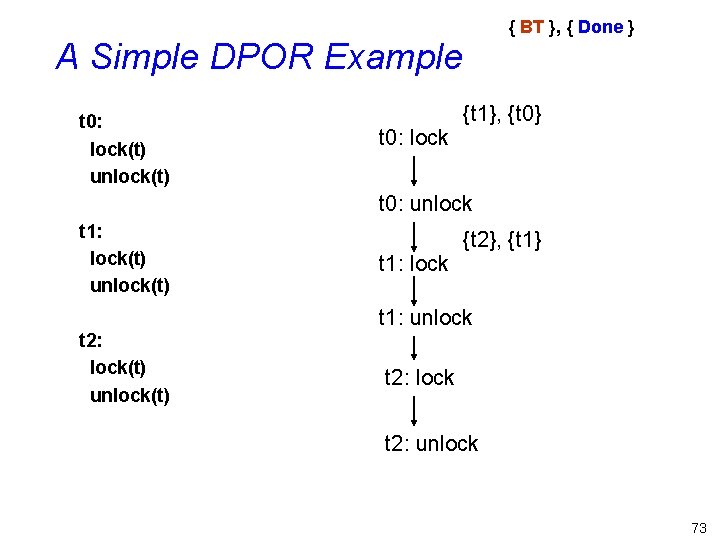

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1}, {t 0} t 0: unlock t 1: lock(t) unlock(t) t 1: lock {t 2}, {t 1} t 1: unlock t 2: lock(t) unlock(t) t 2: lock 72

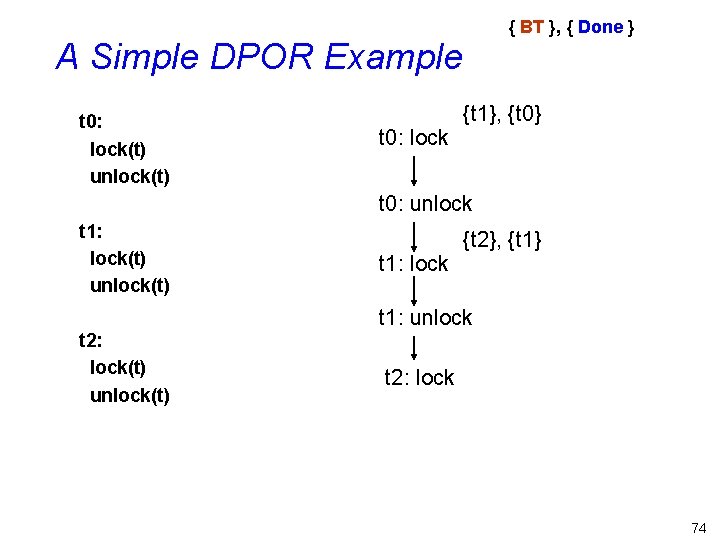

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1}, {t 0} t 0: unlock t 1: lock(t) unlock(t) t 1: lock {t 2}, {t 1} t 1: unlock t 2: lock(t) unlock(t) t 2: lock t 2: unlock 73

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1}, {t 0} t 0: unlock t 1: lock(t) unlock(t) t 1: lock {t 2}, {t 1} t 1: unlock t 2: lock(t) unlock(t) t 2: lock 74

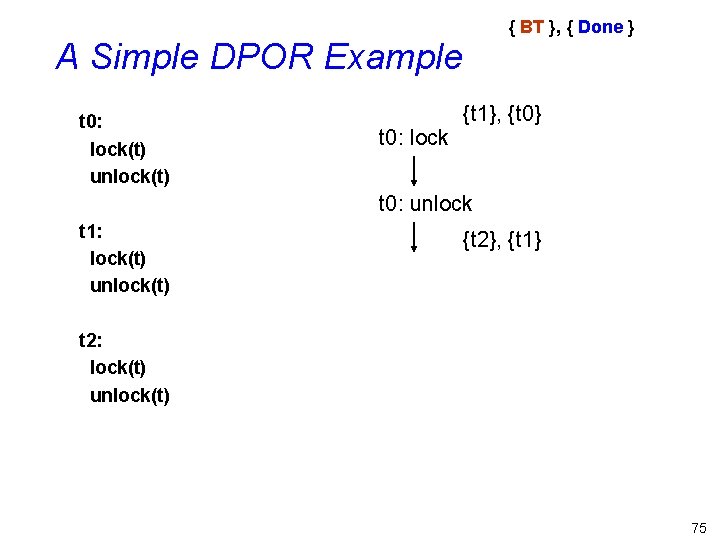

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1}, {t 0} t 0: unlock t 1: lock(t) unlock(t) {t 2}, {t 1} t 2: lock(t) unlock(t) 75

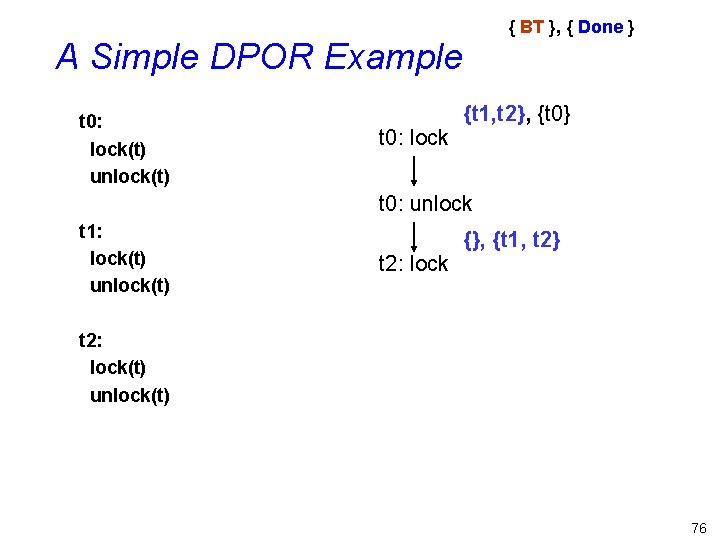

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1, t 2}, {t 0} t 0: unlock t 1: lock(t) unlock(t) t 2: lock {}, {t 1, t 2} t 2: lock(t) unlock(t) 76

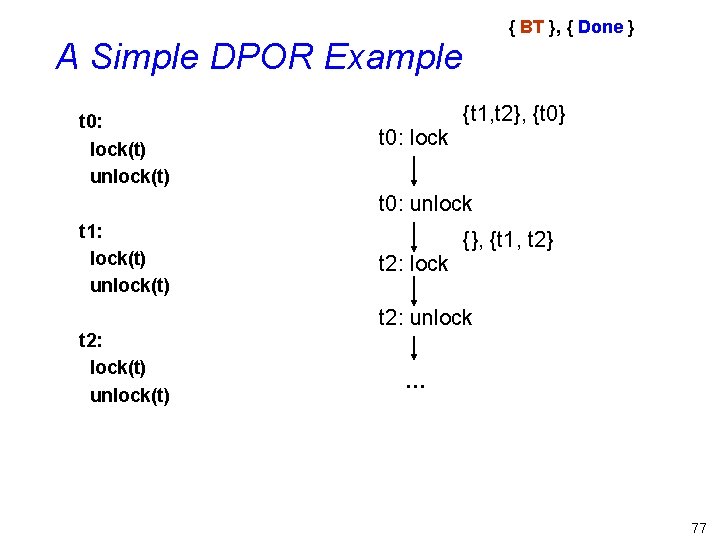

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1, t 2}, {t 0} t 0: unlock t 1: lock(t) unlock(t) t 2: lock {}, {t 1, t 2} t 2: unlock t 2: lock(t) unlock(t) … 77

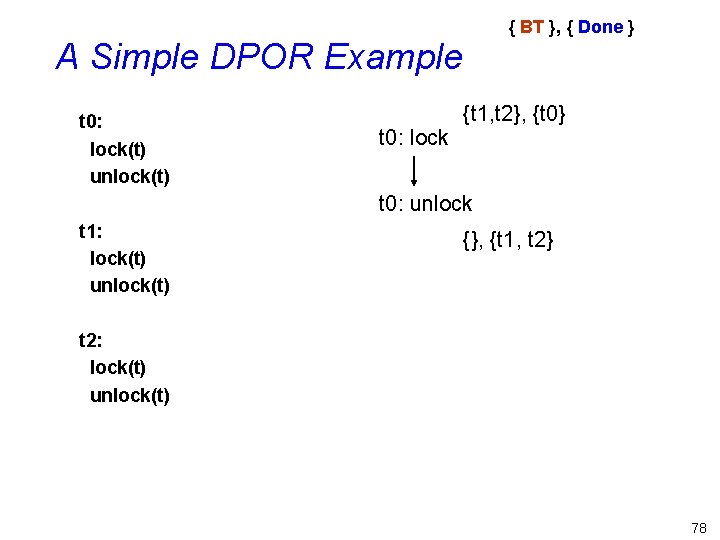

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 0: lock {t 1, t 2}, {t 0} t 0: unlock t 1: lock(t) unlock(t) {}, {t 1, t 2} t 2: lock(t) unlock(t) 78

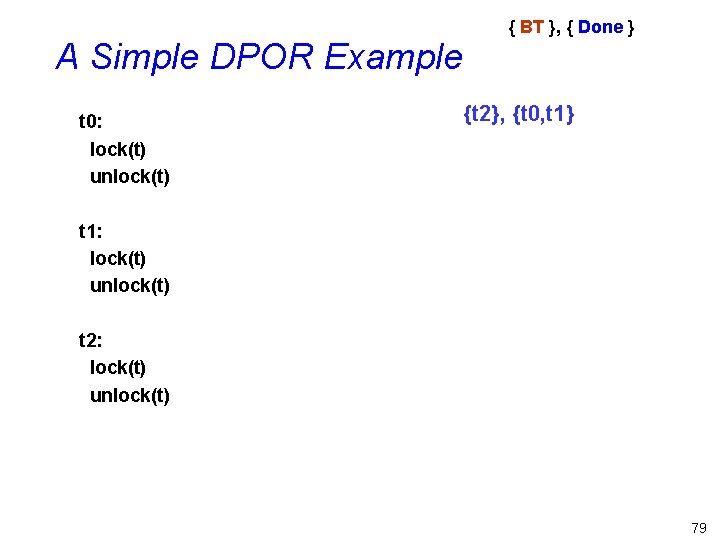

A Simple DPOR Example t 0: lock(t) unlock(t) { BT }, { Done } {t 2}, {t 0, t 1} t 1: lock(t) unlock(t) t 2: lock(t) unlock(t) 79

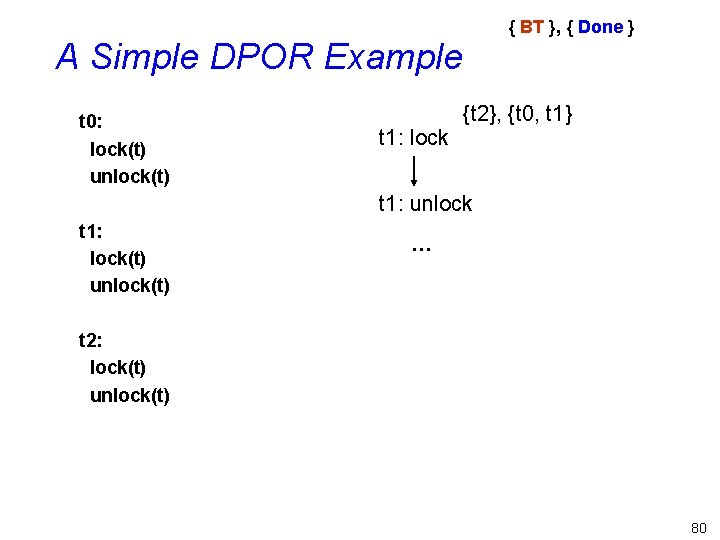

{ BT }, { Done } A Simple DPOR Example t 0: lock(t) unlock(t) t 1: lock {t 2}, {t 0, t 1} t 1: unlock t 1: lock(t) unlock(t) … t 2: lock(t) unlock(t) 80

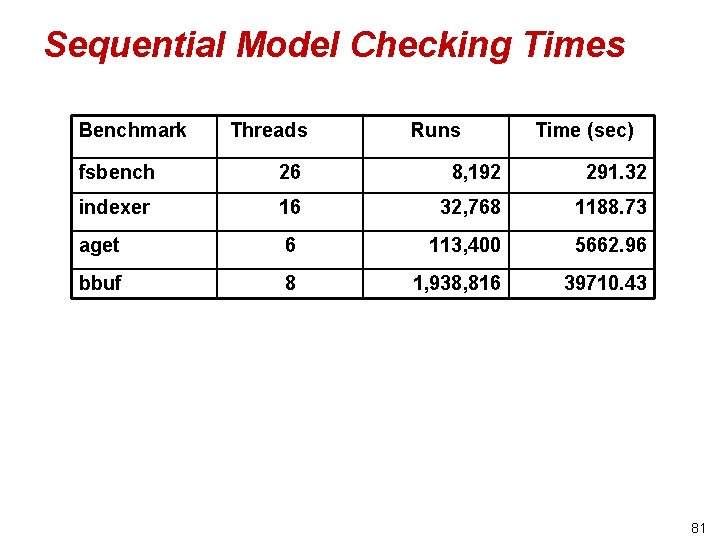

Sequential Model Checking Times Benchmark Threads Runs Time (sec) fsbench 26 8, 192 291. 32 indexer 16 32, 768 1188. 73 aget 6 113, 400 5662. 96 bbuf 8 1, 938, 816 39710. 43 81

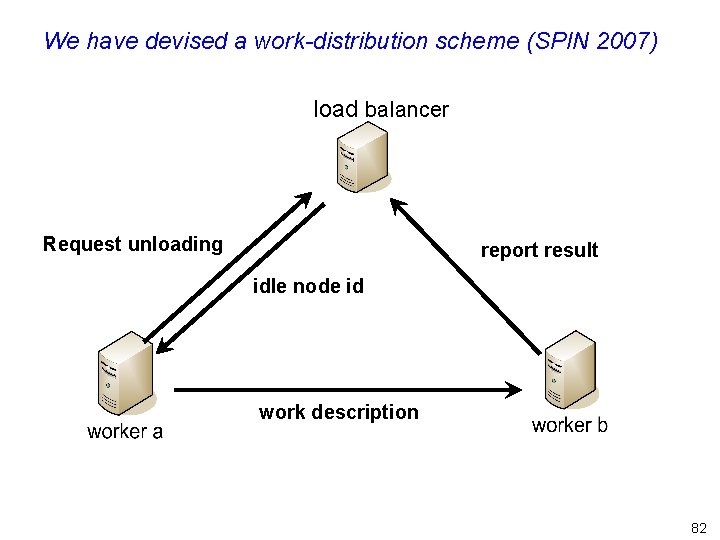

We have devised a work-distribution scheme (SPIN 2007) load balancer Request unloading report result idle node id work description 82

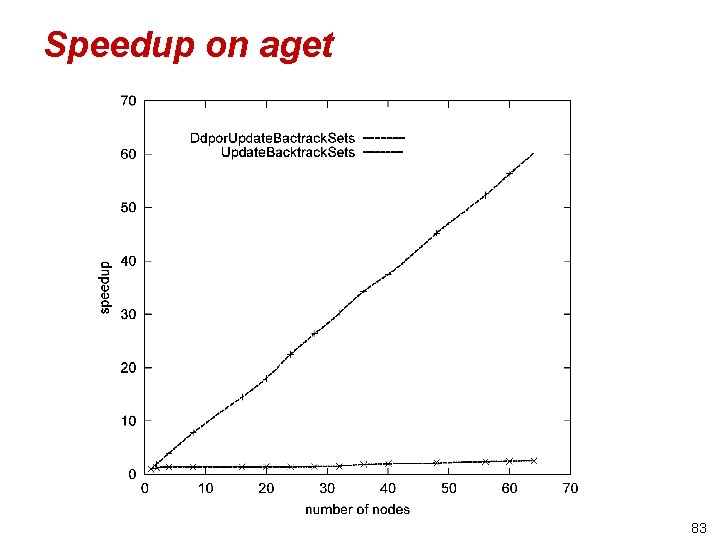

Speedup on aget 83

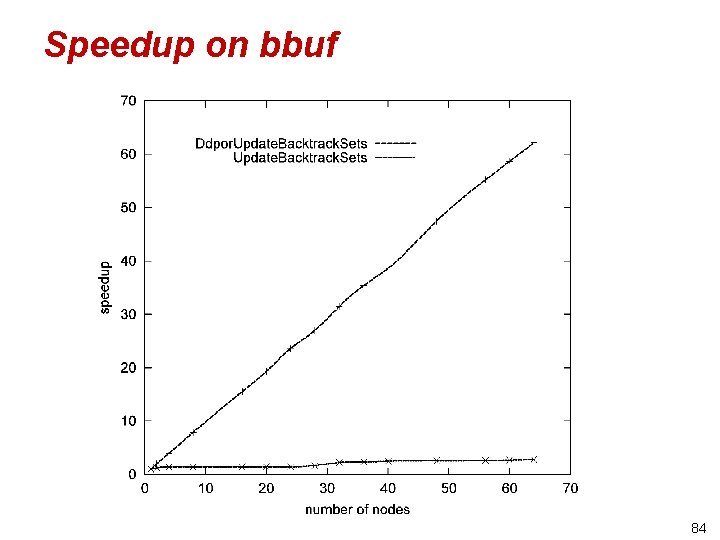

Speedup on bbuf 84

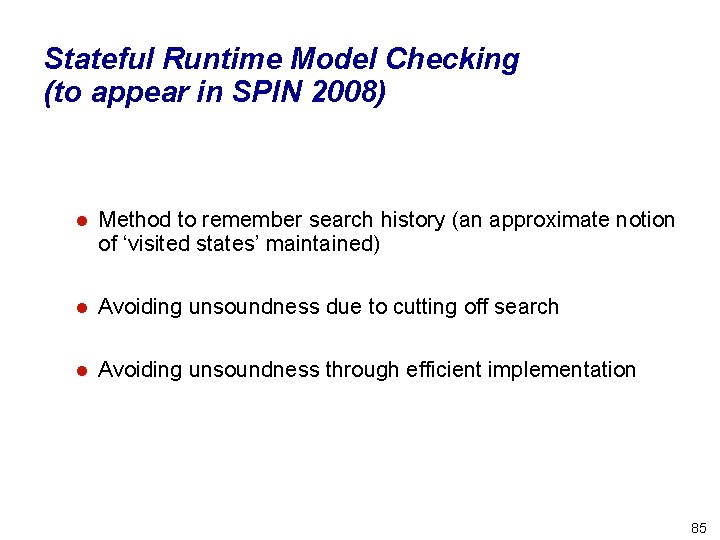

Stateful Runtime Model Checking (to appear in SPIN 2008) l Method to remember search history (an approximate notion of ‘visited states’ maintained) l Avoiding unsoundness due to cutting off search l Avoiding unsoundness through efficient implementation 85

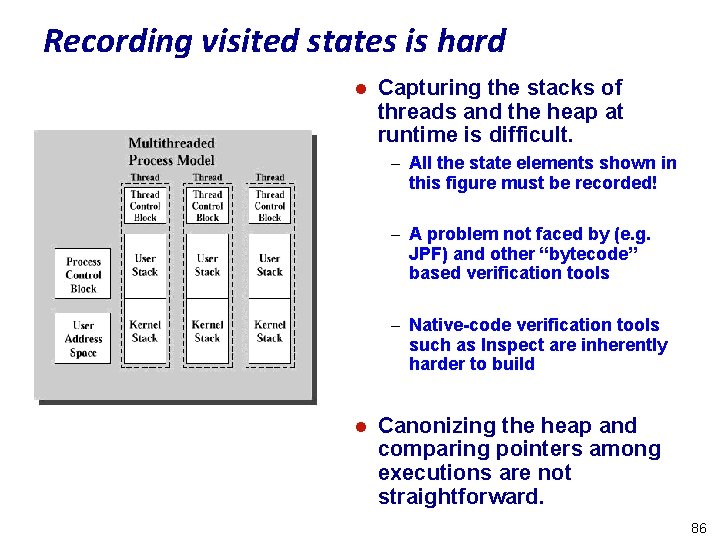

Recording visited states is hard l Capturing the stacks of threads and the heap at runtime is difficult. – All the state elements shown in this figure must be recorded! – A problem not faced by (e. g. JPF) and other “bytecode” based verification tools – Native-code verification tools such as Inspect are inherently harder to build l Canonizing the heap and comparing pointers among executions are not straightforward. 86

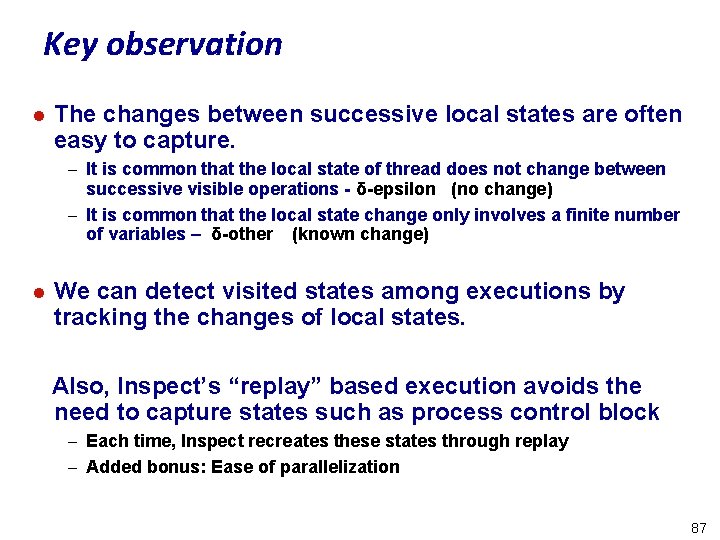

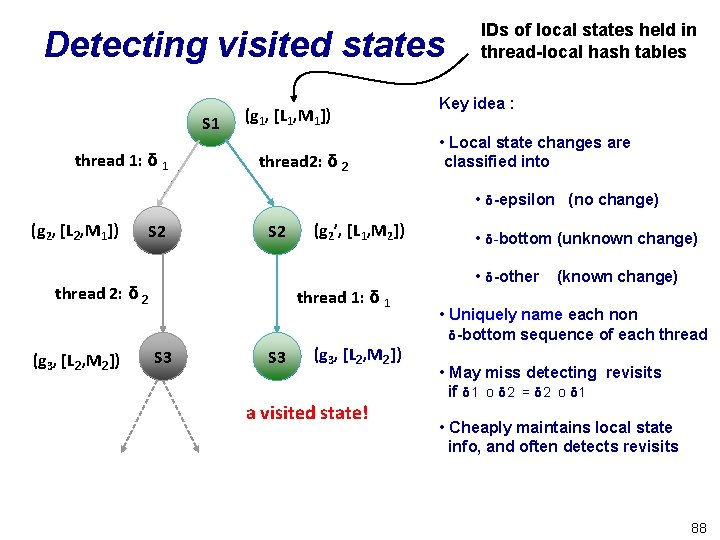

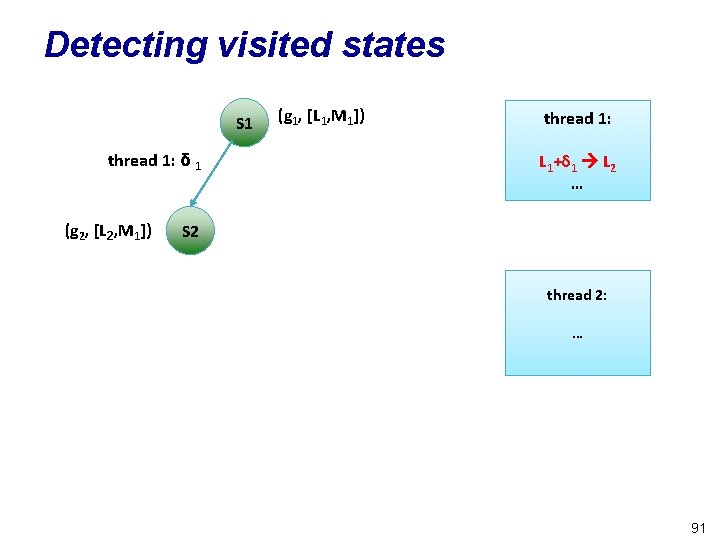

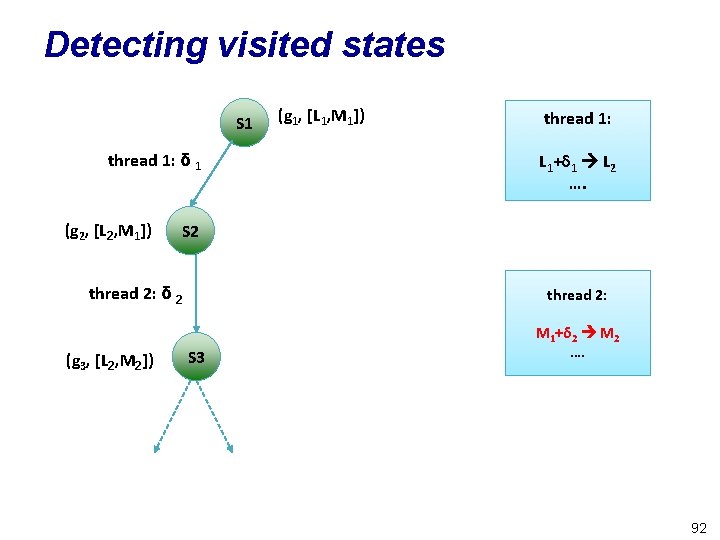

Key observation l The changes between successive local states are often easy to capture. – It is common that the local state of thread does not change between successive visible operations - δ-epsilon (no change) – It is common that the local state change only involves a finite number of variables – δ-other (known change) l We can detect visited states among executions by tracking the changes of local states. Also, Inspect’s “replay” based execution avoids the need to capture states such as process control block – Each time, Inspect recreates these states through replay – Added bonus: Ease of parallelization 87

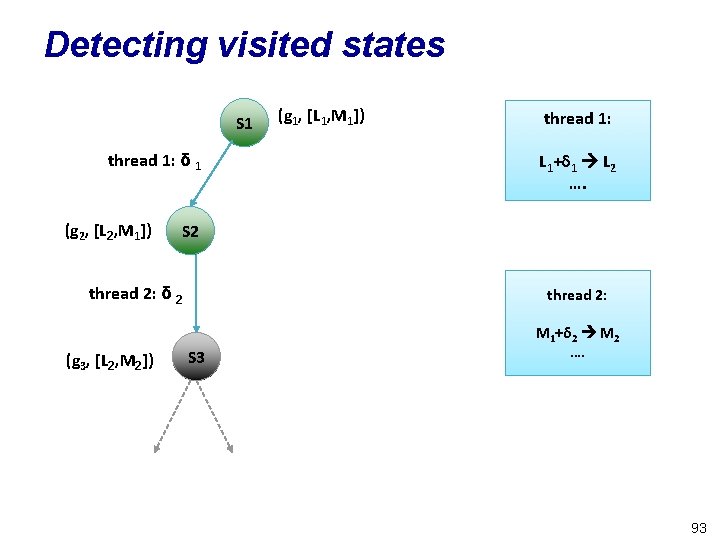

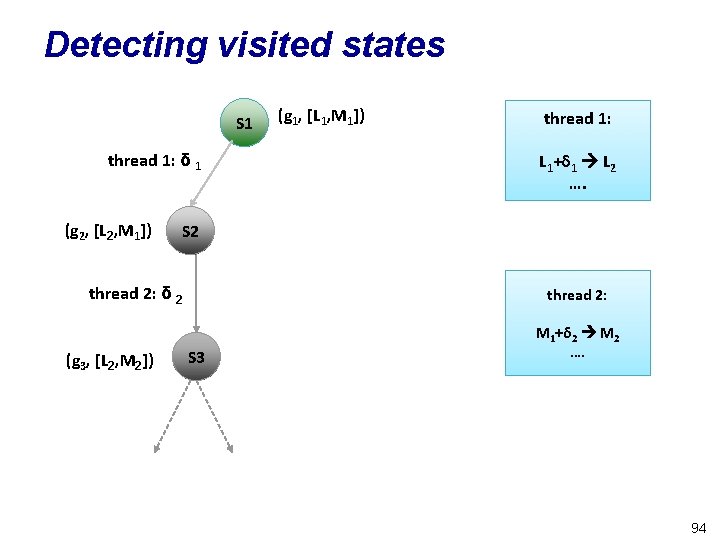

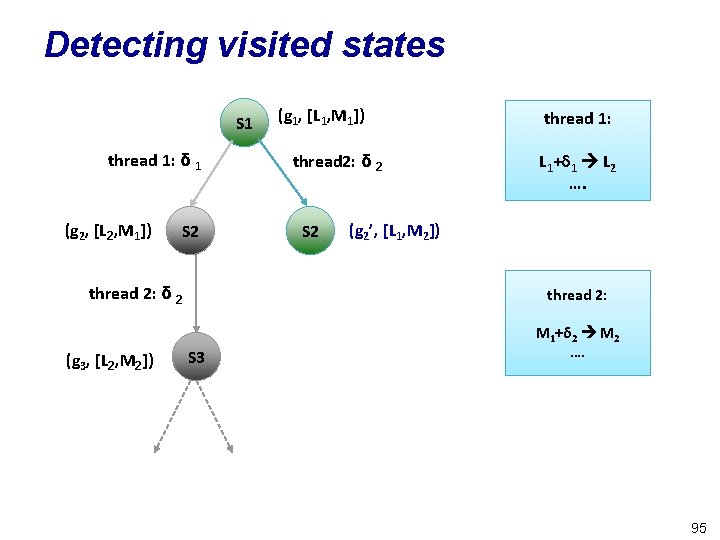

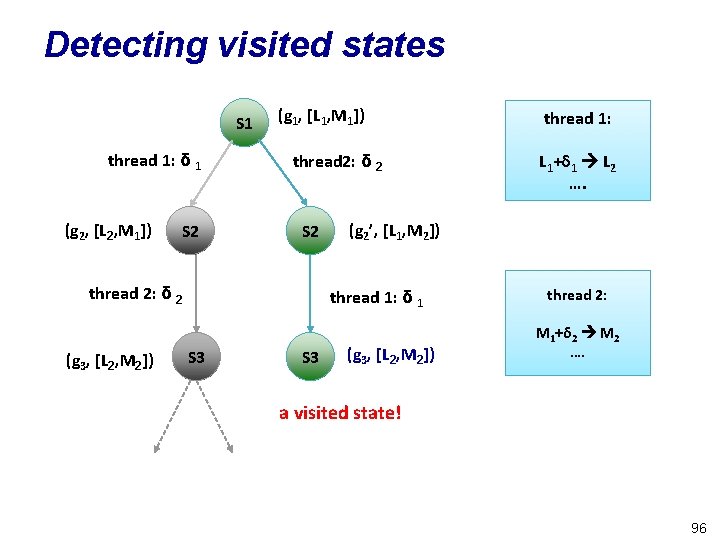

Detecting visited states S 1 thread 1: δ 1 (g 1, [L 1, M 1]) thread 2: δ 2 IDs of local states held in thread-local hash tables Key idea : • Local state changes are classified into • δ-epsilon (no change) (g 2, [L 2, M 1]) S 2 thread 2: δ 2 (g 3, [L 2, M 2]) (g 2’, [L 1, M 2]) thread 1: δ 1 S 3 (g 3, [L 2, M 2]) a visited state! • δ-bottom (unknown change) • δ-other (known change) • Uniquely name each non δ-bottom sequence of each thread • May miss detecting revisits if δ 1 o δ 2 = δ 2 o δ 1 • Cheaply maintains local state info, and often detects revisits 88

![Detecting visited states S 1 (g 1, [L 1, M 1]) thread 1: …. Detecting visited states S 1 (g 1, [L 1, M 1]) thread 1: ….](http://slidetodoc.com/presentation_image_h/232cf04e444311dd84738d70217ba00e/image-89.jpg)

Detecting visited states S 1 (g 1, [L 1, M 1]) thread 1: …. thread 2: …. 89

![Detecting visited states S 1 (g 1, [L 1, M 1]) thread 1: …. Detecting visited states S 1 (g 1, [L 1, M 1]) thread 1: ….](http://slidetodoc.com/presentation_image_h/232cf04e444311dd84738d70217ba00e/image-90.jpg)

Detecting visited states S 1 (g 1, [L 1, M 1]) thread 1: …. thread 2: …. 90

Detecting visited states S 1 thread 1: δ 1 (g 2, [L 2, M 1]) (g 1, [L 1, M 1]) thread 1: L 1+δ 1 L 2 … S 2 thread 2: … 91

Detecting visited states S 1 thread 1: δ 1 (g 2, [L 2, M 1]) thread 1: L 1+δ 1 L 2 …. S 2 thread 2: δ 2 (g 3, [L 2, M 2]) (g 1, [L 1, M 1]) thread 2: S 3 M 1+δ 2 M 2 …. 92

Detecting visited states S 1 thread 1: δ 1 (g 2, [L 2, M 1]) thread 1: L 1+δ 1 L 2 …. S 2 thread 2: δ 2 (g 3, [L 2, M 2]) (g 1, [L 1, M 1]) thread 2: S 3 M 1+δ 2 M 2 …. 93

Detecting visited states S 1 thread 1: δ 1 (g 2, [L 2, M 1]) thread 1: L 1+δ 1 L 2 …. S 2 thread 2: δ 2 (g 3, [L 2, M 2]) (g 1, [L 1, M 1]) thread 2: S 3 M 1+δ 2 M 2 …. 94

Detecting visited states S 1 thread 1: δ 1 (g 2, [L 2, M 1]) S 2 thread 2: δ 2 (g 3, [L 2, M 2]) (g 1, [L 1, M 1]) thread 2: δ 2 S 2 thread 1: L 1+δ 1 L 2 …. (g 2’, [L 1, M 2]) thread 2: S 3 M 1+δ 2 M 2 …. 95

Detecting visited states S 1 thread 1: δ 1 (g 2, [L 2, M 1]) S 2 (g 1, [L 1, M 1]) thread 2: δ 2 S 2 thread 2: δ 2 (g 3, [L 2, M 2]) S 3 L 1+δ 1 L 2 …. (g 2’, [L 1, M 2]) thread 1: δ 1 S 3 thread 1: (g 3, [L 2, M 2]) thread 2: M 1+δ 2 M 2 …. a visited state! 96

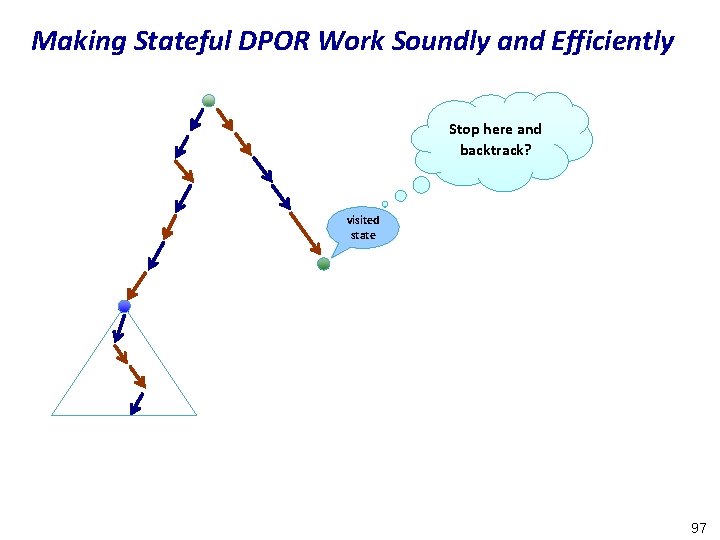

Making Stateful DPOR Work Soundly and Efficiently Stop here and backtrack? visited state 97

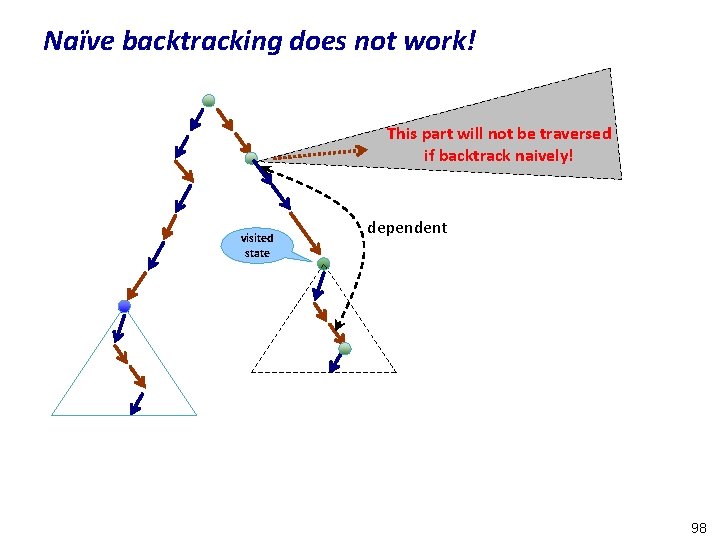

Naïve backtracking does not work! This part will not be traversed if backtrack naively! visited state dependent 98

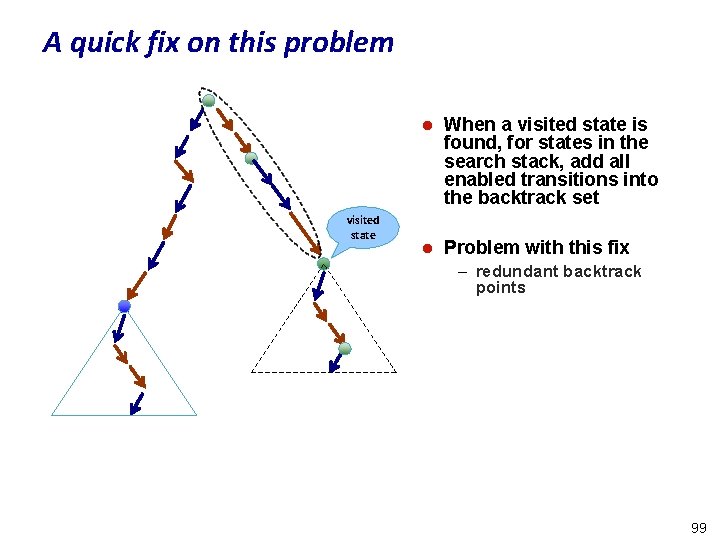

A quick fix on this problem visited state l When a visited state is found, for states in the search stack, add all enabled transitions into the backtrack set l Problem with this fix – redundant backtrack points 99

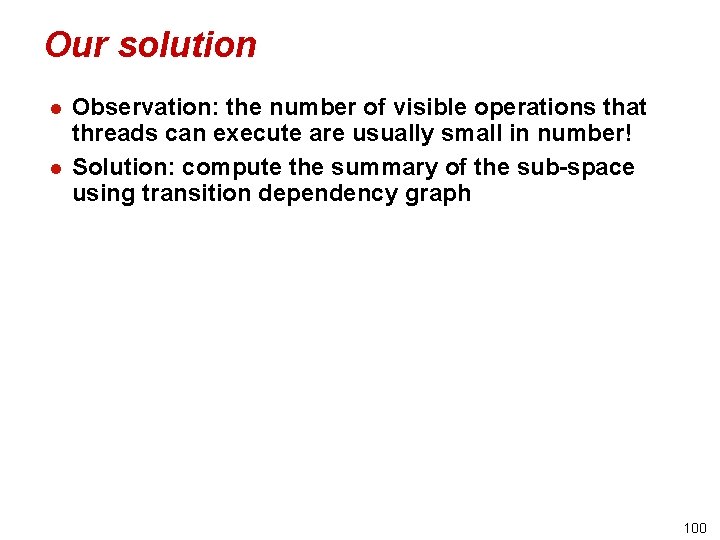

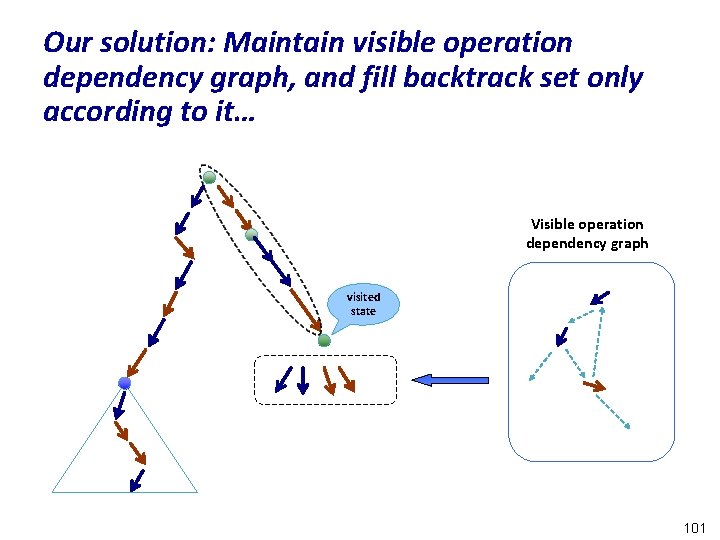

Our solution l l Observation: the number of visible operations that threads can execute are usually small in number! Solution: compute the summary of the sub-space using transition dependency graph 100

Our solution: Maintain visible operation dependency graph, and fill backtrack set only according to it… Visible operation dependency graph visited state 101

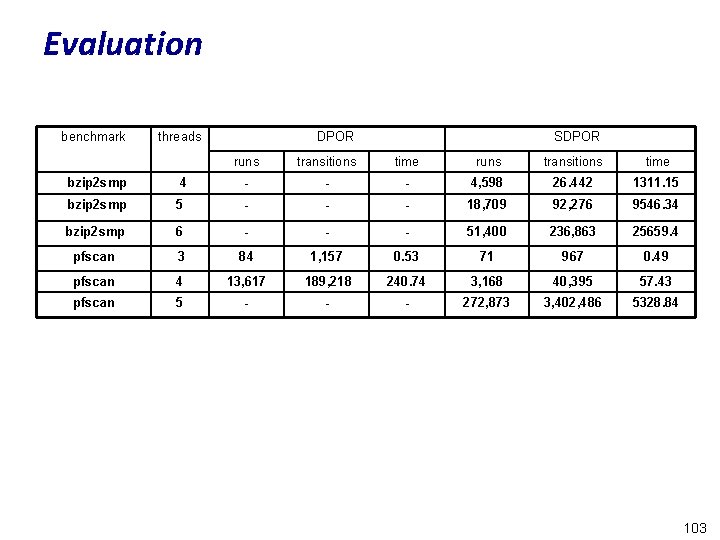

Evaluation l Two (realistic) benchmarks – pfscan -- a parallel file scanner – bzip 2 smp – a parallel file compressor 102

Evaluation benchmark threads DPOR SDPOR runs transitions time bzip 2 smp 4 - - - 4, 598 26. 442 1311. 15 bzip 2 smp 5 - - - 18, 709 92, 276 9546. 34 bzip 2 smp 6 - - - 51, 400 236, 863 25659. 4 pfscan 3 84 1, 157 0. 53 71 967 0. 49 pfscan 4 13, 617 189, 218 240. 74 3, 168 40, 395 57. 43 pfscan 5 - - - 272, 873 3, 402, 486 5328. 84 103

RESULTS pertaining to ISP (to be presented at CAV 2008) 104

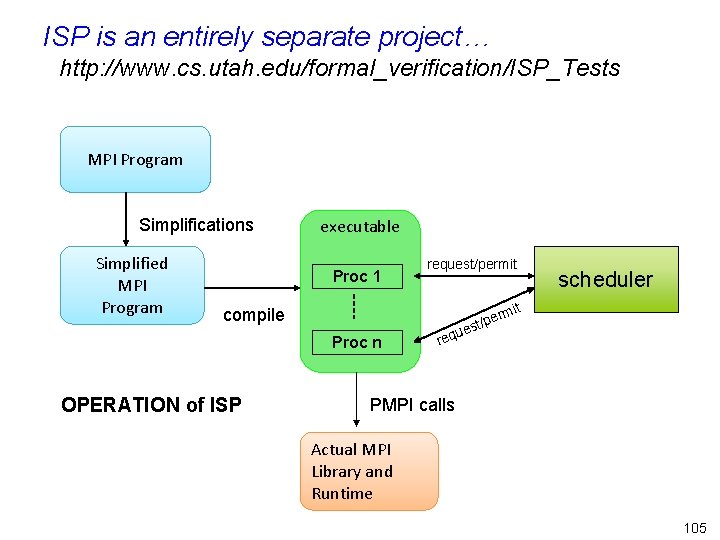

ISP is an entirely separate project… http: //www. cs. utah. edu/formal_verification/ISP_Tests MPI Program Simplifications Simplified MPI Program executable Proc 1 request/permit compile Proc n OPERATION of ISP t rmi e st/p ue req scheduler PMPI calls Actual MPI Library and Runtime 105

MPI Program Verification Work prior to ISP l Siegel and Avrunin, Siegel – MPI programs modeled in Promela » Models built by hand – MPI-SPIN employs some “MPI-aware” reductions » Version of SPIN with C functions serving to capture MPI – Symbolic execution to compare sequential and concurrent algorithms » Three precision levels of comparison l Efforts based on static analysis – Vuduc, Quinlan, de Supinski, Dwyer, Hoveland, … » the usual attributes of static analysis apply l Dynamic Execution based Verification ESSENTIAL for MPI – – Need to examine code-paths in MPI and user-level libraries Bugs may be in the “surrounding code” Dynamic reductions can dramatically reduce # of interleavings MPI programs often compute many things (communicators, send targets. . ) 106

Summary of ISP l Dynamic verification of MPI programs suffers from – the inability to externally control how the MPI runtime performs message matches for wildcard receive statements – the inability to force a desired alternative execution l ISP overcomes these problems by – Exploiting MPI’s out-of-order semantics » If the runtime does not follow certain program orderings, then one can afford to postpone the issue of certain MPI operations » Such postponement allows one to determine the maximal set of message sends that can ever match with a receive l later, we show that this is also the basis of a barrier removal algorithm – ISP execution strategy guarantees AMPLE sets at every point l Dealing with actual concurrent program runtimes in a DPOR approach is a growing reality to be confronted 107

ISP Results l Found deadlocks missed by some other existing tools – Testing tools such as Marmot, MPICH run from the terminal l Could finish examining some large benchmarks – Game of Life example (500+ lines of code) – “Lines of code” is an unfamiliar metric for MPI » even 4 lines of code can be “hard” l This level of coverage unattainable through “testing”, in the presence of – non-determinism » Never known statically whether code is deterministic – too many processes » Blind interleaving can kill any testing strategy l Full table of results presented at http: //www. cs. utah. edu/formal_verification/ISP_Tests 108

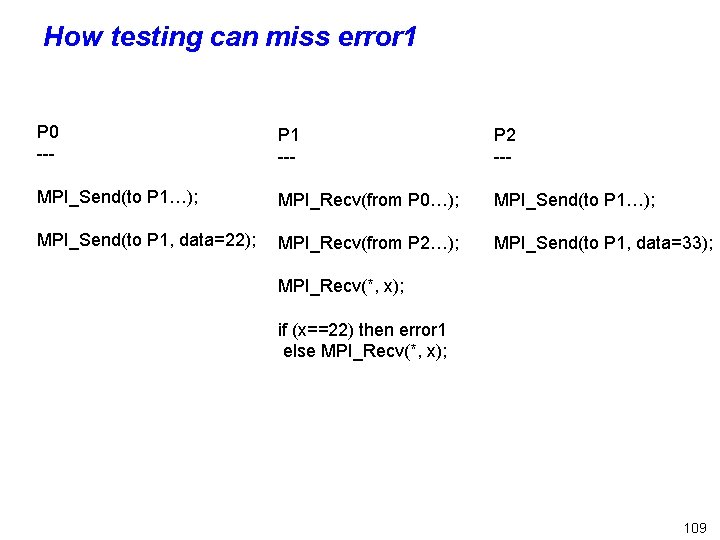

How testing can miss error 1 P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); 109

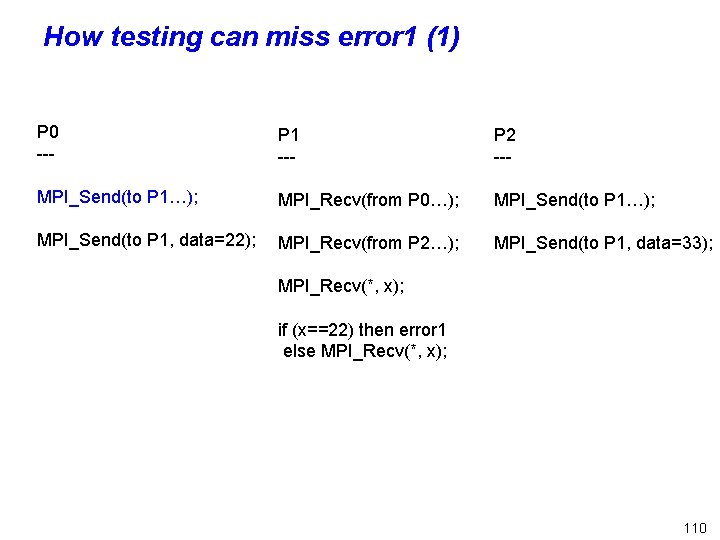

How testing can miss error 1 (1) P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); 110

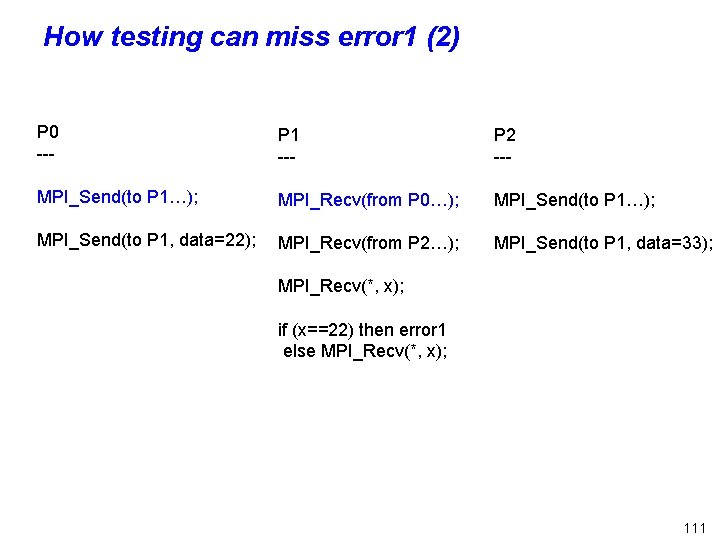

How testing can miss error 1 (2) P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); 111

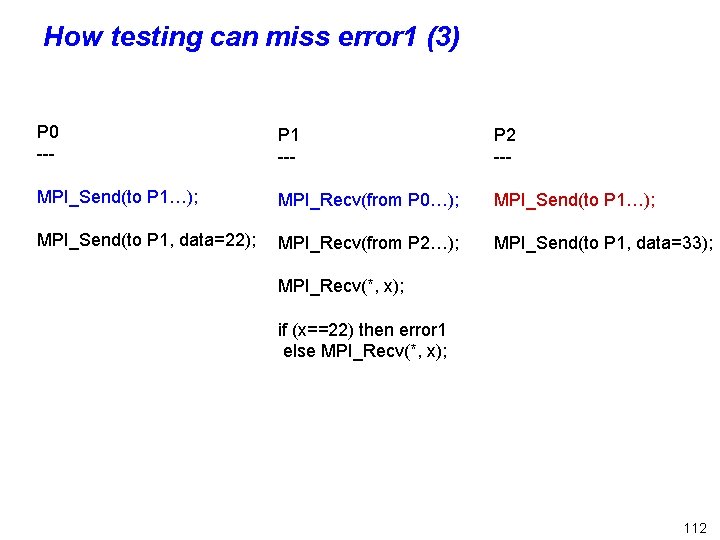

How testing can miss error 1 (3) P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); 112

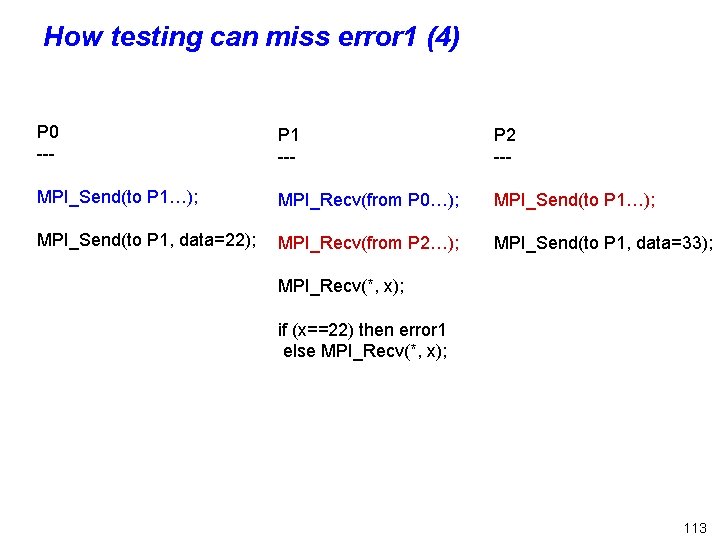

How testing can miss error 1 (4) P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); 113

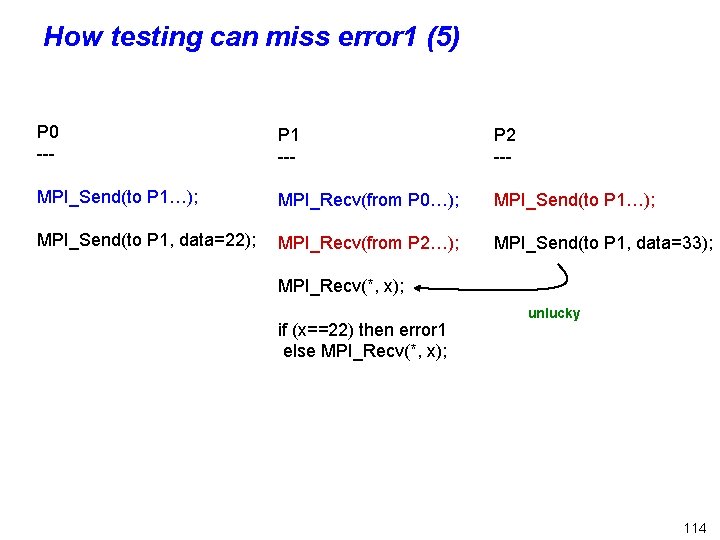

How testing can miss error 1 (5) P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); unlucky 114

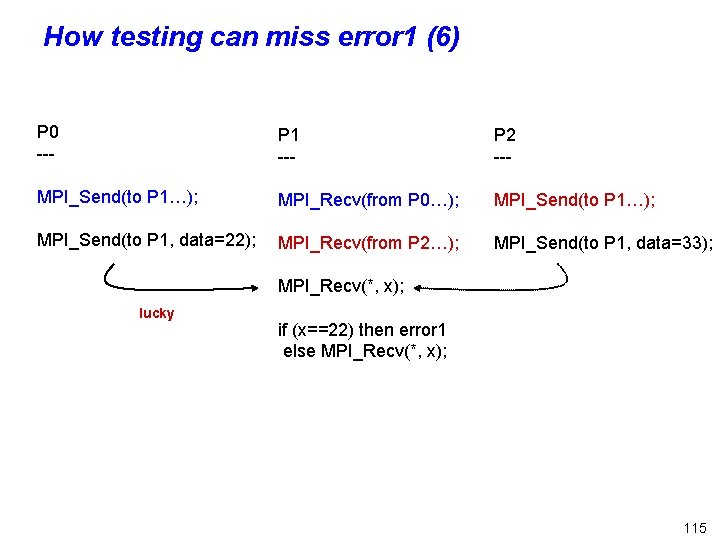

How testing can miss error 1 (6) P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); lucky if (x==22) then error 1 else MPI_Recv(*, x); 115

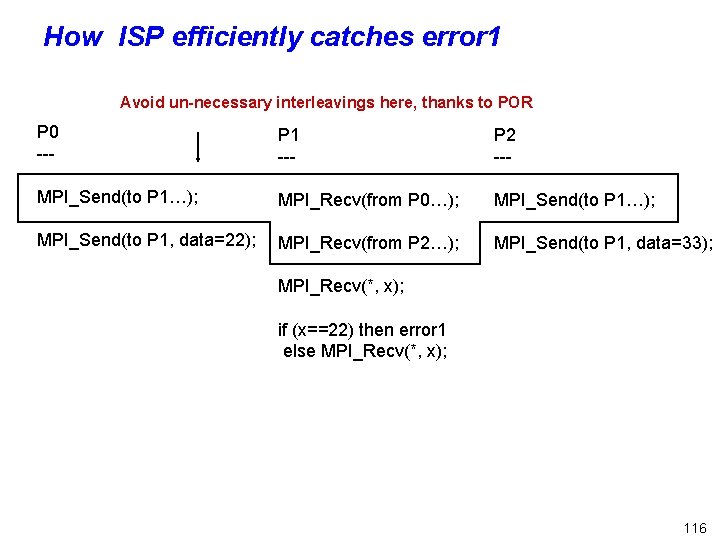

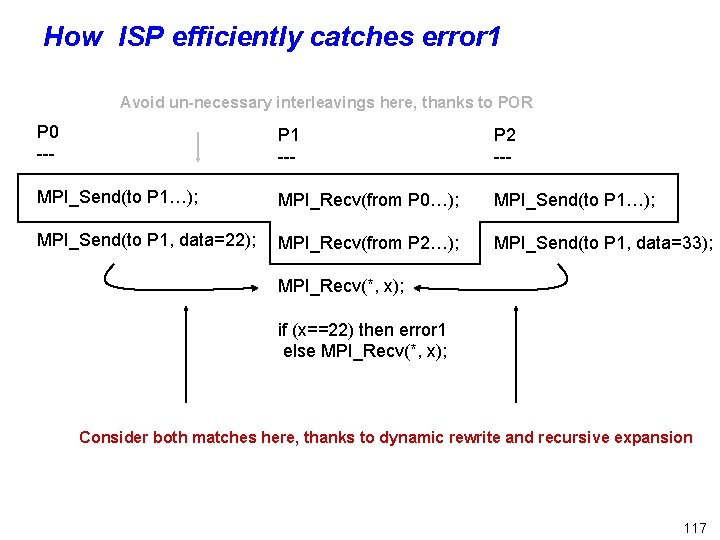

How ISP efficiently catches error 1 Avoid un-necessary interleavings here, thanks to POR P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); 116

How ISP efficiently catches error 1 Avoid un-necessary interleavings here, thanks to POR P 0 --- P 1 --- P 2 --- MPI_Send(to P 1…); MPI_Recv(from P 0…); MPI_Send(to P 1, data=22); MPI_Recv(from P 2…); MPI_Send(to P 1, data=33); MPI_Recv(*, x); if (x==22) then error 1 else MPI_Recv(*, x); Consider both matches here, thanks to dynamic rewrite and recursive expansion 117

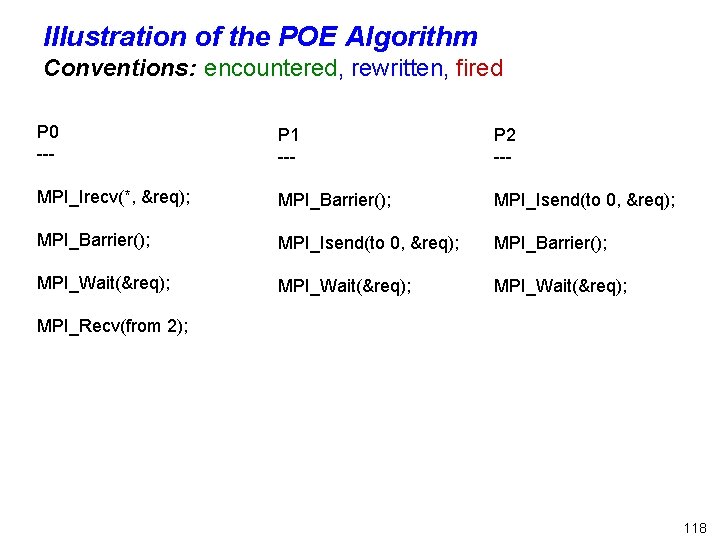

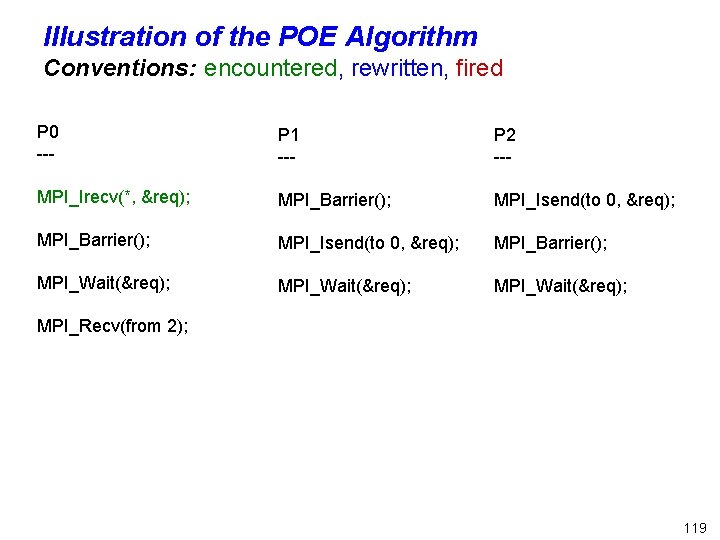

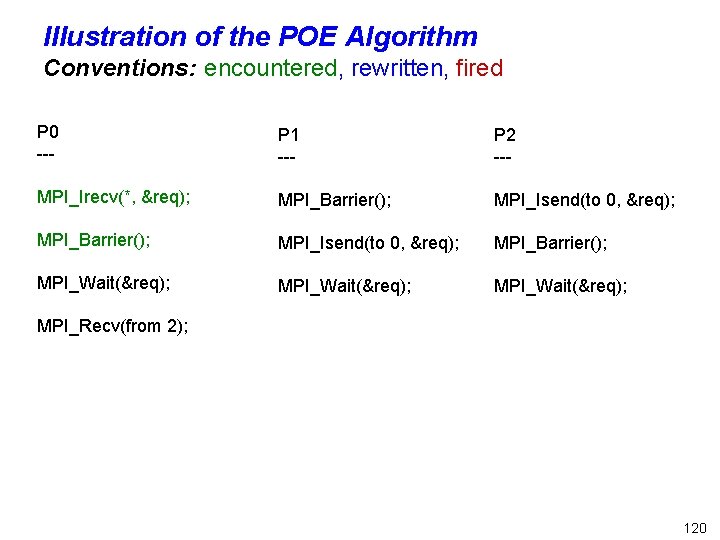

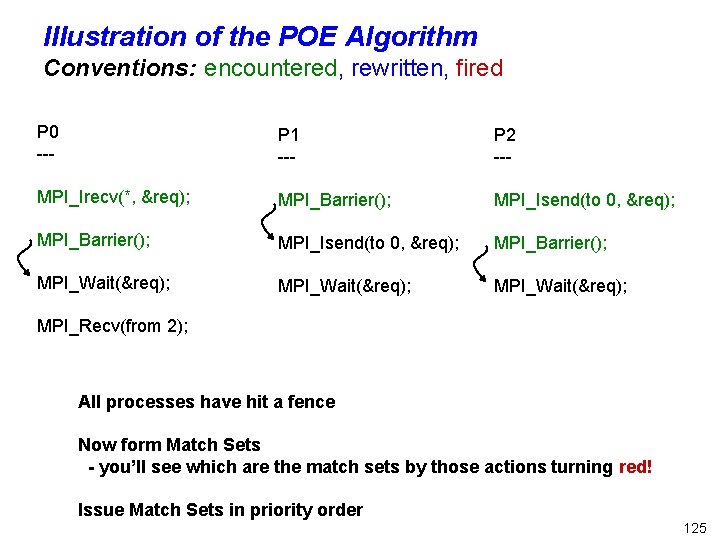

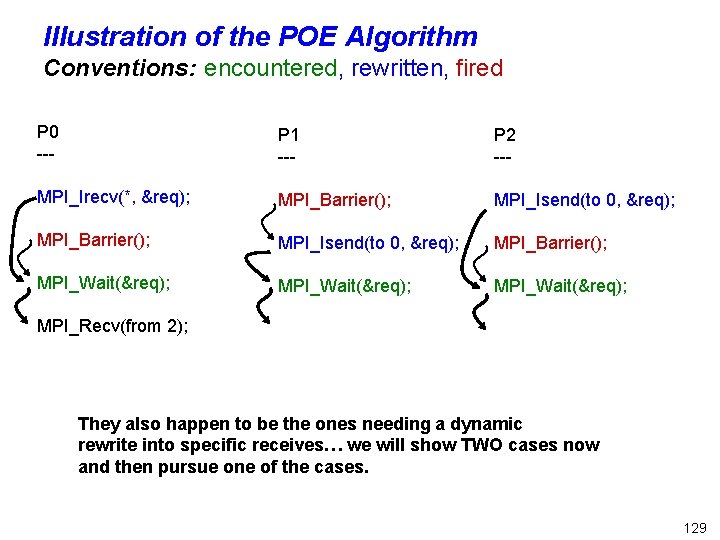

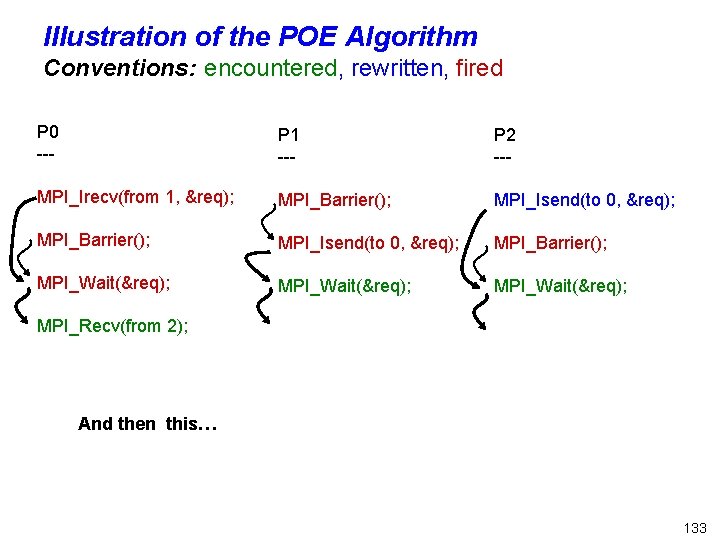

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); 118

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); 119

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); 120

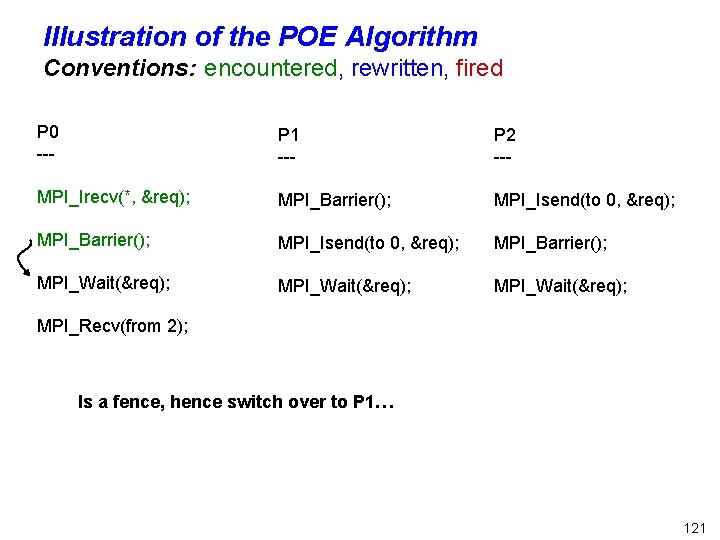

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Is a fence, hence switch over to P 1… 121

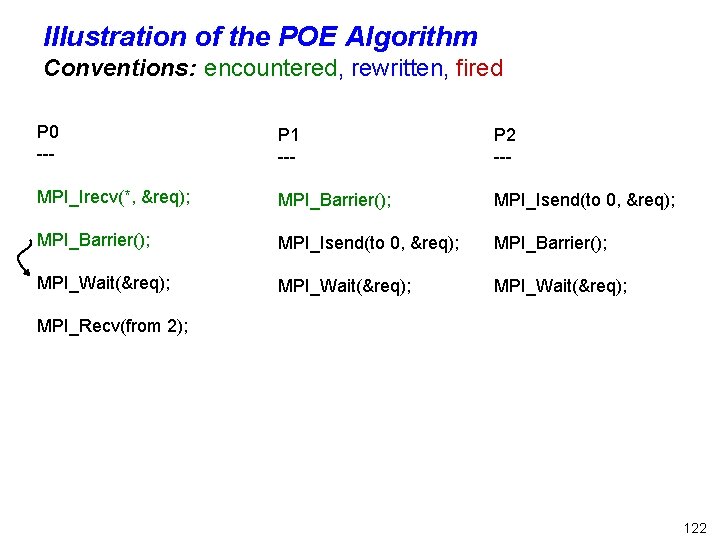

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); 122

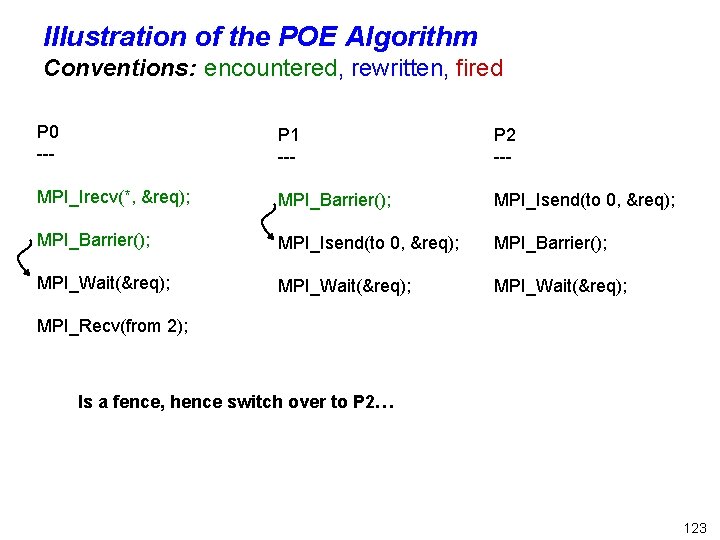

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Is a fence, hence switch over to P 2… 123

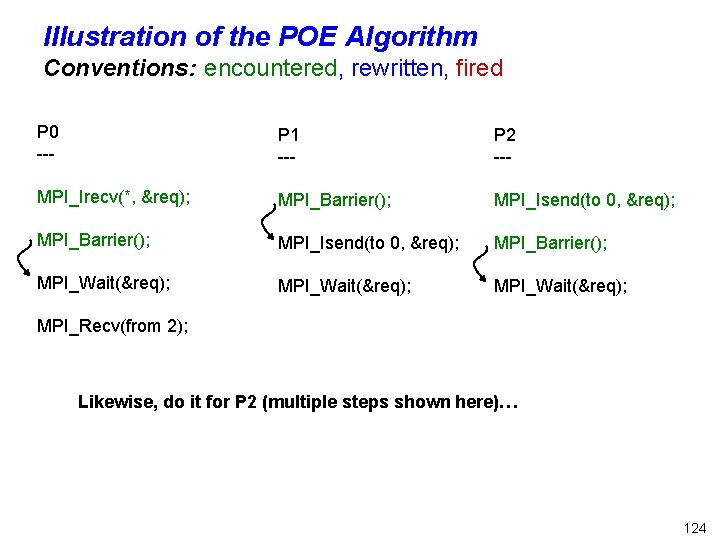

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Likewise, do it for P 2 (multiple steps shown here)… 124

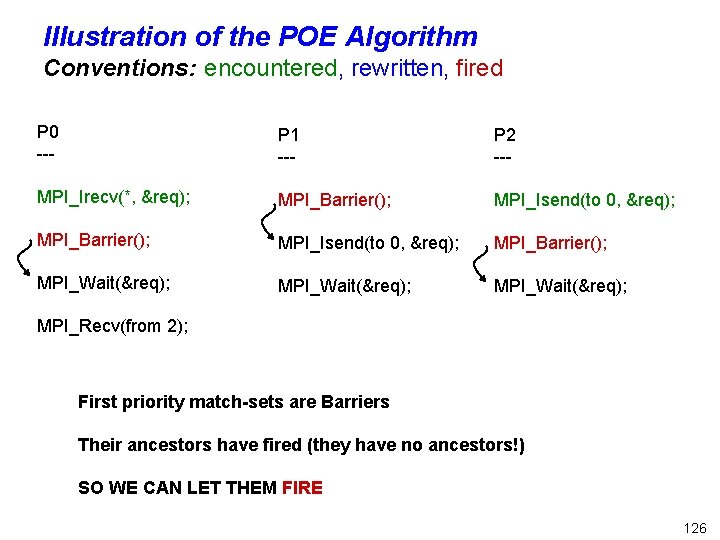

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); All processes have hit a fence Now form Match Sets - you’ll see which are the match sets by those actions turning red! Issue Match Sets in priority order 125

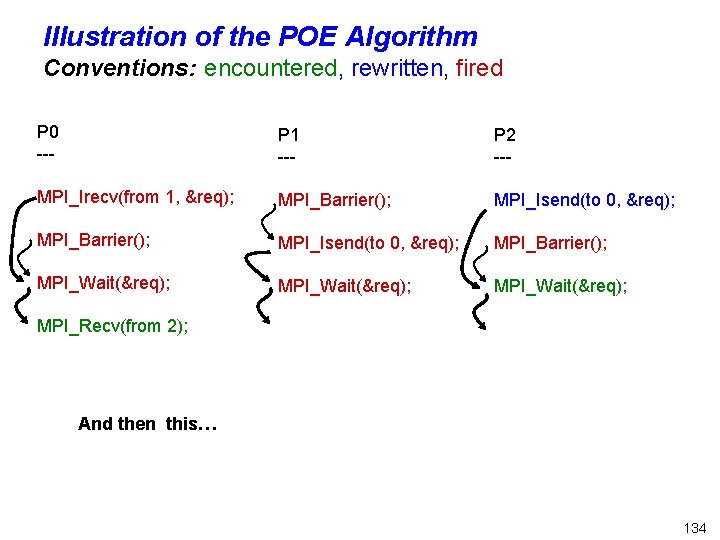

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); First priority match-sets are Barriers Their ancestors have fired (they have no ancestors!) SO WE CAN LET THEM FIRE 126

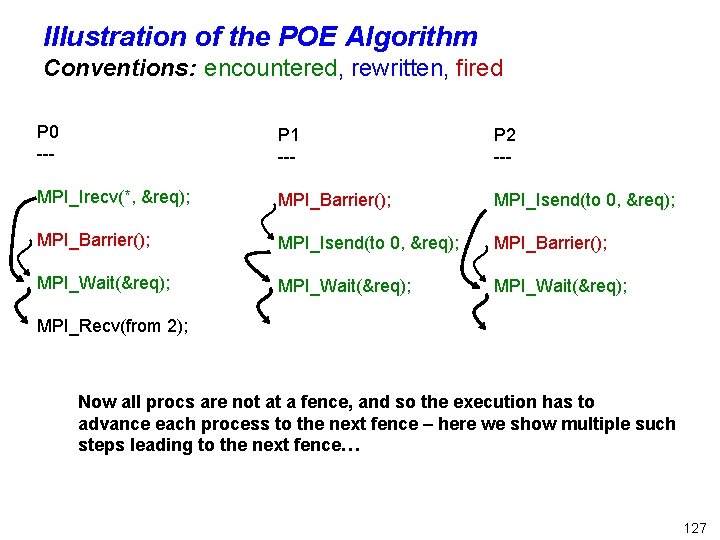

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Now all procs are not at a fence, and so the execution has to advance each process to the next fence – here we show multiple such steps leading to the next fence… 127

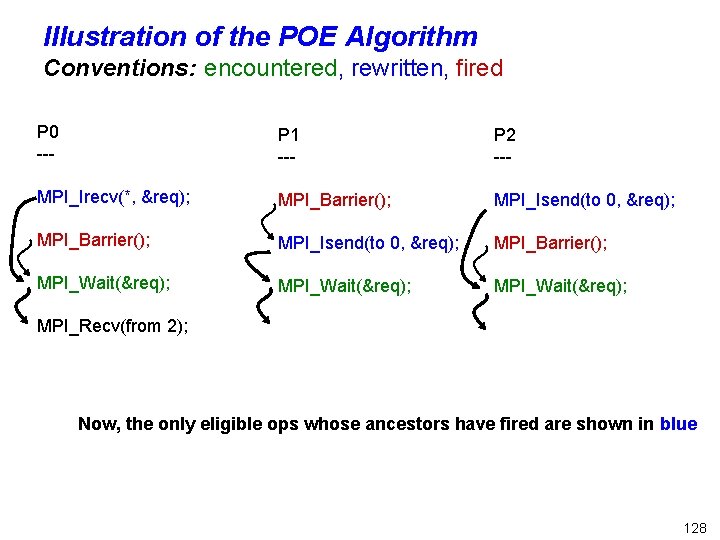

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Now, the only eligible ops whose ancestors have fired are shown in blue 128

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); They also happen to be the ones needing a dynamic rewrite into specific receives… we will show TWO cases now and then pursue one of the cases. 129

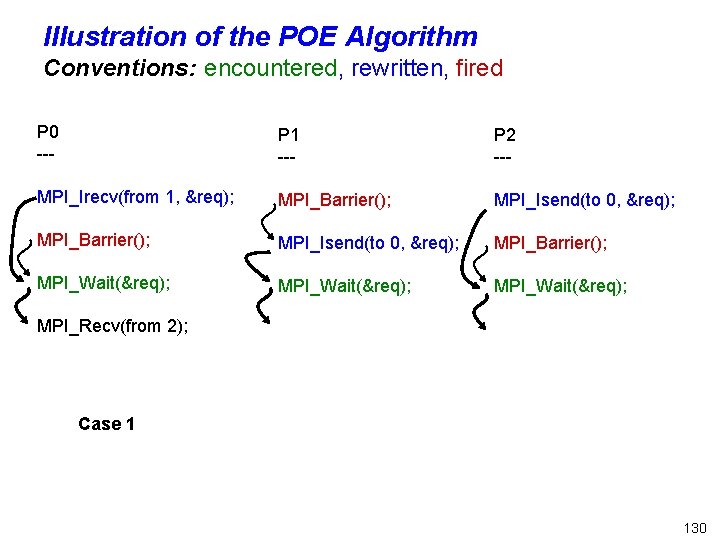

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Case 1 130

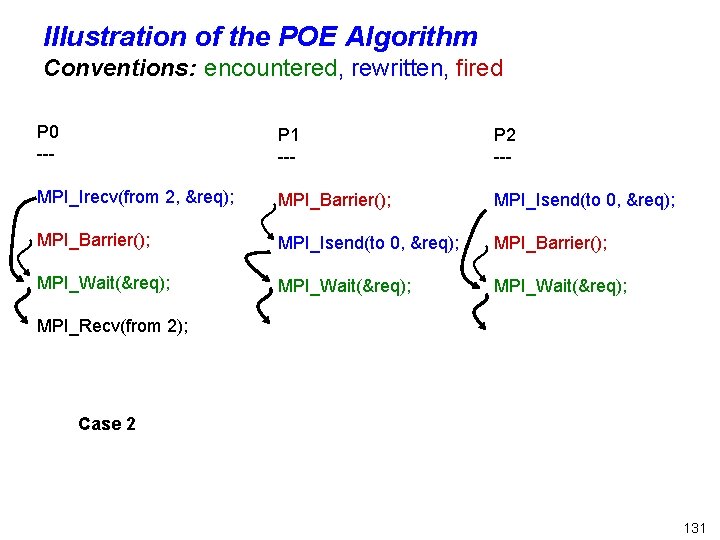

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(from 2, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Case 2 131

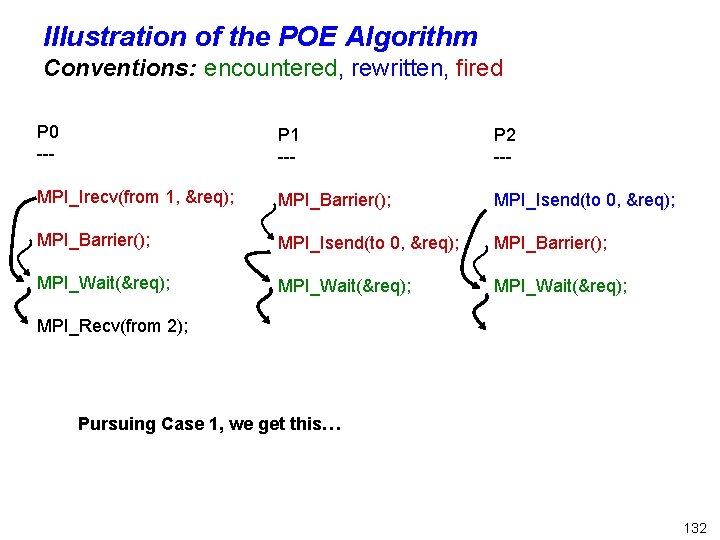

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); Pursuing Case 1, we get this… 132

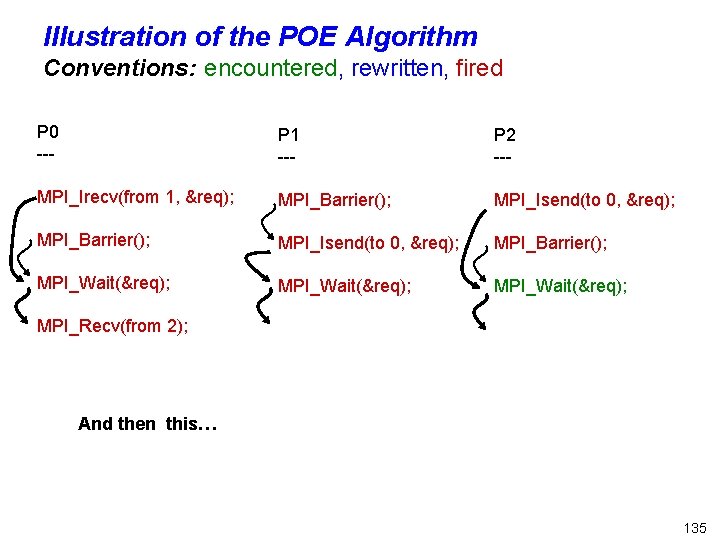

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); And then this… 133

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); And then this… 134

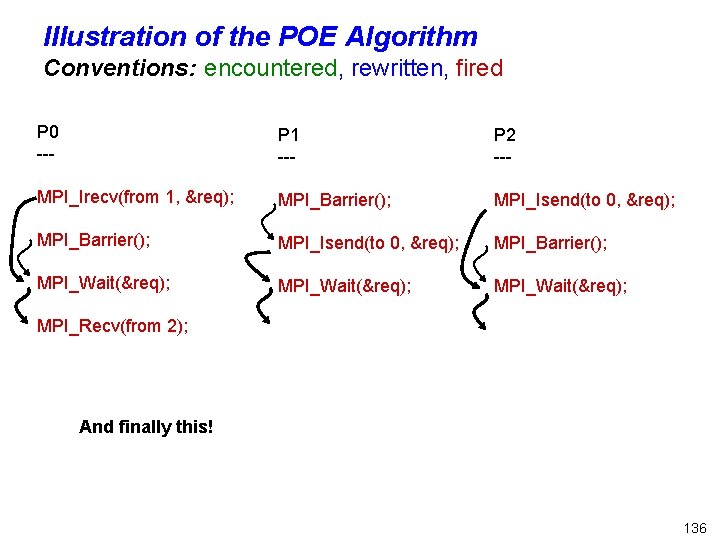

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); And then this… 135

Illustration of the POE Algorithm Conventions: encountered, rewritten, fired P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Barrier(); MPI_Isend(to 0, &req); MPI_Barrier(); MPI_Wait(&req); MPI_Recv(from 2); And finally this! 136

RESULTS pertaining to FIB 137

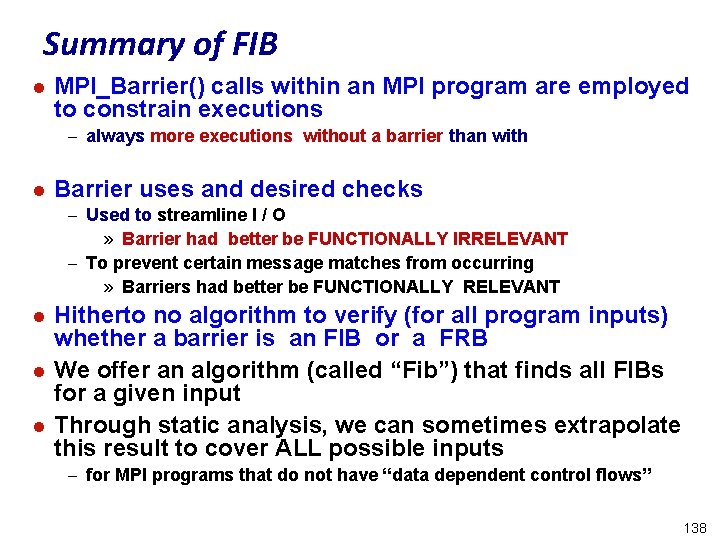

Summary of FIB l MPI_Barrier() calls within an MPI program are employed to constrain executions – always more executions without a barrier than with l Barrier uses and desired checks – Used to streamline I / O » Barrier had better be FUNCTIONALLY IRRELEVANT – To prevent certain message matches from occurring » Barriers had better be FUNCTIONALLY RELEVANT l l l Hitherto no algorithm to verify (for all program inputs) whether a barrier is an FIB or a FRB We offer an algorithm (called “Fib”) that finds all FIBs for a given input Through static analysis, we can sometimes extrapolate this result to cover ALL possible inputs – for MPI programs that do not have “data dependent control flows” 138

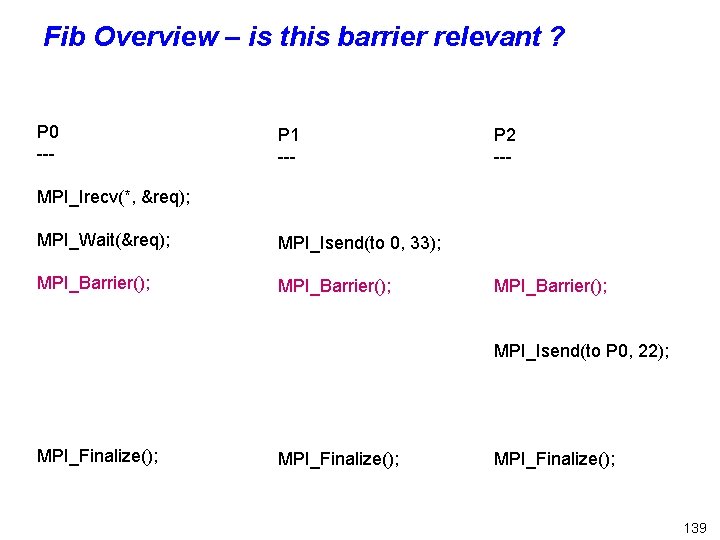

Fib Overview – is this barrier relevant ? P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Wait(&req); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); MPI_Finalize(); 139

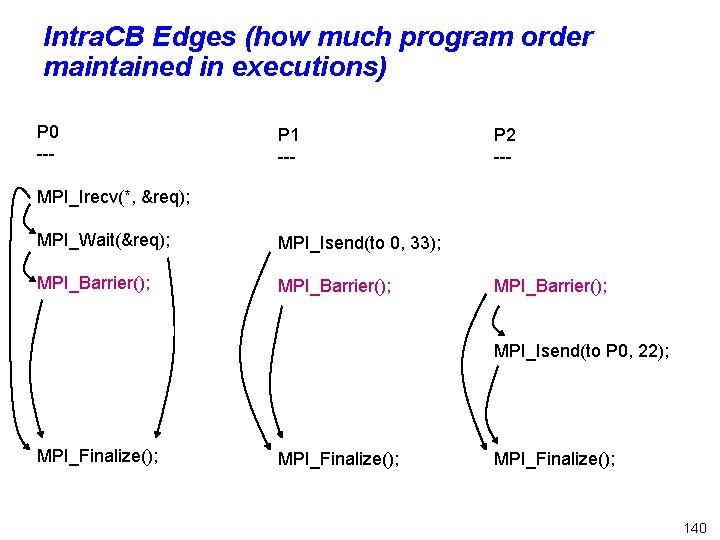

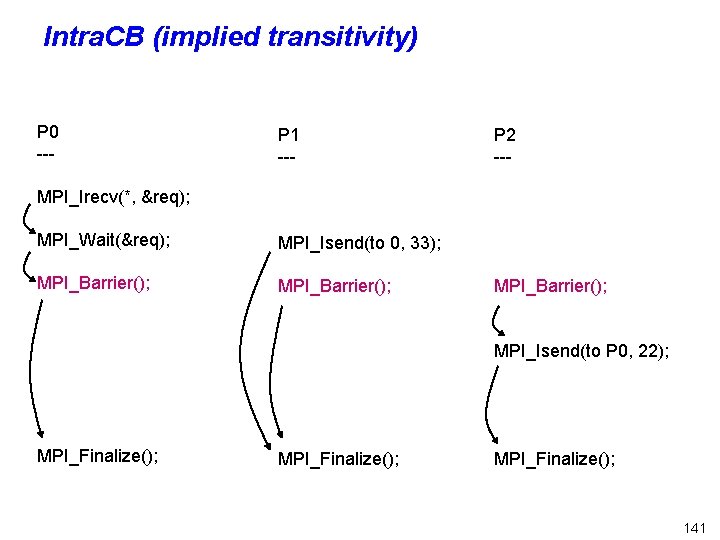

Intra. CB Edges (how much program order maintained in executions) P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Wait(&req); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); MPI_Finalize(); 140

Intra. CB (implied transitivity) P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Wait(&req); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); MPI_Finalize(); 141

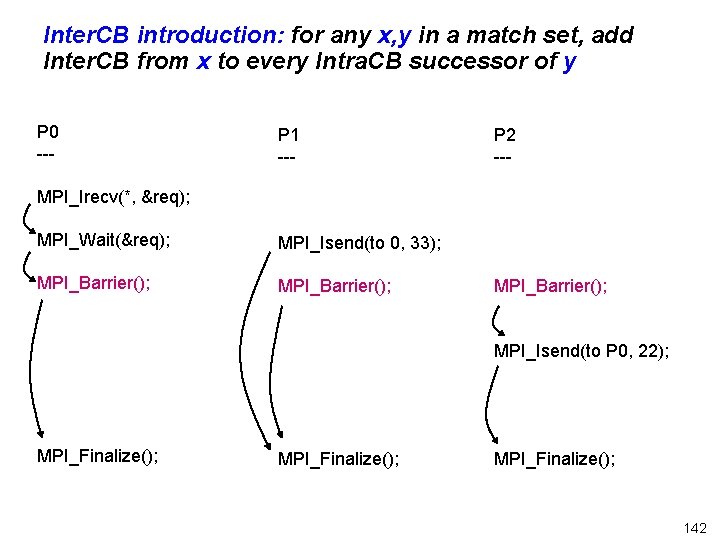

Inter. CB introduction: for any x, y in a match set, add Inter. CB from x to every Intra. CB successor of y P 0 --- P 1 --- P 2 --- MPI_Irecv(*, &req); MPI_Wait(&req); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); MPI_Finalize(); 142

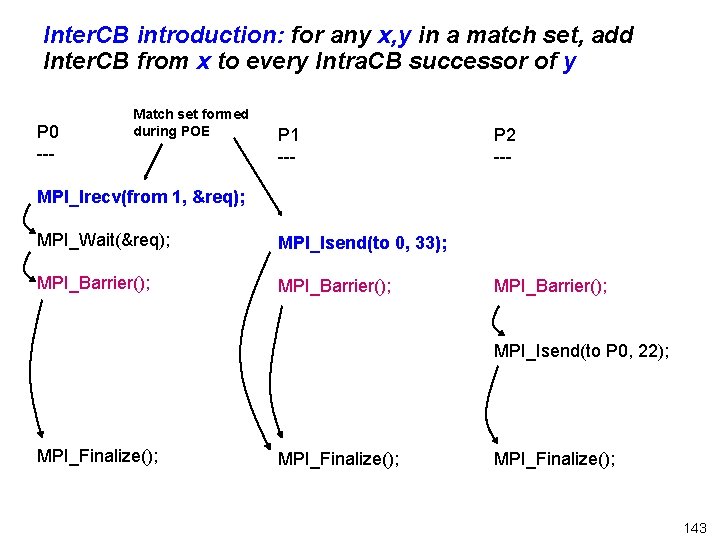

Inter. CB introduction: for any x, y in a match set, add Inter. CB from x to every Intra. CB successor of y P 0 --- Match set formed during POE P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Wait(&req); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); MPI_Finalize(); 143

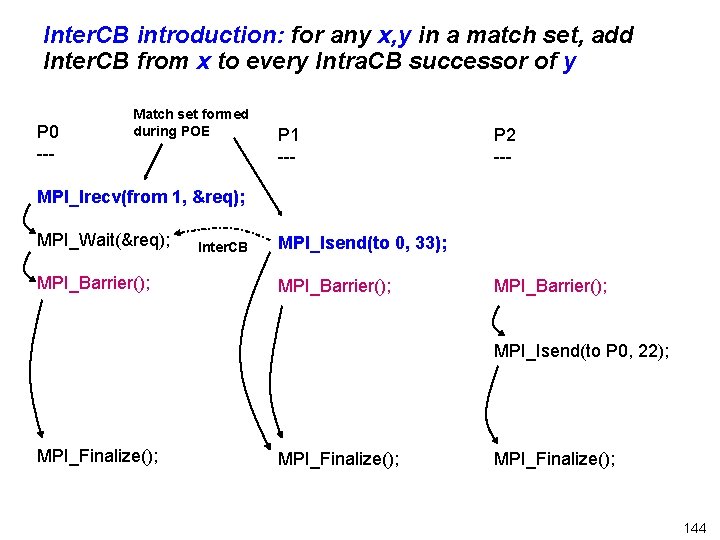

Inter. CB introduction: for any x, y in a match set, add Inter. CB from x to every Intra. CB successor of y P 0 --- Match set formed during POE P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Wait(&req); MPI_Barrier(); Inter. CB MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); MPI_Finalize(); 144

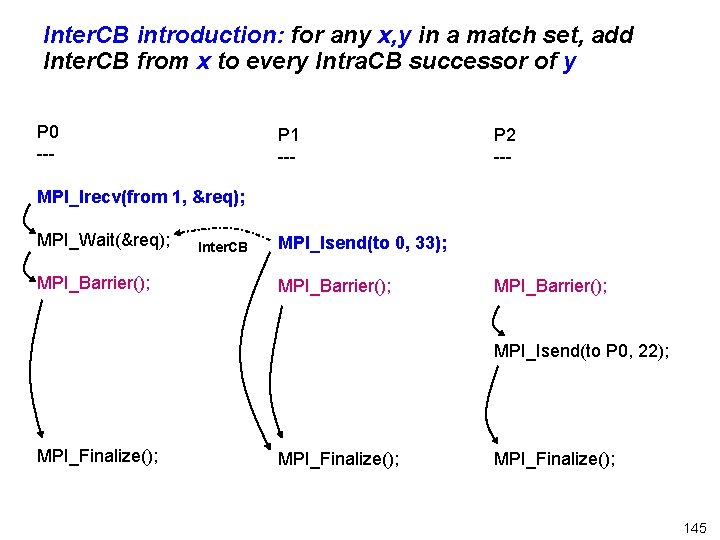

Inter. CB introduction: for any x, y in a match set, add Inter. CB from x to every Intra. CB successor of y P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Wait(&req); MPI_Barrier(); Inter. CB MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); MPI_Finalize(); 145

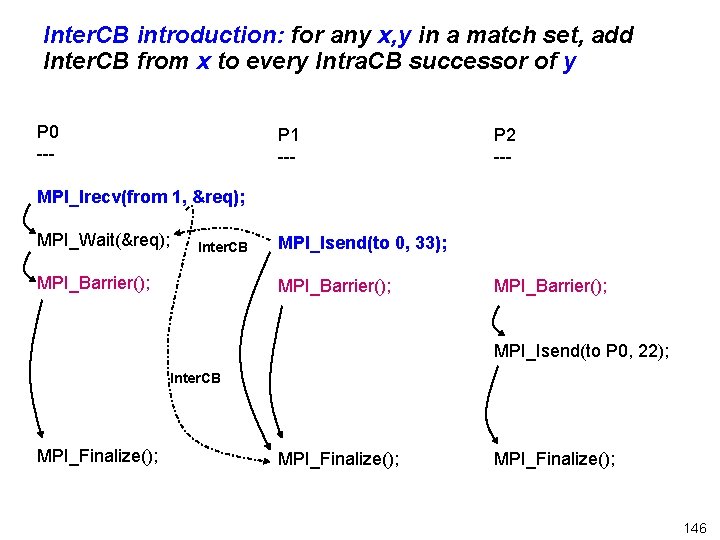

Inter. CB introduction: for any x, y in a match set, add Inter. CB from x to every Intra. CB successor of y P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 146

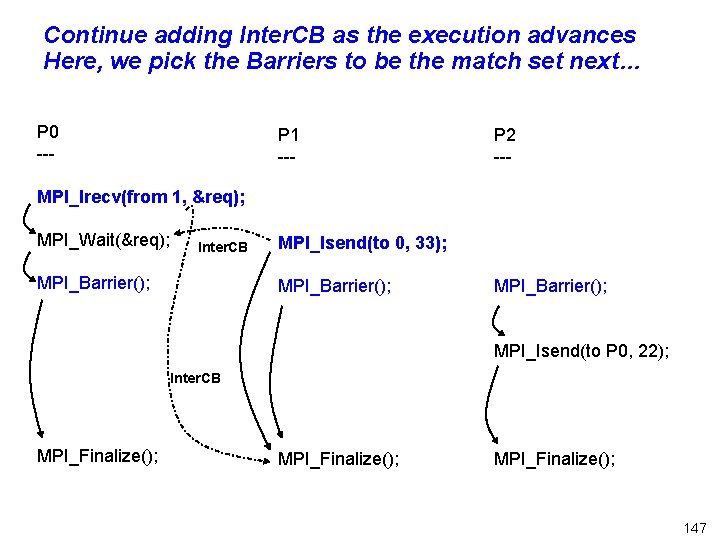

Continue adding Inter. CB as the execution advances Here, we pick the Barriers to be the match set next… P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 147

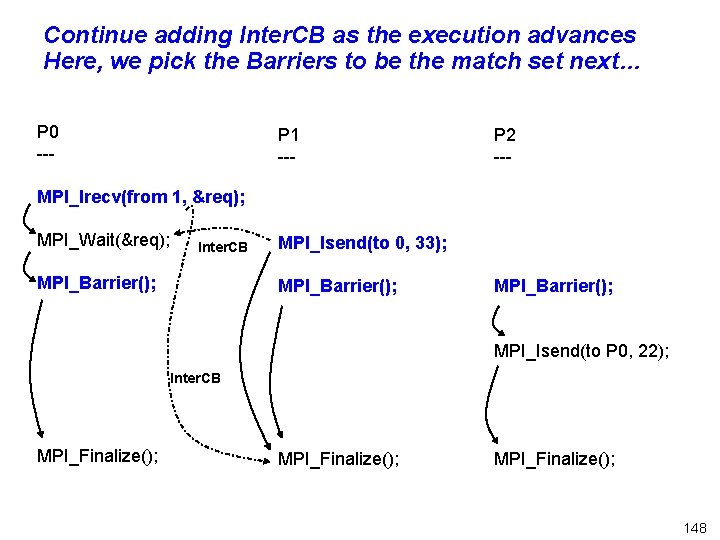

Continue adding Inter. CB as the execution advances Here, we pick the Barriers to be the match set next… P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 148

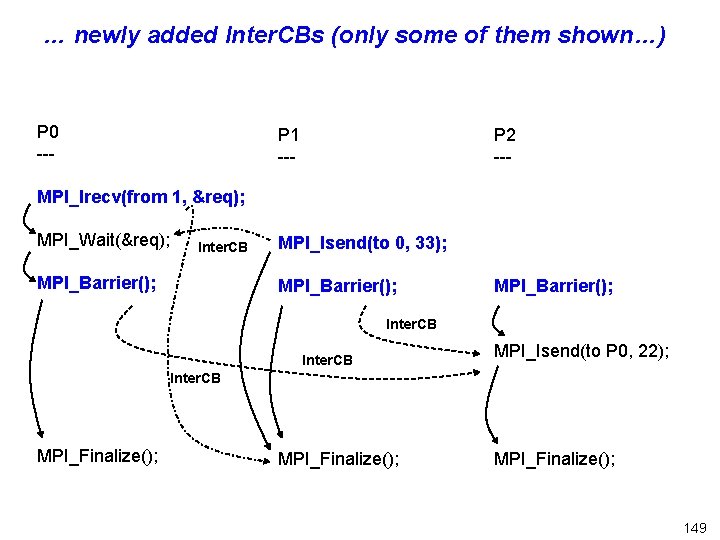

… newly added Inter. CBs (only some of them shown…) P 0 --- P 1 --- P 2 --- MPI_Irecv(from 1, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 149

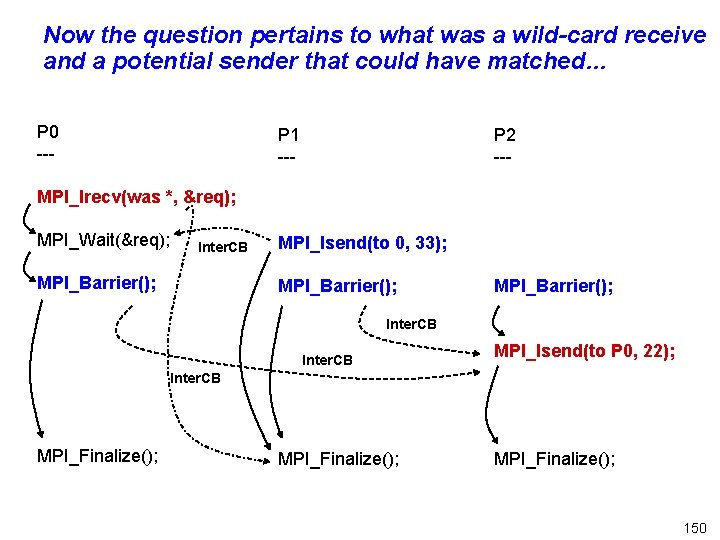

Now the question pertains to what was a wild-card receive and a potential sender that could have matched… P 0 --- P 1 --- P 2 --- MPI_Irecv(was *, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 150

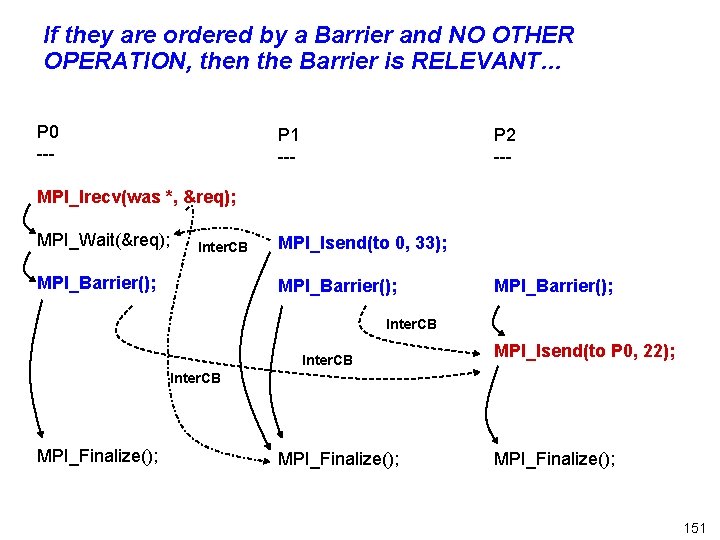

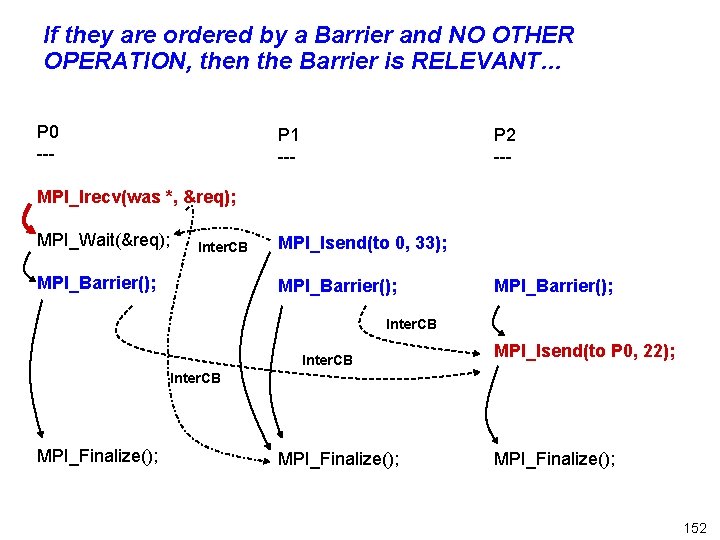

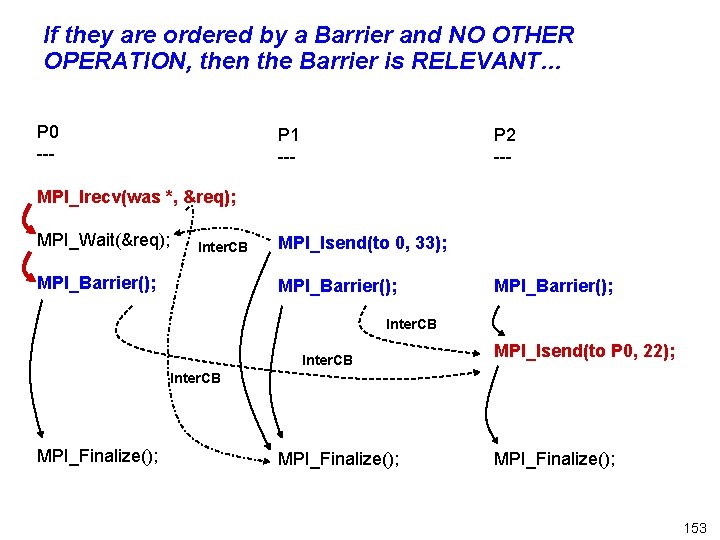

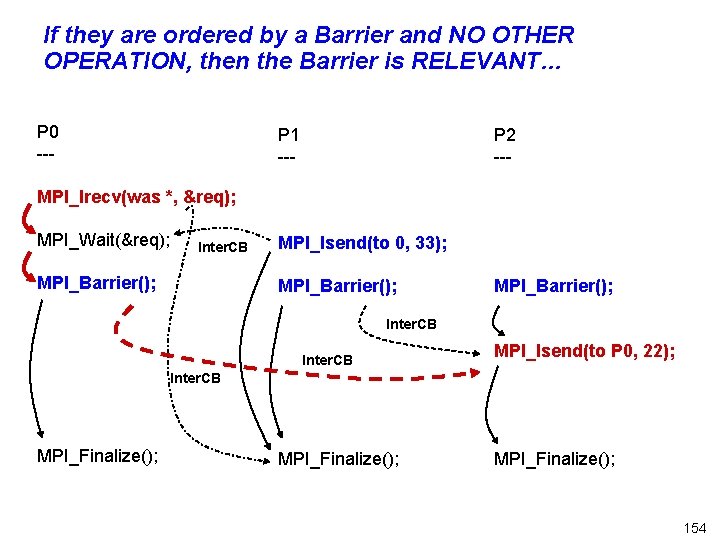

If they are ordered by a Barrier and NO OTHER OPERATION, then the Barrier is RELEVANT… P 0 --- P 1 --- P 2 --- MPI_Irecv(was *, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 151

If they are ordered by a Barrier and NO OTHER OPERATION, then the Barrier is RELEVANT… P 0 --- P 1 --- P 2 --- MPI_Irecv(was *, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 152

If they are ordered by a Barrier and NO OTHER OPERATION, then the Barrier is RELEVANT… P 0 --- P 1 --- P 2 --- MPI_Irecv(was *, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 153

If they are ordered by a Barrier and NO OTHER OPERATION, then the Barrier is RELEVANT… P 0 --- P 1 --- P 2 --- MPI_Irecv(was *, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 154

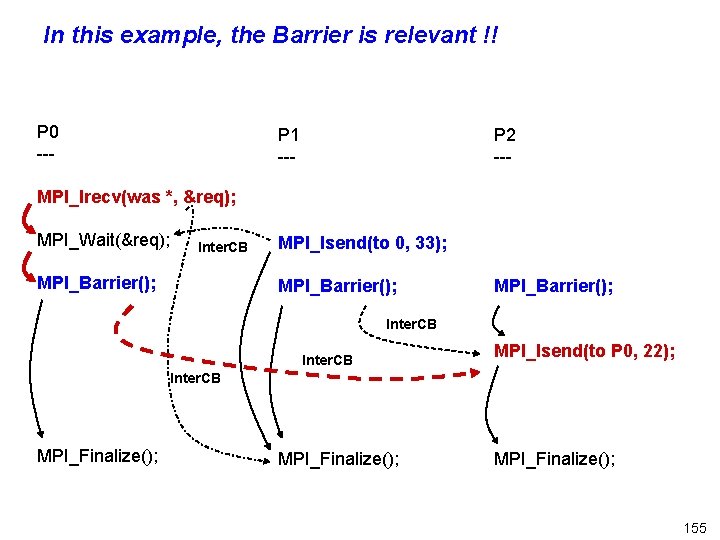

In this example, the Barrier is relevant !! P 0 --- P 1 --- P 2 --- MPI_Irecv(was *, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 155

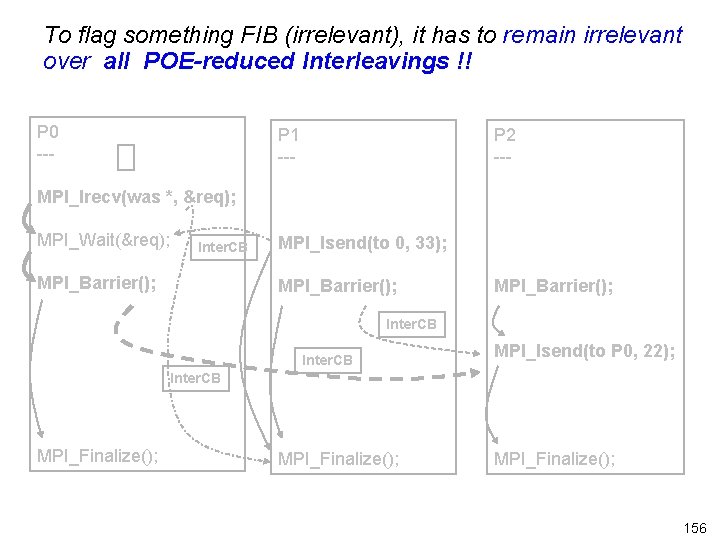

To flag something FIB (irrelevant), it has to remain irrelevant over all POE-reduced Interleavings !! P 0 --- P 1 --- P 2 --- MPI_Irecv(was *, &req); MPI_Wait(&req); Inter. CB MPI_Barrier(); MPI_Isend(to 0, 33); MPI_Barrier(); Inter. CB MPI_Isend(to P 0, 22); Inter. CB MPI_Finalize(); 156

Summary of Fib l Algorithm has been implemented within the ISP tool l Very low overhead (so can keep it turned ‘on’ always…) l Identified FIBs and FRBs in many examples – manually checked correctness of identification l Simple static analysis facility has helped extend this claim over ALL external drivers l Future work : extend reach of this static analysis method to be able to claim FIB over all possible inputs 157

Concluding Remarks l We are “sold” on the merits of dynamic analysis – Gives a sense of realism – Can give designers “debugger-like” interfaces » yet “verifier-like” coverages l Going Forward – Bug-preserving scaling methods are essential to develop » For MPI, Open. MP, … – Collaboration between API designers and verification tool builders » Make APIs easier to use in a “verification mode” » Keep “verification mode” and “execution mode” semantics in agreement » The plethora of concurrency APIs seem to require early attention to such a “verification mode API” – Static and Dynamic Analysis can work with synergy 158

Extra slides 159

Looking Further Ahead: Need to clear “idea log-jam in multi -core computing…” “There isn’t such a thing as Republican clean air or Democratic clean air. We all breathe same air. ” There isn’t such a thing as an architecturalonly solution, or a compilers-only solution to future problems in multi-core computing… 160

Now you see it; Now you don’t ! On the menace of non reproducible bugs. Deterministic replay must ideally be an option l User programmable schedulers greatly emphasized by expert developers l Runtime model-checking methods with statespace reduction holds promise in meshing with current practice… l 161

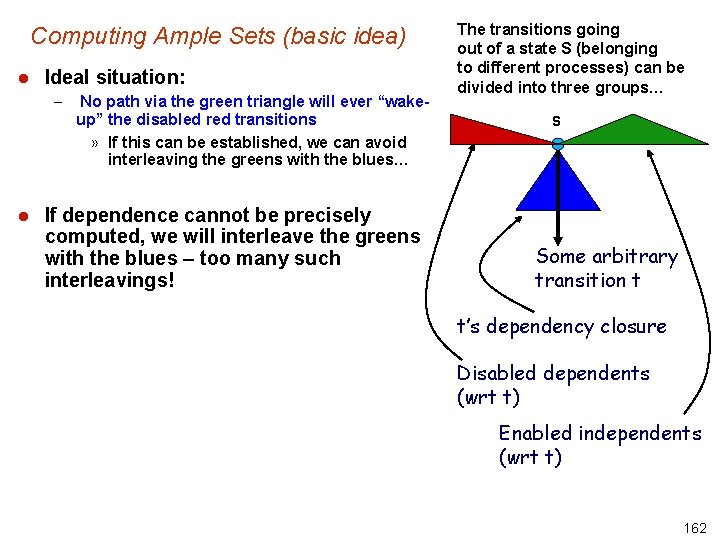

Computing Ample Sets (basic idea) l Ideal situation: – l No path via the green triangle will ever “wakeup” the disabled red transitions » If this can be established, we can avoid interleaving the greens with the blues… If dependence cannot be precisely computed, we will interleave the greens with the blues – too many such interleavings! The transitions going out of a state S (belonging to different processes) can be divided into three groups… S Some arbitrary transition t t’s dependency closure Disabled dependents (wrt t) Enabled independents (wrt t) 162

- Slides: 162