Authorship Verification as a One Classification Problem Moshe

Authorship Verification as a One. Classification Problem Moshe Koppel Jonathan Schler

Introduction § Goal – Given examples of the writing of a single author, ask to determine if given texts is written by this author § Authorship attribution – Given examples of several of authors, ask to determine which author wrote the given anonymous texts

Challenge § Negative samples are neither exhaustive nor representative § Single author may consciously vary his/her style from text to text

Authorship Verification § Naïve Approach – Given examples of the writing of author A – Concoct a mishmash of works by other authors – Learn a model for A vs. not-A – Learn A vs. X (an mystery work) – Easy to distinguish between A and X § Different author § Same author (otherwise)

Authorship Verification § Unmasking basic idea – A small number of features do most of the works in distinguish books – Iteratively remove those most useful features – Gauge the speed with which cross-validation accuracy degrades

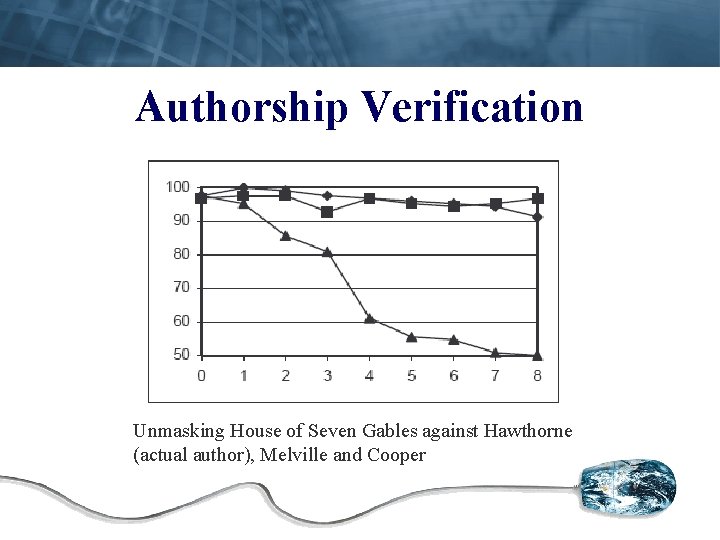

Authorship Verification Unmasking House of Seven Gables against Hawthorne (actual author), Melville and Cooper

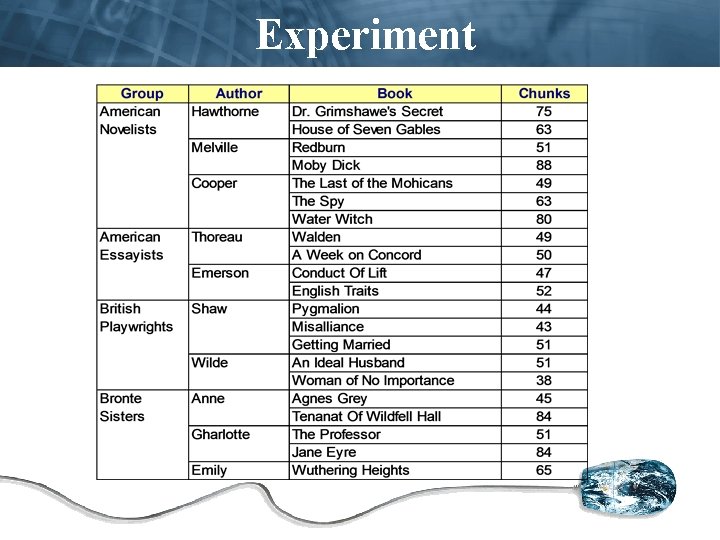

Experiment

Experiment § Use One-class SVM as baseline – 6 of 20 same-author pairs are correctly classified – 143 of 189 different-author pairs are correctly classified

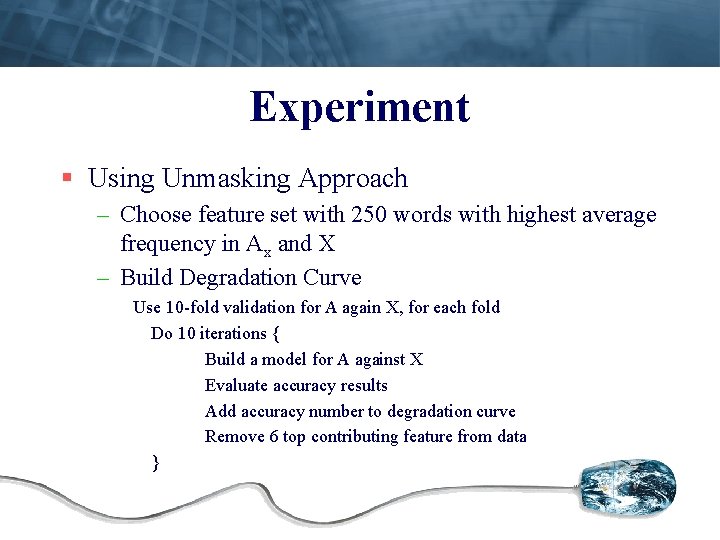

Experiment § Using Unmasking Approach – Choose feature set with 250 words with highest average frequency in Ax and X – Build Degradation Curve Use 10 -fold validation for A again X, for each fold Do 10 iterations { Build a model for A against X Evaluate accuracy results Add accuracy number to degradation curve Remove 6 top contributing feature from data }

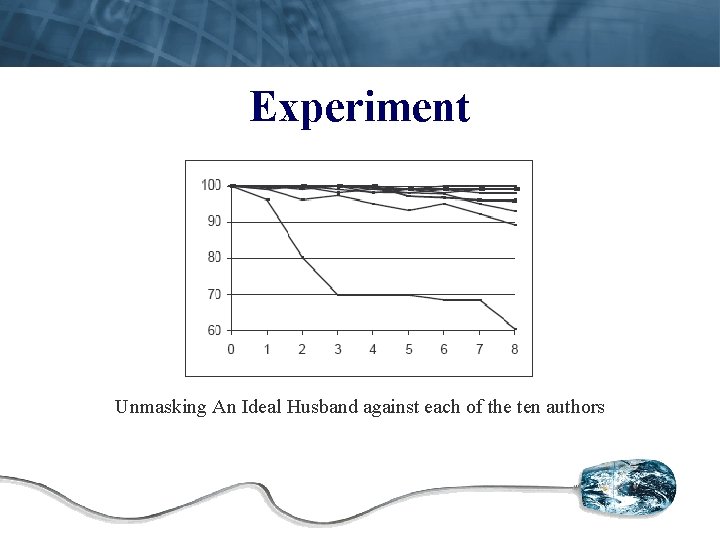

Experiment Unmasking An Ideal Husband against each of the ten authors

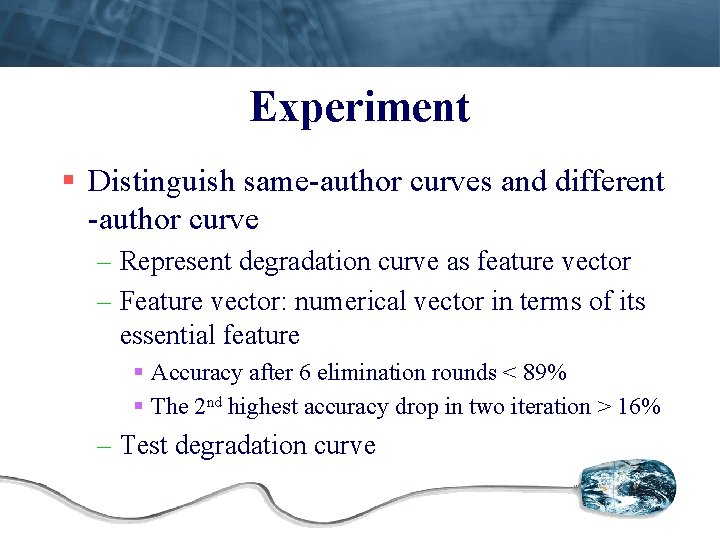

Experiment § Distinguish same-author curves and different -author curve – Represent degradation curve as feature vector – Feature vector: numerical vector in terms of its essential feature § Accuracy after 6 elimination rounds < 89% § The 2 nd highest accuracy drop in two iteration > 16% – Test degradation curve

Experiment Result § 19 of 20 same-author pairs are correctly classified § 181 of 189 different-author pairs are correctly classified § Accuracy 95. 7%

Extension § Use negative examples to eliminate some false positive from the unmasking phase § In our case, use elimination method improved accuracy – 189 of 189 different-author pairs are correctly classified – Introduced a single new misclassified

Extension § Elimination If alternative author {A 1, …, An} exists then { build model M for classifying A vs. all other alternative authors test each chunk of X with built model M for each alternative author Ai build model Mi for classifying Ai vs. {A or all other alternative authors} test each chunk of X with built model Mi } If number of chunks assigned to Ai > # of chunks assigned to A then return different-author }

Actual Literary Mystery § Two 19 th century collection of Hebrew. Aramaic – RP includes 509 documents (by Ben Ish Chai) – TL includes 524 documents (Ben Ish Chai claims to have found in an archive)

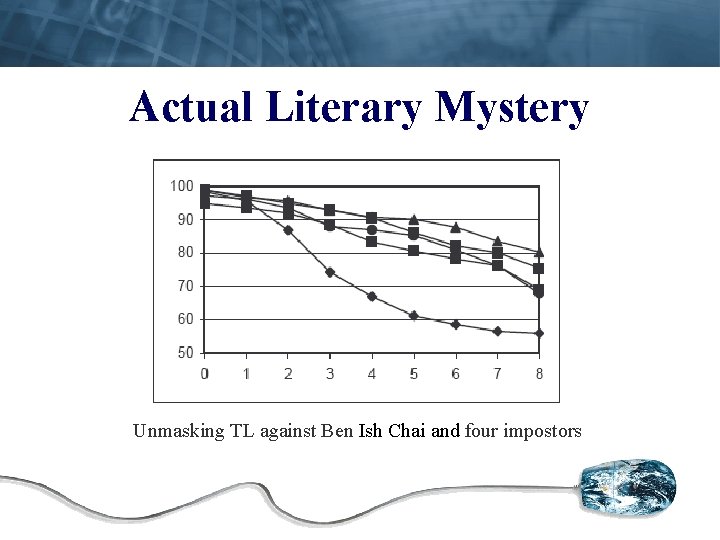

Actual Literary Mystery Unmasking TL against Ben Ish Chai and four impostors

Conclusion § Unmasking – complete ignore examples – High accuracy § Unmasking + Elimination (little negative data) – Accuracy better § More experiment need to confirm this methods is also good for other languages

- Slides: 17