Celebrating 25 Years of TREC The TREC Legal

Celebrating 25 Years of TREC The TREC Legal Track: Key Findings & The Track’s Impact on the Legal Profession Jason R. Baron Drinker Biddle November 15, 2016

Outline § Beginnings: How did the Legal Track come to be? § Issues & Findings § Post-Legal Track Continuing Research § Open Questions § “Measuring” the Legal Track’s Impact on IR & the Law 2

The Legal Profession at the Trailing Edge of Change However, in 2006, real change did occur: the Federal Rules of Civil Procedure were amended to recognize a category of “electronically stored information” as within the scope of civil discovery (“e-discovery”)

In the course of my career, lawyers have gone from …. To this new reality….

Presidential E-mail

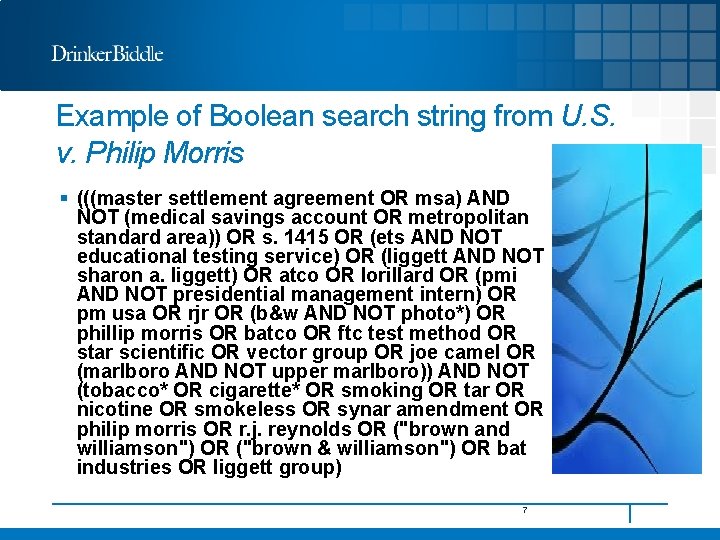

Example of Boolean search string from U. S. v. Philip Morris § (((master settlement agreement OR msa) AND NOT (medical savings account OR metropolitan standard area)) OR s. 1415 OR (ets AND NOT educational testing service) OR (liggett AND NOT sharon a. liggett) OR atco OR lorillard OR (pmi AND NOT presidential management intern) OR pm usa OR rjr OR (b&w AND NOT photo*) OR phillip morris OR batco OR ftc test method OR star scientific OR vector group OR joe camel OR (marlboro AND NOT upper marlboro)) AND NOT (tobacco* OR cigarette* OR smoking OR tar OR nicotine OR smokeless OR synar amendment OR philip morris OR r. j. reynolds OR ("brown and williamson") OR ("brown & williamson") OR bat industries OR liggett group) 7

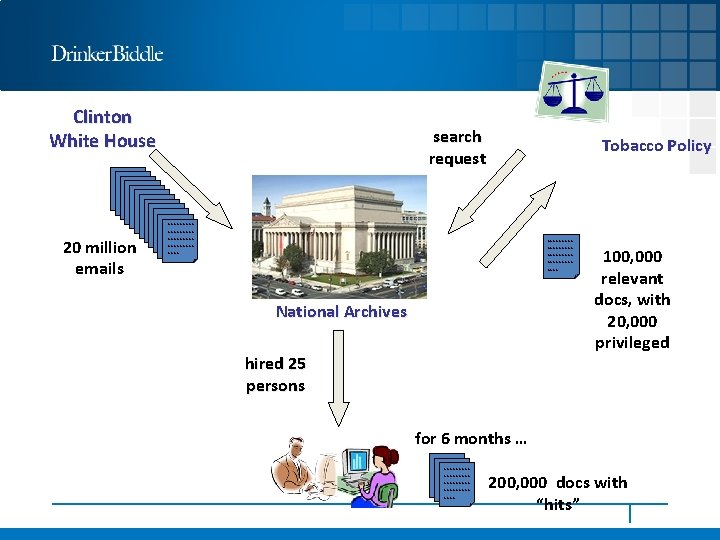

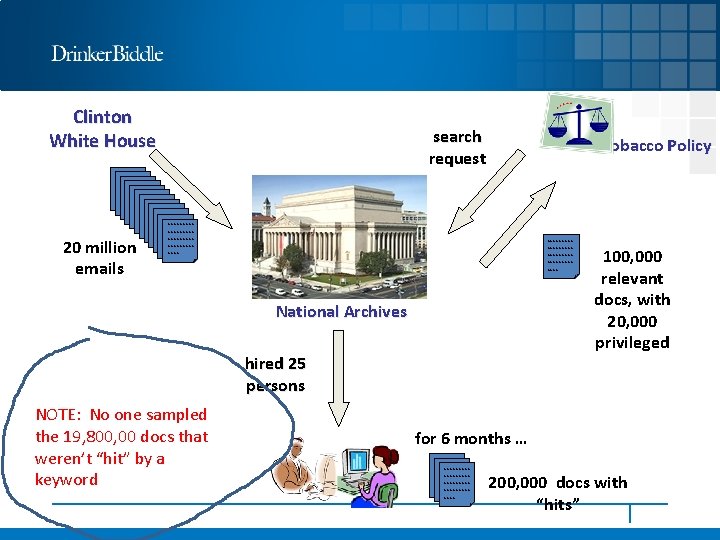

Clinton White House 20 million emails search request Tobacco Policy ~~~~~~~~~ ~~~~~~~~~ ~~~~ National Archives hired 25 persons 100, 000 relevant docs, with 20, 000 privileged for 6 months … ~~~~~~~~~ ~~~~ 200, 000 docs with “hits”

Clinton White House 20 million emails search request Tobacco Policy ~~~~~~~~~ ~~~~~~~~~ ~~~~ National Archives hired 25 persons NOTE: No one sampled the 19, 800, 00 docs that weren’t “hit” by a keyword 100, 000 relevant docs, with 20, 000 privileged for 6 months … ~~~~~~~~~ ~~~~ 200, 000 docs with “hits”

A flawed, inefficient approach to searching ESI…. .

In Search of A Better Way for Lawyers To Search ESI

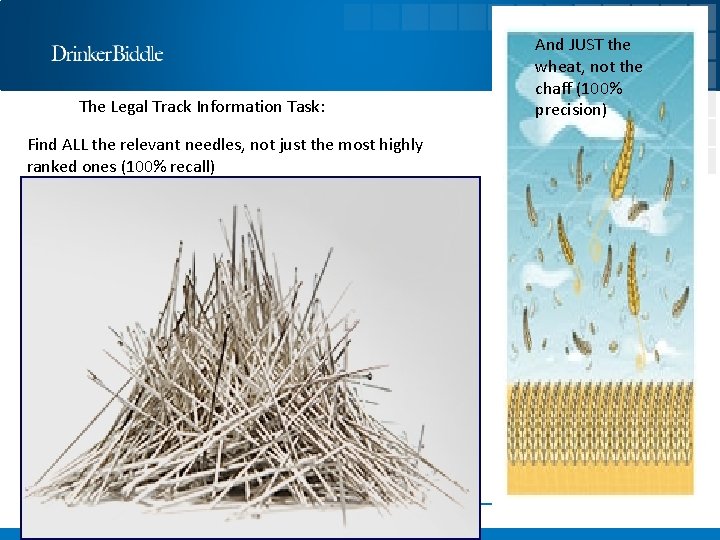

The Legal Track Information Task: Find ALL the relevant needles, not just the most highly ranked ones (100% recall) And JUST the wheat, not the chaff (100% precision)

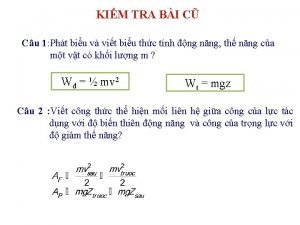

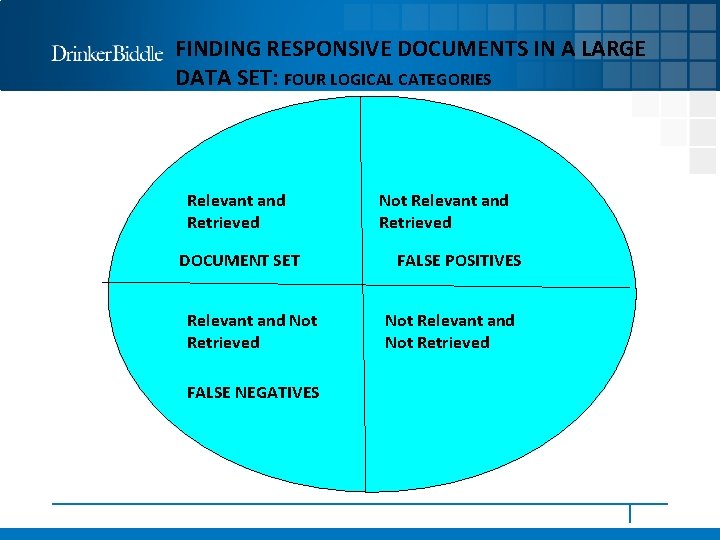

FINDING RESPONSIVE DOCUMENTS IN A LARGE DATA SET: FOUR LOGICAL CATEGORIES Relevant and Retrieved DOCUMENT SET Relevant and Not Retrieved FALSE NEGATIVES Not Relevant and Retrieved FALSE POSITIVES Not Relevant and Not Retrieved

TREC Legal Track Coordinators 2006 -2011 § Jason R. Baron § Gordon V. Cormack § Maura R. Grossman § Bruce Hedin § David D. Lewis § Douglas W. Oard § Stephen Tomlinson § Paul Thompson

TREC Legal Track § The TREC Legal Track was designed to evaluate the effectiveness of search technologies in a real-world legal context § First of a kind study using nonproprietary data since Blair/Maron research in 1985 § Hypothetical complaints and 100+ “requests to produce” drafted by members of The Sedona Conference® § “Boolean negotiations” conducted by Sedona Conference lawyers as a baseline for search efforts § Documents to be searched were drawn from a publicly available 7 million document tobacco litigation Master Settlement Agreement database § Assessments principally accomplished by law student volunteers, later pro bono assistance from staff at document review shops § A new Interactive task added in 2008 and continued in 2009 using Topic Authorities and a post-adjudication round § In 2009, a second Enron data set was added as a separate task, with 11 participants § Participating teams of information scientists from around the world contributing computer runs, plus in 2008 and 2009 from legal service providers § In 2010, the Legal Track included two tasks, an Interactive task focused on end-to-end evaluation of an interactive process of review for responsiveness or privilege and a learning task focused on technology evaluation § In 2011, the Legal Track had a single task, referred to as the Learning task, in which participating teams could use either an interactive or a fully automated process to perform review for responsiveness 16

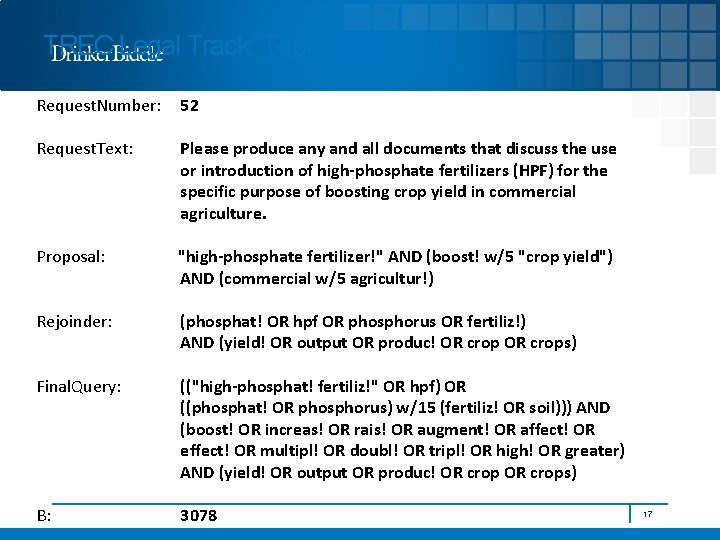

TREC Legal Track: Topics Request. Number: 52 Request. Text: Please produce any and all documents that discuss the use or introduction of high-phosphate fertilizers (HPF) for the specific purpose of boosting crop yield in commercial agriculture. Proposal: "high-phosphate fertilizer!" AND (boost! w/5 "crop yield") AND (commercial w/5 agricultur!) Rejoinder: (phosphat! OR hpf OR phosphorus OR fertiliz!) AND (yield! OR output OR produc! OR crops) Final. Query: (("high-phosphat! fertiliz!" OR hpf) OR ((phosphat! OR phosphorus) w/15 (fertiliz! OR soil))) AND (boost! OR increas! OR rais! OR augment! OR affect! OR effect! OR multipl! OR doubl! OR tripl! OR high! OR greater) AND (yield! OR output OR produc! OR crops) B: 3078 17

Beyond Boolean: getting at the “dark matter” (i. e. , relevant documents not found by keyword searches alone)

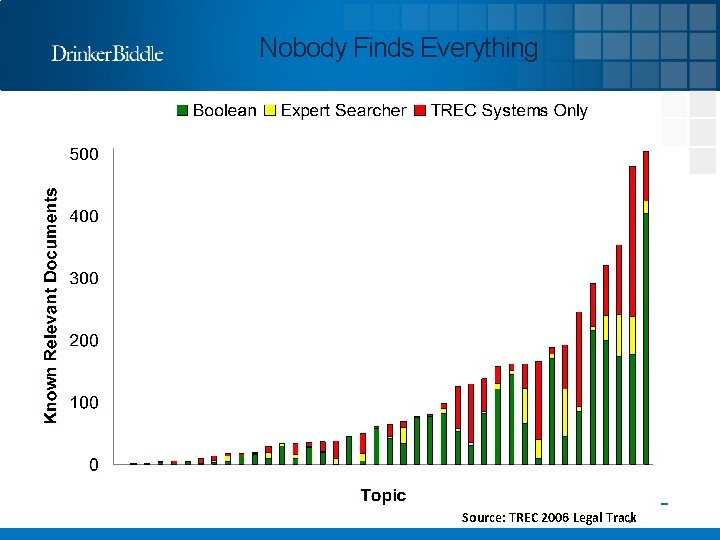

Nobody Finds Everything Source: TREC 2006 Legal Track 19

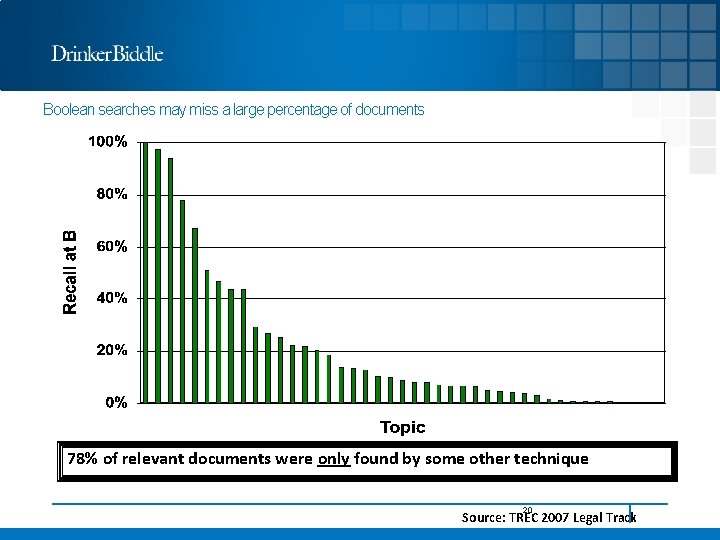

Boolean searches may miss a large percentage of documents 78% of relevant documents were only found by some other technique 20 Source: TREC 2007 Legal Track

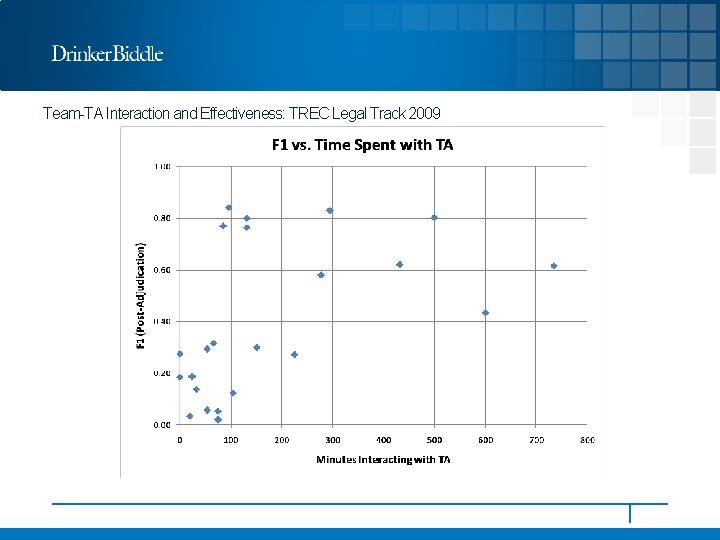

Team-TA Interaction and Effectiveness: TREC Legal Track 2009

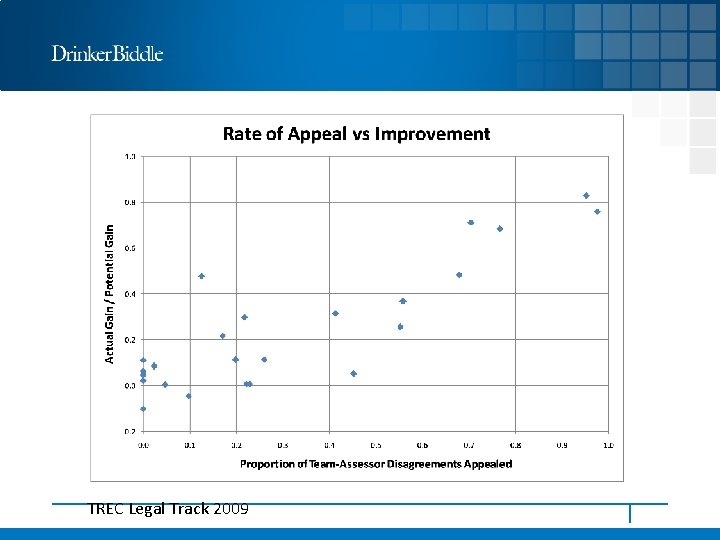

TREC Legal Track 2009

Maura Grossman & Gordon Cormack

Two Important Open Questions as of 2011 When the Legal Track ended § Should lawyers use “training” or “seed” sets for training the machine learning algorithm? § Should lawyers rely on random sampling for the training set vs. using known relevant documents (some of which may be privileged)

ACM Publication § Evaluation of machine-learning protocols for technologyassisted review in electronic discovery § Full Text: Authors: § Gordon V. Cormack, University of Waterloo, ON, Canada; § Maura R. Grossman, Wachtell, Lipton, Rosen & Katz, New York, NY, USA Published in: § SIGIR '14 Proceedings of the 37 th international ACM SIGIR conference on Research & development in information retrieval. Pages 153 -162 § Gold Coast, Queensland, Australia — July 06 - 11, 2014 § ACM New York, NY, USA © 2014 § table of contents ISBN: 978 -1 -4503 -2257 -7 doi>10. 1145/2600428. 2609601 2014 Article 27

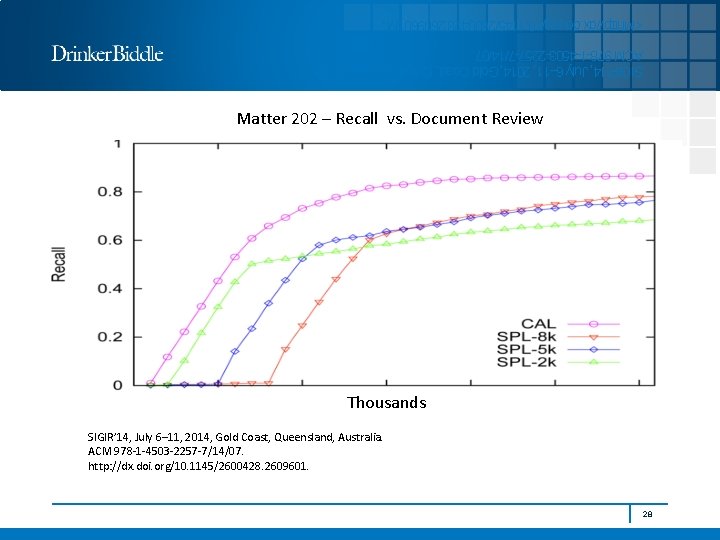

. <Mhttp: //dx. doi. org/10. 1145/2600428. 2609601 M SIGIR’ 14, July 6– 11, 2014, Gold Coast, Queensland, Australia. ACM 978 -1 -4503 -2257 -7/14/07. Matter 202 – Recall vs. Document Review Thousands SIGIR’ 14, July 6– 11, 2014, Gold Coast, Queensland, Australia. ACM 978 -1 -4503 -2257 -7/14/07. http: //dx. doi. org/10. 1145/2600428. 2609601. 28

Open Issues § Using machine learning methods for privilege review § Efficacy of advanced search across all types of electronically stored information (including future datastreams from the Io. T) § Proving the reliability, accuracy, efficiency of machine learning in an evidentiary hearing (under Daubert legal test for the introduction of expert evidence) § How close can we reasonably get to 100% recall with 100% precision? § What type of “standards” can be expected in e-discovery which incorporate what we have learned from machine learning, but which do not hold machine learning to a higher standard? § How transparent should the process be? 29

Do lawyers and judges need to understand the black box?

I

The TREC Legal Track’s Impact 32

Summarizing the Impact of the TREC Legal Track § 2 major court decisions referencing TREC, with judicial blessing of technology assisted review methods coming in 2012 (Da Silva Moore) § Briefs referencing the TREC Legal track: in the hundreds § Online references to TREC Legal Track in the thousands § 57 published law reviews referencing TREC Legal Track § 8 spin-off international workshops (DESI I-VII) and SIRE at SIGIR § SIGIR and CIKM papers and presentations § Ph. D dissertations § TREC Total Recall Track 2015 -2016 (track coordinators include M. Grossman & G. Cormack)

If you build a legal track, lawyers and judges will cite to it

Victor Stanley v. Creative Pipe, 250 F. R. D. 251 (D. Md. 2008) “In addition, there is room for optimism that as search and information retrieval methodologies are studied and tested, this will result in identifying those that are most effective and least expensive to employ for a variety of ESI discovery tasks. Such a study has been underway since 2006, when the National Institute of Standards and Technology (NIST), an agency within the U. S. Department of Commerce, embarked on a cooperative endeavor with the Department of Defense to evaluate the effectiveness of a variety of search methodologies. This project, known as the Text Retrieval Conference (TREC), evolved into the Trec Legal. Track, a research effort aimed at studying the e-discovery review process to evaluate the effectiveness of a wide array of search methodologies. This evaluative process is open to participation by academics, law firms, corporate counsel and companies providing ESI discovery services. See: http: //trec-legal. umiacs. umd. edu. The next test will occur in the summer of 2008. The goal of the project is to create industry best practices for use in electronic discovery. This project can be expected to identify both cost effective and reliable search and information retrieval methodologies and best practice recommendations, which, if adhered to, certainly would support an argument that the party employing them performed a reasonable ESI search, whether for privilege review or other purposes. ” 36

Da Silva Moore vs. Publicis Groupe & MSL Group, 287 F. R. D. 182 (S. D. N. Y. 2012) (Peck, Magistrate Judge) Likewise, Wachtell, Lipton, Rosen & Katz litigation counsel Maura Grossman and University of Waterloo professor Gordon Cormack, studied data from the Text Retrieval Conference Legal Track (TREC) and concluded that: “[T]he myth that exhaustive manual review is the most effective—and therefore the most defensible—approach to document review is strongly refuted. Technology-assisted review can (and does) yield more accurate results than exhaustive manual review, with much lower effort. ” Maura R. Grossman & Gordon V. Cormack, Technology–Assisted Review in E–Discovery Can Be More Effective and More Efficient Than Exhaustive Manual Review, Rich. J. L. & Tech. , Spring 2011, at 48. 12 The technology-assisted reviews in the Grossman–Cormack article also demonstrated significant cost savings over manual review: “The technology-assisted reviews require, on average, human review of only 1. 9% of the documents, a fifty-fold savings over exhaustive manual review. ” Id. at 43. Footnote 12: Grossman and Cormack also note that “not all technology-assisted reviews. . . are created equal” and that future studies will be needed to “address which technology -assisted review process(es) will improve most on manual review. ” Id.

Da Silva Moore vs. Publicis Groupe & MSL Group, 287 F. R. D. 182 (S. D. N. Y. 2012) (Peck, Magistrate Judge) (continued) “This Opinion appears to be the first in which a Court has approved of the use of computer-assisted review. That does not mean computer-assisted review must be used in all cases, or that the exact ESI protocol approved here will be appropriate in all future cases that utilize computer-assisted review. . What the Bar should take away from this Opinion is that computer-assisted review is an available tool and should be seriously considered for use in large-data-volume cases where it may save the producing party (or both parties) significant amounts of legal fees in document review. Counsel no longer have to worry about being the “first” or “guinea pig” for judicial acceptance of computer-assisted review. . Computer-assisted review now can be considered judicially-approved for use in appropriate cases.

1 st E-discovery course taught in US at an i. School LBSC 708 X / INFM 718 X - Seminar on E-Discovery Spring 2009 Doug Oard and J. R. Baron Followed by NSF research grants thru the present day…. .

DESI (Discovery of ESI) Workshops and SIRE at SIGIR Palo Alto 2007 London 2008 Barcelona 2009 Beijing 2010 Pittsburgh 2011 ROME 2013 San Diego 2015

Rand Report: Where the money goes: Understanding Litigant Expenditures for Poducing Electronic Discovery (N. Pace et al. 2012)

THE DECADE OF DISCOVERY • Jun 16 Royal Society of Netherlands Archivists • May 11 Drexel University • Apr 26 ARMA Northwest Portland OR • Apr 7 ARMA Triangle Durham NC • Feb 1 The London, NYC • Jan 20 Seton Hall Law -- 2015 -- • • Nov 26 UK National Archives • Oct 22 Univ of Kansas School of Law • Oct 22 Washburn University School of Law • Oct 21 UMKC School of Law • Oct 8 University of Maryland i. School • Oct 7 Suffolk University Law School • Oct 5 ARMA Annual Meeting • Oct 1 UC San Diego Law School • Sep 29 UC Hastings Law School • Sep 28 UCLA Law School • Sep 10 Emory Law • Aug 26 Digital Government Institute • Aug 21 John Marshall Law School • May 13 Middlesex Univ. , London UK • May 12 London • May 7 Zurich • Apr 29 ARMA Madison • Apr 23 Amsterdam U. of Applied Sciences • Apr 22 Tuschinski Theater, The Netherlands • Apr 20 Palais Brongniart, Paris • Apr 16 Minneapolis - St. Paul Intn'l Film Festival • Apr 15 Everett Dirksen Courthouse, Chicago • Apr 2 U Pitt School of Law • Mar 5 Oklahoma Bar Association • Mar 3 Boston Univ. School of Law • Feb 26 NKU Chase School of Law • Feb 26 ACEDS Jacksonville • Feb 19 Univ. of Denver Sturm College of Law • Jan 28 ARMA NNJ • Jan 27 National Archives, Washington DC • Jan 20 Univ. of Florida Levin College of Law -- 2014 -- • • Dec 11 Bloomberg NYC • Dec 4 SFJazz Center • Dec 3 Google • Dec 2 Bloomberg Houston • Nov 19 Bloomberg DC • Nov 14 Penn State Law • Nov 12 Gene Siskel Theater • Nov 11 Cardozo School of Law • Nov 3 Univ. of South Carolina Law School • Oct 20 Prague, CZ Law. Tech. Europe. Congress • Oct 16 Montreal, QC Canada SSHRC Event • Oct 4 New. Film. Makers. NY Fall Film Series • Oct 2 New York, NY Federal Attorneys • Jun 21 Manhattan Film Festival • May 31 Hoboken Intn'l Film Festival

The TREC Legal Track & alien features in the law… § Project management § Industrial productions § Using interdisciplinary approaches § Using advanced search techniques § Sampling and iteration § Cooperating with adversaries § Metrics and evaluation: the vocabulary of the law Source: The Sedona Conference Commentary on Achieving Quality in E-Discovery (2013) 50

The Legal Community’s Understanding of the TREC Legal Track 51

Faith in Analytics

Analytics in other legal contexts

The Surveillance Society

Algorithm Ethics

Jason R. Baron Of Counsel Information Governance & e. Discovery Group Drinker Biddle LLP 202. 230. 5196 Jason. baron@dbr. com Twitter: @jasonrbaron 1

- Slides: 58