Ben Cousins and Santosh Vempala The Volume Problem

Ben Cousins and Santosh Vempala

The Volume Problem �

Volume: first attempt � Divide and conquer: � Difficulty: number of parts grows exponentially in n.

More generally: Integration �

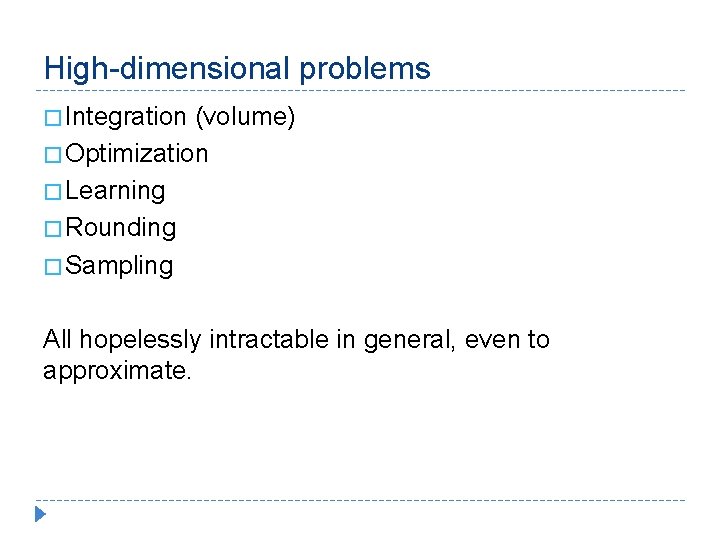

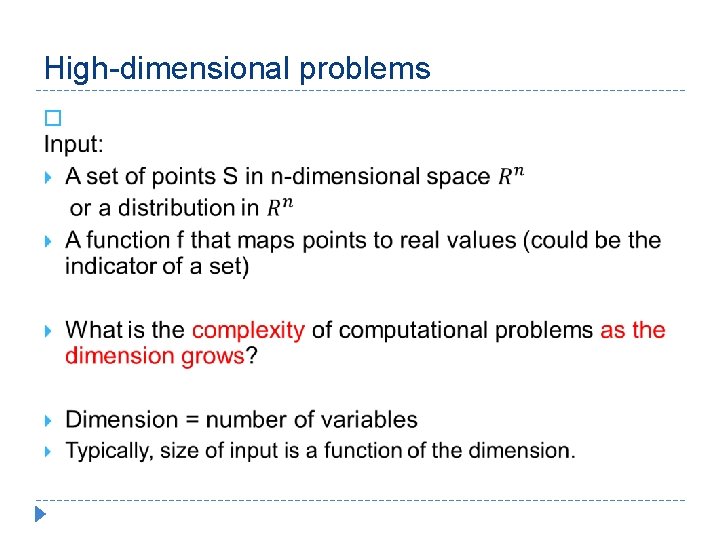

High-dimensional problems � Integration (volume) � Optimization � Learning � Rounding � Sampling All hopelessly intractable in general, even to approximate.

High-dimensional problems �

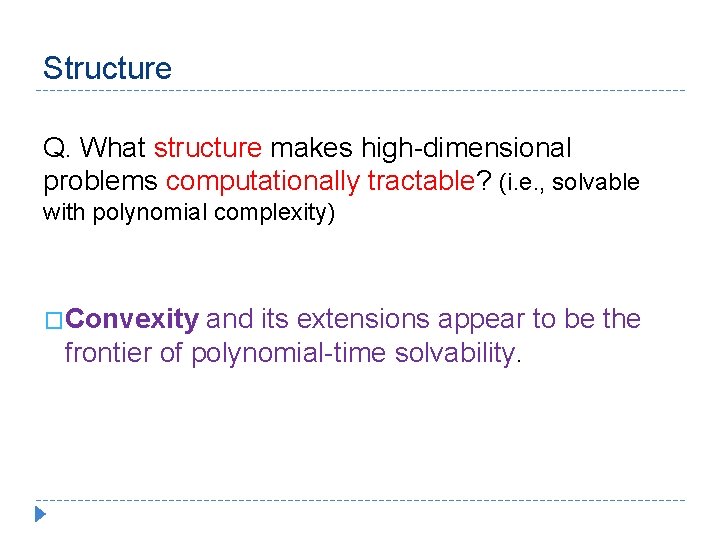

Structure Q. What structure makes high-dimensional problems computationally tractable? (i. e. , solvable with polynomial complexity) �Convexity and its extensions appear to be the frontier of polynomial-time solvability.

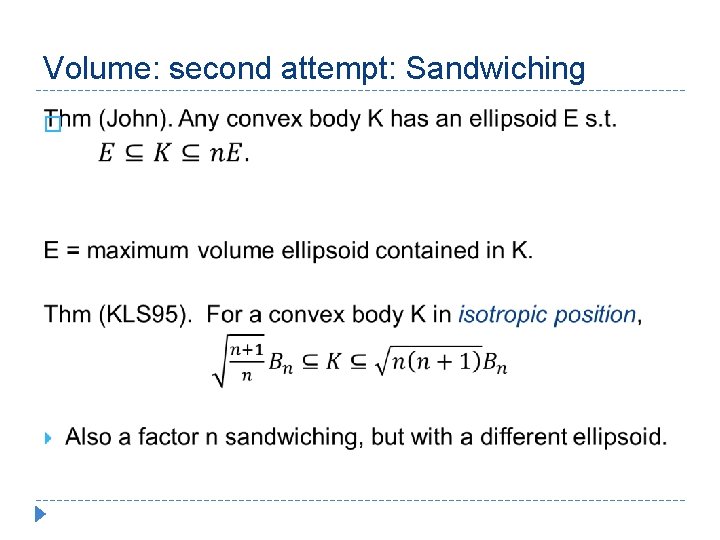

Volume: second attempt: Sandwiching �

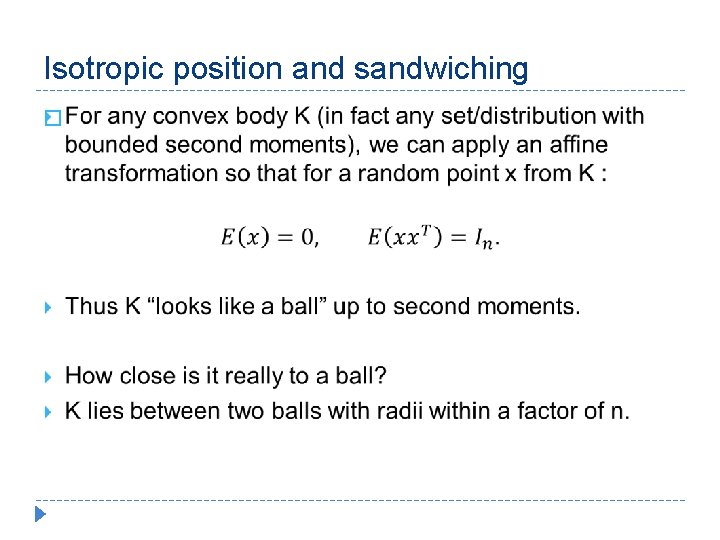

Isotropic position and sandwiching �

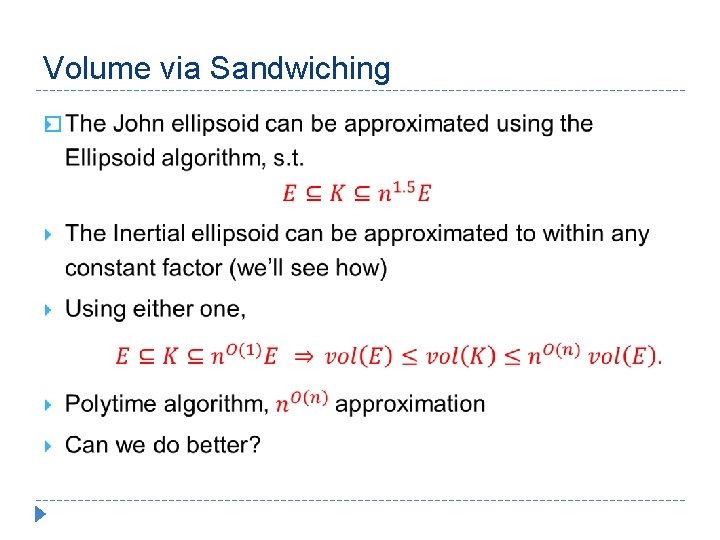

Volume via Sandwiching �

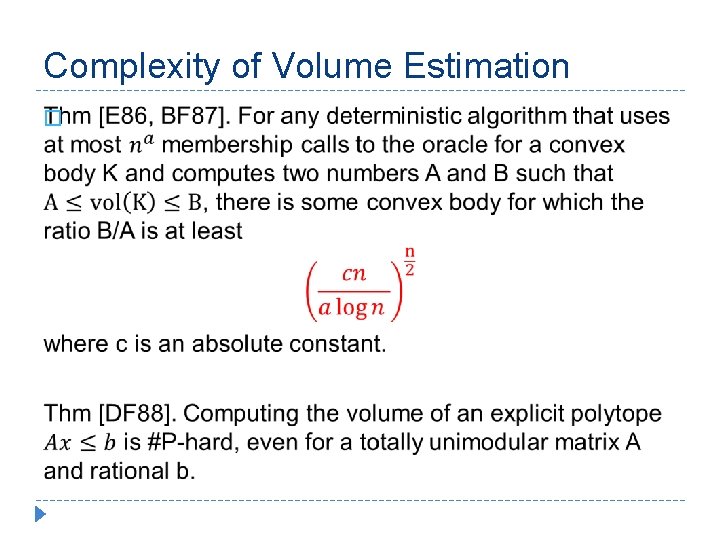

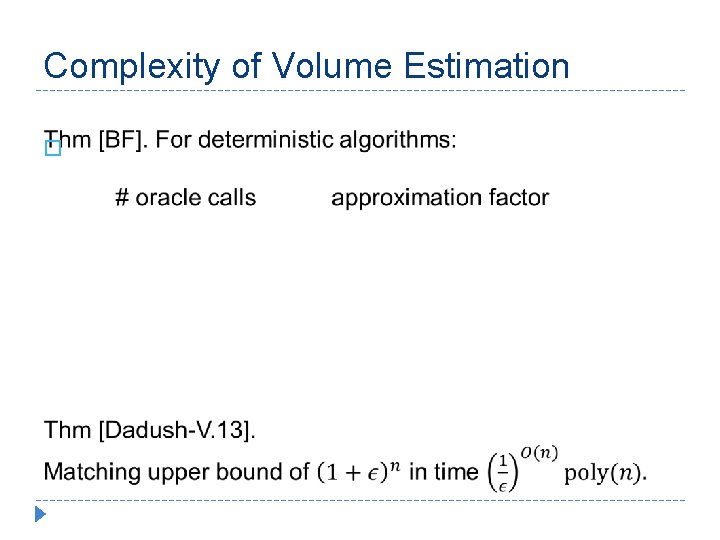

Complexity of Volume Estimation �

Complexity of Volume Estimation �

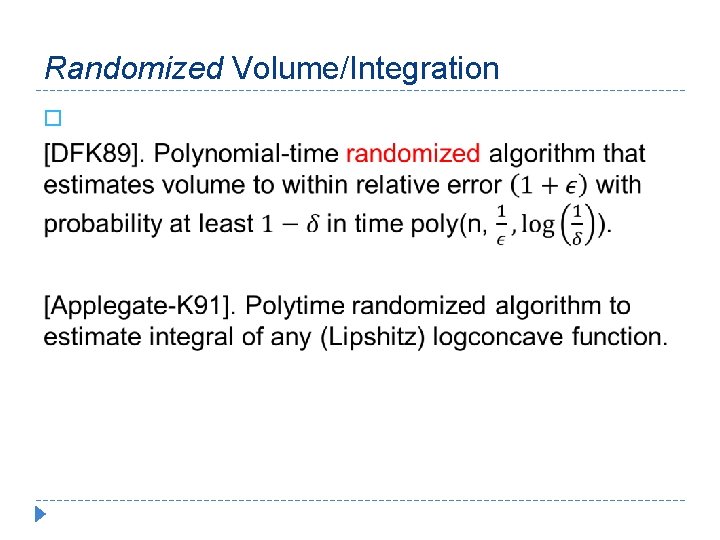

Randomized Volume/Integration �

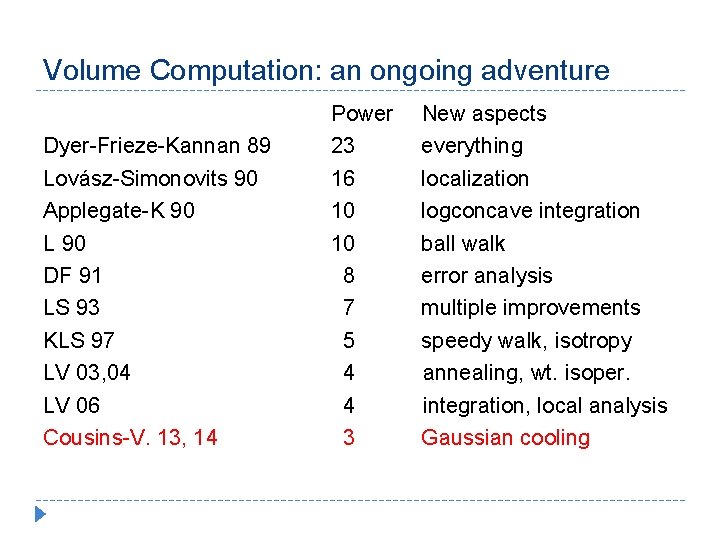

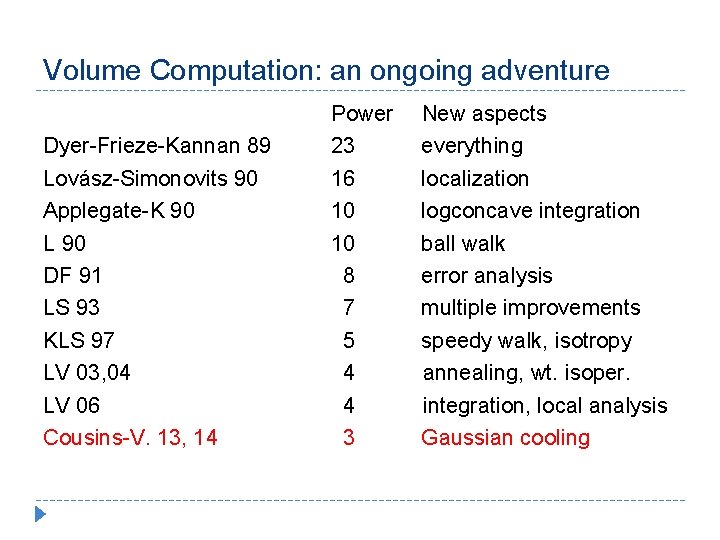

Volume Computation: an ongoing adventure Dyer-Frieze-Kannan 89 Lovász-Simonovits 90 Applegate-K 90 L 90 DF 91 LS 93 KLS 97 LV 03, 04 LV 06 Cousins-V. 13, 14 Power New aspects 23 everything 16 localization 10 logconcave integration 10 ball walk 8 error analysis 7 multiple improvements 5 speedy walk, isotropy 4 annealing, wt. isoper. 4 integration, local analysis 3 Gaussian cooling

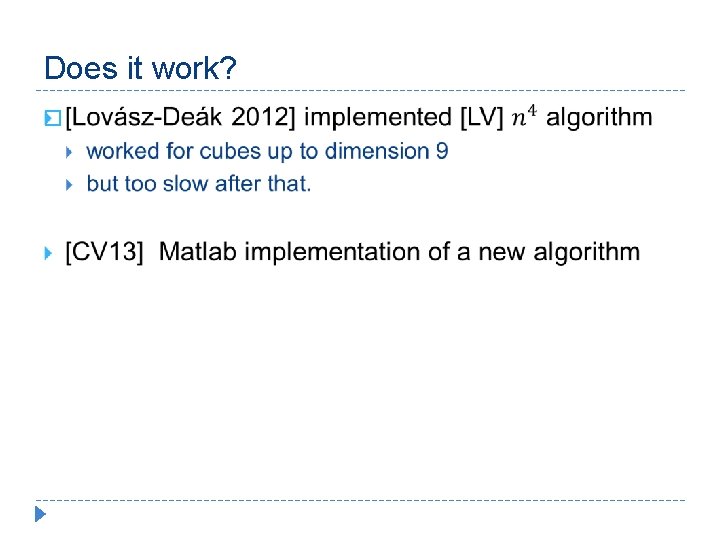

Does it work? �

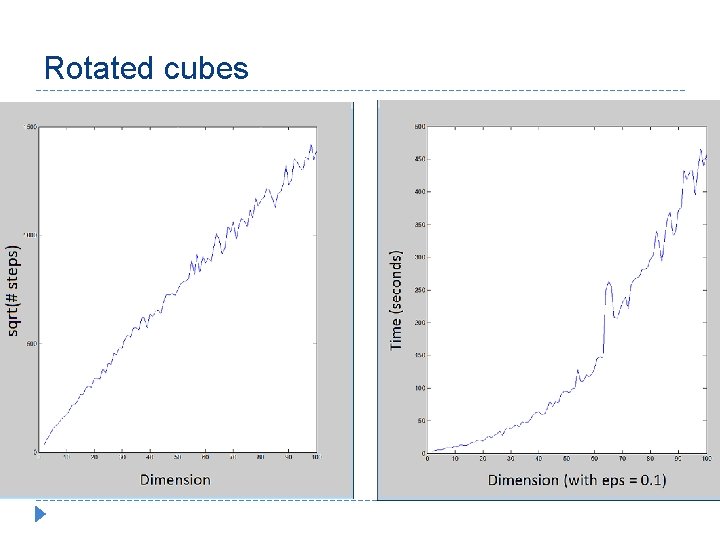

Rotated cubes

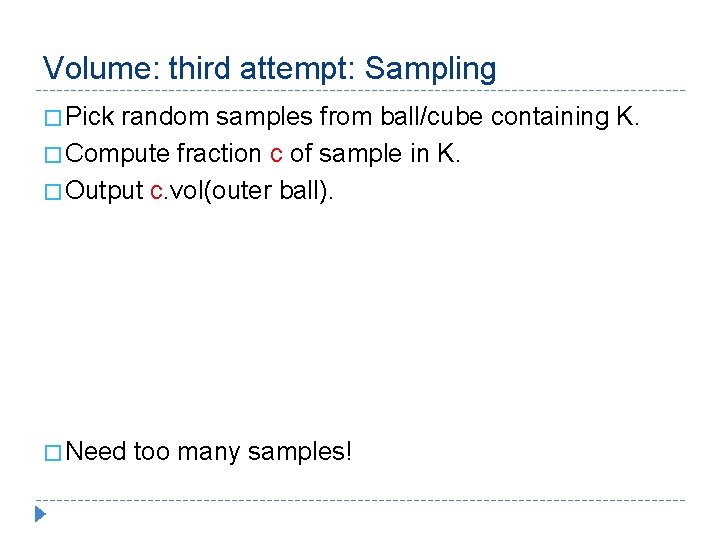

Volume: third attempt: Sampling � Pick random samples from ball/cube containing K. � Compute fraction c of sample in K. � Output c. vol(outer ball). � Need too many samples!

![Volume via Sampling [DFK 89] � Volume via Sampling [DFK 89] �](http://slidetodoc.com/presentation_image_h/14e76d0ef1d4c34f85f693e88e76a56c/image-18.jpg)

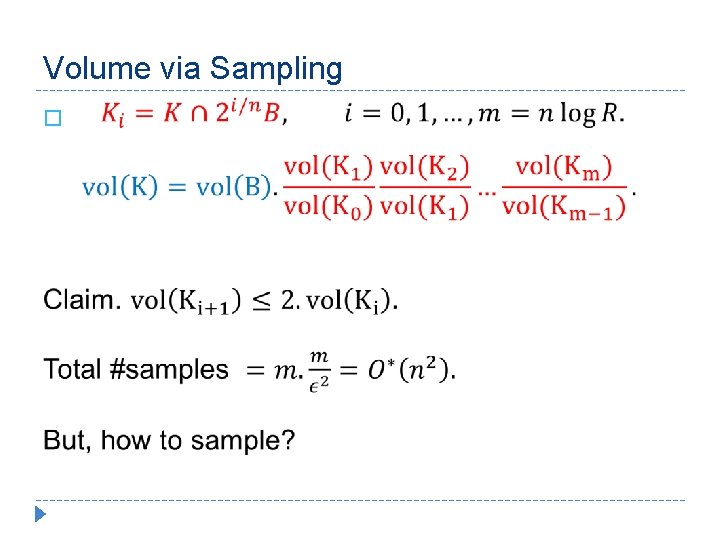

Volume via Sampling [DFK 89] �

Volume via Sampling �

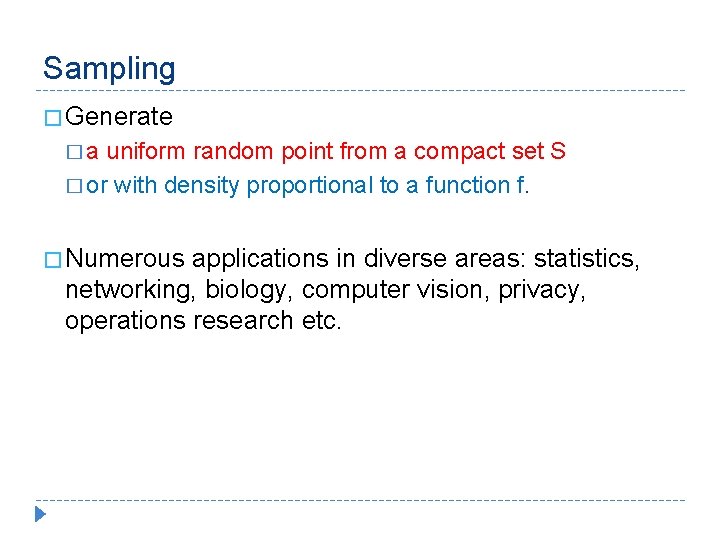

Sampling � Generate � a uniform random point from a compact set S � or with density proportional to a function f. � Numerous applications in diverse areas: statistics, networking, biology, computer vision, privacy, operations research etc.

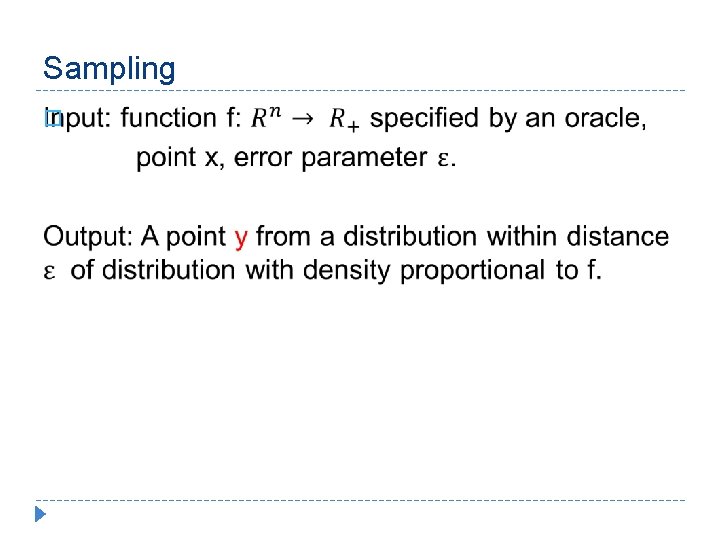

Sampling �

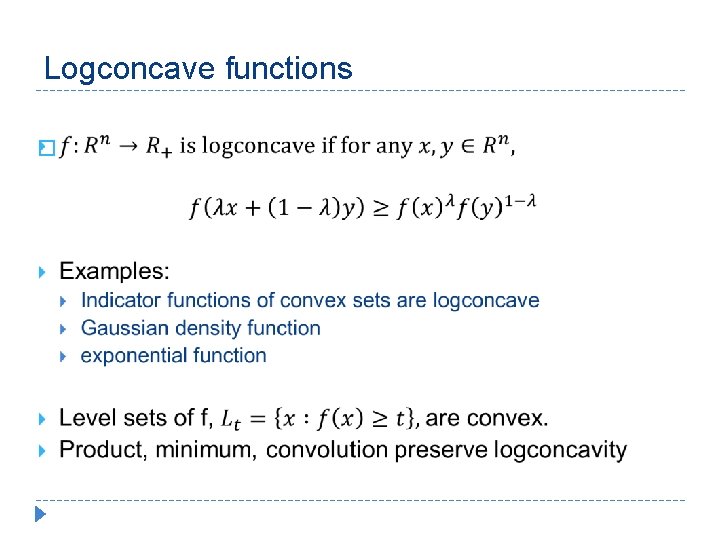

Logconcave functions �

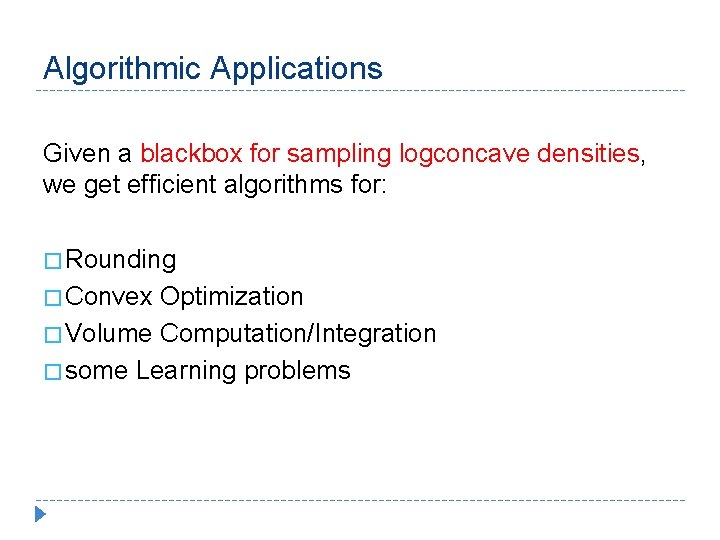

Algorithmic Applications Given a blackbox for sampling logconcave densities, we get efficient algorithms for: � Rounding � Convex Optimization � Volume Computation/Integration � some Learning problems

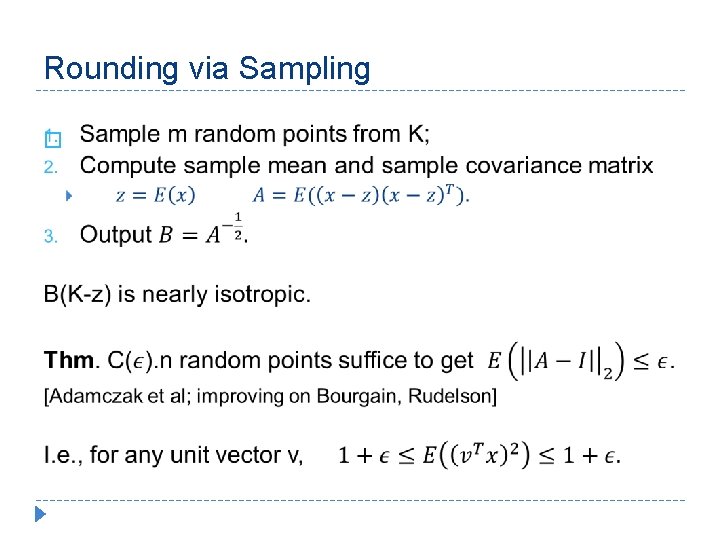

Rounding via Sampling �

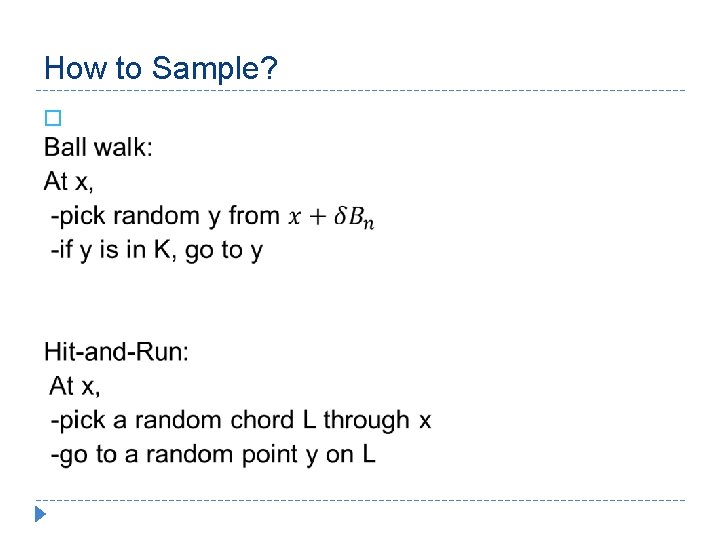

How to Sample? �

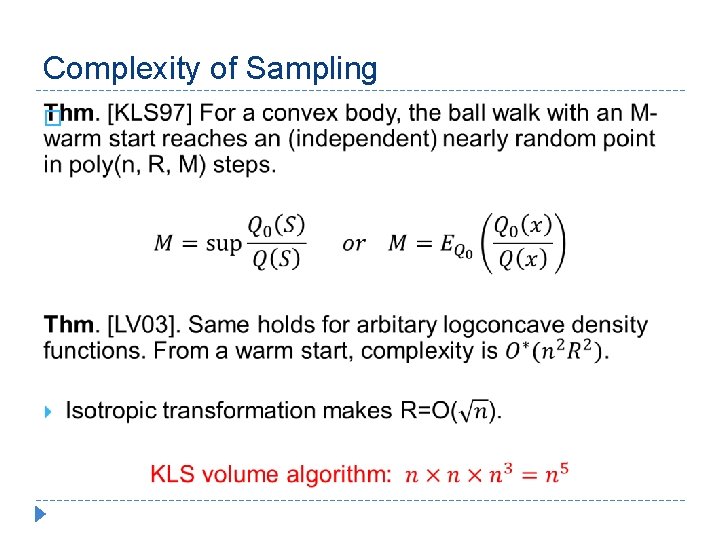

Complexity of Sampling �

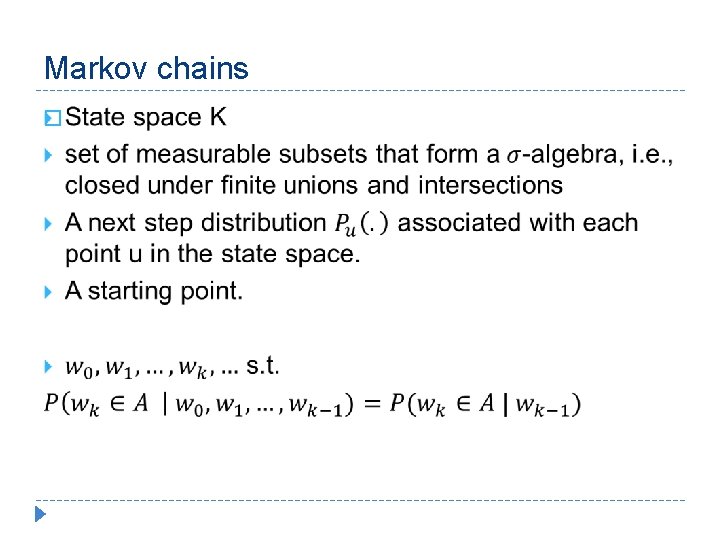

Markov chains �

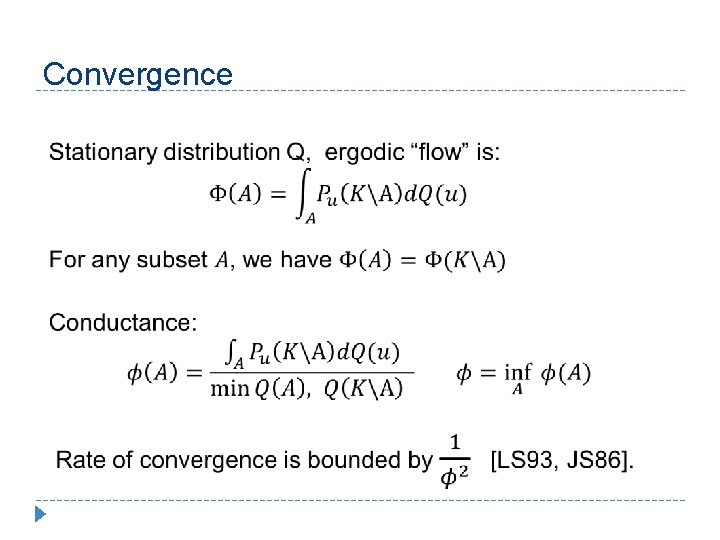

Convergence

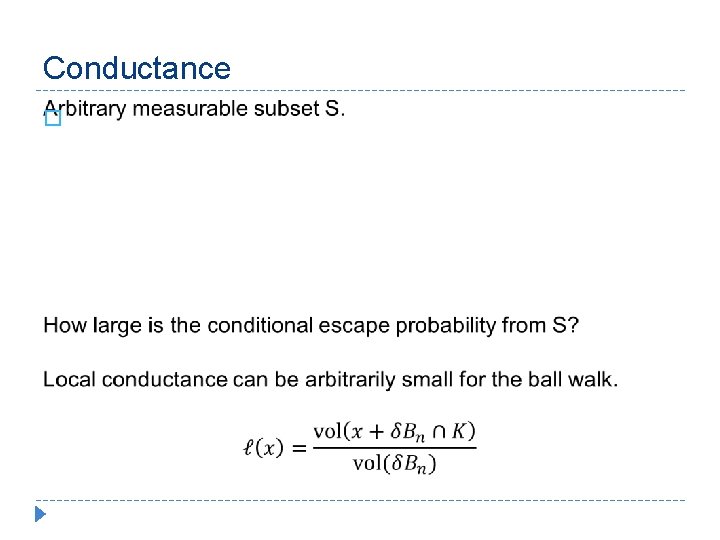

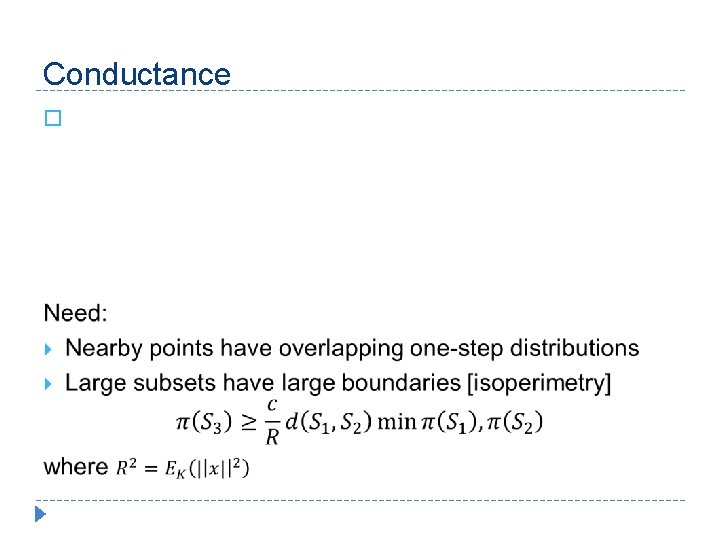

Conductance �

Conductance �

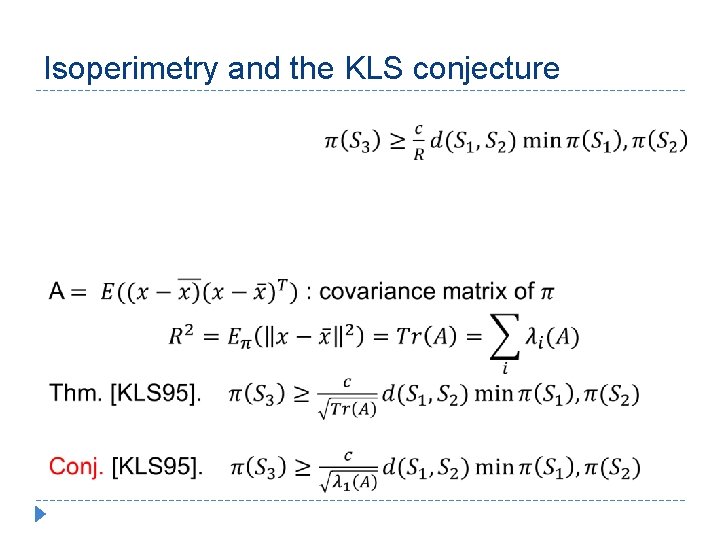

Isoperimetry and the KLS conjecture

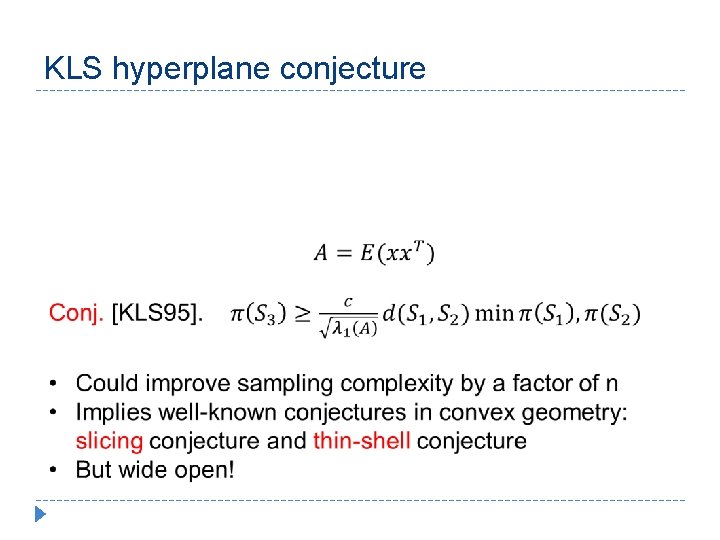

KLS hyperplane conjecture

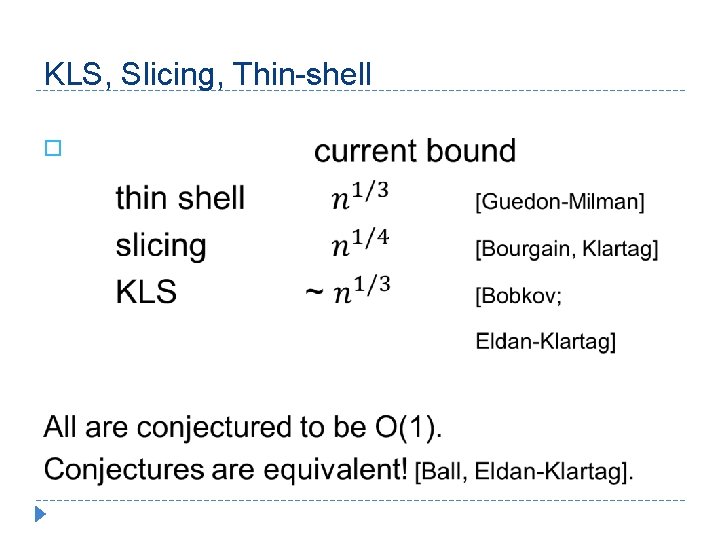

KLS, Slicing, Thin-shell �

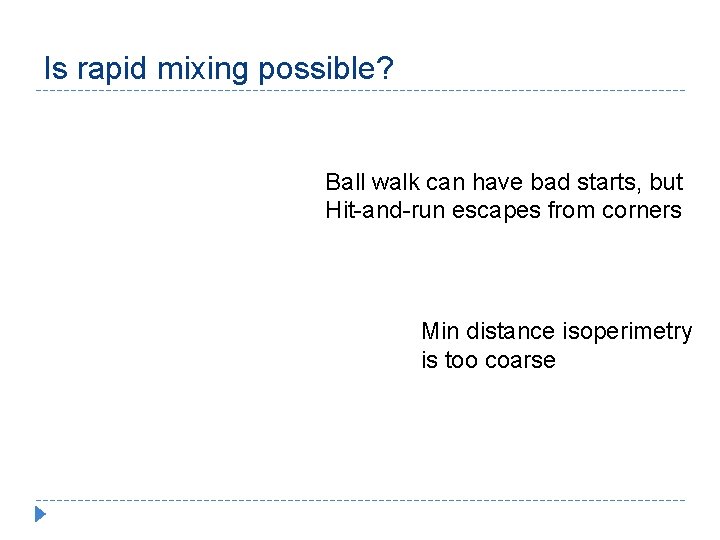

Is rapid mixing possible? Ball walk can have bad starts, but Hit-and-run escapes from corners Min distance isoperimetry is too coarse

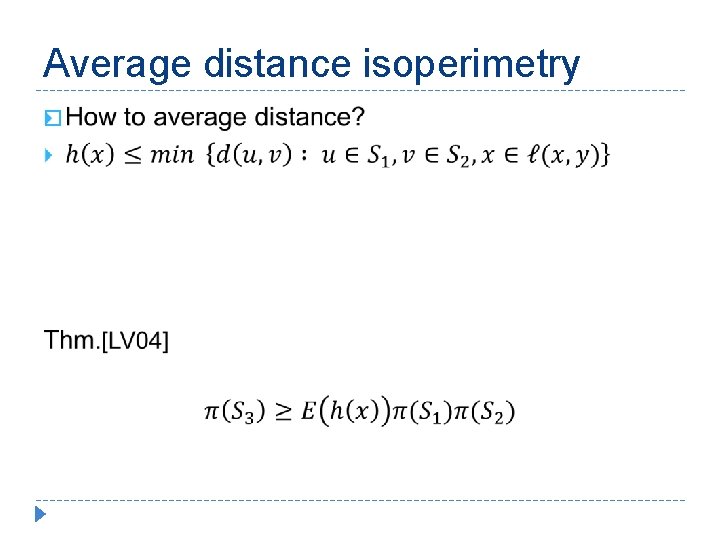

Average distance isoperimetry �

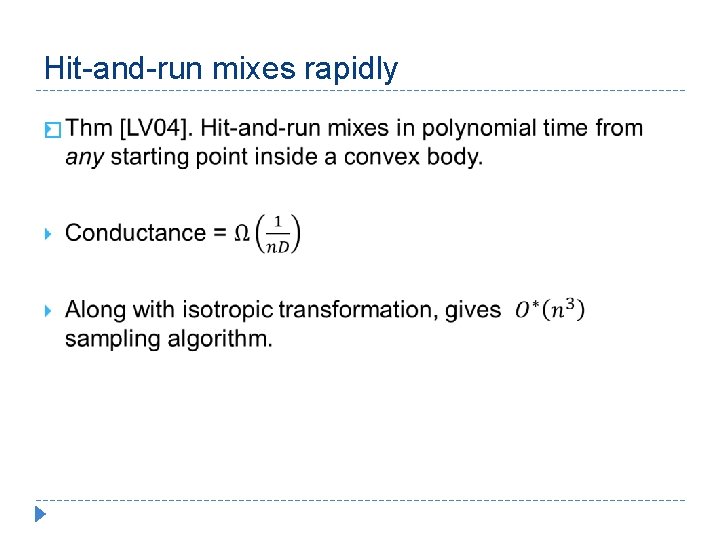

Hit-and-run mixes rapidly �

![Simulated Annealing [LV 03, Kalai-V. 04] � Simulated Annealing [LV 03, Kalai-V. 04] �](http://slidetodoc.com/presentation_image_h/14e76d0ef1d4c34f85f693e88e76a56c/image-37.jpg)

Simulated Annealing [LV 03, Kalai-V. 04] �

![Annealing [LV 06] � Annealing [LV 06] �](http://slidetodoc.com/presentation_image_h/14e76d0ef1d4c34f85f693e88e76a56c/image-38.jpg)

Annealing [LV 06] �

![Annealing [LV 03, 06] � Annealing [LV 03, 06] �](http://slidetodoc.com/presentation_image_h/14e76d0ef1d4c34f85f693e88e76a56c/image-39.jpg)

Annealing [LV 03, 06] �

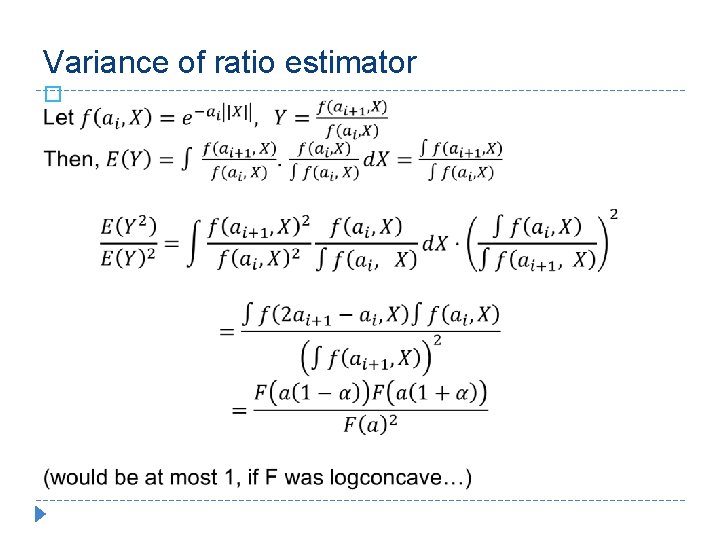

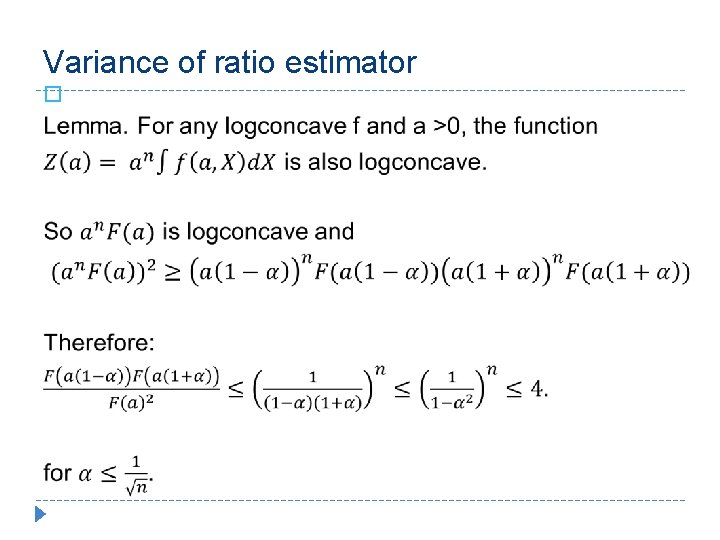

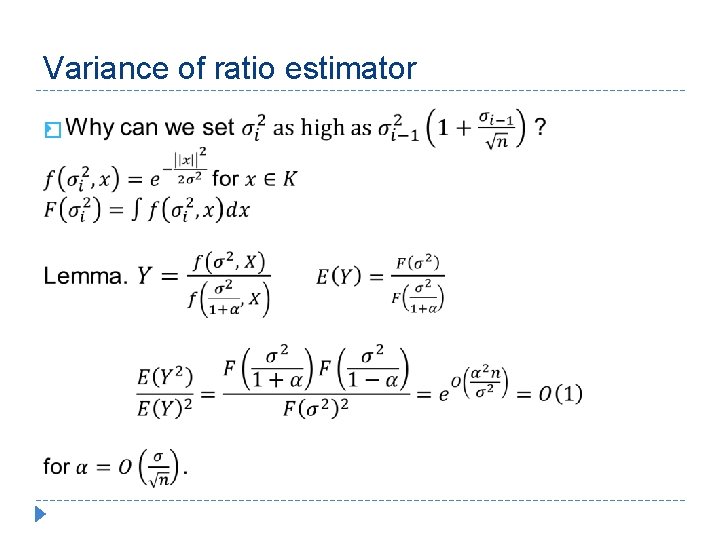

Variance of ratio estimator �

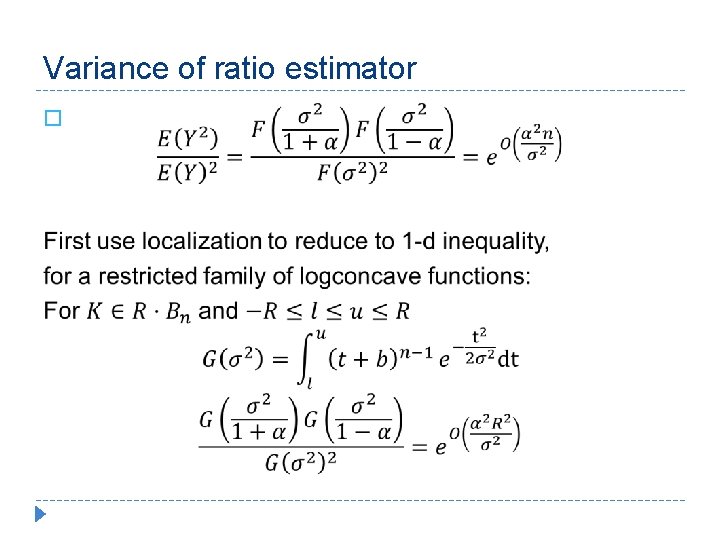

Variance of ratio estimator �

Volume Computation: an ongoing adventure Dyer-Frieze-Kannan 89 Lovász-Simonovits 90 Applegate-K 90 L 90 DF 91 LS 93 KLS 97 LV 03, 04 LV 06 Cousins-V. 13, 14 Power New aspects 23 everything 16 localization 10 logconcave integration 10 ball walk 8 error analysis 7 multiple improvements 5 speedy walk, isotropy 4 annealing, wt. isoper. 4 integration, local analysis 3 Gaussian cooling

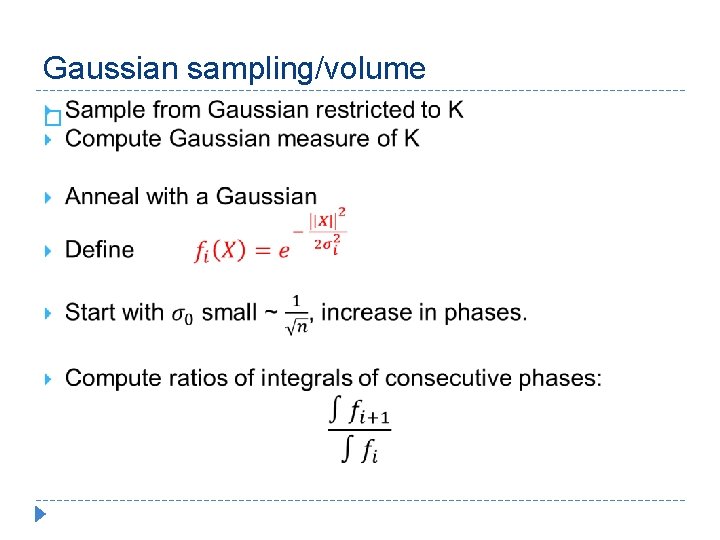

Gaussian sampling/volume �

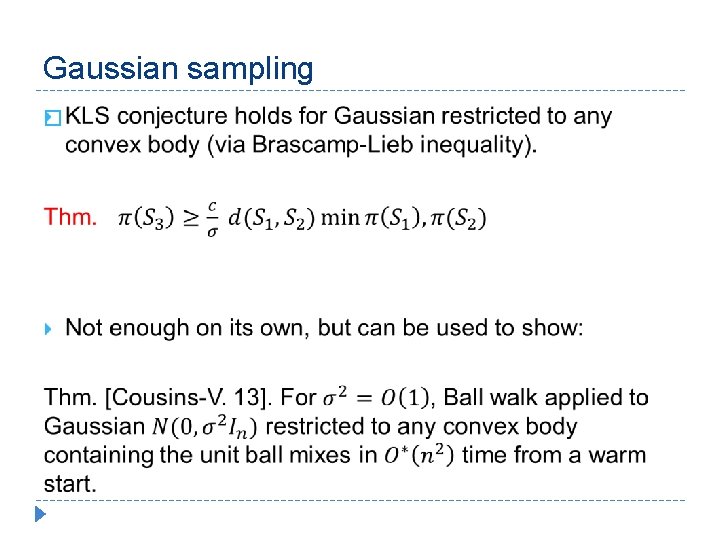

Gaussian sampling �

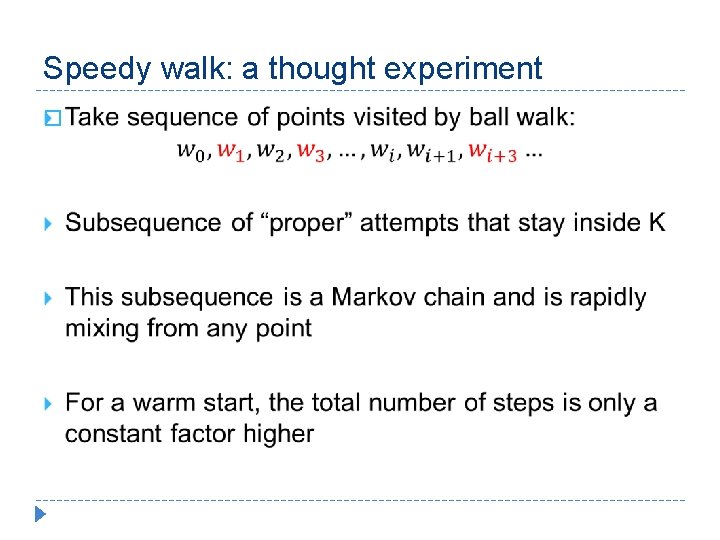

Speedy walk: a thought experiment �

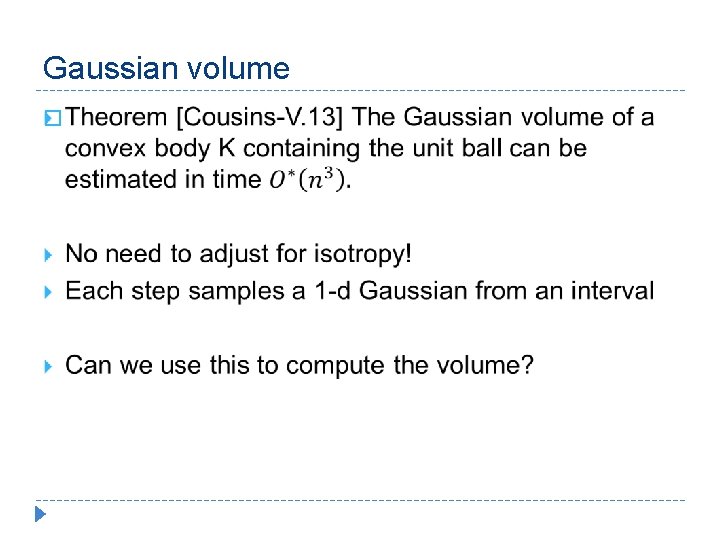

Gaussian volume �

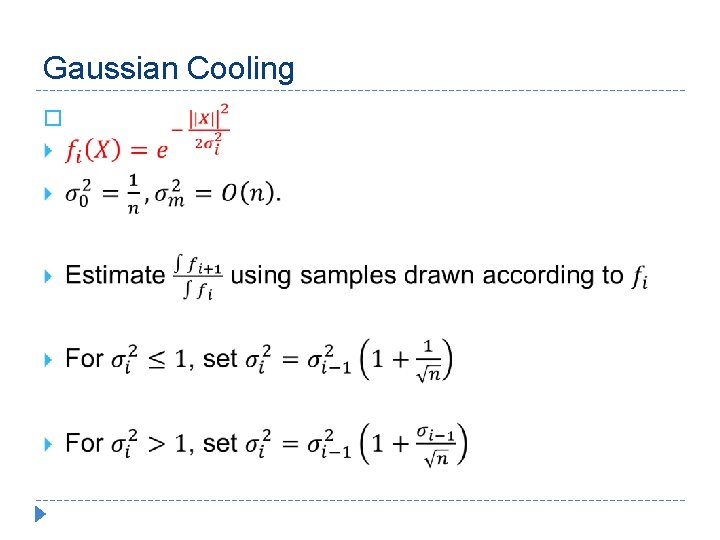

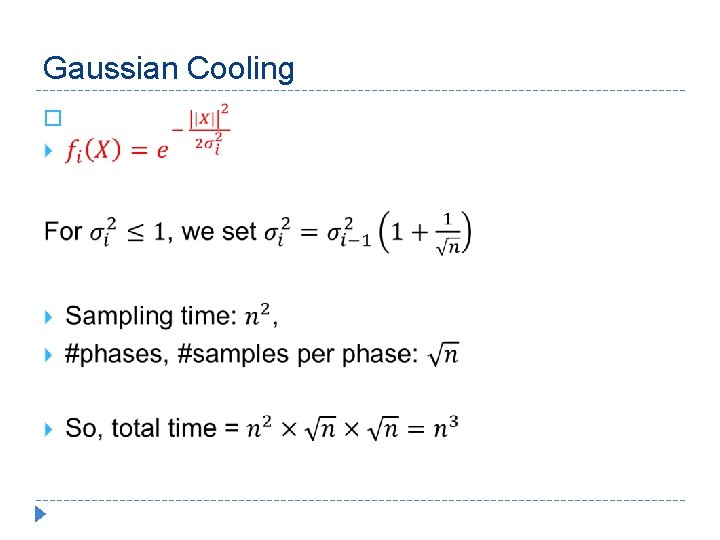

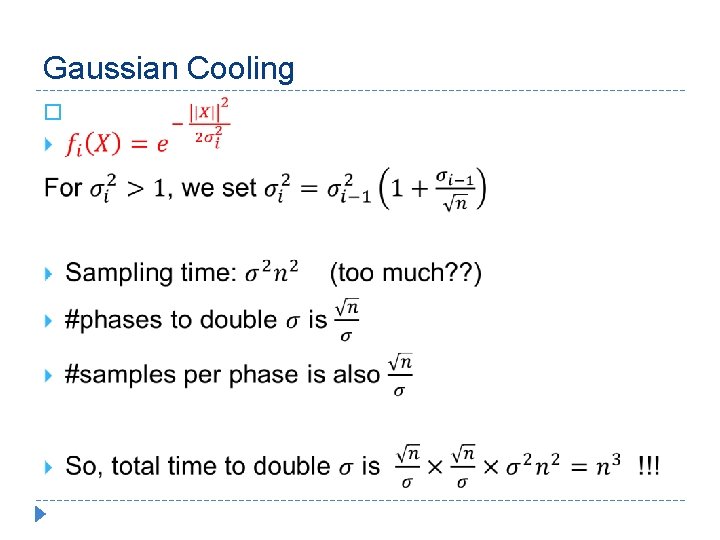

Gaussian Cooling �

Gaussian Cooling �

Gaussian Cooling �

Variance of ratio estimator �

Variance of ratio estimator �

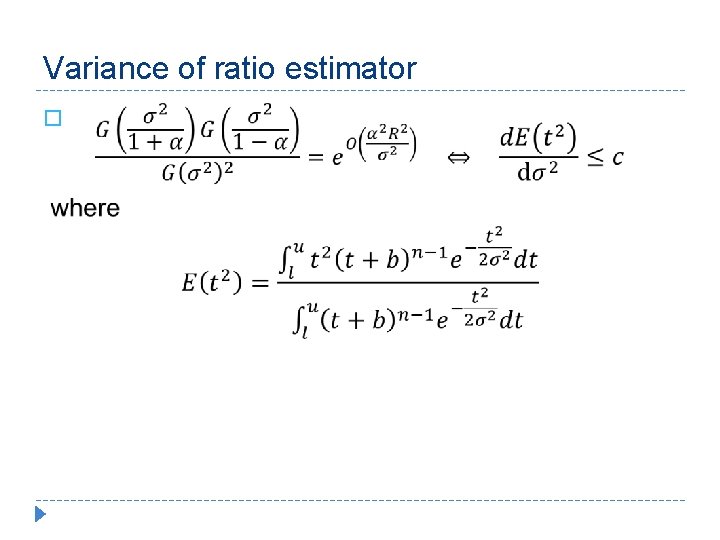

Variance of ratio estimator �

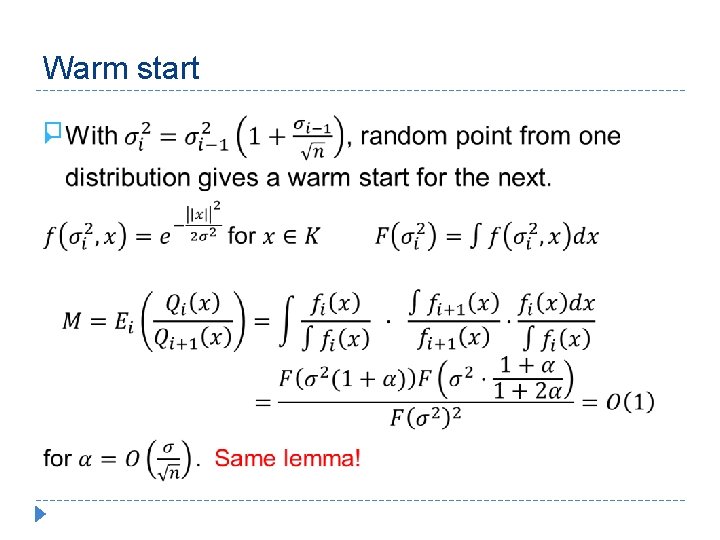

Warm start �

![Gaussian Cooling [CV 14] � Gaussian Cooling [CV 14] �](http://slidetodoc.com/presentation_image_h/14e76d0ef1d4c34f85f693e88e76a56c/image-54.jpg)

Gaussian Cooling [CV 14] �

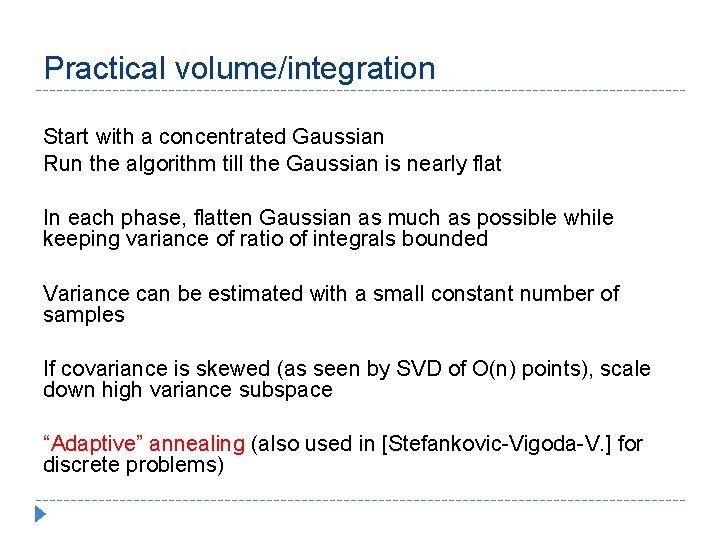

Practical volume/integration Start with a concentrated Gaussian Run the algorithm till the Gaussian is nearly flat In each phase, flatten Gaussian as much as possible while keeping variance of ratio of integrals bounded Variance can be estimated with a small constant number of samples If covariance is skewed (as seen by SVD of O(n) points), scale down high variance subspace “Adaptive” annealing (also used in [Stefankovic-Vigoda-V. ] for discrete problems)

Open questions � How true is the KLS conjecture?

Open questions � When to stop a random walk? (how to decide if current point is “random”? ) � Faster isotropy/rounding? � How to get information before reaching stationary? To make isotropic: run for N steps; transform using covariance; repeat.

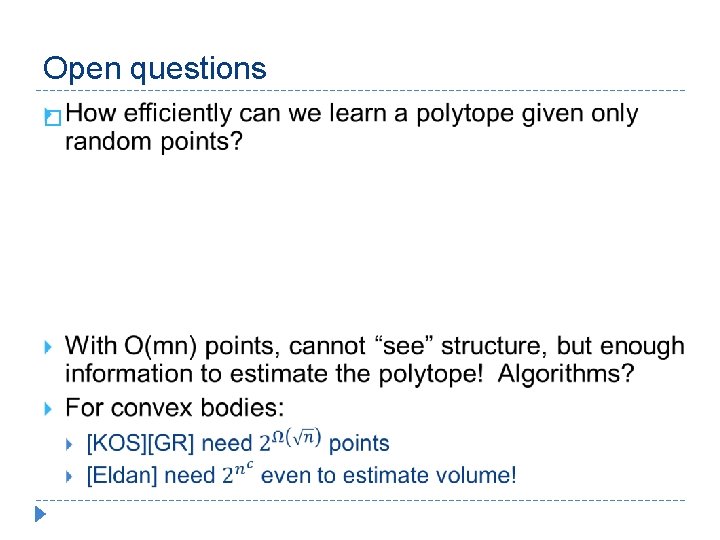

Open questions �

Open questions �

- Slides: 59