Alphabet Soup of Deep Learning Topics Mark Stamp

Alphabet Soup of Deep Learning Topics Mark Stamp Alphabet Soup 1

Topics Covered q Discuss the following in detail o Convolutional Neural Network (CNN) o Recurrent Neural Network (RNN) § Including LSTM and GRU o Residual Network (Res. Net) o Generative Adversarial Network (GAN) o Word 2 Vec q And briefly consider other things o Transfer learning, ensembles, vanishing & exploding gradient, TF-IDF, and more! Alphabet Soup 2

CNN Alphabet Soup 3

Convolutional Neural Network q Typical ANN has fully connected layers o Good, since can deal with interaction between any elements in feature vectors o Bad, due to large number of parameters q For images, local structure dominates q CNNs designed for images o Effective and efficient wrt local structure q CNNs also useful for speech and text analysis, among other applications Alphabet Soup 4

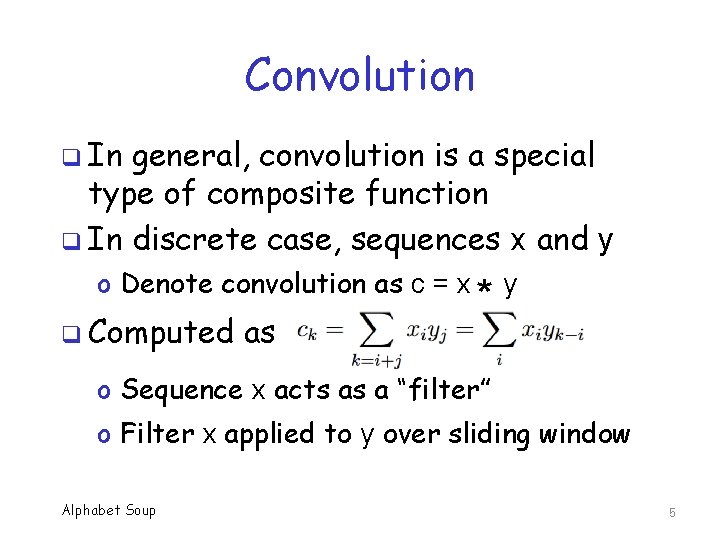

Convolution q In general, convolution is a special type of composite function q In discrete case, sequences x and y o Denote convolution as c = x q Computed as * y o Sequence x acts as a “filter” o Filter x applied to y over sliding window Alphabet Soup 5

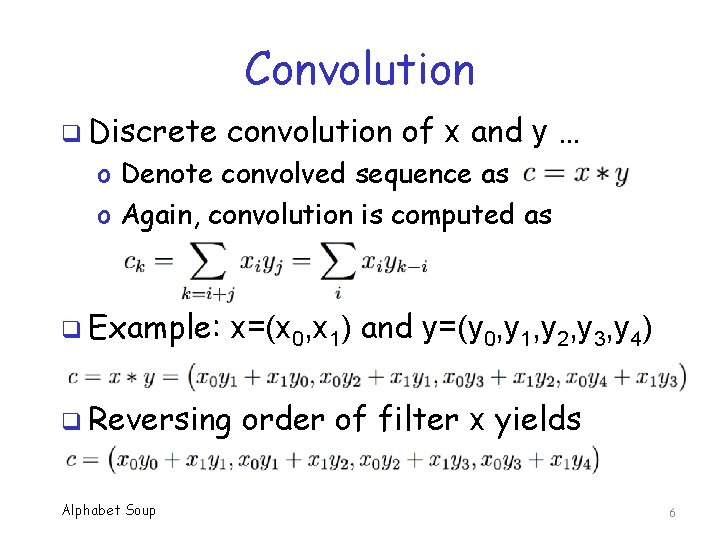

Convolution q Discrete convolution of x and y … o Denote convolved sequence as o Again, convolution is computed as q Example: x=(x 0, x 1) and y=(y 0, y 1, y 2, y 3, y 4) q Reversing Alphabet Soup order of filter x yields 6

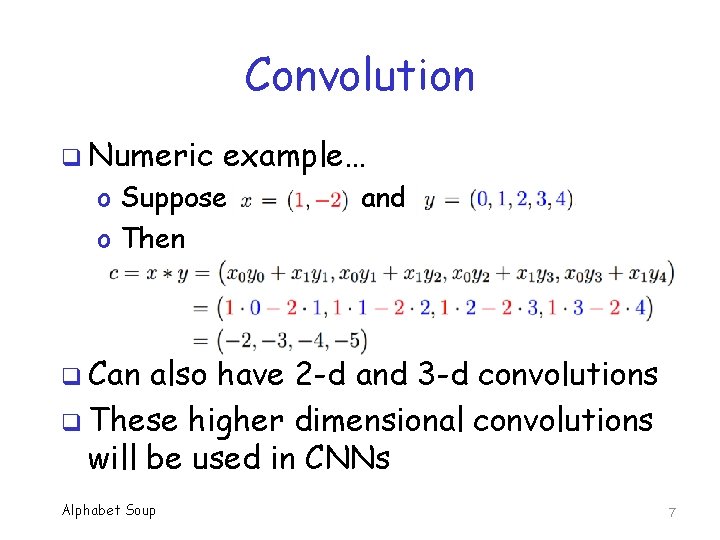

Convolution q Numeric example… o Suppose o Then and q Can also have 2 -d and 3 -d convolutions q These higher dimensional convolutions will be used in CNNs Alphabet Soup 7

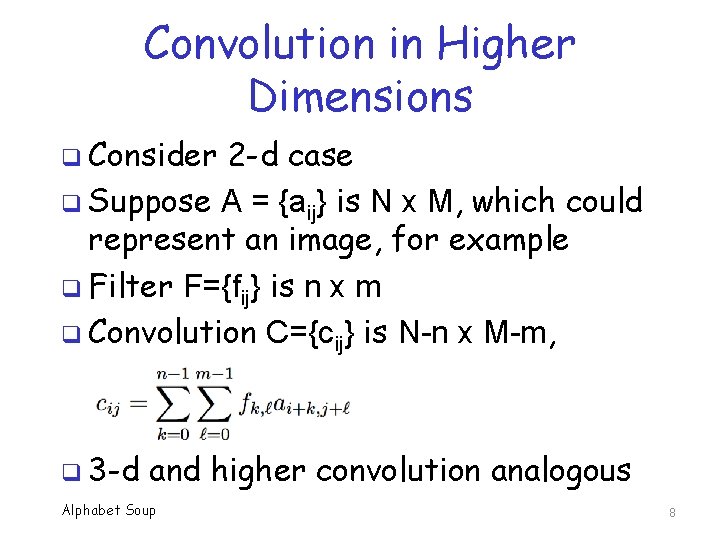

Convolution in Higher Dimensions q Consider 2 -d case q Suppose A = {aij} is N x M, which could represent an image, for example q Filter F={fij} is n x m q Convolution C={cij} is N-n x M-m, q 3 -d and higher convolution analogous Alphabet Soup 8

Filters We could simply define filters (convolutions) and then apply them to images q However, in CNN, filters are learned!!! q 1 st conv. layer filters are applied to image q o Learn intuitive features, e. g. , basic shapes, edges q 2 nd convolutional layer, filters applied to output of 1 st layer, and so on o Learn more abstract features at each layer o Eventually, can distinguish “cat” from “dog”… q Note: Final layer of CNN is fully connected Alphabet Soup 9

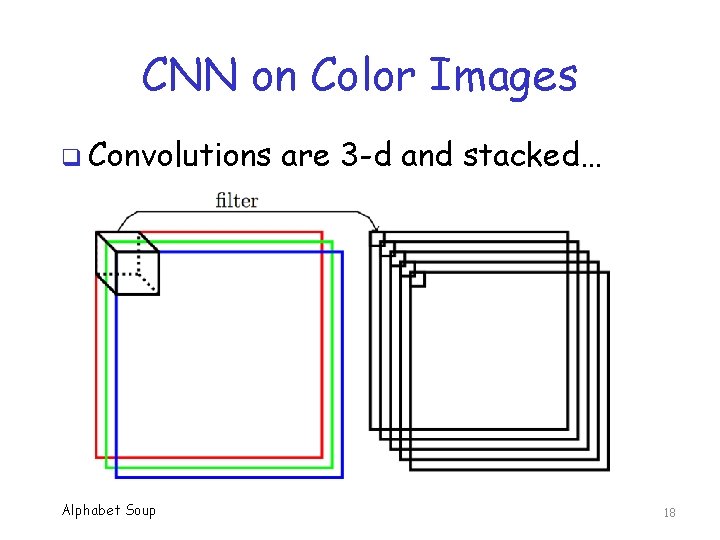

CNN Convolutions q CNN treat R, G, B as separate planes, and hence 1 st convolutional layer is 3 -d q Typically, stack multiple filters at each layer o Each filter initialized at random o Each filter can learn different features q Due to this stacking, 2 nd and deeper layers are also 3 -d convolutions Alphabet Soup 10

Pooling Layers q In addition to convolutional layers, CNNs often include “pooling” layers q Pooling uses a fixed, simple filter o Not learned, mostly to reduce size o Max pooling is most popular select max value within a specified window size q Pooling seems to be losing favor o Today, common to have no pooling layers Alphabet Soup 11

Why CNN for Images? q Fully connected neural network with a neuron for each pixel is impractical o CNN filter applied over whole image, so number of weights greatly reduced q Most structure in image is local o CNN filter deals with local structure q CNN gives some translation invariance o Can be viewed as reducing overfitting that would otherwise occur in images Alphabet Soup 12

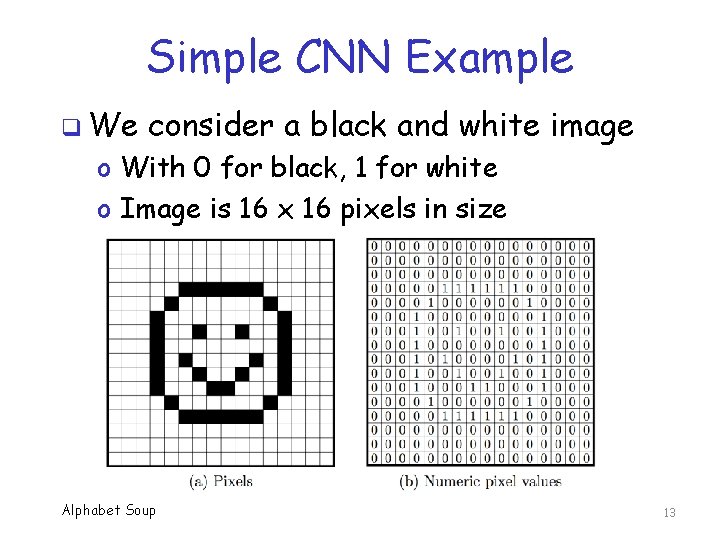

Simple CNN Example q We consider a black and white image o With 0 for black, 1 for white o Image is 16 x 16 pixels in size Alphabet Soup 13

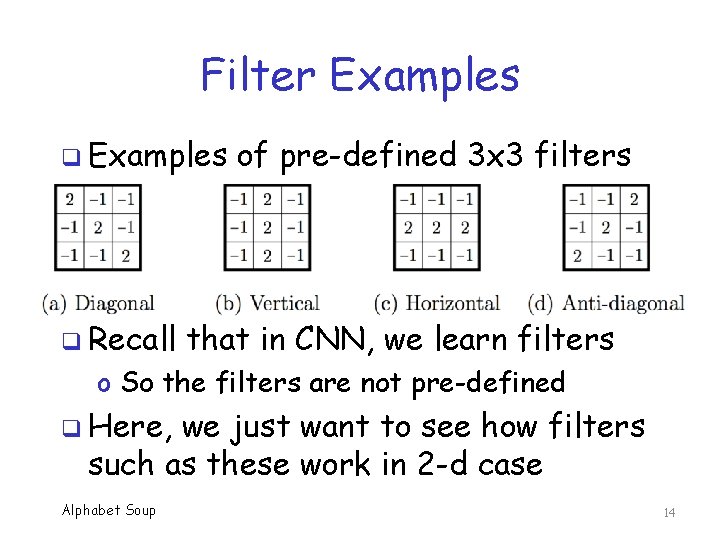

Filter Examples q Recall of pre-defined 3 x 3 filters that in CNN, we learn filters o So the filters are not pre-defined q Here, we just want to see how filters such as these work in 2 -d case Alphabet Soup 14

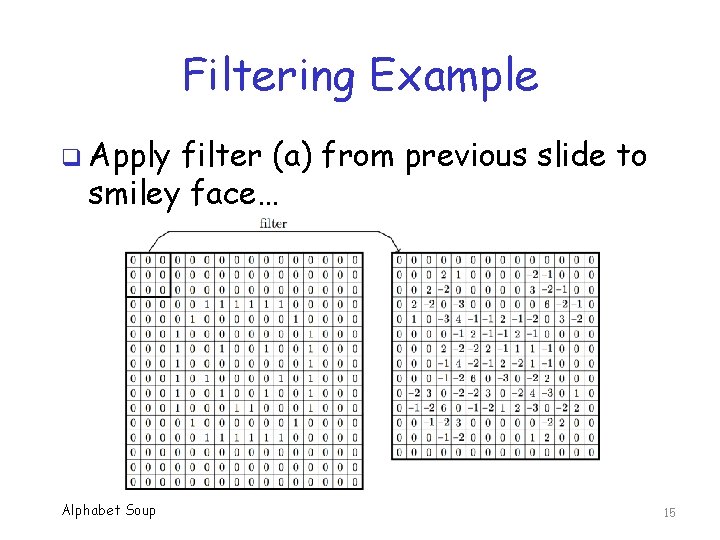

Filtering Example q Apply filter (a) from previous slide to smiley face… Alphabet Soup 15

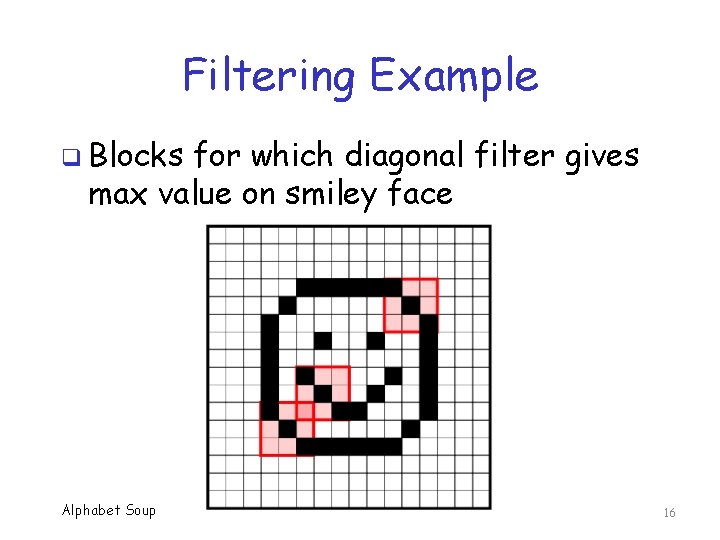

Filtering Example q Blocks for which diagonal filter gives max value on smiley face Alphabet Soup 16

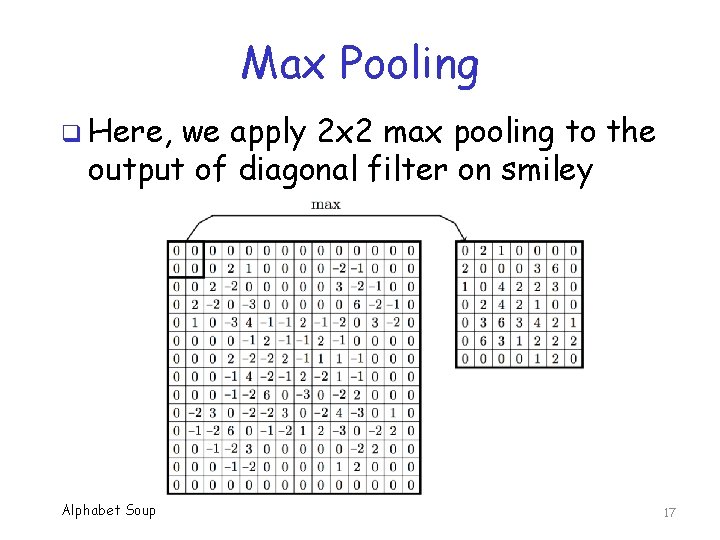

Max Pooling q Here, we apply 2 x 2 max pooling to the output of diagonal filter on smiley Alphabet Soup 17

CNN on Color Images q Convolutions Alphabet Soup are 3 -d and stacked… 18

Visualizing CNNs q Lots of attempts to visualize CNNs q Typically, use some deconvolution to project filters into image space q Here, we present one example… q. . . not necessarily the best way to do it q Many other visualizations. . . q Seem to often be based on Alex. Net o A specific CNN architecture Alphabet Soup 19

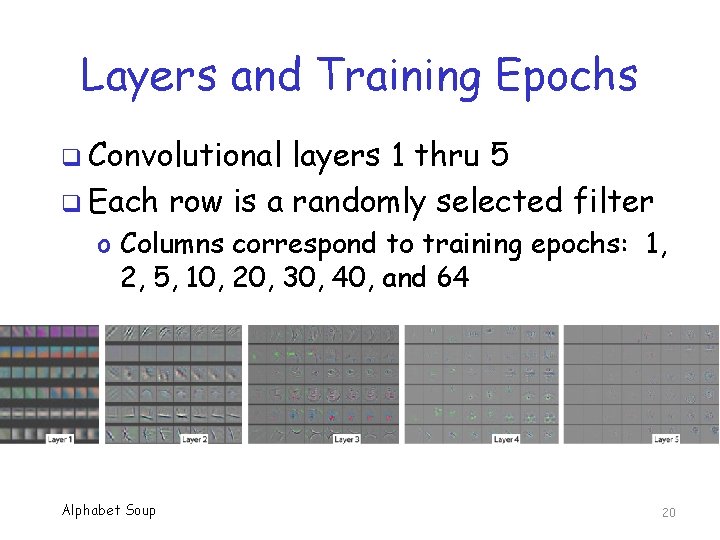

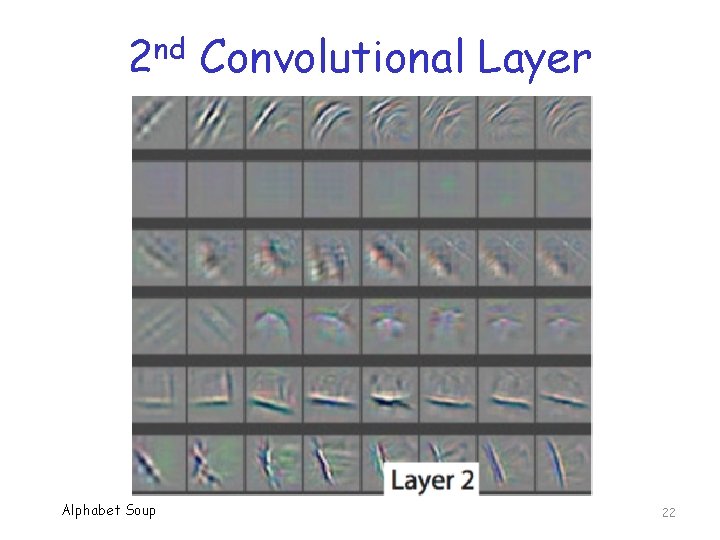

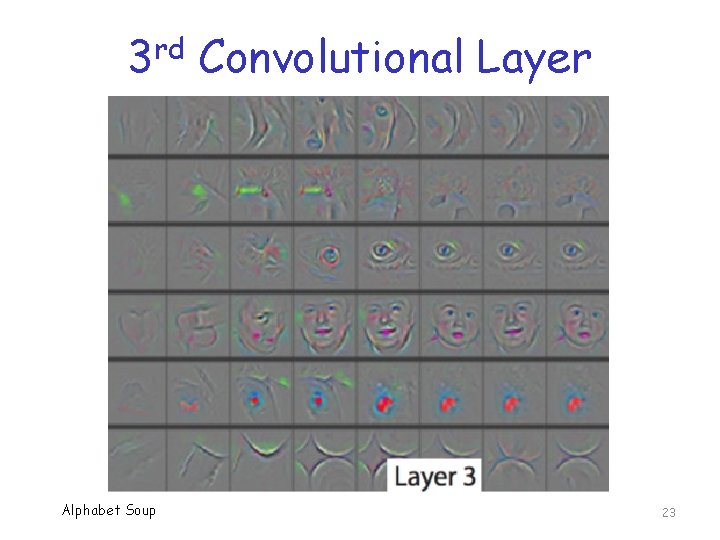

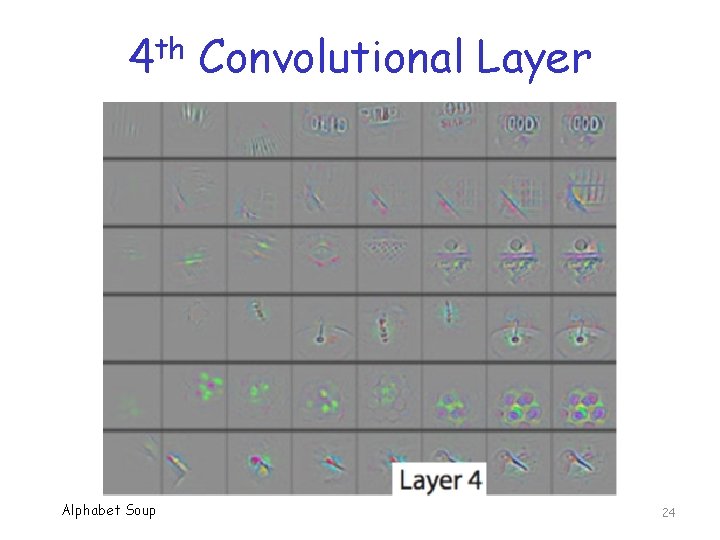

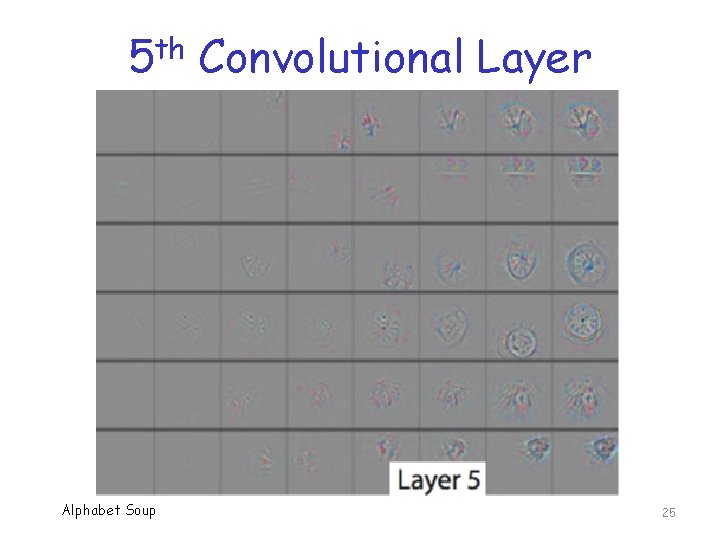

Layers and Training Epochs q Convolutional layers 1 thru 5 q Each row is a randomly selected filter o Columns correspond to training epochs: 1, 2, 5, 10, 20, 30, 40, and 64 Alphabet Soup 20

st 1 Alphabet Soup Convolutional Layer 21

nd 2 Alphabet Soup Convolutional Layer 22

rd 3 Alphabet Soup Convolutional Layer 23

th 4 Alphabet Soup Convolutional Layer 24

th 5 Alphabet Soup Convolutional Layer 25

CNN Summary q Convolutions are simply filters q Main ideas behind CNN Training CNN means learning the filters Filters are generally 3 -d Learn a stack of filters at each layer Filters initialized at random, so stacked filters can learn different features o Can also interleave pooling layers o Output layer fully connected (classifier) o o Alphabet Soup 26

Vanilla RNN Alphabet Soup 27

Persistence of Memory q Standard feedforward neural network will work great for many problems q Feedforward network has no “memory”, so not ideal for sequential data q When is memory needed? q Suppose we want to tag part of speech o “All” can be adjective, adverb, etc. o Cannot tell which it is without context Alphabet Soup 28

Recurrent Neural Network q RNN adds “memory” to a standard feedforward network q Specifically, RNN includes output from previous step as part of state q In theory, can “remember” forever! q In practice, memory is limited q Some tricks used to increase memory o LSTM we discuss this separately Alphabet Soup 29

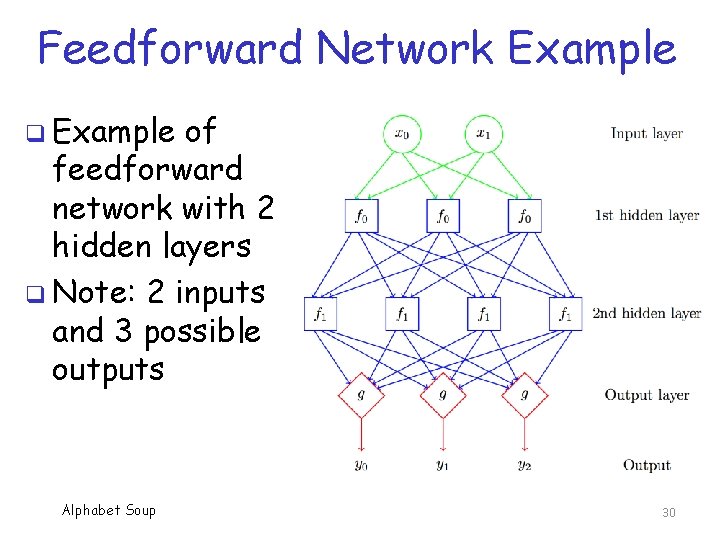

Feedforward Network Example q Example of feedforward network with 2 hidden layers q Note: 2 inputs and 3 possible outputs Alphabet Soup 30

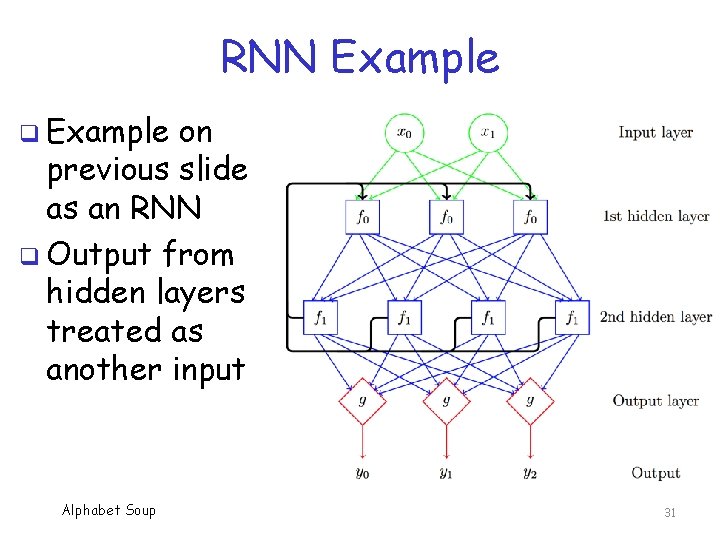

RNN Example q Example on previous slide as an RNN q Output from hidden layers treated as another input Alphabet Soup 31

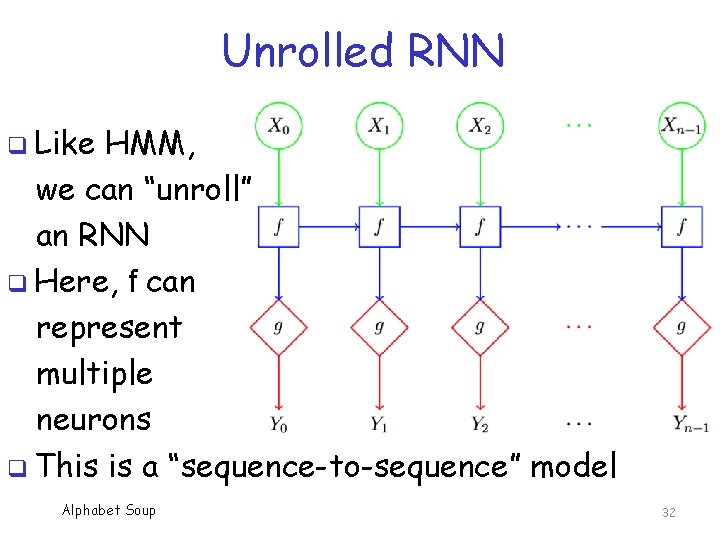

Unrolled RNN q Like HMM, we can “unroll” an RNN q Here, f can represent multiple neurons q This is a “sequence-to-sequence” model Alphabet Soup 32

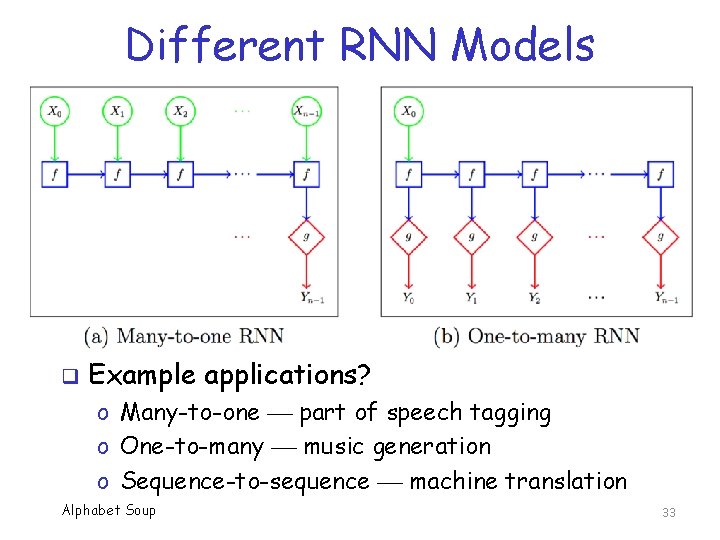

Different RNN Models q Example applications? o Many-to-one part of speech tagging o One-to-many music generation o Sequence-to-sequence machine translation Alphabet Soup 33

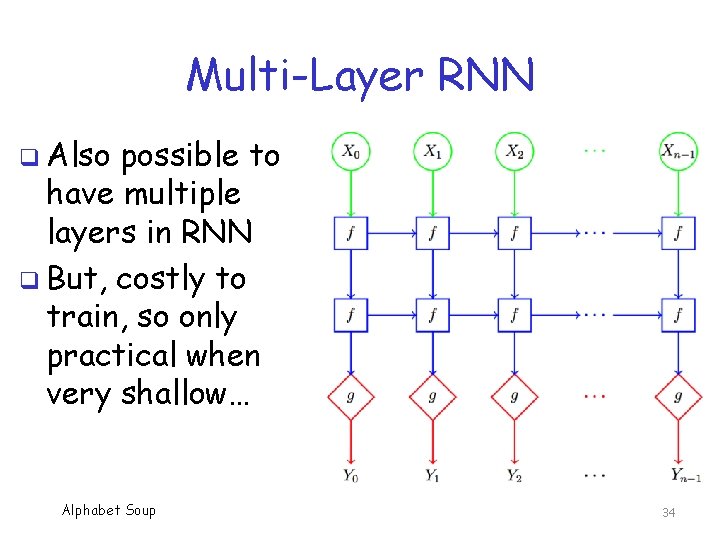

Multi-Layer RNN q Also possible to have multiple layers in RNN q But, costly to train, so only practical when very shallow… Alphabet Soup 34

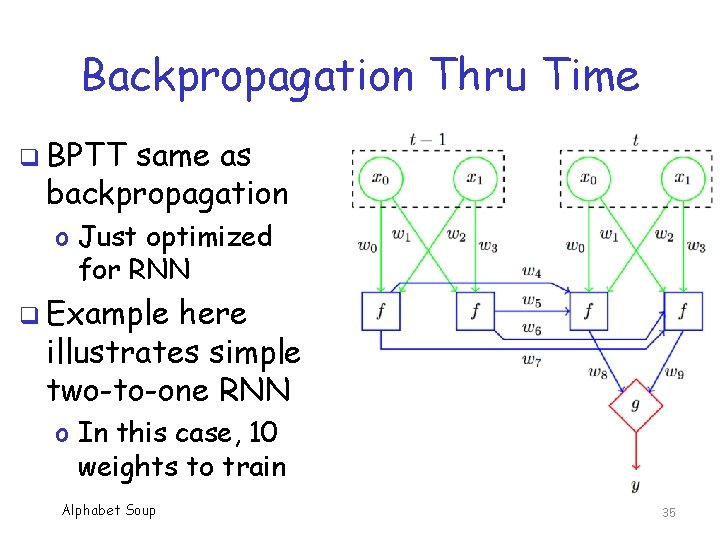

Backpropagation Thru Time q BPTT same as backpropagation o Just optimized for RNN q Example here illustrates simple two-to-one RNN o In this case, 10 weights to train Alphabet Soup 35

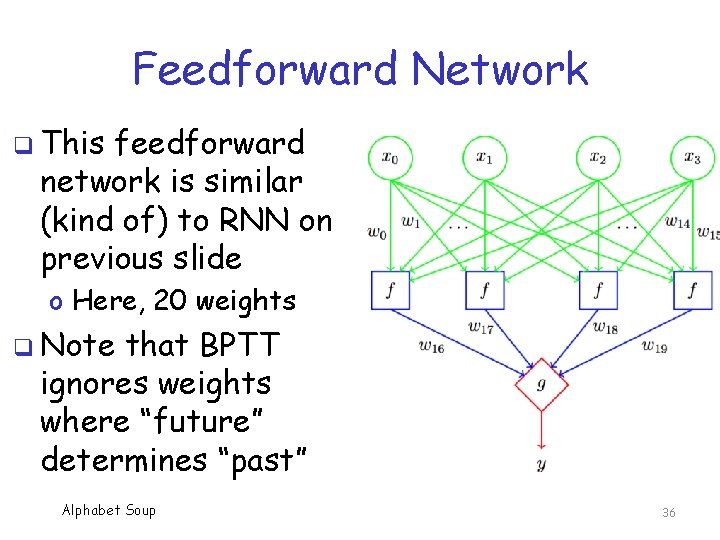

Feedforward Network q This feedforward network is similar (kind of) to RNN on previous slide o Here, 20 weights q Note that BPTT ignores weights where “future” determines “past” Alphabet Soup 36

Vanishing & Exploding Gradient q RNN can extend far back in time q When trying to backpropagate in time, gradient becomes unstable o Gradient can decrease exponentially (vanishing gradient) o Gradient can increase exponentially (exploding gradient) o Gradient can oscillate wildly q This Alphabet Soup is bad, really bad 37

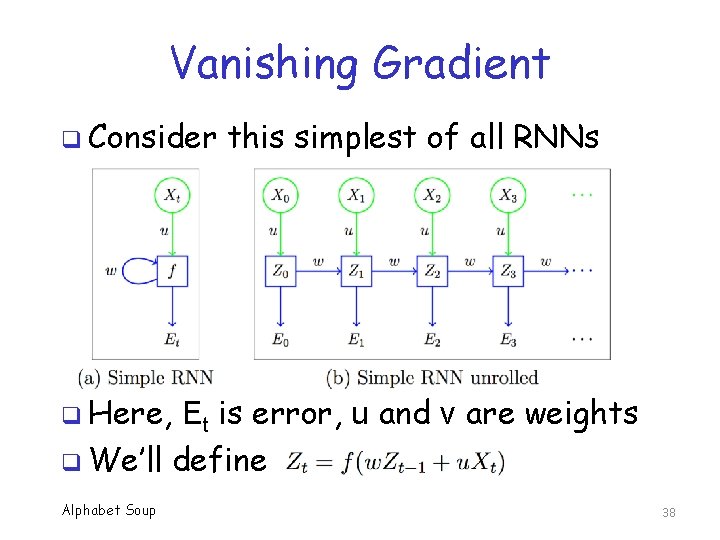

Vanishing Gradient q Consider this simplest of all RNNs q Here, Et is error, u and v are weights q We’ll define Alphabet Soup 38

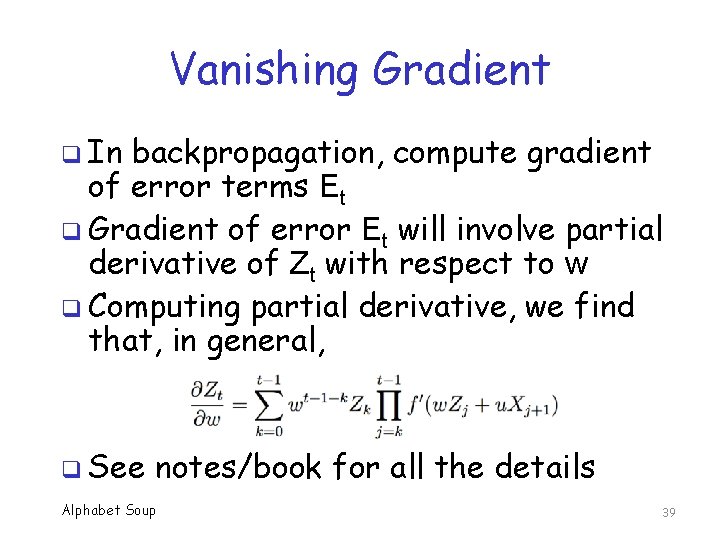

Vanishing Gradient q In backpropagation, compute gradient of error terms Et q Gradient of error Et will involve partial derivative of Zt with respect to w q Computing partial derivative, we find that, in general, q See notes/book for all the details Alphabet Soup 39

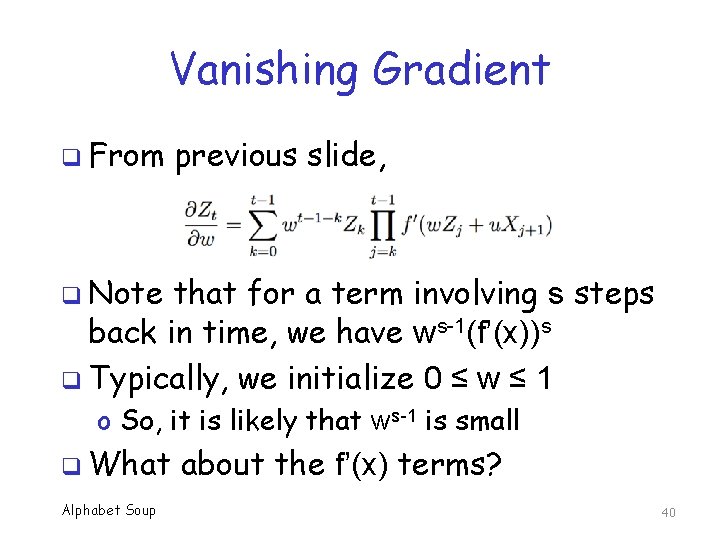

Vanishing Gradient q From previous slide, q Note that for a term involving s steps back in time, we have ws-1(f’(x))s q Typically, we initialize 0 ≤ w ≤ 1 o So, it is likely that ws-1 is small q What Alphabet Soup about the f’(x) terms? 40

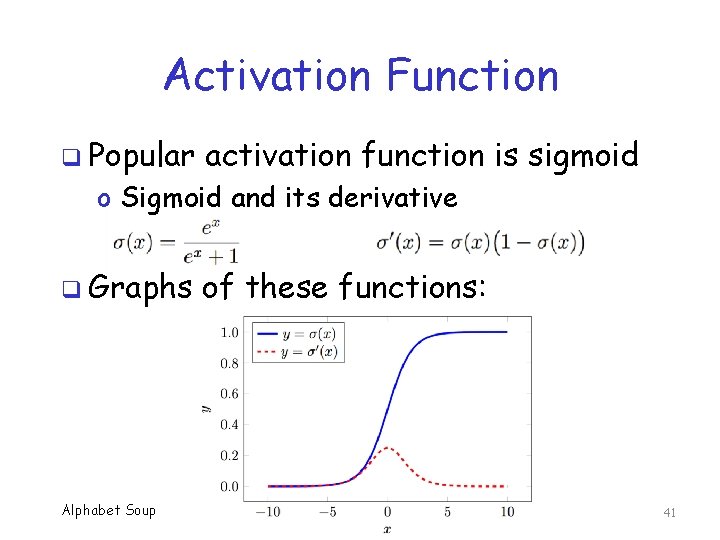

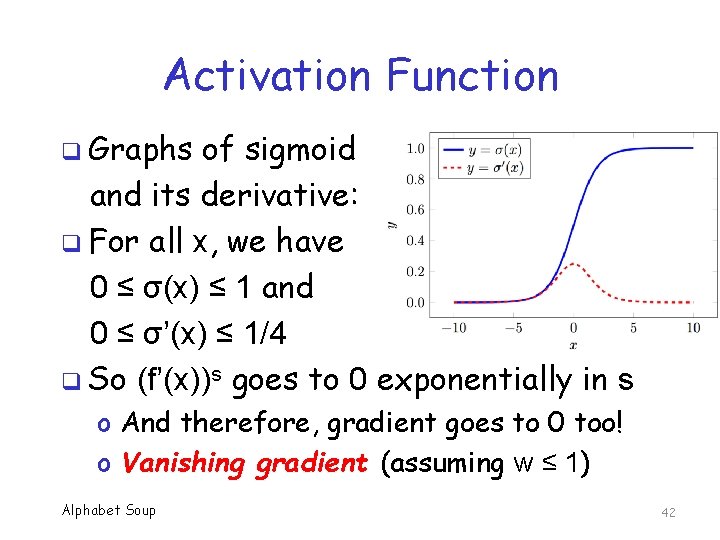

Activation Function q Popular activation function is sigmoid o Sigmoid and its derivative q Graphs Alphabet Soup of these functions: 41

Activation Function q Graphs of sigmoid and its derivative: q For all x, we have 0 ≤ σ(x) ≤ 1 and 0 ≤ σ’(x) ≤ 1/4 q So (f’(x))s goes to 0 exponentially in s o And therefore, gradient goes to 0 too! o Vanishing gradient (assuming w ≤ 1) Alphabet Soup 42

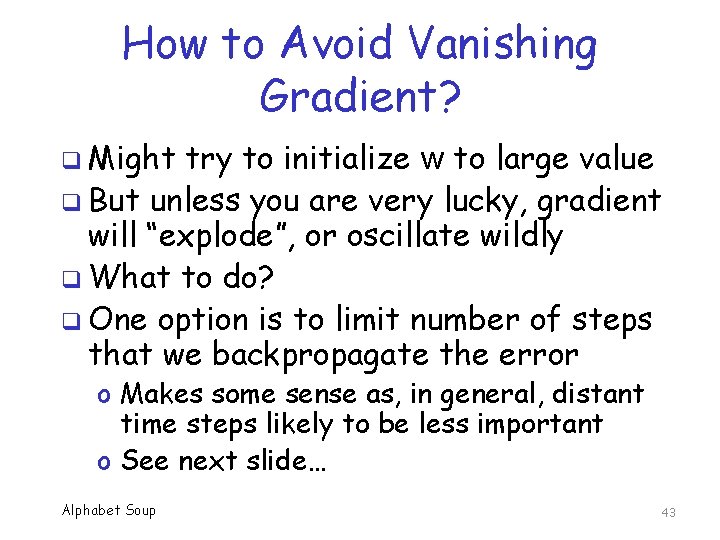

How to Avoid Vanishing Gradient? q Might try to initialize w to large value q But unless you are very lucky, gradient will “explode”, or oscillate wildly q What to do? q One option is to limit number of steps that we backpropagate the error o Makes some sense as, in general, distant time steps likely to be less important o See next slide… Alphabet Soup 43

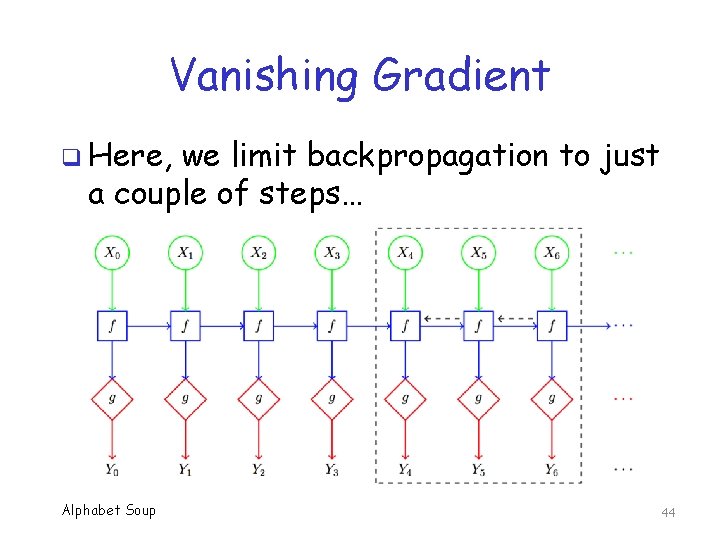

Vanishing Gradient q Here, we limit backpropagation to just a couple of steps… Alphabet Soup 44

Vanishing Gradient q But, in some cases, distant (in time) steps might be needed q If gradient vanishes, cannot make use of training data that is far in past q What to do in such cases? o One options is to modify RNN to allow gradient to easily “flow” further back o This is the idea behind LSTM… Alphabet Soup 45

RNN Summary q RNN adds memory to neural network q Can be trained using BPTT q Vanishing gradient is a big problem! o Also a problem in other architectures q Can limit backpropagation steps to reduce effect of vanishing gradient q Can also change architecture to improve gradient flow (next topic…) Alphabet Soup 46

LSTM Alphabet Soup 47

Long Short-Term Memory q LSTM is special RNN architecture q Designed to allow gradient to “flow” q Mitigates effect of vanishing, exploding, and unstable gradients q Allows “gaps” between where data appears and it is used by the model q LSTM design is somewhat complex o But intuition should be fairly clear Alphabet Soup 48

LSTM Successes q Commercially, LSTM may be the most successful ML/DL architecture ever q Used in Google Allo, Google Translate, Apple’s Siri, Amazon Alexa, and more q Dominance of LSTM may be waning o Res. Net may have advantage over LSTM o Res. Net is “better” in theory, and outperforms LSTM in many applications Alphabet Soup 49

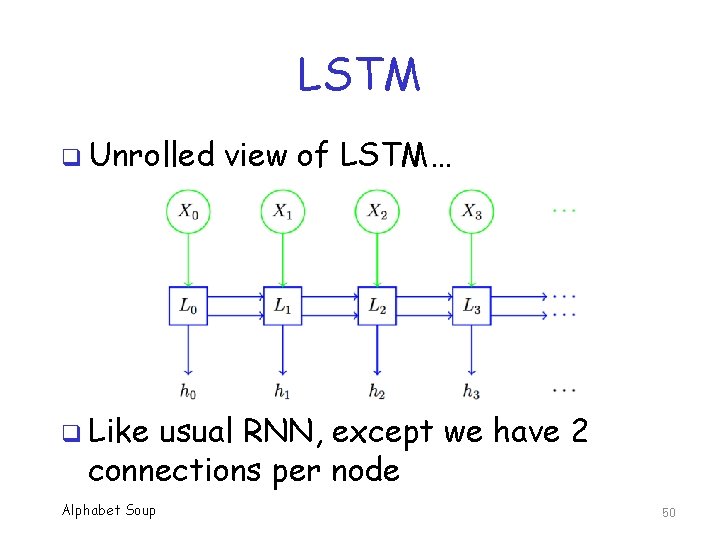

LSTM q Unrolled view of LSTM… q Like usual RNN, except we have 2 connections per node Alphabet Soup 50

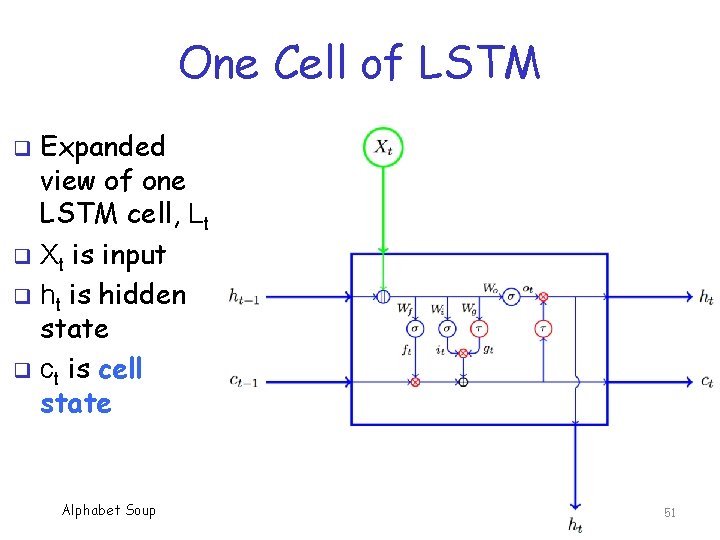

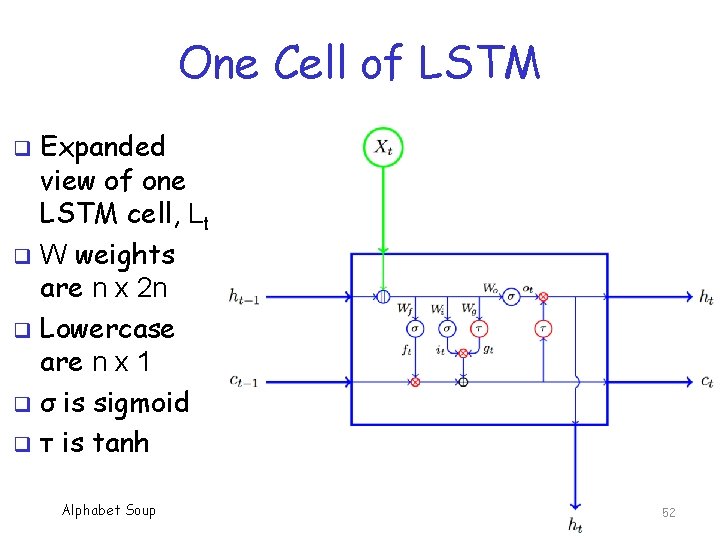

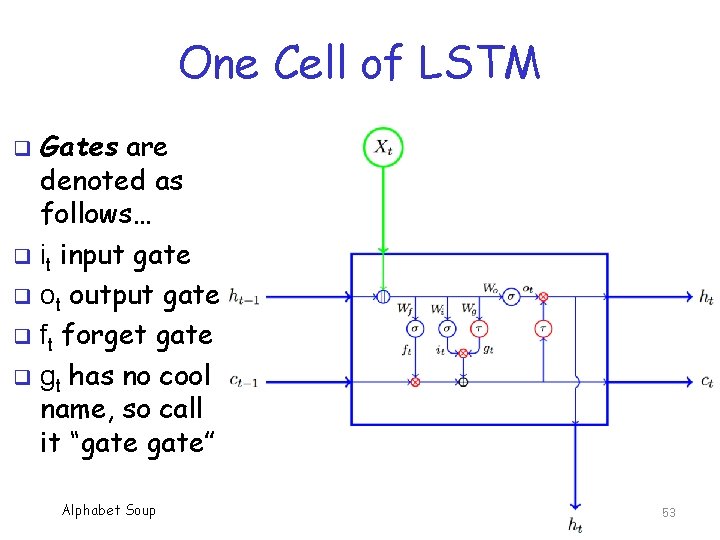

One Cell of LSTM Expanded view of one LSTM cell, Lt q Xt is input q ht is hidden state q ct is cell state q Alphabet Soup 51

One Cell of LSTM Expanded view of one LSTM cell, Lt q W weights are n x 2 n q Lowercase are n x 1 q σ is sigmoid q τ is tanh q Alphabet Soup 52

One Cell of LSTM Gates are denoted as follows… q it input gate q ot output gate q ft forget gate q gt has no cool name, so call it “gate” q Alphabet Soup 53

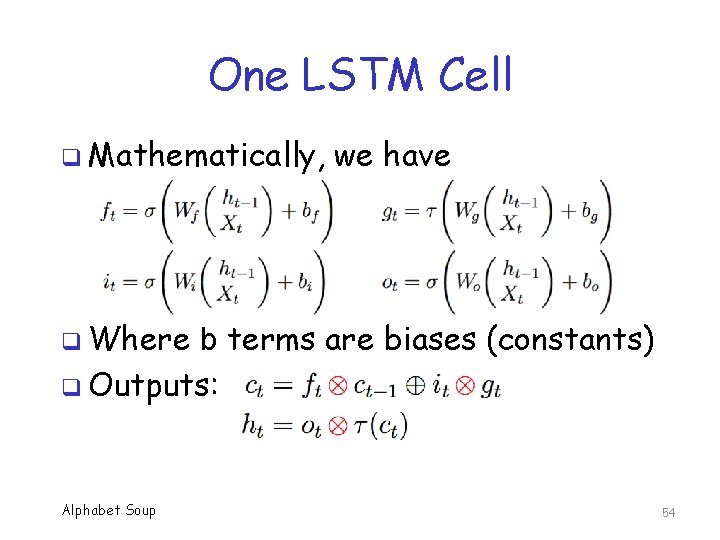

One LSTM Cell q Mathematically, we have q Where b terms are biases (constants) q Outputs: Alphabet Soup 54

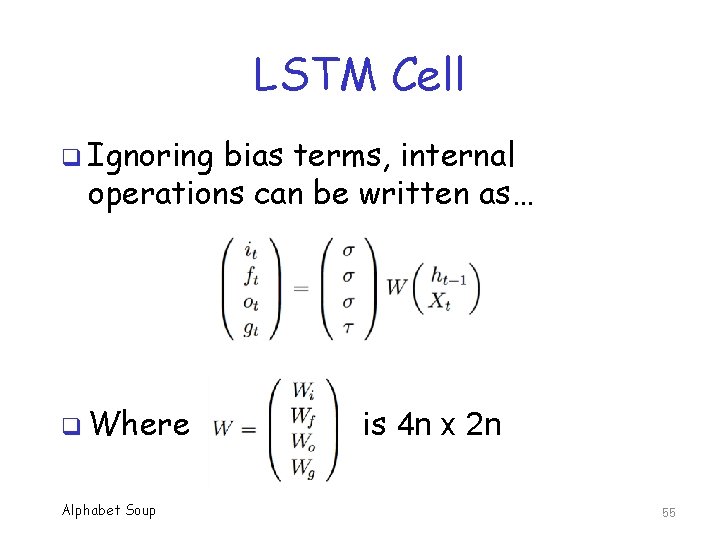

LSTM Cell q Ignoring bias terms, internal operations can be written as… q Where Alphabet Soup is 4 n x 2 n 55

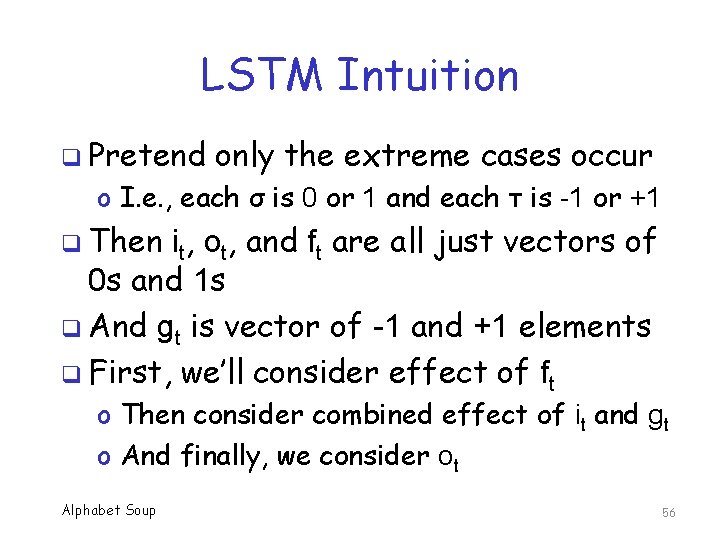

LSTM Intuition q Pretend only the extreme cases occur o I. e. , each σ is 0 or 1 and each τ is -1 or +1 q Then it, ot, and ft are all just vectors of 0 s and 1 s q And gt is vector of -1 and +1 elements q First, we’ll consider effect of ft o Then consider combined effect of it and gt o And finally, we consider ot Alphabet Soup 56

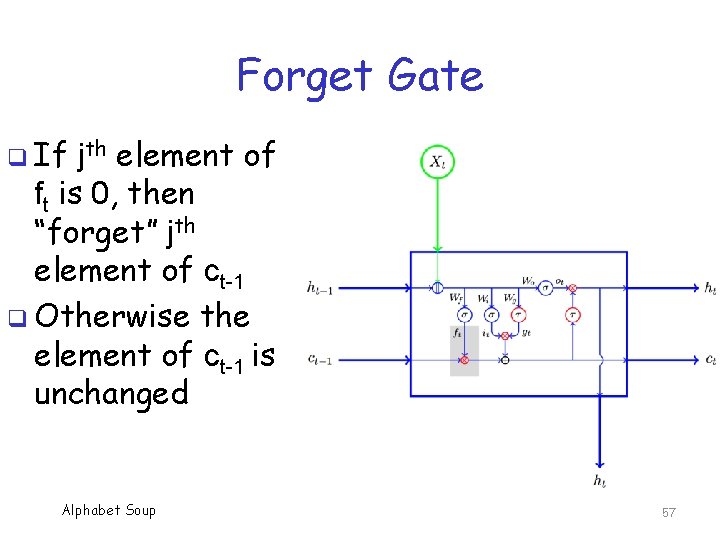

Forget Gate q If jth element of ft is 0, then “forget” jth element of ct-1 q Otherwise the element of ct-1 is unchanged Alphabet Soup 57

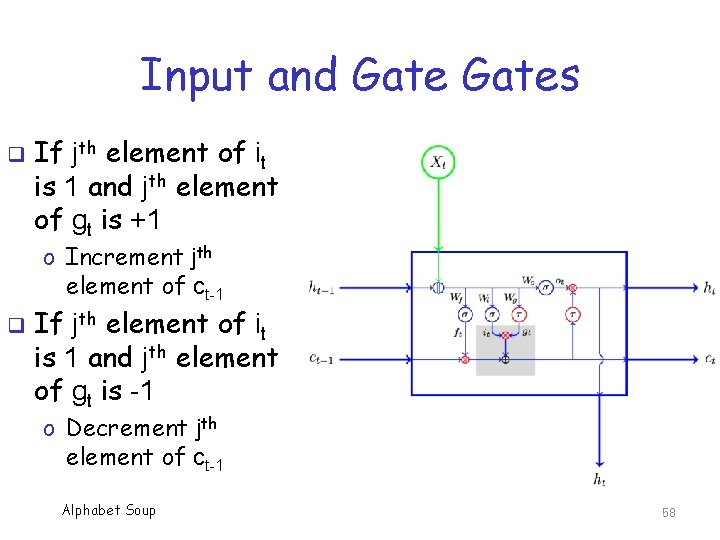

Input and Gates q If jth element of it is 1 and jth element of gt is +1 o Increment jth element of ct-1 q If jth element of it is 1 and jth element of gt is -1 o Decrement jth element of ct-1 Alphabet Soup 58

Input & Gates q These 2 gates determine what is “remembered” in cell state o Cell state is like a persistent memory q What to save (or not) is learned, since it depends on weights Wi and Wg q Note “remember” and “forget” are independent of each other in LSTM q A clever (and flexible) design… Alphabet Soup 59

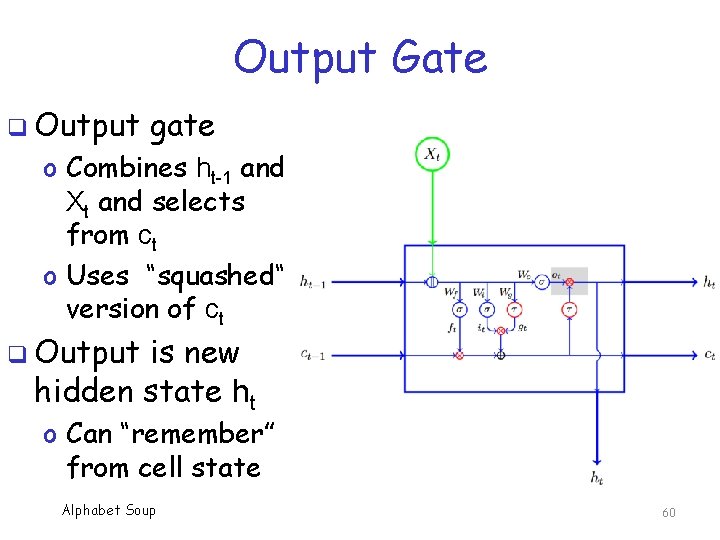

Output Gate q Output gate o Combines ht-1 and Xt and selects from ct o Uses “squashed“ version of ct q Output is new hidden state ht o Can “remember” from cell state Alphabet Soup 60

Reality Bites q Functions σ and τ do not always yield extreme values! q Regardless, the intuition is correct o Forget gate decides what to forget from previous cell state o Input and gate make incremental changes to cell state, based on the current input (decide what to remember) o Output can get info stored in cell state Alphabet Soup 61

LSTM: Bottom Line q The purpose of LSTM is to mitigate effects of vanishing gradient q Accomplishes this by use of cell state o Cell state acts as store of long term info o Allows for “gaps” q Effective/popular/profitable q Many technique variants of LSTM o Next, we discuss one of these, GRU Alphabet Soup 62

GRU Alphabet Soup 63

Variants of LSTM q There a large number of variants of popular LSTM architecture q Most are just minor tweaks to LSTM q Gated recurrent unit (GRU) is a (relatively) radical departure o Significantly different architecture o Not just minor change to parameters Alphabet Soup 64

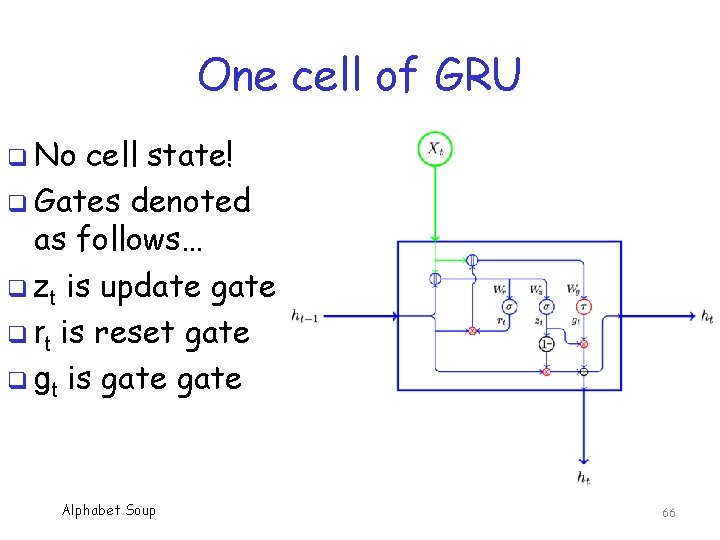

GRU q Gated recurrent unit (GRU) q Fewer parameters, so GRU is easier and faster to train than LSTM o But, GRU is less intuitive, less flexible q GRU reduces 3 gates of LSTM to 2 o GRU gates are “update” and “reset” q And, GRU has no cell state! o All “memory” must reside on hidden state Alphabet Soup 65

One cell of GRU q No cell state! q Gates denoted as follows… q zt is update gate q rt is reset gate q gt is gate Alphabet Soup 66

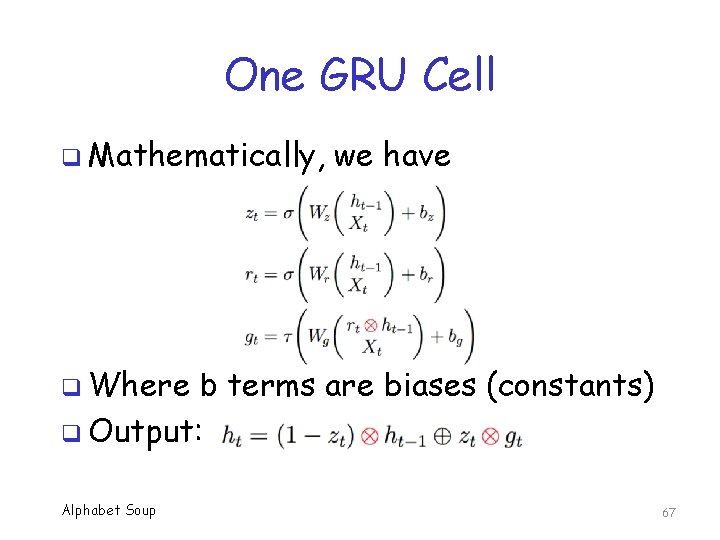

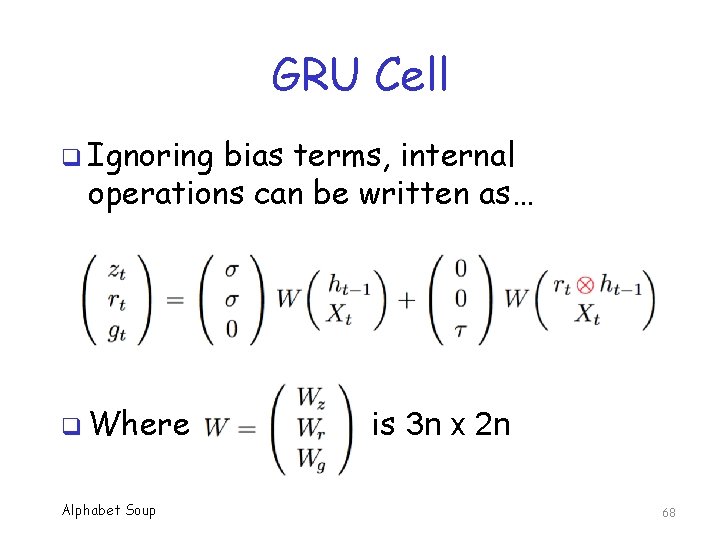

One GRU Cell q Mathematically, we have q Where b terms are biases (constants) q Output: Alphabet Soup 67

GRU Cell q Ignoring bias terms, internal operations can be written as… q Where Alphabet Soup is 3 n x 2 n 68

GRU Intuition q GRU update gate acts like combined forget and output gates of LSTM o The use of 1 – zt function implies anything not output must be forgotten q Intuitively, this makes GRU less flexible, as compared to LSTM q But, GRU works as well as LSTM in many applications Alphabet Soup 69

Other LSTM Variants? q Recent paper compared a number of variants of LSTM o Some 8 different basic architectures o Some 5400 separate experiments q Result? “…none of the variants can improve upon the standard LSTM architecture significantly” o Also, claim that forget and output gates are most important parts of LSTM Alphabet Soup 70

Recursive Neural Network Alphabet Soup 71

Recursive Neural Network q Recursive neural network more general than recurrent neural network (RNN) q Recursive neural network can be defined over any hierarchical structure o Trees, for example o Train via backpropagation thru structure (BPTS) with stochastic gradient descent q RNN is recursive neural network in special case of a linear chain Alphabet Soup 72

RNN: Bottom Line q RNN designed for sequential data q Vanilla RNNs are subject to vanishing gradient, and other gradient issues o Limits length of history that is usable q Complex RNN architectures designed to facilitate gradient “flow” o LSTM, GRU, etc. o LSTM has been extremely successful Alphabet Soup 73

Res. Net Alphabet Soup 74

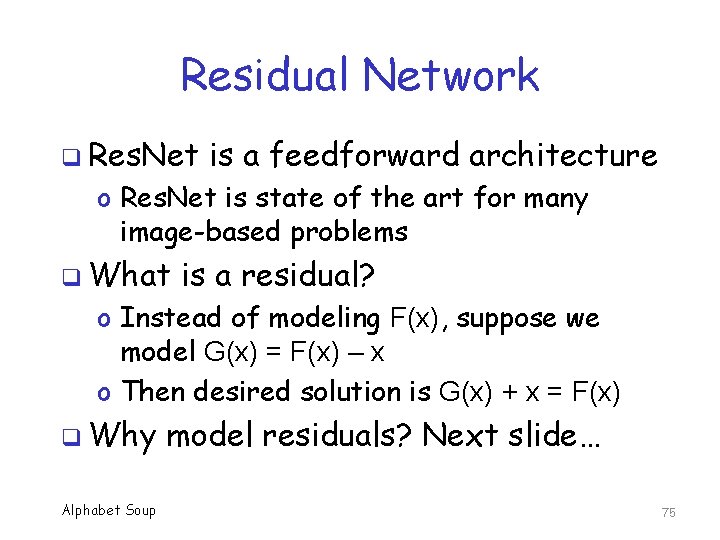

Residual Network q Res. Net is a feedforward architecture o Res. Net is state of the art for many image-based problems q What is a residual? o Instead of modeling F(x), suppose we model G(x) = F(x) – x o Then desired solution is G(x) + x = F(x) q Why Alphabet Soup model residuals? Next slide… 75

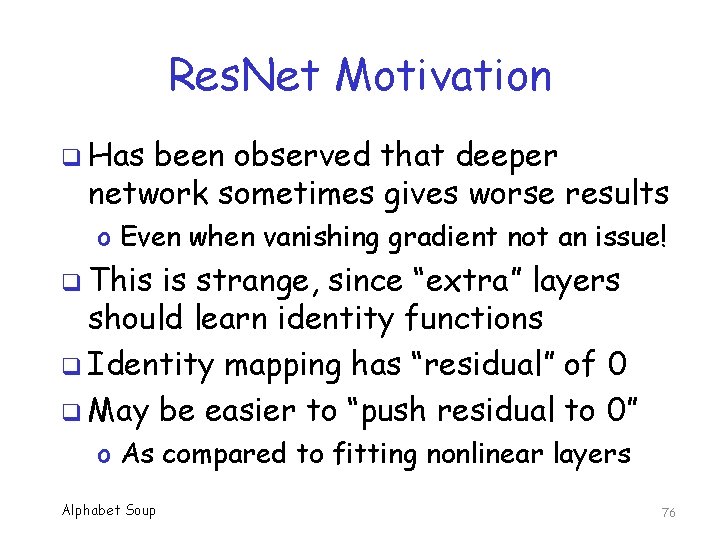

Res. Net Motivation q Has been observed that deeper network sometimes gives worse results o Even when vanishing gradient not an issue! q This is strange, since “extra” layers should learn identity functions q Identity mapping has “residual” of 0 q May be easier to “push residual to 0” o As compared to fitting nonlinear layers Alphabet Soup 76

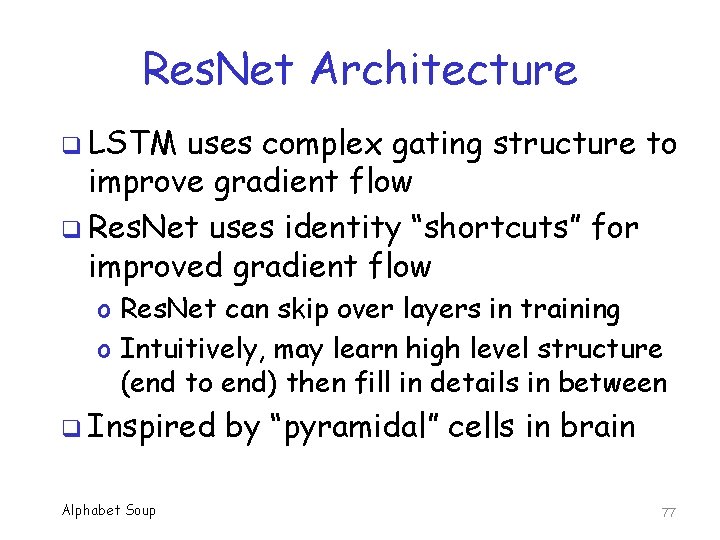

Res. Net Architecture q LSTM uses complex gating structure to improve gradient flow q Res. Net uses identity “shortcuts” for improved gradient flow o Res. Net can skip over layers in training o Intuitively, may learn high level structure (end to end) then fill in details in between q Inspired Alphabet Soup by “pyramidal” cells in brain 77

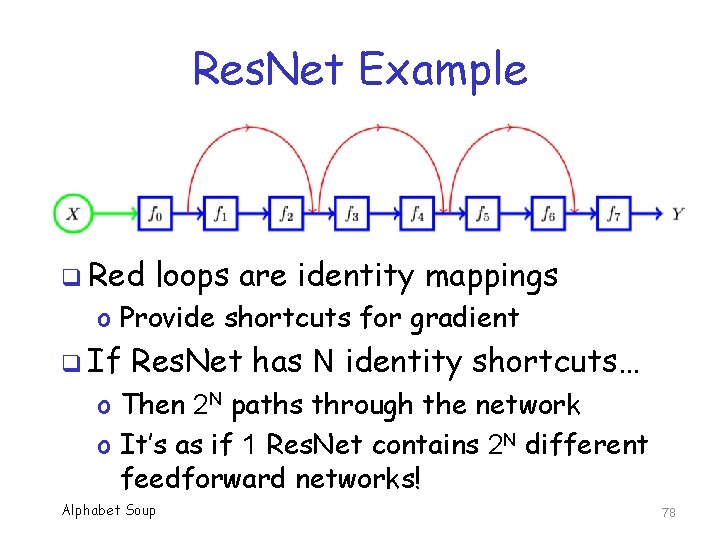

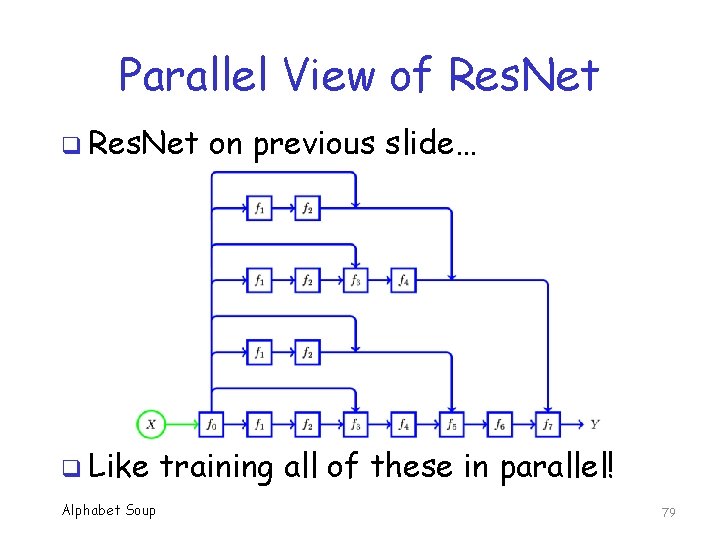

Res. Net Example q Red loops are identity mappings o Provide shortcuts for gradient q If Res. Net has N identity shortcuts… o Then 2 N paths through the network o It’s as if 1 Res. Net contains 2 N different feedforward networks! Alphabet Soup 78

Parallel View of Res. Net q Like Alphabet Soup on previous slide… training all of these in parallel! 79

Res. Net Paths q Most paths in a Res. Net are short q Surprisingly, it has been shown that… o In spite of being trained simultaneously, paths have “ensemble like” behavior o Paths do not depend (much) on each other q More surprising still. . . o Only short paths needed during training o Long paths do not contribute any gradient! q Res. Net Alphabet Soup “intuition” is not correct! 80

Res. Net: Bottom Line q Res. Net state of the art in some cases o Theoretical and practical advantages q Comparable to training multiple feedforward networks in parallel q Surprisingly, short paths dominate o In effect, glue together many short paths q So, gradient problems resolved not by clever design, but by short paths!!! Alphabet Soup 81

GAN Alphabet Soup 82

Generative Adversarial Network q In GAN, two neural networks compete o One network generates fake samples designed to trick the other network o Other network tries to discriminate between fake and real samples q Usually, we are interested in discriminator network q But GAN can improve both networks o As compared to training only on real data Alphabet Soup 83

Generative vs Discriminative q Let {Xi} be a collection of samples o And {Yi} be corresponding class labels q In statistics, a discriminative model gives us probability distribution P(Y | X) o Can then classify X by most likely class q. A generative model gives us P(X, Y) o That is, the joint probability distribution o Can generate pairs that satisfy distribution o Can always determine P(Y | X) from P(X, Y) Alphabet Soup 84

HMM Example q Recall that HMM is triple λ=(A, B, π) o Where A has state transition probabilities o And B contains output probabilities o And π has initial state probabilities q Can use trained HMM to generate sequences that match training data o Generate initial state based on π, get observation from B, next state from A, get obs. from B, next state from A … Alphabet Soup 85

HMM Example q From previous slide, HMM is a generative model q On the other hand, HMM can be used as a discriminative model! o We just need to set a score threshold q For HMM, we obtain discriminative model from a generative model q This always works Alphabet Soup 86

SVM Example q Suppose we train an SVM q Can use the SVM to classify samples o Thus, SVM is a discriminative model q But SVM cannot be used (directly) to generate samples that fit distribution q So, SVM discriminative model does not give rise to a generative model q This is also, in general, the case Alphabet Soup 87

Generative vs Discriminative q Both discriminative and generative models can solve discriminative problem o So, which is better? q Generative models are more general o As compared to discriminative models q Intuitively, harder to train more general models o Easier to train discriminative models? q Discriminative Alphabet Soup is the winner? 88

Generative vs Discriminative q So, are discriminative models better for discriminative problems? q Not entirely clear that this is the case q Research shows generative models may be better when training data is limited o At least in some cases q In ML, discriminative models dominate o But generative becoming more popular… Alphabet Soup 89

GAN q To train a discriminative network… o Use real training data, of course! q But, let’s also use “fake” training data o Where the fake training data is designed to trick the discriminative network q Using this method, discriminative network should be even stronger o As compared to only using real data Alphabet Soup 90

GAN q People had thought of this before, but how to generate fake samples ? q Training data typically lives in high dim. space with unknown distribution o And when training, discriminator network is constantly changing o Discriminator network is a moving target q What Alphabet Soup to do? 91

GAN q Key insight of GAN: Train another neural network to make fake samples o Discriminative network is D o Generative network is G network generates fake samples, trying to fool D network q D network tries to distinguish between real and fake q. G Alphabet Soup 92

GAN q Another key insight of GAN: Train D and G simultaneously as a game o Minimax (aka minmax) game o More about this later… q Why is this useful? o No need to explicitly model probability distribution of samples o G uses random noise as its prob. dist. o Yields random samples that fit G model Alphabet Soup 93

GAN q Generator G(z) generates fake sample o Based on random input z q Discriminator D(x) gives probability that sample x is real or fake o For example, D(x) = 1 means discriminator is certain that sample x is real q Discriminator must consider both real and fake samples … q But, generator only makes fake samples Alphabet Soup 94

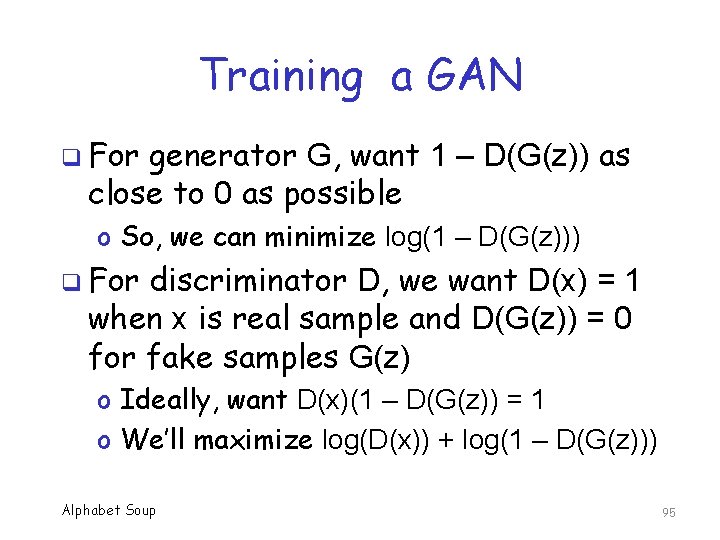

Training a GAN q For generator G, want 1 – D(G(z)) as close to 0 as possible o So, we can minimize log(1 – D(G(z))) q For discriminator D, we want D(x) = 1 when x is real sample and D(G(z)) = 0 for fake samples G(z) o Ideally, want D(x)(1 – D(G(z)) = 1 o We’ll maximize log(D(x)) + log(1 – D(G(z))) Alphabet Soup 95

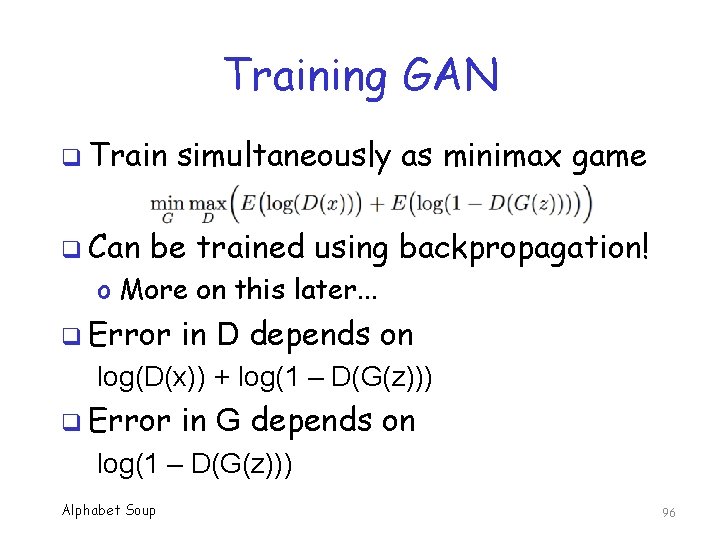

Training GAN q Train q Can simultaneously as minimax game be trained using backpropagation! o More on this later. . . q Error in D depends on log(D(x)) + log(1 – D(G(z))) q Error in G depends on log(1 – D(G(z))) Alphabet Soup 96

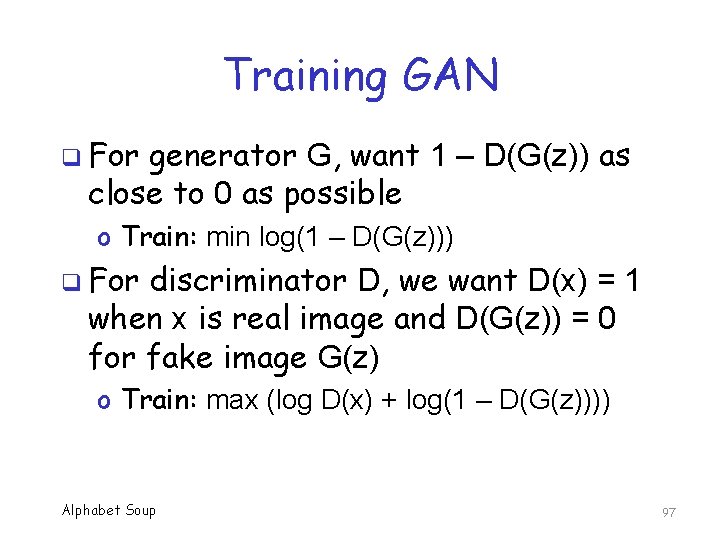

Training GAN q For generator G, want 1 – D(G(z)) as close to 0 as possible o Train: min log(1 – D(G(z))) q For discriminator D, we want D(x) = 1 when x is real image and D(G(z)) = 0 for fake image G(z) o Train: max (log D(x) + log(1 – D(G(z)))) Alphabet Soup 97

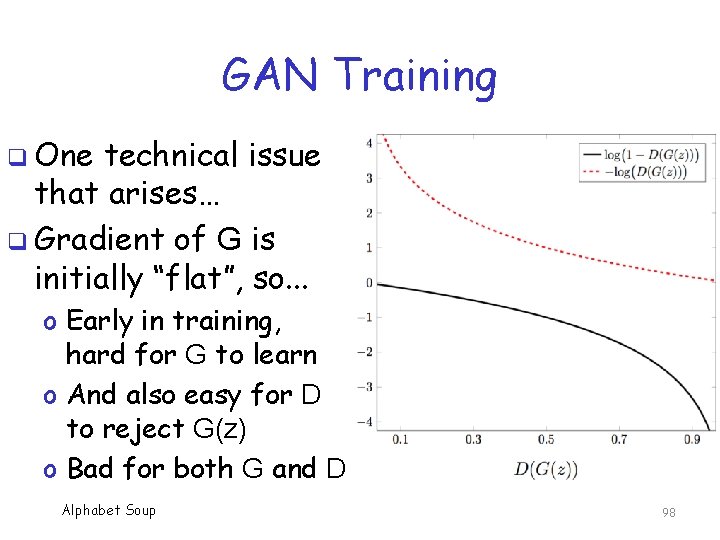

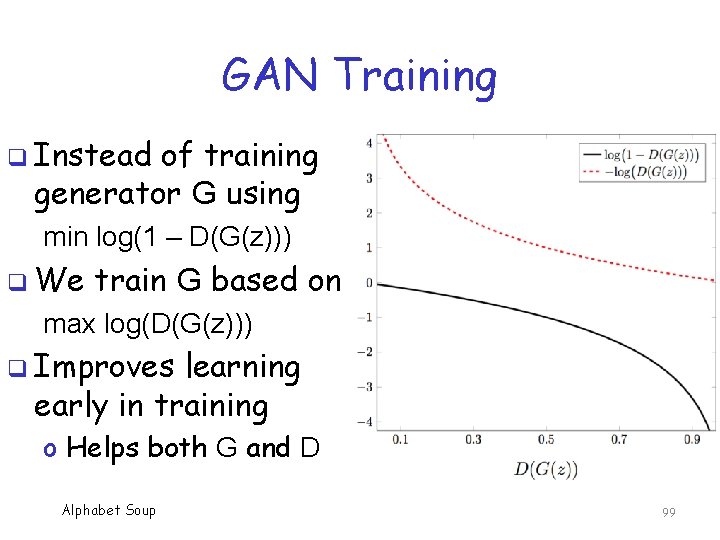

GAN Training q One technical issue that arises… q Gradient of G is initially “flat”, so. . . o Early in training, hard for G to learn o And also easy for D to reject G(z) o Bad for both G and D Alphabet Soup 98

GAN Training q Instead of training generator G using min log(1 – D(G(z))) q We train G based on max log(D(G(z))) q Improves learning early in training o Helps both G and D Alphabet Soup 99

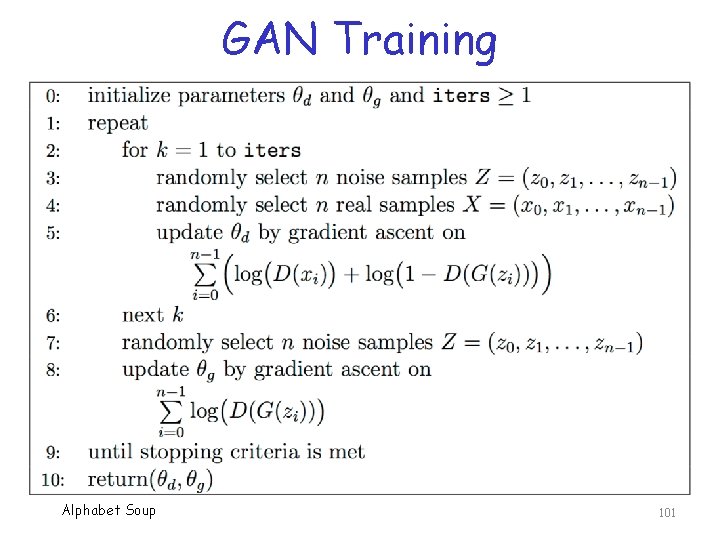

GAN Training q Randomly select (z 1, z 2, …, zm) samples (noise) and (x 1, x 2, …, xm) real samples q Update D by gradient ascent based on Σ (log D(xi) + log(1 – D(G(zi)))) q Randomly select another (z 1, z 2, …, zm) q Update G by gradient ascent based on Σ log D(G(zi)) q Repeat Alphabet Soup 100

GAN Training Alphabet Soup 101

GAN: Bottom Line q GAN is one of the most innovative recent ideas in field of ML/DL o Idea has been previously considered, but generating fake samples was a problem q Game theory concepts is key to GAN q Many variations on basic GAN o At least 50 different versions q Powerful, Alphabet Soup useful, fashionable technique! 102

Word 2 Vec Alphabet Soup 103

Word 2 Vec q Word 2 Vec embeds “words” (features) into a high-dimensional space q Captures semantics of the language q For example, suppose w 0=“king”, w 1=“man”, w 2=“woman”, w 3=“queen” q Let V(wi) be Word 2 Vec embedding of wi q Then V(w 3) is the “closest” vector to V(w 0) − V(w 1) + V(w 2) Alphabet Soup 104

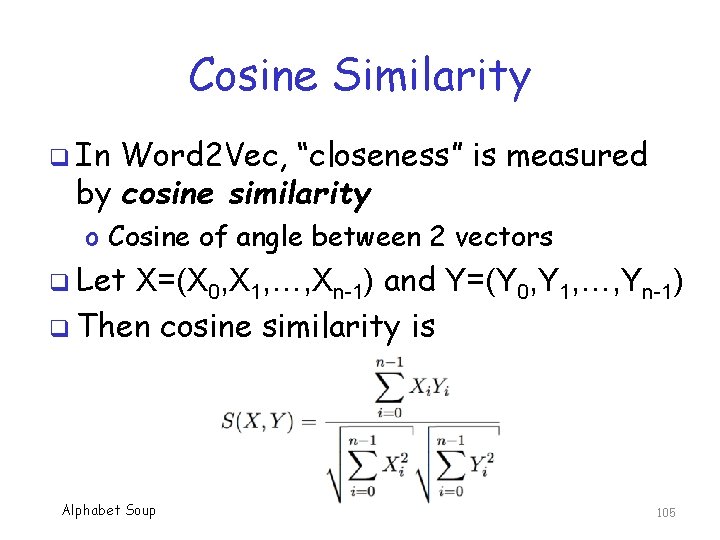

Cosine Similarity q In Word 2 Vec, “closeness” is measured by cosine similarity o Cosine of angle between 2 vectors q Let X=(X 0, X 1, …, Xn-1) and Y=(Y 0, Y 1, …, Yn-1) q Then cosine similarity is Alphabet Soup 105

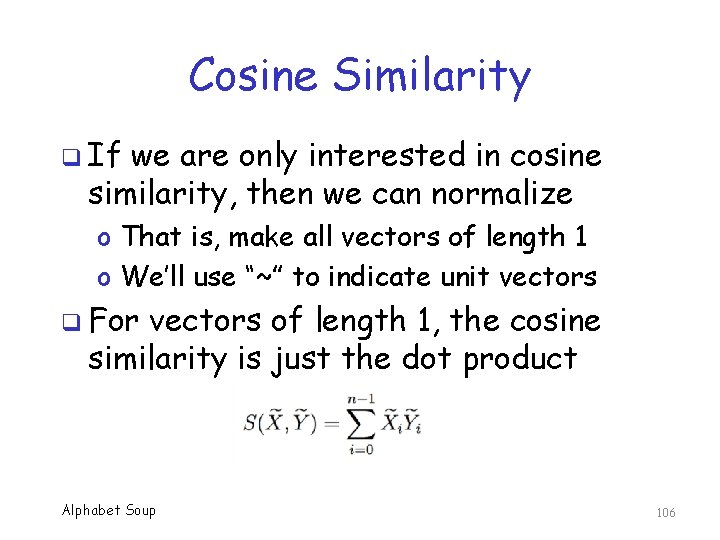

Cosine Similarity q If we are only interested in cosine similarity, then we can normalize o That is, make all vectors of length 1 o We’ll use “~” to indicate unit vectors q For vectors of length 1, the cosine similarity is just the dot product Alphabet Soup 106

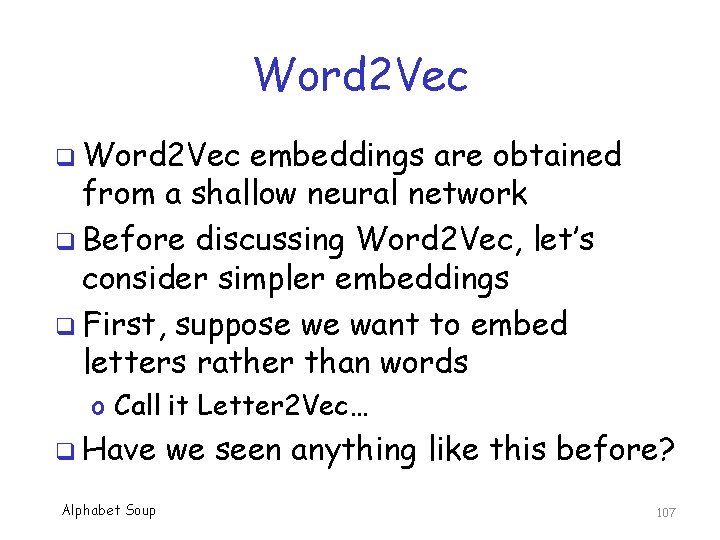

Word 2 Vec q Word 2 Vec embeddings are obtained from a shallow neural network q Before discussing Word 2 Vec, let’s consider simpler embeddings q First, suppose we want to embed letters rather than words o Call it Letter 2 Vec… q Have we seen anything like this before? Alphabet Soup 107

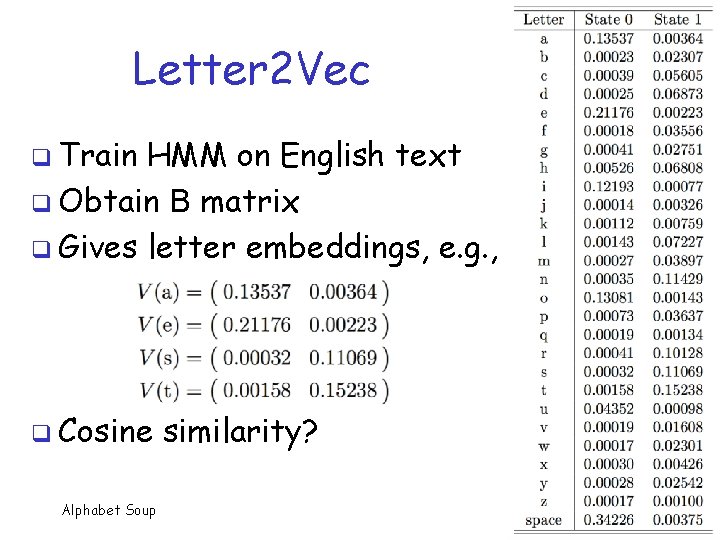

Letter 2 Vec q Train HMM on English text q Obtain B matrix q Gives letter embeddings, e. g. , q Cosine Alphabet Soup similarity? 108

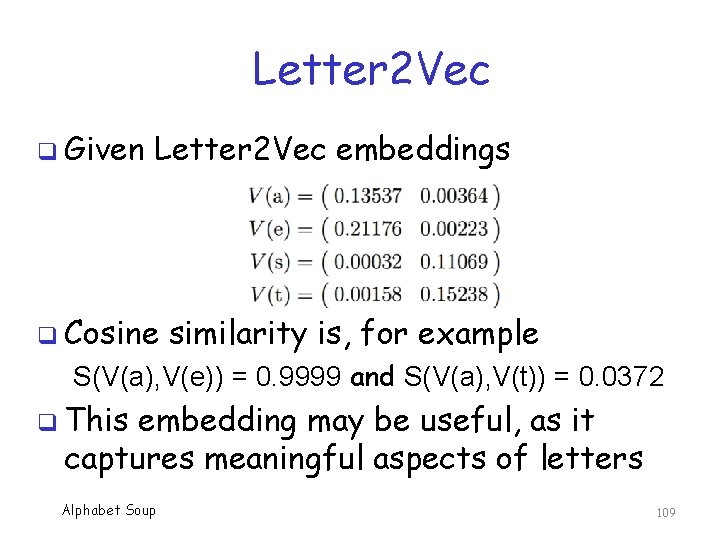

Letter 2 Vec q Given Letter 2 Vec embeddings q Cosine similarity is, for example S(V(a), V(e)) = 0. 9999 and S(V(a), V(t)) = 0. 0372 q This embedding may be useful, as it captures meaningful aspects of letters Alphabet Soup 109

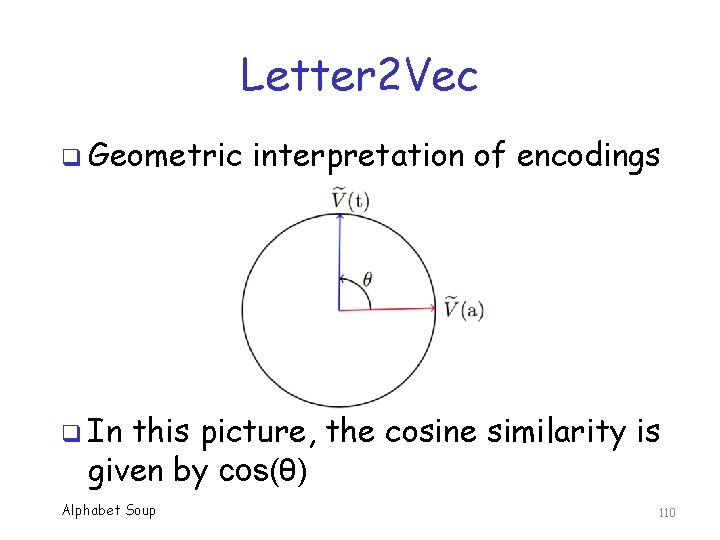

Letter 2 Vec q Geometric interpretation of encodings q In this picture, the cosine similarity is given by cos(θ) Alphabet Soup 110

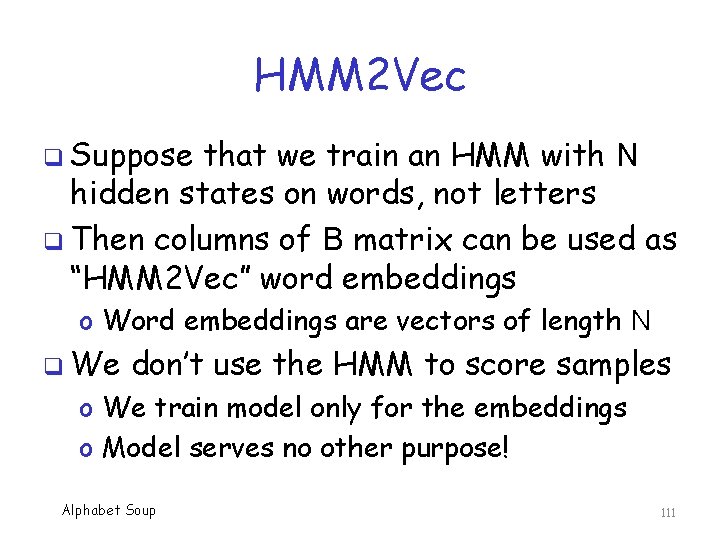

HMM 2 Vec q Suppose that we train an HMM with N hidden states on words, not letters q Then columns of B matrix can be used as “HMM 2 Vec” word embeddings o Word embeddings are vectors of length N q We don’t use the HMM to score samples o We train model only for the embeddings o Model serves no other purpose! Alphabet Soup 111

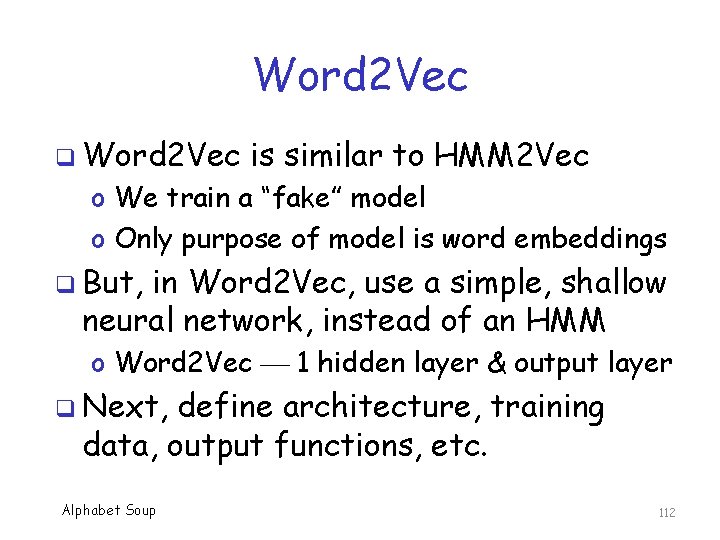

Word 2 Vec q Word 2 Vec is similar to HMM 2 Vec o We train a “fake” model o Only purpose of model is word embeddings q But, in Word 2 Vec, use a simple, shallow neural network, instead of an HMM o Word 2 Vec 1 hidden layer & output layer q Next, define architecture, training data, output functions, etc. Alphabet Soup 112

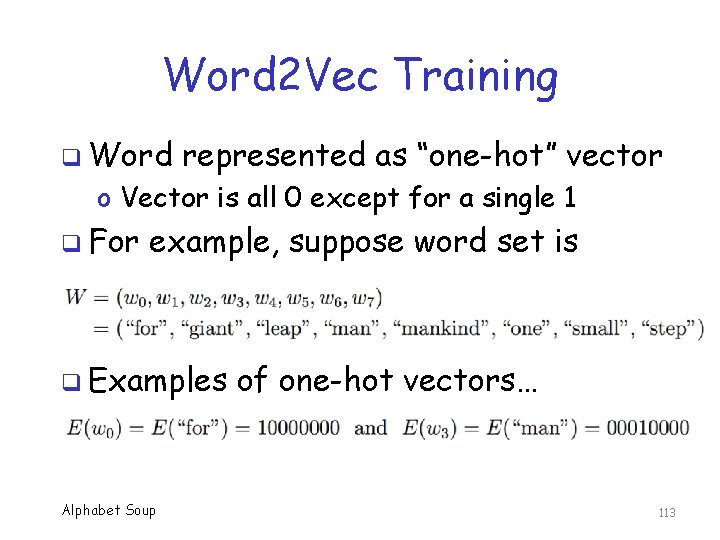

Word 2 Vec Training q Word represented as “one-hot” vector o Vector is all 0 except for a single 1 q For example, suppose word set is q Examples Alphabet Soup of one-hot vectors… 113

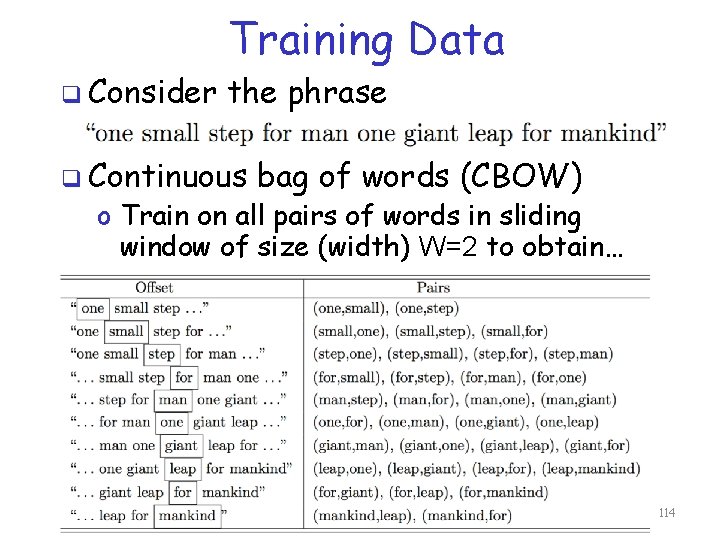

Training Data q Consider the phrase q Continuous bag of words (CBOW) o Train on all pairs of words in sliding window of size (width) W=2 to obtain… Alphabet Soup 114

Training Data q Each pair of words is treated as an input-output pair for training q For example o (for, man) = (10000000, 00010000) o When input is 10000000, we train the model to output 00010000 q Example on previous slide has 34 such training pairs Alphabet Soup 115

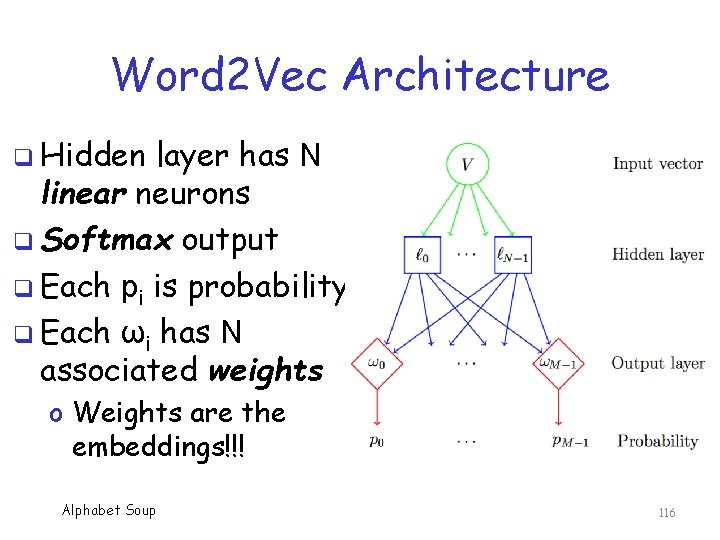

Word 2 Vec Architecture q Hidden layer has N linear neurons q Softmax output q Each pi is probability q Each ωi has N associated weights o Weights are the embeddings!!! Alphabet Soup 116

Word 2 Vec Model q As with HMM 2 Vec, in Word 2 Vec, only interested in the model internals q Don’t actually use the model to score q Word 2 Vec learns context of words o Makes embedding vectors very useful q But to learn context, need HUGE dataset, which requires some tricks… o Conceptually simple, difficult to train Alphabet Soup 117

Word 2 Vec Training q Want to train on HUGE datasets… q How huge is HUGE? State of the art. . . o About T=109 training samples o Vocabularly of size M=10 k o Embedding vectors of size N=300 q This is a ginormous model! q How to train Word 2 Vec model of this magnitude? Alphabet Soup 118

Word 2 Vec Training Tricks q Two main tricks used in Word 2 Vec… q Subsampling o No need to sample common words like “the” as frequently as they appear in text q Negative sampling o When training a neural net, each sample can potentially affect all weights o This would be too costly in Word 2 Vec o So, how to reduce the work? Alphabet Soup 119

Negative Sampling q Again, when training a neural net, each sample can affect all of the weights q Suppose output is word wj q In negative sampling, we adjust weights ωj of “positive” sample wj q Then randomly select (small) subset from M-1 “negative” samples o Adjust weights of selected samples only Alphabet Soup 120

Wrod 2 Vec: Bottom Line q Word 2 Vec is powerful and useful tool in natural language processing (NLP) o Also has uses in ML/DL more generally q Train a “fake” shallow network o Then use weights as word embeddings q Basic idea is pretty straightforward q But, complex due to tricks needed to train on HUGE datasets Alphabet Soup 121

Deep Learning Mini Topics Alphabet Soup 122

Transfer Learning Alphabet Soup 123

Simple Substitution Cipher q Assume that we want to train HMM on simple substitution ciphertext o Let’s use N=26 hidden states o Then hidden states (the A matrix) should correspond to plaintext letters q Suppose that we’ve already trained HMM with N=26 on English o Then we can use this A matrix for the simple substitution problem Alphabet Soup 124

Transfer Learning q Previous slide gives simple example of transfer learning with HMM q Transfer learning popular for CNN q Suppose we train a CNN on a large collection of generic images o Learning contained in hidden layers should be similar for other problems o Can reuse these hidden layers for other image problems, just retrain output layer Alphabet Soup 125

Transfer Learning with HMM q Advantage of transfer learning? q Can save vast amount of training time q Training deep network on lots of data is extremely costly!! q Example application: Detect malware by treating exe files as images o CNN with transfer learning works remarkably well for this problem Alphabet Soup 126

Ensembles Alphabet Soup 127

Ensemble Techniques Ensemble is combination of techniques q We categorize ensembles as follows… q Bagging q o Generate classifiers based in subsets of data and/or features (e. g. , Random Forest) q Boosting o Combine classifiers into strong one (e. g. , Ada. Boost) q Stacking o Combine disparate techniques using meta-classifier Alphabet Soup 128

Ensembles q Note that stacking is more general than bagging or boosting q Most recent competitions won using ensembles (Kaggle, KDD Cup, etc. ) q But ensembles can be impractical! o Netflix prize is a good example… o After paying $1 M to winners, system was never fully implemented by Netflix! Alphabet Soup 129

Combo Architectures Alphabet Soup 130

Combination Architectures q Sometimes combine techniques into one unified architecture q Suppose we want to caption images… o Train CNN on images o Train an LSTM on captions o Combine these models by training output layer for captioning images o Result is an example of an so-called “encoder-decoder” model Alphabet Soup 131

Combination Architectures q As another example, DNN-HMM q Train a deep neural network q Apply DNN to sequence of inputs q Use results of final hidden layer of DNN as input to HMM o Then HMM acts as output layer for DNN o Advantage is that HMM is more analyzable o Might give better understanding of errors Alphabet Soup 132

Combination Architectures q As yet another example, LSTHMM q In LSTM, use cell state to “remember” q HMM can be viewed as a store of longterm information q Some interesting issues… o How to combine HMM & hidden state ht ? o How to update HMM? q Lots Alphabet Soup of experimentation needed… 133

TF-IDF Alphabet Soup 134

TF-IDF q Designed to automatically extract index terms from a set of documents o Other uses (e. g. , malware detection) q TF == Term Frequency o Relative frequency of term in a document q IDF == Inverse Document Frequency o Fraction of documents that have the term o Reciprocal is the IDF (almost) o “Almost”, since log used in IDF calculation Alphabet Soup 135

TF-IDF q Several ways to compute TF-IDF o We give one popular formula here… q Let D={d 0, d 1, …, dn-1} be set of documents o And T={t 0, t 1, . . . , t. M-1} is set of all distinct terms in D o And Ni is number of terms in document di o And Ni, j is the number of times that term tj appears in document di Alphabet Soup 136

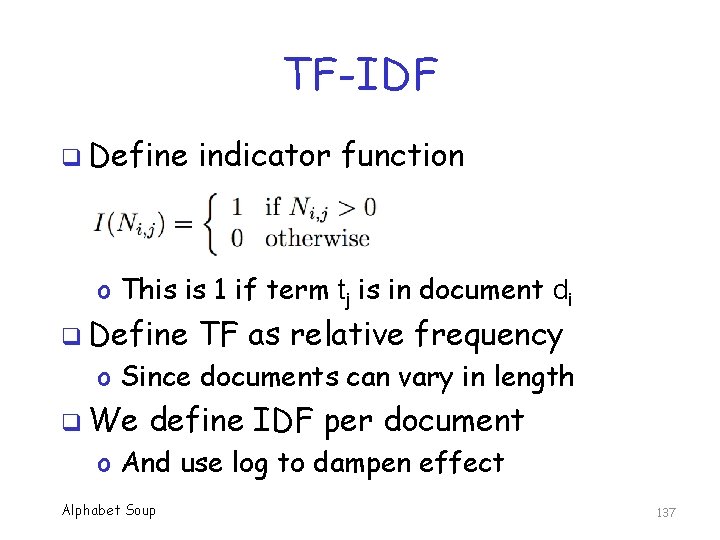

TF-IDF q Define indicator function o This is 1 if term tj is in document di q Define TF as relative frequency o Since documents can vary in length q We define IDF per document o And use log to dampen effect Alphabet Soup 137

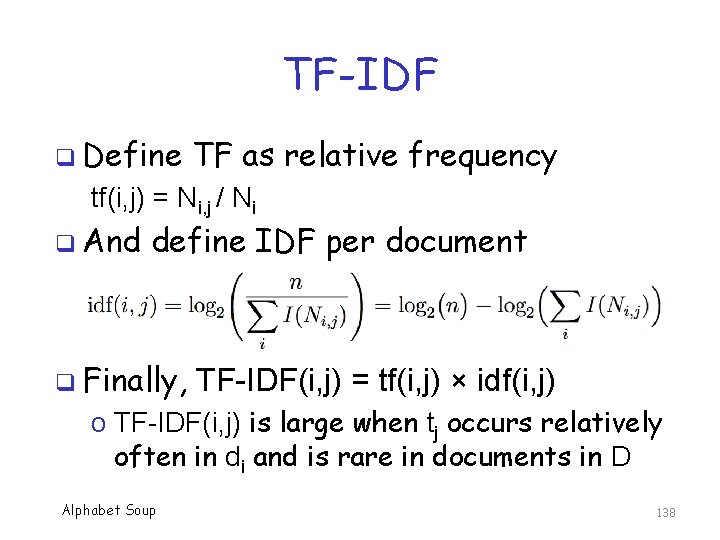

TF-IDF q Define TF as relative frequency tf(i, j) = Ni, j / Ni q And define IDF per document q Finally, TF-IDF(i, j) = tf(i, j) × idf(i, j) o TF-IDF(i, j) is large when tj occurs relatively often in di and is rare in documents in D Alphabet Soup 138

Summary Alphabet Soup 139

Summary q Here, we have covered a wide variety of deep learning topics in some detail o CNN, RNN (including LSTM & GRU), Res. Net, GAN, Word 2 Vec q Covered several more in less detail o Transfer learning, ensembles, etc. q Goal was to provide enough detail to understand basic concepts o Without getting bogged down in trivialities Alphabet Soup 140

References Alphabet Soup 141

CNN References q q q A. Karpathy, Convolutional neural networks for visual recognition, http: //cs 231 n. github. io/convolutional-networks/ A. Deshpande, A beginner’s guide to understanding convolutional neural networks, https: //adeshpande 3. github. io/A-Beginner%27 s. Guide-To-Understanding-Convolutional-Neural. Networks/ D. Cornelisse, An intuitive guide to convolutional neural networks, https: //medium. freecodecamp. org/an-intuitiveguide-to-convolutional-neural-networks 260 c 2 de 0 a 050 Alphabet Soup 142

RNN & LSTM References F. -F. Li, J. Johnson, and S. Yeung. Lecture 10: Recurrent Neural Networks, http: //cs 231 n. stanford. edu/slides/2017/cs 231 n_2017_lecture 10. pdf q A. Narwekar and A. Pampari, Recurrent neural network architectures, http: //slazebni. cs. illinois. edu/spring 17/lec 2 0_rnn. pdf q Alphabet Soup 143

Res. Net References q K. He, X. Zhang, S. Ren, and J. Sun, Deep residual learning for image recognition. https: //arxiv. org/pdf/1512. 03385. pdf Alphabet Soup 144

GAN References q I. J. Goodfellow, et. al, Generative adversarial nets, In Proceedings of the 27 th International Conference on Neural Information Processing Systems, NIPS’ 14, pp. 2672 -2680 q F. -F. Li, J. Johnson, and S. Yeung, Lecture 13: Generative Models, http: //cs 231 n. stanford. edu/slides/20 17/cs 231 n_2017_lecture 13. pdf Alphabet Soup 145

Word 2 Vec References q q q Chris Mc. Cormick. Word 2 vec tutorial - The skipgram model, http: //mccormickml. com/2016/04/19/word 2 vectutorial-the-skip-gram-model T. Mikolov, et. al, Efficient estimation of word representations in vector space, https: //arxiv. org/abs/1301. 3781 T. Mikolov, et. al, Distributed representations of words and phrases and their compositionality, https: //papers. nips. cc/paper/5021 -distributedrepresentations-of-words-and-phrases-and-theircompositionality. pdf Alphabet Soup 146

Best Reference (Of Course…) q M. Stamp, Alphabet soup of deep learning topics, http: //www. cs. sjsu. edu/~stamp/RUA/ alpha. pdf Alphabet Soup 147

- Slides: 147