Synchronization Process Synchronization Background The CriticalSection Problem Petersons

Synchronization

Process Synchronization • Background • The Critical-Section Problem • Peterson’s Solution • Synchronization Hardware • Mutex • Semaphores • Monitors • Other ways to provide synchronization Classic Problems of Synchronization Examples Process Serializabillity Atomic Transactions

Objectives • To introduce the critical-section problem, whose solutions can be used to ensure the consistency of shared data • To present both software and hardware solutions of the criticalsection problem

Concurrency • Concurrent access to shared data may result in data inconsistency • Maintaining data consistency requires mechanisms to ensure the orderly execution of cooperating processes

Concurrency …. • Concurrent threads/processes (informal) • Two processes are concurrent if they run at the same execution is interleaved in any order • Asynchronous • The processes require occasional synchronization • Independent • They do not have any reliance on each other • Synchronous • Frequent synchronization with each other – order of execution is guaranteed • Parallel • Processes run at the same time on separate processors

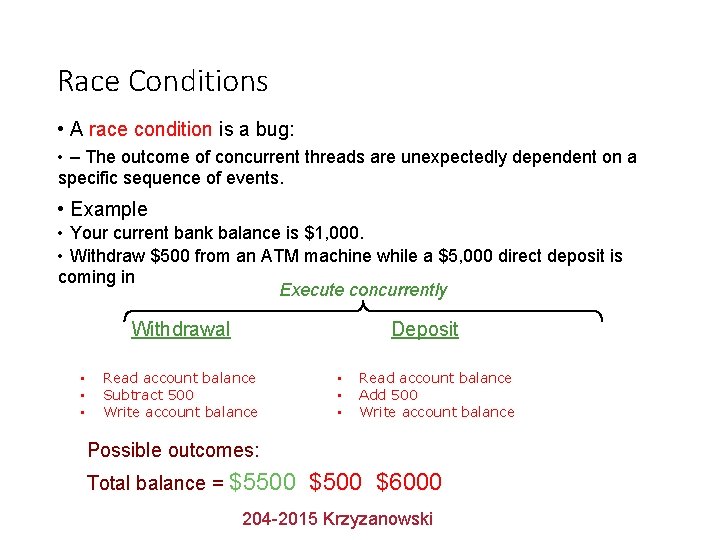

Race Conditions • A race condition is a bug: • – The outcome of concurrent threads are unexpectedly dependent on a specific sequence of events. • Example • Your current bank balance is $1, 000. • Withdraw $500 from an ATM machine while a $5, 000 direct deposit is coming in Execute concurrently Withdrawal • • • Deposit Read account balance Subtract 500 Write account balance • • • Read account balance Add 500 Write account balance Possible outcomes: Total balance = $5500, $500 $6000, 204 -2015 Krzyzanowski

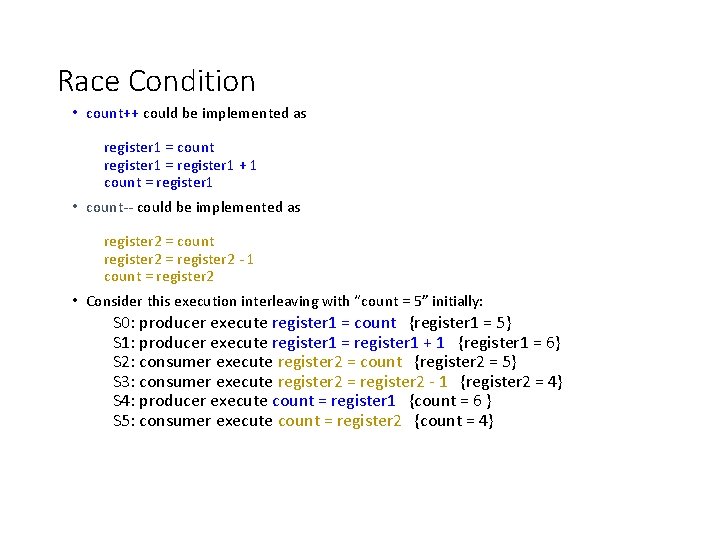

Race Condition • count++ could be implemented as register 1 = count register 1 = register 1 + 1 count = register 1 • count-- could be implemented as register 2 = count register 2 = register 2 - 1 count = register 2 • Consider this execution interleaving with “count = 5” initially: S 0: producer execute register 1 = count {register 1 = 5} S 1: producer execute register 1 = register 1 + 1 {register 1 = 6} S 2: consumer execute register 2 = count {register 2 = 5} S 3: consumer execute register 2 = register 2 - 1 {register 2 = 4} S 4: producer execute count = register 1 {count = 6 } S 5: consumer execute count = register 2 {count = 4}

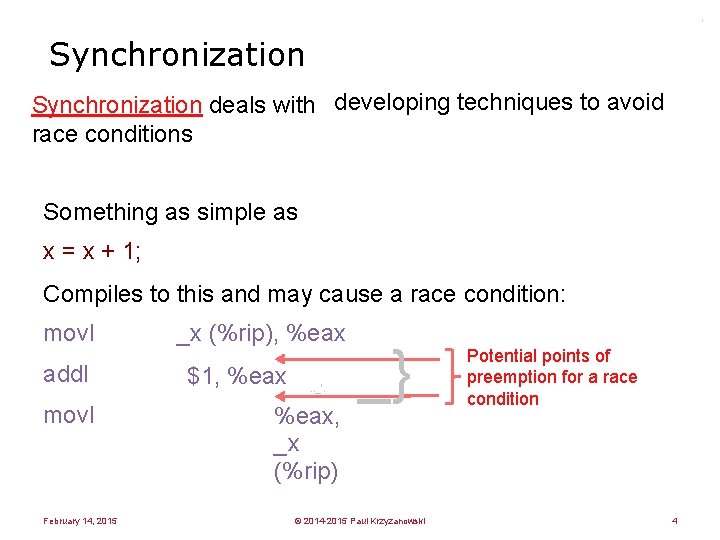

' Synchronization deals with developing techniques to avoid race conditions Something as simple as x = x + 1; Compiles to this and may cause a race condition: movl addl movl February 14, 2015 _x (%rip), %eax $1, %eax . . _'. %eax, _x (%rip) _} © 2014 -2015 Paul Krzyzanowski Potential points of preemption for a race condition 4

Critical Section • The section of code that accesses a shared resource and whose execution should be atomic. • Execution should not be interrupted during a critical sectopm

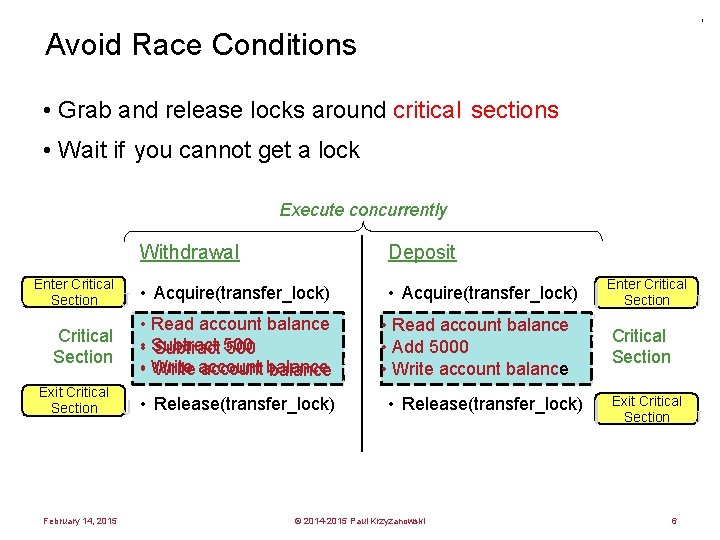

' Avoid Race Conditions • Grab and release locks around critical sections • Wait if you cannot get a lock Execute concurrently Withdrawal Deposit Enter Critical Section J • Acquire(transfer_lock) • Read account balance Critical • Subtract 500 Section • Write account balance Exit Critical Section February 14, 2015 J • Release(transfer_lock) l • Acquire(transfer_lock) I I I • Read account balance • Add 5000 • Write account balance ' • Release(transfer_lock) © 2014 -2015 Paul Krzyzanowski Enter Critical Section J I Critical Section I I I ' l Exit Critical Section 6 J

The Critical Section Problem Design a protocol to allow threads to enter a critical section February 14, 2015 © 2014 -2015 Paul Krzyzanowski 7

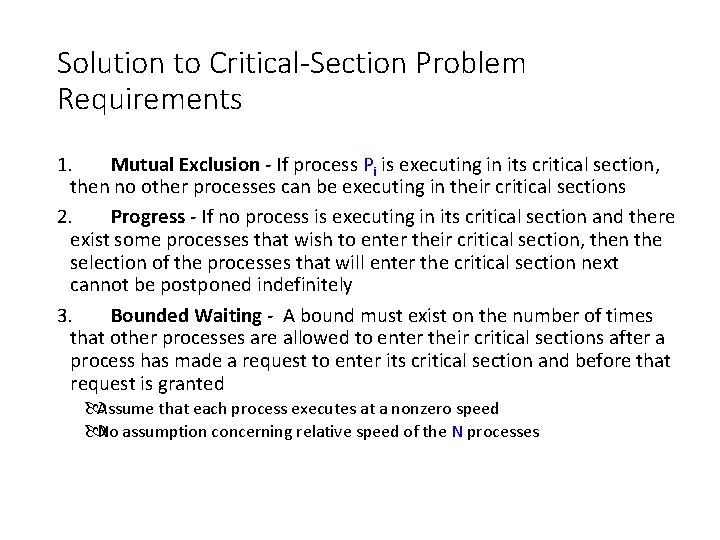

Solution to Critical-Section Problem Requirements 1. Mutual Exclusion - If process Pi is executing in its critical section, then no other processes can be executing in their critical sections 2. Progress - If no process is executing in its critical section and there exist some processes that wish to enter their critical section, then the selection of the processes that will enter the critical section next cannot be postponed indefinitely 3. Bounded Waiting - A bound must exist on the number of times that other processes are allowed to enter their critical sections after a process has made a request to enter its critical section and before that request is granted Assume that each process executes at a nonzero speed No assumption concerning relative speed of the N processes

Solution #1: Disable Interrupts Disable all system interrupts before entering a critical section and re-enable them when leaving Bad! – – Gives the thread too much control over the system Stops time updates and scheduling What if the logic in the critical section goes wrong? What if the critical section has a dependency on some other interrupt, thread, or system call? – What about multiple processors? Disabling interrupts affects just one processor Advantage – Simple, guaranteed to work – Was often used in the uniprocessor kernels February 14, 2015 © 2014 -2015 Paul Krzyzanowski 10

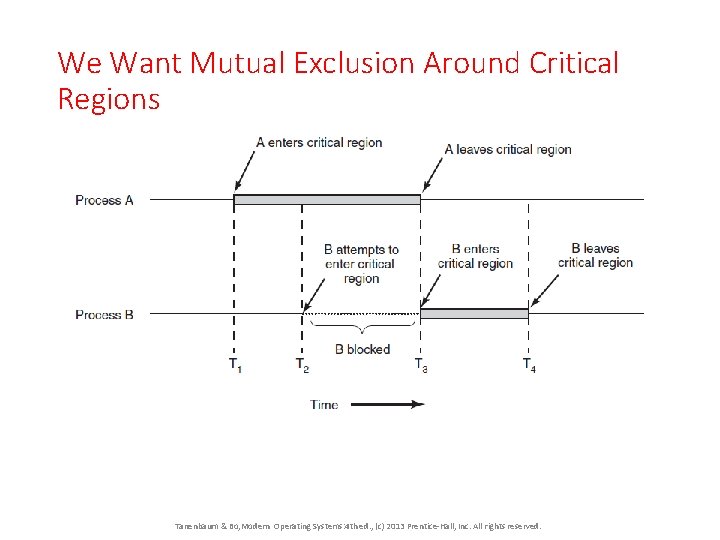

We Want Mutual Exclusion Around Critical Regions Tanenbaum & Bo, Modern Operating Systems: 4 th ed. , (c) 2013 Prentice-Hall, Inc. All rights reserved.

Mutual Exclusion with Busy Waiting s Bu it a y w While (not my turn); Critical section; Indicate my turn is over

Peterson’s Solution • Two process solution • Assume that the LOAD and STORE instructions are atomic; that is, cannot be interrupted. • Peterson’s solution requires that the two processes share two variables: • int turn; • Boolean flag[2] • The variable turn indicates whose turn it is to enter the critical section. • The flag array is used to indicate if a process is ready to enter the critical section. flag[i] = true implies that process Pi is ready!

![Algorithm for Process Pi do { flag[i] = TRUE; turn = j; while (flag[j] Algorithm for Process Pi do { flag[i] = TRUE; turn = j; while (flag[j]](http://slidetodoc.com/presentation_image_h/09ac0b1483ebdf55c1ee9635501ef7f4/image-17.jpg)

Algorithm for Process Pi do { flag[i] = TRUE; turn = j; while (flag[j] && turn == j); critical section flag[i] = FALSE; remainder section } while (TRUE); Use Pj to denote the other process. So P 0 and P 1 , then If I’m 0, j=1 If I’m 1, j = 0

Peterson’s Disadvantages • Difficult to implement for an arbitrary number of threads • BUSY WAITING • Busy waiting → Consumes processors time • Starvation is possible → Selection of waiting process is arbitrary • Deadlock is possible → The flag owned by reset by low priority process can be preempted by high priority process before lock is released

Synchronization Hardware Solution • Many systems provide hardware support for critical section code • Uniprocessors – could disable interrupts • Currently running code would execute without preemption • Generally too inefficient on multiprocessor systems • Operating systems using this not broadly scalable • Modern machines provide special atomic hardware instructions • Atomic = non-interruptable • Either test memory word and set value • Or swap contents of two memory words

Solution to Critical-section Problem Using Locks do { acquire lock critical section release lock remainder section } while (TRUE);

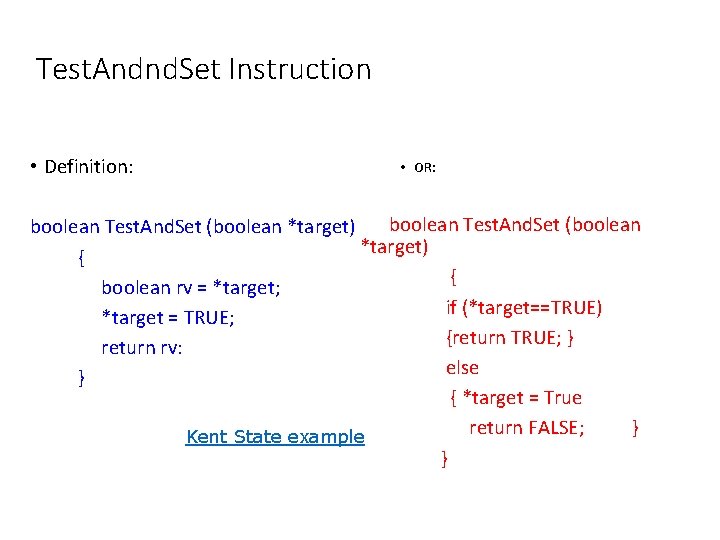

Test. Andnd. Set Instruction • Definition: • OR: boolean Test. And. Set (boolean *target) { { boolean rv = *target; if (*target==TRUE) *target = TRUE; {return TRUE; } return rv: else } { *target = True return FALSE; } Kent State example }

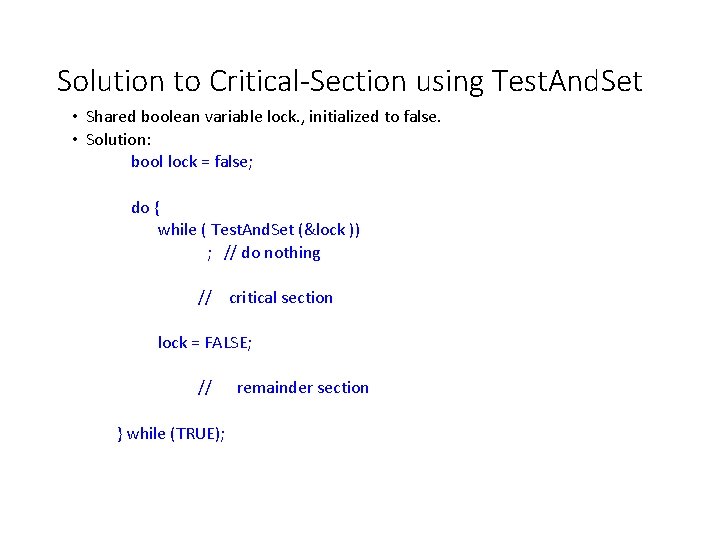

Solution to Critical-Section using Test. And. Set • Shared boolean variable lock. , initialized to false. • Solution: bool lock = false; do { while ( Test. And. Set (&lock )) ; // do nothing // critical section lock = FALSE; // remainder section } while (TRUE);

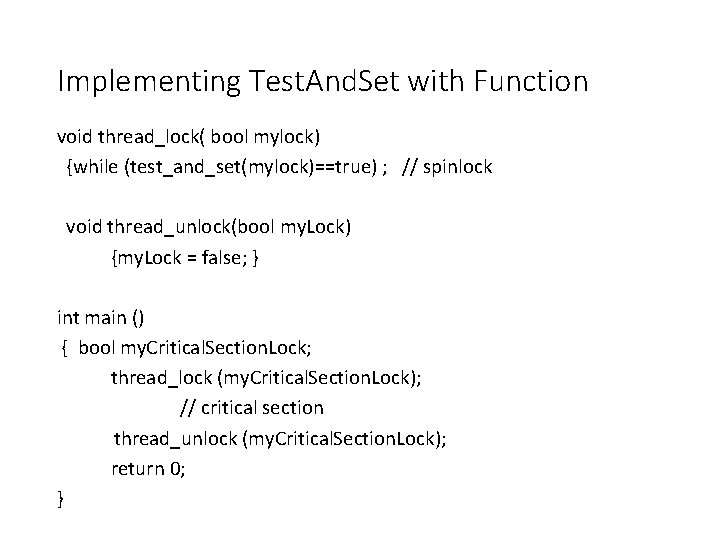

Implementing Test. And. Set with Function void thread_lock( bool mylock) {while (test_and_set(mylock)==true) ; // spinlock void thread_unlock(bool my. Lock) {my. Lock = false; } int main () { bool my. Critical. Section. Lock; thread_lock (my. Critical. Section. Lock); // critical section thread_unlock (my. Critical. Section. Lock); return 0; }

Mutex Lock - Mutual Exclusion • A shared variable that can be on one of 2 states: • Locked • Unlocked • A thread (or process) needs access to a critical region lock with • Mutex_lock • If the lock is available, the thread gets the lock and enters critical section • If not, the thread is blocked until the mutex is unlocked. • If there are multible threads waiting the next one unlocked is chose at random

NOT BUSY WAITING Thread is moved to a wait state until it is released.

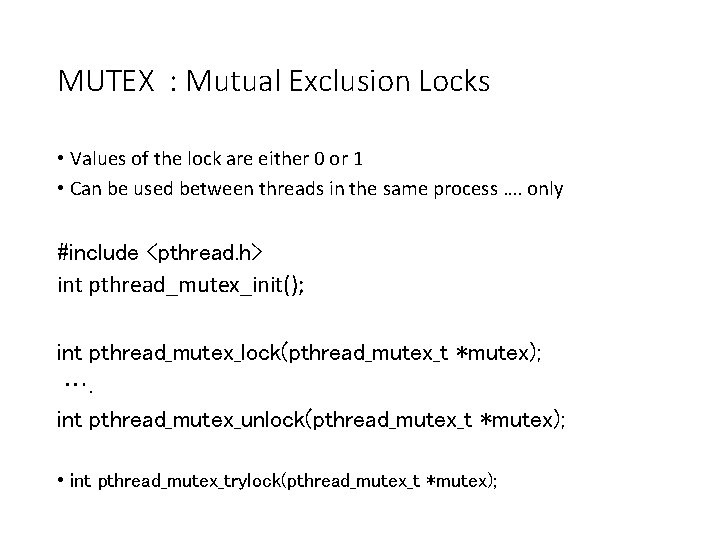

MUTEX : Mutual Exclusion Locks • Values of the lock are either 0 or 1 • Can be used between threads in the same process …. only #include <pthread. h> int pthread_mutex_init(); int pthread_mutex_lock(pthread_mutex_t *mutex); …. int pthread_mutex_unlock(pthread_mutex_t *mutex); • int pthread_mutex_trylock(pthread_mutex_t *mutex);

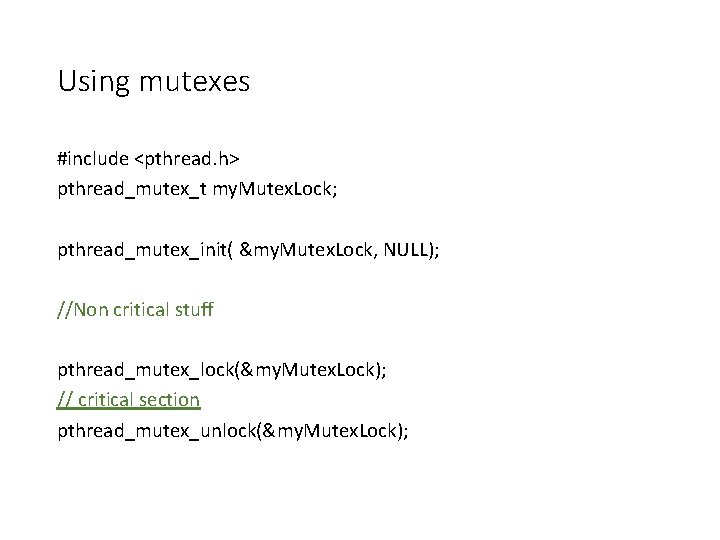

Using mutexes #include <pthread. h> pthread_mutex_t my. Mutex. Lock; pthread_mutex_init( &my. Mutex. Lock, NULL); //Non critical stuff pthread_mutex_lock(&my. Mutex. Lock); // critical section pthread_mutex_unlock(&my. Mutex. Lock);

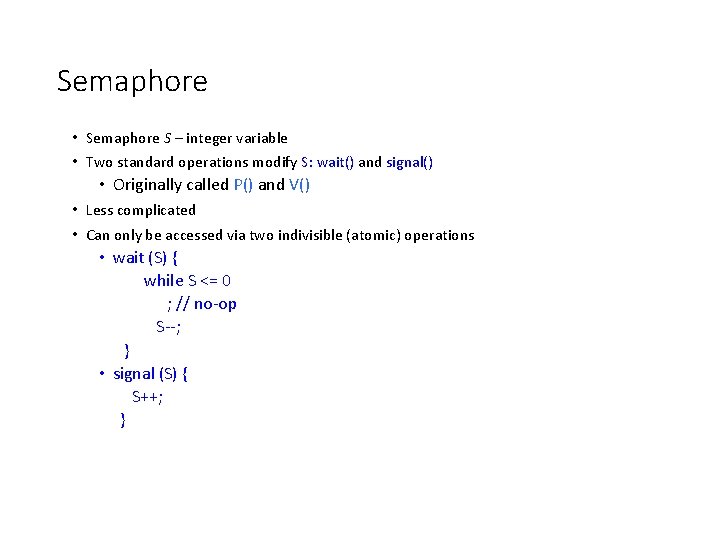

Semaphore • Semaphore S – integer variable • Two standard operations modify S: wait() and signal() • Originally called P() and V() • Less complicated • Can only be accessed via two indivisible (atomic) operations • wait (S) { while S <= 0 ; // no-op S--; } • signal (S) { S++; }

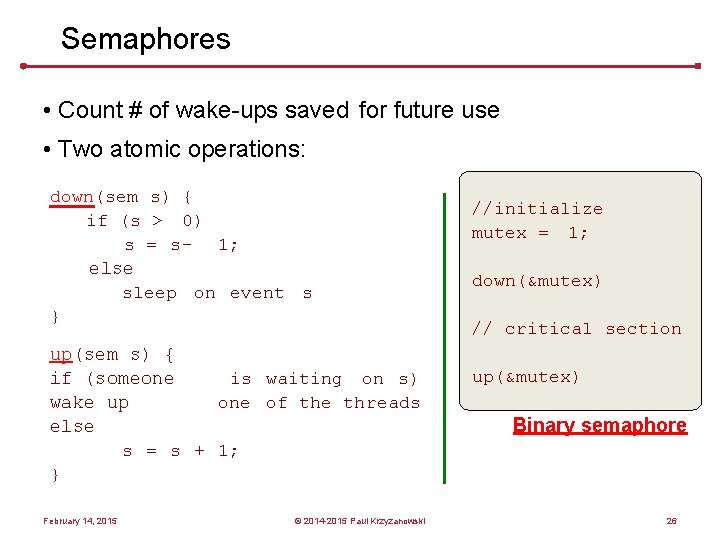

Semaphores • Count # of wake-ups saved for future use • Two atomic operations: down(sem s) { if (s > 0) s = s – 1; else sleep on event s } up(sem s) { is waiting on s) if (someone of the threads wake up else s = s + 1; } February 14, 2015 © 2014 -2015 Paul Krzyzanowski //initialize mutex = 1; down(&mutex) // critical section up(&mutex) Binary semaphore 26

Semaphores Count the number of threads that may enter a critical section at any given time. – Each down decreases the number of future accesses – When no more allowed, processes have to wait – Each up lets a waiting process get in February 14, 2015 © 2014 -2015 Paul Krzyzanowski 27

Semaphore as General Synchronization Tool • Counting semaphore – integer value can range over an unrestricted domain • Binary semaphore – integer value can range only between 0 and 1; can be simpler to implement • Also known as mutex locks • Can implement a counting semaphore S as a binary semaphore • Provides mutual exclusion Semaphore mutex; // initialized to 1 do { wait (mutex); // Critical Section signal (mutex); // remainder section } while (TRUE);

Semaphore Implementation • Must guarantee that no two processes can execute wait () and signal () or post() on the same semaphore at the same time • Thus, implementation becomes the critical section problem where the wait and signal code are placed in the critical section. • Could now have busy waiting in critical section implementation • But implementation code is short • Little busy waiting if critical section rarely occupied • Note that applications may spend lots of time in critical sections and therefore this is not a good solution.

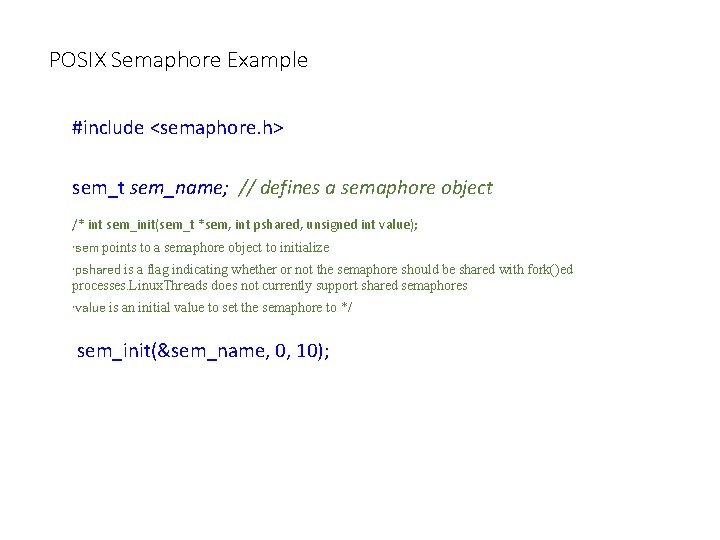

POSIX Semaphore Example #include <semaphore. h> sem_t sem_name; // defines a semaphore object /* int sem_init(sem_t *sem, int pshared, unsigned int value); • sem points to a semaphore object to initialize • pshared is a flag indicating whether or not the semaphore should be shared with fork()ed processes. Linux. Threads does not currently support shared semaphores • value is an initial value to set the semaphore to */ sem_init(&sem_name, 0, 10);

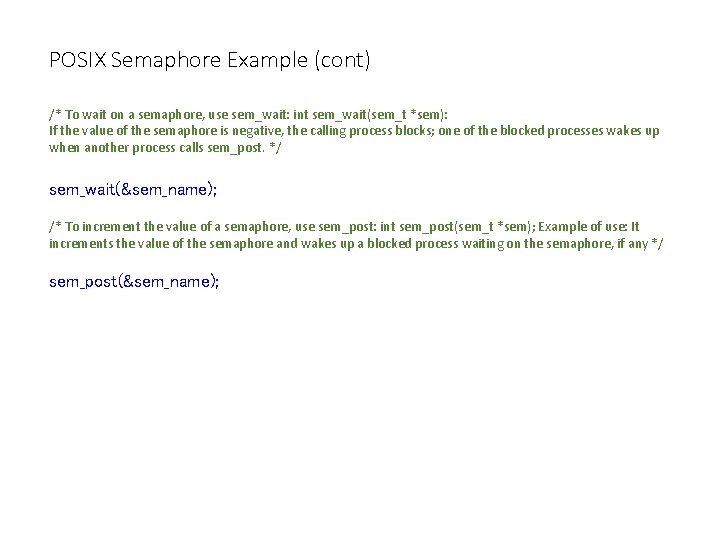

POSIX Semaphore Example (cont) /* To wait on a semaphore, use sem_wait: int sem_wait(sem_t *sem): If the value of the semaphore is negative, the calling process blocks; one of the blocked processes wakes up when another process calls sem_post. */ sem_wait(&sem_name); /* To increment the value of a semaphore, use sem_post: int sem_post(sem_t *sem); Example of use: It increments the value of the semaphore and wakes up a blocked process waiting on the semaphore, if any */ sem_post(&sem_name);

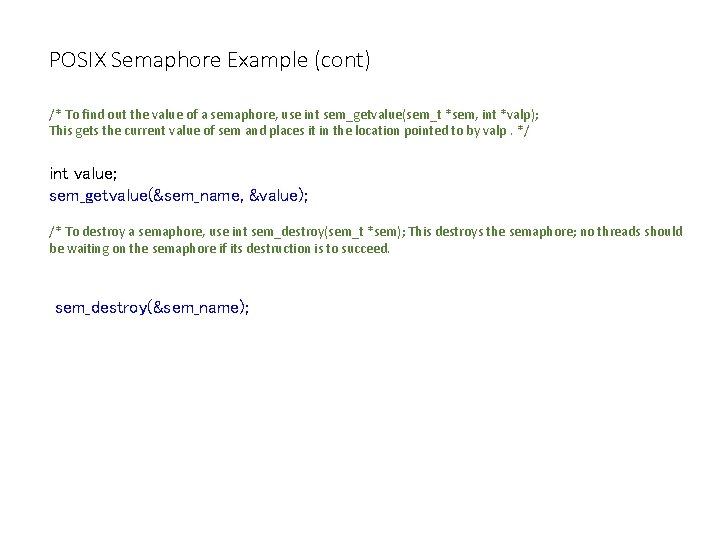

POSIX Semaphore Example (cont) /* To find out the value of a semaphore, use int sem_getvalue(sem_t *sem, int *valp); This gets the current value of sem and places it in the location pointed to by valp. */ int value; sem_getvalue(&sem_name, &value); /* To destroy a semaphore, use int sem_destroy(sem_t *sem); This destroys the semaphore; no threads should be waiting on the semaphore if its destruction is to succeed. sem_destroy(&sem_name);

Classical Problems of Synchronization • Bounded-Buffer Problem • Readers and Writers Problem • Dining-Philosophers Problem

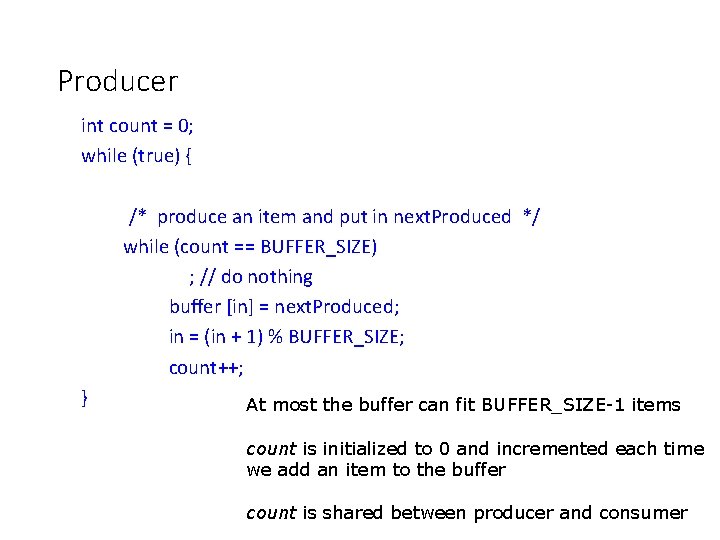

Producer int count = 0; while (true) { /* produce an item and put in next. Produced */ while (count == BUFFER_SIZE) ; // do nothing buffer [in] = next. Produced; in = (in + 1) % BUFFER_SIZE; count++; } At most the buffer can fit BUFFER_SIZE-1 items count is initialized to 0 and incremented each time we add an item to the buffer count is shared between producer and consumer

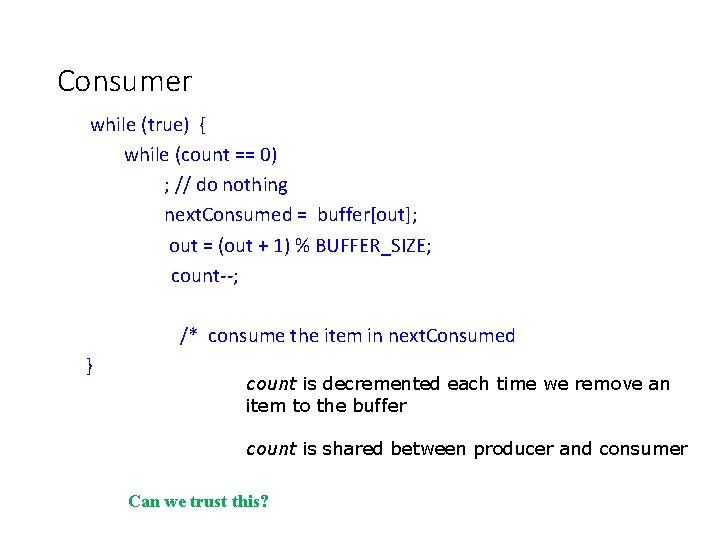

Consumer while (true) { while (count == 0) ; // do nothing next. Consumed = buffer[out]; out = (out + 1) % BUFFER_SIZE; count--; /* consume the item in next. Consumed } count is decremented each time we remove an item to the buffer count is shared between producer and consumer Can we trust this?

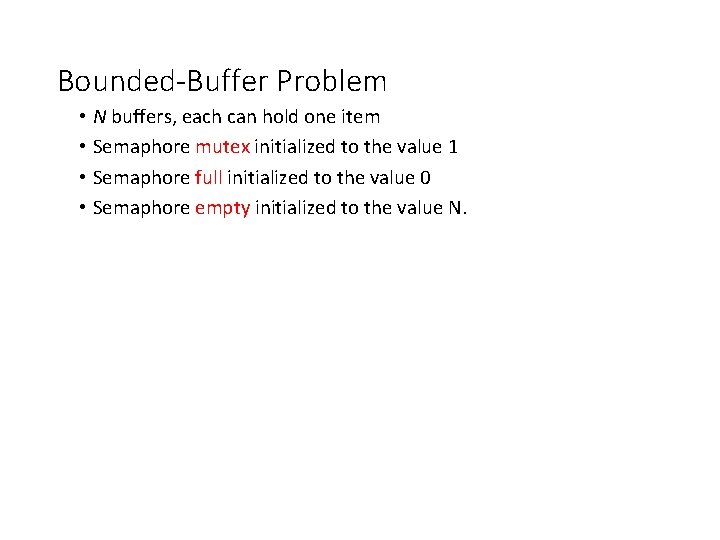

Bounded-Buffer Problem • N buffers, each can hold one item • Semaphore mutex initialized to the value 1 • Semaphore full initialized to the value 0 • Semaphore empty initialized to the value N.

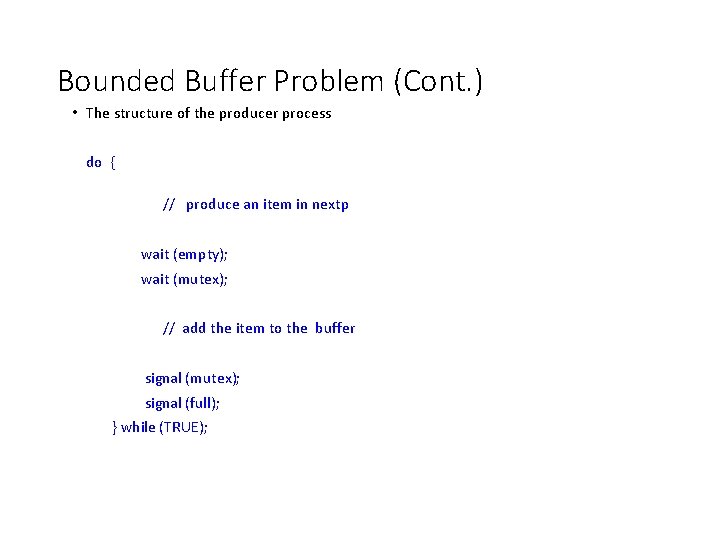

Bounded Buffer Problem (Cont. ) • The structure of the producer process do { // produce an item in nextp wait (empty); wait (mutex); // add the item to the buffer signal (mutex); signal (full); } while (TRUE);

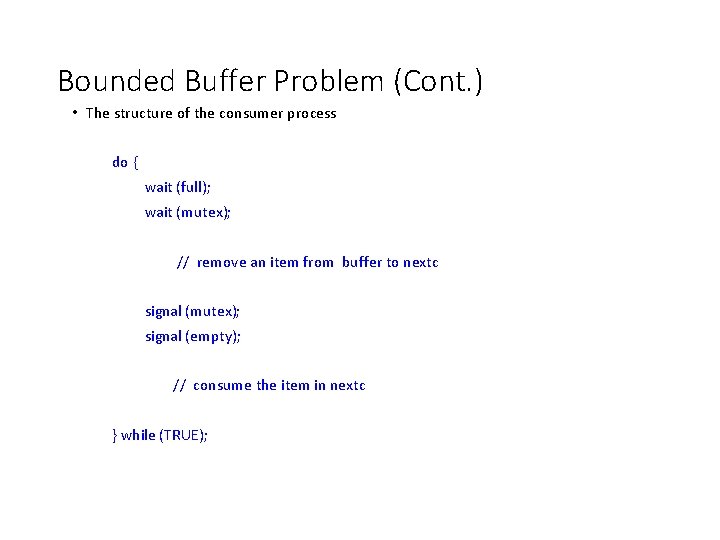

Bounded Buffer Problem (Cont. ) • The structure of the consumer process do { wait (full); wait (mutex); // remove an item from buffer to nextc signal (mutex); signal (empty); // consume the item in nextc } while (TRUE);

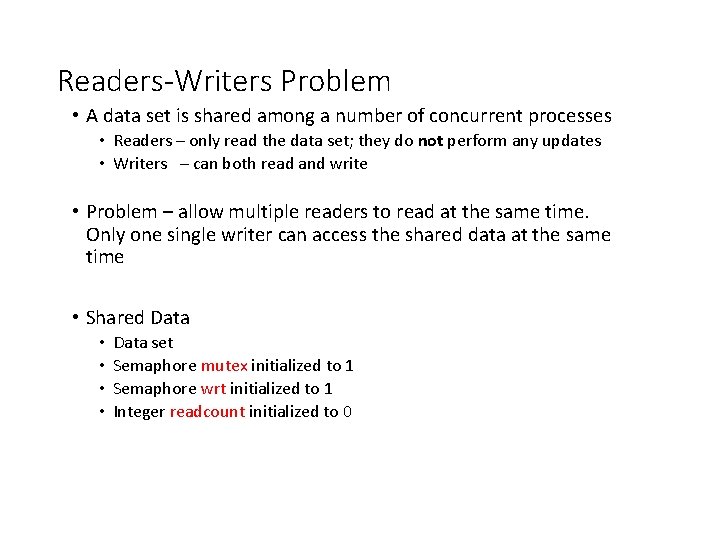

Readers-Writers Problem • A data set is shared among a number of concurrent processes • Readers – only read the data set; they do not perform any updates • Writers – can both read and write • Problem – allow multiple readers to read at the same time. Only one single writer can access the shared data at the same time • Shared Data • • Data set Semaphore mutex initialized to 1 Semaphore wrt initialized to 1 Integer readcount initialized to 0

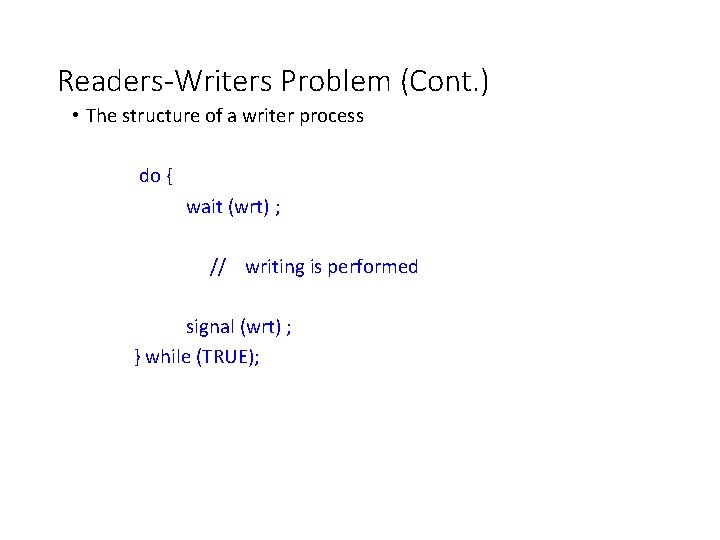

Readers-Writers Problem (Cont. ) • The structure of a writer process do { wait (wrt) ; // writing is performed signal (wrt) ; } while (TRUE);

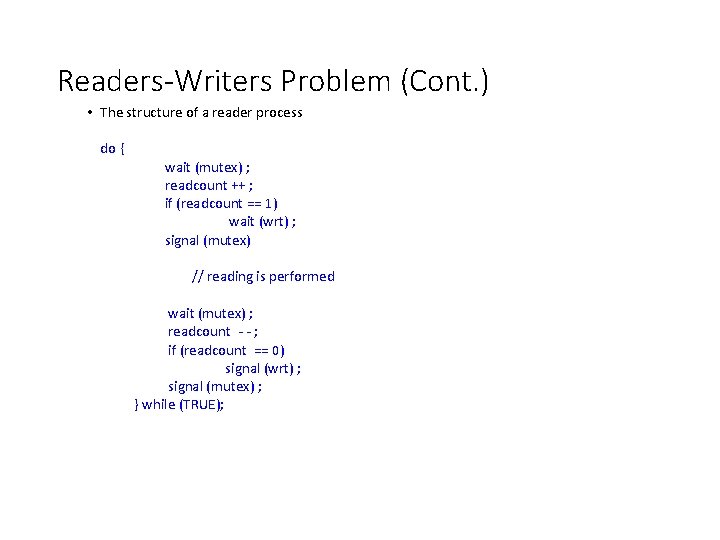

Readers-Writers Problem (Cont. ) • The structure of a reader process do { wait (mutex) ; readcount ++ ; if (readcount == 1) wait (wrt) ; signal (mutex) // reading is performed wait (mutex) ; readcount - - ; if (readcount == 0) signal (wrt) ; signal (mutex) ; } while (TRUE);

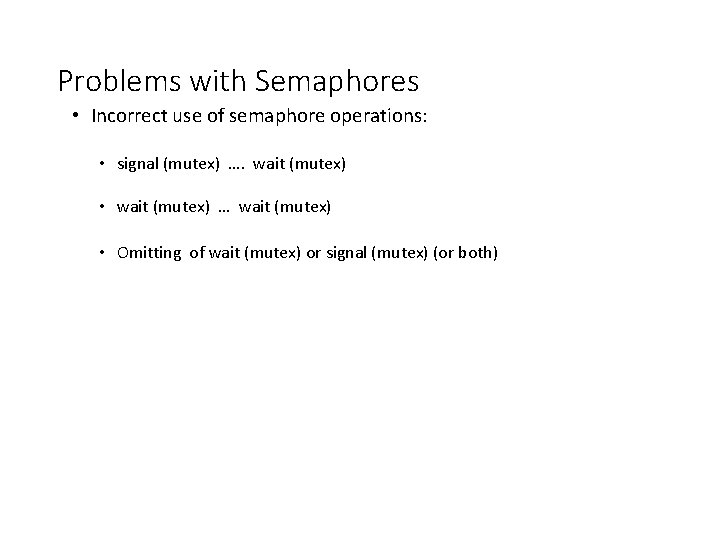

Problems with Semaphores • Incorrect use of semaphore operations: • signal (mutex) …. wait (mutex) • wait (mutex) … wait (mutex) • Omitting of wait (mutex) or signal (mutex) (or both)

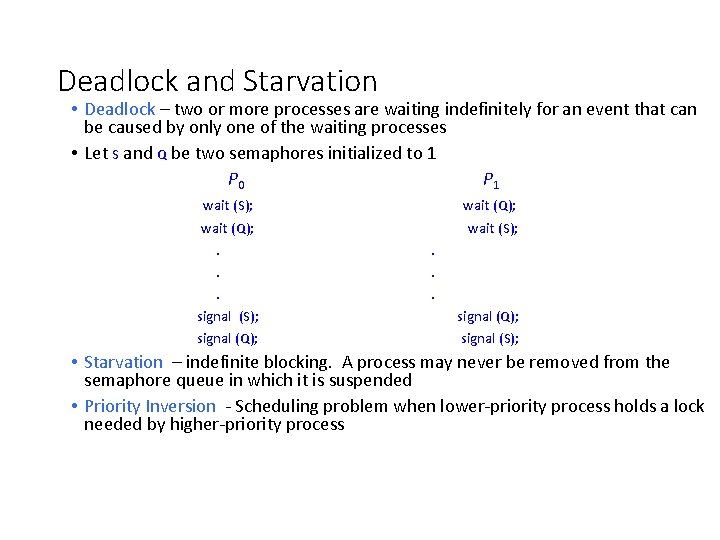

Deadlock and Starvation • Deadlock – two or more processes are waiting indefinitely for an event that can be caused by only one of the waiting processes • Let S and Q be two semaphores initialized to 1 P 0 P 1 wait (S); wait (Q); . . . signal (S); signal (Q); wait (S); . . . signal (Q); signal (S); • Starvation – indefinite blocking. A process may never be removed from the semaphore queue in which it is suspended • Priority Inversion - Scheduling problem when lower-priority process holds a lock needed by higher-priority process

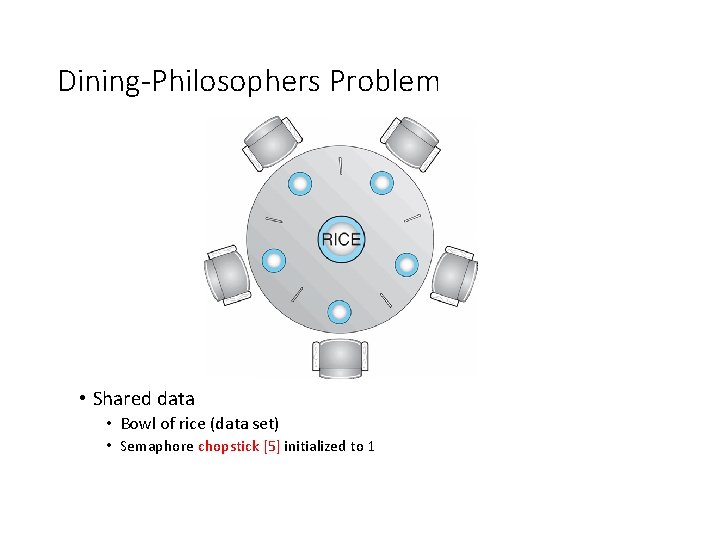

Dining-Philosophers Problem • Shared data • Bowl of rice (data set) • Semaphore chopstick [5] initialized to 1

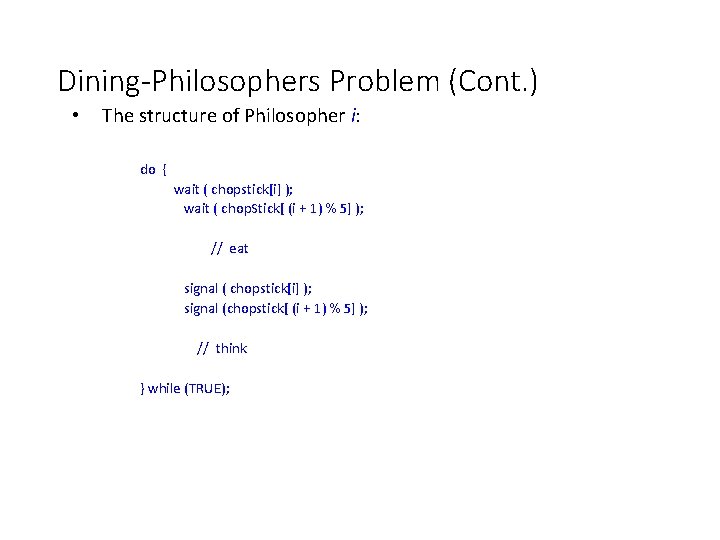

Dining-Philosophers Problem (Cont. ) • The structure of Philosopher i: do { wait ( chopstick[i] ); wait ( chop. Stick[ (i + 1) % 5] ); // eat signal ( chopstick[i] ); signal (chopstick[ (i + 1) % 5] ); // think } while (TRUE);

Condition variables • Remember: • A mutex prevents multiple threads from accessing a shared variable at the same time Condition variable: allows one thread to inform other threads about changes in the state of a shared variable (or research) and allows other threads to wait (block/sleep) for such notification While mutexes implement synchronization by controlling thread access to data, condition variables allow threads to synchronize based upon the actual value of data.

Signaling Changes of State: Condition Variables • Condition variables allows one thread to inform other threads about changes in state of a shared variable or resource and the other threads to wait(block) for such notification • …. . instead of continually checking

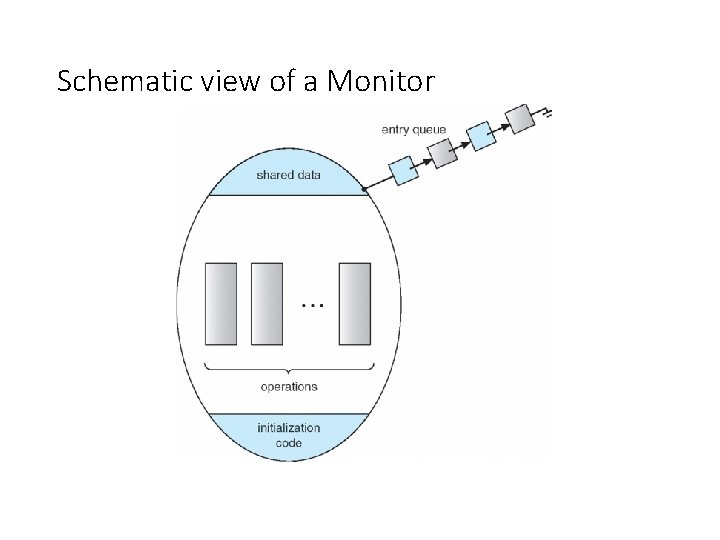

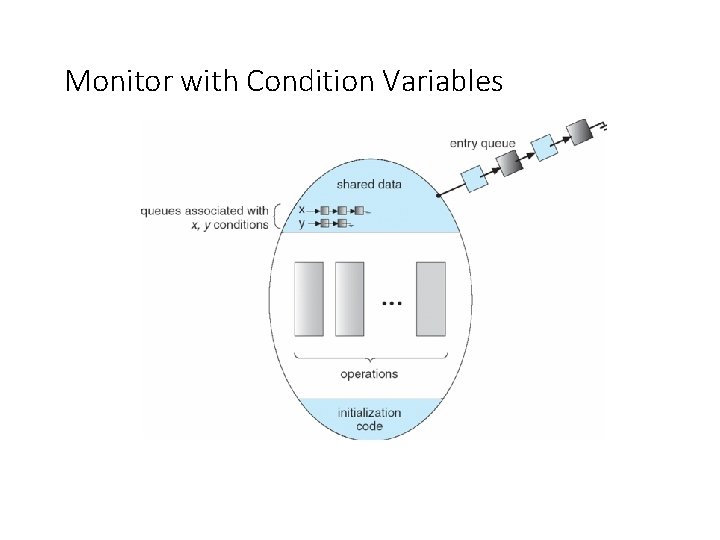

Monitor • Language supplied construct • A monitor class presents a set of programmer defined operations which can provide mutual exclusion within the monitor. • Procedures • Monitor Properties • Shared data can only be accessed by monitor’s procedures • Only ONE process at a time can execute the monitor

Schematic view of a Monitor

Monitor with Condition Variables

![Solution to Dining Philosophers monitor DP { enum { THINKING; HUNGRY, EATING) state [5] Solution to Dining Philosophers monitor DP { enum { THINKING; HUNGRY, EATING) state [5]](http://slidetodoc.com/presentation_image_h/09ac0b1483ebdf55c1ee9635501ef7f4/image-54.jpg)

Solution to Dining Philosophers monitor DP { enum { THINKING; HUNGRY, EATING) state [5] ; condition self [5]; void pickup (int i) { state[i] = HUNGRY; test(i); if (state[i] != EATING) self [i]. wait; } void putdown (int i) { state[i] = THINKING; // test left and right neighbors test((i + 4) % 5); test((i + 1) % 5); }

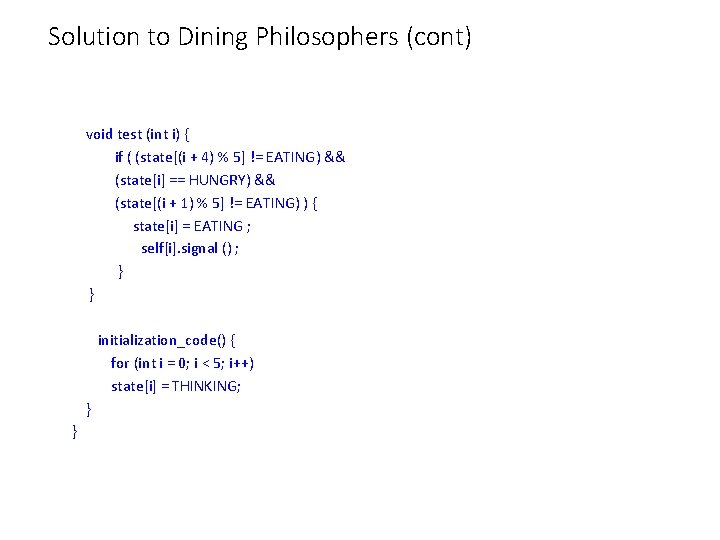

Solution to Dining Philosophers (cont) void test (int i) { if ( (state[(i + 4) % 5] != EATING) && (state[i] == HUNGRY) && (state[(i + 1) % 5] != EATING) ) { state[i] = EATING ; self[i]. signal () ; } } initialization_code() { for (int i = 0; i < 5; i++) state[i] = THINKING; } }

Solution to Dining Philosophers (cont) • Each philosopher I invokes the operations pickup() and putdown() in the following sequence: Dining. Philosophters. pickup (i); EAT Dining. Philosophers. putdown (i);

CONDITION VARIABLES USING PTHREADS • The principle operations are signal and wait • Two operations on a condition variable: • pthread_cond_wait() – a process that invokes the operation is suspended. • pthread_cond_signal()– resumes one of processes (if any) that invoked pthread_cond_wait()

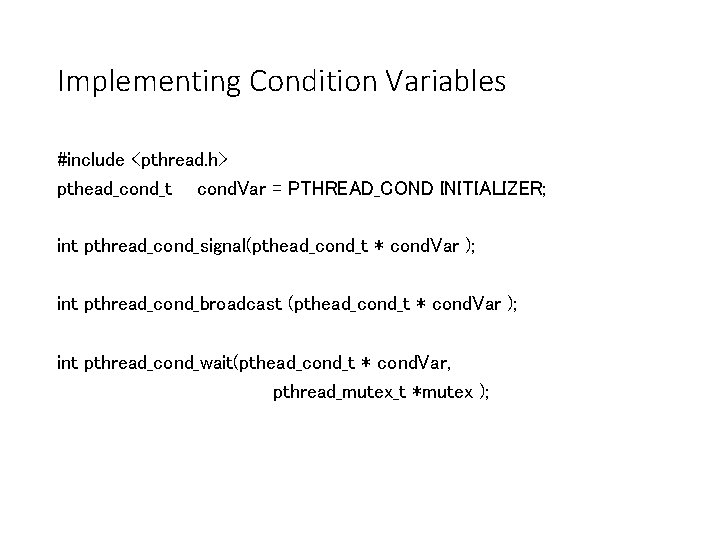

Implementing Condition Variables #include <pthread. h> pthead_cond_t cond. Var = PTHREAD_COND INITIALIZER; int pthread_cond_signal(pthead_cond_t * cond. Var ); int pthread_cond_broadcast (pthead_cond_t * cond. Var ); int pthread_cond_wait(pthead_cond_t * cond. Var, pthread_mutex_t *mutex );

- Slides: 58