CS 6045 Advanced Algorithms Dynamic Programming Fibonacci Numbers

CS 6045: Advanced Algorithms Dynamic Programming

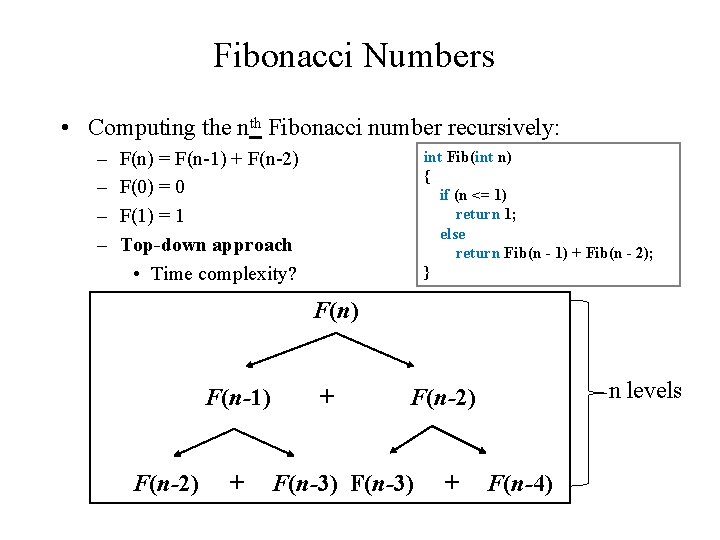

Fibonacci Numbers • Computing the nth Fibonacci number recursively: – – F(n) = F(n-1) + F(n-2) F(0) = 0 F(1) = 1 Top-down approach • Time complexity? int Fib(int n) { if (n <= 1) return 1; else return Fib(n - 1) + Fib(n - 2); } F(n) F(n-1) F(n-2) + + n levels F(n-2) F(n-3) + F(n-4)

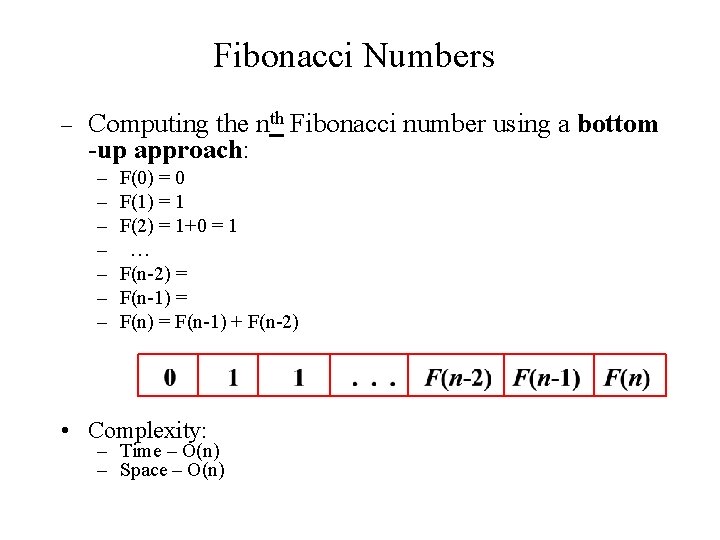

Fibonacci Numbers – Computing the nth Fibonacci number using a bottom -up approach: – – – – F(0) = 0 F(1) = 1 F(2) = 1+0 = 1 … F(n-2) = F(n-1) + F(n-2) • Complexity: – Time – O(n) – Space – O(n)

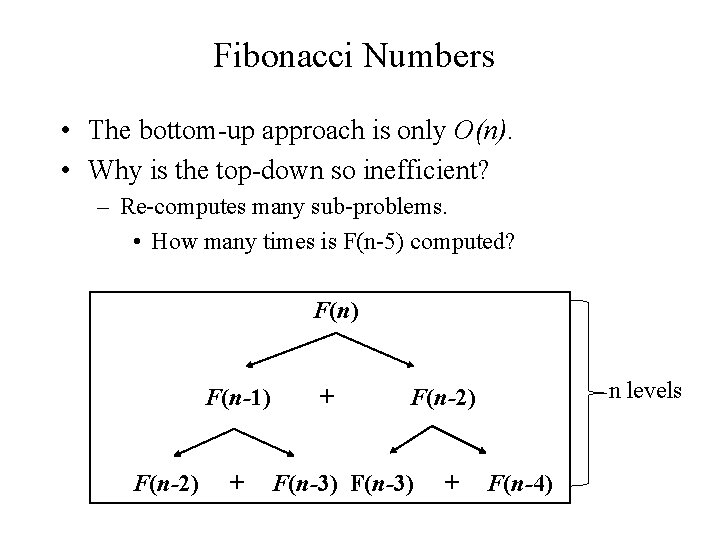

Fibonacci Numbers • The bottom-up approach is only O(n). • Why is the top-down so inefficient? – Re-computes many sub-problems. • How many times is F(n-5) computed? F(n) F(n-1) F(n-2) + + n levels F(n-2) F(n-3) + F(n-4)

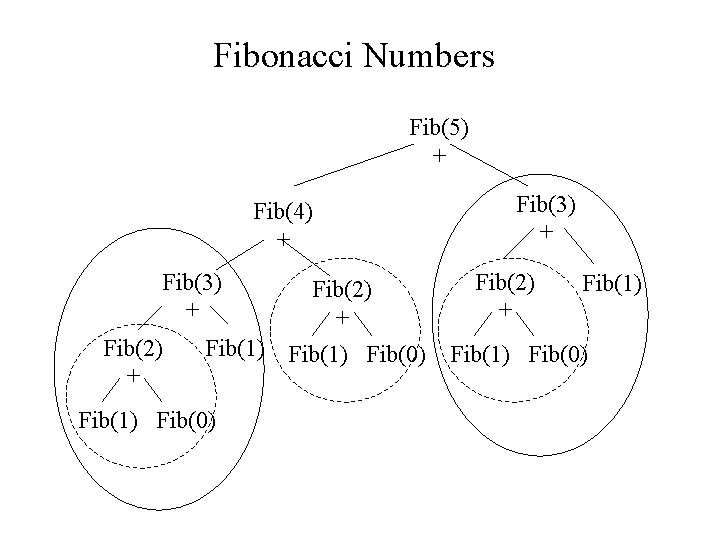

Fibonacci Numbers Fib(5) + Fib(4) + Fib(3) + Fib(2) + Fib(1) Fib(0) Fib(3) + Fib(2) + Fib(1) Fib(0)

Dynamic Programming • Dynamic Programming is an algorithm design technique for optimization problems: often minimizing or maximizing. • Like divide and conquer, DP solves problems by combining solutions to sub-problems. • Unlike divide and conquer, sub-problems are not independent. – Sub-problems overlap (share sub-problems) • Dynamic Programming = meta-technique (not a particular algorithm) • Programming = “tableau method’’, not writing a code

Dynamic Programming • In dynamic programming we usually reduce time by increasing the amount of space • We solve the problem by solving sub-problems of increasing size and saving each optimal solution in a table (usually). • The table is then used for finding the optimal solution to larger problems. • Time is saved since each sub-problem is solved only once.

Dynamic Programming • The best way to get a feel for this is through some more examples. – Longest Common Subsequence – 0 -1 Knapsack Problem – Matrix Chaining optimization

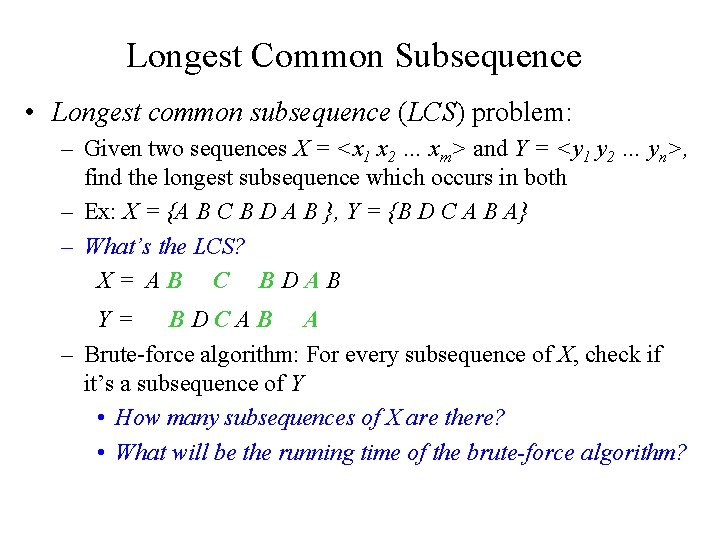

Longest Common Subsequence • Longest common subsequence (LCS) problem: – Given two sequences X = <x 1 x 2 … xm> and Y = <y 1 y 2 … yn>, find the longest subsequence which occurs in both – Ex: X = {A B C B D A B }, Y = {B D C A B A} – What’s the LCS? X= AB C BDAB Y= BDCAB A – Brute-force algorithm: For every subsequence of X, check if it’s a subsequence of Y • How many subsequences of X are there? • What will be the running time of the brute-force algorithm?

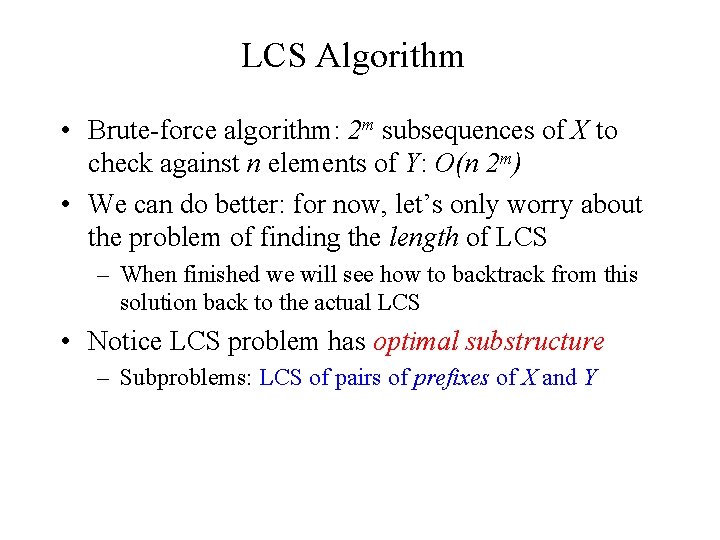

LCS Algorithm • Brute-force algorithm: 2 m subsequences of X to check against n elements of Y: O(n 2 m) • We can do better: for now, let’s only worry about the problem of finding the length of LCS – When finished we will see how to backtrack from this solution back to the actual LCS • Notice LCS problem has optimal substructure – Subproblems: LCS of pairs of prefixes of X and Y

LCS Algorithm • First we’ll find the length of LCS. Later we’ll modify the algorithm to find LCS itself. • Define Xi, Yj to be the prefixes of X and Y of length i and j respectively (i ≤ m; j ≤ n) – Xi = <x 1 x 2 … xi>; Yj = <y 1 y 2 … yj> • Define c[i, j] to be the length of LCS of Xi and Yj • Then the length of LCS of X and Y will be c[m, n] X= AB C BDAB Y= BDCAB A

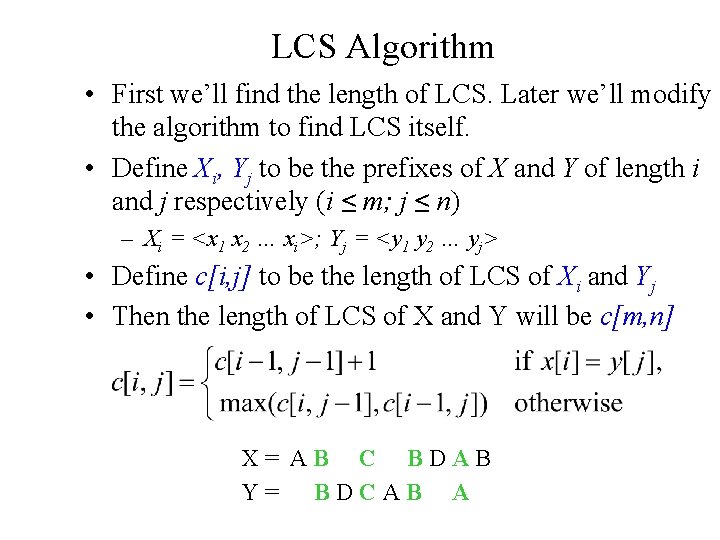

LCS recursive solution • We start with i = j = 0 (empty substrings of X and Y) • Since X 0 and Y 0 are empty strings, their LCS is always empty (i. e. , c[0, 0] = 0) • LCS of empty string and any other string is empty, so for every i and j: c[0, j] = c[i, 0] = 0

![LCS recursive solution • When we calculate c[i, j], we consider two cases: • LCS recursive solution • When we calculate c[i, j], we consider two cases: •](http://slidetodoc.com/presentation_image_h2/33ae5372ea2c05a6cac8eedc2516691d/image-13.jpg)

LCS recursive solution • When we calculate c[i, j], we consider two cases: • First case: x[i]=y[j]: one more symbol in strings X and Y matches, so the length of LCS Xi and Yj equals to the length of LCS of smaller strings Xi-1 and Yi-1 , plus 1

![LCS recursive solution • Second case: x[i] != y[j] • As symbols don’t match, LCS recursive solution • Second case: x[i] != y[j] • As symbols don’t match,](http://slidetodoc.com/presentation_image_h2/33ae5372ea2c05a6cac8eedc2516691d/image-14.jpg)

LCS recursive solution • Second case: x[i] != y[j] • As symbols don’t match, our solution is not improved, and the length of LCS(Xi , Yj) is the same as before (i. e. , maximum of LCS(Xi, Yj-1) and LCS(Xi-1, Yj) Why not just take the length of LCS(Xi-1, Yj-1) ?

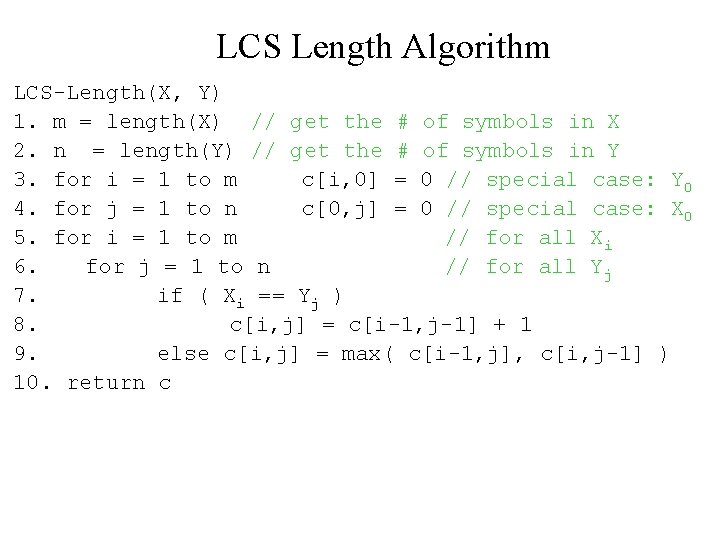

LCS Length Algorithm LCS-Length(X, Y) 1. m = length(X) // get the # of symbols in X 2. n = length(Y) // get the # of symbols in Y 3. for i = 1 to m c[i, 0] = 0 // special case: Y 0 4. for j = 1 to n c[0, j] = 0 // special case: X 0 5. for i = 1 to m // for all Xi 6. for j = 1 to n // for all Yj 7. if ( Xi == Yj ) 8. c[i, j] = c[i-1, j-1] + 1 9. else c[i, j] = max( c[i-1, j], c[i, j-1] ) 10. return c

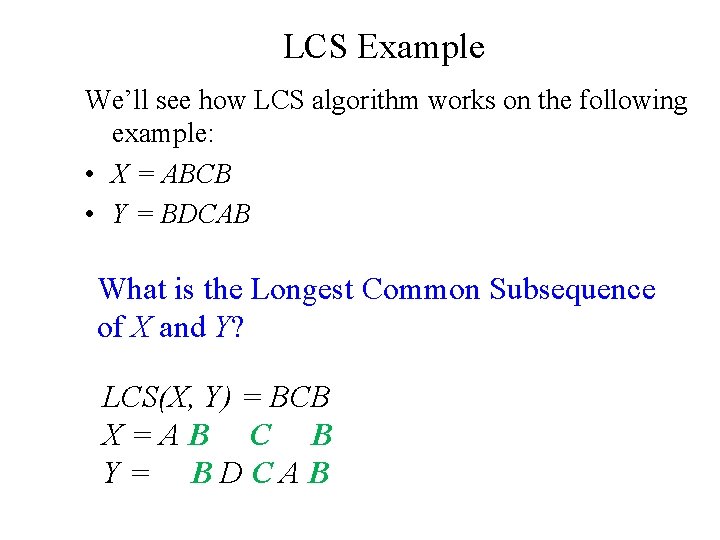

LCS Example We’ll see how LCS algorithm works on the following example: • X = ABCB • Y = BDCAB What is the Longest Common Subsequence of X and Y? LCS(X, Y) = BCB X=AB C B Y= BDCAB

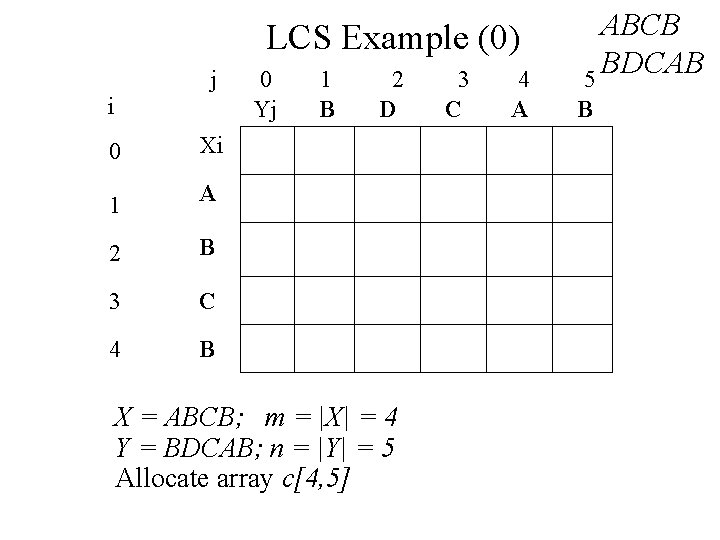

LCS Example (0) j i 0 Xi 1 A 2 B 3 C 4 B 0 Yj 1 B 2 D X = ABCB; m = |X| = 4 Y = BDCAB; n = |Y| = 5 Allocate array c[4, 5] 3 C 4 A ABCB BDCAB 5 B

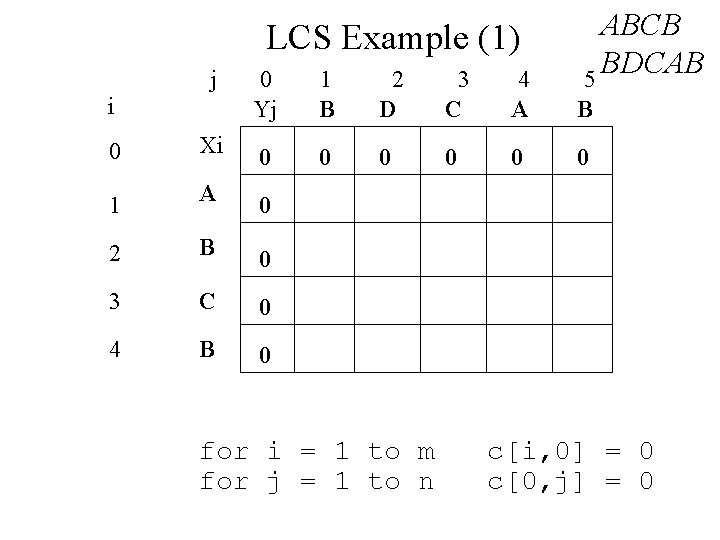

LCS Example (1) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 0 0 Xi 0 1 A 0 2 B 0 3 C 0 4 B 0 for i = 1 to m for j = 1 to n c[i, 0] = 0 c[0, j] = 0

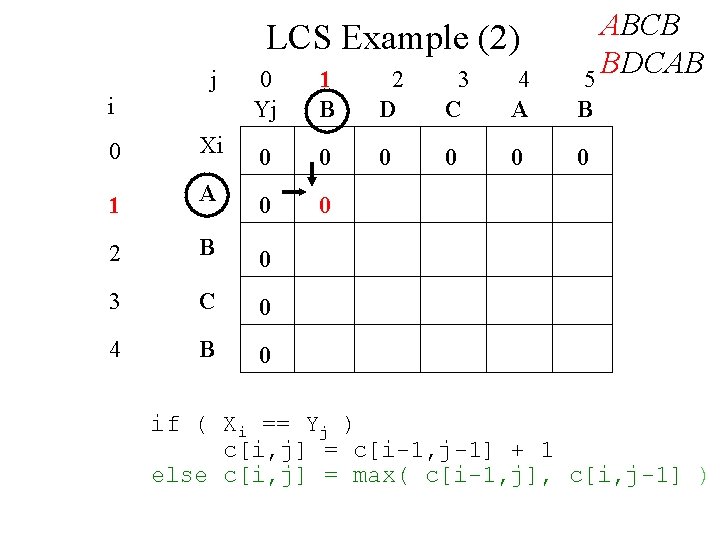

LCS Example (2) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 0 0 Xi 0 0 1 A 0 0 2 B 0 3 C 0 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

LCS Example (3) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 0 0 Xi 0 0 1 A 0 0 2 B 0 3 C 0 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

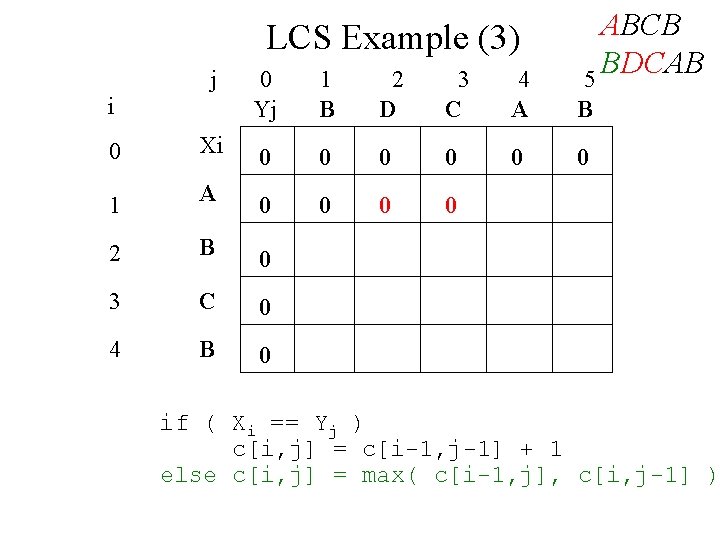

LCS Example (4) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 0 Xi 0 0 0 1 A 0 0 1 2 B 0 3 C 0 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

LCS Example (5) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 3 C 0 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

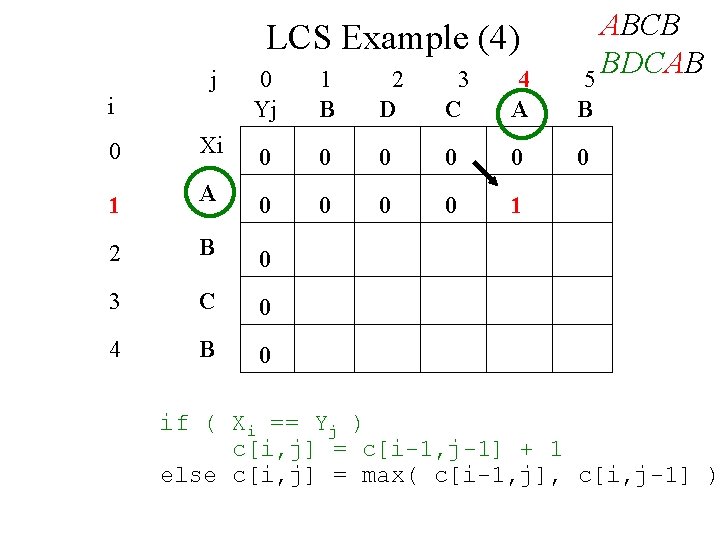

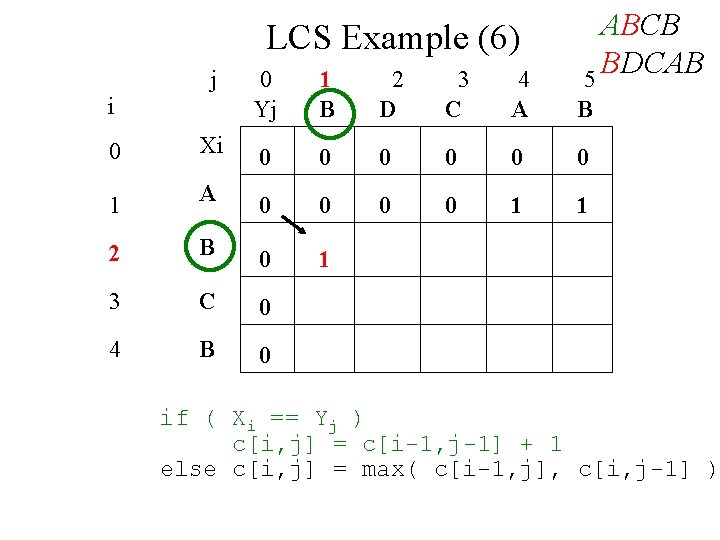

LCS Example (6) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 3 C 0 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

LCS Example (7) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 3 C 0 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

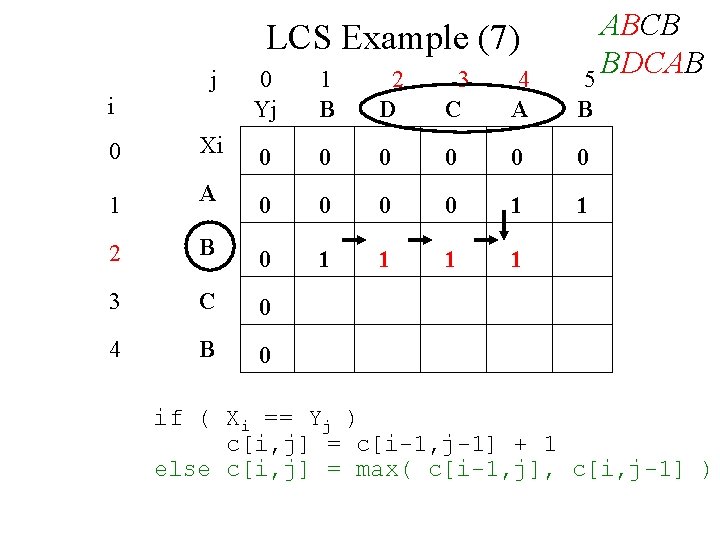

LCS Example (8) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

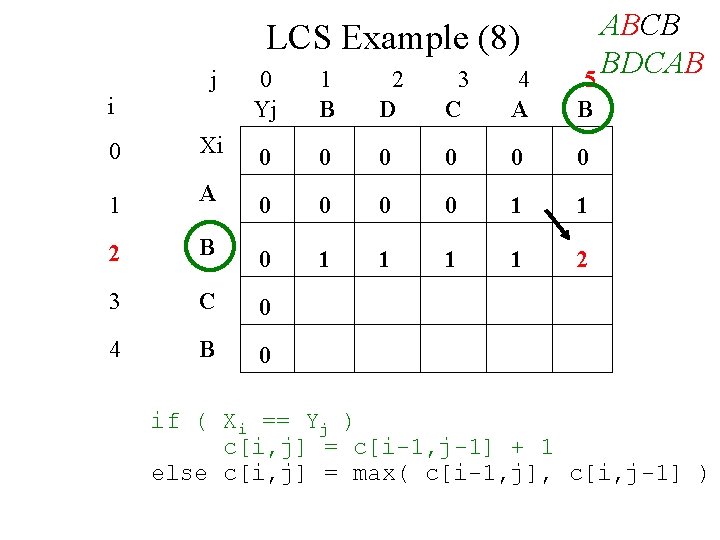

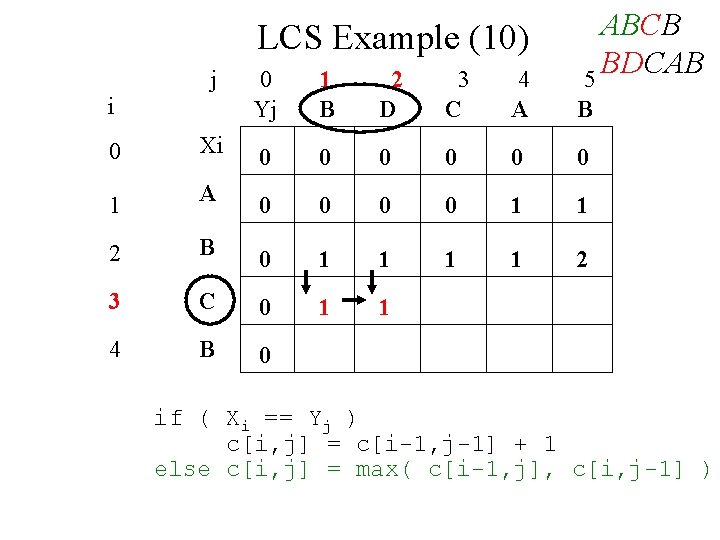

LCS Example (10) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

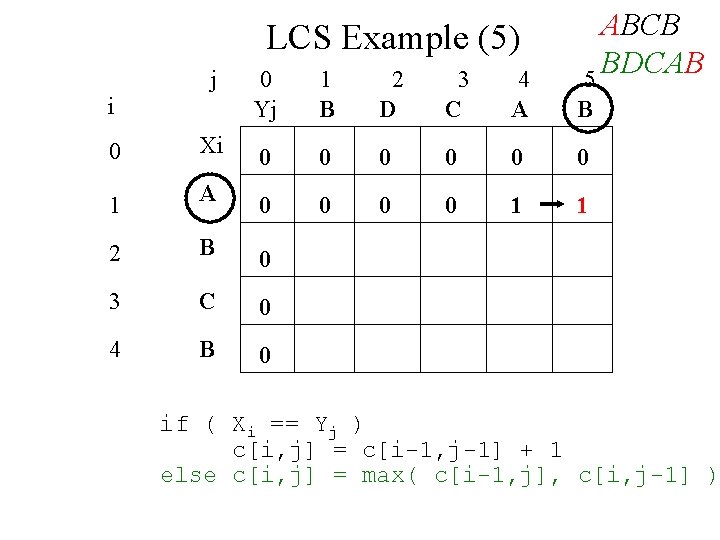

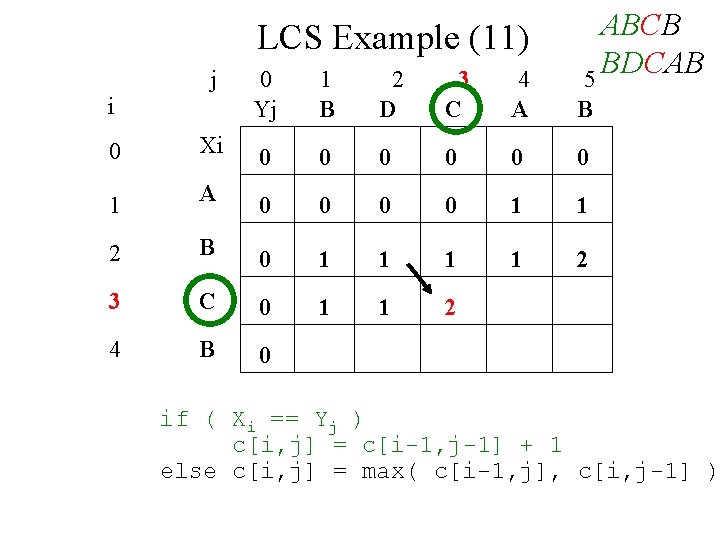

LCS Example (11) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 2 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

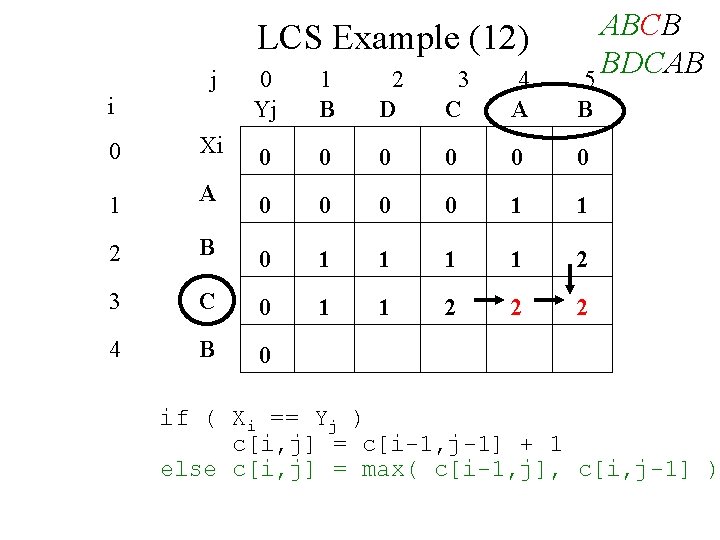

LCS Example (12) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 2 2 2 4 B 0 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

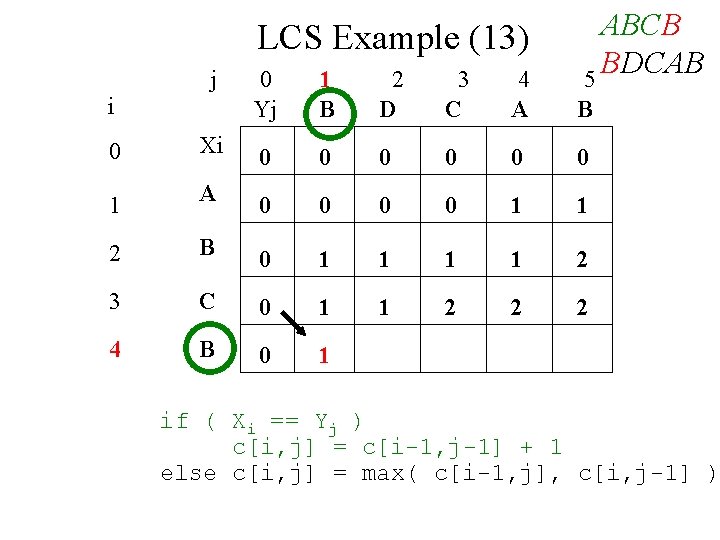

LCS Example (13) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 2 2 2 4 B 0 1 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

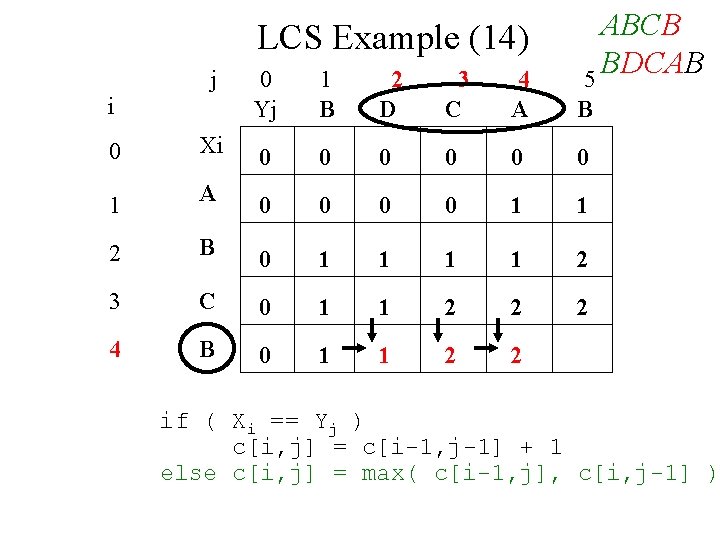

LCS Example (14) j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 2 2 2 4 B 0 1 1 2 2 if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

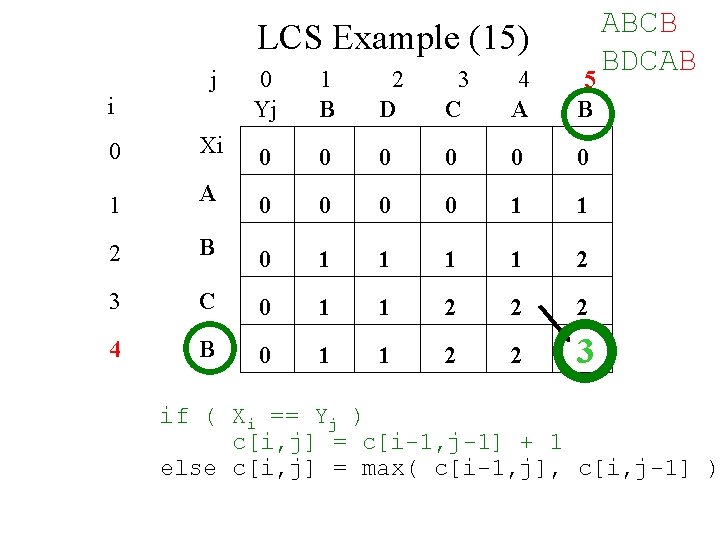

LCS Example (15) j 0 Yj 1 B 2 D 3 C 4 A 5 B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 2 2 2 4 B 0 1 1 2 2 3 i ABCB BDCAB if ( Xi == Yj ) c[i, j] = c[i-1, j-1] + 1 else c[i, j] = max( c[i-1, j], c[i, j-1] )

LCS Algorithm Running Time • LCS algorithm calculates the values of each entry of the array c[m, n] • So what is the running time? O(mn) since each c[i, j] is calculated in constant time, and there are mn elements in the array

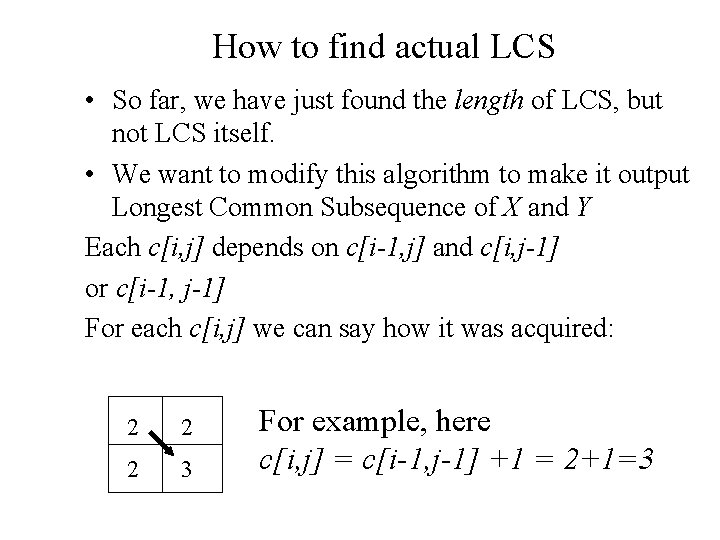

How to find actual LCS • So far, we have just found the length of LCS, but not LCS itself. • We want to modify this algorithm to make it output Longest Common Subsequence of X and Y Each c[i, j] depends on c[i-1, j] and c[i, j-1] or c[i-1, j-1] For each c[i, j] we can say how it was acquired: 2 2 2 3 For example, here c[i, j] = c[i-1, j-1] +1 = 2+1=3

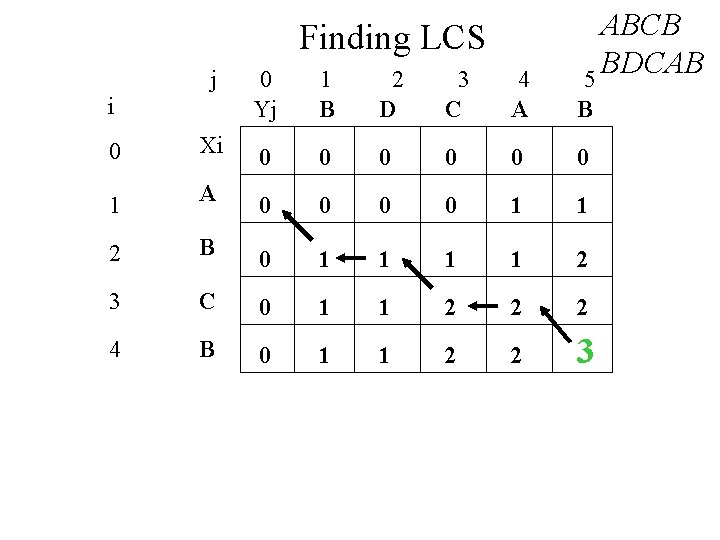

Finding LCS j i ABCB BDCAB 5 0 Yj 1 B 2 D 3 C 4 A B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 2 2 2 4 B 0 1 1 2 2 3

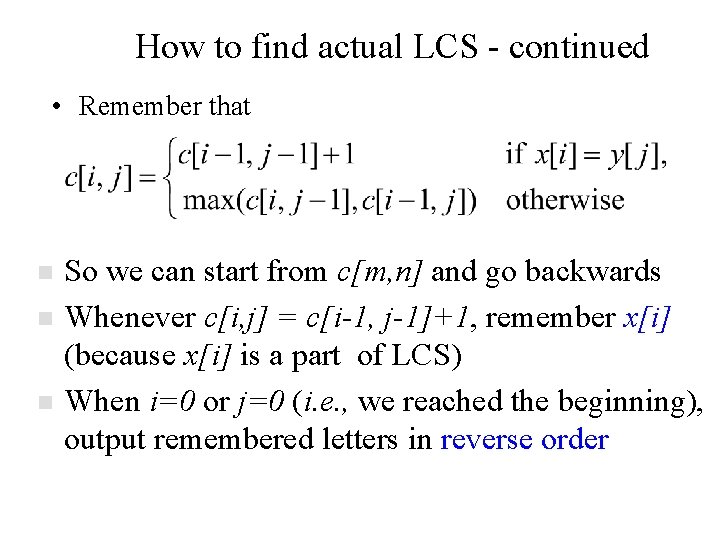

How to find actual LCS - continued • Remember that n n n So we can start from c[m, n] and go backwards Whenever c[i, j] = c[i-1, j-1]+1, remember x[i] (because x[i] is a part of LCS) When i=0 or j=0 (i. e. , we reached the beginning), output remembered letters in reverse order

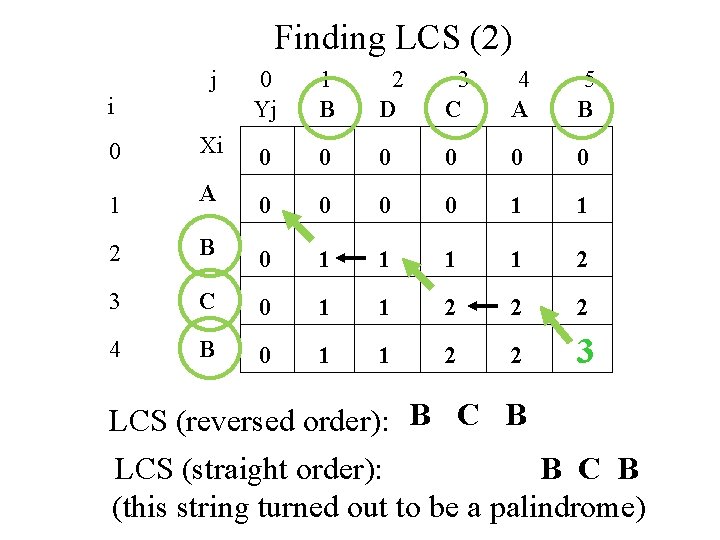

Finding LCS (2) j 0 Yj 1 B 2 D 3 C 4 A 5 B 0 Xi 0 0 0 1 A 0 0 1 1 2 B 0 1 1 2 3 C 0 1 1 2 2 2 4 B 0 1 1 2 2 3 i LCS (reversed order): B C B LCS (straight order): B C B (this string turned out to be a palindrome)

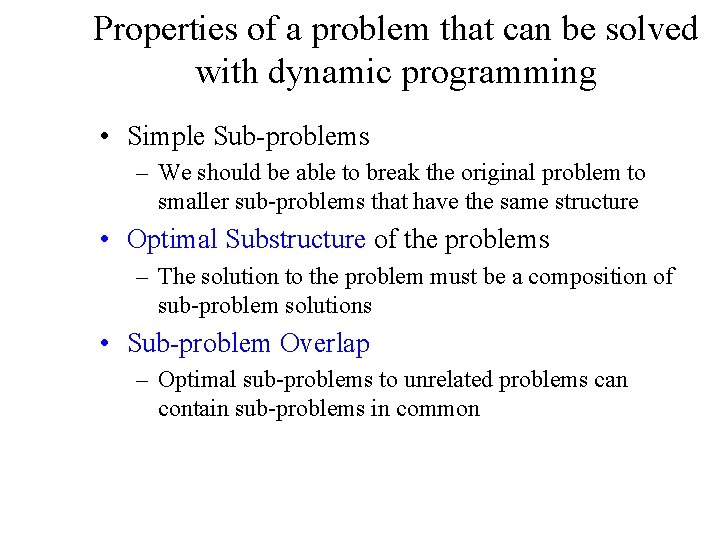

Properties of a problem that can be solved with dynamic programming • Simple Sub-problems – We should be able to break the original problem to smaller sub-problems that have the same structure • Optimal Substructure of the problems – The solution to the problem must be a composition of sub-problem solutions • Sub-problem Overlap – Optimal sub-problems to unrelated problems can contain sub-problems in common

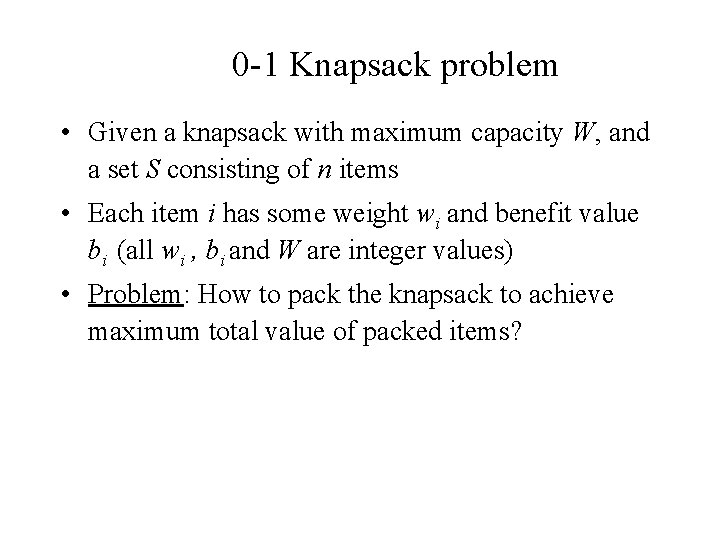

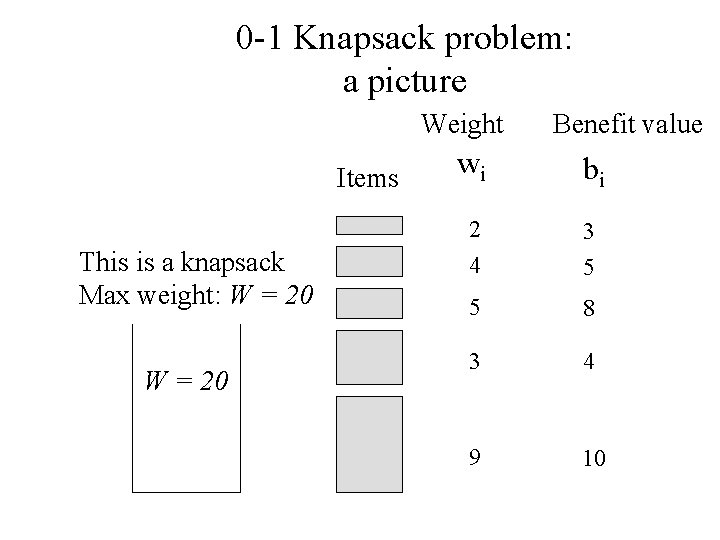

0 -1 Knapsack problem • Given a knapsack with maximum capacity W, and a set S consisting of n items • Each item i has some weight wi and benefit value bi (all wi , bi and W are integer values) • Problem: How to pack the knapsack to achieve maximum total value of packed items?

0 -1 Knapsack problem: a picture Weight Items This is a knapsack Max weight: W = 20 Benefit value wi bi 2 4 3 5 5 8 3 4 9 10

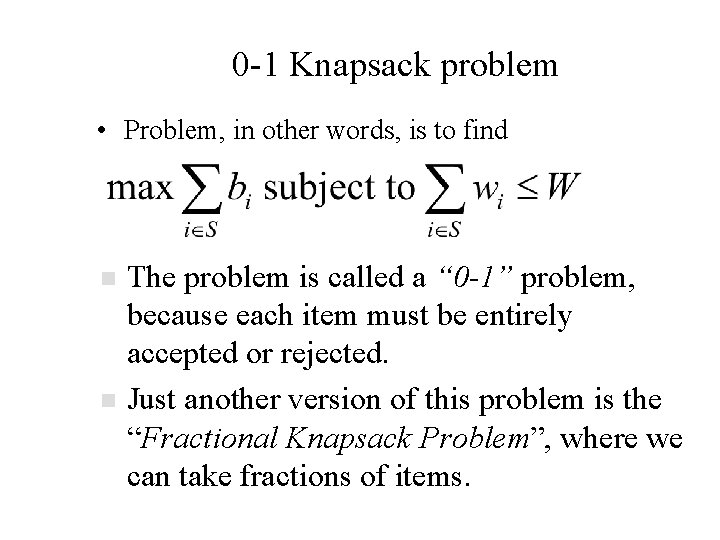

0 -1 Knapsack problem • Problem, in other words, is to find n n The problem is called a “ 0 -1” problem, because each item must be entirely accepted or rejected. Just another version of this problem is the “Fractional Knapsack Problem”, where we can take fractions of items.

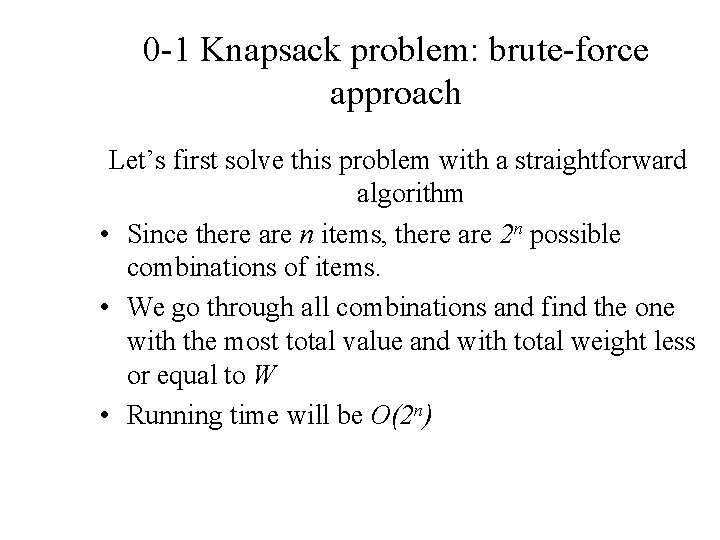

0 -1 Knapsack problem: brute-force approach Let’s first solve this problem with a straightforward algorithm • Since there are n items, there are 2 n possible combinations of items. • We go through all combinations and find the one with the most total value and with total weight less or equal to W • Running time will be O(2 n)

0 -1 Knapsack problem: brute-force approach • Can we do better? • Yes, with an algorithm based on dynamic programming • We need to carefully identify the sub-problems Let’s try this: If items are labeled 1. . n, then a sub-problem would be to find an optimal solution for Sk = {items labeled 1, 2, . . k}, where k ≤ n

Defining a Subproblem If items are labeled 1. . n, then a sub-problem would be to find an optimal solution for Sk = {items labeled 1, 2, . . k} • This is a valid sub-problem definition. • The question is: can we describe the final solution (Sn ) in terms of sub-problems (Sk)? • Unfortunately, we can’t do that. Explanation follows….

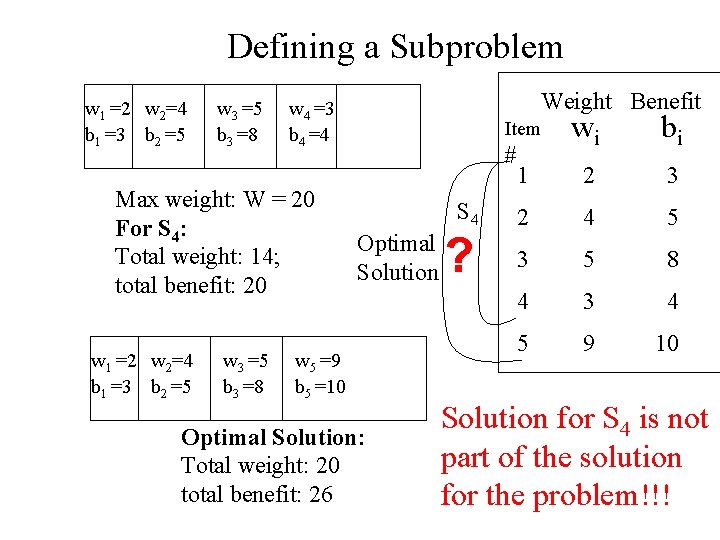

Defining a Subproblem w 1 =2 w 2=4 b 1 =3 b 2 =5 w 3 =5 b 3 =8 Max weight: W = 20 For S 4: Total weight: 14; total benefit: 20 w 1 =2 w 2=4 b 1 =3 b 2 =5 w 3 =5 b 3 =8 Weight Benefit w 4 =3 b 4 =4 wi bi 1 2 3 2 4 5 3 5 8 4 3 4 5 9 10 Item # S 4 Optimal Solution w 5 =9 b 5 =10 Optimal Solution: Total weight: 20 total benefit: 26 ? Solution for S 4 is not part of the solution for the problem!!!

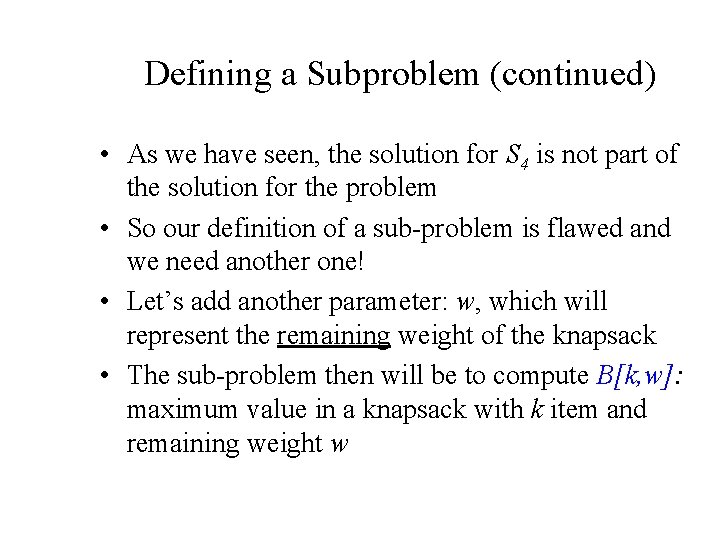

Defining a Subproblem (continued) • As we have seen, the solution for S 4 is not part of the solution for the problem • So our definition of a sub-problem is flawed and we need another one! • Let’s add another parameter: w, which will represent the remaining weight of the knapsack • The sub-problem then will be to compute B[k, w]: maximum value in a knapsack with k item and remaining weight w

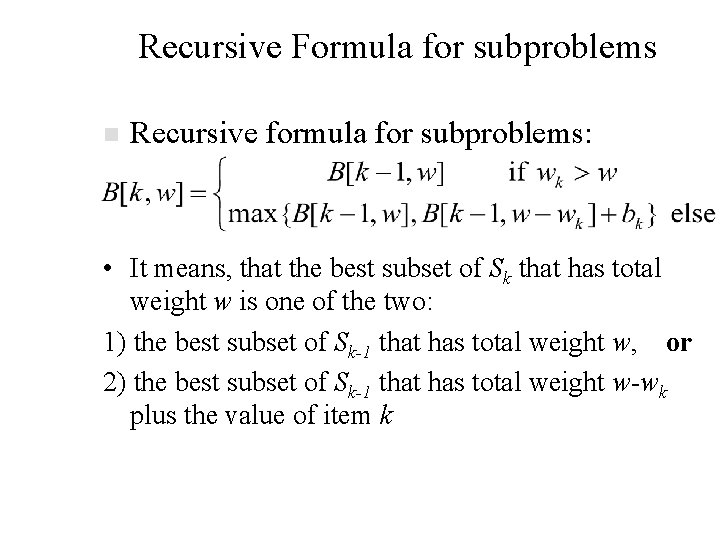

Recursive Formula for subproblems n Recursive formula for subproblems: • It means, that the best subset of Sk that has total weight w is one of the two: 1) the best subset of Sk-1 that has total weight w, or 2) the best subset of Sk-1 that has total weight w-wk plus the value of item k

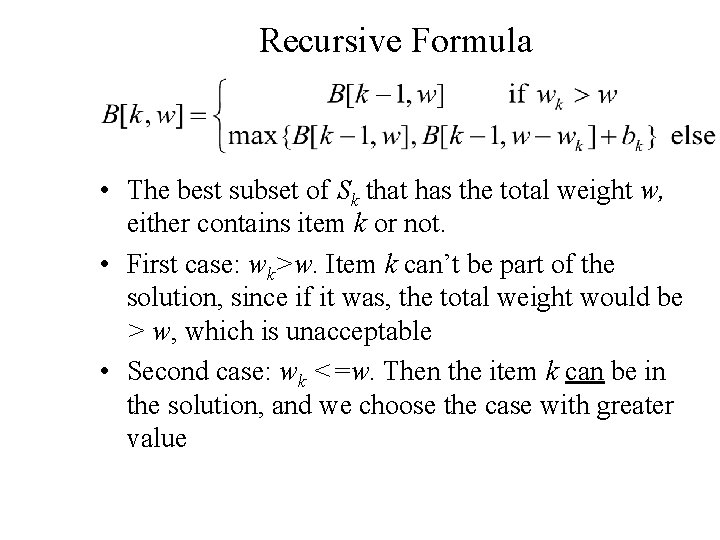

Recursive Formula • The best subset of Sk that has the total weight w, either contains item k or not. • First case: wk>w. Item k can’t be part of the solution, since if it was, the total weight would be > w, which is unacceptable • Second case: wk <=w. Then the item k can be in the solution, and we choose the case with greater value

![0 -1 Knapsack Algorithm for w = 0 to W B[0, w] = 0 0 -1 Knapsack Algorithm for w = 0 to W B[0, w] = 0](http://slidetodoc.com/presentation_image_h2/33ae5372ea2c05a6cac8eedc2516691d/image-48.jpg)

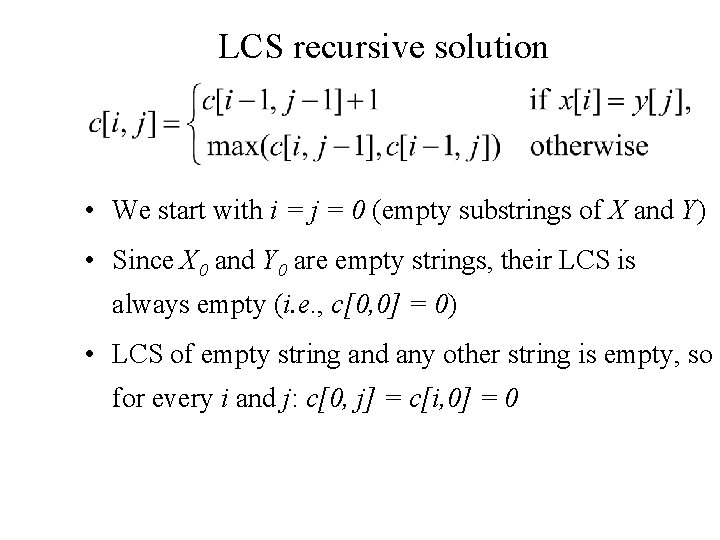

0 -1 Knapsack Algorithm for w = 0 to W B[0, w] = 0 for i = 0 to n B[i, 0] = 0 for w = 0 to W if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w

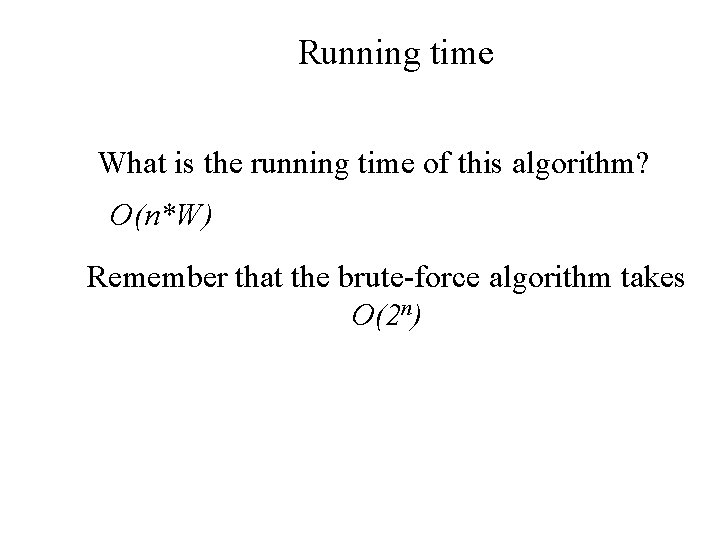

Running time What is the running time of this algorithm? O(n*W) Remember that the brute-force algorithm takes O(2 n)

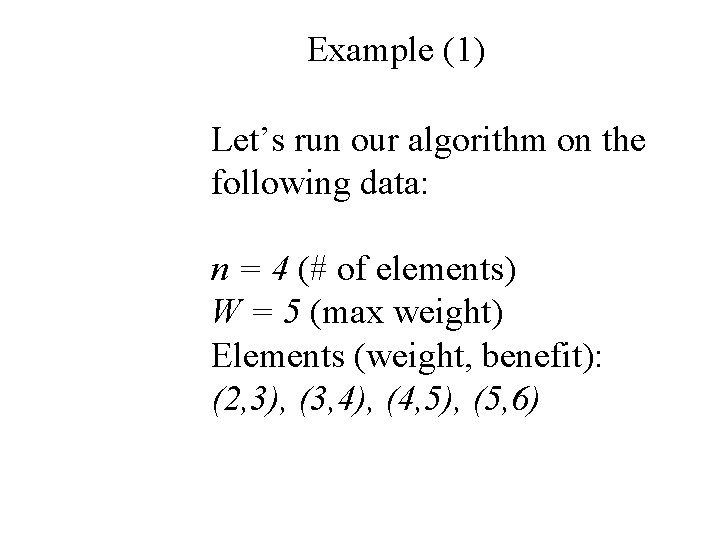

Example (1) Let’s run our algorithm on the following data: n = 4 (# of elements) W = 5 (max weight) Elements (weight, benefit): (2, 3), (3, 4), (4, 5), (5, 6)

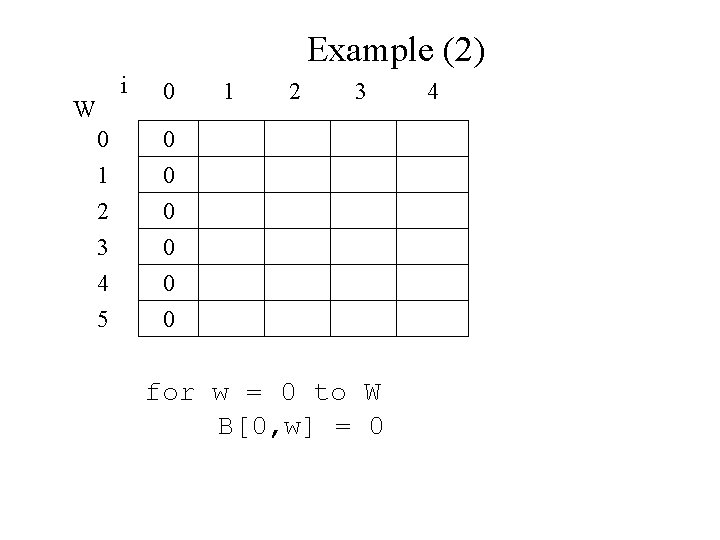

Example (2) i W 0 0 0 1 2 3 4 5 0 0 0 1 2 3 for w = 0 to W B[0, w] = 0 4

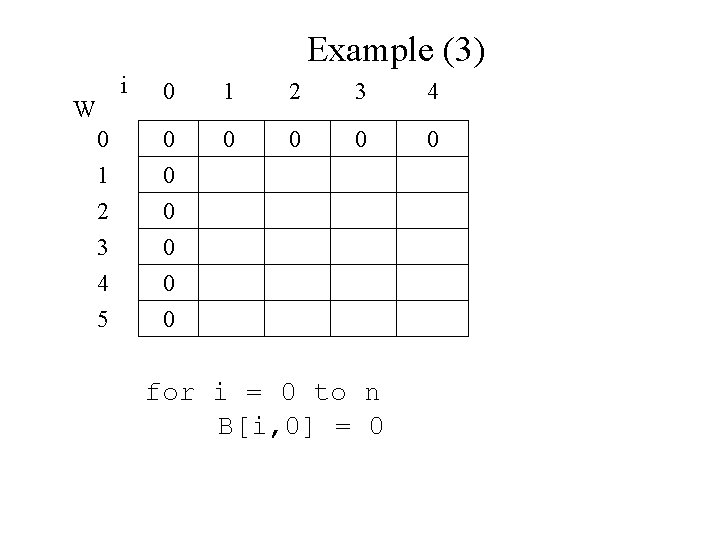

Example (3) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 W for i = 0 to n B[i, 0] = 0

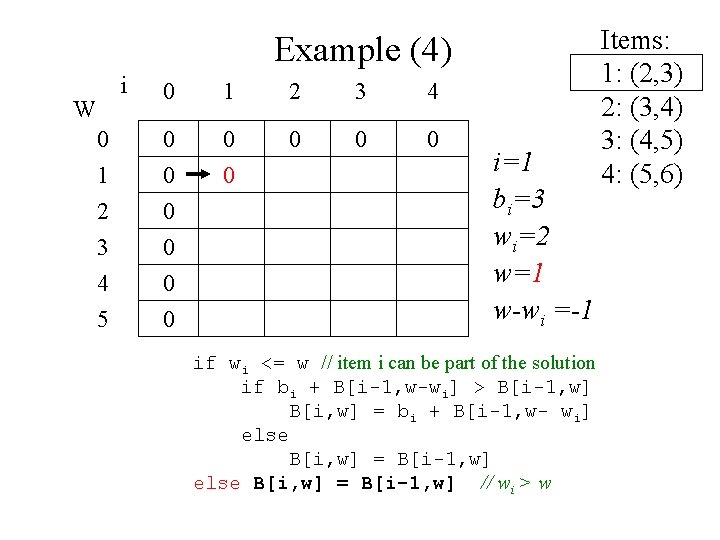

Example (4) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 W i=1 bi=3 wi=2 w=1 w-wi =-1 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

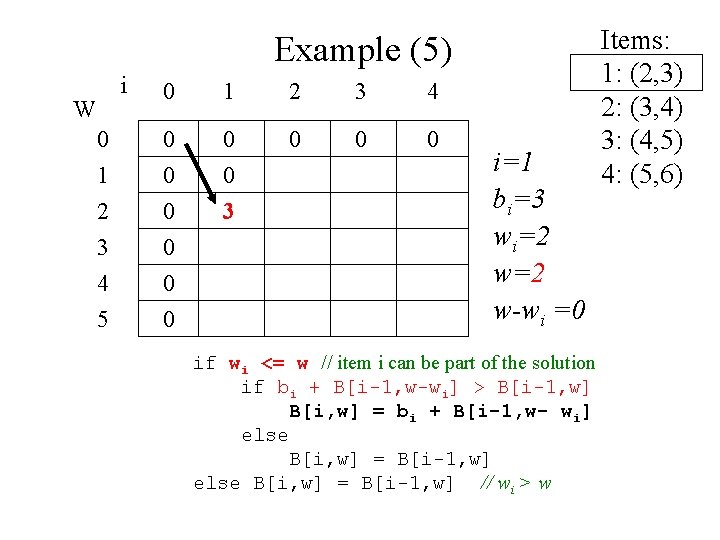

Example (5) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 W i=1 bi=3 wi=2 w-wi =0 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

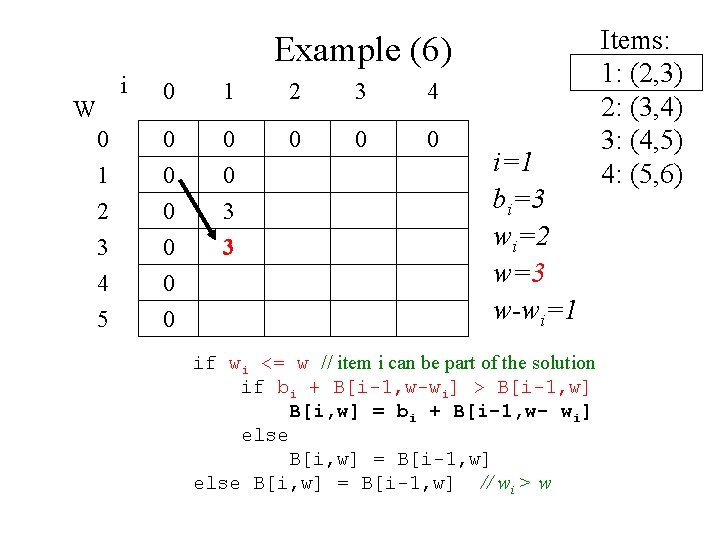

Example (6) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 W i=1 bi=3 wi=2 w=3 w-wi=1 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

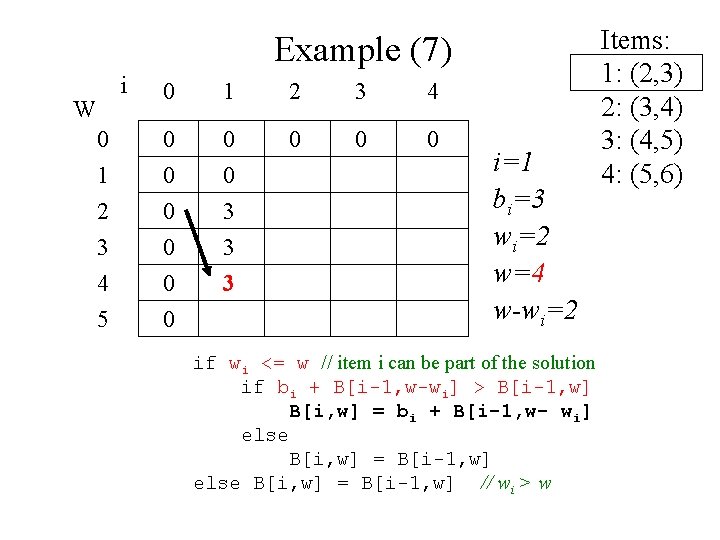

Example (7) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 3 W i=1 bi=3 wi=2 w=4 w-wi=2 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

Example (8) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 W i=1 bi=3 wi=2 w=5 w-wi=2 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

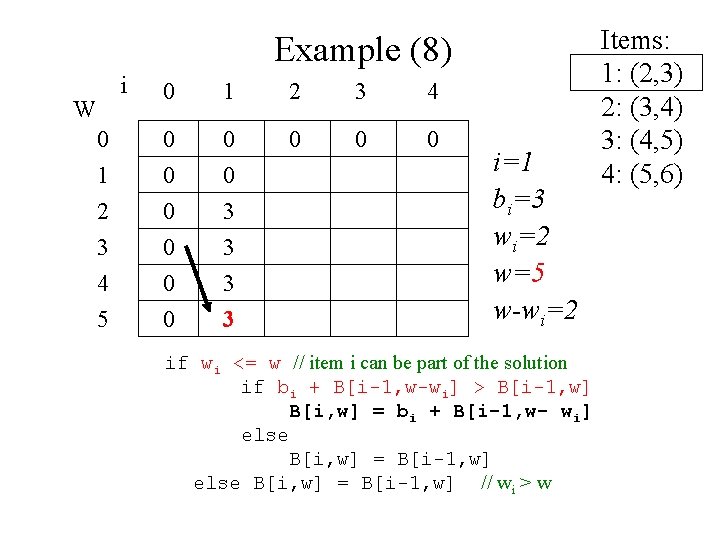

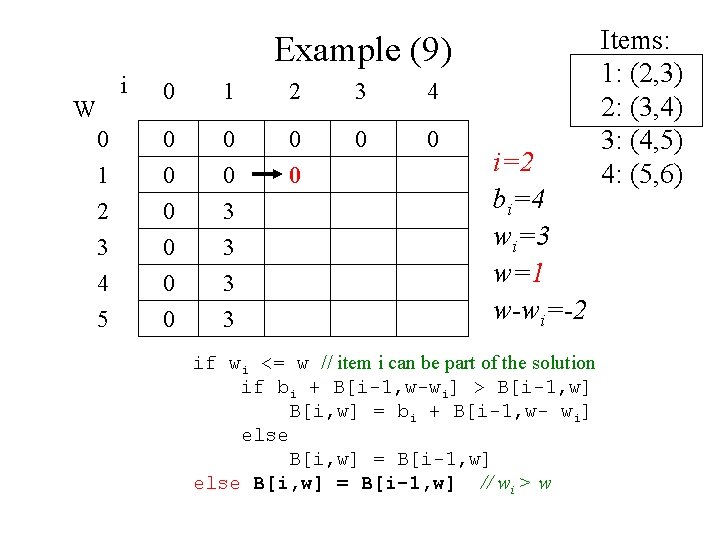

Example (9) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 W i=2 bi=4 wi=3 w=1 w-wi=-2 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

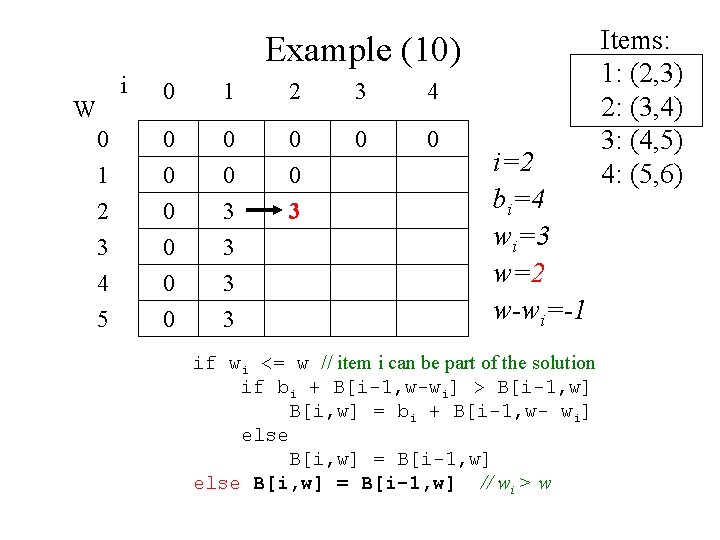

Example (10) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 W i=2 bi=4 wi=3 w=2 w-wi=-1 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

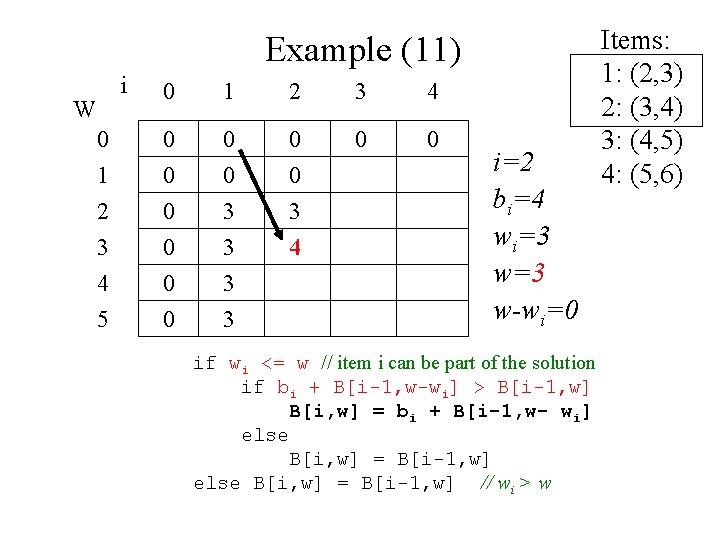

Example (11) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 W i=2 bi=4 wi=3 w-wi=0 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

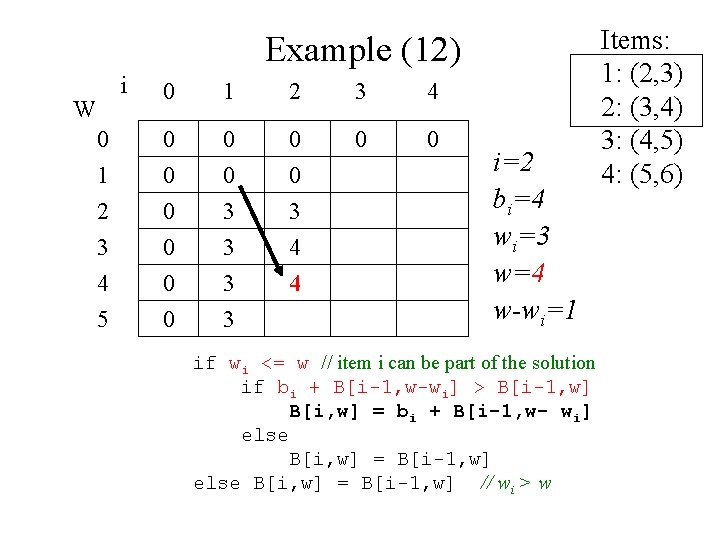

Example (12) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 4 W i=2 bi=4 wi=3 w=4 w-wi=1 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

Example (13) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 4 7 W i=2 bi=4 wi=3 w=5 w-wi=2 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

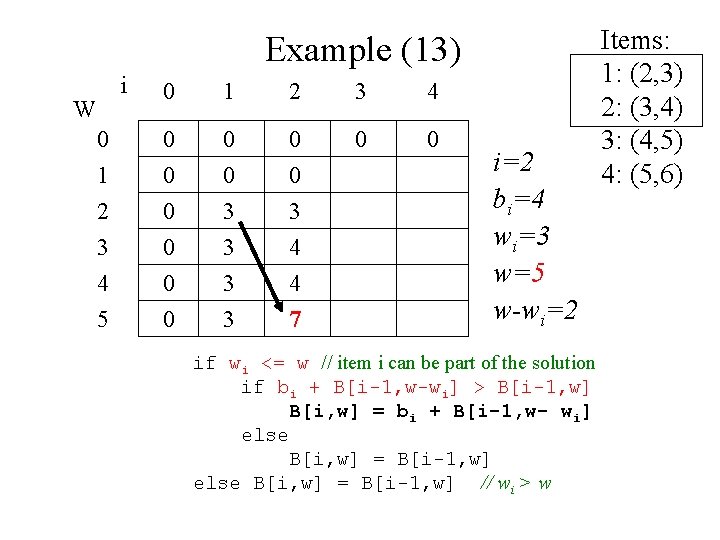

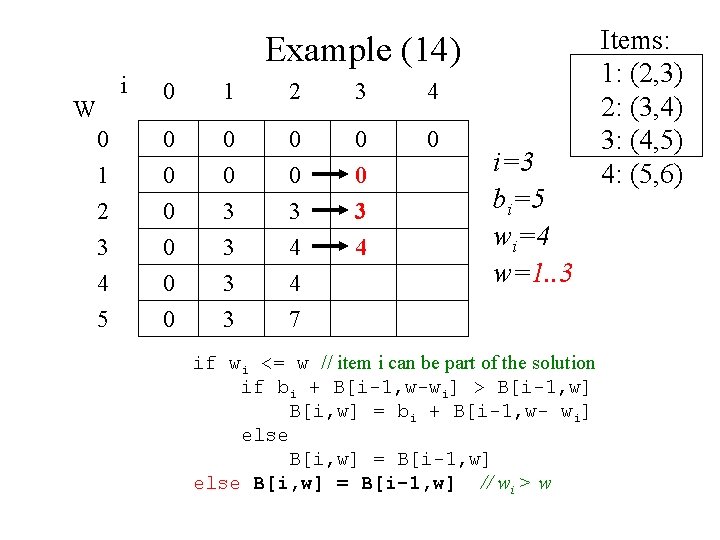

Example (14) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 4 7 0 3 4 W i=3 bi=5 wi=4 w=1. . 3 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

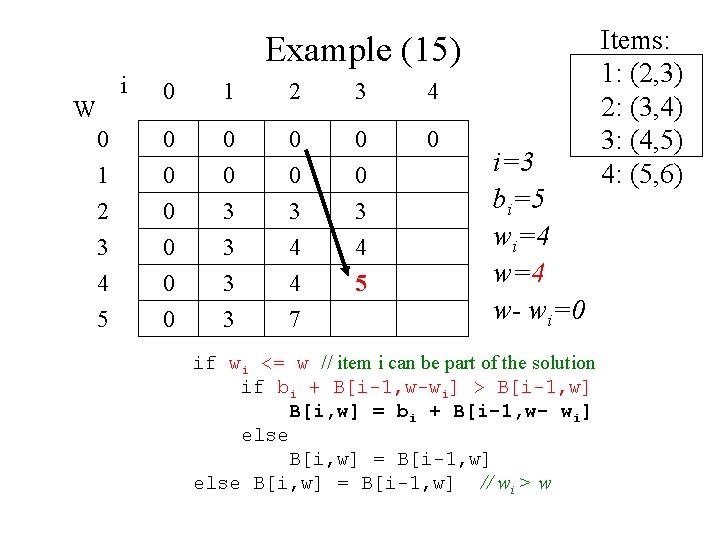

Example (15) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 4 7 0 3 4 5 W i=3 bi=5 wi=4 w- wi=0 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

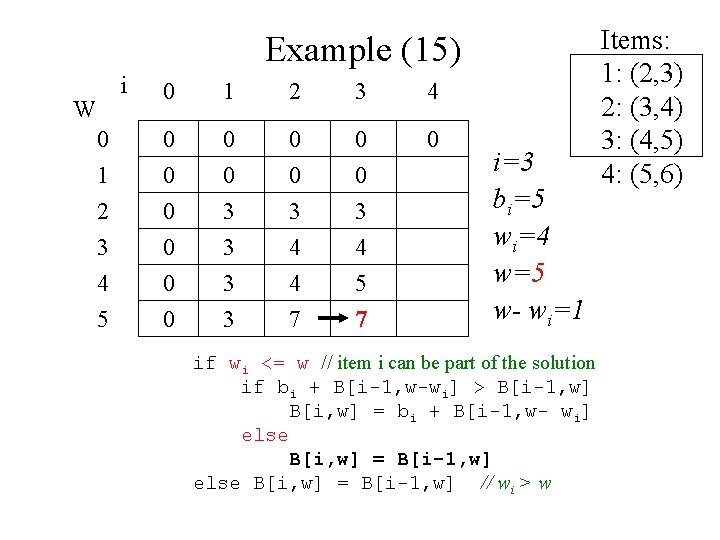

Example (15) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 4 7 0 3 4 5 7 W i=3 bi=5 wi=4 w=5 w- wi=1 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

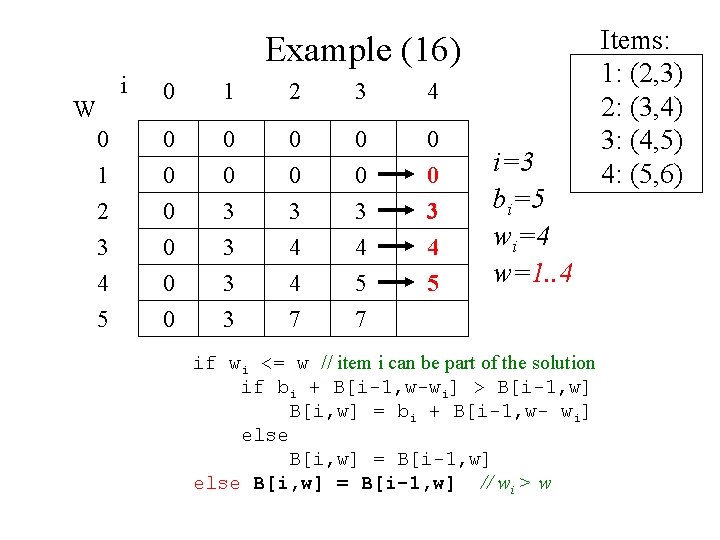

Example (16) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 4 7 0 3 4 5 W i=3 bi=5 wi=4 w=1. . 4 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6)

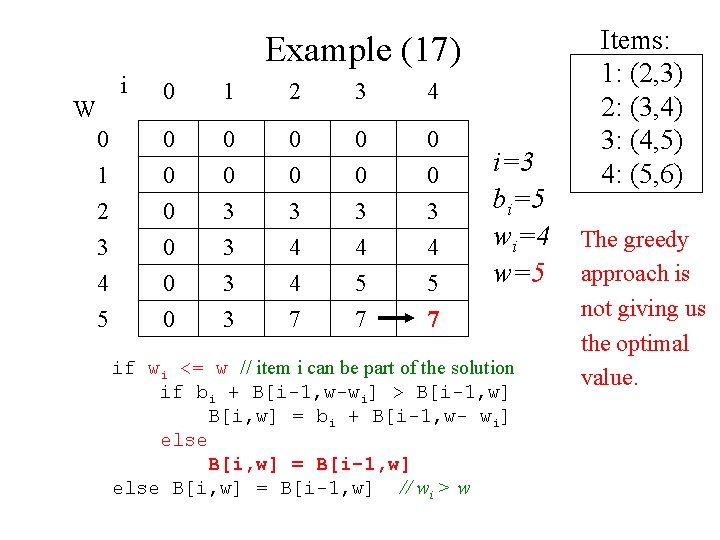

Example (17) i 0 1 2 3 4 0 0 0 1 2 3 4 5 0 0 0 3 3 0 3 4 4 7 0 3 4 5 7 W i=3 bi=5 wi=4 w=5 if wi <= w // item i can be part of the solution if bi + B[i-1, w-wi] > B[i-1, w] B[i, w] = bi + B[i-1, w- wi] else B[i, w] = B[i-1, w] // wi > w Items: 1: (2, 3) 2: (3, 4) 3: (4, 5) 4: (5, 6) The greedy approach is not giving us the optimal value.

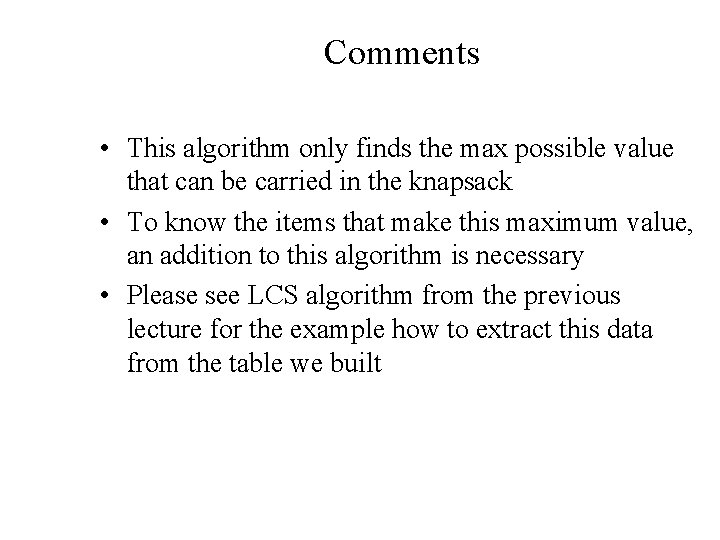

Comments • This algorithm only finds the max possible value that can be carried in the knapsack • To know the items that make this maximum value, an addition to this algorithm is necessary • Please see LCS algorithm from the previous lecture for the example how to extract this data from the table we built

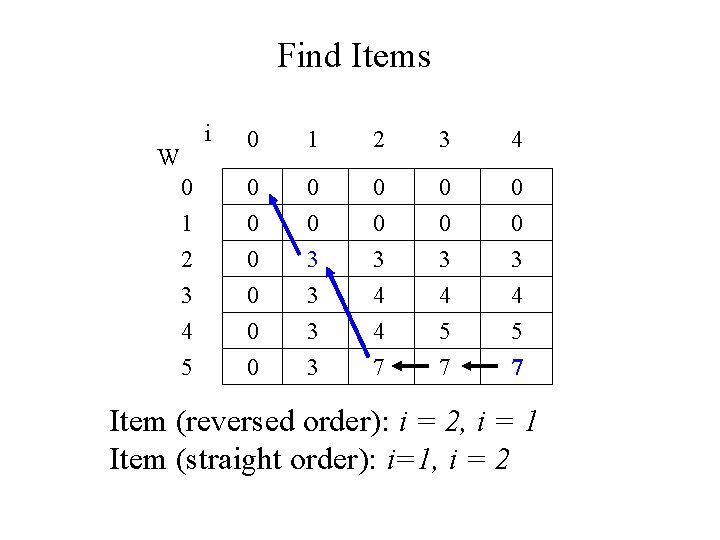

Find Items i W 0 1 2 3 4 5 0 1 2 3 4 0 0 0 0 3 3 0 0 3 4 4 7 0 0 3 4 5 7 Item (reversed order): i = 2, i = 1 Item (straight order): i=1, i = 2

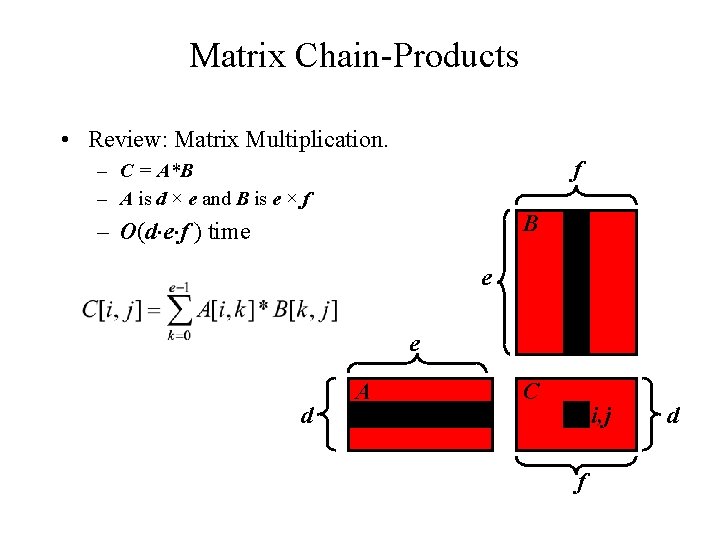

Matrix Chain-Products • Review: Matrix Multiplication. f – C = A*B – A is d × e and B is e × f B – O(d e f ) time j e e d A i C i, j f d

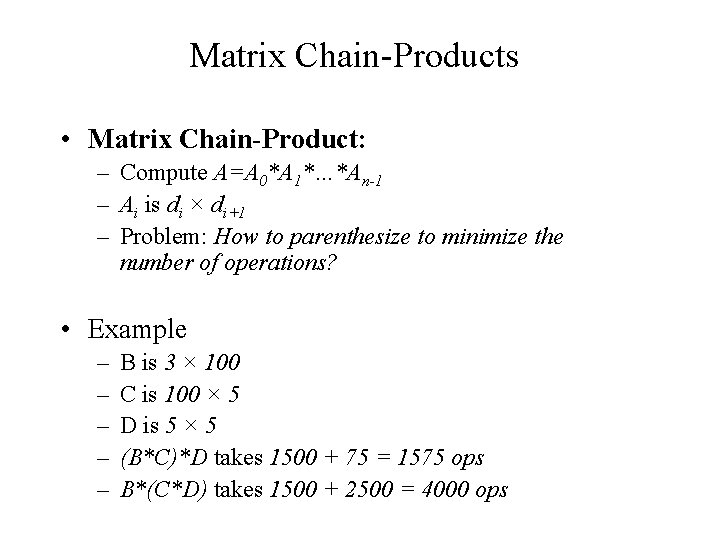

Matrix Chain-Products • Matrix Chain-Product: – Compute A=A 0*A 1*…*An-1 – Ai is di × di+1 – Problem: How to parenthesize to minimize the number of operations? • Example – – – B is 3 × 100 C is 100 × 5 D is 5 × 5 (B*C)*D takes 1500 + 75 = 1575 ops B*(C*D) takes 1500 + 2500 = 4000 ops

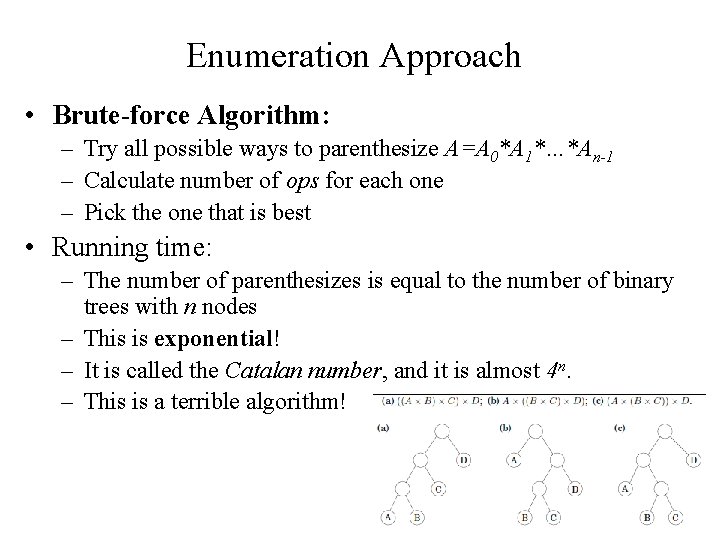

Enumeration Approach • Brute-force Algorithm: – Try all possible ways to parenthesize A=A 0*A 1*…*An-1 – Calculate number of ops for each one – Pick the one that is best • Running time: – The number of parenthesizes is equal to the number of binary trees with n nodes – This is exponential! – It is called the Catalan number, and it is almost 4 n. – This is a terrible algorithm!

Greedy Approach • Idea #1: repeatedly select the product that uses the fewest operations. • Counter-example: – – – A is 101 × 11 B is 11 × 9 C is 9 × 100 D is 100 × 99 Greedy idea #1 gives A*((B*C)*D)), which takes 9900+108900+109989=228789 ops – (A*B)*(C*D) takes 9999+89991+89100=189090 ops • The greedy approach is not giving us the optimal value.

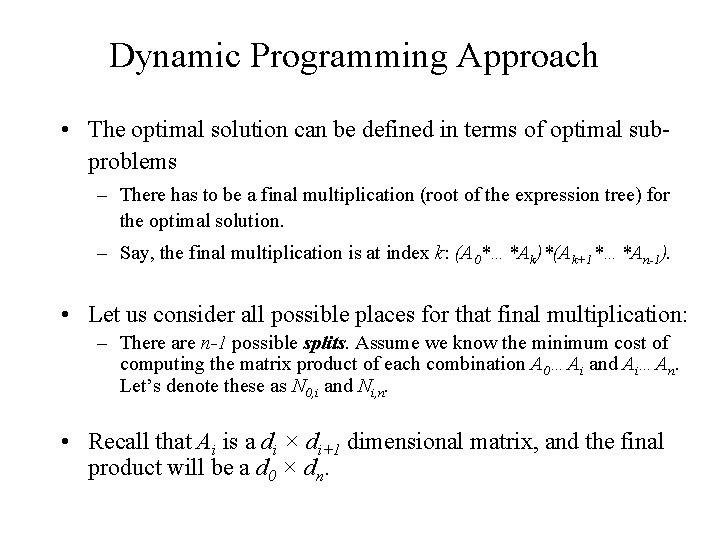

Dynamic Programming Approach • The optimal solution can be defined in terms of optimal subproblems – There has to be a final multiplication (root of the expression tree) for the optimal solution. – Say, the final multiplication is at index k: (A 0*…*Ak)*(Ak+1*…*An-1). • Let us consider all possible places for that final multiplication: – There are n-1 possible splits. Assume we know the minimum cost of computing the matrix product of each combination A 0…Ai and Ai…An. Let’s denote these as N 0, i and Ni, n. • Recall that Ai is a di × di+1 dimensional matrix, and the final product will be a d 0 × dn.

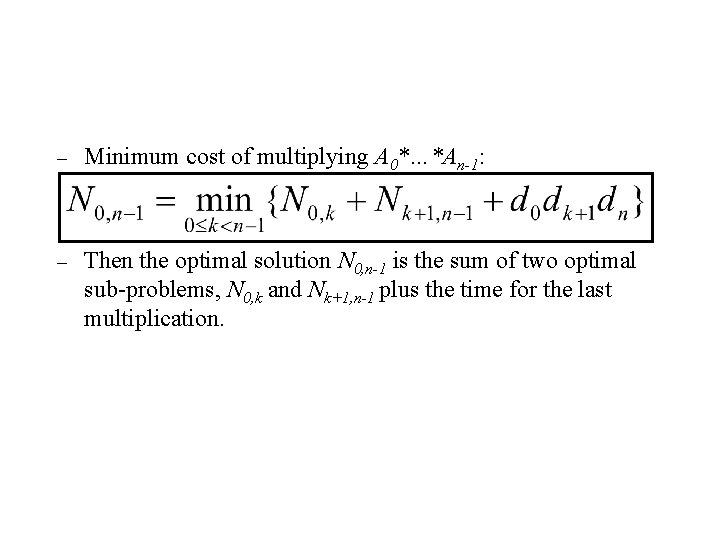

Dynamic Programming Approach – Minimum cost of multiplying A 0*…*An-1: – Then the optimal solution N 0, n-1 is the sum of two optimal sub-problems, N 0, k and Nk+1, n-1 plus the time for the last multiplication.

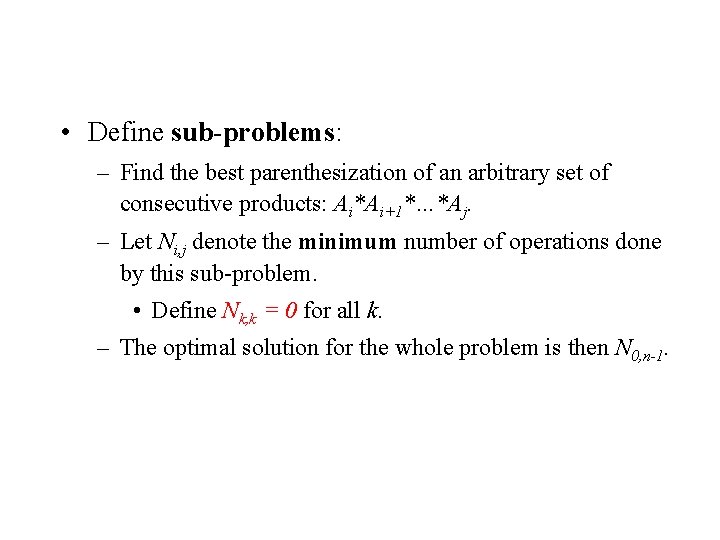

Dynamic Programming Approach • Define sub-problems: – Find the best parenthesization of an arbitrary set of consecutive products: Ai*Ai+1*…*Aj. – Let Ni, j denote the minimum number of operations done by this sub-problem. • Define Nk, k = 0 for all k. – The optimal solution for the whole problem is then N 0, n-1.

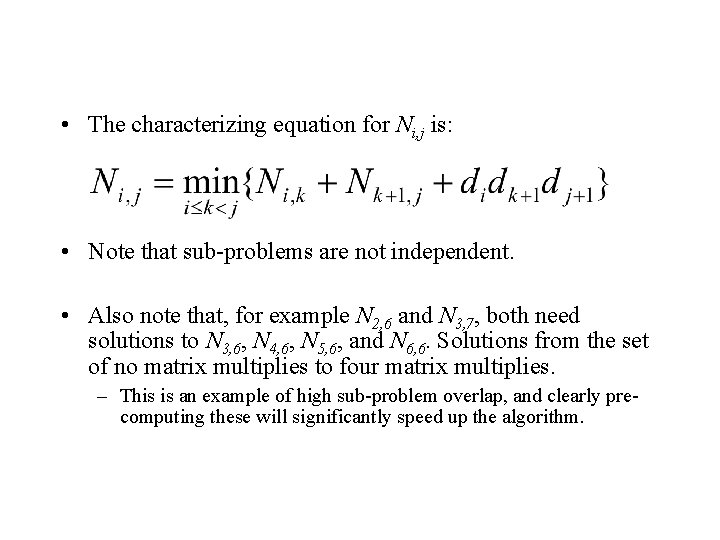

Dynamic Programming Approach • The characterizing equation for Ni, j is: • Note that sub-problems are not independent. • Also note that, for example N 2, 6 and N 3, 7, both need solutions to N 3, 6, N 4, 6, N 5, 6, and N 6, 6. Solutions from the set of no matrix multiplies to four matrix multiplies. – This is an example of high sub-problem overlap, and clearly precomputing these will significantly speed up the algorithm.

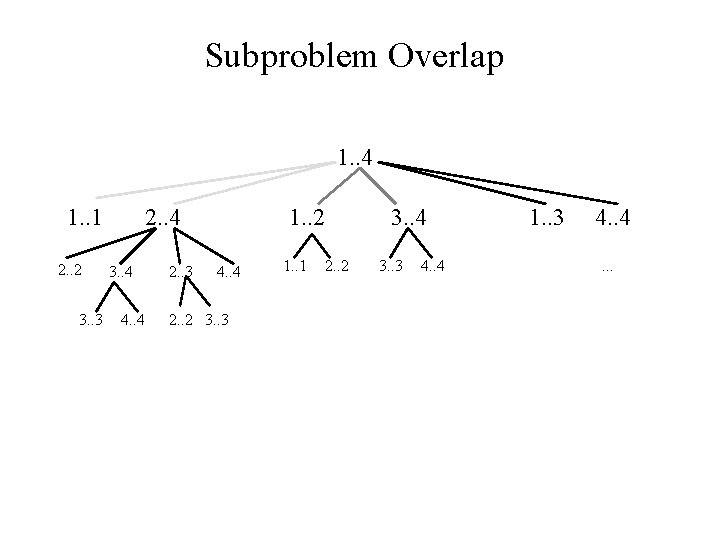

Subproblem Overlap 1. . 4 1. . 1 2. . 2 3. . 3 2. . 4 3. . 4 4. . 4 2. . 3 1. . 2 4. . 4 2. . 2 3. . 3 1. . 1 3. . 4 2. . 2 3. . 3 4. . 4 1. . 3 4. . .

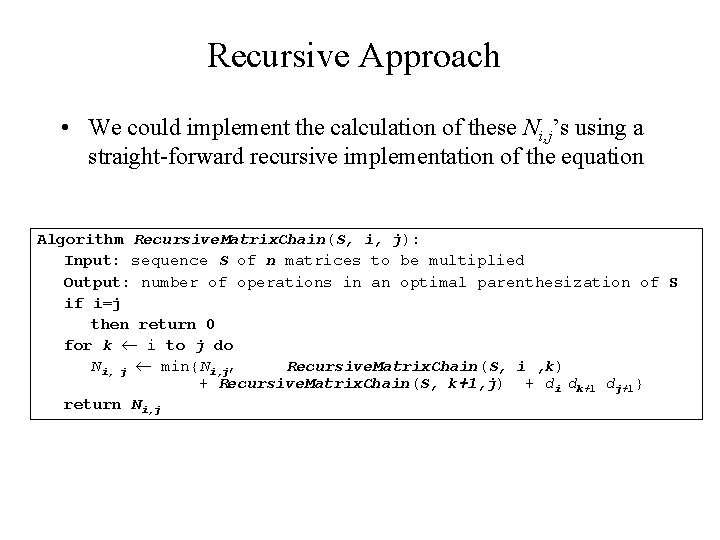

Recursive Approach • We could implement the calculation of these Ni, j’s using a straight-forward recursive implementation of the equation Algorithm Recursive. Matrix. Chain(S, i, j): Input: sequence S of n matrices to be multiplied Output: number of operations in an optimal parenthesization of S if i=j then return 0 for k i to j do Ni, j min{Ni, j, Recursive. Matrix. Chain(S, i , k) + Recursive. Matrix. Chain(S, k+1, j) + di dk+1 dj+1} return Ni, j

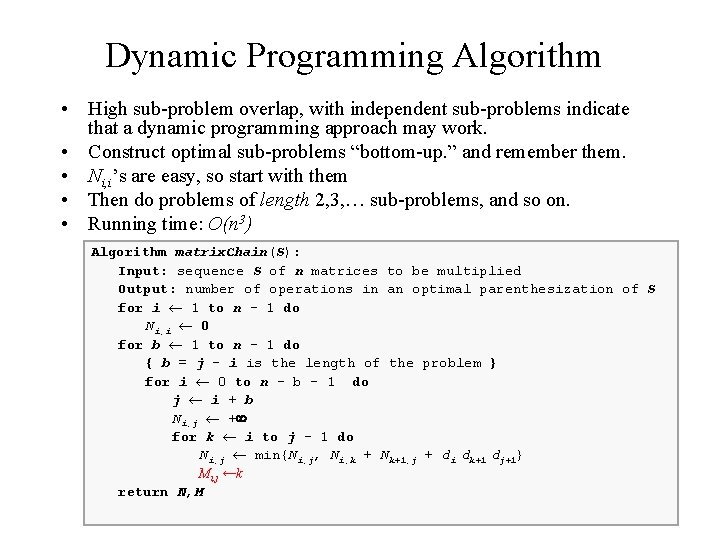

Dynamic Programming Algorithm • High sub-problem overlap, with independent sub-problems indicate that a dynamic programming approach may work. • Construct optimal sub-problems “bottom-up. ” and remember them. • Ni, i’s are easy, so start with them • Then do problems of length 2, 3, … sub-problems, and so on. • Running time: O(n 3) Algorithm matrix. Chain(S): Input: sequence S of n matrices to be multiplied Output: number of operations in an optimal parenthesization of S for i 1 to n - 1 do Ni, i 0 for b 1 to n - 1 do { b = j - i is the length of the problem } for i 0 to n - b - 1 do j i + b Ni, j + for k i to j - 1 do Ni, j min{Ni, j, Ni, k + Nk+1, j + di dk+1 dj+1} Mi, j ←k return N, M

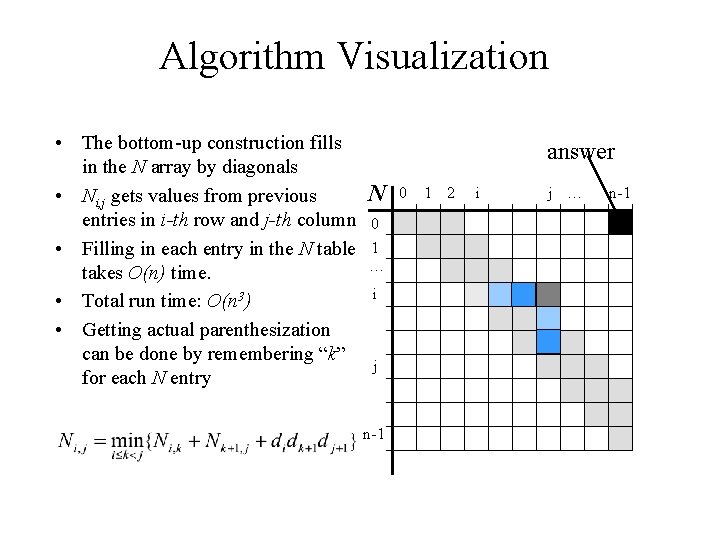

Algorithm Visualization • The bottom-up construction fills in the N array by diagonals N • Ni, j gets values from previous entries in i-th row and j-th column 0 • Filling in each entry in the N table 1 … takes O(n) time. i • Total run time: O(n 3) • Getting actual parenthesization can be done by remembering “k” j for each N entry n-1 answer 0 1 2 i j … n-1

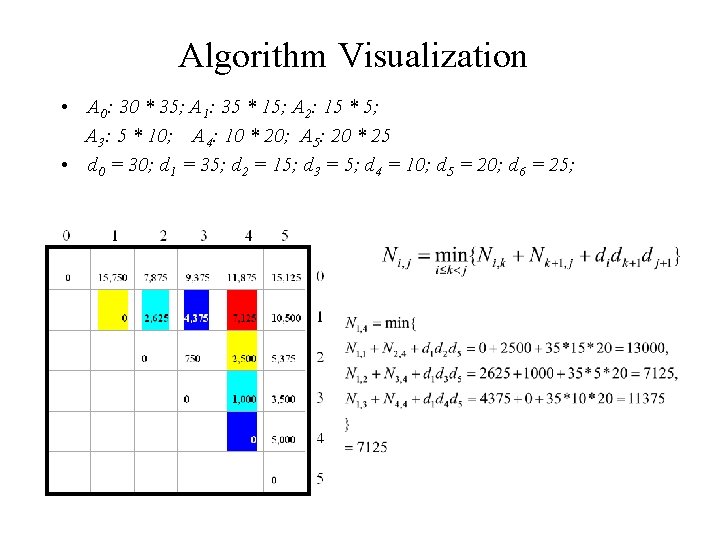

Algorithm Visualization • A 0: 30 * 35; A 1: 35 * 15; A 2: 15 * 5; A 3: 5 * 10; A 4: 10 * 20; A 5: 20 * 25 • d 0 = 30; d 1 = 35; d 2 = 15; d 3 = 5; d 4 = 10; d 5 = 20; d 6 = 25;

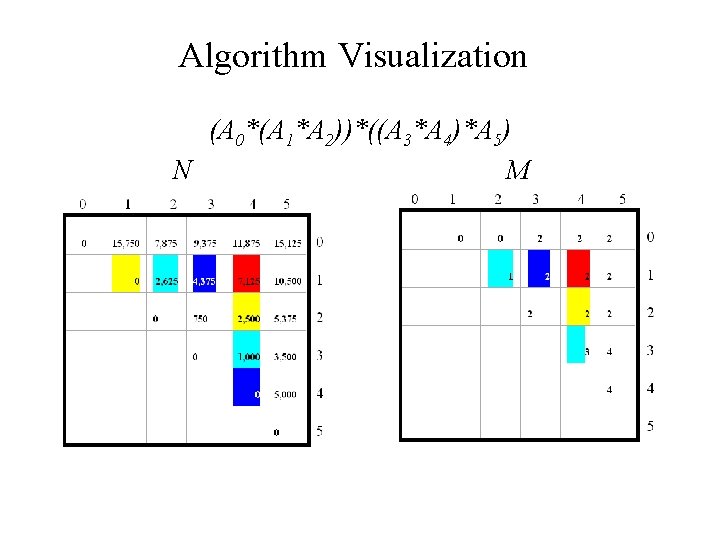

Algorithm Visualization (A 0*(A 1*A 2))*((A 3*A 4)*A 5) N M

Matrix Chain-Products • Some final thoughts – We replaced a O(2 n) algorithm with a O(n 3) algorithm. – While the generic top-down recursive algorithm would have solved O(2 n) sub-problems, there are O(n 2) subproblems. • Implies a high overlap of sub-problems. – The sub-problems are independent: • Solution to A 0 A 1…Ak is independent of the solution to Ak+1…An.

Matrix Chain-Products Summary • Determine the cost of each pair-wise multiplication, then the minimum cost of multiplying three consecutive matrices (2 possible choices), using the pre-computed costs for two matrices. • Repeat until we compute the minimum cost of all n matrices using the costs of the minimum n-1 matrix product costs. – n-1 possible choices.

Dynamic Programming Steps • A Dynamic Programming approach consists of a sequence of 4 steps: 1. Characterize the structure of an optimal solution 2. Recursively define the value of an optimal solution 3. Computer the value of an optimal solution in a bottom -up fashion 4. Construct an optimal solution from computer information

Dynamic Programming Summary • Summary of the basic idea: – Optimal substructure: optimal solution to problem consists of optimal solutions to sub-problems – Overlapping sub-problems: few sub-problems in total, many recurring instances of each – Solve bottom-up, building a table of solved subproblems that are used to solve larger ones • Variations: – “Table” could be 3 -dimensional, triangular, a tree, etc.

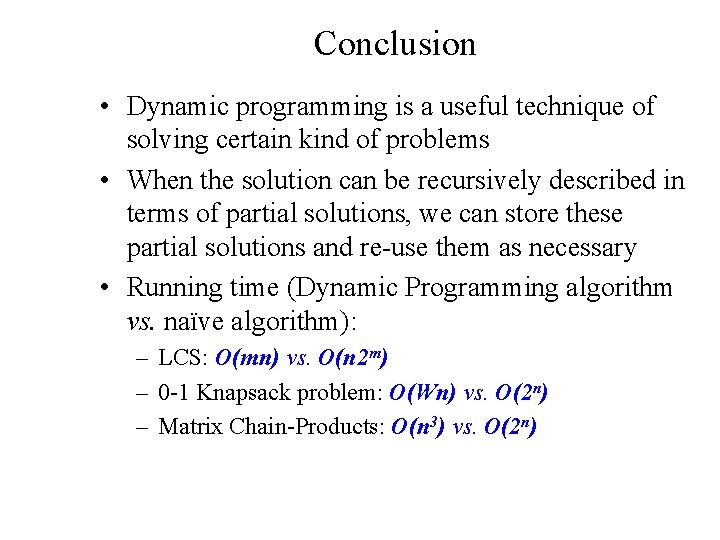

Conclusion • Dynamic programming is a useful technique of solving certain kind of problems • When the solution can be recursively described in terms of partial solutions, we can store these partial solutions and re-use them as necessary • Running time (Dynamic Programming algorithm vs. naïve algorithm): – LCS: O(mn) vs. O(n 2 m) – 0 -1 Knapsack problem: O(Wn) vs. O(2 n) – Matrix Chain-Products: O(n 3) vs. O(2 n)

- Slides: 88