Approaches to Problem Solving greedy algorithms dynamic programming

Approaches to Problem Solving • greedy algorithms • dynamic programming • backtracking • divide-and-conquer

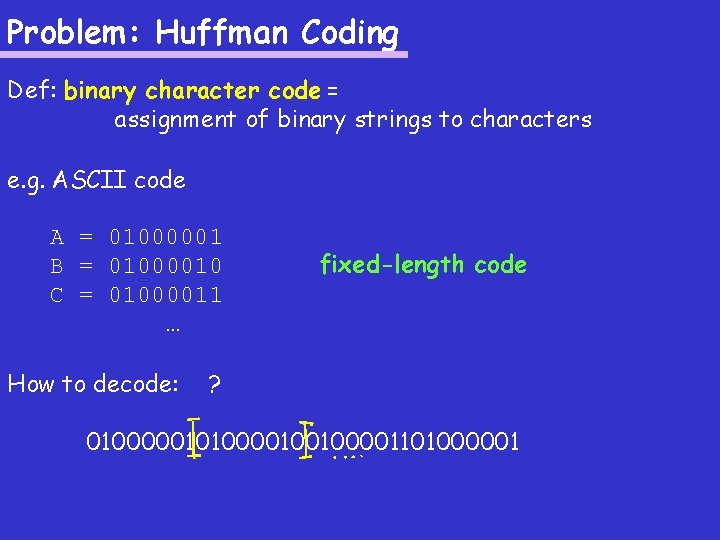

Problem: Huffman Coding Def: binary character code = assignment of binary strings to characters e. g. ASCII code A = 01000001 B = 01000010 C = 01000011 … How to decode: fixed-length code ? 01000001010000100100001101000001

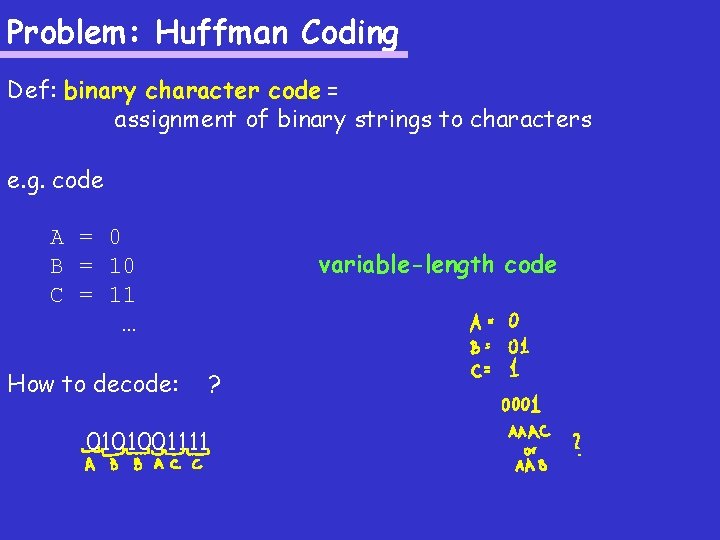

Problem: Huffman Coding Def: binary character code = assignment of binary strings to characters e. g. code A = 0 B = 10 C = 11 … How to decode: variable-length code ? 0101001111

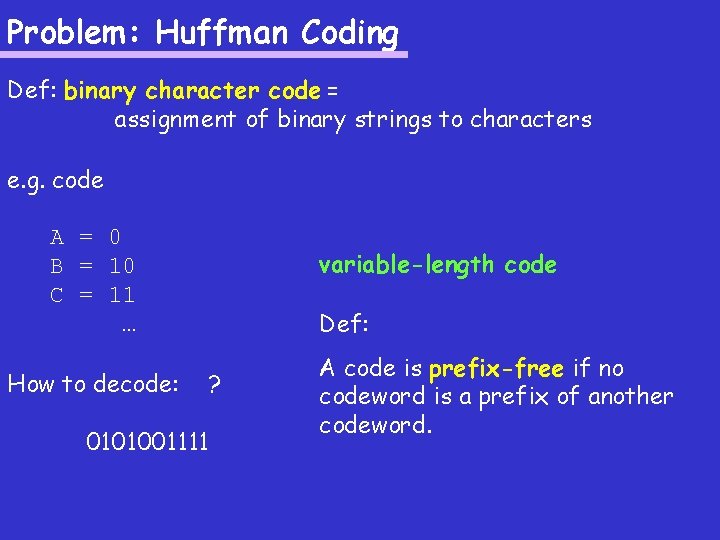

Problem: Huffman Coding Def: binary character code = assignment of binary strings to characters e. g. code A = 0 B = 10 C = 11 … How to decode: variable-length code Def: ? 0101001111 A code is prefix-free if no codeword is a prefix of another codeword.

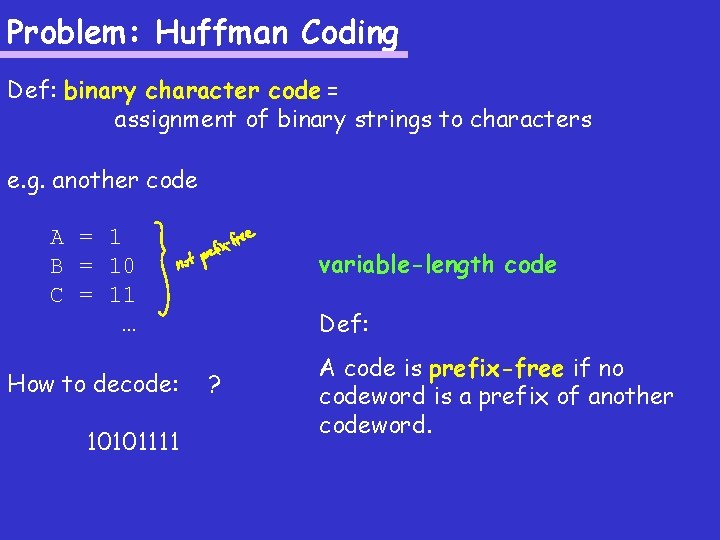

Problem: Huffman Coding Def: binary character code = assignment of binary strings to characters e. g. another code A = 1 B = 10 C = 11 … How to decode: 10101111 variable-length code Def: ? A code is prefix-free if no codeword is a prefix of another codeword.

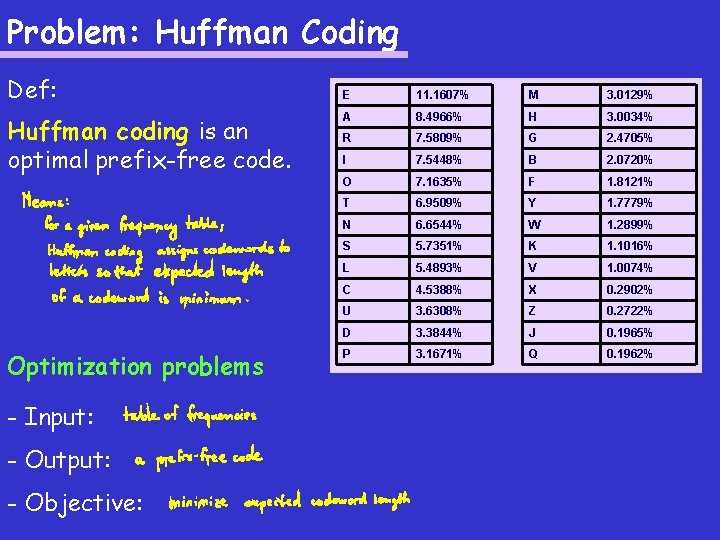

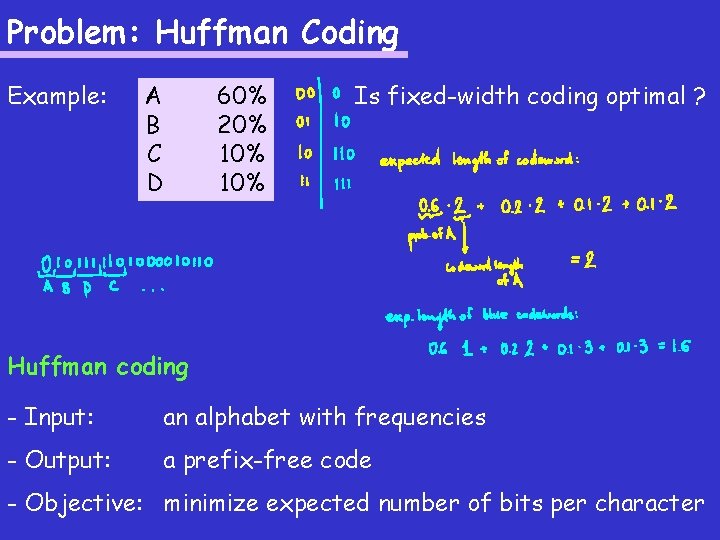

Problem: Huffman Coding Def: Huffman coding is an optimal prefix-free code. Optimization problems - Input: - Output: - Objective: E 11. 1607% M 3. 0129% A 8. 4966% H 3. 0034% R 7. 5809% G 2. 4705% I 7. 5448% B 2. 0720% O 7. 1635% F 1. 8121% T 6. 9509% Y 1. 7779% N 6. 6544% W 1. 2899% S 5. 7351% K 1. 1016% L 5. 4893% V 1. 0074% C 4. 5388% X 0. 2902% U 3. 6308% Z 0. 2722% D 3. 3844% J 0. 1965% P 3. 1671% Q 0. 1962%

Problem: Huffman Coding Def: Huffman coding is an optimal prefix-free code. Huffman coding E 11. 1607% M 3. 0129% A 8. 4966% H 3. 0034% R 7. 5809% G 2. 4705% I 7. 5448% B 2. 0720% O 7. 1635% F 1. 8121% T 6. 9509% Y 1. 7779% N 6. 6544% W 1. 2899% S 5. 7351% K 1. 1016% L 5. 4893% V 1. 0074% C 4. 5388% X 0. 2902% U 3. 6308% Z 0. 2722% D 3. 3844% J 0. 1965% P 3. 1671% Q 0. 1962% - Input: an alphabet with frequencies - Output: a prefix-free code - Objective: minimize expected number of bits per character

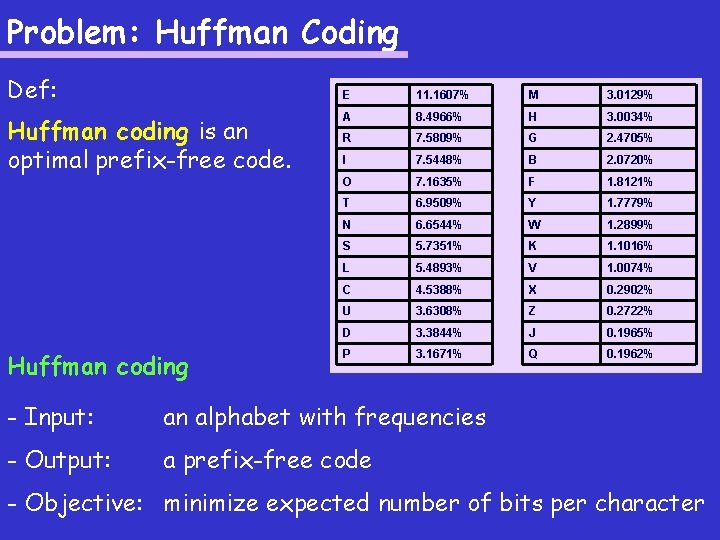

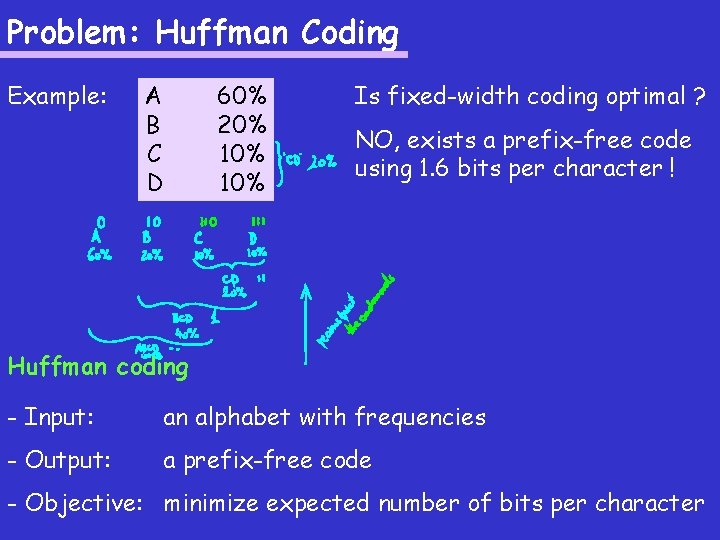

Problem: Huffman Coding Example: A B C D 60% 20% 10% Is fixed-width coding optimal ? Huffman coding - Input: an alphabet with frequencies - Output: a prefix-free code - Objective: minimize expected number of bits per character

Problem: Huffman Coding Example: A B C D 60% 20% 10% Is fixed-width coding optimal ? NO, exists a prefix-free code using 1. 6 bits per character ! Huffman coding - Input: an alphabet with frequencies - Output: a prefix-free code - Objective: minimize expected number of bits per character

![Problem: Huffman Coding Huffman ( [a 1, f 1], [a , f 2], …, Problem: Huffman Coding Huffman ( [a 1, f 1], [a , f 2], …,](http://slidetodoc.com/presentation_image/882cc0bb7163fbdfc2c4134646e5c99b/image-10.jpg)

Problem: Huffman Coding Huffman ( [a 1, f 1], [a , f 2], …, [an, fn]) 2 1. if n=1 then 2. code[a 1] “” 3. else 4. let fi, fj be the 2 smallest f’s 5. Huffman ( [ai, fi+fj], [a 1, f 1], …, [an, fn] ) omits ai, aj 6. 7. code[aj] code[ai] + “ 0” code[ai] + “ 1” Huffman coding - Input: an alphabet with frequencies - Output: a prefix-free code - Objective: minimize expected number of bits per character

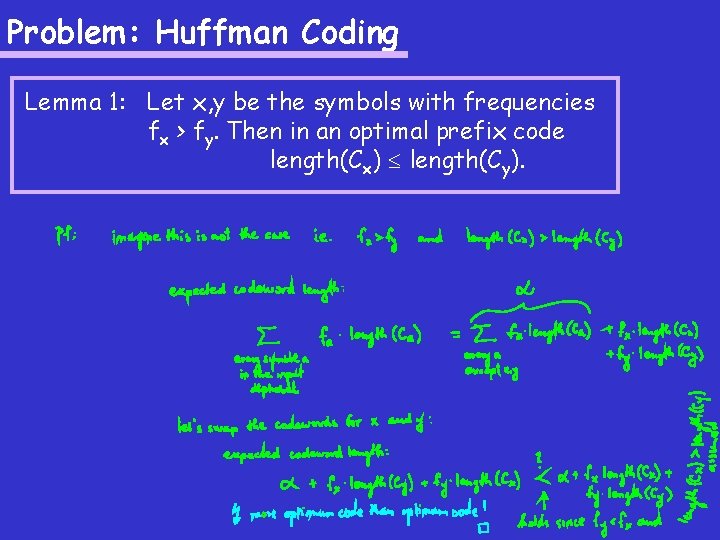

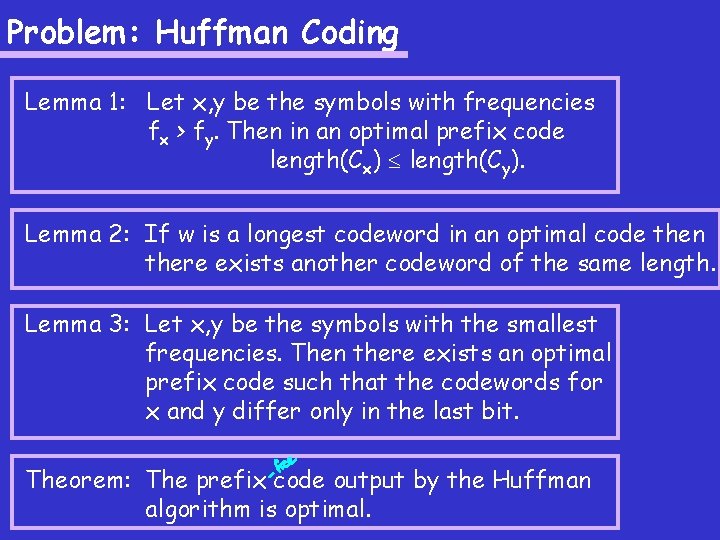

Problem: Huffman Coding Lemma 1: Let x, y be the symbols with frequencies fx > fy. Then in an optimal prefix code length(Cx) length(Cy).

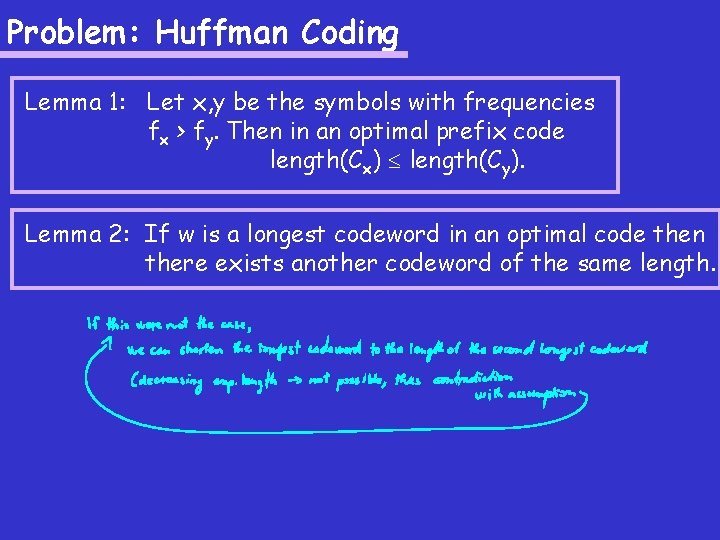

Problem: Huffman Coding Lemma 1: Let x, y be the symbols with frequencies fx > fy. Then in an optimal prefix code length(Cx) length(Cy). Lemma 2: If w is a longest codeword in an optimal code then there exists another codeword of the same length.

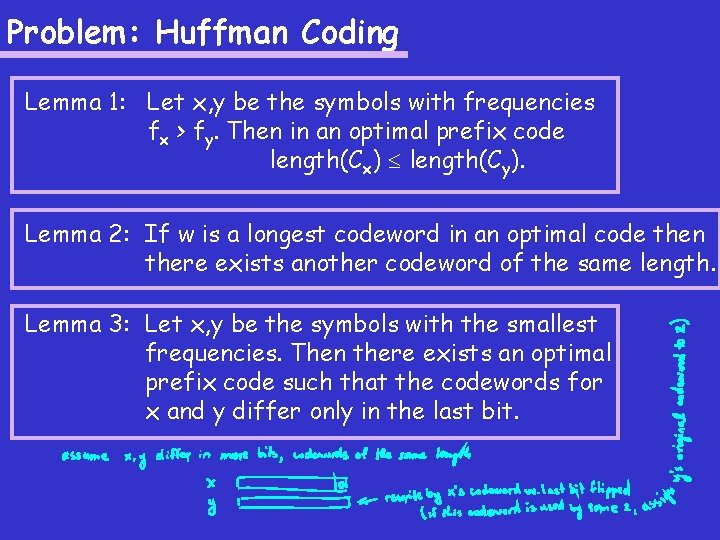

Problem: Huffman Coding Lemma 1: Let x, y be the symbols with frequencies fx > fy. Then in an optimal prefix code length(Cx) length(Cy). Lemma 2: If w is a longest codeword in an optimal code then there exists another codeword of the same length. Lemma 3: Let x, y be the symbols with the smallest frequencies. Then there exists an optimal prefix code such that the codewords for x and y differ only in the last bit.

Problem: Huffman Coding Lemma 1: Let x, y be the symbols with frequencies fx > fy. Then in an optimal prefix code length(Cx) length(Cy). Lemma 2: If w is a longest codeword in an optimal code then there exists another codeword of the same length. Lemma 3: Let x, y be the symbols with the smallest frequencies. Then there exists an optimal prefix code such that the codewords for x and y differ only in the last bit. Theorem: The prefix code output by the Huffman algorithm is optimal.

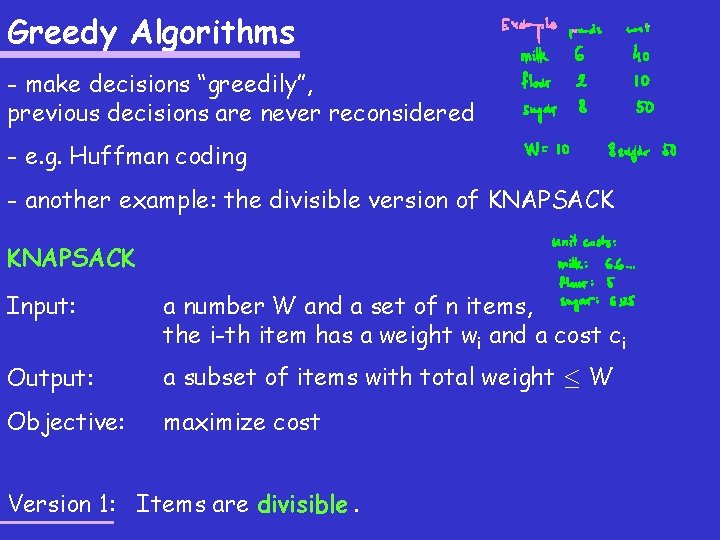

Greedy Algorithms - make decisions “greedily”, previous decisions are never reconsidered - e. g. Huffman coding - another example: the divisible version of KNAPSACK Input: a number W and a set of n items, the i-th item has a weight wi and a cost ci Output: a subset of items with total weight · W Objective: maximize cost Version 1: Items are divisible.

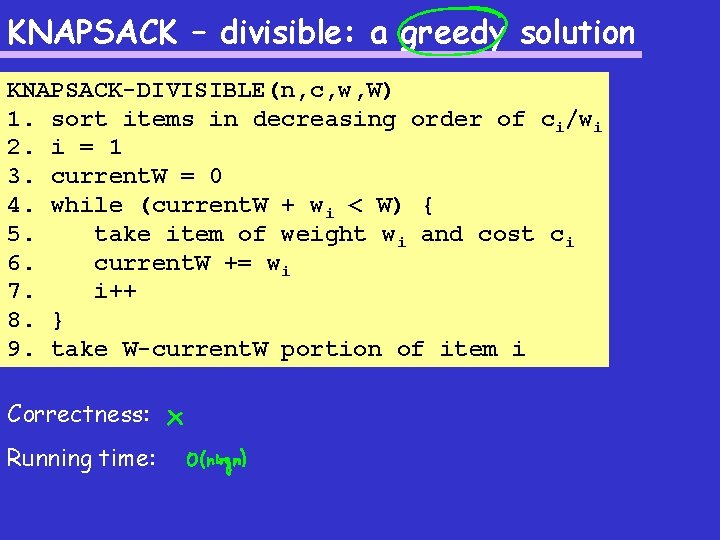

KNAPSACK – divisible: a greedy solution KNAPSACK-DIVISIBLE(n, c, w, W) 1. sort items in decreasing order of ci/wi 2. i = 1 3. current. W = 0 4. while (current. W + wi < W) { 5. take item of weight wi and cost ci 6. current. W += wi 7. i++ 8. } 9. take W-current. W portion of item i Correctness: Running time:

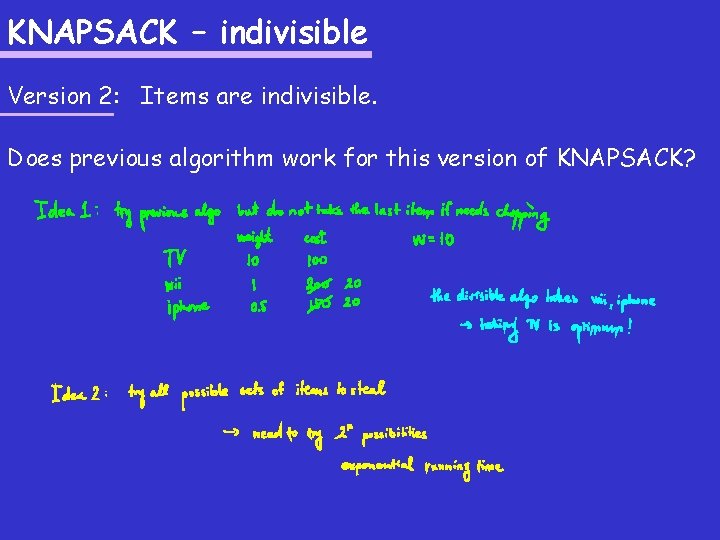

KNAPSACK – indivisible Version 2: Items are indivisible. Does previous algorithm work for this version of KNAPSACK?

Back. Tracking - “brute force”: try all possibilities - running time?

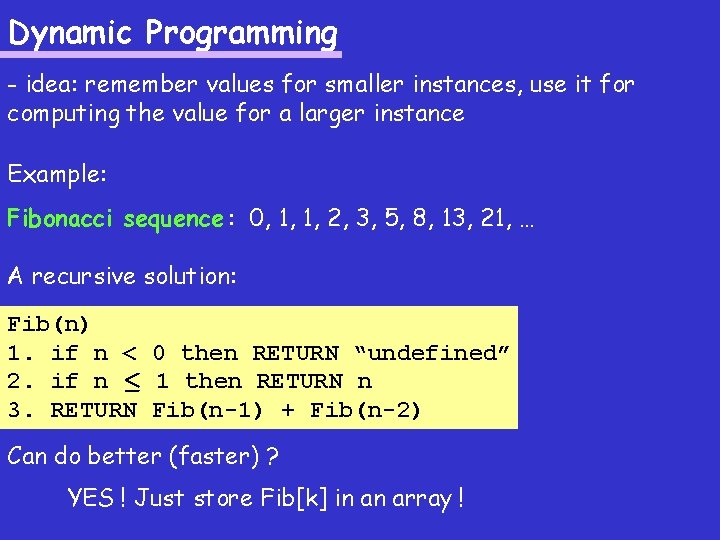

Dynamic Programming - idea: remember values for smaller instances, use it for computing the value for a larger instance Example: Fibonacci sequence : 0, 1, 1, 2, 3, 5, 8, 13, 21, …

Dynamic Programming - idea: remember values for smaller instances, use it for computing the value for a larger instance Example: Fibonacci sequence : 0, 1, 1, 2, 3, 5, 8, 13, 21, … A recursive solution: Fib(n) 1. if n < 0 then RETURN “undefined” 2. if n · 1 then RETURN n 3. RETURN Fib(n-1) + Fib(n-2)

Dynamic Programming - idea: remember values for smaller instances, use it for computing the value for a larger instance Example: Fibonacci sequence : 0, 1, 1, 2, 3, 5, 8, 13, 21, … A recursive solution: Fib(n) 1. if n < 0 then RETURN “undefined” 2. if n · 1 then RETURN n 3. RETURN Fib(n-1) + Fib(n-2) Can do better (faster) ?

Dynamic Programming - idea: remember values for smaller instances, use it for computing the value for a larger instance Example: Fibonacci sequence : 0, 1, 1, 2, 3, 5, 8, 13, 21, … A recursive solution: Fib(n) 1. if n < 0 then RETURN “undefined” 2. if n · 1 then RETURN n 3. RETURN Fib(n-1) + Fib(n-2) Can do better (faster) ? YES ! Just store Fib[k] in an array !

Dynamic Programming - idea: remember values for smaller instances, use it for computing the value for a larger instance Example: Fibonacci sequence : 0, 1, 1, 2, 3, 5, 8, 13, 21, … Fib. Dyn. Prog(n) 1. Fib[0] = 0 2. Fib[1] = 1 3. for i=2 to n do 4. Fib[i] = Fib[i-1] + Fib[i-2] 5. RETURN Fib[n] Can do better (faster) ? YES ! Just store Fib[k] in an array ! The heart of the solution: Fib[k] = the k-th Fibonacci number

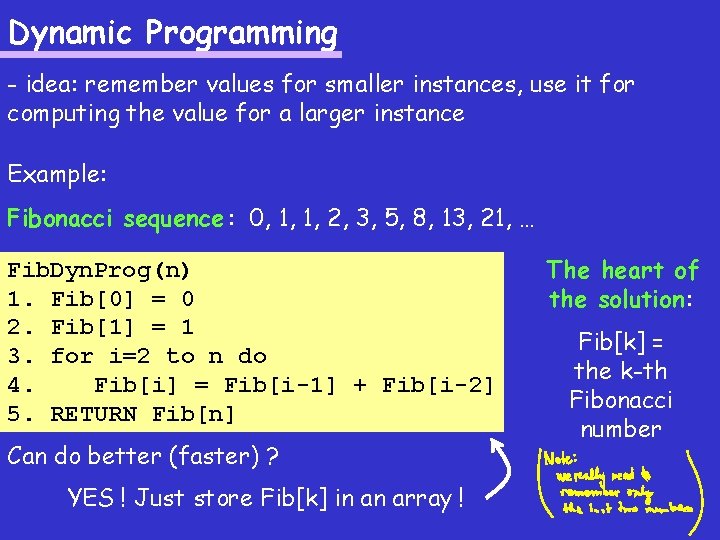

KNAPSACK – indivisible: a dyn-prog solution A recursive approach (returns only the best cost, not the set of items): KNAP-IND-REC(n, c, w, W) 1. if n· 0 2. return 0 3. if W < wn 4. with. Last. Item = -1 // undefined 5. else 6. with. Last. Item =cn+KNAP-IND-REC(n-1, c, w, W-wn) 7. without. Last. Item = KNAP-IND-REC(n-1, c, w, W) 8. return max{with. Last. Item, without. Last. Item}

![KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] =](http://slidetodoc.com/presentation_image/882cc0bb7163fbdfc2c4134646e5c99b/image-25.jpg)

KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] =

![KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum](http://slidetodoc.com/presentation_image/882cc0bb7163fbdfc2c4134646e5c99b/image-26.jpg)

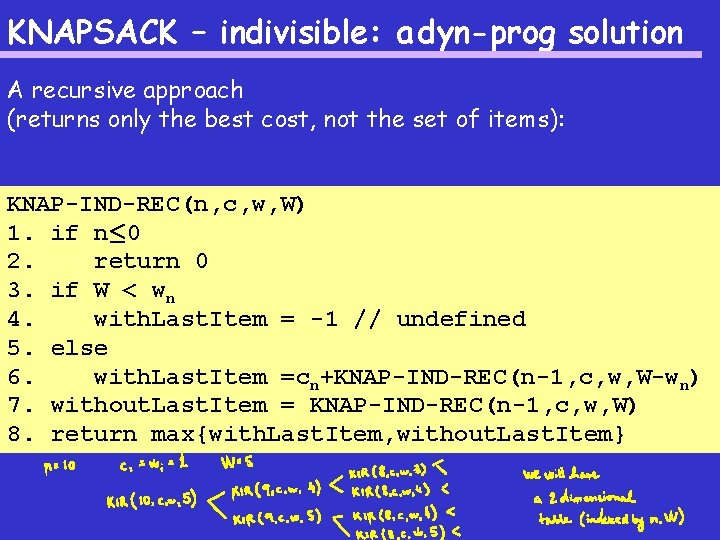

KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum cost of a subset of the first k items, where the weight of the subset is at most v

![KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum](http://slidetodoc.com/presentation_image/882cc0bb7163fbdfc2c4134646e5c99b/image-27.jpg)

KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum cost of a subset of the first k items, where the weight of the subset is at most v KNAPSACK-INDIVISIBLE(n, c, w, W) 1. init S[0][v]=0 for every v=0, …, W 2. init S[k][0]=0 for every k=0, …, n 3. for v=1 to W do 4. for k=1 to n do 5. S[k][v] = S[k-1][v] 6. if (wk · v) and (S[k-1][v-wk]+ck > S[k][v]) then 7. S[k][v] = S[k-1][v-wk]+ck 8. RETURN S[n][W]

![KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum](http://slidetodoc.com/presentation_image/882cc0bb7163fbdfc2c4134646e5c99b/image-28.jpg)

KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum cost of a subset of the first k items, where the weight of the subset is at most v KNAPSACK-INDIVISIBLE(n, c, w, W) 1. init S[0][v]=0 for every v=0, …, W 2. init S[k][0]=0 for every k=0, …, n 3. for v=1 to W do 4. for k=1 to n do 5. S[k][v] = S[k-1][v] 6. if (wk · v) and (S[k-1][v-wk]+ck > S[k][v]) then 7. S[k][v] = S[k-1][v-wk]+ck 8. RETURN S[n][W] Running time:

![KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum](http://slidetodoc.com/presentation_image/882cc0bb7163fbdfc2c4134646e5c99b/image-29.jpg)

KNAPSACK – indivisible: a dyn-prog solution The heart of the algorithm: S[k][v] = maximum cost of a subset of the first k items, where the weight of the subset is at most v KNAPSACK-INDIVISIBLE(n, c, w, W) 1. init S[0][v]=0 for every v=0, …, W 2. init S[k][0]=0 for every k=0, …, n 3. for v=1 to W do 4. for k=1 to n do 5. S[k][v] = S[k-1][v] 6. if (wk · v) and (S[k-1][v-wk]+ck > S[k][v]) then 7. S[k][v] = S[k-1][v-wk]+ck 8. RETURN S[n][W] How to output a solution ?

- Slides: 29