Presto Edgebased Load Balancing for Fast Datacenter Networks

Presto: Edge-based Load Balancing for Fast Datacenter Networks Keqiang He, Eric Rozner, Kanak Agarwal, Wes Felter, John Carter, Aditya Akella 1

Background • Datacenter networks support a wide variety of traffic Elephants: throughput sensitive Data Ingestion, VM Migration, Backups Mice: latency sensitive Search, Gaming, Web, RPCs 2

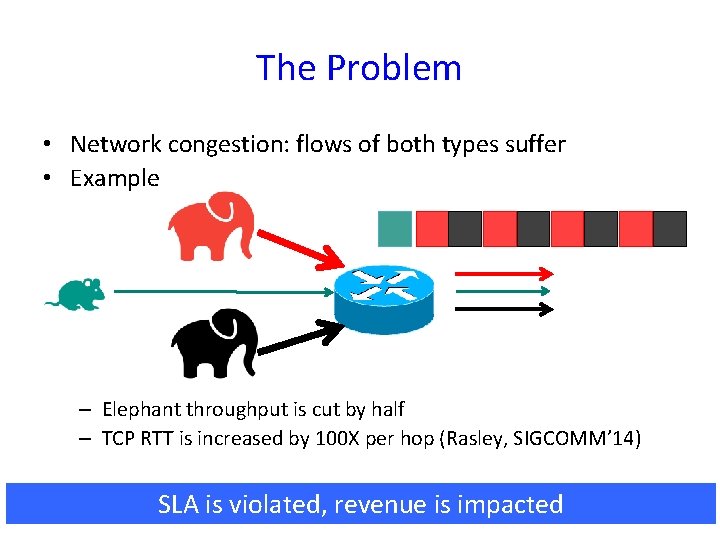

The Problem • Network congestion: flows of both types suffer • Example – Elephant throughput is cut by half – TCP RTT is increased by 100 X per hop (Rasley, SIGCOMM’ 14) SLA is violated, revenue is impacted 3

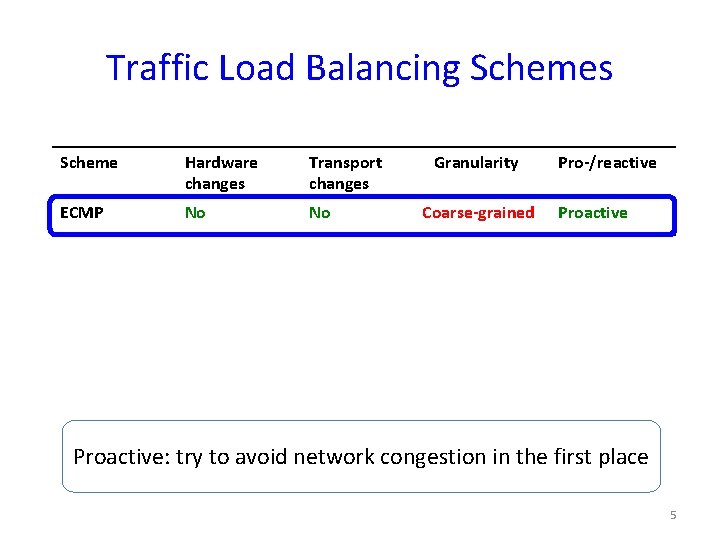

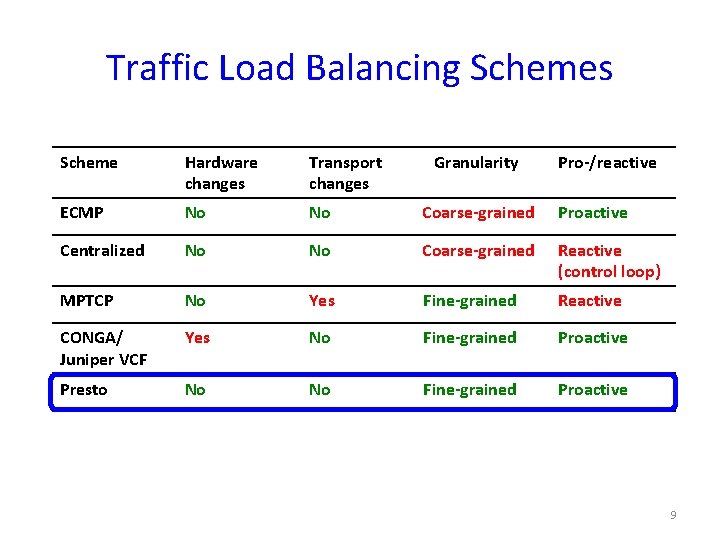

Traffic Load Balancing Schemes Scheme Hardware changes Transport changes Granularity Pro-/reactive 4

Traffic Load Balancing Schemes Scheme Hardware changes Transport changes ECMP No No Granularity Coarse-grained Pro-/reactive Proactive: try to avoid network congestion in the first place 5

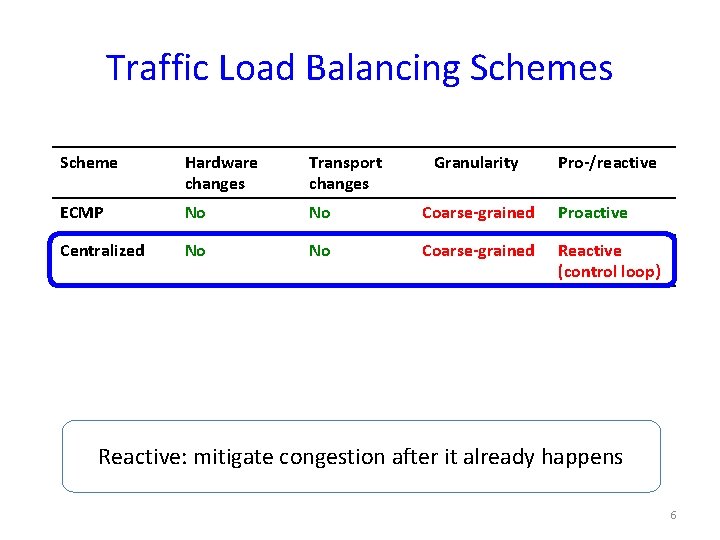

Traffic Load Balancing Schemes Scheme Hardware changes Transport changes Granularity Pro-/reactive ECMP No No Coarse-grained Proactive Centralized No No Coarse-grained Reactive (control loop) Reactive: mitigate congestion after it already happens 6

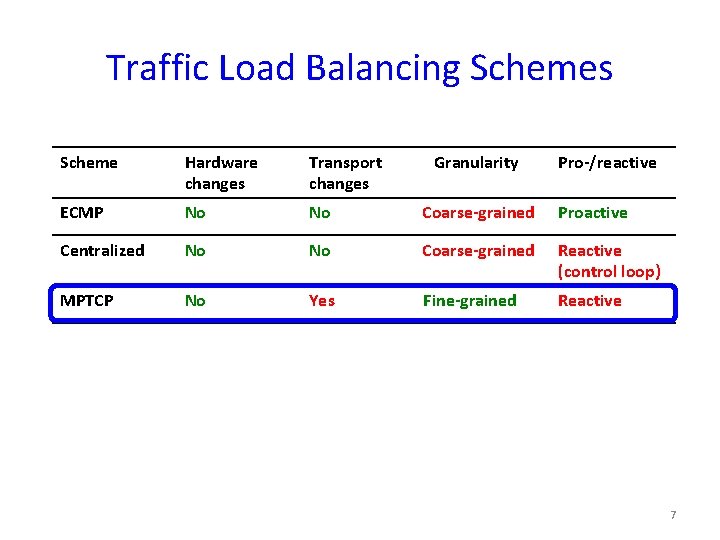

Traffic Load Balancing Schemes Scheme Hardware changes Transport changes Granularity Pro-/reactive ECMP No No Coarse-grained Proactive Centralized No No Coarse-grained Reactive (control loop) MPTCP No Yes Fine-grained Reactive 7

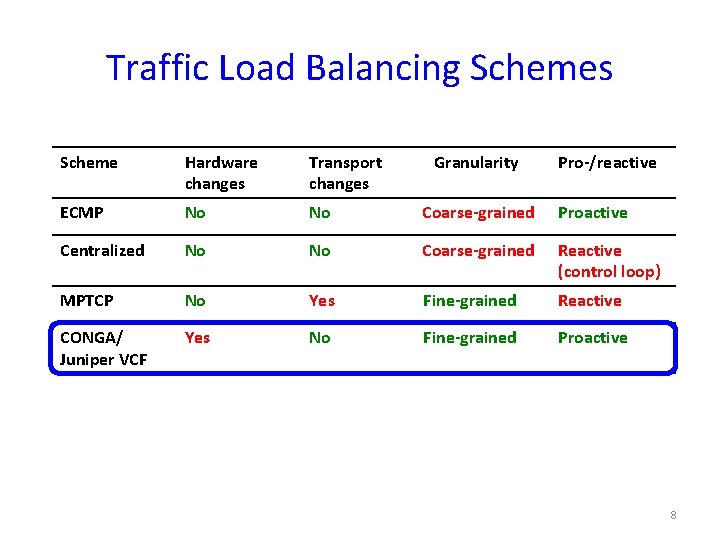

Traffic Load Balancing Schemes Scheme Hardware changes Transport changes Granularity Pro-/reactive ECMP No No Coarse-grained Proactive Centralized No No Coarse-grained Reactive (control loop) MPTCP No Yes Fine-grained Reactive CONGA/ Juniper VCF Yes No Fine-grained Proactive 8

Traffic Load Balancing Schemes Scheme Hardware changes Transport changes Granularity Pro-/reactive ECMP No No Coarse-grained Proactive Centralized No No Coarse-grained Reactive (control loop) MPTCP No Yes Fine-grained Reactive CONGA/ Juniper VCF Yes No Fine-grained Proactive Presto No No Fine-grained Proactive 9

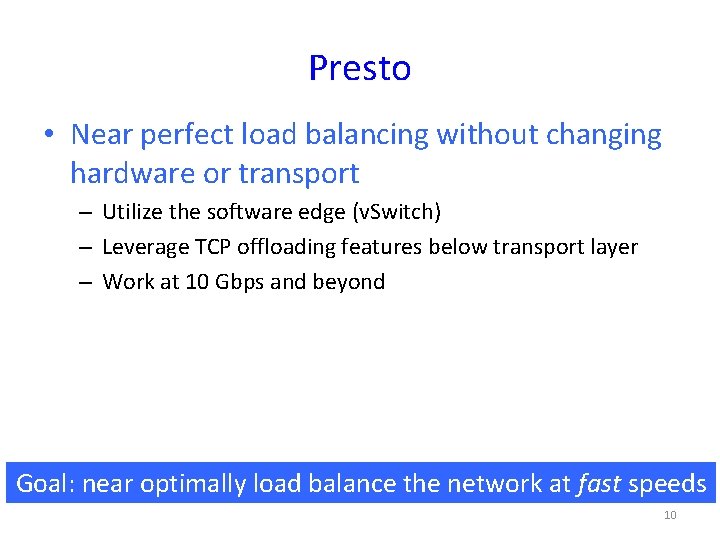

Presto • Near perfect load balancing without changing hardware or transport – Utilize the software edge (v. Switch) – Leverage TCP offloading features below transport layer – Work at 10 Gbps and beyond Goal: near optimally load balance the network at fast speeds 10

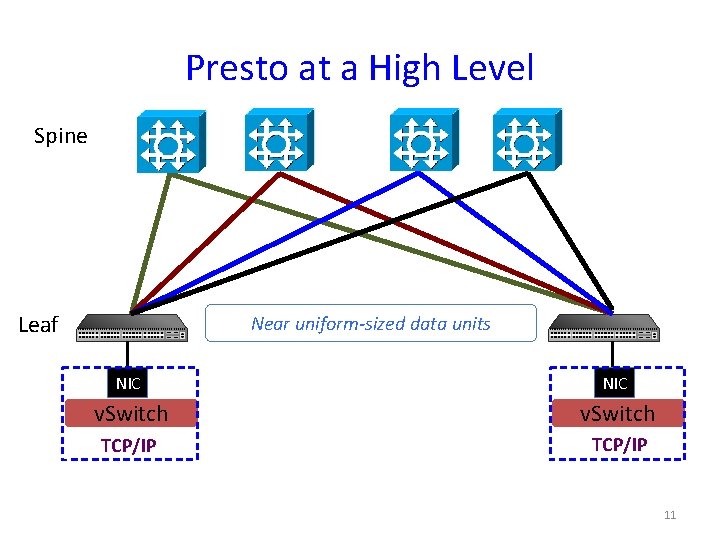

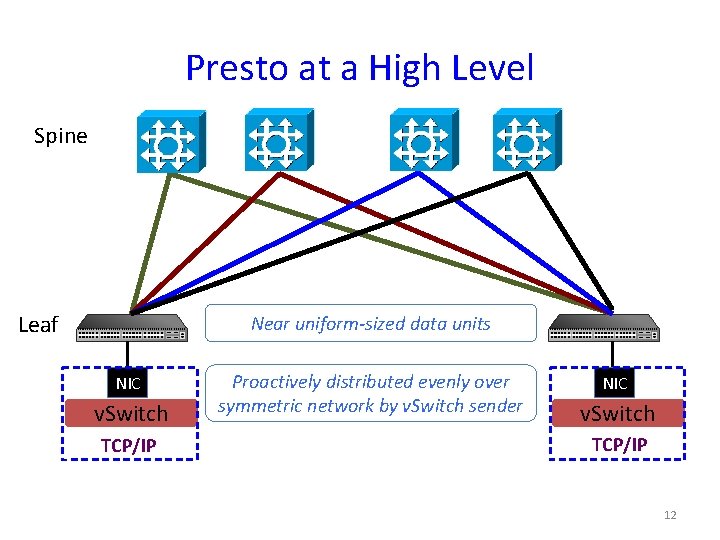

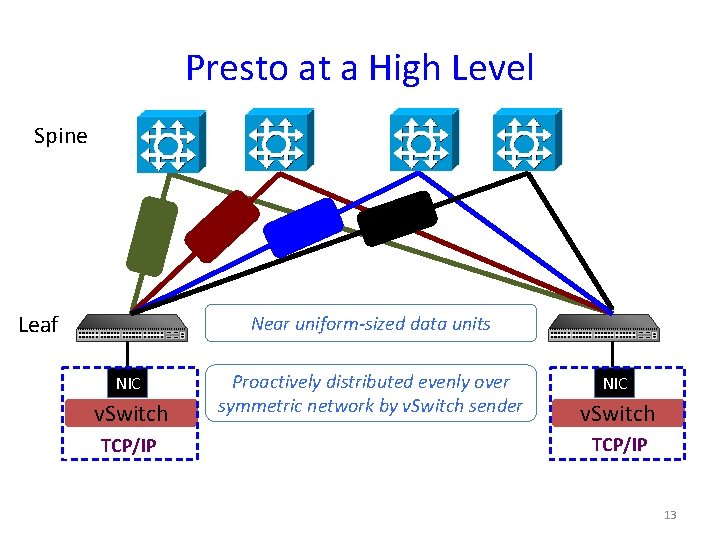

Presto at a High Level Spine Leaf Near uniform-sized data units NIC v. Switch TCP/IP 11

Presto at a High Level Spine Leaf Near uniform-sized data units NIC v. Switch TCP/IP Proactively distributed evenly over symmetric network by v. Switch sender NIC v. Switch TCP/IP 12

Presto at a High Level Spine Leaf Near uniform-sized data units NIC v. Switch TCP/IP Proactively distributed evenly over symmetric network by v. Switch sender NIC v. Switch TCP/IP 13

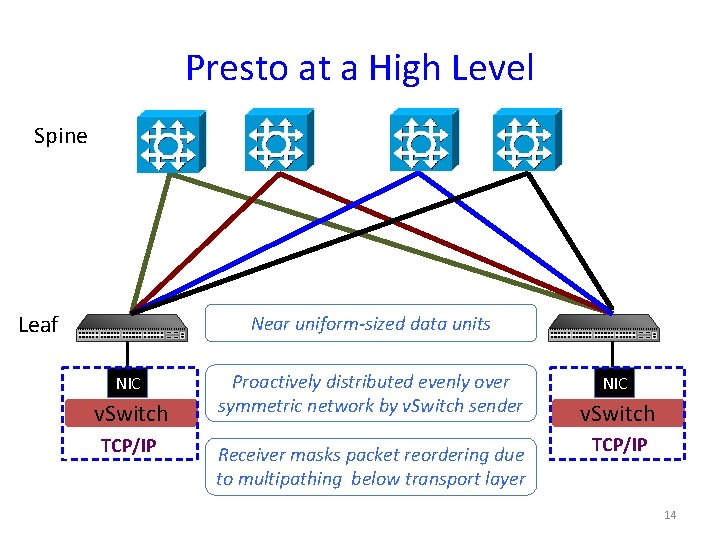

Presto at a High Level Spine Leaf Near uniform-sized data units NIC v. Switch TCP/IP Proactively distributed evenly over symmetric network by v. Switch sender Receiver masks packet reordering due to multipathing below transport layer NIC v. Switch TCP/IP 14

Outline • Sender • Receiver • Evaluation 15

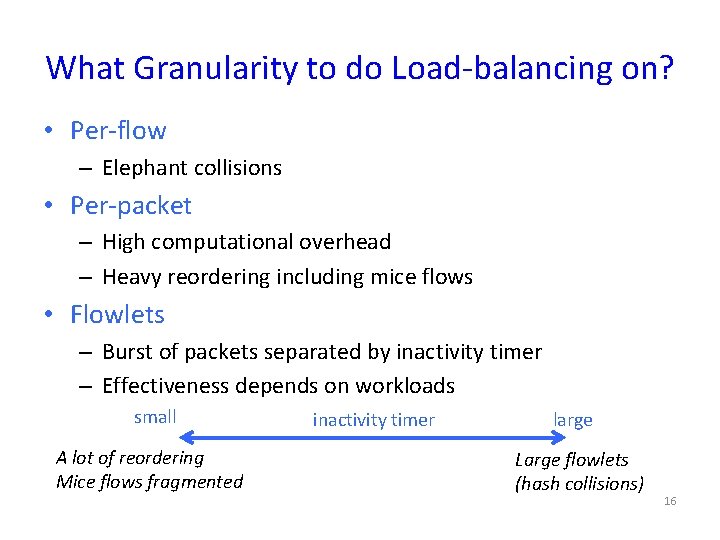

What Granularity to do Load-balancing on? • Per-flow – Elephant collisions • Per-packet – High computational overhead – Heavy reordering including mice flows • Flowlets – Burst of packets separated by inactivity timer – Effectiveness depends on workloads small A lot of reordering Mice flows fragmented inactivity timer large Large flowlets (hash collisions) 16

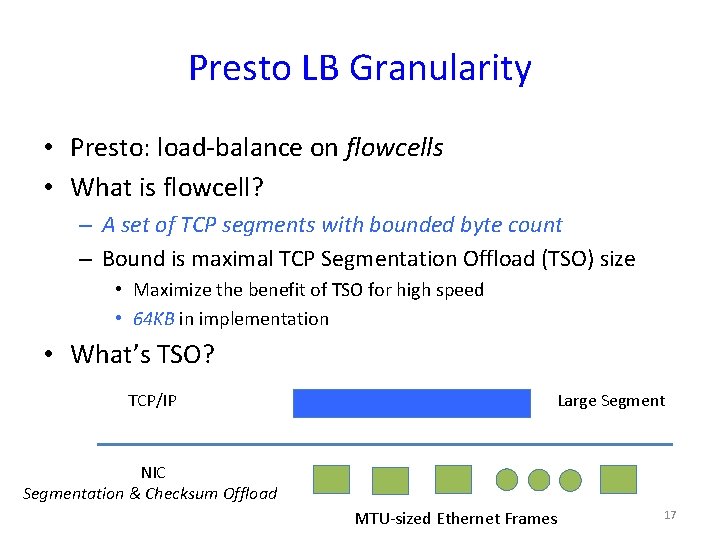

Presto LB Granularity • Presto: load-balance on flowcells • What is flowcell? – A set of TCP segments with bounded byte count – Bound is maximal TCP Segmentation Offload (TSO) size • Maximize the benefit of TSO for high speed • 64 KB in implementation • What’s TSO? TCP/IP Large Segment NIC Segmentation & Checksum Offload MTU-sized Ethernet Frames 17

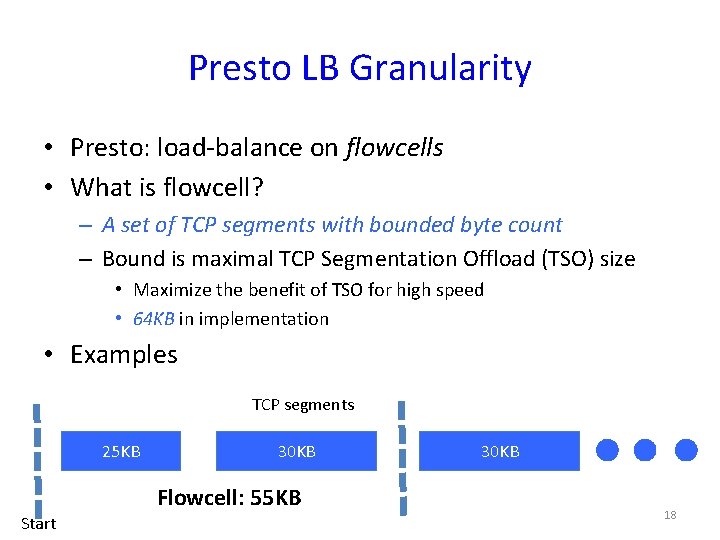

Presto LB Granularity • Presto: load-balance on flowcells • What is flowcell? – A set of TCP segments with bounded byte count – Bound is maximal TCP Segmentation Offload (TSO) size • Maximize the benefit of TSO for high speed • 64 KB in implementation • Examples TCP segments 25 KB 30 KB Flowcell: 55 KB Start 30 KB 18

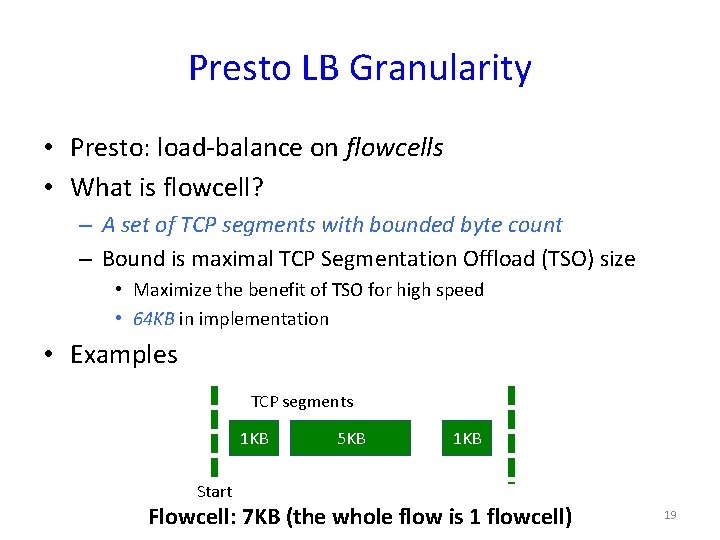

Presto LB Granularity • Presto: load-balance on flowcells • What is flowcell? – A set of TCP segments with bounded byte count – Bound is maximal TCP Segmentation Offload (TSO) size • Maximize the benefit of TSO for high speed • 64 KB in implementation • Examples TCP segments 1 KB 5 KB 1 KB Start Flowcell: 7 KB (the whole flow is 1 flowcell) 19

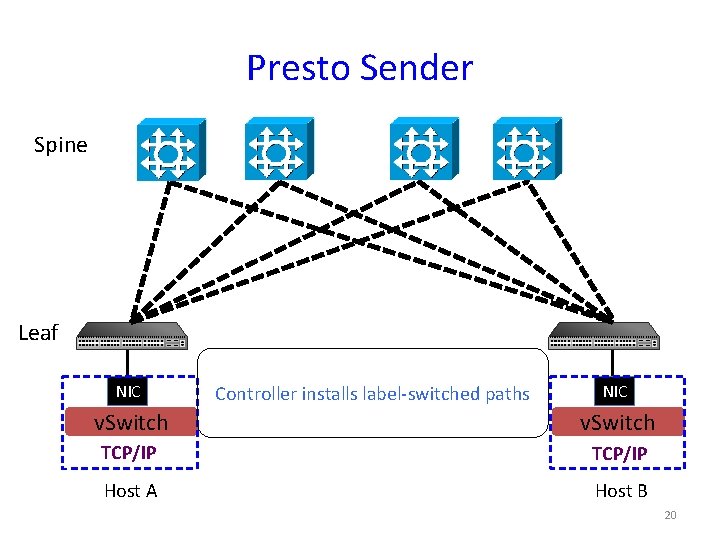

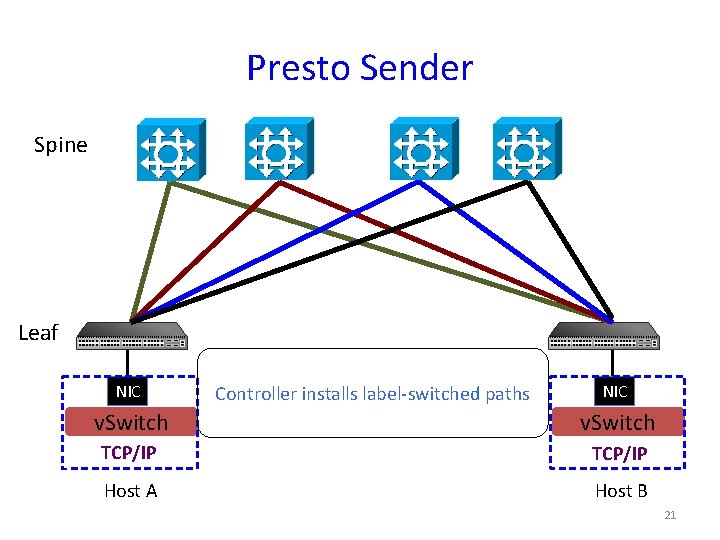

Presto Sender Spine Leaf NIC Controller installs label-switched paths NIC v. Switch TCP/IP Host A Host B 20

Presto Sender Spine Leaf NIC Controller installs label-switched paths NIC v. Switch TCP/IP Host A Host B 21

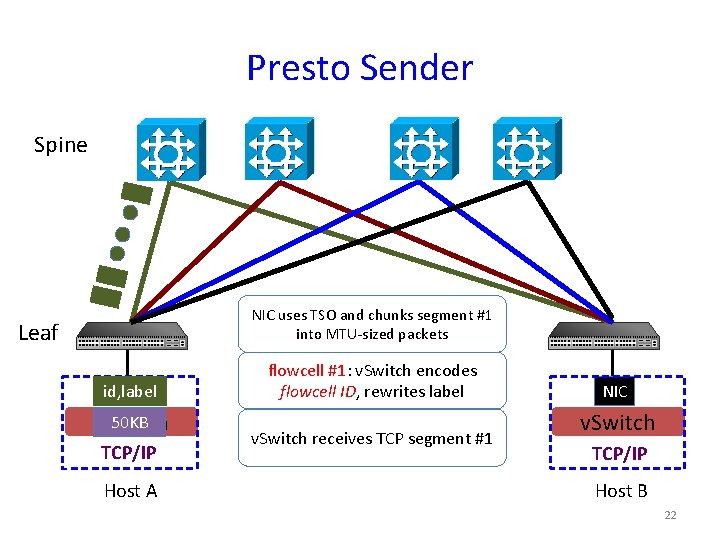

Presto Sender Spine NIC uses TSO and chunks segment #1 into MTU-sized packets Leaf id, label NIC 50 KB v. Switch TCP/IP Host A flowcell #1: v. Switch encodes flowcell ID, rewrites label v. Switch receives TCP segment #1 NIC v. Switch TCP/IP Host B 22

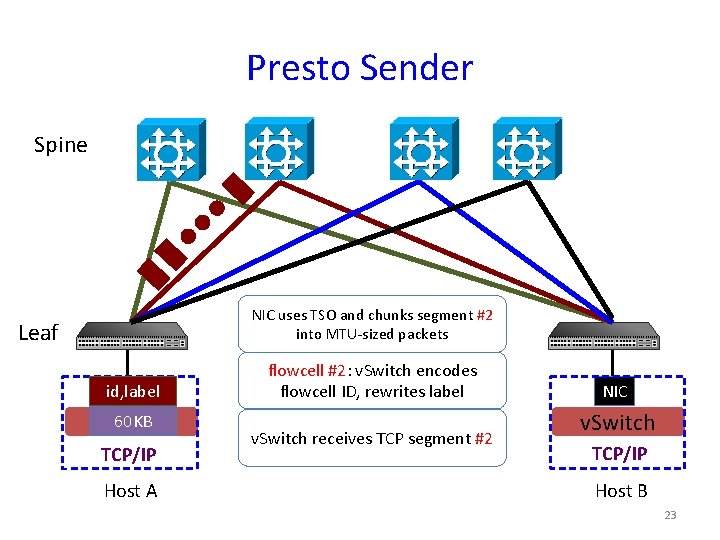

Presto Sender Spine NIC uses TSO and chunks segment #2 into MTU-sized packets Leaf id, label NIC 60 KB v. Switch TCP/IP Host A flowcell #2: v. Switch encodes flowcell ID, rewrites label v. Switch receives TCP segment #2 NIC v. Switch TCP/IP Host B 23

![Benefits • Most flows smaller than 64 KB [Benson, IMC’ 11] – the majority Benefits • Most flows smaller than 64 KB [Benson, IMC’ 11] – the majority](http://slidetodoc.com/presentation_image_h2/7a2632dbc85f1ed06296bfe4526278d9/image-24.jpg)

Benefits • Most flows smaller than 64 KB [Benson, IMC’ 11] – the majority of mice are not exposed to reordering • Most bytes from elephants [Alizadeh, SIGCOMM’ 10] – traffic routed on uniform sizes • Fine-grained and deterministic scheduling over disjoint paths – near optimal load balancing 24

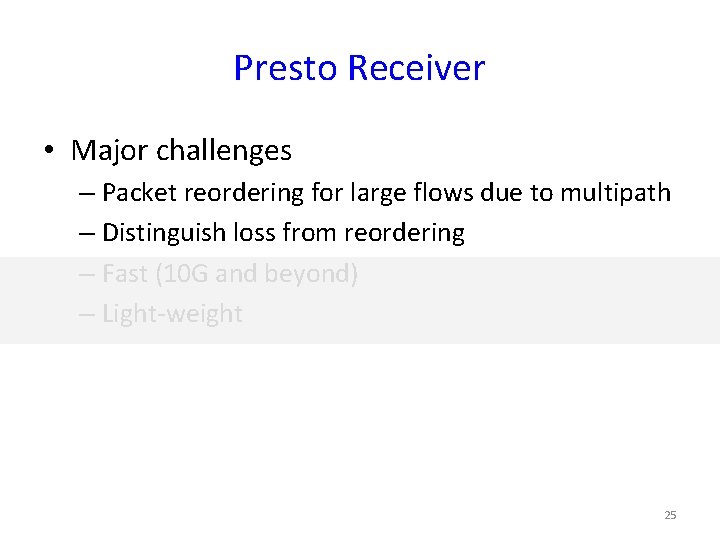

Presto Receiver • Major challenges – Packet reordering for large flows due to multipath – Distinguish loss from reordering – Fast (10 G and beyond) – Light-weight 25

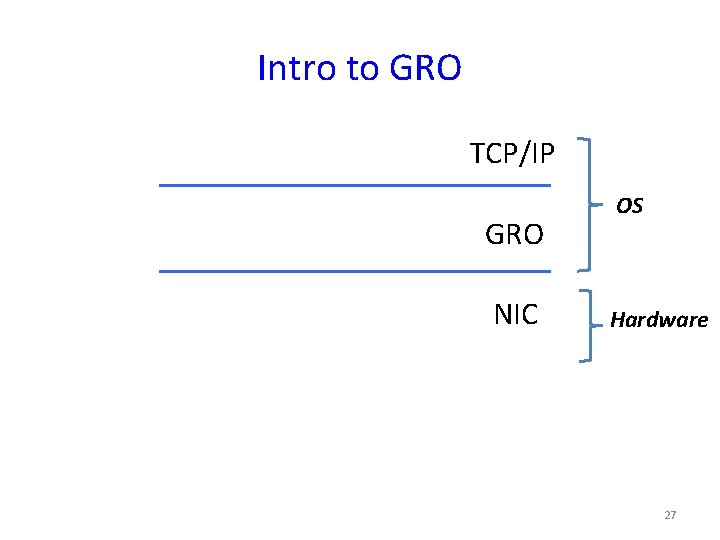

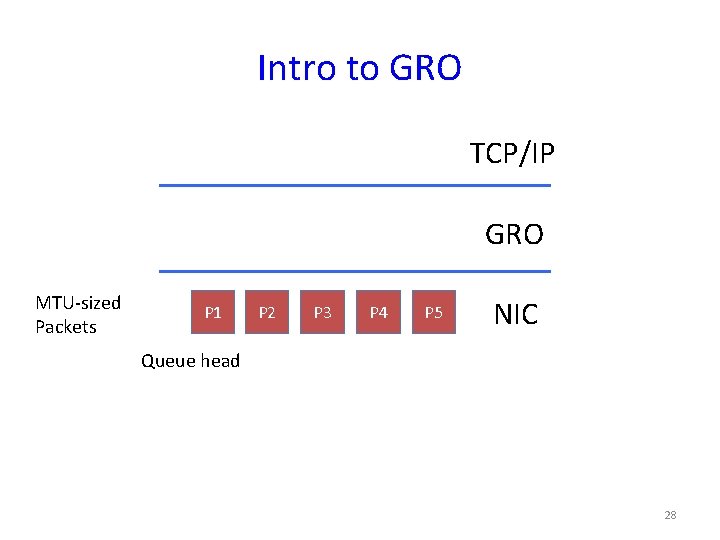

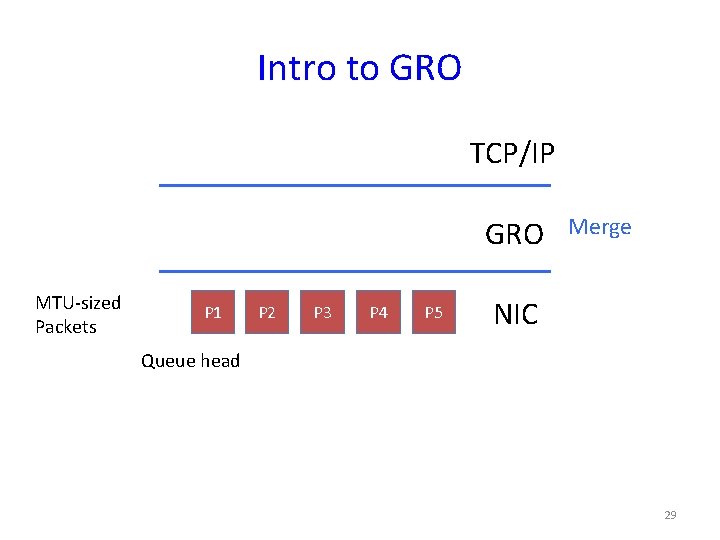

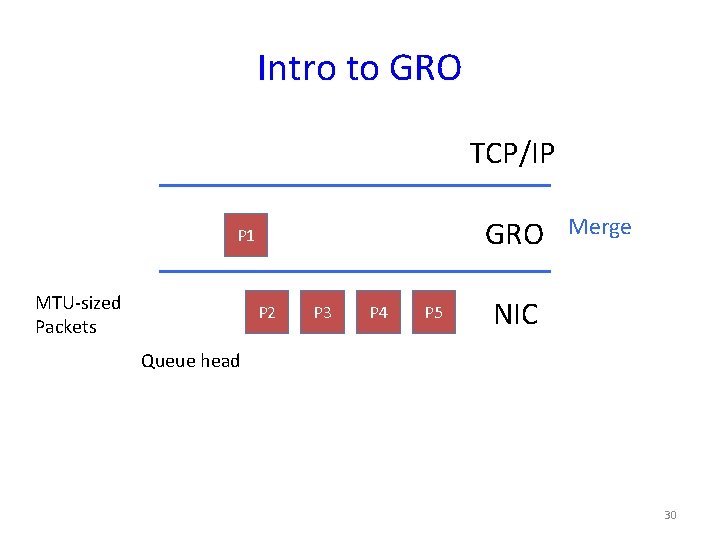

Intro to GRO • Generic Receive Offload (GRO) – The reverse process of TSO 26

Intro to GRO TCP/IP GRO NIC OS Hardware 27

Intro to GRO TCP/IP GRO MTU-sized Packets P 1 P 2 P 3 P 4 P 5 NIC Queue head 28

Intro to GRO TCP/IP GRO MTU-sized Packets P 1 P 2 P 3 P 4 P 5 Merge NIC Queue head 29

Intro to GRO TCP/IP GRO P 1 MTU-sized Packets P 2 P 3 P 4 P 5 Merge NIC Queue head 30

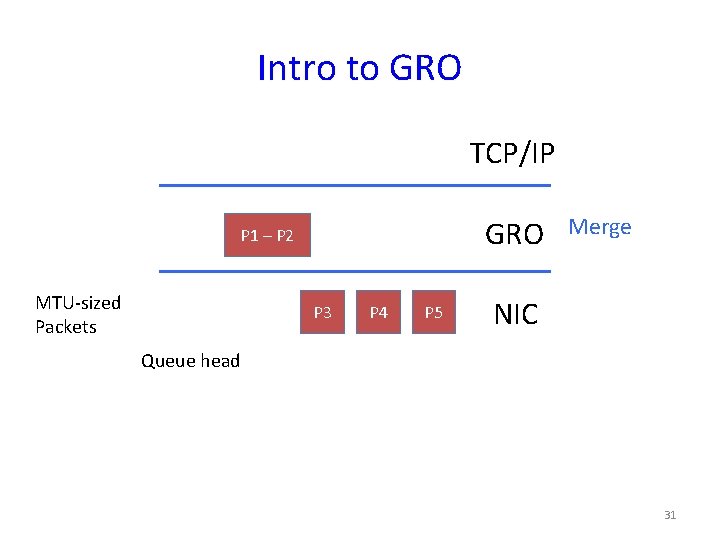

Intro to GRO TCP/IP GRO P 1 – P 2 MTU-sized Packets P 3 P 4 P 5 Merge NIC Queue head 31

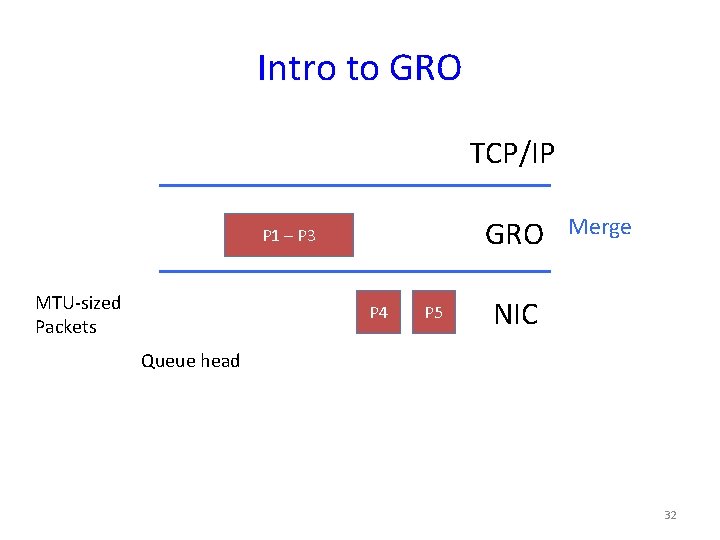

Intro to GRO TCP/IP GRO P 1 – P 3 MTU-sized Packets P 4 P 5 Merge NIC Queue head 32

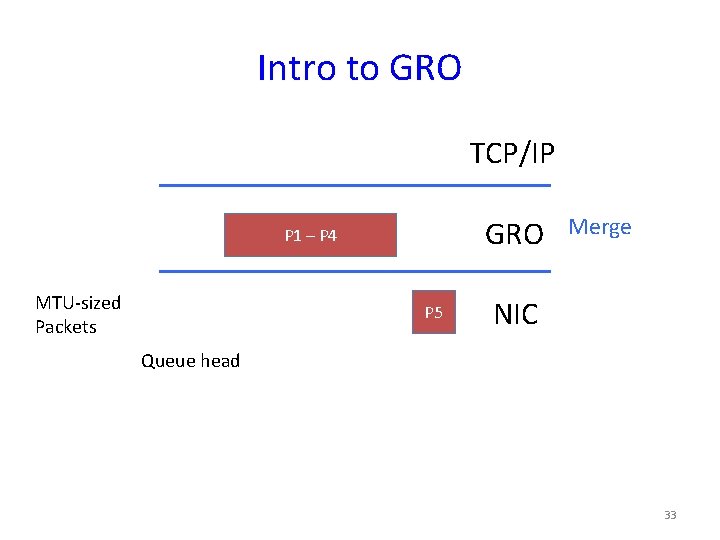

Intro to GRO TCP/IP GRO P 1 – P 4 MTU-sized Packets P 5 Merge NIC Queue head 33

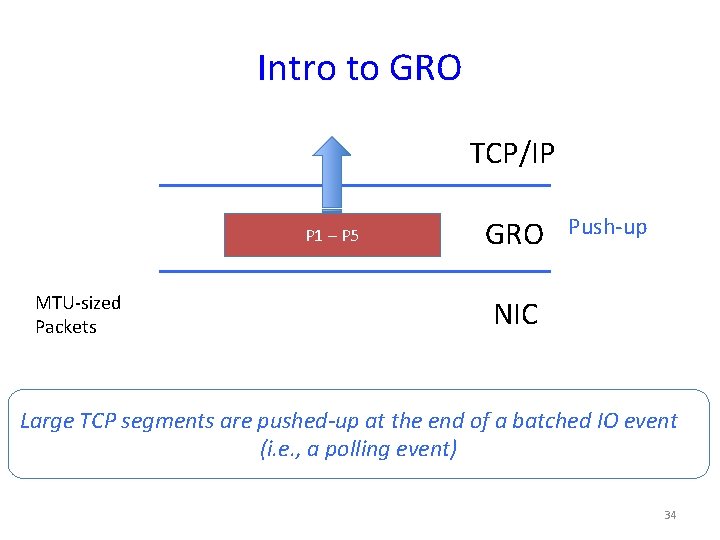

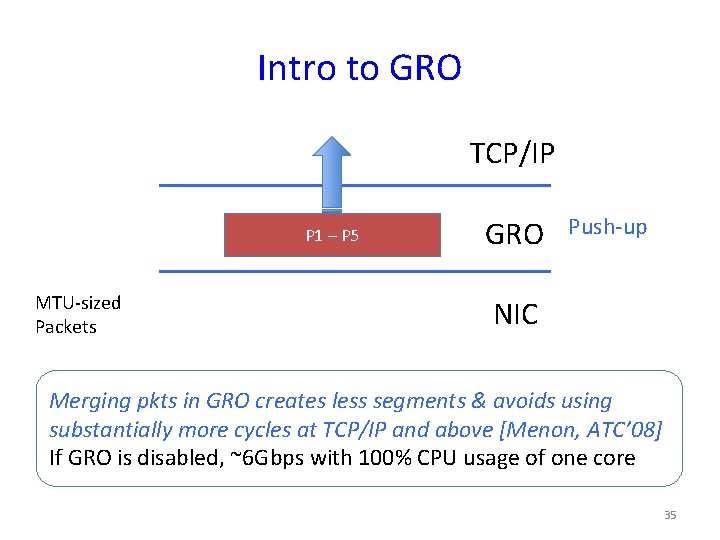

Intro to GRO TCP/IP P 1 – P 5 MTU-sized Packets GRO Push-up NIC Large TCP segments are pushed-up at the end of a batched IO event (i. e. , a polling event) 34

Intro to GRO TCP/IP P 1 – P 5 MTU-sized Packets GRO Push-up NIC Merging pkts in GRO creates less segments & avoids using substantially more cycles at TCP/IP and above [Menon, ATC’ 08] If GRO is disabled, ~6 Gbps with 100% CPU usage of one core 35

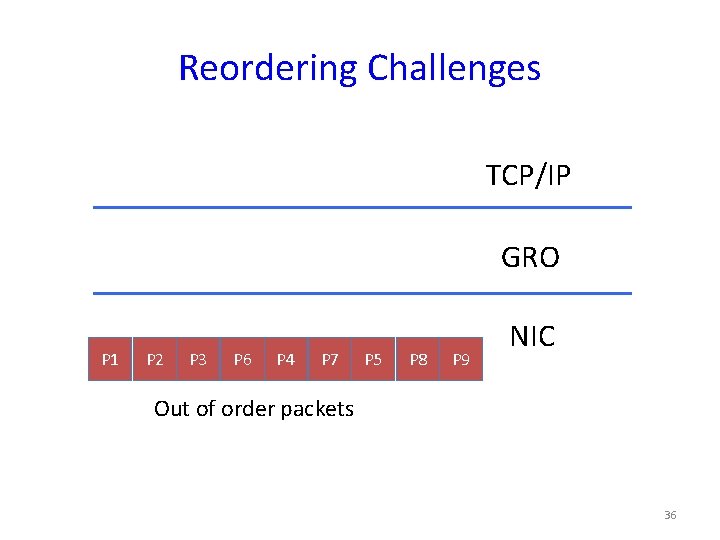

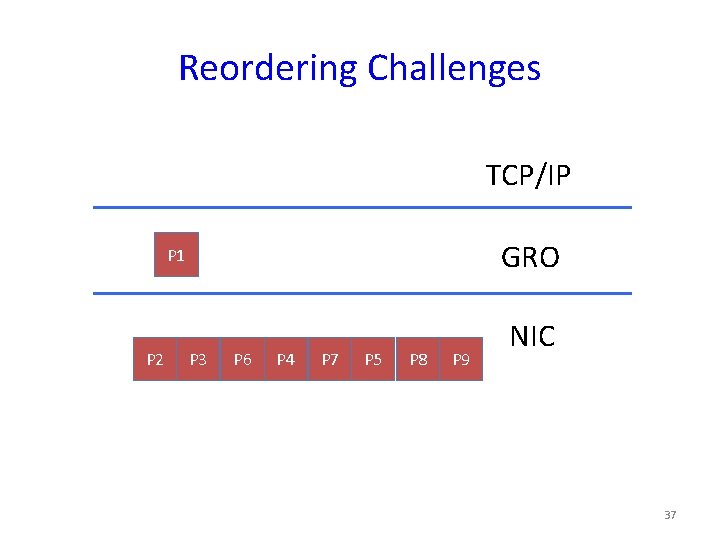

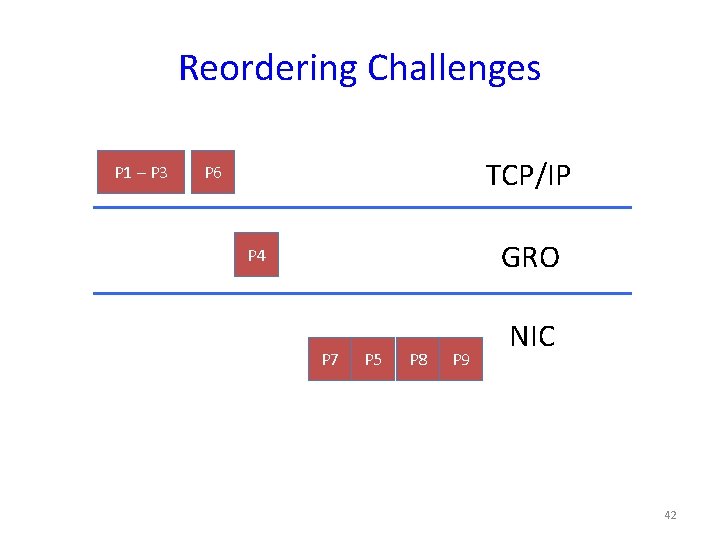

Reordering Challenges TCP/IP GRO P 1 P 2 P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC Out of order packets 36

Reordering Challenges TCP/IP GRO P 1 P 2 P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC 37

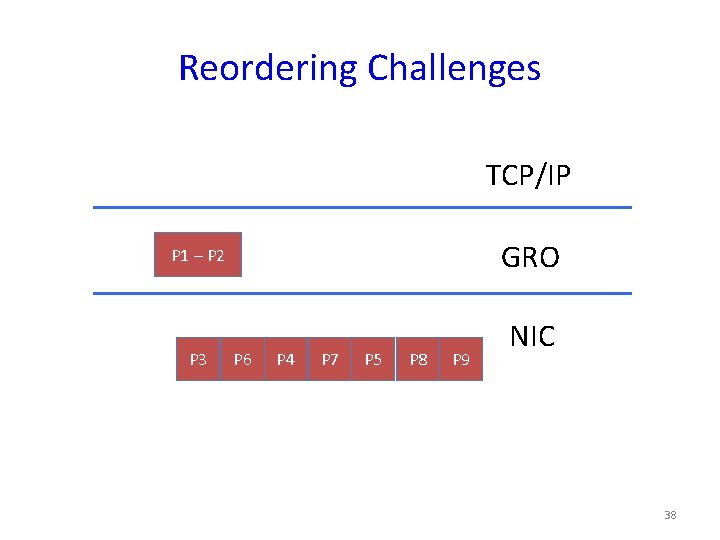

Reordering Challenges TCP/IP GRO P 1 – P 2 P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC 38

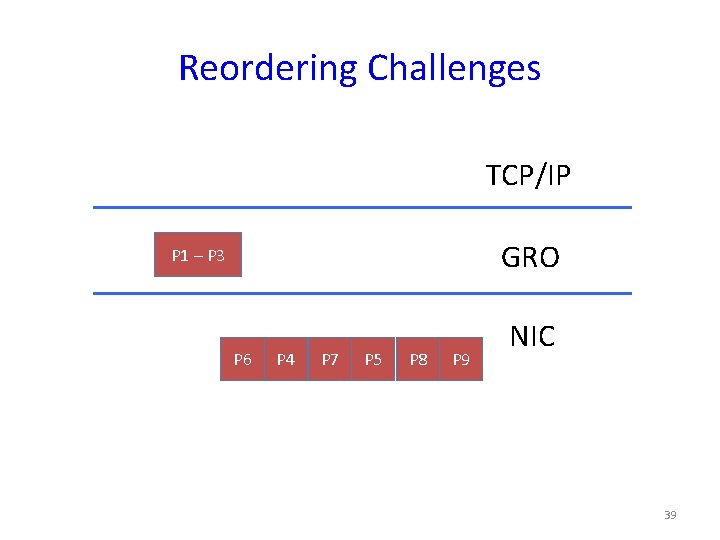

Reordering Challenges TCP/IP GRO P 1 – P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC 39

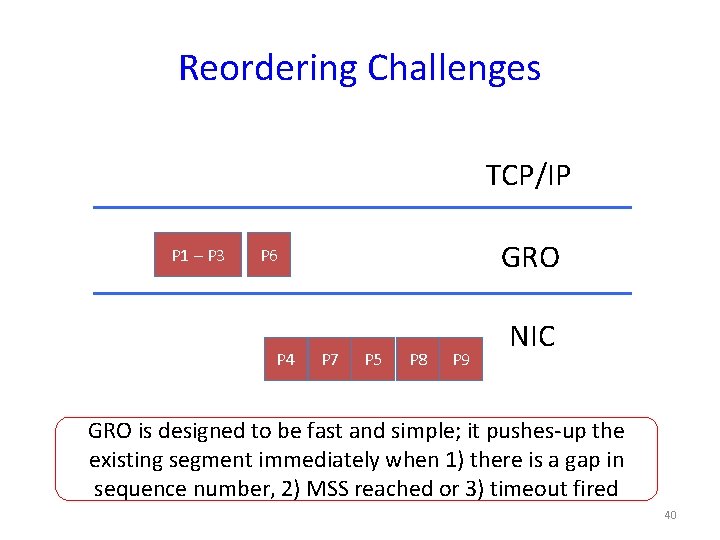

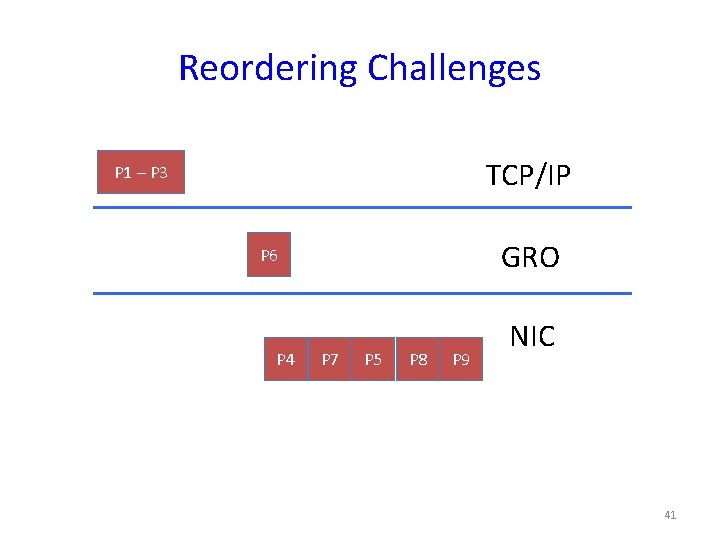

Reordering Challenges TCP/IP P 1 – P 3 GRO P 6 P 4 P 7 P 5 P 8 P 9 NIC GRO is designed to be fast and simple; it pushes-up the existing segment immediately when 1) there is a gap in sequence number, 2) MSS reached or 3) timeout fired 40

Reordering Challenges TCP/IP P 1 – P 3 GRO P 6 P 4 P 7 P 5 P 8 P 9 NIC 41

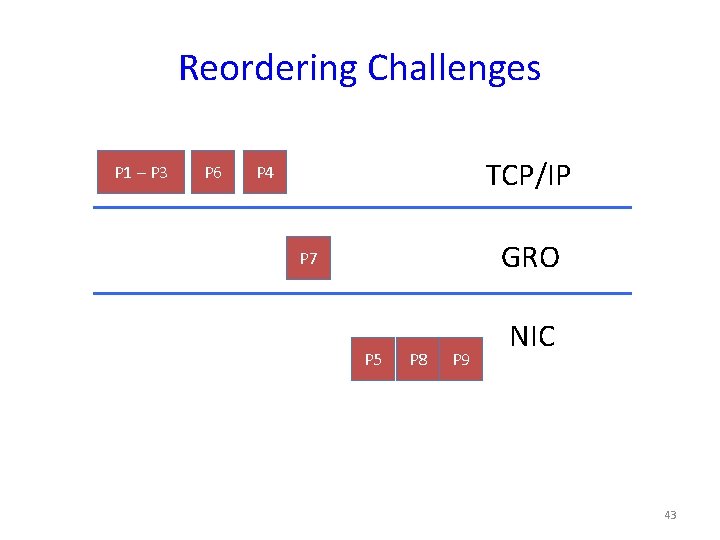

Reordering Challenges P 1 – P 3 TCP/IP P 6 GRO P 4 P 7 P 5 P 8 P 9 NIC 42

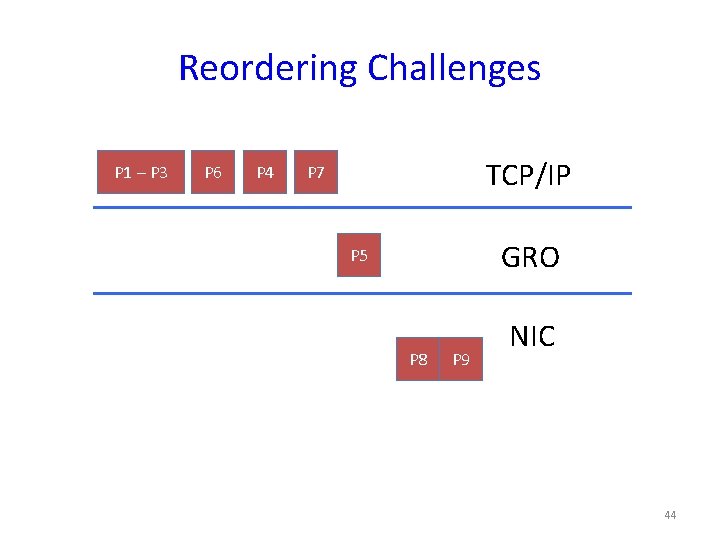

Reordering Challenges P 1 – P 3 P 6 TCP/IP P 4 GRO P 7 P 5 P 8 P 9 NIC 43

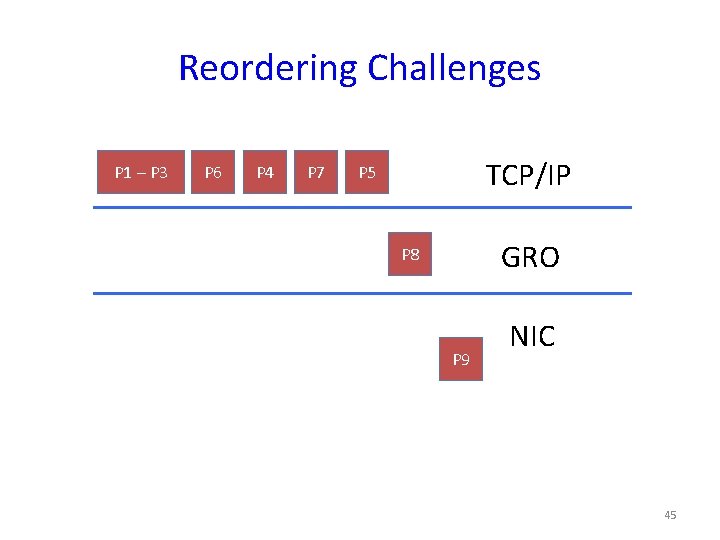

Reordering Challenges P 1 – P 3 P 6 P 4 TCP/IP P 7 GRO P 5 P 8 P 9 NIC 44

Reordering Challenges P 1 – P 3 P 6 P 4 P 7 TCP/IP P 5 GRO P 8 P 9 NIC 45

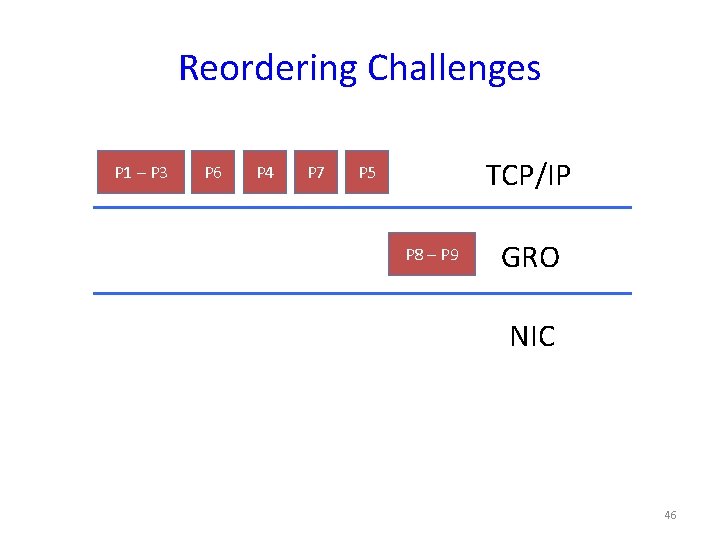

Reordering Challenges P 1 – P 3 P 6 P 4 P 7 TCP/IP P 5 P 8 – P 9 GRO NIC 46

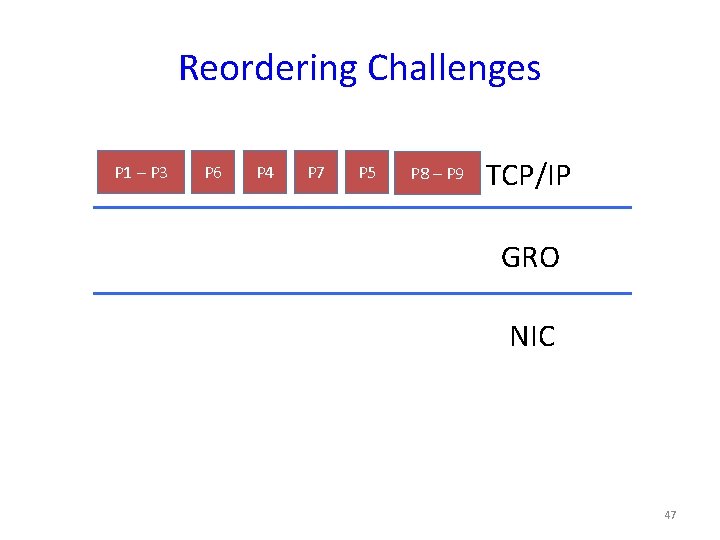

Reordering Challenges P 1 – P 3 P 6 P 4 P 7 P 5 P 8 – P 9 TCP/IP GRO NIC 47

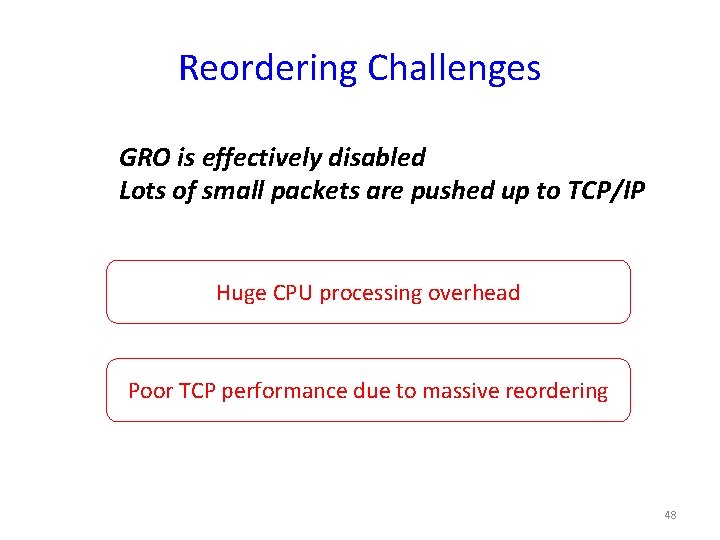

Reordering Challenges GRO is effectively disabled Lots of small packets are pushed up to TCP/IP Huge CPU processing overhead Poor TCP performance due to massive reordering 48

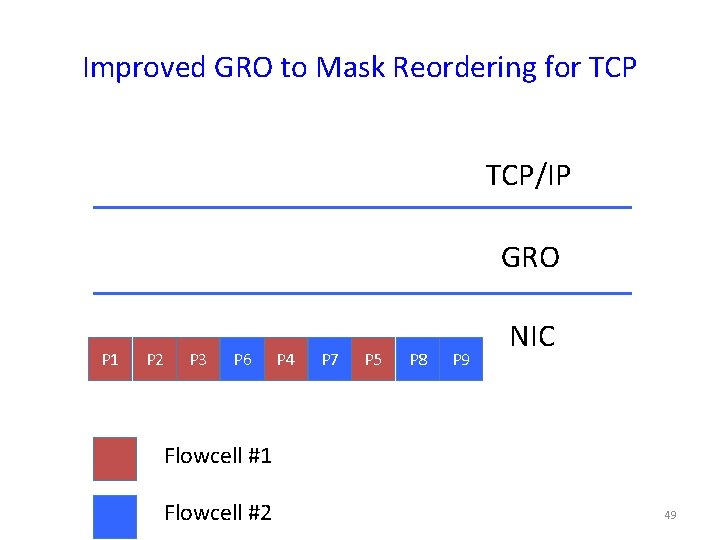

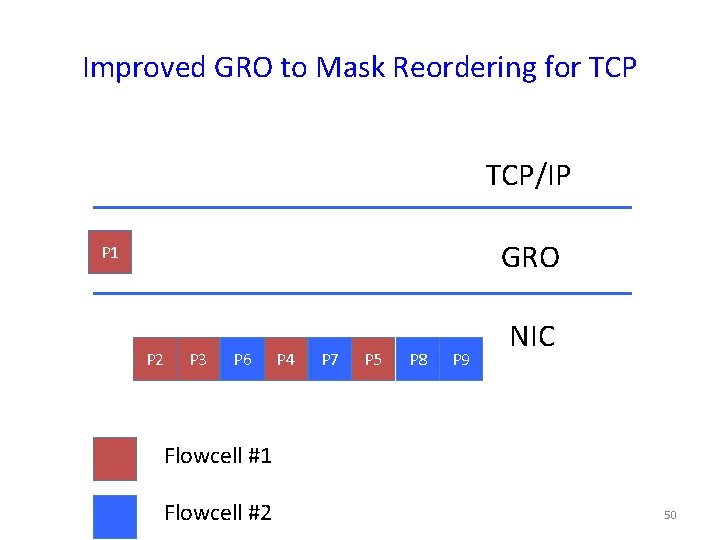

Improved GRO to Mask Reordering for TCP/IP GRO P 1 P 2 P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 49

Improved GRO to Mask Reordering for TCP/IP GRO P 1 P 2 P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 50

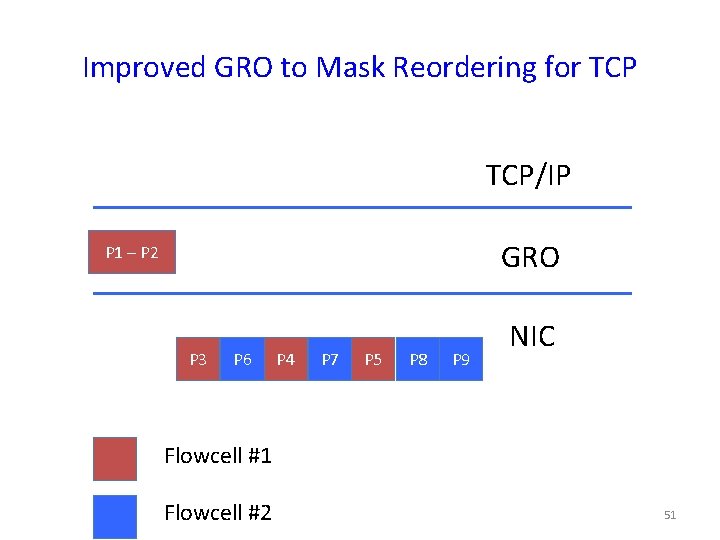

Improved GRO to Mask Reordering for TCP/IP GRO P 1 – P 2 P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 51

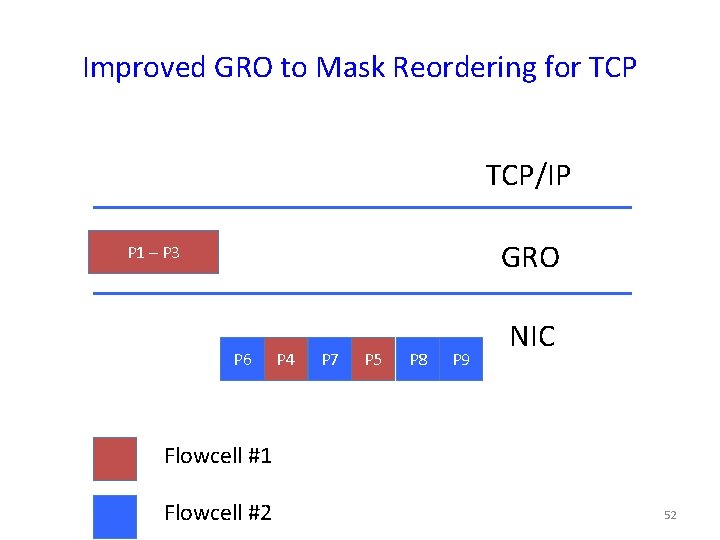

Improved GRO to Mask Reordering for TCP/IP GRO P 1 – P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 52

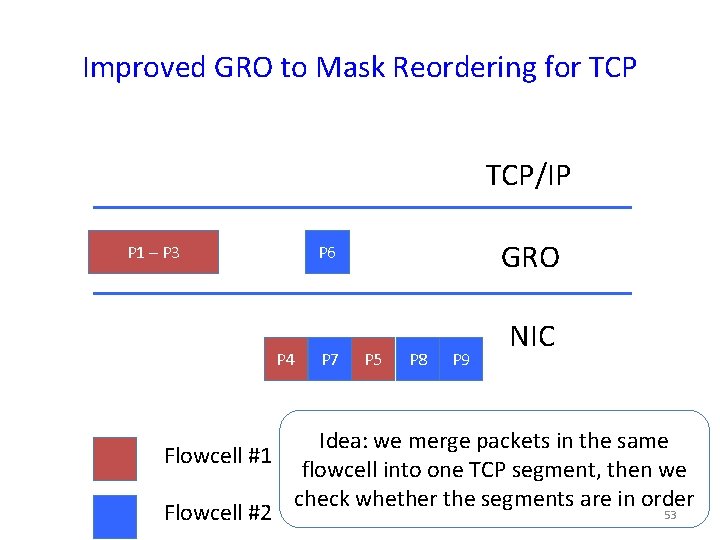

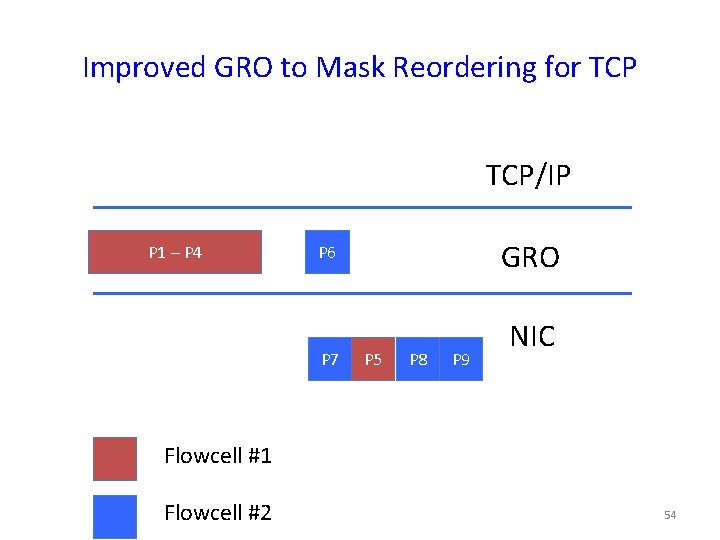

Improved GRO to Mask Reordering for TCP/IP P 1 – P 3 P 4 Flowcell #1 Flowcell #2 GRO P 6 P 7 P 5 P 8 P 9 NIC Idea: we merge packets in the same flowcell into one TCP segment, then we check whether the segments are in order 53

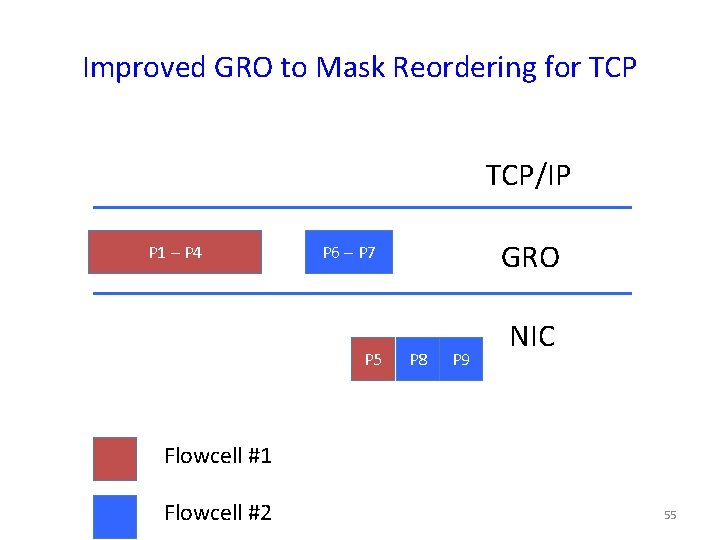

Improved GRO to Mask Reordering for TCP/IP P 1 – P 4 GRO P 6 P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 54

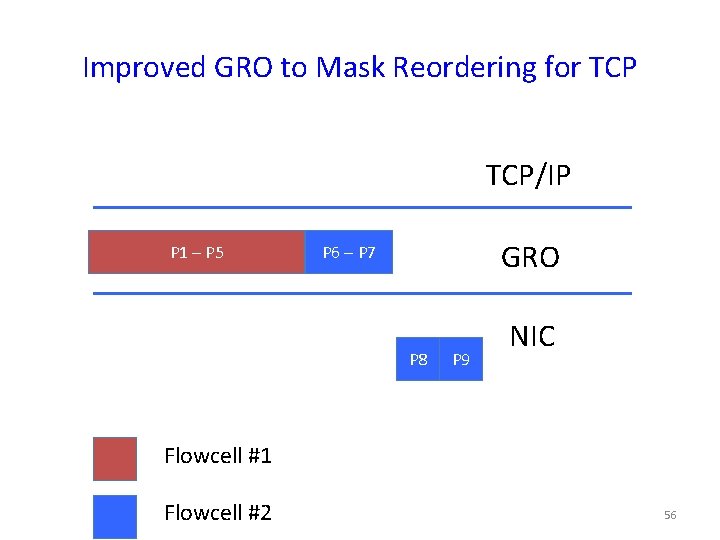

Improved GRO to Mask Reordering for TCP/IP P 1 – P 4 GRO P 6 – P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 55

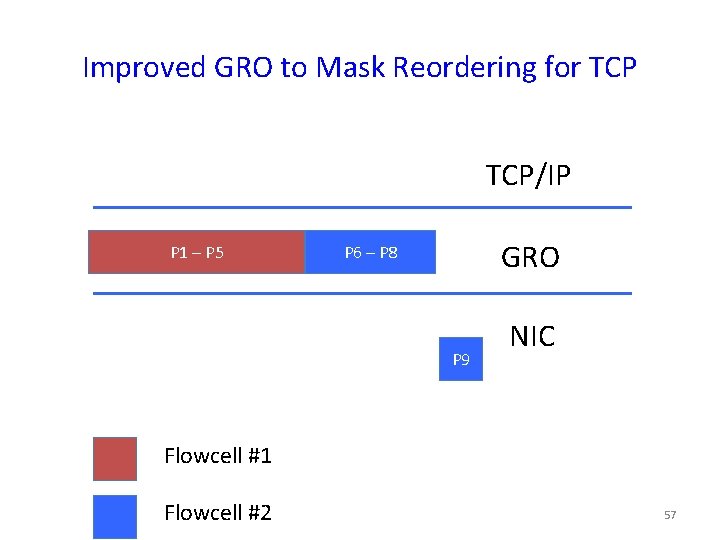

Improved GRO to Mask Reordering for TCP/IP P 1 – P 5 GRO P 6 – P 7 P 8 P 9 NIC Flowcell #1 Flowcell #2 56

Improved GRO to Mask Reordering for TCP/IP P 1 – P 5 GRO P 6 – P 8 P 9 NIC Flowcell #1 Flowcell #2 57

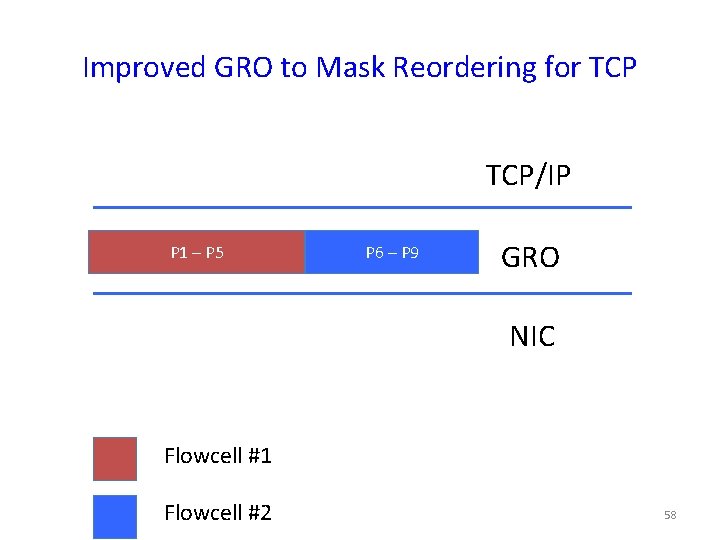

Improved GRO to Mask Reordering for TCP/IP P 1 – P 5 P 6 – P 9 GRO NIC Flowcell #1 Flowcell #2 58

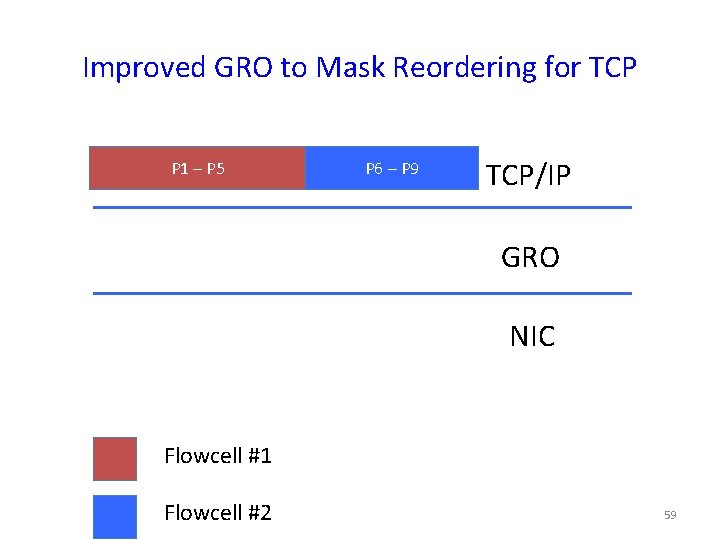

Improved GRO to Mask Reordering for TCP P 1 – P 5 P 6 – P 9 TCP/IP GRO NIC Flowcell #1 Flowcell #2 59

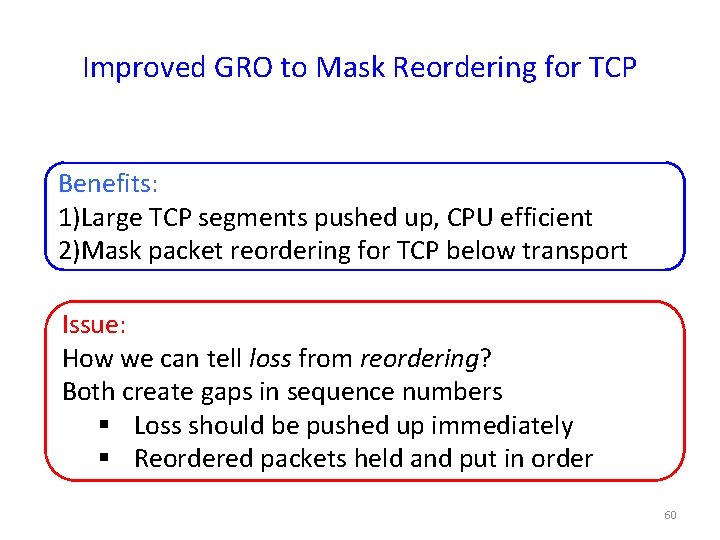

Improved GRO to Mask Reordering for TCP Benefits: 1)Large TCP segments pushed up, CPU efficient 2)Mask packet reordering for TCP below transport Issue: How we can tell loss from reordering? Both create gaps in sequence numbers § Loss should be pushed up immediately § Reordered packets held and put in order 60

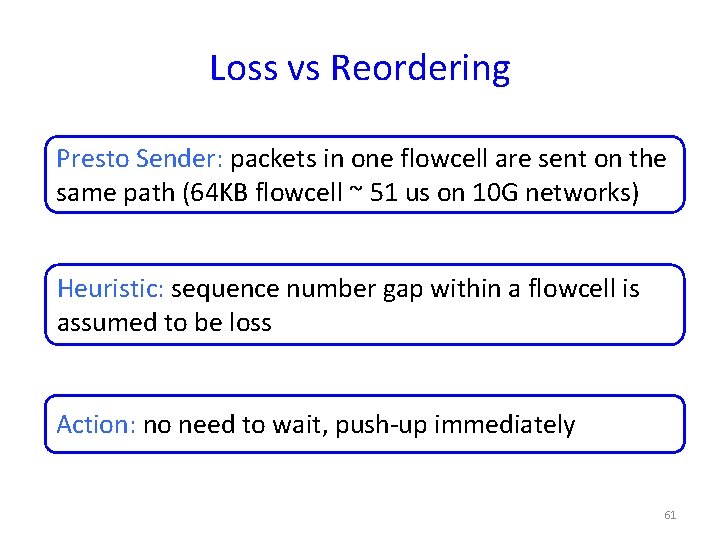

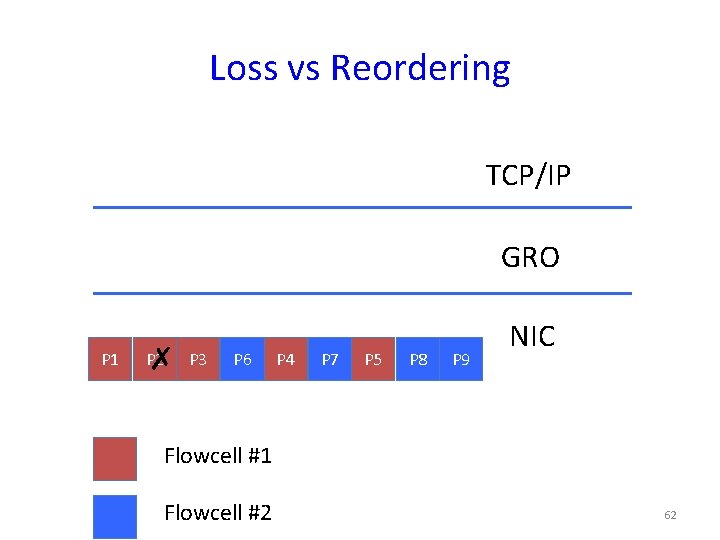

Loss vs Reordering Presto Sender: packets in one flowcell are sent on the same path (64 KB flowcell ~ 51 us on 10 G networks) Heuristic: sequence number gap within a flowcell is assumed to be loss Action: no need to wait, push-up immediately 61

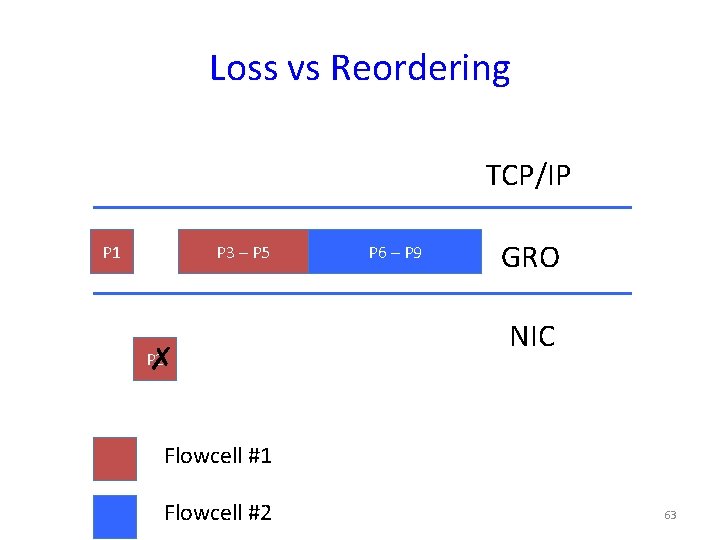

Loss vs Reordering TCP/IP GRO P 1 P 2 ✗ P 3 P 6 P 4 P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 62

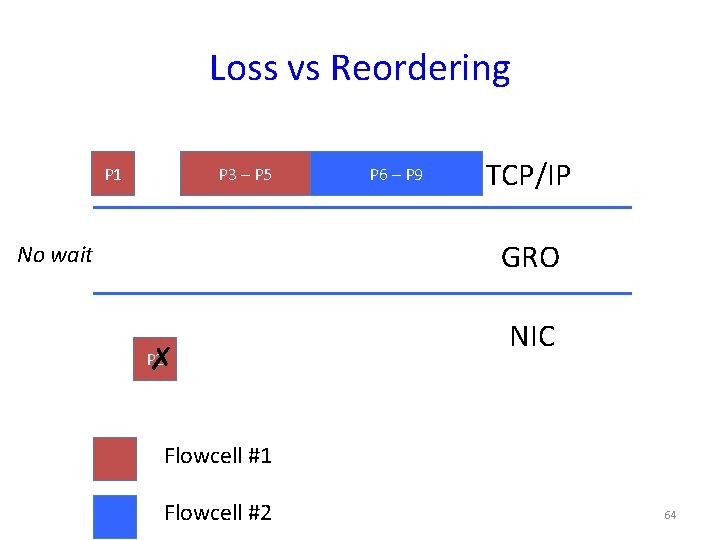

Loss vs Reordering TCP/IP P 1 P 3 – P 5 P 2 ✗ P 6 – P 9 GRO NIC Flowcell #1 Flowcell #2 63

Loss vs Reordering P 1 P 3 – P 5 P 6 – P 9 TCP/IP GRO No wait P 2 ✗ NIC Flowcell #1 Flowcell #2 64

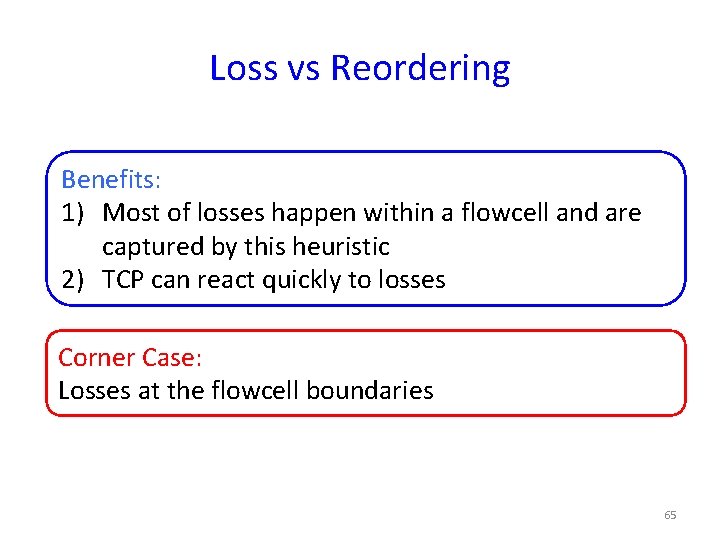

Loss vs Reordering Benefits: 1) Most of losses happen within a flowcell and are captured by this heuristic 2) TCP can react quickly to losses Corner Case: Losses at the flowcell boundaries 65

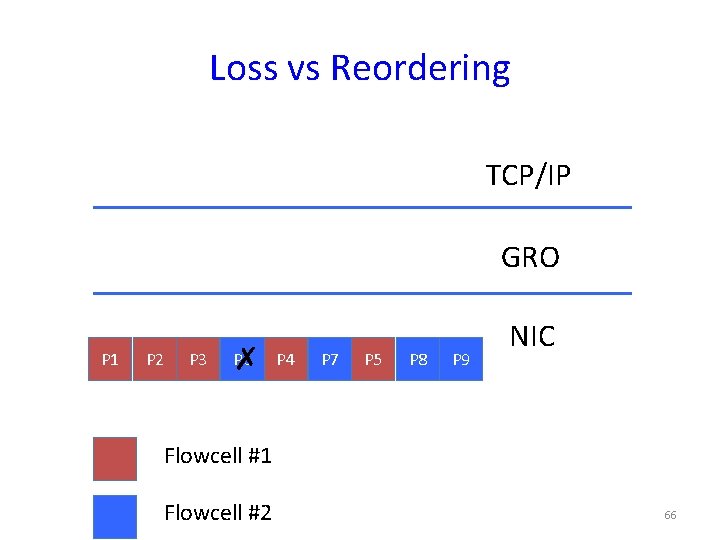

Loss vs Reordering TCP/IP GRO P 1 P 2 P 3 P 6 ✗ P 4 P 7 P 5 P 8 P 9 NIC Flowcell #1 Flowcell #2 66

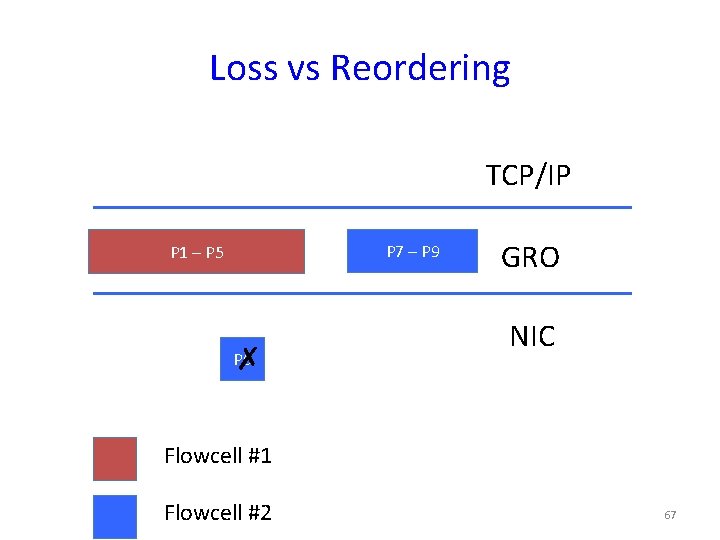

Loss vs Reordering TCP/IP P 7 – P 9 P 1 – P 5 P 6 ✗ GRO NIC Flowcell #1 Flowcell #2 67

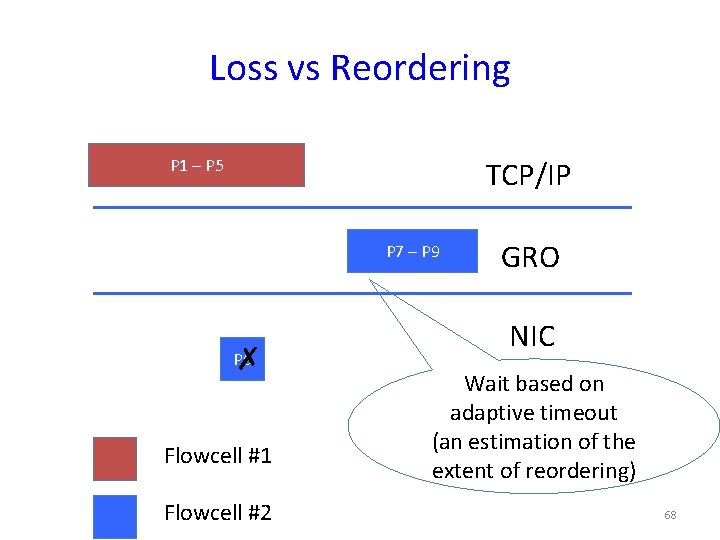

Loss vs Reordering P 1 – P 5 TCP/IP P 7 – P 9 P 6 ✗ Flowcell #1 Flowcell #2 GRO NIC Wait based on adaptive timeout (an estimation of the extent of reordering) 68

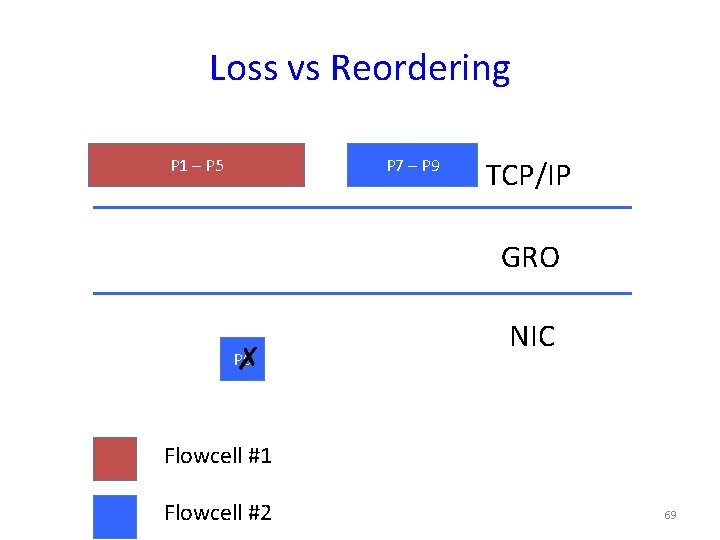

Loss vs Reordering P 1 – P 5 P 7 – P 9 TCP/IP GRO P 6 ✗ NIC Flowcell #1 Flowcell #2 69

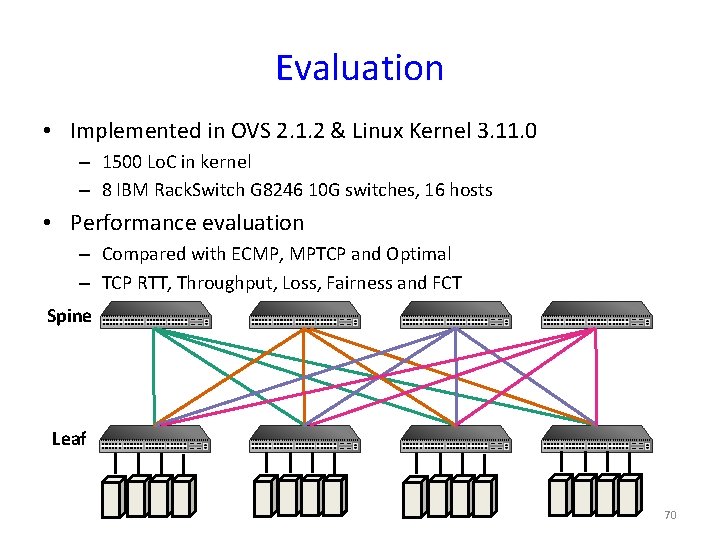

Evaluation • Implemented in OVS 2. 1. 2 & Linux Kernel 3. 11. 0 – 1500 Lo. C in kernel – 8 IBM Rack. Switch G 8246 10 G switches, 16 hosts • Performance evaluation – Compared with ECMP, MPTCP and Optimal – TCP RTT, Throughput, Loss, Fairness and FCT Spine Leaf 70

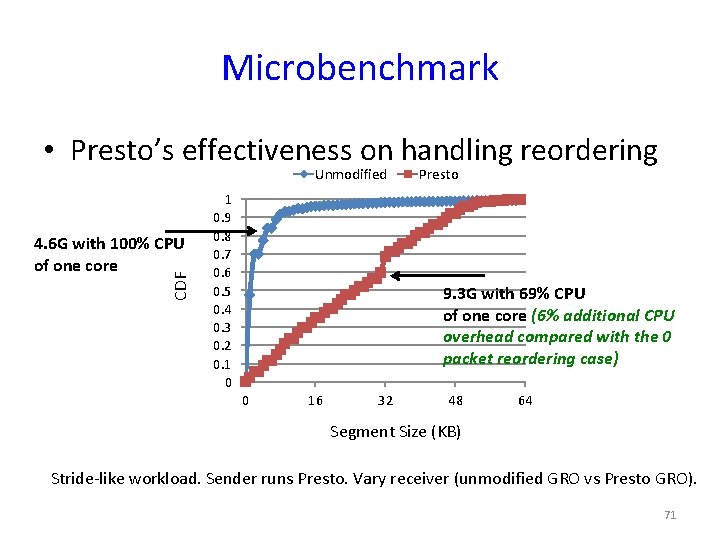

Microbenchmark • Presto’s effectiveness on handling reordering Unmodified CDF 4. 6 G with 100% CPU of one core 1 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 Presto 9. 3 G with 69% CPU of one core (6% additional CPU overhead compared with the 0 packet reordering case) 0 16 32 48 64 Segment Size (KB) Stride-like workload. Sender runs Presto. Vary receiver (unmodified GRO vs Presto GRO). 71

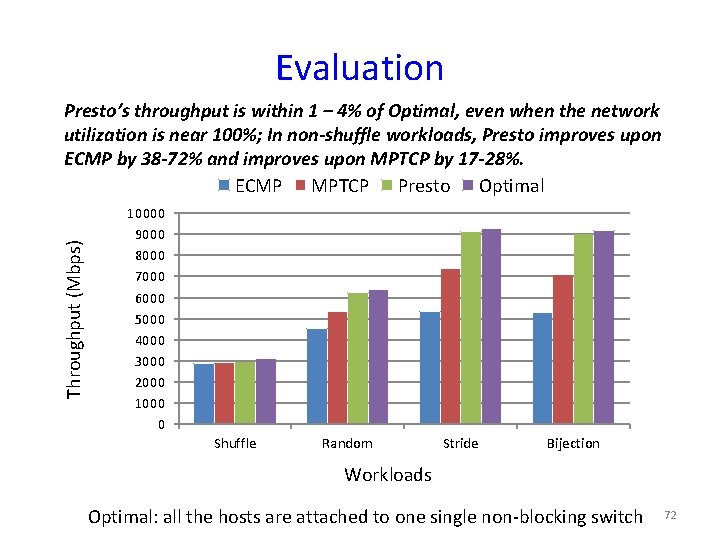

Evaluation Throughput (Mbps) Presto’s throughput is within 1 – 4% of Optimal, even when the network utilization is near 100%; In non-shuffle workloads, Presto improves upon ECMP by 38 -72% and improves upon MPTCP by 17 -28%. ECMP MPTCP Presto Optimal 10000 9000 8000 7000 6000 5000 4000 3000 2000 1000 0 Shuffle Random Stride Bijection Workloads Optimal: all the hosts are attached to one single non-blocking switch 72

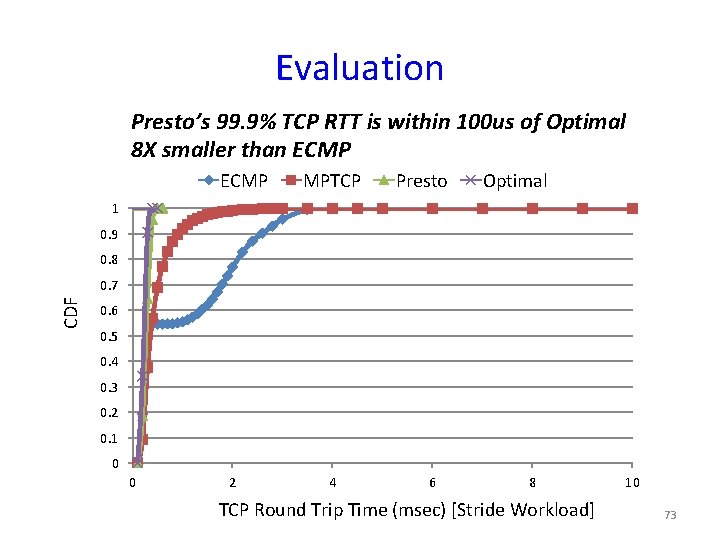

Evaluation Presto’s 99. 9% TCP RTT is within 100 us of Optimal 8 X smaller than ECMP MPTCP Presto Optimal 1 0. 9 0. 8 CDF 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 0 2 4 6 8 TCP Round Trip Time (msec) [Stride Workload] 10 73

Additional Evaluation • Presto scales to multiple paths • Presto handles congestion gracefully – Loss rate, fairness index • • • Comparison to flowlet switching Comparison to local, per-hop load balancing Trace-driven evaluation Impact of north-south traffic Impact of link failures 74

Conclusion Presto: moving network function, Load Balancing, out of datacenter network hardware into software edge No changes to hardware or transport Performance is close to a giant switch 75

Thanks! 76

- Slides: 76