BaseDeltaImmediate Compression Practical Data Compression for OnChip Caches

Base-Delta-Immediate Compression: Practical Data Compression for On-Chip Caches Gennady Pekhimenko§ Vivek Seshadri § Onur Mutlu § Michael A. Kozuch † Phillip B. Gibbons † Todd C. Mowry § § Carnegie Mellon University † Intel Labs Pittsburgh Published at PACT 2012 Presented by Marc-Philippe Bartholomä

Problem & Goal 2

Large Cache Improves Performance n n Larger capacity ⇒ fewer misses ⇒ better performance Larger capacity ⇒ fewer off-chip cache misses q q Avoids memory bandwidth bottleneck Especially important for multi-core with shared memory Idea: Compress the data in But increasing capacity by scaling the conventional caches to save on hardware costsdesign: n n n Slower caches More power consumption More area required 3

Goals of Cache Compression n Compression/decompression need to be very fast q n n Decompression is on the critical path Simple compression logic avoids large power and area costs Must compress the data effectively q Otherwise there isn’t much gain in capacity 4

Background 5

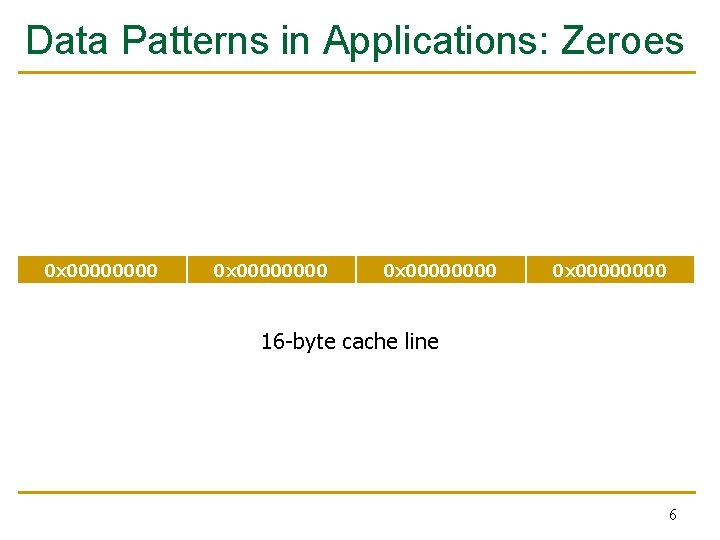

Data Patterns in Applications: Zeroes 0 x 00000000 16 -byte cache line 6

Data Pattern: Repeated Values 0 x. CAFE 4 A 11 7

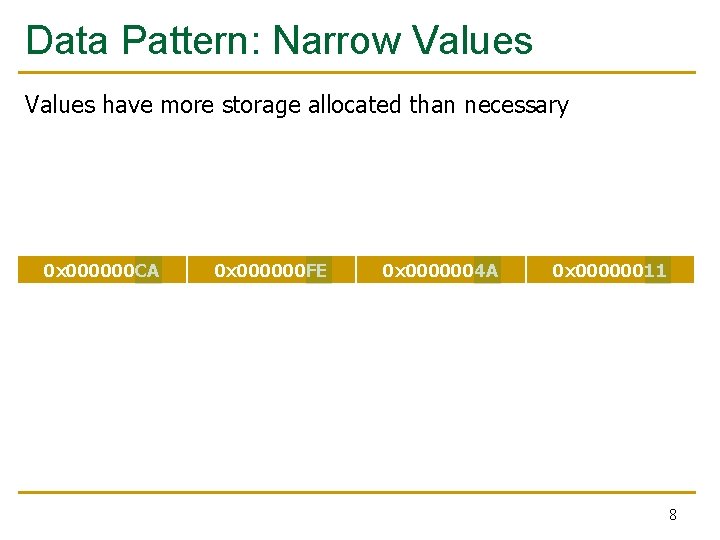

Data Pattern: Narrow Values have more storage allocated than necessary 0 x 000000 CA 0 x 000000 FE 0 x 0000004 A 0 x 00000011 8

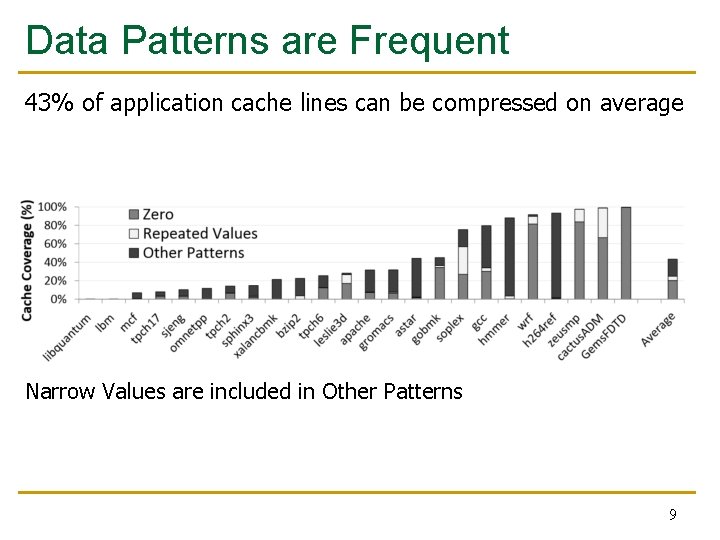

Data Patterns are Frequent 43% of application cache lines can be compressed on average Narrow Values are included in Other Patterns 9

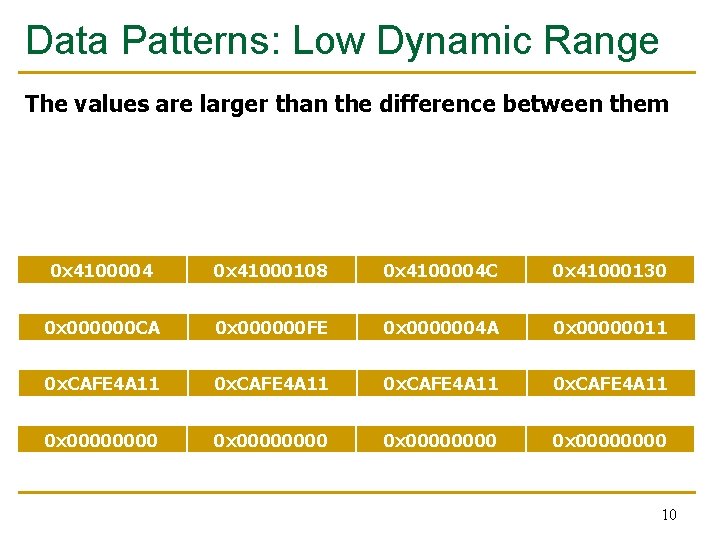

Data Patterns: Low Dynamic Range The values are larger than the difference between them 0 x 4100004 0 x 41000108 0 x 4100004 C 0 x 41000130 0 x 000000 CA 0 x 000000 FE 0 x 0000004 A 0 x 00000011 0 x. CAFE 4 A 11 0 x 00000000 10

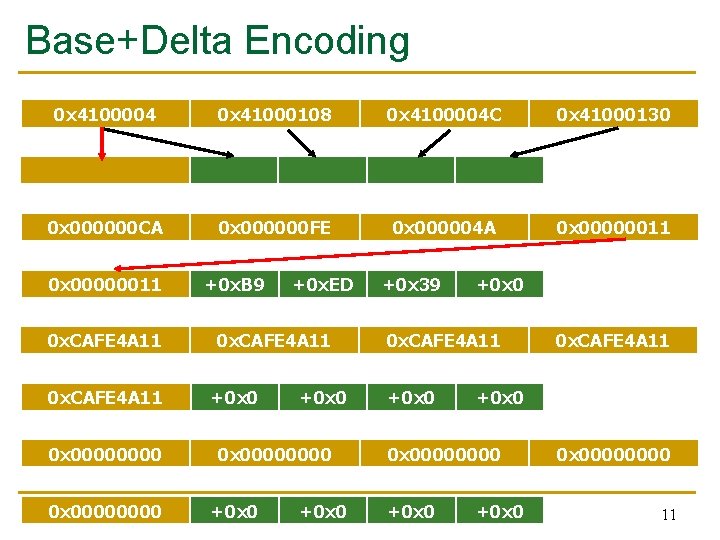

Base+Delta Encoding 0 x 4100004 0 x 000000 CA 0 x 00000011 0 x. CAFE 4 A 11 0 x 00000000 0 x 41000108 +0 x 0 +0 x 104 0 x 000000 FE +0 x. B 9 +0 x. ED 0 x. CAFE 4 A 11 +0 x 0000 +0 x 0 0 x 4100004 C +0 x 48 +0 x 12 C 0 x 000004 A +0 x 39 0 x. CAFE 4 A 11 +0 x 0 0 x 00000011 +0 x 0 0 x. CAFE 4 A 11 +0 x 0 0 x 41000130 +0 x 0000 11

Novelty 12

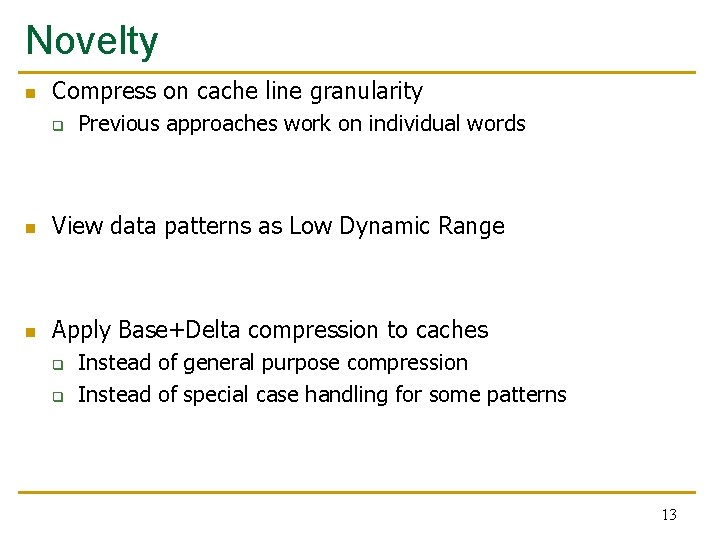

Novelty n Compress on cache line granularity q Previous approaches work on individual words n View data patterns as Low Dynamic Range n Apply Base+Delta compression to caches q q Instead of general purpose compression Instead of special case handling for some patterns 13

Key Approach and Ideas 14

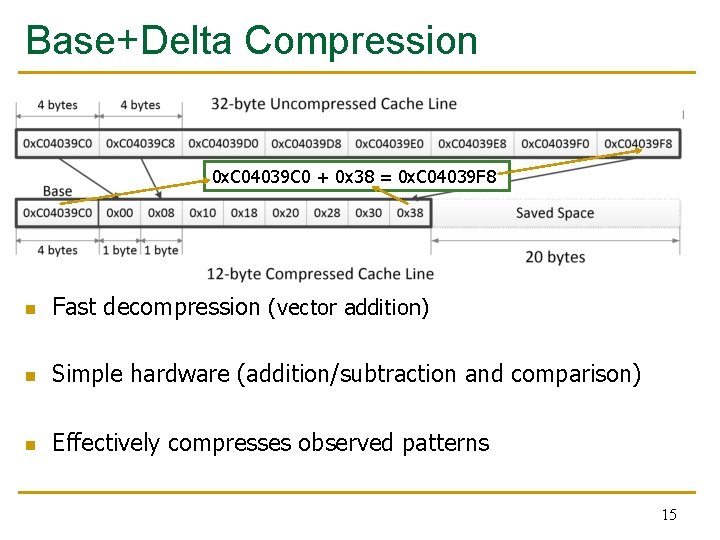

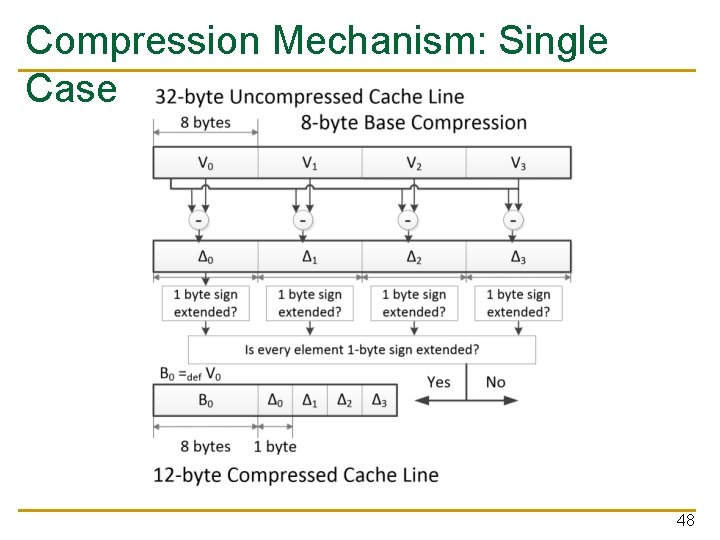

Base+Delta Compression 0 x. C 04039 C 0 + 0 x 38 = 0 x. C 04039 F 8 n Fast decompression (vector addition) n Simple hardware (addition/subtraction and comparison) n Effectively compresses observed patterns 15

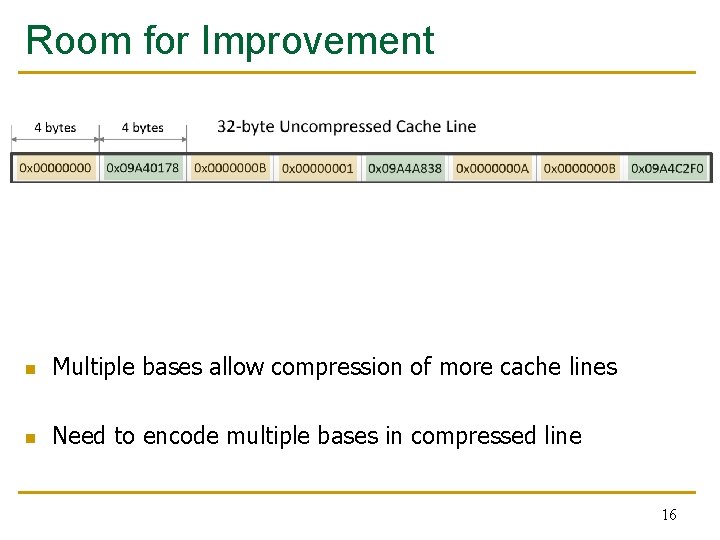

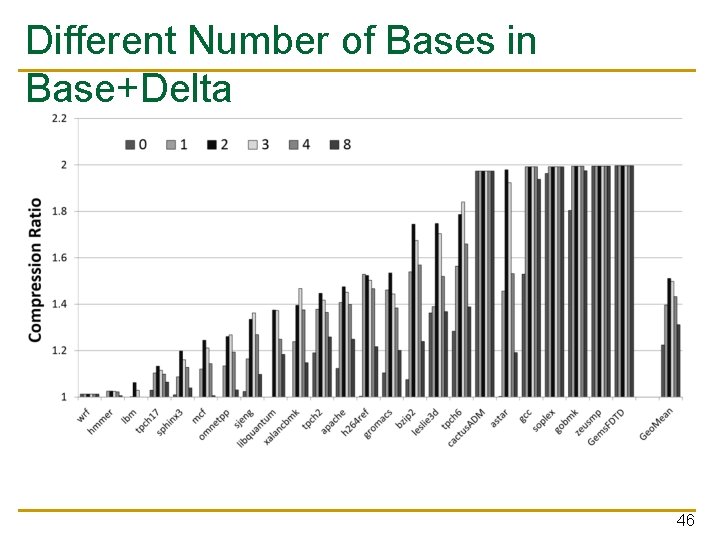

Room for Improvement n Multiple bases allow compression of more cache lines n Need to encode multiple bases in compressed line 16

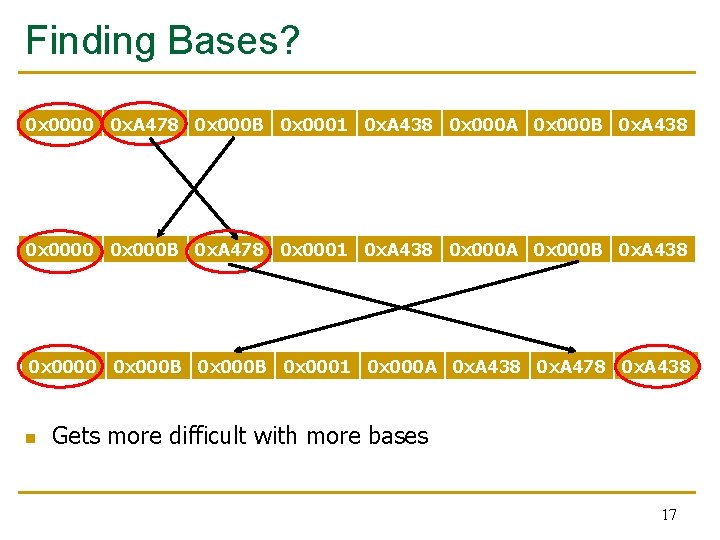

Finding Bases? 0 x 0000 0 x. A 478 0 x 000 B 0 x 0001 0 x. A 438 0 x 000 A 0 x 000 B 0 x. A 438 0 x 0000 0 x 000 B 0 x. A 478 0 x 0001 0 x. A 438 0 x 000 A 0 x 000 B 0 x. A 438 0 x 0000 0 x 000 B 0 x 0001 0 x 000 A 0 x. A 438 0 x. A 478 0 x. A 438 n Gets more difficult with more bases 17

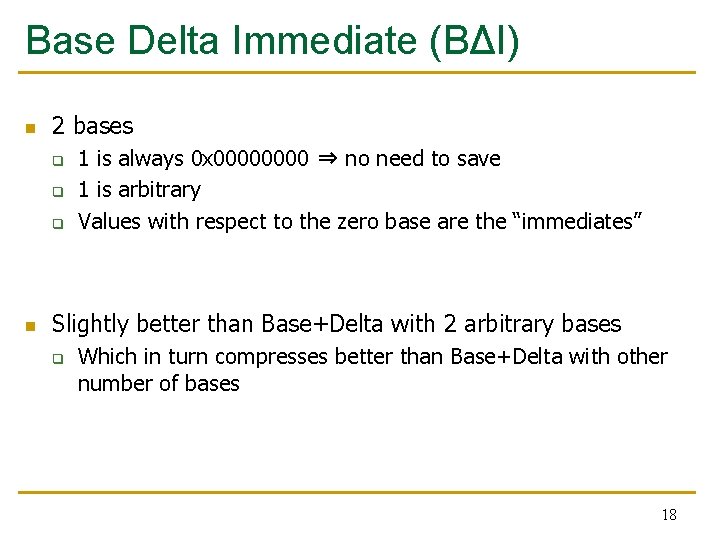

Base Delta Immediate (BΔI) n 2 bases q q q n 1 is always 0 x 0000 ⇒ no need to save 1 is arbitrary Values with respect to the zero base are the “immediates” Slightly better than Base+Delta with 2 arbitrary bases q Which in turn compresses better than Base+Delta with other number of bases 18

Mechanism 19

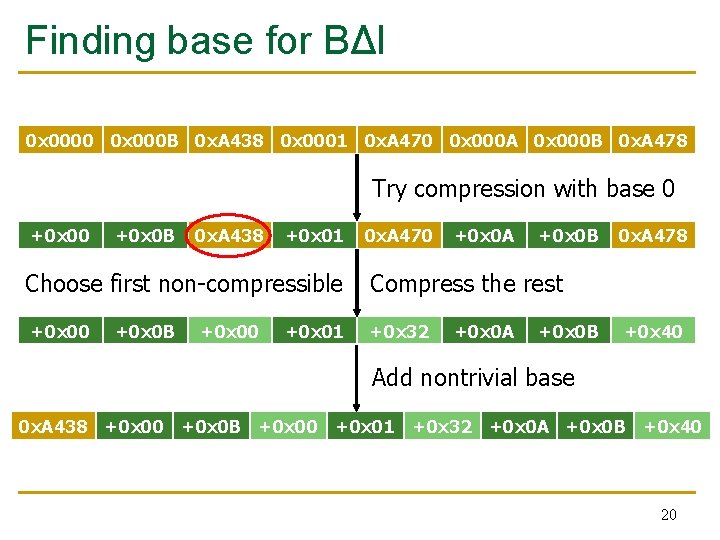

Finding base for BΔI 0 x 0000 0 x 000 B 0 x. A 438 0 x 0001 0 x. A 470 0 x 000 A 0 x 000 B 0 x. A 478 Try compression with base 0 +0 x 0 B 0 x. A 438 +0 x 01 0 x. A 470 +0 x 0 A +0 x 0 B Choose first non-compressible Compress the rest +0 x 00 +0 x 32 +0 x 0 B +0 x 00 +0 x 01 +0 x 0 A +0 x 0 B 0 x. A 478 +0 x 40 Add nontrivial base 0 x. A 438 +0 x 00 +0 x 0 B +0 x 00 +0 x 01 +0 x 32 +0 x 0 A +0 x 0 B +0 x 40 20

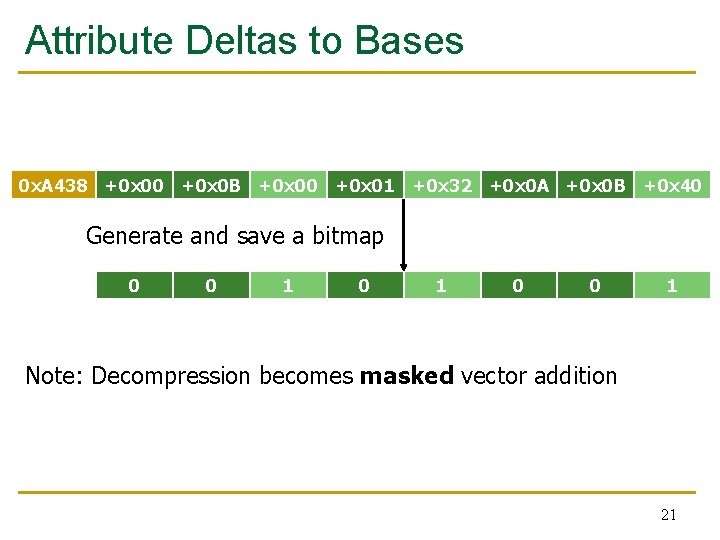

Attribute Deltas to Bases 0 x. A 438 +0 x 00 +0 x 0 B +0 x 00 +0 x 01 +0 x 32 +0 x 0 A +0 x 0 B +0 x 40 Generate and save a bitmap 0 0 1 Note: Decompression becomes masked vector addition 21

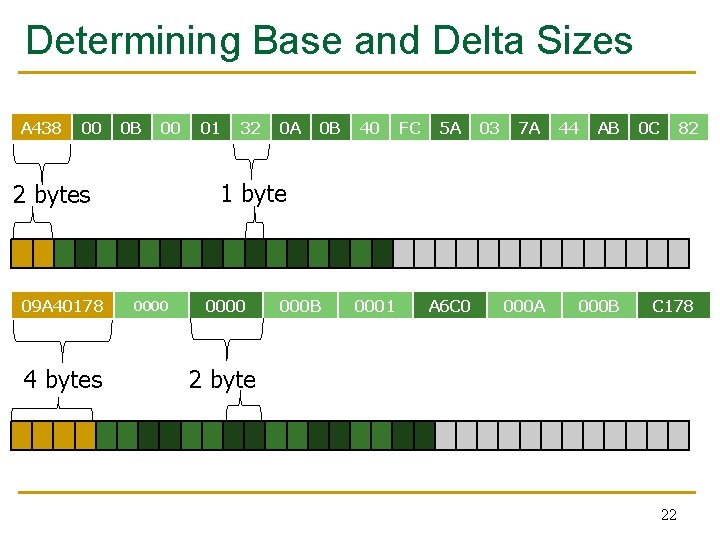

Determining Base and Delta Sizes A 438 00 0 B 00 4 bytes 32 0 A 0 B 40 FC 5 A 03 7 A 44 AB 0 C 82 1 byte 2 bytes 09 A 40178 01 0000 000 B 0001 A 6 C 0 000 A 000 B C 178 2 byte 22

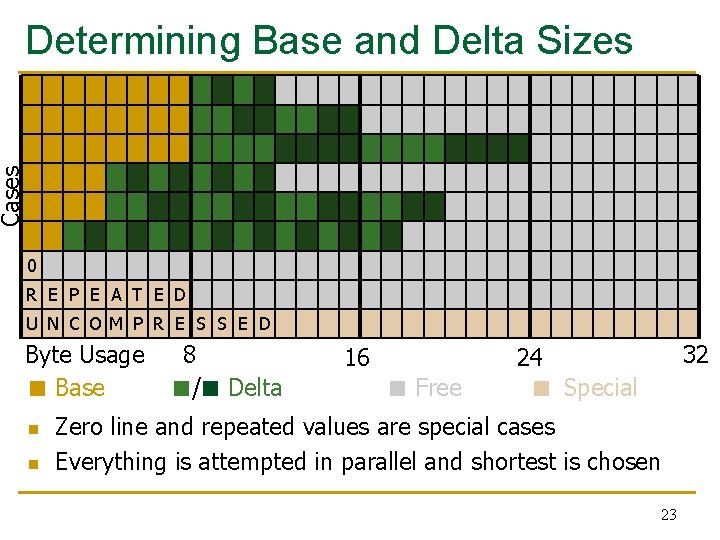

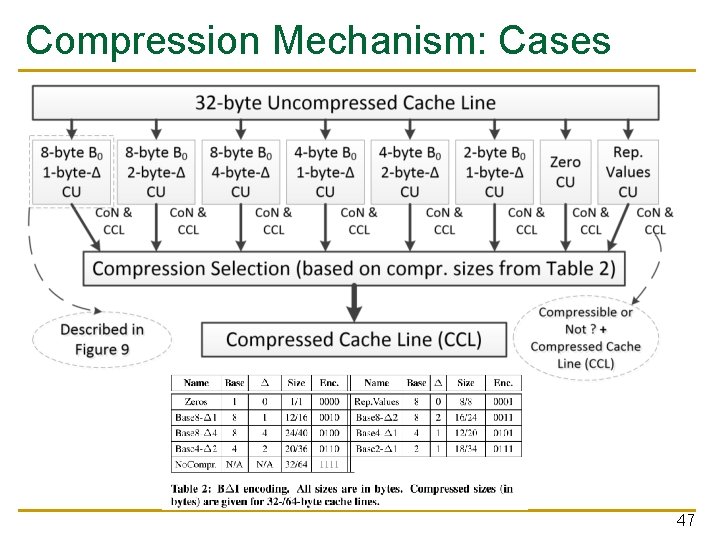

Cases Determining Base and Delta Sizes 0 R E P E A T E D U N C O M P R E S S E D Byte Usage ■ Base n n 8 ■/■ Delta 16 ■ Free 32 24 ■ Special Zero line and repeated values are special cases Everything is attempted in parallel and shortest is chosen 23

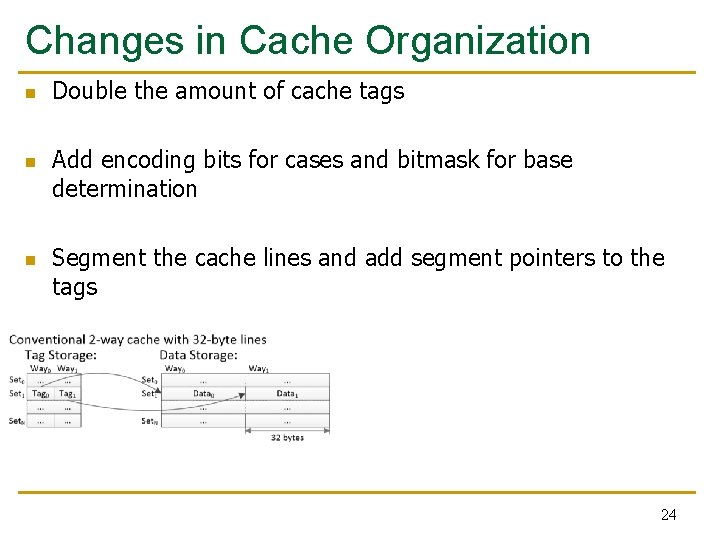

Changes in Cache Organization n Double the amount of cache tags Add encoding bits for cases and bitmask for base determination Segment the cache lines and add segment pointers to the tags 24

Key Results: Methodology and Evaluation 25

Methodology n x 86 -based Simulation n 1 -4 cores n SPEC 2006, TPC-H and Apache web server workloads n L 1/L 2/L 3 cache latencies from CACTI [Thoziyoor+, ISCA’ 08] 26

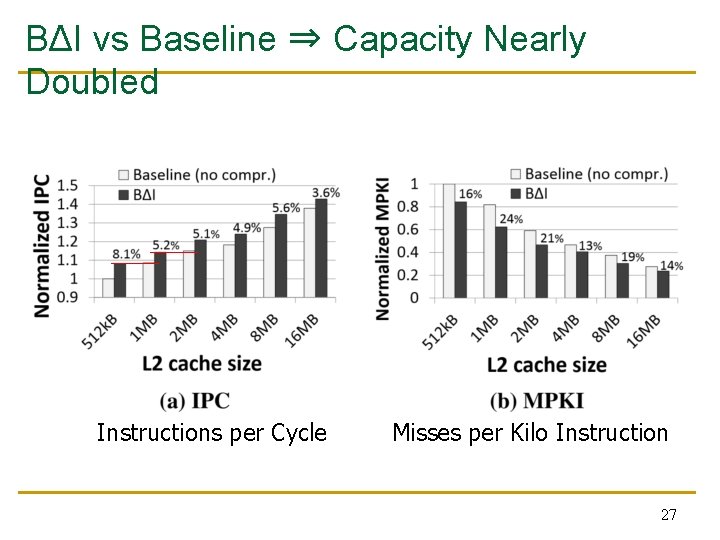

BΔI vs Baseline ⇒ Capacity Nearly Doubled Instructions per Cycle Misses per Kilo Instruction 27

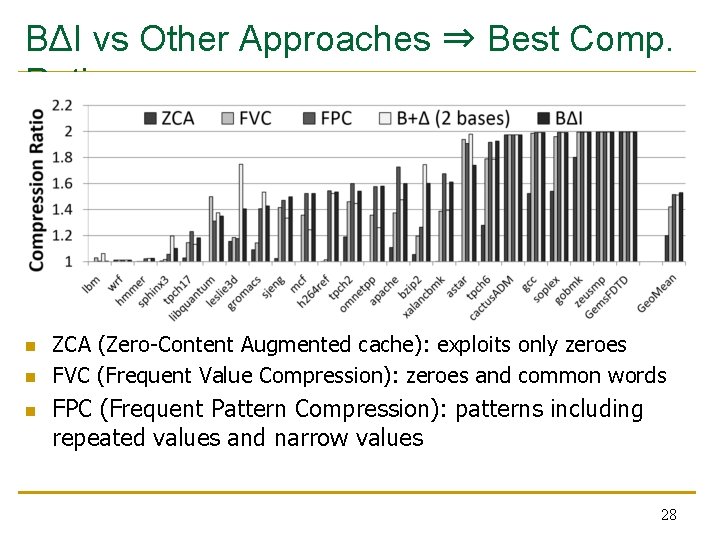

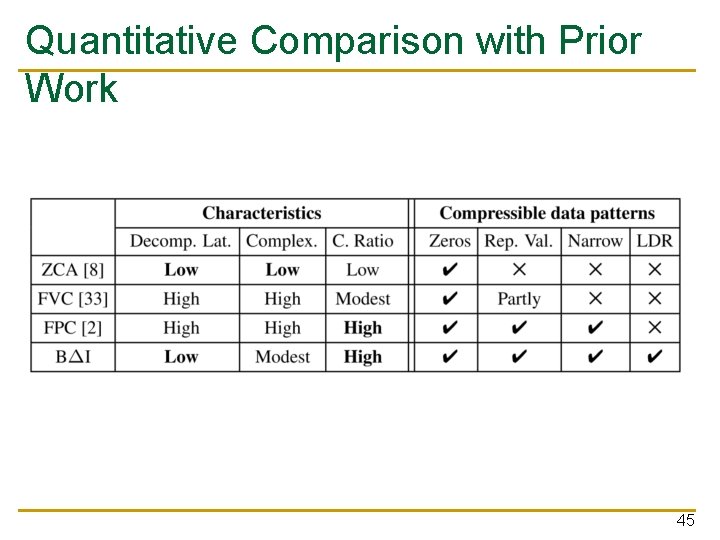

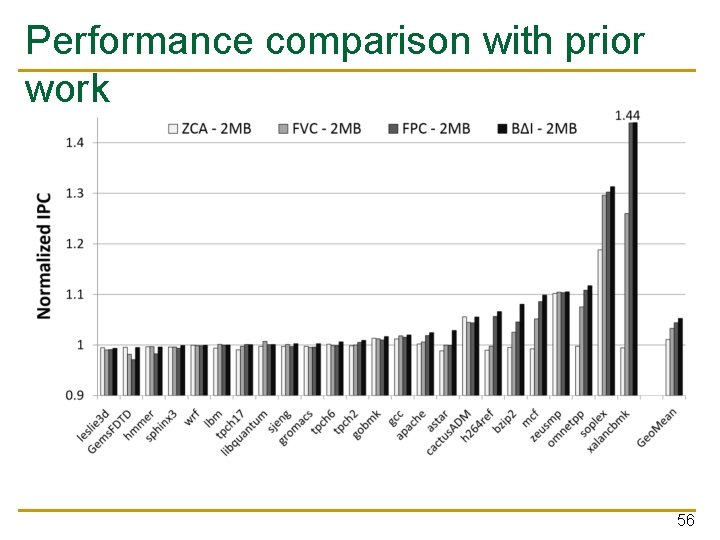

BΔI vs Other Approaches ⇒ Best Comp. Ratio n n n ZCA (Zero-Content Augmented cache): exploits only zeroes FVC (Frequent Value Compression): zeroes and common words FPC (Frequent Pattern Compression): patterns including repeated values and narrow values 28

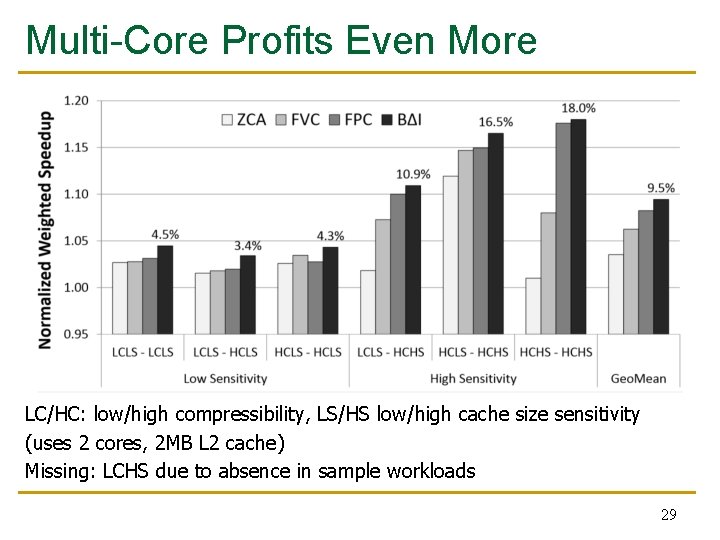

Multi-Core Profits Even More LC/HC: low/high compressibility, LS/HS low/high cache size sensitivity (uses 2 cores, 2 MB L 2 cache) Missing: LCHS due to absence in sample workloads 29

Summary 30

Summary n n Goal: Increase cache capacity using data compression at lower cost Key Insight: A significant fraction (43%) of real-world cache lines can be compressed Key Mechanism: Base+Delta encoding fits well to exploit low dynamic range patterns Key Results: BΔI yields nearly the performance gain of a cache with double capacity without the same costs in area and power q q 5. 1% avg. performance increase on single-core over baseline 9. 5% avg. performance increase on dual-core over baseline 31

Strengths 32

Strengths of the Paper n n Novel approach leading to significant improvement Thorough analysis and evaluation of patterns, previous approaches and variants n Elegant solution and principled design n Easy-to-understand well-structured paper n Transparent to the OS and applications n Compression mechanism is predictable for the user 33

Weaknesses 34

Weaknesses/Limitations of the Paper n Requires double amount of cache tags q n n n Potential bottleneck Adds the possibility of eviction when writing with cache-hit Because real capacity is unknown, it is harder to optimize applications Missing category for multi-core workload Analysis of cache size only for Base+Delta (no bitmap) Compressed data patterns don’t capture floating point values Too much latency for L 1 cache 35

Thoughts and Ideas 36

Extensions n n n Special case with only the 0 base Base Finding approach generalizes to 2 arbitrary bases q Analyze the benefit of switching between BΔI and Base+Delta with 1 or 2 bases To save on cache tags you could load 2 contiguous cache lines Include base bitmap in the deltas For repeated values of size up to 4 bytes, you could save them using the bitmask for base attribution 37

Takeaways 38

Key Takeaways n Paper is a prime example of principled design q q q n Carefully examines the potential Thoroughly analyzes the tradeoffs Picks the best variant Data compression is viable for on-chip caches 39

Questions 40

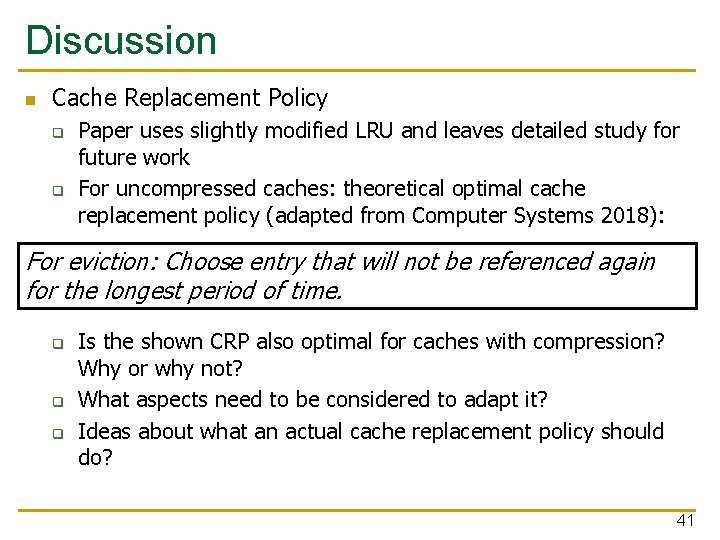

Discussion n Cache Replacement Policy q q Paper uses slightly modified LRU and leaves detailed study for future work For uncompressed caches: theoretical optimal cache replacement policy (adapted from Computer Systems 2018): For eviction: Choose entry that will not be referenced again for the longest period of time. q q q Is the shown CRP also optimal for caches with compression? Why or why not? What aspects need to be considered to adapt it? Ideas about what an actual cache replacement policy should do? 41

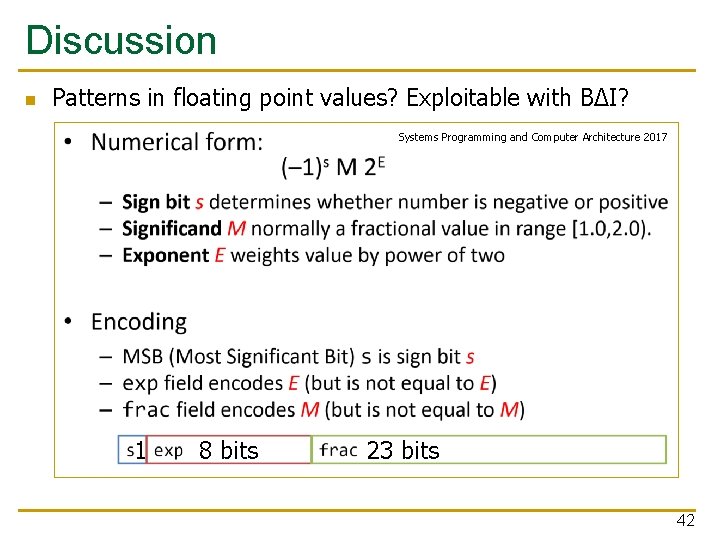

Discussion n Patterns in floating point values? Exploitable with BΔI? Systems Programming and Computer Architecture 2017 1 8 bits 23 bits 42

Base-Delta-Immediate Compression: Practical Data Compression for On-Chip Caches Gennady Pekhimenko§ Vivek Seshadri § Onur Mutlu § Michael A. Kozuch † Phillip B. Gibbons † Todd C. Mowry § § Carnegie Mellon University † Intel Labs Pittsburgh Published at PACT 2012 Presented by Marc-Philippe Bartholomä

Backup Slides 44

Quantitative Comparison with Prior Work 45

Different Number of Bases in Base+Delta 46

Compression Mechanism: Cases 47

Compression Mechanism: Single Case 48

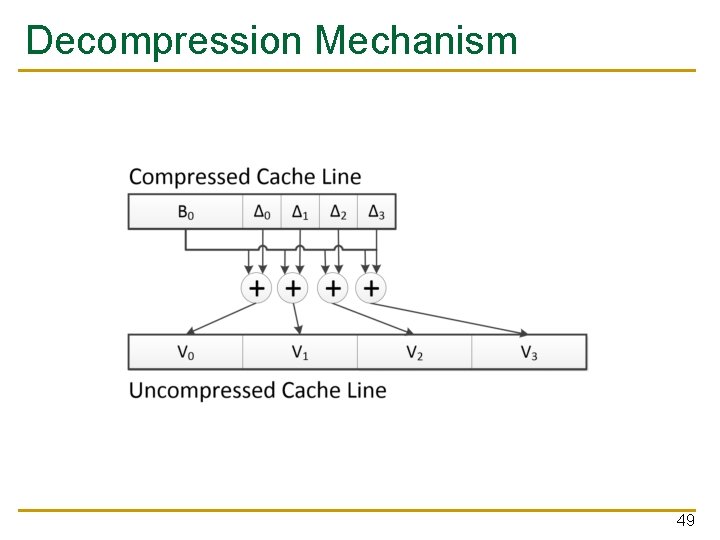

Decompression Mechanism 49

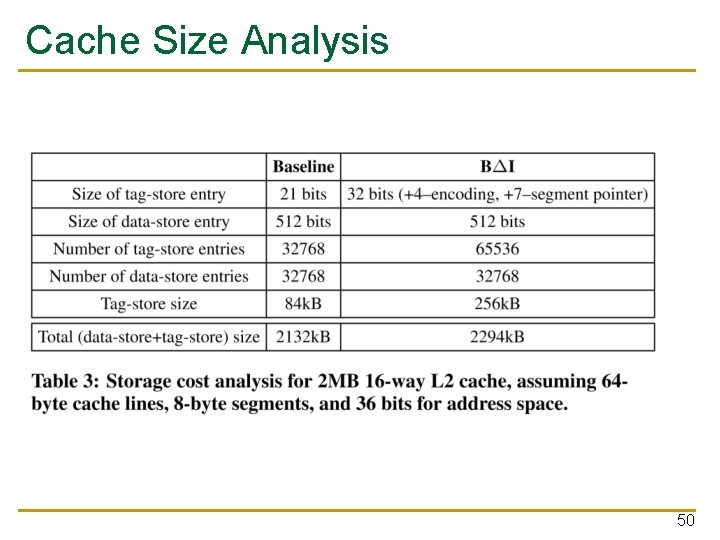

Cache Size Analysis 50

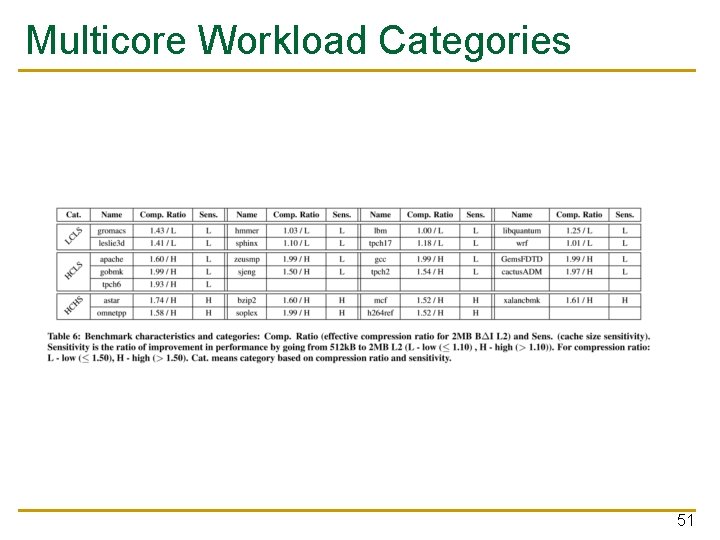

Multicore Workload Categories 51

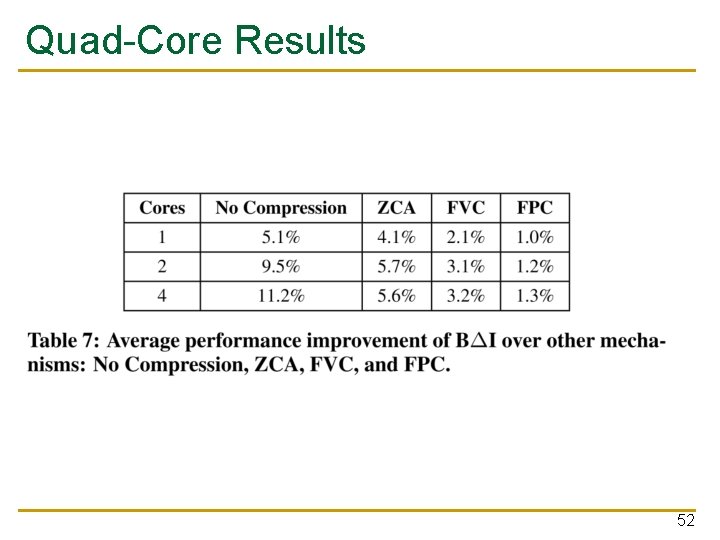

Quad-Core Results 52

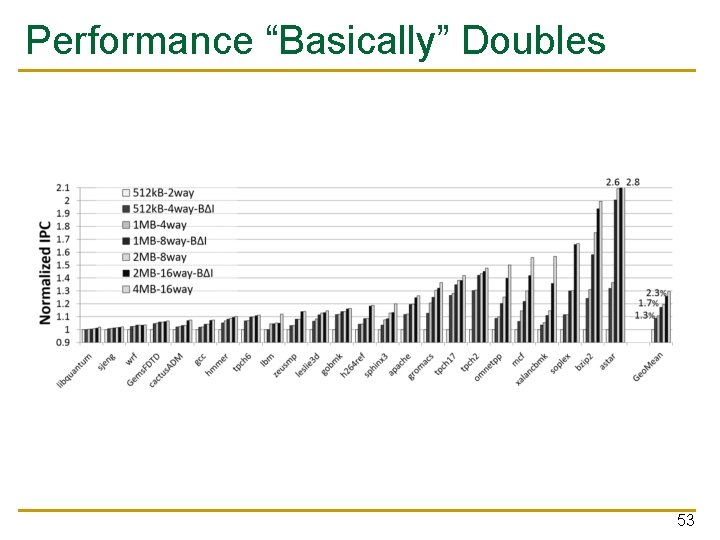

Performance “Basically” Doubles 53

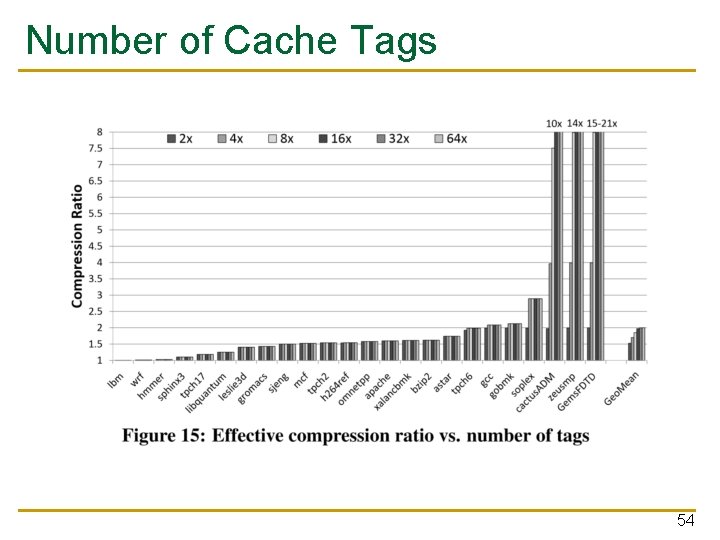

Number of Cache Tags 54

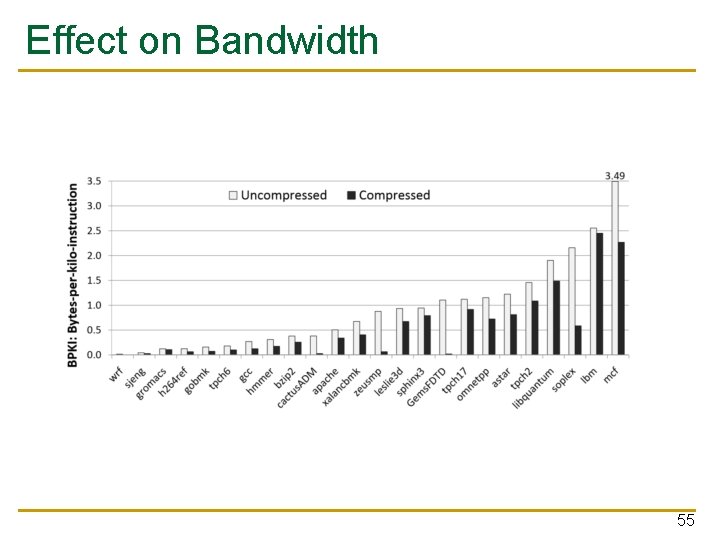

Effect on Bandwidth 55

Performance comparison with prior work 56

- Slides: 56