Architectures and Design Techniques for Energy Efficient Embedded

Architectures and Design Techniques for Energy Efficient Embedded DSP and Multimedia Ingrid Verbauwhede, Christian Piguet, Bart Kienhuis/Ed Deprettere, Patrick Schaumont

Outline • • Intro Low Power Observations So. C Architectures: Ingrid Low Power Components: Christian Design Methods: Ed Design Methods: Patrick Conclusion 2

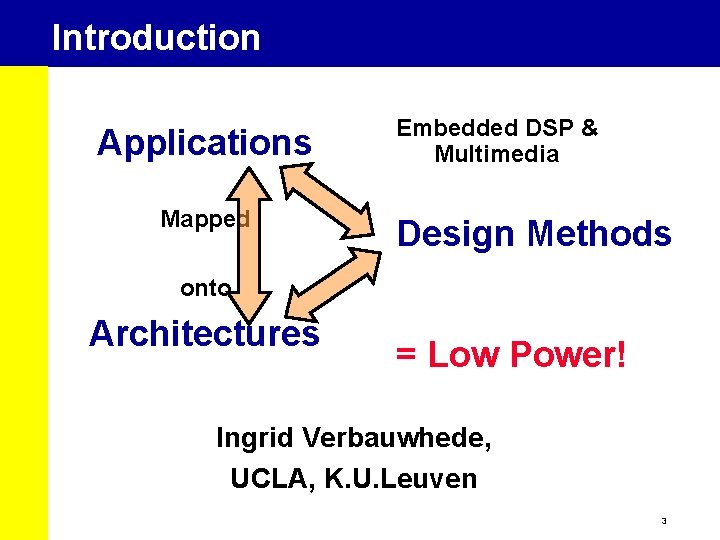

Introduction Applications Mapped Embedded DSP & Multimedia Design Methods onto Architectures = Low Power! Ingrid Verbauwhede, UCLA, K. U. Leuven 3

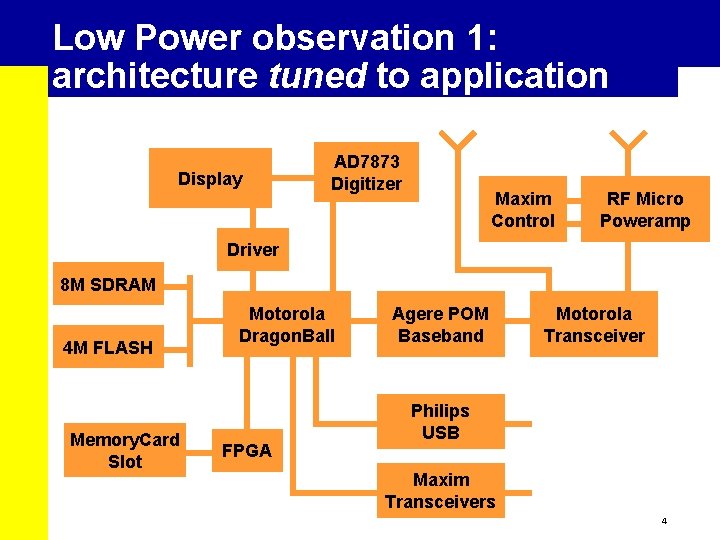

Low Power observation 1: architecture tuned to application Display AD 7873 Digitizer Maxim Control RF Micro Poweramp Driver 8 M SDRAM 4 M FLASH Memory. Card Slot Motorola Dragon. Ball FPGA Agere POM Baseband Motorola Transceiver Philips USB Maxim Transceivers 4

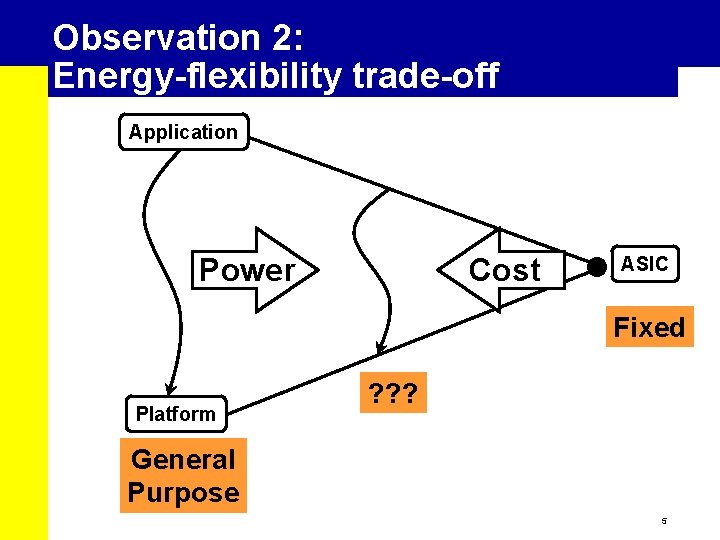

Observation 2: Energy-flexibility trade-off Application Power Cost ASIC Fixed Platform ? ? ? General Purpose 5

Example: DSP processors • Specialized instructions: MAC • Dedicated co-processors: Viterbi acceleration 6

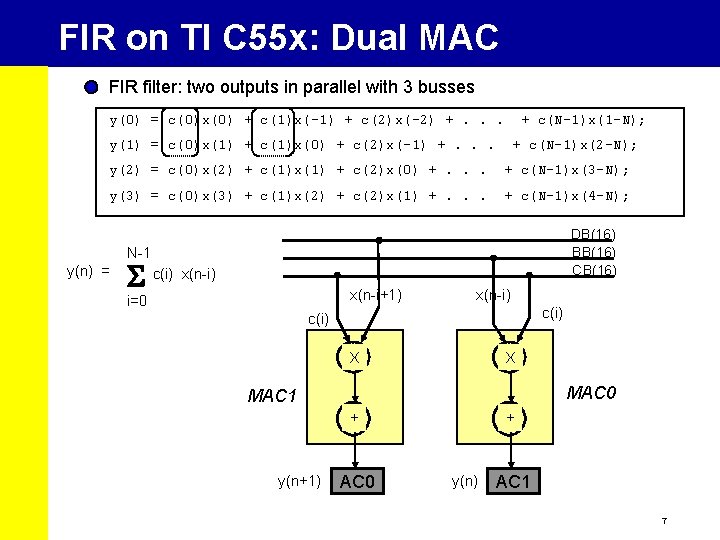

FIR on TI C 55 x: Dual MAC FIR filter: two outputs in parallel with 3 busses y(0) = c(0)x(0) + c(1)x(-1) + c(2)x(-2) +. . . + c(N-1)x(1 -N); y(1) = c(0)x(1) + c(1)x(0) + c(2)x(-1) +. . . + c(N-1)x(2 -N); y(2) = c(0)x(2) + c(1)x(1) + c(2)x(0) +. . . + c(N-1)x(3 -N); y(3) = c(0)x(3) + c(1)x(2) + c(2)x(1) +. . . + c(N-1)x(4 -N); DB(16) BB(16) CB(16) N-1 y(n) = c(i) x(n-i+1) i=0 x(n-i) c(i) X X MAC 0 MAC 1 + y(n+1) AC 0 + y(n) AC 1 7

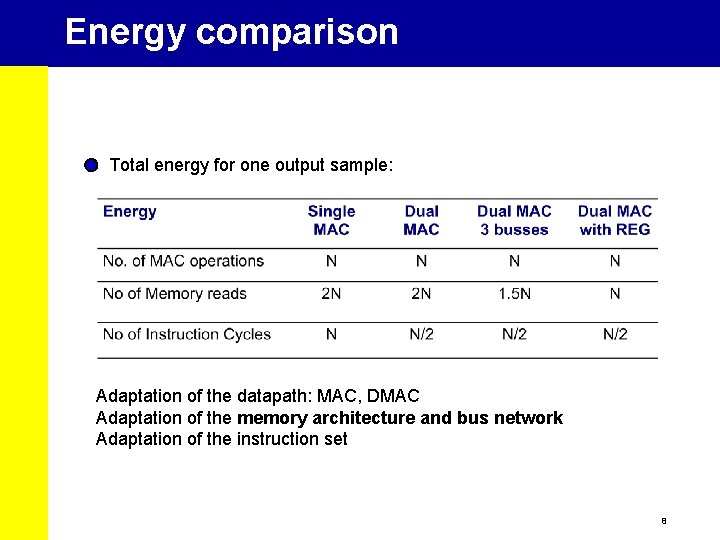

Energy comparison Total energy for one output sample: Adaptation of the datapath: MAC, DMAC Adaptation of the memory architecture and bus network Adaptation of the instruction set 8

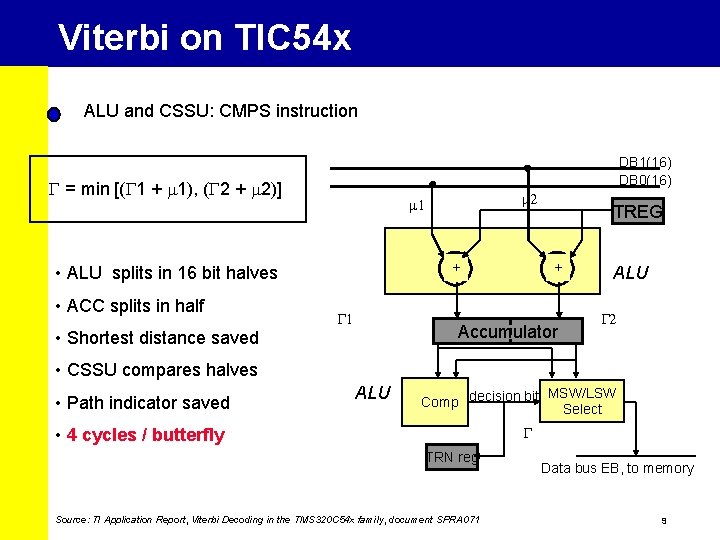

Viterbi on TIC 54 x ALU and CSSU: CMPS instruction DB 1(16) DB 0(16) G = min [(G 1 + m 1), (G 2 + m 2)] + • ALU splits in 16 bit halves • ACC splits in half • Shortest distance saved m 2 m 1 G 1 TREG + Accumulator ALU G 2 • CSSU compares halves • Path indicator saved ALU MSW/LSW Comp decision bit Select G • 4 cycles / butterfly TRN reg Source: TI Application Report, Viterbi Decoding in the TMS 320 C 54 x family, document SPRA 071 Data bus EB, to memory 9

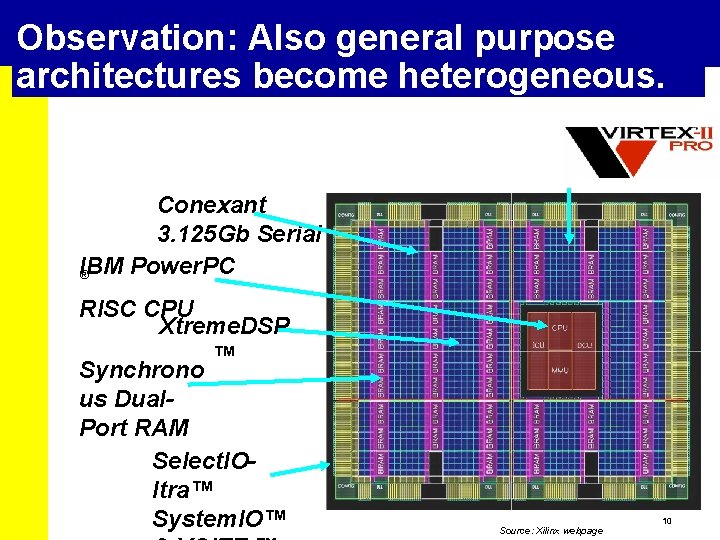

Observation: Also general purpose architectures become heterogeneous. Conexant 3. 125 Gb Serial IBM Power. PC ® RISC CPU Xtreme. DSP ™ Synchrono us Dual. Port RAM Select. IOltra™ System. IO™ Source: Xilinx webpage 10

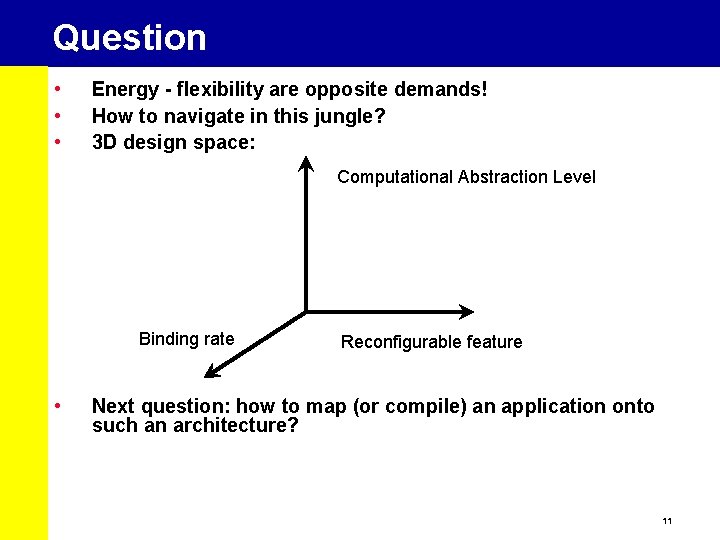

Question • • • Energy - flexibility are opposite demands! How to navigate in this jungle? 3 D design space: Computational Abstraction Level Binding rate • Reconfigurable feature Next question: how to map (or compile) an application onto such an architecture? 11

Flexibility (1) - Abstraction level Computational Abstraction Level • Instruction set level = “programmable” • CLB level = “reconfigurable” 12

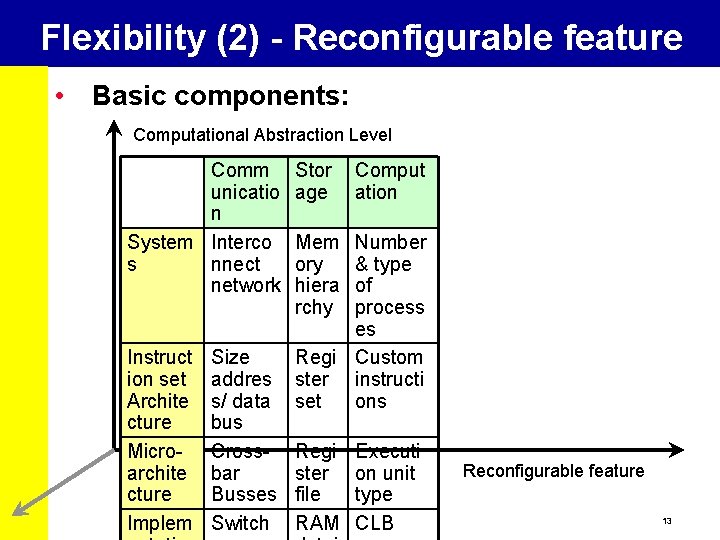

Flexibility (2) - Reconfigurable feature • Basic components: Computational Abstraction Level Comm unicatio n System Interco s nnect network Stor age Comput ation Mem ory hiera rchy Number & type of process es Instruct Size Regi Custom ion set addres ster instructi Archite s/ data set ons cture bus Micro- Cross- Regi Executi archite bar ster on unit cture Busses file type Implem Switch RAM CLB Reconfigurable feature 13

Flexibility (3) - Binding rate Compare processing to binding • Configurable (“compile-time”) • Re-configurable • Dynamic reconfigurable (“adaptive”) 14

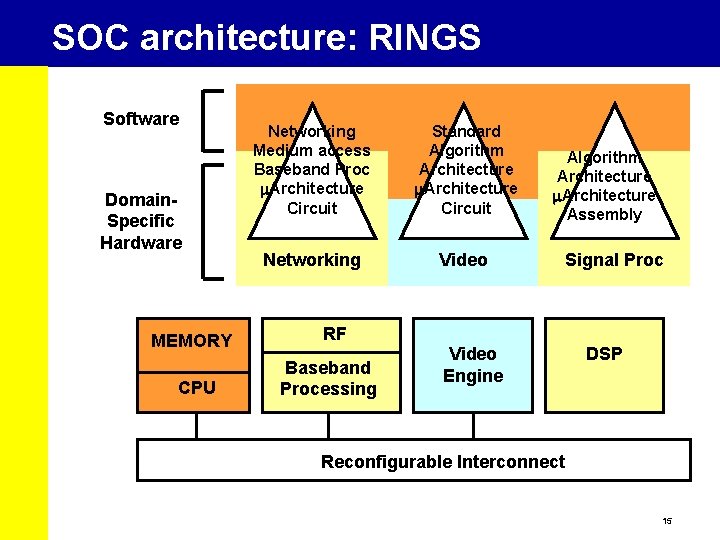

SOC architecture: RINGS Software Domain. Specific Hardware MEMORY CPU Networking Medium access Baseband Proc m. Architecture Circuit Standard Algorithm Architecture m. Architecture Circuit Networking Video Algorithm Architecture m. Architecture Assembly Signal Proc RF Baseband Processing Video Engine DSP Reconfigurable Interconnect 15

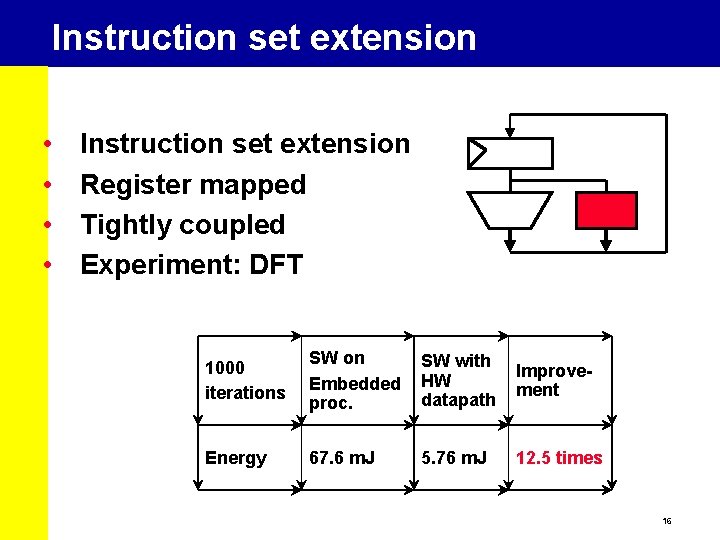

Instruction set extension • • Instruction set extension Register mapped Tightly coupled Experiment: DFT 1000 iterations SW on Embedded proc. SW with HW datapath Improvement Energy 67. 6 m. J 5. 76 m. J 12. 5 times 16

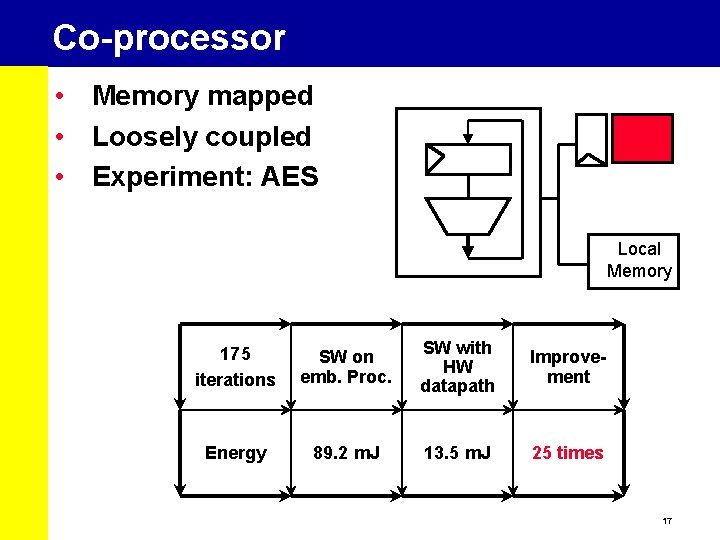

Co-processor • Memory mapped • Loosely coupled • Experiment: AES Local Memory 175 iterations SW on emb. Proc. SW with HW datapath Improvement Energy 89. 2 m. J 13. 5 m. J 25 times 17

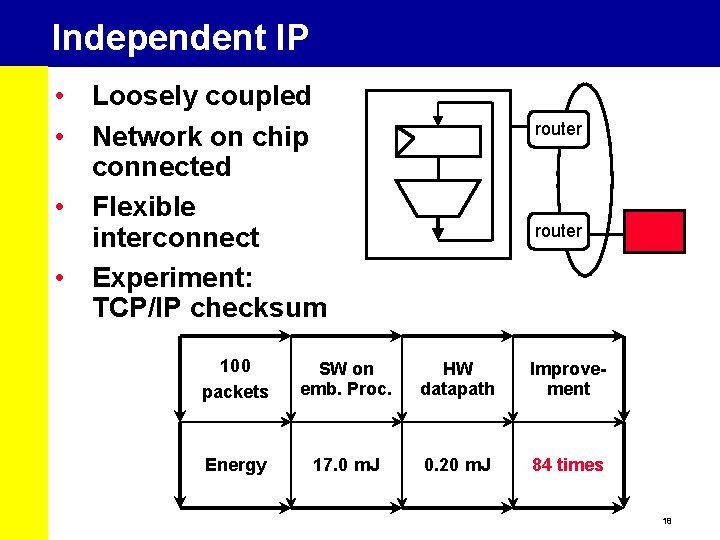

Independent IP • Loosely coupled • Network on chip connected • Flexible interconnect • Experiment: TCP/IP checksum router 100 packets SW on emb. Proc. HW datapath Improvement Energy 17. 0 m. J 0. 20 m. J 84 times 18

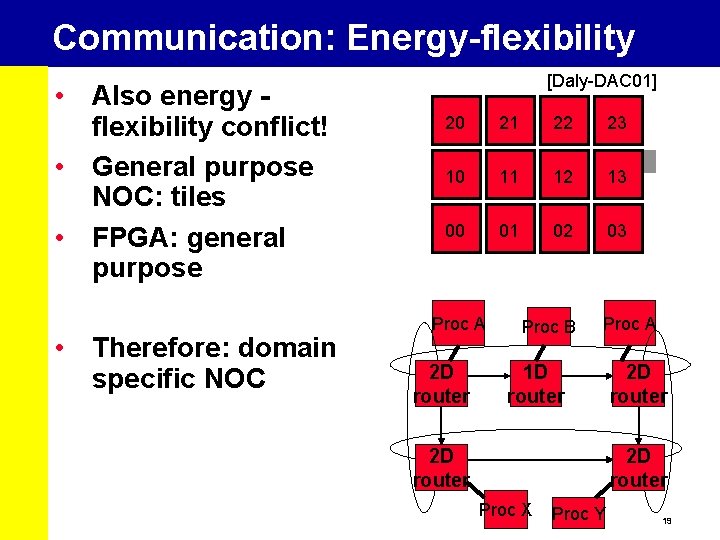

Communication: Energy-flexibility • Also energy flexibility conflict! • General purpose NOC: tiles • FPGA: general purpose • Therefore: domain specific NOC [Daly-DAC 01] 20 21 22 23 10 11 12 13 00 01 02 03 Proc A 2 D router Proc B Proc A 1 D router 2 D router Proc X Proc Y 19

Conclusion • Low Power by going domain-specific • Energy-flexibility conflict • How to “program” this RINGS? • Next: Ultra-low power components: Christian • Design exploration: Ed • Co-design environment: Patrick 20

Introduction Applications Mapped Embedded DSP & Multimedia Design Methods onto Architectures = Low Power! 21

Efficient Embedded DSP Ultra-Low-Power Components Christian Piguet, CSEM

Ultra Low Power DSP Processors • The design of DSP processors is very challenging, as it has to take into account contradictory goals: • • an increased throughput request at a reduced energy budget • New issues due to very deep submicron technologies such as interconnect delays and leakage • History of hearing aids circuits: • • • analog filters 15 years ago digital ASIC-like circuits 5 years ago powerful DSP processors today, below 1 Volt and 1 m. W 23

DSP Architectures for Low-Power • single MAC DSP core of 5 -10 years ago • parallel architectures with several MAC working in parallel • VLIW or multitask DSP architectures • Benchmark: • number of simple operations executed per clock cycle, up to 50 or more • Drawbacks of VLIW: • • • very large instruction words up to 256 bits Some instructions in the set are still missing transistor count is not favorable to reduce leakage 24

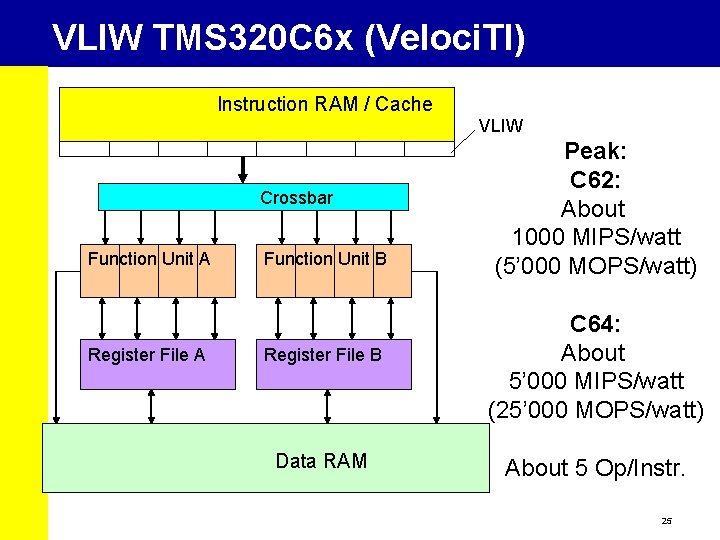

VLIW TMS 320 C 6 x (Veloci. TI) Instruction RAM / Cache VLIW Crossbar Function Unit A Register File A Function Unit B Register File B Data RAM Peak: C 62: About 1000 MIPS/watt (5’ 000 MOPS/watt) C 64: About 5’ 000 MIPS/watt (25’ 000 MOPS/watt) About 5 Op/Instr. 25

3 Ways to be more Energy Efficient • To to design specific very small DSP engines for each task, in such a way that each DSP task is executed in the most energy efficient way on the smallest piece of hardware (N co-processors) • to design reconfigurable architectures such as the DART cluster • in which configuration bits allow the user to modify the hardware in such a way that it can much better fit to the executed algorithms. 26

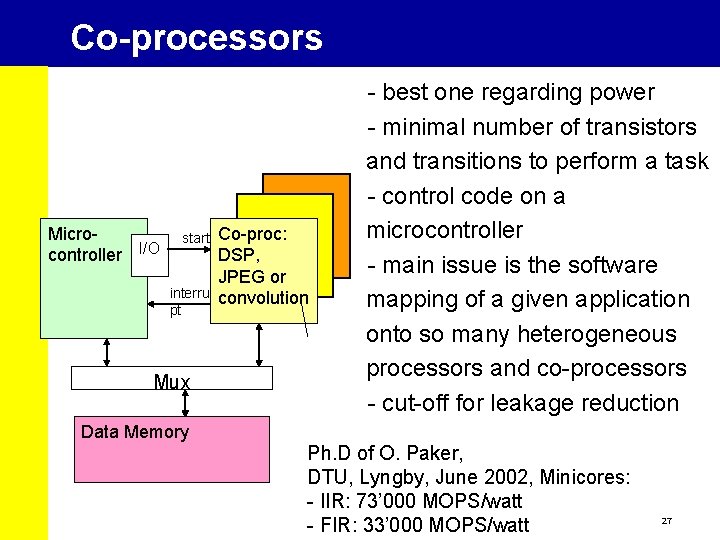

Co-processors - best one regarding power - minimal number of transistors and transitions to perform a task - control code on a microcontroller - main issue is the software mapping of a given application onto so many heterogeneous processors and co-processors - cut-off for leakage reduction Microcontroller I/O start interru pt Mux Co-proc: DSP, JPEG or convolution Data Memory Ph. D of O. Paker, DTU, Lyngby, June 2002, Minicores: - IIR: 73’ 000 MOPS/watt - FIR: 33’ 000 MOPS/watt 27

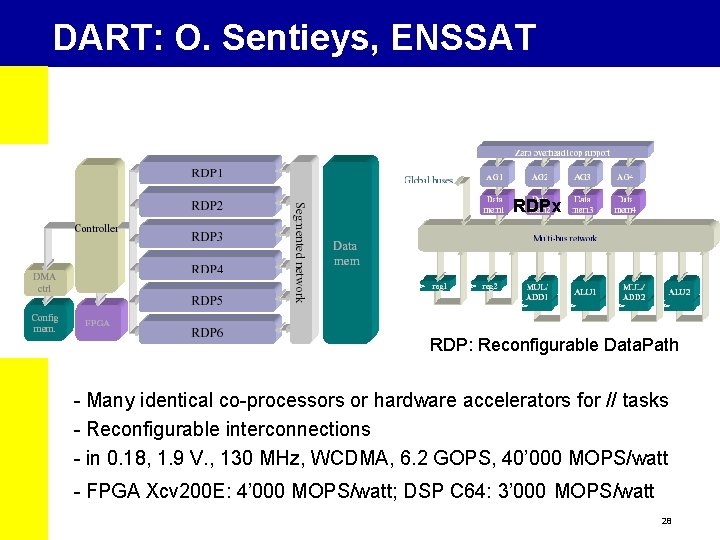

DART: O. Sentieys, ENSSAT RDPx RDP: Reconfigurable Data. Path - Many identical co-processors or hardware accelerators for // tasks - Reconfigurable interconnections - in 0. 18, 1. 9 V. , 130 MHz, WCDMA, 6. 2 GOPS, 40’ 000 MOPS/watt - FPGA Xcv 200 E: 4’ 000 MOPS/watt; DSP C 64: 3’ 000 MOPS/watt 28

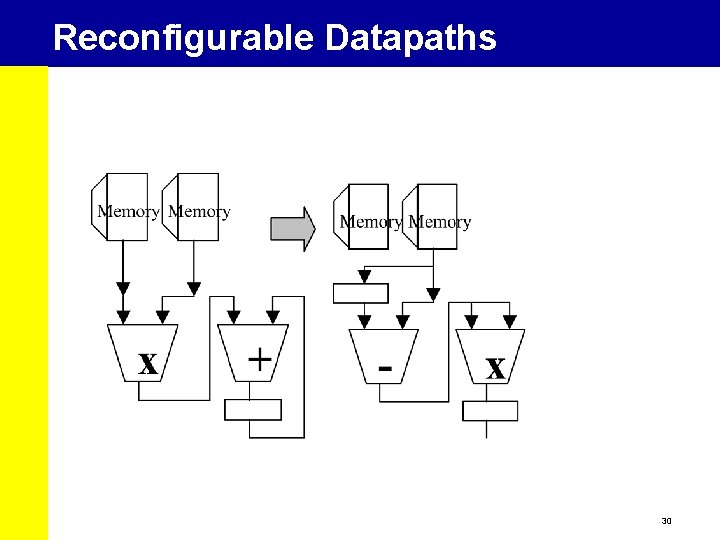

Reconfigurable DSP Architectures • Not FPGA, much more efficient than FPGA. The key point is to reconfigure only a limited number of units • Reconfigurable datapath • Reconfigurable interconnections • Reconfigurable Addressing Units (AGU) • FPGA: • MACGIC DSP consumes 1 m. W/MHz in 0. 18 • Same MACGIC in Altera Stratix consumes 10 m. W/MHz plus 900 m. W of static power, so 1’ 000 m. W at 10 MHz 29

Reconfigurable Datapaths 30

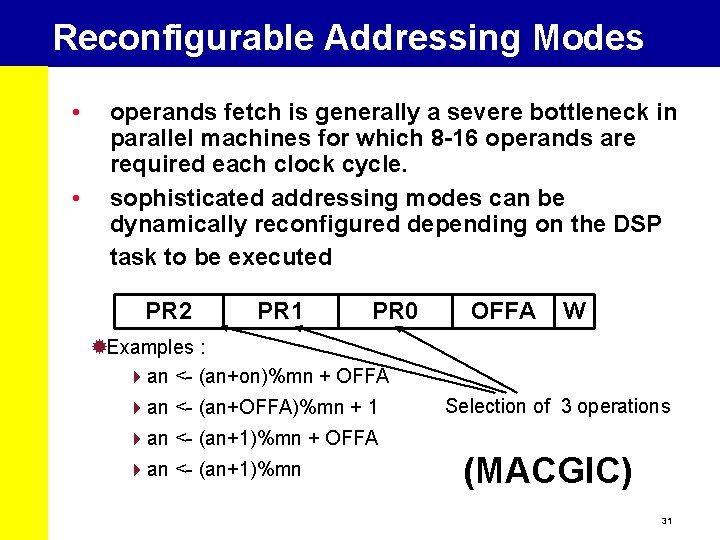

Reconfigurable Addressing Modes • • operands fetch is generally a severe bottleneck in parallel machines for which 8 -16 operands are required each clock cycle. sophisticated addressing modes can be dynamically reconfigured depending on the DSP task to be executed PR 2 PR 1 PR 0 OFFA W ® Examples : an <- (an+on)%mn + OFFA an <- (an+OFFA)%mn + 1 Selection of 3 operations an <- (an+1)%mn + OFFA an <- (an+1)%mn (MACGIC) 31

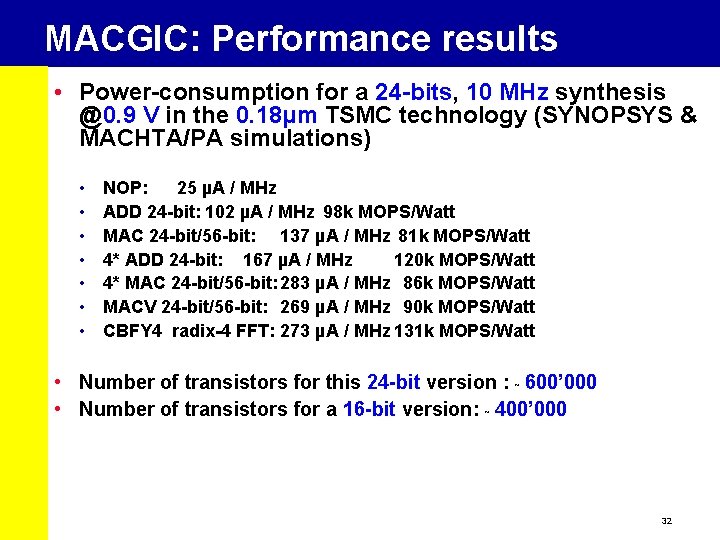

MACGIC: Performance results • Power-consumption for a 24 -bits, 10 MHz synthesis @0. 9 V in the 0. 18µm TSMC technology (SYNOPSYS & MACHTA/PA simulations) • • NOP: 25 µA / MHz ADD 24 -bit: 102 µA / MHz 98 k MOPS/Watt MAC 24 -bit/56 -bit: 137 µA / MHz 81 k MOPS/Watt 4* ADD 24 -bit: 167 µA / MHz 120 k MOPS/Watt 4* MAC 24 -bit/56 -bit: 283 µA / MHz 86 k MOPS/Watt MACV 24 -bit/56 -bit: 269 µA / MHz 90 k MOPS/Watt CBFY 4 radix-4 FFT: 273 µA / MHz 131 k MOPS/Watt • Number of transistors for this 24 -bit version : ˜ 600’ 000 • Number of transistors for a 16 -bit version: ˜ 400’ 000 32

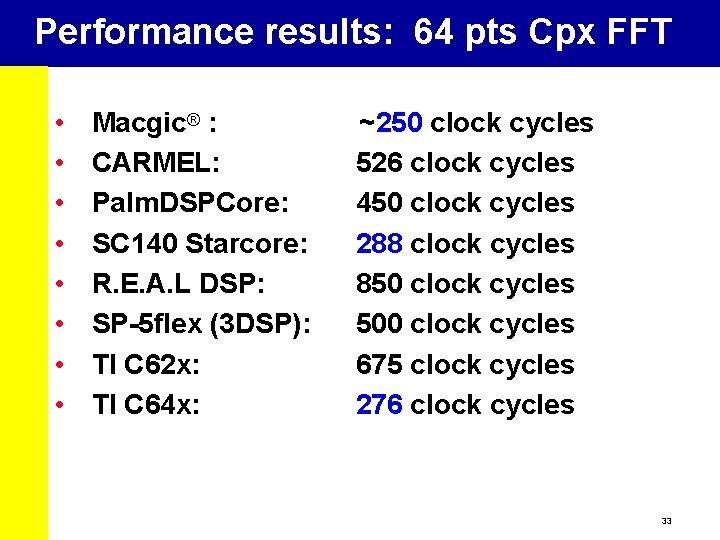

Performance results: 64 pts Cpx FFT • • Macgic® : CARMEL: Palm. DSPCore: SC 140 Starcore: R. E. A. L DSP: SP-5 flex (3 DSP): TI C 62 x: TI C 64 x: ~250 clock cycles 526 clock cycles 450 clock cycles 288 clock cycles 850 clock cycles 500 clock cycles 675 clock cycles 276 clock cycles 33

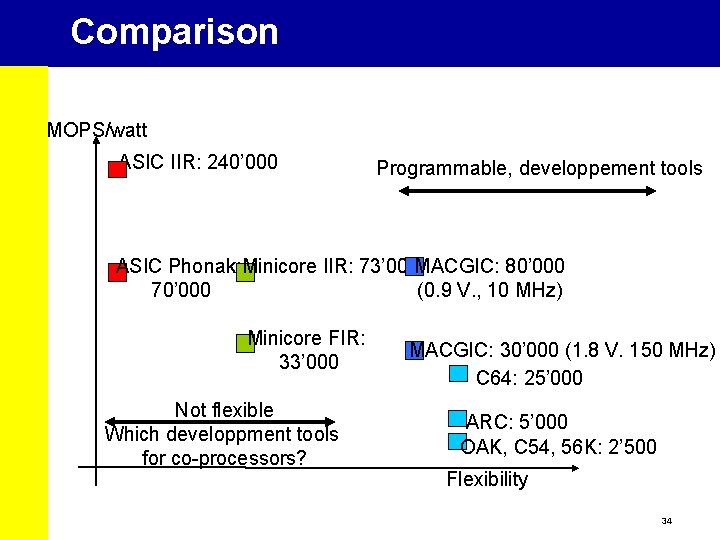

Comparison MOPS/watt ASIC IIR: 240’ 000 Programmable, developpement tools ASIC Phonak: Minicore IIR: 73’ 000 MACGIC: 80’ 000 70’ 000 (0. 9 V. , 10 MHz) Minicore FIR: 33’ 000 Not flexible Which developpment tools for co-processors? MACGIC: 30’ 000 (1. 8 V. 150 MHz) C 64: 25’ 000 ARC: 5’ 000 OAK, C 54, 56 K: 2’ 500 Flexibility 34

Introduction Applications Mapped Embedded DSP & Multimedia Design Methods onto Architectures = Low Power! 35

Design & Architecture Exploration Ed Deprettere, Professor Bart Kienhuis, Assistant Professor Leiden University LIACS, The Netherlands

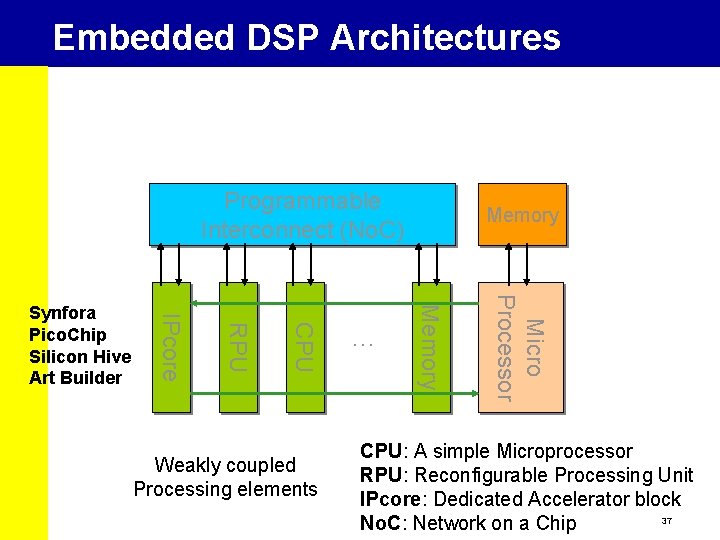

Embedded DSP Architectures Programmable Interconnect (No. C) Micro Processor Weakly coupled Processing elements . . . Memory CPU RPU IPcore Synfora Pico. Chip Silicon Hive Art Builder Memory CPU: A simple Microprocessor RPU: Reconfigurable Processing Unit IPcore: Dedicated Accelerator block 37 No. C: Network on a Chip

System Level Design • Three aspects are important in System Level Design • The Architecture • The Application • How the Application is Mapped on the Architecture. • To optimize a system, you need to take all three aspect into consideration. • This is expressed in terms of the Y-Chart 38

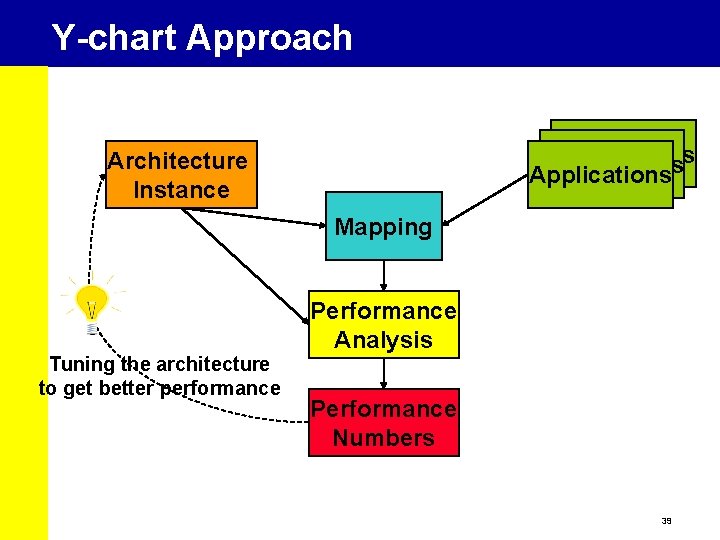

Y-chart Approach Applications Architecture Instance Mapping Performance Analysis Tuning the architecture to get better performance Performance Numbers 39

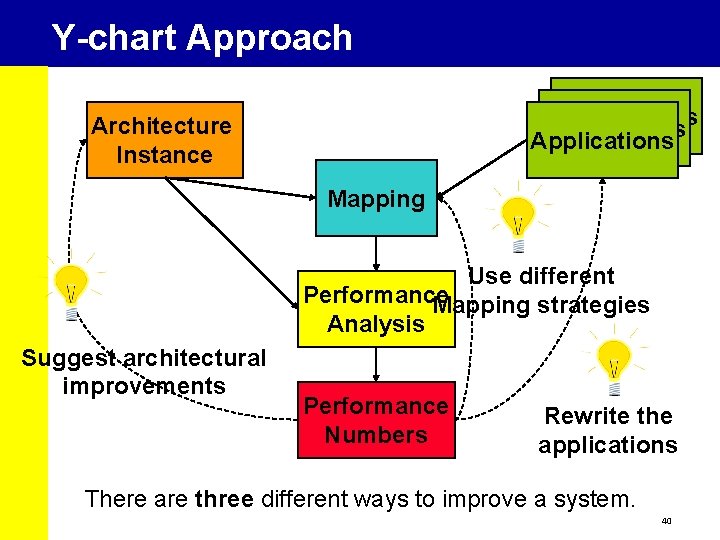

Y-chart Approach Applications Architecture Instance Mapping Use different Performance Mapping strategies Analysis Suggest architectural improvements Performance Numbers Rewrite the applications There are three different ways to improve a system. 40

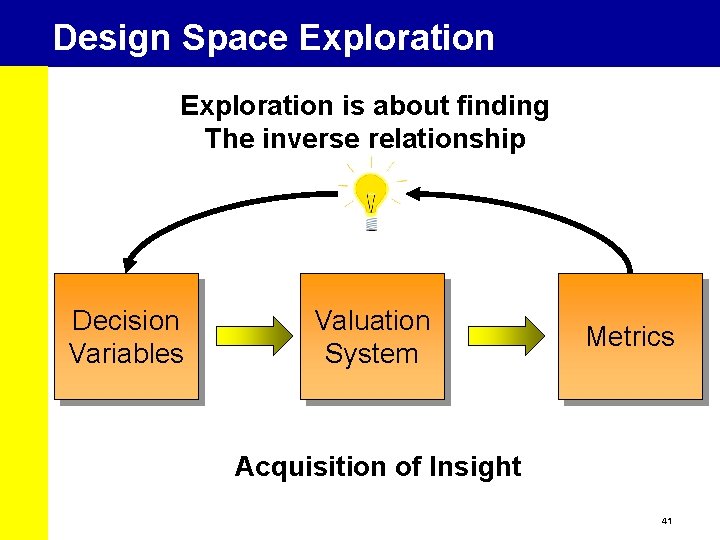

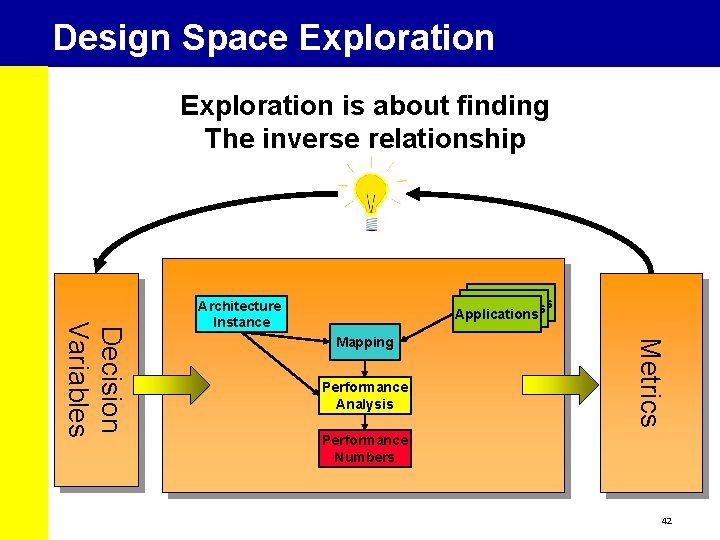

Design Space Exploration is about finding The inverse relationship Decision Variables Valuation System Metrics Acquisition of Insight 41

Design Space Exploration is about finding The inverse relationship Mapping Performance Analysis Metrics Decision Variables Applications Architecture Instance Performance Numbers 42

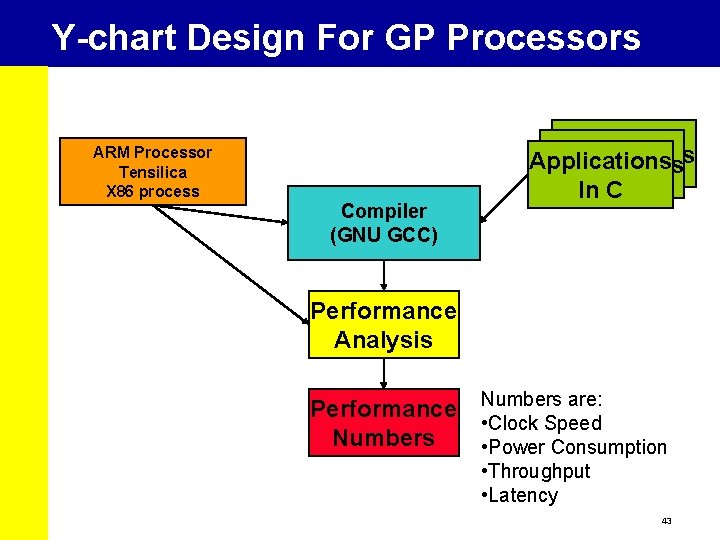

Y-chart Design For GP Processors ARM Processor Tensilica X 86 process Compiler (GNU GCC) Applications In C Performance Analysis Performance Numbers are: • Clock Speed • Power Consumption • Throughput • Latency 43

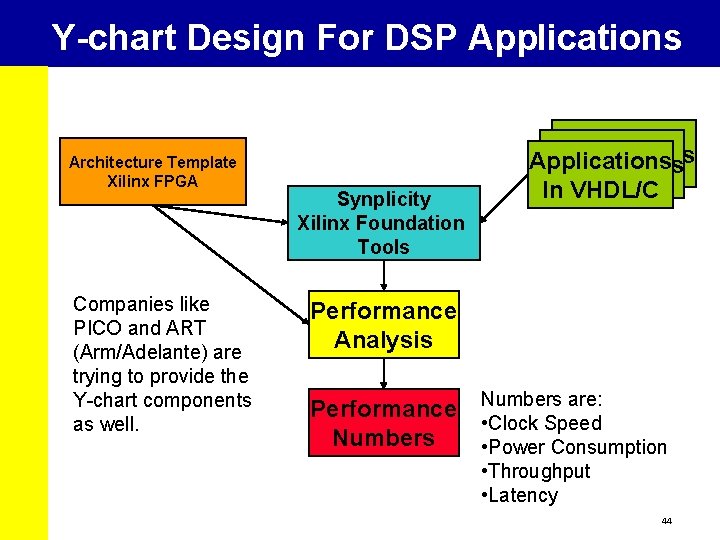

Y-chart Design For DSP Applications Architecture Template Xilinx FPGA Companies like PICO and ART (Arm/Adelante) are trying to provide the Y-chart components as well. Synplicity Xilinx Foundation Tools Applications In VHDL/C Performance Analysis Performance Numbers are: • Clock Speed • Power Consumption • Throughput • Latency 44

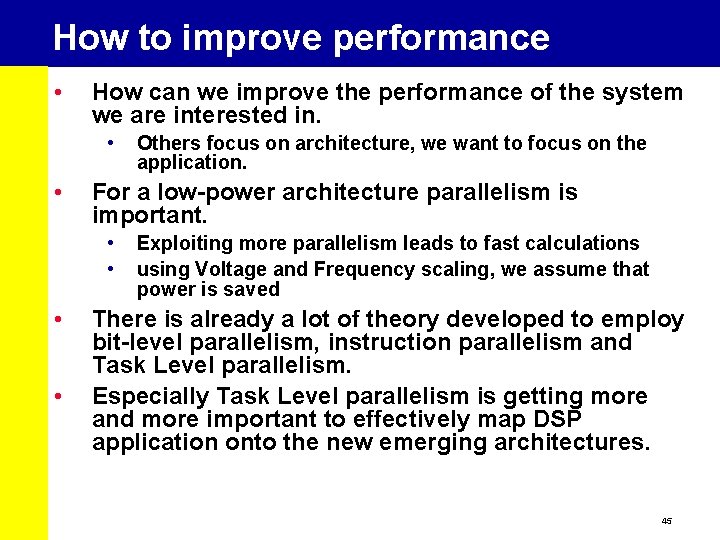

How to improve performance • How can we improve the performance of the system we are interested in. • • For a low-power architecture parallelism is important. • • Others focus on architecture, we want to focus on the application. Exploiting more parallelism leads to fast calculations using Voltage and Frequency scaling, we assume that power is saved There is already a lot of theory developed to employ bit-level parallelism, instruction parallelism and Task Level parallelism. Especially Task Level parallelism is getting more and more important to effectively map DSP application onto the new emerging architectures. 45

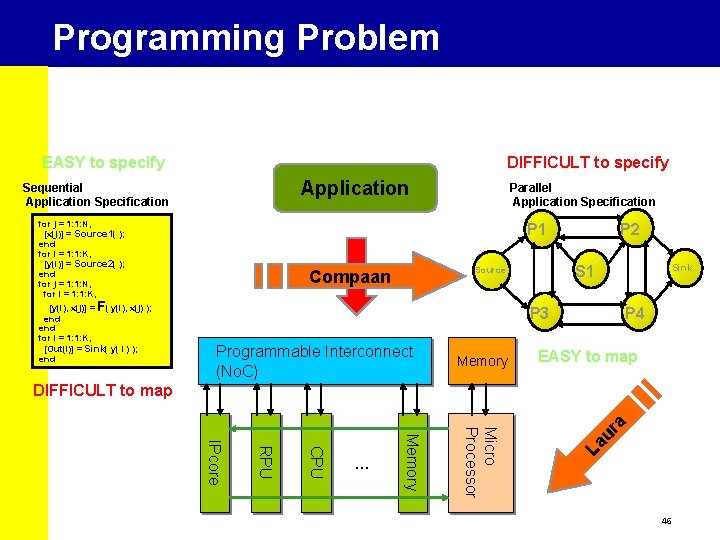

Programming Problem EASY to specify DIFFICULT to specify Application Sequential Application Specification for j = 1: 1: N, [x(j)] = Source 1( ); end for i = 1: 1: K, [y(i)] = Source 2( ); end for j = 1: 1: N, for i = 1: 1: K, [y(i), x(j)] = F( y(i), x(j) ); end for i = 1: 1: K, [Out(i)] = Sink( y( I ) ); end Parallel Application Specification P 1 Programming Compaan P 2 Sink S 1 Source P 3 Programmable Interconnect (No. C) Memory P 4 EASY to map La u Micro Processor Memory CPU RPU IPcore . . . ra DIFFICULT to map 46

![Kahn Process Network (KPN) • • • Kahn Process Networks [Kahn 1974][Parks&Lee 95] • Kahn Process Network (KPN) • • • Kahn Process Networks [Kahn 1974][Parks&Lee 95] •](http://slidetodoc.com/presentation_image_h/c13db55c981e0a16006a09d78097401a/image-47.jpg)

Kahn Process Network (KPN) • • • Kahn Process Networks [Kahn 1974][Parks&Lee 95] • Processes run autonomously • Communicate via unbounded FIFOs • Synchronize via blocking read Process is either Fa Fifo • executing (execute) • communicating A (send/get) Fb B Characteristics • • • get Deterministic execute Distributed Control – No Global Scheduler send is needed Distributed Memory – No memory contention Fc C get execute send C 47

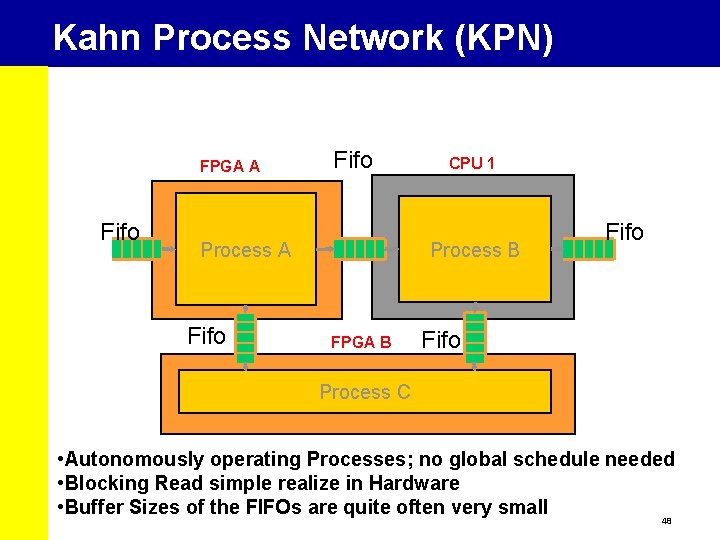

Kahn Process Network (KPN) FPGA A Fifo Process B Process A Fifo CPU 1 FPGA B Fifo Process C • Autonomously operating Processes; no global schedule needed • Blocking Read simple realize in Hardware • Buffer Sizes of the FIFOs are quite often very small 48

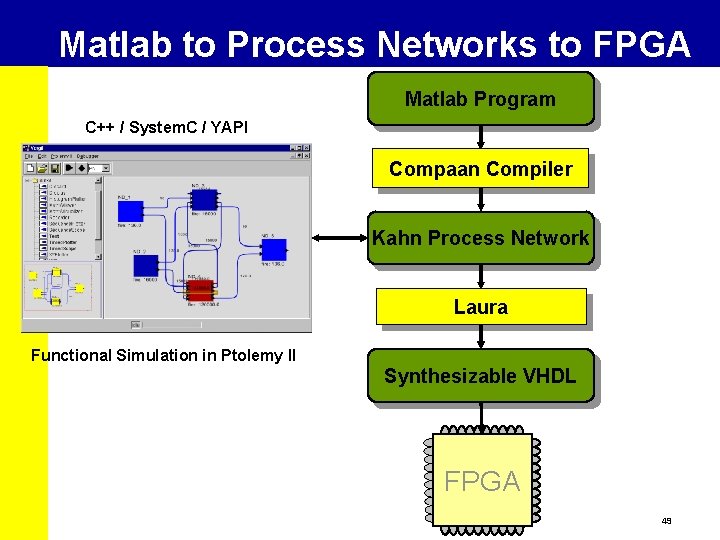

Matlab to Process Networks to FPGA Matlab Program C++ / System. C / YAPI Compaan Compiler Kahn Process Network Laura Functional Simulation in Ptolemy II Synthesizable VHDL FPGA 49

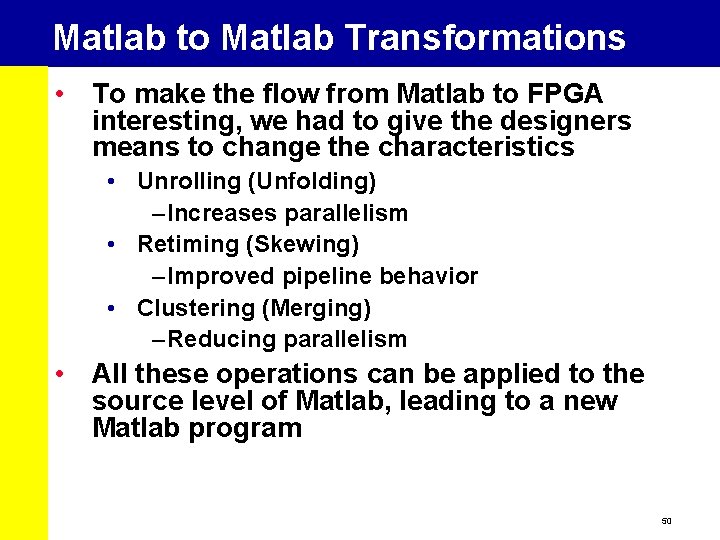

Matlab to Matlab Transformations • To make the flow from Matlab to FPGA interesting, we had to give the designers means to change the characteristics • Unrolling (Unfolding) – Increases parallelism • Retiming (Skewing) – Improved pipeline behavior • Clustering (Merging) – Reducing parallelism • All these operations can be applied to the source level of Matlab, leading to a new Matlab program 50

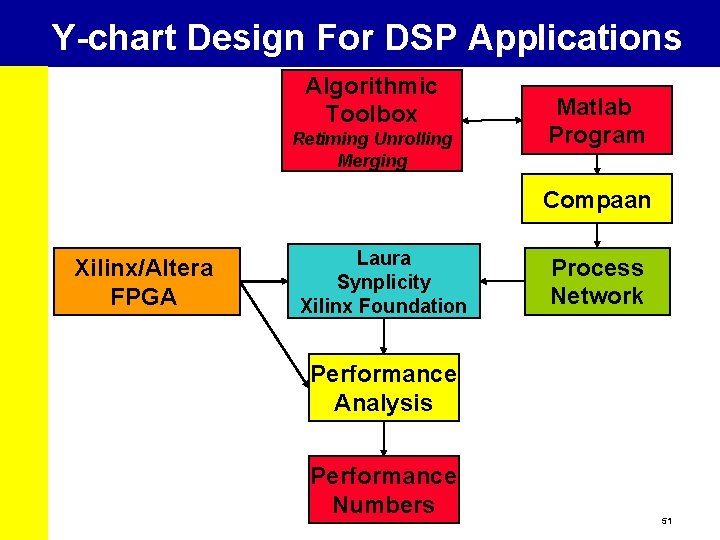

Y-chart Design For DSP Applications Algorithmic Toolbox Retiming Unrolling Merging Matlab Program Compaan Xilinx/Altera FPGA Laura Synplicity Xilinx Foundation Process Network Performance Analysis Performance Numbers 51

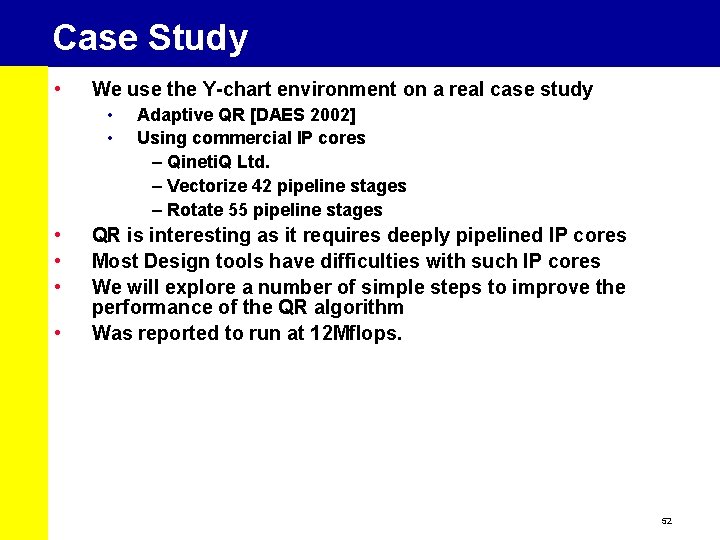

Case Study • We use the Y-chart environment on a real case study • • • Adaptive QR [DAES 2002] Using commercial IP cores – Qineti. Q Ltd. – Vectorize 42 pipeline stages – Rotate 55 pipeline stages QR is interesting as it requires deeply pipelined IP cores Most Design tools have difficulties with such IP cores We will explore a number of simple steps to improve the performance of the QR algorithm Was reported to run at 12 Mflops. 52

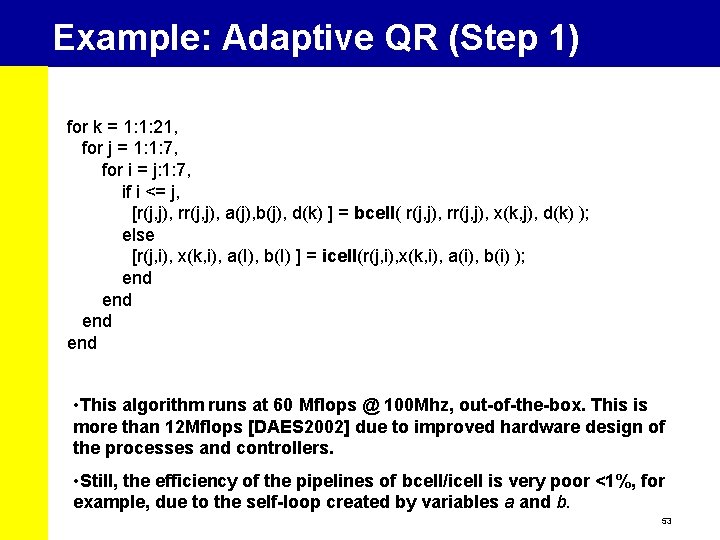

Example: Adaptive QR (Step 1) for k = 1: 1: 21, for j = 1: 1: 7, for i = j: 1: 7, if i <= j, [r(j, j), rr(j, j), a(j), b(j), d(k) ] = bcell( r(j, j), rr(j, j), x(k, j), d(k) ); else [r(j, i), x(k, i), a(I), b(I) ] = icell(r(j, i), x(k, i), a(i), b(i) ); end end • This algorithm runs at 60 Mflops @ 100 Mhz, out-of-the-box. This is more than 12 Mflops [DAES 2002] due to improved hardware design of the processes and controllers. • Still, the efficiency of the pipelines of bcell/icell is very poor <1%, for example, due to the self-loop created by variables a and b. 53

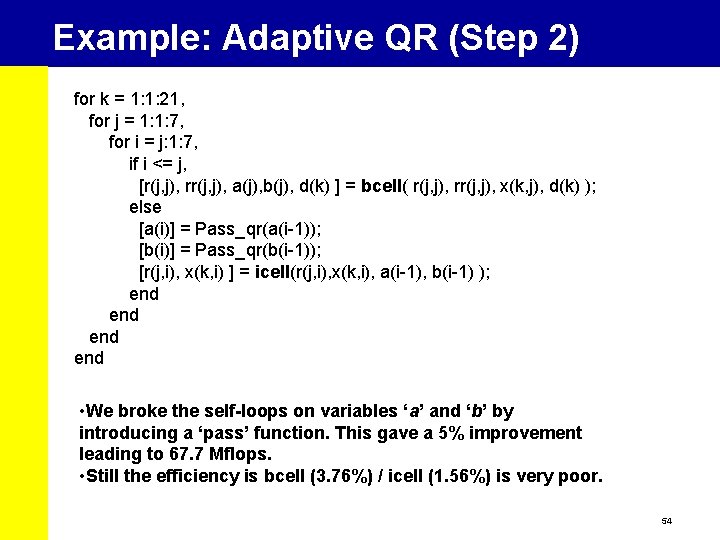

Example: Adaptive QR (Step 2) for k = 1: 1: 21, for j = 1: 1: 7, for i = j: 1: 7, if i <= j, [r(j, j), rr(j, j), a(j), b(j), d(k) ] = bcell( r(j, j), rr(j, j), x(k, j), d(k) ); else [a(i)] = Pass_qr(a(i-1)); [b(i)] = Pass_qr(b(i-1)); [r(j, i), x(k, i) ] = icell(r(j, i), x(k, i), a(i-1), b(i-1) ); end end • We broke the self-loops on variables ‘a’ and ‘b’ by introducing a ‘pass’ function. This gave a 5% improvement leading to 67. 7 Mflops. • Still the efficiency is bcell (3. 76%) / icell (1. 56%) is very poor. 54

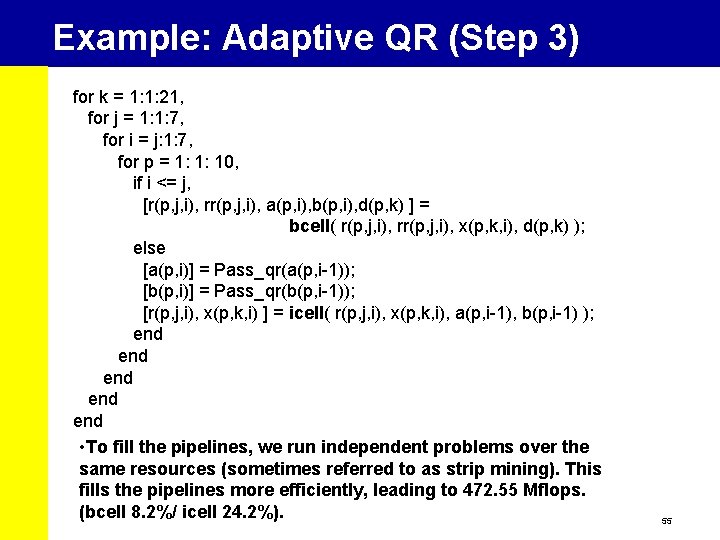

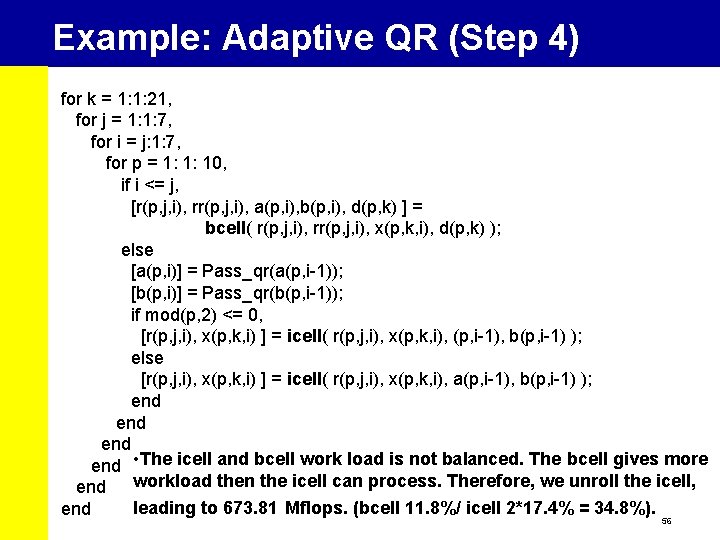

Example: Adaptive QR (Step 3) for k = 1: 1: 21, for j = 1: 1: 7, for i = j: 1: 7, for p = 1: 1: 10, if i <= j, [r(p, j, i), rr(p, j, i), a(p, i), b(p, i), d(p, k) ] = bcell( r(p, j, i), rr(p, j, i), x(p, k, i), d(p, k) ); else [a(p, i)] = Pass_qr(a(p, i-1)); [b(p, i)] = Pass_qr(b(p, i-1)); [r(p, j, i), x(p, k, i) ] = icell( r(p, j, i), x(p, k, i), a(p, i-1), b(p, i-1) ); end end • To fill the pipelines, we run independent problems over the same resources (sometimes referred to as strip mining). This fills the pipelines more efficiently, leading to 472. 55 Mflops. (bcell 8. 2%/ icell 24. 2%). 55

Example: Adaptive QR (Step 4) for k = 1: 1: 21, for j = 1: 1: 7, for i = j: 1: 7, for p = 1: 1: 10, if i <= j, [r(p, j, i), rr(p, j, i), a(p, i), b(p, i), d(p, k) ] = bcell( r(p, j, i), rr(p, j, i), x(p, k, i), d(p, k) ); else [a(p, i)] = Pass_qr(a(p, i-1)); [b(p, i)] = Pass_qr(b(p, i-1)); if mod(p, 2) <= 0, [r(p, j, i), x(p, k, i) ] = icell( r(p, j, i), x(p, k, i), (p, i-1), b(p, i-1) ); else [r(p, j, i), x(p, k, i) ] = icell( r(p, j, i), x(p, k, i), a(p, i-1), b(p, i-1) ); end • The icell and bcell work load is not balanced. The bcell gives more end workload then the icell can process. Therefore, we unroll the icell, leading to 673. 81 Mflops. (bcell 11. 8%/ icell 2*17. 4% = 34. 8%). end 56

Conclusions • Optimizing a System Level Design for Low. Power requires that you look at the architecture, the mapping, and the application. • The Y-chart gives a simple framework to tune a system for optimal performance. • The Y-chart forms the basis for DSE • We showed that by playing with the way applications are written, we get in a number of steps orders better performance 60 MFlops -> 673 MFlops. This without changing the architecture! 57

Introduction Applications Mapped Embedded DSP & Multimedia Design Methods onto Architectures = Low Power! 58

Domain-Specific Co-design Environments Patrick Schaumont, UCLA

Let’s waste no time ! DATE Party You Presentation 60

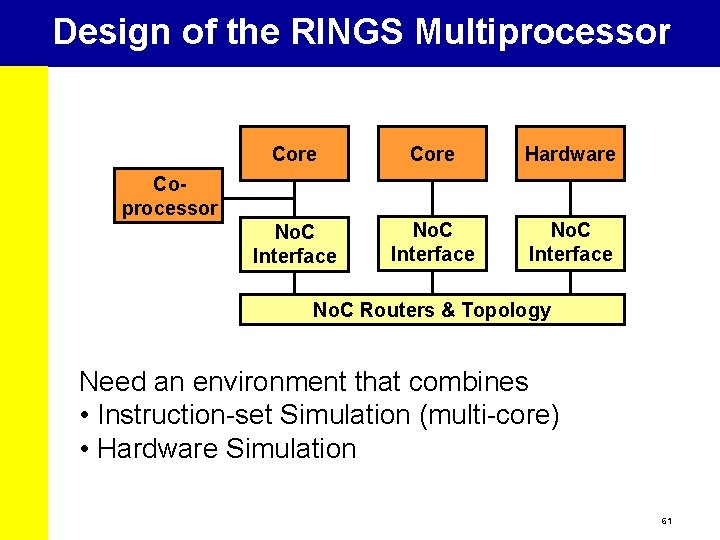

Design of the RINGS Multiprocessor Core Hardware No. C Interface Coprocessor No. C Routers & Topology Need an environment that combines • Instruction-set Simulation (multi-core) • Hardware Simulation 61

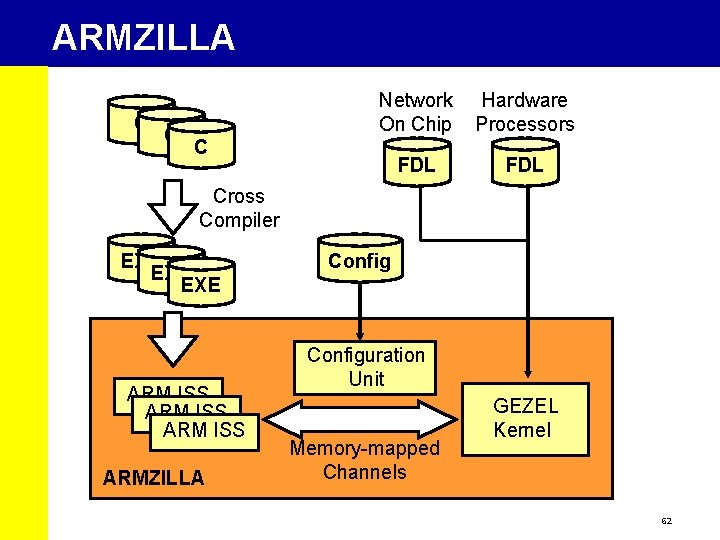

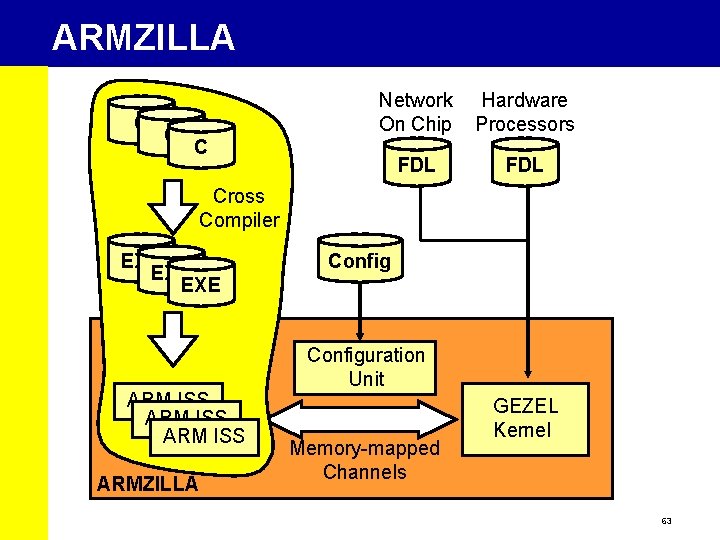

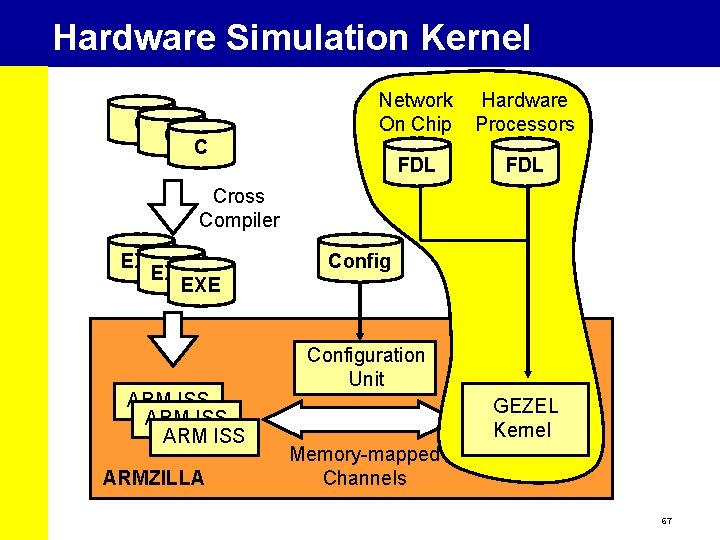

ARMZILLA C C Network On Chip Hardware Processors FDL C Cross Compiler EXE EXE ARM ISS ARMZILLA Configuration Unit Memory-mapped Channels GEZEL Kernel 62

ARMZILLA C C Network On Chip Hardware Processors FDL C Cross Compiler EXE EXE ARM ISS ARMZILLA Configuration Unit Memory-mapped Channels GEZEL Kernel 63

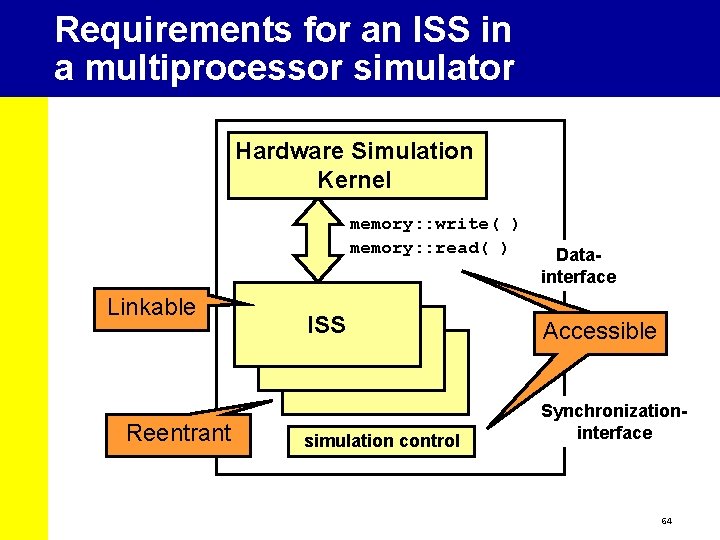

Requirements for an ISS in a multiprocessor simulator Hardware Simulation Kernel memory: : write( ) memory: : read( ) Linkable Reentrant Datainterface ISS Accessible simulation control Synchronizationinterface 64

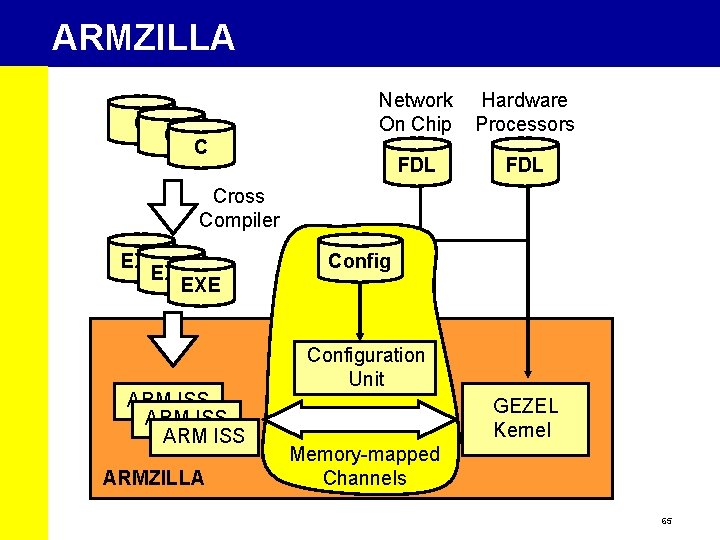

ARMZILLA C C Network On Chip Hardware Processors FDL C Cross Compiler EXE EXE ARM ISS ARMZILLA Configuration Unit GEZEL Kernel Memory-mapped Channels 65

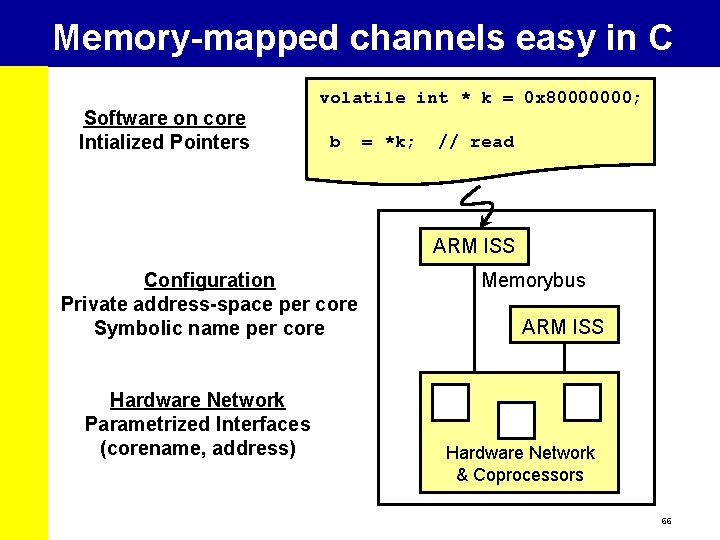

Memory-mapped channels easy in C Software on core Intialized Pointers volatile int * k = 0 x 80000000; b = *k; // read ARM ISS Configuration Private address-space per core Symbolic name per core Hardware Network Parametrized Interfaces (corename, address) Memorybus ARM ISS Hardware Network & Coprocessors 66

Hardware Simulation Kernel C C Network On Chip Hardware Processors FDL C Cross Compiler EXE EXE ARM ISS ARMZILLA Configuration Unit GEZEL Kernel Memory-mapped Channels 67

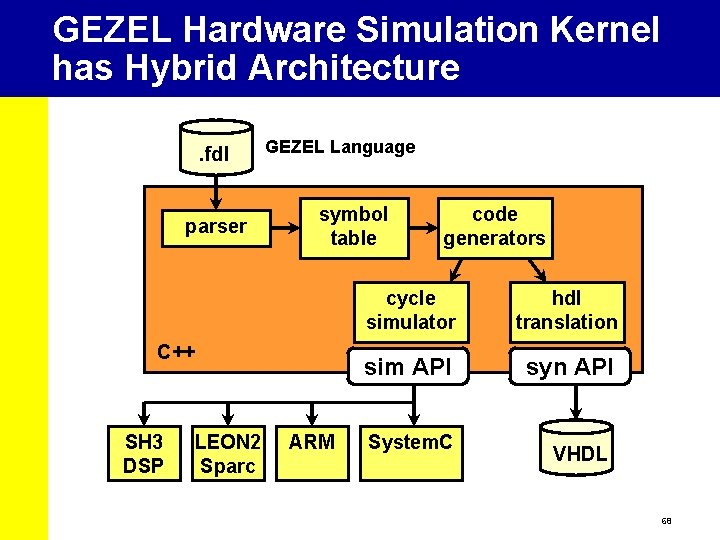

GEZEL Hardware Simulation Kernel has Hybrid Architecture. fdl parser GEZEL Language symbol table C++ SH 3 DSP LEON 2 Sparc ARM code generators cycle simulator hdl translation sim API syn API System. C VHDL 68

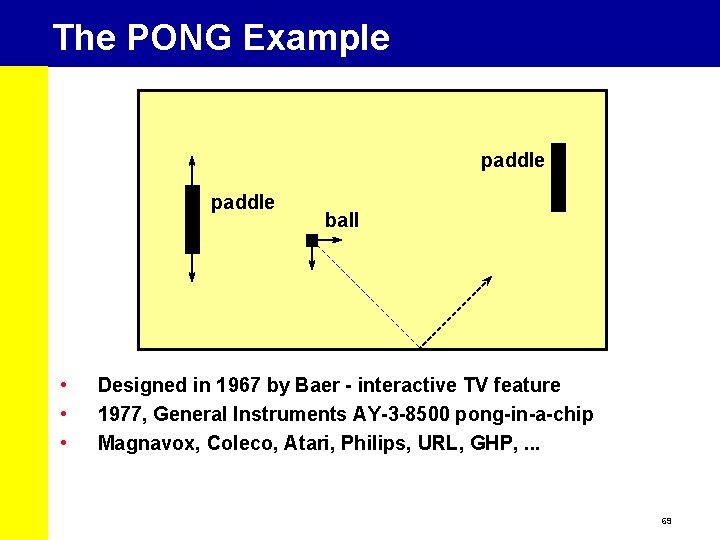

The PONG Example paddle • • • ball Designed in 1967 by Baer - interactive TV feature 1977, General Instruments AY-3 -8500 pong-in-a-chip Magnavox, Coleco, Atari, Philips, URL, GHP, . . . 69

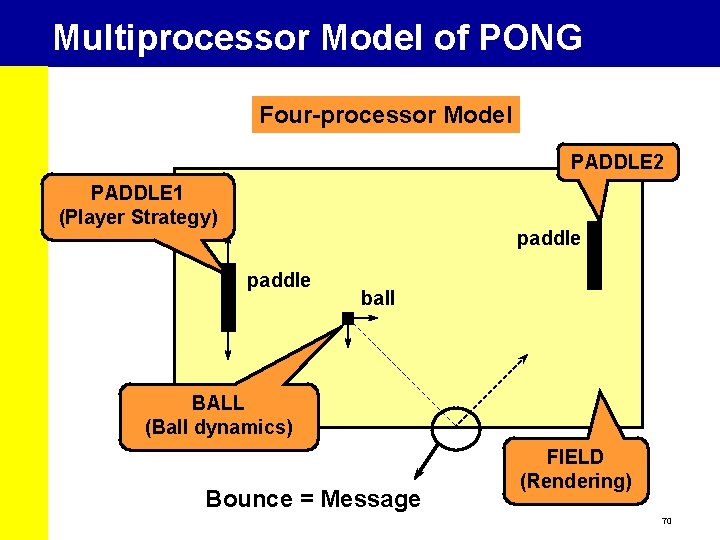

Multiprocessor Model of PONG Four-processor Model PADDLE 2 PADDLE 1 (Player Strategy) paddle ball BALL (Ball dynamics) Bounce = Message FIELD (Rendering) 70

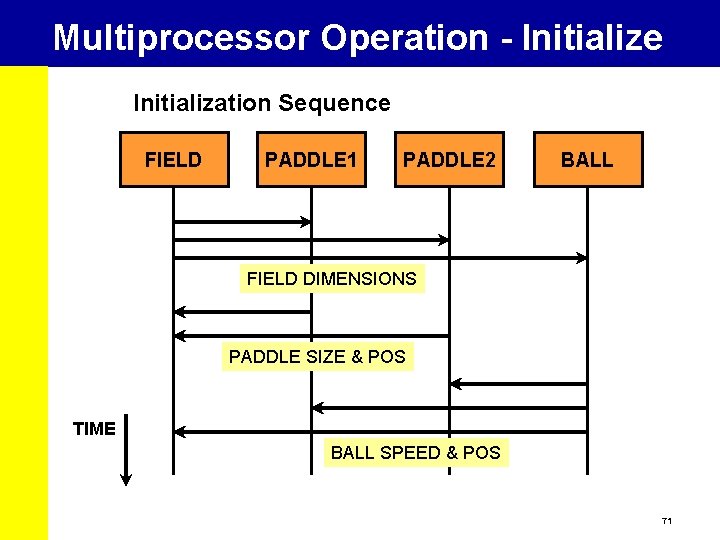

Multiprocessor Operation - Initialize Initialization Sequence FIELD PADDLE 1 PADDLE 2 BALL FIELD DIMENSIONS PADDLE SIZE & POS TIME BALL SPEED & POS 71

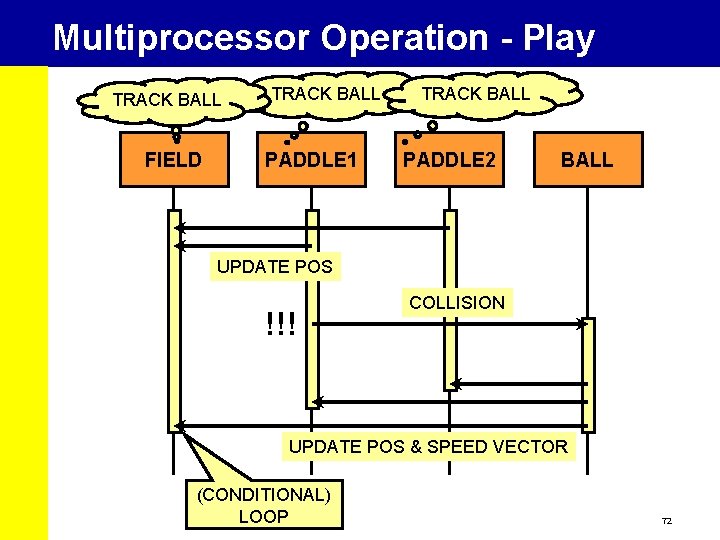

Multiprocessor Operation - Play TRACK BALL Play Sequence FIELD PADDLE 1 TRACK BALL PADDLE 2 BALL UPDATE POS !!! COLLISION UPDATE POS & SPEED VECTOR (CONDITIONAL) LOOP 72

Let’s play! 73

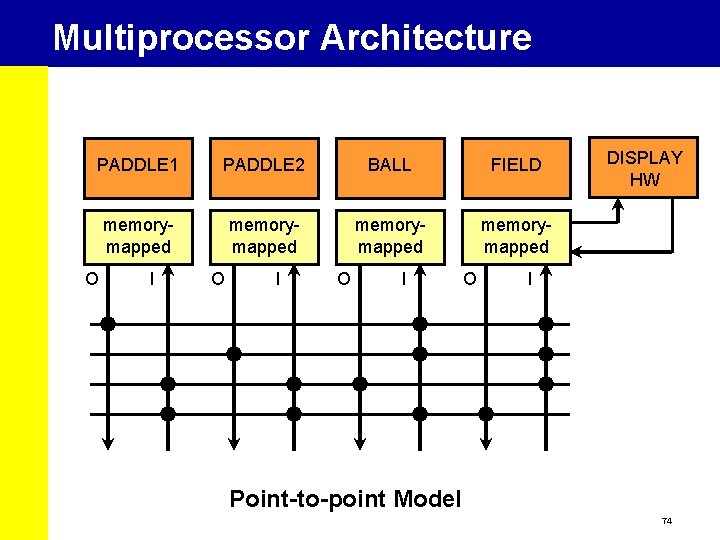

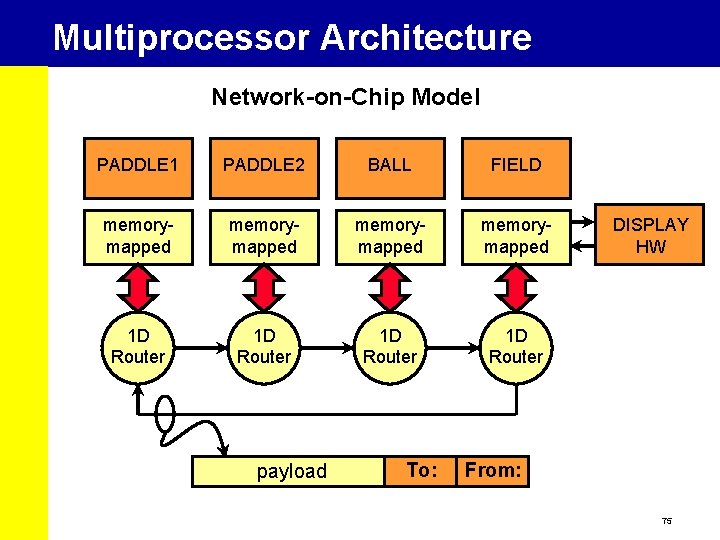

Multiprocessor Architecture PADDLE 1 PADDLE 2 BALL FIELD memorymapped O I O I O DISPLAY HW I Point-to-point Model 74

Multiprocessor Architecture Network-on-Chip Model PADDLE 1 PADDLE 2 BALL FIELD memorymapped 1 D Router payload To: DISPLAY HW From: 75

Links Sim. It-ARM ISS (W. Qin, Princeton University) http: //www. ee. princeton. edu/~wqin/armsim. htm Cross Compiler arm-linux-gcc ftp: //ftp. arm. linux. org. uk GEZEL & ARMZILLA: http: //www. ee. ucla. edu/~schaum/gezel All these tools are free! (free as in freedom, not as in free beer) 76

Conclusion Applications Mapped Embedded DSP & Multimedia Design Methods onto Architectures = Low Power! 77

Thanks for your attention ! 78

- Slides: 78