CMU SCS Spectral Graph Theory Basics Charalampos Babis

CMU SCS Spectral Graph Theory (Basics) Charalampos (Babis) Tsourakakis CMU Charalampos E. Tsourakakis

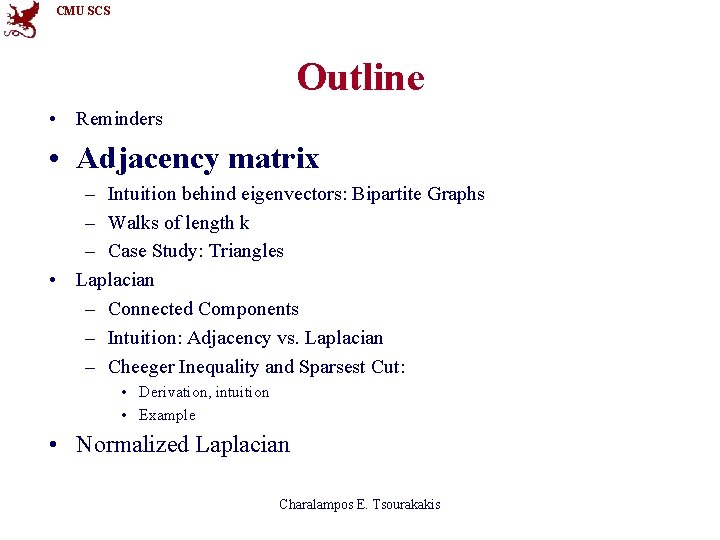

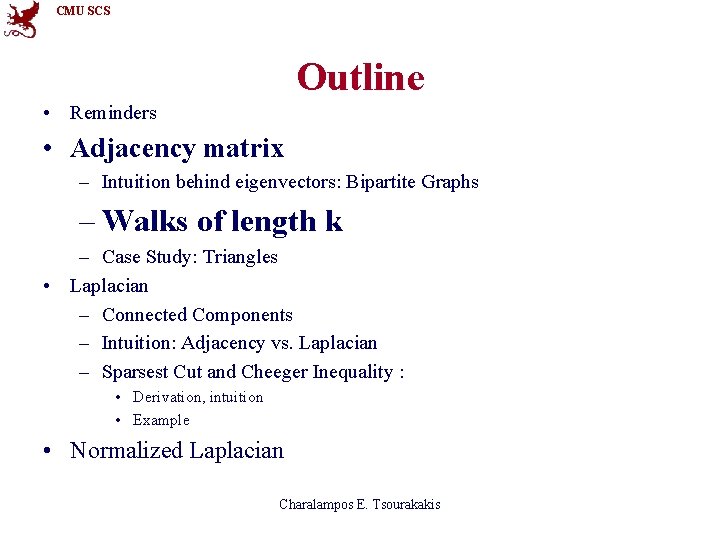

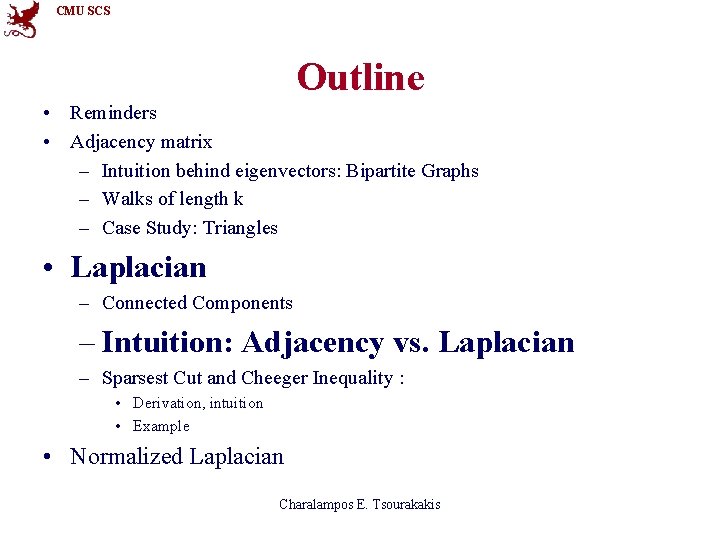

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

CMU SCS Matrix Representations of G(V, E) Associate a matrix to a graph: • Adjacency matrix Main focus • Laplacian • Normalized Laplacian Charalampos E. Tsourakakis

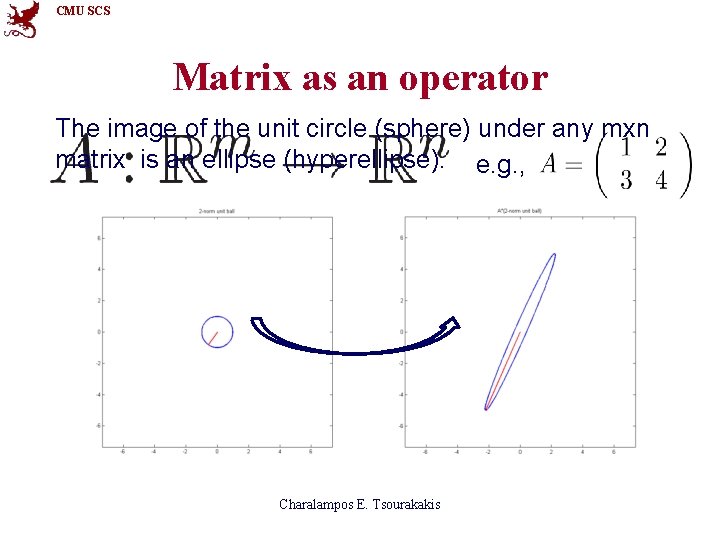

CMU SCS Matrix as an operator The image of the unit circle (sphere) under any mxn matrix is an ellipse (hyperellipse). e. g. , Charalampos E. Tsourakakis

CMU SCS More Reminders • Let M be a symmetric nxn matrix. λ eigenvalue x eigenvector Charalampos E. Tsourakakis

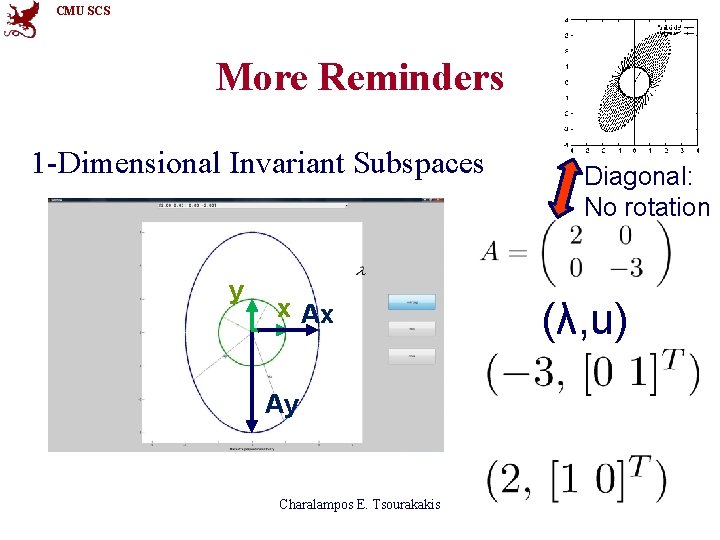

CMU SCS More Reminders 1 -Dimensional Invariant Subspaces y x Ax Ay Charalampos E. Tsourakakis Diagonal: No rotation (λ, u)

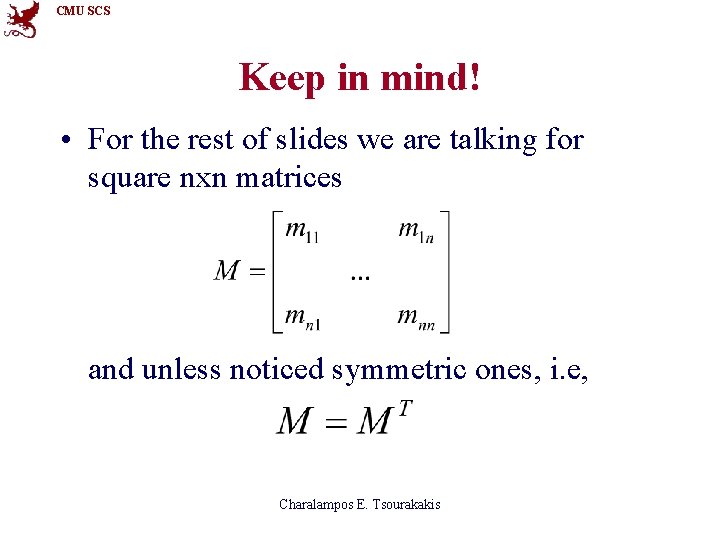

CMU SCS Keep in mind! • For the rest of slides we are talking for square nxn matrices and unless noticed symmetric ones, i. e, Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Cheeger Inequality and Sparsest Cut: • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

CMU SCS Adjacency matrix Undirected 4 1 A= 2 3 Charalampos E. Tsourakakis

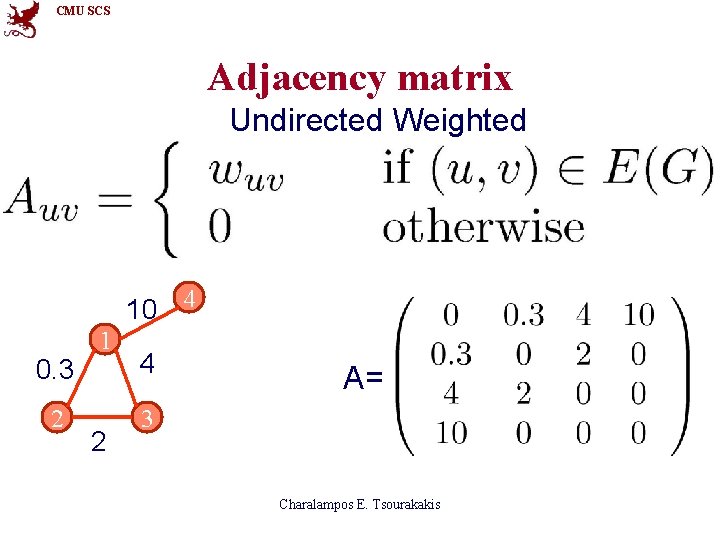

CMU SCS Adjacency matrix Undirected Weighted 10 4 0. 3 2 1 2 4 A= 3 Charalampos E. Tsourakakis

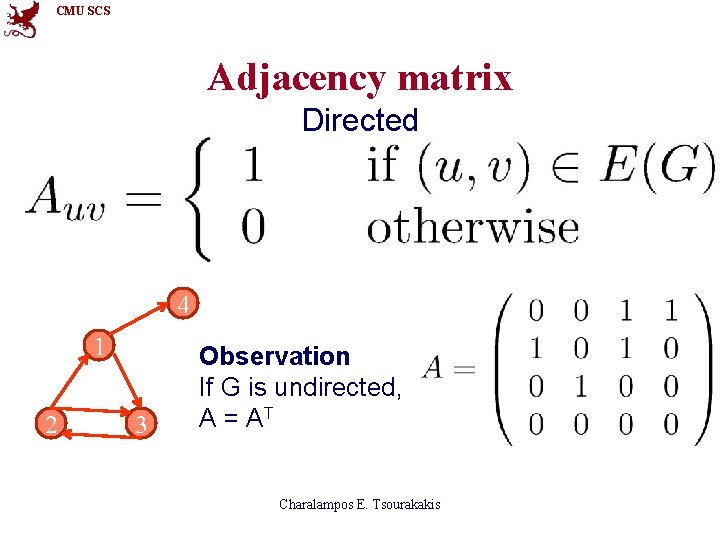

CMU SCS Adjacency matrix Directed 4 1 2 3 Observation If G is undirected, A = AT Charalampos E. Tsourakakis

![CMU SCS Spectral Theorem [Spectral Theorem] • If M=MT, then 0 where λi 0 CMU SCS Spectral Theorem [Spectral Theorem] • If M=MT, then 0 where λi 0](http://slidetodoc.com/presentation_image_h/d76677094ad770775169c3dd90c229ef/image-13.jpg)

CMU SCS Spectral Theorem [Spectral Theorem] • If M=MT, then 0 where λi 0 xi Reminder 2: Reminder 1: xi x , x orthogonal i-th principal axis i j λi xj Charalampos E. Tsourakakis length of i-th principal axis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

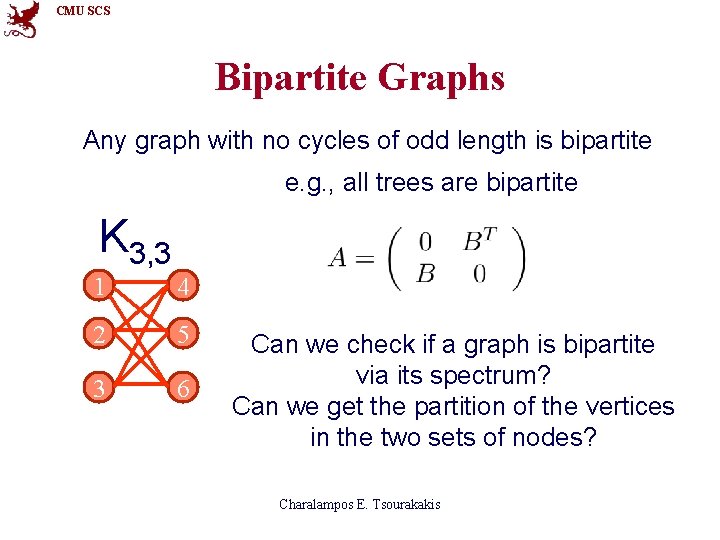

CMU SCS Bipartite Graphs Any graph with no cycles of odd length is bipartite e. g. , all trees are bipartite K 3, 3 1 4 2 5 3 6 Can we check if a graph is bipartite via its spectrum? Can we get the partition of the vertices in the two sets of nodes? Charalampos E. Tsourakakis

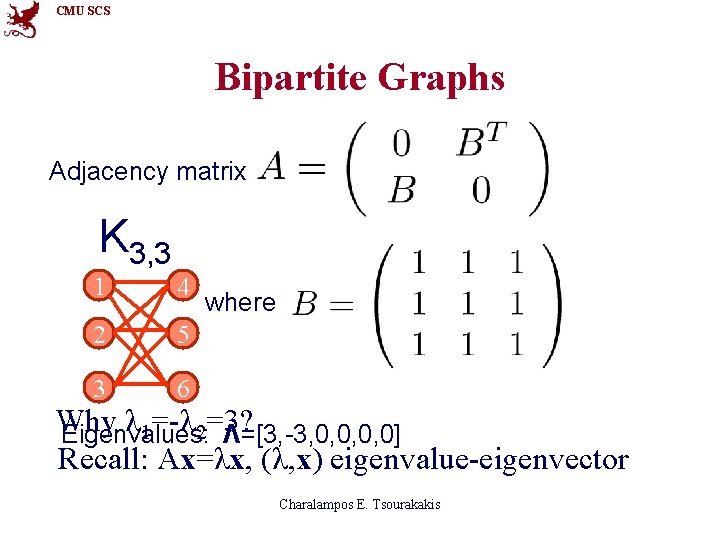

CMU SCS Bipartite Graphs Adjacency matrix K 3, 3 1 4 2 5 3 6 where Why λ 1=-λ 2=3? Eigenvalues: Λ=[3, -3, 0, 0] Recall: Ax=λx, (λ, x) eigenvalue-eigenvector Charalampos E. Tsourakakis

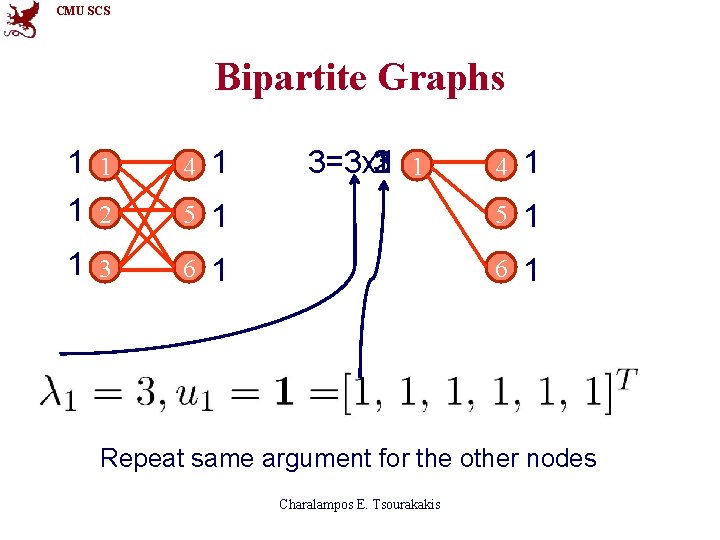

CMU SCS Bipartite Graphs 1 1 1 2 4 1 5 1 3 6 3=3 x 1 1 1 3 2 4 1 1 5 1 1 6 1 Repeat same argument for the other nodes Charalampos E. Tsourakakis

CMU SCS Bipartite Graphs 1 1 1 2 4 -1 -3=(-3)x 1 -1 1 -3 -2 4 -1 5 -1 1 3 6 -1 Repeat same argument for the other nodes Charalampos E. Tsourakakis

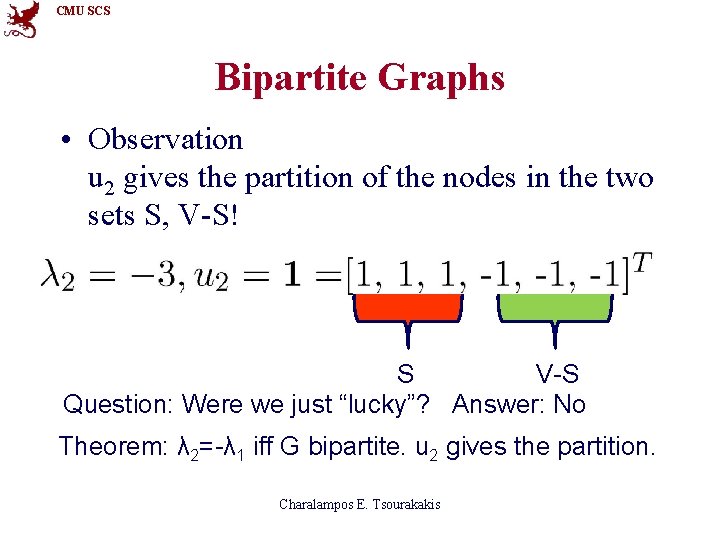

CMU SCS Bipartite Graphs • Observation u 2 gives the partition of the nodes in the two sets S, V-S! S V-S Question: Were we just “lucky”? Answer: No Theorem: λ 2=-λ 1 iff G bipartite. u 2 gives the partition. Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

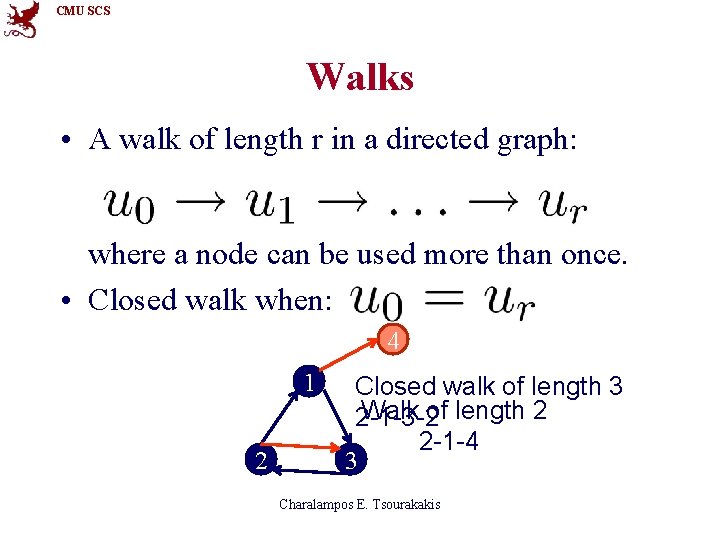

CMU SCS Walks • A walk of length r in a directed graph: where a node can be used more than once. • Closed walk when: 4 1 2 Closed walk of length 3 Walk of length 2 2 -1 -3 -2 2 -1 -4 3 Charalampos E. Tsourakakis

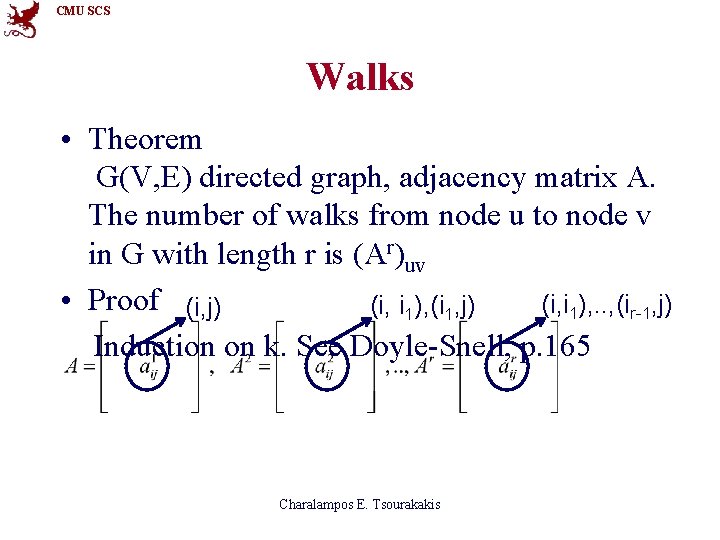

CMU SCS Walks • Theorem G(V, E) directed graph, adjacency matrix A. The number of walks from node u to node v in G with length r is (Ar)uv • Proof (i, j) (i, i 1), . . , (ir-1, j) (i, i 1), (i 1, j) Induction on k. See Doyle-Snell, p. 165 Charalampos E. Tsourakakis

CMU SCS Walks 4 1 2 3 4 i=2, j=4 i=3, j=3 1 2 4 1 3 2 Charalampos E. Tsourakakis 3

CMU SCS Walks 4 1 2 3 Charalampos E. Tsourakakis Always 0, node 4 is a sink

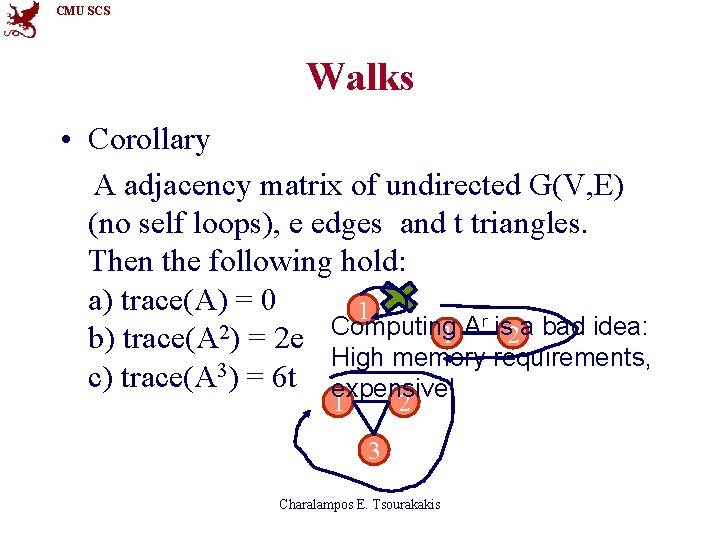

CMU SCS Walks • Corollary A adjacency matrix of undirected G(V, E) (no self loops), e edges and t triangles. Then the following hold: a) trace(A) = 0 1 r is a bad idea: Computing A 2 1 2 b) trace(A ) = 2 e High memory requirements, 3 c) trace(A ) = 6 t expensive! 1 2 3 Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

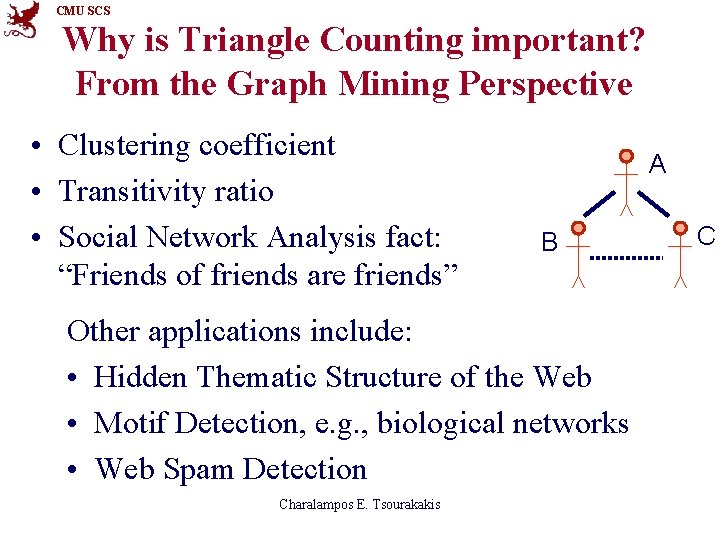

CMU SCS Why is Triangle Counting important? From the Graph Mining Perspective • Clustering coefficient • Transitivity ratio • Social Network Analysis fact: “Friends of friends are friends” A B Other applications include: • Hidden Thematic Structure of the Web • Motif Detection, e. g. , biological networks • Web Spam Detection Charalampos E. Tsourakakis C

![CMU SCS Theorem [Eigen. Triangle] Theorem δ(G) = # triangles in graph G(V, E) CMU SCS Theorem [Eigen. Triangle] Theorem δ(G) = # triangles in graph G(V, E)](http://slidetodoc.com/presentation_image_h/d76677094ad770775169c3dd90c229ef/image-28.jpg)

CMU SCS Theorem [Eigen. Triangle] Theorem δ(G) = # triangles in graph G(V, E) = eigenvalues of adjacency matrix A Charalampos E. Tsourakakis

![CMU SCS Theorem[Eigen. Triangle. Local] Theorem δ(i) = #Δs vertex i participates at. = CMU SCS Theorem[Eigen. Triangle. Local] Theorem δ(i) = #Δs vertex i participates at. =](http://slidetodoc.com/presentation_image_h/d76677094ad770775169c3dd90c229ef/image-29.jpg)

CMU SCS Theorem[Eigen. Triangle. Local] Theorem δ(i) = #Δs vertex i participates at. = i-th eigenvector = j-th entry of Charalampos E. Tsourakakis

CMU SCS Algorithm’s idea • Almost symmetric around 0! Omit! Keep only 3! 3 Charalampos E. Tsourakakis Political Blogs

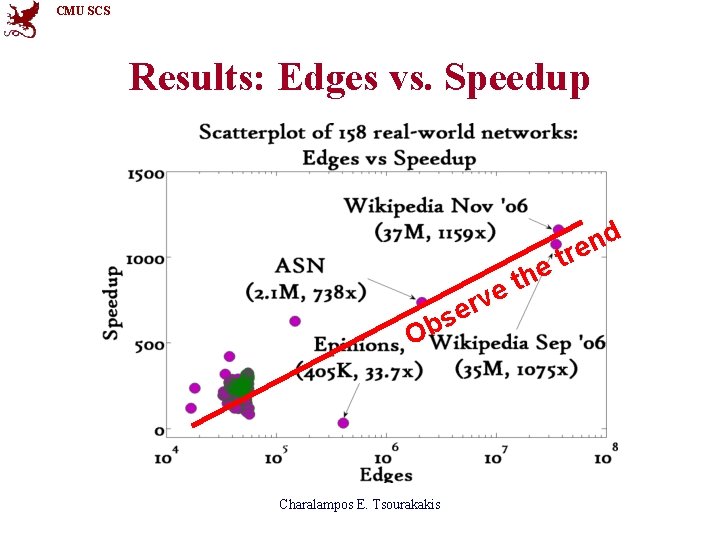

CMU SCS Results: Edges vs. Speedup d n re e v r e s b O Charalampos E. Tsourakakis t e th

CMU SCS #Eigenvalues vs. ϱ 2 -3 eigenvalues almost ideal results! Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

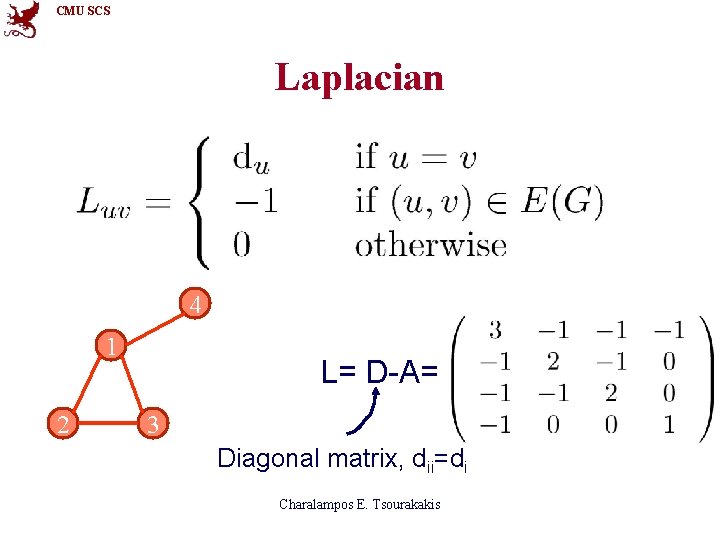

CMU SCS Laplacian 4 1 2 L= D-A= 3 Diagonal matrix, dii=di Charalampos E. Tsourakakis

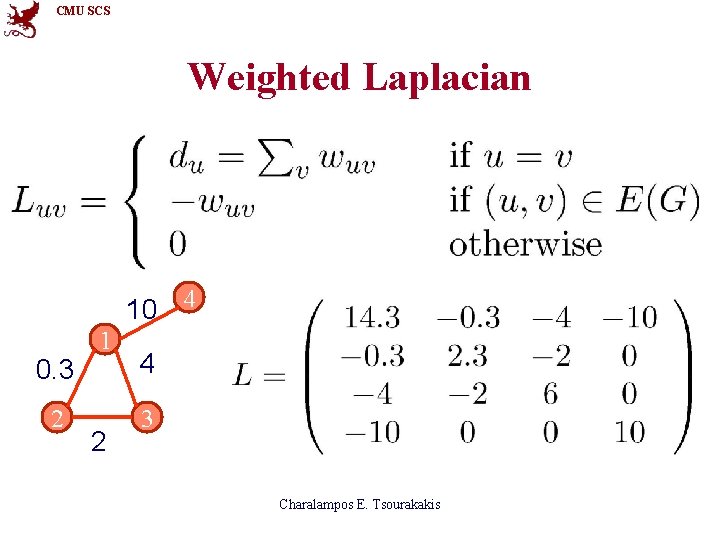

CMU SCS Weighted Laplacian 10 4 0. 3 2 1 2 4 3 Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

CMU SCS Connected Components • Lemma Let G be a graph with n vertices and c connected components. If L is the Laplacian of G, then rank(L)=n-c. • Proof see p. 279, Godsil-Royle Charalampos E. Tsourakakis

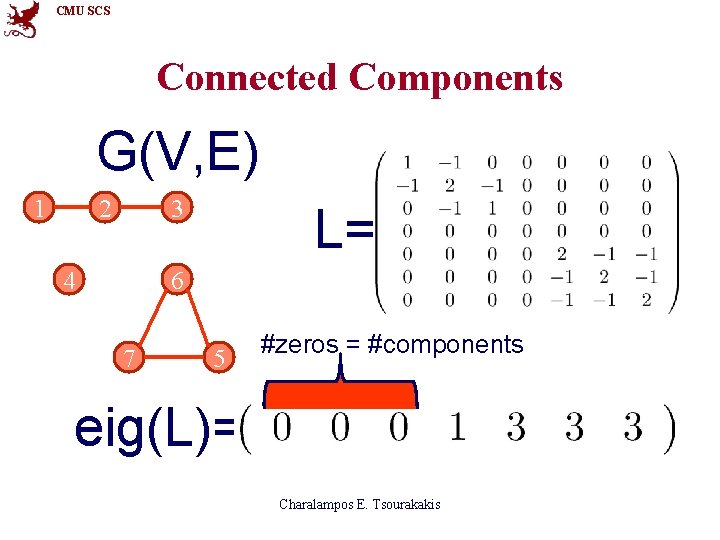

CMU SCS Connected Components G(V, E) 1 2 3 4 L= 6 7 5 #zeros = #components eig(L)= Charalampos E. Tsourakakis

CMU SCS Connected Components G(V, E) 1 2 3 0. 01 6 4 7 5 L= #zeros Indicates = #components a “good cut” eig(L)= Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

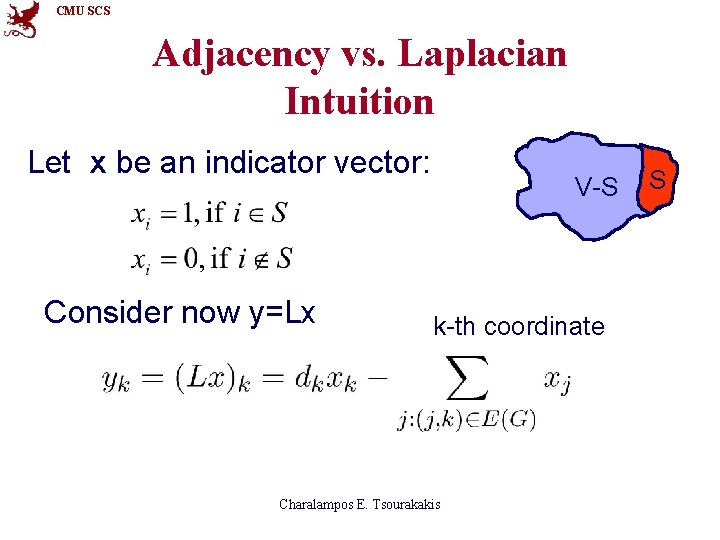

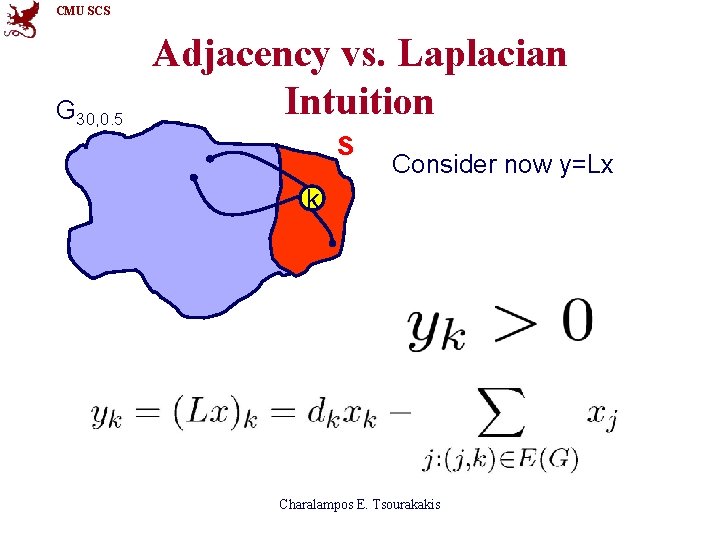

CMU SCS Adjacency vs. Laplacian Intuition Let x be an indicator vector: Consider now y=Lx V-S k-th coordinate Charalampos E. Tsourakakis S

CMU SCS G 30, 0. 5 Adjacency vs. Laplacian Intuition S Consider now y=Lx k Charalampos E. Tsourakakis

CMU SCS G 30, 0. 5 Adjacency vs. Laplacian Intuition S Consider now y=Lx k Charalampos E. Tsourakakis

CMU SCS G 30, 0. 5 Adjacency vs. Laplacian Intuition S Consider now y=Lx k k Laplacian: connectivity, Adjacency: #paths Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

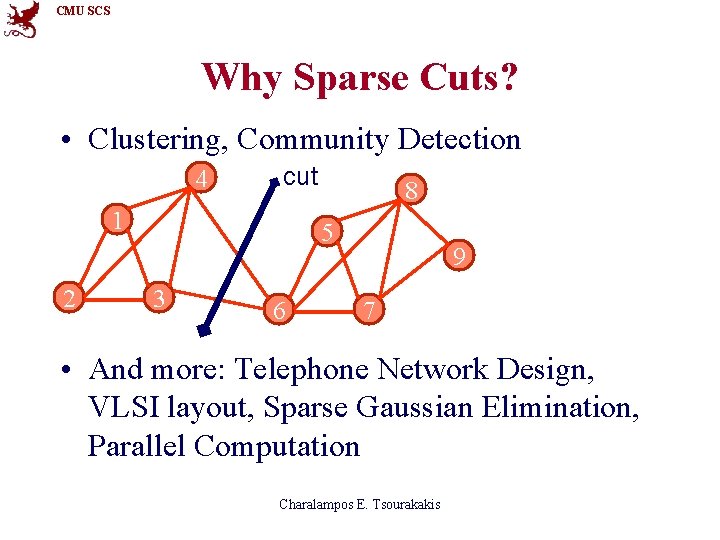

CMU SCS Why Sparse Cuts? • Clustering, Community Detection 4 cut 1 2 8 5 3 6 9 7 • And more: Telephone Network Design, VLSI layout, Sparse Gaussian Elimination, Parallel Computation Charalampos E. Tsourakakis

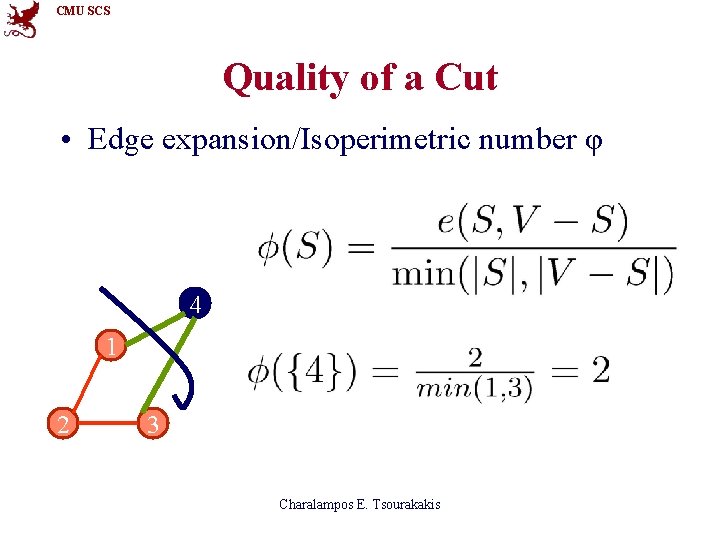

CMU SCS Quality of a Cut • Edge expansion/Isoperimetric number φ 4 1 2 3 Charalampos E. Tsourakakis

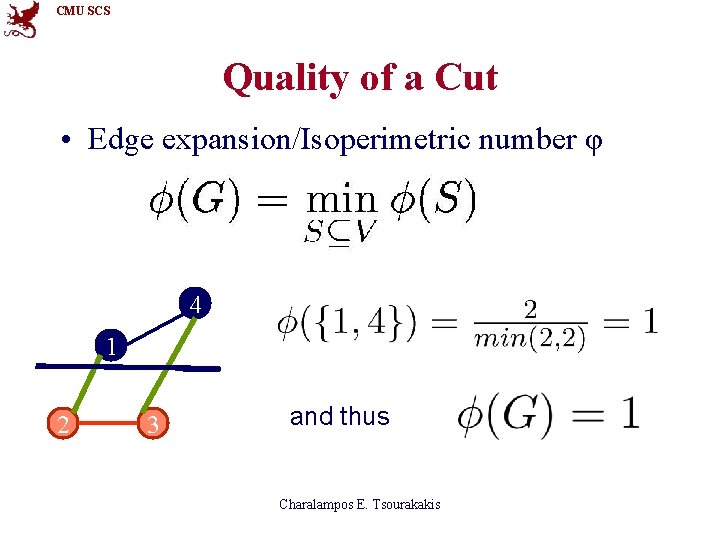

CMU SCS Quality of a Cut • Edge expansion/Isoperimetric number φ 4 1 2 3 and thus Charalampos E. Tsourakakis

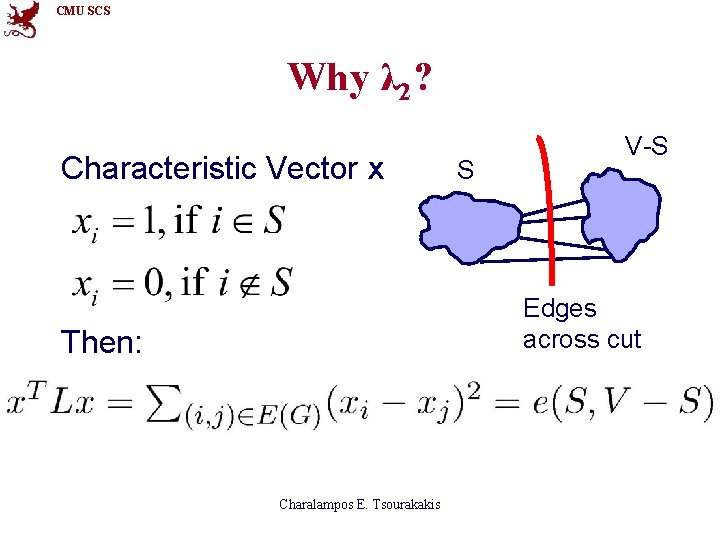

CMU SCS Why λ 2? Characteristic Vector x S V-S Edges across cut Then: Charalampos E. Tsourakakis

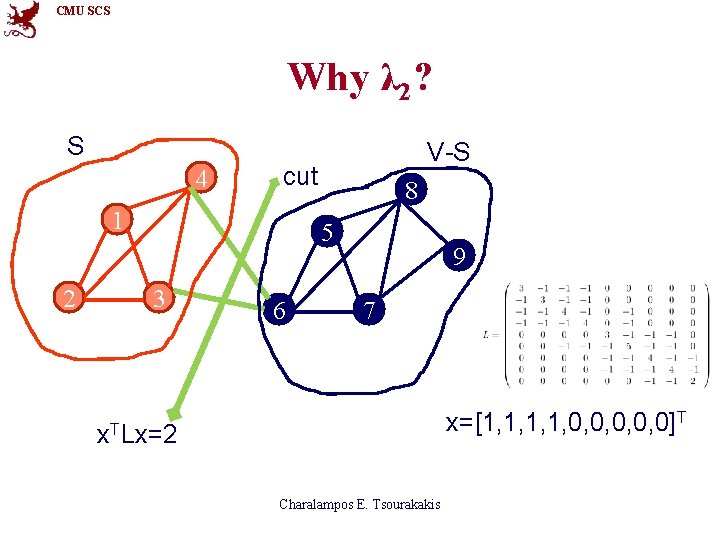

CMU SCS Why λ 2? S 4 cut 1 2 V-S 8 5 3 6 9 7 x=[1, 1, 0, 0, 0]T x. TLx=2 Charalampos E. Tsourakakis

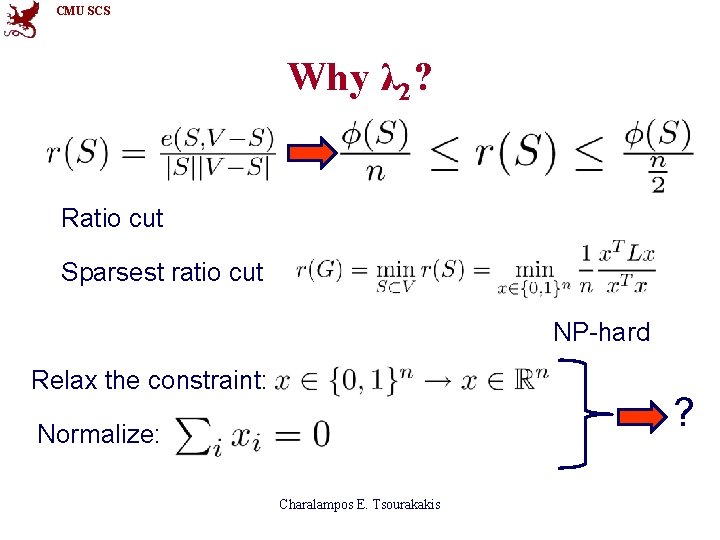

CMU SCS Why λ 2? Ratio cut Sparsest ratio cut NP-hard Relax the constraint: ? Normalize: Charalampos E. Tsourakakis

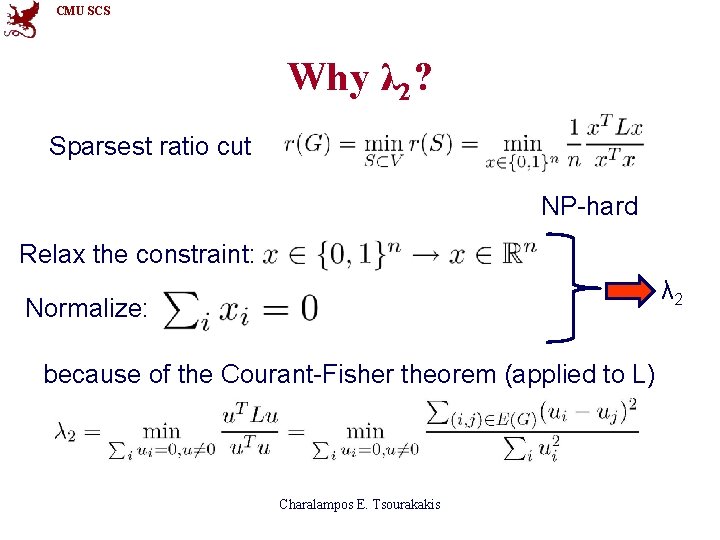

CMU SCS Why λ 2? Sparsest ratio cut NP-hard Relax the constraint: λ 2 Normalize: because of the Courant-Fisher theorem (applied to L) Charalampos E. Tsourakakis

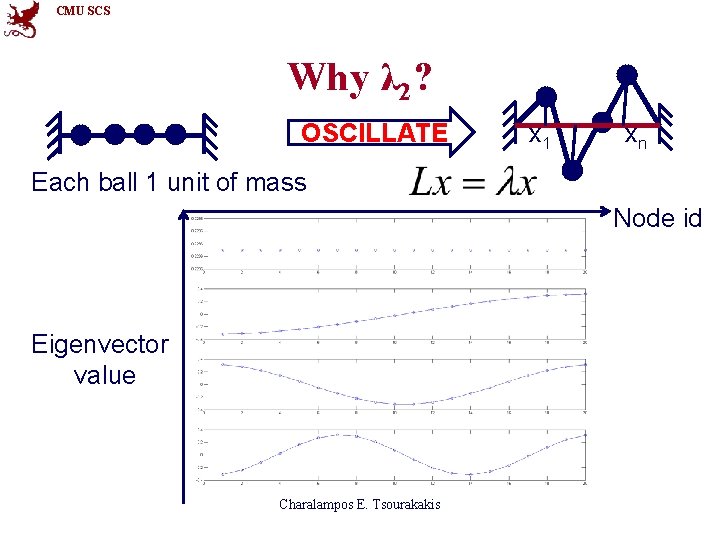

CMU SCS Why λ 2? OSCILLATE x 1 xn Each ball 1 unit of mass Node id Eigenvector value Charalampos E. Tsourakakis

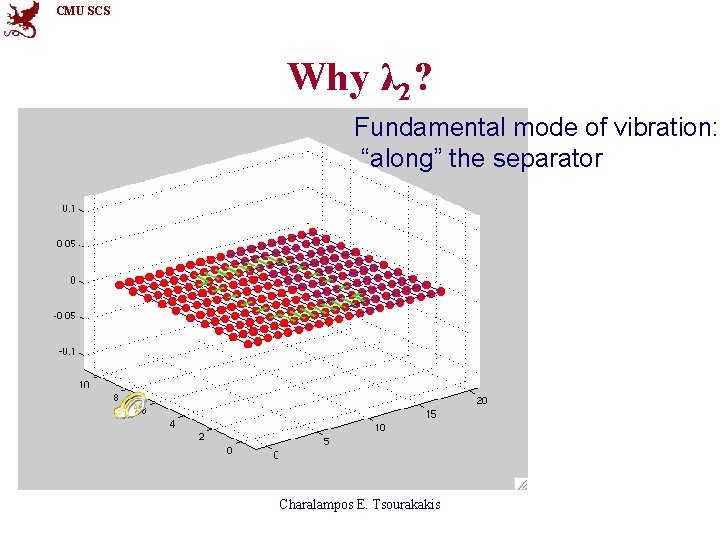

CMU SCS Why λ 2? Fundamental mode of vibration: “along” the separator Charalampos E. Tsourakakis

CMU SCS Cheeger Inequality • Step 1: Sort vertices in non-decreasing order according to their assigned by the second eigenvector value. • Step 2: Decide where to cut. • Bisection Two common heuristics • Best ratio cut Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

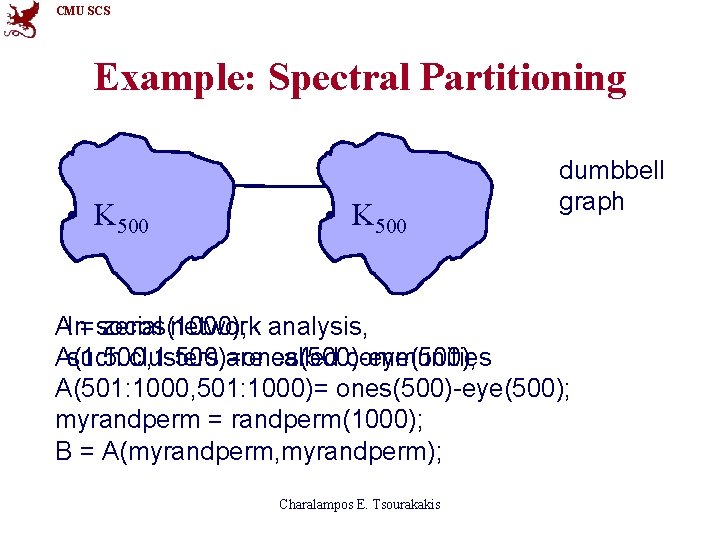

CMU SCS Example: Spectral Partitioning • K 500 dumbbell graph AIn= social zeros(1000); network analysis, A(1: 500, 1: 500)=ones(500)-eye(500); such clusters are called communities A(501: 1000, 501: 1000)= ones(500)-eye(500); myrandperm = randperm(1000); B = A(myrandperm, myrandperm); Charalampos E. Tsourakakis

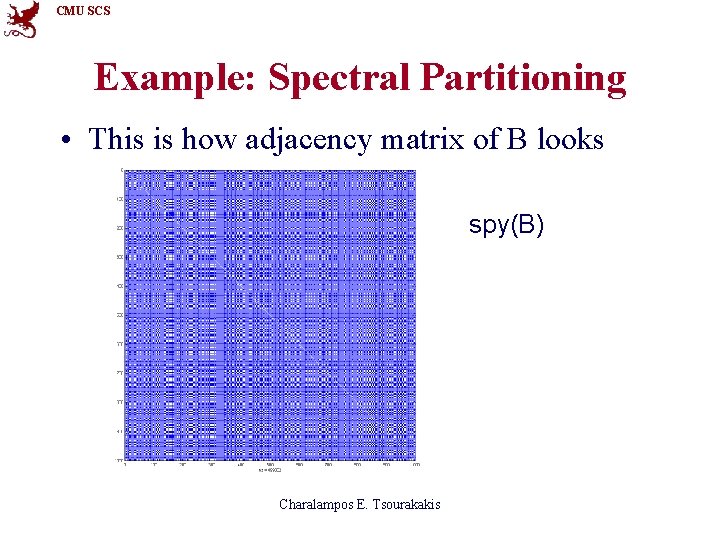

CMU SCS Example: Spectral Partitioning • This is how adjacency matrix of B looks spy(B) Charalampos E. Tsourakakis

CMU SCS Example: Spectral Partitioning • This is how the 2 nd eigenvector of B looks like. L = diag(sum(B))-B; [u v] = eigs(L, 2, 'SM'); plot(u(: , 1), ’x’) Not so much information yet… Charalampos E. Tsourakakis

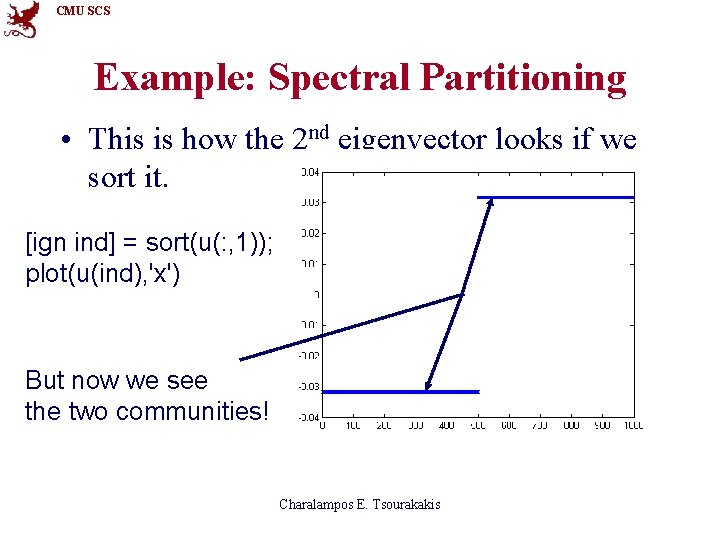

CMU SCS Example: Spectral Partitioning • This is how the 2 nd eigenvector looks if we sort it. [ign ind] = sort(u(: , 1)); plot(u(ind), 'x') But now we see the two communities! Charalampos E. Tsourakakis

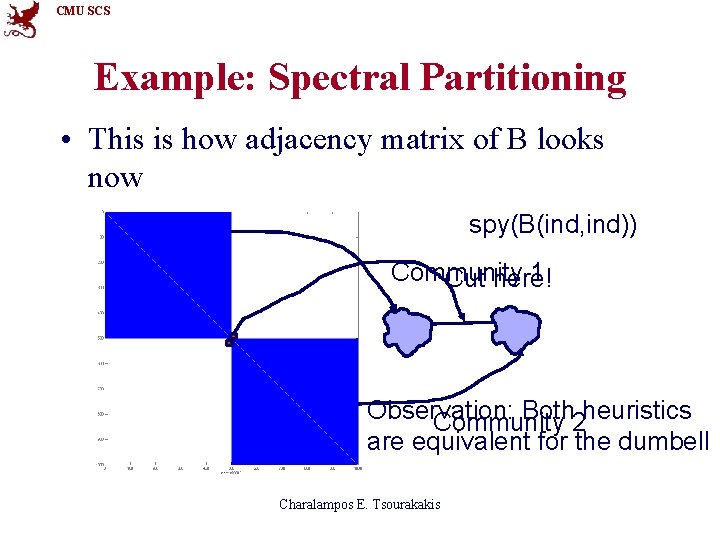

CMU SCS Example: Spectral Partitioning • This is how adjacency matrix of B looks now spy(B(ind, ind)) Community 1 Cut here! Observation: Both 2 heuristics Community are equivalent for the dumbell Charalampos E. Tsourakakis

CMU SCS Outline • Reminders • Adjacency matrix – Intuition behind eigenvectors: Bipartite Graphs – Walks of length k – Case Study: Triangles • Laplacian – Connected Components – Intuition: Adjacency vs. Laplacian – Sparsest Cut and Cheeger Inequality : • Derivation, intuition • Example • Normalized Laplacian Charalampos E. Tsourakakis

CMU SCS Where does it go from here? • Normalized Laplacian – Ng, Jordan, Weiss Spectral Clustering – Laplacian Eigenmaps for Manifold Learning – Computer Vision and many more applications… Standard reference: Spectral Graph Theory Monograph by Fan Chung Graham Charalampos E. Tsourakakis

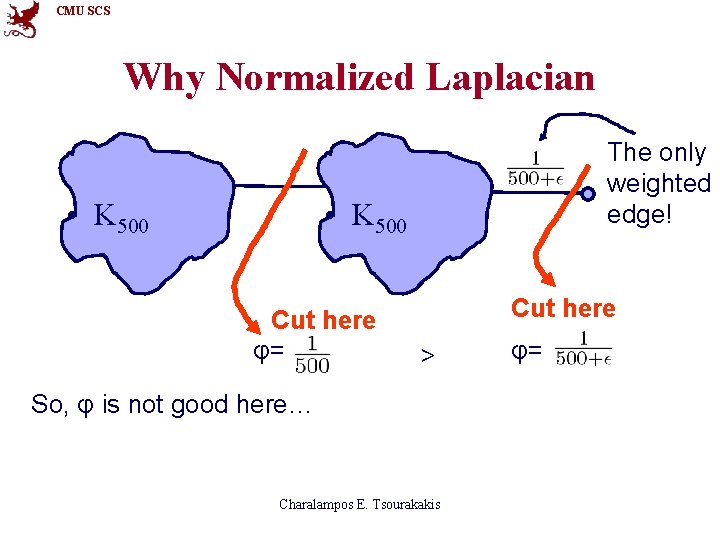

CMU SCS Why Normalized Laplacian • K 500 The only weighted edge! • K 500 Cut here φ= Cut here > So, φ is not good here… Charalampos E. Tsourakakis φ=

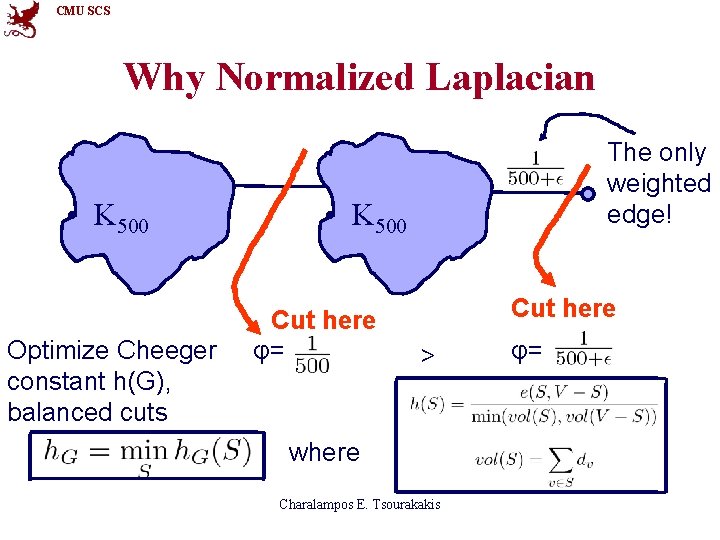

CMU SCS Why Normalized Laplacian • K 500 Optimize Cheeger constant h(G), balanced cuts The only weighted edge! • K 500 Cut here φ= Cut here > where Charalampos E. Tsourakakis φ=

CMU SCS Conclusions • Spectrum tells us a lot about the graph. • What to remember – What is an eigenvector (f: Nodes Reals) – Adjacency: #Paths – Laplacian: Sparsest Cut and Intuition – Normalized Laplacian: Normalized cuts, tend to avoid unbalanced cuts Charalampos E. Tsourakakis

CMU SCS References • A list of references is on my web site, in the KDD tutorial web page www. cs. cmu. edu/~ctsourak/ Charalampos E. Tsourakakis

CMU SCS Thank you! Charalampos E. Tsourakakis

- Slides: 68