Deep Learning from Programs Marc Brockschmidt MSR Cambridge

(Deep) Learning from Programs Marc Brockschmidt - MSR Cambridge @mmjb 86

Personal History: Termination Proving System. out. println(“Hello World!”)

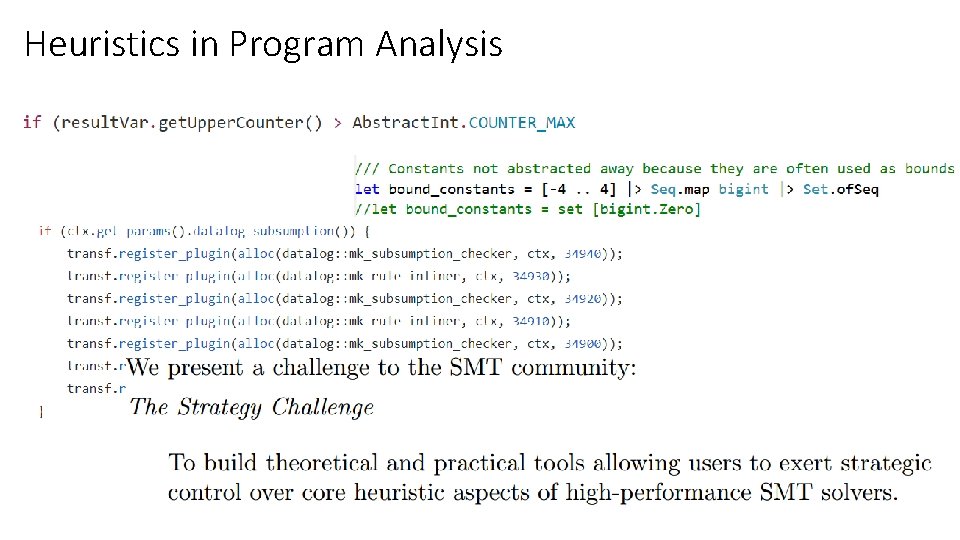

Heuristics in Program Analysis

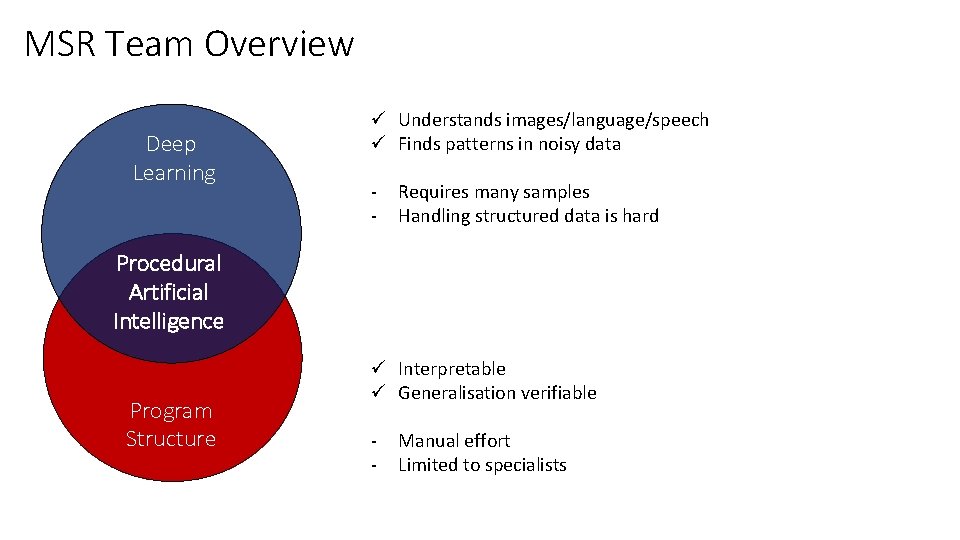

MSR Team Overview Deep Learning ü Understands images/language/speech ü Finds patterns in noisy data - Requires many samples Handling structured data is hard Procedural Artificial Intelligence Program Structure ü Interpretable ü Generalisation verifiable - Manual effort Limited to specialists

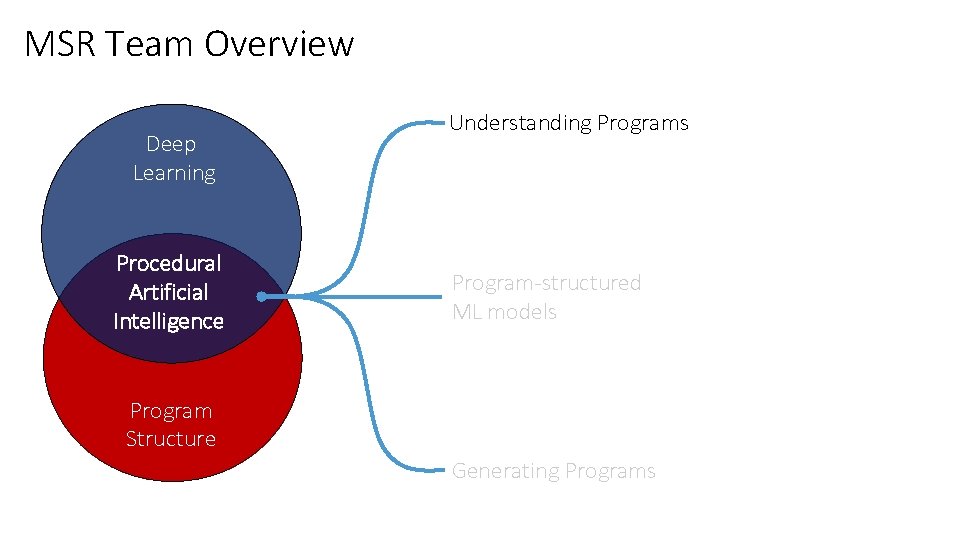

MSR Team Overview Deep Learning Procedural Artificial Intelligence Understanding Programs Program-structured ML models Program Structure Generating Programs

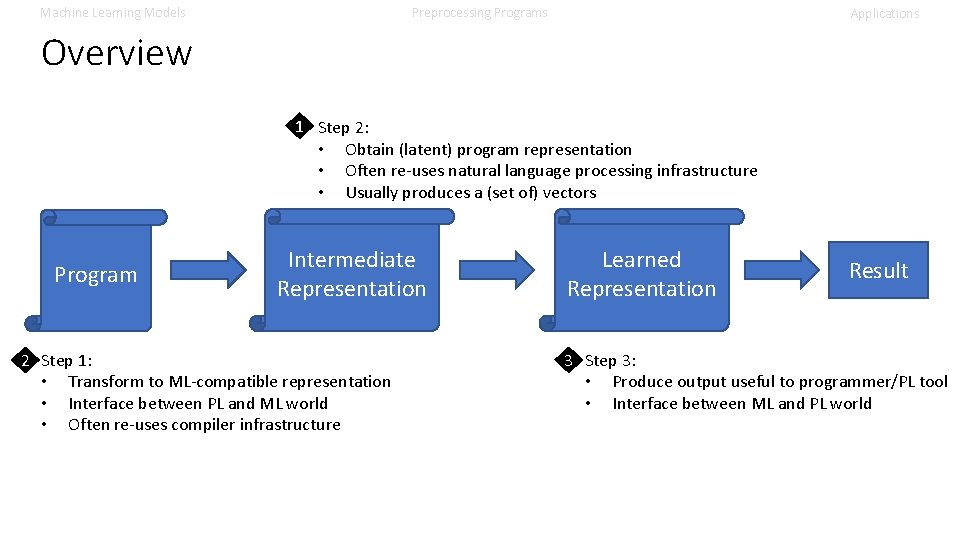

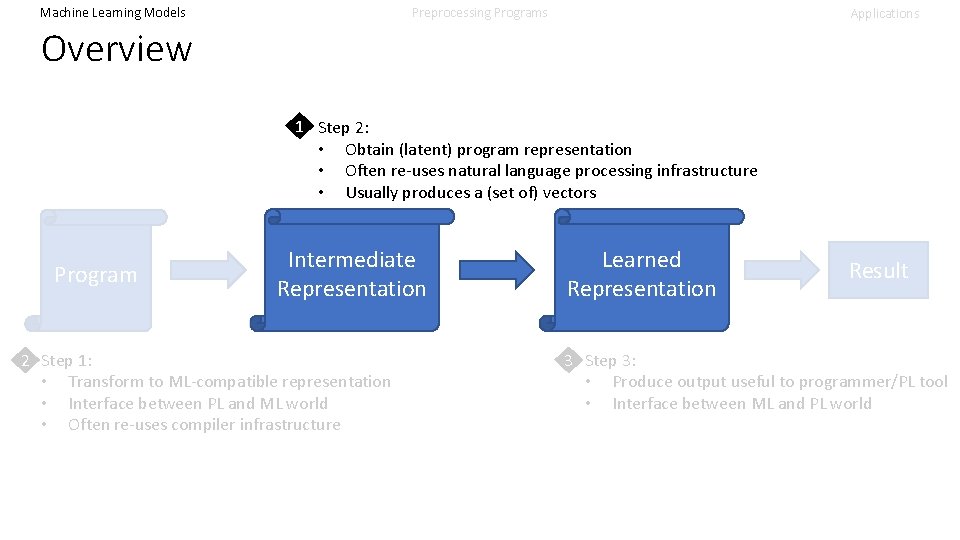

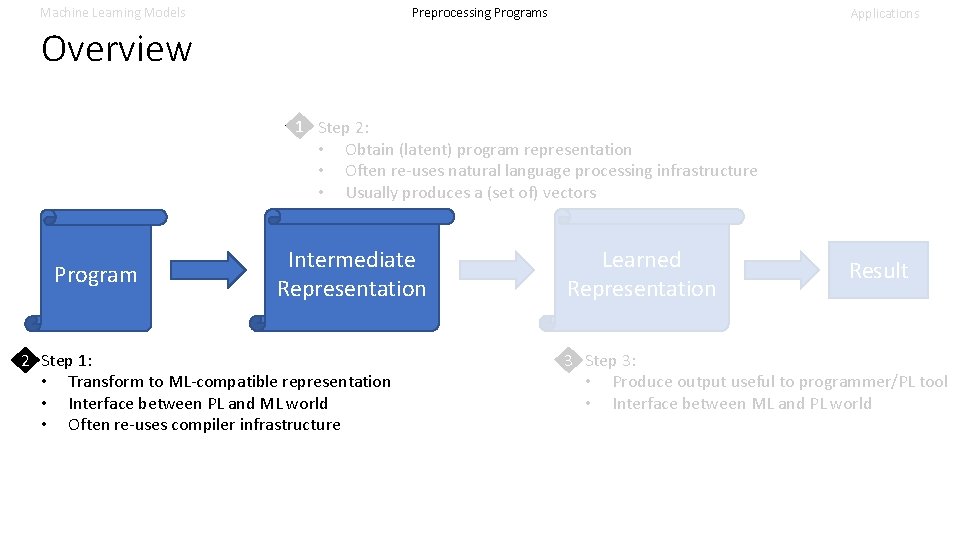

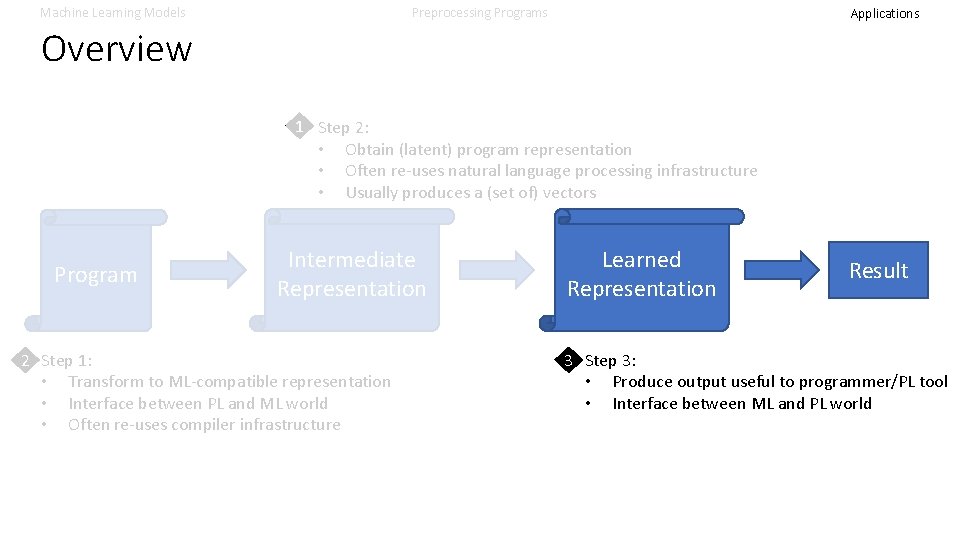

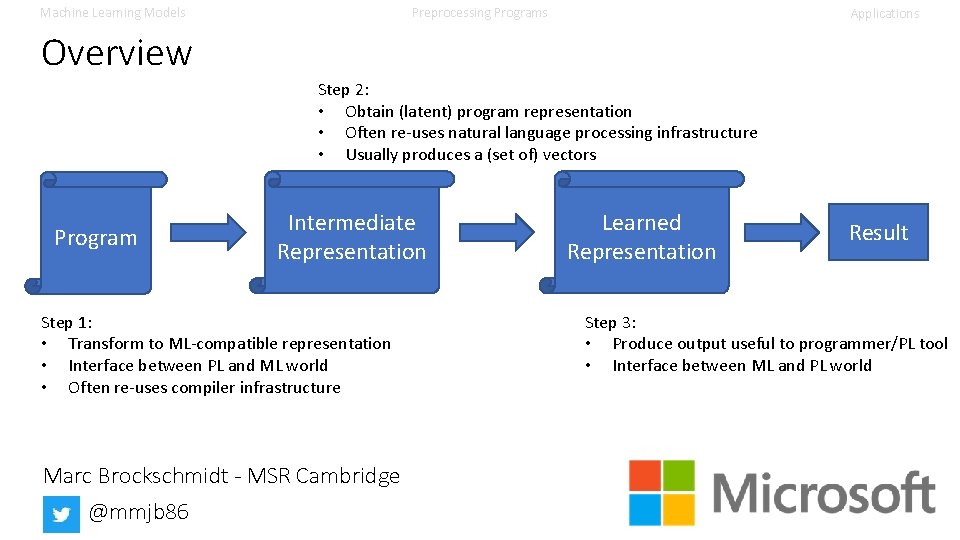

Machine Learning Models Preprocessing Programs Applications Overview 1 Step 2: • Obtain (latent) program representation • Often re-uses natural language processing infrastructure • Usually produces a (set of) vectors Program Intermediate Representation 2 Step 1: • Transform to ML-compatible representation • Interface between PL and ML world • Often re-uses compiler infrastructure Learned Representation Result 3 Step 3: • Produce output useful to programmer/PL tool • Interface between ML and PL world

Machine Learning Models Preprocessing Programs Applications Overview 1 Step 2: • Obtain (latent) program representation • Often re-uses natural language processing infrastructure • Usually produces a (set of) vectors Program Intermediate Representation 2 Step 1: • Transform to ML-compatible representation • Interface between PL and ML world • Often re-uses compiler infrastructure Learned Representation Result 3 Step 3: • Produce output useful to programmer/PL tool • Interface between ML and PL world

ML Models – Part 1 • • Things not covered (Linear) Regression Multi-Layer Perceptrons How to split data

Machine Learning Models Preprocessing Programs Not Covered Today 1. Details of training ML systems See generic tutorials for internals (e. g. , backprop) 2. Concrete implementations But: Many relevant papers come with F/OSS artefacts 3. Non-neural approaches But: Ask me for pointers, and I’ll allude to these from time to time Applications

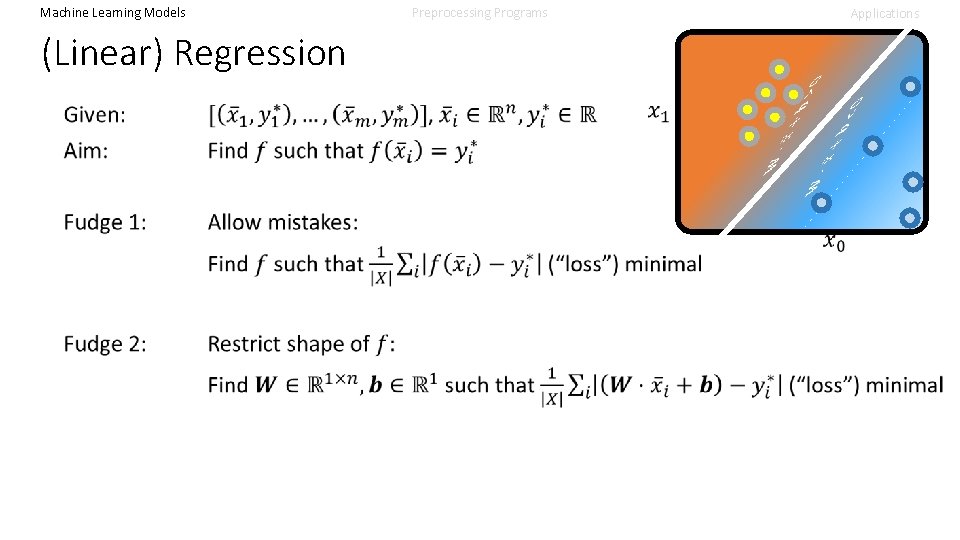

Machine Learning Models Preprocessing Programs Applications (Linear) Regression

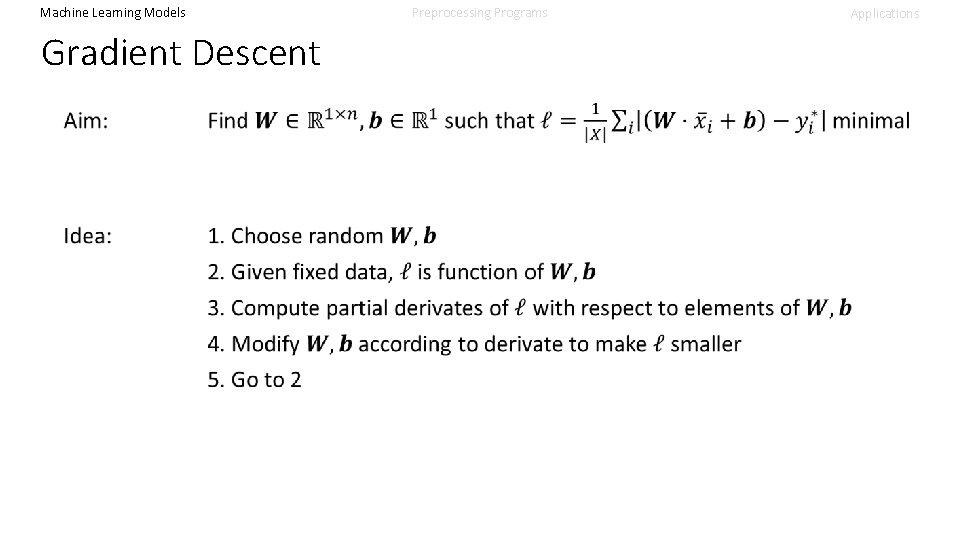

Machine Learning Models Gradient Descent Preprocessing Programs Applications

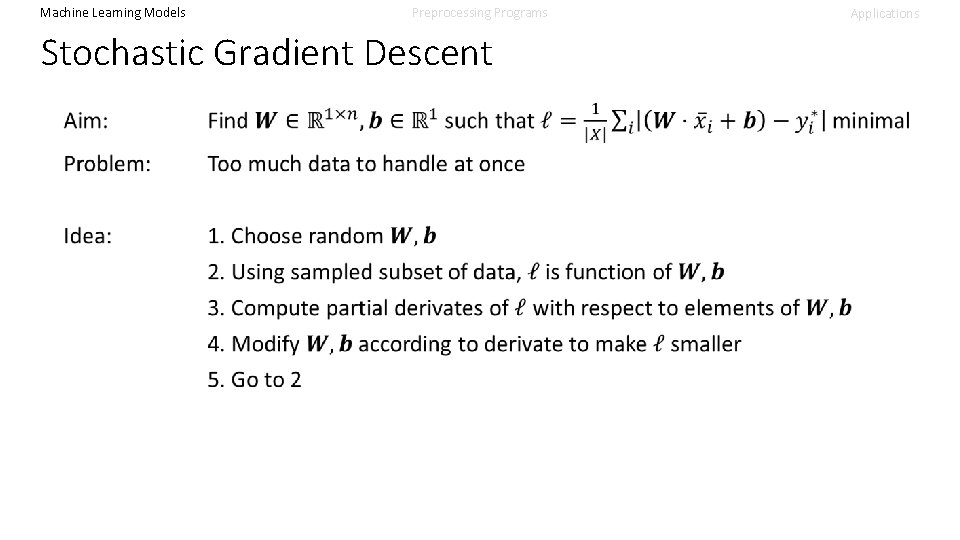

Machine Learning Models Preprocessing Programs Stochastic Gradient Descent Applications

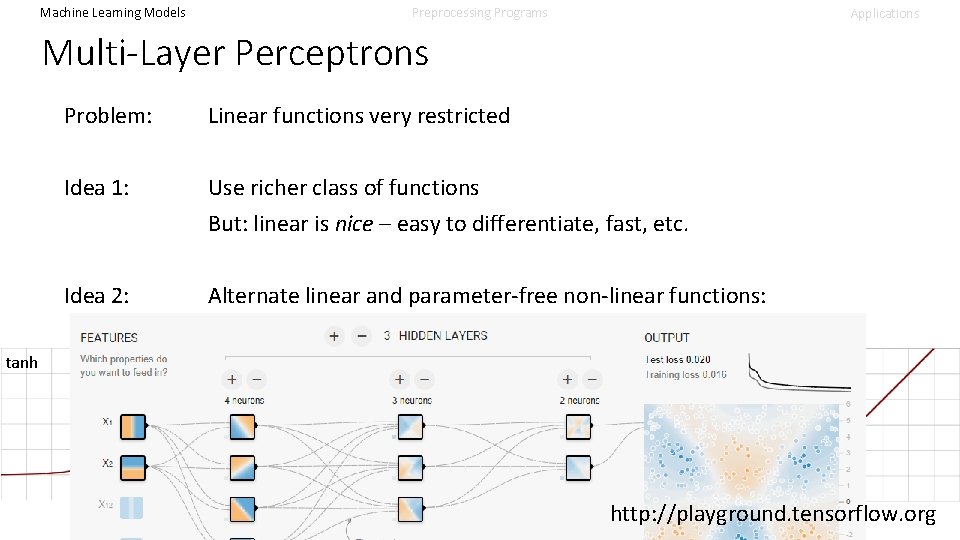

Machine Learning Models Preprocessing Programs Applications Multi-Layer Perceptrons Problem: Linear functions very restricted Idea 1: Use richer class of functions But: linear is nice – easy to differentiate, fast, etc. Idea 2: Alternate linear and parameter-free non-linear functions: sigmoid tanh Re. LU … http: //playground. tensorflow. org

Machine Learning Models Data splitting Preprocessing Programs Applications

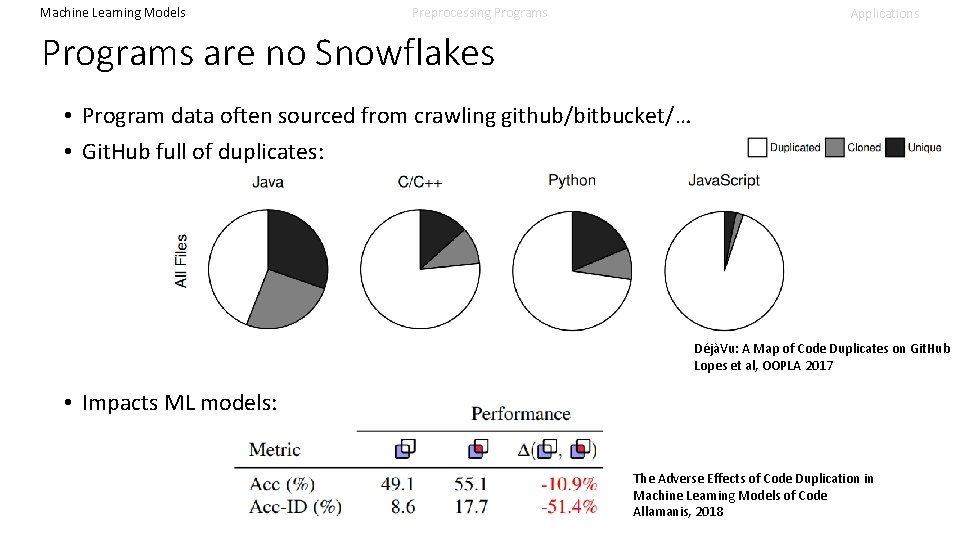

Machine Learning Models Preprocessing Programs Applications Programs are no Snowflakes • Program data often sourced from crawling github/bitbucket/… • Git. Hub full of duplicates: DéjàVu: A Map of Code Duplicates on Git. Hub Lopes et al, OOPLA 2017 • Impacts ML models: The Adverse Effects of Code Duplication in Machine Learning Models of Code Allamanis, 2018

ML Models – Part 2: Sequences • • • Vocabularies & Embeddings Bag of Words Recurrent NNs 1 D Convolutional NNs Attentional Models

Machine Learning Models Preprocessing Programs Learning from !Numbers Applications

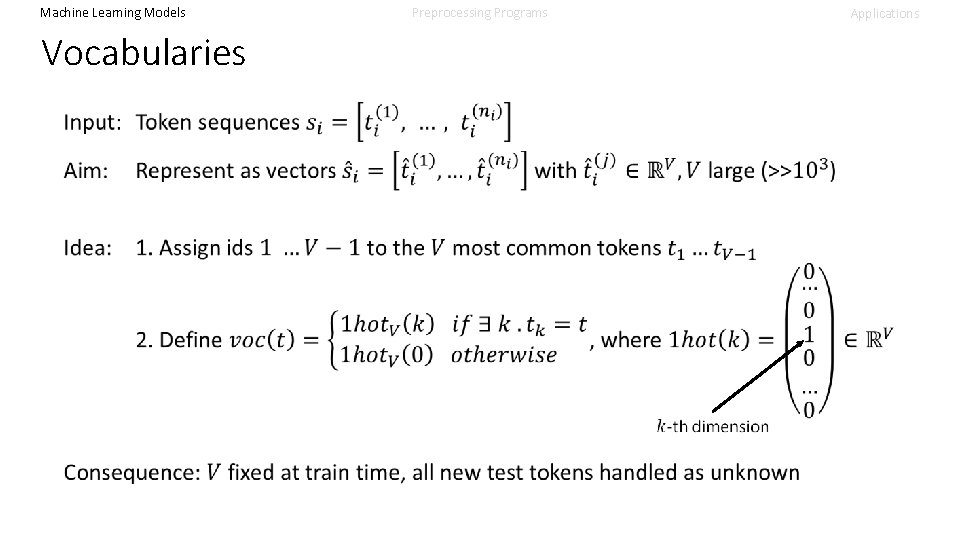

Machine Learning Models Preprocessing Programs Applications Vocabularies

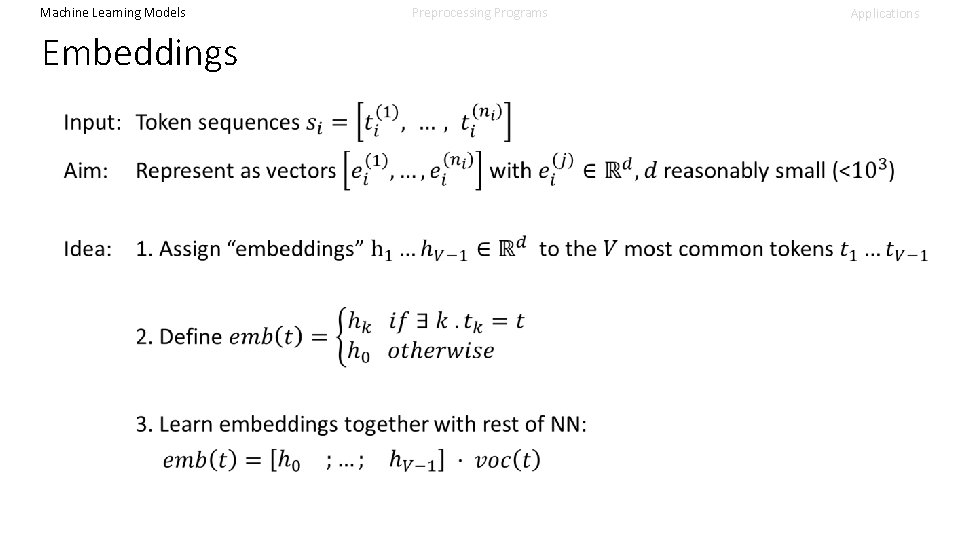

Machine Learning Models Embeddings Preprocessing Programs Applications

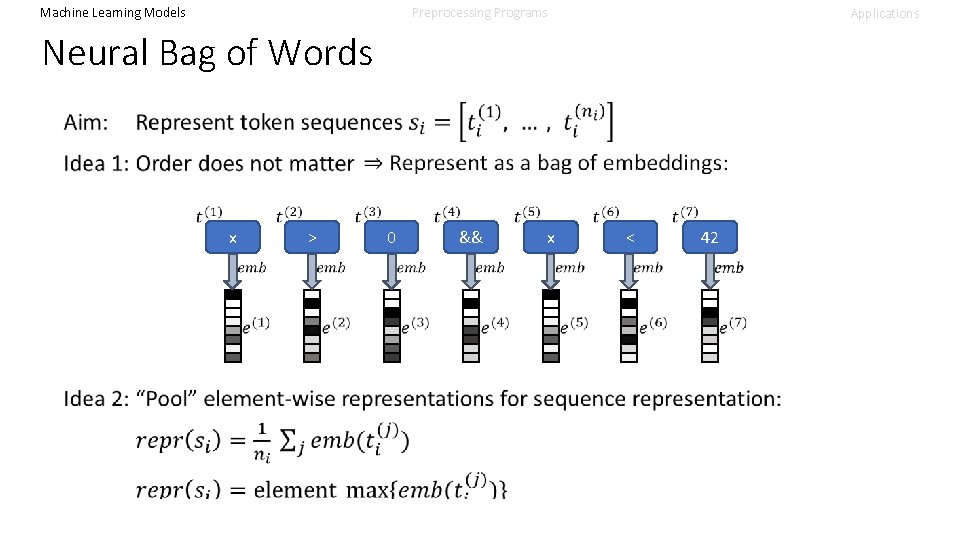

Machine Learning Models Preprocessing Programs Applications Neural Bag of Words x > 0 && x < 42

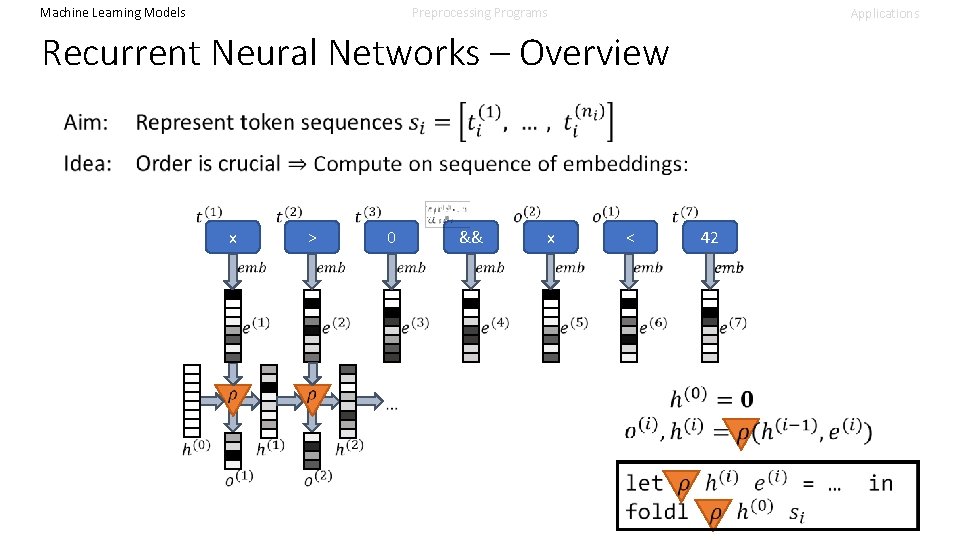

Machine Learning Models Preprocessing Programs Applications Recurrent Neural Networks – Overview x > && x 42 < 0

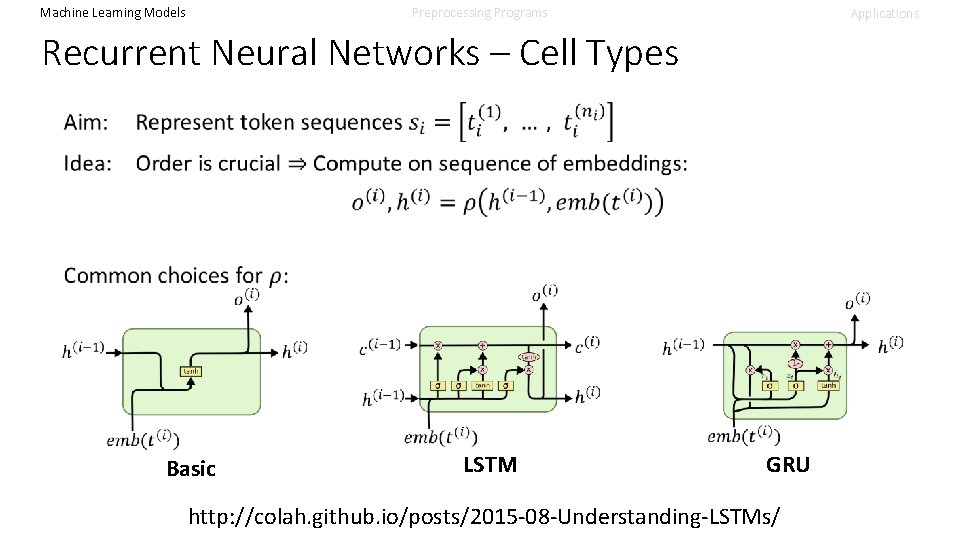

Machine Learning Models Preprocessing Programs Applications Recurrent Neural Networks – Cell Types Basic LSTM GRU http: //colah. github. io/posts/2015 -08 -Understanding-LSTMs/

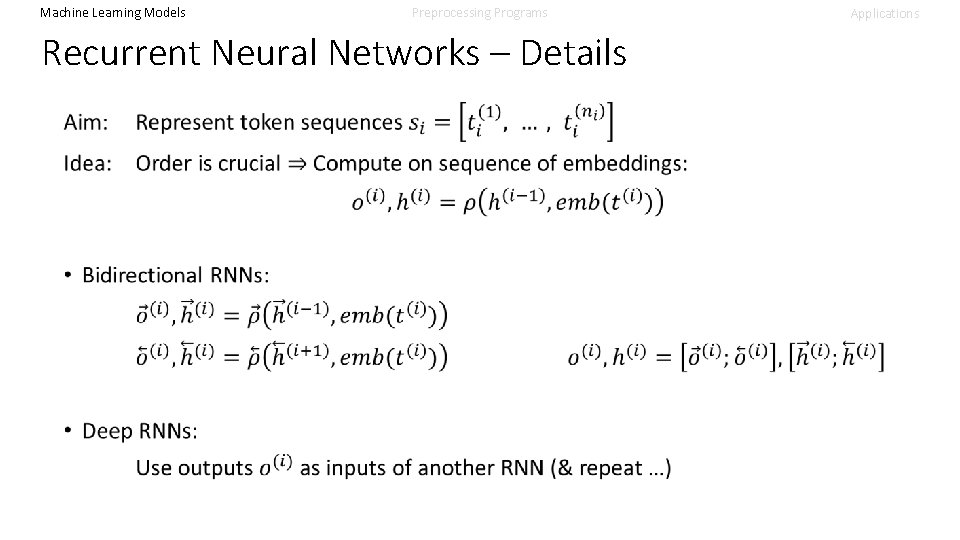

Machine Learning Models Preprocessing Programs Recurrent Neural Networks – Details Applications

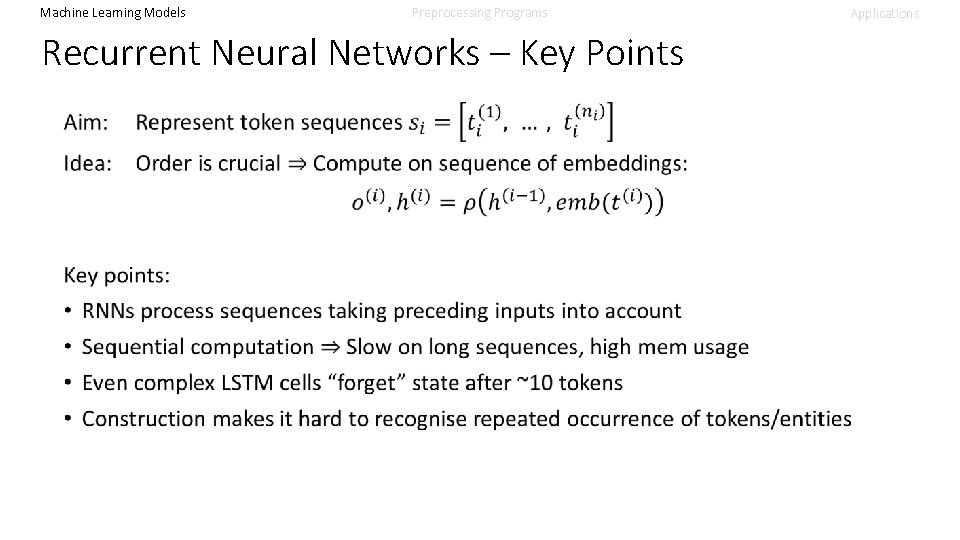

Machine Learning Models Preprocessing Programs Recurrent Neural Networks – Key Points Applications

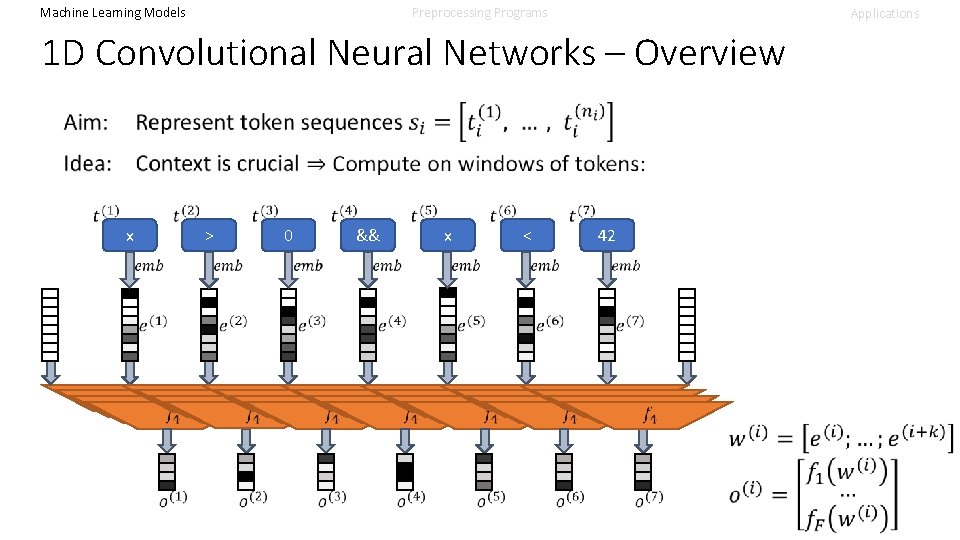

Machine Learning Models Preprocessing Programs Applications 1 D Convolutional Neural Networks – Overview x > 0 && x < 42

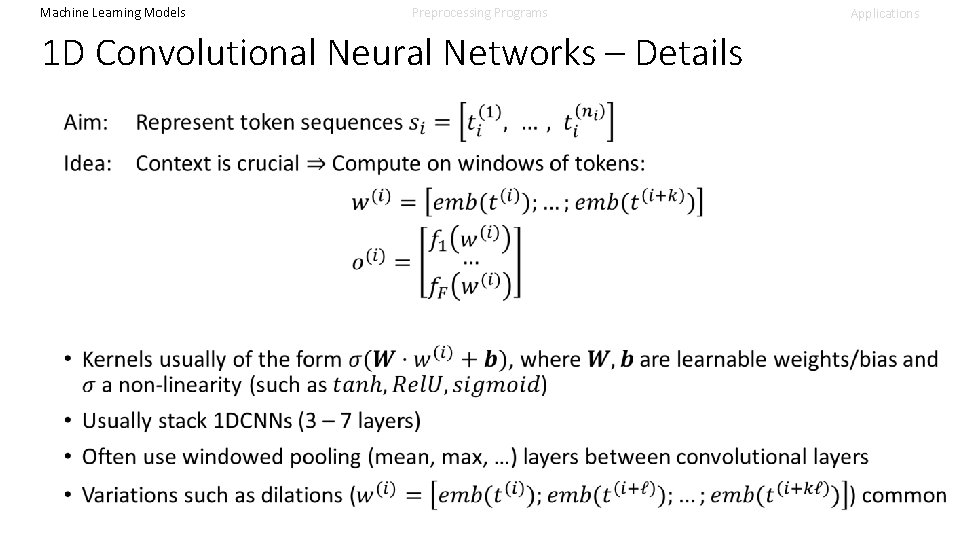

Machine Learning Models Preprocessing Programs 1 D Convolutional Neural Networks – Details Applications

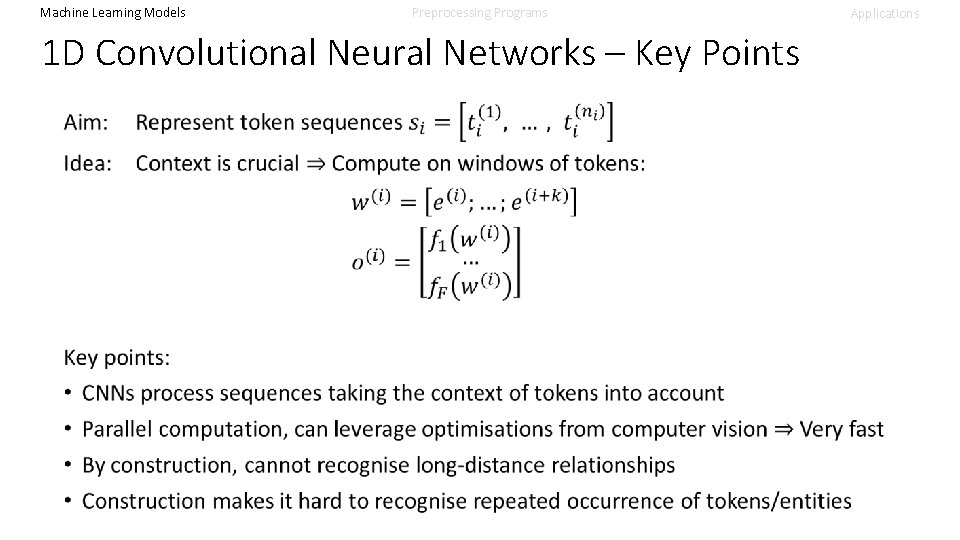

Machine Learning Models Preprocessing Programs 1 D Convolutional Neural Networks – Key Points Applications

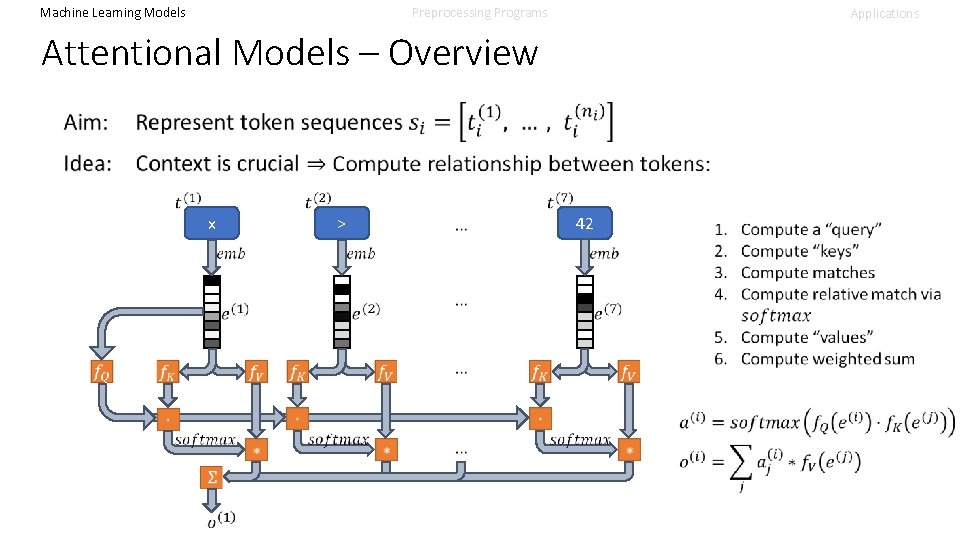

Machine Learning Models Preprocessing Programs Applications Attentional Models – Overview x 42 >

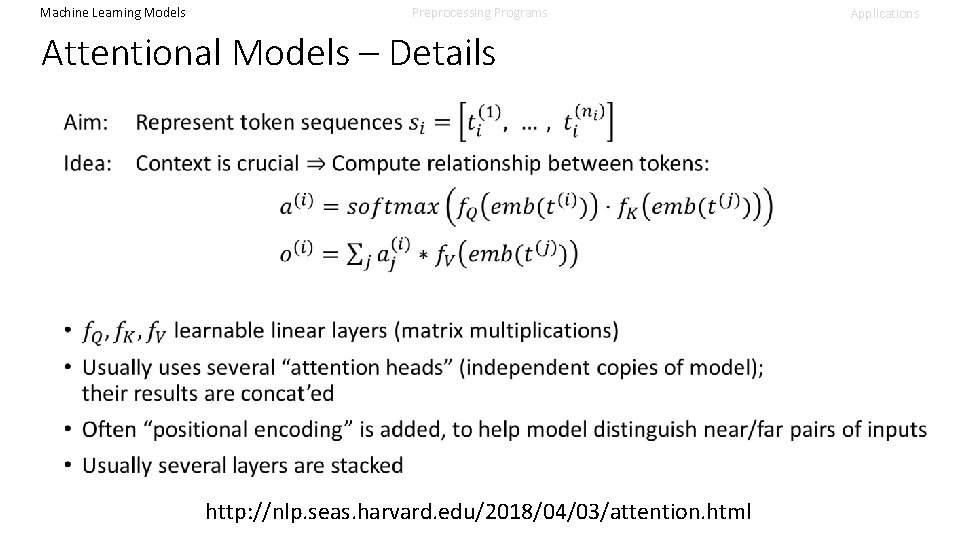

Machine Learning Models Preprocessing Programs Attentional Models – Details http: //nlp. seas. harvard. edu/2018/04/03/attention. html Applications

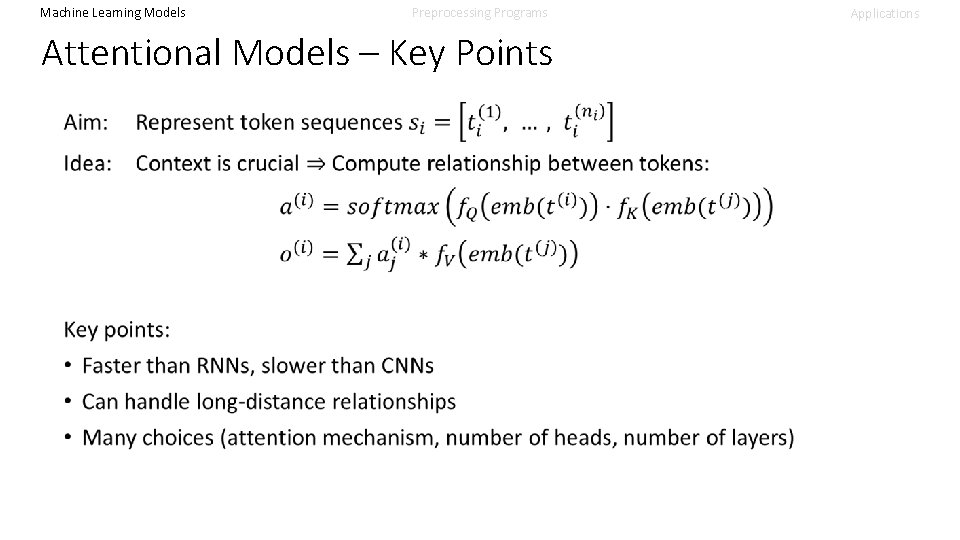

Machine Learning Models Preprocessing Programs Attentional Models – Key Points Applications

ML Models – Part 3: Structures • Tree. NNs • Graph NNs

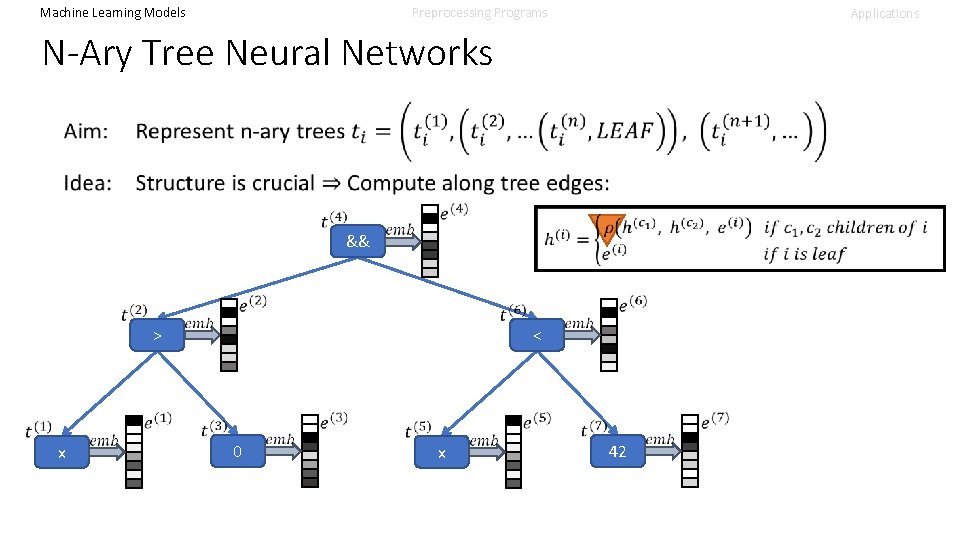

Machine Learning Models Preprocessing Programs Applications N-Ary Tree Neural Networks x > && 0 x < 42

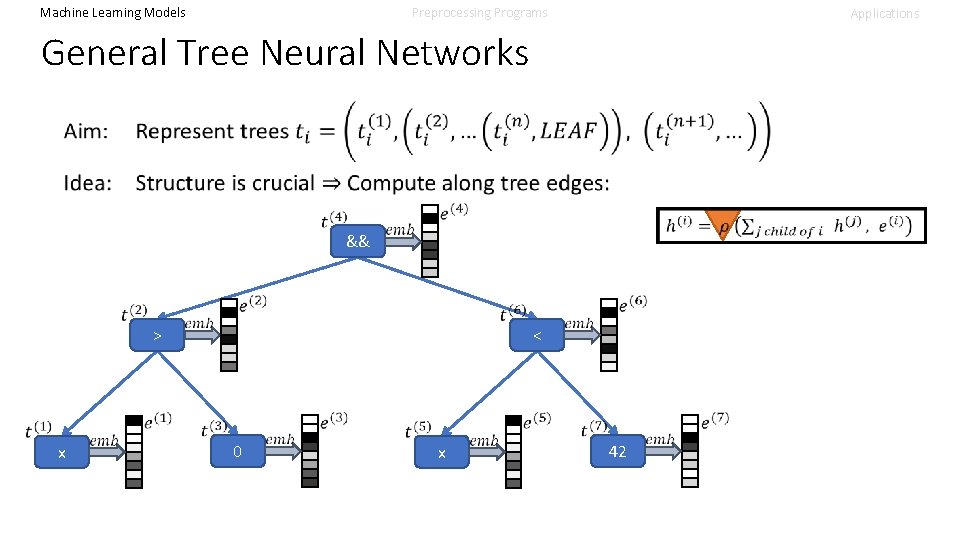

Machine Learning Models Preprocessing Programs Applications General Tree Neural Networks x > && 0 x < 42

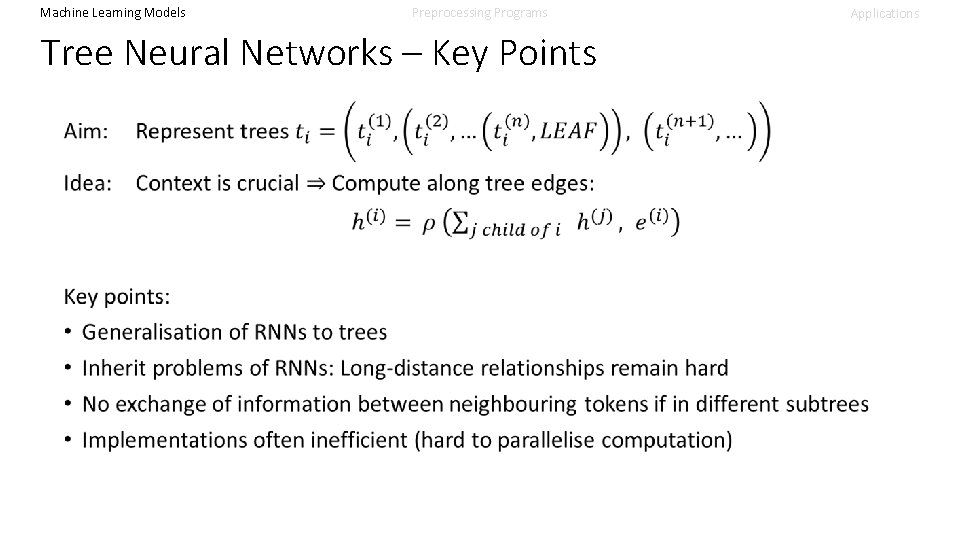

Machine Learning Models Preprocessing Programs Tree Neural Networks – Key Points Applications

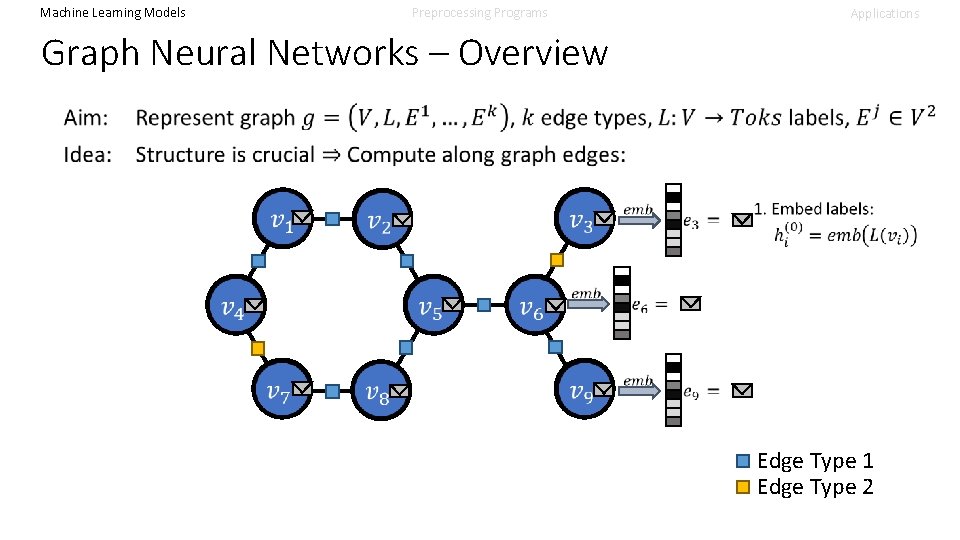

Machine Learning Models Preprocessing Programs Applications Graph Neural Networks – Overview Edge Type 1 Edge Type 2

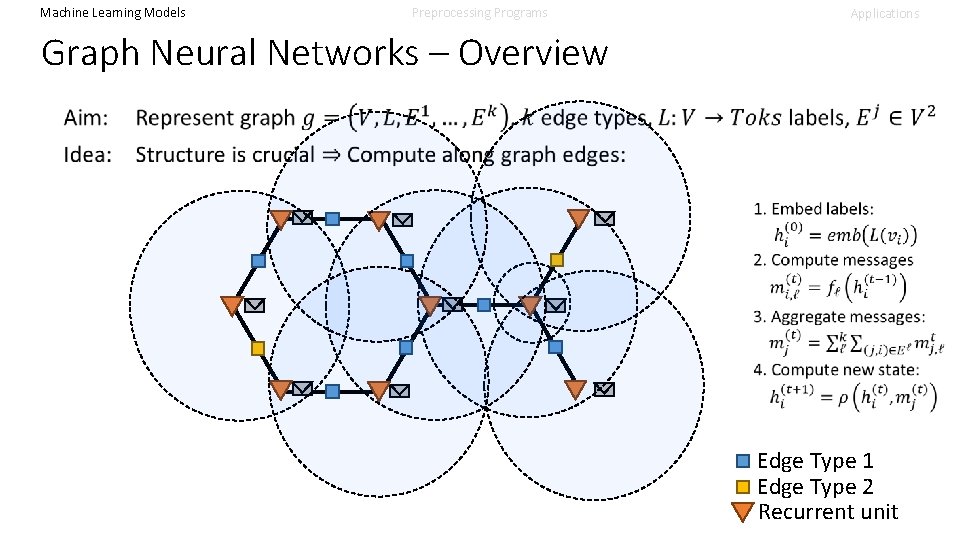

Machine Learning Models Preprocessing Programs Applications Graph Neural Networks – Overview Edge Type 1 Edge Type 2 Recurrent unit

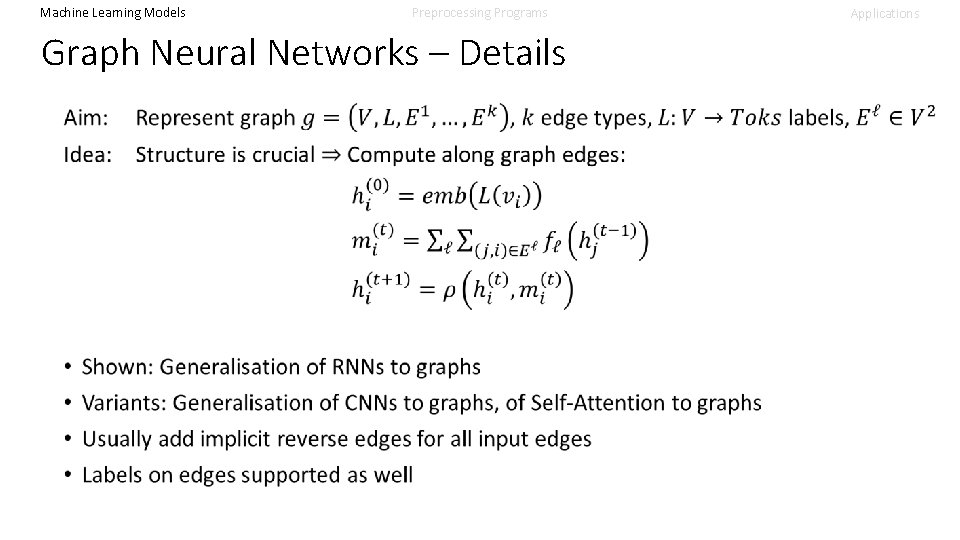

Machine Learning Models Preprocessing Programs Graph Neural Networks – Details Applications

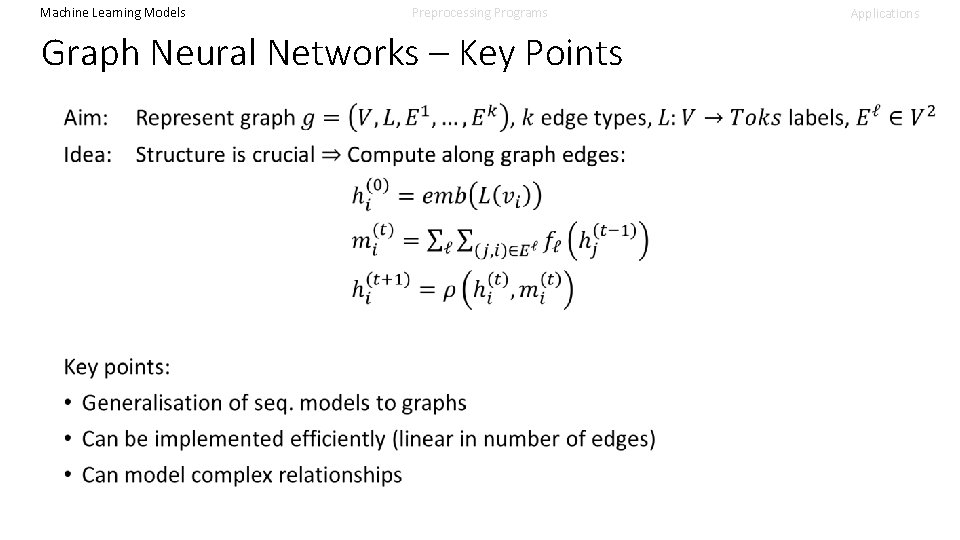

Machine Learning Models Preprocessing Programs Graph Neural Networks – Key Points Applications

Machine Learning Models Preprocessing Programs Model Summary • Learning from structure widely studied; many variations: • • Sets (bag of words) Sequences (RNNs, 1 D-CNNs, Self-Attention) Trees (Tree. NNs variants) Graphs (GNNs variants) • Tension between computational effort and precision • Key insight: Domain knowledge needed to select right model Applications

Machine Learning Models Preprocessing Programs Applications Overview 1 Step 2: • Obtain (latent) program representation • Often re-uses natural language processing infrastructure • Usually produces a (set of) vectors Program Intermediate Representation 2 Step 1: • Transform to ML-compatible representation • Interface between PL and ML world • Often re-uses compiler infrastructure Learned Representation Result 3 Step 3: • Produce output useful to programmer/PL tool • Interface between ML and PL world

Machine Learning Models Preprocessing Programs “Programs” Liberal definition: Element of language with semantics Examples: • Files in Java, C, Haskell, … • Single Expressions • Compiler IR / Assembly • (SMT) Formulas • Diffs of Programs Applications

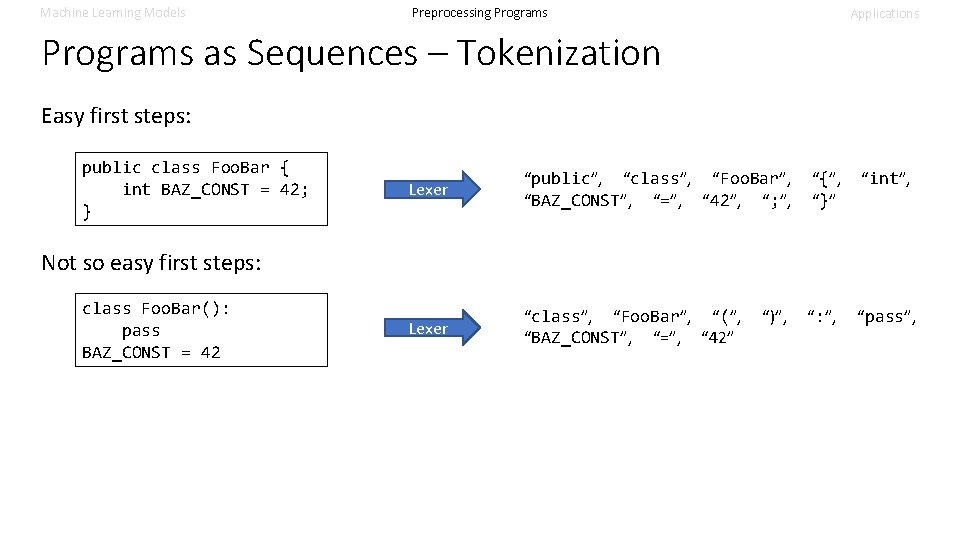

Machine Learning Models Preprocessing Programs Applications Programs as Sequences – Tokenization Easy first steps: public class Foo. Bar { int BAZ_CONST = 42; } Lexer “public”, “class”, “Foo. Bar”, “{”, “int”, “BAZ_CONST”, “=”, “ 42”, “; ”, “}” Lexer “class”, “Foo. Bar”, “(”, “)”, “: ”, “pass”, “BAZ_CONST”, “=”, “ 42” Not so easy first steps: class Foo. Bar(): pass BAZ_CONST = 42

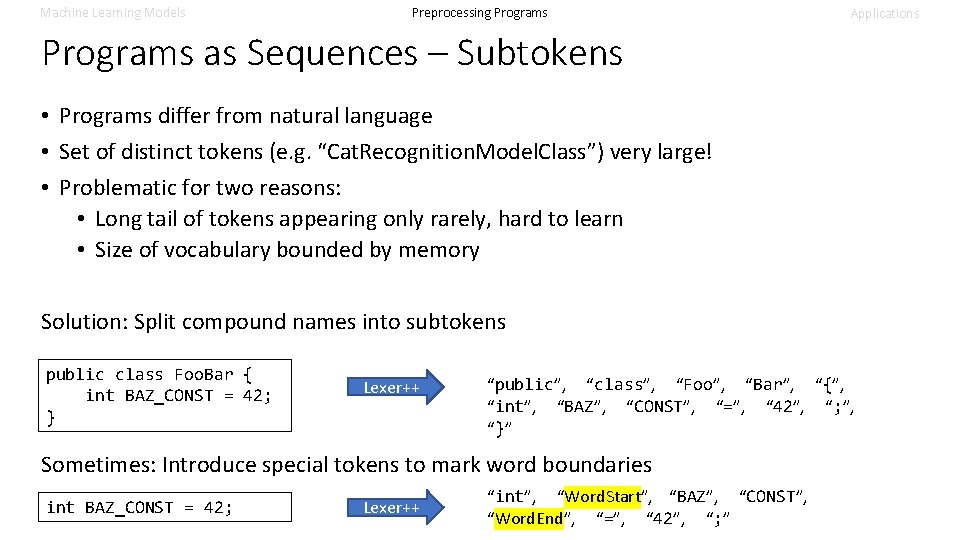

Machine Learning Models Preprocessing Programs Applications Programs as Sequences – Subtokens • Programs differ from natural language • Set of distinct tokens (e. g. “Cat. Recognition. Model. Class”) very large! • Problematic for two reasons: • Long tail of tokens appearing only rarely, hard to learn • Size of vocabulary bounded by memory Solution: Split compound names into subtokens public class Foo. Bar { int BAZ_CONST = 42; } Lexer++ “public”, “class”, “Foo”, “Bar”, “{”, “int”, “BAZ”, “CONST”, “=”, “ 42”, “; ”, “}” Sometimes: Introduce special tokens to mark word boundaries int BAZ_CONST = 42; Lexer++ “int”, “Word. Start”, “BAZ”, “CONST”, “Word. End”, “=”, “ 42”, “; ”

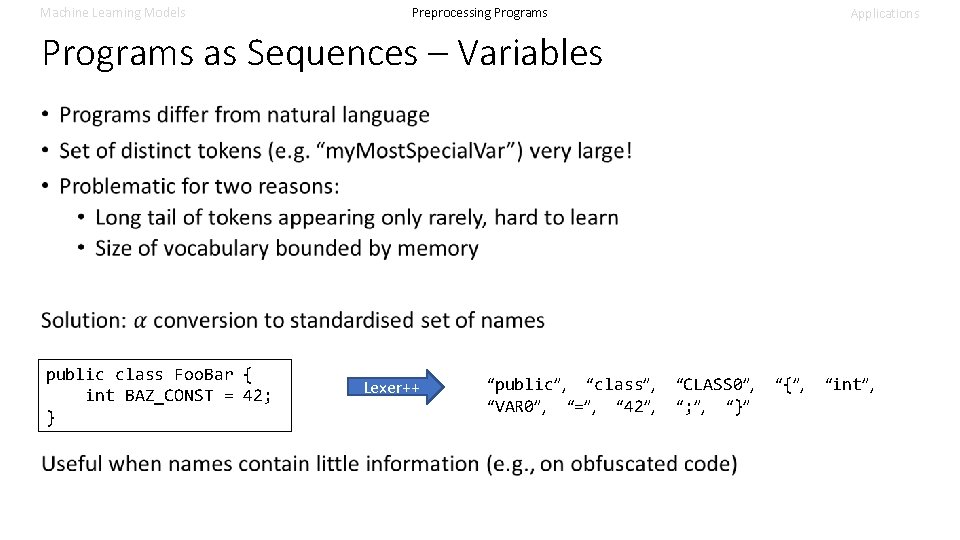

Machine Learning Models Preprocessing Programs Applications Programs as Sequences – Variables public class Foo. Bar { int BAZ_CONST = 42; } Lexer++ “public”, “class”, “CLASS 0”, “{”, “int”, “VAR 0”, “=”, “ 42”, “; ”, “}”

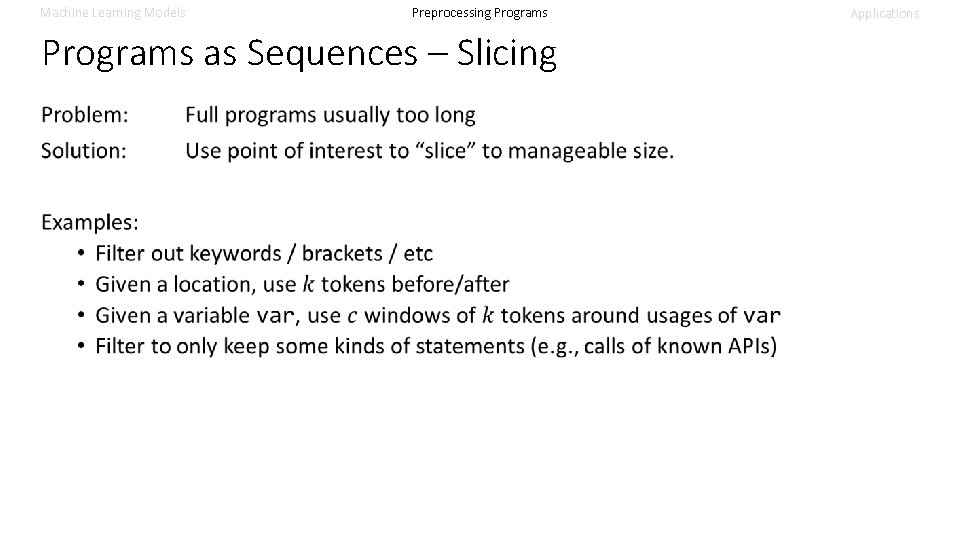

Machine Learning Models Preprocessing Programs as Sequences – Slicing Applications

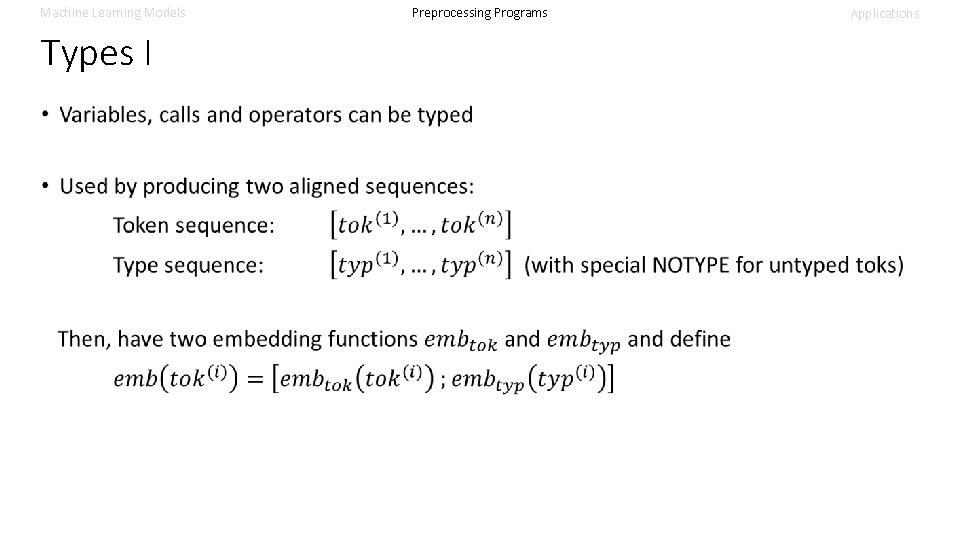

Machine Learning Models Types I Preprocessing Programs Applications

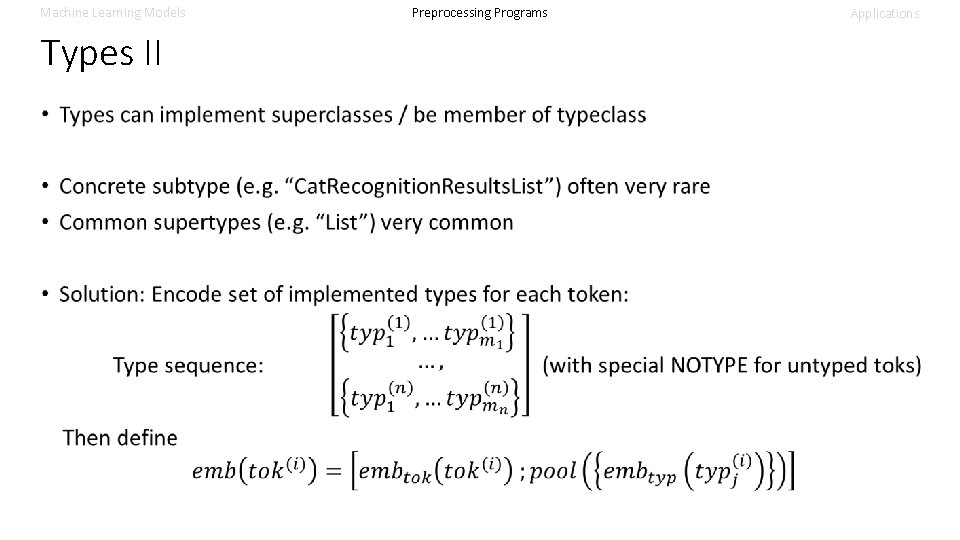

Machine Learning Models Types II Preprocessing Programs Applications

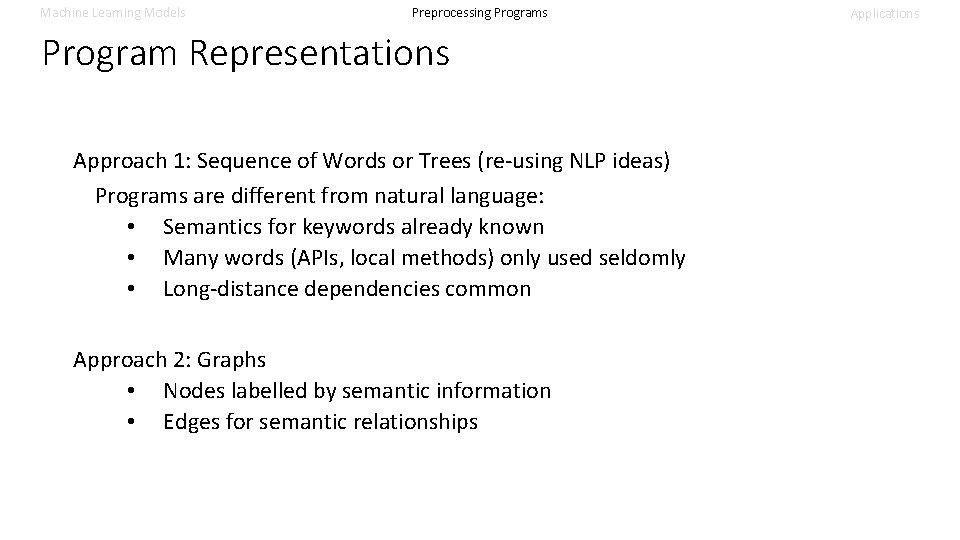

Machine Learning Models Preprocessing Programs Program Representations Approach 1: Sequence of Words or Trees (re-using NLP ideas) Programs are different from natural language: • Semantics for keywords already known • Many words (APIs, local methods) only used seldomly • Long-distance dependencies common Approach 2: Graphs • Nodes labelled by semantic information • Edges for semantic relationships Applications

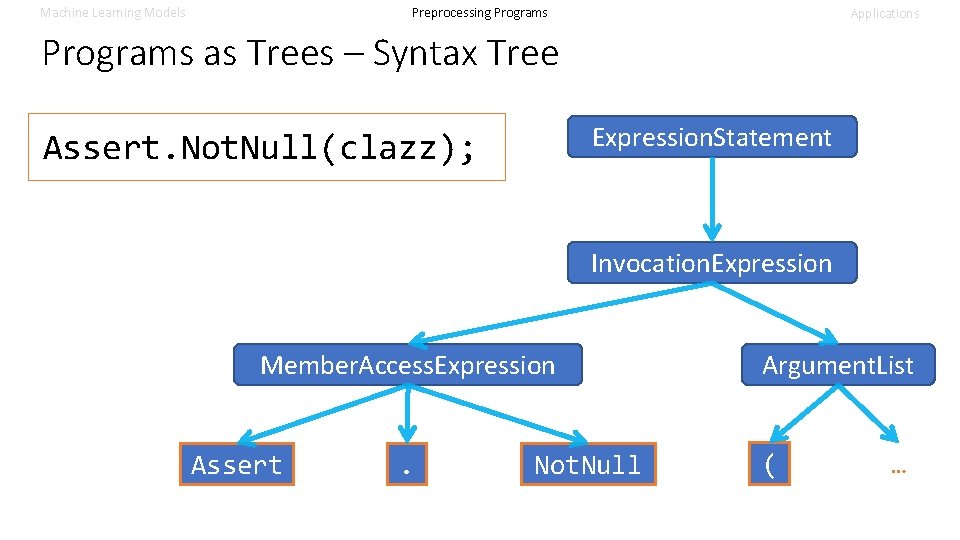

Machine Learning Models Preprocessing Programs Applications Programs as Trees – Syntax Tree Expression. Statement Assert. Not. Null(clazz); Invocation. Expression Member. Access. Expression Assert . Not. Null Argument. List ( …

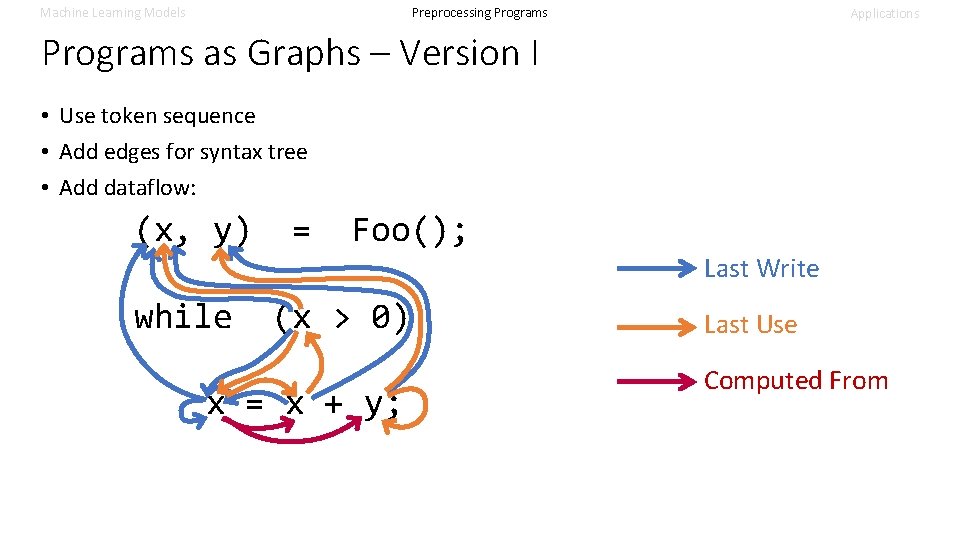

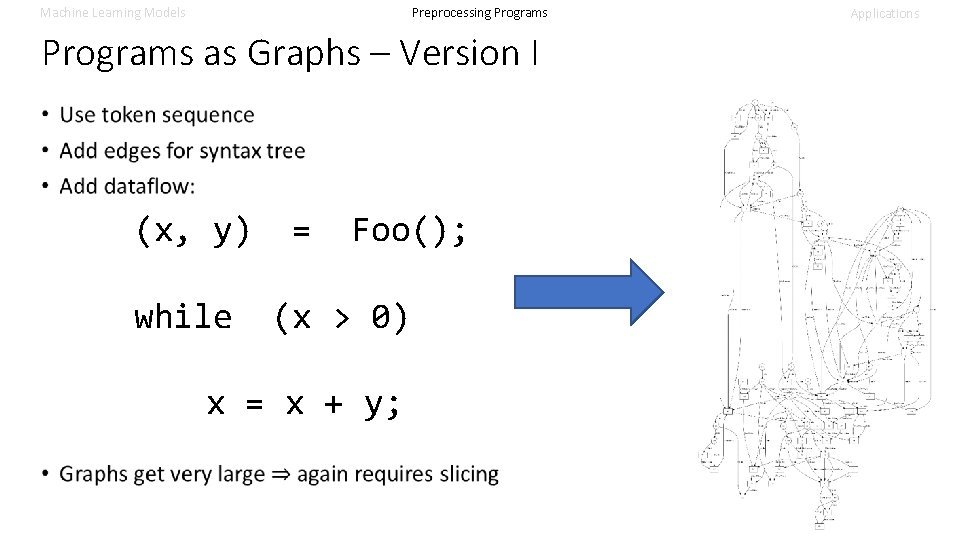

Machine Learning Models Preprocessing Programs Applications Programs as Graphs – Version I • Use token sequence • Add edges for syntax tree • Add dataflow: (x, y) = Foo(); Last Write while (x > 0) x = x + y; Last Use Computed From

Machine Learning Models Preprocessing Programs as Graphs – Version I (x, y) while = Foo(); (x > 0) x = x + y; Applications

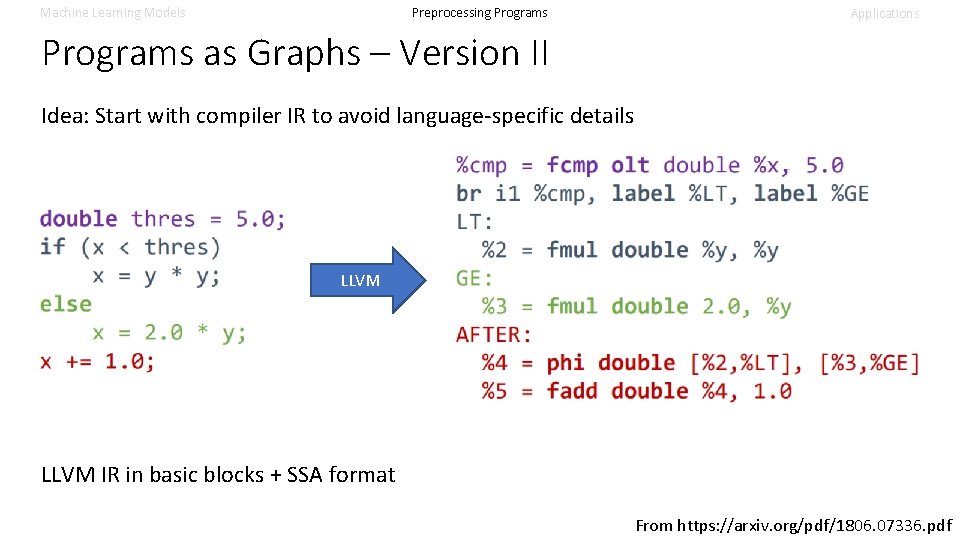

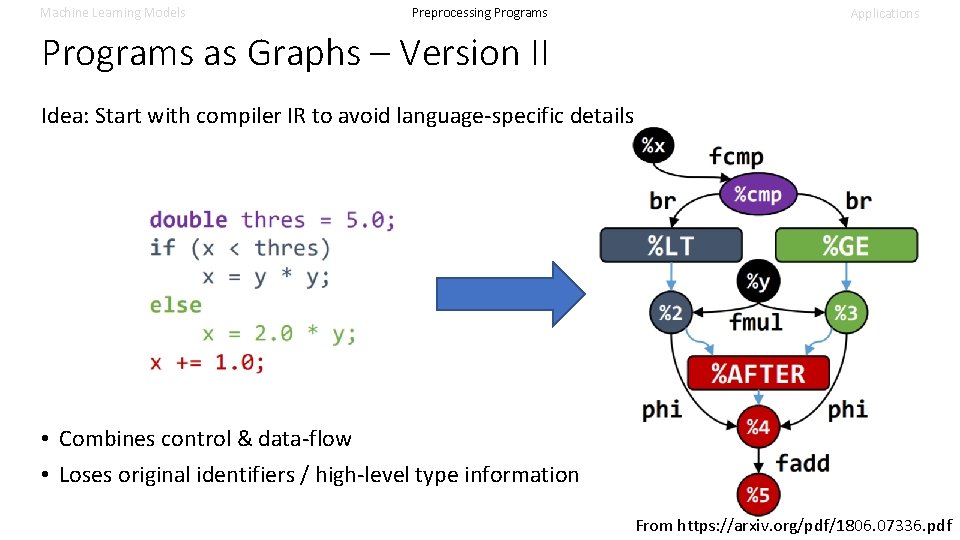

Machine Learning Models Preprocessing Programs Applications Programs as Graphs – Version II Idea: Start with compiler IR to avoid language-specific details LLVM IR in basic blocks + SSA format From https: //arxiv. org/pdf/1806. 07336. pdf

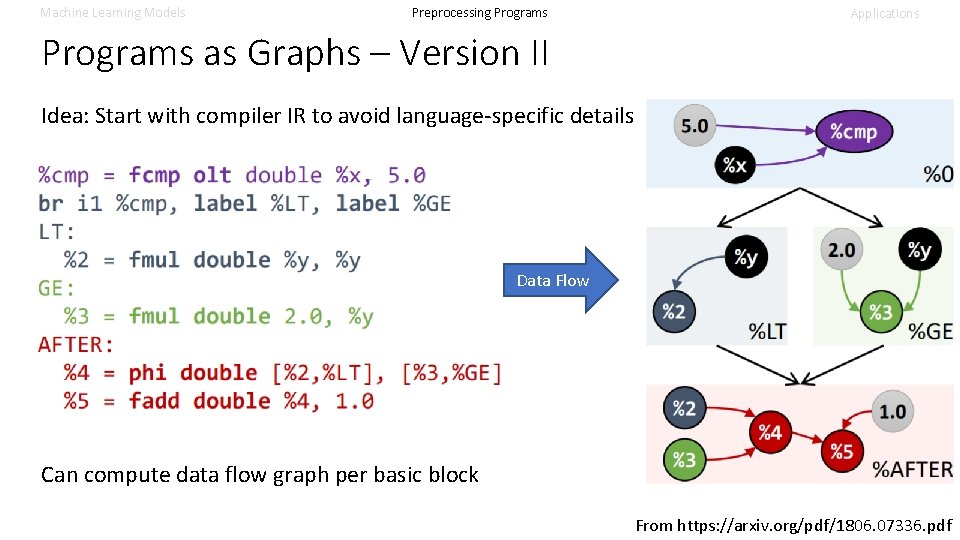

Machine Learning Models Preprocessing Programs Applications Programs as Graphs – Version II Idea: Start with compiler IR to avoid language-specific details Data Flow Can compute data flow graph per basic block From https: //arxiv. org/pdf/1806. 07336. pdf

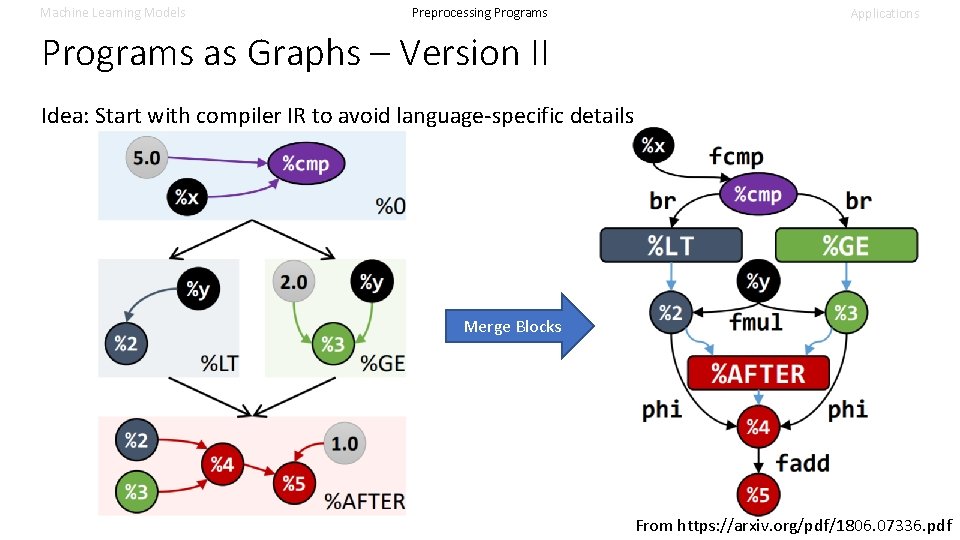

Machine Learning Models Preprocessing Programs Applications Programs as Graphs – Version II Idea: Start with compiler IR to avoid language-specific details Merge Blocks From https: //arxiv. org/pdf/1806. 07336. pdf

Machine Learning Models Preprocessing Programs Applications Programs as Graphs – Version II Idea: Start with compiler IR to avoid language-specific details • Combines control & data-flow • Loses original identifiers / high-level type information From https: //arxiv. org/pdf/1806. 07336. pdf

Machine Learning Models Preprocessing Programs Applications Overview 1 Step 2: • Obtain (latent) program representation • Often re-uses natural language processing infrastructure • Usually produces a (set of) vectors Program Intermediate Representation 2 Step 1: • Transform to ML-compatible representation • Interface between PL and ML world • Often re-uses compiler infrastructure Learned Representation Result 3 Step 3: • Produce output useful to programmer/PL tool • Interface between ML and PL world

Applications – Classification Tasks • Bug Finding • Probabilistic Type Inference • Selecting Optimal Parameters

Machine Learning Models Preprocessing Programs Applications Classification Cat Dog …

Machine Learning Models Preprocessing Programs Applications Deep. Bugs – Overview Old Idea: Variable/Class/… names are meaningful New Idea: Example: Use names to find bugs! promise. done(error, result) promise. done(res, err) Deep. Bugs: A Learning Approach to Name-based Bug Detection Michael Pradel & Koushik Sen

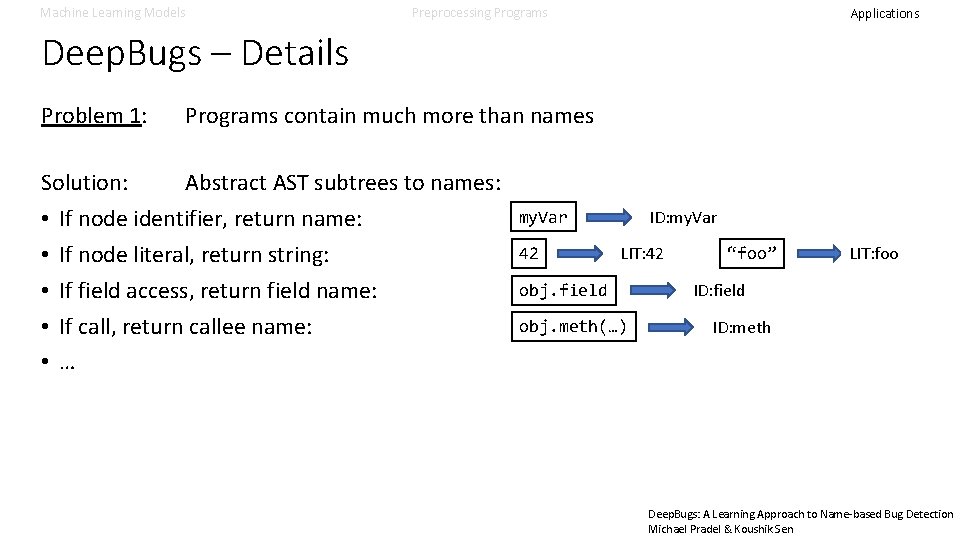

Machine Learning Models Preprocessing Programs Applications Deep. Bugs – Details Problem 1: Programs contain much more than names Solution: Abstract AST subtrees to names: • If node identifier, return name: • If node literal, return string: • If field access, return field name: • If call, return callee name: • … ID: my. Var 42 LIT: 42 obj. field obj. meth(…) “foo” LIT: foo ID: field ID: meth Deep. Bugs: A Learning Approach to Name-based Bug Detection Michael Pradel & Koushik Sen

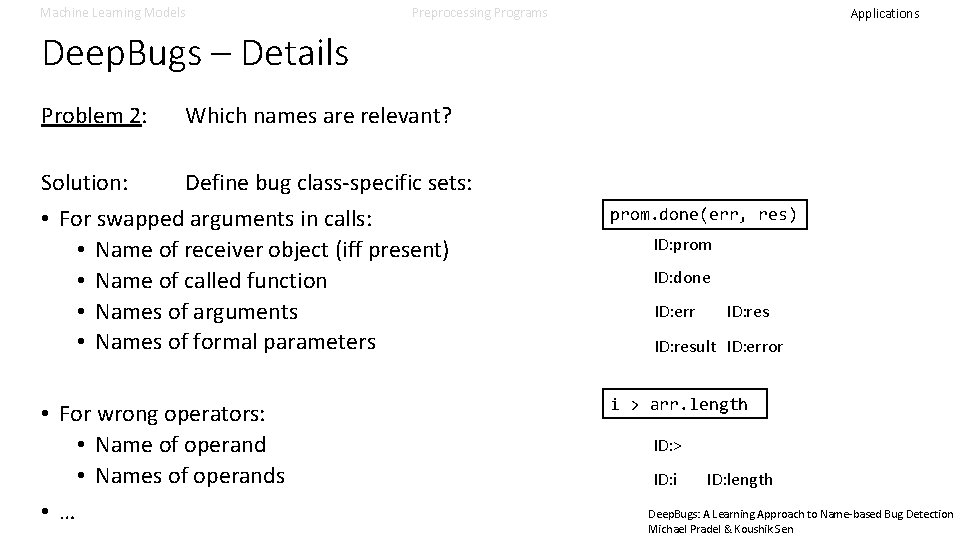

Machine Learning Models Preprocessing Programs Applications Deep. Bugs – Details Problem 2: Which names are relevant? Solution: Define bug class-specific sets: • For swapped arguments in calls: • Name of receiver object (iff present) • Name of called function • Names of arguments • Names of formal parameters • For wrong operators: • Name of operand • Names of operands • … prom. done(err, res) ID: prom ID: done ID: err ID: result ID: error i > arr. length ID: > ID: i ID: length Deep. Bugs: A Learning Approach to Name-based Bug Detection Michael Pradel & Koushik Sen

Machine Learning Models Preprocessing Programs Applications Deep. Bugs – Details Problem 3: Need training data with bugs Solution: Generate using bug class-specific permutations: • For swapped arguments in calls: Swap arguments • For wrong operators: Replace operator • … Training data: 50% correct (original data) + 50% buggy (permuted data) Deep. Bugs: A Learning Approach to Name-based Bug Detection Michael Pradel & Koushik Sen

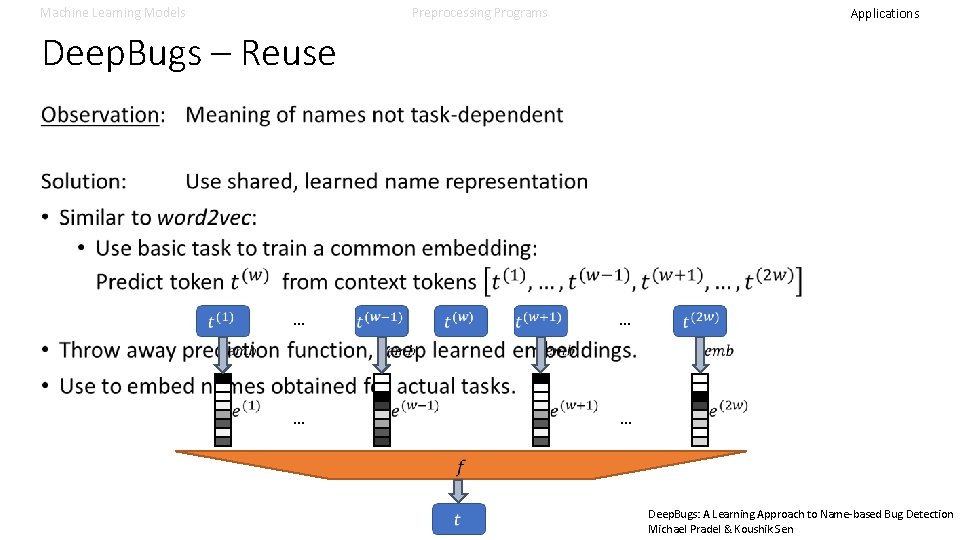

Machine Learning Models Preprocessing Programs Applications Deep. Bugs – Reuse … … … Deep. Bugs: A Learning Approach to Name-based Bug Detection Michael Pradel & Koushik Sen

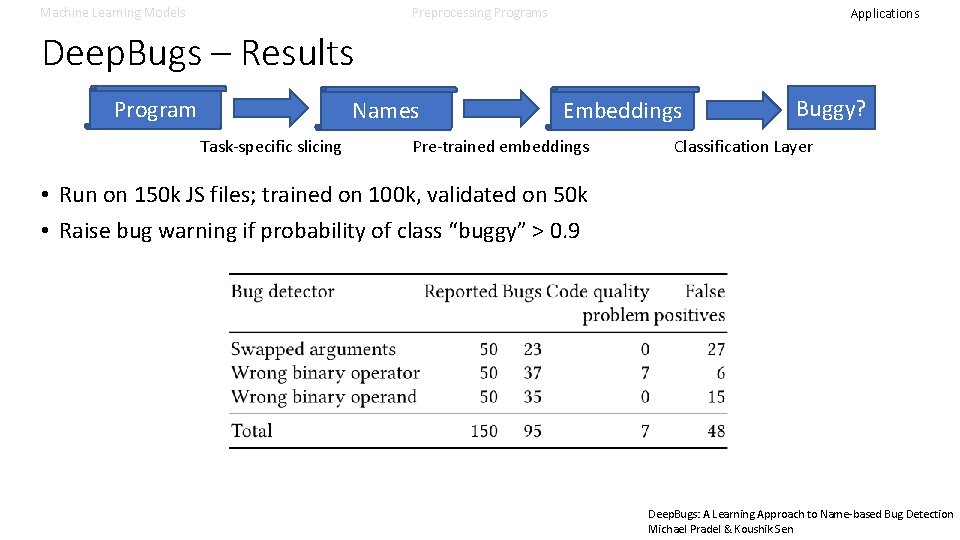

Machine Learning Models Preprocessing Programs Applications Deep. Bugs – Results Program Names Task-specific slicing Embeddings Pre-trained embeddings Buggy? Classification Layer • Run on 150 k JS files; trained on 100 k, validated on 50 k • Raise bug warning if probability of class “buggy” > 0. 9 Deep. Bugs: A Learning Approach to Name-based Bug Detection Michael Pradel & Koushik Sen

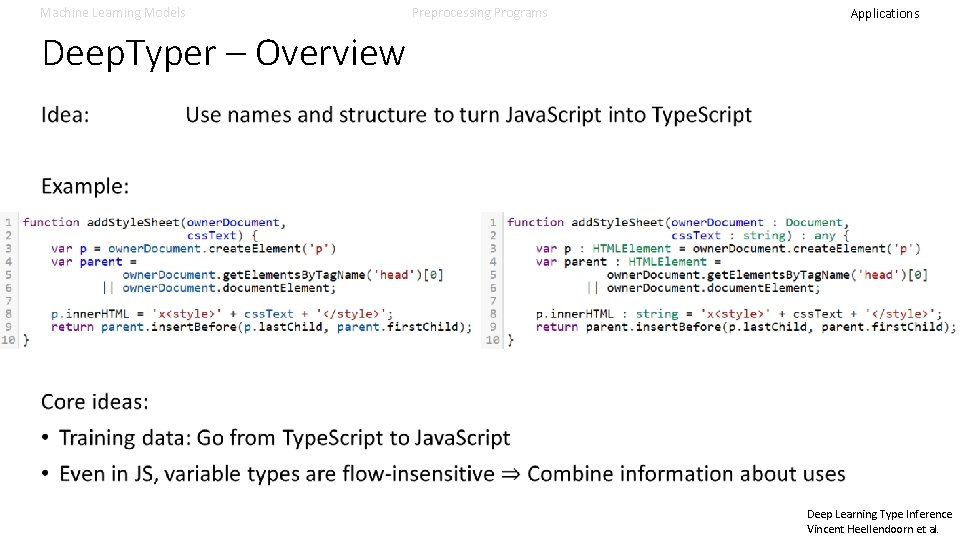

Machine Learning Models Preprocessing Programs Applications Deep. Typer – Overview Deep Learning Type Inference Vincent Heellendoorn et al.

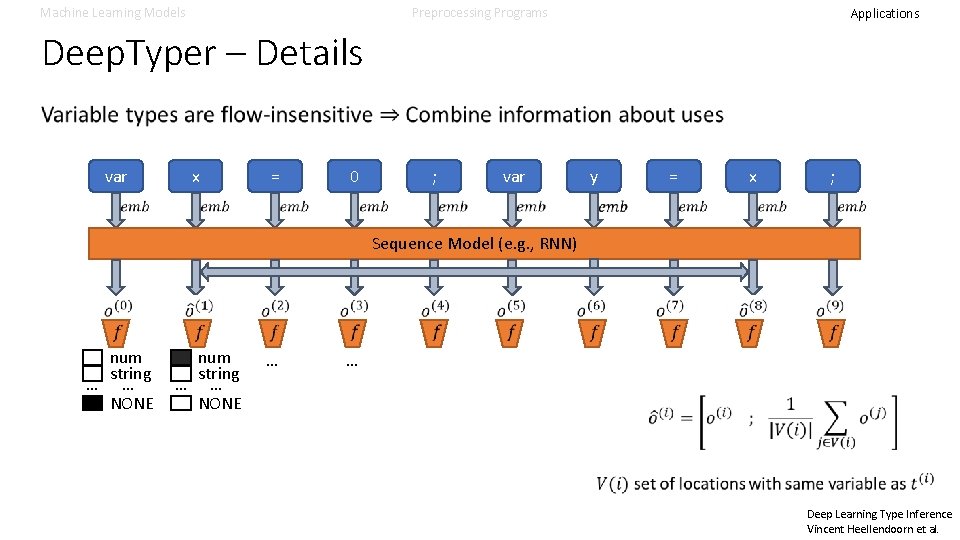

Machine Learning Models Preprocessing Programs Applications Deep. Typer – Details var x = 0 ; var y = x ; Sequence Model (e. g. , RNN) num string … … NONE … … Deep Learning Type Inference Vincent Heellendoorn et al.

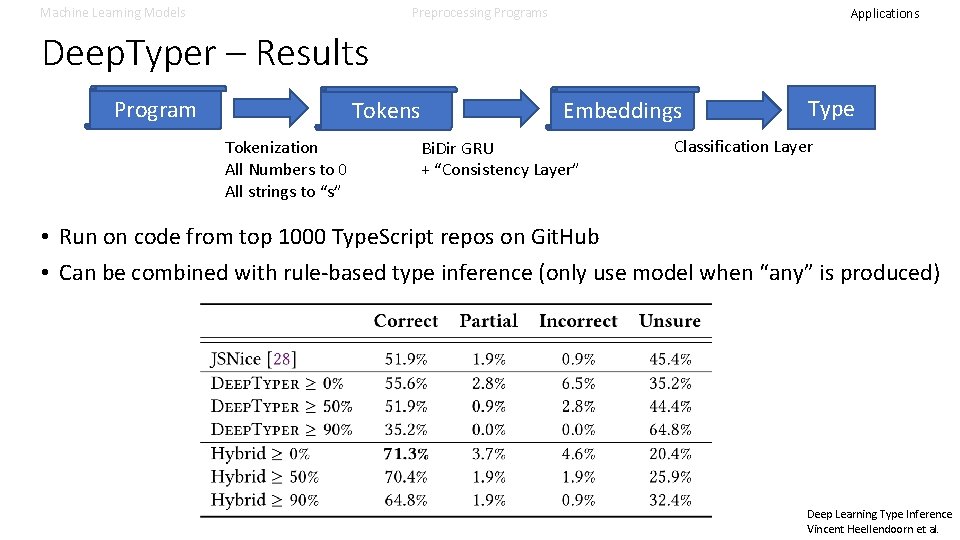

Machine Learning Models Preprocessing Programs Applications Deep. Typer – Results Program Tokens Tokenization All Numbers to 0 All strings to “s” Embeddings Bi. Dir GRU + “Consistency Layer” Type Classification Layer • Run on code from top 1000 Type. Script repos on Git. Hub • Can be combined with rule-based type inference (only use model when “any” is produced) Deep Learning Type Inference Vincent Heellendoorn et al.

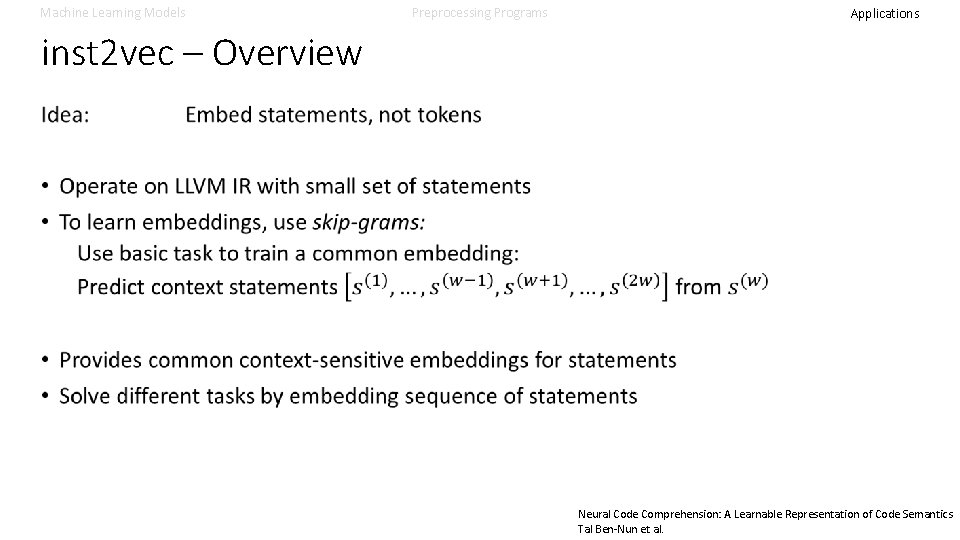

Machine Learning Models Preprocessing Programs Applications inst 2 vec – Overview Neural Code Comprehension: A Learnable Representation of Code Semantics Tal Ben-Nun et al.

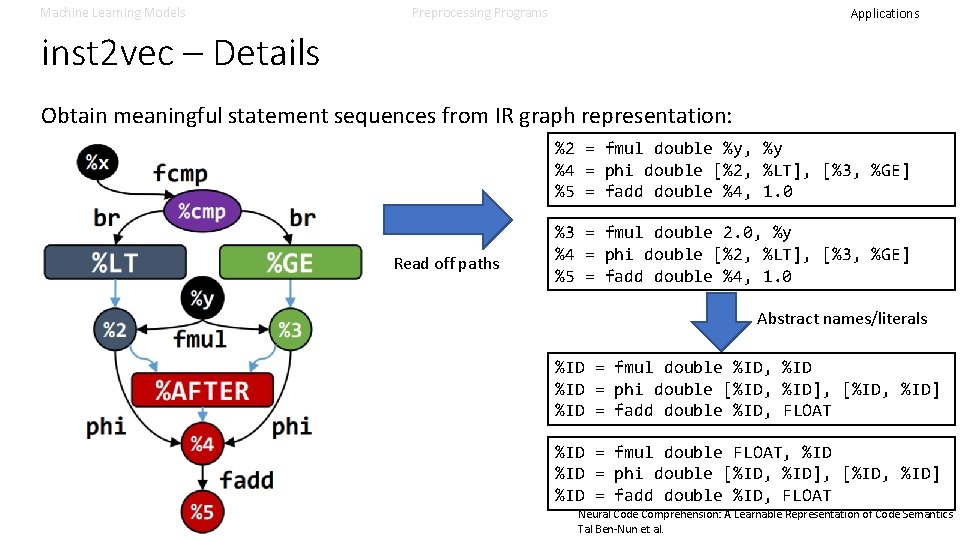

Machine Learning Models Preprocessing Programs Applications inst 2 vec – Details Obtain meaningful statement sequences from IR graph representation: %2 = fmul double %y, %y %4 = phi double [%2, %LT], [%3, %GE] %5 = fadd double %4, 1. 0 Read off paths %3 = fmul double 2. 0, %y %4 = phi double [%2, %LT], [%3, %GE] %5 = fadd double %4, 1. 0 Abstract names/literals %ID = fmul double %ID, %ID = phi double [%ID, %ID], [%ID, %ID] %ID = fadd double %ID, FLOAT %ID = fmul double FLOAT, %ID = phi double [%ID, %ID], [%ID, %ID] %ID = fadd double %ID, FLOAT Neural Code Comprehension: A Learnable Representation of Code Semantics Tal Ben-Nun et al.

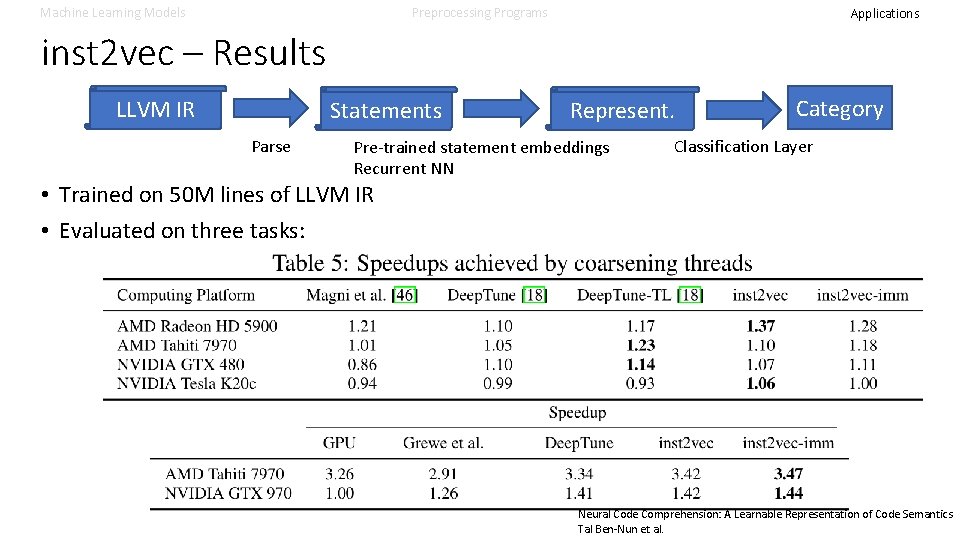

Machine Learning Models Preprocessing Programs Applications inst 2 vec – Results LLVM IR Statements Parse Represent. Pre-trained statement embeddings Recurrent NN Category Classification Layer • Trained on 50 M lines of LLVM IR • Evaluated on three tasks: Neural Code Comprehension: A Learnable Representation of Code Semantics Tal Ben-Nun et al.

Applications – Ranking Tasks • Bug Finding • Premise Selection • Ordering Search Spaces

Machine Learning Models Preprocessing Programs Applications Ranking 23. 42 42. 23 -0. 3

Machine Learning Models Preprocessing Programs Applications Program Graphs – Overview Idea: Directly learn from graph representation of program • Transform program (slice) into graph • Use GNN to compute representation taking context into account • Read out final representations to compute results Learning to Represent Programs with Graphs. Allamanis et al.

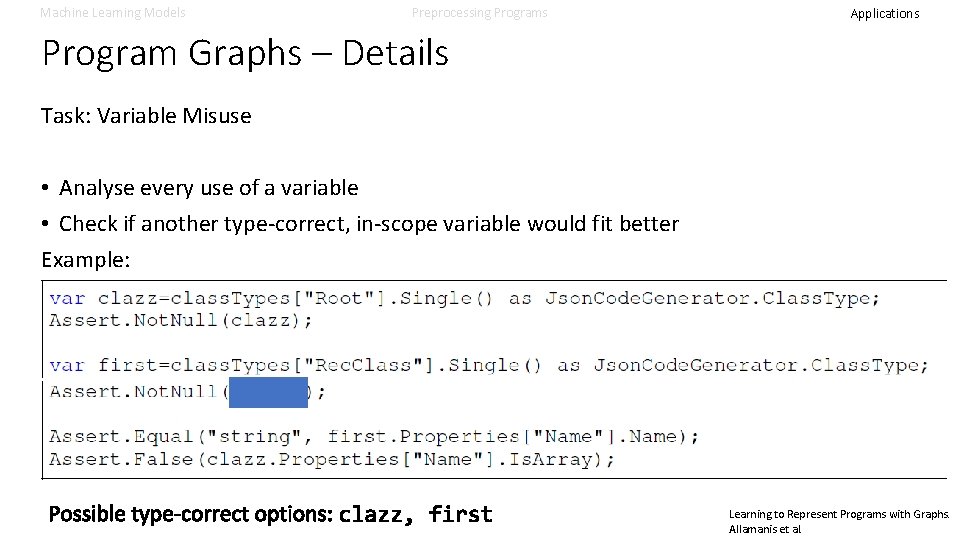

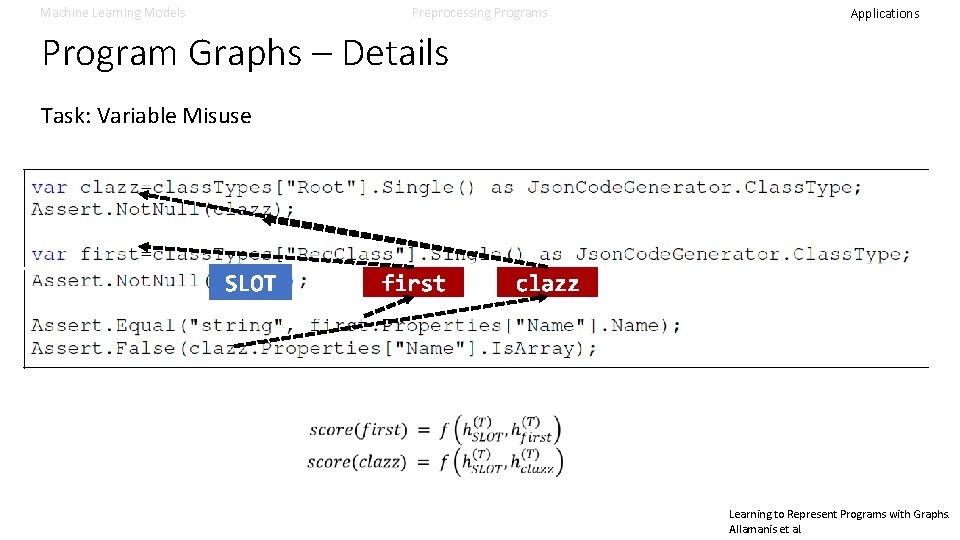

Machine Learning Models Preprocessing Programs Applications Program Graphs – Details Task: Variable Misuse • Analyse every use of a variable • Check if another type-correct, in-scope variable would fit better Example: Learning to Represent Programs with Graphs. Allamanis et al.

Machine Learning Models Preprocessing Programs Applications Program Graphs – Details Task: Variable Misuse Learning to Represent Programs with Graphs. Allamanis et al.

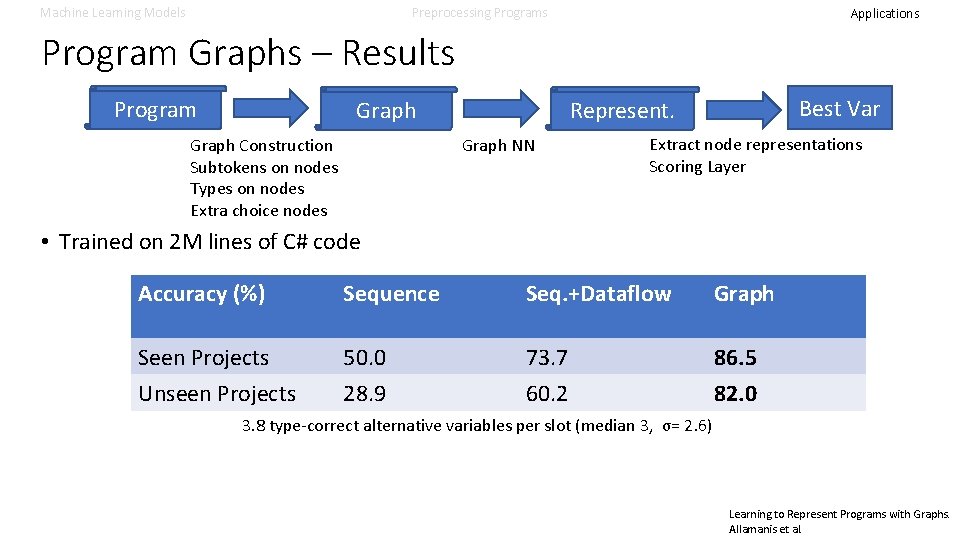

Machine Learning Models Preprocessing Programs Applications Program Graphs – Results Program Graph NN Graph Construction Subtokens on nodes Types on nodes Extra choice nodes Best Var Represent. Graph Extract node representations Scoring Layer • Trained on 2 M lines of C# code Accuracy (%) Sequence Seq. +Dataflow Graph Seen Projects Unseen Projects 50. 0 28. 9 73. 7 60. 2 86. 5 82. 0 3. 8 type-correct alternative variables per slot (median 3, σ= 2. 6) Learning to Represent Programs with Graphs. Allamanis et al.

Machine Learning Models Preprocessing Programs Applications Deep. Math – Overview Idea: • • Theorem Proving would be faster if only relevant facts would be used Automated Theorem Proving to a large degree search ATPs take proof goal, try to apply premises to make progress Time spent on trying premises that are “obviously” useless Can machine learn to identify the most useful premises? Deep. Math - Deep Sequence Models for Premise Selection Alemi et al.

Machine Learning Models Preprocessing Programs Applications Deep. Math – Details Deep. Math - Deep Sequence Models for Premise Selection Alemi et al.

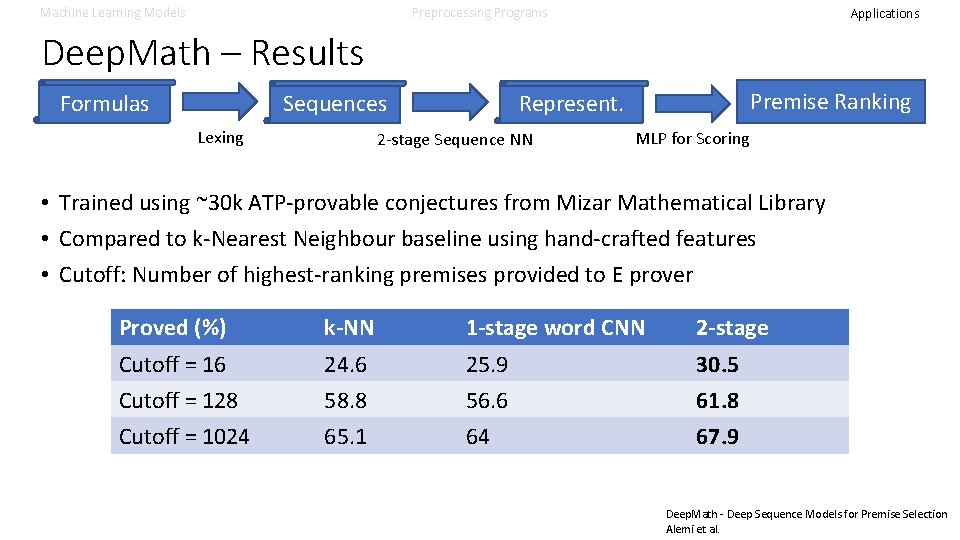

Machine Learning Models Preprocessing Programs Applications Deep. Math – Results Formulas Lexing Premise Ranking Represent. Sequences 2 -stage Sequence NN MLP for Scoring • Trained using ~30 k ATP-provable conjectures from Mizar Mathematical Library • Compared to k-Nearest Neighbour baseline using hand-crafted features • Cutoff: Number of highest-ranking premises provided to E prover Proved (%) k-NN 1 -stage word CNN 2 -stage Cutoff = 16 Cutoff = 128 Cutoff = 1024 24. 6 58. 8 65. 1 25. 9 56. 6 64 30. 5 61. 8 67. 9 Deep. Math - Deep Sequence Models for Premise Selection Alemi et al.

Machine Learning Models Preprocessing Programs Applications Deep. Coder - Overview Idea: • • Program Synthesis would be faster if only relevant functions would be used Program Synthesis to a large degree search Programming-by-Example takes I/O examples, searches for program Time spent on trying functions that are “obviously” useless Can machine learn to identify the most useful functions? Deep. Coder: Learning to Write Programs Balog et al.

Machine Learning Models Preprocessing Programs Applications Deep. Coder - Details Deep. Coder: Learning to Write Programs Balog et al.

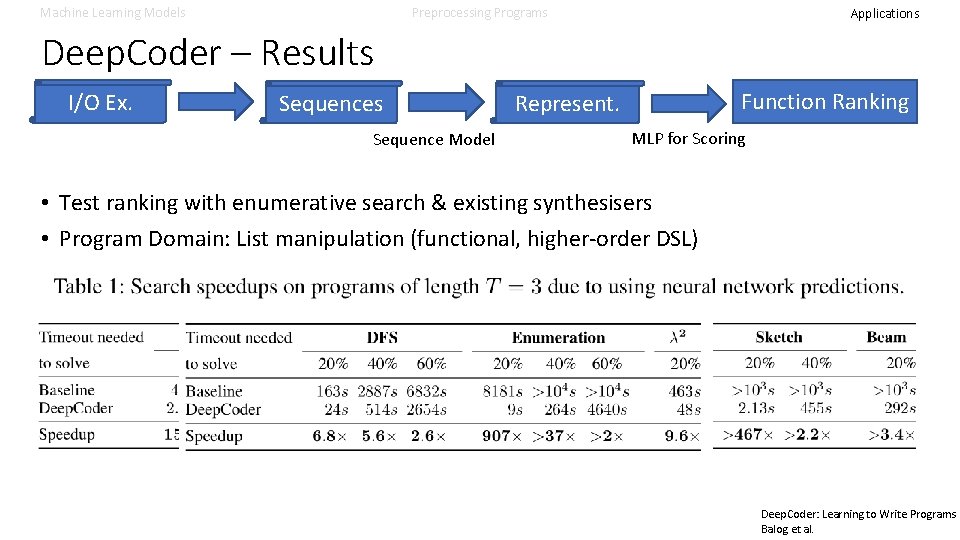

Machine Learning Models Preprocessing Programs Applications Deep. Coder – Results I/O Ex. Sequences Sequence Model Function Ranking Represent. MLP for Scoring • Test ranking with enumerative search & existing synthesisers • Program Domain: List manipulation (functional, higher-order DSL) Deep. Coder: Learning to Write Programs Balog et al.

Applications – Generative Tasks • • Generating Sequences Generating Names Generating Invariants Generating Strategies

Machine Learning Models Preprocessing Programs Applications Generative Tasks A black and white kitten looking at the camera. A puppy sitting on a wood floor. An angry hairball with eyes.

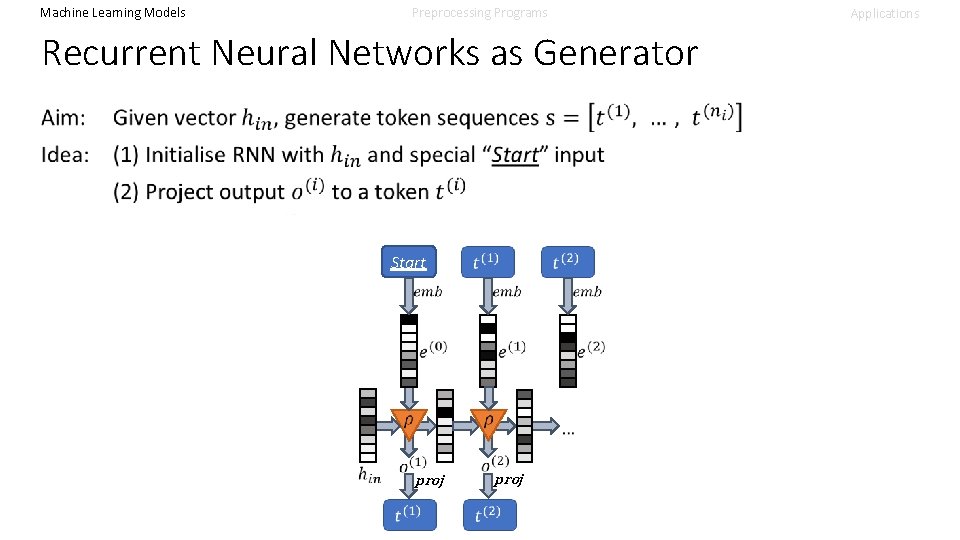

Machine Learning Models Preprocessing Programs Applications Recurrent Neural Networks as Generator Start proj

Machine Learning Models Preprocessing Programs Recurrent Neural Networks as Generator Applications

Machine Learning Models Preprocessing Programs Sequence Models as Generators • Similar extensions exist for 1 D-CNN / Self-Attentional models • Known as seq 2 seq when initial input comes from sequence model (This is what drives Neural Machine Translation, Chat Bots, …) • Similar extensions exist for tree / graph models Applications

Machine Learning Models use 2 name - Overview Preprocessing Programs Applications

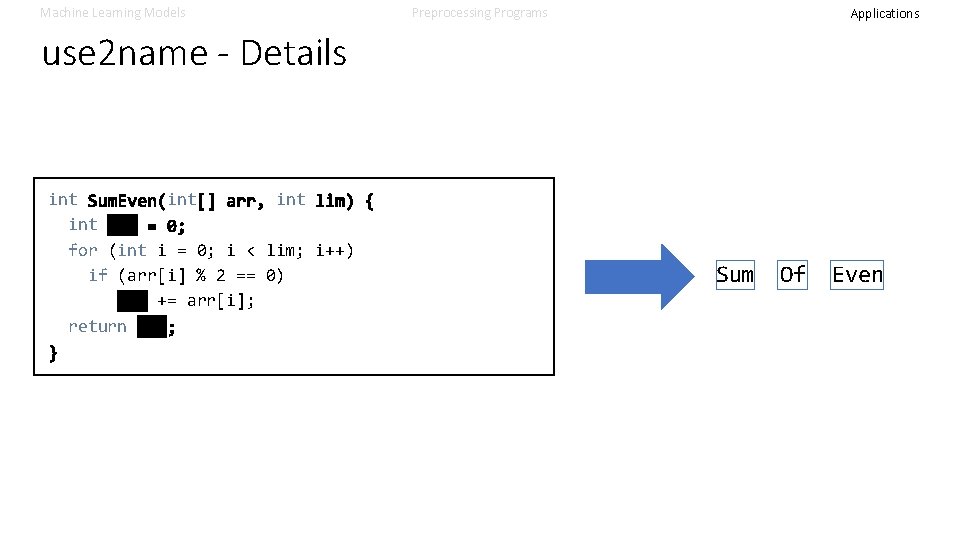

Machine Learning Models Preprocessing Programs Applications use 2 name - Details int int for (int i = 0; i < lim; i++) if (arr[i] % 2 == 0) sum += arr[i]; return Sum Of Even

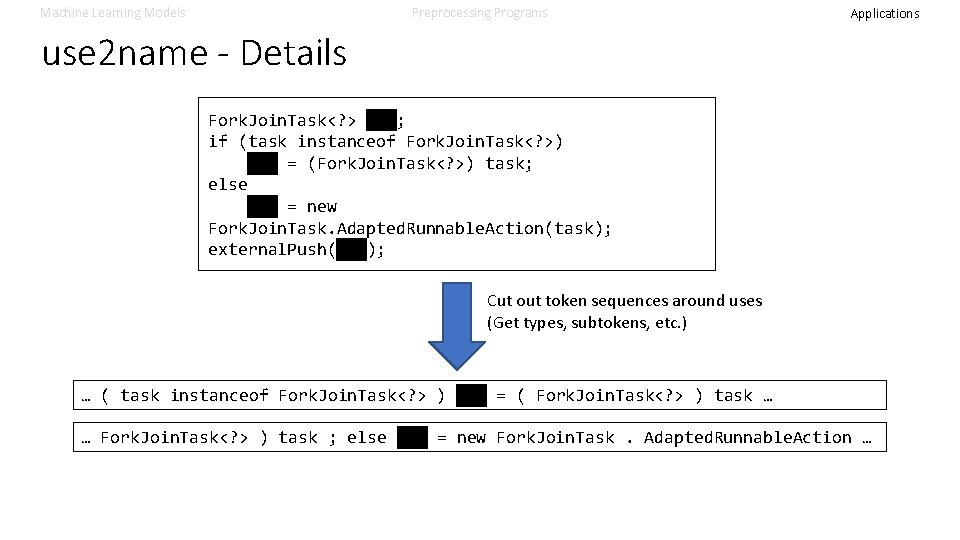

Machine Learning Models Preprocessing Programs Applications use 2 name - Details Fork. Join. Task<? > job; if (task instanceof Fork. Join. Task<? >) job = (Fork. Join. Task<? >) task; else job = new Fork. Join. Task. Adapted. Runnable. Action(task); external. Push(job); Cut out token sequences around uses (Get types, subtokens, etc. ) … ( task instanceof Fork. Join. Task<? > ) job = ( Fork. Join. Task<? > ) task … … Fork. Join. Task<? > ) task ; else job = new Fork. Join. Task. Adapted. Runnable. Action …

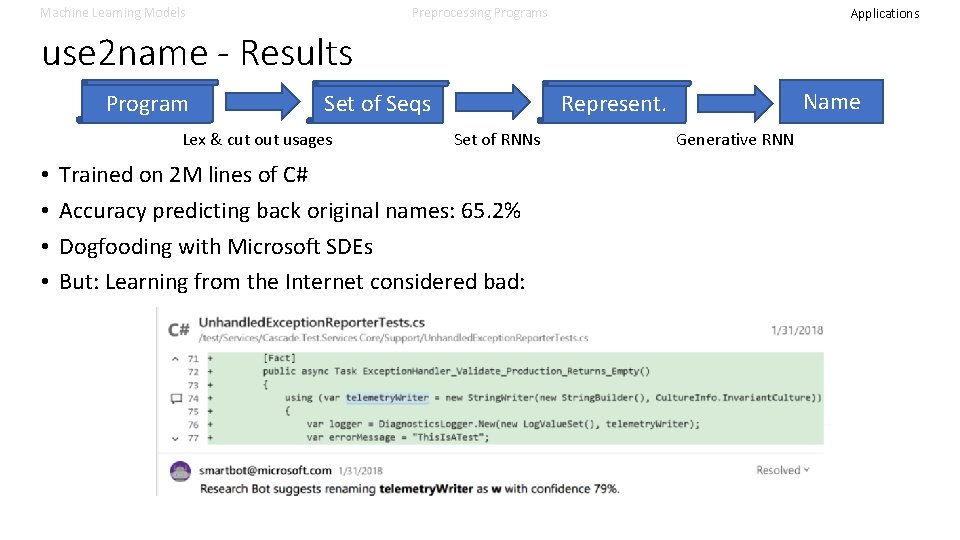

Machine Learning Models Preprocessing Programs Applications use 2 name - Results Program Lex & cut out usages • • Name Represent. Set of Seqs Set of RNNs Trained on 2 M lines of C# Accuracy predicting back original names: 65. 2% Dogfooding with Microsoft SDEs But: Learning from the Internet considered bad: Generative RNN

Machine Learning Models Preprocessing Programs Applications code 2 inv - Overview Learning Loop Invariants for Program Verification Si et al.

Machine Learning Models Preprocessing Programs Sidebar: Reinforcement Learning • Tasks discussed so far: Supervised Learning • Each input has known, unique correct output • Models trained to map inputs to correct outputs • In reality: • Agent interacts with environment (a black box): Agent takes action (e. g. “king’s pawn to e 4”) Environment reacts (e. g. “c 5”) … • Certain actions lead to a reward (e. g. check mate) • Many action sequences can be correct • Reinforcement Learning: Learn agent that maximizes reward Applications

Machine Learning Models Preprocessing Programs Applications code 2 inv - Details • Actions: Proposing an invariant • Environment: • Reward if invariant has hand-selected properties: • No trivial atoms (“x == x”, “ 1 < 2”, “x < x”) • No contradictions • Reward if invariant covers known counterexample traces • Reward if invariant sufficient to prove program correct Learning Loop Invariants for Program Verification Si et al.

Machine Learning Models Preprocessing Programs Applications code 2 inv - Details Loop invariant generation as Reinforcement Learning problem Initially: Program as graph & compute context-sensitive representation per node Generating an invariant: Extend invariant candidate by fresh predicate (using generative model), considering • Predicates generated so far • Program graph node representations Reward: Environment checks that hand-selected properties hold (terminates if not) • No trivial predicates (“x == x”, “ 1 < 2”, “x < x”) • No contradictions Learning Loop Invariants for Program Verification Si et al.

Machine Learning Models Preprocessing Programs Applications code 2 inv - Details Checking an invariant: Reward 1: Known counterexamples are covered: • Counterexample imply invariant on loop entry • Invariant is inductive for counterexamples • Invariant implies post-condition Partial reward provided if not all of the above hold, but process restarts Reward 2: Invariant checked with z 3: • New counterexample: Add to set, compute partial reward • Otherwise: Done! Learning Loop Invariants for Program Verification Si et al.

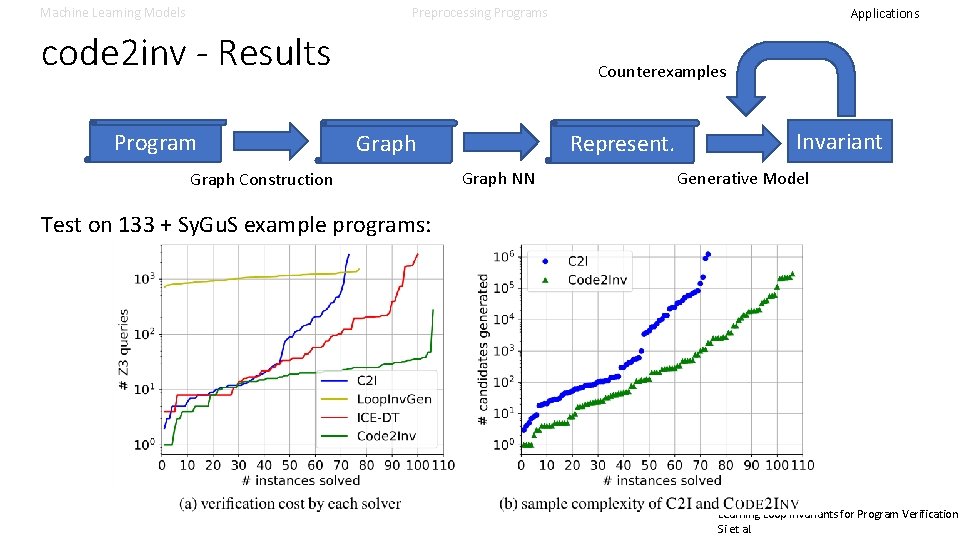

Machine Learning Models Preprocessing Programs code 2 inv - Results Program Applications Counterexamples Represent. Graph Construction Graph NN Invariant Generative Model Test on 133 + Sy. Gu. S example programs: Learning Loop Invariants for Program Verification Si et al.

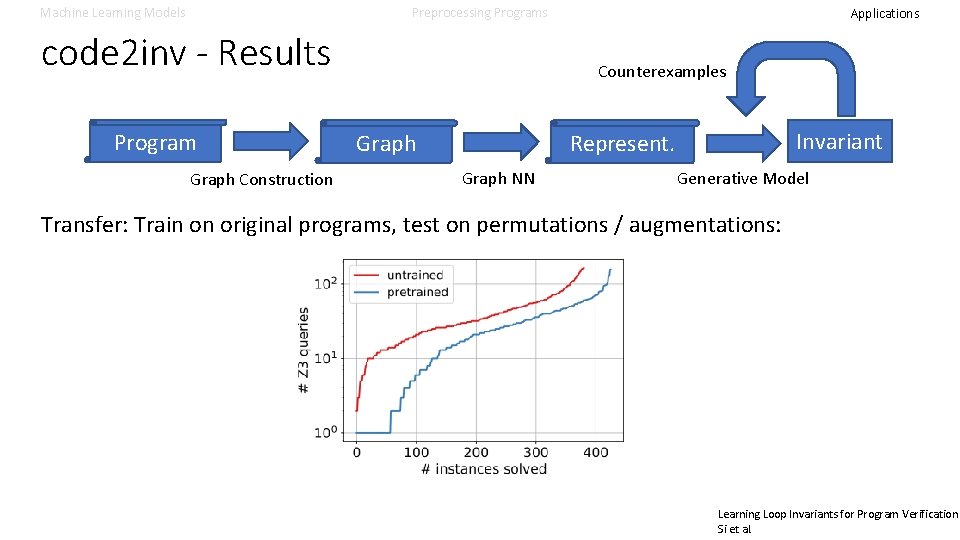

Machine Learning Models Preprocessing Programs code 2 inv - Results Program Graph Construction Applications Counterexamples Invariant Represent. Graph NN Generative Model Transfer: Train on original programs, test on permutations / augmentations: Learning Loop Invariants for Program Verification Si et al.

Machine Learning Models Preprocessing Programs Applications fast. SMT - Overview Learning to Solve SMT Formulas Mislav Balunović et al.

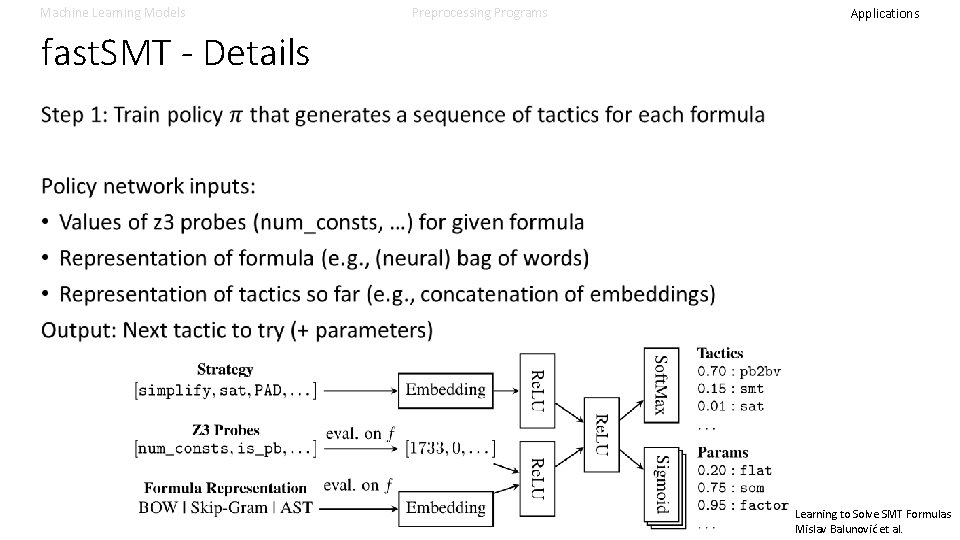

Machine Learning Models Preprocessing Programs Applications fast. SMT - Details Step 1: Train policy that generates a sequence of tactics for each formula Policy network inputs: • Values of z 3 probes (num_consts, …) for given formula • Representation of formula (e. g. , (neural) bag of words) • Representation of tactics so far (e. g. , concatenation of embeddings) Output: Next tactic to try (+ parameters) Learning to Solve SMT Formulas Mislav Balunović et al.

Machine Learning Models Preprocessing Programs Applications fast. SMT - Details Learning to Solve SMT Formulas Mislav Balunović et al.

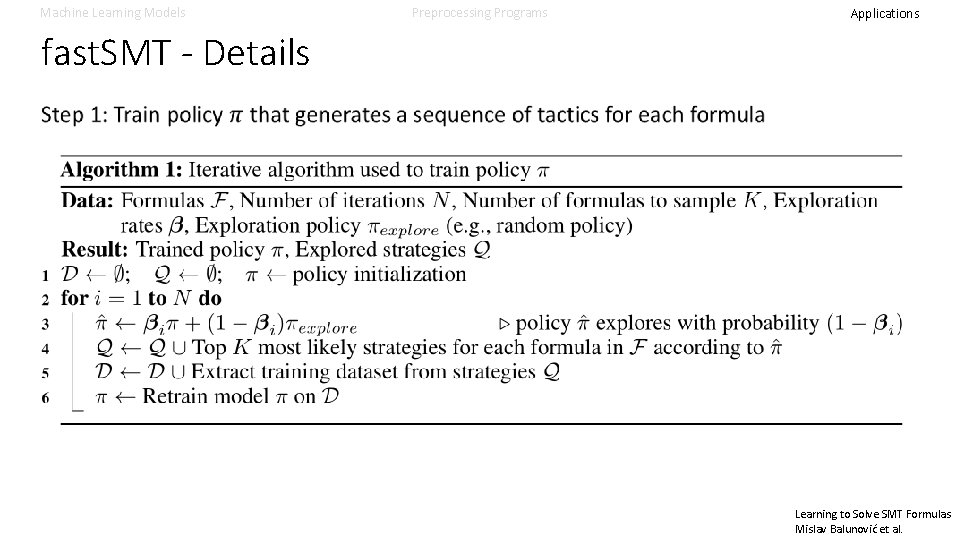

Machine Learning Models Preprocessing Programs Applications fast. SMT - Details Learning to Solve SMT Formulas Mislav Balunović et al.

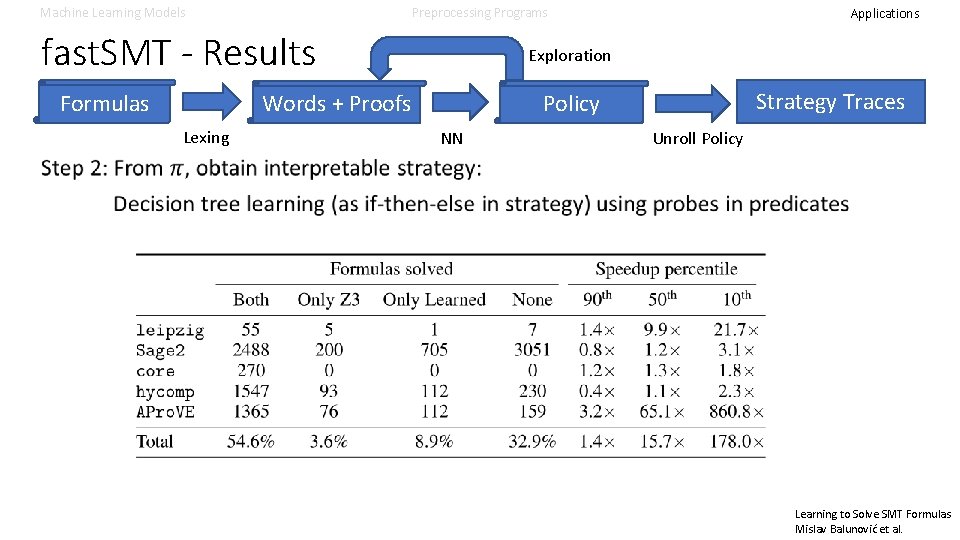

Machine Learning Models Preprocessing Programs fast. SMT - Results Formulas Exploration Strategy Traces Policy Words + Proofs Lexing Applications NN Unroll Policy Learning to Solve SMT Formulas Mislav Balunović et al.

Applications – Clustering Tasks • Finding Code Rules

Machine Learning Models Preprocessing Programs Applications Edit Representations - Overview Learning To Represent Edits Yin et al.

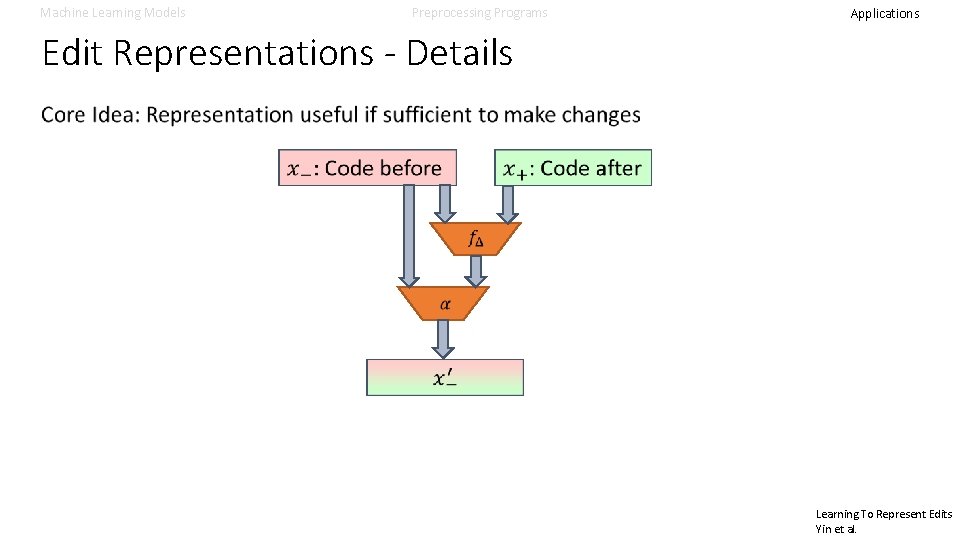

Machine Learning Models Preprocessing Programs Applications Edit Representations - Details Learning To Represent Edits Yin et al.

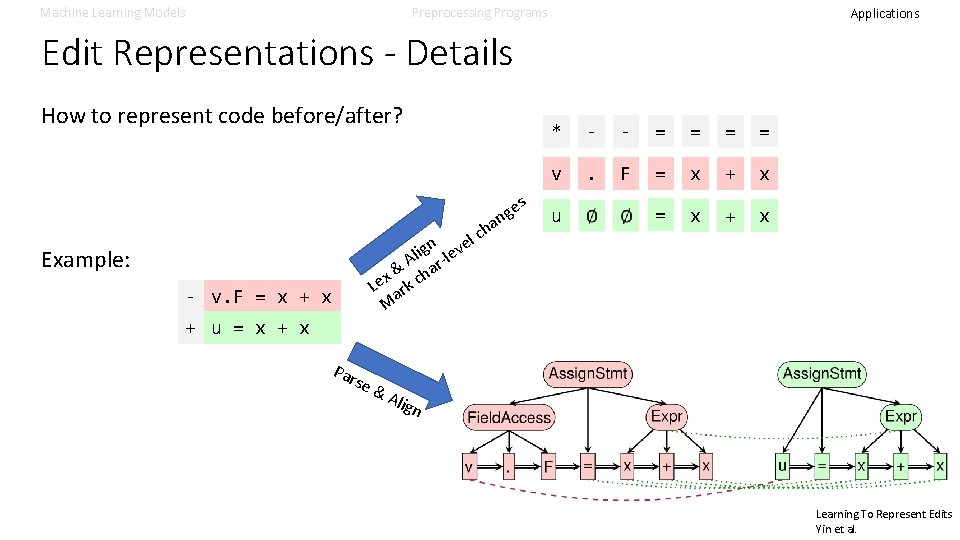

Machine Learning Models Preprocessing Programs Applications Edit Representations - Details How to represent code before/after? a ch l gn leve i l A ar& h x Le ark c M Example: - v. F = x + x e ng s * - - = = v . F = x + x u = x + u = x + x Par se & Ali gn Learning To Represent Edits Yin et al.

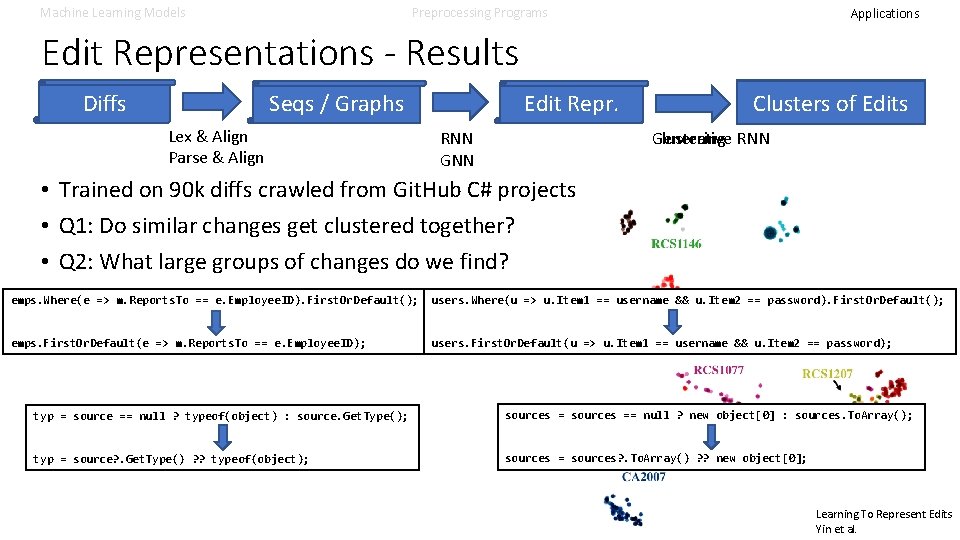

Machine Learning Models Preprocessing Programs Applications Edit Representations - Results Diffs Edit Repr. Seqs / Graphs Lex & Align Parse & Align RNN GNN Edited Code Clusters of Edits Generative RNN Clustering • Trained on 90 k diffs crawled from Git. Hub C# projects • Q 1: Do similar changes get clustered together? • Q 2: What large groups of changes do we find? emps. Where(e => m. Reports. To == e. Employee. ID). First. Or. Default(); users. Where(u => u. Item 1 == username && u. Item 2 == password). First. Or. Default(); emps. First. Or. Default(e => m. Reports. To == e. Employee. ID); users. First. Or. Default(u => u. Item 1 == username && u. Item 2 == password); typ = source == null ? typeof(object) : source. Get. Type(); sources == null ? new object[0] : sources. To. Array(); typ = source? . Get. Type() ? ? typeof(object); sources = sources? . To. Array() ? ? new object[0]; Learning To Represent Edits Yin et al.

Conclusions • The reality of Machine Learning • Recap

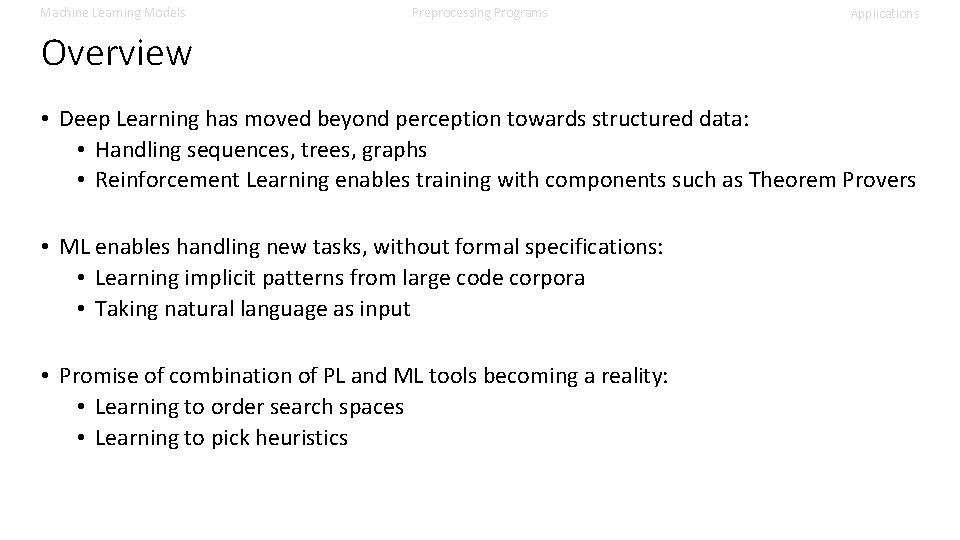

Machine Learning Models Preprocessing Programs Applications Overview • Deep Learning has moved beyond perception towards structured data: • Handling sequences, trees, graphs • Reinforcement Learning enables training with components such as Theorem Provers • ML enables handling new tasks, without formal specifications: • Learning implicit patterns from large code corpora • Taking natural language as input • Promise of combination of PL and ML tools becoming a reality: • Learning to order search spaces • Learning to pick heuristics

Machine Learning Models Preprocessing Programs Applications Overview Step 2: • Obtain (latent) program representation • Often re-uses natural language processing infrastructure • Usually produces a (set of) vectors Program Intermediate Representation Step 1: • Transform to ML-compatible representation • Interface between PL and ML world • Often re-uses compiler infrastructure Marc Brockschmidt - MSR Cambridge @mmjb 86 Learned Representation Result Step 3: • Produce output useful to programmer/PL tool • Interface between ML and PL world

- Slides: 112