Blazes coordination analysis for distributed program Peter Alvaro

Blazes: coordination analysis for distributed program Peter Alvaro, Neil Conway, Joseph M. Hellerstein UC Berkeley Portland State David Maier

Distributed systems are hard Asynchrony Partial Failure

Asynchrony isn’t that hard Ameloriation: Logical timestamps Deterministic interleaving

Partial failure isn’t that hard Ameloriation: Replication Replay

Asynchrony * partial failure is hard 2 Logical timestamps Deterministic interleaving Replication Replay

asynchrony * partial failure is hard 2 Today: Replication Replay Consistency criteria for fault-tolerant distributed systems Blazes: analysis and enforcement

This talk is all setup Frame of mind: 1. Dataflow: a model of distributed computation 2. Anomalies: what can go wrong? 3. Remediation strategies 1. 2. Component properties Delivery mechanisms Framework: Blazes – coordination analysis and synthesis

Little boxes: the dataflow model Generalization of distributed services Components interact via asynchronous calls (streams)

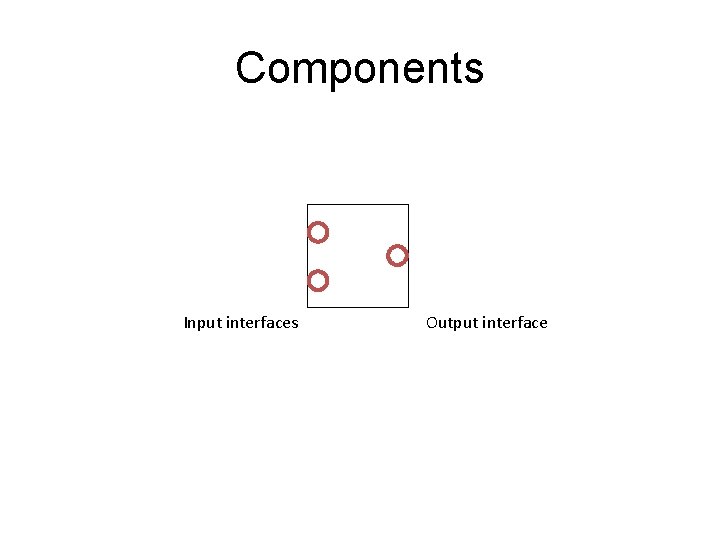

Components Input interfaces Output interface

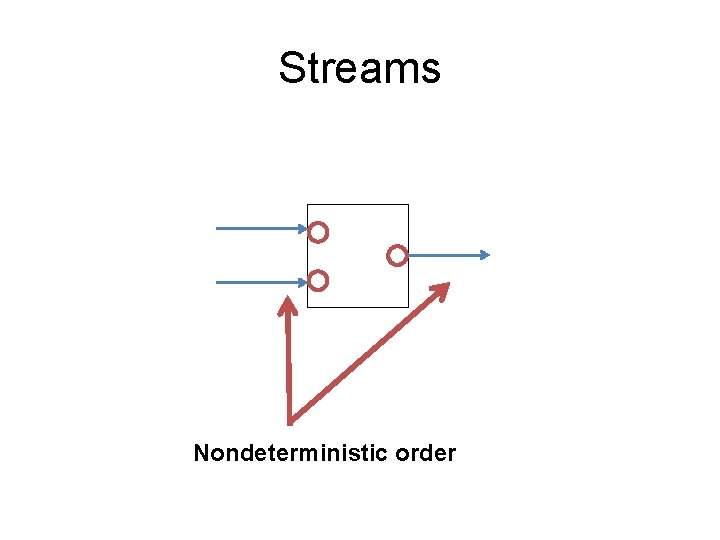

Streams Nondeterministic order

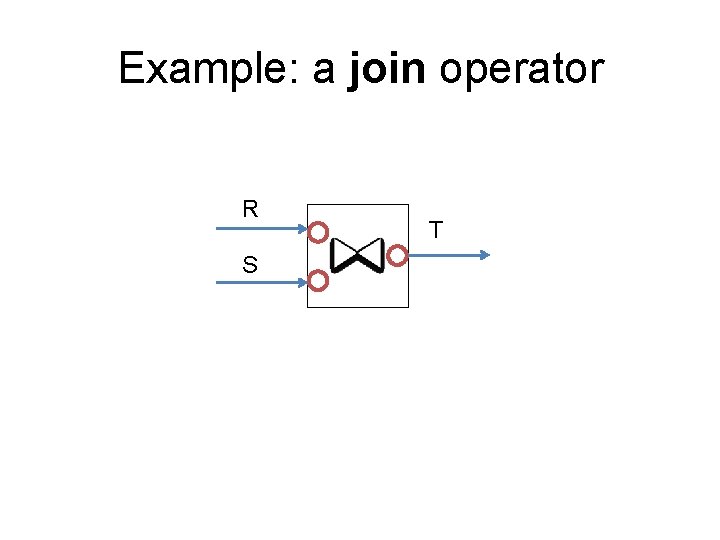

Example: a join operator R S T

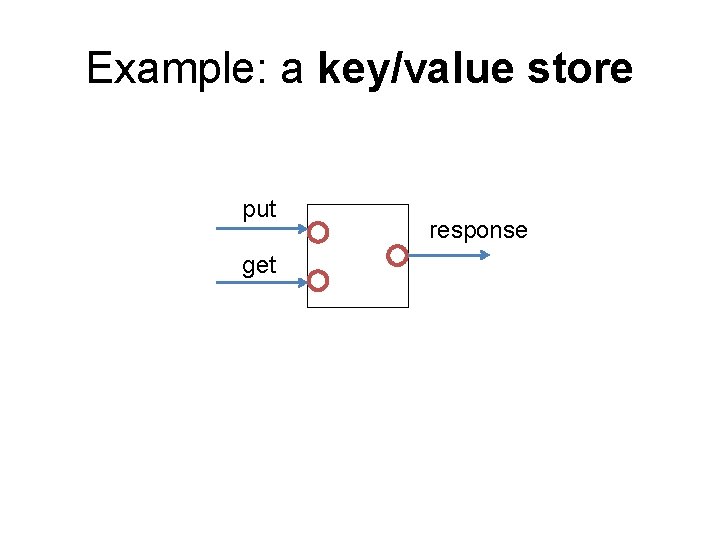

Example: a key/value store put get response

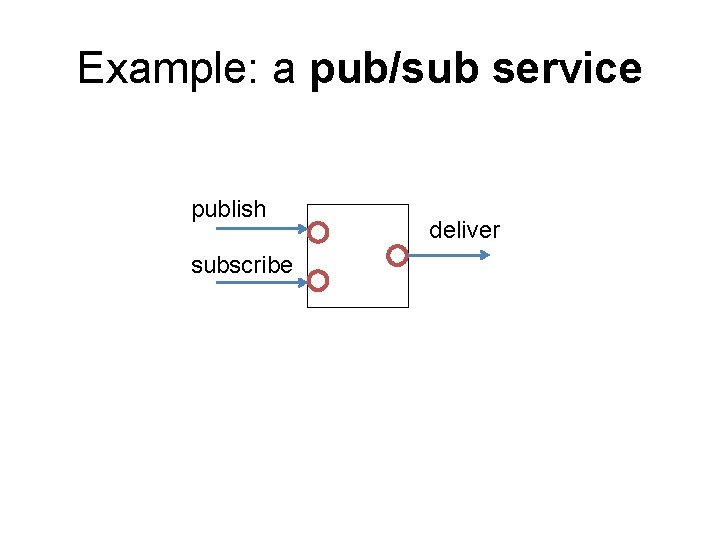

Example: a pub/sub service publish subscribe deliver

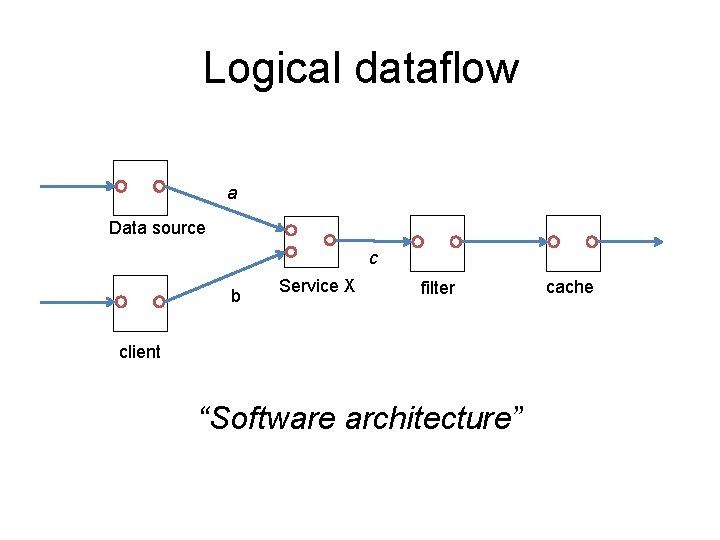

Logical dataflow a Data source c b Service X filter client “Software architecture” cache

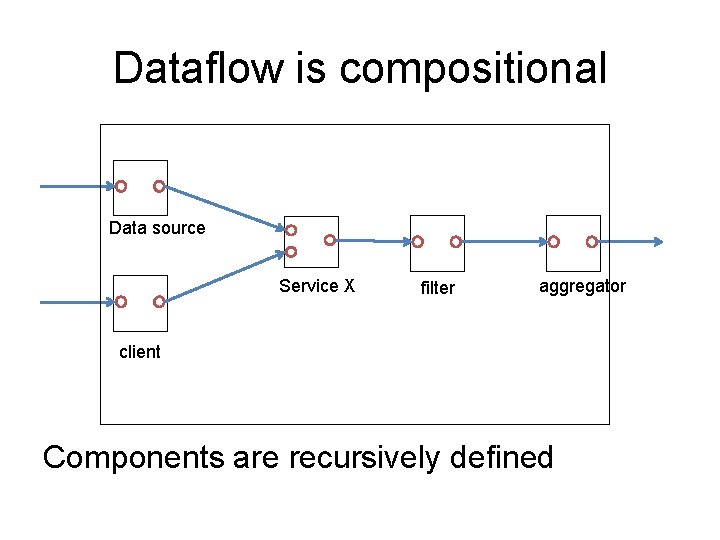

Dataflow is compositional Data source Service X filter aggregator client Components are recursively defined

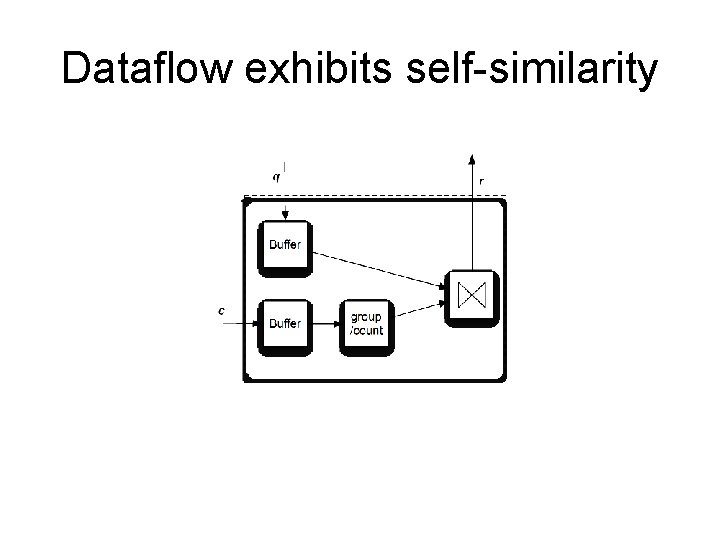

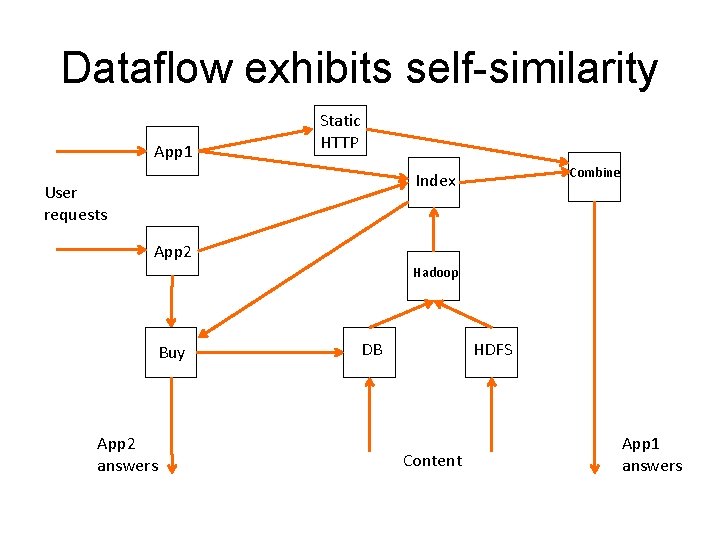

Dataflow exhibits self-similarity

Dataflow exhibits self-similarity App 1 Static HTTP Combine Index User requests App 2 Hadoop Buy App 2 answers DB HDFS Content App 1 answers

Physical dataflow

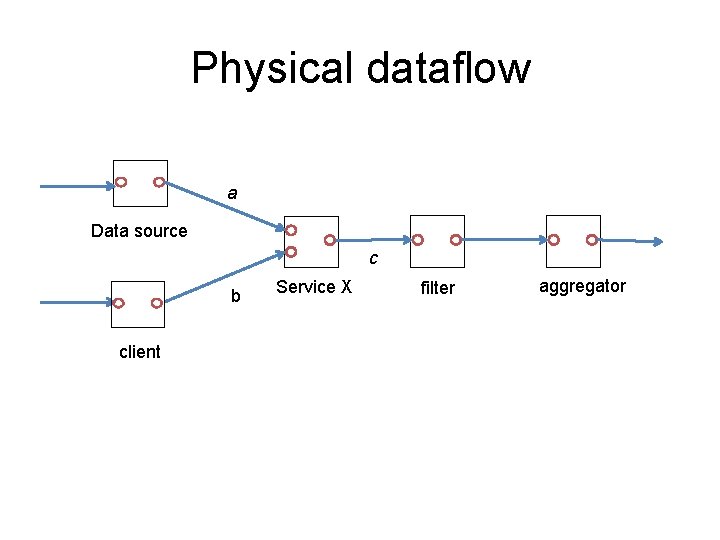

Physical dataflow a Data source c b client Service X filter aggregator

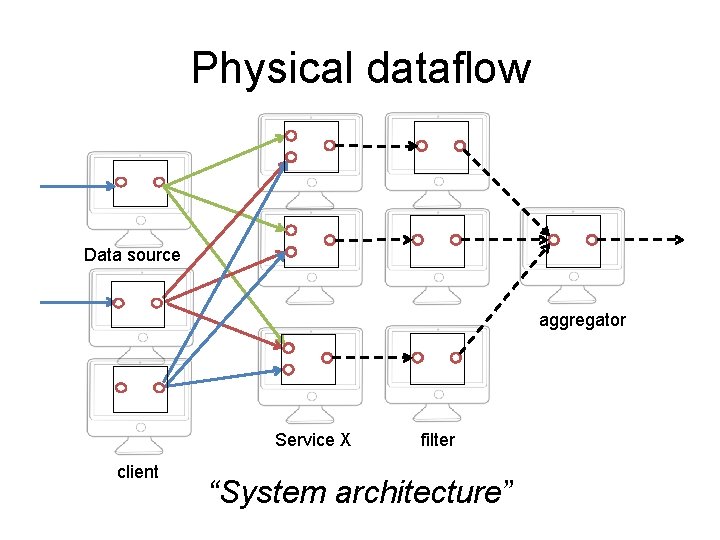

Physical dataflow Data source aggregator Service X client filter “System architecture”

What could go wrong?

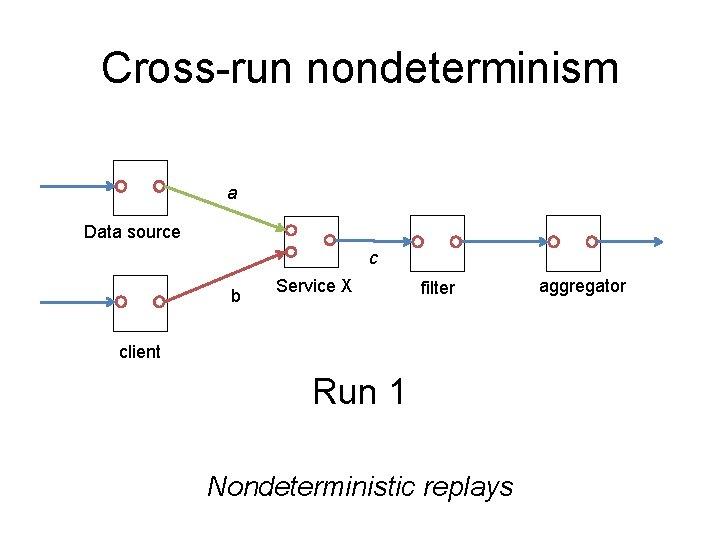

Cross-run nondeterminism a Data source c b Service X filter client Run 1 Nondeterministic replays aggregator

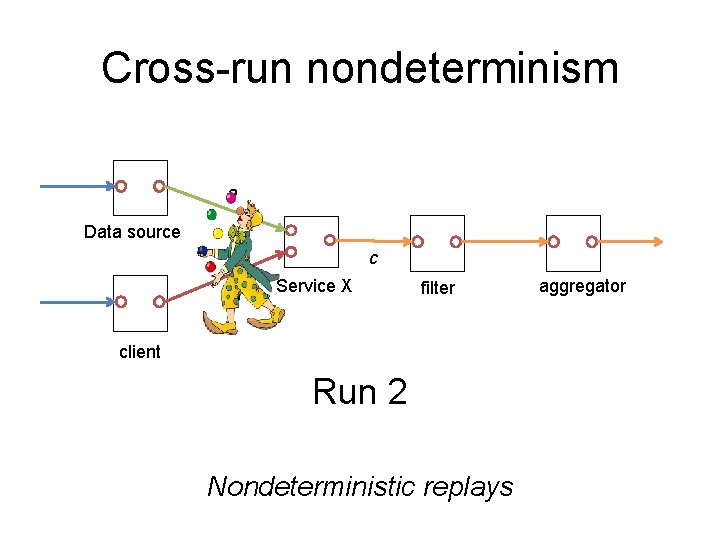

Cross-run nondeterminism a Data source c b Service X filter client Run 2 Nondeterministic replays aggregator

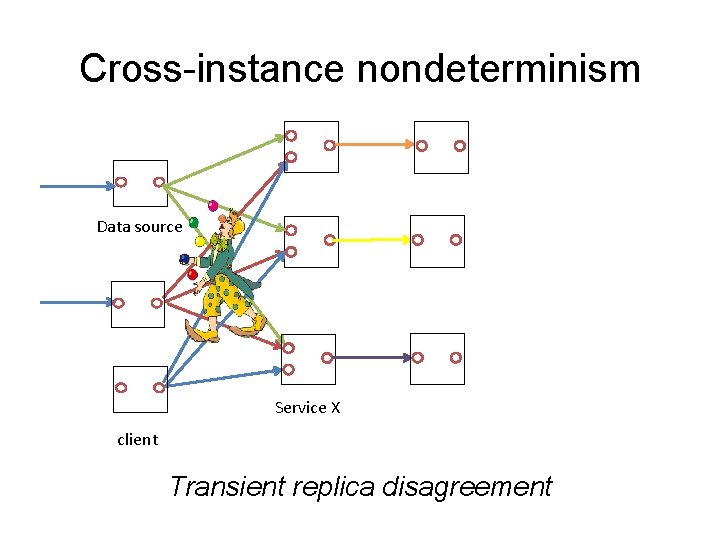

Cross-instance nondeterminism Data source Service X client Transient replica disagreement

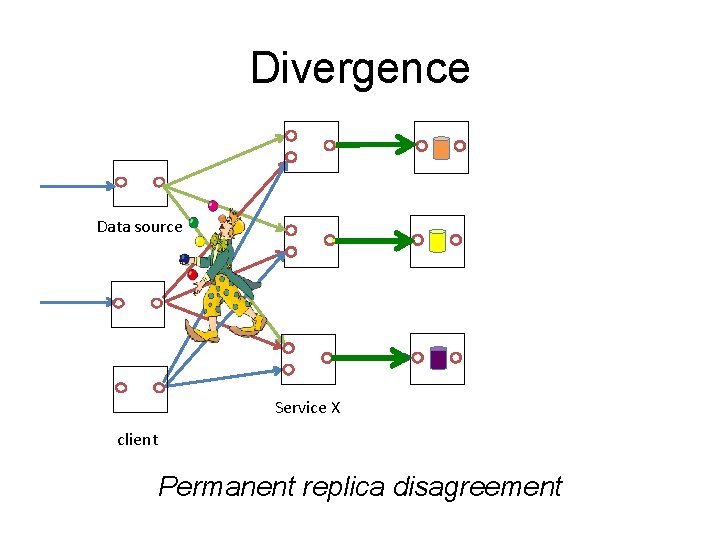

Divergence Data source Service X client Permanent replica disagreement

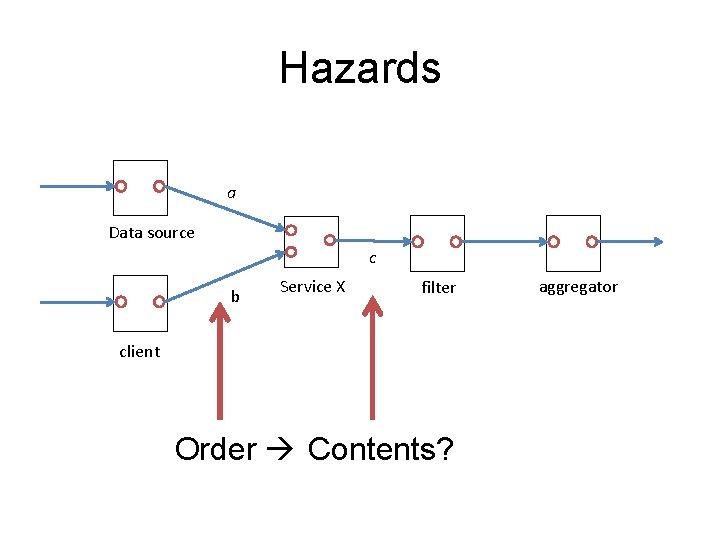

Hazards a Data source c b Service X filter client Order Contents? aggregator

Preventing the anomalies 1. Understand component semantics (And disallow certain compositions)

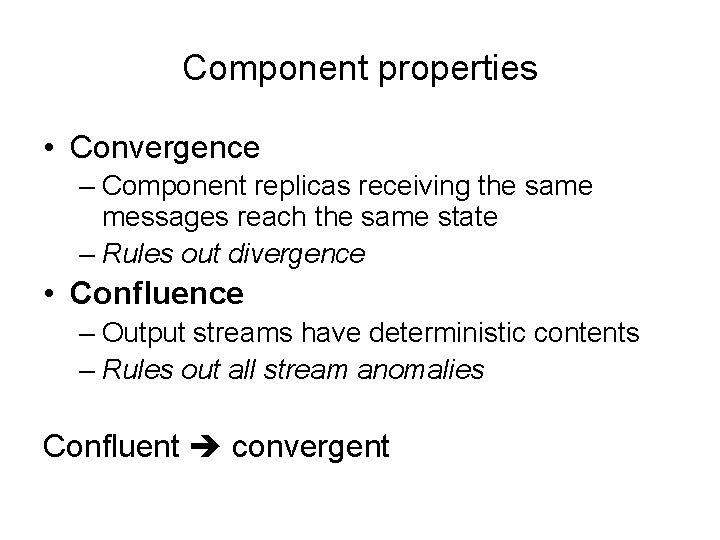

Component properties • Convergence – Component replicas receiving the same messages reach the same state – Rules out divergence

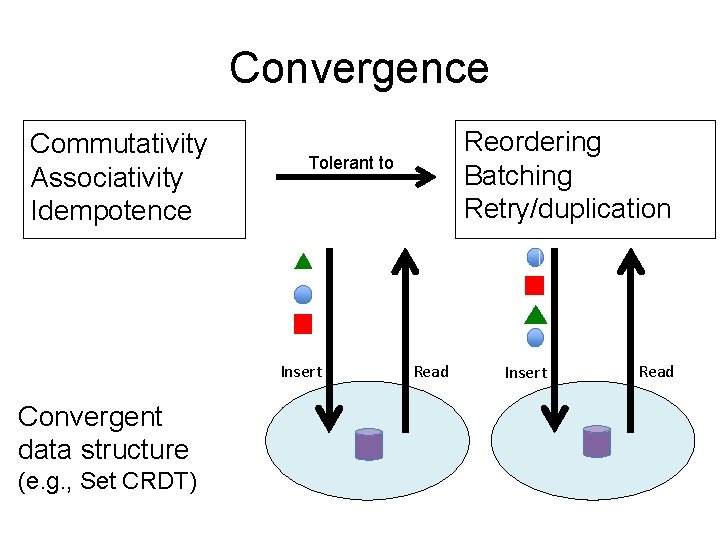

Convergence Commutativity Associativity Idempotence Tolerant to Insert Convergent data structure (e. g. , Set CRDT) Reordering Batching Retry/duplication Read Insert Read

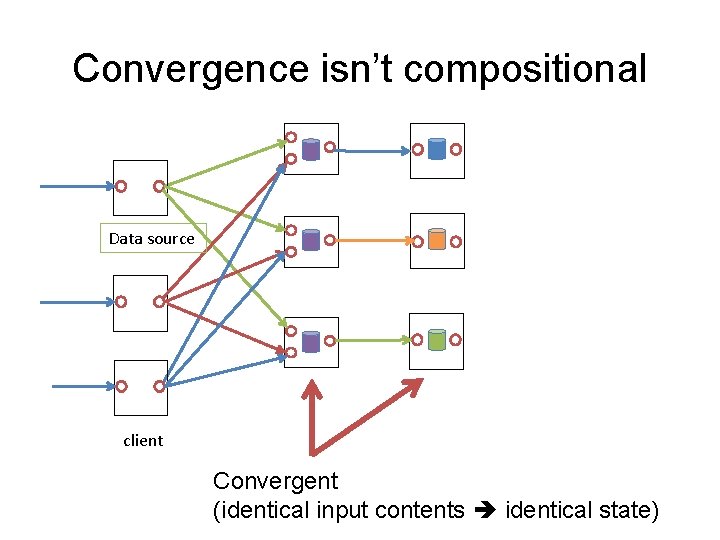

Convergence isn’t compositional Data source client Convergent (identical input contents identical state)

Component properties • Convergence – Component replicas receiving the same messages reach the same state – Rules out divergence • Confluence – Output streams have deterministic contents – Rules out all stream anomalies Confluent convergent

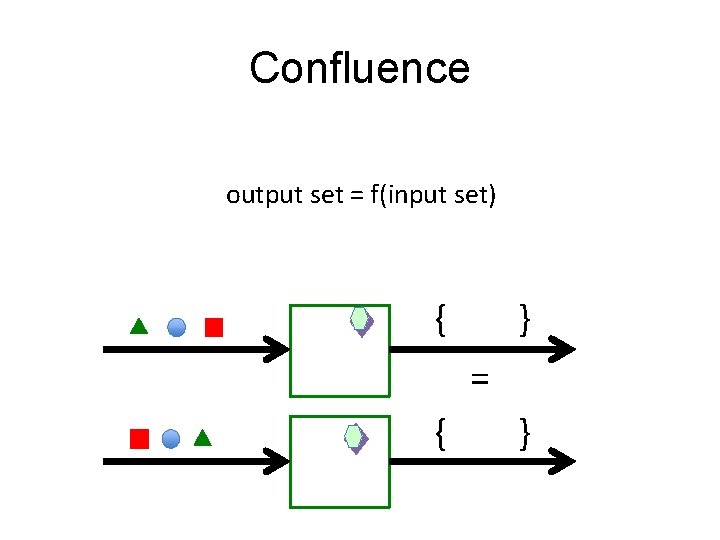

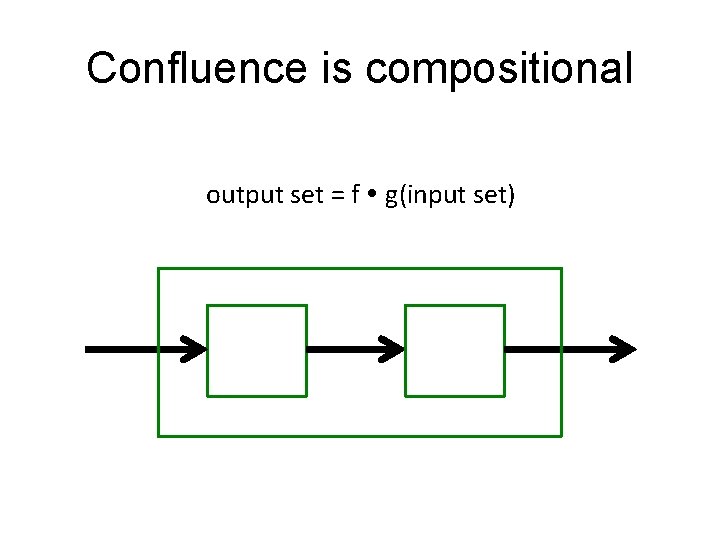

Confluence output set = f(input set) { } = { }

Confluence is compositional output set = f g(input set)

Preventing the anomalies 1. Understand component semantics (And disallow certain compositions) 2. Constrain message delivery orders 1. Ordering

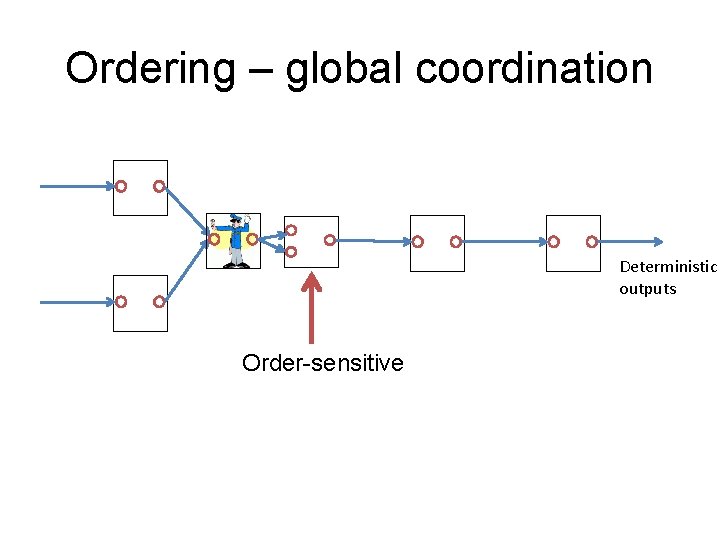

Ordering – global coordination Deterministic outputs Order-sensitive

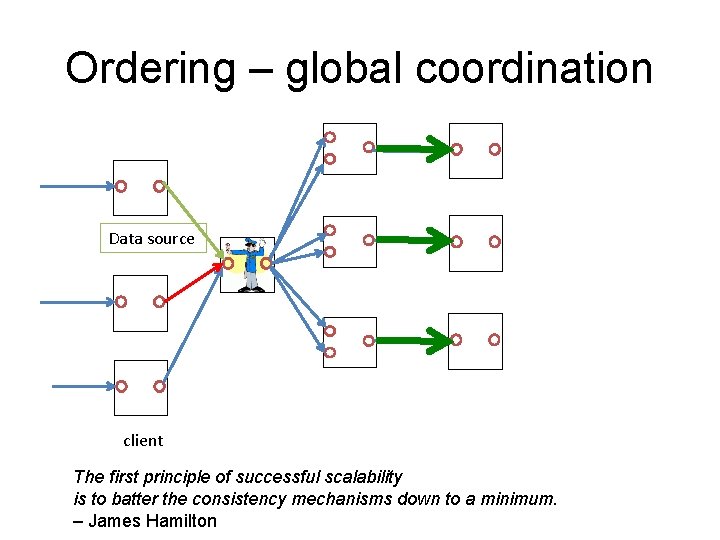

Ordering – global coordination Data source client The first principle of successful scalability is to batter the consistency mechanisms down to a minimum. – James Hamilton

Preventing the anomalies 1. Understand component semantics (And disallow certain compositions) 2. Constrain message delivery orders 1. Ordering 2. Barriers and sealing

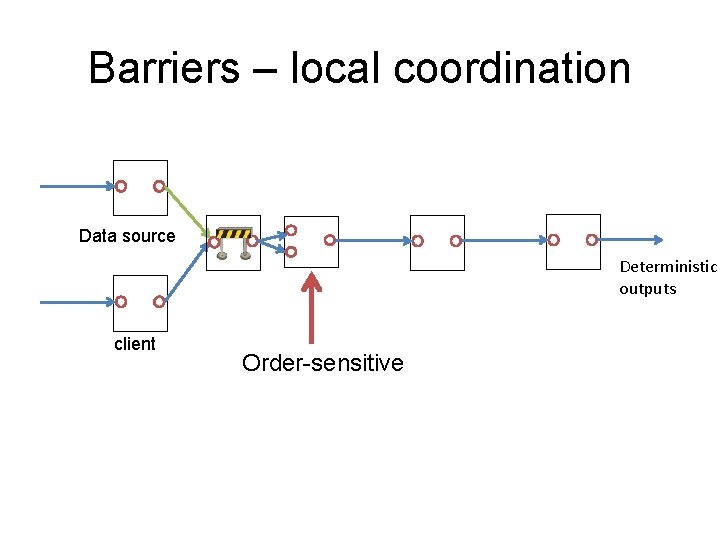

Barriers – local coordination Data source Deterministic outputs client Order-sensitive

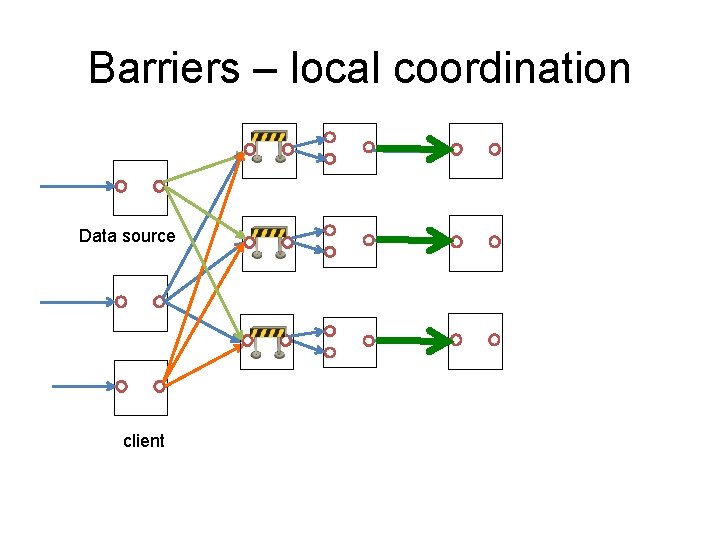

Barriers – local coordination Data source client

Sealing – continuous barriers Do partitions of (infinite) input streams “end”? Can components produce deterministic results given “complete” input partitions? Sealing: partition barriers for infinite streams

Sealing – continuous barriers Finite partitions of infinite inputs are common …in distributed systems – Sessions – Transactions – Epochs / views …and applications – Auctions – Chats – Shopping carts

Blazes: consistency analysis + coordination selection

Blazes: Mode 1: Grey boxes

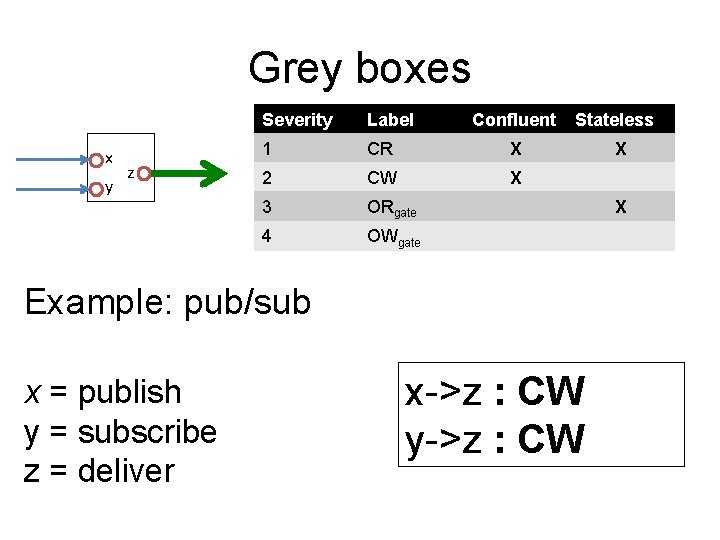

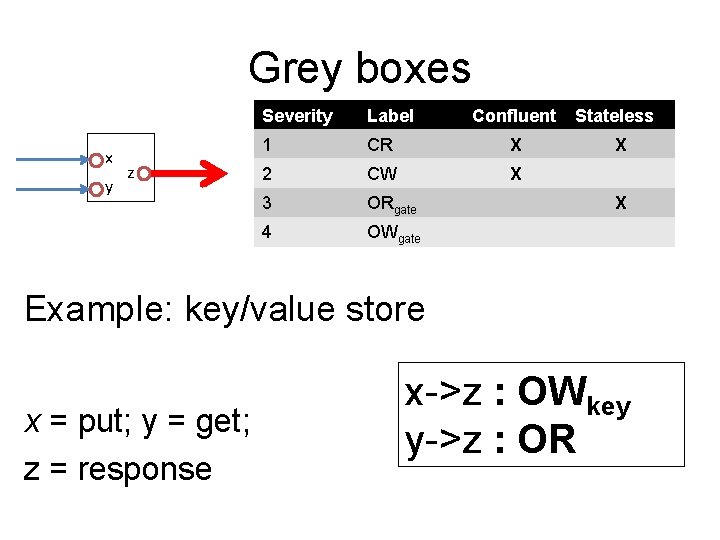

Grey boxes x y Deterministic Severity Label but unordered 1 CR z 2 CW 3 ORgate 4 OWgate Confluent Stateless X X Example: pub/sub x = publish y = subscribe z = deliver X x->z : CW y->z : CWT X

Grey boxes x y Deterministic Severity Label but unordered 1 CR z 2 CW 3 ORgate 4 OWgate Confluent X Stateless X X X Example: key/value store x = put; y = get; z = response x->z : OWkey y->z : ORT

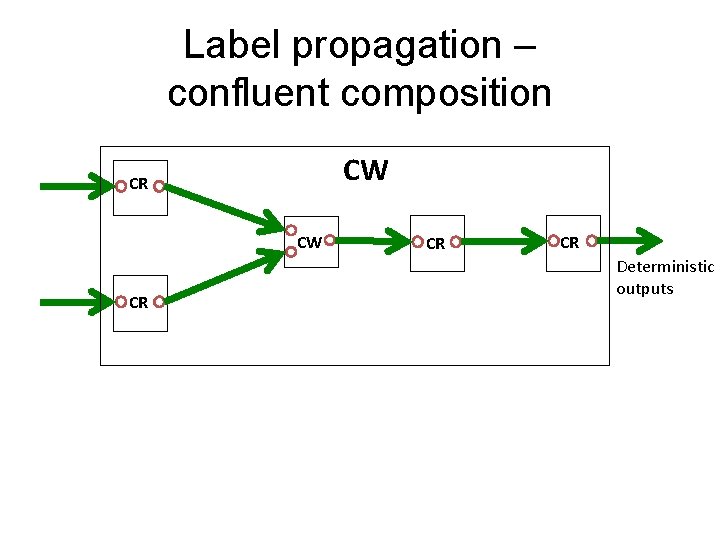

Label propagation – confluent composition CW CR CR CR Deterministic outputs

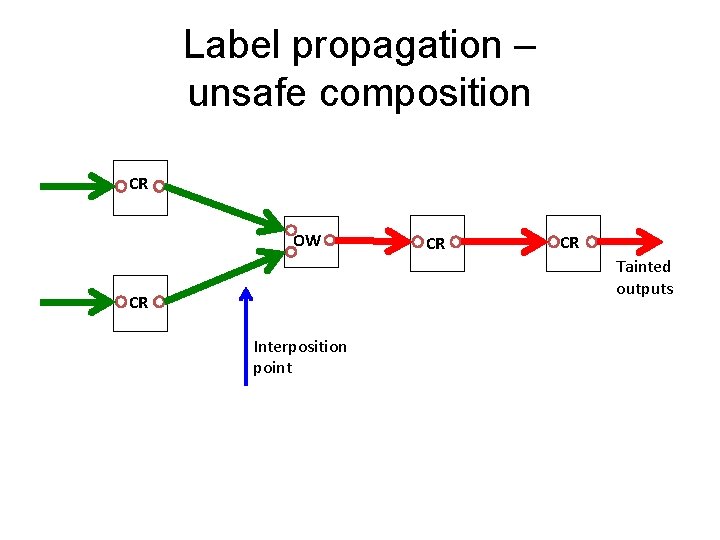

Label propagation – unsafe composition CR OW CR CR Tainted outputs CR Interposition point

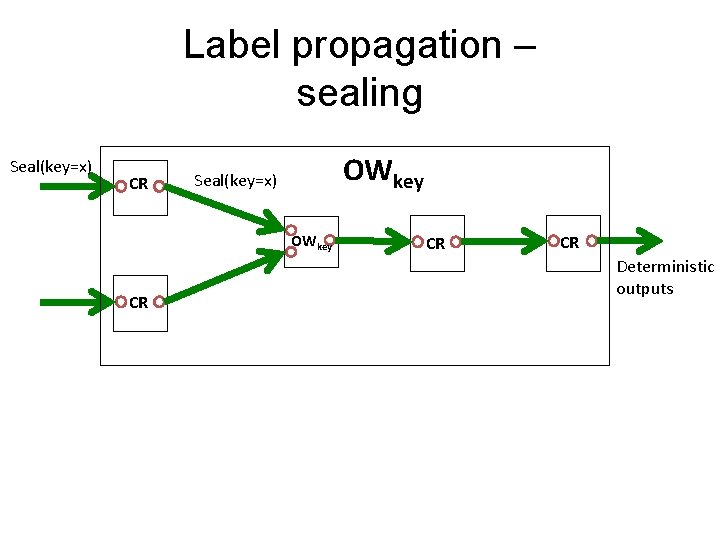

Label propagation – sealing Seal(key=x) CR OWkey Seal(key=x) OWkey CR CR CR Deterministic outputs

Blazes: Mode 1: White boxes

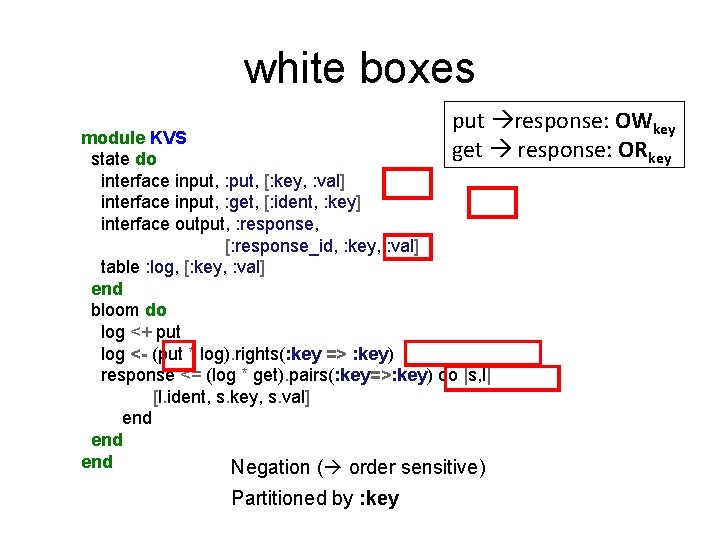

white boxes put response: OWkey get response: ORkey module KVS state do interface input, : put, [: key, : val] interface input, : get, [: ident, : key] interface output, : response, [: response_id, : key, : val] table : log, [: key, : val] end bloom do log <+ put log <- (put * log). rights(: key => : key) response <= (log * get). pairs(: key=>: key) do |s, l| [l. ident, s. key, s. val] end end Negation ( order sensitive) Partitioned by : key

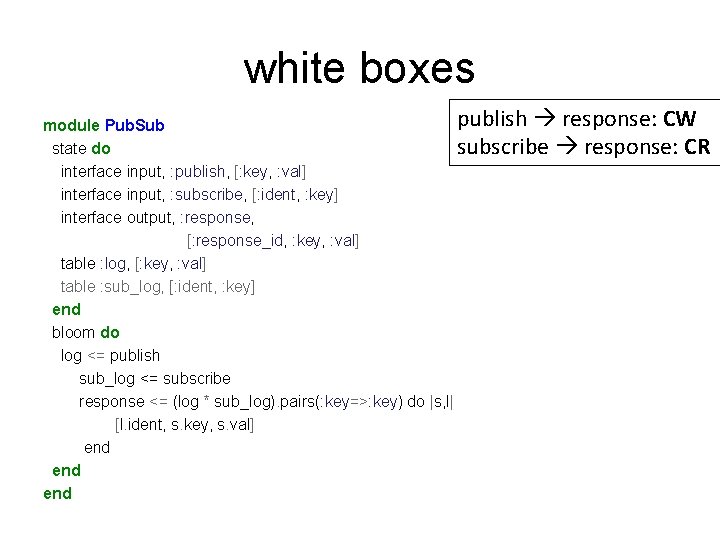

white boxes publish response: CW module Pub. Sub state do subscribe response: CR interface input, : publish, [: key, : val] interface input, : subscribe, [: ident, : key] interface output, : response, [: response_id, : key, : val] table : log, [: key, : val] table : sub_log, [: ident, : key] end bloom do log <= publish sub_log <= subscribe response <= (log * sub_log). pairs(: key=>: key) do |s, l| [l. ident, s. key, s. val] end end

The Blazes frame of mind: • Asynchronous dataflow model • Focus on consistency of data in motion – Component semantics – Delivery mechanisms and costs • Automatic, minimal coordination

Queries?

- Slides: 54