TDD Topics in Distributed Databases Distributed database Distributed

TDD: Topics in Distributed Databases Distributed database Distributed query processing: joins and non-join queries Updating distributed data 1

Distributed databases Data is stored in several sites (nodes), geographically or administratively across multiple systems Each site is running an independent DBMS Data centers What do we get? – Increased availability and reliability – Increased parallelism Complications – Catalog management: distributed data independence and distributed transaction atomicity – Query processing and optimization: replication and fragmentation – Increased update costs, concurrency control: locking, deadlock, commit protocol, recovery 2

Architectures 3

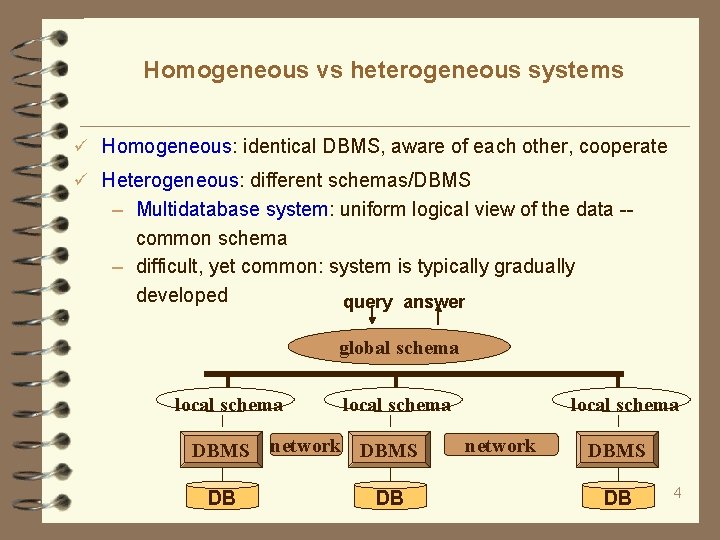

Homogeneous vs heterogeneous systems Homogeneous: identical DBMS, aware of each other, cooperate Heterogeneous: different schemas/DBMS – Multidatabase system: uniform logical view of the data -common schema – difficult, yet common: system is typically gradually developed query answer global schema local schema DBMS DB local schema network DBMS DB 4

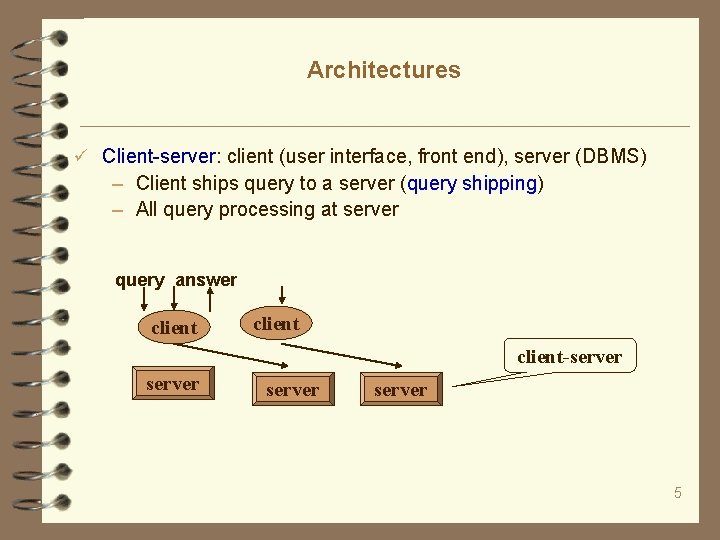

Architectures Client-server: client (user interface, front end), server (DBMS) – Client ships query to a server (query shipping) – All query processing at server query answer client-server 5

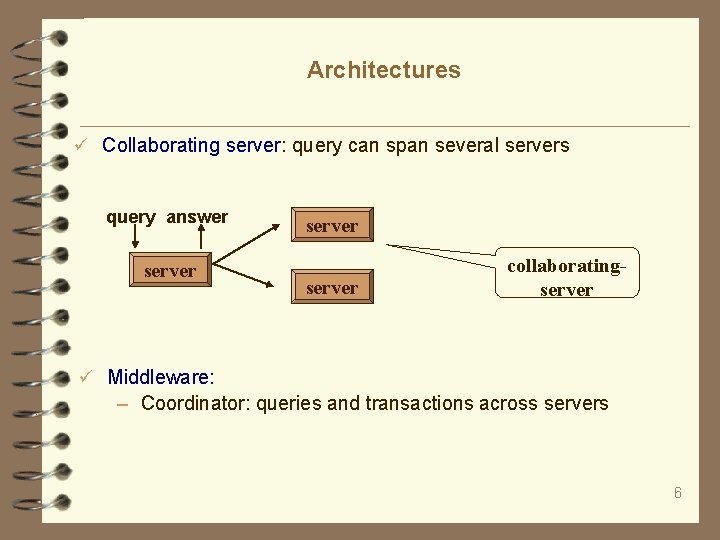

Architectures Collaborating server: query can span several servers query answer server collaboratingserver Middleware: – Coordinator: queries and transactions across servers 6

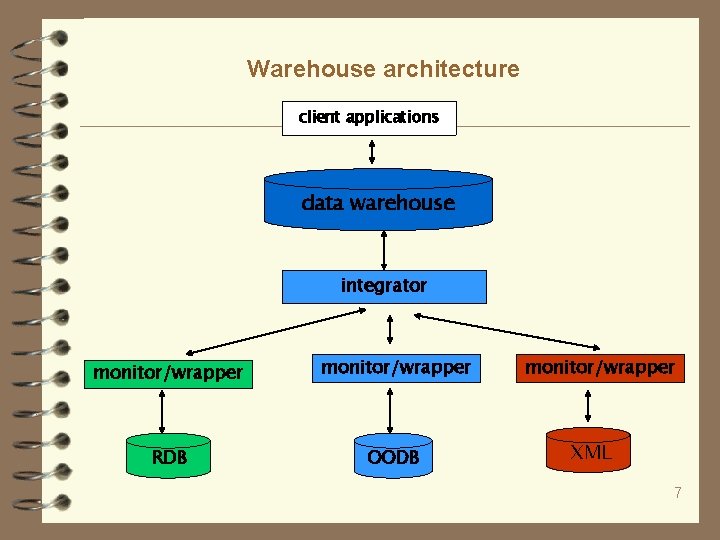

Warehouse architecture client applications data warehouse integrator monitor/wrapper RDB OODB monitor/wrapper XML 7

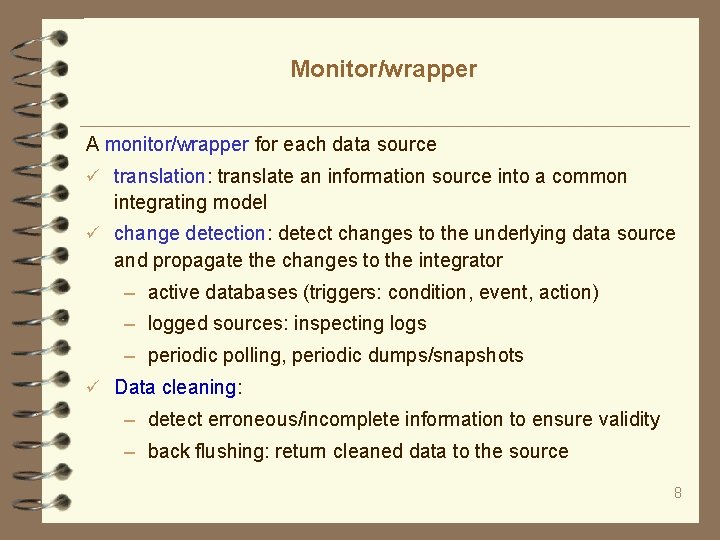

Monitor/wrapper A monitor/wrapper for each data source translation: translate an information source into a common integrating model change detection: detect changes to the underlying data source and propagate the changes to the integrator – active databases (triggers: condition, event, action) – logged sources: inspecting logs – periodic polling, periodic dumps/snapshots Data cleaning: – detect erroneous/incomplete information to ensure validity – back flushing: return cleaned data to the source 8

Integrator Receive change notifications from the wrapper/monitors and reflect the changes in the data warehouse. Typically a rule-based engine: merging information (data fusion) handling references Data cleaning: – removing redundancies and inconsistencies – inserting default values – blocking sources 9

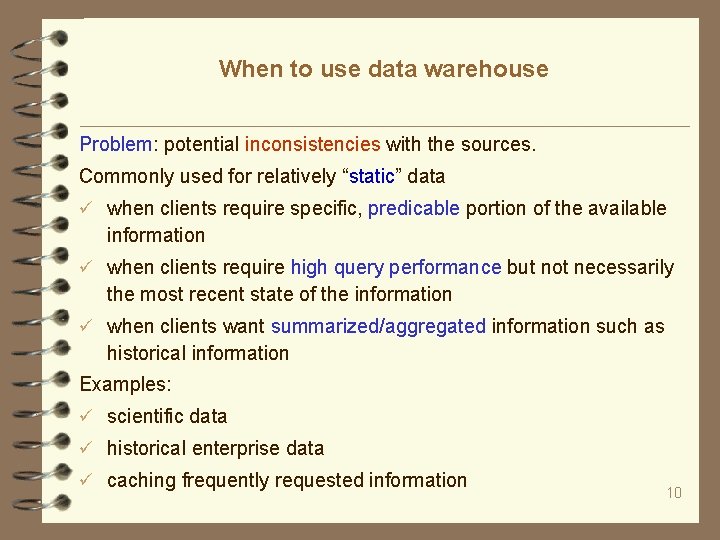

When to use data warehouse Problem: potential inconsistencies with the sources. Commonly used for relatively “static” data when clients require specific, predicable portion of the available information when clients require high query performance but not necessarily the most recent state of the information when clients want summarized/aggregated information such as historical information Examples: scientific data historical enterprise data caching frequently requested information 10

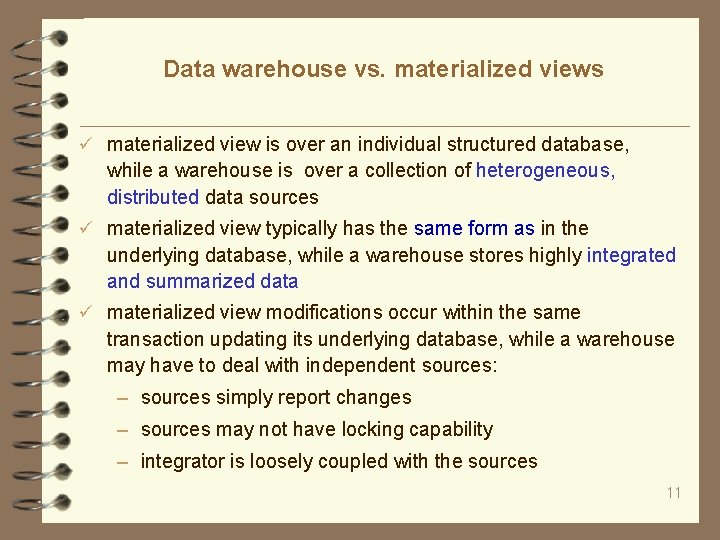

Data warehouse vs. materialized views materialized view is over an individual structured database, while a warehouse is over a collection of heterogeneous, distributed data sources materialized view typically has the same form as in the underlying database, while a warehouse stores highly integrated and summarized data materialized view modifications occur within the same transaction updating its underlying database, while a warehouse may have to deal with independent sources: – sources simply report changes – sources may not have locking capability – integrator is loosely coupled with the sources 11

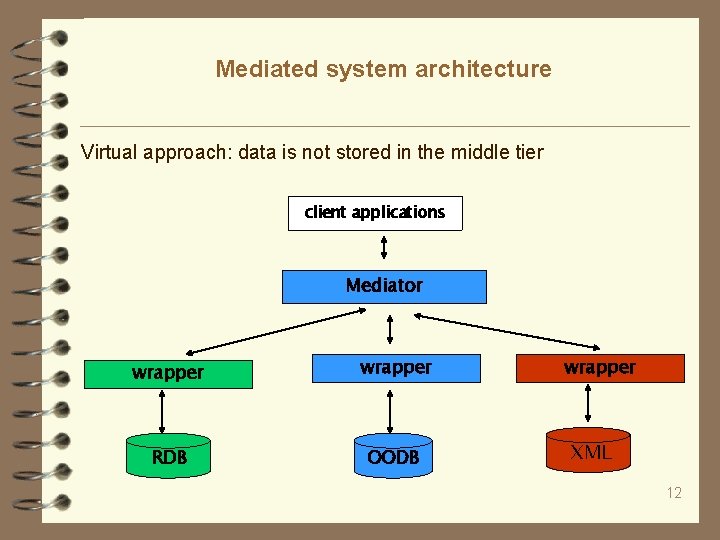

Mediated system architecture Virtual approach: data is not stored in the middle tier client applications Mediator wrapper RDB OODB wrapper XML 12

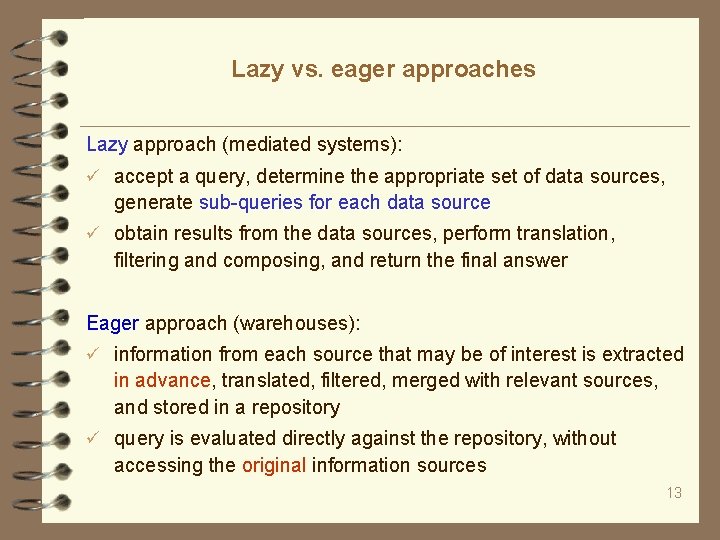

Lazy vs. eager approaches Lazy approach (mediated systems): accept a query, determine the appropriate set of data sources, generate sub-queries for each data source obtain results from the data sources, perform translation, filtering and composing, and return the final answer Eager approach (warehouses): information from each source that may be of interest is extracted in advance, translated, filtered, merged with relevant sources, and stored in a repository query is evaluated directly against the repository, without accessing the original information sources 13

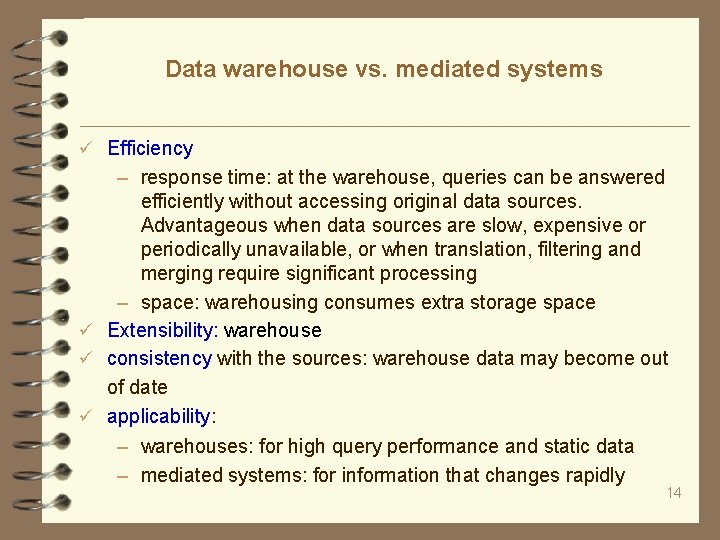

Data warehouse vs. mediated systems Efficiency – response time: at the warehouse, queries can be answered efficiently without accessing original data sources. Advantageous when data sources are slow, expensive or periodically unavailable, or when translation, filtering and merging require significant processing – space: warehousing consumes extra storage space Extensibility: warehouse consistency with the sources: warehouse data may become out of date applicability: – warehouses: for high query performance and static data – mediated systems: for information that changes rapidly 14

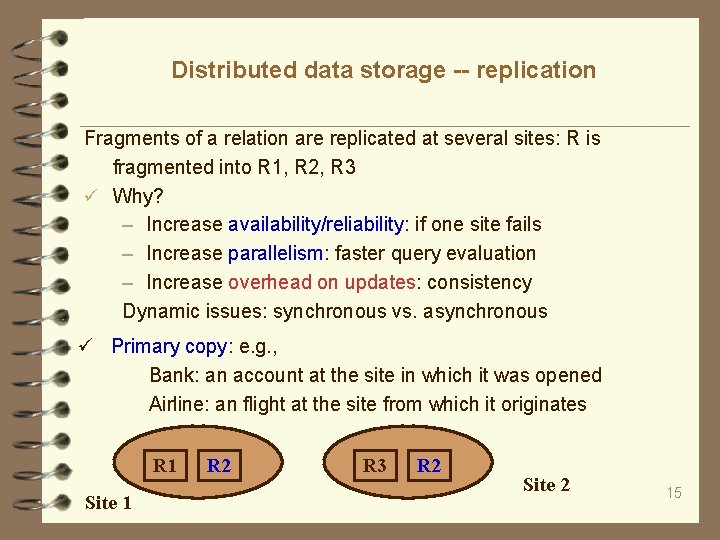

Distributed data storage -- replication Fragments of a relation are replicated at several sites: R is fragmented into R 1, R 2, R 3 Why? – Increase availability/reliability: if one site fails – Increase parallelism: faster query evaluation – Increase overhead on updates: consistency Dynamic issues: synchronous vs. asynchronous Primary copy: e. g. , Bank: an account at the site in which it was opened Airline: an flight at the site from which it originates R 1 Site 1 R 2 R 3 R 2 Site 2 15

Distributed data storage -- fragmentation A relation R may be fragmented or partitioned Horizontal Vertical: lossless join Question: how to reconstruct the original R? eid name city 001 joe NYC 002 mary NYC 003 grace EDI fragmentation: determined by local ownership query answer global schema local schema NYC DBMS DB local schema network EDI DBMS DB 16

Transparency, independence Distributed data transparency (independence): – location (name) transparency – fragmentation – replication transparency (catalog management) Transaction atomicity: across several sites – All changes persist if the transaction commits – None persists if the transaction aborts Data independency and transaction atomicity are not supported currently: the users have to be aware of where data is located 17

Distributed query processing: joins and non-join queries 18 18

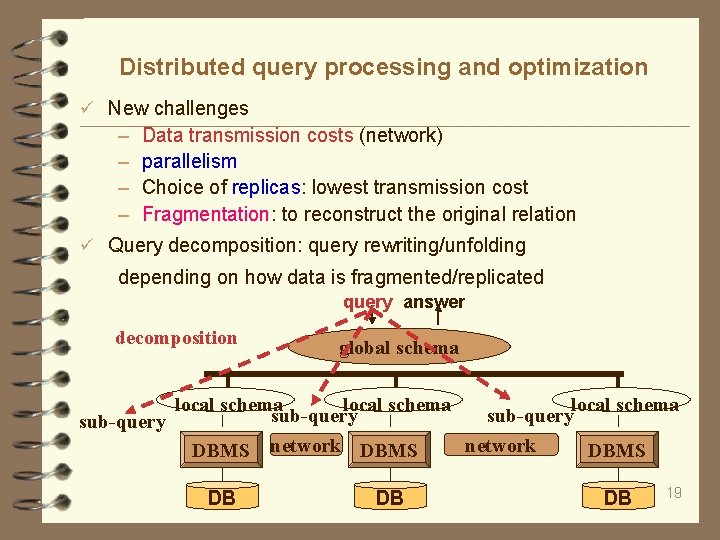

Distributed query processing and optimization New challenges – – Data transmission costs (network) parallelism Choice of replicas: lowest transmission cost Fragmentation: to reconstruct the original relation Query decomposition: query rewriting/unfolding depending on how data is fragmented/replicated query answer decomposition global schema local schema sub-query network DBMS DB DB DB 19

Non-join queries Schema: account(acc-num, branch-name, balance) Query Q: select * from account where branch-name = `EDI” Storage: database DB is horizontally fragmented, based on branch-name: NYC, Philly, EDI, … denoted by DB 1, …, DBn DB = DB 1 … DBn Processing: – Rewrite Q into Q(DB 1) … Q(DBn) – Q(DBi) is empty if branch-name <> EDI • Q(DB 1), where DB 1 is the EDI branch – Q(DB 1) = Q’(DB 1) • Q’: select * from account 20

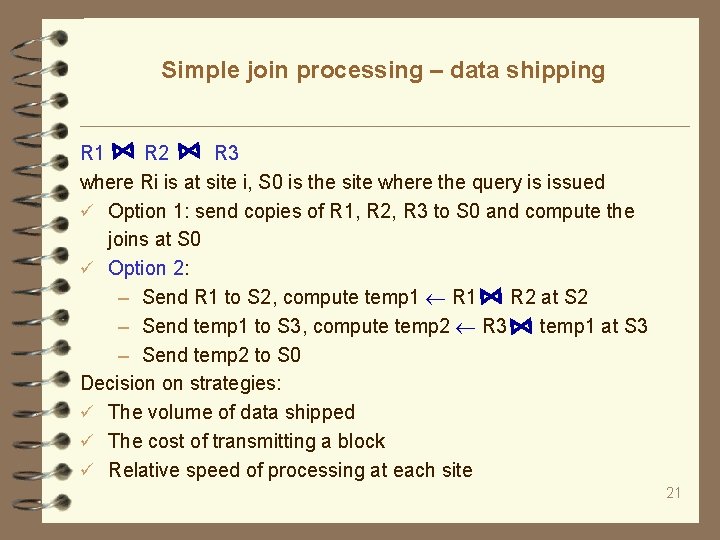

Simple join processing – data shipping R 1 R 2 R 3 where Ri is at site i, S 0 is the site where the query is issued Option 1: send copies of R 1, R 2, R 3 to S 0 and compute the joins at S 0 Option 2: – Send R 1 to S 2, compute temp 1 R 1 R 2 at S 2 – Send temp 1 to S 3, compute temp 2 R 3 temp 1 at S 3 – Send temp 2 to S 0 Decision on strategies: The volume of data shipped The cost of transmitting a block Relative speed of processing at each site 21

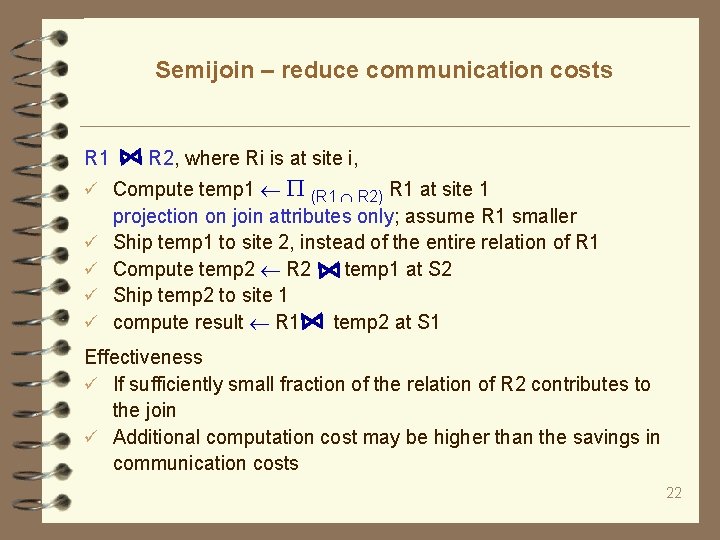

Semijoin – reduce communication costs R 1 R 2, where Ri is at site i, Compute temp 1 (R 1 R 2) R 1 at site 1 projection on join attributes only; assume R 1 smaller Ship temp 1 to site 2, instead of the entire relation of R 1 Compute temp 2 R 2 temp 1 at S 2 Ship temp 2 to site 1 compute result R 1 temp 2 at S 1 Effectiveness If sufficiently small fraction of the relation of R 2 contributes to the join Additional computation cost may be higher than the savings in communication costs 22

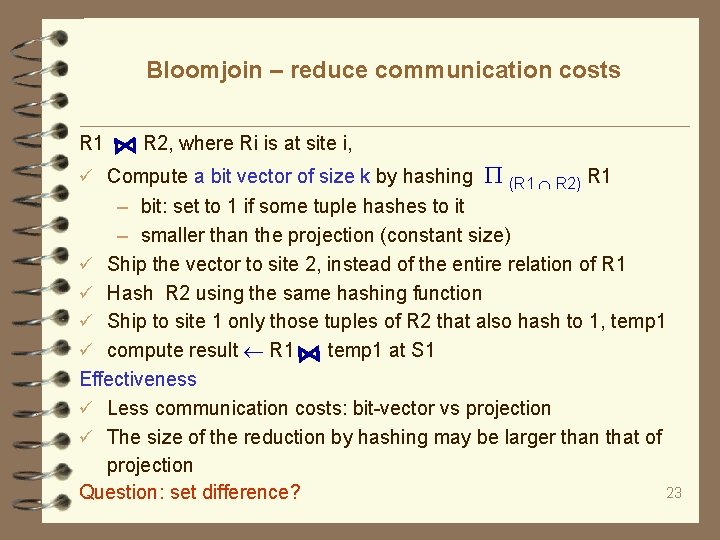

Bloomjoin – reduce communication costs R 1 R 2, where Ri is at site i, Compute a bit vector of size k by hashing (R 1 R 2) R 1 – bit: set to 1 if some tuple hashes to it – smaller than the projection (constant size) Ship the vector to site 2, instead of the entire relation of R 1 Hash R 2 using the same hashing function Ship to site 1 only those tuples of R 2 that also hash to 1, temp 1 compute result R 1 temp 1 at S 1 Effectiveness Less communication costs: bit-vector vs projection The size of the reduction by hashing may be larger than that of projection 23 Question: set difference?

exploring parallelism Consider R 1 R 2 R 3 R 4, where Ri is at site i temp 1 R 1 R 2, by shipping R 1 to site 2 temp 2 R 3 R 4, by shipping R 3 to site 4 result temp 1 temp 2 -- pipelining Question: R 1 R 3, using R 2 parallel Pipelined parallelism Semi-join both 24

Distributed query optimization The volume of data shipped The cost of transmitting a block Relative speed of processing at each site Site selection: replication Two-step optimization At compile time, generate a query plan – along the same lines as centralized DBMS Every time before the query is executed, transform the plan and carry out site selection (determine where the operators are to be executed) – dynamic, just site selection 25

Practice: validation of functional dependencies A functional dependency (FD) defined on schema R: X Y – For any instance D of R, D satisfies the FD if for any pair of tuples t and t’, if t[X] = t’[X], then t[Y] = t’[Y] – Violations of the FD in D: horizontally or but vertically { t | there exists t’ in D, such that t[X] = t’[X], t[Y] t’[Y] } Now suppose that D is fragmented and distributed Develop an algorithm that given fragmented and distributed D and an FD, computes all the violations of the FD in D – semijoin Minimize data shipment – bloomjoin Questions: what can we do if we are given a set of FDs to validate? 26

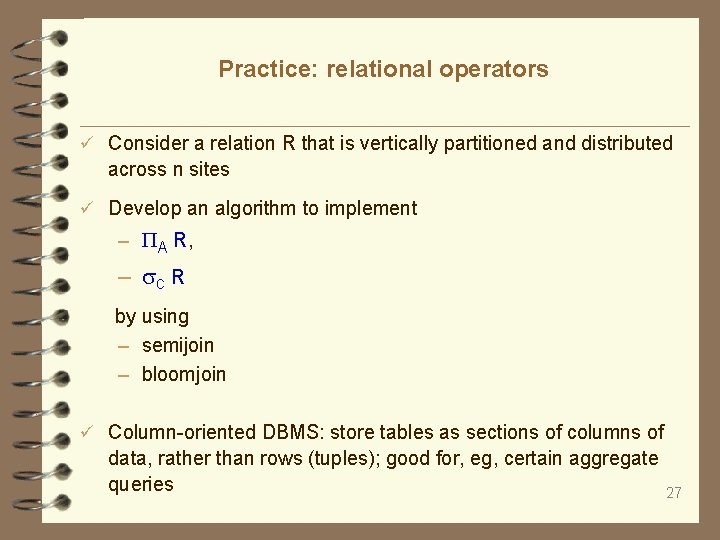

Practice: relational operators Consider a relation R that is vertically partitioned and distributed across n sites Develop an algorithm to implement – A R, – C R by using – semijoin – bloomjoin Column-oriented DBMS: store tables as sections of columns of data, rather than rows (tuples); good for, eg, certain aggregate queries 27

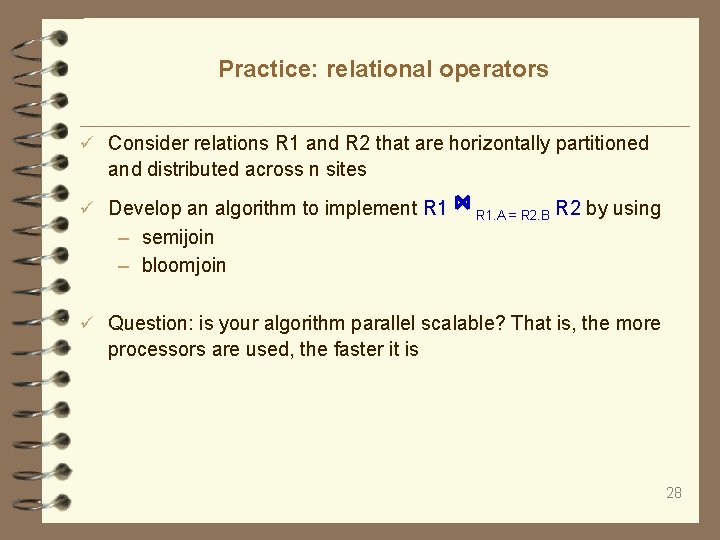

Practice: relational operators Consider relations R 1 and R 2 that are horizontally partitioned and distributed across n sites Develop an algorithm to implement R 1. A = R 2. B R 2 by using – semijoin – bloomjoin Question: is your algorithm parallel scalable? That is, the more processors are used, the faster it is 28

Updating distributed data 29 29

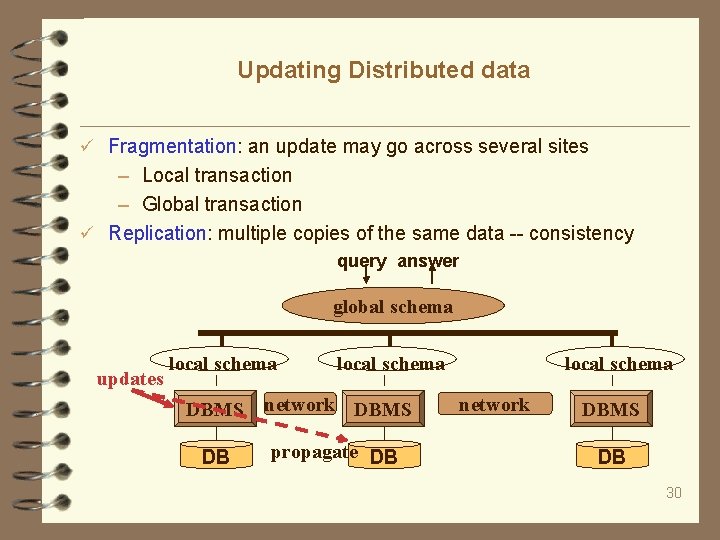

Updating Distributed data Fragmentation: an update may go across several sites – Local transaction – Global transaction Replication: multiple copies of the same data -- consistency query answer global schema updates local schema DBMS network DBMS DB propagate DB local schema network DBMS DB 30

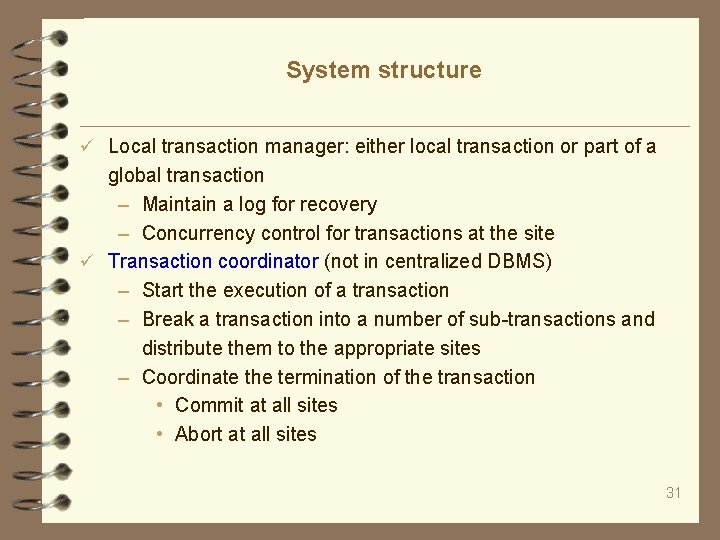

System structure Local transaction manager: either local transaction or part of a global transaction – Maintain a log for recovery – Concurrency control for transactions at the site Transaction coordinator (not in centralized DBMS) – Start the execution of a transaction – Break a transaction into a number of sub-transactions and distribute them to the appropriate sites – Coordinate the termination of the transaction • Commit at all sites • Abort at all sites 31

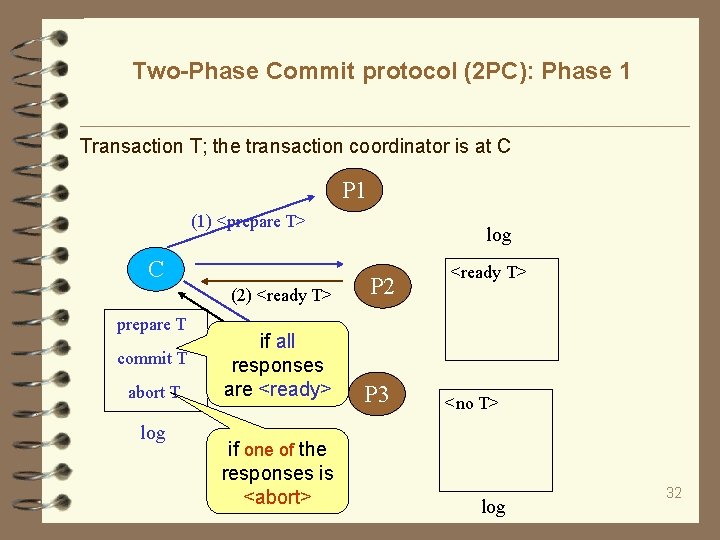

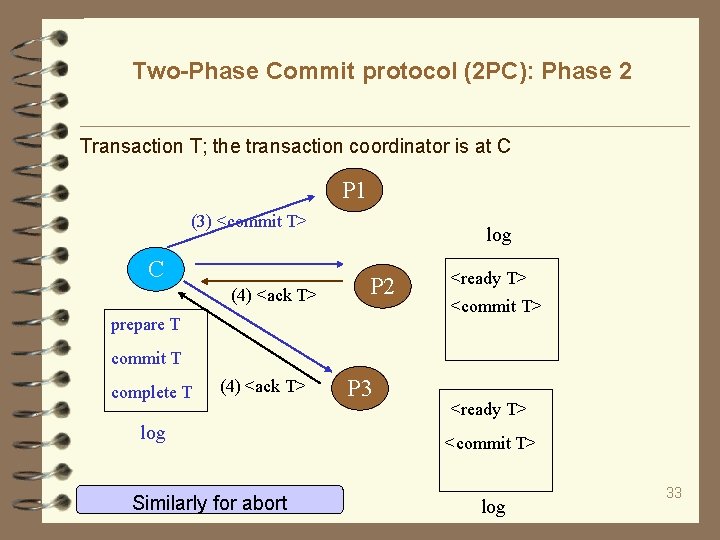

Two-Phase Commit protocol (2 PC): Phase 1 Transaction T; the transaction coordinator is at C P 1 (1) <prepare T> C (2) <ready T> prepare T commit T abort T log if all responses (2) T> are<abort <ready> if one of the responses is <abort> log P 2 P 3 <ready T> <no T> log 32

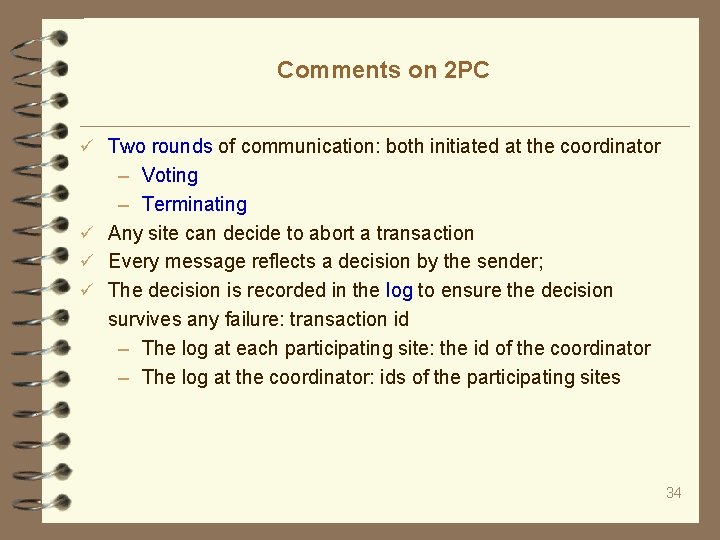

Two-Phase Commit protocol (2 PC): Phase 2 Transaction T; the transaction coordinator is at C P 1 (3) <commit T> C (4) <ack T> log P 2 prepare T <ready T> <commit T> commit T complete T (4) <ack T> log Similarly for abort P 3 <ready T> <commit T> log 33

Comments on 2 PC Two rounds of communication: both initiated at the coordinator – Voting – Terminating Any site can decide to abort a transaction Every message reflects a decision by the sender; The decision is recorded in the log to ensure the decision survives any failure: transaction id – The log at each participating site: the id of the coordinator – The log at the coordinator: ids of the participating sites 34

Concurrency control Single local manager: at a chosen site – Simplementation and deadlock handling – Bottleneck – Vulnerability: if the site fails Distributed lock manager: each site has one to update data item D at site j – Send request to the lock manager at site j – Request is either granted or delayed – Deadlock handling is hard Major complication: replicated data 35

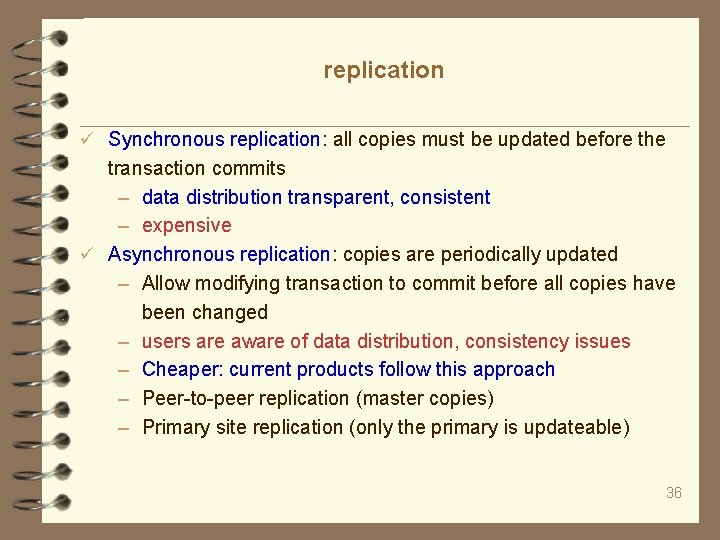

replication Synchronous replication: all copies must be updated before the transaction commits – data distribution transparent, consistent – expensive Asynchronous replication: copies are periodically updated – Allow modifying transaction to commit before all copies have been changed – users are aware of data distribution, consistency issues – Cheaper: current products follow this approach – Peer-to-peer replication (master copies) – Primary site replication (only the primary is updateable) 36

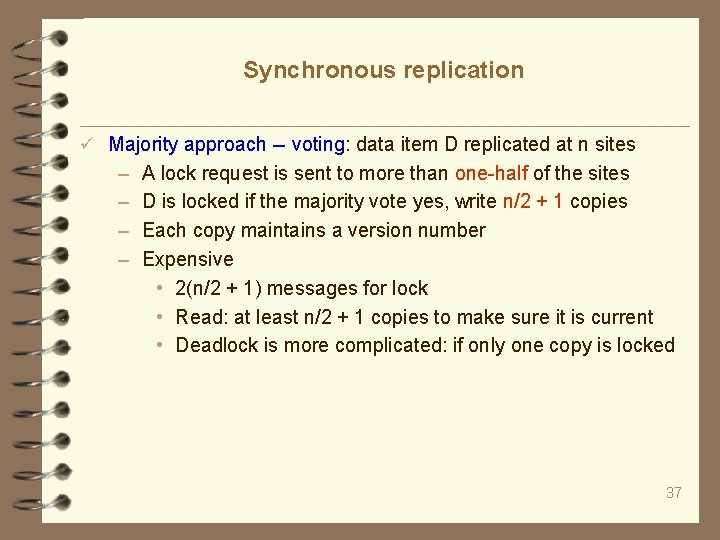

Synchronous replication Majority approach -- voting: data item D replicated at n sites – – A lock request is sent to more than one-half of the sites D is locked if the majority vote yes, write n/2 + 1 copies Each copy maintains a version number Expensive • 2(n/2 + 1) messages for lock • Read: at least n/2 + 1 copies to make sure it is current • Deadlock is more complicated: if only one copy is locked 37

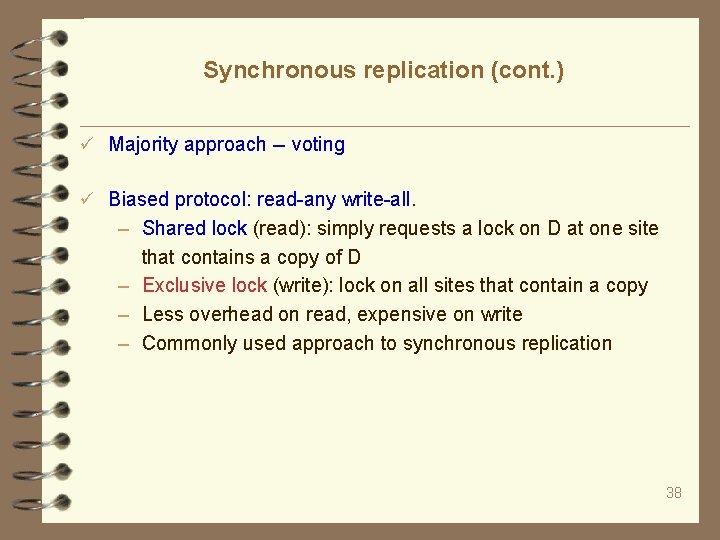

Synchronous replication (cont. ) Majority approach -- voting Biased protocol: read-any write-all. – Shared lock (read): simply requests a lock on D at one site that contains a copy of D – Exclusive lock (write): lock on all sites that contain a copy – Less overhead on read, expensive on write – Commonly used approach to synchronous replication 38

Synchronous replication -- exercise A distributed system uses the majority (voting) approach to update data replicas. Suppose that a data item D is replicated at 4 different sites: S 1, S 2, S 3, S 4. What should be done if a site S wants to Write D Read D 39

Synchronous replication -- answer A distributed system uses majority the (voting) approach to update data replicas. Suppose that a data item D is replicated at 4 different sites: S 1, S 2, S 3, S 4. What should be done if a site S wants to Write D – The site S sends a lock request to any 3 of S 1, S 2, S 3, S 4 – The write operation is conducted if the lock is granted by the lock manager of all the 3 sites; otherwise it is delayed until the lock can be granted Read D – The site S reads copies of D from at least 3 sites – It picks the copy with the highest version number 40

Asynchronous replication Primary site: choose exactly one copy, residing at a primary site – A lock request is sent to the primary site – Replicas at other sites may not be updated; they are secondary to the primary copy – Simple to implement – Main issues: • D becomes inaccessible if the primary site fails • Propagation of changes from the primary site to others 41

Asynchronous replication (cont. ) Primary site Peer-to-peer: more than one of the copies can be a master – Change to a master copy must be propagated to others – Conflicts of changes to two copies have to be resolved – Best used when conflicts do not arise: e. g. , • Each master site owns a distinct fragment • Updating rights owned by one master at a time 42

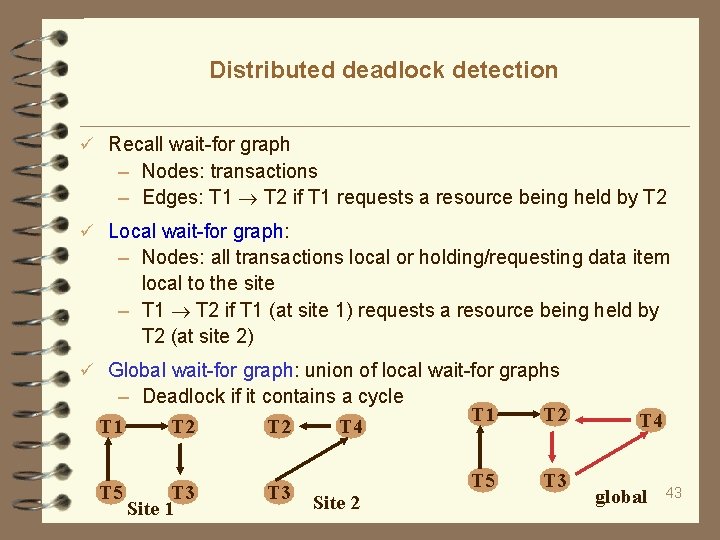

Distributed deadlock detection Recall wait-for graph – Nodes: transactions – Edges: T 1 T 2 if T 1 requests a resource being held by T 2 Local wait-for graph: – Nodes: all transactions local or holding/requesting data item local to the site – T 1 T 2 if T 1 (at site 1) requests a resource being held by T 2 (at site 2) Global wait-for graph: union of local wait-for graphs – Deadlock if it contains a cycle T 1 T 2 T 4 T 5 T 3 Site 1 T 3 Site 2 T 1 T 2 T 5 T 3 T 4 global 43

False cycles Due to communication delay Site 1: local wait-for graph has T 1 T 2 releases the resource – deletion of the edge Site 2: T 2 requests the resource again – addition of T 2 T 1 Cycle if insert T 2 T 1 arrives before removal of T 1 T 2 Centralized deadlock detection – deadlock detection coordinator Constructs/maintains global wait-for graph Detect cycles If it finds a cycle, chose a victim to be rolled back Distributed deadlock manager? More expensive T 1 Site 1 T 2 T 1 T 2 Site 2 T 1 T 2 global 44

Summary and review Homogeneous vs heterogeneous systems Replication and fragmentation. Pros and cons of replication. How to reconstruct a fragmented relation (vertical, horizontal)? Simple join (data shipping), semijoin, bloomjoin set-difference? Intersection? Aggregation? Transaction manager and coordinator. Responsibilities? Describe 2 PC. Recovery: coordinator and participating sites Replication: – majority, read-any write-all, – primary site, peer-to-peer Local wait-for graph, global wait-for graph, deadlock detection, deadlock handling 45

Reading list Map. Reduce tutorial: http: //hadoop. apache. org/docs/r 1. 2. 1/mapred_tutorial. html Take a look at the following: – Cassandra, http: //en. wikipedia. org/wiki/Apache_Cassandra – Clusterpoint, http: //en. wikipedia. org/wiki/Clusterpoint – Riak, http: //en. wikipedia. org/wiki/Riak 46

Reading for the next week 1. Pregel: a system for large-scale graph processing http: //kowshik. github. io/JPregel/pregel_paper. pdf 2. Distributed Graph. Lab: A Framework for Machine Learning in the Cloud, http: //vldb. org/pvldb/vol 5/p 716_yuchenglow_vldb 2012. pdf 3. Power. Graph: Distributed Graph-Parallel Computation on Natural Graphs, http: //select. cs. cmu. edu/publications/paperdir/osdi 2012 gonzalez-low-gu-bickson-guestrin. pdf 4. Graph. Chi: Large-Scale Graph Computation on Just a PC, http: //select. cs. cmu. edu/publications/paperdir/osdi 2012 -kyrolablelloch-guestrin. pdf 5. Performance Guarantees for Distributed Reachability Queries, http: //vldb. org/pvldb/vol 5/p 1304_wenfeifan_vldb 2012. pdf 6. W. Fan, X. Wang, and Y. Wu. Distributed Graph Simulation: Impossibility and Possibility. VLDB 2014. (parallel model) http: //homepages. inf. ed. ac. uk/wenfei/papers/vldb 14 -impossibility. pdf Pick two papers and write reviews 47

- Slides: 47