RGB Display of Hyper Spectral Images Barak Ugav

RGB Display of Hyper. Spectral Images Barak Ugav Yishai Gronich

Introduction Hyperspectral images have a lot of information in each pixel - each “color” is typically a 150 -400 dimensional vector with real values, representing the reflection of the pixel in each wavelength After processing the colors, the human eye sends the brain only 3 values to describe each color! Useful information may be lost.

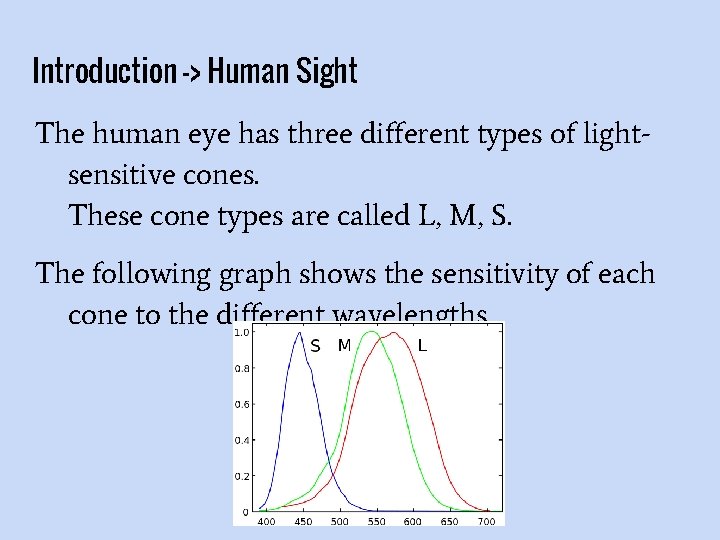

Introduction -> Human Sight The human eye has three different types of lightsensitive cones. These cone types are called L, M, S. The following graph shows the sensitivity of each cone to the different wavelengths.

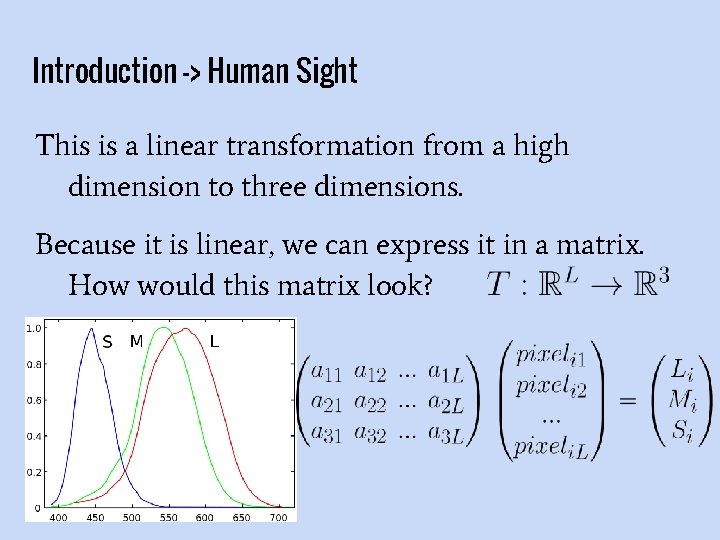

Introduction -> Human Sight This is a linear transformation from a high dimension to three dimensions. Because it is linear, we can express it in a matrix. How would this matrix look?

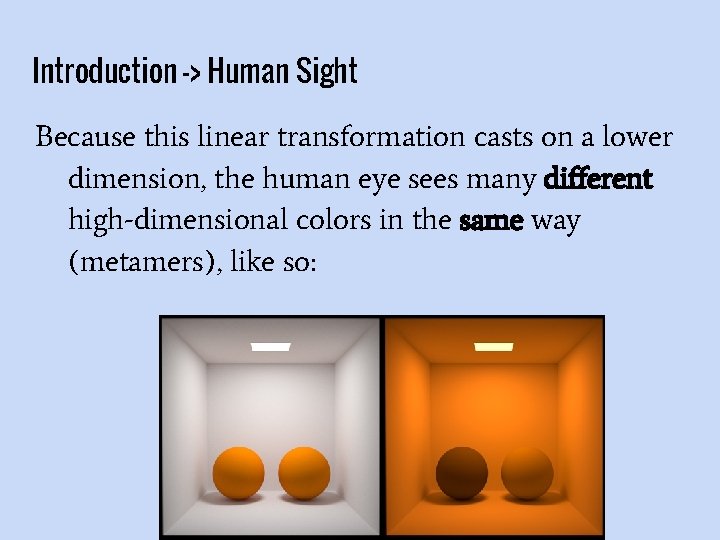

Introduction -> Human Sight Because this linear transformation casts on a lower dimension, the human eye sees many different high-dimensional colors in the same way (metamers), like so:

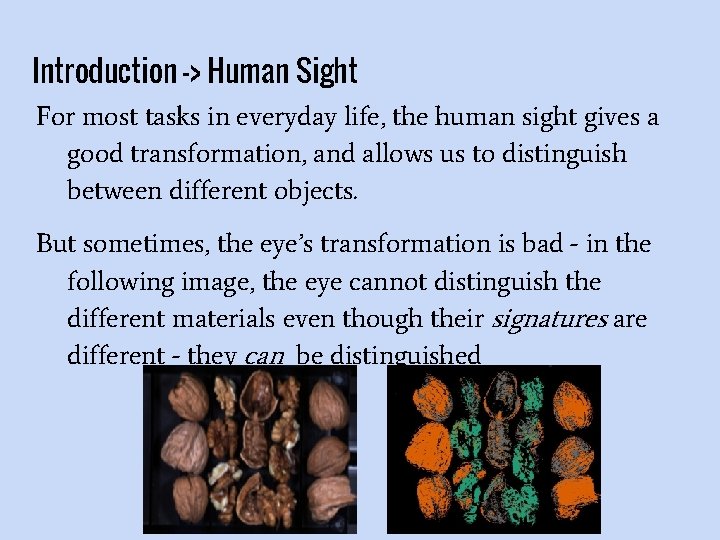

Introduction -> Human Sight For most tasks in everyday life, the human sight gives a good transformation, and allows us to distinguish between different objects. But sometimes, the eye’s transformation is bad - in the following image, the eye cannot distinguish the different materials even though their signatures are different - they can be distinguished

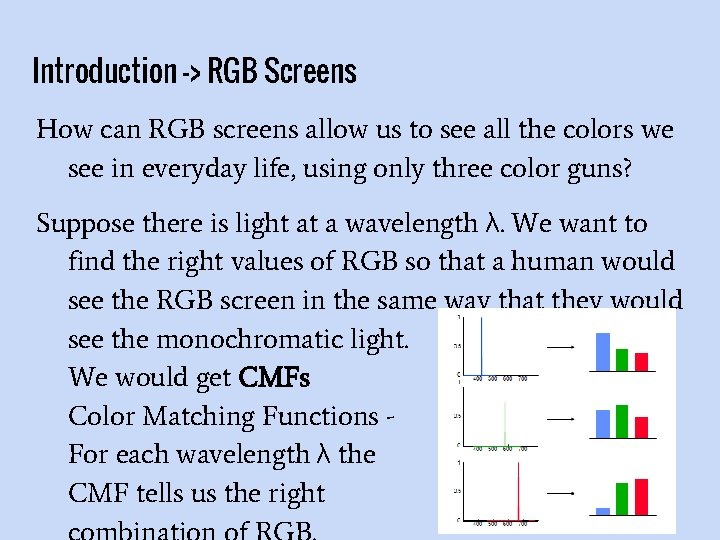

Introduction -> RGB Screens How can RGB screens allow us to see all the colors we see in everyday life, using only three color guns? Suppose there is light at a wavelength λ. We want to find the right values of RGB so that a human would see the RGB screen in the same way that they would see the monochromatic light. We would get CMFs Color Matching Functions For each wavelength λ the CMF tells us the right

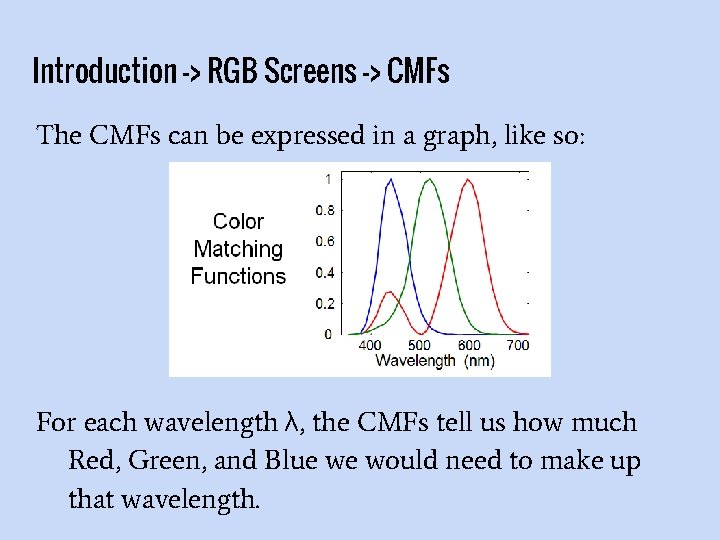

Introduction -> RGB Screens -> CMFs The CMFs can be expressed in a graph, like so: For each wavelength λ, the CMFs tell us how much Red, Green, and Blue we would need to make up that wavelength.

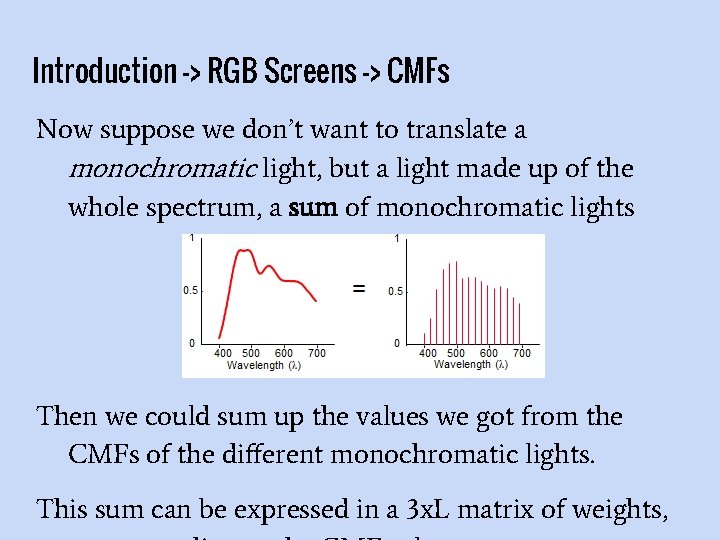

Introduction -> RGB Screens -> CMFs Now suppose we don’t want to translate a monochromatic light, but a light made up of the whole spectrum, a sum of monochromatic lights Then we could sum up the values we got from the CMFs of the different monochromatic lights. This sum can be expressed in a 3 x. L matrix of weights,

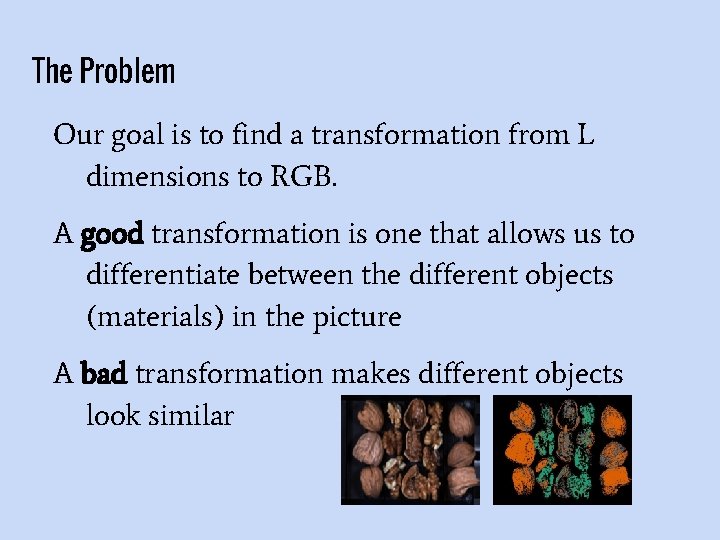

The Problem Our goal is to find a transformation from L dimensions to RGB. A good transformation is one that allows us to differentiate between the different objects (materials) in the picture A bad transformation makes different objects look similar

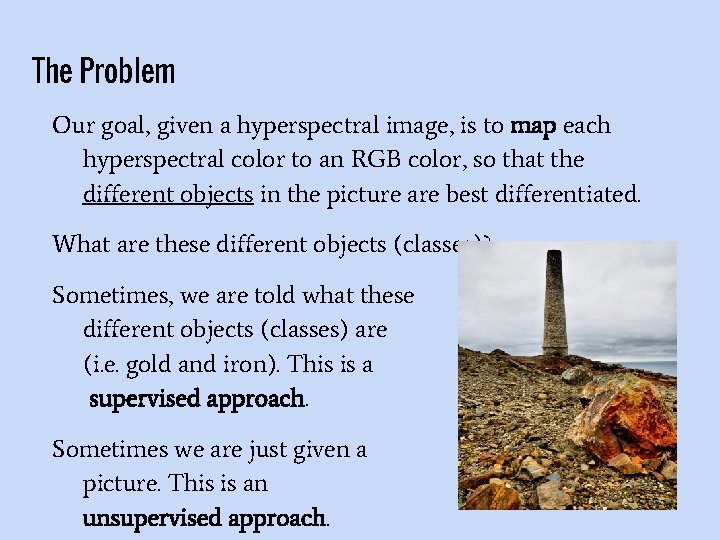

The Problem Our goal, given a hyperspectral image, is to map each hyperspectral color to an RGB color, so that the different objects in the picture are best differentiated. What are these different objects (classes)? Sometimes, we are told what these different objects (classes) are (i. e. gold and iron). This is a supervised approach. Sometimes we are just given a picture. This is an unsupervised approach.

The Problem - definitions L: The number of bands in our hyperspectral image typically 150 -400 p: The number of different materials (classes) that we want to distinguish. For examples, if we want to identify gold and iron, then p = 2. si: the signature (unique reflectance levels) of the class i. It is an L-dimensional Reflectance Signature of Basalt

Solutions The solutions divided into two groups: Fixed solutions High performance Color consistency Poor results for some images Adaptive solutions Better results

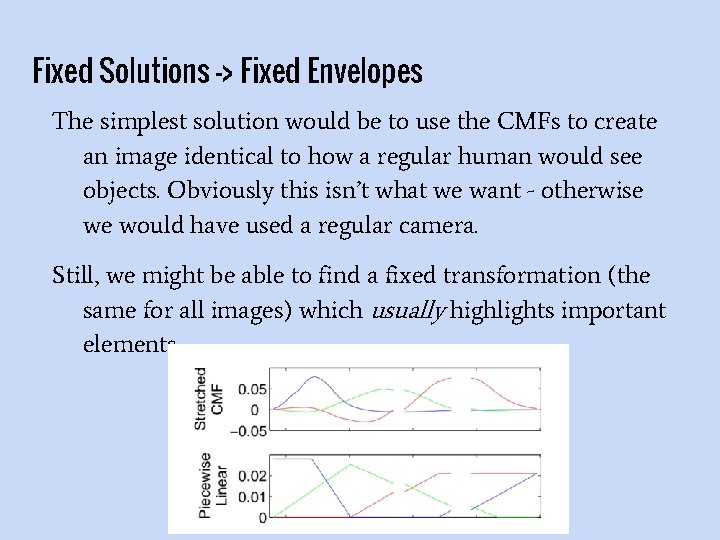

Fixed Solutions -> Fixed Envelopes The simplest solution would be to use the CMFs to create an image identical to how a regular human would see objects. Obviously this isn’t what we want - otherwise we would have used a regular camera. Still, we might be able to find a fixed transformation (the same for all images) which usually highlights important elements.

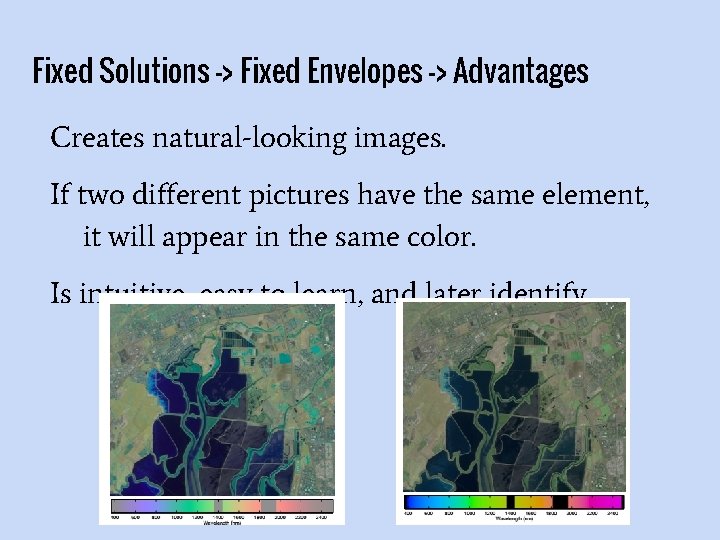

Fixed Solutions -> Fixed Envelopes -> Advantages Creates natural-looking images. If two different pictures have the same element, it will appear in the same color. Is intuitive, easy to learn, and later identify

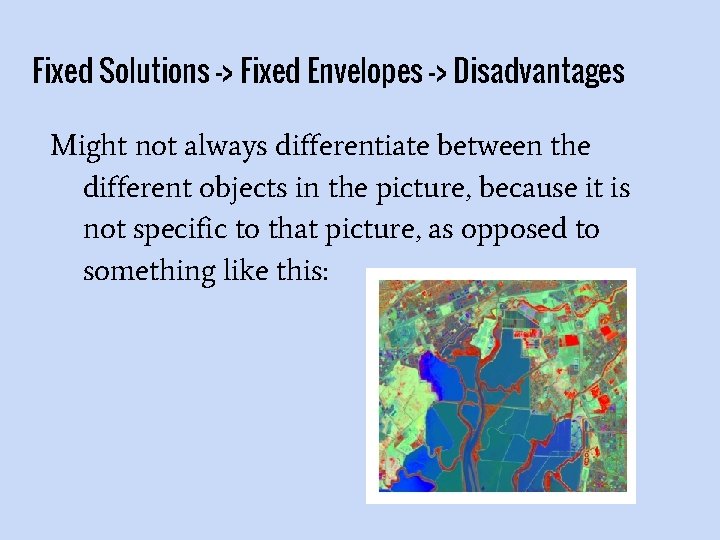

Fixed Solutions -> Fixed Envelopes -> Disadvantages Might not always differentiate between the different objects in the picture, because it is not specific to that picture, as opposed to something like this:

Fixed Solutions -> Neural Networks The neural networks solution is a solution that receives classified training data to create the transformation once, and not for each image.

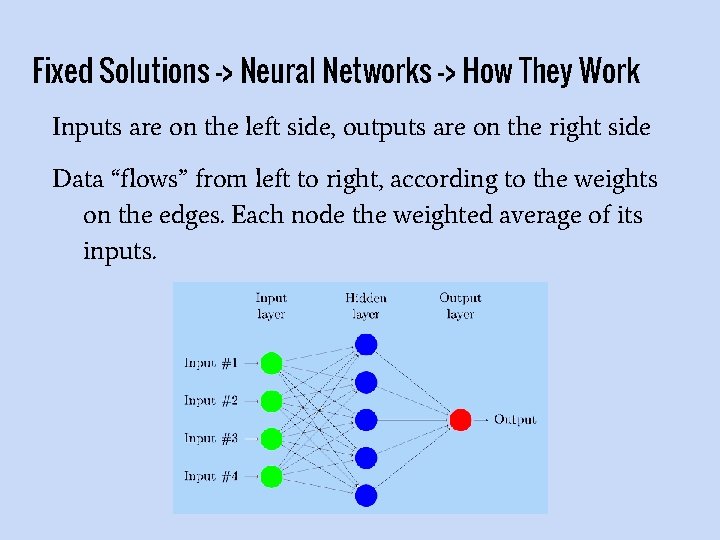

Fixed Solutions -> Neural Networks -> How They Work Inputs are on the left side, outputs are on the right side Data “flows” from left to right, according to the weights on the edges. Each node the weighted average of its inputs.

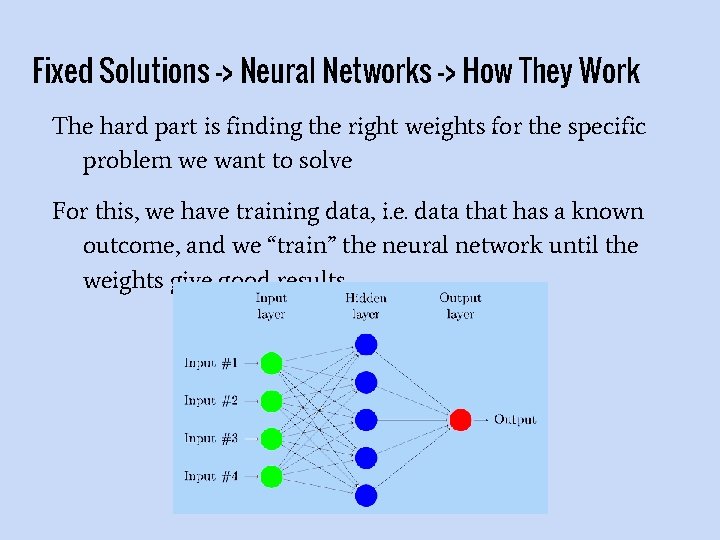

Fixed Solutions -> Neural Networks -> How They Work The hard part is finding the right weights for the specific problem we want to solve For this, we have training data, i. e. data that has a known outcome, and we “train” the neural network until the weights give good results.

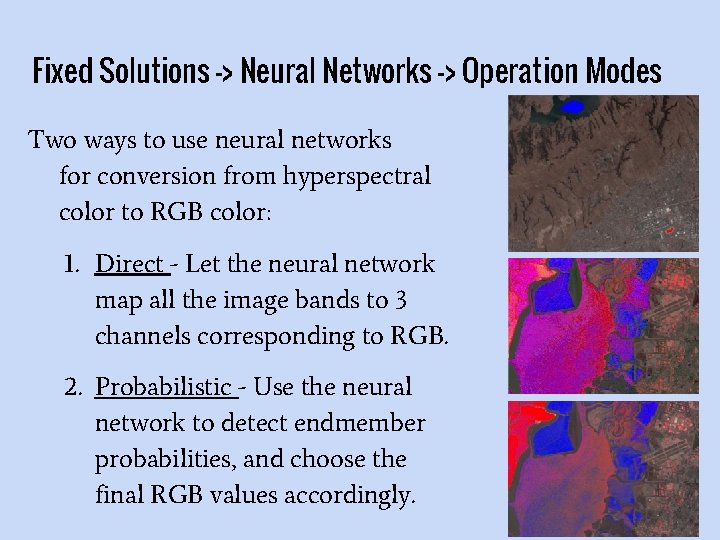

Fixed Solutions -> Neural Networks -> Operation Modes Two ways to use neural networks for conversion from hyperspectral color to RGB color: 1. Direct - Let the neural network map all the image bands to 3 channels corresponding to RGB. 2. Probabilistic - Use the neural network to detect endmember probabilities, and choose the final RGB values accordingly.

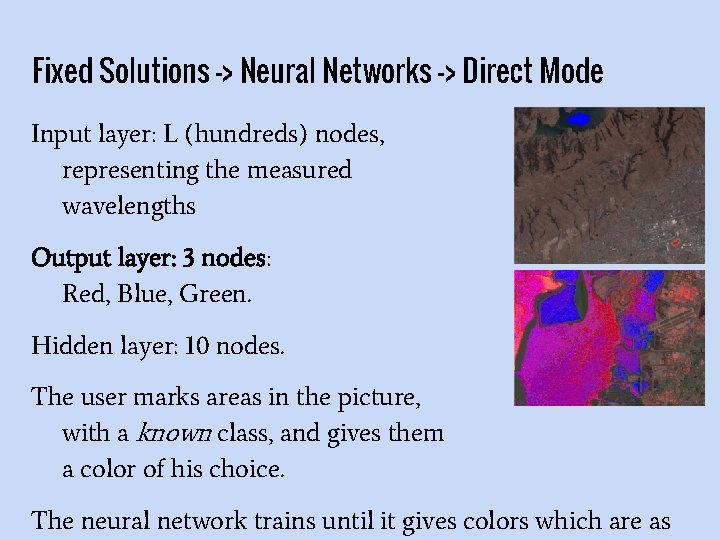

Fixed Solutions -> Neural Networks -> Direct Mode Input layer: L (hundreds) nodes, representing the measured wavelengths Output layer: 3 nodes: Red, Blue, Green. Hidden layer: 10 nodes. The user marks areas in the picture, with a known class, and gives them a color of his choice. The neural network trains until it gives colors which are as

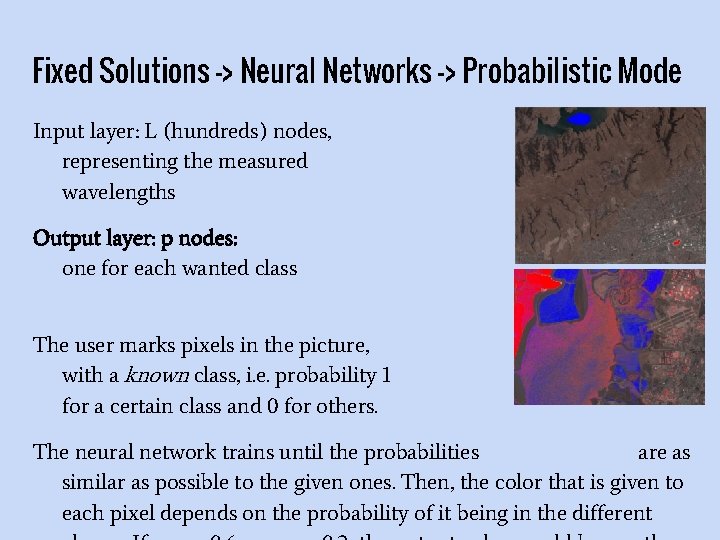

Fixed Solutions -> Neural Networks -> Probabilistic Mode Input layer: L (hundreds) nodes, representing the measured wavelengths Output layer: p nodes: one for each wanted class The user marks pixels in the picture, with a known class, i. e. probability 1 for a certain class and 0 for others. The neural network trains until the probabilities are as similar as possible to the given ones. Then, the color that is given to each pixel depends on the probability of it being in the different

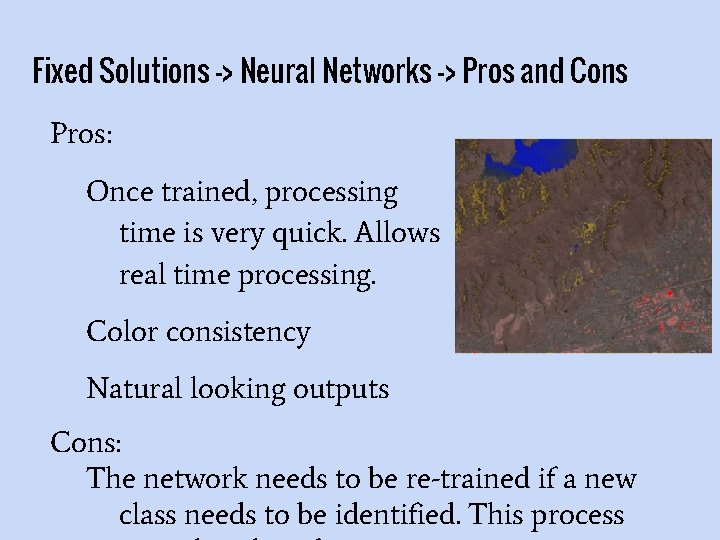

Fixed Solutions -> Neural Networks -> Pros and Cons Pros: Once trained, processing time is very quick. Allows real time processing. Color consistency Natural looking outputs Cons: The network needs to be re-trained if a new class needs to be identified. This process

Adaptive Solutions Some of the adaptive solutions are supervised (receive information about the different materials that need to be differentiated): INAPCA, LDA Other adaptive solutions are unsupervised (receive only an image): 1 Bit, PCA, NAPCA

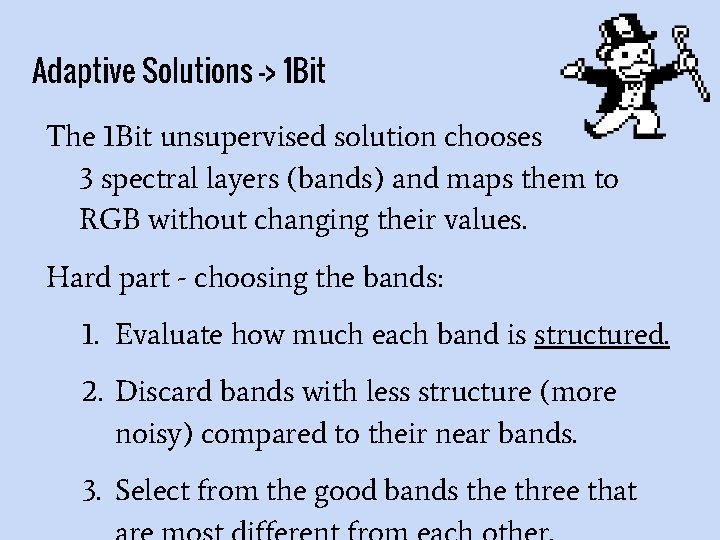

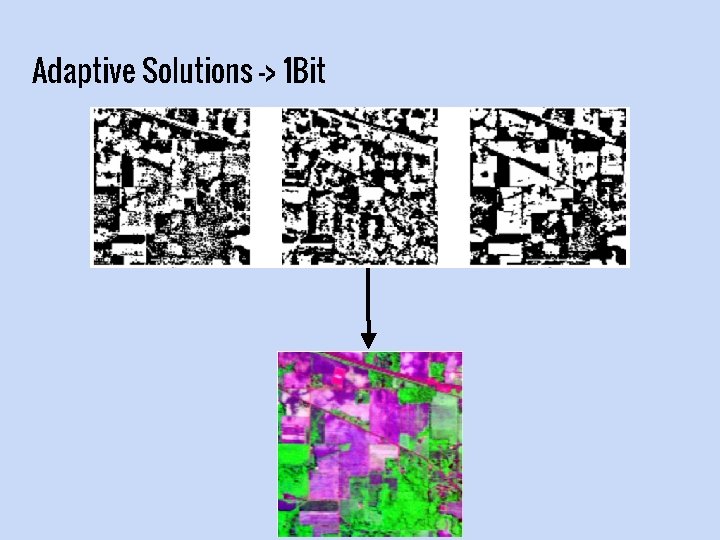

Adaptive Solutions -> 1 Bit The 1 Bit unsupervised solution chooses 3 spectral layers (bands) and maps them to RGB without changing their values. Hard part - choosing the bands: 1. Evaluate how much each band is structured. 2. Discard bands with less structure (more noisy) compared to their near bands. 3. Select from the good bands the three that

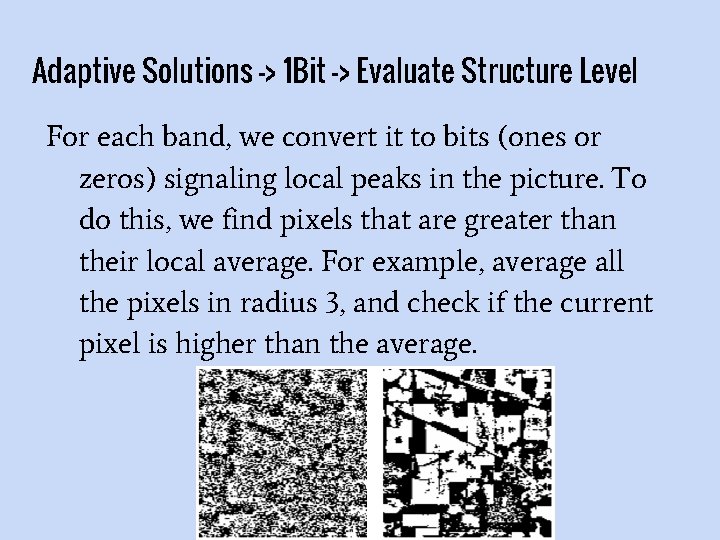

Adaptive Solutions -> 1 Bit -> Evaluate Structure Level For each band, we convert it to bits (ones or zeros) signaling local peaks in the picture. To do this, we find pixels that are greater than their local average. For example, average all the pixels in radius 3, and check if the current pixel is higher than the average.

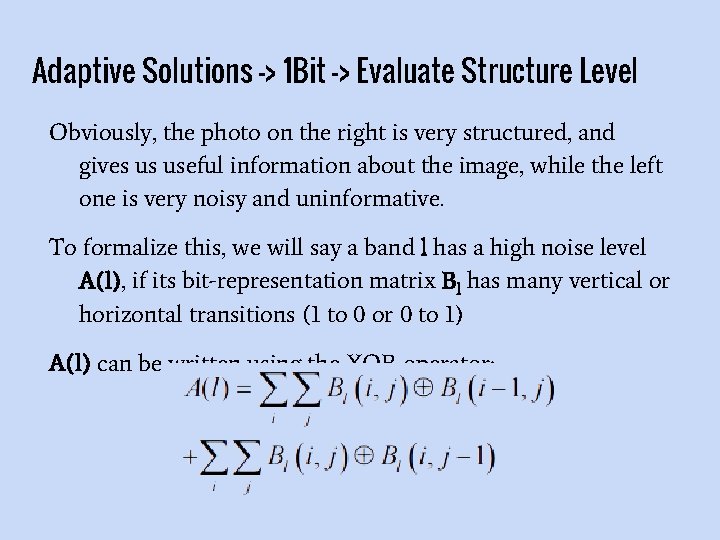

Adaptive Solutions -> 1 Bit -> Evaluate Structure Level Obviously, the photo on the right is very structured, and gives us useful information about the image, while the left one is very noisy and uninformative. To formalize this, we will say a band l has a high noise level A(l), if its bit-representation matrix Bl has many vertical or horizontal transitions (1 to 0 or 0 to 1) A(l) can be written using the XOR operator:

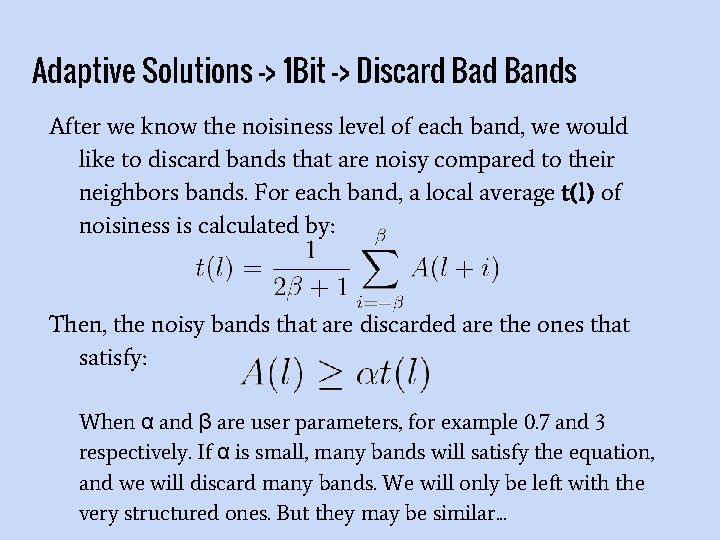

Adaptive Solutions -> 1 Bit -> Discard Bands After we know the noisiness level of each band, we would like to discard bands that are noisy compared to their neighbors bands. For each band, a local average t(l) of noisiness is calculated by: Then, the noisy bands that are discarded are the ones that satisfy: When α and β are user parameters, for example 0. 7 and 3 respectively. If α is small, many bands will satisfy the equation, and we will discard many bands. We will only be left with the very structured ones. But they may be similar. . .

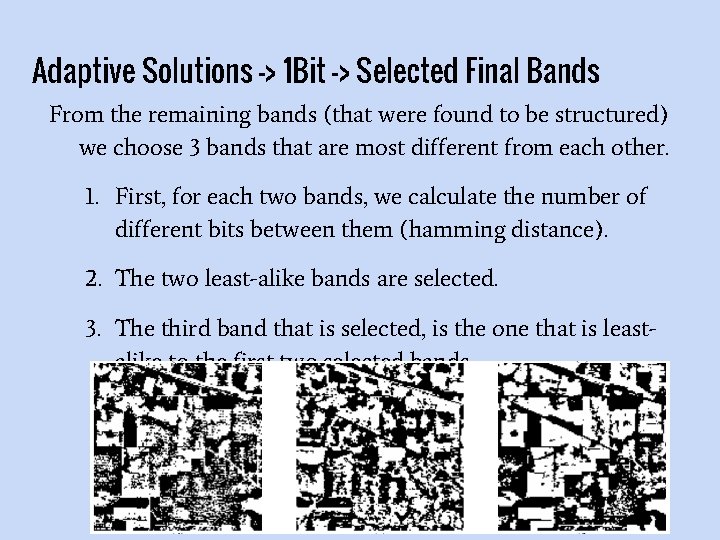

Adaptive Solutions -> 1 Bit -> Selected Final Bands From the remaining bands (that were found to be structured) we choose 3 bands that are most different from each other. 1. First, for each two bands, we calculate the number of different bits between them (hamming distance). 2. The two least-alike bands are selected. 3. The third band that is selected, is the one that is leastalike to the first two selected bands.

Adaptive Solutions -> 1 Bit

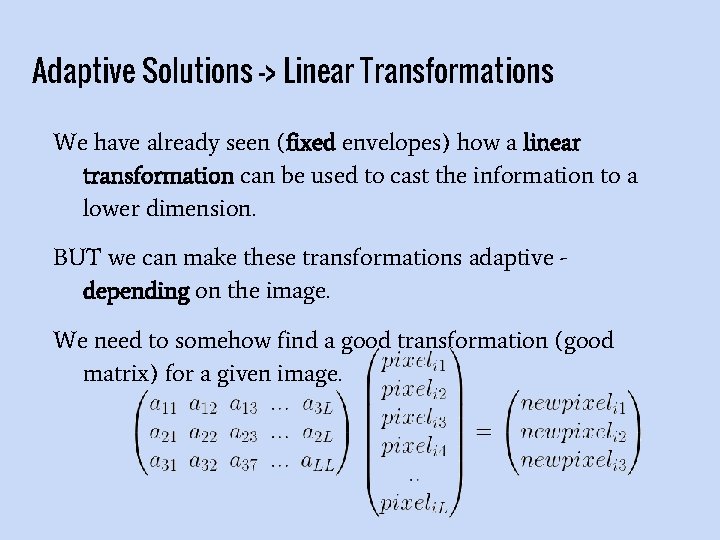

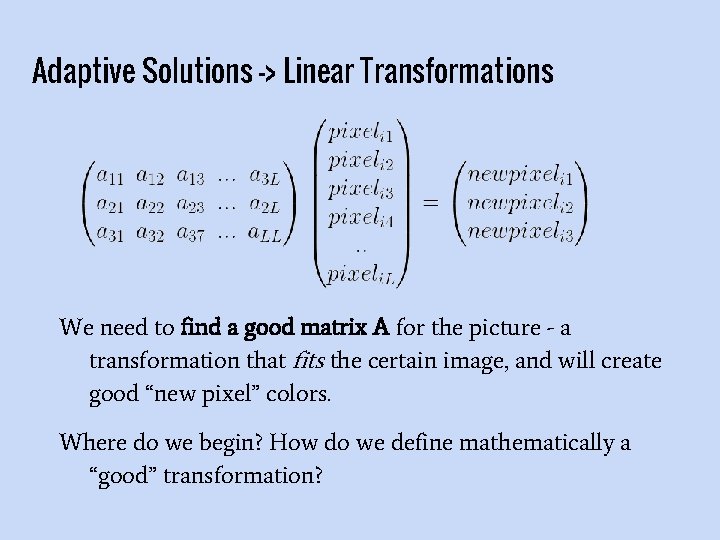

Adaptive Solutions -> Linear Transformations We have already seen (fixed envelopes) how a linear transformation can be used to cast the information to a lower dimension. BUT we can make these transformations adaptive depending on the image. We need to somehow find a good transformation (good matrix) for a given image.

Adaptive Solutions -> Linear Transformations We need to find a good matrix A for the picture - a transformation that fits the certain image, and will create good “new pixel” colors. Where do we begin? How do we define mathematically a “good” transformation?

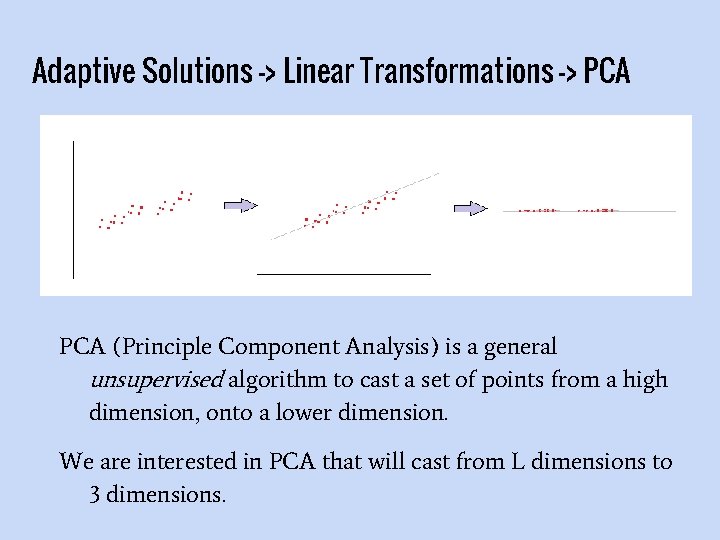

Adaptive Solutions -> Linear Transformations -> PCA (Principle Component Analysis) is a general unsupervised algorithm to cast a set of points from a high dimension, onto a lower dimension. We are interested in PCA that will cast from L dimensions to 3 dimensions.

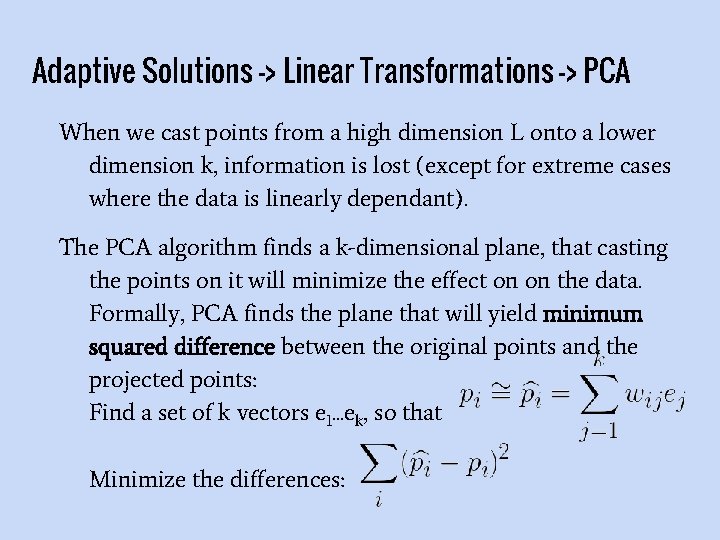

Adaptive Solutions -> Linear Transformations -> PCA When we cast points from a high dimension L onto a lower dimension k, information is lost (except for extreme cases where the data is linearly dependant). The PCA algorithm finds a k-dimensional plane, that casting the points on it will minimize the effect on on the data. Formally, PCA finds the plane that will yield minimum squared difference between the original points and the projected points: Find a set of k vectors e 1. . . ek, so that Minimize the differences:

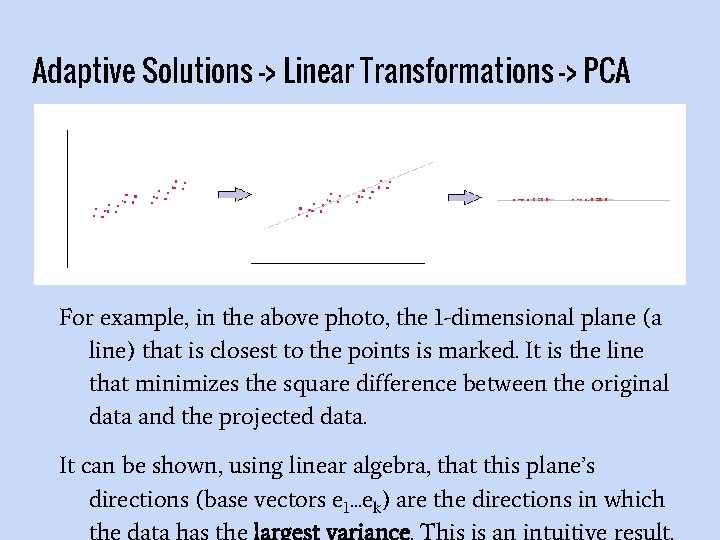

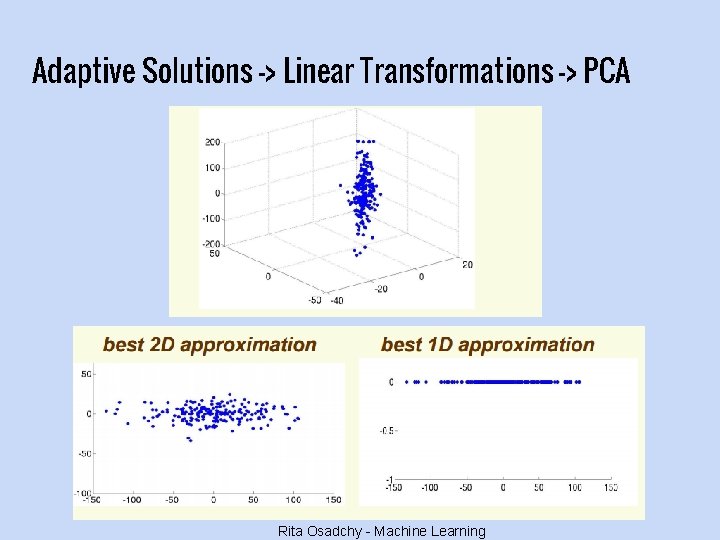

Adaptive Solutions -> Linear Transformations -> PCA For example, in the above photo, the 1 -dimensional plane (a line) that is closest to the points is marked. It is the line that minimizes the square difference between the original data and the projected data. It can be shown, using linear algebra, that this plane’s directions (base vectors e 1. . . ek) are the directions in which the data has the largest variance. This is an intuitive result.

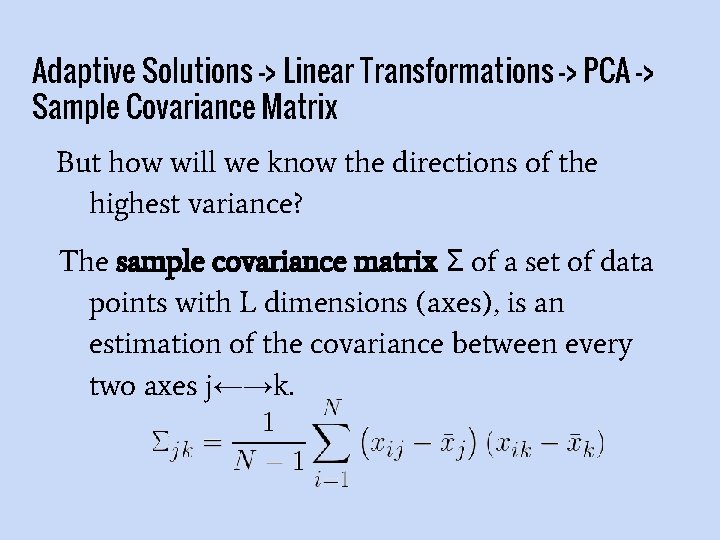

Adaptive Solutions -> Linear Transformations -> PCA -> Sample Covariance Matrix But how will we know the directions of the highest variance? The sample covariance matrix Σ of a set of data points with L dimensions (axes), is an estimation of the covariance between every two axes j←→k.

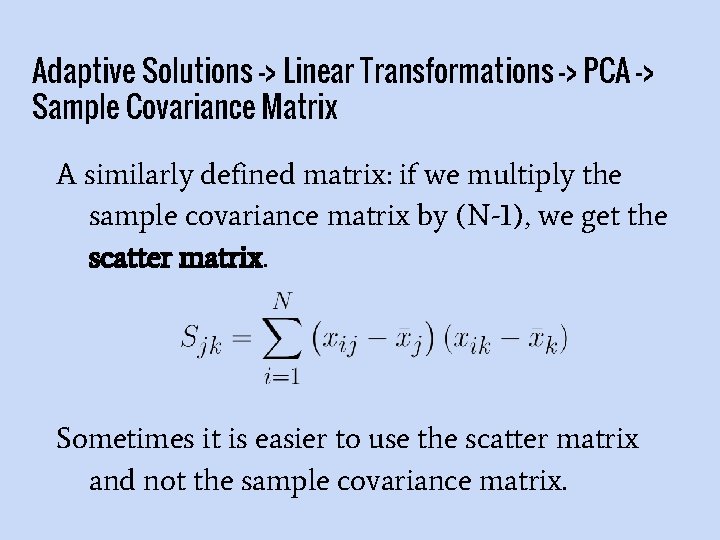

Adaptive Solutions -> Linear Transformations -> PCA -> Sample Covariance Matrix A similarly defined matrix: if we multiply the sample covariance matrix by (N-1), we get the scatter matrix. Sometimes it is easier to use the scatter matrix and not the sample covariance matrix.

Adaptive Solutions -> Linear Transformations -> PCA -> Sample Covariance Matrix The eigenvectors of the matrix sample covariance matrix (same as the scatter) are the orthogonal directions the of highest variance, and their eigenvalues are the corresponding variances. We are interested in the k eigenvectors with the highest eigenvalues.

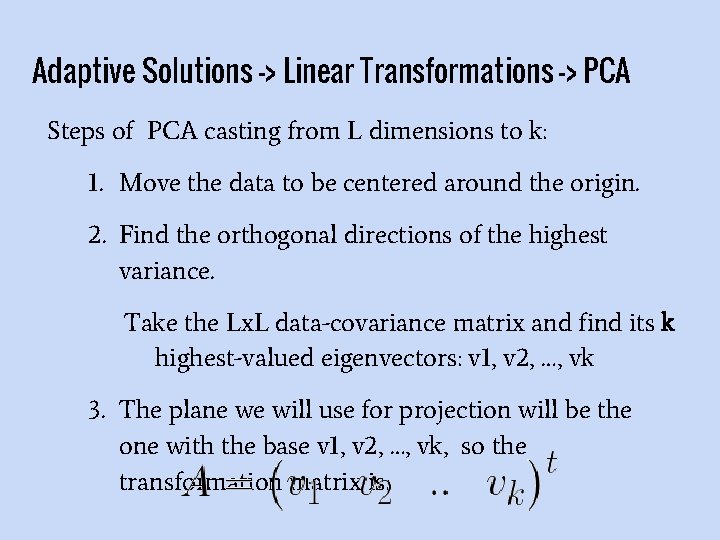

Adaptive Solutions -> Linear Transformations -> PCA Steps of PCA casting from L dimensions to k: 1. Move the data to be centered around the origin. 2. Find the orthogonal directions of the highest variance. Take the Lx. L data-covariance matrix and find its k highest-valued eigenvectors: v 1, v 2, …, vk 3. The plane we will use for projection will be the one with the base v 1, v 2, …, vk, so the transformation matrix is:

Adaptive Solutions -> Linear Transformations -> PCA Rita Osadchy - Machine Learning

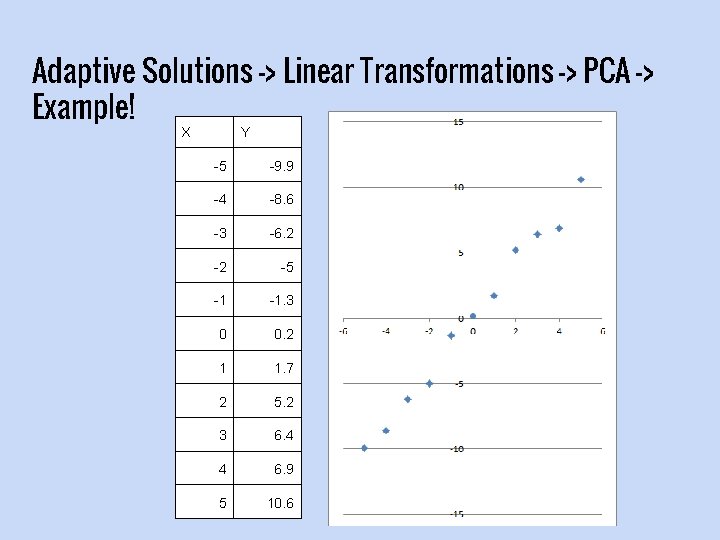

Adaptive Solutions -> Linear Transformations -> PCA -> Example! X Y -5 -9. 9 -4 -8. 6 -3 -6. 2 -2 -5 -1 -1. 3 0 0. 2 1 1. 7 2 5. 2 3 6. 4 4 6. 9 5 10. 6

Adaptive Solutions -> Linear Transformations -> PCA -> Example! Step 1: Since our data’s mean is already at (0, 0), we don’t need to do anything in this step.

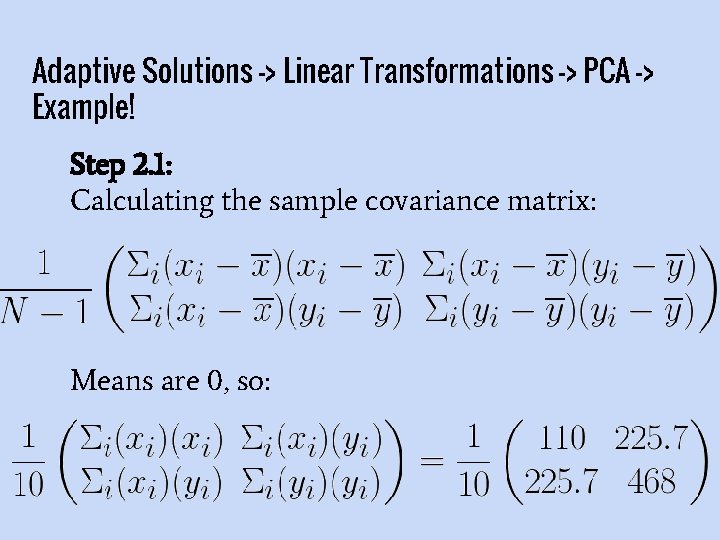

Adaptive Solutions -> Linear Transformations -> PCA -> Example! Step 2. 1: Calculating the sample covariance matrix: Means are 0, so:

Adaptive Solutions -> Linear Transformations -> PCA -> Example! Step 2. 2: Calculate the eigenvectors and eigenvalues of the covariance matrix

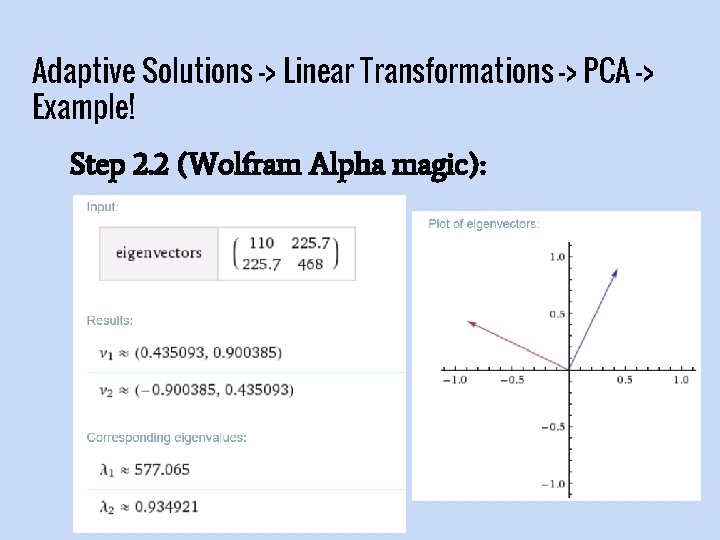

Adaptive Solutions -> Linear Transformations -> PCA -> Example! Step 2. 2 (Wolfram Alpha magic):

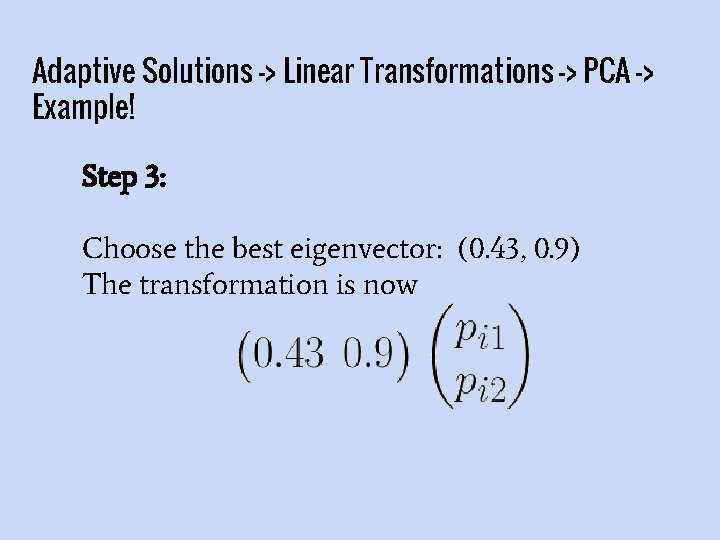

Adaptive Solutions -> Linear Transformations -> PCA -> Example! Step 3: Choose the best eigenvector: (0. 43, 0. 9) The transformation is now

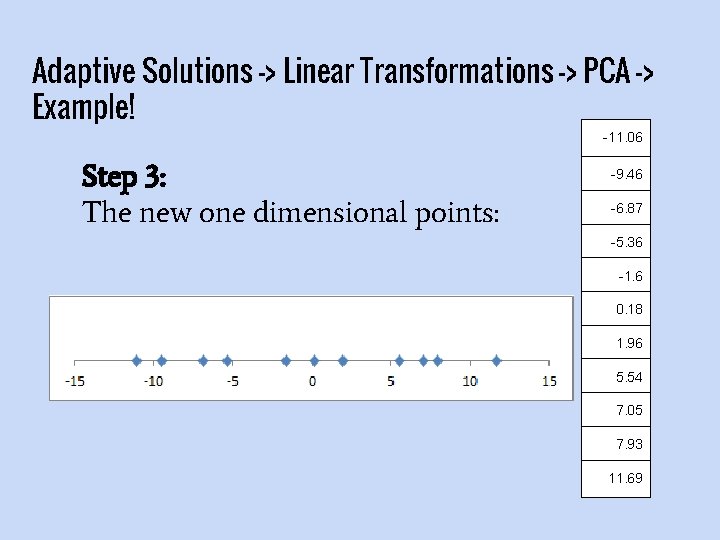

Adaptive Solutions -> Linear Transformations -> PCA -> Example! -11. 06 Step 3: The new one dimensional points: -9. 46 -6. 87 -5. 36 -1. 6 0. 18 1. 96 5. 54 7. 05 7. 93 11. 69

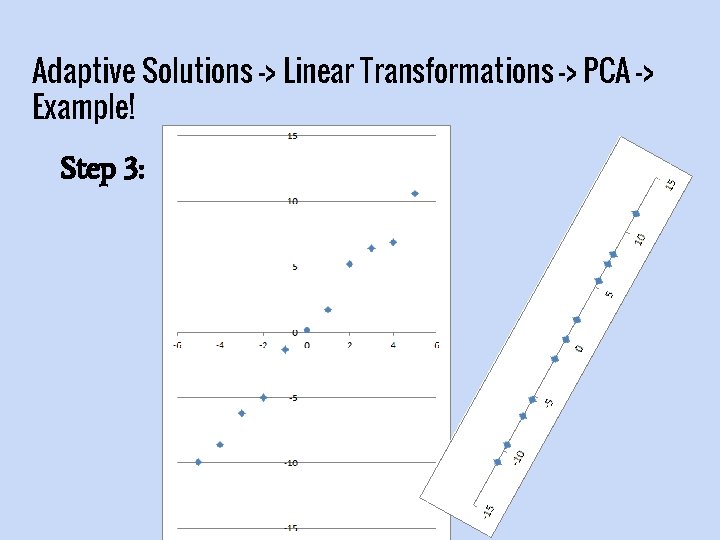

Adaptive Solutions -> Linear Transformations -> PCA -> Example! Step 3:

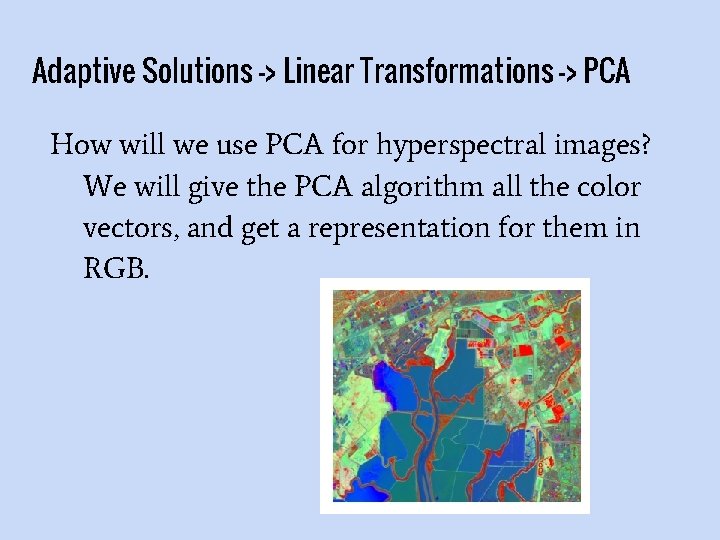

Adaptive Solutions -> Linear Transformations -> PCA How will we use PCA for hyperspectral images? We will give the PCA algorithm all the color vectors, and get a representation for them in RGB.

Adaptive Solutions -> Linear Transformations -> PCA -> -> Pros and Cons In some manner, PCA preserves maximum information, because the new positions are as close as possible to the old ones But: 1. It will not necessary differentiate between the classes we are interested in. 2. It usually has bright colors which confusingly make areas look more important

Adaptive Solutions -> Linear Transformations -> NAPCA Another problem is that PCA is sensitive to noise.

Adaptive Solutions -> Linear Transformations -> NAPCA (Noise Adjusted PCA) is also an unsupervised technique, and it is based on PCA. The goal is to eliminate noise and “then” use PCA.

Adaptive Solutions -> Linear Transformations -> NAPCA Definitions for NAPCA: Σ is the sample covariance matrix, as we have seen before. Σn is an estimation of the noise covariance matrix. There are good ways to estimate this matrix, and special ways for hyperspectral images. F in an inverse of the noise covariance matrix Σn z. NAPCA is the result of the NAPCA algorithm on hyperspectral color vector z.

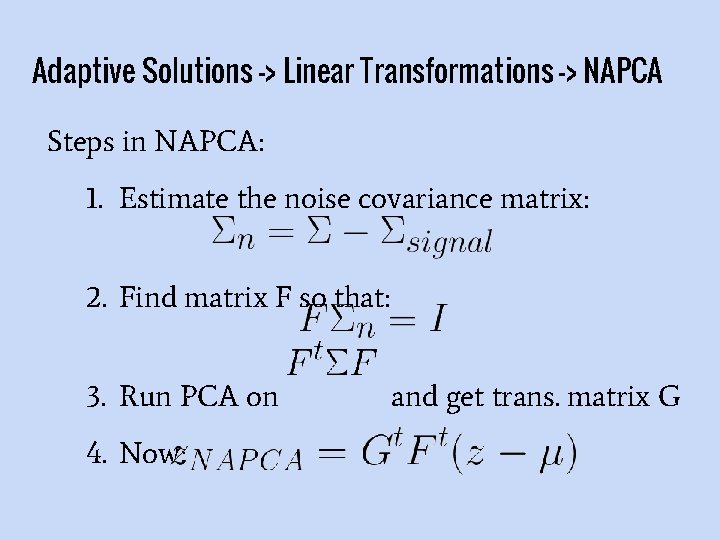

Adaptive Solutions -> Linear Transformations -> NAPCA Steps in NAPCA: 1. Estimate the noise covariance matrix: 2. Find matrix F so that: 3. Run PCA on 4. Now: and get trans. matrix G

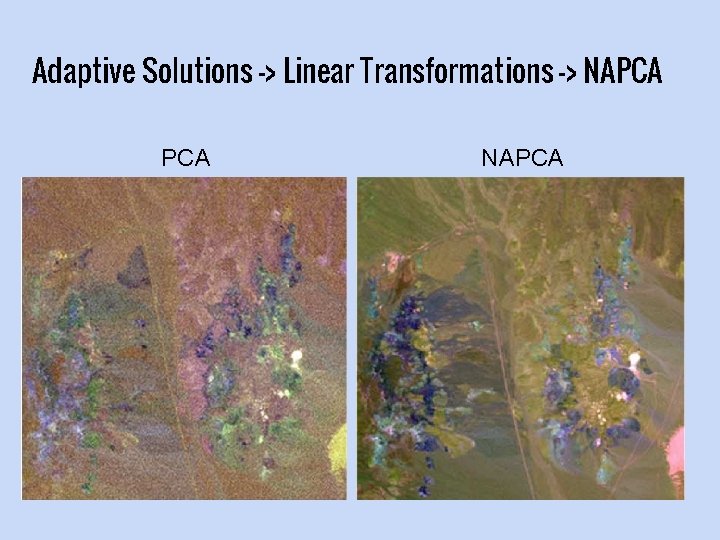

Adaptive Solutions -> Linear Transformations -> NAPCA

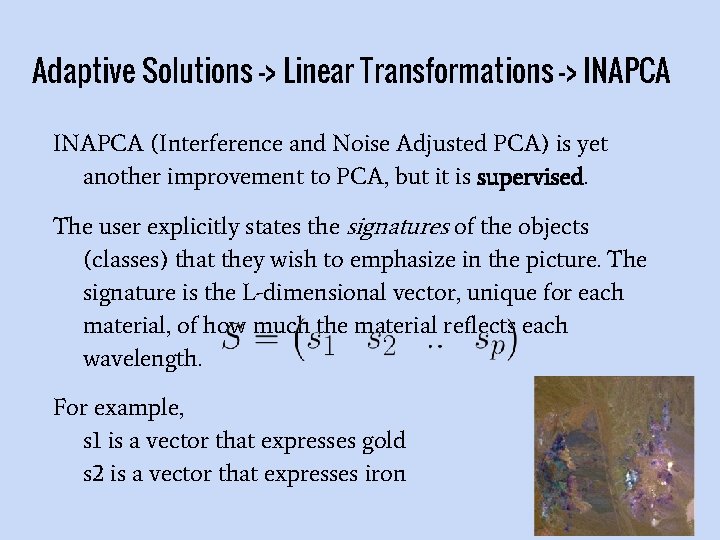

Adaptive Solutions -> Linear Transformations -> INAPCA (Interference and Noise Adjusted PCA) is yet another improvement to PCA, but it is supervised. The user explicitly states the signatures of the objects (classes) that they wish to emphasize in the picture. The signature is the L-dimensional vector, unique for each material, of how much the material reflects each wavelength. For example, s 1 is a vector that expresses gold s 2 is a vector that expresses iron

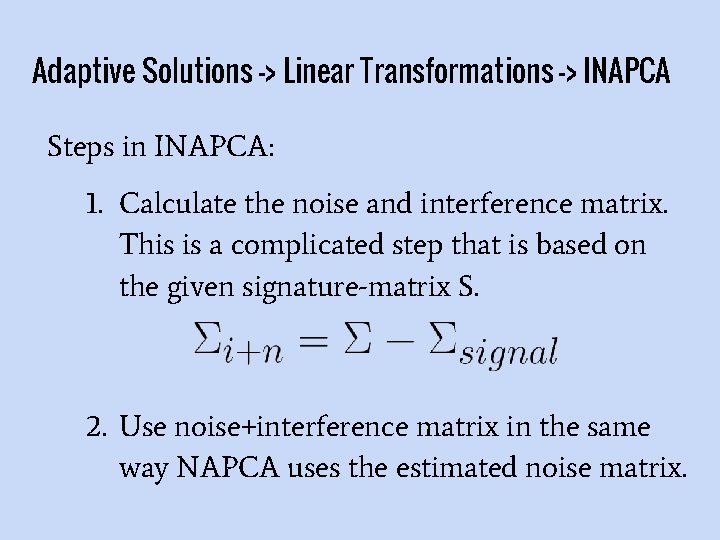

Adaptive Solutions -> Linear Transformations -> INAPCA Steps in INAPCA: 1. Calculate the noise and interference matrix. This is a complicated step that is based on the given signature-matrix S. 2. Use noise+interference matrix in the same way NAPCA uses the estimated noise matrix.

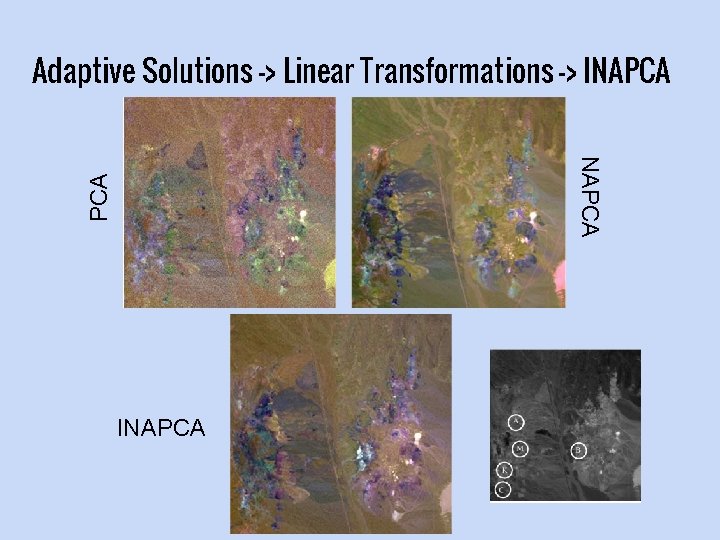

Adaptive Solutions -> Linear Transformations -> INAPCA usually performs better than NAPCA because it has user-given data, about the specific materials we would like to see.

Adaptive Solutions -> Linear Transformations -> INAPCA INAPCA

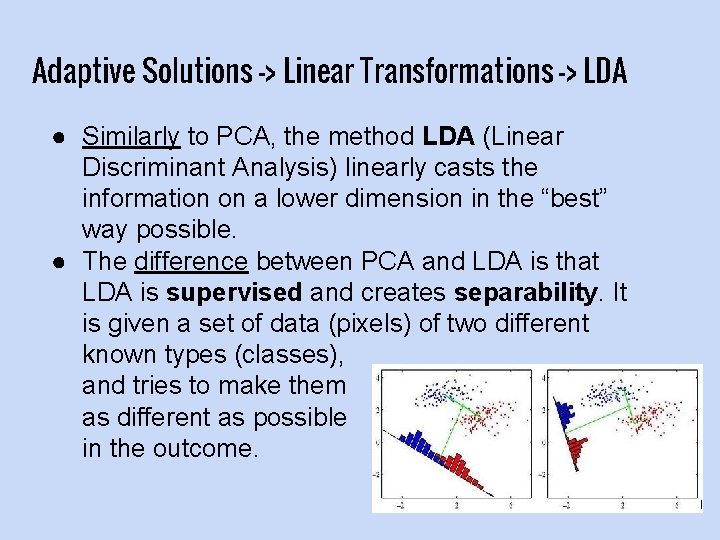

Adaptive Solutions -> Linear Transformations -> LDA ● Similarly to PCA, the method LDA (Linear Discriminant Analysis) linearly casts the information on a lower dimension in the “best” way possible. ● The difference between PCA and LDA is that LDA is supervised and creates separability. It is given a set of data (pixels) of two different known types (classes), and tries to make them as different as possible in the outcome.

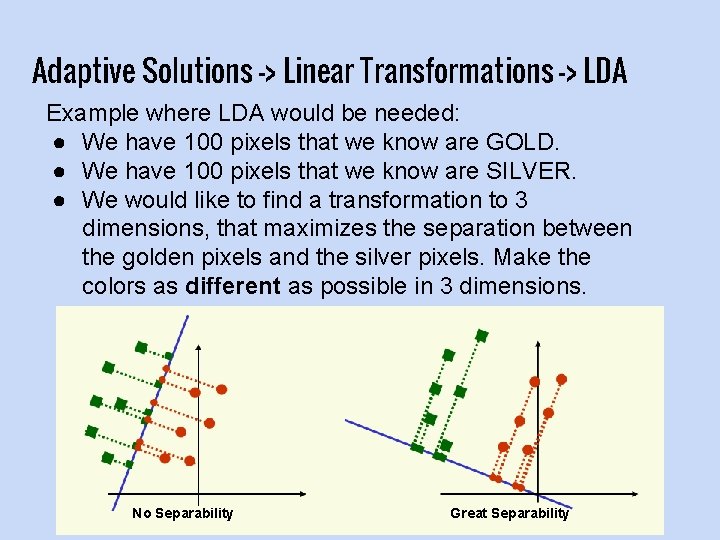

Adaptive Solutions -> Linear Transformations -> LDA Example where LDA would be needed: ● We have 100 pixels that we know are GOLD. ● We have 100 pixels that we know are SILVER. ● We would like to find a transformation to 3 dimensions, that maximizes the separation between the golden pixels and the silver pixels. Make the colors as different as possible in 3 dimensions. No Separability Great Separability

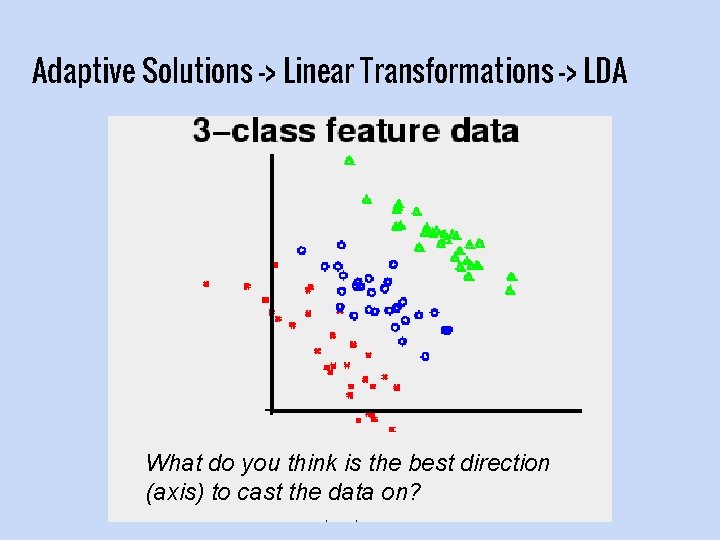

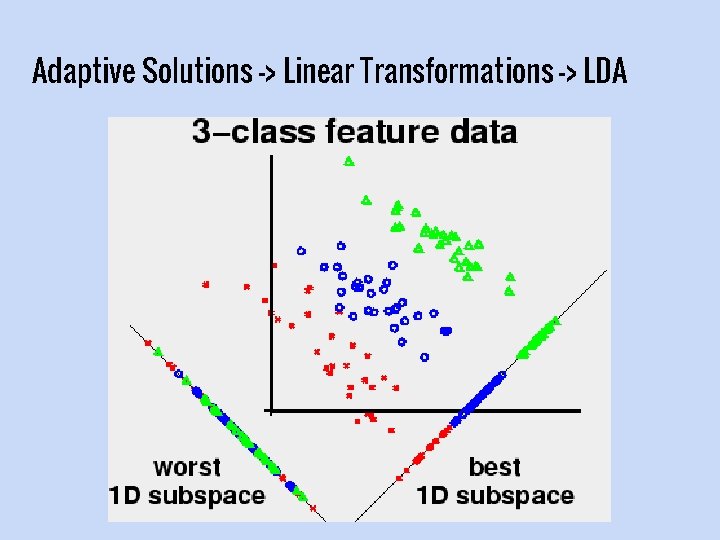

Adaptive Solutions -> Linear Transformations -> LDA What do you think is the best direction (axis) to cast the data on?

Adaptive Solutions -> Linear Transformations -> LDA

Adaptive Solutions -> Linear Transformations -> LDA ● Mathematically, we will define the quality of a certain projection: ○ As high as possible: The squared distance, between the class-means of the projected elements. ○ As low as possible: The sum of the different classes’ variances.

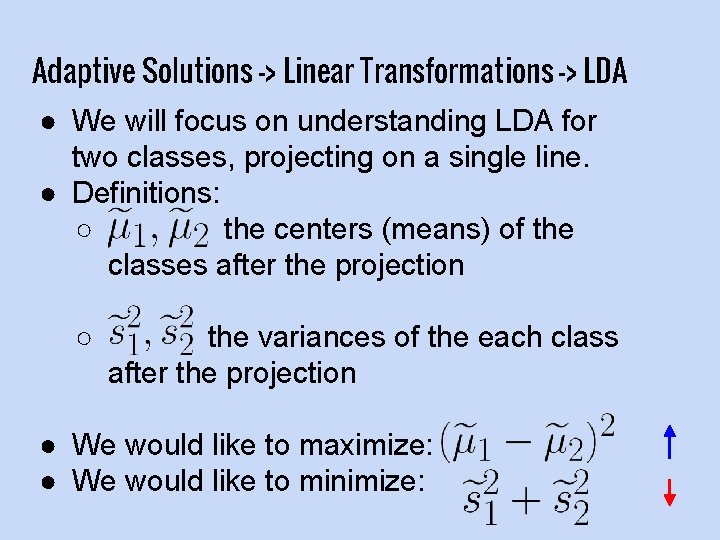

Adaptive Solutions -> Linear Transformations -> LDA ● We will focus on understanding LDA for two classes, projecting on a single line. ● Definitions: ○ the centers (means) of the classes after the projection ○ the variances of the each class after the projection ● We would like to maximize: ● We would like to minimize:

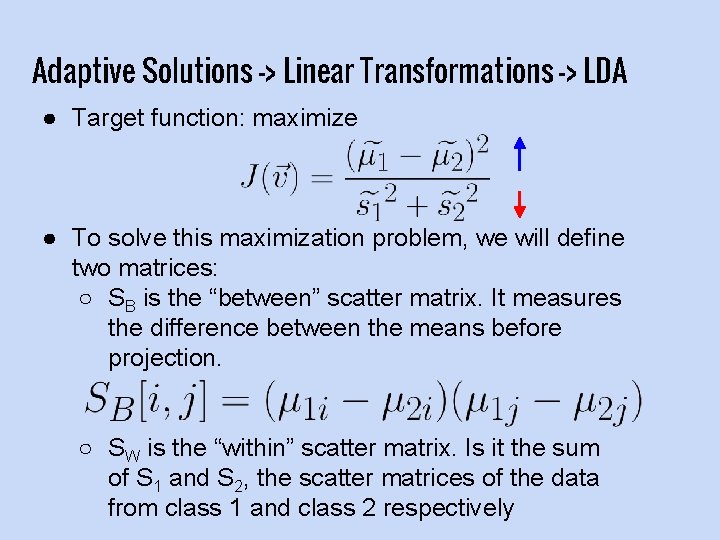

Adaptive Solutions -> Linear Transformations -> LDA ● Target function: maximize ● To solve this maximization problem, we will define two matrices: ○ SB is the “between” scatter matrix. It measures the difference between the means before projection. ○ SW is the “within” scatter matrix. Is it the sum of S 1 and S 2, the scatter matrices of the data from class 1 and class 2 respectively

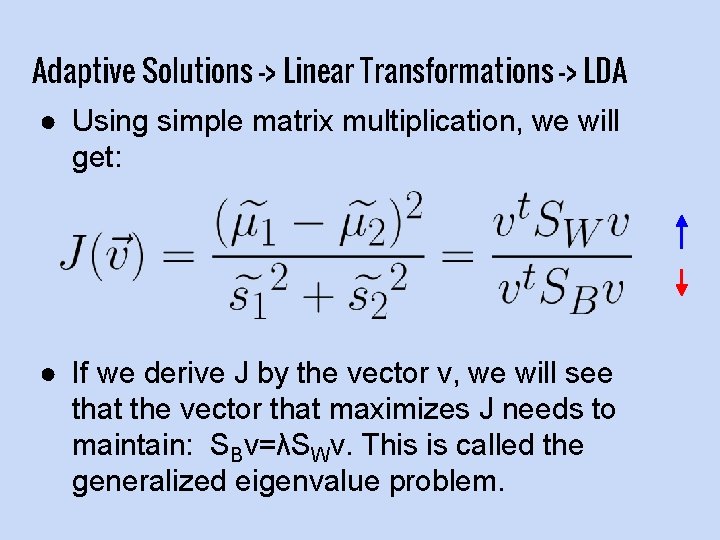

Adaptive Solutions -> Linear Transformations -> LDA ● Using simple matrix multiplication, we will get: ● If we derive J by the vector v, we will see that the vector that maximizes J needs to maintain: SBv=λSWv. This is called the generalized eigenvalue problem.

Adaptive Solutions -> Linear Transformations -> LDA ● So, we need to solve the equation: SBv=λSWv Using techniques for the generalized eigenvalue problem. ● The eigenvectors v which solve the equation are the directions which best separate our two (or more) classes.

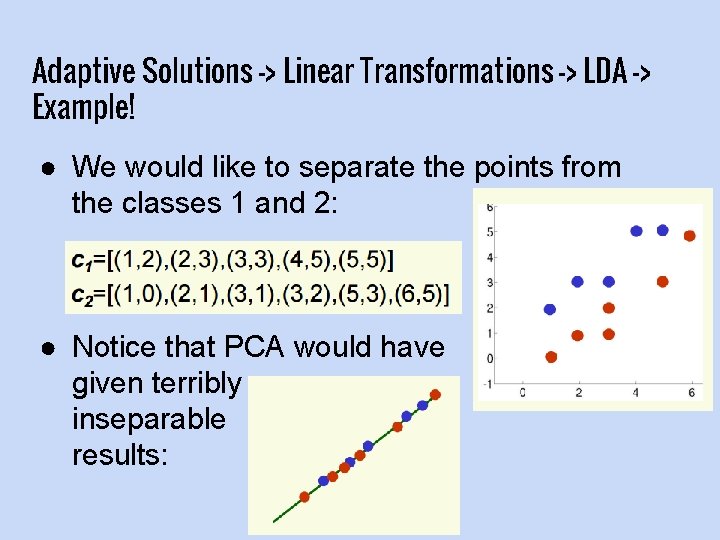

Adaptive Solutions -> Linear Transformations -> LDA -> Example! ● We would like to separate the points from the classes 1 and 2: ● Notice that PCA would have given terribly inseparable results:

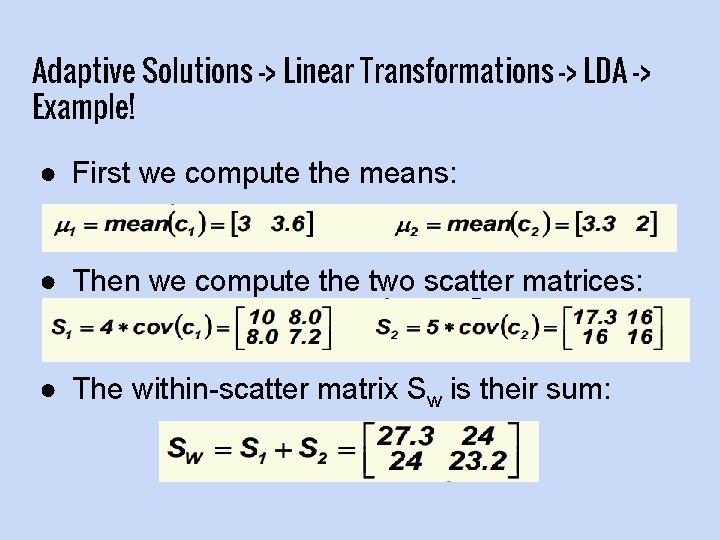

Adaptive Solutions -> Linear Transformations -> LDA -> Example! ● First we compute the means: ● Then we compute the two scatter matrices: ● The within-scatter matrix Sw is their sum:

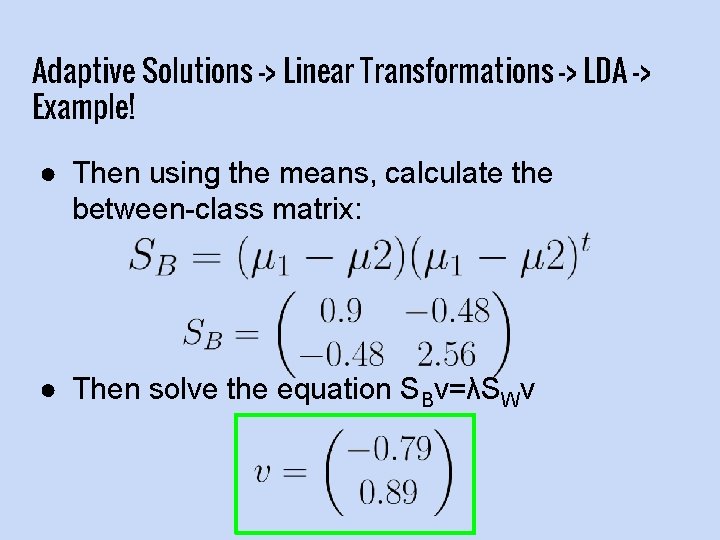

Adaptive Solutions -> Linear Transformations -> LDA -> Example! ● Then using the means, calculate the between-class matrix: ● Then solve the equation SBv=λSWv

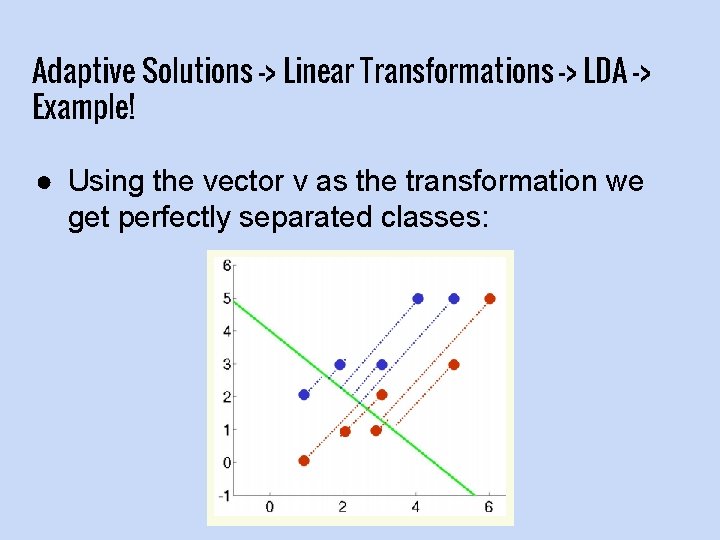

Adaptive Solutions -> Linear Transformations -> LDA -> Example! ● Using the vector v as the transformation we get perfectly separated classes:

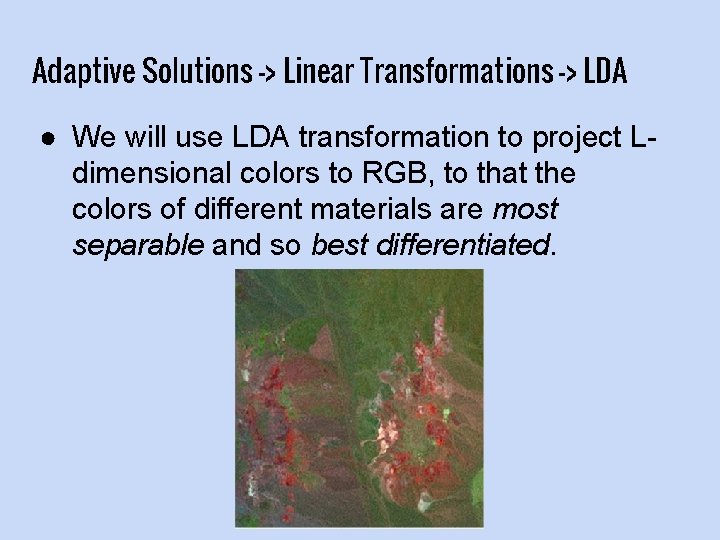

Adaptive Solutions -> Linear Transformations -> LDA ● We will use LDA transformation to project Ldimensional colors to RGB, to that the colors of different materials are most separable and so best differentiated.

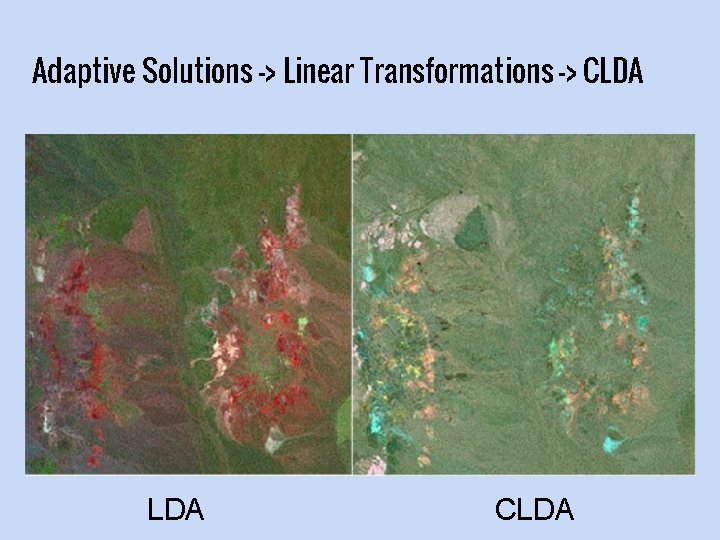

Adaptive Solutions -> Linear Transformations -> CLDA ● Constrained LDA is similar to LDA takes data from L dimensions in p classes, and projects on p dimensions. ● Its constraint is that every class mean has to be in a different direction (axis). ● Sometimes provides better results. Also allows classification together with dimension reduction.

Adaptive Solutions -> Linear Transformations -> CLDA

Adaptive Solutions -> Linear Transformations -> CLDA

Conclusions ● We have seen many different techniques to display hyperspectral images in RGB. ● Each technique has advantages and disadvantages. ● Usually, LDA and INAPCA give very good results, but sometimes we prefer using less complicated methods, like 1 Bit or fixed envelopes.

References Spectral Sensitivity, Wikipedia https: //en. wikipedia. org/wiki/Spectral_sensitivity Resonon Inc Website http: //www. resonon. com/index. html ICA Explained http: //sccn. ucsd. edu/~arno/indexica. html Linear Discriminant Analysis https: //en. wikipedia. org/wiki/Linear_discriminant_analysis Rita Osadchy, Machine Learning http: //www. cs. haifa. ac. il/~rita/ml_course/course. html CLDA for Hyperspectral Images https: //pdfs. semanticscholar. org/aa 1 c/07 d 95444 e 0 d 785387 c 2258580 6104884 cc 77. pdf

References Color Display for Hyperspectral Imagery http: //www. ece. msstate. edu/~du/TGRS-VIS 2. pdf A Low-Complexity Approach for Color Display of Hyperspectral Remote-Sensing Images Using One. Bit Transform Based Band Selection http: //kulis. kocaeli. edu. tr/pub/tgrs 08_hsidisplay. pdf Design Goals and Solutions for Display of Hyperspectral Images https: //pdfs. semanticscholar. org/258 f/d 2 c 3 a 49 c 88 b 4 e 764 ae 84203 e 86 3 fb 5 be 21 e 9. pdf Linear Fusion of Image Sets for Display http: //www. umbc. edu/rssipl/people/jwang/citation_4. pdf Dimensionality Reduction for Useful Display of Hyperspectral Images http: //jeffcole. org/academics/image_display. pdf

THE END Questions?

- Slides: 80