Embedded Systems in Silicon TD 5102 Advanced Architectures

Embedded Systems in Silicon TD 5102 Advanced Architectures with emphasis on ILP exploitation Henk Corporaal http: //www. ics. ele. tue. nl/~heco/courses/Emb. Systems Technical University Eindhoven DTI / NUS Singapore 2005/2006 H. C. TD 5102

Future We foresee that many characteristics of current high performance architectures will find their way into the embedded domain. H. C. TD 5102 2

What are we talking about? ILP = Instruction Level Parallelism = ability to perform multiple operations (or instructions), from a single instruction stream, in parallel H. C. TD 5102 3

Processor Components Overview • • • H. C. TD 5102 Motivation and Goals Trends in Computer Architecture ILP Processors Transport Triggered Architectures Configurable components Summary and Conclusions 4

Motivation for ILP (and other types of parallelism) • Increasing VLSI densities; decreasing feature size • Increasing performance requirements • New application areas, like – Multi-media (image, audio, video, 3 -D) – intelligent search and filtering engines – neural, fuzzy, genetic computing • More functionality • Use of existing Code (Compatibility) • Low Power: P = f. CV 2 H. C. TD 5102 5

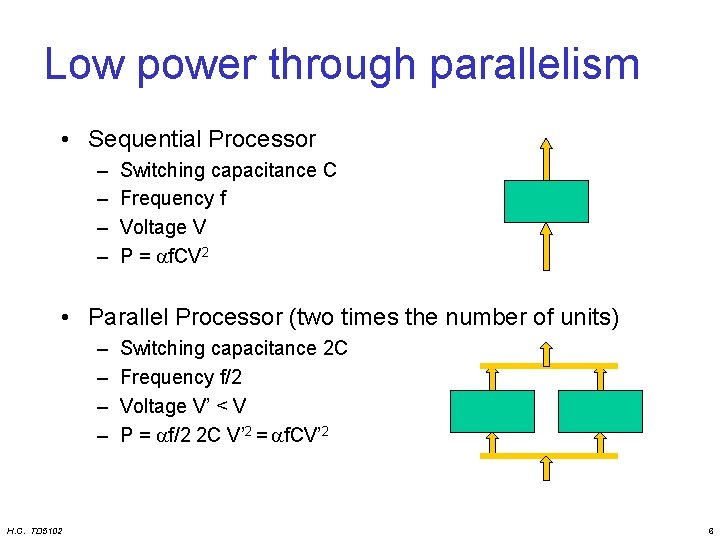

Low power through parallelism • Sequential Processor – – Switching capacitance C Frequency f Voltage V P = f. CV 2 • Parallel Processor (two times the number of units) – – H. C. TD 5102 Switching capacitance 2 C Frequency f/2 Voltage V’ < V P = f/2 2 C V’ 2 = f. CV’ 2 6

ILP Goals • Making the most powerful single chip processor • Exploiting parallelism between independent instructions (or operations) in programs • Exploit hardware concurrency – multiple FUs, buses, reg files, bypass paths, etc. • Code compatibility – binary: superscalar and super-pipelined – HLL: VLIW • Incorporate enhanced functionality (ASIP) H. C. TD 5102 7

Overview • • • H. C. TD 5102 Motivation and Goals Trends in Computer Architecture ILP Processors Transport Triggered Architectures Configurable components Summary and Conclusions 8

Trends in Computer Architecture • • • H. C. TD 5102 Bridging the semantic gap Performance increase VLSI developments Architecture developments: design space The role of compiler Right match 9

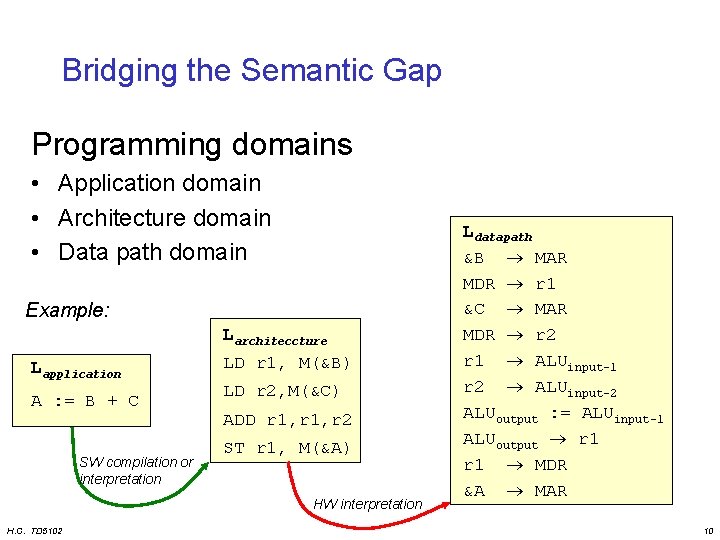

Bridging the Semantic Gap Programming domains • Application domain • Architecture domain • Data path domain Example: Larchiteccture Lapplication A : = B + C SW compilation or interpretation LD r 1, M(&B) LD r 2, M(&C) ADD r 1, r 2 ST r 1, M(&A) HW interpretation H. C. TD 5102 Ldatapath &B MAR MDR r 1 &C MAR MDR r 2 r 1 ALUinput-1 r 2 ALUinput-2 ALUoutput : = ALUinput-1 ALUoutput r 1 MDR &A MAR 10

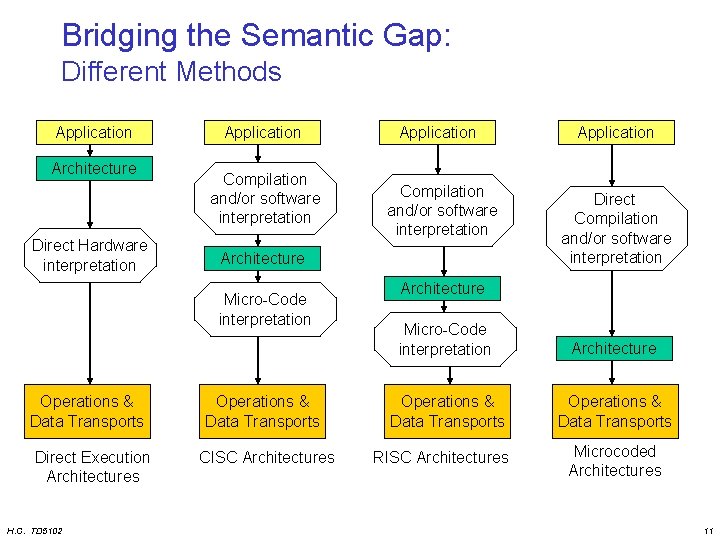

Bridging the Semantic Gap: Different Methods Application Architecture Direct Hardware interpretation Application Compilation and/or software interpretation Direct Execution Architectures H. C. TD 5102 Compilation and/or software interpretation Architecture Micro-Code interpretation Operations & Data Transports Application Operations & Data Transports CISC Architectures Application Direct Compilation and/or software interpretation Architecture Micro-Code interpretation Architecture Operations & Data Transports RISC Architectures Microcoded Architectures 11

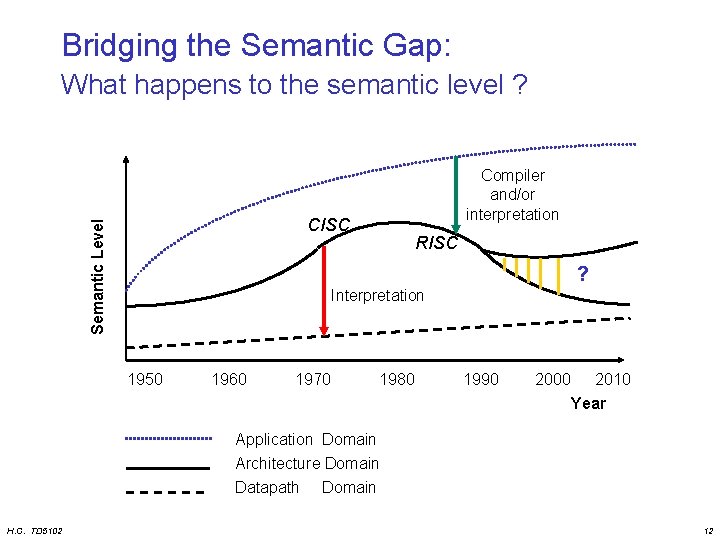

Bridging the Semantic Gap: What happens to the semantic level ? Compiler and/or interpretation Semantic Level CISC RISC ? Interpretation 1950 1960 1970 1980 1990 2000 2010 Year Application Domain Architecture Domain Datapath Domain H. C. TD 5102 12

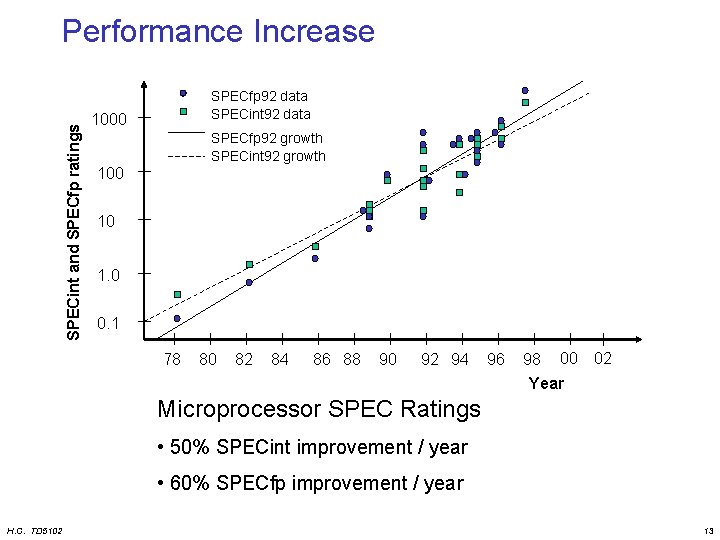

SPECint and SPECfp ratings Performance Increase SPECfp 92 data SPECint 92 data 1000 SPECfp 92 growth SPECint 92 growth 100 10 1. 0 0. 1 78 80 82 84 86 88 90 92 94 96 98 00 02 Year Microprocessor SPEC Ratings • 50% SPECint improvement / year • 60% SPECfp improvement / year H. C. TD 5102 13

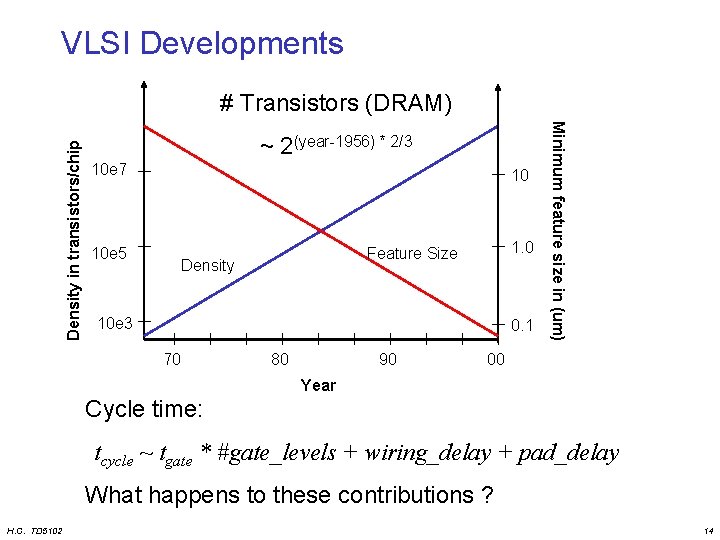

VLSI Developments ~ 2(year-1956) * 2/3 10 e 7 10 10 e 5 1. 0 Feature Size Density 10 e 3 0. 1 70 80 90 Minimum feature size in (um) Density in transistors/chip # Transistors (DRAM) 00 Year Cycle time: tcycle ~ tgate * #gate_levels + wiring_delay + pad_delay What happens to these contributions ? H. C. TD 5102 14

Architecture Developments How to improve performance? • (Super)-pipelining • Powerful instructions – MD-technique • multiple data operands per operation – MO-technique • multiple operations per instruction • Multiple instruction issue H. C. TD 5102 15

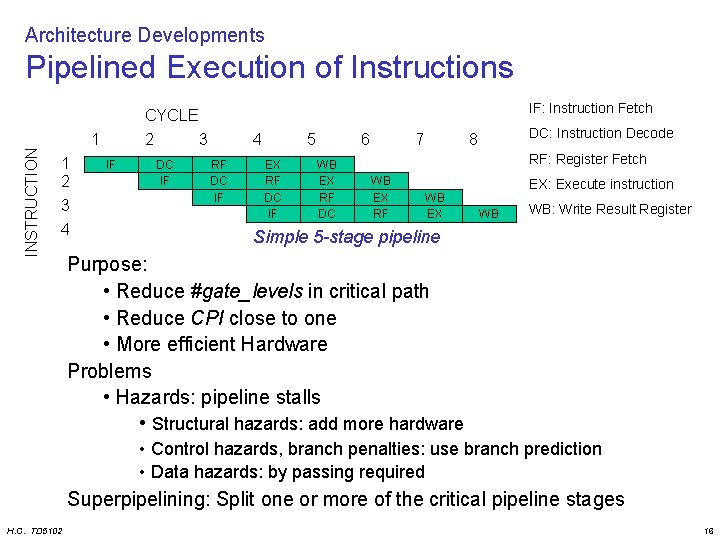

Architecture Developments Pipelined Execution of Instructions IF: Instruction Fetch INSTRUCTION CYCLE 1 1 2 3 4 2 IF 3 DC IF 4 RF DC IF 5 EX RF DC IF 6 WB EX RF DC 7 DC: Instruction Decode 8 RF: Register Fetch WB EX RF WB EX EX: Execute instruction WB WB: Write Result Register Simple 5 -stage pipeline Purpose: • Reduce #gate_levels in critical path • Reduce CPI close to one • More efficient Hardware Problems • Hazards: pipeline stalls • Structural hazards: add more hardware • Control hazards, branch penalties: use branch prediction • Data hazards: by passing required Superpipelining: Split one or more of the critical pipeline stages H. C. TD 5102 16

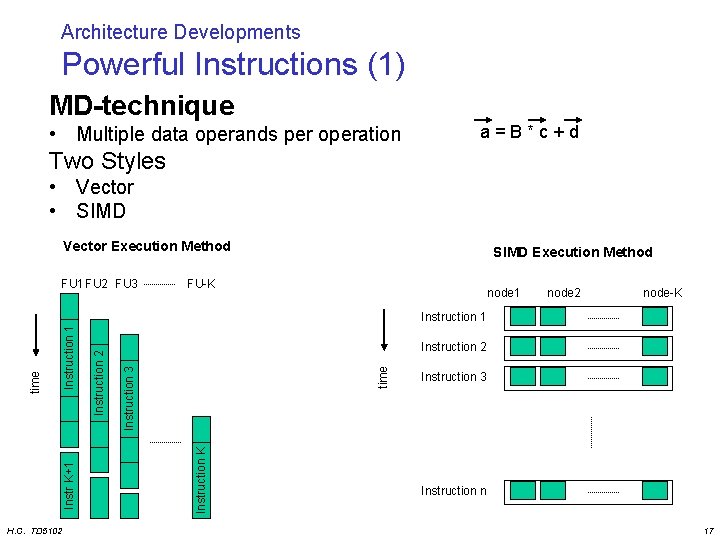

Architecture Developments Powerful Instructions (1) MD-technique • Multiple data operands per operation a=B*c+d Two Styles • Vector • SIMD Vector Execution Method FU 1 FU 2 FU 3 SIMD Execution Method FU-K node 1 node 2 node-K H. C. TD 5102 Instruction K time Instruction 3 Instruction 2 Instruction 1 Instr K+1 time Instruction 1 Instruction 3 Instruction n 17

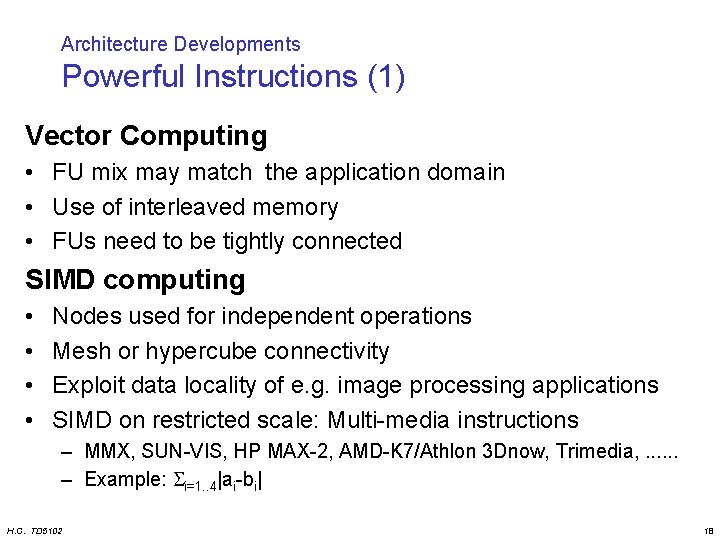

Architecture Developments Powerful Instructions (1) Vector Computing • FU mix may match the application domain • Use of interleaved memory • FUs need to be tightly connected SIMD computing • • Nodes used for independent operations Mesh or hypercube connectivity Exploit data locality of e. g. image processing applications SIMD on restricted scale: Multi-media instructions – MMX, SUN-VIS, HP MAX-2, AMD-K 7/Athlon 3 Dnow, Trimedia, . . . – Example: i=1. . 4|ai-bi| H. C. TD 5102 18

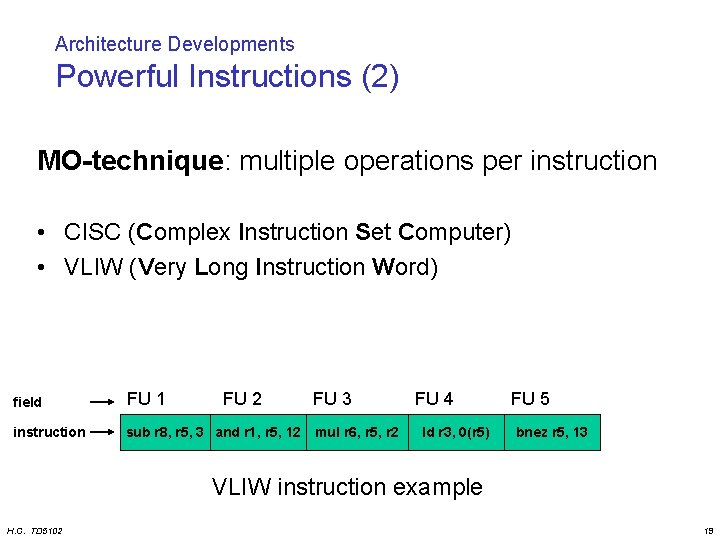

Architecture Developments Powerful Instructions (2) MO-technique: multiple operations per instruction • CISC (Complex Instruction Set Computer) • VLIW (Very Long Instruction Word) field FU 1 FU 2 FU 3 instruction sub r 8, r 5, 3 and r 1, r 5, 12 mul r 6, r 5, r 2 FU 4 ld r 3, 0(r 5) FU 5 bnez r 5, 13 VLIW instruction example H. C. TD 5102 19

Architecture Developments: Powerful Instructions (2) VLIW Characteristics • Only RISC like operation support Short cycle times • Flexible: Can implement any FU mixture • Extensible • Tight inter FU connectivity required • Large instructions • Not binary compatible H. C. TD 5102 20

Architecture Developments Multiple instruction issue (per cycle) Who guarantees semantic correctness? • User specifies multiple instruction streams – MIMD (Multiple Instruction Multiple Data) • Run-time detection of ready instructions – Superscalar • Compile into dataflow representation – Dataflow processors H. C. TD 5102 21

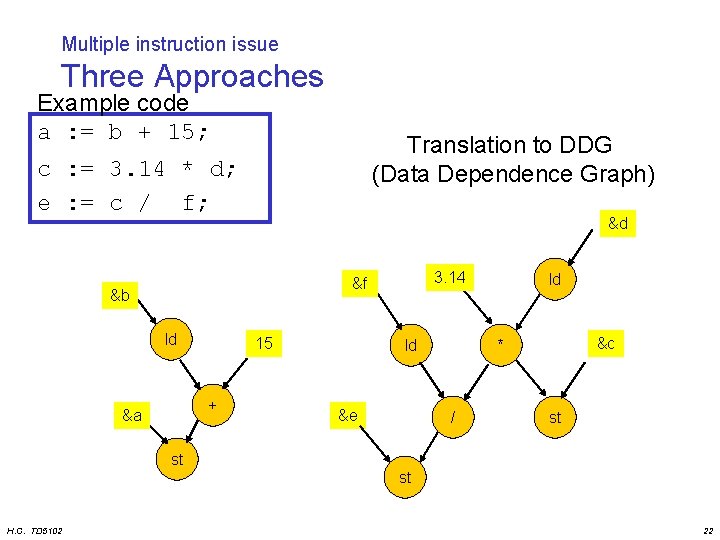

Multiple instruction issue Three Approaches Example code a : = b + 15; Translation to DDG (Data Dependence Graph) c : = 3. 14 * d; e : = c / f; &d 3. 14 &f &b ld 15 + &a ld &e ld &c * / st st st H. C. TD 5102 22

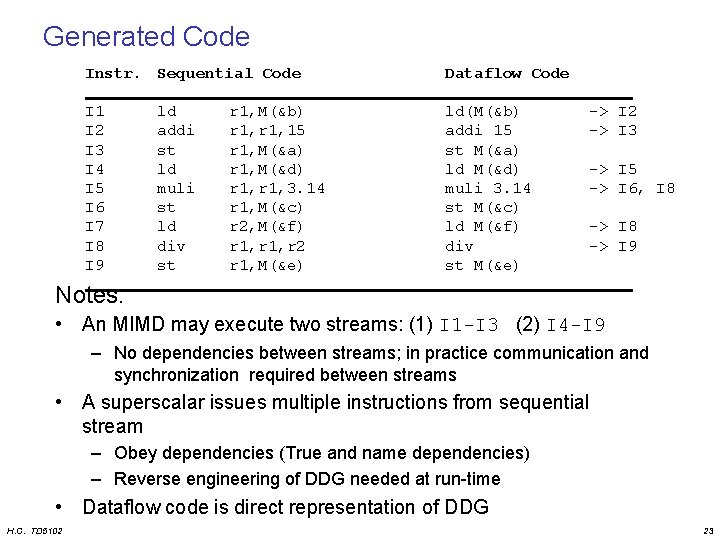

Generated Code Instr. Sequential Code Dataflow Code I 1 I 2 I 3 I 4 I 5 I 6 I 7 I 8 I 9 ld(M(&b) addi 15 st M(&a) ld M(&d) muli 3. 14 st M(&c) ld M(&f) div st M(&e) ld addi st ld muli st ld div st r 1, M(&b) r 1, 15 r 1, M(&a) r 1, M(&d) r 1, 3. 14 r 1, M(&c) r 2, M(&f) r 1, r 2 r 1, M(&e) -> I 2 -> I 3 -> I 5 -> I 6, I 8 -> I 9 Notes: • An MIMD may execute two streams: (1) I 1 -I 3 (2) I 4 -I 9 – No dependencies between streams; in practice communication and synchronization required between streams • A superscalar issues multiple instructions from sequential stream – Obey dependencies (True and name dependencies) – Reverse engineering of DDG needed at run-time • Dataflow code is direct representation of DDG H. C. TD 5102 23

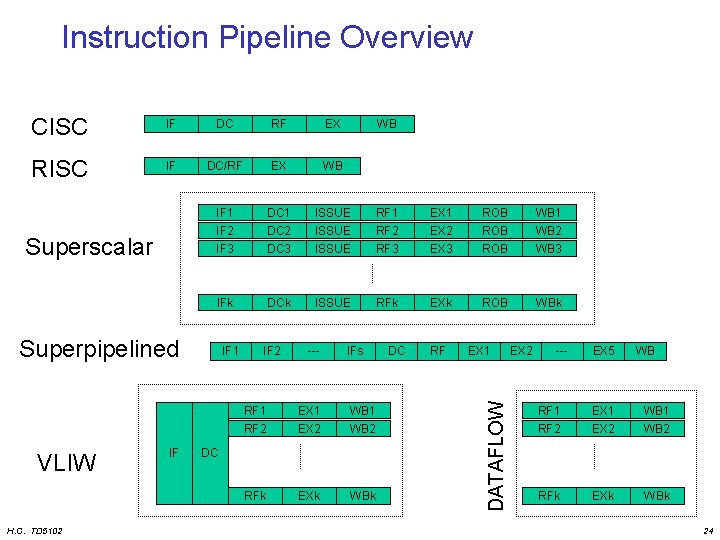

Instruction Pipeline Overview CISC IF DC RF EX RISC IF DC/RF EX WB IF 1 IF 2 DC 1 DC 2 ISSUE RF 1 RF 2 EX 1 EX 2 ROB WB 1 WB 2 IF 3 DC 3 ISSUE RF 3 EX 3 ROB WB 3 IFk DCk ISSUE RFk EXk ROB WBk Superpipelined VLIW H. C. TD 5102 IF IF 1 IF 2 --- IFs RF 1 RF 2 EX 1 EX 2 WB 1 WB 2 RFk EXk WBk DC DC RF EX 1 DATAFLOW Superscalar WB EX 2 --- EX 5 WB RF 1 EX 1 WB 1 RF 2 EX 2 WB 2 RFk EXk WBk 24

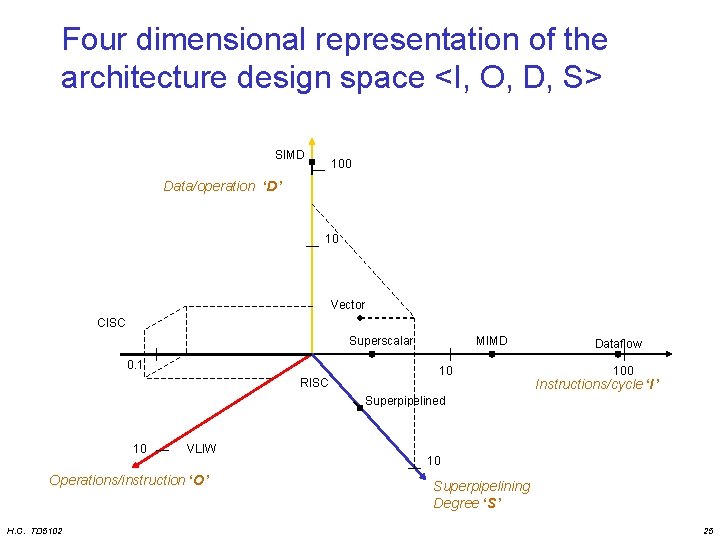

Four dimensional representation of the architecture design space <I, O, D, S> SIMD 100 Data/operation ‘D’ 10 Vector CISC Superscalar 0. 1 RISC MIMD 10 Dataflow 100 Instructions/cycle ‘I’ Superpipelined 10 VLIW Operations/instruction ‘O’ H. C. TD 5102 10 Superpipelining Degree ‘S’ 25

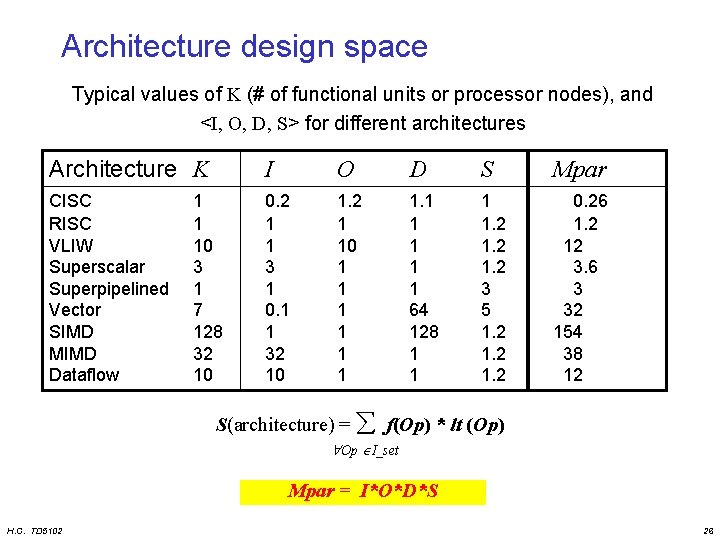

Architecture design space Typical values of K (# of functional units or processor nodes), and <I, O, D, S> for different architectures Architecture K I O D S Mpar CISC RISC VLIW Superscalar Superpipelined Vector SIMD MIMD Dataflow 0. 2 1 1 3 1 0. 1 1 32 10 1. 2 1 10 1 1 1 1 64 128 1 1. 2 3 5 1. 2 0. 26 1. 2 12 3. 6 3 32 154 38 12 1 1 10 3 1 7 128 32 10 S(architecture) = f(Op) * lt (Op) Op I_set Mpar = I*O*D*S H. C. TD 5102 26

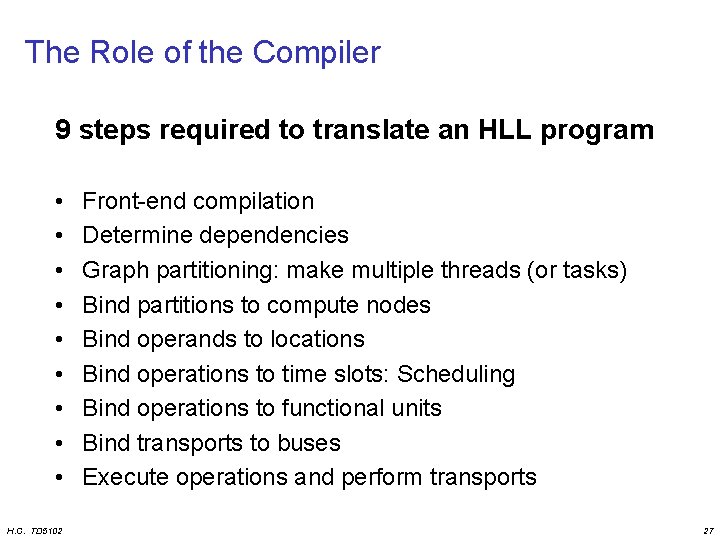

The Role of the Compiler 9 steps required to translate an HLL program • • • H. C. TD 5102 Front-end compilation Determine dependencies Graph partitioning: make multiple threads (or tasks) Bind partitions to compute nodes Bind operands to locations Bind operations to time slots: Scheduling Bind operations to functional units Bind transports to buses Execute operations and perform transports 27

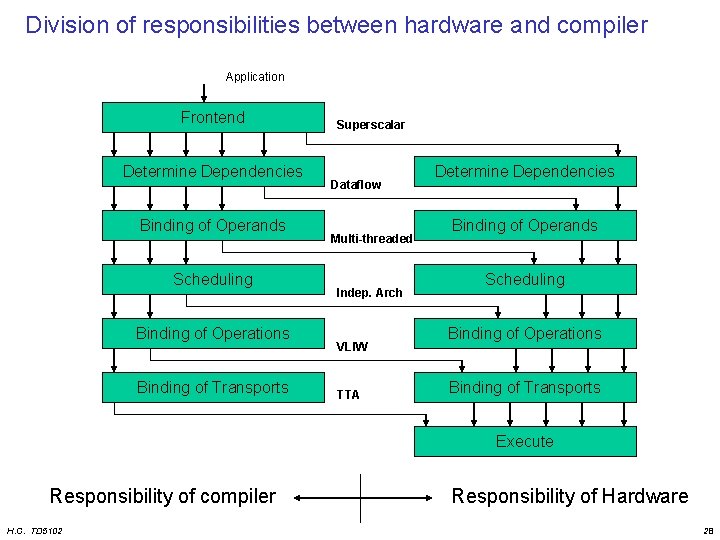

Division of responsibilities between hardware and compiler Application Frontend Determine Dependencies Binding of Operands Scheduling Binding of Operations Binding of Transports Superscalar Dataflow Multi-threaded Indep. Arch VLIW TTA Determine Dependencies Binding of Operands Scheduling Binding of Operations Binding of Transports Execute Responsibility of compiler H. C. TD 5102 Responsibility of Hardware 28

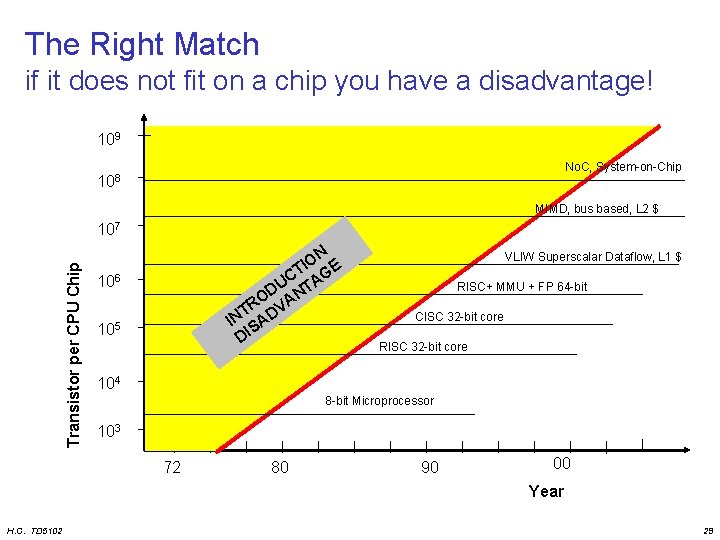

The Right Match if it does not fit on a chip you have a disadvantage! 109 No. C, System-on-Chip 108 MIMD, bus based, L 2 $ Transistor per CPU Chip 107 ON E I CT AG U D NT O R VA T IN SAD DI 106 105 VLIW Superscalar Dataflow, L 1 $ RISC+ MMU + FP 64 -bit CISC 32 -bit core RISC 32 -bit core 104 8 -bit Microprocessor 103 72 80 90 00 Year H. C. TD 5102 29

Overview • • • H. C. TD 5102 Motivation and Goals Trends in Computer Architecture ILP Processors Transport Triggered Architectures Configurable components Summary and Conclusions 30

ILP Processors • Overview • General ILP organization • VLIW concept – examples like: Tri. Media, Mpact, TMS 320 C 6 x, IA-64 • Superscalar concept – examples like: HP-PA 8000, Alpha 21264, MIPS R 10 k/R 12 k, Pentium I-IV, AMD 5 -7, Ultra. Sparc – (Ref: IEEE Micro April 1996 (Hot. Chips issue) • Comparing Superscalar and VLIW H. C. TD 5102 31

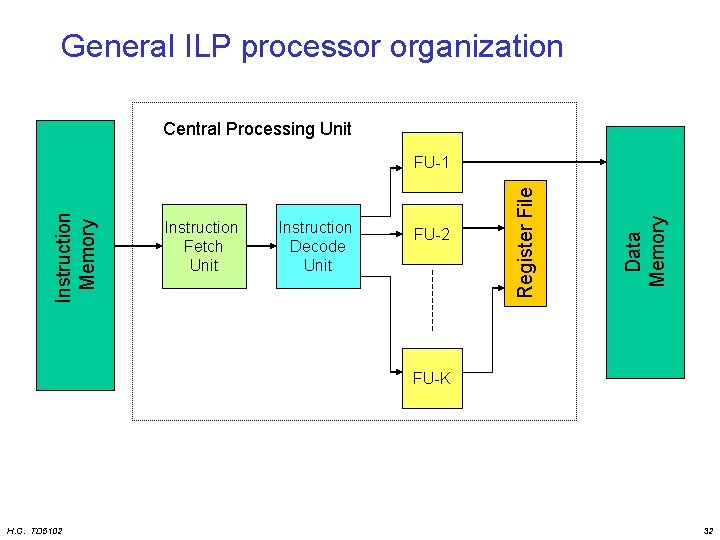

General ILP processor organization Central Processing Unit Instruction Decode Unit FU-2 Data Memory Instruction Fetch Unit Register File Instruction Memory FU-1 FU-K H. C. TD 5102 32

ILP processor characteristics • Issue multiple operations/instructions per cycle • Multiple concurrent Function Units • Pipelined execution • Shared register file • Four Superscalar variants – In-order/Out-of-order execution – In-order/Out-of-order completion H. C. TD 5102 33

VLIW Very Long Instruction Word Architecture H. C. TD 5102 34

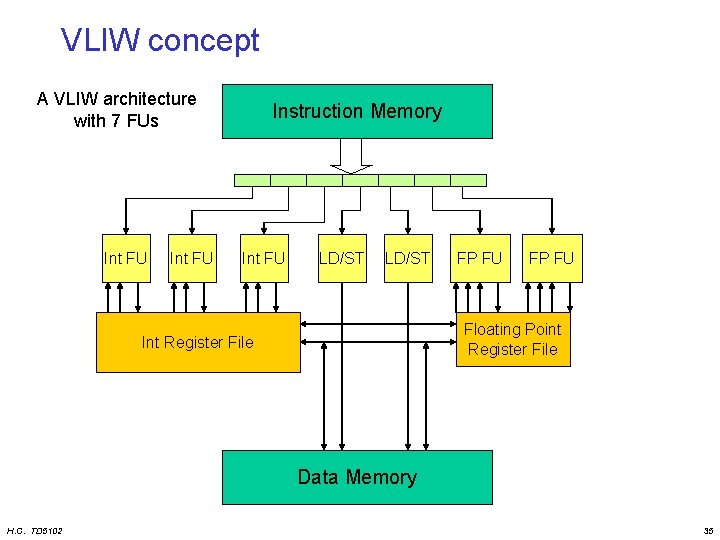

VLIW concept A VLIW architecture with 7 FUs Int FU Instruction Memory Int FU LD/ST FP FU Floating Point Register File Int Register File Data Memory H. C. TD 5102 35

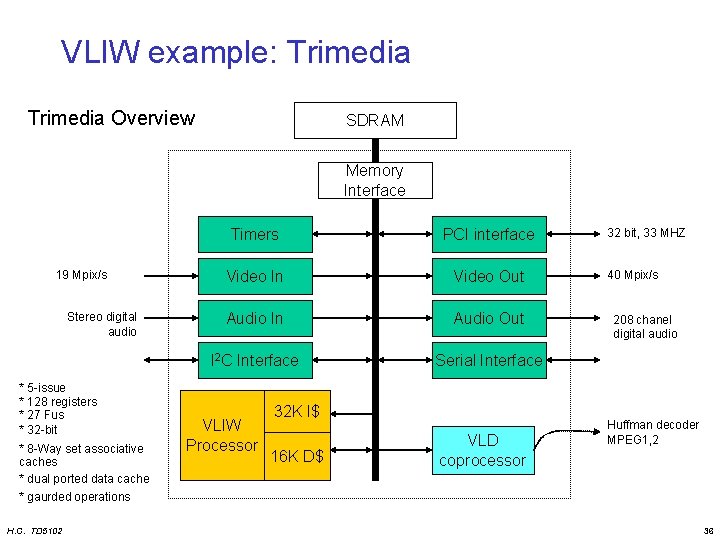

VLIW example: Trimedia Overview SDRAM Memory Interface 19 Mpix/s Stereo digital audio * 5 -issue * 128 registers * 27 Fus * 32 -bit * 8 -Way set associative caches * dual ported data cache * gaurded operations H. C. TD 5102 Timers PCI interface Video In Video Out Audio In Audio Out I 2 C Interface Serial Interface VLIW Processor 32 K I$ 16 K D$ VLD coprocessor 32 bit, 33 MHZ 40 Mpix/s 208 chanel digital audio Huffman decoder MPEG 1, 2 36

VLIW example: TMS 320 C 62 Veloci. TI Processor • 8 operations (of 32 -bit) per instruction (256 bit) • Two clusters – 8 Fus: 4 Fus / cluster : (2 Multipliers, 6 ALUs) – 2 x 16 registers – One port available to read from register file of other cluster • • • H. C. TD 5102 Flexible addressing modes (like circular addressing) Flexible instruction packing All operations conditional 5 ns, 200 MHz, 0. 25 um, 5 -layer CMOS 128 KB on-chip RAM 37

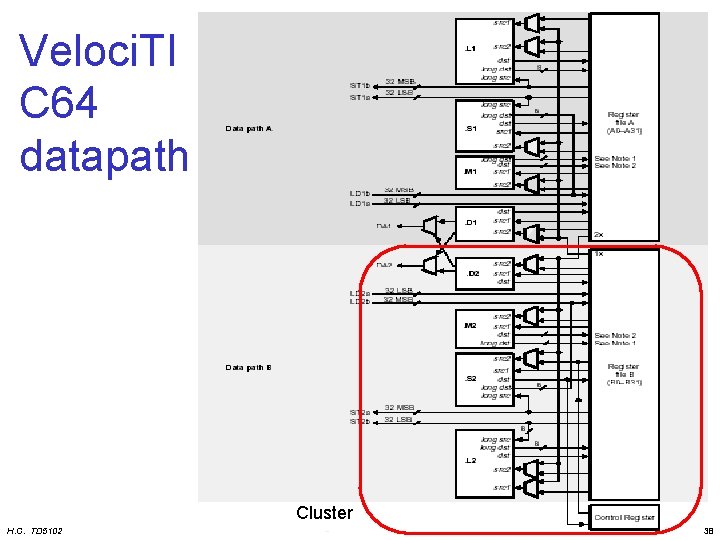

Veloci. TI C 64 datapath Cluster H. C. TD 5102 38

VLIW example: IA-64 Intel HP 64 bit VLIW like architecture • 128 bit instruction bundle containing 3 instructions • 128 Integer + 128 Floating Point registers : 7 -bit reg id. • Guarded instructions – 64 entry boolean register file heavily rely on if-conversion to remove branches • Specify instruction independence – some extra bits per bundle • Fully interlocked – i. e. no delay slots: operations are latency compatible within family of architectures • Split loads – non trapping load + exception check H. C. TD 5102 39

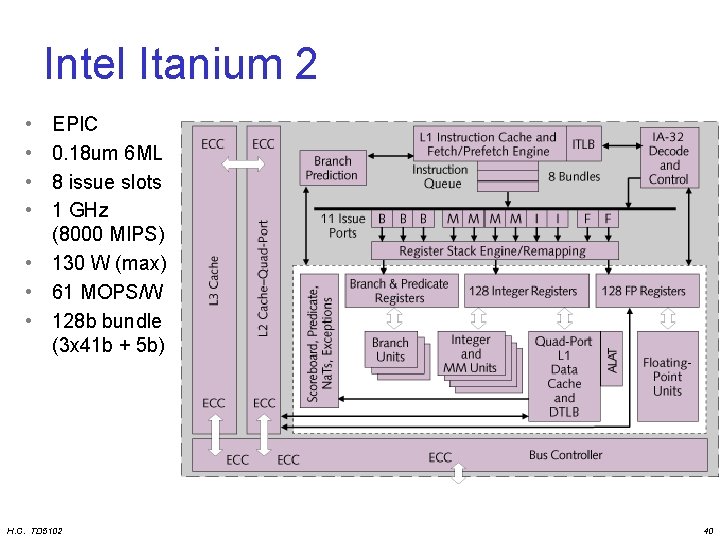

Intel Itanium 2 • • EPIC 0. 18 um 6 ML 8 issue slots 1 GHz (8000 MIPS) • 130 W (max) • 61 MOPS/W • 128 b bundle (3 x 41 b + 5 b) H. C. TD 5102 40

Superscalar Multiple Instructions / Cycle H. C. TD 5102 41

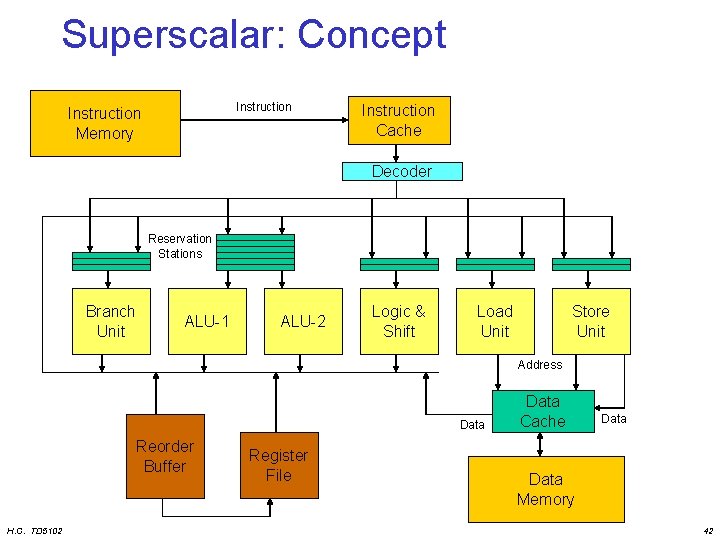

Superscalar: Concept Instruction Memory Instruction Cache Decoder Reservation Stations Branch Unit ALU-1 ALU-2 Logic & Shift Load Unit Store Unit Address Data Reorder Buffer H. C. TD 5102 Register File Data Cache Data Memory 42

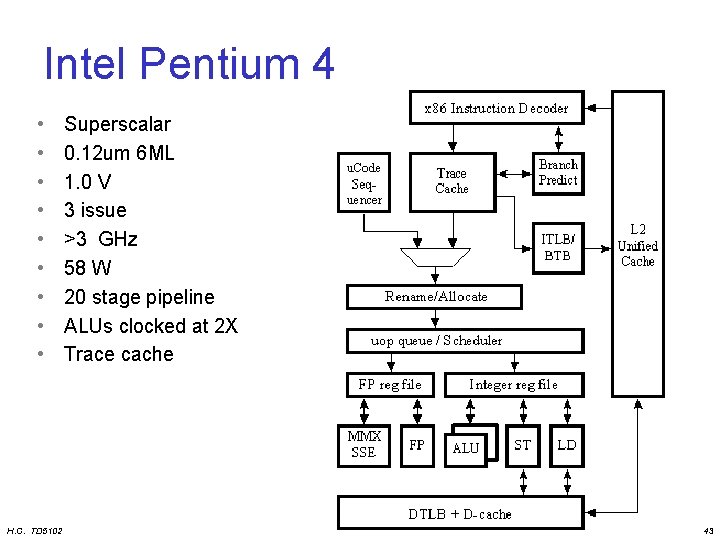

Intel Pentium 4 • • • H. C. TD 5102 Superscalar 0. 12 um 6 ML 1. 0 V 3 issue >3 GHz 58 W 20 stage pipeline ALUs clocked at 2 X Trace cache 43

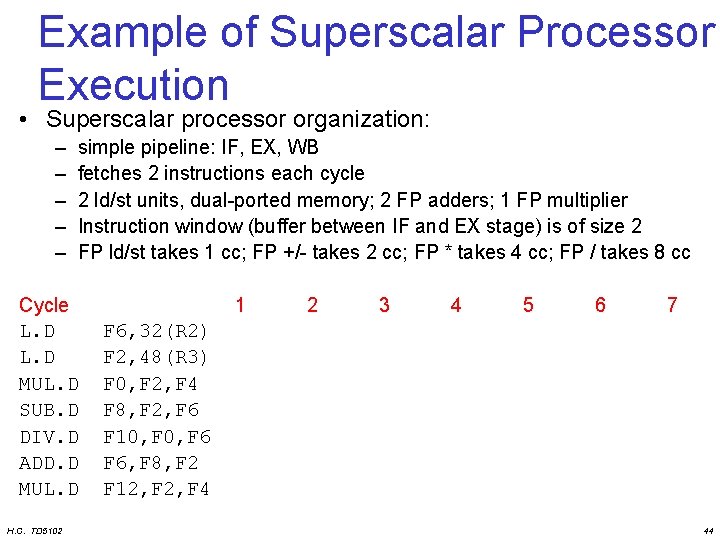

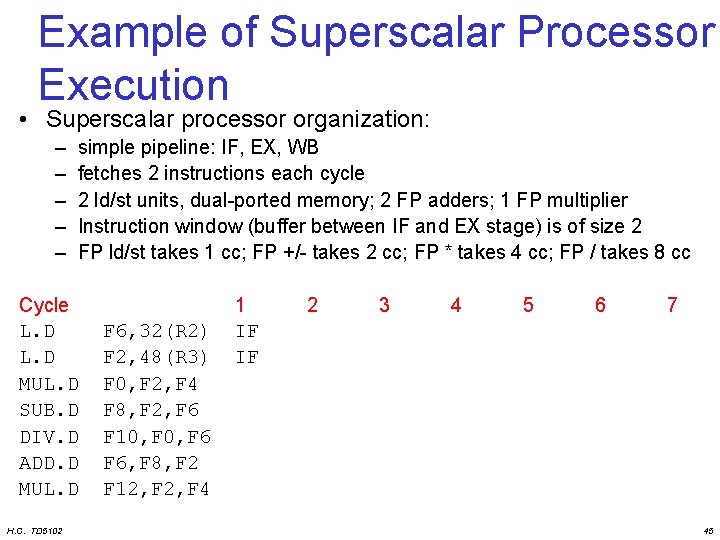

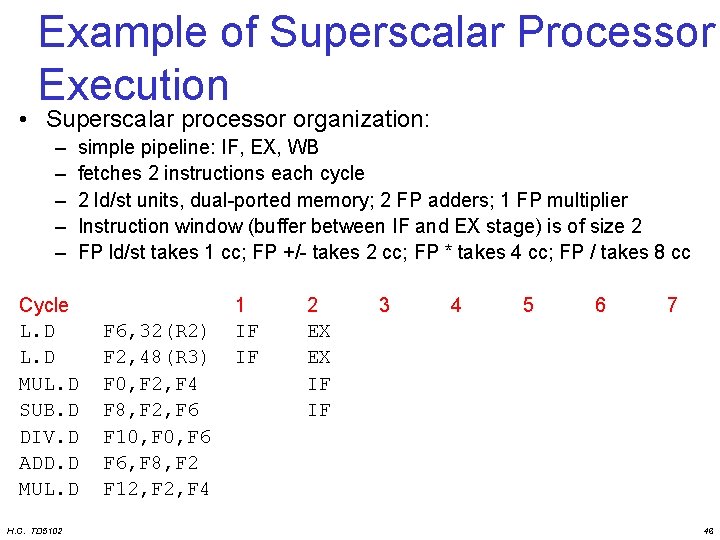

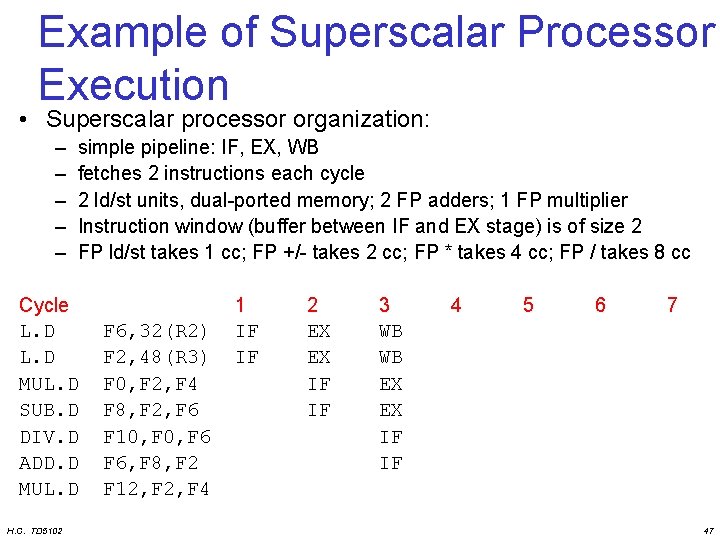

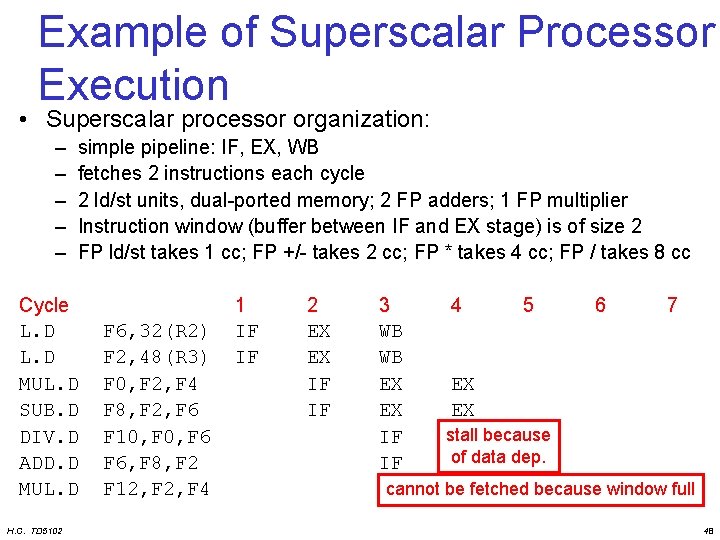

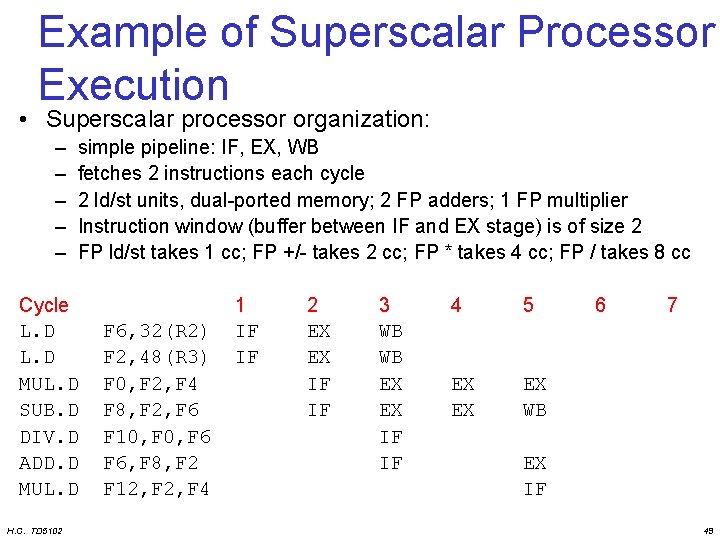

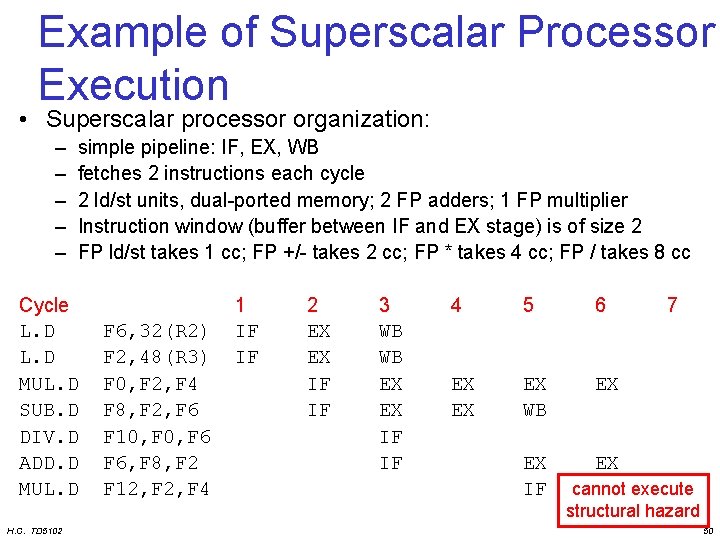

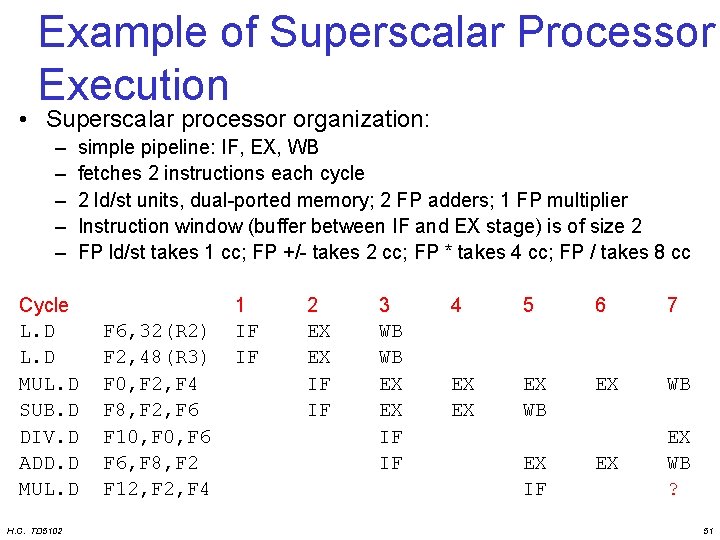

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 1 2 3 4 5 6 7 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 44

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 1 IF IF 2 3 4 5 6 7 45

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 1 IF IF 2 EX EX IF IF 3 4 5 6 7 46

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 1 IF IF 2 EX EX IF IF 3 WB WB EX EX IF IF 4 5 6 7 47

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 1 IF IF 2 EX EX IF IF 3 WB WB EX EX IF IF 4 5 6 7 EX EX stall because of data dep. cannot be fetched because window full 48

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 1 IF IF 2 EX EX IF IF 3 WB WB EX EX IF IF 4 5 EX EX EX WB 6 7 EX IF 49

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 1 IF IF 2 EX EX IF IF 3 WB WB EX EX IF IF 4 5 6 EX EX EX WB EX EX IF EX 7 cannot execute structural hazard 50

Example of Superscalar Processor Execution • Superscalar processor organization: – – – simple pipeline: IF, EX, WB fetches 2 instructions each cycle 2 ld/st units, dual-ported memory; 2 FP adders; 1 FP multiplier Instruction window (buffer between IF and EX stage) is of size 2 FP ld/st takes 1 cc; FP +/- takes 2 cc; FP * takes 4 cc; FP / takes 8 cc Cycle L. D MUL. D SUB. D DIV. D ADD. D MUL. D H. C. TD 5102 F 6, 32(R 2) F 2, 48(R 3) F 0, F 2, F 4 F 8, F 2, F 6 F 10, F 6 F 6, F 8, F 2 F 12, F 4 1 IF IF 2 EX EX IF IF 3 WB WB EX EX IF IF 4 5 6 7 EX EX EX WB ? EX IF 51

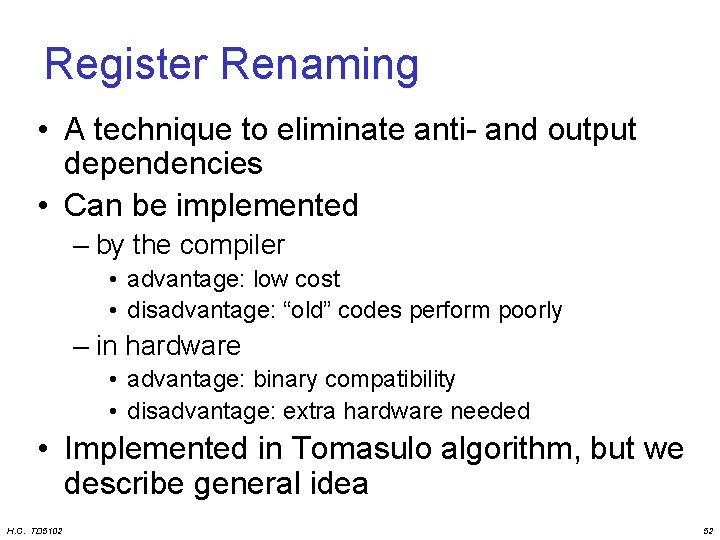

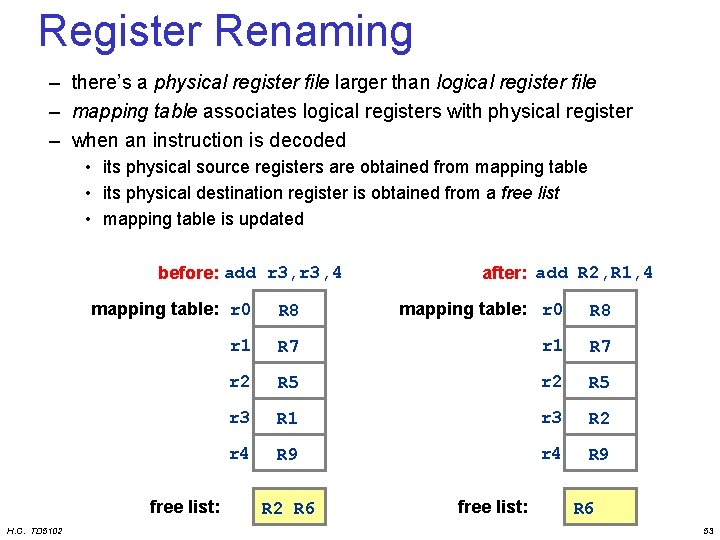

Register Renaming • A technique to eliminate anti- and output dependencies • Can be implemented – by the compiler • advantage: low cost • disadvantage: “old” codes perform poorly – in hardware • advantage: binary compatibility • disadvantage: extra hardware needed • Implemented in Tomasulo algorithm, but we describe general idea H. C. TD 5102 52

Register Renaming – there’s a physical register file larger than logical register file – mapping table associates logical registers with physical register – when an instruction is decoded • its physical source registers are obtained from mapping table • its physical destination register is obtained from a free list • mapping table is updated before: add r 3, 4 mapping table: r 0 R 8 r 1 R 7 r 2 R 5 r 3 R 1 r 3 R 2 r 4 R 9 free list: H. C. TD 5102 after: add R 2, R 1, 4 R 2 R 6 free list: R 6 53

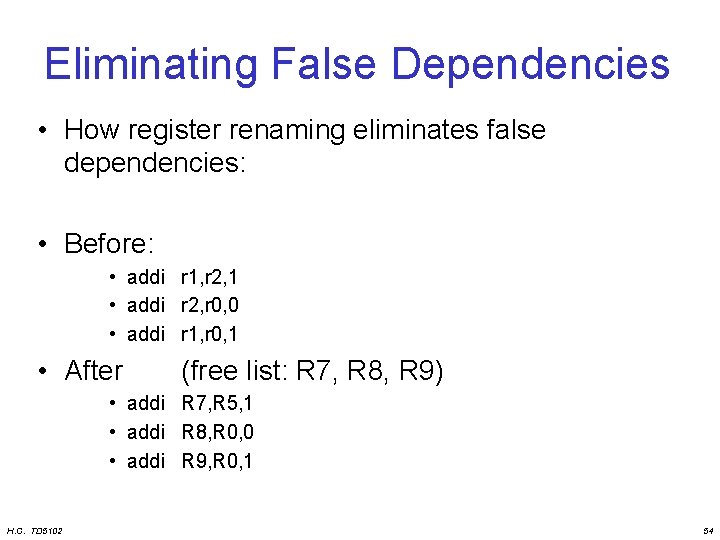

Eliminating False Dependencies • How register renaming eliminates false dependencies: • Before: • addi r 1, r 2, 1 • addi r 2, r 0, 0 • addi r 1, r 0, 1 • After (free list: R 7, R 8, R 9) • addi R 7, R 5, 1 • addi R 8, R 0, 0 • addi R 9, R 0, 1 H. C. TD 5102 54

Branch Prediction H. C. TD 5102 55

Branch Prediction Motivation High branch penalties in pipelined processors: • With on average one out of five instructions being a branch, the maximum ILP is five • Situation even worse for multiple-issue processors, because we need to provide an instruction stream of n instructions per cycle. • Idea: predict the outcome of branches based on their history and execute instructions speculatively H. C. TD 5102 56

5 Branch Prediction Schemes 1. 1 -bit Branch Prediction Buffer 2. 2 -bit Branch Prediction Buffer 3. Correlating Branch Prediction Buffer 4. Branch Target Buffer 5. Return Address Predictors + A way to get rid of those malicious branches H. C. TD 5102 57

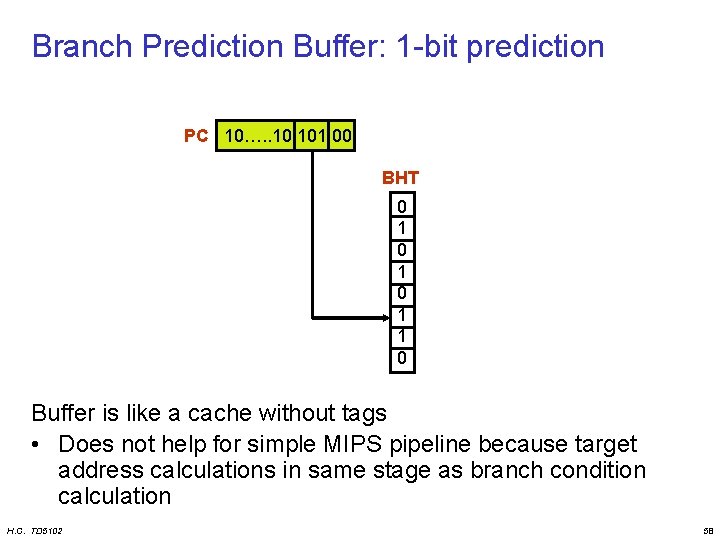

Branch Prediction Buffer: 1 -bit prediction PC 10…. . 10 101 00 BHT 0 1 0 1 1 0 Buffer is like a cache without tags • Does not help for simple MIPS pipeline because target address calculations in same stage as branch condition calculation H. C. TD 5102 58

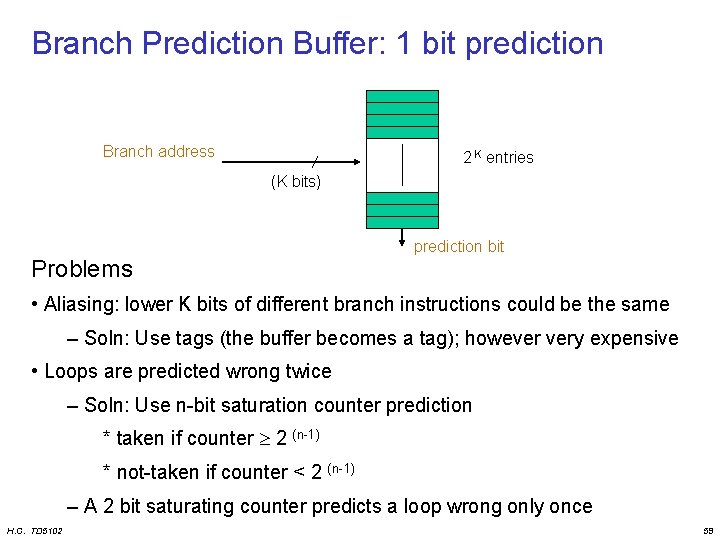

Branch Prediction Buffer: 1 bit prediction Branch address 2 K entries (K bits) Problems prediction bit • Aliasing: lower K bits of different branch instructions could be the same – Soln: Use tags (the buffer becomes a tag); however very expensive • Loops are predicted wrong twice – Soln: Use n-bit saturation counter prediction * taken if counter 2 (n-1) * not-taken if counter < 2 (n-1) – A 2 bit saturating counter predicts a loop wrong only once H. C. TD 5102 59

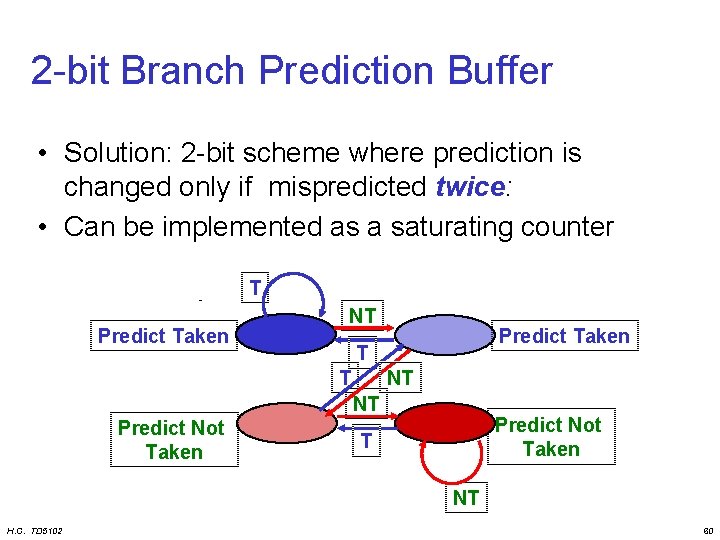

2 -bit Branch Prediction Buffer • Solution: 2 -bit scheme where prediction is changed only if mispredicted twice: • Can be implemented as a saturating counter T Predict Taken NT Predict Taken T T NT NT Predict Not Taken T NT H. C. TD 5102 60

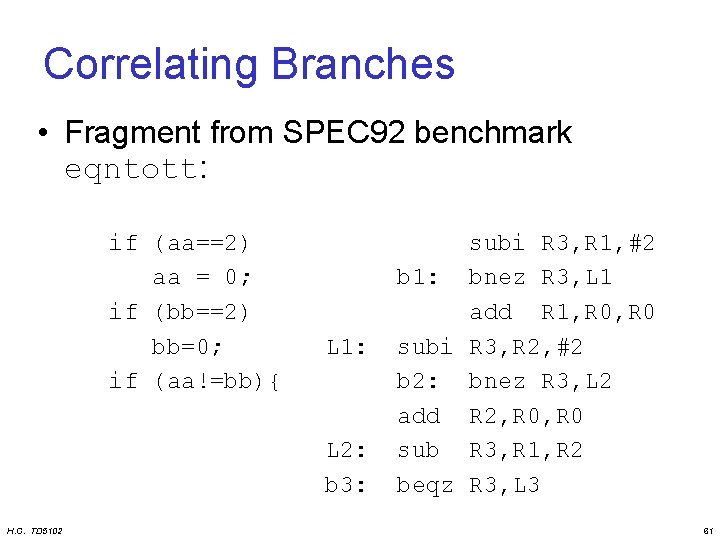

Correlating Branches • Fragment from SPEC 92 benchmark eqntott: if (aa==2) aa = 0; if (bb==2) bb=0; if (aa!=bb){ L 1: L 2: b 3: H. C. TD 5102 subi R 3, R 1, #2 b 1: bnez R 3, L 1 add R 1, R 0 subi R 3, R 2, #2 b 2: bnez R 3, L 2 add R 2, R 0 sub R 3, R 1, R 2 beqz R 3, L 3 61

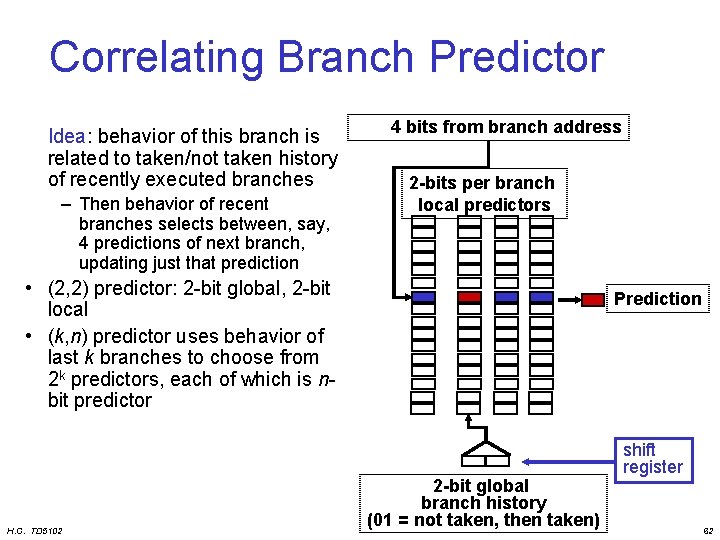

Correlating Branch Predictor Idea: behavior of this branch is related to taken/not taken history of recently executed branches – Then behavior of recent branches selects between, say, 4 predictions of next branch, updating just that prediction 4 bits from branch address 2 -bits per branch local predictors • (2, 2) predictor: 2 -bit global, 2 -bit local • (k, n) predictor uses behavior of last k branches to choose from 2 k predictors, each of which is nbit predictor H. C. TD 5102 Prediction 2 -bit global branch history (01 = not taken, then taken) shift register 62

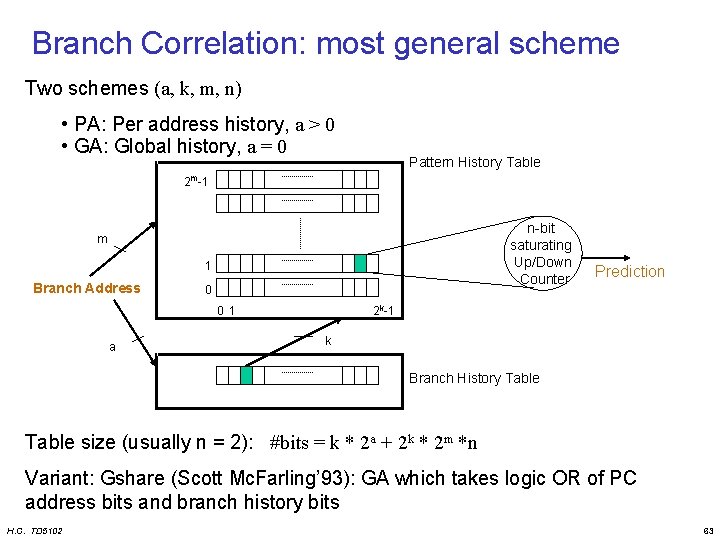

Branch Correlation: most general scheme Two schemes (a, k, m, n) • PA: Per address history, a > 0 • GA: Global history, a = 0 Pattern History Table 2 m-1 n-bit saturating Up/Down Counter m 1 Branch Address 0 2 k-1 0 1 a Prediction k Branch History Table size (usually n = 2): #bits = k * 2 a + 2 k * 2 m *n Variant: Gshare (Scott Mc. Farling’ 93): GA which takes logic OR of PC address bits and branch history bits H. C. TD 5102 63

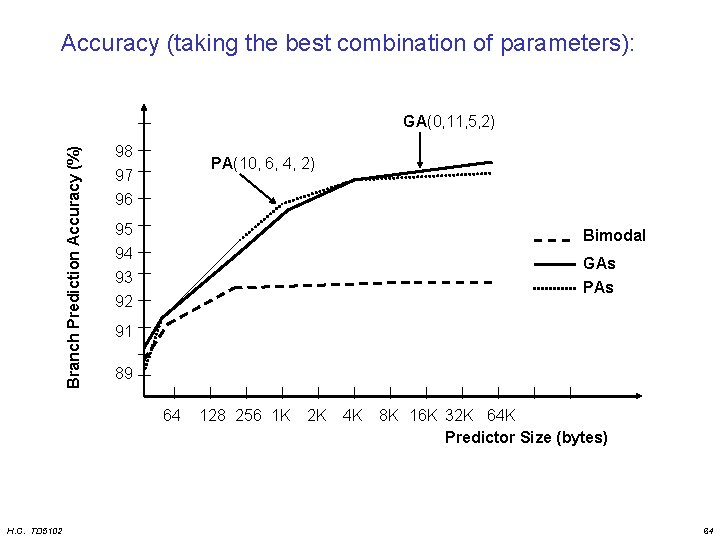

Accuracy (taking the best combination of parameters): Branch Prediction Accuracy (%) GA(0, 11, 5, 2) 98 97 96 PA(10, 6, 4, 2) 95 94 Bimodal 93 92 PAs GAs 91 89 64 H. C. TD 5102 128 256 1 K 2 K 4 K 8 K 16 K 32 K 64 K Predictor Size (bytes) 64

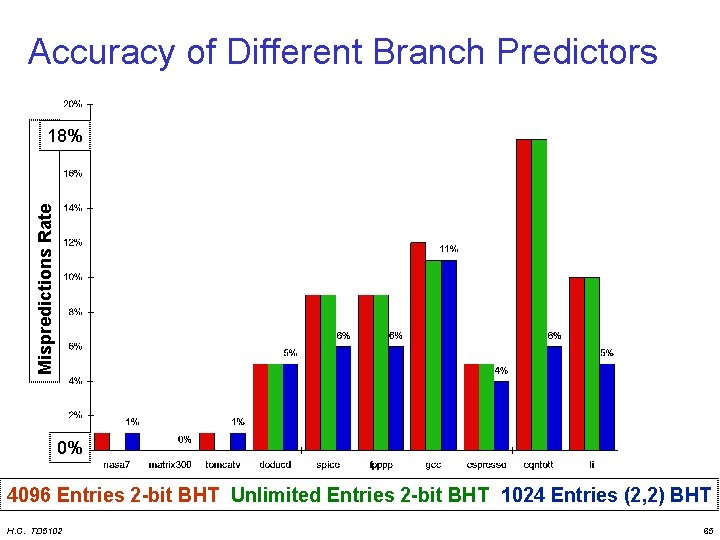

Accuracy of Different Branch Predictors Mispredictions Rate 18% 0% 4096 Entries 2 -bit BHT Unlimited Entries 2 -bit BHT 1024 Entries (2, 2) BHT H. C. TD 5102 65

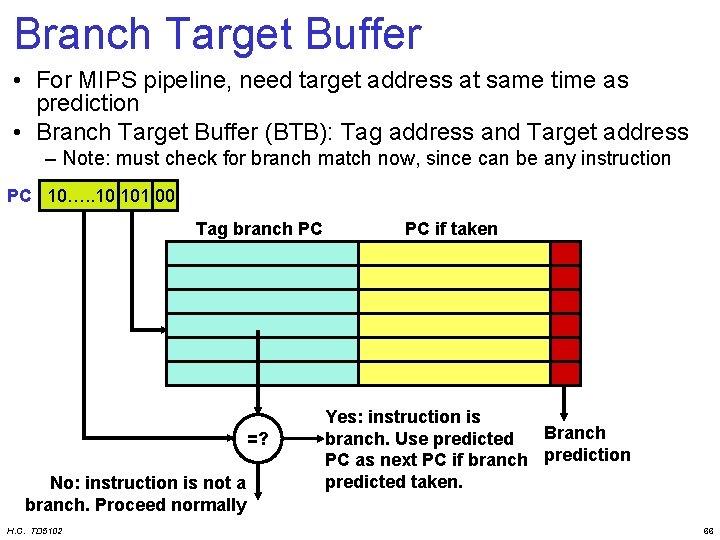

Branch Target Buffer • For MIPS pipeline, need target address at same time as prediction • Branch Target Buffer (BTB): Tag address and Target address – Note: must check for branch match now, since can be any instruction PC 10…. . 10 101 00 Tag branch PC =? No: instruction is not a branch. Proceed normally H. C. TD 5102 PC if taken Yes: instruction is Branch branch. Use predicted PC as next PC if branch prediction predicted taken. 66

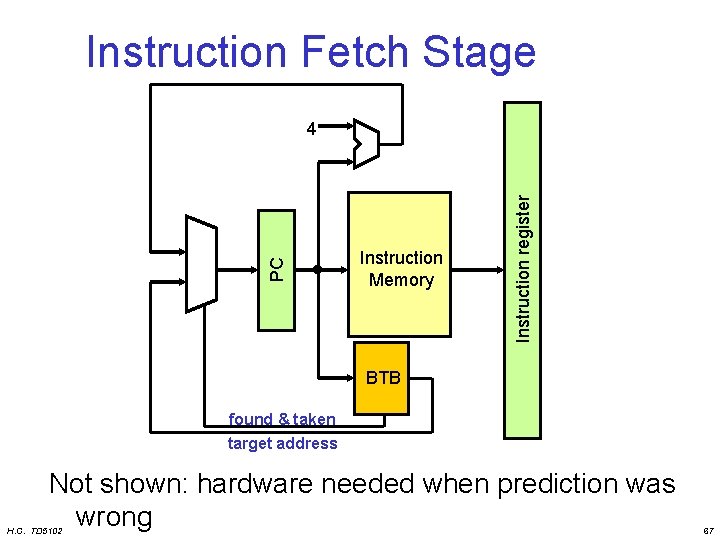

Instruction Fetch Stage Instruction Memory Instruction register PC 4 BTB found & taken target address Not shown: hardware needed when prediction was wrong H. C. TD 5102 67

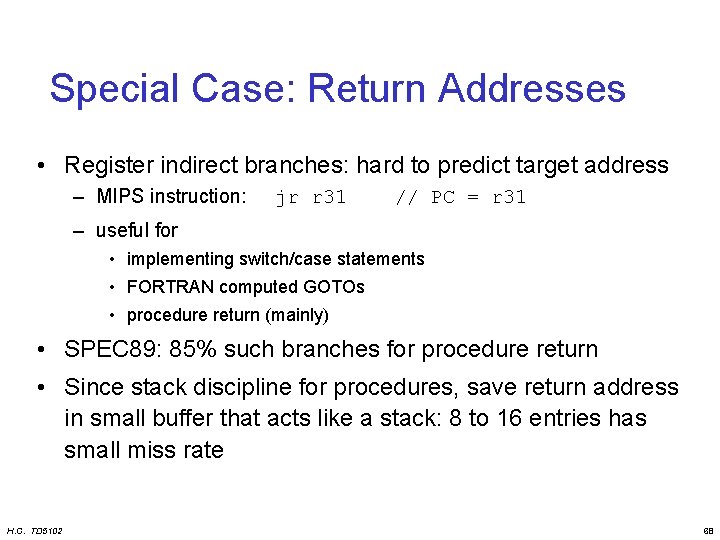

Special Case: Return Addresses • Register indirect branches: hard to predict target address – MIPS instruction: jr r 31 // PC = r 31 – useful for • implementing switch/case statements • FORTRAN computed GOTOs • procedure return (mainly) • SPEC 89: 85% such branches for procedure return • Since stack discipline for procedures, save return address in small buffer that acts like a stack: 8 to 16 entries has small miss rate H. C. TD 5102 68

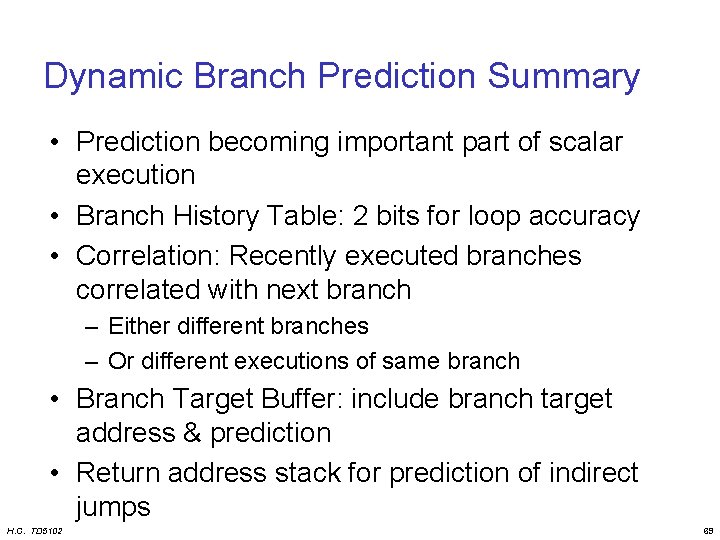

Dynamic Branch Prediction Summary • Prediction becoming important part of scalar execution • Branch History Table: 2 bits for loop accuracy • Correlation: Recently executed branches correlated with next branch – Either different branches – Or different executions of same branch • Branch Target Buffer: include branch target address & prediction • Return address stack for prediction of indirect jumps H. C. TD 5102 69

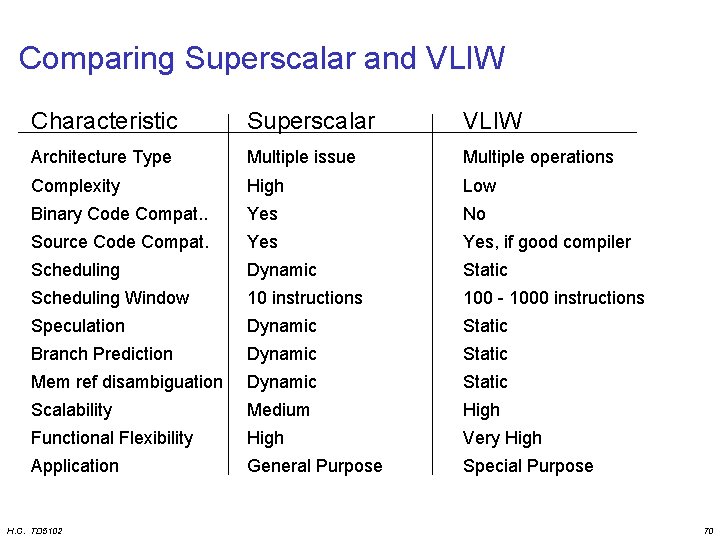

Comparing Superscalar and VLIW Characteristic Superscalar VLIW Architecture Type Multiple issue Multiple operations Complexity High Low Binary Code Compat. . Yes No Source Code Compat. Yes, if good compiler Scheduling Dynamic Static Scheduling Window 10 instructions 100 - 1000 instructions Speculation Dynamic Static Branch Prediction Dynamic Static Mem ref disambiguation Dynamic Static Scalability Medium High Functional Flexibility High Very High Application General Purpose Special Purpose H. C. TD 5102 70

Limitations of Multiple-Issue Processors • Available ILP is limited (we’re not programming with parallelism in mind) • Hardware cost – adding more functional units is easy – more memory ports and register ports needed – dependency check needs O(n 2) comparisons • Limitations of VLIW processors – Loop unrolling increases code size – Unfilled slots waste bits – Cache miss stalls pipeline • Research topic: scheduling loads – Binary incompatibility (not EPIC) H. C. TD 5102 71

Overview • • • H. C. TD 5102 Motivation and Goals Trends in Computer Architecture ILP Processors Transport Triggered Architectures Configurable components Summary and Conclusions 72

Reducing Datapath Complexity: TTA: Transport Triggered Architecture Overview Philosophy MIRROR THE PROGRAMMING PARADIGM • Program transports, operations are side effects of transports • Compiler is in control of hardware transport capacity H. C. TD 5102 73

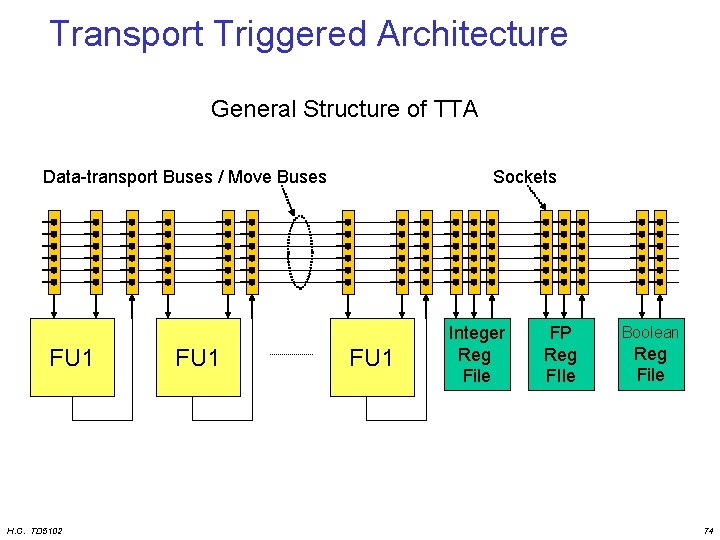

Transport Triggered Architecture General Structure of TTA Data-transport Buses / Move Buses FU 1 H. C. TD 5102 FU 1 Sockets FU 1 Integer Reg File FP Reg FIle Boolean Reg File 74

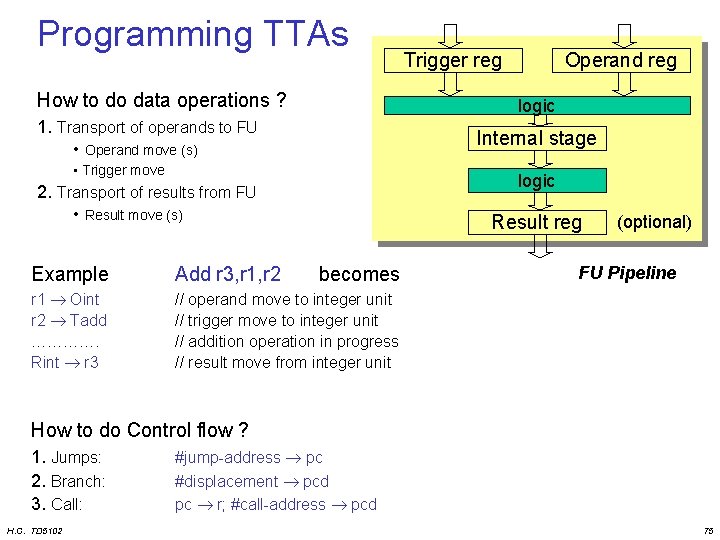

Programming TTAs How to do data operations ? Trigger reg Operand reg logic 1. Transport of operands to FU • Operand move (s) Internal stage • Trigger move logic 2. Transport of results from FU • Result move (s) Result reg Example Add r 3, r 1, r 2 becomes r 1 Oint r 2 Tadd …………. Rint r 3 // operand move to integer unit // trigger move to integer unit // addition operation in progress // result move from integer unit (optional) FU Pipeline How to do Control flow ? 1. Jumps: 2. Branch: 3. Call: H. C. TD 5102 #jump-address pc #displacement pcd pc r; #call-address pcd 75

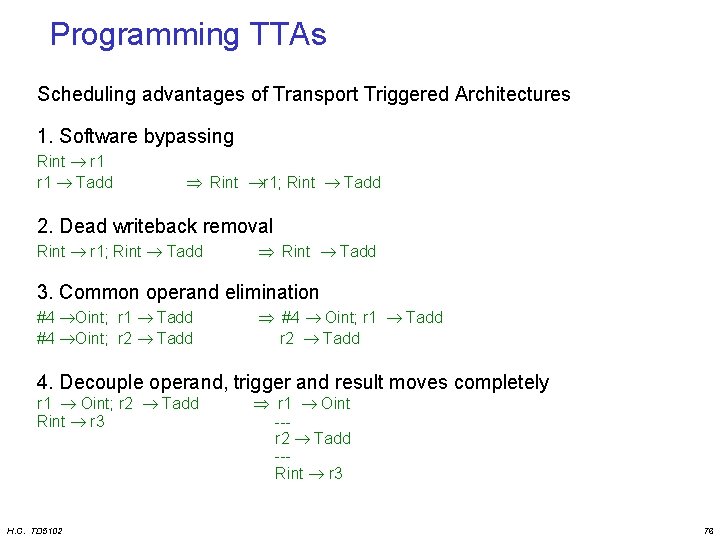

Programming TTAs Scheduling advantages of Transport Triggered Architectures 1. Software bypassing Rint r 1 Tadd Rint r 1; Rint Tadd 2. Dead writeback removal Rint r 1; Rint Tadd 3. Common operand elimination #4 Oint; r 1 Tadd #4 Oint; r 2 Tadd #4 Oint; r 1 Tadd r 2 Tadd 4. Decouple operand, trigger and result moves completely r 1 Oint; r 2 Tadd Rint r 3 H. C. TD 5102 r 1 Oint --r 2 Tadd --Rint r 3 76

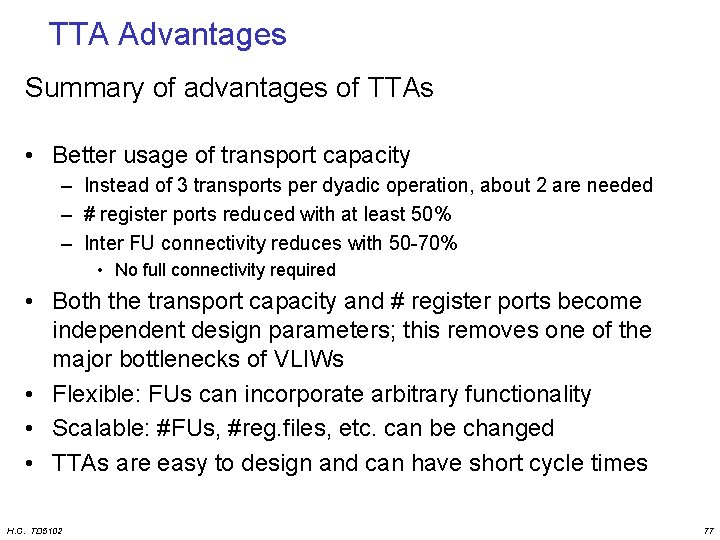

TTA Advantages Summary of advantages of TTAs • Better usage of transport capacity – Instead of 3 transports per dyadic operation, about 2 are needed – # register ports reduced with at least 50% – Inter FU connectivity reduces with 50 -70% • No full connectivity required • Both the transport capacity and # register ports become independent design parameters; this removes one of the major bottlenecks of VLIWs • Flexible: FUs can incorporate arbitrary functionality • Scalable: #FUs, #reg. files, etc. can be changed • TTAs are easy to design and can have short cycle times H. C. TD 5102 77

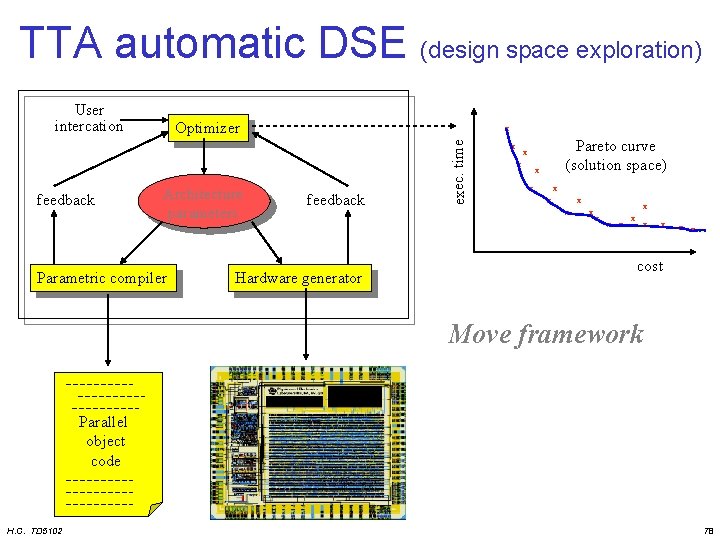

TTA automatic DSE (design space exploration) User intercation x Architecture parameters Parametric compiler feedback Hardware generator exec. time feedback Optimizer x Pareto curve (solution space) x x x x x cost Move framework Parallel object code H. C. TD 5102 chip 78

Overview • • H. C. TD 5102 Motivation and Goals Trends in Computer Architecture RISC processors ILP Processors Transport Triggered Architectures Configurable HW components Summary and Conclusions 79

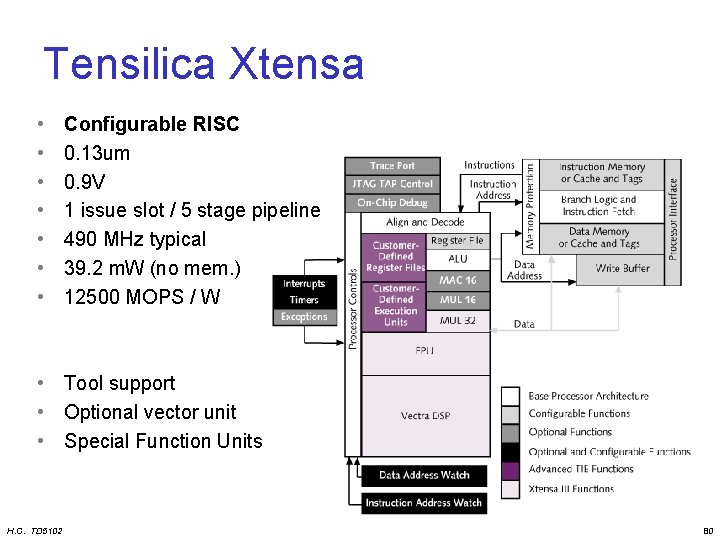

Tensilica Xtensa • • Configurable RISC 0. 13 um 0. 9 V 1 issue slot / 5 stage pipeline 490 MHz typical 39. 2 m. W (no mem. ) 12500 MOPS / W • Tool support • Optional vector unit • Special Function Units H. C. TD 5102 80

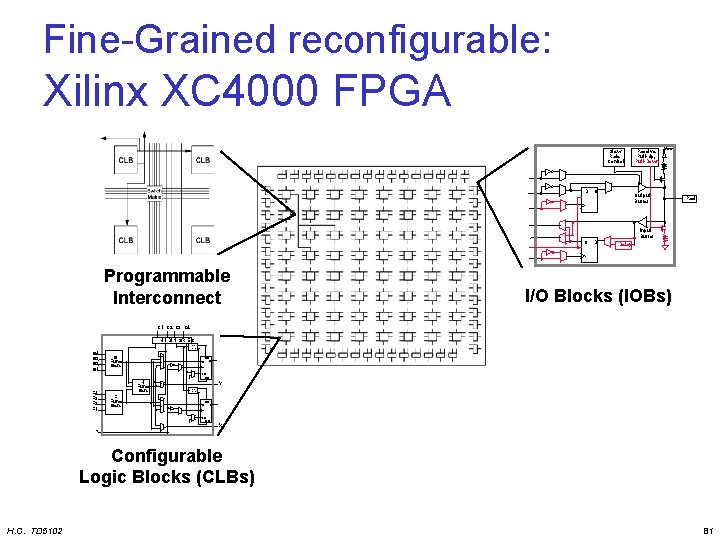

Fine-Grained reconfigurable: Xilinx XC 4000 FPGA Slew Rate Control D Q Passive Pull-Up, Pull-Down Vcc Output Buffer Pad Input Buffer Q Programmable Interconnect D Delay I/O Blocks (IOBs) C 1 C 2 C 3 C 4 H 1 DIN S/R EC S/R Control G 4 G 3 G 2 G 1 F 4 F 3 F 2 F 1 G Func. Gen. DIN F' EC RD 1 Y G' H' S/R Control DIN F' G' D SD Q H' H' K SD Q H' H Func. Gen. F Func. Gen. D G' F' 1 EC RD X Configurable Logic Blocks (CLBs) H. C. TD 5102 81

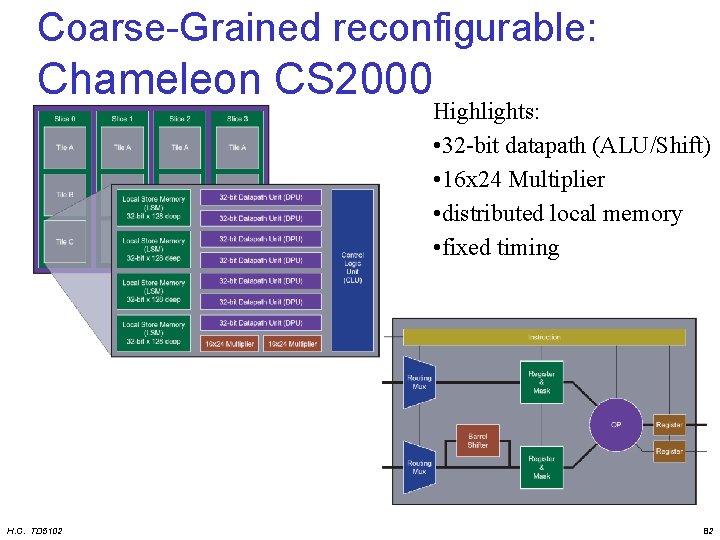

Coarse-Grained reconfigurable: Chameleon CS 2000 Highlights: • 32 -bit datapath (ALU/Shift) • 16 x 24 Multiplier • distributed local memory • fixed timing H. C. TD 5102 82

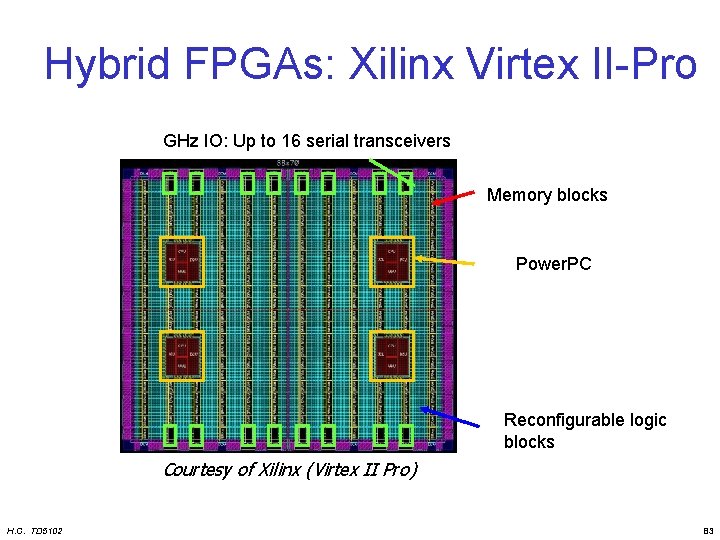

Hybrid FPGAs: Xilinx Virtex II-Pro GHz IO: 16 Upserial to 16 transceivers serial transceivers Up to Power. PCs Memory blocks Power. PC Re. Config. logic Reconfigurable logic blocks Courtesy of Xilinx (Virtex II Pro) H. C. TD 5102 83

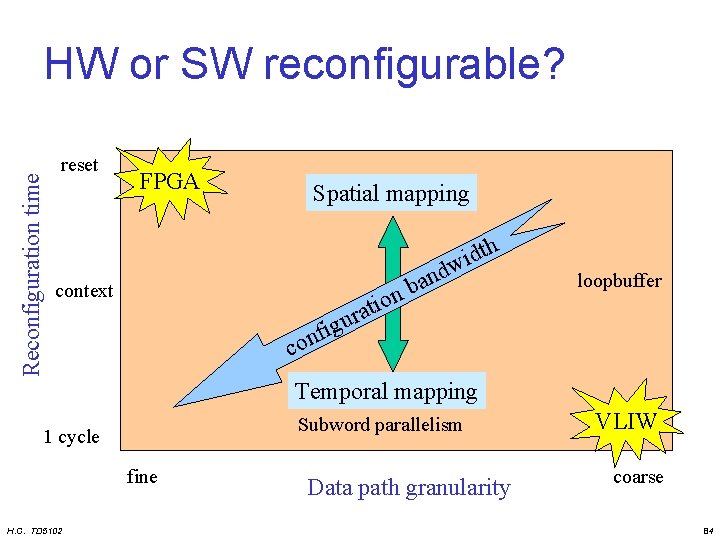

Reconfiguration time HW or SW reconfigurable? reset FPGA Spatial mapping context ig f n o loopbuffer u c Temporal mapping Subword parallelism 1 cycle fine H. C. TD 5102 n o i t ra d n a b th d i w Data path granularity VLIW coarse 84

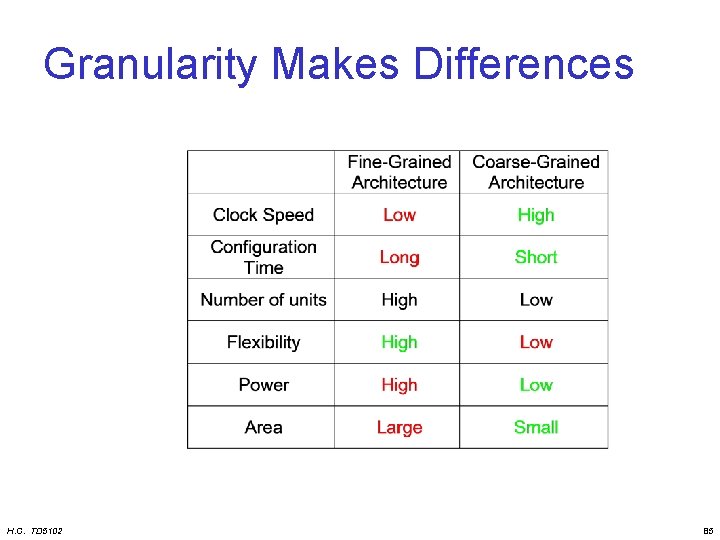

Granularity Makes Differences H. C. TD 5102 85

Overview • • H. C. TD 5102 Motivation and Goals Trends in Computer Architecture RISC processors ILP Processors Transport Triggered Architectures Configurable components Multi-threading Summary and Conclusions 86

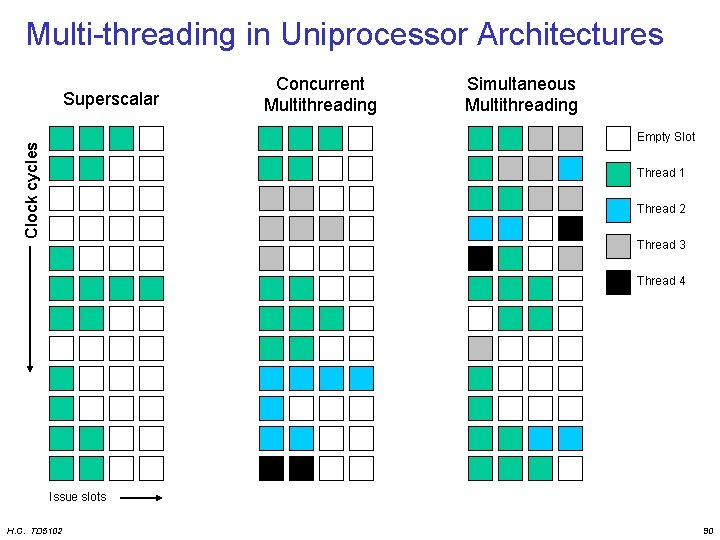

Multi-threading Definition: • A multi-threading architecture is a single processor architecture which can execute 2 or more threads (or processes) either – simultaneously (SMT architecture) – with an extremely short context switch (1 or a few cycles) • A multi-core architecture has 2 or more processor cores on the same die. It can (also) execute 2 or more threads simultaneously ! H. C. TD 5102 87

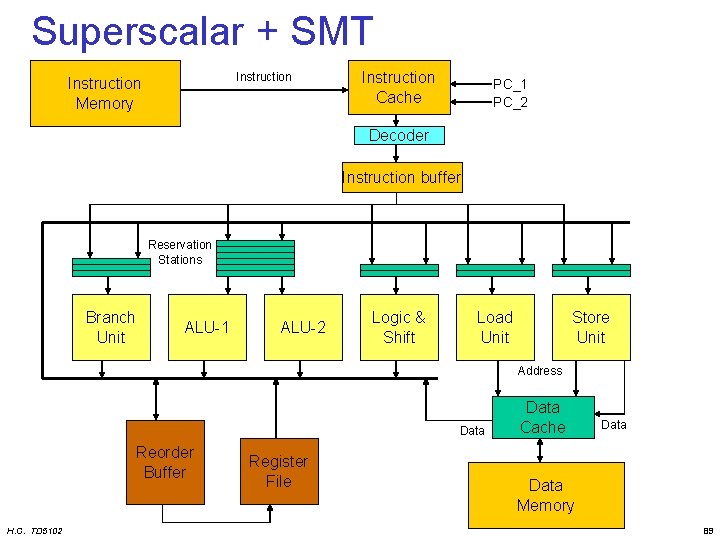

Simultaneous Multithreading Characteristics • An SMT is an extension of a superscalar architecture allowing multiple threads to run simultaneously. • It has separate front-ends for the different threads but shares the back-end between all threads. • Each thread has its own – Program counter – Re-order buffer (if used) – Branch History Register • General registers, caches, branch prediction tables, reservation stations, FUs, etc. can be shared. H. C. TD 5102 88

Superscalar + SMT Instruction Memory Instruction Cache PC_1 PC_2 Decoder Instruction buffer Reservation Stations Branch Unit ALU-1 ALU-2 Logic & Shift Load Unit Store Unit Address Data Reorder Buffer H. C. TD 5102 Register File Data Cache Data Memory 89

Multi-threading in Uniprocessor Architectures Superscalar Concurrent Multithreading Simultaneous Multithreading Clock cycles Empty Slot Thread 1 Thread 2 Thread 3 Thread 4 Issue slots H. C. TD 5102 90

Future Processors Components • New Tri. Media – VLIW with deeper pipeline, L 1 and L 2 cache, branch prediction. – used in Space. Cake cell • Sony-IBM PS 3 Cell architecture • Merrimac (Stanford; successor of Imagine): – combines operation (VLIW) and data level parallelism (SIMD); • TRIPS (Texas Austin / IBM) and SCALE (MIT) – processors combine task, operation and data level parallelism. • Silicon Hife (Philips): – Coarse grain programmable kind of VLIW with many ALUs, Multipliers, . . . • See also, for many more architectures and platforms: – WWW Computer Architecture Page: www. cs. wisc. edu/~arch/www – HOT chips: www. hotchips. org (especially the archives) H. C. TD 5102 91

Summary and Conclusions ILP architectures have great potential • Superscalars – Binary compatible upgrade path • VLIWs – Very flexible ASIPs • TTAs – – Avoid control and datapath bottlenecks Completely compiler controlled Very good cost-performance ratio Low power • Multi-threading – Surpass exploitable ILP in applications – How to choose threads ? H. C. TD 5102 92

What should you choose? Depends on: • application characteristics – what types of parallelism can you exploit – what is a good memory hierarchy • size of each level • bandwidth of each level • • H. C. TD 5102 performance requirements energy budget money budget available tooling 93

- Slides: 93