Computer Architecture Lecture 10 b Memory Latency Prof

Computer Architecture Lecture 10 b: Memory Latency Prof. Onur Mutlu ETH Zürich Fall 2018 18 October 2018

DRAM Memory: A Low-Level Perspective

DRAM Module and Chip 3

Goals • • Cost Latency Bandwidth Parallelism Power Energy Reliability … 4

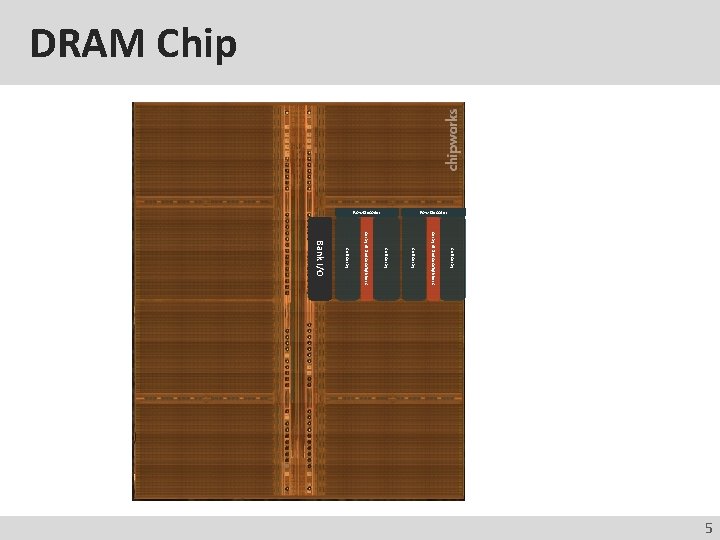

DRAM Chip Row Decoder Cell Array of Sense Amplifiers Cell Array Bank I/O 5

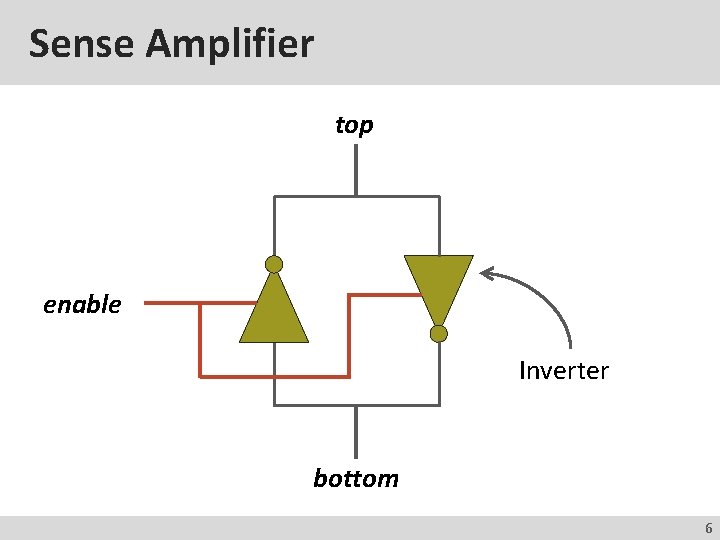

Sense Amplifier top enable Inverter bottom 6

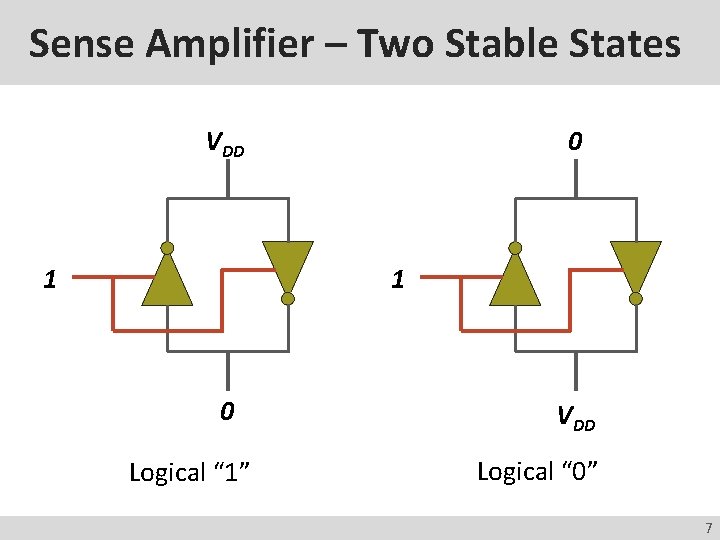

Sense Amplifier – Two Stable States VDD 1 0 Logical “ 1” VDD Logical “ 0” 7

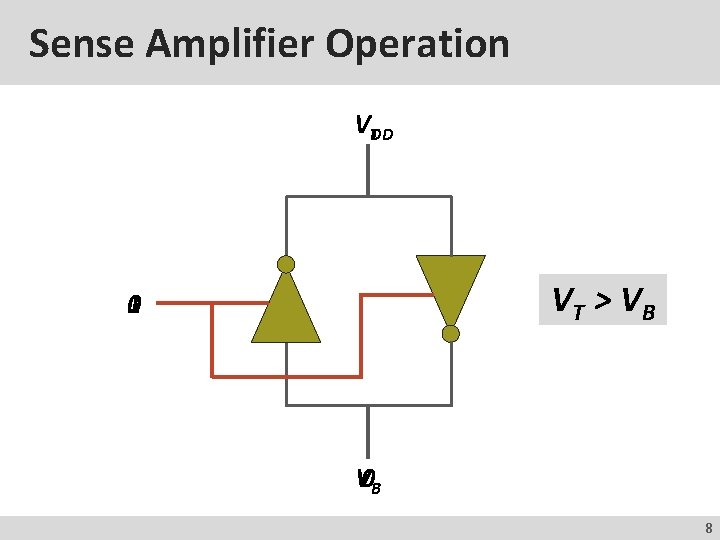

Sense Amplifier Operation VTDD VT > V B 0 1 V 0 B 8

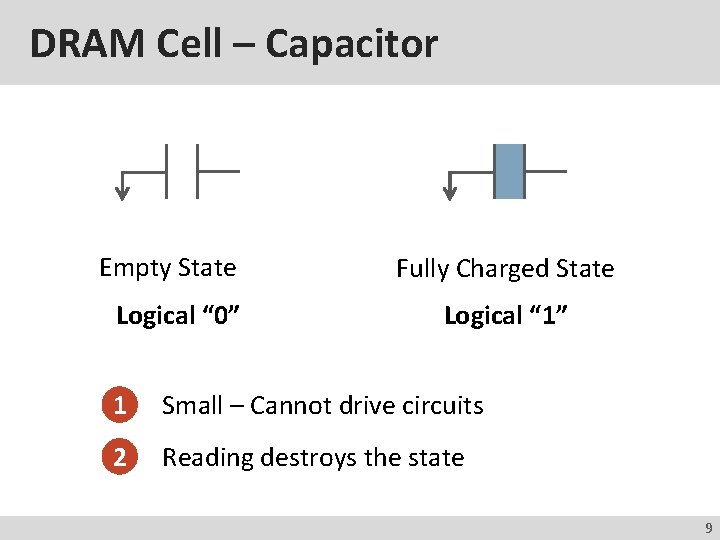

DRAM Cell – Capacitor Empty State Logical “ 0” Fully Charged State Logical “ 1” 1 Small – Cannot drive circuits 2 Reading destroys the state 9

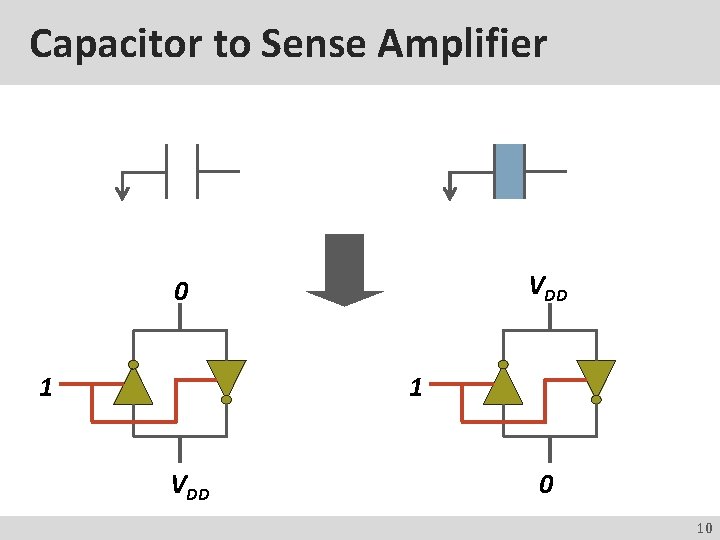

Capacitor to Sense Amplifier VDD 0 1 1 VDD 0 10

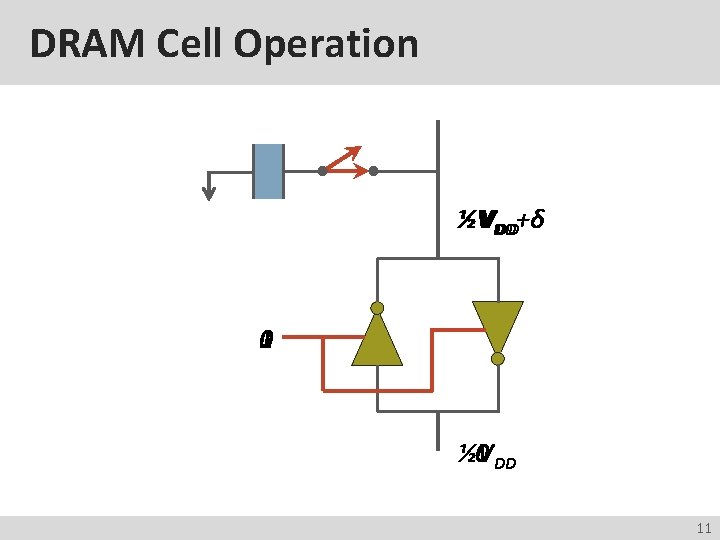

DRAM Cell Operation ½VVDD DD+δ 1 0 0 DD ½V 11

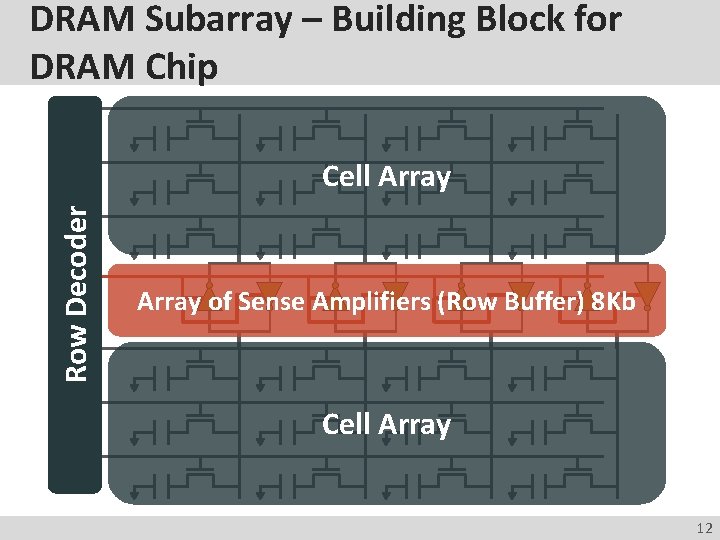

DRAM Subarray – Building Block for DRAM Chip Row Decoder Cell Array of Sense Amplifiers (Row Buffer) 8 Kb Cell Array 12

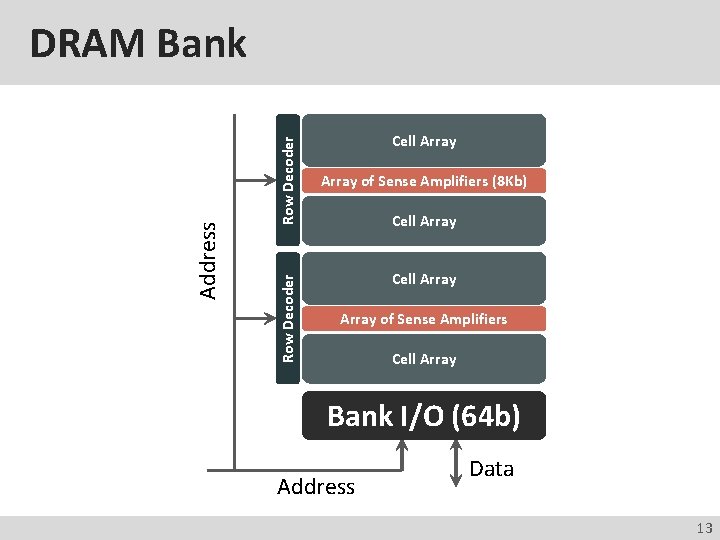

Row Decoder Address DRAM Bank Cell Array of Sense Amplifiers (8 Kb) Cell Array of Sense Amplifiers Cell Array Bank I/O (64 b) Address Data 13

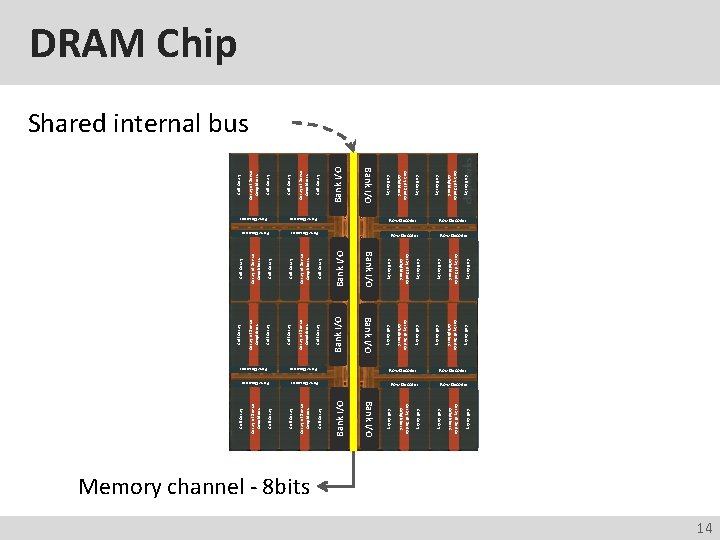

Cell Array of Sense Amplifiers Cell Array Bank I/O Cell Array Array of Sense Amplifiers Bank I/O Row Decoder Bank I/O Cell Array Array of Sense Amplifiers Cell Array Row Decoder Array of Sense Amplifiers Row Decoder Cell Array of Sense Amplifiers Cell Array Row Decoder Cell Array Cell Array of Sense Amplifiers Bank I/O Row Decoder Row Decoder Array of Sense Amplifiers Cell Array Array of Sense Amplifiers Cell Array Bank I/O Row Decoder Array of Sense Amplifiers DRAM Chip Shared internal bus Memory channel - 8 bits 14 Row Decoder

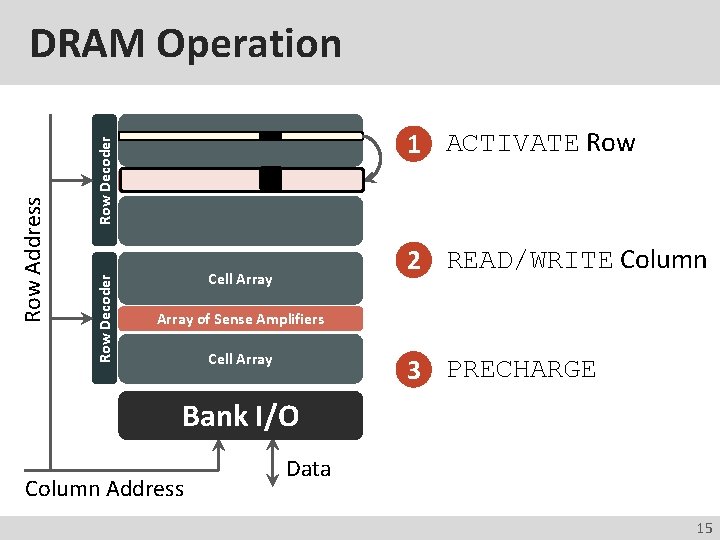

1 ACTIVATE Row Decoder Row Address DRAM Operation 2 READ/WRITE Column Cell Array of Sense Amplifiers Cell Array 3 PRECHARGE Bank I/O Column Address Data 15

Memory Latency: Fundamental Tradeoffs

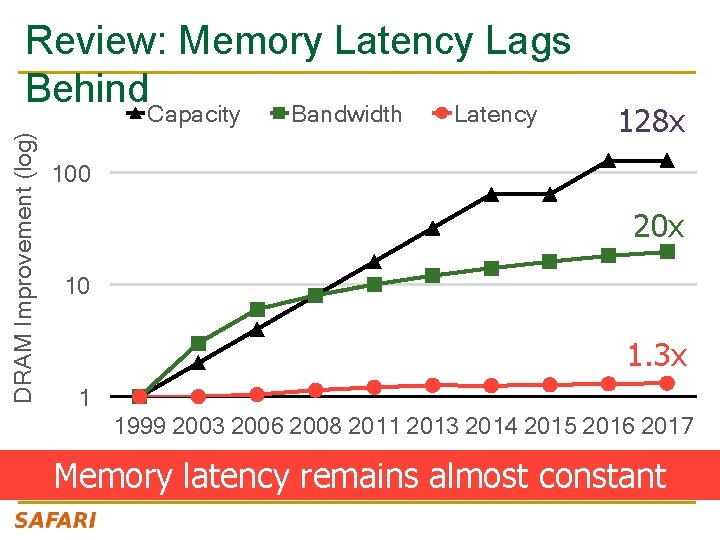

DRAM Improvement (log) Review: Memory Latency Lags Behind. Capacity Bandwidth Latency 128 x 100 20 x 10 1. 3 x 1 1999 2003 2006 2008 2011 2013 2014 2015 2016 2017 Memory latency remains almost constant

DRAM Latency Is Critical for Performance In-memory Databases Graph/Tree Processing [Mao+, Euro. Sys’ 12; Clapp+ (Intel), IISWC’ 15] [Xu+, IISWC’ 12; Umuroglu+, FPL’ 15] In-Memory Data Analytics Datacenter Workloads [Clapp+ (Intel), IISWC’ 15; Awan+, BDCloud’ 15] [Kanev+ (Google), ISCA’ 15]

DRAM Latency Is Critical for Performance In-memory Databases Graph/Tree Processing [Mao+, Euro. Sys’ 12; Clapp+ (Intel), IISWC’ 15] [Xu+, IISWC’ 12; Umuroglu+, FPL’ 15] In-Memory Data Analytics Datacenter Workloads [Clapp+ (Intel), IISWC’ 15; Awan+, BDCloud’ 15] [Kanev+ (Google), ISCA’ 15] Long memory latency → performance bottleneck

The Memory Latency Problem n n High memory latency is a significant limiter of system performance and energy-efficiency It is becoming increasingly so with higher memory contention in multi-core and heterogeneous architectures q q n Exacerbating the bandwidth need Exacerbating the Qo. S problem It increases processor design complexity due to the mechanisms incorporated to tolerate memory latency 20

![Retrospective: Conventional Latency Tolerance Techniques n n n Caching [initially by Wilkes, 1965] q Retrospective: Conventional Latency Tolerance Techniques n n n Caching [initially by Wilkes, 1965] q](http://slidetodoc.com/presentation_image/533acd82123bf7ed5b3cfb695051aafc/image-21.jpg)

Retrospective: Conventional Latency Tolerance Techniques n n n Caching [initially by Wilkes, 1965] q Widely used, simple, effective, but inefficient, passive q Not all applications/phases exhibit temporal or spatial locality Prefetching [initially in IBM 360/91, 1967] q Works well for regular memory access patterns q Prefetching irregular access patterns is difficult, inaccurate, and hardwareintensive e s e h T f o e n No e c u d e R y l l a t n e m a d n u F Multithreading [initially in CDC 6600, 1964] y c Works well if there are multiple threads n e t a L y r o m Me Improving single thread performance using multithreading hardware is an q q n ongoing research effort Out-of-order execution [initially by Tomasulo, 1967] q Tolerates cache misses that cannot be prefetched q Requires extensive hardware resources for tolerating long latencies 21

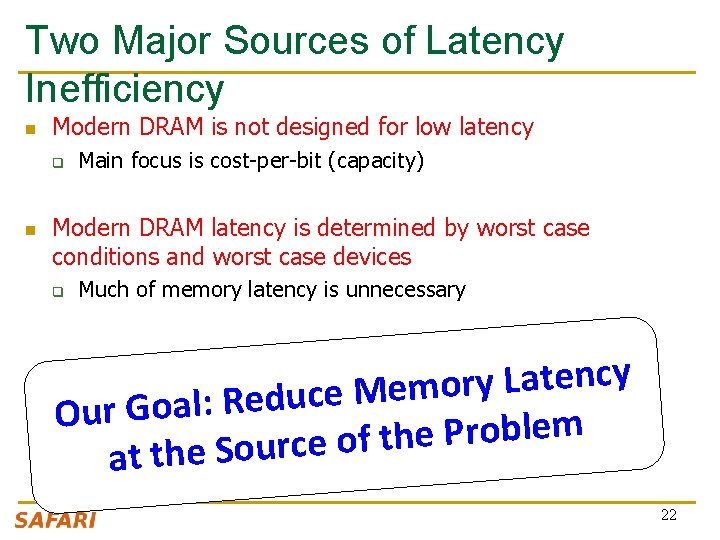

Two Major Sources of Latency Inefficiency n Modern DRAM is not designed for low latency q n Main focus is cost-per-bit (capacity) Modern DRAM latency is determined by worst case conditions and worst case devices q Much of memory latency is unnecessary y c n e t a L y r o m e M e c u d e R : Our Goal m e l b o r P e h t f o e c r u o S e h t at 22

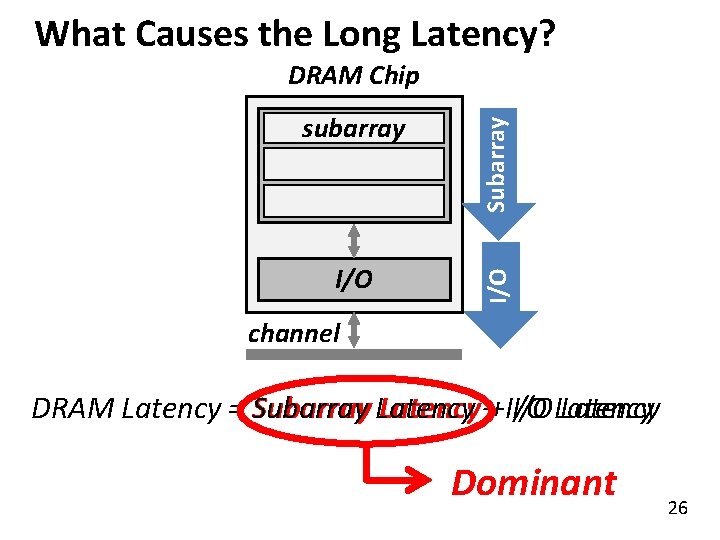

What Causes the Long Memory Latency?

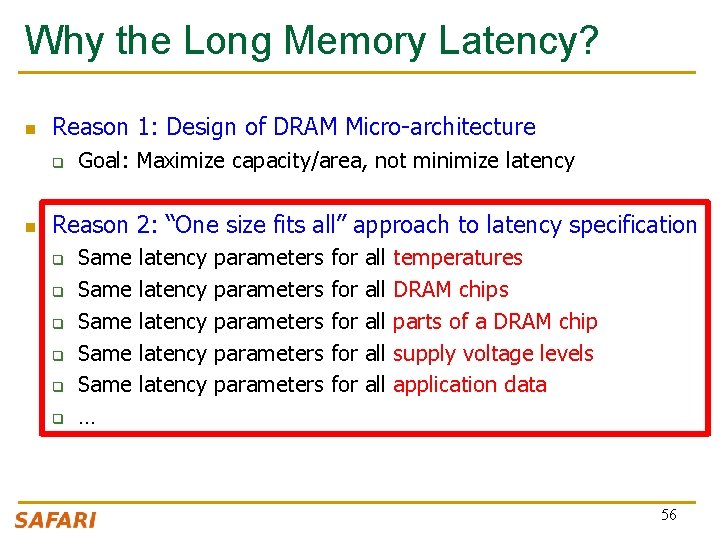

Why the Long Memory Latency? n Reason 1: Design of DRAM Micro-architecture q n Goal: Maximize capacity/area, not minimize latency Reason 2: “One size fits all” approach to latency specification q q q Same latency parameters for all temperatures Same latency parameters for all DRAM chips Same latency parameters for all parts of a DRAM chip Same latency parameters for all supply voltage levels Same latency parameters for all application data … 24

Tiered Latency DRAM 25

What Causes the Long Latency? I/O subarray cell array Subarray DRAM Chip channel DRAM Latency = Subarray Latency ++ I/O Latency Dominant 26

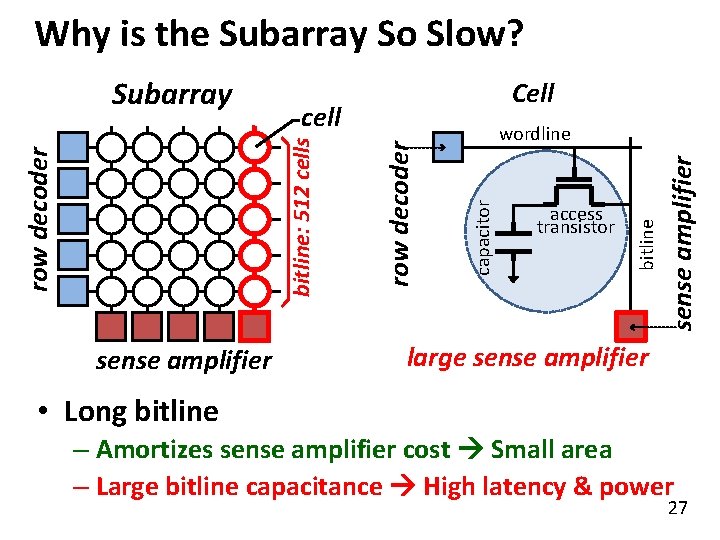

Why is the Subarray So Slow? sense amplifier access transistor bitline wordline capacitor row decoder sense amplifier Cell cell bitline: 512 cells Subarray large sense amplifier • Long bitline – Amortizes sense amplifier cost Small area – Large bitline capacitance High latency & power 27

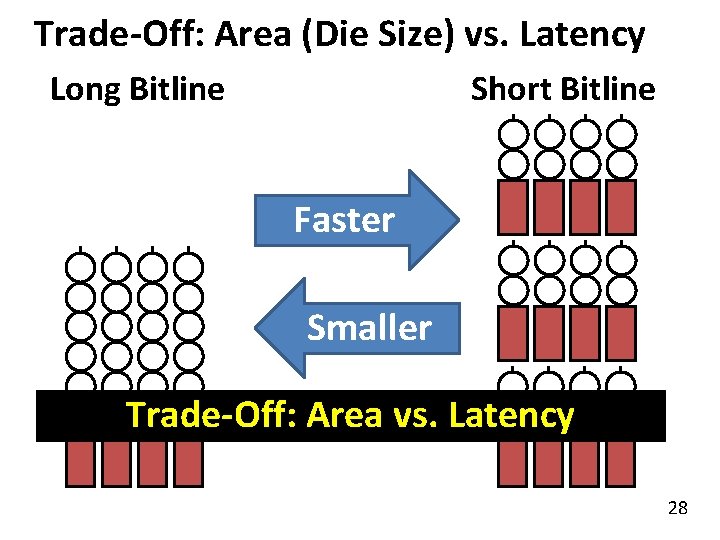

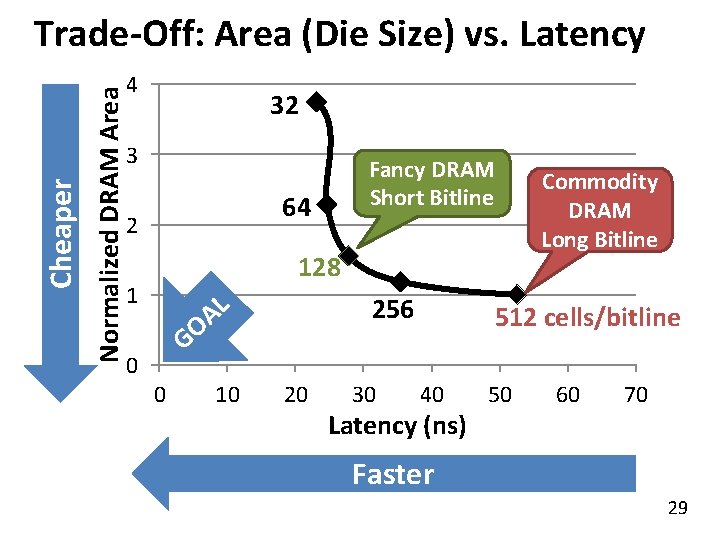

Trade-Off: Area (Die Size) vs. Latency Long Bitline Short Bitline Faster Smaller Trade-Off: Area vs. Latency 28

Normalized DRAM Area Cheaper Trade-Off: Area (Die Size) vs. Latency 4 32 3 Fancy DRAM Short Bitline 64 2 128 1 L A O 256 G 0 0 10 20 30 Commodity DRAM Long Bitline 512 cells/bitline 40 Latency (ns) 50 60 70 Faster 29

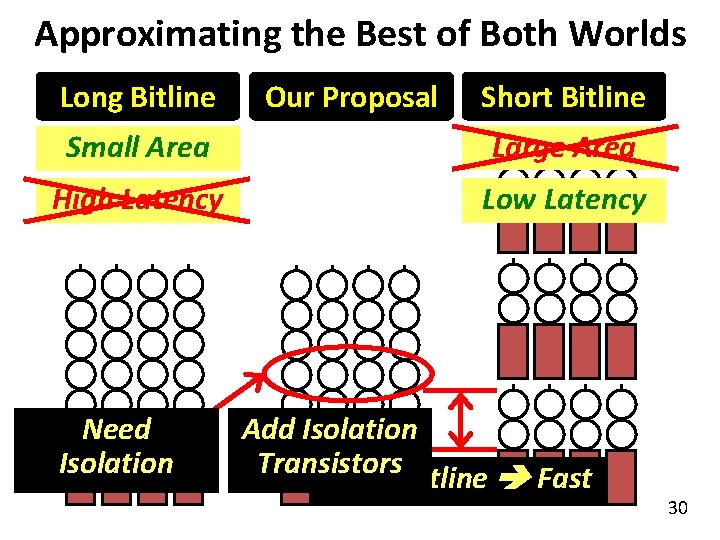

Approximating the Best of Both Worlds Long Bitline Our Proposal Short Bitline Small Area Large Area High Latency Low Latency Need Isolation Add Isolation Transistors Short Bitline Fast 30

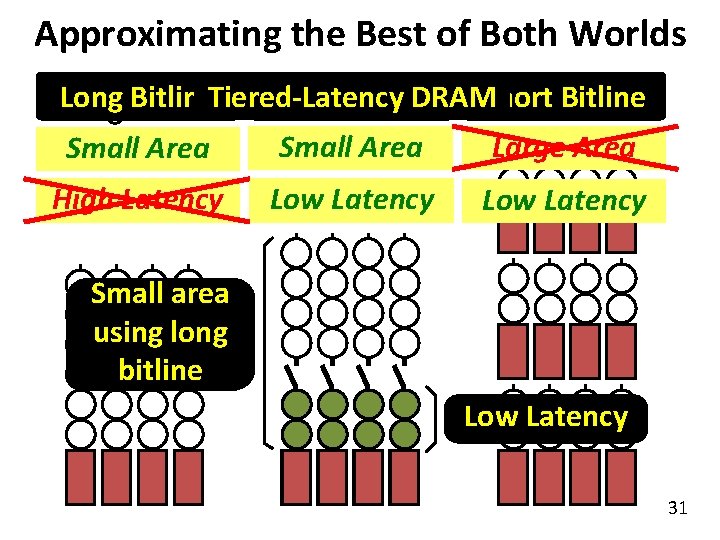

Approximating the Best of Both Worlds DRAMShort Long Our Proposal Long Bitline. Tiered-Latency Short Bitline Large Area Small Area High Latency Low Latency Small area using long bitline Low Latency 31

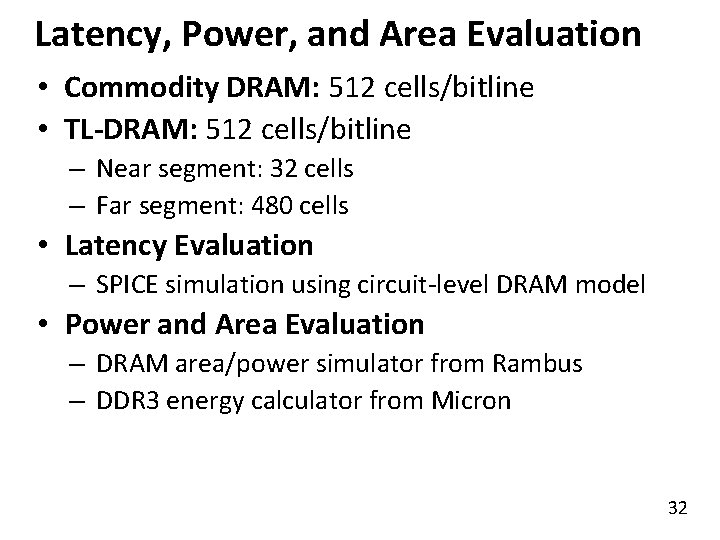

Latency, Power, and Area Evaluation • Commodity DRAM: 512 cells/bitline • TL-DRAM: 512 cells/bitline – Near segment: 32 cells – Far segment: 480 cells • Latency Evaluation – SPICE simulation using circuit-level DRAM model • Power and Area Evaluation – DRAM area/power simulator from Rambus – DDR 3 energy calculator from Micron 32

![Commodity DRAM vs. TL-DRAM [HPCA 2013] • DRAM Latency (t. RC) • DRAM Power Commodity DRAM vs. TL-DRAM [HPCA 2013] • DRAM Latency (t. RC) • DRAM Power](http://slidetodoc.com/presentation_image/533acd82123bf7ed5b3cfb695051aafc/image-33.jpg)

Commodity DRAM vs. TL-DRAM [HPCA 2013] • DRAM Latency (t. RC) • DRAM Power 100% +23% (52. 5 ns) 50% 0% Commodity DRAM – 56% Near +49% 150% Power Latency 150% Far TL-DRAM 100% 50% 0% Commodity DRAM – 51% Near Far TL-DRAM • DRAM Area Overhead ~3%: mainly due to the isolation transistors 33

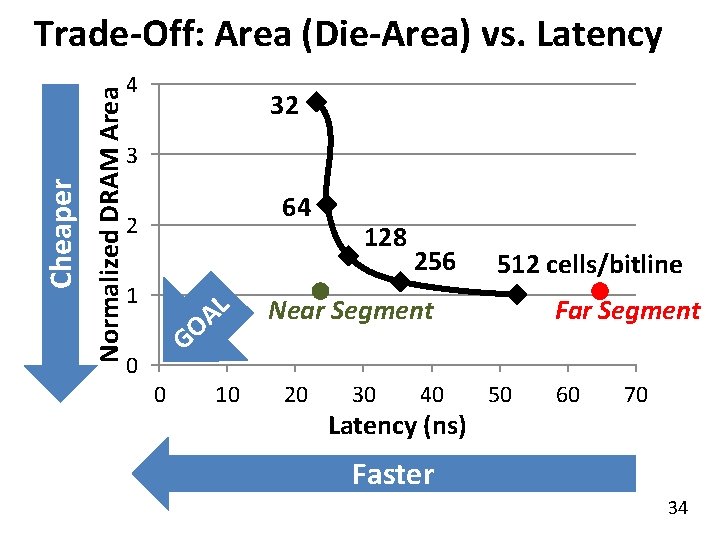

Normalized DRAM Area Cheaper Trade-Off: Area (Die-Area) vs. Latency 4 32 3 64 2 1 L A O G 0 0 10 128 256 512 cells/bitline Near Segment 20 30 40 Latency (ns) Far Segment 50 60 70 Faster 34

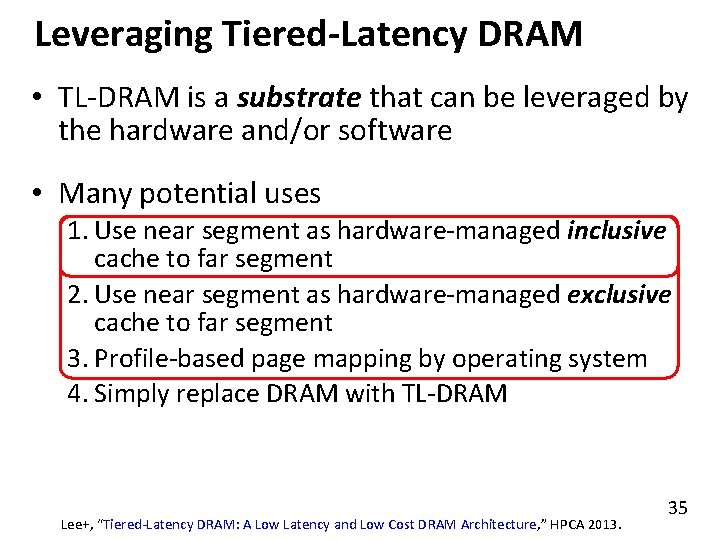

Leveraging Tiered-Latency DRAM • TL-DRAM is a substrate that can be leveraged by the hardware and/or software • Many potential uses 1. Use near segment as hardware-managed inclusive cache to far segment 2. Use near segment as hardware-managed exclusive cache to far segment 3. Profile-based page mapping by operating system 4. Simply replace DRAM with TL-DRAM Lee+, “Tiered-Latency DRAM: A Low Latency and Low Cost DRAM Architecture, ” HPCA 2013. 35

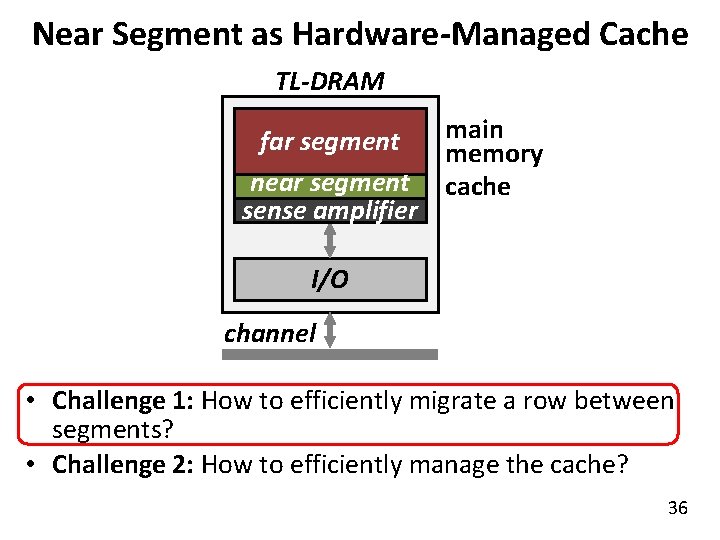

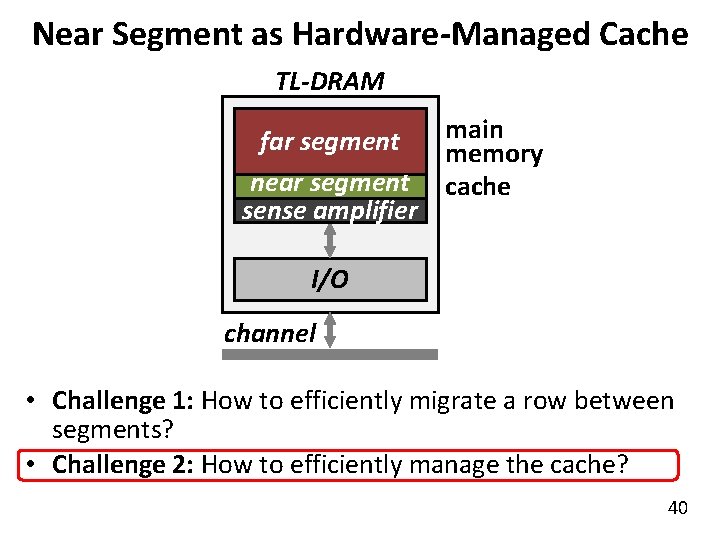

Near Segment as Hardware-Managed Cache TL-DRAM subarray main far segment memory near segment cache sense amplifier I/O channel • Challenge 1: How to efficiently migrate a row between segments? • Challenge 2: How to efficiently manage the cache? 36

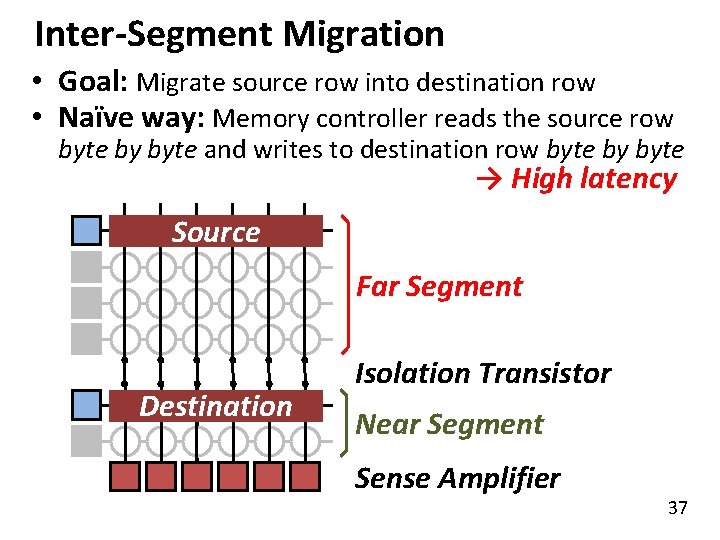

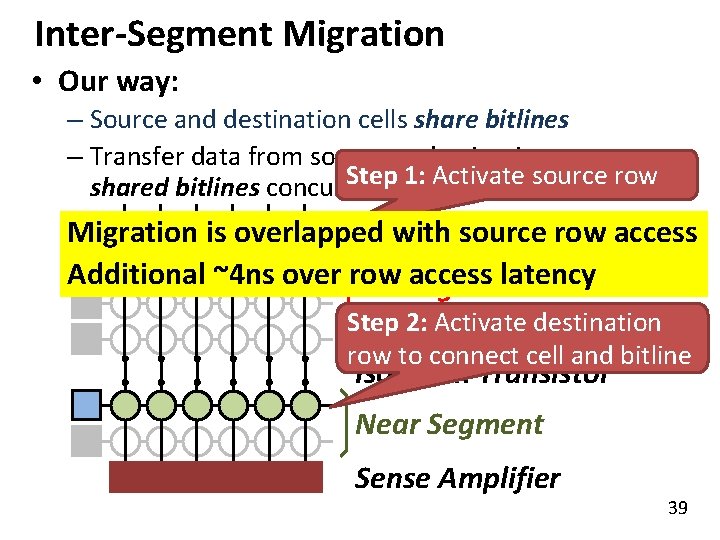

Inter-Segment Migration • Goal: Migrate source row into destination row • Naïve way: Memory controller reads the source row byte by byte and writes to destination row byte by byte → High latency Source Far Segment Destination Isolation Transistor Near Segment Sense Amplifier 37

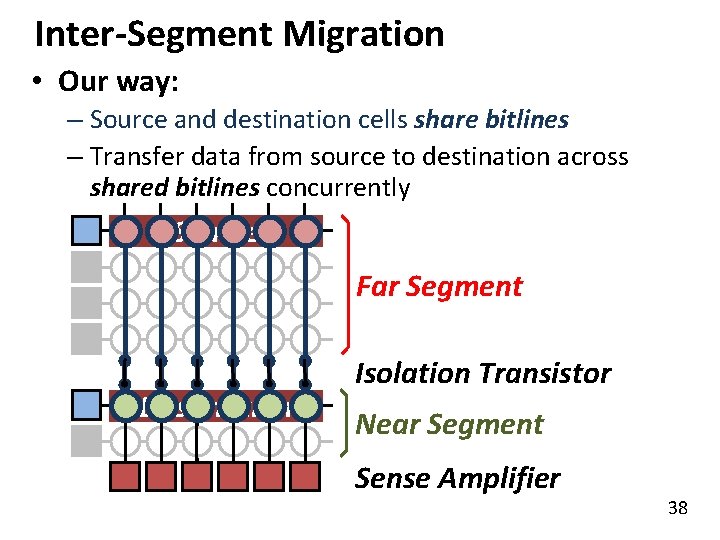

Inter-Segment Migration • Our way: – Source and destination cells share bitlines – Transfer data from source to destination across shared bitlines concurrently Source Far Segment Destination Isolation Transistor Near Segment Sense Amplifier 38

Inter-Segment Migration • Our way: – Source and destination cells share bitlines – Transfer data from source to destination across Step 1: Activate source row shared bitlines concurrently Migration is overlapped with source row access Additional ~4 ns over row access latency Far Segment Step 2: Activate destination row to connect cell and bitline Isolation Transistor Near Segment Sense Amplifier 39

Near Segment as Hardware-Managed Cache TL-DRAM subarray main far segment memory near segment cache sense amplifier I/O channel • Challenge 1: How to efficiently migrate a row between segments? • Challenge 2: How to efficiently manage the cache? 40

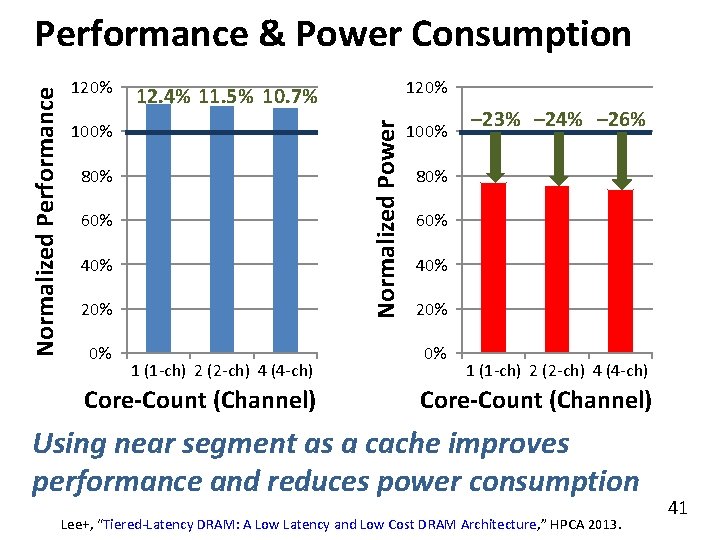

120% 100% 80% 60% 40% 20% 0% 12. 4% 11. 5% 10. 7% Normalized Power Normalized Performance & Power Consumption 1 (1 -ch) 2 (2 -ch) 4 (4 -ch) Core-Count (Channel) 100% – 23% – 24% – 26% 80% 60% 40% 20% 0% 1 (1 -ch) 2 (2 -ch) 4 (4 -ch) Core-Count (Channel) Using near segment as a cache improves performance and reduces power consumption Lee+, “Tiered-Latency DRAM: A Low Latency and Low Cost DRAM Architecture, ” HPCA 2013. 41

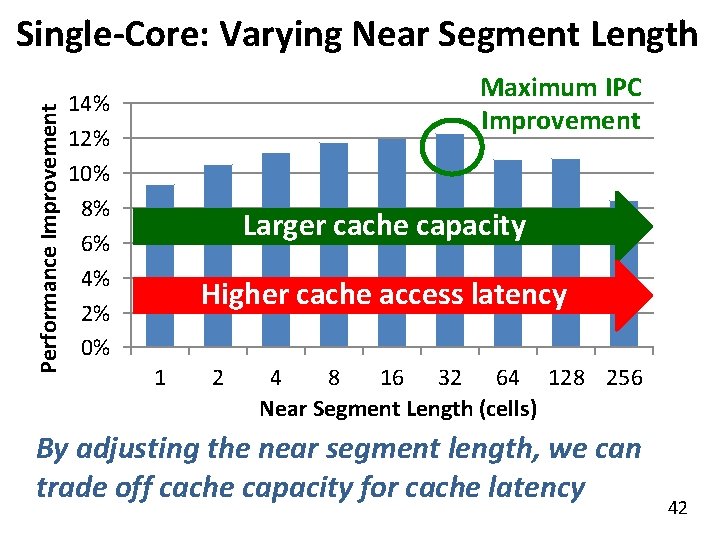

Performance Improvement Single-Core: Varying Near Segment Length Maximum IPC Improvement 14% 12% 10% 8% 6% 4% 2% 0% Larger cache capacity Higher cache access latency 1 2 4 8 16 32 64 128 256 Near Segment Length (cells) By adjusting the near segment length, we can trade off cache capacity for cache latency 42

More on TL-DRAM n Donghyuk Lee, Yoongu Kim, Vivek Seshadri, Jamie Liu, Lavanya Subramanian, and Onur Mutlu, "Tiered-Latency DRAM: A Low Latency and Low Cost DRAM Architecture" Proceedings of the 19 th International Symposium on High. Performance Computer Architecture (HPCA), Shenzhen, China, February 2013. Slides (pptx) 43

![LISA: Low-Cost Inter-Linked Subarrays [HPCA 2016] 44 LISA: Low-Cost Inter-Linked Subarrays [HPCA 2016] 44](http://slidetodoc.com/presentation_image/533acd82123bf7ed5b3cfb695051aafc/image-44.jpg)

LISA: Low-Cost Inter-Linked Subarrays [HPCA 2016] 44

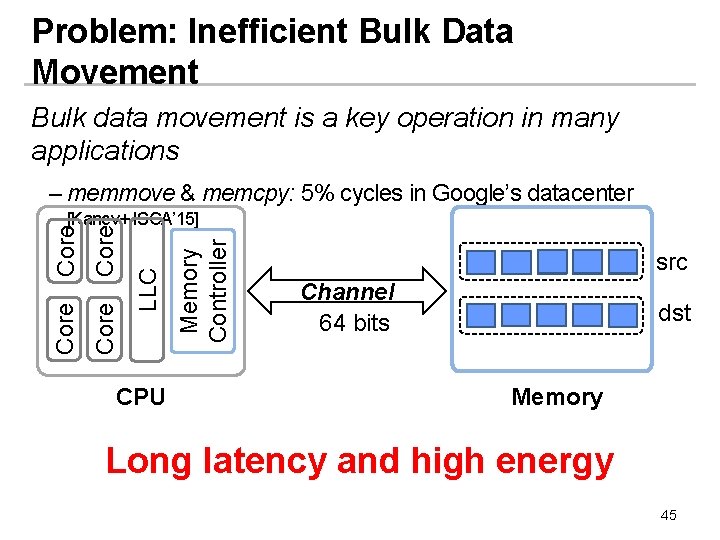

Problem: Inefficient Bulk Data Movement Bulk data movement is a key operation in many applications – memmove & memcpy: 5% cycles in Google’s datacenter CPU Memory Controller LLC Core [Kanev+ ISCA’ 15] src Channel 64 bits dst Memory Long latency and high energy 45

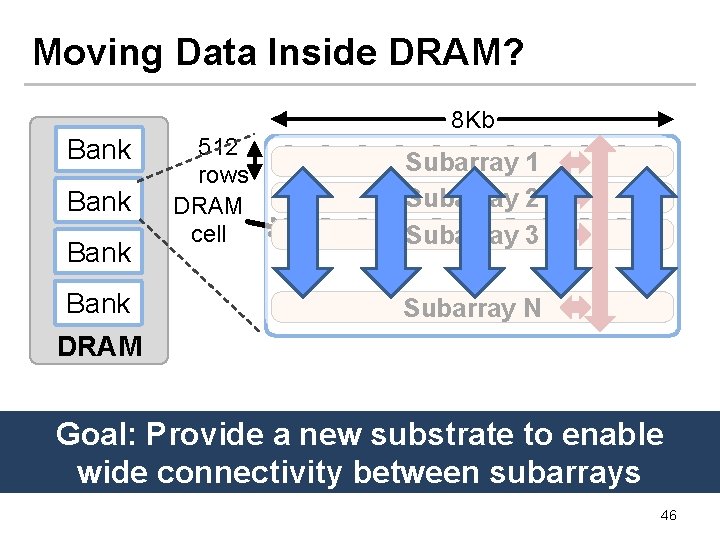

Moving Data Inside DRAM? Bank DRAM Subarray 1 Subarray 2 Subarray 3 … Bank 512 rows DRAM cell 8 Kb Subarray N Internal Data Bus (64 b) Goal: Provide ainnew substrate to enable Low connectivity DRAM is the fundamental wide connectivity between subarrays bottleneck for bulk data movement 46

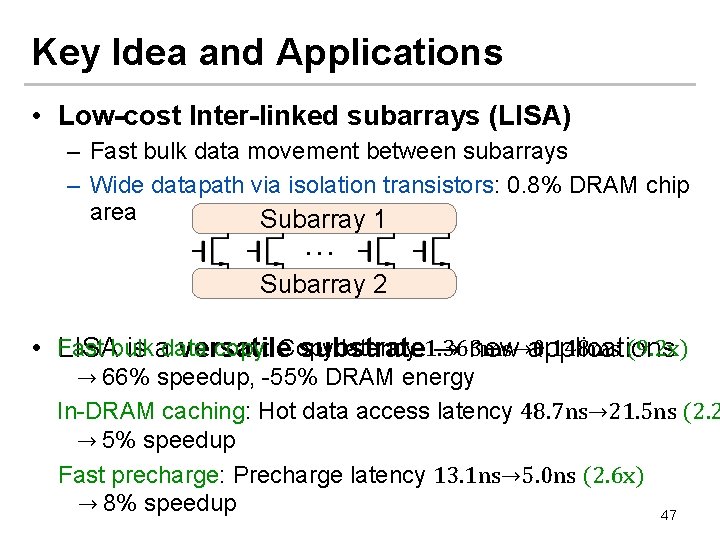

Key Idea and Applications • Low-cost Inter-linked subarrays (LISA) – Fast bulk data movement between subarrays – Wide datapath via isolation transistors: 0. 8% DRAM chip area Subarray 1 … Subarray 2 (9. 2 x) • Fast bulk data copy: Copy latency 1. 363 ms→ 0. 148 ms LISA is a versatile substrate → new applications → 66% speedup, -55% DRAM energy In-DRAM caching: Hot data access latency 48. 7 ns→ 21. 5 ns (2. 2 → 5% speedup Fast precharge: Precharge latency 13. 1 ns→ 5. 0 ns (2. 6 x) → 8% speedup 47

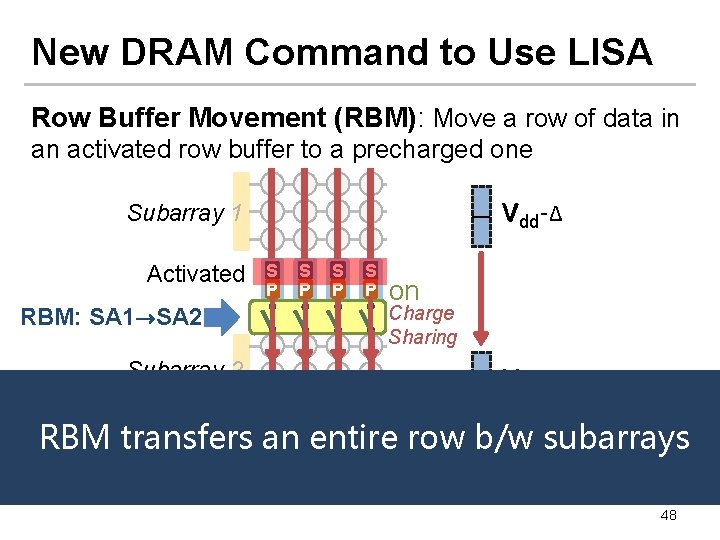

New DRAM Command to Use LISA Row Buffer Movement (RBM): Move a row of data in an activated row buffer to a precharged one Vdd-Δ Subarray 1 Activated S P S P on Charge Sharing RBM: SA 1→SA 2 Subarray 2 Vdd/2+Δ /2 S S Amplify the charge Activated an Precharged RBM transfers row b/w subarrays P Pentire P P … 48

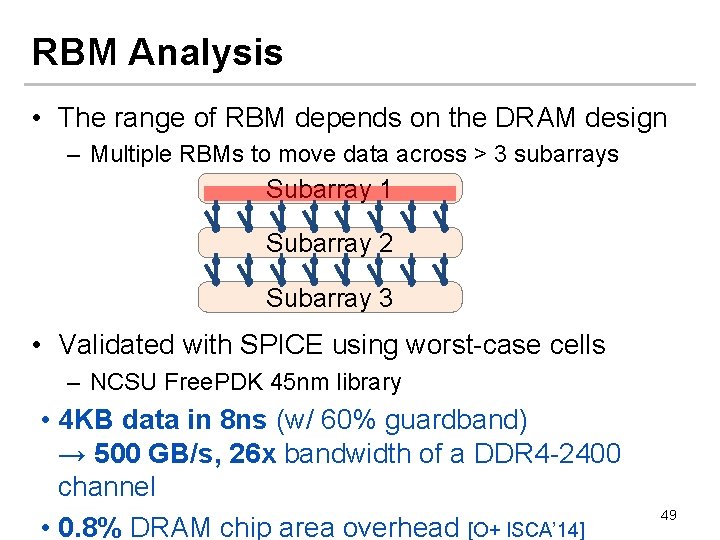

RBM Analysis • The range of RBM depends on the DRAM design – Multiple RBMs to move data across > 3 subarrays Subarray 1 Subarray 2 Subarray 3 • Validated with SPICE using worst-case cells – NCSU Free. PDK 45 nm library • 4 KB data in 8 ns (w/ 60% guardband) → 500 GB/s, 26 x bandwidth of a DDR 4 -2400 channel • 0. 8% DRAM chip area overhead [O+ ISCA’ 14] 49

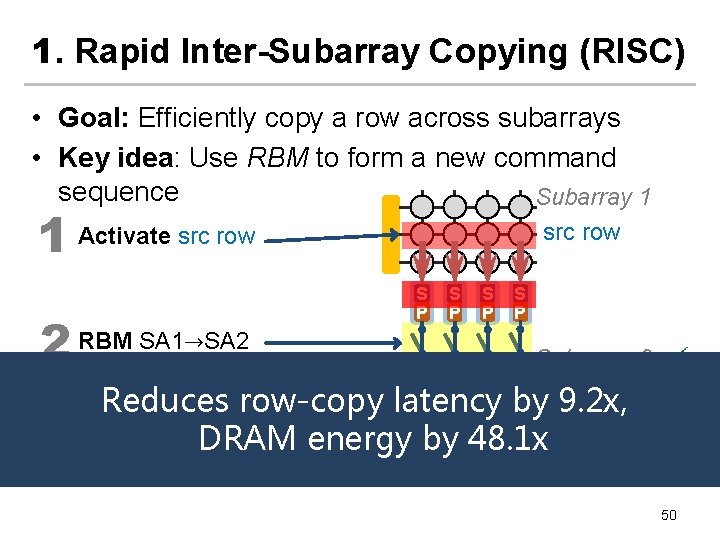

1. Rapid Inter-Subarray Copying (RISC) • Goal: Efficiently copy a row across subarrays • Key idea: Use RBM to form a new command sequence Subarray 1 1 Activate src row 2 RBM SA 1→SA 2 src row S P S P Subarray 2 bydst row 9. 2 x, Reduces row-copy latency Activate dst row DRAM energy Sby. S 48. 1 x 3 (write row buffer into dst row) S S P P 50

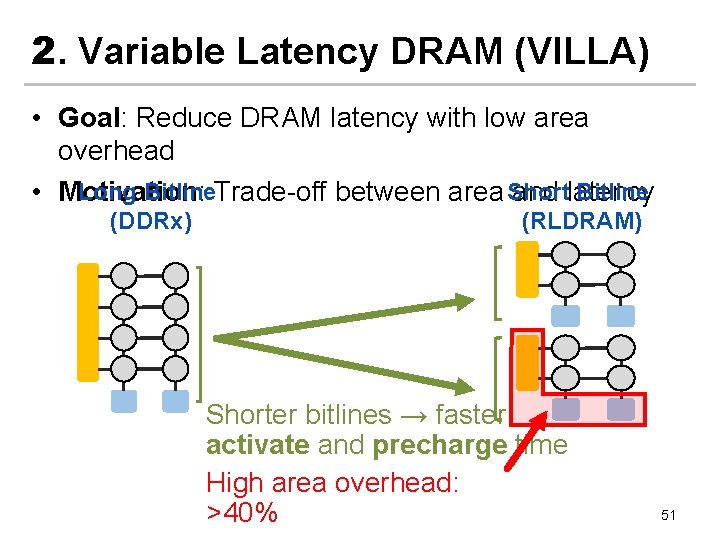

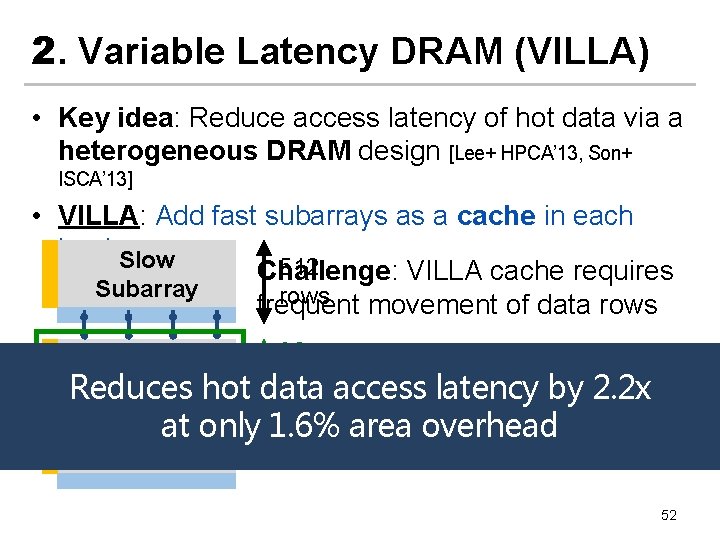

2. Variable Latency DRAM (VILLA) • Goal: Reduce DRAM latency with low area overhead Long Bitline Short Bitline • Motivation: Trade-off between area and latency (DDRx) (RLDRAM) Shorter bitlines → faster activate and precharge time High area overhead: >40% 51

2. Variable Latency DRAM (VILLA) • Key idea: Reduce access latency of hot data via a heterogeneous DRAM design [Lee+ HPCA’ 13, Son+ ISCA’ 13] • VILLA: Add fast subarrays as a cache in each bank. Slow 512 Challenge: VILLA cache requires Subarray rows frequent movement of data rows 32 LISA: Cache rows rapidly from rows access latency by 2. 2 x Reduces hot data slow to fast subarrays Fast Subarray Slow at only Subarray 1. 6% area overhead 52

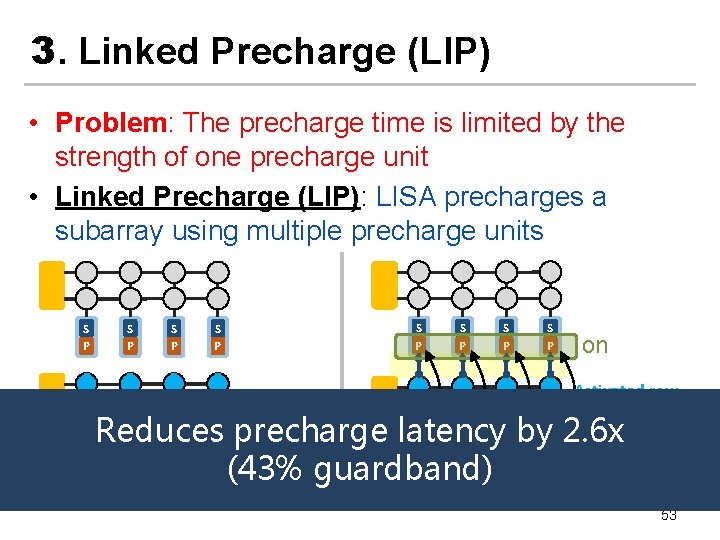

3. Linked Precharge (LIP) • Problem: The precharge time is limited by the strength of one precharge unit • Linked Precharge (LIP): LISA precharges a subarray using multiple precharge units S P S P on Activated row Precharging S P Linked Precharging Reduces S S S precharge latency S S S by. S 2. 6 x P P P on (43% guardband) Conventional DRAM LISA DRAM 53

More on LISA n Kevin K. Chang, Prashant J. Nair, Saugata Ghose, Donghyuk Lee, Moinuddin K. Qureshi, and Onur Mutlu, "Low-Cost Inter-Linked Subarrays (LISA): Enabling Fast Inter-Subarray Data Movement in DRAM" Proceedings of the 22 nd International Symposium on High. Performance Computer Architecture (HPCA), Barcelona, Spain, March 2016. [Slides (pptx) (pdf)] [Source Code] 54

What Causes the Long DRAM Latency?

Why the Long Memory Latency? n Reason 1: Design of DRAM Micro-architecture q n Goal: Maximize capacity/area, not minimize latency Reason 2: “One size fits all” approach to latency specification q q q Same latency parameters for all temperatures Same latency parameters for all DRAM chips Same latency parameters for all parts of a DRAM chip Same latency parameters for all supply voltage levels Same latency parameters for all application data … 56

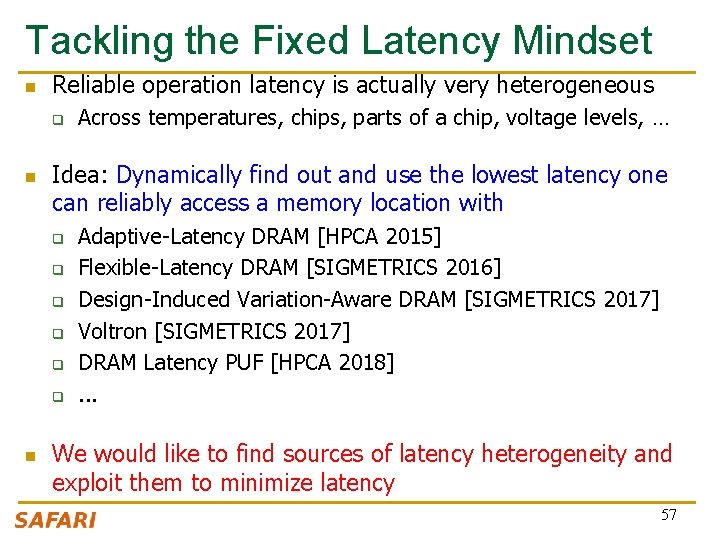

Tackling the Fixed Latency Mindset n Reliable operation latency is actually very heterogeneous q n Idea: Dynamically find out and use the lowest latency one can reliably access a memory location with q q q n Across temperatures, chips, parts of a chip, voltage levels, … Adaptive-Latency DRAM [HPCA 2015] Flexible-Latency DRAM [SIGMETRICS 2016] Design-Induced Variation-Aware DRAM [SIGMETRICS 2017] Voltron [SIGMETRICS 2017] DRAM Latency PUF [HPCA 2018]. . . We would like to find sources of latency heterogeneity and exploit them to minimize latency 57

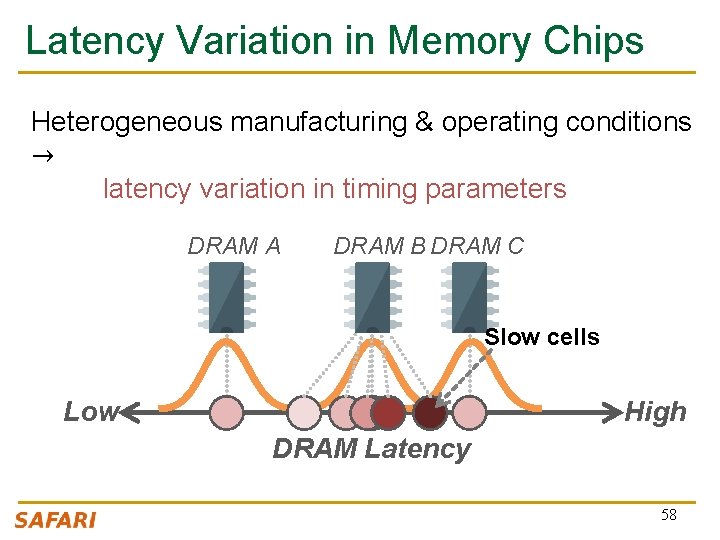

Latency Variation in Memory Chips Heterogeneous manufacturing & operating conditions → latency variation in timing parameters DRAM A DRAM B DRAM C Slow cells Low High DRAM Latency 58

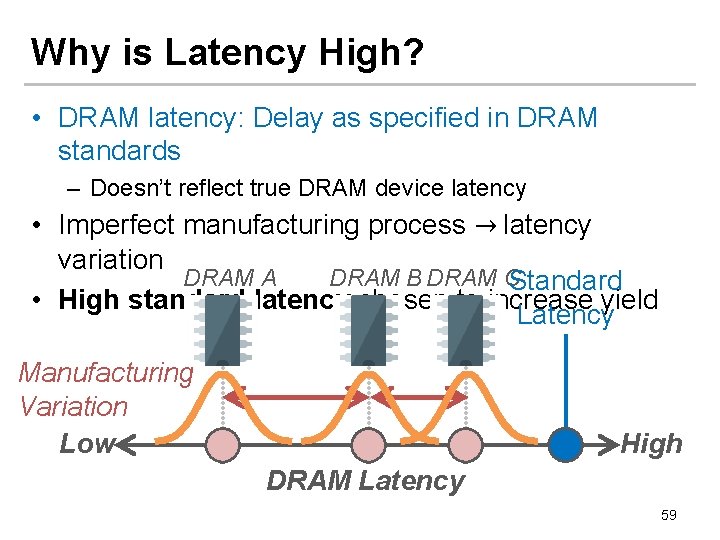

Why is Latency High? • DRAM latency: Delay as specified in DRAM standards – Doesn’t reflect true DRAM device latency • Imperfect manufacturing process → latency variation DRAM A DRAM B DRAM C Standard • High standard latency chosen to increase yield Latency Manufacturing Variation Low High DRAM Latency 59

What Causes the Long Memory Latency? n Conservative timing margins! n DRAM timing parameters are set to cover the worst case n Worst-case temperatures q q n Worst-case devices q q n 85 degrees vs. common-case to enable a wide range of operating conditions DRAM cell with smallest charge across any acceptable device to tolerate process variation at acceptable yield This leads to large timing margins for the common case 60

Understanding and Exploiting Variation in DRAM Latency

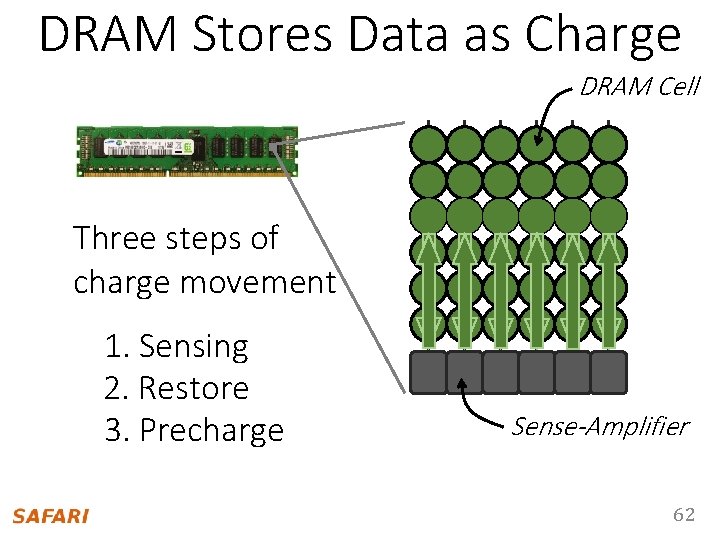

DRAM Stores Data as Charge DRAM Cell Three steps of charge movement 1. Sensing 2. Restore 3. Precharge Sense-Amplifier 62

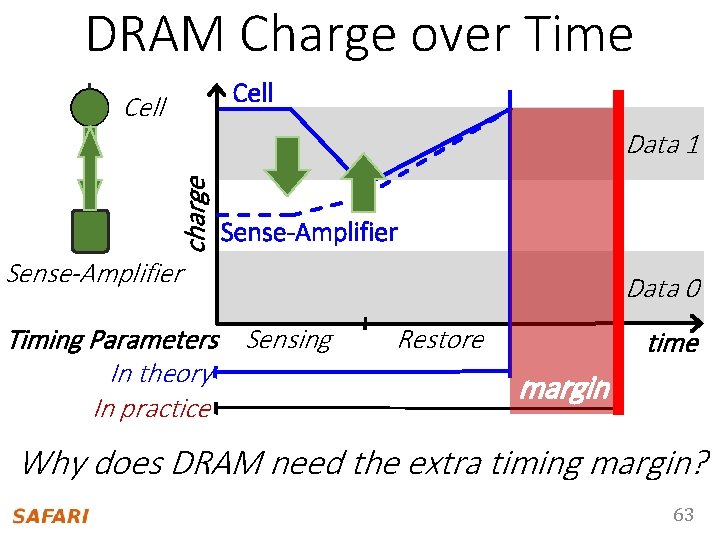

DRAM Charge over Time Cell charge Data 1 Sense-Amplifier Timing Parameters Sensing In theory In practice Data 0 Restore time margin Why does DRAM need the extra timing margin? 63

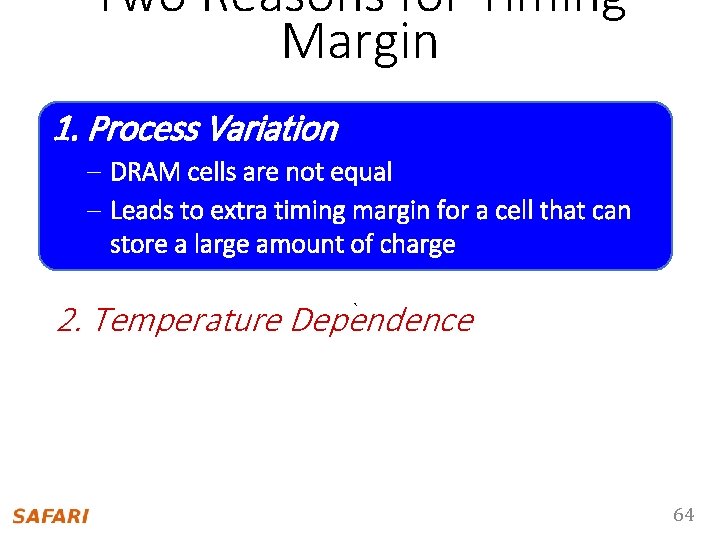

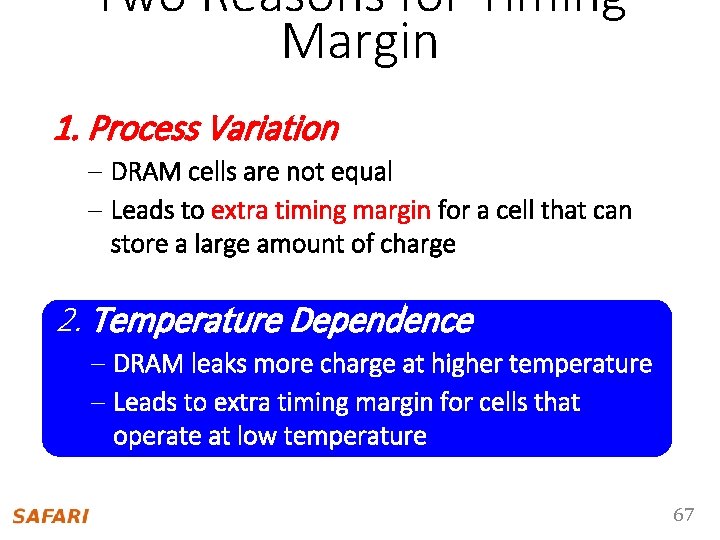

Two Reasons for Timing Margin 1. Process Variation – DRAM cells are not equal – Leads to extra timing margin for acellthatcan store asmall largeamountofofcharge 2. Temperature Dependence ` – DRAM leaks more charge at higher temperature – Leads to extra timing margin when operating at low temperature 64

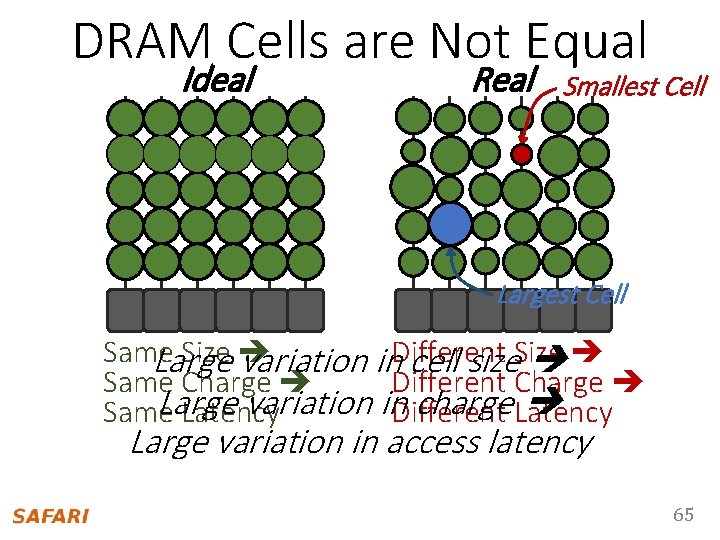

DRAM Cells are Not Equal Ideal Real Smallest Cell Largest Cell Same Size Large variation in. Different cell size. Size Same Charge Different Charge Large variation in. Different charge Latency Same Latency Large variation in access latency 65

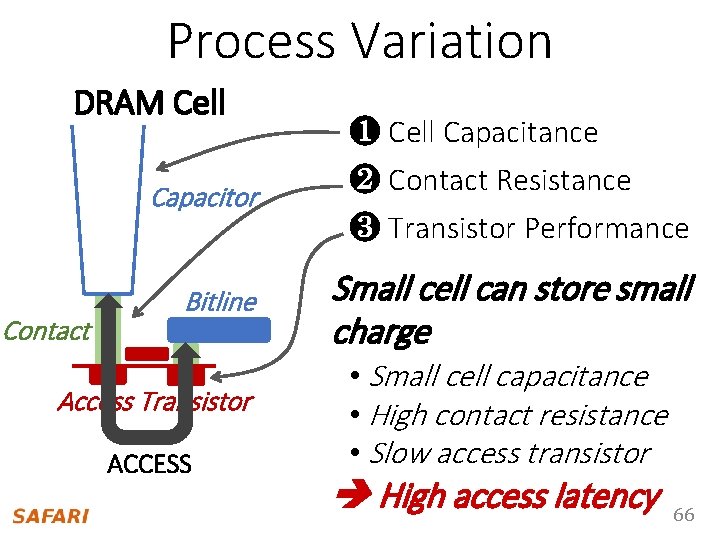

Process Variation DRAM Cell Contact ❶ Cell Capacitance Capacitor ❷ Contact Resistance ❸ Transistor Performance Bitline Small cell can store small charge Access Transistor ACCESS • Small cell capacitance • High contact resistance • Slow access transistor High access latency 66

Two Reasons for Timing Margin 1. Process Variation – DRAM cells are not equal – Leads to extra timing margin for a cell that can store a large amount of charge 2. Temperature Dependence ` – DRAM leaks more charge at higher temperature – Leads to extra timing margin for cells that operate at low temperature the high temperature 67

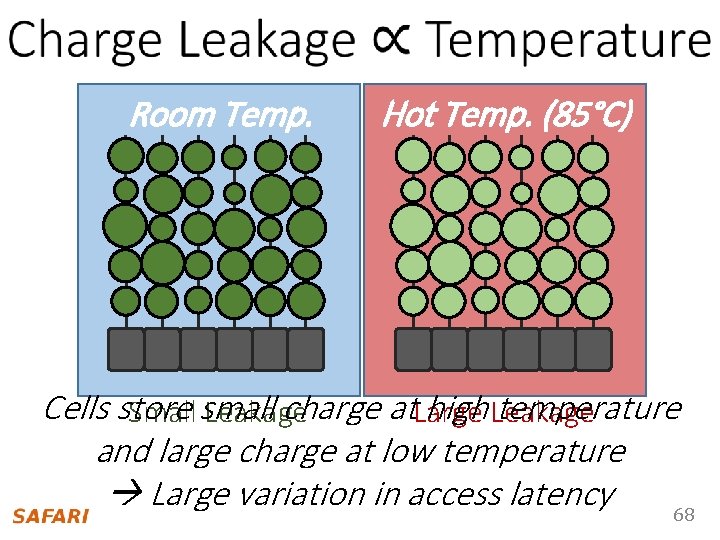

Room Temp. Hot Temp. (85°C) Cells store charge at. Large high Leakage temperature Small small Leakage and large charge at low temperature Large variation in access latency 68

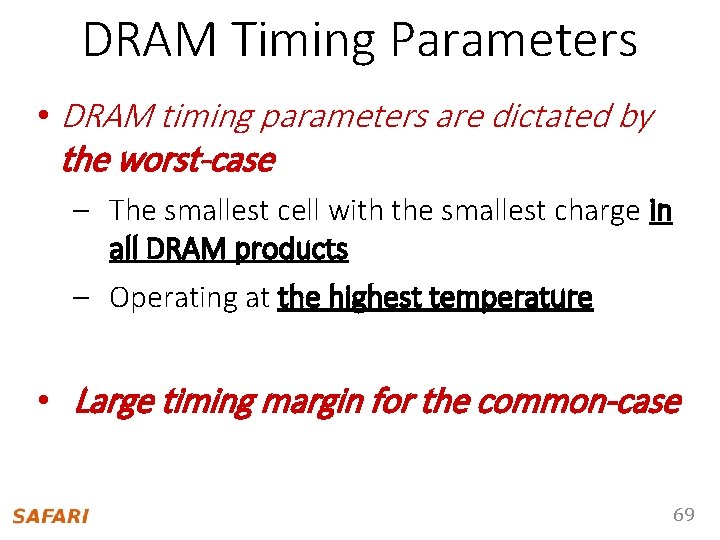

DRAM Timing Parameters • DRAM timing parameters are dictated by the worst-case – The smallest cell with the smallest charge in all DRAM products – Operating at the highest temperature • Large timing margin for the common-case 69

![Adaptive-Latency DRAM [HPCA 2015] n Idea: Optimize DRAM timing for the common case q Adaptive-Latency DRAM [HPCA 2015] n Idea: Optimize DRAM timing for the common case q](http://slidetodoc.com/presentation_image/533acd82123bf7ed5b3cfb695051aafc/image-70.jpg)

Adaptive-Latency DRAM [HPCA 2015] n Idea: Optimize DRAM timing for the common case q q n Current temperature Current DRAM module Why would this reduce latency? q A DRAM cell can store much more charge in the common case (low temperature, strong cell) than in the worst case More charge in a DRAM cell Faster sensing, charge restoration, precharging Faster access (read, write, refresh, …) q Lee+, “Adaptive-Latency DRAM: Optimizing DRAM Timing for the Common-Case, ” HPCA 2015.

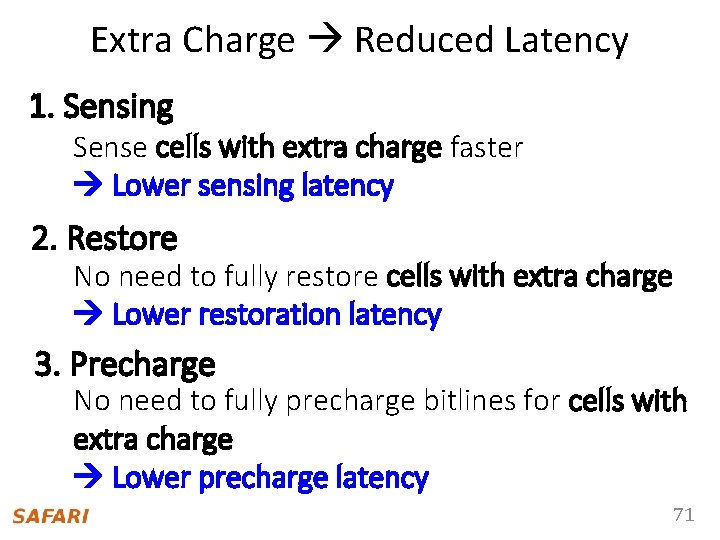

Extra Charge Reduced Latency 1. Sensing Sense cells with extra charge faster Lower sensing latency 2. Restore No need to fully restore cells with extra charge Lower restoration latency 3. Precharge No need to fully precharge bitlines for cells with extra charge Lower precharge latency 71

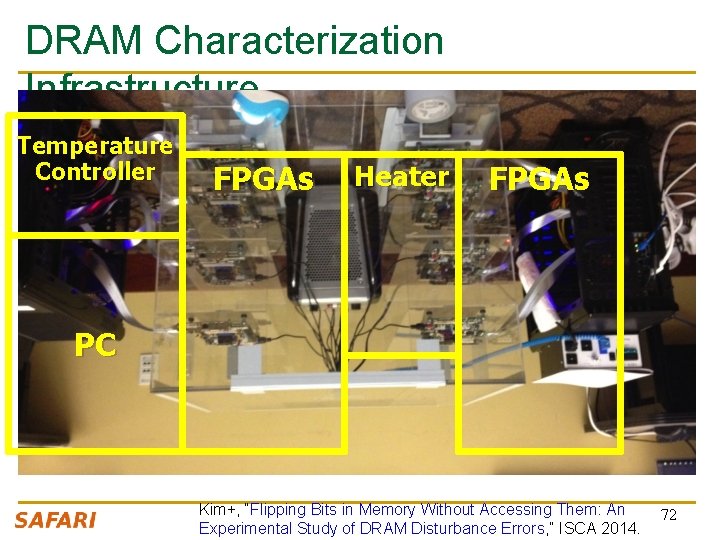

DRAM Characterization Infrastructure Temperature Controller FPGAs Heater FPGAs PC Kim+, “Flipping Bits in Memory Without Accessing Them: An Experimental Study of DRAM Disturbance Errors, ” ISCA 2014. 72

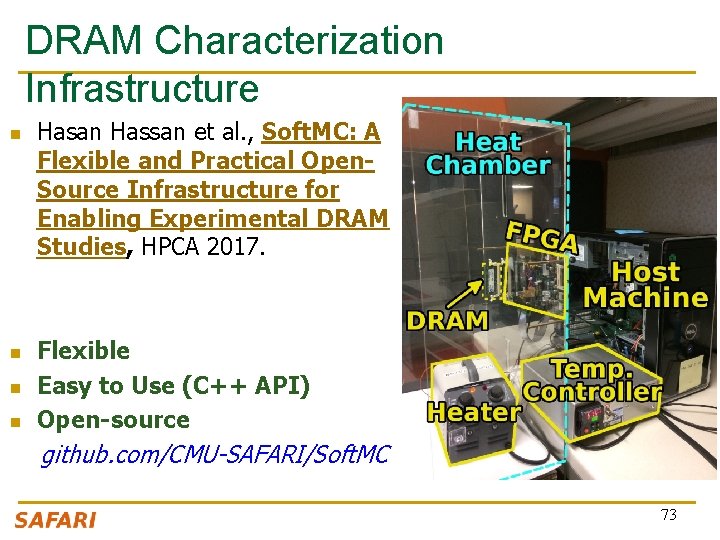

DRAM Characterization Infrastructure n n Hasan Hassan et al. , Soft. MC: A Flexible and Practical Open. Source Infrastructure for Enabling Experimental DRAM Studies, HPCA 2017. Flexible Easy to Use (C++ API) Open-source github. com/CMU-SAFARI/Soft. MC 73

Soft. MC: Open Source DRAM Infrastructure n https: //github. com/CMU-SAFARI/Soft. MC 74

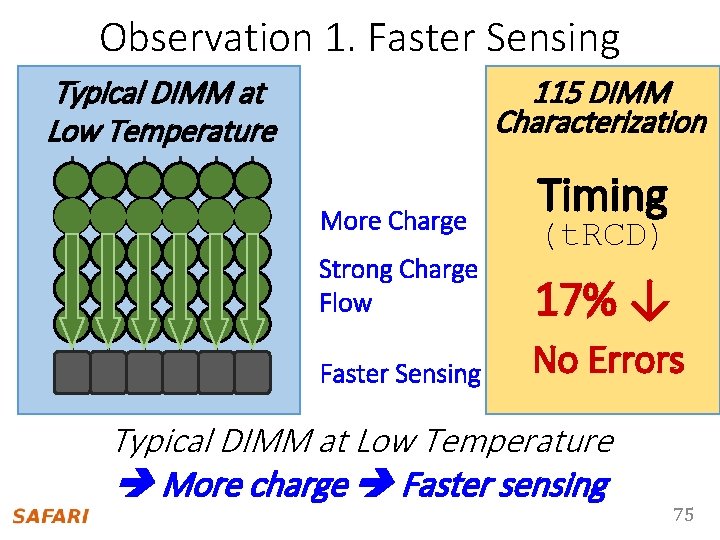

Observation 1. Faster Sensing 115 DIMM Characterization Typical DIMM at Low Temperature More Charge Timing (t. RCD) Strong Charge Flow 17% ↓ Faster Sensing No Errors Typical DIMM at Low Temperature More charge Faster sensing 75

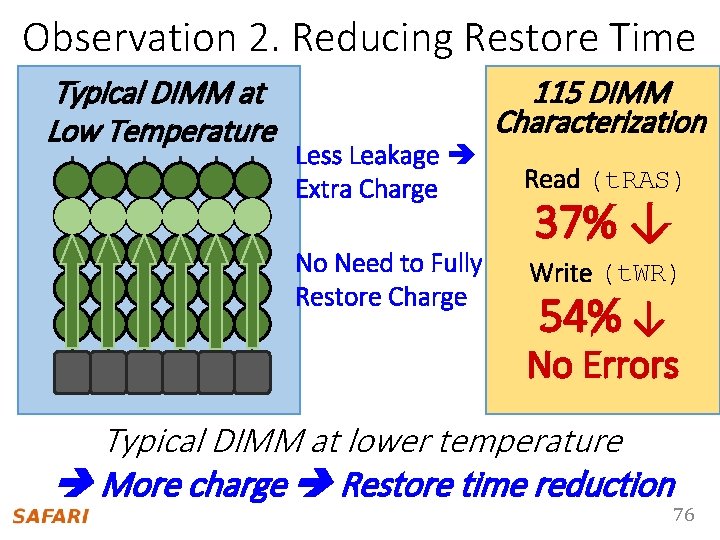

Observation 2. Reducing Restore Time Typical DIMM at Low Temperature Less Leakage Extra Charge No Need to Fully Restore Charge 115 DIMM Characterization Read (t. RAS) 37% ↓ Write (t. WR) 54% ↓ No Errors Typical DIMM at lower temperature More charge Restore time reduction 76

AL-DRAM • Key idea – Optimize DRAM timing parameters online • Two components – DRAM manufacturer provides multiple sets of reliable DRAM timing parameters at different reliable temperatures for each DIMM – System monitors DRAM temperature & uses appropriate DRAM timing parameters Lee+, “Adaptive-Latency DRAM: Optimizing DRAM Timing for the Common-Case, ” HPCA 2015. 77

DRAM Temperature • DRAM temperature measurement • Server cluster: Operates at under 34°C • Desktop: Operates at under 50°C • DRAM standard optimized for 85°C • DRAM Previousoperates works – DRAM temperature is low at low temperatures • El-Sayed+ SIGMETRICS 2012 in 2007 the common-case • Liu+ ISCA • Previous works – Maintain low DRAM temperature • David+ ICAC 2011 • Liu+ ISCA 2007 • Zhu+ ITHERM 2008 78

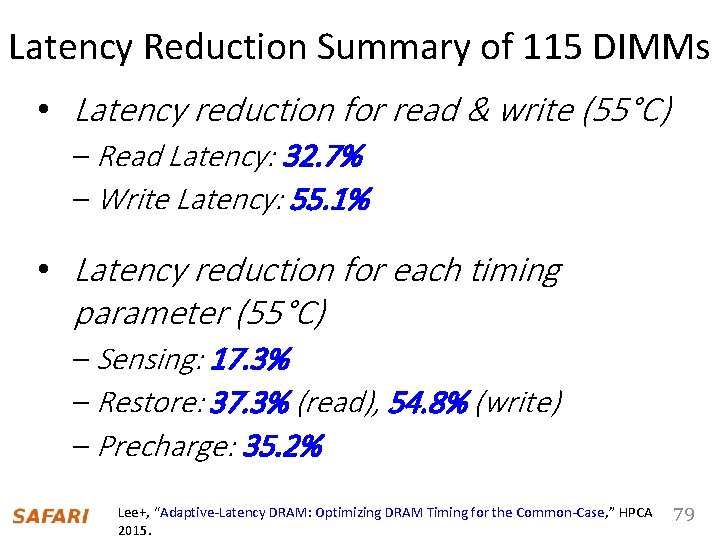

Latency Reduction Summary of 115 DIMMs • Latency reduction for read & write (55°C) – Read Latency: 32. 7% – Write Latency: 55. 1% • Latency reduction for each timing parameter (55°C) – Sensing: 17. 3% – Restore: 37. 3% (read), 54. 8% (write) – Precharge: 35. 2% Lee+, “Adaptive-Latency DRAM: Optimizing DRAM Timing for the Common-Case, ” HPCA 2015. 79

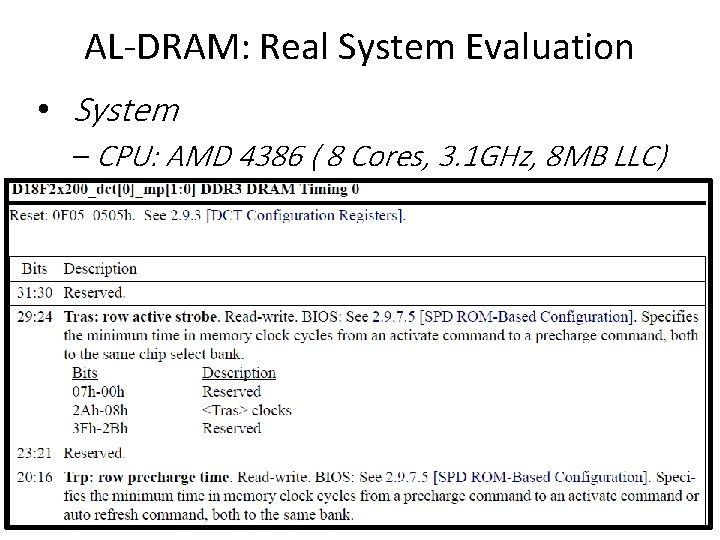

AL-DRAM: Real System Evaluation • System – CPU: AMD 4386 ( 8 Cores, 3. 1 GHz, 8 MB LLC) – DRAM: 4 GByte DDR 3 -1600 (800 Mhz Clock) – OS: Linux – Storage: 128 GByte SSD • Workload – 35 applications from SPEC, STREAM, Parsec, Memcached, Apache, GUPS 80

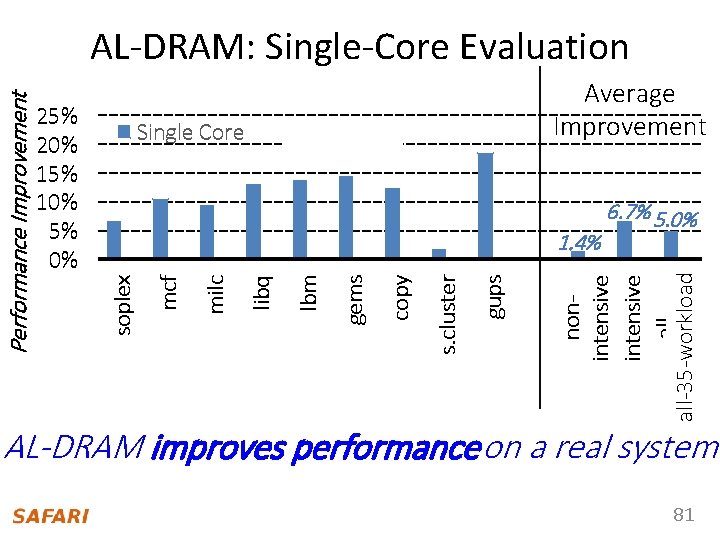

Single Core Average Improvement Multi Core 6. 7% 5. 0% nonintensive allall-35 -workloads gups s. cluster copy gems lbm libq milc 1. 4% mcf 25% 20% 15% 10% 5% 0% soplex Performance Improvement AL-DRAM: Single-Core Evaluation AL-DRAM improves performance on a real system 81

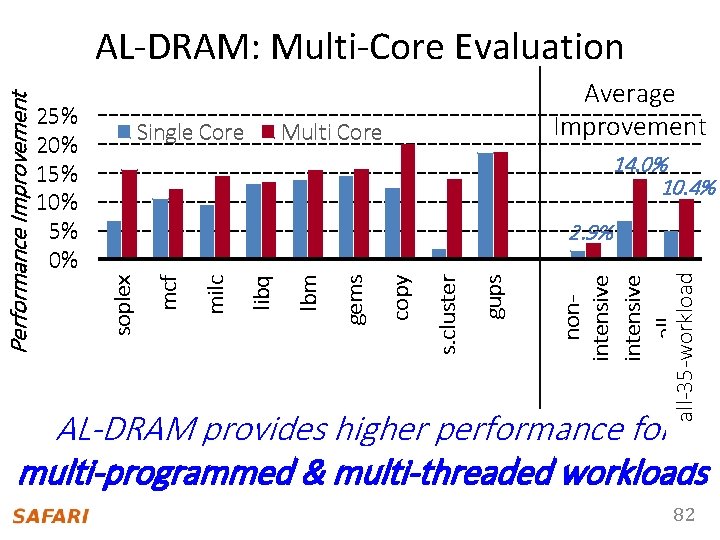

Single Core Average Improvement Multi Core 14. 0% 10. 4% nonintensive allall-35 -workloads gups s. cluster copy gems lbm libq milc 2. 9% mcf 25% 20% 15% 10% 5% 0% soplex Performance Improvement AL-DRAM: Multi-Core Evaluation AL-DRAM provides higher performance for multi-programmed & multi-threaded workloads 82

Reducing Latency Also Reduces Energy n AL-DRAM reduces DRAM power consumption by 5. 8% n Major reason: reduction in row activation time 83

AL-DRAM: Advantages & Disadvantages Advantages + Simple mechanism to reduce latency + Significant system performance and energy benefits + Benefits higher at low temperature + Low cost, low complexity n Disadvantages - Need to determine reliable operating latencies for different temperatures and different DIMMs higher testing cost (might not be that difficult for low temperatures) n 84

More on AL-DRAM n Donghyuk Lee, Yoongu Kim, Gennady Pekhimenko, Samira Khan, Vivek Seshadri, Kevin Chang, and Onur Mutlu, "Adaptive-Latency DRAM: Optimizing DRAM Timing for the Common-Case" Proceedings of the 21 st International Symposium on High. Performance Computer Architecture (HPCA), Bay Area, CA, February 2015. [Slides (pptx) (pdf)] [Full data sets] 85

Different Types of Latency Variation n AL-DRAM exploits latency variation q q n Across time (different temperatures) Across chips Is there also latency variation within a chip? q Across different parts of a chip 86

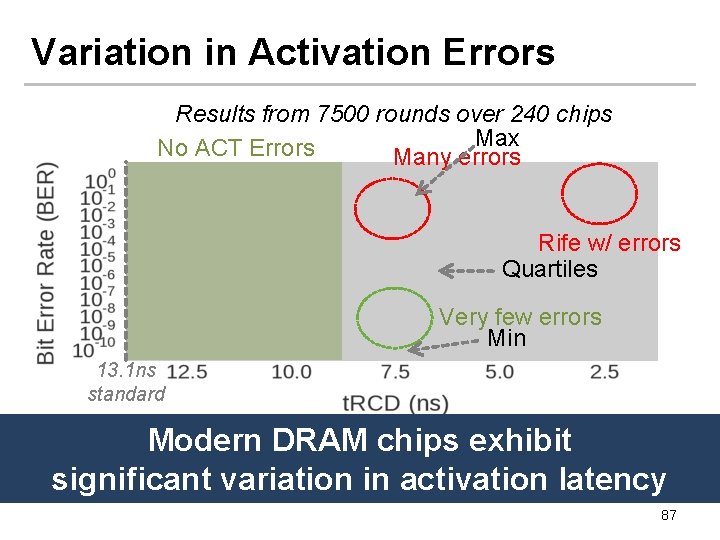

Variation in Activation Errors Results from 7500 rounds over 240 chips Max No ACT Errors Many errors Rife w/ errors Quartiles Very few errors Min 13. 1 ns standard Modern DRAM chips exhibit Different characteristics across DIMMs significant variation in activation latency 87

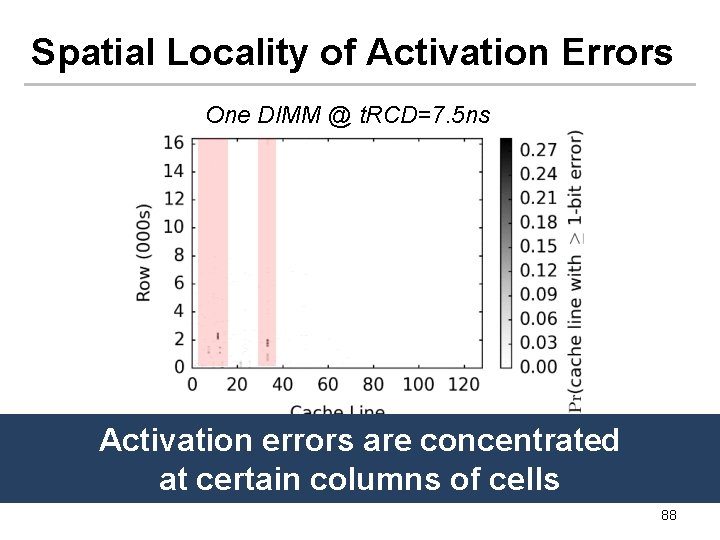

Spatial Locality of Activation Errors One DIMM @ t. RCD=7. 5 ns Activation errors are concentrated at certain columns of cells 88

Mechanism to Reduce DRAM Latency • Observation: DRAM timing errors (slow DRAM cells) are concentrated on certain regions • Flexible-Latenc. Y (FLY) DRAM – A software-transparent design that reduces latency • Key idea: 1) Divide memory into regions of different latencies 2) Memory controller: Use lower latency for regions without slow cells; higher latency for other regions Chang+, “Understanding Latency Variation in Modern DRAM Chips: Experimental Characterization, Analysis, and Optimization", ” SIGMETRICS 2016.

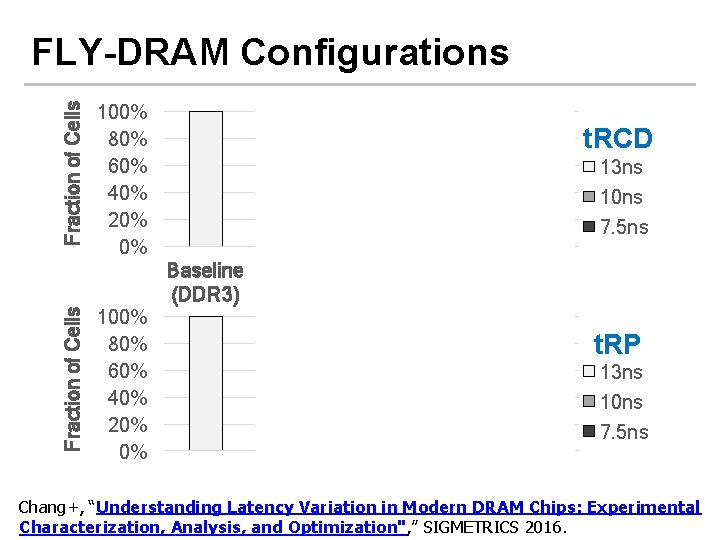

Fraction of Cells FLY-DRAM Configurations 100% 80% 60% 40% 20% 0% t. RCD 93% 12% Fraction of Cells Baseline (DDR 3) 100% 80% 60% 40% 20% 0% 13 ns 10 ns 7. 5 ns 99% D 1 D 2 D 3 Profiles of 3 real DIMMs Upper Bound t. RP 74% 99% 13 ns 10 ns 7. 5 ns Baseline D 1 D 2 D 3 Upper (DDR 3) Bound Chang+, “Understanding Latency Variation in Modern DRAM Chips: Experimental Characterization, Analysis, and Optimization", ” SIGMETRICS 2016.

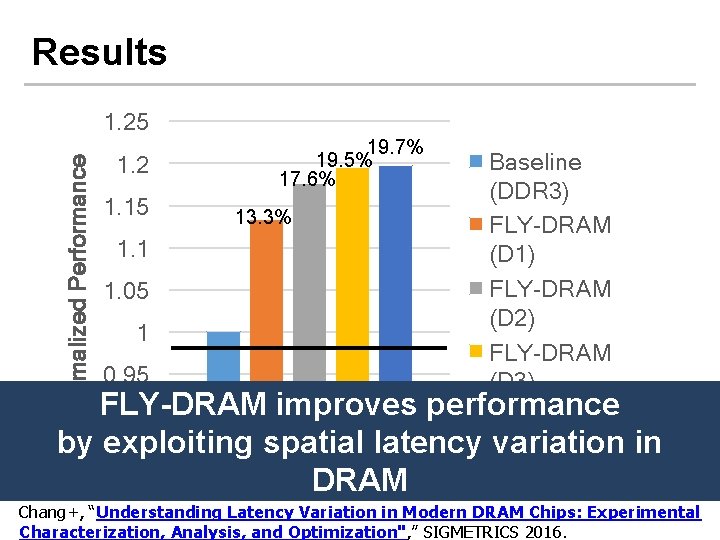

Results Normalized Performance 1. 25 1. 2 1. 15 1. 1 1. 05 1 0. 95 19. 7% 19. 5% 17. 6% 13. 3% Baseline (DDR 3) FLY-DRAM (D 1) FLY-DRAM (D 2) FLY-DRAM (D 3) performance Upper Bound FLY-DRAM improves 0. 9 by exploiting spatial latency variation in 40 Workloads DRAM Chang+, “Understanding Latency Variation in Modern DRAM Chips: Experimental Characterization, Analysis, and Optimization", ” SIGMETRICS 2016.

FLY-DRAM: Advantages & Disadvantages Advantages + Reduces latency significantly + Exploits significant within-chip latency variation n Disadvantages - Need to determine reliable operating latencies for different parts of a chip higher testing cost - Slightly more complicated controller n 92

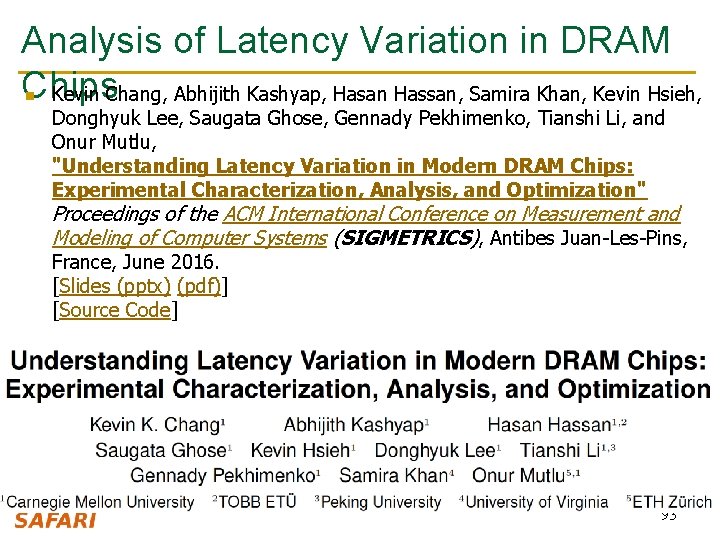

Analysis of Latency Variation in DRAM Chips Kevin Chang, Abhijith Kashyap, Hasan Hassan, Samira Khan, Kevin Hsieh, n Donghyuk Lee, Saugata Ghose, Gennady Pekhimenko, Tianshi Li, and Onur Mutlu, "Understanding Latency Variation in Modern DRAM Chips: Experimental Characterization, Analysis, and Optimization" Proceedings of the ACM International Conference on Measurement and Modeling of Computer Systems (SIGMETRICS), Antibes Juan-Les-Pins, France, June 2016. [Slides (pptx) (pdf)] [Source Code] 93

Computer Architecture Lecture 10 b: Memory Latency Prof. Onur Mutlu ETH Zürich Fall 2018 18 October 2018

We did not cover the following slides in lecture. These are for your benefit.

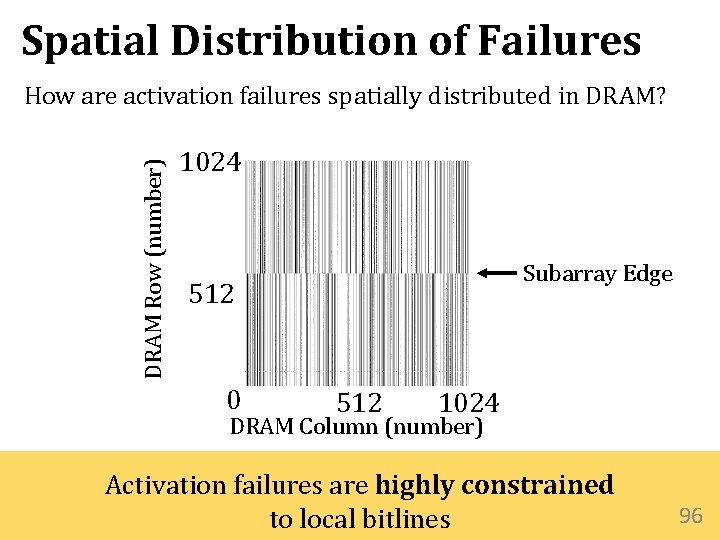

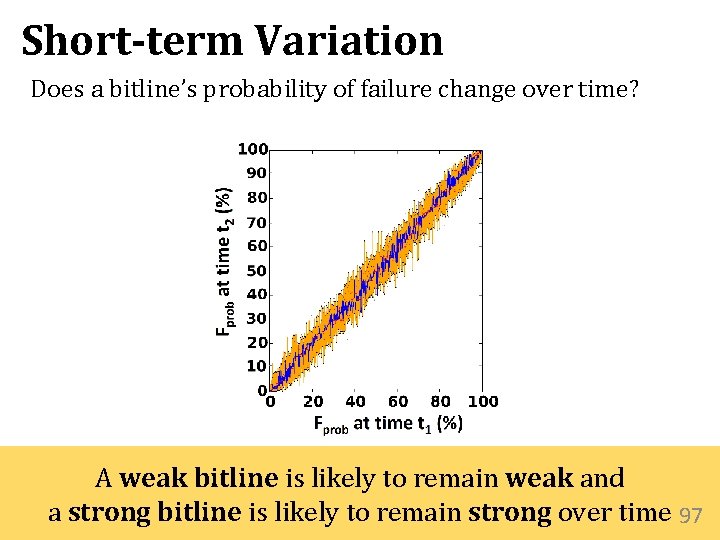

Spatial Distribution of Failures DRAM Row (number) How are activation failures spatially distributed in DRAM? 1024 Subarray Edge 512 0 512 1024 DRAM Column (number) Activation failures are highly constrained to local bitlines 96

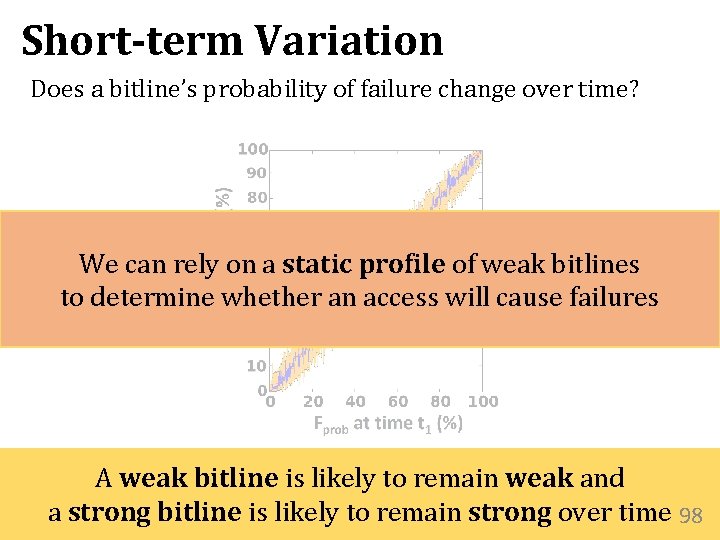

Short-term Variation Does a bitline’s probability of failure change over time? A weak bitline is likely to remain weak and a strong bitline is likely to remain strong over time 97

Short-term Variation Does a bitline’s probability of failure change over time? This. We shows we acan relyprofile on a static profile of weak can that rely on static of weak bitlines to determine whether an access cause failures to determine whether an access will cause failures A weak bitline is likely to remain weak and a strong bitline is likely to remain strong over time 98

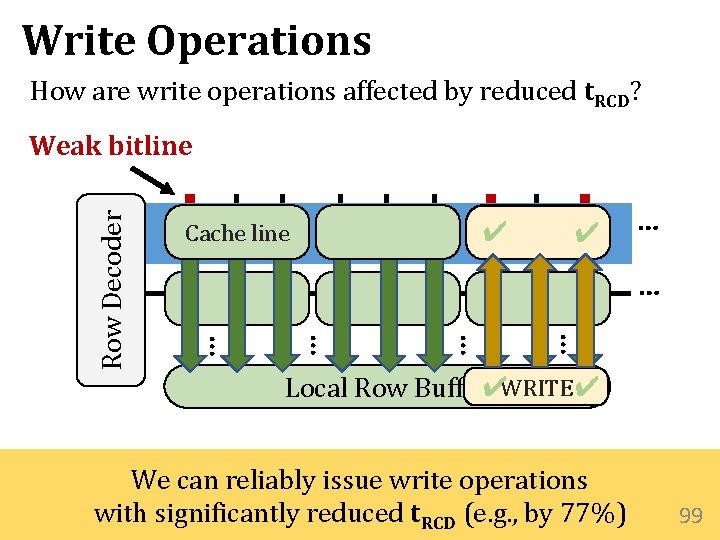

Write Operations How are write operations affected by reduced t. RCD? ✔ Cache line ✔ … … … Row Decoder Weak bitline Local Row Buffer✔WRITE ✔ We can reliably issue write operations with significantly reduced t. RCD (e. g. , by 77%) 99

Solar-DRAM Uses a static profile of weak subarray columns • Identifies subarray columns as weak or strong • Obtained in a one-time profiling step Three Components 1. Variable-latency cache lines (VLC) 2. Reordered subarray columns (RSC) 3. Reduced latency for writes (RLW) 100

Solar-DRAM Uses a static profile of weak subarray columns • Identifies subarray columns as weak or strong • Obtained in a one-time profiling step Three Components 1. Variable-latency cache lines (VLC) 2. Reordered subarray columns (RSC) 3. Reduced latency for writes (RLW) 101

Solar-DRAM Uses a static profile of weak subarray columns • Identifies subarray columns as weak or strong • Obtained in a one-time profiling step Three Components 1. Variable-latency cache lines (VLC) 2. Reordered subarray columns (RSC) 3. Reduced latency for writes (RLW) 102

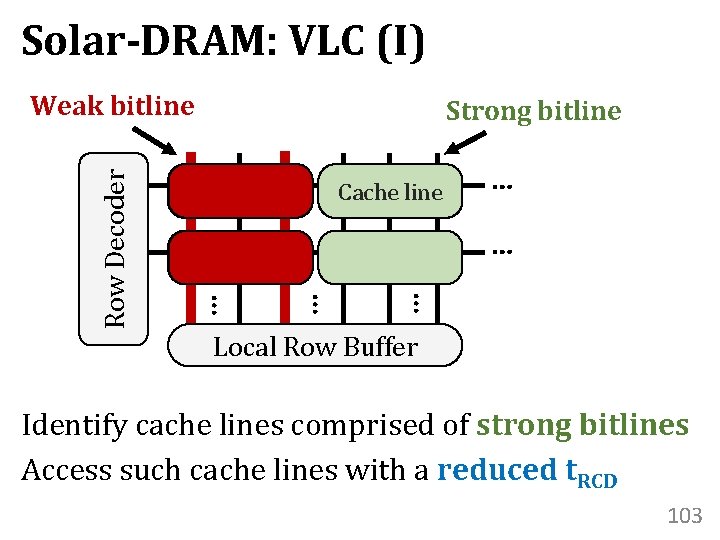

Solar-DRAM: VLC (I) Strong bitline Cache line … … … Row Decoder Weak bitline Local Row Buffer Identify cache lines comprised of strong bitlines Access such cache lines with a reduced t. RCD 103

Solar-DRAM Uses a static profile of weak subarray columns • Identifies subarray columns as weak or strong • Obtained in a one-time profiling step Three Components 1. Variable-latency cache lines (VLC) 2. Reordered subarray columns (RSC) 3. Reduced latency for writes (RLW) 104

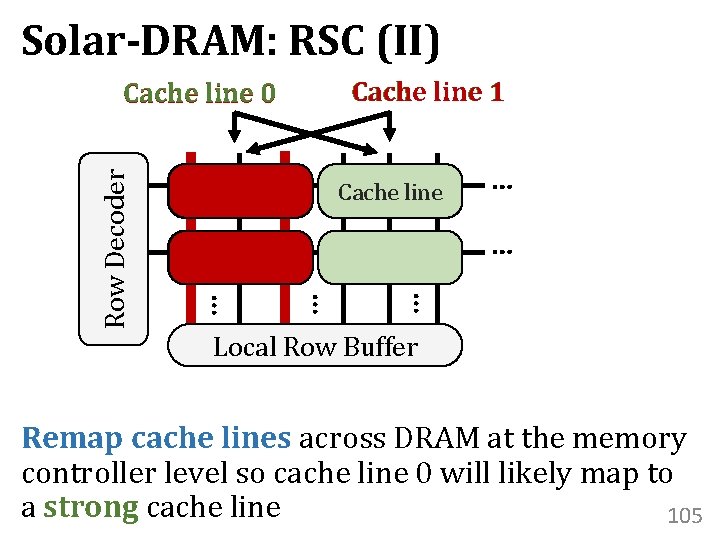

Solar-DRAM: RSC (II) Cache line 1 Cache line … … … Row Decoder Cache line 0 Local Row Buffer Remap cache lines across DRAM at the memory controller level so cache line 0 will likely map to a strong cache line 105

Solar-DRAM Uses a static profile of weak subarray columns • Identifies subarray columns as weak or strong • Obtained in a one-time profiling step Three Components 1. Variable-latency cache lines (VLC) 2. Reordered subarray columns (RSC) 3. Reduced latency for writes (RLW) 106

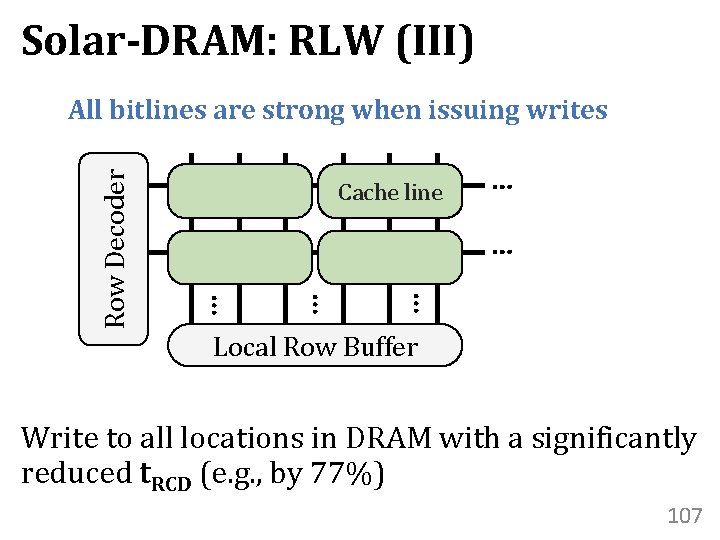

Solar-DRAM: RLW (III) Cache line … … … Row Decoder All bitlines are strong when issuing writes Local Row Buffer Write to all locations in DRAM with a significantly reduced t. RCD (e. g. , by 77%) 107

More on Solar-DRAM n Jeremie S. Kim, Minesh Patel, Hasan Hassan, and Onur Mutlu, "Solar-DRAM: Reducing DRAM Access Latency by Exploiting the Variation in Local Bitlines" Proceedings of the 36 th IEEE International Conference on Computer Design (ICCD), Orlando, FL, USA, October 2018. 108

Why Is There Spatial Latency Variation Within a Chip? 109

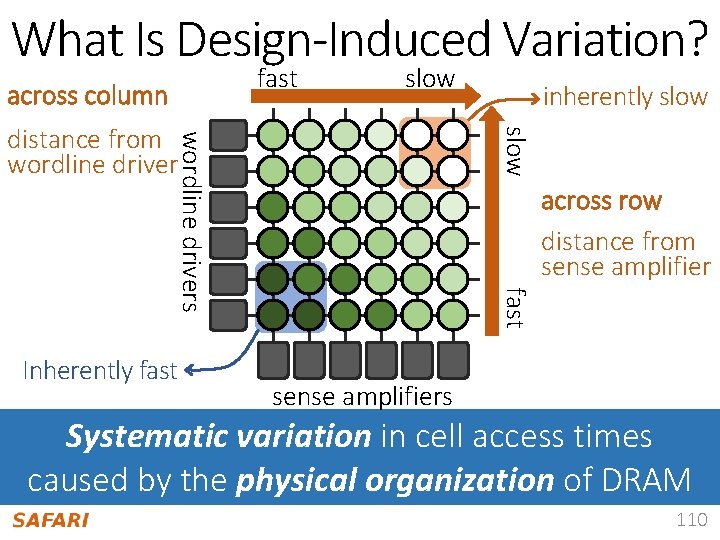

What Is Design-Induced Variation? fast across column slow wordline drivers slow distance from wordline driver across row distance from sense amplifier fast Inherently fast inherently slow sense amplifiers Systematic variation in cell access times caused by the physical organization of DRAM 110

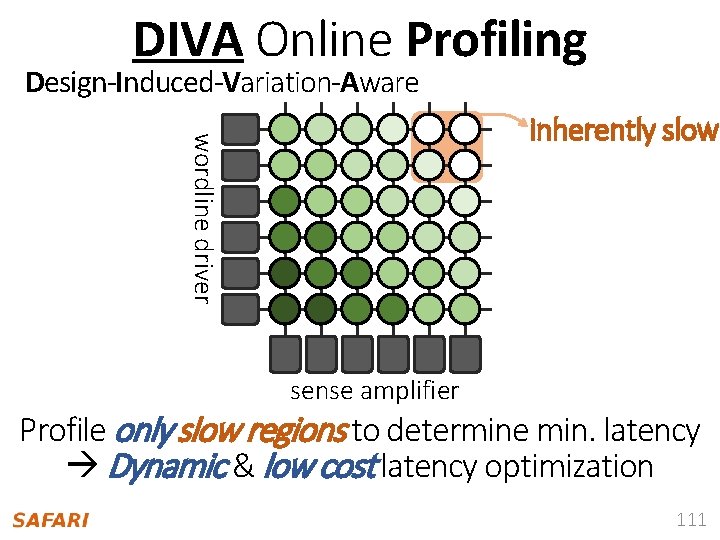

DIVA Online Profiling Design-Induced-Variation-Aware wordline driver inherently slow sense amplifier Profile only slow regions to determine min. latency Dynamic & low cost latency optimization 111

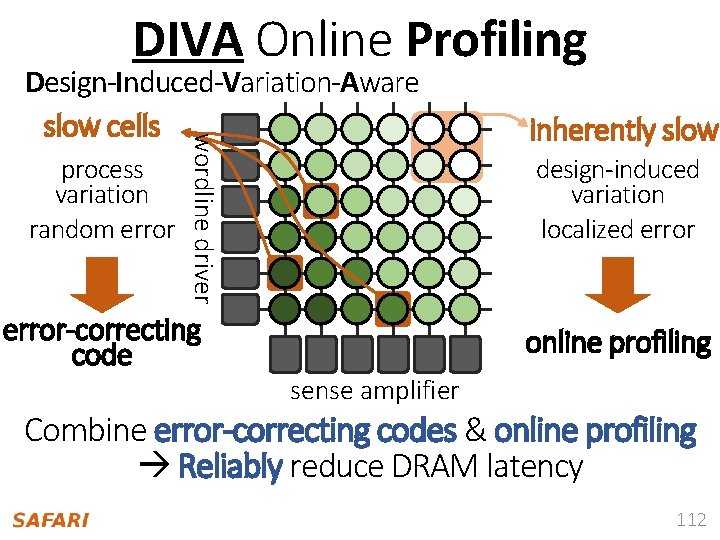

DIVA Online Profiling inherently slow process variation random error design-induced variation localized error wordline driver Design-Induced-Variation-Aware slow cells error-correcting code online profiling sense amplifier Combine error-correcting codes & online profiling Reliably reduce DRAM latency 112

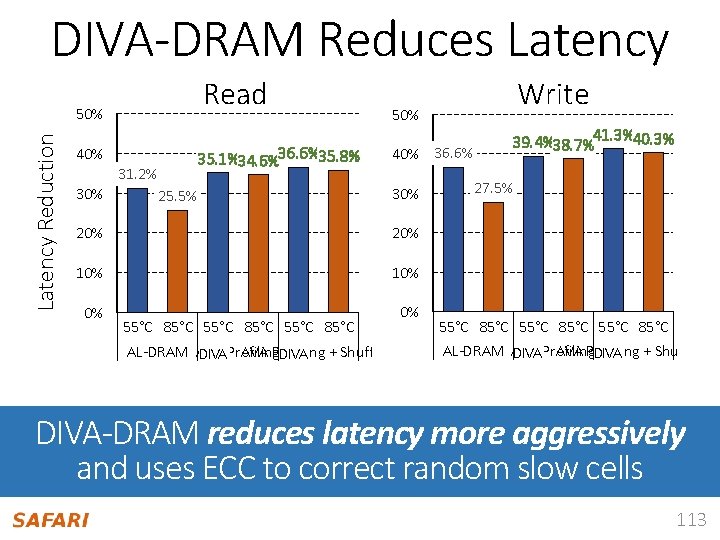

DIVA-DRAM Reduces Latency Read Latency Reduction 50% 40% 31. 2% 30% 35. 1%34. 6%36. 6%35. 8% 25. 5% Write 50% 40% 36. 6% 30% 20% 10% 0% 0% 55°C 85°C AL-DRAM AVA Profiling DIVA + Shuffling 39. 4%38. 7%41. 3%40. 3% 27. 5% 55°C 85°C AL-DRAM AVA Profiling DIVA + Shuffling DIVA-DRAM reduces latency more aggressively and uses ECC to correct random slow cells 113

DIVA-DRAM: Advantages & Disadvantages Advantages ++ Automatically finds the lowest reliable operating latency at system runtime (lower production-time testing cost) + Reduces latency more than prior methods (w/ ECC) + Reduces latency at high temperatures as well n Disadvantages - Requires knowledge of inherently-slow regions - Requires ECC (Error Correcting Codes) - Imposes overhead during runtime profiling n 114

Design-Induced Latency Variation in DRAM Donghyuk Lee, Samira Khan, Lavanya Subramanian, Saugata Ghose, n Rachata Ausavarungnirun, Gennady Pekhimenko, Vivek Seshadri, and Onur Mutlu, "Design-Induced Latency Variation in Modern DRAM Chips: Characterization, Analysis, and Latency Reduction Mechanisms" Proceedings of the ACM International Conference on Measurement and Modeling of Computer Systems (SIGMETRICS), Urbana-Champaign, IL, USA, June 2017. 115

- Slides: 115