Chip Architectures Design Rationale By Joe Peric Rationale

Chip Architectures: Design Rationale By Joe Peric

Rationale Performance: We don’t want slow computers n Cost: They have to be affordable n Money: How much cash to design and test? n Time: How much time do we have? n Target Market: Who will want it? n Competition: What do we do to beat the competition, and what are they doing? n

Problems in Delivering Solutions Clock frequency n Core efficiency n Processor complexity n Power and heat n Old mindsets in new times n Communicating with the rest of the computer n How do we build parallel computers? n

Clock frequency Increasing clock frequency will always decrease latency and increase throughput. n It is the simplest way to increase processor performance n Essentially the “heartbeat” of a processor n How it is viewed by different parties n

Core Efficiency More core efficiency = less wastefulness n Increasing core efficiency leads to more complex cores n The increasing complexity could potentially make the cores less efficient n “Useful” execution per unit time is decent measure of efficiency n

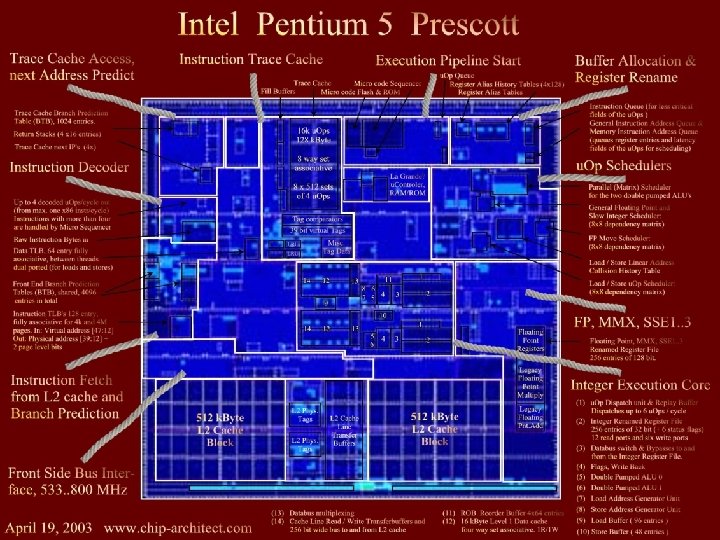

Complexity CPUs were originally CISC, and were complicated n Hardware was expensive n RISC was born to make hardware cheap by decreasing complexity n Today’s situation: CISC/RISC hybrid with astronomical complexity n

Old CPU And now…

Complexity makes it harder to design and build, and test new chips. n Steeper learning curves n Larger CPUs n Slower, more expensive, longer time to reach market n Things have always been moving this way but may change in the near future n

CISC Complex instruction set computing n CPU can handle large range of instructions n New instructions are added over time to new chip designs when compilers demand them n Chips got expensive n Many instructions weren’t used often, but implemented in the chips. n Chips got larger, and more complex n

RISC Reduced instruction set computing n CISC costs increased with complexity n RISC was to be cheap hardware alternative n Idea took Amdahl’s law to heart n Increased compiler costs n RISC computers started to become more CISC like over time n

VLIW Very long instruction word n Designed to remedy the problem of RISC mutating into CISC n Puts extreme emphasis on compiler development and scheduling of code n VLIW executes “words” made up of multiple RISC-like instructions n

VLIW didn’t catch on much n Software development too long and slow n Instruction level parallelism is already achieved through current hardware n Making ILP done through software just make software more expensive n

VLIW Examples Intel’s Itanium n New instruction set (IA-64) n Relied heavily on compiler optimization for an ISA that hadn’t existed before n Huge investment and startup costs n Itanium chips were/are expensive and provide little benefit over standard, well established solutions n

VLIW Examples

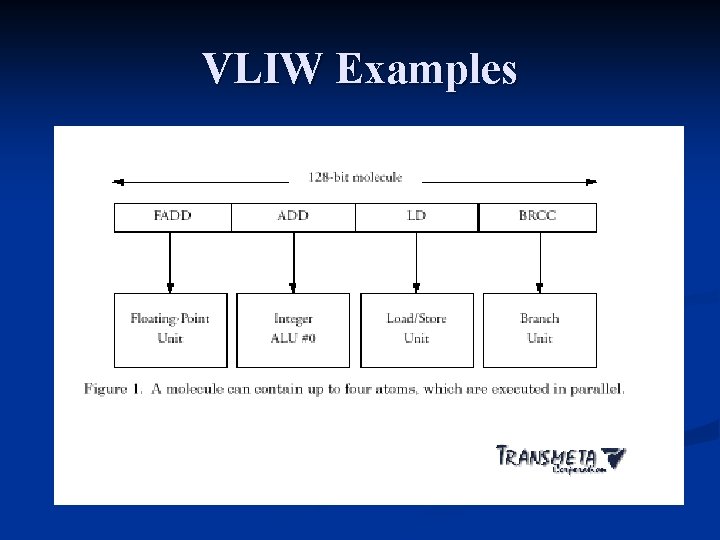

VLIW Examples Transmeta Crusoe n Had own, brand new native ISA n Had code-morphing software that read machine code and transformed it into the crusoe ISA code in real-time n Code-morphing made this machine ISA independent, but very slow n

VLIW Examples

Power and Heat Not an issue ten years ago compared to today n CPUs draw much more power than ever n They produce more heat than ever n How to give CPU power n How to cool CPU n Power to cooling n

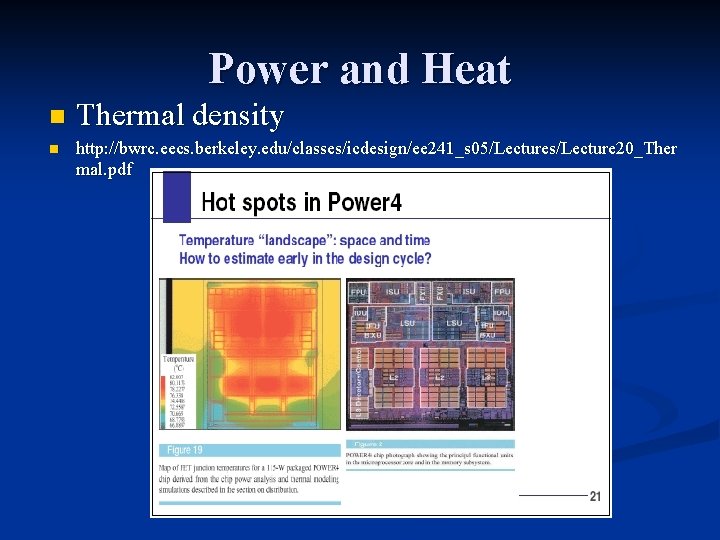

Power and Heat n n Thermal density http: //bwrc. eecs. berkeley. edu/classes/icdesign/ee 241_s 05/Lectures/Lecture 20_Ther mal. pdf

Power and Heat Cost to wire more pins to deliver more power n Most pins dedicated to this n

Power and Heat What is the problem with heat? n How to remove it n How to prevent it to begin with? n Clock frequency, manufacturing processes n Other “tricks” to decrease power usage and heat output n

Engineer Mentality Loving an idea n Market is the plaintiff, judge, jury and executioner. Many fail to realize this n Brand new ideas are ok but tend to fail n Old ideas were ok but are outdated and inapplicable n Slightly new but not radical ideas are best n

Engineer Mentality What has the market led us to? n x 86 architecture n 26 years of backwards compatibility in a slow, inefficient monster that stole ideas from most other design philosophies n Started in original IBM PC; now it’s everywhere n Radical new designs keep losing to this beast n Engineer mentality must disappointingly be limited by the real world and the market n

Solutions that worked in the past Increase clock frequency n Increase width n Make memory faster, more integrated, more available n Oo. O execution n Prediction n Deeper, wider execution pipelines n

Problems with old solutions Every year in the past few years have seen diminishing returns from microarchitectual enhancements n ILP and clock increase engineering complexity and provide less and less benefit each year n Heat and power n Cost n Time to market n

The rest of the computer Processor is useless if it is outside the socket n How does it communicate with other CPUs, memory, the rest of the computer? n Many different approaches by different companies as to how it is done n

Xeon’s bus Essentially same design as the original Pentium’s bus n Has increased in frequency and bus width n A few electrical improvements n Huge standardized technology; Intel is huge so it cannot change its base technologies so fast n Congested design n

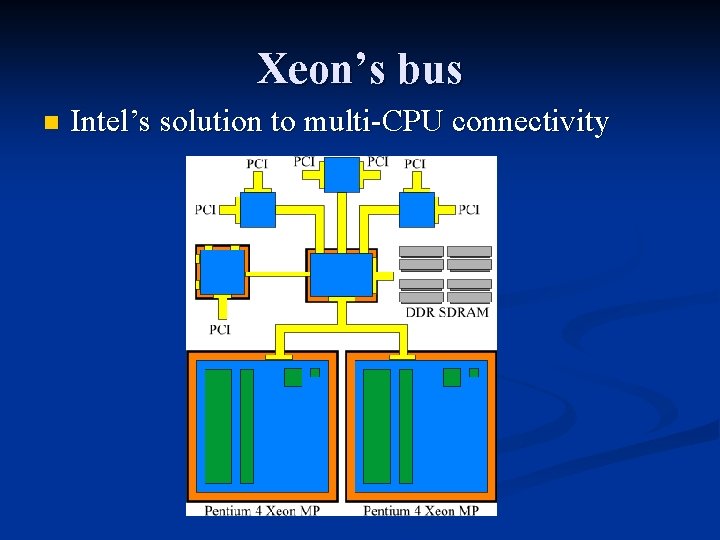

Xeon’s bus n Intel’s solution to multi-CPU connectivity

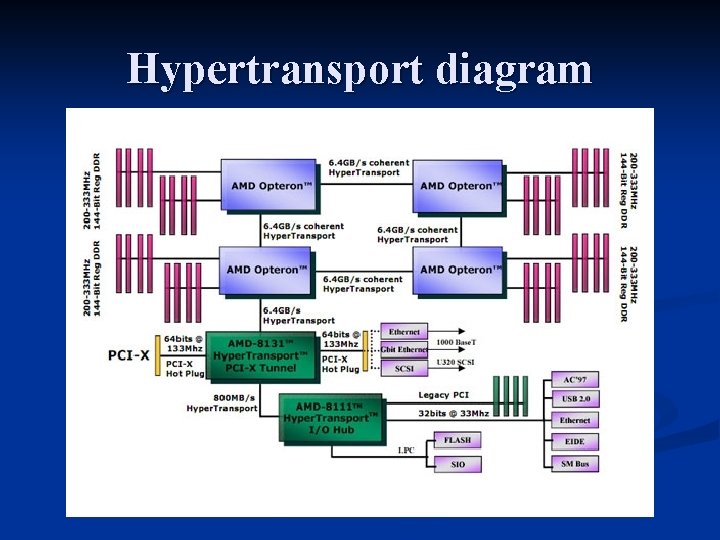

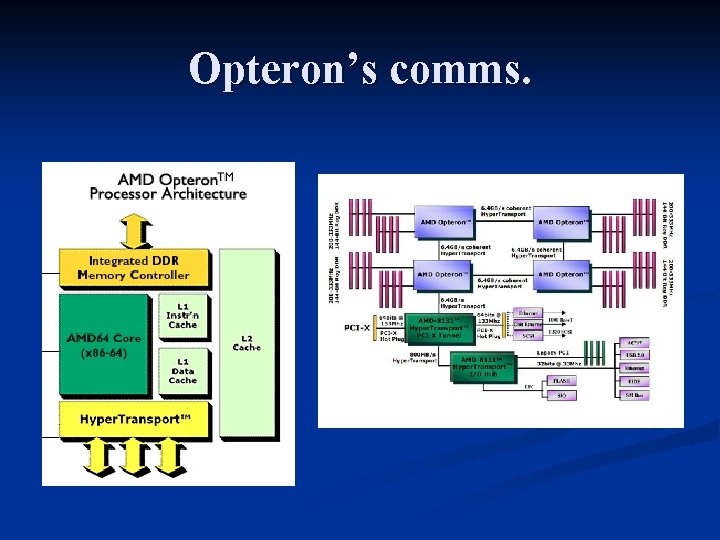

Hypertransport Newer then Intel’s interconnectivity approach n So far more promising n Open for use by anyone in consortium n Tries to simplify interconnections, reduce cost, and spread bandwidth over the system to avoid concentrated congestion n

Hypertransport diagram

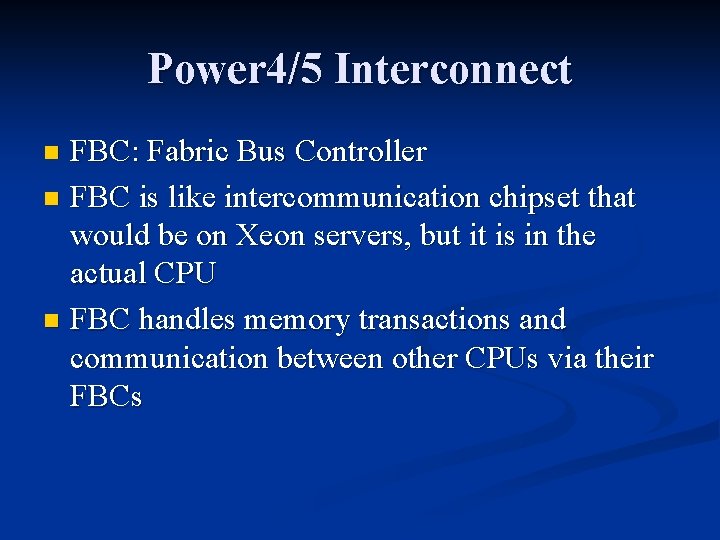

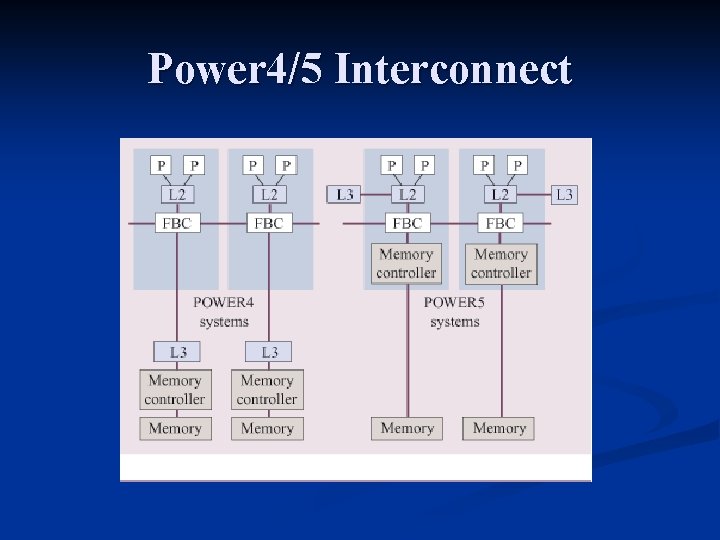

Power 4/5 Interconnect FBC: Fabric Bus Controller n FBC is like intercommunication chipset that would be on Xeon servers, but it is in the actual CPU n FBC handles memory transactions and communication between other CPUs via their FBCs n

Power 4/5 Interconnect

Multiprocessing Connect multiple computers together with their own CPUs n Beowulf clusters n To some extent the Internet n A LAN n Slow connections between CPUs n

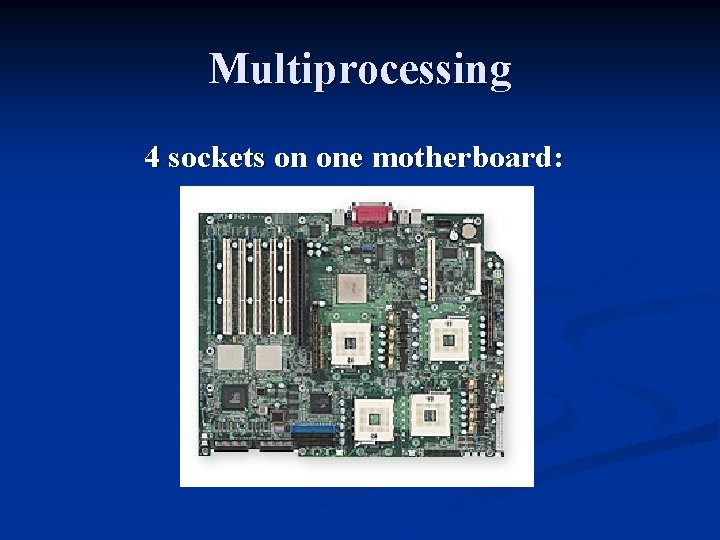

Multiprocessing To remedy the problem of slow and timely communication, processors are put on the same circuit board n Communication much faster between processors and applications n Can be expensive if you want many CPUs to be connected in this fashion n

Multiprocessing 4 sockets on one motherboard:

Multiprocessing If the communication is still too slow and takes too long, just stick the CPUs right next to each other with no obstruction in between n Multi-core solutions n Quick communication between threads n External comms. Must accommodate 2 CPUs now n Twice the space for one CPU n If you buy two CPUs, you’re stuck with both n

Multiprocessing The current trend in computer architecture n Single cores have not been doubling in performance every 18 months; in the past 5 years it is closer to every 36 months (or more) n If you want to go faster, don’t wait 18 months to double your speed. Go parallel instead n Design one core, copy & paste n Multiple cores can perform load balancing to help keep cool n

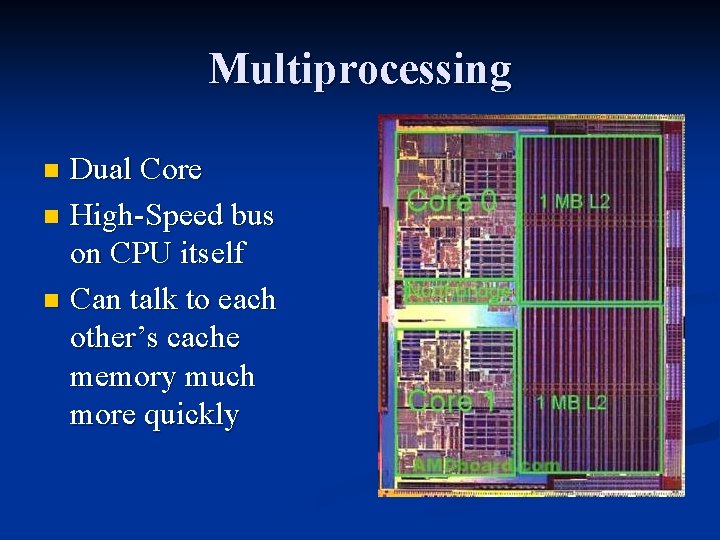

Multiprocessing Dual Core n High-Speed bus on CPU itself n Can talk to each other’s cache memory much more quickly n

Multiprocessing SMT: Simultaneous multiple threading n Allows each CPU core to execute multiple tasks/threads simultaneously instead of sequentially n “Hyperthreading” is Intel’s implementation of SMT on their CPUs n Threads communicate not through a highspeed direct interconnect, but to each other directly n

Multiprocessing SMT increases CPU efficiency n One CPU pretending to be two CPUs is actually effective n Two threads on a single core not as fast as two threads on separate cores n Two threads on one core must fight for / share execution resources n SMT is actually real multitasking n

Microprocessors Intel’s Xeon n AMD’s Opteron n Sun’s Niagara n IBM’s Cell n Intel’s Terascale n

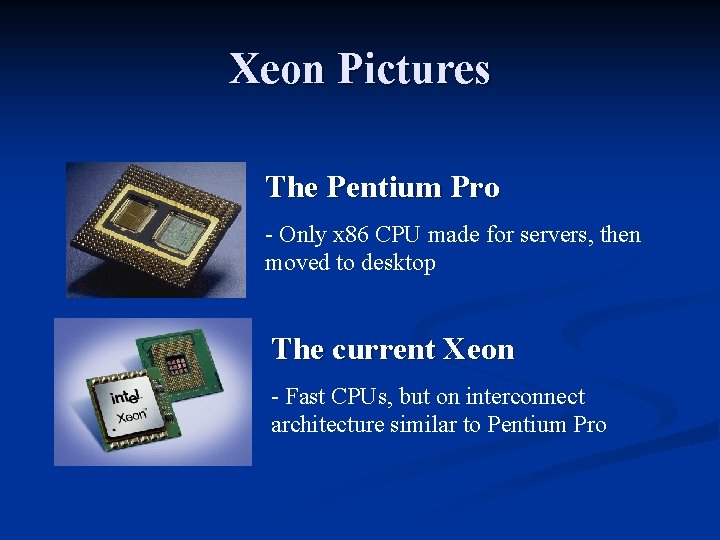

Xeon Intel’s workstation/server CPU n Originally started as Pentium Pro n Lucrative market n Always had weak comms. busses n Add plenty of on-chip memory to alleviate problem n Xeons are Pentiums given features to work as server chips n

Xeon Pictures The Pentium Pro - Only x 86 CPU made for servers, then moved to desktop The current Xeon - Fast CPUs, but on interconnect architecture similar to Pentium Pro

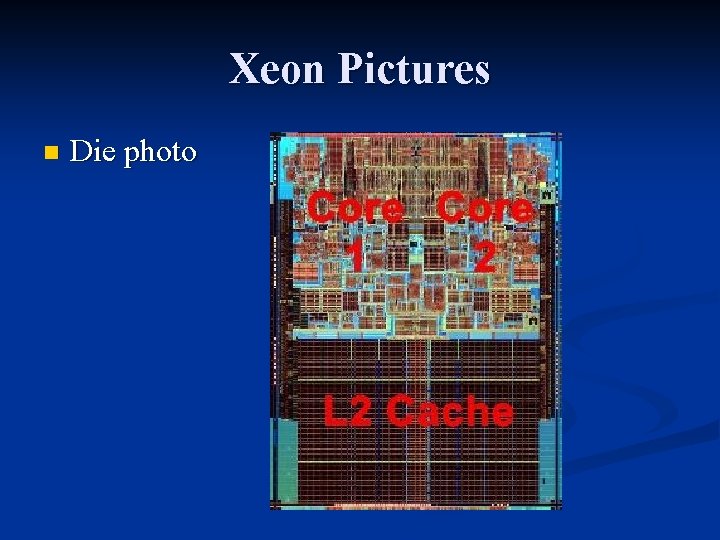

Xeon Pictures n Die photo

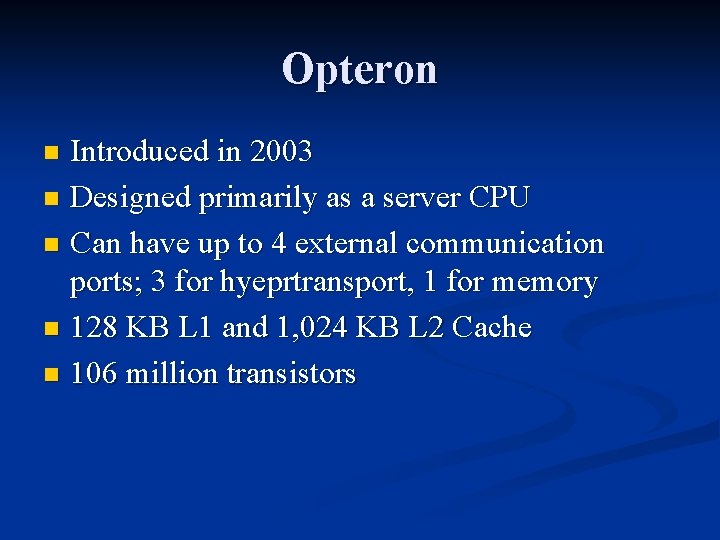

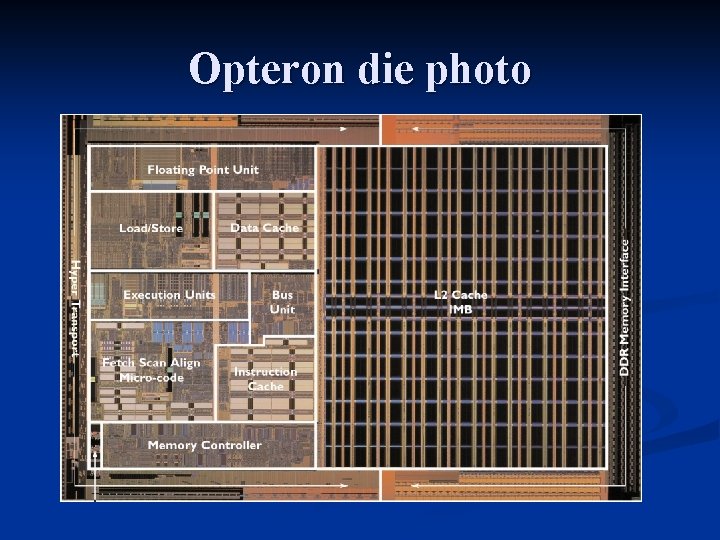

Opteron Introduced in 2003 n Designed primarily as a server CPU n Can have up to 4 external communication ports; 3 for hyeprtransport, 1 for memory n 128 KB L 1 and 1, 024 KB L 2 Cache n 106 million transistors n

Opteron’s comms.

Opteron die photo

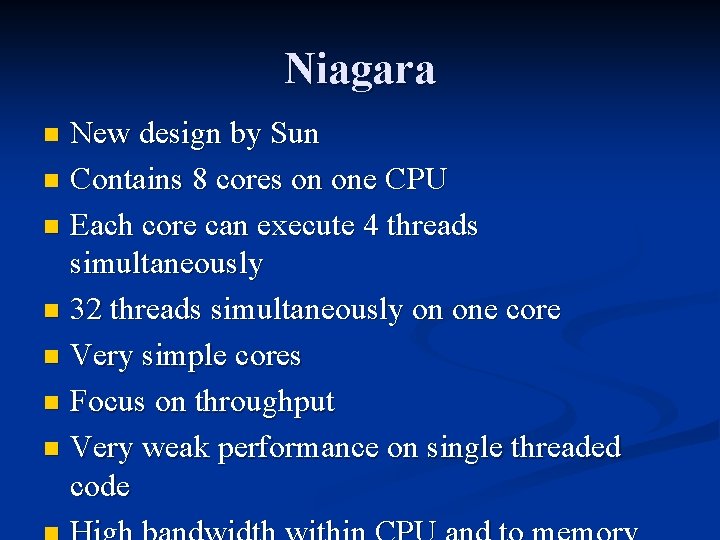

Niagara New design by Sun n Contains 8 cores on one CPU n Each core can execute 4 threads simultaneously n 32 threads simultaneously on one core n Very simple cores n Focus on throughput n Very weak performance on single threaded code n

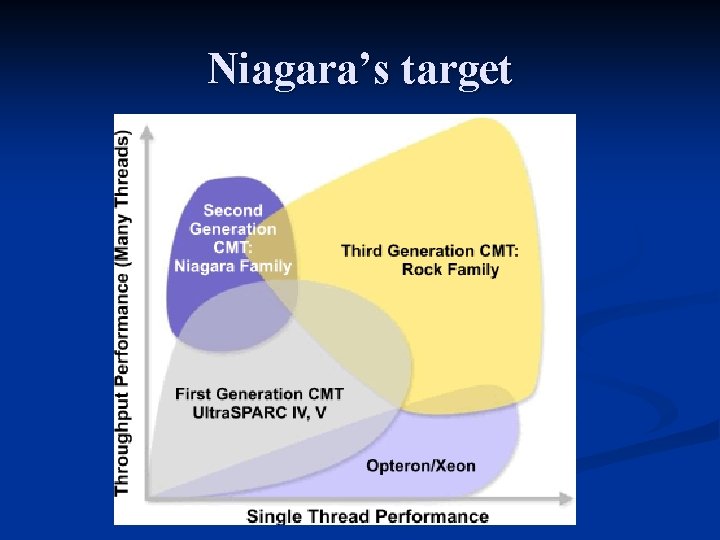

Niagara’s target

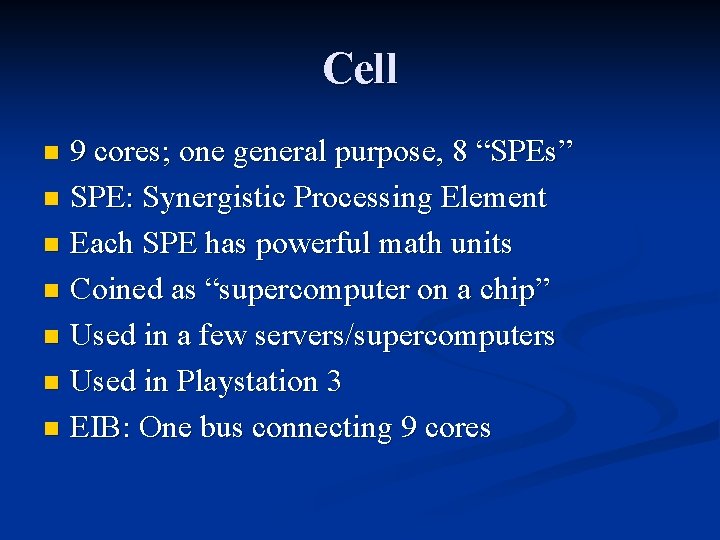

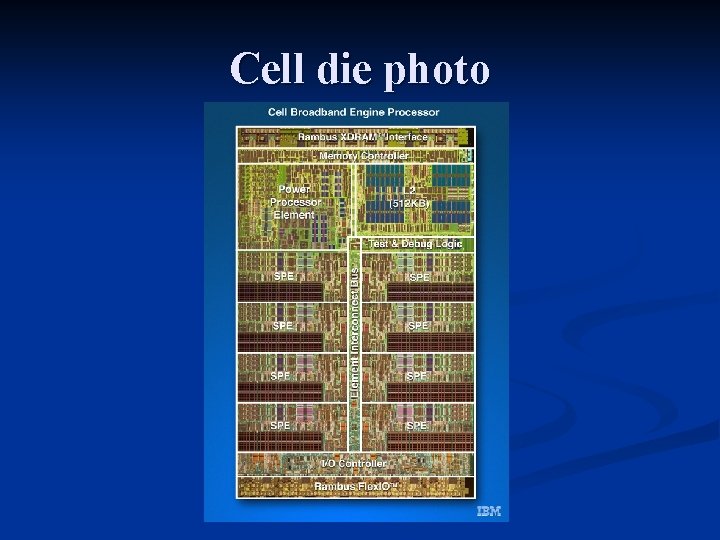

Cell 9 cores; one general purpose, 8 “SPEs” n SPE: Synergistic Processing Element n Each SPE has powerful math units n Coined as “supercomputer on a chip” n Used in a few servers/supercomputers n Used in Playstation 3 n EIB: One bus connecting 9 cores n

Cell die photo

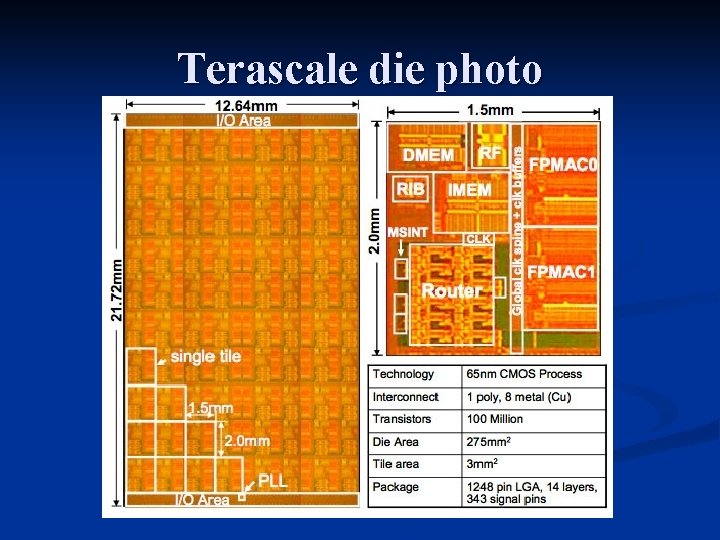

Terascale Proof-of-Concept design n The chip itself is toy-like with respect to real power and ISA n Each chip has a router on it n Allows seamless addition of cores to the CPU n Very cheap for design n Not very effective for performance n

Terascale die photo

Terascale Each core can communicate to immediate neighbour on each of 4 sides n Example chip had 80 cores n Cores not in use decrease power consumption n If area of CPU gets too hot, work done in that area of CPU is passed to other cores n

The Future Old methods of improving performance are no longer as fruitful as they used to be n Systems are developing more integration, as fewer chips are needed in a computer to perform the same functions n Parallelism at the instruction level seems to be fully exploited from compilers and hardware n CPUs are dealing with thermal density problems n

The Future Moore’s law is holding and now transistor budgets are becoming more relaxed n Cores have become ridiculously complicated n We are now seeing limits to sequential computing at the hardware level n Single core performance not promising looking into the future n Where does this lead us? n

The Future Parallel is promising for performance n One simple core can be copied many times n Most new designs have parallelism in mind already n Software is taking it’s sweet time to catch up n Programmers need software to help them parallelize their programs! n OSes need better scheduling and allocation algorithms! n

The Future Most current compute-intensive programs and algorithms can be parallelized n Uses: media processing is embarrassingly parallel and obtains near linear performance increase with more SMT and cores n Making programmers make parallel code could lead to better programs, or programs that scale with better hardware! n

References n n n n http: //www. aceshardware. com/ http: //www. anandtech. com/ http: //www. sandpile. org/ http: //www. chip-architect. com/ http: //www. intel. com/ http: //www. transmeta. com/pdfs/techdocs/efficeon_tm 8600_prod_brief. pdf http: //www. amd. com/usen/assets/content_type/white_papers_and_tech_docs/31411. pdf http: //www. arstechnica. com/

- Slides: 59