Runtime Guarantees for RealTime Systems Reinhard Wilhelm Bjrn

Runtime Guarantees for Real-Time Systems Reinhard Wilhelm , Björn Wachter AVACS Spring School 2010

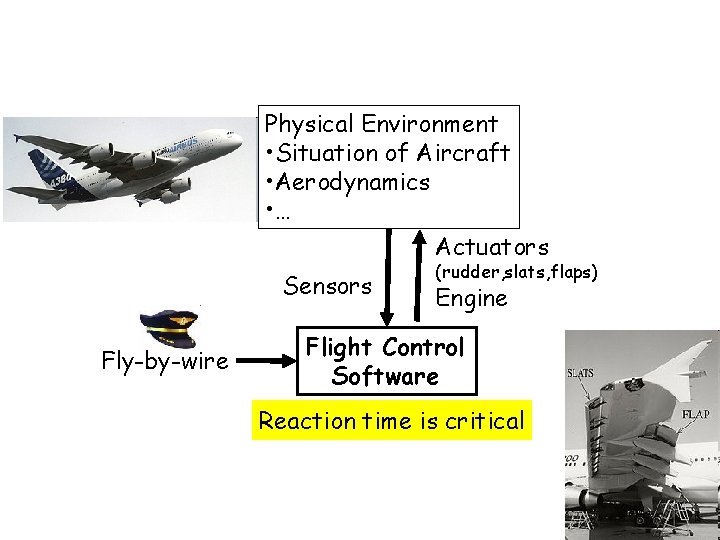

Hard Real-Time Systems Physical Environment • Situation of Aircraft • Aerodynamics • … Actuators Sensors Fly-by-wire (rudder, slats, flaps) Engine Flight Control Software Reaction time is critical

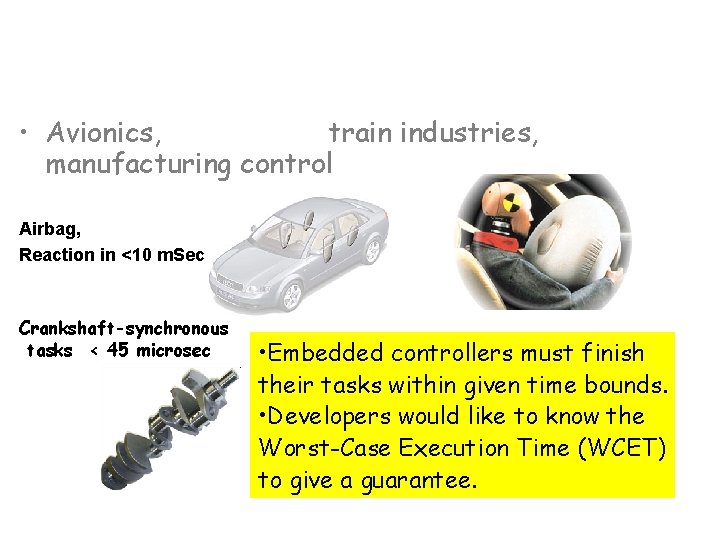

Hard Real-Time Systems Hard real-time systems • Avionics, automotive, train industries, manufacturing control Airbag, Reaction in <10 m. Sec Crankshaft-synchronous tasks < 45 microsec • Embedded controllers must finish their tasks within given time bounds. • Developers would like to know the Worst-Case Execution Time (WCET) to give a guarantee.

Overview • • The Problem Sketch of a Solution Tool Architecture Analyses

Static Timing Analysis - the Application Domain The problem: Given 1. a software expected to produce some reaction, 2. a hardware platform, on which software runs, 3. required reaction time. Derive: a guarantee for timeliness.

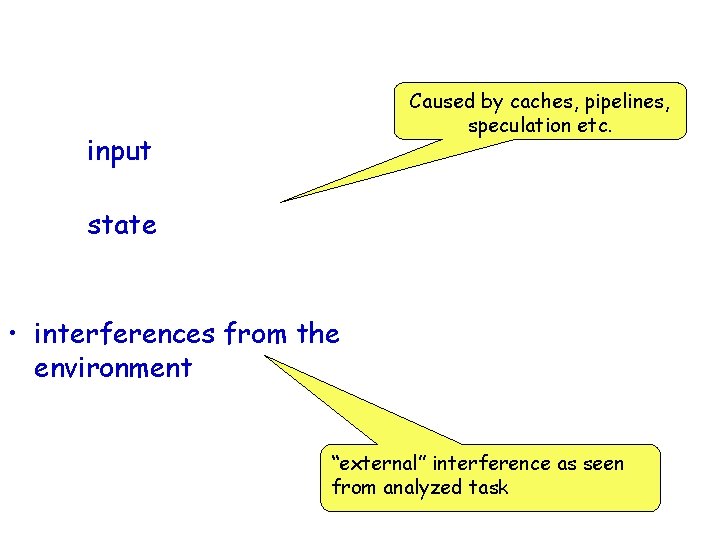

What does Execution Time Depend on? Caused by caches, pipelines, speculation etc. • the input – inevitable, • the state of the execution platform – this is (relatively) new, • interferences from the environment – in presence of preemptive scheduling, interrupts. “external” interference as seen from analyzed task

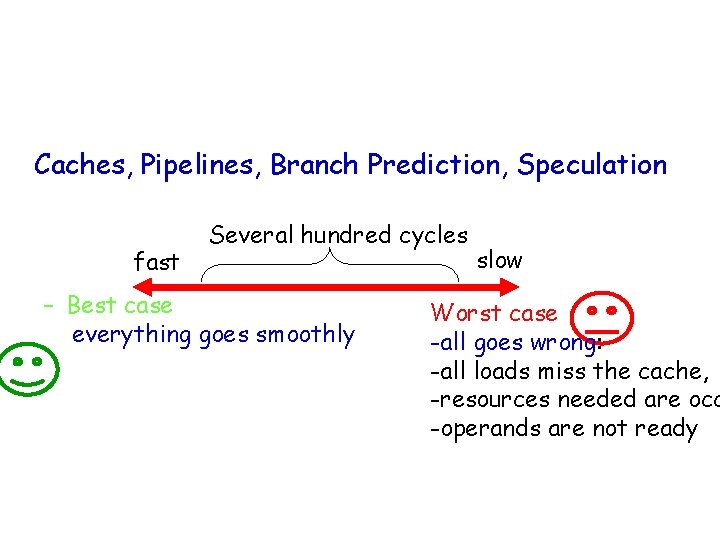

Modern Hardware Features • Modern processors increase performance by using: Caches, Pipelines, Branch Prediction, Speculation • make computation of bounds difficult: fast Several hundred cycles – Best case everything goes smoothly no cache miss, operands ready, needed resources free, branch correctly predicted slow Worst case -all goes wrong: -all loads miss the cache, -resources needed are occ -operands are not ready

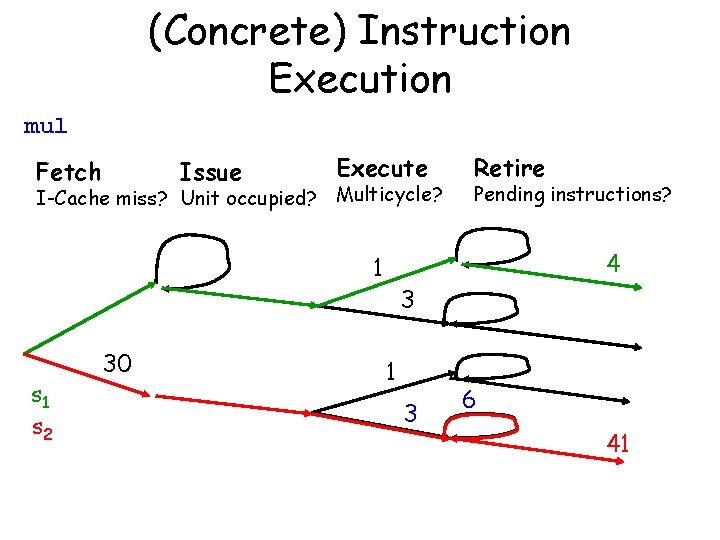

(Concrete) Instruction Execution mul Fetch Issue Execute I-Cache miss? Unit occupied? Multicycle? s 1 s 2 Pending instructions? 4 1 30 Retire 3 1 3 6 41

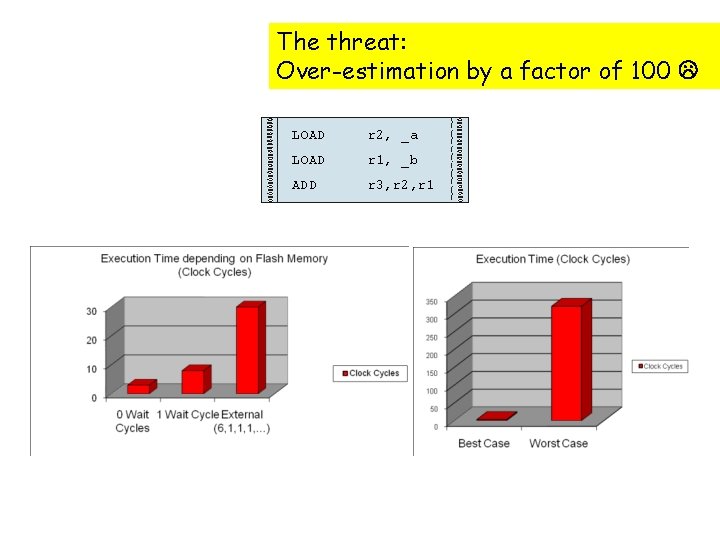

Access Times x = a + b; MPC 5 xx The threat: Over-estimation by a factor of 100 LOAD r 2, _a LOAD r 1, _b ADD r 3, r 2, r 1 PPC 755

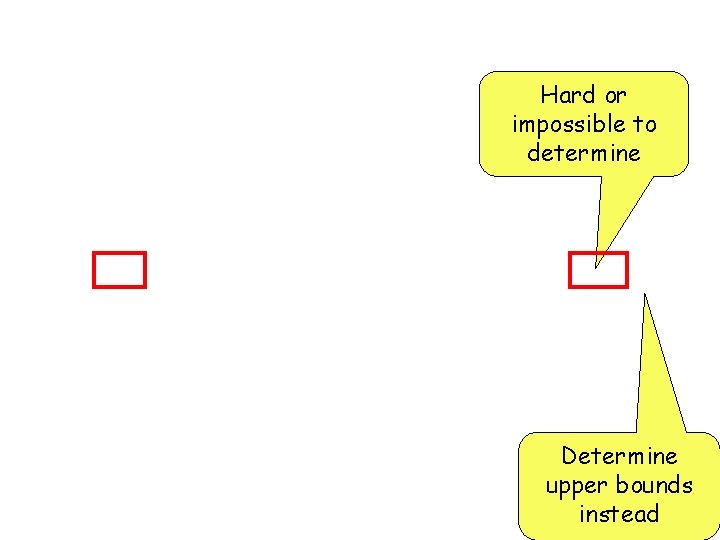

Notions in Timing Analysis Hard or impossible to determine Determine upper bounds instead

High-Level Requirements for Timing Analysis • Upper bounds must be safe, i. e. not underestimated • Upper bounds should be tight, i. e. not far away from real execution times • Analogous for lower bounds • Analysis effort must be tolerable Note: all analyzed programs are terminating, loop bounds need to be known no decidability problem, but a complexity problem!

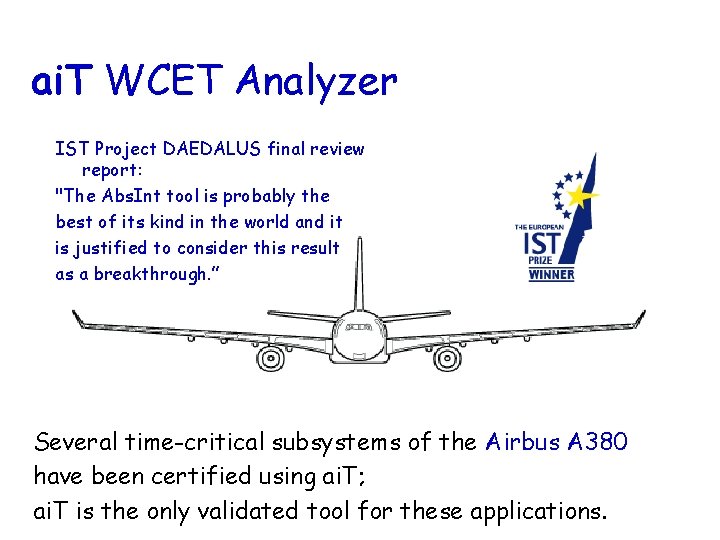

ai. T WCET Analyzer IST Project DAEDALUS final review report: "The Abs. Int tool is probably the best of its kind in the world and it is justified to consider this result as a breakthrough. ” Several time-critical subsystems of the Airbus A 380 have been certified using ai. T; ai. T is the only validated tool for these applications.

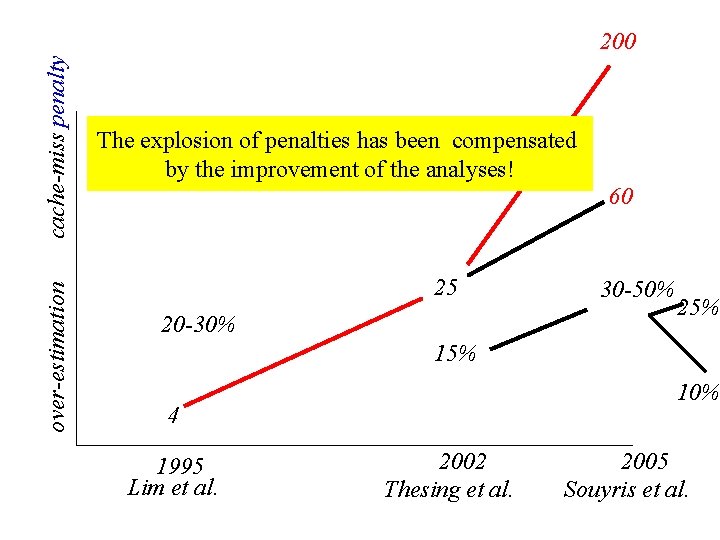

cache-miss penalty over-estimation Tremendous Progress 200 during the past 15 Years The explosion of penalties has been compensated by the improvement of the analyses! 60 25 20 -30% 30 -50% 25% 10% 4 1995 Lim et al. 2002 Thesing et al. 2005 Souyris et al.

Timing Accidents and Penalties Timing Accident – cause for an increase of the execution time of an instruction Timing Penalty – the associated increase • Types of timing accidents – – – Cache misses Pipeline stalls Branch mispredictions Bus collisions Memory refresh of DRAM TLB miss

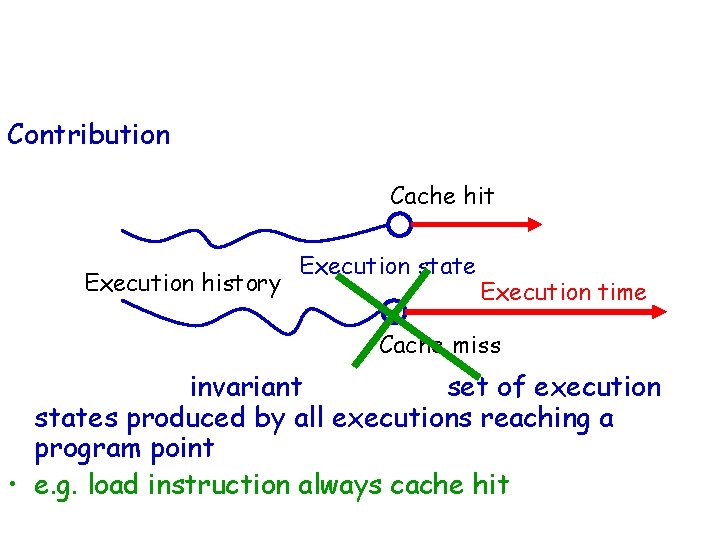

History-Sensitivity of Instruction Execution-Time Contribution of the execution of an instruction to a program‘s execution time Cache hit Execution history Execution state Execution time Cache miss • Needed: an invariant about the set of execution states produced by all executions reaching a program point, • e. g. load instruction always cache hit

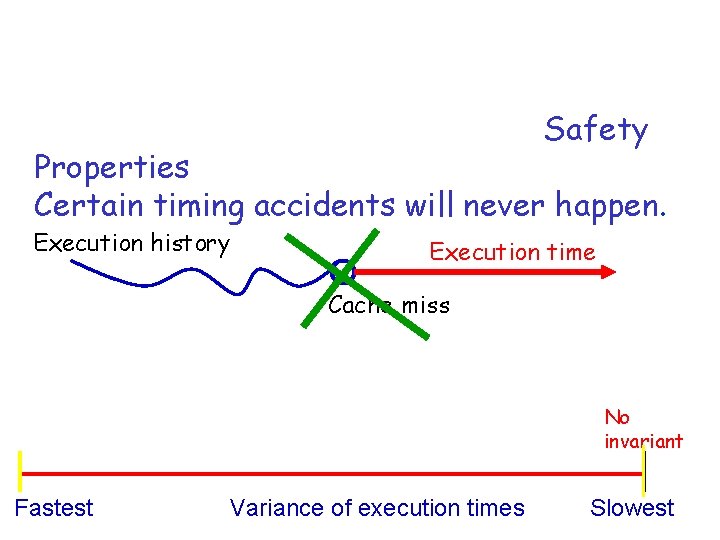

Deriving Run-Time Guarantees • Our method and tool, ai. T, derives Safety Properties from these invariants : Certain timing accidents will never happen. Execution history Execution time Cache miss • The more accidents excluded , the lower the upper bound. No invariant Fastest Variance of execution times Slowest

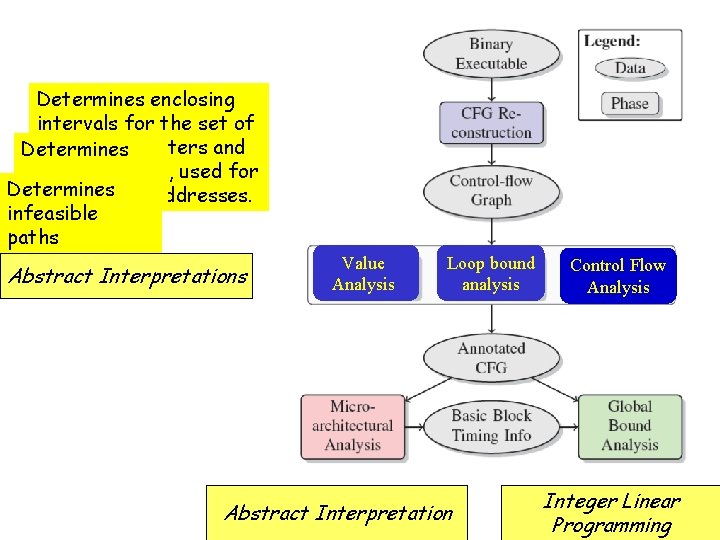

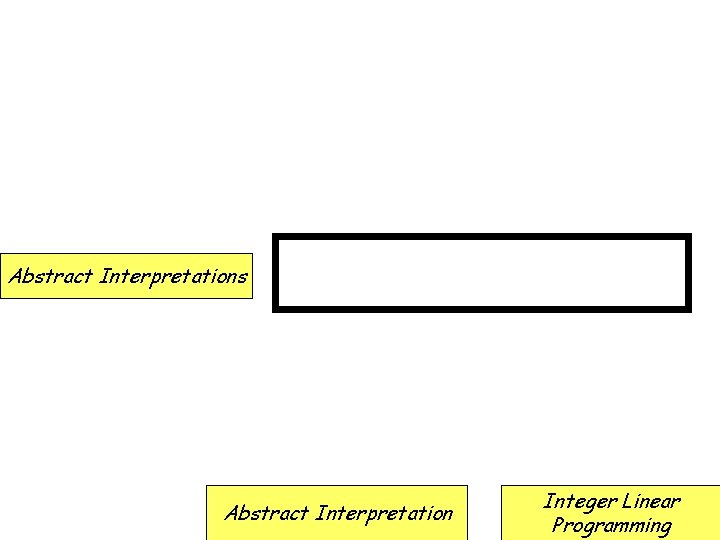

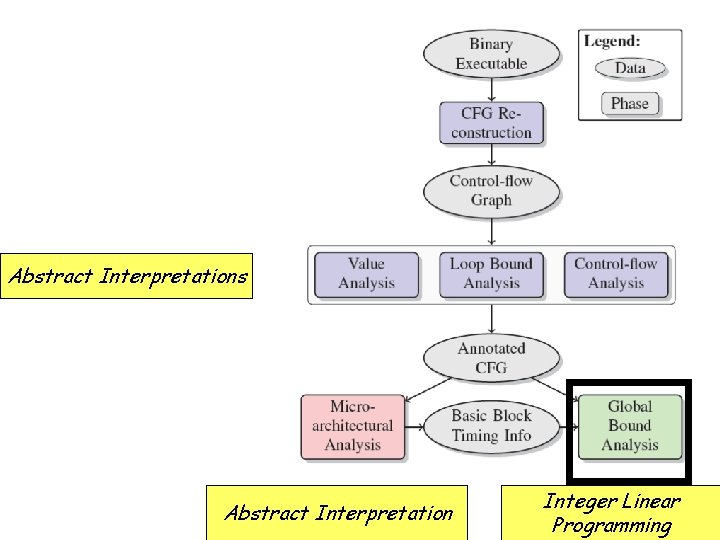

Tool Architecture Determines enclosing intervals for the set of values in registers and Determines local variables, used for loop bounds addresses. Determines determining infeasible paths Abstract Interpretations Value Analysis Loop bound analysis Abstract Interpretation Control Flow Analysis Integer Linear Programming

Tool Architecture Abstract Interpretations Caches, Pipelines Abstract Interpretation Integer Linear Programming

Overview • Intro (20 min) • Analysis – – Caches (20 min) Pipelines (25 min) Value Analysis (20 min) IPET (5 min) • Conclusion (5 min)

Caches: Small & Fast Memory on Chip • Bridge speed gap between CPU and RAM • Caches work well in the average case: – Programs access data locally (many hits) – Programs reuse items (instructions, data) – Access patterns are distributed evenly across the cache • Cache performance has a strong influence on system performance! • The precision of cache analysis has a strong influence on the degree of over-estimation!

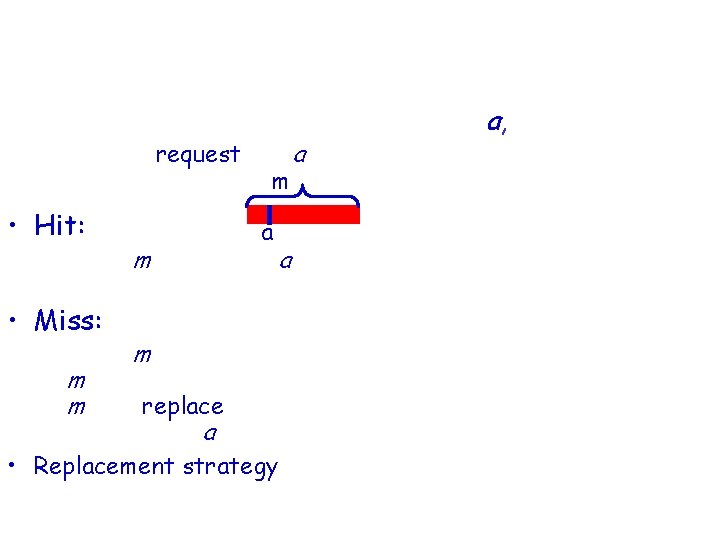

Caches: How they work CPU: read/write at memory address a, – sends a request for a to bus m Cases: • Hit: a – Block m containing a in the cache: request served in the next cycle • Miss: – Block m not in the cache: m is transferred from main memory to the cache, m may replace some block in the cache, request for a is served asap while transfer still continues • Replacement strategy: LRU, PLRU, FIFO, . . . determine which line to replace in a full cache (set)

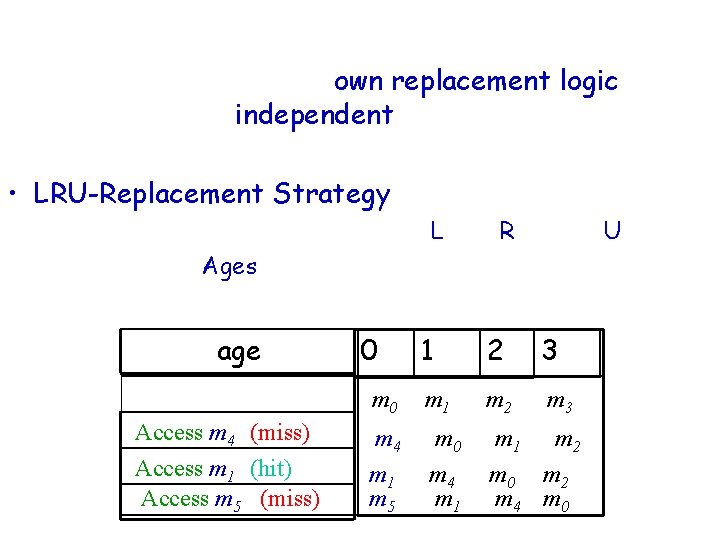

LRU Strategy • Each cache set has its own replacement logic => Cache sets are independent: Everything explained in terms of one set • LRU-Replacement Strategy: – Replace the block that has been Least Recently Used – Modeled by Ages • Example: 4 -way set associative cache age Access m 4 (miss) Access m 1 (hit) Access m 5 (miss) 0 1 2 3 m 0 m 1 m 2 m 3 m 4 m 1 m 5 m 0 m 4 m 1 m 2 m 0 m 2 m 4 m 0

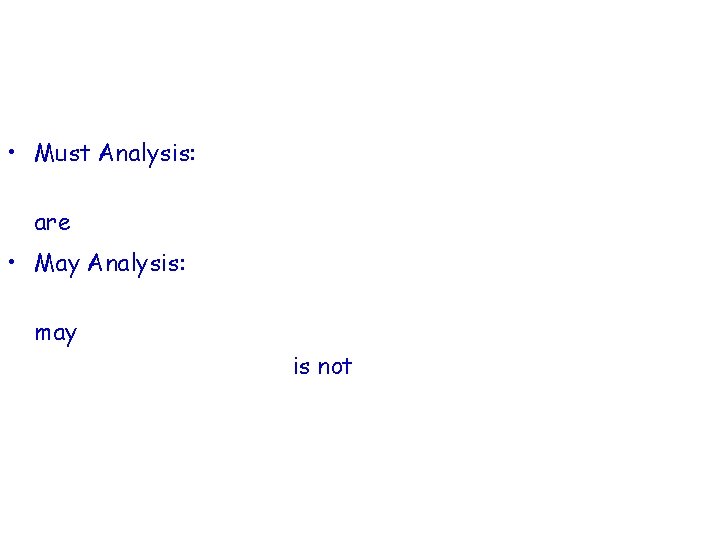

Cache Analysis How to statically precompute cache contents: • Must Analysis: For each program point (and context), find out which blocks are in the cache prediction of cache hits • May Analysis: For each program point (and context), find out which blocks may be in the cache Complement says what is not in the cache prediction of cache misses

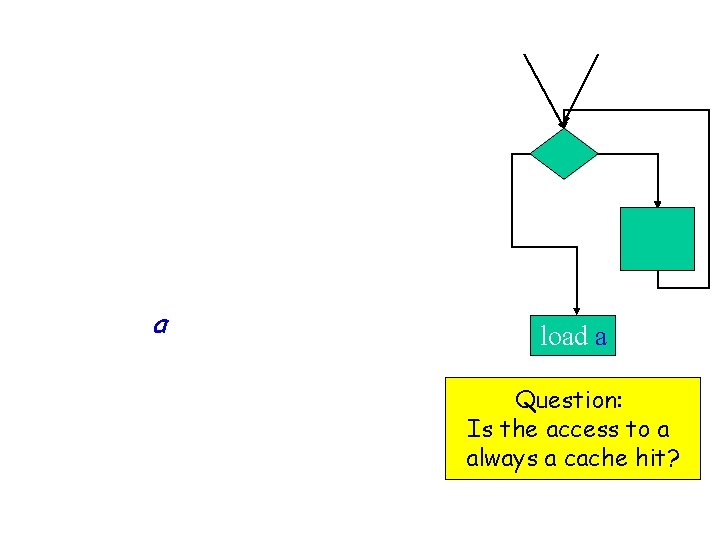

(Must) Cache Analysis • Consider one instruction in the program. • There may be many paths leading to this instruction. • How can we compute whether a will always be in cache independently of which path execution takes? load a Question: Is the access to a always a cache hit?

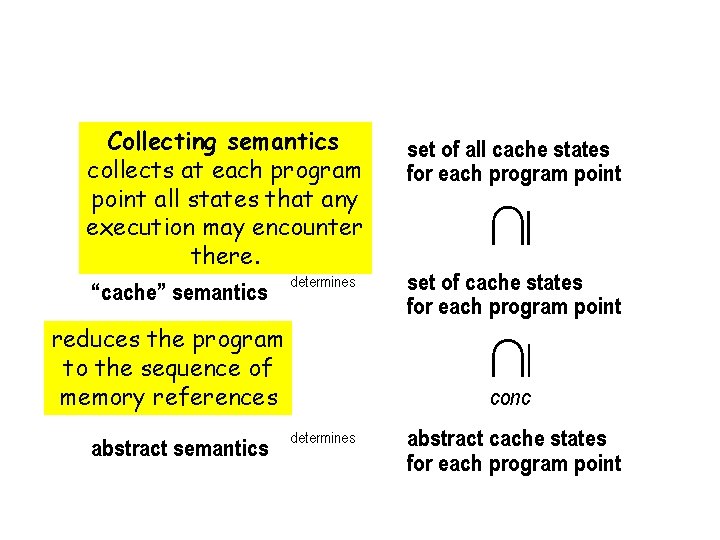

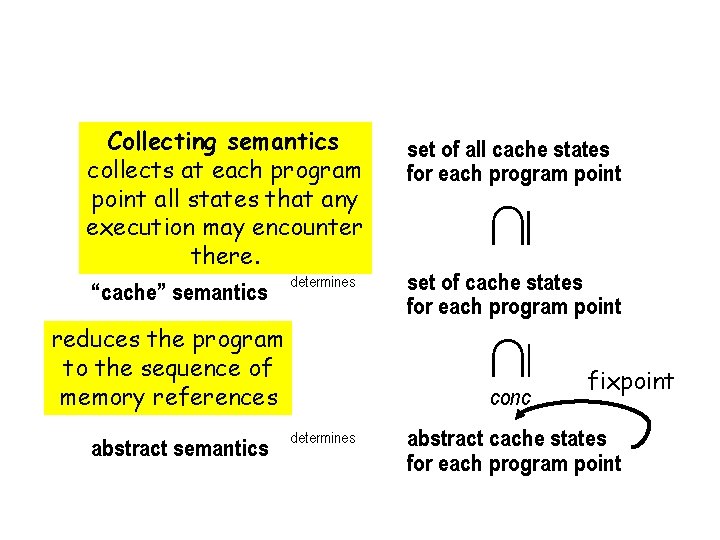

Cache Analysis Over-approximation of the Collecting Semantics Collecting semantics determines the semantics collects at each program point all states that any execution may encounter there. determines “cache” semantics reduces the program to the sequence of memory references abstract semantics set of all cache states for each program point set of cache states for each program point conc determines abstract cache states for each program point

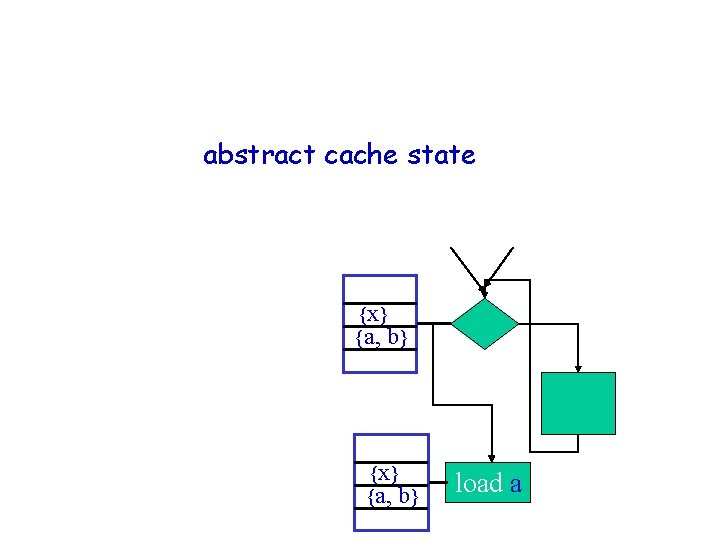

Cache Analysis – how does it work? For each program point, • compute an abstract cache state • represents set of memory blocks guaranteed to be in cache each time execution reaches this program point {x} {a, b} load a

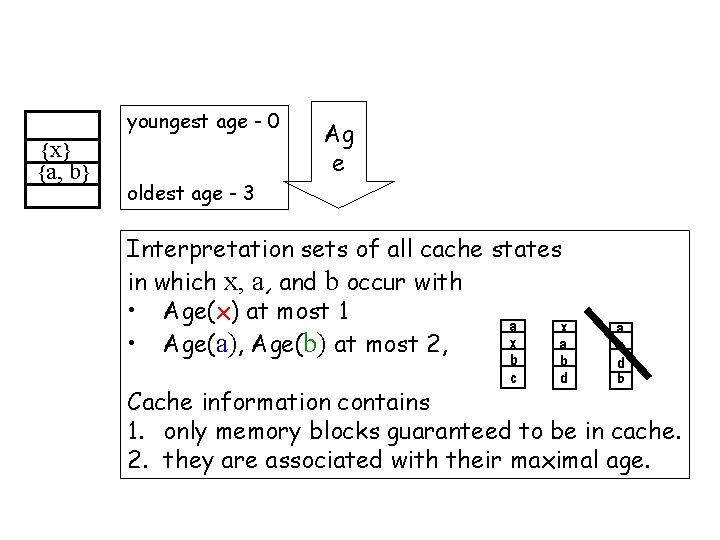

Abstract Cache youngest age - 0 {x} {a, b} Ag e oldest age - 3 Interpretation sets of all cache states in which x, a, and b occur with • Age(x) at most 1 a x x a • Age(a), Age(b) at most 2, b c b d a x d b Cache information contains 1. only memory blocks guaranteed to be in cache. 2. they are associated with their maximal age.

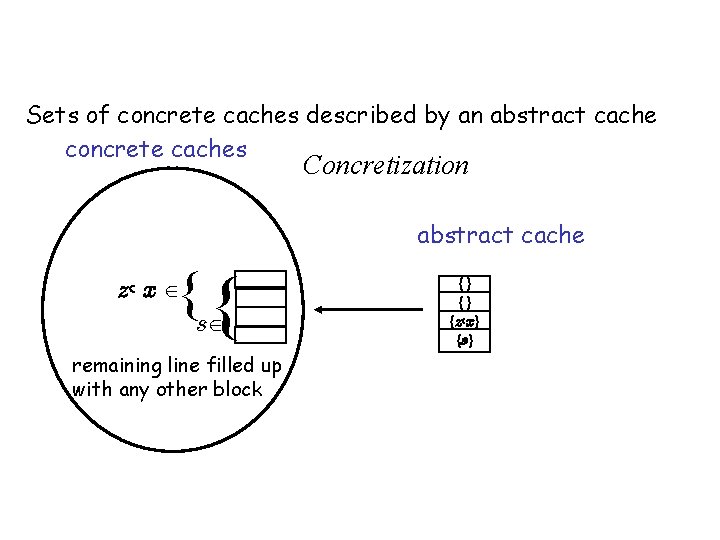

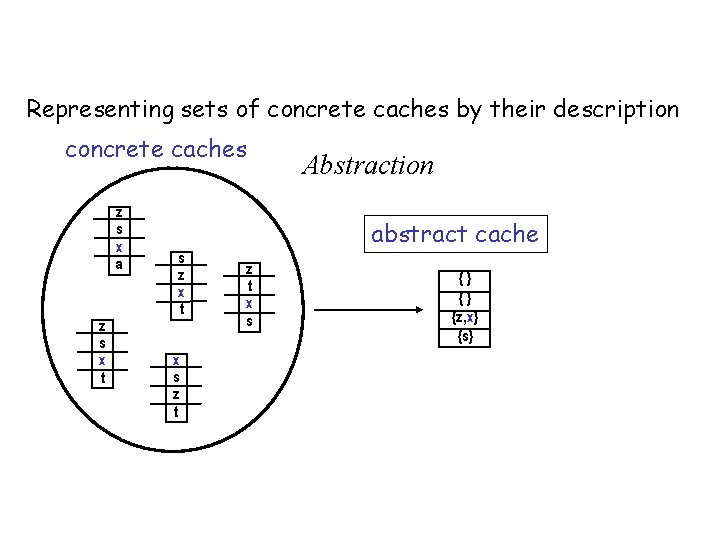

Abstract Domain: Must Cache Sets of concrete caches described by an abstract cache concrete caches Concretization abstract cache {s { z, x {} {} {z, x} {s} remaining line filled up with any other block and form a Galois connection

Abstract Domain: Must Cache Representing sets of concrete caches by their description concrete caches z s x a z s x t s z x t x s z t Abstraction abstract cache z t x s {} {} {z, x} {s}

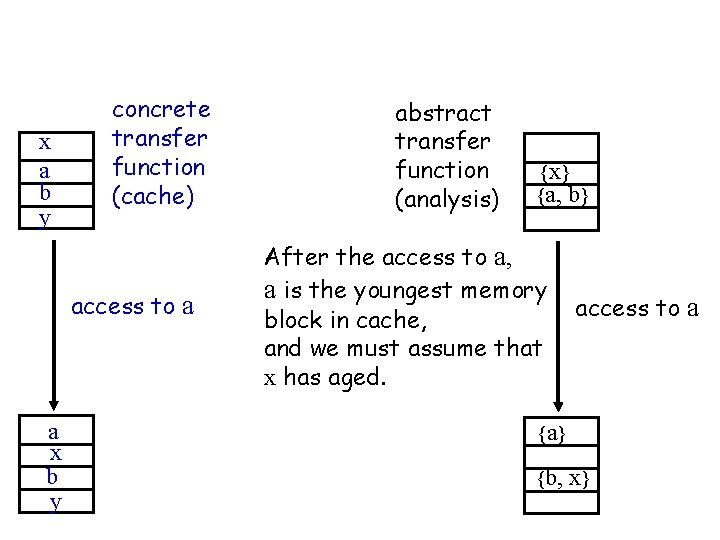

(Must) Cache analysis of a memory access with LRU replacement x a b y concrete transfer function (cache) access to a a x b y abstract transfer function (analysis) {x} {a, b} After the access to a, a is the youngest memory block in cache, and we must assume that x has aged. access to a {a} {b, x}

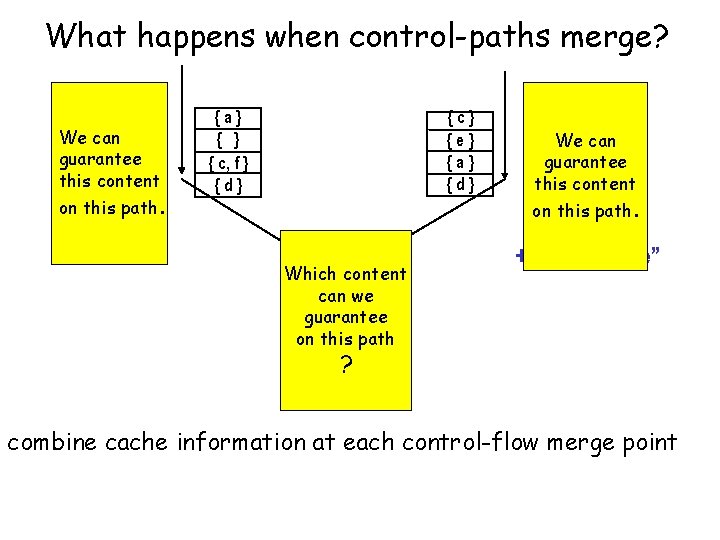

What happens when control-paths merge? We can guarantee this content on this path. {c} {e} {a} {d} {a} { c, f } {d} Which content { } can we { } guarantee { a, c } on this path {d} We can guarantee this content on this path. “intersection + maximal age” ? combine cache information at each control-flow merge point

Cache Analysis Over-approximation of the Collecting Semantics Collecting semantics determines the semantics collects at each program point all states that any execution may encounter there. determines “cache” semantics reduces the program to the sequence of memory references abstract semantics set of all cache states for each program point set of cache states for each program point conc determines fixpoint abstract cache states for each program point

Predictability of Caches - Speed of Recovery from Uncertainty - J. Reineke et al. : Predictability of Cache Replacement Policies, Real-Time Systems, Springer, 2007

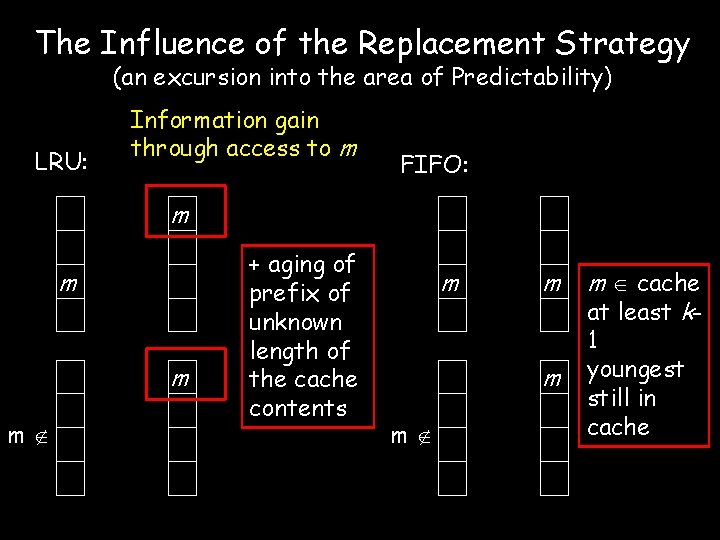

The Influence of the Replacement Strategy (an excursion into the area of Predictability) LRU: Information gain through access to m FIFO: m m + aging of prefix of unknown length of the cache contents m m m cache at least k 1 youngest still in cache

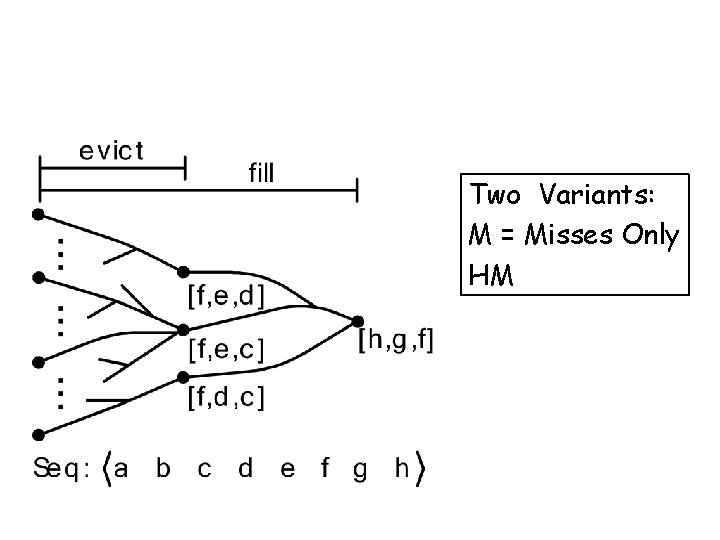

Metrics of Predictability: evict & fill Two Variants: M = Misses Only HM

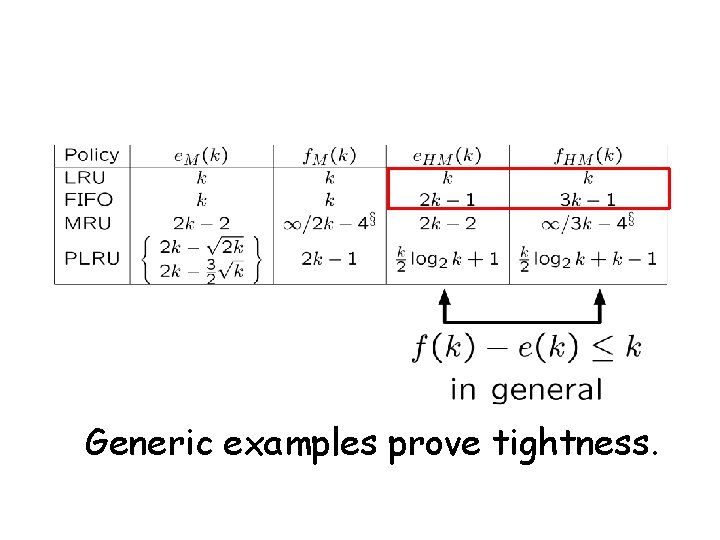

Results: tight bounds Generic examples prove tightness.

Wrap-up • Cache analysis – Problem solved for LRU • … but other strategies exist – These are provably less predictable – Analyses are on-going work • Design recommendation – Use LRU caches Most predictable replacement strategy

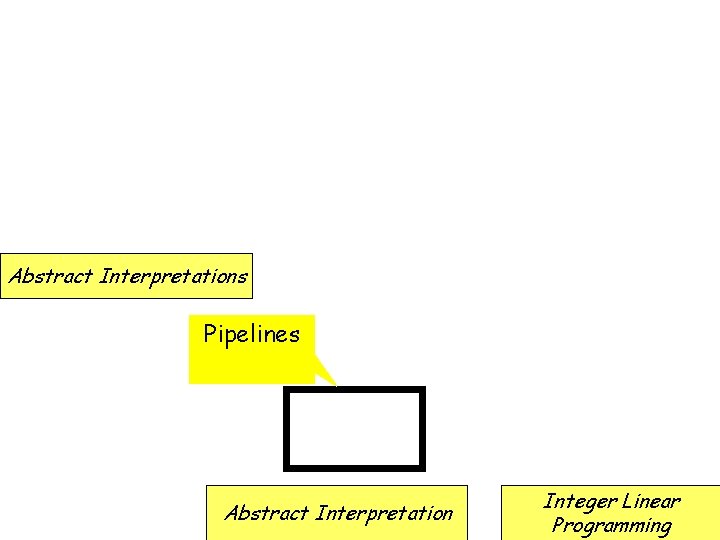

Tool Architecture Abstract Interpretations Pipelines Abstract Interpretation Integer Linear Programming

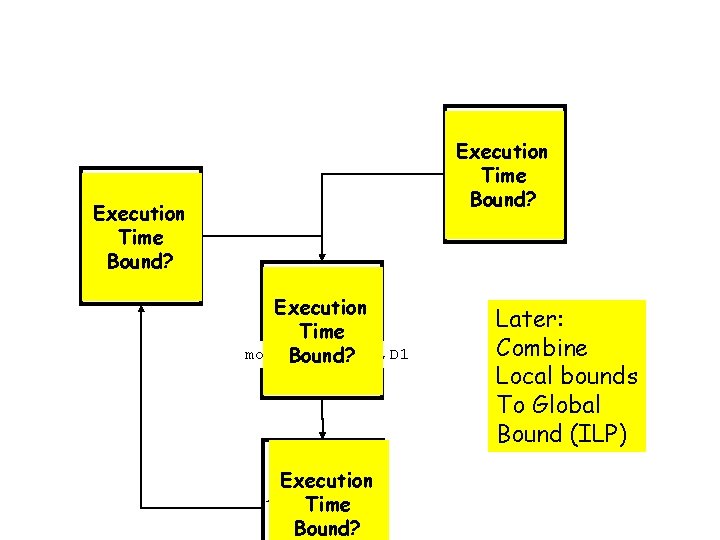

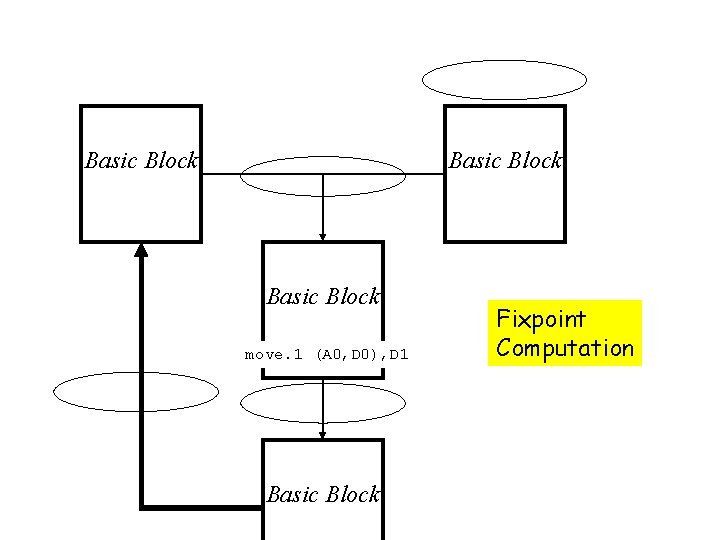

Execution Basic Block Time Bound? Execution Basic Block Time move. 1 (A 0, D 0), D 1 Bound? Execution Basic Block Time Bound? Later: Combine Local bounds To Global Bound (ILP)

Block = Sequence of Instructions time Instruction 1 Instruction 2 Instruction 3 Time bound for entire block

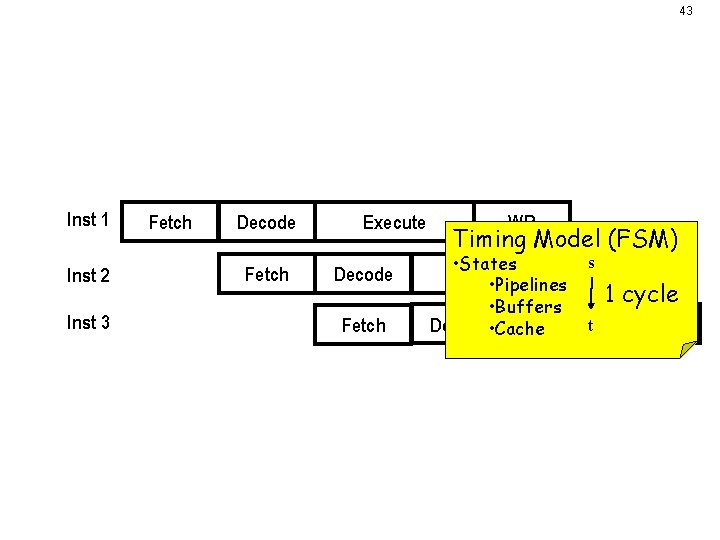

43 What really happens • Pipeline: – overlapped execution of instructions. Inst 1 Inst 2 Fetch Decode Fetch Inst 3 – Execute Decode Fetch WB Timing Model (FSM) s • States Execute WB • Pipelines 1 cycle • Buffers Decode • Cache Executet WB Timing behavior influenced by • availability of resources – Bus, cache • speculation, branch prediction…

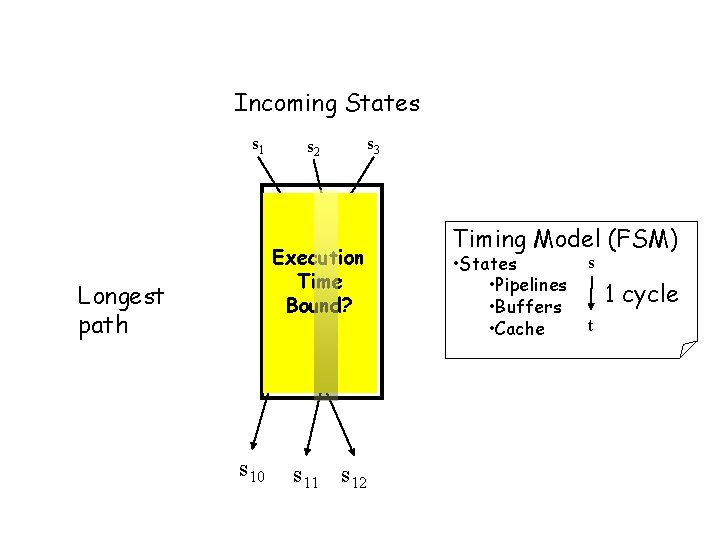

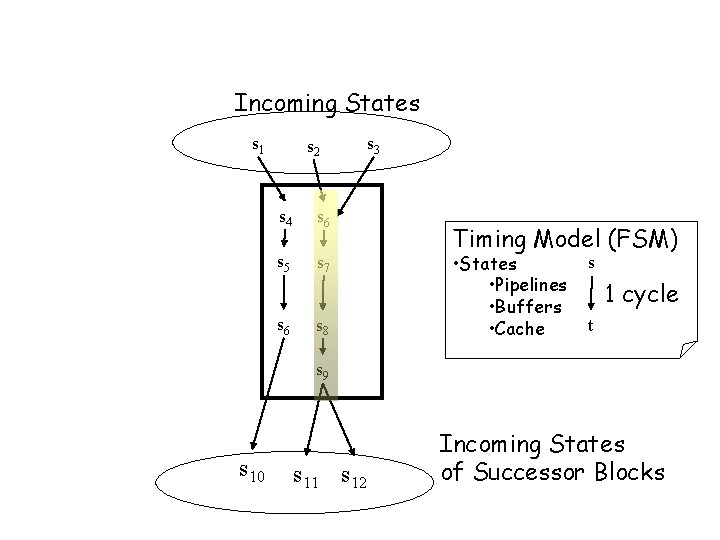

Time Bounds for Basic Blocks Incoming States s 1 s 2 s 4 s 6 Execution s 5 s 7 Time Bound? Longest path s 6 s 8 s 9 s 10 s 11 s 12 s 3 Timing Model (FSM) • States • Pipelines • Buffers • Cache s 1 cycle t

Time Bounds for Basic Blocks Incoming States s 1 s 2 s 4 s 6 s 5 s 7 s 6 s 8 s 3 Timing Model (FSM) • States • Pipelines • Buffers • Cache s 1 cycle t s 9 s 10 s 11 s 12 Incoming States of Successor Blocks

Basic Block move. 1 (A 0, D 0), D 1 Basic Block Fixpoint Computation

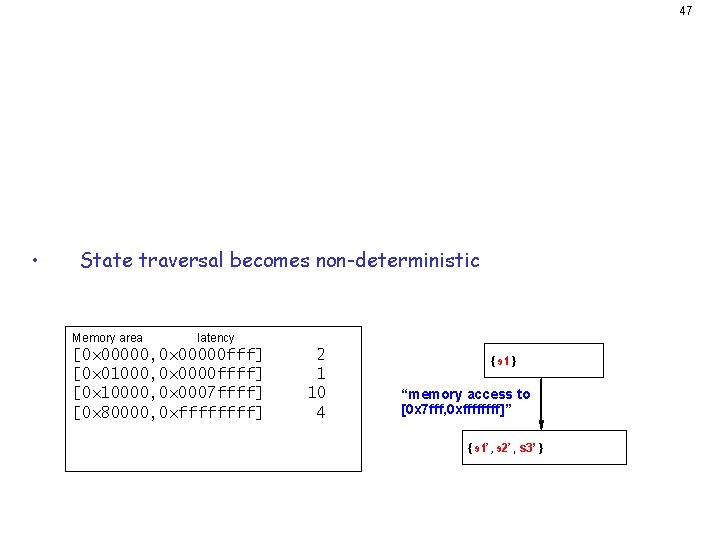

47 Challenge: Imprecise Information • • Imprecision due to abstraction. – Value analysis computes intervals. – Cache analysis predicts “don’t know” (hit or miss). State traversal becomes non-deterministic. Memory area latency [0 x 00000, 0 x 00000 fff] [0 x 01000, 0 x 0000 ffff] [0 x 10000, 0 x 0007 ffff] [0 x 80000, 0 xffff] 2 1 10 4 { s 1 } “memory access to [0 x 7 fff, 0 xffff]” { s 1’ , s 2’ , s 3’ }

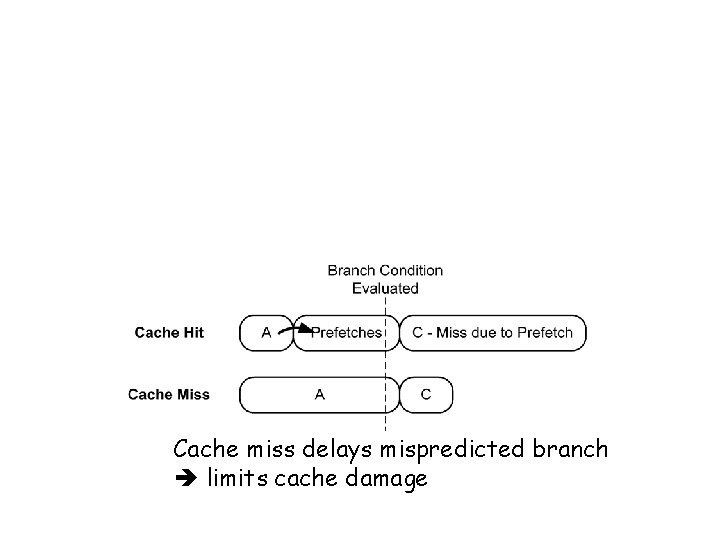

Timing Anomalies • Counterintuitive timing behaviour: Cache miss is not always the worst-case [Lundquist/Stenström 1999]. Cache miss delays mispredicted branch limits cache damage

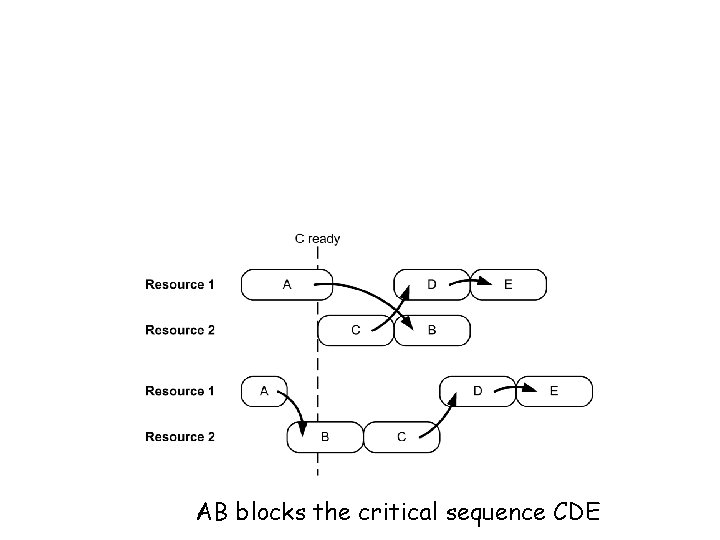

Timing Anomalies • Counterintuitive timing behaviour: Local worst-case does not entail global worst-case AB blocks the critical sequence CDE

Timing Anomalies and Domino Effects • • – – Timing anomalies inhibit local assumptions. Domino effects: difference in execution time between two hardware states cannot be bounded by constant. PPC 755 pipeline [Schneider 2003] PLRU cache replacement [Berg 2006] 50

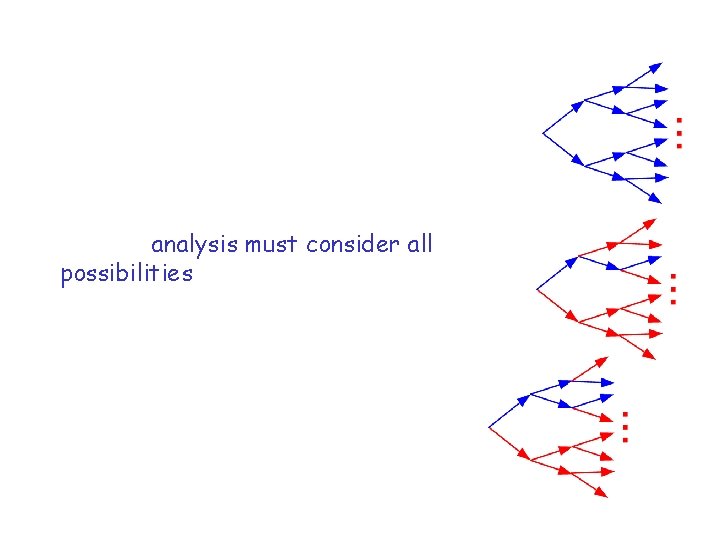

What is the problem? • It makes timing analysis more difficult: The analysis has to follow all possibilities -> exponential blow-up • Pipeline analysis must consider all possibilities. – Explicit state representation doesn’t scale well. – Analysis can become infeasible [Thesing 2004].

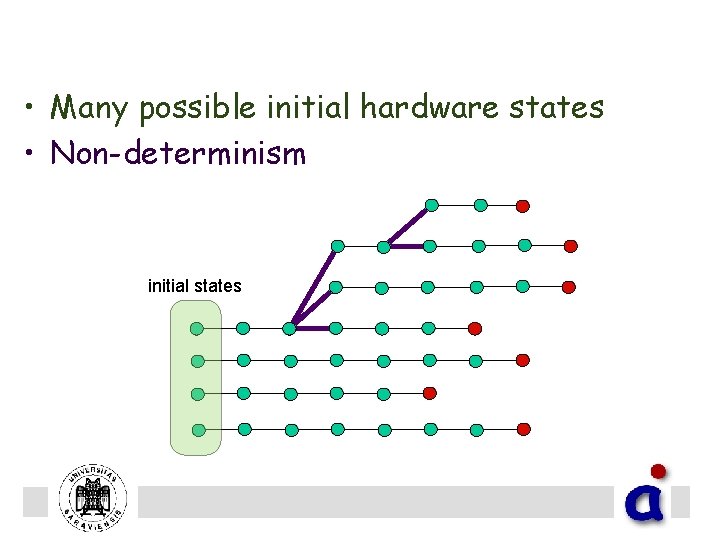

Challenge: State Explosion • Many possible initial hardware states • Non-determinism in timing model initial states

What to do? • Most technologies presented in this talk well-established • Now: some discussion of on-going work – How to tackle state explosion? • On-going Research: Symbolic state traversal for Timing Analysis Research within AVACS R 2

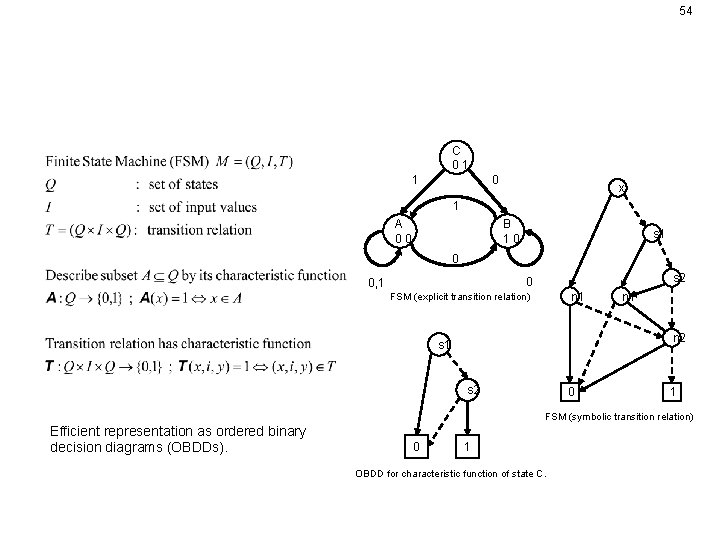

54 Solution: Symbolic Representation of System and its States C 01 1 0 x 1 A 00 B 10 s 1 0 s 2 0 0, 1 n 1 FSM (explicit transition relation) n 1 n 2 s 1 s 2 0 1 FSM (symbolic transition relation) Efficient representation as ordered binary decision diagrams (OBDDs). 0 1 OBDD for characteristic function of state C.

55 OBDD Performance • Explicit: Performance depends on # of states • Symbolic: Performance depends on BDD size exploit redundancy compact representation. – – Size is worst-case exponential in the #variables. Finding optimal variable ordering is NP-hard.

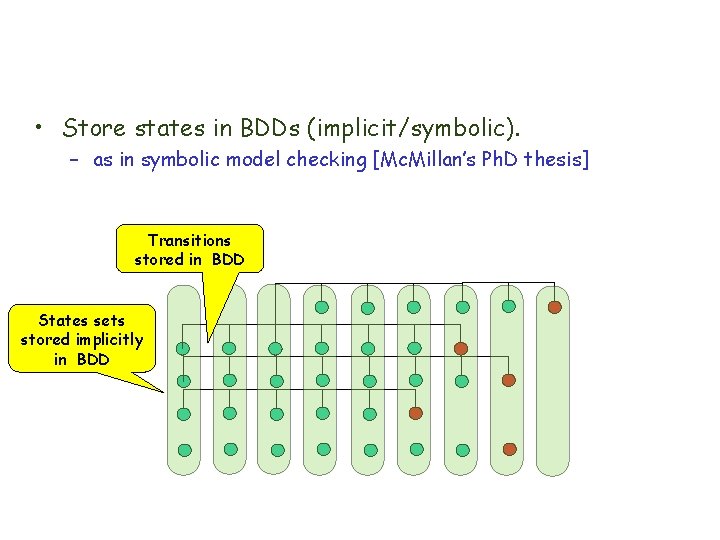

Symbolic Timing Analysis • Store states in BDDs (implicit/symbolic). – as in symbolic model checking [Mc. Millan’s Ph. D thesis] • Explore states in BFS order layer for layer • exploits. Transitions redundancies in large state space. – manystored states in equal BDD up to a few bits. States sets stored implicitly in BDD

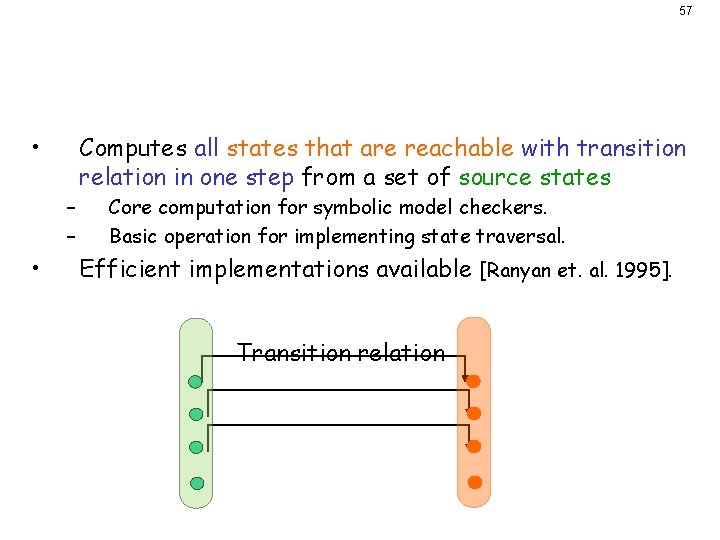

Image Computation • Computes all states that are reachable with transition relation in one step from a set of source states – – • 57 Core computation for symbolic model checkers. Basic operation for implementing state traversal. Efficient implementations available [Ranyan et. al. 1995]. Transition relation

Transition Relation • • Transition relation Conjunction T of – – Transition Relation for Program-independent abstract processor model M. Transition Relation for Program-Analysis Information • Control-flow information, e. g. branch targets. • Value analysis information (register contents), dependencies, … 58

61 Optimizations Goal: Reduce state bits required in encoding – 32 bit addresses are too large –> Compactly enumerate. 1. Uniquely identify all instructions in all contexts for building TL. • Number of state bits. • Number of conjoined relations in TL. • Yet: Program size still affects every OBDD operation!

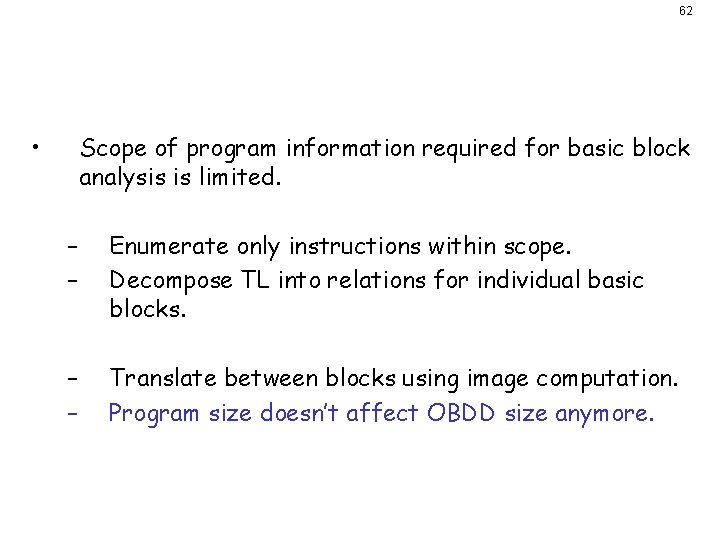

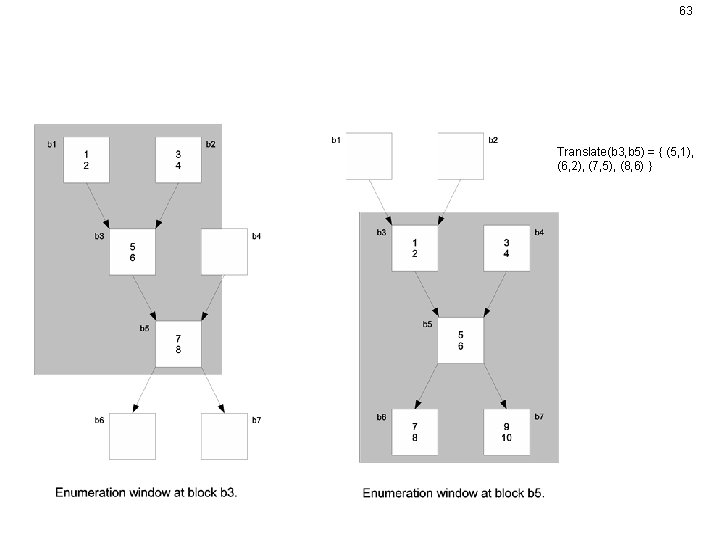

Decomposition I • 62 Scope of program information required for basic block analysis is limited. – – Enumerate only instructions within scope. Decompose TL into relations for individual basic blocks. – – Translate between blocks using image computation. Program size doesn’t affect OBDD size anymore.

63 Decomposition II Translate(b 3, b 5) = { (5, 1), (6, 2), (7, 5), (8, 6) }

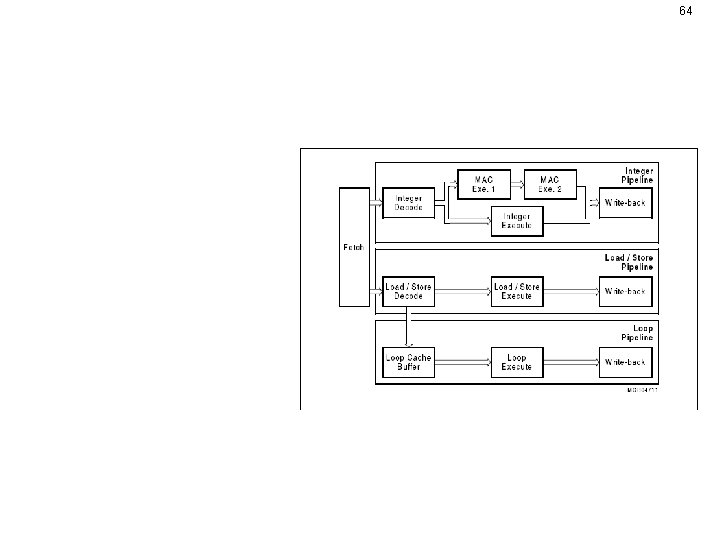

64 Infineon Tri. Core • • • 2 major pipelines 1 minor pipeline 16 byte instruction prefetch buffer static branch prediction Used in automotive industry. Commercial, explicit-state model available (ai. T). – Can be reduced to pipeline core.

65 Implementations • Explicit state implementation: – – • full model including peripheral modules: 696 bytes per state pipeline core representation alone: 500 bytes per state Symbolic implementation: – – – implements most of the pipeline core: ~ 240 bits per state carefully tuned to minimize required state bits loop pipeline still has simplified representation

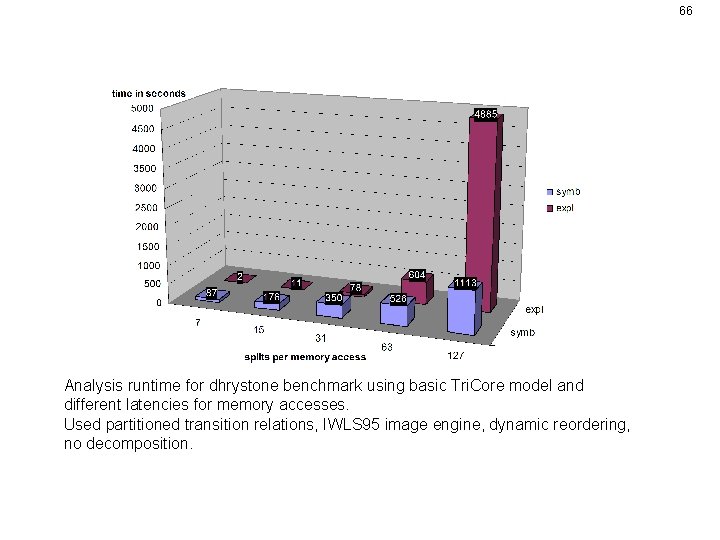

66 Symbolic vs. Explicit Analysis runtime for dhrystone benchmark using basic Tri. Core model and different latencies for memory accesses. Used partitioned transition relations, IWLS 95 image engine, dynamic reordering, no decomposition.

67 Summary • Symbolic state implementation scales much better: – Lots of redundancy between abstract states considered concurrently by the analysis. – Frequently needed operations like set union and checking for equality of sets have efficient OBDD implementations. • OBDD performance depends on size of abstract models. – Models require careful tuning. – Scales very well with growing program size (decomposition). EMSOFT 2009, joint work with Stephan Wilhelm

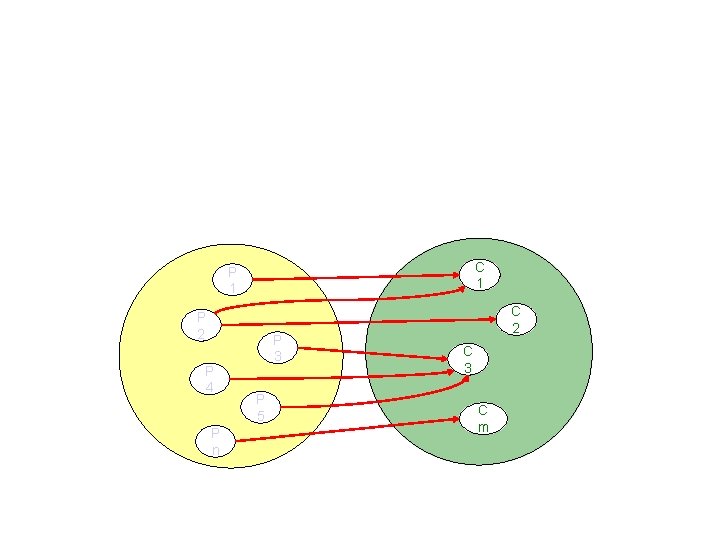

Ongoing Work: Pipeline-Cache. Integration I • Analyses must interact during runtime (cyclic dependency). • Relation between pipeline and cache states affects precision. C 1 P 2 P 4 P 3 P 5 P n Abstract pipeline states C 2 C 3 C m Abstract cache states

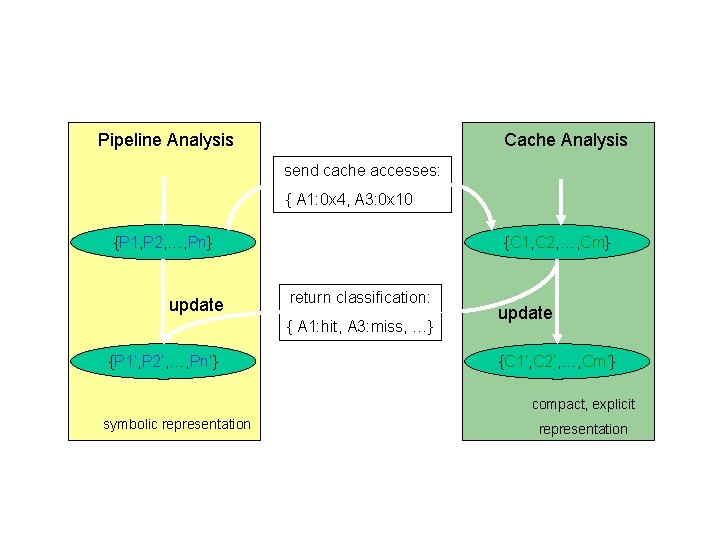

On-going Work: Pipeline-Cache. Integration II Pipeline Analysis Cache Analysis send cache accesses: { A 1: 0 x 4, A 3: 0 x 10, …} {P 1, P 2, …, Pn} update {C 1, C 2, …, Cm} return classification: { A 1: hit, A 3: miss, …} {P 1’, P 2’, …, Pn’} update {C 1’, C 2’, …, Cm’} compact, explicit symbolic representation

Tool Architecture Abstract Interpretations Abstract Interpretation Integer Linear Programming

Static Analysis in Timing Analysis Invariants about the values of variables • Constant propagation (CP) – propagates statically available information about the values of variables, variable x always has value 5 at program point p. – traditional static analysis in compilers • Interval Analysis (IA), C&C‘ 77 – determines intervals enclosing all potential values of variables variable x always has value between -2 and at program point p. – used to exclude index-out-of-bound errors

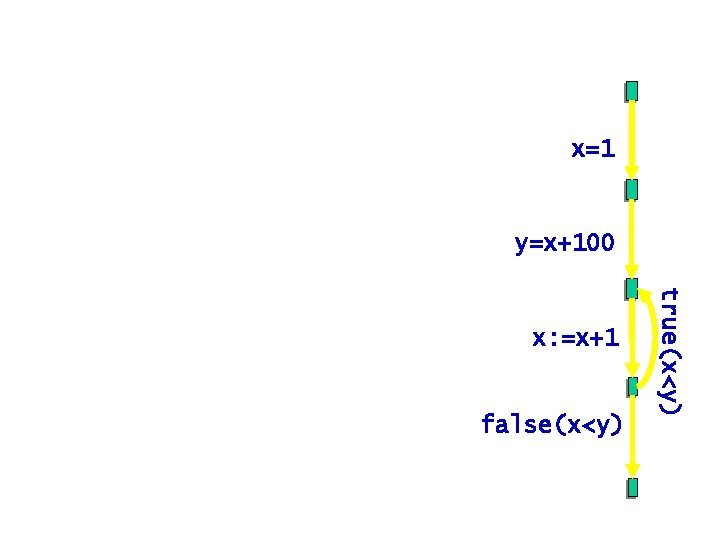

Simple imperative language • finite set of program variables: Var • arithmetic expr. : +, -, *, / • statements: y=x+100 • programs represented by their x: =x+1 control-flow graph, statements/conditions/instructions associated with edges false(x<y) true(x<y) – assignment: x : = e, – conditions e with outgoing edges true(e), false(e) x=1

Collecting Semantics • A state binds variables to values States = Var Z • Collecting semantics – For each program point, compute set of states reached during any execution, • a (possibly infinite) subset of States – in general, non-computable, – need (efficiently) computable static approximations!

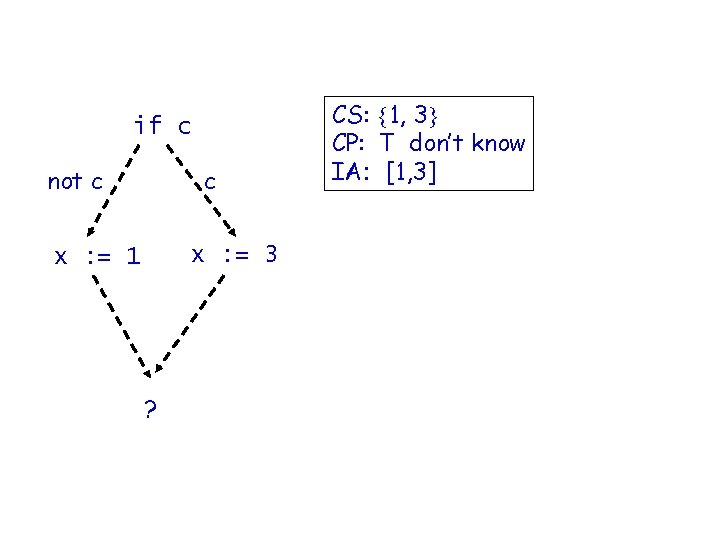

What should be the value of x? if c not c c x : = 3 x : = 1 ? CS: 1, 3 CP: T don’t know IA: [1, 3]

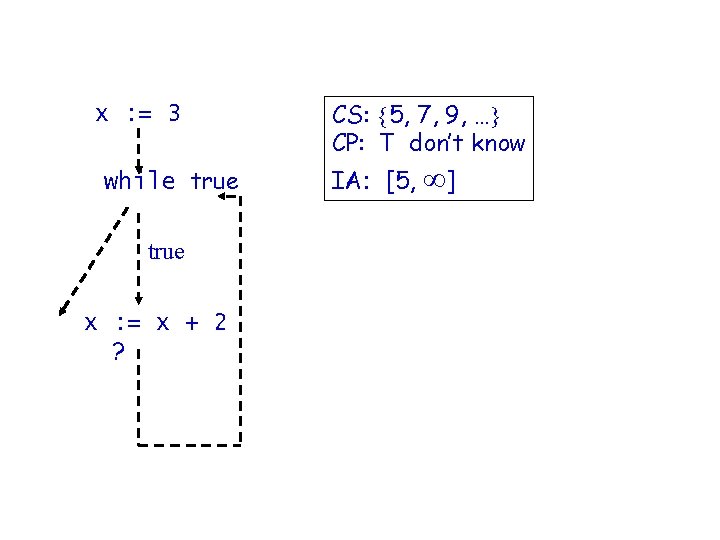

What should be the value? x : = 3 while true false true x : = x + 2 ? CS: 5, 7, 9, … CP: T don’t know IA: [5, ]

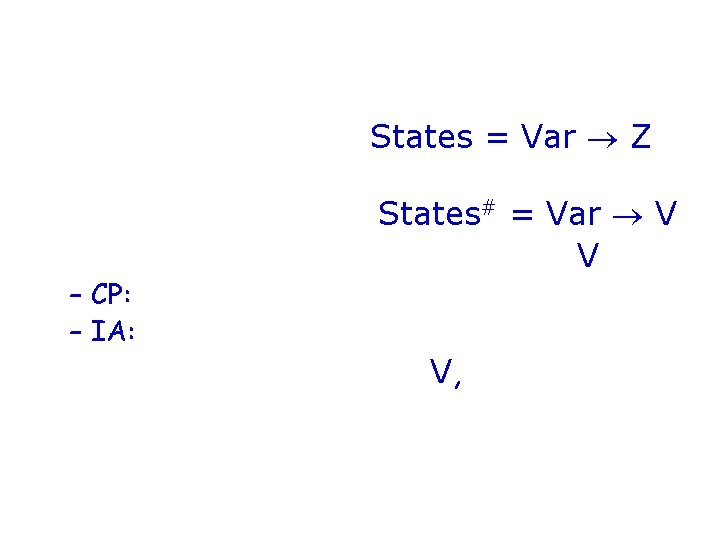

Two Different Abstractions of the Same Concrete Semantics • Concrete semantics States = Var Z • Both are domains of functions binding variables to “values”, States# = Var V • but different domains of “values”, V – CP: Z and some value representing unknown, – IA: intervals over Z including -1 and +1. • Both need arithmetic on V, define [e]# to describe the new value x of associated with it by [ x: = e]#

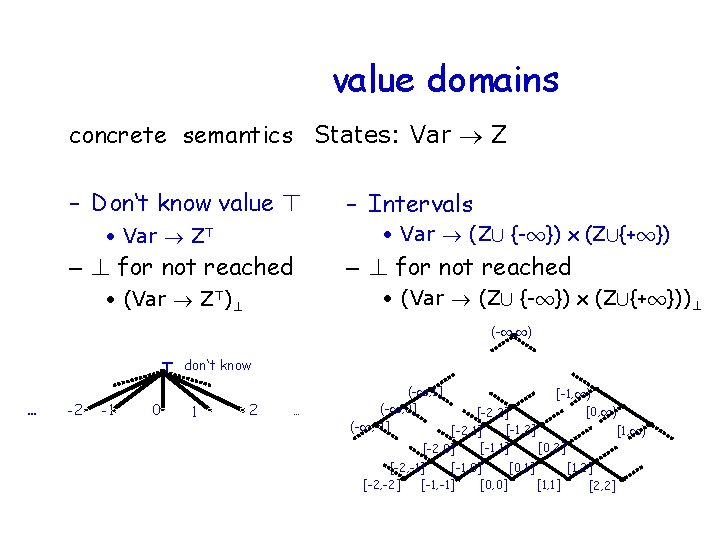

CP vs. IA Domain different value domains concrete semantics States: Var Z – Don‘t know value > – Intervals • Var (Z[ {-1}) x (Z[{+1}) • Var Z> – ? for not reached • (Var (Z[ {-1}) x (Z[{+1}))? • (Var Z>)? (-1, 1) > don‘t know … -2 -1 0 1 2 … (-1, 1] [-1, 1) (-1, 0] [0, 1) [-2, 2] (-1, -1] [-1, 2] [1, 1) [-2, 1] [-1, 1] [0, 2] [-2, 0] [-2, -1] [-1, 0] [0, 1] [1, 2] [-2, -2] [-1, -1] [0, 0] [1, 1] [2, 2]

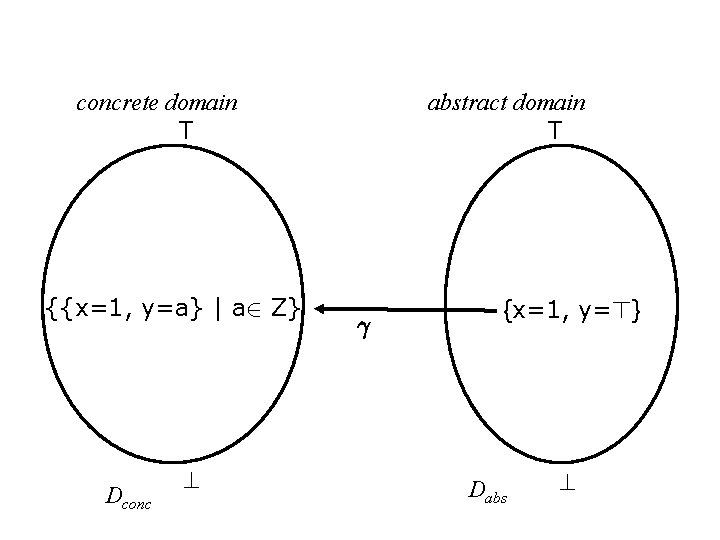

Meaning Function/Concretization concrete domain T {{x=1, y=a} | a 2 Z} Dconc abstract domain T ° {x=1, y=>} Dabs

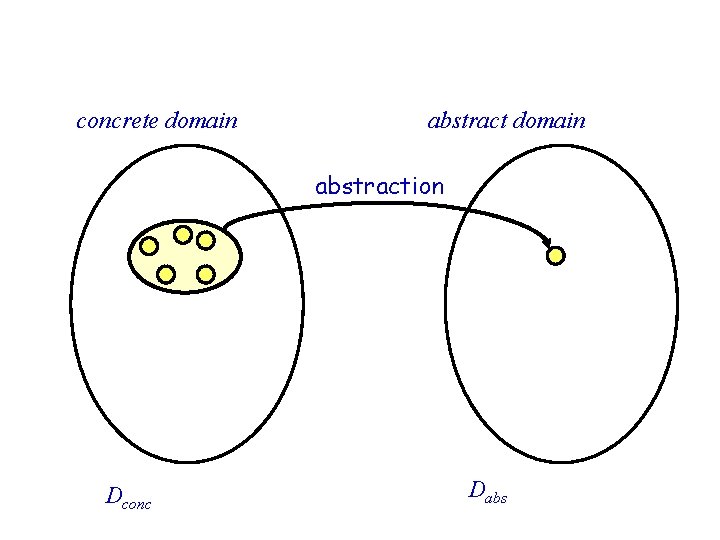

Abstraction concrete domain abstraction Dconc Dabs

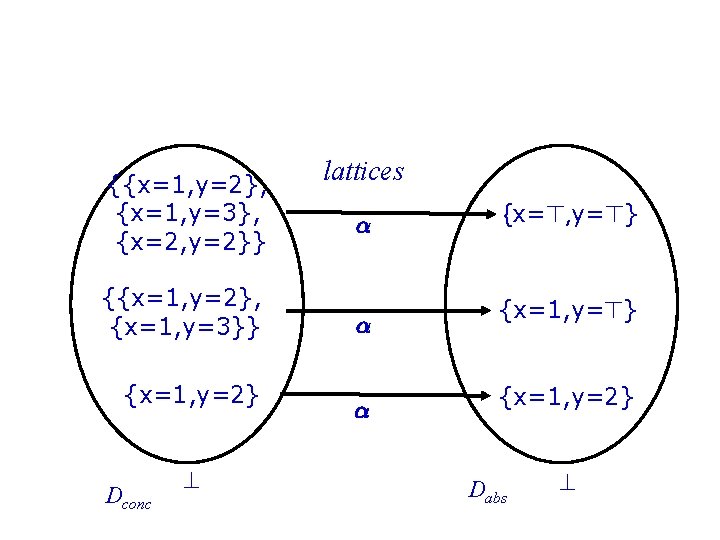

Abstraction concrete domain T {{x=1, y=2}, {x=1, y=3}, {x=2, y=2}} {{x=1, y=2}, {x=1, y=3}} {x=1, y=2} Dconc abstract domain lattices ® ® ® {x=>, y=>} {x=1, y=2} Dabs

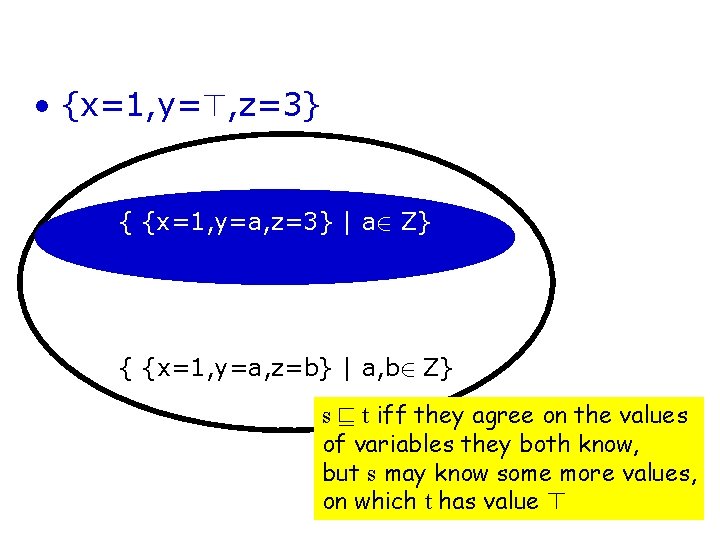

Order v • {x=1, y=>, z=3} v {x=1, y=>, z=>} { {x=1, y=a, z=3} | a 2 Z} { {x=1, y=a, z=b} | a, b 2 Z} s v t iff they agree on the values of variables they both know, but s may know some more values, on which t has value >

CP: Properties of Order • CP Domain has infinite size – Infinitely many values • … however, it is of finite height – No infinite ascending chains • Finitely many program variables • Can only go up in lattice by changing to a > > don‘t know … -2 -1 0 1 2 …

![CP: Expression evaluation • Abstract evaluation � > ; if [a]#(s)=> or [b]#(s)=> [a CP: Expression evaluation • Abstract evaluation � > ; if [a]#(s)=> or [b]#(s)=> [a](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-79.jpg)

CP: Expression evaluation • Abstract evaluation � > ; if [a]#(s)=> or [b]#(s)=> [a � b]#(s)= [a]#(s)� [b]#(s) ; otherwise • Examples: – 1 + 2 = 3, – 1+>=>

![Abstract Semantics [. ]# • [ x: = e]# (s)= s[x/[e]#] ? ; if Abstract Semantics [. ]# • [ x: = e]# (s)= s[x/[e]#] ? ; if](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-80.jpg)

Abstract Semantics [. ]# • [ x: = e]# (s)= s[x/[e]#] ? ; if [e]#(s)=ff • [true(e)]#(s)= s ; otherwise ? ; if [e]#(s)=tt • [false(e)]#(s)= s ; otherwise

![More Precise Value Domain - Intervals 1 height (-1, 1) (-1, 1] [-1, 1) More Precise Value Domain - Intervals 1 height (-1, 1) (-1, 1] [-1, 1)](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-81.jpg)

More Precise Value Domain - Intervals 1 height (-1, 1) (-1, 1] [-1, 1) (-1, 0] (-1, -1] [-2, 0] [-2, -1] [-2, -2] [0, 1) [-2, 2] [-1, 1] [-1, 0] [-1, -1] [0, 0] [1, 1) [0, 2] [0, 1] [1, 2] [1, 1] [2, 2]

![IA Domain [ -2 -1 0 1 2 ] • Abstract Domain • (Var IA Domain [ -2 -1 0 1 2 ] • Abstract Domain • (Var](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-82.jpg)

IA Domain [ -2 -1 0 1 2 ] • Abstract Domain • (Var (Z[ {-1}) x (Z [ {+1}))? ? in the function domain stands for not reached

![Value Domain: Intervals concrete domain T abstract domain T ®(M)=[inf M, sup M] {1, Value Domain: Intervals concrete domain T abstract domain T ®(M)=[inf M, sup M] {1,](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-83.jpg)

Value Domain: Intervals concrete domain T abstract domain T ®(M)=[inf M, sup M] {1, 3, 5, 7} Dconc [1, +1) v µ {1, 2, 3, …} ® ® [1, 7] Dabs

![Value Domain: Intervals concrete domain T abstract domain T °([1, 7])={m 2 Z|1· m Value Domain: Intervals concrete domain T abstract domain T °([1, 7])={m 2 Z|1· m](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-84.jpg)

Value Domain: Intervals concrete domain T abstract domain T °([1, 7])={m 2 Z|1· m · 7} {1, 2, 3, 4, 5, 6, 7} Dconc [1, 7] Dabs

![IA: expression evaluation • Addition: [l 1, u 1] + [l 2, u 2] IA: expression evaluation • Addition: [l 1, u 1] + [l 2, u 2]](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-85.jpg)

IA: expression evaluation • Addition: [l 1, u 1] + [l 2, u 2] = [l 1+l 2, u 1+u 2] where -1 + anything = -1 +1 + anything = + 1 • Unary Minus: -[l 1, u 1] = [-u 1 , -l 1] • Multiplication: ….

![Abstract Semantics [. ]# • [ x: = e]# (s)= s[x/[e]#] ? ; if Abstract Semantics [. ]# • [ x: = e]# (s)= s[x/[e]#] ? ; if](http://slidetodoc.com/presentation_image_h2/b38369b67fcf2fa5c70faca31f122a24/image-86.jpg)

Abstract Semantics [. ]# • [ x: = e]# (s)= s[x/[e]#] ? ; if [e]#(s) = ff • [true(e)]#(s)= s ; otherwise ? ; if [e]#(s) = tt • [false(e)]#(s)= s ; otherwise

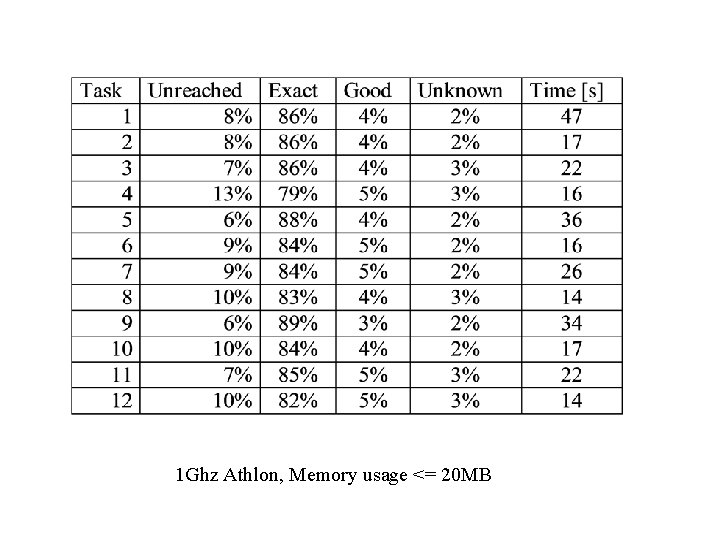

Interval Analysis in Timing Analysis • Data-cache analysis needs effective addresses at analysis time to know where accesses go. • Effective addresses are approximatively precomputed by an interval analysis for the values in registers, local variables • “Exact” intervals – singleton intervals, • “Good” intervals – addresses fit into less than 16 cache lines.

Value Analysis (Airbus Benchmark) 1 Ghz Athlon, Memory usage <= 20 MB

Tool Architecture Abstract Interpretations Abstract Interpretation Integer Linear Programming

Path Analysis by Integer Linear Programming (ILP) • Execution time of a program = Execution_Time(b) x Execution_Count(b) Basic_Block b • ILP solver maximizes this function to determine the WCET • Program structure described by linear constraints – automatically created from CFG structure – user provided loop/recursion bounds – arbitrary additional linear constraints to exclude infeasible paths

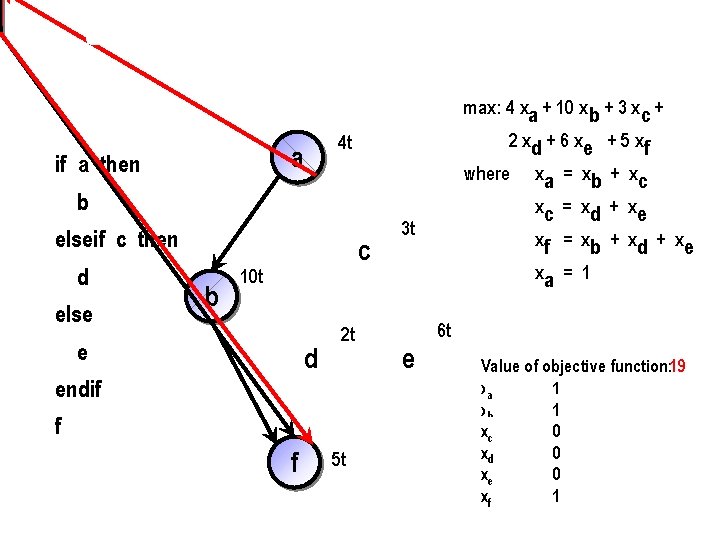

Example (simplified constraints) max: 4 xa + 10 x b + 3 xc + a if a then 2 xd + 6 xe + 5 xf 4 t where b elseif c then d else b c 10 t e d 2 t endif f f 5 t 3 t xa = xb + xc xc = xd + xe xf = xb + xd + xe xa = 1 6 t e Value of objective function: 19 xa 1 xb 1 xc 0 xd 0 xe 0 xf 1

Conclusion • Static Timing Analysis – Execution time bounds for embedded software • Ingredients of successful approach – Decomposition of the problem • Microarchitectural analysis • Static analyses: Value analysis • Implicit path enumeration – Heavy use of abstractions • Cache • Pipeline

Relevant Publications (from my group) • • • C. Ferdinand et al. : Reliable and Precise WCET Determination of a Real-Life Processor, EMSOFT 2001 R. Heckmann et al. : The Influence of Processor Architecture on the Design and the Results of WCET Tools, IEEE Proc. on Real-Time Systems, July 2003 M. Langenbach et al. : Pipeline Modeling for Timing Analysis , SAS 2002 St. Thesing et al. : An Abstract Interpretation-based Timing Validation of Hard Real-Time Avionics Software, IPDS 2003 R. Wilhelm: AI + ILP is good for WCET, MC is not, nor ILP alone, VMCAI 2004 L. Thiele, R. Wilhelm: Design for Timing Predictability, 25 th Anniversary edition of the Kluwer Journal Real-Time Systems, Dec. 2004 R. Wilhelm: Determination of Execution-Time Bounds, CRC Handbook on Embedded Systems, 2005 J. Reineke et al. : Predictability of Cache Replacement Policies, Real-Time Systems, Springer, 2007 Reinhard Wilhelm, et al. : The worst-case execution-time problem—overview of methods and survey of tools, ACM Transactions on Embedded Computing Systems (TECS) , Volume 7 , Issue 3 (April 2008) R. Wilhelm et al. : Memory Hierarchies, Pipelines, and Buses for Future Architectures in Time-critical Embedded Systems, IEEE TCAD, July 2009

- Slides: 93