Timing Analysis and Timing Predictability Reinhard Wilhelm Saarbrcken

Timing Analysis and Timing Predictability Reinhard Wilhelm Saarbrücken

Structure of the Talk 1. Timing Analysis – the Problem 2. Timing Analysis – our Solution • • the overall approach, tool architecture cache analysis • predictability of cache replacement strategies 3. Results and experience 4. The influence of Software 5. Design for Timing Predictability • Conclusion

Industrial Needs Hard real-time systems, often in safety-critical applications abound – Aeronautics, automotive, train industries, manufacturing control Sideairbag in car, Reaction in <10 m. Sec Wing vibration of airplane, sensing every 5 m. Sec

Hard Real-Time Systems • Embedded controllers are expected to finish their tasks reliably within time bounds. • Task scheduling must be performed • Essential: upper bound on the execution times of all tasks statically known • Commonly called the Worst-Case Execution Time (WCET) • Analogously, Best-Case Execution Time (BCET)

Modern Hardware Features • Modern processors increase performance by using: Caches, Pipelines, Branch Prediction, Speculation • These features make WCET computation difficult: Execution times of instructions vary widely – Best case - everything goes smoothely: no cache miss, operands ready, needed resources free, branch correctly predicted – Worst case - everything goes wrong: all loads miss the cache, resources needed are occupied, operands are not ready – Span may be several hundred cycles

Timing Accidents and Penalties Timing Accident – cause for an increase of the execution time of an instruction Timing Penalty – the associated increase • Types of timing accidents – – – Cache misses Pipeline stalls Branch mispredictions Bus collisions Memory refresh of DRAM TLB miss

How to Deal with Murphy’s Law? Essentially three different answers: • Accepting: Every timing accident that may happen will happen • Fighting: Reliably showing that many/most Timing Accidents cannot happen • Cheating: monitoring “enough” runs to get a good feeling

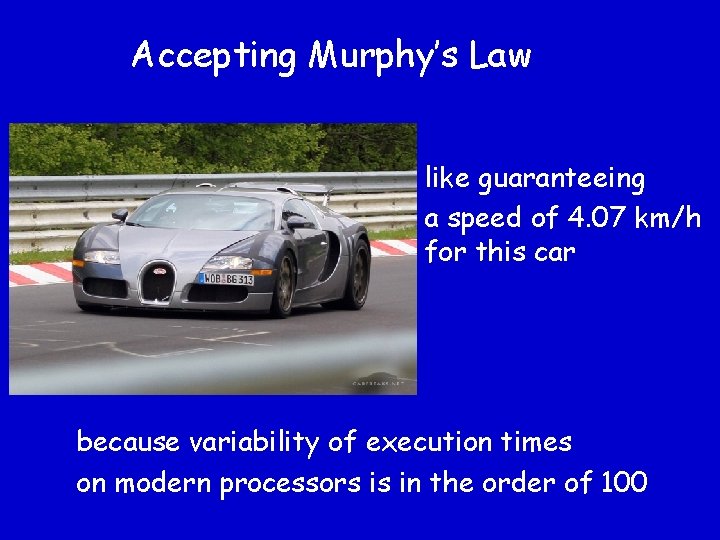

Accepting Murphy’s Law like guaranteeing a speed of 4. 07 km/h for this car because variability of execution times on modern processors is in the order of 100

Cheating to deal with Murphy’s Law • measuring “enough” runs to feel comfortable • how many runs are “enough”? • Example: Analogy – Testing vs. Verification AMD was offered a verification of the K 7. They had tested the design with 80 000 test vectors, considered verification unnecessary. Verification attempt discovered 2 000 bugs! The only remaining solution: Fighting Murphy’s Law!

Execution Time is History-Sensitive Contribution of the execution of an instruction to a program‘s execution time • depends on the execution state, i. e. , on the execution so far, • i. e. , cannot be determined in isolation • history sensitivity is not only a problem – it’s also a chance!

Deriving Run-Time Guarantees • Static Program Analysis derives Invariants about all execution states at a program point. • Derive Safety Properties from these invariants : Certain timing accidents will never happen. Example: At program point p, instruction fetch will never cause a cache miss. • The more accidents excluded, the lower the upper bound. • (and the more accidents predicted, the higher the lower bound).

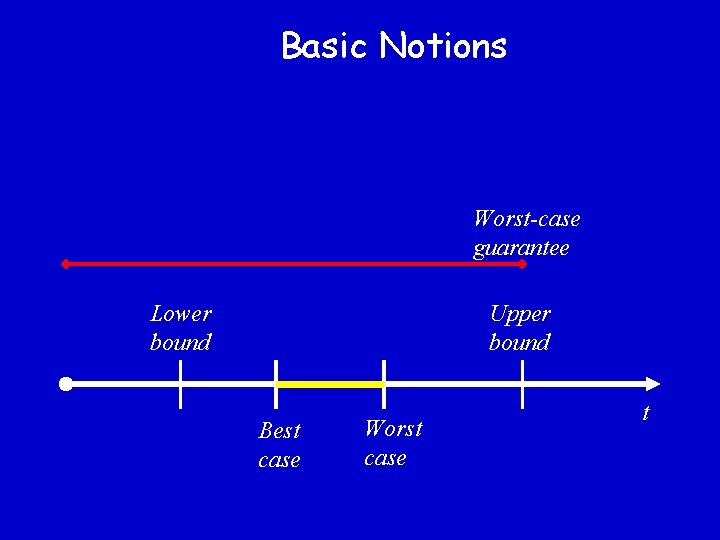

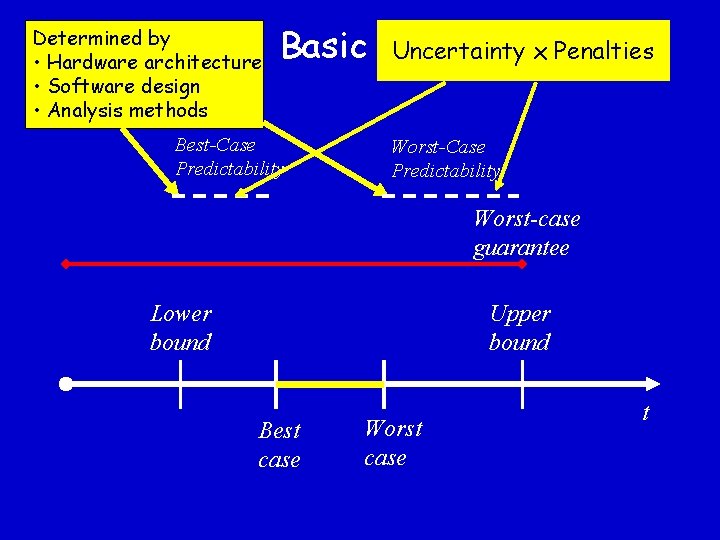

Basic Notions Worst-case guarantee Lower bound Upper bound Best case Worst case t

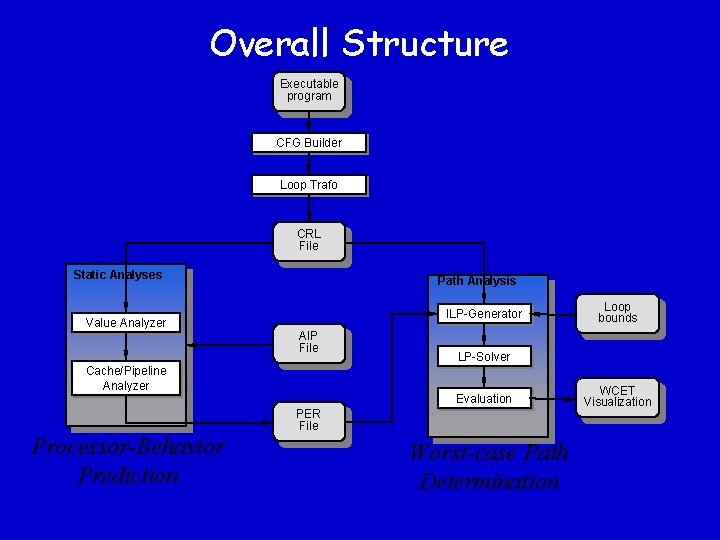

Overall Approach: Natural Modularization 1. Processor-Behavior Analysis: • • • Uses Abstract Interpretation Excludes as many Timing Accidents as possible Determines upper bound for basic blocks (in contexts) 2. Bounds Calculation • • Maps control flow graph to an integer linear program Determines upper bound associated path

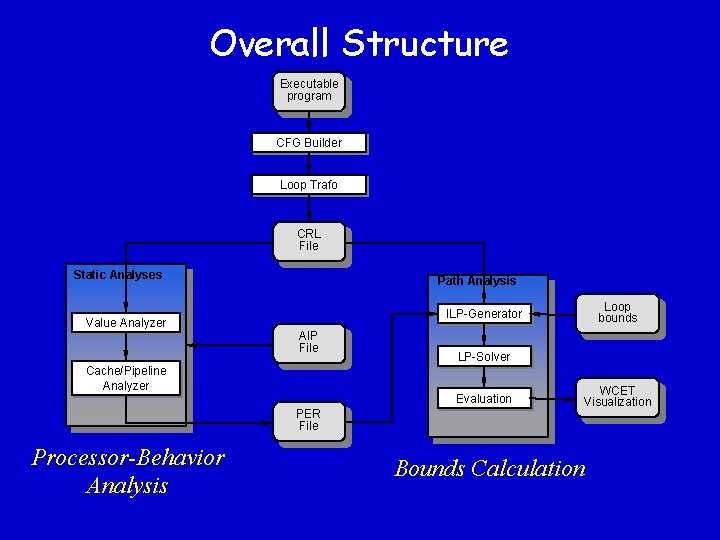

Overall Structure Executable program CFG Builder Loop Trafo CRL File Static Analyses Value Analyzer Path Analysis AIP File Cache/Pipeline Analyzer LP-Solver Evaluation PER File Processor-Behavior Analysis Loop bounds ILP-Generator WCET Visualization Bounds Calculation

Static Program Analysis Applied to WCET Determination • Upper bounds must be safe, i. e. not underestimated • Upper bounds should be tight, i. e. not far away from real execution times • Analogous for lower bounds • Effort must be tolerable

Abstract Interpretation (AI) • semantics-based method for static program analysis • Basic idea of AI: Perform the program's computations using value descriptions or abstract values in place of the concrete values, start with a description of all possible inputs • AI supports correctness proofs • Tool support (Program-Analysis Generator PAG)

Abstract Interpretation – the Ingredients and one Example • abstract domain – complete semilattice, related to concrete domain by abstraction and concretization functions, e. g. intervals of integers (including - , ) instead of integer values • abstract transfer functions for each statement type – abstract versions of their semantics e. g. arithmetic and assignment on intervals • a join function combining abstract values from different control-flow paths – lub on the lattice e. g. “union” on intervals • Example: Interval analysis (Cousot/Halbwachs 78)

Value Analysis • Motivation: – Provide access information to data-cache/pipeline analysis – Detect infeasible paths – Derive loop bounds • Method: calculate intervals, i. e. lower and upper bounds, as in the example above for the values occurring in the machine program (addresses, register contents, local and global variables)

![Value Analysis II D 1: [-4, 4], A[0 x 1000, 0 x 1000] move. Value Analysis II D 1: [-4, 4], A[0 x 1000, 0 x 1000] move.](http://slidetodoc.com/presentation_image/4ffa06bad815b2257b018c7931450a8a/image-19.jpg)

Value Analysis II D 1: [-4, 4], A[0 x 1000, 0 x 1000] move. l #4, D 0[4, 4], D 1: [-4, 4], A[0 x 1000, 0 x 1000] • Intervals are computed along the CFG edges • At joins, intervals are „unioned“ add. l D 1, D 0[0, 8], D 1: [-4, 4], A[0 x 1000, 0 x 1000] D 1: [-2, +2] D 1: [-4, +2] move. l (A 0, D 0), D 1 Which address is accessed here? access [0 x 1000, 0 x 1008] D 1: [-4, 0]

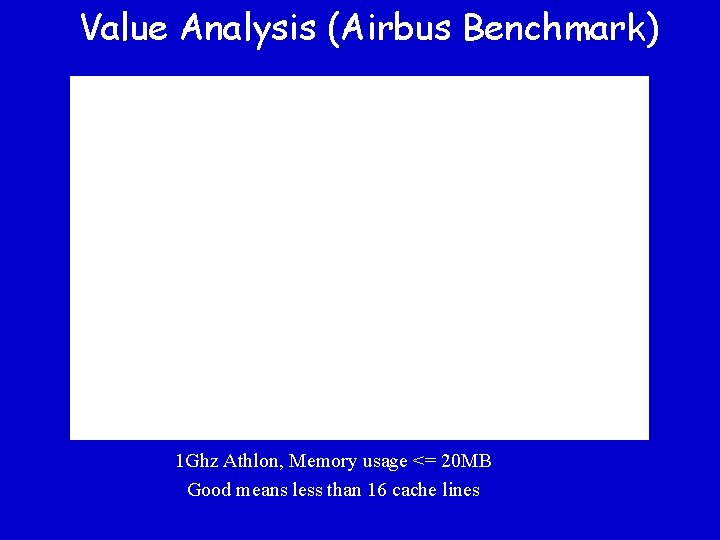

Value Analysis (Airbus Benchmark) 1 Ghz Athlon, Memory usage <= 20 MB Good means less than 16 cache lines

Caches: Fast Memory on Chip • Caches are used, because – Fast main memory is too expensive – The speed gap between CPU and memory is too large and increasing • Caches work well in the average case: – Programs access data locally (many hits) – Programs reuse items (instructions, data) – Access patterns are distributed evenly across the cache

Caches: How the work CPU wants to read/write at memory address a, sends a request for a to the bus Cases: • Block m containing a in the cache (hit): request for a is served in the next cycle • Block m not in the cache (miss): m is transferred from main memory to the cache, m may replace some block in the cache, request for a is served asap while transfer still continues • Several replacement strategies: LRU, PLRU, FIFO, . . . determine which line to replace

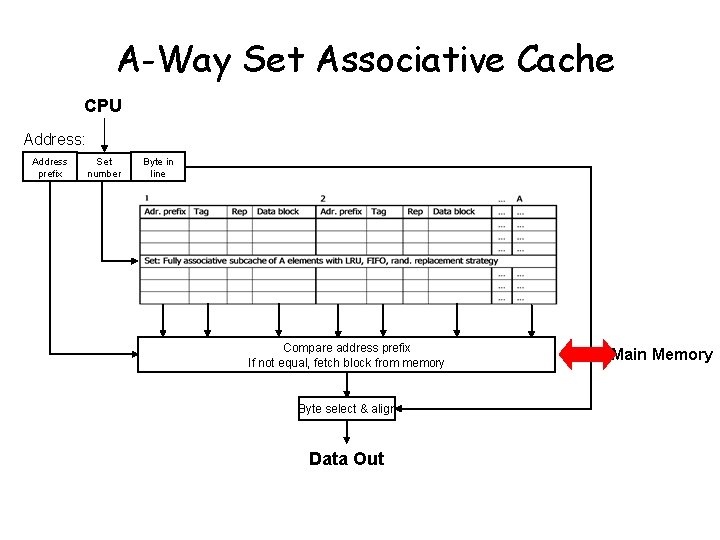

A-Way Set Associative Cache CPU Address: Address prefix Set number Byte in line Compare address prefix If not equal, fetch block from memory Byte select & align Data Out Main Memory

Cache Analysis How to statically precompute cache contents: • Must Analysis: For each program point (and calling context), find out which blocks are in the cache • May Analysis: For each program point (and calling context), find out which blocks may be in the cache Complement says what is not in the cache

Must-Cache and May-Cache- Information • Must Analysis determines safe information about cache hits Each predicted cache hit reduces upper bound • May Analysis determines safe information about cache misses Each predicted cache miss increases lower bound

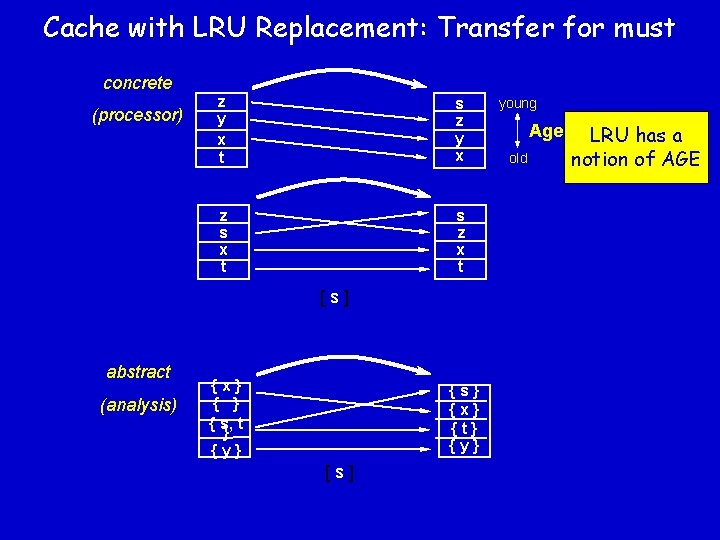

Cache with LRU Replacement: Transfer for must concrete (processor) z y x t s z y x z s x t s z x t [s] abstract (analysis) {x} { s, t } {y} {s} {x} {t} {y} [s] “young” Age “old” LRU has a notion of AGE

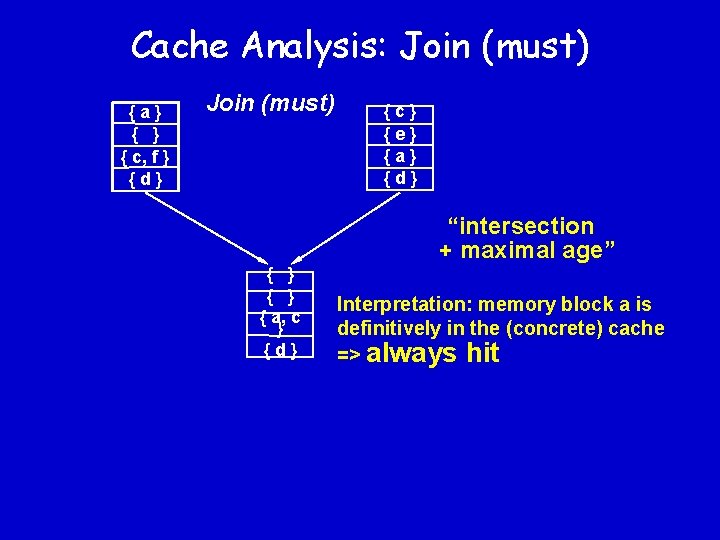

Cache Analysis: Join (must) {a} { c, f } {d} Join (must) {c} {e} {a} {d} “intersection + maximal age” { } { a, c } {d} Interpretation: memory block a is definitively in the (concrete) cache => always hit

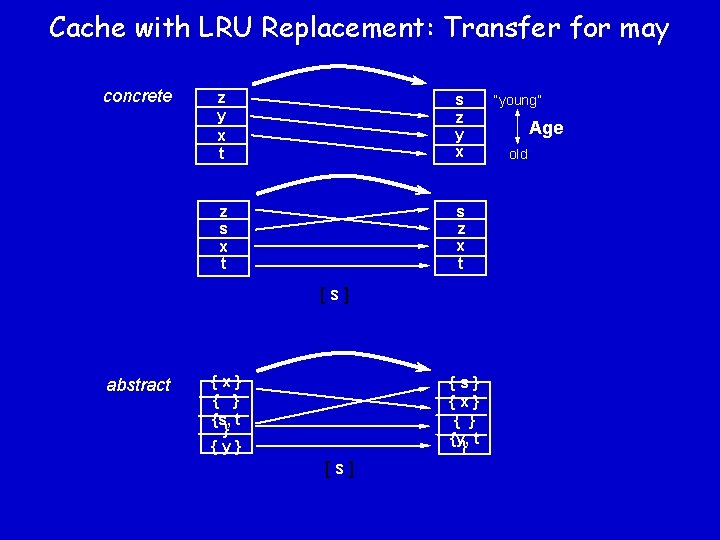

Cache with LRU Replacement: Transfer for may concrete z y x t s z y x z s x t s z x t [s] abstract {x} { } {s, t } {y} {s} {x} { } {y, } t [s] “young” Age “old”

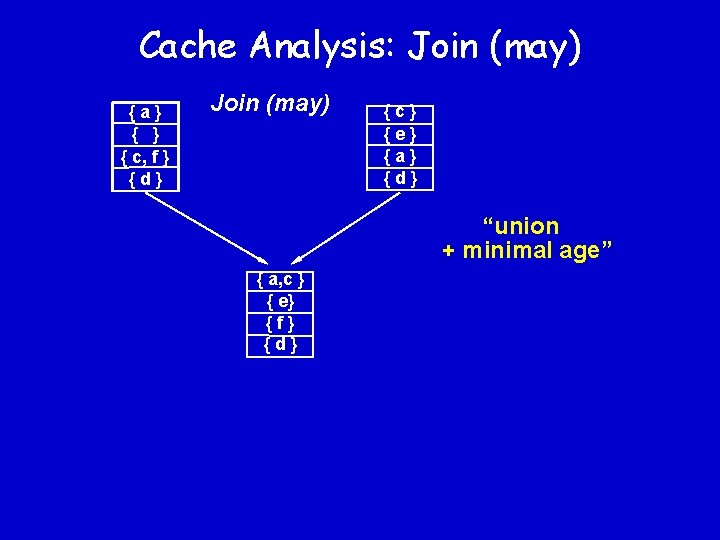

Cache Analysis: Join (may) {a} { c, f } {d} Join (may) {c} {e} {a} {d} “union + minimal age” { a, c } { e} {f} {d}

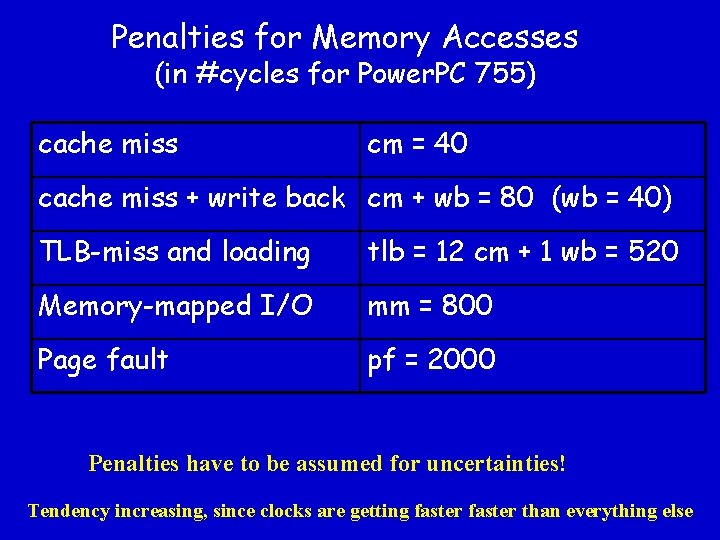

Penalties for Memory Accesses (in #cycles for Power. PC 755) cache miss cm = 40 cache miss + write back cm + wb = 80 (wb = 40) TLB-miss and loading tlb = 12 cm + 1 wb = 520 Memory-mapped I/O mm = 800 Page fault pf = 2000 Penalties have to be assumed for uncertainties! Tendency increasing, since clocks are getting faster than everything else

Cache Impact of Language Constructs • • Pointer to data Function pointer Dynamic method invocation Service demultiplexing CORBA

The Cost of Uncertainty Cost of statically unresolvable dynamic behavior. Basic idea: What does it cost me if I cannot exclude a timing accident? This could be the basis for design and implementation decisions.

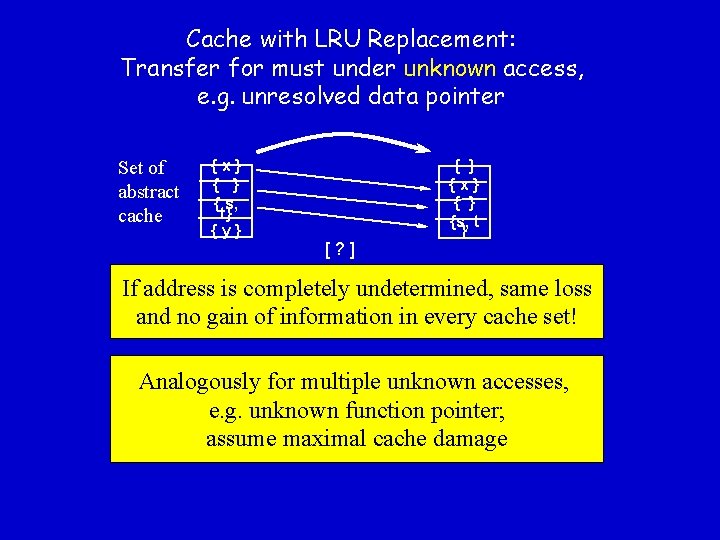

Cache with LRU Replacement: Transfer for must under unknown access, e. g. unresolved data pointer Set of abstract cache {x} { s, t} {y} [? ] { } {x} { } {s, t } If address is completely undetermined, same loss and no gain of information in every cache set! Analogously for multiple unknown accesses, e. g. unknown function pointer; assume maximal cache damage

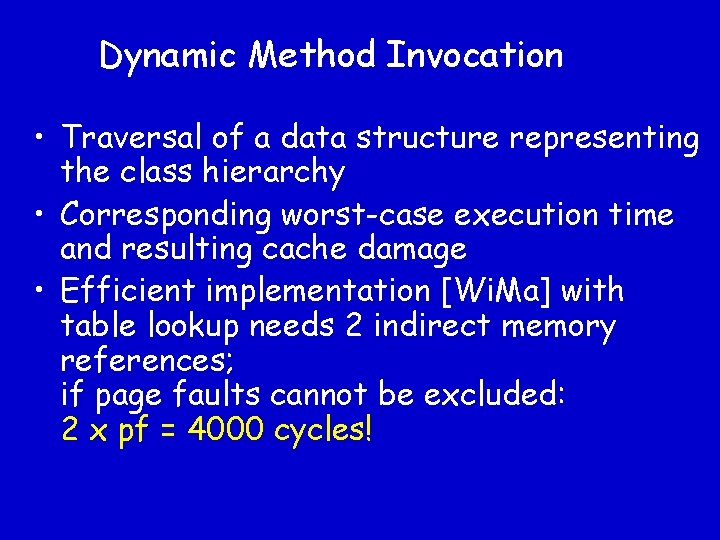

Dynamic Method Invocation • Traversal of a data structure representing the class hierarchy • Corresponding worst-case execution time and resulting cache damage • Efficient implementation [Wi. Ma] with table lookup needs 2 indirect memory references; if page faults cannot be excluded: 2 x pf = 4000 cycles!

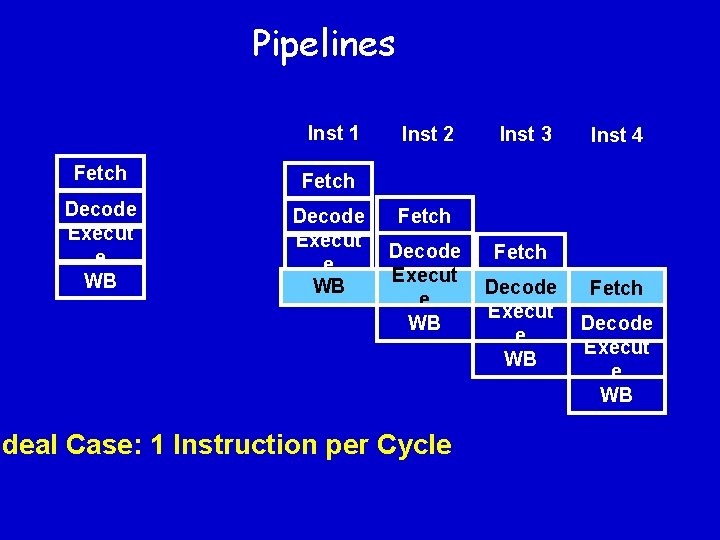

Pipelines Inst 1 Fetch Decode Execut e WB Inst 2 Inst 3 Inst 4 Fetch Decode Execut e WB Ideal Case: 1 Instruction per Cycle Fetch Decode Execut e WB

Pipeline Hazards: • Data Hazards: Operands not yet available (Data Dependences) • Resource Hazards: Consecutive instructions use same resource • Control Hazards: Conditional branch • Instruction-Cache Hazards: Instruction fetch causes cache miss

More Threats • Out-of-order execution • Speculation • Timing Anomalies Consider all possible execution orders ditto Considering the locally worst-case path insufficent

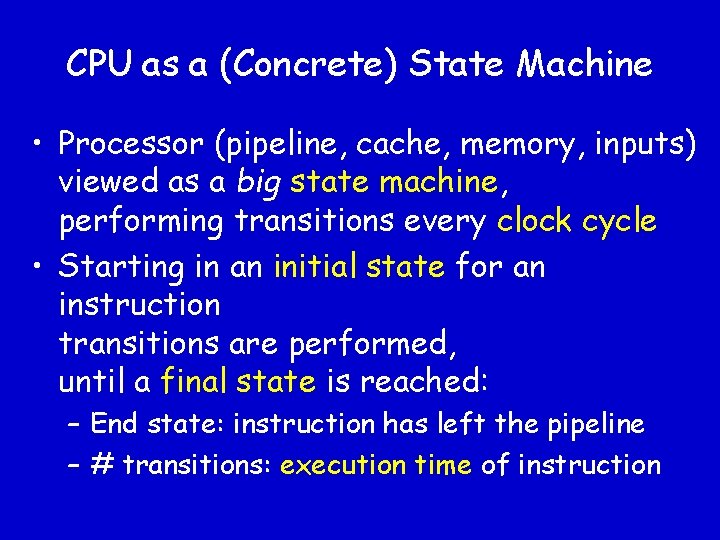

CPU as a (Concrete) State Machine • Processor (pipeline, cache, memory, inputs) viewed as a big state machine, performing transitions every clock cycle • Starting in an initial state for an instruction transitions are performed, until a final state is reached: – End state: instruction has left the pipeline – # transitions: execution time of instruction

A Concrete Pipeline Executing a Basic Block function exec (b : basic block, s : concrete pipeline state) t: trace interprets instruction stream of b starting in state s producing trace t. Successor basic block is interpreted starting in initial state last(t) length(t) gives number of cycles

An Abstract Pipeline Executing a Basic Block function exec (b : basic block, s : abstract pipeline state) t: trace interprets instruction stream of b (annotated with cache information) starting in state s producing trace t length(t) gives number of cycles

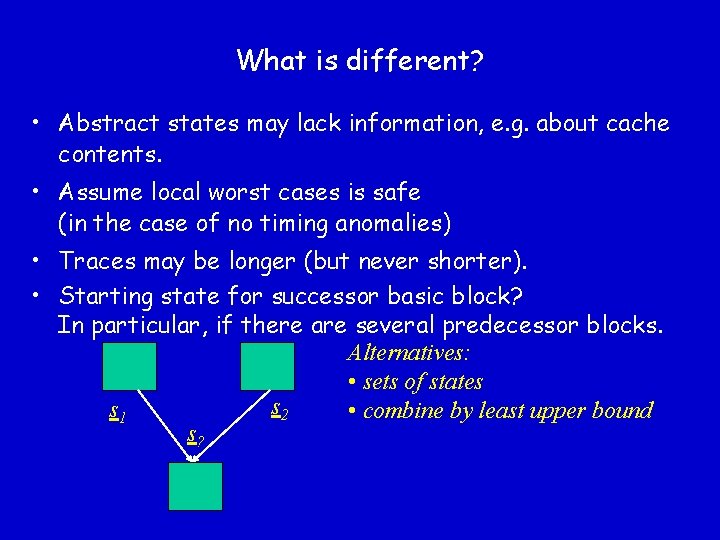

What is different? • Abstract states may lack information, e. g. about cache contents. • Assume local worst cases is safe (in the case of no timing anomalies) • Traces may be longer (but never shorter). • Starting state for successor basic block? In particular, if there are several predecessor blocks. Alternatives: • sets of states s 2 s 1 • combine by least upper bound s?

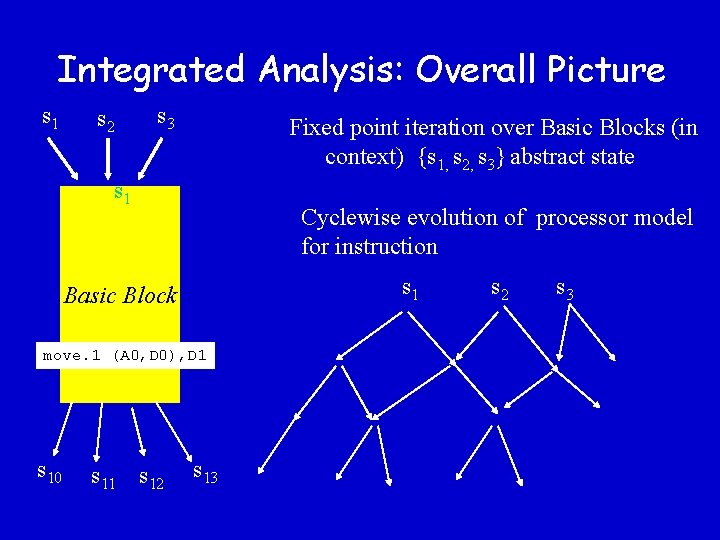

Integrated Analysis: Overall Picture s 1 s 2 s 3 Fixed point iteration over Basic Blocks (in context) {s 1, s 2, s 3} abstract state s 1 Cyclewise evolution of processor model for instruction s 1 Basic Block move. 1 (A 0, D 0), D 1 s 10 s 11 s 12 s 13 s 2 s 3

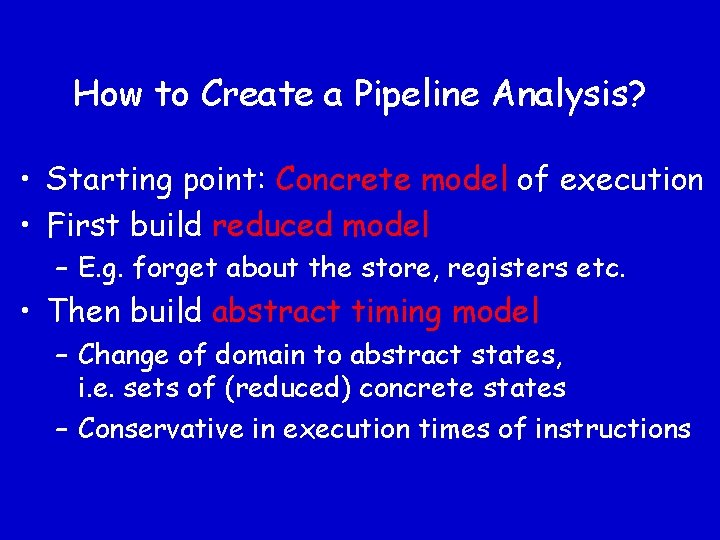

How to Create a Pipeline Analysis? • Starting point: Concrete model of execution • First build reduced model – E. g. forget about the store, registers etc. • Then build abstract timing model – Change of domain to abstract states, i. e. sets of (reduced) concrete states – Conservative in execution times of instructions

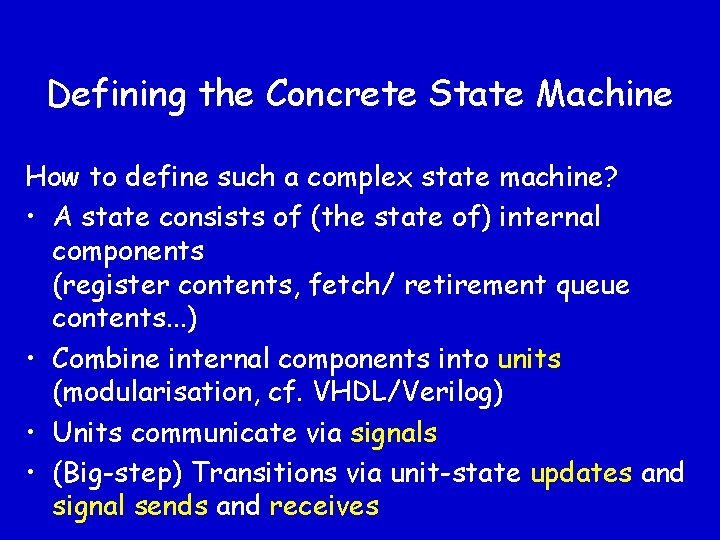

Defining the Concrete State Machine How to define such a complex state machine? • A state consists of (the state of) internal components (register contents, fetch/ retirement queue contents. . . ) • Combine internal components into units (modularisation, cf. VHDL/Verilog) • Units communicate via signals • (Big-step) Transitions via unit-state updates and signal sends and receives

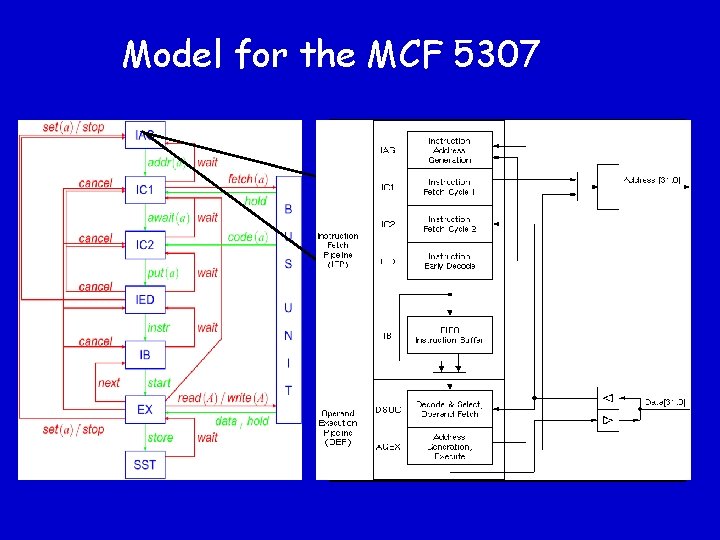

An Example: MCF 5307 • MCF 5307 is a V 3 Coldfire family member • Coldfire is the successor family to the M 68 K processor generation • Restricted in instruction size, addressing modes and implemented M 68 K opcodes • MCF 5307: small and cheap chip with integrated peripherals • Separated but coupled bus/core clock frequencies

Cold. Fire Pipeline The Cold. Fire pipeline consists of • a Fetch Pipeline of 4 stages – – Instruction Address Generation (IAG) Instruction Fetch Cycle 1 (IC 1) Instruction Fetch Cycle 2 (IC 2) Instruction Early Decode (IED) • an Instruction Buffer (IB) for 8 instructions • an Execution Pipeline of 2 stages – Decoding and register operand fetching (1 cycle) – Memory access and execution (1 – many

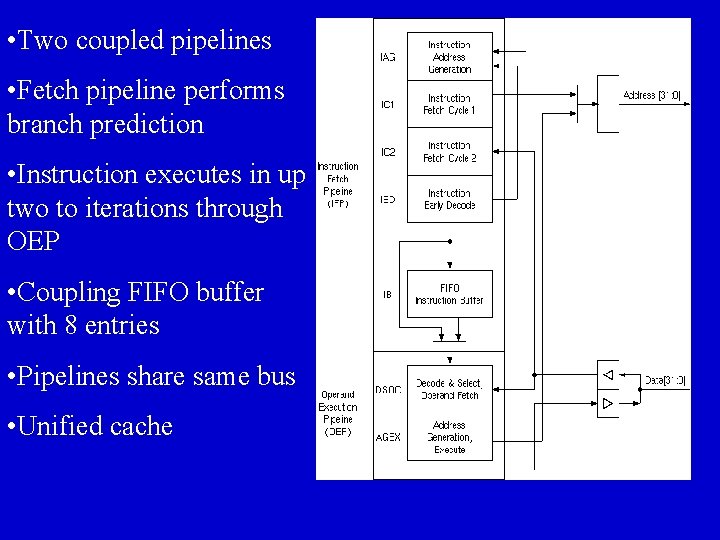

• Two coupled pipelines • Fetch pipeline performs branch prediction • Instruction executes in up two to iterations through OEP • Coupling FIFO buffer with 8 entries • Pipelines share same bus • Unified cache

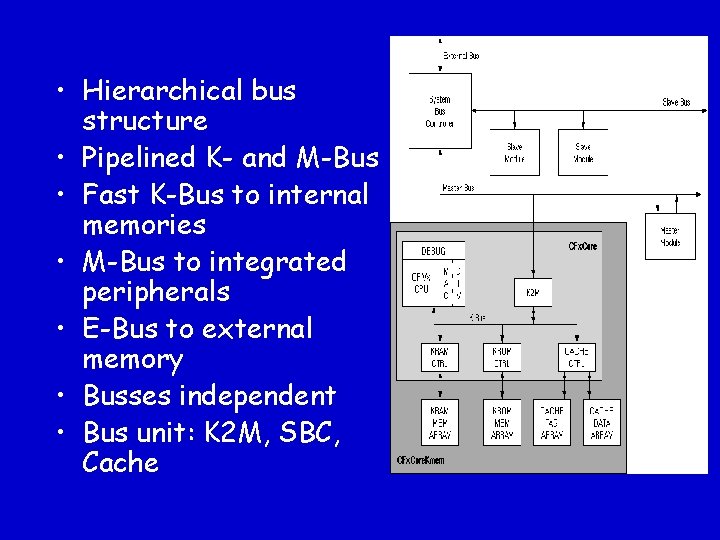

• Hierarchical bus structure • Pipelined K- and M-Bus • Fast K-Bus to internal memories • M-Bus to integrated peripherals • E-Bus to external memory • Busses independent • Bus unit: K 2 M, SBC, Cache

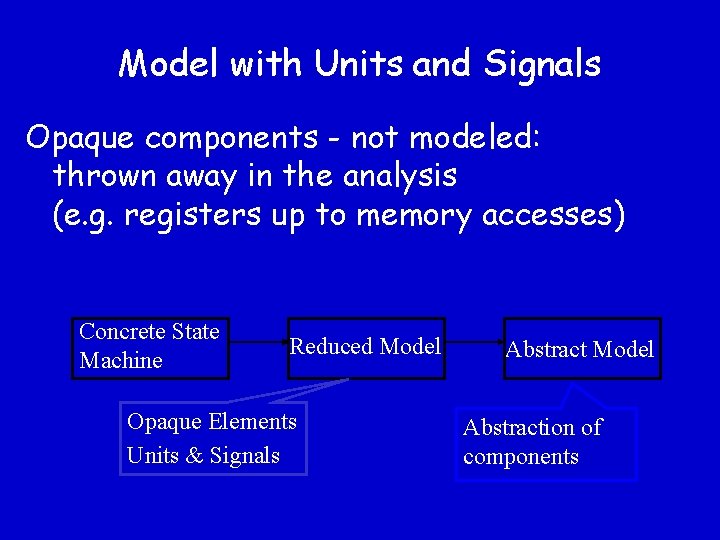

Model with Units and Signals Opaque components - not modeled: thrown away in the analysis (e. g. registers up to memory accesses) Concrete State Machine Reduced Model Opaque Elements Units & Signals Abstract Model Abstraction of components

Model for the MCF 5307 State: Address | STOP Evolution: wait, x => x, --set(a), x => a+4, addr(a+4) stop, x => STOP, -----, a => a+4, addr(a+4)

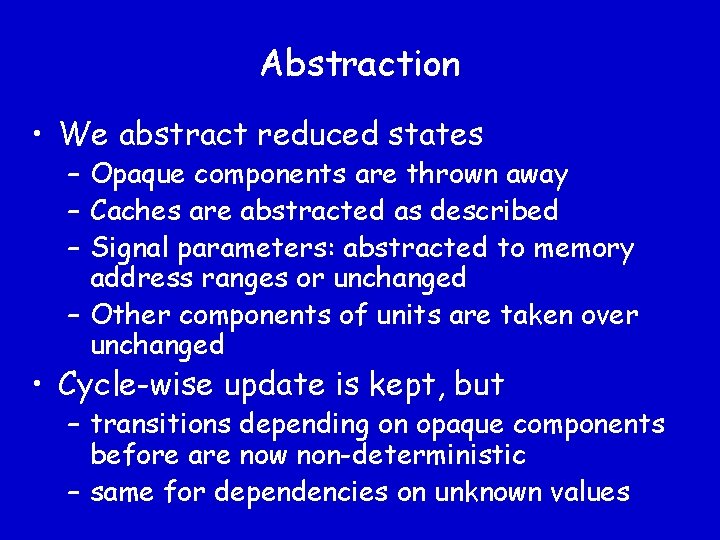

Abstraction • We abstract reduced states – Opaque components are thrown away – Caches are abstracted as described – Signal parameters: abstracted to memory address ranges or unchanged – Other components of units are taken over unchanged • Cycle-wise update is kept, but – transitions depending on opaque components before are now non-deterministic – same for dependencies on unknown values

Nondeterminism • In the reduced model, one state resulted in one new state after a one-cycle transition • Now, one state can have several successor states – Transitions from set of states to set of states

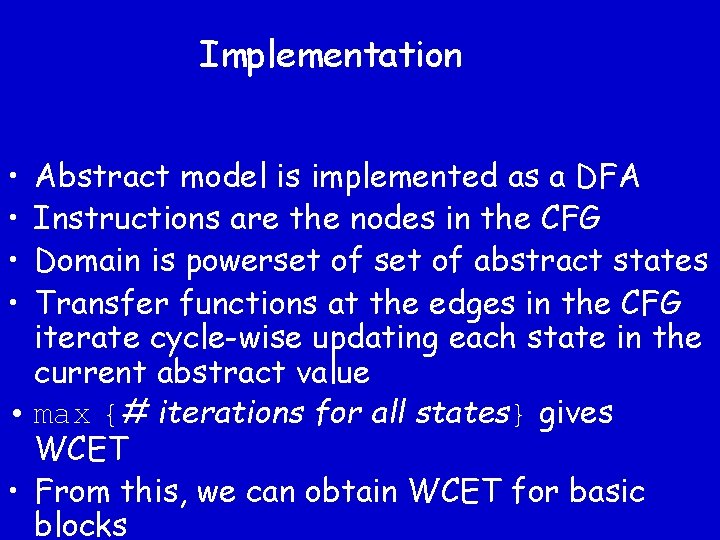

Implementation • • Abstract model is implemented as a DFA Instructions are the nodes in the CFG Domain is powerset of abstract states Transfer functions at the edges in the CFG iterate cycle-wise updating each state in the current abstract value • max {# iterations for all states} gives WCET • From this, we can obtain WCET for basic blocks

Overall Structure Executable program CFG Builder Loop Trafo CRL File Static Analyses Value Analyzer Path Analysis ILP-Generator AIP File Cache/Pipeline Analyzer LP-Solver Evaluation PER File Processor-Behavior Prediction Loop bounds Worst-case Path Determination WCET Visualization

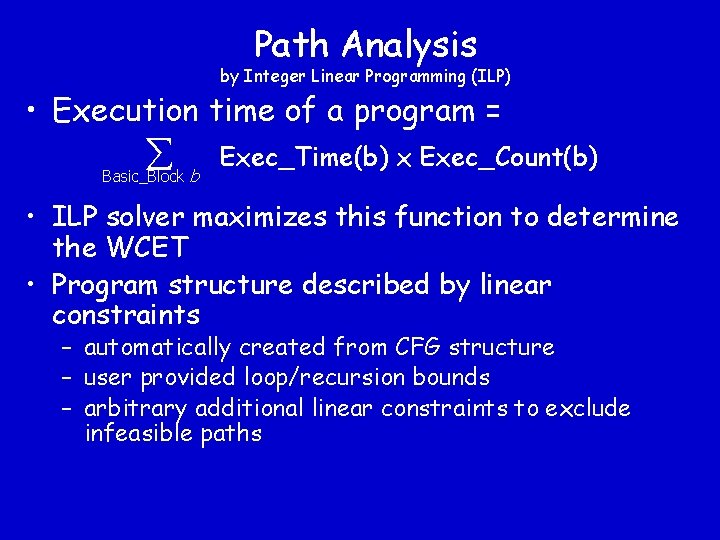

Path Analysis by Integer Linear Programming (ILP) • Execution time of a program = Basic_Block b Exec_Time(b) x Exec_Count(b) • ILP solver maximizes this function to determine the WCET • Program structure described by linear constraints – automatically created from CFG structure – user provided loop/recursion bounds – arbitrary additional linear constraints to exclude infeasible paths

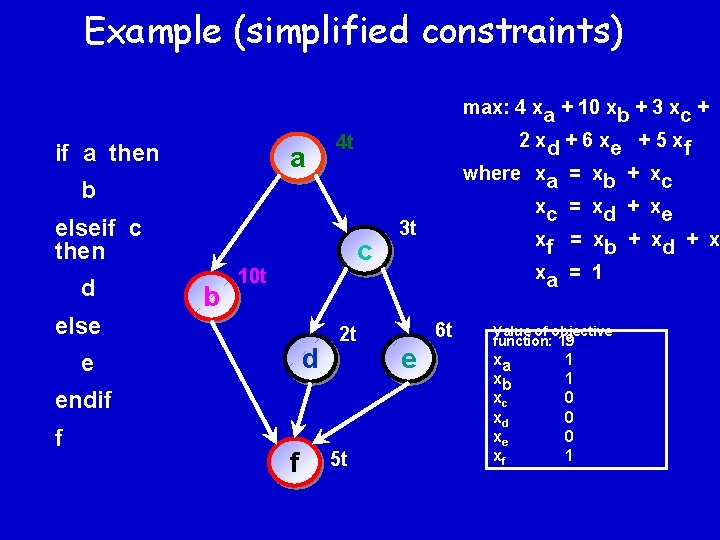

Example (simplified constraints) max: 4 xa + 10 xb + 3 xc + if a then a 2 xd + 6 xe + 5 xf 4 t where xa = xb + xc xc = xd + xe xf = xb + xd + xe xa = 1 b elseif c then d else b c 10 t d e 2 t endif f f 5 t 3 t 6 t e Value of objective function: 19 xa xb xc xd xe xf 1 1 0 0 0 1

Determined by • Hardware architecture • Software design • Analysis methods Basic Notions Uncertainty x Penalties Best-Case Predictability Worst-case guarantee Lower bound Upper bound Best case Worst case t

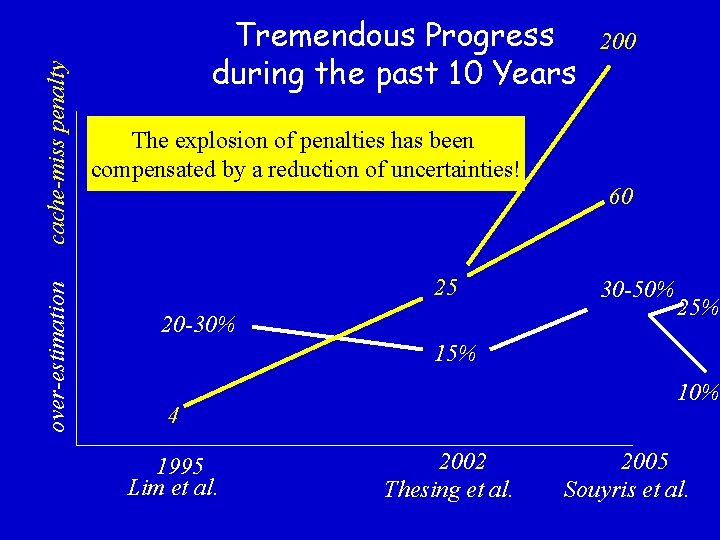

cache-miss penalty over-estimation Tremendous Progress during the past 10 Years 200 The explosion of penalties has been compensated by a reduction of uncertainties! 60 25 20 -30% 30 -50% 25% 10% 4 1995 Lim et al. 2002 Thesing et al. 2005 Souyris et al.

ai. T WCET Analyzer IST Project DAEDALUS final review report: "The Abs. Int tool is probably the best of its kind in the world and it is justified to consider this result as a breakthrough. ”

Timing Predictability • Experience has shown that the precision of results depend on system characteristics both • of the underlying hardware platform and • of the software layers

Timing Predictability • System characteristics determine – size of penalties – hardware architecture – analyzability – HW architecture, programming language, SW design • Many “advances” in computer architecture have increased averagecase performance at the cost of worstcase performance

Conclusion • The determination of safe and precise upper bounds on execution times by static program analysis essentially solves the problem • Usability, e. g. need for user annotation, can still be improved • Precision greatly depends on predictability properties of the system • Integration into the design process is necessary

- Slides: 63