Given by Erez Eyal Uri Klein Lecture Outline

Given by: Erez Eyal Uri Klein

Lecture Outline n Exact Nearest Neighbor search Definition n Low dimensions n KD-Trees n n Overview Approximate Nearest Neighbor search (LSH based) Locality Sensitive Hashing families. Detailed n Algorithm for Hamming Cube n Algorithm for Euclidean space n n Summary

Nearest Neighbor Search in Springfield ?

Nearest “Neighbor” Search for Homer Simpson ? Home planet distance Height Weight Color

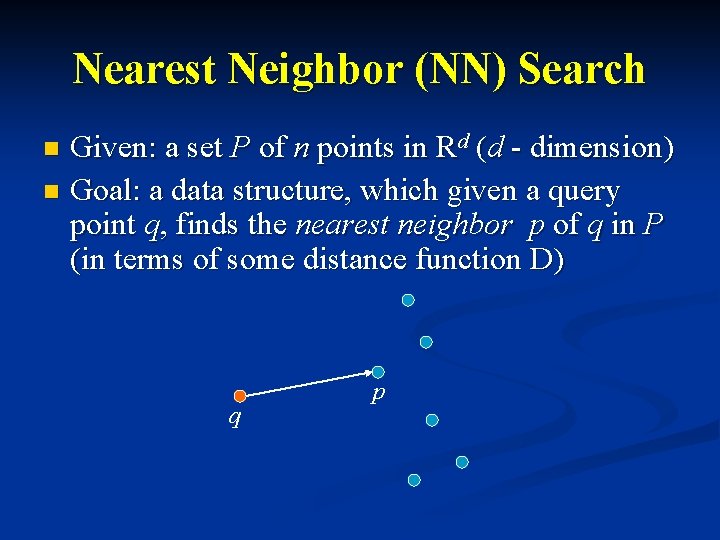

Nearest Neighbor (NN) Search Given: a set P of n points in Rd (d - dimension) n Goal: a data structure, which given a query point q, finds the nearest neighbor p of q in P (in terms of some distance function D) n q p

Nearest Neighbor Search Interested in designing a data structure, with the following objectives: n Space: O(dn) n Query time: O(d log(n)) n Data structure construction time is not important

Lecture Outline n Exact Nearest Neighbor search Definition n Low dimensions n KD-Trees n n Approximate Nearest Neighbor search (LSH based) Locality Sensitive Hashing families n Algorithm for Hamming Cube n Algorithm for Euclidean space n n Summery

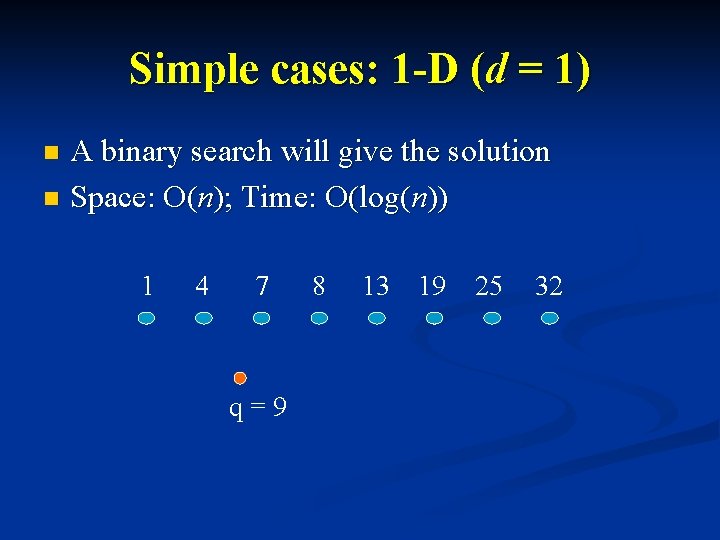

Simple cases: 1 -D (d = 1) A binary search will give the solution n Space: O(n); Time: O(log(n)) n 1 4 7 q=9 8 13 19 25 32

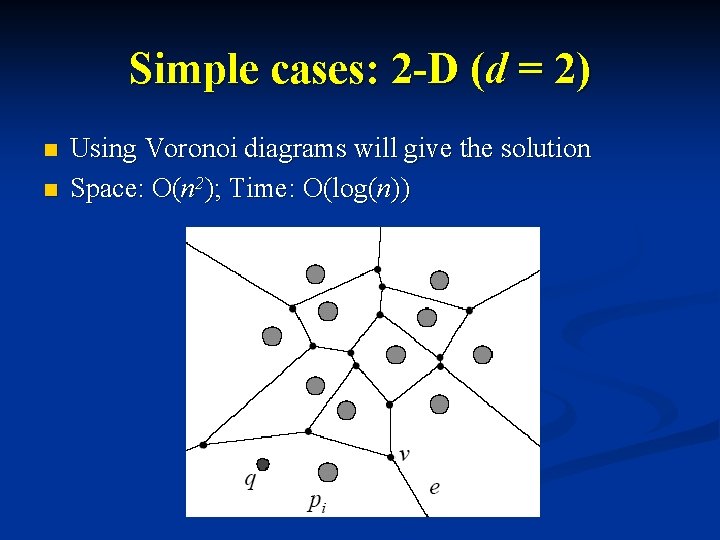

Simple cases: 2 -D (d = 2) n n Using Voronoi diagrams will give the solution Space: O(n 2); Time: O(log(n))

Lecture Outline n Exact Nearest Neighbor search Definition n Low dimensions n KD-Trees n n Approximate Nearest Neighbor search (LSH based) Locality Sensitive Hashing families n Algorithm for Hamming Cube n Algorithm for Euclidean space n n Summary

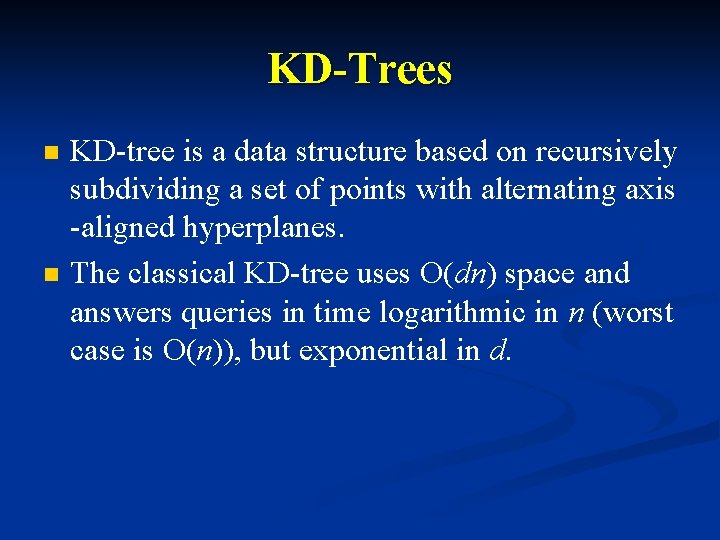

KD-Trees n n KD-tree is a data structure based on recursively subdividing a set of points with alternating axis -aligned hyperplanes. The classical KD-tree uses O(dn) space and answers queries in time logarithmic in n (worst case is O(n)), but exponential in d.

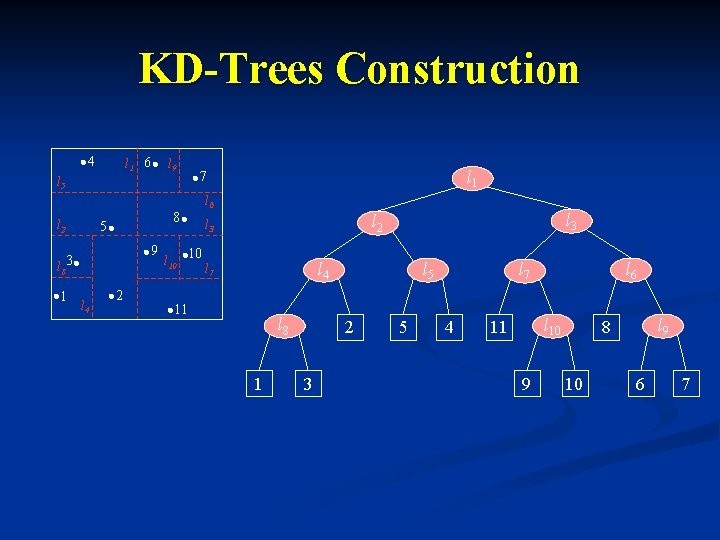

KD-Trees Construction 4 l 1 6 l 9 l 5 l 2 8 5 9 l 8 3 1 l 4 2 l 1 7 l 10 10 l 6 l 3 l 2 l 3 l 4 l 7 11 l 8 1 l 5 2 3 5 l 7 4 l 6 l 10 11 9 l 9 8 10 6 7

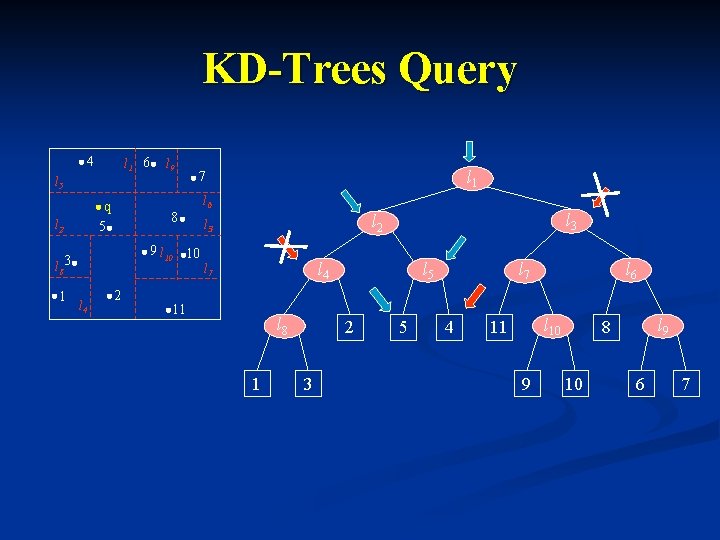

KD-Trees Query 4 l 1 6 l 9 l 5 q 5 l 2 8 9 l 10 10 l 8 3 1 l 4 2 l 1 7 l 6 l 3 l 2 l 3 l 4 l 7 11 l 8 1 l 5 2 3 5 l 7 4 l 6 l 10 11 9 l 9 8 10 6 7

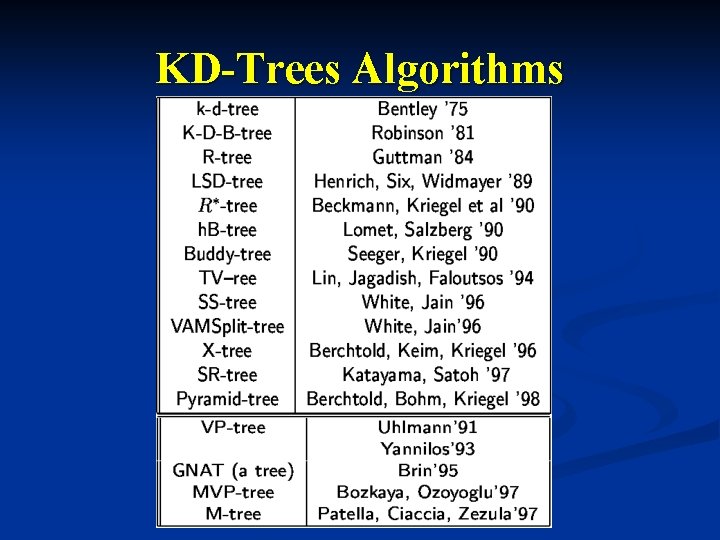

KD-Trees Algorithms

Lecture Outline n Exact Nearest Neighbor search Definition n Low dimensions n KD-Trees n n Approximate Nearest Neighbor search (LSH based) Locality Sensitive Hashing families n Algorithm for Hamming Cube n Algorithm for Euclidean space n n Summary

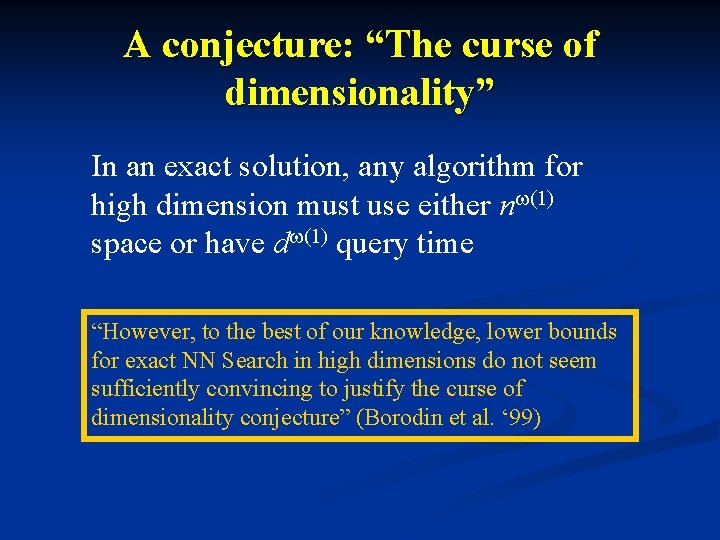

A conjecture: “The curse of dimensionality” In an exact solution, any algorithm for high dimension must use either nw(1) space or have dw(1) query time “However, to the best of our knowledge, lower bounds for exact NN Search in high dimensions do not seem sufficiently convincing to justify the curse of dimensionality conjecture” (Borodin et al. ‘ 99)

Why Approximate NN? n n n Approximation allow significant speedup of calculation (on the order of 10’s to 100’s) Fixed-precision arithmetic on computer causes approximation anyway Heuristics are used for mapping features to numerical values (causing uncertainty anyway)

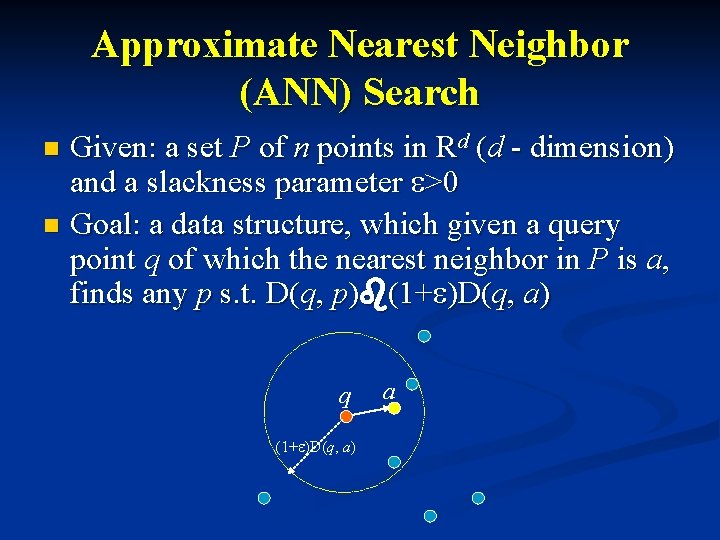

Approximate Nearest Neighbor (ANN) Search Given: a set P of n points in Rd (d - dimension) and a slackness parameter e>0 n Goal: a data structure, which given a query point q of which the nearest neighbor in P is a, finds any p s. t. D(q, p)b(1+e)D(q, a) n q (1+e)D(q, a) a

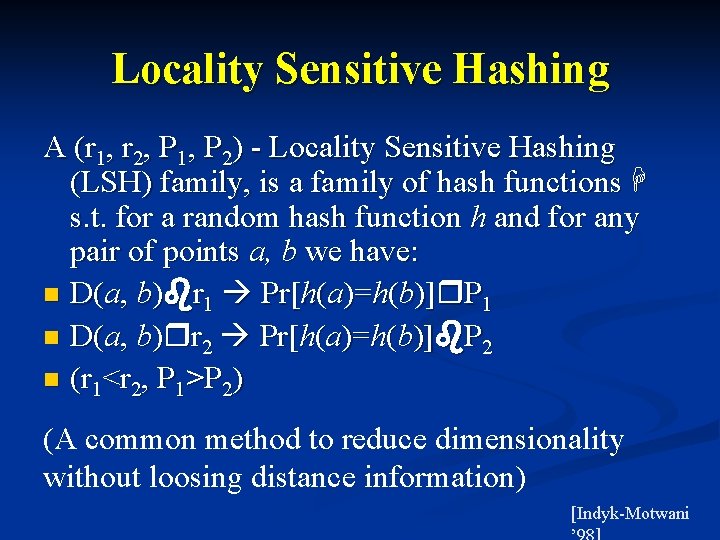

Locality Sensitive Hashing A (r 1, r 2, P 1, P 2) - Locality Sensitive Hashing (LSH) family, is a family of hash functions H s. t. for a random hash function h and for any pair of points a, b we have: n D(a, b)br 1 Pr[h(a)=h(b)]r. P 1 n D(a, b)rr 2 Pr[h(a)=h(b)]b. P 2 n (r 1<r 2, P 1>P 2) (A common method to reduce dimensionality without loosing distance information) [Indyk-Motwani

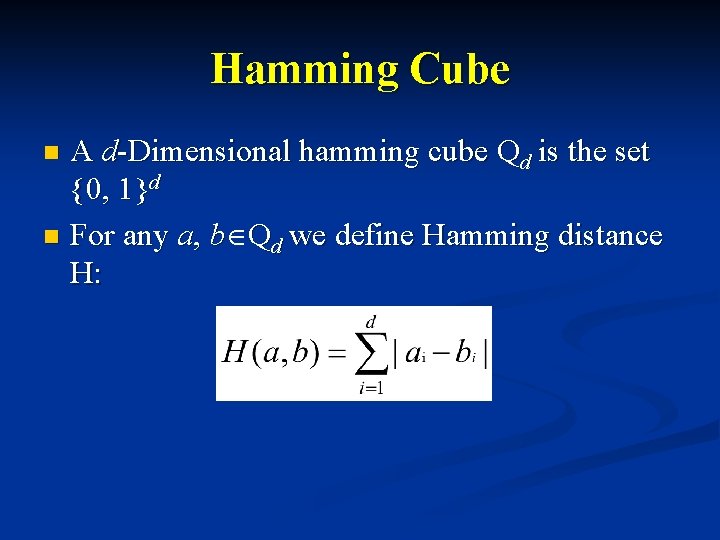

Hamming Cube A d-Dimensional hamming cube Qd is the set {0, 1}d n For any a, b Qd we define Hamming distance H: n

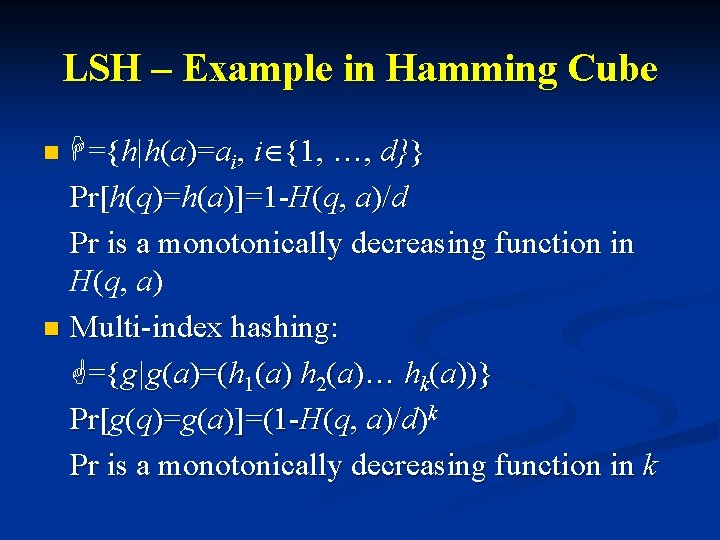

LSH – Example in Hamming Cube H={h|h(a)=ai, i {1, …, d}} Pr[h( Pr[ q)=h( )= a)]=1 -H(q, a)/d Pr is a monotonically decreasing function in H(q, a) n Multi-index hashing: G={g|g(a)=(h 1(a) h 2(a)… hk(a))} Pr[g( Pr[ q)=g( )= a)]=(1 -H(q, a)/d)k Pr is a monotonically decreasing function in k n

Lecture Outline n Exact Nearest Neighbor search Definition n Low dimensions n KD-Trees n n Approximate Nearest Neighbor search (LSH based) Locality Sensitive Hashing families n Algorithm for Hamming Cube n Algorithm for Euclidean space n n Summary

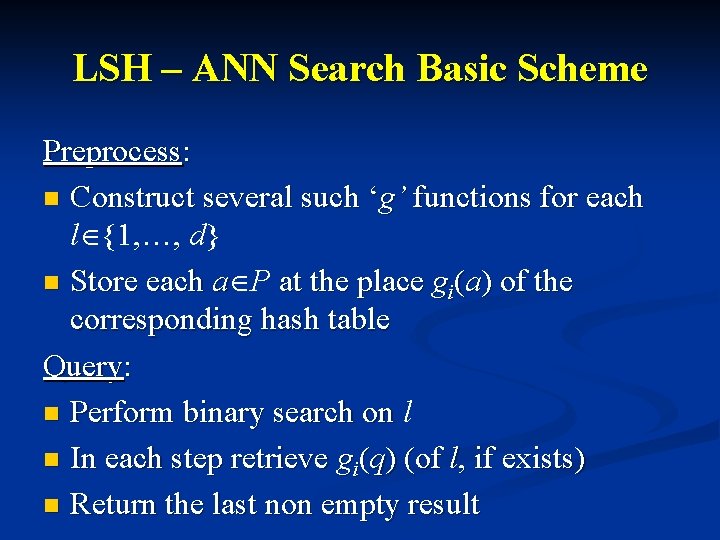

LSH – ANN Search Basic Scheme Preprocess: n Construct several such ‘g’ functions for each l {1, …, d} n Store each a P at the place gi(a) of the corresponding hash table Query: n Perform binary search on l n In each step retrieve gi(q) (of l, if exists) n Return the last non empty result

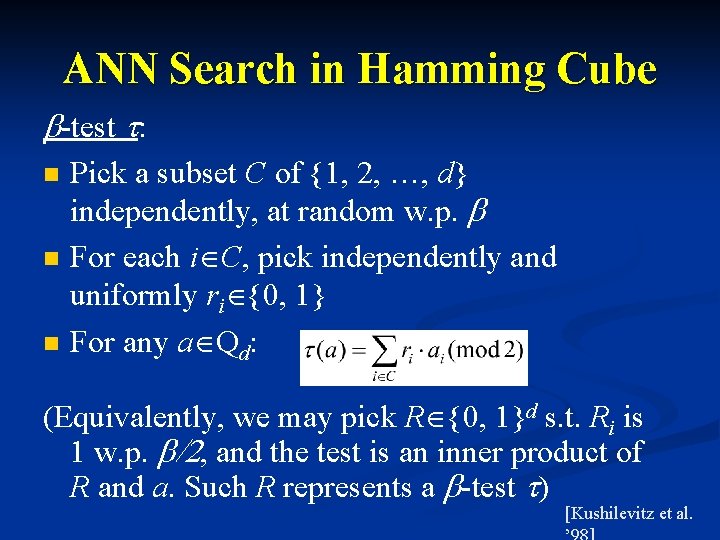

ANN Search in Hamming Cube b-test t: n n n Pick a subset C of {1, 2, …, d} independently, at random w. p. b For each i C, pick independently and uniformly ri {0, 1} For any a Qd: (Equivalently, we may pick R {0, 1}d s. t. Ri is 1 w. p. b/2, and the test is an inner product of R and a. Such R represents a b-test t) [Kushilevitz et al.

![ANN Search in Hamming Cube Define: D(a, b)=Pr[t(a)Rt(b)] n For a query q, Let ANN Search in Hamming Cube Define: D(a, b)=Pr[t(a)Rt(b)] n For a query q, Let](http://slidetodoc.com/presentation_image/350c1a87db5ba42b0016f8c842bc700e/image-25.jpg)

ANN Search in Hamming Cube Define: D(a, b)=Pr[t(a)Rt(b)] n For a query q, Let H(a, q)bl, H(b, q)>l(1+e) Then for b=1/(2 l): D(a, q)bd 1<d 2<D(b, q) Where: n And define: d=d 2 -d 1=Q(1 -e-e/2)

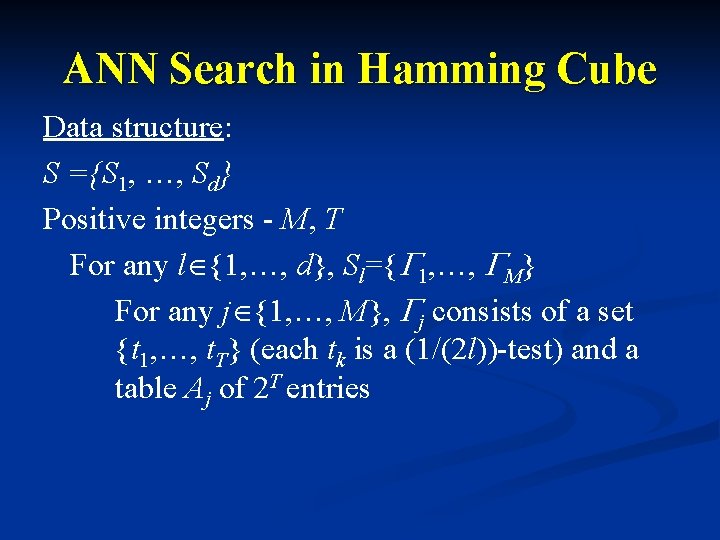

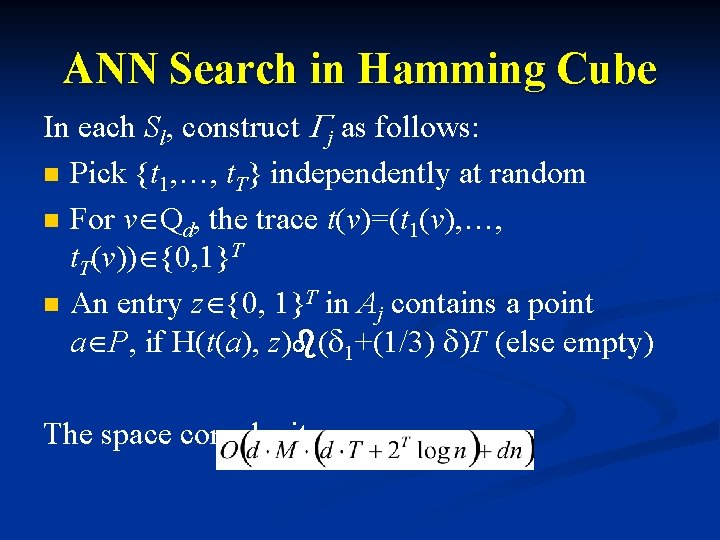

ANN Search in Hamming Cube Data structure: S ={S 1, …, Sd} Positive integers - M, T For any l {1, …, d}, Sl={G 1, …, GM} For any j {1, …, M}, Gj consists of a set {t 1, …, t. T} (each tk is a (1/(2 l))-test) and a table Aj of 2 T entries

ANN Search in Hamming Cube In each Sl, construct Gj as follows: n Pick {t 1, …, t. T} independently at random n For v Qd, the trace t(v)=(t 1(v), …, t. T(v)) {0, 1}T n An entry z {0, 1}T in Aj contains a point a P, if H(t(a), z)b(d 1+(1/3) d)T (else empty) The space complexity:

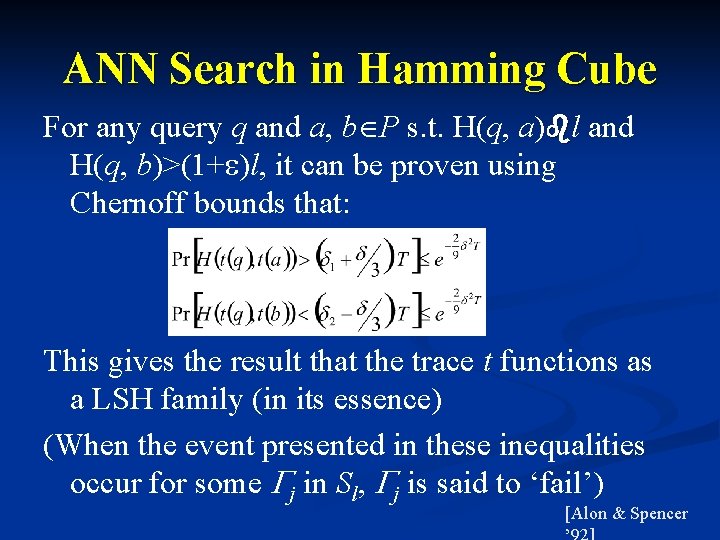

ANN Search in Hamming Cube For any query q and a, b P s. t. H(q, a)bl and H(q, b)>(1+e)l, it can be proven using Chernoff bounds that: This gives the result that the trace t functions as a LSH family (in its essence) (When the event presented in these inequalities occur for some Gj in Sl, Gj is said to ‘fail’) [Alon & Spencer

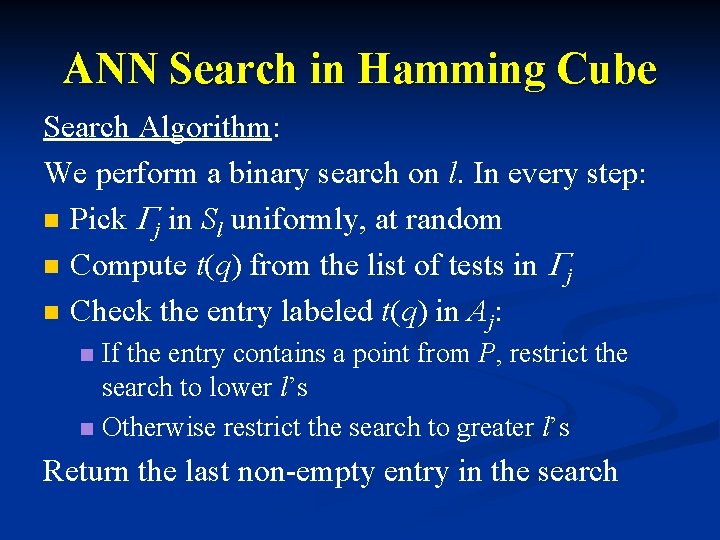

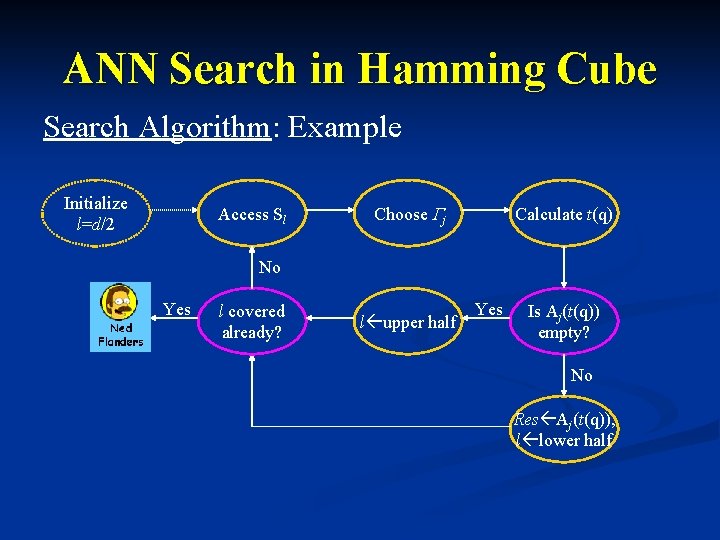

ANN Search in Hamming Cube Search Algorithm: We perform a binary search on l. In every step: n Pick Gj in Sl uniformly, at random n Compute t(q) from the list of tests in Gj n Check the entry labeled t(q) in Aj: If the entry contains a point from P, restrict the search to lower l’s n Otherwise restrict the search to greater l’s n Return the last non-empty entry in the search

ANN Search in Hamming Cube Search Algorithm: Example Initialize l=d/2 Access Sl Choose Gj Calculate t(q) No Yes l covered already? l upper half Yes Is Aj(t(q)) empty? No Res Aj(t(q)), l lower half

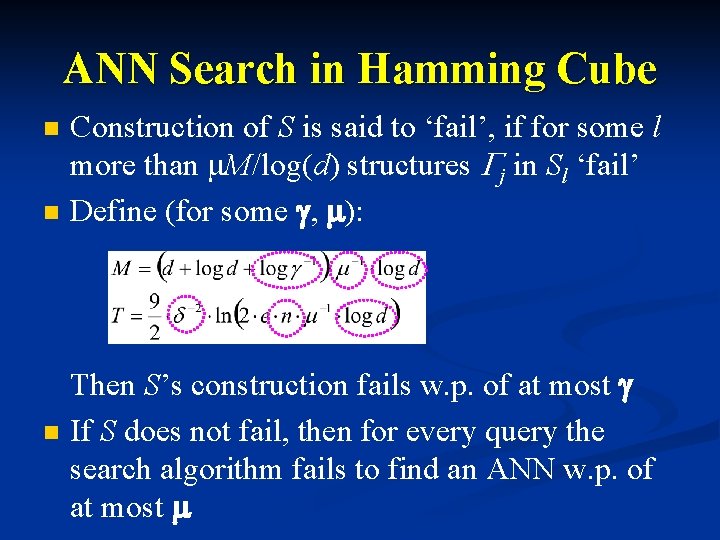

ANN Search in Hamming Cube n n n Construction of S is said to ‘fail’, if for some l more than m. M/log(d) structures Gj in Sl ‘fail’ Define (for some g, m): Then S’s construction fails w. p. of at most g If S does not fail, then for every query the search algorithm fails to find an ANN w. p. of at most m

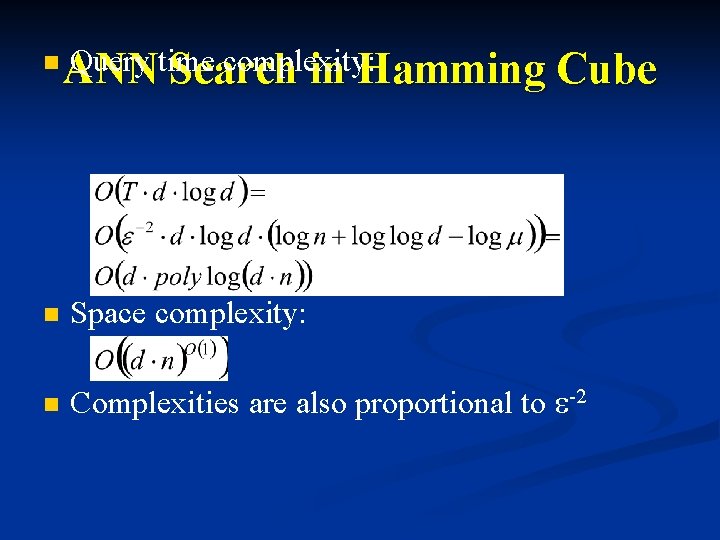

n Query time complexity: ANN Search in Hamming Cube n Space complexity: n Complexities are also proportional to e-2

Lecture Outline n Exact Nearest Neighbor search Definition n Low dimensions n KD-Trees n n Approximate Nearest Neighbor search (LSH based) Locality Sensitive Hashing families n Algorithm for Hamming Cube n Algorithm for Euclidean space n n Summary

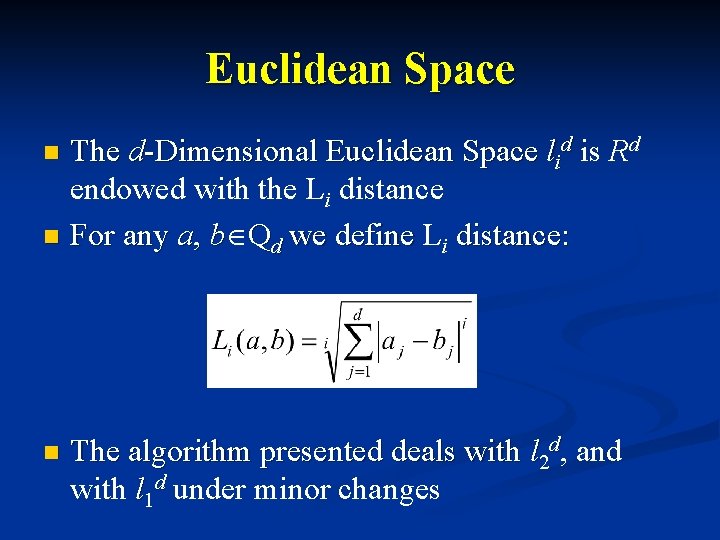

Euclidean Space The d-Dimensional Euclidean Space lid is Rd endowed with the Li distance n For any a, b Qd we define Li distance: n n The algorithm presented deals with l 2 d, and with l 1 d under minor changes

Euclidean Space Define: n B(a, r) is the closed ball around a with radius r n D(a, r)=PIB(a, r) (A subset of Rd) [Kushilevitz et al.

LSH – ANN Search Extended Scheme Preprocess: n Prepare a data structure for each ‘hamming ball’ induced by any a, b P. Query: n Start with some maximal ball n In each step calculate the ANN n Stop according to some threshold

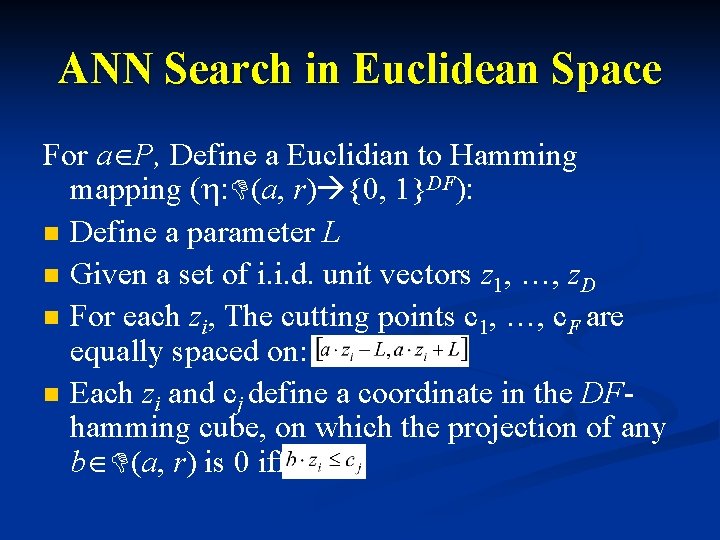

ANN Search in Euclidean Space For a P, Define a Euclidian to Hamming mapping (h: D(a, r) {0, 1}DF): n Define a parameter L n Given a set of i. i. d. unit vectors z 1, …, z. D n For each zi, The cutting points c 1, …, c. F are equally spaced on: n Each zi and cj define a coordinate in the DFhamming cube, on which the projection of any b D(a, r) is 0 iff

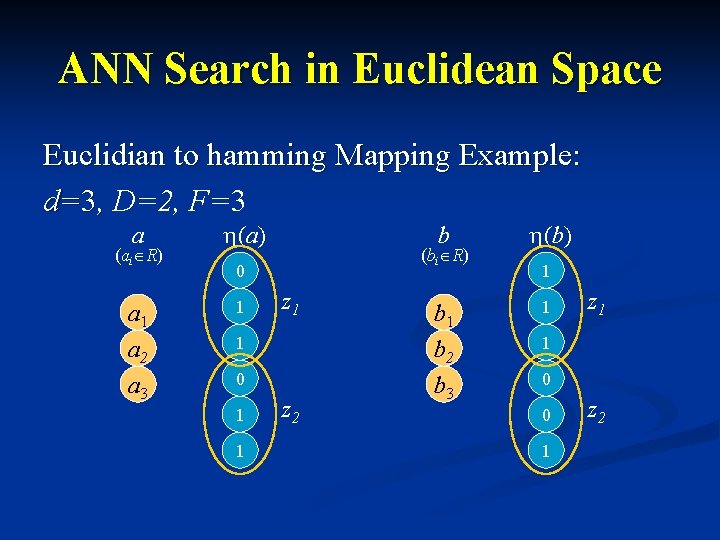

ANN Search in Euclidean Space Euclidian to hamming Mapping Example: d=3, D=2, F=3 a (ai R) a 1 a 2 a 3 h(a) b (bi R) 0 1 z 1 1 0 1 1 z 2 b 1 b 2 b 3 h(b) 1 1 z 1 1 0 0 1 z 2

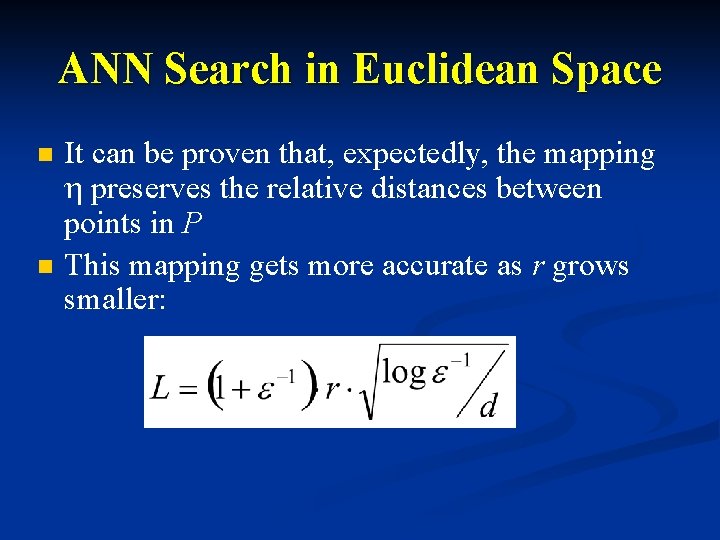

ANN Search in Euclidean Space n n It can be proven that, expectedly, the mapping h preserves the relative distances between points in P This mapping gets more accurate as r grows smaller:

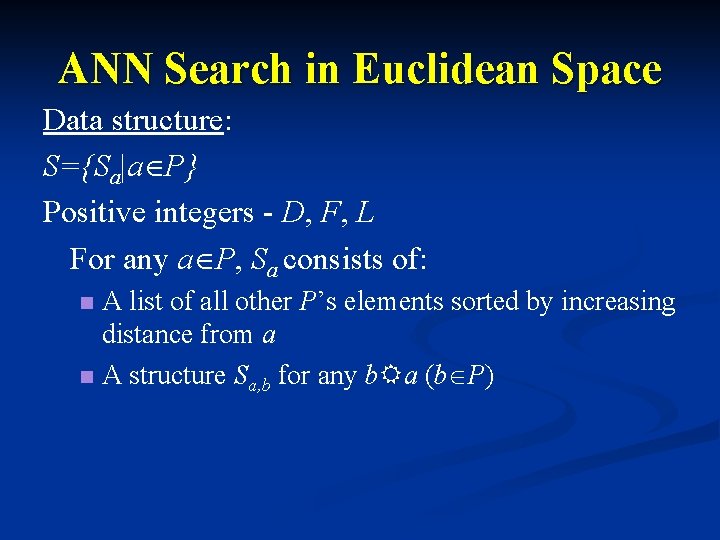

ANN Search in Euclidean Space Data structure: S={Sa|a P} Positive integers - D, F, L For any a P, Sa consists of: A list of all other P’s elements sorted by increasing distance from a n A structure Sa, b for any b. Ra (b P) n

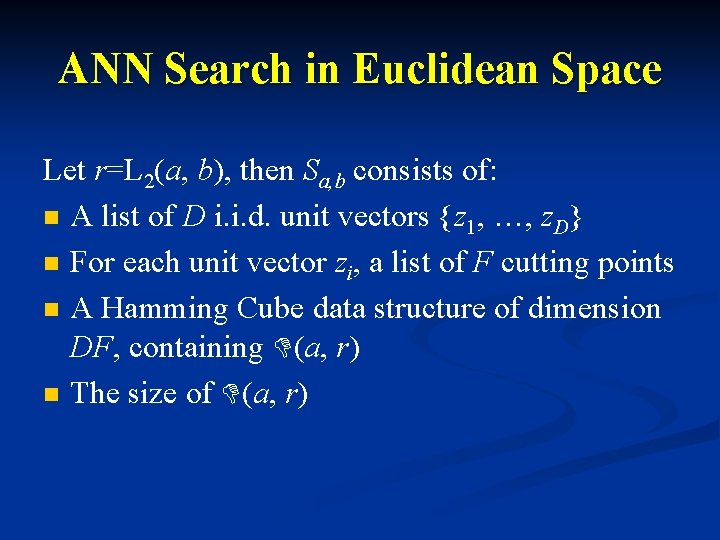

ANN Search in Euclidean Space Let r=L 2(a, b), then Sa, b consists of: n A list of D i. i. d. unit vectors {z 1, …, z. D} n For each unit vector zi, a list of F cutting points n A Hamming Cube data structure of dimension DF, containing D(a, r) n The size of D(a, r)

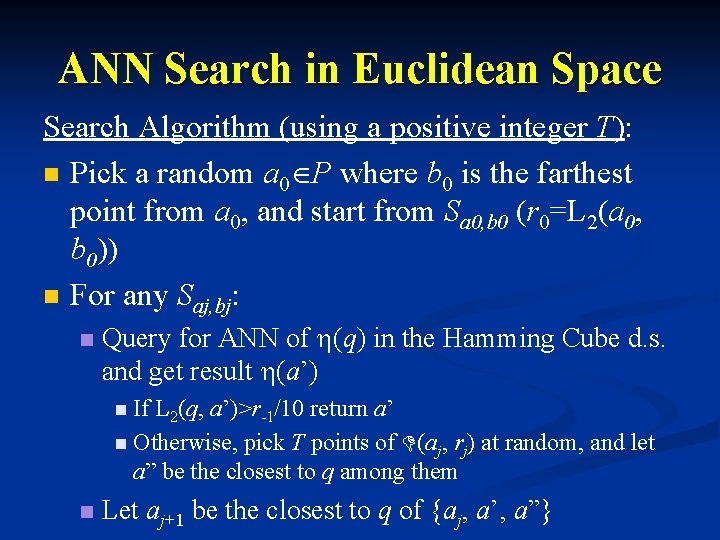

ANN Search in Euclidean Space Search Algorithm (using a positive integer T): n Pick a random a 0 P where b 0 is the farthest point from a 0, and start from Sa 0, b 0 (r 0=L 2(a 0, b 0)) n For any Saj, bj: n Query for ANN of h(q) in the Hamming Cube d. s. and get result h(a’) n If L 2(q, a’)>r-1/10 return a’ n Otherwise, pick T points of D(aj, rj) at random, and let a” be the closest to q among them n Let aj+1 be the closest to q of {aj, a’, a”}

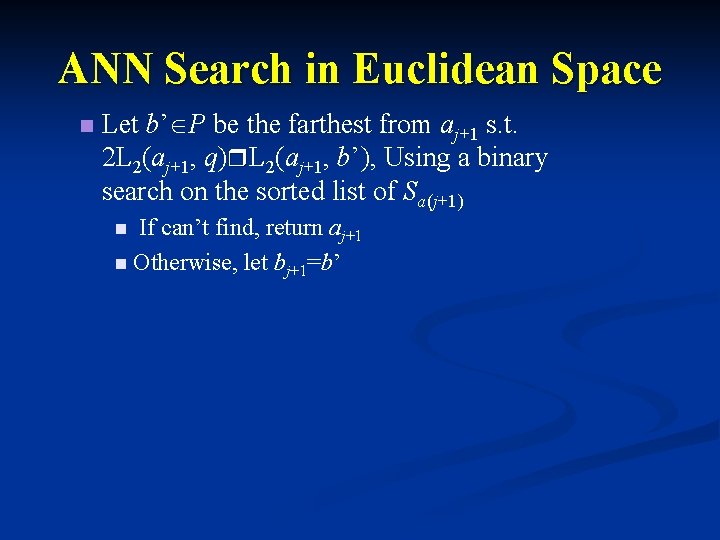

ANN Search in Euclidean Space n Let b’ P be the farthest from aj+1 s. t. 2 L 2(aj+1, q)r. L 2(aj+1, b’), Using a binary search on the sorted list of Sa(j+1) If can’t find, return aj+1 n Otherwise, let bj+1=b’ n

ANN Search in Euclidean Space bi ai q Each ball in the search contains q’s (exact) NN

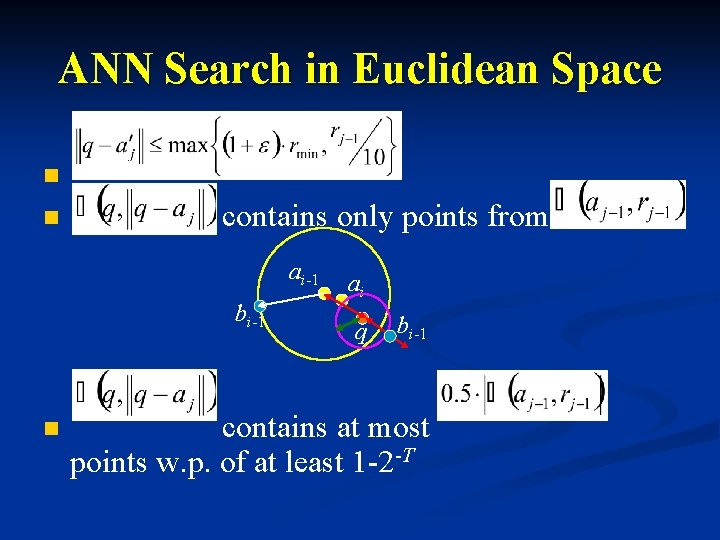

ANN Search in Euclidean Space n n contains only points from ai-1 bi-1 n ai q bi-1 contains at most points w. p. of at least 1 -2 -T

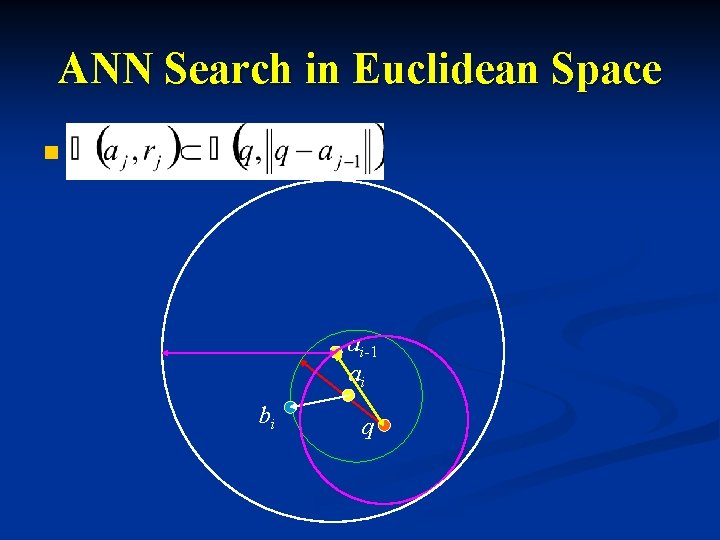

ANN Search in Euclidean Space n ai-1 ai bi q

ANN Search in Euclidean Space Conclusion: In the expected case, this gives us an O(log(n)) number of iterations

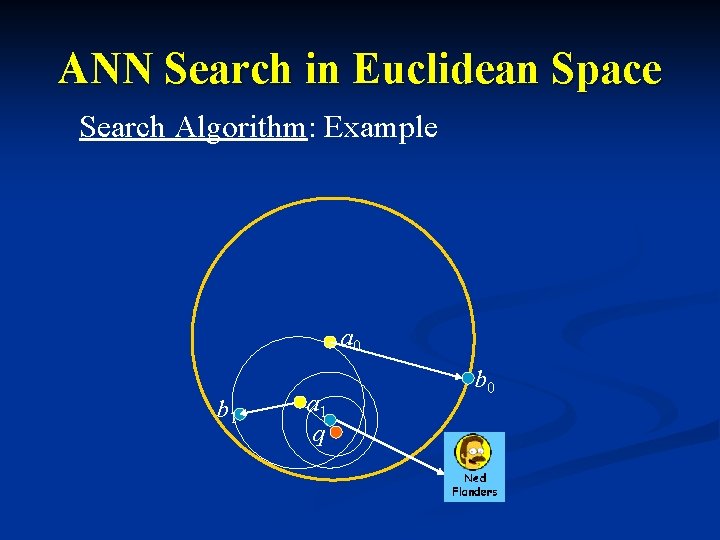

ANN Search in Euclidean Space Search Algorithm: Example a 0 b 1 a 1 q b 0

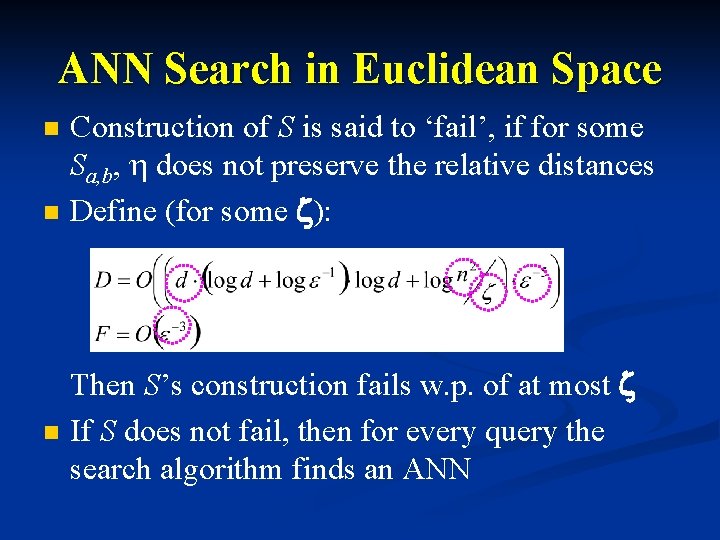

ANN Search in Euclidean Space n n n Construction of S is said to ‘fail’, if for some Sa, b, h does not preserve the relative distances Define (for some z): Then S’s construction fails w. p. of at most z If S does not fail, then for every query the search algorithm finds an ANN

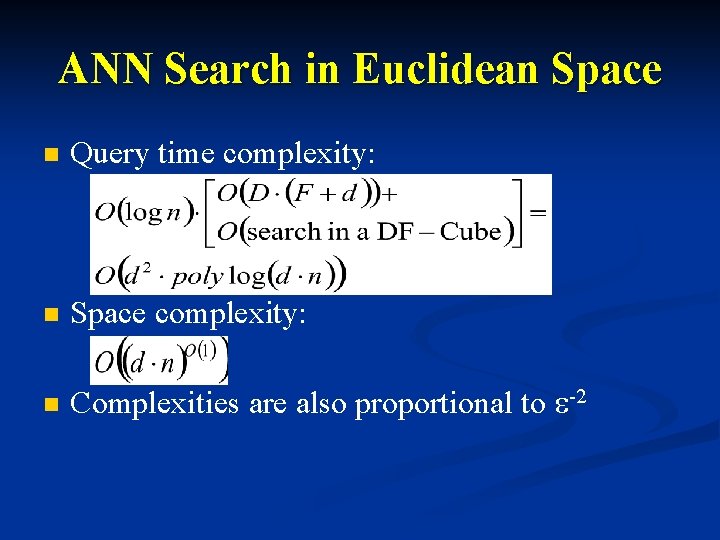

ANN Search in Euclidean Space n Query time complexity: n Space complexity: n Complexities are also proportional to e-2

Remark – Additional Work n n n Related Works: Jon M. Kleinberg. “Two Algorithms for Nearest-Neighbor Search in High Dimensions”, 1997 P. Indyk and R. Motwani. “Approximate Nearest Neighbors: Towards Removing the Curse of Dimensionality”, 1988 P. Indyk and R. Motwani. “Similarity search in High Dimensions via Hashing”, 1999

![Remark – Additional Work Related Works: [P. Indyk and R. Motwani ‘ 99] Remark – Additional Work Related Works: [P. Indyk and R. Motwani ‘ 99]](http://slidetodoc.com/presentation_image/350c1a87db5ba42b0016f8c842bc700e/image-52.jpg)

Remark – Additional Work Related Works: [P. Indyk and R. Motwani ‘ 99]

![Remark – Additional Work Related Works: [P. Indyk and R. Motwani ‘ 99] Remark – Additional Work Related Works: [P. Indyk and R. Motwani ‘ 99]](http://slidetodoc.com/presentation_image/350c1a87db5ba42b0016f8c842bc700e/image-53.jpg)

Remark – Additional Work Related Works: [P. Indyk and R. Motwani ‘ 99]

Summary n n n The Goal: linear space and logarithmic search time Approximate nearest neighbor Locality Sensitive Hash functions Amplify probability by concatenating Discretization of values by projection of points on vector units

Good Bye (Approximate) Neighbor For questions feel free to consult your neighbors: uri. klein@weizmann. ac. il erez. eyal@weizmann. ac. il [http: //www. thesimpsons. com]

- Slides: 56