COMP 9313 Big Data Management Lecturer Xin Cao

COMP 9313: Big Data Management Lecturer: Xin Cao Course web site: http: //www. cse. unsw. edu. au/~cs 9313/

Chapter 11: No. SQL, HBase, and Hive 11. 2

Part 1: Introduction to No. SQL 11. 3

What does RDBMS provide? n Relational model with schemas n Powerful, flexible query language (SQL) n Transactional semantics: ACID n Rich ecosystem, lots of tool support (My. SQL, Postgre. SQL, etc. ) 11. 4

What is No. SQL? n The name stands for Not Only SQL n Does not use SQL as querying language n Class of non relational data storage systems n The term NOSQL was introduced by Eric Evans when an event was organized to discuss open source distributed databases n It's not a replacement for a RDBMS but compliments it n All No. SQL offerings relax one or more of the ACID properties (will talk about the CAP theorem) 11. 5

What is No. SQL? n Key features (advantages): non relational l don’t require strict schema l data are replicated to multiple nodes (so, identical & fault tolerant) and can be partitioned: l 4 down nodes easily replaced 4 no single point of failure horizontal scalable l cheap, easy to implement (open source) l l massive write performance l fast key value access 11. 6

Why No. SQL? n Web apps have different needs (than the apps that RDBMS were designed for) l Low and predictable response time (latency) l Scalability & elasticity (at low cost!) l High availability l Flexible schemas / semi structured data l Geographic distribution (multiple datacenters) n Web apps can (usually) do without l Transactions / strong consistency / integrity l Complex queries 11. 7

Who are Using No. SQL? n Google (Big. Table) n Linked. In (Voldemort) n Facebook (Cassandra) n Twitter (HBase, Cassandra) n Baidu (Hyper. Table) 11. 8

Three Major Papers for No. SQL n Three major papers were the seeds of the No. SQL movement l Big. Table (Google) l Dynamo (Amazon) 4 Ring partition and replication 4 Gossip protocol (discovery and error detection) 4 Distributed key value data store 4 Eventual consistency l CAP Theorem (discuss in the next few slides) 11. 9

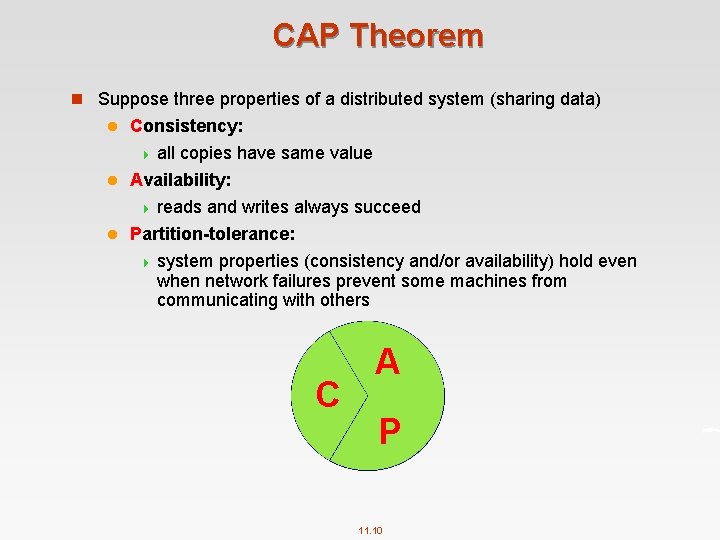

CAP Theorem n Suppose three properties of a distributed system (sharing data) Consistency: 4 all copies have same value l Availability: 4 reads and writes always succeed l Partition-tolerance: 4 system properties (consistency and/or availability) hold even when network failures prevent some machines from communicating with others l C A P 11. 10

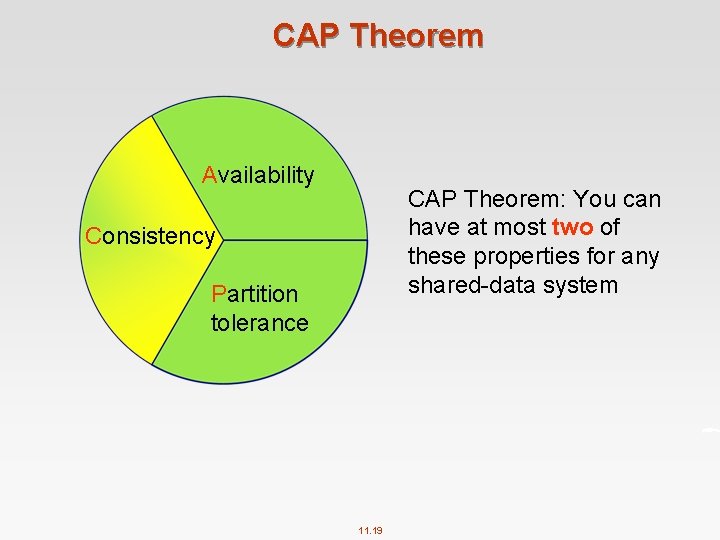

CAP Theorem n Brewer’s CAP Theorem: l For any system sharing data, it is “impossible” to guarantee simultaneously all of these three properties l You can have at most two of these three properties for any shared data system n Very large systems will “partition” at some point: That leaves either C or A to choose from (traditional DBMS prefers C over A and P ) l In almost all cases, you would choose A over C (except in specific applications such as order processing) l 11. 11

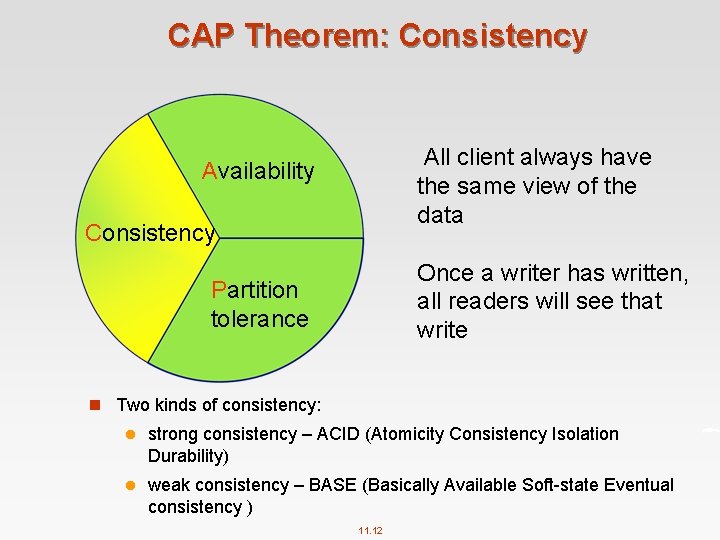

CAP Theorem: Consistency All client always have the same view of the data Availability Consistency Once a writer has written, all readers will see that write Partition tolerance n Two kinds of consistency: l strong consistency – ACID (Atomicity Consistency Isolation Durability) l weak consistency – BASE (Basically Available Soft state Eventual consistency ) 11. 12

ACID & CAP n ACID l A DBMS is expected to support “ACID transactions, ” processes that are: Atomicity: either the whole process is done or none is l Consistency: only valid data are written l Isolation: one operation at a time l Durability: once committed, it stays that way l n CAP l Consistency: all data on cluster has the same copies Availability: cluster always accepts reads and writes l Partition tolerance: guaranteed properties are maintained even when network failures prevent some machines from communicating with others l 11. 13

Consistency Model n A consistency model determines rules for visibility and apparent order of updates n Example: l Row X is replicated on nodes M and N l Client A writes row X to node N l Some period of time t elapses l Client B reads row X from node M l Does client B see the write from client A? l Consistency is a continuum with tradeoffs l For NOSQL, the answer would be: “maybe” l CAP theorem states: “strong consistency can't be achieved at the same time as availability and partition-tolerance” 11. 14

Eventual Consistency n When no updates occur for a long period of time, eventually all updates will propagate through the system and all the nodes will be consistent n For a given accepted update and a given node, eventually either the update reaches the node or the node is removed from service n Known as BASE (Basically Available, Soft state, Eventual consistency), as opposed to ACID n http: //en. wikipedia. org/wiki/Eventual_consistency 11. 15

Eventual Consistency n The types of large systems based on CAP aren't ACID they are BASE (http: //queue. acm. org/detail. cfm? id=1394128): l Basically Available system seems to work all the time l Soft State it doesn't have to be consistent all the time l Eventually Consistent becomes consistent at some later time n Everyone who builds big applications builds them on CAP and BASE: Google, Yahoo, Facebook, Amazon, e. Bay, etc. 11. 16

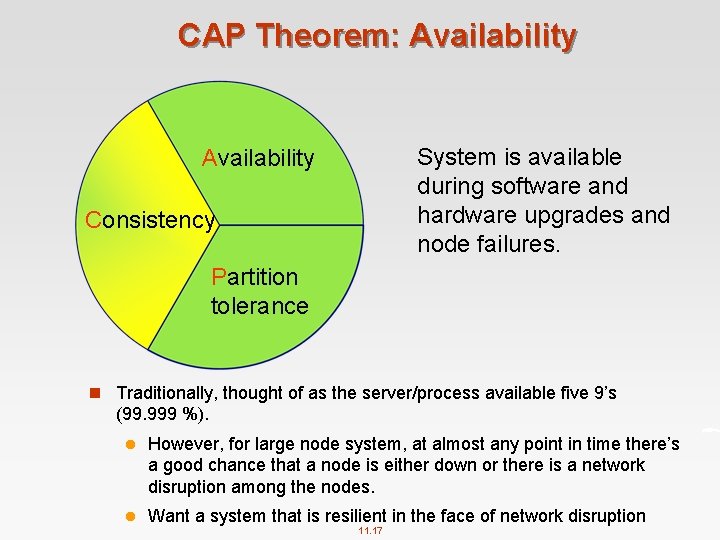

CAP Theorem: Availability System is available during software and hardware upgrades and node failures. Availability Consistency Partition tolerance n Traditionally, thought of as the server/process available five 9’s (99. 999 %). l However, for large node system, at almost any point in time there’s a good chance that a node is either down or there is a network disruption among the nodes. l Want a system that is resilient in the face of network disruption 11. 17

CAP Theorem: Partition-Tolerance A system can continue to operate in the presence of a network partitions. Availability Consistency Partition tolerance 11. 18

CAP Theorem Availability CAP Theorem: You can have at most two of these properties for any shared data system Consistency Partition tolerance 11. 19

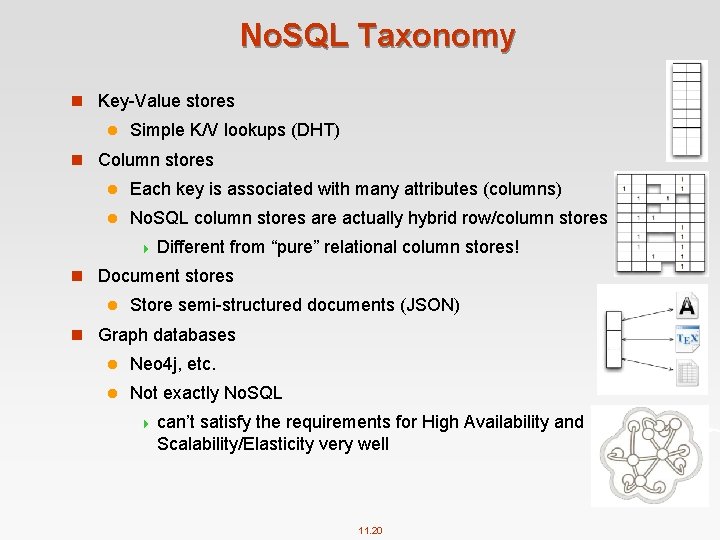

No. SQL Taxonomy n Key Value stores l Simple K/V lookups (DHT) n Column stores l Each key is associated with many attributes (columns) l No. SQL column stores are actually hybrid row/column stores 4 Different from “pure” relational column stores! n Document stores l Store semi structured documents (JSON) n Graph databases l Neo 4 j, etc. l Not exactly No. SQL 4 can’t satisfy the requirements for High Availability and Scalability/Elasticity very well 11. 20

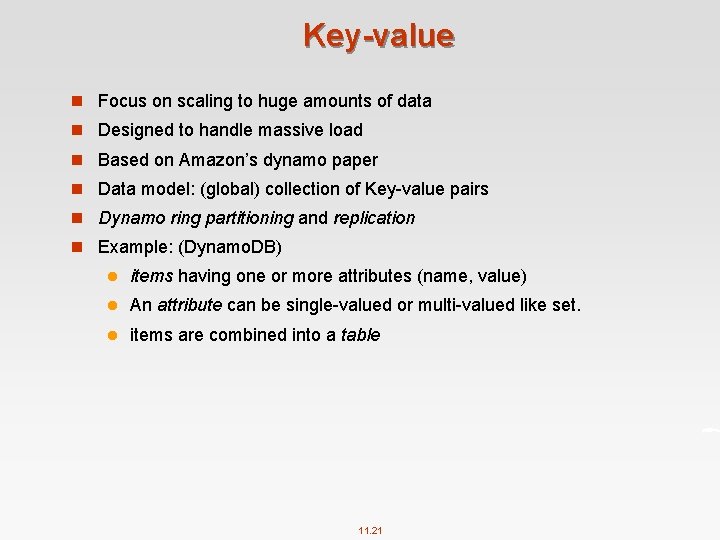

Key-value n Focus on scaling to huge amounts of data n Designed to handle massive load n Based on Amazon’s dynamo paper n Data model: (global) collection of Key value pairs n Dynamo ring partitioning and replication n Example: (Dynamo. DB) l items having one or more attributes (name, value) l An attribute can be single valued or multi valued like set. l items are combined into a table 11. 21

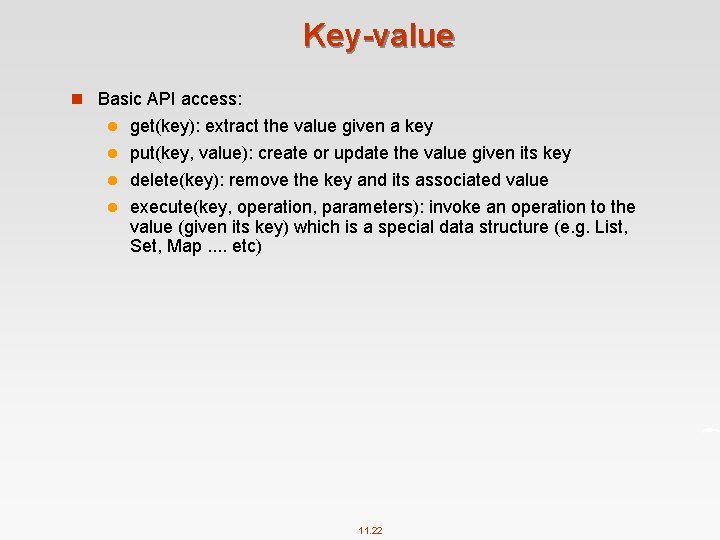

Key-value n Basic API access: get(key): extract the value given a key l put(key, value): create or update the value given its key l delete(key): remove the key and its associated value l execute(key, operation, parameters): invoke an operation to the value (given its key) which is a special data structure (e. g. List, Set, Map. . etc) l 11. 22

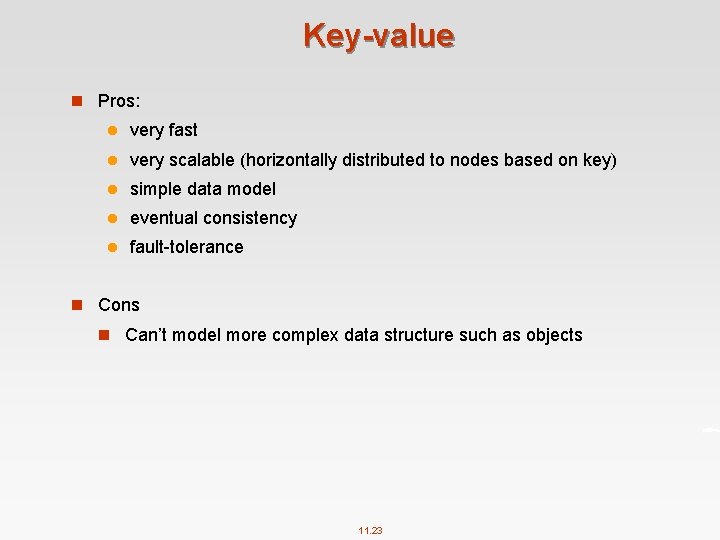

Key-value n Pros: l very fast l very scalable (horizontally distributed to nodes based on key) l simple data model l eventual consistency l fault tolerance n Cons n Can’t model more complex data structure such as objects 11. 23

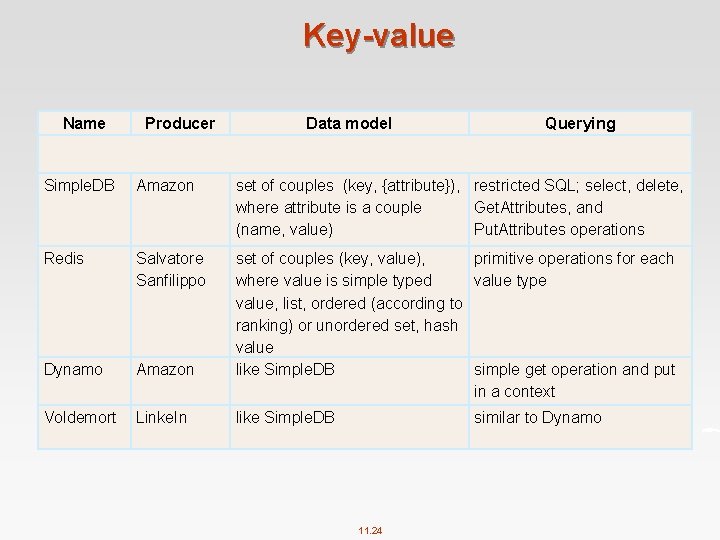

Key-value Name Producer Data model Querying Simple. DB Amazon set of couples (key, {attribute}), restricted SQL; select, delete, where attribute is a couple Get. Attributes, and (name, value) Put. Attributes operations Redis Salvatore Sanfilippo Dynamo Amazon set of couples (key, value), primitive operations for each where value is simple typed value type value, list, ordered (according to ranking) or unordered set, hash value like Simple. DB simple get operation and put in a context Voldemort Linke. In like Simple. DB similar to Dynamo 11. 24

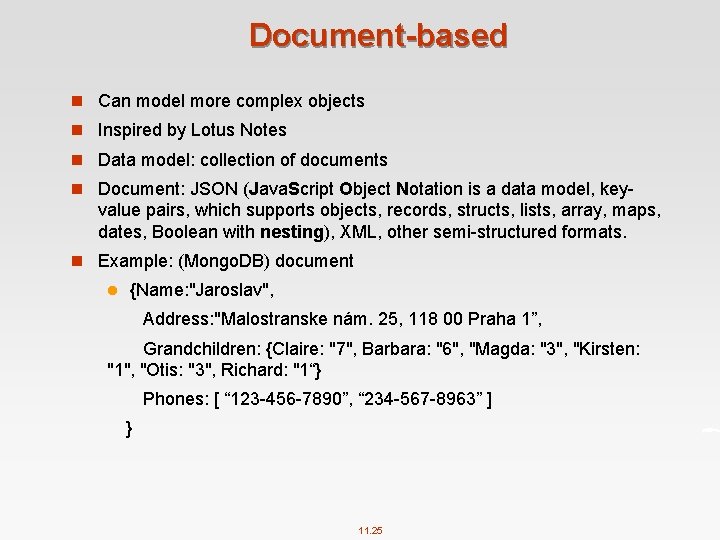

Document-based n Can model more complex objects n Inspired by Lotus Notes n Data model: collection of documents n Document: JSON (Java. Script Object Notation is a data model, key value pairs, which supports objects, records, structs, lists, array, maps, dates, Boolean with nesting), XML, other semi structured formats. n Example: (Mongo. DB) document l {Name: "Jaroslav", Address: "Malostranske nám. 25, 118 00 Praha 1”, Grandchildren: {Claire: "7", Barbara: "6", "Magda: "3", "Kirsten: "1", "Otis: "3", Richard: "1“} Phones: [ “ 123 456 7890”, “ 234 567 8963” ] } 11. 25

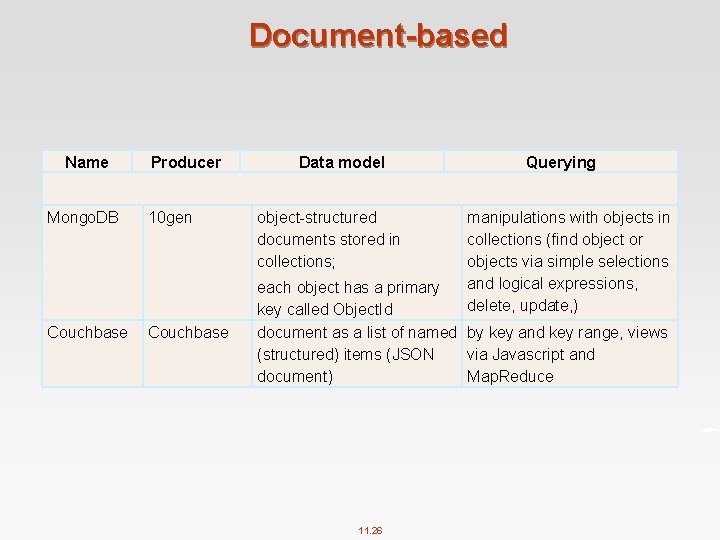

Document-based Name Mongo. DB Couchbase Producer 10 gen Couchbase Data model object structured documents stored in collections; Querying manipulations with objects in collections (find object or objects via simple selections and logical expressions, delete, update, ) each object has a primary key called Object. Id document as a list of named by key and key range, views (structured) items (JSON via Javascript and document) Map. Reduce 11. 26

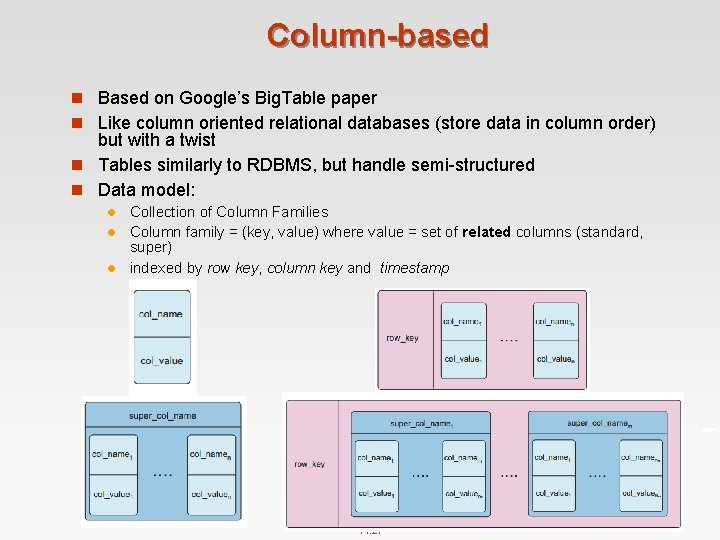

Column-based n Based on Google’s Big. Table paper n Like column oriented relational databases (store data in column order) but with a twist n Tables similarly to RDBMS, but handle semi structured n Data model: Collection of Column Families l Column family = (key, value) where value = set of related columns (standard, super) l indexed by row key, column key and timestamp l 11. 27

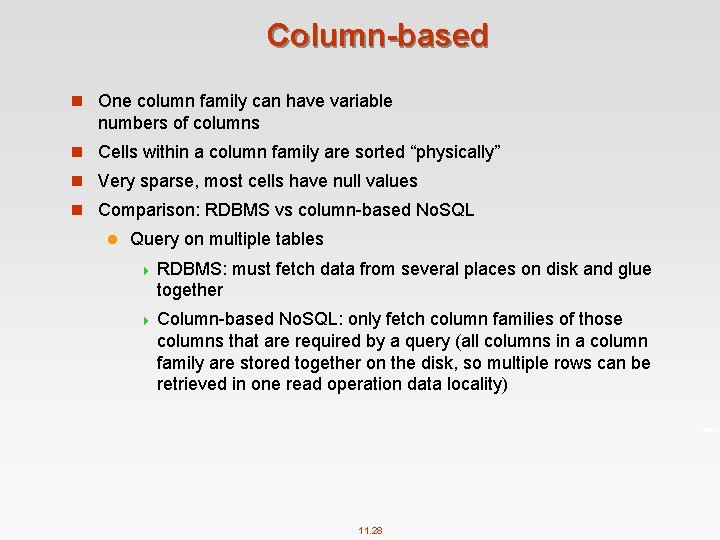

Column-based n One column family can have variable numbers of columns n Cells within a column family are sorted “physically” n Very sparse, most cells have null values n Comparison: RDBMS vs column based No. SQL l Query on multiple tables 4 RDBMS: must fetch data from several places on disk and glue together 4 Column based No. SQL: only fetch column families of those columns that are required by a query (all columns in a column family are stored together on the disk, so multiple rows can be retrieved in one read operation data locality) 11. 28

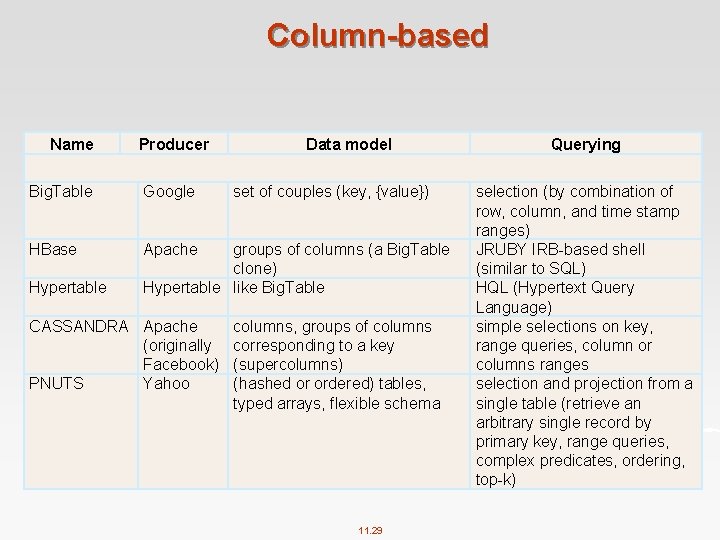

Column-based Name Producer Big. Table Google HBase Apache Hypertable Data model set of couples (key, {value}) groups of columns (a Big. Table clone) Hypertable like Big. Table CASSANDRA Apache (originally Facebook) PNUTS Yahoo columns, groups of columns corresponding to a key (supercolumns) (hashed or ordered) tables, typed arrays, flexible schema 11. 29 Querying selection (by combination of row, column, and time stamp ranges) JRUBY IRB based shell (similar to SQL) HQL (Hypertext Query Language) simple selections on key, range queries, column or columns ranges selection and projection from a single table (retrieve an arbitrary single record by primary key, range queries, complex predicates, ordering, top k)

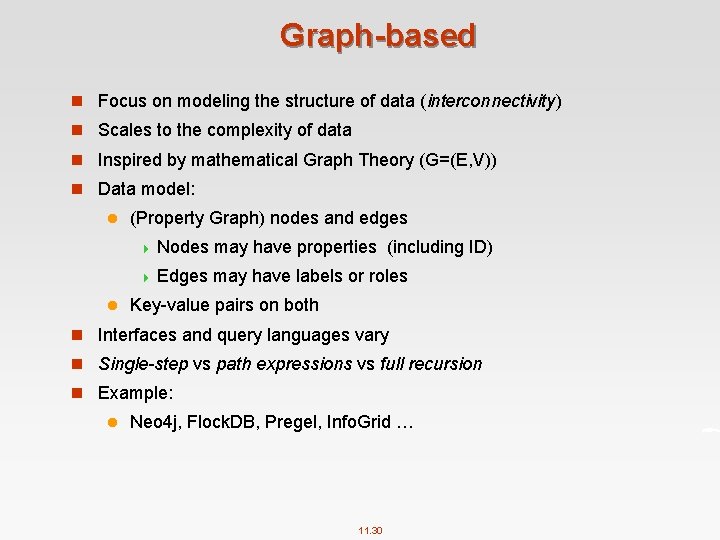

Graph-based n Focus on modeling the structure of data (interconnectivity) n Scales to the complexity of data n Inspired by mathematical Graph Theory (G=(E, V)) n Data model: l (Property Graph) nodes and edges 4 Nodes may have properties (including ID) 4 Edges may have labels or roles l Key value pairs on both n Interfaces and query languages vary n Single-step vs path expressions vs full recursion n Example: l Neo 4 j, Flock. DB, Pregel, Info. Grid … 11. 30

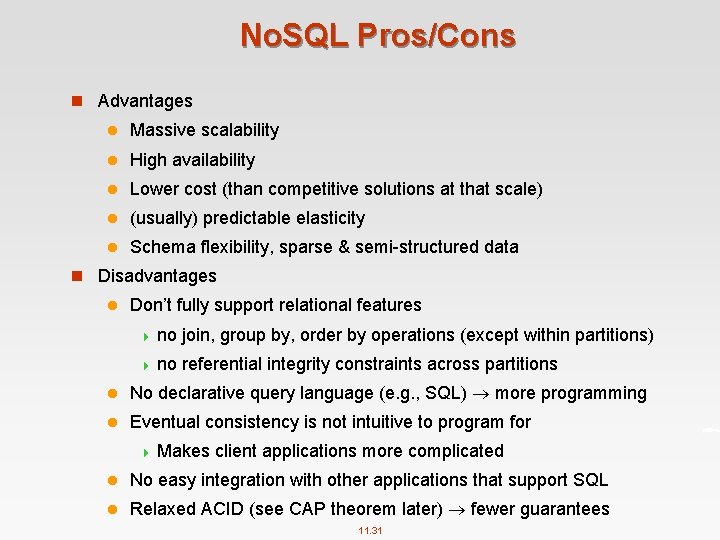

No. SQL Pros/Cons n Advantages l Massive scalability l High availability l Lower cost (than competitive solutions at that scale) l (usually) predictable elasticity l Schema flexibility, sparse & semi structured data n Disadvantages l Don’t fully support relational features 4 no join, group by, order by operations (except within partitions) 4 no referential integrity constraints across partitions l No declarative query language (e. g. , SQL) more programming l Eventual consistency is not intuitive to program for 4 Makes client applications more complicated l No easy integration with other applications that support SQL l Relaxed ACID (see CAP theorem later) fewer guarantees 11. 31

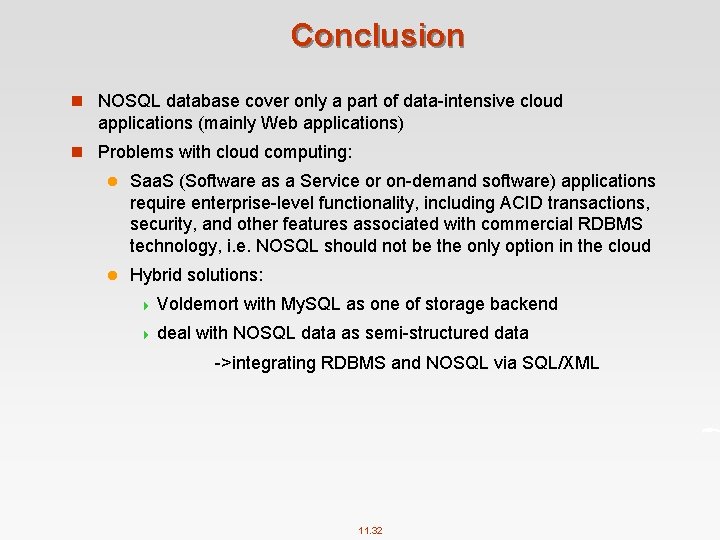

Conclusion n NOSQL database cover only a part of data intensive cloud applications (mainly Web applications) n Problems with cloud computing: l Saa. S (Software as a Service or on demand software) applications require enterprise level functionality, including ACID transactions, security, and other features associated with commercial RDBMS technology, i. e. NOSQL should not be the only option in the cloud l Hybrid solutions: 4 Voldemort with My. SQL as one of storage backend 4 deal with NOSQL data as semi structured data >integrating RDBMS and NOSQL via SQL/XML 11. 32

Part 2: Introduction to HBase 11. 33

What is HBase? n HBase is an open-source, distributed, column-oriented database built on top of HDFS based on Big. Table l Distributed – uses HDFS for storage l Row/column store l Column oriented nulls are free l Multi Dimensional (Versions) l Untyped stores byte[] n HBase is part of Hadoop n HBase is the Hadoop application to use when you require real time read/write random access to very large datasets l Aim to support low latency random access 11. 34

How Data is Stored in HBase ? n A sparse, distributed, persistent multi-dimensional sorted map n Sparse l Sparse data is supported with no waste of costly storage space l HBase can handle the fact that we don’t (yet) know that information l HBase as a schema less data store; that is, it’s fluid — we can add to, subtract from or modify the schema as you go along n Distributed and persistent l Persistent simply means that the data you store in HBase will persist or remain after our program or session ends l Just as HBase is an open source implementation of Big. Table, HDFS is an open source implementation of GFS. l HBase leverages HDFS to persist its data to disk storage. l By storing data in HDFS, HBase offers reliability, availability, seamless scalability and high performance — all on cost effective distributed servers. 11. 35

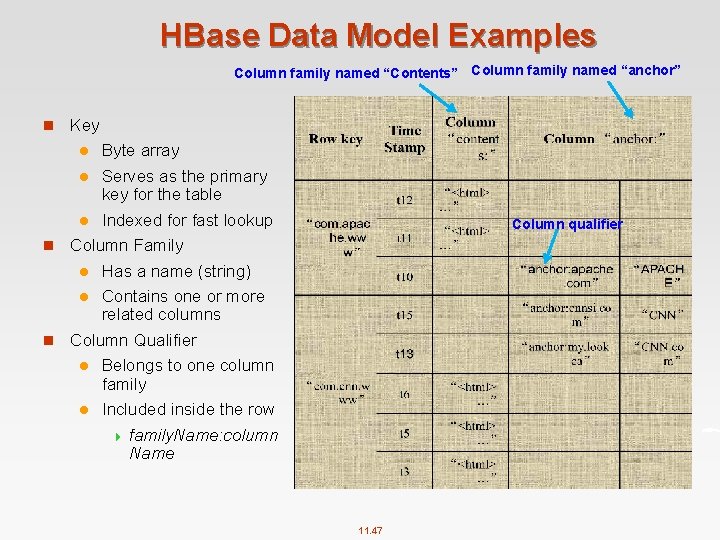

What is HBase? (Cont’) n Multi dimensional sorted map l A map (also known as an associative array) is an abstract collection of key value pairs, where the key is unique. l The keys are stored in HBase and sorted. l Each value can have multiple versions, which makes the data model multidimensional. By default, data versions are implemented with a timestamp. 11. 36

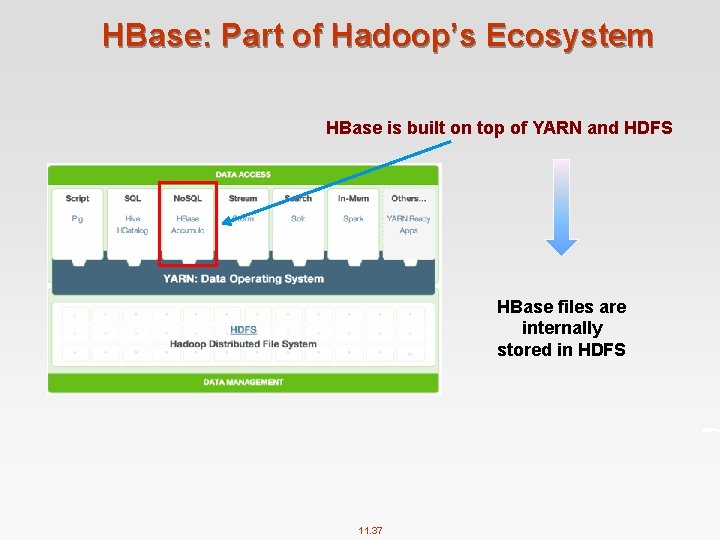

HBase: Part of Hadoop’s Ecosystem HBase is built on top of YARN and HDFS HBase files are internally stored in HDFS 11. 37

HBase vs. HDFS n Both are distributed systems that scale to hundreds or thousands of nodes n HDFS is good for batch processing (scans over big files) l Not good for record lookup l Not good for incremental addition of small batches l Not good for updates n HBase is designed to efficiently address the above points l Fast record lookup l Support for record level insertion l Support for updates (not in place) n HBase updates are done by creating new versions of values 11. 38

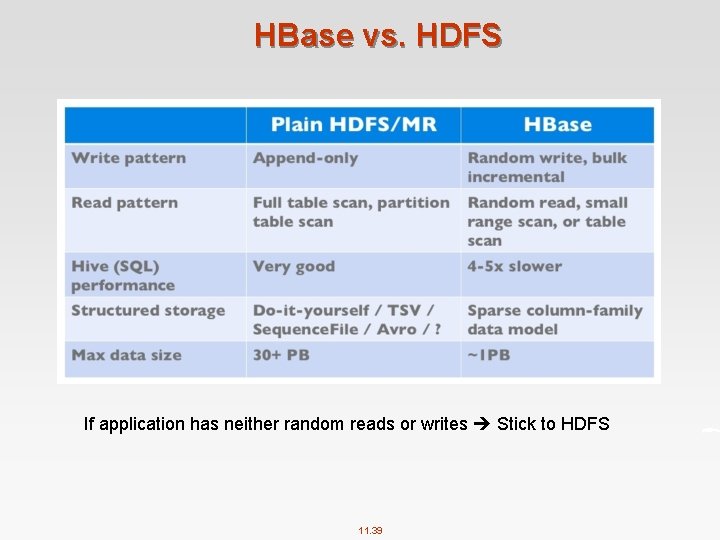

HBase vs. HDFS If application has neither random reads or writes Stick to HDFS 11. 39

HBase Characteristics n Tables have one primary index, the row key. n No join operators. n Scans and queries can select a subset of available columns, perhaps by using a wildcard. n There are three types of lookups: l Fast lookup using row key and optional timestamp. l Full table scan l Range scan from region start to end. n Limited atomicity and transaction support. l HBase supports multiple batched mutations of single rows only. l Data is unstructured and untyped. n No accessed or manipulated via SQL. l Programmatic access via Java, HBase shell, Thrift (Ruby, Python, Perl, C++, . . ) etc. 11. 40

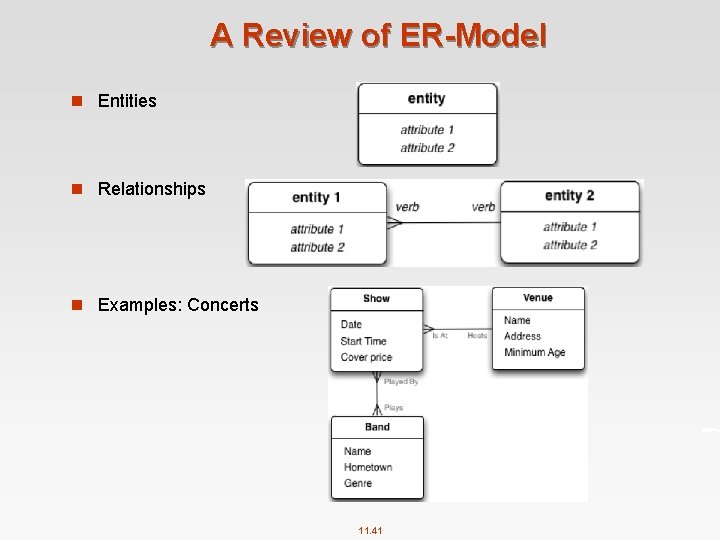

A Review of ER-Model n Entities n Relationships n Examples: Concerts 11. 41

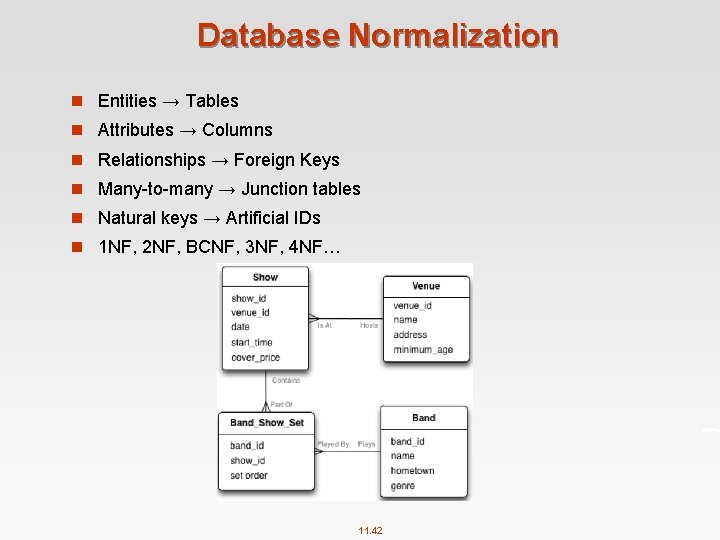

Database Normalization n Entities → Tables n Attributes → Columns n Relationships → Foreign Keys n Many to many → Junction tables n Natural keys → Artificial IDs n 1 NF, 2 NF, BCNF, 3 NF, 4 NF… 11. 42

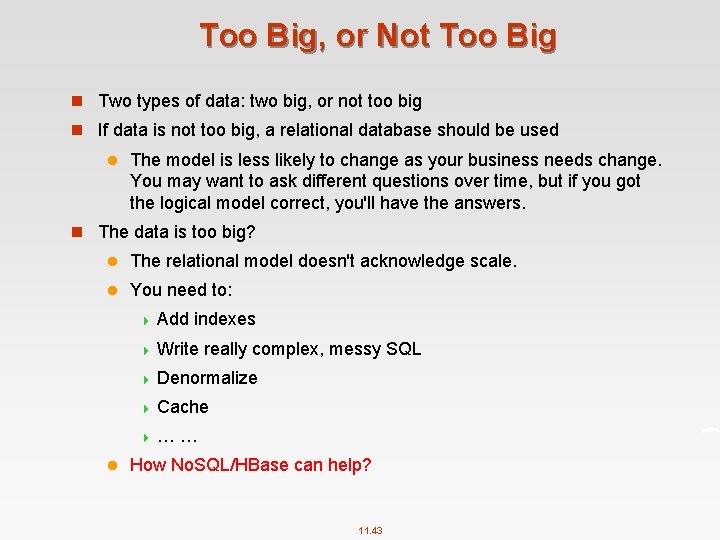

Too Big, or Not Too Big n Two types of data: two big, or not too big n If data is not too big, a relational database should be used l The model is less likely to change as your business needs change. You may want to ask different questions over time, but if you got the logical model correct, you'll have the answers. n The data is too big? l The relational model doesn't acknowledge scale. l You need to: 4 Add indexes 4 Write really complex, messy SQL 4 Denormalize 4 Cache 4 … … l How No. SQL/HBase can help? 11. 43

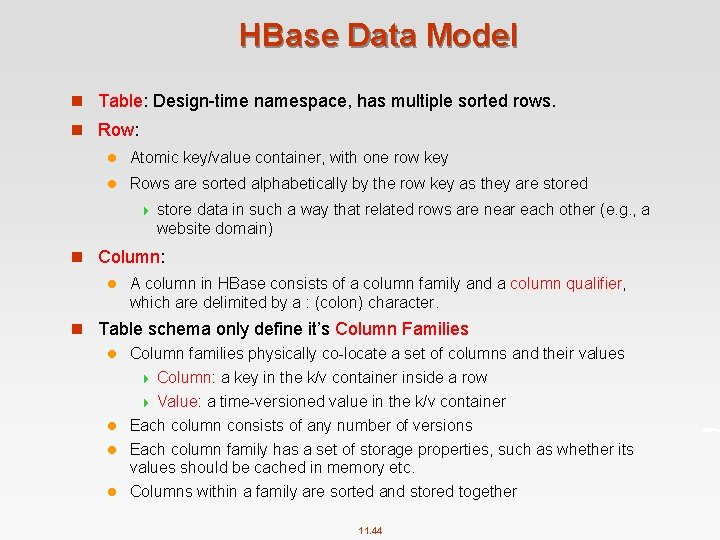

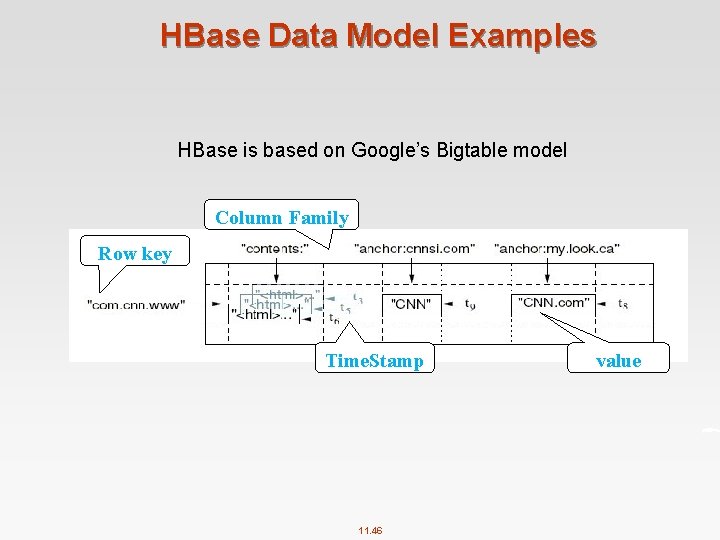

HBase Data Model n Table: Design time namespace, has multiple sorted rows. n Row: l Atomic key/value container, with one row key l Rows are sorted alphabetically by the row key as they are stored 4 store data in such a way that related rows are near each other (e. g. , a website domain) n Column: l A column in HBase consists of a column family and a column qualifier, which are delimited by a : (colon) character. n Table schema only define it’s Column Families l Column families physically co locate a set of columns and their values 4 Column: a key in the k/v container inside a row 4 Value: a time versioned value in the k/v container l Each column consists of any number of versions l Each column family has a set of storage properties, such as whether its values should be cached in memory etc. l Columns within a family are sorted and stored together 11. 44

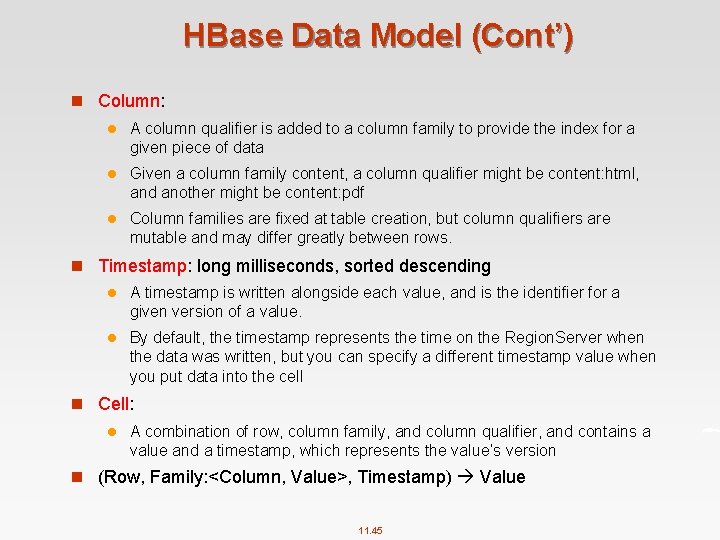

HBase Data Model (Cont’) n Column: l A column qualifier is added to a column family to provide the index for a given piece of data l Given a column family content, a column qualifier might be content: html, and another might be content: pdf l Column families are fixed at table creation, but column qualifiers are mutable and may differ greatly between rows. n Timestamp: long milliseconds, sorted descending l A timestamp is written alongside each value, and is the identifier for a given version of a value. l By default, the timestamp represents the time on the Region. Server when the data was written, but you can specify a different timestamp value when you put data into the cell n Cell: l A combination of row, column family, and column qualifier, and contains a value and a timestamp, which represents the value’s version n (Row, Family: <Column, Value>, Timestamp) Value 11. 45

HBase Data Model Examples HBase is based on Google’s Bigtable model Column Family Row key Time. Stamp 11. 46 value

HBase Data Model Examples Column family named “Contents” Column family named “anchor” n Key Byte array l Serves as the primary key for the table l Indexed for fast lookup n Column Family l Has a name (string) l Contains one or more related columns n Column Qualifier l Belongs to one column family l l Column qualifier Included inside the row 4 family. Name: column Name 11. 47

HBase Data Model Examples Version number for each row n Version Number l Unique within each key l By default System’s timestamp l Data type is Long value n Value l Byte array 11. 48

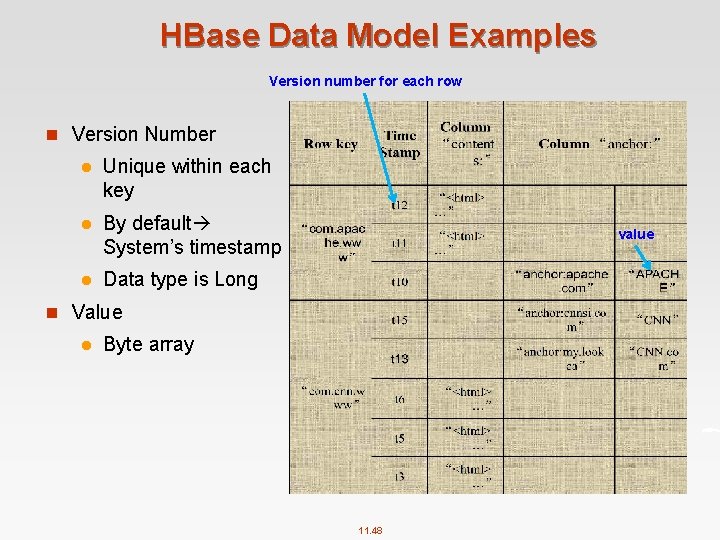

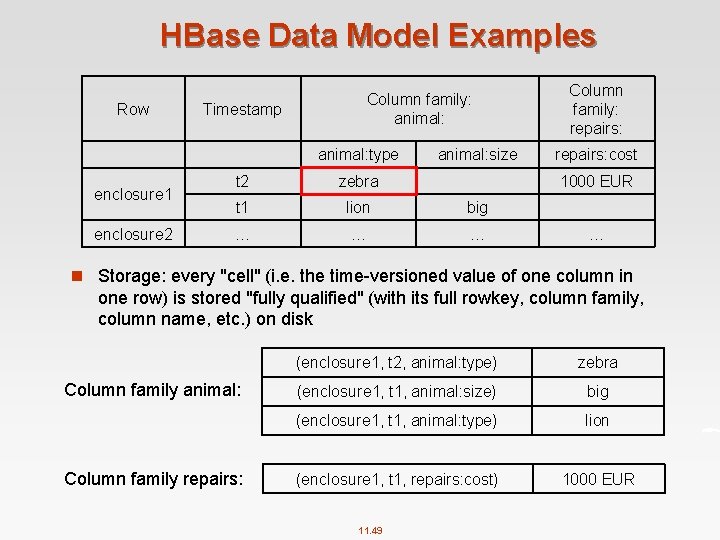

HBase Data Model Examples Row Column family: animal: Timestamp animal: type enclosure 1 enclosure 2 animal: size t 2 zebra t 1 lion big … … … Column family: repairs: cost 1000 EUR … n Storage: every "cell" (i. e. the time versioned value of one column in one row) is stored "fully qualified" (with its full rowkey, column family, column name, etc. ) on disk Column family animal: Column family repairs: (enclosure 1, t 2, animal: type) zebra (enclosure 1, t 1, animal: size) big (enclosure 1, t 1, animal: type) lion (enclosure 1, t 1, repairs: cost) 1000 EUR 11. 49

HBase Data Model Examples 11. 50

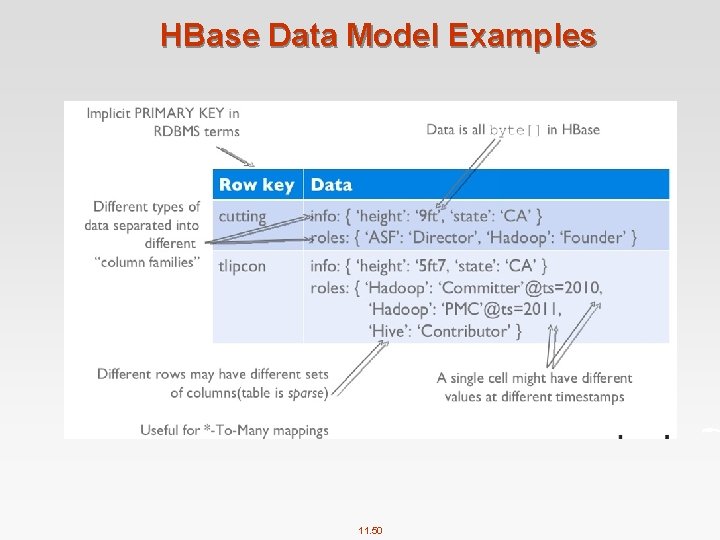

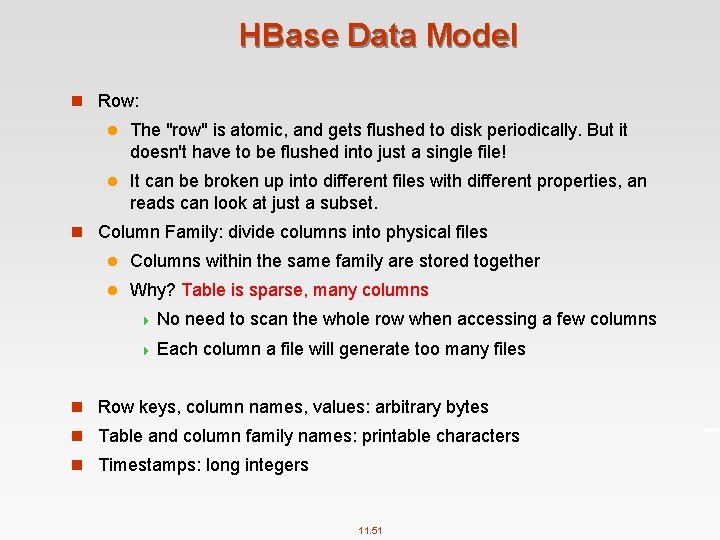

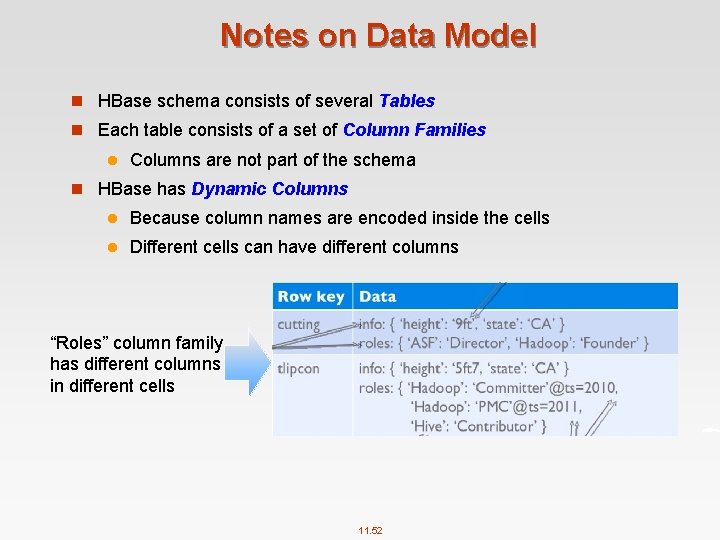

HBase Data Model n Row: l The "row" is atomic, and gets flushed to disk periodically. But it doesn't have to be flushed into just a single file! l It can be broken up into different files with different properties, an reads can look at just a subset. n Column Family: divide columns into physical files l Columns within the same family are stored together l Why? Table is sparse, many columns 4 No need to scan the whole row when accessing a few columns 4 Each column a file will generate too many files n Row keys, column names, values: arbitrary bytes n Table and column family names: printable characters n Timestamps: long integers 11. 51

Notes on Data Model n HBase schema consists of several Tables n Each table consists of a set of Column Families l Columns are not part of the schema n HBase has Dynamic Columns l Because column names are encoded inside the cells l Different cells can have different columns “Roles” column family has different columns in different cells 11. 52

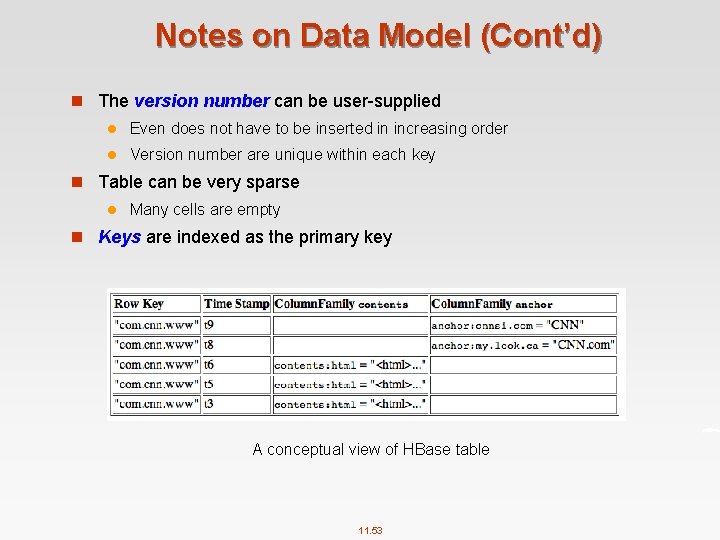

Notes on Data Model (Cont’d) n The version number can be user supplied l Even does not have to be inserted in increasing order l Version number are unique within each key n Table can be very sparse l Many cells are empty n Keys are indexed as the primary key A conceptual view of HBase table 11. 53

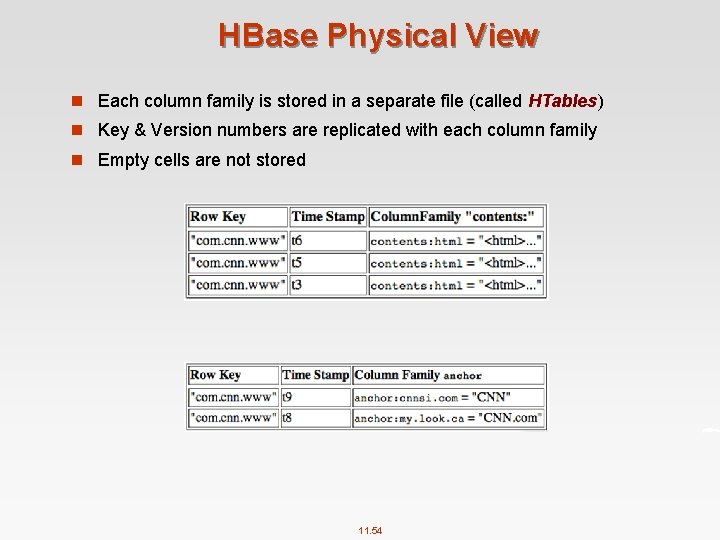

HBase Physical View n Each column family is stored in a separate file (called HTables) n Key & Version numbers are replicated with each column family n Empty cells are not stored 11. 54

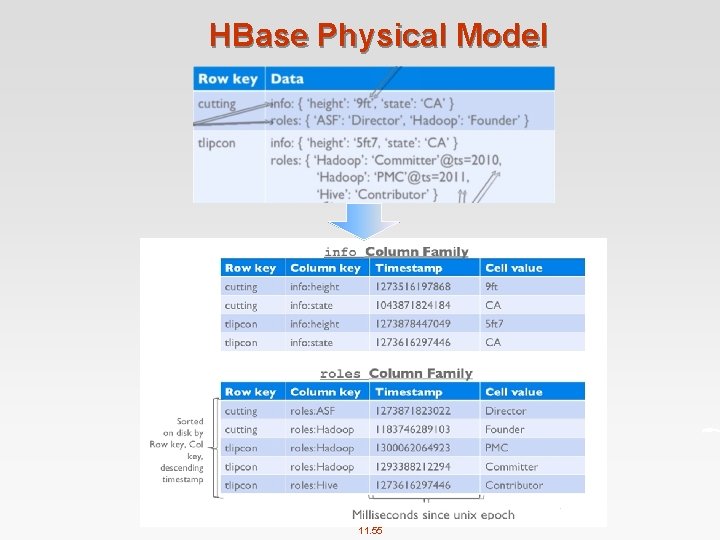

HBase Physical Model 11. 55

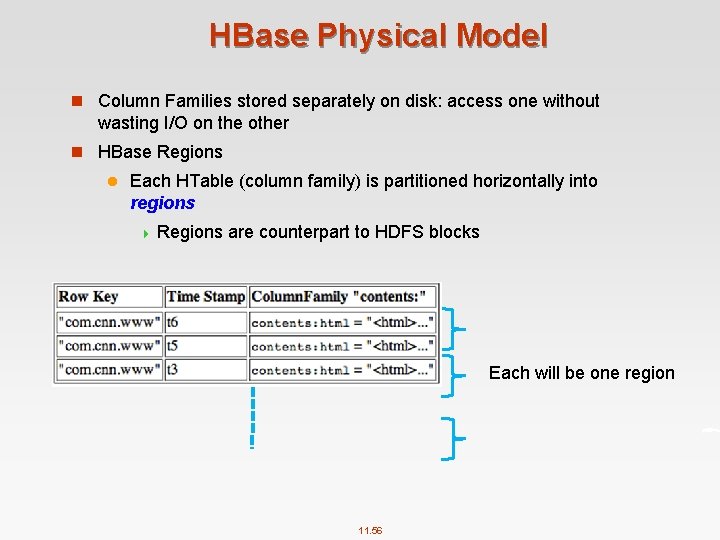

HBase Physical Model n Column Families stored separately on disk: access one without wasting I/O on the other n HBase Regions l Each HTable (column family) is partitioned horizontally into regions 4 Regions are counterpart to HDFS blocks Each will be one region 11. 56

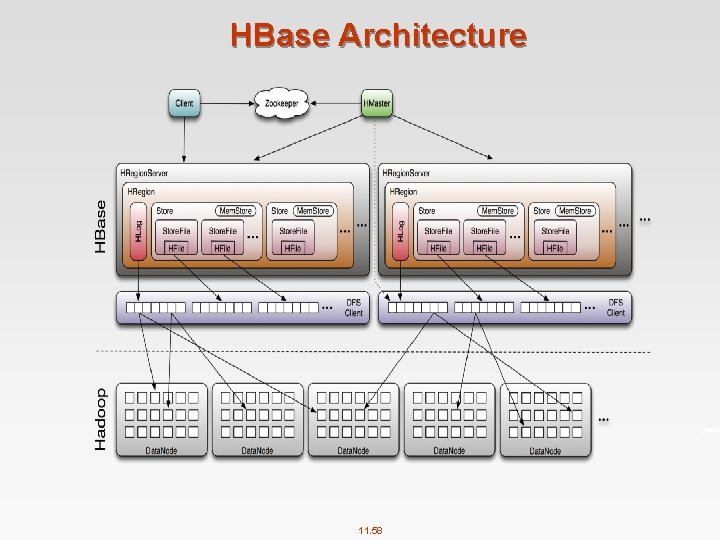

HBase Architecture n Major Components l The Master. Server (HMaster) 4 One master server 4 Responsible for coordinating the slaves 4 Assigns regions, detects failures 4 Admin functions l The Region. Server (HRegion. Server) 4 Many region servers 4 Region (HRegion) – A subset of a table’s rows, like horizontal range partitioning – Automatically done 4 Manages data regions 4 Serves data for reads and writes (using a log) l The HBase client 11. 57

HBase Architecture 11. 58

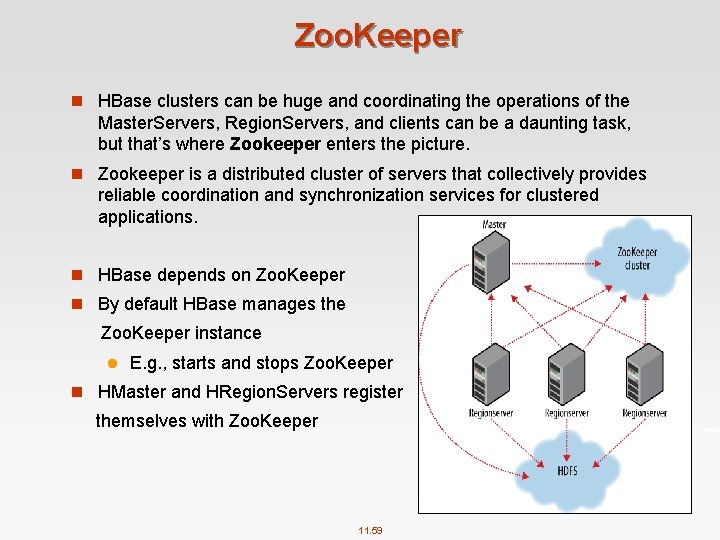

Zoo. Keeper n HBase clusters can be huge and coordinating the operations of the Master. Servers, Region. Servers, and clients can be a daunting task, but that’s where Zookeeper enters the picture. n Zookeeper is a distributed cluster of servers that collectively provides reliable coordination and synchronization services for clustered applications. n HBase depends on Zoo. Keeper n By default HBase manages the Zoo. Keeper instance l E. g. , starts and stops Zoo. Keeper n HMaster and HRegion. Servers register themselves with Zoo. Keeper 11. 59

Install HBase n Install Java and Hadoop first n Download at: https: //hbase. apache. org/ n The current stable version: 1. 2. 6 n Install: $ tar xzf hbase 1. 2. 6. tar. gz $ mv hbase 1. 2. 6 ~/hbase n Environment variables in ~/. bashrc export HBASE_HOME = ~/hbase export PATH = $HBASE_HOME/bin: $PATH n Edit hbase env. sh: $ vim $HBASE_HOME/conf/hbase env. sh export JAVA_HOME = /usr/lib/jvm/… export HBASE_MANAGES_ZK = true l hbase maintains its own Zoo. Keeper 11. 60

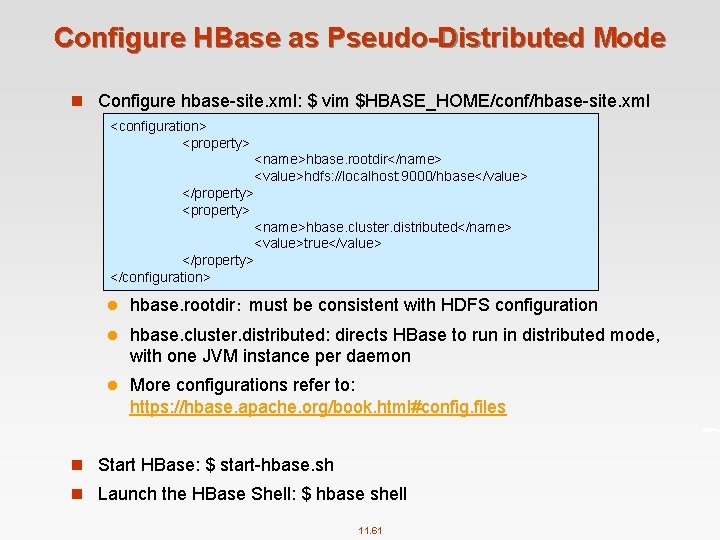

Configure HBase as Pseudo-Distributed Mode n Configure hbase site. xml: $ vim $HBASE_HOME/conf/hbase site. xml <configuration> <property> <name>hbase. rootdir</name> <value>hdfs: //localhost: 9000/hbase</value> </property> <name>hbase. cluster. distributed</name> <value>true</value> </property> </configuration> l hbase. rootdir: must be consistent with HDFS configuration l hbase. cluster. distributed: directs HBase to run in distributed mode, with one JVM instance per daemon l More configurations refer to: https: //hbase. apache. org/book. html#config. files n Start HBase: $ start hbase. sh n Launch the HBase Shell: $ hbase shell 11. 61

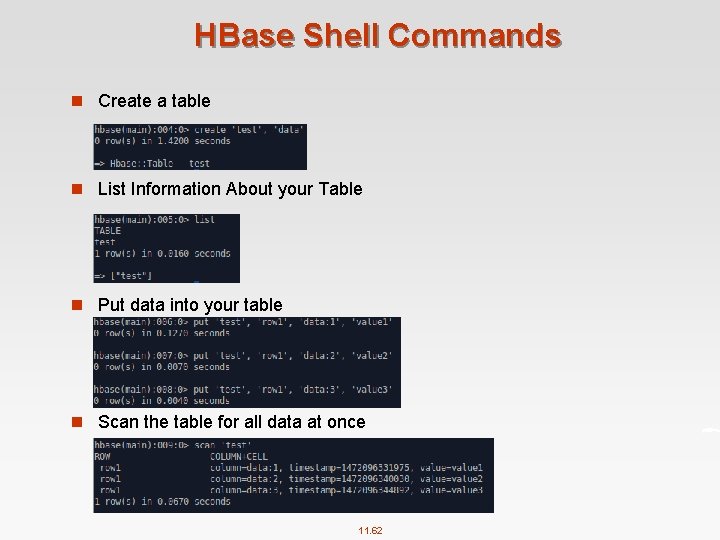

HBase Shell Commands n Create a table n List Information About your Table n Put data into your table n Scan the table for all data at once 11. 62

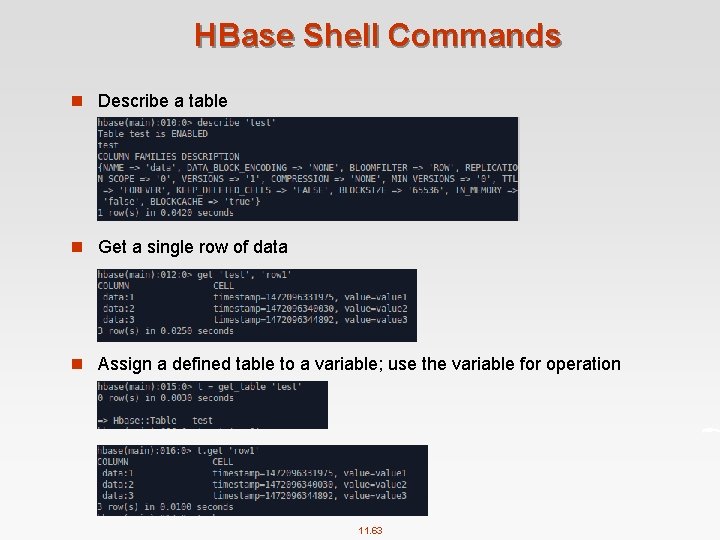

HBase Shell Commands n Describe a table n Get a single row of data n Assign a defined table to a variable; use the variable for operation 11. 63

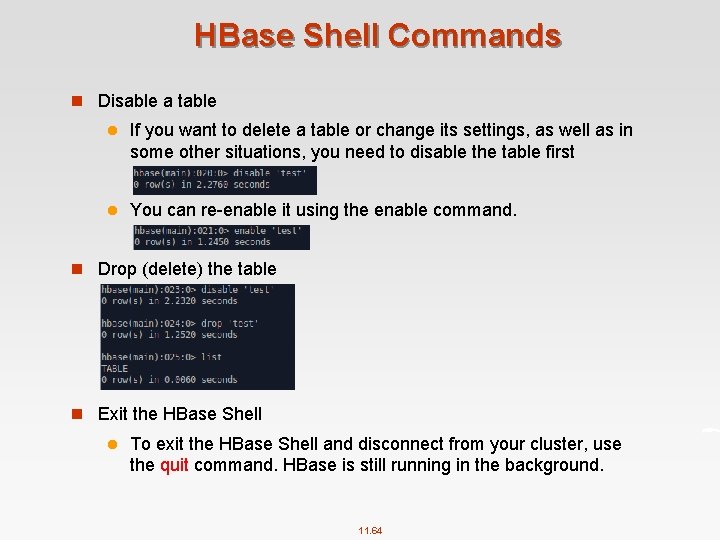

HBase Shell Commands n Disable a table l If you want to delete a table or change its settings, as well as in some other situations, you need to disable the table first l You can re enable it using the enable command. n Drop (delete) the table n Exit the HBase Shell l To exit the HBase Shell and disconnect from your cluster, use the quit command. HBase is still running in the background. 11. 64

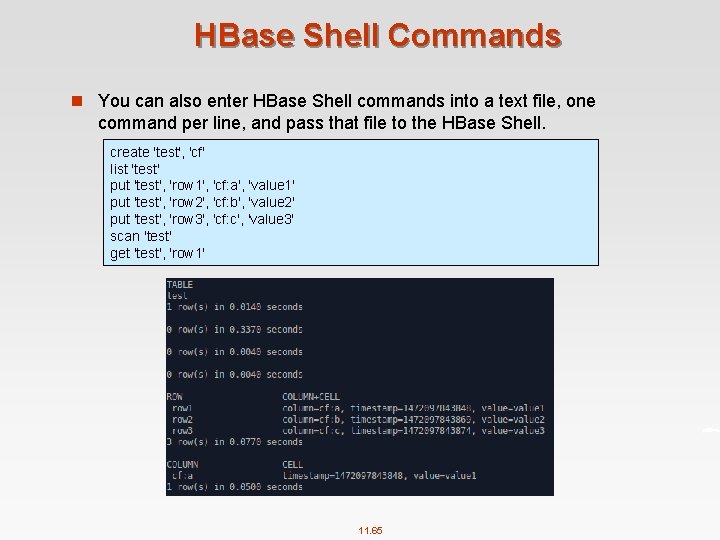

HBase Shell Commands n You can also enter HBase Shell commands into a text file, one command per line, and pass that file to the HBase Shell. create 'test', 'cf' list 'test' put 'test', 'row 1', 'cf: a', 'value 1' put 'test', 'row 2', 'cf: b', 'value 2' put 'test', 'row 3', 'cf: c', 'value 3' scan 'test' get 'test', 'row 1' 11. 65

HBase benefits than RDBMS n No real indexes n Automatic partitioning n Scale linearly and automatically with new nodes n Commodity hardware n Fault tolerance n Batch processing 11. 66

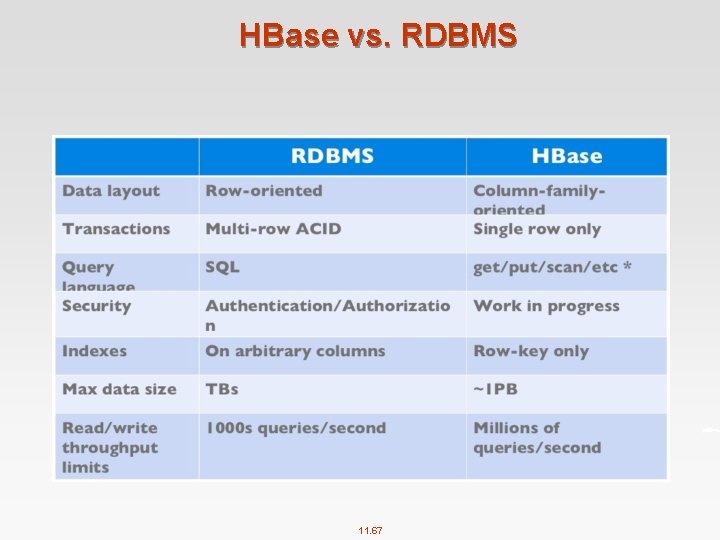

HBase vs. RDBMS 11. 67

When to use HBase n You need random write, random read, or both (but not neither, otherwise stick to HDFS) n You need to do many thousands of operations per second on multiple TB of data n Your acces patterns are well known and simple 11. 68

Part 3: Introduction to Hive 11. 69

What is Hive? n A data warehouse system for Hadoop that l facilitates easy data summarization l supports ad hoc queries (still batch though…) l created by Facebook n A mechanism to project structure onto this data and query the data using a SQL like language – Hive. QL l Interactive console –or l Execute scripts l Kicks off one or more Map. Reduce jobs in the background n An ability to use indexes, built in user defined functions 11. 70

Motivation of Hive n Limitation of MR l Have to use M/R model l Not Reusable l Error prone l For complex jobs: 4 Multiple stage of Map/Reduce functions 4 Just like ask developer to write specified physical execution plan in the database n Hive intuitive l Make the unstructured data looks like tables regardless how it really lays out l SQL based query can be directly against these tables l Generate specified execution plan for this query 11. 71

Hive Features n A subset of SQL covering the most common statements n Agile data types: Array, Map, Struct, and JSON objects n User Defined Functions and Aggregates n Regular Expression support n Map. Reduce support n JDBC support n Partitions and Buckets (for performance optimization) n Views and Indexes 11. 72

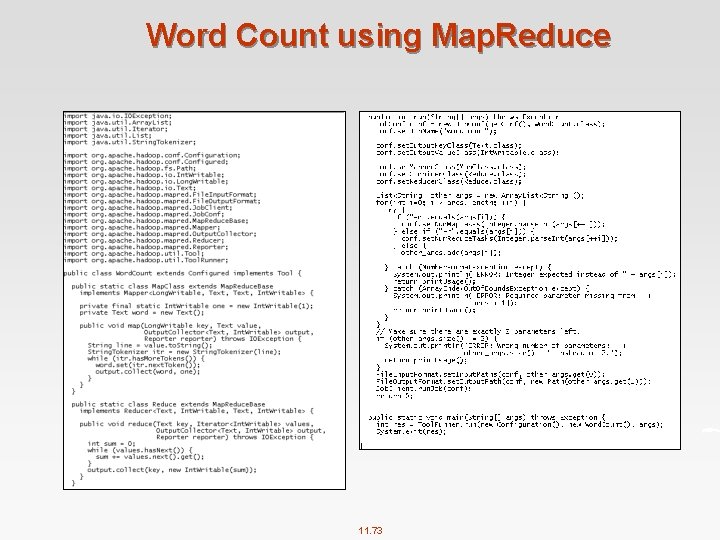

Word Count using Map. Reduce 11. 73

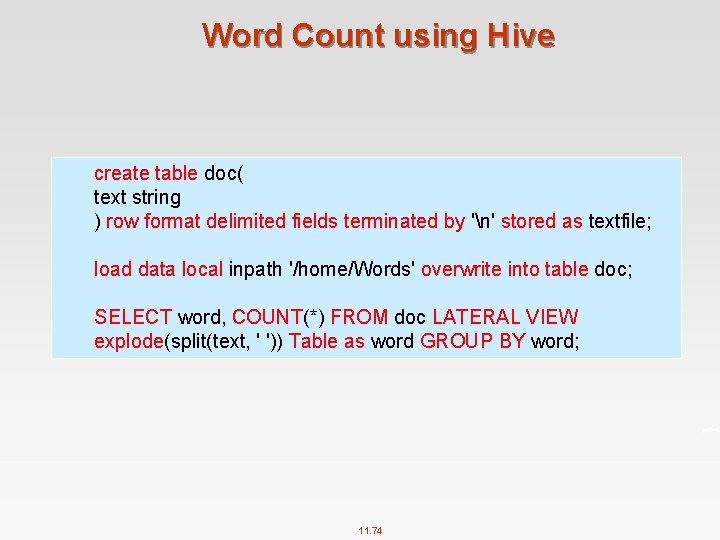

Word Count using Hive create table doc( text string ) row format delimited fields terminated by 'n' stored as textfile; load data local inpath '/home/Words' overwrite into table doc; SELECT word, COUNT(*) FROM doc LATERAL VIEW explode(split(text, ' ')) Table as word GROUP BY word; 11. 74

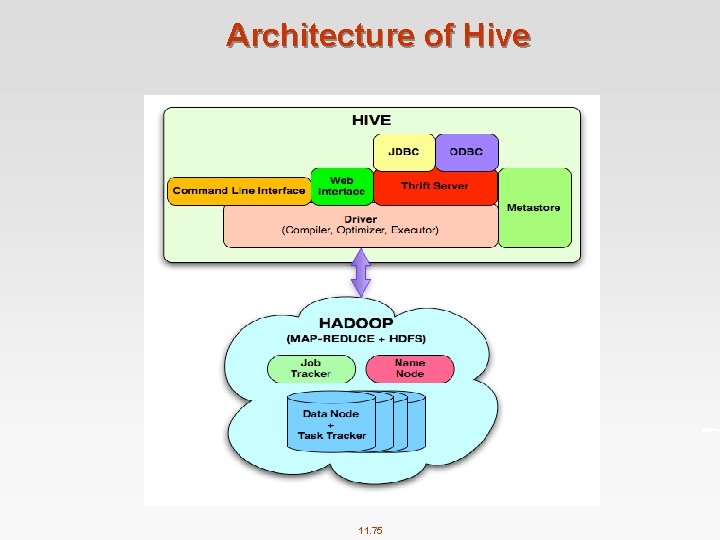

Architecture of Hive 11. 75

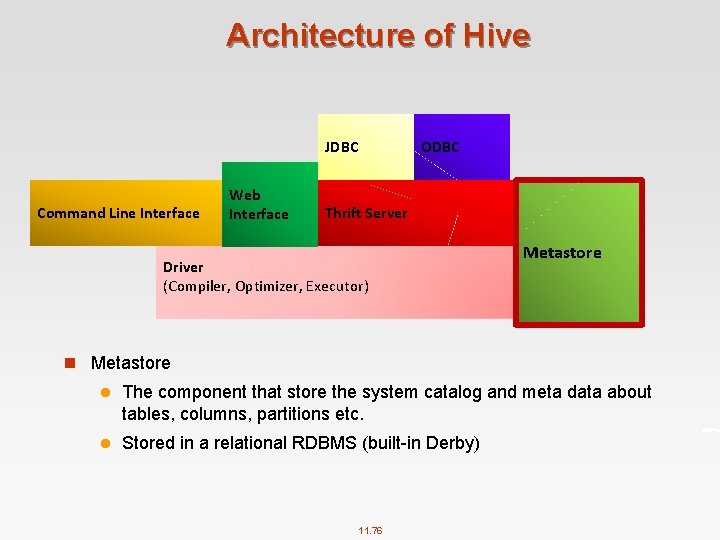

Architecture of Hive JDBC Command Line Interface Web Interface ODBC Thrift Server Driver (Compiler, Optimizer, Executor) Metastore n Metastore l The component that store the system catalog and meta data about tables, columns, partitions etc. l Stored in a relational RDBMS (built in Derby) 11. 76

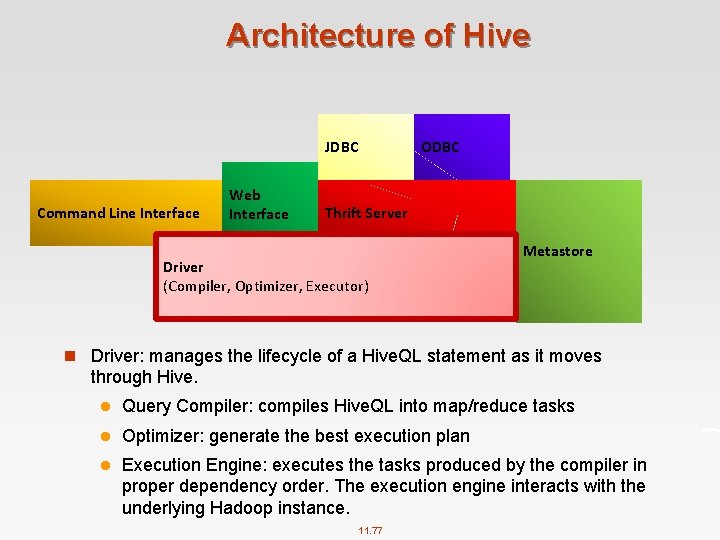

Architecture of Hive JDBC Command Line Interface Web Interface ODBC Thrift Server Driver (Compiler, Optimizer, Executor) Metastore n Driver: manages the lifecycle of a Hive. QL statement as it moves through Hive. l Query Compiler: compiles Hive. QL into map/reduce tasks l Optimizer: generate the best execution plan l Execution Engine: executes the tasks produced by the compiler in proper dependency order. The execution engine interacts with the underlying Hadoop instance. 11. 77

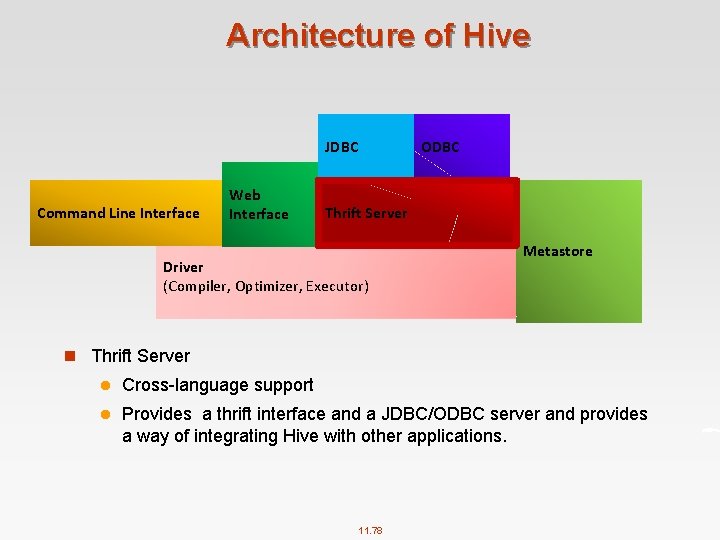

Architecture of Hive JDBC Command Line Interface Web Interface ODBC Thrift Server Driver (Compiler, Optimizer, Executor) Metastore n Thrift Server l Cross language support l Provides a thrift interface and a JDBC/ODBC server and provides a way of integrating Hive with other applications. 11. 78

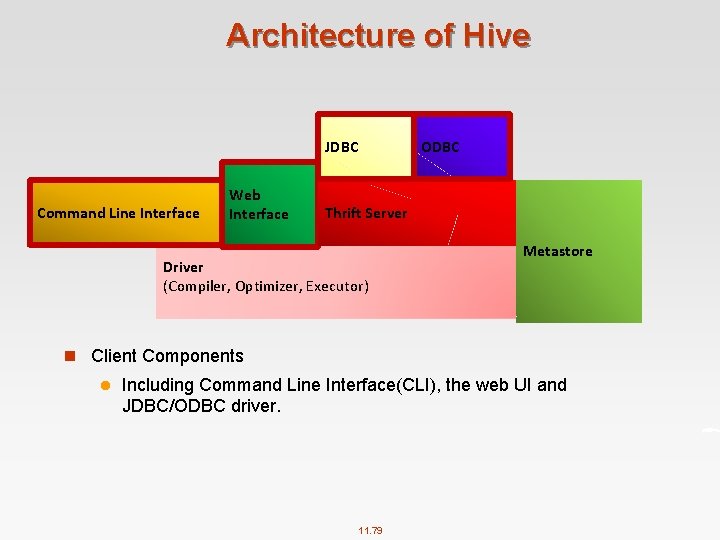

Architecture of Hive JDBC Command Line Interface Web Interface ODBC Thrift Server Driver (Compiler, Optimizer, Executor) Metastore n Client Components l Including Command Line Interface(CLI), the web UI and JDBC/ODBC driver. 11. 79

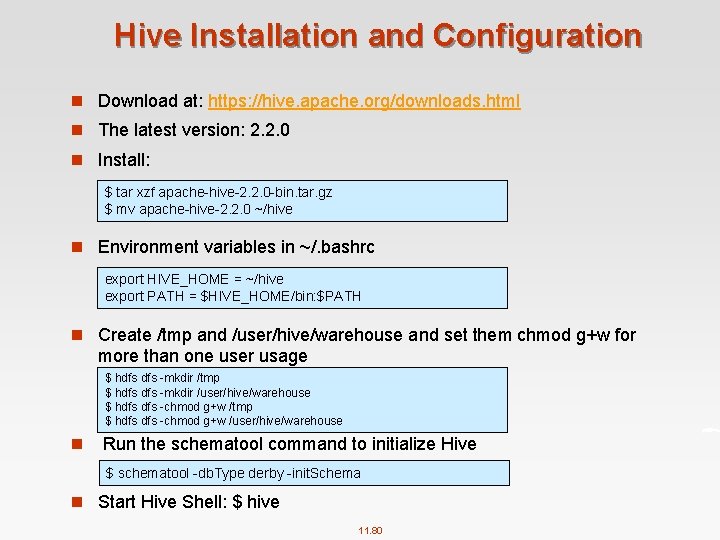

Hive Installation and Configuration n Download at: https: //hive. apache. org/downloads. html n The latest version: 2. 2. 0 n Install: $ tar xzf apache hive 2. 2. 0 bin. tar. gz $ mv apache hive 2. 2. 0 ~/hive n Environment variables in ~/. bashrc export HIVE_HOME = ~/hive export PATH = $HIVE_HOME/bin: $PATH n Create /tmp and /user/hive/warehouse and set them chmod g+w for more than one user usage $ hdfs mkdir /tmp $ hdfs mkdir /user/hive/warehouse $ hdfs chmod g+w /tmp $ hdfs chmod g+w /user/hive/warehouse n Run the schematool command to initialize Hive $ schematool db. Type derby init. Schema n Start Hive Shell: $ hive 11. 80

Hive Type System n Primitive types l Integers: TINYINT, SMALLINT, BIGINT. l Boolean: BOOLEAN. l Floating point numbers: FLOAT, DOUBLE. l Fixed point numbers: DECIMAL l String: STRING, CHAR, VARCHAR. l Date and time types: TIMESTAMP, DATE n Complex types l Structs: c has type {a INT; b INT}. c. a to access the first field l Maps: M['group']. l Arrays: ['a', 'b', 'c'], A[1] returns 'b'. n Example l list< map<string, struct< p 1: int, p 2: int > > > l Represents list of associative arrays that map strings to structs that contain two ints 11. 81

Hive Data Model n Databases: Namespaces function to avoid naming conflicts for tables, views, partitions, columns, and so on. n Tables: Homogeneous units of data which have the same schema. l Analogous to tables in relational DBs. l Each table has corresponding directory in HDFS. l An example table: page_views: 4 timestamp—which is of INT type that corresponds to a UNIX timestamp of when the page was viewed. 4 userid —which is of BIGINT type that identifies the user who viewed the page. 4 page_url—which is of STRING type that captures the location of the page. 4 referer_url—which is of STRING that captures the location of the page from where the user arrived at the current page. 4 IP—which is of STRING type that captures the IP address from where the page request was made. 11. 82

Hive Data Model (Cont’) n Partitions: l Each Table can have one or more partition Keys which determines how the data is stored l Example: 4 Given the table page_views, we can define two partitions a date_partition of type STRING and country_partition of type STRING 4 All "US" data from "2009 12 23" is a partition of the page_views table l Partition columns are virtual columns, they are not part of the data itself but are derived on load l It is the user's job to guarantee the relationship between partition name and data content n Buckets: Data in each partition may in turn be divided into Buckets based on the value of a hash function of some column of the Table l Example: the page_views table may be bucketed by userid 11. 83

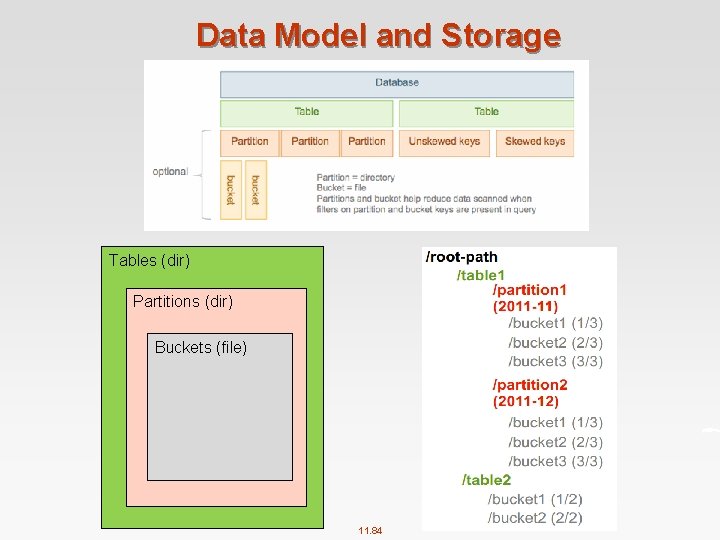

Data Model and Storage Tables (dir) Partitions (dir) Buckets (file) 11. 84

![Create Table n Syntax: CREATE TABLE [IF NOT EXISTS] [db_name. ]table_name [(col_name data_type [COMMENT Create Table n Syntax: CREATE TABLE [IF NOT EXISTS] [db_name. ]table_name [(col_name data_type [COMMENT](http://slidetodoc.com/presentation_image/a40b5225ac6bf418ddb0e84c81af1f29/image-85.jpg)

Create Table n Syntax: CREATE TABLE [IF NOT EXISTS] [db_name. ]table_name [(col_name data_type [COMMENT col_comment], . . . )] [COMMENT table_comment] [PARTITIONED BY (col_name data_type [COMMENT col_comment], . . . )] [CLUSTERED BY (col_name, . . . ) [SORTED BY (col_name [ASC|DESC], . . . )] INTO num_buckets BUCKETS] [ROW FORMAT row_format] [STORED AS file_format] See full CREATE TABLE command at: https: //cwiki. apache. org/confluence/display/Hive/Language. Manual+DDL 11. 85

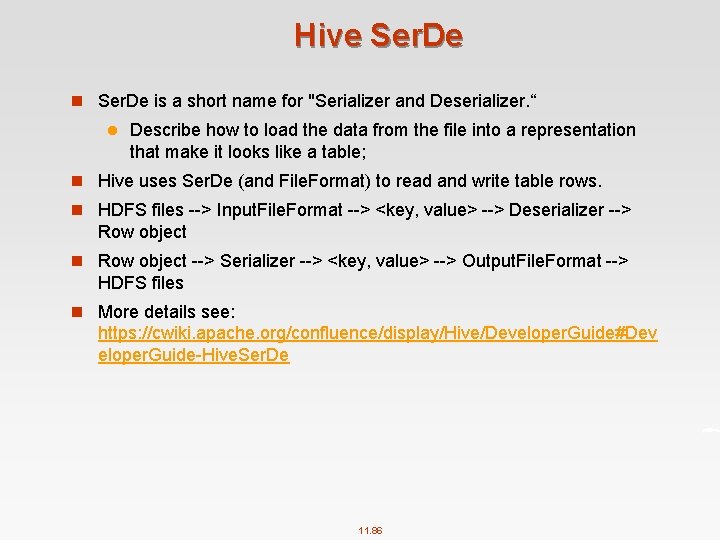

Hive Ser. De n Ser. De is a short name for "Serializer and Deserializer. “ l Describe how to load the data from the file into a representation that make it looks like a table; n Hive uses Ser. De (and File. Format) to read and write table rows. n HDFS files > Input. File. Format > <key, value> > Deserializer > Row object n Row object > Serializer > <key, value> > Output. File. Format > HDFS files n More details see: https: //cwiki. apache. org/confluence/display/Hive/Developer. Guide#Dev eloper. Guide Hive. Ser. De 11. 86

![Hive Ser. De row_format : DELIMITED [FIELDS TERMINATED BY char [ESCAPED BY char]] [COLLECTION Hive Ser. De row_format : DELIMITED [FIELDS TERMINATED BY char [ESCAPED BY char]] [COLLECTION](http://slidetodoc.com/presentation_image/a40b5225ac6bf418ddb0e84c81af1f29/image-87.jpg)

Hive Ser. De row_format : DELIMITED [FIELDS TERMINATED BY char [ESCAPED BY char]] [COLLECTION ITEMS TERMINATED BY char] [MAP KEYS TERMINATED BY char] [LINES TERMINATED BY char] Default values: Ctrl+A, Ctrl+B, Ctrl+C, new line, respectively file_format: : SEQUENCEFILE | TEXTFILE (Default, depending on hive. default. fileformat configuration) | RCFILE (Note: Available in Hive 0. 6. 0 and later) | ORC (Note: Available in Hive 0. 11. 0 and later) | PARQUET (Note: Available in Hive 0. 13. 0 and later) | AVRO (Note: Available in Hive 0. 14. 0 and later) | INPUTFORMAT input_format_classname OUTPUTFORMAT output_format_classname 11. 87

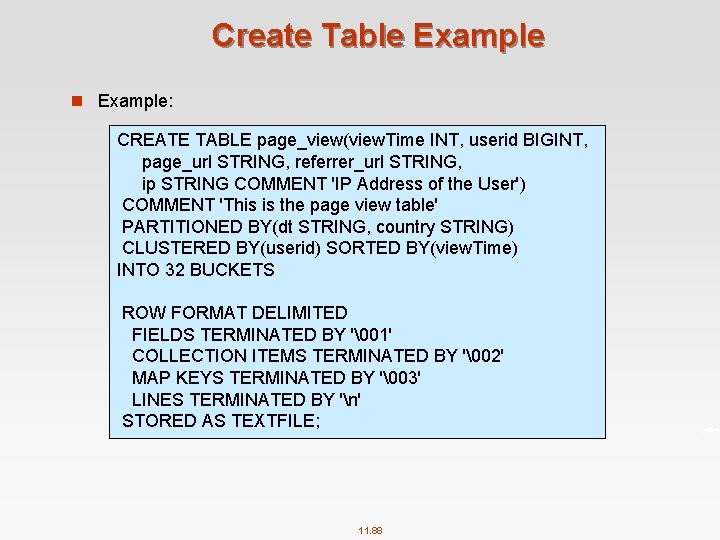

Create Table Example n Example: CREATE TABLE page_view(view. Time INT, userid BIGINT, page_url STRING, referrer_url STRING, ip STRING COMMENT 'IP Address of the User') COMMENT 'This is the page view table' PARTITIONED BY(dt STRING, country STRING) CLUSTERED BY(userid) SORTED BY(view. Time) INTO 32 BUCKETS ROW FORMAT DELIMITED FIELDS TERMINATED BY '�01' COLLECTION ITEMS TERMINATED BY '�02' MAP KEYS TERMINATED BY '�03' LINES TERMINATED BY 'n' STORED AS TEXTFILE; 11. 88

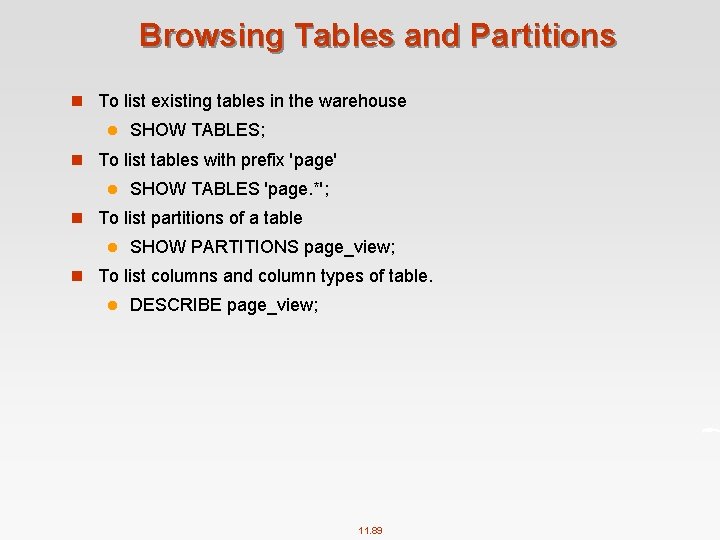

Browsing Tables and Partitions n To list existing tables in the warehouse l SHOW TABLES; n To list tables with prefix 'page' l SHOW TABLES 'page. *'; n To list partitions of a table l SHOW PARTITIONS page_view; n To list columns and column types of table. l DESCRIBE page_view; 11. 89

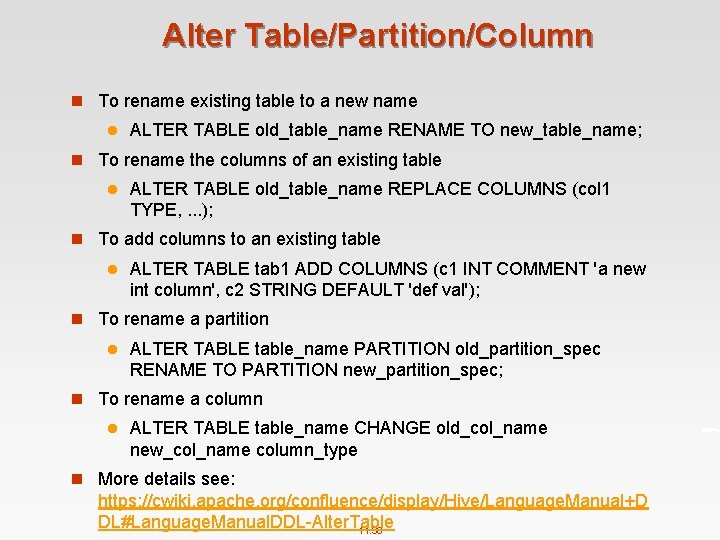

Alter Table/Partition/Column n To rename existing table to a new name l ALTER TABLE old_table_name RENAME TO new_table_name; n To rename the columns of an existing table l ALTER TABLE old_table_name REPLACE COLUMNS (col 1 TYPE, . . . ); n To add columns to an existing table l ALTER TABLE tab 1 ADD COLUMNS (c 1 INT COMMENT 'a new int column', c 2 STRING DEFAULT 'def val'); n To rename a partition l ALTER TABLE table_name PARTITION old_partition_spec RENAME TO PARTITION new_partition_spec; n To rename a column l ALTER TABLE table_name CHANGE old_col_name new_col_name column_type n More details see: https: //cwiki. apache. org/confluence/display/Hive/Language. Manual+D DL#Language. Manual. DDL Alter. Table 11. 90

![Drop Table/Partition n To drop a table l DROP TABLE [IF EXISTS] table_name l Drop Table/Partition n To drop a table l DROP TABLE [IF EXISTS] table_name l](http://slidetodoc.com/presentation_image/a40b5225ac6bf418ddb0e84c81af1f29/image-91.jpg)

Drop Table/Partition n To drop a table l DROP TABLE [IF EXISTS] table_name l Example: 4 DROP TABLE page_view n To drop a paritition l ALTER TABLE table_name DROP [IF EXISTS] PARTITION partition_spec[, PARTITION partition_spec, . . . ] l Example: 4 ALTER TABLE pv_users DROP PARTITION (ds='2008 08 08') 11. 91

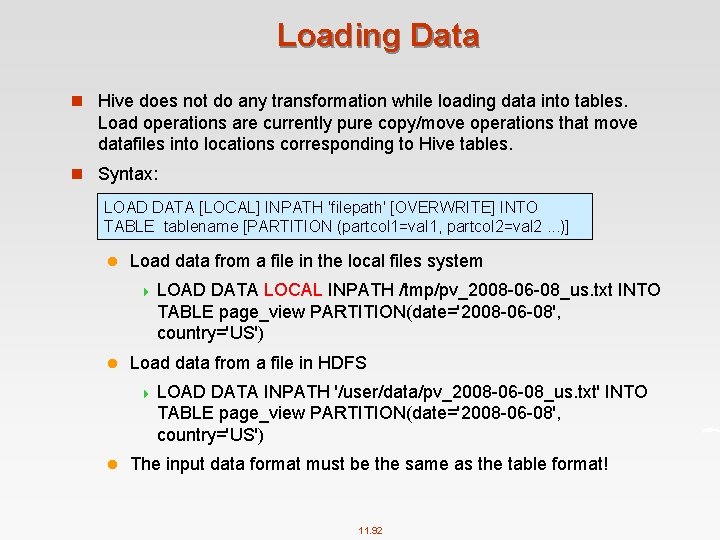

Loading Data n Hive does not do any transformation while loading data into tables. Load operations are currently pure copy/move operations that move datafiles into locations corresponding to Hive tables. n Syntax: LOAD DATA [LOCAL] INPATH 'filepath' [OVERWRITE] INTO TABLE tablename [PARTITION (partcol 1=val 1, partcol 2=val 2. . . )] l Load data from a file in the local files system 4 LOAD DATA LOCAL INPATH /tmp/pv_2008 06 08_us. txt INTO TABLE page_view PARTITION(date='2008 06 08', country='US') l Load data from a file in HDFS 4 LOAD DATA INPATH '/user/data/pv_2008 06 08_us. txt' INTO TABLE page_view PARTITION(date='2008 06 08', country='US') l The input data format must be the same as the table format! 11. 92

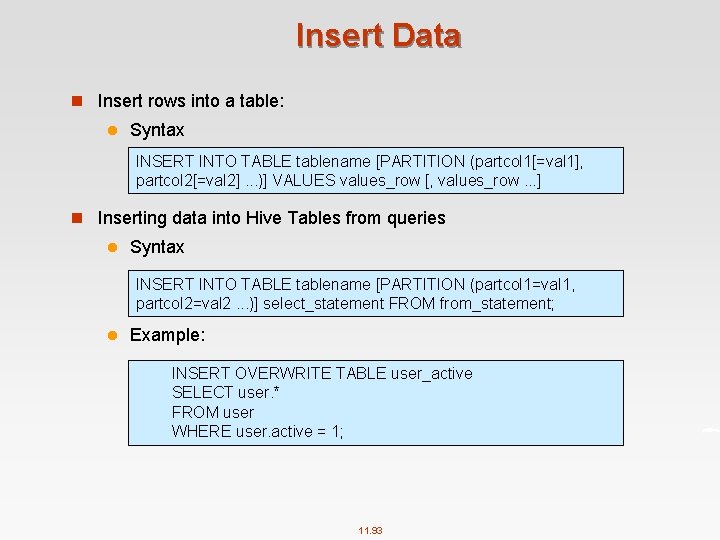

Insert Data n Insert rows into a table: l Syntax INSERT INTO TABLE tablename [PARTITION (partcol 1[=val 1], partcol 2[=val 2]. . . )] VALUES values_row [, values_row. . . ] n Inserting data into Hive Tables from queries l Syntax INSERT INTO TABLE tablename [PARTITION (partcol 1=val 1, partcol 2=val 2. . . )] select_statement FROM from_statement; l Example: INSERT OVERWRITE TABLE user_active SELECT user. * FROM user WHERE user. active = 1; 11. 93

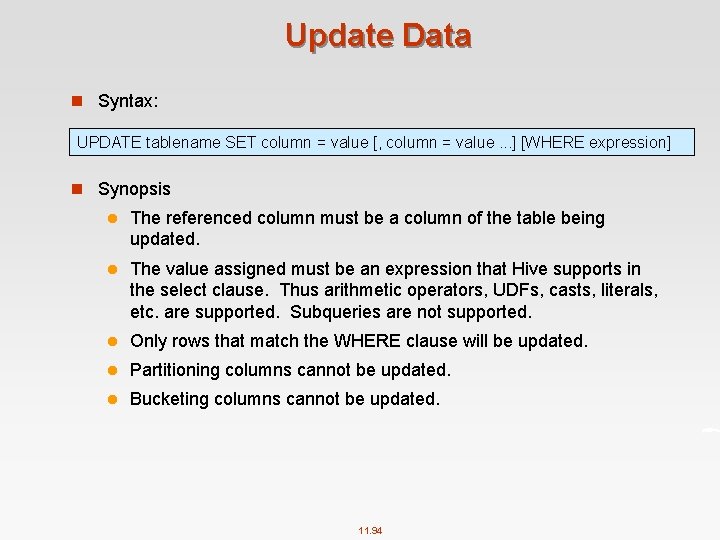

Update Data n Syntax: UPDATE tablename SET column = value [, column = value. . . ] [WHERE expression] n Synopsis l The referenced column must be a column of the table being updated. l The value assigned must be an expression that Hive supports in the select clause. Thus arithmetic operators, UDFs, casts, literals, etc. are supported. Subqueries are not supported. l Only rows that match the WHERE clause will be updated. l Partitioning columns cannot be updated. l Bucketing columns cannot be updated. 11. 94

![Query Data n Select Syntax: SELECT [ALL | DISTINCT] select_expr, . . . FROM Query Data n Select Syntax: SELECT [ALL | DISTINCT] select_expr, . . . FROM](http://slidetodoc.com/presentation_image/a40b5225ac6bf418ddb0e84c81af1f29/image-95.jpg)

Query Data n Select Syntax: SELECT [ALL | DISTINCT] select_expr, . . . FROM table_reference [WHERE where_condition] [GROUP BY col_list] [ORDER BY col_list] [CLUSTER BY col_list | [DISTRIBUTE BY col_list] [SORT BY col_list] ] [LIMIT number] 11. 95

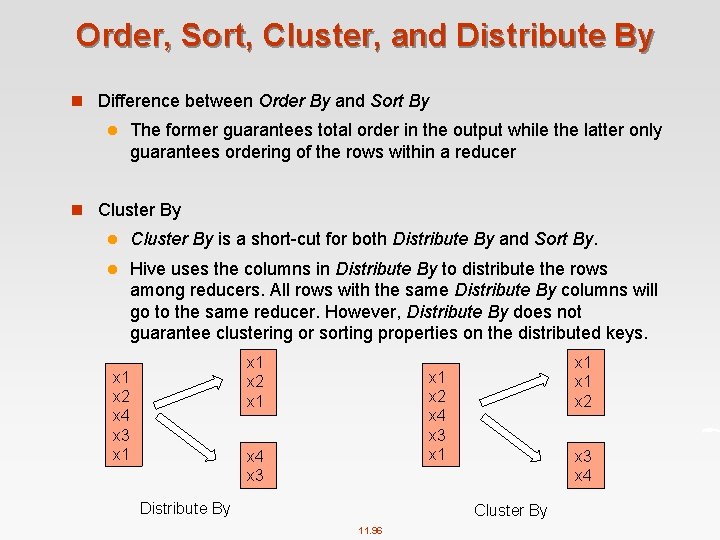

Order, Sort, Cluster, and Distribute By n Difference between Order By and Sort By l The former guarantees total order in the output while the latter only guarantees ordering of the rows within a reducer n Cluster By l Cluster By is a short cut for both Distribute By and Sort By. l Hive uses the columns in Distribute By to distribute the rows among reducers. All rows with the same Distribute By columns will go to the same reducer. However, Distribute By does not guarantee clustering or sorting properties on the distributed keys. x 1 x 2 x 4 x 3 x 1 x 1 x 2 x 4 x 3 x 1 x 4 x 3 Distribute By x 3 x 4 Cluster By 11. 96

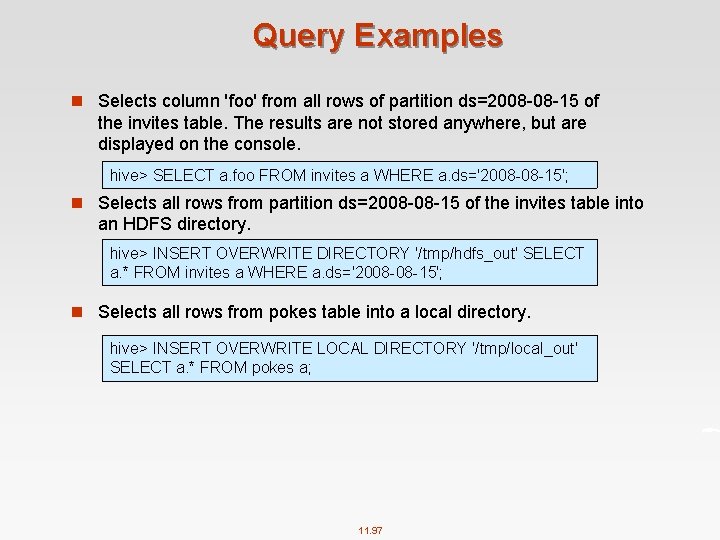

Query Examples n Selects column 'foo' from all rows of partition ds=2008 08 15 of the invites table. The results are not stored anywhere, but are displayed on the console. hive> SELECT a. foo FROM invites a WHERE a. ds='2008 08 15'; n Selects all rows from partition ds=2008 08 15 of the invites table into an HDFS directory. hive> INSERT OVERWRITE DIRECTORY '/tmp/hdfs_out' SELECT a. * FROM invites a WHERE a. ds='2008 08 15'; n Selects all rows from pokes table into a local directory. hive> INSERT OVERWRITE LOCAL DIRECTORY '/tmp/local_out' SELECT a. * FROM pokes a; 11. 97

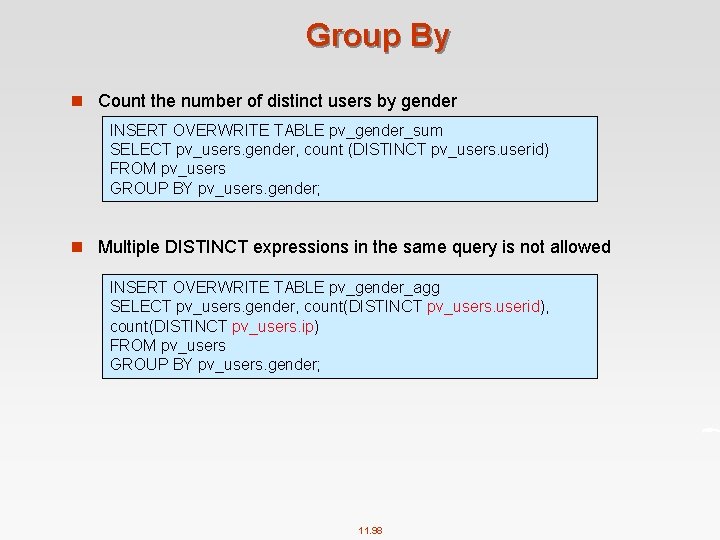

Group By n Count the number of distinct users by gender INSERT OVERWRITE TABLE pv_gender_sum SELECT pv_users. gender, count (DISTINCT pv_users. userid) FROM pv_users GROUP BY pv_users. gender; n Multiple DISTINCT expressions in the same query is not allowed INSERT OVERWRITE TABLE pv_gender_agg SELECT pv_users. gender, count(DISTINCT pv_users. userid), count(DISTINCT pv_users. ip) FROM pv_users GROUP BY pv_users. gender; 11. 98

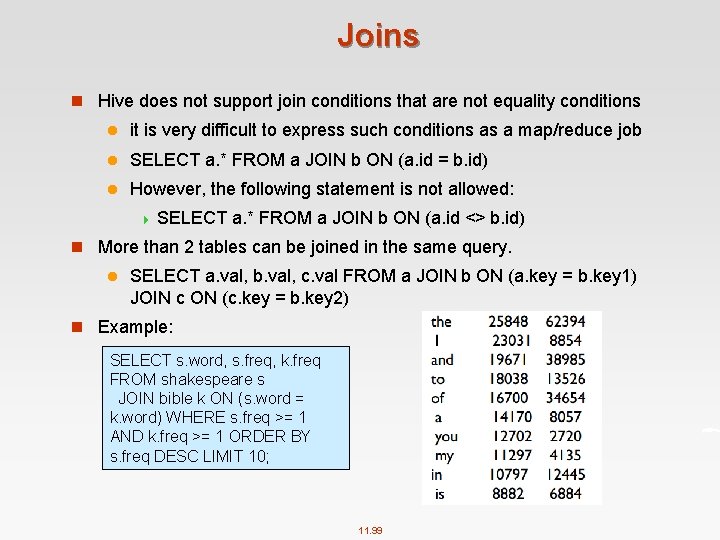

Joins n Hive does not support join conditions that are not equality conditions l it is very difficult to express such conditions as a map/reduce job l SELECT a. * FROM a JOIN b ON (a. id = b. id) l However, the following statement is not allowed: 4 SELECT a. * FROM a JOIN b ON (a. id <> b. id) n More than 2 tables can be joined in the same query. l SELECT a. val, b. val, c. val FROM a JOIN b ON (a. key = b. key 1) JOIN c ON (c. key = b. key 2) n Example: SELECT s. word, s. freq, k. freq FROM shakespeare s JOIN bible k ON (s. word = k. word) WHERE s. freq >= 1 AND k. freq >= 1 ORDER BY s. freq DESC LIMIT 10; 11. 99

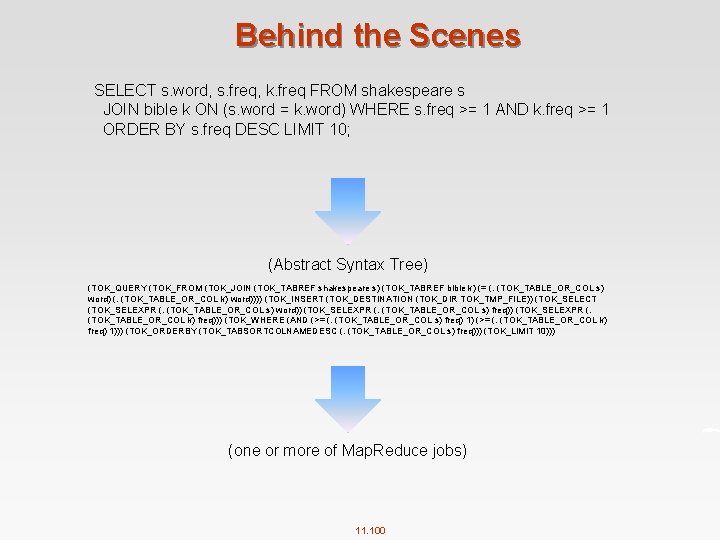

Behind the Scenes SELECT s. word, s. freq, k. freq FROM shakespeare s JOIN bible k ON (s. word = k. word) WHERE s. freq >= 1 AND k. freq >= 1 ORDER BY s. freq DESC LIMIT 10; (Abstract Syntax Tree) (TOK_QUERY (TOK_FROM (TOK_JOIN (TOK_TABREF shakespeare s) (TOK_TABREF bible k) (= (. (TOK_TABLE_OR_COL s) word) (. (TOK_TABLE_OR_COL k) word)))) (TOK_INSERT (TOK_DESTINATION (TOK_DIR TOK_TMP_FILE)) (TOK_SELECT (TOK_SELEXPR (. (TOK_TABLE_OR_COL s) word)) (TOK_SELEXPR (. (TOK_TABLE_OR_COL s) freq)) (TOK_SELEXPR (. (TOK_TABLE_OR_COL k) freq))) (TOK_WHERE (AND (>= (. (TOK_TABLE_OR_COL s) freq) 1) (>= (. (TOK_TABLE_OR_COL k) freq) 1))) (TOK_ORDERBY (TOK_TABSORTCOLNAMEDESC (. (TOK_TABLE_OR_COL s) freq))) (TOK_LIMIT 10))) (one or more of Map. Reduce jobs) 11. 100

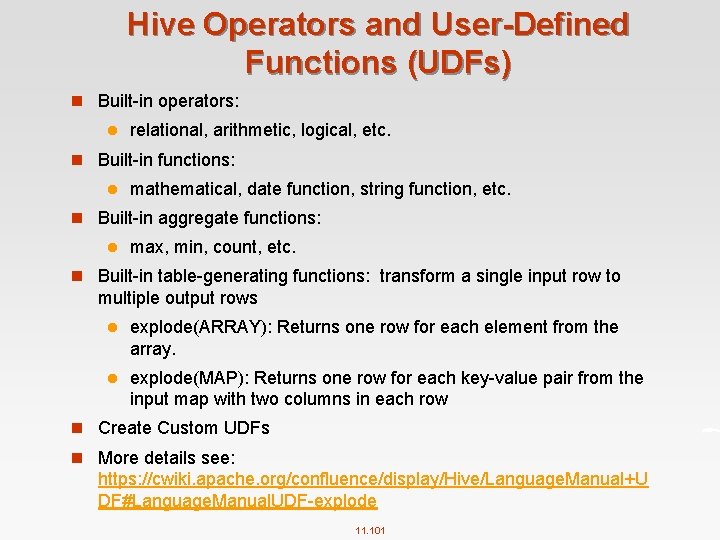

Hive Operators and User-Defined Functions (UDFs) n Built in operators: l relational, arithmetic, logical, etc. n Built in functions: l mathematical, date function, string function, etc. n Built in aggregate functions: l max, min, count, etc. n Built in table generating functions: transform a single input row to multiple output rows l explode(ARRAY): Returns one row for each element from the array. l explode(MAP): Returns one row for each key value pair from the input map with two columns in each row n Create Custom UDFs n More details see: https: //cwiki. apache. org/confluence/display/Hive/Language. Manual+U DF#Language. Manual. UDF explode 11. 101

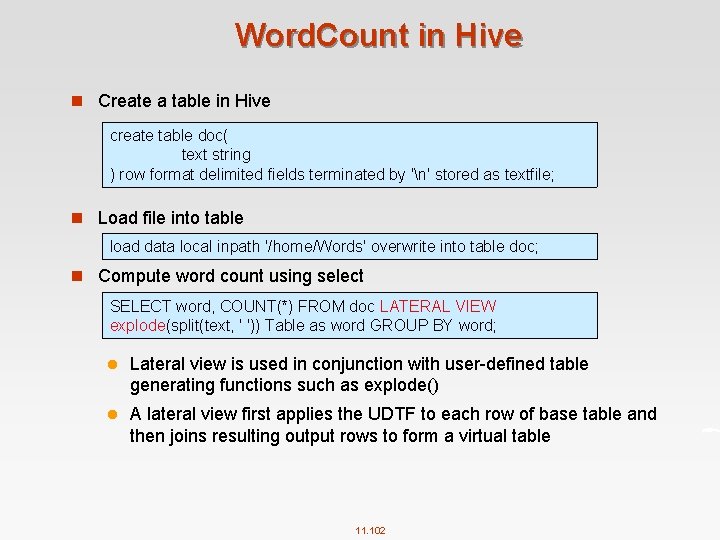

Word. Count in Hive n Create a table in Hive create table doc( text string ) row format delimited fields terminated by 'n' stored as textfile; n Load file into table load data local inpath '/home/Words' overwrite into table doc; n Compute word count using select SELECT word, COUNT(*) FROM doc LATERAL VIEW explode(split(text, ' ')) Table as word GROUP BY word; l Lateral view is used in conjunction with user defined table generating functions such as explode() l A lateral view first applies the UDTF to each row of base table and then joins resulting output rows to form a virtual table 11. 102

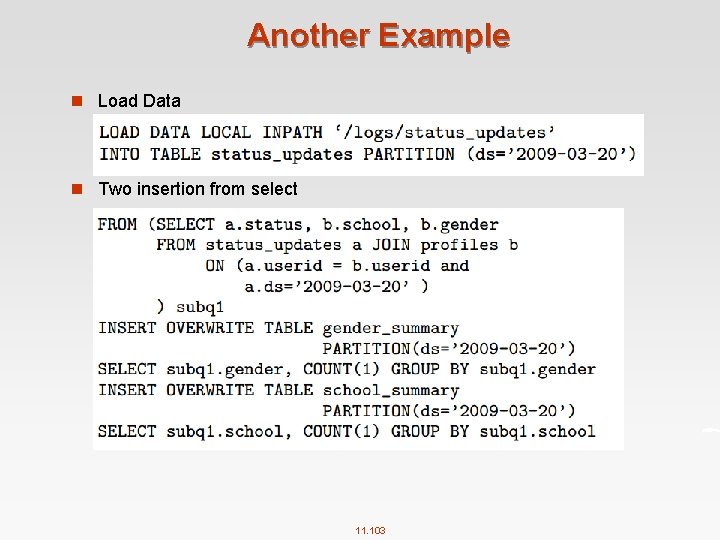

Another Example n Load Data n Two insertion from select 11. 103

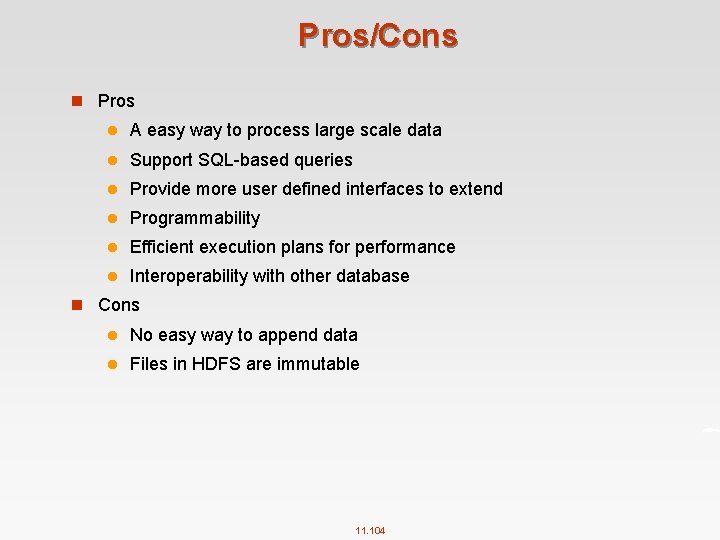

Pros/Cons n Pros l A easy way to process large scale data l Support SQL based queries l Provide more user defined interfaces to extend l Programmability l Efficient execution plans for performance l Interoperability with other database n Cons l No easy way to append data l Files in HDFS are immutable 11. 104

Applications of Hive n Log processing l Daily Report l User Activity Measurement n Data/Text mining l Machine learning (Training Data) n Business intelligence l Advertising Delivery l Spam Detection 11. 105

References n https: //hbase. apache. org/book. html n https: //cwiki. apache. org/confluence/display/Hive/Home#Home Hive. Documentation n http: //www. tutorialspoint. com/hive/ n Hadoop The Definitive Guide. HBase Chapter. n Hadoop the Definitive Guide. Hive Chapter 11. 106

End of Chapter 11

- Slides: 107