COMP 9313 Big Data Management Lecturer Xin Cao

COMP 9313: Big Data Management Lecturer: Xin Cao Course web site: http: //www. cse. unsw. edu. au/~cs 9313/

Chapter 12: Revision and Exam Preparation 12. 2

Learning outcomes n After completing this course, you are expected to: l elaborate the important characteristics of Big Data (Chapter 1) l develop an appropriate storage structure for a Big Data repository (Chapter 5) l utilize the map/reduce paradigm and the Spark platform to manipulate Big Data (Chapters 2, 3, 4, and 7) l use a high-level query language to manipulate Big Data (Chapter 6) l develop efficient solutions for analytical problems involving Big Data (Chapters 8 -11) 12. 3

Final exam n Final written exam (100 pts) n Six questions in total on six topics n Three hours n You can bring the printed lecture notes n If you are ill on the day of the exam, do not attend the exam – I will not accept any medical special consideration claims from people who already attempted the exam. 12. 4

Topic 1: Map. Reduce (Chapters 2 and 3) 12. 5

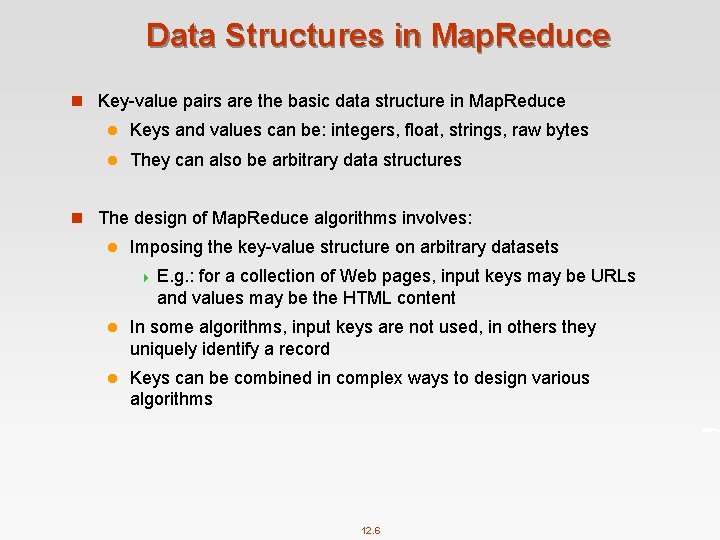

Data Structures in Map. Reduce n Key-value pairs are the basic data structure in Map. Reduce l Keys and values can be: integers, float, strings, raw bytes l They can also be arbitrary data structures n The design of Map. Reduce algorithms involves: l Imposing the key-value structure on arbitrary datasets 4 E. g. : for a collection of Web pages, input keys may be URLs and values may be the HTML content l In some algorithms, input keys are not used, in others they uniquely identify a record l Keys can be combined in complex ways to design various algorithms 12. 6

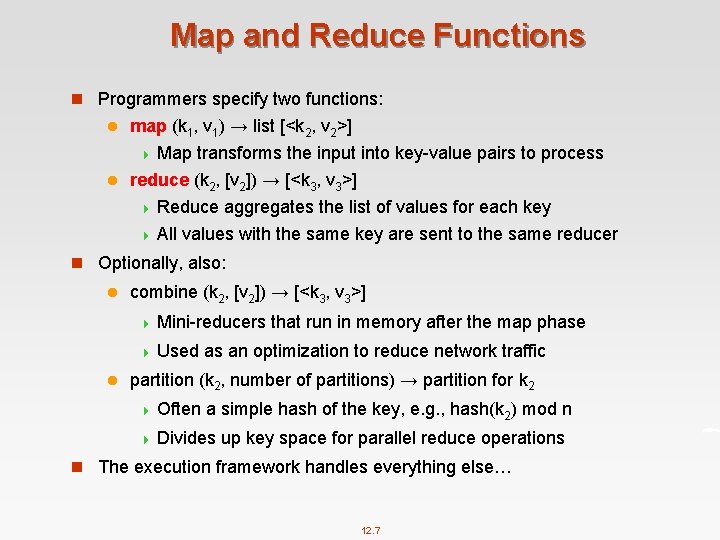

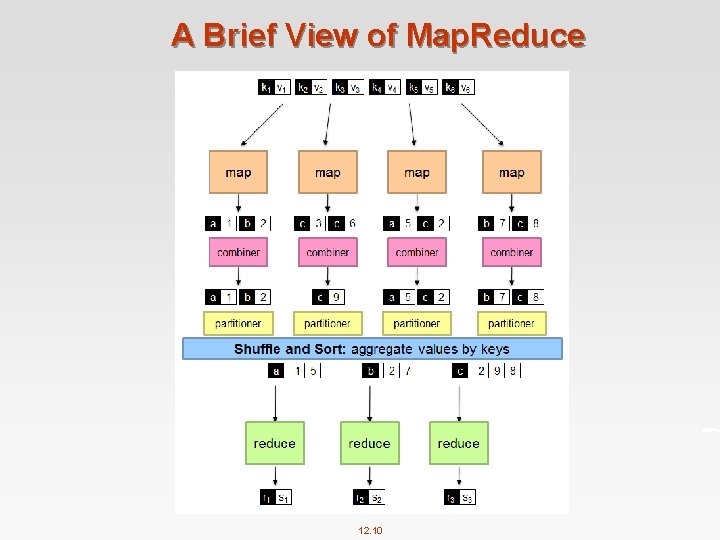

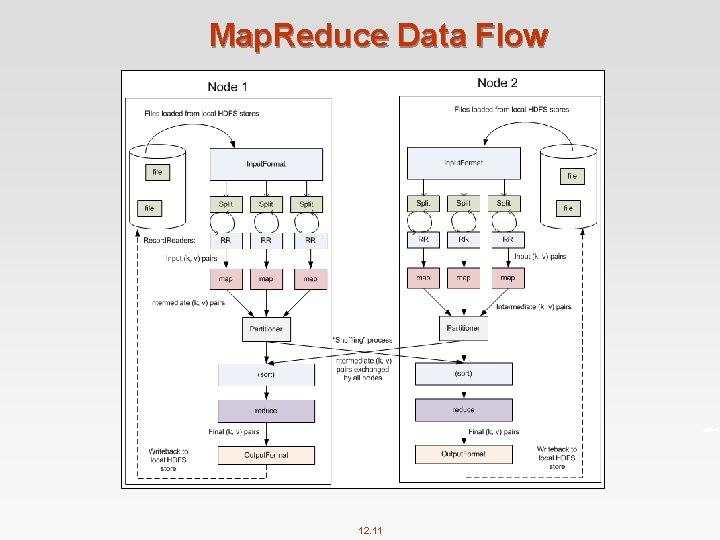

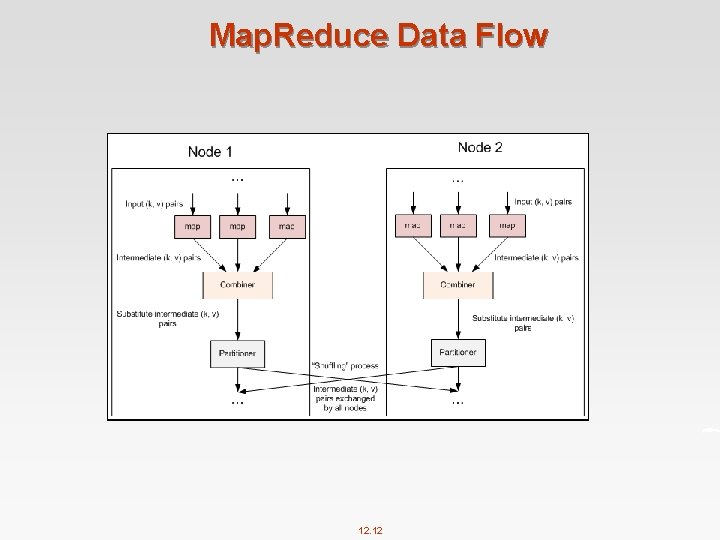

Map and Reduce Functions n Programmers specify two functions: map (k 1, v 1) → list [<k 2, v 2>] 4 Map transforms the input into key-value pairs to process l reduce (k 2, [v 2]) → [<k 3, v 3>] 4 Reduce aggregates the list of values for each key 4 All values with the same key are sent to the same reducer l n Optionally, also: l combine (k 2, [v 2]) → [<k 3, v 3>] 4 Mini-reducers 4 Used l that run in memory after the map phase as an optimization to reduce network traffic partition (k 2, number of partitions) → partition for k 2 4 Often a simple hash of the key, e. g. , hash(k 2) mod n 4 Divides up key space for parallel reduce operations n The execution framework handles everything else… 12. 7

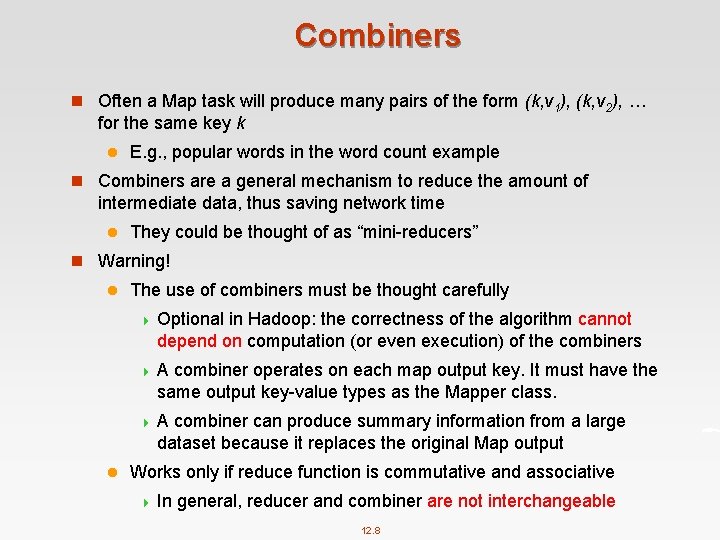

Combiners n Often a Map task will produce many pairs of the form (k, v 1), (k, v 2), … for the same key k l E. g. , popular words in the word count example n Combiners are a general mechanism to reduce the amount of intermediate data, thus saving network time l They could be thought of as “mini-reducers” n Warning! l The use of combiners must be thought carefully 4 Optional in Hadoop: the correctness of the algorithm cannot depend on computation (or even execution) of the combiners 4 A combiner operates on each map output key. It must have the same output key-value types as the Mapper class. 4 A combiner can produce summary information from a large dataset because it replaces the original Map output l Works only if reduce function is commutative and associative 4 In general, reducer and combiner are not interchangeable 12. 8

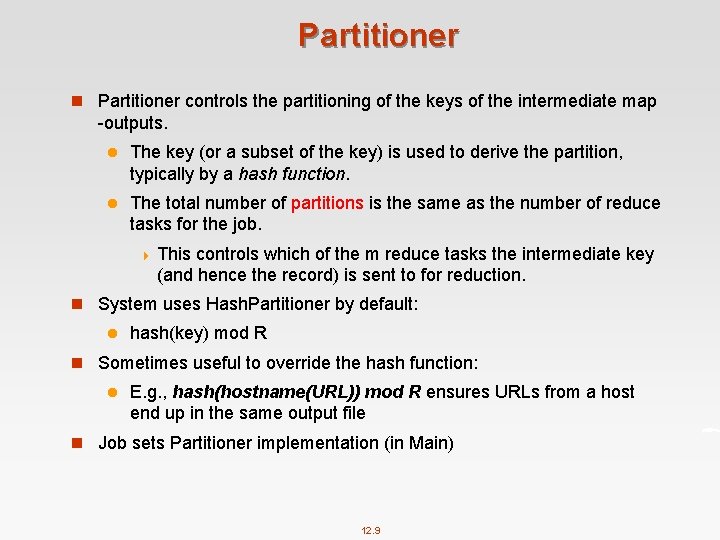

Partitioner n Partitioner controls the partitioning of the keys of the intermediate map -outputs. l The key (or a subset of the key) is used to derive the partition, typically by a hash function. l The total number of partitions is the same as the number of reduce tasks for the job. 4 This controls which of the m reduce tasks the intermediate key (and hence the record) is sent to for reduction. n System uses Hash. Partitioner by default: l hash(key) mod R n Sometimes useful to override the hash function: l E. g. , hash(hostname(URL)) mod R ensures URLs from a host end up in the same output file n Job sets Partitioner implementation (in Main) 12. 9

A Brief View of Map. Reduce 12. 10

Map. Reduce Data Flow 12. 11

Map. Reduce Data Flow 12. 12

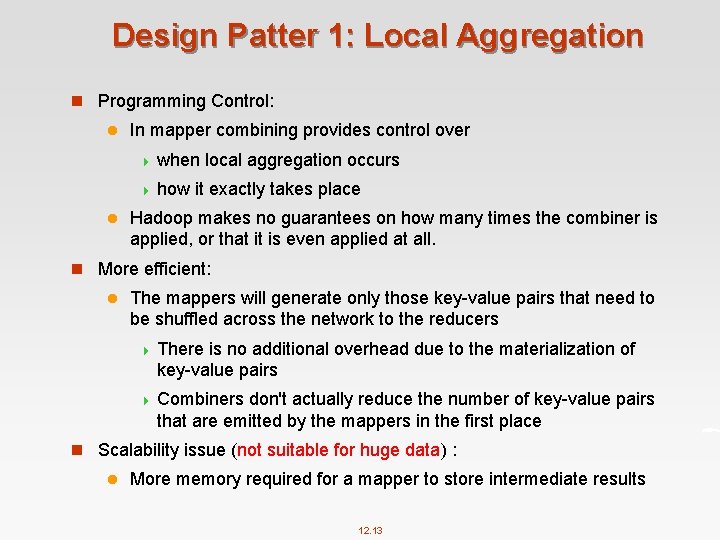

Design Patter 1: Local Aggregation n Programming Control: l In mapper combining provides control over 4 when 4 how l local aggregation occurs it exactly takes place Hadoop makes no guarantees on how many times the combiner is applied, or that it is even applied at all. n More efficient: l The mappers will generate only those key-value pairs that need to be shuffled across the network to the reducers 4 There is no additional overhead due to the materialization of key-value pairs 4 Combiners don't actually reduce the number of key-value pairs that are emitted by the mappers in the first place n Scalability issue (not suitable for huge data) : l More memory required for a mapper to store intermediate results 12. 13

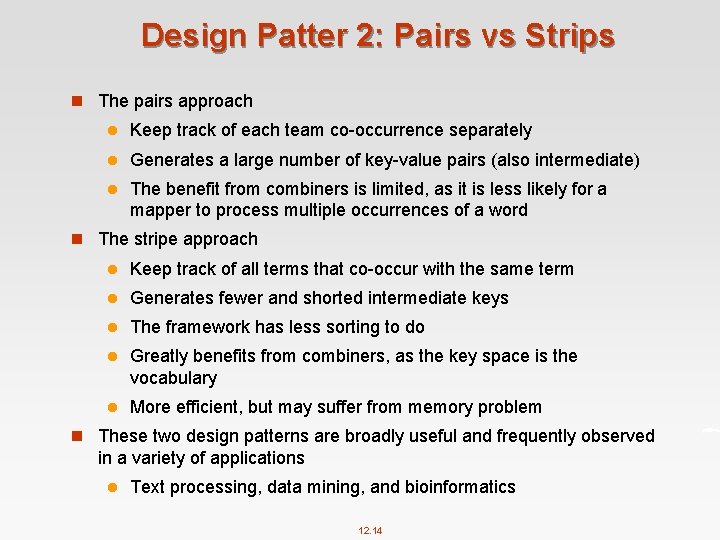

Design Patter 2: Pairs vs Strips n The pairs approach l Keep track of each team co-occurrence separately l Generates a large number of key-value pairs (also intermediate) l The benefit from combiners is limited, as it is less likely for a mapper to process multiple occurrences of a word n The stripe approach l Keep track of all terms that co-occur with the same term l Generates fewer and shorted intermediate keys l The framework has less sorting to do l Greatly benefits from combiners, as the key space is the vocabulary l More efficient, but may suffer from memory problem n These two design patterns are broadly useful and frequently observed in a variety of applications l Text processing, data mining, and bioinformatics 12. 14

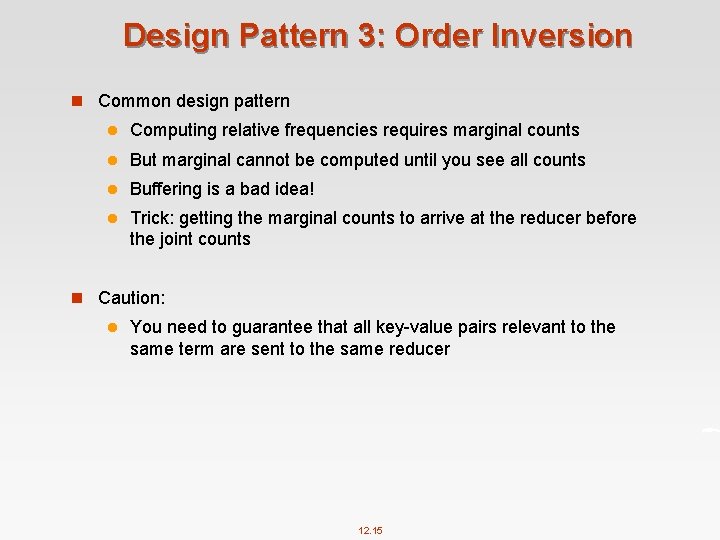

Design Pattern 3: Order Inversion n Common design pattern l Computing relative frequencies requires marginal counts l But marginal cannot be computed until you see all counts l Buffering is a bad idea! l Trick: getting the marginal counts to arrive at the reducer before the joint counts n Caution: l You need to guarantee that all key-value pairs relevant to the same term are sent to the same reducer 12. 15

Design Pattern 4: Value-to-key Conversion n Put the value as part of the key, make Hadoop do sorting for us n Provides a scalable solution for secondary sorting. n Caution: l You need to guarantee that all key-value pairs relevant to the same term are sent to the same reducer 12. 16

Topic 2: Spark (Chapter 7) 12. 17

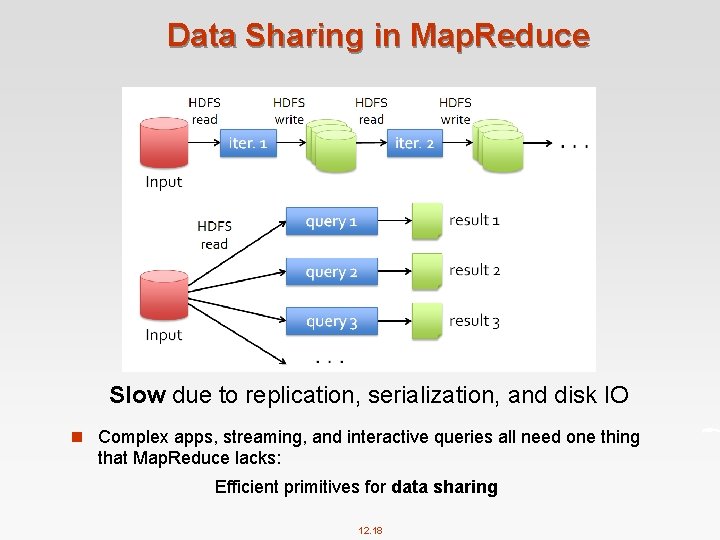

Data Sharing in Map. Reduce Slow due to replication, serialization, and disk IO n Complex apps, streaming, and interactive queries all need one thing that Map. Reduce lacks: Efficient primitives for data sharing 12. 18

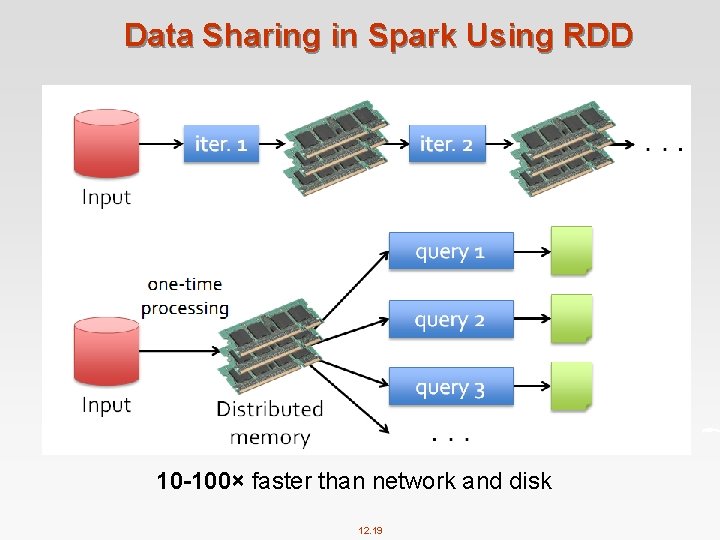

Data Sharing in Spark Using RDD 10 -100× faster than network and disk 12. 19

What is RDD n Resilient Distributed Datasets: A Fault-Tolerant Abstraction for In- Memory Cluster Computing. Matei Zaharia, et al. NSDI’ 12 l RDD is a distributed memory abstraction that lets programmers perform in-memory computations on large clusters in a faulttolerant manner. n Resilient l Fault-tolerant, is able to recompute missing or damaged partitions due to node failures. n Distributed l Data residing on multiple nodes in a cluster. n Dataset l A collection of partitioned elements, e. g. tuples or other objects (that represent records of the data you work with). n RDD is the primary data abstraction in Apache Spark and the core of Spark. It enables operations on collection of elements in parallel. 12. 20

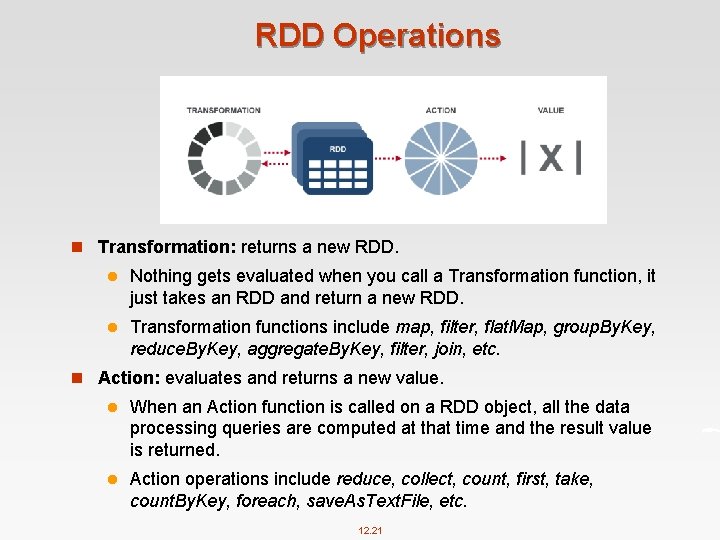

RDD Operations n Transformation: returns a new RDD. l Nothing gets evaluated when you call a Transformation function, it just takes an RDD and return a new RDD. l Transformation functions include map, filter, flat. Map, group. By. Key, reduce. By. Key, aggregate. By. Key, filter, join, etc. n Action: evaluates and returns a new value. l When an Action function is called on a RDD object, all the data processing queries are computed at that time and the result value is returned. l Action operations include reduce, collect, count, first, take, count. By. Key, foreach, save. As. Text. File, etc. 12. 21

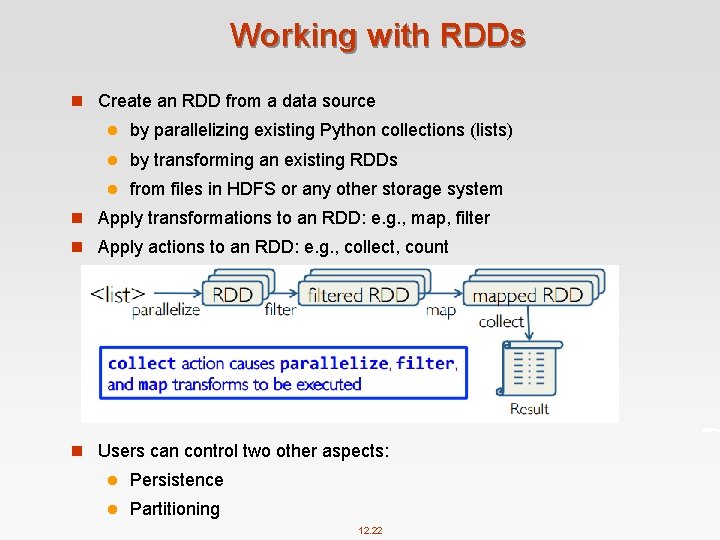

Working with RDDs n Create an RDD from a data source l by parallelizing existing Python collections (lists) l by transforming an existing RDDs l from files in HDFS or any other storage system n Apply transformations to an RDD: e. g. , map, filter n Apply actions to an RDD: e. g. , collect, count n Users can control two other aspects: l Persistence l Partitioning 12. 22

RDD Operations 12. 23

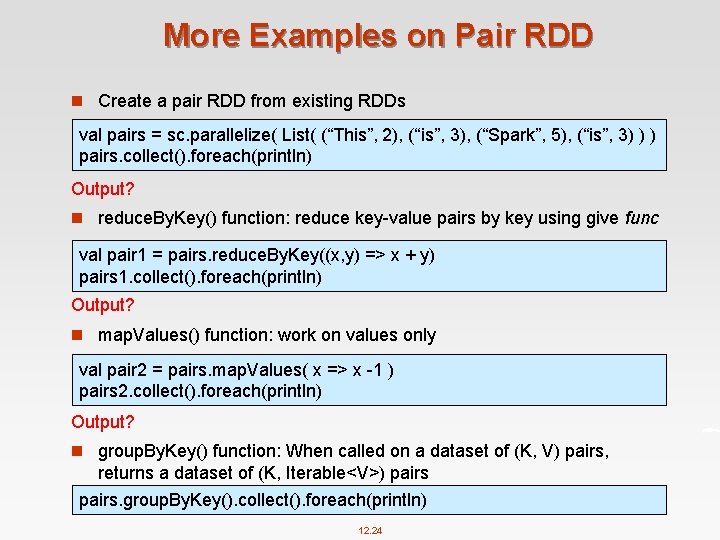

More Examples on Pair RDD n Create a pair RDD from existing RDDs val pairs = sc. parallelize( List( (“This”, 2), (“is”, 3), (“Spark”, 5), (“is”, 3) ) ) pairs. collect(). foreach(println) Output? n reduce. By. Key() function: reduce key-value pairs by key using give func val pair 1 = pairs. reduce. By. Key((x, y) => x + y) pairs 1. collect(). foreach(println) Output? n map. Values() function: work on values only val pair 2 = pairs. map. Values( x => x -1 ) pairs 2. collect(). foreach(println) Output? n group. By. Key() function: When called on a dataset of (K, V) pairs, returns a dataset of (K, Iterable<V>) pairs. group. By. Key(). collect(). foreach(println) 12. 24

Topic 3: Link Analysis (Chapter 8) 12. 25

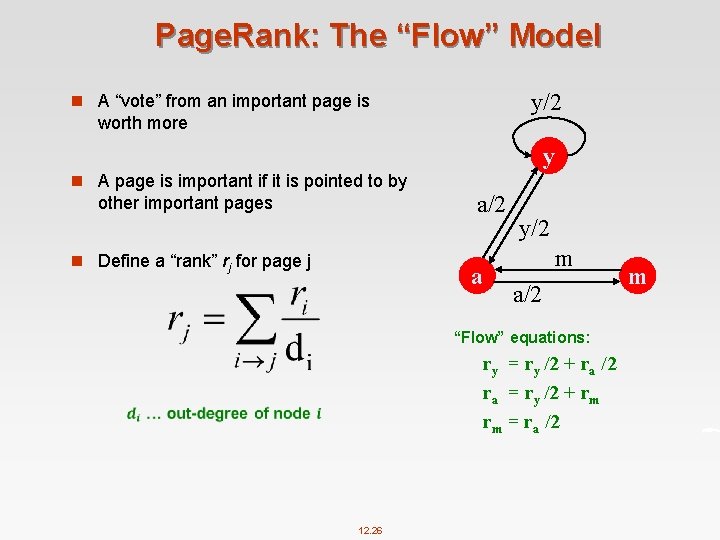

Page. Rank: The “Flow” Model y/2 n A “vote” from an important page is worth more y n A page is important if it is pointed to by other important pages n Define a “rank” rj for page j a/2 a y/2 m a/2 “Flow” equations: ry = ry /2 + ra /2 ra = ry /2 + rm rm = ra /2 12. 26 m

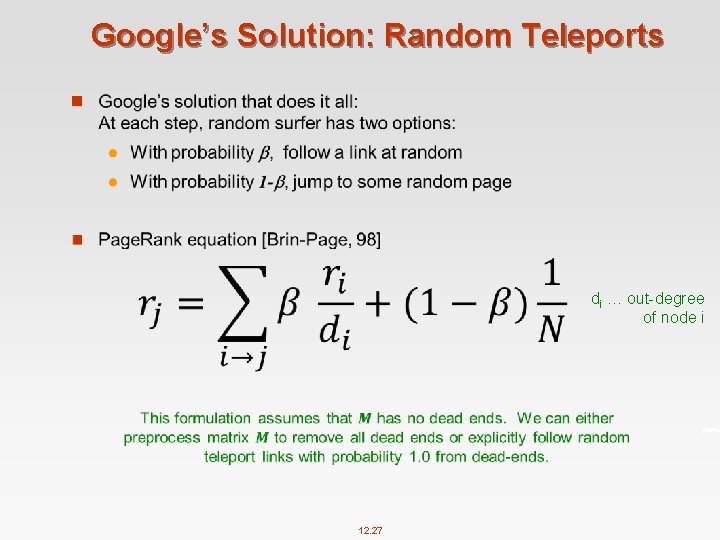

Google’s Solution: Random Teleports n di … out-degree of node i 12. 27

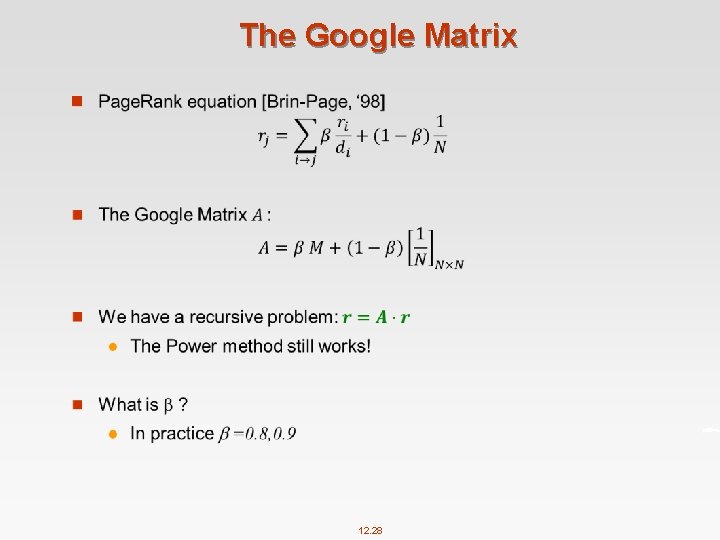

The Google Matrix n 12. 28

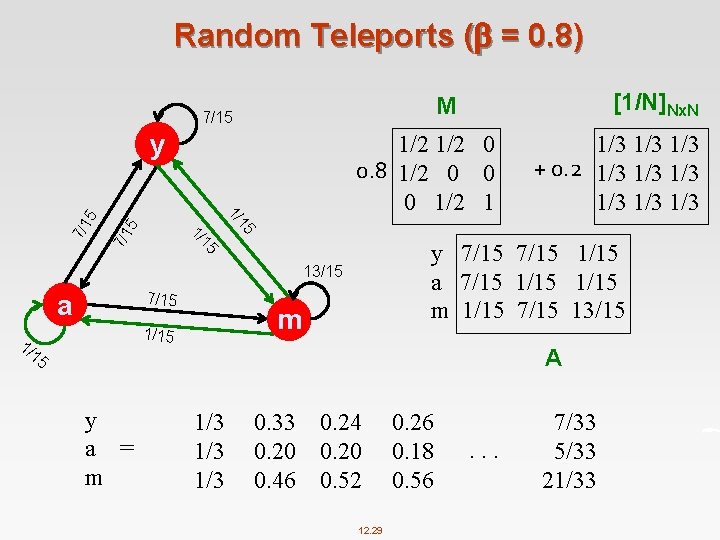

Random Teleports ( = 0. 8) y 7/1 5 5 7/1 y 7/15 1/15 a 7/15 1/15 m 1/15 7/15 13/15 15 1/ 7/15 m 1/15 1/ 15 y a = m 1/3 1/3 + 0. 2 1/3 1/3 1/3 15 1/ 1/2 0 0. 8 1/2 0 0 0 1/2 1 13/15 a [1/N]Nx. N M 7/15 A 1/3 1/3 0. 33 0. 20 0. 46 0. 24 0. 20 0. 52 12. 29 0. 26 0. 18 0. 56 . . . 7/33 5/33 21/33

Topic-Specific Page. Rank n Random walker has a small probability of teleporting at any step n Teleport can go to: l Standard Page. Rank: Any page with equal probability 4 To l avoid dead-end and spider-trap problems Topic Specific Page. Rank: A topic-specific set of “relevant” pages (teleport set) n Idea: Bias the random walk l When walker teleports, she pick a page from a set S l S contains only pages that are relevant to the topic 4 E. g. , l Open Directory (DMOZ) pages for a given topic/query For each teleport set S, we get a different vector r. S 12. 30

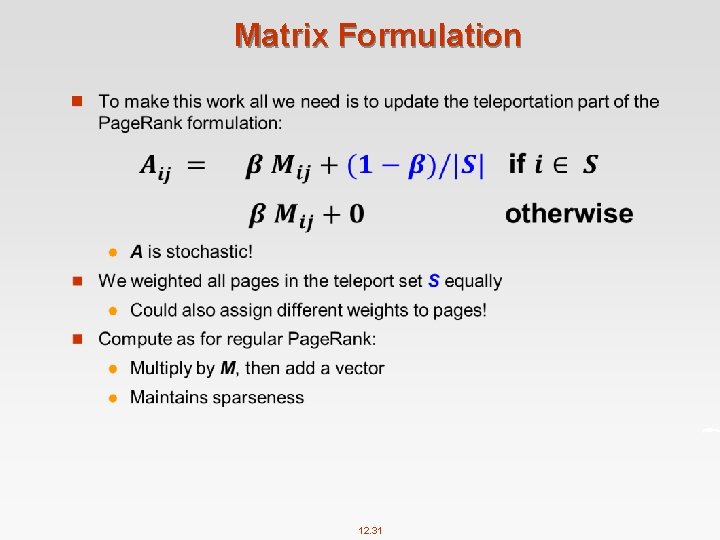

Matrix Formulation n 12. 31

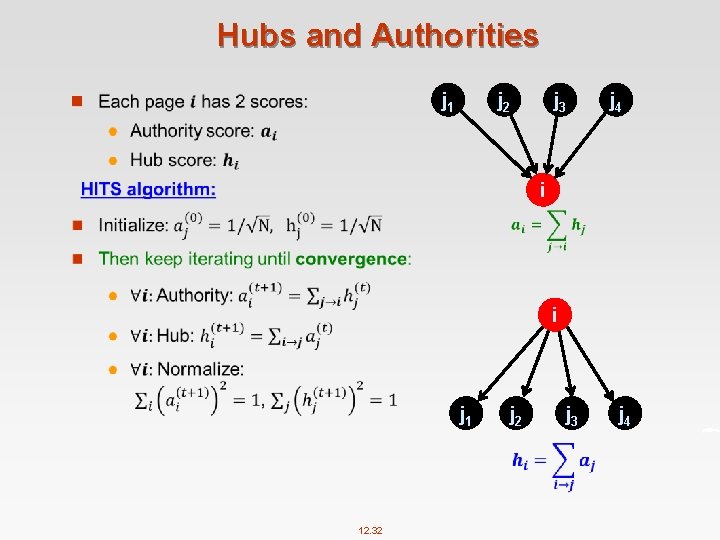

Hubs and Authorities j 1 n j 2 j 3 j 4 i i j 1 12. 32 j 3 j 4

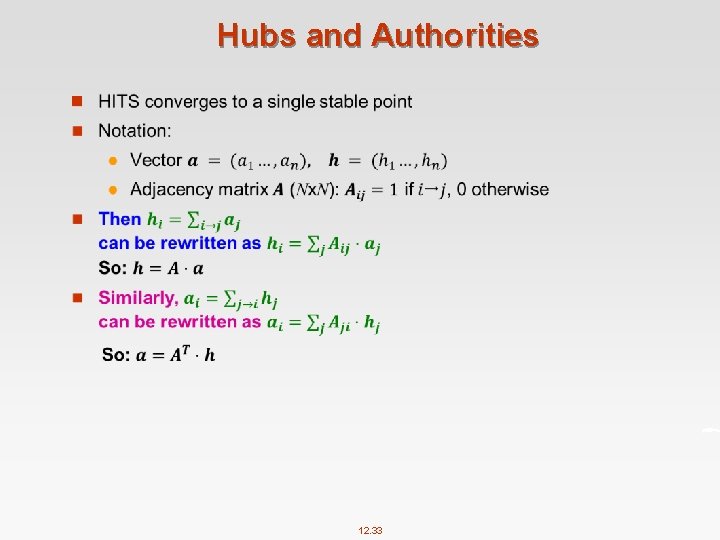

Hubs and Authorities n 12. 33

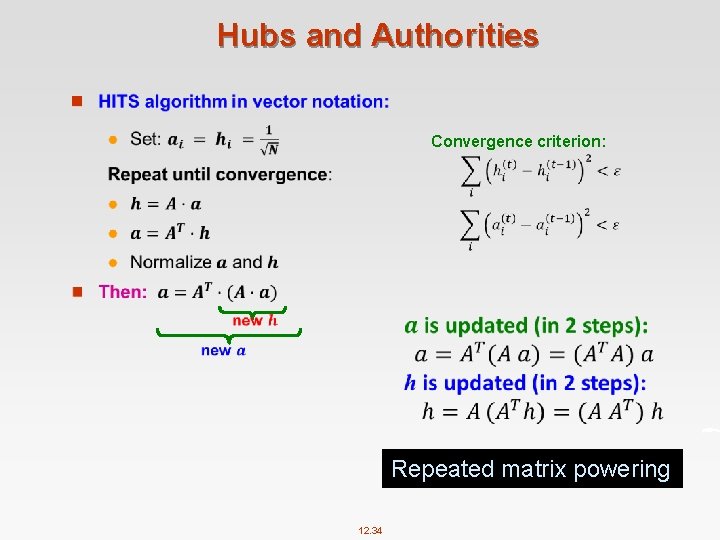

Hubs and Authorities n Convergence criterion: Repeated matrix powering 12. 34

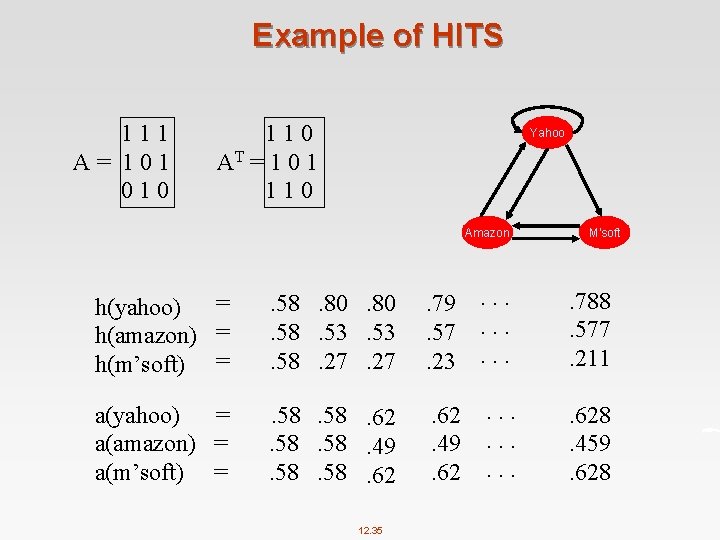

Example of HITS 111 A= 101 010 110 AT = 1 0 1 110 Yahoo Amazon M’soft h(yahoo) = h(amazon) = h(m’soft) = . 58. 80. 58. 53. 58. 27 . 79. 57. 23 . . 788. 577. 211 a(yahoo) = a(amazon) = a(m’soft) = . 58. 62. 58. 49. 58. 62. 49. 62 . . 628. 459. 628 12. 35

Topic 4: Graph Data Processing 12. 36

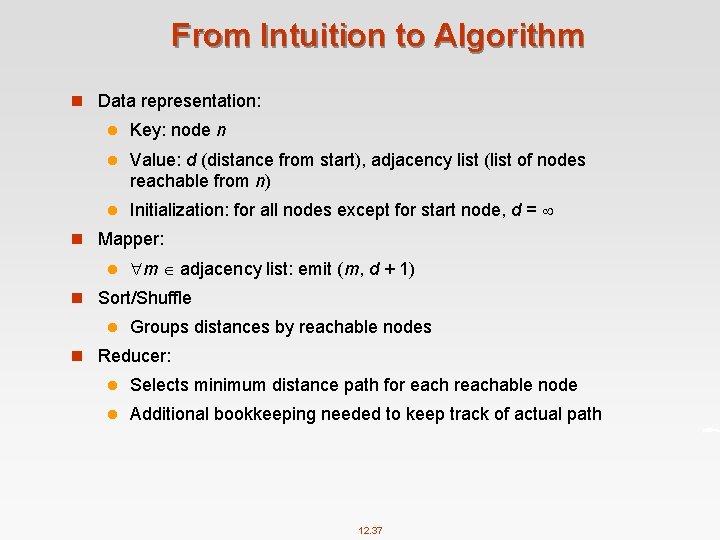

From Intuition to Algorithm n Data representation: l Key: node n l Value: d (distance from start), adjacency list (list of nodes reachable from n) l Initialization: for all nodes except for start node, d = n Mapper: l m adjacency list: emit (m, d + 1) n Sort/Shuffle l Groups distances by reachable nodes n Reducer: l Selects minimum distance path for each reachable node l Additional bookkeeping needed to keep track of actual path 12. 37

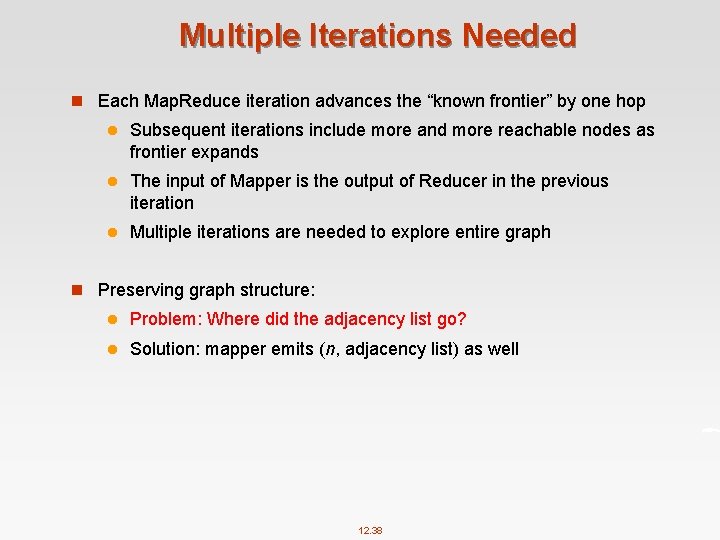

Multiple Iterations Needed n Each Map. Reduce iteration advances the “known frontier” by one hop l Subsequent iterations include more and more reachable nodes as frontier expands l The input of Mapper is the output of Reducer in the previous iteration l Multiple iterations are needed to explore entire graph n Preserving graph structure: l Problem: Where did the adjacency list go? l Solution: mapper emits (n, adjacency list) as well 12. 38

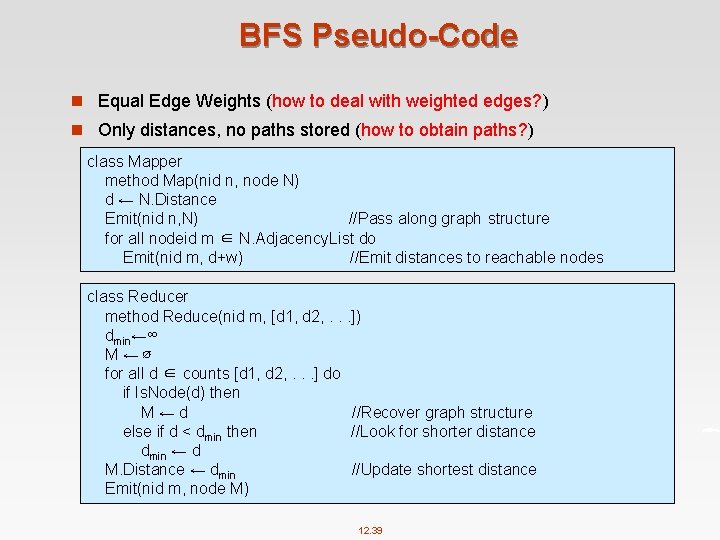

BFS Pseudo-Code n Equal Edge Weights (how to deal with weighted edges? ) n Only distances, no paths stored (how to obtain paths? ) class Mapper method Map(nid n, node N) d ← N. Distance Emit(nid n, N) //Pass along graph structure for all nodeid m ∈ N. Adjacency. List do Emit(nid m, d+w) //Emit distances to reachable nodes class Reducer method Reduce(nid m, [d 1, d 2, . . . ]) dmin←∞ M←∅ for all d ∈ counts [d 1, d 2, . . . ] do if Is. Node(d) then M←d //Recover graph structure else if d < dmin then //Look for shorter distance dmin ← d M. Distance ← dmin //Update shortest distance Emit(nid m, node M) 12. 39

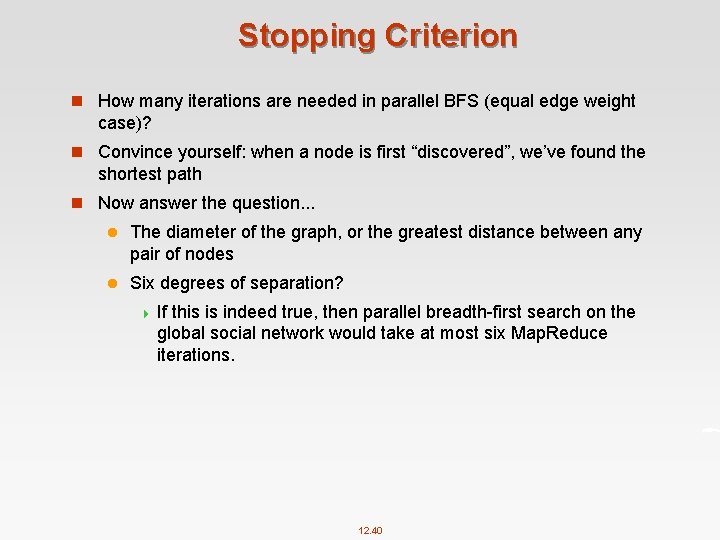

Stopping Criterion n How many iterations are needed in parallel BFS (equal edge weight case)? n Convince yourself: when a node is first “discovered”, we’ve found the shortest path n Now answer the question. . . l The diameter of the graph, or the greatest distance between any pair of nodes l Six degrees of separation? 4 If this is indeed true, then parallel breadth-first search on the global social network would take at most six Map. Reduce iterations. 12. 40

BFS Pseudo-Code (Weighted Edges) n The adjacency lists, which were previously lists of node ids, must now encode the edge distances as well l Positive weights! n In line 6 of the mapper code, instead of emitting d + 1 as the value, we must now emit d + w, where w is the edge distance n The termination behaviour is very different! l How many iterations are needed in parallel BFS (positive edge weight case)? l Convince yourself: when a node is first “discovered”, we’ve found the shortest path ! e u t tr No 12. 41

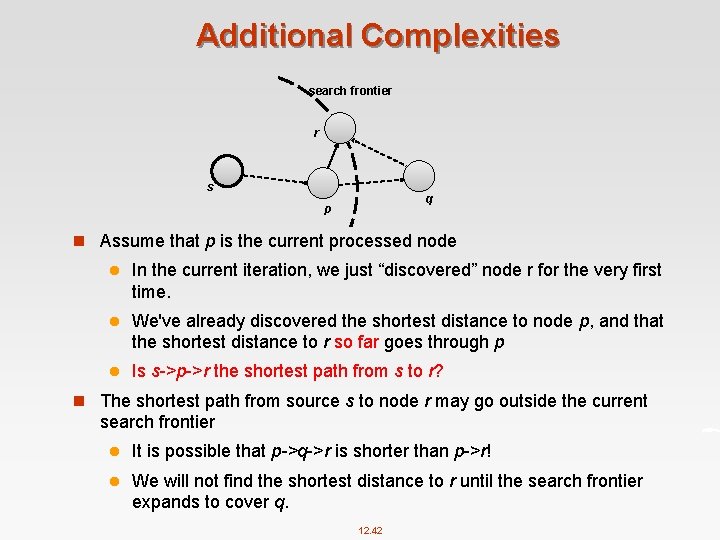

Additional Complexities search frontier r s q p n Assume that p is the current processed node l In the current iteration, we just “discovered” node r for the very first time. l We've already discovered the shortest distance to node p, and that the shortest distance to r so far goes through p l Is s->p->r the shortest path from s to r? n The shortest path from source s to node r may go outside the current search frontier l It is possible that p->q->r is shorter than p->r! l We will not find the shortest distance to r until the search frontier expands to cover q. 12. 42

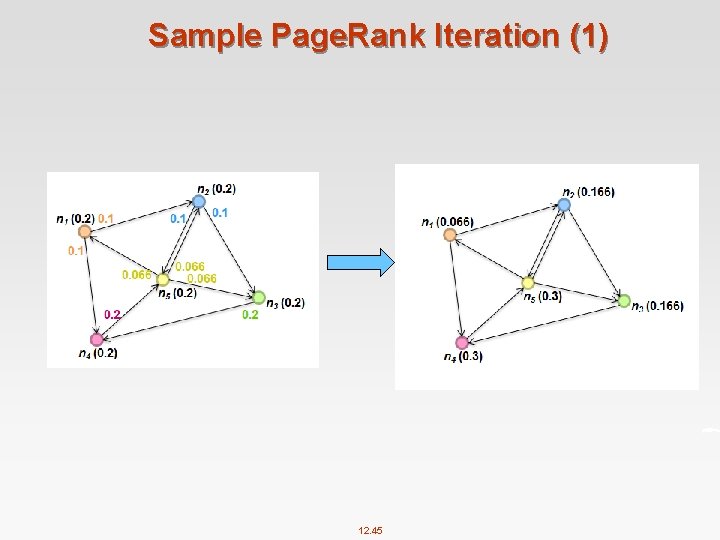

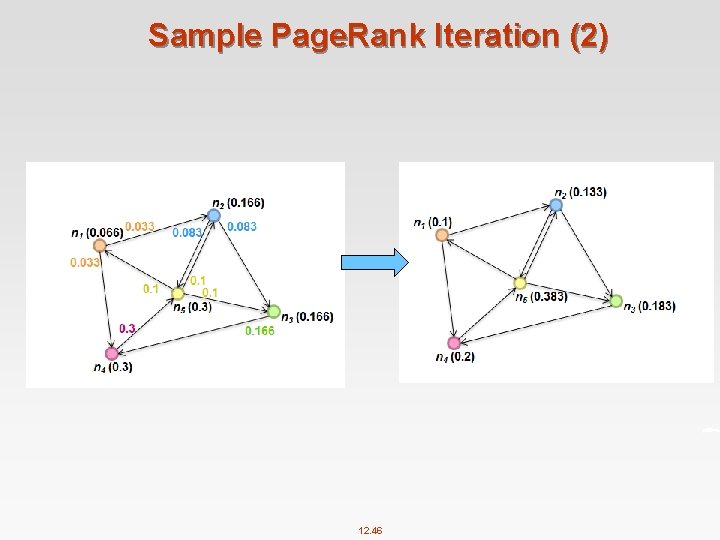

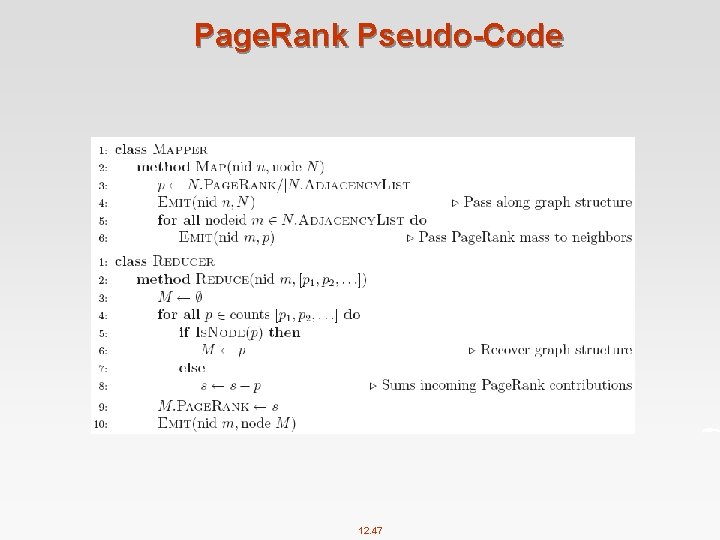

Computing Page. Rank n Properties of Page. Rank l Can be computed iteratively l Effects at each iteration are local n Sketch of algorithm: l Start with seed ri values l Each page distributes ri “credit” to all pages it links to l Each target page tj adds up “credit” from multiple in-bound links to compute rj l Iterate until values converge 12. 43

Simplified Page. Rank n First, tackle the simple case: l No teleport l No dangling nodes (dead ends) n Then, factor in these complexities… l How to deal with the teleport probability? l How to deal with dangling nodes? 12. 44

Sample Page. Rank Iteration (1) 12. 45

Sample Page. Rank Iteration (2) 12. 46

Page. Rank Pseudo-Code 12. 47

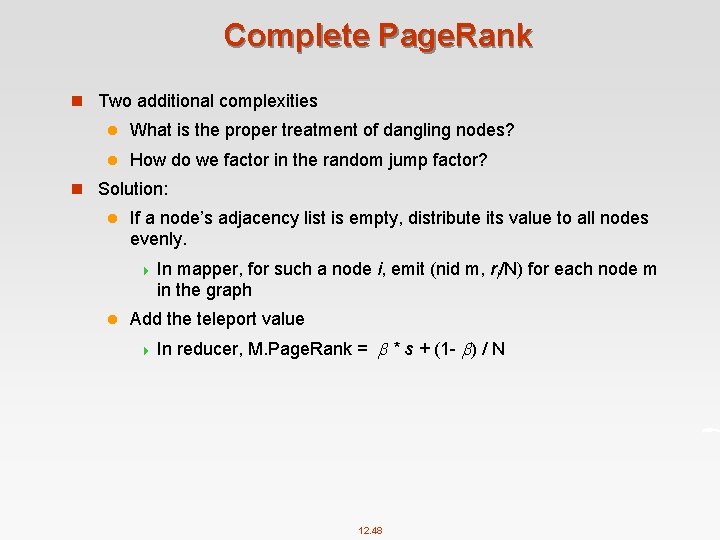

Complete Page. Rank n Two additional complexities l What is the proper treatment of dangling nodes? l How do we factor in the random jump factor? n Solution: l If a node’s adjacency list is empty, distribute its value to all nodes evenly. 4 In mapper, for such a node i, emit (nid m, ri/N) for each node m in the graph l Add the teleport value 4 In reducer, M. Page. Rank = * s + (1 - ) / N 12. 48

Topic 5: Streaming Data Processing n Types of queries one wants on answer on a data stream: (we’ll learn these today) l Sampling data from a stream 4 Construct l Queries over sliding windows 4 Number l of items of type x in the last k elements of the stream Filtering a data stream 4 Select l a random sample elements with property x from the stream Counting distinct elements (Not required) 4 Number of distinct elements in the last k elements of the stream 12. 49

Topic 6: Machine Learning n Recommender systems l User-user collaborative filtering l Item-item collaborative filtering n SVM l Given you the training dataset, find out the optimal solution 12. 50

CATEI and my. Experience Survey 12. 51

End of Chapter 12

- Slides: 52