COMP 9313 Big Data Management Lecturer Xin Cao

COMP 9313: Big Data Management Lecturer: Xin Cao Course web site: http: //www. cse. unsw. edu. au/~cs 9313/

Chapter 1: Course Information and Introduction to Big Data Management 1. 2

Part 1: Course Information 1. 3

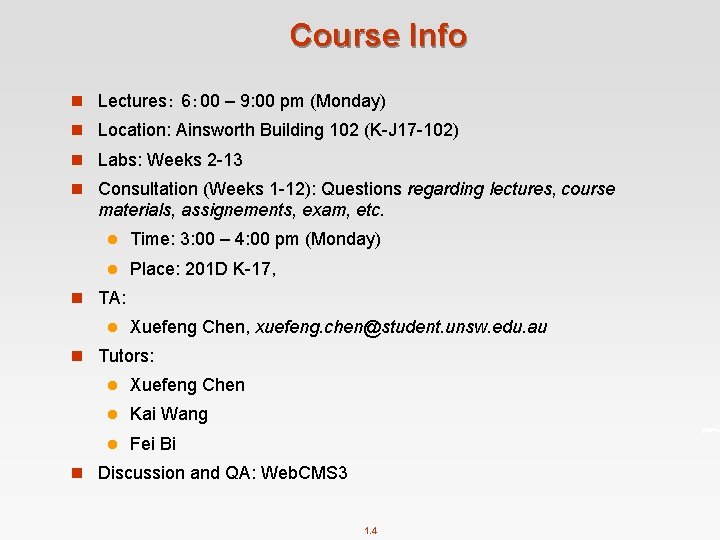

Course Info n Lectures: 6: 00 – 9: 00 pm (Monday) n Location: Ainsworth Building 102 (K-J 17 -102) n Labs: Weeks 2 -13 n Consultation (Weeks 1 -12): Questions regarding lectures, course materials, assignements, exam, etc. l Time: 3: 00 – 4: 00 pm (Monday) l Place: 201 D K-17, n TA: l Xuefeng Chen, xuefeng. chen@student. unsw. edu. au n Tutors: l Xuefeng Chen l Kai Wang l Fei Bi n Discussion and QA: Web. CMS 3 1. 4

Lecturer in Charge n Lecturer: Xin Cao l Office: 201 D K 17 (outside the lift turn left) l Email: xin. cao@unsw. edu. au l Ext: 55932 n Research interests l Database l Data Mining l Big Data Technologies l My homepage: http: //www. cse. unsw. edu. au/~z 3515164/ l My publications list at google scholar: https: //scholar. google. com. au/citations? user=k. JIk. Uag. AAAAJ&hl=en 1. 5

Course Aims n This course aims to introduce you to the concepts behind Big Data, the core technologies used in managing large-scale data sets, and a range of technologies for developing solutions to large-scale data analytics problems. n This course is intended for students who want to understand modern large-scale data analytics systems. It covers a wide range of topics and technologies, and will prepare students to be able to build such systems as well as use them efficiently and effectively to address challenges in big data management. n Not possible to cover every aspect of big data management. 1. 6

Lectures n Lectures focusing on the frontier technologies on big data management and the typical applications n Try to run in more interactive mode and provide more examples n A few lectures may run in more practical manner (e. g. , like a lab/demo) to cover the applied aspects n Lecture length varies slightly depending on the progress (of that lecture) � n Note: attendance to every lecture is assumed 1. 7

Resources n Text Books l Hadoop: The Definitive Guide. Tom White. 4 th Edition - O'Reilly Media l Mining of Massive Datasets. Jure Leskovec, Anand Rajaraman, Jeff Ullman. 2 nd edition - Cambridge University Press l Data-Intensive Text Processing with Map. Reduce. Jimmy Lin and Chris Dyer. University of Maryland, College Park. n Reference Books and other readings l Learning Spark. Matei Zaharia, Holden Karau, Andy Konwinski, Patrick Wendell. O'Reilly Media l Apache Map. Reduce Tutorial l Apache Spark Quick Start l Many other online tutorials … … n Big Data is a relatively new topic (so no fixed syllabus) 1. 8

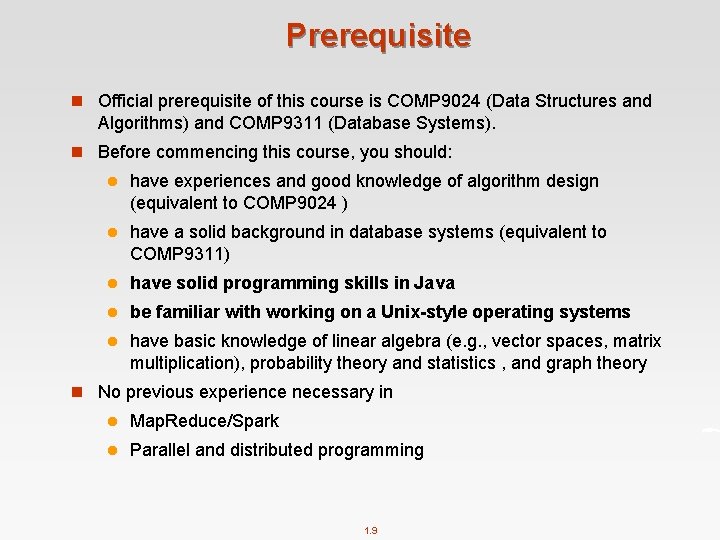

Prerequisite n Official prerequisite of this course is COMP 9024 (Data Structures and Algorithms) and COMP 9311 (Database Systems). n Before commencing this course, you should: l have experiences and good knowledge of algorithm design (equivalent to COMP 9024 ) l have a solid background in database systems (equivalent to COMP 9311) l have solid programming skills in Java l be familiar with working on a Unix-style operating systems l have basic knowledge of linear algebra (e. g. , vector spaces, matrix multiplication), probability theory and statistics , and graph theory n No previous experience necessary in l Map. Reduce/Spark l Parallel and distributed programming 1. 9

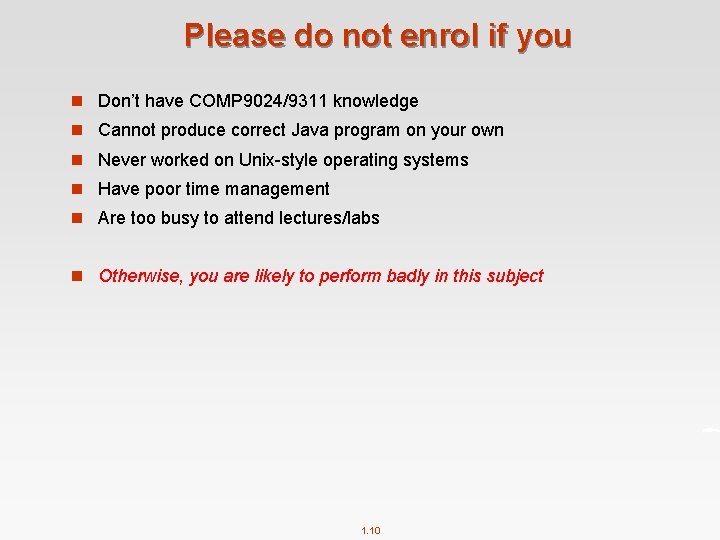

Please do not enrol if you n Don’t have COMP 9024/9311 knowledge n Cannot produce correct Java program on your own n Never worked on Unix-style operating systems n Have poor time management n Are too busy to attend lectures/labs n Otherwise, you are likely to perform badly in this subject 1. 10

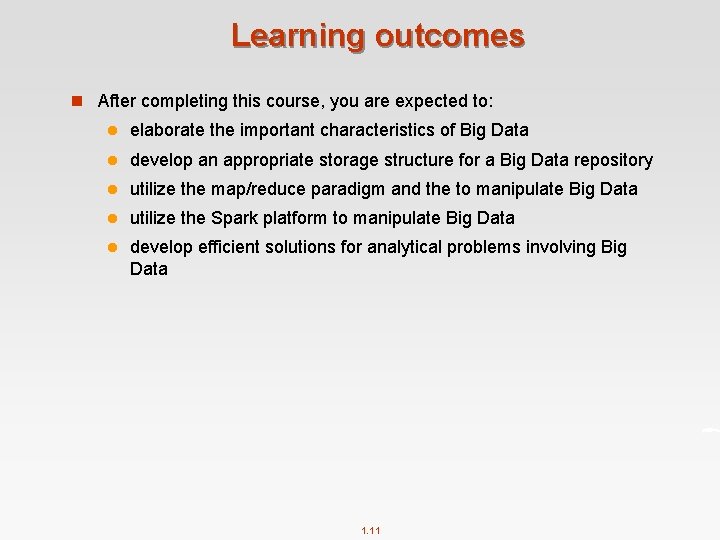

Learning outcomes n After completing this course, you are expected to: l elaborate the important characteristics of Big Data l develop an appropriate storage structure for a Big Data repository l utilize the map/reduce paradigm and the to manipulate Big Data l utilize the Spark platform to manipulate Big Data l develop efficient solutions for analytical problems involving Big Data 1. 11

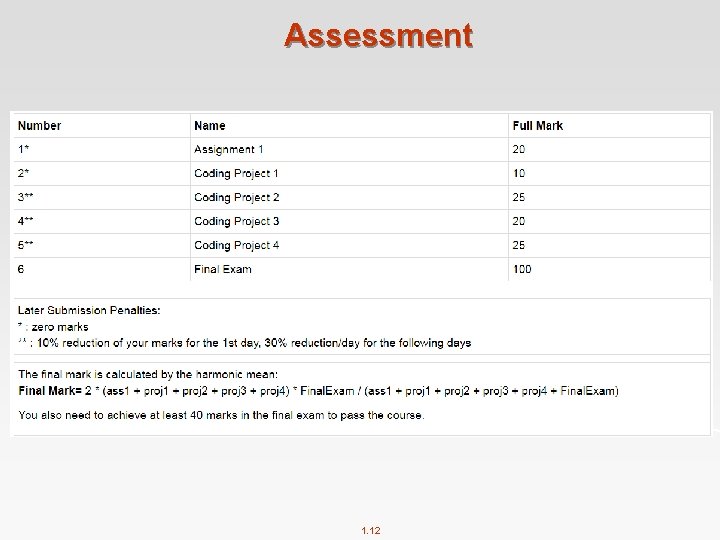

Assessment 1. 12

Projects and Assignments n Projects: l 1 warm-up programming project on Hadoop Map. Reduce l 1 harder project on Hadoop Map. Reduce l 1 project on Spark l 1 project on AWS (Map. Reduce/Spark) [tentative] n Assignment: l 1 assignment on big data applications n Both results and source codes will be checked. l If not able to run your codes due to some bugs, you will not lose all marks. 1. 13

CSE Computing Environment n Use Linux/command line (virtual machine image will be provided) l Projects marked on Linux servers l You need to be able to upload, run, and test your program under Linux n Assignment submission l Use Give to submit (either command line or web page) l Classrun. Check your submission, marks, etc. Read https: //wiki. cse. unsw. edu. au/give/Classrun 1. 14

Final exam n Final written exam (100 pts) n If you are ill on the day of the exam, do not attend the exam – I will not accept any medical special consideration claims from people who already attempted the exam n You need to achieve at least 40 marks in the final exam n No supplementary exam will be given 1. 15

You May Fail Because … n *Plagiarism* n Code failed to compile due to some mistakes n Late submission l 1 sec late = 1 day late l submit wrong files n Program did not follow the spec n I am unlikely to accept the following excuses: l “Too busy” l “It took longer than I thought it would take” l “It was harder than I initially thought” l “My dog ate my homework” and modern variants thereof 1. 16

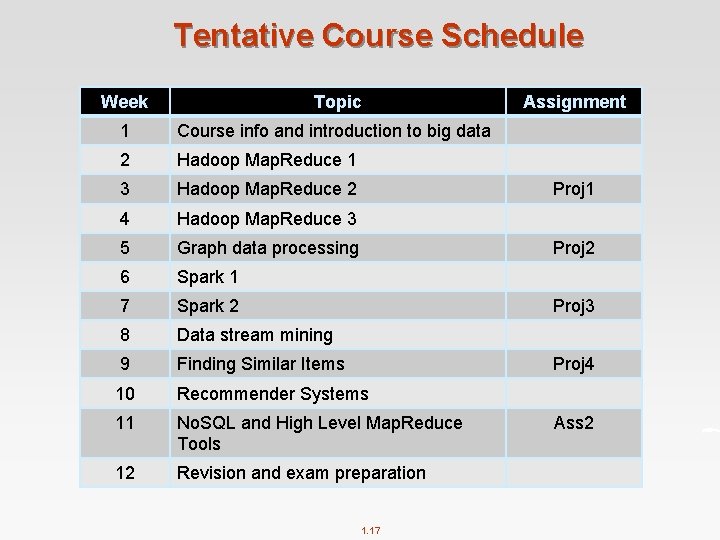

Tentative Course Schedule Week Topic 1 Course info and introduction to big data 2 Hadoop Map. Reduce 1 3 Hadoop Map. Reduce 2 4 Hadoop Map. Reduce 3 5 Graph data processing 6 Spark 1 7 Spark 2 8 Data stream mining 9 Finding Similar Items 10 Recommender Systems 11 No. SQL and High Level Map. Reduce Tools 12 Revision and exam preparation Assignment Proj 1 Proj 2 Proj 3 Proj 4 1. 17 Ass 2

Labs n 5 labs on Map. Reduce n 3 labs on Spark n 1 lab on high level Map. Reduce tools n 1 lab on AWS n 1 lab on big data machine learning platform [tentative] 1. 18

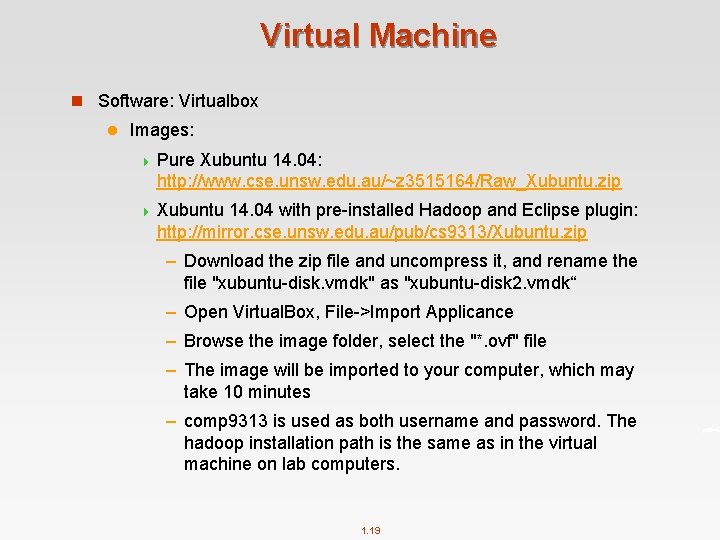

Virtual Machine n Software: Virtualbox l Images: 4 Pure Xubuntu 14. 04: http: //www. cse. unsw. edu. au/~z 3515164/Raw_Xubuntu. zip 4 Xubuntu 14. 04 with pre-installed Hadoop and Eclipse plugin: http: //mirror. cse. unsw. edu. au/pub/cs 9313/Xubuntu. zip – Download the zip file and uncompress it, and rename the file "xubuntu-disk. vmdk" as "xubuntu-disk 2. vmdk“ – Open Virtual. Box, File->Import Applicance – Browse the image folder, select the "*. ovf" file – The image will be imported to your computer, which may take 10 minutes – comp 9313 is used as both username and password. The hadoop installation path is the same as in the virtual machine on lab computers. 1. 19

Your Feedbacks Are Important n Big data is a new topic, and thus the course is tentative n The technologies keep evolving, and the course materials need to be updated correspondingly n Please advise where I can improve after each lecturer, at the discussion and QA website n my. Experience system 1. 20

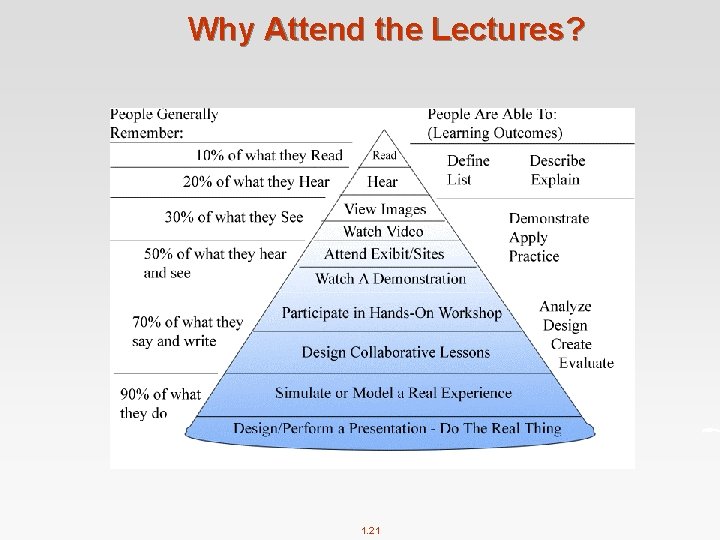

Why Attend the Lectures? 1. 21

Part 2: Introduction to Big Data 1. 22

What is Big Data? n Big data is like teenage sex: l everyone talks about it l nobody really knows how to do it l everyone thinks everyone else is doing it l so everyone claims they are doing it. . . --Dan Ariely, Professor at Duke University 1. 23

What is Big Data? n No standard definition! here is from Wikipedia: l Big data is a term for data sets that are so large or complex that traditional data processing application softwares are inadequate to deal with them l Challenges include capture, storage, analysis, data curation, search, sharing, transfer, visualization, querying, updating and information privacy. l The term "big data" often refers simply to the use of predictive analytics, user behaviour analytics, or certain other advanced data analytics methods that extract value from data, and seldom to a particular size of data set l Analysis of data sets can find new correlations to "spot business trends, prevent diseases, combat crime and so on. " 1. 24

Instead of Talking about “Big Data”… n Let’s talk about a crowded application ecosystem: l Hadoop Map. Reduce l Spark l No. SQL (e. g. , HBase, Mongo. DB, Neo 4 j) l Storm l Pregel l … … n Let’s talk about data science and data management: l Finding similar items l Graph data processing l Streaming data processing l Machine learning technologies l … … 1. 25

Who is generating Big Data? Social e. Commerce User Tracking & Engagement Financial Services 1. 26 Homeland Security Real Time Search

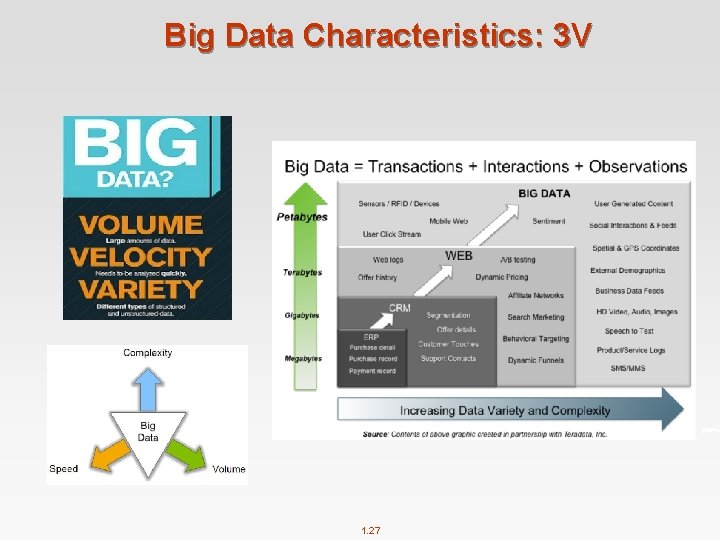

Big Data Characteristics: 3 V 1. 27

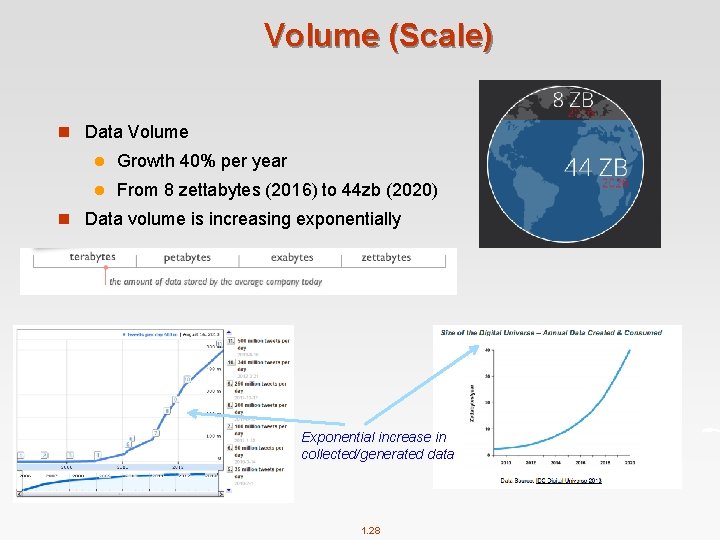

Volume (Scale) n Data Volume l Growth 40% per year l From 8 zettabytes (2016) to 44 zb (2020) n Data volume is increasing exponentially Exponential increase in collected/generated data 1. 28

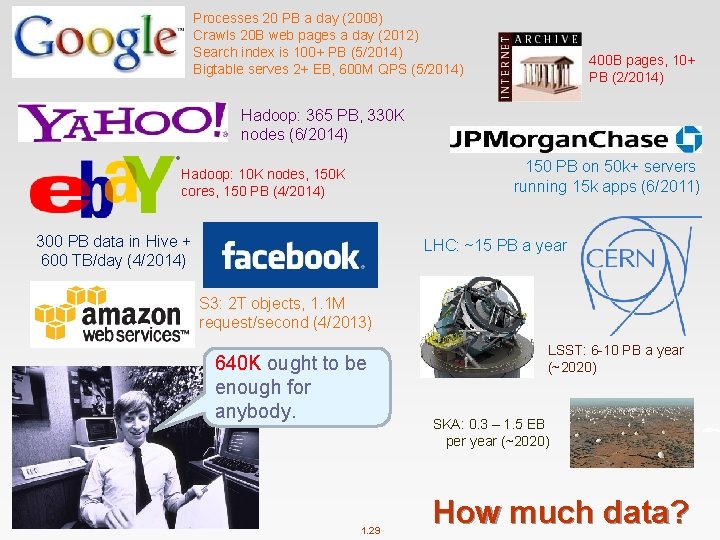

Processes 20 PB a day (2008) Crawls 20 B web pages a day (2012) Search index is 100+ PB (5/2014) Bigtable serves 2+ EB, 600 M QPS (5/2014) 400 B pages, 10+ PB (2/2014) Hadoop: 365 PB, 330 K nodes (6/2014) 150 PB on 50 k+ servers running 15 k apps (6/2011) Hadoop: 10 K nodes, 150 K cores, 150 PB (4/2014) 300 PB data in Hive + 600 TB/day (4/2014) LHC: ~15 PB a year S 3: 2 T objects, 1. 1 M request/second (4/2013) 640 K ought to be enough for anybody. 1. 29 LSST: 6 -10 PB a year (~2020) SKA: 0. 3 – 1. 5 EB per year (~2020) How much data?

Variety (Complexity) n Different Types: l Relational Data (Tables/Transaction/Legacy Data) l Text Data (Web) l Semi-structured Data (XML) l Graph Data 4 Social Network, Semantic Web (RDF), … l Streaming Data 4 You can only scan the data once l A single application can be generating/collecting many types of data n Different Sources: l Movie reviews from IMDB and Rotten Tomatoes l Product reviews from different provider websites To extract knowledge all these types of data need to linked together 1. 30

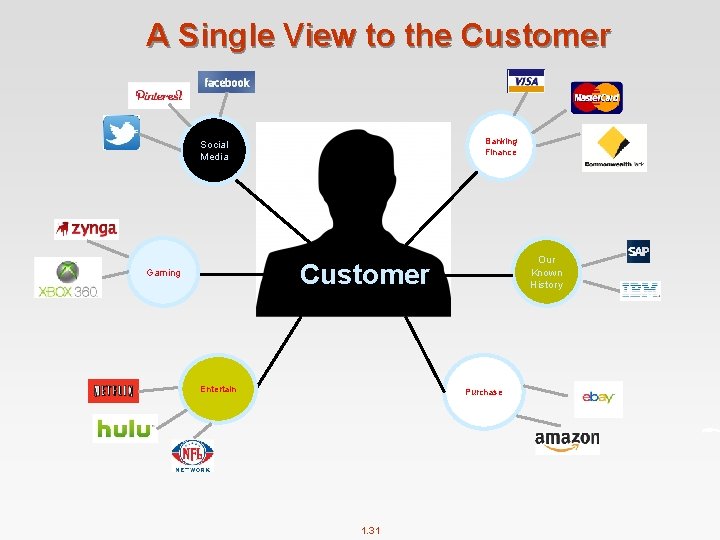

A Single View to the Customer Banking Finance Social Media Our Known History Customer Gaming Entertain Purchase 1. 31

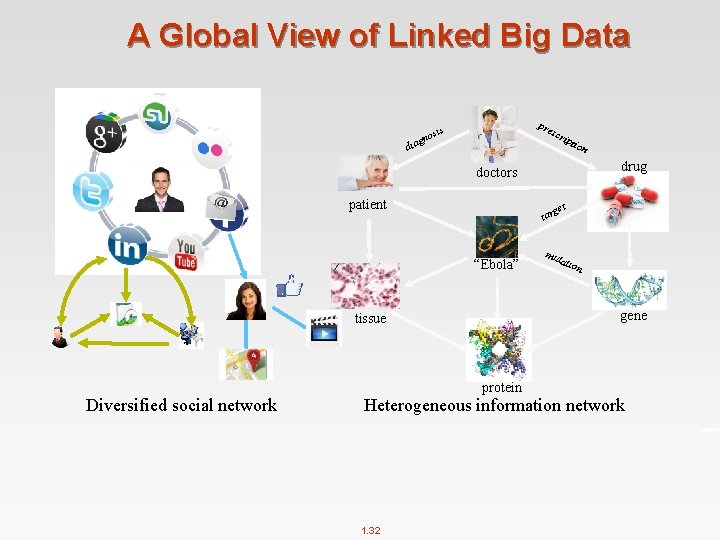

A Global View of Linked Big Data di os agn pre is scr ipt ion drug doctors patient get tar “Ebola” tati on gene tissue Diversified social network mu protein Heterogeneous information network 1. 32

Velocity (Speed) n Data is being generated fast and need to be processed fast n Online Data Analytics n Late decisions missing opportunities n Examples l E-Promotions: Based on your current location, your purchase history, what you like send promotions right now for store next to you l Healthcare monitoring: sensors monitoring your activities and body any abnormal measurements require immediate reaction l Disaster management and response 1. 33

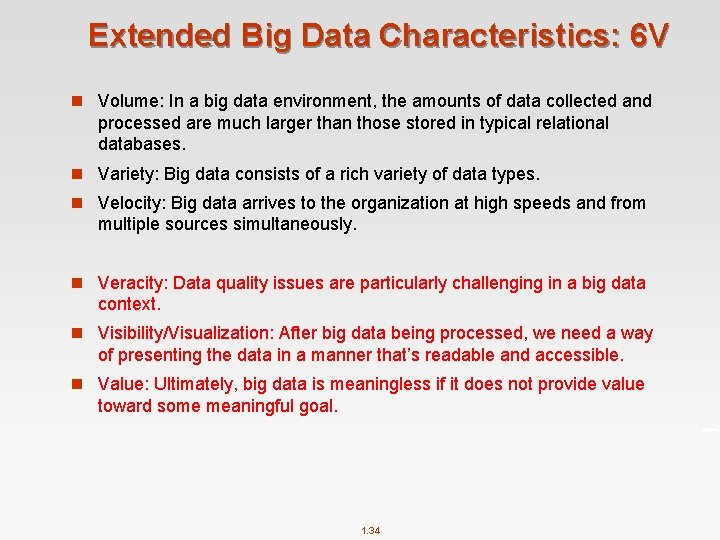

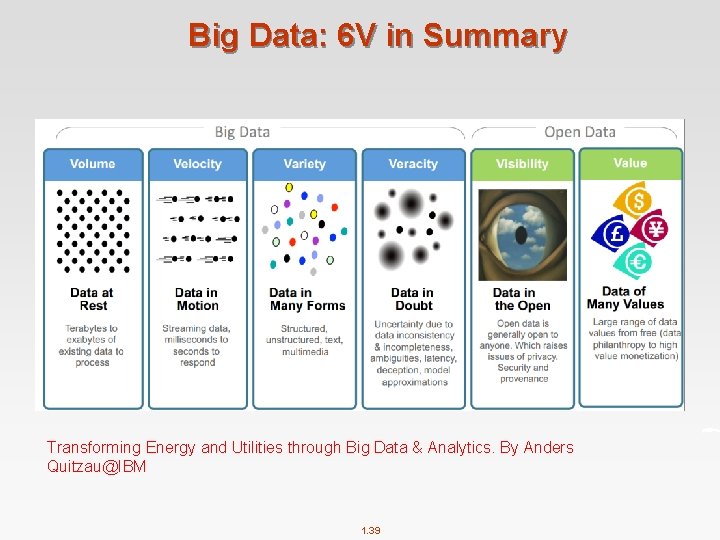

Extended Big Data Characteristics: 6 V n Volume: In a big data environment, the amounts of data collected and processed are much larger than those stored in typical relational databases. n Variety: Big data consists of a rich variety of data types. n Velocity: Big data arrives to the organization at high speeds and from multiple sources simultaneously. n Veracity: Data quality issues are particularly challenging in a big data context. n Visibility/Visualization: After big data being processed, we need a way of presenting the data in a manner that’s readable and accessible. n Value: Ultimately, big data is meaningless if it does not provide value toward some meaningful goal. 1. 34

Veracity (Quality & Trust) n Data = quantity + quality n When we talk about big data, we typically mean its quantity: l What capacity of a system provides to cope with the sheer size of the data? l Is a query feasible on big data within our available resources? l How can we make our queries tractable on big data? l . . . n Can we trust the answers to our queries? l Dirty data routinely lead to misleading financial reports, strategic business planning decision loss of revenue, credibility and customers, disastrous consequences n The study of data quality is as important as data quantity 1. 35

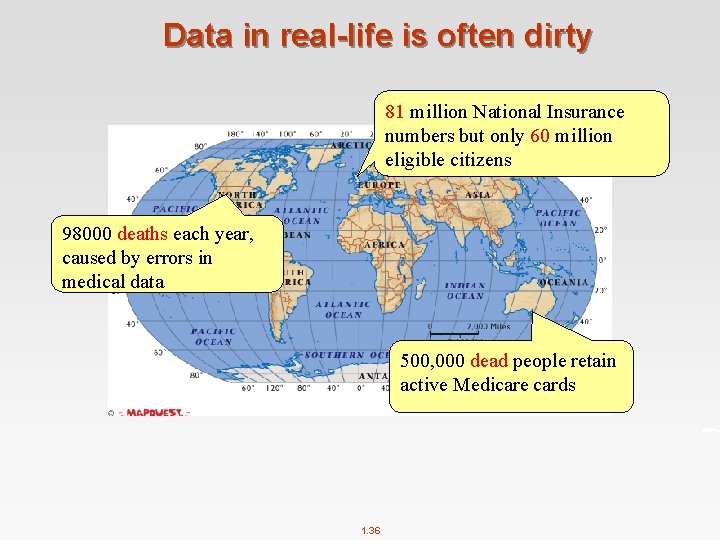

Data in real-life is often dirty 81 million National Insurance numbers but only 60 million eligible citizens 98000 deaths each year, caused by errors in medical data 500, 000 dead people retain active Medicare cards 1. 36

Visibility/Visualization n Visible to the process of big data management n Big Data – visibility = Black Hole? A visualization of Divvy bike rides across Chicago n Big data visualization tools: 1. 37

Value n Big data is meaningless if it does not provide value toward some meaningful goal 1. 38

Big Data: 6 V in Summary Transforming Energy and Utilities through Big Data & Analytics. By Anders Quitzau@IBM 1. 39

Other V’s n Variability l Variability refers to data whose meaning is constantly changing. This is particularly the case when gathering data relies on language processing. n Viscosity l This term is sometimes used to describe the latency or lag time in the data relative to the event being described. We found that this is just as easily understood as an element of Velocity. n Virality l Defined by some users as the rate at which the data spreads; how often it is picked up and repeated by other users or events. n Volatility l Big data volatility refers to how long is data valid and how long should it be stored. You need to determine at what point is data no longer relevant to the current analysis. n More V’s in the future … 1. 40

Big Data Tag Cloud 1. 41

Why Study Big Data Technologies? n The hottest topic in both research and industry n Highly demanded in real world n A promising future career l Research and development of big data systems: distributed systems (eg, Hadoop), visualization tools, data warehouse, OLAP, data integration, data quality control, … l Big data applications: social marketing, healthcare, … l Data analysis: to get values out of big data discovering and applying patterns, predicative analysis, business intelligence, privacy and security, … n Graduate from UNSW 1. 42

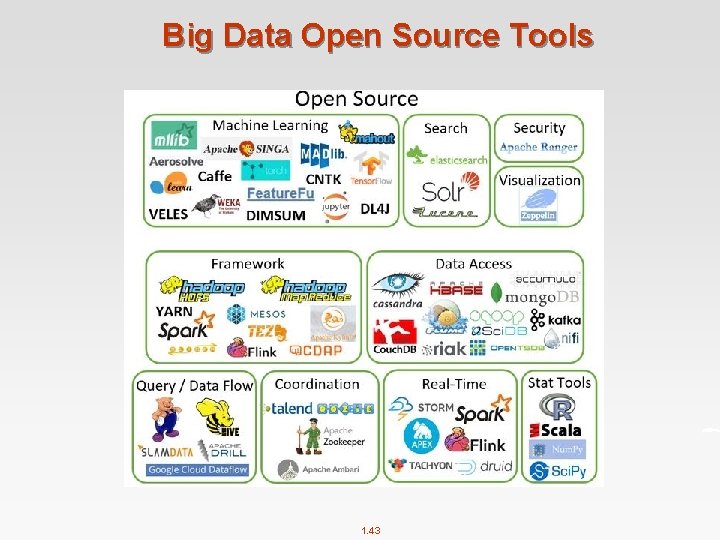

Big Data Open Source Tools 1. 43

What will the course cover n Topic 1. Big data management tools l Apache Hadoop 4 Map. Reduce 4 YARN/HDFS/HBase/Hive/Pig (briefly introduced) 4 Spark 4 AWS platform 4 Mahout [tentative] n Topic 2. Big data typical applications l Finding similar items l Graph data processing l Data stream mining l Some machine learning topics 1. 44

Distributed processing is non-trivial n How to assign tasks to different workers in an efficient way? n What happens if tasks fail? n How do workers exchange results? n How to synchronize distributed tasks allocated to different workers? 1. 45

Big data storage is challenging n Data Volumes are massive n Reliability of Storing PBs of data is challenging n All kinds of failures: Disk/Hardware/Network Failures n Probability of failures simply increase with the number of machines … 1. 46

What is Hadoop n Open-source data storage and processing platform n Before the advent of Hadoop, storage and processing of big data was a big challenge n Massively scalable, automatically parallelizable l Based on work from Google 4 Google: GFS + Map. Reduce + Big. Table (Not open) 4 Hadoop: HDFS + Hadoop Map. Reduce + HBase(opensource) n Named by Doug Cutting in 2006 (worked at Yahoo! at that time), after his son's toy elephant. 1. 47

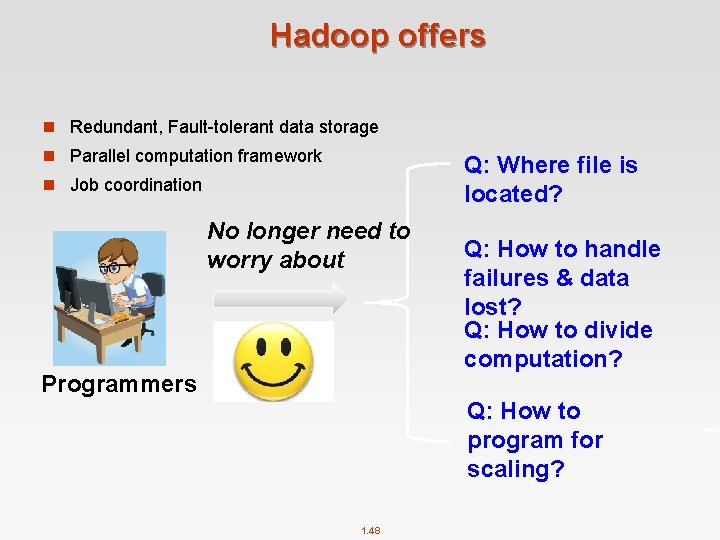

Hadoop offers n Redundant, Fault-tolerant data storage n Parallel computation framework Q: Where file is located? n Job coordination No longer need to worry about Programmers Q: How to handle failures & data lost? Q: How to divide computation? Q: How to program for scaling? 1. 48

Why Use Hadoop? n Cheaper l Scales to Petabytes or more easily n Faster l Parallel data processing n Better l Suited for particular types of big data problems 1. 49

Companies Using Hadoop 1. 50

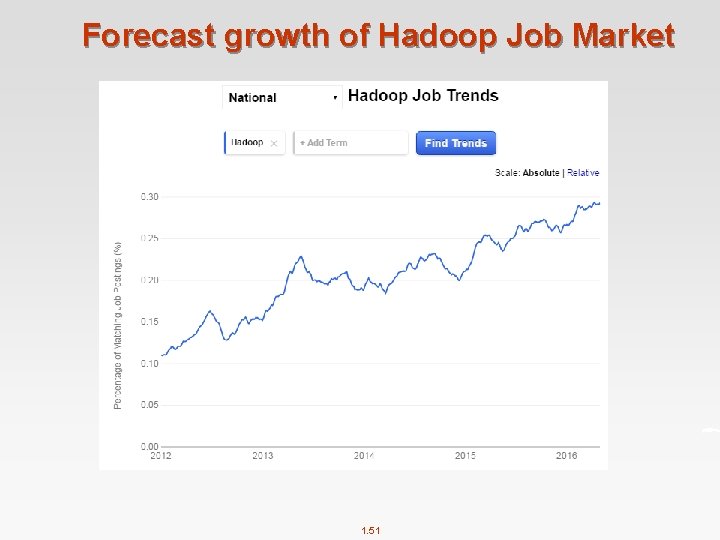

Forecast growth of Hadoop Job Market 1. 51

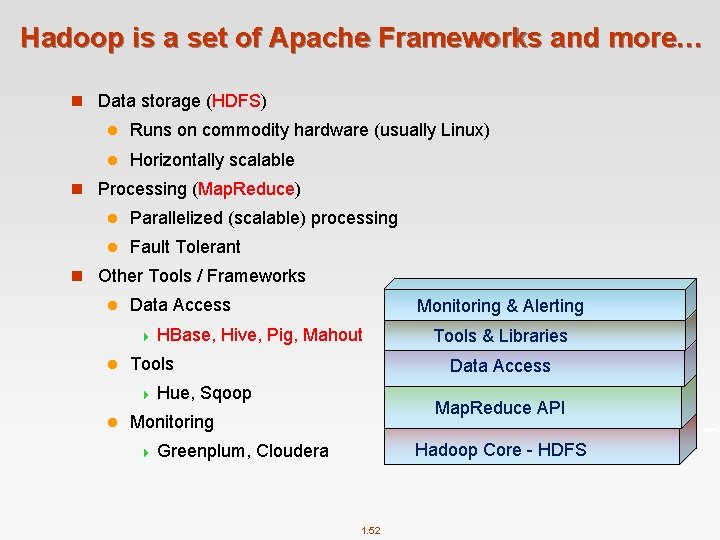

Hadoop is a set of Apache Frameworks and more… n Data storage (HDFS) l Runs on commodity hardware (usually Linux) l Horizontally scalable n Processing (Map. Reduce) l Parallelized (scalable) processing l Fault Tolerant n Other Tools / Frameworks l Data Access Monitoring & Alerting 4 HBase, Hive, Pig, Mahout l Tools Data Access 4 Hue, Sqoop l Tools & Libraries Map. Reduce API Monitoring Hadoop Core - HDFS 4 Greenplum, Cloudera 1. 52

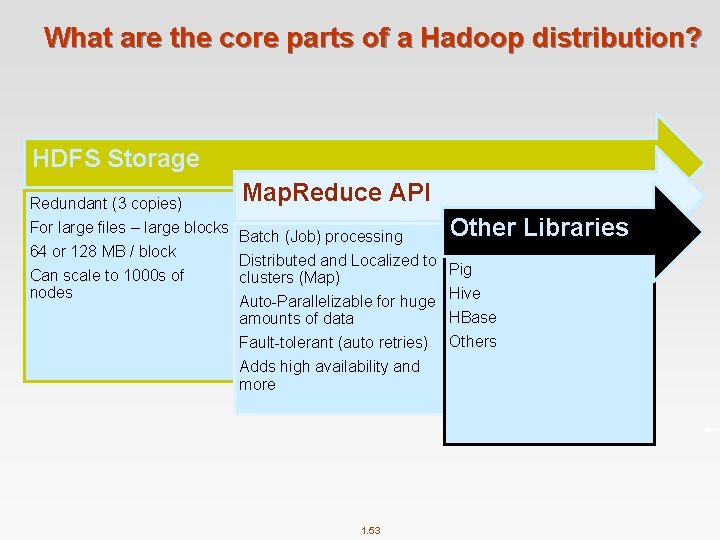

What are the core parts of a Hadoop distribution? HDFS Storage Redundant (3 copies) For large files – large blocks 64 or 128 MB / block Can scale to 1000 s of nodes Map. Reduce API Batch (Job) processing Distributed and Localized to clusters (Map) Auto-Parallelizable for huge amounts of data Fault-tolerant (auto retries) Adds high availability and more 1. 53 Other Libraries Pig Hive HBase Others

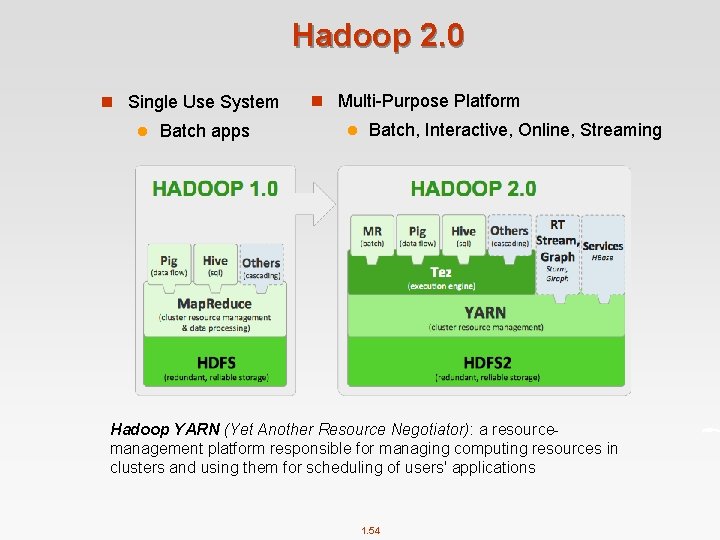

Hadoop 2. 0 n Single Use System l Batch apps n Multi-Purpose Platform l Batch, Interactive, Online, Streaming Hadoop YARN (Yet Another Resource Negotiator): a resourcemanagement platform responsible for managing computing resources in clusters and using them for scheduling of users' applications 1. 54

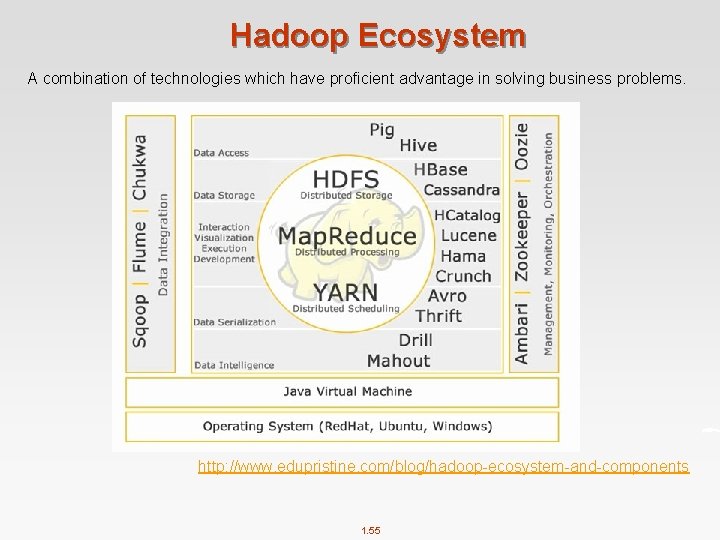

Hadoop Ecosystem A combination of technologies which have proficient advantage in solving business problems. http: //www. edupristine. com/blog/hadoop-ecosystem-and-components 1. 55

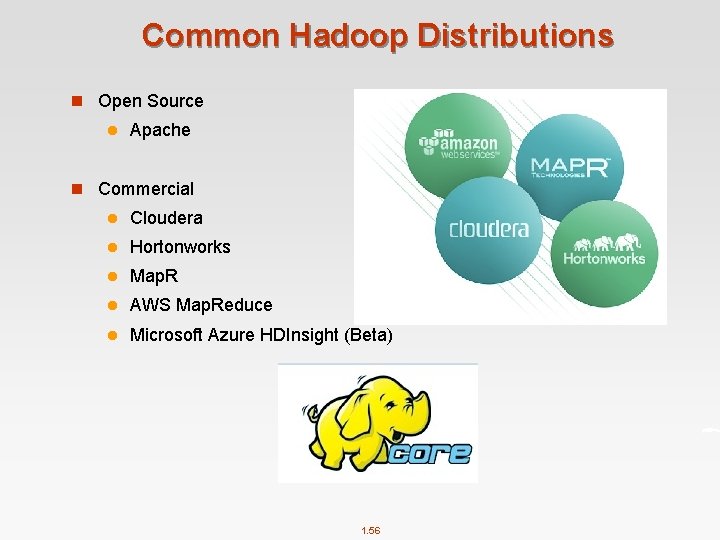

Common Hadoop Distributions n Open Source l Apache n Commercial l Cloudera l Hortonworks l Map. R l AWS Map. Reduce l Microsoft Azure HDInsight (Beta) 1. 56

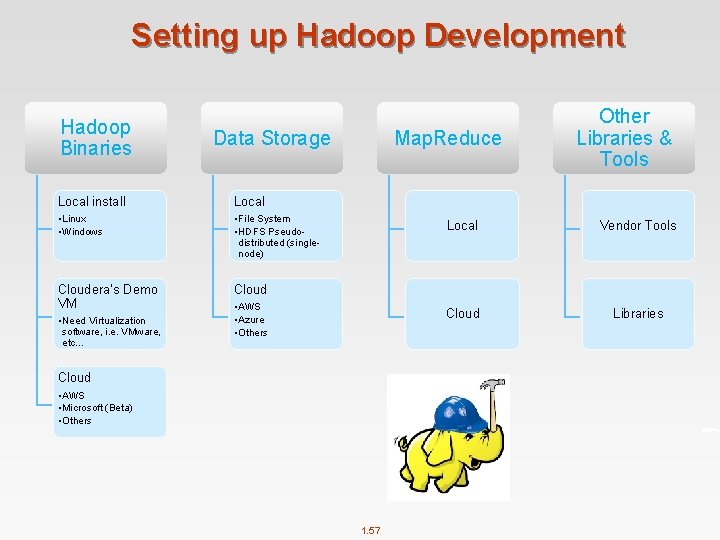

Setting up Hadoop Development Hadoop Binaries Data Storage Local install Local • Linux • Windows • File System • HDFS Pseudodistributed (singlenode) Cloudera’s Demo VM Cloud • Need Virtualization software, i. e. VMware, etc… Map. Reduce • AWS • Azure • Others Cloud • AWS • Microsoft (Beta) • Others 1. 57 Other Libraries & Tools Local Vendor Tools Cloud Libraries

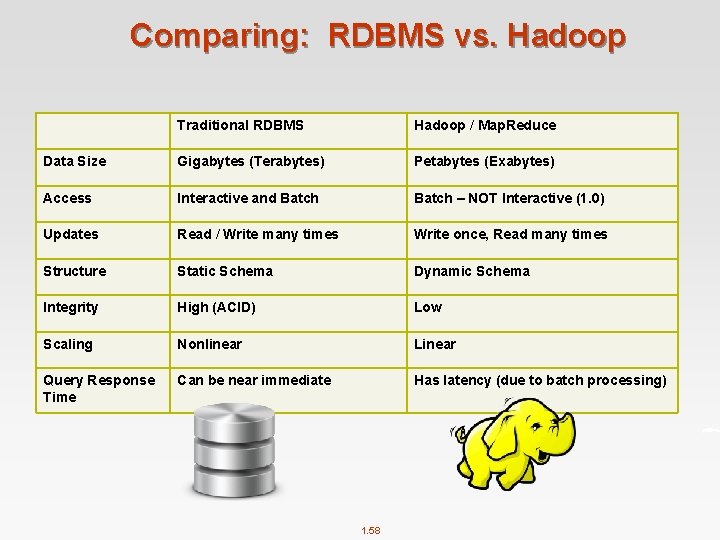

Comparing: RDBMS vs. Hadoop Traditional RDBMS Hadoop / Map. Reduce Data Size Gigabytes (Terabytes) Petabytes (Exabytes) Access Interactive and Batch – NOT Interactive (1. 0) Updates Read / Write many times Write once, Read many times Structure Static Schema Dynamic Schema Integrity High (ACID) Low Scaling Nonlinear Linear Query Response Time Can be near immediate Has latency (due to batch processing) 1. 58

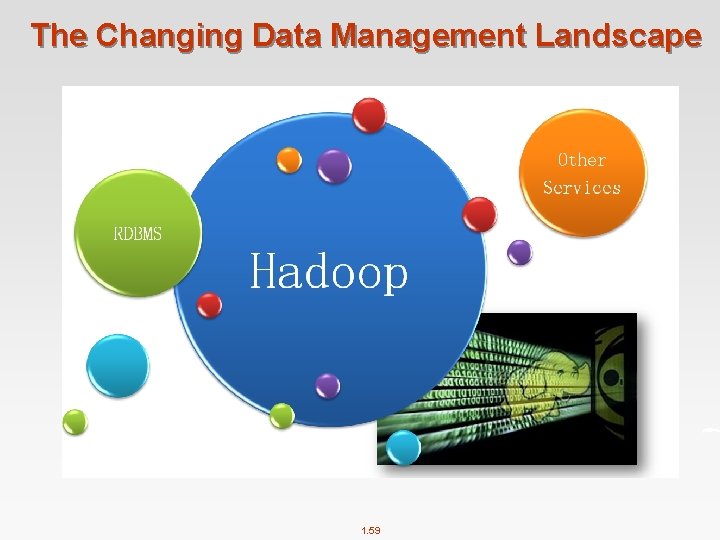

The Changing Data Management Landscape 1. 59

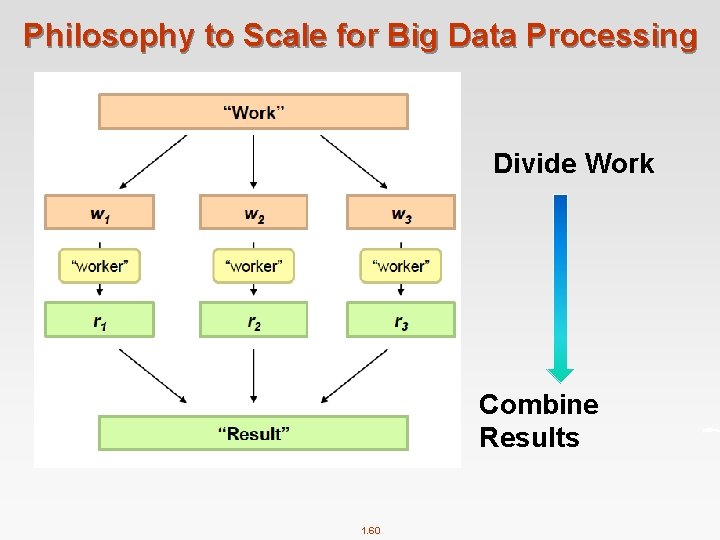

Philosophy to Scale for Big Data Processing Divide Work Combine Results 1. 60

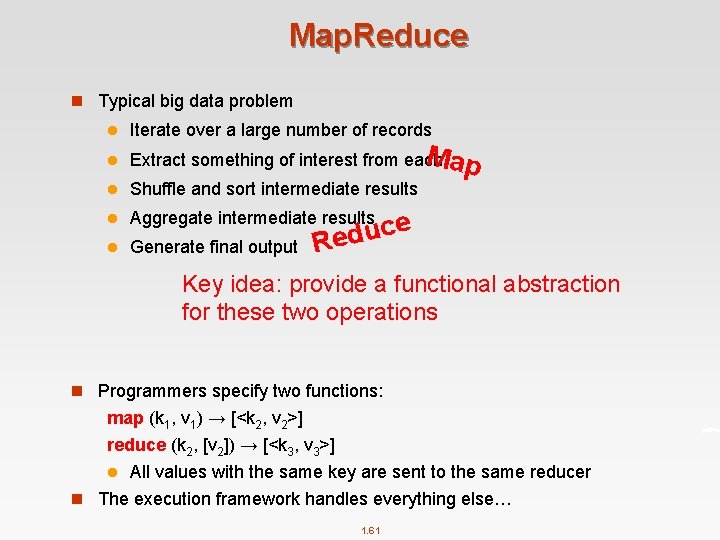

Map. Reduce n Typical big data problem l l Iterate over a large number of records Map Extract something of interest from each l Shuffle and sort intermediate results l Aggregate intermediate results l Generate final output e c u d Re Key idea: provide a functional abstraction for these two operations n Programmers specify two functions: map (k 1, v 1) → [<k 2, v 2>] reduce (k 2, [v 2]) → [<k 3, v 3>] l All values with the same key are sent to the same reducer n The execution framework handles everything else… 1. 61

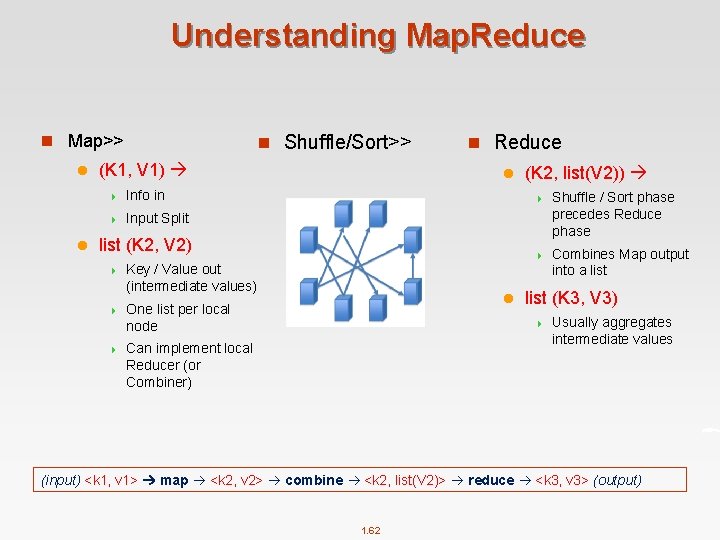

Understanding Map. Reduce n Map>> l l n Shuffle/Sort>> (K 1, V 1) 4 Info in 4 Input Split n Reduce l list (K 2, V 2) 4 Key / Value out (intermediate values) 4 One list per local node 4 l (K 2, list(V 2)) 4 Shuffle / Sort phase precedes Reduce phase 4 Combines Map output into a list (K 3, V 3) 4 Can implement local Reducer (or Combiner) Usually aggregates intermediate values (input) <k 1, v 1> map <k 2, v 2> combine <k 2, list(V 2)> reduce <k 3, v 3> (output) 1. 62

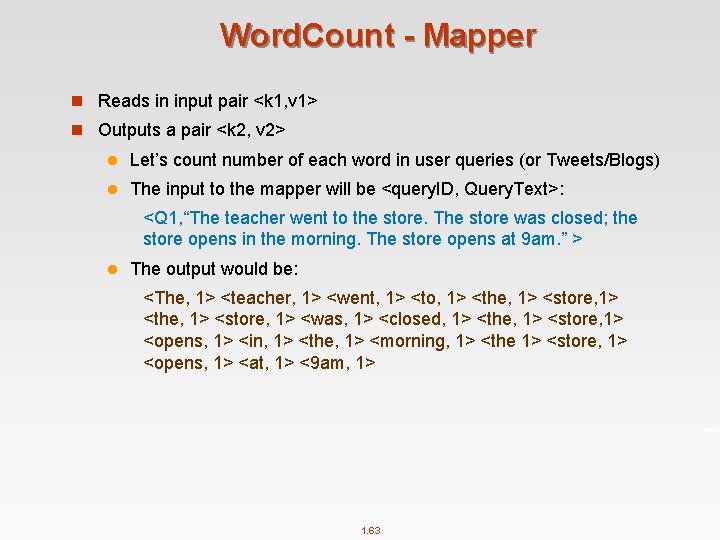

Word. Count - Mapper n Reads in input pair <k 1, v 1> n Outputs a pair <k 2, v 2> l Let’s count number of each word in user queries (or Tweets/Blogs) l The input to the mapper will be <query. ID, Query. Text>: <Q 1, “The teacher went to the store. The store was closed; the store opens in the morning. The store opens at 9 am. ” > l The output would be: <The, 1> <teacher, 1> <went, 1> <to, 1> <the, 1> <store, 1> <was, 1> <closed, 1> <the, 1> <store, 1> <opens, 1> <in, 1> <the, 1> <morning, 1> <the 1> <store, 1> <opens, 1> <at, 1> <9 am, 1> 1. 63

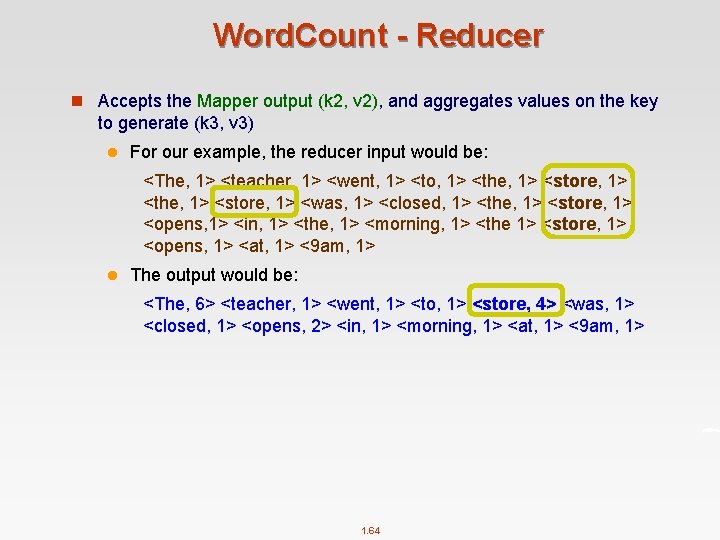

Word. Count - Reducer n Accepts the Mapper output (k 2, v 2), and aggregates values on the key to generate (k 3, v 3) l For our example, the reducer input would be: <The, 1> <teacher, 1> <went, 1> <to, 1> <the, 1> <store, 1> <was, 1> <closed, 1> <the, 1> <store, 1> <opens, 1> <in, 1> <the, 1> <morning, 1> <the 1> <store, 1> <opens, 1> <at, 1> <9 am, 1> l The output would be: <The, 6> <teacher, 1> <went, 1> <to, 1> <store, 4> <was, 1> <closed, 1> <opens, 2> <in, 1> <morning, 1> <at, 1> <9 am, 1> 1. 64

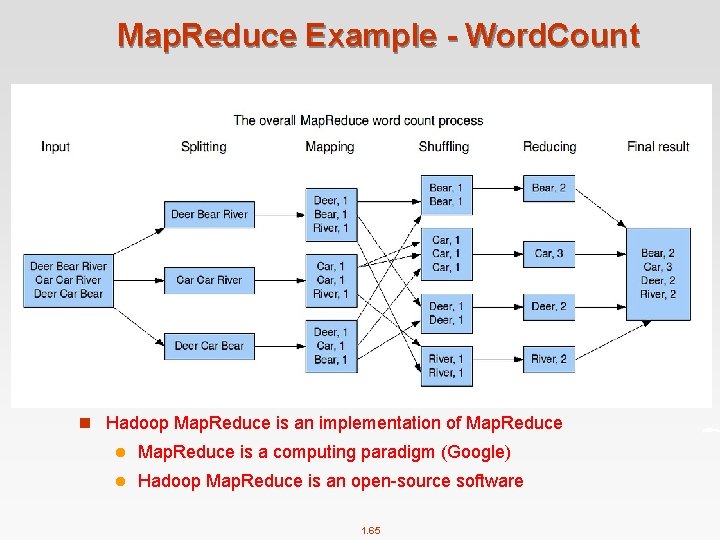

Map. Reduce Example - Word. Count n Hadoop Map. Reduce is an implementation of Map. Reduce l Map. Reduce is a computing paradigm (Google) l Hadoop Map. Reduce is an open-source software 1. 65

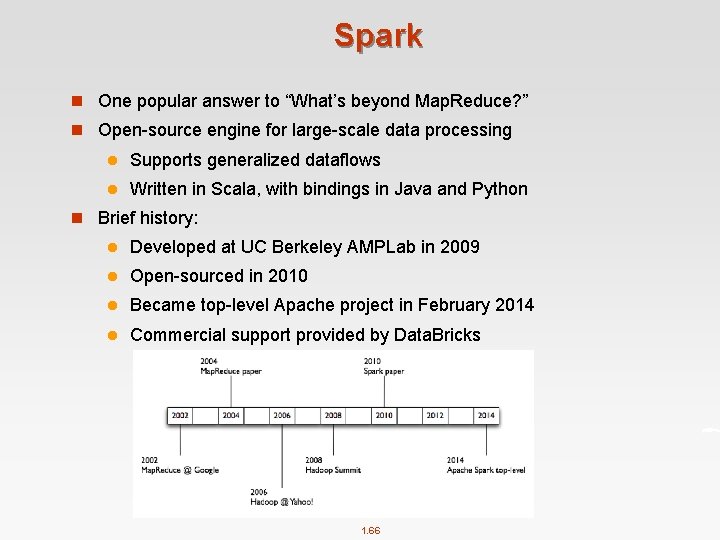

Spark n One popular answer to “What’s beyond Map. Reduce? ” n Open-source engine for large-scale data processing l Supports generalized dataflows l Written in Scala, with bindings in Java and Python n Brief history: l Developed at UC Berkeley AMPLab in 2009 l Open-sourced in 2010 l Became top-level Apache project in February 2014 l Commercial support provided by Data. Bricks 1. 66

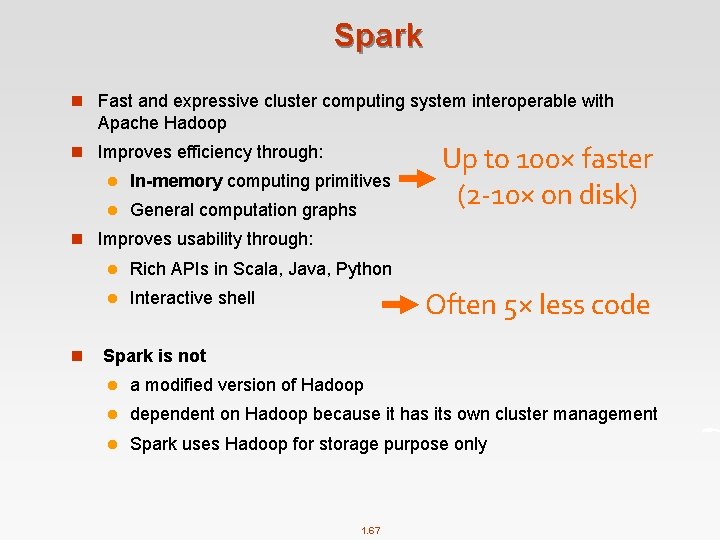

Spark n Fast and expressive cluster computing system interoperable with Apache Hadoop n Improves efficiency through: l In-memory computing primitives l General computation graphs Up to 100× faster (2 -10× on disk) n Improves usability through: l Rich APIs in Scala, Java, Python l Interactive shell Often 5× less code n Spark is not l a modified version of Hadoop l dependent on Hadoop because it has its own cluster management l Spark uses Hadoop for storage purpose only 1. 67

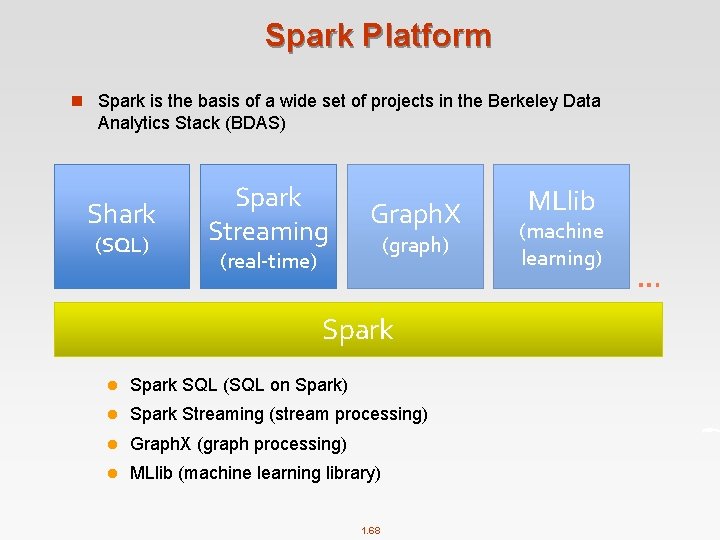

Spark Platform n Spark is the basis of a wide set of projects in the Berkeley Data Analytics Stack (BDAS) Shark (SQL) Spark Streaming Graph. X (graph) (real-time) Spark l Spark SQL (SQL on Spark) l Spark Streaming (stream processing) l Graph. X (graph processing) l MLlib (machine learning library) 1. 68 MLlib (machine learning) …

Spark 1. 69

AWS (Amazon Web Services) n Amazon From Wikipedia 2006 From Wikipedia 2017 1. 70

AWS (Amazon Web Services) n AWS is a subsidiary of Amazon. com, which offers a suite of cloud computing services that make up an on-demand computing platform. n Amazon Web Services (AWS) provides a number of different services, including: l Amazon Elastic Compute Cloud (EC 2) Virtual machines for running custom software l Amazon Simple Storage Service (S 3) Simple key-value store, accessible as a web service l Amazon Elastic Map. Reduce (EMR) Scalable Map. Reduce computation l Amazon Dynamo. DB Distributed No. SQL database, one of several in AWS l Amazon Simple. DB Simple No. SQL database l . . . 1. 71

Cloud Computing Services in AWS n Iaa. S l EC 2, S 3, … l Highlight: EC 2 and S 3 are two of the earliest products in AWS n Paa. S l Aurora, Redshift, … l Highlight: Aurora and Redshift are two of the fastest growing products in AWS n Saa. S l Work. Docs, Work. Mail l Highlight: May not be the main focus of AWS 1. 72

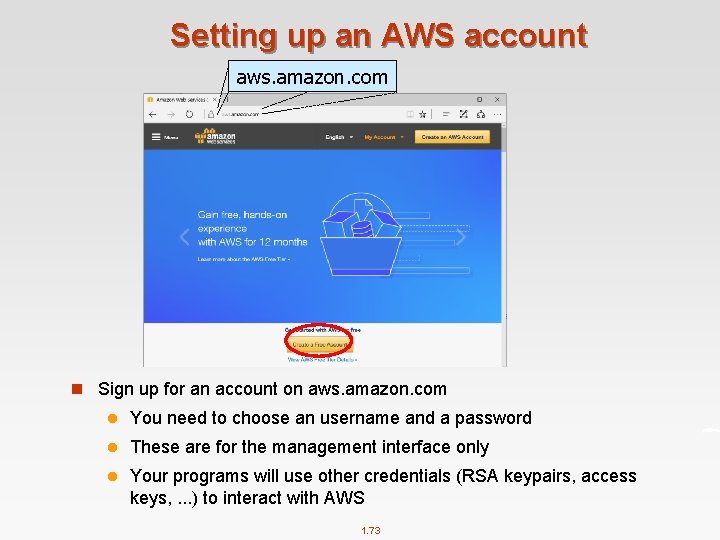

Setting up an AWS account aws. amazon. com n Sign up for an account on aws. amazon. com l You need to choose an username and a password l These are for the management interface only l Your programs will use other credentials (RSA keypairs, access keys, . . . ) to interact with AWS 1. 73

Signing up for AWS Educate n Complete the web form on https: //aws. amazon. com/education/awseducate/ l Assumes you already have an AWS account l Use your UNSW email address! l Amazon says it should only take 2 -5 minutes (but don’t rely on this!!) n This should give you $100/year in AWS credits. Be careful!!! 1. 74

Big Data Applications n Finding similar items n Graph data processing n Data stream mining n Recommender Systems 1. 75

End of Chapter 1

- Slides: 76