A tutorial about SVM Omer Boehm omerbil ibm

A tutorial about SVM Omer Boehm omerb@il. ibm. com Neurocomputation Seminar © 2011 IBM Corporation

IBM Haifa Labs Outline ³ ³ ³ 2 Introduction Classification Perceptron SVM for linearly separable data. SVM for almost linearly separable data. SVM for non-linearly separable data. IBM © 2011 IBM Corporation

IBM Haifa Labs Introduction ³ A branch of artificial intelligence, a scientific discipline concerned with the design and development of algorithms that allow computers to evolve behaviors based on empirical data ³ An important task of machine learning is classification. ³ Classification is also referred to as pattern recognition. 3 IBM © 2011 IBM Corporation

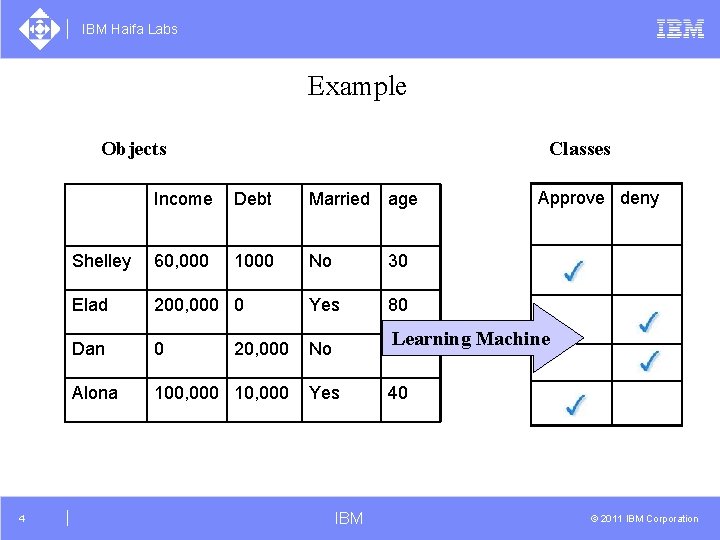

IBM Haifa Labs Example Classes Objects 4 Income Debt Married age Shelley 60, 000 1000 No 30 Elad 200, 000 0 Yes 80 Dan 0 No 25 Alona 100, 000 10, 000 Yes 40 20, 000 Approve deny Learning Machine IBM © 2011 IBM Corporation

IBM Haifa Labs Types of learning problems ³ Supervised learning (n class, n>1) ² Classification ² Regression ³ Unsupervised learning (0 class) ² Clustering (building equivalence classes) ² Density estimation 5 IBM © 2011 IBM Corporation

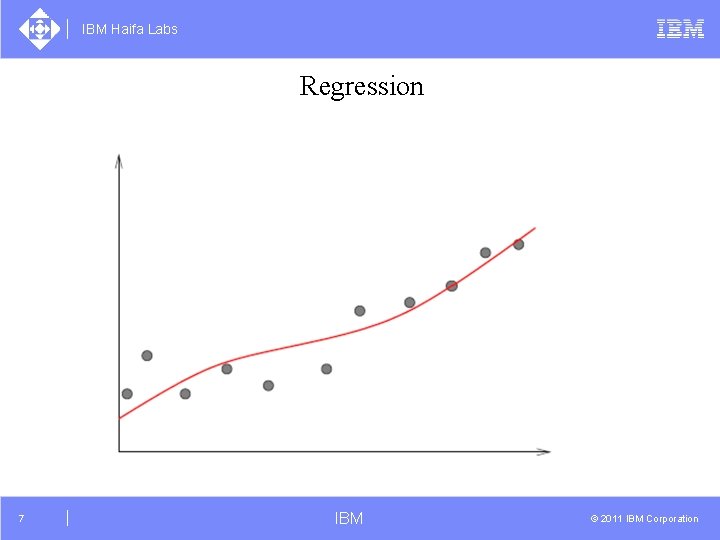

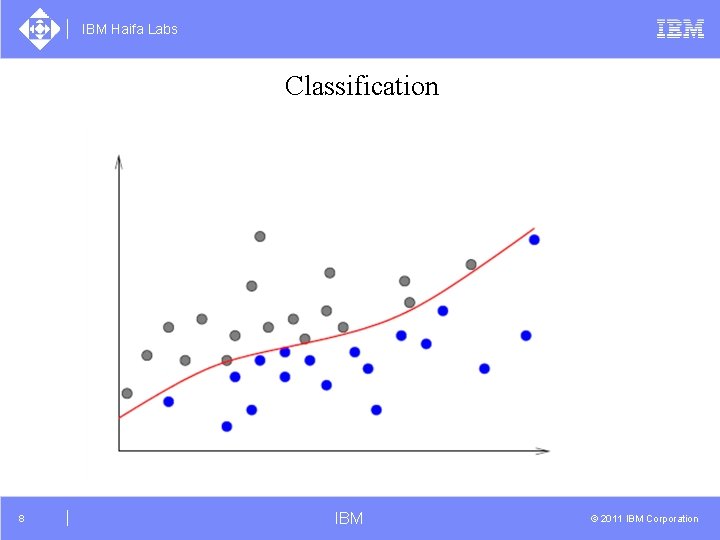

IBM Haifa Labs Supervised learning ³ Regression ² Learn a continuous function from input samples ² Stock prediction In fact, these are ± Input – future date ± Output – stock price approximation problems ± Training – information on stack price over last period ³ Classification ² Learn a separation function from discrete inputs to classes. ² Optical Character Recognition (OCR) ± Input – images of digits. ± Output – labeling 0 -9. ± Training - labeled images of digits. 6 IBM © 2011 IBM Corporation

IBM Haifa Labs Regression 7 IBM © 2011 IBM Corporation

IBM Haifa Labs Classification 8 IBM © 2011 IBM Corporation

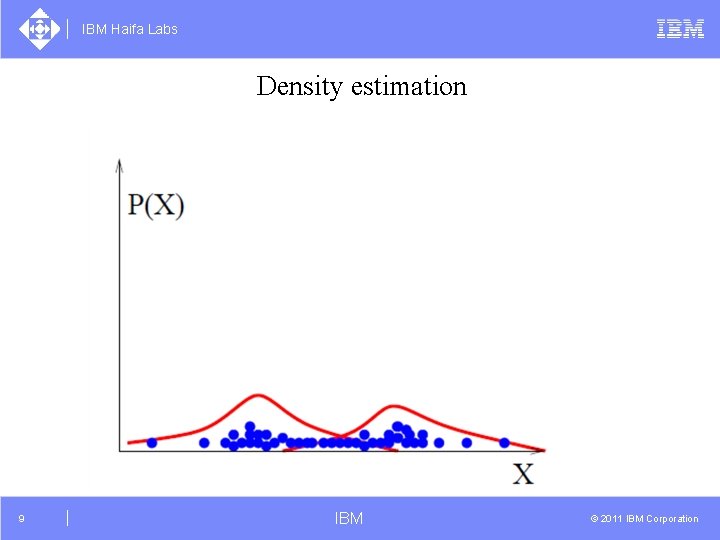

IBM Haifa Labs Density estimation 9 IBM © 2011 IBM Corporation

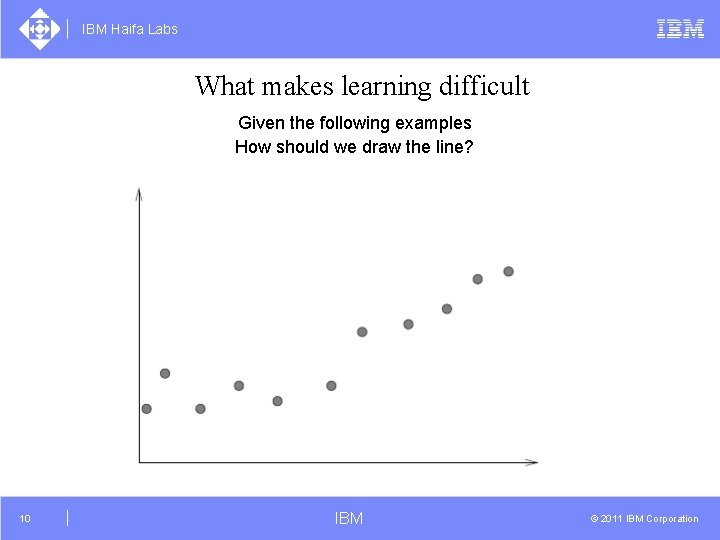

IBM Haifa Labs What makes learning difficult Given the following examples How should we draw the line? 10 IBM © 2011 IBM Corporation

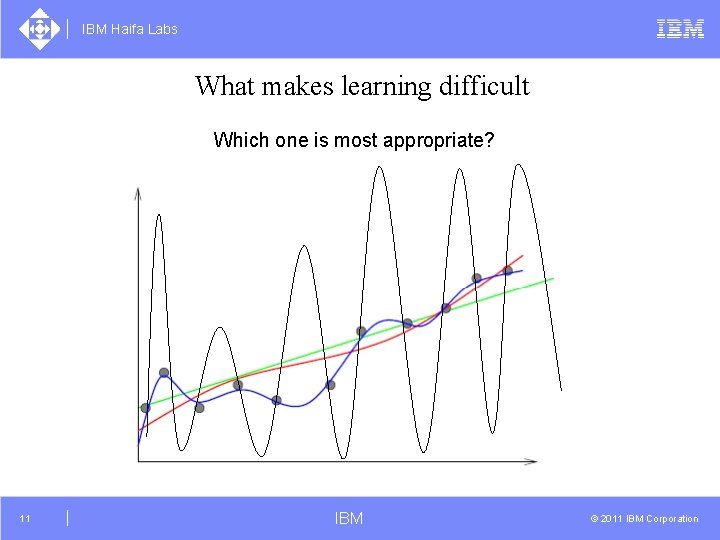

IBM Haifa Labs What makes learning difficult Which one is most appropriate? 11 IBM © 2011 IBM Corporation

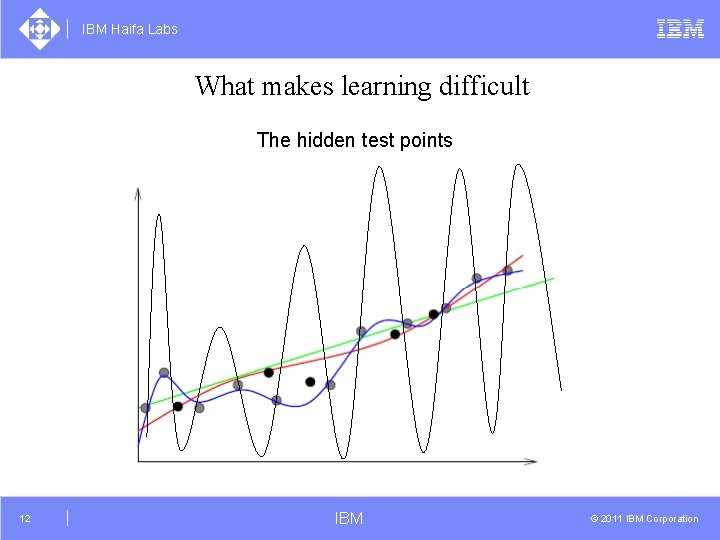

IBM Haifa Labs What makes learning difficult The hidden test points 12 IBM © 2011 IBM Corporation

IBM Haifa Labs What is Learning (mathematically)? ³ We would like to ensure that small changes in an input point from a learning point will not result in a jump to a different classification ³ Such an approximation is called a stable approximation ³ As a rule of thumb, small derivatives ensure stable approximation 13 IBM © 2011 IBM Corporation

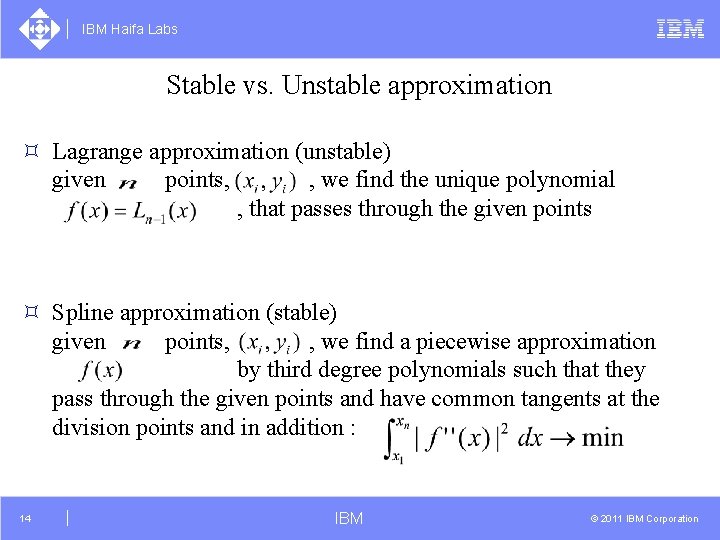

IBM Haifa Labs Stable vs. Unstable approximation ³ Lagrange approximation (unstable) given points, , we find the unique polynomial , that passes through the given points ³ Spline approximation (stable) given points, , we find a piecewise approximation by third degree polynomials such that they pass through the given points and have common tangents at the division points and in addition : 14 IBM © 2011 IBM Corporation

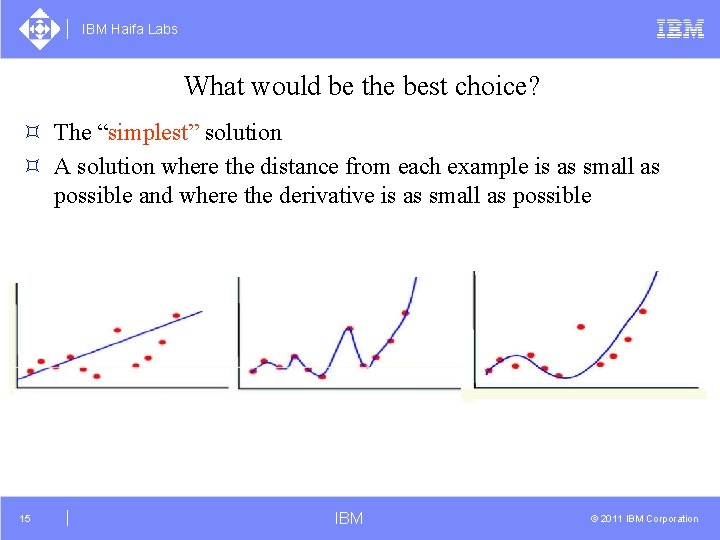

IBM Haifa Labs What would be the best choice? ³ The “simplest” solution ³ A solution where the distance from each example is as small as possible and where the derivative is as small as possible 15 IBM © 2011 IBM Corporation

IBM Haifa Labs Vector Geometry Just in case …. 16 IBM © 2011 IBM Corporation

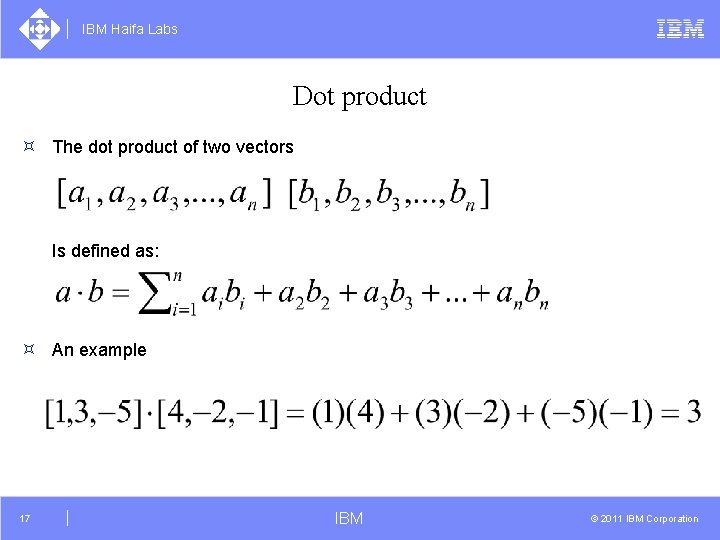

IBM Haifa Labs Dot product ³ The dot product of two vectors Is defined as: ³ An example 17 IBM © 2011 IBM Corporation

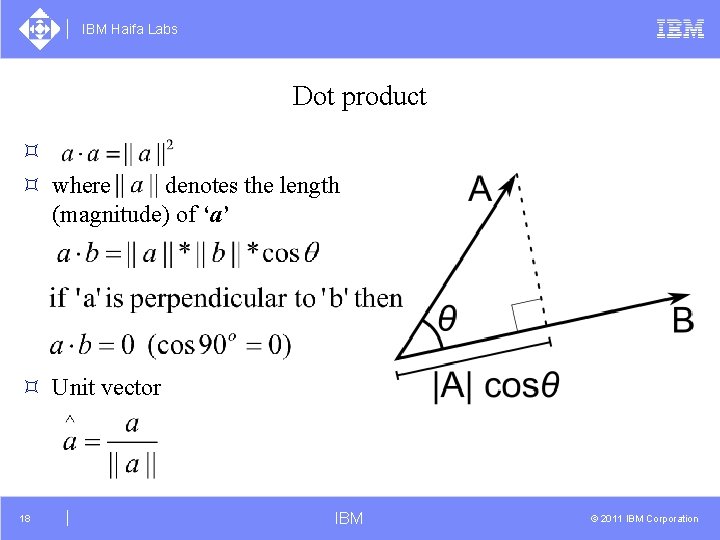

IBM Haifa Labs Dot product ³ ³ where denotes the length (magnitude) of ‘a’ ³ Unit vector 18 IBM © 2011 IBM Corporation

IBM Haifa Labs Plane/Hyperplane ³ Hyperplane can be defined by: ² Three points ² Two vectors ² A normal vector and a point 19 IBM © 2011 IBM Corporation

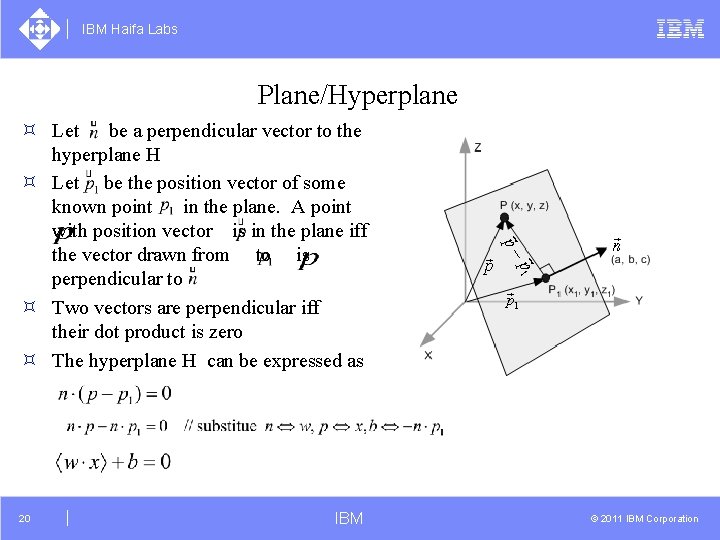

IBM Haifa Labs Plane/Hyperplane ³ Let be a perpendicular vector to the hyperplane H ³ Let be the position vector of some known point in the plane. A point _ with position vector is in the plane iff the vector drawn from to is perpendicular to ³ Two vectors are perpendicular iff their dot product is zero ³ The hyperplane H can be expressed as 20 IBM © 2011 IBM Corporation

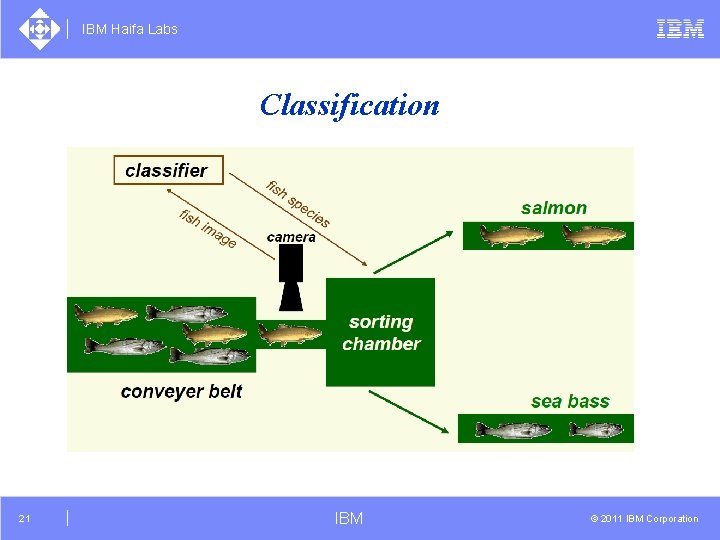

IBM Haifa Labs Classification 21 IBM © 2011 IBM Corporation

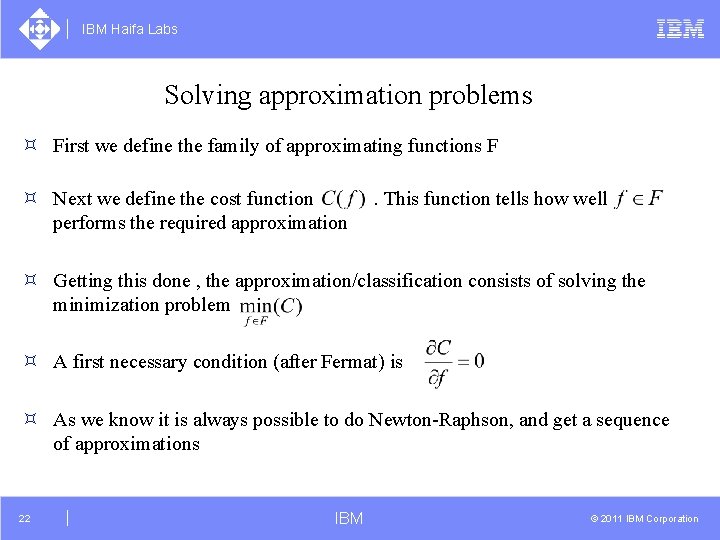

IBM Haifa Labs Solving approximation problems ³ First we define the family of approximating functions F ³ Next we define the cost function performs the required approximation . This function tells how well ³ Getting this done , the approximation/classification consists of solving the minimization problem ³ A first necessary condition (after Fermat) is ³ As we know it is always possible to do Newton-Raphson, and get a sequence of approximations 22 IBM © 2011 IBM Corporation

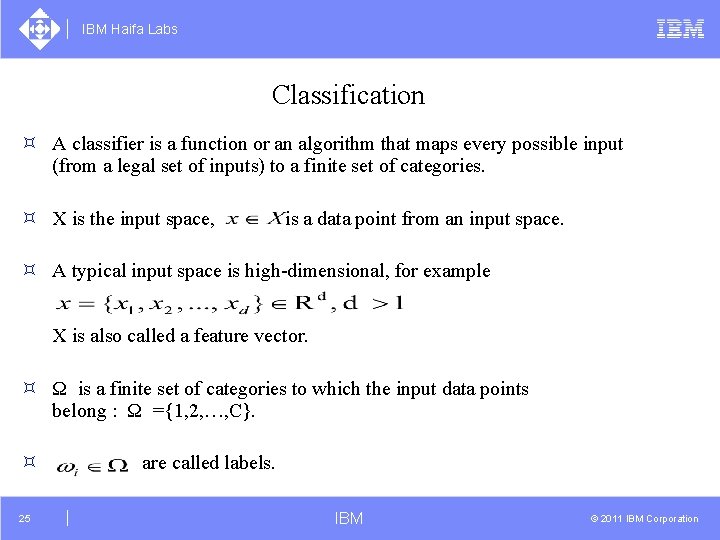

IBM Haifa Labs Classification ³ A classifier is a function or an algorithm that maps every possible input (from a legal set of inputs) to a finite set of categories. ³ X is the input space, is a data point from an input space. ³ A typical input space is high-dimensional, for example X is also called a feature vector. ³ Ω is a finite set of categories to which the input data points belong : Ω ={1, 2, …, C}. ³ 25 are called labels. IBM © 2011 IBM Corporation

IBM Haifa Labs Classification ³ Y is a finite set of decisions – the output set of the classifier. ³ The classifier is a function 26 IBM © 2011 IBM Corporation

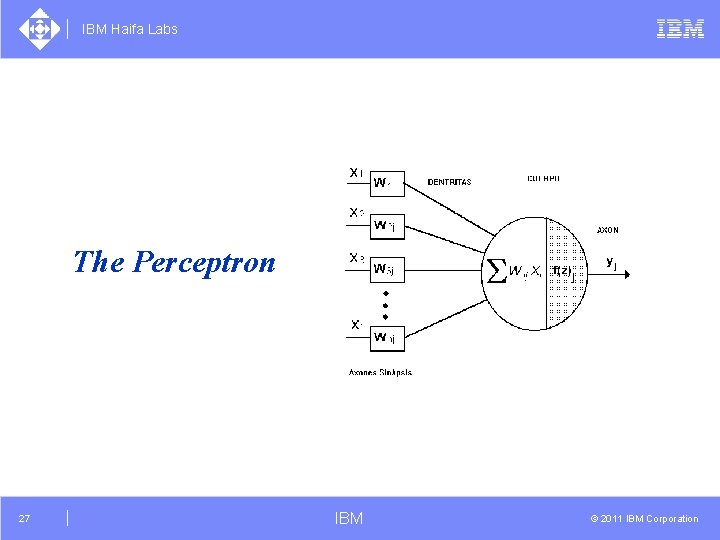

IBM Haifa Labs The Perceptron 27 IBM © 2011 IBM Corporation

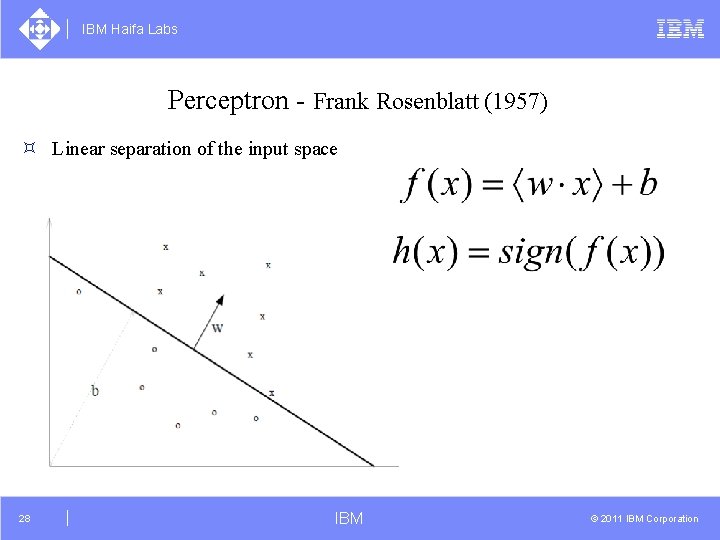

IBM Haifa Labs Perceptron - Frank Rosenblatt (1957) ³ Linear separation of the input space 28 IBM © 2011 IBM Corporation

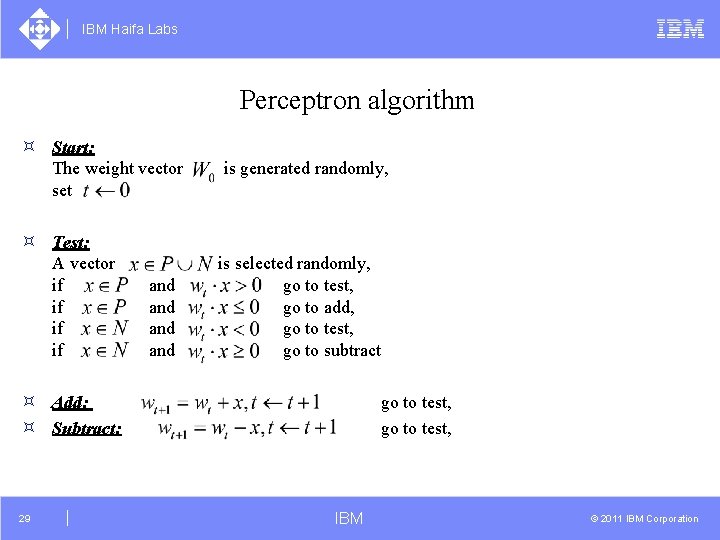

IBM Haifa Labs Perceptron algorithm ³ Start: The weight vector set ³ Test: A vector if if and and is generated randomly, is selected randomly, go to test, go to add, go to test, go to subtract ³ Add: ³ Subtract: 29 go to test, IBM © 2011 IBM Corporation

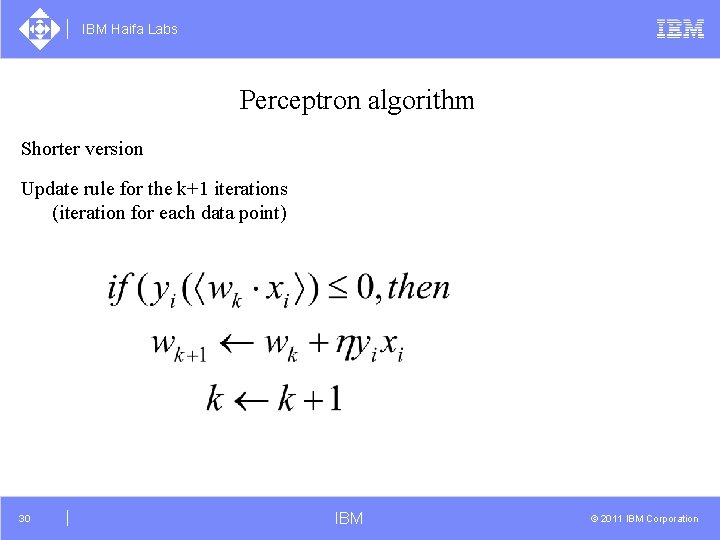

IBM Haifa Labs Perceptron algorithm Shorter version Update rule for the k+1 iterations (iteration for each data point) 30 IBM © 2011 IBM Corporation

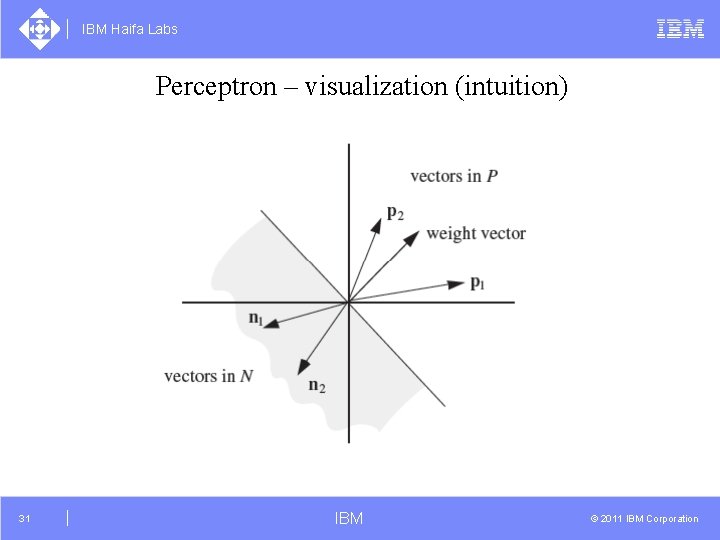

IBM Haifa Labs Perceptron – visualization (intuition) 31 IBM © 2011 IBM Corporation

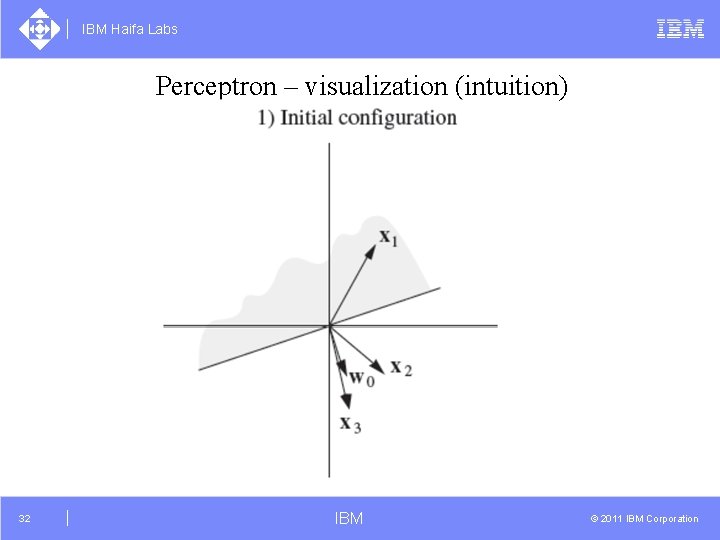

IBM Haifa Labs Perceptron – visualization (intuition) 32 IBM © 2011 IBM Corporation

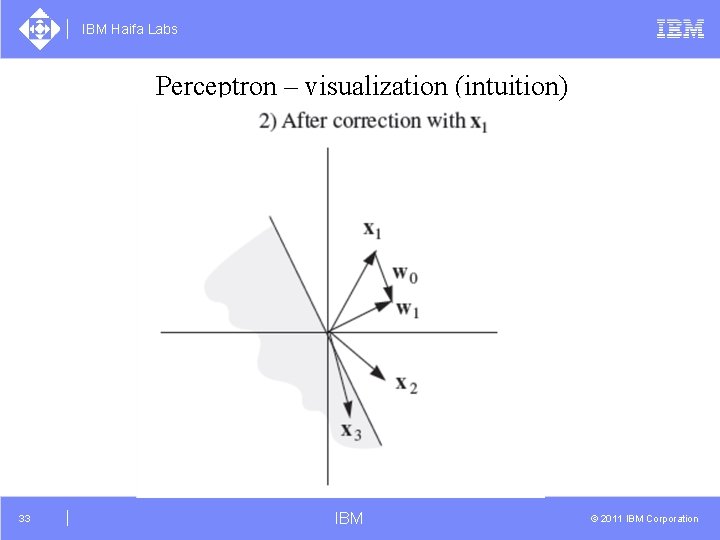

IBM Haifa Labs Perceptron – visualization (intuition) 33 IBM © 2011 IBM Corporation

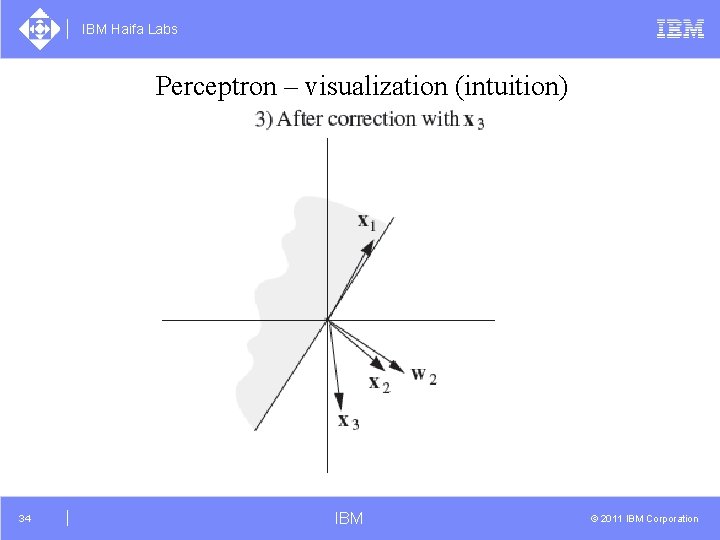

IBM Haifa Labs Perceptron – visualization (intuition) 34 IBM © 2011 IBM Corporation

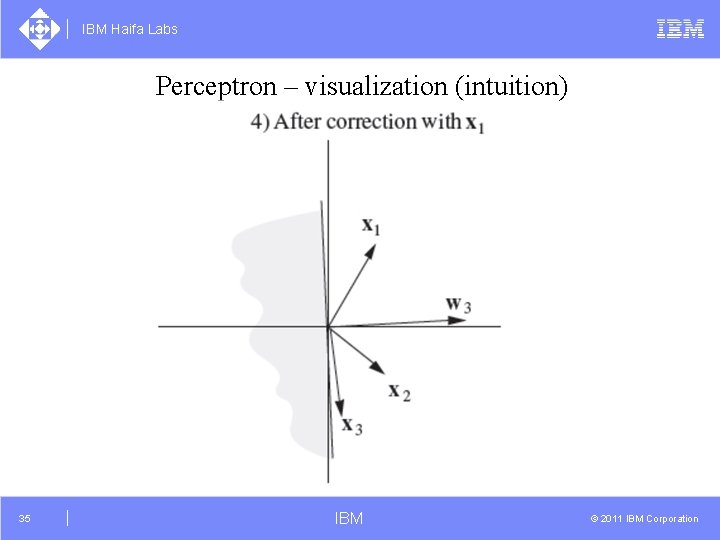

IBM Haifa Labs Perceptron – visualization (intuition) 35 IBM © 2011 IBM Corporation

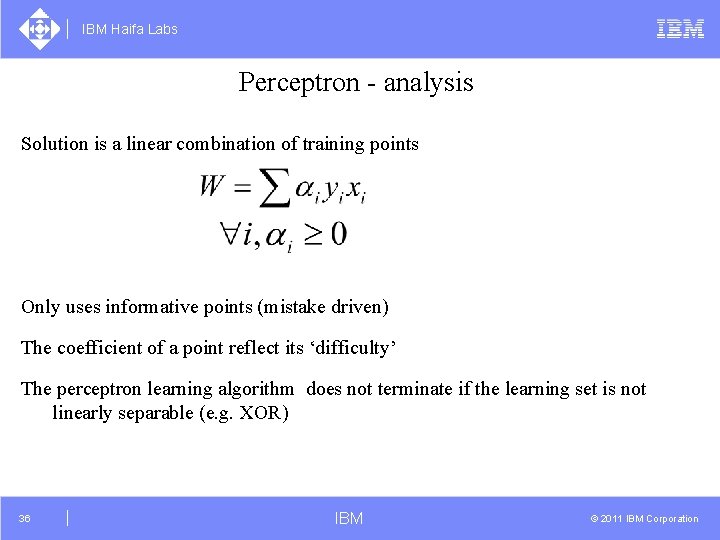

IBM Haifa Labs Perceptron - analysis Solution is a linear combination of training points Only uses informative points (mistake driven) The coefficient of a point reflect its ‘difficulty’ The perceptron learning algorithm does not terminate if the learning set is not linearly separable (e. g. XOR) 36 IBM © 2011 IBM Corporation

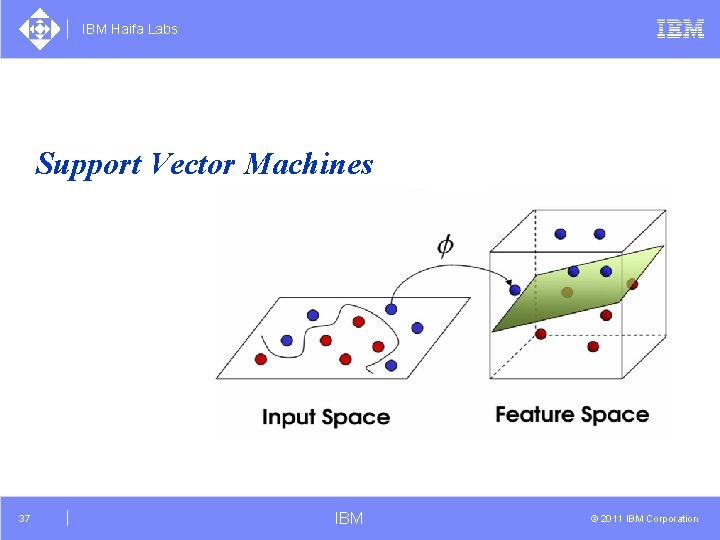

IBM Haifa Labs Support Vector Machines 37 IBM © 2011 IBM Corporation

IBM Haifa Labs Advantages of SVM, Vladimir Vapnik 1979, 1998 ³ Exhibit good generalization ³ Can implement confidence measures, etc. ³ Hypothesis has an explicit dependence on the data (via the support vectors) ³ Learning involves optimization of a convex function (no false minima, unlike NN). ³ Few parameters required for tuning the learning machine (unlike NN where the architecture/various parameters must be found). 38 IBM © 2011 IBM Corporation

IBM Haifa Labs Advantages of SVM ³ From the perspective of statistical learning theory the motivation for considering binary classifier SVMs comes from theoretical bounds on the generalization error. ³ These generalization bounds have two important features: 39 IBM © 2011 IBM Corporation

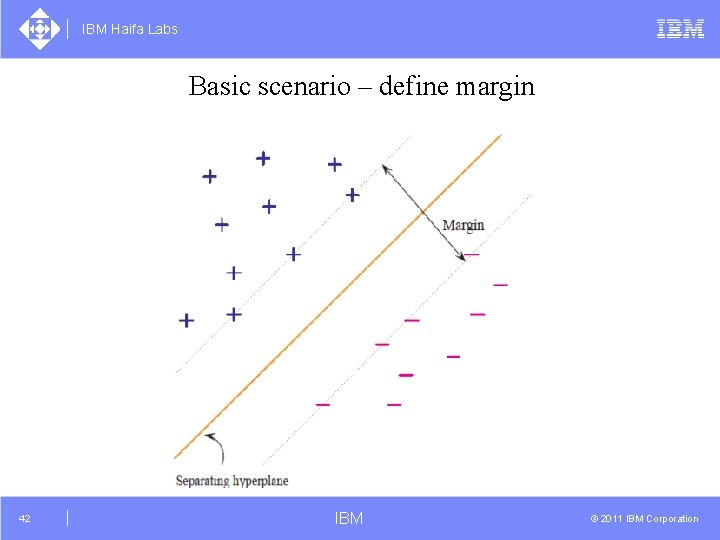

IBM Haifa Labs Advantages of SVM ³ The upper bound on the generalization error does not depend on the dimensionality of the space. ³ The bound is minimized by maximizing the margin, i. e. the minimal distance between the hyperplane separating the two classes and the closest data-points of each class. 40 IBM © 2011 IBM Corporation

IBM Haifa Labs Basic scenario - Separable data set 41 IBM © 2011 IBM Corporation

IBM Haifa Labs Basic scenario – define margin 42 IBM © 2011 IBM Corporation

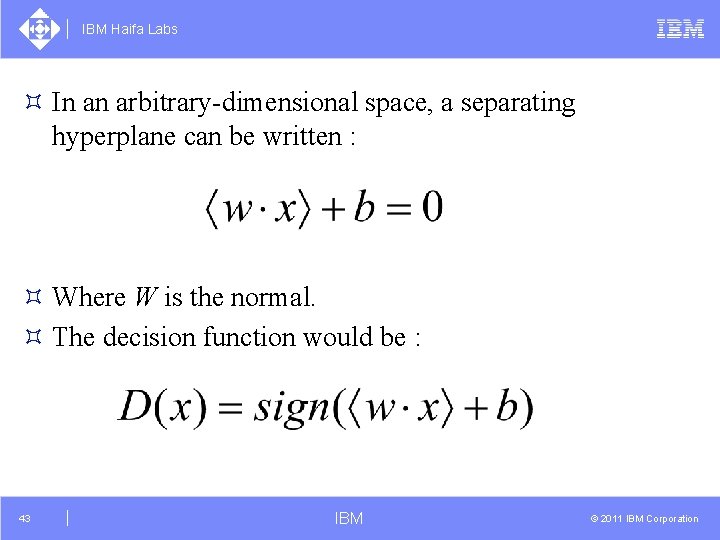

IBM Haifa Labs ³ In an arbitrary-dimensional space, a separating hyperplane can be written : ³ Where W is the normal. ³ The decision function would be : 43 IBM © 2011 IBM Corporation

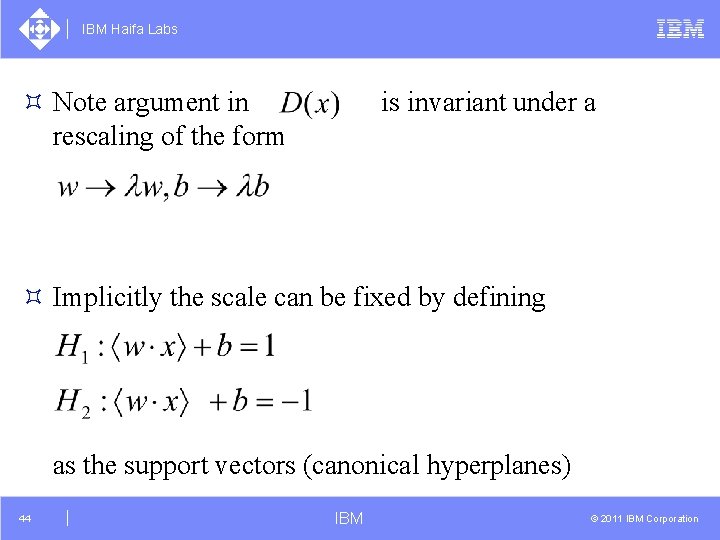

IBM Haifa Labs ³ Note argument in rescaling of the form is invariant under a ³ Implicitly the scale can be fixed by defining as the support vectors (canonical hyperplanes) 44 IBM © 2011 IBM Corporation

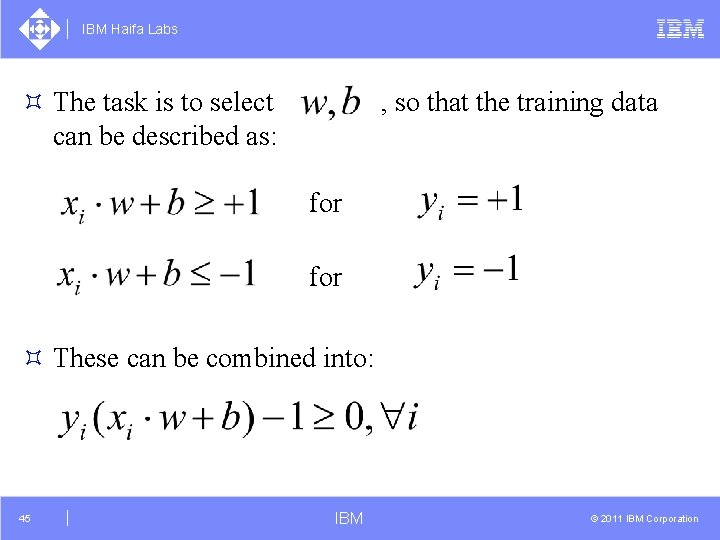

IBM Haifa Labs ³ The task is to select can be described as: , so that the training data for ³ These can be combined into: 45 IBM © 2011 IBM Corporation

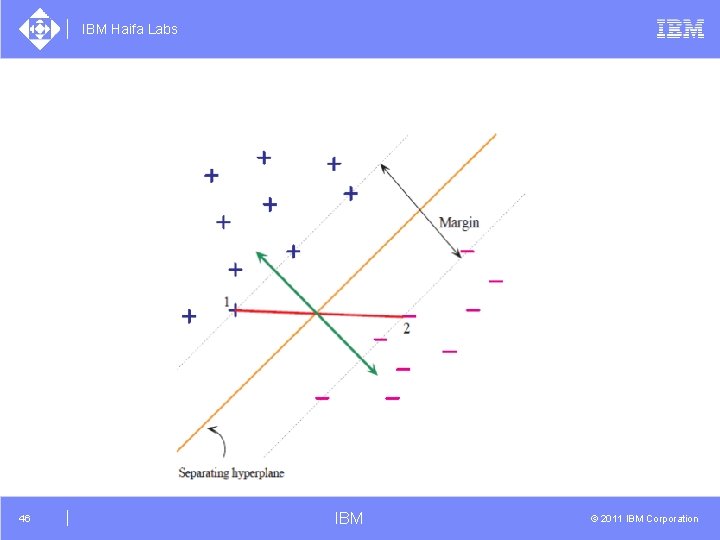

IBM Haifa Labs 46 IBM © 2011 IBM Corporation

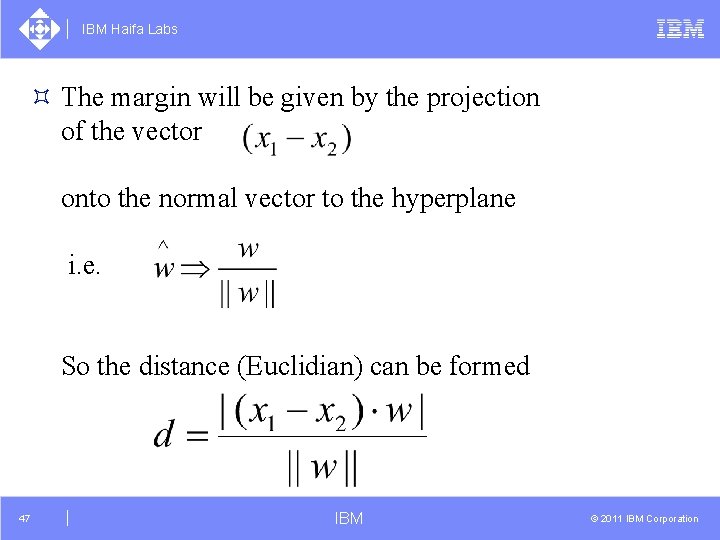

IBM Haifa Labs ³ The margin will be given by the projection of the vector onto the normal vector to the hyperplane i. e. So the distance (Euclidian) can be formed 47 IBM © 2011 IBM Corporation

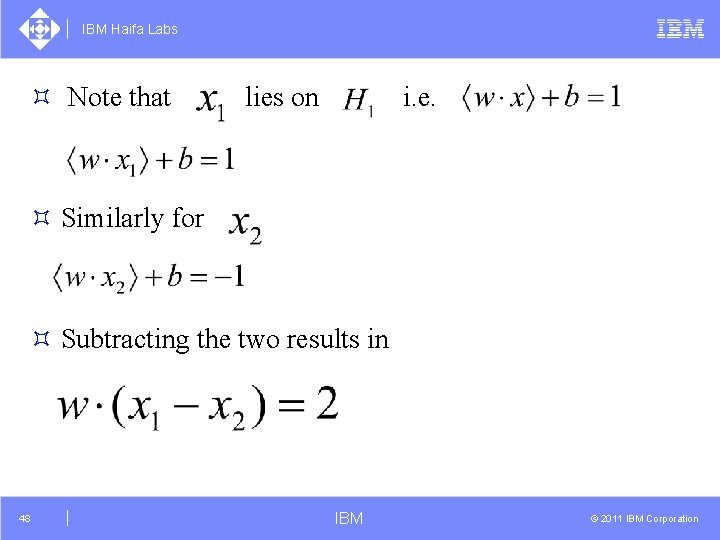

IBM Haifa Labs ³ Note that lies on i. e. ³ Similarly for ³ Subtracting the two results in 48 IBM © 2011 IBM Corporation

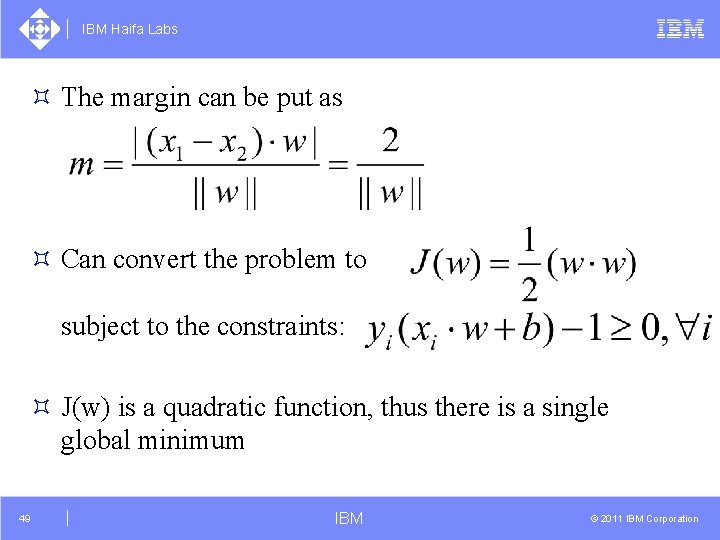

IBM Haifa Labs ³ The margin can be put as ³ Can convert the problem to subject to the constraints: ³ J(w) is a quadratic function, thus there is a single global minimum 49 IBM © 2011 IBM Corporation

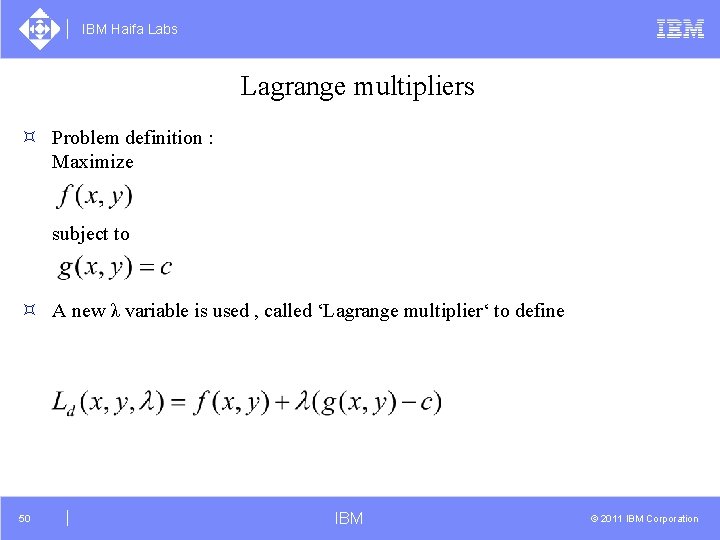

IBM Haifa Labs Lagrange multipliers ³ Problem definition : Maximize subject to ³ A new λ variable is used , called ‘Lagrange multiplier‘ to define 50 IBM © 2011 IBM Corporation

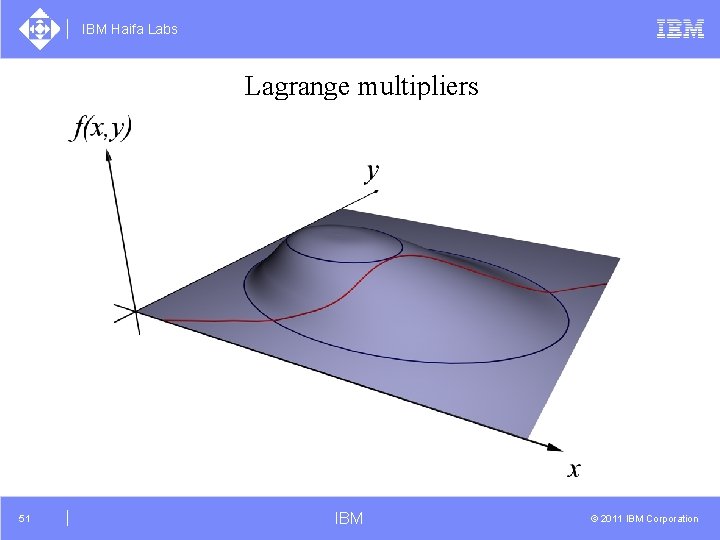

IBM Haifa Labs Lagrange multipliers 51 IBM © 2011 IBM Corporation

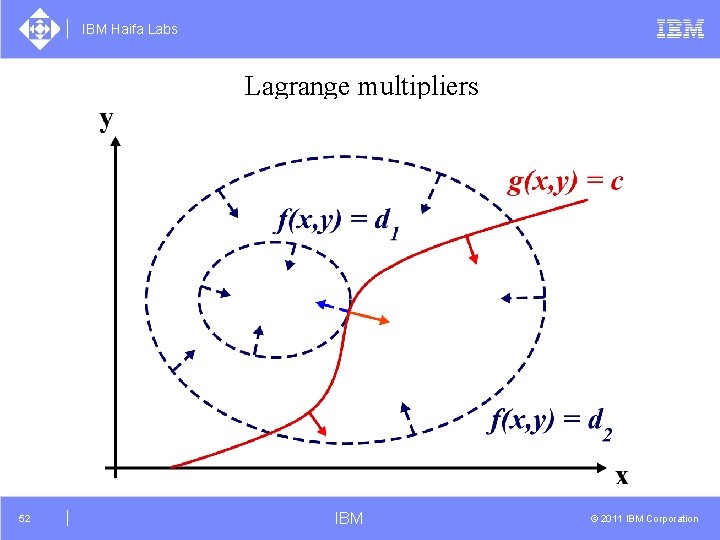

IBM Haifa Labs Lagrange multipliers 52 IBM © 2011 IBM Corporation

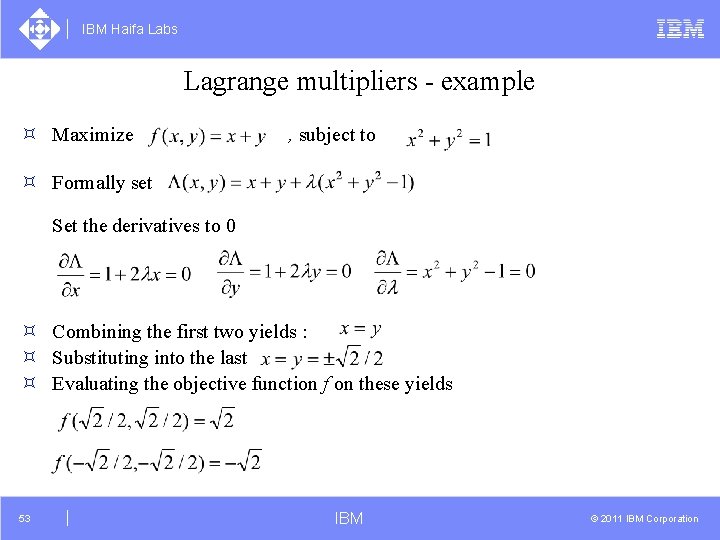

IBM Haifa Labs Lagrange multipliers - example ³ Maximize , subject to ³ Formally set Set the derivatives to 0 ³ Combining the first two yields : ³ Substituting into the last ³ Evaluating the objective function f on these yields 53 IBM © 2011 IBM Corporation

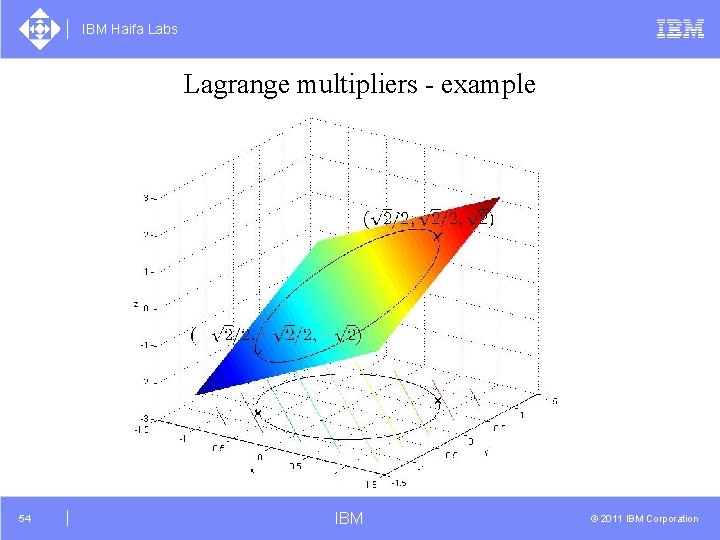

IBM Haifa Labs Lagrange multipliers - example 54 IBM © 2011 IBM Corporation

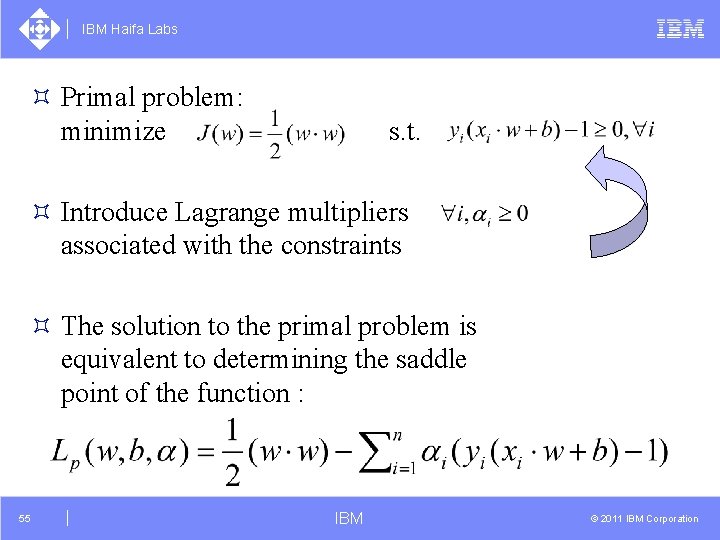

IBM Haifa Labs ³ Primal problem: minimize s. t. ³ Introduce Lagrange multipliers associated with the constraints ³ The solution to the primal problem is equivalent to determining the saddle point of the function : 55 IBM © 2011 IBM Corporation

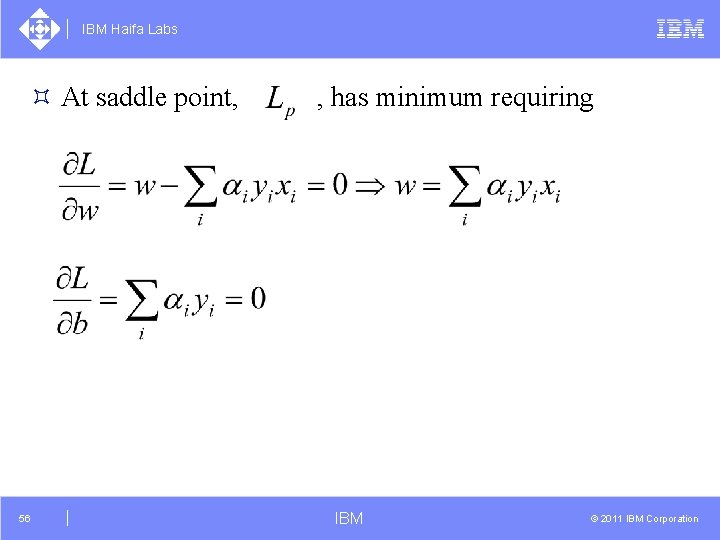

IBM Haifa Labs ³ At saddle point, 56 , has minimum requiring IBM © 2011 IBM Corporation

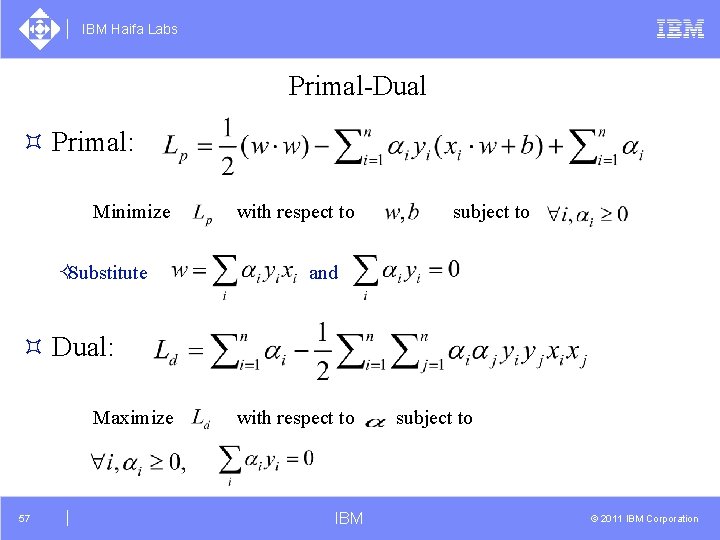

IBM Haifa Labs Primal-Dual ³ Primal: Minimize ²Substitute with respect to subject to and ³ Dual: Maximize 57 with respect to IBM subject to © 2011 IBM Corporation

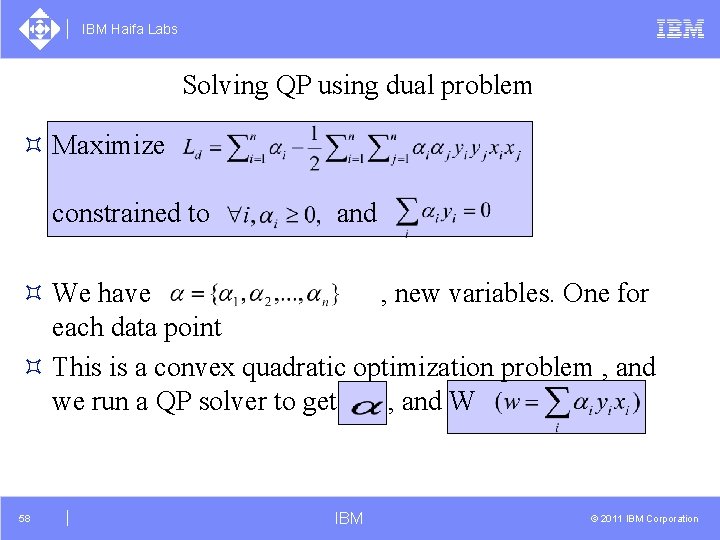

IBM Haifa Labs Solving QP using dual problem ³ Maximize constrained to and ³ We have , new variables. One for each data point ³ This is a convex quadratic optimization problem , and we run a QP solver to get , and W 58 IBM © 2011 IBM Corporation

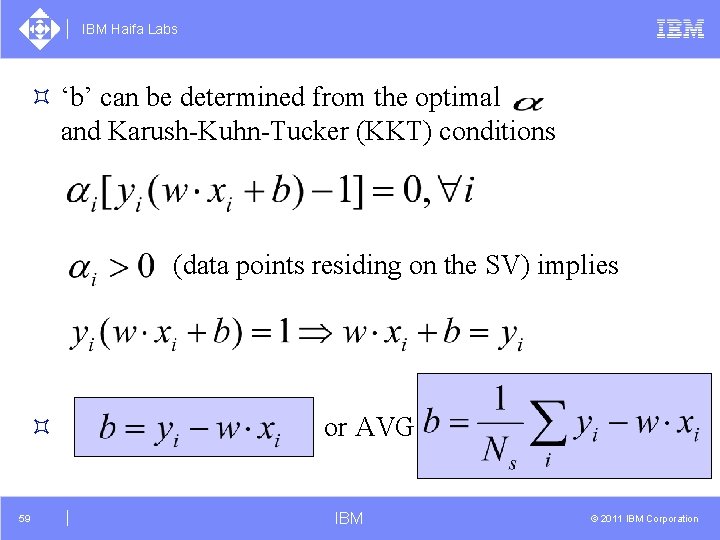

IBM Haifa Labs ³ ‘b’ can be determined from the optimal and Karush-Kuhn-Tucker (KKT) conditions (data points residing on the SV) implies ³ 59 or AVG IBM © 2011 IBM Corporation

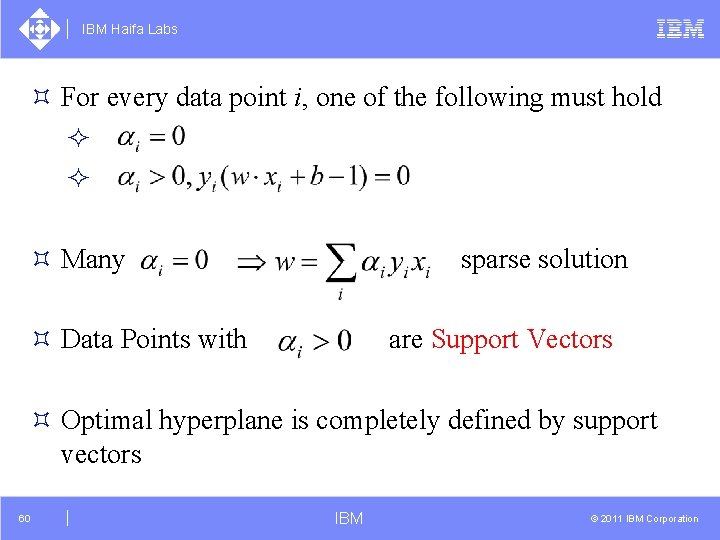

IBM Haifa Labs ³ For every data point i, one of the following must hold ² ² ³ Many sparse solution ³ Data Points with are Support Vectors ³ Optimal hyperplane is completely defined by support vectors 60 IBM © 2011 IBM Corporation

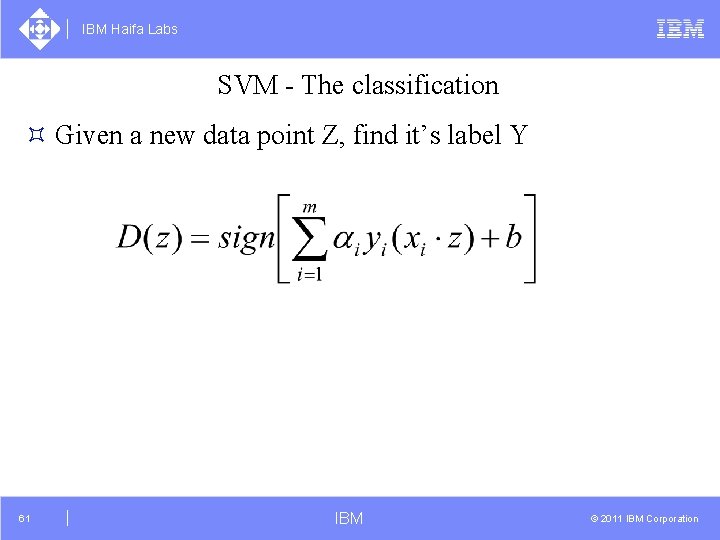

IBM Haifa Labs SVM - The classification ³ Given a new data point Z, find it’s label Y 61 IBM © 2011 IBM Corporation

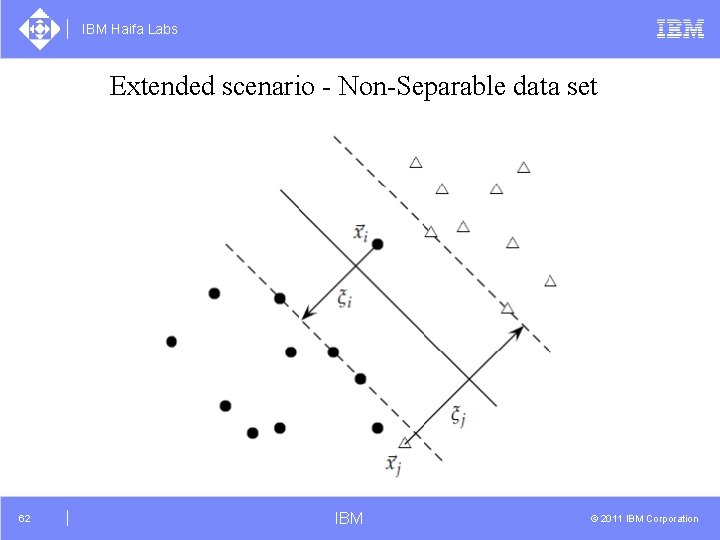

IBM Haifa Labs Extended scenario - Non-Separable data set 62 IBM © 2011 IBM Corporation

IBM Haifa Labs ³ Data is most likely not to be separable (inconsistencies, outliers, noise), but linear classifier may still be appropriate ³ Can apply SVM in non-linearly separable case ²Data should be almost linearly separable 63 IBM © 2011 IBM Corporation

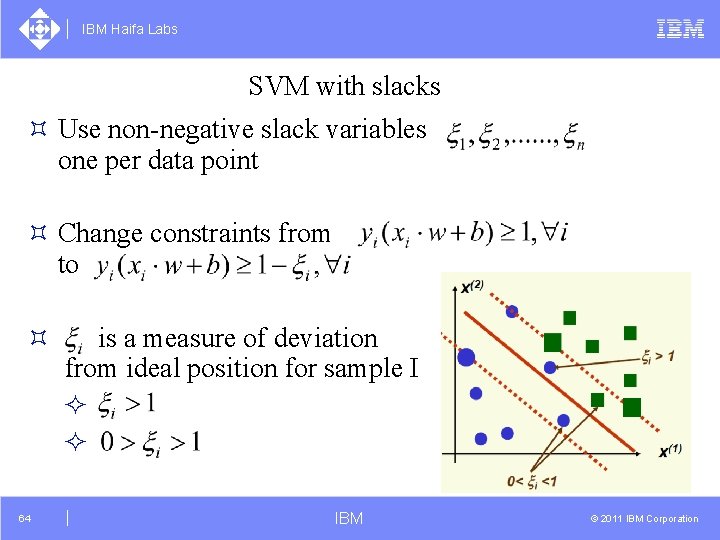

IBM Haifa Labs SVM with slacks ³ Use non-negative slack variables one per data point ³ Change constraints from to ³ 64 is a measure of deviation from ideal position for sample I ² ² IBM © 2011 IBM Corporation

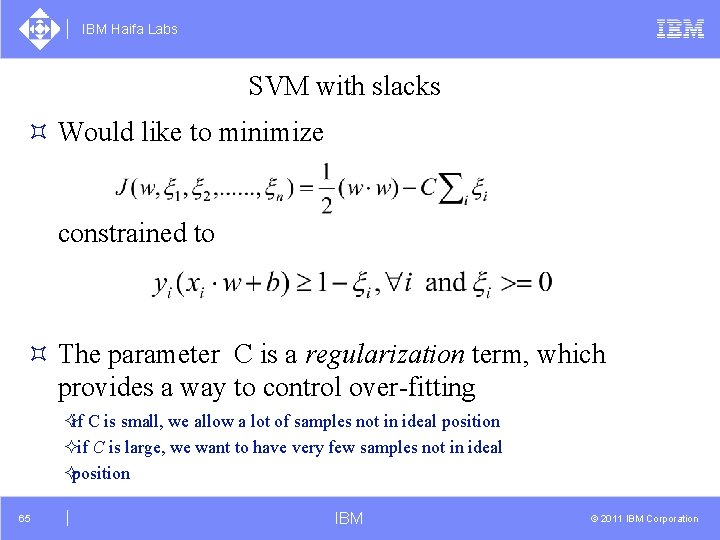

IBM Haifa Labs SVM with slacks ³ Would like to minimize constrained to ³ The parameter C is a regularization term, which provides a way to control over-fitting ²if C is small, we allow a lot of samples not in ideal position ²if C is large, we want to have very few samples not in ideal ²position 65 IBM © 2011 IBM Corporation

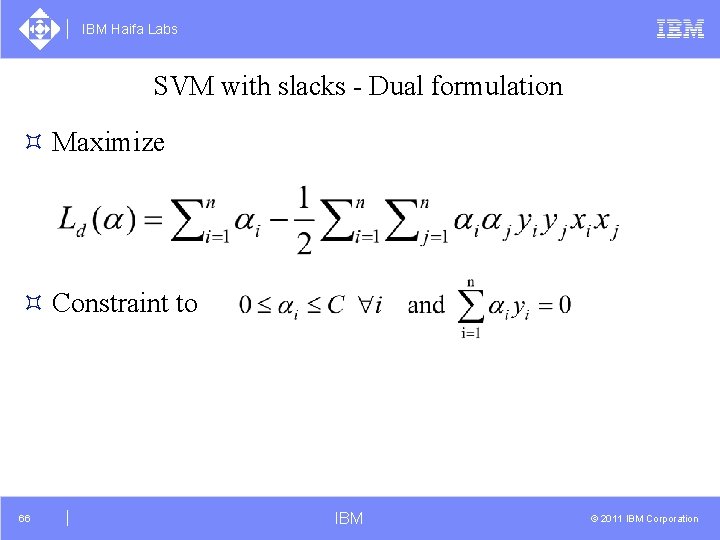

IBM Haifa Labs SVM with slacks - Dual formulation ³ Maximize ³ Constraint to 66 IBM © 2011 IBM Corporation

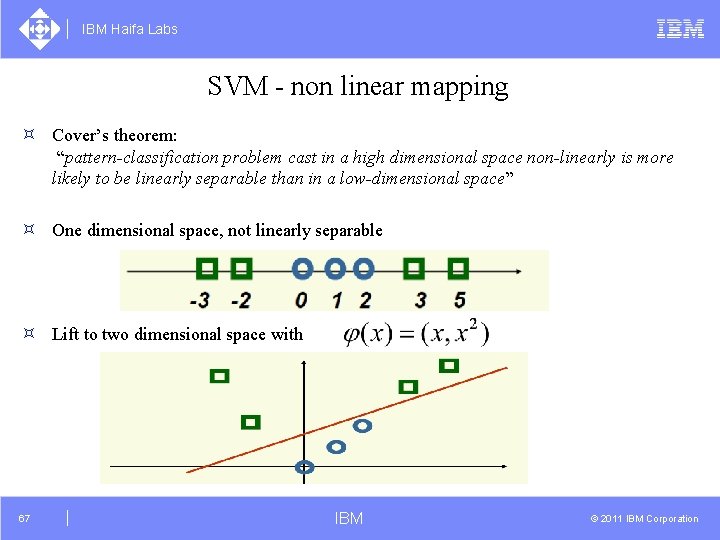

IBM Haifa Labs SVM - non linear mapping ³ Cover’s theorem: “pattern-classification problem cast in a high dimensional space non-linearly is more likely to be linearly separable than in a low-dimensional space” ³ One dimensional space, not linearly separable ³ Lift to two dimensional space with 67 IBM © 2011 IBM Corporation

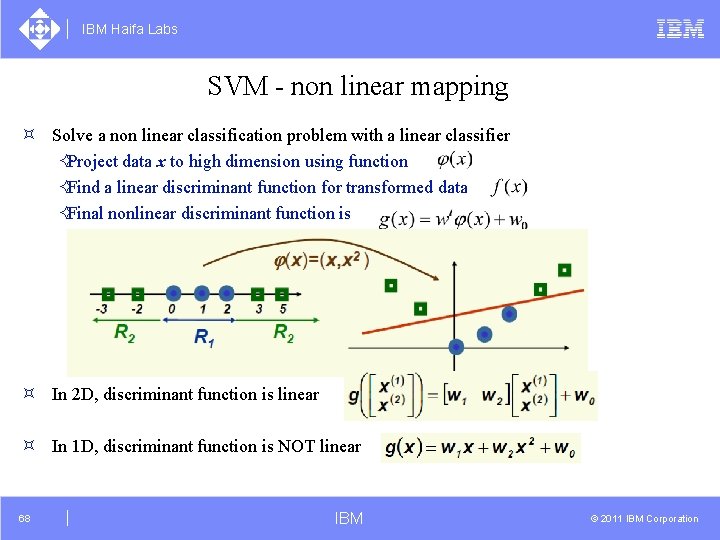

IBM Haifa Labs SVM - non linear mapping ³ Solve a non linear classification problem with a linear classifier ²Project data x to high dimension using function ²Find a linear discriminant function for transformed data ²Final nonlinear discriminant function is ³ In 2 D, discriminant function is linear ³ In 1 D, discriminant function is NOT linear 68 IBM © 2011 IBM Corporation

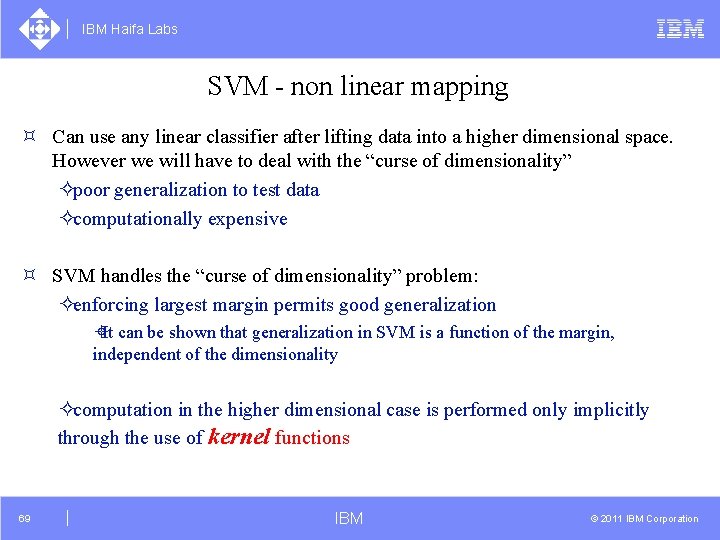

IBM Haifa Labs SVM - non linear mapping ³ Can use any linear classifier after lifting data into a higher dimensional space. However we will have to deal with the “curse of dimensionality” ²poor generalization to test data ²computationally expensive ³ SVM handles the “curse of dimensionality” problem: ²enforcing largest margin permits good generalization ±It can be shown that generalization in SVM is a function of the margin, independent of the dimensionality ²computation in the higher dimensional case is performed only implicitly through the use of kernel functions 69 IBM © 2011 IBM Corporation

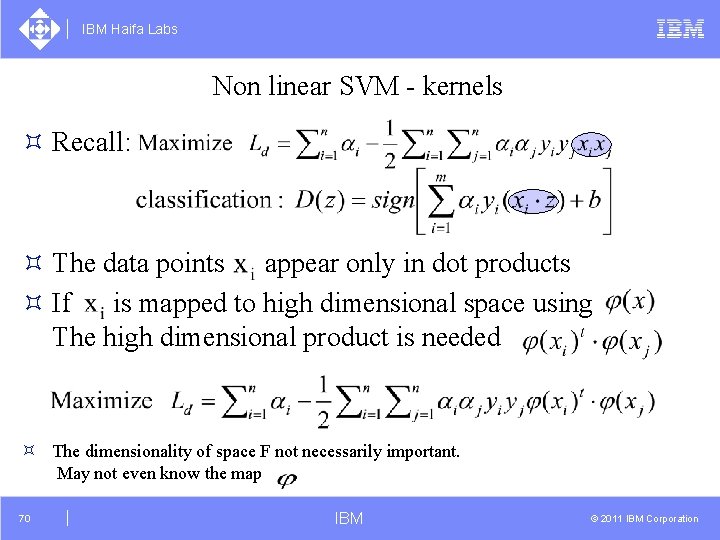

IBM Haifa Labs Non linear SVM - kernels ³ Recall: ³ The data points appear only in dot products ³ If is mapped to high dimensional space using The high dimensional product is needed ³ The dimensionality of space F not necessarily important. May not even know the map 70 IBM © 2011 IBM Corporation

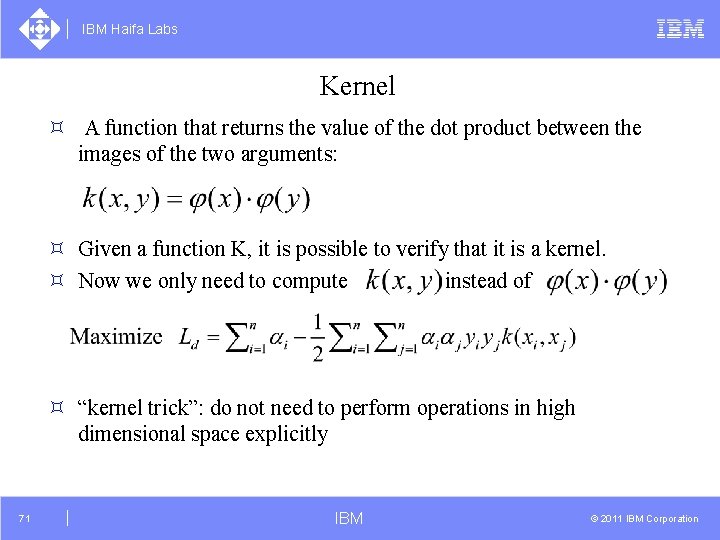

IBM Haifa Labs Kernel ³ A function that returns the value of the dot product between the images of the two arguments: ³ Given a function K, it is possible to verify that it is a kernel. ³ Now we only need to compute instead of ³ “kernel trick”: do not need to perform operations in high dimensional space explicitly 71 IBM © 2011 IBM Corporation

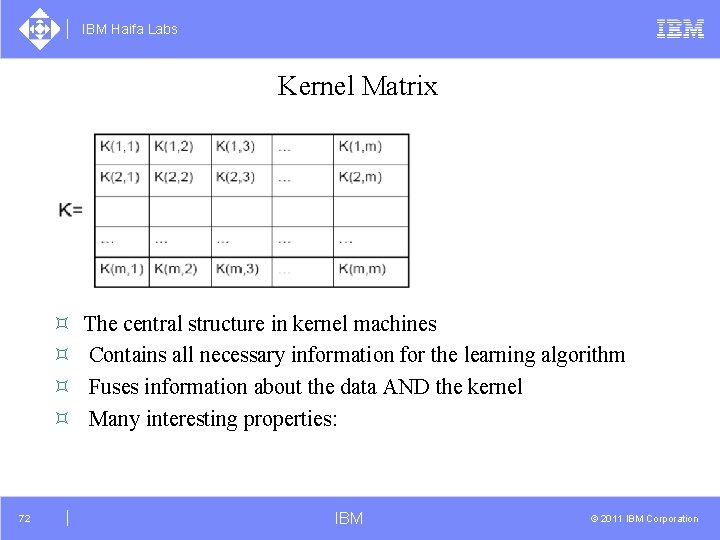

IBM Haifa Labs Kernel Matrix ³ ³ 72 The central structure in kernel machines Contains all necessary information for the learning algorithm Fuses information about the data AND the kernel Many interesting properties: IBM © 2011 IBM Corporation

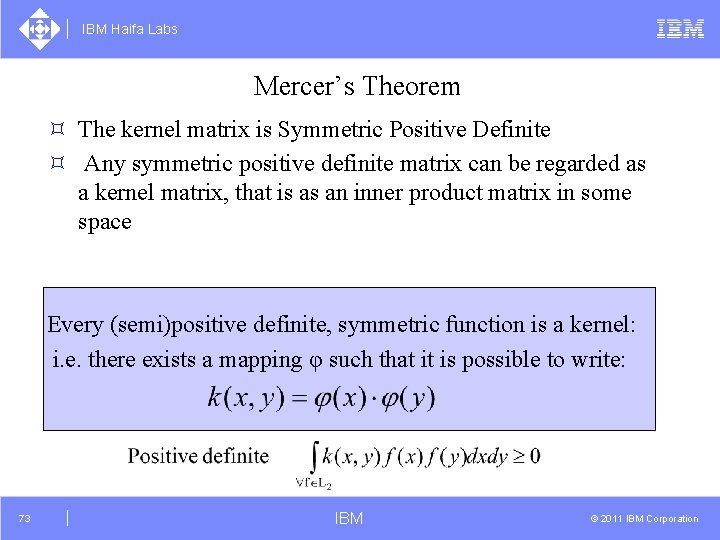

IBM Haifa Labs Mercer’s Theorem ³ The kernel matrix is Symmetric Positive Definite ³ Any symmetric positive definite matrix can be regarded as a kernel matrix, that is as an inner product matrix in some space Every (semi)positive definite, symmetric function is a kernel: i. e. there exists a mapping φ such that it is possible to write: 73 IBM © 2011 IBM Corporation

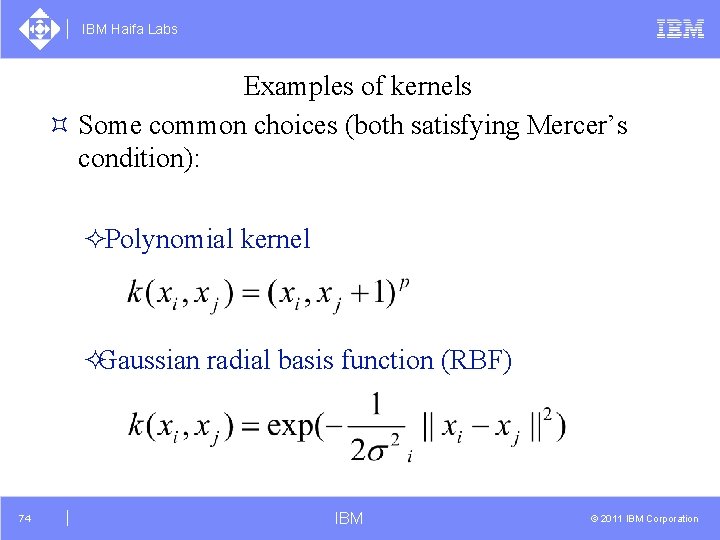

IBM Haifa Labs Examples of kernels ³ Some common choices (both satisfying Mercer’s condition): ²Polynomial kernel ²Gaussian radial basis function (RBF) 74 IBM © 2011 IBM Corporation

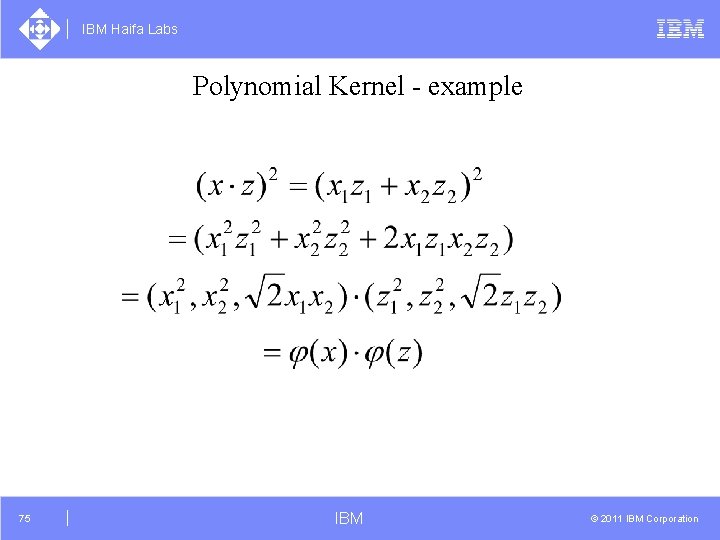

IBM Haifa Labs Polynomial Kernel - example 75 IBM © 2011 IBM Corporation

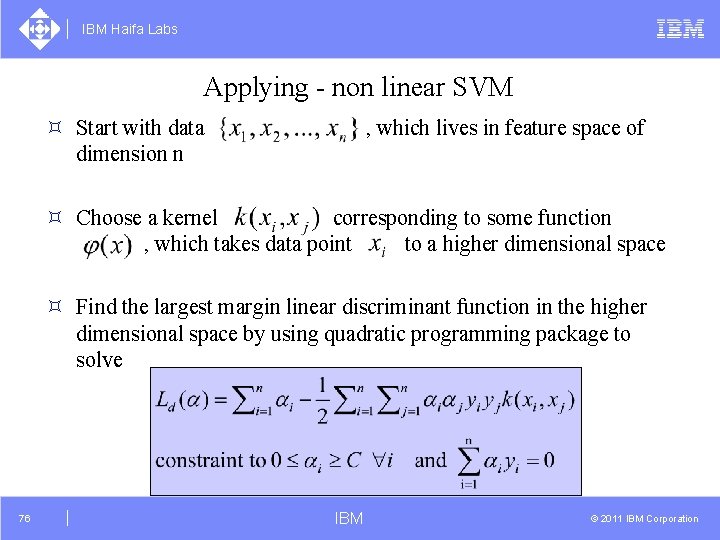

IBM Haifa Labs Applying - non linear SVM ³ Start with data dimension n , which lives in feature space of ³ Choose a kernel corresponding to some function , which takes data point to a higher dimensional space ³ Find the largest margin linear discriminant function in the higher dimensional space by using quadratic programming package to solve 76 IBM © 2011 IBM Corporation

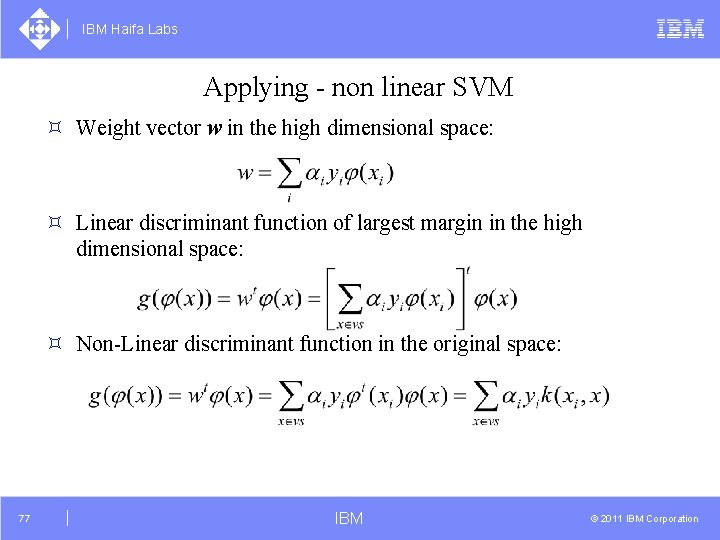

IBM Haifa Labs Applying - non linear SVM ³ Weight vector w in the high dimensional space: ³ Linear discriminant function of largest margin in the high dimensional space: ³ Non-Linear discriminant function in the original space: 77 IBM © 2011 IBM Corporation

IBM Haifa Labs Applying - non linear SVM 78 IBM © 2011 IBM Corporation

IBM Haifa Labs SVM summary ³ Advantages: ²Based on nice theory ²Excellent generalization properties ²Objective function has no local minima ²Can be used to find non linear discriminant functions ²Complexity of the classifier is characterized by the number of support vectors rather than the dimensionality of the transformed space ³ Disadvantages: ²It’s not clear how to select a kernel function in a principled manner ²tends to be slower than other methods (in non-linear case). 79 IBM © 2011 IBM Corporation

- Slides: 77