The Cocktail Party Problem A Case Study in

The Cocktail Party Problem: A Case Study in Deep Learning De. Liang Wang Perception & Neurodynamics Lab Ohio State University & Northwestern Polytechnical University

Outline of primer l What is the cocktail party problem? l l Ideal binary mask and speech intelligibility Speech separation as DNN based mask estimation l l Speech intelligibility tests on hearing impaired listeners Generalization to new noises l l l Ideal ratio mask Reverberant speech separation Speaker separation l l Talker-dependent Talker-independent 2

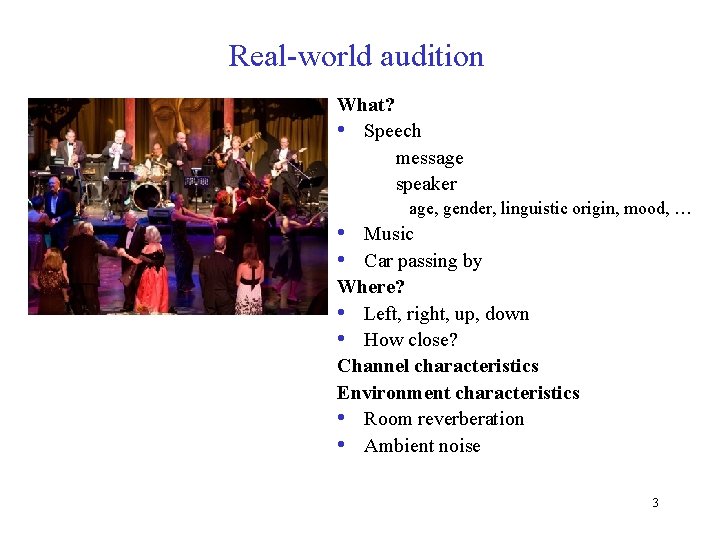

Real-world audition What? • Speech message speaker age, gender, linguistic origin, mood, … • Music • Car passing by Where? • Left, right, up, down • How close? Channel characteristics Environment characteristics • Room reverberation • Ambient noise 3

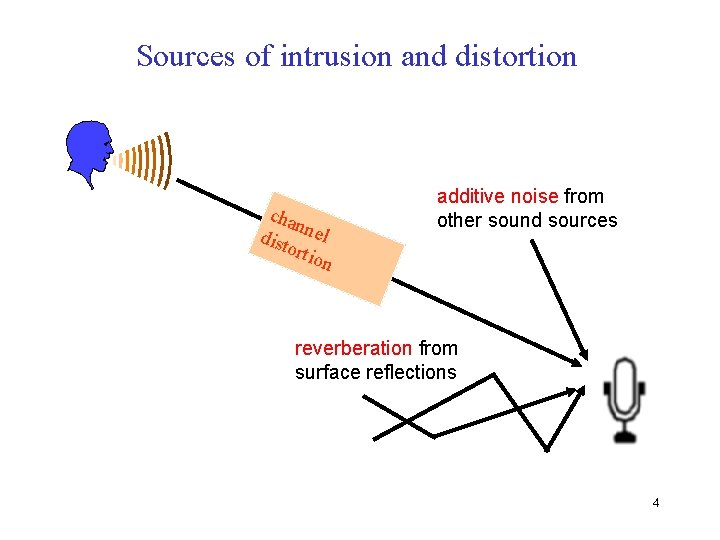

Sources of intrusion and distortion cha n dist nel orti on additive noise from other sound sources reverberation from surface reflections 4

Cocktail party problem • Term coined by Cherry • “One of our most important faculties is our ability to listen to, and follow, one speaker in the presence of others. This is such a common experience that we may take it for granted; we may call it ‘the cocktail party problem. ’ No machine has been constructed to do just that. ” (Cherry, 1957) l Speech separation problem l l Speech enhancement: speech-nonspeech separation Speaker separation: multi-talker separation 5

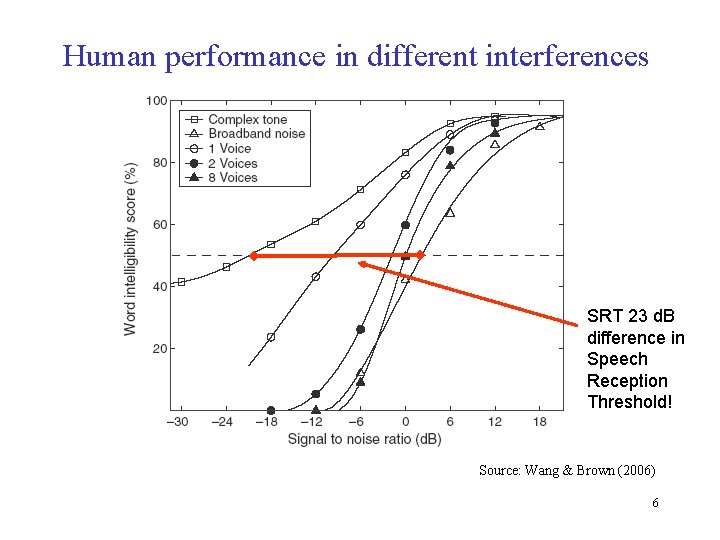

Human performance in different interferences SRT 23 d. B difference in Speech Reception Threshold! Source: Wang & Brown (2006) 6

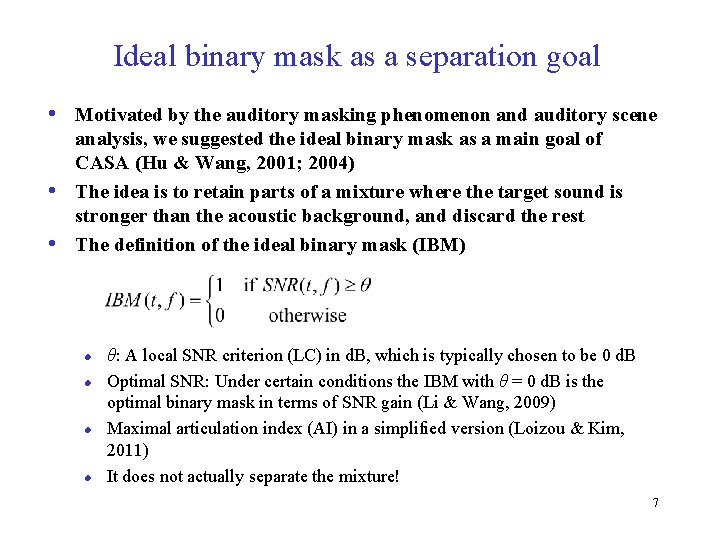

Ideal binary mask as a separation goal • Motivated by the auditory masking phenomenon and auditory scene • • analysis, we suggested the ideal binary mask as a main goal of CASA (Hu & Wang, 2001; 2004) The idea is to retain parts of a mixture where the target sound is stronger than the acoustic background, and discard the rest The definition of the ideal binary mask (IBM) l l θ: A local SNR criterion (LC) in d. B, which is typically chosen to be 0 d. B Optimal SNR: Under certain conditions the IBM with θ = 0 d. B is the optimal binary mask in terms of SNR gain (Li & Wang, 2009) Maximal articulation index (AI) in a simplified version (Loizou & Kim, 2011) It does not actually separate the mixture! 7

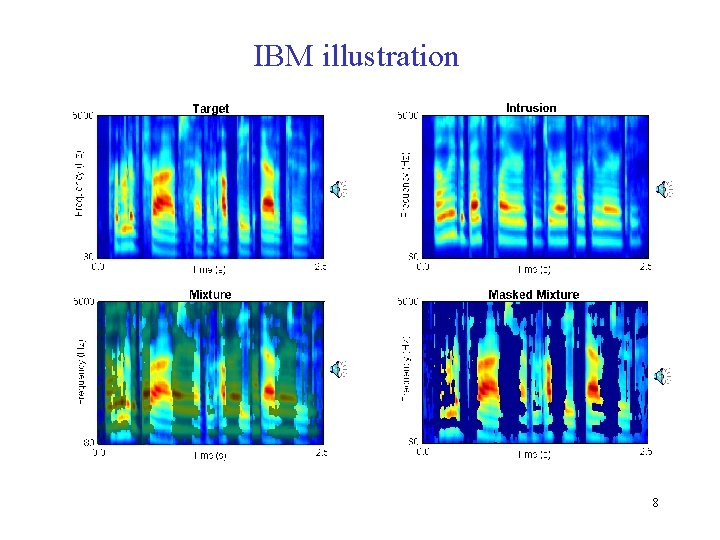

IBM illustration 8

Subject tests of ideal binary masking • IBM separation leads to dramatic speech intelligibility improvements • Improvement for stationary noise is above 7 d. B for normal-hearing • l (NH) listeners (Brungart et al. ’ 06; Li & Loizou’ 08; Ahmadi et al. ’ 13; Chen’ 16), and above 9 d. B for hearing-impaired (HI) listeners (Anzalone et al. ’ 06; Wang et al. ’ 09) Improvement for modulated noise is significantly larger than for stationary noise With the IBM as the goal, the speech separation problem becomes a binary classification problem l This new formulation opens the problem to a variety of pattern classification methods 9

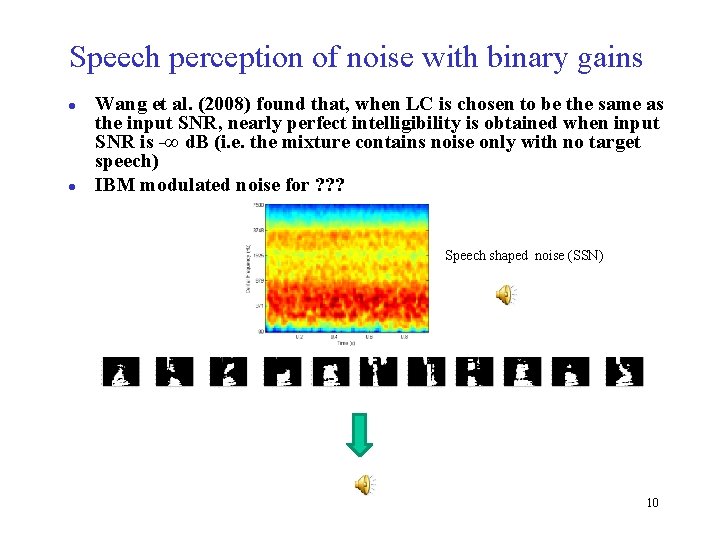

Speech perception of noise with binary gains l l Wang et al. (2008) found that, when LC is chosen to be the same as the input SNR, nearly perfect intelligibility is obtained when input SNR is -∞ d. B (i. e. the mixture contains noise only with no target speech) IBM modulated noise for ? ? ? Speech shaped noise (SSN) 10

Outline of primer l What is the cocktail party problem? l l Ideal binary mask and speech intelligibility Speech separation as DNN based mask estimation l l Speech intelligibility tests on hearing impaired listeners Generalization to new noises l l l Ideal ratio mask Reverberant speech separation Speaker separation l l Talker-dependent Talker-independent 11

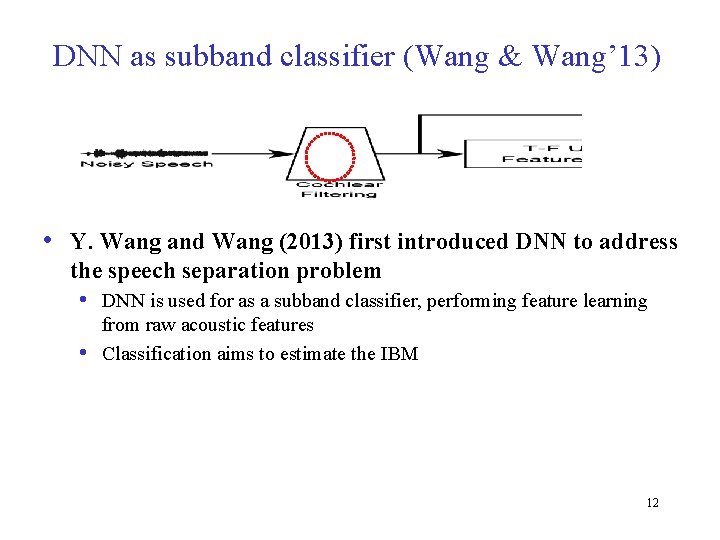

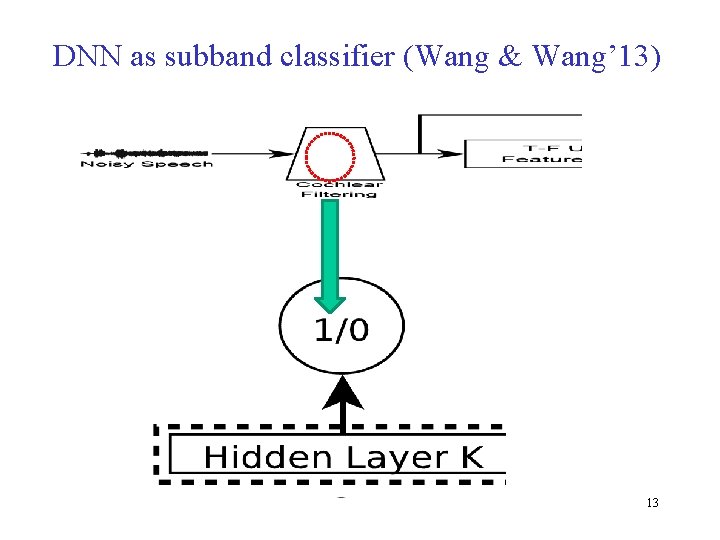

DNN as subband classifier (Wang & Wang’ 13) • Y. Wang and Wang (2013) first introduced DNN to address the speech separation problem • DNN is used for as a subband classifier, performing feature learning • from raw acoustic features Classification aims to estimate the IBM 12

DNN as subband classifier (Wang & Wang’ 13) 13

Extensive training with DNN • Training on 200 randomly chosen utterances from both male and female IEEE speakers, mixed with 100 environmental noises at 0 d. B (~17 hours long) • Six million fully dense training samples in each channel, with 64 channels in total • Evaluated on 20 unseen speakers mixed with 20 unseen • noises at 0 d. B DNN based classifier produced the state-of-the-art separation results at the time 14

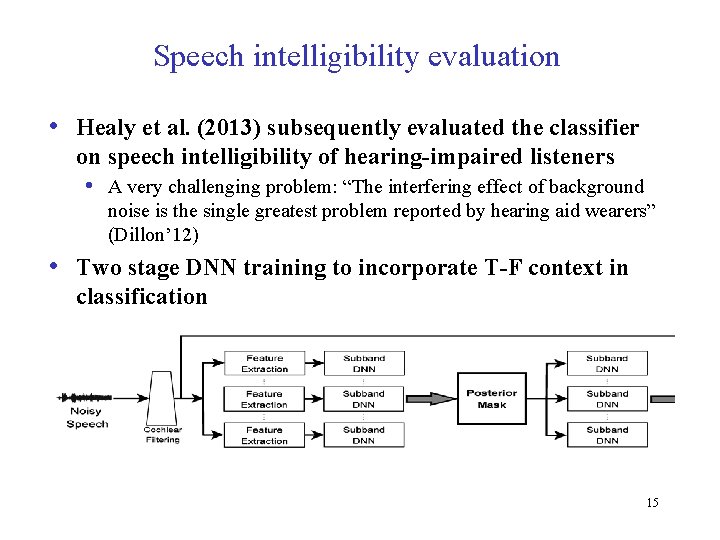

Speech intelligibility evaluation • Healy et al. (2013) subsequently evaluated the classifier on speech intelligibility of hearing-impaired listeners • A very challenging problem: “The interfering effect of background noise is the single greatest problem reported by hearing aid wearers” (Dillon’ 12) • Two stage DNN training to incorporate T-F context in classification 15

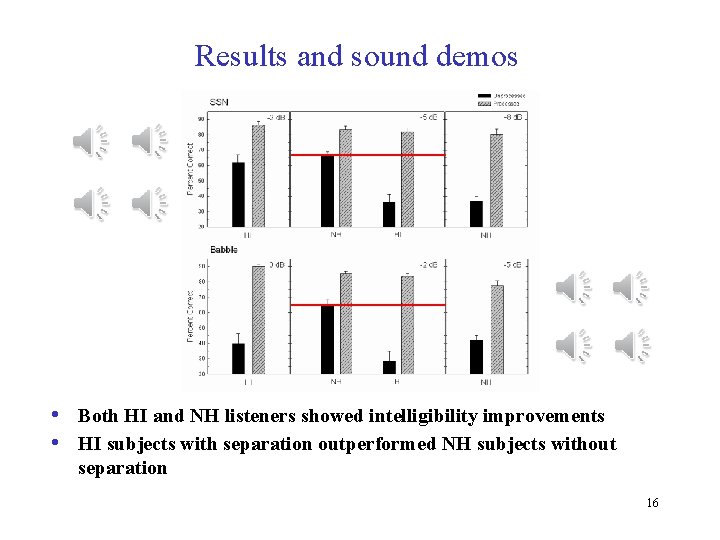

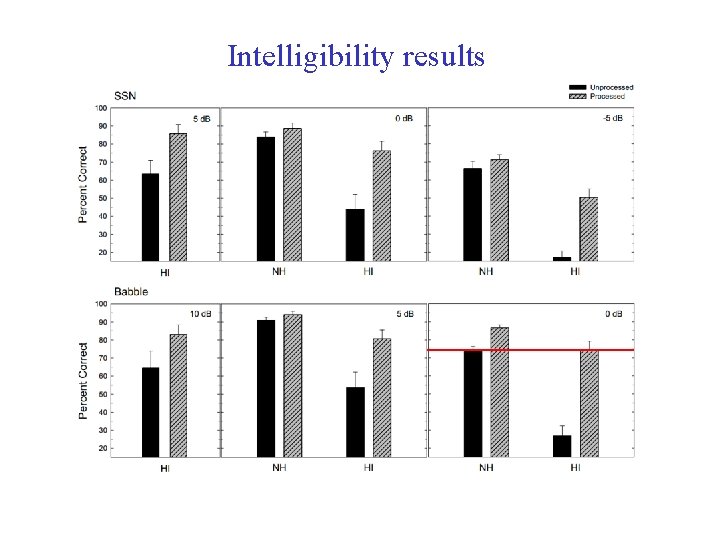

Results and sound demos • Both HI and NH listeners showed intelligibility improvements • HI subjects with separation outperformed NH subjects without separation 16

Generalization to new noises • While previous speech intelligibility results are • impressive, a major limitation is that training and test noise samples were drawn from the same noise segments • Speech utterances were different • Noise samples were randomized This limitation can be addressed through large-scale training for IRM estimation (Chen et al. ’ 16) • IRM can be viewed as a soft version of the IBM 17

Large-scale training • Training set consisted of 560 IEEE sentences mixed with 10, 000 (10 K) non-speech noises (a total of 640, 000 mixtures) • The total duration of the noises is about 125 h, and the total duration of • training mixtures is about 380 h Training SNR is fixed to -2 d. B • The only feature used is the simple T-F unit energy • DNN architecture consists of 5 hidden layers, each with 2048 • units Test utterances and noises are both different from those used in training 18

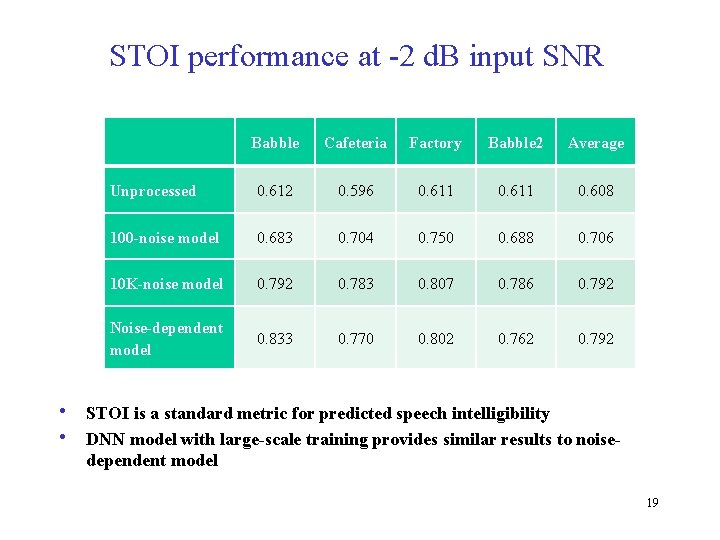

STOI performance at -2 d. B input SNR Babble Cafeteria Factory Babble 2 Average Unprocessed 0. 612 0. 596 0. 611 0. 608 100 -noise model 0. 683 0. 704 0. 750 0. 688 0. 706 10 K-noise model 0. 792 0. 783 0. 807 0. 786 0. 792 Noise-dependent model 0. 833 0. 770 0. 802 0. 762 0. 792 • STOI is a standard metric for predicted speech intelligibility • DNN model with large-scale training provides similar results to noisedependent model 19

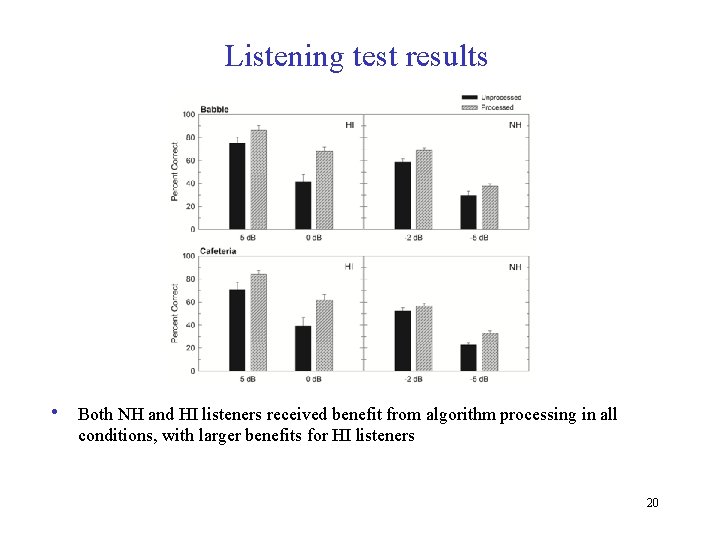

Listening test results • Both NH and HI listeners received benefit from algorithm processing in all conditions, with larger benefits for HI listeners 20

Outline of primer l What is the cocktail party problem? l l Ideal binary mask and speech intelligibility Speech separation as DNN based mask estimation l l Speech intelligibility tests on hearing impaired listeners Generalization to new noises l l l Ideal ratio mask Reverberant speech separation Speaker separation l l Talker-dependent Talker-independent 21

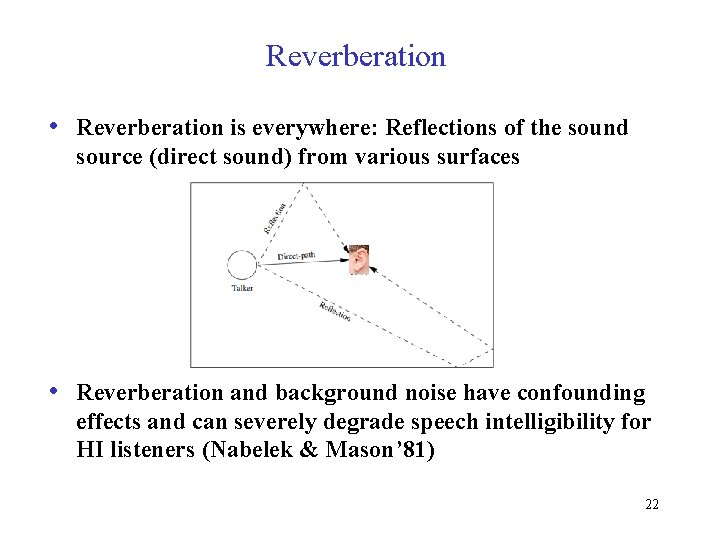

Reverberation • Reverberation is everywhere: Reflections of the sound source (direct sound) from various surfaces • Reverberation and background noise have confounding effects and can severely degrade speech intelligibility for HI listeners (Nabelek & Mason’ 81) 22

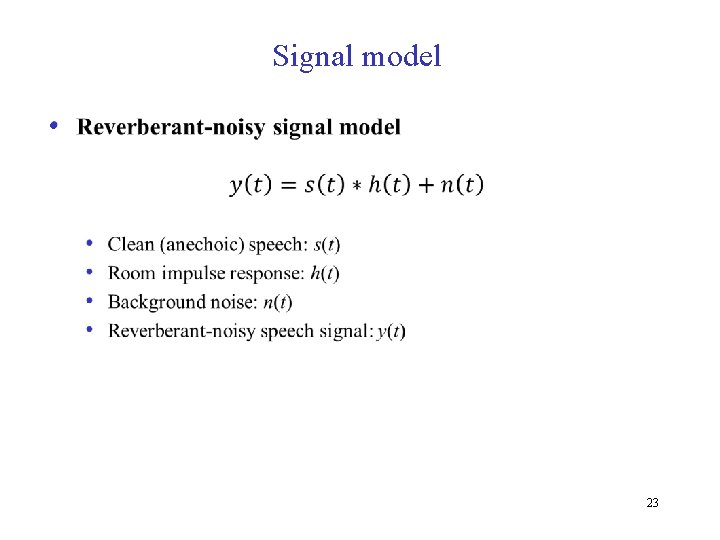

Signal model • 23

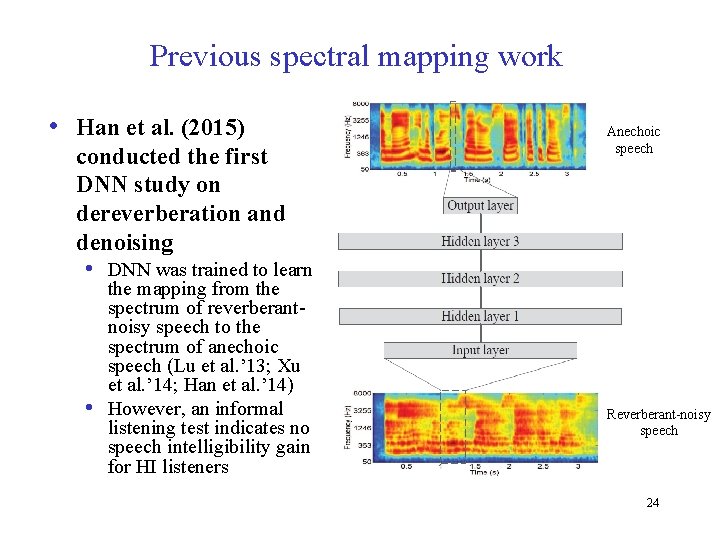

Previous spectral mapping work • Han et al. (2015) conducted the first DNN study on dereverberation and denoising • DNN was trained to learn • the mapping from the spectrum of reverberantnoisy speech to the spectrum of anechoic speech (Lu et al. ’ 13; Xu et al. ’ 14; Han et al. ’ 14) However, an informal listening test indicates no speech intelligibility gain for HI listeners Anechoic speech Reverberant-noisy speech 24

Enhancement of reverberant-noisy speech as mask estimation • Zhao et al. (2018) recently approached dereverberation • • • and denoising as IRM estimation The input is a set of complementary features DNN architecture • Feedforward network with four hidden layers, each with 2048 units Speech intelligibility evaluation of enhanced speech on HI and NH listeners • Reverberation time (T 60) is 0. 6 s 25

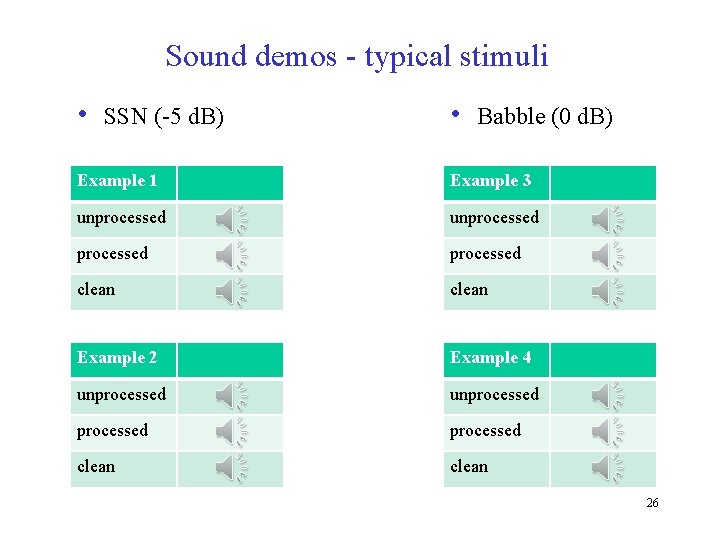

Sound demos - typical stimuli • SSN (-5 d. B) • Babble (0 d. B) Example 1 Example 3 unprocessed clean Example 2 Example 4 unprocessed clean 26

Intelligibility results

Outline of primer l What is the cocktail party problem? l l Ideal binary mask and speech intelligibility Speech separation as DNN based mask estimation l l Speech intelligibility tests on hearing impaired listeners Generalization to new noises l l l Ideal ratio mask Reverberant speech separation Speaker separation l l Talker-dependent Talker-independent 28

Speaker separation • Earlier work shows that DNN based IBM/IRM • estimation remains an effective approach to address speaker separation (Huang et al. ’ 14; Du et al. ’ 14) We recently addressed reverberant two-talker separation as DNN-based IRM estimation (Healy et al. ’ 19) 29

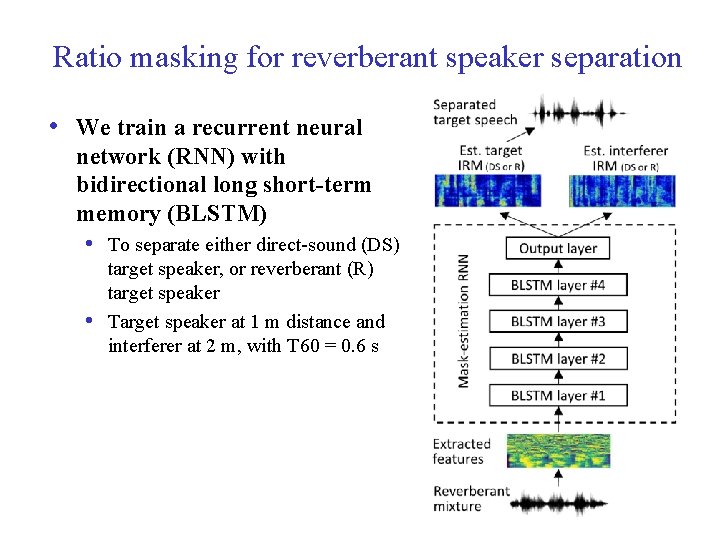

Ratio masking for reverberant speaker separation • We train a recurrent neural network (RNN) with bidirectional long short-term memory (BLSTM) • To separate either direct-sound (DS) • target speaker, or reverberant (R) target speaker Target speaker at 1 m distance and interferer at 2 m, with T 60 = 0. 6 s 30

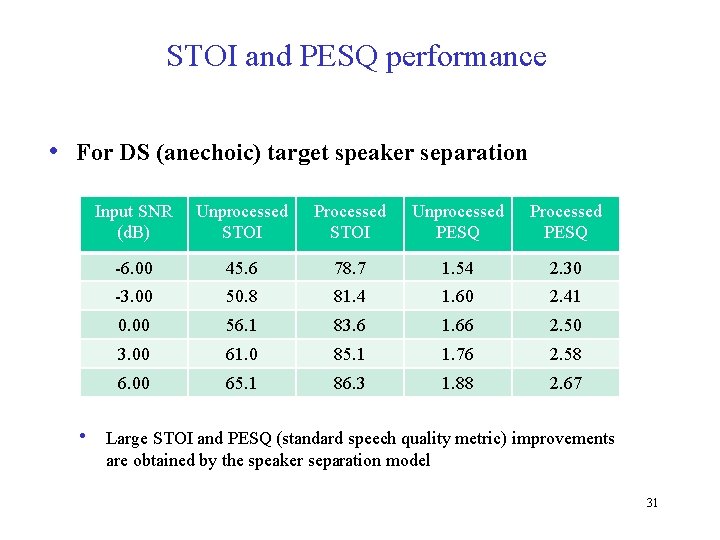

STOI and PESQ performance • For DS (anechoic) target speaker separation Input SNR (d. B) Unprocessed STOI Processed STOI Unprocessed PESQ Processed PESQ -6. 00 45. 6 78. 7 1. 54 2. 30 -3. 00 50. 8 81. 4 1. 60 2. 41 0. 00 56. 1 83. 6 1. 66 2. 50 3. 00 61. 0 85. 1 1. 76 2. 58 6. 00 65. 1 86. 3 1. 88 2. 67 • Large STOI and PESQ (standard speech quality metric) improvements are obtained by the speaker separation model 31

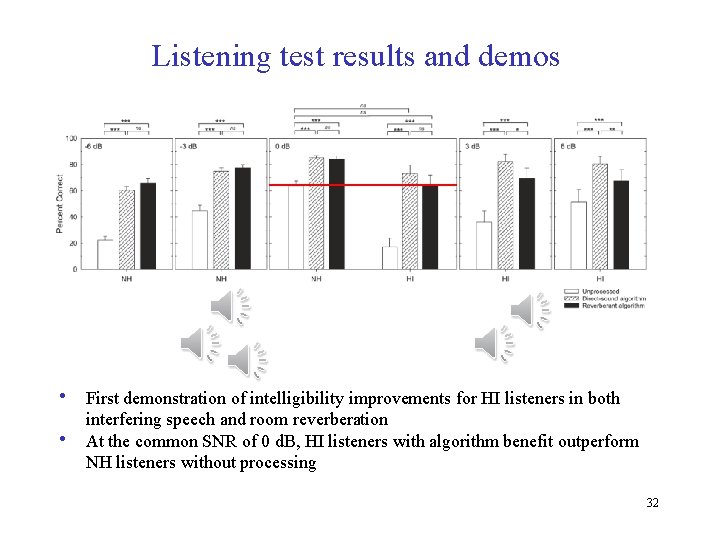

Listening test results and demos • First demonstration of intelligibility improvements for HI listeners in both • interfering speech and room reverberation At the common SNR of 0 d. B, HI listeners with algorithm benefit outperform NH listeners without processing 32

Talker-independent speaker separation • This is the most general case, and it cannot be adequately • addressed by training with many speaker pairs Talker-independent separation can be treated as unsupervised clustering (Bach & Jordan’ 06; Hu & Wang’ 13) • Such clustering, however, does not benefit from discriminant information utilized in supervised training • Deep clustering (Hershey et al. ’ 16) is the first approach to talker-independent separation by combining DNN based supervised feature learning and clustering 33

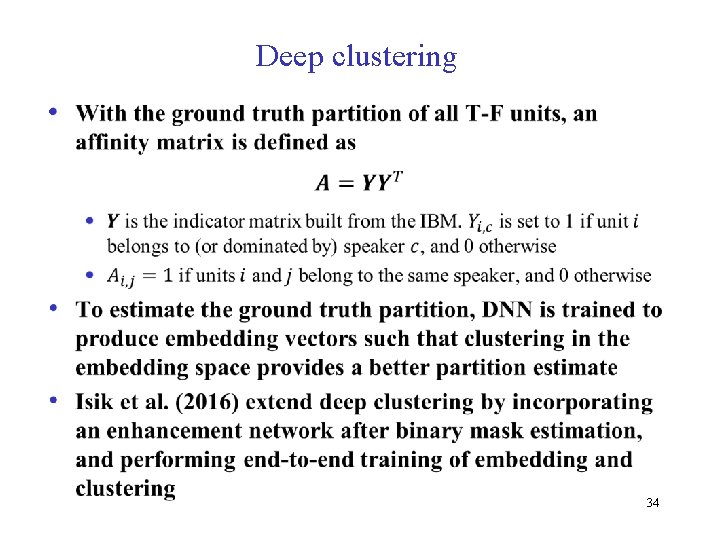

Deep clustering • 34

Permutation invariant training (PIT) • Recognizing that talker-dependent separation ties each DNN output to a specific speaker (permutation variant), PIT seeks to untie DNN outputs from speakers in order to achieve talker independence (Kolbak et al. ’ 17) • Specifically, for a pair of speakers, there are two possible assignments, each of which is associated with a mean squared error (MSE). The assignment with the lower MSE is chosen and the DNN is trained to minimize the corresponding MSE • Two versions of PIT • Frame-level PIT (t. PIT): Permutation can vary from frame to frame, • hence needs speaker tracing (sequential grouping) for speaker separation Utterance-level PIT (u. PIT): Permutation is fixed for a whole utterance, hence needs no speaker tracing 35

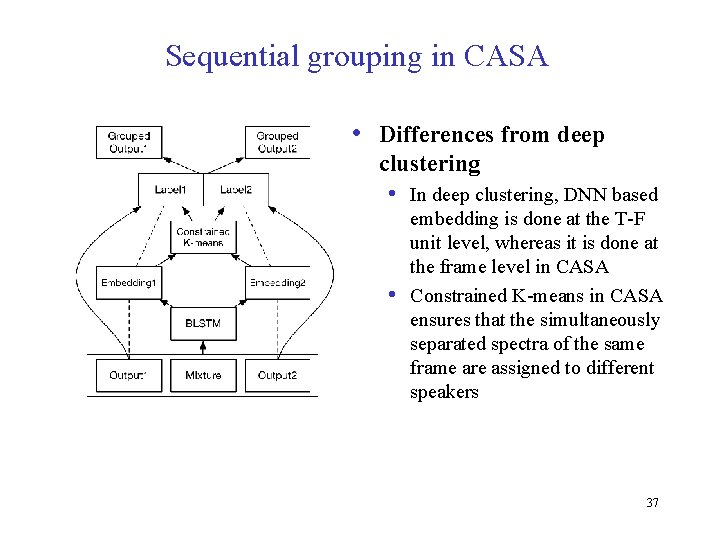

CASA based approach • Limitations of deep clustering and PIT • In deep clustering, embedding vectors for T-F units with similar • energies from underlying speakers tend to be ambiguous u. PIT does not work as well as t. PIT at the frame level, particularly for same-gender speakers, but t. PIT requires speaker tracing • Speaker separation in CASA is talker-independent • CASA performs simultaneous (spectral) grouping first, and then sequential grouping across time • Liu & Wang (2018) proposed a CASA based approach by leveraging PIT and deep clustering • For simultaneous grouping, t. PIT is trained to predict the spectra of • underlying speakers at each frame For sequential grouping, DNN is trained to predict embedding vectors for simultaneously grouped spectra 36

Sequential grouping in CASA • Differences from deep clustering • In deep clustering, DNN based • embedding is done at the T-F unit level, whereas it is done at the frame level in CASA Constrained K-means in CASA ensures that the simultaneously separated spectra of the same frame are assigned to different speakers 37

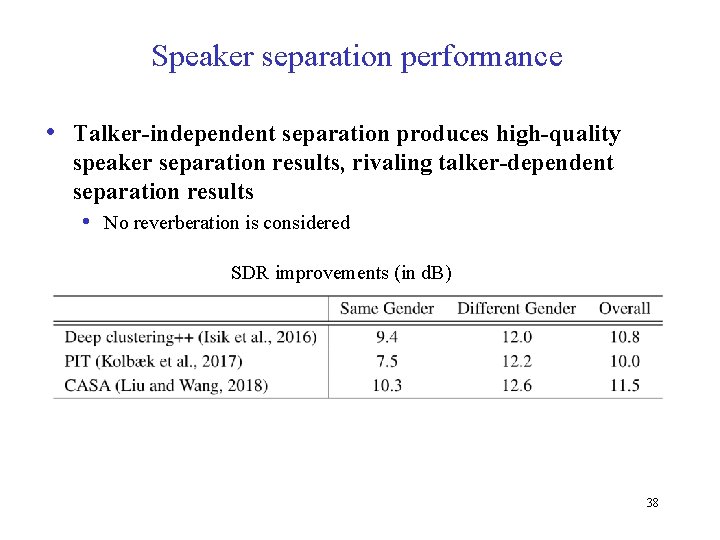

Speaker separation performance • Talker-independent separation produces high-quality speaker separation results, rivaling talker-dependent separation results • No reverberation is considered SDR improvements (in d. B) 38

Talker-independent speaker separation demos New pair of male-male speaker mixture Speaker 1 -u. PIT Speaker 2 - u. PIT Speaker 1 – DC++ Speaker 2 – DC++ Speaker 1 - CASA Speaker 2 - CASA Speaker 1 - clean Speaker 2 - clean 39

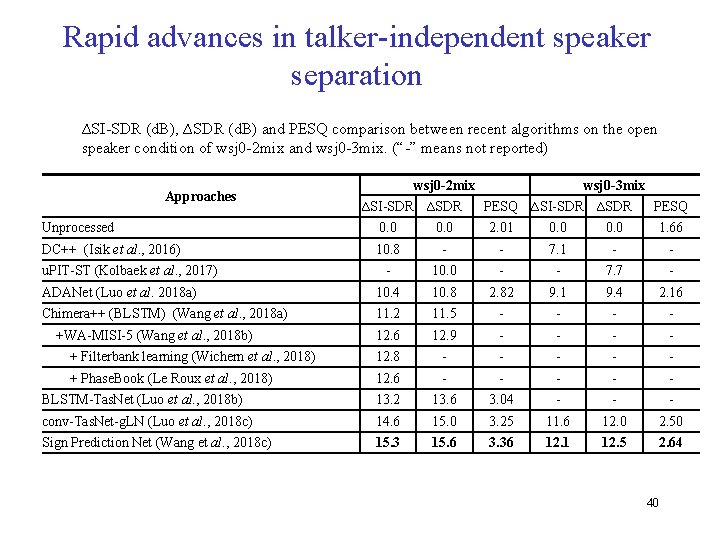

Rapid advances in talker-independent speaker separation ∆SI-SDR (d. B), ∆SDR (d. B) and PESQ comparison between recent algorithms on the open speaker condition of wsj 0 -2 mix and wsj 0 -3 mix. (“-” means not reported) Approaches wsj 0 -2 mix wsj 0 -3 mix ∆SI-SDR ∆SDR PESQ Unprocessed 0. 0 2. 01 0. 0 1. 66 DC++ (Isik et al. , 2016) 10. 8 - - 7. 1 - - - 10. 0 - - 7. 7 - ADANet (Luo et al. 2018 a) 10. 4 10. 8 2. 82 9. 1 9. 4 2. 16 Chimera++ (BLSTM) (Wang et al. , 2018 a) 11. 2 11. 5 - - 12. 6 12. 9 - - + Filterbank learning (Wichern et al. , 2018) 12. 8 - - - + Phase. Book (Le Roux et al. , 2018) 12. 6 - - - BLSTM-Tas. Net (Luo et al. , 2018 b) 13. 2 13. 6 3. 04 - - - conv-Tas. Net-g. LN (Luo et al. , 2018 c) 14. 6 15. 0 3. 25 11. 6 12. 0 2. 50 Sign Prediction Net (Wang et al. , 2018 c) 15. 3 15. 6 3. 36 12. 1 12. 5 2. 64 u. PIT-ST (Kolbaek et al. , 2017) +WA-MISI-5 (Wang et al. , 2018 b) 40

A solution in sight for cocktail party problem? • What does a solution to the cocktail party problem look like? • A system that achieves human auditory analysis performance in all listening situations (Wang & Brown’ 06) • An automatic speech recognition (ASR) system that matches the human speech recognition performance in all noisy environments • Dependency on ASR 41

A solution in sight (cont. )? • A speech separation system that helps hearing-impaired listeners to achieve the same level of speech intelligibility as normal-hearing listeners in all noisy environments • This is my current working definition – see my IEEE Spectrum cover story in March, 2017 42

Conclusion • Formulation of the cocktail party problem as mask estimation enables the use of supervised learning • Supervised separation has yielded the first demonstrations of speech • intelligibility improvement in noise Large-scale training with DNN is a promising direction to make speech separation perform in a variety of conditions • Reverberant-noisy speech separation can be effectively • • addressed in the same framework Major advances in both talker-dependent and talkerindependent speaker separation The cocktail party problem is within reach 43

- Slides: 43