Relational Transfer in Reinforcement Learning Lisa Torrey University

![Inductive Logic Programming IF [ ] THEN pass(Teammate) IF distance(Teammate) ≤ 5 THEN pass(Teammate) Inductive Logic Programming IF [ ] THEN pass(Teammate) IF distance(Teammate) ≤ 5 THEN pass(Teammate)](https://slidetodoc.com/presentation_image_h2/0acfa55da860ad11194cbcea13ed9d50/image-14.jpg)

![Macro-Operators IF [. . . ] THEN pass(Teammate) move(Direction) IF [. . . ] Macro-Operators IF [. . . ] THEN pass(Teammate) move(Direction) IF [. . . ]](https://slidetodoc.com/presentation_image_h2/0acfa55da860ad11194cbcea13ed9d50/image-19.jpg)

![MLN Weight Learning From ILP: IF [. . . ] THEN … Alchemy weight MLN Weight Learning From ILP: IF [. . . ] THEN … Alchemy weight](https://slidetodoc.com/presentation_image_h2/0acfa55da860ad11194cbcea13ed9d50/image-30.jpg)

- Slides: 63

Relational Transfer in Reinforcement Learning Lisa Torrey University of Wisconsin – Madison Doctoral Defense May 2009

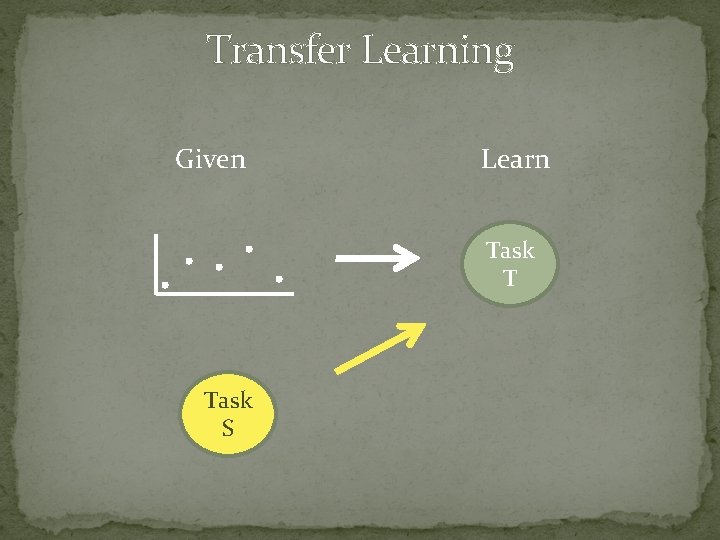

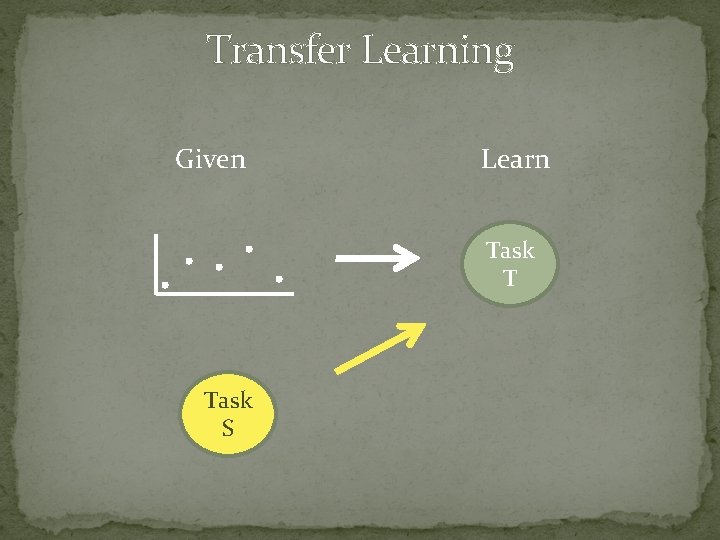

Transfer Learning Given Learn Task T Task S

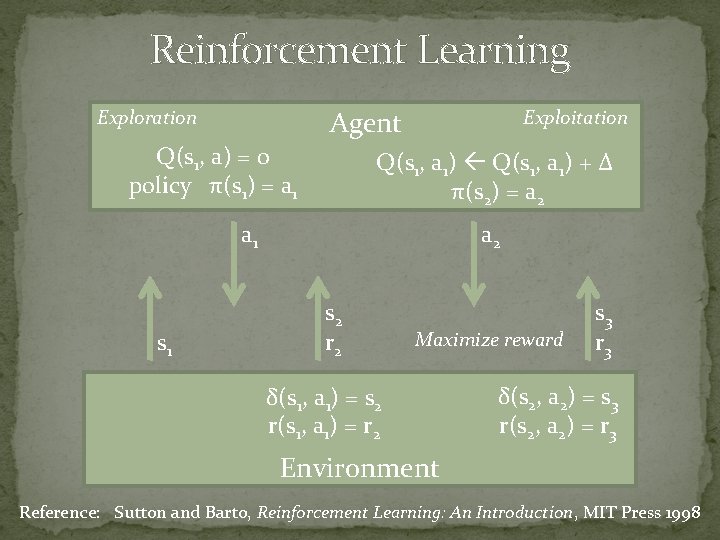

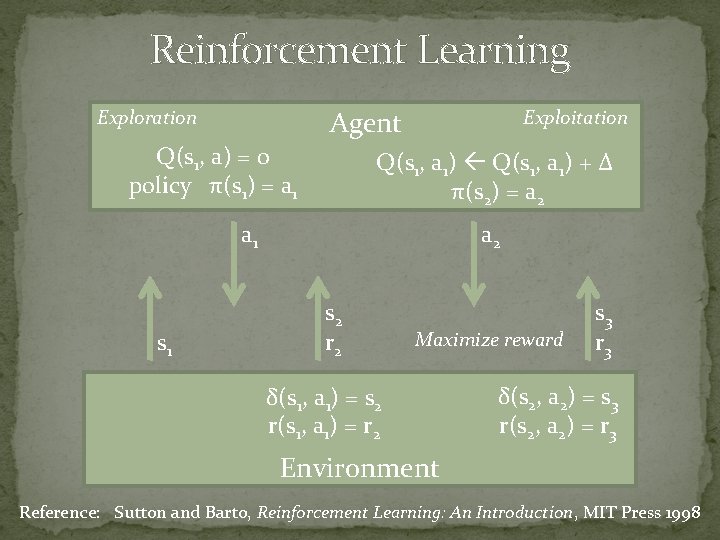

Reinforcement Learning Agent Exploration Q(s 1, a) = 0 policy π(s 1) = a 1 Exploitation Q(s 1, a 1) + Δ π(s 2) = a 2 a 1 s 1 a 2 s 2 r 2 Maximize reward δ(s 1, a 1) = s 2 r(s 1, a 1) = r 2 s 3 r 3 δ(s 2, a 2) = s 3 r(s 2, a 2) = r 3 Environment Reference: Sutton and Barto, Reinforcement Learning: An Introduction, MIT Press 1998

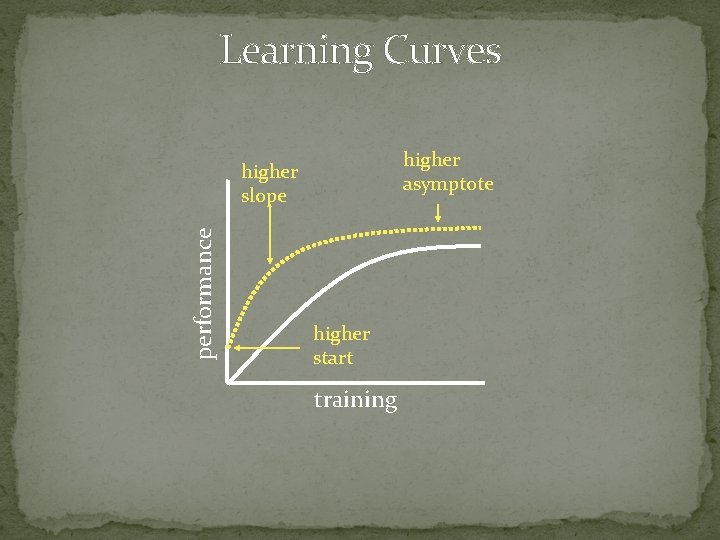

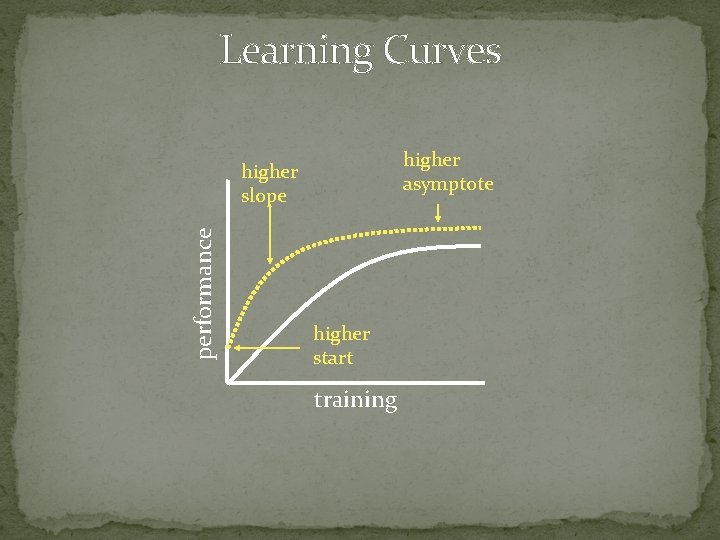

Learning Curves higher asymptote performance higher slope higher start training

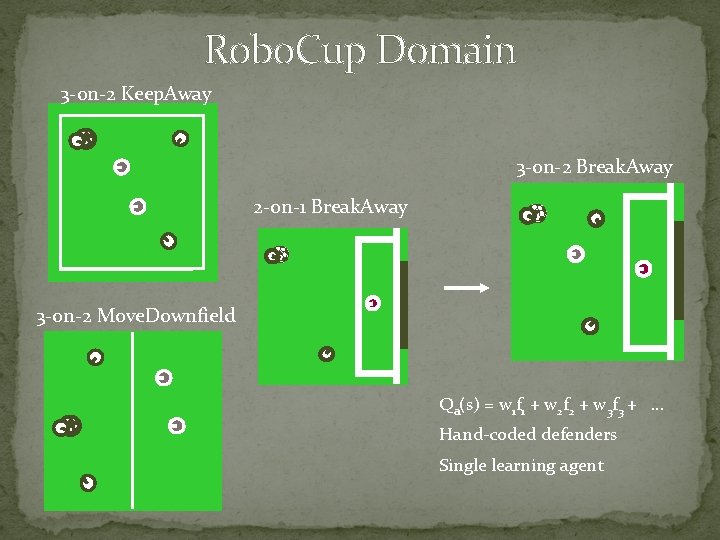

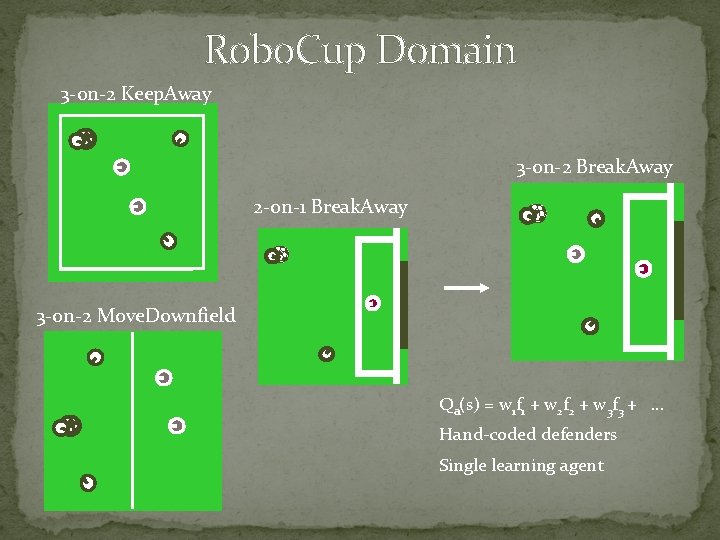

Robo. Cup Domain 3 -on-2 Keep. Away 3 -on-2 Break. Away 2 -on-1 Break. Away 3 -on-2 Move. Downfield Qa(s) = w 1 f 1 + w 2 f 2 + w 3 f 3 + … Hand-coded defenders Single learning agent

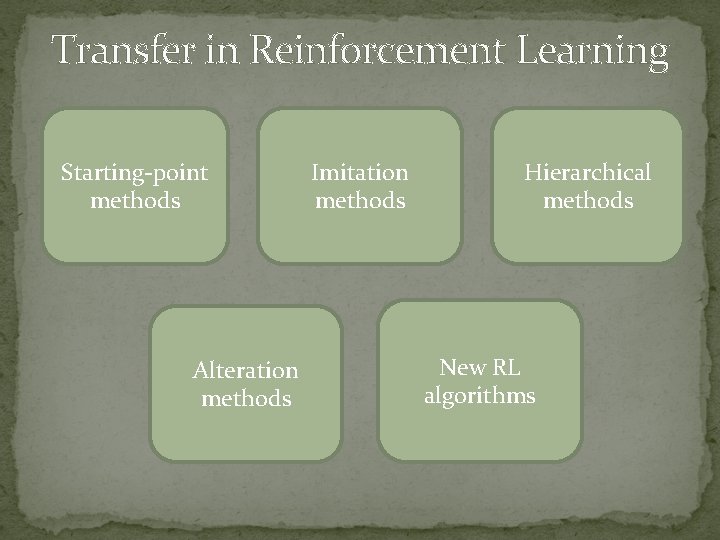

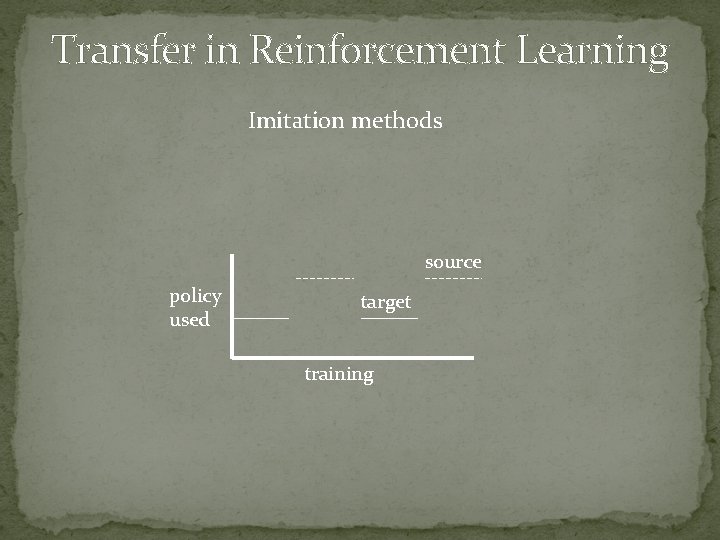

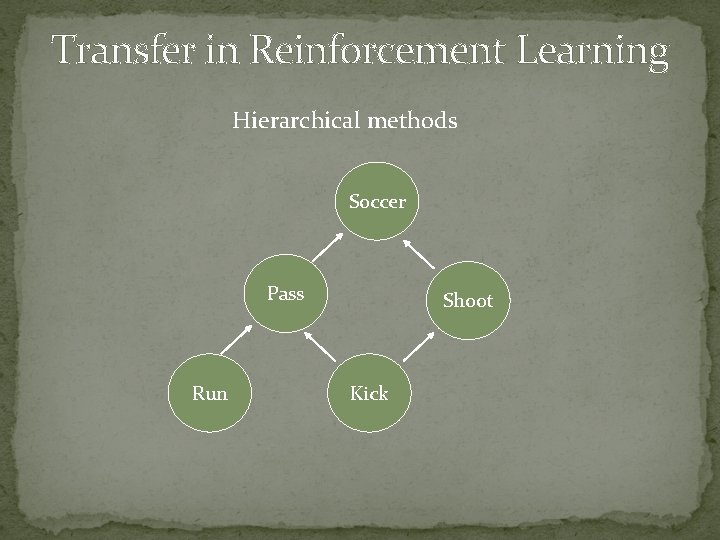

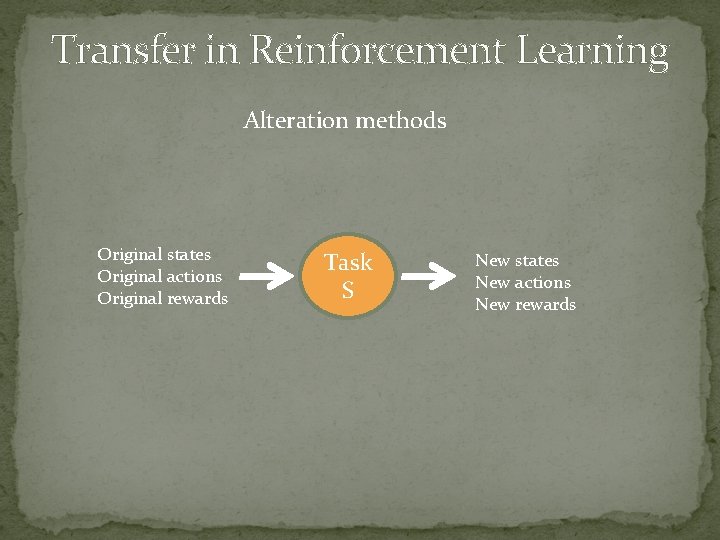

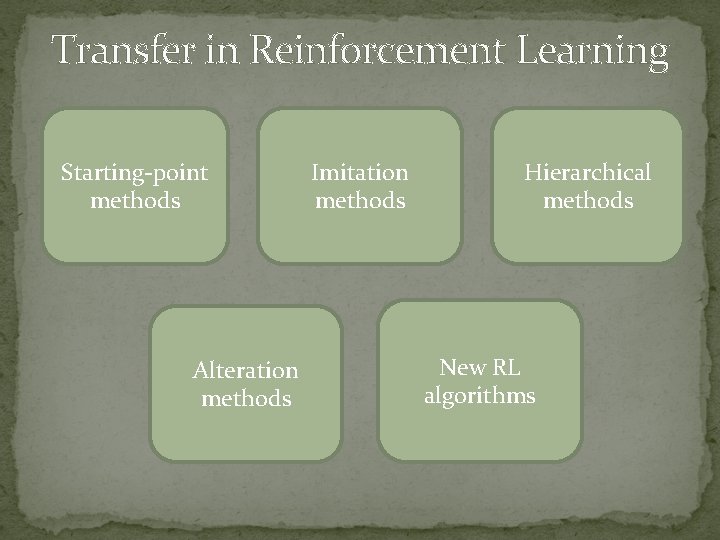

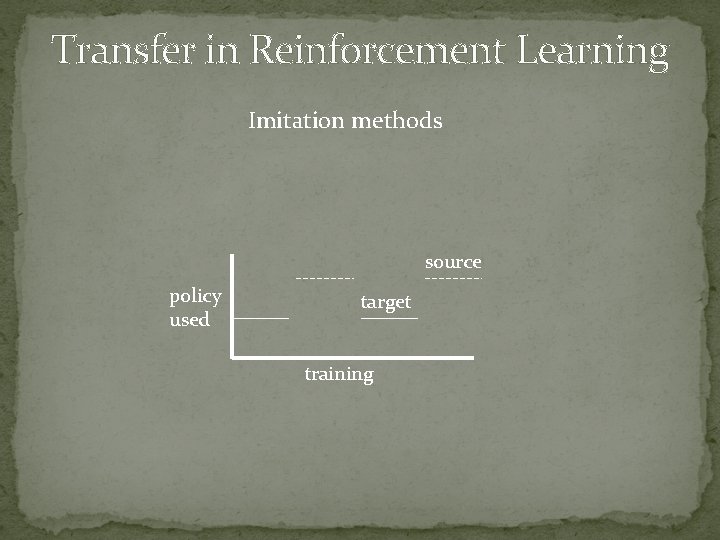

Transfer in Reinforcement Learning Starting-point methods Alteration methods Imitation methods Hierarchical methods New RL algorithms

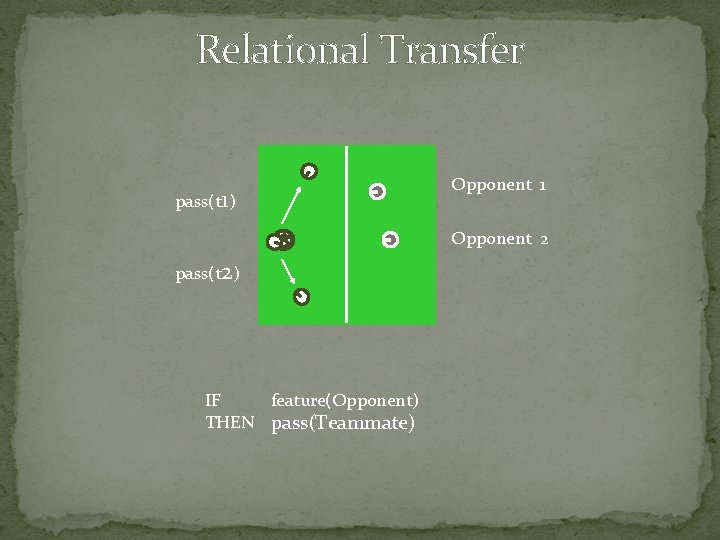

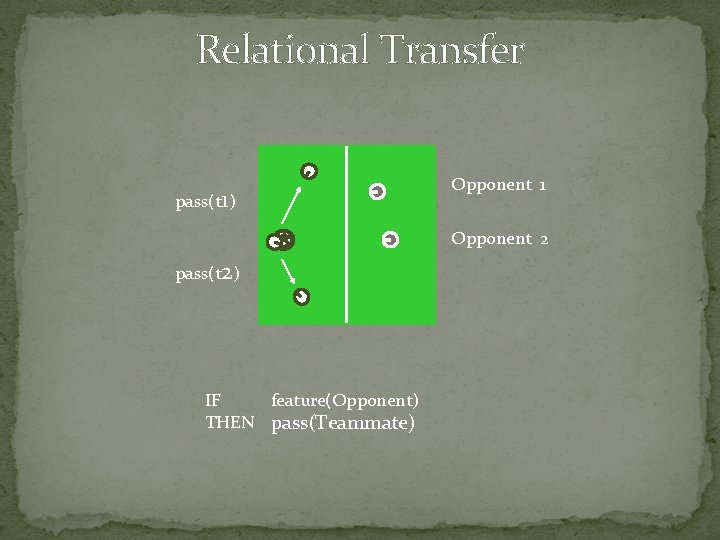

Relational Transfer pass(t 1) Opponent 1 Opponent 2 pass(t 2) IF feature(Opponent) THEN pass(Teammate)

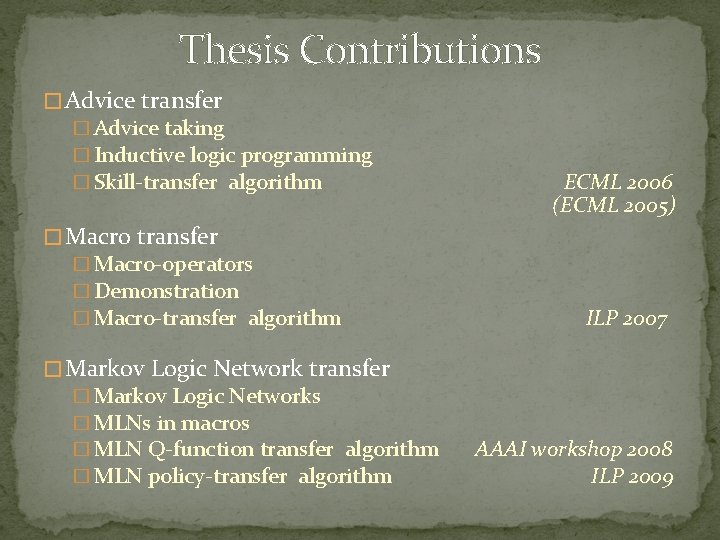

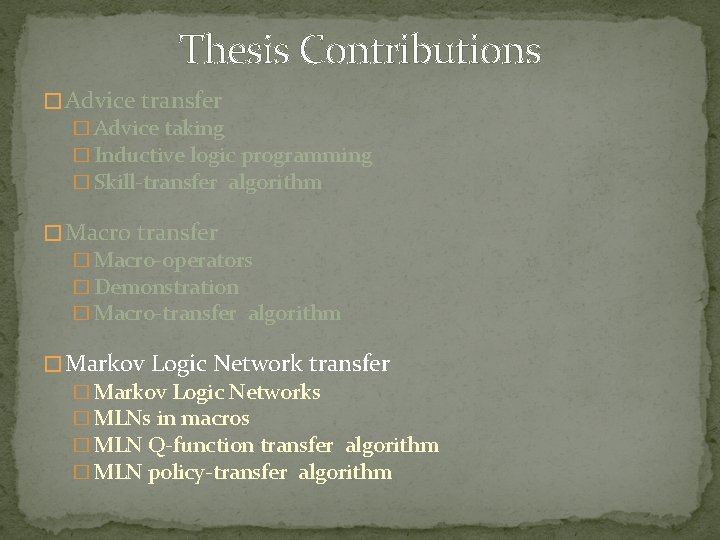

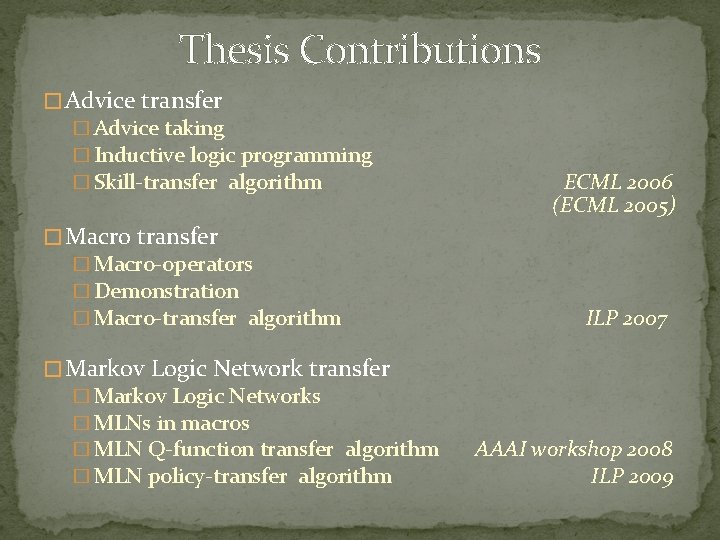

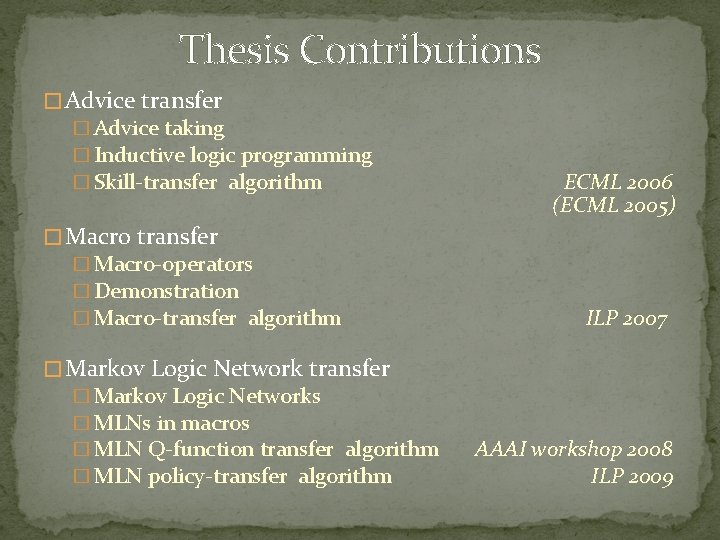

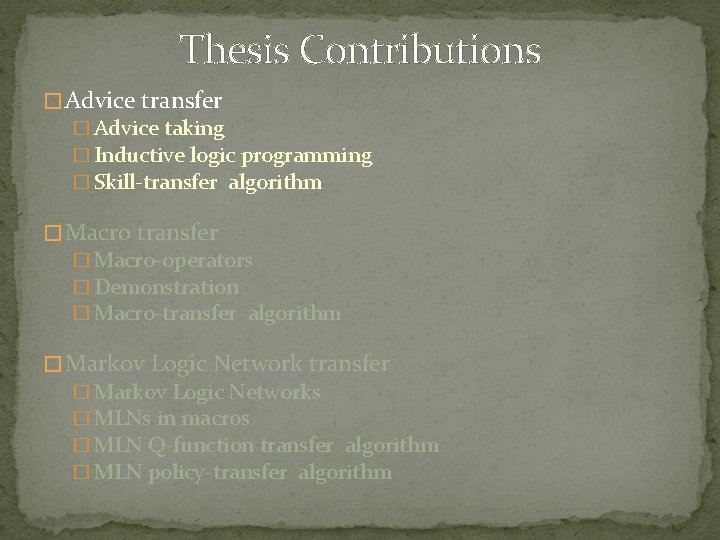

Thesis Contributions � Advice transfer � Advice taking � Inductive logic programming � Skill-transfer algorithm ECML 2006 (ECML 2005) � Macro transfer � Macro-operators � Demonstration � Macro-transfer algorithm ILP 2007 � Markov Logic Network transfer � Markov Logic Networks � MLNs in macros � MLN Q-function transfer algorithm � MLN policy-transfer algorithm AAAI workshop 2008 ILP 2009

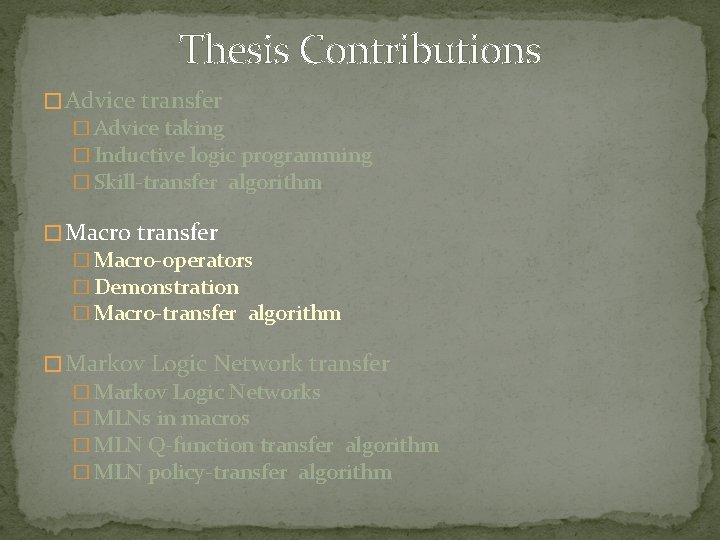

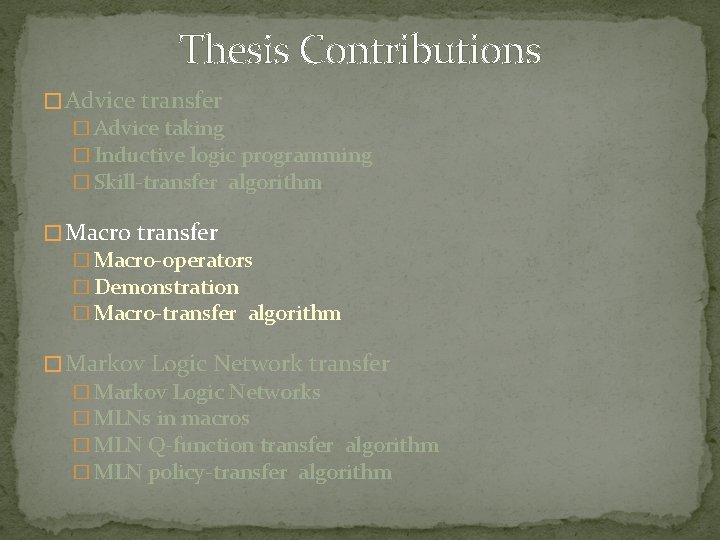

Thesis Contributions � Advice transfer � Advice taking � Inductive logic programming � Skill-transfer algorithm � Macro transfer � Macro-operators � Demonstration � Macro-transfer algorithm � Markov Logic Network transfer � Markov Logic Networks � MLNs in macros � MLN Q-function transfer algorithm � MLN policy-transfer algorithm

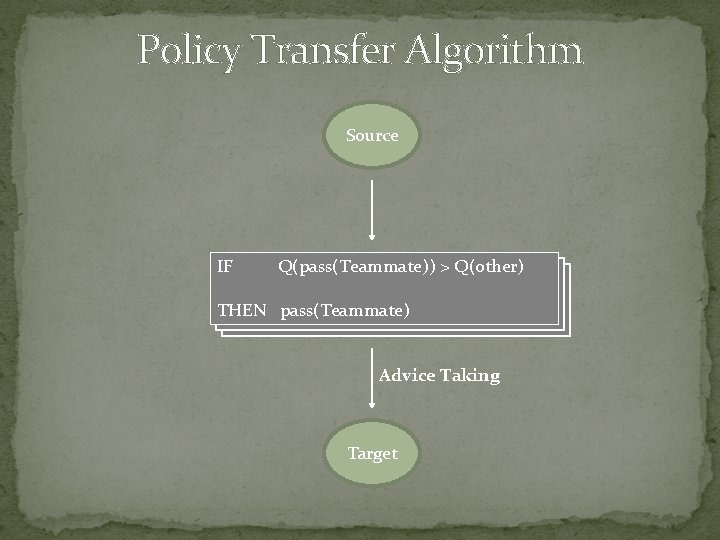

Advice IF these conditions hold THEN pass is the best action

Transfer via Advice Try what worked in a previous task!

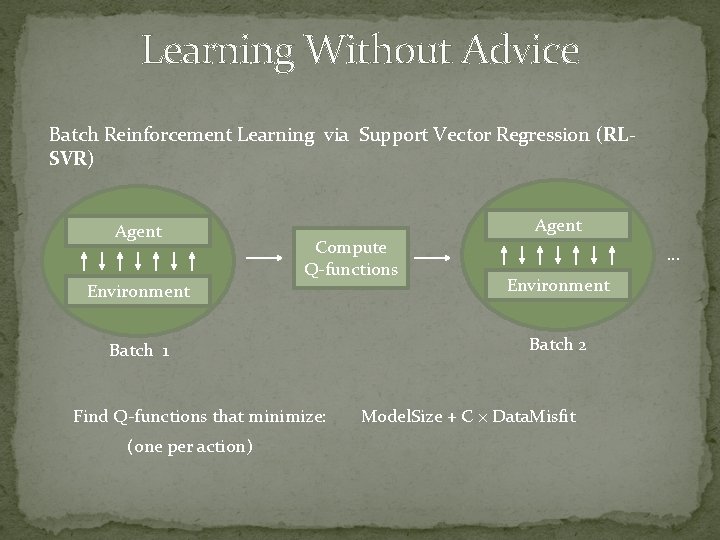

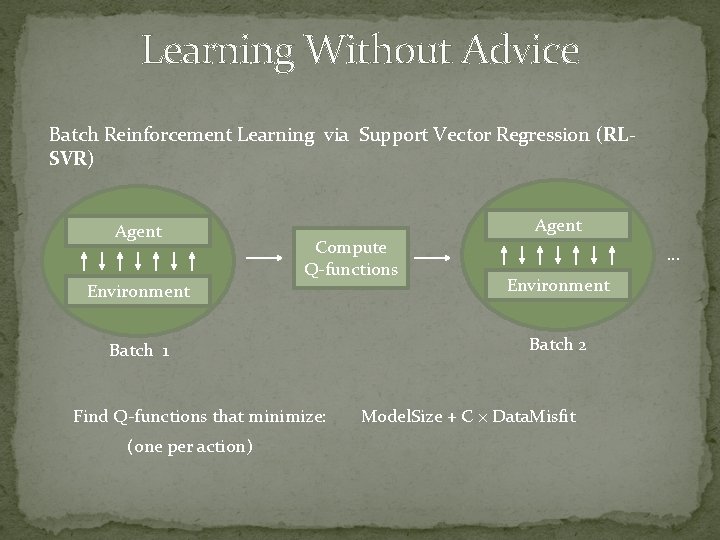

Learning Without Advice Batch Reinforcement Learning via Support Vector Regression (RLSVR) Agent Compute Q-functions … Environment Batch 1 Batch 2 Find Q-functions that minimize: (one per action) Model. Size + C × Data. Misfit

Learning With Advice Batch Reinforcement Learning with Advice (KBKR) Agent Compute Q-functions … Environment Batch 1 Batch 2 Advice Find Q-functions that minimize: Model. Size + C × Data. Misfit + µ × Advice. Misfit Robust to negative transfer!

![Inductive Logic Programming IF THEN passTeammate IF distanceTeammate 5 THEN passTeammate Inductive Logic Programming IF [ ] THEN pass(Teammate) IF distance(Teammate) ≤ 5 THEN pass(Teammate)](https://slidetodoc.com/presentation_image_h2/0acfa55da860ad11194cbcea13ed9d50/image-14.jpg)

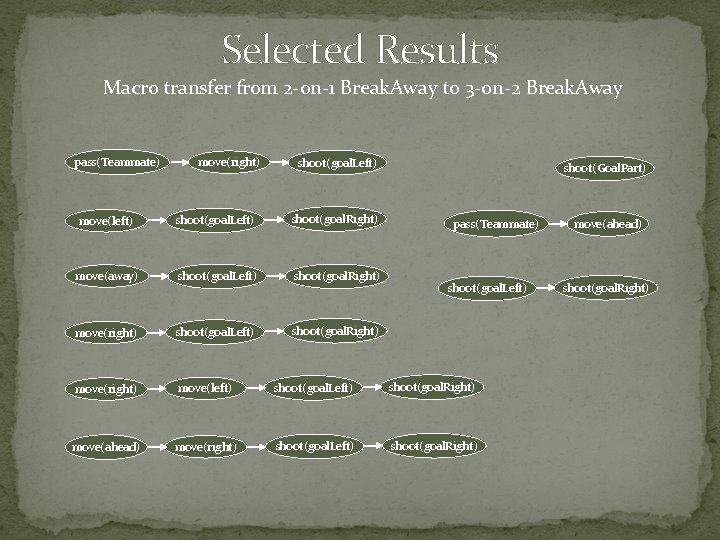

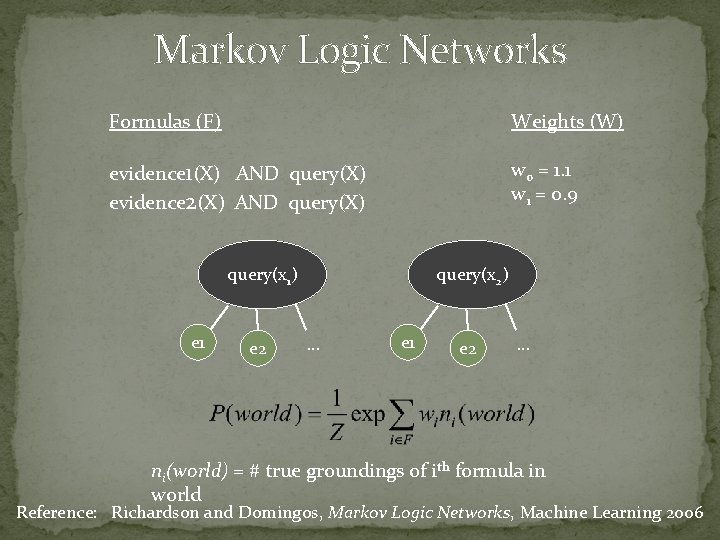

Inductive Logic Programming IF [ ] THEN pass(Teammate) IF distance(Teammate) ≤ 5 THEN pass(Teammate) IF IF distance(Teammate) ≤ 10 THEN pass(Teammate) distance(Teammate) ≤ 5 angle(Teammate, Opponent) ≥ 15 THEN pass(Teammate) IF … distance(Teammate) ≤ 5 angle(Teammate, Opponent) ≥ 30 THEN pass(Teammate) F(β) = (1+ β 2) × Precision × Recall (β 2 × Precision) + Recall Reference: De Raedt, Logical and Relational Learning, Springer 2008

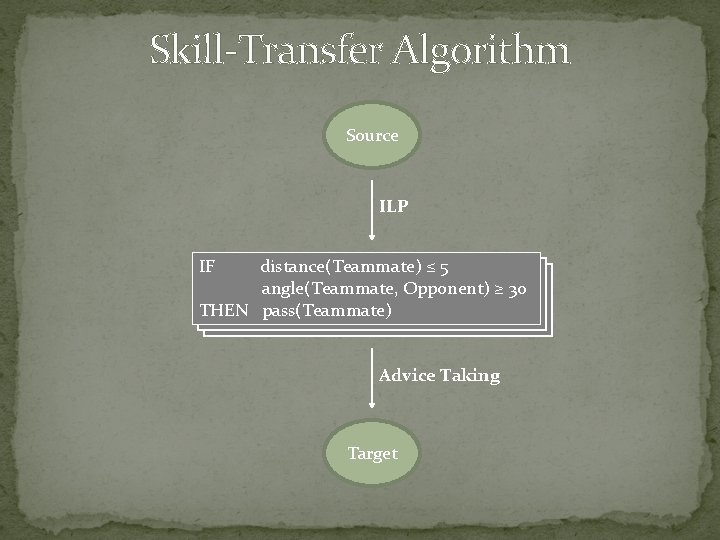

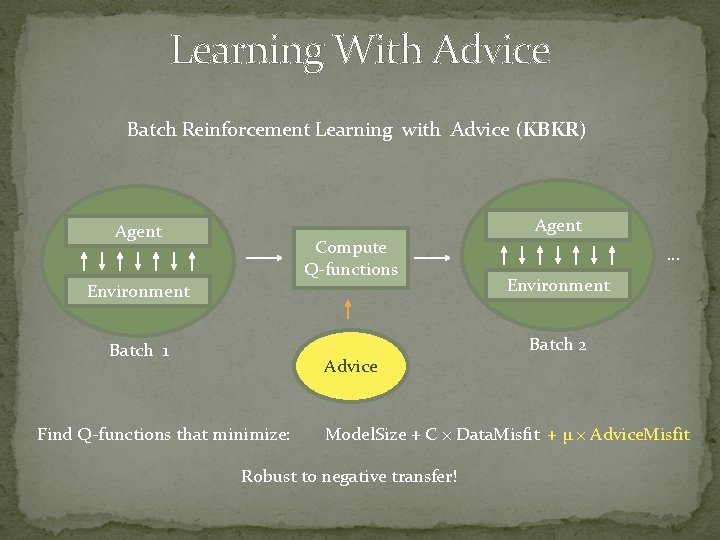

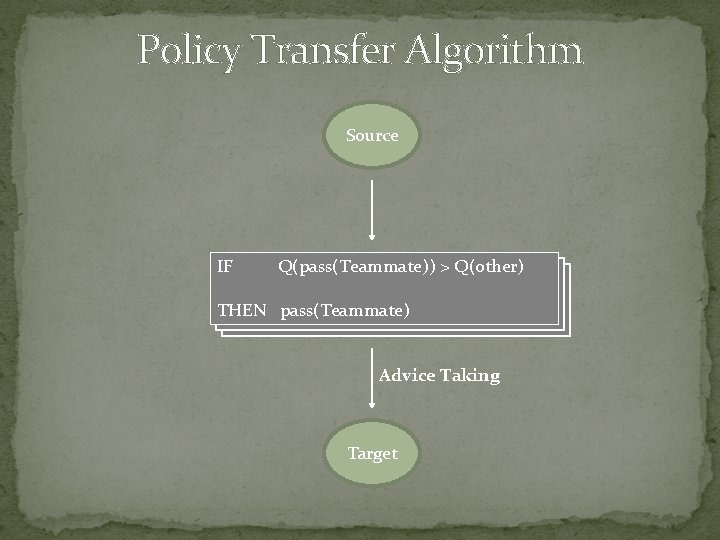

Skill-Transfer Algorithm Source ILP IF distance(Teammate) ≤ 5 angle(Teammate, Opponent) ≥ 30 THEN pass(Teammate) Advice Taking Target

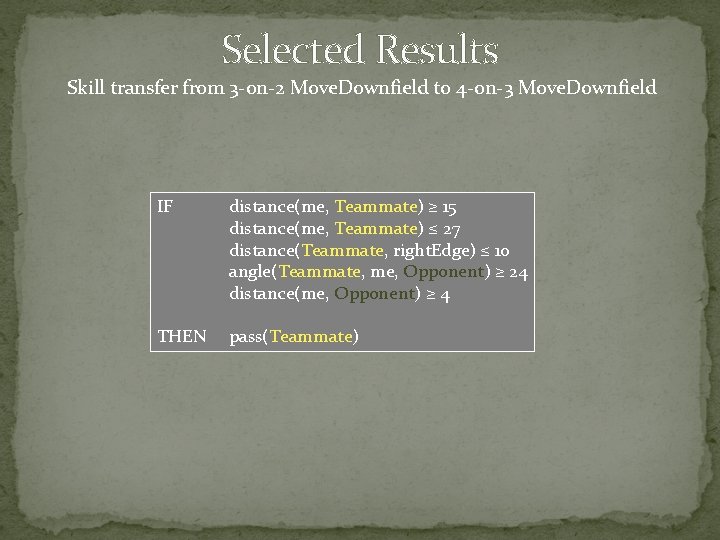

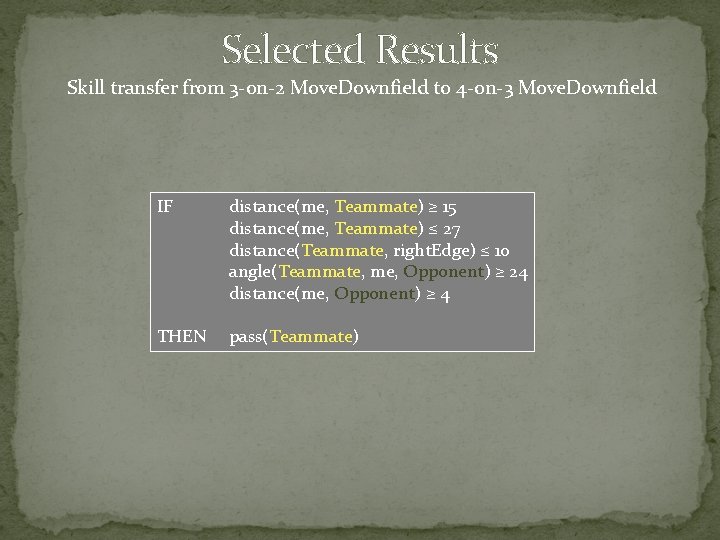

Selected Results Skill transfer from 3 -on-2 Move. Downfield to 4 -on-3 Move. Downfield IF distance(me, Teammate) ≥ 15 distance(me, Teammate) ≤ 27 distance(Teammate, right. Edge) ≤ 10 angle(Teammate, me, Opponent) ≥ 24 distance(me, Opponent) ≥ 4 THEN pass(Teammate)

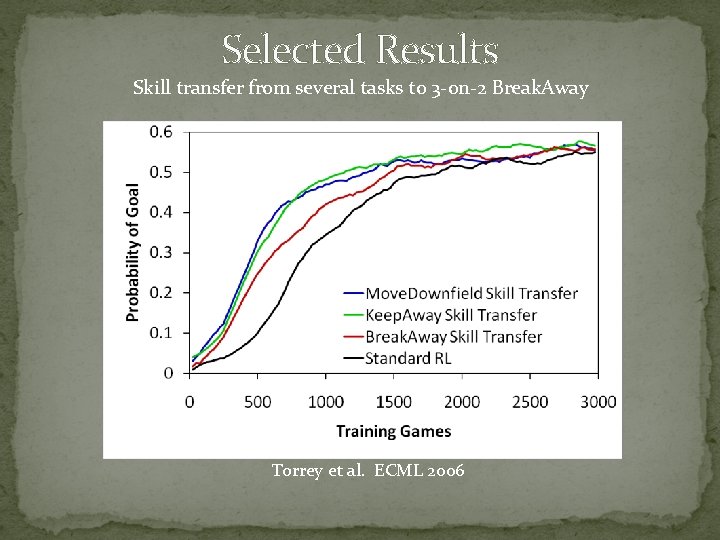

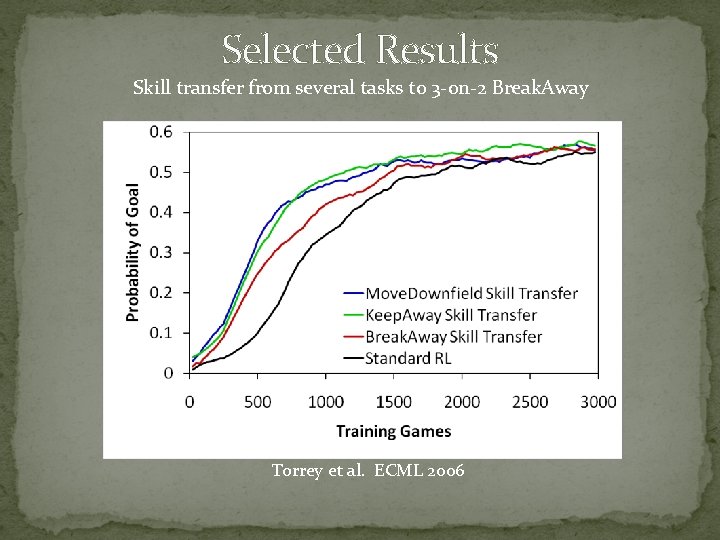

Selected Results Skill transfer from several tasks to 3 -on-2 Break. Away Torrey et al. ECML 2006

Thesis Contributions � Advice transfer � Advice taking � Inductive logic programming � Skill-transfer algorithm � Macro transfer � Macro-operators � Demonstration � Macro-transfer algorithm � Markov Logic Network transfer � Markov Logic Networks � MLNs in macros � MLN Q-function transfer algorithm � MLN policy-transfer algorithm

![MacroOperators IF THEN passTeammate moveDirection IF Macro-Operators IF [. . . ] THEN pass(Teammate) move(Direction) IF [. . . ]](https://slidetodoc.com/presentation_image_h2/0acfa55da860ad11194cbcea13ed9d50/image-19.jpg)

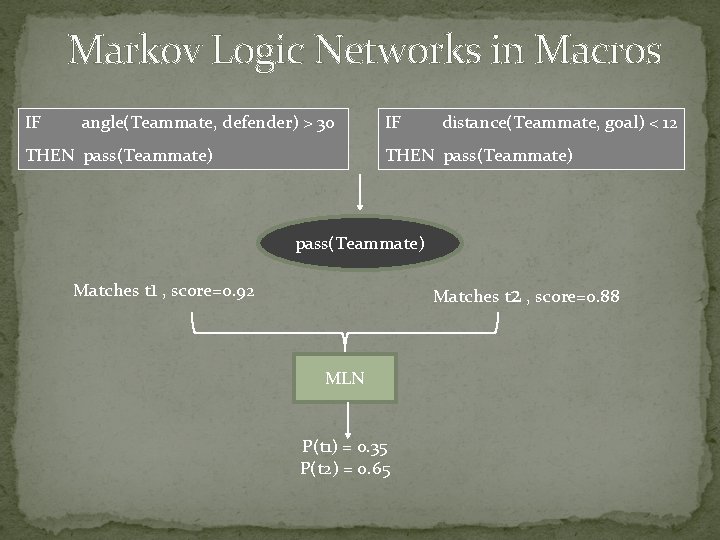

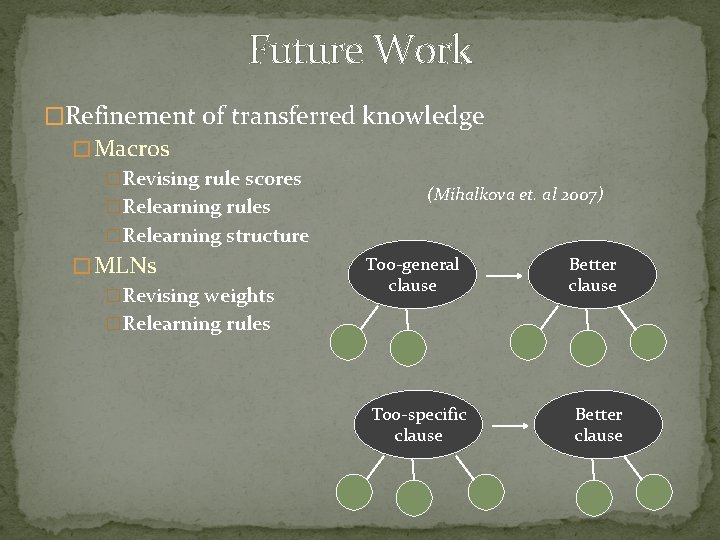

Macro-Operators IF [. . . ] THEN pass(Teammate) move(Direction) IF [. . . ] THEN move(left) shoot(goal. Right) IF [. . . ] THEN shoot(goal. Right) shoot(goal. Left) IF [. . . ] THEN shoot(goal. Right) IF [. . . ] THEN move(ahead) IF [. . . ] THEN shoot(goal. Right) IF [. . . ] THEN shoot(goal. Left)

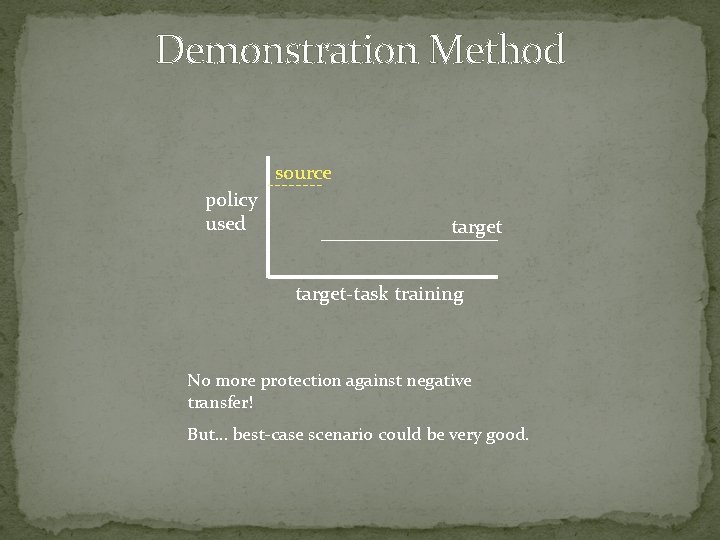

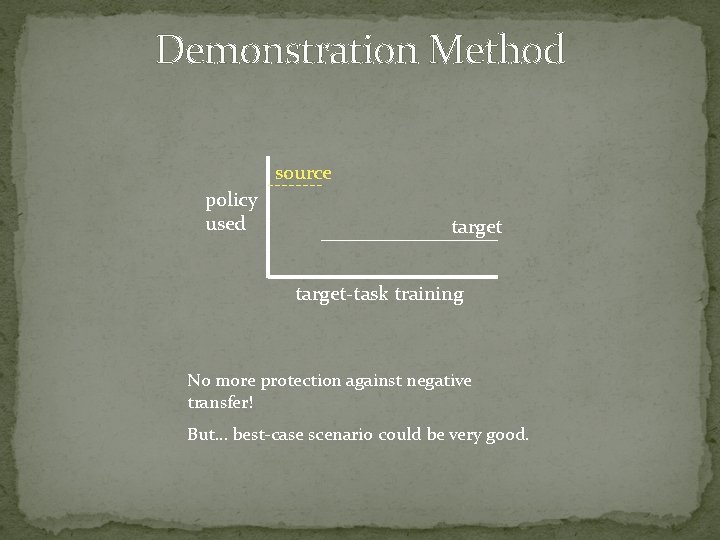

Demonstration Method source policy used target-task training No more protection against negative transfer! But… best-case scenario could be very good.

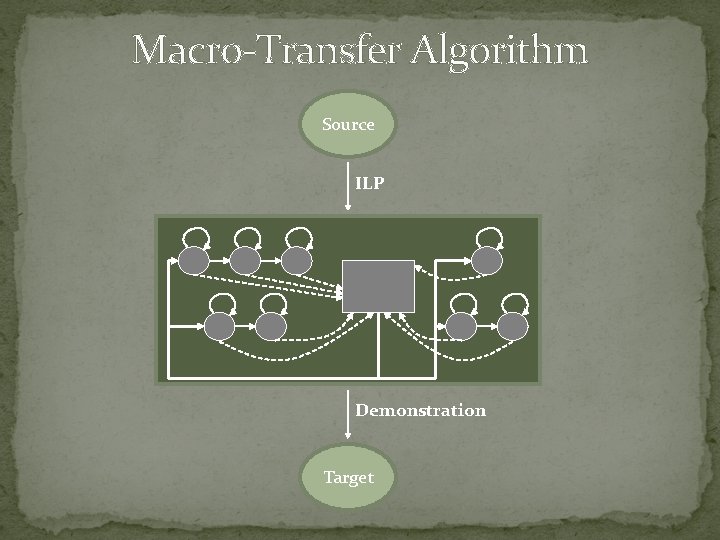

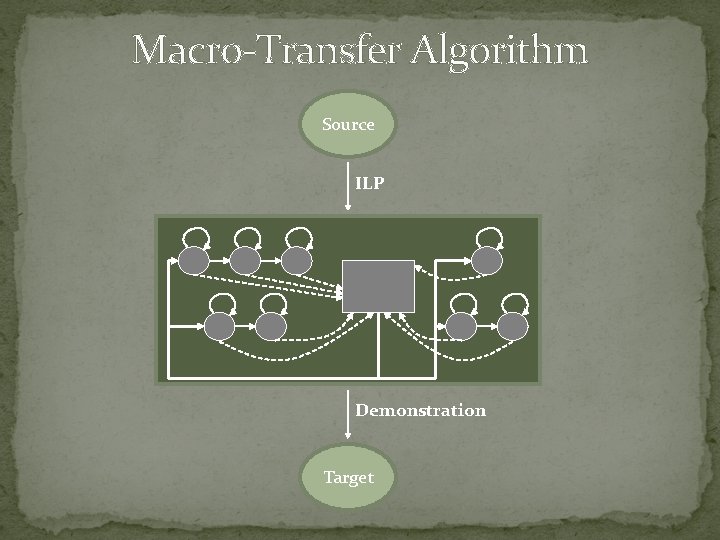

Macro-Transfer Algorithm Source ILP Demonstration Target

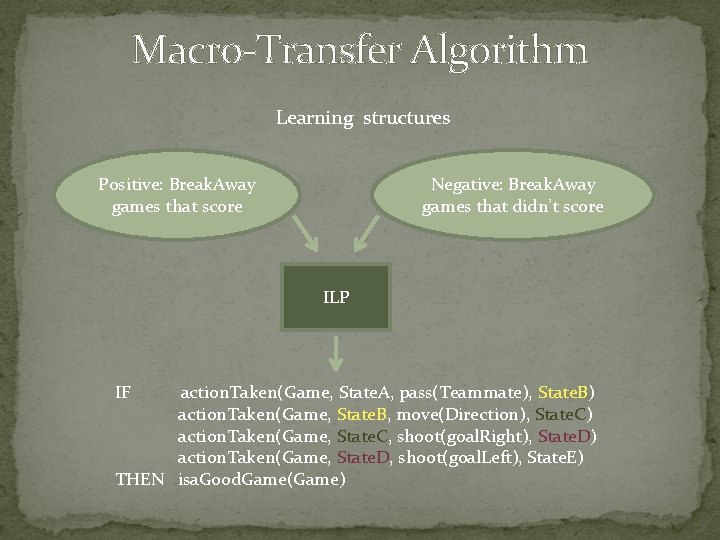

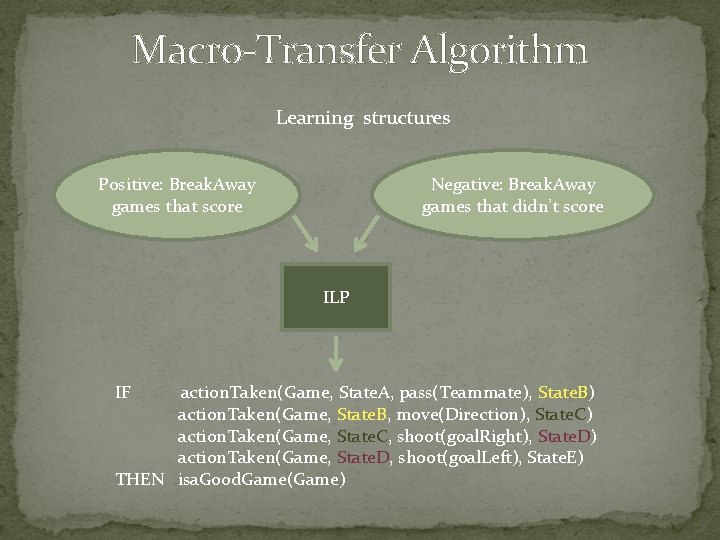

Macro-Transfer Algorithm Learning structures Positive: Break. Away games that score Negative: Break. Away games that didn’t score ILP IF action. Taken(Game, State. A, pass(Teammate), State. B) action. Taken(Game, State. B, move(Direction), State. C) action. Taken(Game, State. C, shoot(goal. Right), State. D) action. Taken(Game, State. D, shoot(goal. Left), State. E) THEN isa. Good. Game(Game)

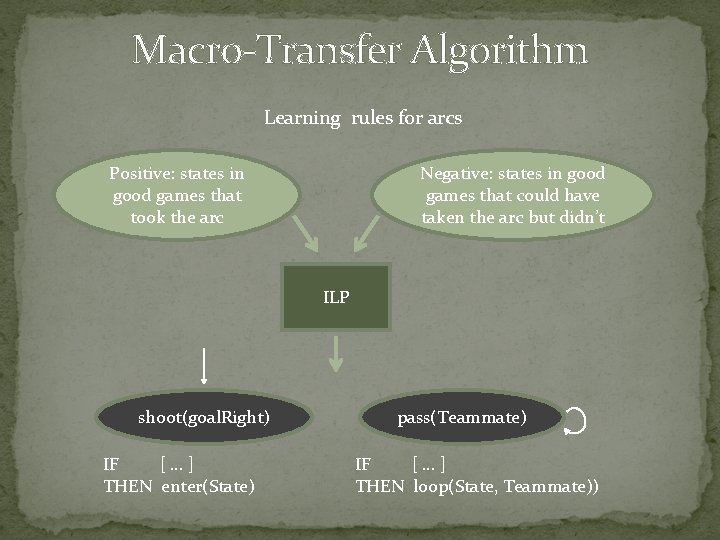

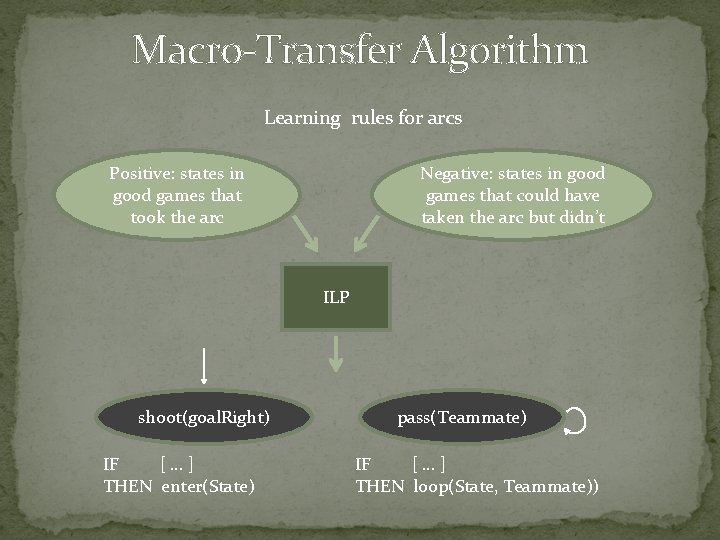

Macro-Transfer Algorithm Learning rules for arcs Positive: states in good games that took the arc Negative: states in good games that could have taken the arc but didn’t ILP shoot(goal. Right) IF […] THEN enter(State) pass(Teammate) IF […] THEN loop(State, Teammate))

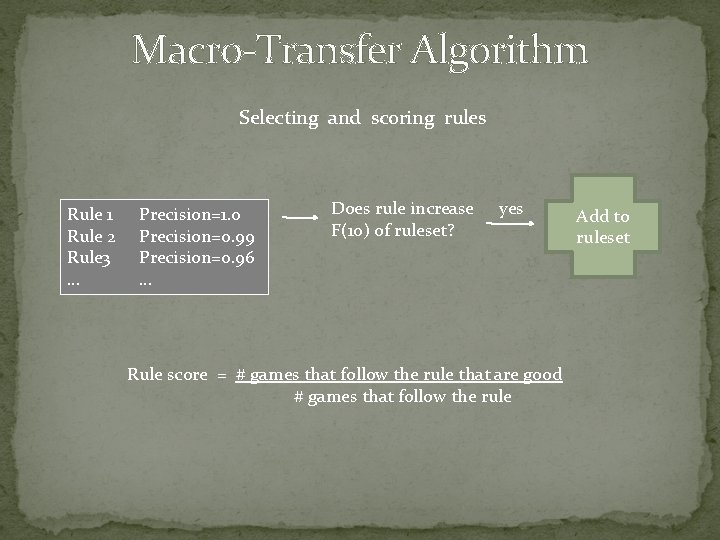

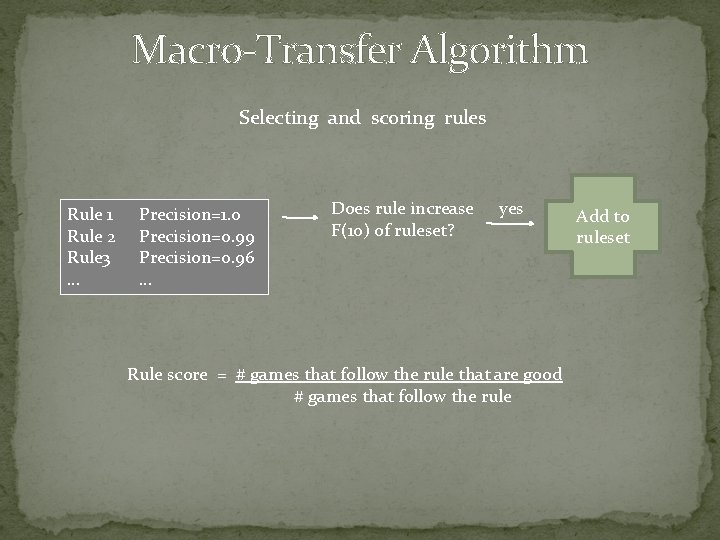

Macro-Transfer Algorithm Selecting and scoring rules Rule 1 Rule 2 Rule 3 … Precision=1. 0 Precision=0. 99 Precision=0. 96 … Does rule increase F(10) of ruleset? yes Rule score = # games that follow the rule that are good # games that follow the rule Add to ruleset

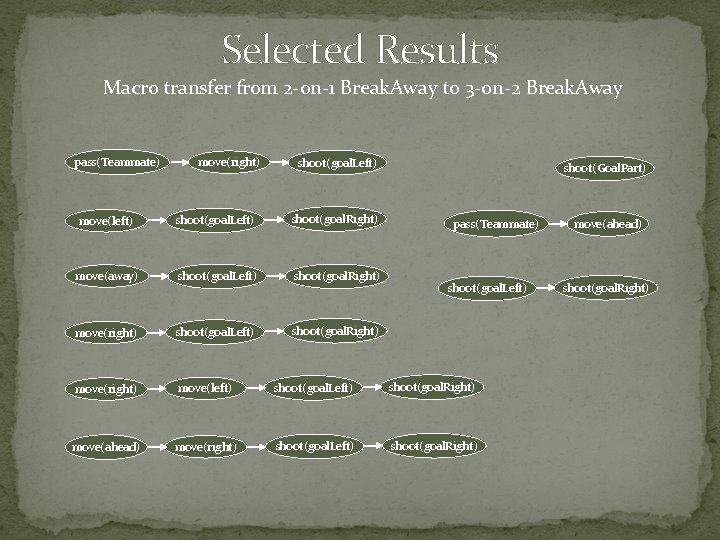

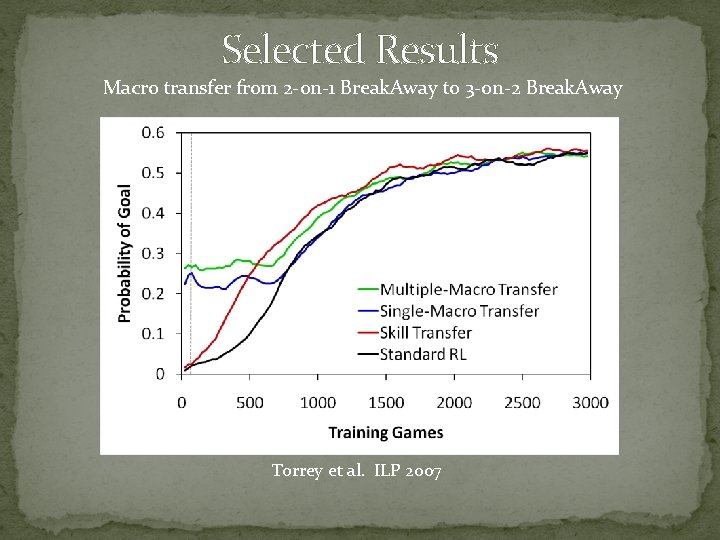

Selected Results Macro transfer from 2 -on-1 Break. Away to 3 -on-2 Break. Away pass(Teammate) move(right) shoot(goal. Left) shoot(Goal. Part) move(left) shoot(goal. Left) shoot(goal. Right) move(away) shoot(goal. Left) shoot(goal. Right) move(right) move(left) shoot(goal. Left) shoot(goal. Right) move(ahead) move(right) shoot(goal. Left) shoot(goal. Right) pass(Teammate) shoot(goal. Left) move(ahead) shoot(goal. Right)

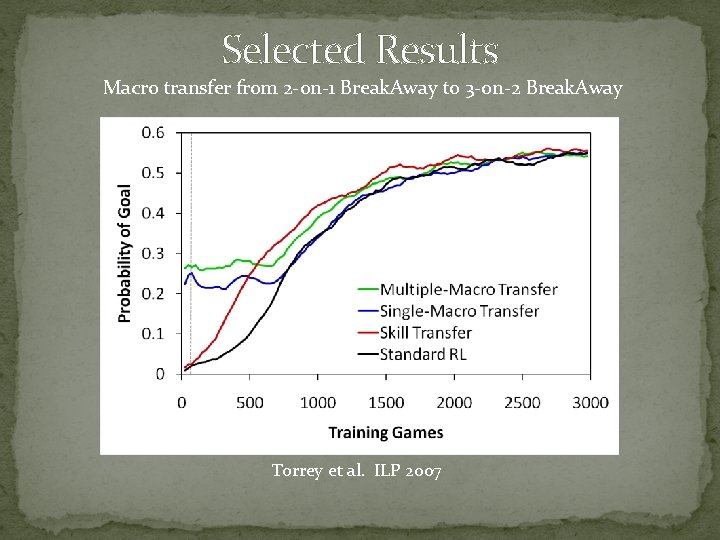

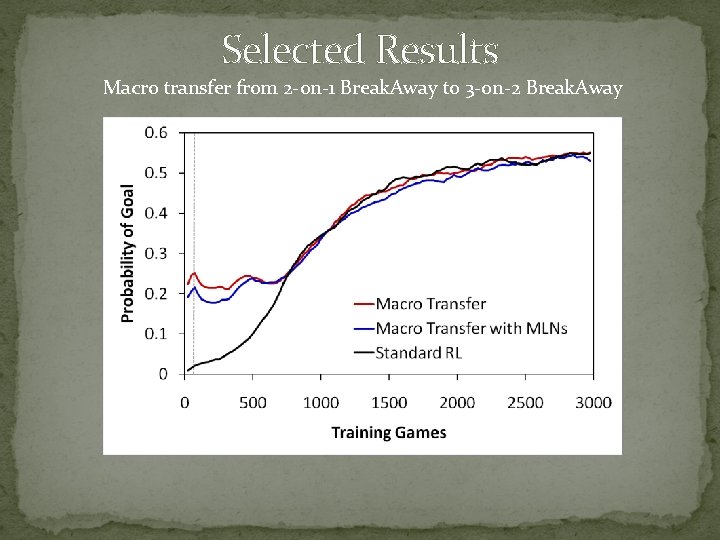

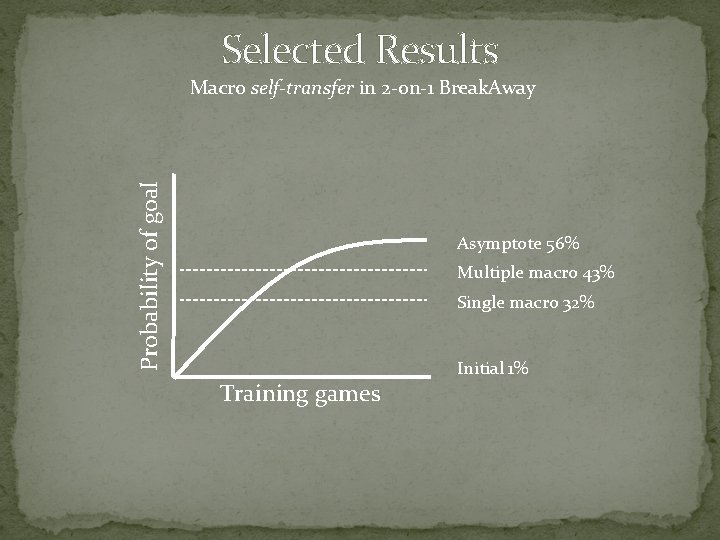

Selected Results Macro transfer from 2 -on-1 Break. Away to 3 -on-2 Break. Away Torrey et al. ILP 2007

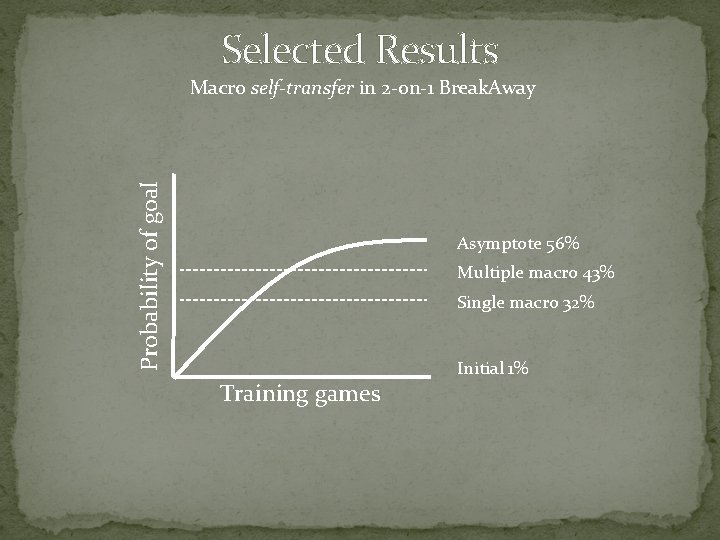

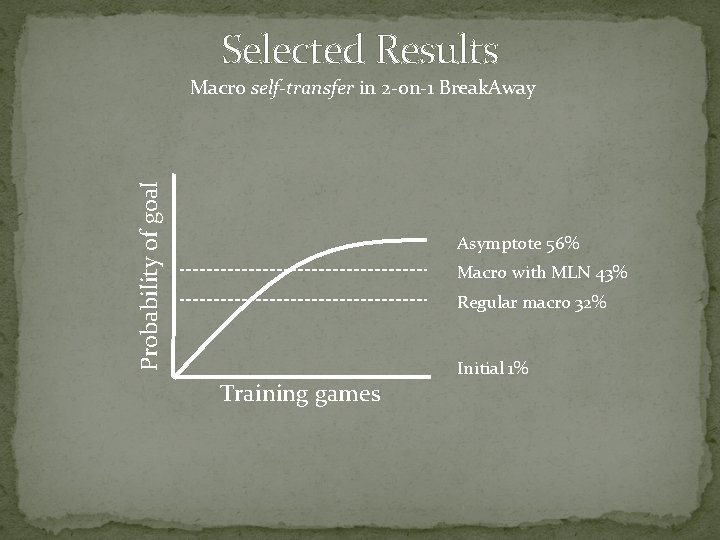

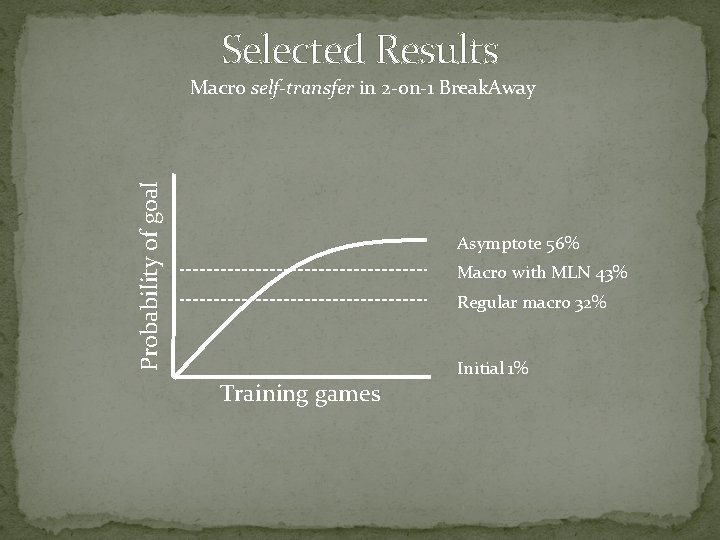

Selected Results Probability of goal Macro self-transfer in 2 -on-1 Break. Away Asymptote 56% Multiple macro 43% Single macro 32% Training games Initial 1%

Thesis Contributions � Advice transfer � Advice taking � Inductive logic programming � Skill-transfer algorithm � Macro transfer � Macro-operators � Demonstration � Macro-transfer algorithm � Markov Logic Network transfer � Markov Logic Networks � MLNs in macros � MLN Q-function transfer algorithm � MLN policy-transfer algorithm

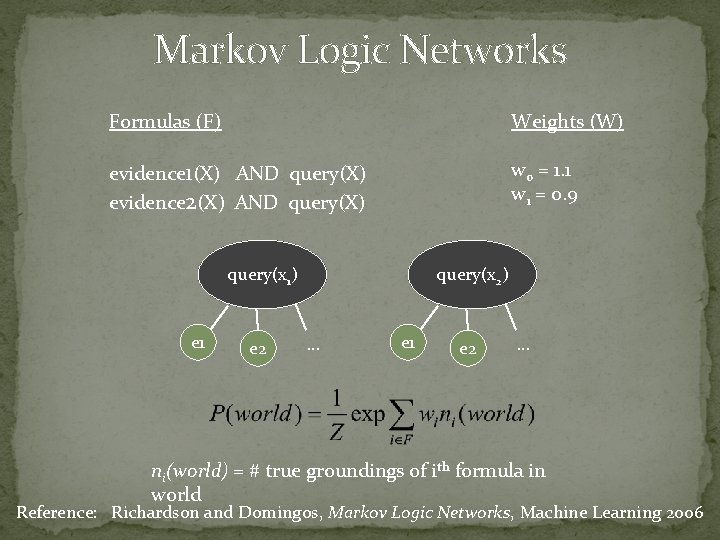

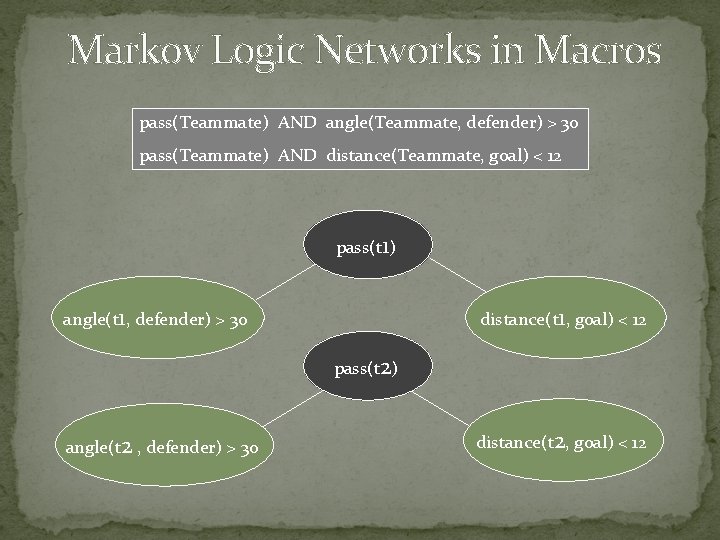

Markov Logic Networks Formulas (F) Weights (W) evidence 1(X) AND query(X) evidence 2(X) AND query(X) w 0 = 1. 1 w 1 = 0. 9 query(x 1) e 1 e 2 query(x 2) … e 1 e 2 … ni(world) = # true groundings of ith formula in world Reference: Richardson and Domingos, Markov Logic Networks, Machine Learning 2006

![MLN Weight Learning From ILP IF THEN Alchemy weight MLN Weight Learning From ILP: IF [. . . ] THEN … Alchemy weight](https://slidetodoc.com/presentation_image_h2/0acfa55da860ad11194cbcea13ed9d50/image-30.jpg)

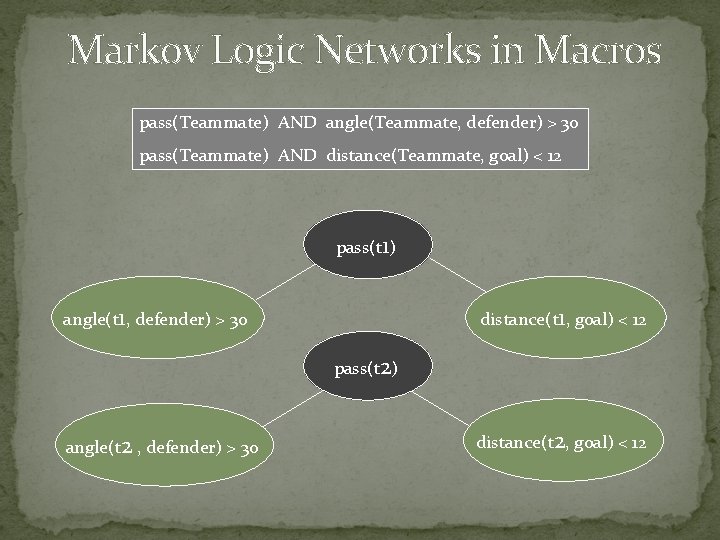

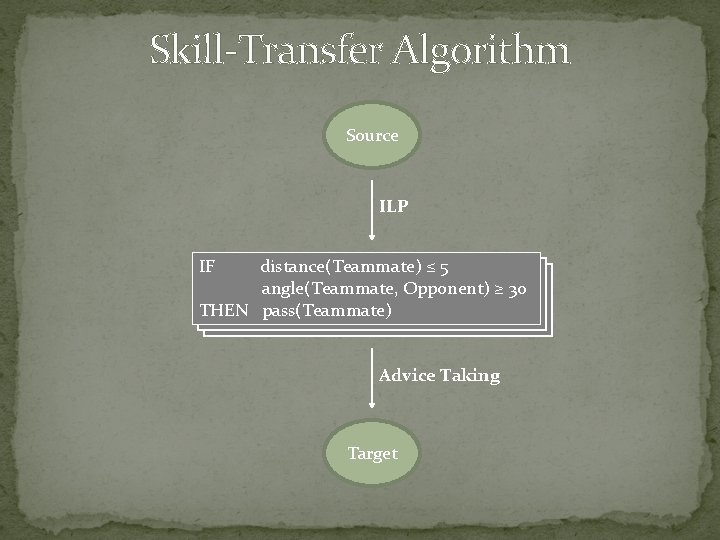

MLN Weight Learning From ILP: IF [. . . ] THEN … Alchemy weight learning MLN: w 0 = 1. 1 Reference: http: //alchemy. cs. washington. edu

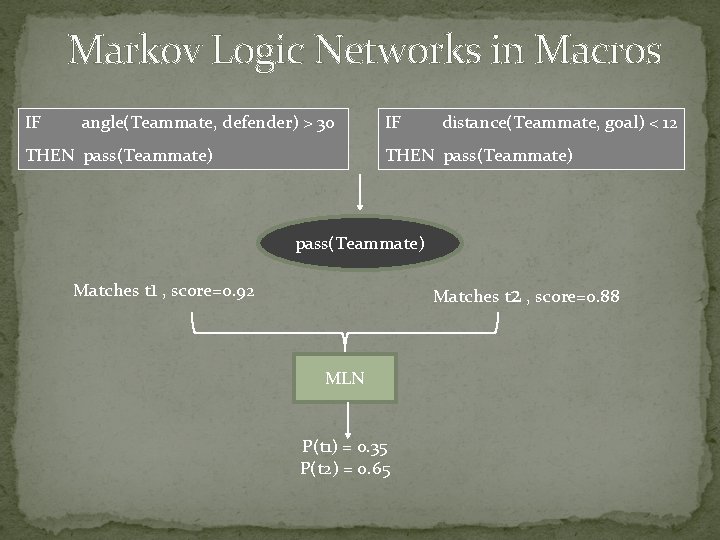

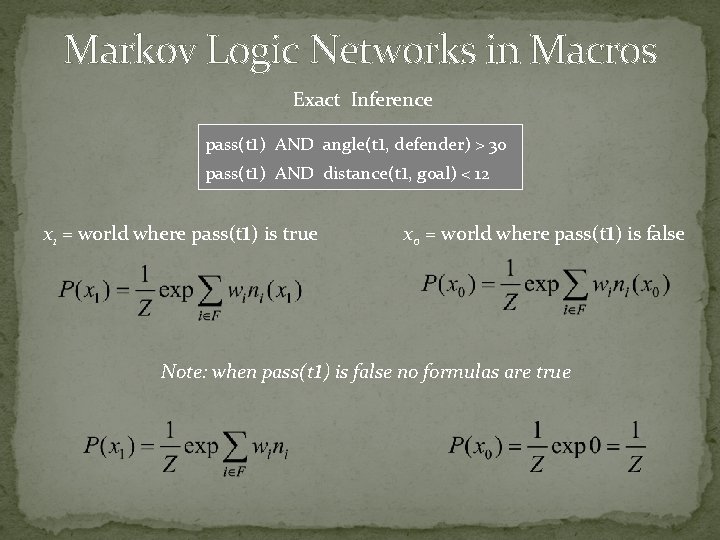

Markov Logic Networks in Macros IF angle(Teammate, defender) > 30 THEN pass(Teammate) IF distance(Teammate, goal) < 12 THEN pass(Teammate) Matches t 1 , score=0. 92 Matches t 2 , score=0. 88 MLN P(t 1) = 0. 35 P(t 2) = 0. 65

Markov Logic Networks in Macros pass(Teammate) AND angle(Teammate, defender) > 30 pass(Teammate) AND distance(Teammate, goal) < 12 pass(t 1) angle(t 1, defender) > 30 distance(t 1, goal) < 12 pass(t 2) angle(t 2 , defender) > 30 distance(t 2, goal) < 12

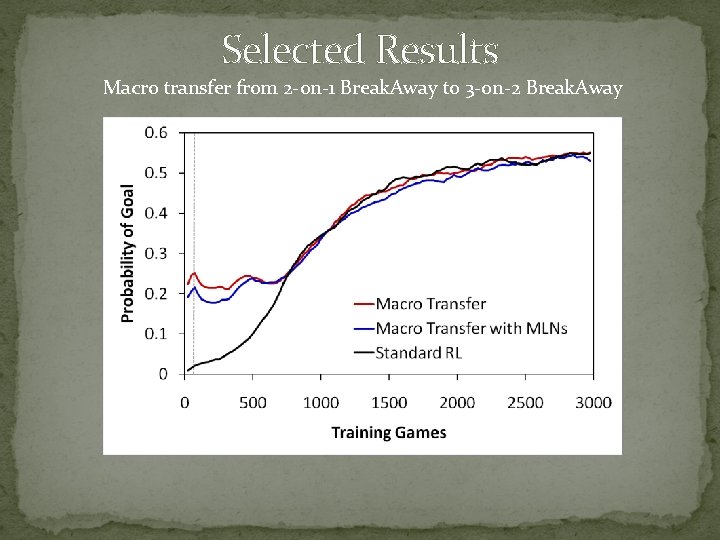

Selected Results Macro transfer from 2 -on-1 Break. Away to 3 -on-2 Break. Away

Selected Results Probability of goal Macro self-transfer in 2 -on-1 Break. Away Asymptote 56% Macro with MLN 43% Regular macro 32% Training games Initial 1%

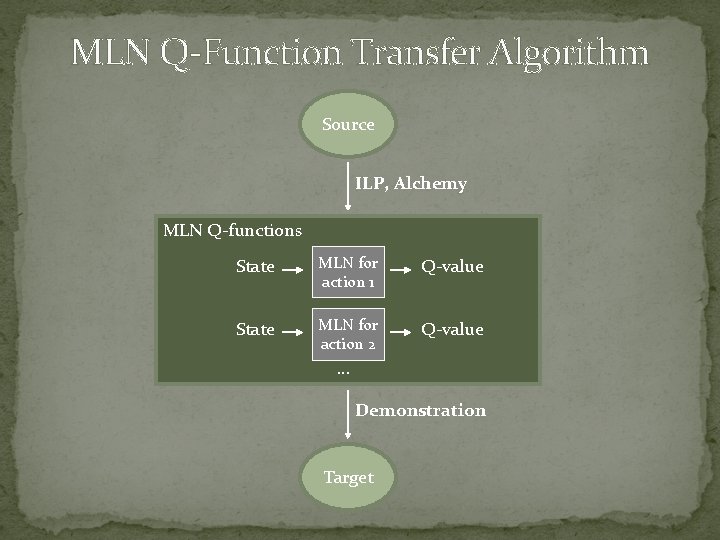

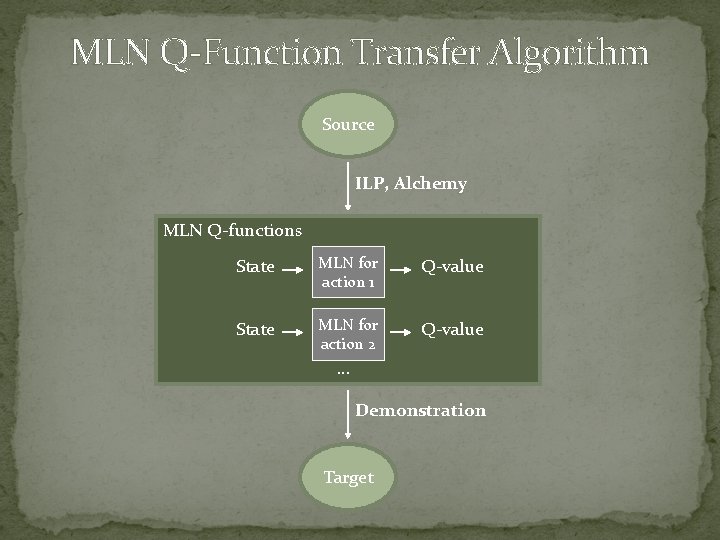

MLN Q-Function Transfer Algorithm Source ILP, Alchemy MLN Q-functions State MLN for action 1 Q-value State MLN for action 2 Q-value … Demonstration Target

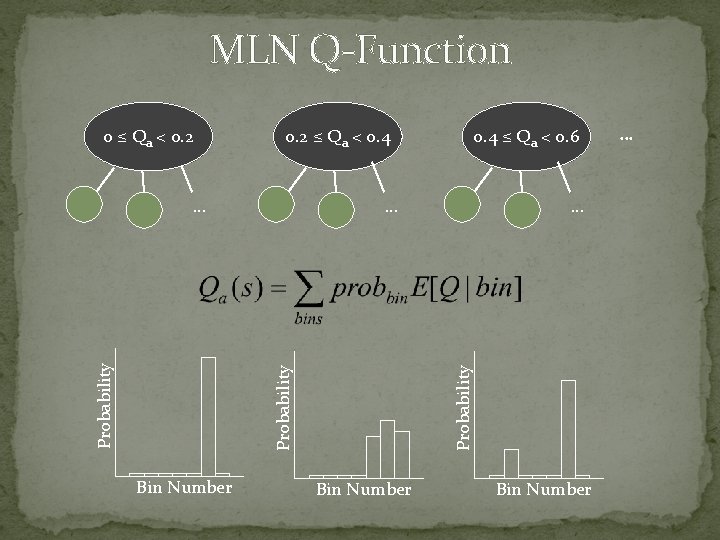

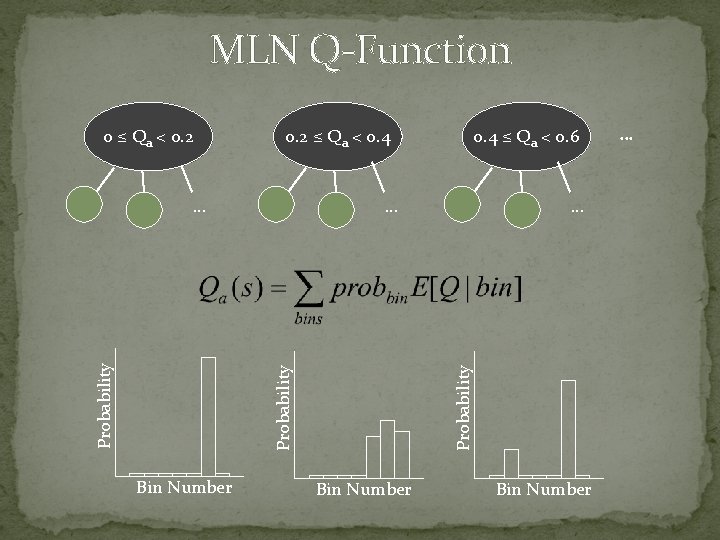

MLN Q-Function 0. 2 ≤ Qa < 0. 4 … Probability … Bin Number 0. 4 ≤ Qa < 0. 6 … Probability 0 ≤ Qa < 0. 2 Bin Number …

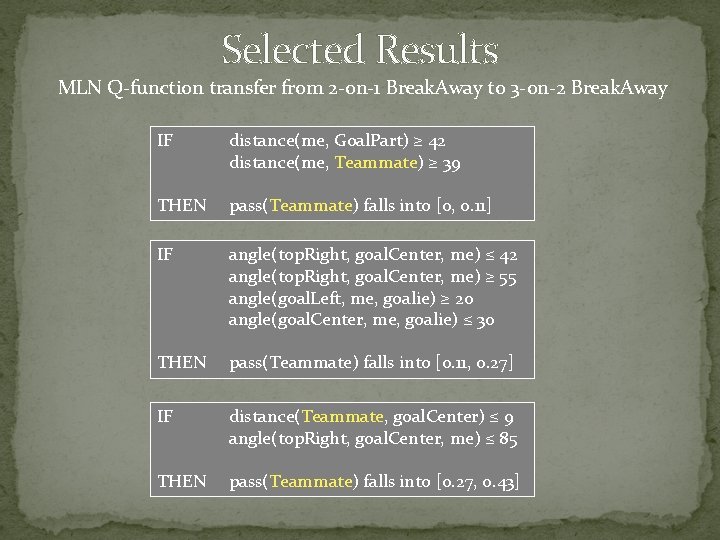

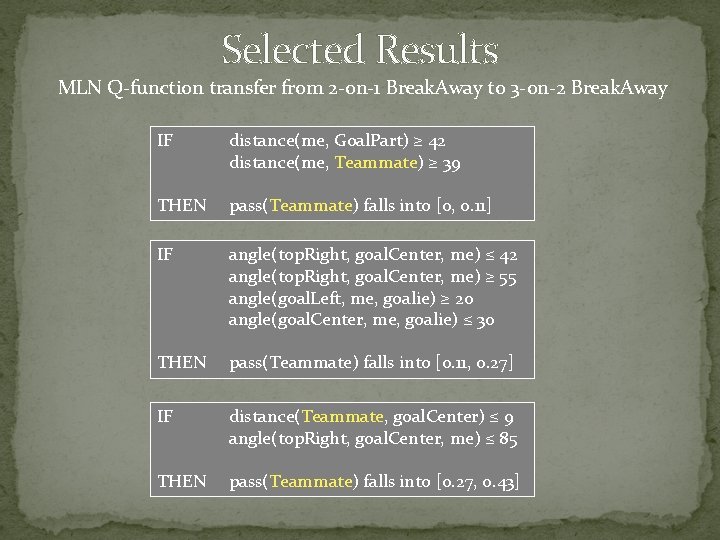

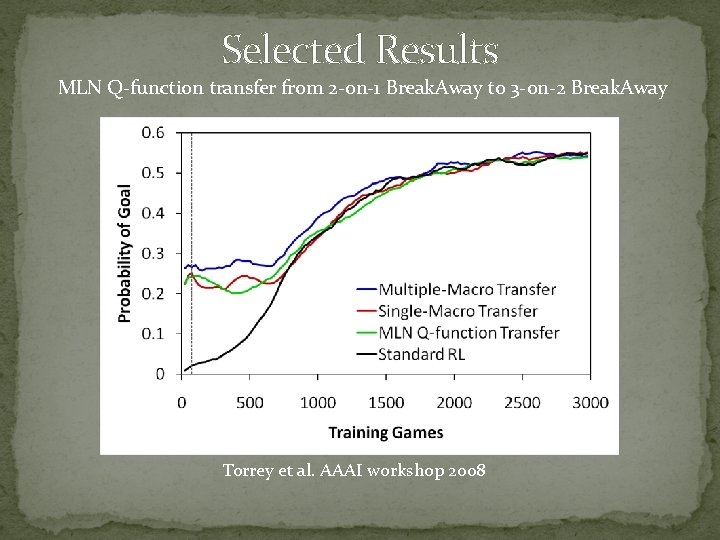

Selected Results MLN Q-function transfer from 2 -on-1 Break. Away to 3 -on-2 Break. Away IF distance(me, Goal. Part) ≥ 42 distance(me, Teammate) ≥ 39 THEN pass(Teammate) falls into [0, 0. 11] IF angle(top. Right, goal. Center, me) ≤ 42 angle(top. Right, goal. Center, me) ≥ 55 angle(goal. Left, me, goalie) ≥ 20 angle(goal. Center, me, goalie) ≤ 30 THEN pass(Teammate) falls into [0. 11, 0. 27] IF distance(Teammate, goal. Center) ≤ 9 angle(top. Right, goal. Center, me) ≤ 85 THEN pass(Teammate) falls into [0. 27, 0. 43]

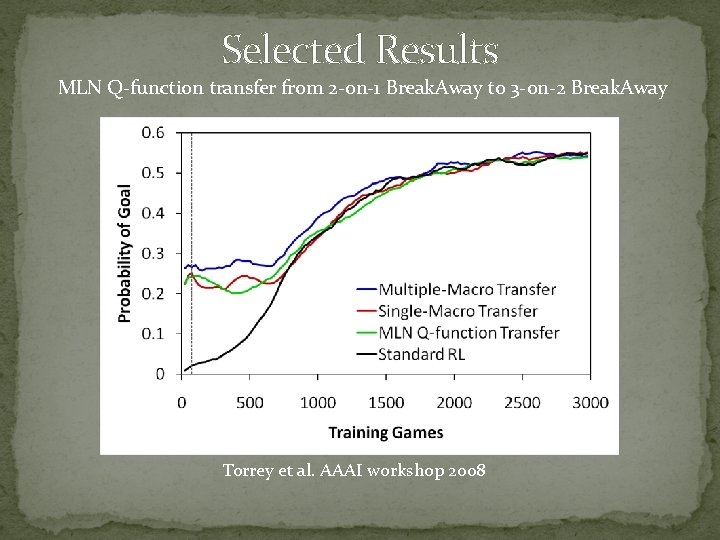

Selected Results MLN Q-function transfer from 2 -on-1 Break. Away to 3 -on-2 Break. Away Torrey et al. AAAI workshop 2008

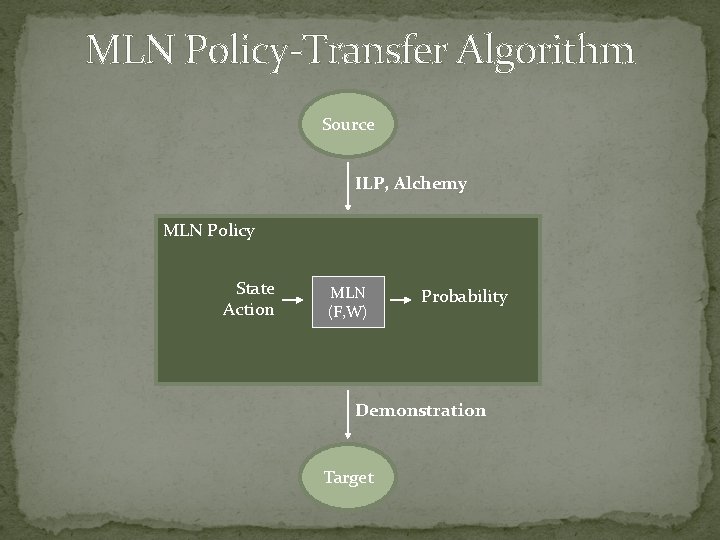

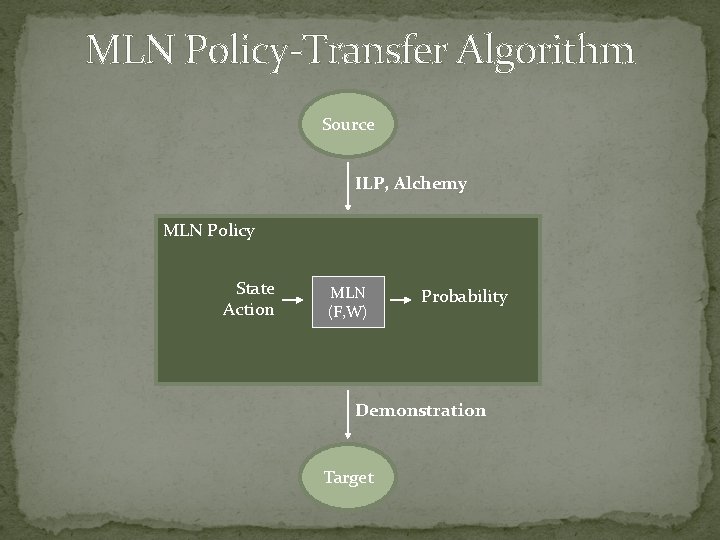

MLN Policy-Transfer Algorithm Source ILP, Alchemy MLN Policy State Action MLN (F, W) Probability Demonstration Target

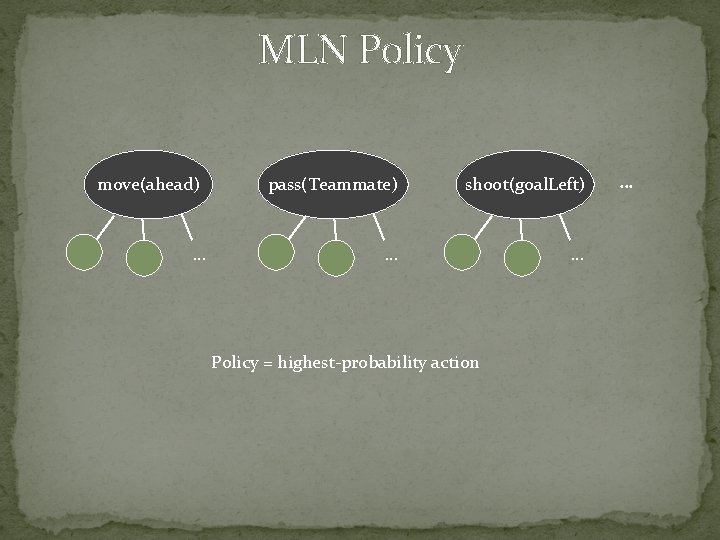

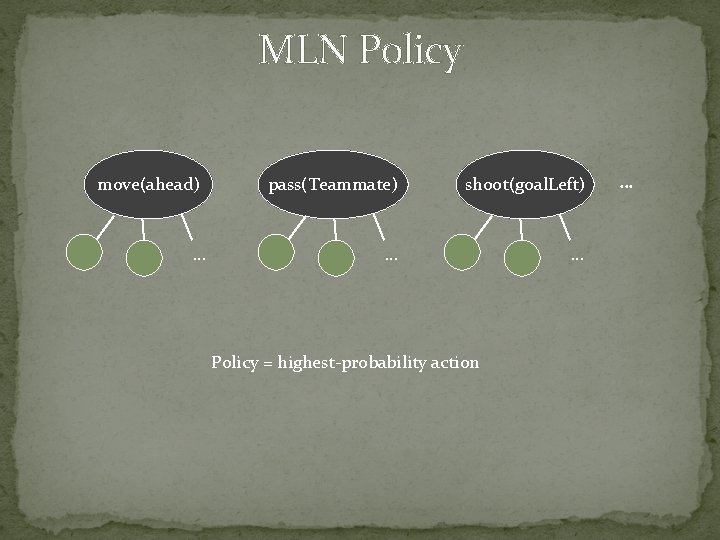

MLN Policy move(ahead) … pass(Teammate) shoot(goal. Left) … … Policy = highest-probability action …

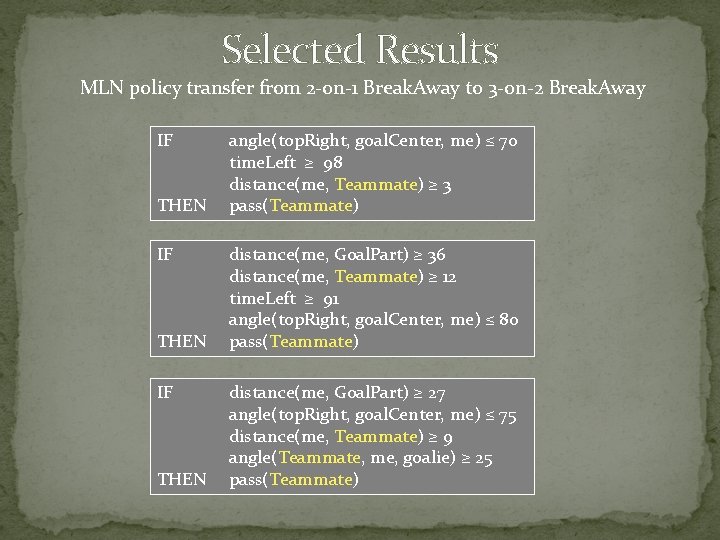

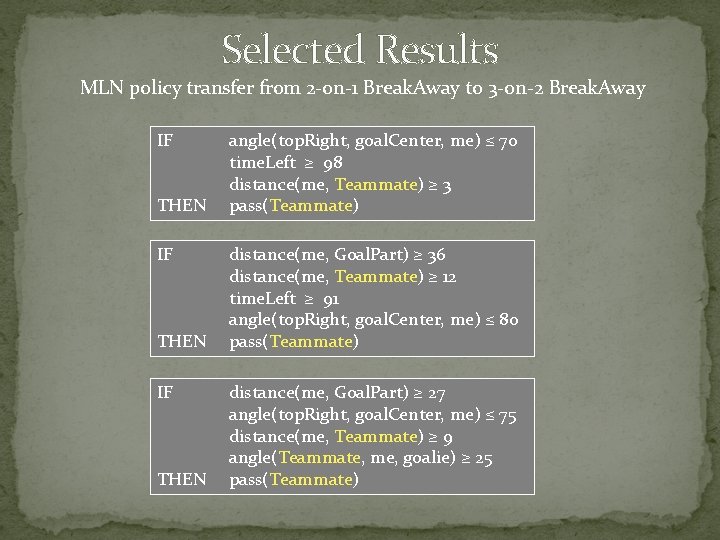

Selected Results MLN policy transfer from 2 -on-1 Break. Away to 3 -on-2 Break. Away IF THEN angle(top. Right, goal. Center, me) ≤ 70 time. Left ≥ 98 distance(me, Teammate) ≥ 3 pass(Teammate) distance(me, Goal. Part) ≥ 36 distance(me, Teammate) ≥ 12 time. Left ≥ 91 angle(top. Right, goal. Center, me) ≤ 80 pass(Teammate) distance(me, Goal. Part) ≥ 27 angle(top. Right, goal. Center, me) ≤ 75 distance(me, Teammate) ≥ 9 angle(Teammate, me, goalie) ≥ 25 pass(Teammate)

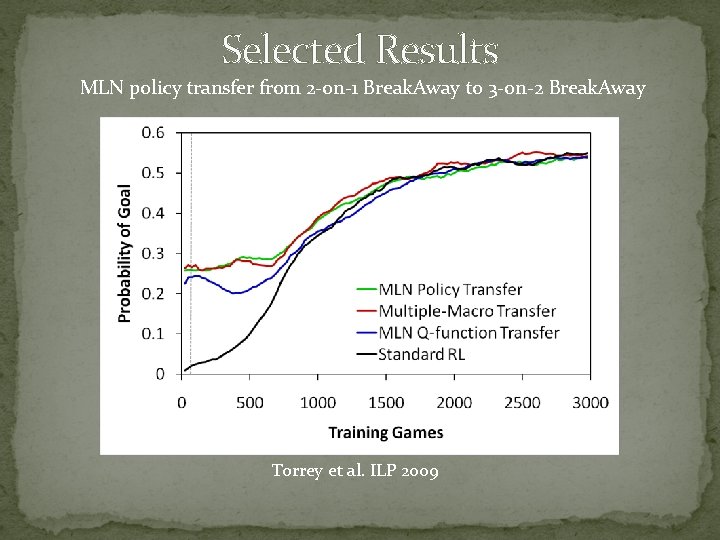

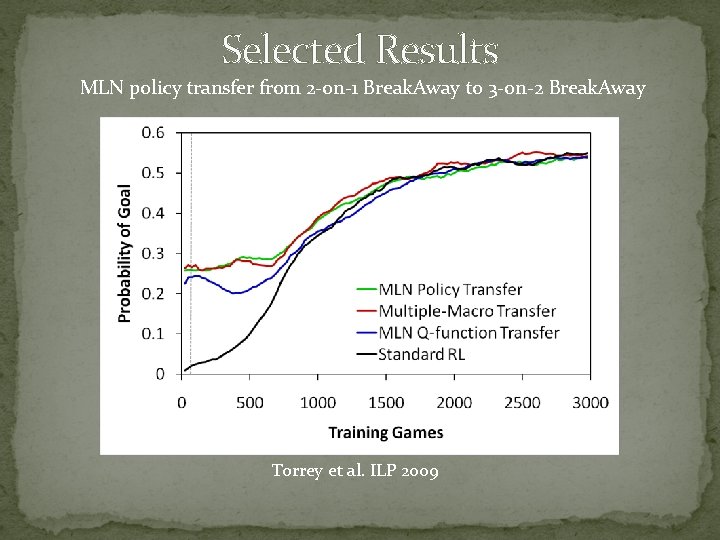

Selected Results MLN policy transfer from 2 -on-1 Break. Away to 3 -on-2 Break. Away Torrey et al. ILP 2009

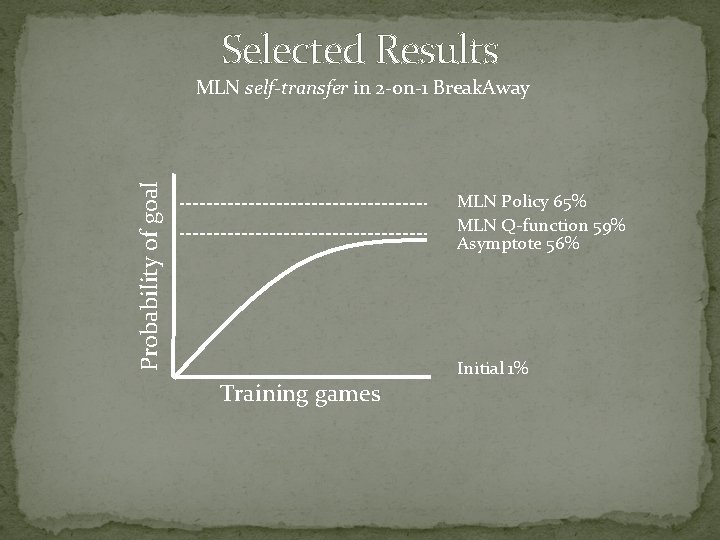

Selected Results Probability of goal MLN self-transfer in 2 -on-1 Break. Away MLN Policy 65% MLN Q-function 59% Asymptote 56% Training games Initial 1%

Thesis Contributions � Advice transfer � Advice taking � Inductive logic programming � Skill-transfer algorithm ECML 2006 (ECML 2005) � Macro transfer � Macro-operators � Demonstration � Macro-transfer algorithm ILP 2007 � Markov Logic Network transfer � Markov Logic Networks � MLNs in macros � MLN Q-function transfer algorithm � MLN policy-transfer algorithm AAAI workshop 2008 ILP 2009

Related Work � Starting-point � Taylor et al. 2005: Value-function transfer � Imitation � Fernandez and Veloso 2006: Policy reuse � Hierarchical � Mehta et al. 2008: Max. Q transfer � Alteration � Walsh et al. 2006: Aggregate states � New Algorithms � Sharma et al. 2007: Case-based RL

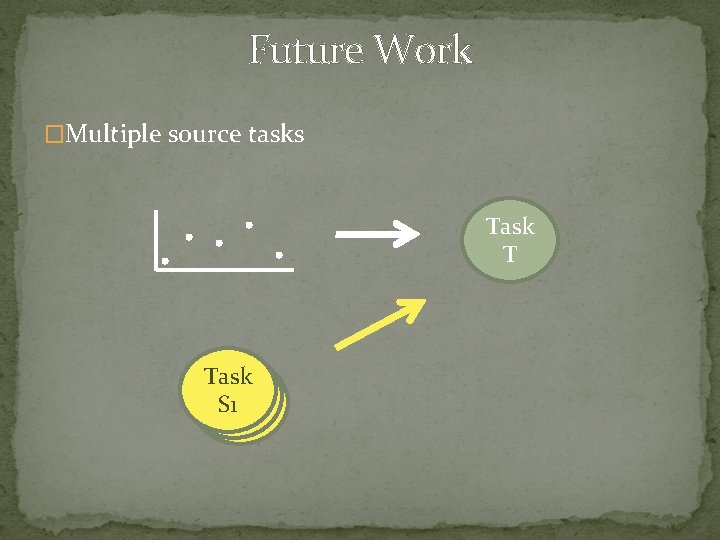

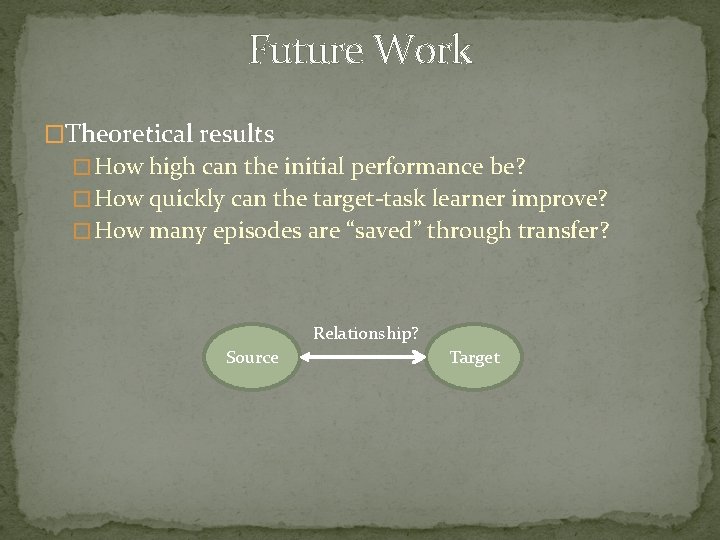

Conclusions �Transfer can improve reinforcement learning � Initial performance � Learning speed �Advice transfer � Low initial performance � Steep learning curves � Robust to negative transfer �Macro transfer and MLN transfer � High initial performance � Shallow learning curves � Vulnerable to negative transfer

Conclusions Close-transfer scenarios Multiple Macro ≥ Single Macro = = MLN Policy MLN Q-Function ≥ Skill Transfer Distant-transfer scenarios Skill Transfer ≥ Multiple Macro ≥ Single Macro = = MLN Policy MLN Q-Function

Future Work �Multiple source tasks Task T Task S 1

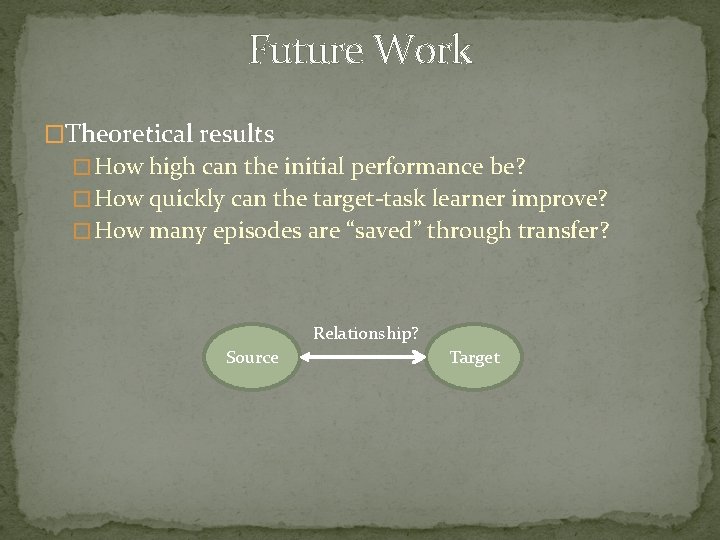

Future Work �Theoretical results � How high can the initial performance be? � How quickly can the target-task learner improve? � How many episodes are “saved” through transfer? Relationship? Source Target

Future Work �Joint learning and inference in macros � Single search � Combined rule/weight learning pass(Teammate) move(Direction)

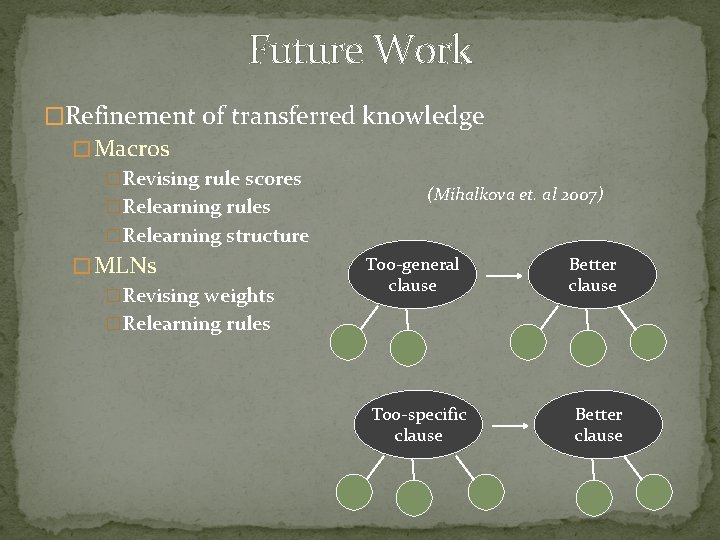

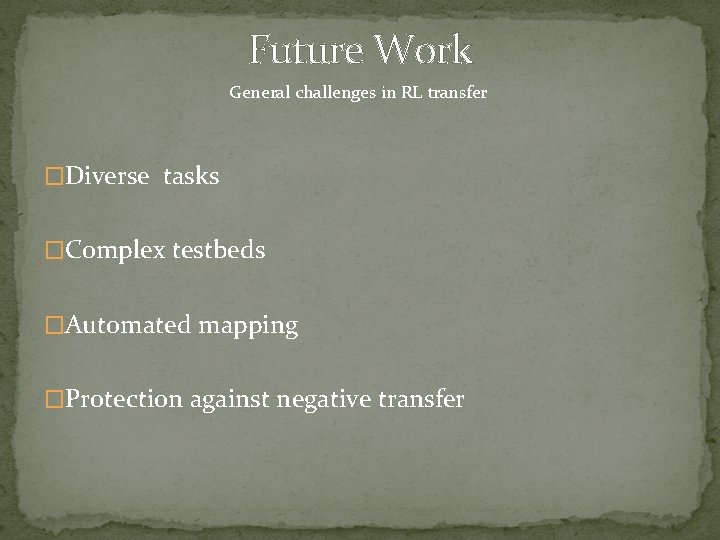

Future Work �Refinement of transferred knowledge � Macros �Revising rule scores �Relearning rules �Relearning structure � MLNs �Revising weights �Relearning rules (Mihalkova et. al 2007) Too-general clause Too-specific clause Better clause

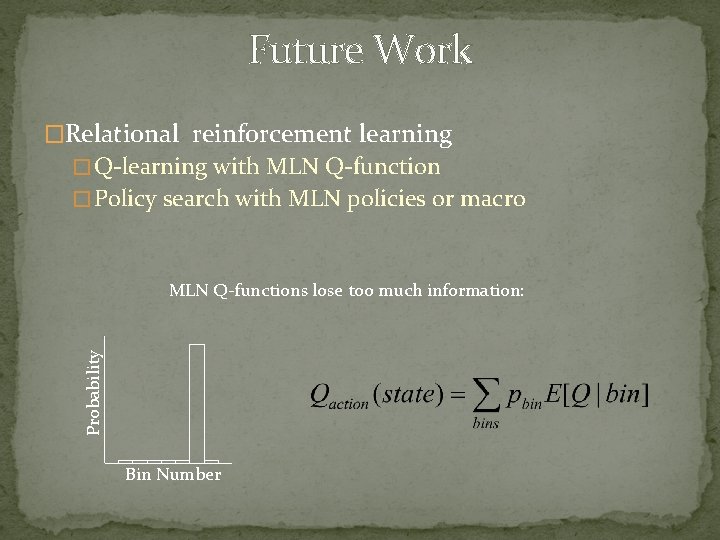

Future Work �Relational reinforcement learning � Q-learning with MLN Q-function � Policy search with MLN policies or macro Probability MLN Q-functions lose too much information: Bin Number

Future Work General challenges in RL transfer �Diverse tasks �Complex testbeds �Automated mapping �Protection against negative transfer

Acknowledgements � Advisor: Jude Shavlik � Collaborators: Trevor Walker and Richard Maclin � Committee � David Page � Mark Craven � Jerry Zhu � Michael Coen � UW Machine Learning Group � Grants � DARPA HR 0011 -04 -1 -0007 � NRL N 00173 -06 -1 -G 002 � DARPA FA 8650 -06 -C-7606

Backup Slides

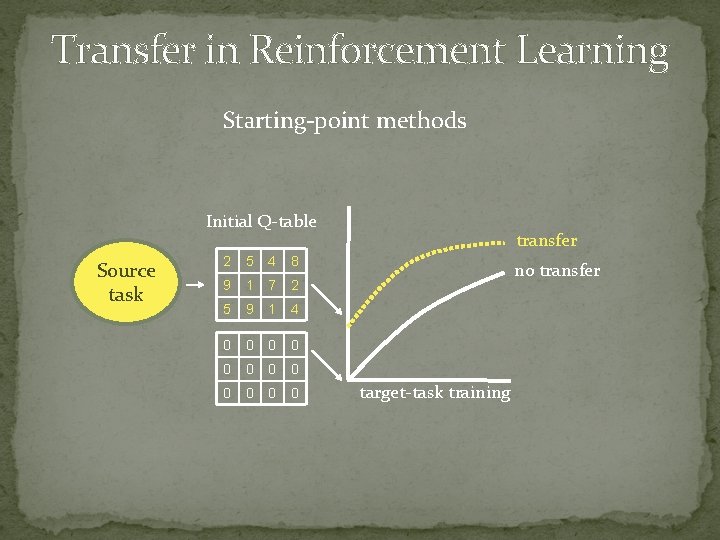

Transfer in Reinforcement Learning Starting-point methods Initial Q-table Source task 2 5 4 8 9 1 7 2 5 9 1 4 0 0 0 transfer no transfer target-task training

Transfer in Reinforcement Learning Imitation methods source policy used target training

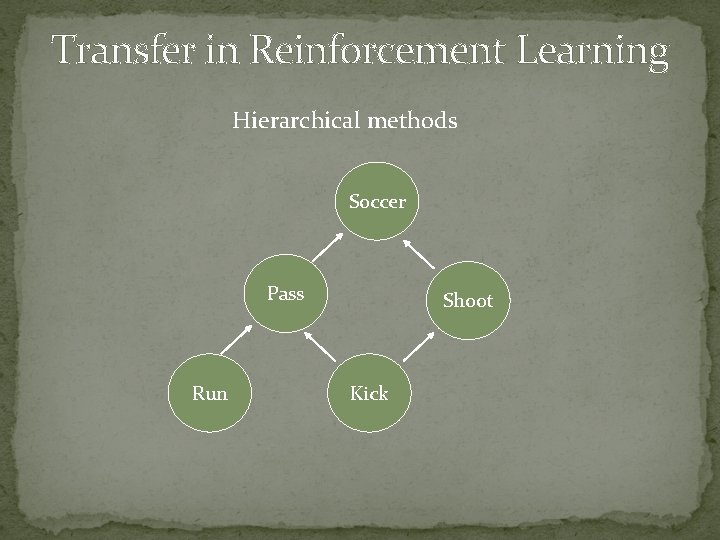

Transfer in Reinforcement Learning Hierarchical methods Soccer Pass Run Shoot Kick

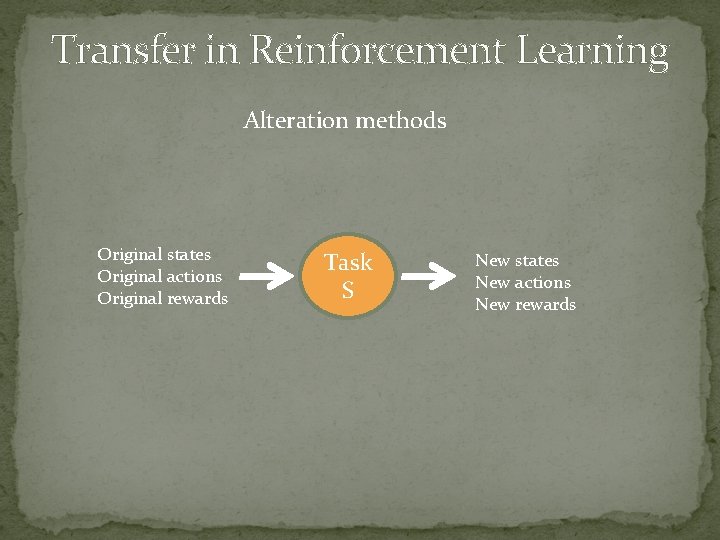

Transfer in Reinforcement Learning Alteration methods Original states Original actions Original rewards Task S New states New actions New rewards

Policy Transfer Algorithm Source IF Q(pass(Teammate)) > Q(other) THEN pass(Teammate) Advice Taking Target

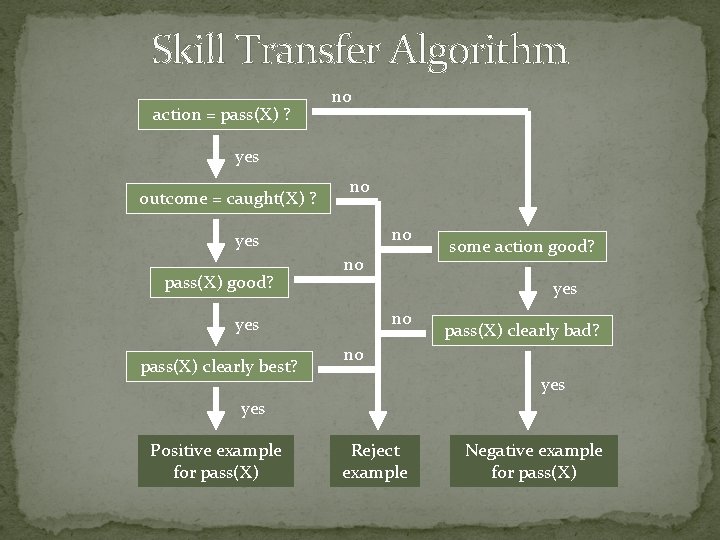

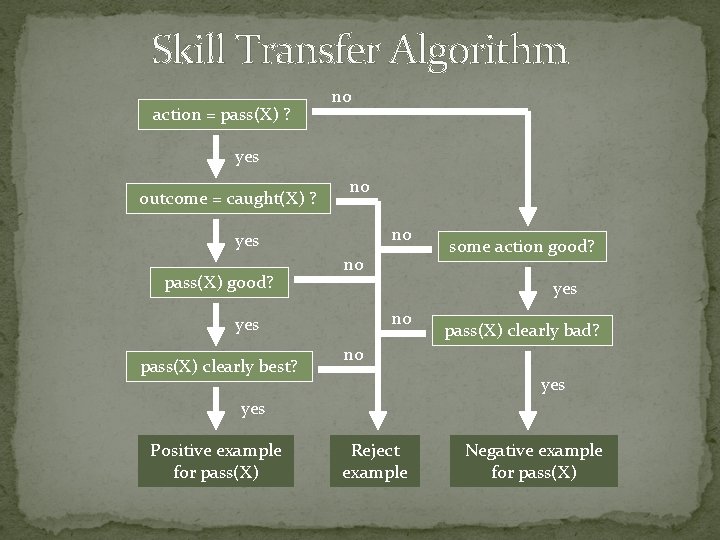

Skill Transfer Algorithm action = pass(X) ? no yes outcome = caught(X) ? no no yes pass(X) good? no yes pass(X) clearly best? some action good? pass(X) clearly bad? no yes Positive example for pass(X) Reject example Negative example for pass(X)

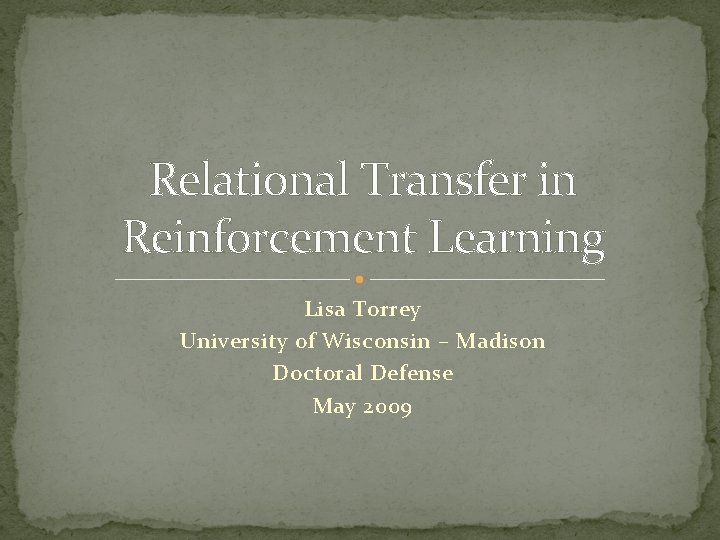

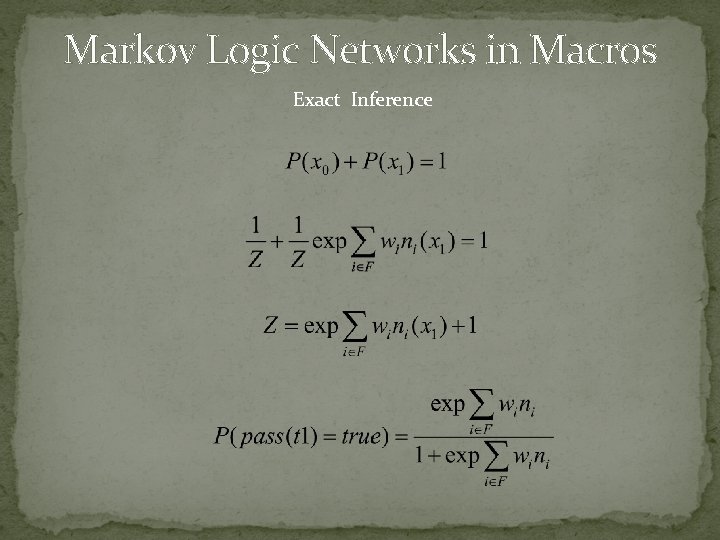

Markov Logic Networks in Macros Exact Inference pass(t 1) AND angle(t 1, defender) > 30 pass(t 1) AND distance(t 1, goal) < 12 x 1 = world where pass(t 1) is true x 0 = world where pass(t 1) is false Note: when pass(t 1) is false no formulas are true

Markov Logic Networks in Macros Exact Inference