Reinforcement Learning An Introduction From Sutton Barto R

Reinforcement Learning An Introduction From Sutton & Barto R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 1

DP Value Iteration Recall the full policy-evaluation backup: Here is the full value-iteration backup: R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 2

Asynchronous DP p All the DP methods described so far require exhaustive sweeps of the entire state set. p Asynchronous DP does not use sweeps. Instead it works like this: n Repeat until convergence criterion is met: – Pick a state at random and apply the appropriate backup p Still need lots of computation, but does not get locked into hopelessly long sweeps p Can you select states to backup intelligently? YES: an agent’s experience can act as a guide. R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 3

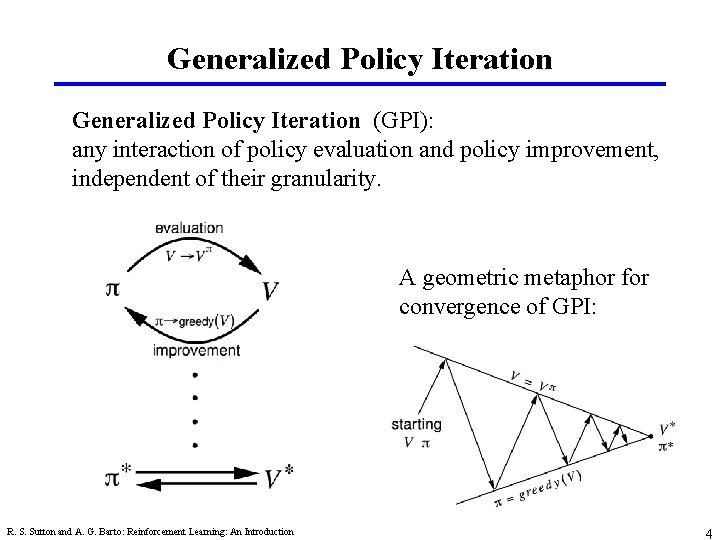

Generalized Policy Iteration (GPI): any interaction of policy evaluation and policy improvement, independent of their granularity. A geometric metaphor for convergence of GPI: R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 4

Efficiency of DP p To find an optimal policy is polynomial in the number of states… p BUT, the number of states is often astronomical, e. g. , often growing exponentially with the number of state variables (what Bellman called “the curse of dimensionality”). p In practice, classical DP can be applied to problems with a few millions of states. p Asynchronous DP can be applied to larger problems, and appropriate for parallel computation. p It is surprisingly easy to come up with MDPs for which DP methods are not practical. R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 5

DP - Summary p Policy evaluation: backups without a max p Policy improvement: form a greedy policy, if only locally p Policy iteration: alternate the above two processes p Value iteration: backups with a max p Full backups (to be contrasted later with sample backups) p Generalized Policy Iteration (GPI) p Asynchronous DP: a way to avoid exhaustive sweeps p Bootstrapping: updating estimates based on other estimates R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 6

Chapter 5: Monte Carlo Methods p Monte Carlo methods learn from complete sample returns n Only defined for episodic tasks p Monte Carlo methods learn directly from experience n On-line: No model necessary and still attains optimality n Simulated: No need for a full model R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 7

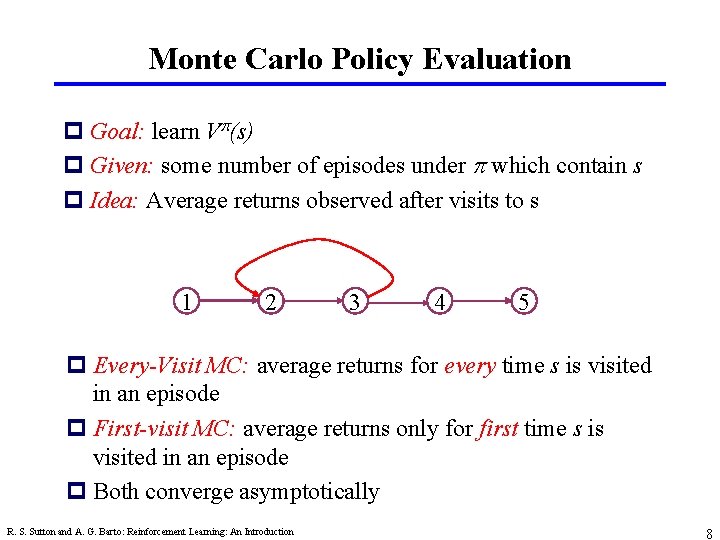

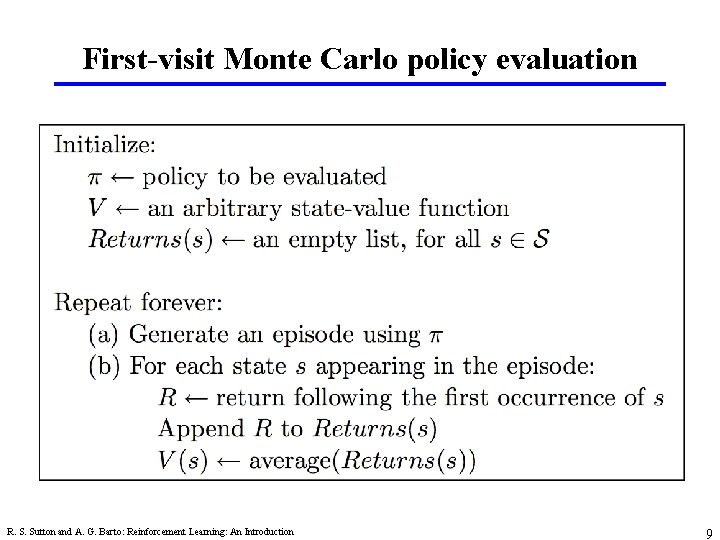

Monte Carlo Policy Evaluation p Goal: learn V (s) p Given: some number of episodes under which contain s p Idea: Average returns observed after visits to s 1 2 3 4 5 p Every-Visit MC: average returns for every time s is visited in an episode p First-visit MC: average returns only for first time s is visited in an episode p Both converge asymptotically R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 8

First-visit Monte Carlo policy evaluation R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 9

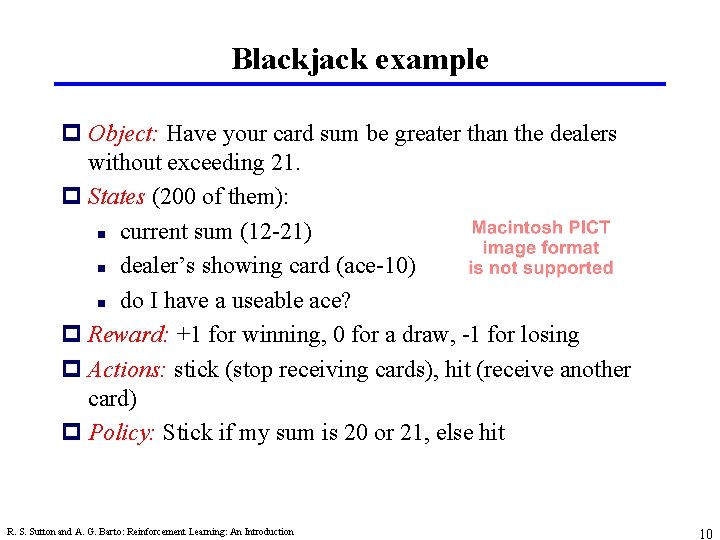

Blackjack example p Object: Have your card sum be greater than the dealers without exceeding 21. p States (200 of them): n current sum (12 -21) n dealer’s showing card (ace-10) n do I have a useable ace? p Reward: +1 for winning, 0 for a draw, -1 for losing p Actions: stick (stop receiving cards), hit (receive another card) p Policy: Stick if my sum is 20 or 21, else hit R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 10

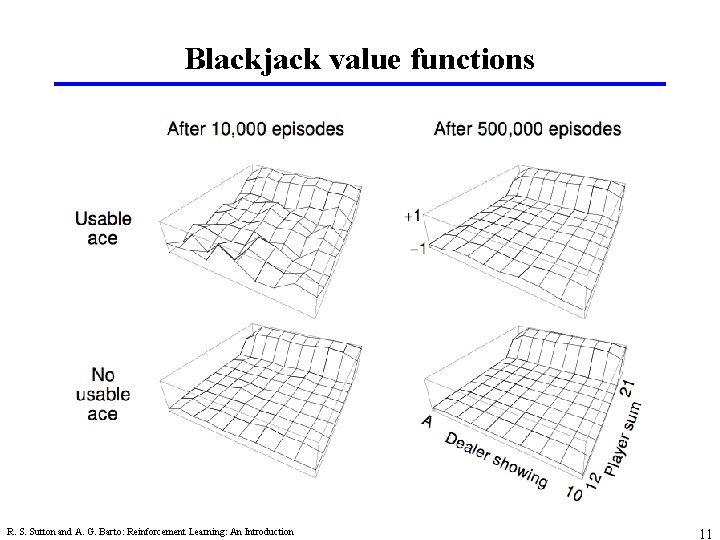

Blackjack value functions R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 11

Backup diagram for Monte Carlo p Entire episode included p Only one choice at each state (unlike DP) p MC does not bootstrap p Time required to estimate one state does not depend on the total number of states R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 12

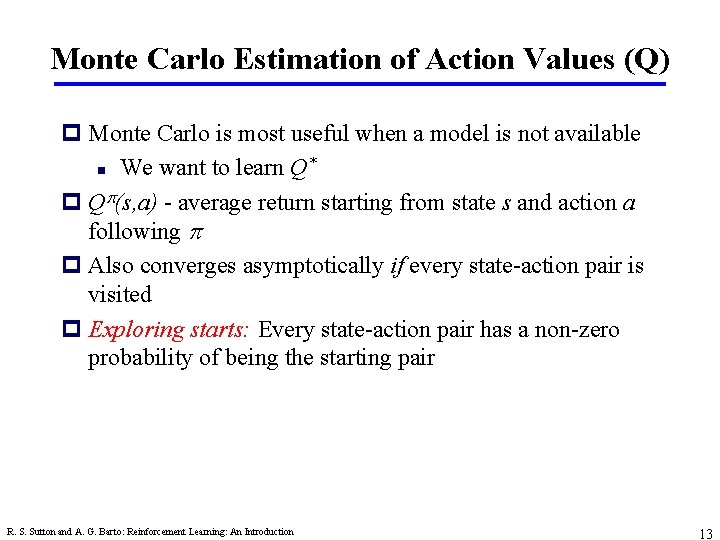

Monte Carlo Estimation of Action Values (Q) p Monte Carlo is most useful when a model is not available * n We want to learn Q p Q (s, a) - average return starting from state s and action a following p Also converges asymptotically if every state-action pair is visited p Exploring starts: Every state-action pair has a non-zero probability of being the starting pair R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 13

Monte Carlo Control p MC policy iteration: Policy evaluation using MC methods followed by policy improvement p Policy improvement step: greedify with respect to value (or action-value) function R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 14

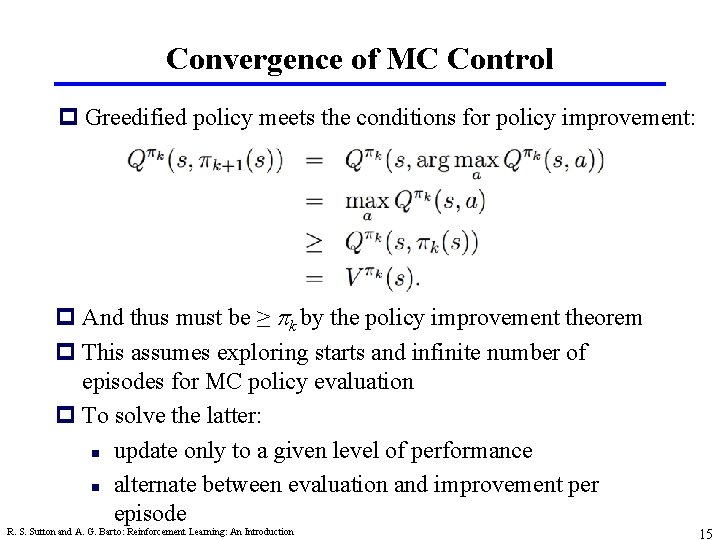

Convergence of MC Control p Greedified policy meets the conditions for policy improvement: p And thus must be ≥ k by the policy improvement theorem p This assumes exploring starts and infinite number of episodes for MC policy evaluation p To solve the latter: n update only to a given level of performance n alternate between evaluation and improvement per episode R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 15

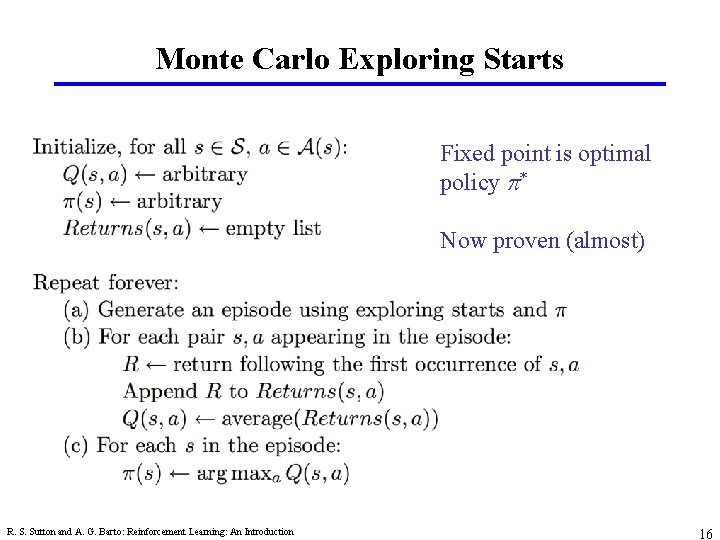

Monte Carlo Exploring Starts Fixed point is optimal policy * Now proven (almost) R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 16

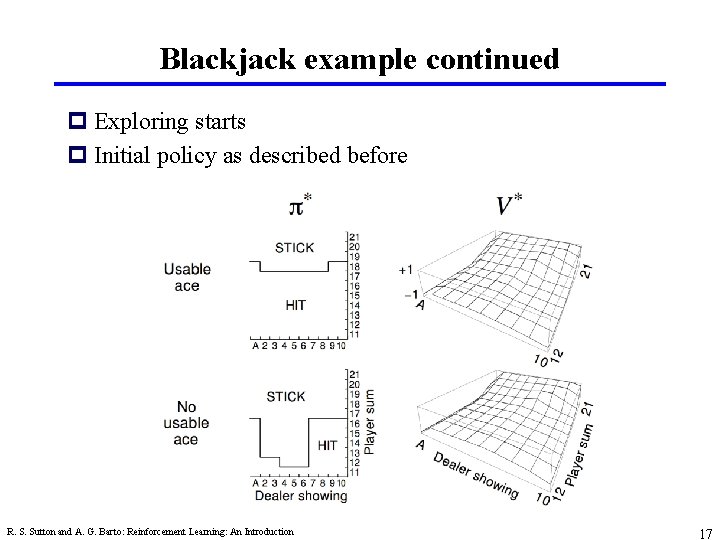

Blackjack example continued p Exploring starts p Initial policy as described before R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 17

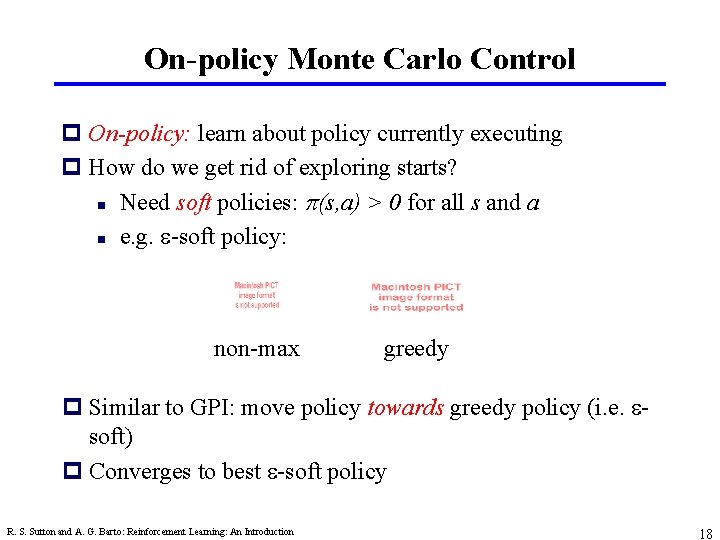

On-policy Monte Carlo Control p On-policy: learn about policy currently executing p How do we get rid of exploring starts? n Need soft policies: (s, a) > 0 for all s and a n e. g. e-soft policy: non-max greedy p Similar to GPI: move policy towards greedy policy (i. e. esoft) p Converges to best e-soft policy R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 18

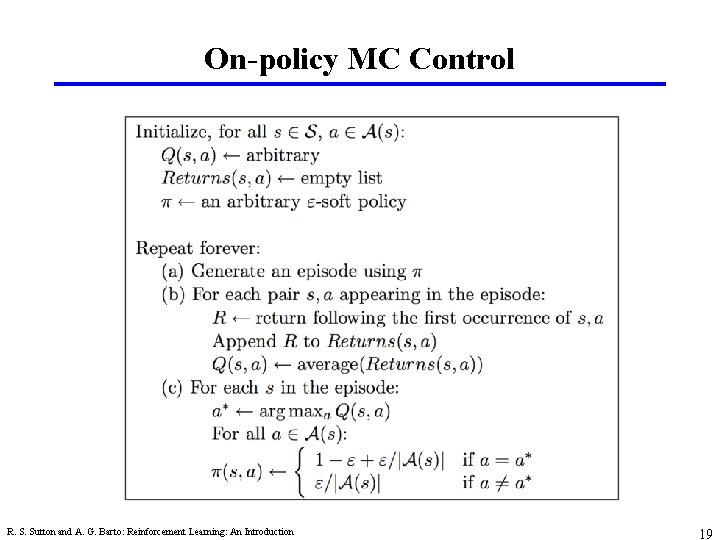

On-policy MC Control R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 19

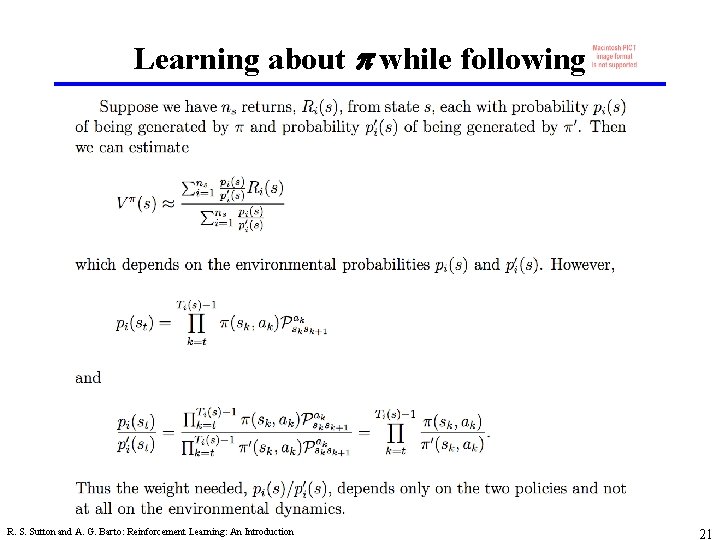

Off-policy Monte Carlo control p Behavior policy generates behavior in environment p Estimation policy is policy being learned about p Average returns from behavior policy by probability their probabilities in the estimation policy R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 20

Learning about p while following R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 21

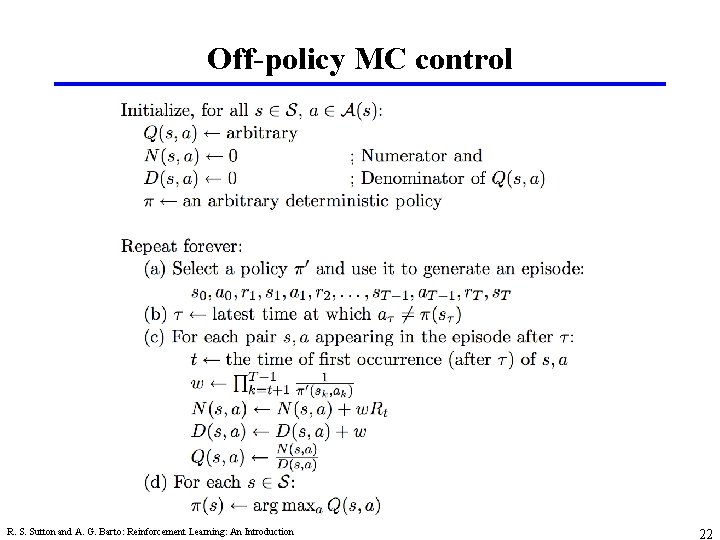

Off-policy MC control R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 22

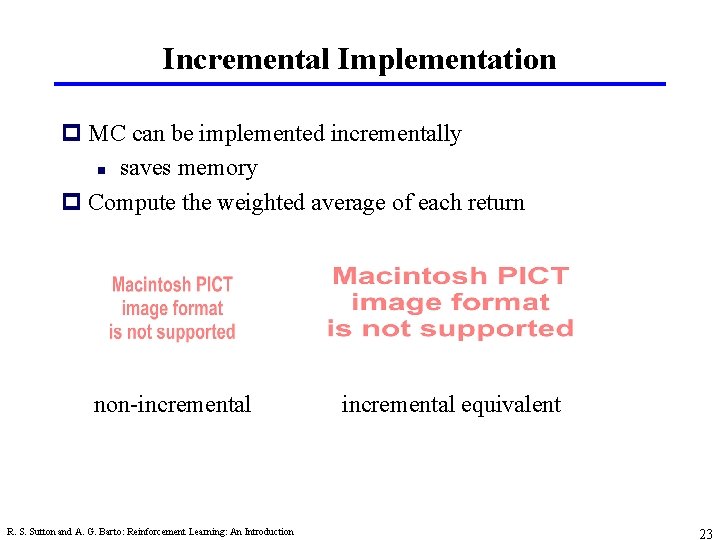

Incremental Implementation p MC can be implemented incrementally n saves memory p Compute the weighted average of each return non-incremental R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction incremental equivalent 23

MC - Summary p MC has several advantages over DP: n Can learn directly from interaction with environment n No need for full models n No need to learn about ALL states n Less harm by Markovian violations (later in book) p MC methods provide an alternate policy evaluation process p One issue to watch for: maintaining sufficient exploration n exploring starts, soft policies p Introduced distinction between on-policy and off-policy methods p No bootstrapping (as opposed to DP) R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 24

Monte Carlo is important in practice p Absolutely p When there are just a few possibilities to value, out of a large state space, Monte Carlo is a big win p Backgammon, Go, … R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction 25

- Slides: 25