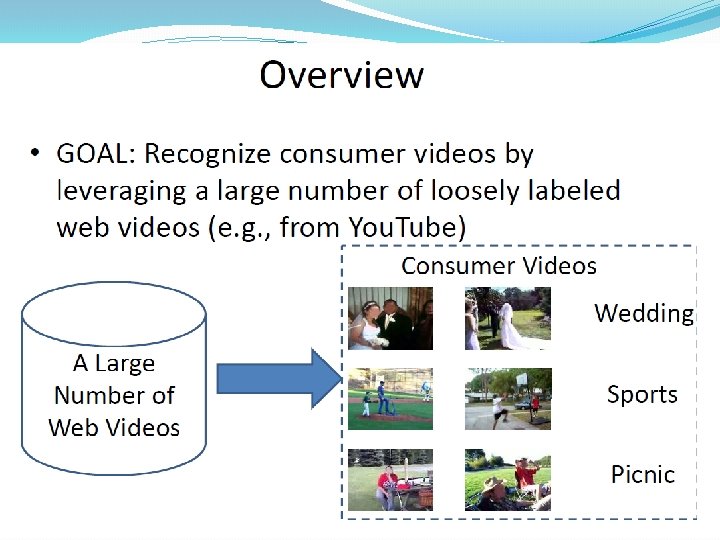

Introduction to Transfer Learning A Major Assumption in

- Slides: 45

Introduction to Transfer Learning

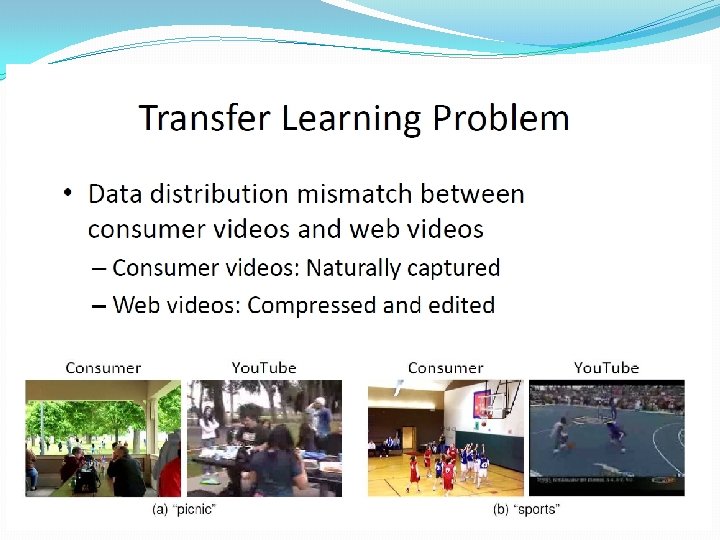

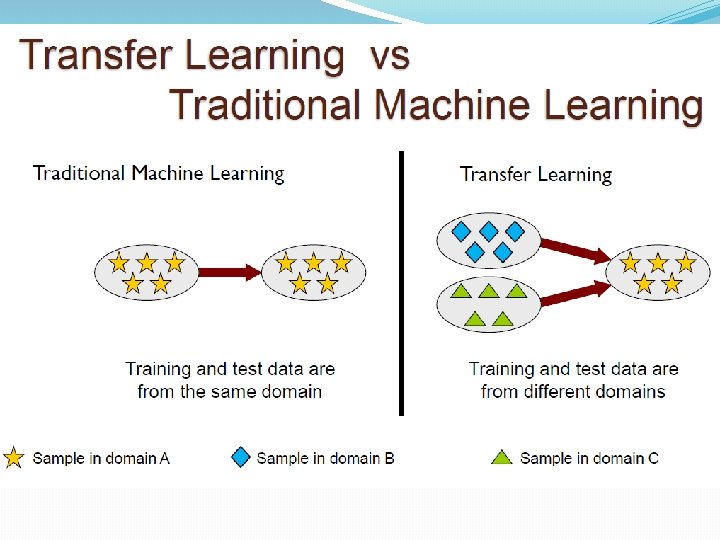

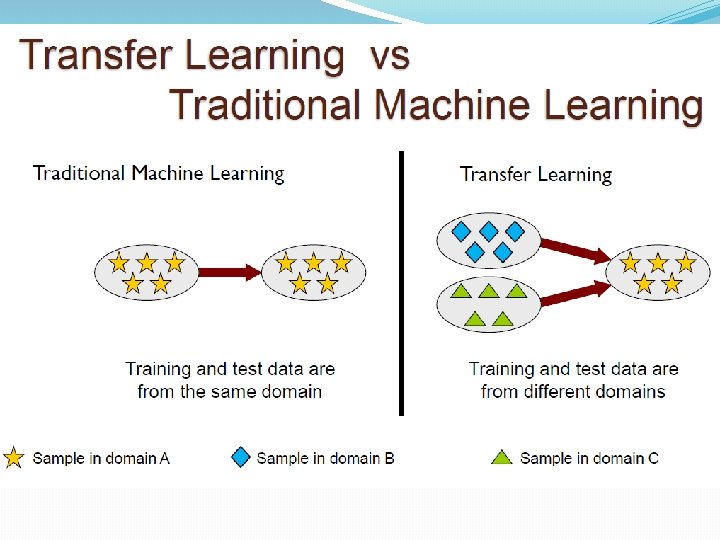

A Major Assumption in. Traditional Machine Learning Training and future (test) data follow the same distribution, and are in same feature space

When distributions are different �Part-of-Speech tagging �Named-Entity Recognition �Classification

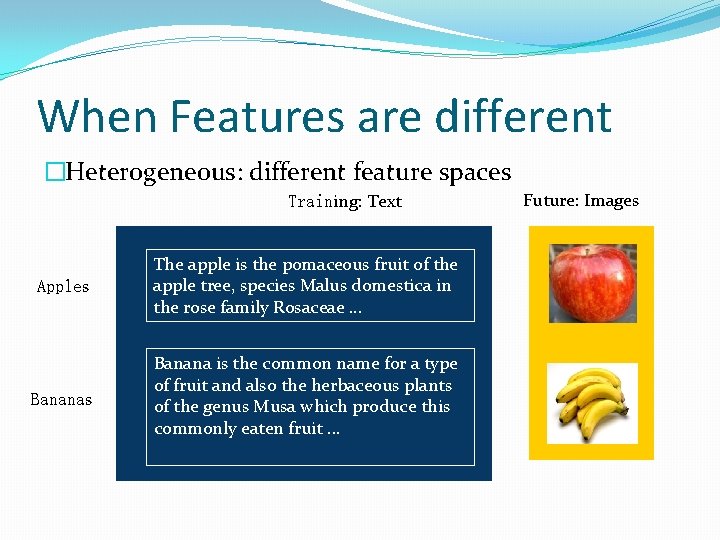

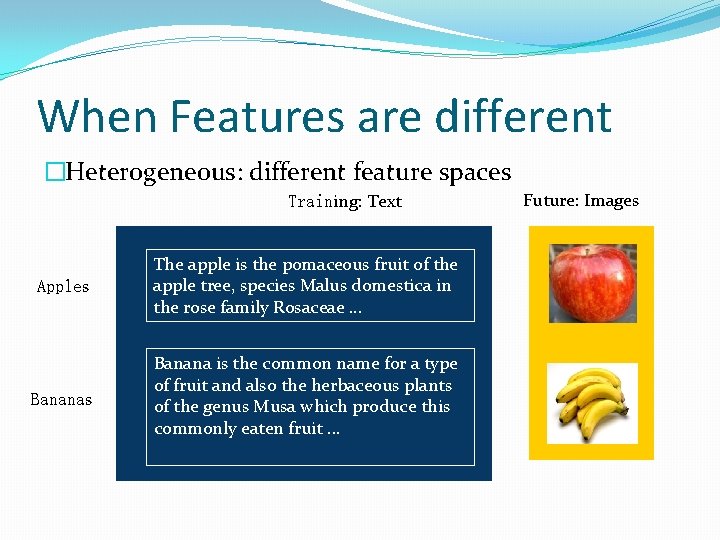

When Features are different �Heterogeneous: different feature spaces Training: Text Apples The apple is the pomaceous fruit of the apple tree, species Malus domestica in the rose family Rosaceae. . . Bananas Banana is the common name for a type of fruit and also the herbaceous plants of the genus Musa which produce this commonly eaten fruit. . . Future: Images

Motivating Example: Sentiment Classification

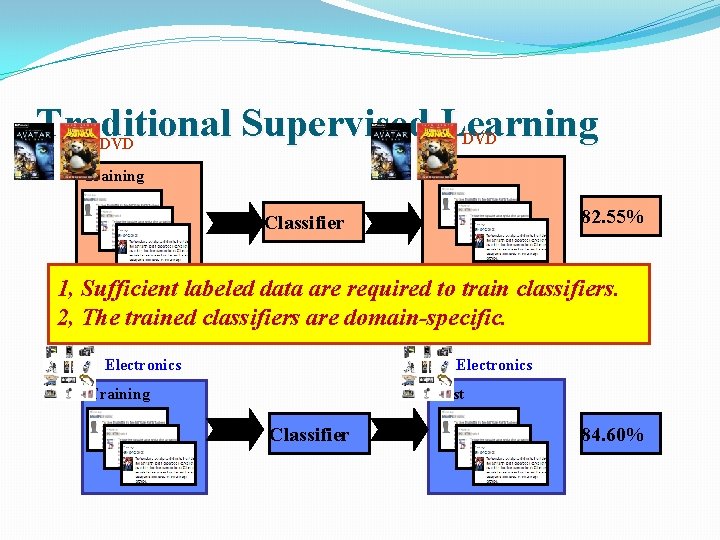

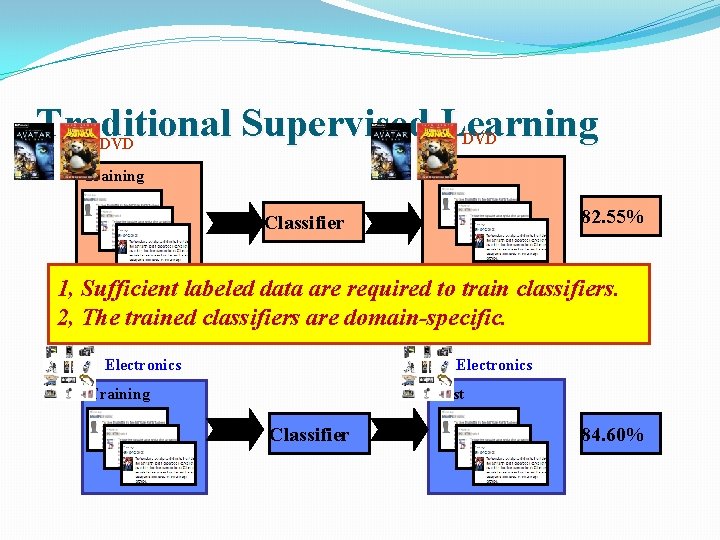

Traditional Supervised Learning DVD Test Training 82. 55% Classifier 1, Sufficient labeled data are required to train classifiers. 2, The trained classifiers are domain-specific. Electronics Test Training Classifier 84. 60%

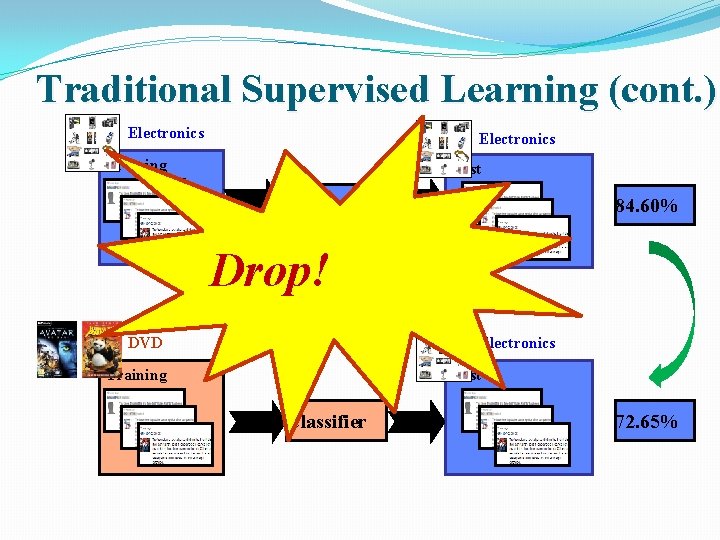

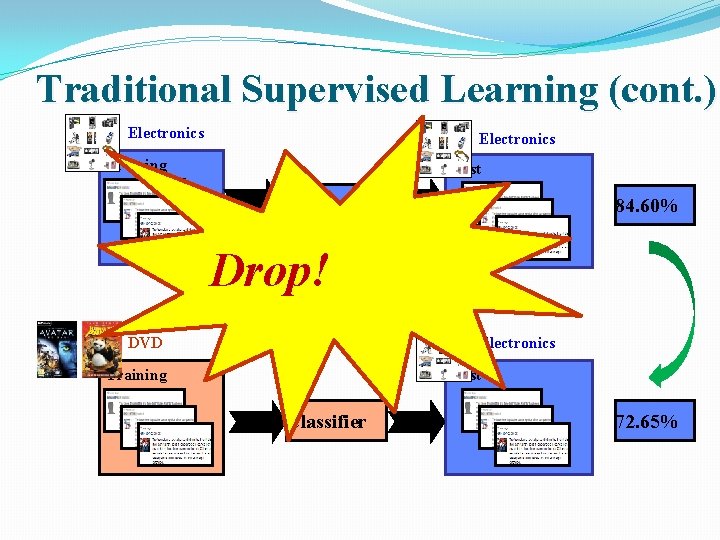

Traditional Supervised Learning (cont. ) Electronics Training Test Classifier 84. 60% Drop! DVD Electronics Training Test Classifier 72. 65%

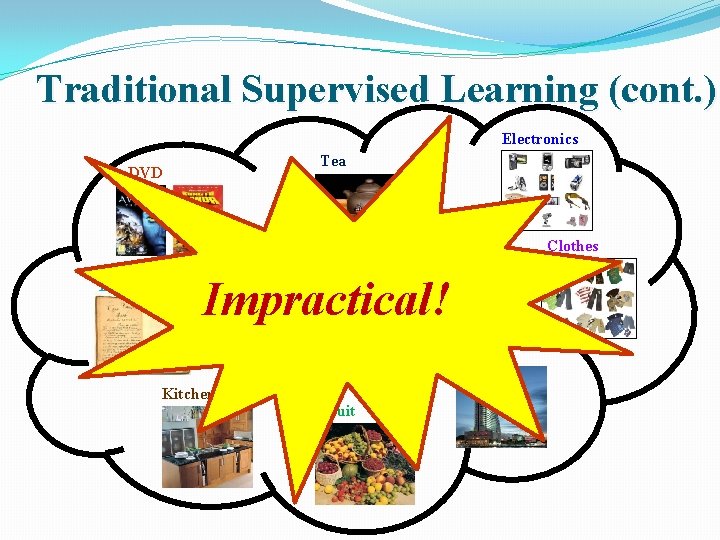

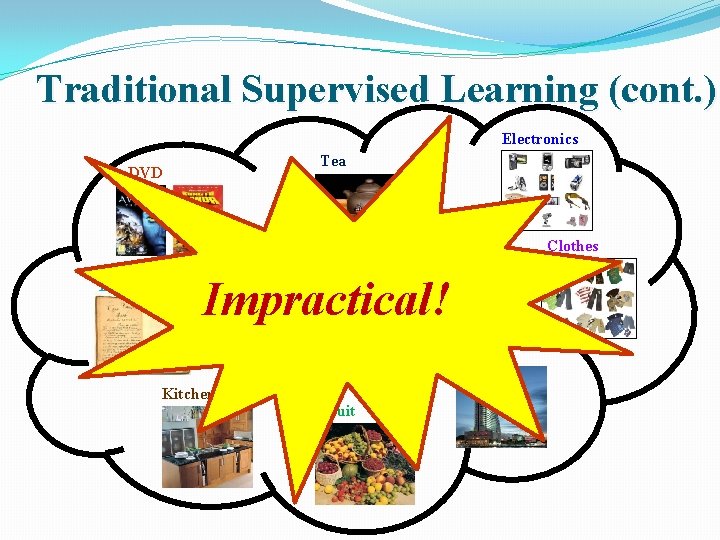

Traditional Supervised Learning (cont. ) Electronics Tea DVD Clothes Book Impractical! Video game Hotel Kitchen Fruit

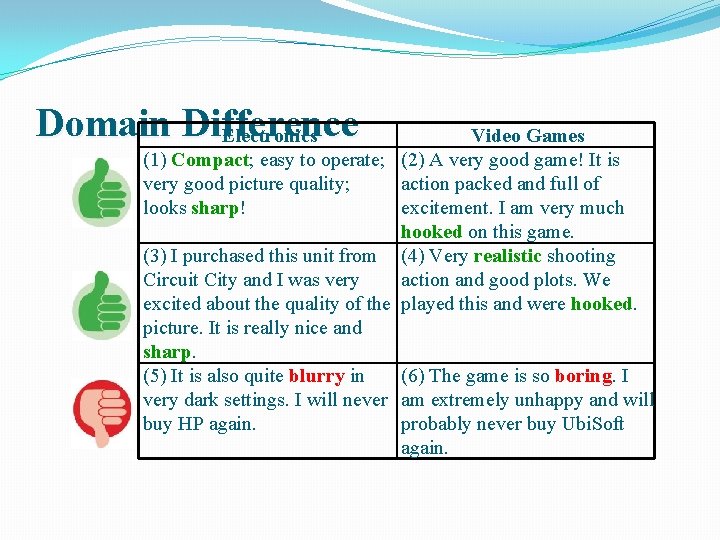

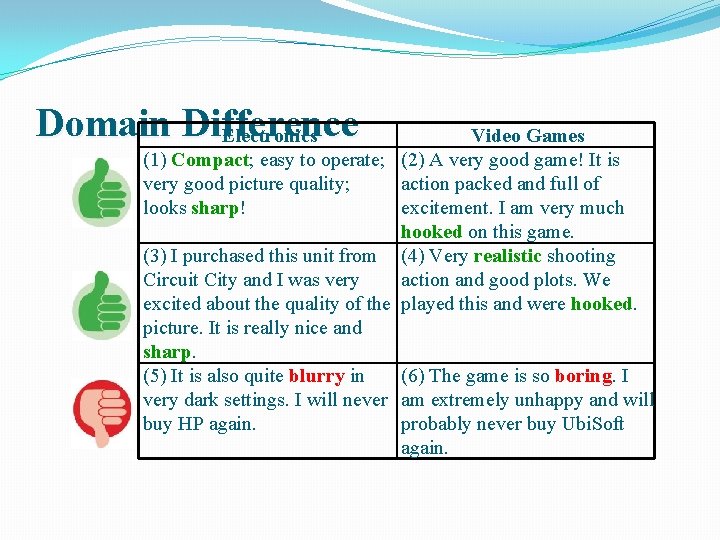

Domain Difference Electronics Video Games (1) Compact; easy to operate; (2) A very good game! It is very good picture quality; action packed and full of looks sharp! excitement. I am very much hooked on this game. (3) I purchased this unit from (4) Very realistic shooting Circuit City and I was very action and good plots. We excited about the quality of the played this and were hooked. picture. It is really nice and sharp. (5) It is also quite blurry in (6) The game is so boring. I very dark settings. I will never am extremely unhappy and will buy HP again. probably never buy Ubi. Soft again.

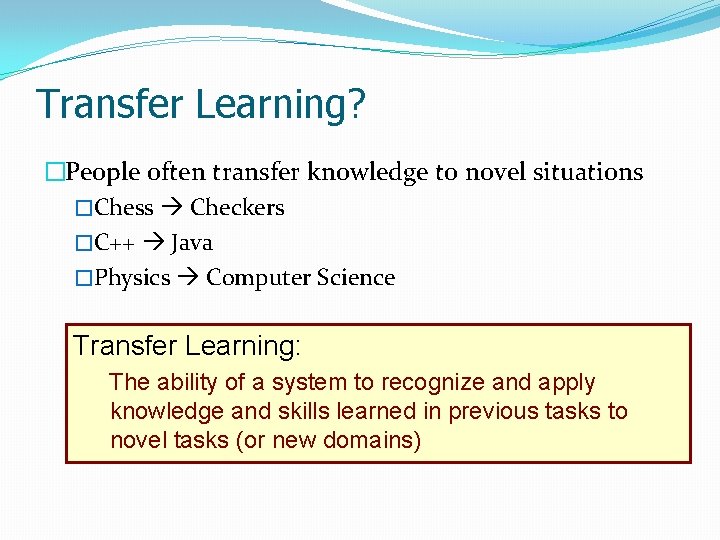

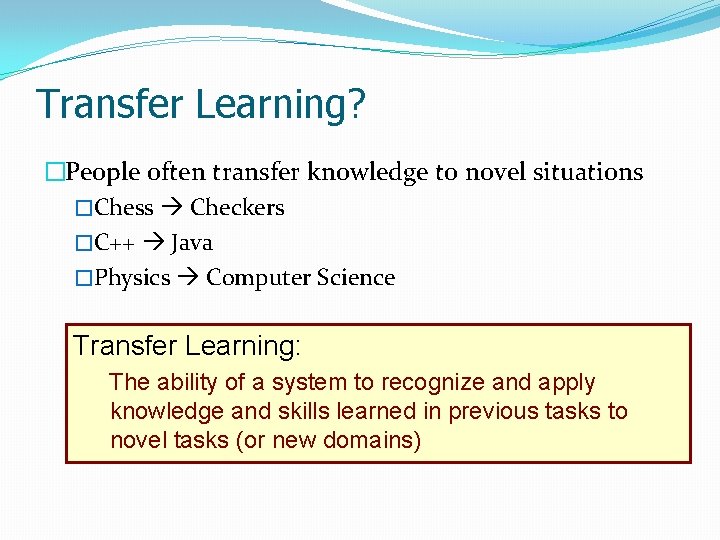

Transfer Learning? �People often transfer knowledge to novel situations �Chess Checkers �C++ Java �Physics Computer Science Transfer Learning: The ability of a system to recognize and apply knowledge and skills learned in previous tasks to novel tasks (or new domains)

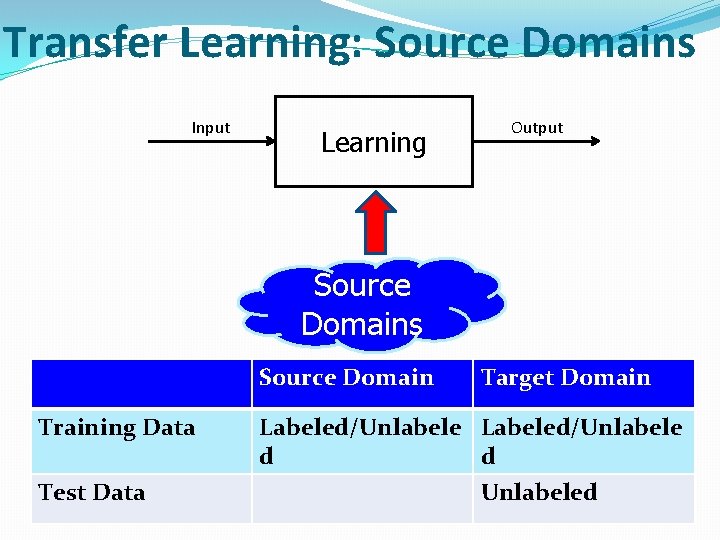

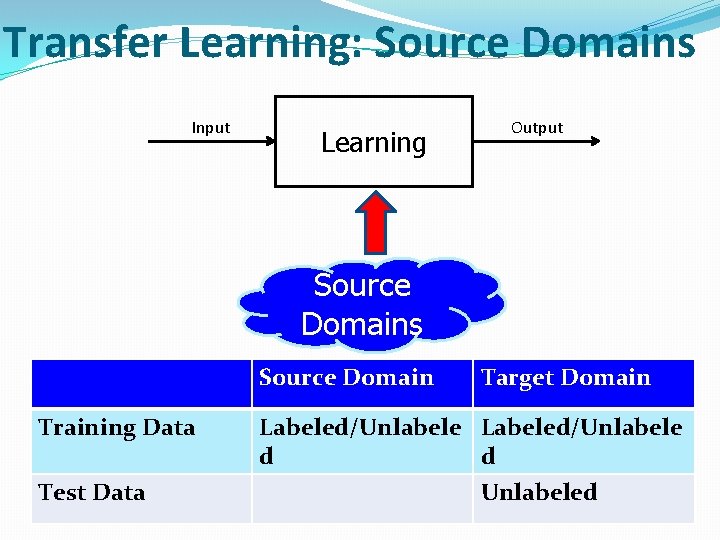

Transfer Learning: Source Domains Input Learning Output Source Domains Source Domain Training Data Test Data Target Domain Labeled/Unlabele d d Unlabeled

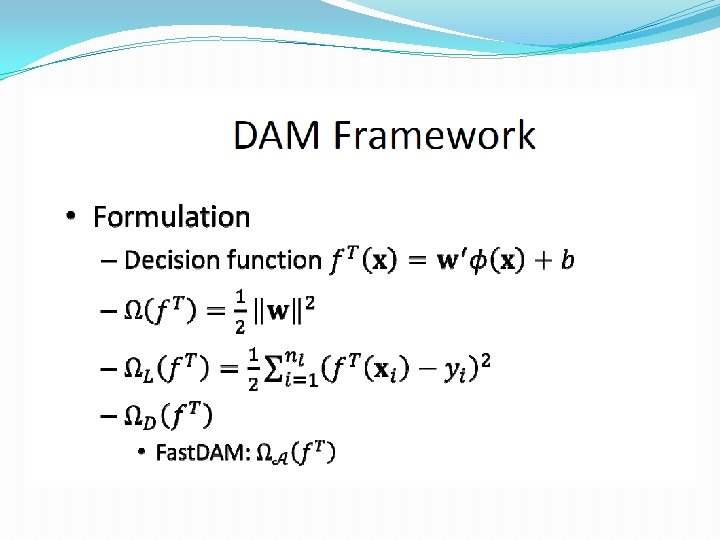

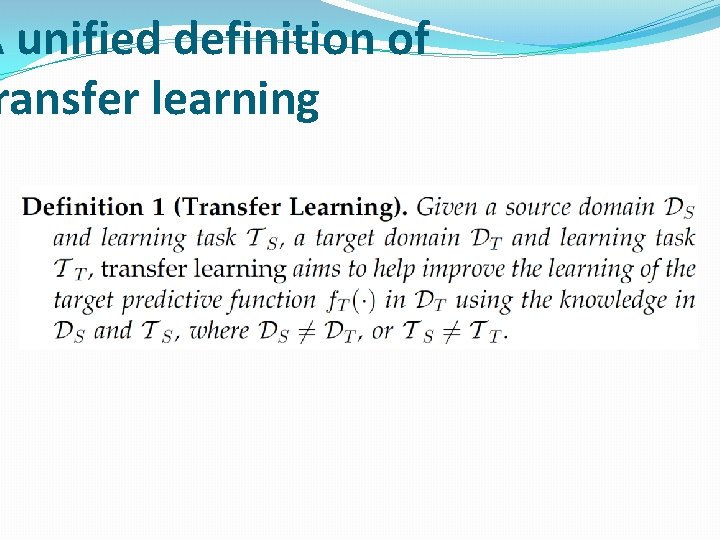

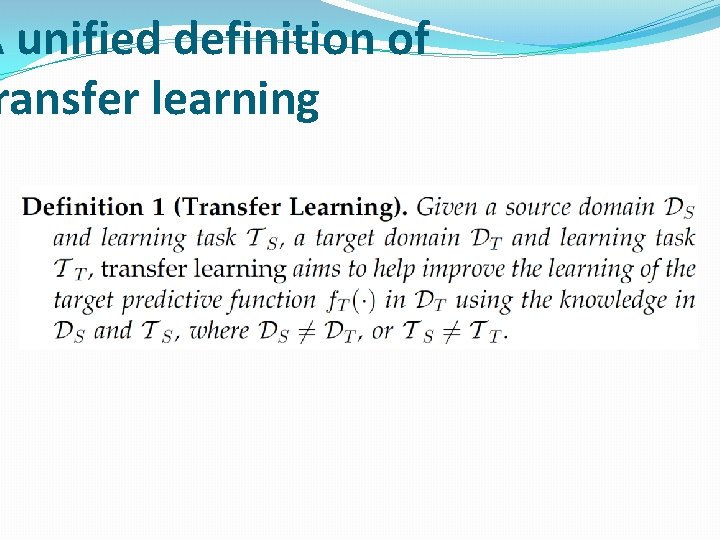

A unified definition of ransfer learning

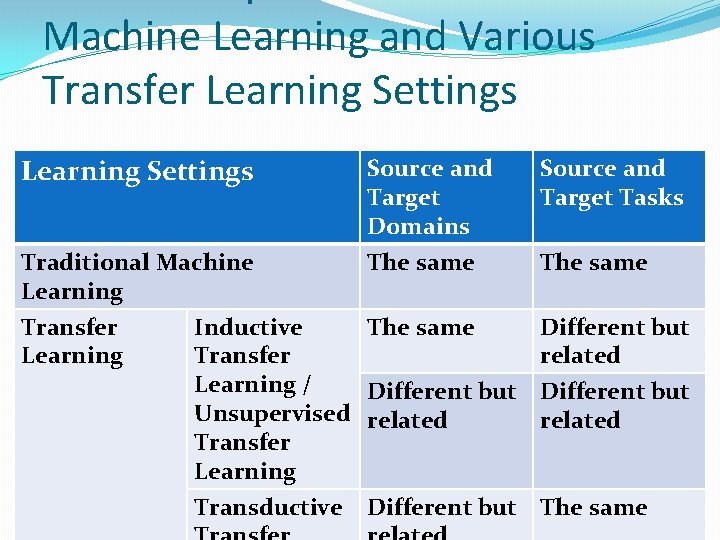

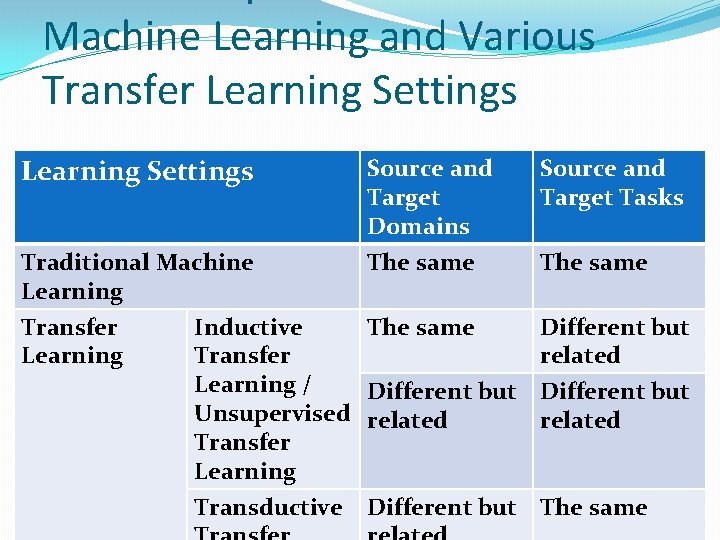

Machine Learning and Various Transfer Learning Settings Traditional Machine Learning Transfer Learning Inductive Transfer Learning / Unsupervised Transfer Learning Transductive Source and Target Domains The same Source and Target Tasks The same Different but related Different but The same

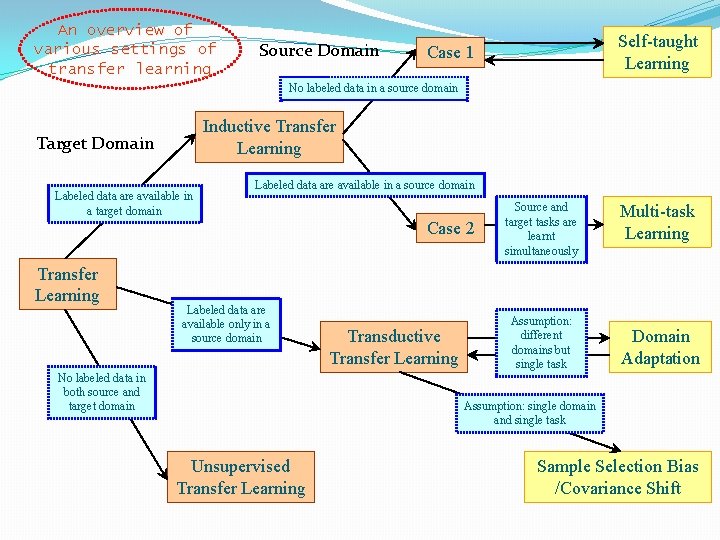

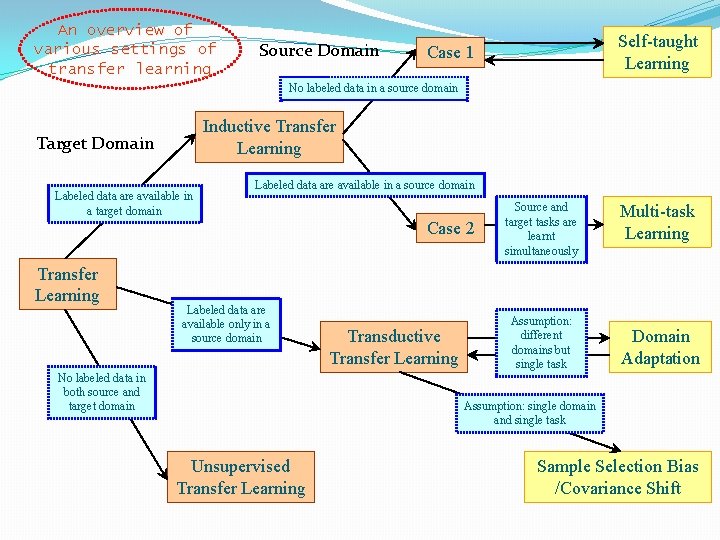

An overview of various settings of transfer learning Source Domain Self-taught Learning Case 1 No labeled data in a source domain Inductive Transfer Learning Target Domain Labeled data are available in a target domain Labeled data are available in a source domain Case 2 Transfer Learning Labeled data are available only in a source domain No labeled data in both source and target domain Transductive Transfer Learning Source and target tasks are learnt simultaneously Assumption: different domains but single task Multi-task Learning Domain Adaptation Assumption: single domain and single task Unsupervised Transfer Learning Sample Selection Bias /Covariance Shift

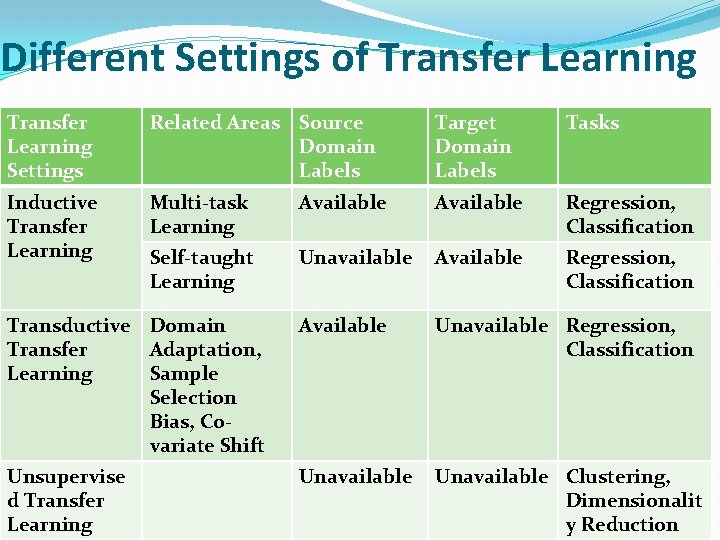

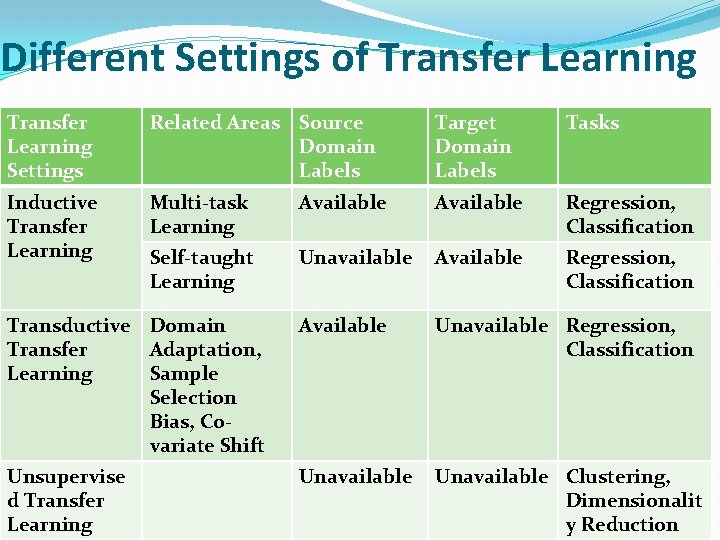

Different Settings of Transfer Learning Settings Related Areas Source Domain Labels Target Domain Labels Tasks Inductive Transfer Learning Multi-task Learning Available Regression, Classification Self-taught Learning Unavailable Available Regression, Classification Transductive Domain Transfer Adaptation, Learning Sample Selection Bias, Covariate Shift Available Unavailable Regression, Classification Unsupervise d Transfer Learning Unavailable Clustering, Dimensionalit y Reduction

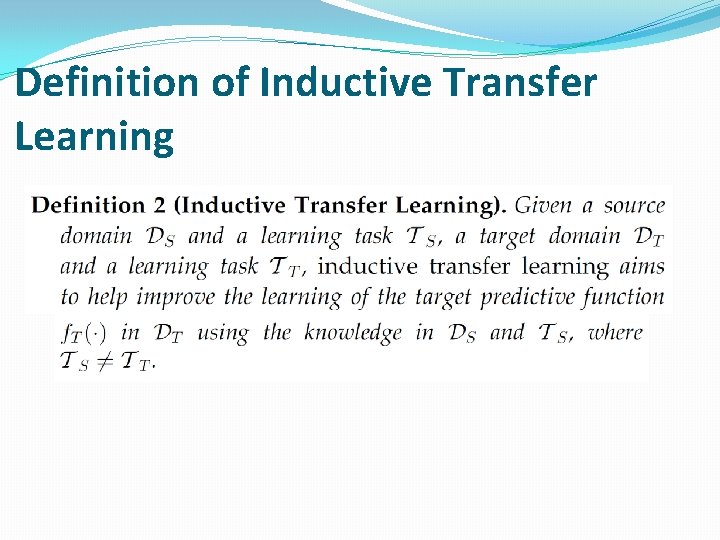

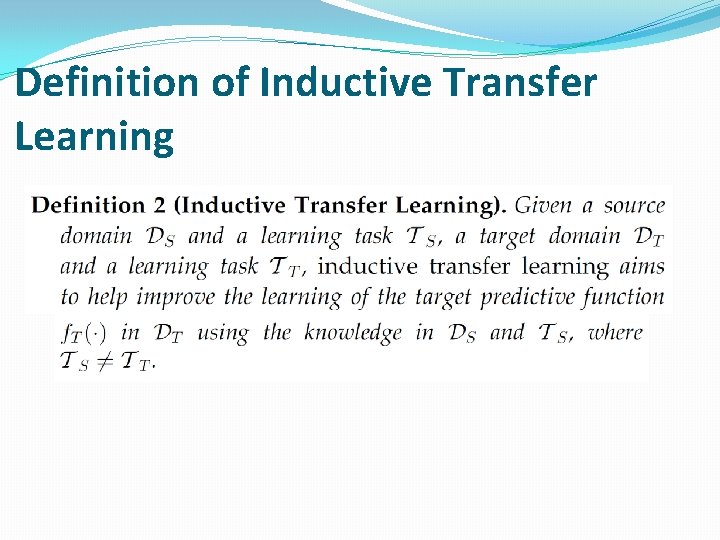

Definition of Inductive Transfer Learning

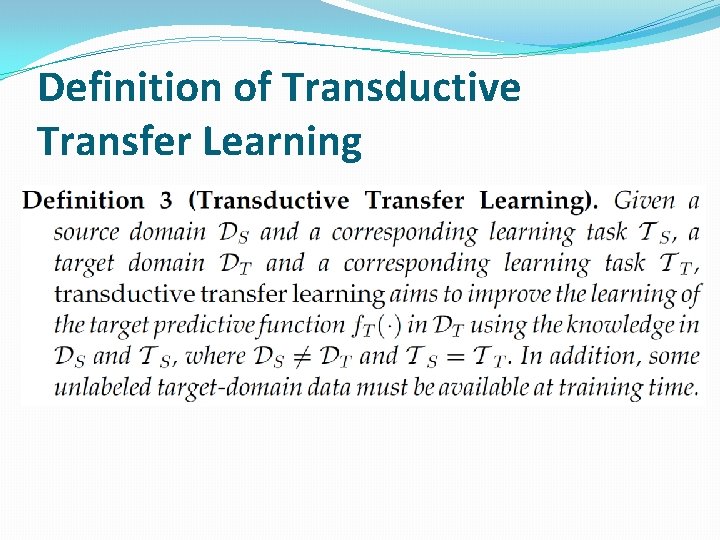

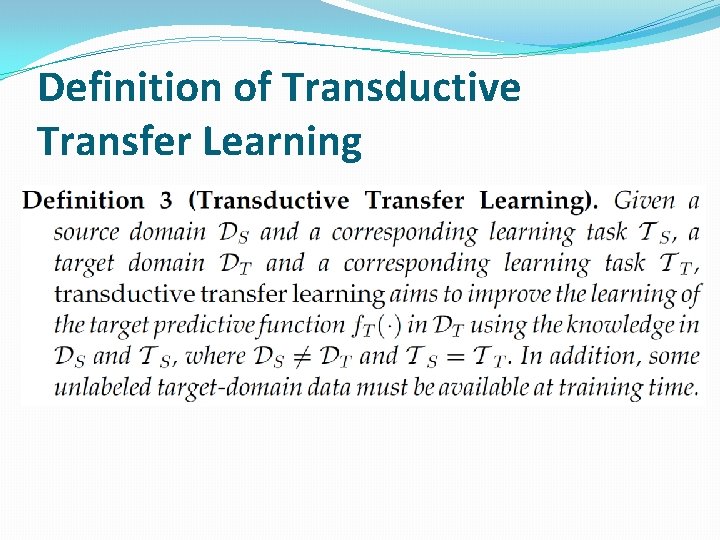

Definition of Transductive Transfer Learning

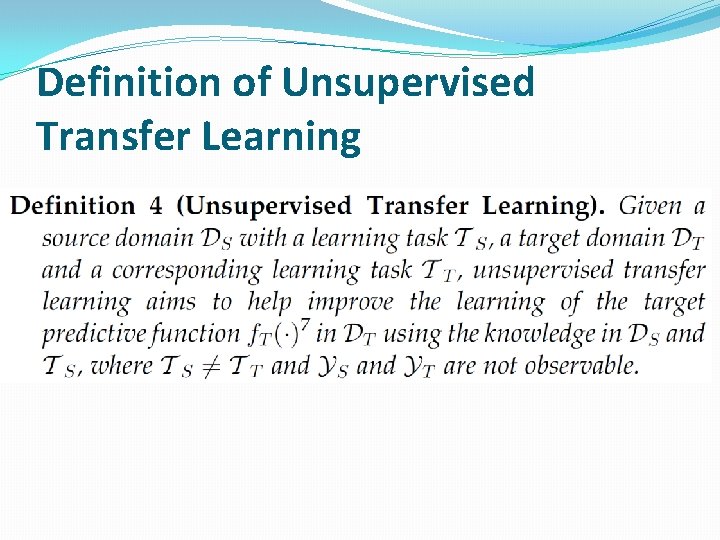

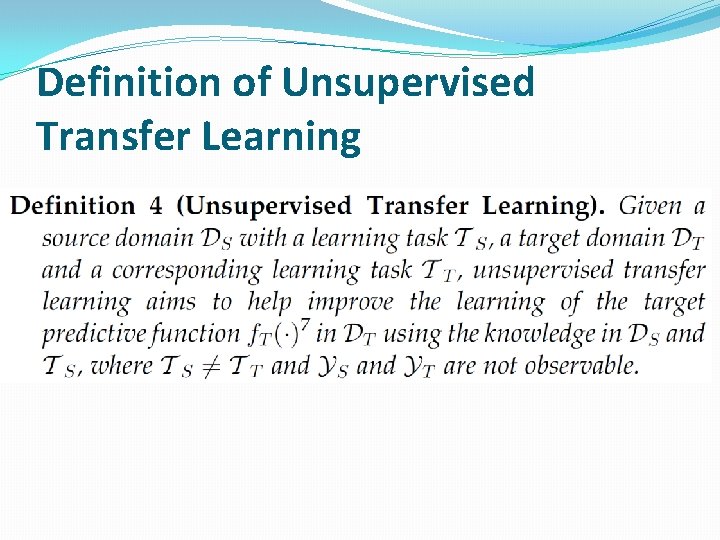

Definition of Unsupervised Transfer Learning

Different approaches �Based on “what to transfer” �Four cases Ø Instance-transfer ØFeature-representation-transfer ØParameter-transfer ØRelational-knowledge-transfer

Instance transfer �To re-weight some labeled data in the source domain for use in the target domain �Instance sampling and importance sampling are two major techniques in instance-based transfer learning method.

Feature-representation-transfer �To learn a “good” feature representation for the target domain. �The knowledge used to transfer across domains is encoded into the learned feature representation. �With the new feature representation, the performance of the target task is expected to improve significantly.

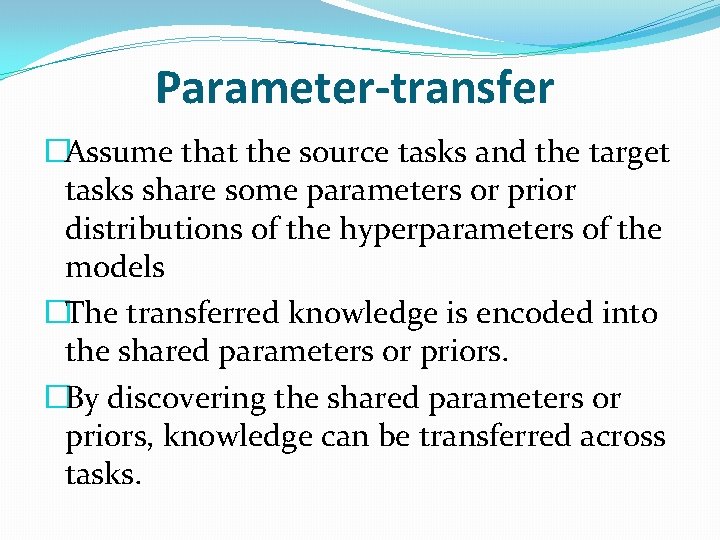

Parameter-transfer �Assume that the source tasks and the target tasks share some parameters or prior distributions of the hyperparameters of the models �The transferred knowledge is encoded into the shared parameters or priors. �By discovering the shared parameters or priors, knowledge can be transferred across tasks.

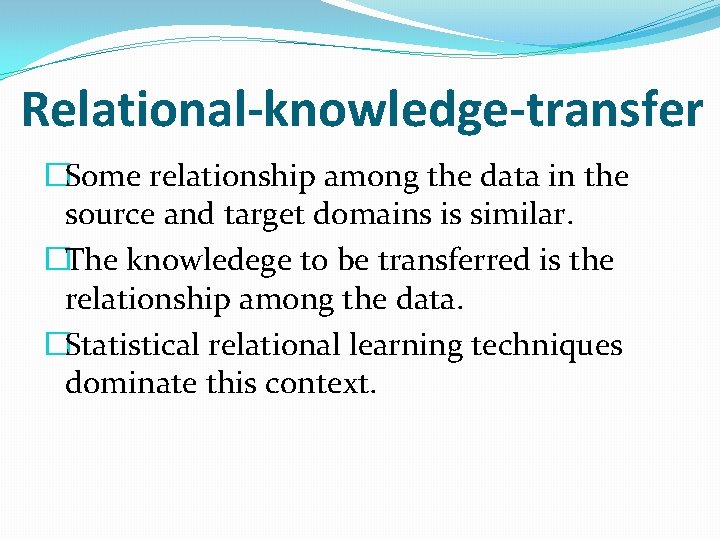

Relational-knowledge-transfer �Some relationship among the data in the source and target domains is similar. �The knowledege to be transferred is the relationship among the data. �Statistical relational learning techniques dominate this context.

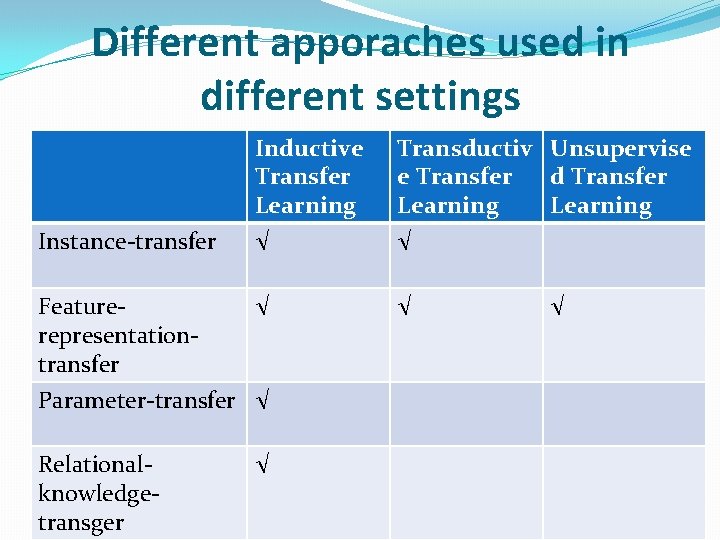

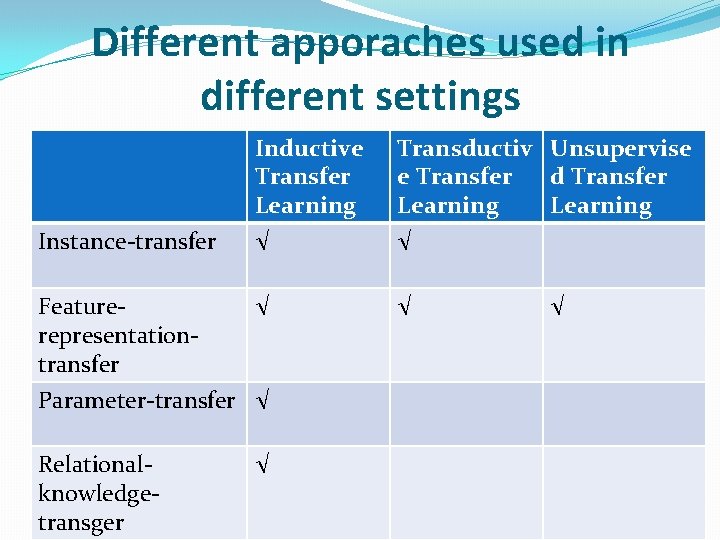

Different apporaches used in different settings Instance-transfer Featurerepresentationtransfer Inductive Transfer Learning √ Transductiv Unsupervise e Transfer d Transfer Learning √ √ √ Parameter-transfer √ Relationalknowledgetransger √ √

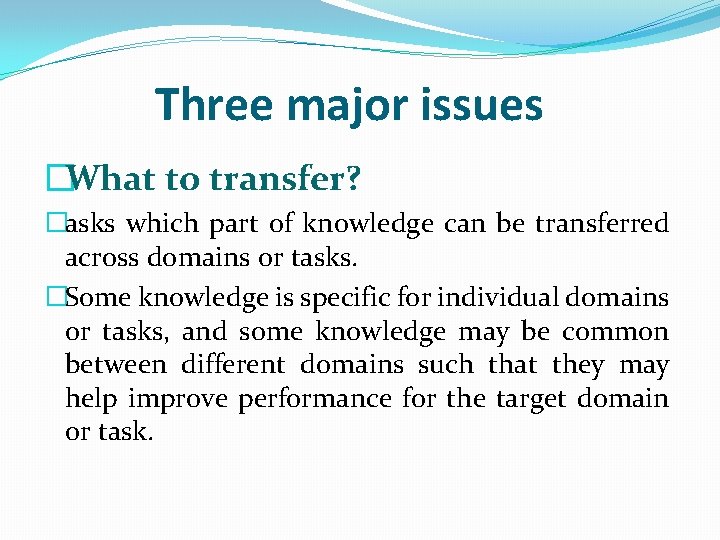

Three major issues �What to transfer? �asks which part of knowledge can be transferred across domains or tasks. �Some knowledge is specific for individual domains or tasks, and some knowledge may be common between different domains such that they may help improve performance for the target domain or task.

�How to transfer? �After discovering which knowledge can be transferred, learning algorithms need to be developed to transfer the knowledge, which corresponds to the“how to transfer” issue.

�When to transfer? �asks in which situations, transferring skills should be done. �in which situations, knowledge should not be transferred. �In some situations, when the source domain and target domain are not related to each other, brute-force transfer may un-succeed. �In the worst case, it may even hurt the performance of learning in the target domain, a situation which is often referred to as negative transfer.

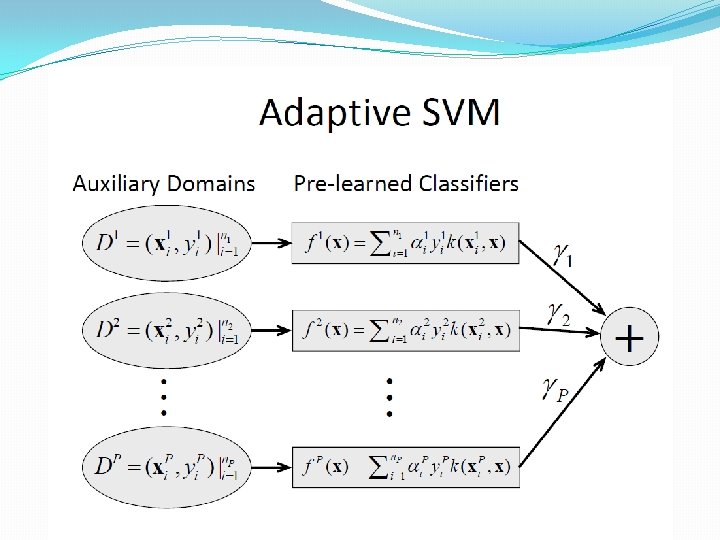

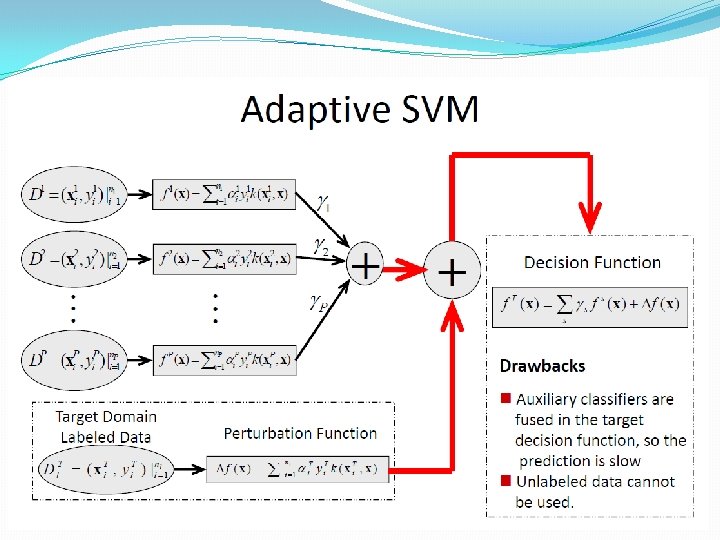

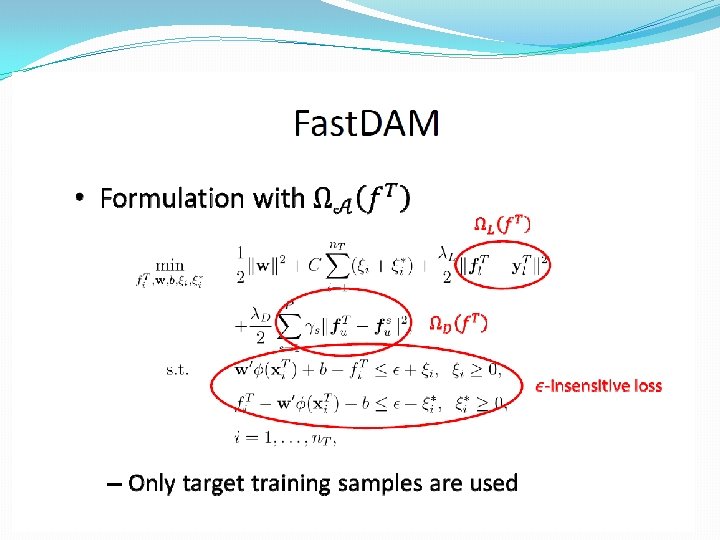

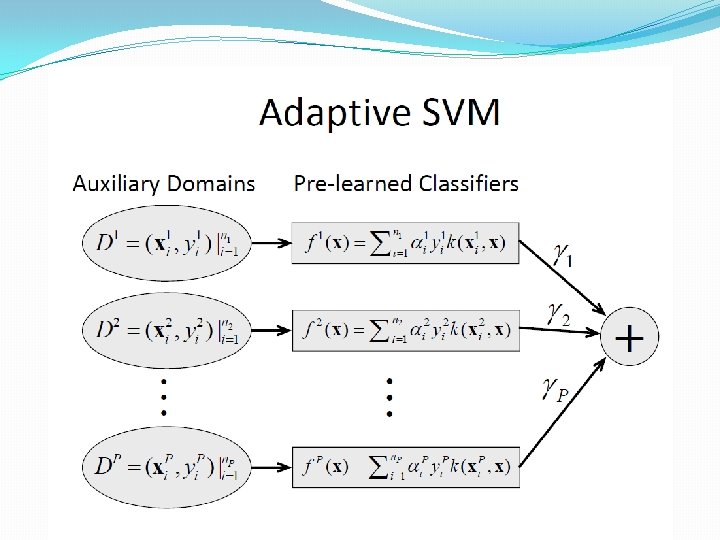

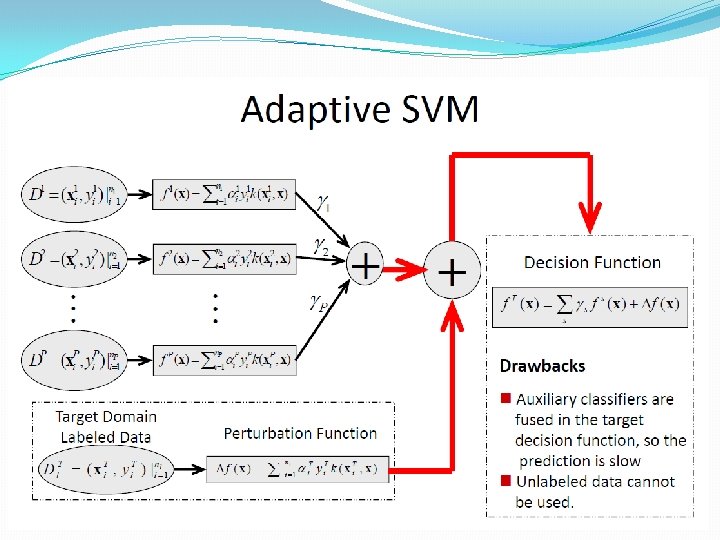

Some SVM-based transfer learning methods